id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

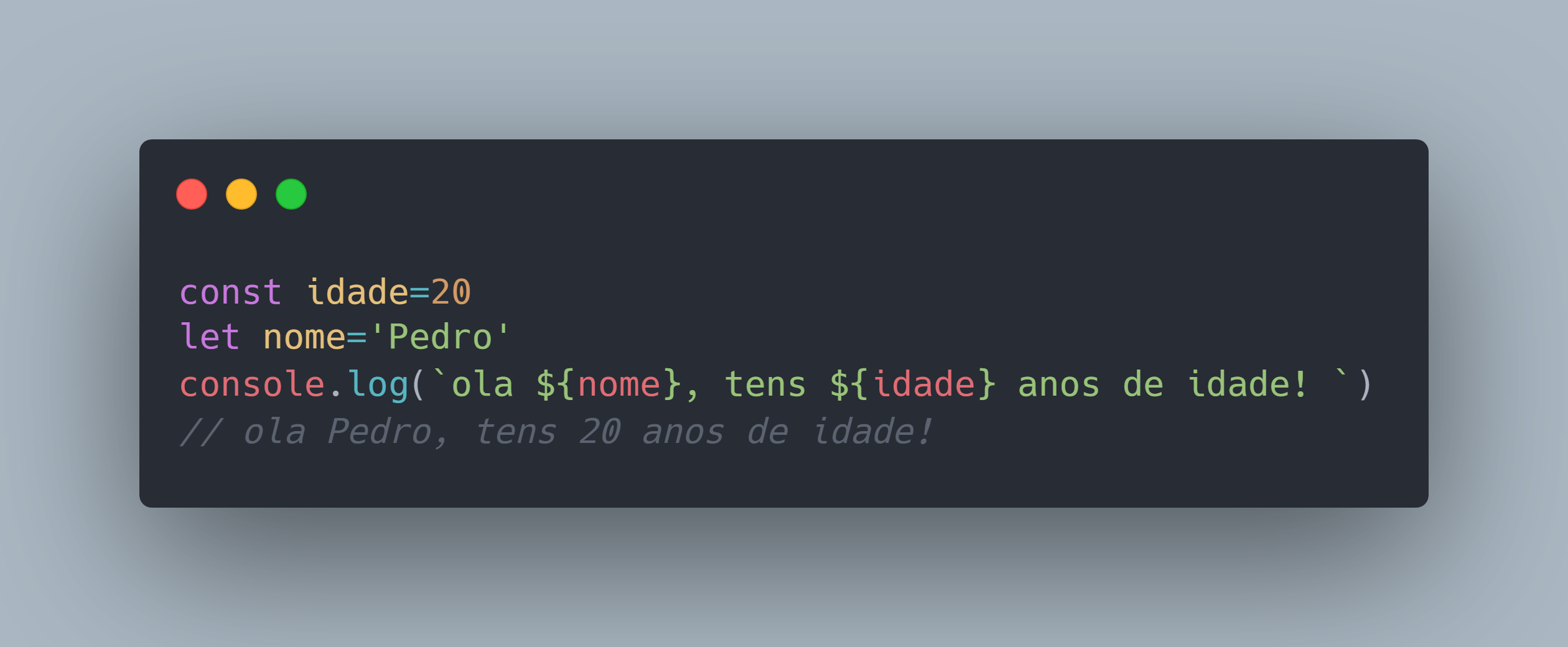

917,516 | Using environment variables with SvelteKit | Because SvelteKit comes with Vite, using .env may be a bit unfamiliar. There are two ways to go about... | 0 | 2021-12-04T17:56:24 | https://dev.to/nostro/using-environment-variables-with-sveltekit-544b | svelte, sveltekit, webdev | Because SvelteKit comes with Vite, using `.env` may be a bit unfamiliar. There are two ways to go about it, either with Vite's `import.meta.env` out of the box or with the usual suspect `process.env` that requires a bit more setting up.

**You may also be interested in**

[SvelteKit + TailwindCSS](https://dev.to/nostro/using-tailwindcss-with-sveltekit-25i6)

[SvelteKit + Heroku](https://dev.to/nostro/deploying-to-heroku-with-sveltekit-3350)

### Using `import.meta.env`

Full information is available in the [official docs](https://vitejs.dev/guide/env-and-mode.html) but the gist of it is that you should prefix any variable that you want exposed to your client with `VITE_`

It means `import.meta.env.FOO` will not be exposed client-side, while `import.meta.env.VITE_FOO` will be.

`.env`

```

FOO=BAR

VITE_FOO=BAR

```

`browser`

```js

console.log(import.meta.env.FOO)

// undefined

console.log(import.meta.env.VITE_FOO)

// BAR

```

### Using `process.env`

If for some reason you still want to use `process.env`, because you're used to it or you don't feel like renaming all your variables `VITE_SOMETHING`, you can also do it with the `env-cmd` package.

```powershell

npm i env-cmd

```

Then modify your `config` in `svelte.config.js`

`svelte.config.js`

```js

const config = {

kit: {

vite: {

define: {

'process.env': process.env,

},

},

},

};

```

And finally add `env-cmd` to your `dev` script

`package.json`

```js

{

"scripts": {

"dev": "env-cmd svelte-kit dev",

},

}

```

And now you'll be able to access `process.env` in your client

`.env`

```

FOO=BAR

```

`browser`

```js

console.log(process.env.FOO)

// BAR

```

| nostro |

917,567 | Integrate PayPal API | This article is about integrating Paypal API As a developer, integrating APIs into your project is... | 0 | 2021-12-04T20:53:52 | https://dev.to/kitarp29/integrate-paypal-api-3f8k | webdev, javascript, beginners, tutorial | **This article is about integrating [Paypal API](https://developer.paypal.com/home)**

As a developer, integrating APIs into your project is imperative. I recently integrated PayPal's payment gateway on a side project and plan on sharing how to do it.

PayPal is well known for online payments. Its credibility and developer experience have convinced many users to use it.

The basic API integration can be done in two different ways. The first one is *client-side*. And the second one is *server-side* integration.

## Implementing on the Client Side

If I talk about the reading resources:

A developer won't need anything further than visiting this page. I really don't think you need anything else. [_Documentation_](https://developer.paypal.com/docs/business

/checkout/set-up-standard-payments/integrate/)

Reasons to consider this resource-

- The workflow is very well explained.

- The code sample is available

- Steps to test out provided.

Talking about the video resources:

{% youtube l3e1uzMhiV8 %}

- This is a pretty short and concise video.

- It is from the official channel of Paypal.

- This video very well explains the concept of different ways to integrate on any website.

- Covers all the cases and is easy to follow up.

There is one more resource, that developers can follow up. I am not promoting their channel in any manner but I liked their flow and ease to follow.

- [Paypal Official Source](https://youtu.be/T1q7JipHR48?list=LL)

## Implementing on the Backend Server

I will not be getting into the benefits of applying on Client or Backend. That is up to the developer's choice of preference. If I had to recommend one, then I would have to say an easy way to implement would b the first one.

Getting on to the resource, this video takes care of most of the integration:

{% youtube DNM9FdFrI1k %}

API integration I would say. I used this node module [paypal-checkout](https://www.npmjs.com/package/paypal-checkout)

- Installing it was very easy.

- The npm page was

also very helpful and informative.

- This resource has Video tutorials.

- Demo app space and much more cool stuff.

## Reviews of API Integration:

This was all enough for integration I think. Moving ahead, I will be sharing my reviews of my experience. Learning was also easy as I expected, the official support and documentation were very helpful. But the

PayPal's youtube channel is also of great help. I got many good articles around it too. Many fun blogs on the dashboard as well.

Thanks for reading my article 😊 | kitarp29 |

917,661 | My Experience at Civo Hackathon 2021 | Learning Kubernetes has been one of my top priorities this year. I spent quite a lot of time... | 0 | 2021-12-05T00:58:18 | https://dev.to/avik6028/my-experience-at-civo-hackathon-2021-3acb | machinelearning, devops, kubernetes, webdev | {% youtube lhdiBAoL80s %}

Learning Kubernetes has been one of my top priorities this year. I spent quite a lot of time finding good resources to learn and have hands-on experience with the technology.

**Finally, I came across Civo Kubernetes, when one of my seniors recommended the platform to me. Yeah, it was the one I was looking for! **

The Civo Kubernetes Platform provided me with fully managed K3s clusters as well as high-quality learning videos about Kubernetes from the platform developers themselves. I instantly got a $250 credit in my Civo account once I signed up with my credit card.

<br>

# Introduction

I am currently in the **final year** of my Bachelor's degree in Computer Engineering, from **KIIT University, Bhubaneswar, India**.

In fact, this is my **2nd victory in a nationwide hackathon** this year. Earlier this year, I had finished as the First Runners-up at the **TCS Inframinds Hackathon**. Apart from that, I am currently a **DevOps intern** at **Highradius Technologies** and also an enthusiastic **open-source contributor**.

When I got to know about the **Civo Hackathon**, I planned to take part in it as I needed hands-on experience with **Kubernetes**. Also, the speaker line-up before the commencing of the hackathon was interesting. I got to know about the platform as well as about monitoring and profiling from the developer advocates of Civo.

The hackathon spanned over the **2nd weekend of November 2021**, starting from Friday, when we had the speaker sessions till Sunday evening. The results were finally announced the very next Monday.

### **Much to my surprise, I finished up 2nd !!!!!!**

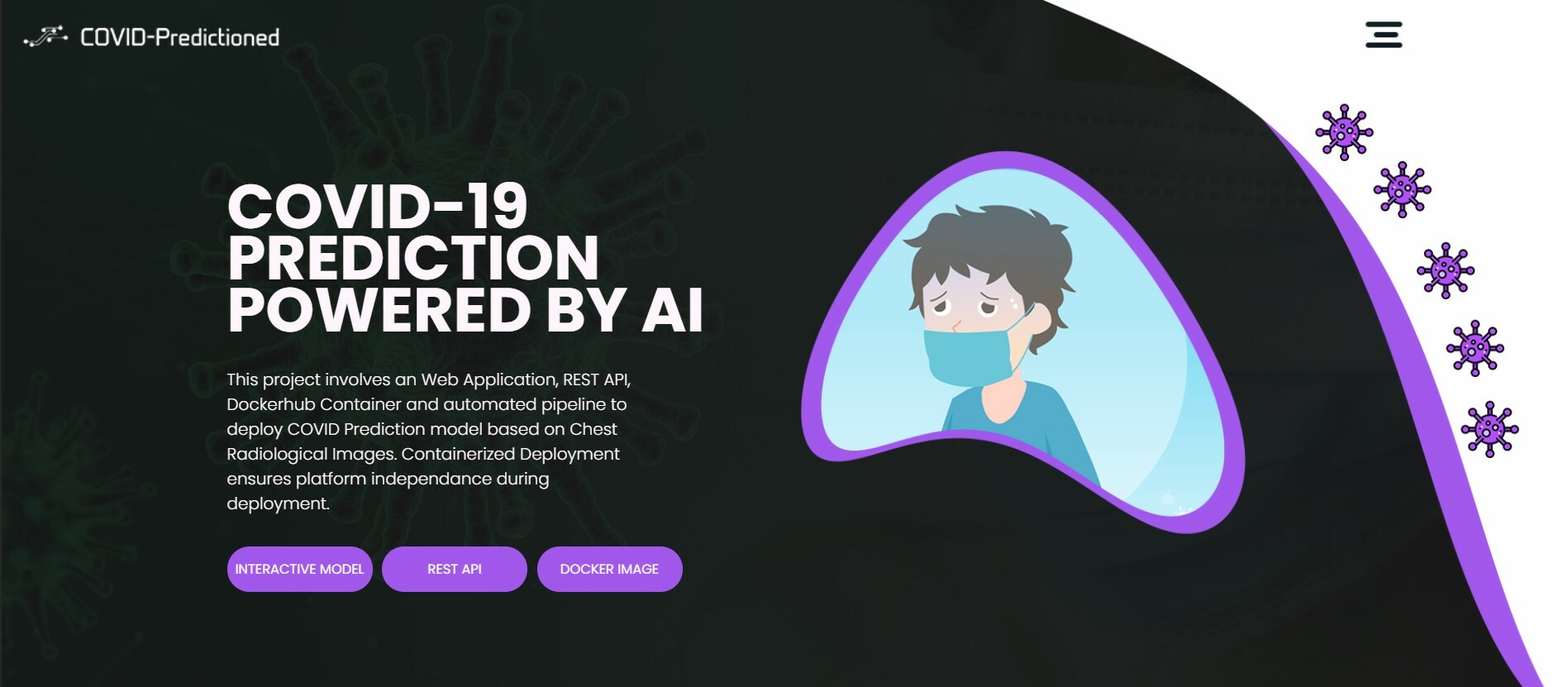

# My Project

The project I built is a **Computer-Aided Diagnostic System** that is used to predict whether a person has been infected with COVID-19.

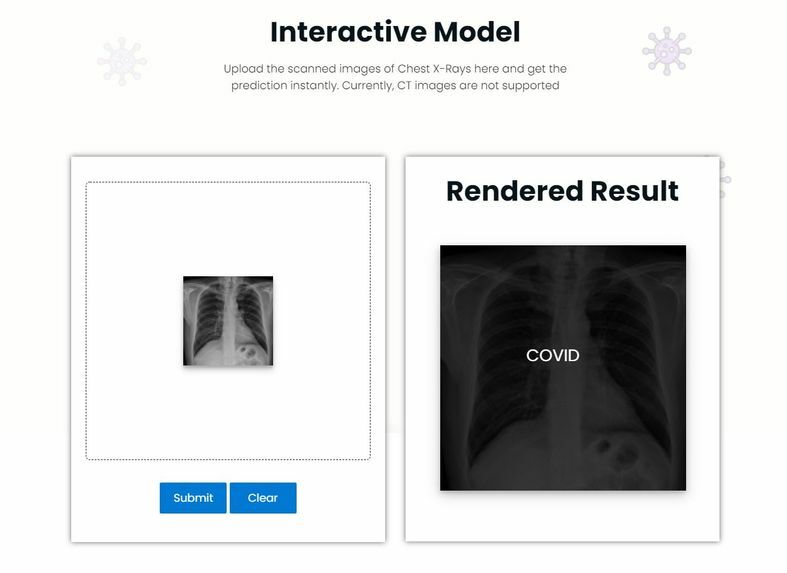

The prediction is possible through the integration of the COVID-19 X-Ray Classifier into a Web Application. By uploading Frontal Chest X-Rays, the model can perform classification between COVID and non-COVID X-Rays using Modified DenseNet architectures.

The users are given the option to save the results to the Database to receive further advice from doctors. The data is stored securely in MongoDB. Apart from that, REST API has been provided for Developers to access the Deep Learning Model and get prediction data in their applications. I have also enabled monitoring facilities for the application.

**The entire project was hosted on the Civo Kubernetes Platform.**

<br>

# How I built it

The project kickstarted with the Development of the Web Application. The UI was finalized and then the application was developed. Several open-source styles, libraries and toolkits were used during the development of the Frontend with HTML, CSS and JavaScript.

After completion, the backend of the application was developed with **Python & Flask framework**. The routes were created and mapped to the Frontend. The Deep Learning Model was integrated with the backend REST APIs. Various libraries such as Numpy, Pillow and Tensorflow was used to manage the model. Finally, MongoDB was integrated with the backend to save the Form data.

**This completed the Web Application Development.**

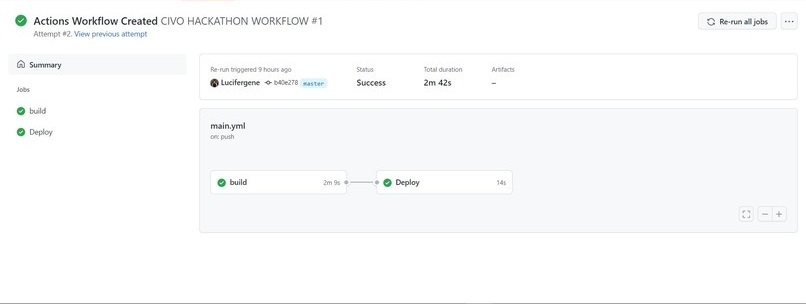

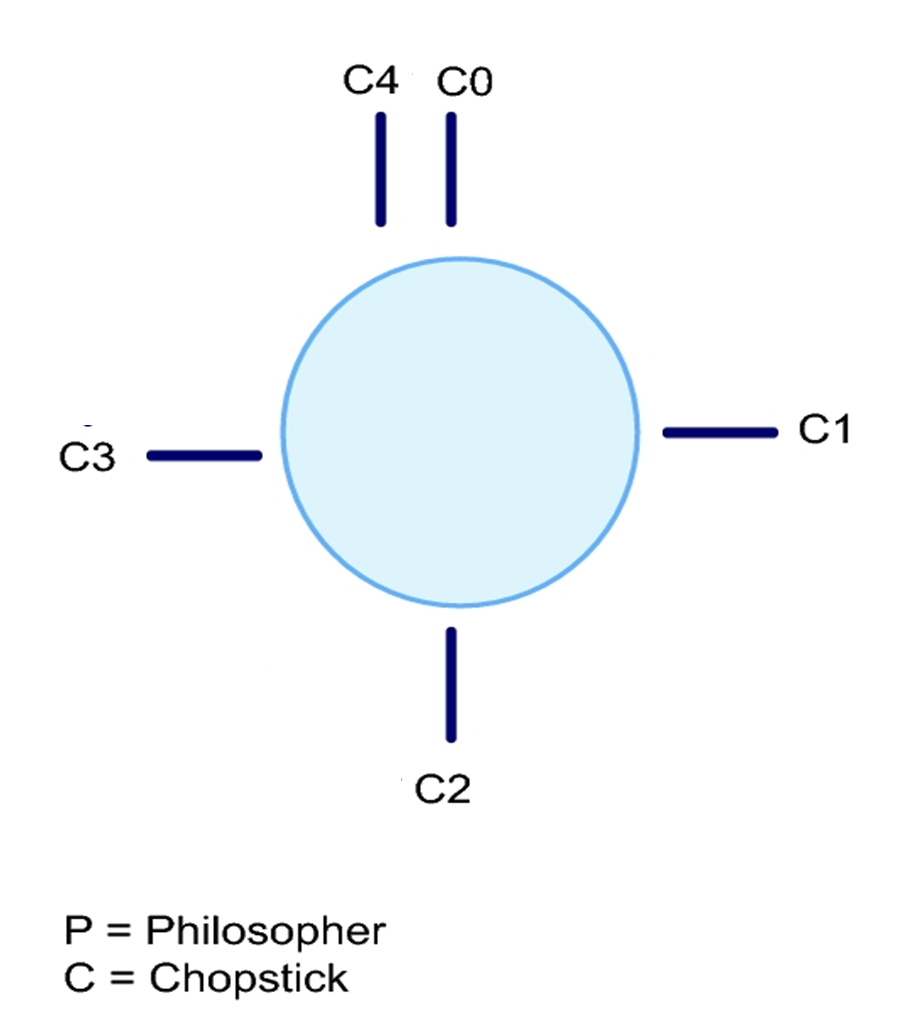

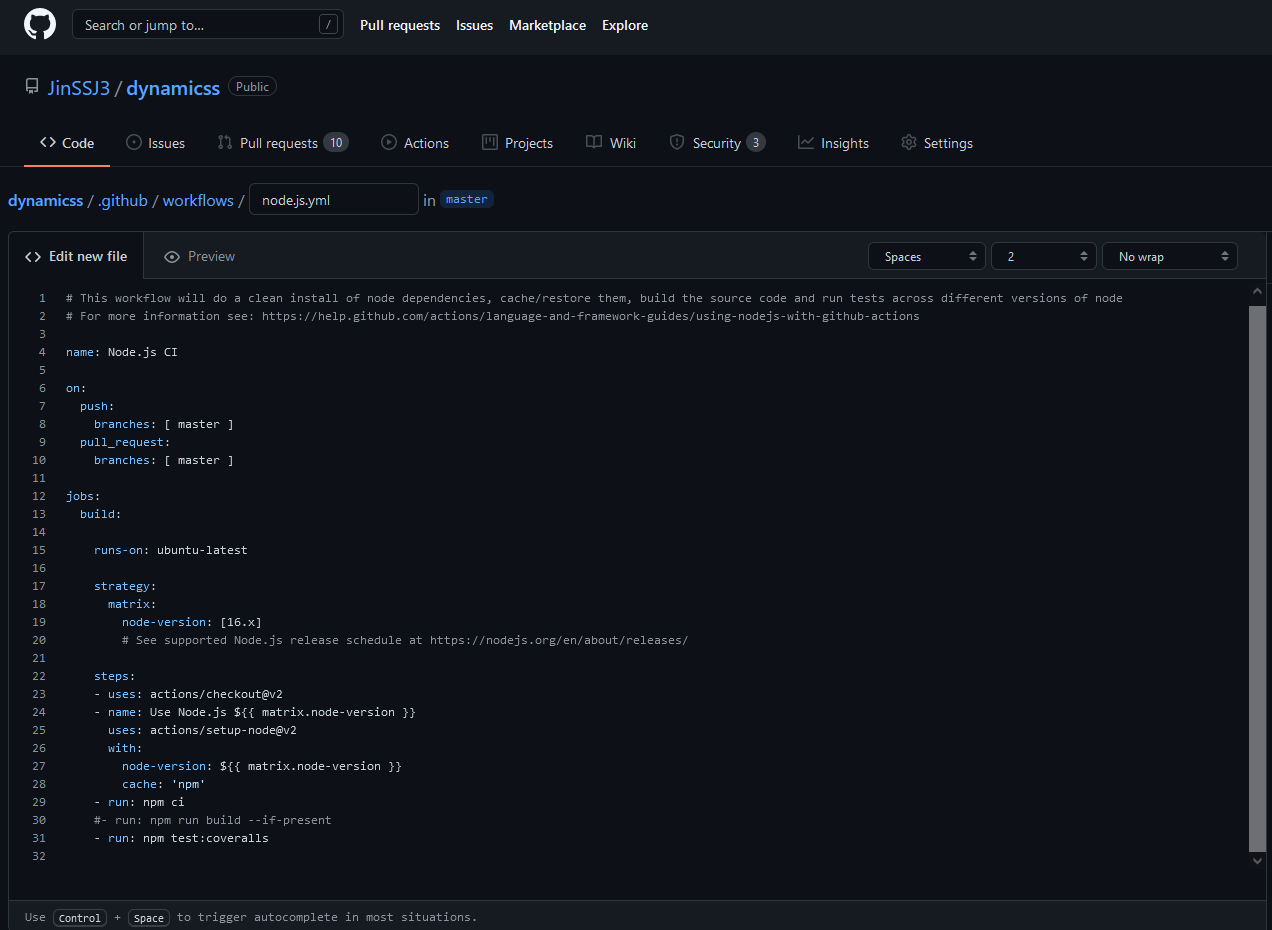

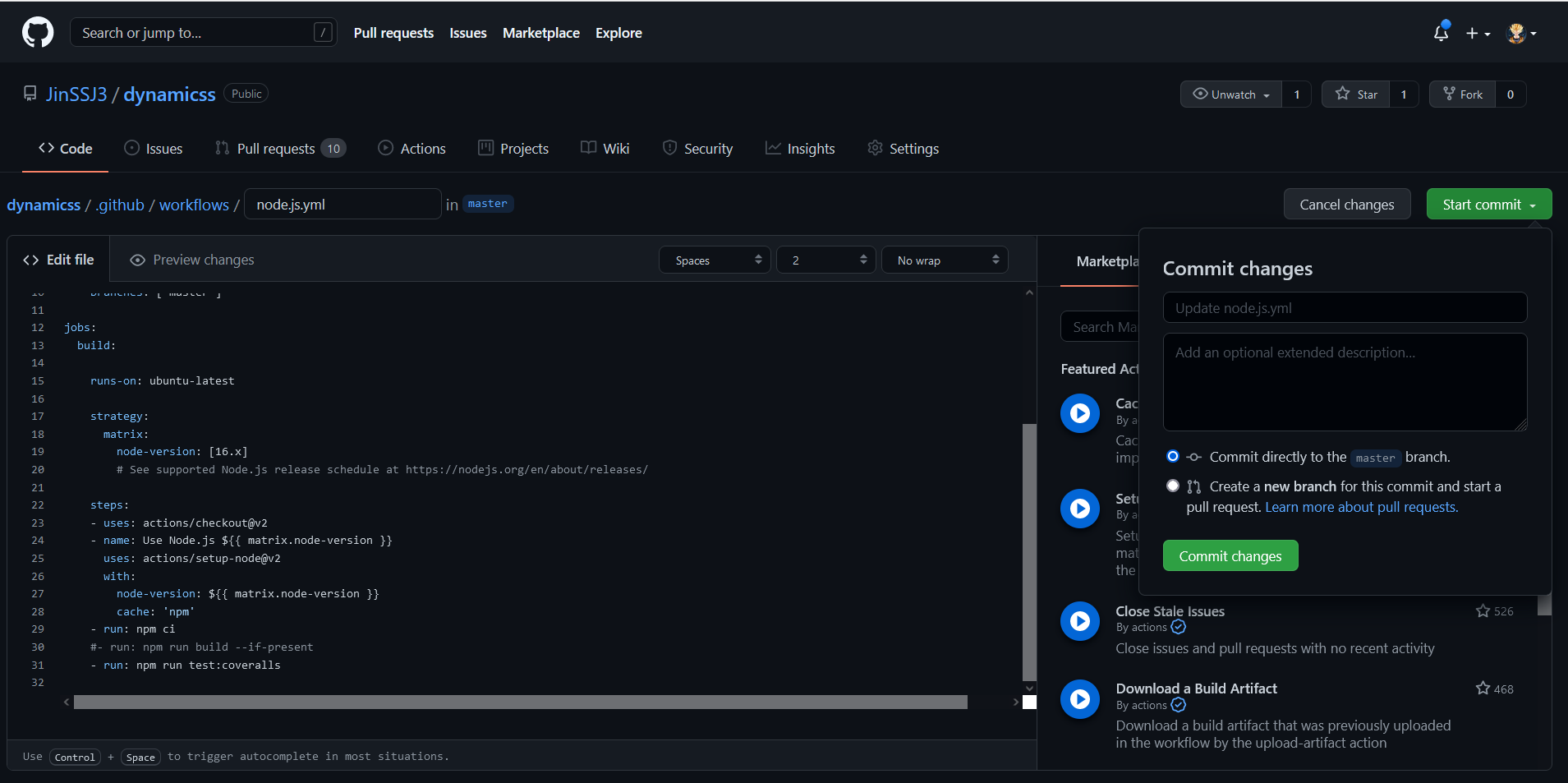

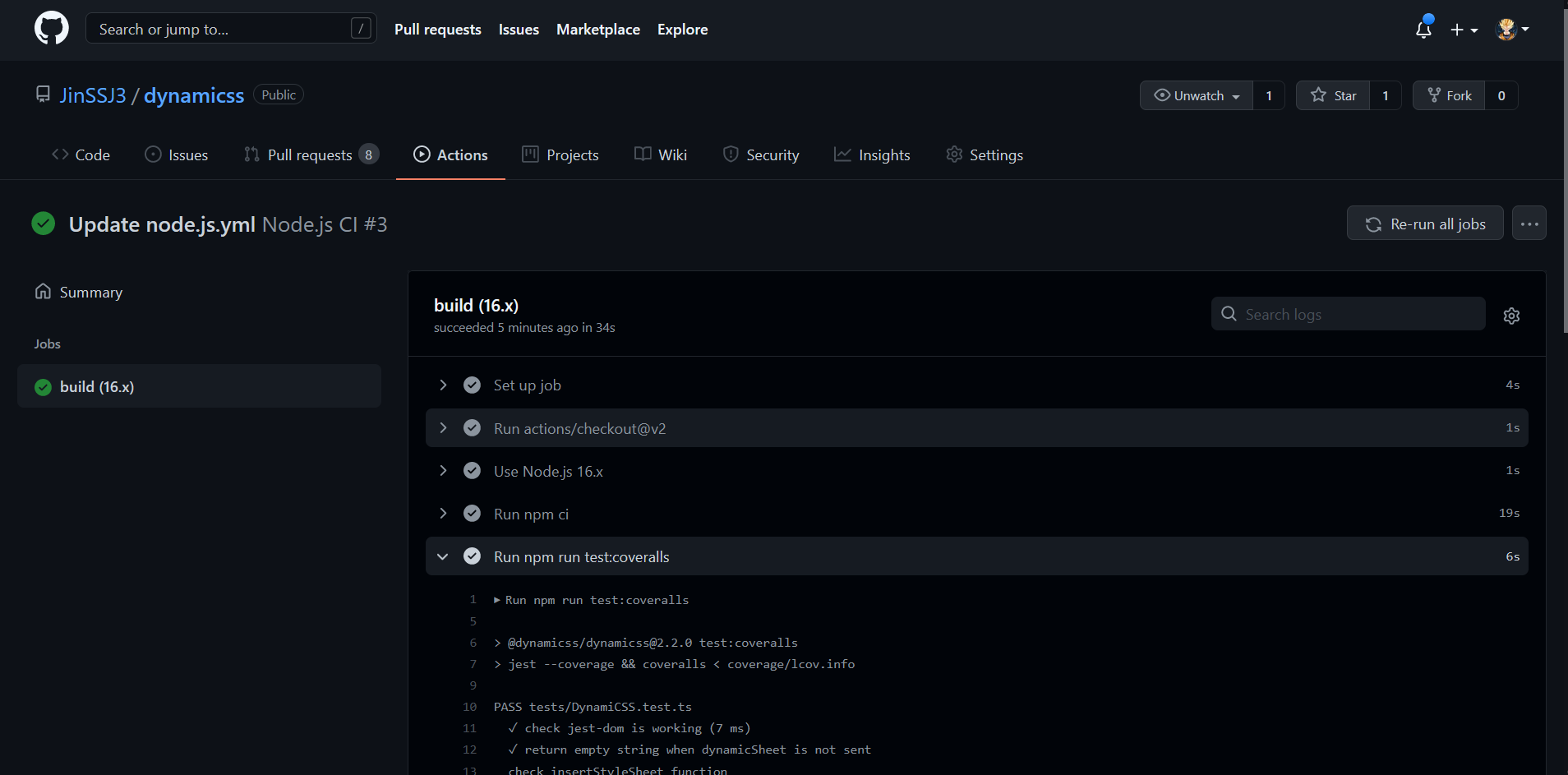

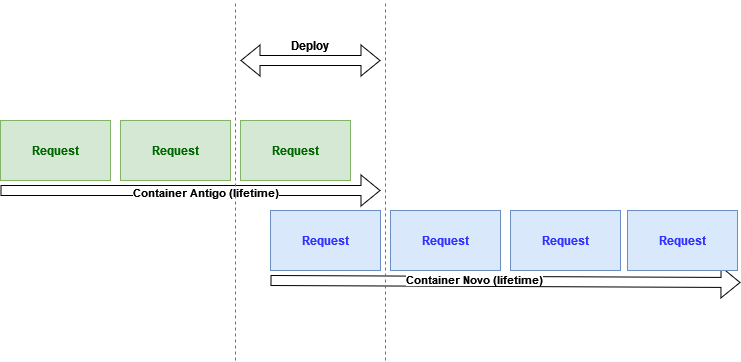

The next stage involved deploying the application on Civo K3s Cluster by developing an automated DevOps CI/CD Pipeline. First, the entire application code was pushed to a GitHub repository. Through this step, the code version control is ensured and any change in the code would automatically trigger the entire pipeline.

To deploy applications on K8s, the application needed to be containerized. The building of the Docker container should automatically take place once any code gets changed. After building the container, it needs to be pushed to a Docker Repository, here Dockerhub. Also, the old Docker Image Tag mentioned in the code would need to be replaced by the new Docker Image Tag. For automating all these, a Continuous Integration Pipeline was created with the help of Github Actions as the CI tool.

A workflow file was written to sequence the jobs that needed to be performed, once the code get changed in the repository. The jobs involved building and pushing the Docker container to Dockerhub.

After pushing, the new container tag replaced the older one mentioned in the customization file automatically, with the help of Kustomize.io. The Deployment, Service and Ingress YAML files were pushed to the repository as K8s needed these files during deployment.

`Github Actions Workflow` file:

```yaml

name: CIVO HACKATHON WORKFLOW

on:

push:

branches: [ master ]

jobs:

build:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v2

- name: Build and push Docker image

uses: docker/build-push-action@v1.1.0

with:

username: ${{ secrets.DOCKER_USER }}

password: ${{ secrets.DOCKER_PASSWORD }}

repository: ${{ secrets.DOCKER_USER }}/civo-hackathon

tags: ${{ github.sha }}, latest

deploy:

name: Deploy

runs-on: ubuntu-latest

needs: build

steps:

- name: Check out code

uses: actions/checkout@v2

- name: Setup Kustomize

uses: imranismail/setup-kustomize@v1

with:

kustomize-version: "3.6.1"

- name: Update Kubernetes resources

env:

DOCKER_USERNAME: ${{ secrets.DOCKER_USER }}

run: |

cd kustomize/base

kustomize edit set image civo-hackathon=$DOCKER_USERNAME/civo-hackathon:$GITHUB_SHA

cat kustomization.yaml

- name: Commit files

run: |

git config --local user.email "action@github.com"

git config --local user.name "GitHub Action"

git commit -am "Bump docker tag"

- name: Push changes

uses: ad-m/github-push-action@master

with:

github_token: ${{ secrets.GITHUB_TOKEN }}

```

**This completed the Continuous Integration process.**

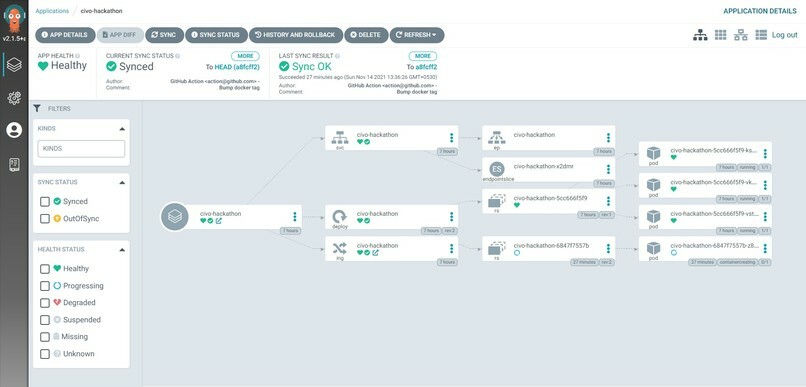

The final stage was to deploy the Docker Image pushed in DockerHub, into a CIVO k3s Cluster. For this, a K3s cluster was created on Civo. Due to CPU intensive nature of the application, the Largest Node configuration was selected. Then through the Civo CLI, the KubeConfig file was connected with the local KubeCTL tool.

Through KubeCTL, a namespace was created and ArgoCD was installed in it. Inside ArgoCD, the configuration was provided to continuously track the GitHub Repository for changes in the Kustomization file.

Since previously through CI, we had managed to update the Kustomization file after a new code change took place, this update in the Kustomization file triggered the ArgoCD to re-deploy the application based on the newer Docker Image Tag provided. Thus after an initial manual Sync, ArgoCD managed to complete the Continuous Deployment process.

**The CI/CD Pipeline was successfully created which helped to automatically deploy code changes to production.**

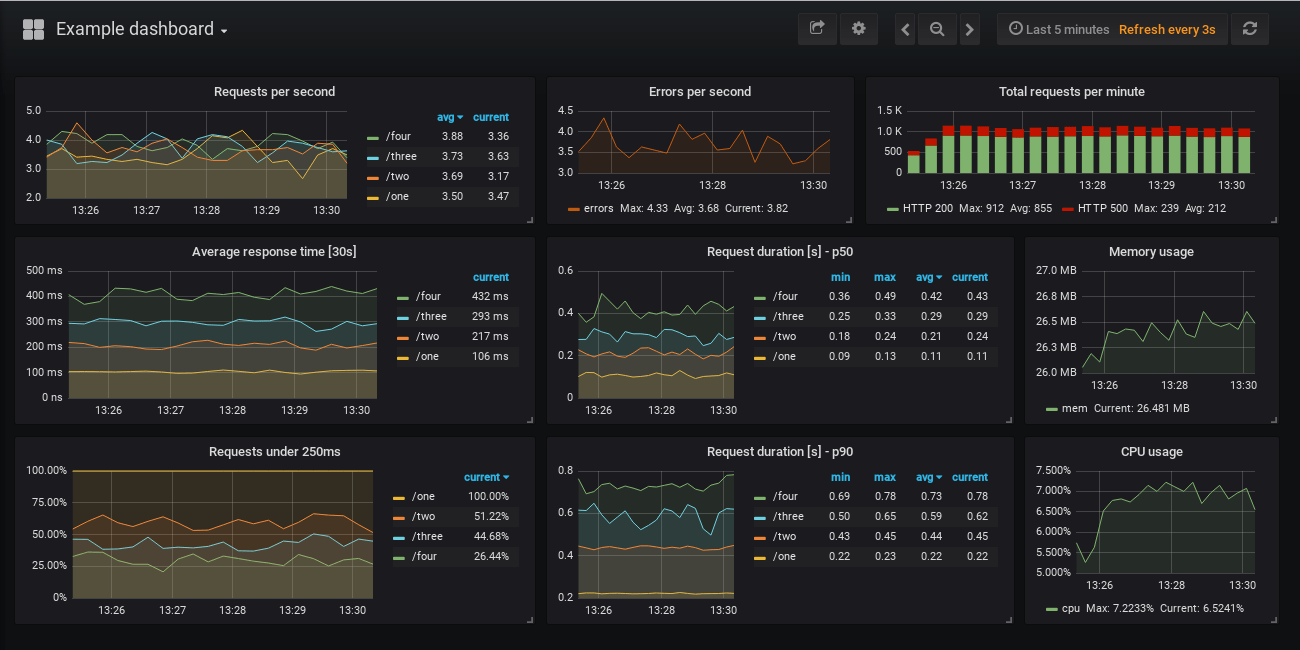

After the application was properly working, I proceeded with installing Prometheus and Grafana in a separate namespace in the cluster to fetch and visualize the metrics. For that, I edited the Flask application to make it generate metrics to be fed to Prometheus.

Then I developed a Service Monitor for exposing the metrics endpoint of the application which in turn would be automatically added to the Prometheus Target group. Now, I was able to fetch metrics into Prometheus from the Web application. After that, I set up the Grafana Dashboard to visualize the metrics.

**This finally concluded the project.**

<br>

# My Experience

Overall, I had a great experience learning and executing new things within a short span of time. The process of deployment could not be made smoother without the Civo Platform. The fact that we can launch a cluster within a few minutes, along with having a marketplace from where we can pick services we want to preinstall in the cluster, really simplified the process for newbie Kubernetes developers like me.

Apart from that, the presence of the Kubernetes academy which contains beginner-friendly videos about all the different features of K8s, integrated into the platform, helped me to quickly navigate and get my doubts cleared before applying stuff on my cluster.

And of course, we had the option of directly contacting the Civo team via Slack to get out queries resolved. Special thanks to **Saiyam Pathak**, for his Monitoring video, which really helped me set up the monitoring stack easily.

<br>

# What's next for the project

Although I tried my best to incorporate all the domains of DevOps into my application, still there are some places that need attention.

First and foremost, I tried to incorporate the GitOps principle as much as possible, which included pushing the application code, Kubernetes Manifests as well as Terraform scripts to the Git. But still, there were some settings that I had to manually set inside the cluster like setting up the ArgoCD. Since ArgoCD supports GitOps, I would be declaring the settings from Git itself.

Apart from that, I would be incorporating some Logging and Profiling tools in the cluster, that would give a better picture of the application deployment.

Last but not the least, the model which has been deployed can currently perform classification only. But recently, in some researches, it has been proved that through Instance Segmentation on the X-Rays, we can actually measure the severity of the spread of the virus by precisely identifying the locations of the GGOs. In the future, I want to integrate such a model with the application, so that users can also measure the severity of the virus instantly.

---

You can visit the repository from below:

{% github Lucifergene/civo-hackathon %}

#### Demo : https://covid-predictioned.herokuapp.com/

#### DevPost : https://devpost.com/software/covid-19-prognosis

#### Civo - https://www.civo.com/

---

You can reach out on my [Twitter](https://twitter.com/avik6028), [Instagram](https://instagram.com/avik6028), or [LinkedIn](https://linkedin.com/in/avik-kundu-0b837715b) if you need more help. I would be more than happy.

If you have come up to this, do drop an :heart: if you liked this article.

**** | avik6028 |

917,753 | Random Text Generator | Choose randomly from 2 arrays to create your own brand name. | 0 | 2021-12-05T04:57:21 | https://dev.to/enlineaweb/random-text-generator-2hdm | codepen | <p>Choose randomly from 2 arrays to create your own brand name.</p>

{% codepen https://codepen.io/enlineaweb/pen/GRMpPBX %} | enlineaweb |

917,838 | Hanukkah lighting in WebXR | To celebrate Hanukkah (חנוכה), I made a WebXR scene where the user can pan, walk around (WASD),... | 15,815 | 2021-12-05T09:21:18 | https://dev.to/barakplasma/hanukkah-lighting-in-webxr-31m7 | webxr, html, vr, showdev | To celebrate Hanukkah (חנוכה), I made a WebXR scene where the user can pan, walk around (WASD), and/or enter VR in the Western Wall (הכותל המערבי) using AFrame, an HTML/JS framework for creating WebXR experiences.

{% github aframevr/aframe %}

To make a WebXR scene, I used the webcomponents proved by AFrame to create a scene with

- a 360 image as the sky

- a number of cylinders as candles

- a number of gltf models for flames and the hanukiah (special thanks to [Santiago Shang](https://skfb.ly/6VLIn) for the CC licensed beautiful GLTF model)

- and some english text (doesn't support hebrew / RTL as far as I can tell)

Here is the [live demo](https://hanukah-aframe.barakplasma.repl.co/) (click the ▶️ to see it in your browser, desktop or mobile)

{% replit @barakplasma/Hanukah-AFrame %}

Future plans involve adding interactivity, so that using a Quest 2 controller / pointer lets you light each candle, or to integrate [hebcal](https://github.com/hebcal/hebcal-es6) so that it can automatically light the right number of candles. | barakplasma |

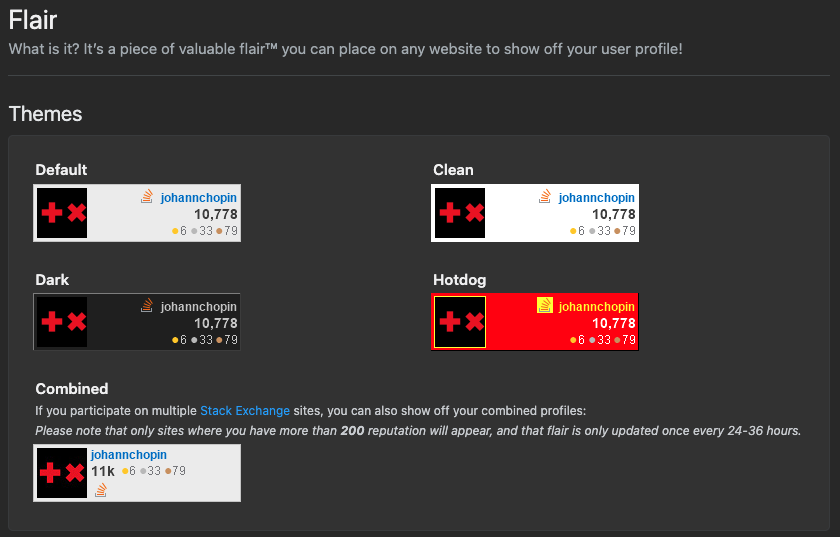

917,870 | Easily share your Stackoverflow's profile on your README | Did you know that it was possible to easily share your Stackoverflow statistics using small dynamic... | 0 | 2021-12-05T15:27:29 | https://dev.to/johannchopin/easily-share-your-stackoverflows-profile-on-your-readme-h9i | opensource, javascript, stackoverflow, readme | Did you know that it was possible to easily share your Stackoverflow statistics using small dynamic images called "Flair"? This small image simply allows you to embellish your different READMEs like the one on GitHub or your online CV:

... and that just by adding the following Markdown:

```md

```

You can check them out at the URL https://stackoverflow.com/users/YOUR_USER_ID/YOUR_USER_NAME/flair :

This service proposed by Stackoverflow works very well, however since it hasn't be updated since a few years, there is some negative point that I noticed:

- the avatar image is weirdly cropped

- the only infos are the username, reputation score and badges amount

- it's a png so there is no fancy animation

- the image is very pixelated because of its small size (208x58)

- and most importantly: you cannot improve it because the project is not open-sourced

That's why I developed my own version of this service that would solve all these problems: [stackoverflow-readme-profile](https://github.com/johannchopin/stackoverflow-readme-profile)

{% github johannchopin/stackoverflow-readme-profile %}

This project allows you to embed improved version of those flairs wherever you want:

Default Stackoverflow profile:

[](https://github.com/johannchopin/stackoverflow-readme-profile)

Smaller profile:

[](https://github.com/johannchopin/stackoverflow-readme-profile)

Just use the following markdown schema to get your flair:

```

[](https://github.com/johannchopin/stackoverflow-readme-profile)

```

Not yet convinced to use this project? I can understand why using an "unofficial" service doesn't seem like a good idea but here are some points that might change your mind:

- stackoverflow-readme-profile proposes a higher customisation with [multiple themes](https://github.com/johannchopin/stackoverflow-readme-profile/tree/main/docs/profile) and [templates](https://github.com/johannchopin/stackoverflow-readme-profile#templates)

- stackoverflow-readme-profile render a svg so the final image is pixel clear and is animated

- the project is [open-sourced](https://github.com/johannchopin/stackoverflow-readme-profile): You want a new feature or improve something? Just [open an issue](https://github.com/johannchopin/stackoverflow-readme-profile/issues/new) so we can collaborate together

- you want to self host the application? No problem there is already a [docker image](https://hub.docker.com/repository/docker/johannchopin/stackoverflow-readme-profile) for that.

Thanks again for reading. If you are willing to see this project growing don't hesitate to leave a ⭐ to the repo and push your ideas of improvement.

{% github johannchopin/stackoverflow-readme-profile %} | johannchopin |

917,903 | I made the Package Manager for old DOS software | After a long time the "Vintage" Package Manager is ready Visit this... | 0 | 2021-12-05T11:41:05 | https://dev.to/francescobianco/i-made-the-package-manager-for-old-dos-software-4jpc | dos, retrocomputing, packagemanager, bash | After a long time the "Vintage" Package Manager is ready

Visit this page

- <https://github.com/francescobianco/vintage> | francescobianco |

917,930 | Kubernetes Create TLS/SSL certificates | Kubernetes For Beginners | A post by jmbharathram | 0 | 2021-12-05T12:13:42 | https://dev.to/jmbharathram/kubernetes-create-tlsssl-certificates-kubernetes-for-beginners-27go | kubernetes, docker, devops | {% youtube y00hfCeHWn0 %} | jmbharathram |

917,941 | Tutorial : manage your community on Metaweave.xyz | Metaweave.xyz is the digital square of the permaweb. Users often ask us how to edit communities. In... | 0 | 2021-12-06T21:24:02 | https://dev.to/falco_sun/tutorial-manage-your-community-on-argoraxyz-16gb | arweave, web3, tutorial, metaweave | Metaweave.xyz is the digital square of the permaweb.

Users often ask us how to edit communities. In this tutorial we are going to show you how to create, claim a community and how to edit its banner and descritption.

#Summary#

1. [Community creation & claiming](#chapter-1)

2. [PST Locking](#chapter-2)

3. [Editing the community description](#chapter-3)

4. [Editing the community banner](#chapter-4)

----

<a name="chapter-1"></a>

# 1. Community creation & claiming#

- Go on [metaweave.xyz](https://metaweave.xyz).

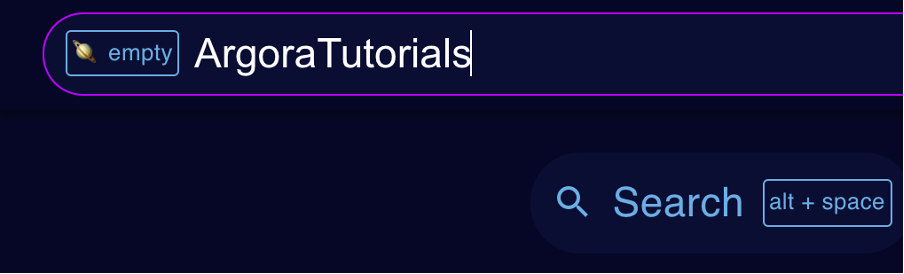

- Click on the search button or use the shortcut `alt-space` to open up the search bar.

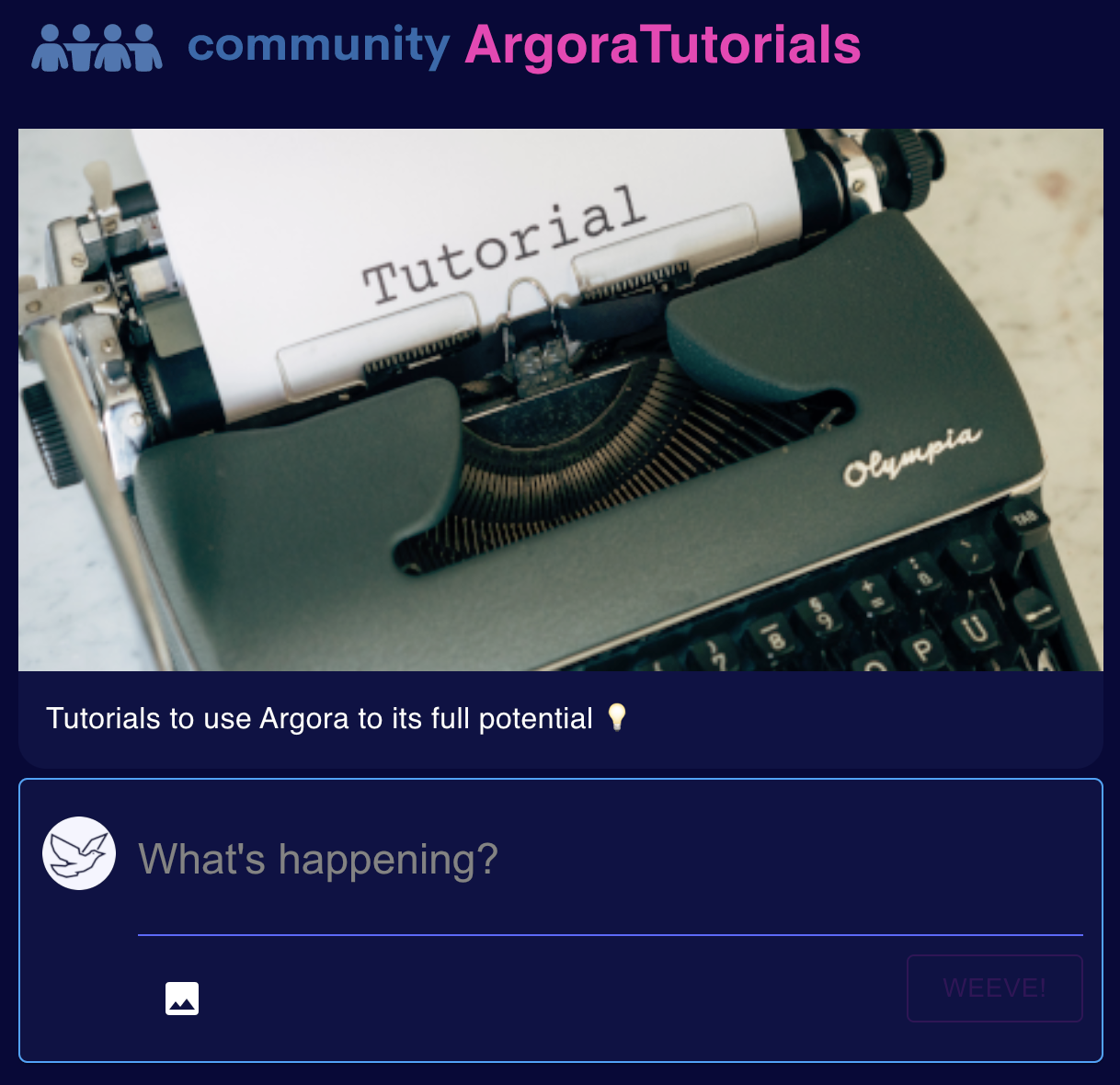

> For the purpose of the tutorial, I decided to claim the community named `ArgoraTutorials`

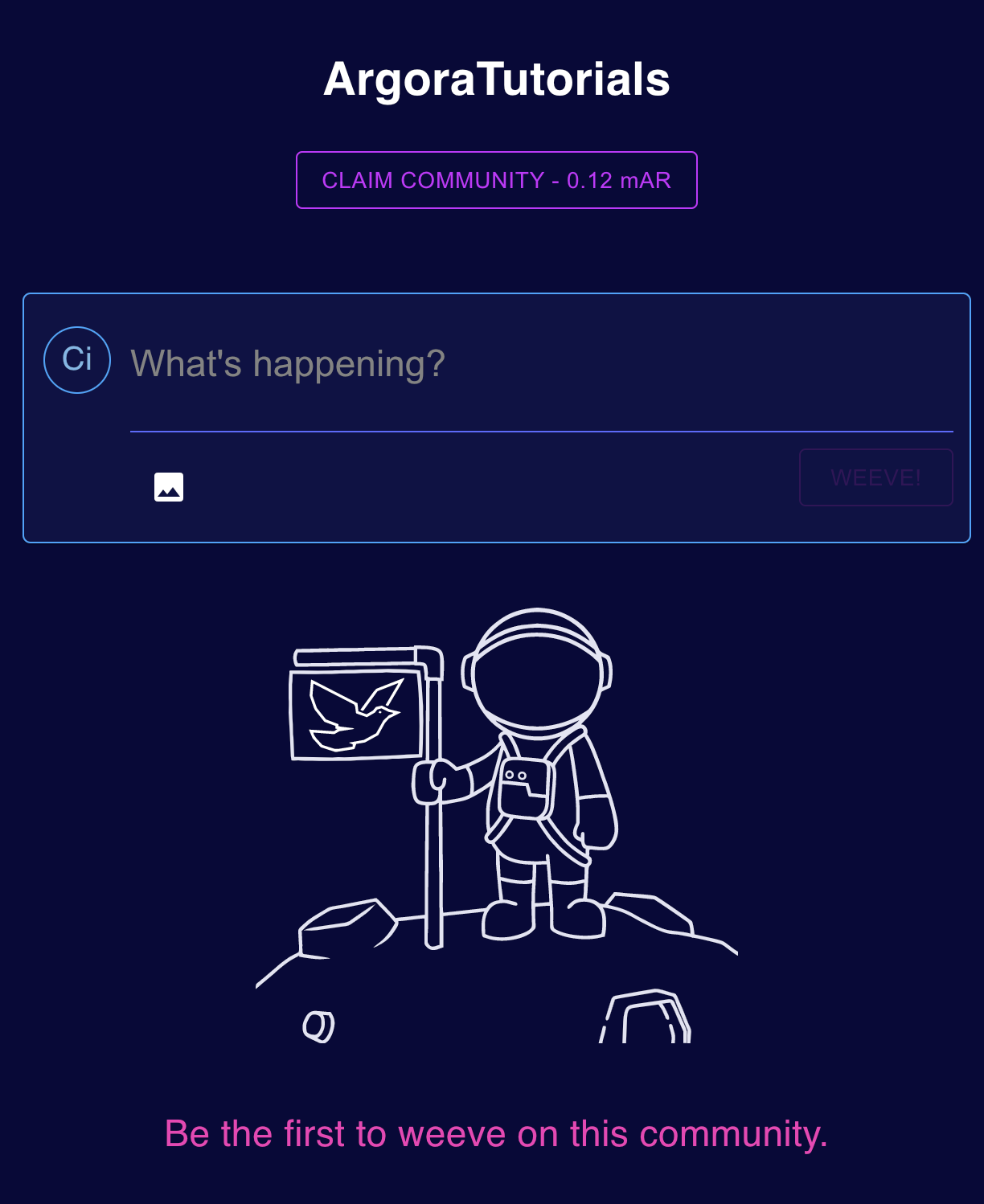

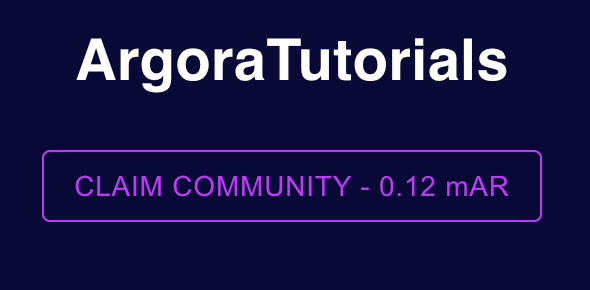

_Here is how the `ArgoraTutorials` community looked like before I claimed it._

- Click on the `CLAIM COMMUNITY` button

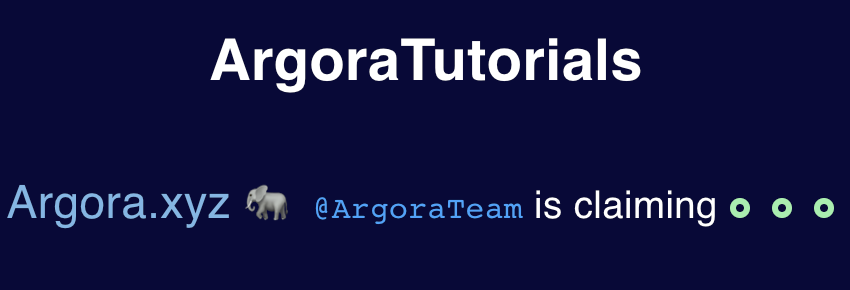

A message that indicates the community is being claimed will appear instead of the button.

> ℹ️ The tree green circles is an indicator that the transaction is being validated by the miners

**txid:** [`F6EF1NjmizVut2CcyP1g5pU_uGob42eUrRIlW8YFTsE`](https://viewblock.io/arweave/tx/F6EF1NjmizVut2CcyP1g5pU_uGob42eUrRIlW8YFTsE)

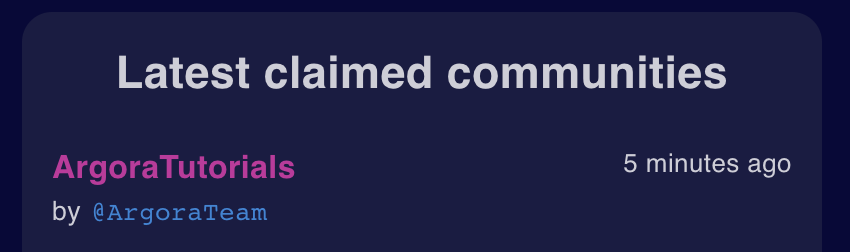

Once the transaction is validated by the network the community appears in the "Lastest claimed communities" on the right panel of the Metaweave’s interface:

> ℹ️ By default, a newly claimed community has no banner and description:

----

<a name="chapter-2"></a>

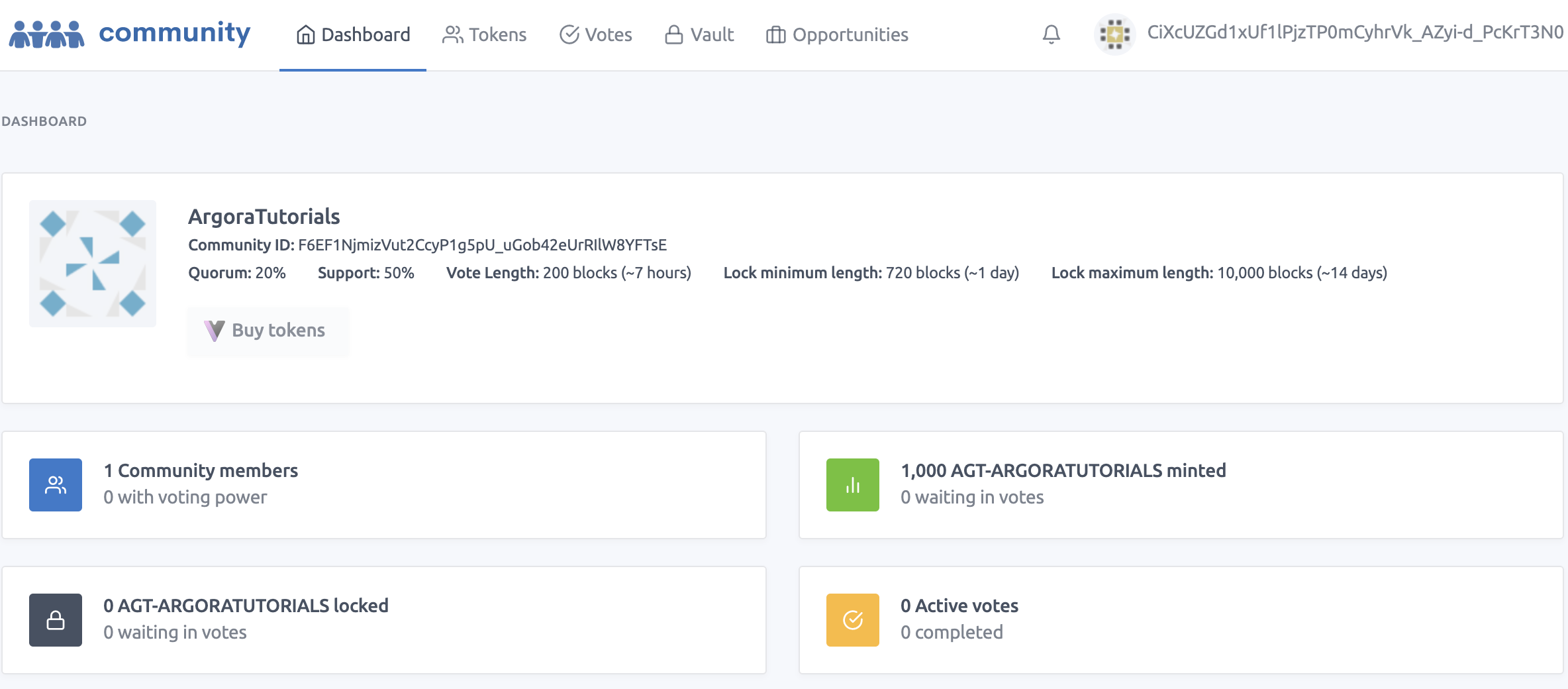

# 2. PST Locking#

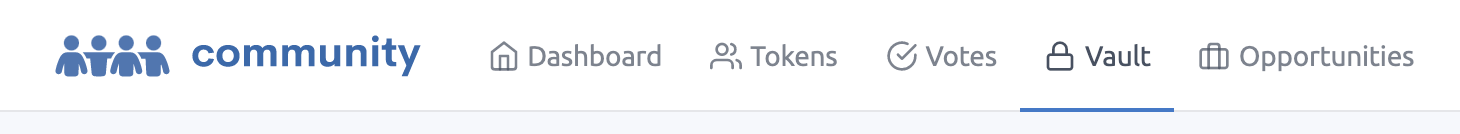

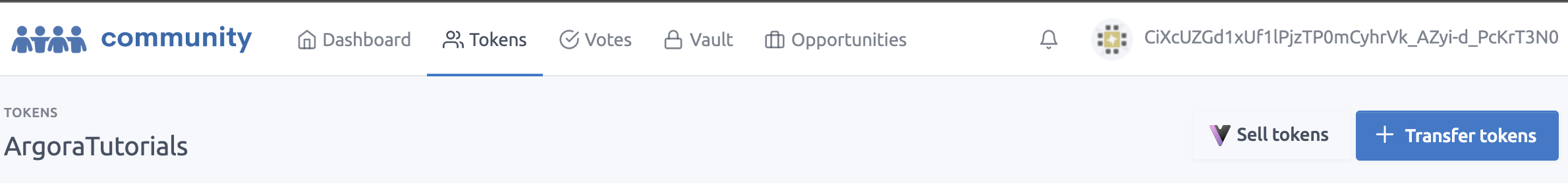

To manage your [PSC](https://arwiki.wiki/#/en/profit-sharing-communities)s on Metaweave, you need to access [community.xyz](https://community.xyz/#F6EF1NjmizVut2CcyP1g5pU_uGob42eUrRIlW8YFTsE)

- To access your community, click on its name on the top of the community page.

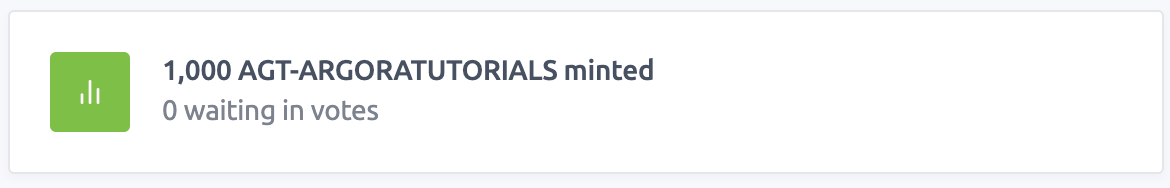

The creation of the PSC also mints 1000 PSTs (Profit Sharing Tokens). Those tokens will give voting power to their owners. Token are named AGT-$CommunityName:

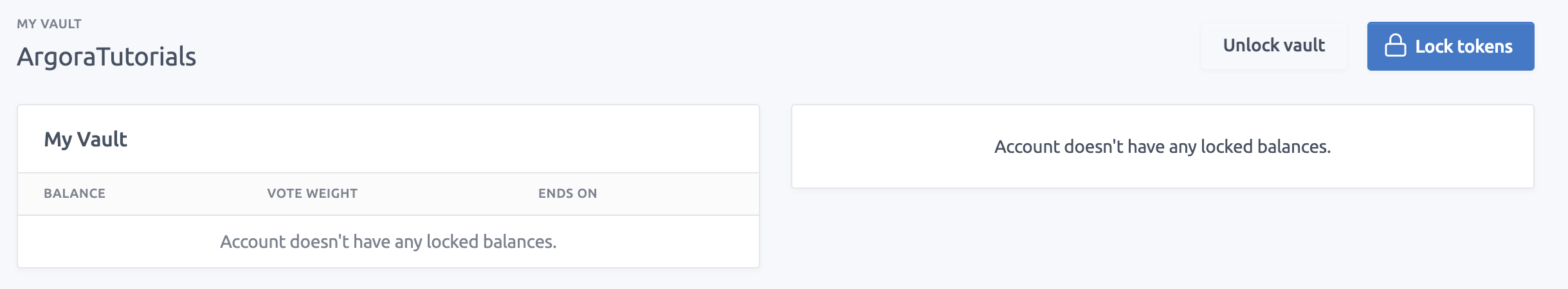

To be able to submit votes we first need to lock our community tokens.

Just click the vault button:

Then click on “Lock tokens”:

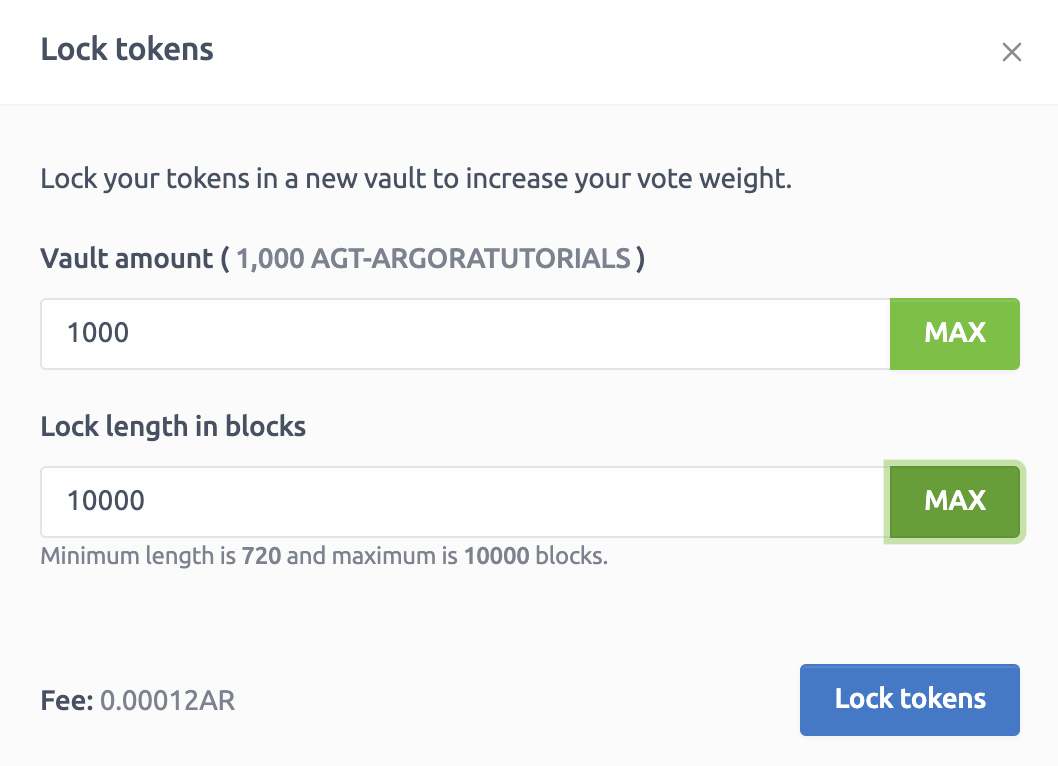

Select the number of PST you want to lock with the duration of the lock in block number:

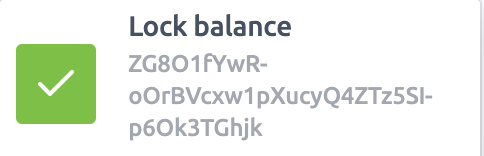

Clicking on the “Lock tokens” button makes the transaction. You are directly notified:

Once it's done you should see notifications as these

**txid:** [ZG8O1fYwR-oOrBVcxw1pXucyQ4ZTz5SI-p6Ok3TGhjk]

(https://viewblock.io/arweave/tx/ZG8O1fYwR-oOrBVcxw1pXucyQ4ZTz5SI-p6Ok3TGhjk)

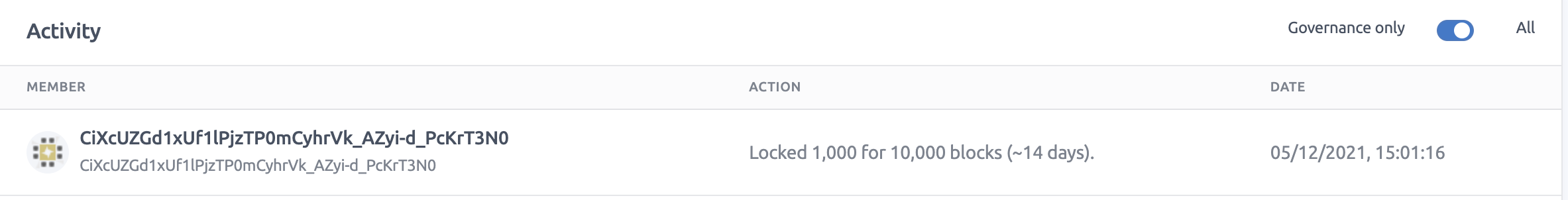

Activity tab now shows the 1000 locked PSTs:

> ℹ️ Click the `Transfer tokens` button to distribute your communties' PSTs to arweave wallets and share the voting power.

----

<a name="chapter-3"></a>

# 3. Editing the community descritption#

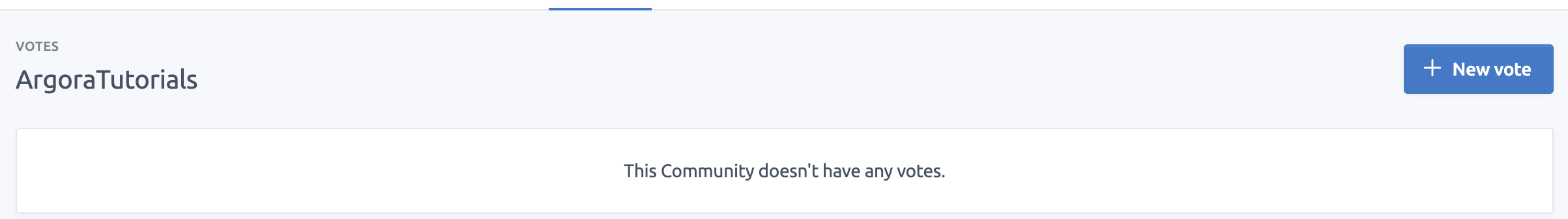

Every modification you can make on community uses a vote.

So to edit the community description we're going to create a new vote:

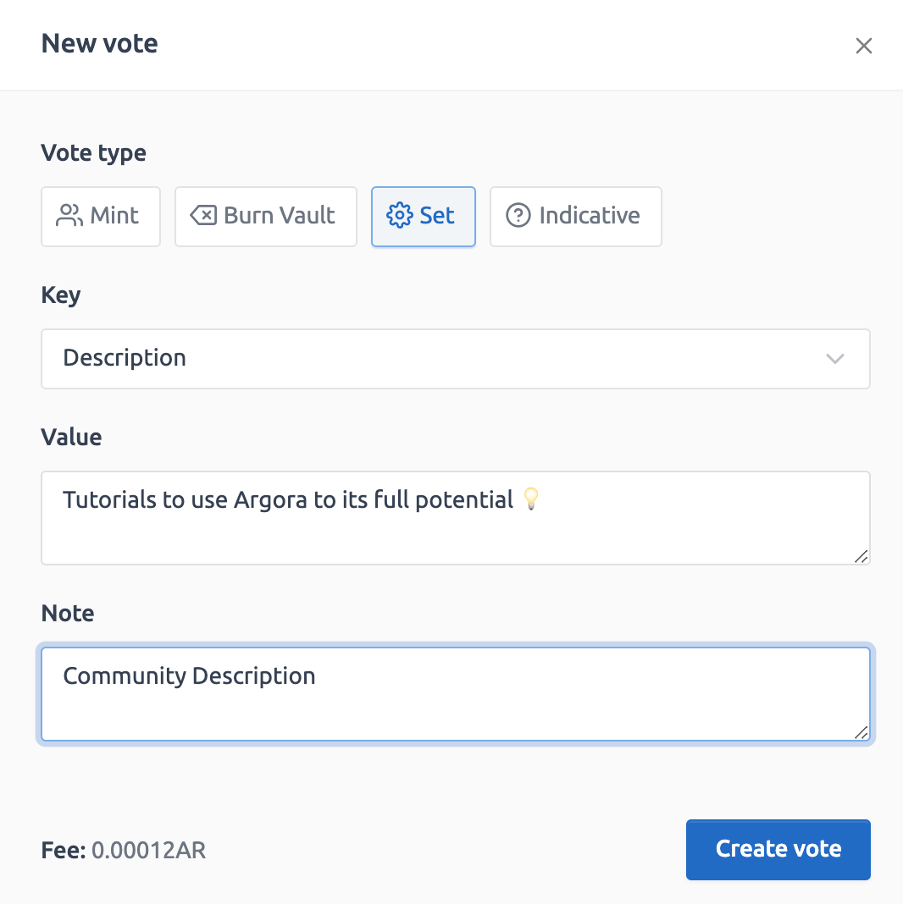

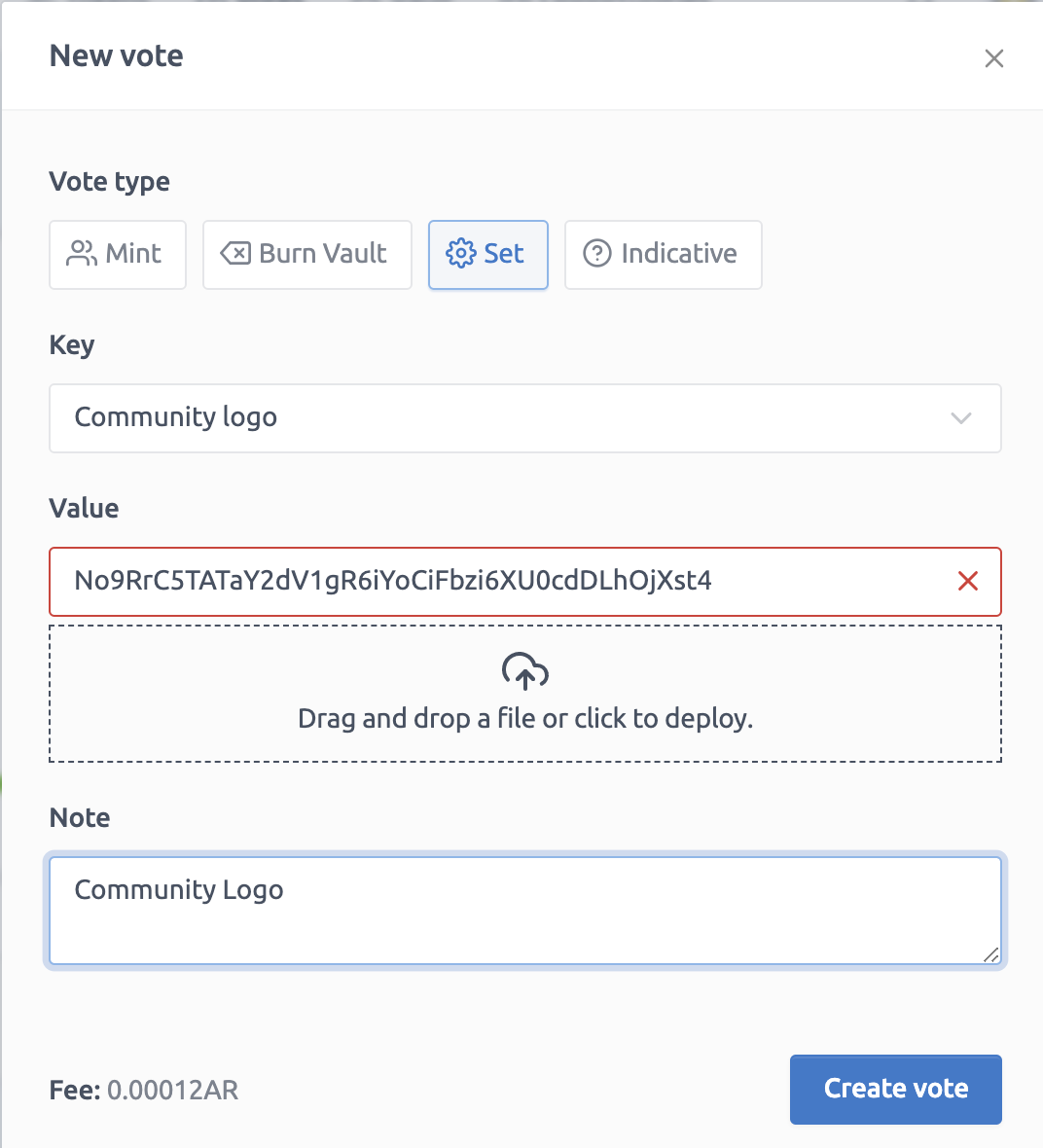

1. After that Click ⚙️ Set

2. Select "Description" for the Key field

3. Type your community description in the "value" field

4. Once done click "Create vote"

Notification of the vote creation:

Notification that the vote transaction has been mined and validated by the network:

**txid:** [SPeMwai9GmsKrzVDmtYzoPvh45szs8F6ga6Ii3KuAHk]

(https://viewblock.io/arweave/tx/SPeMwai9GmsKrzVDmtYzoPvh45szs8F6ga6Ii3KuAHk)

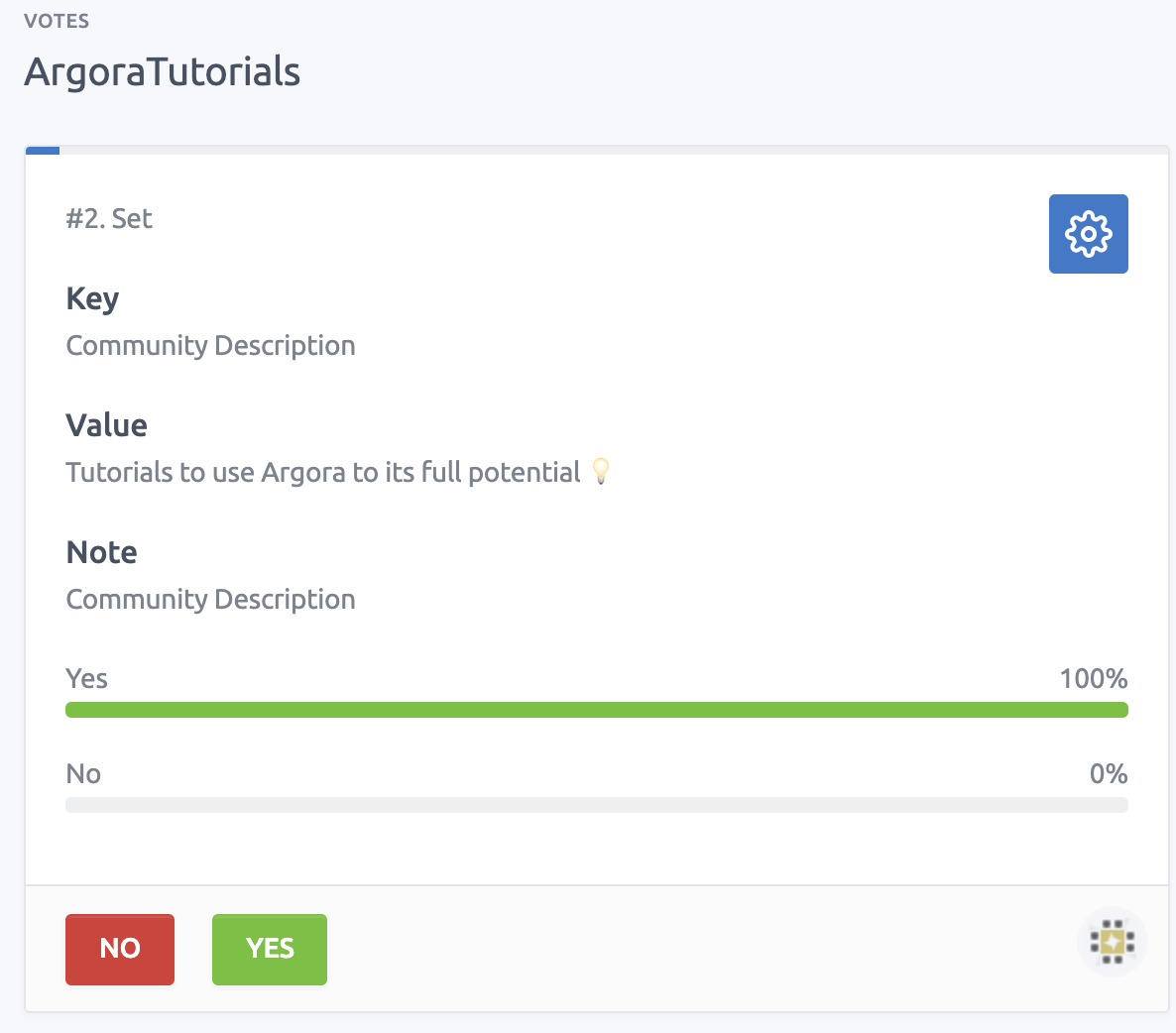

The vote is now available and every account with voting power can vote "yes" or "no" to this new description;

Here is the transaction related to my vote for the community description:

**txid:** [g3TOUmoseqDMeLfRg_r0gtWOf5SXv_5D92pZix2sw_o]

(https://viewblock.io/arweave/tx/g3TOUmoseqDMeLfRg_r0gtWOf5SXv_5D92pZix2sw_o)

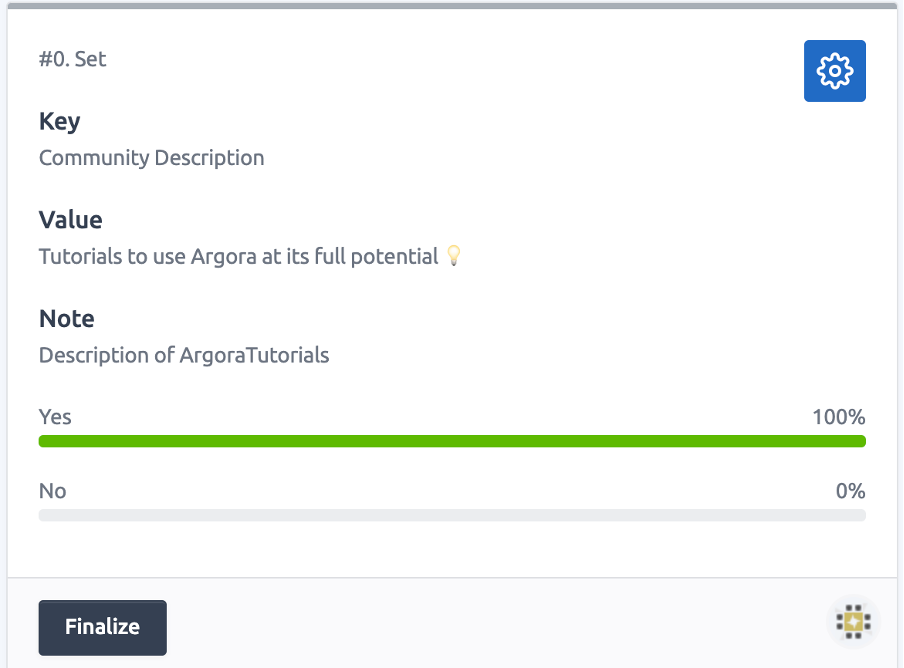

We can now see that 100% of voters voted Yes for the new description:

we just need to wait for approximately 7 hours (200 blocks) to be able to finalize the vote:

Notification of the transaction related to the finalization of the vote:

**txid:** [-WcHL6bUvKAqU7iywuoz2rNQn8Fs7wI-T05vPS0_zFg]

(https://viewblock.io/arweave/tx/-WcHL6bUvKAqU7iywuoz2rNQn8Fs7wI-T05vPS0_zFg)

Now that the vote is finalized the description appears just under the community banner:

----

<a name="chapter-4"></a>

# 4. Editing the community banner#

**Metaweave recommends 600*300 pixels picture resolution for community banners.**

Firstable you need to upload the picture you want to use as a banner for your community on Arweave’s blockchain.

To do so I personally choose to use [Ardrive](https://ardrive.io).

As for the description you need to create a new vote to edit the banner of the community page that will appear on Metaweave.

1. Click ⚙️ Set

2. Select “Community Logo” for the Key field

3. Type your picture TxID in the “value” field

4. Click “Create vote”

**txid:** [JwTWvCKNwmoZufzRqjkQeLjUTsPB02Wnvzgs0vMjuhY]

(https://viewblock.io/arweave/tx/JwTWvCKNwmoZufzRqjkQeLjUTsPB02Wnvzgs0vMjuhY)

You will just need to follow the voting steps you followed for the description

1. Vote [Yes](https://viewblock.io/arweave/tx/CZA_JzpI3purlKrSh552-ye_jIktnY78-Wad00IFgv4)

2. Wait for 7 hours (200 blocks)

3. [Finalize](https://viewblock.io/arweave/tx/xDqP_F9lqkuX2dvgjcSojtnhN_IeD10JkfwQy5Nm-3Q) the vote

The community page on [Metaweave](https://pvcsk5frxfyostm75nhyjneotxwpuldjy5ptdip24ut7w6lfgwha.arweave.net/fUUldLG5cOlNn-tPhLSOnez6LGnHXzGh-uUn-3llNY4/community/ArgoraTutorials):

Thank you for following this tutorial I can't wait to see how you'll use it.

Stay tuned on Metaweave for more tutorials, news and post.

| falco_sun |

917,955 | Especialista SEO Qué es y cómo convertirse | Experto SEO: especialista en seo y Wordpress Un especialista seo es una persona con... | 0 | 2021-12-05T14:03:46 | https://dev.to/benderllin/especialista-seo-que-es-y-como-convertirse-3h9h | ## [Experto SEO: especialista en seo y Wordpress](https://estandar.io/como-convertirse-en-un-especialista-seo-en-el-proximo2022/)

Un especialista seo es una persona con habilidades y técnicas específicas que son eficaces para mejorar el posicionamiento de una página web en los resultados de las búsquedas de los motores de búsqueda. Una vez que uno domina los conocimientos necesarios para asumir la tarea, comienza a trabajar en el sitio web de los clientes para mejorar la visibilidad de la misma en los resultados de búsqueda de los motores de búsqueda. Todos los especialistas seo tienen en común su capacidad de mejorar la visibilidad de un sitio web en las búsquedas de los motores de búsqueda. Sin embargo, existen ciertas diferencias entre los especialistas seo que van desde el tipo de conocimientos que poseen hasta el equipo que tienen. Esos son algunos de los principales tipos de especialistas seo existentes hoy en día:

Analista de contenido

Analista de contenido

Un analista de contenido es un especialista seo que se encarga de analizar la información de los sitios web de los clientes para determinar si está en conformidad con las normas y las demandas de los motores de búsqueda. Se dedica a comprobar si la información de la página web contiene la información que los usuarios esperan encontrar.

Consultor SEO

Un consultor SEO es un especialista seo que tiene una amplia gama de conocimientos técnicos y procedimientos que le permiten mejorar la visibilidad de cualquier sitio web en los resultados de las búsquedas de los motores de búsqueda. El consultor SEO es capaz de analizar los detalles técnicos de un sitio web y puede ayudar al cliente a mejorarlos para que el sitio web sea más visible y genere más tráfico.

## [Experto SEO: especialista en seo y Wordpress](https://estandar.io/como-convertirse-en-un-especialista-seo-en-el-proximo2022/)

Director de marketing digital

Un director de marketing digital es un especialista seo que tiene la responsabilidad de manejar todo el proceso de posicionamiento en los resultados de búsqueda de motores de búsqueda. Tiene la responsabilidad de realizar la medición de la efectividad del sitio web del cliente y puede comunicarse con el equipo de diseño y desarrollo para mejorar la apariencia y el contenido del sitio web.

Especialista SEO

Un especialista SEO es una persona que domina las técnicas y los procedimientos específicos de posicionamiento en los resultados de búsqueda de motores de búsqueda. Está capacitado para realizar todos los procesos que conforman el posicionamiento en búsqueda de motores de búsqueda, desde la medición del tráfico en el sitio web hasta el desarrollo de técnicas de posicionamiento.

| benderllin | |

917,978 | AutoML & AWS SageMaker Autopilot | what is the difference between auto machine learning and AWS SageMaker... | 0 | 2021-12-05T15:03:53 | https://dev.to/aws-builders/automl-aws-sagemaker-autopilot-44b7 | aws, machinelearning, devops |

### what is the difference between auto machine learning and AWS SageMaker Autopilot?

____________________

***SageMaker Autopilot uses a transparent approach to AutoML***

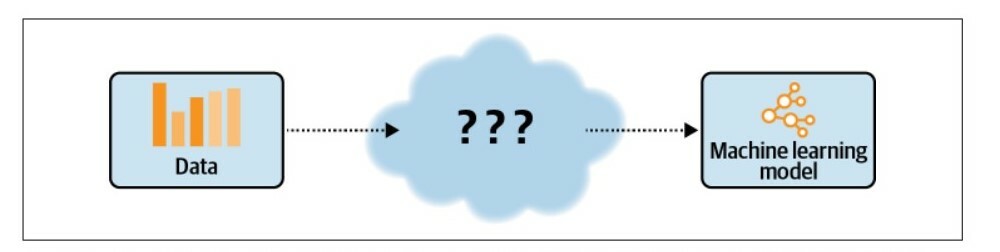

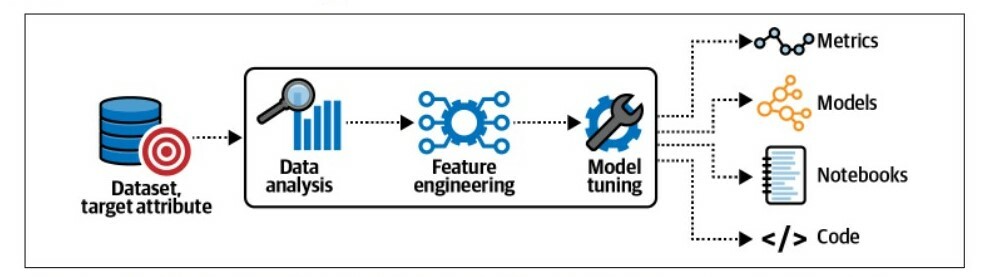

In nontransparent approaches, as shown in the image below, we don’t have control or visibility into the chosen algorithms, applied data transformations, or hyper-parameter choices. We point the

automated machine learning (AutoML) service to our data and receive a trained model.

This makes it hard to understand, explain, and reproduce the model. Many AutoML solutions implement this kind of nontransparent approach.

as you see, in many AutoML services, we don’t have visibility into the chosen algorithms, applied data transformations, or hyper-parameter choices.

_______________________

### SageMaker Autopilot documents and shares its findings throughout the data analysis, feature engineering, and model tuning steps.

SageMaker Autopilot doesn’t just share the models; it also logs all observed metrics and generates Jupyter notebooks, which contain the code to reproduce the model pipelines, as visualized in the image bellow

The data-analysis step identifies potential data-quality issues, such as missing values that might impact model performance if not addressed. The Data Exploration notebook contains the results from the data analysis step. SageMaker Autopilot also generates another Jupyter notebook that contains all pipeline definitions to provide transparency and reproducibility. The Candidate Definition notebook highlights the best algorithms to learn our given dataset, as well as the code and configuration needed to use our dataset with each algorithm.

SageMaker Autopilot generates Jupyter notebooks, features engineering scripts, and model code.

Resources :

[Data science on AWS Book](https://www.oreilly.com/library/view/data-science-on/9781492079385/) | kareemnegm |

917,998 | Best Google Fonts for your website | Merriweather Eben Sorkin’s Merriweather is designed for optimal readability on screens.... | 0 | 2021-12-05T15:57:56 | https://dev.to/codewhiteweb/best-google-fonts-for-your-website-3e5k | design, font, css, googlefont | 1. Merriweather

Eben Sorkin’s [Merriweather](https://fonts.google.com/specimen/Merriweather?sort=alpha&sidebar.open=true&selection.family=Headland+One&query=merriweather) is designed for optimal readability on screens. Merriweather’s large x-height boosts the font’s legibility, making it suitable for use in long texts as well as for headlines and titles. Merriweather currently has 8 styles: Light, Regular, Bold, Black, Light Italic, Italic, Bold Italic, Black Italic.

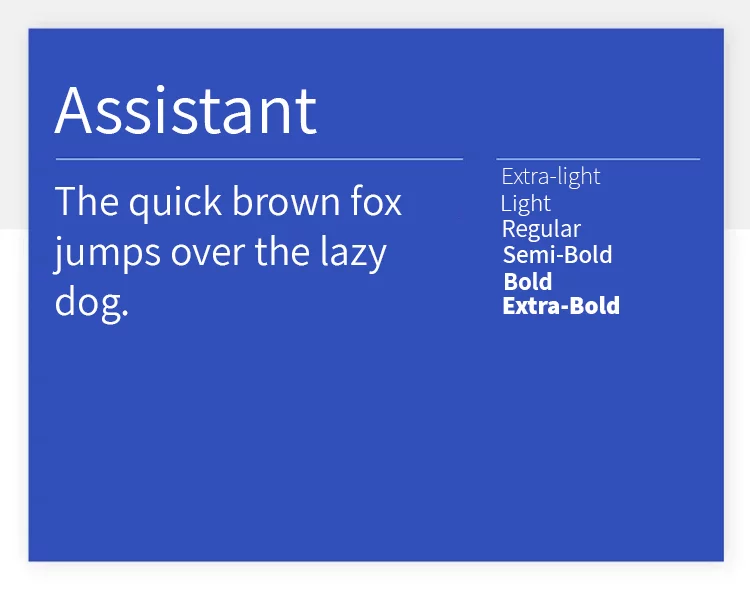

2. Assistant

This is another very versatile Google font. [Assistant](https://fonts.google.com/specimen/Assistant?sort=alpha) is a clean typeface and offers a generous 6 styles, from extra light to bold. The carefully-planned spacing between the letters create a font with great readability. This, combined with the abundant bold styles makes Assistant especially good for larger bodies.

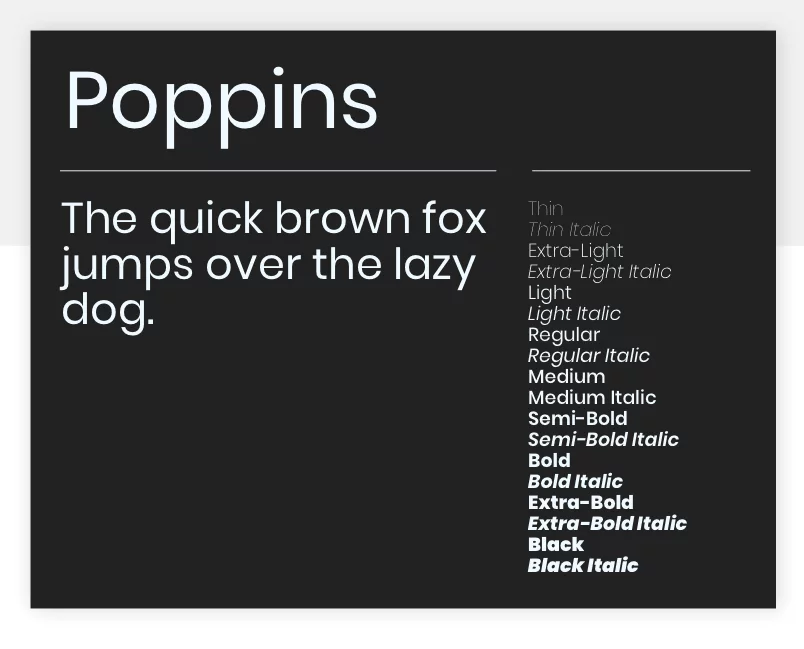

3. Poppins

[Poppins](https://fonts.google.com/specimen/Poppins/), created by the Indian Type Foundry, is an attractive, geometric sans-serif font for use in text or display contexts. It’s also the first font on our list to support the Devanagari system, which is used in over 150 languages including Hindi and Sanskrit.

4. Caladea

[Caladea](https://fonts.google.com/specimen/Caladea?sort=alpha) is a modern and friendly Google font. Created from [Cambo](https://fonts.google.com/specimen/Cambo), Caladea offers 4 different styles to choose from. The font is practical not just in the sense that it has styles to highlight content, but also due to its reliable readability. Caladea works both for big and dramatic titles and small texts that don’t overwhelm readers.

5. Enriqueta

[Enriqueta](https://fonts.google.com/specimen/Enriqueta?sort=alpha) is a Google font that also brings a certain glamour to any page, but it’s special in its own way. This font has bold features that reminded our team of old-times, adding a Rockwell sort of vibe to the page. The best part? Enriqueta is very well-balanced, and even though it has strong visuals, it still delivers great readability – even in very small bodies!

thanks for reading... | codewhiteweb |

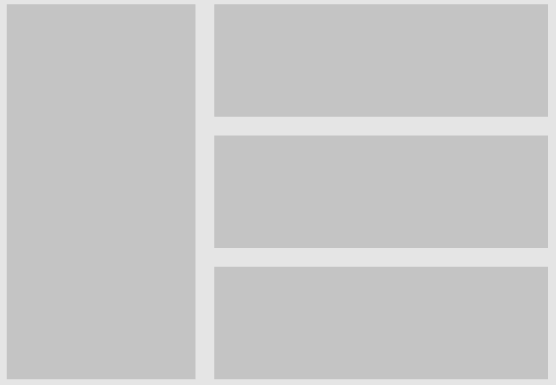

918,069 | Criando mosaicos facilmente com grid-area e grid-template-area | Vocês conhecem esse modo de organizar um layout com o display grid usando grid-area e... | 0 | 2021-12-05T17:19:39 | https://dev.to/matheusfelizardo/criando-mosaicos-facilmente-com-grid-area-e-grid-template-area-314f | css, html, webdev, tutorial | Vocês conhecem esse modo de organizar um layout com o display grid usando grid-area e grid-template-area?

Eu gosto de usar pra montar mosaicos mas pode ser usado pra montar até mesmo o layout da página toda.

Primeiro você faz o desenho do layout que quer montar (só pra facilitar a visualização)

Exemplo:

Depois você estrutura seu HTML.

Eu gosto de utilizar as classes pra montar o layout quando vou utilizar o grid-area.

```HTML

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<link rel="stylesheet" href="./index.css">

<title>Grid</title>

</head>

<body>

<div class="container">

<div class="item-1"></div>

<div class="item-2"></div>

<div class="item-3"></div>

<div class="item-4"></div>

</div>

</body>

</html>

```

Depois você coloca as propriedades no CSS que irão montar o mosaico.

1- Você irá setar o display grid no "container", ele que irá organizar a disposição dos itens no mosaico, vou colocar também uma altura para o meu mosaico, nesse caso

```CSS

.container {

display: grid;

height: 300px;

}

```

2- Você vai setar um "apelido" para cada item do mosaico, usando a propriedade "grid-area", aproveitei para colocar um background.

```CSS

.item-1 {

grid-area: item-1;

background: #ec934a;

}

.item-2 {

grid-area: item-2;

background: #e46c6c;

}

.item-3 {

grid-area: item-3;

background: #85fd7b;

}

.item-4 {

grid-area: item-4;

background: #7fbdf0;

}

```

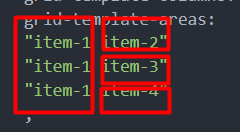

3- Após isso, você vai setar no container, o template que você quer para o mosaico usando a propriedade grid-template-areas. Nela você vai basicamente desenhar o layout que você quer, onde cada abertura de aspas e fechamento, é uma linha.

```CSS

.container {

display: grid;

height: 300px;

grid-template-areas:

"item-1 item-2"

"item-1 item-3"

"item-1 item-4"

;

}

```

Observe como o layout foi desenhado de acordo com a formatação no grid-template-areas

- Só irei fazer mais alguns ajustes de tamanho que quero para minhas colunas usando o grid-template-columns e o gap para gerar um espaçamento entre os elementos

```CSS

.container {

display: grid;

height: 300px;

gap: 15px;

grid-template-columns: 150px 200px;

grid-template-areas:

"item-1 item-2"

"item-1 item-3"

"item-1 item-4"

;

}

```

O resultado é a criação do nosso layout de forma fácil

Um exemplo real de galeria que criei num projeto utilizando essas propriedades

E ai, já conhecia essa propriedade com o diplay grid, já utilizava ou vai começar a utilizar a partir de agora?

Maiores informações: https://developer.mozilla.org/en-US/docs/Web/CSS/grid-template-areas

Qualquer dúvida é só perguntar, e forte abraço!!

Linkedin: https://www.linkedin.com/in/matheus-felizardo

Site Pessoal: https://www.matheusfelizardo.com.br/ | matheusfelizardo |

918,082 | Baloonza IT newsletters weekly digest #14 | IT-related topics and trends of the past week: startup, development, entrepreneurship No... | 15,791 | 2021-12-05T17:49:07 | https://app.baloonza.com/baloons | IT-related topics and trends of the past week:

### startup, development, entrepreneurship

- [No one asked](https://app.baloonza.com/issues/9882)

- [One empty seat](https://app.baloonza.com/issues/9946)

- [Hacker Newsletter Daily - 11/30/21](https://app.baloonza.com/issues/9970)

- [Canva for video games](https://app.baloonza.com/issues/9977)

- [Virtual HQ](https://app.baloonza.com/issues/10021)

- [The bank of the metaverse?](https://app.baloonza.com/issues/10059)

- [Hacker Newsletter #581](https://app.baloonza.com/issues/10107)

### css, design, frontend, html, web development, javascript

- [CSS Animation Weekly #277](https://app.baloonza.com/issues/9933)

- [📝 [CSS-Tricks] 279: Houdini Is Not as Scary as You Think](https://app.baloonza.com/issues/9956)

- [🎨 doing neat things with the Paint API..](https://app.baloonza.com/issues/10025)

### marketing

- [🎓 Frame your product as the ‘gift’ in a bundle](https://app.baloonza.com/issues/9965)

### data science

- [Data Science Weekly - Issue 419](https://app.baloonza.com/issues/10100)

*** | dimamagunov | |

918,112 | A Quick Guide To Setting Up a Rails Directory | First create a new repo on github. In your terminal, cd to the directory in which you want... | 0 | 2021-12-05T19:13:37 | https://dev.to/davidnnussbaum/a-quick-guide-to-setting-up-a-rails-directory-55be | rails, programming | First create a new repo on github.

In your terminal, cd to the directory in which you want the rails directory to reside. Please note the words **directory**, **file-name**, **YourName**, **ModelName**, and **column_name** are being used as generic terms to be replaced with the actual names that you are using.

------------------------------------------------------------------

Now enter:

**~/directory$ rails new file-name -T --database=postgresql --no-test-framework **

Please note that if you do not enter -T --database=postgresql, to install PostgreSQL then the default database is SQLite.

If you do not enter --no-test-framework then the default is to include the extra test files.

------------------------------------------------------------------

Next cd to the created rails file:

**~/directory$ cd file-name**

------------------------------------------------------------------

The next steps will allow you to push your changes to github. Enter the following with everything after the word origin being a copy of the SSH from your github repo.

~/directory/file-name$ git remote add origin git@github.com:YourName/file-name.git

------------------------------------------------------------------

Next enter:

**~/directory/file-name$ git branch**

------------------------------------------------------------------

Followed by:

**~/directory/file-name$ git status**

The following will appear in the terminal:

On branch master

No commits yet

------------------------------------------------------------------

Next add (the period after a space is part of the entry):

**~/directory/file-name$ git add .**

------------------------------------------------------------------

Followed by:

**~/directory/file-name$ git commit -m "Setting up the file."**

------------------------------------------------------------------

Then enter:

**~/directory/file-name$ git status**

The following will appear in the terminal:

On branch master

nothing to commit, working tree clean

------------------------------------------------------------------

Next enter:

**~/directory/file-name$ git branch -M master**

------------------------------------------------------------------

Followed by:

**~/directory/file-name$ git push -u origin master**

------------------------------------------------------------------

Next enter:

**~/directory/file-name$ git pull origin master --allow-unrelated-histories**

------------------------------------------------------------------

Followed by:

**~/directory/file-name$ git push --set-upstream origin master**

------------------------------------------------------------------

If you are installing PostgreSQL enter, otherwise skip this step:

**~/directory/file-name$ gem install pg **

------------------------------------------------------------------

No matter what your database is the next step is:

**~/directory/file-name$ bundle install**

------------------------------------------------------------------

Followed by:

**~/directory/file-name$ rails db:create**

-----------------------------------------------------------------

To set up each model enter:

**~/directory/file-name$ rails g model ModelName column_name:type foreign_key:references**

Please note that you can add in as many columns as are present for this model. The references term will create a column called whatever the foreign key is with _id attached.

Thanks for reading!

| davidnnussbaum |

918,128 | Be better than the If Statement | Decisions, decisions! Regardless of your programming language of choice, your code needs... | 0 | 2021-12-05T19:53:35 | https://dev.to/iamhectorsosa/be-better-than-the-if-statement-4ee1 | javascript, beginners | ## Decisions, decisions!

Regardless of your programming language of choice, your code needs to take decisions and execute actions accordingly. For example, in a game, if you run out of lives (`If (lifes === 0)`), you're done! So today, let's be better than the if statement and understand how conditional statements work in JavaScript.

## if...else statements

Probably one of the most google'd statements out there. An `if...else` statement executes a statement if a specified condition is *truthy*. If else, another chunk of code can be executed.

```jsx

// If..else Syntax

if (condition) { statement1 } else { statement2 };

// Using an if...else statement in a function.

function isItCold(temp) {

if (temp < 15) {

return 'Yes, you better wear something warm!';

} else {

return 'Nah, you good!';

}

}

// isItCold(5);

// Expected output: 'Yes, you better wear something warm!'

```

Here you typically make use of [comparison operators](https://developer.mozilla.org/en-US/docs/Learn/JavaScript/First_steps/Math#comparison_operators) (strict equality, less than, greater than, etc.) to run true/false tests, execute code accordingly depending on the result. These conditional statements are pretty human-readable — "**if** this is `true`, then do this, **else** do that." The chaining of additional if statements or even the nesting of others are infinite but not necessarily optimal. Therefore, let's explore other choices in writing conditional statements in JavaScript.

## How to make it shorter!?

The [conditional ternary operator](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Operators/Conditional_Operator) is the only JavaScript operator that takes three operands: a condition followed by the question mark (`?`), then an expression to execute if the condition is *truthy*, followed by a colon (`:`), and finally the expression if the condition is *falsy*. This operator is frequently used as a shortcut for the `if` statement. The ternary operator can run strings, functions, lines of code or anything you'd like. Let's rewrite our `isItCold` function:

```jsx

// Conditional (ternary) operator Syntax

// condition ? exprIfTrue : exprIfFalse

// Replacing an if...else statment by a conditional (ternary) operator.

function isItCold(temp){

return temp < 15 ? 'Yes, you better wear something warm!' : 'Nah, you good!';

}

// isItCold(5);

// Expected output: 'Yes, you better wear something warm!'

```

## Evaluating bigger data sets?

For those cases where you need to evaluate bigger data sets, even if you do get tempted by its symmetrical beauty, instead of creating a the so-called *arrow type* of code, you can can try Conditional chains using ternary operators. Let's take a look at the syntax and add more data to evaluate in our function:

```jsx

// Instead of writing this:

function example(…) {

if (condition1) { return value1; }

else if (condition2) { return value2; }

else if (condition3) { return value3; }

else { return value4; }

// Write this, using:

// Conditional chaining using Ternary Operators Syntax

function example(…) {

return condition1 ? value1

: condition2 ? value2

: condition3 ? value3

: value4;

}

// Applying conditional chaining to our isItCold function:

function isItCold(temp){

return temp < 5 ? 'Even hell is freezing over!'

: temp < 10 ? 'It is starting to get cold'

: temp < 15 ? 'A bit chilly, innit?'

: 'Nah you good'

}

// isItCold(16);

// isItCold(12);

// isItCold(6);

// isItCold(2);

// Expected output:

// "Nah you good"

// "A bit chilly, innit?"

// "It is starting to get cold!"

// "Even hell is freezing over!"

```

## Let's Switch it up!

If you have many options to choose from, use a *switch* statement instead. A `switch` statement tests a value and can have many *case* statements which define various possible values in a cleaner and more readable way. Statements are executed from the first matched `case` value until a `break` is encountered.

Note though, `case` values are tested with strict equality (`===`). The `break` tells JavaScript to stop executing statements. If the `break` is omitted, the next statement will be executed.

Why not try an exercise to apply how useful the `switch` statement is? Let's check out [freeCodeCamp's Counting Cards](https://www.freecodecamp.org/learn/javascript-algorithms-and-data-structures/basic-javascript/counting-cards) from their JavaScript Algorithms and Data Structures program and change it a bit.

### Counting Cards

In the casino game **Blackjack**, a player can gain an advantage over the house by keeping track of the relative number of high and low cards remaining in the deck. This is called Card Counting.

Having more high cards remaining in the deck favours the player. Each card is assigned a value according to the table below. When the count is positive, the player should bet high. When the count is zero or negative, the player should bet low.

| Count Change | Cards |

| --- | --- |

| +1 | 2, 3, 4, 5, 6 |

| 0 | 7, 8, 9 |

| -1 | 10, 'J', 'Q', 'K', 'A' |

Let's write a card counting function. It will receive an array of `cards` as a parameter (the cards can be strings or numbers), and increment or decrement the `count` variable according to the card's value. The function will then return a string with the current count and the indicate whether the player should `Bet`(if the count is positive) or `Hold`(if the count is zero or negative).

Example outputs: `-3 Hold` or `5 Bet`.

```jsx

// Switch statement syntax

switch (expression) {

case value1:

//Statements executed when the result of expression matches value1

break;

case value2:

//Statements executed when the result of expression matches value2

break;

...

case valueN:

//Statements executed when the result of expression matches valueN

break;

default:

//Statements executed when none of the values match

break;

}

// Building the countCards function using if...else statements

const countCards = (cards) => {

let count = 0;

cards.forEach(card => {

if(card === 2 || card === 3 || card === 4 || card === 5 || card === 6) {

count++;

}

if(card === 10 || card === 'J' || card === 'Q' || card === 'K' || card === 'A') {

count--;

}

})

if(count <= 0) {

return count + ' Hold';

} else {

return count + ' Bet';

}

}

// countCards([4, 5, 2, 7, 'J', 'Q']);

// Expected output: "1 Bet"

// Building the countCards function using Switch and Ternary Operators

const countCards = (cards) => {

let count = 0;

cards.forEach(card => {

switch(card){

case 2:

case 3:

case 4:

case 5:

case 6:

count++;

break;

case 10:

case 'J':

case 'Q':

case 'K':

case 'A':

count--;

break;

}

})

return count <= 0 ? count + ' Hold': count + ' Bet';

}

// countCards([4, 5, 2, 7, 'J', 'Q']);

// Expected output: "1 Bet"

```

Now, I believe you have the necessary knowledge to make better decisions and be better than the if statement alone!

Thank you for reading!

Get in touch:

[Whatsapp](http://wa.me/420608984789)

[ekheinquarto@gmail.com](mailto:ekheinquarto@gmail.com)

[Instagram](https://www.instagram.com/ekheinquarto/) | iamhectorsosa |

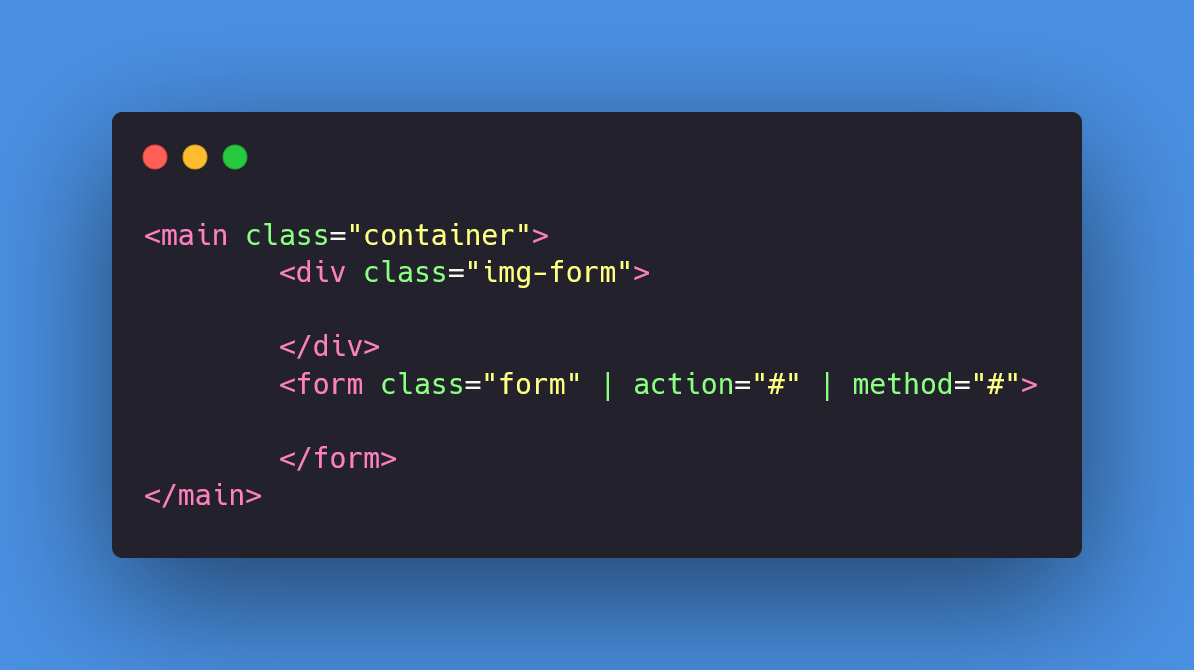

918,245 | Como crear un formulario de registro (2/3) | Hola, continuamos con la parte de crear las etiquetas HTML5, antes de comenzar les informo que el... | 0 | 2021-12-07T01:50:28 | https://dev.to/juan_duque/como-crear-un-formulario-de-registro-23-1mo7 | html, beginners, webdev, tutorial | Hola, continuamos con la parte de crear las etiquetas HTML5, antes de comenzar les informo que el código del formulario esta al final en CodePen para que puedan copiarlo y verlo.

Lo primero que aran es crear la carpeta donde van a guarda su trabajo y dentro de esta crearemos el archivo con la extensión **.html**, yo puntualmente le daré el nombre de 'index', quedaría así **index.html**, como último paso simplemente creamos la estructura básica de HTML como muestro en la imagen.

Pasamos a crear los contenedores para poder colocar todo el contenido que necesitamos, antes de continuar quiero aclarar que las etiquetas no las voy a explicar, si siento necesario estaré explicando cosas puntuales para no extenderme y volver a explicar cosas que ya mencione en la anterior publicación, también de aquí en adelante las imágenes con el html no se mostrara la estructura básica para que se puedan concentrar en lo importante, pero se sobreentiende que todas van dentro de **body**.

Ahora el siguiente paso es agregar los campos al **form** para que nuestro formulario vaya tomando forma, por lo menos a nivel de estructura HTML, lo que vamos hacer escrear un **h2** como titulo y como texto le pondremos **'Regístrate'** , también pueden colocarle otro si quieren, el próxima paso es crear cuatro inputs, el primero con el valor **text**, el segundo con **email** y los ultimos dos tienen que ser **password**, a cada uno se le asignara un **label**, lo siguiente es crear el botón con el texto **Aceptar**, por ultimo crearemos una etiqueta **p** que contenga un pequeño texto preguntando si tiene cuenta y si la tiene abra otro texto en el cual podra dar click en una etiqueta **a** para que lo lleve a 'otra página'.

Quiero aclarar tres atributos que coloque y no explique anterior mente.

* **required:** Hace que el campo no pueda estar vacío.

* **placeholder:** Es simplemente el texto que aparece como fondo en un input y que al dar clic desaparece.

* **minlength:** Este limita la cantidad mínima de caracteres que puede tener un campo, el valor se determina según la necesidad, en este caso es para ayudar al usuario a elegir una contraseña un poco mas segura.

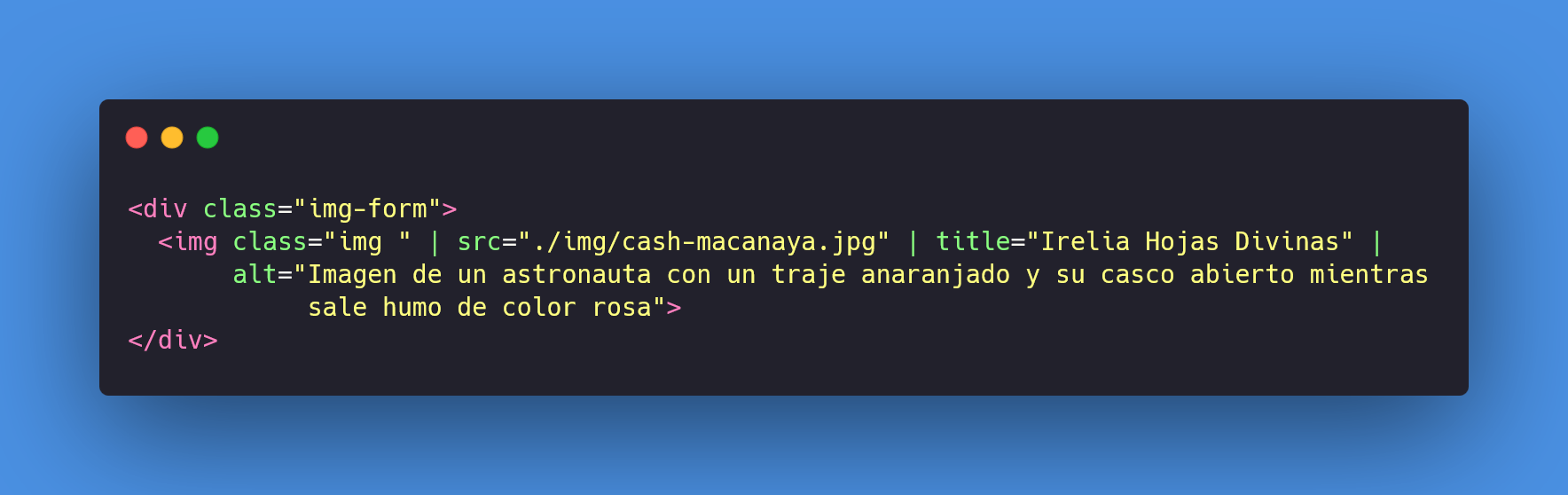

Pasamos a la parte final en la cual agregamos una imagen, puede elegir la que ustedes quieran, lo que vamos a hacer es generar un **div** por fuera del form, pero dentro de la etiqueta main y le damos un nombre a la clase para luego llamarlo y darle estilos, dentro de este **div** colocamos nuestra etiqueta **img** y la ruta relativa de la imagen que van a usar, que daría de esta forma.

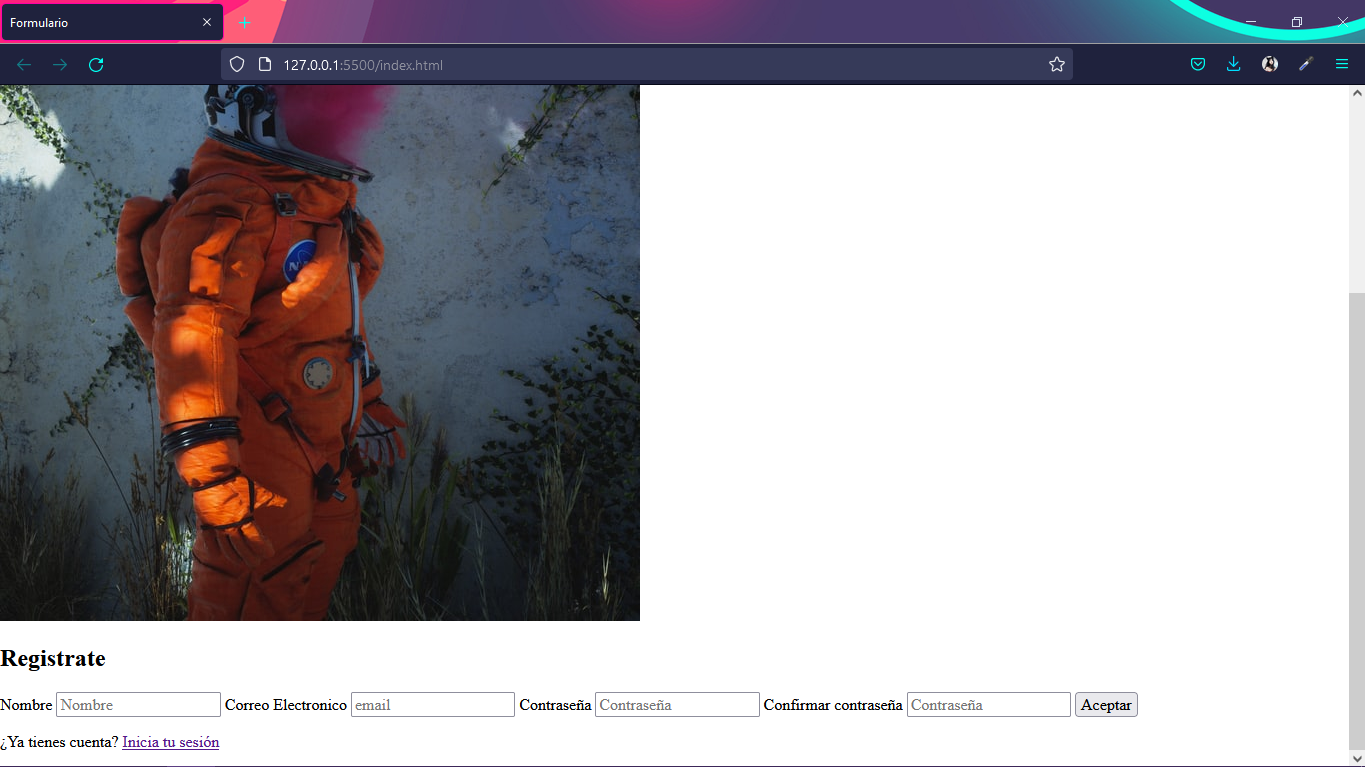

Lo que tenemos hasta ahora seria esto.

Con esto estamos terminando esta parte y pasamos a la siguiente que seria darle estilo.

***

**Pueden seguirme en mis redes sociales por este enlace.**

* [Twitter](https://twitter.com/juan_ariasd)

* [Linktr](https://linktr.ee/Boudgnosis)

***

**Parte 1:** [Como crear un formulario de registro (1/3)](https://dev.to/juan_duque/como-crear-un-formulario-de-registro-13-21lk)

**Parte 2:** [Como crear un formulario de registro (3/3)](https://dev.to/juan_duque/como-crear-un-formulario-de-registro-33-11p9)

***

**Páginas para que puedan descargar imágenes gratuitas**

* [Pixabay](https://pixabay.com/es/)

* [Unsplash](https://unsplash.com/)

| juan_duque |

918,321 | Python Virtual Environment | Python Virtual Environments Hi, before we dive in, let VE stand for Virtual Environment,... | 0 | 2021-12-06T03:44:17 | https://dev.to/otumianempire/python-virtual-environment-27ak | python, programming, beginners, tutorial | ## Python Virtual Environments

> Hi, before we dive in, let **VE** stand for **Virtual Environment**, wherever you see or hear **VE** (here, I mean).

## Back story

In one internship, I joined a team to build an e-commerce system, using python. On my PC I was running `python3.6` but the project uses `python3.8`. Does it matter? Sometimes.

In my case, what do you think I should do so that I would work on and test the project locally, using the same version of python as the project?

I could install `python3.8` and have two versions of python running on my system. I have to call `python3.6` and `python3.8` to make use of them. (I already have `python2.7`, I do not need another). A weirder solution would be to use a Virtual Machine. Install Ubuntu (or any OS) in a Virtual Machine that uses the latest version of python at the time. Someone said I should have used a docker.

There is a simple and stress-free solution that encompasses the same idea as a virtual machine called a Virtual Environment. We shall be talking about Virtual environments.

## What is a Virtual Environment and Why Use It?

With a **VE**, we will have a specific version of python. This environment will have separate dependencies for our project. These dependencies will affect only our project. These dependencies would have absolutely nothing to do with the dependencies on the computer we are using. So an upgrade or downgrade on our machine would not affect our project dependencies. The same applies to making changes in the **VE**, such as deleting a dependency, which would not affect the dependencies on the local machine. [python-environment-101] also introduces python **VE**.

We would discuss the python **VE**s, [virtualenv] and [pipenv], knowing what a **VE** is and why to use one.

## Check Python Version

To be on the safer side check the python version you are using, `python --version` or `python3 --version`.

You should get an output similar to `Python x.y.z`. Where _x_ has to be _3_, with _y_, _6_ or above.

## Install pip3

In the case you do not have `pip3`, then install `pip3` with the command below:

```bash

sudo apt-get install python3-pip

```

We would use `pip` to install packages. If you are new to `pip`, [pip-fcc] and [pip-w3schools] have easy to follow tutorial on [pip].

## Create project directory

On the terminal, navigate to your project folder or create one with:

```bash

mkdir PATH_TO_PROJECT_FOLDER && cd PATH_TO_PROJECT_FOLDER

```

If you are good with the GUI, go with it. We want to create a folder for our project, that is it.

## Virtualenv

Before we start using `virtualenv` we need [pip]. If you have multiple python versions like the _python2_ and _python3_, install `pip3` rather or check if you have `pip3`.

> (What about those who are using `python2.7`? Sorry, but try to adjust and use [pip] in place of `pip3` until you install the **VE** and activate it. I can not promise you that it will work or not. So you have to give it a go.)

### Install virtualenv

Then install virtualenv:

```bash

pip3 install virtualenv

```

Or when you are getting,

```bash

Command 'virtualenv' not found, but can be installed with:

sudo apt install python3-virtualenv

```

Follow this prompt to install _virtualenv_.

### Initialize the virtualenv

Initialize the virtualenv:

```bash

virtualenv VIRTUAL_ENV_NAME

```

For the sake of demonstration, let's assume we're creating an API for jokes, then we'd do:

```bash

mkdir jokeapp && cd jokeapp

```

We'd then initialize the virtual environment with,

```bash

virtualenv joke_env

```

This will create a folder called `joke_env` in the project directory. You can choose to hide the folder by prefixing the **VE** name with a dot, `.`. I prefer to make it visible.

### Activate The **VE**

We have to activate the **VE** else installed packages will be installed in the global space or scope (on the local machine).

```bash

source VIRTUAL_ENV_NAME/bin/activate

```

This will activate the **VE** but in our case, we'd do:

```bash

source joke_env/bin/activate

```

The name of the **VE** gets added to the username before the computer name, like this below:

```bash

(joke_env) username@computername:PATH_TO/jokeapp$

```

Sometimes things can be scary like you turn on the light bulb and you'd forget where the switch is or how to switch it off. Entering into vim is scarier when you can not exit. Press: `[esc] [:] [q] [!] [enter]` to exit vim.

### Deactivate the **VE**

In the activated **VE**, we can deactivate the **VE** with, `deactivate`.

Either our terminal will return to `username@computername:PATH_TO/jokeapp$` or we'd get an error output, saying, `deactivate: command not found`. In the latter case, you didn't _activate_ the **VE** in the first place.

Now we know how to get in and get out of the **VE** smoothly. We can install and uninstall all the packages we want.

Can we use other versions of python if we want (I don't mean `python2.7`) and not just create a **VE** for our project?

Yeah, we can. It is just that we have to install it locally first, to use it (the python version we want must exist before we can use it or create a **VE** for it). It must

exist in /usr/bin/PYTHON_VERSION

We would have to do:

```bash

virtualenv -p python3 venv

```

or

```bash

virtualenv -p /usr/bin/python3.8 venv

```

### Install/Uninstalling packages

We can install and uninstall packages just like we do with [pip].

## pipenv

### install pipenv

Install `pipenv` like you'd install any package, with the command:

```bash

pip3 install pipenv

```

If you look at the logs/output as the installation goes on you see that

virtualenv pops up here and there. So we can say or assume that `pipenv` was built on top of `virtualenv`.

### Activate the Pipenv Shell

Pipenv has a shell that allows you to create a **VE** with the command:

```bash

pipenv shell

```

This will use the project/root directory name as the **VE** name, using the highest version of python you have. It creates a **VE** when there is no **VE** for our project directory.

### Create **VE** For a Specific Version

You can create the **VE** with the command, `pipenv --python VERSION`. Here, _VERSION_ is the version of python you wish to use for your project.

We could have done, `pipenv --three` or `pipenv --two` to create a `python3` or `python2` **VE** respectively.

> Note the python version you want to use must exist locally else you have to install it.

> The [pipenv] docs tell you not to do `pipenv --three --two`, for "things can happen".

### Where Can I Find The **VE**

The **VE** will be installed at, `~/.local/share/virtualenvs/PROJECT_NAME`

Use `pipenv --venv` to output the path to where the packages will be or are installed on your pc for this very **VE**.

We can use `pipenv --where`, to tell us where our project root directory is. Something the `pwd` command.

### Remove/Delete **VE**

We can remove the **VE**, as in, delete it with `pipenv --rm`. This will delete the content and root directory of our **VE** located at the path provided by, `pipenv --venv`.

### Exist **VE**

Use `exit` to exit the **VE** and activate it with `pipenv shell` (we know this already).

### Pipfile

Pipfile is created when a **VE** is created and it holds the names of the packages we'd use in our projects. `cat Pipfile` will display something like this below, on the terminal.

```

[[source]]

url = "https://pypi.org/simple"

verify_ssl = true

name = "pypi"

[packages]

[dev-packages]

[requires]

python_version = "3.6"

```

So what does this even mean?

- I have no packages installed

- I am using is `python3.6`.

- _dev-packages_ refers to packages that your projects need only during development. An example is an `env` package that reads the content of `.env` files. This is will not be needed, say we publish our project on Heroku (a web project).

### Install a Package

Let's install fastAPI, you can install any package you want.

```bash

pipenv install fastapi

```

We could also do, `pip install fastapi` (in the activated environment). A `Pipfile.lock` file will be created and this is the file that has the hashes for the packages installed.

To install a package with pipenv, do, `pipenv install package_name`

### Uninstall a Package

To uninstall a package, use `pipenv uninstall package`. This works like `pip uninstall package`.

### Run a Python Script

To run a python script do, `pipenv run python script.py`. We could do, `python script.py` (in the activated environment).

### Required Packages, Dev Packages and The requirement.txt File

`pip freeze > requirements.txt` or `pipenv run pip freeze > requirements.txt` will write the output of `pip freeze` into a `.txt` file called, `requirement.txt`. The project dependencies are printed on the terminal when we enter the command, `pip freeze`. In this case, we write the output into a file. This is a basic practice, like package.json for Nodejs.

We can install required packages from the `requirement.txt` file with, `pipenv install -r requirement.txt`.

We can also use the `Pipfile.lock` to write our package dependencies into the `requirement.txt` file using any of the commands below:

- `pipenv lock --requirements > requirements.txt`

- `pipenv lock -r > requirements.txt`

### PYENV

Now there was something I did not mention, which was supposed to be one of the purposes of using a **VE**.

Assuming we just have say python 3.6, running `pipenv shell` for the first time will choose the current `python3` that we have. By the assumption made, we'd have a **VE** for `python3.6`.We have a **VE** for separate dependencies, **what if we want a higher version or a version that we

do not have (on our PC)?**

Yeah. We can a version higher or lower than the current version we have and we can install that with [pyenv]. [pyenv] will allow us to install the various version of python we want. Remember that we want to just use this new version in the **VE** so we must install this new version **in** the **VE** else it will install as part of our PC.

### Install PYENV

Follow the guide from [pyenv-github] page to install [pyenv] on your OS. On Ubuntu (I am on ubuntu 16, which explains why my version of python was `3,6`. If you want to call me out on this because maybe, I have said or you have read that I was on PopOS/ubuntu 20.04, yeah, it is true but things happened and I am on 18 now).

With the instruction provided by the [pyenv-github] page, installation should be quite simple.

### Install Python3.9 With PYENV

We know that `pipenv --python VERSION` will install a python version that already exists on our PC, if it does else spit out some error message. After the [pyenv] installation, if their version doesn't exist, `pipenv` will use `pyenv` to install that version. `pipenv --python 3.9`.

And We Are Done Here... No, I am joking... We are not done.

Say you had, `python_version = "3.6"` in the `Pipfile`, you have to change the version to that which you just installed.

### How I Did Mine When It Was Becoming Weird. How Weird?

This is what I did and how I should have rather done it (At this point I already have the project started and there are packages I am working with. How weird? ).

- Get the packages/dependencies into the `requirements.txt` file, `pip freeze > requirements.txt`

- Remove the `3.6` **VE** with, `pipenv --rm` rather than change the version number, from `python_version = "3.6"` to `python_version = "3.9"`

- Exit out of the activate **VE** with, `exit`

- Remove the Pipfiles, `rm Pipfile*`

- Then start a new environment, `pipenv --python 3.9`

- Assuming the latest (on your PC - you used `pyenv` to install) is `3.9` and you want the `3.9`, then you can run, `pipenv shell`.

- Activate the environment with, `pipenv shell` (I didn't have `3.9` on my PC, as the previous step describes)

- Install the packages from the `requirements.txt` file, `pipenv install -r requirement.txt`

### Somethings We Will Know Only When We Have Used a **VE**

- `Virtualenv`, the folder for the packages used will be available in the main directory and you have to git ignore it. For `Pipenv`, the folder at `pipenv --venv`. You have to either delete the packages folder when the project leaves your custody (you don't work on the project anymore). Out of sight out of mind, remember? This means we will have our memory chip away bit by bit as we use `pipenv` when we do not remove the unwanted **VE** (just the `node_modules`) and we'd not know it because we don't see it.

- `Pipenv` creates `Pipfile` and `Pipfile.lock`. This is similar to `package.json` and `package-lock.json`.

- The only drawback I faced was when I wanted to install only `dev-packages`.

- `pipenv lock -r > requirement.txt`, for requirement packages

- `pipenv lock -d > dev-requirements.txt`, for both requirement and dev packages

- `pipenv lock --dev-only > only-dev-requirements.txt`, for only dev packages

- We could exit the `pipenv` **VE** with `deactivate`. We used `exit`before.

- So practically `Pipenv` is suited for your projects. `Virtualenv` is sugar-coated to give us `Pipenv`. I am just saying from a practical point of view.

### The END

Let me know if you run into a weird situation and how you solved it.

#

[pipenv]: https://docs.pipenv.org/

[virtualenv]: https://virtualenv.pypa.io/en/latest/index.html

[pyenv-github]: https://github.com/pyenv/pyenv

[pyenv]: https://pypi.org/project/pipenv/

[python-environment-101]: https://towardsdatascience.com/python-environment-101-1d68bda3094d

[pip-w3schools]: https://www.w3schools.com/python/python_pip.asp

[pip-fcc]: https://www.freecodecamp.org/news/how-to-use-pip-install-in-python/

[pip]: packaging.python.org/tutorials/installing-packages/

| otumianempire |

918,326 | Open Source React Developer Tools in Today's Digital Era | Facebook, Skype, Tesla, and Airbnb all have something in common. They're all using React, a... | 0 | 2021-12-06T04:20:31 | https://dev.to/ashikarose/open-source-react-developer-tools-in-todays-digital-era-13e7 | react, programming, tutorial, devops | Facebook, Skype, Tesla, and Airbnb all have something in common. They're all using React, a JavaScript toolkit for developing engaging user interfaces with component-based building pieces. As a result, when combined with useful React developer tools, this handy package aids in the rapid development of fully working single-page or mobile applications.

Why is React so popular?

Jordan Walke, one of Facebook's software engineers, designed React in 2011 to handle Facebook advertisements. React is now an open-source, declarative, dynamic library for building complex interfaces using reusable individual components called components.

React outperforms Angular and Vue in terms of automated re-rendering and component updates. According to Statista, React is the second most popular web and mobile app framework in the world.

A framework, despite the convenience it provides, also stores a different set of vulnerabilities. Data interoperability is a typical behavior in the digital world, and it puts a lot of user-sensitive data at risk.

React apps also have their own set of security vulnerabilities, such as XSS (Cross-Site Scripting), SQL injection, Zip Slip, XXE (XML External Entities), failed authentication, and more. This opens the door to unauthorized access, data breaches, hacking, and data tampering, among other cyber-criminal acts.

Many countries have adopted regulations that regulate the significance of preserving sensitive data due to the sensitive nature of user data. Failure to follow these rules and regulations might result in serious legal consequences for both the software owner and the offenders.Ensure absolute security and privacy compliant services that include:

HIPAA compliant medical software development services for the United States

GDPR compliant medical software development services for European countries

DPA compliant medical software development services for the United Kingdom

PIPEDA compliant medical software development services for Canadian countries, Hence, develop 100% secured and compliant solutions only with the best [react agency](https://www.cronj.com/react/react-development-agency).

What React developer tools will you need to know in 2022?

We've put up a collection of important React developer tools. Some will be useful for beginners as well, while others will be chosen by more experienced programmers. This isn't just a list of useful React development tools, though. We also opted to acknowledge their inventors. In fact, one of the most crucial elements that contributes to React and React Native being one of our favorite technologies is the React community.

1.Storybook

Another React tool for creating, developing, and testing your own User Interface components. Storybook includes a UI development environment as well as a UI component playground. You may not only profit from the Storybook's development environment for UI components, but you can also simply test and show them.

You can create a static version of Storybook on your server that includes a gallery of UI components that all team members can see. It does not, however, extract or distribute components between projects.

2.React Navigation

Based on JavaScript, the utility provides an expandable and usable navigation solution. As a result, developers can get started right away thanks to built-in navigators that give a consistent experience. As a result, the tool has received more than 13 000 stars on GitHub.

3.Create React App

Create React App is a command-line interface(CLI) tool that requires no development configuration. It encourages you to create your own standard and guides you to start the app developing procedure seamlessly.

There is no complexity because you only need a build dependency. The Create React App has behind layers of ESLint, Webpack Babel, and other features that make it more suitable for small web applications.

4.Jest

[Jest ](http://en.wikipedia.org/wiki/Jest_(JavaScript_framework))is an out-of-the-box solution that requires no configuration. The testing procedure is intended to maintain the greatest level of performance. In the next iteration, for example, previously failed tests are run first.

Jest will be your favorite ReactJS testing tool. It's a Facebook-developed JavaScript testing framework. It was created for the purpose of testing React components. It should be your first choice for testing React because it comes from the React creator and is developed and supported by the React community. It also works with other JS frameworks such as Babel, TypeScript, Node, Angular, and Vue.

5.Linx

Linx is a low-code programming tool for backend development, not necessarily a React tool (such as APIs, integrations and automations). It's on this list because it's a simple and effective tool for integrating an API into your react SPA. The frontend and backend can take many various forms, and understanding how to connect the two might be difficult. Low-code solutions, such as Linx, can help you save backend development time by reducing the coding part and allowing you to focus more on the logic.

6.Redux

For JavaScript apps, [Redux ](https://redux.js.org/)is a state management solution. It's most commonly used to integrate with React, it also works with other React-like frameworks.

For Redux, it's now able to connect all components directly to the entire state and thereby, reduces the requirement for using call-backs or props.

7.React Boilerplate

A developer-friendly start library that gives you the infrastructure you need to build scalable projects. The focus of this offline-first React architecture is on high performance and excellent development methods. Your software will be available as soon as your users load it, with no requirement for a network connection. Chrome Redux DevTools are compatible with React.js Boilerplate. You may now use the CLI to automate the creation and testing of components, containers, and other types of objects. All CSS and JavaScript changes are immediately visible and accessible for testing without having to refresh the page.

8.BIT

It's essentially a command-line interface (CLI) utility. BIT was built to address issues with sharing React components. You may arrange and distribute User Interface components among your team members with this React developer tool. Furthermore, you can reuse the components that have been lost in the future.

Each component is isolated and tested separately before being exported as a complete unit. It simplifies testing, because you just have to deal with this one component if you wish to make a little change. Bit is also great for test-driven development because of its component isolation.It is available for personal and open source projects at no cost.

9.Razzle

[Razzle ](https://razzlejs.org/)simplifies SSR configuration by encapsulating it in a single dependency. It provides developers with a similar experience to create-react-app, but with more control over frameworks, routing, and data fetching.

10. React Cosmos

This is a useful developer tool that aids in the creation of reusable React components. It looks for components in React projects and allows them to be rendered using any mix of props, context, and state. [React Cosmos](https://reactcosmos.org/) allows developers to simulate any external dependency, such as localStorage or API replies, in order to examine the current state of their project in real time.

11.React Sight

This React visualization tool provides a visual depiction of the structure of React apps to developers. The tool requires the previously described React Developer Tools for Chrome. Developers who want to use it will also need to install React Sight as a Chrome extension, which adds a new "React Sight" panel to Chrome DevTools. React Router and Redux are supported by the utility.

12.React 360