id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,022,041 | Netlify makes your entire Project in One Click Action | Well you have completed your javascript project and feeling proud of yourself and want to show your... | 0 | 2022-03-14T15:05:19 | https://dev.to/ojhanidhi036/netlify-makes-your-entire-project-in-one-click-action-3pke | Well you have completed your javascript project and feeling proud of yourself and want to show your hard work with your friends, family etc. Here,the question is what will be the shortest and fastest way to show your project or hard work to anyone with just one click action.

In this blog, you will learn how to publish your javascript project using popular platform-**Netlify**

Before i start, would like to give little description about my javascript project so that you will be able to understand what project is about and after all it's my hardwork which i want to share with you .

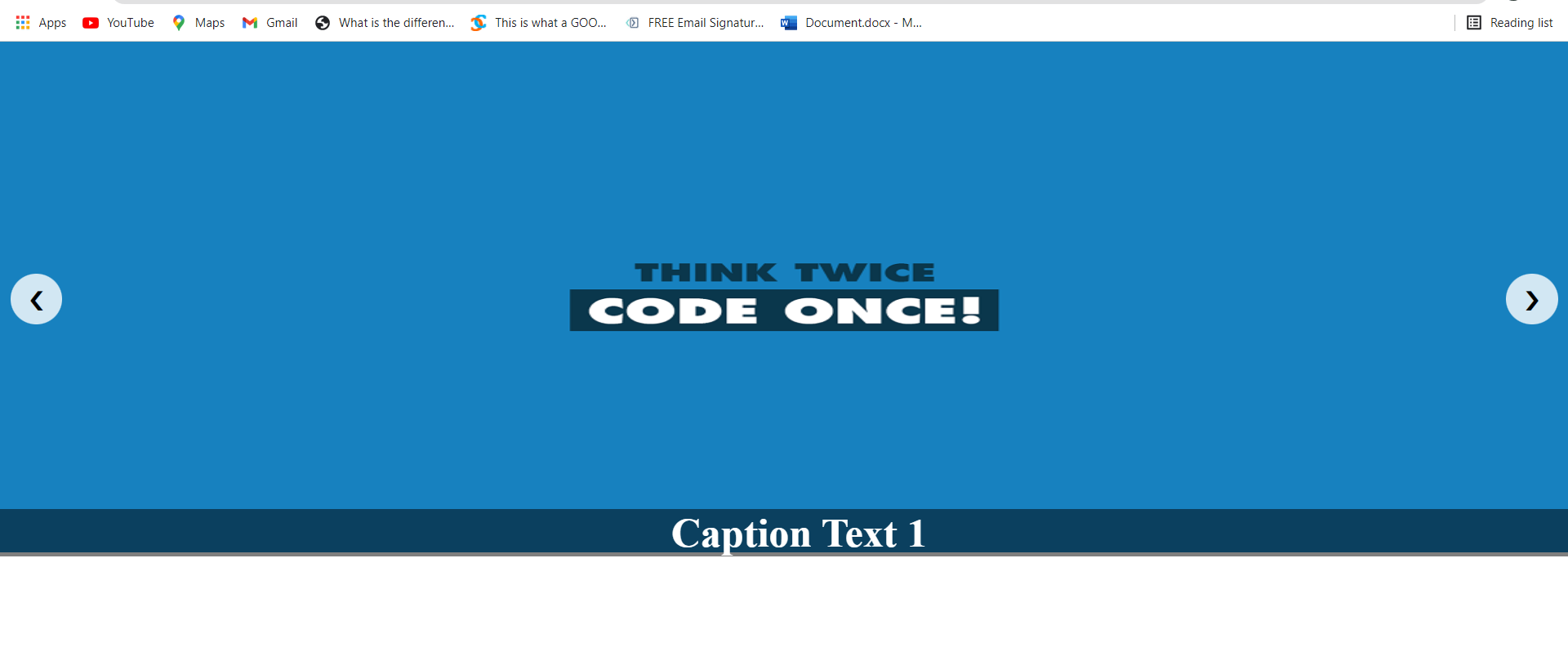

In real-time scenarios, there may be a requirement to put an image slider on the application web page. If the slider requirement is simple and short, building your own slider using HTML and JavaScript can be one of the best ways to achieve it. This will take less time to implement and give no conflicts/errors.

**Just your one click on this link you will be redirect to my project website.**

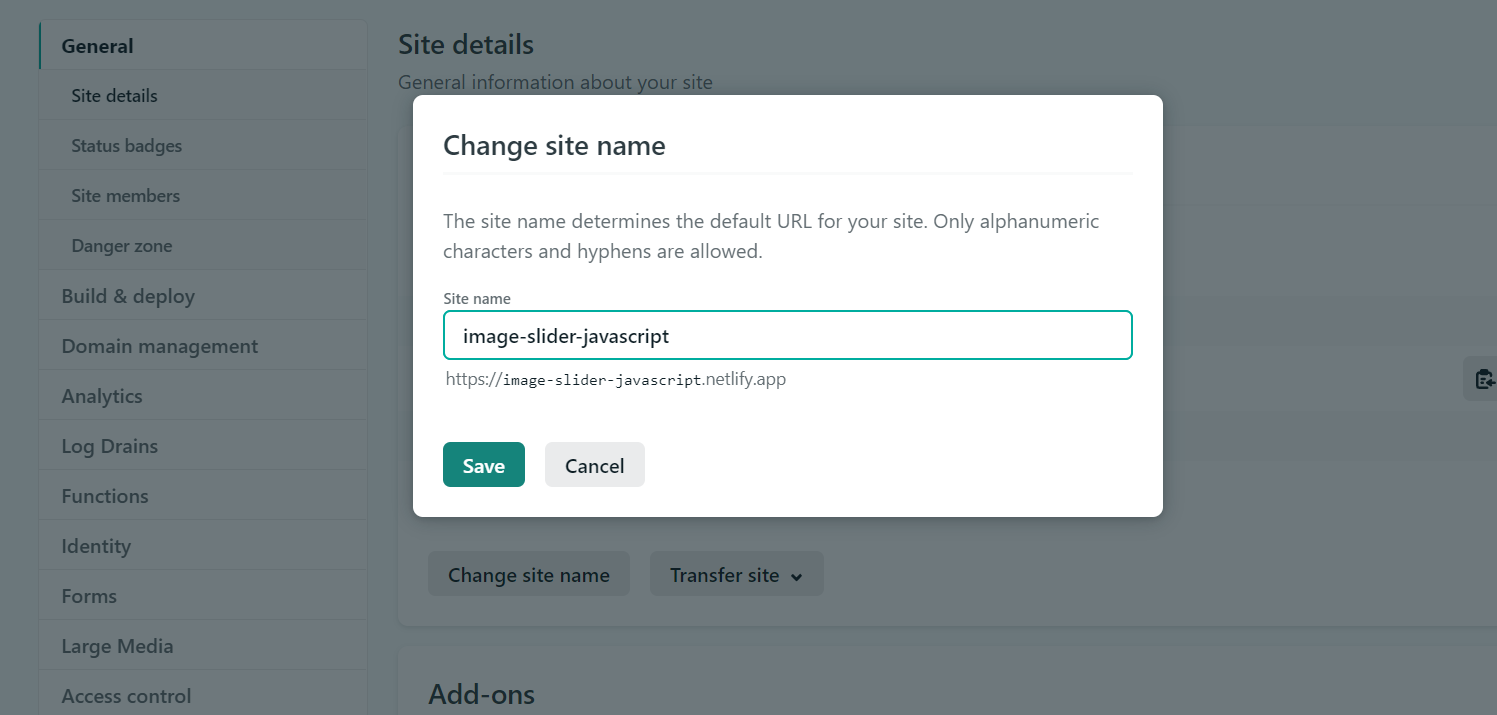

[https://image-slider-javascript.netlify.app/](https://image-slider-javascript.netlify.app/)

Basically just want to mention that this is the way where you can publish your project on netlify and get a link of your project so that you can share with anyone at anytime through link like above the link of my project which i have shared with you .

## How to Publish a Website on Netlify

_The first method we're going to explore is how to publish your website on Netlify._

Netlify is a platform for hosting webistes. It is easy to host sites on Netlify as you don't need to configure it manually- and best of all, it's free. If you haven't signed up for an account, now is a good time to do so. Click on this link and sign up.

[https://www.netlify.com/](https://www.netlify.com/)

Here's the step-by-step process of publishing your website on Netlify:

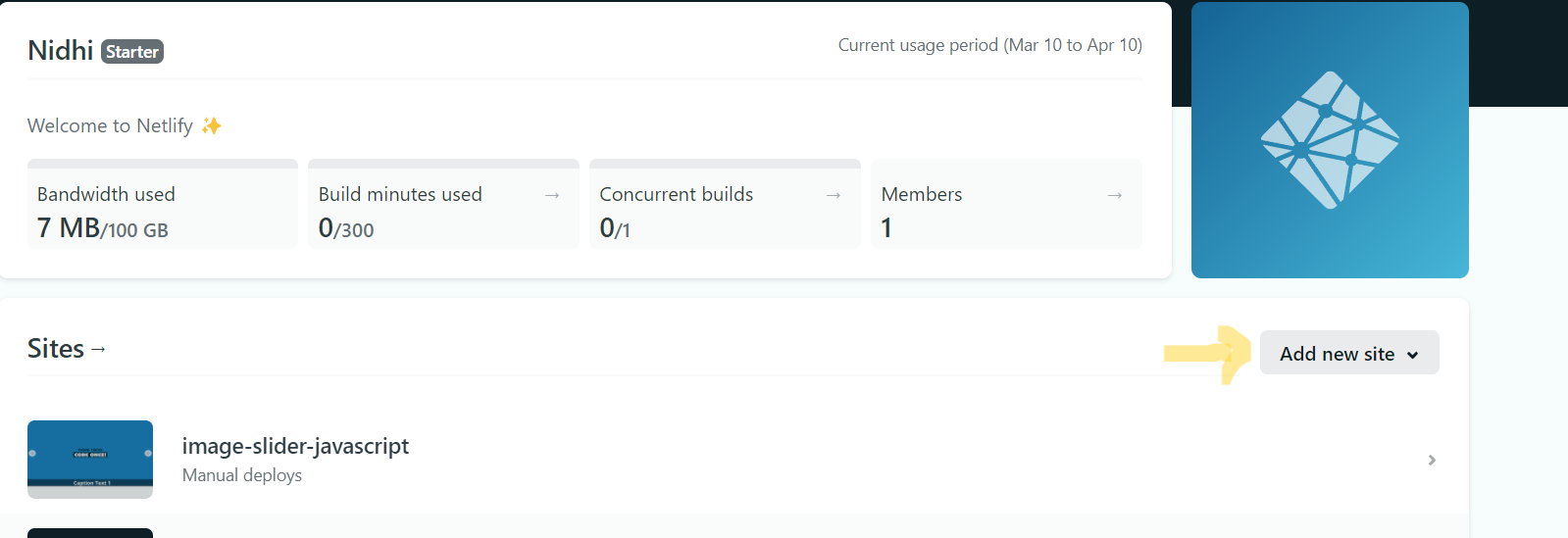

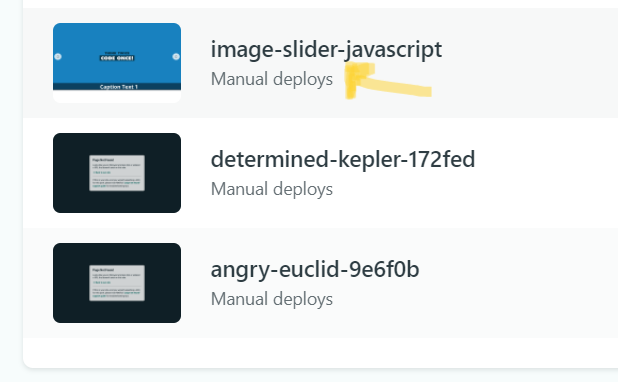

### Step 1: Add your new site

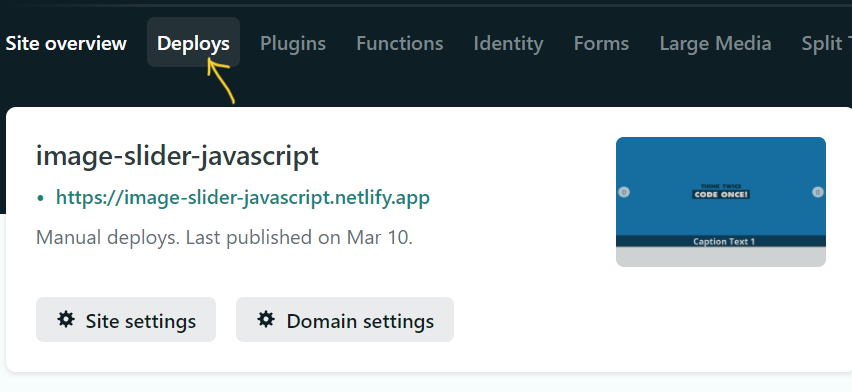

Once you've logged in, it will take you to a home dashboard. Click the **Add new site** button to add your new website to Netlify.

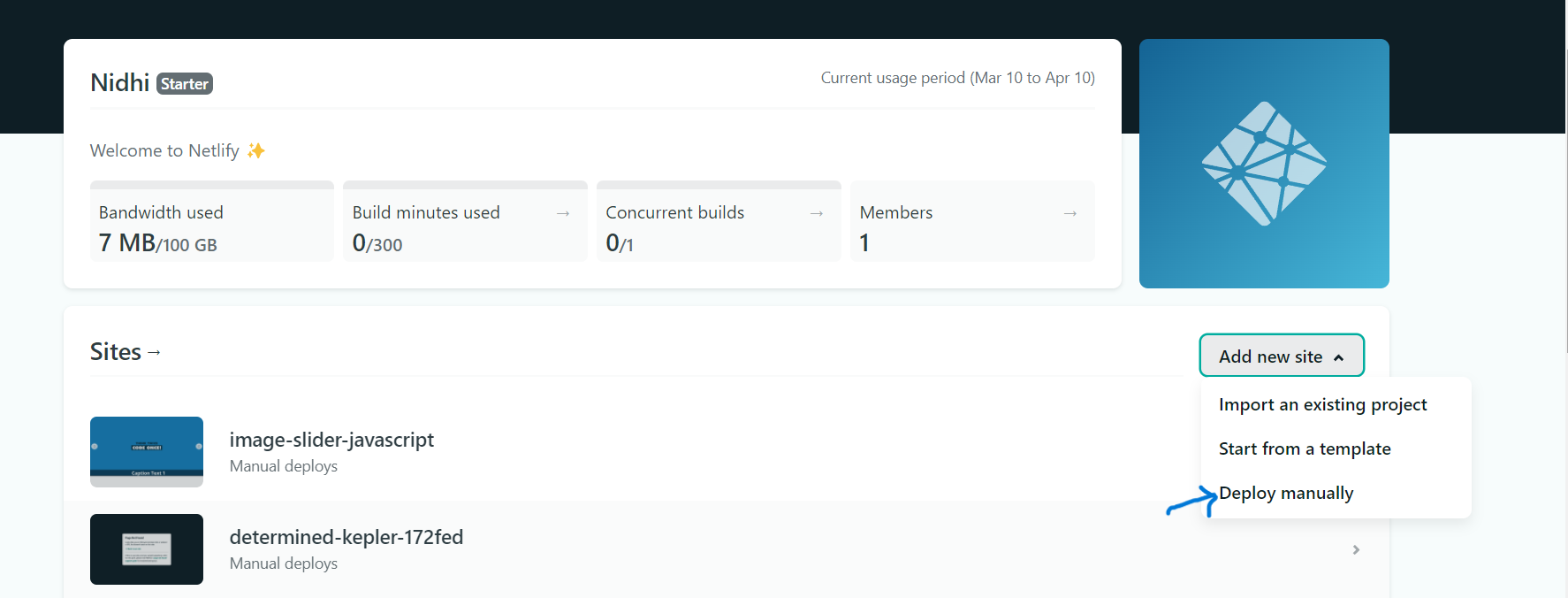

### Step 2: Deploy project manually

After clicking on **Add new site** you will get dropdown list so select **Deploy manually**

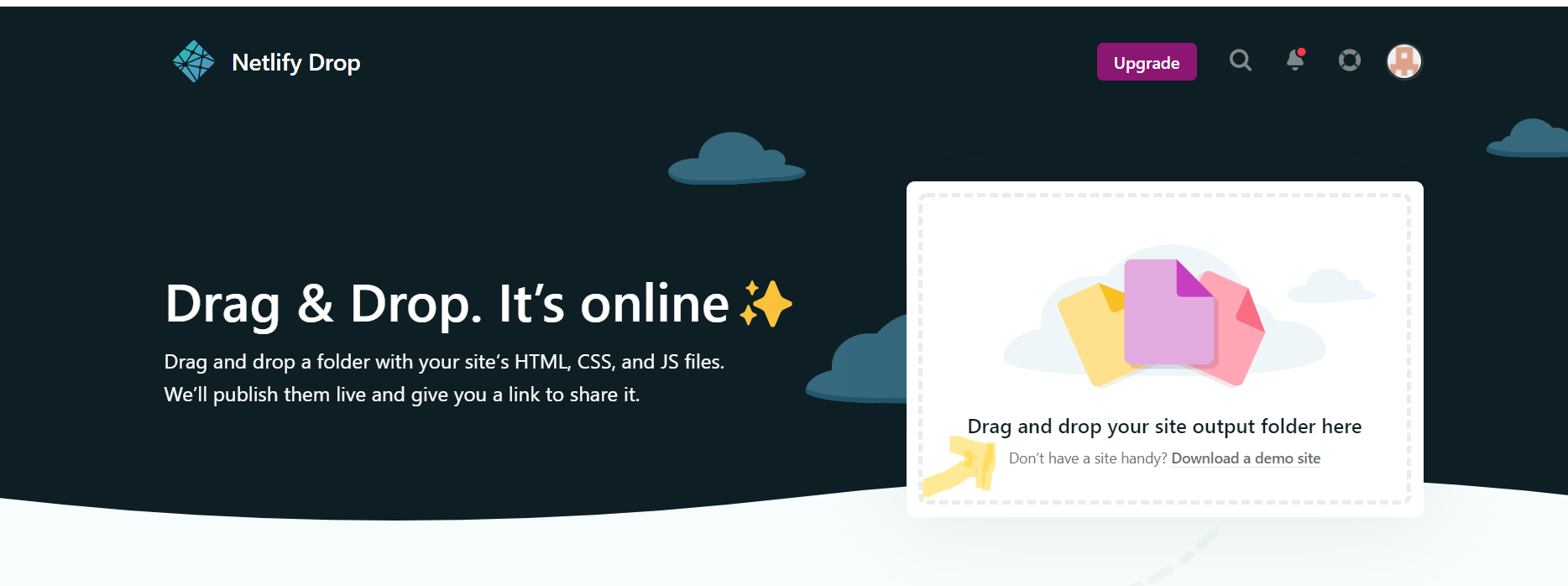

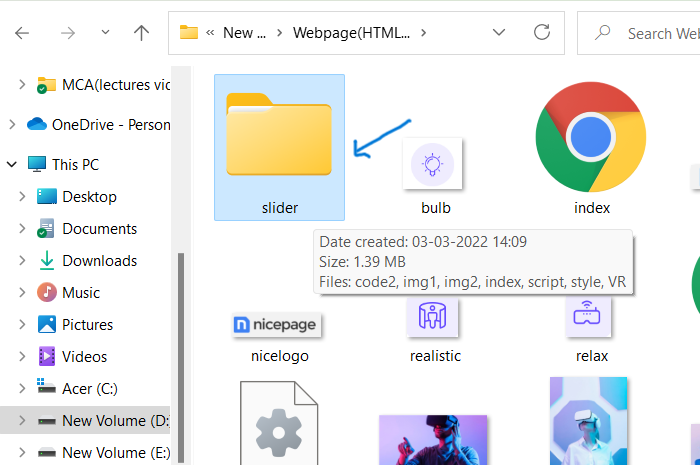

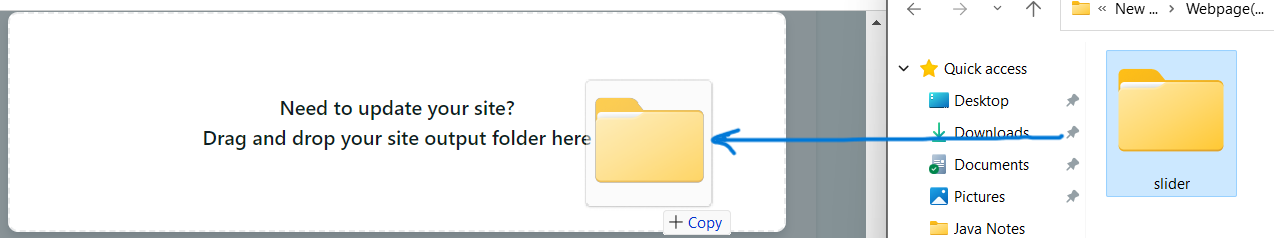

### Step 3:Drag and Drop your site output folder

Once you redirected on this page then just follow your project location and drag your project folder and drop into the deployement box. See here i have followed my project folder location to drag my project to drop in deployment box.

### Step 4: Publish your website

Your website is now ready to publish!

Netlify will do the rest of the work for you, and it will only take less than 10 seconds to complete the process.

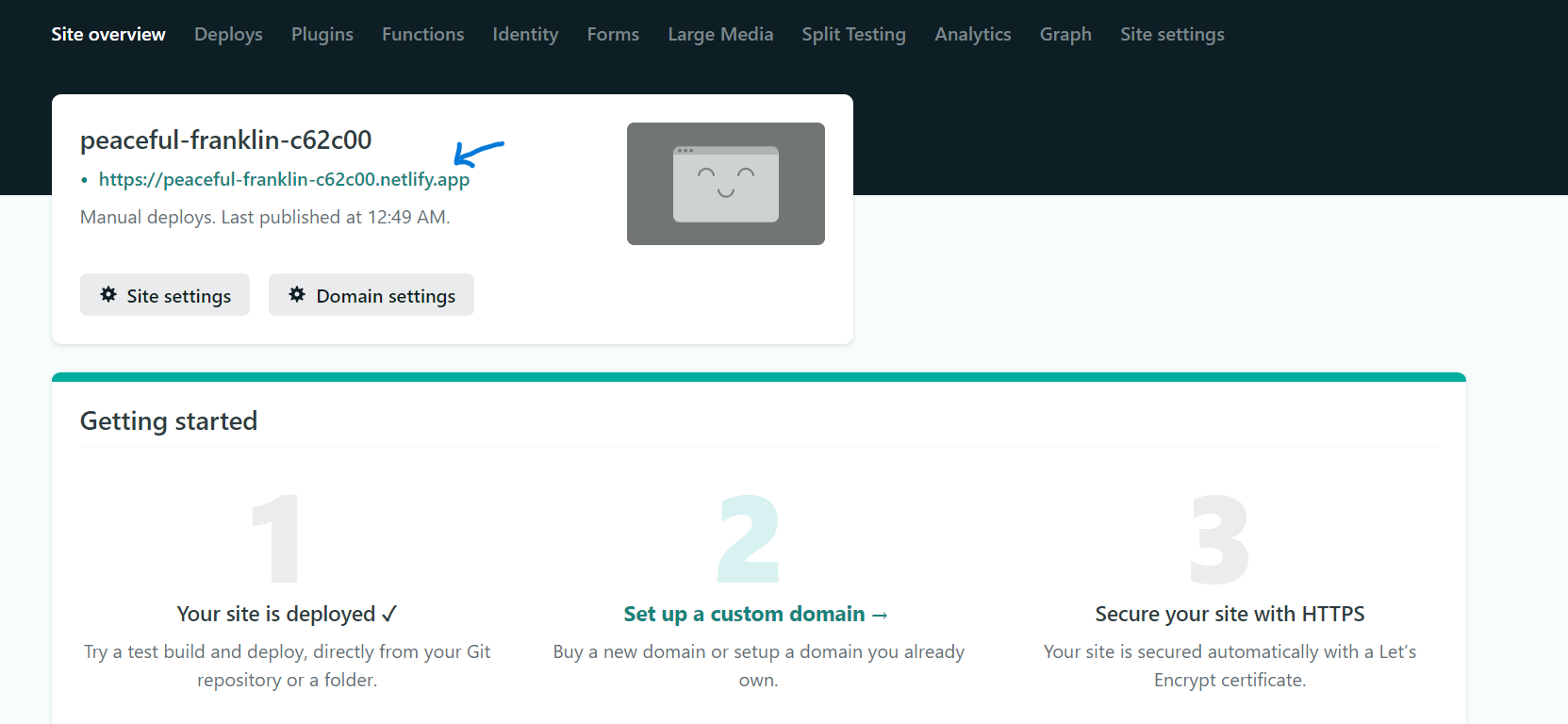

Now you are done! Your new website is published, and you can view it by clicking the green link.

### Step 5:Change site name(optional)

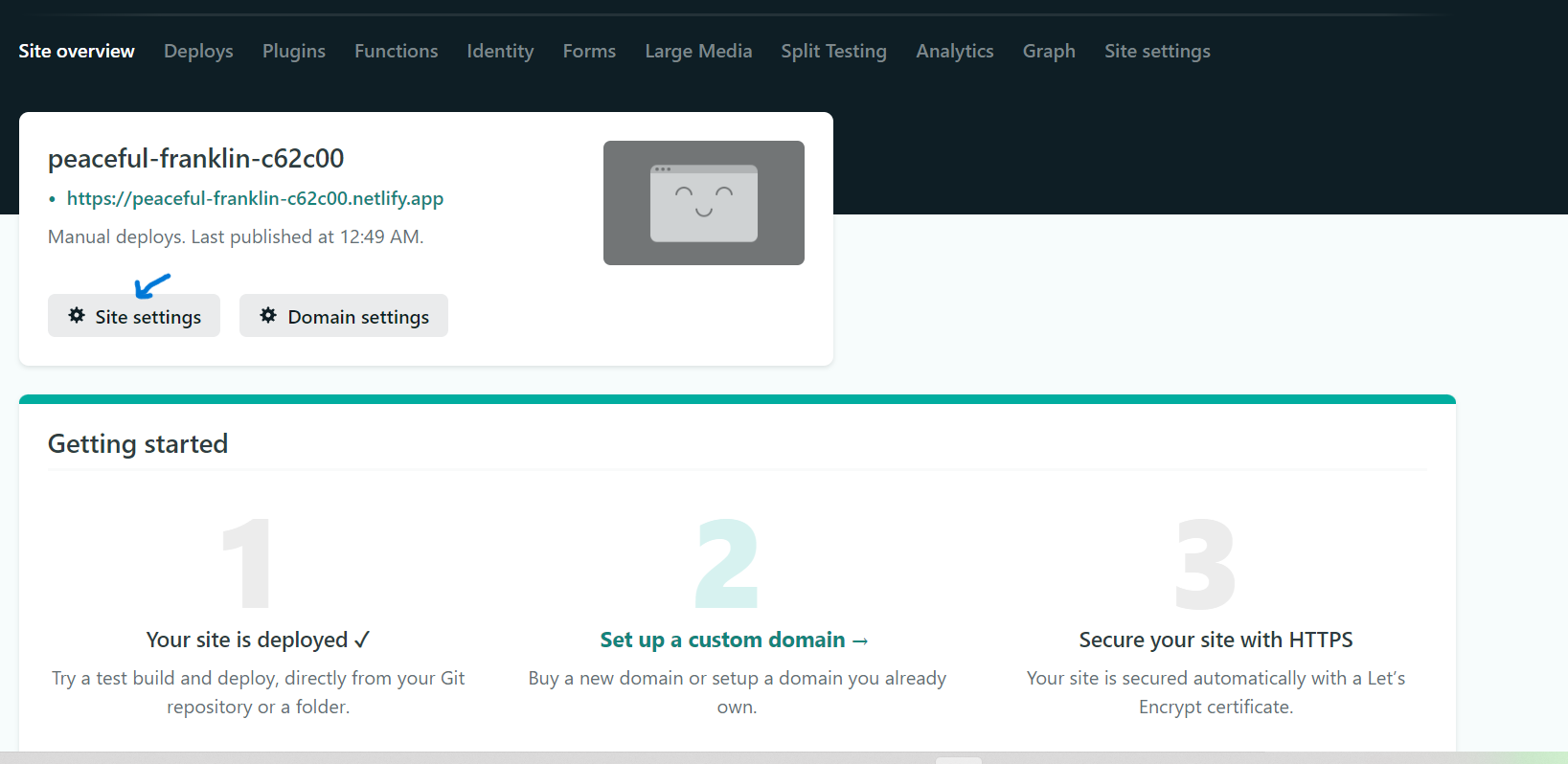

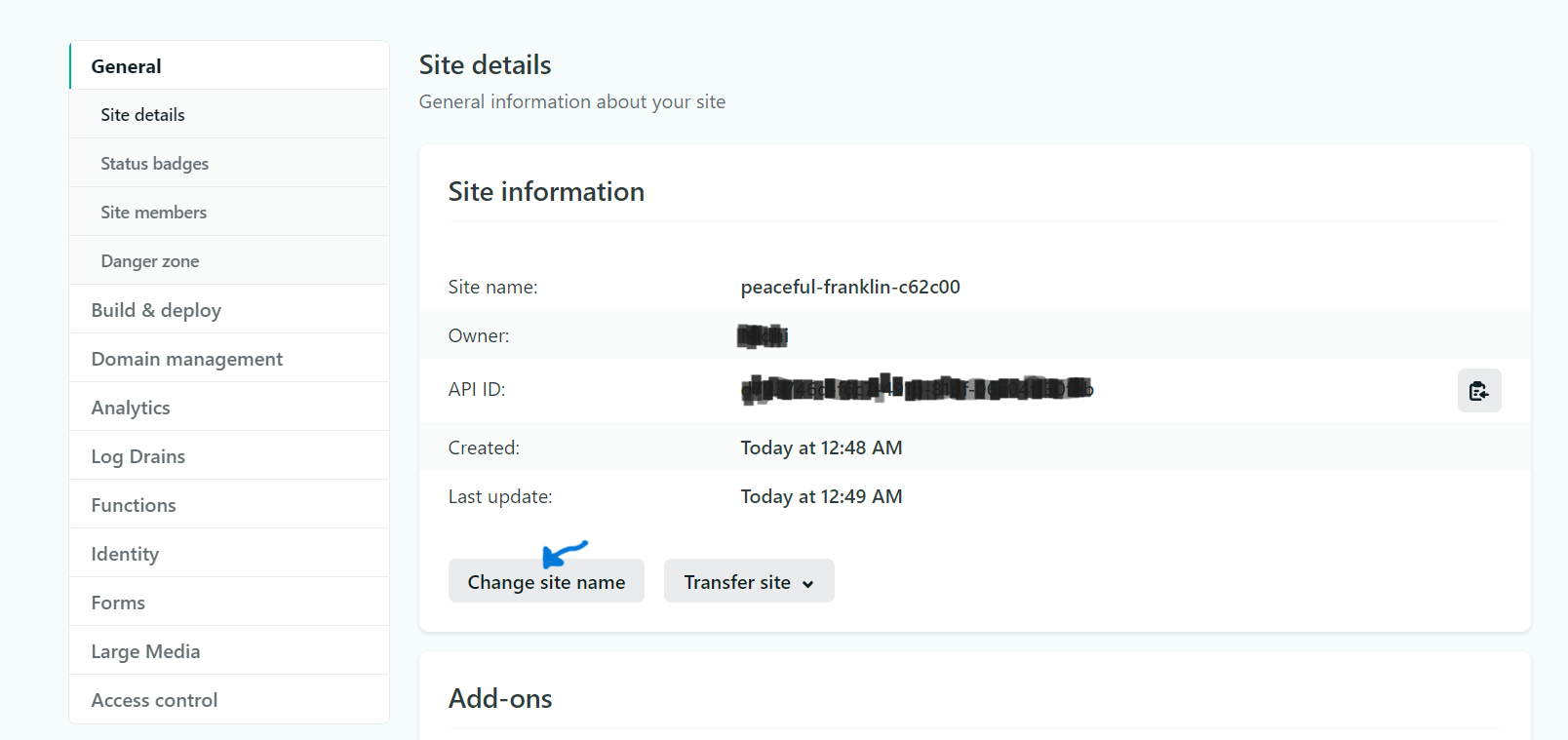

Right now, your URL looks random, but you can edit it by clicking the **Site settings** button and then the **Change site name** button.

Click on **Save** button . Congratulation on publishing your first new website!

## How to update a website manually deployed on Netlify

_The second method we are going to explore is how to deploy project again on the same site on netlify after done some changes in the same project files._

### Step 1: Select the website you have manually deployed

Here, I have selected the website which i have manaully deployed and want to deploy project folder again on the same link after done some changes in the same project.

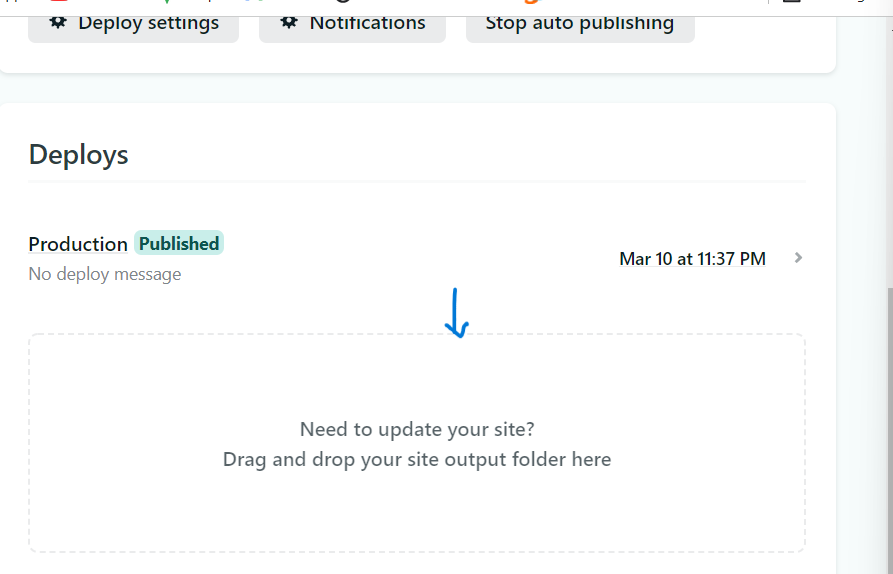

### Step 2: Select the Deploys tab and reupload your project folder

Once there click on Deploys then drag and drop your new website files into the Netlify deployment box.

See in the below image am updating my site through drag and drop. Once dragged and dropped the website will automatically publish your website files and you should see the word "published" in green.

Here if you see the differences of my initially **new site** Vs **updated site**

## Conclusion

I hope you've found this blog helpful. You have learned how to deploy your website manually with Netlify. Now you can go ahead and show the world of your incredible work.

**Follow me for such contents**

[https://twitter.com/89NidhiOjha1](https://twitter.com/89NidhiOjha1)

[https://hashnode.com/@ojhanidhi036](https://hashnode.com/@ojhanidhi036)

| ojhanidhi036 | |

1,023,507 | Angular vs. React : Which Framework is Best For Web Development? | The Angular vs. React comparison has become an enormous task for developers. Every JavaScript... | 0 | 2022-03-15T14:10:32 | https://dev.to/freita_browning/angular-vs-react-which-framework-is-best-for-web-development-49g6 | javascript, programming | The Angular vs. React comparison has become an enormous task for developers. Every JavaScript framework has its capability. Some frameworks are better for large applications, some are better for small, and some are good for both applications. Choosing the best JavaScript frameworks will provide the right direction to a [JavaScript development company](https://www.csschopper.com/javascript-development.shtml) to create eye-catching web applications.

Nowadays, JavaScript is becoming more and more widely used. Hence, web developers use the best JavaScript framework for creating their web applications. When they think of web development, two popular front-end technologies come to their mind: AngularJS and ReactJS.

{% embed https://www.youtube.com/watch?v=8TtAh91CNDM&feature=youtu.be %}

## Know the comparison of Angular vs. React

## 1. Principles of Interaction with DOM treemap

DOM is the document object model that is utilized for executing dynamic changes. It is used for enhancing the speed of JS frameworks.

**AngularJS:**

AngularJS is the front-end JavaScript framework that functions with real DOM. It utilizes directives to bind app data to the attributes of the HTML element. Below some directives are mentioned:

.ng-disabled Directive: It binds AngularJS application data to the disabled attribute of HTML elements.

.ng-show Directive: It displays the HTML element.

More directives are available apart from the above. It executes any work indirectly through abstractions, TemplateRef, and viewContainerRef.

**ReactJS:**

The process of reactJS uses the lightweight copy of real DOM; therefore, the execution of reactJS is dependent on virtual DOM. To check the redrawn nodes, the React-based application compares the two DOM treemaps: the first is a real DOM tree, and the second is a virtual DOM tree.

After that, it functions the operation only on those nodes which need any changes. Thus, improving the performance of the software. But in complicated apps such as animation and complex synchronization, react.js may fail.

## 2. Performance

To load the website faster, performance is the primary concern. Moreover, developers implement a performance review process in most workplaces. JavaScript frameworks enable JavaScript web development services to enhance the performance of your website accordingly.

**AngularJS**

AngularJS depends on a two-way data binding process, making a visitor for every binding to change any track in the DOM. If there are many visitors, it can get slow as the developer makes any transformation, then the visitor needs to change it again.

The digest loop compares the new value against the initial value when any view is updated. Following that, the watcher calculates the actual value. Therefore, your application will perform better since the watcher tracks any changes in the view model, so you no longer have to go back to the previous application.

**ReactJS:**

As we know, ReactJS uses the concept of virtual DOM. Once a developer loads an HTML document, ReactJS makes a lightweight DOM tree from JavaScript objects and saves it on the server. When the user enters new data in the browser field, a new virtual DOM immediately appears, and the user can compare it with the new DOM. The dissimilarities found in these two models rebuild the virtual DOM again. These days, with new HTML, it is possible to do each work on the server, reducing the load time and improving performance. Thus, this approach is better between React vs. Angular.

## 3. Adaptability to work with a team

The development process is a huge task, and we should perform it with a team. Let’s look at which is the best JavaScript framework for working in a team.

**AngularJS:**

This framework was designed according to developer convenience. It uses the MVW architecture, separating the application logic from the given representation to the user interface. It provides a modular solution that can operate simultaneously. Therefore, this framework is beneficial for productive team interaction.

**ReactJS:**

Model-View-Controller, Model-View-View-View-Model, and Model-View-Whatever structures do not exist in this library. Therefore, multiple developers cannot work on the same code simultaneously because the logic and representation are not separated. And, a lot of time is involved in developing any application. As a result, most developers dislike the complicated development process.

**Conclusion**

Both of the frameworks mentioned above excel at something. If you plan on creating large projects, Angular is the right choice for you. For those who don't want to be bound by a framework, React is a perfect choice. You can then select whatever framework you like depending on your project needs. You can [hire a JavaScript developer](https://www.csschopper.com/hire-dedicated-javascript-developer.shtml) with the requisite expertise and experience for further assistance. It will build a competitive web app or website using the most suitable JavaScript framework for your business.

| freita_browning |

1,025,825 | File_get_contents('php //input') not working | Problem: I am unable to understand this problem .. Please suggest me a better solution to solve it... | 0 | 2022-03-17T10:35:07 | https://kodblems.com/14448/file_get_contents-php-input-not-working | restfularchitecture, curl, rest, json | Problem:

I am unable to understand this problem ..

Please suggest me a better solution to solve it ..File_get_contents('php //input') not working

Solution:

First off, I was able to run this code and it worked fine:

--Terminal---------

//I ran this curl request against my own php file:

curl -i -X PUT -d '{"address":"Sunset Boulevard"}' http://localhost/test.php

--PHP--------------

//get the data

$json = file_get_contents("php://input");

//convert the string of data to an array

$data = json_decode($json, true);

//output the array in the response of the curl request

print_r($data);

If that doesn't work, check the console for errors and your php settings:

1. The curl url you used, make sure that url is actually working and

not returning errors.

2. open up another terminal / console window and run tail -f

/path/to/the/php/log/file so you can actually see the output of

these php calls.

3. often people get this error: file_get_contents(file://input): failed

to open stream: no suitable wrapper could be found which can

indicate either a typo of the "file://input" string or the fact that allow_url_fopen is disabled in php (see #5 if unsure)

4. make sure your code is correct, and by that I mean make sure you're

not typing in incorrect arguments and things... stuff that doesn't

necessarily get underlined in netbeans.

5. remember, file_get_contents only works when allow_url_fopen is set

to true in your PHP settings. thats something that is set in

php.ini, but you can also change settings at run time by writing

something along the lines of the following code before the other

code:

ini_set("allow_url_fopen", true); | coderlegi0n |

1,026,410 | Start Implementing your own Typescript Class Decorators | Start Implementing Your Own Typescript Class Decorators Class Decorator What is... | 0 | 2023-09-12T11:57:45 | https://levelup.gitconnected.com/start-implementing-your-own-typescript-class-decorators-84a49f560dea | classdecorator, decorators, typescript, node | ---

title: Start Implementing your own Typescript Class Decorators

published: true

date: 2021-12-23 12:53:42 UTC

tags: classdecorator,decorators,typescript,nodejs

canonical_url: https://levelup.gitconnected.com/start-implementing-your-own-typescript-class-decorators-84a49f560dea

---

### Start Implementing Your Own Typescript Class Decorators

_Class Decorator_

#### What is a Decorator?

It is a structural design pattern that lets you attach new behaviors to objects by placing these objects inside special wrapper objects that contain the behaviors ([reference](https://refactoring.guru/design-patterns/decorator)).

**Typescript Class Decorators Definition:** _The class decorator is applied to the constructor of the class and can be used to observe, modify, or replace a class definition.(_[_reference_](https://www.typescriptlang.org/docs/handbook/decorators.html#class-decorators)_)_

#### What is going on behind the scene?

Your class decorator is actually **a simple function** that is called as a function **at runtime** and it gets _one argument_ and that is **the constructor function of the class**. If _the class decorator_ returns something it is going to be used as the constructor of the class. These things might sound confusing but it’s going to be clear as day after taking a look at some pieces of code.

### Setup

In order to run the Typescript code, we need to compile them using the Typescript compiler.

We need a tsconfig.json file :

<iframe src="" width="0" height="0" frameborder="0" scrolling="no"><a href="https://medium.com/media/39e61b1249de30de02c2830a5244d69b/href">https://medium.com/media/39e61b1249de30de02c2830a5244d69b/href</a></iframe>

We have to enable the experimentalDecorators. Also, the target should not be less than ES5.

If you do not want to use a tsconfig file you can pass these options directly:

```

tsc --experimentalDecorators // If you installed tsc globaly

npx tsc --experimentalDecorators // If you installed tsc in your current directory

```

Now by running tsc in the current directory, the typescript files will compile to Javascript files and we can run them using Node.([reference](https://dev.to/pshaddel/start-writing-your-own-typescript-method-decorators-3jm4-temp-slug-1785958))

### Define a Class Decorator

First class decorator we want to create is just going to log a message on the console and tell us that it is running.

<iframe src="" width="0" height="0" frameborder="0" scrolling="no"><a href="https://medium.com/media/86a7aa80e3ee4ce7a713f02af9a16a81/href">https://medium.com/media/86a7aa80e3ee4ce7a713f02af9a16a81/href</a></iframe>

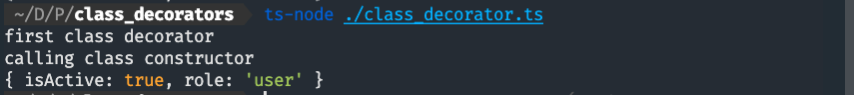

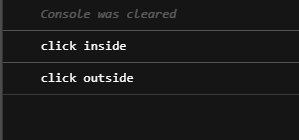

Now if I compile and run this code I will see this on the console:

_Result of running the code_

Here the class decorator was a function that had _constructor_ of the class as its argument and logs something on console easy-peasy. We can see that **before** running the constructor of the class it runs _the class decorator_.

Let’s see an example that we can pass our constructor in the decorator:

<iframe src="" width="0" height="0" frameborder="0" scrolling="no"><a href="https://medium.com/media/56031b8ba6d683b3215b01a8a67006d5/href">https://medium.com/media/56031b8ba6d683b3215b01a8a67006d5/href</a></iframe>

In order to make some changes to constructor of the class from _the class decorator_ we have to return a class that **extends** the **base class**.

The Typescript compiler forces us to do this(return a class that extends the base class).

**Why compiler is forcing us do this?** Because otherwise the type we are returning is not compatible with the base class.

If what is happening is still vague you should recall that classes are actually functions(You may find so many helpful comments on that [here](https://stackoverflow.com/questions/11970141/javascript-whats-the-difference-between-a-function-and-a-class)).

<iframe src="" width="0" height="0" frameborder="0" scrolling="no"><a href="https://medium.com/media/8149596a93aed43fd64e86da5feb9660/href">https://medium.com/media/8149596a93aed43fd64e86da5feb9660/href</a></iframe>

Now that we know this, consider the class decorator as a higher order function. It is somehow similar to the implementation of [method decorator](https://dev.to/pshaddel/start-writing-your-own-typescript-method-decorators-3jm4-temp-slug-1785958).

**What if we want to use a class decorator on all classes?** That is possible by using Typescript Generics. Take a look at this example:

<iframe src="" width="0" height="0" frameborder="0" scrolling="no"><a href="https://medium.com/media/1bca8141f9a41e450fe6431a1746c53f/href">https://medium.com/media/1bca8141f9a41e450fe6431a1746c53f/href</a></iframe>

We can use this class decorator on all classes.

```

<T extends { new (...args: any[]): {} }>

```

This is the definition of generic and if you are wondering why it extends { new (...args: any[]): {} you should know that we expect a constructor function and this is how we can tell the compiler to check that.

Here T is the generic class and we are able to return new constructor by extending it in line 4.

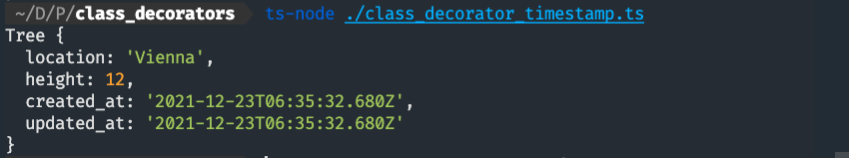

In line 5 and 6 we added two timestamps members to the class. Now each class that wrapped by this decorator has these two timestamps created\_at and updated\_at .

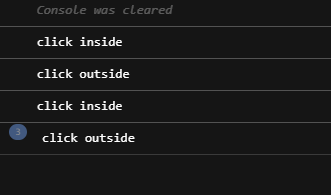

Let’s see the result on console:

_Timestamp Decorator_

As we can see class has two additional members updated\_at and created\_at

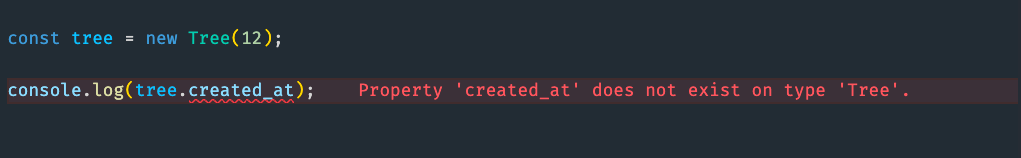

**Can we access these two additional properties?**

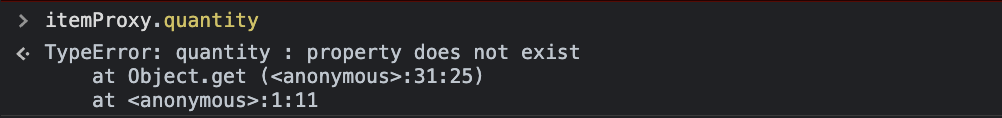

_Calling Additional Properties that added by Decorator_

> _The Decorator does not change the Typescript Type_

The only way to access that property is to tell the compiler to ignore that line. If you consider timestamps example, we usually want to pass them to an ORM or ODM to save a record or document in the database, so there should be no problem even if we do not call them directly. These properties are going to be passed in the object.

Another really useful decorator that is mentioned in official TS Docs is **sealed decorator**.

### Sealed Decorator

When we seal a class we want to prevent more functionalities and properties to be added or removed. Let’s see that in action:

<iframe src="" width="0" height="0" frameborder="0" scrolling="no"><a href="https://medium.com/media/615cd03dd8d6e9f73a2173da533cf301/href">https://medium.com/media/615cd03dd8d6e9f73a2173da533cf301/href</a></iframe>

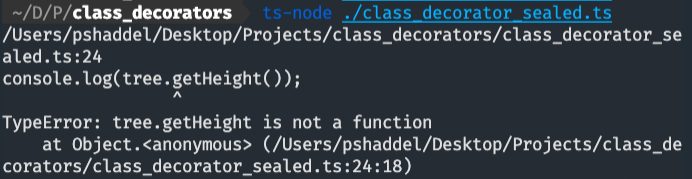

We sealed the constructor and it’s prototype. If we try to change the constructor this way(We had to ignore the compiler to be able to do this):

<iframe src="" width="0" height="0" frameborder="0" scrolling="no"><a href="https://medium.com/media/5f85915def8a4c38978cfd6a3cb7c8e4/href">https://medium.com/media/5f85915def8a4c38978cfd6a3cb7c8e4/href</a></iframe>

That will result in a TypeError:

_Result of changing a class prototype_

If we do not use @sealed decorator then this is going to be the result:

_Calling getHeight without sealed decorator_

### Conclusion

Class decorators are simply a function that runs at runtime and it can change behavior of a class. Using Class Decorators and wrapping other classes can be a little bit tricky in some cases if you want to add some functionalities to them since the decorator does not change the typescript type.

Decorators are widely used in [Angular](https://angular.io) and [NestJS](https://docs.nestjs.com/) since both of these frameworks use OOP paradigm and you can take a look at their documents and the implementation of decorators.

* * * | pshaddel |

1,027,417 | Lessons in AWS Python CDK: 2-Parameterizing Your Stack | TLDR I don't like using the cdk.context.json file. I use a config/<env>.yaml file that... | 17,190 | 2022-03-20T13:40:23 | https://dev.to/aws-builders/lessons-in-aws-python-cdk-2-parameterizing-your-stack-384 | aws, python, beginners, tutorial | {% details TLDR %}

I don't like using the `cdk.context.json` file. I use a `config/<env>.yaml` file that holds environment specific values and omegaconf to parse it.

Say you have dev and prod VPCs that already exist and you want to use the same stack to deploy to both.

Files:

config/dev.yaml

```yaml

vpc:

vpc_id: vpc-1234567890

```

config/prod.yaml

```yaml

vpc:

vpc_id: vpc-9876543210

```

In the stack:

```python

from omegaconf import Omegaconf

deploy_env = "dev" # <-- This could/should be set by your CICD pipeline

conf = OmegaConf.load("config/{0}.yaml".format(deploy_env))

# load pre-existing vpc into variable

vpc = ec2.Vpc.from_lookup(self, conf.vpc.vpc_id, vpc_id=conf.vpc.vpc_id)

```

In typical yaml fashion everything is a dictionary or a list and you can, therefore, navigate and loop. (details below)

{% enddetails %}

{% details About Me %}

My name is Jakob and I am a DevOps Engineer. I used to be a lot of other things as well (Dish Washer, Retail Employee, Camp Counselor, Army Medic, Infectious Disease Researcher), but now I am a DevOps Engineer. I received no formal CS education but I'm not self taught, because I had thousands of instructors who taught me through their tutorials and blog posts. The culture of information sharing within the software engineering community is vital to everyone, especially those like me who didn't have other options. So, as I learn new things I will be documenting them through the eyes of someone learning for the first time, because those are the people most in need of a guide. Happy Learning! And don't be a stranger.

{% enddetails %}

---

Note: I am NOT going to be sourcing and fact checking everything here. This is not an O'Reilly book. The language and descriptions are intended to allow beginners to understand. If you want to be pedantic about details or critique my framing of what something is or how it works feel free to write your own post about it. These posts are intended to be enough to get you started so that you can begin breaking things in new ways on your own!

---

## The Problem ##

One thing that I found some difficulty with when I started using the AWS-CDK was how to handle deploying into multiple pre-existing environments. Of course, the CDK makes it easy to create a stand-alone stack with a new VPC, subnets, buckets, and certificates, but sometimes we have a pre-existing environment we need to deploy into. Or perhaps we want to use variations on the same stack to deploy into multiple environments, eg. smaller instances instances for a development environment.

In Terraform, we might pass in a `dev.tfvars` or `prod.tfvars` file. With the CDK you can use the recommended `cdk.context.json` file and pass context dependent parameters into the stack. But after scanning over the documentation for how to add values to the context file, I decided it was too annoying and I wanted a better way.

## My Solution ##

I have settled on pairing a yaml parsing library called `omegaconf` to make my own `.tfvars`-like parameter file. Let me show you how it works.

### The Setup ###

First, create yourself come configuration files. I made a folder at the root of the project called `/config` where I created yaml files named for each environment, eg. `dev.yaml`, `uat.yaml`, `prod.yaml`. Lets also add a couple things to `dev.yaml`

```yaml

aws:

account: "12345678910"

region: us-east-1

env: Dev

```

Now, throw `omegaconf` into your requirements.txt file and `pip install` it. (you are using a virtual environment right?)

Import it into both the `app.py` file at the root of your project and any stack files you plan on using the parameters.

```python

from omegaconf import Omegaconf

```

---

### Implementation ###

Depending on how you are going to manage deploying to your environments, you are going to load a different config file.

For example, if you are just going to deploy from your local computer, you can set an variable the the deploy environment at the top of your file (so that it is visible and easy to change) and then use that variable to load your config.

```python

1 import aws_cdk as cdk

2 from uber_for_cats.uber_for_cats_stack import UberForCatsStack

3 from omegaconf import Omegaconf

4

5 deploy_env = "dev"

6

7 conf = OmegaConf.load("config/{0}.yaml".format(deploy_env))

8

9 app = cdk.App()

10 UberForCatsStack(app, "UberForCats{0}".format(conf.env),

11 env=cdk.Environment(account=conf.aws.account, region=conf.aws.region),

12 )

13

14 app.synth()

```

On `lines 5-7` the environment is set to dev and the `config/dev.yaml` file is set to `conf`.

If you were using a CICD pipeline to automatically deploy, `deploy_env` could be set based on a pipeline variable and `line 5` could look like this.

```python

5 deploy_env = os.getenv("DEPLOY_ENV")

```

But the result will be the same the values set in the `config/dev.yaml` file will be used and the file will be read as if the strings were there.

```python

1 import aws_cdk as cdk

2 from uber_for_cats.uber_for_cats_stack import UberForCatsStack

3 from omegaconf import Omegaconf

4

5 deploy_env = "dev"

6

7 conf = OmegaConf.load("config/dev.yaml")

8

9 app = cdk.App()

10 UberForCatsStack(app, "UberForCatsDev",

11 env=cdk.Environment(account="12345678910", region="us-east-1"),

12 )

13

14 app.synth()

```

This allows you to set other values in `config/uat.yaml` such as a different account, different sized instances/EBS volumes, autoscaling rules, etc. depending on what is required in each environment.

### Something to Remember ###

Yaml is basically a nested dictionary that can also contain lists. When you see a `-` before something it is a list and therefore iterable. Take, for example, the following.

```yaml

aws:

account: "12345678910"

region: us-east-1

vpc:

vpc_id: vpc-aabbccdd

subnet:

private:

- subnet-65asdf651sadf65

- subnet-c65as1df65f56sa

- subnet-afas65df1a6sdf5

```

Those private subnet IDs are a list and you can iterate over them.

```python

private_subnets = []

for i, subnet in enumerate(conf.vpc.subnet.private):

private_subnets.append(

ec2.Subnet.from_subnet_id(self, "pri{0}".format(i), subnet_id=subnet)

)

```

This would create a list of subnet objects (`ISubnet`) that you can use for the placement of an autoscaling group or EKS cluster. Neat!

Congrats, Those are the basics! You should be able to get started.

---

## Some Additional Tricks ##

While using a yaml file for configuration settings, I ran into some situations that might be worth sharing.

### __Sometimes strings require extra steps__ ###

I am using the CDK to make some EKS clusters. Part of this process is creating node-groups (think autoscaling groups for Kubernetes). I wanted to leverage some spot instances for a portion of our development cluster and part of the process of creating these nodegroups in CDK is specifying the compute size and class. Because this could be different between environments I put it in the config file.

```yaml

node_group:

spot:

min: 1

max: 5

type:

- i_class: BURSTABLE3

i_size: LARGE

- i_class: BURSTABLE3

i_size: XLARGE

- i_class: COMPUTE6_INTEL

i_size: LARGE

- i_class: COMPUTE6_INTEL

i_size: XLARGE

```

But that won't work for setting instance types:

```python

instance_type = ec2.InstanceType.of(

ec2.InstanceClass.conf.node_group.spot.type[0].i_class,

ec2.InstanceSize.conf.node_group.spot.type[0].i_size,

)

```

And, honestly, it isn't very readable either. My workaround was making dictionaries of instance classes and sizes. then using the value in the yaml as the key the the appropriate class/size.

```yaml

node_group:

spot:

min: 1

max: 5

type:

- i_class: t3

i_size: large

- i_class: t3

i_size: xl

- i_class: c6i

i_size: large

- i_class: c6i

i_size: xl

```

```python

ec2_class = {

"t3": ec2.InstanceClass.BURSTABLE3, # max 2xl

"c6i": ec2.InstanceClass.COMPUTE6_INTEL, # min large

}

ec2_size = {

"large": ec2.InstanceSize.LARGE,

"xl": ec2.InstanceSize.XLARGE,

}

```

With the combination of the above, we can now use the string in the yaml as the key to pull in the ec2 objects in the format that the CDK requires. Below I make a list of the specified instance combinations and pass it into the EKS cluster as nodegroup capacity, but you could just as easily specify a list of classes and types and use a python library like `itertools` to make ALL of the combinations in one line. That might sacrifice readability though. So actually, don't do that. But you could...

```python

spot_instance_types = []

for instance_type in node_group.spot.type:

this_type = ec2.InstanceType.of(

ec2_class[instance_type.i_class],

ec2_size[instance_type.i_size],

)

spot_instance_types.append(this_type)

cluster.add_nodegroup_capacity(

"{0}-spot-nodegroup".format(conf.env),

nodegroup_name="{0}-spot-ng".format(conf.env),

capacity_type=eks.CapacityType.SPOT,

min_size=node_group.spot.min,

max_size=node_group.spot.max,

instance_types=spot_instance_types, # <-- list of instance types

disk_size=250,

subnets=ec2.SubnetSelection(subnets=private_subnets), # <-- those subnets from before!

)

```

---

### __Booleans are Useful__ ###

So we are promoting this project out to production and _someone_ doesn't think spot instances are a good idea even though you have diversified your spot pools. Throw a trigger into the config.

```yaml

node_group:

spot:

enabled: False

min: 1

max: 5

```

Then you can run your node creation based on it!

```python

if conf.node_group.spot.enabled:

spot_instance_types = []

for instance_type in node_group.spot.type:

this_type = ec2.InstanceType.of(

ec2_class[instance_type.i_class],

ec2_size[instance_type.i_size],

)

spot_instance_types.append(this_type)

cluster.add_nodegroup_capacity(

"{0}-spot-nodegroup".format(conf.env),

nodegroup_name="{0}-spot-ng".format(conf.env),

capacity_type=eks.CapacityType.SPOT,

min_size=node_group.spot.min,

max_size=node_group.spot.max,

instance_types=spot_instance_types, # <-- list of instance types

disk_size=250,

subnets=ec2.SubnetSelection(subnets=private_subnets), # <-- those subnets from before!

)

```

Beautiful!

| misterjacko |

1,028,241 | How to understand complex coding concepts better using the Feynman Technique | There are other learning techniques available, but this is one of my favorites. The Feynman approach... | 0 | 2022-03-19T22:20:57 | https://cesscode.hashnode.dev/how-to-understand-complex-coding-concepts-better-using-the-feynman-technique | javascript, beginners, webdev, programming | There are other learning techniques available, but this is one of my favorites. The Feynman approach is an excellent way to gain a deeper understanding of a complex topic. It's one of the quickest ways to turn a complex topic into one that you can explain in simple terms to others.

This article will teach you how to break complex coding concepts into the simplest terms.

let's get started 💃

## The Feynman Technique Of Learning

Richard Feynman, a Nobel Prize-winning physicist, created the Feynman method for learning. He enjoyed explaining complex topics in simpler terms. In Feynman's view, the best way to study an idea was to ask hard questions and fully understand it.

For more information about Richard Feynman, see this [article.](https://www.nobelprize.org/prizes/physics/1965/feynman/biographical/)

> "If you want to learn something, read about it. If you want to understand something, write about it. If you want to master something, teach it." - Yogi Bhajan.

Feynman's technique, in a nutshell, is you can't explain something well if you do not know it well yourself. When you try to explain what you know to someone who doesn't know anything about it, you'll notice your flaws. The goal is to communicate what you've learned in a simple way that a child can understand.

## What are the benefits of using the Feynman technique?

Here are a few of the benefits of using Feynman's learning techniques:

- It helps in gaining a thorough understanding of what you're learning. If you're having trouble understanding Javascript loops, try this learning method.

- **Learn new Ideas:** It allows you to learn new things fast, recall what you have learned, and be more productive.

- It helps you become a better teacher. You get better at teaching when you keep sharing your knowledge with others.

- It improves your critical thinking ability. You will be able to reason in an organized manner to explain complex stuff in simpler terms.

## The four steps of the Feynman technique

The Feynman Technique consists of four significant steps:

- Choose a topic you want to learn about.

- Explain it to a 12-year-old.

- Review Your Explanation.

- Simplify.

### Step 1 - Choose a topic you want to learn about

First, you should come up with a subject or topic you would like to learn and then write it at the top of a piece of paper.

For example, if you want to study javascript loops, write it as a heading on a blank piece of paper. As you keep learning about javascript loops, write whatever you know on that piece of paper. Write it so that someone who knows nothing about javascript loops will understand it.

Before moving on to step 2, do more research on Javascript loops or take a practice test to see how good you are.

Check out this article for resources to help you practice [web development](https://cesscode.hashnode.dev/resources-to-help-you-practice-web-development).

Once you have a firm understanding of the topic (Javascript loops), proceed to step 2.

P.S. Paper can be anything you use for writing, such as your phone's notebook app to any other app you use every day.

### Step 2 - Explain it to a 12-year-old

Now that you have a clear understanding of Javascript loops, it's time to explain it to a 12-year-old.

You don't have to look for a 12-year-old to teach. All you have to do is explain loops in the most basic terms possible, such that even a child can understand.

There's a saying that using complex terms to explain a topic mask one's lack of understanding. So your ability to explain loops in the simplest terms possible means you know what you are saying.

I know some of you reading this article are thinking, but what if I don't have somebody to explain what I'm learning?

That's not a problem. You'll use a variety of methods to explain what you've learned, including:

- **Technical writing:** You don't have to be a great writer to start writing. All you have to do is start writing. Platforms like dev.to, hashnode, medium make it easy to share what you learn. So sign up for one of the platforms and begin writing. Also, check out Google's free technical writing [course](https://developers.google.com/tech-writing).

- **Join online communities:** Join online communities to share what you have learned. When you join online developer groups, you'll meet people who share your interests. You will be comfortable sharing and answering questions. A fantastic developer community to join is the Freecodecamp online [forum](https://forum.freecodecamp.org). You can also use social media platforms like YouTube, Tik Tok, Twitter, e.tc.

Another way you can explain what you've learned is by doing it in front of an imaginary audience. Pretend you're teaching a group of 12-year-olds about javascript loops.

Teaching to an imaginary audience might be fun, but they cannot ask questions. This learning method works best when you use a real audience because they can ask questions.

When your audience asks you questions, you get to identify areas in which you need to improve.

### Step 3 - Review Your Explanation

Step 2 will, as stated before, assist you in identifying specific areas where you need to improve.

Review your loop explanation and identify areas where you think it fell short. Now that you know where you fell short, go back over your learning material to understand better. Consider using other learning resources if possible. Study until everything you couldn't explain before is clear to you.

Step 3's goal is to change your areas of weakness into your areas of strength.

### Step 4 - Simplify

As a result of step 3, you now have a better knowledge of javascript loops. Step 4 requires you to practice step 2 again with your new understanding of loops.

Take up your note and simplify every area of loops you couldn't explain before. Rewrite your loops article or give someone else a better explanation.

You can also pretend you're teaching a group of 12-year-olds about javascript loops. If you cannot explain a particular part of the topic, go back to step 3 to understand it better.

This method of learning works best for topics that are tough to understand. It is not an effective learning method if you already understand a concept.

## Conclusion

This learning style is all about understanding a topic to the point where you explain it in your own words. When you describe it, act as though you're educating a child to see how well you know the subject.

Also, keep in mind that you can use this learning method to study any concepts that you find difficult.

Thank you for reading. I hope you enjoyed the article! If you have any questions or a learning strategy you would love to share? Post about it in the comment section. I'll attend to them shortly.

If you found this article helpful, please like and share it 💙.

## Resources

Here are some resources that may be useful to you:

- [Learning From the Feynman Technique](https://medium.com/taking-note/learning-from-the-feynman-technique-5373014ad230)

- [Getting work done with the Pomodoro technique](https://cesscode.hashnode.dev/getting-work-done-with-the-pomodoro-technique)

- [Richard Feynman](https://en.wikipedia.org/wiki/Richard_Feynman)

| cesscode |

1,028,419 | Turn a single brand color into your own Complete Web Color System... in minutes! | How many times have you neglected your app's UI just because you had a deadline, and you needed to... | 0 | 2022-03-20T22:03:36 | https://dev.to/arnelenero/turn-a-single-brand-color-into-your-own-complete-web-color-system-in-minutes-4nkb | javascript, typescript, showdev, webdev | How many times have you neglected your app's UI just because you had a deadline, and you needed to focus on your app's functionality instead?

Color forms an important foundation of every UI. It enables **consistent** expression of your brand/identity and style, and **effective** communication of intent and meaning.

However, it can be quite daunting for developers like you and me to implement a proper color system, especially when this task has to contend with working on actual functionality of our app.

For Web developers, here's where **Simpler Color** could help. I wrote this small library so that we no longer have to sacrifice not having a cohesive, professional UI color system while focusing on the other important stuff.

And all you need is **a single brand color**...

## Easy as 1-2-3

**Step 1:** Install simpler-color

```

npm install simpler-color

```

**Step 2:** Specify your brand color, and it generates the rest of the _base colors_!

```js

import { harmony } from 'simpler-color'

// Generate 5 harmonious base colors from your main brand color!

const baseColors = harmony('#609E3F')

```

(You can also define your own custom base colors if you prefer, or if you already have a set of brand colors)

**Step 3:** Create your _color scheme(s)_ by mapping UI roles to specific colors from the auto-generated palettes

```js

import { colorScheme } from 'simpler-color'

const scheme = colorScheme(

baseColors, // 👈 From these base colors...

// 👇 ...your color palettes are auto-generated

colors => ({

// 👇 which you then map to UI roles.

primaryButton: colors.primary(40),

primaryButtonText: colors.primary(95),

surface: colors.neutral(98),

text: colors.neutral(10),

...etc,

}),

)

// Access various UI colors as `scheme.primaryButton` and so on.

```

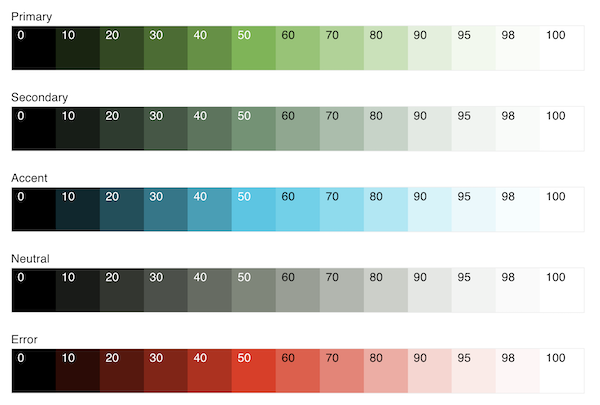

Here's the complete range of colors that our example code generates from a single color value of `#609E3F`:

It's that simple! (Plus the library can do quite a bit more.)

So why don't you give Simpler Color a try. Check it out on GitHub: https://github.com/arnelenero/simpler-color

> Please don't forget to give it a star ⭐️ on GitHub if you like the library, its concept and the simplicity.

If some terms used above sound a bit alien to you, check out the comprehensive README in the link above for more details.

Hope you find this library useful. With proper color, even an early prototype or proof-of-concept app would certainly impress!

| arnelenero |

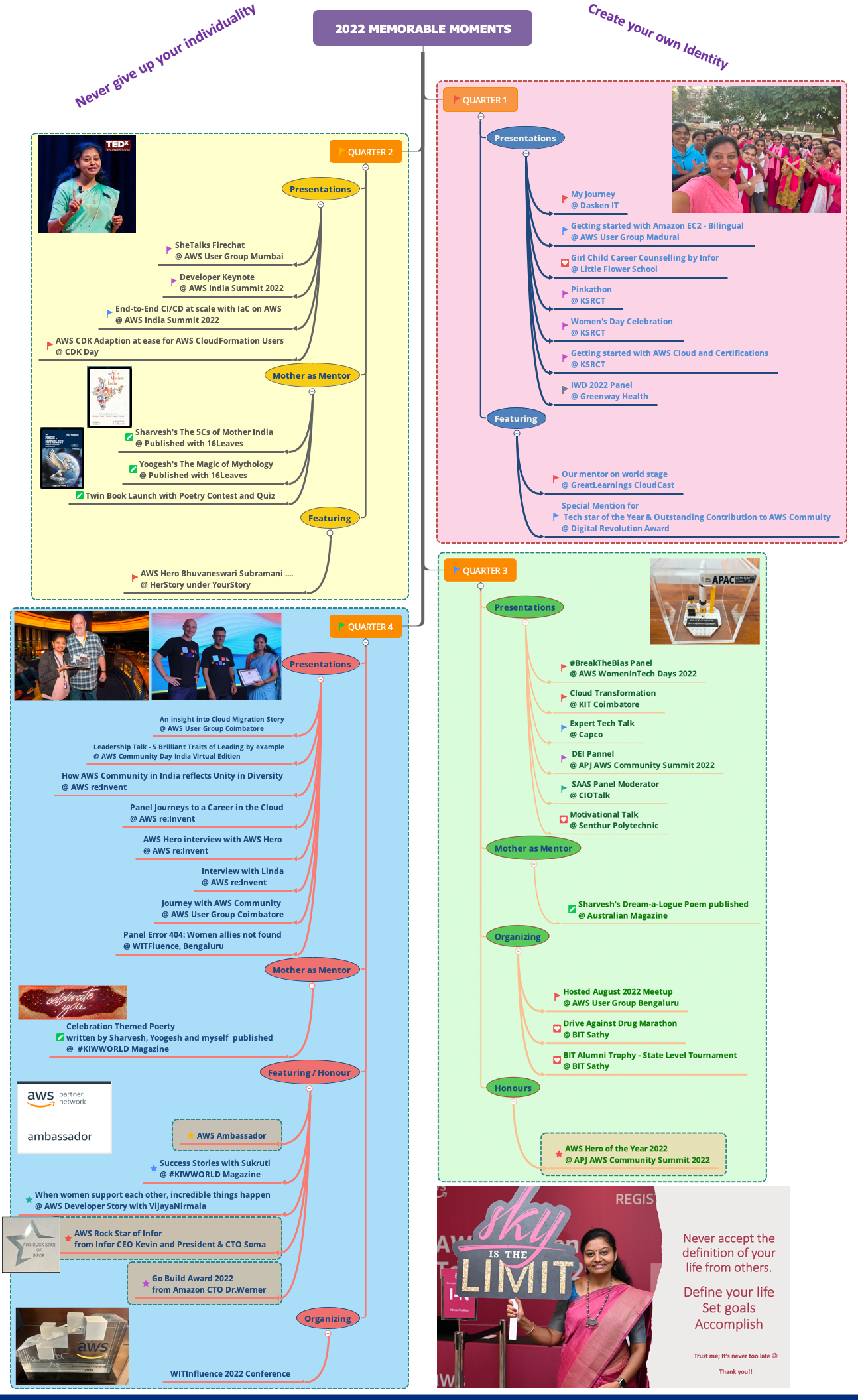

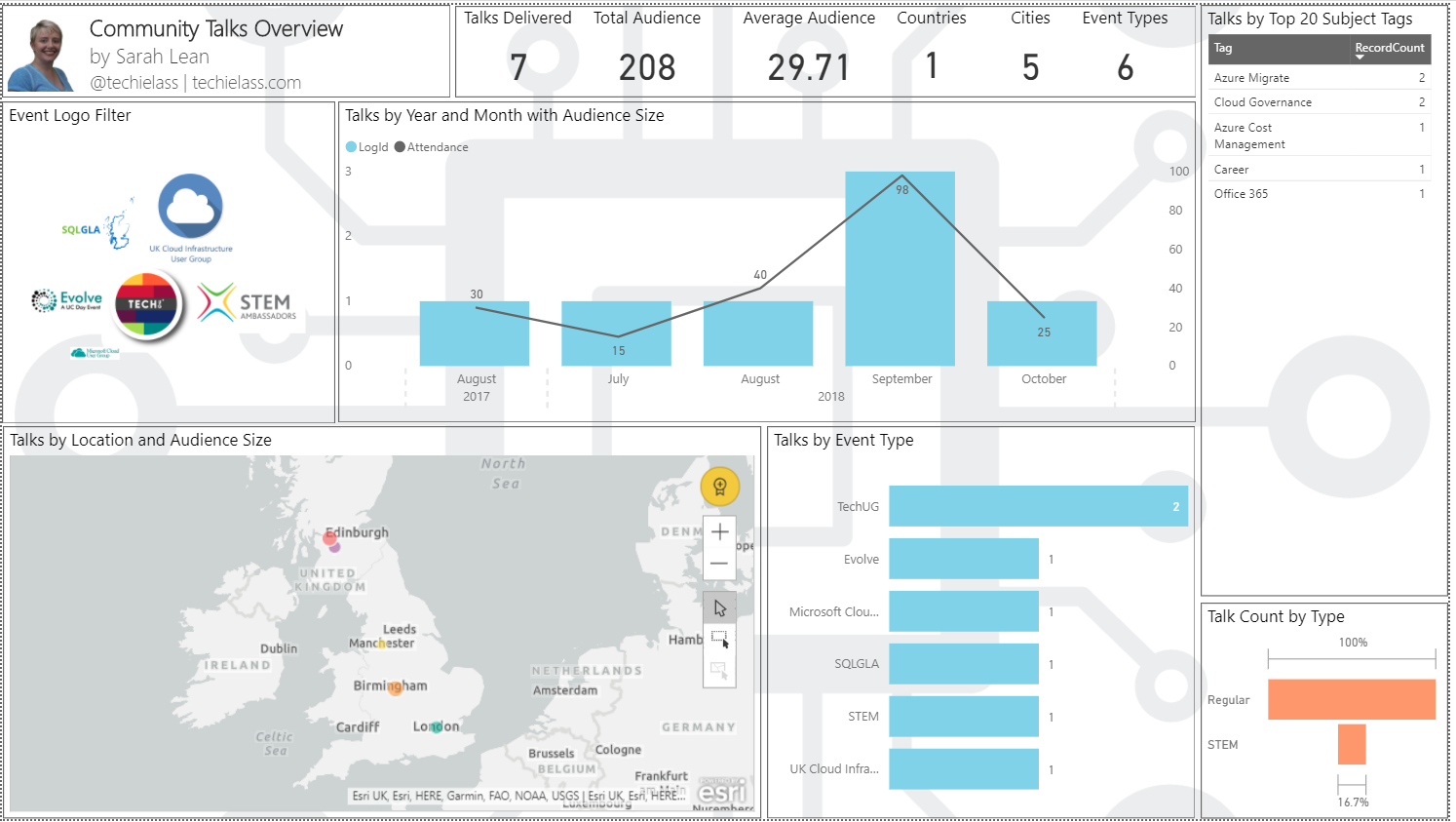

1,028,720 | 2022 Memorable Moments | When I shared my journey 2021, I was wondering can I do any better in future and to my surprise, I... | 0 | 2022-12-28T13:21:00 | https://dev.to/aws-heroes/2022-memorable-moments-32ja | aws, leadership, womenintech, cloud |

When I shared [my journey 2021](https://dev.to/aws-heroes/look-back-and-relish-2021-bn4), I was wondering can I do any better in future and to my surprise, I could go above and beyond my own records in 2022.

Everytime when I post in social media about the presentation in industry conferences, community sessions, or the honorary recognition received,

I wonder, is it really needed ? Am I bragging too much?

But trust me, a WomenInTech made me realize that how invaluable these little posts are to inspire and motivate all those aspiring individuals with burning desire and dream about their career.

> And the storyline is like this..

> A WomenInTech who was awestruck by what Bhuvana could accomplish in 2021 took a screenshot of my 2021 journey infographic and stored it on her phone as a reference.

Six months later, she came and told me about her self-transformation which included

> - The discipline she has brought to her fitness routine and shed several kgs of weight

> - Upskilled in a couple of technologies

> - Become a blogger

> - Speaker at a few external events

> - Furthermore, she advanced in her career

> - Most importantly, the confidence level and energy went up

Yes, she proved it right that `if Bhuvana could do something and I can do much more.`

Thereafter I became #unstoppable to share every little success to those followers / aspirants

Here are my 2022 memorable moments

- Industry Conferences, corporates & Community Events - 19

- Expert / Motivational Talk Educational Institutions - 8

- Hosting / Organizing - 2

- Featuring - article, poem, success story - 6

- Honours / Awards - 5

- Happily published poetry books written by my kids - 2

My suggestion to the #awscommunity, #womenintech, please keep sharing your little progress and accomplishments. You never know it could be a game changer for someone.

When you could inspire so many, its always heart-warming to give the credit to who inspired you. I drew-up inspiration from a good friend and best techie - Runcy Oommen and less he would know this fact because never discussed 😊.

Thanks a lot to AWS User Group Bengaluru, AWS User Group Madurai, Infor, #AWSCommunity, #AWSHeroes, BIT Sathy, KIT, KSRCT, Greenway Health, Dasken IT, Capco, Little Flower school, AWS User Group Madurai, AWS User Group Coimbatore, AWS User Group Mumbai for travelling with me in this journey.

And certainly not challenging myself to set any new record in 2023.

Let me continue to **Learn**, **Unlearn** and **Share** as always.

As we march into a new year, wishing you all loads of success on your way with good health and happiness taking the front seat 💐

| bhuvanas |

1,029,540 | RapidLoad | A post by toyagov | 0 | 2022-03-21T09:54:58 | https://dev.to/toyagov378/rapidload-review-automated-unused-css-removal-up-to-95-1kh7 | toyagov378 | ||

1,029,886 | Diary of a developer #1: Which way to go? | If you are just starting out in the development area, this post can help you get a sense of how to... | 0 | 2022-03-21T16:20:05 | https://dev.to/bielmartin/diary-of-a-developer-1-which-way-to-go-4p3l | If you are just starting out in the development area, this post can help you get a sense of how to proceed, however, if you are already an experienced developer, it is worth sharing a little bit of the path you took taken.

Initially, it is important that you set some goals of your own, **analyze if this is really what you want**, sit all day in front of the computer, analyze demands and propose solutions on top of them, do **not imagine that it will be easy**, but as all the effort is worth it if applied in the right way, when you pass through all these points **you will be in a beautiful area full of possibilities and challenges**.

Below I leave my opinion on what I consider an ideal path for a novice developer to follow, remembering that it is not a rule, but they are important steps for learning and in the search for your first job.

Let's go for points. if you've never had contact with any programming language, don't worry, choose one calmly, always taking into account **your way of coding**, **level of complexity of the language for beginners**, **the job market** and if you already know people who develop and who may give you a boost initially. **But the reality is that it doesn't matter**, python, ruby, java, C#, each one has its peculiarities, but they all exist for common purposes, **they are codes that tell the computer how to behave**, never forget that, the common computer is not intelligent to the point of being highly managed, virtually everything that exists has been coded previously.

Assuming that you have already chosen the most attractive language, now it's time to run, every information you receive in this period is important, **try to develop mini-projects**, calculators, calendars, all this will help even when setting up your portfolio, at the end of this article I will leave some links with projects for beginner developers.

Most development positions follow a pattern, and something I can suggest is: **Be pursuing a degree related to technology**. It's not a rule, but if you're in an academic environment, your chances of getting your first job go up a lot, be it even an internship.

**Paths in development**

Assuming you have some experience in your language, have already developed introductory projects and feel that you are ready for the next steps, then let's see some interesting technologies that add significantly to your curriculum. Below I will cite a few:

1. Understand the **IDE** you are using (I quote VS Code)

2. **Git**

3. Search for a **framework** related to your language and the Area you intend to follow

4. **Docker**

5. Understand what a **stack** is

Now I will briefly detail each topic:

**IDE** - The integrated development environment is a development tool for editing code, accessing a terminal, running a script, debugging and compiling using a single environment.

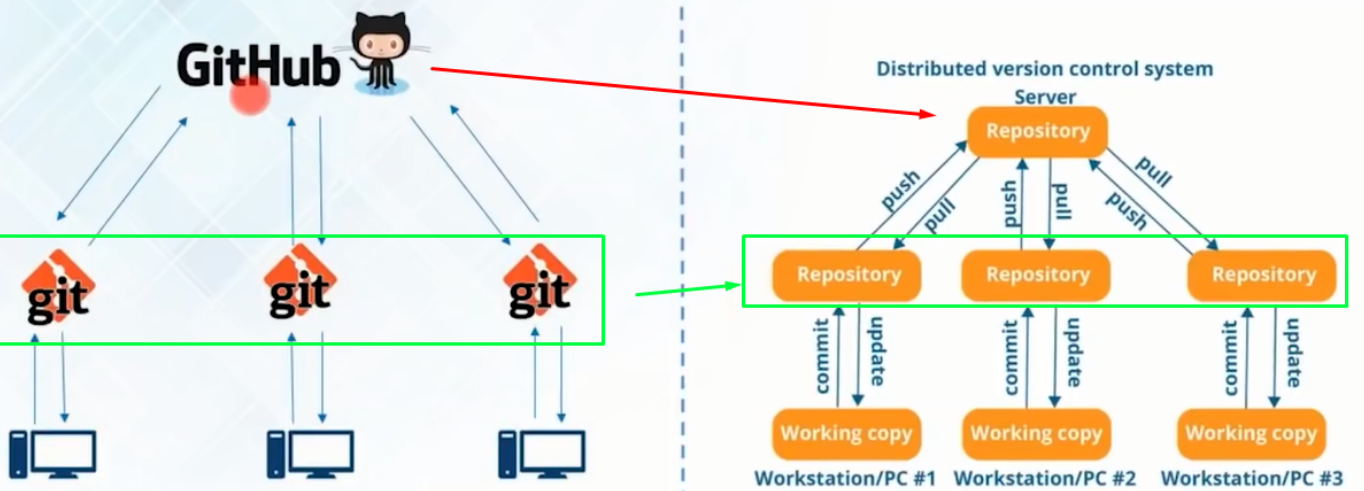

**Git** - These control systems have the function of recording any changes made on top of a code, storing this information and allowing, if necessary, a programmer to revert to previous versions of an application in a simple and fast way.

**Framework** - In programming, a framework is a set of generic codes capable of uniting parts of a development project. It works like a puzzle piece capable of fitting in the most diverse places and connecting all the lines of code in an almost perfect way.

**Docker** - In short, we can say that Docker is an open platform, created with the aim of facilitating the development, deployment and execution of applications in isolated environments. It was specially designed to deliver an application as quickly as possible.

**Stack** - In programming, stack is the name we give to the set of technologies that can be used to develop applications.

At the end of this article I will leave all the references so that you can study and better understand each technology. This was the learning line that I used in my learning flow, of course each language, framework and stack has its particularities, but understanding the concept makes everything easier.

Thank you for reading and I hope I have helped you in the best way. As a bonus I can tell you, if this is your dream, don't give up, you have the ability, the most important thing is to never give up.

References:

- https://www.tiagogouvea.com.br/profissional/projetos-reais-aprender-programacao/

- https://www.alura.com.br/artigos/o-que-e-uma-ide

- https://www.atlassian.com/br/git/tutorials/what-is-git

- https://www.lewagon.com/pt-BR/blog/o-que-e-framework

- https://stack.desenvolvedor.expert/appendix/docker/oquee.html

- https://kenzie.com.br/blog/full-stack-o-que-e/

| bielmartin | |

1,030,436 | RSMQ for golang | RSMQ is the most simple Queue Implementation in the known universe. My own implementation here comes... | 0 | 2022-03-22T22:03:31 | https://dev.to/ebuckley/rsmq-for-golang-2ej5 | go, redis, showdev | RSMQ is the most simple Queue Implementation in the known universe. My own implementation [here](https://github.com/ebuckley/rsmq) comes in at under 500 lines of code. Join me for a short tour of the code, and how it works.

People might be asking why I went to this effort when there are already so many implementations? Educational reasons. Implementing RSMQ is a fine and tightly scoped project that can be completed in a couple days of after work effort. In the day job we have been using a PHP implementation of the library but I wanted to deepen my knowledge of this simple tool by creating an implementation in my favorite language!

If you have Redis already, RSMQ is probably the easiest way to add messaging between different languages, processes and machines!

There are PHP/Python/Javascript/Java/C#/Rust/Go implementations now available for your usage. Want to write your own? No problem! Read this and become convinced that this is a simple and basic implementation task.

## How does RSMQ work?

Create Queues, send Messages, receive them on the diaspora of devices/processes and languages. Queues contain messages. Messages are delivered in a first in-first out basis (think about the queue in line at the McDonloads).

A Message is only delivered to one consumer. A consumer, being the thing that takes the message and finishes the work, deleting the message at the end. There is a time limit for how long a message can be processed for. When the time runs out, the message re-enters the queue and can be picked up by another consumer.

A typical worker implementation will receive a message, do the work, and then delete the message once it has processed successfully. If the worker crashes during the work, the message will be re-entered on the queue automatically, and another consumer will be able to pick it up.

# Implementing our own.

Some Redis knowledge assumed. You should become familiar with the basics. Today we will be using the following data structures.

- Sets (contains the list of queues)

- Hashes (contains the stats for a specific queue, and messages)

- Sorted Sets (Zset)

## Creating a new message Queue

A new queues is simply a unique Hash and a member added to a set.

```go

key := rsmq.ns + ":" + opts.QName + ":Q"

```

A queue is uniquely identified by the key. This is how we reference the queue, send messages to it, pop from it, update attributes about the queue. You will be using similar identifiers to this throughout your code in a lot of places.

```go

_, err = rsmq.cl.HMSet(ctx, key, map[string]interface{}{

"createdby": "ersin",

"vt": 30, // TODO allow this to be set with CreateQueueRequestOptions

"delay": 0,

"maxsize": 65536,

"created": unixMilliseonds,

"modified": unixMilliseconds,

}).Result()

if err != nil {

return fmt.Errorf("CreateQueue: set queue params: %w", err)

}

```

We define a hash for the attributes of the queue, including informative things like the delay in seconds before a message becomes receivable and the maximum size of the message in the queue.

```go

_, err = rsmq.cl.SAdd(ctx, rsmq.ns+":QUEUES", opts.QName).Result()

if err != nil {

return fmt.Errorf("CreateQueue: add queue to QUEUES set: %w", err)

}

```

Finally, we add the name of the queue to a set key. This allows our library to list all queues that are created under a given namespace.

## Sending a message to the queue

Code first, description second. It looks like this!

```go

pipe := rsmq.cl.Pipeline()

pipe.ZAdd(ctx, key, &Redis.Z{

Score: float64(q.TimeSent.Add(sendTime).UnixMilli()),

Member: q.UID,

})

pipe.HSet(ctx, key+":Q", q.UID, opts.Message)

pipe.HIncrBy(ctx, key+":Q", "totalsent", 1)

_, err = pipe.Exec(ctx)

```

Sending a message is a three step process that must happen as Redis 'pipeline'. A pipeline ensures that the actions do not require a round trip between client and server.

1. Add the message ID to the Sorted Set `rsmq.ns + ":" + opts.QName` with a score being the unix milliseconds of when it should send. Later on, we will re-score this message which has the effect of hiding it from other Q consumers.

2. Set the message content as a value on the `rsmq.ns + ":" + opts.QName + ":Q"` hash. The key of the content is the message ID

3. Increment the `totalSent` field of the Q hash used in step (2).

Note that we execute these in a 'pipe', which ensures that these steps happen atomically on the Redis data store, so we can be confident that the 3 commands always happen in sequence.

## ReceiveMessage

Our final operation for this code review is to receive a message from the queue. This step would be called by the workers that do the actual processing.

When a message is received it comes with an explicit deadline set for processing the message. This is known as the 'Visibility Timeout' and it is the number of second in which this message will be exclusively available to the one worker. If the visibility timeout expires, then the message will end up being sent to another worker.

```golang

results, err := rsmq.cl.EvalSha(

ctx,

// this references the embedded LUA procedure

*rsmq.receiveMessageSha1,

// these parameters are presented as KEY to the LUA script

[]string{key, timeSentUnix, timeVisibilityExpiresUnix}).Slice()

```

Only a single line on the Go side of things. We call a Lua script which runs on Redis to do the heavy lifting. An embedded Lua script runs in an atomic an thread-safe way on the datastore. This is how we get the guarantees of only one worker receiving a message at a time.

The following parameters to the Lua:

- KEYS[1]. Is the name of the sorted set containing the Q `rsmq.ns + ":" + opts.QName`

- KEYS[2]. Is the time when a message has been requested from the Q.

- KEYS[3]. Is the time when a message should become visible to other consumers again.

Now let's step through the Lua procedure.

```lua

local msg = Redis.call("ZRANGEBYSCORE", KEYS[1], "-inf", KEYS[2], "LIMIT", "0", "1")

if #msg == 0 then

return {}

end

```

Remember that there is a sorted set scored by timestamp sent for each message in the queue. The above code returns exactly one or zero message that is due for return between the `-inf` score and the current timestamp provided `KEYS[2]`.

```lua

Redis.call("ZADD", KEYS[1], KEYS[3], msg[1])

```

Next we re-score the current message found with the new visibility timeout defined in the `KEYS[3]` parameter. This step is important, because it keeps our queue in the data store. Other workers will be able to pick up this message again if it is not deleted before the visibility time has expired.

```lua

Redis.call("HINCRBY", KEYS[1] .. ":Q", "totalrecv", 1)

```

Now we increment the `totalrecv` counter on the Hash which contains the Q.

The final block of code concerns returning the message.

```lua

local mbody = Redis.call("HGET", KEYS[1] .. ":Q", msg[1])

local rc = Redis.call("HINCRBY", KEYS[1] .. ":Q", msg[1] .. ":rc", 1)

local o = {msg[1], mbody, rc}

if rc==1 then

Redis.call("HSET", KEYS[1] .. ":Q", msg[1] .. ":fr", KEYS[2])

table.insert(o, KEYS[2])

else

local fr = Redis.call("HGET", KEYS[1] .. ":Q", msg[1] .. ":fr")

table.insert(o, fr)

end

return o

```

An HINCRBY is called to increment our count of how many times this message has been received.

To wrap it all up, the message content is returned along with a few additional infomrative attributes.

## Want to implement your own?

My dreams would come true if I inspired you to write something like this for your own learning purposes. It would make my week complete if you left a comment.

All Credit for the original implementation of RSMQ goes to https://github.com/smrchy/rsmq :)

| ebuckley |

1,031,538 | POO - Abstracción | Según una definición que encontré en internet, abstraer algo significa: separar por medio de una... | 0 | 2022-03-23T03:08:24 | https://dev.to/nahuelsegovia/poo-abstraccion-4m0h | poo, oop, python, backend | Según una definición que encontré en internet, abstraer algo significa: separar por medio de una operación intelectual(pensar)

un rasgo o una cualidad(en nuestro caso métodos y atributos) para

analizarlos aisladamente. En palabras más simples aún, sería enfocarnos en eliminar o ignorar detalles de algo para obtener los puntos más relevantes para su funcionamiento.

**¿Pero como nos sirve todo lo de arriba?**

Bien, supongamos nuevamente que tenemos un celular moderno, este va a poder:

- Llamar

- Enviar SMS

- Instalar aplicaciones

- Sacar fotos

- Grabar video

Y para realizar todas estas cosas el celular necesita:

- Batería

- Placa wifi

- Camára de foto y video

- Sistema operativo funcional(Android, iOS)

De esta forma vemos como se puede realizar una abstracción de un celular, así que ahora podemos crear nuestra clase:

```python

class Celular():

def __init__(self, name, wifi_card, battery, camera):

self._name = name

self._battery = battery

self._wifi_card = wifi_card

self._camera = camera

def call(self, number:int): print('Calling'+str(number)+'...')

def send_sms(self, number:int, msg:str): print('Sending '+msg+' to ' +str(number))

def install_app(self, app_name:str): print('Installing '+app_name+' app...')

def take_a_photo(): print('Taking a photo...')

def record_video(): print('Recording video...')

``` | nahuelsegovia |

1,031,870 | ESLint and Prettier configuration for React project | ESLint is a tool for identifying and reporting on patterns found in ECMAScript/JavaScript code, with... | 0 | 2023-03-21T04:53:52 | https://dev.to/tsamaya/eslint-and-prettier-configuration-for-react-project-2gij | react, eslint, prettier |

> ESLint is a tool for identifying and reporting on patterns found in ECMAScript/JavaScript code, with the goal of making code more consistent and avoiding bugs.

> Prettier is an opinionated code formatter. It ensures a consistent style by parsing your code and re-writing it with its rules.

_If you are using VScode, and already have the ESLINT and PRETTIER plugins, as you are goigng to change the configuration as you follow the tutorial, you might have linter/prettier errors displayed, the solution is to restart VSCode._

In your git working folder running the following command will guide you with the ESLint configuration, we are going to use the airbnb style guide:

```bash

npm init @eslint/config

? How would you like to use ESLint? …

To check syntax only

To check syntax and find problems

❯ To check syntax, find problems, and enforce code style

```

Select `To check syntax, find problems, and enforce code style`

```bash

? What type of modules does your project use? …

❯ JavaScript modules (import/export)

CommonJS (require/exports)

None of these

```

For React / React-Native, you probably use import/export!

```bash

? Which framework does your project use? …

❯ React

Vue.js

None of these

```

Use React, but it also works with `Vue.js`.

```bash

? Does your project use TypeScript? › No / Yes

```

up to you. Here I went for No.

```bash

? Where does your code run? … (Press <space> to select, <a> to toggle all, <i> to invert selection)

✔ Browser

✔ Node

```

I tend to use both (even for pure React project)

```bash

? How would you like to define a style for your project? …

❯ Use a popular style guide

Answer questions about your style

```

and now we can choose the style:

```bash

? Which style guide do you want to follow? …

❯ Airbnb: <https://github.com/airbnb/javascript>

Standard: <https://github.com/standard/standard>

Google: <https://github.com/google/eslint-config-google>

XO: <https://github.com/xojs/eslint-config-xo>

```

```bash

? What format do you want your config file to be in? …

❯ JavaScript

YAML

JSON

```

I prefer using an `.eslintrc.js` but up to you if one prefer a different flavour.

```bash

Checking peerDependencies of eslint-config-airbnb@latest

Local ESLint installation not found.

The config that you've selected requires the following dependencies:

eslint-plugin-react@^7.28.0 eslint-config-airbnb@latest eslint@^7.32.0 || ^8.2.0 eslint-plugin-import@^2.25.3 eslint-plugin-jsx-a11y@^6.5.1 eslint-plugin-react-hooks@^4.3.0

? Would you like to install them now? › No / Yes

```

Select yes to install the dependencies. And choose your package manager:

```bash

? Which package manager do you want to use? …

❯ npm

yarn

pnpm

```

```bash

Installing eslint-plugin-react@^7.28.0, eslint-config-airbnb@latest, eslint@^7.32.0 || ^8.2.0, eslint-plugin-import@^2.25.3, eslint-plugin-jsx-a11y@^6.5.1, eslint-plugin-react-hooks@^4.3.0

up to date, audited 208 packages in 1s

85 packages are looking for funding

run `npm fund` for details

found 0 vulnerabilities

Successfully created .eslintrc.js file in XXX/YYY

```

Today is a good day, all packages are safe with 0 vulnerabilities.

Now, your `package.json` file contains these dev dependencies

```json

"devDependencies": {

"eslint": "^8.36.0",

"eslint-config-airbnb": "^19.0.4",

"eslint-plugin-import": "^2.27.5",

"eslint-plugin-jsx-a11y": "^6.7.1",

"eslint-plugin-react": "^7.32.2",

"eslint-plugin-react-hooks": "^4.6.0"

}

```

Now let's have a look at the eslintrc file. It looks like that:

```js

module.exports = {

env: {

browser: true,

es2021: true,

node: true,

},

extends: ['plugin:react/recommended', 'airbnb'],

parserOptions: {

ecmaFeatures: {

jsx: true,

},

ecmaVersion: 'latest',

sourceType: 'module',

},

plugins: ['react'],

rules: {},

}

```

Adding prettier to the configuration

```bash

npm i -D prettier eslint-config-prettier eslint-plugin-prettier

added 5 packages, and audited 213 packages in 4s

86 packages are looking for funding

run `npm fund` for details

found 0 vulnerabilities

```

create a .prettierrc.js file

```js

module.exports = {

singleQuote: true,

}

```

Read more about the other options on prettier [website](https://prettier.io/docs/en/configuration.html).

Time to update the `.eslintrc.js` file, adding the prettier recommended plugin

```js

extends: [

'plugin:react/recommended',

'airbnb',

'plugin:prettier/recommended',

],

```

add two scripts in the `package.json` file

```json

"scripts": {

"lint": "eslint .",

"lint:fix": "eslint -fix ."

},

```

then you will be able to run

```bash

npm run lint

```

just to check your code

```bash

npm run lint:fix

```

to check and automatically fix what can be fixed.

### Pre-commit Hook with lint-staged

```bash

Running lint-staged...

husky - Git hooks installed

husky - created .husky/pre-commit

```

This adds husky to hook the pre-commit and run the lint-staged script added to the project’s `package.json` file

Read more at the [lint-staged](https://github.com/okonet/lint-staged#configuration) repo.

now let's create two files `.eslintignore`, and `.prettierignore` with the same content:

```yml

node_modules/

build/

coverage/

```

You are all set happy coding!

## Resources

- Photo by [Andrea De Santis](https://unsplash.com/@santesson89?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText) on [Unsplash](https://unsplash.com/s/photos/dragon-bridge?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText)

| tsamaya |

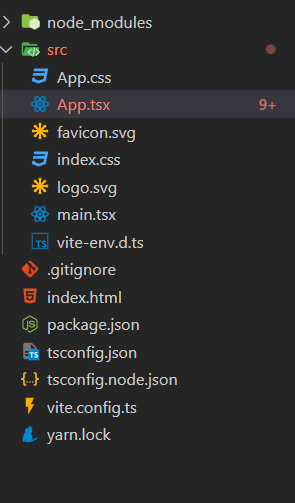

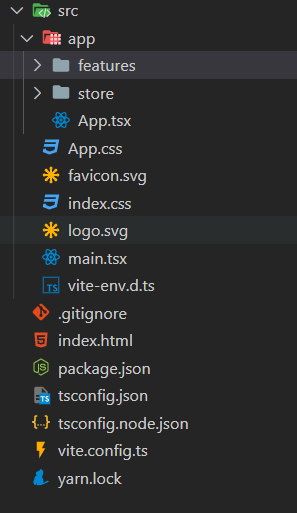

1,032,504 | Introducción react-redux y redux toolkit | Después de haber entendido algunos conceptos de Redux, llegó el momento de utilizarlo como tal 😁. ... | 17,372 | 2022-03-23T23:52:07 | https://dev.to/leobar37/introduccion-react-redux-y-redux-toolkit-4k63 | typescript, react, redux, webdev | Después de haber entendido algunos conceptos de Redux, llegó el momento de utilizarlo como tal 😁.

## Setup

Para hacer el setup de la app usaré [vite](https://vitejs.dev/). Vite es una alternativa a webpack, el cual mejora la experiencia de usuario y es mucho más rápido. Si quieres saber más acerca de los beneficios de vite, puedes visitar el siguiente [artículo](https://radixweb.com/blog/vite-js-latest-front-end-development-tool)

Para crear una aplicación con vite y react, solo es necesario abrir tu consola y poner el siguiente comando.

```sh

yarn create vite redux-tutorial --template react-ts

```

La opción de `--template` le dice a vite con que template inicializar el proyecto, en este caso el de `react-ts` ahora ya se tendrá la siguiente estructura.

Ahora empecemos con las dependencias, como habíamos dicho utilizaremos redux con react, para eso tenemos que instalar el paquete `react-redux`, el cual trae lo necesario para hacer la conexión a redux, además a ellos vamos a instalar `@reduxjs/toolkit` el cual trae algunos superpoderes para redux

```sh

yarn add react-redux @reduxjs/toolkit

```

## ¿Que es redux toolkit?

Redux esta bien, pero era un poco complicado. Actualmente, contamos con [Redux toolkit](https://redux-toolkit.js.org/) él ofrece las siguientes soluciones:

- Simplifica la configuración de redux

- Elimina la necesidad de agregar múltiples paquetes para tener una aplicación escalable.

- Reduce el código repetitivo.

Actualmente no se recomienda usar `react-redux` sin `@reduxjs/toolkit`.

## Preparando el Store

Para empezar a escribir lógica con redux, lo primero que se tiene que hacer es configurar el `Store`. Para eso [Redux toolkit](https://redux-toolkit.js.org/) provee un método que nos ayuda con el procedimiendo, el cual se llama `configureStore`.

```tsx

// store/index.ts

import { configureStore } from "@reduxjs/toolkit";

export const store = configureStore({

reducer: {},

devTools: process.env.NODE_ENV !== "production",

});

export default store;

```

[configureStore](https://redux-toolkit.js.org/api/configureStore)

Ahora ya tenemos él store :) . Al hacer esto `redux-toolkit` ha puesto algunas configuracioens por defecto, las cuales iré comentando conforme avancemos en el ejemplo. En este instante podemos hablar de las [devTools](https://github.com/reduxjs/redux-devtools) las cuales son indispensables para poder depurar la aplicación. En este caso la opción `devtools` se activa solo en producción, también puedes personalizar el funcionamiento, pasando un objeto de [opciones](https://github.com/reduxjs/redux-devtools/blob/main/extension/docs/API/Arguments.md).

**Conexión con React**

Ahora es momento de poner disponible el store hacia React, para eso `react-redux` provee un `Provider` para poner disponible el `Store` en todo el árbol de componentes.

```tsx

import "./App.css";

import { Provider as ReduxProvider } from "react-redux";

import store from "./store";

function App() {

return (

<ReduxProvider store={store}>

<div></div>

</ReduxProvider>

);

}

export default App;

```

## Estructura de archivos en Redux

Ahora que ya se tiene la tienda en el nivel superior de la aplicación, es hora la lógica de nuestra aplicación, en este caso vamos a hacer una agenda de contactos, para poder realizar un CRUD. Antes de continuar necesitamos tener en cuenta algo muy importante, el cual es la estructura de los archivos. Si bien React es una librería muy flexible frente a la estructura de archivos, Redux poner a nuestra disposición una estructura base, para a partir de eso organizar nuestros archivos.

**Pensando en Ducks**

Ducks es una [propuesta](https://github.com/erikras/ducks-modular-redux) que básicamente propone que empaquetemos un conjunto de acciones, reductores, nombres de acciones a una funcionalidad en concreto, llamando a esta agrupación `duck` el cual, tranquilamente, puede empaquetarse y distribuirse como una librería.

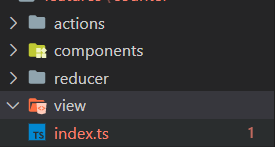

Ahora, teniendo un poco en cuenta los patos 😅, vamos a dividir la aplicación en algo parecido, pero le llamaremos `features`. De esta manera.

**Feature Structure:**

Como se mencionaba en un inicio, el ecosistema de React es muy flexible a la hora de organizar los archivos. Teniendo en cuenta los elementos de redux, al momento de partir una `feature` debemos dividir `actions`, `reducer`, `selectors` esto mejora la organización.

En mi caso inspirado un poco en el siguiente [artículo](https://alexmngn.medium.com/how-to-better-organize-your-react-applications-2fd3ea1920f1), mi estructura es la siguiente.

_View_: Carpeta donde van las vistas que el usuario va a ver en pantalla, generalmente todos los componentes que son utilizando junto con el router de la aplicación. Por ejemplo, si estamos creando una aplicación de inventarios, el listado de estos productos podrían ir en una pantalla `producs/list`.

_Componentes_: Normalmente, se querrá tener una carpeta `components` en general, donde estén ubicados todos aquellos componentes, que **pueden ser usados en cualquier lugar**, una feature puede tener componentes que sean propios de la característica, por ejemplo el listado, de productos.

_actions:_ En esta carpeta irán todas las acciones ligadas a esta característica.

_reducer:_ Cada feature tiene como regla que debe exportar un solo reductor, eso no quiere decir que solo tengamos que concentrar toda lógica en un solo reducer, podemos usar [`combineReducers`](https://redux.js.org/api/combinereducers) para combinar múltiples reducer en unos solo si es que fuese necesario.

Puedes pensar en una `feature` como una mini aplicación dentro de una aplicación, este se encarga de un proceso en concreto, que al final aportará un valor agregado a la aplicación en general.

## Accciones

Las acciones son objetos planos que expresan una intención de cambiar el estado, eso es lo que se mencionó en el artículo anterior. Puedes pensar en una acción como un evento ocurrido en la aplicación, por ejemplo; se agregó un producto, se eliminó un contacto, cargando contactos, todos ellos describen algo que está pasando en al app.

Dicho esto podemos empezar a escribir acciones, las acciones tiene un [estándar](https://github.com/redux-utilities/flux-standard-action) que indican que deben ser así.

```tsx

{

type: 'ADD_TODO',

payload: {

text: 'Do something.'

}

}

```

**Creadores de acciones:**

Normalmente, las acciones puede despacharse de la siguiente manera.

```tsx

store.dispatch({ type: "ITEM_ADDED_TO_CART", payload: 47 });

```

Pero en cierto punto, poner el tipo, cada vez que queramos despachar esta acción, no es muy escalable porque si se quisiera cambiar el tipo de acción, tendría que hacerlo en diferentes archivos y además, se vuelve complicado repetir lo mismo.

Ahí es donde entran loas [creadores de acciones](https://read.reduxbook.com/markdown/part1/04-action-creators.html), que no son nada más que funciones encargadas de crear este objeto, un creador de acción sería el siguiente.

```tsx

function doAddToDoItem(text) {

return { type: "TODO_ADDED", payload: text };

}

```

Entonces, cada vez que se requiera formar esta acción, solo es necesario ejecutar `doAddToDoItem`.

Redux toolkit Simplifica este procedimiento con un utility llamado [`createAction`](https://redux-toolkit.js.org/api/createAction) el cual es una [HOF](https://leobar37.medium.com/hofs-clousures-y-callbacks-para-ser-feliz-en-javascript-fa105ed6ad44)(higher order function) los cuales son funciones que retornan funciones.

```ts

// features/schedule/actions/schedule.actions.ts

import { createAction } from "@reduxjs/toolkit";

export const contactAdded = createAction("CONTACT_ADDED");

```

Ahora `contactAdded` es una función que al ser disparada creará una acción de tipo `CONTACT_ADDED` es importante saber que por [recomendación de redux](https://redux.js.org/style-guide/style-guide#model-actions-as-events-not-setters) las acciones deben ser "Descripciones de eventos que ocurrieron" en lugar expresarlas en presente, como por ejemplo `ADD_CONTACT`.

**Payload:**

Hasta este paso se creó la acción `contactAdded`, pero esto no es suficiente para agregar un contacto, se necesitaría la información de ese contacto. En el caso de typescript `redux toolkit` tiene un [genérico](https://www.typescriptlang.org/docs/handbook/2/generics.html) para poder describir el payload.

```ts

import { createAction } from "@reduxjs/toolkit";

export const contactAdded =

createAction<{ name: string; phone: string }>("CONTACT_ADDED");

```

Listo ahora el primer parámetro(payload) de `contactAdded` estará tipado.

## Reducer

Como se mencionó anteriormente, los [reducers](https://redux.js.org/tutorials/fundamentals/part-3-state-actions-reducers#writing-reducers) son funciones puras que toman el estado actual y la acción para retornar un nuevo estado.

[Redux toolkit](https://redux-toolkit.js.org/api/createreducer) exporta una función llamada `createReducer` la cual facilita la creación de un reducer agregando ciertas características que facilitan el desarrollo.

```ts

import { createReducer } from "@reduxjs/toolkit";

const initalState = {

contacts: [],

};

export type ScheduleState = typeof initalState;

const reducer = createReducer(initalState, (builder) => {});

```

Esta sería la forma de crear un reducer con **Redux toolkit**

**Case:**

Anteriormente vimos, que cuando creamos un reducer dentro de el planteamos un `switch...case` para manejar cada acción.

```ts

const reducer = (state, action) => {

switch (action) {

case "EAT": {

return {

...state,

eatCount: state.eatCount + 1,

};

}

}

};

```

Redux toolkit propone una forma más amigable de hacerlo, mediante un objeto builder, el cual expone una serie de métodos como `addCase` con el cual le recibe como parámetros.

**ActionCreator:** La función generada por `createAction`o una acción como tal.

**Reducer:** El reducer encargado solo de manejar esta acción.

Incorporando la lógica de agregar contacto, tendríamos lo siguiente.

```ts

import { createReducer } from "@reduxjs/toolkit";

import * as scheduleActions from "../actions/schedule.actions";

export interface IContact {

id: number;

name: string;

phone: string;

}

const initalState = {

contacts: [] as IContact[],

};

export type ScheduleState = typeof initalState;

const reducer = createReducer(initalState, (builder) => {

builder.addCase(scheduleActions.contactAdded, (state, action) => {

state.contacts.push({

id: state.contacts.length,