id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

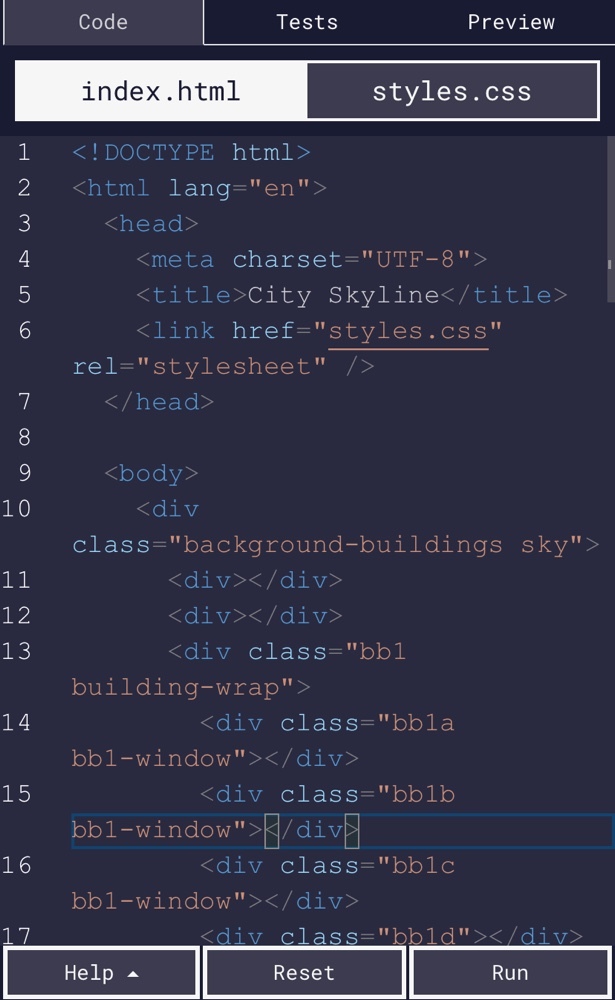

1,157,615 | Day 58-61 of coding complete | 100DaysOfCode #100daysofcodechallenge Day 58-61 complete. Finished building the skyline and... | 0 | 2022-08-02T15:07:00 | https://dev.to/chrisgomad/day-58-61-of-coding-complete-47nl | webdev, beginners, programming, 100daysofcode | #100DaysOfCode #100daysofcodechallenge Day 58-61 complete. Finished building the skyline and learning more about CSS variables. Really cool to see how to manipulate containers and utilize variables. You can use the “:root” selector in CSS to apply a value in all your CSS code.

| chrisgomad |

1,157,915 | Faithful E2E Testing of Nx Preset Generators | This article was originally published on my blog. TLDR: Here's a full working example of a faithful... | 0 | 2022-08-02T22:51:00 | https://dev.to/chiubaka/faithful-e2e-testing-of-nx-preset-generators-m5a | nx, nxpreset, e2e, verdaccio | _This article was originally published on [my blog](https://blog.chiubaka.com/faithful-e2e-testing-of-nx-preset-generators)._

TLDR: Here's a full working example of a faithful E2E test for an Nx preset generator since the default generated E2E harness isn't correct.

Putting it all together, here's a full sample test suite:

```typescript

import {

checkFilesExist,

cleanup,

runNxCommandAsync,

tmpProjPath,

} from "@nrwl/nx-plugin/testing";

import { ChildProcess, execSync, fork } from "node:child_process";

import path from "node:path";

import { getPortPromise as getOpenPort } from "portfinder";

// These tests can take awhile to run. Modify or remove this depending on how long this takes

// on your machine or in your environment.

jest.setTimeout(60_000);

const startVerdaccio = async (port: number): Promise<ChildProcess> => {

const configPath = path.join(__dirname, "../verdaccio.yaml");

return new Promise((resolve, reject) => {

const child = fork(require.resolve("verdaccio/bin/verdaccio"), [

"-c",

configPath,

"-l",

`${port}`,

]);

child.on("message", (message: { verdaccio_started: boolean }) => {

if (message.verdaccio_started) {

resolve(child);

}

});

child.on("error", (error: any) => reject([error]));

child.on("disconnect", (error: any) => reject([error]));

});

};

describe("nx-plugin e2e", () => {

let verdaccioProcess: ChildProcess;

beforeAll(async () => {

cleanup();

const verdaccioPort = await getOpenPort();

verdaccioProcess = await startVerdaccio(verdaccioPort);

const verdaccioUrl = `http://localhost:${verdaccioPort}`;

execSync(`yarn config set registry ${verdaccioUrl}`);

execSync(

`npx npm-cli-login -u chiubaka -p test -e test@chiubaka.com -r ${verdaccioUrl}`,

);

execSync(`npm publish --registry=${verdaccioUrl}`, {

cwd: path.join(__dirname, "../../../dist/packages/nx-plugin"),

});

const destination = path.join(tmpProjPath(), "..");

const workspaceName = path.basename(tmpProjPath());

execSync(

`npm_config_registry=${verdaccioUrl} npx create-nx-workspace ${workspaceName} --preset=@chiubaka/nx-plugin --nxCloud=false`,

{

cwd: destination,

},

);

});

afterAll(async () => {

// `nx reset` kills the daemon, and performs

// some work which can help clean up e2e leftovers

await runNxCommandAsync("reset");

execSync(`yarn config set registry https://registry.yarnpkg.com`);

verdaccioProcess.kill();

});

it("should not create an apps dir", () => {

expect(() => {

checkFilesExist("apps");

}).toThrow();

});

});

```

Be sure that you have `_debug: true` somewhere in your `verdaccio.yml` and that you have `verdaccio`, `verdaccio-auth-memory`, and `verdaccio-memory` installed as `devDependencies`.

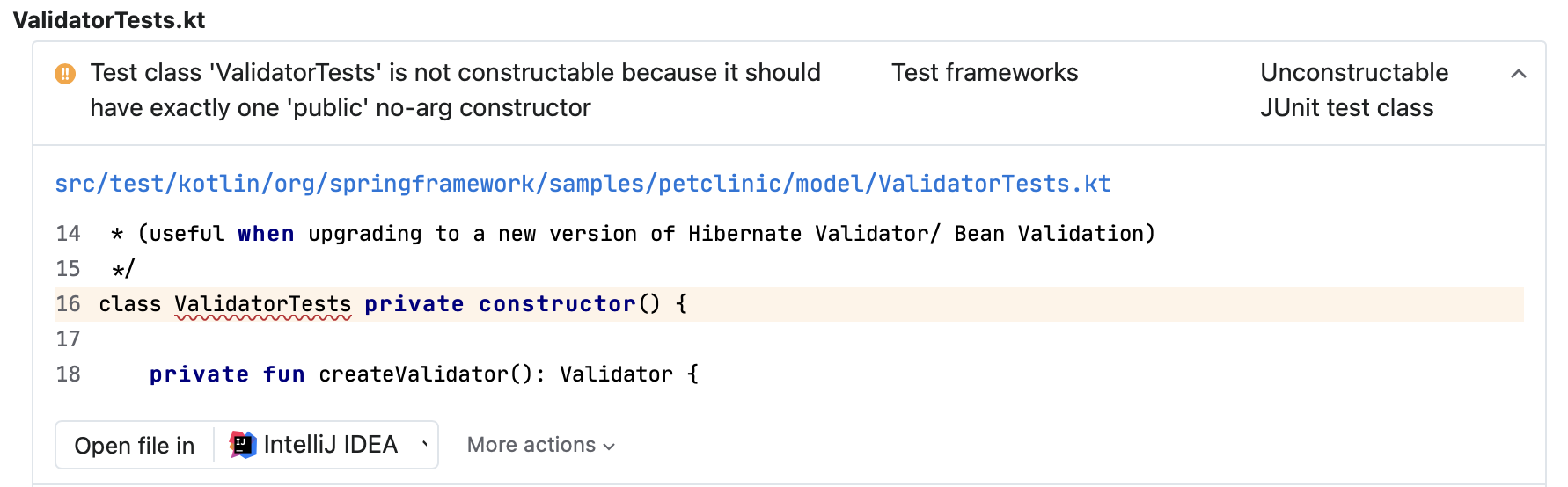

## The Problem

Nx models custom presets as ordinary generators that get treated differently by Nx's internals when generating a workspace. The suggested command for scaffolding a preset generator is the exact same command used for scaffolding generators for other use cases with only the caveat that a preset generator _must be named `preset`_.

Unfortunately, the preset generator use case is sufficiently different from other generator use cases (e.g. generating a project within a pre-existing workspace) that this mental model and a lot of the generated scaffolding turns out to be misleading.

Specifically, I've found that neither the unit nor the E2E testing harnesses for the preset generator are terribly faithful. By "faithful" I mean: does this testing set up accurately reflect the real context my code will run in?

In the case of the default E2E testing harness, the answer is a definite no. Out of the box, this harness is designed to test a generator by running it inside of a pre-existing workspace as if it were a library or app generator, which it's not.

This harness:

1. Generates a new workspace with the `empty` preset.

2. Patches the `package.json` file in the newly generated workspace to install my plugin from the local filesystem.

3. Invokes `nx generate @my-org/my-plugin:preset project` to run the preset generator as a normal generator.

For awhile this _seemed_ fine, but as my preset generator grew more complex, I started noticing places where real workspace generation would fail, but my E2E tests were passing. It didn't take long to realize that this was because my generator gets run differently in production than it does in these E2E tests.

Tests that don't reliably tell me when my code is broken aren't doing their job 🙃!

## Motivation

Lately I've been writing a [custom Nx workspace preset](https://nx.dev/packages/nx-plugin#preset). The goal of a custom preset is to allow customization of the workspace creation process.

I'm hoping to use this to create a batteries-included standardized monorepo generator complete with my preferred configuration for things like linting, testing, CI, and even GitHub project settings.

Unfortunately, this use case isn't very well-documented within Nx. In fact, in a lot of cases the documentation and provided scaffolding for preset generators is, in my humble opinion, seriously misleading. I've had to figure things out by reading through Nx's open source codebase and doing a lot of experimentation.

This article is my attempt at saving someone else all that time and pain :).

## The Solution

It was a little tricky getting a full E2E test for workspace generation itself, but I managed to piece a solution together.

Here's the outline:

1. Start up a [Verdaccio](https://verdaccio.org/) server before running tests.

1. Verdaccio is a lightweight registry that's easy to install and use locally.

2. Authenticate with the Verdaccio registry to allow publishing.

3. Publish the built Nx plugin locally to the Verdaccio registry.

4. Run `create-nx-workspace` with my preset, making sure to hit the local Verdaccio registry to grab the plugin.

### Why Verdaccio?

The challenge in E2E testing a preset generator is that preset generators are usually invoked through `create-nx-workspace` and passed as a package name in the `preset` argument.

Behind the scenes, `create-nx-workspace` resolves the package name from NPM in order to run the preset.

In our tests, we obviously don't want to pull in a real published version of the plugin. We'd like to bundle the current state of the plugin and run the E2E tests against that.

Since `create-nx-workspace` is a CLI, often run through `npx`, we can't use other common local package linking methods like `npm link` or modifying a `package.json` file (because there isn't one yet!). Instead, the strategy is to actually publish the package, but to a local registry that won't affect anything outside of our tests.

Verdaccio fills this role perfectly. By default, it acts as a proxy for NPM, pulling any packages that aren't found in the local registry from the remote registry. This means we can publish our plugin registry and expect Verdaccio to return the development version of our own packages, while still correctly pulling in other dependencies from elsewhere.

### Starting an ephemeral Verdaccio server in tests

One of the trickier parts of getting this E2E harness to work was figuring out how to reliably run an ephemeral Verdaccio server as part of my test set up. As it turns out, the Verdaccio documentation is a bit rough around the edges, as well. There are two relevant pages, one about [End to End Testing](https://verdaccio.org/docs/e2e), which doesn't provide a lot of context and another about the [Node.js API](https://verdaccio.org/docs/verdaccio-programmatically), which is ostensibly not about E2E testing at all.

I was most drawn to the idea of running Verdaccio programmatically using the [module API](https://verdaccio.org/docs/verdaccio-programmatically#using-the-module-api), but had trouble getting this to work. In most cases it seemed that the server would not start up properly and my tests would just hang.

Ultimately the approached that work was [running Verdaccio as a child process using `fork`](https://verdaccio.org/docs/verdaccio-programmatically#using-fork-from-child_process-module). I took cues from the example code in the Verdaccio documentation as well as from this [sample repo](https://github.com/juanpicado/verdaccio-fork) that contains a complete example of this set up.

For my test setup and tear down I ended with something like this:

```typescript

import { ChildProcess, fork } from "node:child_process";

import { getPortPromise as getOpenPort } from "portfinder";

const startVerdaccio = async (port: number): Promise<ChildProcess> => {

const configPath = path.join(__dirname, "../verdaccio.yaml");

return new Promise((resolve, reject) => {

const child = fork(require.resolve("verdaccio/bin/verdaccio"), [

"-c",

configPath,

"-l",

`${port}`,

]);

child.on("message", (message: { verdaccio_started: boolean }) => {

if (message.verdaccio_started) {

resolve(child);

}

});

child.on("error", (error: any) => reject([error]));

child.on("disconnect", (error: any) => reject([error]));

});

};

describe("nx-plugin e2e", () => {

let verdaccioProcess: ChildProcess;

beforeAll(async () => {

const verdaccioPort = await getOpenPort();

verdaccioProcess = await startVerdaccio(verdaccioPort);

});

afterAll(async () => {

verdaccioProcess.kill();

});

});

```

I created a `verdaccio.yaml` file that looks like this:

```yaml

# verdaccio-memory

store:

memory:

limit: 1000

# verdaccio-auth-memory plugin

auth:

# htpasswd:

# file: ./htpasswd

auth-memory:

users:

foo:

name: foo

password: bar

admin:

name: foo

password: bar

# uplinks

uplinks:

npmjs:

url: https://registry.npmjs.org/

verdacciobk:

url: http://localhost:8000/

auth:

type: bearer

token: dsyTcamuhMd8GlsakOhP5A==

packages:

"@*/*":

access: $all

publish: $authenticated

unpublish: $authenticated

proxy: npmjs

"react":

access: $all

publish: $authenticated

unpublish: $authenticated

proxy: verdacciobk

"**":

access: $all

publish: $authenticated

unpublish: $authenticated

proxy: npmjs

# rate limit configuration

rateLimit:

windowMs: 1000

max: 10000

middlewares:

audit:

enabled: true

security:

api:

jwt:

sign:

expiresIn: 1d

logs: { type: file, path: /dev/null, level: info }

i18n:

web: en-US

# try to use verdaccio with child_process:fork

_debug: true

```

Notably, I took this file almost completely from the [`verdaccio-fork` example repo](https://github.com/juanpicado/verdaccio-fork/blob/main/verdaccio.yaml). The only small change I made was to modify `logs` to send all `verdaccio` output to `/dev/null` so it wouldn't clutter my testing output.

Per the Verdaccio docs, `_debug: true` is very important when using Verdaccio in this way, as it's what turns on the ability to listen for the `verdaccio_started` message once the server is ready to go.

### Authenticating with Verdaccio

Next challenge was authenticating with the new Verdaccio server from inside of tests. Initially, I thought I could just run a simple `npm adduser --registry=http://my-local-registry` command. It took a few confusing test failures before I realized that `npm adduser` is an interactive CLI and was failing my tests because it was expecting user input.

The way around this is to use `npm-cli-login` instead. You can either add it as a `devDependency` and invoke it with `npm run npm-cli-login` or just use `npx npm-cli-login`.

Here's the full command to authenticate:

```typescript

import { execSync } from "node:child_process";

import { getPortPromise as getOpenPort } from "portfinder";

const verdaccioPort = await getOpenPort();

const verdaccioUrl = `http://localhost:${verdaccioPort}`;

execSync(

`npx npm-cli-login -u chiubaka -p test -e test@chiubaka.com -r ${verdaccioUrl}`,

);

```

This needs to go in the `beforeAll` setup block of your tests.

### Publishing to Verdaccio

Now that Verdaccio is running and we're authenticated, publishing is easy!

```typescript

import { execSync } from "node:child_process";

import { getPortPromise as getOpenPort } from "portfinder";

const verdaccioPort = await getOpenPort();

const verdaccioUrl = `http://localhost:${verdaccioPort}`;

execSync(`npm publish --registry=${verdaccioUrl}`, {

cwd: path.join(__dirname, "../../../dist/packages/nx-plugin"),

});

```

Where the `cwd` of the `execSync` here needs to be the path to the built version of your plugin, which the `@nrwl/nx-plugin:e2e` executor will ensure is pre-built before running your tests by default.

### Running the workspace generation command

With our plugin package published to the local registry, all that's left is to run the workspace generation command to create a real generated workspace in the E2E testing directory (`tmp` within the Nx plugin workspace by default).

Since we're aiming to be as faithful as possible to the true experience users will have when using our plugin, we'll use the `create-nx-workspace` command to invoke the preset generator.

In order to get the `create-nx-workspace` command to use the local Verdaccio registry, we'll need to run it with `npx` and prefix with the `npm_config_registry=[http://my-local-registry]` environment variable.

Additionally, in order for a lot of the `@nrwl/nx-plugin/testing` utils to work properly, note that your testing workspace needs to be generated in a very specific place. At time of writing, the name of that directory is `proj`, but since that could change without warning it's safest to dynamically determine the name of the testing workspace using `tmpProjPath()`.

Here's what the full command looks like:

```typescript

import { tmpProjPath } from "@nrwl/nx-plugin/testing";

import { execSync } from "node:child_process";

import path from "node:path";

import { getPortPromise as getOpenPort } from "portfinder";

const verdaccioPort = await getOpenPort();

const verdaccioUrl = `http://localhost:${verdaccioPort}`;

const destination = path.join(tmpProjPath(), "..");

const workspaceName = path.basename(tmpProjPath());

execSync(

`npm_config_registry=${verdaccioUrl} npx create-nx-workspace ${workspaceName} --preset=@chiubaka/nx-plugin --nxCloud=false`,

{

cwd: destination,

},

);

```

### Full working solution

Putting it all together, here's a full sample test suite:

```typescript

import {

checkFilesExist,

cleanup,

runNxCommandAsync,

tmpProjPath,

} from "@nrwl/nx-plugin/testing";

import { ChildProcess, execSync, fork } from "node:child_process";

import path from "node:path";

import { getPortPromise as getOpenPort } from "portfinder";

// These tests can take awhile to run. Modify or remove this depending on how long this takes

// on your machine or in your environment.

jest.setTimeout(60_000);

const startVerdaccio = async (port: number): Promise<ChildProcess> => {

const configPath = path.join(__dirname, "../verdaccio.yaml");

return new Promise((resolve, reject) => {

const child = fork(require.resolve("verdaccio/bin/verdaccio"), [

"-c",

configPath,

"-l",

`${port}`,

]);

child.on("message", (message: { verdaccio_started: boolean }) => {

if (message.verdaccio_started) {

resolve(child);

}

});

child.on("error", (error: any) => reject([error]));

child.on("disconnect", (error: any) => reject([error]));

});

};

describe("nx-plugin e2e", () => {

let verdaccioProcess: ChildProcess;

beforeAll(async () => {

cleanup();

const verdaccioPort = await getOpenPort();

verdaccioProcess = await startVerdaccio(verdaccioPort);

const verdaccioUrl = `http://localhost:${verdaccioPort}`;

execSync(`yarn config set registry ${verdaccioUrl}`);

execSync(

`npx npm-cli-login -u chiubaka -p test -e test@chiubaka.com -r ${verdaccioUrl}`,

);

execSync(`npm publish --registry=${verdaccioUrl}`, {

cwd: path.join(__dirname, "../../../dist/packages/nx-plugin"),

});

const destination = path.join(tmpProjPath(), "..");

const workspaceName = path.basename(tmpProjPath());

execSync(

`npm_config_registry=${verdaccioUrl} npx create-nx-workspace ${workspaceName} --preset=@chiubaka/nx-plugin --nxCloud=false`,

{

cwd: destination,

},

);

});

afterAll(async () => {

// `nx reset` kills the daemon, and performs

// some work which can help clean up e2e leftovers

await runNxCommandAsync("reset");

execSync(`yarn config set registry https://registry.yarnpkg.com`);

verdaccioProcess.kill();

});

it("should not create an apps dir", () => {

expect(() => {

checkFilesExist("apps");

}).toThrow();

});

});

```

Be sure to include a `verdaccio.yaml` file that looks something like this:

```yaml

# verdaccio-memory

store:

memory:

limit: 1000

# verdaccio-auth-memory plugin

auth:

# htpasswd:

# file: ./htpasswd

auth-memory:

users:

foo:

name: foo

password: bar

admin:

name: foo

password: bar

# uplinks

uplinks:

npmjs:

url: https://registry.npmjs.org/

verdacciobk:

url: http://localhost:8000/

auth:

type: bearer

token: dsyTcamuhMd8GlsakOhP5A==

packages:

"@*/*":

access: $all

publish: $authenticated

unpublish: $authenticated

proxy: npmjs

"react":

access: $all

publish: $authenticated

unpublish: $authenticated

proxy: verdacciobk

"**":

access: $all

publish: $authenticated

unpublish: $authenticated

proxy: npmjs

# rate limit configuration

rateLimit:

windowMs: 1000

max: 10000

middlewares:

audit:

enabled: true

security:

api:

jwt:

sign:

expiresIn: 1d

logs: { type: file, path: /dev/null, level: info }

i18n:

web: en-US

# try to use verdaccio with child_process:fork

_debug: true

```

And of course, make sure you've installed `verdaccio`, `verdaccio-auth-memory`, and `verdaccio-memory` to support this config file. | chiubaka |

1,158,033 | Space Invaders Game With Python: (Part2). BULLETS | See Part 1 In the previous part, we saw how to begin using pygame, how to work with classes, and we... | 0 | 2022-08-03T04:44:00 | https://dev.to/munyite001/space-invaders-game-with-python-part2-bullets-5dl0 | programming, python, tutorial, gamedev | See [Part 1](https://dev.to/munyite001/space-invaders-game-in-python-part-1-4kn3)

In the previous part, we saw how to begin using pygame, how to work with classes, and we created the Alien Invasion Game class that was responsible for running the game, we also added a ship image to our game, and added some functionality to allow us to control our ship. In today's tutorial, we will be adding the functionality of bullets, to allow us to fire bullets from our ship. We will create the bullet class, and use sprite to generate bullets.

### Shooting Bullets

At the end of the __init__() method, we’ll update settings.py to include the

values we’ll need for a new Bullet class:

```python

#settings.py

def __init__(self):

--snip--

# Bullet settings

self.bullet_speed = 1.0

self.bullet_width = 3

self.bullet_height = 15

self.bullet_color = (60, 60, 60)

```

These settings create dark gray bullets with a width of 3 pixels and a

height of 15 pixels. The bullets will travel slightly slower than the ship.

#### Creating the bullet class

Now we will create a bullet.py file to store our Bullet class.

```python

#bullet.py

import pygame

from pygame.sprite import Sprite

class Bullet(Sprite):

"""A class to manage bullets fired from the ship"""

def __init__(self, ai_game):

"""Create a bullet object at the ship's current position."""

super().__init__()

self.screen = ai_game.screen

self.settings = ai_game.settings

self.color = self.settings.bullet_color

# Create a bullet rect at (0, 0) and then set correct position.

self.rect = pygame.Rect(0, 0, self.settings.bullet_width,

self.settings.bullet_height)

self.rect.midtop = ai_game.ship.rect.midtop

# Store the bullet's position as a decimal value.

self.y = float(self.rect.y)

```

The Bullet class inherits from Sprite, which we import from the pygame

.sprite module. When you use sprites, you can group related elements in

your game and act on all the grouped elements at once.

To create a bulletinstance, __init__() needs the current instance of AlienInvasion, and we call super() to inherit properly from Sprite. We also set attributes for the screen and settings objects, and for the bullet’s color.

Then, we create the bullet’s rect attribute. The bullet isn’t based on an

image, so we have to build a rect from scratch using the pygame.Rect() class.

This class requires the x- and y-coordinates of the top-left corner of the

rect, and the width and height of the rect. We initialize the rect at (0, 0),

but we’ll move it to the correct location in the next line, because the bullet’s

position depends on the ship’s position. We get the width and height of the

bullet from the values stored in self.settings.

Then, we set the bullet’s midtop attribute to match the ship’s midtop attri

bute. This will make the bullet emerge from the top of the ship, making it

look like the bullet is fired from the ship. We store a decimal value for the

bullet’s y-coordinate so we can make fine adjustments to the bullet’s speed.

Here’s the second part of bullet.py, update() and draw_bullet():

```python

#bullet.py

def update(self):

"""Move the bullet up the screen."""

# Update the decimal position of the bullet.

self.y -= self.settings.bullet_speed

# Update the rect position.

self.rect.y = self.y

def draw_bullet(self):

"""Draw the bullet to the screen."""

pygame.draw.rect(self.screen, self.color, self.rect)

```

The update() method manages the bullet’s position. When a bullet is

fired, it moves up the screen, which corresponds to a decreasing y-coordinate

value. To update the position, we subtract the amount stored in settings

.bullet_speed from self.y. We then use the value of self.y to set the value

of self.rect.y.

The bullet_speed setting allows us to increase the speed of the bullets

as the game progresses or as needed to refine the game’s behavior. Once a

bullet is fired, we never change the value of its x-coordinate, so it will travel

vertically in a straight line even if the ship moves.

When we want to draw a bullet, we call draw_bullet(). The draw.rect()

function fills the part of the screen defined by the bullet’s rect with the

color stored in self.color

#### Storing bullets in a group

Now that we have a Bullet class and the necessary settings defined, we can

write code to fire a bullet each time the player presses the spacebar. We’ll

create a group in AlienInvasion to store all the live bullets so we can man

age the bullets that have already been fired. This group will be an instance

of the pygame.sprite.Group class, which behaves like a list with some extra

functionality that’s helpful when building games. We’ll use this group

to draw bullets to the screen on each pass through the main loop and to

update each bullet’s position.

We’ll create the group in __init__():

```python

#alien_invasion.py

def __init__(self):

--snip--

self.ship = Ship(self)

self.bullets = pygame.sprite.Group()

```

Then we need to update the position of the bullets on each pass

through the while loop:

```python

alien_invasion.py

u

def run_game(self):

"""Start the main loop for the game."""

while True:

self._check_events()

self.ship.update()

self.bullets.update()

self._update_screen()

```

When we call update() on a group, the group automatically calls

update() for each sprite in the group. The line self.bullets.update() calls

bullet.update() for each bullet we place in the group bullets.

#### Firing Bullets

In AlienInvasion, we need to modify _check_keydown_events() to fire a bullet

when the player presses the spacebar. We don’t need to change _check_keyup

_events() because nothing happens when the spacebar is released. We also

need to modify _update_screen() to make sure each bullet is drawn to the

screen before we call flip().

let’s write a new method, _fire_bullet(), to handle the whole process of firing bullets:

```python

# alien_invasion.py

--snip--

from ship import Ship

from bullet import Bullet

class AlienInvasion:

--snip--

def _check_keydown_events(self, event):

--snip--

elif event.key == pygame.K_q:

sys.exit()

elif event.key == pygame.K_SPACE:

self._fire_bullet()

def _check_keyup_events(self, event):

--snip--

def _fire_bullet(self):

"""Create a new bullet and add it to the bullets group."""

new_bullet = Bullet(self)

self.bullets.add(new_bullet)

def _update_screen(self):

"""Update images on the screen, and flip to the new screen."""

self.screen.fill(self.settings.bg_color)

self.ship.blitme()

for bullet in self.bullets.sprites():

bullet.draw_bullet()

--snip--

```

First, we import the Bullet class. Then we call _fire_bullet() when the space

bar is pressed. In _fire_bullet(), we make an instance of Bullet and call it

new_bullet. We then add it to the group bullets using the add() method.

The add() method is similar to append(), but it’s a method that’s written spe

cifically for Pygame groups.

The bullets.sprites() method returns a list of all sprites in the group

bullets. To draw all fired bullets to the screen, we loop through the sprites

in bullets and call draw_bullet() on each one.

When you run alien_invasion.py now, you should be able to move the ship

right and left, and fire as many bullets as you want. The bullets travel up the

screen and disappear when they reach the top. You

can alter the size, color, and speed of the bullets in settings.py.

#### Deleting Old Bullets

At the moment, the bullets disappear when they reach the top, but only

because Pygame can’t draw them above the top of the screen. The bullets

actually continue to exist; their y-coordinate values just grow increasingly

negative. This is a problem, because they continue to consume memory and

processing power. We need to get rid of these old bullets, or the game will slow down from doing so much unnecessary work. To do this, we need to detect when the

bottom value of a bullet’s rect has a value of 0, which indicates the bullet has

passed off the top of the screen:

```python

alien_invasion.py

def run_game(self):

"""Start the main loop for the game."""

while True:

self._check_events()

self.ship.update()

self.bullets.update()

# Get rid of bullets that have disappeared.

for bullet in self.bullets.copy():

if bullet.rect.bottom <= 0:

self.bullets.remove(bullet)

print(len(self.bullets))

self._update_screen()

```

When you use a for loop with a list (or a group in Pygame), Python

expects that the list will stay the same length as long as the loop is run

ning. Because we can’t remove items from a list or group within a for loop,

we have to loop over a copy of the group. We use the copy() method to set

up the for loop, which enables us to modify bullets inside the loop. We

check each bullet to see whether it has disappeared off the top of the screen. If it has, we remove it from bullets. We then insert a print() call to

show how many bullets currently exist in the game and verify that they’re

being deleted when they reach the top of the screen.

If this code works correctly, we can watch the terminal output while fir

ing bullets and see that the number of bullets decreases to zero after each

series of bullets has cleared the top of the screen. After you run the game

and verify that bullets are being deleted properly, remove the print() call. If

you leave it in, the game will slow down significantly because it takes more

time to write output to the terminal than it does to draw graphics to the

game window.

#### Limiting the number of bullets fired at a time

We'll limit the number of bullets fired by the player at any given time in order to make the game a bit more challenging

First, store the number of bullets allowed in settings.py:

```python

#settings.py

# Bullet settings

--snip--

self.bullet_color = (60, 60, 60)

self.bullets_allowed = 3

```

This limits the player to three bullets at a time. We’ll use this setting in

AlienInvasion to check how many bullets exist before creating a new bullet

in _fire_bullet():

```python

#alien_invasion.py

def _fire_bullet(self):

"""Create a new bullet and add it to the bullets group."""

if len(self.bullets) < self.settings.bullets_allowed:

new_bullet = Bullet(self)

self.bullets.add(new_bullet)

```

When the player presses the spacebar, we check the length of bullets.

If len(self.bullets) is less than three, we create a new bullet. But if three

bullets are already active, nothing happens when the spacebar is pressed.

When you run the game now, you should be able to fire bullets only in

groups of three.

#### Creating the _update_bullets() Method

We want to keep the AlienInvasion class reasonably well organized, so now

that we’ve written and checked the bullet management code, we can move

it to a separate method. We’ll create a new method called _update_bullets()

and add it just before _update_screen():

```python

alien_invasion.py

def _update_bullets(self):

"""Update position of bullets and get rid of old bullets."""

# Update bullet positions.

self.bullets.update()

# Get rid of bullets that have disappeared.

for bullet in self.bullets.copy():

if bullet.rect.bottom <= 0:

self.bullets.remove(bullet)

```

The code for _update_bullets() is cut and pasted from run_game(); all

we’ve done here is clarify the comments.

The while loop in run_game() looks simple again:

```python

#alien_invasion.py

while True:

self._check_events()

self.ship.update()

self._update_bullets()

self._update_screen()

```

Now our main loop contains only minimal code, so we can quickly read

the method names and understand what’s happening in the game. The

main loop checks for player input, and then updates the position of the

ship and any bullets that have been fired. We then use the updated posi-

tions to draw a new screen.

Run alien_invasion.py one more time, and make sure you can still fire

bullets without errors.

In the next part, we will be adding aliens to our game.

To access the Full project source code and files, visit the repo on [Github](https://github.com/munyite001/Alien-Invasion-Game-Python).

| munyite001 |

1,158,083 | Carbon language Fibonacci series working example | Carbon language beginner series: Google introduced Carbon programming language recently. Carbon... | 0 | 2022-08-03T06:29:00 | https://dev.to/tipseason/carbon-language-fibonacci-series-working-example-1c2h | programming, beginners, tutorial, opensource | Carbon language beginner series:

Google introduced [Carbon programming language](https://tipseason.com/carbon-language-tutorial-syntax/) recently.

Carbon language is still in early stages and is not yet ready. However just want to explore it around to learn a new language. After setting up carbon language, tried to run fibonacci series example with iteration but didn't work . So tried a recursive example and it worked. Here is the full working example.

If you prefer to have an iterative version using `while` loops + recursion you can check it here : [Carbon language Fibonacci series, print nth Fibonacci number](https://tipseason.com/carbon-language-fibonacci-sequence/)

```

package sample api;

fn Fibonacci(n: i32, a: i32, b: i32) -> i32 {

Print("{0} ", a);

if (n == 0) {

return a;

}

return Fibonacci(n - 1, b, a + b);

}

fn Main() -> i32 {

var n: i32 = 6;

let nthFibNumber : auto = Fibonacci(n, 1, 1);

Print("*****");

Print("(N+1)th fibonacci number : {0}", nthFibNumber);

return nthFibNumber;

}

```

### Understanding the code:

We use a recursive code to calculate nth fibonacci number .

`fib(n) = fib(n-1) + fib(n-2)`

To print the sequence, since `for` loops doesn't work in carbon yet, we will use print nth number using recursion. At each step we will replace the positions of `a` and `b` using `b` and `a+b` and print the nth number in the starting of recursion.

```

fn Fibonacci(n: i32, a: i32, b: i32) -> i32 {

Print("{0} ", a);

if (n == 0) {

return a;

}

return Fibonacci(n - 1, b, a + b);

}

```

Finally we call this in the main method. One thing to note is each time the method returns n+1 th fibonacci number in the recursion. So its easier to print nth fibonacci number too.

```

fn Main() -> i32 {

var n: i32 = 6;

let nthFibNumber : auto = Fibonacci(n, 1, 1);

Print("*****");

Print("(N+1)th fibonacci number : {0}", nthFibNumber);

return nthFibNumber;

}

```

Additional Carbon language Reading:

[Carbon language vs Rust detailed comparison]

(https://tipseason.com/carbon-language-vs-rust/)

[Carbon language memory management](https://tipseason.com/carbon-language-memory-safety/)

This is a part of carbon language series for beginners. Feel free to ask any questions regarding Carbon. | tipseason |

1,158,202 | TypeScript - The Best Way to Use It with React | Why TypeScript? I have another article that explains a lot about TypeScript, what it is... | 0 | 2022-08-03T09:10:00 | https://dev.to/omerwow/how-to-use-typescript-with-react-mn9 | webdev, typescript, react, beginners | ###Why TypeScript?

I have another article that explains a lot about TypeScript, what it is and how and why you should use it.

You're welcome to read about it here: https://dev.to/omerwow/how-i-began-using-typescript-3noe

In a nutshell, the benefits of using TypeScript include:

1. Catching errors early in the development process.

2. Making code easier to understand and maintain.

3. Providing a better development experience, with features like autocompletion and type checking.

###Getting started

To create a new React application with TypeScript, use the following command:

```

npx create-react-app my-app --template typescript

```

That's it, the Create React App CLI will create a new app with TypeScript configured properly and you can get started right away.

If, however, you have an existing React app that you want to convert to TypeScript, you're going to need to do a few extra steps.

Don't worry though, it's pretty simple!

First, install TypeScript and other required packages:

```

npm install --save typescript @types/node @types/react @types/react-dom @types/jest

```

Now, rename all .js files to .tsx files, and make sure to restart your dev server before continuing.

Also, a restart to your code editor / IDE may be needed or helpful as well.

The last thing you're going to need to do is to create a tsconfig.json file.

This file will usually be created for you when creating a new project, but since this an existing project, you're going to need to create it yourself.

In the root folder of your project, just create a new file called tsconfig.json, and paste the following inside it:

```

{

"compilerOptions": {

"target": "es5",

"lib": [

"dom",

"dom.iterable",

"esnext"

],

"allowJs": true,

"skipLibCheck": true,

"esModuleInterop": true,

"allowSyntheticDefaultImports": true,

"strict": true,

"forceConsistentCasingInFileNames": true,

"noFallthroughCasesInSwitch": true,

"module": "esnext",

"moduleResolution": "node",

"resolveJsonModule": true,

"isolatedModules": true,

"noEmit": true,

"jsx": "react-jsx"

},

"include": [

"src"

]

}

```

That's pretty much it.

Be aware that enabling TypeScript in an existing project can "introduce" or uncover some errors.

This is usually not a big deal and may even be pretty helpful and help you solve a few bugs. You're going to need to deal with them before continuing development.

Now that we have a working TypeScript React app, we can start utilizing TypeScript to improve our development.

###Writing .tsx files

We'll start with a simple React component that renders a header. Then we'll use TypeScript to add types and type safety to the component. Finally, we'll compile the TypeScript code to JavaScript and run the app.

First, let's create a simple React component that renders a header:

```

import React from 'react';

const Header = () => {

return (

<header>

<h1>Hello, world!</h1>

</header>

);

};

export default Header;

```

This Header component doesn't do much yet it just renders a header element with the text "Hello, world!" We can write this component in TypeScript or JavaScript. For this example, we'll write it in TypeScript.

### Adding Types with TypeScript

Now that we have a basic React component, let's add some types with TypeScript. We can start by adding types to our props and state:

```

import React from 'react';

interface HeaderProps {

message: string;

}

const Header = (props: HeaderProps) => {

return (

<header>

<h1>{props.message}</h1>

</header>

);

};

export default Header;

```

As you can see, we've added an interface for our props and specified that the message prop is of type string. This way, if we try to pass anything other than a string to the message prop, TypeScript will give us an error.

We can also add types to our state:

```

import React, { useState } from 'react';

const [count, setCount] = useState<number>(0);

const Header = (props: HeaderProps) => {

return (

<header>

<h1>{props.message}</h1>

<button onClick={() => setCount(count + 1)}>

Click me!

</button>

<p>You've clicked the button {count} times.</p>

</header>

);

};

export default Header;

```

As you can see, we've added types for our state and specified that the count state variable is of type number. This way, if we try to set the count state variable to anything other than a number, TypeScript will give us an error.

###Exploring the type safety of React event handlers

One of the benefits of using TypeScript with React is that developers can catch errors in their event handlers. Event handlers are a way to respond to user input in React applications. When an event occurs, such as a user clicking a button, The compiler will check the type of each parameter in the event handler function, and it will also check the return type of the function. If there is a mismatch in either of them, the compiler will throw an error. This means that developers can catch errors in their event handlers before the code runs.

However, there are some potential pitfalls when using TypeScript with React. One pitfall is that it is possible to write code that is valid TypeScript but will not compile because of an error in React. For example, take a look at the following code:

```

class MyComponent extends React.Component {

handleClick(event: MouseEvent) {

// do something

}

}

```

This code will not compile because of an error in React: "handleClick" must be declared as a static method on "MyComponent". However, this code is valid TypeScript, and it will only produce an error when it is compiled with React. This means that developers need to be aware of both TypeScript and React when they are writing their code.

In conclusion, TypeScript is a great way to improve your React code. It can help you catch errors, optimize performance, and make your code more readable. Plus, it's just plain fun to use.

Star our [Github repo](https://bit.ly/3QFgAUf) and join the discussion in our [Discord channel](https://bit.ly/3HQtlYo)!

Test your API for free now at [BLST](https://www.blstsecurity.com/?promo=blst&domain=https://dev.to/How_to_use_TypeScript_with_React)! | omerwow |

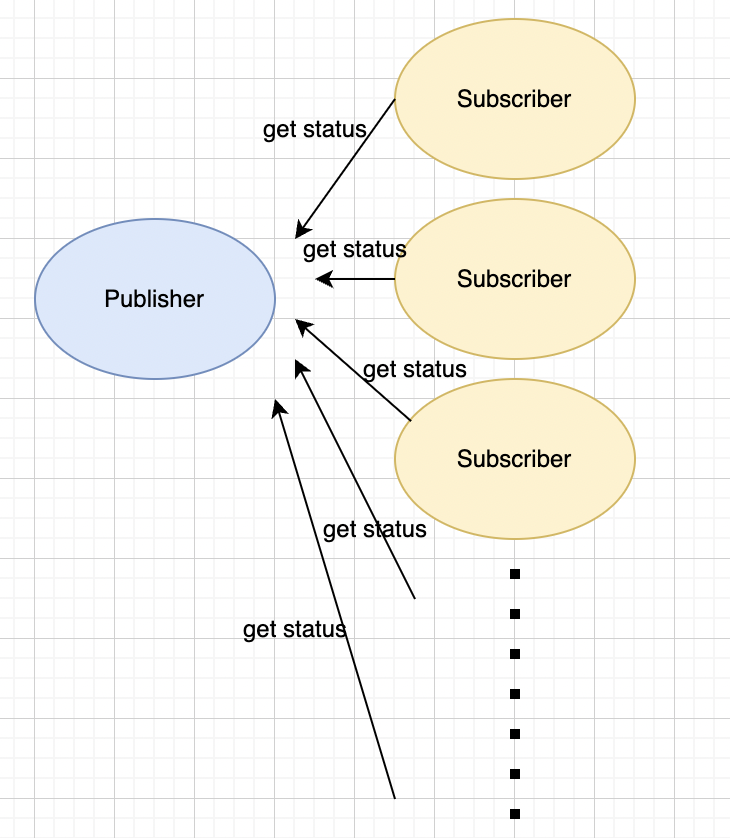

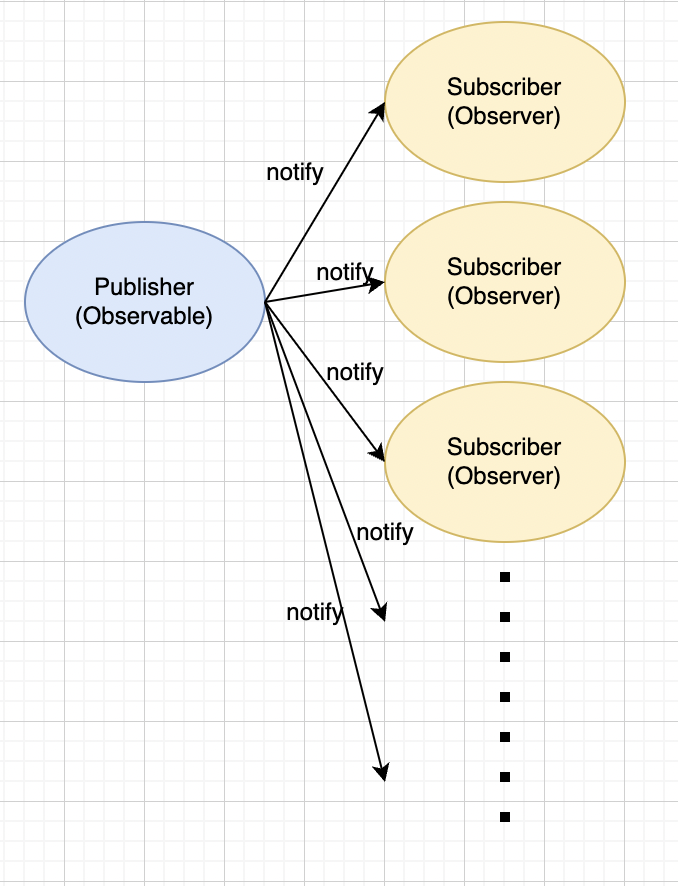

1,158,225 | [Design Pattern] Observer Pattern | The observer pattern is a one to many relationship dependency, and when one object(Observable object)... | 0 | 2022-08-08T07:37:11 | https://dev.to/edindevto/design-pattern-observer-pattern-4ipb | The observer pattern is a one to many relationship dependency, and when one object(Observable object) changes its status, all its dependencies(Observer objects) will be notified and updated accordingly.

**Scenario Problem**

Now we have a publisher object and many subscriber objects, and subscriber objects poll new status from the publisher. However, all these subscribers don't know when the publisher will update a new status, so they just keep polling new status from the publisher(like polling every 1 minute or something).

Now there comes a bad smell... What if there are like 1000 subscribers and each of them tries to get status every 10 seconds!?

As a result, the requesting traffic must explode and obviously there are lots of unnecessary calling... Also, subscribers might not get the most recent status - there is still up to 10 seconds delay before getting the status.

**Solution**

With the observable pattern, every time the publisher(Observable object) has a new status, it notifies all its subscribers. This way we can avoid lots of unnecessary callings, and all subscribers can get the most recent status immediately without delay.

**Implementation**

A very simple class diagram of the pattern is like the following:

[Implementation](https://dev-to-uploads.s3.amazonaws.com/uploads/articles/jmjrve1ll7jz5cgygmyd.png)

The `notify()` method will be like:

```java

public void notify() {

this.status = // Do what ever to get the latest status...;

this.subscribers.stream().foreach((s) => {

s.update(this.status);

});

}

```

| edindevto | |

1,158,231 | Conversational analysis AI tool | Hi there, Hope you are doing well. Me and a few people are starting this project where we will... | 0 | 2022-08-03T10:15:46 | https://dev.to/ayhan_dzhemalov_/conversational-analysis-ai-tool-55hh | Hi there,

Hope you are doing well.

Me and a few people are starting this project where we will develop an AI Conversational analysis to detect visual and tonal markers for sale purposes.

We are looking for people who could jump on board and help us with the creation of this tool and get a working prototype.

Anyone who is interested could DM me.

Regards,

Ayhan | ayhan_dzhemalov_ | |

1,158,311 | How to regain concentration and focus | This article was originally published at INNOQ. No sooner had I started a task, the next thing I... | 0 | 2022-08-03T12:34:00 | https://www.innoq.com/en/blog/wie-ich-meine-konzentration-wiederfand/ | productivity, motivation | This article was originally published at [INNOQ](https://www.innoq.com/en/blog/wie-ich-meine-konzentration-wiederfand/).

No sooner had I started a task, the next thing I knew I was doing something else. I distracted myself by checking my email inbox every so often, and I was addicted to checking what was going on in the world on various news websites, constantly interrupting the original task.

You're perfectly right: This sounds completely insane. Somehow these bad habits had sneaked silently into my life.

>News is to the mind what sugar is to the body.<p>

>Rolf Dobelli, Author and entrepreneur

And then two lightning bolts struck almost simultaneously: a book and a blog post.

I significantly changed my (digital) life as a result and within only two weeks I was able to get more done again, sleep better, and am significantly happier.

The short version: news diet and productive smartphone use.

<figcaption>Lightning strikes</figcaption>

## How my concentration was lost…

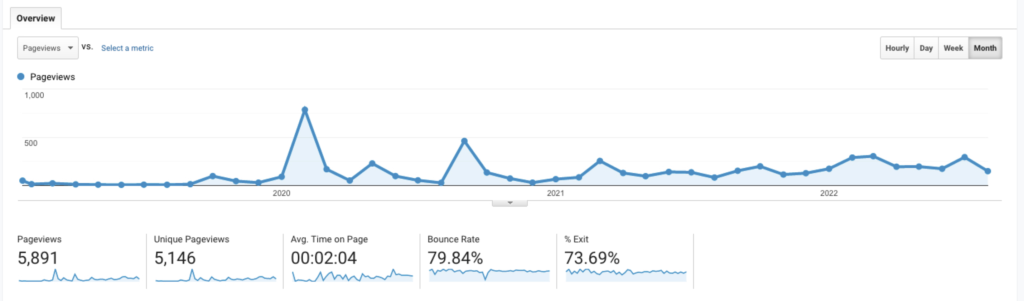

For years, I've been producing _content_ on a fairly regular basis in the form of talks, books, journal articles, blog posts, podcasts, and contributions to open-source projects (To quantify that: [30+ books or book editions](https://www.gernotstarke.de/buecher/) and about a hundred articles in various magazines within the last 20 years - that's still one and a half books and five articles on average per year).

In the last few months, I ran out of steam, which made me personally very discontent. In early 2022 I published the last blog post, and in late 2021 the last articles. For the third time, I postponed the new edition of the arc42-in-Action book ...

I had plenty of ideas, but somehow I couldn't put them down on paper. Unfortunately, ideas alone are not enough: the ideas also have to be made into reality, structured, and written down in comprehensible ways.

Moreover, I was always a dedicated textbook reader, and binge-read a new book every few weeks. That, too, became more and more difficult for me - even though I was genuinely interested in the topics of the unread books on the shelf.

Now, I could chalk it all up to the pandemic, my age (don't ask...), or whatever. But finding bogus excuses is just not my thing.

"You've got to do something about that," I told myself. At first, I tried to increase my productivity and creativity by getting up earlier. No such luck. Staying up later in the evening didn't work either.

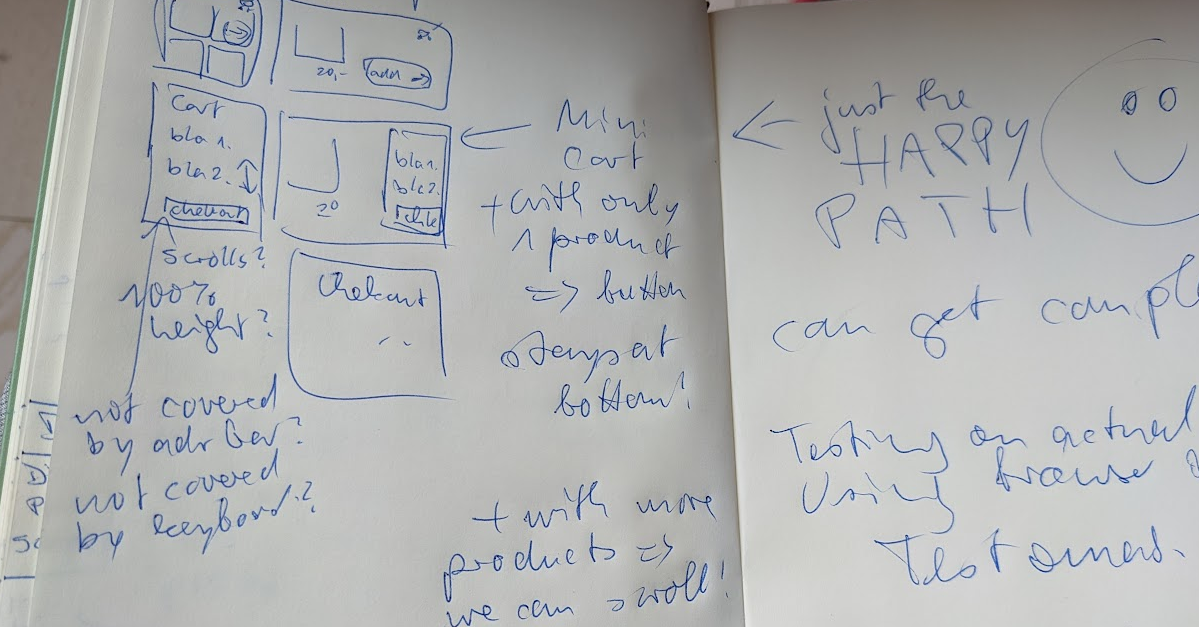

Then I tried what I've recommended (successfully!) countless times in my job as an IT consultant: A systematic analysis of the situation, a self-review.

#### The self-analysis

I tried to analyze my own way of working. I quickly noticed that it was becoming increasingly difficult for me to concentrate on individual tasks: No sooner had I started a task than I was already doing something else in between - or _distracted_ myself with emails or various news websites. Likewise, I sat at preparing a lecture - and checked the INNOQ Slack channels in between. Furthermore, I continued to write on a slide - and took a brief look at my smartphone to see what's been happening on WhatsApp or Signal. And since I was holding the phone in my hand anyway, I could read a few news items on BBC-News there too.

Did you know that smartphone users unlock and look at their phones an average of 80-100 times a day (source: [Cision Newswire](https://www.prnewswire.com/news-releases/americans-check-their-phones-96-times-a-day-300962643.html), several other sources giving similar numbers)? I was one of them.

News websites like [CNN](https://edition.cnn.com/), [WSJ](https://www.wsj.com/), and [BBC](https://www.bbc.com/) had me as a permanent customer.

For me, these context switches already had addiction-like traits.

Even while I was watching a series (yes, I admit that I like to _binge_ on one or two episodes), I would pick up the phone or tablet in between and casually check the news.

Against my better judgment, I also checked my e-mails first thing in the morning before I even drank my first espresso.

And in my inbox, there were always various news summaries, from stock-market news, and international politics up to technology updates.

Unfortunately, all this news has done me more harm than good: For my coaching and consulting engagements, I don't need to know anything about current domestic or foreign politics.

For my software architecture and engineering workshops, neither stock market trends nor the details of international terrorist acts are of any value.

My articles and books deal with software and software engineering, not with climate or politics.

## A self-discovery

Constantly switching between (sophisticated) technical work, the news from around the world, and private communication on personal and professional topics, I would argue, cost me a significant amount of my ability to concentrate.

My brain (apparently) doesn't handle frequent context switches well. Moreover, my brain must have unconsciously perceived distractions as something positive - and, like Pavlov's dog, kept wanting more of them, at increasingly shorter intervals.

<figcaption>A (Pavlovian) dog</figcaption>

I noticed the lack of concentration myself. However, my own fainthearted attempts, with Pomodoro timer and calming sounds, clearly failed.

Effective remedies _from the outside_ had to come first, two major sources helped me:

While travelling to an architecture workshop, I sat on the train, not reading the news for a change, but attempting to read a book.

For some time now, I have been using [Blinkist](https://www.blinkist.com/) to read short summaries of various non-fiction books, to find out for myself whether I is worth for me to read the original of these books in their entirety.

So - I'm sitting on the train and reading one of these [Blinks](https://www.dobelli.com/en/books/), when I'm struck by a mental lightning bolt: I felt so _caught_ by Rolf Dobelli's explanations, caught in my concentration trap, that I spontaneously decided to get serious. Let me quote from this book:

>**News is not good for us:**

>It clouds our mind, distorts our view of what is really important, robs us of time, makes us depressed and paralyzes our willpower.

Rolf Dobelli, Author and entrepreneur

Paralyze my willpower, rob me of time. </p>

That was precisely my problem.

While still sitting on the train, I decided to try a strict break from any news (_news_, not e-mails or other messages) for a while.

However, that wasn't enough: I remembered a blog post I had read a few years ago - about the [productive configuration of smartphones](https://betterhumans.pub/how-to-set-up-your-iphone-for-productivity-focus-and-your-own-longevity-bb27a68cc3d8), which has the promising subtitle _Configure Your iPhone to Work for You, Not Against You_.

I therefore decided to optimize my iPhone for maximum productivity and to reduce or even eliminate distractions.

## Drastic, but effective

1. A (currently very strict) **zero-news-diet**.

2. (Re)configure smartphone for high productivity and minimal distractions.

3. Reduce distractions on tablets and computers.

Add to that slight changes I made to my daily habits: I've resolved (and managed for a few weeks now) to stop staring at my phone in the morning, and to stop using my iPhone at all in the evening after 8 PM at the latest (exception: setting an alarm for the next morning).

## Zero News Diet

During the aforementioned train ride, I cancelled my subscription to my formerly favorite online news portal (the German "Spiegel-Online") and deleted the associated app from my iPhone. I used the initial motivation to cancel additional news subscriptions and deleted the their apps from my smartphone and iPad so that I wouldn't be tempted.

I have resolved **very firmly** not to read any news at all for a while. In other words, a zero news diet. That was really hard for me for two or three days. Yet, that alone was a startling realization for me: I was actually _addicted_ to news and variety... and _cold withdrawal_ is hard with any kind of addiction, as you know.

I purposefully invested the hours gained each day.

As a kind of early reward for my zero news diet, I treated myself to an interesting non-fiction book as a PDF (by the way, the infamous "Thinking, Fast and Slow" by the great Daniel Kahnemann).

The result: I was thrilled to have made good progress without any distractions.

To support my news diet, I instructed my browser (Firefox) to _not_ present me with recommendations for supposedly interesting or important topics on new tabs or windows.

![Firefox preferences: No more news suggestions]()

<figcaption>Firefox preferences: No more news suggestions</figcaption>

## Reconfigure Smartphone

The above quote, "Configure Your iPhone to Work for You, Not Against You," sets the objective:

It's the subtitle of a very long [blog post](https://betterhumans.pub/how-to-set-up-your-iphone-for-productivity-focus-and-your-own-longevity-bb27a68cc3d8) by Tony Stubblebine.

In addition to his many configuration suggestions, Tony also gives various advice for being more attentive and healthy, entirely worth reading in my opinion.

I summarize the things most important for me, which he illustrates in his article with many screenshots and explanations:

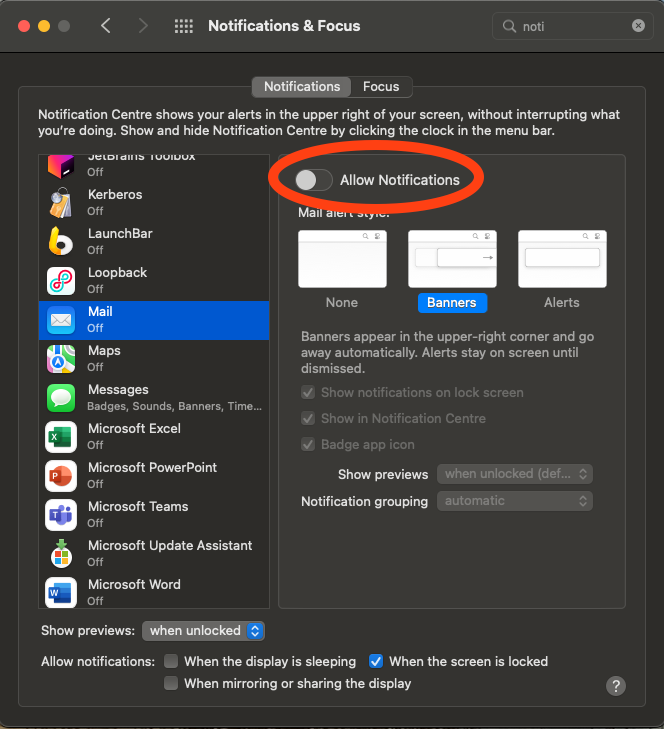

- Turn off (almost) all notifications – so those red dots aren't constantly vying for your attention or making you feel guilty.

- Hide _social media_ apps as well as possible. Facebook, Instagram, Twitter & Co act like drugs. They deserve to be removed from the start/home screen and moved somewhere in the back of your smartphone. I deleted the Twitter app and kept Instagram only because it allows me to catch up a bit with my adult children.

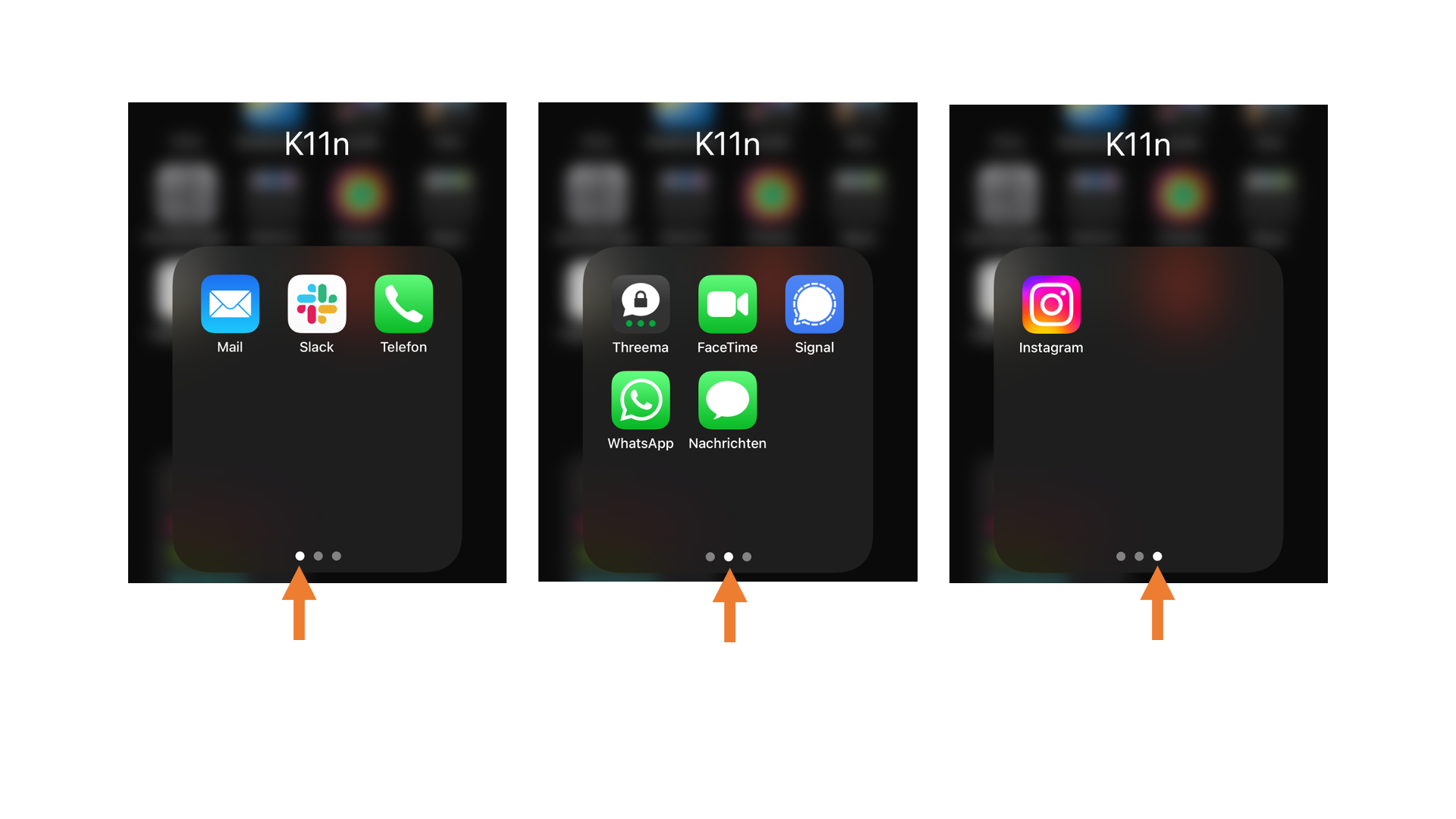

- Messaging apps (email, WhatsApp, Signal and co.) go into a folder, and preferably on the second screen of that folder. For me, it looks like this (K11n stands for communication):

<figcaption>Communication apps – unimportant ones need more “swipe” gestures</figcaption>

- Turn on do-not-disturb-mode, the longer, the better. At least from evening until morning.

- Lifting the phone should **not** unlock it. This setting is called "Display & Brightness/Activate on Lift" on the iPhone - and should definitely be turned off.

- Turn on the screen time widget – this gives me control over all the things I spend my smartphone time on... Meanwhile, I have made a sport out of getting to the smallest value possible.

- Turn on content and app restrictions. I only allow myself 15 minutes a day for email and Instagram.

- Install a swipe keyboard app, so I can text faster on the phone and not have to awkwardly _click_ each letter. I use [Microsoft Swiftkey](https://www.microsoft.com/en-us/swiftkey) (yes correct, a Microsoft app on the iPhone) for this, others do better with Google's [Gboard](https://en.wikipedia.org/wiki/Gboard).

- Wallpapers in muted colors. I chose a completely black home screen, and a reduced color lock screen:

<figcaption>iPhone backgrounds in muted colors</figcaption>

## Reduce distractions on tablets and computers

You will find similar preference settings on your tablet and your computer, respectively. I set those to reduce _notifications_ as much as possible:

<figcaption>Notifications settings, almost all turned off</figcaption>

## In case you fall off the wagon

In case you want to be a bit stricter with yourself, there are various blockers for popular desktop platforms that can prevent access to certain websites or applications for certain times. These can improve your digital self-discipline. I tried [ColdTurkey](https://getcoldturkey.com/) and like it a lot. However, I hope to become disciplined enough to stick to my new habits without this electronic tether… at least in the near future.

## Furthermore...

Besides the aforementioned settings and the zero news diet, I like to listen to _soundscapes_ for (supposedly) better mental focus when working intensively at my desk. I write _supposedly_ because I like this kind of aural background, but can't prove that it helps me in any way. My family, by the way, thinks it's horrible.

If you want to try it out: My two favorites are [Endel](https://endel.io/about) and [brain.fm](https://www.brain.fm/). Works on both smartphones and desktops (but please don’t say I didn’t warn you…).

I still haven't gotten comfortable with Pomodoro timers, they annoy me more than they help.

And finally, thanx to Łukasz, you might want to restrict your YouTube usage with the [unhook](https://unhook.app/) extension. I tried that and immediately liked it. You have to tweak the default settings, though. No more wasted hours due to the addictive YouTube proposition algorithm...

## Conclusion

Why don't you start a self-experiment, and refrain from reading sports, politics, business, and technology news as much as possible for a while? Reduce your smartphone time drastically for one or two weeks, and enjoy the time gained with a good book, a personal conversation or approach a previously abandoned activity that has been left undone so far (due to a perceived lack of time…)

Good luck – and I look forward to your feedback.

## Acknowledgements

Thanks to Jochen Christ, Joachim Praetorius, Jan Seeger and Ben Wolf for reviews and constructive comments. @m and Joy Heron have drastically improved readability and wording.

This article was originally published at [INNOQ](https://www.innoq.com/en/blog/wie-ich-meine-konzentration-wiederfand/), achieved a \#4 rank and more than 300 comments on [Hackernews](https://news.ycombinator.com)

### Image Sources

* [Dog](https://unsplash.com/photos/ngqyo2AYYnE)

* [Lightning](https://unsplash.com/photos/vmvlzJz1lHg)

* [Header image](https://unsplash.com/photos/7KLa-xLbSXA) by Paul Skorupskas

| gernotstarke |

1,158,532 | Answer: Accessing ASP.NET Core DI Container From Static Factory Class | answer re: Accessing ASP.NET Core DI... | 0 | 2022-08-03T17:27:15 | https://dev.to/wahidbitar/answer-accessing-aspnet-core-di-container-from-static-factory-class-931 | {% stackoverflow 55678060 %} | wahidbitar | |

1,158,940 | How to Read Barcode QR Code on the Server Side Using PHP Laravel | If you want to use PHP Laravel framework to build a web barcode and QR code reader, you can implement... | 0 | 2022-08-04T06:49:04 | https://www.dynamsoft.com/codepool/php-laravel-barcode-qr-code-reader.html | php, laravel, webdev, qrcode | If you want to use PHP Laravel framework to build a web barcode and QR code reader, you can implement the code logic either on the client side or on the server side. Dynamsoft provides a variety of SDKs for different platforms: desktop, mobile and web. In this article, we focus on how to leverage the PHP extension built with Dynamsoft C++ Barcode SDK to read barcode and QR code on the server side. If web client side programming is your type, please refer to [https://www.dynamsoft.com/barcode-reader/sdk-javascript/](https://www.dynamsoft.com/barcode-reader/sdk-javascript/).

## PHP Laravel Installation on Windows and Linux

Install [PHP 7.4](https://windows.php.net/download), [Composer](https://getcomposer.org/download/) and [Laravel](https://laravel.com/).

- [PHP 7.4](https://windows.php.net/download)

- Windows

[php-7.4.30-nts-Win32-vc15-x64.zip](https://windows.php.net/downloads/releases/php-7.4.30-nts-Win32-vc15-x64.zip)

- Linux

```bash

sudo apt install php7.4

```

- [Composer](https://getcomposer.org/download/)

- Windows

Run [Composer-Setup.exe](https://getcomposer.org/Composer-Setup.exe)

- Linux

```bash

php -r "copy('https://getcomposer.org/installer', 'composer-setup.php');"

php -r "if (hash_file('sha384', 'composer-setup.php') === '55ce33d7678c5a611085589f1f3ddf8b3c52d662cd01d4ba75c0ee0459970c2200a51f492d557530c71c15d8dba01eae') { echo 'Installer verified'; } else { echo 'Installer corrupt'; unlink('composer-setup.php'); } echo PHP_EOL;"

php composer-setup.php

php -r "unlink('composer-setup.php');"

sudo mv composer.phar /usr/local/bin/composer

```

- Laravel:

```bash

composer global require laravel/installer

```

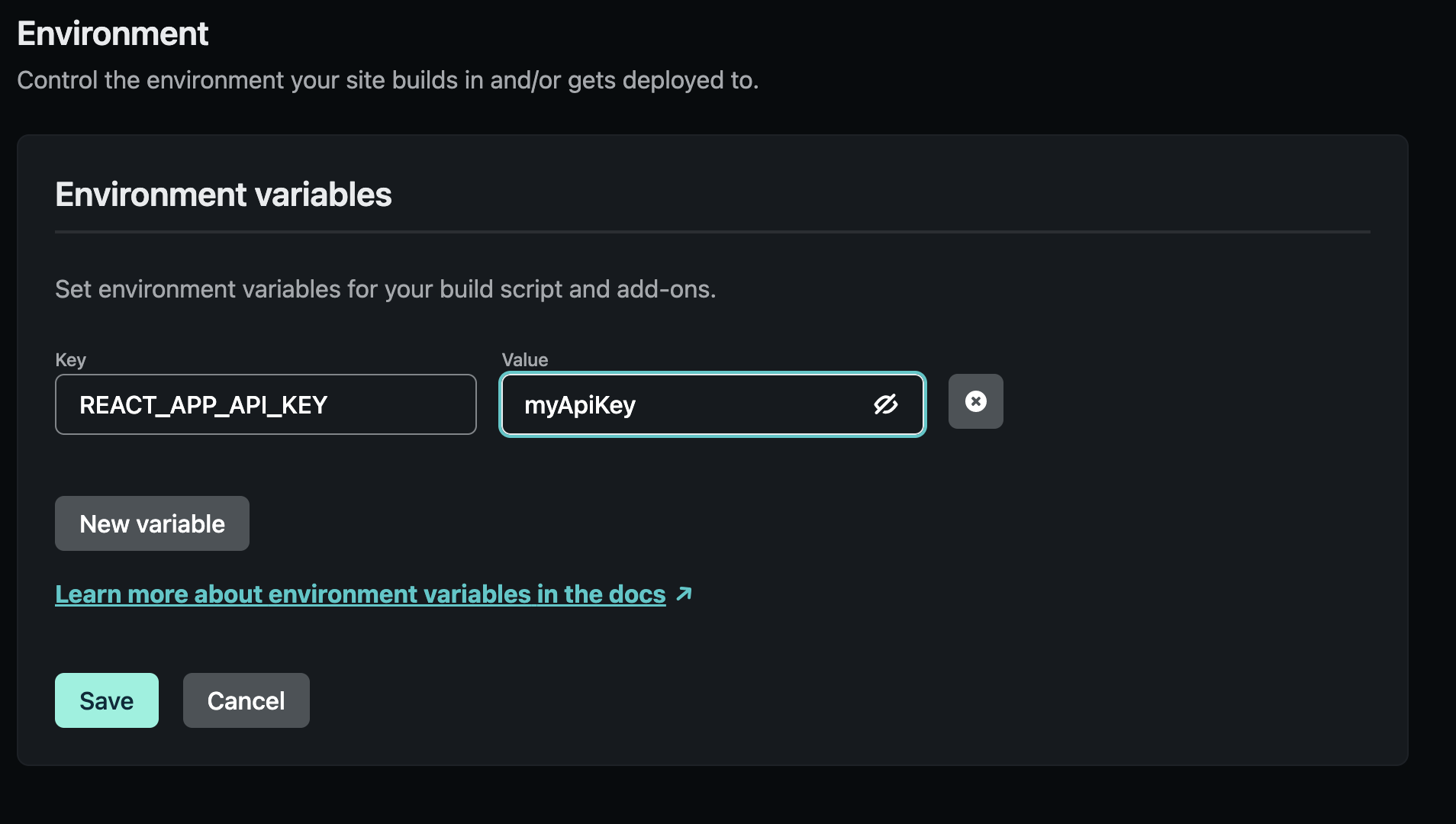

## Steps to Implement Server Side Barcode QR Code Reading Using PHP Laravel

In the following paragraphs, we will guide you through the process of developing a PHP Laravel project that can read barcode and QR code from image files on the server side.

### Step 1: Install the PHP Barcode QR Code Reader Extension

There is no pre-built binary package. To read barcode and QR code in PHP, you need to [build and install the PHP extension](https://github.com/yushulx/php-laravel-barcode-qr-reader/tree/main/ext/dbr) from source code on Windows and Linux.

### Step 2: Scaffold a Laravel Project

Once the extension is installed, you can start a new Laravel project.

```bash

composer create-project laravel/laravel web-barcode-qrcode-reader

```

The above command installs the latest stable version of Laravel. To avoid the compatibility issue, a better way is to specify the Laravel version number.

```bash

php artisan --version

Laravel Framework 8.83.23

composer create-project laravel/laravel:^8.0 web-barcode-qrcode-reader

```

### Step 3: Create a Controller

Laravel [controllers](https://laravel.com/docs/8.x/controllers) handle HTTP requests. We can create a controller to handle the uploaded image files and return the barcode and QR code decoding results.

```bash

php artisan make:controller ImageUploadController

```

The command generates an `ImageUploadController.php` file in the `app/Http/Controllers` directory. Open the file to add the following code:

```php

<?php

namespace App\Http\Controllers;

use Illuminate\Http\Request;

use Validator;

class ImageUploadController extends Controller

{

function __construct() {

DBRInitLicense("DLS2eyJoYW5kc2hha2VDb2RlIjoiMjAwMDAxLTE2NDk4Mjk3OTI2MzUiLCJvcmdhbml6YXRpb25JRCI6IjIwMDAwMSIsInNlc3Npb25QYXNzd29yZCI6IndTcGR6Vm05WDJrcEQ5YUoifQ==");

DBRInitRuntimeSettingsWithString("{\"ImageParameter\":{\"Name\":\"BestCoverage\",\"DeblurLevel\":9,\"ExpectedBarcodesCount\":512,\"ScaleDownThreshold\":100000,\"LocalizationModes\":[{\"Mode\":\"LM_CONNECTED_BLOCKS\"},{\"Mode\":\"LM_SCAN_DIRECTLY\"},{\"Mode\":\"LM_STATISTICS\"},{\"Mode\":\"LM_LINES\"},{\"Mode\":\"LM_STATISTICS_MARKS\"}],\"GrayscaleTransformationModes\":[{\"Mode\":\"GTM_ORIGINAL\"},{\"Mode\":\"GTM_INVERTED\"}]}}");

}

function page()

{

return view('barcode_qr_reader');

}

function upload(Request $request)

{

$validation = Validator::make($request->all(), [

'BarcodeQrImage' => 'required'

]);

if($validation->passes())

{

$image = $request->file('BarcodeQrImage');

$image->move(public_path('images'), $image->getClientOriginalName());

$resultArray = DecodeBarcodeFile(public_path('images/' . $image->getClientOriginalName()), 0x3FF | 0x2000000 | 0x4000000 | 0x8000000 | 0x10000000); // 1D, PDF417, QRCODE, DataMatrix, Aztec Code

if (is_array($resultArray)) {

$resultCount = count($resultArray);

echo "Total count: $resultCount", "\n";

if ($resultCount > 0) {

for ($i = 0; $i < $resultCount; $i++) {

$result = $resultArray[$i];

echo "Barcode format: $result[0], ";

echo "value: $result[1], ";

echo "raw: ", bin2hex($result[2]), "\n";

echo "Localization : ", $result[3], "\n";

}

}

else {

echo 'No barcode found.', "\n";

}

}

return response()->json([

'message' => 'Successfully uploaded the image.'

]);

}

else

{

return response()->json([

'message' => $validation->errors()->all()

]);

}

}

}

```

In `__construct()` method, you initialize the barcode SDK instance by setting a valid license key, which can be obtained from [Dynamsoft customer portal](https://www.dynamsoft.com/customer/license/trialLicense/?product=dbr). Calling `DBRInitRuntimeSettingsWithString()` is optional, because the default settings are suitable for most cases.

The uploaded images are saved to the `public/images` directory. The `DecodeBarcodeFile()` method is used to read barcode and QR code from the image file.

The next step is to create the `barcode_qr_reader` view.

### Step 4: Create a Web View

Create a `barcode_qr_reader.blade.php` file in the `public/resources/views` directory. The file contains the HTML5 code for uploading an image via a form.

```php

<!DOCTYPE html>

<html>

<head>

<title>PHP Laravel Barcode QR Reader</title>

<meta name="_token" content="{{csrf_token()}}" />

</head>

<body>

<H1>PHP Laravel Barcode QR Reader</H1>

<form action="{{ route('image.upload') }}" method="post" enctype="multipart/form-data">

@csrf

Select barcode image:

<input type="file" name="BarcodeQrImage" id="BarcodeQrImage" accept="image/*"><br>

<input type="submit" value="Read Barcode" name="submit">

</form>

<img id="image" />

<script>

var input = document.querySelector('input[type=file]');

input.onchange = function() {

var file = input.files[0];

var fileReader = new FileReader();

fileReader.onload = function(e) {

{

let image = document.getElementById('image');

image.src = e.target.result;

}

}

fileReader.readAsDataURL(file);

}

</script>

</body>

</html>

```

[CSRF Protection](https://laravel.com/docs/8.x/csrf) is required for the form. A convenient way is to use the `@csrf` Blade directive to generate the hidden token input field:

```html

<form action="{{ route('image.upload') }}" method="post" enctype="multipart/form-data">

@csrf

...

</form>

```

As the web page is done, one more step is to add the web routes in `public/routes/web.php`.

```php

Route::get('/barcode_qr_reader', 'App\Http\Controllers\ImageUploadController@page');

Route::post('/barcode_qr_reader/upload', 'App\Http\Controllers\ImageUploadController@upload')->name('image.upload');

```

### Step 5: Run the PHP Laravel Barcode QR Code Reader

Now you can run the PHP Laravel project and visit `http://127.0.0.1:8000/barcode_qr_reader` in your browser.

```bash

php artisan serve

```

## Source Code

[https://github.com/yushulx/php-laravel-barcode-qr-reader](https://github.com/yushulx/php-laravel-barcode-qr-reader) | yushulx |

1,159,175 | There are many variations of passages of Lorem Ipsum available, but the majority have suffered alteration in some form | making it over 2000 years old. Richard McClintock, a Latin professor at Hampden-Sydney College in... | 0 | 2022-08-04T12:56:00 | https://dev.to/kkumargcc/there-are-many-variations-of-passages-of-lorem-ipsum-available-but-the-majority-have-suffered-alteration-in-some-form-by-in-2jmk | javascript, lorem, testing | making it over 2000 years old. Richard McClintock, a Latin professor at Hampden-Sydney College in Virginia, looked up one of the more obscure Latin words, consectetur, from a Lorem Ipsum passage, and going through the cites of the word in classical literature, discovered the undoubtable source. Lorem Ipsum comes from sections 1.10.32 and 1.10.33 of "de Finibus Bonorum et Malorum" (The Extremes of Good and Evil) by Cicero, written in 45 BC. This book is a treatise on the theory of ethics, very popular during the Renaissance. The first line of Lorem Ipsum, "Lorem ipsum dolor sit amet..", comes from a line in section 1.10.32.

The standard chunk of Lorem Ipsum used since the 1500s is reproduced below for those interested. Sections 1.10.32 and 1.10.33 from "de Finibus Bonorum et Malorum" by Cicero are also reproduced in their exact original form, accompanied by English versions from the 1914 translation by H. Rackham.

Where can I get some?

There are many variations of passages of Lorem Ipsum available, but the majority have suffered alteration in some form, by injected humour, or randomised words which don't look even slightly believable. If you are going to use a passage of Lorem Ipsum, you need to be sure there isn't anything embarrassing hidden in the middle of text. All the Lorem Ipsum generators on the Internet tend to repeat predefined chunks as necessary, making this the first true generator on the Internet. It uses a dictionary of over 200 Latin words, combined with a handful of model sentence structures, to generate Lorem Ipsum which looks reasonable. The generated Lorem Ipsum is therefore always free from repetition, injected humour, or non-characteristic | kkumargcc |

1,159,567 | Rust - Struct, Generics | 0 | 2022-08-05T16:51:19 | https://dev.to/deadlock/rust-struct-generics-4k3e | rust, programming | ---

published: true

title: 'Rust - Struct, Generics'

cover_image: 'https://github.com/kodelint/blog-assets/raw/main/images/01-rust-struct.jpg'

description: null

tags: 'rust, programming'

series: null

canonical_url: null

id: 1159567

date: '2022-08-05T16:51:19Z'

---

Let’s talk about the some custom **data types** in **Rust** like `struct` and `emuns` . We all know why need **custom data type**, like any other language regular data type may not suffice the need and hence we have custom data types.

### Structs, How to use them in `Rust` ?

**Structure**, `struct` in short, are very similar to `tuple` . **Tuple** are used to store related items with mixed data type in **Order**. Now if the use case is to have large number of elements and the **Order** is not obvious then it could be difficult to identify them out of Tuples

<img align="left" src="https://github.com/kodelint/blog-assets/raw/main/images/01-rust-tuple.png" width="650" height="410" style="float:left; padding-right:20px"/>

```bash

the first element of tuple [a_tuple] is 1

the last element of tuple [a_tuple] is Rust

the first element of tuple [a_tuple] after modification is 6

the last element of tuple [a_tuple] after modification is Rustic

```

So you can see that if number of elements are larger than normal then it becomes very difficult to keep track of the order and that’s when we use **`struct`**.

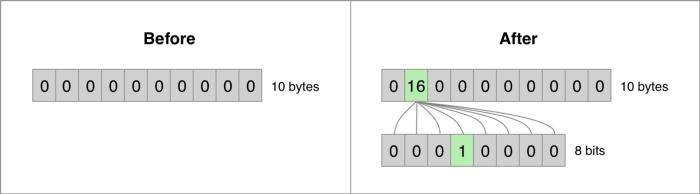

> _**Struct**_ data are usually stored in usually stored in **stack** given it contain **stack** only data types like *numbers*. To store *Struct* in **heap** you have specifically mentioned that. Also, if your _**Struct**_ contain **heap** data types like `String` then it will be stored in **heap** and reference, associated data of the **heap** data will be stored in **stack**. So, when your _**Struct**_ instance goes **out of scope** the associated data in **heap** will be **automatically dropped**.

Defining a `struct` in `Rust` is similar to `Golang`. `Golang` require `type` keyword along side with `struct` keyword

<img align="left" src="https://github.com/kodelint/blog-assets/raw/main/images/01-rust-vs-golang-struct.jpeg" width="450" height="310" style="float:left; padding-right:20px"/>

A `struct` is like a `tuple`, which allows you to package together related items with mixed data type, however you don’t need to use the **index** to access the elements, instead you use the field name to access them.

**Struct** also allow us to update an `instance` from another `instance` and the syntax is pretty simple called **update syntax**, which basically tells `complier` if there are missing field in instance should have the same field from previous instance.

```rust

let second_car = Car {

name : String::*from*("Tesla"),

model : String::from("Model 3"),

..new_car,

};

```

**Any update to the first instance after the second instance initialization will not reflect in second instance.**

> If you see then the missing fields are `int` datatype means they live in **stack**, therefore they gets implicitly copied. However, if there were any `String` datatype involved, it would have error time as it violates the **ownership** **rule** of rust . To make that work we need to use explicit **clone** for copying the data from first instance, something like this **`..new_car.clone()`**. To know more about [ownership and borrowing](https://medium.com/p/5ba45c44f986). Also we have to add `trait` as the `Car` datatype doesn’t have the `trait` to `Clone()` data, so we need to derived that at the **struct definition**, something like this `#[derive(Clone)]`.

### Struct has methods

In Rust we can call subroutines, which are **method** for the **struct**. They are pretty much like a **function** and defined using `fn` key word. The difference is between **methods** and **function** is that **method** are always within the context of the `struct` and the first input parameter is alway the `struct` itself.

To define the **method** we need to `impl` key word _(short for implementation)_ followed by the `struct` name

<img src="https://github.com/kodelint/blog-assets/raw/main/images/01-rust-struct-methods.png" width="870" height="280" />

### Struct has function too

Rust also allow us to create **associated functions**, they are pretty much like `method`, however they don’t take `&self` as argument. They are mostly used to create initialize the new instance of custom datatype, like a **constructors** in other object oriented languages.

<img src="https://github.com/kodelint/blog-assets/raw/main/images/01-rust-associative-function.png" width="870" height="500" />

> There is something call **Struct Tuple**, it is a combination of **Struct** and **Tuple**. In `Rust` **Struct Tuple’s** are defined similarly as **Struct** but they don’t have any named field. It is usually deployed to make a custom type with mixed primary datatypes, however don’t need field to be named. Something like `struct CarFeatures(4, "electric", "falcon doors")`

So this is how the whole code looks like

<img src="https://github.com/kodelint/blog-assets/raw/main/images/01-rust-struct-tuple.png" width="870" height="1500" />

```bash

Car Name: Runner, Model: Tesla Model Y, Year: 2022, Price: $70000/-

Price of Tesla Model Y has increased to $75000/-

Car Name: Beast, Model: Tesla Plaid, Year: 2022, Price: $110000/-

Price of Tesla Plaid has increased to $135000/-

```

### Generic Types … Yay!!

Rust is **statically** type language so a defined `struct`, `function` or `method` can only be used for it own defined variable data types. Which mean you might end up maintaining same code body for `struct`, `function` or `method` with different data types. What if we can define `struct`, `function` or `method` in such a way that we can use any data type with it. Enters…..**Generic Type!!**

<img src="https://github.com/kodelint/blog-assets/raw/main/images/01-rust-generics.jpg" width="970" height="1500" />

Above we have struct `Car` with generic type _(denoted using `<..>`)_ `<S,I,T>` which feeds the `type` for struct fields. Similarly, a `impl` block to define `new_car` and `update_price` **methods**. They are also using generic types. Lastly, a function choose with `generics`, used to get confirm for the right choice based on `electric_per_unit` and `gas_per_gallon` price constraints.

> Don’t worry about the `traits` like `std::cmp::PartialOrd` and `std::ops::AddAssign` for now. I will have separate blog explaining them. For now, we need them because `rust` complier doesn’t know what kind of data types `generics` will have to perform **`comparison`** and **`addition`**.

### Box Datatype