id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,225,931 | How to use Tailwind CSS 3 with Headless UI In React | In this section we will install tailwind v3 headless ui react. Headless UI is a set of completely... | 0 | 2022-10-21T06:16:21 | https://larainfo.com/blogs/how-to-use-tailwind-css-3-with-headless-ui-in-react | react, tailwindcss, headlessui, webdev | In this section we will install tailwind v3 headless ui react. Headless UI is a set of completely unstyled, fully accessible UI components, designed to integrate beautifully with Tailwind CSS. it is also provide dropdown menu, lightbox, Switch (Toggle), Dialog (Modal), Popover, Radio Group, Transition, Tabs. So you can easily copy and paste code in you project.

### Tool Use

**Tailwind CSS 3.x**

**[Headless UI](https://headlessui.com/)**

**React JS**

### Install Tailwind CSS v3 In React

Create react project

```react

npx create-react-app react-headlessui

```

move to project folder & run.

```react

cd react-headlessui

npm start

```

Install tailwind v3.

```react

npm install -D tailwindcss postcss autoprefixer

```

Create tailwind config file.

```react

npx tailwindcss init

```

Next, you need to set tailwind config path.

_tailwind.config.js_

```react

module.exports = {

content: [

"./src/**/*.{js,jsx,ts,tsx}",

],

theme: {

extend: {},

},

plugins: [],

}

```

Add the @tailwind directives for each of Tailwind’s layers to your ./src/index.css file.

_index.css_

```css

@tailwind base;

@tailwind components;

@tailwind utilities;

```

_App.js_

```react

function App() {

return (

<div className="container mx-auto mt-4">

<h1 className="text-xl font-bold ">

Setup Tailwind CSS 3 with{' '}

<span className="text-transparent bg-clip-text bg-gradient-to-r from-green-400 via-blue-500 to-purple-600">

Headless UI In React

</span>

</h1>

</div>

);

}

export default App;

```

**Install headless ui**

To get started, install Headless UI via npm or yarn:

```react

# npm

npm install @headlessui/react

# Yarn

yarn add @headlessui/react

```

Now lets test headless ui toggle code.

_App.js_

```react

import { useState } from 'react'

import { Switch } from '@headlessui/react'

export default function App() {

const [enabled, setEnabled] = useState(false)

return (

<div className="container mx-auto mt-20">

<h1 className="text-xl font-bold ">

Tailwind Headless UI {' '}

<span className="text-transparent bg-clip-text bg-gradient-to-r from-green-400 via-blue-500 to-purple-600">

Switch (Toggle)

</span>

</h1>

<div className="ml-28">

<Switch

checked={enabled}

onChange={setEnabled}

className={`${enabled ? 'bg-teal-900' : 'bg-teal-700'}

relative inline-flex flex-shrink-0 h-[38px] w-[74px] border-2 border-transparent rounded-full cursor-pointer transition-colors ease-in-out duration-200 focus:outline-none focus-visible:ring-2 focus-visible:ring-white focus-visible:ring-opacity-75`}

>

<span className="sr-only">Use setting</span>

<span

aria-hidden="true"

className={`${enabled ? 'translate-x-9' : 'translate-x-0'}

pointer-events-none inline-block h-[34px] w-[34px] rounded-full bg-white shadow-lg transform ring-0 transition ease-in-out duration-200`}

/>

</Switch>

</div>

</div>

)

}

```

run project via npm or yarn.

```react

# npm

npm start

# Yarn

yarn start

```

You can use more tailwind headless components in [doc](https://headlessui.com/).

[](https://www.buymeacoffee.com/same6)

### Read Also

👉 [Tailwind CSS Halloween Buttons Tutorial Example](https://larainfo.com/blogs/tailwind-css-halloween-buttons-tutorial-example)

👉 [Tailwind CSS List Style Marker Example](https://larainfo.com/blogs/tailwind-css-list-style-marker-example)

👉 [Create a Google Clone UI using Tailwind CSS](https://larainfo.com/blogs/create-a-google-clone-ui-using-tailwind-css)

👉 [Tailwind CSS Use Custom Fonts Example](https://larainfo.com/blogs/tailwind-css-use-custom-fonts-example)

👉 [Tailwind CSS Line Chart Example](https://larainfo.com/blogs/tailwind-css-line-chart-example)

👉 [Tailwind CSS Gradient Button Example](https://larainfo.com/blogs/tailwind-css-gradient-button-example)

👉 [Tailwind CSS Text Gradient Example](https://larainfo.com/blogs/tailwind-css-text-gradient-example)

👉 [Tailwind CSS Simple POST CRUD UI Example](https://larainfo.com/blogs/tailwind-css-simple-post-crud-ui-example)

👉 [Tailwind CSS Thank You Page Example](https://larainfo.com/blogs/tailwind-css-thank-you-page-example)

👉 [Tailwind CSS 3 Breadcrumb Example](https://larainfo.com/blogs/tailwind-css-3-breadcrumb-example)

👉 [Tailwind CSS 3D Button Example](https://larainfo.com/blogs/tailwind-css-3d-button-example)

👉 [How to Use Custom Colors in Tailwind CSS](https://larainfo.com/blogs/how-to-use-custom-colors-in-tailwind-css)

👉 [How to Use Strike Tag (cut text) in Tailwind CSS](https://larainfo.com/blogs/how-to-use-strike-tag-cut-text-in-tailwind-css)

👉 [Tailwind CSS Headings Typography Examples](https://larainfo.com/blogs/tailwind-css-headings-typography-examples)

👉 [Tailwind CSS Product List Example](https://larainfo.com/blogs/tailwind-css-product-list-example)

👉 [How to Center a Div in Tailwind CSS](https://larainfo.com/blogs/how-to-center-a-div-in-tailwind-css)

| saim_ansari |

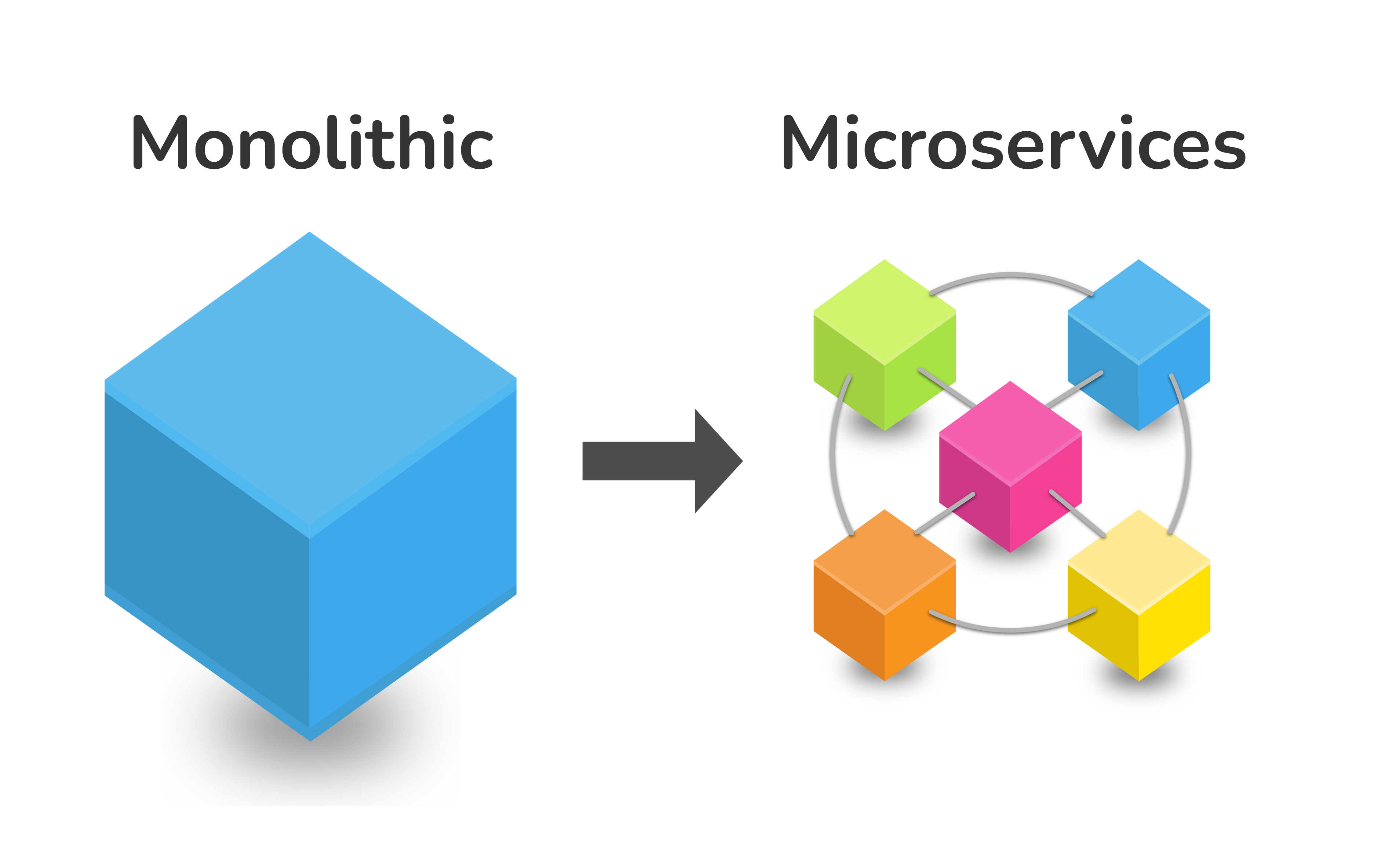

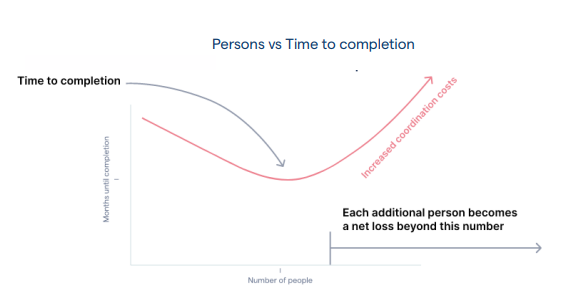

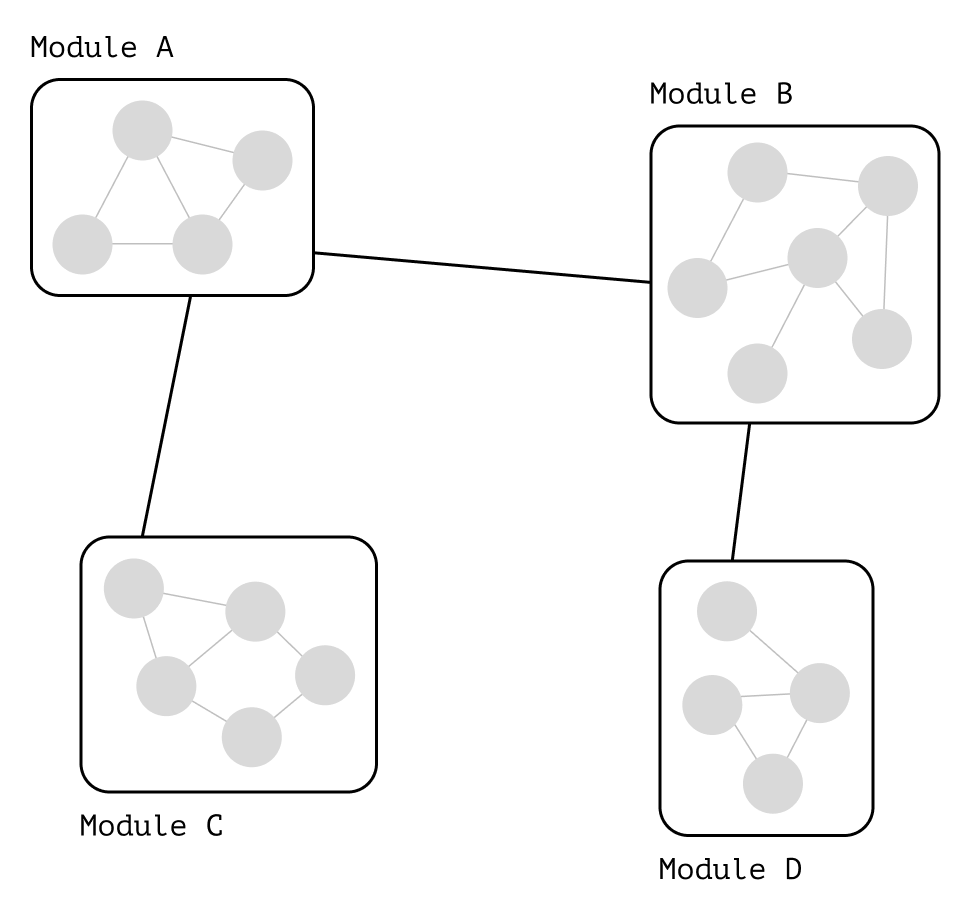

1,225,937 | Microservices | Microservice software architecture is something opposite of monolithic architecture. For small... | 0 | 2022-10-21T06:30:04 | https://dev.to/jungle_sven/microservices-42o | microservices, python, programming, api | Microservice software architecture is something opposite of monolithic architecture. For small projects, monolithic architecture is the most straightforward way to think about its design in general. But any mature project cannot be developed and supported easily if it is a monolith.

The microservice pattern forces developers to divide the project not just into separate modules and classes but into individual tiny apps called microservices. They can be deployed in different data centers, supported by other teams, etc.

Now we will build a simple microservice for our trading software. It will receive our order data via API and save it to the local database. Of course, it is possible to save that data to a local disk. Still, suppose we want to build high-frequency trading software. In that case, we don't want to waste any local resources on logging or statistics, or maybe we want to outsource the development of a feature to a different developers team.

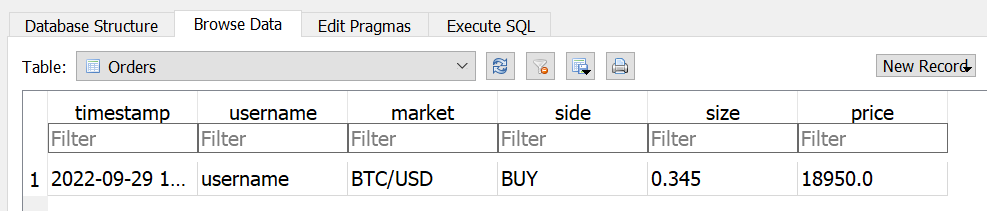

First of all, we will build a basic database connector. We will use the SQLite database for educational purposes. We will create a table of Orders containing six fields: timestamp, username, market, side, size, and price.

Example code:

```plaintext

class Database:

#this is a DB connector

#we will use SQLite in this example for simplicity

def init(self):

#filename and path to the database are hardcoded for simplicity

self.connect_to = 'test.db'

def create_table_orders(self):

#a func to create our database

conn = sqlite3.connect(self.connect_to)

conn.execute('''CREATE TABLE if not exists Orders

(timestamp TEXT NOT NULL,

username TEXT NOT NULL,

market TEXT NOT NULL,

side TEXT NOT NULL,

size FLOAT NOT NULL,

price FLOAT NOT NULL

);''')

conn.close()

```

Database connector will have only one method implemented to save order data to the database.

Example code:

```plaintext

def add_data_orders(self, timestamp, username, market, side, size, price):

#a func to save orders data

conn = sqlite3.connect(self.connect_to)

conn.execute("INSERT INTO Orders (timestamp, username, market, side, size, price) VALUES (?, ?, ?, ?, ?, ?)", (timestamp,

username, market, side, size, price));

conn.commit()

conn.close()

```

Second, we need an API server. Creating a simple API server with the Flask module in less than 30 lines of code is possible. It will be able to receive HTTP POST requests with order data and save it to the database.

Example code:

```plaintext

@app.post("/API/orders")

def save_orders():

if request.is_json:

response = request.get_json()

DB.add_data_orders(response['timestamp'], response['username'], response['market'], response['side'],

response['size'], response['price'])

return response, 201

return {"error": "Request must be JSON"}, 415

```

You can find complete database connector code and API SERVER code on GitHub.

And finally, we need an API connector for our service. Our API connector will use the requests library to make POST HTTP requests to our API server.

Example code:

```plaintext

def generate_request(self, order):

try:

response = requests.post(self.api_url, json=order)

print(response)

except Exception as e:

print('generate_request - Exception', e)

```

You can find the complete API CLIENT code on GitHub.

In ~100 lines of code, we created a database connector, API server, and API client to save order data to the database on the remote server.

The code is available in this [Github repo](https://github.com/Jungle-Sven/efficient_trading_software/blob/main/3_microservices_server.py). | jungle_sven |

1,226,180 | Tailwind CSS Grid System | Author: Abdullah Numan Introduction In this article we look at how to implement... | 0 | 2022-10-21T13:46:38 | https://refine.dev/blog/tailwind-grid/ | tailwindcss, react, webdev, css | **Author: <a target="_blank" href="https://refine.dev/blog/author/abdullah_numan/">Abdullah Numan</a>**

## Introduction

In this article we look at how to implement responsive layouts with CSS Grid using **TailwindCSS Grid** classes.

TailwindCSS, or just Tailwind, is a CSS framework used for rapidly building websites. It comes with a core set of already defined CSS utility classes that can be composed and easily custom configured afterwards to implement any design with respect to responsiveness, layout and themes.

It is possible to build multi column layouts using Tailwind with its Flexbox classes by dividing the width of the container with `w-{fraction}` classes. However, with versions 1.2 and above, we can build highly elaborate layouts with Tailwind's CSS Grid classes.

Steps we'll cover:

- [What is Tailwind Grid System](#what-is-tailwind-grid-system)

- [Basic Tailwind Grid Example](#basic-tailwind-grid-example)

- [Add Grid Formatting Context](#add-grid-formatting-context)

- [Column Numbers](#column-numbers)

- [Grid Gap](#grid-gap)

- [Responsive Column Numbers](#responsive-column-numbers)

- [Size and Placement](#size-and-placement)

- [Setting Size with Span](#setting-size-with-span)

- [Placement](#placement)

- [Grid Rows](#grid-rows)

- [Span Rows](#span-rows)

- [Reorder Regions](#reorder-regions)

- [Customizing TailwindCSS Theme](#customizing-tailwindcss-theme)

## What is Tailwind Grid System

Unlike Twitter's Bootstrap and Foundation, that still use flexbox to implement their 12 column layout under the hood, Tailwind uses CSS Grid to allow us build responsive and highly customizable layouts of any number of columns and rows. It ships with CSS classes that implement a CSS Grid container with names like `grid`, `grid-cols-{n}` and `grid-rows-{n}`, etc. It also comes with Grid child classes that helps us define grid behavior of child elements of the grid with classes like `col-span-{n}`, `row-span-{n}`, and so on.

Tailwind's default configuration allows a maximum of 12 columns on a screen. It can be customized from the `tailwind.config.js` file. Many other options related to Tailwind's CSS Grid classes can be altered according to our taste and needs. In this post, we are going to explore extending the number of columns to 16.

In this post, mostly, we will be playing with responsive application of **Tailwind Grid** classes that allow us to change layouts after a certain breakpoint, such as using `grid-cols-3 md:grid-cols-3`.

I recommend following the documentation for Grid classes starting from [this section](https://tailwindcss.com/docs/grid-template-columns) of TailwindCSS refrences and those that follow.

## Project Goals

We'll implement a simple layout having a navbar, a side content area, a main content area and a footer. It will consist of three columns and five rows.

We'll start from scratch with a set of `div`s that follow the Block Formatting Context or **BFC**, and then gradually cover the concepts related to **Grid Formatting Context** by introducing new classes according to our needs.

## Project Setup

Before we begin though, in order to get things ready, follow these steps:

1. Navigate to a folder of your choice and clone [this repo](https://github.com/anewman15/tailwindcss-grid)

2. Open the cloned repository. It is important that it has the Live Server added and enabled.

3. Install dependencies with ```npx tailwindcss -i ./src/styles.css -o ./dist/styles.css --watch```

4. Start Live Server and navigate to the port number. Or just click on the `Go Live` button at the bottom right corner of the your code editor:

## Basic Tailwind Grid Example

First, we have the following `index.html` document that is linked to the TailwindCSS styles in the `<head>`:

```html

<!-- index.html -->

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<link href="/dist/styles.css" rel="stylesheet">

<title>Document</title>

</head>

<body>

<div class="container m-auto">

<div class="tile bg-teal-500">

<h1 class="tile-marker">ONE</h1>

</div>

<div class="tile bg-amber-500">

<h1 class="tile-marker">TWO</h1>

</div>

<div class="tile bg-yellow-500">

<h1 class="tile-marker">THREE</h1>

</div>

<div class="tile bg-lime-600">

<h1 class="tile-marker">FOUR</h1>

</div>

<div class="tile bg-green-600">

<h1 class="tile-marker">FIVE</h1>

</div>

<div class="tile bg-emerald-500">

<h1 class="tile-marker">SIX</h1>

</div>

<div class="tile bg-teal-500">

<h1 class="tile-marker">SEVEN</h1>

</div>

<div class="tile bg-purple-500">

<h1 class="tile-marker">EIGHT</h1>

</div>

<div class="tile bg-pink-500">

<h1 class="tile-marker">NINE</h1>

</div>

</div>

</body>

</html>

```

## Add Grid Formatting Context

If we introduce `grid` class to the container `div`, nothing happens:

```html

<div class="container m-auto grid">

```

That's because the default `grid` has only one column.

### Column Numbers

Let's add three explicit columns:

```html

<div class="container m-auto grid grid-cols-3">

```

Now, we have all the `div`s flowed into 3 columns according to Grid Formatting Context:

Notice that `grid-cols-3` just divides the screen into 3 equal parts of each `1fr` as with: `grid-template-columns: repeat(3, minmax(0, 1fr));`.

### Grid Gap

Let's add some gap with `gap-{size}` class. We have to set it to the grid container:

```html

<div class="container m-auto grid grid-cols-3 gap-4">

```

Now we have a gap between the children `div`s:

### Responsive Column Numbers

We can add columns at larger breakpoints:

```html

<div class="container m-auto grid grid-cols-3 md:grid-cols-5 lg:grid-cols-8 gap-4">

```

Here, we're using Tailwind's default breakpoints at `md: 768px` and `lg: 1024px`. We can see the number of columns increasing at `768px` and `1024px`:

It's important to notice that Tailwind's responsive classes such as `md` and `lg` do not represent screensizes, but rather mobile-first breakpoints. So `grid-cols-3` lays out 3 columns for all screen sizes, but since column numbers change after `md` we get 5 columns **after** that screen size. And because it changes again after `lg` we get 8. It doesn't change afterwards, so we continue getting 8 columns even in `xl`, `2xl` screen sizes.

## Size and Placement

**Tailwind's CSS Grid** sizing and placing classes gives us more control over how many columns a section should span and where we want to start and end it.

### Setting Size with Span

Below, we place the first `div` as a navbar. We are doing this with `col-span-{n}` for each screen size. We have to make sure it starts at the beginning of the grid and spans the entire screen at each screen size:

```html

<div class="tile bg-teal-500 col-span-3 md:col-span-5 lg:col-span-8">

<h1 class="tile-marker">ONE</h1>

</div>

```

We can refactor the above `div` as:

```html

<div class="tile bg-teal-500 col-span-full">

<h1 class="tile-marker">ONE</h1>

</div>

```

We can also use `col-start-{n}` and `col-end-{n}` to achieve this:

```html

<div class="tile bg-teal-500 col-start-1 col-end-4 md:col-start-1 md:col-end-6 lg:col-start-1 lg:col-end-9">

<h1 class="tile-marker">ONE</h1>

</div>

```

But look at the double responsive classes for at each breakpoint for each of start and end:

```html

md:col-start-1 md:col-end-6 lg:col-start-1 lg:col-end-9

```

They are not very DRY. `col-span-{n}` classes are better for setting the size.

Let's set some more sizes. The width of the second and third `div`s like so:

```html

<div class="tile bg-amber-500 col-span-1 md:col-span-2 lg:col-span-3">

<h1 class="tile-marker">TWO</h1>

</div>

<div class="tile bg-yellow-500 col-span-2 md:col-span-3 lg:col-span-5">

<h1 class="tile-marker">THREE</h1>

</div>

```

### Placement

`col-span-{n}` classes provide limited freedom to place `div`s inside the grid container, whereas `col-start-{n}` and `col-end-{n}` leads to a lot of repetition when repsonsive classes are used.

We can combine start classes with span classes to write more succint code. Start classes can be used to **place** a `div` and span classes can be used to define its width and height.

Let's see an instance of it with the fourth `div`:

```html

<div class="tile bg-lime-600 lg:col-start-4 lg:col-span-2">

<h1 class="tile-marker">FOUR</h1>

</div>

```

As we can see, at `lg` and onward the fourth `div` starts at column four and spans two columns to the right.

OK.

Now if we look closely at the layout, we see that everything is haphazard and it is still not a well set and usable grid to work with - as the last two `div`s seem to be isolated from the rest. This is because, we have applied the half of the equation: only Grid **column** classes. The other half involves the Grid **rows**.

---

## Is your CRUD app overloaded with technical debt?

Meet the headless, React-based solution to build sleek **CRUD** applications. With refine, you can be confident that your codebase will always stay clean and boilerplate-free.

Try [refine](https://github.com/pankod/refine) to rapidly build your next **CRUD** project, whether it's an admin panel, dashboard, internal tool or storefront.

<div>

<a href="https://github.com/pankod/refine">

<img src="https://refine.dev/img/generic_banner.png" alt="refine blog logo" />

</a>

</div>

<br/>

## Grid Rows

If we want to have finer control over the Grid, we need to explicitly set the number of rows, just like we have the columns. We'll do this by introducing `grid-rows-{n}`.

For our grid, we want 5 rows. The number of rows has been defined implicitly so far, but we want to define it explicitly this time. So, we add `grid-rows-5` to our container:

```html

<div class="container m-auto grid grid-cols-3 grid-rows-5 md:grid-cols-5 lg:grid-cols-8 gap-4">

```

Now, we can go ahead and set the last `div` to become a footer. This footer will be placed to the bottom row. To the left side on smaller screens but span the entire bottom row after `md`:

```html

<div class="tile bg-pink-500 row-start-5 md:col-span-full">

<h1 class="tile-marker">NINE</h1>

</div>

```

Here, `row-start-5` tells the footer to be placed to the bottom row. It looks like this:

### Span Rows

We can go further and make it more structured. Let's make the second `div` span the left side of the grid - between the navbar and the footer. We can do this by choosing the second row as the starting point of the `div` and ending at 5:

```html

<div class="tile bg-amber-500 row-start-2 row-end-5 col-span-1 md:col-span-2 lg:col-span-3">

<h1 class="tile-marker">TWO</h1>

</div>

```

We have it shaping into a better website layout:

## Reorder Regions

We can change the order of a region by altering the the value of `n` in `row-start-{n}` and `col-start-{n}`. The second `div` could be an ad section which we want to display at the bottom on smaller screens and at the top on larger screens. Let's set its order at smaller screens first and then change it at `md`:

```html

<div class="tile bg-yellow-500 row-start-4 row-end-5 md:row-start-2 md:row-end-3 col-span-2 md:col-span-3 lg:col-span-5">

<h1 class="tile-marker">THREE</h1>

</div>

```

We have something looking like this:

And the final `index.html` looks like this:

```html

<!-- index.html -->

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<link href="/dist/styles.css" rel="stylesheet">

<title>Document</title>

</head>

<body>

<div class="container m-auto grid grid-cols-3 grid-rows-5 md:grid-cols-5 lg:grid-cols-8 gap-4">

<div class="tile bg-teal-500 col-span-full">

<h1 class="tile-marker">ONE</h1>

</div>

<div class="tile bg-amber-500 row-start-2 row-end-5 col-span-1 md:col-span-2 lg:col-span-3">

<h1 class="tile-marker">TWO</h1>

</div>

<div class="tile bg-yellow-500 row-start-4 row-end-5 md:row-start-2 md:row-end-3 col-span-2 md:col-span-3 lg:col-span-5">

<h1 class="tile-marker">THREE</h1>

</div>

<div class="tile bg-lime-600 lg:col-start-4 lg:col-span-2">

<h1 class="tile-marker">FOUR</h1>

</div>

<div class="tile bg-green-600">

<h1 class="tile-marker">FIVE</h1>

</div>

<div class="tile bg-emerald-500">

<h1 class="tile-marker">SIX</h1>

</div>

<div class="tile bg-teal-500">

<h1 class="tile-marker">SEVEN</h1>

</div>

<div class="tile bg-purple-500">

<h1 class="tile-marker">EIGHT</h1>

</div>

<div class="tile bg-pink-500 row-start-5 md:col-span-full">

<h1 class="tile-marker">NINE</h1>

</div>

</div>

</body>

</html>

```

<br/>

<div>

<a href="https://discord.gg/refine">

<img src="https://refine.dev/img/discord_big_blue.png" alt="discord banner" />

</a>

</div>

## Customizing TailwindCSS Theme

As mentioned above, **TailwindCSS Grid** configuration can be changed according to our needs.

Let's say for some reason we want to set the maximum number of Tailwind's grid columns to be 16. This means we will need to be able to generate all the `grid-cols-{n}` classes starting from `n` 0 to 16. This also means we will need to generate all the `col-start-{n}` and `col-end-{n}` classes.

We can generate these classes by extending the related properties in the `tailwind.config.js` file. For our purposes, the `gridTemplateColumns`, `gridColumnStart` and `gridColumnEnd` properties:

```ts

// tailwin.config.js

module.exports = {

theme: {

extend: {

gridTemplateColumns: {

// Simple 16 column grid

'16': 'repeat(16, minmax(0, 1fr))',

},

gridColumnStart: {

'13': '13',

'14': '14',

'15': '15',

'16': '16',

'17': '17',

},

gridColumnEnd: {

'13': '13',

'14': '14',

'15': '15',

'16': '16',

'17': '17',

}

}

}

}

```

This will make all the relevant grid classes available for us to use.

## Conclusion

In this article, we built a simple responsive layout using **Tailwind's CSS Grid** classes. These classes allow us to set the number of columns and rows explicitly, and help us make the layout responsive by allowing us to reset them at larger breakpoints.

We can also easily alter the size and placement of a given section with **Tailwind Grid**. Reordering of sections at different breakpoints are also commonly done. And perhaps more conveniently, Tailwind allows us to customize many of the properties for generating classes we need so that we can build fluid and responsive layouts according to our individual needs. | necatiozmen |

1,226,379 | GraphQL API Integration for Full-Stack Apps with PostGraphile | In part two of this tutorial series, we’re going to look at the key features of GraphQL and how to... | 0 | 2022-10-24T14:20:01 | https://dev.to/adambiggs/graphql-api-integration-for-full-stack-apps-with-postgraphile-5701 | graphql, api, backend, postgraphile | In part two of this tutorial series, we’re going to look at the key features of GraphQL and how to integrate it with PostGraphile to enhance the back-end of our full-stack application.

In part one, we covered how to approach building a GraphQL API with TypeScript and Node.js as well as the key benefits of this architecture. If you missed it, check out how we set up the project and bootstrapped our code by installing dependencies and configuring our data model.

## What is GraphQL?

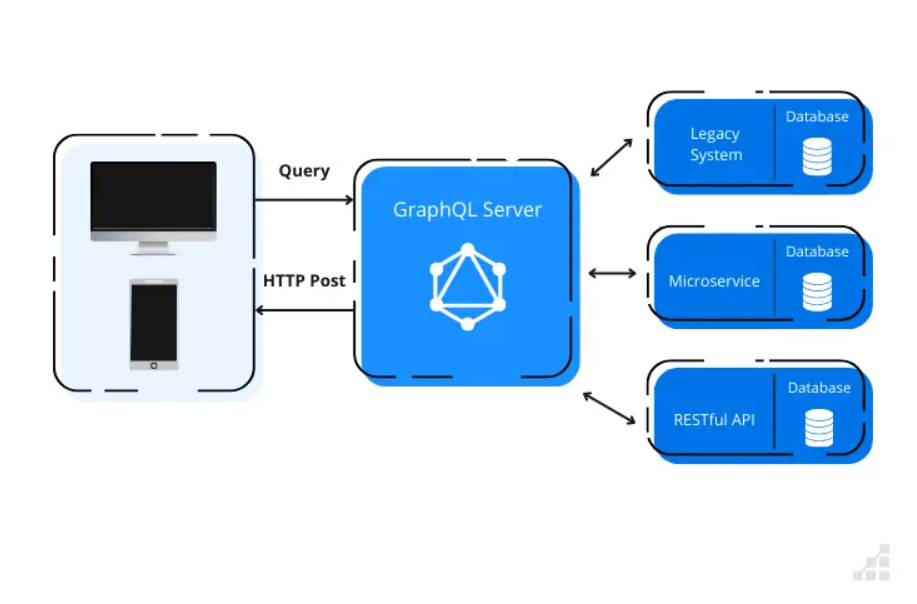

In a nutshell, GraphQL acts as a layer to fetch and mutate data. It’s language-agnostic on both the front and back-end (e.g. JavaScript, Java, C#, Go, PHP, etc.) and serves as a bridge between client and server communications.

The goal of GraphQL is to provide methods for retrieving and modifying data. To provide this function, GraphQL has several operations:

- **Queries**: for performing data fetching operations on the server.

- **Mutations**: analogue to the standard CRUD (Create, Retrieve, Update, Delete) operations, except for the Retrieve (for which Queries are responsible in GraphQL).

- **Subscriptions**: conceptually, subscriptions are like Queries in that it’s utilized to fetch data. It may maintain an active connection to your GraphQL server to enable the server to push live updates for the subscribed clients.

- **Resolvers**: Resolvers are implemented in the back-end as handlers for the lookup logic for the requested resources.

It’s important to mention that GraphQL isn’t a framework/library, nor a database implementation/query language for the DB. Rather, it’s a specification powered by a robust type system called Schema Definition Language (GraphQL SDL) described in its specs. It serves as a mechanism to enforce a well-defined schema that serves like a contract establishing what is and what isn’t allowed.

It’s a wrong assumption to think that GraphQL is a database implementation or a query language tied to any particular database. Although it’s common to see that being translated to DB interactions, it’s possible to use GraphQL even without having any sort of DB (ex., you can set up a GraphQL layer to expose and orchestrate different REST APIs endpoints).

## Using PostGraphile to Integrate with GraphQL

There are a few ways to set up a GraphQL API in Node.js. These options may include:

- Apollo Server

- Hasura

- Prisma

- PostGraphile

Alternatively, you can build your own server with custom resolvers and a schema definition. While there are pros and cons for each method, we’ll use PostGraphile in this tutorial. We’ve made that choice because it provides an instant GraphQL API based on the DB schema.

## What Is PostGraphile?

PostGraphile is a powerful tool that makes it easy to set up a robust GraphQL API relatively quickly. According to the official documentation:

_“PostGraphile automatically detects tables, columns, indexes, relationships, views, types, functions, comments, and more — providing a GraphQL server that is highly intelligent about your data, and that automatically updates itself without restarting when you modify your database.”_

This makes PostGraphile a great option for developers because it enables them to build fast, reliable APIs. Some of the key features that make this possible are:

- Strong PostgreSQL support

- Use of GraphQL best practices

- Simplicity to deploy and scale

- Customizability

- Impressively high performance

- Granular authorisation via RLS

- Open source under MIT license

- Powerful plugin system

## Configuring PostGraphile

There are two ways of integrating PostGraphile into our project: via **PostGraphile CLI** or through a middleware. For this project, we’ll use a middleware.

Now that we have an overview of GraphQL and how **PostGraphile** can be helpful in our demo project, let’s go ahead and install the **PostGraphile** dependency in our project.

```

npm install postgraphile@^4.12.9

```

To use PostGraphile in our application, we need to import it like our other dependencies. The import can be added to the top of the App.ts file:

```

import postgraphile from 'postgraphile'

```

After that, all we need to do to complete the setup is to enhance our App.ts and bootstrap our Express server with PostGraphile Middleware. To do that, replace this code section:

```

/**

* This is our main entry point of our Express server.

* All the routes in our API are going to be here.

**/

const App = () => {

const app = express()

app.use(express.json())

```

With this:

```

const pgUser = '*** INSERT YOUR POSTGRESQL USER HERE ***'

/**

* This is our main entry point of our Express server.

* All the routes in our API are going to be here.

**/

const App = () => {

const app = express()

app.use(express.json())

app.use(postgraphile(`postgresql://${pgUser}@localhost/catalog_db`, 'public', {

watchPg: true,

graphiql: true,

enhanceGraphiql: true,

}))

```

We’re basically configuring the **PostGraphile** middleware in our server.

Now, if you restart the server and hit the http://localhost:8090/graphiql in your browser, you’re going to see some really interesting stuff! We’ll dig into all of that in the next section.

Note: if, when restarting the server, you see:

- _“Failed to setup watch fixtures in Postgres database”_

- _“A serious error occurred when building the initial schema. Exiting because `retryOnInitFail` is not set”_

_Then make sure the user specified in the **const pgUser =** is valid and that you have the admin privileges for changing the Postgres DB Schema._

## GraphQL Playground

GraphQL Playground is a sort of IDE for exploring and interacting with a GraphQL server. It comes with an interactive UI that can run on the browser to where you can build and test queries/mutations and explore the GraphQL schemas.

What you are seeing is an enhanced version of GraphiQL shipped with PostGraphile. While it’s out of the scope of this tutorial to dive deep into the GraphQL playground, we’ll cover some of the key features that it provides.

## GraphQL API Documentation

Our GraphQL Playground can also serve as an API documentation with the powerful schema introspection feature. Let’s take a look.

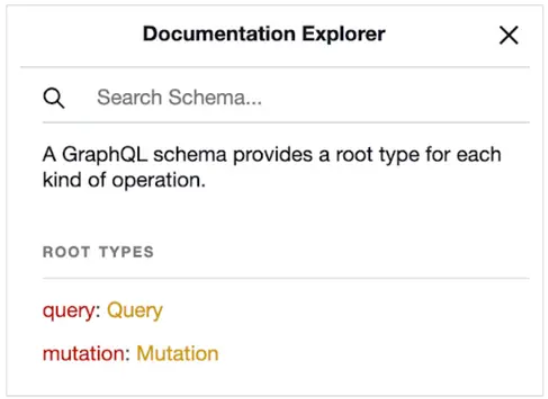

First, in the top right-corner, click on the **“< Docs”** option. This will open the **Documentation Explorer**:

There are two root types enabled: **Query** and **Mutation**. Let’s explore the **Query** type.

If you scroll down, you’ll see many options available to use. In TypeORM, we defined the entities to be added to the PostgreSQL server. The PostGraphile middleware is able to automatically expose these entities to GraphQL, allowing us to access them through GraphiQL.

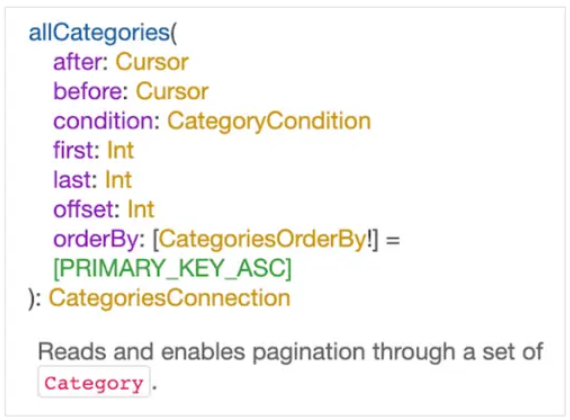

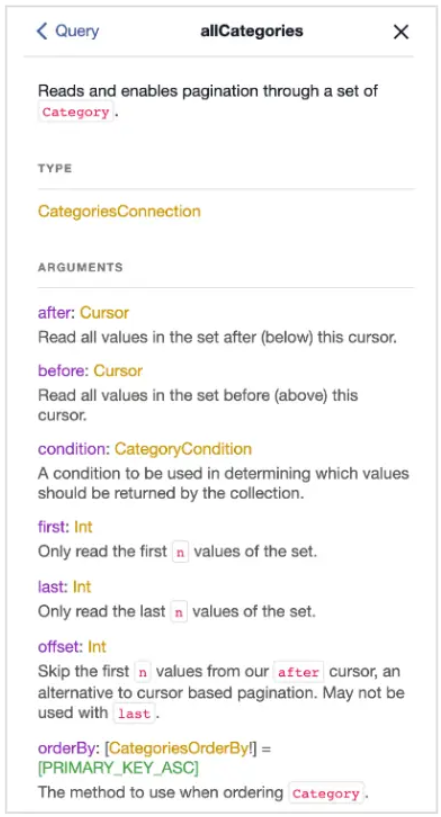

Let’s take a look at the **allCategories** query as an example:

By clicking on the **allCategories** hyperlink, you can see the details of that query:

This window displays the different methods you can use to work with the query results.

Notice that GraphQLsupports Cursor (after, before) and Offset (first/last, offset) based pagination, Ordering and Filters (condition), with all of that supported out of the box!

As for the **Mutation** type, you have access to create, update and delete utilities for all of our entities!

In the following sections, we’ll explore some of the fundamental features of a GraphQL API: writing Queries and Mutations.

_Note: this article is not going to cover all the details of writing **Queries** and **Mutations**. If you’re interested in this, there are some great resources on the official GraphQL Foundation website._

Want to learn more? I would highly recommend this article: [GraphQL API Integration for Full-Stack Apps with PostGraphile [Tutorial Part 2]](https://www.scalablepath.com/full-stack/graphql-and-postgraphile-integration-full-stack-tutorial-part-2) | adambiggs |

1,226,403 | Writing and testing a custom RuboCop cop | Solving a problem is great — but keeping it from coming back is even better. As we resolve issues in... | 0 | 2022-10-21T17:36:44 | https://dev.to/aha/writing-and-testing-a-custom-rubocop-cop-32bc | rails, ruby, rubocop | Solving a problem is great — but keeping it from coming back is even better. As we resolve issues in our code base, we often consider how to keep that classification of issue out of the code base entirely. Sometimes we reach for [RuboCop](https://docs.rubocop.org/rubocop/index.html) to help us police certain patterns. This also helps to document the originating issue and educates teammates on why these patterns are undesirable.

RuboCop is more than just a linter. It is highly extensible and allows you to write [custom cops](https://thoughtbot.com/blog/rubocop-custom-cops-for-custom-needs) to enforce specific behavior. These cops can be used to create better code practices, prevent bad patterns from sneaking into a legacy code base, and provide training for other engineers. But it can be tricky to know [how to create a new cop](https://docs.rubocop.org/rubocop/development.html) and if it will work long-term.

> We can write unit tests to ensure the success of our custom cops, just as we would with any application code.

Let's explore this with an example to show how testing could be done.

## Testing custom cops

With the Aha! engineering team, every model has an `account_id` attribute present and for [security reasons][security], we never want this to be set via mass-assignment. To avoid this, we want to prevent certain attributes from being added to [attr_accessible][attr_accessible].

[attr_accessible]: https://apidock.com/rails/ActiveRecord/Base/attr_accessible/class

[security]: https://brakemanscanner.org/docs/warning_types/mass_assignment/

```ruby

# bad

class Foo

attr_accessible :name, :account_id

end

Foo.create(account_id: 1, name: "foo")

# good

class Foo

attr_accessible :name

end

foo = Foo.new(name: "foo")

foo.account_id = 1

foo.save

```

We have a custom cop that analyzes the arguments to that method and will error if any protected attribute is present. The custom cop we have ends up looking something like this:

```ruby

class RuboCop::Cop::ProtectedAttrAccessibleFields < RuboCop::Cop::Cop

# We can define a list of attributes we want to protect

PROTECTED_ATTRIBUTES = [

:account_id,

].freeze

# We can define an error message that is displayed when an offense is detected.

# This can be helpful to communicate information back to other engineers

ERROR_MESSAGE = <<~ERROR.freeze

Only permit attributes that are safe to be completely user controlled. Typically any *_id field could be problematic.

Instead perform direct assignment of the field after doing a scoped lookup. This is the safest way to handle user input.

Some fields such as #{PROTECTED_ATTRIBUTES.inspect} should never be used as part of attr_accessible.

ERROR

# We want to examine method calls. Particularly those that are calling the attr_accessible method

# and also have arguments we care about

def on_send(node)

if receiver_attr_accessible?(node) && protected_arguments?(node)

# If we do detect an attr_accessible call with arguments we care about, we can record an offense

add_offense(node, message: ERROR_MESSAGE)

end

end

private

def receiver_attr_accessible?(node)

node.method_name == :attr_accessible

end

def protected_arguments?(node)

node.arguments.any? do |argument|

if argument.sym_type? || argument.str_type?

PROTECTED_ATTRIBUTES.include?(argument.value.to_sym)

end

end

end

end

```

This custom cop does the trick. Adding a test for it ensures that it won't break in the future when we update RuboCop or extend the functionality. In order to write a test, we need to understand how the custom cops are set up and run.

## Instantiate a custom cop

`RuboCop::Cop::Cop` inherits from `RuboCop::Cop::Base` and that allows the instantiation without [any arguments](https://github.com/rubocop/rubocop/blob/d8c2cd0d891c9e49f528041d3b0758a6fa480265/lib/rubocop/cop/base.rb#L71). So it turns out this isn't anything special — creating a new instance of our cop is really as simple as: `RuboCop::Cop::ProtectedAttrAccessibleFields.new`

If the cop requires some kind of configuration, it can be passed to the instance via a `RuboCop::Config` object. The `RuboCop::Config` takes two arguments. RuboCop can [provide configuration](https://docs.rubocop.org/rubocop/configuration.html) via YML files. You can use the first argument of `RuboCop::Config` to pass this configuration with various values from the test. The second argument is the path of the loaded YML file, which can be ignored in the tests.

```ruby

config = RuboCop::Config.new({ RuboCop::Cop::ProtectedAttrAccessibleFields.badge.to_s => {} }, "/")

cop = RuboCop::Cop::ProtectedAttrAccessibleFields.new(config)

```

## Process, execute, examine

As it turns out, there is a [method](https://github.com/rubocop/rubocop/blob/d8c2cd0d891c9e49f528041d3b0758a6fa480265/lib/rubocop/cop/base.rb#L238) available, `RuboCop::Cop::Base#parse` , that accepts a string as input and will return something the cop can process.

This allows us to have something like:

```ruby

source = <<~CODE

attr_accessible :account_id

CODE

processed_source = cop.parse(source)

```

There is a class from within RuboCop, `RuboCop::Cop::Commissioner` , that is responsible for taking a [list of cops](https://github.com/rubocop/rubocop/blob/d8c2cd0d891c9e49f528041d3b0758a6fa480265/lib/rubocop/cop/commissioner.rb#L44) and using those to [investigate](https://github.com/rubocop/rubocop/blob/d8c2cd0d891c9e49f528041d3b0758a6fa480265/lib/rubocop/cop/commissioner.rb#L79) the processed source code. In order to run our cop, we can run this method.

```ruby

commissioner = RuboCop::Cop::Commissioner.new([cop])

investigation_report = commissioner.investigate(processed_source)

```

The `RuboCop::Cop::Commissioner#investigate` method will return an instance of [RuboCop::Cop::Commissioner::InvestigationReport](https://github.com/rubocop/rubocop/blob/d8c2cd0d891c9e49f528041d3b0758a6fa480265/lib/rubocop/cop/commissioner.rb#L18) which is a simple struct class that has a list of offenses that have been recorded.

## Put it all together

We end up with a test file that looks something like this:

```ruby

describe RuboCop::Cop::ProtectedAttrAccessibleFields do

let(:config) { RuboCop::Config.new({ described_class.badge.to_s => {} }, "/") }

let(:cop) { described_class.new(config) }

let(:commissioner) { RuboCop::Cop::Commissioner.new([cop]) }

it "records an offense if we use allow account_id as a string" do

source = <<~CODE

attr_accessible :foo, 'account_id'

CODE

investigation_report = commissioner.investigate(cop.parse(source))

expect(investigation_report.offenses).to_not be_blank

expect(investigation_report.offenses.first.message).to eql described_class::ERROR_MESSAGE

end

it "records an offense if we use allow account_id as symbol" do

source = <<~CODE

attr_accessible :foo, :account_id

CODE

investigation_report = commissioner.investigate(cop.parse(source))

expect(investigation_report.offenses).to_not be_blank

expect(investigation_report.offenses.first.message).to eql described_class::ERROR_MESSAGE

end

it "doesn't record an offense if no protected attribute is used" do

source = <<~CODE

attr_accessible :foo

CODE

investigation_report = commissioner.investigate(cop.parse(source))

expect(investigation_report.offenses).to be_blank

end

end

```

Now that we know how to write tests, we can use them as a starting point for building new cops, extending existing cops, and ensuring that things continue to function as our application grows and evolves. These little investments into [project-specific cops](https://evilmartians.com/chronicles/custom-cops-for-rubocop-an-emergency-service-for-your-codebase) can end up being a large investment in the future health of the projects.

**Sign up for a free trial of Aha! Develop**

Aha! Develop is a fully extendable agile development tool. Prioritize the backlog, estimate work, and plan sprints. If you are interested in an integrated [product development](https://www.aha.io/suite-overview) approach, use [Aha! Roadmaps and Aha! Develop together](https://www.aha.io/product/overviewhttps://www.aha.io/product/integrations/develop). Sign up for a [free 30-day trial](https://www.aha.io/trial) or [join a live demo](https://www.aha.io/live-demo) to see why more than 5,000 companies trust our software to build lovable products and be happy doing it. | doliveirakn |

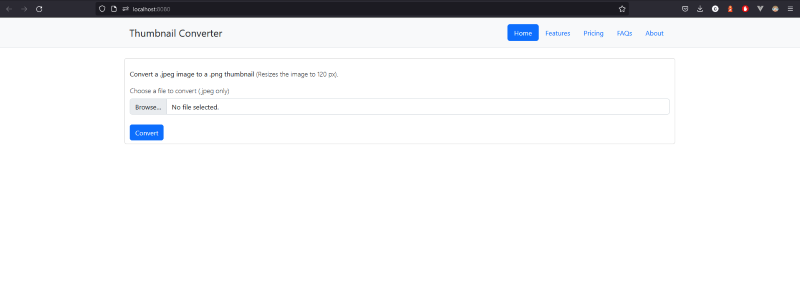

1,275,327 | Free Online PNG to JPG Converters You Can Trust | The PNG file format is great for storing images with transparent backgrounds because it produces... | 0 | 2022-11-28T08:47:57 | https://dev.to/swangden/free-online-png-to-jpg-converters-you-can-trust-3jce | pngtojpg, png, jpg, onlineconverte | The PNG file format is great for storing images with transparent backgrounds because it produces smaller files than the JPEG image format, while still keeping image quality high. However, the PNG format doesn’t support transparency, so if you want to insert a PNG file into another document or build an HTML page, you will need an online PNG to JPG converter. Luckily, we have a great option for you! Read on for more information about the best free online PNG to JPG converters and how they can help you with your projects.

## Free PNG to JPG converters

Toolsable is a website equipped with a wide variety of free online tools including a [PNG to JPG converter](https://www.toolsable.com/en/png-to-jpg) that converts PNG files into JPG. If you have a PNG file that you need to insert into a Microsoft Word document or upload to a website, you will have to convert the file to JPG first, since those programs and websites don’t support the PNG image format. Converting your PNG files to JPG is quite easy and only takes a few seconds.

You can do this either by opening the PNG file in a photo-editing program like Photoshop and then saving it as a JPG, or you can use a [free online PNG to JPG converter](https://www.toolsable.com/en/png-to-jpg). There are several of these online converters available, with the main differences being the quality of the converted images and the number of options you have for customizing the conversion process.

## Why should you convert your PNG files to JPG?

The PNG file format is great for storing images with transparent backgrounds because it produces smaller files than the JPEG image format, while still keeping image quality high. However, the PNG format doesn’t support transparency, so if you want to insert a PNG file into another document or build an HTML page, you will need to convert it to the JPG format first.

There are a few different reasons why you might want to convert your PNG files to JPG. The most common reason is to insert the PNG file into another document or website. This is especially true if you are working in a field that requires you to use Microsoft Office programs a lot since those don’t support the PNG format.

If you want to insert an image into an Office document or website without having to save it as a different file type first, you need to convert it from a PNG to JPG first. Another reason you may want to convert your PNG files to JPG is to make a smaller file. PNG files are great for storing high-quality images with transparent backgrounds, but they produce larger file sizes than JPG images.

## How to convert PNG images to JPG?

If you have a PNG file and want to convert it to JPG, you can do so using an online PNG to JPG converter in toolsable. There are many different [PNG to JPG converters available online](https://www.toolsable.com/en/png-to-jpg), but not all of them are free. You can also use a toolsable if you want to save yourself the hassle of downloading and installing a program on your computer.

There are several key differences between free and paid PNG to JPG converters. First, most free converters let you select the image quality of the JPG file that is created.

You can also control how much the image is compressed, which affects the image quality. Paid PNG to JPG converters usually don’t give you any control over these settings, so the quality of the converted files may not be as high. Another important difference between free and paid PNG to JPG converters is the number of options you have for customizing the conversion process.

Many free converters have a very basic user interface and don’t give you many options for adjusting the conversion process. Paid converters are often more flexible and let you customize the conversion settings.

## Recommend a free online PNG to JPG converter.

There are many [free PNG to JPG converters](https://www.toolsable.com/en/png-to-jpg) available online, but many of them have very limited functionality. If you are looking for a PNG to JPG converter that gives you more control over the conversion process and lets you choose the quality setting for the JPG files you create, you can use the toolsable.

Toolsable - has one of the most popular free PNG to JPG converters available online. It has an easy-to-use interface and provides several different conversion options, including resizing the images before converting them. Toolsable also chooses the quality setting for the JPG files and also has an option to compress the JPG files further to reduce the file size even further.

## Conclusion

A PNG to JPG converter is a program or website that converts PNG files into JPG. If you have a PNG file that you need to insert into a document or website, you will have to convert the file to JPG first, since those programs and sites don’t support the PNG format.

You can do this either by opening the PNG file in a photo-editing program like Photoshop and then saving it as a JPG, or you can use a free online PNG to JPG converter like toolsable.

There are several of these online converters available, with the main differences being the quality of the converted images and the number of options you have for customizing the conversion process. | swangden |

1,226,573 | Um pouco sobre o NGINX pt.1 | O NGINX (Engine X) é um Software Open Source que tem como funcionalidade principal atender... | 0 | 2022-10-24T15:34:51 | https://dev.to/juannunesz/um-pouco-sobre-o-nginx-pt1-3c6l | nginx, devops, cahce, webdev |

O **NGINX** (Engine X) é um Software Open Source que tem como funcionalidade principal atender requisições HTTP na web.

Porém ele não serve somente para isso, não é atoa que ele é o webserver mais utilizado na internet. Além de atender requisições HTTP de forma excepcional existe uma serie de recursos que o torna cada vez mais interessante, sendo elas: proxy reverso, armazenamento em cache, balanceamento de carga, streaming de mídia e muitas vezes utilizado para funcionar como um servidor proxy para e-mail (IMAP, POP3 e SMTP). Mais a frente falo um pouco sobre cada uma dessas funcionalidades do nginx e em quais contextos são mais utilizadas.

## História por trás

O NGINX foi escrito originalmente para resolver a dificuldade que os servidores web existentes enfrentavam em lidar com grandes números (os 10K ) de conexões simultâneas.

Em 2004 seu fundador Igor Sysoev após ver seu uso crescer exponencialmente, decide a abrir o código do projeto e cria a NGINX, Inc. para dar suporte ao desenvolvimento contínuo do NGINX e comercializar o NGINX Plus como um produto comercial para clientes corporativos.

## Diferenças entre Apache e NGINX

O NGINX supera o Apache e alguns outros servidores em benchmarks que medem o desempenho de servidores web, Desde o lançamento do NGINX. No entanto, os sites evoluiram de páginas HTML estáticas para conteúdo dinâmico. O NGINX cresceu junto com ele e agora suporta todos os componentes da Web "moderna", incluindo WebSocket, HTTP/2, gRPC e streaming de vários formatos de vídeo HDS, HLS entre outros.

Além da alta customização do nginx e suporte de novos componentes da web, o que se da a sua alta performace em relação ao apache é a sua arquitetura que dita a forma na qual o webserver atende suas requisições, por sua vez o apache é "process-based server" (arquitetura baseada em processos) em que cada solicitação de conexão é tratada por um único processo. A maneira como geralmente funciona é um processo pai do servidor recebe solicitações de conexão e, quando isso acontece, ele cria (gera) um processo filho para lidar com isso. Quando outra solicitação chega, o processo pai gera um novo processo filho para lidar com a nova solicitação e assim por diante.

Porém, isso tudo acaba gerando um custo de processamento absurdo, pois, quanto mais solicitações e conexões abertas, mais recursos computacionais serão gastos.

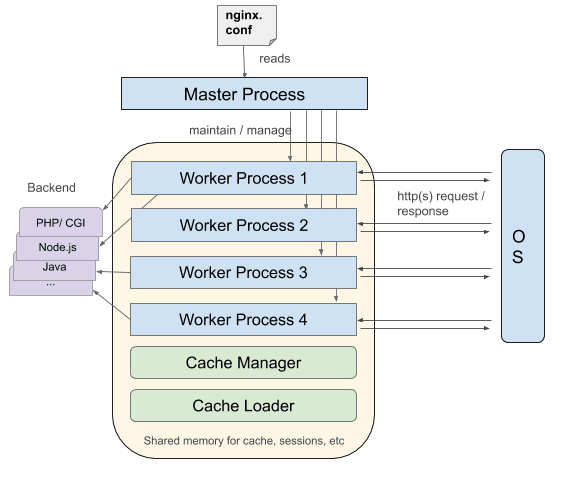

Já no NGINX temos um outro tipo de arquitetura, uma arquitetura assíncrona e “event‑driven architecture” (Arquitetura orientada a eventos).

Significa que threads iguais são comandadas por um process_worker, e cada process_worker contém unidades menores chamadas worker_connections. Esta unidade inteira é responsável de cuidar das solicitações. worker_connections levam as solicitações até um process_worker, que por sua vez as envia para o processo master. Finalmente o processo master fornece o resultado da solicitação.

de forma simples: existe um worker principal e diversos workers menores que recebem requisiçoes, porem cada worker é assincrono e capaz de receber mais de uma requisição, ou seja, enquanto ele está devolvendo um arquivo estático de CSS o mesmo worker já está atendendo uma nova requisição e por ai vai...

Um detalhe: Os workers geralmente são criados de acordo com a quantidade de núcleos da CPU. Porém pode ser "setado" no arquivo de configuração do nginx.

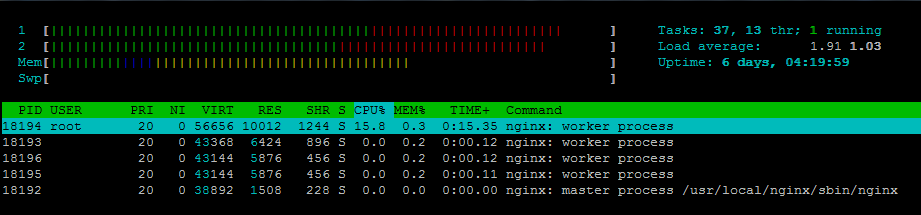

Caso tenha acabado de fazer a instalação do nginx vai estar assim:

```

worker_processes: auto;

```

Desta forma ele está configurado para criar workers de acordo com a quantidade de núcleos da CPU.

Um único worker é capaz "cuidar" de até 1024 solicitações similares.

## Proxy Reverso

Quande se fala de NGINX sempre é citado o famoso Proxy reverso, mas afinal, oque seria isso?

Confesso que quando me explicaram o que era fiquei uns 2 minutos com cara de paisagem tentando entender

Bom, para entendermos o proxy reverso primeiro precisamos elucidar o conceito de proxy. Proxy é um servidor que atua como intermediário entre o usuário e a internet, recebendo as requisições e repassando. Geralmente muito utilizado dentro de empresas para bloquear acesso de sites e outros conteúdos.

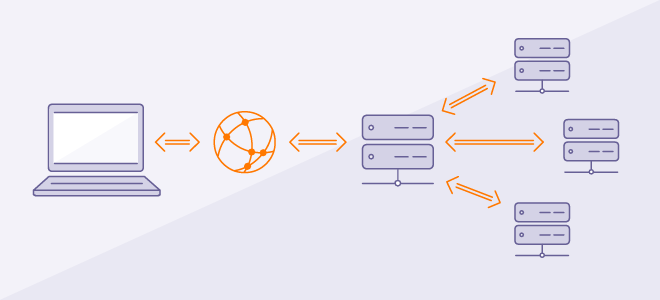

Tendo essa difinição podemos dizer que um proxy reverso é um servidor intermediário que fica ao lado do servidor e não mais ao lado do cliente recebendo as requisições e redirecionando para outros servidores/serviços corretos.

Um exemplo bem simples:

```

server {

listen 80;

server_name localhost;

location / {

root /users/juan/dev/nginx;

index index.html

}

location ~ /.php$ {

proxy_pass http://localhost:8000;

}

}

```

- **server** - é um bloco designado a escrever as configurações de um servidor dentro da sua configuração. Você pode ter vários deles, cada um atendendo em uma porta diferente. Você pode expor um servidor para o mundo e ter outro interno, sem cache, por exemplo, ou até driblando a autenticação, por exemplo.

- **listen** - aqui você define em qual porta seu servidor vai aceitar as conexões.

- **location** - é a diretiva usada para definir as rotas. Elas são bem poderosas. Aceitam expressões regulares, é possível capturar as variáveis e usá-las na configuração. O sistema de location, também, conta com diferentes tipos de match.

- Sem modificador, o match é feito pelo começo da URI.

- *=* é um match exato.

- *~* é um match com expressão regular.

Nesse caso o nginx foi configurado para redirecionar toda requisição que tenha PHP para um serviço especifico, que vai executar a lógica e processar as informações, caso seja uma requisição de algum arquivo estático como uma página em html, o servidor prontamente devolverá sem precisar enviar requisição ao servidor PHP. Assim dando mais dinâmica a todo o processo.

# LoadBalancing

A maioria das vezes uma aplicação em produção tem mais de um servidor para servir ela, isso se dá pois os servidores possuem recursos finitos (CPU,Disco etc.) para atender multiplas requisições. E não é apenas sobre isso. E se o servidor sofrer uma falha de hardware? Ou até alguma falha de rede? Inúmeros motivos podem fazer sua aplicação ficar sem um fallback pra esses casos.

Nesses casos utilizamos o **Upstream** onde denominamos servidores para balancear a aplicação.

```

upstream servicos {

server localhost:8001;

server localhost:8002;

}

server {

listen 8080;

server_name localhost;

location / {

proxy_pass http://servicos;

}

}

```

Como não estamos utilizando algoritimos de balanceamento como Round robin, IP hash etc. O nosso servidor apenas vai intercalar as requisiçõe entre os serviços, ou seja, 50% das requisções vai para o localhost:8001 e 50% para o localhost:8002.

Esse é um exemplo básico, mas pode ser explorado de várias maneiras assim mitigando riscos de ter sua aplicação fora do ar.

# Considerações Finais

Objetivo desse Post é apenas dar uma pincelada sobre o webserver mais rápido do mercado e mostrar algumas caracteristicas que lhe deram esse título. No próximo Post teremos exemplos aplicados e mostrarei algumas outras funcionalidades como: Cache, Stream etc.

| juannunesz |

1,226,828 | JS Polyfills - Part 2 (forEach, keys, Values, Entries) | Github Code: JS Polyfills 4. forEach() Function: forEach(callback, thisArg) Usage:... | 0 | 2022-10-22T09:19:01 | https://dev.to/uttarasriya/js-polyfills-part-2-307d | javascript, webdev, jsitor, jspolyfill | <link rel="canonical" href="https://dev.to/uttarasriya/js-polyfills-part-2-307d"/>

**Github Code:** [JS Polyfills](https://github.com/uttara-sriya/JS-Polyfills)

**4. forEach()**

- <u>Function</u>: `forEach(callback, thisArg)`

- <u>Usage</u>: `arr.forEach((ele, index, arr)=>{...}, this)`

- <u>Description</u>: callback function will execute on every element. But not for uninitialized elements for sparse arrays.

- <u>Polyfill</u>: [forEach](https://jsitor.com/C42UCNGj9)

```javascript

//function returns undefined

Function.prototype.customForEach = function (callback) {

//this should point to array

if(Array.isArray(this)) {

for (let i = 0; i < this.length; i++) {

//check if each element exists

if(typeof this[i] !== 'undefined') {

//callback will take element, index and array as parameters

callback(this[i], i, this);

}

}

}

};

```

**5. Keys()**

- <u>Function</u>: `keys(obj)`

- <u>Usage</u>: `Obj.keys(anyobj)`

- <u>Description</u>: returns array iterator

- <u>Polyfill</u>:[Keys](https://jsitor.com/uvUwSV8D1)

```javascript

//function returns Array Iterator

Array.prototype.customKeys = function () {

let keys = [];

for (let i = 0; i < this.length; i++) {

keys.push(i);

}

// A generator function which returns a generator object( basically follows iterator protocol)

// Why we use here? because the keys return array iterator

// A yield will pause and resume the function (Basically will return the keys one by one until done becomes true)

function* iterator() {

yield* keys;

}

return iterator();

};

```

**6. Values()**

- <u>Function</u>: `values(obj)`

- <u>Usage</u>: `Obj.values(anyobj)`

- <u>Description</u>: returns array iterator

- <u>Polyfill</u>:[Values](https://jsitor.com/kU-55C-wc)

```javascript

//function returns Array Iterator

Array.prototype.customValues = function () {

let values = [];

for (let i = 0; i < this.length; i++) {

values.push(this[i]);

}

// A generator function which returns a generator object( basically follows iterator protocol)

// Why we use here? because the values return array iterator

// A yield will pause and resume the function (Basically will return the values one by one until done becomes true)

function* iterator() {

yield* values;

}

return iterator();

};

```

**7. Entries()**

- <u>Function</u>: `entries(obj)`

- <u>Usage</u>: `Obj.entries(anyobj)`

- <u>Description</u>: returns array iterator

- <u>Polyfill</u>:[Entries](https://jsitor.com/S865hAWaa)

```javascript

//function returns Array Iterator

Array.prototype.customEntries = function () {

let entries = [];

for (let i = 0; i < this.length; i++) {

entries.push(i, this[i]);

}

// A generator function which returns a generator object( basically follows iterator protocol)

// Why we use here? because the entries return array iterator

// A yield will pause and resume the function (Basically will return the entries one by one until done becomes true)

function* iterator() {

yield* entries;

}

return iterator();

};

```

Stay Tuned for Part 3

Keep Learning! | uttarasriya |

1,226,934 | What is the proper length for a podcast? | Podcasts come in a variety of sizes and forms. Podcasts range in length from a minute to several... | 0 | 2022-10-22T11:48:32 | https://dev.to/krishnalal7/what-is-the-proper-length-for-a-podcast-5fmf | podcast |

Podcasts come in a variety of sizes and forms.

Podcasts range in length from a minute to several hours. So, the major question is, what kind of stuff are you going to record?

If your episodes are suitable for bite-sized content, strive for 5 to 10 minutes.

If you wish to speak with a co-host, decide how long each chat will be (and make sure you have something to say, not just meandering gibberish) and keep to that time limit.

Are you putting together a story episode? Many story-based podcasts are around an hour long.

Long-form interviews and in-depth analyses? There are several that last more than an hour - up to 3-4 hours.

What is important is that you have something to say.

Turning on the microphone and making it up as you go is a recipe for disaster.

"Be On, Be Good, Be Gone," my employer pounded into my head early in my radio career.

Be On

Meaning: Be ready to speak up and be intriguing. Have a clear goal in mind and be prepared to deal with anything unexpected that may arise.

Take Care

Meaning: When you open the microphone, your audience expects you to present them with something enjoyable, thrilling, amusing, motivating, or informative that will keep them listening. You must deliver.

Go Away

Meaning: Do not overstay your welcome. When you've completed your mission, get out of dodge.

"Take as much time you need, but be as brief as you can," radio coach Tommy Kramer adds.

Understand Your Audience

As you develop your episodes, you will discover what your audience appreciates and expects from you based on how they react to your content.

Make sure you're surveying your audience, allowing them to contact you via email, and creating a community with your new listeners. It also allows you to ask them questions.

This is the most effective method for determining how to improve and change the length of your episodes.

Know Your Audience

As you develop your episodes, you will discover what your audience appreciates and expects from you based on how they react to your content.

Make sure you're surveying your audience, allowing them to contact you via email, and creating community with your new listeners. It also allows you to interrogate them

This is the most effective technique to modify and adjust the length of your episodes.

Listen to #1 [Malayalam Podcast](https://www.themalayali.in/) from kerala | krishnalal7 |

1,227,020 | Next.js E-commerce StarterKit (2022) | Intro Creating an e-commerce website might look like a simple task, but in fact, it is a... | 0 | 2022-10-22T15:59:58 | https://dev.to/kirillzhirnov/nextjs-e-commerce-starterkit-2022-6ha | ecommerce, nextjs, starterkit, tutorial | ## Intro

Creating an e-commerce website might look like a simple task, but in fact, it is a pretty complicated one: you need to find a Backend, an API, organize product listings on the Frontend, a catalog with hierarchy, filters, and search, a cart and a checkout. And don't forget about image resize. And all these parts should be done in a modern way, e.g. with Next.js and SSG.

## The solution

Ready to use Next.JS E-Commerce Starter kit!

https://github.com/kirill-zhirnov/boundless-nextjs-sample

Demo: https://blank.demos.my-boundless.app/

There are 2 ready to use themes:

https://github.com/kirill-zhirnov/boundless-marsfull-theme/

Demo: https://mars.demos.my-boundless.app/

https://github.com/kirill-zhirnov/boundless-moon-theme

Demo: https://moon.demos.my-boundless.app

The checkout is a standalone component, which can me easily customized: https://github.com/kirill-zhirnov/boundless-checkout-react

Themes use BoundlessCommerce (https://boundless-commerce.com) as a backend. There is an absolutely free tariff plan which covers small business needs.

These Next.JS sites can be easily deployed to Vercel or Netlify. If you want a longread about creating NextJS Ecommerce website - please visit our blog (I don't want to do a copy/paste :)): https://boundless-commerce.com/blog/how-to-create-a-nextjs-e-commerce-website

And if any question - please contact us - we are happy to help!

| kirillzhirnov |

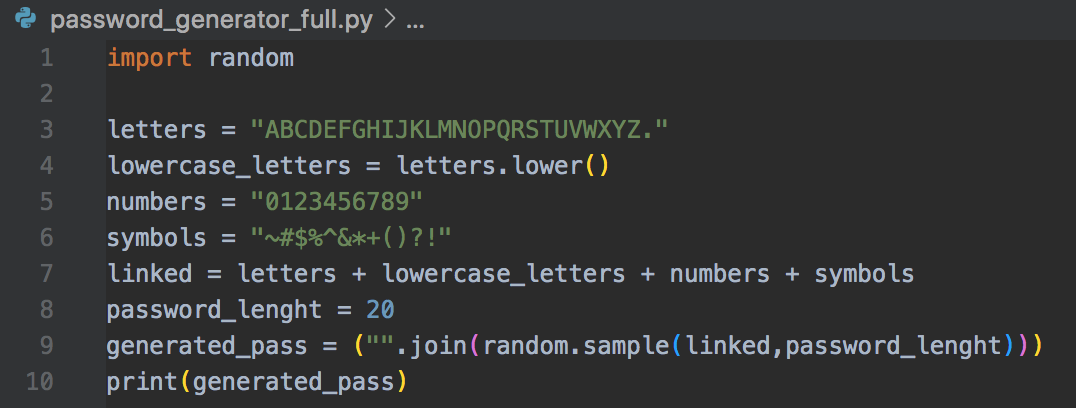

1,227,087 | Simple Password Generator | Here is a simple python generator that use the "Random" module with a random.sample() method. The... | 0 | 2022-10-22T16:30:16 | https://dev.to/sngvfx/simple-password-generator-591m | generator, python | Here is a simple python generator that use the "Random" module with a random.sample() method. The generated password is a combination of upper and lowercase letters, numbers and symbols. To make the password more complex and not easy to hack, the length of the password has been set to 20.

Give it a try, feel free to improve it and let me know!

| sngvfx |

1,227,355 | Awesome way to convert every type to Boolean on JavaScript | Compare between two variables on JavaScript maybe the thing that every developer has done on their... | 0 | 2022-10-23T03:04:35 | https://dev.to/junedang/awesome-way-to-convert-every-type-to-boolean-on-javascript-11ip | javascript, webdev | Compare between two variables on JavaScript maybe the thing that every developer has done on their daily work but there is a cool and faster way that you can impress your colleague on doing convert variable to Boolean.

## Reverse logical

We are all see the used of reverse logical logic (!) in all of our code base. In JavaScript, this symbol will convert every type into Boolean and then reverse the logic of its operation.

## What happen if you use “!!”?

This is tricky now! As I said earlier, JavaScript will cast every operation into Boolean when we attach it with “!” symbol. And when we attach another “!” logical expression here, we are doing the reverse of reverse of the logical operation thus we converting variable into Boolean type without changing variable context.

Some examples when we only use one “!” logical expression:

```JavaScript

!'' // true

!{} // false

!0 // true

!1 // false

![] // false

!undefined // true

!null // true

```

As you can see on above code, the logical of values were converted into Boolean and then be reversed it conditional evaluation.

So here is what happen when we use two “!” logical expressions:

```JavaScript

!!'' // false

!!{} // true

!!0 // false

!!1 // true

!![] // true

!!undefined // false

!!null // false

```

You can see that the values were converted into Boolean but not changing its conditional expression thus we have success convert the value into Boolean and keep it logical.

Thank you for reading and see you in other articles. | junedang |

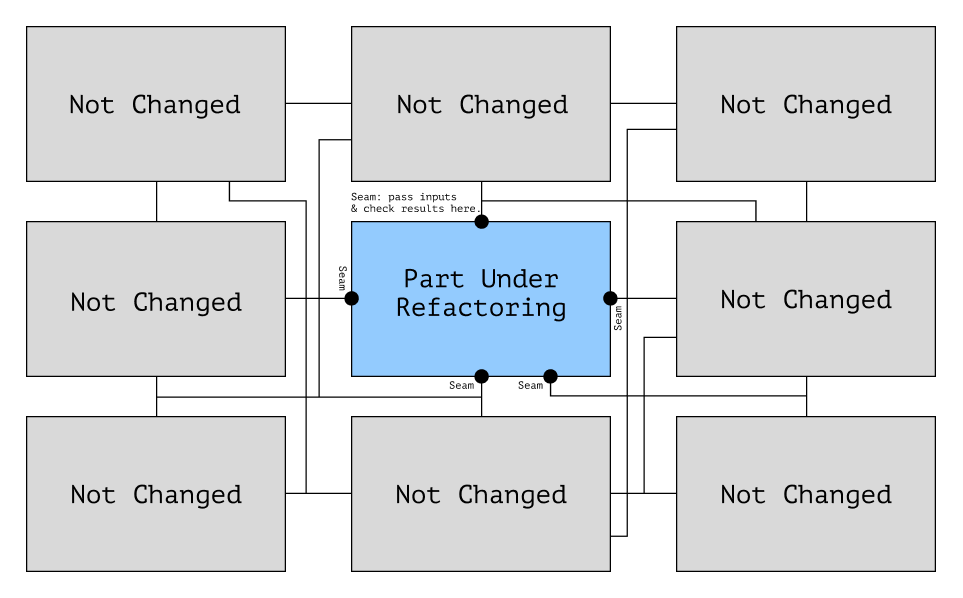

1,227,815 | Consider Anti-corruption Layer when integrate with another system | Motivation You may be worked on projects that deal with third party APIs (APIs related to... | 0 | 2022-10-23T20:16:04 | https://dev.to/smuhammed/consider-anti-corruption-layer-when-integrate-with-another-system-2c55 | programming, bestpractice, adapter, integration | ## Motivation

You may be worked on projects that deal with third party APIs (APIs related to another service provider) or some libraries generated by another companies or you building new system but it deals with a legacy system, when you use or deal with these APIs you have to deal them with special handling in order to keep your code clean and maintainable.

## The main problem is different languages

Mostly these APIs you deal with outside your system are built with different language from that in your system, By different language I don't mean programming language I mean Domain language, Different objects and properties from that used in your system.

If you start directly use this language (Objects and Properties) in the middle of your Domain business logic, you will find that you are make explicit conversion between your system objects and third party objects, also may handle exceptions that may occur from library or handle failure of HTTP requests in the middle of your business logic, and maybe your functionality is very small or trivial but because it uses a third party API it became more complex to read and maintain.

## Meet Anti-Corruption Layer

Anti-corruption layer is one of DDD (Domain Driven Design) principles, name came from that preventing corruption of your business logic that may happen because of integration code, which tells you that if you will deal with any third party API you have to isolate integration code in different layer and any service in your system needs to use this API it should use it from Contract/Interface.

Let's take an example, If you have a Social Insurance System and want to integrate with a third party that provides **Citizen information** based on **national id**, so your system have Insurer class that belongs to your system domain like this

`Class Insurer {

private String firstName;

private String lastName;

private String nationalId;

private Integer age;

// getters and setters

}`

And third party response will be like this

`Class Citizen {

private String fullName;

private String id;

private Date dateOfBirth;

// getters and setters

}`

it's Obvious that 2 different classes and need conversion, **so how our anti-corruption layer will be look like?**

**First we will build the contract/interface as this**

`public interface CitizenSystemIntegration {

public Insurer getInsurer(String nationalId);

}`

**and our contract implementation service will be like**

`class CitizenSystemIntegrationImpl implements CitizenSystemIntegration {

@Override

public Insurer getInsurer(String nationalId) {

// build request object

// call third party api

// convert response to Insurer object

// return

}

}`

Now whenever you need this facility you can create object from this implementation and use it, listing below benefits of this strategy

- Isolating the integration code.

- Reducing code duplication , as we have one place to edit and maintain.

- You can build your exception handling logic in one place.

- Your main system functionality is clear, there is no different object you need to handle, no specific exceptions you have to deal with and your domain service is clear.

- You can add logging functionality

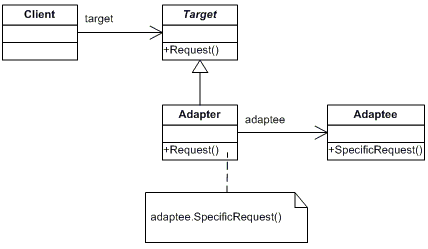

At the end , Anti-corruption layer is built based on same concept of Adapter Design Pattern

## Resources

- [Adapter Pattern](https://refactoring.guru/design-patterns/adapter)

- [Anti-Corruption Layer](https://www.thereformedprogrammer.net/wrapping-your-business-logic-with-anti-corruption-layers-net-core/)

IF YOU LIKED THE POST, THEN YOU CAN SUPPPORT SUCH CONTENT WITH A CUP OF COFFEE, THANKS IN ADVANCE.

<a href="https://www.buymeacoffee.com/samehmuh95" target="_blank"><img src="https://cdn.buymeacoffee.com/buttons/default-orange.png" alt="Buy Me A Coffee" height="41" width="174"></a> | smuhammed |

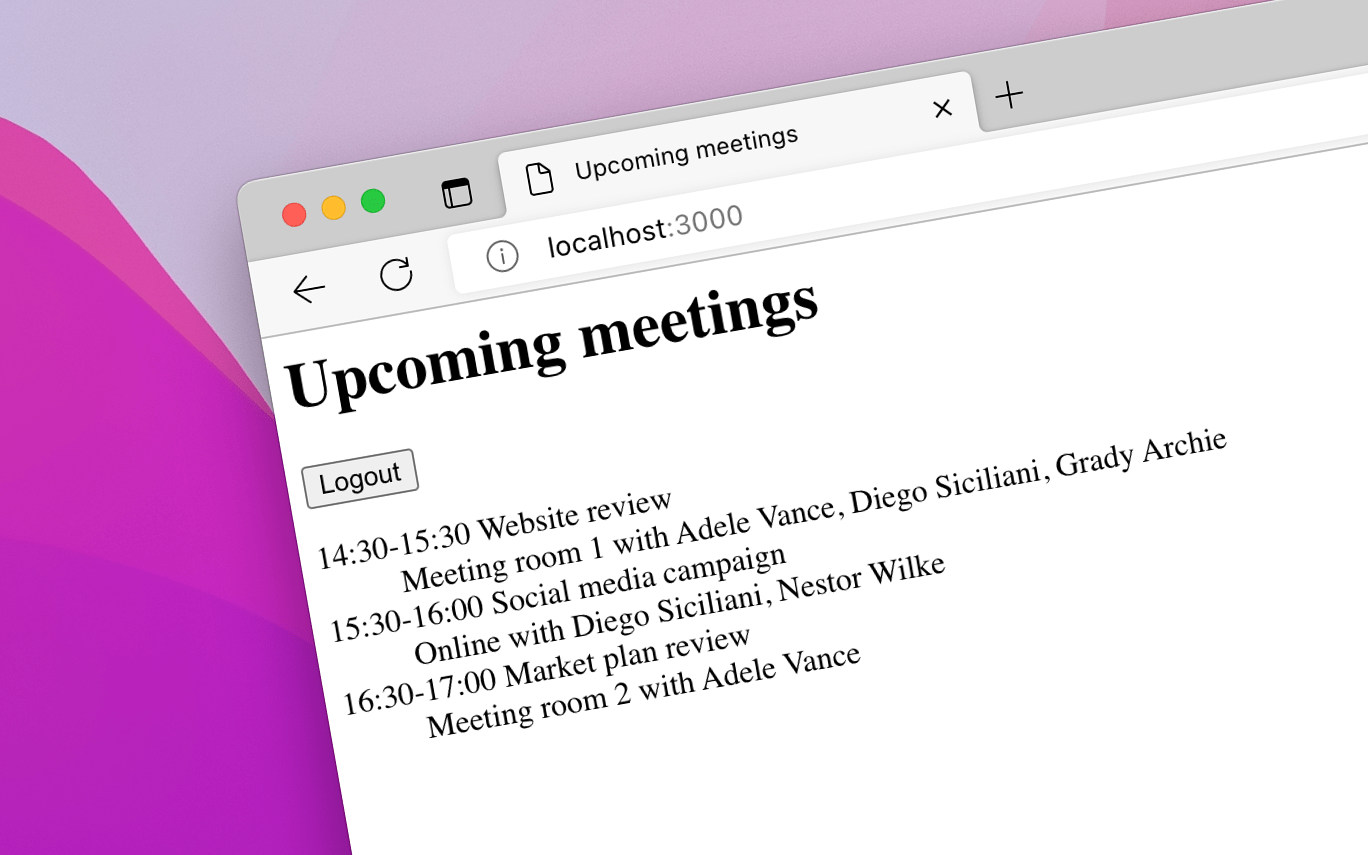

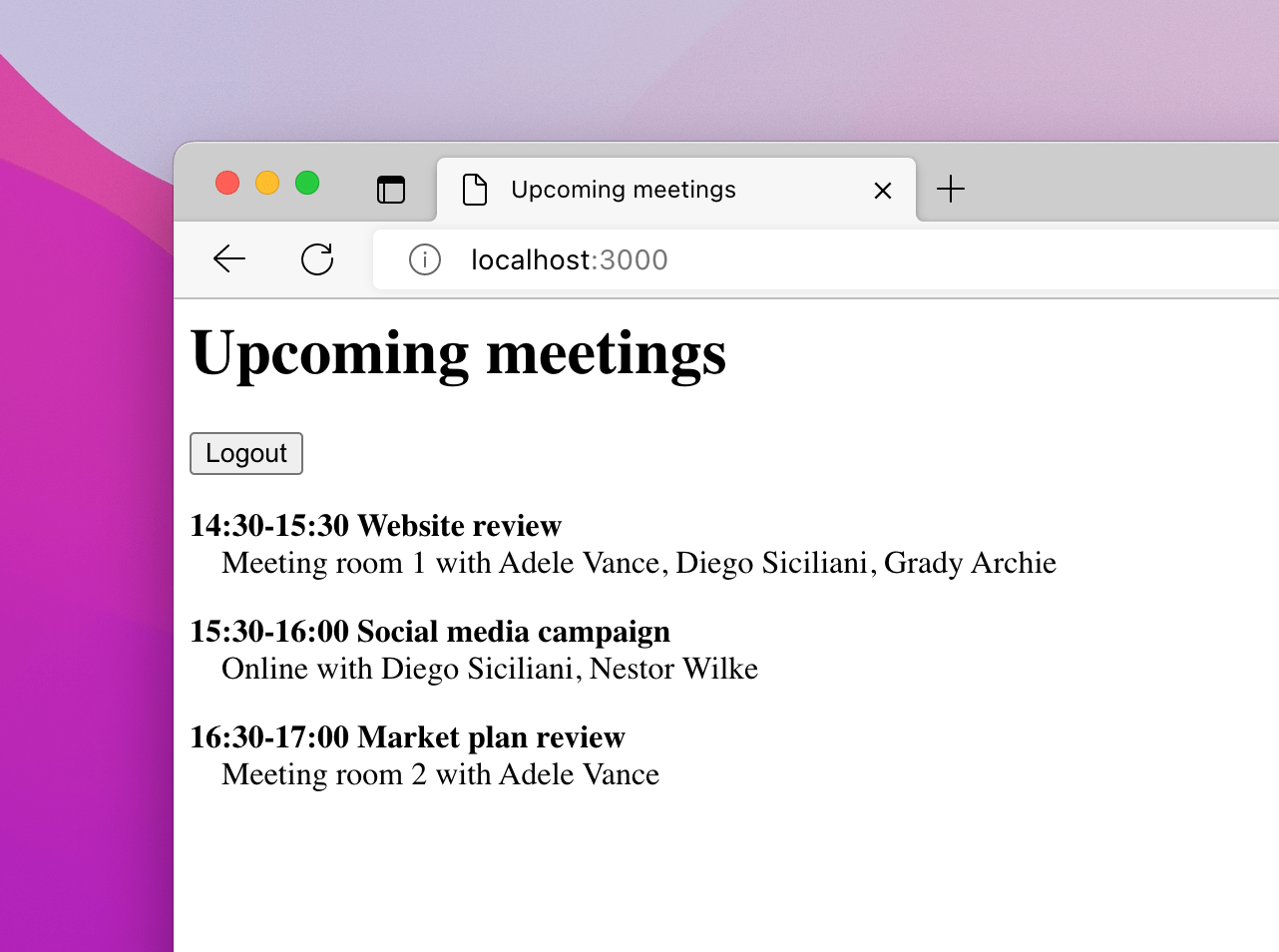

1,227,837 | Processing user input in Bubble Tea with a menu component | In the last tutorial, we did a "hello world" app, and it processed just a bit of user input ("press... | 20,227 | 2022-10-23T21:25:24 | https://dev.to/andyhaskell/processing-user-input-in-bubble-tea-with-a-menu-component-222i | go, codenewbie, 100devs, tui | ---

title: Processing user input in Bubble Tea with a menu component

published: true

description:

tags: #go, #codenewbie, #100devs, #tui

series: Make slick command-line apps with Bubble Tea

---

In the last tutorial, we did a "hello world" app, and it processed just a bit of user input ("press Ctrl+C to exit").

But we didn't really get a feel for actually using user input to change the model's data, and in turn change what we see in the app. So in this tutorial, we're going to create a menu component that lets us move between buttons.

## 📝 Defining our data

The first thing we need for any Bubble Tea component is the data our model is in charge of. If you recall, in our simplePage model, the data was just the text we were displaying:

```go

type simplePage struct { text string }

```

In our menu, what we need to do is:

* Display our options

* Show which option is selected

* Additionally, let the user press the enter to go to another page. But we'll add that in a later tutorial.

* For now, we can still make an onPress function passed in that tells us what we do if the user presses enter.

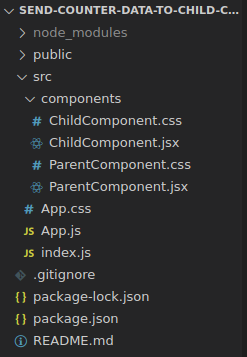

So our model's data will look like this; if you're following along, write this in a file named `menu.go`.

```go

type menu struct {

options []menuItem

selectedIndex int

}

type menuItem struct {

text string

onPress func() tea.Msg

}

```

A menu is made up of menuItems, and each menuItem has text and a function handling pressing enter. In this tutorial we'll just have the app toggle between all-caps and all-lowercase so it's at least doing something.

It returns a `tea.Msg` because that's we're able to change the data in response to this user input. We'll see why in the next section, when we're implementing the `Model` interface.

## 🧋 Implementing the Model interface

If you recall, for us to use our model as a UI component, it needs to implement this interface:

```go

type Model interface {

Init() Cmd

Update(msg Msg) (Model, Cmd)

View() string

}

```

First let's write the Init function.

```go

func (m menu) Init() tea.Cmd { return nil }

```

Again, we still don't have any initial `Cmd` we need to run, so we can just return `nil`.

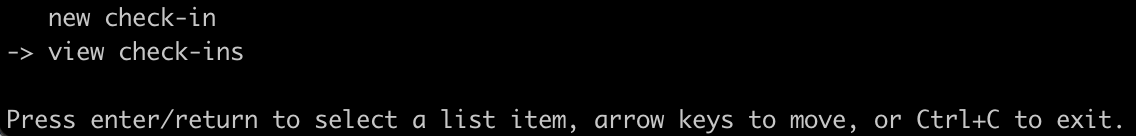

For the `View` function, let's make an old-school menu with an arrow to tell us which item is currently selected.

```go

func (m menu) View() string {

var options []string

for i, o := range m.options {

if i == m.selectedIndex {

options = append(options, fmt.Sprintf("-> %s", o.text))

} else {

options = append(options, fmt.Sprintf(" %s", o.text))

}

}

return fmt.Sprintf(`%s

Press enter/return to select a list item, arrow keys to move, or Ctrl+C to exit.`,

strings.Join(options, "\n"))

}

```

As mentioned in the last tutorial, one of the things that makes Bubble Tea really learnable is that the display for your UI is basically one big string. So in `menu.View` we make a slice of strings where the selected option has an arrow and the non-selected options have leading spaces. Then we join them all together and add our contols to the bottom.

Finally, let's write our Update method to handle user input.

```go

func (m menu) Update(msg tea.Msg) (tea.Model, tea.Cmd) {

switch msg.(type) {

case tea.KeyMsg:

switch msg.(tea.KeyMsg).String() {

case "ctrl+c":

return m, tea.Quit

case "down", "right", "up", "left":

return m.moveCursor(msg.(tea.KeyMsg)), nil

}

}

return m, nil

}

func (m menu) moveCursor(msg tea.KeyMsg) menu {

switch msg.String() {

case "up", "left":

m.selectedIndex--

case "down", "right":

m.selectedIndex++

default:

// do nothing

}

optCount := len(m.options)

m.selectedIndex = (m.selectedIndex + optCount) % optCount

return m

}

```

The `Update` method is the most complex part of this app, so let's break that down.

```go

case "ctrl+c":

return m, tea.Quit

```

Like before, we're handling the `KeyMsg` type, and we're the Ctrl+C keypress to quit the app by returning the Quit cmd.

```go

case "down", "right", "up", "left":

return m.moveCursor(msg.(tea.KeyMsg)), nil

```

For the arrow keys, though, we use a helper function, `moveCursor`, which returns an updated model.

```go

func (m menu) moveCursor(msg tea.KeyMsg) menu {

switch msg.String() {

case "up", "left":

m.selectedIndex--

case "down", "right":

m.selectedIndex++

default:

// do nothing

}

optCount := len(m.options)

m.selectedIndex = (m.selectedIndex + optCount) % optCount

return m

}

```

The up and left KeyMsg strings serve as our "navigate up" keys, and the down and right ones navigate us down, decrementing and incrementing `m.selected`.

Then, we use the mod operator to ensure that `m.selected` is one of the indices of our options.

Finally, with the model updated, `moveCursor` returns the model that in turn is returned by `Update`, and the new model ultimately gets processed by our `View` method.

Before we move on to processing the enter key though, we should see our app run. So let's put our new `menu` component into a `main` function and run it.

```go

func main() {

m := menu{

options: []menuItem{

menuItem{

text: "new check-in",

onPress: func() tea.Msg { return struct{}{} },

},

menuItem{

text: "view check-ins",

onPress: func() tea.Msg { return struct{}{} },

},

},

}

p := tea.NewProgram(m)

if err := p.Start(); err != nil {

panic(err)

}

}

```

For now, onPress is just a no-op that returns an empty struct. Now, let's run our app.

```

go build

./check-ins

```

You should see something like this:

Cool! Now the menu can toggle what's selected! Now let's handle that user input.

## ✅ Handling the enter key and seeing what the tea.Cmd type actually does

So far, we haven't really taken a close look at the `tea.Cmd` type. It's one of the two return values for the `Update` method, but we've only used it so far to exit the app. Let's take a closer look at its type signature.

```go

type Cmd func() tea.Msg

```