id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,783,187 | Kotlin Koans BR: Tipagem inteligente | 🔗 Tarefa Reescreva o código fornecido usando smart casts e a expressão when do... | 26,703 | 2024-03-07T10:28:50 | https://dev.to/rsicarelli/kotlin-koans-br-tipagem-inteligente-5b74 | kotlin, braziliandevs | ## 🔗 [Tarefa](https://play.kotlinlang.org/koans/Classes/Smart%20casts/Task.kt)

Reescreva o código fornecido usando [smart casts](https://kotlinlang.org/docs/typecasts.html#smart-casts) e a expressão [when](https://kotlinlang.org/docs/control-flow.html#when-expression) do Kotlin.

Java

```java

class Java {

public int eval(Expr expr) {

if (expr instanceof Num) {

return ((Num) expr).getValue();

}

if (expr instanceof Sum) {

Sum sum = (Sum) expr;

return eval(sum.getLeft()) + eval(sum.getRight());

}

throw new IllegalArgumentException("Unknown expression");

}

}

```

<details>

<summary>C#</summary>

```csharp

public interface Expr { }

public class Num : Expr

{

public int Value { get; set; }

}

public class Sum : Expr

{

public Expr Left { get; set; }

public Expr Right { get; set; }

}

public int Eval(Expr expr)

{

if (expr is Num num)

return num.Value;

if (expr is Sum sum)

return Eval(sum.Left) + Eval(sum.Right);

throw new ArgumentException("Unknown expression");

}

```

</details>

<details>

<summary>Dart</summary>

```dart

abstract class Expr {}

class Num implements Expr {

final int value;

Num(this.value);

}

class Sum implements Expr {

final Expr left, right;

Sum(this.left, this.right);

}

int eval(Expr expr) {

if (expr is Num) return expr.value;

if (expr is Sum) return eval(expr.left) + eval(expr.right);

throw ArgumentError('Unknown expression');

}

```

</details>

<details>

<summary>Go</summary>

```go

package main

type Expr interface{}

type Num struct {

Value int

}

type Sum struct {

Left, Right Expr

}

func Eval(expr Expr) int {

switch e := expr.(type) {

case Num:

return e.Value

case Sum:

return Eval(e.Left) + Eval(e.Right)

default:

panic("Unknown expression")

}

}

```

</details>

<details>

<summary>JavaScript</summary>

```javascript

function eval(expr) {

if (expr instanceof Num) {

return expr.value;

}

if (expr instanceof Sum) {

return eval(expr.left) + eval(expr.right);

}

throw new Error("Unknown expression");

}

class Num {

constructor(value) {

this.value = value;

}

}

class Sum {

constructor(left, right) {

this.left = left;

this.right = right;

}

}

```

</details>

<details>

<summary>TypeScript</summary>

```typescript

interface Expr {

}

class Num implements Expr {

constructor(public value: number) {

}

}

class Sum implements Expr {

constructor(public left: Expr, public right: Expr) {

}

}

function eval(expr: Expr): number {

if (expr instanceof Num) return expr.value;

if (expr instanceof Sum) return eval(expr.left) + eval(expr.right);

throw new Error("Unknown expression");

}

```

</details>

<details>

<summary>PHP</summary>

```injectablephp

interface Expr {}

class Num implements Expr {

public $value;

function __construct($value) { $this->value = $value; }

}

class Sum implements Expr {

public $left, $right;

function __construct($left, $right) { $this->left = $left; $this->right = $right; }

}

function evalExpr($expr) {

if ($expr instanceof Num) return $expr->value;

if ($expr instanceof Sum) return evalExpr($expr->left) + evalExpr($expr->right);

throw new Exception("Unknown expression");

}

```

</details>

<details>

<summary>Python</summary>

```python

class Expr:

pass

class Num(Expr):

def __init__(self, value):

self.value = value

class Sum(Expr):

def __init__(self, left, right):

self.left = left

self.right = right

def eval_expr(expr):

if isinstance(expr, Num):

return expr.value

if isinstance(expr, Sum):

return eval_expr(expr.left) + eval_expr(expr.right)

raise ValueError("Unknown expression")

```

</details>

<details>

<summary>Swift</summary>

```swift

protocol Expr {}

class Num: Expr {

let value: Int

init(_ value: Int) { self.value = value }

}

class Sum: Expr {

let left, right: Expr

init(_ left: Expr, _ right: Expr) { self.left = left; self.right = right }

}

func eval(_ expr: Expr) -> Int {

if let num = expr as? Num {

return num.value

}

if let sum = expr as? Sum {

return eval(sum.left) + eval(sum.right)

}

fatalError("Unknown expression")

}

```

</details>

---

## Casos de uso

Em programação, cada tipo de dado é representado e operado diferentemente na memória. O "casting" é uma técnica usada para informar ao compilador que uma variável deve ser tratada como outro tipo. Isso permite realizar operações específicas com essa variável, além de garantir a compatibilidade com outras partes do código.

Em Kotlin, existe um recurso do compilador chamado **Smart casts** que rastreia verificações de tipos (como com o operador `is`) e infere automaticamente o seu tipo quando necessário.

### Verificação de tipo e inferência

#### Verificação positiva

Ao verificar uma variável com o operador `is`, e se a verificação for bem-sucedida, Kotlin reconhece imediatamente o tipo dessa variável dentro do bloco de código:

```kotlin

class Gato(val emojiGato: String = "🐱")

class Cachorro(val emojiCachoro: String = "🐶")

class Peixe(val emojiPeixe: String = "🐟")

class Pássaro(val emojiPassaro: String = "🐦")

fun falar(animal: Any): String {

return when (animal) {

is Gato -> "Miau ${animal.emojiGato}"

is Cachorro -> "Au au ${animal.emojiCachoro}"

is Peixe -> "Blub blub ${animal.emojiPeixe}"

is Pássaro -> "Pi pi ${animal.emojiPassaro}"

else -> "Não reconhecemos esse animal."

}

}

fun ondeVive(animal: Any) {

if (animal is Gato || animal is Cachorro) {

println("Vive em terra.")

} else if (animal is Peixe) {

println("Vive na água.")

} else if (animal is Pássaro) {

println("Vive no ar e na terra.")

} else {

println("Não reconhecemos esse animal.")

}

}

```

#### Verificação negativa

Usando `!` antes do operador `is`, é possível reagir quando a variável não é do tipo esperado:

```kotlin

class Ave(val canto: String)

class Macaco(val grito: String)

class Reptil(val som: String = "Ssssss")

fun documentarSom(animal: Any) {

if (animal !is Ave) return

print("O som da ave é: ${animal.canto}")

}

// Testando a função

val tucano = Ave("Pi-pi-piu")

documentarSom(tucano) // Saída: "O som da ave é: Pi-pi-piu"

```

#### Limitações com variáveis mutáveis (`var`)

O compilador pode não realizar um Smart Cast se não puder garantir que o valor da variável não mudou entre o momento da verificação e o momento do uso:

```kotlin

open class Animal

class Cachorro() : Animal() {

fun alimentar() = Unit

}

var animal: Animal? = Cachorro()

if (animal is Cachorro) {

animal = null

animal.alimentar() // Erro de compilação: Smart cast para 'Cachorro' é impossível

}

```

### Smart Casts com operadores lógicos

Kotlin vai além e integra a capacidade de "Smart Casts" com operadores lógicos como `&&` e `||`. Isso evita a necessidade de conversões explícitas, tornando o código mais limpo e legível.

```kotlin

open class Animal(val nome: String, val energia: Int = 100)

class Peixe(nome: String, energia: Int, val habitatPreferido: String) : Animal(nome, energia) {

fun explorar() = "está explorando o habitat $habitatPreferido!"

}

class Passaro(nome: String, energia: Int, val tipoBico: String) : Animal(nome, energia) {

fun bicar() = "está usando seu bico $tipoBico para buscar comida!"

}

fun acaoEspecifica(animal: Animal) {

when {

animal is Peixe && animal.energia > 50 -> {

println("${animal.nome} ${animal.explorar()}")

}

animal is Passaro && animal.tipoBico == "afiado" -> {

println("${animal.nome} ${animal.bicar()}")

}

else -> {

println("${animal.nome} não está realizando uma ação específica no momento.")

}

}

}

// Testando a função

val tilapia = Peixe("Tilápia", 60, "lago de água doce")

val aguia = Passaro("Águia", 80, "afiado")

val canario = Passaro("Canário", 50, "pequeno")

acaoEspecifica(tilapia) // Saída: "Tilápia está explorando o habitat lago de água doce!"

acaoEspecifica(aguia) // Saída: "Águia está usando seu bico afiado para buscar comida!"

acaoEspecifica(canario) // Saída: "Canário não está realizando uma ação específica no momento."

```

### Vantagens

- **Sintaxe limpa e código legível**: permite um código mais limpo, direto e legível, evitando repetições de conversões explícitas de tipo.

- **Segurança de tipo**: o compilador realiza o Smart Cast apenas quando é seguro, reduzindo a possibilidade de erros de conversão em tempo de execução.

- **Integração com controle de fluxo**: dentro de controles condicionais como `if`, `else`, `when`, ou loops como `for`, `while`, o Kotlin reconhece e ajusta o tipo da variável de acordo, permitindo o acesso direto a suas propriedades específicas sem necessidade de casting explícito.

### Desvantagens

- **Limitações com Variáveis Mutáveis**: com variáveis mutáveis, Smart Casts pode não ser garantido pelo compilador, já que o tipo pode ter mudado entre a verificação e o uso.

- **Concorrência**: em ambientes com múltiplos threads, o Smart Cast pode apresentar riscos se uma variável for alterada por outro thread após a verificação.

- **Potencial confusão com lógica complexa**: em certas lógicas condicionais, o compilador pode não conseguir inferir o tipo, mesmo que pareça claro para o desenvolvedor.

## Analogia

Ao ouvir o canto de um pássaro específico na floresta, um ornitólogo pode identificar imediatamente a espécie, mesmo sem vê-la. Esse reconhecimento imediato permite ao especialista saber tudo sobre esse pássaro, desde seus hábitos até seu habitat.

O Smart Cast no Kotlin age de forma semelhante, permitindo utilizar o tipo específico assim que identificado, sem necessidade de verificações adicionais.

| rsicarelli |

1,783,201 | Want to become a Professional Organiser? | How to become a Professional Organiser? Want to become a Professional Organiser? A Tidy Mind is an... | 0 | 2024-03-07T10:39:48 | https://dev.to/atidymind/want-to-become-a-professional-organiser-2gik | other | [How to become a Professional Organiser](https://www.atidymind.co.uk/franchise-opportunities/)? Want to become a Professional Organiser?

A Tidy Mind is an established brand and a successful professional decluttering and organising business. Because of this and our love for the job, we want to grow and share the business with others so offer Professional Organiser training and mentoring. We’re excited to let you know there are currently UK-wide franchise opportunities – for the right people.

We already have 5 franchisees running thriving businesses that fit their lives.

**The franchise involves an investment & in return, you run your own version of A Tidy Mind & join our website & join our team. If you’re just researching or want to go your own way, you may be interested in ad hoc mentoring from me as the founder of A Tidy Mind. See the ‘mentoring for professional organisers’ page**

Why buy into a Franchise?

Starting a business from scratch can be difficult, overwhelming and lonely. First, there’s the research stage, then getting the right website, then of course marketing the business and the long road to becoming established. It’s certainly been a learning curve for us, and we want to be able to share that learning so that franchisees can buy into something that has been proven to work. Buying a franchise means you will be given everything you need to successfully run your own version of this business.

What do you stand to earn?

One-to-one decluttering & organising work is charged at £30-£40 per hour (up to £50 per hour in London)

Productivity & budgeting coaching is £40 plus per hour,

PA/VA work is charged at £25 plus per hour,

One off home makeovers start from £250,

Workshops & talks are variably priced.

Your investment

6k Initial franchise fee

10% monthly franchise fee

£117 per year insurance

£160 every 3 years for a waste carrier licence

Working capital to purchase materials, i.e. refuse sacks

Interested? Tick all the boxes? Please email kate@atidymind.co.uk or ring 07961770452. | atidymind |

1,783,207 | 🚀🚀🚀Implementing Redis Functionalities in Node.js Applications 📦 | Introduction This documentation outlines the process of implementing caching using Redis... | 0 | 2024-03-07T10:50:35 | https://dev.to/surajvast1/implementing-redis-functionalities-in-nodejs-applications-45g6 | node, redis, database, coding |

## Introduction

This documentation outlines the process of implementing caching using Redis in a Node.js Express application. Caching data with Redis helps reduce response times and server load by:

- Storing frequently accessed data in memory

- Optimizing data retrieval by avoiding repetitive requests to external sources

## Prerequisites

- Node.js installed on your machine

- Basic understanding of JavaScript and Express.js

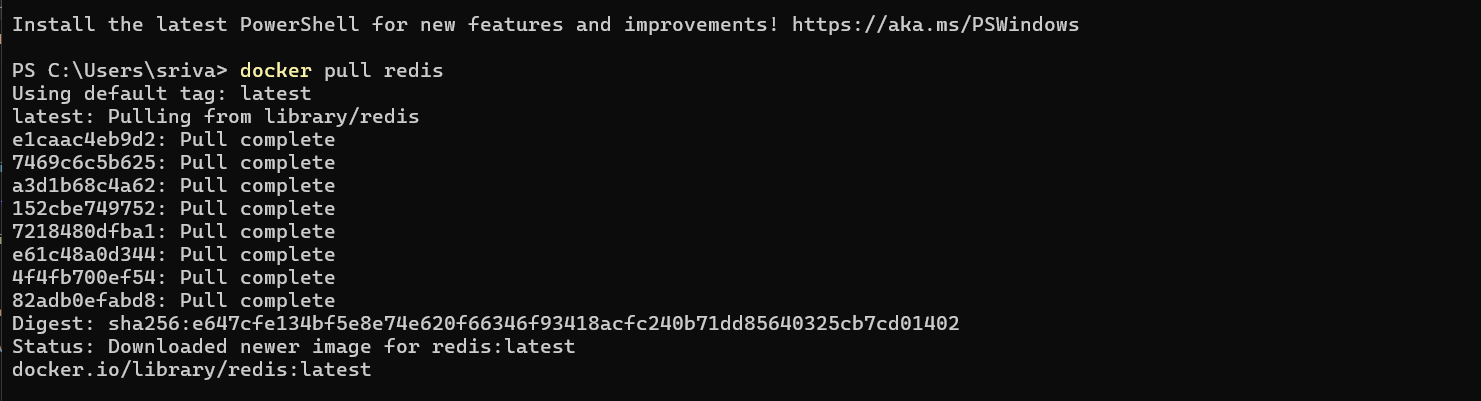

## Redis Docker Installation

To set up Redis using Docker, follow these steps:

1. **Download and Install Docker**:

- Visit the [official Docker website](https://www.docker.com/get-started).

- Download Docker for your operating system and follow the installation instructions provided.

2. **Run Redis Container**: Execute the following command in your terminal to fetch Redis in a Docker container:

```bash

docker run -d --name redis-stack -p 6379:6379 -p 8001:8001 redis/redis-stack:latest

```

This command pulls the latest Redis image from Docker Hub and runs it in a detached mode (`-d`). It also names the container as `redis-stack`, maps the container's port 6379 to the host port 6379 (for Redis), and maps port 8001 to the RedisInsight UI for monitoring Redis data.

**Verify Installation:**

1. Check if the Redis container is running by executing:

```bash

docker ps

```

2. Access RedisInsight by visiting `http://localhost:8001` in your web browser. You should see the RedisInsight dashboard.

3. Connect to the Redis server using the default connection settings (`localhost:6379`). Here's a visual guide on how to connect:

4. After verifying that the Redis container is running and accessing RedisInsight, proceed with the following steps to interact with Redis:

a. Run `docker ps` to check the container IDs.

b. Run the following command to access the Redis container shell (replace `<container_id>` with the actual container ID):

```bash

docker exec -it <container_id> bash

```

c. Once inside the container, run the Redis CLI by executing:

```bash

redis-cli

```

You should now be connected to the Redis server and able to interact with it using the Redis CLI.

## Installation

Ensure Redis server is installed and running. Additionally, install required dependencies for your Express application:

```bash

npm install express axios ioredis

```

## Basic Setup

Begin by setting up a basic Express application that listens on a specific port:

```javascript

const express = require("express");

const axios = require("axios");

const app = express();

app.get("/", async (req, res) => {

const { data } = await axios.get(

"https://jsonplaceholder.typicode.com/photos"

);

return res.json(data);

});

const port = 9000;

app.listen(port, () => {

console.log(`Server is running on port ${port}`);

});

```

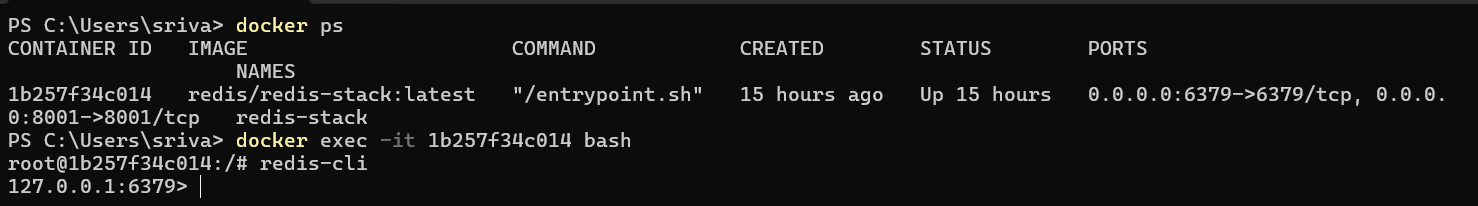

## Problem Statement

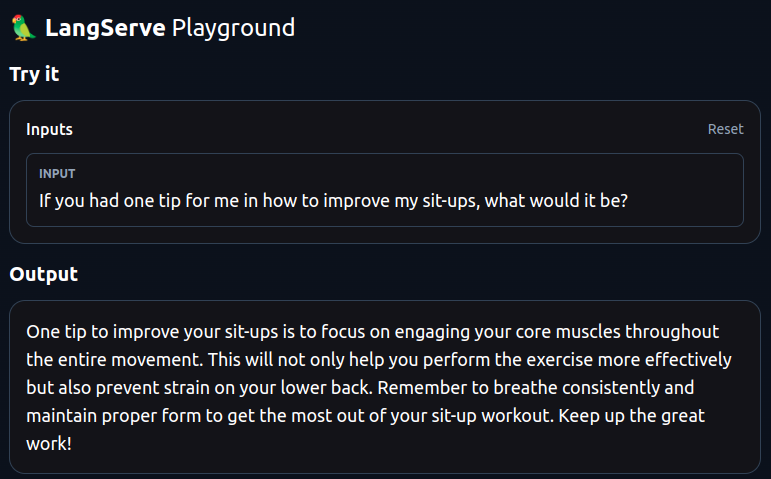

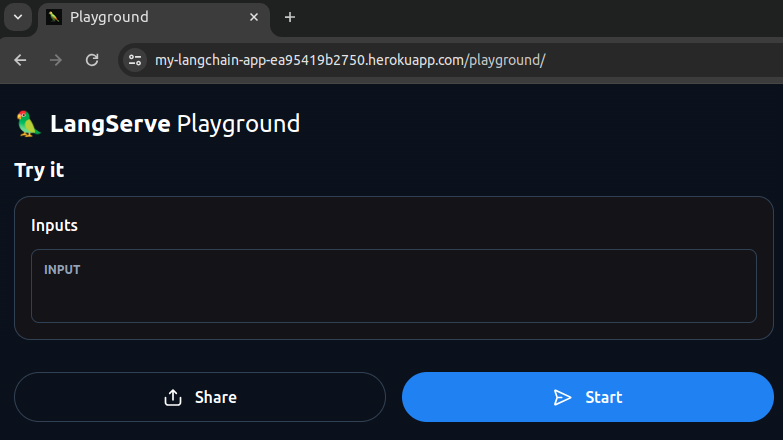

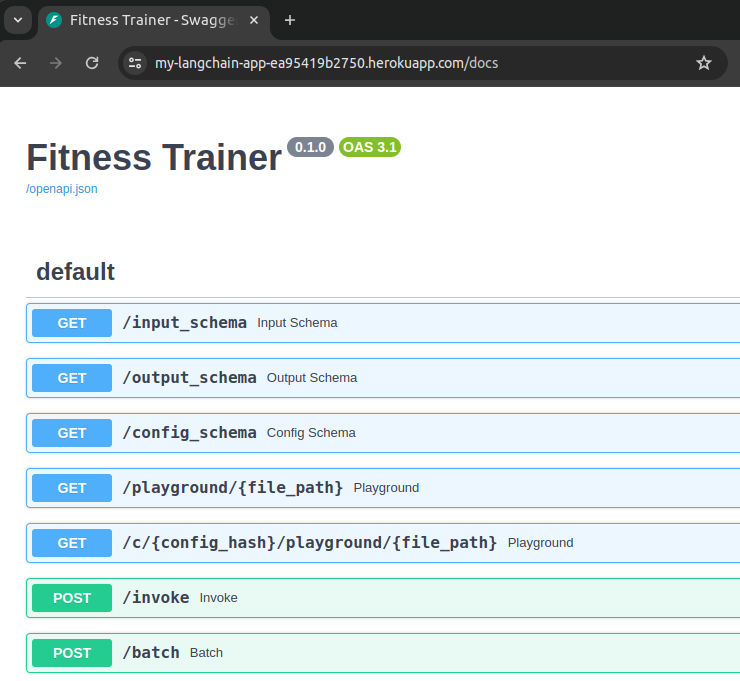

Fetching data from external APIs directly may result in slow response times due to network latency and server load,here as you can see it took 364 millisecond to fetch the data.

## Solution

Implement caching using Redis to store fetched data temporarily and serve it from the cache for subsequent requests, thereby reducing response times.

Certainly! Here's the updated documentation with the added points:

## Implementation Steps

1. **Initialize Redis Client**

First, create a Redis client to interact with the Redis server. Create a file named `client.js` and add the following code:

```javascript

const { Redis } = require("ioredis");

const client = new Redis();

module.exports = client;

```

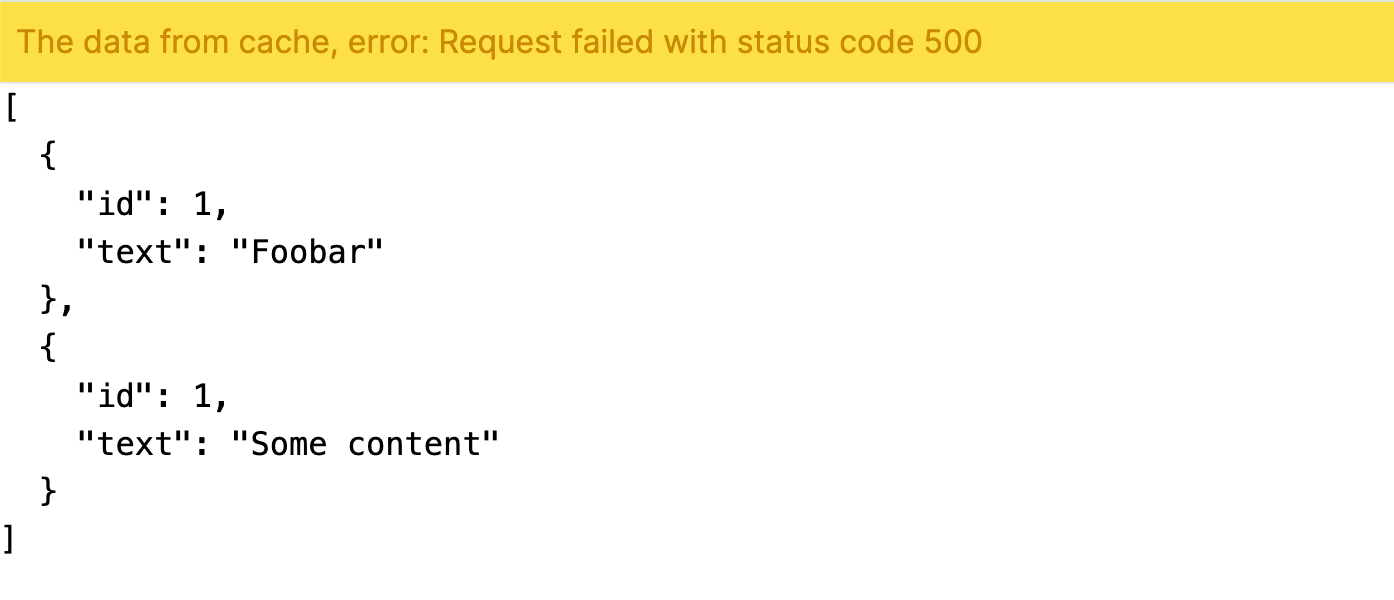

2. **Implement Caching Logic**

Modify your route handler to utilize the Redis client for caching. Update your `server.js` file with the following code:

```javascript

const express = require("express");

const axios = require("axios");

const client = require("./client");

const app = express();

app.get("/", async (req, res) => {

const cacheValue = await client.get("to-dos");

if (cacheValue) {

console.log("Cached value");

return res.json(JSON.parse(cacheValue));

}

const { data } = await axios.get(

"https://jsonplaceholder.typicode.com/photos"

);

await client.set("to-dos", JSON.stringify(data));

await client.expire("to-dos", 30);

return res.json(data);

});

const port = 9000;

app.listen(port, () => {

console.log(`Server is running on port ${port}`);

});

```

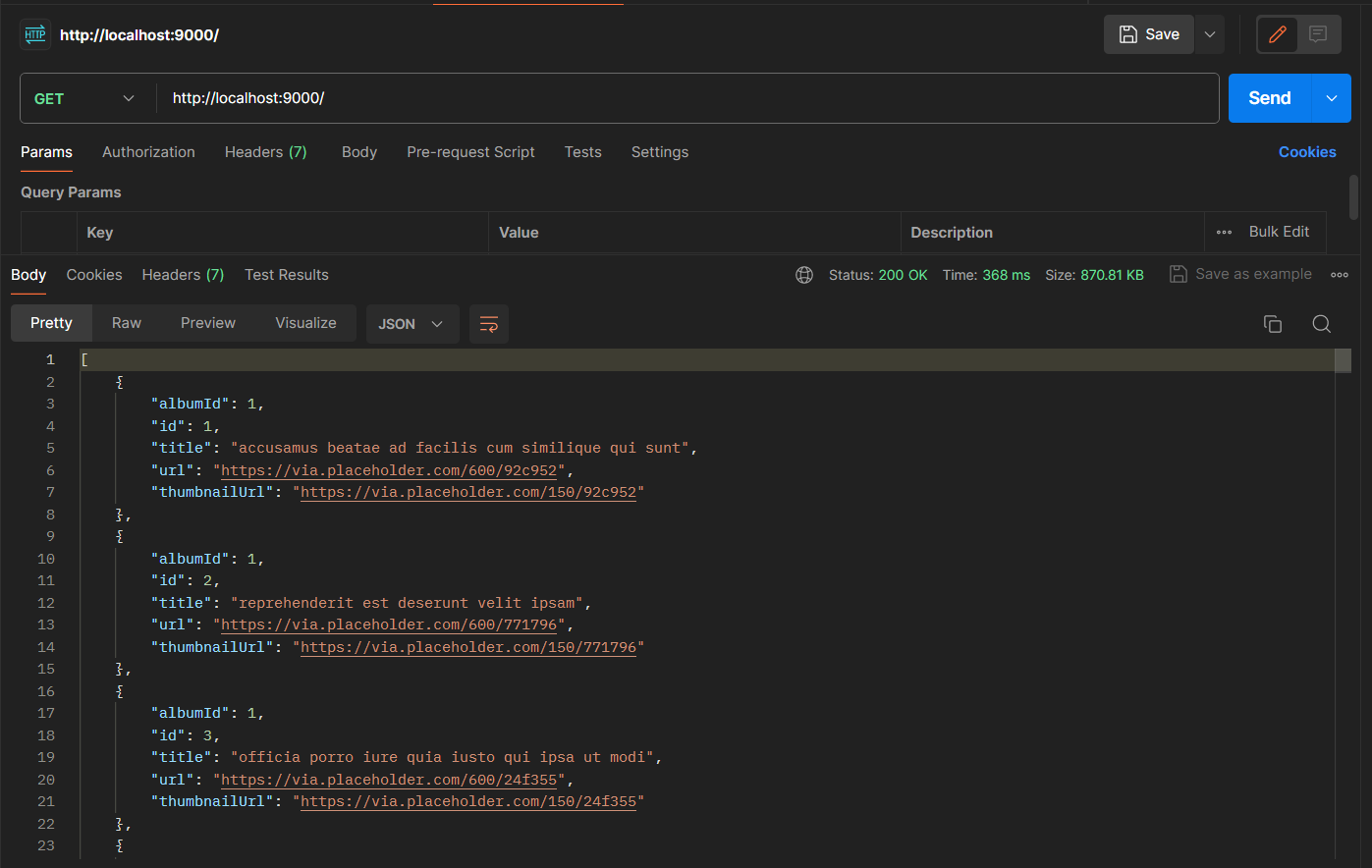

3. **Observation of Data Fetching Time**

After implementing the caching mechanism, you may notice that the initial data fetching time is higher as it involves fetching data from the external API. However, upon subsequent requests, the data fetching time significantly reduces to approximately 37 milliseconds due to the cached data being served from Redis. This demonstrates the effectiveness of caching in reducing response times and improving overall performance.

4. **Expiration and Renewal**

Note that the cached data expires after 30 seconds (`await client.expire("to-dos", 30)`). Upon expiration, the next request will trigger a fresh data fetch from the external API, refreshing the cache with updated data. This ensures that users receive the most up-to-date information while still benefiting from reduced response times during the cache lifespan.

## Conclusion

By implementing caching with Redis in your Express application, you can significantly improve performance by reducing response times and server load. Caching commonly accessed data helps minimize latency and enhances the overall user experience, especially for frequently accessed resources. | surajvast1 |

1,783,253 | What Is International Women's Day and Why Do We Celebrate It? | International Women's Day is a special day celebrated around the world to honor the accomplishments... | 0 | 2024-03-07T11:29:09 | https://dev.to/muthu1010/what-is-international-womens-day-and-why-do-we-celebrate-it-4pk5 | womensday, womensdayppttemplate, womensdaypresentations | International Women's Day is a special day celebrated around the world to honor the accomplishments of women in society. It happens every year on March 8th. This day is important because it reminds us to recognize the achievements and progress women have made in various areas like social, economic, cultural, and political fields. It's also a time to think about the work that still needs to be done to achieve gender equality. International Women's Day is a chance to celebrate women's achievements and to raise awareness about the challenges they still face in many parts of the world.

## The Origins of International Women's Day

In the early 1900s, when there were big changes happening in the world, people started talking about a special day for women. This was a time of lots of growth and change, especially in places with a lot of factories and big cities. The first time a 'Women's Day' was celebrated was in the United States in 1909. It was on February 28th, and it was organized by the Socialist Party of America. They wanted to honor the women who had protested in a big strike in New York the year before, in 1908. These women were fighting for better conditions at work.

Then, in 1910, in a city called Copenhagen, there was a meeting of women from many different countries. One woman, Clara Zetkin, who was from Germany and was part of the Socialist group, suggested something big. She said there should be a day for women celebrated all around the world, every year. She thought this day could be used to talk about what women needed and wanted. Everyone at the meeting, which had over 100 women from 17 countries, agreed. And that's how International Women's Day started. It was a way for women to come together and speak out for their rights.

## The Significance of International Women's Day

International Women's Day is a special day celebrated worldwide to honor the progress women have made in society, politics, and economics. It's a time to acknowledge how much has been achieved and recognize the ongoing work needed. This day brings together people from all walks of life, including governments, women's groups, businesses, and charities. Events like talks, rallies, conferences, and marches take place globally, focusing on important issues such as gender equality, women's rights to make choices about their bodies, ending violence against women, and ensuring women have equal economic opportunities. It's a day to raise awareness and show support for women everywhere.

## Why We Celebrate International Women's Day

**Recognition of Women's Achievements**

International Women's Day is a special day to celebrate and recognize the amazing things women have done in our world. It's a time to appreciate the important jobs women have in making our communities, economies, and governments strong. This day lets us honor the women who have led the way in making progress in different areas. Sharing **[presentations about Women's Day](https://www.slideegg.com/womens-day-ppt-template)** helps people learn more and understand why this day is important. It's a chance to appreciate what women have accomplished and work towards making sure everyone has equal opportunities and power.

**Raising Awareness About Gender Inequality**

Despite some progress, gender inequality is still a major issue around the globe. International Women's Day serves as a reminder of the ongoing struggle for gender equality and the importance of supporting the rights of women and girls in all areas of life. We need to keep pushing for change and strive for a future where everyone, regardless of gender, has the same opportunities and rights. It's crucial to stand united, spread awareness, and actively work towards building a fairer and more equal world for everyone.

**Solidarity and Unity**

International Women's Day is a day when women from all around the world join together to celebrate their unity and support for each other. It's a special occasion where we recognize the different experiences and backgrounds of women everywhere. We gather to show our commitment to gender equality and women's rights. This day reminds us of the challenges women face and encourages us to work together to overcome them. No matter where we're from or what we do, on International Women's Day, we stand united as sisters, allies, and advocates for a fairer and more just world for all women.

**Inspiring Action**

This special day reminds us to work together for gender equality. It tells us to help women in every way we can and to fight against unfairness. We should support women's progress and challenge stereotypes that stop them from succeeding. We need to speak out when we see unfair treatment. By working together and spreading the word, we can make a world where everyone, no matter their gender, can do well.

## How to Celebrate International Women's Day

There are many ways to celebrate International Women's Day, from participating in events and rallies to supporting women-owned businesses. Here are a few suggestions:

**Educate Yourself and Others:** Use the day as an opportunity to learn more about **[women's rights](https://en.wikipedia.org/wiki/Women%27s_rights)** issues and educate others. Reading books, watching documentaries, or attending seminars can be a great way to start.

**Support Women-Owned Businesses:** Make an effort to support businesses owned and operated by women. This can help promote economic growth and women's empowerment.

**Advocate for Change:** Use your voice on social media or in your community to advocate for gender equality. Support campaigns and initiatives that aim to address gender-based issues.

**Celebrate Women in Your Life: **Take the time to appreciate and celebrate the women in your life. Acknowledge their achievements, strength, and resilience.

## Conclusion

International Women's Day is a special occasion when we come together to celebrate, reflect, speak out, and make a difference. It's a time to recognize women's achievements, acknowledge the challenges they continue to confront, and strive for a more just world. Observing this day emphasizes the importance of women in building a better future. It serves as a reminder that everyone can contribute to achieving gender equality. By working together, we can make a real difference. Let's continue to advocate for progress and appreciate the remarkable contributions of women everywhere.

| muthu1010 |

1,783,313 | 讓 MicroPython 完全釋放 ESP32-S3 N16R8 的威力 | ESP32-S3 N16R8 的開發板搭載有 16MB 的 flash 和 8MB 的 psram, 不過如果你使用 MicroPython 網站上的 bin 檔,... | 0 | 2024-03-07T12:59:39 | https://dev.to/codemee/rang-micropython-wan-quan-shi-fang-esp32-s3-n16r8-de-wei-li-5d3b | esp32, esp32s3, psram | ESP32-S3 N16R8 的開發板搭載有 16MB 的 flash 和 8MB 的 psram, 不過如果你使用 MicroPython 網站上的 bin 檔, 會得到以下的結果:

```python

>>> import esp

>>> esp.flash_size()

8388608

>>> import micropython

>>> micropython.mem_info()

stack: 736 out of 15360

GC: total: 64000, used: 16096, free: 47904, max new split: 188416

No. of 1-blocks: 346, 2-blocks: 41, max blk sz: 32, max free sz: 2982

>>> import gc

>>> gc.mem_alloc() + gc.mem_free()

252416

>>>

```

flash 只有 8MB, 而載入系統後記憶體看起來只有 252KB, 那我的 psram 跑哪裡去了?

## 解放 psram

這是因為韌體檔有分兩個版本:

- [不支援 8 線 SPI ram 的版本](https://micropython.org/resources/firmware/ESP32_GENERIC_S3-20240222-v1.22.2.uf2)

- [支援 8 線 SPI ram 的版本](https://micropython.org/resources/firmware/ESP32_GENERIC_S3-SPIRAM_OCT-20240222-v1.22.2.uf2)

如果改用支援 8 線 SPI ram 版本的韌體, 得到的結果如下:

```python

>>> import esp

>>> esp.flash_size()

8388608

>>> import micropython

>>> micropython.mem_info()

stack: 736 out of 15360

GC: total: 64000, used: 15440, free: 48560, max new split: 8257536

No. of 1-blocks: 340, 2-blocks: 40, max blk sz: 32, max free sz: 3023

>>> import gc

>>> gc.mem_alloc() + gc.mem_free()

8321536

```

可以看到雖然 flash 還是只有 8MB, 但是可用的記憶體已經暴增到接近 8MB 了, 這表示 psram 已經啟用了。

## 解放 flash

最後要再找回來的就是少掉的 8MB flash, 這主要是因為原本的韌體就是被編譯成 8MB 的空間, 必須要把韌體重新延伸為 16MB, 還好, 已經有善心人心幫我們使用 Python 做好了工具--[mp-image-tool-esp32](https://github.com/glenn20/mp-image-tool-esp32), 安裝方式如下:

1. 從 github 上複製專案:

```

git clone https://github.com/glenn20/mp-image-tool-esp32

cd mp-image-tool-esp32

```

2. 安裝相依的套件:

```

pip install -r requirements.txt

```

利用以下的指令就可以把韌體檔案變成 16MB 空間的大小:

```

python .\mp-image-tool-esp32 -f 16M ..\..\firmware\ESP32_GENERIC_S3-SPIRAM_OCT-20240222-v1.22.2.bin

```

選項 `-f` 就是用來指定韌體大小, 它會產生一個和原始韌體檔案同名但加上 "-16MB" 的檔案, 使用這個韌體檔就可以把 16MB 的 flash 通通解放了:

```python

>>> import esp

>>> esp.flash_size()

16777216

>>> import micropython

>>> micropython.mem_info()

stack: 736 out of 15360

GC: total: 64000, used: 15440, free: 48560, max new split: 8257536

No. of 1-blocks: 340, 2-blocks: 40, max blk sz: 32, max free sz: 3023

>>> import gc

>>> gc.mem_alloc() + gc.mem_free()

8321536

```

不論是 flash 還是 psram, 通通都到位了。

| codemee |

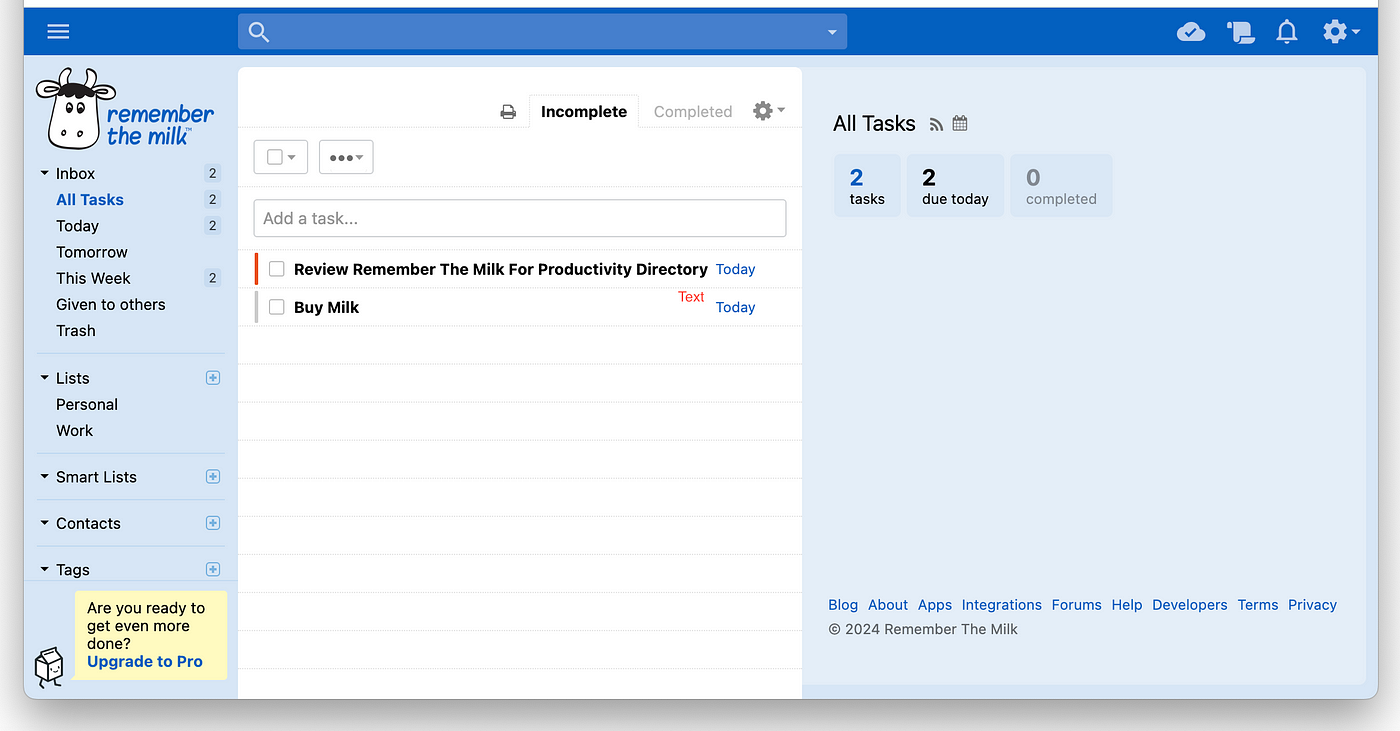

1,783,334 | Remember the Milk Review 2024: Old But Gold | Remember the Milk is one of those applications that I can't remember how many years I've known it,... | 0 | 2024-03-07T13:21:35 | https://blog.productivity.directory/remember-the-milk-review-2024-old-but-gold-67986c201f38 | rememberthemilk, productivity, todolistapp, todolist | [Remember the Milk](https://productivity.directory/remember-the-milk) is one of those applications that I can't remember how many years I've known it, but it has always been a reminder of simplicity and quality for me. Today, I plan to have a short review of the application Old But Gold, as probably the newer generation isn't that familiar with it, but you know, us old friends, we remember it well. I spent a few days with it again, on my Mac and on my Android mobile.

What is Remember the Milk?

==========================

Remember the Milk is a web and mobile application for managing your tasks and time, essentially a [to-do list](https://productivity.directory/category/to-do-lists). It was first created in 2004 in Australia by two friends, and as you can see, it has been helping us manage our tasks for now 20 years. Oh boy, 20 years!

A brief Review

==============

I used the web version on my Mac and the Android version on my mobile phone. Honestly, it felt a bit classic. It still does not have proper social network sign-in options like newer software does, and you need to fill out a form to sign up, but the fact that it didn't ask me to verify my email to use an application that's 20 years old is not bad.

In my opinion, it had the following positive and negative features:

Pros:

-----

- Cross-platform availability: Works on web, Mac, Windows, Linux, Android, and iOS.

- Task synchronization: Keeps tasks updated across all devices.

- Intuitive interface: Easy to use, even for beginners.

- Advanced task management: Allows for prioritization, tagging, and deadlines.

- Integration capabilities: Can integrate with other tools and services.

Cons:

-----

- No social media sign-in: Lacks modern login options like social network integration.

- Classic feel: May seem outdated compared to newer applications.

- Initial setup: Requires filling out a signup form, which might be off-putting for some.

- Limited free version: Some advanced features are locked behind a subscription model.

And now, with that said, will I use it for the long term? Honestly, No. Maybe if I had continued to keep my tasks on it, I would have had to do so, but unfortunately not now!

What platforms is it available for?

===================================

Remember the Milk is fundamentally known for its web version and its synchronization capabilities, but there are also versions available for Windows, Linux, and Mac for desktop systems, and Android and iOS versions for mobile phones. The installation is simple, and you can access all the links from the [Remember the Milk page](https://productivity.directory/remember-the-milk) on the [Productivity Directory](https://productivity.directory/).

It was supposed to be brief, and well, we've come to the end of the post. If you still like to experience the old-school feel or enjoy the cute logo of Remember the Milk, it's not a bad idea to give it a try.

For [more modern tools](https://productivity.directory/) and other [alternatives](https://productivity.directory/alternatives/remember-the-milk), you can visit [the Productivity Directory](https://productivity.directory/). | stan8086 |

1,783,341 | Task 2 | Answer 1: Condition | F.name |L.name |mob num |email id |appointment D/T | condition 1: All... | 0 | 2024-03-07T14:01:41 | https://dev.to/karthikaa/task-2-4jjd | Answer 1:

Condition | F.name |L.name |mob num |email id |appointment D/T |

-----------------------------------------------------------------

condition 1: All fields are blank

inputs--> first name- blank

second name - blank

mobile num - blank

email id- blank

appointment date/time - blank

**output---> Display "All fields are required" **

Condition 2: Any one or more field is blank

inputs --> first name- blank

second name- valid name

mobile number- valid name

email id- valid mail id

appointment date/time- blank

**output---> Display "All fields are required"**

Condition 3: Email id is invalid

inputs--> first name- valid name

second name--> valid name

mobile number--> valid mobile number

email id: Invalid email id

appointment date/time - valid date/time

**output---> Display " please enter a valid email"**

condition 4: Phone number in invalid

inputs--> first name - valid name

second name - valid name

mobile number - invalid number

email id - valid email id

appointment date/time - valid date/time

output---> Display " please enter a valid phone number"

condition 4: Appointment date/time is not available

inputs--> first name - valid name

second name - valid name

mobile number - valid number

email id - valid email id

appointment date/time - not available

**output---> Display "Please choose another date/time"**

condition 5: All fields are correct and appointment available

inputs--> first name - valid name

second name - valid name

mobile number - valid number

email id - valid email id

appointment date/time - available

**output---> Display "appointment Scheduled successfully"**

Answer 2:

Scenario 1. Testing the mobile app with different user role and Access level.

Test steps:

1.1 Login in with basic user account and verify the features.

1.2 Login in with a premium user account and verify access to all features.

1.3 Logon in with admin user account and verify access to advanced settings and all actions.

Expected Results:

Basic users should have limited access in the application like viewing and accessing basic information and basic functionalities.

Premium users should have access to all features which are available in the mobile app.

Admin users should have access to advanced settings and be able to perform all actions.

Scenario 2 : Testing the Account creation and deletion in the mobile app

Test Steps:

2.1 Create a new account with valid credentials and verify successful account creation.

2.2 Attempt to create an account with a password that does not meet complexity standards and verify appropriate error message.

2.3 Delete the newly created account and verify successful deletion.

Expected Results:

Account creation should be successful with valid credentials, and the user should receive a confirmation email.

If the password does not meet complexity standards, an error message should be displayed, prompting the user to choose a stronger password.

Account deletion should remove the account from the system without any errors.

Scenario 3: Testing App Navigation and Error Handling in the mobile app.

Test Steps:

3.1 Navigate through various screens and functionalities as a basic user, premium user and admin user.

3.2 Attempt to perform an action they are not authorized to perform.

Expected Results:

Users should be able to navigate through the app seamlessly without any crashes or performance issues.

If a user attempts to perform an unauthorized action, an appropriate error message should be displayed, informing them "sorry you are not authorized to perform this action".

| karthikaa | |

1,783,388 | Innovating Agile User Personas with ChatGPT and DALL-E | Dive into the fusion of AI and agile development to revolutionize user persona creation. Discover how integrating ChatGPT and DALL-E enriches personas with depth and visual empathy, paving the way for more insightful, human-centric product development. | 0 | 2024-03-07T15:00:38 | https://dev.to/dev3l/innovating-agile-user-personas-with-chatgpt-and-dall-e-4kng | ux, agile, ai, chatgpt | ---

title: Innovating Agile User Personas with ChatGPT and DALL-E

published: true

description: Dive into the fusion of AI and agile development to revolutionize user persona creation. Discover how integrating ChatGPT and DALL-E enriches personas with depth and visual empathy, paving the way for more insightful, human-centric product development.

tags: UX, Agile, AI, ChatGPT

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/d1gfuqnxj1yt4iyph5sg.png

---

In agile development, the clarity of our understanding about those who use our products—our users—is crucial. User personas help bridge this gap by sketching out fictional yet realistic profiles of our target audience, based on data and insights we gather. These personas aim to deepen our empathy and align our development efforts with user needs. However, as our understanding of users deepens and evolves, traditional methods of creating personas may not fully capture the nuances we’re discovering. There’s a growing need for more detailed, dynamic personas that better represent the complex nature of real users.

This is where the potentials of artificial intelligence, particularly tools like ChatGPT and DALL-E, come into play. By leveraging these tools, we propose enhancing the traditional persona creation process. ChatGPT can help generate detailed personas based on structured prompts, while DALL-E can add a visual dimension by creating images that represent each persona. This combination aims to enrich persona profiles, making them more relatable and useful for agile teams.

This blog post explores how integrating AI tools like ChatGPT and DALL-E into persona creation can provide agile teams with deeper, more actionable insights. Our goal is to show how these tools can complement existing methods, offering a fresh perspective on understanding and engaging with users.

## The Need for Detailed Personas

Understanding the myriad ways in which users interact with products is a cornerstone of successful agile development. The creation of user personas serves as a pivotal step in this process, attempting to distill the essence of diverse user experiences into comprehensible archetypes. Traditionally, these persona profiles stem from a mix of interviews, surveys, and demographic studies. However, as our products and their ecosystems become increasingly complex, the personas built from these conventional methodologies sometimes lack the depth and individuality needed to fully encapsulate the user experience.

A more detailed and vividly constructed persona can bring a wealth of benefits to the agile process. It allows product teams to tailor their work more precisely to user needs, fostering a more empathetic understanding of the audience. Detailed personas paint a richer picture of the user's daily life, their frustrations, goals, and how they interact with technology. This deeper insight enables agile teams to devise solutions that don't just meet basic requirements but truly resonate with users on a personal level.

Yet, achieving this level of detail and authenticity in personas often presents a significant challenge. Traditional data collection methods can be resource-intensive and may not always capture the subtleties of human behavior and motivation. Furthermore, the rapid pace of change in modern technology and societal trends necessitates a dynamic approach to persona creation—one that can adapt and evolve as quickly as our understanding of the user base does.

This is where the potential for innovation lies. By augmenting the traditional persona creation process with advanced AI tools like ChatGPT for textual data generation and DALL-E for visual representation, we can develop richer, more dynamic personas. These AI-enhanced personas not only provide a broader, more nuanced understanding of the user but also offer agile teams a more vibrant and engaging blueprint to guide their development efforts.

## Crafting Personas with ChatGPT: A Strategy

The evolution of artificial intelligence presents new horizons for agile teams to create more detailed and dynamic user personas. ChatGPT, with its capability to process and generate human-like text, offers a unique opportunity to deepen the persona development process. Here’s a strategic approach to leveraging ChatGPT for crafting enriched personas:

### Define the Scope:

Begin by delineating the boundaries of your project or feature and the intended user base. This step ensures that ChatGPT-generated content remains relevant and targeted. Understanding the product’s objective and potential users helps in forming prompts that elicit meaningful and insightful responses.

### Identify Key Attributes:

To create well-rounded personas, it's essential to explore a range of attributes that paint a comprehensive picture of the user. These may include demographics, professional background, personal goals, pain points, and technology usage patterns. Each attribute sheds light on different aspects of the user's life and preferences, contributing to a fuller understanding and representation.

### Craft Detailed Prompts:

With the key attributes in mind, construct specific prompts for ChatGPT that delve into each aspect. The prompts should be open-ended and designed to encourage detailed responses. For example, asking ChatGPT to describe "a day in the life" of a user, focusing on their interactions with technology, can unearth valuable insights into their behaviors, preferences, and challenges.

### Iterative Refinement:

The process doesn't end with the first set of responses. Use the insights gained from ChatGPT to refine your understanding and ask follow-up questions. This iterative approach allows you to dig deeper into certain aspects of the persona, fleshing out their characteristics and refining their profile.

Utilizing ChatGPT in this structured manner enables agile teams to develop personas that are not only detailed but also deeply rooted in realistic user scenarios and preferences. These personas provide a more solid foundation for empathy-driven development, ensuring that products and features are designed with a keen understanding of the user at their core.

## Real-World Example: Creating a Persona Card

To concretize our strategy, let's apply it to the development of a mobile application designed to promote sustainable living. This app aims to engage young professionals passionate about reducing their environmental impact through features like carbon footprint tracking, sustainable lifestyle tips, and community challenges.

### Scope and Goal:

Our target user is a young professional, early in their career, who is environmentally conscious and seeks practical ways to incorporate sustainable living practices into their daily life.

### Identifying Key Attributes:

For our persona, we'll explore several attributes:

- Age and Name for a personal touch

- Professional Background to understand their daily environment

- Personal Goals related to sustainability

- Pain Points in practicing or learning about sustainable living

- Technology Use indicating their interaction with digital tools

- Motivations for using the app to connect features with user needs

### Crafting Detailed Prompts for ChatGPT:

- "Describe a day in the life of a young professional actively trying to live sustainably but struggling to measure their carbon footprint."

- "What are the top sustainability goals of a young professional, and what barriers do they encounter in achieving these?"

- "Imagine the ideal mobile app for someone interested in sustainability. What features would they find most useful, and why?"

These prompts are designed to generate detailed responses that cover each of the identified attributes, forming a rich, holistic view of our persona.

### Iterative Refinement & Persona Creation:

Based on the insights provided by ChatGPT, we refine our persona, continuously asking follow-up questions until a vivid user profile emerges. Now, let's introduce our persona:

Persona Card:

```

## Name and Age: Mia Chen, 29

Occupation and Industry: Digital Marketing Specialist,

Clean Energy Sector

### Personal Goals:

- Mia aims to reduce her carbon footprint by 40% in

the next year.

- She’s interested in learning about zero-waste

lifestyles and seeking practical tips to implement them.

### Pain Points:

- Finds it challenging to track and measure her

environmental impact.

- Feels overwhelmed by the volume of information

online, unsure of which practices are truly effective.

### Technology Use:

- Comfortable with tech, prefers apps with

straightforward, actionable insights.

- Values community features, looking to connect with

like-minded individuals.

### Motivations for Using the App:

- Wants a reliable tool for tracking her carbon footprint

and receiving personalized suggestions for improvement.

- Seeks a community feature to share experiences and learn

from others on the same journey.

```

This persona card for Mia Chen not only paints a picture of who she is but also sheds light on her needs and how they connect with the app's features. By using ChatGPT to craft such detailed personas, agile teams can develop user stories that truly resonate with their audience.

## Enriching Personas with Visuals: A DALL-E Demonstration

Upon establishing a detailed persona card for Mia Chen through thoughtful prompts with ChatGPT, we now venture into adding a visual layer to our persona. Visual representations can powerfully enhance the empathy and connection agile teams feel towards their user personas. DALL-E, an AI capable of generating images from textual descriptions, offers an innovative approach to visualize our personas.

### Visualizing Mia Chen:

To create a visual representation of Mia Chen, we would craft a descriptive prompt for DALL-E, encapsulating Mia’s characteristics, style, and essence, without resorting to stereotypes. It's important that the imagery fosters inclusivity and relatability. An example prompt might be:

"Generate an image representing Mia Chen, a 29-year-old digital marketing specialist in the clean energy sector. Mia is environmentally conscious, values simplicity, and embodies an approachable and optimistic outlook on contributing to sustainable living. Reflect these qualities in a headshot that captures her professional yet eco-friendly demeanor."

### Why Visual Representation Matters:

- **Empathy and Relatability:** Seeing a visual representation of personas like Mia can trigger a stronger emotional connection than text alone, making Mia's challenges and goals more tangible to the team.

- **Communication & Collaboration:** Visuals can serve as quick, universally understandable references that enhance communication about user needs and preferences among diverse team members.

- **Inspiration for Design:** A visual persona can also inspire design elements of the product, ensuring that the user interface resonates with the target user's aesthetics and values.

### Integrating Visuals into Agile Processes:

Once Mia’s persona card is accompanied by a DALL-E-generated image, the combined visual and textual persona becomes a powerful tool in agile development. During planning and brainstorming sessions, Mia's image alongside her detailed profile helps keep the team aligned on who they are building for. This alignment ensures that user stories and development efforts are closely tailored to meet the specific needs and preferences of the target users, like Mia, thereby enhancing the overall user experience of the product.

## Reflecting on the Journey Towards Empathy and Innovation

Our exploration into the integration of ChatGPT and DALL-E within agile development processes reveals a landscape ripe with potential for innovation and deeper user connections. The transformation from conventional persona crafting to these AI-enhanced methods marks a significant evolution in our approach to understanding our users. By detailing the lives of personas like Mia Chen through ChatGPT and visually bringing them to life with DALL-E, we commit to a richer, more empathetic engagement with those we aim to serve.

This methodological evolution prompts us to ponder the future of agile development and the broader implications of our work. Enhanced personas don't just empower us to create better products; they challenge us to build bridges to better futures, informed by the nuanced needs and dreams of our users.

### Imagining the Horizon of Human-Centric Innovation

As we stand at this crossroads between human insight and AI innovation, it's crucial to consider what lies ahead. The integration of artificial intelligence into the agile development cycle is not just an enhancement of our tools but a paradigm shift towards greater empathy and understanding in product design.

What narratives can we explore, and what user needs can we fulfill as we continue to innovate with empathy at our core? The potential to reshape product development and design through AI tools like ChatGPT and DALL-E offers us a unique opportunity to deepen our connection to the human experience at the heart of technology.

### A Call to Inventive Compassion

Let's view this exploration not as an endpoint but as a springboard into a future where our tools and methodologies are as dynamic and thoughtful as the users they benefit. The marriage of AI and agile development beckons us to a world where understanding and innovation walk hand in hand.

I invite you, fellow innovators and creators, to venture further into this intersection of technology and empathy. Together, let's dream, design, and develop with an unwavering focus on the people behind the personas, ensuring that every feature, function, and interface we create resonates with the heartbeat of humanity that drives it all. | dev3l |

1,783,515 | Bridging IoT and Cloud: Enhancing Connectivity with Kong's TCPIngress in Kubernetes | By Rajesh Gheware In the rapidly evolving landscape of Internet of Things (IoT) and cloud... | 0 | 2024-03-07T15:43:44 | https://dev.to/rajeshgheware/bridging-iot-and-cloud-enhancing-connectivity-with-kongs-tcpingress-in-kubernetes-4ogj | kubernetes, kong, unigps, iot | ## By Rajesh Gheware

In the rapidly evolving landscape of Internet of Things (IoT) and cloud computing, organizations are constantly seeking efficient ways to bridge these two realms. The IoT space, particularly in applications like GPS-based vehicle tracking systems, demands robust, seamless connectivity to cloud-native applications to process, analyze, and leverage data in real time. UniGPS Solutions, a pioneer in IoT platforms for vehicle tracking, utilizes Kubernetes Cluster as its cloud-native infrastructure. A key component in ensuring seamless connectivity between IoT devices and cloud services in this setup is Kong's TCPIngress, an integral part of the Kong Ingress Controller.

## **The Role of TCPIngress in IoT-Cloud Connectivity**

Kong's TCPIngress resource is designed to handle TCP traffic, making it an ideal solution for IoT applications that communicate over TCP, such as GPS trackers in vehicles. By enabling TCP traffic management, TCPIngress facilitates direct, efficient communication between IoT devices and the cloud-native applications that process their data. This is crucial for real-time monitoring and analytics of vehicle fleets, as provided by Spring boot based microservices in UniGPS' solution.

### **How TCPIngress Works**

TCPIngress acts as a gateway for TCP traffic, routing it from IoT devices to the appropriate backend services running in a Kubernetes cluster. It leverages Kong's powerful proxying capabilities to ensure that TCP packets are securely and efficiently routed to the correct destination, without the overhead of HTTP protocols. This direct TCP handling is especially beneficial for low-latency, high-throughput scenarios typical in IoT applications.

### **Implementing TCPIngress in UniGPS' Kubernetes Cluster**

To integrate TCPIngress with UniGPS' Kubernetes cluster, we start by deploying the Kong Ingress Controller, which automatically manages Kong's configuration based on Kubernetes resources. Here's a basic example of how to deploy TCPIngress for a GPS tracking application:

```yaml

apiVersion: configuration.konghq.com/v1beta1

kind: TCPIngress

metadata:

name: gps-tracker-tcpingress

namespace: unigps

spec:

rules:

- port: 5678

backend:

serviceName: gps-tracker-service

servicePort: 5678

```

In this example, `gps-tracker-tcpingress` is a TCPIngress resource that routes TCP traffic on port `5678` to the `gps-tracker-service`. This service then processes the incoming GPS packets from the vehicle tracking devices.

### **Security and Scalability with TCPIngress**

Security is paramount in IoT applications, given the sensitive nature of data like vehicle locations. Kong's TCPIngress supports TLS termination, allowing encrypted communication between IoT devices and the Kubernetes cluster. This ensures that GPS data packets are securely transmitted over the network.

To configure TLS for TCPIngress, you can add a `tls` section to the TCPIngress resource:

```yaml

spec:

tls:

- hosts:

- gps.unigps.io

secretName: gps-tls-secret

rules:

- port: 5678

backend:

serviceName: gps-tracker-service

servicePort: 5678

```

This configuration enables TLS for the TCPIngress, using a Kubernetes secret (`gps-tls-secret`) that contains the TLS certificate for `gps.unigps.io`.

Scalability is another critical factor in IoT-cloud connectivity. The deployment of TCPIngress with Kong's Ingress Controller enables auto-scaling of backend services based on load, ensuring that the infrastructure can handle varying volumes of GPS packets from the vehicle fleet.

### **Monitoring and Analytics**

Integrating TCPIngress in the UniGPS platform not only enhances connectivity but also facilitates advanced monitoring and analytics. By leveraging Kong's logging plugins, it's possible to capture detailed metrics about the TCP traffic, such as latency and throughput. This data can be used to monitor the health and performance of the IoT-cloud communication and to derive insights for optimizing vehicle fleet operations.

### **Conclusion**

The integration of IoT devices with cloud-native applications presents unique challenges in terms of connectivity, security, and scalability. Kong's TCPIngress offers a robust solution to these challenges, enabling seamless, secure, and efficient communication between IoT devices and cloud services. By implementing TCPIngress in Kubernetes clusters, organizations like UniGPS can leverage the full potential of their IoT platforms, enhancing real-time vehicle tracking, monitoring, and analytics capabilities. This strategic approach to bridging IoT and cloud not only optimizes operations but also drives innovation and competitive advantage in the IoT space.

In summary, Kong's TCPIngress is a cornerstone in building a future-proof, scalable IoT-cloud infrastructure, empowering businesses to harness the power of their data in unprecedented ways. Through strategic deployment and configuration, TCPIngress paves the way for next-generation IoT applications, making the promise of a truly connected world a reality. | rajeshgheware |

1,783,551 | Blockchain Use Cases and Applications by Industry | Blockchain technology, once synonymous with cryptocurrencies, has evolved into a revolutionary force... | 0 | 2024-03-07T16:20:33 | https://dev.to/matthewcyrus09/blockchain-use-cases-and-applications-by-industry-3ci6 | blockchainusecases | Blockchain technology, once synonymous with cryptocurrencies, has evolved into a revolutionary force with applications across various industries. From Real Estate to Healthcare, Finance, and Global Trade, the decentralized and secure nature of blockchain offers solutions to longstanding challenges. Let's explore the diverse [use cases and applications of blockchain](https://theblockchain.team/tbt-blog/blockchain-use-cases-and-applications-by-industry) across different sectors.

## Introduction

**Defining Blockchain Use Cases**

Blockchain, at its core, is a decentralized and distributed ledger technology. It ensures secure and transparent transactions by creating a chain of blocks linked through cryptographic hashes. This article delves into the practical applications, or use cases, of blockchain technology.

**Significance of Blockchain Technology**

Understanding the importance of blockchain sets the stage for exploring its applications in various industries. The technology's ability to provide transparency, security, and efficiency has led to its widespread adoption.

## Blockchain in Real Estate

**Property Ownership Transparency**

Blockchain ensures a transparent and unalterable record of property ownership, reducing disputes and fraud in real estate transactions.

**Smart Contracts for Real Estate Transactions**

Smart contracts automate and secure real estate deals, minimizing the need for intermediaries and accelerating the transfer of property.

## Blockchain in Finance

**Decentralized Finance (DeFi)**

Blockchain's role in DeFi revolutionizes traditional banking systems, providing decentralized lending, borrowing, and trading platforms.

**Cross-Border Transactions**

Blockchain facilitates faster and more secure cross-border transactions, eliminating delays and reducing transaction costs.

## Blockchain in Healthcare

**Patient Data Security**

Blockchain ensures the secure storage and sharing of patient data, maintaining confidentiality and integrity.

**Drug Traceability and Supply Chain**

Blockchain aids in tracking pharmaceuticals through the supply chain, reducing the risk of counterfeit drugs.

## Blockchain in Supply Chain Management

**Transparency and Traceability**

Blockchain enhances supply chain transparency by recording every transaction, ensuring the traceability of products from manufacturer to consumer.

**Reduction of Fraud and Counterfeiting**

The decentralized nature of blockchain minimizes the risk of fraud and counterfeiting in the supply chain.

## Blockchain in Global Trade and Commerce

**Streamlining International Transactions**

Blockchain simplifies complex international transactions, reducing paperwork and delays.

**Enhancing Trust in Global Trade**

The immutability of blockchain records builds trust among international trading partners, reducing disputes.

## Blockchain in eCommerce

**Secure Online Transactions**

Blockchain secures online transactions, protecting both buyers and sellers from fraud.

**Supply Chain Visibility**

eCommerce businesses leverage blockchain for transparent and traceable supply chain management.

## Blockchain in Insurance

**Improved Claims Processing**

Blockchain expedites claims processing through smart contracts, reducing bureaucracy and delays.

**Fraud Prevention**

The transparent nature of blockchain helps prevent insurance fraud by providing an immutable record of transactions.

## Blockchain in Media and Entertainment

**Copyright Protection**

Blockchain enables the protection of intellectual property rights, preventing unauthorized use of digital content.

**Royalty Tracking**

Artists benefit from blockchain's transparent royalty tracking, ensuring fair compensation for their work.

## Blockchain In Identity Management

**Enhanced Security in Identity Verification**

Blockchain enhances the security of identity verification processes, reducing the risk of identity theft.

**Personal Data Control**

Individuals gain more control over their personal data, deciding who can access and use their information.

## Future Trends in Blockchain Use Cases

**Integration of Artificial Intelligence**

The integration of AI with [blockchain technology ](https://theblockchain.team/blockchain-application-development/)opens new possibilities for automation and data analysis.

**Increased Adoption in Government Sectors**

Governments worldwide are exploring blockchain applications for secure record-keeping, voting systems, and more.

## Challenges and Solutions in Blockchain Implementations

**Scalability Issues**

Blockchain faces challenges in scaling to meet the demands of large-scale transactions. Innovations like layer-two solutions aim to address scalability.

**Regulatory Compliance**

Navigating regulatory frameworks remains a challenge; however, increased collaboration between industry and regulators is working towards viable solutions.

## How Businesses Can Leverage Blockchain Technology

**Small and Medium Enterprises (SMEs)**

SMEs benefit from blockchain by streamlining operations, reducing costs, and gaining a competitive edge.

**Large Corporations**

Big enterprises leverage blockchain for enhanced security, transparent operations, and improved efficiency in complex business processes.

## Success Stories: Companies Benefiting from Blockchain Use Cases

**IBM's Food Trust**

IBM's Food Trust uses blockchain to trace the origin and journey of food products, ensuring food safety and quality.

**Everledger in Diamond Tracking**

Everledger utilizes blockchain to track the authenticity and origin of diamonds, preventing the trade of conflict diamonds.

## Conclusion

Blockchain's transformative potential extends across diverse industries, revolutionizing the way we conduct transactions and secure data. As we embrace this technology, addressing challenges and maximizing its benefits will define the future landscape of various sectors. | matthewcyrus09 |

1,783,736 | Frameworks - Analogy | Yesterday, I viewed an AppDev course overview video and an analogy comparing Frameworks to species... | 0 | 2024-03-07T21:01:50 | https://dev.to/sernern/frameworks-analogy-2ob0 | framework | Yesterday, I viewed an AppDev course overview video and an analogy comparing Frameworks to species that share DNA stuck out to me:

"Did you know that humans and chimpanzees share about 98% of their DNA, and it's only 2% that makes us different? Because most of our DNA is just the plumbing of being alive, having a metabolism and being a primate, and then just a little bit makes us distinct and we have exactly the same 98% because we had a common ancestor like, six to 10 million years ago. So similarly, Twitter and Airbnb share much of their code. And really any cloud-based application has the same kind of plumbing. You gotta have a web server, which lets you listen for HTTP requests, you gotta connect to the database. You have to have some rendering engine to render the UI.

Very much the same kind of code for every cloud-based app. And then a little tiny bit makes Twitter distinct from Airbnb. Airbnb has listings, Twitter has tweets. They both have users. Really, that's all that makes them distinct. Most of the plumbing is the same. And in particular, Twitter and Airbnb have a lot of their code is the same because they had a common ancestor." - Professor Raghu Betina

Being a novice to software development, this really helped drive the message home regarding frameworks. No need to reinvent the wheel and all that. | sernern |

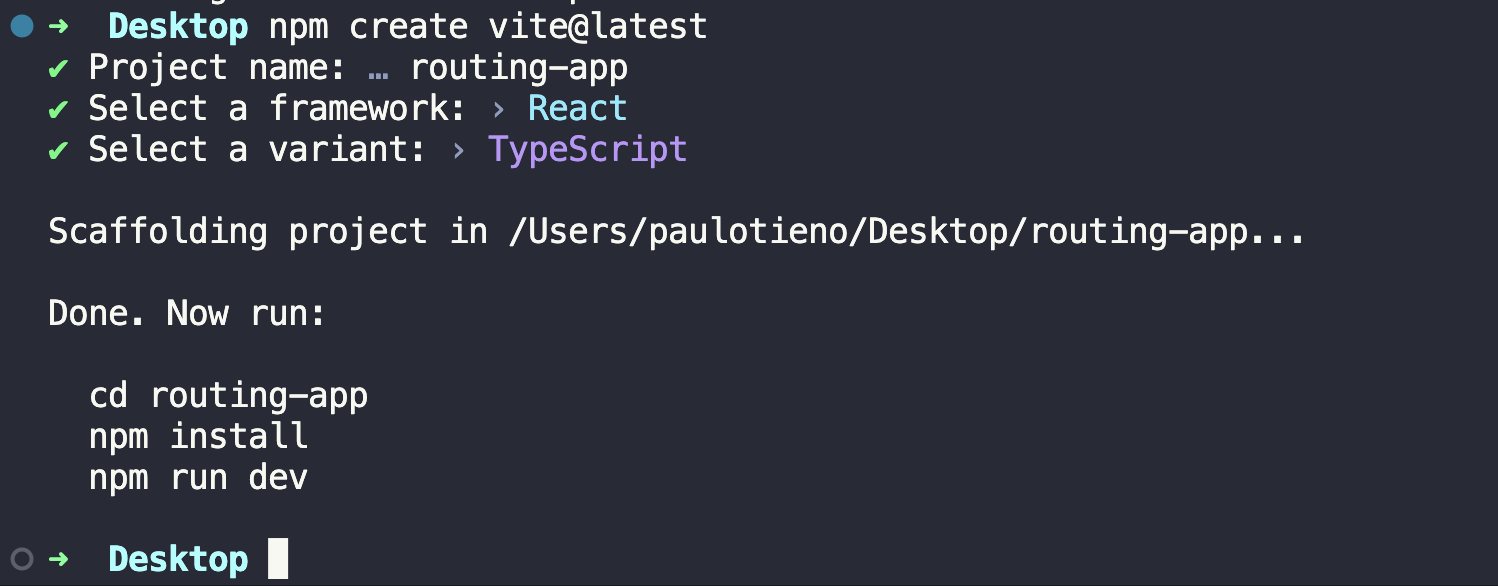

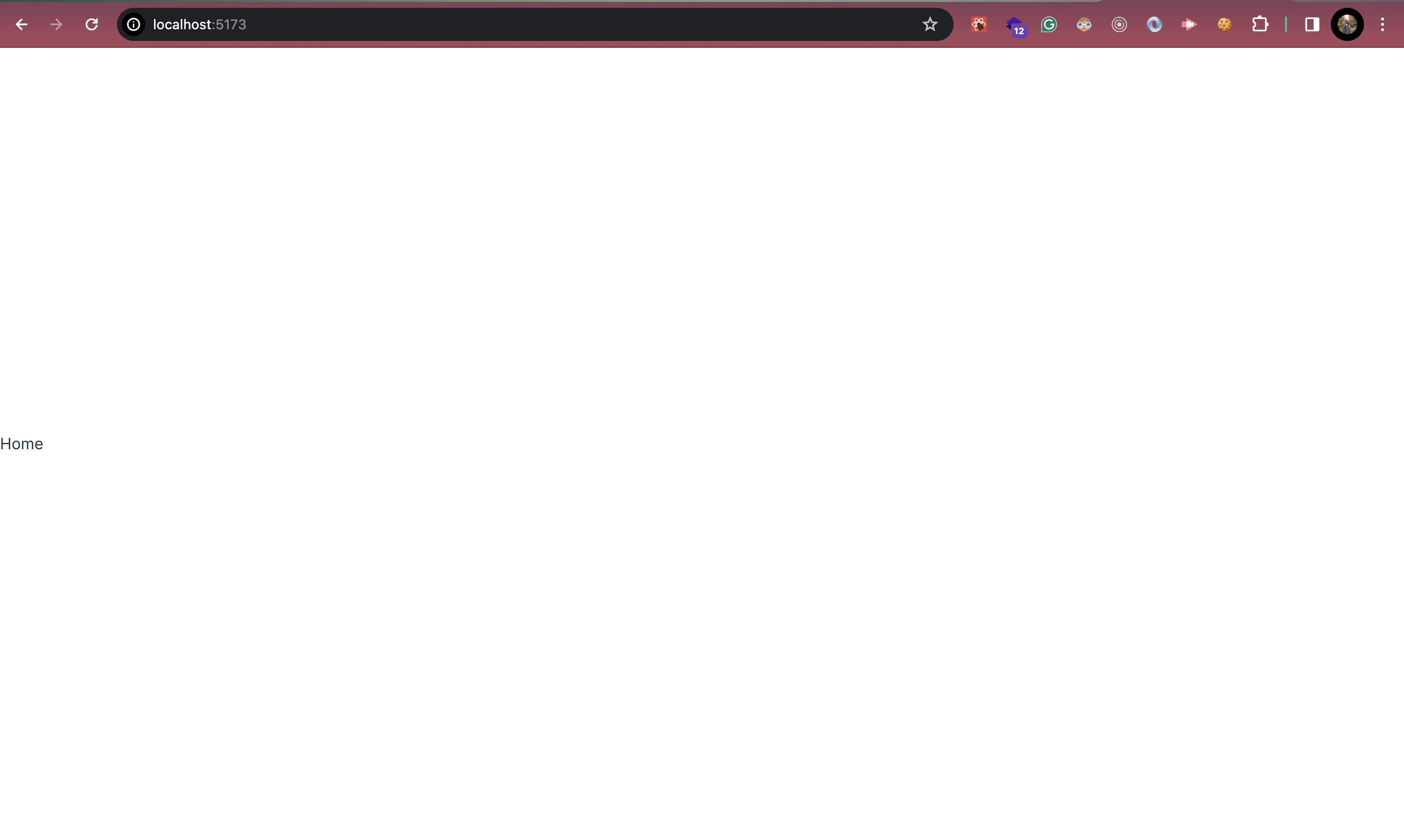

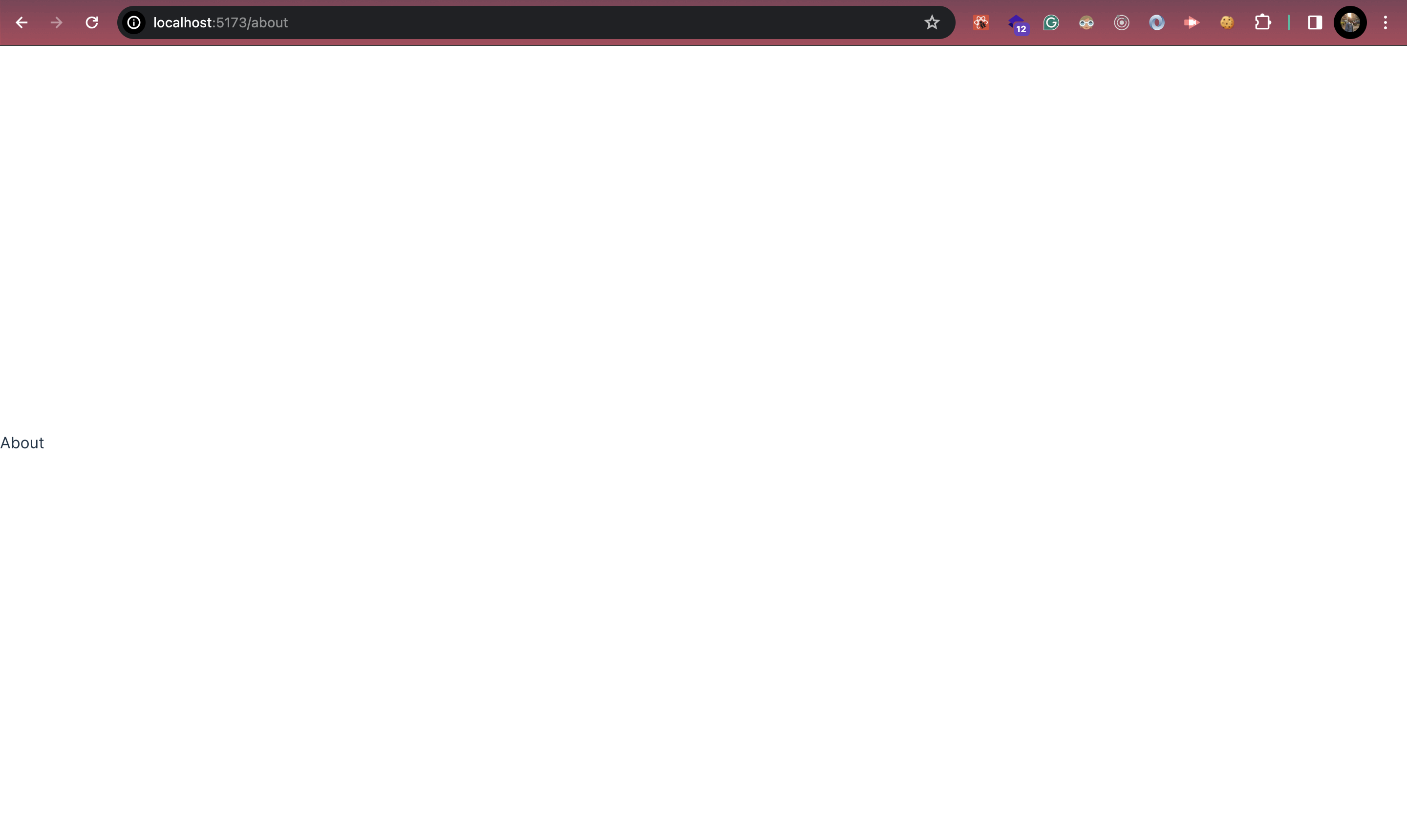

1,784,143 | All about routing in React (Part 1) ft. react-router-dom | Here, in this post we are going to learn about the routing in react applications using... | 0 | 2024-03-08T07:23:29 | https://dev.to/jmilind1234/all-about-routing-in-react-part-1-ft-react-router-dom-2fd1 | react, reactrouterdom, javascript, javascriptlibraries | Here, in this post we are going to learn about the routing in react applications using react-router-dom v6.

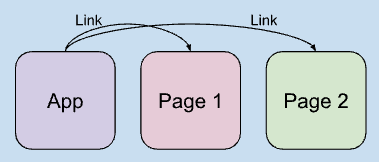

React-router-dom is a powerful library that is used to handle the routing mechanism in the react application. Its special because in addition to routing it also provides the way to achieve client-side-routing instead of server-side-routing.

Before getting to point on how it provides the client-side-routing, lets discuss in short about client-side-routing and server-side-routing.

So basically in old web apps where hyperlinks (to navigate to other pages) were mentioned using anchor tag, used to make the request to the server and server used to send the page data in response. Even if in react we use anchor tags to navigate we can see that as we click on hyperlinks it will make a request to server and server will return the landing page of our app (where a div with id root is placed), and browser will paint on to that page.

Such kind of routing is called server-side-routing.

Now, another comes client-side-routing, where for getting the content of new page (page to which we want to land), webapp will not make a request to the server.

So how and from where that page content will come from? 🤔

Well in react when we first time hit the domain of our app, all the components are loaded. And these already loaded components will be only served when we navigate to page. Simple !!!

This was about the two types of routing that is used across all web apps.

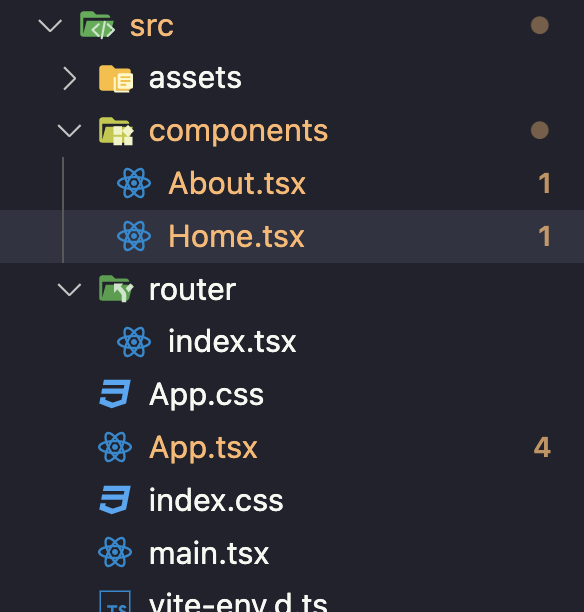

Now, lets discuss on about "how to start with react-router-dom, to handle routing?"

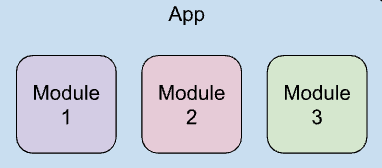

So, to start with routing we first need to convey our react app all important information about routes and what to show.

This is done by creating routing configuration and wrap our whole app with this routing configuration.

```js

import { Outlet, createBrowserRouter, useLocation } from "react-router-dom";

export const appRouter = new createBrowserRouter([

{

path: "/",

element: <AppLayout />,

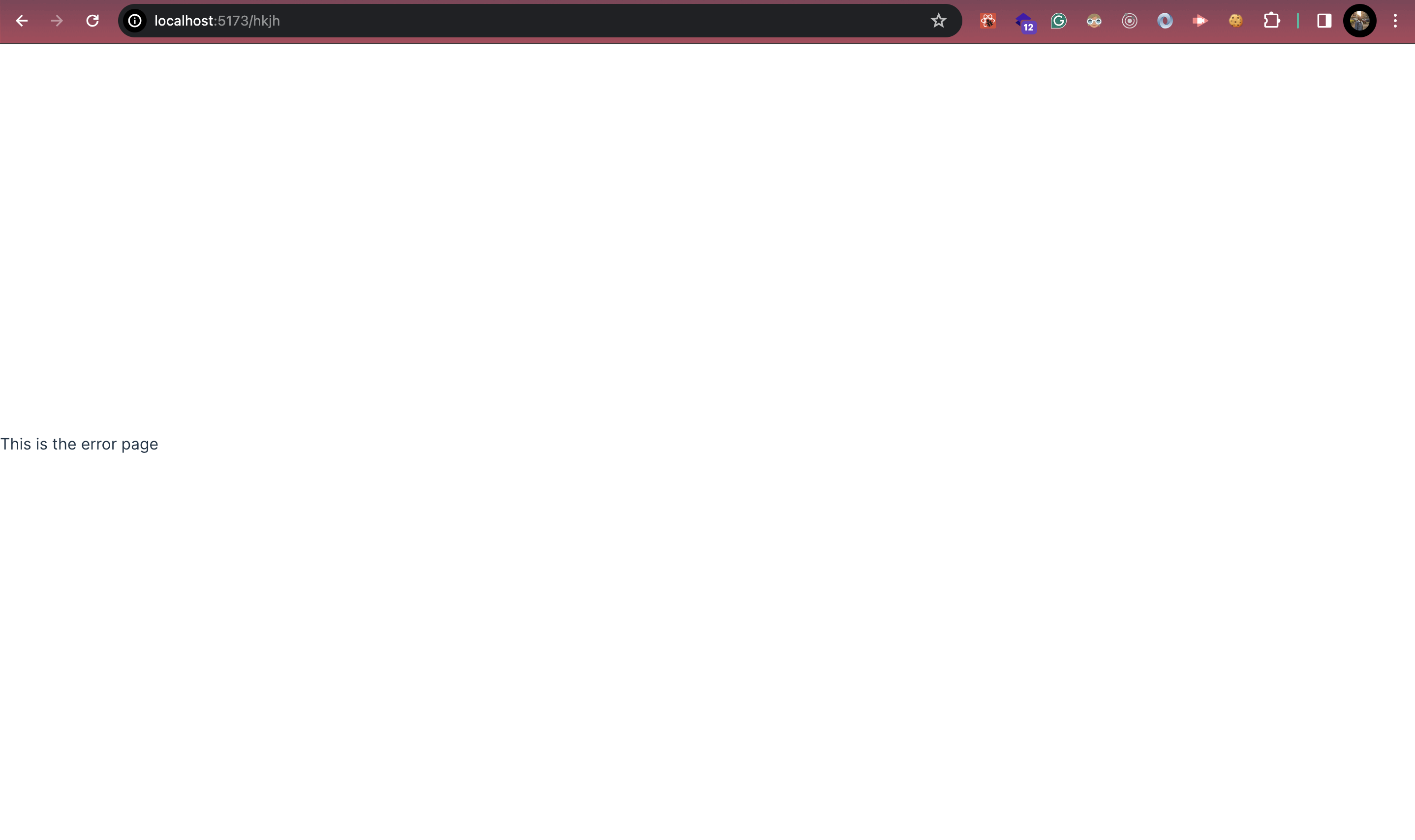

errorElement: <Error />,

},

{

path: "/about",

element: <About />,

},

{

path: "/contact",

element: <Contact />,

},

]);

```

So, here basically we mentioned -

1. Number of routes.

2. Path of routes.

3. Component to display on each route.

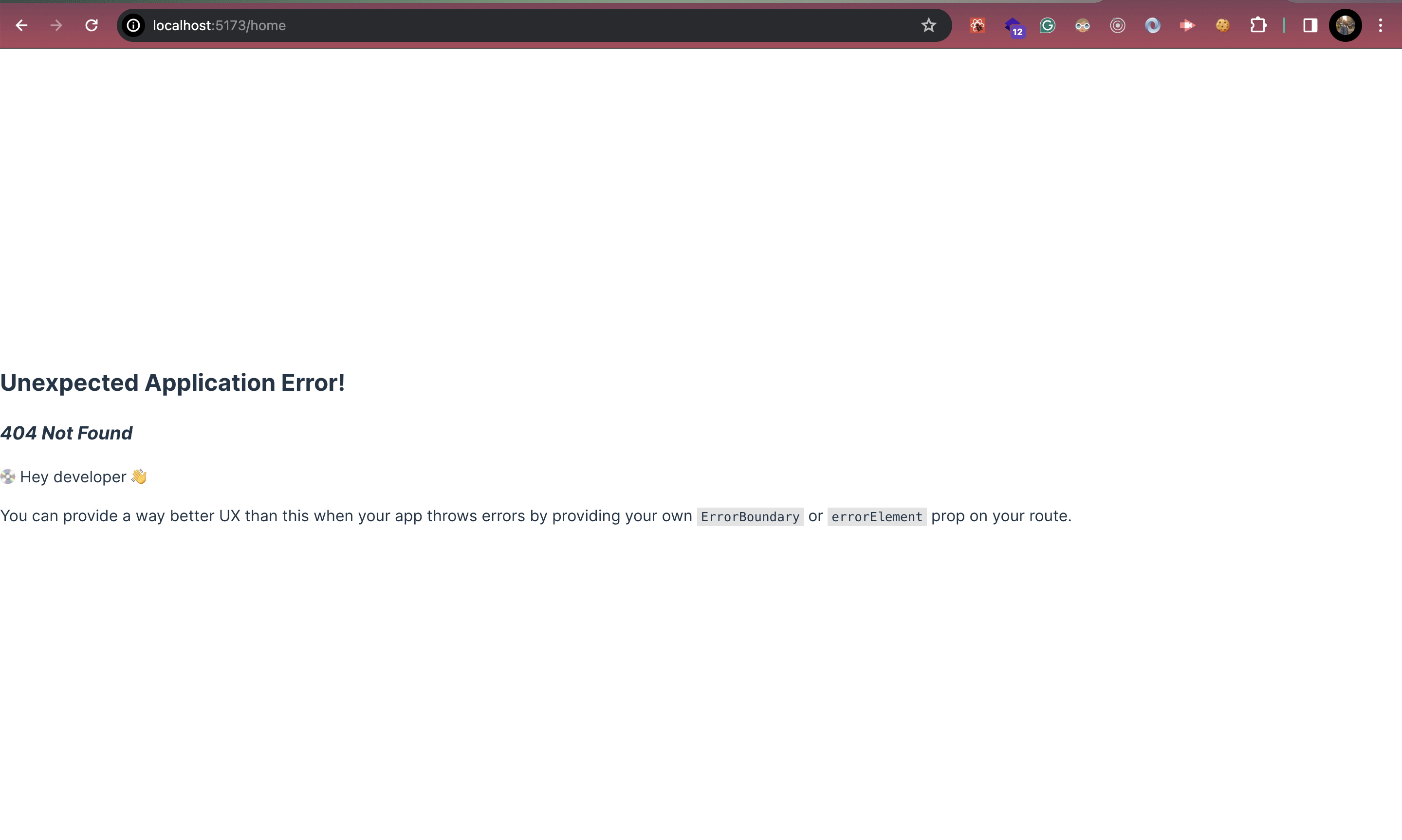

> Remember - if component is missed than `<Outlet/>` will be rendered, which is by default null (means a white screen)

But this is not enough, because still our app is not aware about the paths or routes. To make our app aware about routes and what to show for each path, we need to wrap whole app inside the router provider.

```js

import { RouterProvider } from "react-router-dom";

import AppLayout from "./src/AppLayout";

import ReactDOM from "react-dom/client";

import { appRouter } from "./src/AppLayout";

const root = ReactDOM.createRoot(document.getElementById("root"));

root.render(

<RouterProvider router={appRouter}>

<AppLayout />

</RouterProvider>

);

```

This AppLayout is the starting point of our react-app.

Now our app is aware about all the routes. 🚀

This was about basic routing, but lets say if requested path is not aware than? What to show? How to handle?

No worries, errorElement will take care of it.

In the next post/article we will resume about nested routes, dynamic routing etc. 😊

| jmilind1234 |

1,784,169 | Introduction To OOP: Objects | This article was first published on konadu.dev(Introduction To OOP: Objects) Undoubtedly, two of the... | 0 | 2024-03-08T08:00:57 | https://konadu.dev/introduction-to-oop-objects | programming, oop, beginners, learning | This article was first published on [konadu.dev(Introduction To OOP: Objects)](https://konadu.dev/introduction-to-oop-objects)

Undoubtedly, two of the most known programming paradigms are object-oriented programming and functional programming. We can debate all day about the best paradigm, but one thing is clear: they all have pros and cons. I can confidently say that FP and OOP are here to stay for now. So, as a software engineer, getting to grasp FP or OOP concepts is very important, so in this blog post, we will look over one key aspect of object-oriented programming: Objects and Classes.

## What is an Object in OOP?

Objects are the building blocks of an OO program. A program that uses OO technology is a collection of objects.

Object-oriented programming encourages combining our data, functions, or methods in a single object. Unlike some programming paradigms, such as procedural programming, where we write functions to mutate the global scope of data that is probably living elsewhere, OOP encourages us to put our data into a single entity called an object and to write methods or functions that work on these data inside our object.

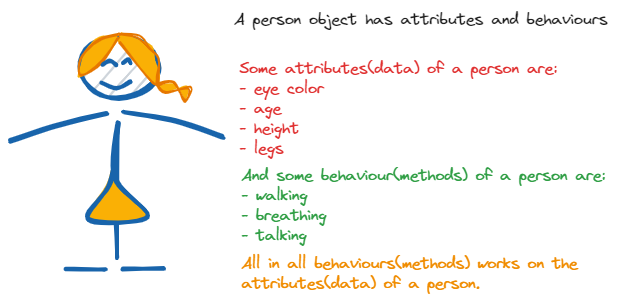

In its basic form, an object can be defined by two major components: attributes and behaviors. And it can represent real-life entities. For example, we can say that a person is an object.

A person has attributes like eye color, age, height, mouth, legs, etc. A person also has behaviors like walking, talking, breathing, etc. **As said earlier, an object is an entity that contains both attributes and behavior.** Based on this, we can confidently say that a person's behavior works on the attributes of a person. For example, a person's walking behavior will work on the attribute of the legs because the leg does the job of walking.

Programmatically, we can say that the attribute of a person is also known as the concrete data about the person, and the person's behavior is also known as the person's methods programmatically.

Therefore, we can say that a person's eye color, age, and height are the data of the person's object. And walking, talking, and breathing are the methods or behaviors of the person's object.

### Object data

The data stored within an object represents the state of the object. In OO programming terminology, this data is called **attributes**.

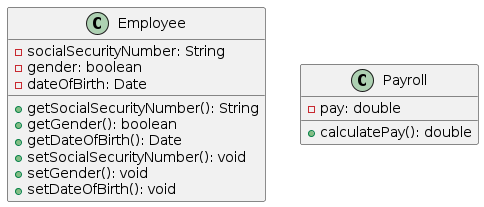

We can create an employee object and give it **attributes** such as Social Security numbers, date of birth, gender, phone number, etc. The below example shows an object with the mentioned data or attributes.

```java

public class Employee {

private String name;

private String socialSecurityNumber;

private String dateOfBirth;

private String phoneNumber;

}

```

### Object Behaviours

The behavior of an object represents what the object can do. In procedural languages, the behavior is defined by procedures, functions, and subroutines. In OO programming terminology, these behaviors are contained in methods, and you invoke a method by sending a message to it. In our employee example, consider that one of the behaviors required of an employee object is to set and return the values of the various attributes. Thus, each attribute would have corresponding methods, such as `setGender()` and `getGender()`. In this case, when another object needs this information, it can send a message to an employee object and ask it what its gender is. The below example shows how these methods work.

```java

package com.example.chapter_one;

public class Employee {

...

public void setSocialSecurityNumber(String socialSecurityNumber) {

this.socialSecurityNumber = socialSecurityNumber;

}

public String getSocialSecurityNumber() {

return socialSecurityNumber;

}

}

```

> **Getters and Setters** - The concept of getters and setters supports the concept of data hiding. Because other objects should not directly manipulate data within another object, the getters and setters provide controlled access to an object's data. Getters and setters are sometimes called accessor methods and mutator methods, respectively.

So, let's say we have an `Payroll` object that contains a method called `CalculatePay()` that calculates the pay for a specific employee. Among other information, the Payroll object must obtain the Social Security number of this employee. To get this information, the payroll object must send a message to the Employee object (in this case, the `getSocialSecurityNumber()` method). This means that the Payroll object calls the getSocialSecurityNumber() method of the Employee object. The employee object recognizes the message and returns the requested information.

The diagram below is a class diagram representing the Employee/Payroll system we have been talking about. In the next blog post, we will talk more about classes, so stay tuned.

Each class/object diagram is defined by three separate sections: the name itself, the data (attributes), and the behaviors (methods). For example, the Employee class/object diagram's attribute section contains `SocialSecurityNumber`, `Gender`, and `dateOfBirth`, whereas the method section contains the methods that operate on these attributes.

When an object is created, we say that the objects are instantiated. Thus, if we create three employees, we create three distinct instances of an Employee class. Each object contains its copy of the attributes and methods.

> **An Implementation Issue** - Be aware that there is not necessarily a physical copy of each method for each object. Rather, each object points to the same implementation. However, this is an issue left up to the compiler/operating platform. From a conceptual level, you can think of objects as being wholly independent and having their own attributes and methods.

## Why objects?

In structured or procedural programming, the data is often separated from the procedures(), and often, the data is global, so it is easy to modify data that is outside the scope of your code. This means that access to data is uncontrolled and unpredictable (that is, multiple functions may have access to the global data). Second, because you have no control over who has access to the data, testing and debugging are much more difficult. Objects address these problems by combining data and behavior into one complete package.

So this means that an object can contain entities such as integers and strings, which are used to represent attributes. They also have methods that represent behaviors.

In an object, methods are used to perform operations on the data and other actions. Perhaps more importantly, you can control access to members of an object (both attributes and methods). This means some members, attributes, and methods can be hidden from other objects. For instance, an object called Math might contain two integers called `myInt1` and `myInt2`. Most likely, the Math object also includes the necessary methods to set and retrieve the values of `myInt1 `and `myInt2`. It might also have a method called `sum() `to add the two integers together. Don't worry about the code; we will talk about classes in a different post.

```java

public class Math {

// Here, myInt1 and myInt2 are attributes of the Math object. They are private, meaning they are hidden from other objects.

private int myInt1 = 1;

private int myInt2 = 2;

// This is a method called sum(). It performs an operation on the data (myInt1 and myInt2) by adding them together.

int sum() {

return myInt1 + myInt2;

}

// These are methods used to set the values of myInt1 and myInt2. They control access to these attributes.

public void setInt1(int myIntOne) {

myInt1 = myIntOne;

}

public void setInt2(int myIntTwo) {

myInt2 = myIntTwo;

}

// These are methods used to retrieve the values of myInt1 and myInt2. They also control access to these attributes.

public int getInt1() {

return myInt1;

}

public int getInt2() {

return myInt2;

}

}

```

So basically, if we did not add the getters and setters methods, `myInt1` and `myInt2` will never or cannot be accessed from any place in our code (because they are private attributes/data) except inside the class in which it was declared.

> **Data Hiding** - In OO terminology, data are referred to as attributes and behaviors as methods. Restricting access to certain attributes and/or methods is called data hiding.

## Encapsulation

This brings us to our last sub-topic on objects, **encapsulation**. E**ncapsulation** is the principle that binds together the data and functions that manipulate the data and that keeps both safe from outside interference and misuse. The data of an object is known as its attributes, and the functions/methods that can be performed on that data are known as methods.

By using **encapsulation**, thus combining the data and the methods, we can control access to the data in the Math object. By defining these integers as off-limits, another logically unconnected function cannot manipulate the integers `myInt1` and `myInt2` — only the Math object can do that.