id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,768,862 | 사천모텔아가씨/콜걸 ☎LINE-wag58☎ 사천외국인출장 사천출장전문업소 사천출장안마? 사천출장만남 | 사천모텔아가씨/콜걸 ☎LINE-wag58☎ 사천외국인출장 사천출장전문업소 사천출장안마? 사천출장만남 사천모텔아가씨/콜걸 ☎LINE-wag58☎ 사천외국인출장 사천출장전문업소... | 0 | 2024-02-22T08:10:52 | https://dev.to/1rbzwoa2hq/saceonmotelagassikolgeol-line-wag58-saceonoeguginculjang-saceonculjangjeonmuneobso-saceonculjanganma-saceonculjangmannam-12b8 | 사천모텔아가씨/콜걸 ☎LINE-wag58☎ 사천외국인출장 사천출장전문업소 사천출장안마? 사천출장만남

사천모텔아가씨/콜걸 ☎LINE-wag58☎ 사천외국인출장 사천출장전문업소 사천출장안마? 사천출장만남

사천모텔아가씨/콜걸 ☎LINE-wag58☎ 사천외국인출장 사천출장전문업소 사천출장안마? 사천출장만남 | 1rbzwoa2hq | |

1,768,866 | Premium Informatica PR000007 Practice Test- Guaranteed Way Towards Success | Unveil The Strategies And Mindset For Triumph In Informatica PR000007 Practice Test Are you... | 0 | 2024-02-22T08:12:38 | https://dev.to/ameliajohn/premium-informatica-pr000007-practice-test-guaranteed-way-towards-success-3lg7 | <h1 style="text-align: justify;"><strong></strong></h1>

<h2 style="text-align: justify;"><strong>Unveil The Strategies And Mindset For Triumph In Informatica PR000007 Practice Test</strong></h2>

<p style="text-align: justify;">Are you gearing up for the challenge of PR000007 Informatica exams? Look no further – P2PExams is your key to confidence and success. Explore the ins and outs of PR000007 Exam preparation as we delve into proven strategies, effective techniques, and the winning mindset required to ace the PR000007 exams. Read on to discover how P2PExams can guide you towards triumph in your Informatica Certified Professional PR000007 exam journey.</p>

<p style="text-align: center;"><a href="https://www.p2pexams.com/products/pr000007"><img alt="Informatica PR000007" src="https://i.imgur.com/T7F4Dhi.jpg" style="height: 405px; width: 720px;" /></a></p>

<p style="text-align: center;"><strong>To Get Success Visit Here:<a href="https://www.p2pexams.com/products/pr000007">https://www.p2pexams.com/products/pr000007</a></strong></p>

<h2 style="text-align: justify;"><strong>Informatica PR000007 Exam Overview:</strong></h2>

<ul>

<li style="text-align: justify;"><strong>Vendor:</strong> Informatica</li>

<li style="text-align: justify;"><strong>Exam Name:</strong> PowerCenter Data Integration 9.x Administrator Specialist</li>

<li style="text-align: justify;"><strong>Exam Code:</strong> PR000007</li>

<li style="text-align: justify;"><strong>Number Of Questions: </strong>70</li>

<li style="text-align: justify;"><strong>Exam Format:</strong> Multiple Choice Questions (MCQs)</li>

<li style="text-align: justify;"><strong>Exam Language:</strong> English</li>

<li style="text-align: justify;"><strong>Promo Code For Informatica PR000007 Exam Questions: "SAVE25"</strong></li>

</ul>

<h2 style="text-align: justify;"><strong>Effortless Learning With Informatica PR000007 Exam Questions In PDF Format – Anytime, Anywhere</strong></h2>

<p style="text-align: justify;">Embark on a journey of effortless learning with P2PExams' intuitive and accessible ICP PR000007 PDF format learning guide. Our user-friendly Informatica Certified Professional PR000007 exam questions can be easily accessed on your mobile devices and computers, offering flexibility right after purchase. Dive into your ICP PR000007 Exam preparation at your own pace, whether you're at home or on the go.</p>

<h2 style="text-align: justify;"><strong>Sharpen Your Skills With Informatica PR000007 Practice Test Engine</strong></h2>

<p style="text-align: justify;">Mastering ICP PR000007 practice questions is paramount to understanding your strengths and weaknesses. P2PExams introduces an online PR000007 practice test engine tailored to PR000007 exam patterns. Elevate your PR000007 Informatica Exam readiness, outshine the competition and gain a competitive edge through hands-on practice with P2PExams Informatica Certified Professional PR000007 online practice test engine.</p>

<h2 style="text-align: justify;"><strong>Unlock Expert Insights With Real And Verified Informatica PR000007 Exam Questions & Answers</strong></h2>

<p style="text-align: justify;">Enhance your knowledge with expert insights uncovered in P2PExams' real and verified ICP PR000007 exam questions and answers. These authentic Informatica Certified Professional PR000007 questions guide you in understanding the exam format and formulating effective responses. If you seek a comprehensive ICP PR000007 exam prep guide, P2PExams ICP PR000007 practice questions product is your go-to resource.</p>

<h2 style="text-align: justify;"><strong>Free Updated Informatica PR000007 Exam Questions Demo</strong></h2>

<p style="text-align: justify;">Access unlimited updated content and resources with P2PExams PR000007 exam questions preparation. Benefit from free updates for three months post-purchase, ensuring your PR000007 exam preparation aligns with the latest syllabus. Sample the quality of P2PExams Informatica Certified Professional PR000007 exam questions through a complimentary demo. The Informatica PR000007 questions demo offers a sneak peek into meticulously crafted ICP PR000007 exam questions, aiding you in making an informed decision before purchasing.</p>

<p style="text-align: justify;"><strong>Are You Ready To Move Forward? Then Download Now.: <a href="https://www.p2pexams.com/informatica/pdf/pr000007">https://www.p2pexams.com/informatica/pdf/pr000007</a></strong></p>

<h2 style="text-align: justify;"><strong>Approach Informatica PR000007 Exam Questions With Confidence – Backed By A Money-Back Promise</strong></h2>

<p style="text-align: justify;">Secure your Informatica Certified Professional PR000007 exam questions guide with confidence, knowing that P2PExams ICP PR000007 exam questions come with a money-back guarantee. Commit to at least two weeks of preparation using our Informatica PR000007 exam quiz, and if you don't pass you're ICP PR000007 Exam as promised, claim your refund per our policy.</p>

<h2 style="text-align: justify;"><strong>Special Offer! Enjoy 25% Special Discount On All Informatica PR000007 Exam Dumps | Use Coupon Code "SAVE25"</strong></h2>

<p style="text-align: justify;">Maximize your PR000007 preparation with an exclusive 25% discount on P2PExams comprehensive PR000007 practice test questions. Act fast to secure this limited-time offer – order your Informatica PR000007 exam questions now and don't miss out on the substantial discount.</p>

<p style="text-align: center;"><a href="https://www.p2pexams.com/products/pr000007"><img alt="Informatica PR000007" src="https://i.imgur.com/v6S6yYL.jpeg" style="height: 327px; width: 720px;" /></a></p>

<h3 style="text-align: justify;"><strong>Informatica PR000007 Exam Search Queries:</strong></h3>

<p style="text-align: justify;">PR000007 Questions | PR000007 Dumps | PR000007 Exam Questions | PR000007 Exam Dumps | PR000007 Practice Questions | PR000007 Practice Dumps | PR000007 Braindumps | PR000007 Test Questions | PR000007 Test Dumps | PR000007 Questions PDF | PR000007 Dumps PDF | Free PR000007 Questions | Free PR000007 Dumps | PR000007 PDF Questions | PR000007 PDF Dumps | Actual PR000007 Questions |</p>

<p style="text-align: justify;"> </p>

| ameliajohn | |

1,768,877 | 태백출장전문 ◀상담톡 sx-58▶ 태백출장아가씨 태백출장추천 태백여대생출장 태백홈타이마사지샵 | 태백출장전문 ◀상담톡 sx-58▶ 태백출장아가씨 태백출장추천 태백여대생출장 태백홈타이마사지샵 태백출장전문 ◀상담톡 sx-58▶ 태백출장아가씨 태백출장추천 태백여대생출장... | 0 | 2024-02-22T08:20:06 | https://dev.to/1rbzwoa2hq/taebaegculjangjeonmun-taebaegculjangagassi-taebaegculjangcuceon-taebaegyeodaesaengculjang-taebaeghomtaimasajisyab-2peb | 태백출장전문 ◀상담톡 sx-58▶ 태백출장아가씨 태백출장추천 태백여대생출장 태백홈타이마사지샵

태백출장전문 ◀상담톡 sx-58▶ 태백출장아가씨 태백출장추천 태백여대생출장 태백홈타이마사지샵

태백출장전문 ◀상담톡 sx-58▶ 태백출장아가씨 태백출장추천 태백여대생출장 태백홈타이마사지샵 | 1rbzwoa2hq | |

1,768,883 | 강남구출장샵추천 LINE--라인wag58 강남구맛사지 애인대행 강남구한국인출장 강남구와꾸보장 강남구출장안마 | 강남구출장샵추천 LINE--라인wag58〓 강남구맛사지 애인대행 강남구한국인출장 강남구와꾸보장 강남구출장안마 강남구출장샵추천 LINE--라인wag58〓 강남구맛사지 애인대행... | 0 | 2024-02-22T08:26:16 | https://dev.to/1rbzwoa2hq/gangnamguculjangsyabcuceon-line-rainwag58x-gangnamgumassaji-aeindaehaeng-gangnamguhanguginculjang-gangnamguwaggubojang-gangnamguculjanganma-3bfa | 강남구출장샵추천 LINE--라인wag58〓 강남구맛사지 애인대행 강남구한국인출장 강남구와꾸보장 강남구출장안마

강남구출장샵추천 LINE--라인wag58〓 강남구맛사지 애인대행 강남구한국인출장 강남구와꾸보장 강남구출장안마

강남구출장샵추천 LINE--라인wag58〓 강남구맛사지 애인대행 강남구한국인출장 강남구와꾸보장 강남구출장안마 | 1rbzwoa2hq | |

1,768,898 | betvisabetcom | Betvisa - Betvisa Com - Casino - Lo De - No Hu Tang 100k la trang chu chinh thuc, chuyen cung cap moi... | 0 | 2024-02-22T08:44:43 | https://dev.to/betvisabetcom/betvisabetcom-21ge | Betvisa - Betvisa Com - Casino - Lo De - No Hu Tang 100k la trang chu chinh thuc, chuyen cung cap moi tro choi ca cuoc online, dac biet tai nha cai nay con co cac su kien lon nho nhu khung gio vang, thuong bat ngo 888k, chao don nam moi len den 8.888.000 VND.

Dia Chi: 118 Truong Dinh, Hai Ba Trung, Ha Noi, Viet Nam

Email: betvisabetcom@gmail.com

Website: https://betvisabet.com/

Dien Thoai: (+63) 9622372537

#betvisa #betvisa_game #betvisa_bet #betvisa_com #betvisa_app

Social Media:

https://betvisabet.com/

https://betvisabet.com/tai-app-betvisa/

https://betvisabet.com/dang-ky-betvisa/

https://betvisabet.com/nap-tien-momo/

https://betvisabet.com/rut-tien-betvisa/

https://betvisabet.com/ceo-thanh-hien/

https://www.facebook.com/betvisabetcom/

https://twitter.com/betvisabetcom

https://www.youtube.com/channel/UCkwk686Z6f5Qqfqjgxm6iGQ

https://www.pinterest.com/betvisabetcom/

https://social.msdn.microsoft.com/Profile/betvisabetcom

https://social.technet.microsoft.com/Profile/betvisabetcom

https://vimeo.com/betvisabetcom

https://github.com/betvisabetcom

https://community.fabric.microsoft.com/t5/user/viewprofilepage/user-id/673472

https://www.blogger.com/profile/07509188490658730450

https://www.reddit.com/user/betvisabetcom

https://gravatar.com/betvisabetcom

https://talk.plesk.com/members/betvisabetcom.318396/#about

https://soundcloud.com/betvisabetcom

https://medium.com/@betvisabetcom/about

https://www.flickr.com/people/betvisabetcom/

https://www.tumblr.com/betvisabetcom

https://betvisabetcom.wixsite.com/betvisabetcom

https://sites.google.com/view/betvisabetcom/trang-ch%E1%BB%A7

https://www.behance.net/betvisabetcom

https://www.openstreetmap.org/user/betvisabetcom

https://draft.blogger.com/profile/07509188490658730450

https://www.liveinternet.ru/users/betvisabetcom/profile

https://linktr.ee/betvisabetcom

https://www.twitch.tv/betvisabetcom/about

http://tinyurl.com/betvisabetcom

https://ok.ru/betvisabetcom/statuses/157448002763758

https://profile.hatena.ne.jp/betvisabetcom/profile

https://issuu.com/betvisabetcom

https://dribbble.com/betvisabetcom/about

https://form.jotform.com/240110726278047

https://sway.cloud.microsoft/BpGmWtSD6vhOfO7R?ref=Link

https://unsplash.com/fr/@betvisabetcom

https://scholar.google.com/citations?hl=vi&user=tkl26-MAAAAJ

https://www.goodreads.com/user/show/174210430-betvisabetcom

https://www.kickstarter.com/profile/betvisabetcom/about

https://tawk.to/betvisabetcom

https://groups.google.com/g/betvisabetcom

https://webflow.com/@betvisabetcom1

https://podcasters.spotify.com/pod/show/betvisabetcom

https://www.ted.com/profiles/45951460/about

https://disqus.com/by/combetvisabet/about/

https://500px.com/p/betvisabetcom

https://betvisabetcom.blogspot.com/

https://betvisabetcom.weebly.com/

https://betvisabetcom.webflow.io/

https://betvisabetcom.gitbook.io/untitled/

https://betvisabetcom.mystrikingly.com/

https://betvisabetcom.amebaownd.com/posts/51430744

https://betvisabetcom.seesaa.net/article/502042025.html?1705039833

http://betvisabetcom.splashthat.com

http://betvisabetcom.idea.informer.com/

https://betvisabetcom.contently.com/

https://betvisabetcom.amebaownd.com/posts/51430744

https://betvisabetcom.bravesites.com/#builder

https://betvisabetcom.themedia.jp/posts/51430869

https://betvisabetcom.storeinfo.jp/posts/51431437

https://betvisabetcom.theblog.me/posts/51431589

https://betvisabetcom.my.cam/#

https://betvisabetcom.onlc.fr/

https://betvisabetcom.gallery.ru/

https://betvisabetcom.therestaurant.jp/posts/51431817

https://betvisabetcom.wordpress.com/

https://ext-6487563.livejournal.com/profile/

https://betvisabetcom.thinkific.com/courses/your-first-course

https://ko-fi.com/betvisabetcom

https://www.provenexpert.com/betvisabetcom/

https://hub.docker.com/r/betvisabetcom/betvisabetcom

https://independent.academia.edu/betvisabetcom

https://fliphtml5.com/homepage/ylcgn/com-betvisabet/

https://www.quora.com/profile/Betvisabetcom

https://www.evernote.com/shard/s514/sh/f2a1bea5-0cc7-6a01-a682-85ff418e72cf/J35omHuumehbHKmoRC6sAwgJsUN6G8WaGZRsUVVwLcd4Mcyejf_7X-kv0w

https://heylink.me/betvisabetcom/

https://trello.com/u/combetvisabet

https://giphy.com/channel/betvisabetcom

https://www.mixcloud.com/betvisabetcom/

https://orcid.org/0009-0009-8533-6905

https://www.deviantart.com/betvisabetcom

https://vws.vektor-inc.co.jp/forums/users/betvisabetcom

https://codepen.io/betvisabetcom

https://community.cisco.com/t5/user/viewprofilepage/user-id/1663522

https://wellfound.com/u/betvisabetcom-1

https://about.me/betvisabetcom

https://betvisabetcom.peatix.com/

https://sketchfab.com/betvisabetcom

https://gitee.com/betvisabetcom

https://public.tableau.com/app/profile/betvisabetcom/vizzes

https://connect.garmin.com/modern/profile/b27d2643-7f90-4711-9b88-b46e857e2772

https://www.reverbnation.com/artist/betvisabetcom

https://profile.ameba.jp/ameba/betvisabetcom

https://onlyfans.com/betvisabetcom

https://mastodon.social/@betvisabetcom

https://readthedocs.org/projects/betvisabetcom/

https://flipboard.com/@betvisabetcom

https://www.awwwards.com/betvisabetcom/ | betvisabetcom | |

1,768,957 | How AI and DePIN Will Change Web3 | Web3, often referred to as the decentralized web, represents a paradigm shift in how the internet... | 0 | 2024-02-22T10:08:43 | https://dev.to/mayanks01798115/how-ai-and-depin-will-change-web3-36lh | Web3, often referred to as the decentralized web, represents a paradigm shift in how the internet operates, leveraging decentralized technologies like blockchain to create a more transparent, secure, and user-centric online experience. Within the Web3 ecosystem, AI (Artificial Intelligence) and [DePIN ](https://tradedog.io/how-ai-and-depin-will-change-web3/)(Decentralized Public Infrastructure Network) are poised to play transformative roles, reshaping various aspects of online interaction, security, and innovation. Here's how AI and DePIN will change Web3:

Enhanced Security and Privacy:

DePIN provides a robust foundation for secure and private online interactions by decentralizing key infrastructure components. With its distributed architecture, DePIN reduces the risk of single points of failure and potential security breaches.

AI technologies can further bolster security by analyzing vast amounts of data to identify patterns indicative of malicious activities or vulnerabilities. AI-powered security systems can help detect and mitigate threats in real-time, enhancing overall cybersecurity within the Web3 ecosystem.

Data Ownership and Control:

Web3 aims to empower users by granting them greater control over their data. Through decentralized identity solutions facilitated by DePIN, individuals can maintain ownership of their digital identities and personal information.

AI technologies enable users to leverage their data more effectively, allowing for personalized experiences without sacrificing privacy. By employing techniques like federated learning and homomorphic encryption, AI models can be trained on decentralized data sources without compromising individual privacy.

Autonomous Decision Making:

AI algorithms integrated into Web3 platforms can facilitate autonomous decision-making processes, such as smart contract execution and decentralized governance mechanisms.

Through the combination of AI and decentralized technologies, Web3 applications can automate various tasks and processes, reducing the need for intermediaries and enhancing operational efficiency.

Content Curation and Personalization:

AI-driven algorithms can analyze user behavior and preferences to deliver personalized content recommendations and tailored user experiences within the Web3 environment.

By leveraging decentralized content platforms enabled by DePIN, users can access diverse content without centralized control or censorship, fostering greater freedom of expression and information dissemination.

Innovation and Collaboration:

AI and DePIN technologies enable the development of decentralized AI marketplaces and collaborative ecosystems where developers can create, share, and monetize AI models and services.

Web3 facilitates frictionless collaboration and innovation by removing barriers to entry and providing transparent incentive mechanisms through blockchain-based protocols and smart contracts.

In summary, the convergence of AI and DePIN within the Web3 ecosystem promises to redefine the way we interact with the internet, offering enhanced security, privacy, autonomy, and innovation across various domains. As these technologies continue to evolve, they will shape the future of digital interactions and pave the way for a more decentralized and inclusive online environment. | mayanks01798115 | |

1,768,965 | 🎁 Event | Join the NFTScan Redotpay Collaboration Event and win a Redotpay Payment Card! | NFTScan is thrilled to announce its partnership with RedotPay, marking the beginning of a... | 0 | 2024-02-22T10:20:06 | https://dev.to/nft_research/event-join-the-nftscan-x-redotpay-collaboration-event-and-win-a-redotpay-payment-card-43gb | nft | NFTScan is thrilled to announce its partnership with RedotPay, marking the beginning of a multifaceted collaboration in the NFT space. Together, we’re launching the exclusive NFTScan x Redotpay co-branded payment card with customizable covers.

**About Redotpay**

RedotPay is a leading blockchain technology company specializing in crypto wallets and payment solutions. With a focus on innovation and user experience, RedotPay provides secure and efficient payment solutions for users worldwide.

RedotPay payment card supports various online and offline payment scenarios by automatically converting cryptocurrencies into local fiat currencies. Currently, RedotPay has over 800k users globally who are using RedotPay’s payment cards for their daily expenses. (RedotPay’s payment cards currently support currencies and networks including Bitcoin (BTC), Ethereum (ETH, BSC), USDC (ERC20, TRC20, BSC, ARB), and USDT (ERC20, TRC20, BSC, ARB).

**Event Details**

NFTScan will randomly select 50 participants from the event to receive 50 exclusive NFTScan x Redotpay co-branded cards for free, valued at $10 each. Each card will also include a $5 registration bonus, which can be used upon verification. In addition, winners will have the opportunity to personalize their card covers using their NFTs.

To claim your virtual payment card, use the promo code provided by NFTScan after winning. Activate your card to start enjoying its benefits!

🎈 **How to Participate:**

Follow NFTScan and RedotPay on X (Twitter).

Download the RedotPay App. [Get the APP}

Retweet & like the event tweet.[Post link]

📆 Event Duration:

February 22, 2024, 10:00 AM — March 1, 2024, 10:00 AM (UTC)

📌 **Important Notes:**

1. To qualify for the prize, winners are requested to send a direct message (DM) of the downloaded Redotpay APP page to NFTScan on Twitter to redeem the promo code;

2. Please note that RedotPay does NOT support transactions in the following countries and regions: Croatia, Libya, Guinea-Bissau, Bosnia and Herzegovina, Montenegro, Macedonia, Slovenia, Serbia, North Korea, Iran, Mali, South Sudan, Central African Republic, Yemen, United States, Eritrea, Lebanon, ISIL(Da’esh) — Al Qaida and Taliban, Democratic Republic of Congo, Sudan, Somalia, Iraq, Haiti, Afghanistan, Cuba, Belarus, Mainland China, Myanmar, Burundi, Nicaragua, Syria, Ukraine and Russia, Venezuela, Balkans, Zimbabwe, Ethiopia, Darfur, Syria residents. For more details, please refer to the official website FAQ.

**Contact Us:**

If you have any questions or need assistance, feel free to reach out to us. Don’t miss this fantastic opportunity to participate in the NFTScan x Redotpay custom payment card event and win the custom payment card.

Don’t miss out! Win Now! 🎉

NFTScan is the world’s largest NFT data infrastructure, including a professional NFT explorer and NFT developer platform, supporting the complete amount of NFT data for 20 blockchains including Ethereum, Solana, BNBChain, Arbitrum, Optimism, and other major networks, providing NFT API for developers on various blockchains.

Official Links:

NFTScan: https://nftscan.com

Developer: https://developer.nftscan.com

Twitter: https://twitter.com/nftscan_com

Discord: https://discord.gg/nftscan | nft_research |

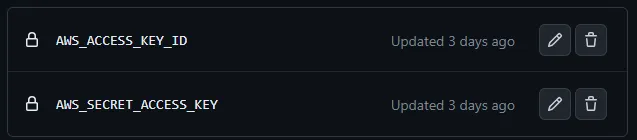

1,768,996 | How to get end user's access-key and secret-access-key w.r.t "amazon s3" in spring boot application ? | How to get end user's access-key and secret-access-key by using account-Id and registered... | 0 | 2024-02-22T10:58:25 | https://dev.to/santhum/how-to-get-end-users-access-key-and-secret-access-key-wrt-amazon-s3-in-spring-boot-application--10np | How to get end user's access-key and secret-access-key by using account-Id and registered email/username w.r.t "amazon s3" in spring boot application ? | santhum | |

1,768,998 | Unlocking SEO Potential: The Power of PPT Submission Sites | Introduction: In the realm of digital marketing, leveraging diverse platforms to enhance Search... | 0 | 2024-02-22T11:00:07 | https://dev.to/seoworld/unlocking-seo-potential-the-power-of-ppt-submission-sites-45df | pptsubmissionsites, pptsites, pptsubmissionsite, pptsubmission |

Introduction:

In the realm of digital marketing, leveraging diverse platforms to enhance Search Engine Optimization (SEO) has become a cornerstone strategy for businesses aiming to boost their online presence. Among the myriad of techniques available, harnessing the potential of PowerPoint (PPT) submission sites has emerged as a dynamic avenue for driving traffic, increasing visibility, and fortifying brand authority. This article delves into the significance of [PPT submission sites](https://www.seoworld.in/top-high-pr-ppt-submission-sites-list/) in the SEO landscape and elucidates how businesses can capitalize on this potent tool to elevate their online footprint.

Understanding PPT Submission Sites:

PPT submission sites serve as repositories where users can upload and share PowerPoint presentations on a myriad of topics ranging from business insights and educational content to creative designs and industry updates. These platforms boast significant domain authority (DA) and are frequented by a diverse audience, presenting an invaluable opportunity for businesses to amplify their reach beyond conventional SEO tactics.

The SEO Benefits of PPT Submission Sites:

- **Enhanced Visibility:** By disseminating informative and visually compelling presentations across reputable [PPT submission site](https://www.seoworld.in/top-high-pr-ppt-submission-sites-list/) such as SlideShare and AuthorStream, businesses can augment their online visibility and attract a broader audience segment.

- Backlink Opportunities: Each presentation uploaded to these platforms offers the potential to embed backlinks directing viewers back to the company’s website or relevant landing pages. These high-quality backlinks serve as a testament to the website’s credibility and authority in the eyes of search engines, thereby bolstering its ranking in search results.

- Diversification of Content: Incorporating PowerPoint presentations into the content marketing strategy diversifies the content portfolio, catering to varied audience preferences. This multifaceted approach not only fosters engagement but also fosters stronger brand recall and customer loyalty.

- Social Sharing Amplification: PPT Sharing sites often integrate social sharing functionalities, enabling users to seamlessly distribute presentations across popular social media platforms. This amplifies the reach of the content, fostering organic sharing and engagement while driving traffic back to the source website.

- Exposure to Niche Audiences: These platforms attract a diverse array of users seeking insights and information on specific topics. By tailoring presentations to cater to niche interests and industry verticals, businesses can effectively target and engage with their desired audience segments, fostering community engagement and thought leadership.

- Best Practices for Effective PPT Submission:

Optimize Content: Craft visually appealing presentations that are both informative and aesthetically pleasing, incorporating relevant keywords, titles, and descriptions to optimise searchability.

Strategic Link Placement: Integrate strategically placed hyperlinks within the presentation content to direct traffic back to the website or specific landing pages.

Engagement Enhancement: Encourage audience interaction and engagement by incorporating interactive elements such as polls, quizzes, and calls-to-action (CTAs) within the presentation.

Consistency and Quality: Maintain consistency in branding and messaging across all presentations while adhering to high-quality standards in content creation and design.

Promotion and Distribution: Actively promote and distribute presentations across social media channels, email newsletters, and relevant online communities to maximise visibility and engagement.

Conclusion:

In an increasingly competitive digital landscape, the utilisation of PPT submission sites as a supplementary SEO strategy offers businesses a distinct advantage in bolstering their online presence, driving traffic, and fortifying brand authority. By leveraging these platforms effectively and adhering to best practices, businesses can unlock the full potential of PowerPoint presentations as a dynamic tool for enhancing SEO performance and achieving sustained growth in the digital sphere. | seoworld |

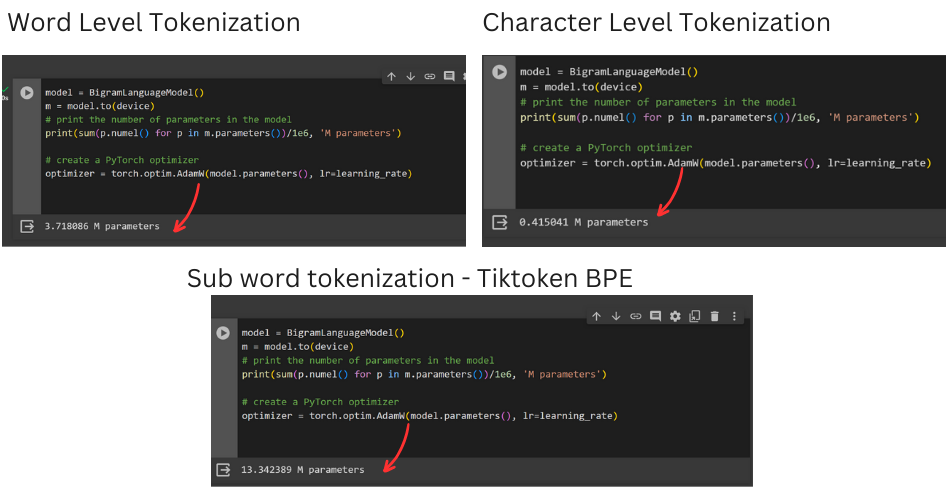

1,769,011 | Character vs. Word Tokenization in NLP: Unveiling the Trade-Offs in Model Size, Parameters, and Compute | In Natural Language Processing, the choice of tokenization method can make or break a model. Join me... | 0 | 2024-02-22T11:09:06 | https://dev.to/kagemanjoroge/character-vs-word-tokenization-in-nlp-unveiling-the-trade-offs-in-model-size-parameters-and-compute-3jjh | ai, machinelearning, python, nlp | In Natural Language Processing, the choice of tokenization method can make or break a model. Join me on a journey to understand the profound impact of character-level, word-level tokenization and Sub-word tokenization on model size, number of parameters, and computational complexity.

<img src="https://dev-to-uploads.s3.amazonaws.com/uploads/articles/dgeq41t7cp99j0n6yqrd.jpg" alt="Alt Text" style={{height:"400px", textAlign:"center", width:"40pc"}} />

**First Things First, What is Tokenization?**

AI operates with numbers, and for deep learning on text, we convert that text into numerical data. Tokenization breaks down textual data into smaller units, or tokens, like words or characters, which can be represented numerically.

**Decoding Tokenization**

There are various techniques in tokenization, such as:

- **Word Tokenization:** Divides text into words, creating a vocabulary of unique terms.

- **Character Tokenization:** Breaks down text into individual characters, useful for specific tasks like morphological analysis.

- **Sub word Tokenization:** Splits words into smaller units, capturing morphological information effectively. Examples [BERT](https://huggingface.co/docs/transformers/model_doc/bert) and [SentencePiece](https://github.com/google/sentencepiece)

**Character-Level Tokenization**

We essentially create tokens out of individual characters present in the text. This involves compiling a unique set of characters found in the dataset, ranging from alphanumeric characters to ASCII symbols. By breaking down the text into these elemental units, we generate a concise set of tokens, resulting in a smaller number of model parameters. This lean approach is particularly advantageous in scenarios with `limited datasets, such as low-resource languages`, where it can efficiently capture patterns without overwhelming the model.

```python

# Consider the sentence, "I love Python"

# If we tokenize by characters the result will be ['I', ' ', 'l', 'o', 'v', 'e', ' ', 'P', 'y', 't', 'h', 'n']

sent = "I love Python"

tokens = [i for i in set(sent)] # Use a set to obtain ony unique ones

# if we then represent the sentence above numerically

numerical_representation = {i:ch for i, ch in enumerate(tokens)}

number_of_tokens = len(s)

```

**Word-Level Tokenization:**

Word-level tokenization involves breaking down the text into individual words. This process results in a vocabulary composed of unique terms present in the dataset. Unlike character-level tokenization, which deals with individual characters, word-level tokenization operates at a higher linguistic level, capturing the meaning and context of words within the text.

This approach leads to a larger model vocabulary, encompassing the diversity of words used in the dataset. While this richness is beneficial for understanding the semantics of the language, it introduces challenges, particularly when working with extensive datasets. The increased vocabulary size translates to a higher number of model parameters, necessitating careful management to prevent overfitting.

```py

# Consider the sentence, "I love Python"

sent = "I love Python"

tokens = [i for i in set(sent.split())]

numerical_representation = {i:ch for i, ch in enumerate(tokens)}

```

However, the trade-off lies in the potential for overfitting, especially when dealing with smaller datasets. Striking a balance between a rich vocabulary and avoiding over-parameterization becomes a critical consideration when employing word-level tokenization in natural language processing tasks.

**Subword Tokenization**

Subword tokenization interpolates between word-based and character-based tokenization.

Instead of treating each whole word as a single building block, subword tokenization breaks down words into smaller, meaningful pieces.

These smaller parts, or subwords, carry meaning on their own and help the computer understand the structure of the word in a more detailed way.

Common words get a slot in the vocabulary, but the tokenizer can fall back to word pieces and individual characters for unknown words.

_Let's do a simple experiment to show the impacts of a tokenization method on the model_

[Colab Link](https://colab.research.google.com/drive/1PwAr2Gt0x_8UUXd5ED_nBTJlpdRXeU3V?usp=sharing) to the code

From the experiment I conducted above the trend is as follows:

- As the number of tokens increases:

- The model size also increases

- Model training and inference becomes more compute demanding

- The size of dataset required to achieve high accuracy also increases

**Which tokenization method should I use?**

Sub-word tokenization is the industry standard!

Consider [Byte Pair Encodinge(BPE) techniques](https://huggingface.co/learn/nlp-course/chapter6/5?fw=pt) such as:

1. [TikToken](https://github.com/openai/tiktoken)

2. [SentencePiece](https://github.com/google/sentencepiece)

3. [BERT](https://huggingface.co/docs/transformers/model_doc/bert)

Use character level tokenization or word level tokenization where you have a smaller dataset. | kagemanjoroge |

1,781,497 | Every Neovim, Every Config, All At Once | Neovim is hot 🔥 right now Neovim, a successful fork of Vim, is a text editor that is... | 0 | 2024-03-06T00:26:24 | https://dev.to/hoonweedev/every-neovim-every-config-all-at-once-578p | neovim, config, development | ---

id: Every Neovim, Every Config, All At Once

aliases: []

tags: []

---

## Neovim is hot 🔥 right now

Neovim, a successful fork of Vim, is a text editor that is gaining popularity. I won't link David Heinemeier Hansson's blog post again since everyone has done it already.

I also won't list all the good stuff about Neovim, since you can find them on the official website and tons of blog posts.

## You can build your own IDE, or should I say, own **IDEs**(plural)

Just by learning a super-easy Lua language, you can build your own IDE with Neovim. If you're a busy person, you can just copy and paste someone else's config.

You can also find a fully-plugged IDE level config like:

- [LazyVim](https://www.lazyvim.org/)

- [AstroNvim](https://astronvim.com/)

- [LunarVim](https://www.lunarvim.org/)

- [NvChad](https://nvchad.com/)

All you have to do is to clone the repo and paste it in your `~/.config/nvim` directory.

```bash

# Backup your current config

mv ~/.config/nvim ~/.config/nvim.bak

# Clone the repo

git clone <remote repo> ~/.config/nvim --depth 1

# Run neovim

nvim

```

But what if you want to use multiple configs at the same time?

The answer is simple. All you need to set is the **`NVIM_APPNAME`** environment variable.

### Example: Using LunarVim and NvChad at the same time

Let's say you want to use LunarVim for your web development and NvChad for your data science work.

You should first clone both repos with their custom directory names.

```bash

# Clone LunarVim

$ git clone git@github.com:LunarVim/LunarVim.git ~/.config/lunarvim --depth 1

# Clone NvChad

$ git clone https://github.com/NvChad/NvChad ~/.config/nvchad --depth 1

```

Then, you can set the `NVIM_APPNAME` environment variable to use each config.

```bash

# Run neovim with LunarVim

$ NVIM_APPNAME=lunarvim nvim

# Run neovim with NvChad

$ NVIM_APPNAME=nvchad nvim

```

You can still use the default config by just running `nvim` without setting the `NVIM_APPNAME` environment variable. (This reads the config from `~/.config/nvim`)

```bash

# Run neovim with the default config

$ nvim

```

### Example: Make aliases for each config

You can also make aliases for each config.

```bash

# Add these lines to your .bashrc or .zshrc

alias lunarvim="NVIM_APPNAME=lunarvim nvim"

alias nvchad="NVIM_APPNAME=nvchad nvim"

# Run neovim with LunarVim

$ lunarvim

```

## Conclusion

That's it! You can use multiple Neovim configs at the same time, with just a simple environment variable `NVIM_APPNAME`.

Now, some might say _"Why don't you just use Docker?"_. Well, not everyone is comfortable with Docker, and since Neovim just supports this feature, why not use it?

I hope this tip helps you to use Neovim more effectively. Happy hacking! 🚀

| hoonweedev |

1,769,130 | Dart Basic - Part 1 | Exploring Dart Fundamentals: Variables, Types, Constants, and Operators Dart, with its simplicity... | 26,684 | 2024-02-23T11:44:33 | https://dev.to/sadanandgadwal/dart-basic-part1-p35 | dart, basic, programming, sadanandgadwal | Exploring Dart Fundamentals: Variables, Types, Constants, and Operators

Dart, with its simplicity and power, is a modern programming language that caters to various development needs, from mobile applications to server-side solutions. In this comprehensive guide, we'll explore the foundational concepts of Dart through practical examples.

## 1. Variables and Types:

**1.1 Variables:** Variables store data that can be manipulated and referenced in a program. In Dart, you declare variables using the var, final, or const keywords.

**var**: Declares a variable whose type is inferred by the Dart compiler based on the assigned value. For example:

```

var age = 23; //age as an integer

```

**final**: final variables in Dart are variables whose values cannot be changed once they are initialized.

- They must be initialized before they are used, and once initialized, their values cannot be reassigned.

- Final variables are initialized when they are first accessed, and their value remains constant throughout the program's execution.

- Final variables can be initialized with a value at the time of declaration or within constructors.

```

final name = 'Sadanand'; // name is assigned 'Sadanand' and it cannot be changed

```

**const**: const variables are compile-time constants in Dart. They are implicitly final but also compile-time constants.

- Their values must be known at compile-time.

- Const variables are evaluated and set at compile-time, not runtime.

- They are useful for declaring values that will not change during the execution of the program.

```

const PI = 3.1415; // PI is a compile-time constant

```

**1.2 Types:** Dart is a statically typed language, meaning each variable has a specific data type known at compile-time.

Dart provides several built-in data types:

**Numbers**: Dart supports both integers and floating-point numbers.

```

int age = 30;

double height = 5.11;

```

**Strings**: Used to represent textual data.

```

String name = 'Sadanand';

```

**Booleans**: Represents a true or false value.

```

bool isAdult = true;

```

**Lists**: Ordered collections of objects.

```

List<int> numbers = [1, 2, 3, 4, 5];

```

**Maps**: Unordered collections of key-value pairs.

```

Map<String, dynamic> person = {

'name': 'Sadanand',

'age': 23,

'isAdult': true

};

```

## 2. Dynamic:

Dynamic: Represents a variable whose type can change dynamically at runtime.

```

dynamic dynamicVariable = 'Sadanand';

dynamicVariable = 23; // Now dynamicVariable is an integer

```

## 3. Common Operators:

Common operators in programming languages are symbols or keywords used to perform various operations on data. Here's a brief explanation of common operators used in programming:

**3.1 Arithmetic Operators:**

+: Adds two numbers.

-: Subtracts the second number from the first.

*: Multiplies two numbers.

/: Divide the first number by the second.

~/: Truncating Division returns an integer result by rounding towards zero.

%: Modulus operator returns the remainder of the division.

**3.2 Relational Operators:**

>: Checks if the first operand is greater than the second.

<: Checks if the first operand is less than the second.

>=: Checks if the first operand is greater than or equal to the second.

<=: Checks if the first operand is less than or equal to the second.

**3.3 Equality Operators:**

==: Checks if two operands are equal.

!=: Checks if two operands are not equal.

**3.4 Logical Operators:**

&& (Logical AND): Returns true if both operands are true.

|| (Logical OR): Returns true if at least one of the operands is true.

```

void operatorExample() {

int x = 23;

int y = 27;

// Arithmetic operators

final add = x + y; // Addition

final sub = x - y; // Subtraction

final mut = x * y; // Multiplication

final div = x / y; // Division

final divwithintegers = y ~/ x; // Truncating Division (returns an integer)

final modulo = x % y; // Modulus (remainder of division)

// Relational operators

final greater = x > y; // Greater than

final notGreater = x < y; // Less than

final greaterthan = x >= y; // Greater than or equal to

final notgreaterthan = x <= y; // Less than or equal to

// Equality operators

final equalTo = x == y; // Equal to

final notEqualTo = x != y; // Not equal to

// Logical operators

final logicalAnd = x > y && y < x; // Logical AND

final logicalOr = x > y || y < x; // Logical OR

// Printing results

print("Addition of two numbers: $add");

print("Subtraction of two numbers: $sub");

print("Multiplication of two numbers: $mut");

print("Division of two numbers: $div");

print("Divide, returning an integer result: $divwithintegers");

print("Remainder of an integer division: $modulo");

print("Greater than: $greater");

print("Less than: $notGreater");

print("Greater than or equal to: $greaterthan");

print("Less than or equal to: $notgreaterthan");

print("Equal to: $equalTo");

print("Not equal to: $notEqualTo");

print("Logical AND: $logicalAnd");

print("Logical OR: $logicalOr");

}

```

These operators are fundamental for performing arithmetic calculations, making comparisons, and evaluating conditions in Dart programs.

**Conclusion:**

Dart's versatility and simplicity make it an excellent choice for developers across various domains. Understanding these fundamental concepts equips you to write efficient Dart code for diverse applications, ensuring clarity, reliability, and performance. Happy coding with Dart!

_Start Coding in Dart Now!_

Head over to [DartPad](https://dartpad.dev) (https://dartpad.dev) to start coding immediately. DartPad is a user-friendly online editor where you can write, run, and share Dart code without any setup required.

🌟 Stay Connected! 🌟

Hey there, awesome reader! 👋 Want to stay updated with my latest insights,Follow me on social media!

[🐦](https://twitter.com/sadanandgadwal) [📸](https://www.instagram.com/sadanand_gadwal/) [📘](https://www.facebook.com/sadanandgadwal7) [💻](https://github.com/Sadanandgadwal) [🌐](https://sadanandgadwal.me/) [💼

](https://www.linkedin.com/in/sadanandgadwal/)

[Sadanand Gadwal](https://dev.to/sadanandgadwal) | sadanandgadwal |

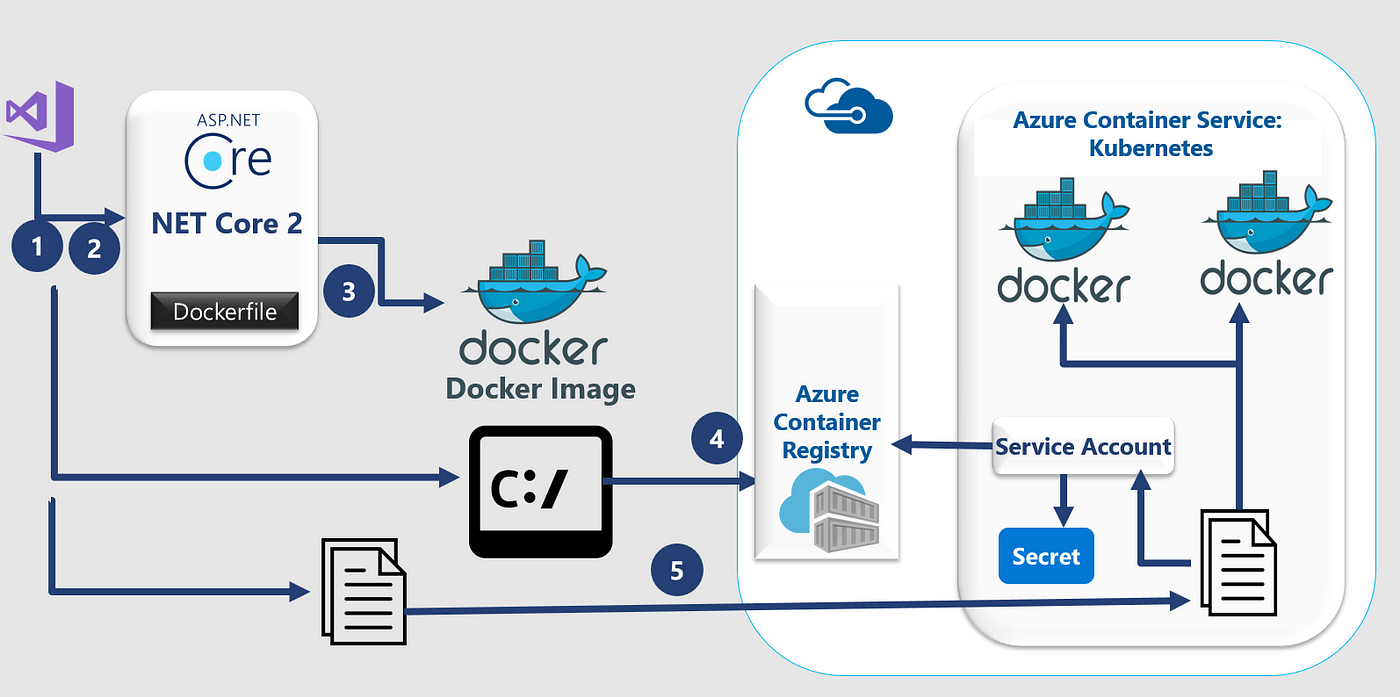

1,769,132 | Build your first Hangfire job .NET8 with PostgreSQL | In this article, I will share a few simple steps you will need to create your first hangfire job... | 0 | 2024-02-22T12:29:29 | https://dev.to/pradeepradyumna/your-first-hangfire-job-fornet8-with-postgresql-30nd | postgres, hangfire, dotnet, dotnetcore | In this article, I will share a few simple steps you will need to create your first hangfire job using .NET 8(the latest at the time of writing this article) with Postgres as the database.

Also, please be aware that in this article I'm not going to brief what Hangfire is, as there are plenty of articles already written by wise authors. :)

Alright, let's get started.

## 1. First step first

We can create either an ASP.NET Core Web App or API (it doesn't really matter what you choose). However, for simplicity, I'm using an API project targeting.NET8

## 2. Install Nuget Packages

You'll need to install the following Nuget packages

`Npgsql.EntityFrameworkCore.PostgreSQL`

`Microsoft.EntityFrameworkCore.Design`

`Hangfire.AspNetCore`

`Hangfire.PostgreSql`

That's all you need!

## 3. Create a DBContext

You will need a custom DBContext class to run a DB Migration

```

public class DefaultDbContext : DbContext

{

public DefaultDbContext(DbContextOptions<DefaultDbContext> options)

: base(options) { }

}

```

## 4. Create a DB in Postgres

Just go ahead and create a database called `HangfireSample`

This is all you need to do at the DB level!

## 5. Configuration

Now before you run migration configure the DB path in `appsettings.json` and update `program.cs`

```

"ConnectionStrings": {

"defaultConnection": "Host=localhost;Port=5432;Username=postgres;Password=YOUR_PWD;Database=HangfireSample"

}

```

Update the below code in `program.cs`

```

builder.Services.AddEntityFrameworkNpgsql().AddDbContext<DefaultDbContext>(options => {

options.UseNpgsql(builder.Configuration.GetConnectionString("defaultConnection"));

});

```

## 6. Run migration

Just open Package Manager Console and run the below commands

```

dotnet ef migrations add InitContext

dotnet ef database update

```

This will create `__EFMigrationsHistory` under `public` schema

## 7. Almost done

Now configure Hangfire service in `program.cs` with the below code

```

builder.Services.AddHangfire(x =>

x.UsePostgreSqlStorage(builder.Configuration.GetConnectionString("defaultConnection")));

```

And update middleware

```

app.UseHangfireDashboard("/dashboard");

app.UseHangfireServer();

```

This was all you had to do create the hangfire server and storage.

Now, let's create a simple job.

## 8. First Hangfire job

Just copy the code below

```

BackgroundJob.Enqueue(() => Console.WriteLine("My first handfire job!"));

```

Now, you run the solution!

To see your job, visit

[https://localhost:44397/dashboard](https://localhost:44397/dashboard)

And if you go to [https://localhost:44397/dashboard/jobs/succeeded](https://localhost:44397/dashboard/jobs/succeeded)

you'll see the job you just executed.

Also, just you know, if you check the `HangfireSample` database, you'll the hangfire tables would be created under `hangfire` schema.

Just for your reference [here](https://github.com/pradeepradyumna/HangfireSample) is the complete working sample of the code I just explained.

I hope it helped you! | pradeepradyumna |

1,769,164 | Dashboard | You Can Start the Power BI Training Courses now in Thane. Analysing and interpreting data using... | 0 | 2024-02-22T12:13:20 | https://dev.to/deepk8989/dashboard-27hm | You Can Start the Power BI Training Courses now in Thane. Analysing and interpreting data using Power BI to derive actionable insights. This may involve creating visualizations, reports, and dashboards. Designing and developing reports and dashboards using Power BI. This involves transforming raw data into a format suitable for analysis. Developing and maintaining data infrastructure to support Power BI reports, ensuring data is accessible and accurate. join now to start the career with Actifyzone center. | deepk8989 | |

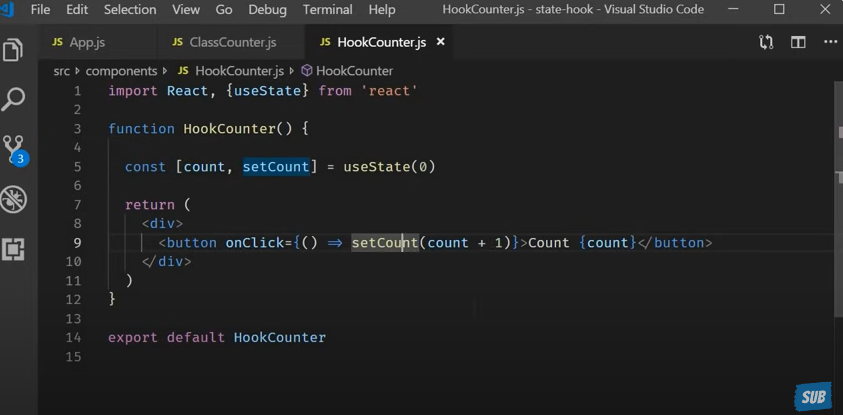

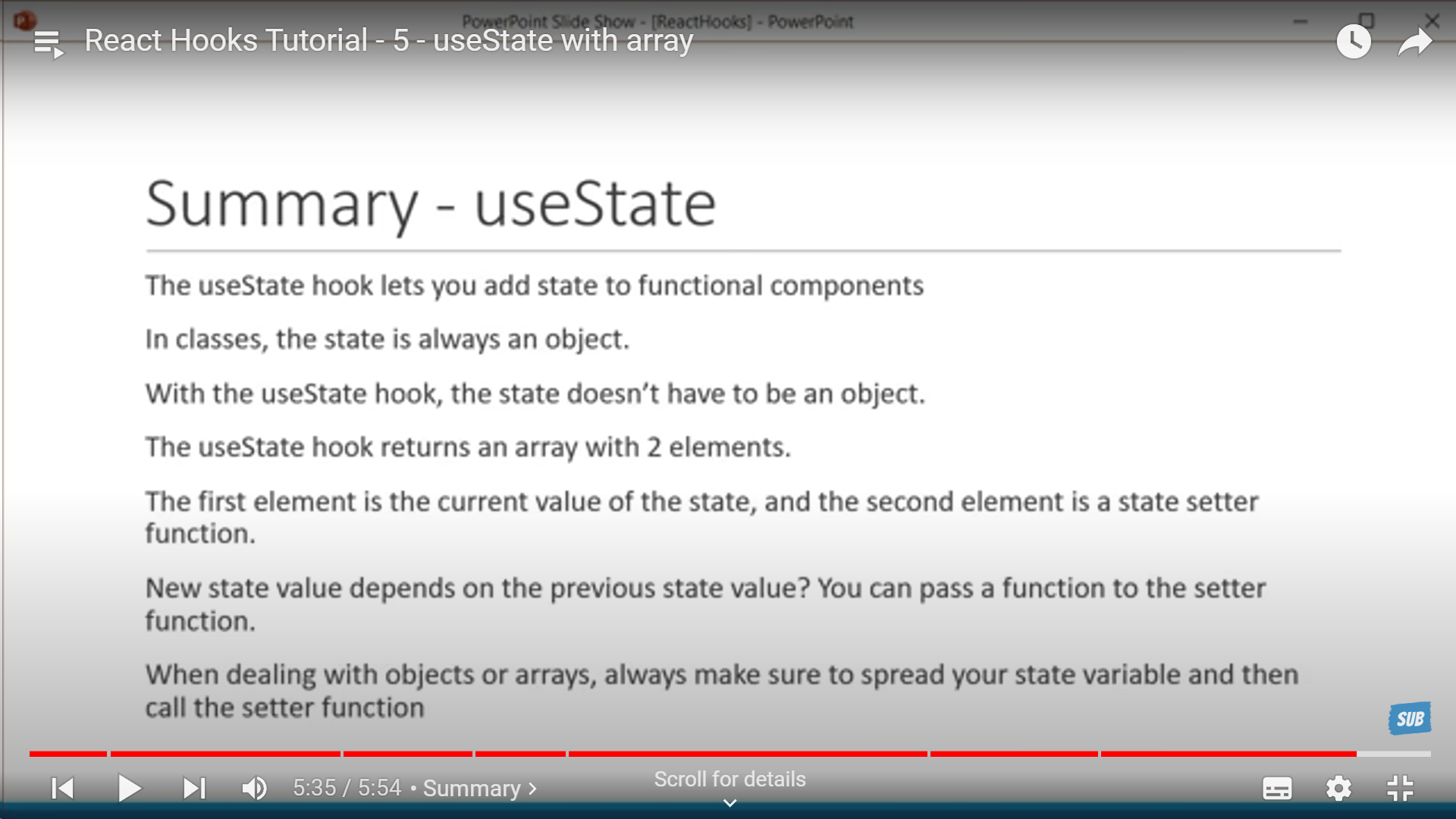

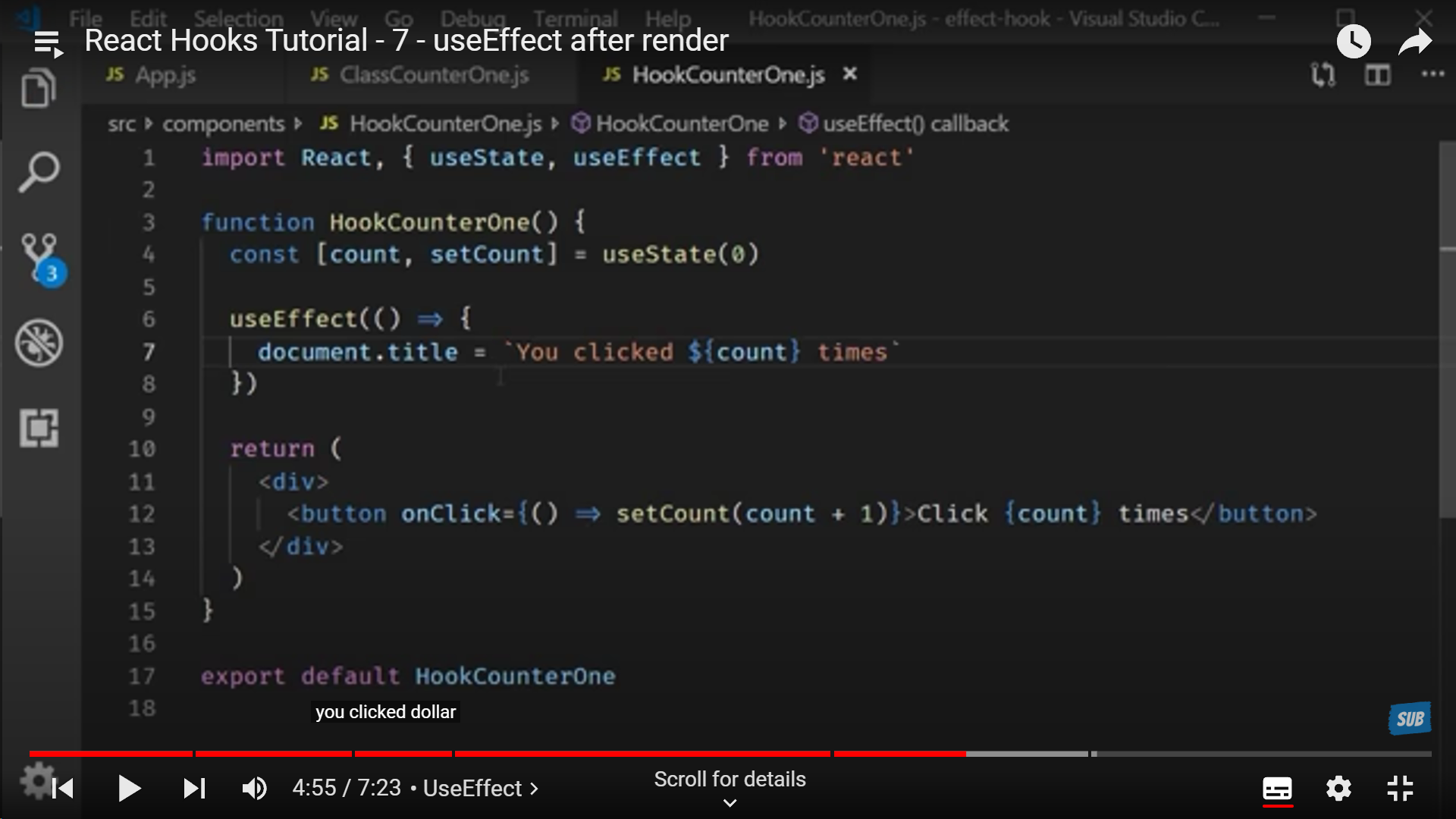

1,769,248 | React Hooks | Hooks Why Hooks Hook Rules Syntax In a hook -> A state variable can be -> A... | 0 | 2024-02-22T14:33:42 | https://dev.to/alamfatima1999/react-hooks-20m0 | _<u>**Hooks**</u>_

**_<u>Why Hooks</u>_**

**Hook Rules**

**Syntax**

In a hook -> A state variable can be ->

1. A number

2. A string

3. Boolean

4. Object

5. Array

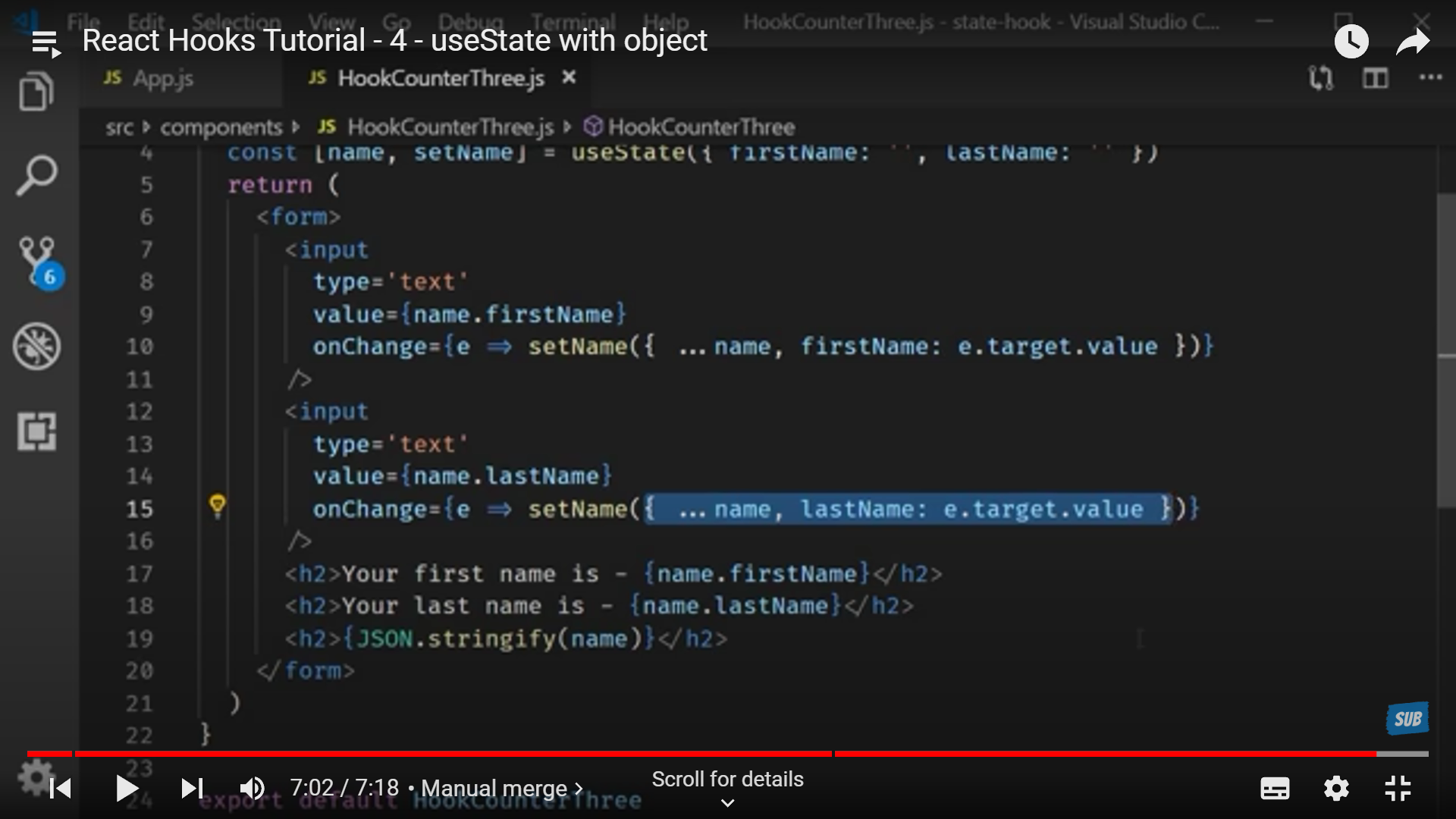

If we have use dan object as set state and modified a part of it and then some other part it doesn't merge them and update but updates them independently and so they are rendered on screen as different entities so to rule that out we us ethe spread operator to give the whole object value first and then modify a part(s) of it.

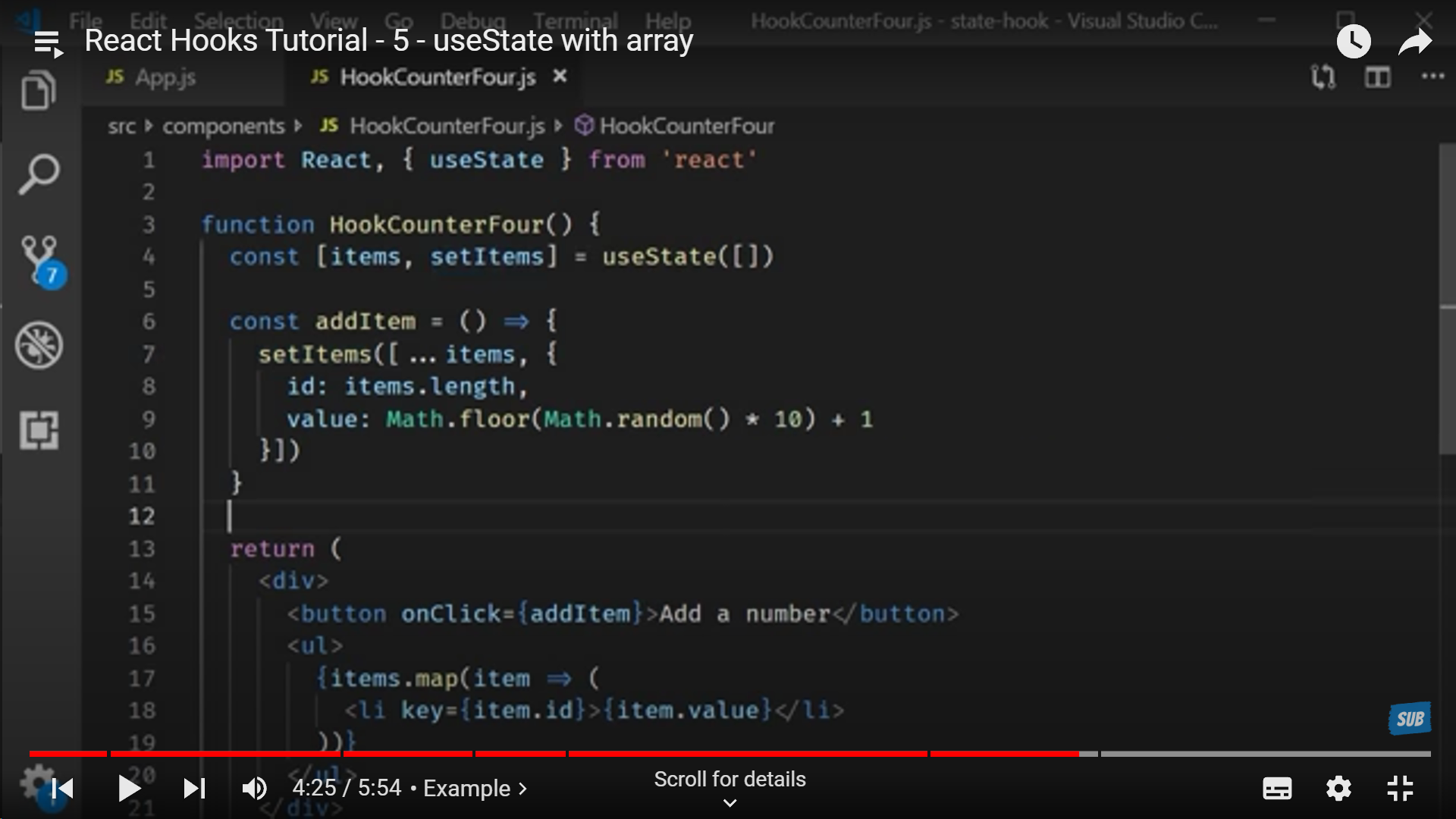

**Hooks with Arrays**

**Things to remember while dealing with hooks**

**useEffect**

It is basically whenever the component renders or update in rendering on screen this useEffect() gets triggered and what we define under this is what function we want to trigger. Here we have defined an arrow function.

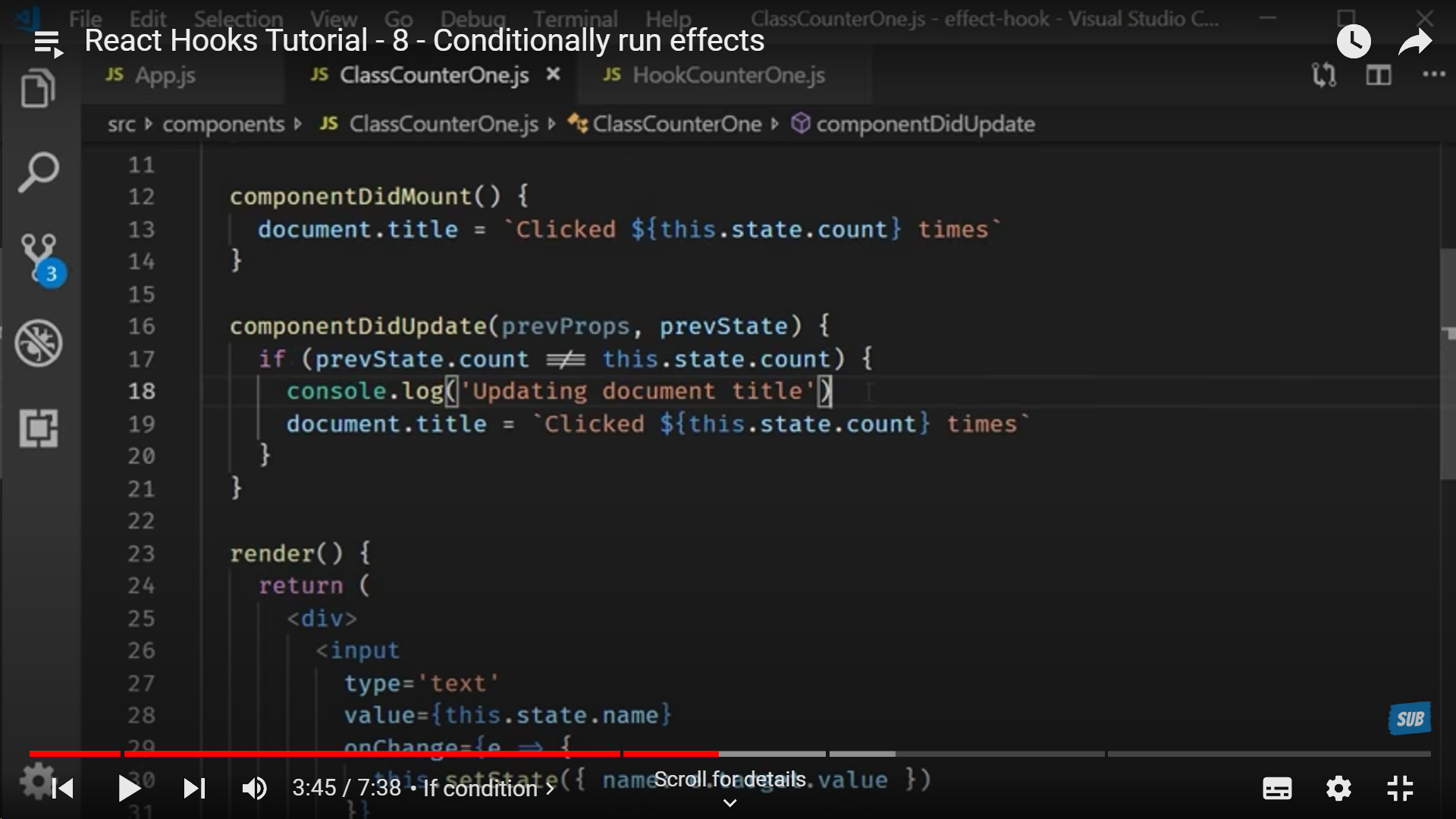

**In a class component**

A simple condition will help us to not re-render unnecessarily.

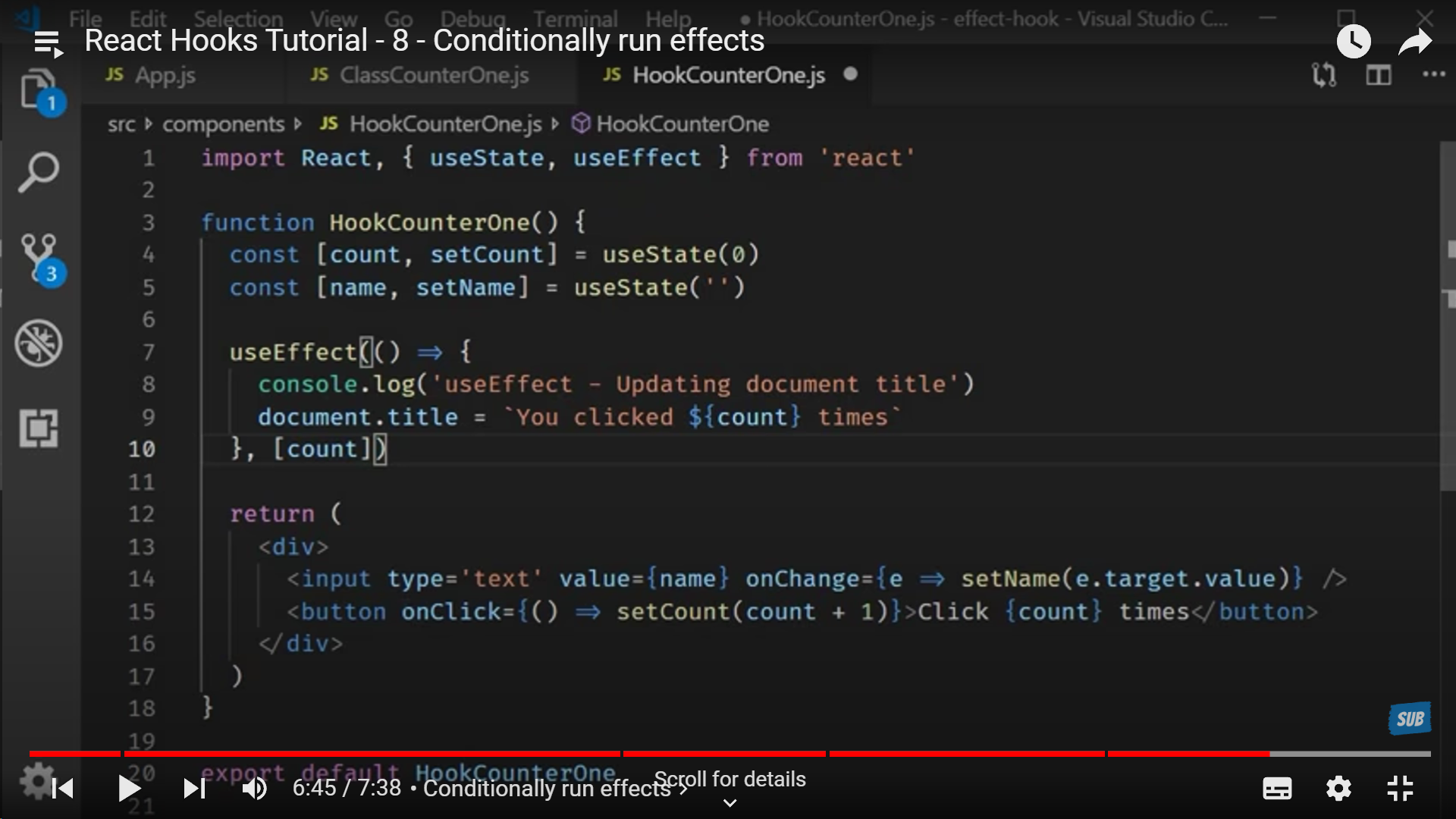

**Similarly with Functional Components**

We can use useEffect() to do the same by adding the state or prop to be checked if it has updated or not if it's not then useEffect() isn't executed.

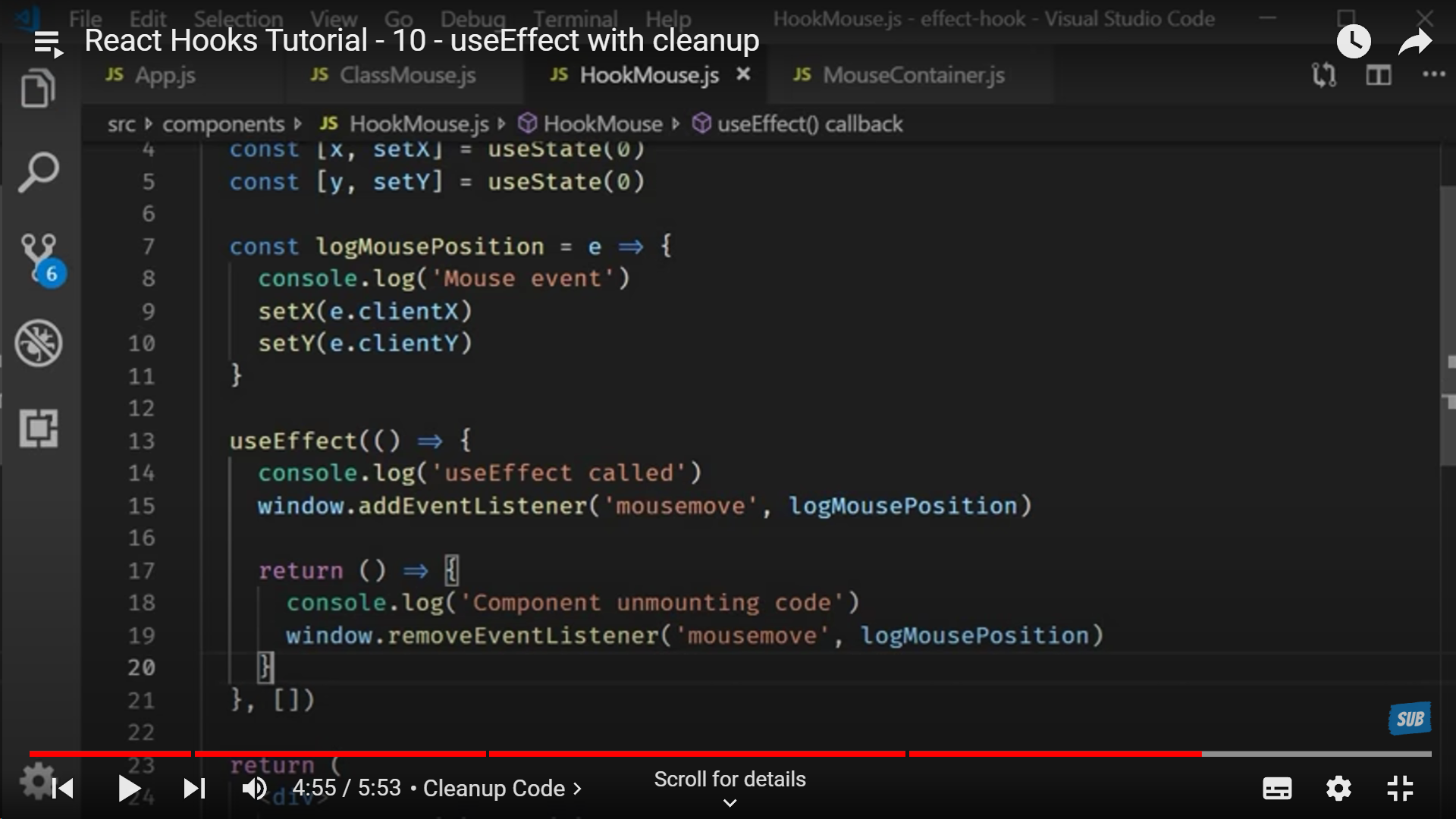

**How to mimic ComponentWillUnmount() in a Functional Component**

With the help of removeEventListener()

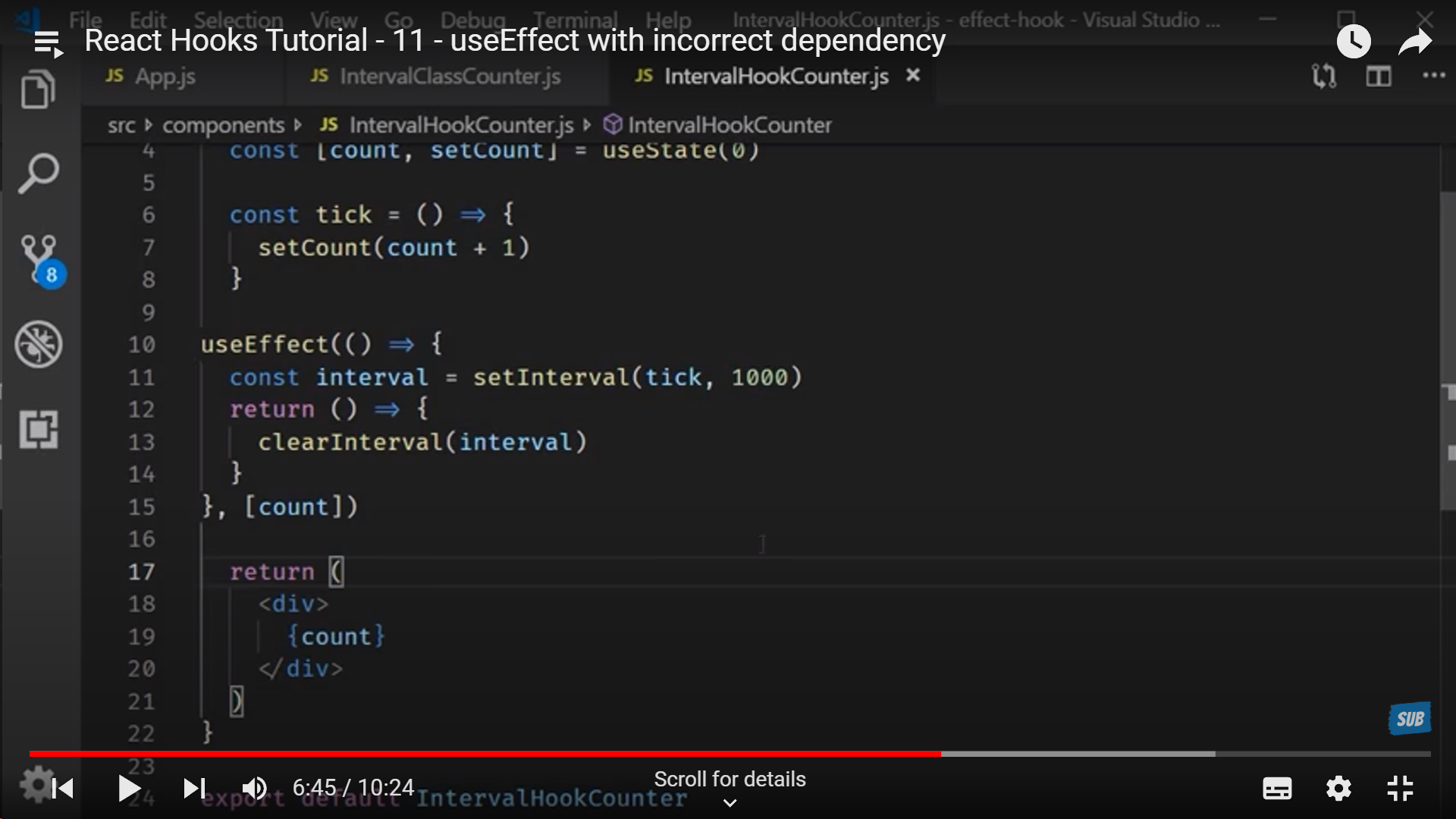

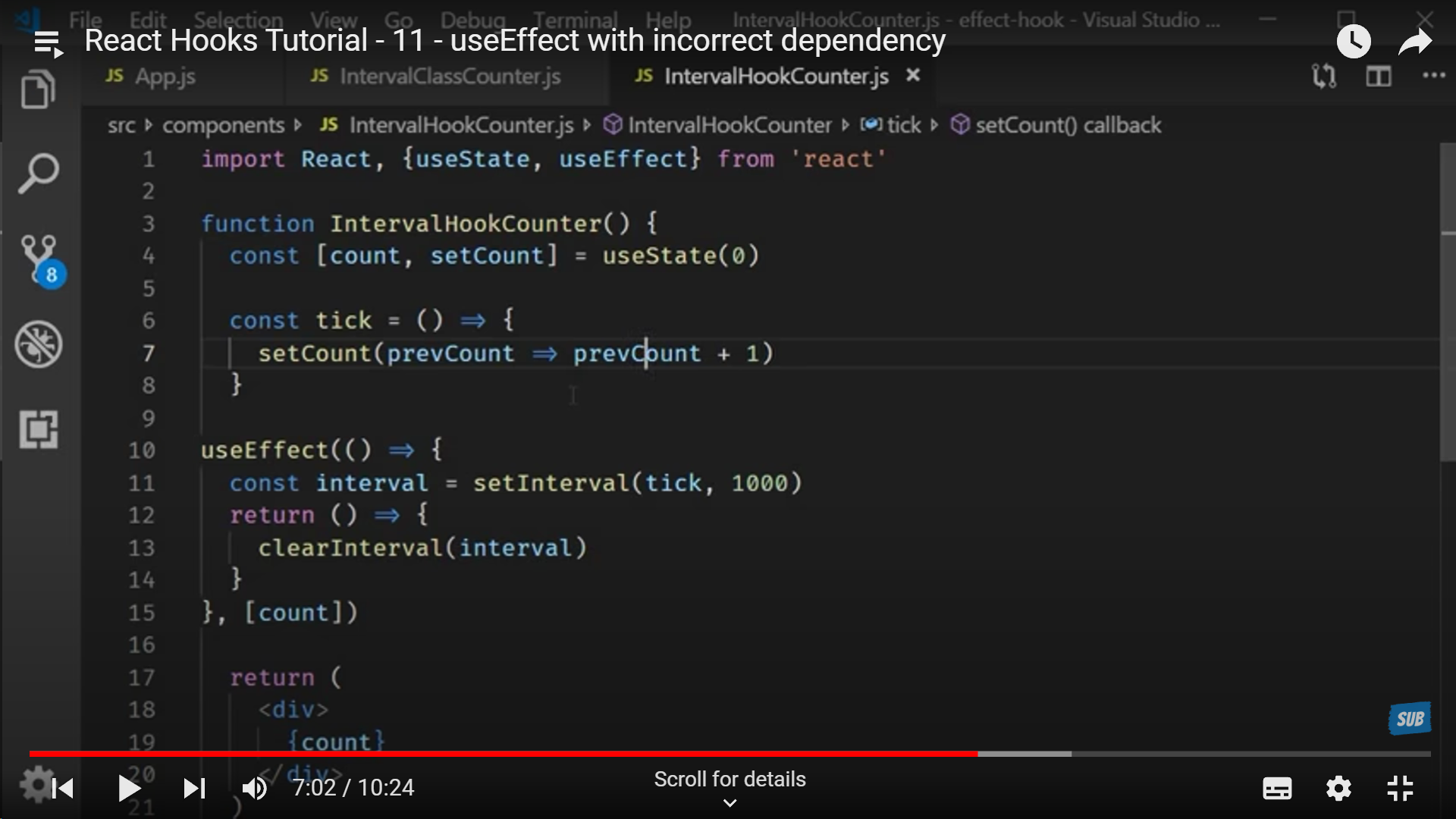

**Tick in Hooks**

To mention the state variable that we need to re-render needs to be mentioned in the dependency array (a.k.a the array that is passed to useEffect()). This is how we re-render specific state variables.

**OR**

By using state variable's prevState to update the new state and re-render it on screen.

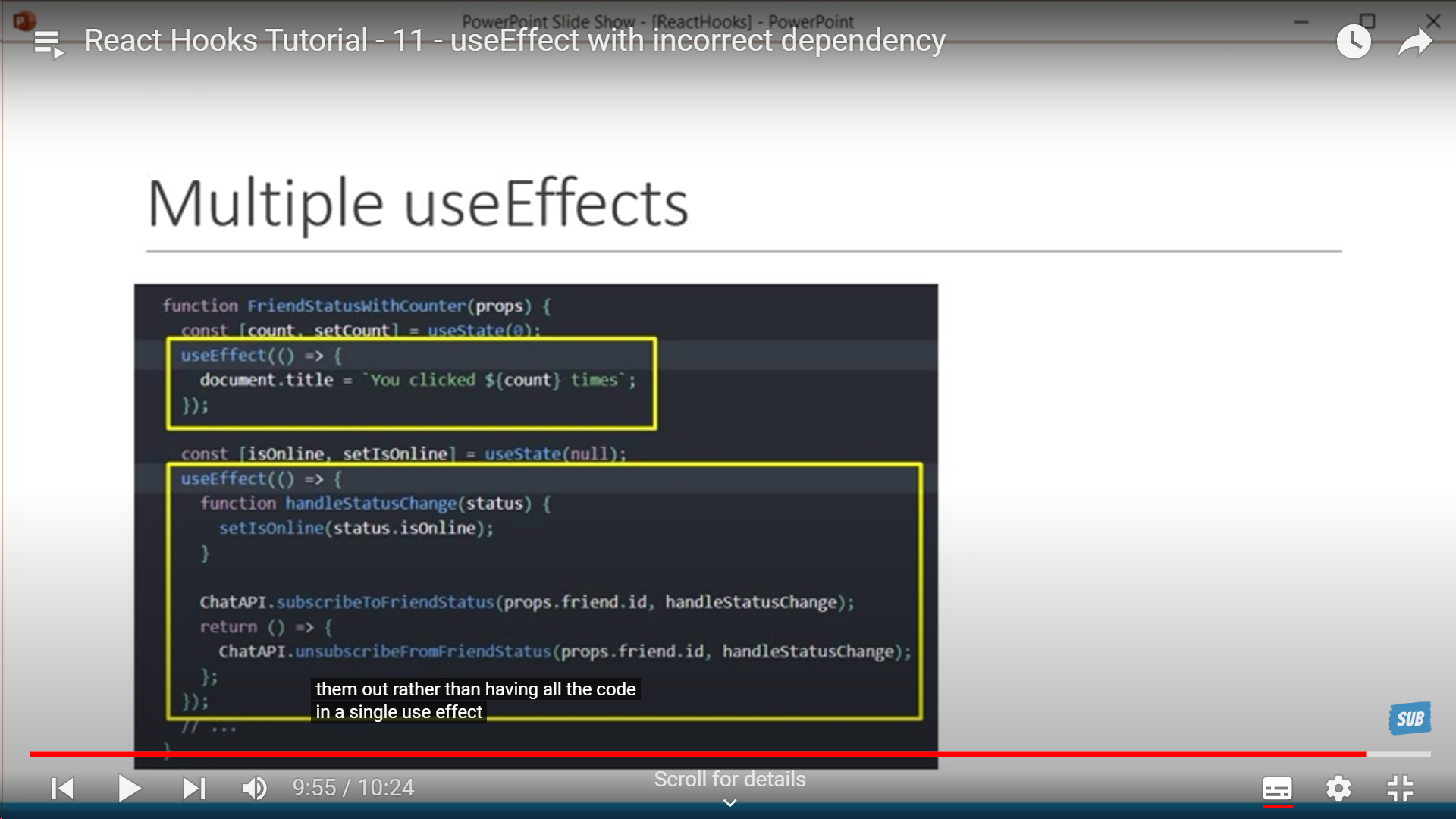

**Multiple useEffect to group the data**

This groups relevant data together and use multiple useEffect -> Notice how we have a state variable declared and a corresponding useEffect for it. This solve the problem of "relevant data far apart" in CLass Components

**_<u>Context</u>_**

**Defining context in parent component**

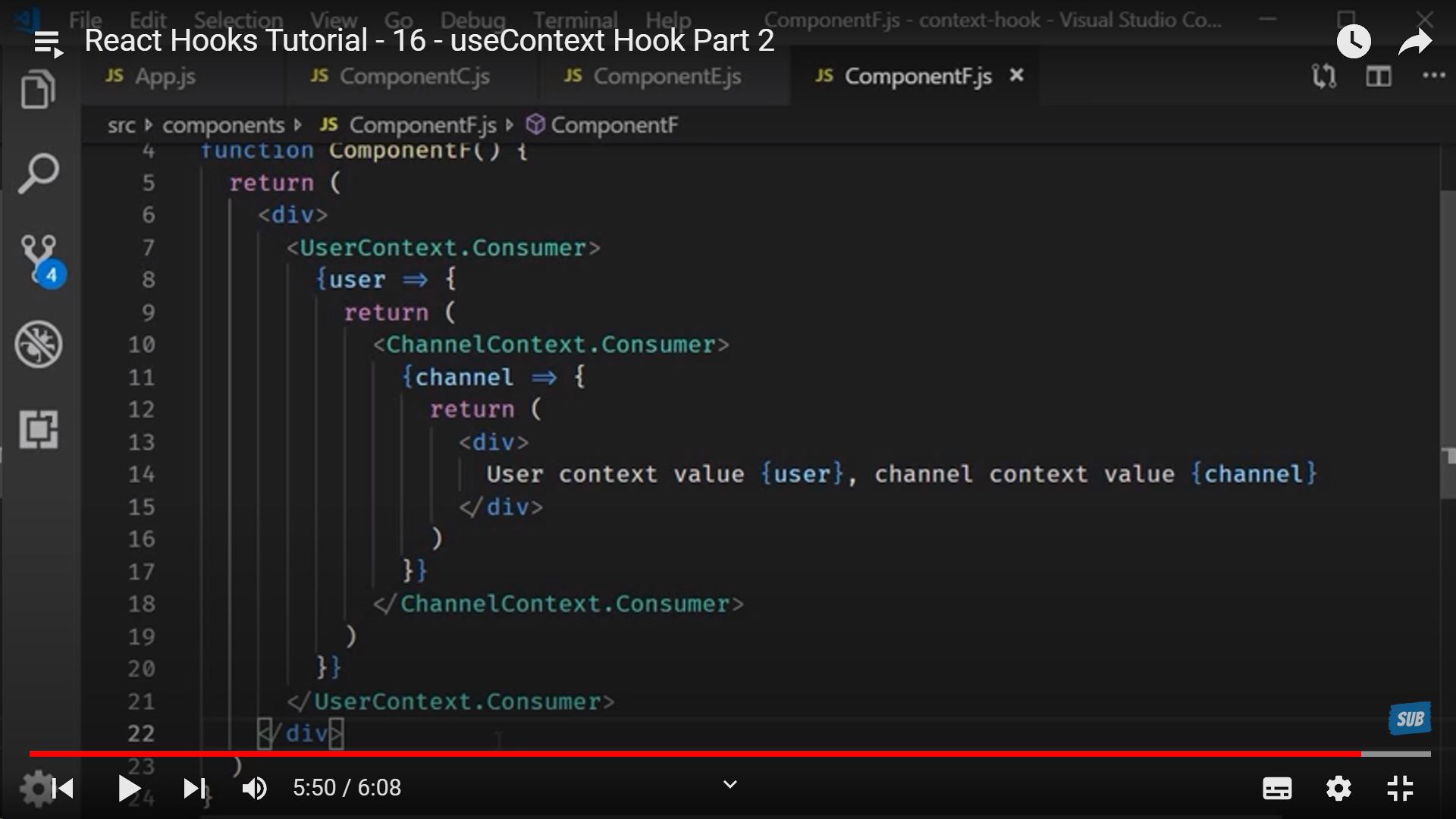

**Using context in child component**

_<u>**Shortcut for using context**</u>_

| alamfatima1999 | |

1,769,456 | affordable web development company | At Websleague, our all-affordable web development services combine quality and economy. Our team... | 0 | 2024-02-22T17:49:49 | https://dev.to/gregoryhomer/affordable-web-development-company-1n0l | At Websleague, our all-[affordable web development services](https://websleagues.com/) combine quality and economy. Our team delivers customised solutions that satisfy your company objectives while staying inside your budget by uniting affordability and information. | gregoryhomer | |

1,769,457 | Gamedev.js Survey’s all questions and answers landed on GitHub | With Gamedev.js Survey 2023 completed in December and the report published in January, I got asked... | 0 | 2024-02-22T17:53:16 | https://enclavegames.com/blog/gamedevjs-survey-github/ | github, results, gamedevjs, surveys | ---

title: Gamedev.js Survey’s all questions and answers landed on GitHub

published: true

date: 2024-02-22 17:29:26 UTC

tags: github,results,gamedevjs,surveys

canonical_url: https://enclavegames.com/blog/gamedevjs-survey-github/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/h3frzguvdt8dk14lfe6m.png

---

With [Gamedev.js Survey 2023](https://gamedevjs.com/survey/2023/) completed in December and the [report published](https://gamedevjs.com/survey/report-on-the-current-state-of-web-game-development-in-2023-is-now-published/) in January, I got asked multiple times about the raw results of this year’s answers and those from the past editions as well, especially from the open questions, and decided to publish all that [on GitHub](https://github.com/GamedevJS/Gamedev.js-Survey/).

You can find all the data we’ve collected (minus timestamps and email addresses) over the years by looking at the dedicated files:

- [answers-2021.csv](https://github.com/GamedevJS/Gamedev.js-Survey/blob/main/answers-2021.csv)

- [answers-2022.csv](https://github.com/GamedevJS/Gamedev.js-Survey/blob/main/answers-2022.csv)

- [answers-2023.csv](https://github.com/GamedevJS/Gamedev.js-Survey/blob/main/answers-2023.csv)

All the questions (2021–2023) were also published for reference, and the given file for the 2024 edition, [questions-2024.md](https://github.com/GamedevJS/Gamedev.js-Survey/blob/main/questions-2024.md), is up — feel free to send a [Pull Request](https://github.com/GamedevJS/Gamedev.js-Survey/pulls) if you have any feedback or updates to it already.

Now with the questions and answers from three consecutive editions, folks could pick up trends like the usage of specific technologies by developers, their tooling preferences, how earning money from building web games evolve over the years, or even the overall happiness of developers themselves. Another take would be on the open questions like what the devs are struggling with, or what might be their biggest challenges in the coming year.

The **Gamedev.js Survey** officially [joined WebDX Community Group at W3C](https://end3r.com/blog/webdx-gamedevjs-survey/) recently, so there’s hope it will grow even bigger with this year’s edition, which is planned in the second part of 2024. | end3r |

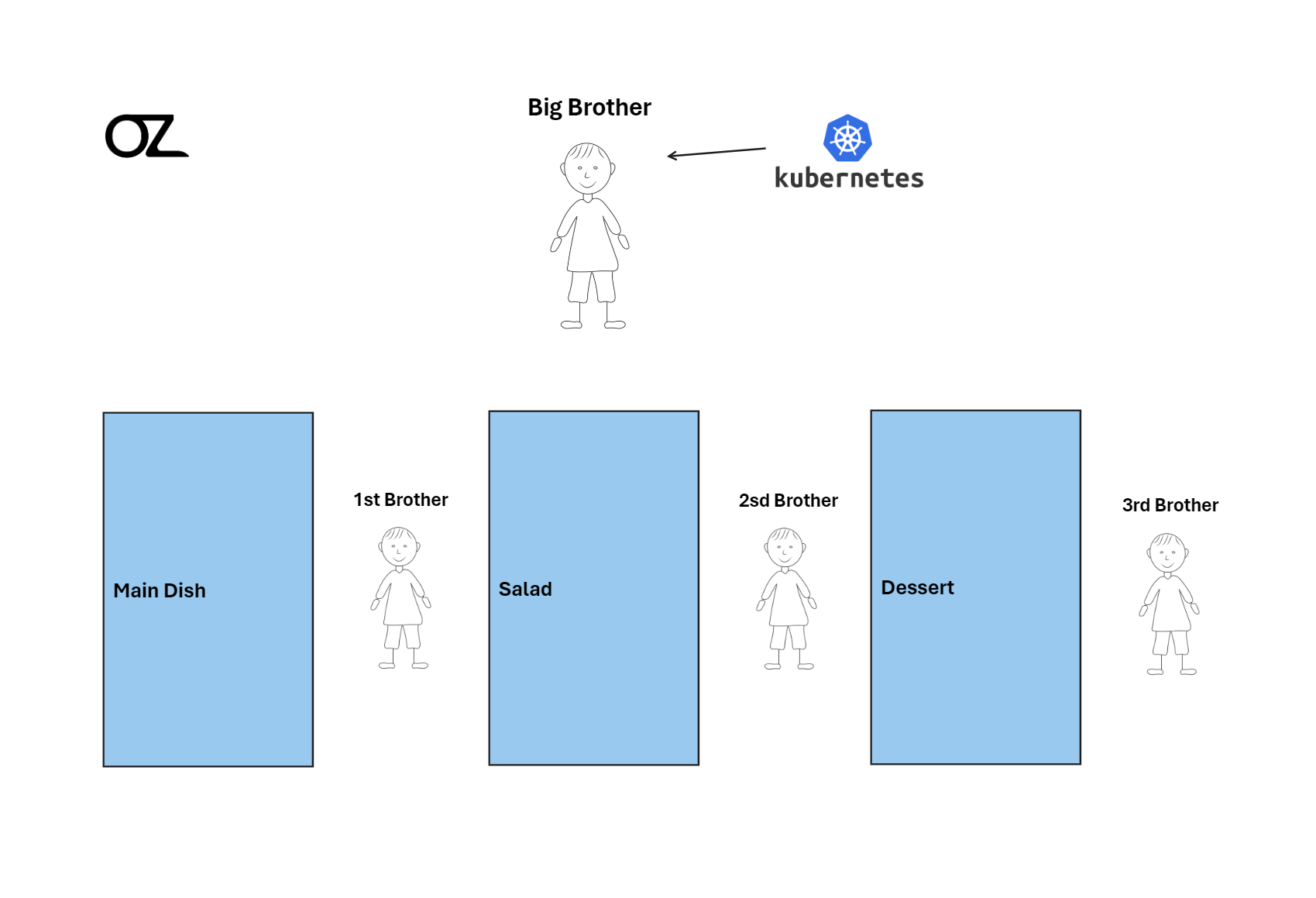

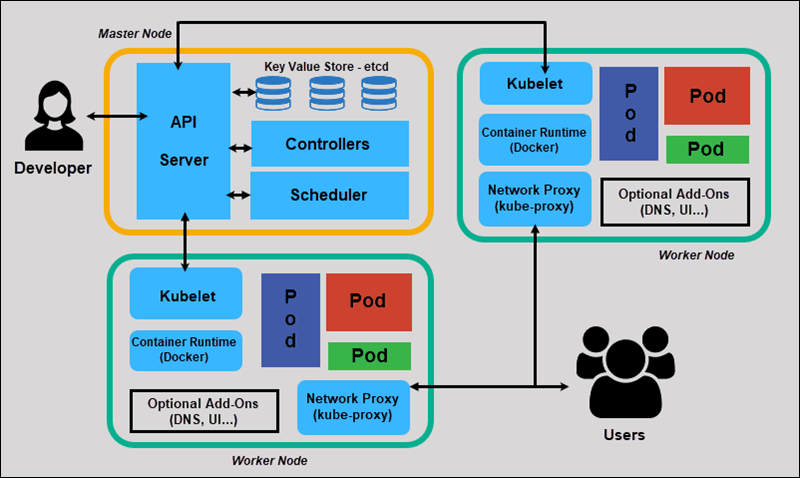

1,769,498 | Explaining Kubernetes In Kitchen | Exciting Announcement! 🎉 Prepare to Indulge Your Appetite for Knowledge! 😁 Join me on a... | 0 | 2024-02-22T19:23:40 | https://dev.to/omar_zenhom/explaining-kubernetes-in-kitchen-4men | kubernetes, devops, deployment, cloudcomputing | **Exciting Announcement! 🎉 Prepare to Indulge Your Appetite for Knowledge! 😁**

Join me on a mouthwatering journey through the world of Kubernetes, where software meets gourmet cuisine! 🍲🚀

Two days ago, my colleagues and I embarked on a challenging project, navigating the intricacies of the **Development stage** with skill and determination. Now, we’re gearing up to transition to the **Deploy stage** using a powerful tool called **Containers**. But wait, there’s more! We’ll be enlisting the help of **Kubernetes**, a software wizard that I’ll unravel for you in the most delicious way possible — through a culinary tale! 👨💻

**

## Start of the story

**

Explaining Kubernetes can be as complex as mastering a new recipe. That’s why I’m taking a different approach. In a fun analogy, understanding Kubernetes is like organizing a kitchen to cook a meal. Imagine you and your siblings are culinary prodigies, tasked with creating a gourmet meal to impress your mom. Each sibling has a role, just like Kubernetes assigns tasks to containers. From appetizers to dessert, Kubernetes orchestrates the entire process, ensuring your software runs as smoothly as a well-coordinated kitchen. 👩🍳🍴

But what exactly is Kubernetes? It’s like the master chef in your software kitchen, organizing and overseeing containers (your cooking stations) to ensure everything runs seamlessly. Just as you’d divide cooking tasks among your siblings, Kubernetes divides tasks among containers, managing everything from appetizers to dessert. It scales, balances loads, and automates deployment, making it the ultimate chef in your software kitchen! 👨🍳👨🍳

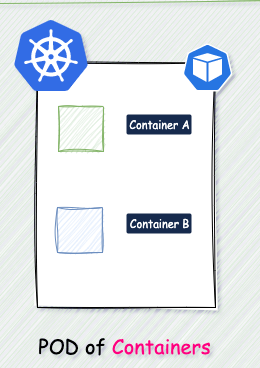

If we dive into the technical definition, Kubernetes is a **“container-orchestration tool,”** a tool for coordinating containers. To simplify, let’s consider it an organizational tool for the chef — you! Each container represents a dish where the food (your application) will be placed. 🍽️🔧

Moving on to **Pods**, which contain all the appetizer dishes served in the meal. Each dish serves a purpose, just like Pods work together to serve your application. They all have a common goal: to be appetizers for the main course. And just like in a meal, each Pod doesn’t interfere with the other because they are focused on their own tasks.

Now, let’s talk about the functions of Kubernetes:

1- **Scaling:** Like a chef adjusting the cooking pace, Kubernetes knows how to organize and run or turn off Pods based on the workload. So, if you’re running late in preparing the food, Kubernetes can speed up your work to finish quickly because the family is eagerly waiting to eat! 😋

2- **Automated deployment:** Kubernetes streamlines the deployment process, saving you time and effort. It’s like having a recipe that you follow to cook 📖👨🍳.

3- **Load balancing:** If there’s a high demand for a dish, Kubernetes can regulate the load by increasing the number of Pods working in that area. For example, if your younger brother is struggling to make dessert, you can clone him and tell your brother with version 2.0 to help your brother with version 1.0!

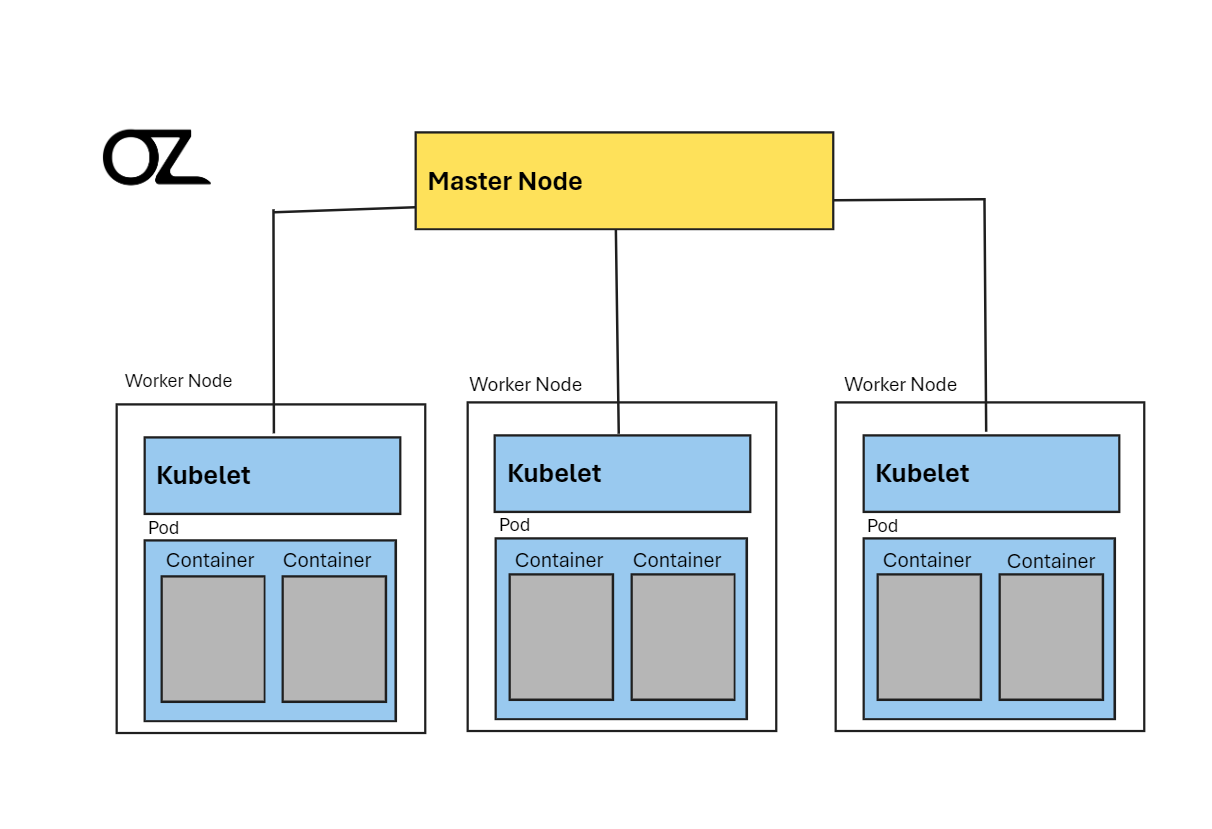

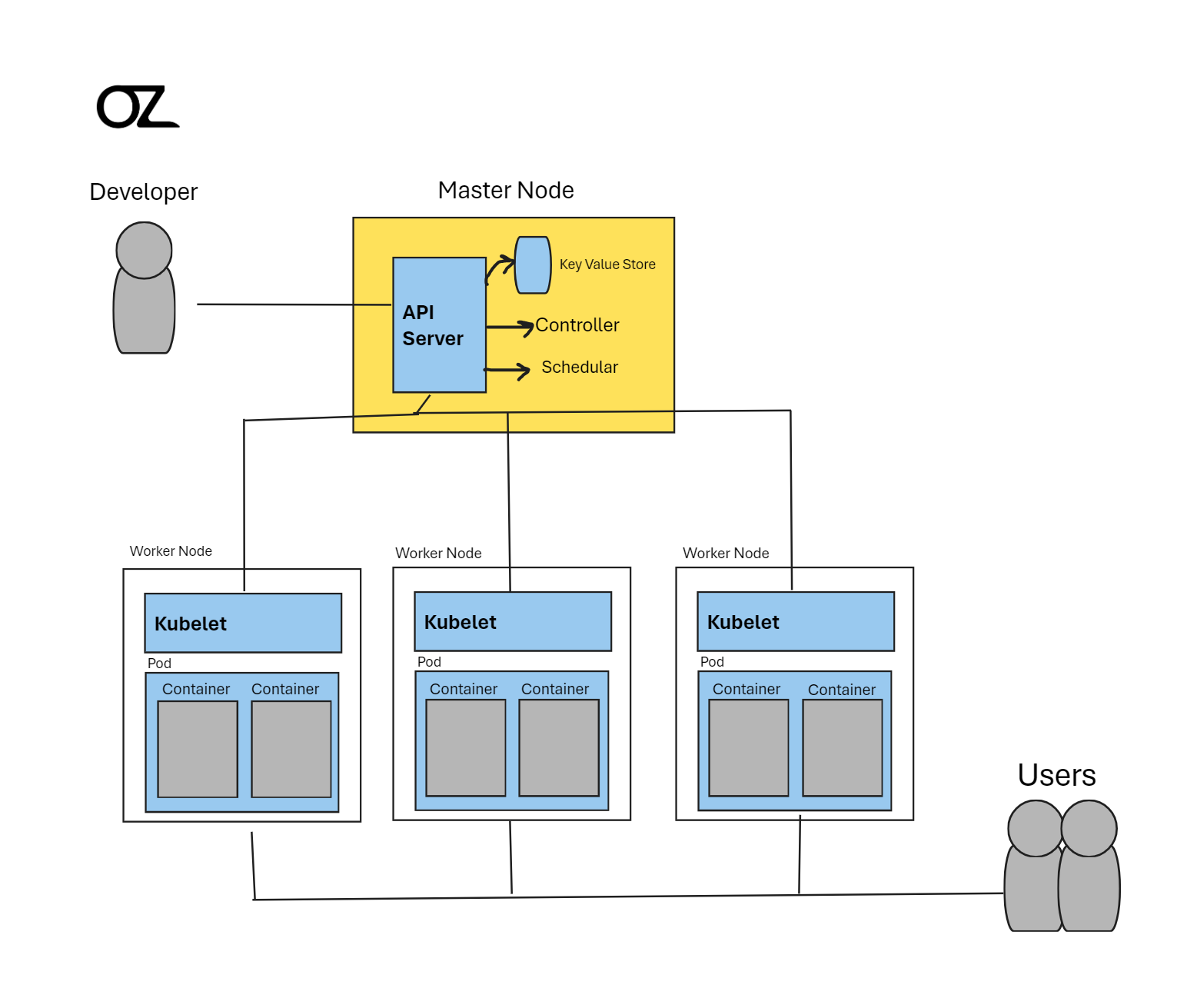

Back to the point, imagine your kitchen as a bustling restaurant, divided into three sections: appetizers, main course, and dessert. Each section represents a **“Working Node,”** like having three tables in a large kitchen, each dedicated to a specific part of the meal preparation. And guess who’s the head chef? That’s right, you’re the **“Master Node,”** overseeing the entire operation with precision and flair! 🧐

Your job is to ensure that each section works seamlessly, much like Kubernetes supervising containers in your software kitchen. To assist you, there’s a **“kubelet”** at each Working Node, acting as a diligent supervisor to ensure everything runs smoothly on each Node.

Now, let’s dive into why we use Kubernetes and its benefits. Just as a restaurant manager needs to know why they use certain tools to improve efficiency and customer satisfaction, understanding Kubernetes helps developers manage applications more effectively.

Think of Kubernetes as the big brother in the kitchen, organizing and directing the workflow. If, for instance, the **“mother”** (representing a user) wants to know what’s happening in the kitchen or needs something changed, she talks to the big brother (**Kubernetes**) to get all the details. From a technical standpoint, programmers can gather information about all the pods, nodes, and services, allowing them to make informed decisions about resources and operations.

**

## Conclusion

**

In essence, Kubernetes is like a well-oiled kitchen, where everyone has a role, everything is organized, and the result is a delicious meal that satisfies everyone’s cravings! 😋

I’ve served up this explanation like a well-crafted dish, breaking down complex concepts into bite-sized pieces for everyone to enjoy. The table is set, the meal is ready, and now it’s time to dig in and feast on your newfound knowledge! 🎓🍽️

But hey, the kitchen is always open for more culinary adventures! Don’t forget to hit that follow button to join us in the kitchen of software development, where Kubernetes is the key ingredient to success! 🚀.

I’d love to hear your thoughts on this feast of an explanation and whether my quirky drawings added that extra flavor to your learning experience 😂😂😁.

I’ll also provide some sources for those craving more details. Until next time, happy cooking with Kubernetes!

Thank you for reading!

P.S:

You can follow me:

[https://www.linkedin.com/in/omarwaleedzenhom/](https://www.linkedin.com/in/omarwaleedzenhom/)

[https://www.facebook.com/OmarZenho](https://www.facebook.com/OmarZenho)

My Portfolio:

[https://omarzen.github.io/Omar-Zenhom/](https://omarzen.github.io/Omar-Zenhom/)

| omar_zenhom |

1,769,536 | IP Addresses: Digital Connectivity | Title: Understanding IP Addresses: A Comprehensive Guide Introduction The term "IP" stands for... | 0 | 2024-02-22T20:37:16 | https://dev.to/noblepearl/ip-addresses-digital-connectivity-jf4 | beginners, productivity, learning | **Title: Understanding IP Addresses: A Comprehensive Guide**

_Introduction_

The term "IP" stands for "Internet Protocol," a set of rules governing data format for communication over the Internet or a local network. It serves as a unique identifier assigned to each device connected to a computer network, akin to phone numbers for our devices. This article explores the significance, generation, and types of IP addresses, delving into the complexities that define our digital communication landscape.

**IP Address Basics**

An IP address allows devices to connect, enabling communication over the Internet. It plays a crucial role in differentiating computers, routers, and websites. The Internet Assigned Numbers Authority (IANA), a division of the Internet Corporation for Assigned Names and Numbers (ICANN), oversees the allocation of IP addresses. The 32-bit length of an IPv4 address and its format, consisting of four sets of numbers separated by dots, are key elements that lay the foundation for our interconnected digital world.

_Understanding IP Address Components_

The subnet mask and default gateway accompany the IP address. The subnet, also known as the netmask, and the default gateway are integral for network communication. Subnet masks, often resembling IP addresses, assist in identifying network sections and host sections. For instance, the subnet mask "255.255.255.0" implies that devices within the network will have IP addresses starting with "192.168.1."

_IP Address Allocation_

Wireless routers play a crucial role in IP address allocation through DHCP (Dynamic Host Configuration Protocol). The subnet mask aligns with the IP address, determining the network portion and the host portion. Recognizing the class of an IP address, whether A, B, or C, simplifies understanding.

_Transition to IPv6_

With the exhaustion of IPv4 addresses, IPv6 has been introduced to provide an abundance of IP addresses. Additionally, private IP addresses save public addresses, ensuring efficient use. The default gateway, often ending in ".1," facilitates communication beyond the local network.

**The Role of IP Addresses in Networking**

_Layer 3 in TCP_

The third layer in the TCP model is responsible for IP addresses. IP addresses, akin to a language for devices, facilitate communication, allowing computers worldwide to exchange information seamlessly.

_How IP Addresses Work_

IP addresses function behind the scenes, allowing devices to communicate through set guidelines. The assignment of IP addresses, both private and public, occurs based on network locations. Devices may have dynamic or static public IP addresses, with dynamic IPs changing regularly.

**Types of IP Addresses**

_Consumer IP Addresses_

Individuals and businesses possess private and public IP addresses. Private IP addresses are assigned to devices within a network, while the public IP address represents the entire network. Dynamic and static public IP addresses cater to different needs.

_Website IP Addresses_

For website owners, the choice between shared and dedicated IP addresses depends on hosting plans. Shared hosting involves multiple websites on a single server, each with a shared IP address. In contrast, dedicated IP addresses are crucial for businesses hosting their servers.

_Extended Discussion on IP Address Types_

Public IP addresses come in two forms – dynamic and static. Dynamic IP addresses change automatically and regularly, providing cost savings and enhanced security. Static IP addresses remain consistent, crucial for businesses hosting their servers.

**How to Look Up IP Addresses**

Checking your router's public IP address is as simple as searching "What is my IP address?" on Google. Finding private IP addresses varies by platform and can be accessed through system preferences or settings.

_Extended Discussion on IP Address Lookup_

If you need to check the IP addresses of other devices on your network, accessing the router provides a comprehensive list. Navigating to "attached devices" displays all devices recently or currently attached to the network, including their IP addresses.

**Security Concerns and Protection

**

_Criminal Exploitation of IP Addresses_

Criminals can exploit IP addresses by tracking online activities, posing risks such as location tracking and network attacks. Awareness of these risks and implementing protective measures, including proxies or Virtual Private Networks (VPNs), is crucial for safeguarding IP addresses.

_Risks Include:_

Downloading illegal content using your IP address

Tracking down your location

Directly attacking your network

Hacking into your device

_Protective Measures:_

IP addresses can be protected and hidden by using a proxy server or a Virtual Private Network (VPN).

VPNs are strongly advised in certain situations to ensure privacy.

_Extended Discussion on IP Address Security_

Understanding the risks associated with IP addresses is paramount. Criminals can track down your IP address through various online activities, posing threats such as network attacks and identity impersonation. Protecting your IP address becomes essential, and measures like proxy servers and VPNs offer a shield against potential cyber threats.

_Conclusion

_

In conclusion, IP addresses are fundamental in the digital landscape, serving as identifiers that facilitate seamless communication across networks. Understanding their intricacies, allocation methods, and potential risks is crucial in navigating the digital realm securely. The evolution of IP addresses, from IPv4 to IPv6, showcases the dynamic nature of our digital infrastructure. As we continue to rely on these unique identifiers, staying vigilant about security measures ensures a robust and protected online presence. | noblepearl |

1,769,639 | Have you ping ponged between being an individual contributor and being an engineering manager? | If so, what did you learn? | 0 | 2024-02-22T22:19:58 | https://dev.to/jess/have-you-ping-ponged-between-being-an-individual-contributor-and-being-an-engineering-manager-2jo | discuss, career | If so, what did you learn?

| jess |

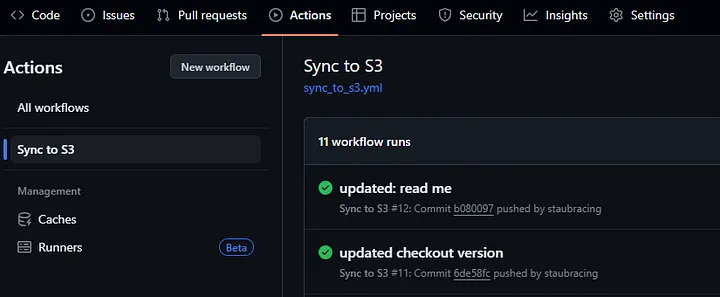

1,769,647 | Introducing a GitHub Action for Interacting with Lagoon | https://github.com/uselagoon/lagoon-action We're excited to announce the release of the Lagoon... | 0 | 2024-03-07T03:47:09 | https://dev.to/uselagoon/introducing-a-github-action-for-interacting-with-lagoon-5a91 | github, action, workflow | [https://github.com/uselagoon/lagoon-action](https://github.com/uselagoon/lagoon-action)

We're excited to announce the release of the Lagoon Action – a GitHub Action that allows you to integrate with Lagoon, making it easy for you to automate your deployment workflows and manage Lagoon environments directly from your GitHub repository.

Currently the Lagoon action allows you to:

- Deploy branches and PRs

- Upsert environment variables

Taken together, these two features open up a range of new deployment strategies on Lagoon.

For instance, Lagoon only presently responds to a subset of GitHub webhook options. Now, with the Lagoon action, you’re able to run your CI processes in GitHub itself, and instead of having a webhook call deploy your environment, you can choose to deploy only if the CI process passes.

## Getting Started

Getting started with the Lagoon Action is straightforward. [Add the action to your GitHub workflow](https://docs.github.com/en/actions/quickstart), configure the required parameters, and you're ready to automate your Lagoon deployments. [Check out the GitHub repository](https://github.com/uselagoon/lagoon-action) for detailed documentation and examples.

## Requirements

To use the Lagoon CLI Action, ensure you have set up a GitHub Actions secret containing a private SSH key with the necessary permissions added to the Lagoon API. This key will be used for authentication during the deployment process.

We’d love to know if you use this and how it helps your workflow! Hop in to the [Lagoon Discord](https://discord.gg/te5hHe95JE) and drop us a line!

And don’t forget our [2024 Community Survey](https://dev.to/uselagoon/2024-community-hours-survey-50m5)! Let us know how we can best serve the Lagoon community.

| alannaburke |

1,769,752 | Symfony Station Communiqué — 16 February 2024. A look at Symfony, Drupal, PHP, Cybersec, and Fediverse News! | This communiqué originally appeared on Symfony Station. Welcome to this week's Symfony Station... | 0 | 2024-02-23T03:02:42 | https://symfonystation.mobileatom.net/Symfony-Station-Communique-16-February-2024 | symfony, drupal, php, fediverse | This communiqué [originally appeared on Symfony Station](https://symfonystation.mobileatom.net/Symfony-Station-Communique-16-February-2024).

Welcome to this week's Symfony Station communiqué. It's your review of the essential news in the Symfony and PHP development communities focusing on protecting democracy. Because open-source equals open societies, peeps. We also cover the cybersecurity world and the Fediverse (more open-source).

We cover a brawl in the Mastodon community this week. And there is good content in all of our categories, so please take your time and enjoy the items most relevant and valuable to you. This is why we publish on Fridays. So you can savor it over your weekend. 😉

Or jump straight to your favorite section via our website.

- [Symfony](https://symfonystation.mobileatom.net/Symfony-Station-Communique-16-February-2024#symfony)

- [PHP](https://symfonystation.mobileatom.net/Symfony-Station-Communique-16-February-2024#php)

- [More Programming](https://symfonystation.mobileatom.net/Symfony-Station-Communique-16-February-2024#more)

- [Fighting for Democracy](https://symfonystation.mobileatom.net/Symfony-Station-Communique-16-February-2024#other)

- [Cybersecurity](https://symfonystation.mobileatom.net/Symfony-Station-Communique-16-February-2024#cybersecurity)

- [Fediverse](https://symfonystation.mobileatom.net/Symfony-Station-Communique-16-February-2024#fediverse)

Once again, thanks go out to Javier Eguiluz and Symfony for sharing [our communiqué](https://symfonystation.mobileatom.net/Symfony-Station-Communique-09-February-2024) in their [Week of Symfony](https://symfony.com/blog/a-week-of-symfony-893-5-11-february-2024).

**My opinions will be in bold. And will often involve cursing. Because humans. And I have plenty of them this week.**

---

## Symfony

As always, we will start with the official news from Symfony.

Highlight -> "This week, Symfony maintained versions focused on fixing bugs and updating the translation of validation messages to many of the supported languages. Meanwhile, the upcoming Symfony 7.1 version improved the parsing/linting methods of ExpressionLanguage and also improved the BinaryFileResponse. Lastly, we published more details about the talks of the upcoming SymfonyLive Paris 2024 conference."

[A Week of Symfony #893 (5-11 February 2024)](https://symfony.com/blog/a-week-of-symfony-893-5-11-february-2024)

SymfonyCasts has:

[This week on SymfonyCasts!](https://5hy9x.r.ag.d.sendibm3.com/mk/mr/sh/1t6AVsd2XFnIGBrRERGJumwddAsNT1/bKFiWCP8K8ZT)

---

## Featured Item

Cory Doctorow opines on a recent study:

[Big Tech disrupted disruption](https://doctorow.medium.com/big-tech-disrupted-disruption-2a57b6178a00)

**More on why these enshittified mofos are the enemy of humanity.**

Stanford Law School has all the gory details:

[Co-opting Disruption](https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4713845)

---

### This Week

Filip Horvat explores:

[Mastering the ‘Decorator’ Design Pattern in Symfony](https://medium.com/@fico7489/mastering-the-decorator-design-pattern-in-symfony-b633c345dd77)

[Mastering the ‘Adapter’ Design Pattern in Symfony](https://medium.com/@fico7489/mastering-the-adapter-design-pattern-in-symfony-cb07b157bb34)

[Mastering the ‘Abstract Factory’ Design Pattern in Symfony](https://medium.com/@fico7489/mastering-the-abstract-factory-design-pattern-in-symfony-386c2c95bd9d)

Danil Bifidokk examines:

[Asynchronous state machine with Symfony Workflows](https://dev.to/bifidokk/asynchronous-state-machine-with-symfony-workflows-35jl)

Oliver Davies broadcasts:

[Episode 10: Twig, Symfony and SymfonyCasts with Ryan Weaver](https://www.oliverdavies.uk/podcast/10-ryan-weaver-symfonycast)

**Anything with Ryan involved is awesome.**

Alberto Robles (who may or may not be a robot) shows us:

[How to secure your Symfony Apps with HTTPS](https://bertorobles.medium.com/how-to-secure-your-symfony-apps-with-https-2bf238378633)

Ludo Dev asks:

[Recurring actions? Symfony and RabbitMQ for asynchronous events...](https://en.developpeur-freelance.io/rabbitmq-symfony/)

Vandeth Tho shows us:

[How I build platform that focusing on making Symfony workflow configuration easier with SymFlowBuilder](https://medium.com/@thovandeth/how-i-build-platform-that-focusing-on-making-symfony-workflow-configuration-easier-with-e26a38eea3ed)

### eCommerce

Specbee looks at:

[Driving E-Commerce Revenue Success with Drupal Commerce](https://www.specbee.com/blogs/driving-ecommerce-revenue-success-drupal-commerce)

Dragan Rapić shares:

[Securing Your Shopware 6 Shop](https://levelup.gitconnected.com/securing-your-shopware-6-shop-a10ed49df4fd)

### CMSs

TYPO3 has a case study:

[TYPO3 and DMK Power Digital Transition to Responsive, Accessible City Services](https://typo3.com/customers/case-studies/city-of-leipzig)

And:

[TYPO3 13.0.1, 12.4.11 and 11.5.35 security releases published](https://typo3.org/article/typo3-1301-12411-and-11535-security-releases-published)

Mike Street explores:

[Testing the frontend of a TYPO3 project](https://www.mikestreety.co.uk/blog/testing-the-frontend-of-a-typo3-project/)

**Nice animated logo.**

<br/>

Contao has:

[Contao News](https://contao.org/de/news/contao-5-3-lts-ist-da)

<br/>

Drupal announces:

[Single Sign-On is coming to Drupal.org thanks to Cloud-IAM](https://www.drupal.org/drupalorg/blog/single-sign-on-is-coming-to-drupalorg-thanks-to-cloud-iam)

[It's time to migrate from Drupal 7. Let me show you (how to start)](https://www.drupal.org/drupalorg/blog/its-time-to-migrate-from-drupal-7-let-me-show-you-how-to-start)

[Bounty program extension (for innovative modules and ideas)](https://www.drupal.org/drupalorg/blog/bounty-program-extension-for-innovative-modules-and-ideas)

[Turning Takers into Makers: The enhanced Drupal Certified Partner Program](https://www.drupal.org/association/blog/turning-takers-into-makers-the-enhanced-drupal-certified-partner-program)

**This has set off a bruhaha with independent developers and small agencies. At a minimum Drupal sucks at naming things.**

Core Contributor, Gábor Hojtsy has:

[Looking for your input for DrupalCon Portland 2024 initiative highlights](https://www.hojtsy.hu/blog/2024-feb-12/looking-your-input-drupalcon-portland-2024-initiative-highlights)

[Onwards to Drupal 11 - ways to get involved](https://www.hojtsy.hu/blog/2024-feb-12/onwards-drupal-11-ways-get-involved)

**Recognize contributors everywhere and show them some love.**

[Upgraded my blog from Drupal 7 to Drupal 10 in less than 24 hours with the open source Acquia Migrate Accelerate](https://www.hojtsy.hu/blog/2024-feb-09/upgraded-my-blog-drupal-7-drupal-10-less-24-hours-open-source-acquia-migrate)

Quick aside:

**You know what easier than going from 7 to 10 (which is a pain in the ass), Moving to [Frontkom's Gutenberg Theme](https://www.drupal.org/project/gutenberg_starter) from DXPR's distribution. In an update from last week, we moved our [Mobile Atom Media site](https://media.mobileatom.net/) over in less than 12 hours, and 90% of that was content updates and custom CSS. :)**

**Note that the Gutenberg Starter Theme is not ready for production even though I am using it that way. There are a few bugs with certain blocks. Obviously, it doesn't have the same capabilities as the WordPress version (they are working on that). Still, if you are comfortable using the code editor rather than the visual one (and write HTML and CSS), you can come close. Plus it is compatible with Layout Builder and Drupal blocks (so you can keep your business logic).

**Unfortunately, when I tried to create a subtheme, add to regions, etc., I got the white screen of death. So keep that in mind and take them at their word on the not ready for production yet.**

TrueSummit announces:

[Search Web Components Alpha 2 Release](https://truesummit.dev/blog/search-web-components-alpha-2)

The Drop Times has an interview:

[André Angelantoni Discusses Automated Testing Kit Module](https://www.thedroptimes.com/37084/andre-angelantoni-discusses-automated-testing-kit-module)

ChapterThree has a case study:

[Apigee Kickstart in Action: Powering Financial Services](https://www.chapterthree.com/blog/apigee-kickstart-action-powering-financial-services)

Matt Glaman explores:

[Verifying your Drupal site’s configuration against changes from dependency updates](https://mglaman.medium.com/verifying-your-drupal-sites-configuration-against-changes-from-dependency-updates-385c08b332d9)

Oliver Davies has a few quick takes:

[Symfony conventions making their way to Drupal](https://www.oliverdavies.uk/archive/2024/02/12/symfony-conventions-making-their-way-to-drupal)

**The more, the merrier. And it's why I'm mastering Drupal before putting my big boy pants on and moving up to Symfony.**

[Major version updates are just removing deprecated code](https://www.oliverdavies.uk/archive/2024/02/14/major-version-updates-are-just-removing-deprecated-code)

ImageX shows us:

[How Project Browser Transforms Module Discovery and Installation Experience](https://imagexmedia.com/blog/drupal-project-browser)

DrupalizeMe demonstrates a great new feature:

[New in Drupal 10.2: Create a New Field UI](https://drupalize.me/blog/new-drupal-102-create-new-field-ui)

Golems examines:

[Exploring Drupal's Entity API: Tips and Tricks for Better Site Development](https://gole.ms/guidance/exploring-drupals-entity-api-tips-and-tricks-better-site-development)

### Previous Weeks

Acceseo looks at:

[Import map, simplificando procesos en el desarrollo web](https://www.acceseo.com/import-map-simplificando-procesos-en-el-desarrollo-web.html)

---

## PHP

### This Week

Mohammad Roshandelpoor explores:

[Makefile: Simplifying Command Execution and Automation](https://medium.com/@mohammad.roshandelpoor/makefile-simplifying-command-execution-and-automation-9dbaa6d91ac8)

Matthias Noback announces a:

[New edition for the Rector Book](https://matthiasnoback.nl/2024/02/new-edition-for-the-rector-book/)

**Similar to Matt Glaman's Retrofit project with Drupal 7 migration, I feel that products like Rector don't get the credit they deserve. So, if you work with legacy sites, I would buy the book.**

Camilo Herrea examines:

[Basic route management with PHP and Apache httpd](https://medium.com/winkhosting/basic-route-managament-with-php-and-apache-httpd-98f9126b1384)

**This was informative for a mainly frontend developer.**

Dragan Rapić shares:

[Troubleshooting PHP Errors](https://levelup.gitconnected.com/troubleshooting-php-errors-87f97f6e68d4)

Hamid Rohani looks at: