id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,817,668 | TASK - 3 | The difference between functional testing and non-functional testing with example Functional... | 0 | 2024-04-10T14:55:47 | https://dev.to/kpsshankar/task-3-4ip2 | The difference between functional testing and non-functional testing with example

Functional testing:

Functional testing is a crucial quality assurance process in software development that evaluates whether a software application functions correctly according to its specifications and requirements. It focuses on testing the functionality of the application, ensuring that it performs its intended tasks accurately.

Here is an example for functional testing

Consider a basic e-commerce website that allows users to browse products, add items to their carts, and complete a purchase. Functional testing for this application would involve testing various aspects of its functionality:

User Registration: verify that users can successfully create an account with valid information.

Product search: Test whether the search function accurately retrieves products based in keywords, categories or filters.

Add to cart: Check if users can add products to their cart and that the cart accurately displays the selected items and their quantities.

Checkout process: Validate that users can process through the checkout process smoothly. This includes entering shipping information, payment details and reviewing the order before confirming it.

Payment processing: Ensure that payment are processed accurately, with appropriate validation for credit card information, addresses and transaction confirmations.

Order confirmation: Verify that users receive an order confirmation email after completing a purchase.

User account: Test various account-related functionalities, such as updating personal information resetting passwords, and viewing order history.

Compatibility: Check if the website functions correctly across different browsers and devices.

Functional testing involves both positive testing and negative testing. Test cases are designed based on the applications functional requirements, and test results are compared against expected outcomes to identify any deviation or defects.

Automated testing tools and manual testing can be used for functional testing. Regression testing, which retests existing functionalities after changes, is also a vital part of the process to ensure that new updates or features do not break existing functionality

In summary, functional testing is essential for assuring the reliability and correctness of software applications, and it involves systematically testing each function and feature to ensure it meets the specified requirements like the example provided for e-commerce website

Non-functional testing:

Non-functional testing often referred to as quality testing, focuses on evaluating the characteristics of a software system that are not directly related to its functional behavior. These characteristics include performance, usability, reliability, scalability, security and more.

Performance testing assesses how well a software system performs under various conditions. It ensures that the application meets performance expectations and can handle a specified load.

Some example scenarios for performance testing:

Load testing: This assesses how the system performs under expected and peak loads. For instance, an e-commerce website, load testing would involve simulating a large number of concurrent users accessing the site to ensure it can handle heavy traffic during sales events.

Stress testing: Stress testing pushes the system beyond its specified limits. For example, lets take a mobile banking app, stress testing would involve initiating transaction with a higher-than-usual number of concurrent users to identify performance bottlenecks or system failures.

Scalability testing: Scalability testing checks if the system can handle increased load by adding more resources or servers. It ensures that the application can scale smoothly as the user base grows.

Response time testing: This measures how quickly the system responds to user actions. For a video streaming service, response time testing would evaluate the time it takes to start playing a video after a user clicks the play button

Usability testing:

It focuses on the user friendliness and overall user experience of the software. It ensures that the application is easy to navigate and meets user expectations. For example:

User interface testing: Evaluates the design and layout of the user interface. Testers assess whether buttons, menus and navigation are intuitive and user-friendly.

Accessibility testing: Checks if the application is accessible to users with disabilities, ensuring compliance with accessibility standards

Security testing:

Security testing identifies vulnerabilities and weaknesses in the software that could lead to breaches or data leaks

Penetration testing: Simulates cyberattacks to identifies vulnerabilities that hackers could exploit, suck as SQL injection or cross site scripting vulnerabilities.

Authentication testing: Verifies the effectiveness of users authentication mechanism, ensuring that only authorized users can access sensitive information.

So, non-functional testing is crucial to ensure that software not only functions correctly but also meets performance usability, security, and other critical criteria. Conducting these tests helps identify and mitigate risks and improve the overall quality of the software product. | kpsshankar | |

1,795,797 | Enterprises Beware — Google Cloud Fleet Routing Might Be the Wrong Choice for You | Google Cloud Fleet Routing (CFR) is a major player in logistics, dominating the market. Despite its... | 0 | 2024-03-20T03:24:24 | https://dev.to/lara9963/enterprises-beware-google-cloud-fleet-routing-might-be-the-wrong-choice-for-you-3oig | google, cloudfleetrouting | Google Cloud Fleet Routing (CFR) is a major player in logistics, dominating the market. Despite its strengths, though, it might not be the best fit for large-scale enterprises. In fact, many such CFR users are seeking better options. Let’s explore why.

5 Reasons Why Google CFR Isn’t Right for Enterprise Logistics

- Suboptimal Routing Efficiency

User reports and benchmarking exercises have established that Google CFR’s routing efficiency can often be subpar and not by a small margin. Results show that CFR frequently generates route plans that take over 50% more time and/or distance compared to higher-performing alternatives like NextBillion.ai’s Route Optimization API. This is, without a doubt, Google CFR’s biggest weakness. Though route optimization solutions serve many functions, performing poorly at the primary one — route optimization itself — is a cardinal sin. Opting for CFR might mean sacrificing efficiency, potentially leaving money on the table due to ineffective asset utilization. Suboptimal routes cause inefficiencies, increased operational costs, longer delivery times and potential customer dissatisfaction. These are compromises nobody wants to make, let alone billion-dollar enterprises.

- Absence of Truck Routing

CFR’s inability to support truck-specific routing is a huge omission. Large organizations often have middle-mile and other truck-related use cases, not just last-mile deliveries with cars. Truck routing involves intricacies not applicable to traditional car routing, like customized routes for different truck types and driver safety regulations, handling diverse load capacities and navigating specific road restrictions according to truck size, weight and type of cargo. The platform’s inability to address such trucking specificities makes it somewhat unidimensional — and it’s not on the dimension of enterprise logistics. CFR primarily caters to last-mile scenarios involving conventional cars, leaving a crucial gap for larger enterprises that require a more comprehensive solution.

[Discover why NextBillion.ai is the best alternative to Google Cloud Fleet Routing (CFR) for route optimization.

](https://nextbillion.ai/compare/nextbillionai-vs-google-cloud-fleet-routing)

- Supply Chain Complexity

Building on the theme of ignoring the first- and middle-mile aspects of logistics, CFR lacks the sophistication required to handle the dynamics of supply chain optimization in larger enterprise operations. For instance, it won’t let you designate something as basic as a depot! Is it possible to get the best results from a solution that ignores such a core concept in enterprise logistics? That’s not all. CFR fails to offer custom objective functions like minimizing vehicles used, lowering transportation costs, ensuring equitable task distribution and maximizing on-time deliveries. It also does not support zone-based allocation or complex task sequencing. Such inflexibility restricts users from tailoring the optimization process to suit their needs. In the context of today’s elaborate supply chains, that’s a severe handicap.

- Pricing Rigidity

Speaking of inflexibility, Google CFR’s fixed pricing model can be a challenge for large organizations with differing business models, usage patterns and evolving operational requirements. Rigid pricing that doesn’t account for these kinds of variances makes it difficult for companies to accurately forecast costs, and nothing good ever comes of that. With NextBillion.ai, on the other hand, you’ll find that flexibility is one of our defining characteristics, and that holds true for pricing as well. We bring a more nuanced approach to the conversation, allowing customers to choose between asset-based pricing and usage-based pricing to align with their operational and financial needs.

- Poor Customer Support

Route optimization solutions generally don’t make for the best DIY products. Most customers would require technical support during the implementation phase, except in the simplest of use cases. Even after successful integration into the tech stack, solutions can sometimes fail to deliver on expectations due to poor adoption. This can be avoided with high-quality support that proactively eliminates hindrances for users. It’s no secret that Google CFR customers frequently face difficulties getting any level of support for their problems, let alone the kind of close attention that such complex solutions demand. Meanwhile, NextBillion.ai’s support team is one of our greatest strengths. Our customers rave about it, and we have the credentials to back up our confidence.

Selecting a route optimization solution takes meticulous consideration to align with both current requirements and future scalability. While CFR may work for certain scenarios, its practical limitations become apparent when dealing with the complex needs of larger enterprises. From the absence of critical features in logistics optimization to challenges in customer support and pricing rigidity, enterprises exploring comprehensive route optimization solutions may find more fitting alternatives in platforms that offer advanced technical capabilities, such as NextBillion.ai. | lara9963 |

1,795,809 | WeTest Compatibility Testing: The Ultimate Cloud Testing Experience for Mobile, PC & Console Games | WeTest Compatibility Testing offers a comprehensive compatibility testing service, catering to the... | 0 | 2024-03-20T03:51:45 | https://dev.to/wetest/wetest-compatibility-testing-the-ultimate-cloud-testing-experience-for-mobile-pc-console-games-2nnf | compatibility, compatibilitytesting, testing, qa | WeTest Compatibility Testing offers a comprehensive compatibility testing service, catering to the needs of mobile, PC, and console game developers. With stable and efficient cloud testing experiences, we ensure your game runs seamlessly across various platforms.

Launching a game in different regions and platforms comes with compatibility challenges, including device distribution, fragmentation, and updates. Building your own testing capabilities can be costly and inefficient, requiring significant investments in equipment, hard-to-obtain development kits, and high labor costs. However, by choosing WeTest, you gain access to extensive device coverage, efficient testing execution, rich reporting data, and expert assistance in problem identification and analysis.

Unrivaled professionalism combined with broad coverage of the game's core scenarios sets us apart. Our diverse range of cloud devices represents approximately 80% of global users, ensuring your game reaches a wide audience. Furthermore, we provide exclusive and unique PC and console devices, including R&D versions, for comprehensive testing. Our services offer high stability and cost-effectiveness, suitable for a variety of testing projects.

WeTest supports private cloud deployment through WeTest Real Devices, enabling secure and customized solutions tailored to your specific needs. With a quick delivery time of approximately one week, we provide compatible combinations in 10 dimensions, including GPU coverage for 70% of Steam user models and mainstream CPU models from Intel and AMD.

Our testing approach focuses on validating stability, rendering quality, and responsiveness by thoroughly covering the core scenarios of your game. WeTest holds official authorization for dev kits of popular consoles such as Xbox, PlayStation, and Switch, enabling us to deliver professional console compatibility testing services.

We understand the importance of validating adaptability across different combinations of game platforms, environments, devices, display types, resolutions, networks, and input methods. Our testing process meticulously identifies and resolves issues such as crashes, visual artifacts, unresponsiveness, and feature gaps.

With WeTest Compatibility Testing, you can confidently launch your game worldwide, knowing that it has undergone comprehensive compatibility testing and meets the highest standards of performance. Join the ranks of successful game developers who have chosen WeTest for their compatibility testing needs.

Contact us through this link: https://www.wetest.net/?utm_source=pr&utm_medium=PR-8 to experience the ultimate cloud-testing solution for your mobile, PC, and console games.

| wetest |

1,795,861 | "Day 46 of My Learning Journey: Setting Sail into Data Excellence! ⛵️ Today's Focus: Mathematics for Data Analysis ( P & C- 1) | PERMUTATION AND COMBINATION - 1 It is a method in which we count something with different... | 0 | 2024-03-20T06:00:28 | https://dev.to/nitinbhatt46/day-46-of-my-learning-journey-setting-sail-into-data-excellence-todays-focus-mathematics-for-data-analysis-p-c-1-1fba | ai, data, learning, math |

PERMUTATION AND COMBINATION - 1

It is a method in which we count something with different methods.

WHY do we need to learn about it ?

It is the best and most practical thing which we or everyone can learn to be practically more logical towards any thing which we can encounter in our life.

In Data Science and Analyst jobs it will help us in probability not just to learn but to understand it in depth.

Nowadays everything is done by machine so, don’t mug up all the formulas, just understand the concept and apply it in your project.

Fundamental Principle of Counting. :-

Multiplication

Addition

Fundamental Multiplication Principle of Counting. :-

If there are ‘m’ different ways of doing one event and ‘n’ different ways of doing another event, then the simultaneous occurrence of these events will be given by

‘m x n’ in different ways.

Eg of different ways to explain Fundamental Multiplication Principle of Counting.

MODEL 1 : - TWO COIN ARE TOSSED SIMULTANEOUSLY.

Total no. of outcome = 4

For three coins the outcome is :- 8 possible outcomes.

Because everyone has 2 possible outcome and when we are doing is simultaneously we get 2 X 2 X 2 = 8

Because every outcome is independent of each other.

No.of outcome = 2^(n)

n = no.of coin.

MODEL 2 : - TWO DICE ARE ROLLED SIMULTANEOUSLY.

EACH DICE OUTCOME POSSIBILITY = 6.

Total no. of outcome = 36

No.of outcome = 6^(n)

n = no.of dice

MODEL 3 : - Clothes Wearing possibility upper and lower.

2 upper and 4 lower.

No. of ways to wear = 2 X 4 = 8.

MODEL 4 : - Find the no. of way to reach somewhere ask in the question.

A has three (3) paths to reach B and from B to C we have four (4) ways.

So, to total outcome to reach C from A through B is = 3 X 4 = 12

MODEL 5 : - Four boxes and Four Different balls.

We NEED to place these 4 balls into the boxes. How many ways are there ?

Condition box can also be empty or it can be the only one who is filled with all balls.

So, to find the correct outcome. We need to know the possible outcome of each ball. I.e = 4.

Total outcomes :-

4 X 4 X 4 X 4 = 256

🙏 Thank you all for your time and support! 🙏

Don't forget to catch me daily at 6:30 Pm (Monday to Friday)for the latest updates on my programming journey! Let's continue to learn, grow, and inspire together! 💻✨

| nitinbhatt46 |

1,795,882 | 11 Best IPL Betting Sites In India Of 2024 | Are you prepared to add an extra layer of excitement to the upcoming IPL? The match between Chennai... | 0 | 2024-03-20T06:30:56 | https://dev.to/sportsx9s/11-best-ipl-betting-sites-in-india-of-2024-4i51 | ipl, betting, website, 2024 | Are you prepared to add an extra layer of excitement to the upcoming IPL? The match between Chennai Super Kings (CSK) and Royal Challengers Bangalore (RCB) is on Friday, 22 March 2024, at 14:30:00 UTC in MA Chidambaram Stadium, Chennai. Dive into our guide on the 11 best IPL betting sites in India, each offering unique bonuses and features to enhance your cricket betting experience. Whether you’re a seasoned punter or a newcomer, these platforms have something special for everyone. Let’s find the ideal betting companion for the cricketing extravaganza that is the Indian Premier League. [Continue](https://bet.sportsx9.com/cricket/best-ipl-betting-sites/) | sportsx9s |

1,795,893 | Input Sanitation | Zod has a really nice feature that allows us to define, for schemas that describe objects, how... | 26,937 | 2024-03-22T07:06:52 | https://dev.to/shaharke/zod-zero-to-here-chapter-3-182b | programming, typescript, node, zod | Zod has a really nice feature that allows us to define, for schemas that describe objects, how properties not defined in the schema should be treated. We can choose one of 3 modes:

- Strip: Zod will strip out unrecognized keys during parsing. This is the default behaviour.

- Passthrough: Zod will keep unrecognized keys and will not validate them.

- Strict: Zod will return an error for any unrecognized key.

All three modes have their uses, but in this post I will focus on `strip` and `strict`, who can help with what is called "Input Sanitation".

## What is input sanitation?

Input sanitation is a critical security practice aimed at preventing malicious users from injecting harmful data into our software. This practice involves validating and cleaning up the data received from the user before processing or storing it.

### Example scenario: unauthorized account modification

Imagine a web application that allows users to update their profile information, including their email but not their user role, which is intended to be controlled only by administrators. The application receives an object with the fields to be updated and directly passes it to the database query without properly sanitizing the input to remove or restrict fields.

Vulnerable code snippet:

```typescript

app.post('/updateProfile', function(req, res) {

// Assuming req.body is something like {email: "newemail@example.com"}

const updates = req.body;

const userId = req.session.userId; // The ID of the currently logged-in user

// Update the user profile with the provided fields

db.collection('users').updateOne({ _id: userId }, { $set: updates }, function(err, result) {

if (err) {

// handle error

} else {

// success

}

});

});

```

An attacker discovers this endpoint and decides to send a modified request that includes an additional property, `role`, in an attempt to escalate their privileges:

```json

{

"email": "attacker@example.com",

"role": "admin"

}

```

By sending this payload, the attacker could potentially change their user role to "admin", assuming the application does not properly check the fields that are being updated. This happens because the database command directly uses the object from the request, allowing any properties provided to be included in the `$set` operation.

## How can Zod help?

We can use one of the modes mentioned above to prevent this attack. Let's see what would be the behavior of each mode:

### Strip

Strip is the default mode of every schema and does not require any explicit configuration. We can change the vulnerable endpoint above in the following way:

```typescript

const Updates = z.object({

email: z.string()

})

app.post('/updateProfile', function(req, res) {

const updates = Updates.parse(req.body);

// log updates to see the result

console.log("You shall not pass!", updates);

const userId = req.session.userId;

// Update the user profile with the provided fields

db.collection('users').updateOne({ _id: userId }, { $set: updates }, function(err, result) {

if (err) {

// handle error

} else {

// success

}

});

});

```

Now when an attacker sends the following body:

```json

{

"email": "attacker@example.com",

"role": "admin"

}

```

Zod will strip the `role` property from the body before passing it on to the update statement. We should expect to see the following log:

```

You shall not pass! { "email": "attacker@example.com" }

```

### Strict

We can also configure a schema to be strict, causing unrecognized keys to throw an error:

```typescript

const Updates = z.object({

email: z.string()

}).strict()

// ... rest of code

```

Now calling the endpoint with the malicious payload will result in the following error:

```

ZodError: [

{

"code": "unrecognized_keys",

"keys": [

"role"

],

"path": [],

"message": "Unrecognized key(s) in object: 'role'"

}

]

```

In the context of input sanitation for security, using `strict` could be useful if we are looking to identify security breaches attempts as they happen.

Another interesting option is to use a mix of `strict` and `strip`:

```typescript

const Updates = z.object({

email: z.string()

})

const StrictUpdates = Updates.strict();

app.post('/updateProfile', function(req, res) {

const parseResult = StrictUpdates.safeParse(req.body);

if (!parseResult.success && parseResult.error.issues.some(issue => issue.code === ZodIssueCode.unrecognized_keys)) {

console.error("Unrecognized keys in updates");

}

const updates = Updates.parse(req.body);

console.log("You shall not pass!", updates);

// ... rest of code

});

```

Notice that usage of `safeParse` instead of `parse` when using the `StrictUpdates` schema. `safeParse` allows us to validate input without throwing an error in case the input is invalid. In this case we use `safeParse` to identify and log unrecognized keys, but not fail the request.

## Summary

Input sanitation is a very common and important security measure. Zod can help sanitize inputs in different ways - by silently dropping unrecognized keys or by throwing errors.

[Next chapter](- [Chapter 4: Union Types](https://dev.to/shaharke/zod-zero-to-hero-chapter-4-513c)

) we will learn how to define union types with Zod. | shaharke |

1,795,983 | Seamlessly Connect Your WP Contact Forms to Any API | In today's digital age, integrating your website with various third-party services and APIs has... | 0 | 2024-03-20T08:25:06 | https://dev.to/johnsmith244303/seamlessly-connect-your-wp-contact-forms-to-any-api-4fi6 | In today's digital age, integrating your website with various third-party services and APIs has become a necessity for businesses to streamline their operations and enhance their online presence. Contact forms are a crucial component of any website, serving as the primary means of communication between you and your potential customers. However, simply collecting form submissions is often not enough; you need a way to seamlessly connect your contact forms to other platforms, services, and APIs to unlock their full potential.

**The Importance of API Integration**

APIs (Application Programming Interfaces) are the backbone of modern web development, enabling different software applications and services to communicate and exchange data with one another. By connecting your WordPress contact forms to APIs, you can automate a wide range of tasks and processes, saving you time, effort, and resources.

For instance, you could integrate your contact forms with a Customer Relationship Management (CRM) system like Salesforce or HubSpot, allowing you to automatically capture and store lead information directly in your CRM. This streamlines your lead management process, ensuring that no potential customer falls through the cracks.

Alternatively, you might want to connect your contact forms to a marketing automation platform like MailChimp or Constant Contact, allowing you to automatically add new subscribers to your email lists based on the information they provide in the form.

The possibilities are virtually endless – you could integrate with project management tools, invoicing systems, payment gateways, and more, all by leveraging the power of APIs and the "WordPress Contact Form Plugin."

**Setting Up API Integrations with the "WordPress Contact Form Plugin"**

The "**[Best WordPress Contact Form Plugin](https://wordpress.org/plugins/contact-form-to-any-api/)**" makes it incredibly easy to set up API integrations for your contact forms. Here's a step-by-step guide to get you started:

**1. Install and Activate the Plugin**

Begin by installing and activating the "WordPress Contact Form Plugin" on your WordPress website. The plugin's user-friendly interface ensures a smooth setup process

**2. Create Your Contact Form**

Use the plugin's intuitive form builder to create your desired contact form. Customize the form fields, layout, and styling to match your brand and website design

**3. Set Up API Integration**

Navigate to the plugin's API integration settings and select the API or service you want to connect your form to. The plugin supports a vast array of popular APIs out of the box, and if you don't find the one you need, you can easily add custom API integrations using the plugin's developer-friendly API integration framework.

**4. Configure API Settings**

Depending on the API you've chosen, you'll need to provide the necessary authentication credentials, such as API keys, access tokens, or other required information. The plugin will guide you through this process, ensuring a seamless configuration experience.

**5. Map Form Fields**

Once you've authenticated with the API, you can map your contact form fields to the corresponding fields in the API. This ensures that the data submitted through your form is accurately captured and stored in the connected service or platform.

**6. Test and Deploy**

Before going live, take the time to thoroughly test your API integration by submitting a few test form entries. Once you're satisfied with the results, you can publish your contact form, confident that it's seamlessly connected to the desired API.

**Advanced Features and Customizations**

The "WordPress Contact Form Plugin" is more than just a basic contact form builder – it offers a wide range of advanced features and customization options to cater to even the most sophisticated requirements.

**Conditional Logic**

The plugin allows you to implement conditional logic, enabling you to show or hide specific form fields based on the user's input. This feature is particularly useful for creating dynamic, user-friendly forms that adapt to the visitor's needs.

**Multi-Step Forms**

For complex forms with numerous fields, the plugin supports the creation of multi-step forms. This feature breaks down lengthy forms into manageable sections, improving the user experience and reducing form abandonment rates.

**Form Styling and Customization**

With the "WordPress Contact Form Plugin," you have complete control over the appearance of your forms. Customize the form's layout, colors, fonts, and styles to seamlessly match your website's branding and design.

**Spam Protection**

Protecting your forms from spam submissions is essential, and the plugin offers robust spam protection features, including reCAPTCHA integration, honeypot fields, and advanced spam filtering algorithms

**Comprehensive Analytics and Reporting**

Gain valuable insights into your form's performance with the plugin's comprehensive analytics and reporting capabilities. Track form submissions, conversion rates, and other key metrics to continuously optimize and refine your forms.

**Developer-Friendly API Integration Framework**

While the plugin offers out-of-the-box integrations with popular APIs, its developer-friendly API integration framework allows you to create custom integrations tailored to your specific needs. Whether you need to connect to a proprietary internal system or a lesser-known third-party service, the plugin's extensible architecture makes it possible.

**Conclusion**

In today's fast-paced digital landscape, seamlessly connecting your WordPress contact forms to various APIs and third-party services is no longer a luxury – it's a necessity. The "WordPress Contact Form Plugin" empowers you to do just that, unlocking a world of possibilities for streamlining your business processes, enhancing customer engagement, and maximizing the potential of your online presence.

With its user-friendly interface, advanced features, and robust API integration capabilities, this plugin is a game-changer for businesses of all sizes and industries. Whether you're an agency, e-commerce store, or a professional service provider, the "WordPress Contact Form Plugin" is a must-have tool in your WordPress arsenal, enabling you to take your contact forms to new heights and provide exceptional experiences for your customers.

So, what are you waiting for? Unlock the full potential of your WordPress contact forms today and seamlessly connect them to any API with the "WordPress Contact Form Plugin."

| johnsmith244303 | |

1,796,090 | Gimli Tailwind now features a sleek dark UI theme! | In Version 4.1 and 4.2, I’ve primarily focused on addressing bugs and optimizing code. However, I’ve... | 0 | 2024-03-20T10:48:41 | https://dev.to/gimli_app/gimli-tailwind-now-features-a-sleek-dark-ui-theme-37j5 | tailwindcss, webdev, css, frontend |

In Version 4.1 and 4.2, I’ve primarily focused on addressing bugs and optimizing code. However, I’ve also made some subtle yet valuable improvements!

[Check out the video](https://www.youtube.com/watch?v=gykSctSuMK4&ab_channel=Gimli)

[Click here to get the extension.](https://chromewebstore.google.com/detail/gimli-tailwind/fojckembkmaoehhmkiomebhkcengcljl) | gimli_app |

1,796,399 | How to Create Interface in Laravel 11 | In this article, we'll create an interface in laravel 11. In laravel 11 introduced new Artisan... | 0 | 2024-03-20T16:28:33 | https://techsolutionstuff.com/post/how-to-create-interface-in-laravel-11 | laravel, laravel11, interface, webdev | In this article, we'll create an interface in laravel 11. In laravel 11 introduced new Artisan commands.

An interface in programming acts like a contract, defining a set of methods that a class must implement.

Put simply, it ensures that different classes share common behaviors, promoting consistency and interoperability within the codebase.

So, let's see laravel 11 creates an interface, what is an interface in laravel, laravel creates an interface command, and php artisan make interface.

Laravel 11 new artisan command to create an interface.

```

php artisan make:interface {interfaceName}

```

> Laravel Create Interface:

First, we'll create ArticleInterface using the following command.

```

php artisan make:interface Interfaces/ArticleInterface

```

Next, we will define the publishArticle() and getArticleDetails() functions in the ArticleInterface.php file. So, let's update the following code in the ArticleInterface.php file.

app/Interfaces/ArticleInterface.php

```

<?php

namespace App\Interfaces;

interface ArticleInterface

{

public function publishArticle($title, $content);

public function getArticleDetails($articleId);

}

```

Next, we will create two new service classes and implement the "ArticleInterface" on them. Run the following commands now:

```

php artisan make:class Services/ShopifyService

php artisan make:class Services/LaravelService

```

app/Services/ShopifyService.php

```

<?php

namespace App\Services;

use App\Interfaces\ArticleInterface;

class ShopifyService implements ArticleInterface

{

/**

* Write code on Method

*

* @return response()

*/

public function publishArticle($title, $content) {

info("Publish article on Shopify");

}

/**

* Write code on Method

*

* @return response()

*/

public function getArticleDetails($articleId) {

info("Get Article details from Shopify");

}

}

```

app/Services/LaravelService.php

```

<?php

namespace App\Services;

use App\Interfaces\ArticleInterface;

class LaravelService implements ArticleInterface

{

/**

* Write code on Method

*

* @return response()

*/

public function publishArticle($title, $content) {

info("Publish article on Laravel");

}

/**

* Write code on Method

*

* @return response()

*/

public function getArticleDetails($articleId) {

info("Get Article details from Laravel");

}

}

```

Now, we'll create a controller using the following command and implement the service into the controller.

```

php artisan make:controller ShopifyArticleController

php artisan make:controller LaravelArticleController

```

app/Http/Controllers/ShopifyArticleController.php

```

<?php

namespace App\Http\Controllers;

use Illuminate\Http\Request;

use App\Services\ShopifyService;

class ShopifyArticleController extends Controller

{

protected $shopifyService;

/**

* Constructor to inject ShopifyService instance

*

* @param ShopifyService $shopifyService

* @return void

*/

public function __construct(ShopifyService $shopifyService) {

$this->shopifyService = $shopifyService;

}

/**

* Publish an article

*

* @param Request $request

* @return \Illuminate\Http\JsonResponse

*/

public function index(Request $request) {

$this->shopifyService->publishArticle('This is title.', 'This is body.');

return response()->json(['message' => 'Article published successfully']);

}

}

```

app/Http/Controllers/LaravelArticleController.php

```

<?php

namespace App\Http\Controllers;

use Illuminate\Http\Request;

use App\Services\LaravelService;

class LaravelArticleController extends Controller

{

protected $laravelService;

/**

* Constructor to inject ShopifyService instance

*

* @param LaravelService $laravelService

* @return void

*/

public function __construct(LaravelService $laravelService) {

$this->laravelService = $laravelService;

}

/**

* Publish an article

*

* @param Request $request

* @return \Illuminate\Http\JsonResponse

*/

public function index(Request $request) {

$this->laravelService->publishArticle('This is title.', 'This is body.');

return response()->json(['message' => 'Article published successfully']);

}

}

```

After that, we'll define routes in the web.php file

routes/web.php

```

<?php

use Illuminate\Support\Facades\Route;

use App\Http\Controllers\ShopifyArticleController;

use App\Http\Controllers\LaravelArticleController;

Route::get('shofipy/post', [ShopifyArticleController::class, 'index']);

Route::get('laravel/post', [LaravelArticleController::class, 'index']);

```

---

You might also like:

#### **[Read Also: How to Get Current URL in Laravel](https://techsolutionstuff.com/post/how-to-create-interface-in-laravel-11)** | techsolutionstuff |

1,796,412 | If statements | Type end immediately after typing the if. Indent elsif is only when you need to check more than 2... | 0 | 2024-03-20T16:49:28 | https://dev.to/feelo31/if-statements-ah0 | Type `end` immediately after typing the `if`. Indent

`elsif` is only when you need to check more than 2 conditions.

Causing code to execute is known as 'truthy'

Causing code to not execute is known as 'falsy'

Only `nil` and `false` are falsy.

`!=` means not equivalent

`p` if for quick inspection, `pp` is for more human readable representations. especially with complex data structures.

In variables you can put methods on both sides to evaluate `if` statements more concise.

`&&` both statements have to be true

`||` at least one statement has to be true. | feelo31 | |

1,796,445 | Next JS 14 | Setting Up Your Database | In this chapter Here are the topics we’ll cover: 🐱 Push your project to GitHub. 🔺 Set up a Vercel... | 26,880 | 2024-03-20T17:24:12 | https://dev.to/w3tsa/next-js-14-setting-up-your-database-4ank | webdev, nextjs, database, vercel | In this chapter

Here are the topics we’ll cover:

🐱 Push your project to GitHub.

🔺 Set up a Vercel account and link your GitHub repo for instant previews and deployments.

🔗 Create and link your project to a Postgres database.

𝌏 Seed the database with initial data.

**Create a GitHub repository**

To start, let's push your repository to Github if you haven't done so already. This will make it easier to set up your database and deploy.

If you need help setting up your repository, take a look at [this guide on GitHub](https://help.github.com/en/github/getting-started-with-github/create-a-repo).

**Create a Vercel account**

Visit [vercel.com/signup](https://vercel.com/signup) to create an account. Choose the free "hobby" plan. Select Continue with GitHub to connect your GitHub and Vercel accounts.

**Connect and deploy your project**

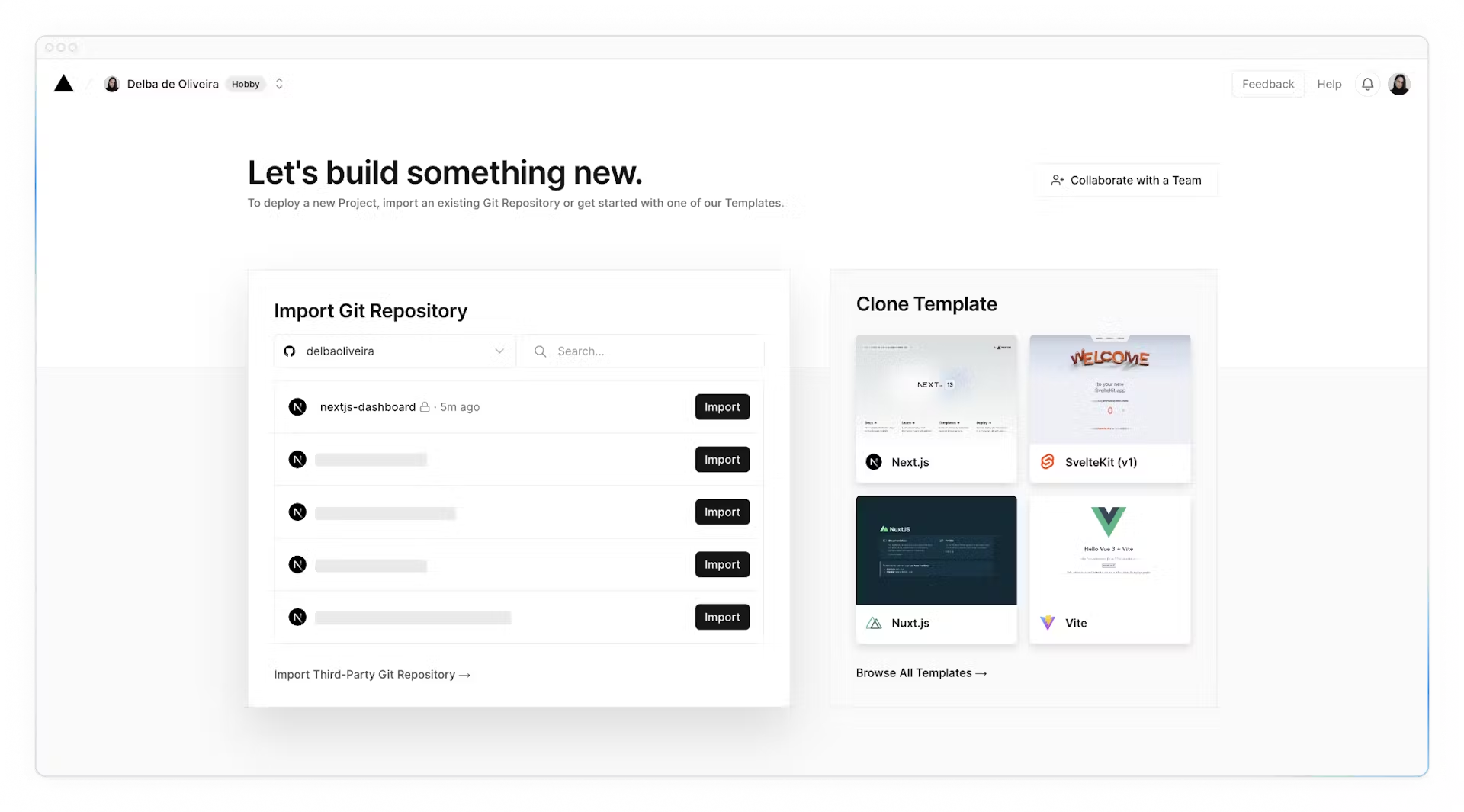

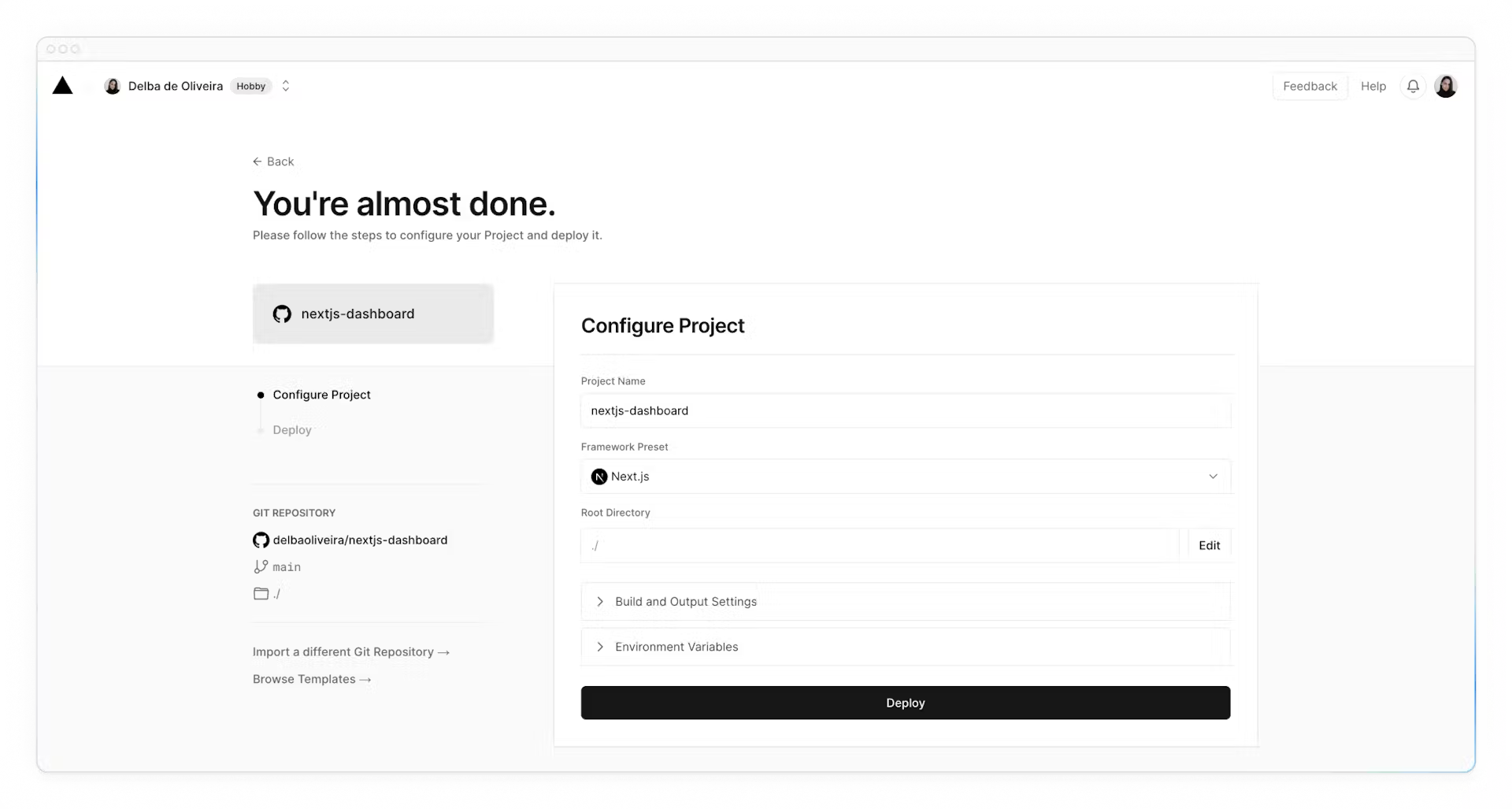

Next, you'll be taken to this screen where you can select and **import** the GitHub repository you've just created:

Name your project and click **Deploy**.

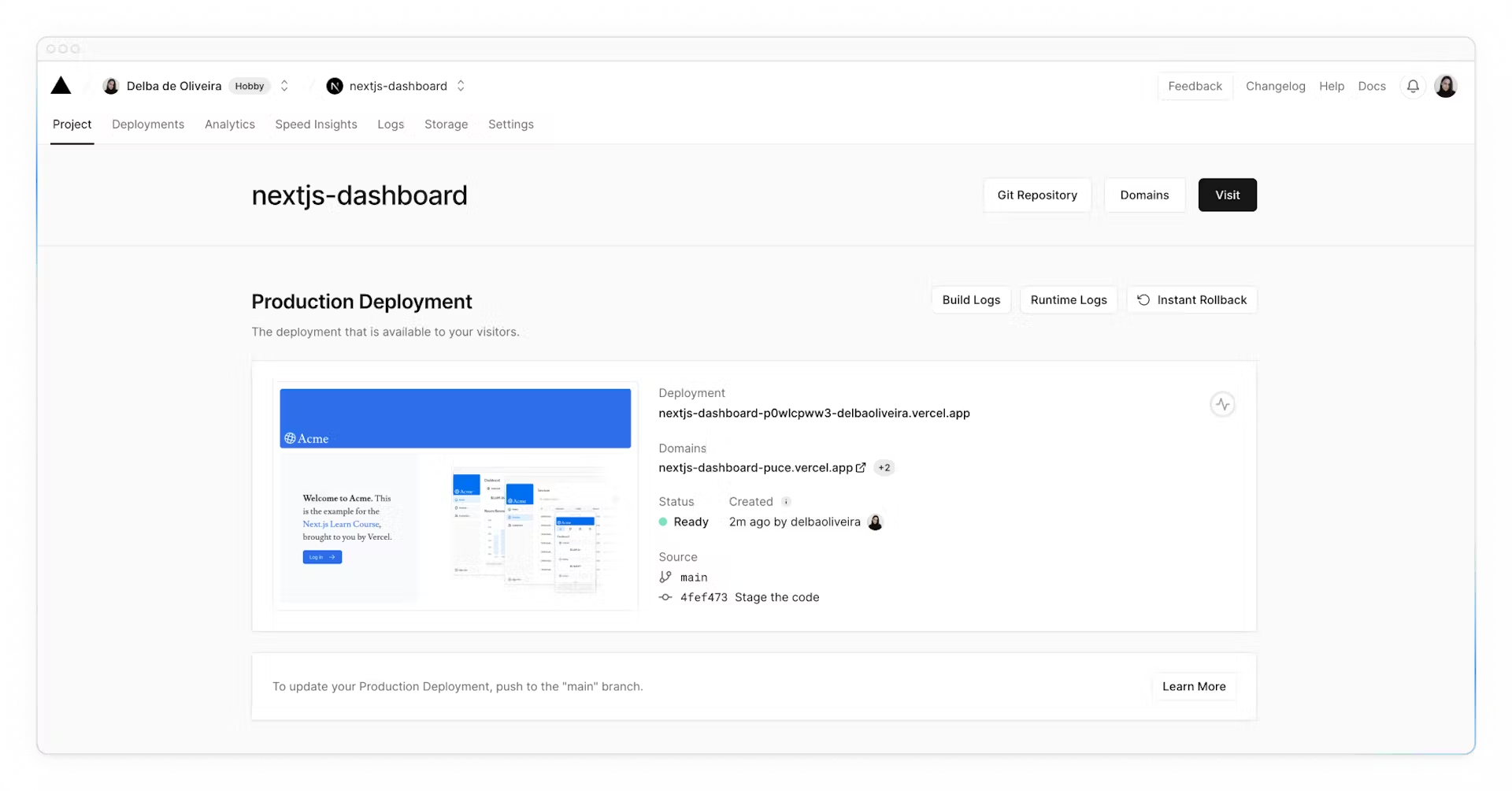

Hooray! 🎉 Your project is now deployed.

By connecting your GitHub repository, whenever you push changes to your main branch, Vercel will automatically redeploy your application with no configuration needed. When opening pull requests, you'll also have [instant previews](https://vercel.com/docs/deployments/preview-deployments#preview-urls) which allow you to catch deployment errors early and share a preview of your project with team members for feedback.

**Create a Postgres database**

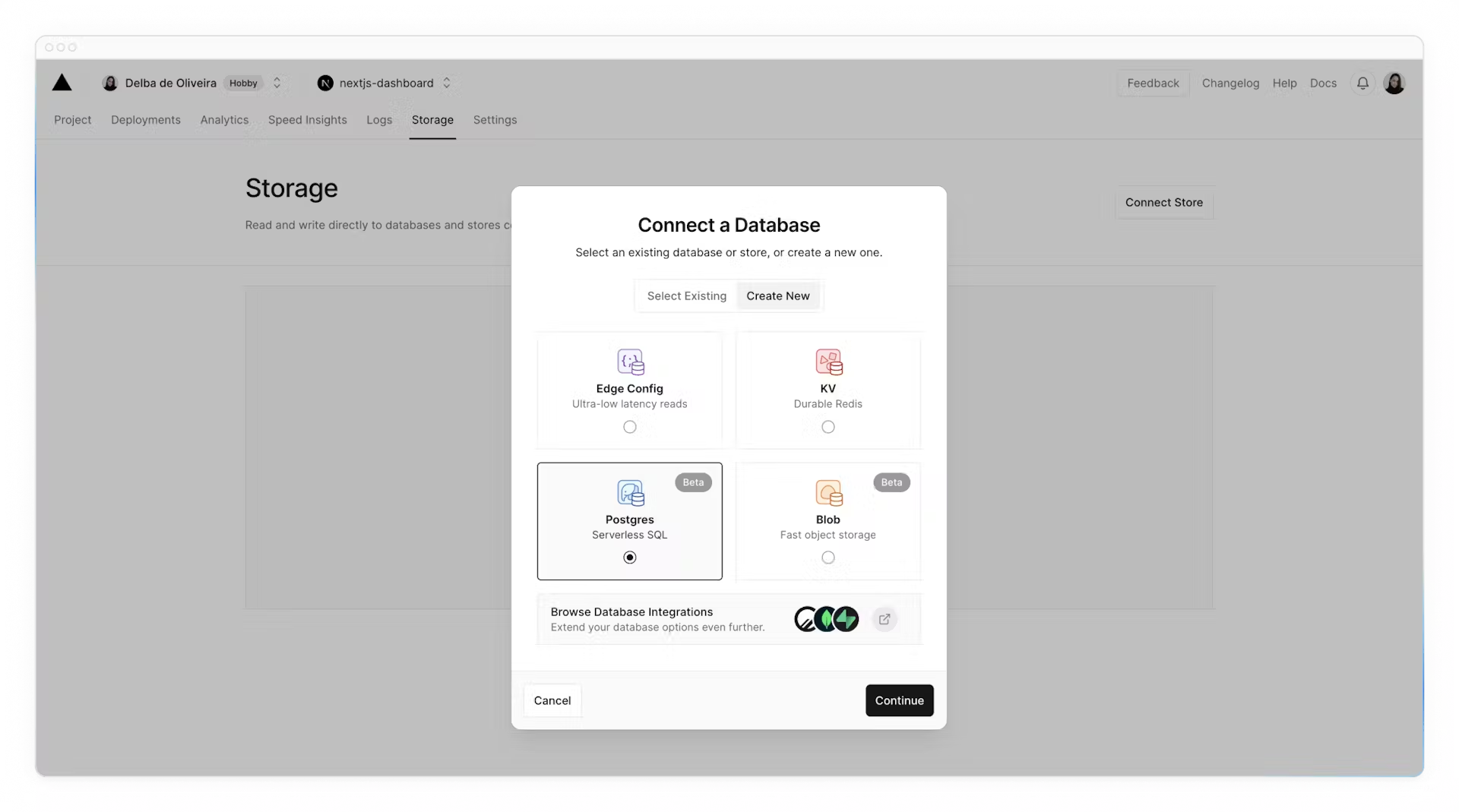

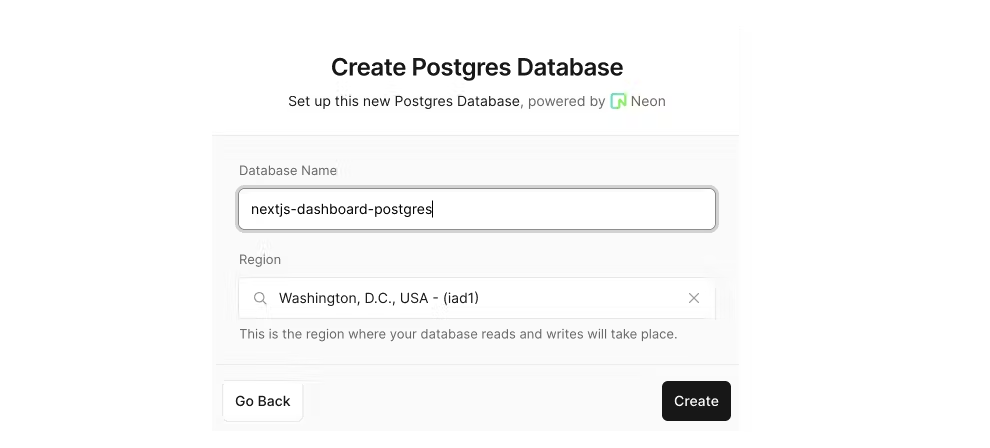

Next, to set up a database, click **Continue to Dashboard** and select the **Storage** tab from your project dashboard. Select **Connect Store → Create New → Postgres → Continue**.

Accept the terms, assign a name to your database, and ensure your database region is set to **Washington D.C (iad1)** - this is also the [default region](https://vercel.com/docs/functions/serverless-functions/regions#select-a-default-serverless-region) for all new Vercel projects. By placing your database in the same region or close to your application code, you can reduce [latency](https://developer.mozilla.org/en-US/docs/Web/Performance/Understanding_latency) for data requests.

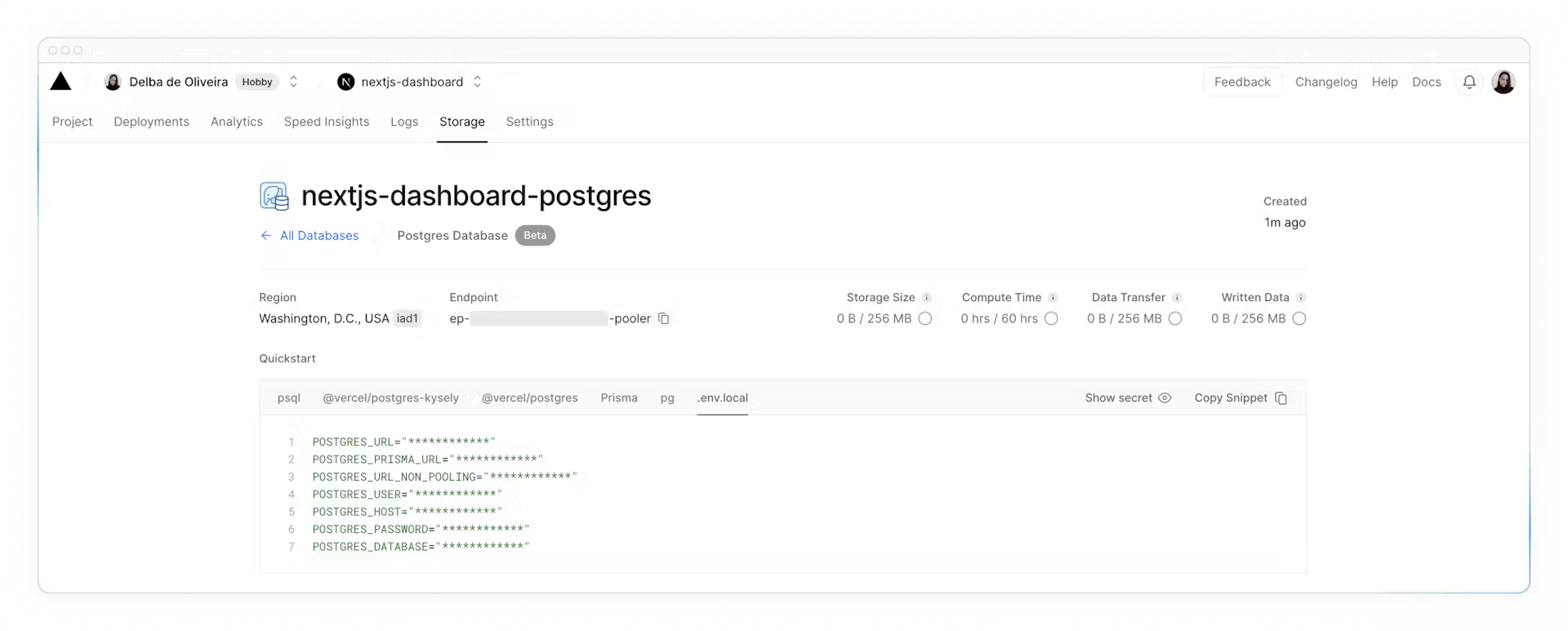

Once connected, navigate to the `.env.local` tab, click **Show secret** and **Copy Snippet**. Make sure you reveal the secrets before copying them.

Navigate to your code editor and rename the `.env.example` file to `.env`. Paste in the copied contents from Vercel.

**Important**: Go to your `.gitignore` file and make sure `.env` is in the ignored files to prevent your database secrets from being exposed when you push to GitHub.

Finally, run `npm i @vercel/postgres` in your terminal to install the [Vercel Postgres SDK](https://vercel.com/docs/storage/vercel-postgres/sdk).

**Seed your database**

Now that your database has been created, let's seed it with some initial data. This will allow you to have some data to work with as you build the dashboard.

In the `/scripts` folder of your project, there's a file called `seed.js`. This script contains the instructions for creating and seeding the invoices, customers, user, revenue tables.

The script uses SQL to create the tables, and the data from `placeholder-data.js` file to populate them after they've been created.

Next, in your `package.json` file, add the following line to your scripts:

```json

"scripts": {

"build": "next build",

"dev": "next dev",

"start": "next start",

"seed": "node -r dotenv/config ./scripts/seed.js"

},

```

This is the command that will execute `seed.js`.

Now, run `npm run seed`. You should see some `console.log` messages in your terminal to let you know the script is running.

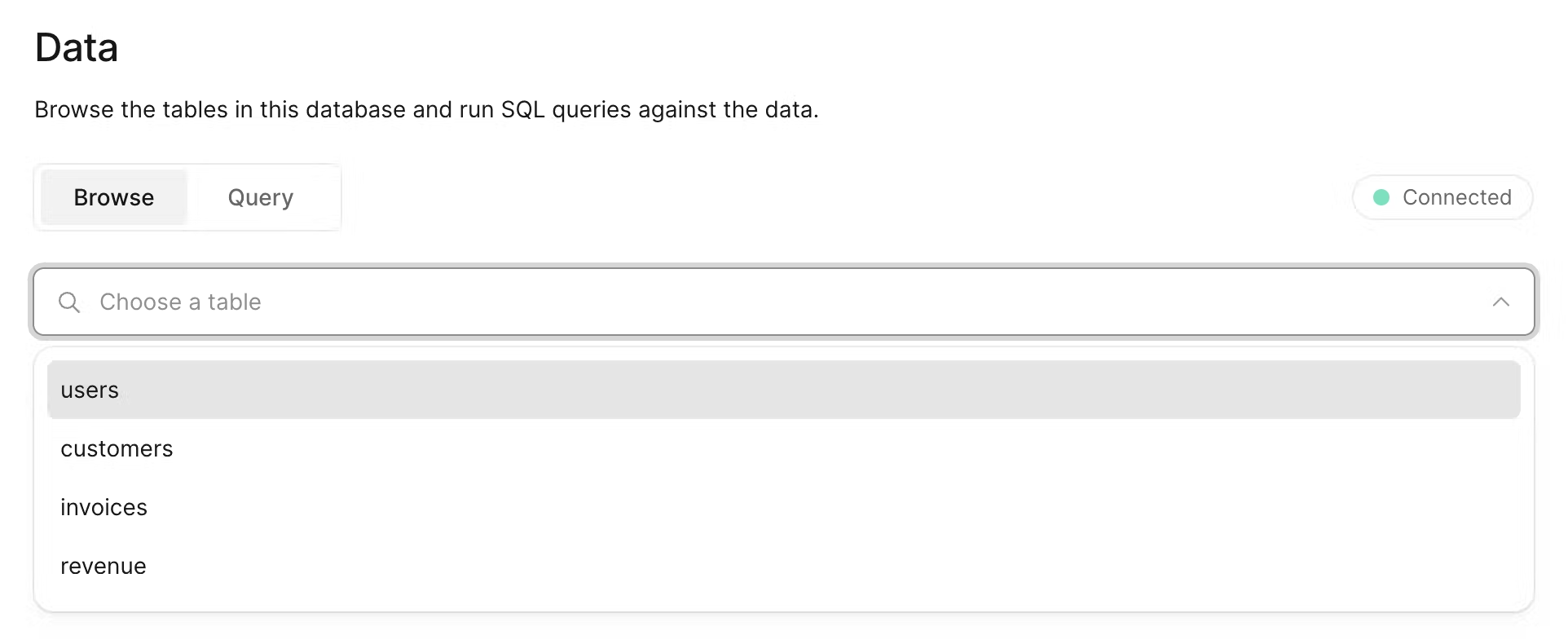

Let's see what your database looks like. Go back to Vercel, and click **Data** on the sidenav.

In this section, you'll find the four new tables: users, customers, invoices, and revenue.

By selecting each table, you can view its records and ensure the entries align with the data from `placeholder-data.js` file.

**Executing queries**

You can switch to the "query" tab to interact with your database. This section supports standard SQL commands. For instance, inputting `DROP TABLE customers` will delete "customers" table along with all its data - **so be careful!**

Let's run your first database query. Paste and run the following SQL code into the Vercel interface:

```sql

SELECT invoices.amount, customers.name

FROM invoices

JOIN customers ON invoices.customer_id = customers.id

WHERE invoices.amount = 666;

```

Check out the video: {% youtube vc_evtMq11k%}

Support me: Like, Share and Subscribe!

| w3tsa |

1,796,643 | Building a Native Mobile App: Select the Right Framework | In this podcast, Krish explores the realm of mobile app development, comparing native approaches... | 0 | 2024-03-20T21:17:47 | https://dev.to/vpalania/building-a-native-mobile-app-select-the-right-framework-56ed | In this podcast, Krish explores the realm of mobile app development, comparing native approaches using Swift and Kotlin for iOS and Android respectively, with cross-platform options like React Native and Flutter. While discussing the merits of each approach, Krish notes Google's endorsement of Kotlin for Android development and the growing popularity of Flutter. He emphasizes the importance of considering project requirements and personal preferences when selecting a technology stack. Krish expresses excitement about his positive initial experiences with Flutter and hints at delving into API integration in future discussions, setting the stage for further exploration of mobile app development strategies.

### Summary

**Introduction to Mobile App Development Options**

- Krish introduces the topic of mobile app development and advises listeners to check out previous episodes for context.

**Native Development vs. Cross-Platform Options**

- Krish discusses the choice between native development (Swift for iOS, Kotlin for Android) and cross-platform alternatives like React Native and Flutter.

- He highlights Kotlin as Google’s recommended language for Android development.

**Considerations for Cross-Platform Development**

- Krish examines React Native as an initial choice but mentions widening gaps between React and React Native.

- He touches on personal preferences in technology stack selection and the importance of considering suitability for each project.

**Introduction to Flutter and Dart**

- Krish introduces Flutter as Google’s mobile framework using the Dart language.

- He shares his positive initial impressions of Flutter, citing its rich components and promising traction in the developer community.

**Conclusion and Future Discussion**

- Krish expresses excitement about working with Flutter and hints at discussing API integration in the next episode.

### Podcast

Check out on [Spotify](https://open.spotify.com/episode/1KKVAKjfeFUHeJQyl8DoFx?si=WvmCmUZURRea1Nrk2mUKag).

### Transcript

**0:00**

Hello. Hey, this is Krish. Hope you’re doing well in this podcast. I want to follow up on one of the previous ones where I started talking about mobile app development. If you haven’t listened to that, please, you may want to check that out. In that first one, I just talked at the highest level about various options available if you were starting out to build a mobile app.

**0:23**

Let me continue the conversation or that monologue here. So if memory serves me right, I believe I left off that podcast mentioning Kotlin and also cross-browser alternatives, right? Like Flutter and React Native. Let’s continue from that point onwards, right? So let’s say you’ve decided to go ahead, and before you jump all in into a cross-platform technology, you want to be doubly sure about the other option that’s out there, which is building Swift and native-like Android apps.

**1:02**

With Android, you have two options at least. The traditional way of building Android apps was using an Android SDK, purely using Java as a programming language. But I think, I believe starting last year, Google’s official recommendation, whatever official means, their recommendation has been to use Kotlin as an alternative to Java. And that could be one of many reasons as to why that happened. Which is not super important, I think, to this conversation because our interest here is just to get an app out there and then build it, right? Get to building it. In any case, Kotlin’s a different language, it’s statically typed and it was founded, I believe, by JetBrains as a company. But it’s fully interoperable with Java, right? So, and it’s… I mean, I’ve taken an initial look at it. I haven’t done much development using Kotlin, but it doesn’t like… I mean, it doesn’t look terribly different from Java. At least, my first look is to say anything about it in any case, so that’s an option definitely available for you.

**2:30**

So you want to, you know, build a Kotlin app, an Android app, using Kotlin as your programming language of choice. Now, that’s just a precursor to our discussion, to this, to this podcast. What I want to actually talk about is, okay, now let’s say you want to go with a cross-platform option, and I’m very much for it, just because I’ve actually not run into an extremely compelling use case, at least in the work I’ve done thus far where I’ve not been able to solve problems using a cross-platform technology.

**3:03**

Right. So having said that, right, so what do you want to go with, right? React Native was one of my initial choices, at least in mind, because I love React on the web just because of the way it does things and the ease of integration that it has with some other plugins and libraries, even outside of the React ecospace. But even having said that I just want to… when you start to do something, you want to make sure you do your due diligence and for every project right. Not just because you pick one stack or one technology for a project and then the next one you do something similar.

**4:28**

From what I’ve read, and I actually did a bit of playing around as well. What I’ve read, it sounds like the gap between React and React Native seems to be widening. I’m not entirely sure why, and just in terms of the feature and support and whatnot, it seems like it’s getting wider. It’s not like the same level of support that you expect and you do see for React on the web. You may or may not get that for React Native. That was one. That was kind of the feeling I’ve had for a little bit, but that seems to be more and more true of late. Also, I also read an article about Airbnb being almost entirely based on React Native, their mobile apps, and they’ve moved off of it for several different reasons.

**6:15**

So you know, we have our own preferred languages and frameworks every time we do things and then we learn other items. But you know, unless something changes dramatically, I don’t see myself building mobile apps using anything other than Dart, at least in the short term to middle term, right? Going back to GitHub and and dart’s traction, it’s actually quite new compared to React Native with, I don’t know, it’s the newest kid. I don’t know if it’s the newest kid, but it’s a pretty new kid on the block. But it has, it’s very comparable. I think I saw like about 80,000 stars and in terms of the number of folks, it’s probably a percentage of React Native if you go to GitHub and look that up. But otherwise the actual in terms of adoptability, it seems to have a lot of traction. I mean, things change every day, so who knows what happens tomorrow. But as of this recording that seems to have, flutter seems to have a lot of traction and interest in the community, which is actually very good, right.

**10:19**

So you’re going to find more help and and articles and and whatnot. So that is very promising. And what else? Right, In terms of the samples and then widgets that are available, I mean I’ve found a decent number. I don’t know how it compares with what React Native has. But just given the fact that React Native has been around for much longer, it’s very likely if you’re going to, you’re going to find more help on like Stack Overflow or other places if you have questions. So I’m sure there’s going to be some gap there in terms of documentation and what not. But my initial reaction is, is very positive and I’m super excited. I’ve started to do some work and you know starting to get the initial set of pages and and what not. So we’ll see how it goes in the next podcast. Let me talk more about API integration of the app and what might be some of the options that that are available to us.

**11:19**

Thank you. | vpalania | |

1,796,937 | Day 13: Unveiling the Magic of INTRODUCTION in Java, C++, Python, and Kotlin! 🚀 | DAY - 13 For more Tech content Join us on linkedin click here All the code snippets in this journey... | 0 | 2024-03-21T05:48:57 | https://dev.to/nitinbhatt46/day-13-unveiling-the-magic-of-introduction-in-java-c-python-and-kotlin-l0n | programming, coding, developer, beginners | DAY - 13

For more Tech content Join us on linkedin [click here](https://www.linkedin.com/in/nitin-bhatt-962356260/)

All the code snippets in this journey are available on my GitHub repository. 📂 Feel free to explore and collaborate: [Git Repository](https://github.com/Nitin-bhatt46/POWER_OF_PROGRAMMING/tree/main

)

Today’s Learning :-

Decimal to Binary

Universal code for decimal to binary. ( cpp, java, python )

Binary to decimal ( cpp, java , python )

Introduction to Function :-

Python:

Theory:

Functions in Python are defined using the def keyword.

They can take zero or more parameters as input and optionally return a value.

Python functions can be defined anywhere in the code, even within other functions.

Python functions can have default parameter values, making some parameters optional.

Python functions can also accept variable-length arguments (*args and **kwargs).

Syntax:

def function_name(parameter1, parameter2, ...):

# Function body

# Statements

return result # Optional return statement

Java:

Theory:

Functions in Java are called methods.

They are defined within classes and objects.

Java methods must specify their return type explicitly, even if it's void (no return value).

Java methods can be static, public, private, etc., to define their accessibility and behaviour.

Java methods can be overloaded, meaning you can define multiple methods with the same name but different parameter lists.

Syntax:

access_modifier return_type method_name(parameter1_type parameter1, parameter2_type parameter2, ...) {

// Method body

// Statements

return result; // Optional return statement

}

C++:

Theory:

Functions in C++ are similar to those in C.

They are standalone entities and can exist outside of classes.

C++ functions can have default parameter values, similar to Python.

C++ supports function overloading, allowing multiple functions with the same name but different parameter lists.

C++ allows you to define inline functions using the inline keyword for small and frequently called functions.

Syntax:

return_type function_name(parameter1_type parameter1, parameter2_type parameter2, ...) {

// Function body

// Statements

return result; // Optional return statement

}

Feel free to reshare this post to enhance awareness and understanding of these fundamental concepts.

Code snippets are in Git repository.

🙏 Thank you all for your time and support! 🙏

Don't forget to catch me daily at 10:30 Am (Monday to Friday) for the latest updates on my programming journey! Let's continue to learn, grow, and inspire together! 💻✨

| nitinbhatt46 |

1,796,961 | Create Complete User Registration Form in PHP and MySQL | What You Will Learn? As stated previously, in this article, you are going to learn the... | 0 | 2024-03-21T06:35:47 | https://dev.to/hmawebdesign/create-complete-user-registration-form-in-php-and-mysql-3k8i | php, javascript, backend, mysql | ## What You Will Learn?

As stated previously, in this article, you are going to learn the following important concepts while creating the complete user registration form in PHP and using the MySQL database.

## How to create a user registration form in HTML and CSS?

1. How to create a new MySQL database in PHPMyAdmin?

2. How to connect with MySQL database with PHP?

3. How to do registration form validation in PHP?

4. Password encryption or password hashing in PHP?

5. How to insert or update user data into the database table?

6. How to use $_session variable in the PHP user registration and login form?

7. How to set the cookies variable in PHP for user registration?

8. How to create a User logout PHP code?

9. How to create a user login system in PHP?

10. How to write PHP code for the user login form?

## Video Tutorial – Login Register PHP

Meanwhile, You can watch the following video tutorial which includes the step-by-step process to create a complete user registration form using PHP and MySQL databases.

{% embed https://youtu.be/m50q5_RQFB4 %}

## Steps – Login and Register for PHP Form

More importantly, to create a complete user registration system in PHP and MySQL, we are going to adopt the following approach.

1. First of all, we are going to create the following PHP files:

2.

3. Create an index.php file. This file contains the HTML and CSS code for the user Sign up form.

4. Create a new MySql database in PHPMyAdmin and a new user table where you want to store the user login details.

5. Create a linkDB.php file. This file will contain MYSQL database connection PHP code.

6. Create a server.php file. This file will contain all server-side PHP and MYSQL database codes for user registration and user login forms. This file is linked with both, index.php and login.php files using the PHP include function.

7. Create a LoggedInPage.php file. This will be your home page, and after the successfully logged user in, will redirect to this home page.

8. Create a login.php file. This file contains the login form HTML and CSS code.

## Source Code – (index.php File)

The following HTML CSS source code belongs to the index.php file. This code is for the user registration form in PHP.

```

<!-- PHP command to link server.php file with registration form -->

<?php include('server.php'); ?>

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Registration</title>

<!-- CSS Code -->

<style>

.container{

justify-content: center;

text-align: center;

align-items: center;

}

input{

padding: 5px;

}

.error{

background-color: pink;

color: red;

width: 300px;

margin: 0 auto;

}

</style>

</head>

<body>

<div class="container">

<h1>User Registration System</h1>

<h4><a href="loggedInPage.php">Home Page</a></h4>

<div class="form" id="signUp">

<form method="POST">

<div class="error"> <?php echo $error ?> </div>

<!--------- To check user regidtration status ------->

<p>

<?php

if (!isset($_COOKIE["id"]) OR !isset($_SESSION["id"]) ) {

echo "Please first register to proceed.";

}

?>

</p>

<input type="text" name="name" placeholder="User Name"> <br> <br>

<input type="email" name="email" placeholder="Email"> <br><br>

<input type="password" name="password" placeholder="password"><br><br>

<input type="password" name="repeatPassword" placeholder="Repeat Password"><br><br>

<label for="checkbox">Stay logged in</label>

<input type="checkbox" name="stayLoggedIn" id="chechbox" value="1"> <br><br>

<input type="submit" name="signUp" value="Sign Up">

<p >Have an account already? <a href="logIn.php">Log In</a></p>

</form>

</div>

</body>

</html>

```

## Source Code – (login.php File)

```

<!-- PHP command to link server.php file with registration form -->

<?php include('server.php'); ?>

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta http-equiv="X-UA-Compatible" content="IE=edge">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>User logIn</title>

<style>

.container{

justify-content: center;

text-align: center;

align-items: center;

}

input{

padding: 5px;

}

.error{

background-color: pink;

color: red;

width: 300px;

margin: 0 auto;

}

</style>

</head>

<body>

<div class="container">

<h1> User Registration System</h1>

<h4><a href="loggedInPage.php">Home Page</a></h4>

<!--------log in form------>

<div class="logInForm" id="logIn">

<form method="POST">

<!-- To show errors is user put wrong data -->

<div class="error"> <?php echo $error2 ?> </div>

<!-- To check the user loged In status -->

<p>

<?php

if (!isset($_COOKIE["id"]) OR !isset($_SESSION["id"]) ) {

echo "<p>Please first log in to proceed.</p>";

}

?>

</p>

<input type="email" name="email" placeholder="Email"> <br><br>

<input type="password" name="password" placeholder="password"><br><br>

<label for="checkbox">Stay logged in</label>

<input type="checkbox" name="stayLoggedIn" id="chechbox" value="1"> <br><br>

<input type="submit" name="logIn" value="Log In">

<!-- User registration form link -->

<p>Not a register user <a href="index.php"> Create Account</a></p>

</form>

</div>

</div>

</script>

</body>

</html>

```

## Source Code – (linkDB.php File)

The linkDB.php includes the following PHP code.

```

<?php

// Open a new connection to the MySQL server

$linkDB = mysqli_connect("localhost","my_user_name","my_password","my_db_name");

if (mysqli_connect_error()){ //for connection error finding

die ('There was an error while connecting to database');

}

?>

```

## Source Code – (server.php File)

The following back-end code for the user registration form in PHP will be included in the server.php file:

```

<?php

session_start();

//------ PHP code for User registration form---

$error = "";

if (array_key_exists("signUp", $_POST)) {

// Database Link

include('linkDB.php');

//Taking HTML Form Data from User

$name = mysqli_real_escape_string($linkDB, $_POST['name']);

$email = mysqli_real_escape_string($linkDB, $_POST['email']);

$password = mysqli_real_escape_string($linkDB, $_POST['password']);

$repeatPassword = mysqli_real_escape_string($linkDB, $_POST['repeatPassword']);

// PHP form validation PHP code

if (!$name) {

$error .= "Name is required <br>";

}

if (!$email) {

$error .= "Email is required <br>";

}

if (!$password) {

$error .= "Password is required <br>";

}

if ($password !== $repeatPassword) {

$error .= "Password does not match <br>";

}

if ($error) {

$error = "<b>There were error(s) in your form!</b> <br>".$error;

} else {

//Check if email is already exist in the Database

$query = "SELECT id FROM users WHERE email = '$email'";

$result = mysqli_query($linkDB, $query);

if (mysqli_num_rows($result) > 0) {

$error .="<p>Your email has taken already!</p>";

} else {

//Password encryption or Password Hashing

$hashedPassword = password_hash($password, PASSWORD_DEFAULT);

$query = "INSERT INTO users (name, email, password) VALUES ('$name', '$email', '$hashedPassword')";

if (!mysqli_query($linkDB, $query)){

$error ="<p>Could not sign you up - please try again.</p>";

} else {

//session variables to keep user logged in

$_SESSION['id'] = mysqli_insert_id($linkDB);

$_SESSION['name'] = $name;

//Setcookie function to keep user logged in for long time

if ($_POST['stayLoggedIn'] == '1') {

setcookie('id', mysqli_insert_id($linkDB), time() + 60*60*365);

//echo "<p>The cookie id is :". $_COOKIE['id']."</P>";

}

//Redirecting user to home page after successfully logged in

header("Location: loggedInPage.php");

}

}

}

}

//-------User Login PHP Code ------------

if (array_key_exists("logIn", $_POST)) {

// Database Link

include('linkDB.php');

//Taking form Data From User

$email = mysqli_real_escape_string($linkDB, $_POST['email']);

$password = mysqli_real_escape_string($linkDB, $_POST['password']);

//Check if input Field are empty

if (!$email) {

$error2 .= "Email is required <br>";

}

if (!$password) {

$error2 .= "Password is required <br>";

}

if ($error2) {

$error2 = "<b>There were error(s) in your form!</b><br>".$error2;

}

else {

//matching email and password

$query = "SELECT * FROM users WHERE email='$email'";

$result = mysqli_query($linkDB, $query);

$row = mysqli_fetch_array($result);

if (isset($row)) {

if (password_verify($password, $row['password'])) {

//session variables to keep user logged in

$_SESSION['id'] = $row['id'];

//Logged in for long time untill user didn't log out

if ($_POST['stayLoggedIn'] == '1') {

setcookie('id', $row['id'], time() + 60*60*24); //Logged in permanently

}

header("Location: loggedInPage.php");

} else {

$error2 = "Combination of email/password does not match!";

}

} else {

$error2 = "Combination of email/password does not match!";

}

}

}

?>

```

## User Logout PHP Code

The below code is used to log out with a session in PHP and log out PHP session.

```

//PHP code to logout user from website

if (isset($_GET["logout"])) {

unset($_SESSION['id']);

setcookie("id", "", time() - 3600);

$_COOKIE['id'] = "";

}

```

Final Words

In the above discussion, we have created the registration and login form in PHP and MySQL databases. If you want to implement the above user registration form in PHP on your website then you need to follow the procedures explained in this tutorial. If you still find any difficulty in understanding, feel free to contact this website www.hmawebdesign.com. If you found this tutorial helpful please don’t forget to [SUBSCRIBE YOUTUBE CHANNEL](https://www.youtube.com/channel/UCCj8edobyplMAnhwYvi9n4A?sub_confirmation=1).

Share this Article! | hmawebdesign |

1,796,970 | How to Use and Add Face Recognition Feature on Android App | *Introduction to Face Recognition Feature on Android Apps * Unlocking your Android device with just a... | 0 | 2024-03-21T06:42:55 | https://dev.to/websitedevelopmentco/how-to-use-and-add-face-recognition-feature-on-android-app-4e3i | webdev, website, mobile | **Introduction to Face Recognition Feature on Android Apps

**

Unlocking your Android device with just a glance, and accessing exclusive features with a simple smile – the future of mobile technology is here! Face recognition feature on Android apps has revolutionized the way we interact with our devices. From enhanced security to seamless user experience, this cutting-edge technology is reshaping the landscape of app development. Let's dive into how you can leverage this innovation and stay ahead in the world of Android apps development.

**Benefits of Adding Face Recognition Feature

**

Implementing a face recognition feature in your Android app can bring numerous benefits to both users and developers.

It enhances security by providing an additional layer of protection beyond traditional password or PIN methods. Users can feel more secure knowing that their personal information is being safeguarded with biometric technology.

The convenience factor cannot be overlooked. Users can simply look at their device to unlock it or access specific features without having to type in passwords repeatedly. This streamlined authentication process saves time and improves user experience.

Additionally, incorporating face recognition adds a modern and sophisticated touch to your app, making it stand out from competitors. It shows that your app is up-to-date with the latest technological advancements, which can attract tech-savvy users looking for innovative solutions.

Integrating face recognition into your Android app offers improved security, enhanced user experience, and a competitive edge in the market.

**Step by Step Guide on How to Use Face Recognition Feature on Android App**

To use the face recognition feature on an Android app, start by navigating to the settings menu within the app. Look for the option that enables face recognition and toggle it on. You may be prompted to set up your facial recognition data by scanning your face using the front camera of your device.

Follow the instructions provided on-screen to ensure a successful setup process. Make sure you are in a well-lit environment and position your face properly within the frame for accurate scanning. Once your facial data is stored securely, you can now access certain features or functionalities within the app using just your face as authentication.

Ensure that you re-scan your face periodically to update and improve accuracy. Remember that security is key when utilizing this feature, so always keep your device locked when not in use. Enjoy the convenience and added layer of security that face recognition technology brings to your Android experience!

**Best Practices for Implementing Face Recognition Feature

**

When implementing a face recognition feature in your Android app, it's crucial to prioritize user privacy and data security. Make sure to inform users about how their facial data will be stored and used, obtaining their consent transparently.

Additionally, consider the lighting conditions under which the face recognition feature will operate. Testing in various lighting settings can help ensure accurate performance across different environments.

It's also recommended to provide an alternative authentication method for users who may not prefer or be able to use facial recognition. Offering options like PIN or password entry can enhance user experience and accessibility.

Regularly update your app's face recognition algorithms to keep up with technological advancements and improve accuracy over time. This proactive approach ensures that your feature remains reliable and effective for users.

By following these best practices, you can integrate a seamless and secure face recognition feature into your Android app that enhances usability without compromising on privacy or convenience.

Common Challenges and Solutions

When integrating face recognition feature into your Android app, you might encounter common challenges along the way. One challenge could be ensuring the accuracy and reliability of the facial recognition system in varying lighting conditions. To overcome this issue, consider implementing advanced algorithms that adapt to different lighting environments.

Another challenge developers often face is managing user privacy concerns related to storing biometric data securely. A solution to this can be encrypting all facial data stored on the device and obtaining explicit consent from users before collecting any information.

Additionally, compatibility with different devices and operating systems can pose a hurdle. To address this challenge, conduct thorough testing across various platforms to ensure seamless functionality regardless of the device being used.

By anticipating these challenges and implementing effective solutions, you can enhance the performance and user experience of your Android app with face recognition technology.

**Alternatives to Face Recognition Feature**

When considering alternatives to face recognition features in Android apps, developers can explore various options to enhance user experience and security. One alternative is fingerprint recognition, which offers a convenient and secure way for users to unlock their devices or access specific app functionalities.

Another option is voice recognition technology, where users can interact with the app through spoken commands. This feature not only adds a personal touch but also provides accessibility benefits for users with disabilities.

Additionally, pattern recognition can be used as an alternative method for authentication. Users can create unique patterns that they draw on the screen to unlock the app or access certain features.

Furthermore, some apps opt for traditional password-based authentication as an alternative to face recognition. While not as cutting-edge, passwords remain a reliable method of securing user data and accounts.

Exploring these alternatives allows developers to cater to different user preferences and needs while maintaining high levels of security within their Android apps.

Conclusion: Future of Face Recognition Technology in Android Apps Development

As technology continues to advance rapidly, the future of face recognition technology in Android apps development looks promising. With enhanced security features and user experience, integrating face recognition into Android apps will become more common. Developers are constantly innovating to make this technology more accurate and efficient.

As we move forward, it is essential for app developers to stay updated with the latest trends in face recognition technology. By leveraging this innovative feature effectively, Android apps can offer a seamless and secure user experience like never before. Embracing the potential of face recognition can revolutionize the way users interact with mobile applications, opening up new possibilities for personalized experiences and heightened security measures.

The future holds immense potential for the integration of face recognition technology into Android apps. As advancements continue to unfold, there is no doubt that this feature will play a significant role in shaping the landscape of **[mobile app development](https://www.algosoft.co/service/mobile-application-development)**. Stay tuned for exciting developments as we embark on this journey towards a more secure and intuitive digital world powered by face recognition technology in Android apps!

| websitedevelopmentco |

1,797,014 | Unlocking Data Privacy: SageMaker Teams Up with Secure Multi-Party Computation for AI Advancements | Cloud service providers can leverage the power of Amazon SageMaker to provide customers with advanced... | 0 | 2024-03-21T07:50:52 | https://dev.to/sygitech/unlocking-data-privacy-sagemaker-teams-up-with-secure-multi-party-computation-for-ai-advancements-41le | aws, sagemaker, cloudcomputing, ai | [Cloud service providers](https://sygitech.com/) can leverage the power of Amazon SageMaker to provide customers with advanced AI capabilities in their cloud environments. Amazon SageMaker is a complete solution for building, training, and deploying machine learning models at scale, enabling cloud service providers to deliver AI services to their customers. We explore the strong links between Amazon SageMaker's ability to use AI and its ability to improve compliance with industry standards such as the COBIT and NIST frameworks.

**Key features of Amazon SageMaker:

**

**Data Labeling and Built-in Algorithms:** In order for supervised learning tasks to be successfully carried out, it becomes important that users have tools for annotating as well as labeling their datasets in an efficient manner.

**End-to-End Machine Learning Workflow:** Amazon SageMaker provides a unified platform for the entire machine learning lifecycle, from data preparation to model deployment. This streamlines the process for cloud service providers, allowing them to offer a cohesive AI solution to their clients.

**Managed Infrastructure: ** SageMaker abstracts away the complexities of managing underlying infrastructure, allowing cloud service providers to focus on delivering high-quality AI services without the burden of infrastructure management. This reduces operational overhead and accelerates time to market for AI initiatives.

**Hyperparameter Optimization and Deployment:** This process involves tuning model hyperparameters automatically using this system so as to enhance its performance thereby reducing time spent by data scientists trying to do this activity manually. After training a model; it is easy to deploy it via the RESTful API with the help of SageMaker. Monitor model performance and drift, and respond to changes in data distribution or model behavior.

**Integration with AWS services:** SageMaker integrates with other AWS services, including S3 for data storage, AWS Lambda for off-premises computing, and AWS Glue for data organization. Amazon SageMaker makes it easier and faster for other developers and data scientists to build, train, and deploy machine learning models.

Amazon SageMaker can also support the NIST (National Institute of Standards and Technology) and COBIT (Control Objectives for Information and Related Technologies) frameworks in several ways:

**Security and Compliance:** Both NIST and COBIT emphasize the importance of security and compliance in information technology systems. SageMaker provides features such as encryption, access controls, and audit logs to help users meet security requirements and comply with industry regulations.

**Data Governance:** NIST and COBIT frameworks stress the need for effective data governance practices. SageMaker facilitates data governance by providing tools for data labeling, versioning, and access control. It also integrates with AWS services like AWS Lake Formation for managing data lakes and AWS Glue for data cataloging and ETL (Extract, Transform, Load) operations.

**Risk Management:** NIST and COBIT frameworks include guidelines for risk management and mitigation strategies. SageMaker supports risk management efforts by enabling users to track model performance, monitor for data drift, and implement automated alerting mechanisms for detecting anomalies or security threats.

**Auditability and Transparency: **NIST and COBIT emphasize the importance of auditability and transparency in IT processes. SageMaker offers features for model versioning, experiment tracking, and model explainability, allowing users to understand how models were developed and make decisions based on transparent, auditable processes.

Using Amazon SageMaker capabilities, organizations can accelerate machine learning development processes and improve the efficiency of AI-based applications and strengthen compliance with NIST and COBIT frameworks.

In the future, [cloud providers](https://www.sygitech.com/cloud-management.html) will integrate encryption techniques into machine learning workflows using Amazon SageMaker features: