id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

825,826 | How to Deploy Your App to Netlify | 1. Push Your Code to Any Version Control Applications The first thing you need to do is... | 0 | 2021-09-16T09:41:06 | https://blog.furkanozbek.com/how-to-deploy-your-app-to-netlify | react, webdev, netlify, 100daysofcode | ## 1. Push Your Code to Any Version Control Applications

The first thing you need to do is push your git repository to any version control application. You can use any one of these;

- Github

- Gitlab

- Bitbucket

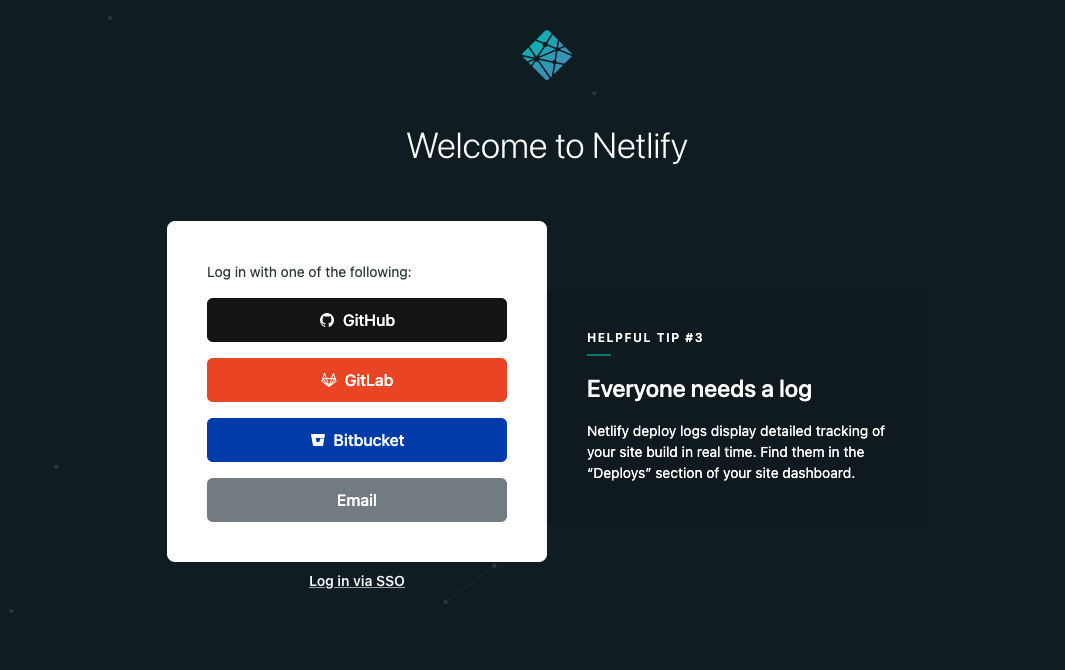

## 2. Register to Netlify

Once you have a repository inside any given application just signup to **Netlify** and connect them.

## 3. Creating New Site

Now, you should be on the team page where you can see your applications which right now you do not have but don't worry we'll add them right now.

In there you can either click "New site from Git" button or you can visit **https://app.netlify.com/start**

**You will see this screen**

You should click the button which you are storing your repository right now. In my case it is Github.

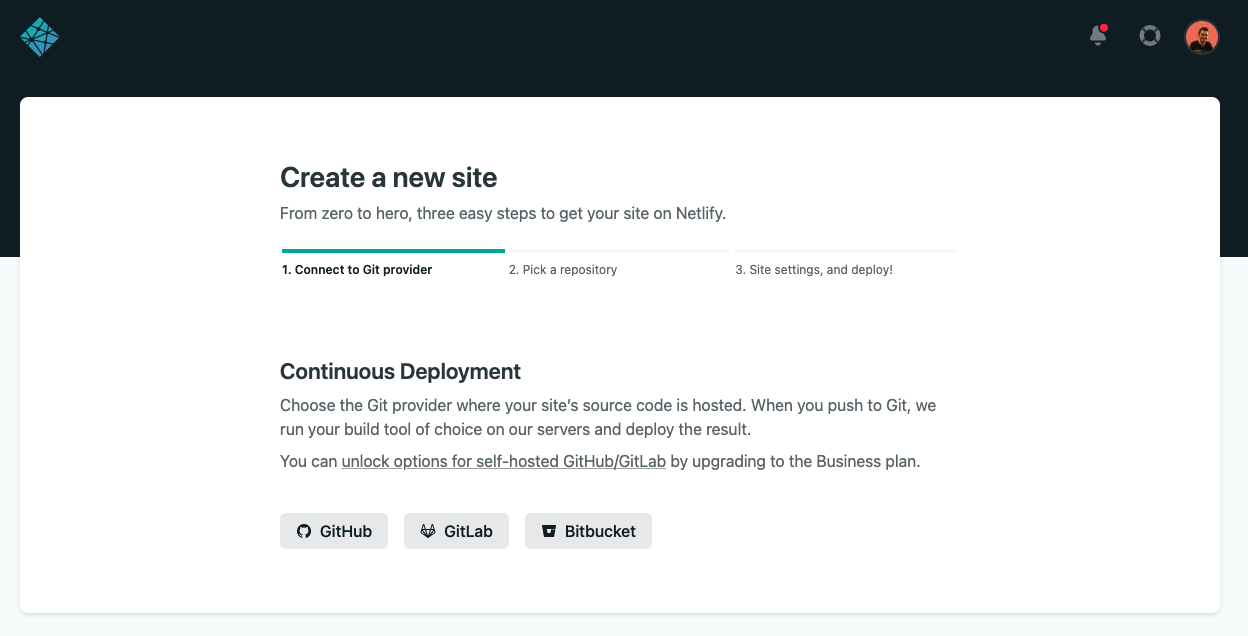

## 4. Connecting Accounts

After that, you should see a connection screen where you will connect your Github account with Netlify.

Or if you are connected you will see a text that says **"Authorized"** which indicates that you already connect your account with Github****

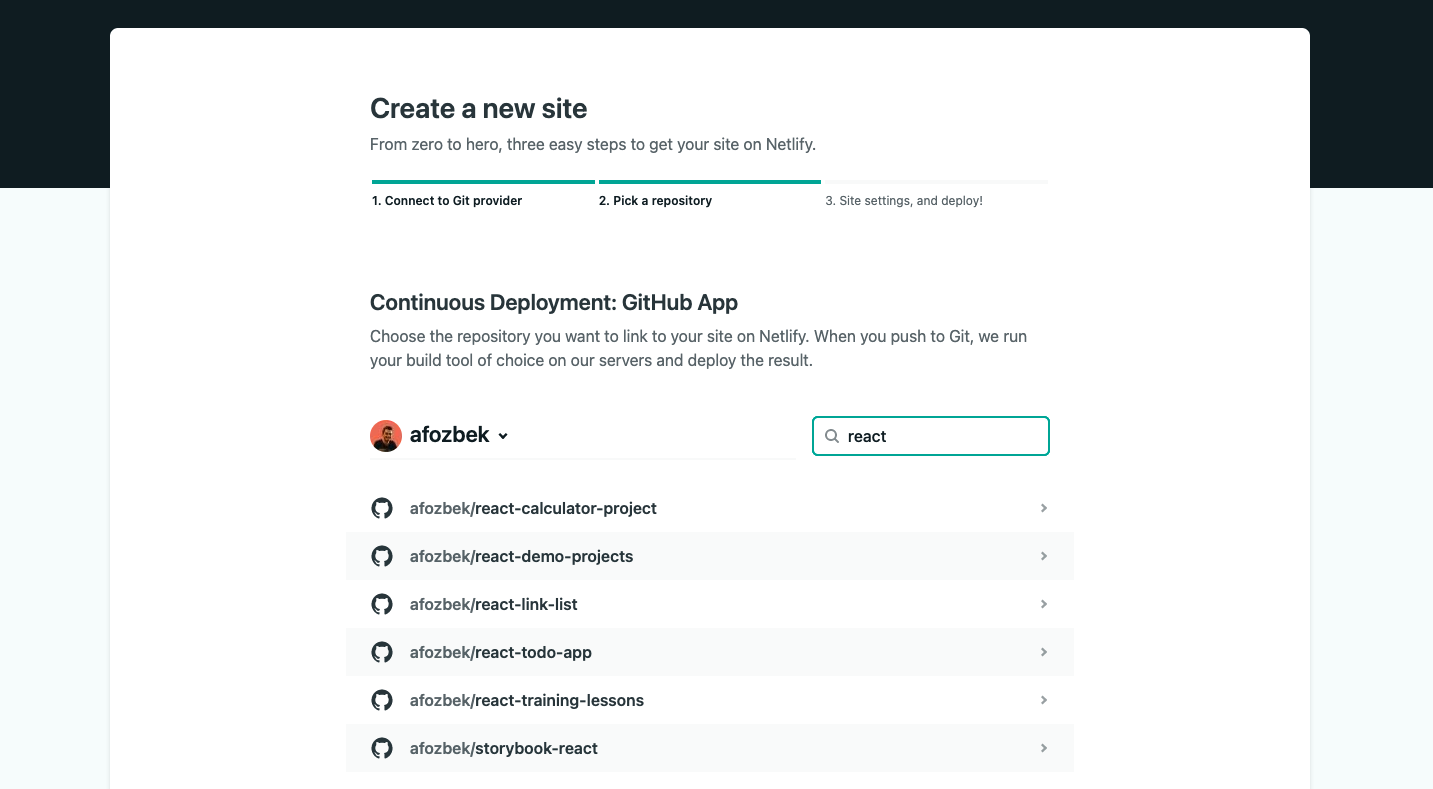

## 5. Choose a project

Now select the project(repository) you want and continue

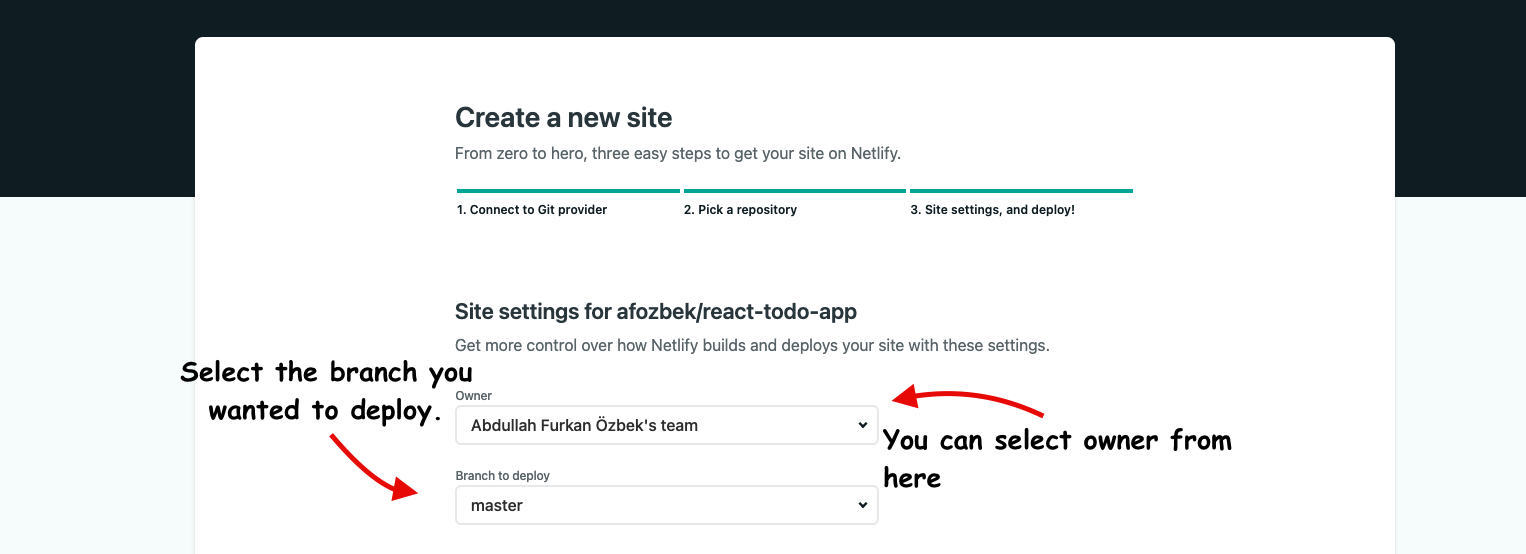

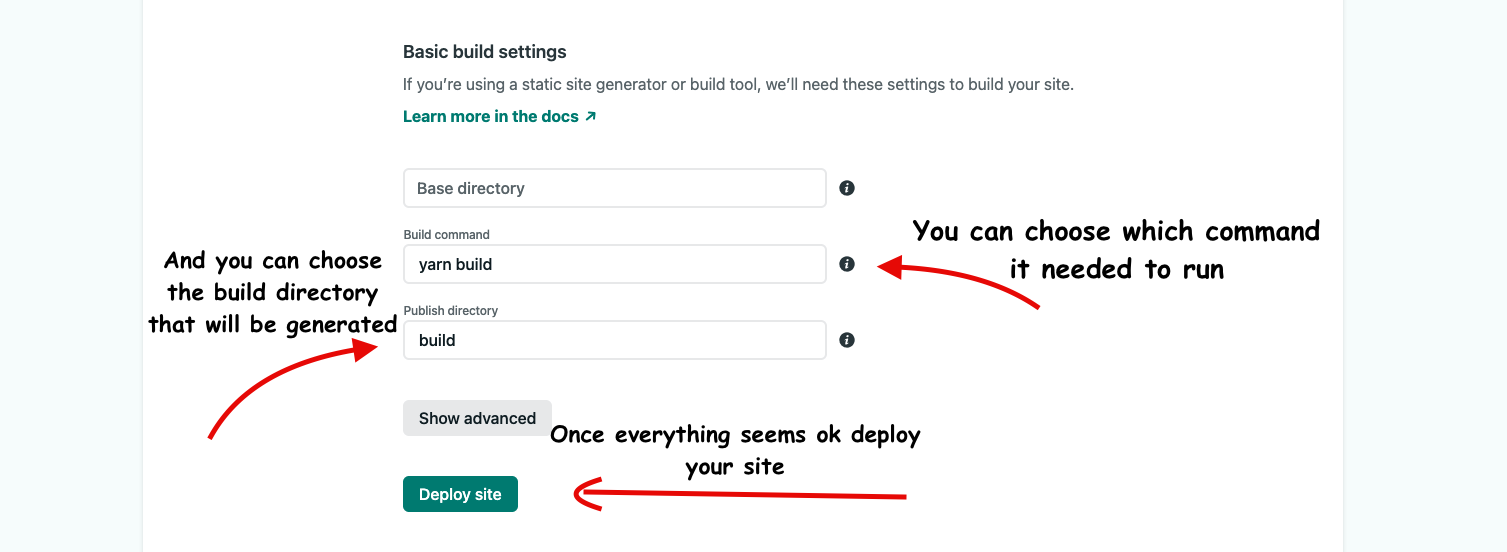

## 6. Choosing Site Settings

Now you can choose the owner of this application and choose which branch to deploy. Netlify will listen to that branch and if any changes published it will rebuild your application and run the tests for you.

You can also choose the build command that is needed to run to generate the build folder. This folder name can be different from framework to framework. So make sure to run on your local first to see the folder name.

And once everything is ok deploy your site.

## 7. Congratulations

Congratz! You deployed your first application to Netlify.

| afozbek |

826,077 | Why Video Call SDKs Should Be Used? | Arvia Video call SDKs can drive up your sales with remote sale practices. Increase your customer... | 0 | 2021-09-16T13:20:05 | https://dev.to/arviatech/why-video-call-sdks-should-be-taken-196g | webrtc, webdev, videochat, livechat | <a href="https://arvia.tech/all-in-one-sdk/">Arvia Video call SDKs</a> can drive up your sales with remote sale practices. Increase your customer engagement rate and decrease your operational costs by utilizing video calls. You can have multiple functions with one simple SDK implementation.

Integrating video call SDKs to your website or your app can provide amazing benefits. The business can use this implementation to drive up sales and customer engagement rates. Also, video calls can be embedded in a mobile app for convenience. SDKs enable easier embedding process by decreasing the overall implementation costs.

<b>Video Call SDK Benefits</b>

Using video SDK might sound intimidating. But the benefits of this remote sales method will worth every effort.

<b>Fast Download and Implementation</b>

Compared to native video apps, video SDK offers a fast implementation. All you need to do is download the SDK and install it on your browser. Since it is a software development kit, the implementation time and costs will be significantly lower than developing a native app. When a business needs a fast video call solution, video call SDKs will be the best choice.

<b>Sell Under Any Condition</b>

The novel coronavirus pandemic has shown us one thing. You need to keep selling under any condition. But you need an alternative channel to your brick-and-mortar stores. This global pandemic enabled many global companies and SMEs to drive up their sales with alternative methods. When you use video selling, your sales team can sell anytime anywhere. They don’t need to be at the office. A good internet connection is all you need to continue your business.

<b>Personalized Videos</b>

Recently, personalized content gained importance in any sector. Customers and leads want to see written and video content that matches their needs. The standardized and one-fits-all approach is all behind. A remote sales tool can give you this personalized content. The videos for remote sales can be created for a specific customer or lead segments. Thus, the engagement rate and the conversion rate will increase significantly.

<b>Support Sales E-Mails with Video Remote Sale</b>

E-mail marketing is still one of the most popular marketing practices, especially among B2B customers. E-mails have a relatively higher opening rate than any other marketing method. But this global pandemic taught all of us an important lesson. You need to differentiate your content to engage with customers even in the hardest times.

When you support your e-mail marketing campaigns with video, you can send special messages to your customer. For example, during the pandemic period, a video can promote your business better. The video-specific to this event can include precautions and hygiene, especially for the service sector.

<b>Decreased Operational Costs</b>

By using the video call SDKs, you can decrease the operational costs of your business. Your sales team no longer needs to have face-to-face meetings with customers in their office. All you need is a video call. This will decrease transportation and other fees.

<b>Engage by Using Webcam</b>

Another way to start a remote sale is by using a webcam to engage with the customers. You will also need a video SDK for this process. However, you might also need some extra properties to live stream.

In this process, the sales team can directly engage with the customers by using their webcam. Instead of face-to-face customer visits, an online visit can be completed within minutes. Thus, your sales team and the customer can save time. Also, the dangers for the disease are minimized by keeping the social distance. When you implement a video sale tool, you can immediately start your remote sale practices.

<b>Support for Multiple Languages</b>

With an integrated subtitle program, you can solve all language problems by using video call SDK integration. Multiple language support is one of the key properties of these tools. You can choose different language subtitles for your videos. Thus, you can easily reach customers living in different countries.

<b>Control the Entire Video Call</b>

These SDKs empower the users by giving control of the conversation. It is possible to mute the video or blur the faces. Also, users can change the background for a more formal look. All of these can be completed with just a few clicks. Your sales team can bring the entire office to their home and connect with your customers and leads. There is no need to leave your home to increase your sales.

<b>Video Call SDK Disadvantages</b>

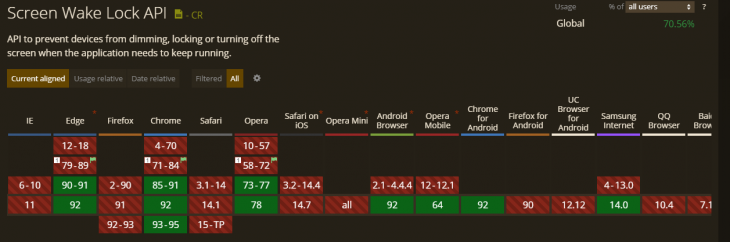

One of the main video call SDK disadvantages is related to browser incompatibility. Since the majority of these SDKs work on a browser, an older browser version might cause an issue in terms of audio and video quality.

In addition to that, these SDKs require a stable internet connection. All users should have a good and high-speed internet connection to benefit from the best capabilities of a video call SDK. Poor internet connection might lead to lags, disconnection issues and low-quality visuals. | arviatech |

826,214 | How And When to use bind, call, and apply in JavaScript | Call call quite simply lets you substitute a this context when you invoke a function. Calling the... | 0 | 2021-09-16T15:42:30 | https://dev.to/abhi784pat/how-and-when-to-use-bind-call-and-apply-in-javascript-2n5l | javascript | Call

call quite simply lets you substitute a this context when you invoke a function. Calling the .call() method on a function, will immediately invoke that function.

let Person = {

name: "Abhishek Patel",

showname : function(){

return this.name;

}

};

let changename = {

name: "Abhishek"

};

Person.showname; // "Abhishek Patel"

Person.showname.call(changename); // "Abhishek"

When to use call

When you are dealing with an array-like object and want to call an array method.

Apply

The apply() method is used to write methods, which can be used on different objects

let name={

firstname:"Abhishek",

lastname:"Patel

}

let printFullname=function(home,state)

{

console.log(this.firstname+" "+this.lastname+"from"+hometown+","+state

}

printFullname.apply(name,"[Sonepur","Bihar"]) //"Abhishek Patel from Sonepur ,Bihar

so in above function "this" will point to "name" object

we can also apply call function in this way

printFullname.apply(name,"Sonepur","Bihar")

When to use apply

A common use case for apply was to call functions that couldn’t be passed an array of arguments. For example, if you had an array of numbers: let nums = [1,2,3] and you wanted to find the smallest number. You couldn’t just call Math.min(nums) you would instead have to call Math.min.apply(null,nums) to get a minimum.

Bind

Bind creates a new function that will force the "this" inside the function to be the parameter passed to bind().

let name={

firstname:"Abhishek",

lastname:"Patel

}

let printFullname=function(home,state)

{

console.log(this.firstname+" "+this.lastname+"from"+hometown+","+state

}

let printmyname=printFullname.bind(name,"Sonepur","Bihar")

printmyname() //"Abhishek Patel from Sonepur ,Bihar

So,bind will force "this" to point at "name object

When to use

Async stuff

A very common front-end use case for bind is for anything that will happen asynchronously. For example in a callback that needs access to the original this context. This is especially handy in component based frameworks like React.

Further Reading

Call()-https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Function/call

Apply()-https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Function/apply

Bind()-https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_objects/Function/bind

| abhi784pat |

829,098 | SAAS Marketing Techniques To Grow Faster In 2021

Your Way To Success | Like every product/service. SaaS products are different. They are intangible. They have intangible... | 0 | 2021-09-17T05:20:01 | https://dev.to/debbiemoran/saas-marketing-techniques-to-grow-faster-in-2021-your-way-to-success-1o8k | saas, saasmarketing, saasmarketingstrategies | Like every product/service. SaaS products are different. They are intangible. They have intangible buyers. They have subscribers like Netflix, who have to pay for their product/ service annually, monthly, or quarterly.

To [increase sales](https://meetanshi.com/blog/magento-2-extensions-to-boost-sales/), they have a few tricks which are by giving annual discounts, showing off customer testimonials, social media promotions, [enhanced customer experience](https://wotnot.io/blog/ecommerce-customer-experience/), demos, [Live chats](https://www.helpcenterapp.com/blog/live-chat-software-benefits/), CTA, set up calls, and Feedback to retain the customer.

Not only this, this industry has its process as it has an intangible audience. So, they have to understand their presentations not through body language but by how they are expressing their views or presenting their questions in an email or call.

Providing Free trials is mostly there in the [SaaS industry] (https://www.peoplehum.com/blog/choosing-the-perfect-saas-partnership-a-checklist). As the audience will get to know what your product is creating value to their biz and how it is helpful in the growth of the biz by saving time/ money.

The free trial should not be too long or short. It should depend upon the complexity of the software.

SAAS Marketing Techniques To Grow Faster In 2021

Your Way To Success

Before knowing the technique one should know what Saas is and how it works. Right?

Like before buying anything we ask for the price of the product.

We all have heard about [SaaS product marketing](https://wotnot.io/blog/top-growth-hacking-strategies-for-saas-companies/)? But how it is different from other marketing channels is the thing to look into. Isn't it?

So, here we will give you the beginners guide For SaaS.

SaaS stands for Software as a service. It is a software licensing tool and a delivery model of a product. This service runs on a subscription basis and is hosted centrally. Like: Microsoft office.

The model involves software delivery by getting access to software with a proper internet connection and a device and removing the time-consuming process of installing and maintaining it.

Example: Netflix, where you chill and watch is an OTT platform which is a SaaS-based product where your service i.e. movies or series. It offers software to [watch licensed videos](https://www.mostlyblogging.com/youtube-shorts-download/) on its platform. It has a zillionth base of subscribers all over the world.

SaaS products are intangible like any tangible product. But attain revenue and profits. They have their selling strategies that differ from company to company.

###What are SaaS conversions?

It is like selling the software that the customer access through an online website or portal which is on a subscription basis which helps the biz and customers to grow their biz with save of time or money or improving their revenues

Since it is a subscription-based price model the customer gets frequent while buying these products as they are part of their growth which is a steady sale of their service.

And also, these products are highly customized. So, you can choose features according to your needs.

But the more expensive your product is, the more stakeholders will be involved which can stretch the process to one month or more. Here are other factors that slow down the SaaS sale cycle :

**1.Complex software:** the more complex the software is, the duration of the cycle will be more. So, in this case, the right prospect should be in the room for the proper and fastened solutions.

**2.New Markets:** if you are selling it to new markets, the cycle may be longer as you’re communicating with them and giving valuable information for the product. But before making them your customer you should educate them properly about the product which will further make them a frequent buyer.

**3.Enterprises:** Selling to these companies would increase the stakeholders but there would be technical and more legal red tapes which would slow down the cycle of sales.

**4.Free Trials:** if offer a free trial, then the length of the trial will naturally add time to the sales cycle. As the customer will utilize the free trial before proceeding with further buying. keeping in mind, the free trial should be short which will give two things: shorten the sales cycle and make the customer buy the service quickly.

The longer the trial, the longer would be the sale cycle which will take time to decide if the customer will buy it or not.

###Fundamentals of SaaS Sales

“Having a repeatable process empowers your sales reps to sell with efficiency and confidence”. The process begins from:

**Prospecting:**Since we are selling it to the tech-savvy audience, we will do [inbound marketing](https://www.yourdigitalresource.com/post/inbound-marketing-strategy-fundamentals) which includes blogs, online posts, email newsletters, whitepapers, etc.

In addition to that, we can do face-to-face interaction with potential buyers which is rare in this SaaS industry.

**Qualifying:** Not all visitors on your website or trial subscribers will buy the product. To know that, there are lead scores which is an automatic way to qualify leads and you will get information and interpret it from your data and assign it with the lead scores from 1 to 10.

Another way is to start a trial is to email them or call them for after-sale service. So, you will get an idea of the status of the buyer.

**Presenting:** Mostly it’s not possible to present in face-to-face interactions in the SaaS industry otherwise we have got it through body language.

Through online mode, presenting would be done through email sequence and frequent follow-ups which will let us know the pain points the way it’s been expressed.

**Handling objectives:** After presenting, prospects have questions and concerns about the product or service. So, you must have known what most of the customers ask and know how to handle the response of the prospect.

**Closing:** At this moment, the prospect becomes your customer. In this stage, we will deliver the final proposal of the product and negotiate with the customer.

**Nurturing:** to retain the SaaS customers excluding customer support which includes training for existing and new customers. Feedback includes reviews, rates, and testimonials.

Part of their happy moments by sending happy birthday, and celebration notes, etc.

###How to increase your SaaS conversions using the right marketing strategy:

**Create strategic trial duration**

Most of the SaaS products have a trial duration. As it is a great way to hook new customers to buy, however, to make it worthwhile, you should plan it strategically.

When the customer has the opportunity to get trials which will help them to know the value of the product in their biz as well as the benefit of your offering.

The trial period should depend on the software. if the software is complex then 14 days or more trials would work. But what if the software is easy to adapt and gives the trial for 30 days?

It would only make the sale cycle long and there would be fewer customers buying the product. As they know every part of your software and will compare with the alternatives of the service. So, it depends on the product.

**Custom/ live setup call**

Many of us don’t know how to set up the software. How did things work? These customers have the priority of how the software will work for them by giving lesser importance to how your products work.

SaaS has the highest conversion from this special technique of Live setup call only. This [CRM](https://close.com/crm/) would help them to achieve their objective related to the product they have taken a trial or purchased.

**Leverage Annual discounts**

Many SaaS products offer annual discounts in exchange for the customer paying the bill all at once in a cheaper amount as compared to paying it every month which attracts customers to attain the service.

While the company gets a sizable influx of cash at a moment and doesn’t get worried about a customer leaving at any moment.

**Automated follow-up**

As you see whenever you log in to the florist website or hospital website. They will ask ‘how may I help you?’ which is an automated way of assisting you.

In the same way, these automated services will help the new customer to assist with the service and product and they have common queries stored which will be solved by the assistant by putting up the questions and shortening the sale cycle.

**Content Marketing**

This is the only way of communication between the buyer and seller. By reading your content, understanding it, and perceiving it makes the buyer come into your contact.

Biz around the globe is using content marketing to reach the audience and generate leads. With consistency, you share the content it will increase its value over time

PPC( pay per click) also generates leads but with valuable content.

So, content marketing is an asset for biz owners while online ads are temporary or rented. Biz around the globe is using content marketing to reach the audience and generate leads. Keeping content quality consistent across [multiple channels](http://litcommerce.com) helps increase brand awareness among your audience.

**Search Engine Optimization (SEO)**

This feature works hand in hand with content marketing by making it discoverable in google, bing, and ranking it in two ways: On-page SEO and off-page SEO.

On-page SEO: This form is under your control. You create content that people search to read. By adding internal links, UI, use of the title, and description.

Off-Page SEO: Mainly, when we hear about SEO, links are the things that click us. But getting the right trusted and authoritative link is what matters! The best way to gain links and shares is by a distribution strategy.

**Google AdWords**

While inbound marketing reduces the spending of Adwords, zillion people are still clicking on the search engine ads per day.

If you don’t want to miss out on these people to generate leads then you should still invest in PPCi.e pay per click

.

PPC is beneficiary as it is scalable, generate leads, and generate the best ROI for your biz

**Pro Tip:** to increases sales, some strategies to take care of is to choose the right model of SaaS, identify the target audience and its value proposition, set prospect qualification criteria, create templates, call scripts, set revenue goals, create a customer support system, and keep track of sales performance metrics that will be effective in rank in the SEO.

| debbiemoran |

829,362 | The OS Data Hub explained: when detail matters | A comprehensive look at what the OS Data Hub is and the APIs that are accessible. Ordnance... | 0 | 2021-09-22T11:36:26 | https://dev.to/charleyglynn/the-os-data-hub-explained-when-detail-matters-5dp3 | ordnancesurvey, webdev, geospatial, tutorial | ###A comprehensive look at what the OS Data Hub is and the APIs that are accessible.

**Ordnance Survey, a data company?**

Fundamentally, Ordnance Survey (OS) is a data company and has been capturing information about Great Britain for almost 230 years. Our original purpose was to create a map in 1801 that would help the military defend and protect the nation. Our aerial imagery techniques helped support surveyors on foot 100 years ago. In the 1940s we were providing an advisory role to international governments on mapping and surveying; and in the 1960s we were mapping government sites.

We’ve developed digital maps of Mars and even used [OS OpenData](https://osdatahub.os.uk/downloads/open?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained) to recreate a map of Great Britain in Minecraft.

[Throughout its history](https://www.ordnancesurvey.co.uk/newsroom/blog/top-10-mapping-moments-in-the-history-of-os?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained), OS has always been at the cutting-edge of location data technology, and its capture and storage. In the early days, surveyors would go out into the wilderness to record waypoints, write them in ledgers and take them back to the office to create an archive of paper maps. However, in the last 50 years, OS has transitioned to capturing and storing this information in digital forms, and as a result, has a huge range of geospatial data about Great Britain that has been included in its data products.

**What is the OS Data Hub?**

[The OS Data Hub](https://osdatahub.os.uk/?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained) is our new platform to serve trusted, authoritative data through new formats to end users. It’s focused on building new efficient ways to access OS data and is a portal for mapping, data, and/or Application Programming Interfaces (APIs).

For those who are not familiar with the term, APIs are services that submit requests to servers and specify the data you want, which is then sent back to you immediately. Think of the restaurant analogy; where you submit your dinner ‘request’ to a server, who will provide this information to the kitchen, who cooks and prepares your order, and then brings your requested order to the table. APIs are essentially important middleware, and programmatically speaking, can reduce technical barriers to entry and the overheads associated with using large, complex datasets.

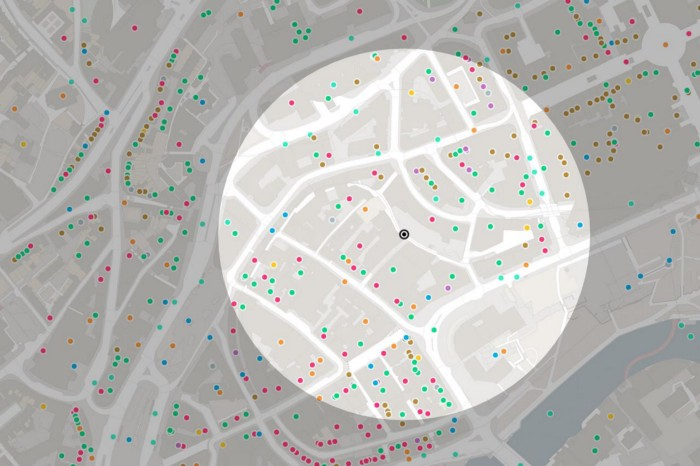

The OS Data Hub delivers the foundational layer to many geospatial applications. OS makes around 20,000 updates to the database every day. This trusted layer of detailed geospatial data can be pulled into many different use cases including data visualisation, geospatial analysis, and creating business insights. Even within the world of academia, OS data is helping to answer new and interesting geospatial theories.

The data is served through OS APIs in either the British National Grid or Web Mercator standards, and is interoperable with different software and mapping libraries. There are various types of online documentation including [code examples](https://labs.os.uk/public/os-data-hub-examples/?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained) where developers can copy and paste, add in their API keys, and start using OS data within minutes.

The OS Data Hub also has an error reporting tool to feedback about the data, so if you identify errors with the location information, you can report this directly to OS.

**The difference between Open and Premium data**

The signup process provides the technical and pricing information to choose between OS OpenData, Premium and Public Sector plan (Public Sector plan users will need to be a PSGA member).

The OS OpenData plan provides free and unlimited usage but with a data limit determined by the detail. The APIs can be used to view and integrate publicly-accessible datasets and to understand the capabilities of the data within the OS Data Hub. Users can also download the datasets to query offline.

The Premium plan provides access to premium OS datasets where the requests and transactions contain a price. The OS Data Hub provides users free premium data (API transactions) up to £1,000 per month and [information around how much each transaction costs](https://osdatahub.os.uk/plans?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained) so you can estimate the cost based on your usage, to support any budgeting.

The OS Data Hub comes with a dashboard to track progress of API usage. API Projects can help you organise your keys (think of servicing different customers or different websites that you are using) and track the overall usage of the applications you are using OS data on, in more sophisticated ways. Within an API Project you can select several APIs and your key is then directly linked to them all.

**OS Data Hub: the API suite**

OS provides APIs in three broad categories; maps, address data, and geographic features.

**OS Maps API** is a raster tile service that serves maps as PNG images, which are assembled in your browser or GIS. There are two ways to access OS Maps API; Open Geospatial Consortium (OGC) standard web map tile service (WMTS) and ZXY, meaning these maps work with almost all geospatial software and every mapping library. OS Maps API styles are designed and developed by OS cartographers with different features and visual hierarchies.

**Using OS APIs for quicker updates**

The OS Maps API is useful for base mapping. For example, the [National Library of Scotland](https://www.ordnancesurvey.co.uk/newsroom/blog/comparing-past-present-new-os-maps-api-layers?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained), which is a repository for historical maps and provides a tool where users can view historical maps and compare them against modern versions. Analysts of The National Library of Scotland originally downloaded large files of OS Maps onto local servers, which meant there could have been gaps of up to two years before updated basemaps were used in their application. Now the analysts have integrated OS Maps API that are connected to OS servers, they are using the most up to date basemaps by only having to change a couple of lines of JavaScript code.

OS Vector Tile API provides an on-demand and quick way to create maps for web and mobile users using OS cartography. The advantage is that vector features are rendered within the browser or client. This means its capable of supporting more interactivity and online experiences and its compatible with common web mapping libraries and increasingly more GIS software. The Vector Tile API is fully customisable not only for individual layers but also individual features.

The Vector Tile API is used with GeoAR.it, an augmented reality company for the environment. It uses vector tiles to create 3D extruded buildings. Every geographic feature can have metadata or attributes that hold relevant details about that feature. Within the Vector Tile API, building footprints have a feature called the building height attribute, a number that represents the height of that building. GeoAR have extruded out the polygons to recreate a 3D model as a city, using the Vector Tile API, to use in this augmented reality environment.

**OS Features API** is used to access the geometries and attribution of OS data. It provides rich geographic vector features in either GeoJSON or GML formats. In addition to the geospatial data (geometries), you receive a set of metadata that is connected to that feature, which are called attributes. In a GIS application, you can examine an attribute table such as address, area, postcodes etc.

The OS Features API provides many data layers, including OS MasterMap Topography Layer. The user specifies the details of the data they need; for example, results that match a building or road, or a spatial query including features that intersect a property. The OS Features API removes overhead of managing and storing the data locally.

**Achieve detailed location analysis without surveying**

Start-up Balkerne uses OS Features API to conduct location intelligence risk analytics for customers. The data provided via the API helps Balkerne achieve an advanced understanding of which areas of their properties are at risk of natural disasters, such as floods or subsidence. They can perform this location risk analysis, without having to send out surveyors of their own to inspect a given area.

**OS Linked Identifiers API**

Across OS and UK government, a number of geospatial databases store data for several purposes, such as UK highways and Land Registry. Each of these databases require a unique ID to look up the correct data using analytical processes. The community and Government have agreed on providing unique reference numbers that can be attached to a property, street, or topographic feature through its lifecycle. These include the Unique Property Reference Number (UPRN), Unique Street Reference Number (USRN) and OS also provides the Topographic identifier (TOID).

The Linked Identifiers API enables correlation between all these reference points.

OS has individual features associated with their datasets. However, within the Land Registry database, they may have one UPRN that is associated with a single structure. The Linked Identifiers API allows you to query a UPRN and it will correlate and send back all the TOIDs connected to that UPRN. There is no geospatial data returned by the Linked Identifiers API, but it is a way to link between several different database that use multiple reference systems.

**OS Names API** is a free-to-use searchable database to help the user find and verify populated places, cities, roads, and postcodes within Great Britain. To find ‘Southampton’ from the API perspective, when sending a request with the string of ‘Southampton’, it will pull out searches related to that request. Information will include where the location is, its size, and the map view will zoom directly to that location. The OS Names API enables forward and reverse geocoding, and links diverse information such as a statistics or descriptions to locations.

**OS Downloads API** automates the discovery and download of OS OpenData. It enables users to work with bigger datasets, even within the capability of hosting them on their own servers, or to do some country-scale analytics. Users are able to request various coverage areas, metadata, and data format depending on the dataset. From October 2021, it will also include OS premium datasets including OS MasterMap Topography Layer and AddressBase Premium.

**OS Places API and OS Match & Cleanse API** are address APIs and contain AddressBase Premium data. Users can use these APIs for forward and reverse geocoding of detailed address data, which includes a UPRN for linking and sharing. The GeoSearch function allows the user to search for addresses using bounding box, radius, and polygon queries. Both address APIs can save the user time, ensure they capture the correct address details at source, and help minimise errors in their own databases.

**Accessing the OS Data Hub**

Any developers, data scientists, or GIS users can access the OS Data Hub using standard protocols for accessing information over the internet and using the standard format for requesting and retrieving data.

For [web developers](https://labs.os.uk/public/os-data-hub-tutorials/web-development/automated-open-data-download?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained), connect with libraries such as Leaflet, OpenLayers, Mapbox GL JS and ArcGIS for JavaScript. All libraries have, native to their functionality, ways to connect to the OS Data Hub in several standard data formats.

For [data scientists](https://labs.os.uk/public/os-data-hub-tutorials/data-science/price-paid-spatial-distribution?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained), use Python and R libraries, including Geopandas and Jupyter Notebook for fetching data over an http request. This is more around structuring those requests so they comply with how the OS Data Hub APIs are expecting to receive the request and will send the data back.

[GIS analysts](https://labs.os.uk/public/os-data-hub-tutorials/gis-applications/3d-flood-modelling?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained) can use OS APIs to pull the basemaps into their workflows and connect to rich geospatial features directly, along with attribution, for the areas you want to analyse. It’s about using the right data, as and when you need it.

Most trends in software development are due to their ability to do something easier, cheaper, and/or more efficiently. The OS Data Hub encompasses 230 years of mapping and geospatial expertise; it has undertaken and managed the technical GIS burden, in addition to the heritage associated with OS, to allow the developer to use and manage geospatial data through simple and easy-to-access APIs.

**For more information visit [osdatahub.co.uk](https://osdatahub.os.uk/?utm_source=devto&utm_campaign=devrel&utm_content=data-hub-explained).** | charleyglynn |

829,382 | Curious Media lab - DocsBarcelona x Garage Stories | Hello! Sharing this amazing #opencall :) At Garage Stories we are preparing a lab along our... | 0 | 2021-09-17T09:28:57 | https://dev.to/garage__stories/curious-media-lab-docsbarcelona-x-garage-stories-3g0d | design, techtalks, career, challenge | Hello! Sharing this amazing #opencall :)

At Garage Stories we are preparing a lab along our colleagues from #DocsBarcelona to encourage you to work on your first emerging media project (VR/AR/AI) for social impact! Open for creators, artists, filmmakers, storytellers and all sorts of creative minds from all walks of life! 🚀🌍

This year we'll be focusing on these themes:

- conservation of economical balance

- injustices and abuse of power

- the legacy of wars and colonialism

We'll be super excited to have you on board! Please feel free to spread the word!

Curious Media 🕵️♀️📹 - https://www.garagestories.org/curiousmedia

18-21 October, 2021 · 4-day virtual workshop · 6pm-9 CET

Cost: 35 €

Applications open now: https://docs.google.com/forms/d/e/1FAIpQLSeiV1IhWuZVvmgRep3DAXOqfCNA6ZC3suHHGnLA-CHgEDdUKw/viewform | garage__stories |

829,411 | How To Sell Online Fitness Programs | Due to the spread of corona virus, many governments have implemented the rule that gyms, yoga studios... | 0 | 2021-09-17T10:33:46 | https://dev.to/praveene27295/how-to-sell-fitness-programs-online-29c2 | video, videostreaming, streaming, fitness | Due to the spread of corona virus, many governments have implemented the rule that gyms, yoga studios and swimming pools will remain closed for the next 12 months. This has drastically affected gym owners, yoga teachers businesses. So at present, many fitness instructors have started their own online fitness studio, started selling fitness programs online and they have been able to reach more people beyond borders. Many people have moved to an alternative solution that is doing a workout at home by watching fitness videos online. Online Fitness programs have become a trend as many have decided to do work out at home themselves.

Online fitness Studios are Video streaming platforms where fitness instructors perform exercises so that users can watch videos to learn exercises it’s a kind of virtual gym. Through an online fitness studio, you can share workouts, training plans, nutritional diet and health tips and exercise courses. This remains an excellent opportunity for fitness instructors to online fitness as this helps in reaching more people virtually literally from any location.

It’s better to start online fitness programs where you need to build a Video platform with help of a video platforms provider so that people can workout videos at any time, anywhere and on any devices like mobile, laptops and TV. With this online fitness studio, you can train more people without the need of hiring an extra trainer, expanding your training facility and spending more time. So let get into the main topic of how to sell online fitness programs

## How to Start Online Fitness Programs in 4 steps

### 1. Choosing the Business Model

There are two business models: TVOD and SVOD. A business model needs to be chosen depending upon the type of business you run.

**Transactional Video on Demand(TVOD)**: It is a Model where users pay money one time to access the video content permanently or even download it. The transactional model is best when you want to teach a particular topic or exercise, workout routine and even workshop. You can sell a single series of videos as a package such as training plans, exercise, nutritional plans and workout tips. Once the customer bought this video content from you they don’t need to buy it again and they access the content for an entire lifetime

**Advantages**:

* Once the Video product is ready you can sell it for an entire lifetime

* You can sell video products on average between $75 and $100

* You can market your online fitness training business in person and also virtually

* You can even use referrals and affiliate groups to boost your business growth

**Disadvantages**

* Once the product is sold to the particular customer you cannot sell the same product to him again, so you need to find new customers every time to sell the product

* Revenue is not recurring and stable

* More money has to spent in marketing

* It takes more time to create a new video course again

**[Subscription Video on Demand (SVOD)](https://dev.to/praveene27295/7-ways-to-reduce-subscription-churn-59ni)**: It is a model where users have to pay a particular fee monthly or yearly to access an entire library of video content an infinite number of times. If they stop paying the fees, the user loses access. It is the best model for fitness instructors as they want to build a client-based online fitness platform. In this model, the trainer adds and updates videos on these platforms so that users learn and stay updated. Trainers keep on adding multiple exercise videos, nutritional and health tips and different courses from time to time to keep users engaged.

**Advantages**

* Income flow is stable

* You can sell video on an average from $12 to $15

* Recurring income as user renew it monthly or yearly

* Customers remain subscribed for a longer time

* It can be easily promoted online and in-person

**Disadvantages**

* It takes time in creating video content regularly

* Initially, it takes some time to make money

### 2. Planning your content

After selecting the business model and in the next step you have to plan your content of what type of online fitness course you are going to offer and how it looks on a client level.

You need to have a clear picture of what your online fitness program is all about. You need to have some plan before you start training. you need to know your goal before creating video content. You need to have some clear idea about

* What am I going to teach

* How am I going to teach

* What are the important things needed

* What do customers expect from us?

Next is the video content you create,it is what your audience engage and experience it.It is the step how you are going to create an online fitness program for :

* Different intensity of workout from easy to difficult phase

* Different experience levels from beginner to professional

* Training plans

* Health tips

* Workout for particular body parts

It also depends upon how to do you make a video

* Solo or in groups

* Live videos or already recorded content

* Will your course follow logical order or not

It is always better to plan your content before starting off your online fitness course

### 3. Building your own Video Platform

Building your own video platform that is a website and App for mobile remains the face of your online fitness program so through this people will come to know more about fitness courses, pricing and what kind of video is suitable for them. Well developed website is the most important thing to do as there are many **[video platform providers](https://www.flicknexs.com/)** at present that build video platforms for fitness instructors. It is always better to choose a Video platform provider that builds you complete video streaming platforms with important features included such as

* Website and Video player Customization tools.

* Analytics dashboard

* CMS

* Video monetization options

* Different payment options and

* White-label video streaming

* Both on-demand and live streaming capability

With a well-built video platform, you are ready to start and grow your Online fitness program

### 4.Setting up the Pricing Plan

Here comes the most important part as Fitness instructors find it difficult to fix a price for online courses. Most fitness instructors might be knowing the fees for real-time gym sessions but when it comes to online it is better to make market research what other fitness instructs are offering. If prices are too high users will look for other platforms and if prices are too low then the value of your online fitness program goes down. It should be fixed in such a way that it is affordable for all types of users. Set a price at a normal range and then increase it based on customer feedback. Consider giving a free trial for the first week for first-time users. Try to fix a higher price for live and personal training when compared to pre-recorded video content. For the Subscription model, you can sell video on an average from $12 to $15. For the Transaction-based model, You can sell video products on average between $75 and $100

### 5.Marketing Online Fitness Programs

Marketing online fitness programs is a most essential part that needs to be done after building your video platforms can be done through social media channels such as Facebook, Instagram and LinkedIn. You can make it through youtube by releasing short videos or trailers so you gain more traffic to your website. Sending out coupons, promo codes to attract an audience. Conducting referral programs making existing customers refer fitness courses to their family and friends. Setting up a newsletter campaign to retarget the audience who have visited our site. Giving out the free trial for a week to give the new users an experience with our new fitness platform

## Wrapping up

Online Fitness Programs are **[Video-based training platforms](https://www.flicknexs.com/)** where you can easily build, grow your business by reaching more people. It helps in easily selling your product to many people beyond borders to make money provided the content is good and engaging.

| praveene27295 |

829,583 | Debounce Function in Javascript 🚀 | let count = 0; const debounce = (func, delay) => { let timer; return function(){ ... | 0 | 2021-09-17T11:59:05 | https://dev.to/cagatayunal/debounce-function-in-javascript-47ha | javascript, functional, webdev, debounce | ```javascript

let count = 0;

const debounce = (func, delay) => {

let timer;

return function(){

clearTimeout(timer);

timer = setTimeout(() => {

func();

}, delay);

}

}

let scrollCount = () => {

console.log(count++);

}

scrollCount = debounce(scrollCount, 500);

window.addEventListener('scroll', scrollCount);

``` | cagatayunal |

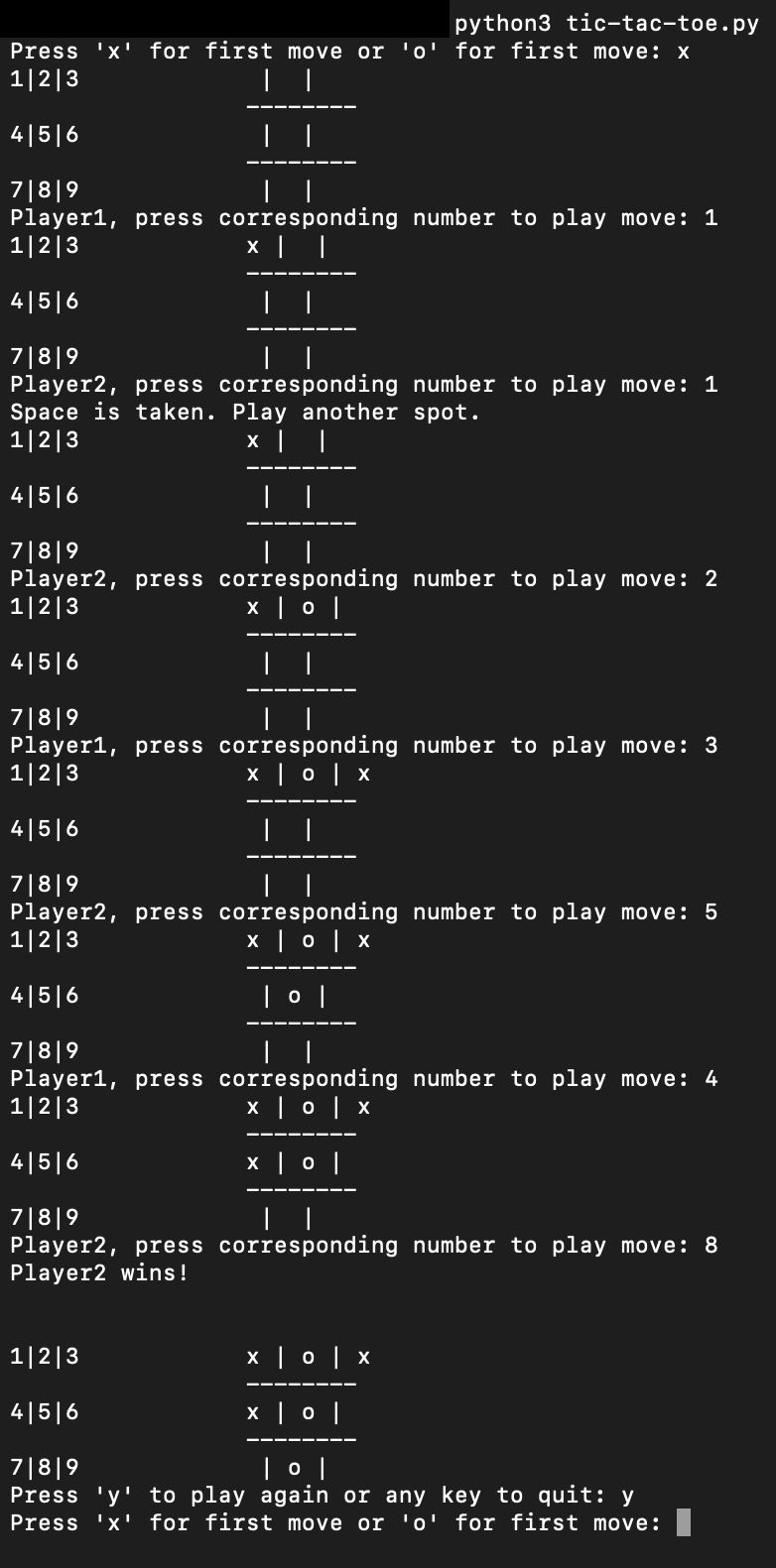

829,593 | html page | how to ctreate the multpage for the dispaly product details page thanks | 0 | 2021-09-17T12:12:21 | https://dev.to/rakes97/html-page-3ji1 | pagehtml | how to ctreate the multpage for the dispaly product details page

thanks | rakes97 |

829,602 | How to make a simple unread notification animation (ex: with a bell icon) | When building notifications UI elements it is very common to point out when a user has new elements... | 0 | 2021-09-17T12:53:39 | https://dev.to/miralo/how-to-make-a-simple-unread-notification-animation-ex-with-a-bell-icon-4e1i | css, animation, fontawesome, csstrick | When building **notifications UI elements** it is very common to point out when a user has **new elements to read**. This is achieved in most cases with an animation, for example if we have a bell icon we're gonna to make it swing every # seconds.

**Today we're gonna to see this**, you can find a finished example [in this pen](https://codepen.io/Miralo/pen/KKqmVMa).

As usual we're gonna use FontAwesome and, in this case, the SVG format of the "fa-bell" icon (remember to read the [license indications](https://fontawesome.com/license)). So, when we have it's markdown on our page we will add this animation (Courtesy of [Animate.css](https://github.com/animate-css/animate.css/blob/main/source/attention_seekers/swing.css)).

---

### The animation (part 1)

```css

svg {

animation: custom-swing 1s;

-webkit-animation: custom-swing 1s;

animation-iteration-count: infinite;

-webkit-animation-iteration-count: infinite;

}

@keyframes custom-swing {

20% {

transform: rotate3d(0, 0, 1, 15deg);

}

40% {

transform: rotate3d(0, 0, 1, -10deg);

}

60% {

transform: rotate3d(0, 0, 1, 5deg);

}

80% {

transform: rotate3d(0, 0, 1, -5deg);

}

to {

transform: rotate3d(0, 0, 1, 0deg);

}

}

```

The main animation is set, but as you can see it's... too invasive 😜: our bell swings "forever" and fast.

**We need to add some "delay" in the main animation between the swings**, so we will use some Math and logic to get around it.

---

### The animation (2 part)

In few words we need do **edit the keyframe in order to "finish" the animation early**, leaving some "space" as a delay.

At the moment our animation last 1 second, but if we want to our bell swing for 1 second and wait "about" 4 seconds before another one we have to set the animation to 5 seconds.

After this, **we have to "divide" and limit the animation timing to complete in about 1/5 of the total**.

```css

svg {

animation: custom-swing 5s;

-webkit-animation: custom-swing 5s;

animation-iteration-count: infinite;

-webkit-animation-iteration-count: infinite;

}

@keyframes custom-swing {

20% {

transform: rotate3d(0, 0, 1, 15deg);

}

40% {

transform: rotate3d(0, 0, 1, -10deg);

}

60% {

transform: rotate3d(0, 0, 1, 5deg);

}

80% {

transform: rotate3d(0, 0, 1, -5deg);

}

to {

transform: rotate3d(0, 0, 1, 0deg);

}

}

```

Here it is, now we have some delay between the swings, and you can play with duration and percentages as you like to adjust the result!

Photo by <a href="https://unsplash.com/@arturorey?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Arturo Rey</a> on <a href="https://unsplash.com/s/photos/bell?utm_source=unsplash&utm_medium=referral&utm_content=creditCopyText">Unsplash</a> | miralo |

829,816 | How a Shy, Introvert Dev, With Anxiety Issues Spoke at 2 Conferences 🇪🇺. | 8 days, 5 countries, 2 conferences - Dealing with anxiety and networking. Hello beautiful people, in... | 0 | 2021-09-17T16:43:21 | https://blog.eleftheriabatsou.com/how-a-shy-introvert-dev-with-anxiety-issues-spoke-at-2-conferences | conference, publicspeaking, introvert, developer | **8 days, 5 countries, 2 conferences - Dealing with anxiety and networking.**

Hello beautiful people, in this article I’ll describe my last 8 days traveling in Europe and attending as a speaker 2 tech conferences. While we progress I'll share what I learned during these days (tech and non-tech news/advice) as well as some tips about networking and being comfortable around new people (which is something I always struggle with, but I am making my best to improve it).

**Notes:**

*1. I used to travel a lot in conferences all over the world, but then covid happened and I stopped! Maybe the old followers/readers, already know that, but as I usually say, I have 2 passions: my job and traveling. So when these 2 things can be combined you can bet I am a happy girl 😊*

*2. Also, I am fully vaccinated and I take seriously all the health regulations. It's not only about my well-being but also about the people around me. I would never jeopardize their health.*

**Let the journey begin...**

P.S. If you are bored to read, watch the [vlog](https://youtu.be/VBlnjW2Lvgs) 😉.

<a href="http://www.youtube.com/watch?feature=player_embedded&v=VBlnjW2Lvgs" target="_blank"><img src="http://img.youtube.com/vi/VBlnjW2Lvgs/0.jpg" alt="IMAGE ALT TEXT HERE" width="240" height="180" border="10" /></a>

# Day 1 - Monday

## ✈ Greece, Germany and Croatia

I woke up so excited for my journey, everything was ready and planned. I had made my preparation through the weekend (airplane check-in, suitcases, and even some of Monday's work), my flight wasn't until midday so I had some time to work and be productive.

The socializing part started as soon as I arrived at the airport. There was an issue with the online check-in so I had to actually speak to a human for my tickets 😳. I know, I know, for some of you that wouldn't be an issue, or it could even be your pleasure, but I have some anxiety issues even on the smallest things...

I asked the man in the check-in "do you need to see my vaccine ce ce cert certif certification?" I was rumbling so hard, I couldn't prefer the word certification in my own language (certification = πιστοποιητικό), the man laugh but he started to chat with me about other things and that actually made me feel better... Maybe we were chatting for about 7-10' until a huge waiting line had created... (oops, sorry travelers 😊).

The next stop was Munich (Germany 🇩🇪) and right after there Zadar (Croatia 🇭🇷). From Zadar, a taxi driver picked me up and drove me to the hotel. The taxi driver didn't know English so we couldn't communicate... As you can probably understand that was not an issue for me😏.

The hotel was located in an area outside of Zadar and it was one of the best luxurious hotels I have ever been to (more about that later). It was about 7 pm when I took a shower and went alone for a dinner at the hotel's restaurant. As I started to eat a young smiley man approached me, "hi, are you also a speaker? are you this girl?" (pointing at my photo on the conference's website) as I knot my head positively the young man showed his photo on the website too, introduced himself and asked me if he could join me. My heart started to beat faster, not because I didn't like the man, but because, you know, I had to speak with another human😳.

I actually spent the next few hours with this person. He was just one year older than me, a CEO of a company, and a creator of a js framework (let's keep some anonymity, and not get into more details, at least for now). 😁

After dinner, I went to my room, worked for a few more hours, and went for sleeping. 😴

*1st day's thoughts💭 : Could humans be actually pleasant?! The guy from the airport check-in made me feel comfortable and the guy from the dinner kept me company and actually became my companion for the next days!!*

# Day 2: Tuesday

## Conference: [Infobip Shift](https://shift.infobip.com/) - Day 1 of 2 - The day of my talk

The next day started with a nice breakfast near the hotel's pool 🏊 🥐 (I'm telling you dear readers, the hotel was amazing!).

I stayed in my room working until midday when it was time to call an uber and reach the conference's location.

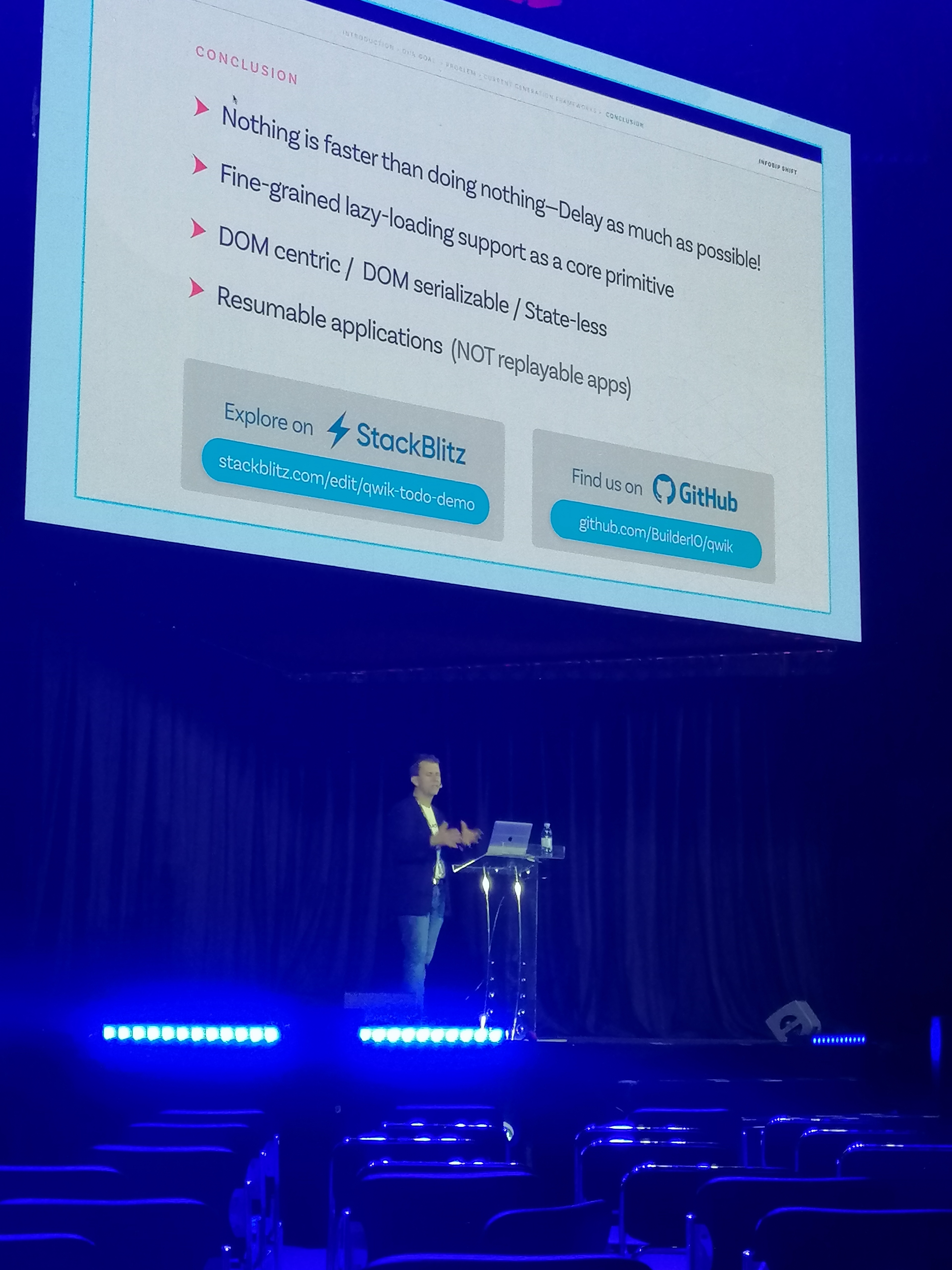

I attended several people's talks and gained some valuable knowledge of tech and coding.

I also gave my talk which was about UX and UI. Everything went great and according to the plan. Weirdly enough when I'm presenting I'm not nervous (I have a trick for that, maybe I could share it on another article... 😊, let me know if you are interested in how to speak to an audience/giving a presentation and not getting a panic attack!! 😁 )

At the venue, I met the dinner-guy who introduced me to his friends/speakers and we became a nice "little" nerd 🤓 and IT gang!! How cool, right?!

At this point, I had checked the conference's website and I knew who were the people that I definitely wanted to meet. These were people like Gift, Ekene, Sébastien, Eduardo, Kent, Nikolas, and more.

Some of those people I was following on social media or I was familiar with their work. Other people seemed very interesting, I loved their talk so I definitely wanted to meet them. As I was surrounded with my cool IT gang it was easier to meet people, they could introduce me to others and if they also didn't know the "others", they were not as anxious as I was (hello extrovert people), so they would just go and speak and I would follow (that's a pretty good approach for meeting new people!)

*2nd day's thoughts💭: Humans have started to seem less terrifying... They like to talk, they like to make jokes and they even want to help you!*

After the conference, I went back to the hotel, worked for a few hours and at night I went for dinner 🍲 in a nice location with my nerd IT gang and we even went for an after-party (come on dear introvert friends, you have to applause me here 👏 👏, I went for both dinner + after party 🎉).

# Day 3: Wednesday

## Conference: [Infobip Shift](https://shift.infobip.com/) - Day 2 of 2 - Networking and meetings

The day started with a nice chilled breakfast ☕ near the pool and continued with work. I only went to the conference venue in the afternoon when I was done with work.

It was time for meetings and networking once again😔.

### 1.The Porche experience 🚙

Let's start with something cool.

**Porche** was one of the sponsors and they wanted a woman in tech to represent them. They liked my talk and my presence and they asked me for some possible collaborations. They took some professional photos with the cars and me (I am waiting for the official photos too... As soon as I get them I’ll share them on Instagram 📸), and they had a professional driver who took me on a fast ride (I can't even describe how insanely beautiful the car and the ride were). For those of you thinking "but where can you drive/run a fast car like Porche safely?", I have an answer: the event was held in Zadar's biggest sports arena. The arena had also a huge parking which was allocated by Porche. Each time only a handful of people could visit at the same time the parking due to safety reasons!

### 2. Being approached by attendees

A few people approached me as they had some questions and queries around my UX and UI talk or they would be interested in potential collaborations. That went well... I only messed up in the end with some emails and phone numbers🤣. See, I m not good with names... And that resulted in sending some messages to the wrong people on WhatsApp!! But I guess these things happen, right?! Or is it only me? (Please say it is not only me, it will make me feel terrible, no kidding😕.)

### 3. The interview

It's not uncommon in a conference to be asked by the organizers to take part in a video that will be used mainly for promoting purposes in their current or upcoming events. Usually, there is someone who will make a few simple questions (hence I call this part "the interview") around the conference and will record the speaker's answers. On the 1st day of the conference the organizer asked me to take part in the video, I said "yes" but then..."hello darkness my old friend" I could not do it... My shyness had returned... So I said "no".

But...the 2nd day, as I was sitting with the cool nerd IT gang and I could watch them doing the interview I decided to give it a chance (that was a nice win 🏆 for my little introvert self), everything went fine!

### 4. The potential collaboration

Since this is work-related I won't get into details. The main point is that I had an online meeting with an "x company" and as we were discussing we realized that on of their developers and I would be at the same conference so now it would be a nice opportunity to meet in real life and discuss some business! That's how I met a fellow developer 😊

### 5. The other speaker, for the other conference

As I mentioned, in the upcoming days I was about to hit another conference in another country. The organizer from the other conference told me about this guy who would be in Croatia and then in Ukraine too (we would be in the same conferences), so I had to meet him! But you know, I'm introvert and shy... How would I do that... At first, I didn't even know who he was...

At the second day of dinner, I asked my cool yet nerdy IT gang if they knew him. Long story short, they found him for me and even the dinner-guy made the introduction as I was too scared for that...

But guess what, it went well! The other-conference-guy was super nice and friendly! And **he's also a writer here at Hashnode**! 😁

Moving on, I had a lovely night with good company 🍻 !

# Day 4: Thursday

## Croatia to Austria 🇦🇹

My day started in the same way. Breakfast 🥐 and work. Later that day I would catch my flight to Vienna, Austria. I reached Vienna and stayed one day there as there was no direct flight to Odesa, Ukraine which was the 2nd conference.

No networking stories this time. I quickly arrived at the hotel. There, I only talked with the hotel receptionist and the host of the hotel's restaurant.

My evening ended with working out and work.

# Day 5: Friday

## Austria to Ukraine 🇺🇦

Another day, another flight 🛬 !

Breakfast in the hotel, a few hours of work, and catching up on a flight!!

This time I landed in Odesa-Ukraine for the conference of **[PyCon](https://pyconodessa.com/en/) Odesa**.

### The "German" organizer

Shall we move on with another **networking story**?!

Did you wonder how a girl from Greece, working occasionally with Javascript and Design, working full time as a community manager at Hashnode, would end up in a Python conference in a little city in Ukraine?

Well, there are 2 points connected to each other. The 1st one is that I simply applied to speak to the conference as I believe my talk is equally important for all kinds of developers... (and that's usually what all speakers do, they apply to conferences...). The 2nd one (and usually the hardest one) is to get an invitation... But... A beautiful sunny morning I received a Twitter DM from an organizer I met a few years ago in Munich, Germany telling me that he is a co-organizer for this conference too and he'd love to have me as a speaker. I specified to him once again that my talk is not related to Python but it would be my pleasure. And just like that, I was in!

Will I have an invitation from the organizer if he didn't know me? I honestly don't know... But I have a suspicion that our old meeting helped.

### Let's go to the accommodation and the venue

The conference venue and the accommodation were in the same location. This is very convenient for the speakers... The only issue was that the location was a bit outside of Odesa and not many taxis were willing to drive you... Thankfully another organizer came and picked me up 😊, he drove me to the venue where I met once again the "German organizer". He introduced me to some lovely girls and fellow speakers (who later became my friends).

I took a few hours to relax and then it was time for dinner and drinks in the city center!

# Day 6: Saturday

## Conference: PyCon Odessa - Day 1 of 2

By now, I m sure you know the drill. Breakfast 🥐 and work! You may be thinking "But Elef, it was Saturday did you have to work?!" and the answer is no... But the thing is I hadn't finished Friday's work and I also wanted to prepare some stuff for Monday that I knew I would be traveling and I wouldn't be able to do.

This time my breakfast wasn't near a pool or at a fancy hotel restaurant but at a terrace with a view of the ocean and the city!

After work, I met my cool gang, a few new speakers and started to attending talks. In the evening, we had a lovely dinner with the crew and the attendees in an open space and we talked about tech and life.

# Day 7: Sunday

## Conference: PyCon Odessa - Day 2 of 2 - The day of my talk

This day started differently... It started with yoga! The speakers and the attendees got together on the building's terrace where we met the yoga instructor and had a lovely short session with her!

We continued with talks, and after lunchtime, it was my turn! I gave once again a talk about UX and UI. I was pleased with it as many people approached me asking for advice.

Since this was the second and last day people were more relaxed and the conference ended up earlier than the previous day.

As a closing party for the organizers and the speakers, we booked ourselves a yacht ⛵ for the afternoon/evening. It was my first time sailing and having so much fun with people that I literally met a couple of days before. Everyone was welcoming and chilled!

*Last day's thoughts💭: Actually those humans who called them shelves developers and advocates are... quite fantastic!*

# Day 8: Monday - Back home 🏠

## Ukraine to Germany to Greece 🇬🇷

As soon as I woke up ☀ , I found the organizers who helped me to find a taxi and get to the airport. At the airport's cafeteria, I did my daily stand-up meeting with the Hashnode team, worked for a few hours, and took my first flight to Berlin. That flight was 2h 30min so I started writing this article!

In Berlin, I had a long layover AND a delay on my flight which actually gave me the time to finish all my work, prepare for the next day, and of course attend Hashnode's Bootcamp!

On the last flight from Berlin to Greece I kept writing this article and I even managed to finish it.

(After writing an article I need some time to review and edit it... Plus in this case, add some photos and edit a video. So although the article was finished on Monday it was published on Thursday.)

# General thoughts 💭

## Could I have created more connections/opportunities?

Of course! You can find opportunities everywhere you just have to know where to look for and be willing to take your risks.

Could I have met more people? Yes.

But on the other hand, I'm glad that I managed to pull this trip of... As a shy, introvert person I'm glad with my progress!

## Thank you... 🙏

At this point I'd like to thank:

- Hashnode for giving me the opportunity to work in a flexible schedule.

- The InfoBip organizers for creating an amazing event.

- The PyCon organizers for creating a truly unique and memorable event.

- All the speakers and the fantastic people who shared freely their knowledge with me (and kept me company).

- And you dear reader. Thank you. I wouldn't be here without your support either on [social](https://instagram.com/elef_in_tech) [media](https://twitter.com/BatsouElef), [YouTube](https://www.youtube.com/c/EleftheriaBatsou), or here at Hashnode! Let me know in the comments if I can do anything to help you too 😊.

Did you actually read the article? If not that's ok, watch the video [here](https://youtu.be/VBlnjW2Lvgs).

<a href="http://www.youtube.com/watch?feature=player_embedded&v=VBlnjW2Lvgs" target="_blank"><img src="http://img.youtube.com/vi/VBlnjW2Lvgs/0.jpg" alt="IMAGE ALT TEXT HERE" width="240" height="180" border="10" /></a>

**************************************

👋Hello, I'm Eleftheria, Community Manager at Hashnode, developer, public speaker, and chocolate lover.

🥰If you liked this post please share.

🍩Would you care about buying me a coffee? You can do it [here](https://www.paypal.com/paypalme/eleftheriabatsou) but If you can't that's ok too!

**************************************

🙏It would be nice to subscribe to my [Youtube](https://www.youtube.com/c/EleftheriaBatsou) channel. It’s free and it helps to create more content.

🌈[Youtube](https://www.youtube.com/c/EleftheriaBatsou) | [Codepen](https://codepen.io/EleftheriaBatsou) | [GitHub](https://github.com/EleftheriaBatsou) | [Twitter](https://twitter.com/BatsouElef) | [Site](http://eleftheriabatsou.com/) | [Instagram](https://www.instagram.com/elef_in_tech) | [LinkedIn](https://www.linkedin.com/in/eleftheriabatsou/)

| eleftheriabatsou |

829,884 | Entendendo o Node.js e seu funcionamento | Introdução Neste artigo, você irá entender o que é o Node.JS, e a sua principal... | 0 | 2021-09-17T23:18:44 | https://dev.to/leonardodesa/entendendo-o-node-js-e-seu-funcionamento-1p9e | node, javascript, programming, tutorial | # Introdução

Neste artigo, você irá entender **o que é o Node.JS**, e a sua principal funcionalidade que é indispensável para qualquer desenvolvedor compreender o funcionamento da linguagem, chamada de **Event Loop**.

# O que é o Node.js

Node.js, ou simplesmente Node, é um software de código aberto baseado no interpretador V8 do Google e que **permite a execução do código javascript do lado do servidor** de forma simples, rápida e performática.

# Interpretador do Node

O Interpretador é um **software especializado que interpreta e executa javascript**. O Node utiliza o Interpretador V8, que tem como proposta acelerar o desempenho de uma aplicação compilando o código Javascript para o formato que a máquina irá entender antes de executá-lo.

# Call Stack

**É uma pilha de eventos**, esses eventos podem ser uma função disparada pelo código. Por isso o event-loop fica monitorando para que, toda vez que uma função for disparada, ele deverá executá-la somente uma coisa por vez.

Vamos ver um exemplo:

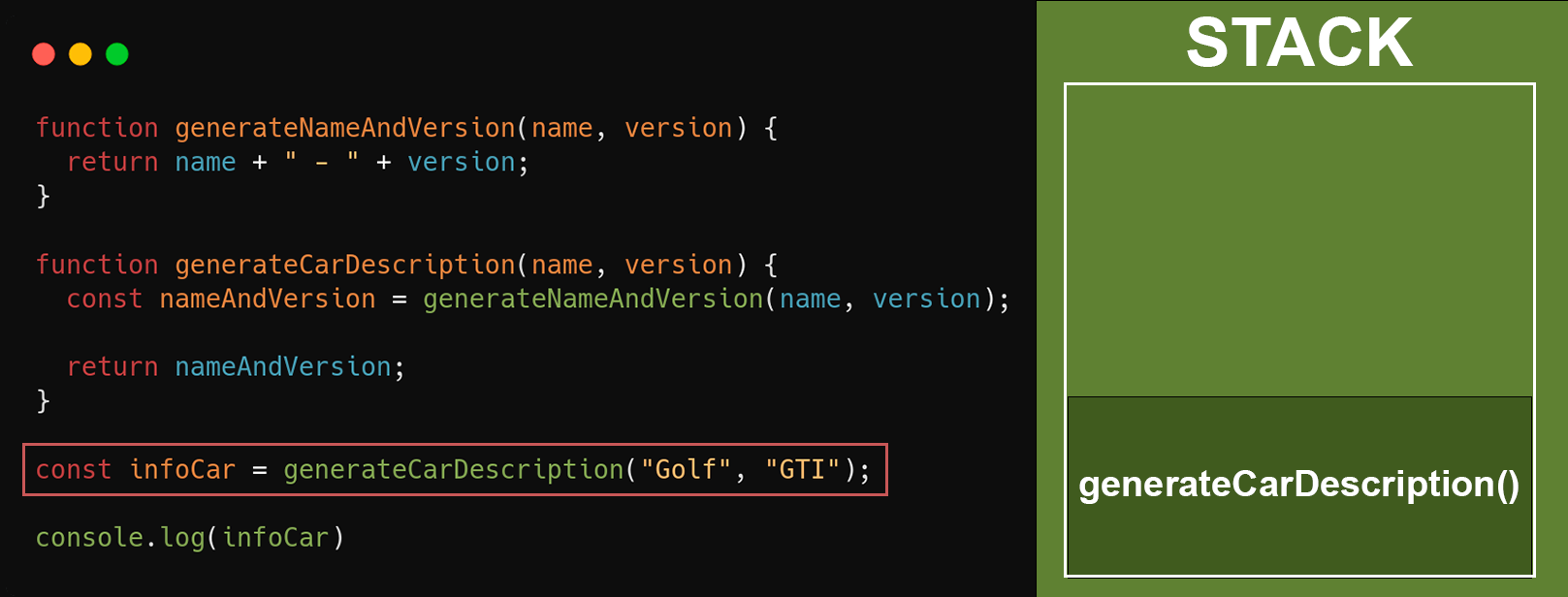

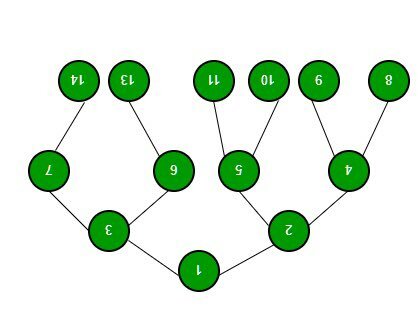

Aqui temos um exemplo bem simples para entendermos como funciona a stack. Basicamente a função **`generateCarDescription`** é chamada recebendo o nome do carro e sua versão, e retorna uma sentença com os parâmetros concatenados. A função **`generateCarDescription`** depende da função **`generateNameAndVersion`**, que é responsável por unir as informações de nome e versão.

Quando a função **`generateCarDescription`** é invocada, ela depende da função **`generateNameAndVersion`** para atribuir o valor do nome e versão do carro na variável **`nameAndVersion`** e, quando ela for chamada, ela será adicionada na stack como no exemplo abaixo:

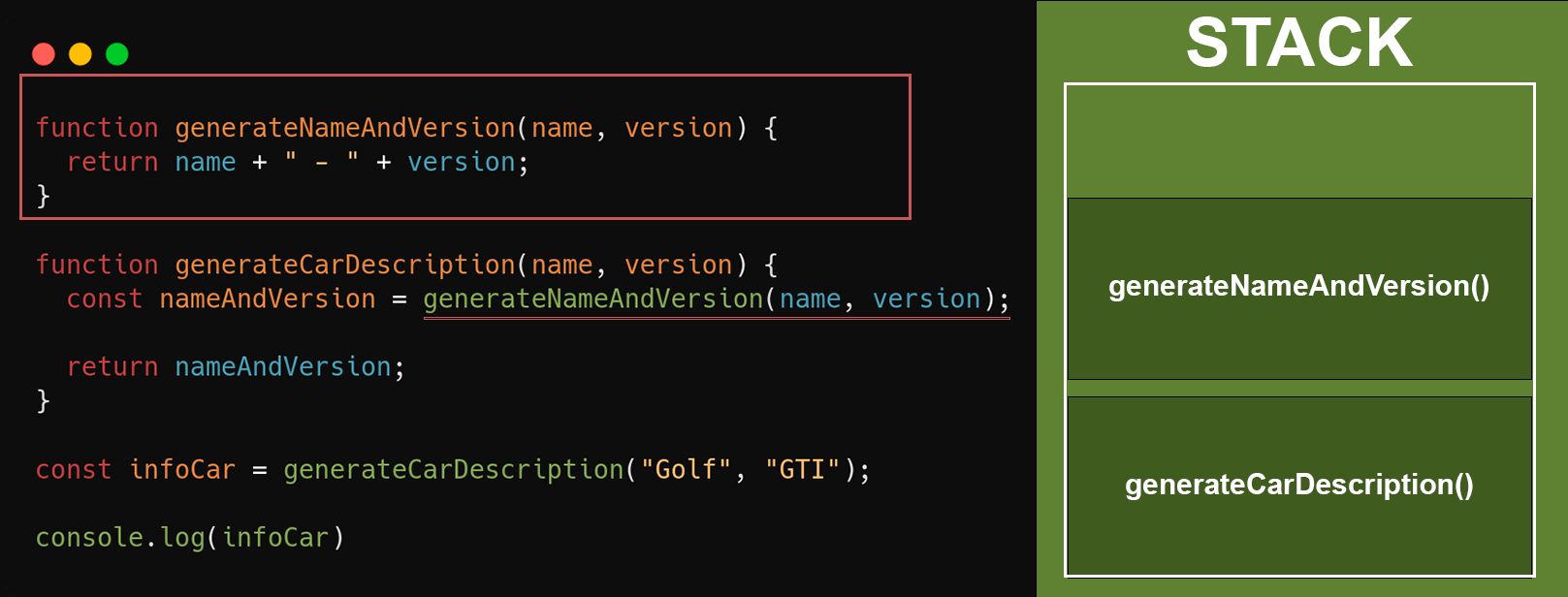

Após a execução da funcão **`generateCarDescription`**, logo em seguida a variável **`nameAndVersion`** irá receber o retorno da função **`generateNameAndVersion`** que foi imediatamente adicionada na stack, até que sua execução finalize e o retorno seja feito. Após o retorno, a stack ficará assim:

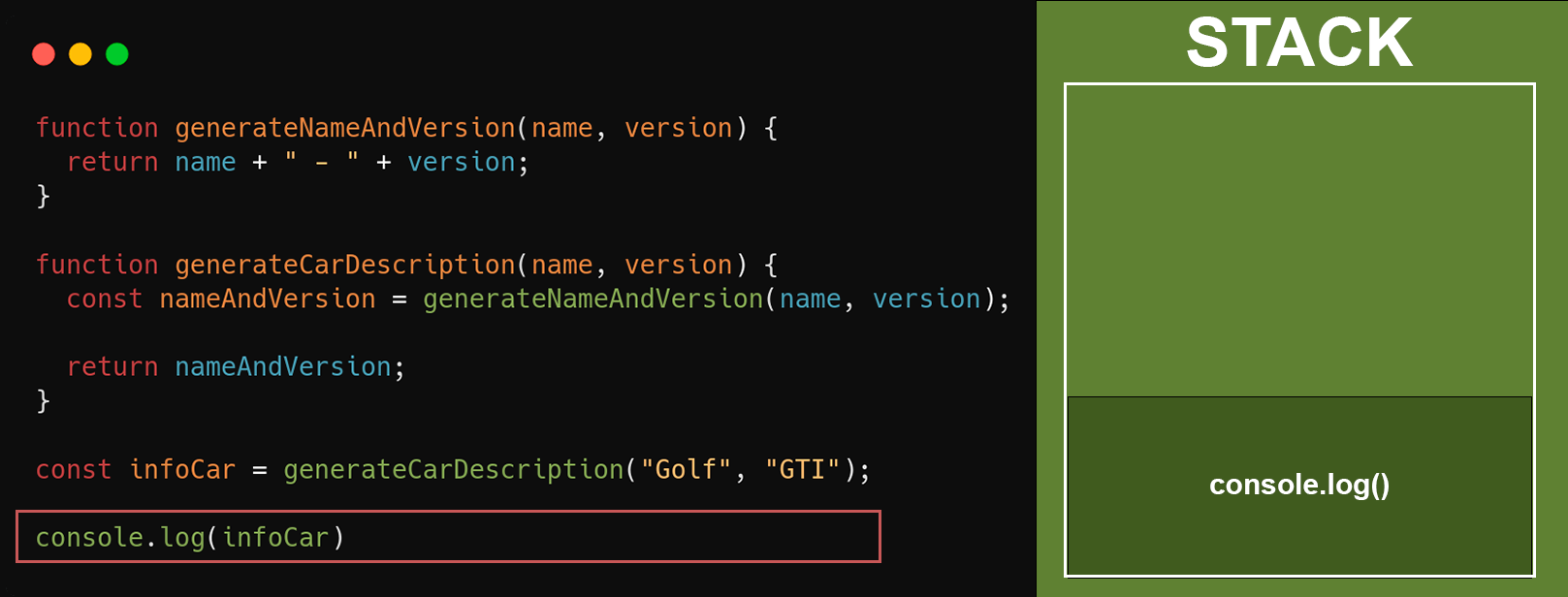

A última etapa será retornar a variável **`nameAndVersion`**, que contém o nome e versão do veículo. Isso não irá alterar em nada na stack. Quando a função `generateCarDescription` terminar, as demais linhas serão executadas. No nosso exemplo será o **`console.log()`** imprimindo a variável **`infoCar`**. E por fim, será adicionado o **`console.log()`** quando tudo acima já estiver sido executado.

Como a **stack só executa uma função por vez**, de acordo com o tamanho da função e o que será processado, isso irá ocupar mais tempo na stack, fazendo com que as próximas chamadas esperem mais tempo para serem executadas.

# I/O - Operação bloqueante e não bloqueante

I/O se refere, principalmente, à interação com o disco do sistema e a integração com a [libuv](https://libuv.org/).

**Operação bloqueante é a execução do código no processo do Node precisa esperar** até que uma operação seja concluída. Isso acontece porque o event loop é incapaz de continuar executando alguma tarefa, enquanto uma operação bloqueante está sendo executada.

**Todos os métodos I/O na biblioteca padrão do Node tem uma versão assíncrona, que, por definição, são não-bloqueantes**, e aceitam funções de callback. Alguns métodos também têm suas versões bloqueantes, que possuem o sufixo **Sync** no nome. Para maiores Informações sobre I/O, acesse: [I/O](https://nodejs.org/pt-br/docs/guides/blocking-vs-non-blocking/)

# Single-Thread

O Node é uma plataforma orientada a eventos, que utiliza o conceito de thread única para gerenciar a stack. Quem é **single thread é o v8 do Google**, responsável por rodar o código do Node, a stack faz parte do v8, ou seja, ela é single thread, que executa uma função de cada vez.

# Multi-Threading

Para trabalhar com operações paralelas, e obter um ganho de desempenho, o Node e sua stack por si só são incapazes de resolver múltiplas operações ao mesmo tempo, por isso, ele conta com uma lib chamada de [libuv](https://libuv.org/), **que é capaz de gerenciar processos em background de I/O assíncrono não bloqueantes**.

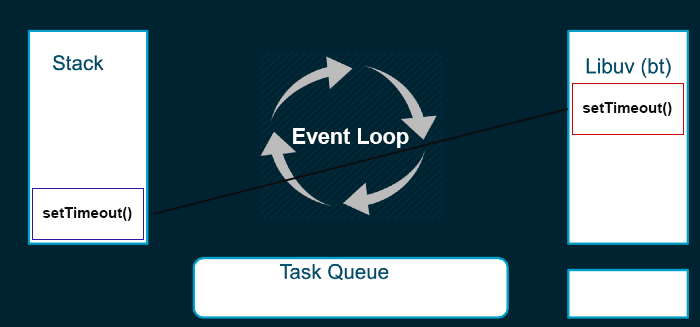

Exemplo de uma função assíncrona sendo executada:

Nesse exemplo, a função **`setTimeout()`** é executada na stack e jogada para uma thread, enquanto ela vai sendo processada e administrada pela libuv. A stack continua executando as próximas funções e, quando terminar o processamento, a função de callback será adicionada na Task Queue para ser processada quando a stack estiver vazia.

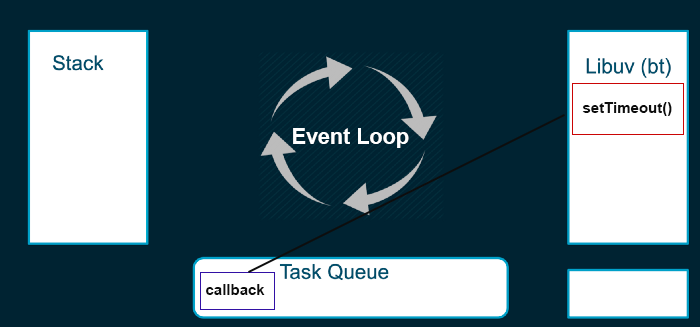

# Task queue

Algumas funções são enviadas para serem executadas em outra thread, permitindo que a stack siga para as próximas funções e não bloqueie nossa aplicação.

Essas funções que são enviadas para outra thread precisam ter um callback, que é uma função que será executada quando a função principal for finalizada.

Os callbacks aguardam a sua vez para serem executados na stack. Enquanto esperam, eles ficam em um lugar chamado de task queue. **Sempre que a thread principal finalizar uma tarefa, o que significa que a stack estará vazia, uma nova tarefa é movida da task queue para a stack, onde será executada**.

Aqui temos um exemplo para facilitar:

**O Event Loop é responsável por buscar essas tarefas de background, e executá-las na stack**.

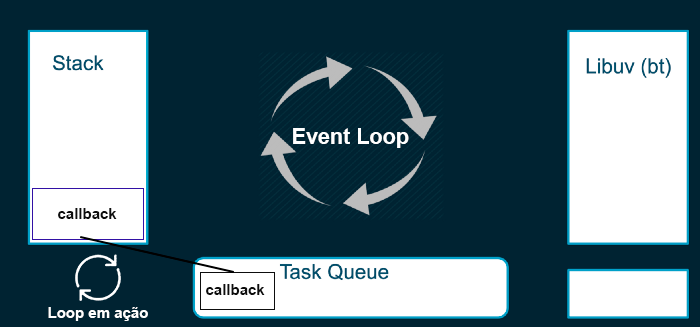

# Micro e macro tasks

**O Event Loop é formado por macro tasks e micro tasks**. As macro tasks que serão enfileiradas no background, e que quando forem processadas, terão um callback dentro da task queue que será chamado quando a stack estiver vazia. dentro de cada ciclo, o **event loop irá executar primeiramente as micro tasks** disponíveis. As micro tasks vão sendo processadas, até que a fila de microtask esteja esgotada, assim que todas as chamadas de micro tasks forem feitas, então no próximo ciclo, o callback da macro task que está na task queue será executado. Ou seja, **dentro de um mesmo ciclo, as micro tasks, serão executadas antes das macro tasks**.

# Macro tasks

Vou citar alguns exemplos de funções que se comportam como **macro tasks**: **`setTimeout`**, **`I/O`** e **`setInterval`**.

# Micro tasks

Alguns exemplos conhecidos de **micro tasks** são as **`promises`** e o **`process.nextTick`**. As micro tasks normalmente são tarefas que devem ser executadas rapidamente após alguma ação, ou realizar algo assíncrono sem a necessidade de inserir uma nova task na task queue.

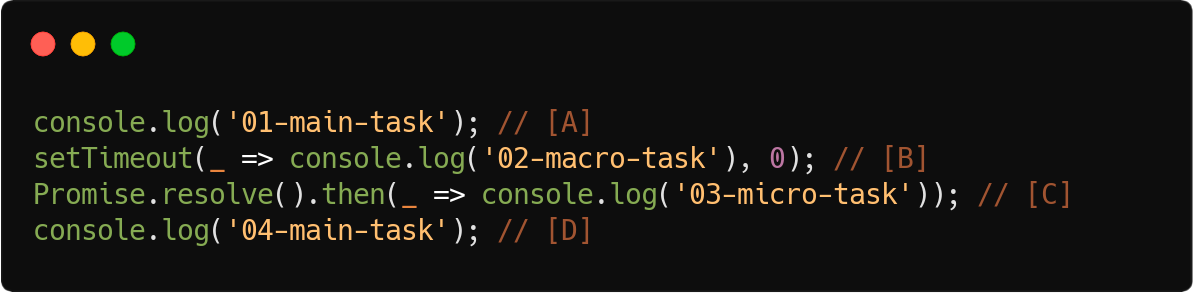

Vamos tentar entender melhor com alguns exemplos, coloquei algumas anotações para facilitar o entendimento:

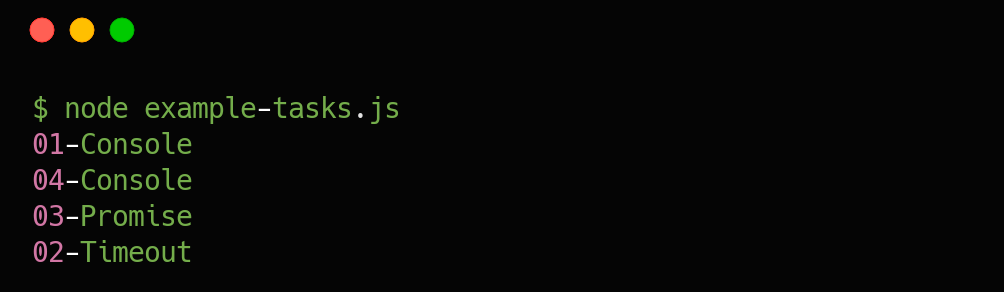

Ao executar o código acima, teremos o seguinte resultado de priorização:

Você deve estar se perguntando o porquê não está sendo executado em ordem, vou tentar explicar com as anotações que fiz no código.

* **[A]**: **Executado diretamente na stack**, dessa forma ele é síncrono, então o restante do código irá aguardar o resultado para ser executado.

* **[B]**: Enfileirado como uma tarefa futura, **prioridade macro task**, será executado apenas no próximo loop.

* **[C]**: Enfileirado como uma tarefa futura, **prioridade micro task**, será executado imediatamente após todas as tarefas/tasks do loop atual e antes do próximo loop.

* **[D]**: **Executado diretamente na stack**, dessa forma ele é síncrono, então o restante do código irá aguardar o resultado para ser executado.

# Conclusão

**Nesse artigo vimos o que é o Node e como ele funciona “por baixo dos panos”**, espero que vocês tenham entendido com clareza e que essa visão ajude vocês a escreverem códigos melhores e de uma maneira que tire maior proveito desse funcionamento. Aconselho também a leitura complementar dos links de referências que irão facilitar o entendimento.

# Links relacionados

https://nodejs.org/pt-br/docs/guides/blocking-vs-non-blocking/

https://imasters.com.br/front-end/node-js-o-que-e-esse-event-loop-afinal

https://fabiojanio.com/2020/03/12/introducao-ao-node-js-single-thread-event-loop-e-mercado/

https://oieduardorabelo.medium.com/javascript-microtasks-e-macrotasks-fac33016de4f

https://www.youtube.com/watch?v=8aGhZQkoFbQ

| leonardodesa |

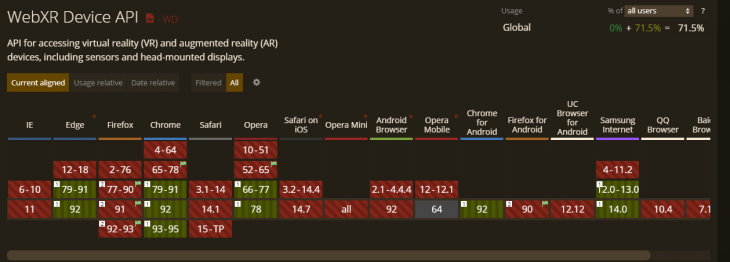

829,906 | 5 web APIs that add mobile functionality to your project | Written by Chimezie Enyinnaya ✏️ As developers, we frequently use APIs (Application Programming... | 0 | 2021-09-17T18:56:26 | https://blog.logrocket.com/5-web-apis-mobile-functionality/ | mobile | **Written by [Chimezie Enyinnaya](https://blog.logrocket.com/author/chimezieenyinnaya/)** ✏️

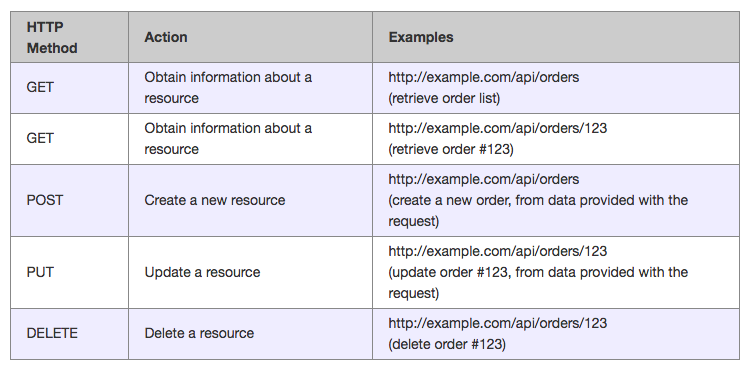

As developers, we frequently use APIs (Application Programming Interfaces) to implement complex functionalities easily or create them to abstract complexity. APIs are what allow services to talk to each other and do things like post a Tweet or display a map.

We can classify web APIs into two categories for building client-side web applications:

* [Browser APIs](https://developer.mozilla.org/en-US/docs/Web/API): These are APIs that interface with JavaScript, allowing developers to implement functionalities easily. APIs such as the DOM, Fetch, Audio and Video, WebGL, Notifications and so much more

* Third-party APIs: You’ve probably used one in your project already. These are APIs that are not built into the browser by default. They're provided by companies such as Google, Facebook, Trello, etc. to allow you to access their functionality via JavaScript and use it on your project

Let's go through some of the popular Web APIs:

* Geolocation API: This API allows access to retrieve location information of the host device

* Document Object Model API: The DOM is the API for HTML documents in the sense that it is the interface between your JavaScript program and the HTML document. The [DOM itself has an extensive list of interfaces](https://developer.mozilla.org/en-US/docs/Web/API/Document_Object_Model) such as the `Document` interface, `Window` interface, and so on

* History API: The History API is abstracted in most of the Router implementations. The API enables you to track and modify the browser's URL and history data, as well as access your browsing history through JavaScript

* Canvas API: The Canvas API allows you to display different visual graphics on the page by using a `<canvas>` element, which is useful for HTML games and charts

* Web Animations API: The Web Animations API enables coordinated visual changes on your page. It combines the pros of CSS transitions/animations and JavaScript-based animations.

In this article, I'll be exploring some of my favorite APIs that provide mobile-friendly functionality. This can include anything from social media shares and clipboard operations to contact, speech, and notifications functionalities.

## 5 mobile functionality web APIs for your next project

So far, we've discussed some common APIs that you've made use of directly or indirectly through JavaScript libraries.

In this section, we'll explore five unique APIs that you might need for your next project. These APIs are important because they bring mobile native functionalities to the web.

### Web Share API

This API helps you implement sharing functionality on your websites. It gives that mobile native-sharing feel. It makes it possible to share text, files, and links to other applications on the device.

The Web Share API is accessible through the `navigator.share` method:

```javascript

if (navigator.share) {

navigator.share({

title: 'Logrocket alert here',

text: 'Check out Logrocket',

url: '<https://logrocket.com/>',

})

.then(() => console.log('Successful share'))

.catch((error) => console.log('Error sharing', error));

}

```