id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

866,754 | Introduction to Nodejs and Express | We are going to learn some back end web development using JavaScript. The framework we are going... | 0 | 2021-10-17T17:46:20 | https://dev.to/moreno8423/introduction-to-nodejs-and-express-4pik | javascript, node, webdev, express |

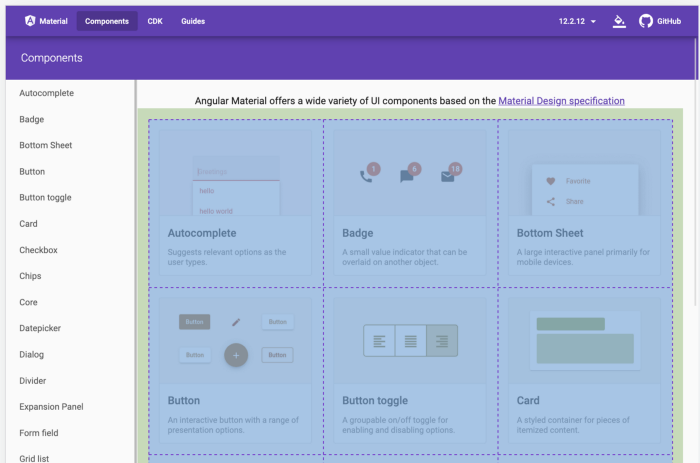

We are going to learn some back end web development using JavaScript. The framework we are going to learn is very useful for back end web development. That framework is Nodejs. We are also going to use the most popular middleware for Nodejs. That middleware is "Express”. In this tutorial we expect you have some <a href="https://moeslink.com/intro-to-javascript-for-web-development/">JavaScript</a> knowledge. Also some <a href="https://moeslink.com/easy-learn-html/">HTML</a> and <a href="https://moeslink.com/intro-to-css/">CSS</a> for templates and styling purposes. At the end of this tutorial you will have a great foundation to start creating your own applications.

###Introduction###

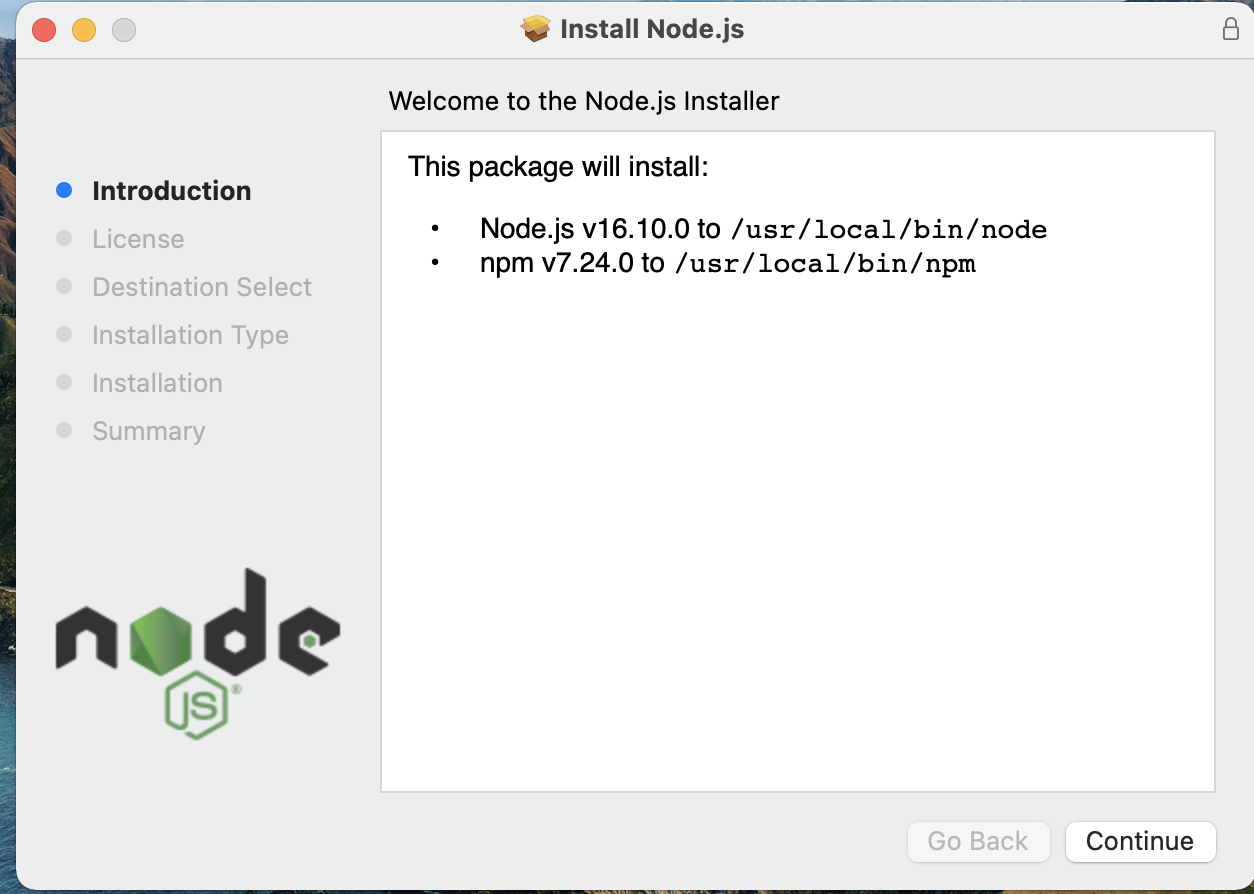

To start we first need to download Nodejs from its website. Then we proceed to install NodeJs. Once the software is installed, "The Node Package Manager" npm will also be installed.

Once we finish the installation we can check the version of the installed softwares in the terminal. We only need to type the command npm -v && node -v to get the result. If your result is similar to the one we show you below; that means you successfuly installed Nodejs and npm . Otherwise you need to check for installation errors.

```shell

node -v && npm -v

v16.9.1

7.21.1

```

###Creating a Simple server###

It's quite easy to create a simple server with Nodejs. As you may already know, you just need the terminal and a text editor. My favorite text editor is <a href="https://code.visualstudio.com/">Visual Studio Code</a>; but you can use your preferred one. The result is going to be the same. Once we choose our text editor we can create a file and name it app.js. After we create the file we can type the example we see below in our file. Then you will get a result of a route and a console log.

``` javascript

const http = require('http');

const hostname = '127.0.0.1';

const port = 3000;

const server = http.createServer((req, res) => {

res.statusCode = 200;

res.setHeader('Content-Type', 'text/plain');

res.send('Hello World!');

});

server.listen(port, hostname, () => {

console.log(`Server running at http://${hostname}:${port}/`);

});

```

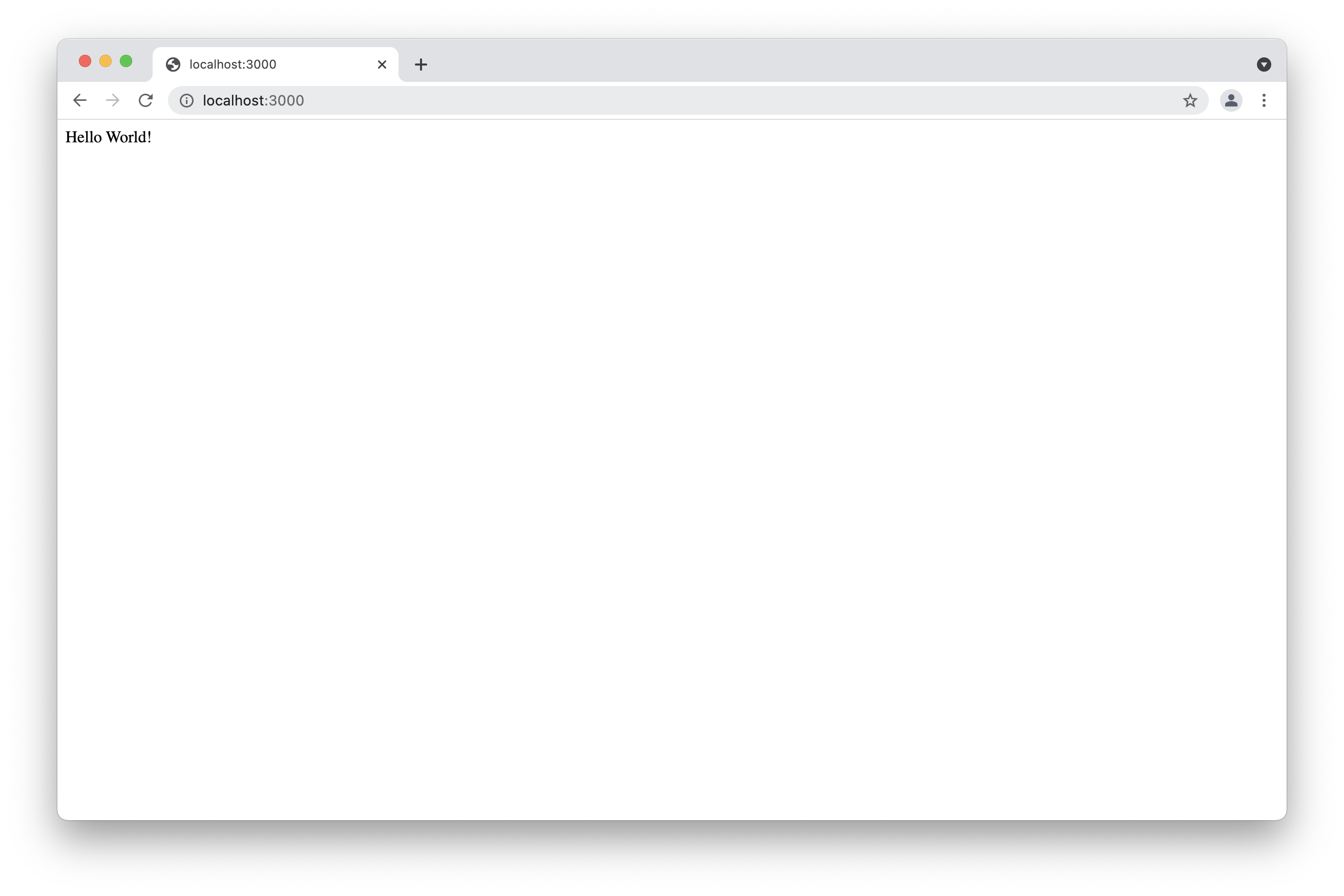

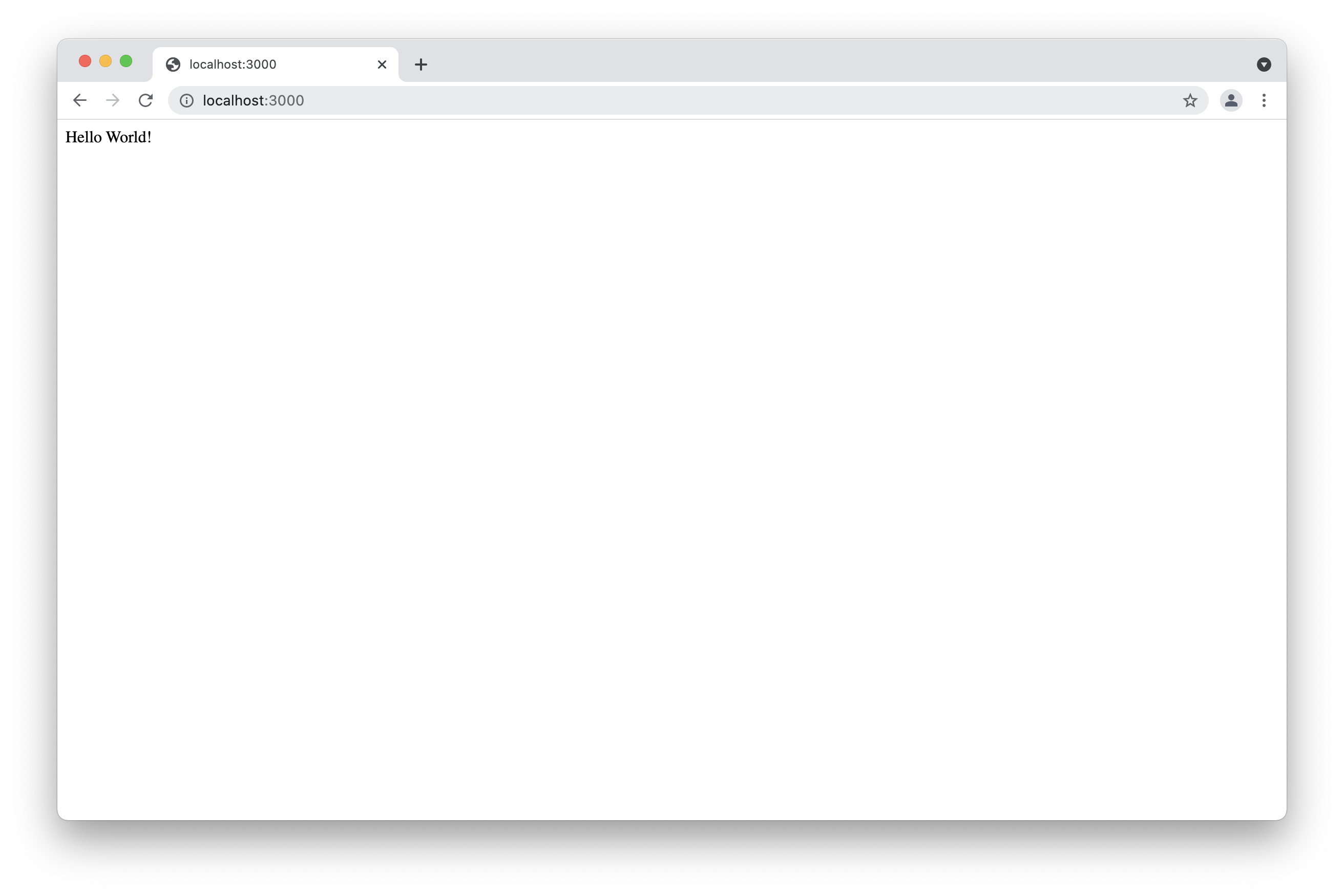

The example above shows how to create a simple server using Nodejs. This example does not use npm. All the used packages are default node packages. As you can see we firstly required the http package to create the route. Then we created a host name and a port. After that we send the status codes and headers. Nodejs Applications listen the port and we get a "Hello World" result in our browser. If you want to see the result, you only need to type in your terminal node app . Then you can go to your favorite browser and type localhost:3000. The result will be similar to the one we show below.

###Introduction to Express###

The example above is the most basic example of a Nodejs server. We can create a lot of things by only using Nodejs; but sometimes it can be tricky. You can see the Nodejs Docs and explore all you can do by only using Nodejs without any additional package. Using only Nodejs can be confusing sometimes. For that reason we have the npm. One of the most popular packages is express, and we are going to learn a lot of express in this tutorial. Let us start learning the express package.

###Installing Express###

When we started this tutorial we set some instructions to install Nodejs and npm. After we installed these softwares we could check their version. In order to install any package we only need to type the command npm install followed by the package name. We can install express as any other package as you can see in the example below.

```shell

npm install express

```

###Creating an Express Application###

To create any Nodejs application using the npm, We first need to create our package.json file. In that package we specify our dependencies and scripts. We can. also specify our dependencies for development as we are going to see below. In order to create the package.json for our application, we need to create a folder with the name of the application we want. We can create the folder in our terminal or manually. After we the folder is created we go to the folder in our terminal and type the command npm init. We can also type the command npm init -y.The example below shows you how the package.json is created.

```shell

npm init

This utility will walk you through creating a package.json file.

It only covers the most common items, and tries to guess sensible defaults.

See `npm help init` for definitive documentation on these fields

and exactly what they do.

Use `npm install <pkg>` afterwards to install a package and

save it as a dependency in the package.json file.

Press ^C at any time to quit.

package name: (sample)

version: (1.0.0)

description:

entry point: (index.js) app.js

test command:

git repository:

keywords:

author:

license: (ISC)

About to write to /Users/moe/webapps/node/sample/package.json:

{

"name": "sample",

"version": "1.0.0",

"description": "",

"main": "app.js",

"scripts": {

"test": "echo \"Error: no test specified\" && exit 1"

},

"author": "",

"license": "ISC"

}

Is this OK? (yes) y

```

In the example above you can see how we create the package.json using npm init. We created it and specify everything in the terminal. In case we want to create everything automatically, and then modify it in our text editor. We only need to type the other command that is npm init -y.

###Modifying our Package###

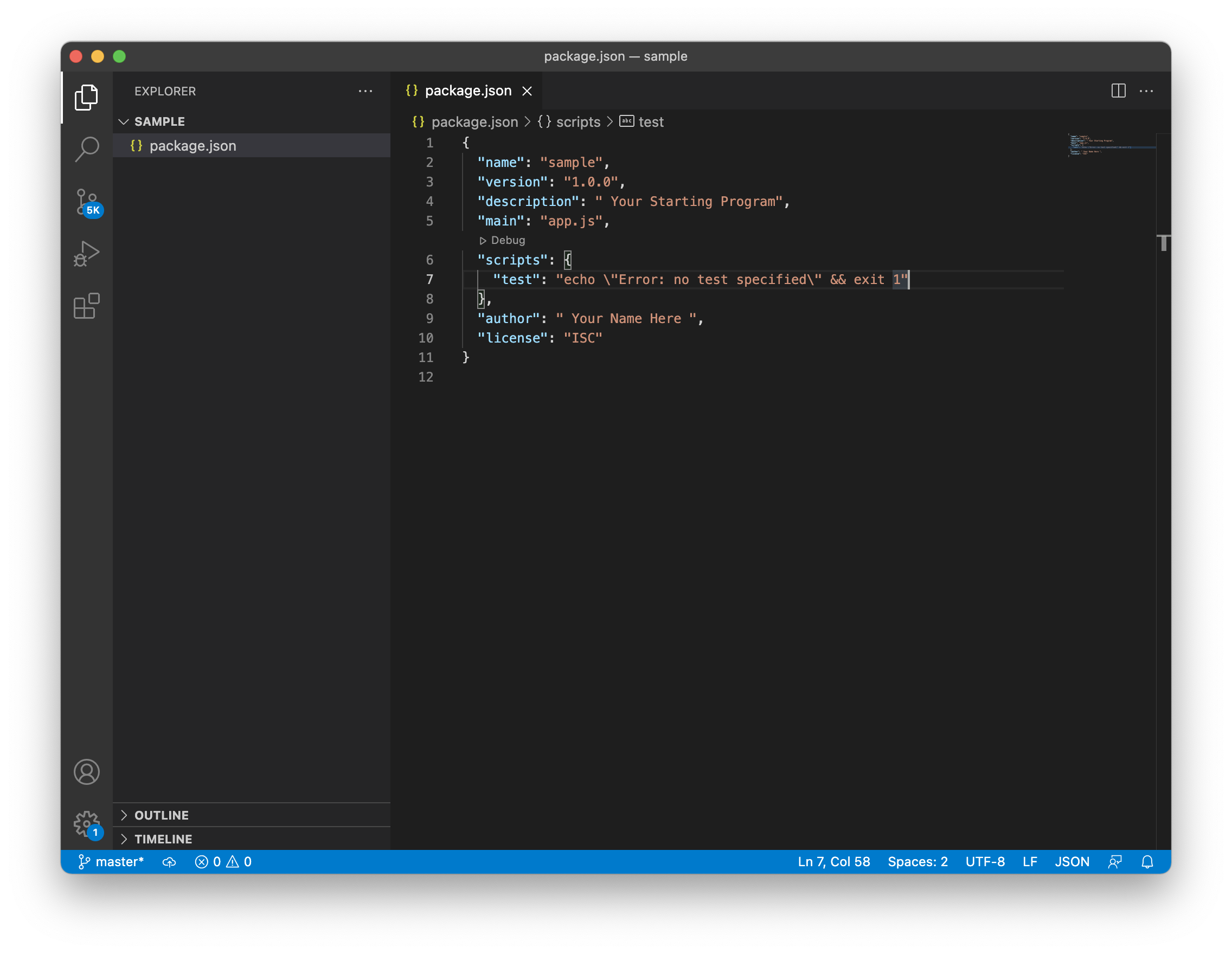

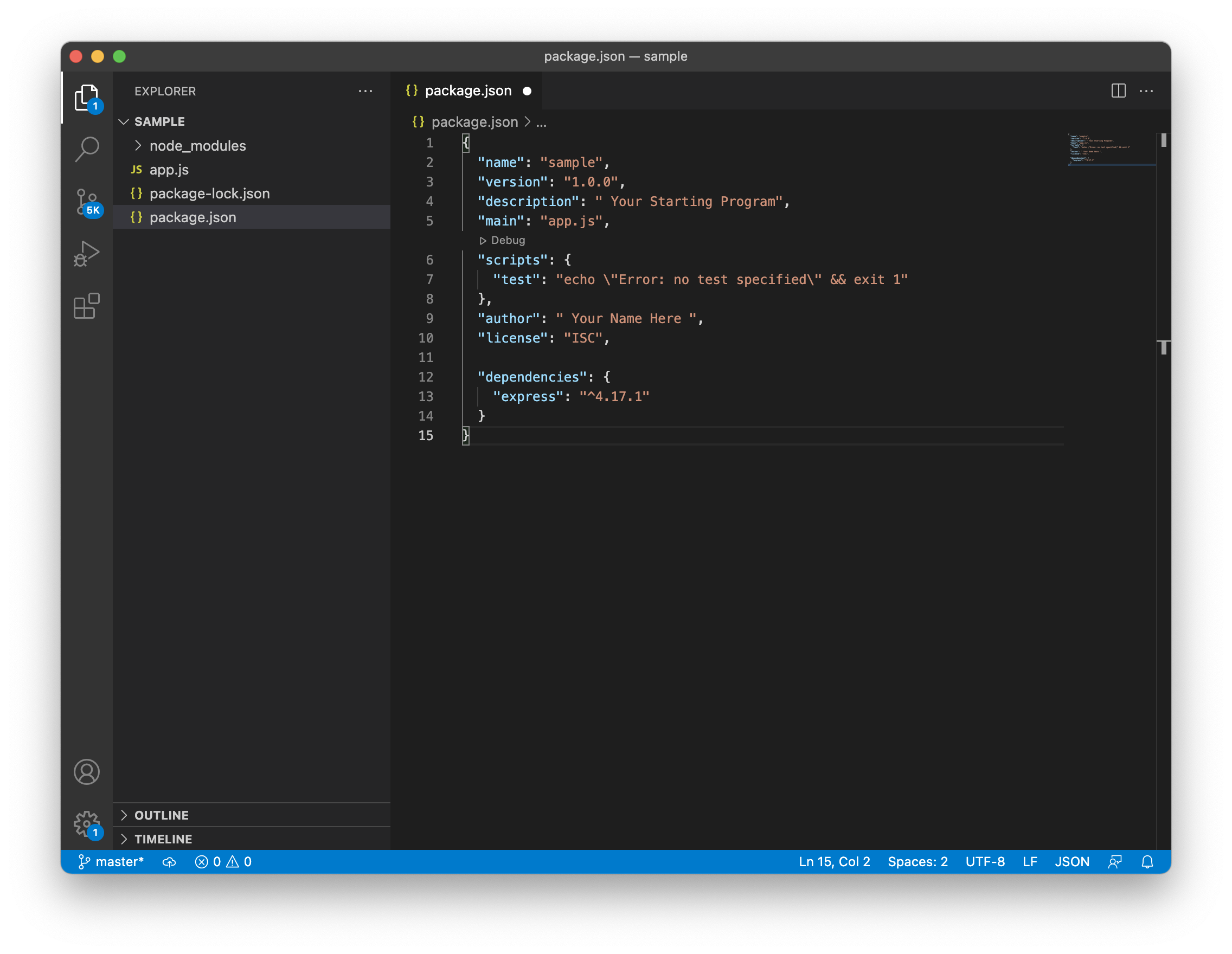

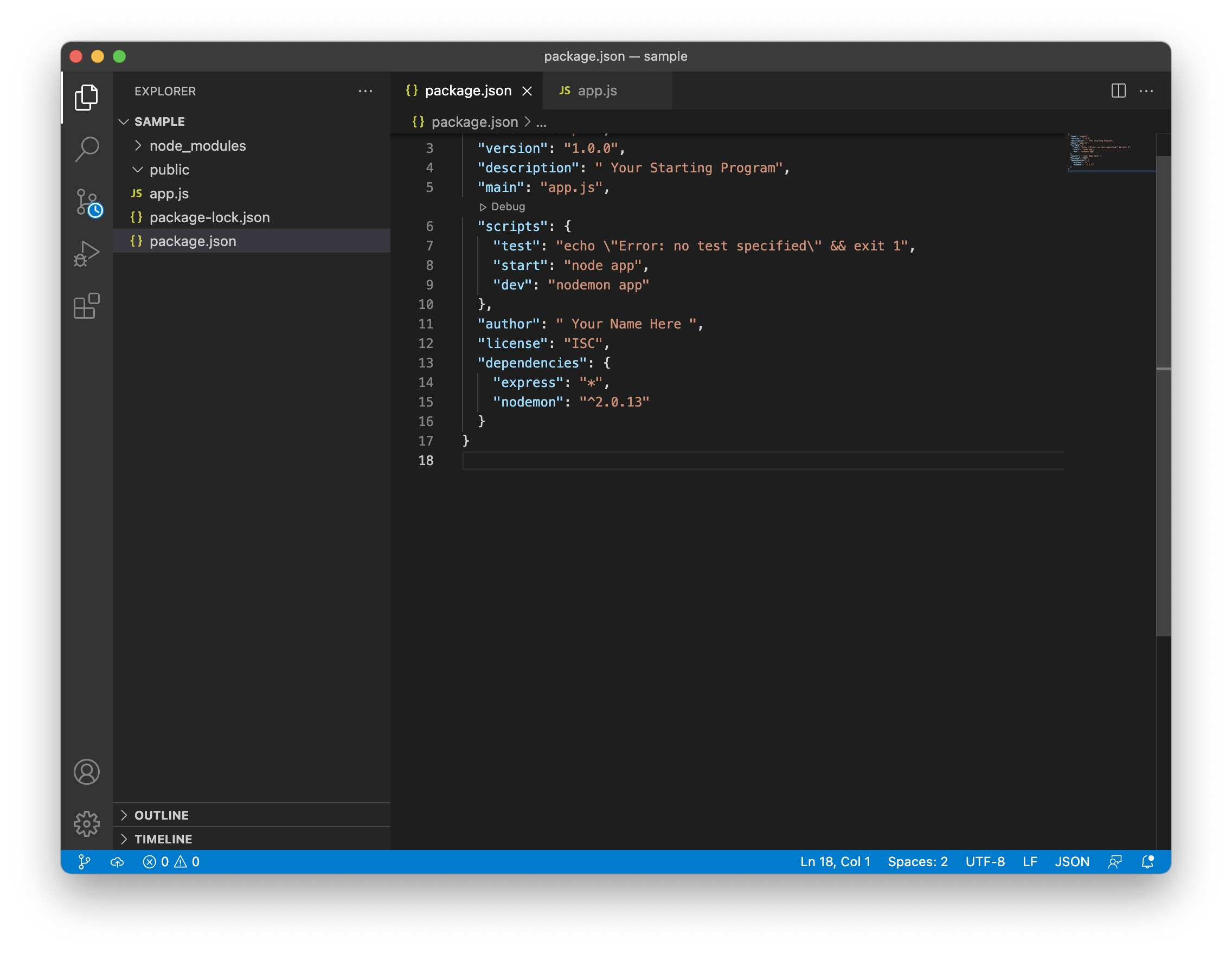

Once we create our package we can modify it using our text editor. No matter if you created it using npm init or npm init -y, the result is similar. You name your application using the name you want. Most times you use the same name we used for our container folder. By default the version is 1.0.0; you are creating a new application. In the description you can add a brief description. The main part is the name of the main file of your application. By default it will be index.js; but I personally name it app.js. You can name it as you want. in the scripts you can type the scripts you are going to use. We are going to explain scripts below. In the author field you can put your name or the name or your company. The image below shows you the package.json using Visual Studio Code.

###Setting Up The Express Application###

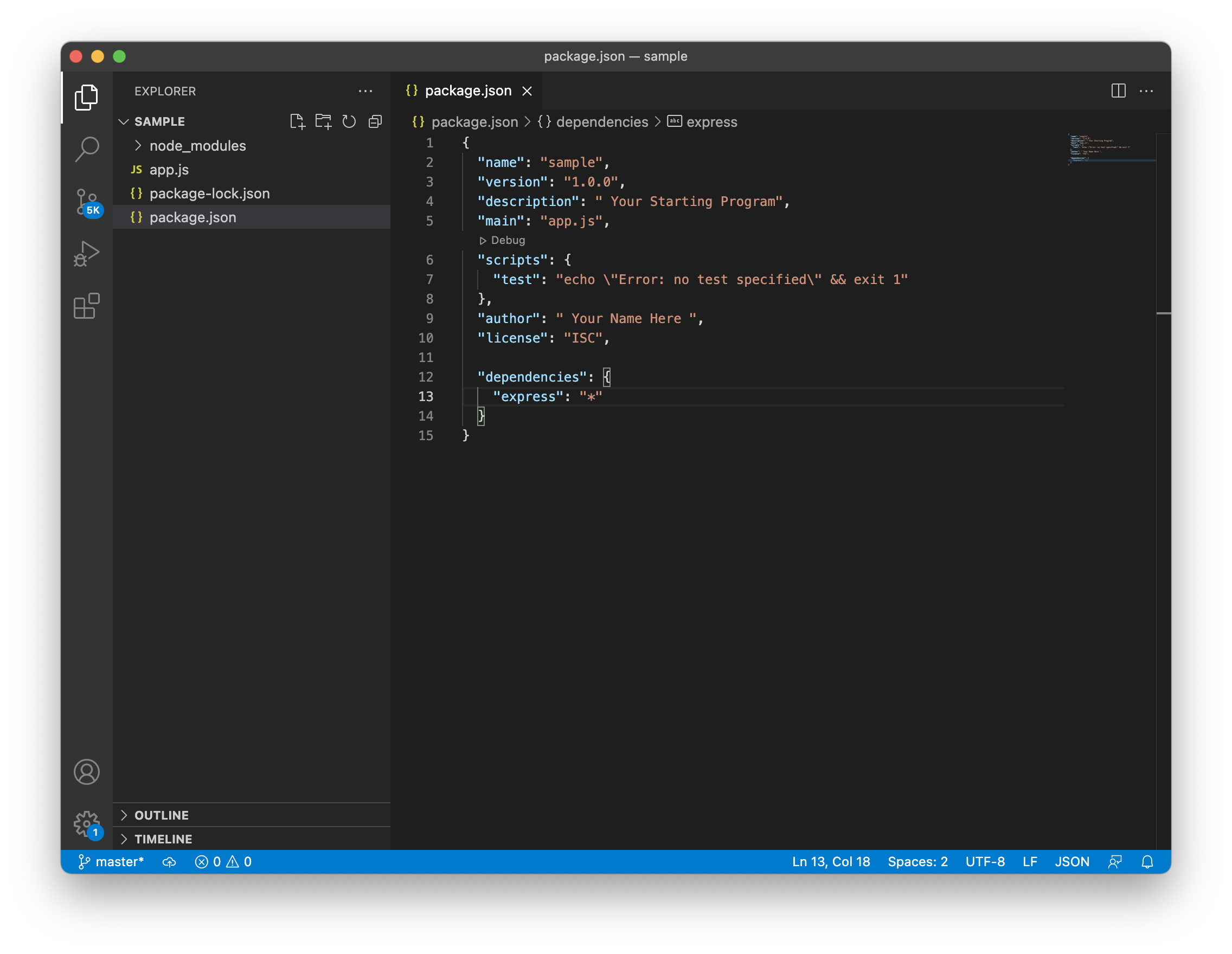

As the package.json specified the name of our application, now we proceed to create it. We said above that default name for any Nodejs application in the package.json is index.js. We can name as we want, and I personally like to name it app.js. In the same folder we create an empty file and name app.js Now we have two files for our application. We also need to install express. We previously showed you how to install express. You only need to go to your terminal and type the command npm install express. That command is going to install the latest version of express in your application. Once express is installed we can check our package.json file and see that another field is added. that field is the dependencies field. and inside is the version of express installed. as we show in the picture below.

```shell

npm install express

```

Once we install the first package in this case express, another file will be generated. This file is the package.lock.json. That package describes the exact tree that was generated. That packages also modifies itself when we install more dependencies. We also can create the dependencies field manually in our text editor. We can specify the dependencies we need. Then we type in our terminal npm install. All dependencies will be installed. In case we don't. know the number version of the dependency and we want the latest one. We can type an asterisk * where the number of the version goes. The latest version of the dependency will be installed. Another image below shows you how to do it.

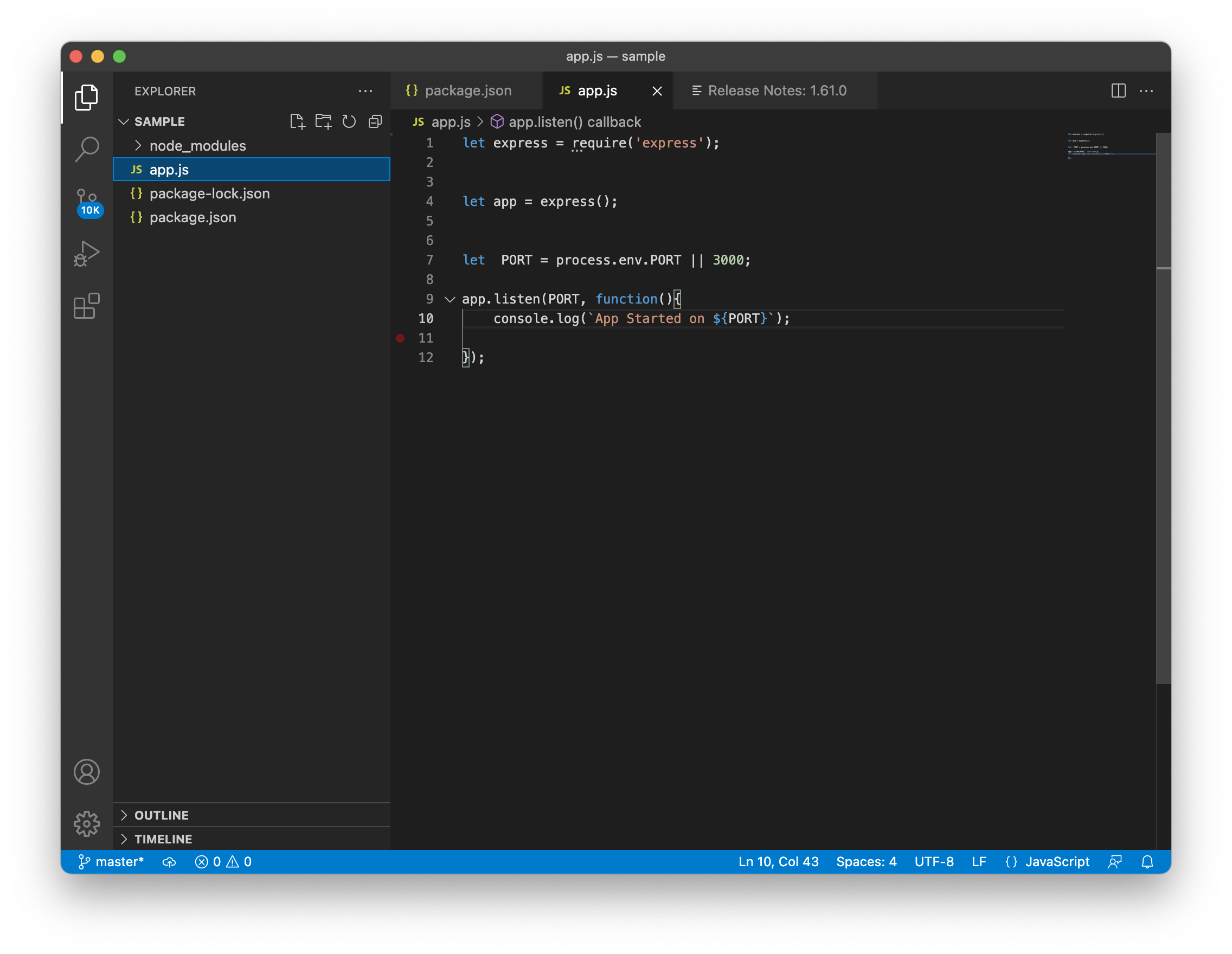

###Creating The Express Application###

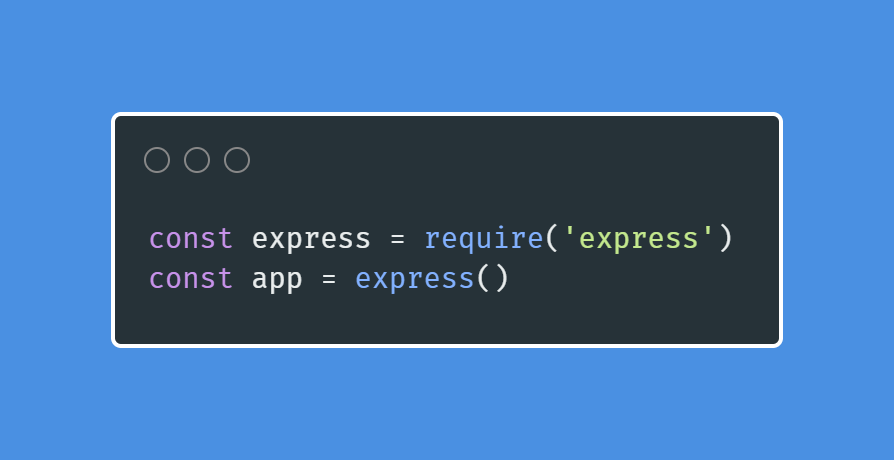

After we set everything up in our package.json file and also create the app.js file we can start creating our application. In the app.js file we start importing our packages. As we only have the express package installed. We proceed to import it or required in our app.js creating a variable that import it. To import or require a package we can use the following syntax.

```javascript

import express from 'express'; //ES6 syntax.

let express = require('express');

// Creating the Variable. That is the most used syntax and the one used in this tutorial.

```

After we create the express variable we can create the app. In order to create the app we only use the express variable that we created above as a function. Then we create a variable called app as the one we show below.

``` javascript

let app = express();

```

###Adding a Port for our new Application###

Once we create a new app the app needs to listen to a port. So we can create a variable called port and assign a port number. For development purposes we assign a number; but in order to deploy our application we use the process environment port. After the post creation we can use the listen function and add another callback function to get some results.The example below shows you how to use the process environment port and the number at the same time using a logic OR (||).

```javascript

let PORT = process.env.PORT || 3000;

app.listen(PORT, function(){

console.log(`App Started on ${PORT}`);

// Using Back ticks we can add the variable name to a string using "${}"

});

```

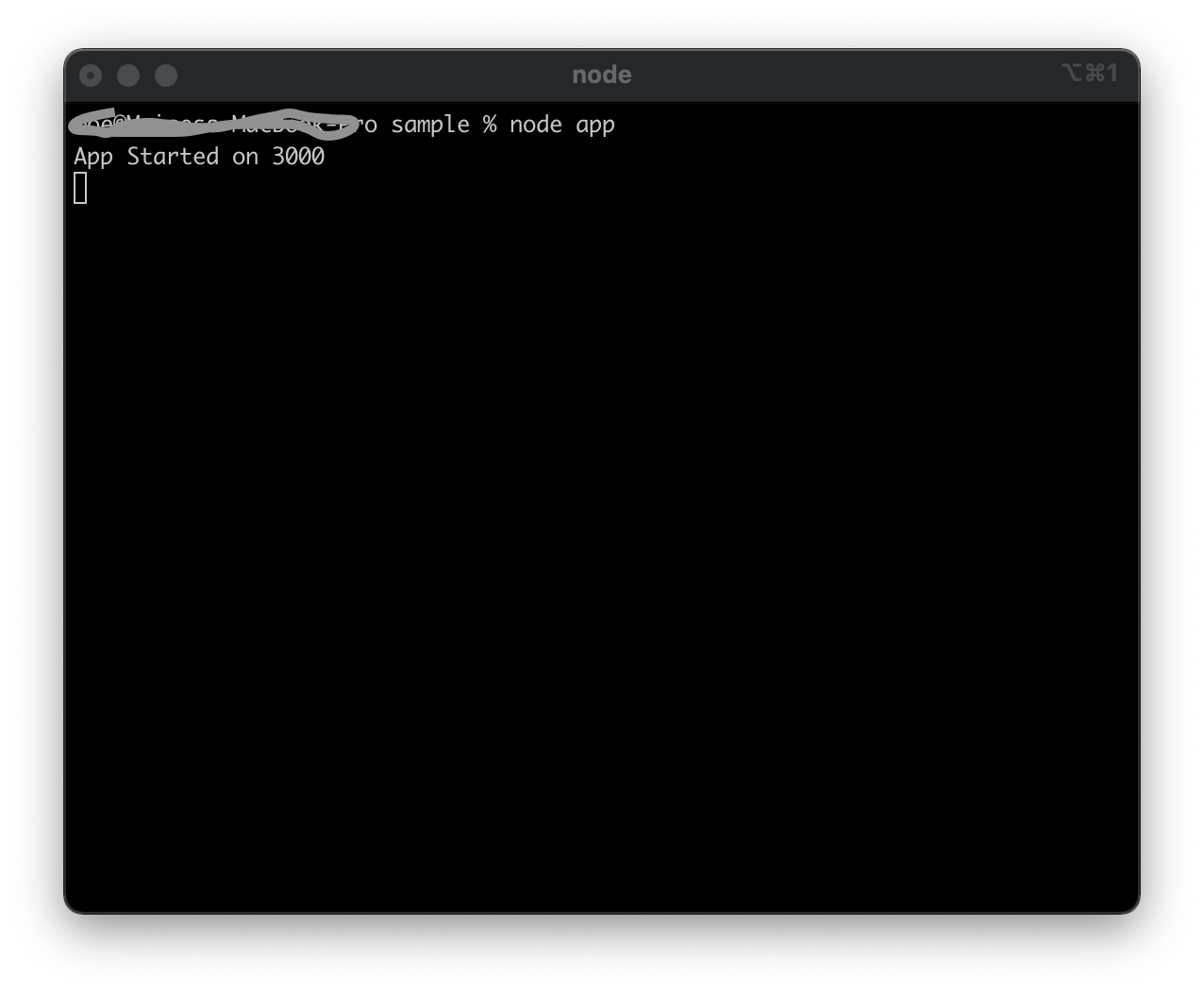

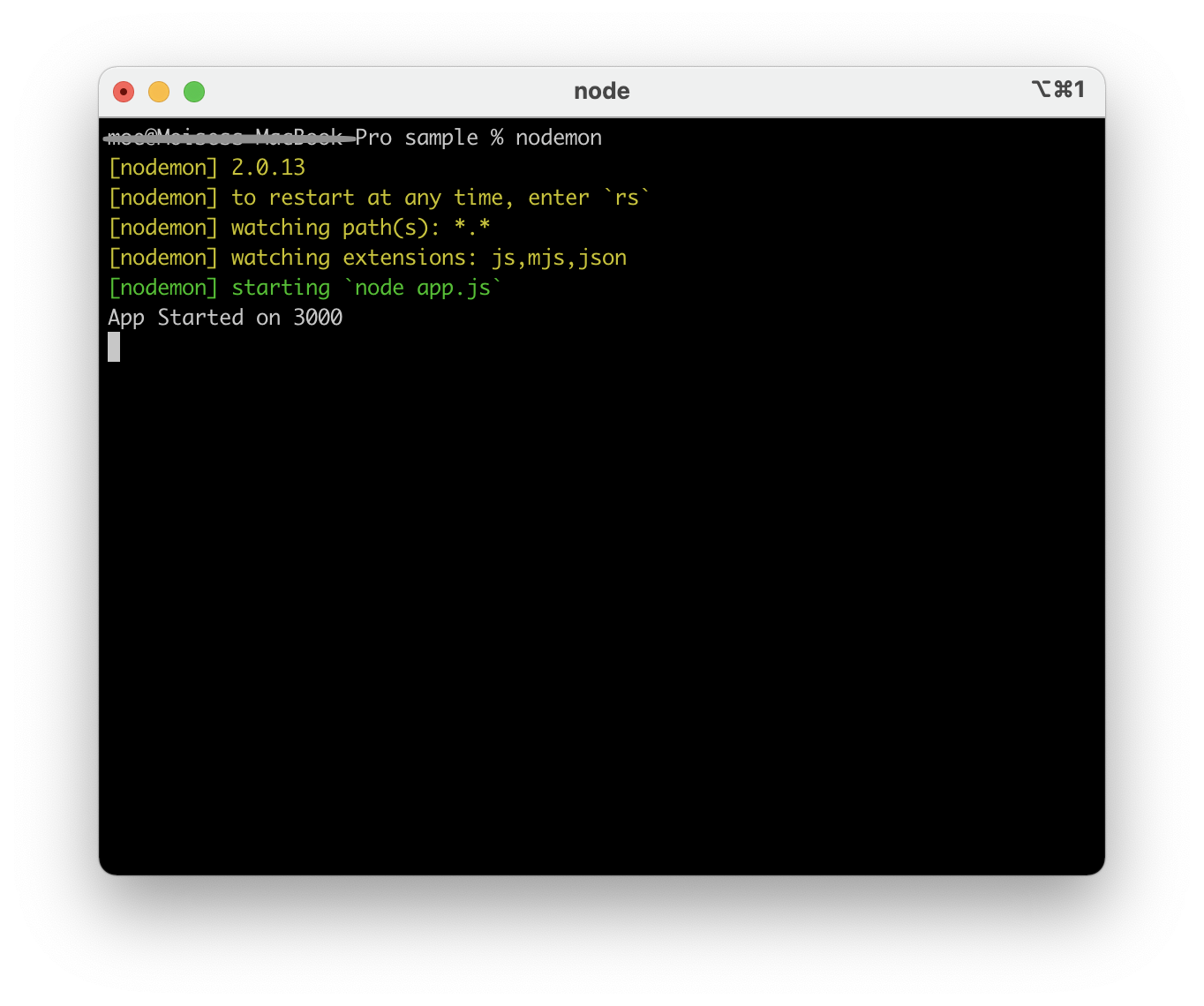

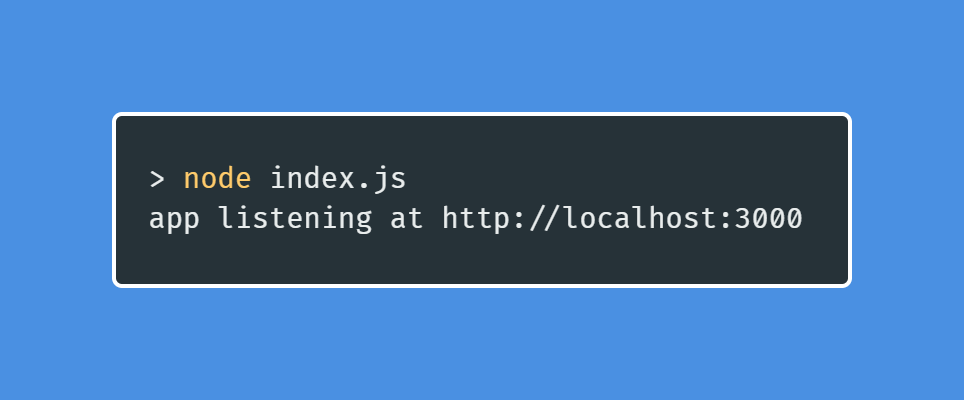

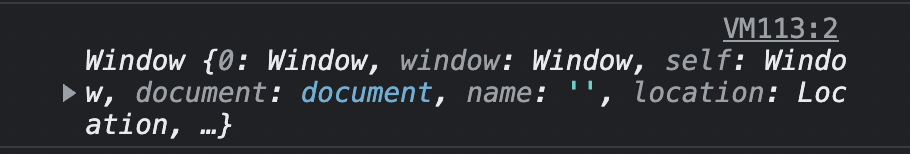

If we followed the same steps we explained here, we created our application. That is a simple application but that is the starting point. In order to run our applications we need to go to our terminal and type node app. Our application will run, and the result will be similar to the one showed in the images below.

###Express Routing###

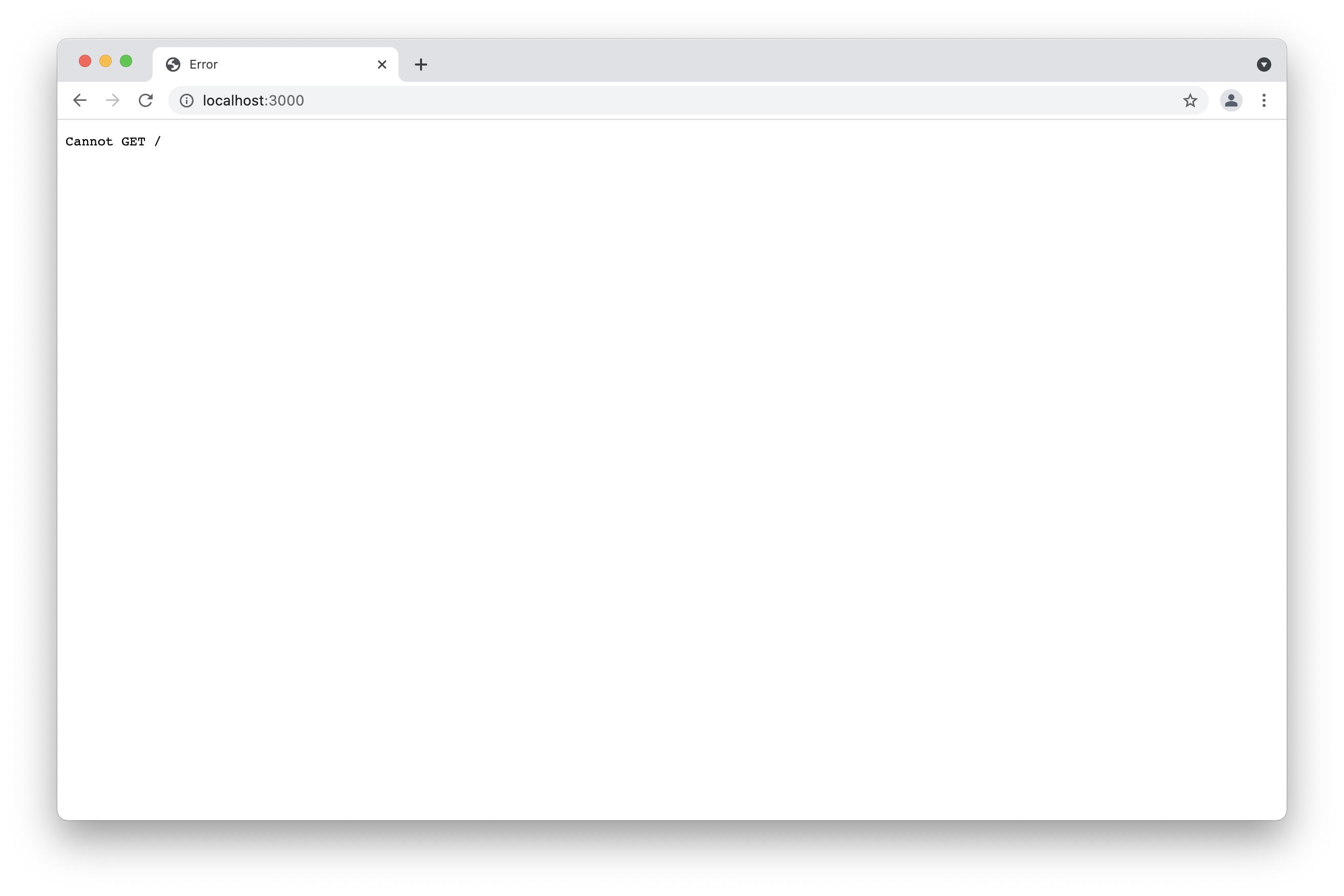

Now that you successfully created your application you can open your browser and go to localhost:3000 and see some results. Well, you can see the application is running; but in the browser you don't have anything. The only result you see in your browser is that the browser cannot get the route. The result is similar to the image below. For that reason we are now going to learn some routing for express applications.

Now it's time to get some routes to our application. In order to add routes we need to apply the HTTP verbs. These verbs are get, post put and delete. In this introduction we are going to use only the get. in a further tutorial we are going to learn how to use all the others. The get method gets the route of the page. the slash symbol( / ) is the home page. We are also going to create some other routes; but let us start with the home route. Most times we start creating routes after the app declaration and before the PORT. If you add the following code to your app you are going to send a Hello World! to the browser.

```javascript

app.get('/', function(req, res){

res.send("Hello World!");

});

```

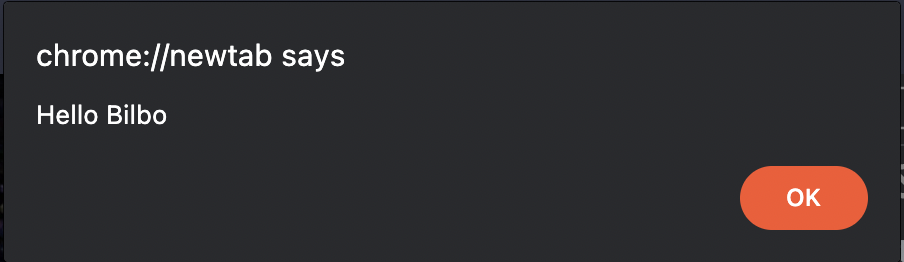

You can see in the code we added above that we are using the get method as a function. Then we add the home route ('/'). After that we created a callback function with the parameters req and res. These parameters are also Nodejs built in functions. The req parameter requires information, and the res parameter can send information to your browser. You are going to learn deeper about these parameters in a further tutorial. If you added the code to your application your result will be similar to the one we show you below.

###The Express static Public folder###

Now you could send some information to your browser; but that is not the result you want in your application. We can create a static folder where we can put our HTML files. In that folder we can also put our CSS and Javascript files. We can name the folder as we want. Most times we name the folder the public folder. To add the public folder to your app you need to add the following code.

```javascript

app.use(express.static('public')); // Using Express static. The name of the folder is public.

```

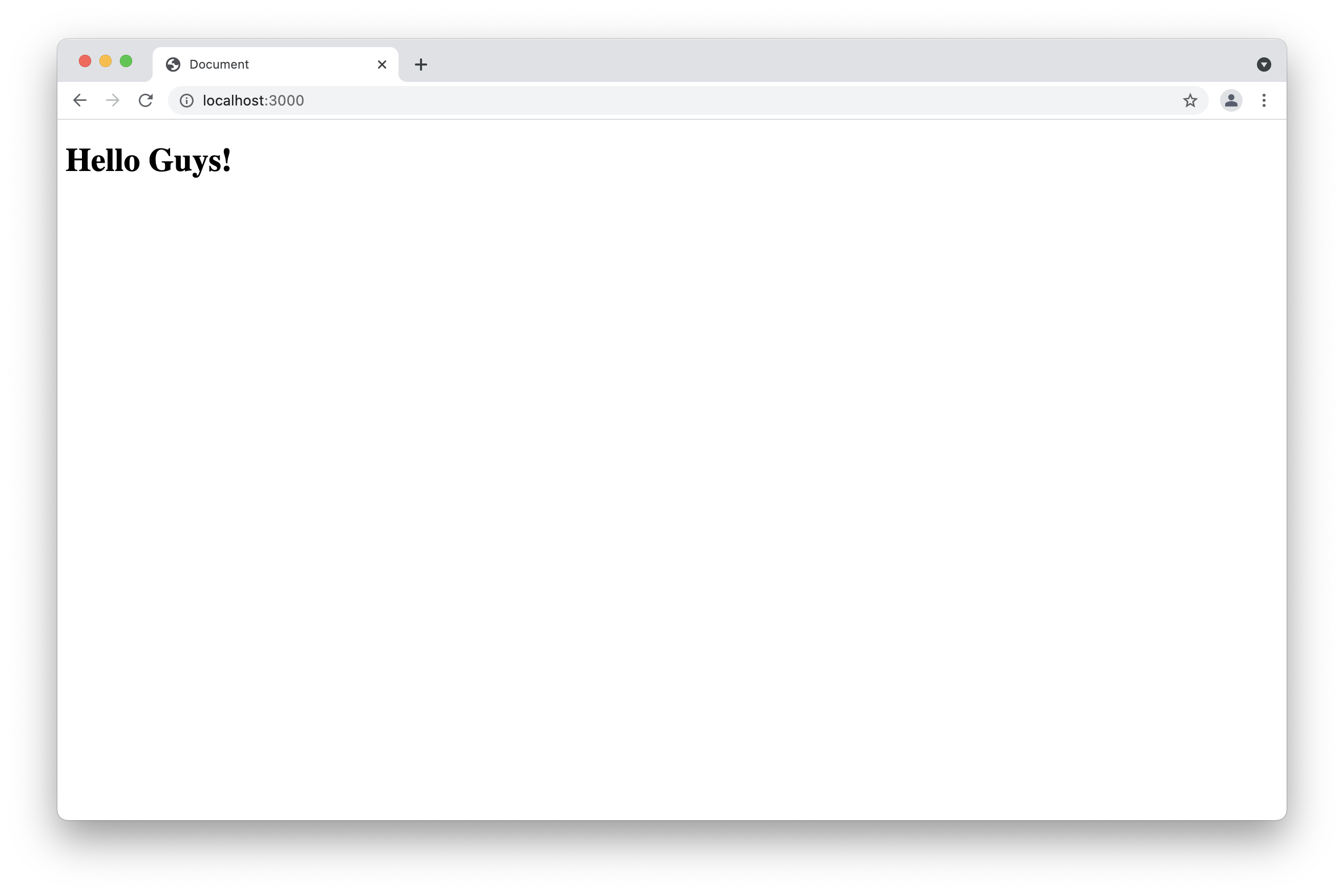

After we create the static folder we can create a file called index.html. By now we are only going to display a "Hello Guys" heading as the result. You can create the html file you want in your html. You only need to name it index.htm so express recognizes the file. now if you reset your application using CTRL+C, and restart it again. Then you go to your browser localhost:3000, and see the new result. ( Make sure you comment out the first route.)

###Installing New Dependencies###

In express applications we can install as many dependencies as we want. We only need to type in our terminal npm install followed by package name. Once we install the package, it will be added to de dependencies in your package.json file. Now let's install one package. In previous examples you saw that you had to reset terminal when you modified your code. This package is going to avoid the need of resetting your application as you add code. This package is<a href="https://nodemon.io"> Nodemon</a>. You only need to type the following command, and your application will be ready.

```shell

npm install nodemon

```

After you installed nodemon, you only need to type nodemon in your terminal in order to use it. Nodemon will reset the application after you add some changes and saved them. In case it does not run on the first try, you need to install the package globally using the following command.

```shell

npm install -g nodemon

OR

sudo npm install -g nodemon

```

###Working with Scripts###

When you first saw your package.json you noted there is a field called scripts. In Nodejs these scripts refer to commands we type in our terminal. They are not like the regular JavaScript scripts. To run any script you created you only need to type the command npm run followed by your script name. When you first create the package.json you can see there is a test script automatically created. Now let us create some scripts for our package.json The first script we are going to create is the start script. Most deployment process environment use the start script by default to start any Nodejs Application. Then we are going to create a dev script in order to use nodemon. The image below shows the modified package.json

```shell

npm start //starts the application

npm run dev // starts the application via nodemon

```

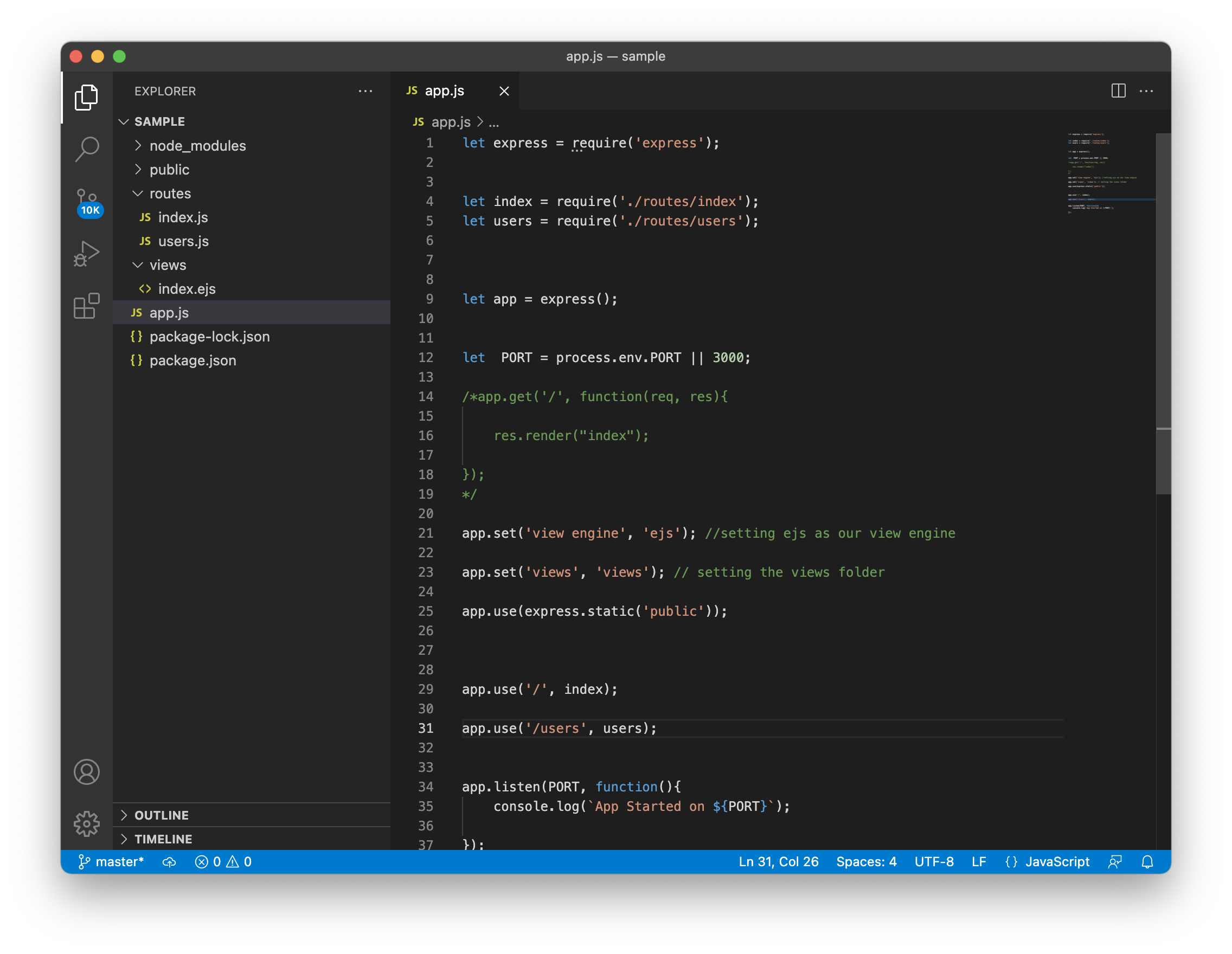

### Express Template Engines###

Besides using plain HTML in the public folder, we can use some view or template engines. That practice is very useful while creating express applications. Express has many view engines such as ejs, jade, handlebars, pug etc. You can see more information about view engines in <a href="https://expressjs.com/en/resources/template-engines.html">their website</a>. My Favorite one is ejs, because that one is similar to HTML. In order to add a view engine to our application, we first proceed to install the package. In this case we install ejs and then set the view engine in our app.js using the app.set() function. After that we can also set a views folder as you can see in the example below.

```shell

npm install ejs

```

``` javascript

app.set('view engine', 'ejs'); //setting ejs as our view engine

app.set('views', 'views'); // setting the views folder

```

After you set the view engine and views folder to your application, you proceed to create the views folder. If you are using a view engine is because you are not going to use the index file that you created in the public folder. You can delete the file and keep the folder for styling and scripts. Now in your views folder you can create a new index file. In that file you use the file extension ejs. The syntax will be similar to HTML. In a further tutorial we are going to show you how to deal with forms and databases using Nodejs and template engines.

###Routes to Views###

The previous section shows how to set a view engine and create the views. In order to see the views in your browser, you need to add routes to your views. That is an easy task in express. When you used the app.get('/') function you also used the res.send() method. In order to display a view from your views folder, you need to change the method to the res.render method. That is going to render the view in your route. The example below shows you how to render a view called index to our home route.

```javascript

app.get('/', function(req, res){

res.render('index');

});

```

###Using the Express Router###

Imagine you have several routes in your app. In express we have pre build router that we can use for these cases. Even if you don't have too many routes, using the router is a good practice. Most times we create another folder called routes for our routes files. After we create the folder we can add as many files as we need. In this case we are creating a file called index.js. In this file we are going to use the pre built express router. Then we can export the file to our app.js file. The example below shows you how to use the express router.

```

let express = require('express');

let router = express.Router();

router.get('/', function(req, res){

res.render('index');

});

module.exports = router;

```

Now we can import the route in our app.js file. We need to make sure we delete the route we had before to avoid errors in our applications. Below you can see how to add the route to the app.js

```javascript

let index = require('./routes/index'); // importing the route from the routes folder

app.use('/', index); Implementing our route with the use() function.

```

As we said before you can use as many routes as you want. We can create another file in our routes folder similar to the one that we created before. We are going to call this file users.js. Then we can import it to our app.js as we can see the other example below.

```javascript

let users = require('./routes/users'); // Importing the users route

app.use('/users', users); // Adding the route to our application.

```

If we add all the changes to the app.js file; the file will be similar to the one we show you in the following image.

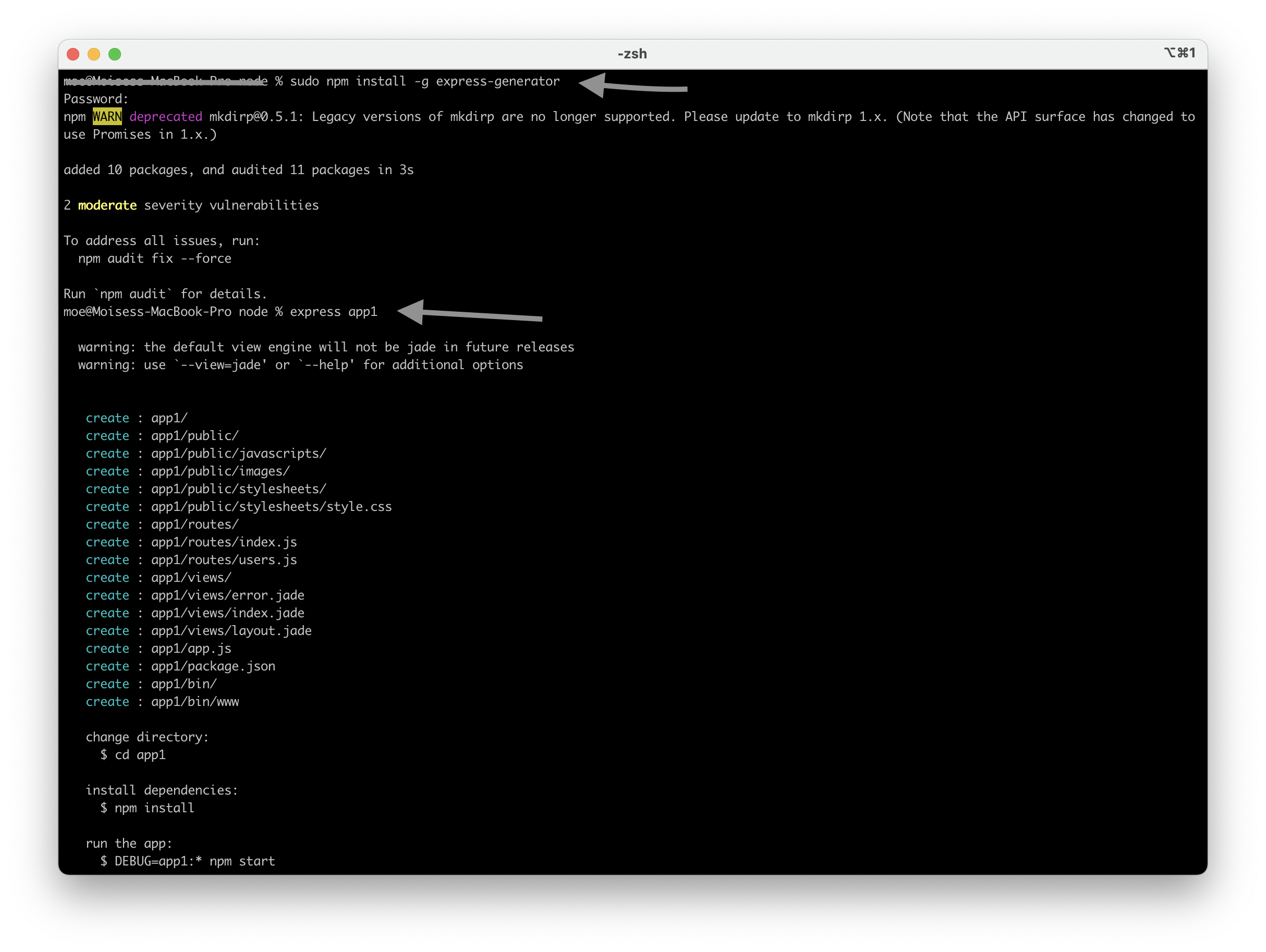

###Express Generator###

There is a node package called express-generator. That package serves as a shortcut to create an express application. That package will create the package.json, the app.js , and many other required files. It will also add a view engine though we need to install all the dependencies using the npm install command. To use the express generator we first proceed to install it using npm install express-generator. Now we can create an application using express as a command as express app1. Then we install dependencies as we stated before. The application generated with express generator listen port 3000 by default. the image below shows you how to use the express generator.

###Conclusion###

This tutorial is an introduction to Nodejs and Express. We focused more on express because learning a package is a great way to start learning Nodejs and more of its packages. Express is a complete package that we can use to create any application. This is only an introduction to Nodejs and Express. At this time we are working on some others more advance tutorials. Thank you for reading our tutorials, and we hope you learn a lot in this tutorial.

You can find more information about Nodejs in their <a href="https://nodejs.org/en/docs/">Documentation</a>. Also you can find information about express in the <a href="https://expressjs.com/">Express Website</a>. The <a href="https://developer.mozilla.org/en-US/docs/Learn/Server-side/Express_Nodejs">Express MDN </a> also

More at <a href="https://moeslink.com">Moe's Link </a>

| moreno8423 |

902,464 | Kubuntu Focus M2 Linux Laptop Review | Typically a Linux machine is a mostly do-it-yourself (DIY) endeavor. You’re usually on your own... | 0 | 2021-11-18T23:39:15 | https://techuplife.com/kubuntu-focus-m2-review/ | linux, ubuntu | Typically a Linux machine is a mostly do-it-yourself (DIY) endeavor. You’re usually on your own installing an operating system (OS), regardless of whether you’re using a pre-built machine or custom build. However, there are plenty of off-the-shelf Linux laptops and desktops available. The Kubuntu Focus is an outstanding Linux laptop. It comes with the Ubuntu-based Kubuntu Linux distribution (distro) pre-loaded, boasts an impressive spec sheet, and exceptional build quality. This combination makes is a great choice for programmers, gamers, and anyone switching from Windows or macOS to Linux. But is the [Kubuntu Focus M2](https://kfocus.org/) right for you? Find out in my hands-on review!

What is the Kubuntu Focus M2 – Kubuntu Focus Laptop Overview

------------------------------------------------------------

The [Kubuntu Focus M2](https://kfocus.org/) is a Linux laptop. It ships with the Kubuntu Linux distro pre-installed and, as such, runs Ubuntu with the KDE Plasma Desktop rather than vanilla Ubuntu’s GNOME desktop environment. There’s a 15.6-inch 1080p full high-definition (FHD) 144Hz display.

Under the hood, you’ll find an Intel 11th gen 8-core processor. You can outfit the Kubuntu Focus M2 gen 3 with up to 64GB of 3200MHz RAM, as high as 4TB of NVMe [SSD](https://techuplife.com/what-is-an-ssd/) storage, and up to an NVIDIA GeForce RTX 3080 with a whopping 16GB of GDDR6 video RAM (vRAM).

On the networking side, the Focus M2 sports dual-band 5GHz ac/a/b/g/n/ax Wi-Fi 6, Gigabit Ethernet, and Bluetooth 5.2. Inputs/outputs include a USB 3.0 Type-A, two USB 3.2 Type-A hosts, a 3.5mm audio jack, 3.5mm mic input, HDMI 2.0 port, 40Gbps Thunderbolt USB-C jack, and DisplayPort 1.4.

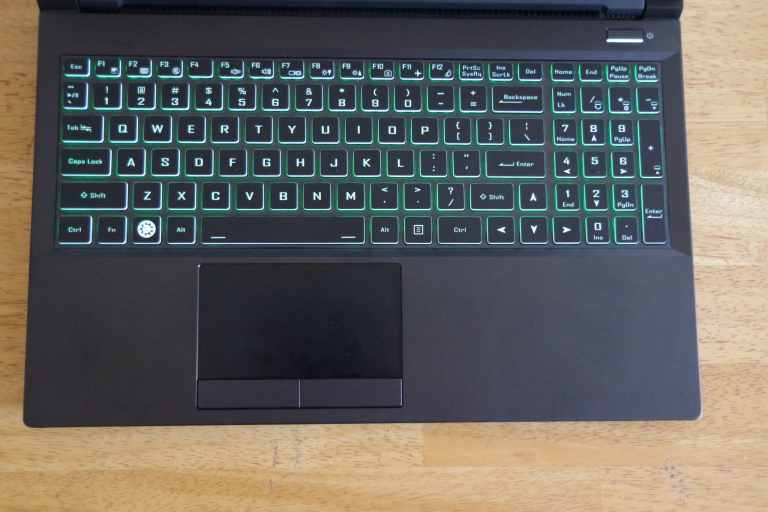

There’s a sturdy aluminum chassis. Its keyboard features RGB backlighting with over 65,000 different color combinations and 4mm keyboard travel. Pricing starts at $1,945 USD for the Kubuntu Focus M2 gen 3 which gets you an Intel Core i7 11800H, NVIDIA RTX 3060, 16GB of RAM, and 250GB NVMe SSD. You can max the Focus M2 out with the Beast preset which comes with an Intel Core i7 11800H CPU, NVIDIA RTX 3080, 4TB NVMe storage total (2x 2TB NVMe SSDs), and 64GB of RAM.

Kubuntu Focus M2 Specs

----------------------

* CPU: Intel Core i7 11800H

* GPU: Up to NVIDIA GeForce RTX 3080 (choice of GeForce RTX 3060, 3070, 3080)

* RAM: Up to 64GB 3200MHz dual-channel RAM (base 16GB)

* 15.6-inch 1080p 144Hz display

* Aluminium chassis

* RGB backlit keyboard with over 65,000 color combinations

* I/O: 1 x USB 3.0 Type-A, 2 x USB 3.2 Type-A hosts, 1 x 3.5mm audio jack, 1 x 3.5mm mic input, 1 x HDMI 2.0 port, 1 x 40Gbps Thunderbolt USB-C jack, 1 x DisplayPort 1.4

* Networking/communication: Dual-band 5GHz ac/a/b/g/n/ax Wi-Fi 6, Gigabit Ethernet, Bluetooth 5.2, Realtek RTL8168 Gigabit Ethernet jack

[Buy from Kubuntu](https://kfocus.org/)

Kubuntu Focus M2 Review Verdict

-------------------------------

The Kubuntu Focus M2 is an outstanding Linux laptop. I liked that it came with Kubuntu Linux preloaded. Although it’s easy enough to install most Ubuntu and Debian-based Linux distros yourself, I appreciated the ability to fire up the Focus M2 and begin using it right away. Kubuntu is a real treat to use, and several useful apps including the Google Chrome web browser and Steam video game client come preinstalled.

Build quality is excellent. I found the all-metal chassis sturdy and durable. The lid is emblazoned with the Kubuntu logo, and the keyboard includes a super key sporting the Kubuntu logo as well. Keyboard travel at 4mm is great. Writing and editing felt natural. The RGB backlit keyboard was gorgeous, and I enjoyed setting custom colors as well as brightness.

The 1080p FHD 144Hz IPS display boasts fantastic color accuracy along with good viewing angles. Everything from gaming and watching movies to general web browsing looks lovely. My review unit came with an Intel Core i7 11800H CPU, NVIDIA GeForce RTX 3070 GPU, and 16GB of RAM.

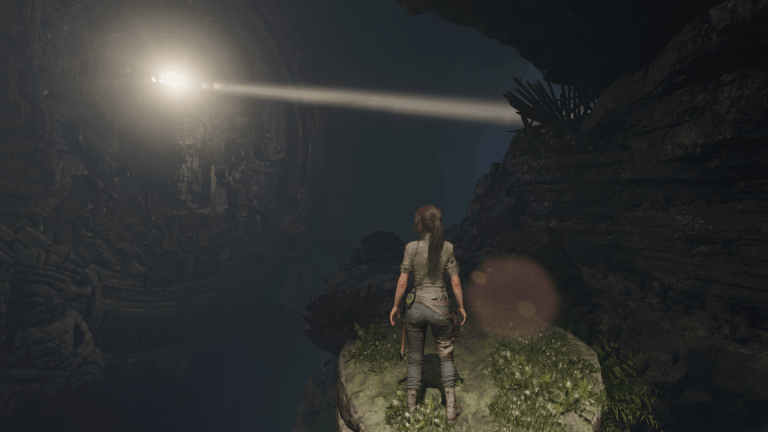

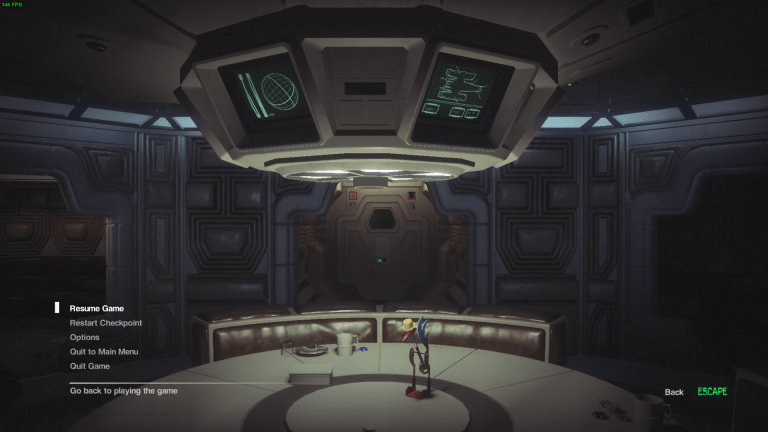

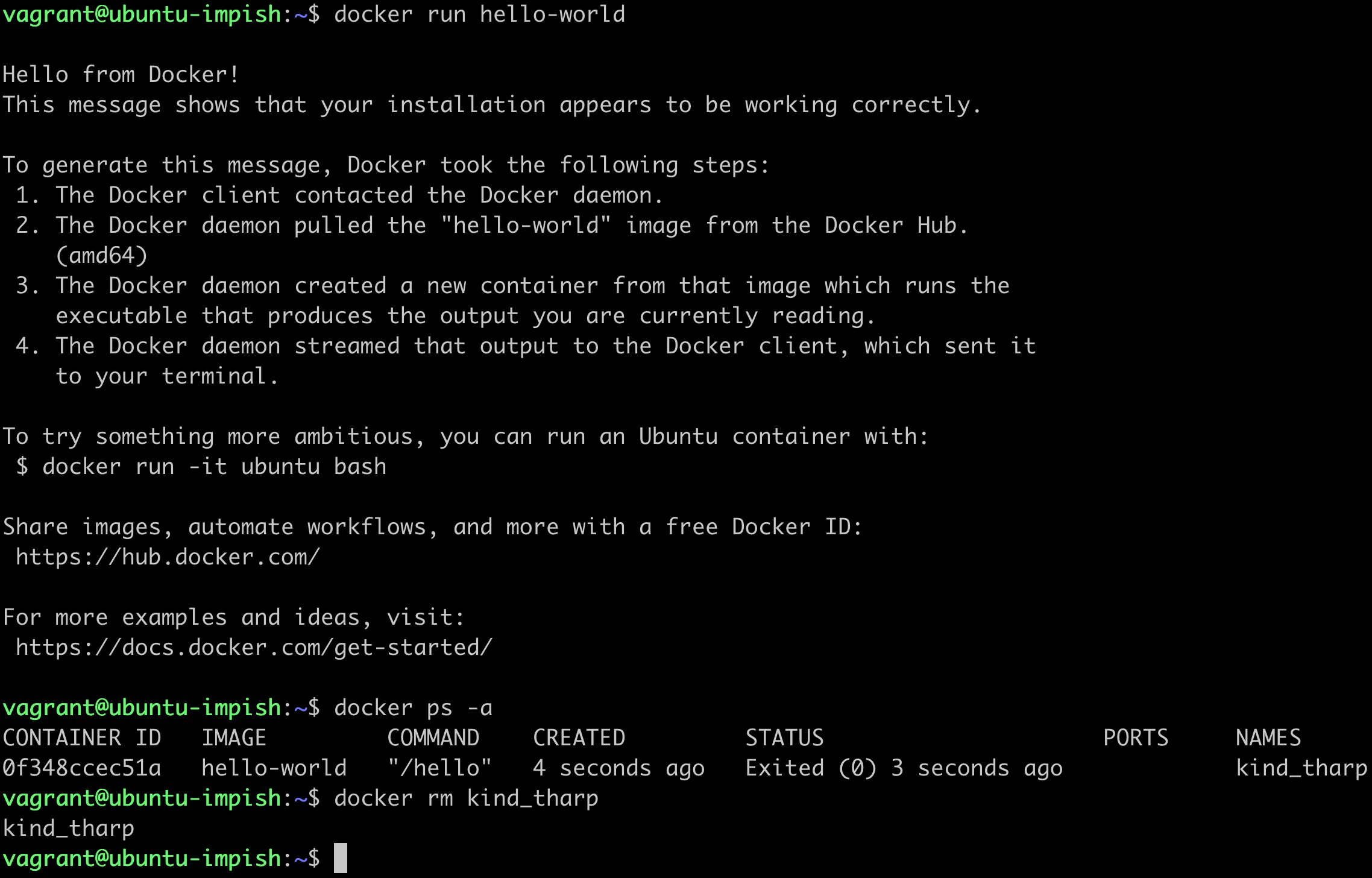

In my testing, gaming was buttery smooth for titles such as _Rise of the Tomb Raider_, _Shadow of the Tomb Raider_, and _Alien: Isolation_. Similarly, spinning up Docker instances was blisteringly fast. Therefore, the Kubuntu Focus M2 is a versatile machine that should satisfy a variety of users including gamers, programmers, and system administrators (sysadmins).

I was impressed with the array of different ports. With three USB ports, HDMI and DisplayPort, 3.5mm headphone/mic jacks, Thunderbolt USB-C, and Gigabit Ethernet, there’s generous connectivity. And Wi-Fi 6 alongside Bluetooth 5.2 offer fast wireless networking. The well-ventilated bottom hatch is easy to remove for simple upgrades, a nice touch in an age where laptops are becoming increasingly difficult to open up.

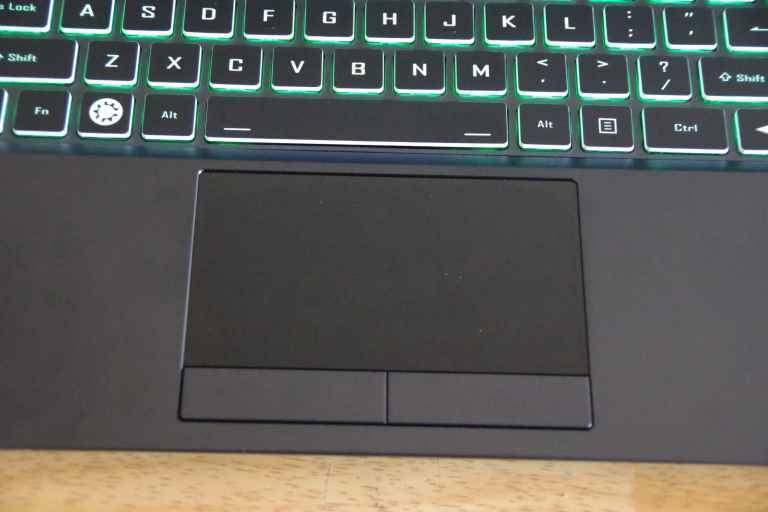

Unfortunately, the metal chassis is a bit of a fingerprint magnet. Additionally, I found the trackpad somewhat small and tough. Since the touchpad isn’t clickable, it took some getting used to. Under full load, the fans do kick in and are slightly noticeable, though this is common to pretty much all gaming laptops. While the Kubuntu Focus does feature switchable graphics, changing between the dedicated GPU and integrated GPU requires restarting the desktop environment. Though that’s a minor convenience, it’s not the end of the world.

Ultimately, the Kubuntu Focus M2 is a superb [Linux](https://techuplife.com/how-to-free-up-more-space-in-boot-on-ubuntu/) laptop. Regardless of whether I was gaming, programming, writing, or simply browsing the web, the Focus was more than up to the task. A few minor quirks don’t detract from the overall excellence of the M2, making it arguably the best Linux laptop on the market.

Kubuntu Focus M2 – Design and Build

-----------------------------------

The Kubuntu Focus M2 features a solidly built aluminum chassis. Its lid includes the Kubuntu logo. Around front, you’ll find a set of status indicator LED lights.

The right-hand side of the laptop includes a pair of USB 3.2 Type-A ports and a Gigabit Ethernet LAN jack.

In back, the Focus M2 sports HDMI 2.0 and DisplayPort 1.4 video outputs. There’s also a barrel jack power connector.

A Kensington lock port, USB 3.0 Type-A, 3.5mm headphone out jack, and 3.5mm mic input grace the left-hand side of the unit.

Underneath, there’s a well-ventilated grille that covers the RAM, NVMe SSD, and cooling fans.

The keyboard boasts great key travel of 4mm. Its RGB led backlighting can easily be customized using a hotkey.

And the keypad is pretty sizable, though not nearly as big as the touchpad on my Razer Blade 15 or Apple’s MacBook Pro.

Construction is stellar all-around. The metal chassis is sturdy, there’s little to no flex to the screen, the keyboard is comfortable to type on, its bottom panel is well-ventilated, and you’ll find ample connection ports.

Kubuntu Focus M2 Gaming Performance

-----------------------------------

Gaming performance is extremely impressive. On my NVIDIA GeForce RTX 3070-equipped system, I clocked an average of around 160 frames per second (FPS) in _Shadow of the Tomb Raider_ at the highest preset in 1080p.

In _Rise of the Tomb Raider_, I averaged about 150 FPS in the highest preset. Visuals were buttery smooth.

Playing _Alien Isolation_ maxed out on ultra, I averaged around 140 frames per second. The Kubuntu Focus churned out gorgeous eyecandy, replicating a lifelike recreation of the Nostromo.

Your experience will vary depending on which GPU you select – the RTX 3060, 3070, or 3080. While the 3080 can handle 4K on ultra or high for most modern AAA titles, the RTX 3070 is best suited to 1080p or 1440p. For Linux gaming, the Kubuntu Focus M2 is a great choice.

**Kubuntu Focus M2 gaming benchmarks:**

* _Alien Isolation_ – 144 FPS average, 1080p ultra

* _Shadow of the Tomb Raider_ – 160 FPS average, 1080p highest preset

* _Rise of the Tomb Raider_– 150 FPS, 1080p average, highest preset

Kubuntu Focus M2 – Software Experience

--------------------------------------

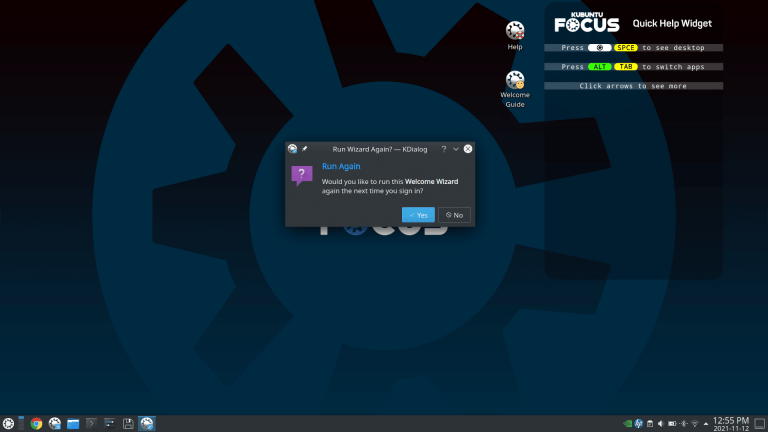

While the Focus M2 delivers wonderful hardware, it’s the software experience that makes this an incredible Linux laptop. Ubuntu and its derivatives tend to be fairly user-friendly on the whole. But Kubuntu takes this to an entirely new level. When you first boot into Kubuntu, there’s a helpful software setup wizard which runs. It walks you through some initial configuration including changing your password, choosing an avatar, and enabling various optional software packages. For instance, Kubuntu prompts you to turn on Dropbox, enable InSync, and turn on the open-source password manager KeePassXC.

Moreover, there’s a helpful list of recommended applications. You can launch this with ease from the taskbar. Suggested [software options](https://techuplife.com/best-linux-media-server-software-options/) include Steam, Kubernetes, Lutris (for playing Windows games with WINE), JetBrains Toolbox, the LibreOffice suite, and more. I appreciated the software wizard and recommended apps list. Although most Linux enthusiasts should have no issue installing different apps, these features make the Kubuntu Focus M2 great for newcomers switching from Windows or macOS to Linux.

Since Kubuntu utilizes the DPKG package manager, downloading apps is pretty fast. The KDE Plasma desktop is an excellent, visually pleasing graphical user interface (GUI). KDE looks similar to Windows for a familiar experience that should ease the transition from a non-Linux OS. The KDE Plasma desktop environment uses few system resources for a snappy experience. Loading up apps and switching windows was seamless.

Privacy Features on the Kubuntu Focus M2

----------------------------------------

The Kubuntu Focus M2 touts a slew of safety and security features. You’ll find full-disk encryption, optional pre-configured YubiKey 5 NFC two-factor authentication (2FA), and a BIOS Trusted Platform Module (TPM) 2.0 disable option. Its YubiKey 5 NFC 2FA support lets you enable hardware-based authentication for bolstered security. There’s even KeePassXC open-source password manager compatibility.

Outside of its robust software security features, the Focus M2 packs hardware security goodies as well. There’s a Kensington lock port for securing your Linux laptop to a desk. And its HD webcam has a physical privacy shutter cover.

**Privacy features:**

* Full-disk encryption

* YubiKey 5 NFC 2FA

* Disable BIOS TPM 2.0

* Kensington lock

* Physical webcam privacy cover

Should You Get the Kubuntu Focus M2 – Who is the Kubuntu Focus M2 For?

----------------------------------------------------------------------

So now on to the real question: Should you get the Kubuntu Focus M2? As a versatile Linux laptop, the Focus is a compelling choice for all sorts of users. Because of its NVIDIA GPU options, the Focus M2 is great for gamers, designers, and video editors. Steam comes preinstalled for gaming. Under the curated apps list, you’ll find Blender for 3D modeling as well as Lutris for running Windows games on Linux with WINE.

Since Linux is an [extremely popular environment for programmers and developers](https://dzone.com/articles/best-linux-distros-for-developers). As such, the Focus M2 is a fantastic choice for devs. Spinning up Docker containers is blisteringly fast, with generally under two-minute install times. That’s mostly because the Focus M2 runs Linux natively, so it eliminates any filesystem emulation layers like you’ll find on macOS or Windows. And there’s a whole host of different programming tools available including Kubernetes, JetBrains Toolbox, IDEs ([integrated development environments](https://dzone.com/articles/best-raspberry-pi-ides)) like IntelliJ or PyCharm, VScode, and more.

Furthermore, with its extremely user-friendly Linux OS in Kubuntu, the Focus M2 is a solid choice for anyone switching from Windows or macOS. Although it’s a full-fledged Linux distro, Kubuntu lets you install apps, run updates, and customize your system without needing to touch the command line much.

**Who is the Kubuntu Focus M2 for:**

* Gamers

* Developers/programmers

* Designers/animators/3D modelers

* Anyone switching from macOS or Windows to Linux

Kubuntu Focus M2 Review – Final Thoughts

----------------------------------------

There’s a lot to love about the Kubuntu Focus M2 third-generation. It offers a spectacular, user-friendly experience that’s suitable for everyone including gamers, programmers, sysadmins, and designers. The pre-loaded Kubuntu Linux OS is easy to use and great for anyone switching from macOS or Windows to Linux. Gaming performance is superb, and many apps for development like Docker benefit from the native Linux environment. With Linux preloaded, the Focus M2 is ready to use out-of-the-box. You can outfit the Focus with outstanding hardware all the way up to an NVIDIA RTX 3080, 4TB of NVMe SSD storage, and 64GB of RAM.

The touchpad is a bit stiff, though I eventually got used to it. While the Focus includes switchable graphics, you’ll have to restart the desktop environment in order to toggle between the high-performance GPU and energy-efficient integrated graphics chip. Although that’s not the end of the world, it’s somewhat cumbersome. Admittedly, the price (beginning at $1,945) is a bit high. However, it’s about in line with premium laptops. For instance, the RTX 30-series Razer Blade 15 starts around $1,700 while the MacBook Pro 14-inch normally starts at $1,999. Thus, the Focus M2’s price tag is aligned with the competition. And for the unparalleled experience of a pre-installed Linux laptop, it’s well worth the cost.

In the end, the Kubuntu Focus is an extraordinary piece of kit. It should satisfy a variety of different needs and makes getting started a breeze. Whether you’re a hardcore gamer, sysadmin, developer, designer, or merely a curious new Linux user, the Kubuntu Focus M2 is a spectacular machine, and arguably the best Linux laptop on the planet.

**Your turn: Which Linux laptops are you using, and what Linux distros are you running?** | mitchellclong |

866,768 | How to make a simple slider component in React | A very common component to use in forms or to receive numeric input with a non-arbitrary range is... | 0 | 2021-10-17T17:12:10 | https://relatablecode.com/how-to-make-a-simple-slider-component-in-react/ | react, slider | ---

title: How to make a simple slider component in React

published: true

date: 2021-10-17 16:53:56 UTC

tags: react,slider

canonical_url: https://relatablecode.com/how-to-make-a-simple-slider-component-in-react/

---

A very common component to use in forms or to receive numeric input with a non-arbitrary range is to use a slider component. However, this can easily be done with some basic HTML and CSS whilst adding some react-y props.

The basic usage can be implemented with a simple HTML Input. Something that is not readily apparent is that an input can be of various types. One of these is of type range.

This is how it looks:

<figcaption>HTML Input type range</figcaption>

Code:

```

<input type="range" />

```

However, while this may fulfill the slider component necessity there are a few things that limit it. We should add some styling and make it so the values of the min and max.

### Styling

Let’s dig into the CSS

```

input[type='range'] {

-webkit-appearance: none;

height: 7px;

background: grey;

border-radius: 5px;

background-image: linear-gradient(#D46A6A, #D46A6A);

background-repeat: no-repeat;

}

```

<figcaption>Bar Styled</figcaption>

This first bit of styling is to target the bar targeting the color and shape:

However it still looks a bit awkward with the circle being of a different color, we can target this portion with a selector: -webkit-slider-thumb

```

input[type='range']::-webkit-slider-thumb {

-webkit-appearance: none;

height: 20px;

width: 20px;

border-radius: 50%;

background: #D46A6A;

cursor: pointer;

box-shadow: 0 0 2px 0 #555;

}

```

<figcaption>Thumb Styled</figcaption>

Simple enough we just make the circle a bit bigger and change the color to match the rest of the component.

However its not very clear what part of the bar is being filled, so let’s change that by changing the track of the slider:

```

input[type="range"]::-webkit-slider-runnable-track {

-webkit-appearance: none;

box-shadow: none;

border: none;

background: transparent;

}

```

But, we also need a way to calculator the current background size of the bar:

```

const [value, setValue] = useState(0);

const MAX = 10;

const getBackgroundSize = () => {

return { backgroundSize: `${(value * 100) / MAX}% 100%` }; };

<input type="range"

min="0"

max={MAX}

onChange={(e) => setValue(e.target.value)}

style={getBackgroundSize()} value={value}

/>

```

Let’s break down a few holes here. When an input is of type you have access to several different properties, two of which are min and max which set the two different ends of the slider.

Essentially we’re just calculating the percentage size of the background color based on the value and possible max value.

<figcaption>Styled Background</figcaption>

### Active Styling

With the bar styled let’s focus on some UX enhancement, with some pseudo-classes we can make the thumb look a bit prettier to the user:

```

input[type="range"]::-webkit-slider-thumb:hover {

box-shadow: #d46a6a50 0px 0px 0px 8px;

}

input[type="range"]::-webkit-slider-thumb:active {

box-shadow: #d46a6a50 0px 0px 0px 11px;

transition: box-shadow 350ms cubic-bezier(0.4, 0, 0.2, 1) 0ms, left 350ms cubic-bezier(0.4, 0, 0.2, 1) 0ms, bottom 350ms cubic-bezier(0.4, 0, 0.2, 1) 0ms;

}

```

As well as adding some transition styling to the thumb itself:

```

input[type="range"]::-webkit-slider-thumb {

// ...other styles;

transition: background 0.3s ease-in-out;

}

```

<img width="100%" style="width:100%" src="https://media.giphy.com/media/tWbIlHfDf4fXYvXHPk/giphy.gif">

And that’s it! Here is a fully working example:

[](https://codesandbox.io/s/react-slider-k868o?fontsize=14&hidenavigation=1&theme=dark)

Do you have a preferred slider component? Let me know in the comments below.

Find more articles at [Relatable Code](https://relatablecode.com)

_Originally published at_ [_https://relatablecode.com_](https://relatablecode.com/how-to-make-a-simple-slider-component-in-react/) _on October 17, 2021._ | diballesteros |

866,944 | Streaming Ethereum Blocks Into bSQL Using Infura and Python | Overview Blockchain data is secure and tamper proof, but when working with blockchain data... | 0 | 2021-11-10T02:05:54 | https://dev.to/cassidymountjoy/streaming-ethereum-blocks-into-bsql-using-infura-and-python-3dl4 | blockchain, python, database, web3 | ##Overview

Blockchain data is secure and tamper proof, but when working with blockchain data in a more traditional environment, let's say a conventional database, it becomes harder to extend these guarantees. Can data be trusted after it has left the blockchain?

By using a less traditional form of DBMS, an immutable database, we can:

- Verify that data hasn't been illicitly changed

- Track all changes made to the system

- Easily access old versions of the system

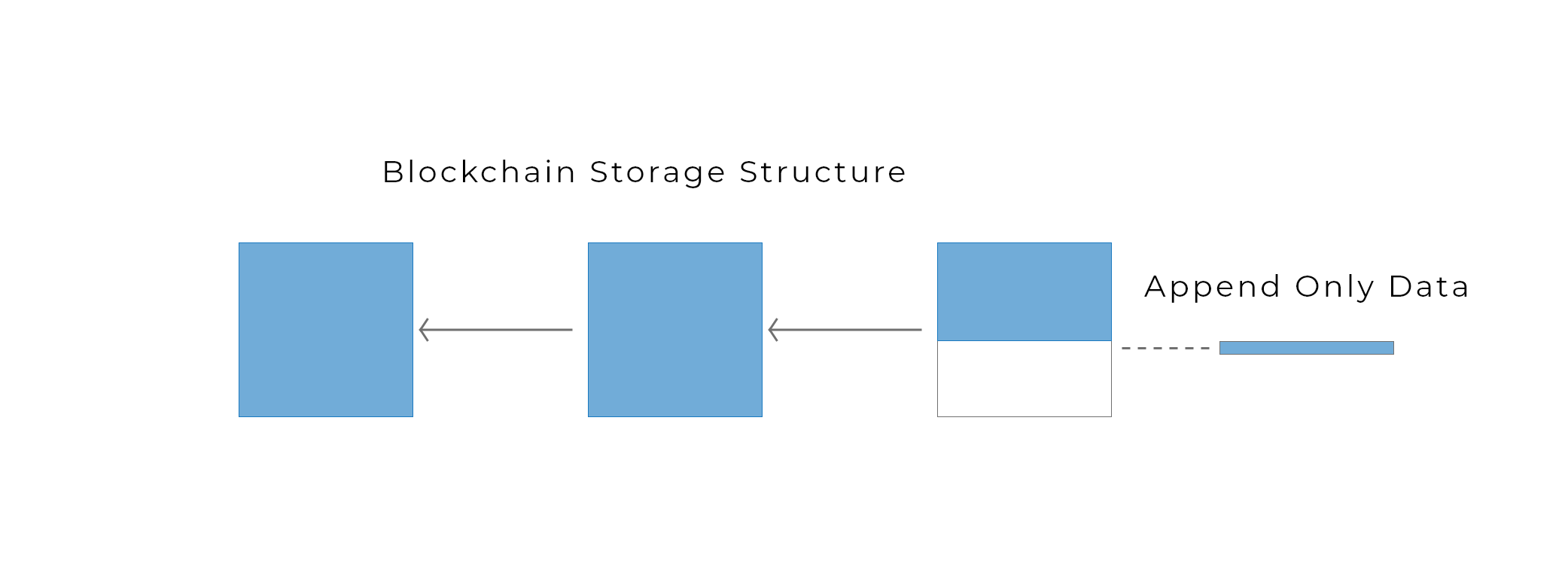

In this tutorial I will be using [Blockpoint's](https://blockpointdb.com/) [bSQL](https://bsql.org/docs/overview), because it's immutable, relational, structured, and has a rich language. The bSQL storage structure is actually very similar to that of a blockchain in that data pages are hashed and linked together. Data is added, never deleted:

---

#Let's Stream Some Ethereum Blocks

We will be using an Infura free trial to access the Ethereum network, Python to filter for new blocks, and bSQL to store blockchain data. In order to do so we will:

- Sign up for a free Infura account and obtain an Ethereum endpoint.

- Create a bSQL account and deploy a free instance.

- Write a python script and start streaming Ethereum blocks to the database.

At any time you can reference the public [repo](https://github.com/blockpointSystems/bsql_eth)

---

##Setting up Infura

Register for a free Infura account [here](https://infura.io/). This will give you access to 100,000 Requests/Day, which is plenty given that this is just a demonstration.

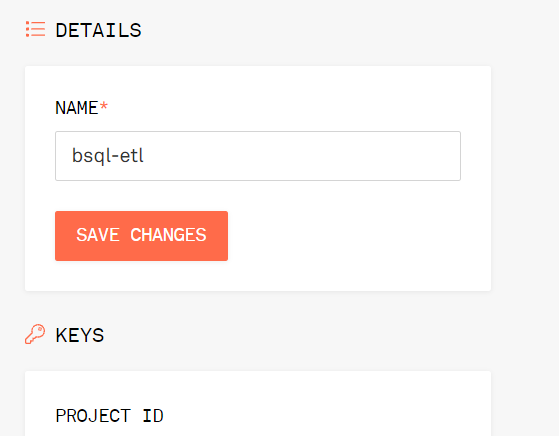

Once you've set up your Infura account, you can access your project ID, it will be needed to connect to Infura using an endpoint and can be found under your project name.

The endpoint for accessing the data will resemble the following:

`https://mainnet.infura.io/v3/your_project_id`

We will use this endpoint when we set up our python application.

---

##Deploying a bSQL instance

The next step is to set up our bSQL instance by:

- Deploying a database using the Blockpoint Portal

- Opening the instance in the IDE

- Creating a database and a blockchain

In order to create a bSQL account you will need an a unique access token, you can get your access token by messaging me directly or joining the [slack](https://join.slack.com/t/bsqlcommunity/shared_invite/zt-169oaigpu-R3W1D5_vc6OyNnKy4thNoA), it's free!

1.) The tutorial for creating an account and deploying your first instance can be found [here](https://www.youtube.com/watch?v=Em8b5qXcQew&list=PLdRS5wHN77gWuG71IvaH4pX7IdfZYGd06). Once completed, a new instance should appear on the blockpoint portal home page.

2.) Once created, navigate to the home page. To open the IDE, click "Open in IDE" and, when prompted, provide your database credentials.

3.) Finally we are going to run a few bSQL commands to finish our set up.

**a.** Create a new database called "eth" by running `CREATE

DATABASE eth;`

**b.** Interact with the newly created database by running `USE

eth;`

Next, we are going to want to configure a single blockchain for capturing Ethereum data. A blockchain is a structured container for storing data in bSQL. Once data has been added to the system, it cannot be removed. For a more comprehensive overview on the blockchain structure read the documentation [here](https://bsql.org/docs/blockchain-overview).

For the sake of keeping this tutorial simple, we are going to use a single *blockchain* called **blocks** to track new blocks added to the Ethereum network. Using a *historical blockchain* we can enforce immutability and check data integrity. Deploy the blockchain by running the following command in the IDE.

```

CREATE BLOCKCHAIN blocks HISTORICAL (

time TIMESTAMP,

number UINT64,

hash STRING SIZE=66,

parent_hash STRING SIZE=66,

nonce STRING SIZE=42,

sha3_uncles STRING SIZE=66,

logs_bloom STRING PACKED SIZE=18000,

transactions_root STRING SIZE=66,

state_root STRING SIZE=66,

receipts_root STRING SIZE=66,

miner STRING SIZE=42,

difficulty FLOAT64,

size_of_block INT64,

extra_data STRING PACKED,

gas_limit INT64,

transaction_count INT64,

base_fee_per_gas INT64

);

```

Congrats on building your first bSQL blockchain! Now let's start adding data.

---

## Setting up your python application.

In order to set up your python applet you will need the following:

- Python downloaded and installed on your computer, I'm using python 3.9.

- A python IDE, I'm using [Pycharm](https://www.jetbrains.com/pycharm/)

- [web3](https://web3py.readthedocs.io/en/stable/) for python

- The bSQL [python bSQL database driver](https://bsql.org/docs/connecting/python-odbc)

Once the follow criteria are met, you can set up a main.py file in your project directory. The code is on [Github](https://github.com/blockpointSystems/bsql_ethk) although it will not work until the above criteria is met and you have deployed an instance.

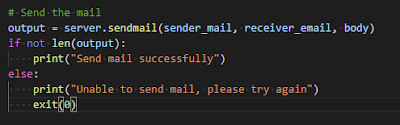

## Connecting to Infura and bSQL

The first step in our code is to define our connections. You will need to fill out the following fields:

- your Infura project id

- your bSQL username, password and public IP address

```python

infru = "https://mainnet.infura.io/v3/your_project_id" #change me

web3 = Web3(Web3.HTTPProvider(infru))

conn = mdb_bp.driver.connect(

username="your username", #change me

password="your password", #change me

connection_protocol="tcp",

server_address="server address", #change me

server_port=5461,

database_name="eth",

parameters={"interpolateParams": True},

)

```

## Defining our main method

The main method defines a filter for the latest Ethereum block and passes it into our loop that we will define next.

```python

def main():

block_filter = web3.eth.filter('latest')

loop = asyncio.get_event_loop()

try:

loop.run_until_complete(

asyncio.gather(

log_loop(block_filter, 2)))

finally:

# close loop to free up system resources

loop.close()

if __name__ == '__main__':

main()

```

## Defining our Loop

Our loop sleeps for a desired interval, then attempts to pull new entries from the event filter. Every time a new entry is received the event is handled in the `handle_event` function.

```python

async def log_loop(event_filter, poll_interval):

while True:

for PairCreated in event_filter.get_new_entries():

handle_event(web3.eth.get_block(PairCreated))

await asyncio.sleep(poll_interval)

```

## Defining our Event Handling

Time for the database call. Every time a new block is added to the chain, we print to the console and send an insertion statement to the database, inserting block data into **blocks**.

```python

def handle_event(block):

print(block['number'])

conn.exec("INSERT blocks VALUES (" +

"\"" + str(datetime.datetime.utcfromtimestamp(block['timestamp'])) + "\"," +

str(block['number']) + "," +

"\"" + str(block['hash'].hex()) + "\"," +

"\"" + str(block['parentHash'].hex()) + "\"," +

"\"" + str(block['nonce'].hex()) + "\"," +

"\"" + str(block['sha3Uncles'].hex()) + "\"," +

"\"" + str(block['logsBloom'].hex()) + "\"," +

"\"" + str(block['transactionsRoot'].hex()) + "\"," +

"\"" + str(block['stateRoot'].hex()) + "\"," +

"\"" + str(block['receiptsRoot'].hex()) + "\"," +

"\"" + str(block['miner']) + "\"," +

str(block['difficulty']) + "," +

str(block['size']) + "," +

"\"" + str(block['extraData'].hex()) + "\"," +

str(block['gasLimit']) + "," +

str(len(block['transactions'])) + "," +

str(block['baseFeePerGas']) + ")")

```

And that's all the code needed, so give that baby a run.

## Putting it all together

After letting my program run for about an hour, I stopped my script and started to do a little data exploration.

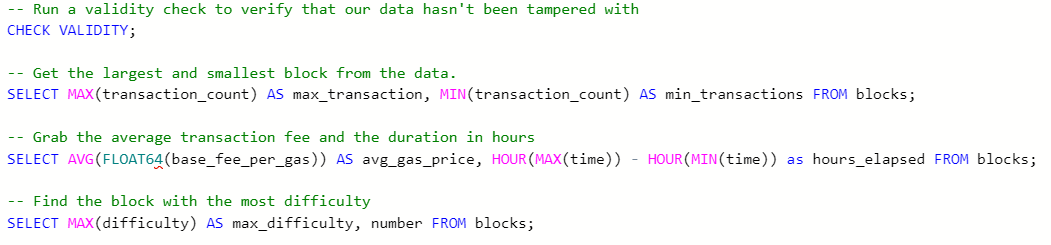

I ran a few queries in the bSQL portal and included them in the [repo](https://github.com/blockpointSystems/bsql_eth/blob/master/example_queries.bsql). You can load this file into the bSQL IDE or write your own queries.

Here's what I came up with:

##Conclusion

There you have it. A fun little script for Ethereum data. There is definitely more to explore when it comes to how the data is pulled and even more queries to write.

Like always, please comment your feedback or any questions you may have.

| cassidymountjoy |

866,985 | ASP.NET Core 6: Minimal APIs y Carter | Introducción El mundo de .NET 6 está a la vuelta de la esquina (al día de hoy está en RC... | 0 | 2021-10-17T21:42:48 | https://dev.to/isaacojeda/aspnet-core-6-minimal-apis-y-carter-3f8k | minimalapis, dotnet, csharp, carter | # Introducción

El mundo de .NET 6 está a la vuelta de la esquina (al día de hoy está en RC 2) y no solo yo estoy emocionado con estos cambios que vienen, si no también el equipo de Carter ya se ha encargado de no quedarse atrás y aprovechar lo nuevo que se viene.

[Carter](https://github.com/CarterCommunity/Carter) en versiones anteriores a .NET 6, nos ofrecían esta forma elegante de modularizar nuestras APIs y olvidarnos por siempre de los controladores y tener una forma simple de crear endpoints. Ahora esto ya lo hace las Minimal APIs pero eso significa que Carter esté muerto.

Para conocer más sobre Minimal APIs podemos ver este [gist](https://www.notion.so/ff1addd02d239d2d26f4648a06158727) de David Flow que simplemente es lo que necesitas para entender todo sobre Minimal APIs.

Otra forma de aprender sobre Minimal APIs es este [repositorio](https://github.com/DamianEdwards/MinimalApiPlayground) de Damian Edwards donde explora toda las funcionalidades con las que se cuenta. Si quieren aprender muy bien Minimal APIs les recomiendo esos dos enlaces.

Te recomiendo seguir este post viendo el código en [GitHub](https://github.com/isaacOjeda/DevToPosts/tree/main/MinimalApis) para una mejor comprensión.

# Carter

Lo que exploraremos en este post es la creación de Minimal APIs con carter con un ejemplo sencillo de catálogo de Productos (as usual).

## ¿Por qué usar Carter?

Que usemos Minimal APIs no significa que nuestra aplicación tiene que ser pequeña. Minimal APIs nace para tener una introducción sencilla a .NET y empezar sin tanto **boilerplate** y **ceremony**.

Carter nos da la posibilidad de modularizar nuestra API de una forma efectiva, utilizando una [arquitectura vertical](https://jimmybogard.com/vertical-slice-architecture/) donde todo nuestro código está partido en **Features,** aunque esto es meramente opcional y no será tema de este post.

> Nota 👀: Muchos de los features que exploraremos en este post no son propias de carter sino de Minimal APIs.

>

También Carter ya nos incluye [FluentValidation](https://github.com/FluentValidation/FluentValidation), que es algo muy importante porque el `ModelState` en Minimal APIs no está disponible. Así que necesitamos alguna forma de validar nuestros modelos. Damian Edwards tiene una [librería](https://github.com/DamianEdwards/MiniValidation) que hace validaciones con Data Annotations, que es un approach válido también, aunque prefiero no decorar los modelos con atributos (y más en este ejemplo que estamos usando directamente los Domain Objects y no DTOs).

## Carter en ASP.NET Core 6

Comenzaremos desde 0, creando un proyecto web vacío con .NET 6 instalado previamente (versión RC 2 para este post).

```bash

dotnet new web -o MinimalApis

```

Y posteriormente instalaremos los siguientes paquetes que incluyen carter y varios de EF Core (revisa mi [GitHub](https://github.com/isaacOjeda/DevToPosts/tree/main/MinimalApis) para ver el ejemplo completo para que no te queden dudas)

```xml

<PackageReference Include="Carter" Version="6.0.0-pre2" />

<PackageReference Include="Microsoft.EntityFrameworkCore" Version="6.0.0-rc.2.21480.5" />

<PackageReference Include="Microsoft.EntityFrameworkCore.InMemory" Version="6.0.0-rc.2.21480.5" />

<PackageReference Include="Swashbuckle.AspNetCore" Version="6.2.3" />

```

> Nota 👀: Recuerda que estamos usando versiones preview y RC de estos paquetes NuGet. Si no te aparecen, hay que decirle a NuGet que incluya paquetes en **pre-release** y si estás leyendo esto después de noviembre, probablemente ya estarás usando las versiones finales.

>

### In Memory Database

Para este ejemplo usaremos la siguiente base de datos con Entity Framework Core que mas adelante la configuraremos para que solo sea en memoria (para fines prácticos).

```csharp

namespace MinimalApis.Api.Entities;

public class Product

{

public Product(string description, double price)

{

Description = description;

Price = price;

}

public int ProductId { get; set; }

public string Description { get; set; }

public double Price { get; set; }

}

```

```csharp

using Microsoft.EntityFrameworkCore;

using MinimalApis.Api.Entities;

namespace MinimalApis.Api.Persistence;

public class MyDbContext : DbContext

{

public MyDbContext(DbContextOptions<MyDbContext> options) : base(options) {}

public DbSet<Product> Products => Set<Product>();

}

```

> Nota 👀: Los nullable reference types han llegado ya por default y es necesario asegurarnos que cualquier tipo de dato por referencia no sea nulo. Esto nos ayudará a que por fin (al menos tratar) eliminemos los `NullReferenceExceptions` que siempre aparecen cuando menos te lo esperas.

>

`Products` lo he guardado en una carpeta **/Entities** y el contexto en **/Persistence**. Realmente esto puede variar según el estilo de cada quien, así que no es relevante por ahora.

### Products Module

Como mencioné antes, Carter nos pide crear módulos en lugar de Controladores. Por lo que guardaré esté módulo dentro de una carpeta **Features/Products**.

La intención de no poner todo junto es poder separar cada Feature según sus componentes relevantes (como Mappers, Validations, Servicios, etc).

```csharp

namespace MinimalApis.Api.Features.Products;

public class ProductsModule : ICarterModule

{

public void AddRoutes(IEndpointRouteBuilder app)

{

// Los endpoints van aquí

}

}

```

Para registrar Endpoints en Minimal APIs tenemos disponible todos los verbos HTTP como lo solemos hacer en Web API (Ejem. `[HttpPost]`, `[HttpGet]`, etc).

Ejemplo.

```csharp

app.MapGet("api/products", () =>

{

// Consultar productos

});

```

Aquí estamos registrando la ruta **api/products** con el verbo `HttpGet`.

### Dependency Injection

Esta es una de las partes un poco extrañas, pero para resolver dependencias (en este caso nuestro `DbContext`) utilizamos los parámetros de la función del Endpoint en lugar del constructor como lo hacíamos antes.

```csharp

app.MapGet("api/products", (MyDbContext context) =>

{

var products = await context.Products

.ToListAsync();

return Results.Ok(products);

});

```

Eso significa que en los parámetros de nuestra función se resuelven dependencias de distintos lados. Como por ejemplo (como ya vimos aquí) del `IServiceCollection` pero también de los datos que se reciben (ejemplo del Body, Headers, Query strings, etc).

Ocurre Model Binding de los datos recibidos por el cliente y también se puede resolver cualquier dependencia desde ahí. Si revisamos los links de Minimal APIs que he puesto más arriba podremos aprender más sobre el tema (ya que para todos es nuevo 😅).

Una versión mejorada y como hice este ejemplo, queda de la siguiente manera.

```csharp

public void AddRoute(IEndpointRouteBuilder app)

{

app.MapGet("api/products", GetProducts)

.Produces<List<Product>>();

}

private static async Task<IResult> GetProducts(MyDbContext context)

{

var products = await context.Products

.ToListAsync();

return Results.Ok(products);

}

```

Aquí ya estamos agregando metadatos al endpoint (con `.Produces<List<Product>>()`) para que librerías como Swagger sepa interpretar y documentar nuestra API.

La clase `Results` cuenta con las posibles respuestas que podemos regresar, así como sucedía con los controladores (que regresábamos un `IActionResult`) aquí regresamos un `IResult` (algo muy similar).

### Problem Details y Validaciones

Una de las cosas que también me gustó de Carter es que ya incluye **FluentValidation**. Y aunque no es algo automático como se podría configurar con MediatR o ASP.NET Web API, es muy sencillo.

Dentro de **Features/Products** puse el siguiente `AbstractValidator`.

```csharp

using FluentValidation;

using MinimalApis.Api.Entities;

namespace MinimalApis.Api.Features.Products

{

public class ProductValidator : AbstractValidator<Product>

{

public ProductValidator()

{

RuleFor(q => q.Description).NotEmpty();

RuleFor(q => q.Price).NotNull();

}

}

}

```

Simplemente estamos estableciendo que los campos son obligatorios, pero aquí se pueden configurar muchas validaciones y es demasiado flexible (en comparación a los `DataAnnotations`).

Nuestro endpoint de creación queda así:

```csharp

using Carter;

using Carter.ModelBinding;

// ... código omitido

public void AddRoutes(IEndpointRouteBuilder app)

{

// ... código omitido

app.MapPost("api/products", CreateProduct)

.Produces<Product>(StatusCodes.Status201Created)

.ProducesValidationProblem()

}

private static async Task<IResult> CreateProduct(HttpRequest req, Product product, MyDbContext context)

{

var result = req.Validate(product);

if (!result.IsValid)

{

return Results.ValidationProblem(result.ToValidationProblems());

}

context.Products.Add(product);

await context.SaveChangesAsync();

return Results.Created($"api/products/{product.ProductId}", product);

}

```

Estamos inyectando 3 cosas en esta función:

- `HttpRequest`. El request actual del `HttpContext` del endpoint

- `Product`. Los datos recibidos en el `[FromBody]` del `[HttpPost]` que viene siendo el entity a crear

- `MyDbContext`. DbContext de EF Core

> Nota 👀: Si el método `Validate` no te aparece, probablemente es por namespaces faltantes.

>

Este endpoint tiene 2 posibles respuestas: un 201 o un 400. Aunque no se está obligado especificarlo, pero con los métodos Produces se especifica eso para que Swagger conozca mejor la API.

Un ejemplo de respuesta de error de validación.

```json

{

"type": "https://tools.ietf.org/html/rfc7231#section-6.5.1",

"title": "One or more validation errors occurred.",

"status": 400,

"errors": {

"Description": [

"'Description' no debería estar vacío."

]

}

}

```

Este formato es un estandar para APIs llamado [Problem Details](https://datatracker.ietf.org/doc/html/rfc7807), este también se usa en los Api Controllers al usar el atributo `[ApiController]` (por si no sabías 😅).

Ejemplo de respuesta exitosa.

```json

{

"productId": 5,

"description": "Product description here.",

"price": 2499.99

}

```

El método `ToValidationProblems` es una extensión de `ValidationResult` de **FluentValidation** para convertirlo en un diccionario agrupado según las propiedades que validaron.

```csharp

using FluentValidation.Results;

namespace MinimalApis.Api.Extensions;

public static class GeneralExtensions

{

public static Dictionary<string, string[]> ToValidationProblems(this ValidationResult result) =>

result.Errors

.GroupBy(e => e.PropertyName, e => e.ErrorMessage)

.ToDictionary(failureGroup => failureGroup.Key, failureGroup => failureGroup.ToArray());

}

```

### Products Module completo

Creo que ya expliqué lo relevante en estos 2 endpoints. Así que `ProductsModule` queda de la siguiente manera.

```csharp

using Carter;

using Carter.ModelBinding;

using Microsoft.EntityFrameworkCore;

using MinimalApis.Api.Entities;

using MinimalApis.Api.Extensions;

using MinimalApis.Api.Persistence;

namespace MinimalApis.Api.Features.Products;

public class ProductsModule : ICarterModule

{

public void AddRoutes(IEndpointRouteBuilder app)

{

app.MapGet("api/products", GetProducts)

.Produces<List<Product>>();

app.MapGet("api/products/{productId}", GetProduct)

.Produces<Product>()

.Produces(StatusCodes.Status404NotFound);

app.MapPost("api/products", CreateProduct)

.Produces<Product>(StatusCodes.Status201Created)

.ProducesValidationProblem();

app.MapPut("api/products/{productId}", UpdateProduct)

.Produces(StatusCodes.Status204NoContent)

.ProducesProblem(StatusCodes.Status404NotFound)

.ProducesValidationProblem();

app.MapDelete("api/products/{productId}", DeleteProduct)

.Produces(StatusCodes.Status204NoContent)

.ProducesProblem(StatusCodes.Status404NotFound);

}

private static async Task<IResult> GetProducts(MyDbContext context)

{

var products = await context.Products

.ToListAsync();

return Results.Ok(products);

}

private static async Task<IResult> GetProduct(int productId, MyDbContext context)

{

var product = await context.Products.FindAsync(productId);

if (product is null)

{

return Results.NotFound();

}

return Results.Ok(product);

}

private static async Task<IResult> CreateProduct(HttpRequest req, Product product, MyDbContext context)

{

var result = req.Validate(product);

if (!result.IsValid)

{

return Results.ValidationProblem(result.ToValidationProblems());

}

context.Products.Add(product);

await context.SaveChangesAsync();

return Results.Created($"api/products/{product.ProductId}", product);

}

private static async Task<IResult> UpdateProduct(

HttpRequest request, MyDbContext context, int productId, Product product)

{

var result = request.Validate(product);

if (!result.IsValid)

{

return Results.ValidationProblem(result.ToValidationProblems());

}

var exists = await context.Products.AnyAsync(q => q.ProductId == productId);

if (!exists)

{

return Results.Problem(

detail: $"El producto con ID {productId} no existe",

statusCode: StatusCodes.Status404NotFound);

}

context.Entry(product).State = EntityState.Modified;

await context.SaveChangesAsync();

return Results.NoContent();

}

private static async Task<IResult> DeleteProduct(int productId, MyDbContext context)

{

var product = await context.Products.FirstOrDefaultAsync(q => q.ProductId == productId);

if (product is null)

{

return Results.Problem(

detail: $"El producto con ID {productId} no existe",

statusCode: StatusCodes.Status404NotFound);

}

context.Remove(product);

await context.SaveChangesAsync();

return Results.NoContent();

}

}

```

## Extensiones (IServiceCollection y WebApplication)

Para dejar un Program.cs más limpio, siempre suelo usar extensiones para agregar la configuración de los middlewares y dependencias.

```csharp

using Microsoft.EntityFrameworkCore;

using Microsoft.OpenApi.Models;

using MinimalApis.Api.Persistence;

namespace MinimalApis.Api.Extensions;

public static class ServiceCollectionExtensions

{

public static IServiceCollection AddSwagger(this IServiceCollection services)

{

services.AddEndpointsApiExplorer();

services.AddSwaggerGen(c =>

{

c.SwaggerDoc("v1", new OpenApiInfo()

{

Description = "Minimal API Demo",

Title = "Minimal API Demo",

Version = "v1",

Contact = new OpenApiContact()

{

Name = "Isaac Ojeda",

Url = new Uri("https://github.com/isaacOjeda")

}

});

});

return services;

}

public static IServiceCollection AddPersistence(this IServiceCollection services)

{

services.AddDbContext<MyDbContext>(options =>

options.UseInMemoryDatabase(nameof(MyDbContext)));

return services;

}

}

```

Aquí simplemente estamos creando 2 métodos de extensión para configurar Swagger y el DbContext (en este caso, en memoria).

```csharp

namespace MinimalApis.Api.Extensions;

public static class WebApplicationExtensions

{

public static WebApplication MapSwagger(this WebApplication app)

{

app.UseSwagger();

app.UseSwaggerUI(c =>

{

c.SwaggerEndpoint("/swagger/v1/swagger.json", "API");

c.RoutePrefix = "api";

});

return app;

}

}

```

Aquí también configuramos Swagger pero ahora su middleware para que tengamos un endpoint de exploración (el json) y la UI para hacer pruebas.

## Integración de todo en Program

Ya tenemos todo listo para por fin correr esta API hecha con Carter

```csharp

using Carter;

using MinimalApis.Api.Extensions;

var builder = WebApplication.CreateBuilder(args);

builder.Services.AddSwagger();

builder.Services.AddPersistence();

builder.Services.AddCarter();

var app = builder.Build();

app.MapSwagger();

app.MapCarter();

app.Run();

```

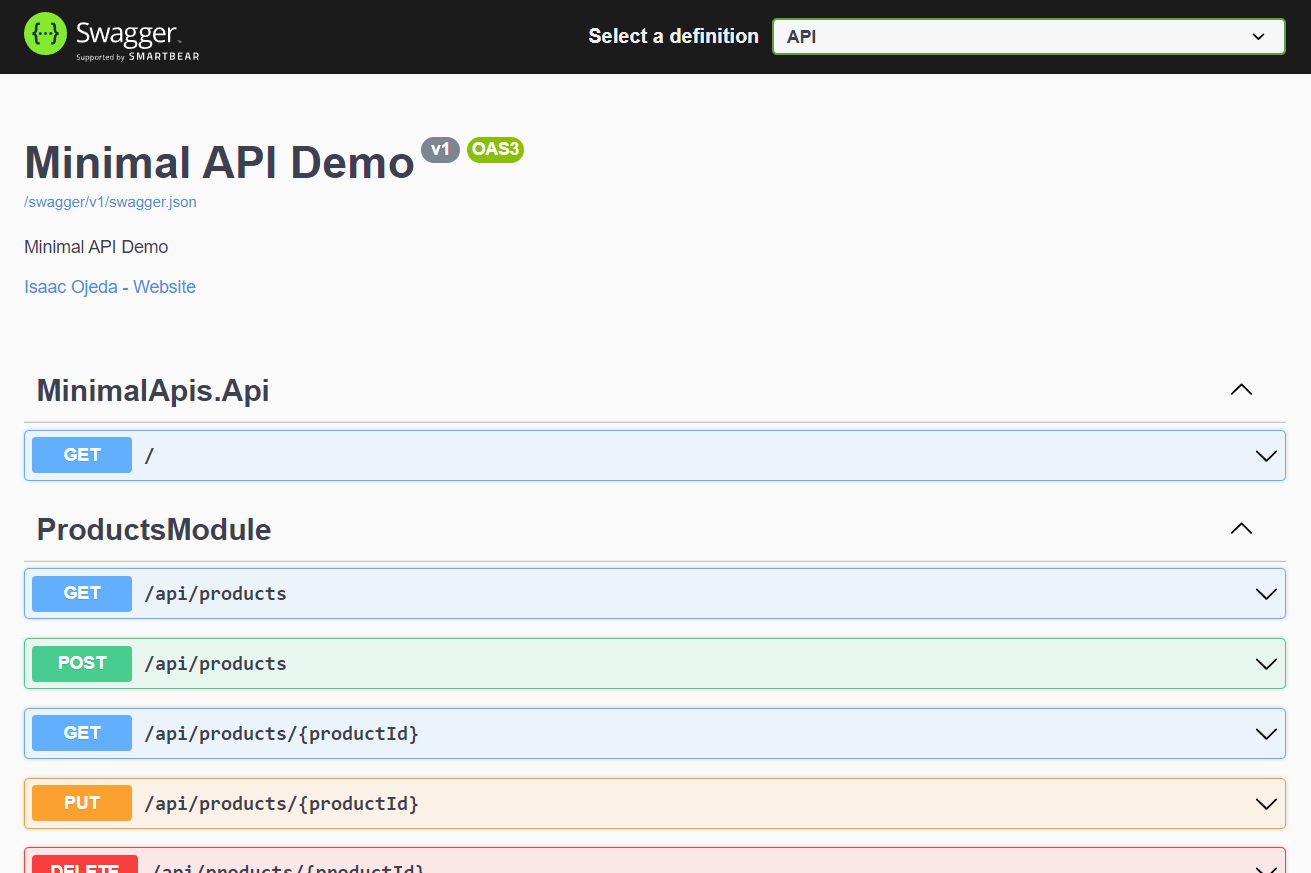

## Probando Swagger

Si ejecutamos `dotnet run` ya podemos explorar la API con Swagger ( o postman si lo prefieres)

# Conclusión

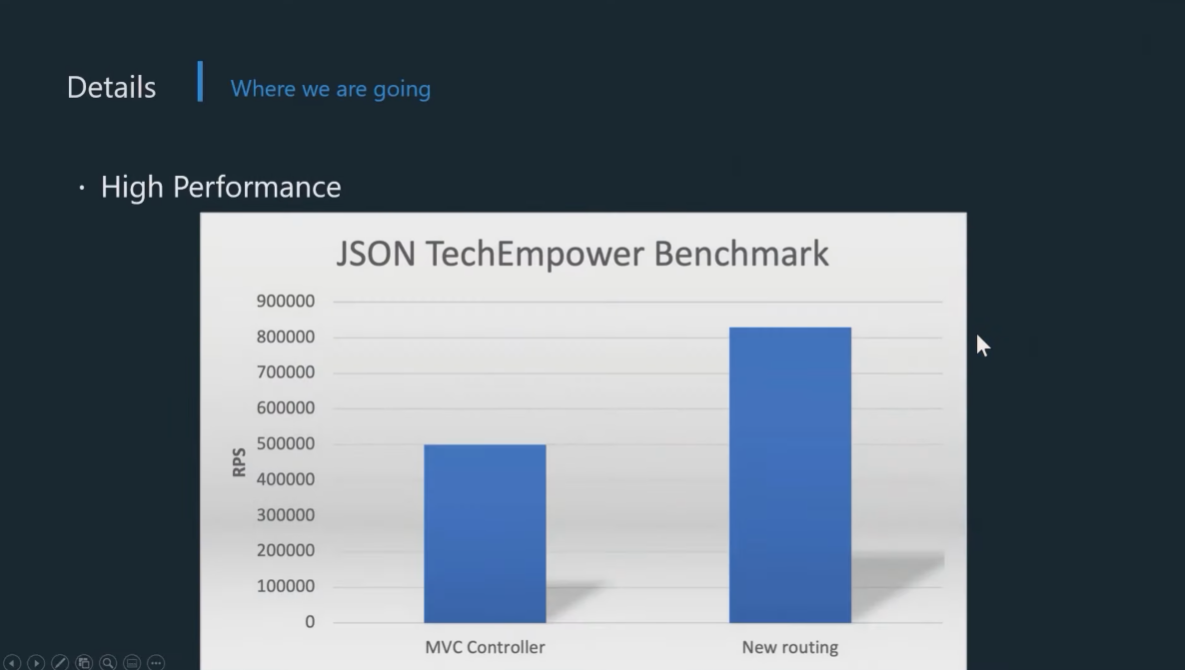

Las Minimal APIs es algo que se necesitaba en .NET. Lenguajes como node con express manejaban este concepto desde siempre, pero poderlo hacer con .NET y toda su plataforma, es perfecto.

Carter existe desde versiones anteriores de .NET Core y buscaba simplificar esto, y su antecessor Nancy.Fx lo ha hecho desde siempre. Hay gente que odia los Controllers 😅.

Es cuestión de gustos, tal vez no todos empiecen a utilizar este approach, pero una buena razón es que por ahora es más rápido.

En un futuro tal vez se construyan más capas sobre Minimal APIs (como carter lo hace) y se vuelvan estandard en los templates que el mismo dotnet ofrecerá, no sé, puede pasar.

Espero que te haya gustado 🖖🏽

Code4Fun 🤓 | isaacojeda |

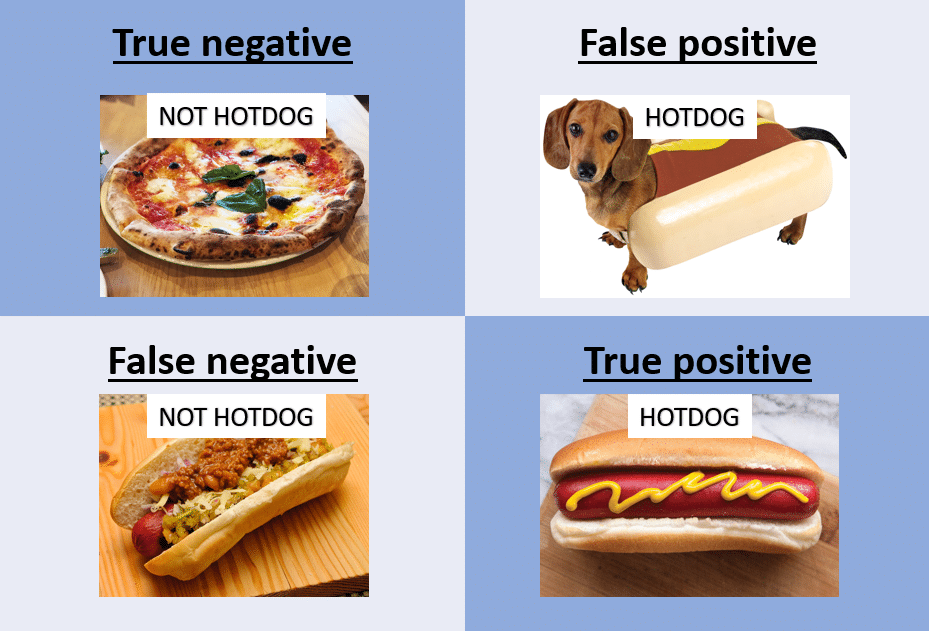

867,079 | The definitive guide to Accuracy, Precision, and Recall for product developers | TLDR Accuracy tells you how many times the ML model was correct overall. Precision is how... | 0 | 2021-11-29T21:21:28 | https://dev.to/mage_ai/the-definitive-guide-to-accuracy-precision-and-recall-for-product-developers-4ahg | productdevelopment, machinelearning, ai, programming | ### TLDR

Accuracy tells you how many times the ML model was correct overall. Precision is how good the model is at predicting a specific category. Recall tells you how many times the model was able to detect a specific category.

### Outline

1. Introduction

2. Accuracy

3. Precision

4. Recall

5. How to determine if your model is doing well

6. When to use precision or recall

7. Conclusion

## Introduction

Most machine learning (ML) problems fit into 2 groups: [classification and regression](https://m.mage.ai/roadmap-to-ai-ml-sorcerer-supreme-2fc4bdbe89bf). The main metrics used to assess performance of classification models are accuracy, precision, and recall.

<center>_Source: Final Fantasy_</center>

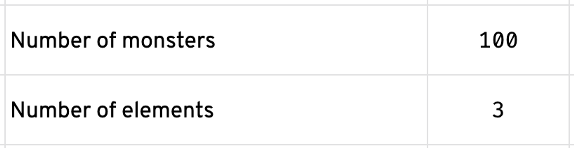

To demonstrate each of these metrics, we’ll use the following example: We’re a mage on a quest to save the villagers from a horde of monsters. There are 100 monsters attacking the village. We’re going to use machine learning to predict what element each monster is weak against. There are 3 possible elements that monsters are weak against: fire, water, and wind.

## Accuracy

After summoning our machine learning model, we approach the village and begin the battle. After hours of intense combat, we are victorious and the villagers are all saved. We sit down by the fire, have a few refreshments (e.g. boba from the local village tea shop), and review how the battle unfolded.

<center>_Magic vs Monsters_</center>

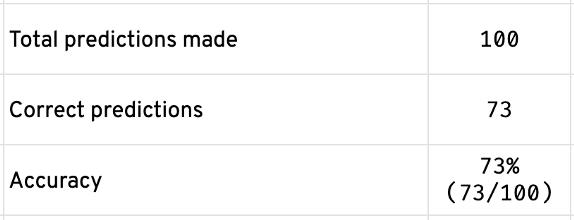

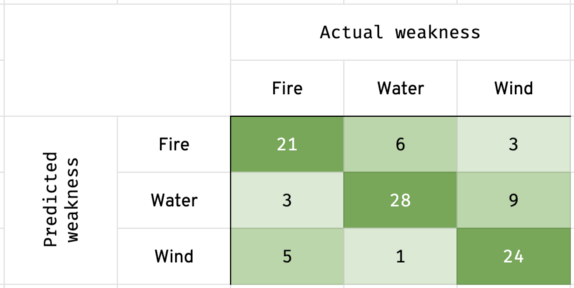

For every monster we fought, our model predicted an element for us to use that would make the monster the weakest possible. Out of 100 predictions, our model predicted the correct element 73 times. Therefore, the accuracy of our model is 73%; 73 correct predictions out of 100 predictions made.

## Precision

Measuring our model’s accuracy told us overall how many times we were correct when predicting a monster’s weakness. However, if we want to know how many times we were correct when predicting a monster was weak against fire, we need to use the precision metric.

<center>_Source: Pokemon_</center>

Let’s say we predicted 30 monsters were weak against fire. At the end of the battle, we saw that of those 30 monsters, 6 of them were actually weak against water and 3 of them were actually weak against wind. That means our precision for predicting fire is 70% (21/30). In other words, we predicted 21 fire weaknesses correctly out of 30 fire predictions.

<center>_Source: Naruto_</center>

After further review of the battle, we predicted 40 monsters were weak against water and 30 monsters were weak against wind. Out of the 40 monsters who we predicted were weak against water, we were correct 28 times resulting in 70% precision (28/40). Out of the 30 monsters who we predicted were weak against wind, we were correct 24 times resulting in 80% precision (24/30).

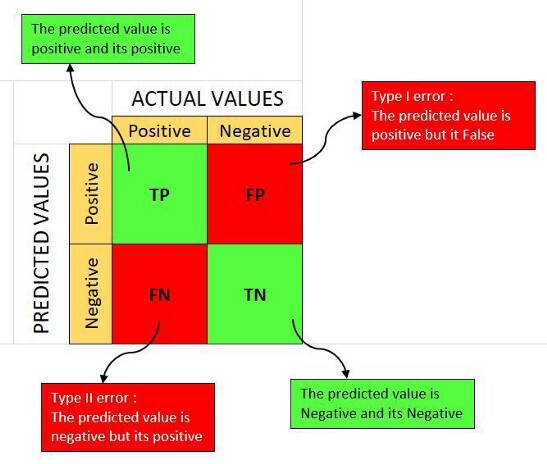

The following chart is called a confusion matrix:

The left y-axis is what was predicted. The top x-axis is what the monster was truly weak against. Look at row 1 and column 2. It’s saying that out of the 30 monsters (21 + 6 + 3) that the model predicted a fire weakness for, 6 of those monsters were actually weak against water.

For more information on how to read a confusion matrix, check out this [article](https://www.dataschool.io/simple-guide-to-confusion-matrix-terminology/). Here are 2 helpful images:

<center>_Source: Anuganti Suresh_</center>

<center>_Source: NillsF blog_</center>

## Recall

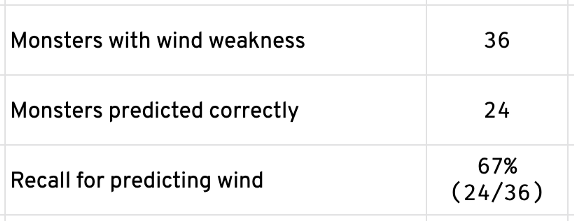

Now that we know how many times our model predicted correctly overall and how good our model is at predicting a specific weakness (e.g. fire, water, wind), it’s time to find out how good our model is at finding monsters with a particular weakness.

<center>_Source: Avatar: The Last Airbender_</center>

For all the monsters who were actually weak against wind (there are 36 of them), how many did our model find out? Our model found 24 monsters who were weak against wind. That means our model has 67% recall when predicting monsters who are actually weak against wind.

## How to determine if your model is doing well

Before evaluating how good a model is doing, we must establish a baseline score. A baseline score is a value that our model should perform better than. For example, if we have a baseline score of 60% accuracy and our model achieved an accuracy greater than that (e.g. 61%), then the model is good enough to use in real life scenarios (e.g. production environment).

There are many ways to establish a baseline score for your model. In an upcoming tutorial, we’ll show you multiple other methods that can be used.

For our monster weakness prediction model, we’ll use a baseline score method called mode category. This method takes the most abundant weakness category and divides that by the number of predictions. Specifically, more monsters are weak against wind than any other element (only 29 monsters are weak against fire and only 35 monsters are weak against water).

Our baseline accuracy score will be 36% (e.g. 36 monsters weak against wind divided by 100 monsters in total).

<center>_Source: Borat_</center>

Our model had a 73% accuracy score. That is well above the baseline score. We can conclude that our model is performing well.

*Note: you may want to have different baseline scores and use different metrics other than accuracy (e.g. precision, recall, etc) to assess whether your model performed well or not. Depending on your use case, precision or recall may be more important.*

## When to use precision or recall

On our quest to save the village from monsters, our goal was to defeat all the monsters. If we can predict their weakness, we have a higher chance of victory. Therefore, we want a model with the highest accuracy; in other words, a model with the highest chance of predicting the weakness correctly.

However, there are scenarios where you may want a model with higher precision. For example, you may have a fire spell that drains a lot of your energy and you want to use it sparingly. In that case, you’ll want a model that has high precision when predicting a monster is weak against fire. That way, when you use your fire spell, you know with high certainty that the monster is weak against it.

<center>_Source: Lifehack_</center>

The scenario in which you want to choose a model with high recall is when the predicted outcome leads to a critical decision. For example, let’s say we had a model that predicts whether a villager has been poisoned by a monster and needs immediate medical treatment. We want a model that can find every villager that is poisoned even if it incorrectly labels a healthy villager as needing medical treatment. The reason why we want this model to have high recall is because it’s better to give a healthy person unnecessary treatment than to accidentally pass over someone who was poisoned and needs treatment. In other words, better safe than sorry.

## Conclusion

<center>_Accuracy, precision, and recall matters_</center>

Accuracy, precision, and recall are used to measure the performance of a classification machine learning model (there are other metrics for regression models, [read more here](https://m.mage.ai/roadmap-to-ai-ml-sorcerer-supreme-2fc4bdbe89bf)). The metrics alone aren’t enough to determine if your model is usable in real life scenarios. You must establish a baseline score and compare your model’s performance against that baseline score. In a future tutorial, we’ll show you how to assess a regression machine learning model; a model predicting a continuous numerical value (e.g. housing prices, forecasting sales, customer lifetime value, etc). | mage_ai |

867,106 | Completed Hacktoberfest 2021! | I'm glad to say I'm in First 50,000 Participants to earn a T-shirt from Hacktoberfest and also got... | 0 | 2021-10-18T02:32:13 | https://dev.to/saugatrimal/completed-hacktoberfest-2021-1pa9 | hacktoberfest, opensource, github, swags | I'm glad to say I'm in First 50,000 Participants to earn a T-shirt

from Hacktoberfest and also got Hacktoberfest Exclusive badge from Dev.to

Thank You everyone who supports me for this event

| saugatrimal |

867,318 | A malicious user gaining access to your apps can be catastrophic. Here's how a secure SSO could help. | In any enterprise, it is a given that employees will come and go, and many will switch roles within... | 0 | 2021-10-18T07:11:39 | https://dev.to/cloudnowtech/a-malicious-user-gaining-access-to-your-apps-can-be-catastrophic-heres-how-a-secure-sso-could-help-145f | In any enterprise, it is a given that employees will come and go, and many will switch roles within the organization as well. At the same time, the same is true for the applications that the company uses - new apps will be deployed, old ones will be retired, and changes are constant.

What this means is a continuous churn - in identity management for users, and service providers, by means of the SaaS applications in use. Ensuring data and app security across the organization depends heavily on ensuring secure communication between your identity provider and service providers.

Deploying a robust [Single Sign-On (SSO)] (https://www.akku.work/product/single-sign-on.html) solution represents the best answer to this challenge. An SSO allows an enterprise to manage the identities of employees in one place, and delegate access and privileges from there.

Most SaaS providers support [SSO] (https://www.akku.work/product/single-sign-on.html) integration as it is the most efficient route to centralized identity and access management. The [SSO] (https://www.akku.work/product/single-sign-on.html) authentication method also enables users to securely access multiple apps and websites with a single set of credentials, which reduces issues like password fatigue, which boosts security, lowers IT help desk load, and increases organizational efficiency.

**How SSO works**

To get your [SSO] (https://www.akku.work/product/single-sign-on.html) in place, you need to find the right identity provider. The identity provider is essentially a service that securely stores and manages digital identities. An [SSO] (https://www.akku.work/product/single-sign-on.html) works based on a trust relationship between the app and the identity provider.

Organizations establish a trust relationship between an identity provider and their service providers to allow their employees or users to then connect with the resources they need. Such a trust relationship is established by exchanging digital certificates and metadata. The certificate carries secure tokens which contain identity information like email address and password, to authenticate that the request has come from a trusted source and to verify identity.

Although [SSO] (https://www.akku.work/product/single-sign-on.html) can work with as many apps as the organization wants, each must be configured with a unique trust relationship.

**How the Service Provider-Identity Provider relationship works**