id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

761,065 | Game Dev Digest — Issue #103 - Making Things Better | Issue #103 - Making Things Better This article was originally published on... | 4,330 | 2021-07-16T12:20:47 | https://gamedevdigest.com/digests/issue-103-making-things-better.html | gamedev, unity3d, csharp, news | ---

title: Game Dev Digest — Issue #103 - Making Things Better

published: true

date: 2021-07-16 12:20:47 UTC

tags: gamedev,unity,csharp,news

canonical_url: https://gamedevdigest.com/digests/issue-103-making-things-better.html

series: Game Dev Digest - The Newsletter About Unity Game Dev

---

### Issue #103 - Making Things Better

*This article was originally published on [GameDevDigest.com](https://gamedevdigest.com/digests/issue-103-making-things-better.html)*

Short but sweet this week. Some focus on optimization, animation, meshes, shading and more. Enjoy!

---

[**Optimize your mobile game performance: Get expert tips on physics, UI, and audio settings**](https://blog.unity.com/technology/optimize-your-mobile-game-performance-get-expert-tips-on-physics-ui-and-audio-settings) - In this second installment in this series, we’re zooming in on how to improve performance with the UI, physics, and audio settings.

[_Unity_](https://blog.unity.com/technology/optimize-your-mobile-game-performance-get-expert-tips-on-physics-ui-and-audio-settings)

[**Unity Event systems interfaces for Customized GUI behavior**](https://indiewatch.net/2021/02/04/unity-event-systems-interfaces-for-customized-gui-behavior/) - A tutorial for boosting your creativity with the Unity Event Systems Library.

[_indiewatch.net_](https://indiewatch.net/2021/02/04/unity-event-systems-interfaces-for-customized-gui-behavior/)

[**Mesh Deformation in Unity**](https://bronsonzgeb.com/index.php/2021/07/10/mesh-deformation-in-unity/) - In this article, I explore mesh deformation using a custom vertex shader.

[_Bronson Zgeb_](https://bronsonzgeb.com/index.php/2021/07/10/mesh-deformation-in-unity/)

[**ML-Agents plays DodgeBall**](https://blog.unity.com/technology/ml-agents-plays-dodgeball) - Today, we are excited to share a new environment to further demonstrate what ML-Agents can do. DodgeBall is a competitive team vs team shooter-like environment where agents compete in rounds of Elimination or Capture the Flag. The environment is open-source, so be sure to check out the repo.

[_Unity_](https://blog.unity.com/technology/ml-agents-plays-dodgeball)

[**Unity 2021.2.0 Beta 4**](https://unity3d.com/unity/beta/2021.2.0b4) - Unity 2021.2.0 Beta 4 has been released.

[_Unity_](https://unity3d.com/unity/beta/2021.2.0b4)

## Videos

[](https://www.youtube.com/watch?v=lUmRJRrZfGc)

[**Making a Zelda-style Cel Shading Effect in Unity Shader Graph**](https://www.youtube.com/watch?v=lUmRJRrZfGc) - Tons of games use a stylised cel-shading art style to help them stand out graphically, including hits like Zelda: Breath of the Wild, Persona 5 and Okami. In this tutorial, we'll unlock the secrets of cel-shading in Shader Graph by using our own custom lighting and end up with an effect that supports multiple lights!

[_Daniel Ilett_](https://www.youtube.com/watch?v=lUmRJRrZfGc)

[**How to create an AI Bot Race Car Controller in Unity tutorial Part 2 - Avoidance**](https://www.youtube.com/watch?v=5SJ6AAI6Wcs) - This is the 2nd part of our Car AI in Unity from scratch.

[_Pretty Fly Games_](https://www.youtube.com/watch?v=5SJ6AAI6Wcs)

[**Changing Action Maps with Unity's "New" Input System**](https://www.youtube.com/watch?v=T8fG0D2_V5M) - Changing actions maps with Unity's new input system is easy and allows precise control of which inputs are being listened to - switching between a 3rd person controller, vehicle controller or UI buttons. An input manager can provide a static instance of an input action asset as well as centralized control of toggling action maps making implementation easy and error-free.

[_One Wheel Studio_](https://www.youtube.com/watch?v=T8fG0D2_V5M)

[**First Person Controller - Slope Sliding (EP06) [Unity Tutorial]**](https://www.youtube.com/watch?v=jIsHe9ARE70) - Hey guy! In this next instalment of the first person controller series we're going to take a look at fixing one of the flaws in the Unity Character Controller by adding sliding t slope limit parameter. That meaning, if we're on or above the character controllers slope limit, we wont be able to bunny hop or jump our way up it, instead we'll just slide on back down it!

[_Comp-3 Interactive_](https://www.youtube.com/watch?v=jIsHe9ARE70)

[**Animation Retargeting (Unity Tutorial)**](https://www.youtube.com/watch?v=fNgPkuMgWFg) - In this Unity game development tutorial we're going look at how we can make use of animation retargeting in Unity, to use the same animations between different characters.

[_Ketra Games_](https://www.youtube.com/watch?v=fNgPkuMgWFg)

[**DConf 2017 Day 2 Keynote: Things that Matter -- Scott Meyers**](https://www.youtube.com/watch?v=3WBaY61c9sE) - In the 45+ years since Scott Meyers wrote his first program, he’s played many roles: programmer, user, educator, researcher, consultant. Different roles beget different perspectives on software development, and so many perspectives over so much time have led Scott to strong views about the things that really matter. In this presentation, he’ll share what he believes is especially important in software and software development, and he’ll try to convince you to embrace the same ideas he does.

[_The D Language Foundation_](https://www.youtube.com/watch?v=3WBaY61c9sE)

[**How to make a Better Health Bar in Unity : Chip Away Tutorial**](https://www.youtube.com/watch?v=CFASjEuhyf4) - In this Video we will create a better looking health bar for use in your game!

[_Natty Creations_](https://www.youtube.com/watch?v=CFASjEuhyf4)

[**Unity 2D: One Way Platforms**](https://www.youtube.com/watch?v=3Tb-__P_UvU) - Learn how to create 2D one-way platforms in Unity 2020.

[_Root Games_](https://www.youtube.com/watch?v=3Tb-__P_UvU)

[**How to Implement Enemy Abilities/Skills Part 1 | AI Series Part 21 | Unity Tutorial**](https://www.youtube.com/watch?v=faNV-hWu07o) - In this tutorial I show how to set up the foundation for enemies using abilities or skills and implement a basic gap-closing skill - Jump where the enemy AI will jump to the player's location then resume following.

[_LlamAcademy_](https://www.youtube.com/watch?v=faNV-hWu07o)

## Assets

[](https://assetstore.unity.com/summer-sale?aid=1011l8NVc)

[**Smash Hit Summer - Asset Store Sale**](https://assetstore.unity.com/summer-sale?aid=1011l8NVc) - Over the next seven weeks, Unity is launching a series of weekly sales, each with a unique game development theme. Each week, approximately 150 assets will be on sale for 50% off and the assets included will all be great selections to use when making a game highlighted in the weekly theme.

The top assets from one week will carry over into the next week and new assets will be added. The final two weeks of the sale will feature the top assets from the first five weeks, plus an additional set of our most popular assets.

* Week 1: Low-Poly Game - June 22 - June 28

* Week 2: 2D Game - June 29 - July 5

* Week 3: 3D Game - July 6 - July 12

* [Week 4: Fantasy Game - July 13 - July 19](https://assetstore.unity.com/summer-sale?aid=1011l8NVc)

* Week 5: Sci-Fi Game- July 20 - July 26

* Week 6: Dream Project - July 27 - August 9

[_Unity_](https://assetstore.unity.com/summer-sale?aid=1011l8NVc) **Affiliate**

[**Harmony**](https://github.com/pardeike/Harmony) - A library for patching, replacing and decorating .NET and Mono methods during runtime.

[_Andreas Pardeike_](https://github.com/pardeike/Harmony) *Open Source*

[**BMesh for Unity**](https://github.com/eliemichel/BMeshUnity) - This Unity package is a library to make runtime procedural mesh generation as flexible as possible. The mesh structure is similar to the one used in Blender, and a mechanism for adding arbitrary attributes to vertices/edges/loops/faces is available, for instance for people used to Houdini's wrangle nodes.

[_eliemichel_](https://github.com/eliemichel/BMeshUnity) *Open Source*

[**UniMob**](https://github.com/codewriter-packages/UniMob) - Reactive state management for Unity. UniMob is a library that makes state management simple and scalable by transparently applying functional reactive programming. _[also check out [UniMob.UI](https://github.com/codewriter-packages/UniMob.UI)]_

[_codewriter-packages_](https://github.com/codewriter-packages/UniMob) *Open Source*

[**Unity Sparse Voxel Octrees**](https://github.com/BudgetToaster/unity-sparse-voxel-octrees) - A Unity-based method of rendering voxels using Sparse Voxel Octrees as seen in Nvidia's paper: "Efficient Sparse Voxel Octrees – Analysis, Extensions, and Implementation".

[_BudgetToaster_](https://github.com/BudgetToaster/unity-sparse-voxel-octrees) *Open Source*

[**DevLogger**](https://github.com/TheWizardsCode/DevLogger) - DevLogger is a Unity Plugin that helps you keep a DevLog while working on your project.

[_TheWizardsCode_](https://github.com/TheWizardsCode/DevLogger) *Open Source*

[**WooshiiAttributes**](https://github.com/WooshiiDev/WooshiiAttributes) - A growing collection of flexible, powerful Unity attributes.

[_WooshiiDev_](https://github.com/WooshiiDev/WooshiiAttributes) *Open Source*

[**UIElements**](https://github.com/plyoung/UIElements) - Various scripts related to Unity UI Toolkit (UIElements). These are not all plug-and-play but should serve as examples you can adopt for your own needs.

[_plyoung_](https://github.com/plyoung/UIElements) *Open Source*

[**Sound Effects and Audio Bundle**](https://www.humblebundle.com/software/music-sound-effects-for-games-files-content?partner=unity3dreport) - Visuals start a game, great sound finishes them! When creating your next video or game, don’t forget one of the most important senses for immersion; sound. Make whatever you create magic with this bundle of spectacular music and sound effects. Plus, your purchase will support The Michael J. Fox Foundation for Parkinson's Research, WWF, and a charity of your choice!

[_Humble Bundle_](https://www.humblebundle.com/software/music-sound-effects-for-games-files-content?partner=unity3dreport) **Affiliate**

[**Game Dev Arts MegaPack**](https://www.humblebundle.com/software/game-dev-arts-megapack-software?partner=unity3dreport) - Make your game truly magic with these awesome developer assets! Want to add that special spark to your next game creation? Pick up the Game Dev Arts Megapack and enjoy software that puts a wealth of icons, assets, UI frames, characters, and much more at your fingertips! Plus, your purchase will support Cancer Research UK, Save the Children, & a charity of your choice!

[_Humble Bundle_](https://www.humblebundle.com/software/game-dev-arts-megapack-software?partner=unity3dreport) **Affiliate**

## Spotlight

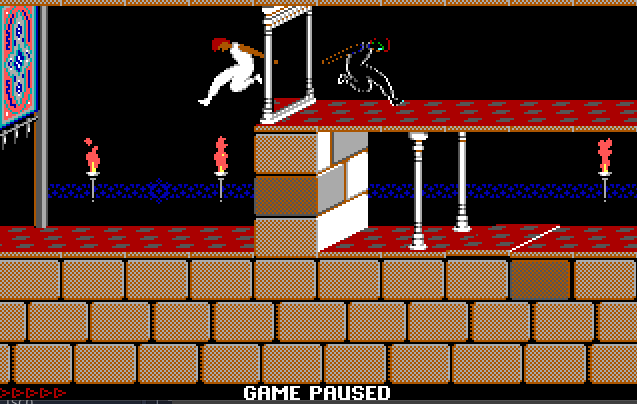

[](https://store.steampowered.com/app/1605320/Unusual_Findings/)

[**Unusual Findings**](https://store.steampowered.com/app/1605320/Unusual_Findings/) - It’s the 80s and the Christmas is coming, Vinny, Nick and Tony are young and their new cable signal descrambler just arrived. That same night while trying to decrypt a pay per view adult channel, they pick up the distress signal of an alien spaceship crash-landing in the woods near their town… Things only get weirder as they realize that the towering alien is killing very specific members of their community!

Explore the world oozing with nostalgia, check the Video Buster Store for clues, challenge other kids at the Laser Llamas Arcades, go learn a new trick at The Emerald Sword comic store, try to get along with the punk looking Lost Boys at their hideout or even dare to ask THE BULL, the quintessential 80s Action hero, for some help!

Follow the story that pays tribute to 80s classics like The Goonies, The Explorers, Monster Squad, The Lost Boys, They Live, Terminator, and Aliens among others and a gameplay that combines mechanics of Point and Clicks masterpieces like Full Throttle with its own unique twist and more.

_[You can [follow development](https://twitter.com/EpicLLamaGames) on Twitter]_

[_Epic Lama_](https://store.steampowered.com/app/1605320/Unusual_Findings/)

---

You can subscribe to the free weekly newsletter on [GameDevDigest.com](https://gamedevdigest.com)

This post includes affiliate links; I may receive compensation if you purchase products or services from the different links provided in this article.

| gamedevdigest |

761,069 | Tailwind Buttons | Responsive buttons built with Tailwind. Buttons provide predefined styles for multiple button types:... | 0 | 2021-10-13T10:06:46 | https://dev.to/keepcoding/tailwind-buttons-2po | tailwindcss, webdev | Responsive buttons built with Tailwind. Buttons provide predefined styles for multiple button types: outline, rounded, social, floating, fixed, tags, etc.

______

## Installation

#### Quick Start

In order to start using Tailwind simply download our starter.

[DOWNLOAD ZIP STARTER](http://tailwind-elements.com/tw-starter.zip)

Tailwind Elements does not change or add any CSS to the already one from TailwindCSS.

You can directly copy our components into your Tailwind design and they will work straight away.

In some dynamic components (like dropdowns or modals) we add Font Awesome icons and custom JavaScript. However, they do not require any additional installation, all the necessary code is always included in the example and copied to any Tailwind project - it will work.

_______

#### MDB GO

{% youtube RAhugF8NOBs %}

_______

## Customization

##### Regular button

###### HTML

```html

<button

class="bg-purple-500 text-white active:bg-purple-600 font-bold uppercase text-xs px-4 py-2 rounded shadow hover:shadow-md outline-none focus:outline-none mr-1 mb-1 ease-linear transition-all duration-150"

type="button">

Small

</button>

<button

class="bg-purple-500 text-white active:bg-purple-600 font-bold uppercase text-sm px-6 py-3 rounded shadow hover:shadow-lg outline-none focus:outline-none mr-1 mb-1 ease-linear transition-all duration-150"

type="button">

Medium

</button>

<button

class="bg-purple-500 text-white active:bg-purple-600 font-bold uppercase text-base px-8 py-3 rounded shadow-md hover:shadow-lg outline-none focus:outline-none mr-1 mb-1 ease-linear transition-all duration-150"

type="button">

Large

</button>

```

##### Regular button with icon

###### HTML

```html

<!-- Required font awesome -->

<link rel="stylesheet" href="https://use.fontawesome.com/releases/v5.11.2/css/all.css" />

<button

class="bg-purple-500 text-white active:bg-purple-600 font-bold uppercase text-xs px-4 py-2 rounded shadow hover:shadow-md outline-none focus:outline-none mr-1 mb-1 ease-linear transition-all duration-150"

type="button">

<i class="fas fa-gem"></i> Small

</button>

<button

class="bg-purple-500 text-white active:bg-purple-600 font-bold uppercase text-sm px-6 py-3 rounded shadow hover:shadow-lg outline-none focus:outline-none mr-1 mb-1 ease-linear transition-all duration-150"

type="button">

<i class="fas fa-gem"></i> Regular

</button>

<button

class="bg-purple-500 text-white active:bg-purple-600 font-bold uppercase text-base px-8 py-3 rounded shadow-md hover:shadow-lg outline-none focus:outline-none mr-1 mb-1 ease-linear transition-all duration-150"

type="button">

<i class="fas fa-gem"></i> Large

</button>

```

#### You can see more customization examples on the [📄 Buttons documentation page](https://tailwind-elements.com/docs/standard/components/buttons/)

______

## Crucial Resources

Here are the resources that we have prepared to help you work with this component:

1. Read [📄 Buttons documentation page](https://tailwind-elements.com/docs/standard/components/buttons/) <-- start here

2. In to get the most out of your project, you should also get acquainted with other Components options related to Buttons. See the section below to find the list of them.

3. After finishing the project you can publish it with CLI in order to receive [💽 Free hosting (beta)](https://mdbootstrap.com/docs/standard/cli/)

-----

## Related Components options & features

- [Accordion](https://tailwind-elements.com/docs/standard/components/accordion/)

- [Alerts](https://tailwind-elements.com/docs/standard/components/alerts/)

- [Badges](https://tailwind-elements.com/docs/standard/components/badges/)

- [Button group](https://tailwind-elements.com/docs/standard/components/button-group/)

- [Cards](https://tailwind-elements.com/docs/standard/components/cards/)

- [Carousel](https://tailwind-elements.com/docs/standard/components/carousel/)

- [Charts](https://tailwind-elements.com/docs/standard/components/charts/)

- [Chips](https://tailwind-elements.com/docs/standard/components/chips/)

- [Dropdown](https://tailwind-elements.com/docs/standard/components/dropdown/)

- [Gallery](https://tailwind-elements.com/docs/standard/components/gallery/)

- [Headings](https://tailwind-elements.com/docs/standard/components/headings/)

- [Images](https://tailwind-elements.com/docs/standard/components/images/)

- [List group](https://tailwind-elements.com/docs/standard/components/listgroup/)

- [Modal](https://tailwind-elements.com/docs/standard/components/modal/)

- [Paragraphs](https://tailwind-elements.com/docs/standard/components/Paragraphs/)

- [Popover](https://tailwind-elements.com/docs/standard/components/popover/)

- [Progress](https://tailwind-elements.com/docs/standard/components/progress/)

- [Rating](https://tailwind-elements.com/docs/standard/components/rating/)

- [Spinners](https://tailwind-elements.com/docs/standard/components/spinners/)

- [Stepper](https://tailwind-elements.com/docs/standard/components/stepper/)

- [Tables](https://tailwind-elements.com/docs/standard/components/tables/)

- [Template](https://tailwind-elements.com/docs/standard/components/template/)

- [Toast](https://tailwind-elements.com/docs/standard/components/toast/)

- [Tooltip](https://tailwind-elements.com/docs/standard/components/tooltip/)

-----

## Additional resources

Learn web development with our **learning roadmap**:

**[:mortar_board: Start Learning](https://mdbootstrap.com/docs/standard/getting-started/)**

Join our mailing list & receive **exclusive resources** for developers

**[:gift: Get gifts](https://mdbootstrap.com/newsletter/)**

Join our private FB group for **inspiration & community experience**

**[👨👩👧👦 Ask to join](https://www.facebook.com/groups/682245759188413)**

**Support creation of open-source packages** with a STAR on GitHub

[](https://github.com/dawidadach/Tailwind-Elements/)

| keepcoding |

761,269 | Why Astro matters | Next, Nuxt, Gatsby, SvelteKit ... there's been an explosion of frontend application frameworks lately. It's never been a more delightful experience to spin up a new project. What's the point of difference with this one? Why does it 'matter' so much? | 0 | 2021-07-16T16:12:15 | https://dev.to/endymion1818/why-astro-matters-55nj | javascript, react, vue, svelte | ---

title: Why Astro matters

published: true

description: "Next, Nuxt, Gatsby, SvelteKit ... there's been an explosion of frontend application frameworks lately. It's never been a more delightful experience to spin up a new project. What's the point of difference with this one? Why does it 'matter' so much?"

tags: javascript, react, vue, svelte

---

**Next, Nuxt, Gatsby, SvelteKit ... there's been an explosion of frontend application frameworks lately. I've tried many (but not all) of them, and I've got to say, it's never been a more delightful experience to spin up a new project. So much so, that I've got hundreds of unfinished ones lying around everywhere.**

Recently, [Astro](https://astro.build), another new frontend application framework, launched itself on the unsuspecting JavaScript public.

Whilst many of us may have been tempted to say "oh no not another one", this framework really stood out to me.

What's the point of difference with this one? Why does it "matter" so much? Well, consider this:

## 1. Frontend can be one happy family again

Astro could be considered the first frontend "meta framework".

What's one of those then? It's a "set of core interfaces for common services and highly extensible backbone for integrating components [this is already Java thing by the way](https://www.igi-global.com/chapter/java-web-application-frameworks/16864).

Astro is essentially a "bring your own frontend" approach to modern web frameworks. You can use whatever framework (oh, ok "library" then) you know and love, and still spin up a performant app that you can host almost anywhere.

Think about the potential here. Astro could be the place the frontend finally comes together. It no longer matters (as much) what framework you use. Use them all if you like 🤷♂️.

Love Vue? You can love Astro. React? Same. Svelte? You'll find no argument from Astro, because Astro is the glue that underpins how we build websites and applications.

Great, innit? It'll probably never happen but I can dream, can't I?

## 2. Astro pushes the boundaries for every javascript framework*

(* oh, ok library then)

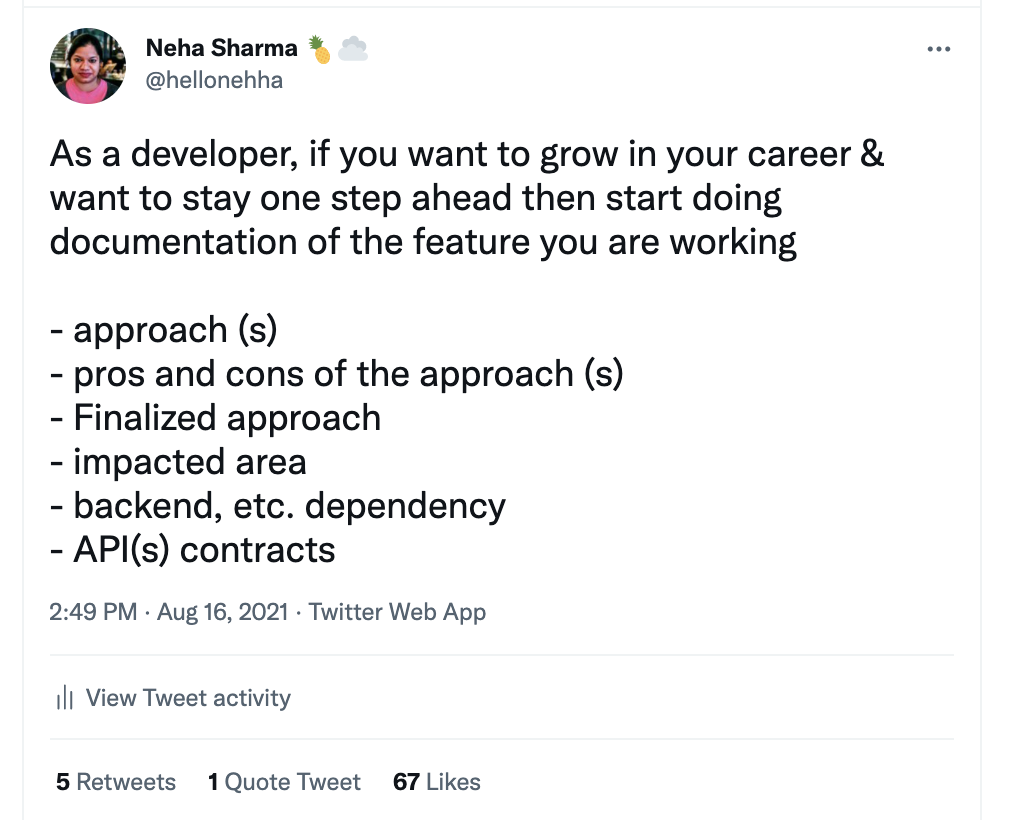

Take a look at this tweet from Evan You, the creator of Vue:

<blockquote class="twitter-tweet"><p lang="en" dir="ltr">I just tried this in a Vite SSRed app and this approach totally works... a plugin can simply remove the script tags for the actual bundle and let petite-vue "sprinkle" the parts.<br><br>aka "Island Architecture" 🏝️ <a href="https://t.co/Oe9KRvFsrd">https://t.co/Oe9KRvFsrd</a> <a href="https://t.co/KV7SvCwyn8">pic.twitter.com/KV7SvCwyn8</a></p>— Evan You (@youyuxi) <a href="https://twitter.com/youyuxi/status/1411405615369539590?ref_src=twsrc%5Etfw">July 3, 2021</a></blockquote> <script async src="https://platform.twitter.com/widgets.js" charset="utf-8"></script>

Is it a coincidence that Vue now can do a similar thing to Astro? did Astro get Evan to start thinking more about this problem? Could the same be said for the other frameworks too?

[Better hydration is something I've been wanting ever since the present generation of frontend application frameworks came out](https://deliciousreverie.co.uk/post/towards-better-rehydration/).

I know the React team have been working on it for a long time. [I even opened (very prematurely it turns out!) this issue on the GatsbyJS repo around 2 years ago](https://github.com/gatsbyjs/gatsby/issues/17993).

React 18's hydration prioritisation is a good step forward, however the whole DOM tree still need to be hydrated. Won't it be great when we need only attach JavaScript generated elements to the DOM when components really need them?!

It would be wonderful to think that partial rehydration could be everywhere, it would certainly level the playing field and even things up a lot [for the next 1 billion web users](https://gomakethings.com/progressive-enhancement-and-the-next-billion-web-users/).

## Check out Astro

If you care about performance (you care right?) please check out this gamechanger. I'm so excited for the potential here.

https://astro.build | endymion1818 |

761,280 | .NET 6: The MOST promising FEATURES 🔥 | Microsoft is putting the batteries and already presents .NET 6 Preview 5. According to what they tell... | 0 | 2021-07-20T15:38:00 | https://www.bytehide.com/blog/dotnet-6-is-here-the-features-that-will-blow-your-mind/ | dotnet, netcore, csharp, dotnet6 | **Microsoft** is putting the batteries and already presents .NET 6 Preview 5. According to what they tell us, they are already in the second half of the **.NET 6** version and they are beginning to teach new features that promise a lot. 🤗

---

## What is .NET? What is it for?

What is .NET in general, is a _Microsoft_ platform to develop Software, covering many operating systems such as **Windows**, **iOS**, **Linux**, **Android** … The main idea of .NET is to be able to develop applications independent of the physical architecture or the operating system in the one that was going to be executed.

Its main advantages are:

* **Decreased** development time

* Use of **predesigned functionalities**

* **Reduction** of development and maintenance cost

* **Simplification** of maintenance

> Okay, I understand. And what is .NET 6? _🤔_

.NET 6 is the new version of .NET _(currently .NET 5)_ that Microsoft promises to release on **November 9** of this year. It brings many improvements and new features compared to .NET 5.

---

## What are .NET 6 new features?

If we speak in general, we can speak from .NET MAUI, through the new implementation of the 64-bit architecture, to Visual Studio 2022 and its function more... 😎 But we are going to explain the most important ones and the ones you should know.

---

### NuGet package validation

Package validation tools will allow **NuGet** library developers to validate that their packages are consistent and well-formed.

Its main characteristics are:

* Validate that there are **no important change**s in the versions

* Validate that the package **has the same set of public APIs** for all specific runtime implementations.

* Determine **applicability gaps in the target framework** or in the execution time.

---

### Workload Enhancements in the .NET SDK

Microsoft reports that it has added new workload commands in .NET 6 to improve administration:

* `dotnet workload search` List the workloads available to install.

* `dotnet workload unistall` Removes the specified workload if you no longer need a workload. It is also a good option to save space.

* `dotnet workload repair` Reinstall all previously installed workloads.

---

### Crossgen2

[Crossgen](https://github.com/dotnet/runtime/blob/main/docs/workflow/building/coreclr/crossgen.md) allows **IL** precompiling into native code as a publishing step. Pre-compiling is primarily beneficial to improve startup. **Crossgen2** is a scratch implementation that is already proving to be a superior platform for code generation innovation.

Here we can see how to enable the pre-compilation with Crossgen2 from the _MSBuild_ properties:

```csharp

<!-- Enable pre-compiling native code (in ready-to-run format) with crossgen2 -->

<PublishReadyToRun>true</PublishReadyToRun>

<!-- Enable generating a composite R2R image -->

<PublishReadyToRunComposite>true</PublishReadyToRunComposite>

```

---

### Windows Forms: The default font

Already with .NET 6 you can set a default font for an `Application.SetDefaultFont`application. Also the pattern it uses is similar to setting high dpi or visual styles. An example:

```csharp

class Program

{

\[STAThread\]

static void Main()

{

Application.SetHighDpiMode(HighDpiMode.SystemAware);

Application.EnableVisualStyles();

Application.SetCompatibleTextRenderingDefault(false);\+

Application.SetDefaultFont(new Font(new FontFamily("Microsoft Sans Serif"), 8f));Application.Run(new Form1());

}

}

```

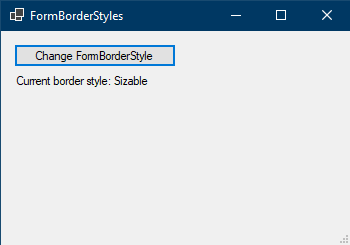

Here are **2 examples** after you set the default font.

Microsoft Sans Serif, 8pt:

Chiller, 12pt:

---

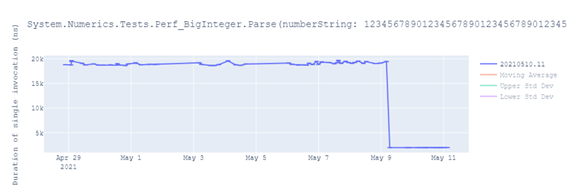

### Performance increase in the BigInteger library

BigIntegers parsing of decimal and hexadecimal strings has been improved. In the following photo you can see improvements of up to **89%**:

---

### SSL 3 support

The .NET crypto APIs support the use of **OpenSSL 3** as the preferred native crypto provider on _Linux_. .NET 6 will use **OpenSSL 3** if available. Otherwise, it will use **OpenSSL 1.x**.

---

### IOS CPU Sampling (SpeedScope)

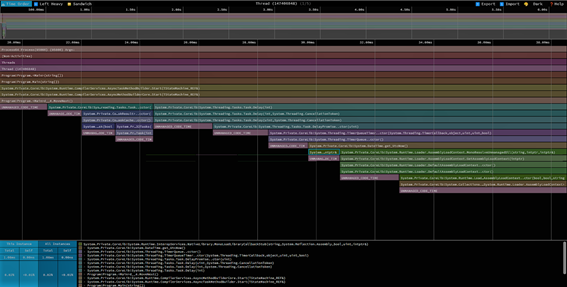

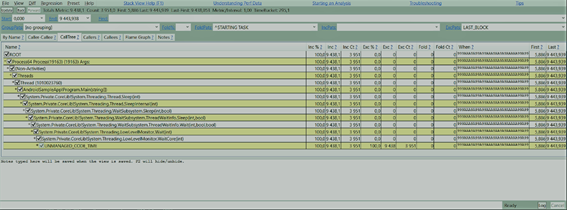

The graph below shows part of an **iOS** startup _CPU_ sampling session seen in **SpeedScope**:

---

### Android CPU Sampling (PerfView)

The following image shows the **Android** _CPU_ sampling seen in **PerfView**:

---

## .NET 6 Conclusion

This .NET 6 Preview 5 is perhaps the biggest so far of all the ones that have been released in terms of quantity and quality of features. Right now you can try .NET 6 by downloading it from the **Official Web of .NET 6.**

As Microsoft says…

> The future has arrived

With all the tools already announced and all that remain to be shown and released, development will become a much easier, more optimized and more productive task, highly improving the developer experience in their current and future projects.

We would like to know your opinion. Sound good features? Anything you would like them to take out? Leave it in the comments so we can talk about it.😊 | bytehide |

761,289 | Princípio da Responsabilidade Única (SRP) | Neste artigo vou explicar como funciona o Princípio da Responsabilidade Única (SRP) do SOLID e... | 13,664 | 2021-07-16T16:55:06 | https://felipecesar.dev/princ%C3%ADpio-da-responsabilidade-%C3%BAnica-srp | braziliandevs, javascript, programming, architecture | Neste artigo vou explicar como funciona o **Princípio da Responsabilidade Única (SRP)** do **SOLID** e mostrar como aplicá-lo em JavaScript.

## O que é SOLID?

SOLID é um acrônimo de 5 princípios da programação orientada a objetos, são eles:

**[S]**ingle Responsability Principle

**[O]**pen/Closed Principle

**[L]**iskov Substitution Principle

**[I]**nterface Segregation Principle

**[D]**ependency Inversion Principle

Com a aplicação destes princípios podemos obter alguns benefícios, como códigos mais fáceis de manter, adaptar, testar, etc. Além de evitar possíveis problemas como códigos desestruturados, frágeis e duplicados.

## O primeiro princípio

O primeiro princípio do SOLID é o da responsabilidade única ou **SRP**, este princípio define que uma classe deve possuir apenas uma responsabilidade que deve estar totalmente encapsulada dentro dela.

Sua definição formal diz:

> Uma classe deve ter **um, e apenas um**, motivo para ser modificada.

Se uma classe só deve ter um motivo para ser modificada, certamente ela só deve ter uma única responsabilidade.

## Exemplo de violação

Vamos supor que precisamos criar uma classe que faça requisições ao servidor e valide os erros para apresenta-los como um alerta na tela, essa é uma classe que possui mais de uma responsabilidade e claramente viola o SRP. Abaixo segue um exemplo desta classe onde importamos o [SweetAlert2](https://sweetalert2.github.io/) para exibir os alertas de erro:

```javascript

import Swal from 'sweetalert2';

export class HttpClient {

get(url) {

return fetch(url, {

headers: {

Accept: 'application/json'

}

}).then(response => {

if (response.ok) {

return response.json();

} else {

if (response.status == 401) {

Swal({

title: 'Não autorizado',

type: 'error'

});

} else if (response.status == 404) {

Swal({

title: 'Não encontrado',

type: 'warning'

});

} else if (response.status == 500) {

Swal({

title: 'Erro do Servidor Interno',

type: 'error'

});

} else {

Swal({

title: 'Erro desconhecido',

type: 'info'

});

}

}

});

}

}

```

## Resolvendo a violação do SRP

Nesse caso como decidimos importar uma lib para validar o erro antes de que ele seja exibido, podemos separar as responsabilidades em duas classes:

```javascript

import Swal from 'sweetalert2';

export default class ErrorHandler {

static handle(response) {

if (response.status == 401) {

Swal({

title: 'Não autorizado',

type: 'error'

});

} else if (response.status == 404) {

Swal({

title: 'Não encontrado',

type: 'warning'

});

} else if (response.status == 500) {

Swal({

title: 'Erro do Servidor Interno',

type: 'error'

});

} else {

Swal({

title: 'Erro desconhecido',

type: 'info'

});

}

}

}

```

```javascript

import ErrorHandler from './error-handler';

export default class HttpClient {

get(url) {

return fetch(url, {

headers: {

Accept: 'application/json'

}

}).then(response => {

if (response.ok) {

return response.json();

} else {

ErrorHandler.handle(response);

}

});

}

}

```

Dessa forma conseguimos corrigir a violação, dividindo as responsabilidades em classes diferentes.

## Conclusão

Esse é o tipo de princípio que toda aplicação orientada a objetos deve ter, aplicando ele conseguimos criar classes mais coesas e com um acoplamento mais baixo.

Espero que tenham gostado, se tiverem duvidas ou sugestões podem comentar. Abraço! | felipecesr |

761,429 | Tips for creating a good post | [Clique aqui para ler em português] TITLE Write an attention-grabbing headline that will... | 0 | 2021-07-16T19:25:44 | https://dev.to/walternascimentobarroso/tips-for-creating-a-good-post-2bd6 | post, content, tips, article | [[Clique aqui para ler em português]](https://medium.com/walternascimentobarroso-pt/dicas-para-criar-um-bom-post-a5c69143f3d)

## TITLE

Write an attention-grabbing headline that will draw an audience to your text.

## IMAGES

Use an image to reflect what your post means.

some sites with free images:

https://stocksnap.io/

https://www.pexels.com/

Website that searches for free images on other websites https://farejadordeimagens.com.br/

Site to change image dimension

https://www.easy-resize.com/

Website to reduce image weight

https://compressor.io/

## TABLES

Whenever possible, adjust your content in tables, so the information is more organized, by comparison tables.

To help assemble the tables, use the website

https://ozh.github.io/ascii-tables/

## CODES

Whenever you need to put in some code that is too extensive, use some of the tools below:

https://carbon.now.sh/

https://codepen.io/

https://gist.github.com/

## TAGS

Always add tags to your post, so it’s easier to find

## EMOJIS

To convey more feelings and expressions in your posts, use

emojis

http://emojipedia.org/

http://www.iemoji.com/

***

## Thanks for reading!

If you have any questions, complaints or tips, you can leave them here in the comments. I will be happy to answer!

😊😊See you! 😊😊 | walternascimentobarroso |

761,498 | Creando Arte CSS Accesible | El arte y los dibujos CSS no son accesibles por defecto, pero con las técnicas modernas de HTML y CSS, se pueden hacer más cercanos a todo el mundo. | 0 | 2021-07-16T23:13:04 | https://alvaromontoro.com/blog/67979/creating-accessible-css-art#2 | css, a11y, html, spanish | ---

title: Creando Arte CSS Accesible

published: true

description: El arte y los dibujos CSS no son accesibles por defecto, pero con las técnicas modernas de HTML y CSS, se pueden hacer más cercanos a todo el mundo.

tags: css, a11y, html, spanish

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/mduo5hrwmec3ewnhda5l.jpg

canonical_url: https://alvaromontoro.com/blog/67979/creating-accessible-css-art#2

---

> For an English version of this article, visit this other [DEV post](https://dev.to/alvaromontoro/creating-more-accessible-css-art-179n)

El arte y los dibujos CSS ha existido básicamente desde que se creo CSS. Son una manera perfecta de practicar y aprender y son interesantes como retos de programación. Pero tienen un gran problema: el arte CSS no es accesible.

En este post no vamos a ver cómo crear dibujos e ilustraciones en CSS (hay muchos de esos posts). En lugar de eso, nos centraremos en consejos y buenas prácticas para hacer que los dibujos CSS sean más accesibles para todos.

Después de aplicar estas técnicas, tus imágenes en CSS se llevarán mejor con los lectores de pantalla, personas con necesidades específicos de colores, gente con trastornos vestibulares o vértigo... y todo sin impactar negativamente tu arte o tu creatividad. Todos ganan.

Para ver un ejemplo con los consejos de este artículo, [visita esta ilustración CSS](https://codepen.io/alvaromontoro/pen/WNwrPmW). Y sin más preámbulos, vamos a ver las recomendaciones:

1. Identifica el dibujo CSS como una imagen

2. Añade texto alternativo

3. Elige entre dibujar a la perfección o responsivamente

4. Usa elementos HTML semánticos

5. Precaución con las animaciones

6. Respeta las elecciones del colores

7. Especifica la relación de aspecto

## Identifica el dibujo CSS como una imagen

Una de las cosas más importantes es identificar tu arte CSS como una image. Eso se puede hacer añadiendo un [rol ARIA de `img`](https://developer.mozilla.org/en-US/docs/Web/Accessibility/ARIA/Roles/Role_Img) al contenedor principal del dibujo:

```html

<div role="img">

<!-- HTML de la imagen -->

</div>

```

Al añadir este rol ARIA, las tecnologías de asistencia anunciarán que el contenedor del arte CSS es una imagen cuando lleguen a él.

## Añade texto alternativo

Ahora que los lectores de pantalla anuncian el arte CSS como una imagen, es important proveer una descripción o texto alternativo tal y como lo tendría una imagen normal. Algo equivalente al [atributo `alt`](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/img#attr-alt).

Podemos lograr esto al añadir el [atributo `aria-label`](https://developer.mozilla.org/en-US/docs/Web/Accessibility/ARIA/ARIA_Techniques/Using_the_aria-label_attribute) al dibujo y añadiendo el texto alternativo ahí:

```html

<div aria-label="El texto alternativo iría aquí">

<!-- HTML de la imagen -->

</div>

```

Esto funcionará pero, en el caso improbable (pero posible) de que el CSS no se cargue correctamente, puede no ser suficiente para hacer lo que queremos. Por eso prefiero usar mejor el [atributo `aria-labelledby`](https://developer.mozilla.org/en-US/docs/Web/Accessibility/ARIA/ARIA_Techniques/Using_the_aria-labelledby_attribute):

```html

<div aria-labelledby="alt-imagen">

<div id="alt-imagen">El texto alternativo va aquí</div>

<!-- HTML de la imagen -->

</div>

```

Esta solución incluye la etiqueta como texto, lo que permite esconderla usando cualquier técnica accesible. Hay muchas posibilidades para ello. Una sencilla se puede encontrar en la [página web de The A11Y Project](https://www.a11yproject.com/posts/2013-01-11-how-to-hide-content/):

```css

#alt-imagen {

clip: rect(0 0 0 0);

clip-path: inset(50%);

height: 1px;

overflow: hidden;

position: absolute;

white-space: nowrap;

width: 1px;

}

```

De este modo, si el CSS no se carga, el texto alternativo se mostrará parecido a como se mostraría el texto alternativo de un `<img />` nativo si la imagen no se carga correctamente en la página.

## Elige entre dibujar a la perfección o responsivamente

Algo **a tener en cuenta antes incluso de empezar a codificar nada**: ¿queremos que el dibujo sea _pixel-perfect_? ¿O queremos que sea algo responsivo y que cambie de tamaño (al coste de que igual no se ve del todo bien)? Por supuesto, las respuesta tendrán consecuencias y decidirán sobre cómo debemos crear el arte CSS.

Si queremos alcanzar resultados perfectos, deberíamos usar [unidades absolutas de medida](https://developer.mozilla.org/en-US/docs/Learn/CSS/Building_blocks/Values_and_units#absolute_length_units) como `px`, `cm`, `pt`, etc. De este modo nuestra imagen tendrá un tamaño fijo y no escalará del todo bien (aunque mejor que las imágenes raster ya que lo nuestro será un dibujo vectorial), pero podremos usar cualquier propiedad y valor CSS.

Por otro lado, utilizaremos [unidades relativas de medida](https://developer.mozilla.org/en-US/docs/Learn/CSS/Building_blocks/Values_and_units#relative_length_units) como `%`, `vmin`, `em`, etc. si queremos que sea escalable.

Para que sea responsivo debemos tener en cuenta otras consideraciones:

- El resultado será escalable y responsivo... o al menos, tendrá la capacidad de serlo.

- Tendremos que tener cuidado con algunas propiedades CSS que no funcionan bien con algunas unidades relativas (p.e. [`box-shadow`](https://developer.mozilla.org/en-US/docs/Web/CSS/box-shadow) o [`border`](https://developer.mozilla.org/en-US/docs/Web/CSS/border-width)).

- Debemos evitar valores CSS que no usan unidades relativas (p.e. [`clip-path`](https://developer.mozilla.org/en-US/docs/Web/CSS/clip-path) puede tener valores relativos con `polygon()` pero no con `path()`).

No hay nada malo con uno u otro enfoque. Para dibujos artísticos seguramente optemos por escalabilidad, mientras que para cosas más _prácticas_ como iconos o fondos, optar por algo más pixel perfecto será conveniente.

## Usa elementos HTML semánticos

HTML5 provee muchos elementos semánticos. No hace falta usar `<div>` para todas las partes de nuestro dibujo y especialmente no para el contenedor principal. La pregunta debería ser "¿qué elemento HTML debemos usar?"

> Nota del autor: comprendo que mi elección de elementos semánticos puede ser discutible. Intentaré ser lo más neutral/objetivo posible.

Hay un par de elementos que parecen ideales en este caso: [`<article>`](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/article) y [`<figure>`](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/figure)/[`<figcaption>`](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/figcaption). Mientras que el segundo parece una elección obvia, el primero tiene algunas ventajas semánticas, como veremos pronto.

Por un lado, tenemos `<figure>`. Un contenido autónomo con un subtítulo opcional (`<figcaption>`) que puede usarse para el texto alternativo:

```html

<figure aria-labelledby="alt-imagen">

<figcaption id="alt-imagen">Aquí va el texto alternativo</figcaption>

<!-- HTML de la imagen -->

</figure>

```

Esta elección parece venir como un guante para lo que queremos, pero no debemos olvidar `<article>`: una composición completa, autónoma e independiente, con la intención de ser reusada y distribuida. ¡Precisamente lo que es el dibujo CSS!

```html

<article>

<!-- HTML de la imagen -->

</article>

```

¿Qué elemento HTML semántico elegir? Eso dependerá del autor y de cómo quiere que su arte CSS sea presentado a los usuarios.

Alguna gente ha mencionado que no puede haber un `<article>` dentro de otro `<article>`, pero eso no es correcto. [Un artículo puede contener otro artículo](https://html.spec.whatwg.org/multipage/sections.html#the-article-element). Cuando hay artículos anidados, el artículo interior debe estar relacionado con el artículo exterior.

El uso de `<article>` da mucho juego también porque permite una incorporación más natural de otros elementos HTML semánticos como encabezados para el título/texto alternativo de la ilustración, [`<address>`](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/address) para el contacto del autor, o [`<time>`](https://developer.mozilla.org/en-US/docs/Web/HTML/Element/time) para la fecha de publicación:

```html

<article aria-labelledby="image-alt"

aria-describedby="image-info">

<!-- Todo el `<header>` se escondería de forma accesible -->

<header>

<h2 id="image-alt">Título/Texto alternativo</h2>

<p id="image-info">

<address>

Creado por

<a href="https://twitter.com/alvaro_montoro">

Alvaro Montoro

</a>

</address>

el

<time datetime="2021-06-10">10 de Junio de 2021</time>

</p>

</header>

<!-- HTML de la imagen -->

</article>

```

## Precaución con las animaciones

Algunas veces los dibujos CSS incluyen algún tipo de animación: un perro que mueve la cola, una persona moviéndose y parpadeando, un avión que vuela y da vueltas...

Cuando añadimos animaciones a nuestro arte CSS, debemos tener en cuenta que no a todo el mundo le gusta ver las animaciones o que pueden sufrir de algún trastorno que se vea acentuado por ellas (p.e. [los trastornos vestibulares o el vértigo](https://css-tricks.com/introduction-reduced-motion-media-query/)).

Tenemos que proporcionar algún modo de para las animaciones o reemplazarlas con algo diferente. Para nuestra suerte, CSS ofrece la [media query `prefers-reduced-motion`](https://developer.mozilla.org/en-US/docs/Web/CSS/@media/prefers-reduced-motion) que permite a los desarrolladores hacer precisamente eso.

Por ejemplo, podemos deshabilitar una animación haciendo algo como esto (suponiendo que la clase "animado" está en todos los elementos que se animan):

```css

@media (prefers-reduced-motion) {

.animado {

animation: none;

}

}

```

También tenemos que tener en cuenta que no todas las animaciones son iguales y no todas causarán problemas. **En lugar de cancelar todas las animaciones, podemos plantearnos cambiarlas por otras más apropiadas** o ajustando los tiempos para que no sean tan bruscas.

## Respeta las elecciones del colores

Algunos sistemas operativos permiten a los usuarios seleccionar opciones de accesibilidad y, en algunos casos, CSS puede identificar esos valores usando algunas media queries y propiedades.

Algunos de los métodos que vamos a comentario [no están soportados por los navegadores](https://caniuse.com/?search=forced-colors), pero pueden usarse para mejorar nuestro arte CSS una vez que el soporte esté más extendido.

Las media queries son:

- [`prefers-contrast`](https://developer.mozilla.org/en-US/docs/Web/CSS/@media/prefers-contrast): para indicar si el usuario quiere un contraste más alto o más bajo.

- [`prefers-color-scheme`](https://developer.mozilla.org/en-US/docs/Web/CSS/@media/prefers-color-scheme): para detectar si el usuario prefiere un tema oscuro o claro (muy común para mostrar el modo noche/día.)

- [`forced colors`](https://developer.mozilla.org/en-US/docs/Web/CSS/@media/forced-colors): especifica si un usuario ha elegido una paleta de colores limitada.

```css

@media (prefers-contrast: more) {

/* more: contrastes más altos, bordes, sin transparencias... */

/* less: contrastes de color más bajos */

}

@media (prefers-colors-scheme: dark) {

/* dark: dibujos con más contraste frente a fondos oscuros */

/* light: dibujos con más contraste frente a fondos claros */

}

@media (forced-colors: active) {

/* sobreescribir propiedades como box-shadow, añadir bordes, etc. */

}

```

El uso de [propiedades personalizadas en CSS](https://developer.mozilla.org/en-US/docs/Web/CSS/Using_CSS_custom_properties) (también llamadas variables CSS) hará más fácil el uso de esas media queries ya que sólo habrá que redefinir los valores de las variables.

En casos más extremos, puede que queremos evitar algunas propiedades CSS. Por ejemplo, el valor de `box-shadow` se fuerza a `none` en el modo de colores forzados, y tendríamos que buscar alternativas.

## Especifica la relación de aspecto

Una propiedad más reciente que puede ser útil para crear arte CSS responsivo es [`aspect-ratio`](https://developer.mozilla.org/en-US/docs/Web/CSS/aspect-ratio). Con ella podemos definir la relación de aspecto preferida para la imagen, que el navegador usará para calcular el tamaño y aplicarlo automáticamente:

```css

/* Este dibujo CSS será cuadrado */

#miArteCSS {

aspect-ratio: 1 / 1;

}

/* Este dibujo CSS será el doble de alto que de ancho */

#miArteCSS {

aspect-ratio: 1 / 2;

}

```

Esto es algo muy conveniente para los desarrolladores, pero también tiene un impacto en la accesibilidad porque asegura que nuestros dibujos CSS no se verán estirados o deformados. Y tiene un [buen soporte](https://caniuse.com/?search=aspect-ratio) por parte de los navegadores.

----

Este artículo es una extensión de la presentación que hice durante un meetup de SydCSS. Puedes encontrar el [video en Youtube](https://www.youtube.com/watch?v=bJRETGarbqE&t=2460s).

| alvaromontoro |

761,689 | JS Quiz: Practise Arithmetic Skills | Check out this website I created, where you can practice basic addition, subtraction, multiplication... | 0 | 2021-07-17T05:39:21 | https://dev.to/swatitr06172888/js-quiz-practise-arithmetic-skills-ngh | javascript, beginners, html, css | Check out this website I created, where you can practice basic addition, subtraction, multiplication and division. It is made using pure HTML, CSS and JavaScript. Any suggestions for improvement are most welcome.

Check out the main website here:

[Click to visit sankhya: website to practise mental maths](https://swati-gwc.github.io/sankhya)

Below was the first version of the above website.

{% codepen https://codepen.io/swati_gwc/pen/YzNxdGE %} | swatitr06172888 |

761,767 | Create LANDING PAGE with 3-D Effect | In this article I am going to show you how to add 3-D effects to a Landing Page using HTML, CSS and... | 0 | 2021-07-17T07:50:41 | https://dev.to/rajshreevats/create-landing-page-with-3-d-effect-4136 | html, javascript, css, tutorial | In this article I am going to show you how to add 3-D effects to a Landing Page using __HTML, CSS and JavaScript__. You can add 3-D effects to give your website a _bold navigation_ and also show off your skills of better usability standards. _Unique and beautiful_ effects are what can easily make people stop and take notice, increasing the time they spend on the site.

##Step 1: Create HTML File

This is a basic layout of a landing page, further we are going to add effects using _CSS and JavaScript_.

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8" />

<meta name="viewport" content="width=device-width, initial-scale=1.0" />

<title>3D Navbar Animation</title>

<link rel="stylesheet" href="style.css" />

</head>

<body>

<div class="container">

<div class="navbar">

<div class="menu">

<h3 class="logo">Rajshree<span>Vats</span></h3>

<div class="hamburger-menu">

<div class="bar"></div>

</div>

</div>

</div>

<div class="main-container">

<div class="main">

<header>

<div class="overlay">

<div class="inner">

<h2 class="title"> Imagination creates reality</h2>

<p>

Hello, This is a sample landing page with 3-D navigation bar.

Created using HTML,CSS and JAVASCRIPT. I hope you like it.

</p>

<button class="btn">Read more</button>

</div>

</div>

</header>

</div>

```

##Step 2: Styling Layout and Hamburger menu

This will style our html elements.

```css

* {

padding: 0;

margin: 0;

box-sizing: border-box;

}

body,

button {

font-family: "Poppins", sans-serif;

}

.container {

min-height: 100vh;

width: 100%;

background-image: url(bg2.png);

overflow-x: hidden;

transform-style: preserve-3d;

}

.navbar {

position: fixed;

top: 0;

left: 0;

width: 100%;

z-index: 10;

height: 3rem;

}

.menu {

max-width: 72rem;

width: 100%;

margin: 0 auto;

padding: 0 2rem;

display: flex;

justify-content: space-between;

align-items: center;

color: #fff;

}

.logo {

font-size: 1.1rem;

font-weight: 600;

text-transform: uppercase;

letter-spacing: 2px;

line-height: 4rem;

}

.logo span {

font-weight: 300;

}

.hamburger-menu {

height: 4rem;

width: 3rem;

cursor: pointer;

display: flex;

align-items: center;

justify-content: flex-end;

}

.bar {

width: 1.9rem;

height: 1.5px;

border-radius: 2px;

background-color: #eee;

transition: 0.5s;

position: relative;

}

.bar:before,

.bar:after {

content: "";

position: absolute;

width: inherit;

height: inherit;

background-color: #eee;

transition: 0.5s;

}

.bar:before {

transform: translateY(-9px);

}

.bar:after {

transform: translateY(9px);

}

.main {

position: relative;

width: 100%;

left: 0;

z-index: 5;

overflow: hidden;

transform-origin: left;

transform-style: preserve-3d;

transition: 0.5s;

}

header {

min-height: 100vh;

width: 100%;

background: url("bg1.png") no-repeat top center / cover;

position: relative;

}

.overlay {

position: absolute;

width: 100%;

height: 100%;

top: 0;

left: 0;

display: flex;

justify-content: center;

align-items: center;

}

.inner {

max-width: 35rem;

text-align: center;

color: #fff;

padding: 0 2rem;

}

.title {

font-size: 2rem;

}

.btn {

margin-top: 1rem;

padding: 0.6rem 1.8rem;

background-color: #1179e7;

border: none;

border-radius: 25px;

color: #fff;

text-transform: uppercase;

cursor: pointer;

text-decoration: none;

}

```

##Step 3 : The Javascript Code

To add __animation__, we need to add a __class__ called __active__ to the container element and remove it every time _hamburger menu_ is clicked on.

```javascript

const hamburger_menu = document.querySelector(".hamburger-menu");

const container = document.querySelector(".container");

hamburger_menu.addEventListener("click", () => {

container.classList.toggle("active");

});

```

##Step 4: Styling __active__ class

After this Let's style the container element and add animation using css -

```css

.container.active .bar {

transform: rotate(360deg);

background-color: transparent;

}

.container.active .bar:before {

transform: translateY(0) rotate(45deg);

}

.container.active .bar:after {

transform: translateY(0) rotate(-45deg);

}

.container.active .main {

animation: main-animation 0.5s ease;

cursor: pointer;

transform: perspective(1300px) rotateY(20deg) translateZ(310px) scale(0.5);

}

@keyframes main-animation {

from {

transform: translate(0);

}

to {

transform: perspective(1300px) rotateY(20deg) translateZ(310px) scale(0.5);

}

}

```

We are done with the 3-D transformation.

####Now, add shadow effect to the image

```css

.shadow {

position: absolute;

width: 100%;

height: 100vh;

top: 0;

left: 0;

transform-style: preserve-3d;

transform-origin: left;

transition: 0.5s;

background-color: white;

}

.shadow.one {

z-index: -1;

opacity: 0.15;

}

.shadow.two {

z-index: -2;

opacity: 0.1;

}

.container.active .shadow.one {

animation: shadow-one 0.6s ease-out;

transform: perspective(1300px) rotateY(20deg) translateZ(215px) scale(0.5);

}

@keyframes shadow-one {

0% {

transform: translate(0);

}

5% {

transform: perspective(1300px) rotateY(20deg) translateZ(310px) scale(0.5);

}

100% {

transform: perspective(1300px) rotateY(20deg) translateZ(215px) scale(0.5);

}

}

.container.active .shadow.two {

animation: shadow-two 0.6s ease-out;

transform: perspective(1300px) rotateY(20deg) translateZ(120px) scale(0.5);

}

@keyframes shadow-two {

0% {

transform: translate(0);

}

20% {

transform: perspective(1300px) rotateY(20deg) translateZ(310px) scale(0.5);

}

100% {

transform: perspective(1300px) rotateY(20deg) translateZ(120px) scale(0.5);

}

}

.container.active .main:hover + .shadow.one {

transform: perspective(1300px) rotateY(20deg) translateZ(230px) scale(0.5);

}

.container.active .main:hover {

transform: perspective(1300px) rotateY(20deg) translateZ(340px) scale(0.5);

}

```

##Step 5: Adding and styling Menu links

```html

<div class="links">

<ul>

<li>

<a href="#" style="--i: 0.05s;">Home</a>

<span> </span>

</li>

<li>

<a href="#" style="--i: 0.1s;">Services</a>

<span> </span>

</li>

<li>

<a href="#" style="--i: 0.15s;">Portfolio</a>

<span> </span>

</li>

<li>

<a href="#" style="--i: 0.2s;">Testimonials</a>

<span> </span>

</li>

<li>

<a href="#" style="--i: 0.25s;"> About</a>

<span> </span>

</li>

<li>

<a href="#" style="--i: 0.3s;"> Contact</a>

<span> </span>

</li>

</ul>

</div>

</div>

```

####Let's __style__ links

```css

.links {

position: absolute;

width: 20%;

right: 2em;

top: 0;

height: 100vh;

z-index: 2;

display: flex;

justify-content: center;

align-items: center;

}

ul {

list-style: none;

}

ul li a .fa{

font-size: 40px;

color:black;

line-height: 80px;

transition: 0.5s;

padding-right: 14px;

}

ul li a{

text-decoration: none;

display:absolute;

display: block;

width:160px;

height:60px;

background:#609aca;

text-align:centre;

padding-left: 20px;

transform: rotate(0deg) skewX(25deg) translate(0,0);

transition: 0.5s;

box-shadow: -20px 20px 10px rgba(0,0,0,3);

}

ul li a:before {

content: '';

position: absolute;

top: 10px;

left: -20px;

height: 100%;

width:20px;

background:#b1b1b1;

transform:0.5s;

transform:rotate(0deg) skewY(-45deg);

}

ul li a:before {

content: '';

position: absolute;

top: 6px;

left: -10px;

height: 100%;

width:10px;

background:#dbe7f0;

transform:0.5s;

transform:rotate(0deg) skewY(-45deg);

}

ul li a:after {

content: '';

position: absolute;

bottom: -10px;

left: -6px;

height: 10px;

width:100%;

background:#dbe7f0;

transform:0.5s;

transform:rotate(0deg) skewX(-45deg);

}

ul li a:hover {

transform:rotate(-30deg) skew(25deg) translate(20px,-15px);

box-shadow: -50px 50px 50px rgba(0,0,0,0);

}

ul li a:hover .fa {

color: #fff;

}

ul li:hover span{

color: #fff;

}

ul li:hover a{

background: #9b154c;

}

ul li:hover a:before{

background: #7B153F;

}

ul li:hover a:after{

background: #9b154c;

}

.links a {

text-decoration: none;

color: black;

padding: 0.7rem 0;

display: flex;

display: block;

font-size: 1.2rem;

font-weight: 200;

text-transform: uppercase;

letter-spacing: 0px;

transition: 0.3s;

opacity: 0;

transform: translateY(10px);

animation: hide 0.5s forwards ease;

}

.links a:hover {

color: #fff;

}

.container.active .links a {

animation: appear 0.5s forwards ease var(--i);

}

```

View the Final Version of this page [here](https://rajshreevats.github.io/3-d-navigation-bar.github.io/)

Hopefully this tutorial was fun and worth your while. Get the full Source Code [here](https://github.com/RajshreeVats/3-d-navigation-bar.github.io) on my [Github](https://github.com/RajshreeVats) __Repo__.

Let's Connect on [LinkedIn](https://www.linkedin.com/in/rajshree-vatsa-6493371b8/) or [Twitter](https://twitter.com/RajshreeVatsa).

| rajshreevats |

761,845 | chemistry tutor | In addition to one-to-one organic chemistry tutoring, I extend my support to the interested students... | 0 | 2021-07-17T10:28:55 | https://dev.to/scopex70932047/chemistry-tutor-2eom | organicchemistrytutor | In addition to one-to-one organic chemistry tutoring, I extend my support to the interested students who are in the need of short and crisp learning material for any organic chemistry topics.

Every year I tutor at least a hundred organic chemistry students from all over the country. Also, I taught organic chemistry topics at the graduate level for other country students who are residing in the USA. Some of the students are from Colorado State University, Georgetown University, Allen University, Augustana University, and few community colleges in the US.

| scopex70932047 |

762,272 | Getting Started with Lodash in JavaScript | Why use lodash It reduces the lines of code significantly Supports common operations... | 0 | 2021-07-17T23:52:12 | https://www.realpythonproject.com/getting-started-with-lodash-in-javascript/ | javascript, programming, codenewbie, computerscience | ### Why use lodash

- It reduces the lines of code significantly

- Supports common operations done on Objects and Arrays

- Supports common operations on strings

- Supports generic functions

- Trusted by other developers. It has [50k+ ⭐️](https://github.com/lodash/lodash) on GitHub

- Well Documented

- You don't need to learn any new syntax or concepts or anything. It uses plain old JavaScript.

### Install lodash

```npm install lodash```

### Accessing values in deeply nested objects

When dealing with API responses, more often than not, the data you'd like to access will be deeply nested.

Consider the following example.

This is a sample response from the [JSON API](https://jsonapi.org/examples/)

If we want to access the title, we could do something like this

This works fine but we made a big assumption:

>> **'deepObject' , 'data' , 'attributes' ,'title' are all defined**.

However, it is possible that any of them might be undefined. This would throw an error. If **'attributes'** is empty or undefined, then **'attributes.title'** would not exist.

Lodash's get function can be used to handle the error gracefully. Below is the syntax

```javascript

_.get(object, path, [defaultValue])

```

In the second console statement, we try to access the element at index 3 in data but this doesn't exist. Therefore 'Value doesn't exist' is printed in the console.

In the third console statement, we try to print the value for **'title'** in **'data[0]'** but **'data[0]'** doesn't have any attribute called **'title'**. Similar to the above case, 'Value doesn't exist' is printed in the console.

### Adding attributes to deeply nested Objects

We will work with the same object we were working with earlier.

If we want to add a new key-value pair for subtitle inside 'attributes', we could do something like this

Again, we made a similar assumption that the entire path is defined. However, if any part of the path is undefined, it will throw an error.

We can use Lodash's set function to handle this error gracefully. Below is the syntax

```javascript

_.set(object, path, value)

```

If the path doesn't exist, it will create the path.

set is an in-place function, i.e it updates the input object. Our new object is below

The second set operation added 3 elements (2 empty elements) to the **'data'** array while the third set operation added an attribute **'subtitle'** to **'data[0]'**

### Check if a path exists

We can use the has function to check if a path exists in an object. Below is the syntax

```javascript

_.has(object, path)

```

### Invert Keys an Values of an object

Lodash's invert function will invert the keys and values. Below is the syntax

```javascript

_.invert(object)

```

### Create an object from another object

If you have a object and want to create an object with some of the keys from the original object, you can use Lodash's pick function. It doesn't add the key and value directly, if the path provided is nested, it will recreate the path as well. If you are confused, refer to the example below

Below is the syntax

```javascript

_.pick(object, [paths])

```

Let's work with the [JSON API](https://jsonapi.org/examples/) response again.

Instead of directly adding title directly, it recreate the path 'data[0].attributes.title'.

### Deep Copy of an Object

The cloneDeep function creates a deep copy of an object

As you can see, the original object remains unchanged.

### Compare Object irrespective of the order of the keys

### Some Set operations on Arrays

#### Find the elements in array1 but not in array2

#### Find Common elements in two arrays

#### Find the difference between two arrays

### Zip

This is similar to the zip function in Python.

### Get unique elements of an array

Lodash has a bunch of other useful functions, refer to their [documentation](https://lodash.com/docs/4.17.15) for more | rahulbanerjee99 |

773,571 | How to only run a job on a pull request in CircleCI | I wanted a thing to only happen when a pull request is opened. I also wanted to do some cleanup when... | 0 | 2021-07-27T22:00:52 | https://ruarfff.com/circleci-pr-only/ | circleci, pipelines | I wanted a thing to only happen when a pull request is opened. I also wanted to do some cleanup when the pull request is closed. In my last place we used GitHub actions and this was super easy.

Now I am using [CircleCI](https://circleci.com/) and this wasn't so easy.

In this post we will look at how to only run a job on a pull request in CircleCI. There is one major caveat. We also need a way to trigger the job on a pull request. We will look at how to do this with the [CircleCI web api](https://circleci.com/docs/api/v2/).

## Conditionally run a job

There are a few options you can use to only run a job on a pull request in CircleCI. There is the option to

[only ever build on a pull request](https://discuss.circleci.com/t/only-build-pull-requests-not-every-branch/200) but this is all or nothing

i.e. you can never run a build on a branch without opening a pull request.

Another option is, within a job, you can inspect the [environment variables](https://circleci.com/docs/2.0/env-vars/) to see if there is a pull request number like so:

```bash

if [ "${CIRCLE_PULL_REQUEST##*/}" != "" ];then

echo "Is a pull request"

fi

```

This is OK but it would be nice to conditionally run a whole job instead. It is not possible to read environment variables when the pipeline is loaded. It is only possible when a job is run.

To work around this we can use the [circleci/continuation](https://circleci.com/developer/orbs/orb/circleci/continuation) orb.

If you are trying this out, make sure to update your project settings in **Advanced Settings -> Enable dynamic config using setup workflows**.

CircleCI expects all your configuration in one file called `.circleci/config.yml`. The continuation orb takes over as the entry point giving you access to the environment variables and then runs the pipeline using whatever configuration you tell it to.

It's a little bit weird but it works.

This is an example of using the continuation orb to conditionally run a job only on a pull request.

`.circleci/config.yml`

```yaml

setup: true

version: 2.1

orbs:

continuation: circleci/continuation@0.2.0

workflows:

setup:

jobs:

- continuation/continue:

configuration_path: ".circleci/main.yml"

parameters: /home/circleci/params.json

pre-steps:

- run:

command: |

if [ -z "${CIRCLE_PULL_REQUEST##*/}" ]

then

IS_PR=false

else

IS_PR=true

fi

echo '{ "is_pr": '$IS_PR' }' >> /home/circleci/params.json

```

Note, we mentioning PR here but you could do more or less anything to configure your pipeline there. `/home/circleci/params.json` is written to and specified with `parameters: /home/circleci/params.json`.

`.circleci/main.yml`

```yaml

version: 2.1

parameters:

is_pr:

type: boolean

default: false

jobs:

do_something:

docker:

- image: cimg/base:2021.04

steps:

- run:

name: something

command: echo 'You get the picture'

workflows:

version: 2

whence-pr:

when: << pipeline.parameters.is_pr >>

jobs:

- do_something:

name: something

```

We called the file `main.yml` here but it could be any file. You just need to specify it in the parameter called `configuration_path`. [This post](https://circleci.com/blog/building-cicd-pipelines-using-dynamic-config/) also shows another way to generate the configuration on the fly.

Now we have passed the `is_pr` parameter to the pipeline. We can conditionally run things using `when: << pipeline.parameters.is_pr >>`.

There is one major issue with this approach. Our build may have run before a PR (pull request) was ever opened. Opening a PR will not trigger a build in CircleCI.

## Triggering CircleCI pipeline when a pull request is opened

First thing you must do is grab a [CircleCi API token](https://circleci.com/docs/2.0/managing-api-tokens/). A [personal API token](https://app.circleci.com/settings/user/tokens) will do for this example.

You can trigger a pipeline run like so:

```bash

SCM=github

ORG=your-org-here

PROJECT=your-project-here

CIRCLE_BRANCH=a-derived-branch

curl -X POST \

-H "Circle-Token: ${CIRCLE_TOKEN}" \

-H 'Content-Type: application/json' \

-H 'Accept: application/json' \

-d "{\"branch\":\"${CIRCLE_BRANCH}\"}" \

https://circleci.com/api/v2/project/${SCM}/${ORG}/${PROJECT}/pipeline

```

Hopefully it's clear what values you need to change there. How you will run this bit depends on what tools you have available to you. I was using GitHub and even though we use CircleCI, there are enough free [GitHub Action](https://github.com/features/actions) minutes for me to setup an action like this:

`.github/workflows/pr.yml`

```yaml

name: Trigger Build on PR

on:

pull_request:

types: [opened, reopened]

jobs:

trigger-build:

runs-on: ubuntu-latest

steps:

- name: Trigger CircleCI

env:

CIRCLE_BRANCH: ${{ github.head_ref }}

CIRCLE_TOKEN: ${{ secrets.CIRCLE_TOKEN }}

ORG: your-org-here

PROJECT: your-project-here

run: |

curl -X POST \

-H "Circle-Token: ${CIRCLE_TOKEN}" \

-H 'Content-Type: application/json' \

-H 'Accept: application/json' \

-d "{\"branch\":\"${CIRCLE_BRANCH}\"}" \

https://circleci.com/api/v2/project/github/${ORG}/${PROJECT}/pipeline

```

This feels like an incredible hack but it works.

## A side note on doing something when a PR is merged

This has nothing to do with CircleCI but if you happen to have access to GitHub actions this might be useful.

`.github/workflows/pr-closed.yml`

```yaml

name: On PR Closed

on:

pull_request:

types: [closed]

jobs:

on-pr-closed:

runs-on: ubuntu-latest

steps:

- name: Print PR number

env:

PR_NUMBER: ${{ github.event.number }}

run: |

echo "${PR_NUMBER}"

```

| ruarfff |

762,584 | A look at PostgreSQL migrations in Node | Data migrations are a common operation for any application with a persistence layer. In this post we... | 0 | 2021-07-27T19:12:27 | https://www.antoniovdlc.me/a-look-at-postgresql-migrations-in-node/ | node, postgres | ---

title: A look at PostgreSQL migrations in Node

published: true

description:

tags: node, postgresql

cover_image: https://images.unsplash.com/photo-1520792699872-64c6ab4e4c3e?ixlib=rb-1.2.1&ixid=MnwxMjA3fDB8MHxwaG90by1wYWdlfHx8fGVufDB8fHx8&auto=format&fit=crop&w=1350&q=80

canonical_url: https://www.antoniovdlc.me/a-look-at-postgresql-migrations-in-node/

---

Data migrations are a common operation for any application with a persistence layer. In this post we will look at how to set a simple data migration on a PostgreSQL database in a Node back-end.

There are already existing libraries that provide the needed level of abstraction to perform data migrations with the above stack, such as [node-pg-migrate](https://www.npmjs.com/package/node-pg-migrate), [postgres-migrations](https://www.npmjs.com/package/postgres-migrations) or [db-migrate](https://www.npmjs.com/package/db-migrate). If you need to run migrations in production, I would encourage using any of those instead of coming up with your own solution.

Yet, let's look at what it takes to build such a library!

---

## Keeping track of migrations

At its core, migrations are just SQL files being executed at most once in a certain order. It is primordial that migrations are only ever run once, and exactly once. To achieve that, we need to keep track of which migrations have already been run when triggering a migration.

As we are already using a persistence layer, one straightforward approach is to use that same persistence layer to keep track of the migrations that have been run. In our case, we can create a `migrations` table in PostgreSQL, which will be updated on every migration run ... and, of course, we will be setting that up using a migration!

`00000001-init-migrations.sql`

```sql

-- Migrations

-- This table will keep a list of migrations that have been run on this database.

--

CREATE TABLE IF NOT EXISTS migrations (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

file TEXT,

created_at TIMESTAMP DEFAULT NOW()

);

```

Some migrations might need to run on certain assumptions (for example, that a table exists). For those cases, we enforce an order for migrations to run. As such, we prefix all migration files with 8 digits.

---

## Writing migrations

As we ideally want to get a working end-to-end system from scratch, the next logical step is to create the needed tables for our application. For example:

`00000002-init-basic-schema.sql`

```sql

-- Users

-- This table will hold the users' information.

CREATE TABLE IF NOT EXISTS users (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

email TEXT,

created_at TIMESTAMP DEFAULT NOW()

);

-- Settings

-- This table holds all users' settings

CREATE TABLE IF NOT EXISTS settings (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

lang TEXT,

currency TEXT,

user_id UUID REFERENCES users (id),

created_at TIMESTAMP DEFAULT NOW()

);

...

```

With this setup, migrations are just SQL files doing any sort of operation like creating tables, inserting data, deleting columns, adding indexes etc...

`00000003-add-test-data.sql`

```sql

CREATE TABLE IF NOT EXISTS test (

name TEXT

);

INSERT INTO test (name) VALUES ('bonjour'), ('hola'), ('nihao');

```

You might have noticed that we are not supporting "down" migrations. This is by design, as "down" migrations can be thought of as "up" migrations negating a previous migration. In that sense, they are just simply migrations too.

---

## Running migrations

Now for the trickiest part of this exercise, let's see how to run those migration files!

For this section, we will assume we have implemented a similar `getClient()` method as described in https://node-postgres.com/guides/project-structure using [pg](https://www.npmjs.com/package/pg).

The first thing we want to do, is check if there are any outstanding migrations to be run, and if so, read the content of those migrations. To do so, we will introduce a utility function:

```js

async function getOutstandingMigrations(migrations = []) {

const files = await promisify(fs.readdir)(__dirname);

const sql = await Promise.all(

files

.filter((file) => file.split(".")[1] === "sql")

.filter((file) => !migrations.includes(file))

.map(async (file) => ({

file,

query: await promisify(fs.readFile)(`${__dirname}/${file}`, {

encoding: "utf-8",

}),

}))

);

return sql;

}

```

In a nutshell, what this function does is read all files in the current directory and filter out files that don't contain SQL and previously ran migrations. Finally, it reads the content of those files. Note that we are using promisified a few `fs` utility functions to increase the efficiency of this function.

We can now use that function to get all outstanding migrations (i.e. migrations that haven't run yet against the current database) in our `migrate()` function like follows:

```js

async function migrate() {

...

// Check previous migrations

let existingMigrations = [];

try {

let result = await client.query("SELECT * FROM migrations");

existingMigrations = result.rows.map(r => r.file)

} catch {

console.warn("First migration");

}