id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

929,929 | Clean Code in C# Part 3 Comments | Introduction According to uncle bob comments should be avoided at all costs. Well written... | 16,214 | 2021-12-18T15:14:54 | https://dev.to/caiocesar/clean-code-in-c-part-3-comments-17p | csharp, cleancode, programming, comment | ---

series: Clean Code in C#

---

#Introduction

According to uncle bob comments should be avoided at all costs. Well written code should written in a way that is easy for other developers to understand. If developers follow the rules of writing clean methods as describe in [Part 2 Methods](https://dev.to/caiosousa/clean-code-in-c-part-2-methods-58mb) avoiding comments makes even more sense.

#Explaining Code

Developers sometimes write logic and try to explain the code through comments. These comments in most cases are not necessary as displayed in the code bellow.

```csharp

// Verify if user has access to every module.

if ((user.Type == ADMINISTRATOR || user.Type == MANAGER) &&

user.IsActive) { }

```

```csharp

if (user.HasAccessToWholeModule) { }

```

The code above in both cases has the same responsibility. The second case is a much more cleaner approach that doesn't need a comment to explain its purpose. Comments can also become obsolete if programmer's don't update them as the code evolves.

#Useful Comments

In some cases it can be useful to create comments, but it is important to keep in mind that no comment is always better than having comments. Analyze the following code:

```csharp

services.AddQuartz(q =>

{

q.UseMicrosoftDependencyInjectionScopedJobFactory();

var jobKey = new JobKey(JOB_NAME);

q.AddJob<HelloWorldJob>(opts => opts.WithIdentity(jobKey));

q.AddTrigger(opts =>

opts.ForJob(jobKey)

.WithIdentity(JOB_TRIGGER_NAME)

.WithCronSchedule("0/5 * * * * ?")); // run every 5 seconds

});

```

The code above has an example of middleware configuration of the open source job scheduling system library named [quartz](https://www.quartz-scheduler.net). It uses [cron](https://en.wikipedia.org/wiki/Cron) to define schedules and in this case it would make sense to add a comment to interpret the schedule.

Other comments might also be appropriate in code like:

- Important functionalities

- TODO explaining obsolete or pending items about a method.

- Alerts about long running process.

#Excessive Comments

Many times I run into code with excessive comments. Programmers might think the code looks elegant with these comments, however they are redundant and not necessary. The code bellow has an example of excessive comments:

```csharp

/// <summary>

/// User Class

/// </summary>

public class User

{

/// <summary>

/// User Type

/// </summary>

public string Type { get; set; }

/// <summary>

/// Verifies if user is active

/// </summary>

public bool IsActive { get; set; }

}

```

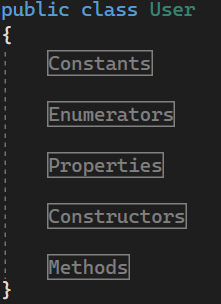

#Regions

Regions in C# are sometimes used inside a method to "Improve Code Readability". Regions inside methods actually increases the size of the method and should be avoided at all costs. Regions could be used outside of methods to organize code, but that should be avoided too. In some cases where classes contains hundreds or thousands of lines of code it could make sense to have regions as shown in the following figure:

#Conclusion

There are many other examples of comments that should be avoided not explored here. The main idea that you should be aware if you got to this point is to always avoid commenting code whenever possible. I find that having a concise external documentation about the system, requirements and architecture can also avoid many unnecessary comments in the code.

#References

1. Clean Code: A Handbook of Agile Software Craftsmanship by Robert C. Martin.

2. [Quartz](https://www.quartz-scheduler.net)

| caiocesar |

929,947 | What should I test? | You might often hear developers say that you should write tests. Great, let’s go! But what should you... | 0 | 2021-12-18T15:28:11 | https://www.csrhymes.com/2021/12/18/what-hould-i-test.html | testing, php, javascript, webdev | ---

title: What should I test?

published: true

date: 2021-12-18 09:00:07 UTC

tags: Testing,PHP,JavaScript,webdev

canonical_url: https://www.csrhymes.com/2021/12/18/what-hould-i-test.html

cover_image: https://www.csrhymes.com/img/what-should-i-test.jpg

---

You might often hear developers say that you should write tests. Great, let’s go! But what should you test and where do you start? I don’t know all the answers, but here are some tips for getting started.

I recently started working an existing application which was very different to what I had been working on previously. When I write a brand new application, one of the first things I do is to get the test tool up and running and start writing tests alongside writing the application code. I would often start with writing some smaller unit tests and then feature or end to end tests to ensure the process worked as a whole.

When you start working on an existing project that has little test coverage it’s not so easy to figure out where to start with your tests. It can seem a very daunting task as there is so much existing code and features to test.

So what should you do? Just ignore tests and carry on coding?

The truth is you probably won’t get the opportunity to spend weeks solely writing tests as you still need to work on new features and produce visible output to your customer.

## Start small

Some tests are better than none at all.

Sometimes the smallest, simplest test is the most useful test. This tweet by Nuno Maduro says it all. Write a simple test that “ensures your application boots” and it “ensures your homepage can be loaded”.

> Does your [@laravelphp](https://twitter.com/laravelphp?ref_src=twsrc%5Etfw) application have 0 tests? Here is one test you can easily add to get started. It's probably the most important test — in web projects — and it has an enormous value.

>

> ✓ Ensures your application boots. ✅

> ✓ Ensures the home page can be loaded. 💨 [pic.twitter.com/BclTaFcig8](https://t.co/BclTaFcig8)

>

> — Nuno Maduro (@enunomaduro) [December 9, 2021](https://twitter.com/enunomaduro/status/1468901807585955848?ref_src=twsrc%5Etfw)

<script async="" src="https://platform.twitter.com/widgets.js" charset="utf-8"></script>

## Feature tests

So we have proven that the application boots, but should we write feature or unit tests next? In my opinion it is better to start writing feature (or end to end) tests rather than unit tests. If you inherit an application that has little or no test coverage, then writing a feature test then you can quickly cover more of your code by writing fewer tests.

Even the best documentation can’t provide you with the level of detail that the code is written at. It may describe a feature at top level, such as “Users can log in with a email address and a password”, so you should be able to write a feature test that calls the login endpoint, pass in the email address and password, then assert that the user is logged in successfully.

## Unit tests

Hopefully all the tests will pass, but if they don’t then this will prompt you to dig into the code a bit deeper. This will allow you to learn more about specific functions, which you can then write unit tests for to prove they are doing what they are supposed to be doing.

It’s actually very difficult to write a unit test without understanding the code in detail. When you start working on an application you won’t have that in depth knowledge of what each method does, in fact you won’t even know what the method names are without spending time digging through the code.

You will gain this knowledge over time, but you may not remember it all, so this is where writing unit tests will help you out, acting as a kind of documentation for your code. It will allow you to construct scenarios that you code should handle.

Building on the example of users logging in, you could write a specific unit test that asserts the user has entered a valid email address in the log in form, otherwise a validation error should be thrown.

## Happy path

Start by writing tests for the happy path.

The happy path assumes that everything has gone as you expect and the user has entered the correct information and the process completes from start to finish. For example, the user entered their email address in the email field, instead of entering it in the password field, and they successfully logged in.

You may say, what value is there in testing this? We know it works as our application is up and running and people are quite happily using it. This is true, but code won’t stay the same forever and at some point you may add a new feature, such as allowing logins with social media accounts, and you want to write this happy path test to ensure that existing users will still be able to log in as they did before.

Sometimes everyone is so excited about testing the new feature, that the existing functionality can be overlooked. Testing existing functionality is also known as regression testing.

> Regression testing (rarely, non-regression testing) is re-running functional and non-functional tests to ensure that previously developed and tested software still performs after a change.

[https://en.wikipedia.org/wiki/Regression\_testing](https://en.wikipedia.org/wiki/Regression_testing)

## Write tests for bugfixes

It’s tempting to jump straight into a bug, fix the code and then move on. Instead, take some time to write a test that replicates the bug. This will allow you to prove that the bug does in fact exist and that you know what triggers it. Once you have established this, you can then work on the fix and use your test to prove whether the bug has been resolved or not.

Having a test for a bug also saves a lot of effort as you can run the automated test with the specific scenario over and over again, without having to manually set up the database or visit a specific screen and perform a specific action to replicate it.

## Tests for new features

If you have to develop a new feature then this is a great time to write tests for it. One way of ensuring that tests will definitely be written for this new feature is to use Test Driven Development (TDD). TDD encourages you to write the tests first and then write the code that makes the tests pass.

TDD may not be everyone’s cup of tea, but I recommend trying it out and seeing how you get on. I often find that it makes you think about what you are trying to accomplish and you can end up with a different solution than if you were to just build it as you go.

## Write tests when you update packages

Quite often there will be a new major version of the framework you are using released. Rather than jumping straight in and updating the framework, ask for a bit more time to write some more tests to cover the areas that will be specifically effected by the upgrade.

This will allow you to have confidence it worked before the upgrade, and that the issues are caused specifically by the upgrade and not an existing issue. I have spent many hours debugging an issue that I was sure was caused by updating a package, only to eventually realise it had nothing to do with the upgrade.

If the tests fail after the upgrade then it will provide you with a checklist of what you need to work on to make the upgrade work.

## Start small, build test coverage over time

These are some of the ideas for how you can build up test coverage over time. Eventually you will realise that tests are there to help you and you will have more confidence in your application.

The next developer that inherits your application will thank you for the tests too!

[Photo](https://stocksnap.io/photo/goldengatebridge-sanfrancisco-II0IJP2AC7) by [Burst](https://stocksnap.io/author/burstshopify) on [StockSnap](https://stocksnap.io) | chrisrhymes |

930,027 | Create simple calculator in javascript | A simple calculator in javascript and html. This is the basic simple calculator in javascript which... | 0 | 2021-12-18T16:58:02 | https://dev.to/shine18/create-simple-calculator-in-javascript-1j5i | javascript, beginners, programming, tutorial | A simple calculator in javascript and html. This is the basic simple calculator in javascript which is made using a simple class in javascript. It also demonstrates how you can create a class for simple tasks and instantiate it.

**HTML**

```

<div id="calc">

<input type="number" id="num1" placeholder="Enter a number"/>

<select id="operator" >

<option value="+">+</option>

<option value="-">-</option>

<option value="*">*</option>

<option value="/">/</option>

</select>

<input type="number" id="num2" placeholder="Enter a number" />

<button id="calc">=</button>

<input type="number" id="result" placeholder="Result" />

</div>

```

**Javascript**

```

class Calculator{

// Properties

num1

num2

result

num1Input

num2Input

resultInput

operatorSelect

// member functions

constructor(){

// initialization of the calculator

}

setEvents(){

// set events on button

}

add(){

// add numbers

}

subtract(){

// subtract numbers

}

multiple(){

// multiple numbers

}

divide(){

// divide numbers

}

output(){

// output the results

}

}

```

For detailed tutorial on (this code) simple calculator in javascript, please follow this link:

https://10code.dev/javascript/a-simple-calculator-in-vanilla-javascript/ | shine18 |

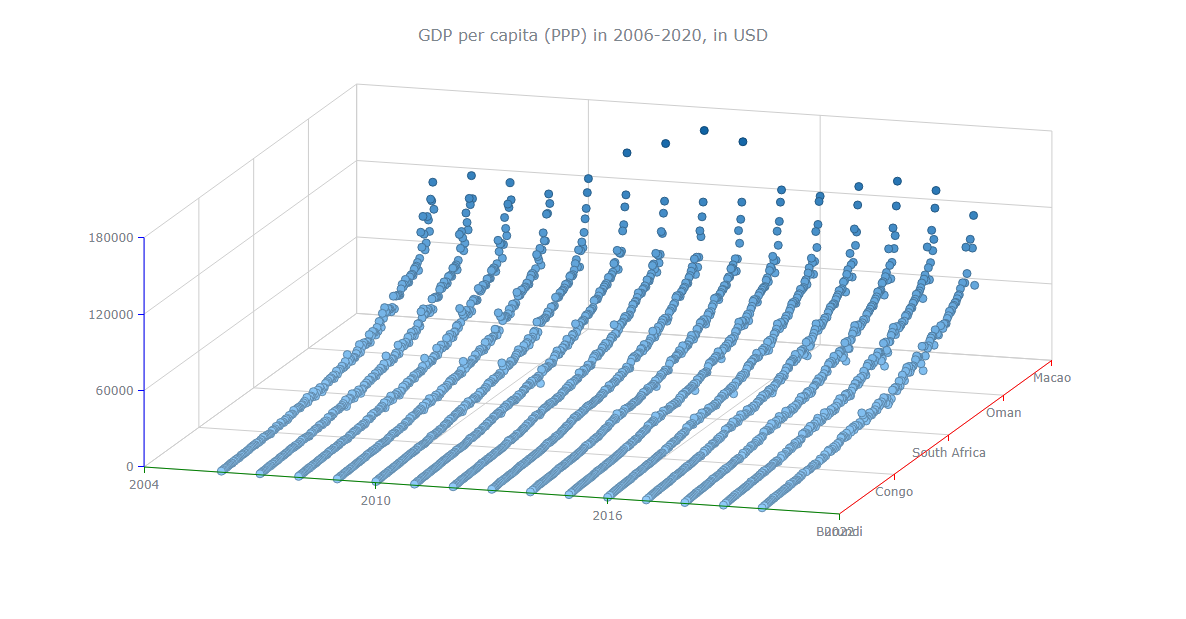

930,039 | How to Create a 3D Surface Chart in JavaScript | 🌈 Creating an embeddable interactive 3D Surface Plot is not as tricky as it may seem. A new tutorial... | 0 | 2021-12-18T17:19:57 | https://www.anychart.com/blog/2021/12/15/surface-chart-javascript/ | javascript, webdev, datascience, programming | _🌈 Creating an embeddable interactive 3D Surface Plot is not as tricky as it may seem. A new tutorial on Hongkiat demonstrates a way that must be easy even for beginners! 🔥_

_🙋 Learn how to build a compelling surface chart using JavaScript in four quick steps and then customize it to your liking in just a few more lines of code. For an illustrative example, 15 years of GDP data for 195 countries are being visualized along the article — have fun exploring the final diagram, too!_

[Data visualization](https://www.anychart.com/blog/2018/11/20/data-visualization-definition-history-examples/) is a must-have skill today with ever-growing data and the need to analyze as well as present that data. You will definitely come across data charts whether you are in the technology industry or not and therefore, it is a good idea to learn how to build visualizations.

I will show you here that building charts is not very tough and with the right tools, you can start creating interactive, interesting visualizations in little time and effort!

In this step-by-step tutorial, I will demonstrate how to represent GDP values of various countries for the past 15 years on a beautiful interactive 3D surface chart using a JavaScript library for data visualization.

The surface plot looks quite complex, but I will show you how straightforward it is to make a compelling and fully functional one.

## What’s a 3D Surface Chart?

A 3D surface chart plots three dimensions of data on the x, y, and z axes with two of the variables being independent (displayed along the horizontal axes) and one being dependent on the other two (shown on the vertical axis).

In this tutorial, I will be plotting countries and years as the independent variables and GDP values as the dependent variable.

## JavaScript Charting Libraries

Currently, there are a lot of good [JS charting libraries](https://en.wikipedia.org/wiki/Comparison_of_JavaScript_charting_libraries), all of them having some pros and cons. You can choose which one to use based on your specific requirements and the best part is that the process of building visualizations is very similar for all of the libraries. So, you can start learning with any of the libraries and extend your knowledge to another library as well.

For this tutorial, I am going to use the [AnyChart](https://www.anychart.com/) JavaScript charting library which is likely a good choice for beginners. It has tons of [examples](https://www.anychart.com/products/anychart/gallery/) along with extensive [documentation](https://docs.anychart.com/) that is really useful when starting out.

Also, AnyChart is quite easy to use and flexible with loads of customization options. And what’s especially important to many — it is free for personal, educational, and other non-commercial use.

## Building Basic 3D Surface Plot Using a JS Library

It is an advantage, of course, if you have background knowledge of HTML, CSS, and JavaScript. But don’t get overwhelmed even if you are a complete beginner. I will walk you through each line of the code, and once you understand what is happening, it should get easier to grasp.

There are four general steps to create a 3D surface plot or basically any chart with a JS library, and as mentioned earlier, these steps remain alike irrespective of the library you use.

- Create an HTML page to display your chart.

- Include the required JavaScript files.

- Prepare and connect your data.

- Write the JS code for the chart.

### Step 1 — Create a basic HTML page

The initial step is to have a blank HTML page that will hold the chart. I will add a block element with a unique id to the page. I will use the id to reference the `<div>` later.

I will now specify the height and width of the `<div>` as 100% for both in the `<style>` block of the page. This will render the chart on the full page. You can specify the height and width according to your preference.

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>JavaScript Surface Chart</title>

<style type="text/css">

html, body, #container {

width: 100%;

height: 100%;

margin: 0;

padding: 0;

}

</style>

</head>

<body>

<div id="container"></div>

</body>

</html>

```

### Step 2 — Add the necessary scripts

When you are using a JavaScript library, you need to add the scripts specific to the chart that you are building and the library that you are using.

Here, I am using AnyChart so I need to add the corresponding scripts for the surface plot from [its CDN](https://cdn.anychart.com/) (Content Delivery Network) which is basically where all the scripts can be found.

So, I will include AnyChart’s [Core](https://docs.anychart.com/Quick_Start/Modules#core) and [Surface](https://docs.anychart.com/Quick_Start/Modules#surface) modules for this chart.

Just to remind you, include all these script files in the `<head>` section of your HTML page.

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>JavaScript Surface Chart</title>

<script src="https://cdn.anychart.com/releases/8.10.0/js/anychart-core.min.js"></script>

<script src="https://cdn.anychart.com/releases/8.10.0/js/anychart-surface.min.js"></script>

<script src="https://cdn.anychart.com/releases/8.10.0/js/anychart-data-adapter.min.js"></script>

<style type="text/css">

html, body, #container {

width: 100%; height: 100%; margin: 0; padding: 0;

}

</style>

</head>

<body>

<div id="container"></div>

<script>

// All the code for the JS Surface Chart will come here

</script>

</body>

</html>

```

### Step 3 — Include the data

The data I decided to visualize in a 3D surface plot comes from the World Bank Open Data website that gives me the GDP (PPP based) data for all the countries in a CSV file.

It is easier to create a chart if the data is in the format that the chart expects and how you want to show the data. So I pre-processed the data accordingly. You can go through my JSON data file [here](https://gist.githubusercontent.com/shacheeswadia/b0d6b34a1910359e0e1a8fc0c84019a6/raw/4ab92ca6361f1bc9875d2854e2e1271bc991f86b/surfaceAreaData.json).

To load the data from the JSON file, I will add the [Data Adapter](https://docs.anychart.com/Quick_Start/Modules#data_adapter) module of AnyChart and use the `loadJsonFile` method inside the `<script>` tag in the body of the HTML page.

The three preparatory steps are done so get ready to write some code!

### Step 4 — Write the code to draw the chart

The first thing I will do is ensure that all the code is executed only after the page is fully loaded. To do that, I will enclose the entire code within the `anychart.onDocumentReady()` function.

Then, I will work with the data that is loaded from the JSON file. Even though I have already pre-processed the data, I will need to further process it for plotting the 3D surface chart. Basically, I will create an array that holds the y and z axes data according to the sequence of the x axis data.

```html

anychart.onDocumentReady(function () {

anychart.data.loadJsonFile(

'https://gist.githubusercontent.com/shacheeswadia/b0d6b34a1910359e0e1a8fc0c84019a6/raw/4ab92ca6361f1bc9875d2854e2e1271bc991f86b/surfaceAreaData.json',

function (data) {

// processing of the data

var result = [];

for (var x = 0; x < data.x.length; x++) {

for (var y = 0; y < data.y.length; y++) {

result.push([x, data.y.sort()[y], data.z[x][y]]);

}

}

}

);

});

```

Now, I will create the surface chart and set the markers based on the data array just created.

Next, I will need to set the x axis labels from the loaded data because the array that I created contains only a sequence and not the country names. I will also specify the maximum for the x scale.

```javascript

// create surface chart

var chart = anychart.surface();

// enable markers and set data for them

chart.markers().enabled(true).data(result);

// set x axis labels format

chart

.xAxis()

.labels()

.format(function () {

return data.x[Math.round(this.value)];

});.

// set x axis scale maximum

chart.xScale().maximum(data.x.length - 1);

```

I will now give my chart a title and a bit of padding on all the sides. Lastly, I will reference the `<div>` created in step one and draw the chart.

```javascript

// set chart paddings

chart.padding(25, 50, 75, 50);

// set chart title

chart.title('GDP per capita (PPP) in 2006-2020, in USD');

// set container id for the chart

chart.container('container');

// initiate chart drawing

chart.draw();

```

Voila! A basic functional 3D surface plot is ready!

You can have a look at this basic version of a JavaScript 3D surface plot on [AnyChart Playground](https://playground.anychart.com/R1Mq06kP/) or check out the code right here.

```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>JavaScript Surface Chart</title>

<script src="https://cdn.anychart.com/releases/8.10.0/js/anychart-core.min.js"></script>

<script src="https://cdn.anychart.com/releases/8.10.0/js/anychart-surface.min.js"></script>

<script src="https://cdn.anychart.com/releases/8.10.0/js/anychart-data-adapter.min.js"></script>

<style type="text/css">

html, body, #container {

width: 100%; height: 100%; margin: 0; padding: 0;

}

</style>

</head>

<body>

<div id="container"></div>

<script>

anychart.onDocumentReady(function () {

anychart.data.loadJsonFile(

'https://gist.githubusercontent.com/shacheeswadia/b0d6b34a1910359e0e1a8fc0c84019a6/raw/4ab92ca6361f1bc9875d2854e2e1271bc991f86b/surfaceAreaData.json',

function (data) {

// processing of the data

var result = [];

for (var x = 0; x < data.x.length; x++) {

for (var y = 0; y < data.y.length; y++) {

result.push([x, data.y.sort()[y], data.z[x][y]]);

}

}

// create surface chart

var chart = anychart.surface();

// enable markers and set data for them

chart.markers().enabled(true).data(result);

// set x axis labels format

chart

.xAxis()

.labels()

.format(function () {

return data.x[Math.round(this.value)];

});

// set x axis scale maximum

chart.xScale().maximum(data.x.length - 1);

// set chart paddings

chart.padding(25, 50, 75, 50);

// set chart title

chart.title('GDP per capita (PPP) in 2006-2020, in USD');

// set container id for the chart

chart.container('container');

// initiate chart drawing

chart.draw();

}

);

});

</script>

</body>

</html>

```

## Customizing the JS Surface Chart

One of the best parts of using any JS charting library is that you need to write a very minimal amount of code to get a working version of the chart implemented. Moreover, most of the libraries provide options to customize the chart to make it more personalized and informative.

Let me show you how to enhance the JS 3D surface chart to make it more intuitive and aesthetically better:

- Improve the look and feel of all the axes:

Modify the basic settings of the axes

Modify the labels of the axes

Modify the stroke of the axes

- Add a color palette

- Enhance the tooltip

**FOR A WALKTHROUGH OF THESE JS SURFACE CHART CUSTOMIZATIONS, [CONTINUE READING HERE](https://www.anychart.com/blog/2021/12/15/surface-chart-javascript/)**. | andreykh |

930,090 | Day 2 of #100DaysOfCode, google-clone flex layout | Introduction it's me again with another report from the second day of 100 days of code... | 0 | 2021-12-18T20:05:30 | https://dev.to/th3realad/day-2-of-100daysofcode-google-clone-flex-layout-od1 | webdev, beginners, css, codenewbie | ##Introduction

it's me again with another report from the second day of 100 days of code challenge, i haven't posted yesterday, i know i know what's the point of this challenge if you can skip days, well to clarify, within the rules you can skip a day as long as you do skip just one day and not any longer, i had a pretty busy schedule yesterday but i'll try to sum it up below in the next section, i was pretty busy with school and couldn't squeeze any time for my challenge, merely because i had french classes and had to prepare for them and sleep before the time i'm used to, to attend the said classes.

##What i did yesterday

######preparing for the nodejs event

in the morning i had to read up a bit in the nodejs docs as i have mentioned in earlier posts, we're studying javascript and we are starting with server side first before moving back to the front end and study a bit on react and have hands on practice with both of them.

######the NodeJs event

in the evening the nodejs event started hosted by one of our classmates who is a 19 year old boy from a city not far away from mine, also happens to be experienced in javascript in the last 6 years, and merely self-taught, he was able to provide us the newbies with an introduction on nodeJs, how it works behind the scenes and how it came into existence, i was fascinated by the event loop that node uses and the libuv library that's provided along with most of the v8 engine utilites, i did read up about node before hand but having someone who has hands on experience with a subject provides you with some things that would take you forever to grasp going on the self-taught path, nevertheless this only motivated more as they say the more you know, the more you don't know.

##Today(Day2 of the challenge)

today aswell was just like day 1, all about flexbox, i did feel proud of myself going through the css exercises, it still is pretty frustrating when you feel like you hit a dead end after an hour or two has passed and i'm still not capable of completing the first few assignments, i might take a break or go for a walk, clear my mind and come back with a fresh perspective and try again but it's not quick it, couple breaks later, i hit the jackpot i was able to replicate a google like homepage, to be specific it's the flex layout exercise from the css exercises on the odin project, you can find the repo [here.](https://github.com/TheOdinProject/css-exercises)

######my progress

i can't really determine or limit myself just yet, i do feel like i'm progressing but pretty slowly and due to the fact that i have school and on a busy schedule makes it a little tiny bit harder, but it gets easier, you just have to do it everyday, that's the hard part, but i will keep posting daily updates and try to stay on a streak everyday

######what else am i doing right now

i'm also taking up cs50 course to understand technology a little better, even though i have been around computers for most of my life, i still feel like there's a lot of levels of abstraction that i have to uncover, and i'm planning on doing that slowly but surely, i dont do much during my days, simply because school takes most of my time and the rest is either to gather more information or follow the the top curriculum, i'm satisfied with the results of the effort and time i put in lately.

##Conclusion

i do apologize for the delay, but i will try to be on time on most of the days of this challenge, and hopefully lookback and remember this journey,i wish you good luck wether you are at the beginning of your path, end, or somewhere in the middle, i've only been here a few days and noticed the great support from the community, i'm also excited to grow and give back in my own way, until the next read, thank you for taking time to ready this article, you can find me or contact me directly [here.](https://twitter.com/AdnaneBouthir) Happy Coding ! | th3realad |

930,136 | Generators and yield in python 🤓 | Generators Generators are functions that can be stopped while running and then resumed.... | 0 | 2021-12-18T20:48:38 | https://dev.to/00000/generators-and-yield-in-python-29do | ## Generators

Generators are functions that can be stopped while running and then resumed. These functions return an object that can be scrolled. Unlike lists, generators are lazy, which causes the calculation and evaluation of a phrase to be delayed as much as possible and to be calculated as soon as it was needed. These functions make memory usage more efficient when working with large data sets.

## Differences between generators and normal Python functions:

- To call the next values in the generator, we use the next () method.

- In normal functions we use return to return values, but in generator we use yield (the yield statement acts to stop the function and save its status to continue working in the future from where it left off).

- Generators are also iterators, meaning that the classes we define have the __ (iter__) method defined in them.

## Example of generator

In the following code we have a generator function that takes the list as an argument and returns the sum of the numbers of its elements:

```

def myGenerator(l):

total = 0

for n in l:

total += n

yield total

mygenerator = myGenerator([15,10, 7])

print(mygenerator)

print(next(mygenerator))

print(next(mygenerator))

print(next(mygenerator))

```

Output:

`<generator object myGenerator at 0x0000026B84B6C150>

15

25

32`

We see that a new value is displayed for each call of the next method. If you re-read the function, you will get an error saying "You can not navigate".

`Traceback (most recent call last):

File "generators.py", line 13, in <module>

print(next(mygenerator))

StopIteration`

Do not forget to like!

Bye until the next post👋😘 | 00000 | |

930,144 | What are the first 10 apps you install on a new computer? | I recently bought a new MacBook and don't know where to start. I learned how to code on a used... | 0 | 2021-12-18T21:33:53 | https://dev.to/jasterix/what-are-the-first-10-apps-you-install-on-a-new-computer-2gik | help, productivity, watercooler, webdev | I recently bought a new MacBook and don't know where to start. I learned how to code on a used MacBook Air and installed everything from any list of apps I found online.

But after random keys stopped working, it felt like a good opportunity to put my that old thing away and get more comfortable working in a Windows environment.

Instead, I just found myself writing less code because logging onto my Surface felt like work.

But now that I've bought a new MacBook, I don't really know how to kick things off again.

I installed the basics:

1. VS Code

2. Git

3. Bear and RemNote

4. Top Notch

But after that, I'm drawing a blank.

My old laptop was decked out so much it was running out of space. But this new one is precious. It deserves only the finest apps. This is why I'm seeking some inspiration from the Dev community.

### What are your top 10 apps for a fresh MacBook?

If you had to chooser only 10 apps, what are fave apps for coding, productivity, or anything else that brings you joy? Nothing questionable or that's impossible to remove down the line.

Updating the list as I rediscover some old faves:

1. VS Code

2. Git

3. Bear / RemNote / Tab Notes

4. Top Notch

5. Node

6. Postman

7. Homebrew

8. Chrome

9. Discord / Slack

Photo by Ron Lach from Pexels | jasterix |

930,251 | How I Created a Crowdfunding Platform with Web3 & Micro-Frontends | Over the past few months, I was learning about Micro-Frontend & Web3 Technologies. As the best... | 0 | 2021-12-19T06:32:06 | https://dev.to/ruppysuppy/how-i-created-a-crowdfunding-platform-with-web3-micro-frontends-3pb2 | webdev, typescript, webpack, web3 | Over the past few months, I was learning about **Micro-Frontend** & **Web3 Technologies**. As the best way to learn is to _try things hands-on_, so I built up a small side-project to test out my understanding of the topics.

The aim of the project was to create a **Crowdfunding** Platform based on the **Ethereum Blockchain** utilizing **Micro-Frontend Architecture**

You can dive into the source code here

{% github https://github.com/ruppysuppy/Crypto-Crowdfund no-readme %}

And the website here: https://crypto-crowdfund.web.app/

**NOTE:** You need a **[MetaMask](https://metamask.io/) Wallet** to interact with the blockchain

# Smart Contract

You can interact with **Ethereum Blockchain** using **Smart Contracts**, so let's create one as per our requirement. The **Contracts** used in the project is available [here](https://github.com/ruppysuppy/Crypto-Crowdfund/blob/main/packages/smart-contract/contracts/Campaign.sol)

Making changes to a deployed **Smart Contracts** is not possible, and you have to _re-deploy_ the contract, which costs **Ethereum coins** as **Gas Fee**. So it is essential that you [extensively test](https://github.com/ruppysuppy/Crypto-Crowdfund/tree/main/packages/smart-contract/test) the contract before deploying

Since storing data in the **Smart Contracts** incur **Gas Fee** too, only the essential data, such as the **Campaign Manager**, the **Votes for a Transaction Request**, etc are stored on the **Blockchain**.

### Potential Improvements

1. Split the **Campaign Factory** and the **Campaign** into separate files containing only the given **Smart Contract**

2. Add the **Manager functionality** as a separate **Smart Contract** and add it to the **Campaign** using inheritance

# Micro-Frontend

With the **Smart Contract** out of the way, let's focus on the **Micro-Frontend**

**Micro-Frontend architecture** is a design approach in which a Frontend app is decomposed into **individual, independent “micro-apps”** working _loosely together_.

> Was **Micro-Frontend Architecture** essential for this project?

The answer to that is a definite **NO**. Then why did I use it? _Just to put what I learned to practice_. **Micro-Frontend Architecture** is useful only when working with _large teams_, _where the role of each team is to work on only a small sub-section of the project_

The **Micro-Frontend** was implemented using **Webpack's Module Federation Plugin**. If you want to learn how to implement **Micro-Frontends** from scratch, you are highly encouraged to check out [this article](https://dev.to/ruppysuppy/micro-frontends-the-next-gen-way-to-build-web-apps-16da)

The **Webpack** config for each of the sub-apps and the container follow the same method.

1. Make a `common config` file with the shared **config** for **dev** & **prod** build, for example:

```js

// imports ...

module.exports = {

// all shared config ...

};

```

2. Make a `dev config` and merge it with the `common config`

```js

const { merge } = require('webpack-merge');

// other imports ...

const devConfig = {

// all development config ...

},

};

module.exports = merge(commonConfig, devConfig);

```

3. Finally make a `prod config` and merge it with the `common config`

```js

const { merge } = require('webpack-merge');

// other imports ...

const prodConfig = {

// all production config ...

},

};

module.exports = merge(commonConfig, prodConfig);

```

You can check out all the configuration files [here](https://github.com/ruppysuppy/Crypto-Crowdfund/search?l=JavaScript&q=webpack)

**NOTE:** In the project all the **sub-apps** use **React**, but you can very well use any other _library_ or _framework_ in any of them

## Marketing

Let's start with the simplest **sub-app**, the [Marketing sub-app](https://github.com/ruppysuppy/Crypto-Crowdfund/tree/main/packages/marketing) is only responsible for rendering the _home_, _about_, _faq_, _terms-and-conditions_, _privacy-policy_, and _disclaimer_ pages, and doesn't have much functionality apart from this.

Just as the **Micro-Frontend sub-apps** should only expose generic functions to avoid _library_ or _framework_ dependency between **sub-apps** and the **container**, the **Marketing sub-apps** exposes a `mount` function which takes simple objects as _params_. It has the following signature:

```ts

type Mount = (

mountPoint: HTMLElement,

{

defaultHistory?: History | MemoryHistory;

initialPath?: string;

isAuthenticated?: boolean;

routes: {

HOME: string;

ABOUT: string;

FAQ: string;

TERMS_AND_CONDITIONS: string;

PRIVACY_POLICY: string;

DISCLAIMER: string;

CAMPAIGNS: string;

SIGN_IN: string;

};

onNavigate?: ({ pathname: string }) => void;,

},

) => {

onParentNavigate: ({ pathname: string }) => void

}

```

Using the `mount` function, the container can mount the **sub-app** as per requirement. `defaultHistory`, `onNavigate` and `onParentNavigate` are used to keep both the container and the **sub-app** in sync and avoid some nasty bugs.

## Auth

Next up is the [Auth sub-app](https://github.com/ruppysuppy/Crypto-Crowdfund/tree/main/packages/auth). It uses **Firebase** authentication to **sign-in** and **sign-up** users and grants them the required permissions.

It works similarly as the **Marketing sub-app**, by exposing the `mount` function with a similar set of _params_ and handling the _sign-in_ and _sign-up_ pages

### Potential Improvements

- Use a method to **sign-in** only using the [MetaMask](https://metamask.io/) account, removing the need to **sign-in** in two places to get full access to the application

## Blockchain

Finally, we are at the most difficult to understand **sub-app** of all, the [Blockchain sub-app](https://github.com/ruppysuppy/Crypto-Crowdfund/tree/main/packages/blockchain) is the meat of the project, enabling users to interact with the **backend** & the **blockchain** (to interact with the **blockchain**, you need a [MetaMask](https://metamask.io/) extension on your browser).

It handles the _account_, _campaign_, _campaigns_ and _create-campaign_ pages.

As mentioned previously, _only the essential data is stored on the contracts_, saving the rest on **Cloud Firestore**. The data from the campaigns are fetched from the addresses residing on the **Blockchain**, and then data from **Firestore** is merged to generate the complete data for a given **Campaign**. Only the creator of the **Campaign** can modify the data on **Firestore** or create **Transaction Request** to spend the available funds, which the Contributors can approve.

### Potential Improvements

- Using _image uploads_ in place of _add url to image_ for both the cover image and the user profile picture (skipped it as the main focus was on integrating **web3** & **micro-frontend**)

## Container

The [Container](https://github.com/ruppysuppy/Crypto-Crowdfund/tree/main/packages/container) is responsible for _condensing all the **sub-apps** into a single application_ and _controlling what is displayed on the screen_.

### Potential Improvements

- Improvised **UI/UX**

# Wrapping Up

It's finally over...

This article presented a _brief overview_ of the project, you can always dive into the **source code** and _check out the project line by line_

{% github https://github.com/ruppysuppy/Crypto-Crowdfund no-readme %}

**Happy Developing!**

Finding **personal finance** too intimidating? Checkout my **Instagram** to become a [**Dollar Ninja**](https://www.instagram.com/the.dollar.ninja/)

# Thanks for reading

Need a **Top Rated Front-End Development Freelancer** to chop away your development woes? Contact me on [Upwork](https://www.upwork.com/o/profiles/users/~01c12e516ee1d35044/)

Want to see what I am working on? Check out my [Personal Website](https://tapajyoti-bose.vercel.app) and [GitHub](https://github.com/ruppysuppy)

Want to connect? Reach out to me on [LinkedIn](https://www.linkedin.com/in/tapajyoti-bose/)

I am a freelancer who will start off as a **Digital Nomad** in mid-2022. Want to catch the journey? Follow me on [Instagram](https://www.instagram.com/tapajyotib/)

Follow my blogs for **Weekly new Tidbits** on [Dev](https://dev.to/ruppysuppy)

**FAQ**

These are a few commonly asked questions I get. So, I hope this **FAQ** section solves your issues.

1. **I am a beginner, how should I learn Front-End Web Dev?**

Look into the following articles:

1. [Front End Development Roadmap](https://dev.to/ruppysuppy/front-end-developer-roadmap-zero-to-hero-4pkf)

2. [Front End Project Ideas](https://dev.to/ruppysuppy/5-projects-to-master-front-end-development-57p)

2. **Would you mentor me?**

Sorry, I am already under a lot of workload and would not have the time to mentor anyone.

3. **Would you like to collaborate on our site?**

As mentioned in the _previous question_, I am in a time crunch, so I would have to pass on such opportunities. | ruppysuppy |

932,500 | MailerLite Review - E-Mail-Marketing und Automatisierung | Wenn du als kleines Unternehmen eine E-Mail-Marketingkampagne starten willst, musst du gut über die... | 0 | 2021-12-21T19:18:43 | https://bloggerpilot.com/mailerlite/ | onlinemarketing, newsletter | ---

title: MailerLite Review - E-Mail-Marketing und Automatisierung

published: true

date: 2021-12-21 17:50:49 UTC

tags: OnlineMarketing,newsletter

canonical_url: https://bloggerpilot.com/mailerlite/

---

Wenn du als kleines Unternehmen eine E-Mail-Marketingkampagne starten willst, musst du gut über die verfügbaren Optionen informiert sein. Die Zahl der E-Mail-Dienstleister ist groß, und obwohl viele von ihnen gut sind, gibt es auch viele, die nicht so ideal sind. Es gibt MailerLite, ein Unternehmen, das eine Reihe von E-Mail-Diensten und -Produkten anbietet, darunter ein kostenloses E-Mail-Marketing-Tool namens Mailerlite.

Der Original-Artikel erschien auf [MailerLite Review - E-Mail-Marketing und Automatisierung](https://bloggerpilot.com/mailerlite/).

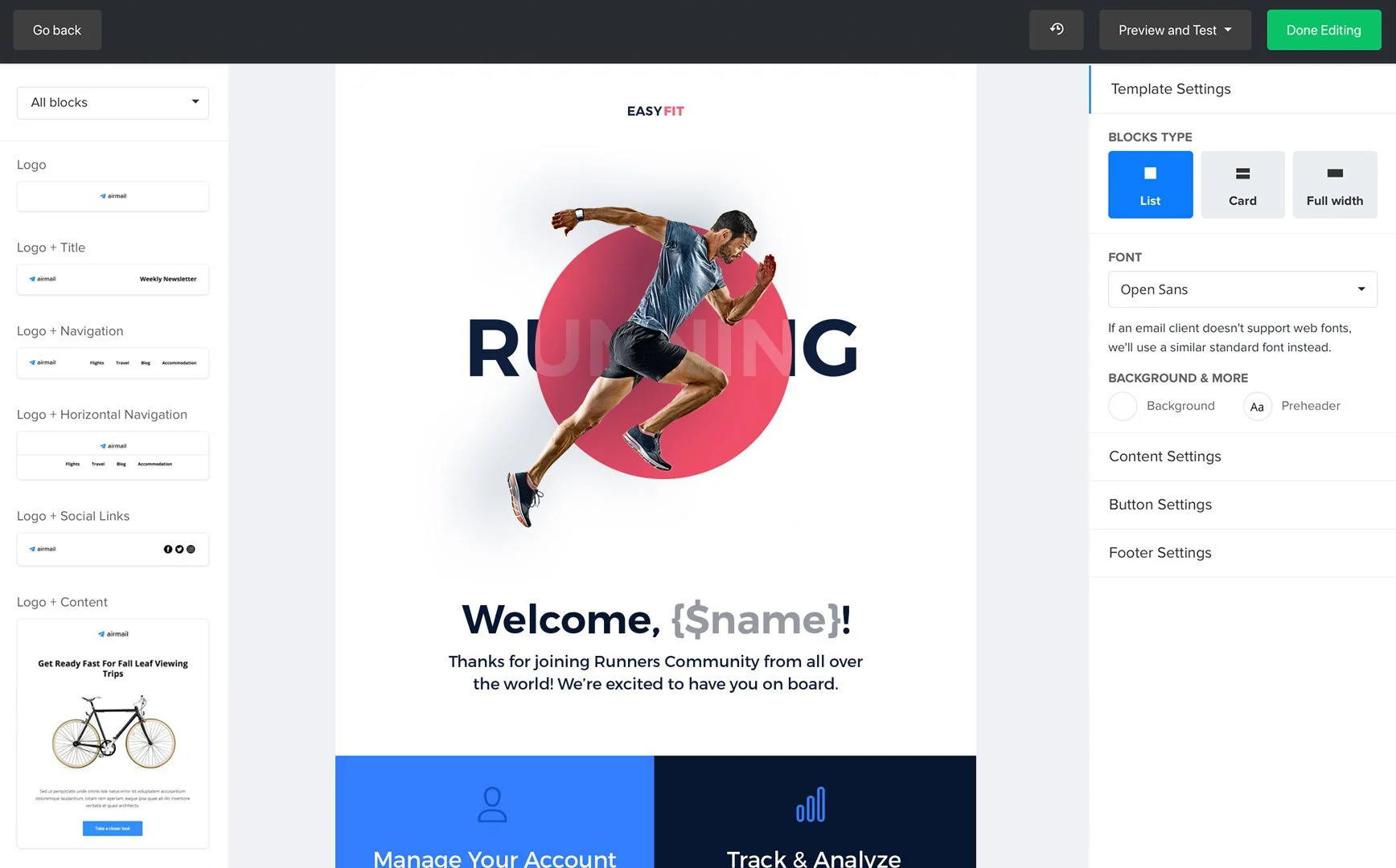

MailerLite ist ein Produktionswerkzeug für den Versand von Newslettern, Berichten und anderen Geschäftsdokumenten per E-Mail. Damit kannst du die von dir erstellten Dokumente mit verschiedenen Vorlagen, E-Mail-Adressen und Betreffs verschicken. In diesem Beitrag stellen wir dir einige der verschiedenen Preisoptionen vor. MailerLite ist ein kostenloses, leichtgewichtiges, selbstgehostetes E-Mail-Programm, das in Java geschrieben wurde. Sie ist so konzipiert, dass du keinen Mailserver installieren oder benutzen musst. Sie kann im Web oder auf dem Desktop genutzt werden. Sie hat eine einfache Benutzeroberfläche ohne zusätzliche Funktionen.

MailerLite ist eine neue Art des E-Mail-Versands, an der wir in den letzten 7 Monaten gearbeitet haben. Das Ziel ist es, das Versenden von E-Mails so einfach zu machen wie das Versenden eines Bildes. Zurzeit verwendet MailerLite das gleiche E-Mail-Konto, das du bereits benutzt. In Zukunft planen wir, den Versand von Google Mail, Outlook.com, iCloud und mehr zu unterstützen. Der folgende Text erklärt den Prozess und die Einstellungen, die nötig sind, um MailerLite zum Laufen zu bringen.

| j0e |

932,977 | React core concepts | JSX JSX is a JavaScript syntax extension that provides a way to structure component rendering using... | 0 | 2021-12-22T07:03:39 | https://dev.to/saddaul_siam/react-core-concepts-5b8d | javascript, react, reactcoreconcepts | **JSX**

JSX is a JavaScript syntax extension that provides a way to structure component rendering using syntax familiar to many developers. It is similar in appearance to HTML.

**Prop Types **

Prop Types is Reacts internal mechanism for adding type checking to components. React components use a special property named prop Types to set up type checking. When props are passed to a React component, they are checked against the type definitions configured in the prop Types property.

**state props **

In a React component, props are variables passed to it by its parent component. State on the other hand is still a variable but directly initialized and managed by the component. The state can be initialized by props. and any other method in this class can reference the props using this

**Component Lifecycle**

React components have a lifecycle. It has three phases. Mounting (componentDidMount -> Updating (componentDidUpdate)-> Unmounting (componentWillUnmount)

**How React Hook works, send state via props, props vs state**

useState, useEffect is called Hook in React.

If the state is used in any component is called Stateful Component and doesn’t contain the state is called Stateless / Presentational component.

Difference between state and props - Interview Important - Main difference is: props are Read-only, but the state can be changed.

**Custom hooks**

Custom Hooks lets you extract component logic into reusable functions.

**Context API**

Normally when we pass data from one component to another component it is called props dealing. But when we have to send data down to 4 to 5 components, we can use context API. If we use the context API, we don't have to deal with props, we can import and use data wherever we need it.

**Virtual DOM and diffing- algorithm**

When we made a React app, React creates a virtual DOM internally by combining all the components and sending it to the browser. The browser renders it and displays it. If the user does something in UI and needs to update the Browser DOM, React compares only the changed part with its old virtual DOM and updates it significantly faster-using diff algorithm and sends the browser only the changed part for the update. The browser immediately updates it in the browser DOM and displays it. | saddaul_siam |

934,938 | Build an Offline-First React Native Mobile App with Expo and Realm | In this post we'll build, step by step, a simple React Native Mobile App for iOS and Android using Expo and Realm. The App will use Realm Sync to store data in a MongoDB Atlas Database, will Sync automatically between devices and will work offline. | 0 | 2021-12-23T16:00:21 | https://www.mongodb.com/developer/how-to/build-offline-first-react-native-mobile-app-with-expo-and-realm/ | expo, reactnative, realm, mobile | ---

title: Build an Offline-First React Native Mobile App with Expo and Realm

published: true

description: In this post we'll build, step by step, a simple React Native Mobile App for iOS and Android using Expo and Realm. The App will use Realm Sync to store data in a MongoDB Atlas Database, will Sync automatically between devices and will work offline.

tags: expo, reactnative, realm, mobile

canonical_url: https://www.mongodb.com/developer/how-to/build-offline-first-react-native-mobile-app-with-expo-and-realm/

cover_image: https://mongodb-devhub-cms.s3.us-west-1.amazonaws.com/Twitter_Realm_expo_aef7185261.png

---

## Introduction

Building Mobile Apps that work offline and sync between different devices is not an easy task. You have to write code to detect when you’re offline, save data locally, detect when you’re back online, compare your local copy of data with that in the server, send and receive data, parse JSON, etc.

It’s a time consuming process that’s needed, but that appears over and over in every single mobile app. You end up solving the same problem for each new project you write. And it’s worse if you want to run your app in iOS and Android. This means redoing everything twice, with two completely different code bases, different threading libraries, frameworks, databases, etc.

To help with offline data management and syncing between different devices, running different OSes, we can use MongoDB’s [Realm](https://realm.io/). To create a single code base that works well in both platforms we can use React Native. And the simplest way to create React Native Apps is using [Expo](https://expo.io/).

### React Native Apps

The [React Native Project](https://reactnative.dev/), allows you to create iOS and Android apps using [React](https://reactnative.dev/docs/intro-react) _“a best-in-class JavaScript library for building user interfaces_”. So if you’re an experienced Web developer who already knows React, using React Native will be the natural next step to create native Mobile Apps.

But even if you’re a native mobile developer with some experience using SwiftUI in iOS or Compose in Android, you’ll find lots of similarities here.

### Expo and React Native

Expo is a set of tools built around React Native. Using Expo you can create React Native Apps quickly and easily. For that, we need to install Expo using Node.js package manager [`npm`](https://docs.npmjs.com/cli/v8/configuring-npm/install):

```

npm install --global expo-cli

```

This will install `expo-cli` globally so we can call it from anywhere in our system. In case we need to update Expo we’ll use that very same command. __For this tutorial we’ll need the latest version of Expo, that’s been updated to support Realm__. You can find all the new features and changes in the [Expo SDK 44 announcement blog post](https://blog.expo.dev/expo-sdk-44-beta-is-now-available-75d9751b0a18).

To ensure you have the latest Expo version run:

```

expo --version

```

Should return at least `5.0.1`. If not, run again `npm install --global expo-cli`

## Prerequisites

Now that we have the latest Expo installed, let’s check out that we have everything we need to develop our application:

* [Xcode 13](https://apps.apple.com/us/app/xcode/id497799835), including Command Line Tools, if we want to develop an iOS version. We’ll also need a macOS computer running at least macOS 11/Big Sur in order to run Xcode.

* [Android Studio](https://developer.android.com/studio), to develop for Android and at least one Android Emulator ready to test our apps.

* Any code editor. I’ll be using [Visual Studio Code](https://code.visualstudio.com/) as it has plugins to help with React Native Development, but you can use any other editor.

* Check that you have the latest version of yarn running `npm install -g yarn`

* Make sure you are NOT on the latest version of node, however, or you will see errors about unsupported digital envelope routines. You need the LTS version instead. Get the latest LTS version number from https://nodejs.org/ and then run:

```

nvm install 16.13.1 # swap for latest LTS version

```

If you don’t have Xcode or Android Studio, and need to build without installing anything locally you can also try [Expo Application Services](https://expo.dev/eas), a cloud-based building service that allows you to build your Expo Apps remotely.

### MongoDB Atlas and Realm App

Our App will store data in a cloud-backed MongoDB Atlas cluster. So we need to [create a free MongoDB account](https://cloud.mongodb.com/) and [set up a cluster](https://docs.atlas.mongodb.com/tutorial/create-new-cluster/). For this tutorial, a Free-forever, M0 cluster will be enough.

Once we have our cluster created we can go ahead and create a Realm App. The Realm App will sync our data from mobile into a MongoDB Atlas database, although it has many other uses: manages authentication, can run serverless functions, host static sites, etc. [Just follow this quick tutorial](https://docs.mongodb.com/realm/manage-apps/create/create-with-realm-ui/#std-label-create-a-realm-app) (select the React Native template) but don’t download any code, as we’re going to use Expo to create our app from scratch. That will configure our Realm App correctly to use Sync and set it into Development Mode.

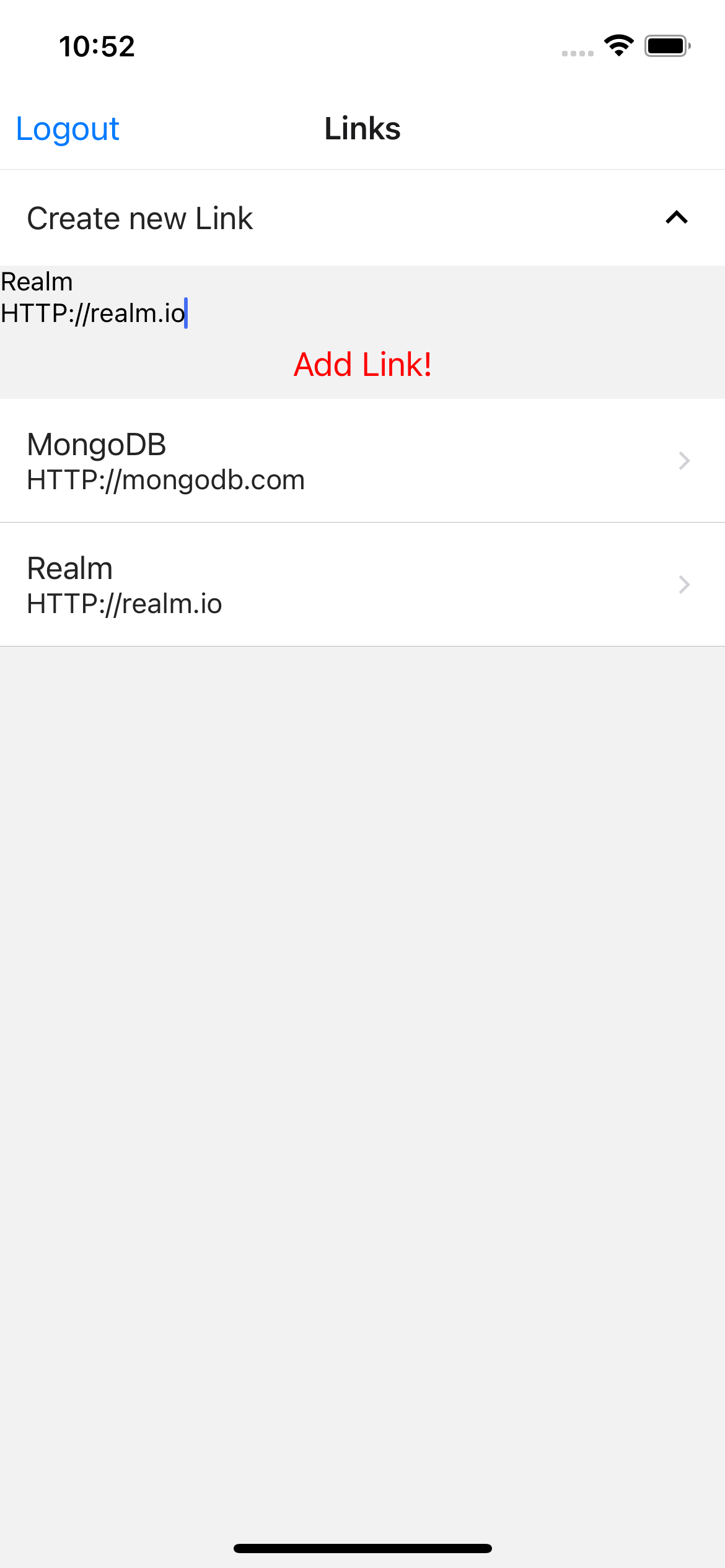

## Read It Later - Maybe

Now we can go ahead and create our app, a small “read it later” kind of app to store web links we save for later reading. As sometimes we never get back to those links I’ll call it Read It Later - _Maybe_.

You can always [clone the repo](https://github.com/mongodb-developer/read-it-later-maybe) and follow along.

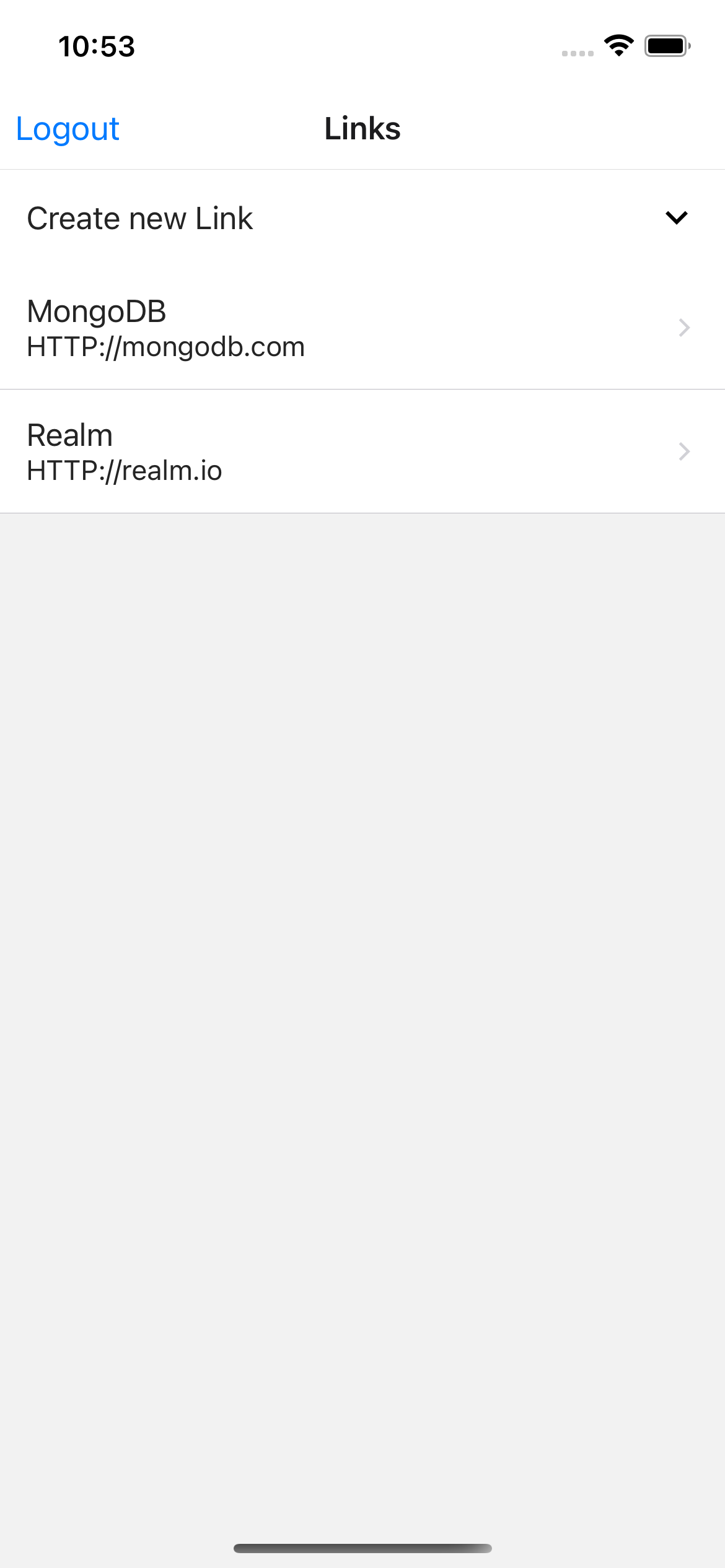

| Login | Adding a Link |

| :-------------: | :----------: |

|  | |

| All Links | Deleting a Link |

| :-------------: | :----------: |

|  | |

### Install Expo and create the App

We’ll use Expo to create our app using `expo init read-later-maybe`. This will ask us which template we want to use for our app. Using up and down cursors we can select the desired template, in this case, from the Managed Workflows we will choose the `blank` one, that uses JavaScript. This will create a `read-later-maybe` directory for us containing all the files we need to get started.

To start our app, just enter that directory and start the React Native Metro Server using ` yarn start`. This will tell Expo to install any dependencies and start the Metro Server.

```bash

cd read-later-maybe

yarn start

```

This will open our default browser, with the Expo Developer Tools at [http://localhost:19002/](http://localhost:19002/). If your browser doesn't automatically open, press `d` to open Developer Tools in the browser. From this web page we can:

* Start our app in the iOS Simulator

* Start our app in the Android Emulator

* Run it in a Web browser (if our app is designed to do that)

* Change the connection method to the Developer Tools Server

* Get a link to our app. (More on this later when we talk about Expo Go)

We can also do the same using the developer menu that’s opened in the console, so it’s up to you to use the browser and your mouse or your Terminal and the keyboard.

## Running our iOS App

To start the iOS App in the Simulator, we can either click “Start our app in the iOS Simulator” on Expo Developer Tools or type `i` in the console, as starting expo leaves us with the same interface we have in the browser, replicated in the console. We can also directly run the iOS app in Simulator by typing `yarn ios` if we don’t want to open the development server.

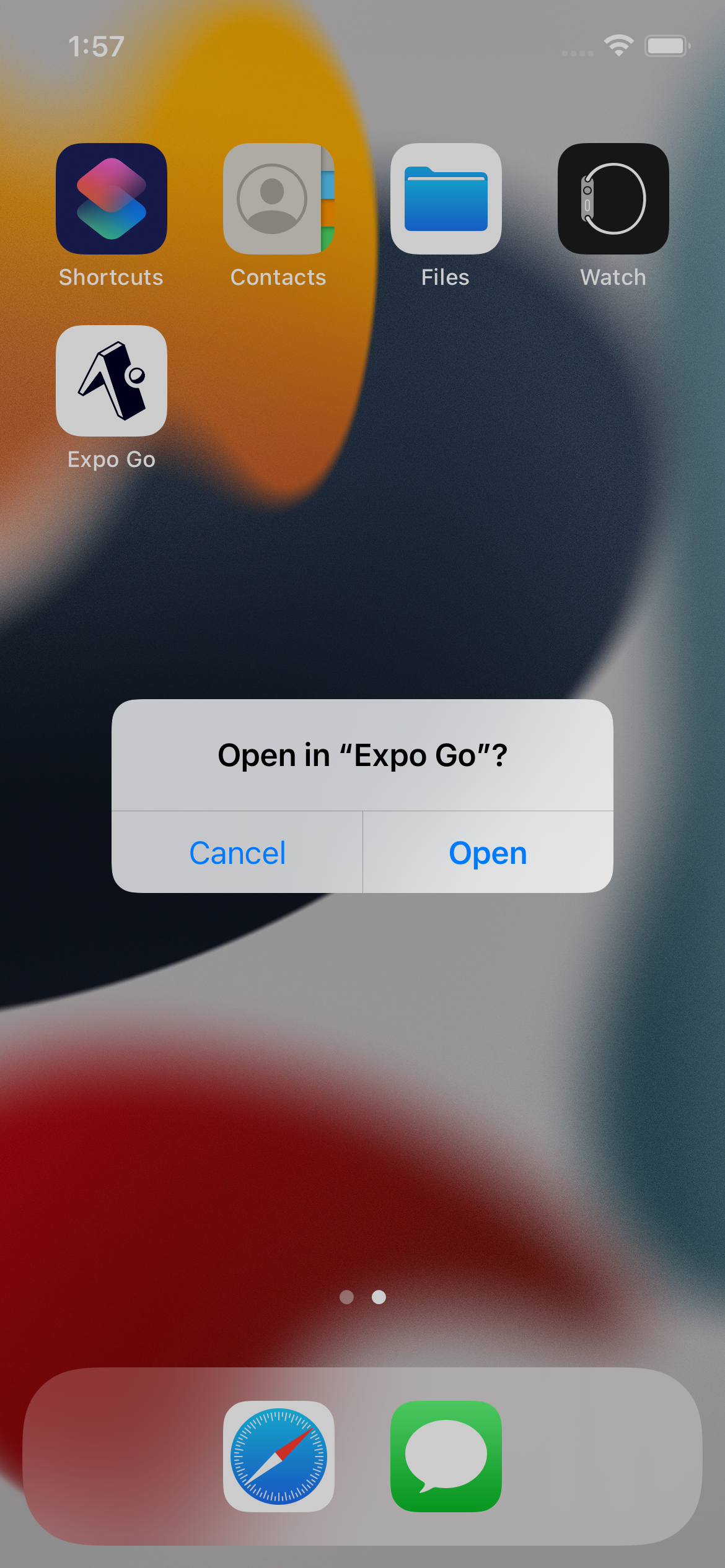

### Expo Go

The first time we run our app Expo will install Expo Go. This is a native application (both for iOS and Android) that will take our JavaScript and other resources bundled by Metro and run it in our devices (real or simulated/emulated). Once run in Expo Go, we can make changes to our JavaScript code and Expo will take care of updating our app on the fly, no reload needed.

| Open Expo Go | 1st time Expo Go greeting | Debug menu |

| :-------------: | :----------: | :----------: |

|  |  |  |

Expo Go apps have a nice debugging menu that can be opened pressing “m” in the Expo Developer console.

### Structure of our App

Now our app is working, but it only shows a simple message: “Open up App.js to start working on your app!”. So we’ll open the app using our code editor. These are the main files and folders we have so far:

```

.

├── .expo-shared

│ └── assets.json

├── assets

│ ├── adaptive-icon.png

│ ├── favicon.png

│ ├── icon.png

│ └── splash.png

├── .gitignore

├── App.js

├── app.json

├── babel.config.js

├── package.json

└── yarn.lock

```

The main three files here are:

* `package.json`, where we can check / add / delete our app’s dependencies

* `app.json`: configuration file for our app

* `App.js`: the starting point for our JavaScript code

These changes can be found in tag `step-0` of the [repo](https://github.com/mongodb-developer/read-it-later-maybe).

## Let’s add some navigation

Our App will have a Login / Register Screen and then will show the list of Links for that particular User. We’ll navigate from the Login Screen to the list of Links and when we decide to Log Out our app we’ll navigate back to the Login / Register Screen. So first we need to add the React Native Navigation Libraries, and the gesture handler (for swipe & touch detection, etc). Enter the following commands in the Terminal:

```bash

expo install @react-navigation/native

expo install @react-navigation/stack

expo install react-native-gesture-handler

expo install react-native-safe-area-context

expo install react-native-elements

```

These changes can be found in tag `step-1` of the [repo](https://github.com/mongodb-developer/read-it-later-maybe).

Now, we’ll create a mostly empty LoginView in `views/LoginView.js` (the `views` directory does not exist yet, we need to create it first) containing:

```javascript

import React from "react";

import { View, Text, TextInput, Button, Alert } from "react-native";

export function LoginView({ navigation }) {

return (

<View>

<Text>Sign Up or Sign In:</Text>

<View>

<TextInput

placeholder="email"

autoCapitalize="none"

/>

</View>

<View>

<TextInput

placeholder="password"

secureTextEntry

/>

</View>

<Button title="Sign In" />

<Button title="Sign Up" />

</View>

);

}

```

This is just the placeholder for our Login screen. We open it from App.js. Change the `App` function to:

```javascript

export default function App() {

return (

<NavigationContainer>

<Stack.Navigator>

<Stack.Screen

name="Login View"

component={LoginView}

options={{ title: "Read it Later - Maybe" }}

/>

</Stack.Navigator>

</NavigationContainer>

);

}

```

And add required `imports` to the top of the file, below the existing `import` lines.

```javascript

import { NavigationContainer } from "@react-navigation/native";

import { createStackNavigator } from "@react-navigation/stack";

import { LoginView } from './views/LoginView';

const Stack = createStackNavigator();

```

All these changes can be found in tag `step-2` of the [repo](https://github.com/mongodb-developer/read-it-later-maybe/compare/step-1...step-2).

## Adding the Realm Library

### Installing Realm

To add our [Realm library](https://docs.mongodb.com/realm/sdk/react-native/) to the project we’ll type in the Terminal:

```bash

expo install realm

```

This will add Realm as a dependency in our React Native Project. Now we can also create a file that will hold the Realm initialization code, we’ll call it `RealmApp.js` and place it in the root of the directory, alongside `App.js`.

```javascript

import Realm from "realm";

const app = new Realm.App({id: "your-realm-app-id-here"});

export default app;

```

We need to add a Realm App ID to our code. [Here](https://docs.mongodb.com/realm/get-started/find-your-project-or-app-id/#find-a-realm-application-id) are instructions on how to do so. In short, a Mobile Realm-powered App will use a local database to save changes and will connect to a MongoDB Atlas Database using a Realm App that we create in the cloud. We have Realm as a library in our Mobile App, doing all the heavy lifting (sync, offline, etc.) for our React Native app, and a _Realm App in the cloud_ that connects to MongoDB Atlas, acting as our backend. This way, if we go offline we’ll be using our local database on device and when online, all changes will propagate in both directions.

All these changes can be found in tag `step-3` of the [repo](https://github.com/mongodb-developer/read-it-later-maybe/compare/step-2...step-3).

>

> __Update 24 January 2022__

>

> A simpler way to create a React Native App that uses Expo & Realm is just to create it using a template.

> For JavaScript based apps:

> `npx expo-cli init ReactRealmJsTemplateApp -t @realm/expo-template-js`

>

> For TypeScript based apps:

> `npx create-react-native-app ReactRealmTsTemplateApp -t with-realm`

>

## Auth Provider

All Realm related code to register a new user, log in and log out is inside a Provider. This way we can provide all descendants of this Provider with a context that will hold a logged in user. All this code is in `providers/AuthProvider.js`. You’ll need to create the `providers` folder and then add `AuthProvider.js` to it.

Realm not only stores data offline, syncs across multiple devices and stores all your data in a MongoDB Atlas Database, but can also run Serverless Functions, host static html sites or [authenticate using multiple providers](https://docs.mongodb.com/realm/authentication/providers/). In this case we’ll use the simpler email/password authentication.

We create the context with:

```javascript

const AuthContext = React.createContext(null);

```

The SignIn code is asynchronous:

```javascript

const signIn = async (email, password) => {

const creds = Realm.Credentials.emailPassword(email, password);

const newUser = await app.logIn(creds);

setUser(newUser);

};

```

As is the code to register a new user:

```javascript

const signUp = async (email, password) => {

await app.emailPasswordAuth.registerUser({ email, password });

};

```

To log out we simply check if we’re already logged in, in that case call `logOut`

```javascript

const signOut = () => {

if (user == null) {

console.warn("Not logged in, can't log out!");

return;

}

user.logOut();

setUser(null);

};

```

All these changes can be found in tag `step-4` of the [repo](https://github.com/mongodb-developer/read-it-later-maybe/compare/step-3...step-4).

### Login / Register code

Take a moment to have a look at the styles we have for the app in the `stylesheet.js` file, then modify the styles to your heart’s content.

Now, for Login and Logout we’ll add a couple `states` to our `LoginView` in `views/LoginView.js`. We’ll use these to read both email and password from our interface.

Place the following code inside `export function LoginView({ navigation }) {`:

```javascript

const [email, setEmail] = useState("");

const [password, setPassword] = useState("");

```

Then, we’ll add the UI code for Login and Sign up. Here we use `signIn` and `signUp` from our `AuthProvider`.

```javascript

const onPressSignIn = async () => {

console.log("Trying sign in with user: " + email);

try {

await signIn(email, password);

} catch (error) {

const errorMessage = `Failed to sign in: ${error.message}`;

console.error(errorMessage);

Alert.alert(errorMessage);

}

};

const onPressSignUp = async () => {

console.log("Trying signup with user: " + email);

try {

await signUp(email, password);

signIn(email, password);

} catch (error) {

const errorMessage = `Failed to sign up: ${error.message}`;

console.error(errorMessage);

Alert.alert(errorMessage);

}

};

```

All changes can be found in [`step-5`](https://github.com/mongodb-developer/read-it-later-maybe/compare/step-4...step-5).

## Prebuilding our Expo App

On save we’ll find this error:

```

Error: Missing Realm constructor. Did you run "pod install"? Please see https://realm.io/docs/react-native/latest/#missing-realm-constructor for troubleshooting

```

Right now, Realm is not compatible with [Expo Managed Workflows](https://docs.expo.dev/introduction/managed-vs-bare/#managed-workflow). In a managed Workflow Expo hides all iOS and Android native details from the JavaScript/React developer so they can concentrate on writing React code. Here, we need to [prebuild](https://github.com/expo/fyi/blob/main/prebuilding.md) our App, which will mean that we lose the nice Expo Go App that allows us to load our app using a QR code.

The Expo Team is working hard on improving the compatibility with Realm, as is our React Native SDK team, who are currently working on improving the compatibility with Expo, supporting the Hermes JavaScript Engine and expo-dev-client. Watch this space for all these exciting announcements!

So to run our app in iOS we’ll do:

```

expo run:ios

```

We need to provide a Bundle Identifier to our iOS app. In this case we’ll use `com.realm.read-later-maybe`

This will install all needed JavaScript libraries using `yarn`, then install all native libraries using CocoaPods, and finally will compile and run our app. To run on Android we’ll do:

```

expo run:android

```

## Navigation completed

Now we can register and login in our App. Our `App.js` file now looks like:

```javascript

export default function App() {

return (

<AuthProvider>

<NavigationContainer>

<Stack.Navigator>

<Stack.Screen

name="Welcome View"

component={LoginView}

options={{ title: "Read it Later - Maybe" }}

/>

</Stack.Navigator>

</NavigationContainer>

</AuthProvider>

);

}

```

We have an AuthProvider that will provide the user logged in to all descendants. Inside is a Navigation Container with one Screen: Login View. But we need to have two Screens: our “Login View” with the UI to log in/register and “Links Screen”, which will show all our links.

So let’s create our LinksView screen:

```javascript

import React, { useState, useEffect } from "react";

import { Text } from "react-native";

export function LinksView() {

return (

<Text>Links go here</Text>

);

}

```

Right now only shows a simple message “Links go here”, as you can check in [`step-6`](https://github.com/mongodb-developer/read-it-later-maybe/compare/step-5...step-6)

## Log out

We can register and log in, but we also need to log out of our app. To do so, we’ll add a Nav Bar item to our Links Screen, so instead of having “Back” we’ll have a logout button that closes our Realm, calls logout and pops out our Screen from the navigation, so we go back to the Welcome Screen.

In our LinksView Screen in we’ll add:

```javascript

React.useLayoutEffect(() => {

navigation.setOptions({

headerBackTitle: "Log out",

headerLeft: () => <Logout closeRealm={closeRealm} />

});

}, [navigation]);

```

Here we use a `components/Logout` component that has a button. This button will call `signOut` from our `AuthProvider`. You’ll need to add the `components` folder.

```javascript

return (

<Button

title="Log Out"

onPress={() => {

Alert.alert("Log Out", null, [

{

text: "Yes, Log Out",

style: "destructive",

onPress: () => {

navigation.popToTop();

closeRealm();

signOut();

},

},

{ text: "Cancel", style: "cancel" },

]);

}}

/>

);

```

Nice! Now we have Login, Logout and Register! You can follow along in [`step-7`](https://github.com/mongodb-developer/read-it-later-maybe/compare/step-6..step-7).

## Links

### CRUD

We want to store Links to read later. So we’ll start by defining how our Link class will look like. We’ll store a Name and a URL for each link. Also, we need an `id` and a `partition` field to avoid pulling all Links for all users. Instead we’ll just sync Links for the logged in user. These changes are in `schemas.js`

```javascript

class Link {

constructor({

name,

url,

partition,

id = new ObjectId(),

}) {

this._partition = partition;

this._id = id;

this.name = name;

this.url = url;

}

static schema = {

name: 'Link',

properties: {

_id: 'objectId',

_partition: 'string',

name: 'string',

url: 'string',

},

primaryKey: '_id',

};

}

```

You can get these changes in `step-8` of the [repo](https://github.com/mongodb-developer/read-it-later-maybe).

And now, we need to code all the CRUD methods. For that, we’ll go ahead and create a `LinksProvider` that will fetch Links and delete them. But first, we need to open a Realm to read the Links for this particular user:

```javascript

realm.open(config).then((realm) => {

realmRef.current = realm;

const syncLinks = realm.objects("Link");

let sortedLinks = syncLinks.sorted("name");

setLinks([...sortedLinks]);

// we observe changes on the Links, in case Sync informs us of changes

// started in other devices (or the cloud)

sortedLinks.addListener(() => {

console.log("Got new data!");

setLinks([...sortedLinks]);

});

});

```

To add a new Link we’ll have this function that uses `[realm.write](https://docs.mongodb.com/realm-sdks/js/latest/Realm.html#write)` to add a new Link. This will also be [observed by the above listener](https://docs.mongodb.com/realm/sdk/react-native/examples/use-change-listeners-in-components/), triggering a UI refresh.

```javascript

const createLink = (newLinkName, newLinkURL) => {

const realm = realmRef.current;

realm.write(() => {

// Create a new link in the same partition -- that is, using the same user id.

realm.create(

"Link",

new Link({

name: newLinkName || "New Link",

url: newLinkURL || "http://",

partition: user.id,

})

);

});

};

```

Finally to delete Links we’ll use `[realm.delete](https://docs.mongodb.com/realm-sdks/js/latest/Realm.html#delete)`.

```javascript

const deleteLink = (link) => {

const realm = realmRef.current;

realm.write(() => {

realm.delete(link);

// after deleting, we get the Links again and update them

setLinks([...realm.objects("Link").sorted("name")]);

});

};

```

### Showing Links

Our `LinksView` will `map` the contents of the `links` array of `Link` objects we get from `LinkProvider` and show a simple List of Views to show name and URL of each Link. We do that using:

```javascript

{links.map((link, index) =>

<ScrollView>

<ListItem.Content>

<ListItem.Title>

{link.name}

</ListItem.Title>

<ListItem.Subtitle>

{link.url}

</ListItem.Subtitle>

</ListItem.Content>

<ListItem.Chevron />

</ScrollView>

```

### UI for deleting Links

As we want to delete links we’ll use a swipe right-to-left gesture to show a button to delete that Link

```javascript

<ListItem.Swipeable

onPress={() => onClickLink(link)}

bottomDivider

key={index}

rightContent={

<Button

title="Delete"

onPress={() => deleteLink(link)}

/>

}

>

```

We get `deleteLink` from the `useLinks` hook in `LinksProvider`:

```javascript

const { links, createLink, deleteLink } = useLinks();

```

### UI for adding Links

We’ll have a [TextInput](https://reactnative.dev/docs/textinput) for entering name and URL, and a button to add a new Link directly at the top of the List of Links. We’ll use an accordion to show/hide this part of the UI:

```javascript

<ListItem.Accordion

content={

<ListItem.Content>

<ListItem.Title>Create new Link</ListItem.Title>

</ListItem.Content>

}

isExpanded={expanded}

onPress={() => {

setExpanded(!expanded);

}}

>

{

<>

<TextInput

style={styles.input}

onChangeText={setLinkDescription}

placeholder="Description"

value={linkDescription}

/>

<TextInput

style={styles.input}

onChangeText={setlinkURL}

placeholder="URL"

value={linkURL}

/>

<Button

title='Click!'

color='red'

onPress={ () => { createLink(linkDescription, linkURL); }}

/>

</>

}

</ListItem.Accordion>

```

## Adding Links in the main App

Finally, we’ll integrate the new `LinksView` inside our `LinksProvider` in `App.js`

```javascript

<Stack.Screen name="Links">

{() => {

return (

<LinksProvider>

<LinksView />

</LinksProvider>

);

}}

</Stack.Screen>

```

## The final App

Wow! That was a lot, but now we have a React Native App, that works with the same code base in both iOS and Android, storing data in a MongoDB Atlas Database in the cloud thanks to Realm Sync. And what’s more, any changes in one device syncs in all other devices with the same user logged-in. But the best part is that Realm Sync works even when offline!

| Syncing iOS and Android | Offline Syncing! |

| :-------------: | :----------: |

|  |  |

## Recap

In this tutorial we’ve seen how to build a simple React Native application using Expo that takes advantage of Realm Sync for their offline and syncing capabilities. This App is a prebuilt app as right now Managed Expo Workflows won’t work with Realm (yet, read more below). But you still get all the simplicity of use that Expo gives you, all the Expo libraries and the EAS: build your app in the cloud without having to install Xcode or Android Studio.

The Realm SDK team is working hard to make Realm fully compatible with Hermes. Once we release an update to the Realm React Native SDK compatible with Hermes, we’ll publish a new post updating this app. Also, we’re working to finish an [Expo Custom Development Client](https://blog.expo.dev/introducing-custom-development-clients-5a2c79a9ddf8). This will be our own Realm Expo Development Client that will substitute Expo Go while developing with Realm. Expect also a piece of news when that is approved!

All the code for this tutorial can be found [in this repo](https://github.com/mongodb-developer/read-it-later-maybe).

| dfreniche |

935,660 | Difference between export as class and object in javascript ? | Hello Devs, Here I am going to share what I learn from my fellow colleague while working on react... | 0 | 2021-12-24T11:37:33 | https://www.internetkatta.com/difference-between-export-as-class-and-object-in-javascript | Hello Devs,

Here I am going to share what I learn from my fellow colleague while working on react application. I generally start using some concept whichever I learn recently ( generally whichever I learned I kept like arrows in quiver and whichever depends on the instance I used it ) then implement whatever requirement comes. But sometimes that concept may not be useful or best to use. This is what I learned.

Long back ago I learned to use singleton class for some third party initiation code. So, I used many times as class but one problem was addressed by colleague is everything is Javascript is object based even if we use finally it converted to object based only then if can be done simple object based approach will help to reduce lines of code and simpler approach. Instead we should use export as object which will be a simpler approach and less code line compared to class based approach.

Let me explain how to export as a class and object.

First see how to export as class or singleton class :

Below sample code is singleton class and it exports as default singleton class.

```

// ExampleClass.js

export default class ExampleClass {

constructor() {

if (this.constructor.instance) {

return this.constructor.instance;

}

this.constructor.instance = this;

}

/**

* @name ExampleClass#setData

* Role of this function is to set data

* @param {Object} data

*/

setData(data) {