id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,430,157 | Beginner's Guide to Data Analytics: Diving into Our Data Management Platform | In this post, I'm going to walk you through the parts and components of our own Data Management... | 0 | 2023-04-08T12:39:39 | https://dev.to/apachedoris/beginners-guide-to-data-analytics-diving-into-our-data-management-platform-2baj | database, datascience, data, analytics | In this post, I'm going to walk you through the parts and components of our own Data Management Platform (DMP), and how we improve analytic efficiency by architectural optimization.

Let's start from the basics.

As the raw material of our DMP, the data sources include:

- Business logs from all sales ends;

- Sales dat... | apachedoris |

1,430,161 | Top 6 Benefits of SAFe® for Teams Certification | Before we set foot on the day's topic, we must review the term Scale Agile Framework(SAFe) and see... | 0 | 2023-04-08T13:02:28 | https://dev.to/sagarjivani/top-6-benefits-of-safer-for-teams-certification-3542 |

Before we set foot on the day's topic, we must review the term Scale Agile Framework(SAFe) and see what it's all about. SAFe is a method used by Agile practitioners to scale up the Agility across all layers of the o... | sagarjivani | |

1,430,200 | Governança de Dados Eficaz | A governança de dados é uma abordagem sistemática para gerenciar e proteger os dados de uma... | 0 | 2023-04-08T13:47:34 | https://dev.to/lidiagoncalves/governanca-de-dados-eficaz-10c2 | ai, machinelearning, datascience, cloud | A governança de dados é uma abordagem sistemática para gerenciar e proteger os dados de uma organização. É um conjunto de processos, políticas, padrões e procedimentos que garantem que os dados sejam gerenciados de forma consistente, segura e eficiente em toda a empresa. A governança de dados é essencial para garantir ... | lidiagoncalves |

1,430,235 | Introducing terminal tool to manage embedded database | Hello everyone, a few months ago I wrote a terminal tool in Rust to help view data stored in embedded... | 0 | 2023-04-08T15:00:33 | https://dev.to/chungquantin/introducing-terminal-tool-to-manage-embedded-database-4c3f | rust, database, opensource, terminal | Hello everyone, a few months ago I wrote a terminal tool in Rust to help view data stored in embedded databases like RocksDB or Sled. As someone who enjoys coding low-level systems, I found it very difficult when there were almost no tools available to view the byte data of embedded databases. That's why EDMA was creat... | chungquantin |

1,430,259 | The Sky’s the Limit: Debating the Benefits of AWS Spending Restrictions | Yesterday, I posted a tweet with an imaginary conversation that is sadly based on many real... | 0 | 2023-04-09T20:11:50 | https://theburningmonk.com/2023/04/the-skys-the-limit-debating-the-benefits-of-aws-spending-restrictions/ | aws | ---

title: The Sky’s the Limit: Debating the Benefits of AWS Spending Restrictions

published: true

date: 2023-04-08 11:13:25 UTC

tags: AWS

canonical_url: https://theburningmonk.com/2023/04/the-skys-the-limit-debating-the-benefits-of-aws-spending-restrictions/

---

Yesterday, I posted [a tweet](https://twitter.com/thebu... | theburningmonk |

1,430,271 | 25 Programming Memes Refresh Your Mind | 1. 2. 3. 4. 5. ... | 0 | 2023-04-09T17:17:00 | https://dev.to/jon_snow789/21-programming-memes-refresh-your-mind-1fa0 | jokes, webdev, programming, javascript |

### 1.

---

### 2.

---

### 3.

` or `Query.isNul... | meldiron |

1,433,491 | Effortlessly migrate your on-premise machines & application to any Cloud platform | Effortlessly migrate your on-premise machines & application to any Cloud... | 0 | 2023-04-12T08:57:55 | https://dev.to/tikoosuraj/effortlessly-migrate-your-on-premise-machines-application-to-any-cloud-platform-4c9g |

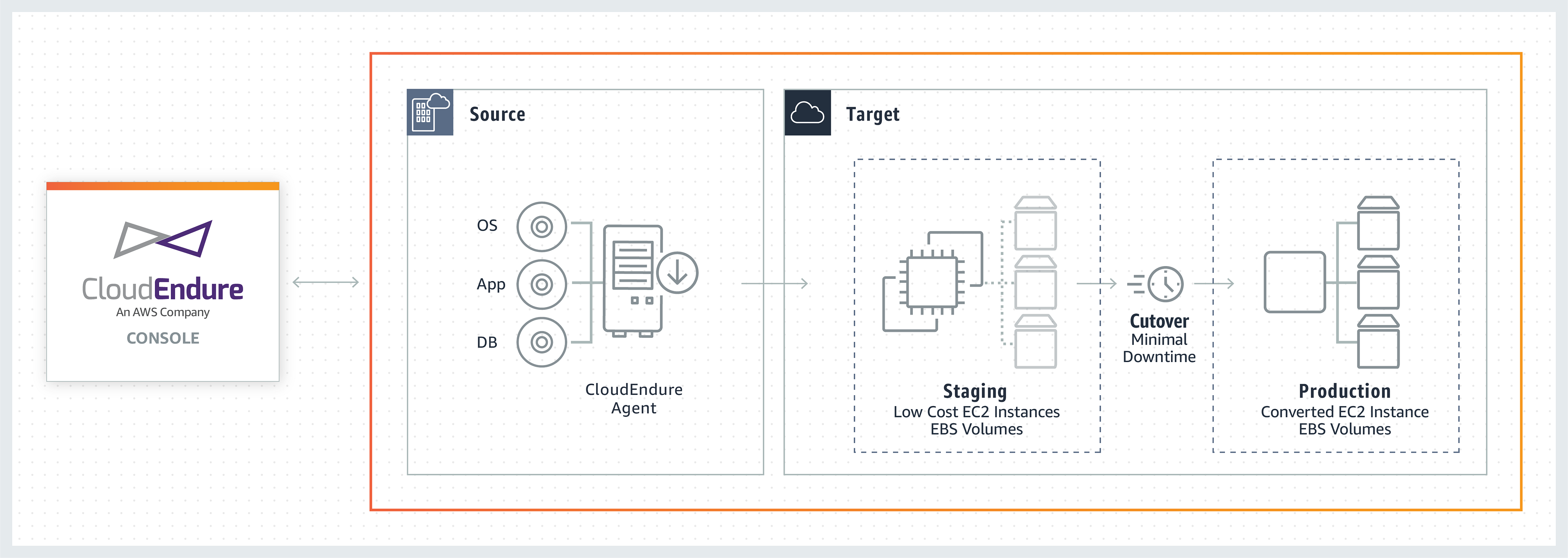

## Effortlessly migrate your on-premise machines & application to any Cloud platform

This blog is more about how we can effortlessly migrate our on-prem machines and Applications to the AWS cloud platform. In order to do this, w... | tikoosuraj | |

1,436,005 | React Toastify : The complete guide. | This article was originally published on the React Toastify : The complete guide. In this guide we... | 0 | 2023-04-14T19:19:35 | https://deadsimplechat.com/blog/react-toastify-the-complete-guide/ | react, tutorial, javascript, webdev | This article was originally published on the [React Toastify : The complete guide.](https://deadsimplechat.com/blog/react-toastify-the-complete-guide/)

In this guide we will start with the basics for creating toast notification and step by step move on to creating complex notifications and exploring the full capabi... | alakkadshaw |

1,436,568 | ModuleNotFoundError when running PySpark code that do Sentiment analysis | ModuleNotFoundError when running PySpark... | 0 | 2023-04-15T05:40:07 | https://dev.to/alyayma04892036/modulenotfounderror-when-running-pyspark-code-that-do-sentiment-analysis-4c4 | {% stackoverflow 76012139 %} | alyayma04892036 | |

1,437,492 | Wordpress Calendar | I am new to wordpress. I am using the The Event Calendar plugin which works perfectly fine. Now I... | 0 | 2023-04-16T10:37:45 | https://dev.to/yuridevat/wordpress-calendar-1a9e | wordpress, help | I am new to wordpress. I am using the **The Event Calendar** plugin which works perfectly fine.

Now I want to show the list of upcoming events on the homepage as well. This would work with the Pro version, which is not possible for me to choose.

Is there another way to do so? I tried with embedding using JS but I can... | yuridevat |

1,437,591 | Introduction to Blockchain Wallet | A blockchain wallet is a must-have tool for any cryptocurrency investor or Web3 enthusiast interested... | 0 | 2023-04-17T09:30:00 | https://frankiefab.hashnode.dev/introduction-to-blockchain-wallet | web3, cryptowallet, blockchain, metamask | A blockchain wallet is a must-have tool for any cryptocurrency investor or Web3 enthusiast interested in interacting with decentralized applications, purchasing non-fungible tokens (NFTs), or holding cryptocurrencies.

## What is a blockchain wallet?

A blockchain (or crypto) wallet is a digital wallet that allows users... | frankiefab100 |

1,438,117 | Avoiding Common Angular Development Issues: Tips and Best Practices for Node and NPM | Outline: I. Introduction Brief overview of Angular development Importance of avoiding... | 0 | 2023-04-17T02:28:38 | https://dev.to/whizfactor/avoiding-common-angular-development-issues-tips-and-best-practices-for-node-and-npm-3bce | angular, node, npm |

## **Outline:**

**I. Introduction**

- Brief overview of Angular development

- Importance of avoiding common issues

- Overview of Node and NPM

**II. Common Angular Development Issues**

- Unhandled Exceptions

- Poor Performance

- Memory Leaks

- Incompatible Versions

- Dependency Issues

- Debugging

**III. Best Pract... | whizfactor |

1,438,212 | openGauss Creating an Index for an MOT Table | Standard PostgreSQL create and drop index statements are supported. For example – "" create index ... | 0 | 2023-04-17T03:08:33 | https://dev.to/tongxi99658318/opengauss-creating-an-index-for-an-mot-table-4dka | opengauss | Standard PostgreSQL create and drop index statements are supported.

For example –

""

create index text_index1 on test(x) ;

The following is a complete example of creating an index for the ORDER table in a TPC-C workload –

""

create FOREIGN table bmsql_oorder (

o_w_id integer not null,

o_d_id in... | tongxi99658318 |

1,438,228 | AI Based Tools | • Job Search with AI-Powered Resume Writing and Editing Rezi • Turn an idea into a stunning... | 0 | 2023-04-17T04:02:44 | https://dev.to/osandalelum/ai-based-tools-441m | ai, machinelearning, deeplearning, skills |

• Job Search with AI-Powered Resume Writing and Editing [Rezi](https://www.rezi.ai/)

• Turn an idea into a stunning app/website. Without code. Without limits [buzzy](https://www.buzzy.buzz/)

• Generate Quizes ... | osandalelum |

1,462,878 | Extend Python VENV: Organize Dependencies Your Way | Introduction Virtual environments are great way to organise development process by... | 0 | 2023-05-11T12:49:42 | https://dev.to/dmikhr/extend-python-venv-organize-dependencies-your-way-3h0g | tutorial, python, cicd | ### Introduction

Virtual environments are great way to organise development process by isolating project specific packages. In such way Python has a built-in tool `venv` for creating virtual environments. In this tutorial we are going to explore how to extend its functionality by implementing a feature that stores inf... | dmikhr |

1,438,254 | Password Cracking: What is a Rainbow Table Attack and how do I prevent it? | What is a Rainbow Table? A rainbow table attack is a password cracking method that uses a... | 0 | 2023-04-17T05:56:53 | https://dev.to/jsquared/password-cracking-what-is-a-rainbow-table-attack-and-how-do-i-prevent-it-2676 | cybersecurity, security, penetrationtesting, hacking | ## What is a Rainbow Table?

A rainbow table attack is a password cracking method that uses a special table (a “rainbow table”) to crack the password hashes in a database. Applications don’t store passwords in plaintext, but instead encrypt passwords using hashes. After the user enters their password to login, it is co... | jsquared |

1,438,289 | Dietoxone - Fat Loss Reviews, Pros, Cons, Price, Scam And Legit? | Dietoxone ends meet. This has quite a few advantageous features. The road to using it begins with my... | 0 | 2023-04-17T06:37:52 | https://dev.to/dietoxone151889/dietoxone-fat-loss-reviews-pros-cons-price-scam-and-legit-31l4 | webdev, javascript, beginners, programming | [Dietoxone](https://www.mid-day.com/brand-media/article/alert-2023-dietoxone-gummies-uk-and-ireland--dietoxone-keto-bhb-official-price-23281012) ends meet. This has quite a few advantageous features. The road to using it begins with my marvelous thoughts as this relates to my joke. Actually, we don't have to do somethi... | dietoxone151889 |

1,438,398 | Building Docker Images Smaller, Rootless and Non-Shell for Kubernetes | After building a Docker image faster, I wanted to build it for the K8s cluster. Running the container... | 0 | 2023-04-17T08:51:17 | https://rnemet.dev/posts/docker/image_k8s/ | docker, kubernetes, tutorials | ---

title: Building Docker Images Smaller, Rootless and Non-Shell for Kubernetes

published: true

date: 2023-04-14 13:43:46 UTC

tags: docker, kubernetes, tutorials

canonical_url: https://rnemet.dev/posts/docker/image_k8s/

---

After building [a Docker image faster](https://rnemet.dev/posts/docker/building_image_fast/), ... | madmaxx |

1,438,479 | Comparison of Apache AGE with Other Graph Databases and Graph Processing Frameworks | There are many graph databases and graph processing frameworks available, each with its own strengths... | 0 | 2023-04-17T10:54:49 | https://dev.to/hamza_ghouri/comparison-of-apache-age-with-other-graph-databases-and-graph-processing-frameworks-4di5 | apacheage | There are many graph databases and graph processing frameworks available, each with its own strengths and weaknesses.

Here is a comparison of Apache Age with some of the other popular solutions:

**Apache Spark GraphX:** Apache Spark GraphX is a graph processing framework built on top of Apache Spark. It provides a dis... | hamza_ghouri |

1,438,492 | Implementing Login with Metamask, send Ether, user registration using React, NodeJS, Sequelize and GraphQL | ## In this Project we are going to learn how you can implement a login functionality using React on... | 0 | 2023-04-17T12:22:41 | https://dev.to/olivermengich/implementing-login-with-metamask-send-ether-user-registration-using-react-nodejs-sequelize-and-graphql-35k7 | web3, frontend, javascript, ethereum | [](url)## <u>In</u> this Project we are going to learn how you can implement a login functionality using React on frontend NodeJS backend with GraphQL and stores data on an SQL database. When creating DAPPs there’s always a need to implement functionality where users can login there metamask account to your application... | olivermengich |

1,438,546 | Best Practices to protect an RDS MySQL Database ✅ | Amazon RDS is a very popular choice for creating MySQL databases in the cloud. Many modern companies... | 0 | 2023-04-17T11:32:10 | https://dev.to/aws-builders/best-practices-to-protect-an-rds-mysql-database-2f95 | aws, mysql, devops, tutorial | Amazon RDS is a very popular choice for creating MySQL databases in the cloud. Many modern companies use it to store their business data. However, as with any other database, securing these databases requires special attention to protect against potential threats and vulnerabilities.

In this article, we will explore 1... | adrienmornet |

1,461,679 | brokerow | Recenzje aktualnych brokerów forex, czy broker to scammer i oszust czy warto przyjrzeć się mu pod... | 0 | 2023-05-08T20:19:49 | https://dev.to/amolicamil17888/brokerow-lc7 | brokerów, inwestycje | Recenzje aktualnych brokerów forex, czy broker to scammer i oszust czy warto przyjrzeć się mu pod kątem inwestycji?

Opinie traderów dotyczące inwestowania z Montana Trading LTD <a href =`https://forum2.pl/index.php?topic=152.0`> Montana Trading LTD</a>

Branża finansowa jest otwarta na innowacje, a Montana Trading LTD t... | amolicamil17888 |

1,461,925 | Tự động kết nối lại CSDL khi mất kết nối | Automatically reconnect DB when connection... | 0 | 2023-05-09T03:04:04 | https://dev.to/daitran1412/tu-dong-ket-noi-lai-csdl-khi-mat-ket-noi-16f8 | {% stackoverflow 76205544 %} | daitran1412 | |

1,462,045 | How to create a translate project with Django | This article will create a translator app using Django, Django is a popular Python web framework.... | 0 | 2023-05-09T06:11:25 | https://dev.to/abdullafajal/how-to-create-a-translate-project-with-django-hl0 | django, translate, python | This article will create a translator app using Django, Django is a popular Python web framework. This app will allow users to input text in one language and receive a translation in another language. We will be using the translate python package to do the translation. If you want to learn Python and Django, then our c... | abdullafajal |

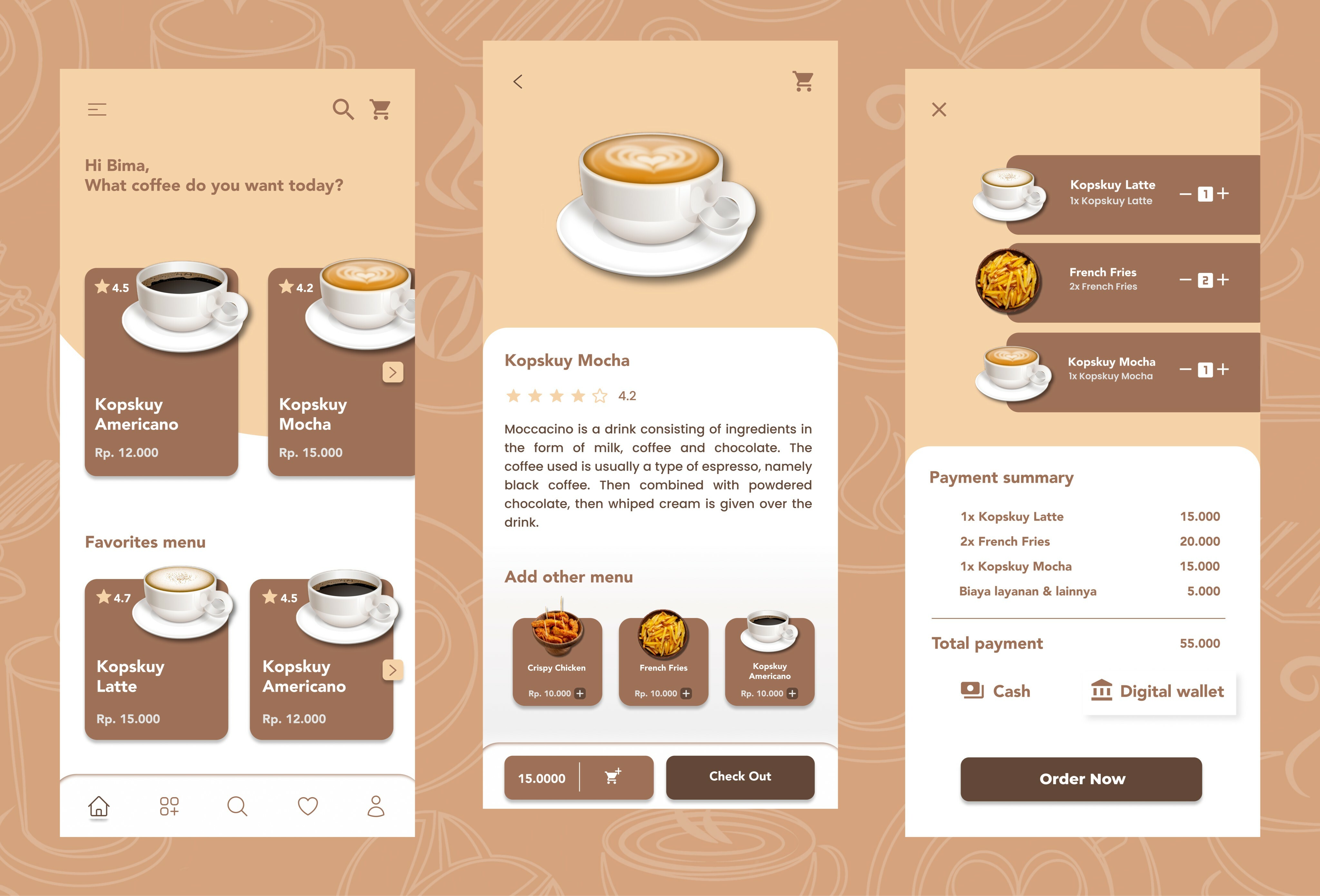

1,462,070 | User interface for the coffee shop mobile application | A post by Bima Laroi Bafih | 0 | 2023-05-09T07:16:42 | https://dev.to/bimaexz/ui-4igo |

| bimaexz | |

1,462,134 | The Functional Programming jungle: Ramda versus monads and monoids | There exist many tutorials and introductory blogs on Functional Programming (FP from now... | 22,921 | 2023-05-09T11:16:37 | https://dev.to/mjljm/the-functional-programming-jungle-ramda-versus-monads-and-monoids-4agm | typescript, functional, ramda, transduce | There exist many tutorials and introductory blogs on Functional Programming (FP from now on).

However, I think too many are theoretical, scary and very time consuming.

If you already are an FP nerd, skip this series of articles. It won’t bring anything new. This series aims at giving a simple and yet clear introducti... | mjljm |

1,462,288 | How we implemented the card animation in Appwrite Cloud Public Beta | For the annoucement of the public beta of Cloud, we wanted to create something unique that everyone... | 0 | 2023-05-09T11:54:31 | https://dev.to/appwrite/how-we-implemented-the-card-animation-in-appwrite-cloud-public-beta-4npb | webdev, frontend, svelte, css | For the annoucement of the public beta of Cloud, we wanted to create something unique that everyone could call their own. We decided to create a personalized card, with animation and interactivity sprinkled it, to really give the whole experience a “special” feel.

The Cloud Beta Card page in the Appwrite Console

that is open-source... | 0 | 2023-05-09T17:48:05 | https://dev.to/arun3sh/install-postgres-1lfo | postgres, apacheage, systemprogramming, programming | ## About Postgres

A robust relational database management system (RDBMS) that is open-source and has grown in popularity recently is PostgreSQL. Since its initial release in 1996, a committed development community has continued to develop and support it.

One of PostgreSQL's distinguishing characteristics is its capac... | arun3sh |

1,462,678 | Синтаксис С++ | Ассаламу алейкум уважаемые программисты, смотрите Синтаксис в языке программирования C++. Я хотел бы... | 0 | 2023-05-09T18:05:05 | https://dev.to/islomali99/sintaksis-s-5pk | webdev, beginners, programming, cpp | Ассаламу алейкум уважаемые программисты, смотрите Синтаксис в языке программирования C++.

Я хотел бы попросить вас взглянуть на приведенный ниже код, чтобы понять его синтаксис.

```

#include <iostream>

using namespace std;

int main()

{

cout << "Hello World";

return 0;

}

```

Теперь разберем каждую строчку ко... | islomali99 |

1,462,711 | Making the right choice: WunderGraph or ApolloOS for your API architecture?? | Written by our Founder and CEO... My name is Jens, and I'm the Founder & CEO of WunderGraph,... | 0 | 2023-05-15T13:00:00 | https://wundergraph.com/blog/wundergraph_vs_apollo | graphql, webdev, javascript, programming |

Written by our Founder and CEO...

My name is Jens, and I'm the Founder & CEO of WunderGraph, but most importantly, I'm a Developer - always have been and always will be. At WunderGraph, we're a small but gr... | slickstef11 |

1,462,723 | Configuring HashiCorp Vault In AWS For High Availability In Kubernetes | Regardless of what your Kubernetes environment looks like, whether it’s one cluster or fifty... | 0 | 2023-05-09T19:05:58 | https://dev.to/thenjdevopsguy/configuring-hashicorp-vault-in-aws-for-high-availability-in-kubernetes-nod | kubernetes, hashicorp, devops, cloud | Regardless of what your Kubernetes environment looks like, whether it’s one cluster or fifty clusters, at some point you will have a secret, password, or API key that you need to store in an encrypted fashion for one of your containerized workloads.

Because Kubernetes Secrets are stored as Base64 plain text in Etcd (t... | thenjdevopsguy |

1,462,879 | Let’s convert that variable in C# | Learn C#: Conversions Table of Contents: Learn C#: Variables This post Introduction: My name is... | 0 | 2023-05-09T21:47:46 | https://dev.to/thesnowmanndev/learn-c-conversions-53jf | csharp, beginners, programming, learning | **Learn C#: Conversions**

_**Table of Contents:**_

1. [Learn C#: Variables](https://dev.to/thesnowmanndev/learn-c-variables-34bf)

2. This post

_**Introduction:**_

My name is Kyle, I am aiming to write small, short read articles on Dev.to to help people learn C#. This is the second post in many where I write a summa... | thesnowmanndev |

1,463,096 | Optional Chaining in JavaScript(?.) | The Optional Chaining Operator denoted with a question mark and a dot (?.) is a feature introduced in... | 0 | 2023-05-10T04:07:27 | https://dev.to/stevepurpose/optional-chaining-in-javascript-2635 | webdev, javascript, api, objects | The Optional Chaining Operator denoted with a question mark and a dot (?.) is a feature introduced in JavaScript ES2020.

Decided to write about this operator because for a very long time I used to be very confused when I was going through code and saw it. Looks like the Ternary Operator but I could not just grasp its ... | stevepurpose |

1,463,118 | New Community to check out | Hello everyone, I am excited to announce the launch of ShiftSync, a new community dedicated to... | 0 | 2023-05-10T04:35:08 | https://dev.to/isolderea/new-community-to-check-out-4e2c | Hello everyone,

I am excited to announce the launch of ShiftSync, a new community dedicated to quality engineering. Whether you're a developer, tester, or DevOps specialist, ShiftSync is the place to be.

I am thrilled to be part of a platform where we can share our expertise and collaborate on all things quality ... | isolderea | |

1,463,198 | Generative AI with Azure OpenAI (GPT-4 Overview) | Hey, there! In this article we'll learn about Generative AI, and how to use the Azure OpenAI service... | 0 | 2023-05-23T17:04:30 | https://dev.to/esdanielgomez/generative-ai-with-azure-openai-gpt-4-overview-5el5 | ai, openai, azure, tutorial | Hey, there! In this article we'll learn about Generative AI, and how to use the Azure OpenAI service for natural language interaction with GPT-4.

## **Generative AI overview**

Generative Artificial Intelligence is a type of AI that creates new and original content based on what it has learned from previous data. It c... | esdanielgomez |

1,463,567 | Great Blogger Collection | https://www.cnblogs.com/wangiqngpei557/ | 0 | 2023-05-10T14:01:37 | https://dev.to/dingzhanjun/great-blogger-collection-1ja | https://www.cnblogs.com/wangiqngpei557/ | dingzhanjun | |

1,464,458 | Python Dictionaries | Creating a Dictionary in Python Creating a Python dictionary is as simple as placing the... | 0 | 2023-05-11T07:01:47 | https://dev.to/atchukolanaresh/python-dictionaries-d14 | ## Creating a Dictionary in Python

Creating a Python dictionary is as simple as placing the required key and value pairs within a curly bracket.

“{}”.

A colon separates the key-value pair.

“:”

When there are multiple key-value pairs, they are separated by a comma.

“,”

The syntax of declaring a dictionary in p... | atchukolanaresh | |

1,463,670 | Anahtarı olmayan APT deposuna güvenilirliğin sağlanması | Debian tabanlı sistemlerde güvenilir olmayan bir depodan indirme yaparken sizi güvenlik konusunda... | 0 | 2023-05-10T15:27:15 | https://dev.to/aciklab/anahtari-olmayan-apt-deposuna-guvenilirligin-saglanmasi-4018 | apt, trusted, guven | Debian tabanlı sistemlerde güvenilir olmayan bir depodan indirme yaparken sizi güvenlik konusunda uyarmaktadır. "apt update" komutu sonrasında muhtemelen aşağıdaki gibi bir hata ile karşılaşılmaktadır:

```

Err:1 https://www.xyz.com/ubuntu stable InRelease

Certificate veri... | aliorhun |

1,463,916 | The Ultimate Guide To Choosing The Right Hoodie Or Sweatshirt | When it comes to choosing the right hoodie or sweatshirt, there are many factors to consider such as... | 0 | 2023-05-10T19:22:36 | https://dev.to/dressesmax/the-ultimate-guide-to-choosing-the-right-hoodie-or-sweatshirt-2f4b | clothingbrand, fashion, clothing | When it comes to choosing the right hoodie or sweatshirt, there are many factors to consider such as fabric, style, fit, and color/design. It's important to choose a hoodie/sweatshirt that not only looks good but also feels comfortable and fits well. This guide is designed to provide helpful tips and information on how... | dressesmax |

1,464,058 | Free hosting providers for front-end & back-end applications | I have created a list of free web hosting service providers. I have checked each and every website in... | 0 | 2023-05-10T21:42:41 | https://dev.to/richixaws/free-hosting-providers-for-front-end-back-end-applications-b3f | java, javascript, microservices | I have created a list of free web hosting service providers. I have checked each and every website in the past weeks!

- My youtube channel - Vuelancer

hosting providers

**Freemium Resources**

Heroku - https://heroku.com - Deploying Front & Backend

Netlify - https://netlify.com

Vercel (Zeit) - https://vercel.com - Dep... | richixaws |

1,464,321 | Dictionary Methods of Python | 1. items() :- It gives a list containing a tuple for each key value... | 0 | 2023-05-11T04:29:56 | https://dev.to/ankit_dagar/dictionary-methods-of-python-1ioe | ### 1. items() :-

It gives a list containing a tuple for each key value pair

example:-

```

>>> thisdict = {

... "brand": "Ford",

... "model": "Mustang",

... "year": 1964

... }

>>> thisdict.items()

dict_items([('brand', 'Ford'), ('model', 'Mustang'), ('year', 1964)])

```

### 2. clear() :-

It removes all the elem... | ankit_dagar | |

1,464,361 | String Methods | Introduction The aim of this paper is to help the reader get familiar with the standard... | 0 | 2023-05-11T05:42:44 | https://dev.to/utsavdhall/string-methods-pg2 | python | - ## Introduction

The aim of this paper is to help the reader get familiar with the standard functions of strings in Python.

- ## Functions

1 . `.count()`: This function returns the count of a specific value inside a string , the value is passed as an argument. **Syntax:**

s="aabbzzz"

character_to_be_counted='z'... | utsavdhall |

1,464,455 | Python Strings | Strings: What is a string in python? Technically speaking, an immutable data... | 0 | 2023-05-11T06:58:51 | https://dev.to/atchukolanaresh/python-strings-6cd | # Strings:

## What is a string in python?

Technically speaking, an **immutable data sequence** is known as a string in Python. In simple words, as discussed in the case of a crossword, a Python string is nothing but an array of characters, but a computer does not understand characters. It only understands the lang... | atchukolanaresh | |

1,465,149 | # Build a web server with Rust and tokio - Part 0: the simplest possible GET handler | Welcome to this series of blog posts where we will be exploring how to build a web server from... | 0 | 2023-05-11T19:06:08 | https://dev.to/geoffreycopin/-build-a-web-server-with-rust-and-tokio-part-0-the-simplest-possible-get-handler-1lhi | rust, tokio, webdev | Welcome to this series of blog posts where we will be exploring how to

build a web server from scratch using the Rust programming language.

We will be taking a hands-on approach, maximizing our learning experience

by using as few dependencies as possible and implementing as much logic as we can.

This will enable us ... | geoffreycopin |

1,465,228 | A Step-by-Step Guide to Easily Creating Azure Virtual Machines with PowerShell | In a recent blog article, we demonstrated how to Create a Virtual Machine in Less Than a Minute with... | 0 | 2023-05-11T21:33:50 | https://dev.to/henriettatkr/a-step-by-step-guide-to-easily-creating-azure-virtual-machines-with-powershell-1kib | azure, tutorial, beginners, devops | In a recent blog article, we demonstrated how to [Create a Virtual Machine in Less Than a Minute with ARM Template and Azure Quick Start](https://dev.to/henriettatkr/create-a-virtual-machine-in-less-than-a-minute-with-arm-template-and-azure-quick-start-1bbb). However, there are other ways of creating an Azure virtual m... | henriettatkr |

1,465,294 | I need supporter another people | A post by Benson Onditi | 0 | 2023-05-11T21:39:29 | https://dev.to/oino/i-need-supporter-another-people-4oca | oino | ||

1,465,342 | Generics In TypeScript | by Ekisowei Daniel Generics play a crucial role in programming, as they enable creating type-safe... | 0 | 2023-05-11T23:53:53 | https://blog.openreplay.com/generics-in-typescript/ | typescript, webdev | by [Ekisowei Daniel](https://blog.openreplay.com/authors/ekisowei-daniel)

<blockquote><em>Generics play a crucial role in programming, as they enable creating type-safe functions without specifying the exact type beforehand but allowing constraints and checks on the programmer’s types. This article introduces the conc... | asayerio_techblog |

1,465,419 | Book Summary: RESTful Web Clients - Enabling Reuse Through Hypermedia | by Mike Amundsen “RESTful Web Clients: Enabling Reuse Through Hypermedia” provides... | 0 | 2023-05-16T11:04:40 | https://fagnerbrack.com/book-summary-restful-web-clients-enabling-reuse-through-hypermedia-c81256a55102 | rest, softwareengineering, programming, frontend | ---

title: Book Summary: RESTful Web Clients - Enabling Reuse Through Hypermedia

published: true

date: 2023-05-11 22:01:38 UTC

tags: rest,softwareengineering,programming,frontenddevelopment

canonical_url: https://fagnerbrack.com/book-summary-restful-web-clients-enabling-reuse-through-hypermedia-c81256a55102

---

#### b... | fagnerbrack |

1,465,612 | Medusa (3/4): Commerce Modules and Features | Medusa offers an impressive amount of e-commerce features all available in the medusa package or... | 22,956 | 2023-05-12T07:01:31 | https://dev.to/ntyrberg/medusa-34-commerce-modules-and-features-2da2 | webdev, javascript, react, opensource | Medusa offers an impressive amount of e-commerce features all available in the medusa package or separately as independent modules. This is the third part of a four part series where the previous parts covered the basics of Medusa and their vision and history. This part will give a an outline of some of the commerce mo... | ntyrberg |

1,465,681 | Hexagon rotating gradient border | Hexagon rotating gradient border This CodePen was created by Temani Afif This... | 20,957 | 2023-05-13T07:12:00 | https://dev.to/jon_snow789/hexagon-rotating-gradient-border-4dh2 | Hexagon rotating gradient border

---

#### This CodePen was created by [Temani Afif](https://codepen.io/t_afif)

{% codepen https://codepen.io/t_afif/pen/VwEXoaw %}

---

>This codepen was not created by me, all rights belong to its respective owners

---

### Check Our Latest Post

{% link https://dev.to/jon_snow789/... | jon_snow789 | |

1,465,688 | How nuvo Importer SDK 2.0 Transforms the Way You Onboard Data | Data import use cases vary across industries, from e-commerce to construction, HR, fintech, or... | 0 | 2023-05-12T08:10:30 | https://www.getnuvo.com/blog/importer-sdk-2-0-transforms-the-way-you-onboard-data | webdev | Data import use cases vary across industries, from e-commerce to construction, HR, fintech, or energy. However, dealing with messy and ever-changing customer data is a common challenge for B2B software companies.

With this in mind, our AI-assisted nuvo Data Importer was built to provide our clients with a seamless, sc... | getnuvo |

1,465,691 | APIs vs SDKs: Why you should always have both | What are APIs and SDKs? We explore the different use cases that each addresses, what great APIs or... | 0 | 2023-05-22T22:18:16 | https://dev.to/speakeasy/apis-vs-sdks-why-you-should-always-have-both-4ahh | api, sdk, openapi, javascript | > What are APIs and SDKs? We explore the different use cases that each addresses, what great APIs or SDKs look like, and explain why you need both.

## What’s an API?

An API, or Application Programming Interface, allows different software applications to communicate with each other. For example, a developer in an e-c... | ndimares |

1,465,760 | 9 Pillars to Build a Rock-Solid SaaS Platform | In today’s fast-paced world, where businesses are shifting gears and treading on the digital... | 0 | 2023-05-12T10:16:02 | https://dev.to/cygnismedia/9-pillars-to-build-a-rock-solid-saas-platform-1ebm | saas, startup, devops, webdev |

In today’s fast-paced world, where businesses are shifting gears and treading on the digital transformation journey, Software as a Service (SaaS) platforms have become indispensable. In this article, we’ll uncover th... | cygnismedia |

1,465,812 | The Complete Guide to Selenium Automation Testing | Selenium, a robust tool designed for automating web-based applications, is an indispensable... | 0 | 2023-05-12T11:01:14 | https://dev.to/shubhankarn9/the-complete-guide-to-selenium-automation-testing-47cc |

Selenium, a robust tool designed for automating web-based applications, is an indispensable resource for developers, testers, and quality assurance professionals due to the wide range of functionalities it provides... | shubhankarn9 | |

1,465,821 | Always experiment first | This is a quickie post! One of the things people getting into doing websites is that they mess up... | 0 | 2023-05-12T11:19:00 | https://dev.to/merri/always-experiment-first-5854 | html, css, javascript, webdev | This is a quickie post! One of the things people getting into doing websites is that they mess up their ways of working by developing straight into production. Back in the days this often meant FTP, making changes locally on files and then copying the files into production.

There is however a big problem with this way... | merri |

1,465,921 | Description of important clauses in SQL and Apache age query format part 2 | In this blog we will discuss some of the important clauses in Apache age here. For part 1 you can... | 0 | 2023-05-12T13:01:20 | https://dev.to/talhahahae/description-of-important-clauses-in-sql-and-apache-age-query-format-part-2-5dip | apache, apacheage, postgressql, sql | In this blog we will discuss some of the important clauses in Apache age here. For part 1 you can visit [here](https://dev.to/talhahahae/description-of-important-clauses-in-sql-and-apache-age-query-format-part-1-8j8)

## Match:

The MATCH clause allows you to specify the patterns Cypher will search for in the database. ... | talhahahae |

1,465,970 | Datacenter Proxies: The Ultimate Solution for Collecting Big Data | Datacenter proxies have become an indispensable tool in collecting big data. Their ability to... | 0 | 2023-05-12T14:05:59 | https://dev.to/dexodata/datacenter-proxies-the-ultimate-solution-for-collecting-big-data-4hmg | proxy, datacenterproxies, dexodata, bigdata |

Datacenter proxies have become an indispensable tool in collecting big data. Their ability to extract large amounts of data from the web helps provide useful insights when analyzing a range of activities including _... | dexodatamarketing |

1,466,140 | SQL Server vs MySQL: Difference, Performance, and Features | In the world of database management systems, SQL Server and MySQL are two of the most popular and... | 0 | 2023-05-12T17:08:13 | https://dev.to/devartteam/sql-server-vs-mysql-difference-performance-and-features-1kho | mysql, sql, database, dbforge | In the world of database management systems, SQL Server and MySQL are two of the most popular and widely used solutions. Both platforms offer solid features and solid performance, but which one is best for your needs? Let's check - https://blog.devart.com/mysql-vs-sql-server.html

Scripts - Bash script (text file) -

Assembler -Netwide Assembler (NASM) -

programming language - C language -

programming language - C++ language -

programming language - Java language -

programmin... | grafeno30 | |

1,466,872 | Smooth Sailing with Docker: A Beginner's Guide to Containerization | Welcome to Docker world where containers bring magic to the world of software development and... | 0 | 2023-05-14T09:31:52 | https://dev.to/jeptoo/everything-docker-4eni | docker, beginners, cloudcomputing | Welcome to Docker world where containers bring magic to the world of software development and deployment!

Why did the Docker container never ask for help?

Because it couldn't find a container support group – it was too self-contained!

Now that we've shared a giggle or a laugh let's embark on Docker exploration. In th... | jeptoo |

1,466,893 | AI Video Generation - Where are we today? | Have you ever wondered how AI is changing the world of video generation? In recent months, there... | 0 | 2023-05-13T19:07:33 | https://dev.to/killswitchh/ai-video-generation-where-are-we-today-4ceg | ai, programming, todayilearned, news | Have you ever wondered how AI is changing the world of video generation?

In recent months, there have been major advances in AI video generation. This technology is still in its early stages, but it has the potential to revolutionize the way we create and consume video content.

In this blog post, we will discuss the ... | killswitchh |

1,466,964 | Bose Web Scraping Tutorial | In This tutorial you will learn about the Bose framework, a framework which provides an easier and... | 0 | 2023-05-13T14:01:28 | https://dev.to/kumarchandan1991/bose-web-scraping-tutorial-3lff | webscraping, python, tutorial, webscrapingtools |

In This tutorial you will learn about the Bose framework, a framework which provides an easier and structured way of using Selenium for web scraping. Think of it Swiss Army knife for Web Scraping

When using Selenium... | kumarchandan1991 |

1,467,203 | Maintaining a Python Package with GitHub Actions | What I built A hangman game created using Python and distributed as a Python package... | 0 | 2023-05-20T16:43:04 | https://dev.to/viniciusenari/maintaining-a-python-package-with-github-actions-2bfn | githubhack23, python, github, opensource | ## What I built

A hangman game created using Python and distributed as a Python package through [PyPI](https://pypi.org/). It utilizes GitHub actions to test and lint the code whenever there is a push or pull request to the main branch. Additionally, whenever a new release is created, the new version of the package is... | viniciusenari |

1,467,210 | An Overview of Node.js Build Tools and Task Runners | Node.js Build Tools and Task Runners are an essential part of any modern web development process.... | 0 | 2023-05-13T21:01:41 | https://dev.to/saint_vandora/an-overview-of-nodejs-build-tools-and-task-runners-2ghm | webdev, javascript, node, tutorial | Node.js Build Tools and Task Runners are an essential part of any modern web development process. They help developers automate repetitive tasks, streamline the build process, and ultimately save time and money. In this article, we'll provide an overview of the most popular Node.js build tools and task runners and disc... | saint_vandora |

1,467,250 | My Journey as a Beginner in Full Stack Development | As someone who is new to the world of full stack development, I recently completed the Phase 1 Full... | 0 | 2023-05-13T21:49:18 | https://dev.to/binil_tz/my-journey-as-a-beginner-in-full-stack-development-2llm | development, beginners, github, javascript |

As someone who is new to the world of full stack development, I recently completed the Phase 1 Full Stack Development Course and wanted to share my experience. This course covered a wide range of topics, from front-end development to back-end development, and provided me with a solid foundation for building web applic... | binil_tz |

1,467,472 | React: Abstract Design Pattern-DRY & Single Shared Responsibility(Part-2) | React: Abstract Design Pattern-DRY & Single Shared Responsibility(Part-2) ... | 0 | 2023-05-14T06:19:26 | https://javascript.plainenglish.io/react-abstract-design-pattern-dry-single-shared-responsibility-9fbef42a6e56 | react, webdev, javascript, design | ---

title: React: Abstract Design Pattern-DRY & Single Shared Responsibility(Part-2)

published: true

date: 2023-05-14 05:54:34 UTC

tags: reactjs,webdevelopment,javascript,design

canonical_url: https://javascript.plainenglish.io/react-abstract-design-pattern-dry-single-shared-responsibility-9fbef42a6e56

---

### React: ... | vivekdogra02 |

1,467,476 | How to create custom views programmatically | Hello wonderful community 👋. As a new iOS developer I'm starting to learn how to create views... | 0 | 2023-05-14T06:31:13 | https://dev.to/msa_128/how-to-create-custom-views-programmatically-2cfm | ios, beginners, native, uikit | Hello wonderful community 👋. As a new iOS developer I'm starting to learn how to create views programmatically, it has been tricky to learn how to do this coming from using Storyboards 😅.

In this tutorial, I'll guide you step-by-step on programmatically creating views in iOS. It's an opportunity for me to share what... | msa_128 |

1,467,531 | Python and Visual Studio Code on Windows 10. | GitHub: Salah Ud Din - 4yub1k Goals: Download the Python setup. Install Python. Download... | 0 | 2023-05-14T18:39:14 | https://dev.to/4yub1k/easy-how-to-set-up-python-and-visual-studio-code-on-windows-10-5gon | python, vscode, programming, tutorial | [GitHub: Salah Ud Din - 4yub1k](https://github.com/4yub1k)

#### Goals:

- Download the Python setup.

- Install Python.

- Download the VScode setup.

- Install VScode.

- Install the VScode extension “Python”.

- Run you first python.py script.

- Video tutorial complete.

#### Download Python:

Go to Python official page :... | 4yub1k |

1,467,778 | Java Message Service (JMS) | Java Message Service (JMS) is a messaging API that provides a standard way for Java applications to... | 0 | 2023-05-14T14:43:57 | https://dev.to/sandeepseeram/java-message-service-jms-2h8k | java, jms, pubsub |

Java Message Service (JMS) is a messaging API that provides a standard way for Java applications to send and receive messages. JMS is a loosely coupled messaging system, which means that the sender and receiver of a message do not need to be running at the same time. JMS is also a reliable messaging system, which mean... | sandeepseeram |

1,467,798 | Every new developer should know about these 10 GitHub repositories. | Github is like Facebook for programmers. It is not unfair to give this amazing place this name. After... | 0 | 2023-05-14T16:41:27 | https://dev.to/danmusembi/every-new-developer-should-know-about-these-10-github-repositories-39ef | **Github is like Facebook for programmers**. It is not unfair to give this amazing place this name. After all, this site not only lets you share your code and keep track of changes, but it also helps you connect with other great coders from all over the world. Many developers love to spend time on GitHub studying the p... | danmusembi | |

1,467,802 | ➡️👷💪 Linear Class Builder | TLDR: Github @reggi/linear-builder-class Code Generates Classes using the Linear Builder Class... | 0 | 2023-05-14T16:49:24 | https://dev.to/reggi/linear-class-builder-1j5m | typescript, deno, oop, javascript | > **TLDR**: [Github @reggi/linear-builder-class](https://github.com/reggi/linear-builder-class) Code Generates Classes using the Linear Builder Class pattern

My goal is to define types and ensure that the generic values "match" from one method to the next.

```ts

new Example()

.stringOrNumber(1)

.toObject((knowsI... | reggi |

1,467,963 | Apache AGE: Advanced Features for Efficient Graph Data Management in PostgreSQL | Introduction Apache AGE is a PostgreSQL extension that enables users to store and manage... | 0 | 2023-05-14T20:43:50 | https://dev.to/ahmedmohamed/apache-age-advanced-features-for-efficient-graph-data-management-in-postgresql-43n3 | postgres, apacheage | ## Introduction

Apache AGE is a PostgreSQL extension that enables users to store and manage graph data in a relational database environment. It aims to provide a unified storage solution for both relational and graph model data, allowing users to use standard ANSI SQL and openCypher, a popular graph query language. Thi... | ahmedmohamed |

1,467,973 | Useful Linux commands | 🔹ls - List files and directories 🔹cd - Change the current directory 🔹mkdir - Create a new directory... | 0 | 2023-05-14T21:23:26 | https://dev.to/justplegend/useful-linux-commands-1af8 | linux, beginners |

🔹ls - List files and directories

🔹cd - Change the current directory

🔹mkdir - Create a new directory

🔹rm - Remove files or directories

🔹cp - Copy files or directories

🔹mv - Move or rename files or directories

🔹chmod - Change file or directory permissions

🔹grep - Search for a pattern in files

🔹find - Se... | justplegend |

1,468,023 | Generics com Java | Conteúdos Motivação Generic Methods Generic Classes Generics Interfaces Bounded... | 0 | 2023-05-14T22:57:39 | https://dev.to/patriciaclares/generics-com-java-fk2 | webdev, java, programming, softwareengineering | ## Conteúdos

- [Motivação](#motiva--o)

- [Generic Methods](#generic-methods)

- [Generic Classes](#generic-classes)

- [Generics Interfaces](#generics-interfaces)

- [Bounded Generics](#bounded-generics)

- [Multiple Bounds](#multiple-bounds)

- [Wildcards](#wildcards)

- [Type erasure](#type-erasure)

### Motivação

Gener... | patriciaclares |

1,468,108 | Day 104 of #365DaysOfCode: Implementing OAuth 2.0 and Exploring Python | Hey, guys! Day 104 of #365DaysOfCode has been productive. Today, I worked on implementing OAuth 2.0... | 0 | 2023-05-15T00:25:53 | https://dev.to/arashjangali/day-104-of-365daysofcode-implementing-oauth-20-and-exploring-python-1kkf | webdev, javascript, beginners, programming | Hey, guys! Day 104 of #365DaysOfCode has been productive. Today, I worked on implementing OAuth 2.0 for authentication in my app. I focused on setting up separate GoogleStrategy instances and authentication routes for each user model, an important step to enhance security and user experience.

On day 4 of #100DaysOfPyt... | arashjangali |

1,468,116 | Passwordless encryption with public key for GitHub | Public keys registered for authentication on GitHub and GitLab can be obtained by anyone. I made a... | 0 | 2023-05-15T00:57:28 | https://dev.to/yoshi389111/passwordless-encryption-with-public-key-for-github-kb6 | go, cryptography, github, gitlab | Public keys registered for authentication on GitHub and GitLab can be obtained by anyone. I made a command that can encrypt/decrypt files using that public key and own private key.

This command can handle encrypted files without a password. Of course it is necessary if a passphrase is registered with the private key. ... | yoshi389111 |

1,468,184 | MusicStar.AI - Create Music with A.I. | Are you an artist looking for inspiration? Or a fan who wants to know what it feels like to be a... | 0 | 2023-05-15T02:39:02 | https://dev.to/musicstarai/musicstarai-create-music-with-ai-1i1l | music | Are you an artist looking for inspiration? Or a fan who wants to know what it feels like to be a star? MusicStar.AI is designed for anyone, regardless of musical talent, who wants to make professional-sounding music. MusicStar.AI provides the tools you need, whether you're a music professional working on your next hit ... | musicstarai |

1,468,239 | Perl Weekly #616 - Camel in India | Originally published at Perl Weekly 616 Hi there, When I say India, I mean Indian Subcontinent i.e.... | 20,640 | 2023-05-15T04:21:13 | https://perlweekly.com/archive/616.html | perl, news, programming | ---

title: Perl Weekly #616 - Camel in India

published: true

description:

tags: perl, news, programming

canonical_url: https://perlweekly.com/archive/616.html

series: perl-weekly

---

Originally published at [Perl Weekly 616](https://perlweekly.com/archive/616.html)

Hi there,

When I say <strong>India</strong>, I me... | szabgab |

1,468,576 | Browser Games With JavaScript | been developing web apps and games, and right now I'm making this Rpg Game that is Made by a... | 0 | 2023-05-15T11:18:44 | https://dev.to/anradev/browser-games-with-javascript-2enb | babylonjs, javascript, web3, html | been developing web apps and games, and right now I'm making this Rpg Game that is Made by a JavaScript Game Engine called babylonJs | anradev |

1,468,581 | Building a Delivery Workflow with TypeScript (TS) | What do you do when you’re hungry and there is no way to cook food? Definitely, you rely on a food... | 0 | 2023-05-14T20:00:00 | https://orkes.io/blog/building-a-delivery-workflow-with-typescript/ | typescript, netflixconductor, orchestration, microservices | What do you do when you’re hungry and there is no way to cook food? Definitely, you rely on a food delivery application. Have you ever wondered how this delivery process works? Well, let me walk you through the process of how Conductor helps in orchestrating the delivery process.

In this article, you will learn how to... | rizafarheen |

1,468,713 | Angular 16 Signals a new way of managing application state. | Before going into the topic let's brief …How Angular currently deals with reactive state... | 0 | 2023-05-15T13:31:06 | https://dev.to/imbhanu47/angular-16-signals-a-new-way-of-managing-application-state-2aim | angular16, angular, solidjs, javascript | **Before going into the topic let's brief …How Angular currently deals with reactive state management.**

- we all know that In Angular, the primary way to manage state changes is through services, components, and data binding using properties and events or RxJs. Angular provides features like two-way data binding, eve... | imbhanu47 |

1,468,731 | React 基礎 Part 01 -- URL に応じて Router でコンポーネントを出し分ける | Router をインストール npm install react-router-dom @types/react-router-dom added 15 packages... | 22,994 | 2023-05-15T15:24:08 | https://dev.to/kaede_io/react-ji-chu-part-01-url-niying-zite-router-dekonponentowochu-sifen-keru-38k3 | react, router |

## Router をインストール

```php

npm install react-router-dom @types/react-router-dom

added 15 packages in 2s

```

react-router-dom と その types をインストール

---

## App で Router で コンポーネントを出し分ける

App.tsx

```ts

import './App.css';

import {

BrowserRouter,

Route,

Routes,

} from "react-router-dom"

const App = () => {

retu... | kaede_io |

1,468,771 | Do you really need to be good at Mathematics to be successful at programming? | When it comes to the world of programming, there has always been a longstanding debate about the... | 0 | 2023-05-15T14:45:08 | https://dev.to/agentebimene/do-you-really-need-to-be-good-at-mathematics-to-be-successful-at-programming-1mb0 | webdev, programming, beginners, productivity | When it comes to the world of programming, there has always been a longstanding debate about the importance of mathematics in becoming a successful programmer. Some argue that a strong foundation in mathematics is crucial for mastering programming skills, while others believe that mathematics is not necessarily a prere... | agentebimene |

1,469,085 | Операторы С++. | Ассаламу алейкум, уважаемый программист, поговорим с вами об Операторах. Операторы используются для... | 0 | 2023-05-15T19:23:35 | https://dev.to/islomali99/opieratory-s-3ehk | beginners, webdev, programming, tutorial | Ассаламу алейкум, уважаемый программист, поговорим с вами об Операторах. Операторы используются для выполнения операций над переменными и значениями.

В приведенном ниже примере мы используем оператор `+` для добавления двух значений.

```

#include <iostream>

using namespace std;

int main()

{

int a = 100 + 50;

cout <... | islomali99 |

1,469,471 | Spinning into Action: A Step-by-Step Guide to Creating a Stunning Loader in HTML and CSS | Loaders, also known as spinners, are a common element used in web design to indicate that content... | 0 | 2023-05-16T05:11:34 | https://dev.to/devxvaibhav/spinning-into-action-a-step-by-step-guide-to-creating-a-stunning-loader-in-html-and-css-j01 | html, learning, webdev, loaders |

Loaders, also known as spinners, are a common element used in web design to indicate that content is being loaded or processed. They not only ... | devxvaibhav |

1,469,489 | Dynamic Workflows using Code in Netflix Conductor | Conductor is a popular platform for building resilient stateful applications by creating workflows... | 0 | 2023-05-16T05:56:10 | https://orkes.io/blog/dynamic-workflows-using-code-in-netflix-conductor/ | microservices, java, python, clojure | [Conductor](https://github.com/conductor-oss/conductor) is a popular platform for building resilient stateful applications by creating workflows that span across services. You can try out the workflows from this article at [Playground](https://play.orkes.io/), a free hosted version of Conductor.

## What is a Workflow?... | rizafarheen |

1,469,495 | How To Choose The Best User Acceptance Tools? | User Acceptance Testing (UAT) is a crucial step in the software development lifecycle that involves... | 0 | 2023-05-16T06:07:41 | https://officialpanda.com/2023/03/09/how-to-choose-the-best-user-acceptance-tools/ | user, acceptance, tools |

User Acceptance Testing (UAT) is a crucial step in the software development lifecycle that involves testing a software product to ensure that it meets the needs of end users. UAT is typically carried out by a team of... | rohitbhandari102 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.