id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,469,592 | Navigating Web Analytics: Tracing the Evolution and Introducing Zaraz | Originally posted here:... | 0 | 2023-05-16T07:25:01 | https://dev.to/imtiaz101325/navigating-web-analytics-tracing-the-evolution-and-introducing-zaraz-4o4o | zaraz, cloudflare, analytics, seo | Originally posted here: https://imtiaz101325.notion.site/Navigating-Web-Analytics-Tracing-the-Evolution-and-Introducing-Zaraz-d472aad2e60547c89bf13066e220170e

The digital realm is a fast-paced, evolving landscape. Significant contributors to this evolution include third-party utilities, Tag Management Systems (TMS), a... | imtiaz101325 |

1,469,913 | How Top Enterprises Embrace Blockchain Technology to Improve Their Business Operation | Here are the top enterprises implementing blockchain into their businesses to bring more efficiency... | 0 | 2023-05-16T13:32:49 | https://dev.to/cooper_91/how-top-enterprises-embrace-blockchain-technology-to-improve-their-business-operation-p2c | blockchain, javascript, programming | Here are the top enterprises implementing blockchain into their businesses to bring more efficiency to their business operations.

**1, Walmart: Product Tracking**

Retail giant Walmart is one of the multinationals that has taken the lead in Blockchain implementation. The company is leveraging the digital ledger technol... | cooper_91 |

1,470,080 | Launch Alert! Wowen Modular Blockchain Network - Unleash the Power of Consensus Choice 🚀⛓️💻 | Welcome to Wowen, the world's first modular blockchain network tailored for developers seeking... | 0 | 2023-05-16T15:55:07 | https://dev.to/wowen_network/launch-alert-wowen-modular-blockchain-network-unleash-the-power-of-consensus-choice-4d8k | ethereum, blockchain, polygon, binance | **Welcome to Wowen, the world's first modular blockchain network tailored for developers seeking ultimate control and flexibility.** Hailing from Switzerland, Wowen revolutionizes the Web3 space by allowing developers to select their preferred consensus mechanism for each transaction.

Unleash your blockchain's potenti... | wowen_network |

1,470,471 | Getting started using Google APIs: Workspace & OAuth client IDs (2/3) | Introduction Are you a developer but a complete beginner using Google APIs? This series is... | 25,403 | 2023-05-16T21:58:52 | https://dev.to/googleworkspace/getting-started-using-google-apis-workspace-23-3ch8 | python, googlecloud, node, googleapi | <!-- Getting started using Google APIs: Workspace (Part 1/3) -->

## Introduction

Are you a developer but a complete beginner using Google APIs? This series is for you because I'm showing you how get started from scratch. Each API family differs from others, so while it would be _great_ if the UX (user experience) was... | wescpy |

1,470,501 | What's Your Wildest Unfulfilled Coding Project Idea? | Got any coding project ideas that are totally out of the box? Share your wildest and most... | 22,092 | 2023-05-17T07:00:00 | https://dev.to/codenewbieteam/whats-your-wildest-unfulfilled-coding-project-idea-kog | discuss, beginners, codenewbie | Got any coding project ideas that are totally out of the box? Share your wildest and most unconventional project concept that you've been itching to bring to life but haven't had the chance yet. Let's inspire each other with our creative and unconventional ideas!

---

Follow the [CodeNewbie Org](https://dev.to/codenewb... | ben |

1,470,510 | The Next Language Evolution | Let's talk about language and how it's linked to the future of Software Engineers and Programmers.... | 0 | 2023-05-16T23:31:58 | https://dev.to/btfranklin/the-next-language-evolution-3afo | language, future, engineering, ai | Let's talk about language and how it's linked to the future of Software Engineers and Programmers. Now, these terms—Software Engineer, Programmer, Software Developer, Coder—often get used interchangeably. But I believe we're going to see more differences between them soon.

The best way to understand this is by looking... | btfranklin |

1,470,577 | Rust中的“废话” | 相比于Python语言的简洁,Rust中存在很多废话 1、 let... | 0 | 2023-05-17T01:50:48 | https://dev.to/dragon72463399/rustzhong-de-fei-hua--l6b | rust, python | ### 相比于Python语言的简洁,Rust中存在很多废话

---

- 1、 `let` 定义变量时会用到这个参数,而Python并没有,这个并没有存在的必要性啊;大家都懂,何必多加一个词汇呢,显得冗余

```

let x = 5;

```

- 2、 `;` 每行代码结束时有一个这个结束符;Js也是这种风格,应该是有很多语言都是这种风格,但是没有啥必要性啊;懂的都懂,显得冗余

```

let x: i32 = 42;

```

- 3、 `: <type>` 申明变量类型;这点不错,不是强制的,可写可不写;用在定义变量,变量名和赋值操作之间;如果不写,编译器会自动识别,感觉还是没有写的必要性;懂得都懂,这个让编译器自动去干就完事了... | dragon72463399 |

1,470,663 | 04.01 - Redux and Redux Saga | App preview: Project files: src/index.html <!DOCTYPE html> <html... | 20,220 | 2023-05-17T04:52:18 | https://dev.to/adriangheo/0401-redux-and-redux-saga-14c1 | vscode | App preview:

Project files:

---------------------------------------------------------... | adriangheo |

1,470,714 | Zero Trust Network for Microservices with Istio | Security was mostly perimeter-based while building monolithic applications. This means securing the... | 0 | 2023-05-17T07:43:57 | https://imesh.ai/blog/zero-trust-network-for-microservices-with-istio/ | istio, kubernetes, microservices, zerotrust | ---

title: Zero Trust Network for Microservices with Istio

published: true

date: 2023-03-24 07:41:06 UTC

tags: istio,Kubernetes,microservices,zerotrust

canonical_url: https://imesh.ai/blog/zero-trust-network-for-microservices-with-istio/

---

Security was mostly perimeter-based while building monolithic applications. T... | 0anas0 |

1,470,728 | Create Portfolio Website Using HTML and CSS (Source Code) | Well, today I’ll be making a visually delicious Portfolio Website Using Html and CSS Source Code. In... | 0 | 2023-05-17T06:09:14 | https://dev.to/cwrcode/create-portfolio-website-using-html-and-css-source-code-5d5c | Well, today I’ll be making a visually delicious **[Portfolio Website ](https://www.codewithrandom.com/2023/03/14/simple-portfolio-website-using-html-css-portfolio-website-source-code/)**Using Html and CSS Source Code. In this article, you get completed code and an explanation about the portfolio website using Html and ... | cwrcode | |

1,474,655 | "this" in JavaScript and "self" in Python; Any Difference? | We use this in JavaScript and self in Python. Are they same? This question was haunting me for some... | 0 | 2023-05-21T00:01:29 | https://dev.to/ibtesum/this-in-javascript-and-self-in-python-any-difference-38fm | javascript, python, programming | We use `this` in JavaScript and `self` in Python. Are they same? This question was haunting me for some time. Finally after some digging I came up with a solution. Hope you will love it.

Personally I love examples. So without further ado, let's jump into some examples.

Here is some JS code:

```js

function Love(part... | ibtesum |

1,479,400 | How to publish a React Component without even including React? | React is a JavaScript library which is used to build attractive User-Interfaces (UIs). Now, most of... | 0 | 2023-05-25T16:44:05 | https://dev.to/aryan_shourie/how-to-publish-a-react-component-without-even-including-react-3kg1 | react, npm, javascript, webdev | React is a **JavaScript library** which is used to build attractive **User-Interfaces** (UIs). Now, most of us know what React is and we also know that to publish a React Component, it is necessary to include the React library.

But, what if I tell you, that it is possible to publish a React Component without even incl... | aryan_shourie |

1,482,228 | Host your Automation Allure Report on GitHub Pages with GitHub Actions. | We all have heard of or used Allure Report in our day-to-day life. Additionally, GitHub Actions and... | 0 | 2023-05-27T05:07:52 | https://dev.to/sadia/host-your-automation-allure-report-on-github-pages-with-github-actions-56a | githubactions, githubpage, automation, testing | We all have heard of or used Allure Report in our day-to-day life. Additionally, GitHub Actions and GitHub Pages are also commonly used by many people in their daily lives. Will integrate all of them and learn something new. First of all, let's recap what these three are.

## Allure Report

An Allure report is an open-... | sadia |

1,482,722 | Crafting Microservices with NodeJS - Or How to Build a Servant from Scratch | Crafting Microservices with NodeJS - Or How to Build a Servant from Scratch If you're... | 0 | 2023-05-27T08:14:05 | https://dev.to/shubhamt619/crafting-microservices-with-nodejs-or-how-to-build-a-servant-from-scratch-1hkk | microservices, node, webdev, beginners | # Crafting Microservices with NodeJS - Or How to Build a Servant from Scratch

If you're reading this article, I presume that you've been smitten by the microservices charm and you're brave enough to tinker with it. Kudos, my friend. For those who just stumbled upon this article and are wondering, "What the heck is a m... | shubhamt619 |

1,483,016 | Easy React data fetching with the new `use()` hook | React.use() is still an unstable API. For more information check out the support for promises React... | 0 | 2023-05-27T15:38:21 | https://chiubaca.com/easy-react-data-fetching-with-use-16jg | react, webdev, nextjs | > `React.use()` is still an unstable API. For more information check out the [support for promises React RFC](https://github.com/acdlite/rfcs/blob/first-class-promises/text/0000-first-class-support-for-promises.md) . At the time of writing this, you can only test this API in Next.js 13.

OK with that disclaimer out the... | chiubaca |

1,483,172 | Promesas con async y await | Hay dos formas de manejar las promesas en JavaScript una es con then que ya lo explique en un... | 0 | 2023-05-27T20:20:55 | https://dev.to/ulisesserranop/promesas-con-async-y-await-53gc | javascript, programming, webdev, spanish | Hay dos formas de manejar las promesas en JavaScript una es con then que ya lo explique en un articulo anterior. Y otra es la que voy a explicar a continuación que es async y await. En este ejemplo se realiza una petición a una API fake.

Lists are one of the most commonly used data structures in Python. They are used to store collections of data, Such as a list of numbers or a list of names. In this article, we will explore the basics of list in Pyt... | bansikah |

1,483,619 | Angular Universal vs React Server-Side Rendering: Which One Should You Choose? | Discover the benefits and drawbacks of Angular Universal and React Server-Side Rendering for boosting performance and SEO compatibility in modern web development. | 0 | 2023-05-28T11:20:01 | https://angulardive.com/blog/angular-universal-vs-react-server-side-rendering-which-one-should-you-choose/ | ## Angular Universal vs React Server-Side Rendering: Which One Should You Choose?

Server-Side Rendering (SSR) is an important technique for modern web development. With the rise of Single Page Applications (SPAs), SSR can help boost the performance of these applications, particularly in terms of search engine optimi... | josematoswork | |

1,483,739 | The Power of Rust in Automotive Software Development | The automotive industry is experiencing a significant transformation driven by the integration of... | 0 | 2023-05-30T06:37:48 | https://blog.chetanmittaldev.com/the-power-of-rust-in-automotive-software-development | ---

title: The Power of Rust in Automotive Software Development

published: true

date: 2023-05-28 09:10:39 UTC

tags:

canonical_url: https://blog.chetanmittaldev.com/the-power-of-rust-in-automotive-software-development

---

? | When I started my career as a developer, I was flooded with many articles about best practices, and... | 0 | 2023-05-29T15:57:45 | https://dev.to/yohantsn/what-do-you-think-about-tdd-test-driven-devolepment-3bib | testing, programming, development, discuss | When I started my career as a developer, I was flooded with many articles about best practices, and one, in particular, caught my attention: the TDD.

In my case I used it a lot when projects were small and new, but now working on larger legacy code I can't use this concept.

My reflection here is around the real gain,... | yohantsn |

1,485,231 | Curso de JavaScript Online e Gratuito com 10 Horas de Aprendizado | Explore o curso de programação gratuito “JavaScript do Zero” oferecido pela Trybe! Se você está... | 0 | 2023-05-30T02:01:52 | https://guiadeti.com.br/curso-de-javascript-online-e-gratuito/ | cursogratuito, bancodedados, css, cursosgratuitos | ---

title: Curso de JavaScript Online e Gratuito com 10 Horas de Aprendizado

published: true

date: 2023-05-30 00:06:48 UTC

tags: CursoGratuito,bancodedados,css,cursosgratuitos

canonical_url: https://guiadeti.com.br/curso-de-javascript-online-e-gratuito/

---

... | 0 | 2023-10-15T16:44:51 | https://dev.to/longtth/sync-windows-local-folder-voi-aws-s3-4m9i | tips | Đồng bộ Windows Local folder với aws s3

Tiền điều kiện

đã biết dùng S3 CLI (với Access Key)

1. cài [aws cli ](https://aws.amazon.com/cli/)

2. tạo 1 file `sync-my-folder.bat`

trong nội dung file bat đấy gõ vào lệnh

```

aws s3 sync s3://mybucket D:\folder-can-sync

```

3. bật Task Scheduler (nhấn nút `Win... | longtth |

1,636,205 | 5 Tips for Managing Small Business Finance | Starting and running a small business is a challenging endeavor, and one of the most critical aspects... | 0 | 2023-10-16T12:28:20 | https://dev.to/sanya3245/5-tips-for-managing-small-business-finance-1fnk | finance | Starting and running a small business is a challenging endeavor, and one of the most critical aspects of success is managing your finances effectively. Small business finance management can be the difference between growth and stagnation. In this blog post, we'll share five essential tips to help you navigate the finan... | sanya3245 |

1,635,550 | Get involved: Your guide to contributing to WebCrumbs | Hey there, champ! So, you're itching to dive into the WebCrumbs community, eh? Fantastic! You're... | 0 | 2023-10-15T22:15:00 | https://dev.to/buildwebcrumbs/get-involved-your-guide-to-contributing-to-webcrumbs-30p7 | hacktoberfest, hacktoberfest23, opensource, beginners | Hey there, champ! So, you're itching to dive into the WebCrumbs community, eh? Fantastic! You're about to join a legion of coders hell-bent on making React development as smooth as silk. Here's how you can get your boots on the ground.

## First step: the lay of the land

Start by taking a tour of the [WebCrumbs GitHub... | opensourcee |

1,635,797 | how to fix this issue on my own | Uncaught Reference Error: Submit is not defined shown on my browser inspect let c1 =... | 0 | 2023-10-16T04:26:45 | https://dev.to/rbalaji150720/uncaught-reference-error-submit-is-not-defined-shown-on-my-browser-inspect-2n8k | > **_Uncaught Reference Error: Submit is not defined shown on my browser inspect_**

```

let c1 = document.getElementById('c1')

let c2 = document.getElementById('c2')

let Bd1 = document.getElementById('Bd')

let index=2;

Bd.addEventListener(Submit,(d)=>{

d.preventDefault();

let num1=parseInt(c1.value);

le... | rbalaji150720 | |

1,635,913 | Perl Weekly #638 - Dancing Perl? | Originally published at Perl Weekly 638 Hi there, What a great and pleasant surprise announcement... | 20,640 | 2023-10-16T07:33:57 | https://perlweekly.com/archive/638.html | perl, news, programming | ---

title: Perl Weekly #638 - Dancing Perl?

published: true

description:

tags: perl, news, programming

canonical_url: https://perlweekly.com/archive/638.html

series: perl-weekly

---

Originally published at [Perl Weekly 638](https://perlweekly.com/archive/638.html)

Hi there,

What a great and pleasant surprise annou... | szabgab |

1,636,025 | Data Modeling. | Over the years, many businesses have been cautious about decision making processes that affect them.... | 0 | 2023-10-23T08:34:48 | https://dev.to/philemonkiplangat/data-modeling-2iio | Over the years, many businesses have been cautious about decision making processes that affect them. This is important since decisions made by a business determines its success. The part of the decision is forecasting which can be made possible by studying the growth of the business. The oil for decision making has bee... | philemonkiplangat | |

1,636,077 | Scraping AliExpress with Python | This article was originally posted on Crawlbase Blog. In the expansive world of e-commerce data... | 0 | 2023-10-16T10:58:09 | https://dev.to/crawlbase/scraping-aliexpress-with-python-2gb3 | python, webdev, dataengineering, data |

This article was originally posted on [Crawlbase Blog](https://crawlbase.com/blog/?utm_source=dev.to&utm_medium=referral&utm_campaign=content_distribution).

In the expansive world of e-commerce data retrieval, Scraping AliExpress with Python stands out as a vital guide for seasoned and novice data enthusiasts. This g... | crawlbase |

1,636,239 | The comprehensive guide to Entity Framework Core | Hello everyone, in the previous article, we provided an overview of how to access data from our... | 25,039 | 2023-10-16T16:00:57 | https://dev.to/maurizio8788/the-comprehensive-guide-to-entity-framework-core-l5a | dotnet, beginners, api, csharp | Hello everyone, in the previous article, we provided an overview of how to access data from our database through ADO.NET. Most of the time, we won't use ADO.NET in our applications; instead, we'll use an **ORM (Object Relational Mapper)**, and in .NET Core, the most commonly used one is **Entity Framework Core**.

In t... | maurizio8788 |

1,636,587 | npm projects | To start an npm project, you can run npm init in the directory where you want to initiate the... | 0 | 2023-10-16T18:56:50 | https://dev.to/itsmohamedyahia/npm-projects-4o7a | npm, node, javascript, webdev | To start an npm project, you can run `npm init` in the directory where you want to initiate the project.

You will be prompted with some prompts about the name of the project and some other stuff that you can just skip by pressing `enter`.

After that a `package.json` file will be created. It will in a `json` format ... | itsmohamedyahia |

1,636,618 | A digital marketer finds creative solutions to drive brand awareness and lead generation via free or paid digital channels | I am Farabi Ahmed. I am a Digital Marketer and SEO expert with a strong track record of creating... | 0 | 2023-10-16T19:59:55 | https://dev.to/farabi/a-digital-marketer-finds-creative-solutions-to-drive-brand-awareness-and-lead-generation-via-free-or-paid-digital-channels-1bj4 | digitalmarketer, freelancer, seoexpert, promot | I am Farabi Ahmed. I am a Digital Marketer and SEO expert with a strong track record of creating successful campaigns that drive traffic, generate leads, and increase revenue.

With a deep understanding of online marketing strategies and tactics,

I have developed a unique approach to optimizing websites for search eng... | farabi |

1,636,622 | Detail Explanation This Keyword in Java | In Java, the this keyword is a reference variable that refers to the current object. It is a special... | 0 | 2023-10-16T20:09:25 | https://dev.to/gaurbprajapati/this-keyword-in-java-5cpf | java, programming, coding, tutorial |

In Java, the `this` keyword is a reference variable that refers to the current object. It is a special keyword that has several important uses in object-oriented programming. Here's why and how we use the `this` keyword in Java:

### 1. `this` as a reference variable that refers to the current object:

In real life, t... | gaurbprajapati |

1,636,648 | The Evolution of "Do My Exam Online" Services | The digital era has not only transformed the way we communicate, shop, and entertain ourselves but... | 0 | 2023-10-16T21:10:02 | https://dev.to/carlosstewart1/the-evolution-of-do-my-exam-online-services-33lg | The digital era has not only transformed the way we communicate, shop, and entertain ourselves but has also revolutionized the educational landscape. One of the most significant advancements in this domain is the rise of online examination services [https://domyexams.net/](https://domyexams.net/) . While skeptics once ... | carlosstewart1 | |

1,636,728 | Styling React Components | Personally, for me, I dont like to style. I mean, I dont hate CSS and styling but I hate when I give... | 0 | 2023-10-19T18:21:45 | https://dev.to/balamurugan16/styling-react-components-i2 | ---

title: Styling React Components

published: true

date: 2023-10-16 17:35:27 UTC

tags:

canonical_url:

---

Personally, for me, I dont like to style. I mean, I dont hate CSS and styling but I hate when I give styling a try and It looks bad.

).

## Recursividade

**Inc., a leading provider of digital public and dating safety solutions, is proud to announce the launch of its revolutionary person verification and criminal history checks solution. Available on an iPhone via the web mobile version and in the Google Play Store, BrightSwipe... | matrubharti |

1,637,097 | Create and Deploy a Smart Contract on the NEAR Protocol | This article gives you a comprehensive guide on smart contract development and deployment on the NEAR... | 0 | 2023-10-17T08:05:22 | https://dev.to/oodlesblockchain/create-and-deploy-a-smart-contract-on-the-near-protocol-39je | smartcontract, blockchain, webdev, beginners | This article gives you a comprehensive guide on [smart contract development](https://blockchain.oodles.io/smart-contract-development-services/) and deployment on the NEAR platform.

NEAR Protocol is a decentralised [blockchain app development platform](https://blockchain.oodles.io/) that has been dubbed the “Ethereum k... | oodlesblockchain |

1,637,346 | Striking a Balance: The Rise of StrikeCo Electric Scooters in India | In the bustling streets of India, where urban congestion and air pollution continue to pose... | 0 | 2023-10-17T11:32:00 | https://dev.to/rohitjain/striking-a-balance-the-rise-of-strikeco-electric-scooters-in-india-48d5 | electric, scooter | In the bustling streets of India, where urban congestion and air pollution continue to pose significant challenges, electric scooters have emerged as a game-changing solution. One brand, in particular, is making waves with its innovative approach – StrikeCo Electric Scooters in india.

The Need for Electric Mobility in ... | rohitjain |

1,637,437 | Deploy Next JS App To Cpanel Using Github Actions | Deployment can be a challenging and time-consuming process if you zip files manually, put the zip on... | 0 | 2023-11-04T17:01:35 | https://dev.to/heyitsuzair/deploy-next-js-app-to-cpanel-using-github-actions-1nl9 | Deployment can be a challenging and time-consuming process if you zip files manually, put the zip on the server, and extract the files each time🥴

Github actions solve this problem by giving us an automated CI/CD platform.

## **Prerequisite**

- FTP account created in cpanel ([Check this link](https://support.cpanel.... | heyitsuzair | |

1,637,572 | La conferencia se ha celebrado en Anji, provincia china de Zhejiang | Yucun combina la ecoagricultura con el turismo, transforma minas abandonadas y cementeras en zonas... | 0 | 2023-10-17T14:48:27 | https://dev.to/jensenberry/la-conferencia-se-ha-celebrado-en-anji-provincia-china-de-zhejiang-10lo | Yucun combina la ecoagricultura con el turismo, transforma minas abandonadas y [cementeras](https://detailfinancial.com) en zonas turísticas, superpone el bambú, el té blanco y el turismo para crear productos agrícolas característicos, y convierte las granjas en granjas y casas de familia características. Como primer p... | jensenberry | |

1,637,590 | Azure Storage services - Learning Day 1 | Hi, here I am sharing my learning for my better practices and storing here so that I can read it... | 0 | 2023-10-17T16:20:34 | https://dev.to/rashmiranjan28/azure-storage-services-learning-day-1-4f12 | azure | Hi, here I am sharing my learning for my better practices and storing here so that I can read it whenever I need.

If it helps you to learn or recall the fundamental things, I would be happy.

## cloud

> The practice of using a network of remote servers hosted on the internet to store, manage and process data, rath... | rashmiranjan28 |

1,637,944 | Reverting Slack's New UI: A Guide for Developers Who Love Control | Hello fellow developers! Being a software engineer for the past 10 years, I've had my fair share of... | 0 | 2023-10-17T22:02:38 | https://dev.to/makepad/reverting-slacks-new-ui-a-guide-for-developers-who-love-control-2dii | tips, programming, tutorial, productivity |

Hello fellow developers!

Being a software engineer for the past 10 years, I've had my fair share of software updates, upgrades, and UI revamps. Some of them are warmly welcomed, while others make us question, "Why? Just... why?"

Recently, Slack decided to roll out a new UI, and let's say it wasn't my cup of coffee. ... | kaanyagci |

1,638,027 | Hacktoberfest 2023 Pledge | This will be my sixth Hacktoberfest! | 0 | 2023-10-18T00:45:52 | https://dev.to/kayh/hacktoberfest-2023-pledge-2b3k | hacktoberfest23 | This will be my sixth Hacktoberfest! | kayh |

1,638,173 | How WhatsApp tests software? | I was reflecting on how the WhatsApp team (WA) tests its apps and what other teams across the globe... | 0 | 2024-05-25T05:52:48 | https://automationhacks.io/2023-10-18-how-whatsapp-tests-software | engineeringpractices, testautomation, metaengineering, softwareengineering | ---

title: How WhatsApp tests software?

published: true

date: 2023-10-18 00:00:00 UTC

tags: Engineeringpractices,Testautomation,MetaEngineering,SoftwareEngineering

canonical_url: https://automationhacks.io/2023-10-18-how-whatsapp-tests-software

---

We asked CIOs and CISOs what keeps them up at night, and the two main concerns are reducing security risks and improving analyst confidence and productivity. CxOs must report to corporate boards, and members of tho... | stellarcyber | |

1,638,306 | List of Best websites to get Python homework help | Python, with its versatile applications and user-friendly syntax, has become a staple in the world of... | 0 | 2023-10-18T08:07:47 | https://dev.to/shimlawalarahul/list-of-best-websites-to-get-python-homework-help-43l8 | Python, with its versatile applications and user-friendly syntax, has become a staple in the world of programming. However, like any language, it can pose challenges, especially for those just starting their coding journey. Whether you're a student grappling with a complex assignment or a professional seeking clarity o... | shimlawalarahul | |

1,638,411 | ZenGPT: a simple ChapGPT alternative frontend | Originally posted on cri.dev I've been playing around with a home-made, super simple ChatGPT UI... | 0 | 2023-10-18T09:21:47 | https://cri.dev/posts/2023-10-17-zengpt-chapgpt-alternative-frontend-opensource-self-hosting/ | chatgpt, javascript, htmx, alpine | ---

title: "ZenGPT: a simple ChapGPT alternative frontend"

cover_image: https://cri.dev/assets/images/posts/zengpt.jpeg

tags:

- chatgpt

- javascript

- htmx

- alpine

published: true

canonical_url: https://cri.dev/posts/2023-10-17-zengpt-chapgpt-alternative-frontend-opensource-self-hosting/

---

**Originally post... | christianfei |

1,638,439 | Enter the World of Blockchain Gaming with Axie Infinity Clone Script | Certainly! Blockchain technology has changed many industries, including gaming. There's a popular... | 0 | 2023-10-18T10:01:49 | https://dev.to/josephinesaro/enter-the-world-of-blockchain-gaming-with-axie-infinity-clone-script-3gke | webdev, axie, axieinfinityclone | Certainly! Blockchain technology has changed many industries, including gaming. There's a popular game called Axie Infinity that uses blockchain. This article is here to tell you about blockchain gaming and a tool called Axie Infinity Clone Script. With this tool, you can make your version of the game.

**What is Block... | josephinesaro |

1,638,537 | Rails Core AMA - Rails World 2023: Hosted by...me! | As a contributing member of the Ruby on Rails Foundation, Planet Argon is delighted to collaborate... | 0 | 2023-10-18T11:43:45 | https://dev.to/planetargon/rails-core-ama-rails-world-2023-hosted-byme-355b | ruby, rails | As a [contributing member](https://blog.planetargon.com/blog/entries/news-planet-argon-joins-the-rails-foundation) of the [Ruby on Rails Foundation](https://rubyonrails.org/foundation), Planet Argon is delighted to collaborate with the Rails Foundation Core members and other contributing members to improve and maintain... | robbyrussell |

1,638,982 | Front-End: Qual framework escolher? Por onde começar? | Desenvolver aplicativos web modernos exige a escolha de um framework de frontend que atenda às... | 0 | 2023-10-18T18:01:31 | https://dev.to/raynneandrade/front-end-qual-framework-escolher-por-onde-comecar-1e2p | webdev, javascript, react, vue |

Desenvolver aplicativos web modernos exige a escolha de um framework de frontend que atenda às necessidades do projeto. Entre as opções mais populares, React, Angular e Vue.js se destacam. Neste artigo, faremos uma análise comparativa entre esses frameworks para ajudar você a tomar uma decisão.

## Popularidade e Com... | raynneandrade |

1,639,065 | How do I check if a bundle is installed in Netsuite? | Sometimes you want to check for the existence of another bundle prior to performing a feature or process. This shows you how. | 0 | 2023-10-18T19:28:23 | https://dev.to/smith288/how-do-i-check-if-a-bundle-is-installed-in-netsuite-1mc0 | ---

title: How do I check if a bundle is installed in Netsuite?

published: true

description: Sometimes you want to check for the existence of another bundle prior to performing a feature or process. This shows you how.

tags:

cover_image: https://media.tenor.com/Nfct9RreQfUAAAAd/dog-meme.gif

# Use a ratio of 100:42 fo... | smith288 | |

1,639,090 | Exploring Different Types of Software Development Roles and How to Get Ahead in Each | Discover the different types of software development roles and what aligns best with your skills, interests, and aspirations in this complete guide. | 0 | 2023-10-18T20:20:55 | https://code.pieces.app/blog/different-types-of-software-development-roles | <figure><img src="https://d37oebn0w9ir6a.cloudfront.net/account_32099/types-of-software-dev_849a39b64c4006cf9c1f023281433ecd.jpg" alt="Exploring Different Types of Software Development Roles."/></figure>

Software development is a vast and exciting field, teeming with opportunities and various career paths. Whether you... | get_pieces | |

1,639,103 | Build a Text Summarization app using Reflex (Pure Python) | Reflex is an open-source, full-stack Python framework that makes it easy to build and deploy web apps... | 0 | 2023-10-19T19:00:16 | https://dev.to/emmakodes_/build-a-text-summarization-app-using-reflex-pure-python-1a94 | reflex, python, openai, machinelearning | [Reflex](https://reflex.dev/) is an open-source, full-stack Python framework that makes it easy to build and deploy web apps in minutes. You have most of the features of a frontend library like Reactjs and a backend framework like Django in one with ease in development and deployment. All while developing in a single l... | emmakodes_ |

1,639,250 | JavaScript: Promise | How to use JavaScript Promises in the industry :- Ecommerce: Promises are a way to... | 0 | 2023-10-19T01:40:03 | https://dev.to/lakharashubham007/javascript-promise-4dj5 | javascript, interview | ## How to use JavaScript Promises in the industry :-

**Ecommerce:**

> Promises are a way to handle asynchronous operations more cleanly and manage errors effectively.

- E-commerce platforms often rely on external APIs for various functionalities like product catalog, payment processing, or shipping calculations. When... | lakharashubham007 |

1,639,361 | How to Get Your First Data Engineer Job? | Are you curious about data, numbers, and technology? Do you dream of working as a data engineer but... | 0 | 2023-10-19T05:05:05 | https://dev.to/aqsa81/how-to-get-your-first-data-engineer-job-4ifn | dataengineering, datascience, bigdata, sql | Are you curious about data, numbers, and technology? Do you dream of working as a data engineer but don't know where to start? You've come to the right place! In this guide, I will show you how to land an entry-level data engineer job step by step, using straightforward language and actionable tips.

## **Understanding... | aqsa81 |

1,639,530 | Why We Call Saving the Magical Feature | Recently, the entire team at Welltested AI engaged in a discussion to understand what according to us... | 0 | 2023-10-19T08:42:23 | https://dev.to/welltestedai/why-we-call-saving-the-magical-feature-183o | welltested, testing, flutter, ai | Recently, the entire team at Welltested AI engaged in a discussion to understand what according to us is the most notable feature of Welltested at the moment. And, everyone agreed upon `welltested save unit` which we also call the magic command :)

While we internally love it, quick analytics into our database showed u... | yogesh009 |

1,639,548 | Industrial Ethylene Oxide Sterilizer-Lodha International | The Industrial Ethylene Oxide (EO) Sterilizer offered by Lodha International is a state-of-the-art... | 0 | 2023-10-19T09:18:27 | https://dev.to/lodhapharma/industrial-ethylene-oxide-sterilizer-lodha-international-119n | The Industrial Ethylene Oxide (EO) Sterilizer offered by Lodha International is a state-of-the-art sterilization system capable of effectively sterilizing various types of medical devices and equipment. which has excellent penetrating properties, to ensure thorough and efficient sterilization of even the most intricate... | lodhapharma | |

1,639,781 | Deleting a Column in SQL: Everything You Need to Know | Let’s learn what happens when you delete a column in SQL and how to do it in the most popular DBMS... | 21,681 | 2023-10-19T13:10:22 | https://www.dbvis.com/thetable/deleting-a-column-in-sql/ | delete, sql | **Let’s learn what happens when you delete a column in SQL and how to do it in the most popular DBMS technologies.**

---

Tools used in this tutorial

[DbVisualizer](https://www.dbvis.com/), top rated database management tool and SQL client.

---

Deleting a column in SQL is one of the most common operations when dealing... | dbvismarketing |

1,639,836 | An Easier Way: Chat to Deploy Llama2 with Walrus | In the previous blog, we explored how to deploy Llama2 on AWS with Walrus. In this blog, we will... | 25,061 | 2023-10-19T14:14:05 | https://www.seal.io/resource/blog/easier-way-to-deploy | devops, tutorial, llm, aws | In [the previous blog](https://dev.to/seal-io/how-to-deploy-llama2-on-aws-with-walrus-in-minutes-1n13), we explored how to deploy Llama2 on AWS with Walrus. In this blog, we will introduce an AI tool, [Appilot](https://github.com/seal-io/appilot), to simplify the deployment.

Appilot ['æpaɪlət] stands for application-p... | seal-io |

1,640,027 | Install Azure CLI using Powershell on Windows | If you want to interact with Microsoft Azure resources from your machine, you need an Azure CLI... | 26,166 | 2023-10-19T17:11:51 | https://dev.to/jasper475/install-azure-cli-using-powershell-on-windows-c | If you want to interact with Microsoft Azure resources from your machine, you need an Azure CLI installed.

**Pre-Requisites: **

1. Host Laptop (Windows, Mac, Linux)

2. [Azure CLI](https://learn.microsoft.com/en-us/cli/azure/install-azure-cli) Installation documentation.

In this tutorial, I will share specific steps... | jasper475 | |

1,640,257 | Day 836 : Worst Comes To Worst | liner notes: Professional : Got to demo an application I want to use in upcoming talks and... | 0 | 2023-10-19T21:55:14 | https://dev.to/dwane/day-836-worst-comes-to-worst-594h | hiphop, code, coding, lifelongdev | _liner notes_:

- Professional : Got to demo an application I want to use in upcoming talks and workshops. Looked like it worked for everyone except with a person on a iPhone using Chrome. I'll have to investigate more. I responded to some community questions. I even went through some tutorials and updated the code snip... | dwane |

1,640,269 | Medical Grade Protective Mask Filter Material Market Size, Type, segmentation, growth and forecast 2023-2030 | Medical Grade Protective Mask Filter Material Market The Medical Grade Protective Mask Filter... | 0 | 2023-10-19T22:23:56 | https://dev.to/chiragreportprime1/medical-grade-protective-mask-filter-material-market-size-type-segmentation-growth-and-forecast-2023-2030-17gf | business, marketing, growth, marketresearch |

Medical Grade Protective Mask Filter Material Market

The Medical Grade Protective Mask Filter Material Market is expected to grow from USD 2.80 Billion in 2022 to USD 3.70 Billion by 2030, at a CAGR of 3.30% durin... | chiragreportprime1 |

1,640,318 | PCR Tubes And PCR Plates Market Size, Type, segmentation, growth and forecast 2023-2030 | PCR Tubes And PCR Plates Market The PCR Tubes And PCR Plates Market is expected to grow from USD... | 0 | 2023-10-19T23:32:57 | https://dev.to/chiragreportprime2/pcr-tubes-and-pcr-plates-market-size-type-segmentation-growth-and-forecast-2023-2030-9ah | marketing, business, growth, marketresearch |

PCR Tubes And PCR Plates Market

The PCR Tubes And PCR Plates Market is expected to grow from USD 1.70 Billion in 2022 to USD 2.20 Billion by 2030, at a CAGR of 3.10% during the forecast period.

Get the Sample Rep... | chiragreportprime2 |

1,640,399 | Eu Capacito: cursos gratuitos de IA, IOT, Azure e muito mais | A “Eu Capacito” se orgulha de ter impactado positivamente a vida de mais de 1,5 milhão de usuários... | 0 | 2023-10-20T23:52:35 | https://guiadeti.com.br/eu-capacito-cursos-gratuitos-ti/ | cursogratuito, bigdata, blockchain, cursosgratuitos | ---

title: Eu Capacito: cursos gratuitos de IA, IOT, Azure e muito mais

published: true

date: 2023-10-19 19:13:21 UTC

tags: CursoGratuito,bigdata,blockchain,cursosgratuitos

canonical_url: https://guiadeti.com.br/eu-capacito-cursos-gratuitos-ti/

---

A “Eu Capacito” se orgulha de ter impactado positivamente a vida de ma... | guiadeti |

1,640,404 | The Magic of Event Listeners | As I delve further into the tech world, I've come to realize the vast array of functionalities that... | 0 | 2023-10-20T02:45:44 | https://dev.to/devincb93/the-magic-of-event-listeners-52lf | webdev, javascript, beginners, programming | As I delve further into the tech world, I've come to realize the vast array of functionalities that code can provide. Among these, event listeners stand out as a crucial tool that can be leveraged to enhance user interaction and engagement. In this blog post, we'll delve into the world of event listeners with a focus o... | devincb93 |

1,640,518 | Understanding the Art of Defense: Social Engineering Attack Detection and Defense | Technological advancements and increased connectivity have made our lives more convenient, cyber... | 0 | 2023-10-20T05:45:50 | https://dev.to/indrajithbandara/understanding-the-art-of-defense-social-engineering-attack-detection-and-defense-4lji | cybersecurity | Technological advancements and increased connectivity have made our lives more convenient, cyber threats have also evolved, becoming more sophisticated and deceptive. Social engineering attacks, in particular, have become a substantial menace to cybersecurity. These attacks prey on human vulnerabilities rather than tec... | indrajithbandara |

1,640,524 | "Expert Estimating Services in Canada and the USA: Unlocking Your Project's Potential" | In the dynamic landscape of construction and project management, accurate estimates are the... | 0 | 2023-10-20T06:02:44 | https://dev.to/estimate/expert-estimating-services-in-canada-and-the-usa-unlocking-your-projects-potential-5b81 | <p>In the dynamic landscape of construction and project management, accurate estimates are the foundation for successful outcomes. In both Canada and the USA, where the construction industry is booming, having the right estimating services in place can make all the difference. This article delves into the world of <a t... | estimate | |

1,640,697 | Benefits of Enrolling in a Local IELTS Coaching Center | Enrolling in a coaching center when preparing for your IELTS is a great way to improve your skills.... | 0 | 2023-10-20T09:04:11 | https://dev.to/sgold7593/benefits-of-enrolling-in-a-local-ielts-coaching-center-9e2 | <p>Enrolling in a coaching center when preparing for your IELTS is a great way to improve your skills. After all, you need to prove that you have exceptional mastery over reading, writing, speaking, and listening. Now, you can choose online live tutoring sessions. But the better option is certainly a local IELTS coachi... | sgold7593 | |

1,640,862 | Managing Your Money Abroad: Automating Expense Tracking With Receipt Recognition | Just a few months ago I went off on a new journey in search of new experiences in Portugal! However,... | 0 | 2023-10-20T10:44:41 | https://dev.to/kwan/managing-your-money-abroad-automating-expense-tracking-with-receipt-recognition-1meg | ai, ocr, javascript | _**Just a few months ago I went off on a new journey in search of new experiences in Portugal! However, during the initial months of my stay, I encountered unexpected challenges, such as the lack of financial control…**_

Like many others who move to a new country, I found myself caught up in a big net of activities an... | kwan |

1,641,006 | How to get the hex code of a key from my keyboard ? 👓 | If you are studying your keyboard's behavior and want to know the hexadecimal value of the key event... | 0 | 2023-10-20T13:49:02 | https://dev.to/stacy-roll/how-to-get-the-code-of-a-letter-or-an-event-from-my-keyboard-in-hex-6o2 | rust, tutorial, keyboar, learning |

If you are studying your keyboard's behavior and want to know the hexadecimal value of the key event you wish to execute when pressing it, you can run this Rust code to obtain the exact value. If you don't have Rust installed, **you can find the mapped values in** the [k_board](https://docs.rs/k_board/1.1.2/src/k_boar... | stacy-roll |

1,641,190 | Power Automate - Environment Variables | If you want to do ALM (application Life-cycle Management), and you should, then you need environment... | 21,919 | 2023-11-06T07:40:36 | https://dev.to/wyattdave/power-automate-environment-variables-a5d | powerplatform, powerautomate, lowcode, rpa | If you want to do ALM (application Life-cycle Management), and you should, then you need environment variables.

ALM has 2 main benefits

1. Separation of duty

2. Protection of prod

Separation of duty means the person who created it can't deploy it. Why is that important, well we need checks and balances, apps and aut... | wyattdave |

1,641,274 | Pledge #hacktoberfest23 | Intro So this is my first post here, so I guess Hello World! or Hello Dev.to community! This year,... | 0 | 2023-10-20T18:55:38 | https://dev.to/gonmmarques/pledge-hacktoberfest23-41kb | hacktoberfest23 | **Intro**

So this is my first post here, so I guess Hello World! or Hello Dev.to community!

This year, I'm also taking part of the community effort to pledge to the event.

I'm dedicating myself to actively contribute to open-source projects throughout the month of October.

**Goals**

My goals for Hacktoberfest ... | gonmmarques |

1,641,672 | Proseso ng paggawa ng aking (dating) Website | Dahil sa pagkabagot noong pandemic, gumawa ako ng sarili kong website, mula noon hanggang ngayon di... | 0 | 2023-10-21T05:36:36 | https://dev.to/torten/proseso-ng-paggawa-ng-aking-dating-website-3kb3 | webdev, svelte, javascript, programming | Dahil sa pagkabagot noong pandemic, gumawa ako ng sarili kong website, mula noon hanggang ngayon di ko pa rin alam kung ano ang ilalagay ko dito. Sana naman ay sa susunod na rebisyon ng aking website ay magbago ito.

## Detalyeng Teknikal

Ang website na... | torten |

1,641,688 | Top NFT Marketplace Business for Entrepreneurs in 2024 | The NFT marketplace is booming nowadays, and entrepreneurs have a golden opportunity to jump into... | 0 | 2023-10-21T06:12:44 | https://dev.to/aanaethan/top-nft-marketplace-business-for-entrepreneurs-in-2024-ao0 | nft, business, technology, blockchain |

The NFT marketplace is booming nowadays, and entrepreneurs have a golden opportunity to jump into this exciting space. NFTs have transformed the way we think about digital assets and owne... | aanaethan |

1,641,753 | ChatGPT vs. Human Chat: Pros and Cons | AI makes many online functions faster: generating blog posts and other written material and even... | 0 | 2023-10-21T07:43:03 | https://dev.to/iamfranklin/chatgpt-vs-human-chat-pros-and-cons-55ni | AI makes many online functions faster: generating blog posts and other written material and even customer service responses on an online text chat. Let’s analyze the pros and cons of each option to find out which one is best for running your business.

<h2>ChatGPT Pros and Cons</h2>

Here are the pros and cons of utilizi... | iamfranklin | |

1,641,766 | Latest Technology News in Pakistan | Latest Technology News in Pakistan Introduction In recent years, Pakistan has witnessed significant... | 0 | 2023-10-21T08:13:18 | https://dev.to/technewspakistan/latest-technology-news-in-pakistan-4722 | Latest Technology News in Pakistan

Introduction

In recent years, Pakistan has witnessed significant growth in the field of technology. The government of Pakistan has been actively investing in artificial intelligence research, leading to numerous advancements in the tech sector. This article will delve into the lates... | technewspakistan | |

1,641,778 | What Is LangChain? Unlocking the Potential of LLMs | LangChain is an open-source framework crafted to ease the development of applications that leverage... | 0 | 2023-10-21T08:35:10 | https://medium.com/altern/what-is-langchain-unlocking-the-potential-of-llms-8ee8623888c6 | llm, langchain, ai, machinelearning |

[LangChain](https://langchain.com) is an open-source framework crafted to ease the development of applications that leverage LLMs. Its primary function is to provide a standardized interface for chains, offering extensive integrations with other tools, and facilitating end-to-end chains for typical applications. The ... | dariubs |

1,641,953 | What is npm? | 🔍 What is npm? 📦 npm is an open-source repository of tools & libraries created by the... | 0 | 2023-10-21T13:10:50 | https://dev.to/omkarbhavare/npm-120c | webdev, javascript, npm, developer | 🔍 What is npm? 📦

npm is an open-source repository of tools & libraries created by the developers. It serves as a central-hub for JavaScript Community, offering a vast collection of resusable code packages to enhance and accelerate their projects.

🤝 Benefits of npm

- Easy Package Management: npm simplifies the pr... | omkarbhavare |

1,642,120 | The Top Entrepreneurs of Marbella & The Costa Del Sol | Top Entrepreneurs Marbella has been created for local businesses who desire more from their... | 0 | 2023-10-21T17:06:00 | https://dev.to/thetopmarbella/the-top-entrepreneurs-of-marbella-the-costa-del-sol-5cgn | Top [Entrepreneurs](https://thetopmarbella.com/) Marbella has been created for local businesses who desire more from their advertising budget. | thetopmarbella | |

1,642,131 | Demystifying Android Architecture: A City Analogy | Introduction: Welcome to the bustling city of Android! Just like a city, Android has a... | 0 | 2023-11-08T19:51:01 | https://dev.to/olaoluwa99/demystifying-android-architecture-a-city-analogy-3l4f | ### Introduction:

Welcome to the bustling city of Android! Just like a city, Android has a complex architecture composed of various layers and components that work together to provide a seamless user experience. In this blog post, we'll use a city analogy to demystify Android's architecture, from its foundation to the ... | olaoluwa99 | |

1,642,247 | Designing for Inclusivity: Creating Accessible Web Experiences for All | In today's digital age, the internet has become an integral part of our lives, connecting people from... | 0 | 2023-10-24T09:00:00 | https://dev.to/sajeeb_me/designing-for-inclusivity-creating-accessible-web-experiences-for-all-4c0e | webdev, webaccessibility, inclusivedesign, digitalinclusion | In today's digital age, the internet has become an integral part of our lives, connecting people from all walks of life. As the digital landscape continues to expand, it's crucial to ensure that everyone can access and benefit from web content. This is where web accessibility comes into play. In this article, we will e... | sajeeb_me |

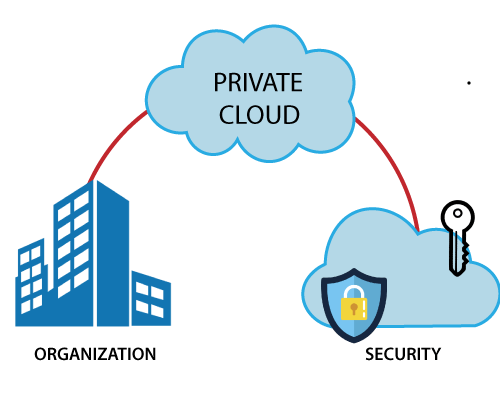

1,642,301 | Demystifying Cloud Computing: Exploring the Core Pillars of Private and Public Clouds, IaaS, PaaS, and SaaS | The fundamental pillars of cloud computing include various deployment models and service... | 0 | 2023-12-02T18:18:24 | https://dev.to/msfaizi/demystifying-cloud-computing-exploring-the-core-pillars-of-private-and-public-clouds-iaas-paas-and-saas-n8c | cloud, aws, devops, opensource | ## The fundamental pillars of cloud computing include various deployment models and service models. Let's explore each of these pillars:

**1. Private Cloud:**

- A private cloud is a cloud environment exclusively used... | msfaizi |

1,642,389 | 678. Valid Parenthesis String | Problem: 678. Valid Parenthesis String First Approach Can utilize a similar method like validating... | 0 | 2023-10-22T03:12:52 | https://dev.to/truongductri01/678-valid-parenthesis-string-58op | medium, leetcode | Problem: [678. Valid Parenthesis String](https://leetcode.com/problems/valid-parenthesis-string/description/)

<strong>First Approach</strong>

Can utilize a similar method like validating parenthesis.

Loop through each char `c` in the string `s` and check:

- if `c == *`, increase `countStar`

- if `c == (`, increase `... | truongductri01 |

1,642,544 | Copy blobs between Storage Accounts with an Azure Function | Introduction In this post I’m going to detail how you can use the blob copy feature in... | 0 | 2023-12-05T16:01:27 | https://rios.engineer/copy-blobs-between-storage-accounts-with-an-azure-function/ | azure, automation, functions, azcopy | ---

title: Copy blobs between Storage Accounts with an Azure Function

published: true

date: 2023-04-02 13:40:34 UTC

tags: Azure,Automation,Functions,AzCopy

canonical_url: https://rios.engineer/copy-blobs-between-storage-accounts-with-an-azure-function/

---

## Introduction

In this post I’m going to detail how you can ... | riosengineer |

1,642,673 | Abstraindo a API Pública do CNJ - DataJud | Nesta semana o Filipe Deschamps postou em sua newsletter oficial sobre a API Pública do DataJud,... | 0 | 2023-10-22T13:43:07 | https://dev.to/joaotextor/abstraindo-a-api-publica-do-cnj-datajud-54d | javascript, webdev, api, programming | Nesta semana o Filipe Deschamps postou em sua newsletter oficial sobre a API Pública do DataJud, lançada pelo CNJ para oferecer de forma unificada em uma única API os metadados dos processos judiciais de todo o Brasil (exceto os que tramitam em segredo de justiça).

Como trabalho atualmente no Judiciário Federal e me i... | joaotextor |

1,642,765 | Application Dependency Mapping: A 2024 Guide | What Is Application Dependency Mapping? Application dependency mapping is a process that... | 0 | 2023-10-22T17:02:24 | https://dev.to/giladmaayan/application-dependency-mapping-a-2024-guide-25n6 |

## What Is Application Dependency Mapping?

Application dependency mapping is a process that visualizes and documents the dependencies between software applications and the underlying IT infrastructure. In essenc... | giladmaayan | |

1,642,978 | Bash Script Operators | INTRODUCTION A Bash shell script, often known as a shell script or just a shell, is a text... | 0 | 2023-10-22T23:08:17 | https://dev.to/dhebbythenerd/bash-script-operators-4e6e | beginners, devops, cloudcomputing, bash | ## INTRODUCTION

A Bash shell script, often known as a shell script or just a shell, is a text file that contains a series of commands written in the Bash (Bourne Again Shell) scripting language.

Operators are special symbols or characters used in Bash scripting to carry out various operations on values and variables; ... | dhebbythenerd |

1,643,013 | What are we building⁉️ | Lucky Holders aims to redefine the NFT industry by providing a comprehensive range of utilities and... | 0 | 2023-10-23T01:10:02 | https://dev.to/luckyholders/what-are-we-building-2e1 | interview, productivity, blockchain, web3 |

Lucky Holders aims to redefine the NFT industry by providing a comprehensive range of utilities and exclusive opportunities to its holders.

“By offering a vast array of NFT-backed access and utilities, Lucky Hol... | luckyholders |

1,643,061 | Ansible - Part 1 | Lets Install Ansible sudo apt install software-properties-common sudo... | 0 | 2023-10-25T23:58:39 | https://dev.to/technonotes/ansible-part-1-1fgh | ### Lets Install Ansible

```

sudo apt install software-properties-common

sudo add-apt-repository --yes ppa:ansible/ansible

apt update

sudo apt install ansible

or

If any issues faced , just follow below steps according to the error message which is received in your screen.

sudo apt install software-properties-common... | technonotes | |

1,643,206 | Placeholder Contributor | Intro Highs and Lows Growth | 0 | 2023-10-23T07:12:02 | https://dev.to/fresult/placeholder-contributor-5422 | hack23contributor | <!-- ✨This template is only meant to get your ideas going, so please feel free to write your own title, structure, and words! ✨ -->

### Intro

<!-- Share a bit about yourself as a contributor. Is this your first Hacktoberfest, or have you contributed to others? Feel free to embed your GitHub account by using {% embe... | fresult |

1,643,398 | Business Analyst Career | A career as a Business Analyst can be rewarding and offers a range of opportunities in various... | 0 | 2023-10-23T10:29:45 | https://dev.to/abhinav1838/business-analyst-career-2ipl | business, course, onlinetraining, certification | A career as a Business Analyst can be rewarding and offers a range of opportunities in various industries. Business Analysts are responsible for bridging the gap between business needs and technology solutions, helping organizations make informed decisions and improve their processes.

Here's an overview of a Business ... | abhinav1838 |

1,643,799 | Creating a First-Person Shooter Game | First-person shooter (FPS) games have long been a staple of the gaming world, offering thrilling and... | 0 | 2023-10-23T15:30:08 | https://dev.to/carolreed/creating-a-first-person-shooter-game-381 | gamedev, shootinggame, shootergame | First-person shooter (FPS) games have long been a staple of the gaming world, offering thrilling and immersive experiences for players. If you've ever dreamt of developing your own FPS game, you're in the right place. In this comprehensive guide, we'll walk you through the process of creating a first-person shooter gam... | carolreed |

1,644,004 | Baby Essentials: Bejbi.com - Your Go-To Source for Child Product Reviews | For parents, every day with a little one is an extraordinary journey filled with joy, challenges, and... | 0 | 2023-10-23T19:49:16 | https://dev.to/bejbicomm/baby-essentials-bejbicom-your-go-to-source-for-child-product-reviews-24cp | baby, mom | For parents, every day with a little one is an extraordinary journey filled with joy, challenges, and discoveries. With those parents in mind who wish to ensure the comfort and development of their little one, Bejbi.com was created. It's a place where you'll find reviews and recommendations for children's products that... | bejbicomm |

1,644,270 | TIL (1st Post) | CodeSpace Codespaces allow edits to GitHub repositories you "forked." Bash is useful because you... | 0 | 2023-10-24T05:35:05 | https://dev.to/joannarodriguez134/til-1st-post-3ac | **CodeSpace**

Codespaces allow edits to GitHub repositories you "forked."

Bash is useful because you can type in rakeup, which takes you to a server called puma in which you can see your changes.

**Deploying websites**

I also learned about deploying websites through _Render_ for my repositories.

**Reflection**

Ove... | joannarodriguez134 | |

1,644,336 | Network X 2023 Paris | Always on the move! 🛫 Today, we depart to Network X event! Will you be there? Please, contact us!... | 0 | 2023-10-24T07:26:43 | https://dev.to/relianoid/network-x-2023-paris-5bmd | networking, telco, 5g, paris | Always on the move! 🛫 Today, we depart to Network X event!

Will you be there? Please, contact us!

https://www.relianoid.com/about-us/events/network-x-paris-2023/

#NetworkX2023 #TelecomsInnovation #5GMonetization #NetworkCloudTech #TelecomsLeaders #Fiber5GIntegration #TelcoInnovations #NetworkInfrastructure

#T... | relianoid |

1,645,159 | My Hacktoberfest 2023 Recap | Intro Hi there! My name is Matt and I'm a software engineer by trade but also by passion.... | 0 | 2023-10-24T21:19:41 | https://dev.to/shelbourn/my-hacktoberfest-2023-recap-535c | hack23contributor | ### Intro

Hi there! My name is Matt and I'm a software engineer by trade but also by passion. In addition to programming, I also enjoy spending time with my daughter, landscape/nature photography, and cooking (recently I've been really into baking). :smile:

This isn't my first rodeo with Hacktoberfest and it most-cer... | shelbourn |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.