id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

253,839 | Laptops? | Looking for a used or new cheap MACBOOK if anyone knows somebody selling theirs or if anyone has one... | 0 | 2020-02-02T20:46:55 | https://dev.to/jasminelad16/laptops-29ef | hardware, laptops, ios, delas | Looking for a used or new cheap MACBOOK if anyone knows somebody selling theirs or if anyone has one to lend. Thats all missing to start my bootcamp. | jasminelad16 |

253,889 | Primeira Oficina de Lógica de Programação WoMakersCode no Rio de Janeiro | Créditos da foto de capa: Érika Alves No dia 01/02 aconteceu a nossa primeira oficina de lógica de p... | 0 | 2020-02-02T22:16:34 | https://dev.to/womakerscode/primeira-oficina-de-logica-de-programacao-womakerscode-no-rio-de-janeiro-2de2 | womenintech, wecoded, womakerscode | _Créditos da foto de capa: Érika Alves_

No dia 01/02 aconteceu a nossa primeira oficina de lógica de programação da comunidade WoMakersCode RJ. O evento gratuito ocorreu no Sesc Tijuca e contou com o apoio da DigitalOcean e a Alura.

A oficina foi bastante especial para nós. Por conta das vagas limitadas precisávamos selecionar apenas 25 mulheres, mas recebemos no total **156 inscrições** o que para nós foi uma grande - e feliz! - supresa. A escolha das participantes não foi fácil, pois a nossa vontade era de escolher todas! Ouvimos histórias incríveis de mulheres de advogadas, arquitetas a jornalistas, vendedoras e estudantes que estavam em busca de um novo aprendizado, mudança de carreira ou simplesmente complementando os seus estudos. Conhecimento nunca é demais!

Muita coisas legais aconteceram durante o dia e tivemos alguns destaques: duas mulheres que vieram de São Paulo para participar do evento - e que no final descobrimos que uma era da Colômbia e outra do Peru! Além disso, tivemos uma participante de 15 anos que nos contou que quer estudar programação e seguir por essa área quando terminar o ensino médio. Sua irmã veio apenas para acompanhar, mas no fim acabou sentando em um dos computadores e participou também. Por fim, uma das mulheres selecionadas veio do Acre, mas por sorte o evento aconteceu bem no período que a mesma estava de férias e simplesmente uniu o útil ao agradável :)

_Foto: Amanda Azevedo_

O evento começou às 9h30 com a apresentação da nossa voluntária Luanda Pereira sobre a comunidade e realizando uma pequena dinâmica entre as participantes e depois apresentando o time de voluntárias que estariam atuando naquele dia: Gabrielly de Andrade, Daiane Alves, Adrielle Ribeiro, Mariana Coelho, Hillary Sousa e Aline Bezzoco.

Depois da apresentação inicial tivemos um bate-papo com as voluntárias Gabrielly, Adriele e Hillary sobre as diversas vertentes da área de tecnologia. Em seguida, Daiane e Adriele apresentaram sobre o que é lógica de programação, sua importância na área de tecnologia e além de apresentar a pseudo-linguagem a ser utilizada na oficina, o Portugol, utilizando da IDE Portugol Studio.

Nisso, alguns exercícios foram sendo passados e as mentoras foram ajudando as participantes, tirando dúvidas e auxiliando-as em todos os momentos. No Dojo, a nossa voluntária Gabrielly conduziu o desafio com as participantes em desenvolver um programa chamado Megasena.

Vimos as participantes ajudando umas as outras algo que para nós mulheres é gratificante, já que prezamos pela união e protagonismo feminino na área de tecnologia.

_Foto: Mariana Coelho_

Por fim, tivemos a hora do sorteio! Sorteamos algumas camisetas cedidas pela DigitalOcean, dois cursos da Alura e um ingresso para o PHPWomen.

Agradecemos a todas as voluntárias que se disponibilizaram a organizar mais um evento da comunidade! Desde a parte da organização até conteúdo e mentorias! Vocês foram incríveis!

Por trás de todo código existem pessoas e essas tem suas histórias para contar. Esperamos que a WoMakersCode tenha sido um capítulo feliz na vida delas e as que se apaixonaram e escolheram a programação como forma de aprendizado possa continuar estudando e conseguir trabalhar como uma pessoa desenvolvedora.

Esperamos todas vocês novamente nos nossos próximos eventos!

Para quem não conseguiu participar desta primeira edição: fiquem atentas, pois estamos considerando fazer mais uma edição ainda este ano :) Acompanhe as novidades nas nossas [redes sociais](https://linktr.ee/womakerscode) para ficar por dentro de tudo o acontece na comunidade!

**Sobre a WoMakersCode**

A WoMakersCode é uma iniciativa sem fins lucrativos, que busca o protagonismo feminino na tecnologia, através do desenvolvimento profissional e econômico. Acreditamos que empoderar é incentivar a participação, o aprendizado colaborativo e, acima de tudo, dar voz às mulheres.

Oferecemos para a comunidade workshops, eventos e debates com foco no mercado de tecnologia, orientados para capacitação técnica e fortalecimento de habilidades pessoais. Nós trabalhamos para prepará-las e incentivá-las a investir em suas carreiras e em realizar seus sonhos. | alinebezzoco |

254,019 | Patterns for resilient architecture & optimizing web performance | TL;DR notes from articles I read today. Patterns for resilient architecture: Embracing fail... | 0 | 2020-02-03T13:00:20 | https://insnippets.com/tag/issue88/ | todayilearned, architecture, webperf, serverless | *TL;DR notes from articles I read today.*

### [Patterns for resilient architecture: Embracing failure at scale](http://bit.ly/38YQm8P)

- Build your application to be redundant, duplicating components to increase overall availability across multiple availability zones or even regions. To support this, ensure you have a stateless application and perhaps an elastic load balancer to distribute requests.

- Enable auto-scaling not just for AWS services but application auto-scaling for any service built on AWS. Determine your auto-scaling technology by the speed you tolerate - preconfigure custom golden AMIs, avoid running or configuring at startup time, replace configuration scripts with Dockerfiles, or use container platforms like ECS or Lambda functions.

- Use infrastructure as code for repeatability, knowledge sharing, and history preservation and have an immutable infrastructure with immutable components replaced for every deployment, with no updates on live systems and always starting with a new instance of every resource, with an immutable server pattern.

- As a stateless service, treat all client requests independently of prior requests and sessions, storing no information in local memory. Share state with any resources within the auto-scaling group using in-memory object caching systems or distributed databases.

*[Full post here](http://bit.ly/38YQm8P), 10 mins read*

---

###[Tips to speed up serverless web apps in AWS](http://bit.ly/384p1li)

- Keep Lambda functions warm by invoking the Ping function using AWS CloudWatch or Lambda with Scheduled Events and using the Serverless WarmUP plugin.

- Avoid cross-origin resource sharing (CORS) by accessing your API and frontend using the same origin point. Set origin protocol policy to HTTPS when connecting the API gateway to AWS CloudFront and configure both API Gateway and CloudFront to the same domain, and configure their routing accordingly.

- Deploy API gateways as REGIONAL endpoints.

- Optimize the frontend by compressing files such as JavaScript, CSS using GZIP, Upload to S3. Use the correct Content-Encoding: gzip headers, and enable Compress Objects Automatically in CloudFront.

- Use the appropriate memory for Lambda functions. Increase CPU speed when using smaller memory for Lambda.

*[Full post here](http://bit.ly/384p1li), 4 mins read*

---

### [Optimizing website performance and critical rendering path](http://bit.ly/36Nrcbz)

- Many things can lead to high rendering times for web pages - the amount of data transferred, the number of resources to download, length of the critical rendering path (CRP), etc.

- To minimize data transferred, remove unused parts (unreachable JavaScript functions, styles with selectors not matching any element, HTML tags always hidden with CSS) and remove all duplicates.

- Reduce the total count of critical resources to download by setting media attributes for all links referencing stylesheets and making some styles inlined. Also, mark all script tags as async (not parser blocking) or defer (evaluated at end of page load).

- You can shorten the CRP with the approaches above, and also rearrange the code amongst files so that the styles and scripts of above-the-fold content load before you parse or render anything else.

- Keep style tags and script tags close to each other in HTML (linewise) to help the browser preloader, and batch HTML updates to avoid multiple layout changes (such as those triggered by window resizing or device orientation).

*[Full post here](http://bit.ly/36Nrcbz), 8 mins read*

---

*[Get these notes directly in your inbox every weekday by signing up for my newsletter, in.snippets().](https://mailchi.mp/appsmith/insnippets?utm_source=devto&utm_medium=post08&utm_campaign=is)* | mohanarpit |

254,105 | [solution] Des champs personnalisés en plein coeur | Salut les joomlers de l'extrême! Un ami joomler qui se reconnaitra m'a demandé comment faire pour... | 0 | 2020-02-05T17:11:18 | https://dev.to/mralexandrelise/solution-des-champs-personnalises-en-plein-coeur-3k2o | webdev, tutorial, joomla | ---

title: [solution] Des champs personnalisés en plein coeur

published: true

date: 2019-11-26 09:41:31 UTC

tags: webdev,tutorial,joomla

canonical_url:

---

Salut les joomlers de l'extrême!

Un ami joomler qui se reconnaitra m'a demandé comment faire pour intégrer $this->item->jcfields dans un module comme mod\_articles\_latest

J'ai accepté le défi et je partage le resultat avec vous. La communauté de Joomla!. La famille des joomlers.

Découvrez sans plus attendre l'exemple de code à utiliser, bien commenté pour réussir le challenge.

Bon courage et à bientôt pour de nouvelles astuces

[Voir comment faire](https://gist.github.com/alexandreelise/e04d417c9f911ce2ab2a3e931142e89b "Exemple de code pour le module articles latest") | mralexandrelise |

254,112 | docker containers deployment in ECS EC2 | Hello community, I am looking for deployment best practices. i want to deploy docker images in ECS c... | 0 | 2020-02-03T09:52:28 | https://dev.to/mourik/docker-containers-deployment-in-ecs-ec2-2m2m | terraform, cicd, ecs, aws | Hello community,

I am looking for deployment best practices. i want to deploy docker images in ECS cluster, i want to know if terraform is a good way to perform container deployment in ECS, is there a better way adapted to this kind of deployment?

Thanks in advance. | mourik |

254,200 | Build React Native Fitness App #8 : [iOS] Firebase Facebook Login | This tutorial is eight chapter of series build fitness tracker this app use for track workouts, diets... | 4,584 | 2020-02-03T13:47:15 | https://kriss.io/build-react-native-fitness-app-8-ios-firebase-facebook-login/ | reactnativefirebas, firebase | ---

title: Build React Native Fitness App #8 : [iOS] Firebase Facebook Login

published: true

date: 2020-02-03 05:37:00 UTC

tags: react-native-firebas,firebase

canonical_url: https://kriss.io/build-react-native-fitness-app-8-ios-firebase-facebook-login/

cover_image: https://cdn-images-1.medium.com/max/1024/0*Zi-H6NonKNlpenCh.png

series: Build React native Fitness app

---

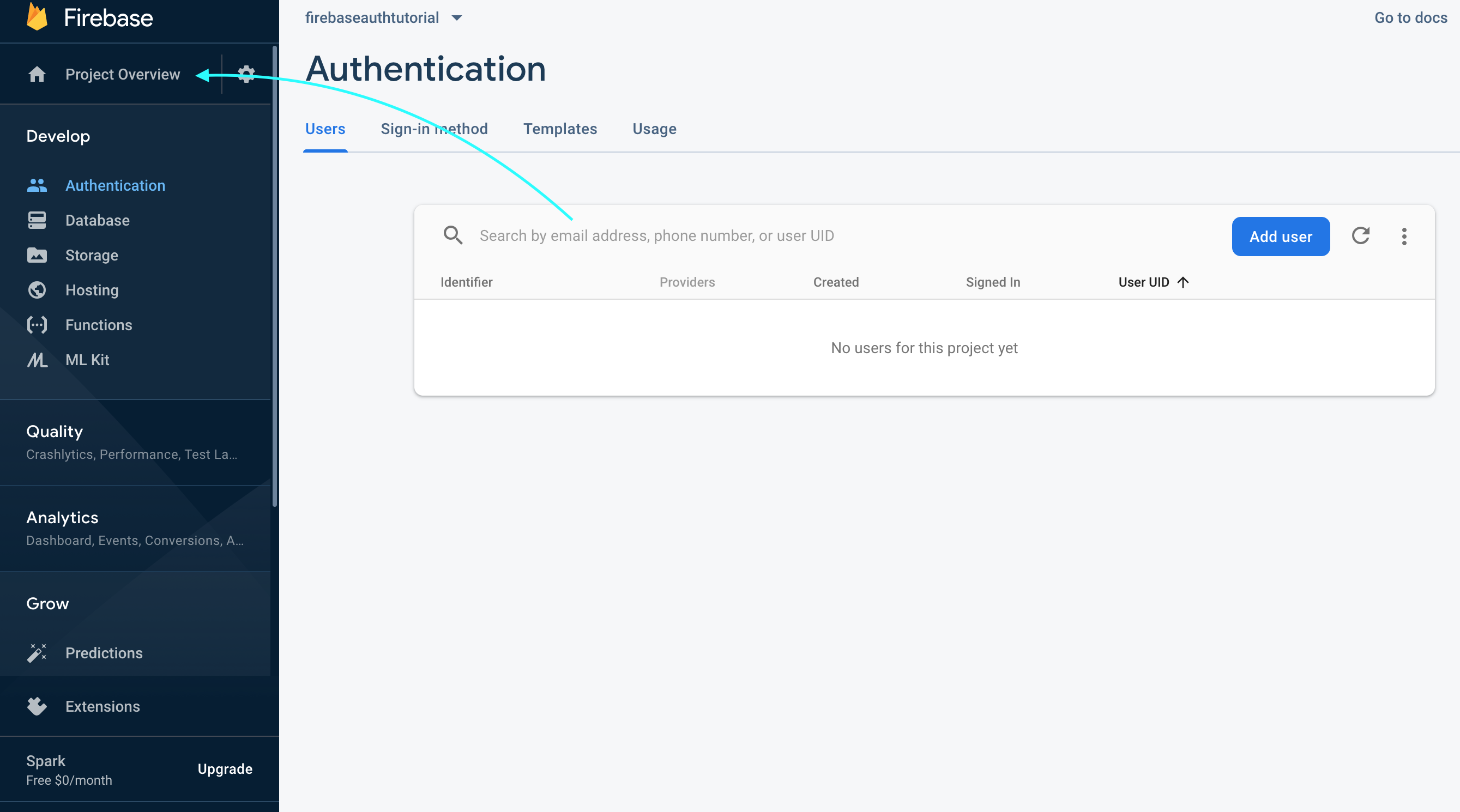

This tutorial is eight chapter of series build fitness tracker this app use for track workouts, diets, or health activities and analyze data and display suggestion the ultimate goal is to create food and health recommendation using Machine learning we start with creating app that user wants to use and connect to google health and apple heath for gathering everything to create dataset that uses for train model later I start with ultimate goal. Still, we will start to create a react native app and set up screen navigation with React navigation. inspired by [React native template](http://instamobile.io/) from instamobile

your can view the [previous chapter here](https://kriss.io/category/react-native-fitness/)

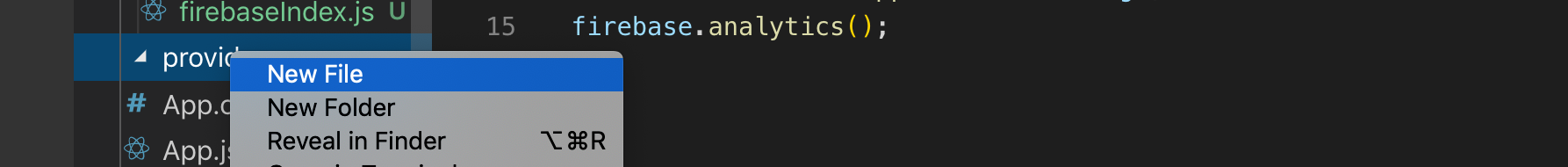

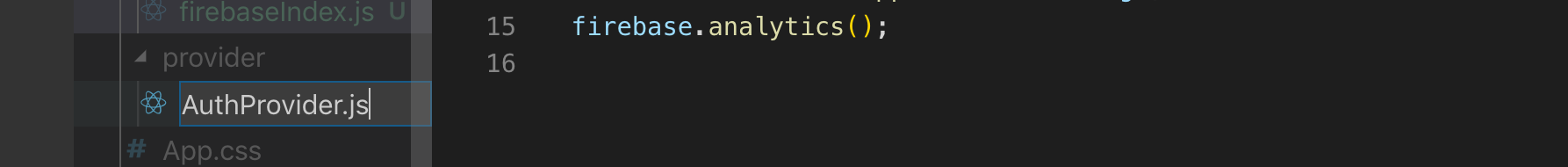

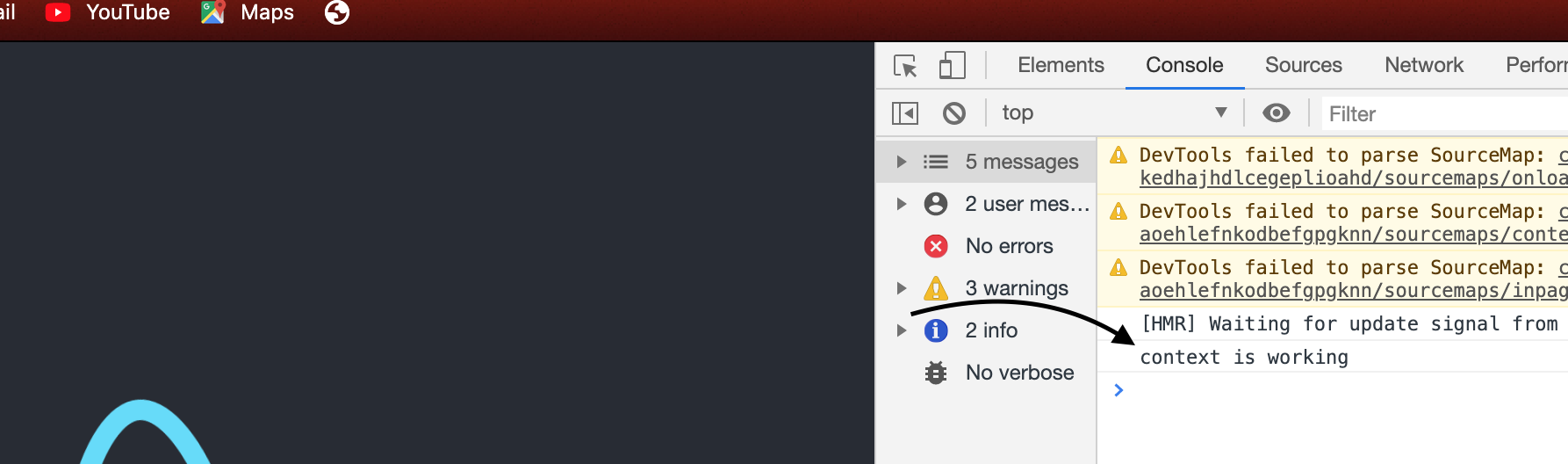

in this chapter we want to add more way that user can authenticate to the app first we implement Facebook login in this part we deal with iOS then will deal with Android in the next episode

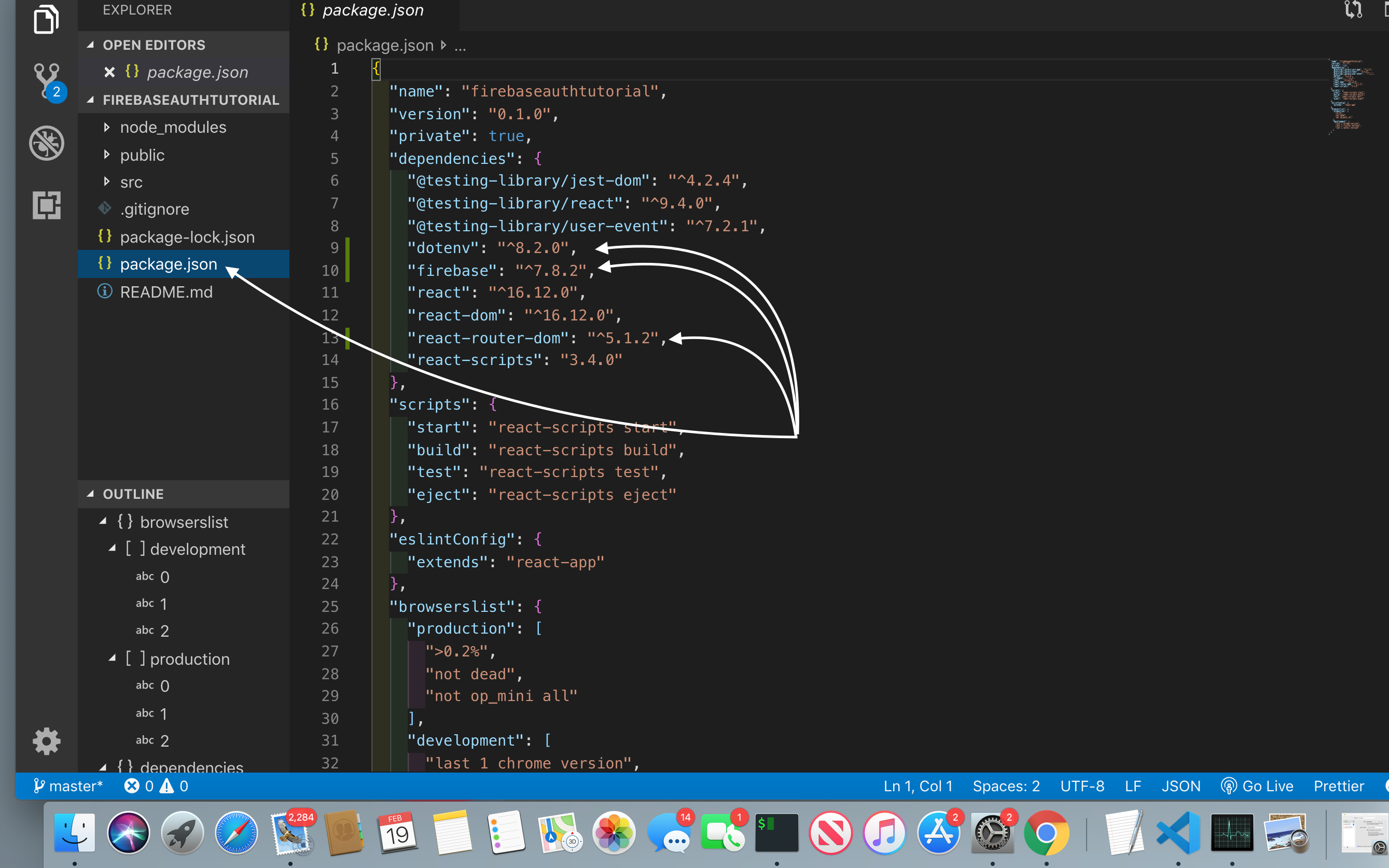

first, we need to install react-native-fbsdk package

```

yarn add react-native-fbsdk

```

next, we need to follow the official document install cacao pod package

```

cd ios ; pod install

```

now we finish on React native part

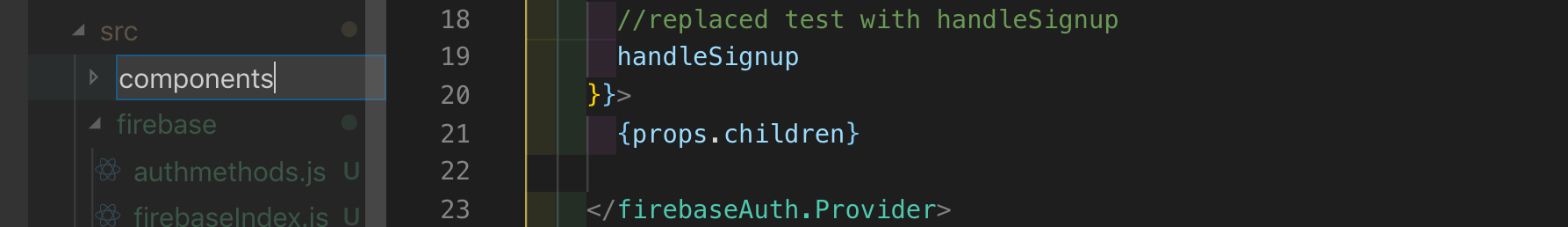

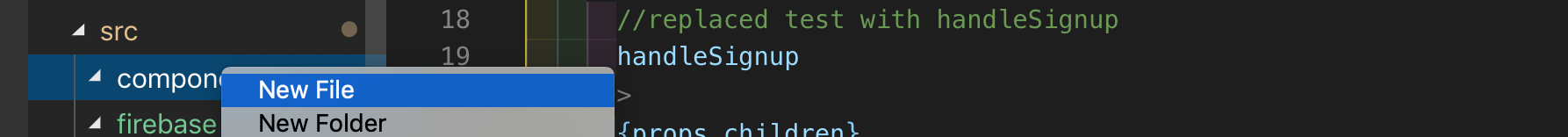

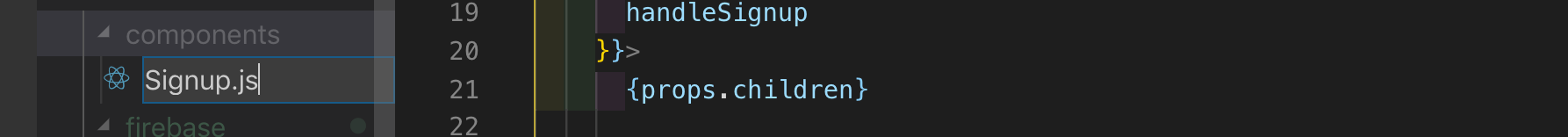

#### Configure on Xcode

next, open our project in Xcode add code below to info.plist

```

<key>CFBundleURLTypes</key>

<array>

<dict>

<key>CFBundleURLSchemes</key>

<array>

<string>fb6598530980\*\*\*\*</string>

</array>

</dict>

</array>

<key>FacebookAppID</key>

<string>65985309808\*\*\*\*</string>

<key>FacebookDisplayName</key>

<string>FitnessMaster</string>

```

result like here

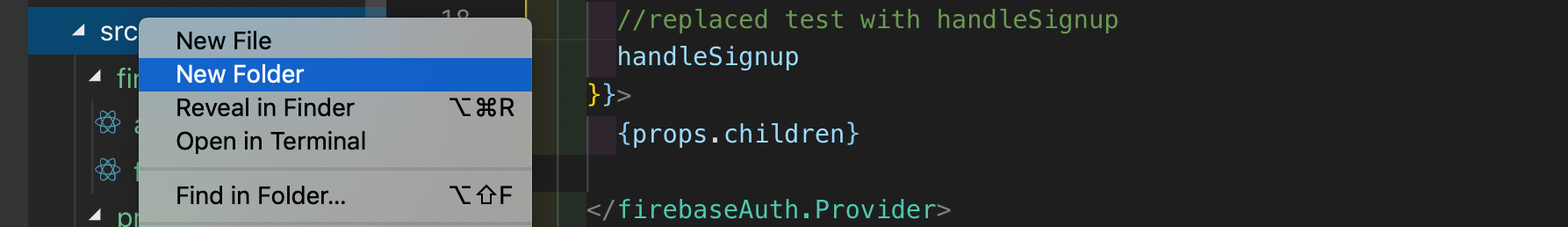

#### React native part

next, we come back to react-native part in LoginScreen.js import react-native-fbsdk package

```

import {LoginManager, AccessToken} from 'react-native-fbsdk';

```

then add function for handle Authentication data

```

async FacebookLogin() {

const result = await LoginManager.logInWithPermissions([

'public\_profile',

'email',

]);

if (result.isCancelled) {

throw new Error('User cancelled the login process');

}

const data = await AccessToken.getCurrentAccessToken();

if (!data) {

throw new Error('Something went wrong obtaining access token');

}

const credential = firebase.auth.FacebookAuthProvider.credential(

data.accessToken,

);

await firebase.auth().signInWithCredential(credential);

alert('Registration success');

setTimeout(() => {

navigation.navigate('HomeScreen');

}, 2000);

}

```

Here, we’ve implemented the FacebookLogin() function as an asynchronous function. First, we activate the Facebook login. Then, we’ll get an access token in return, which we save to Firebase. And when Firebase returns the login credentials, we manually authenticate to Firebase. And after ensuring that everything is a success, we navigate to the Home screen.

Next, we need to add the FacebookLogin() function to the onPress event of the SocialIcon component, which represents the Facebook login button:

```

<TouchableOpacity onPress={() => this.FacebookLogin()}>

<SocialIcon type="facebook" light />

</TouchableOpacity>

```

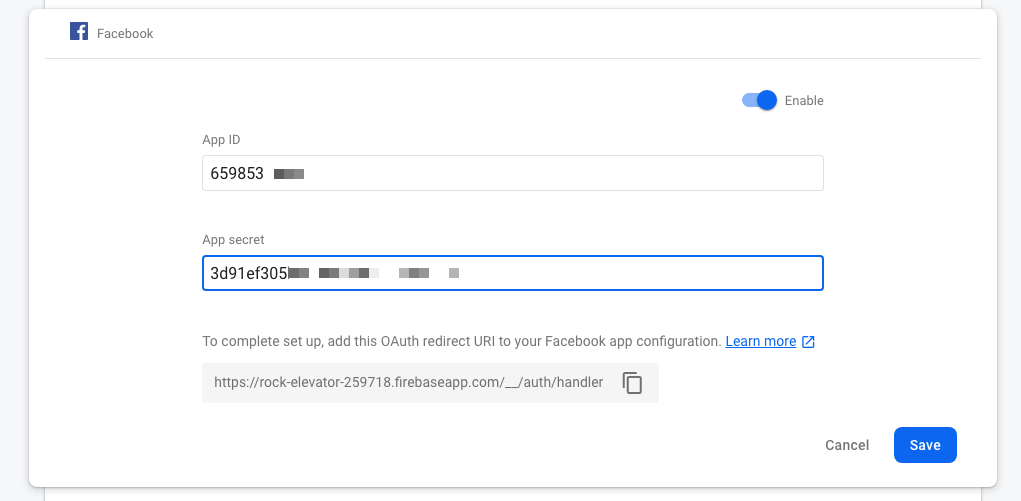

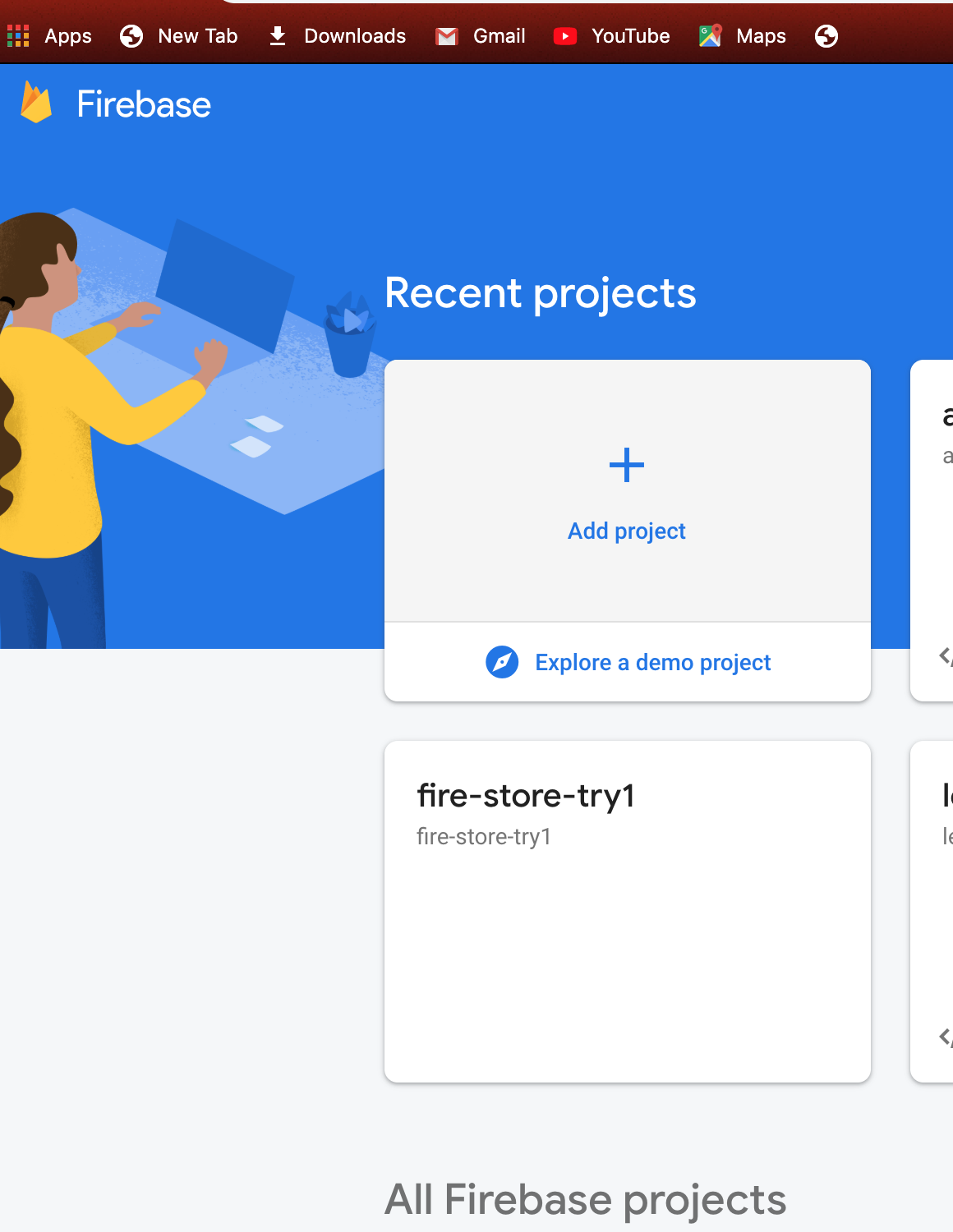

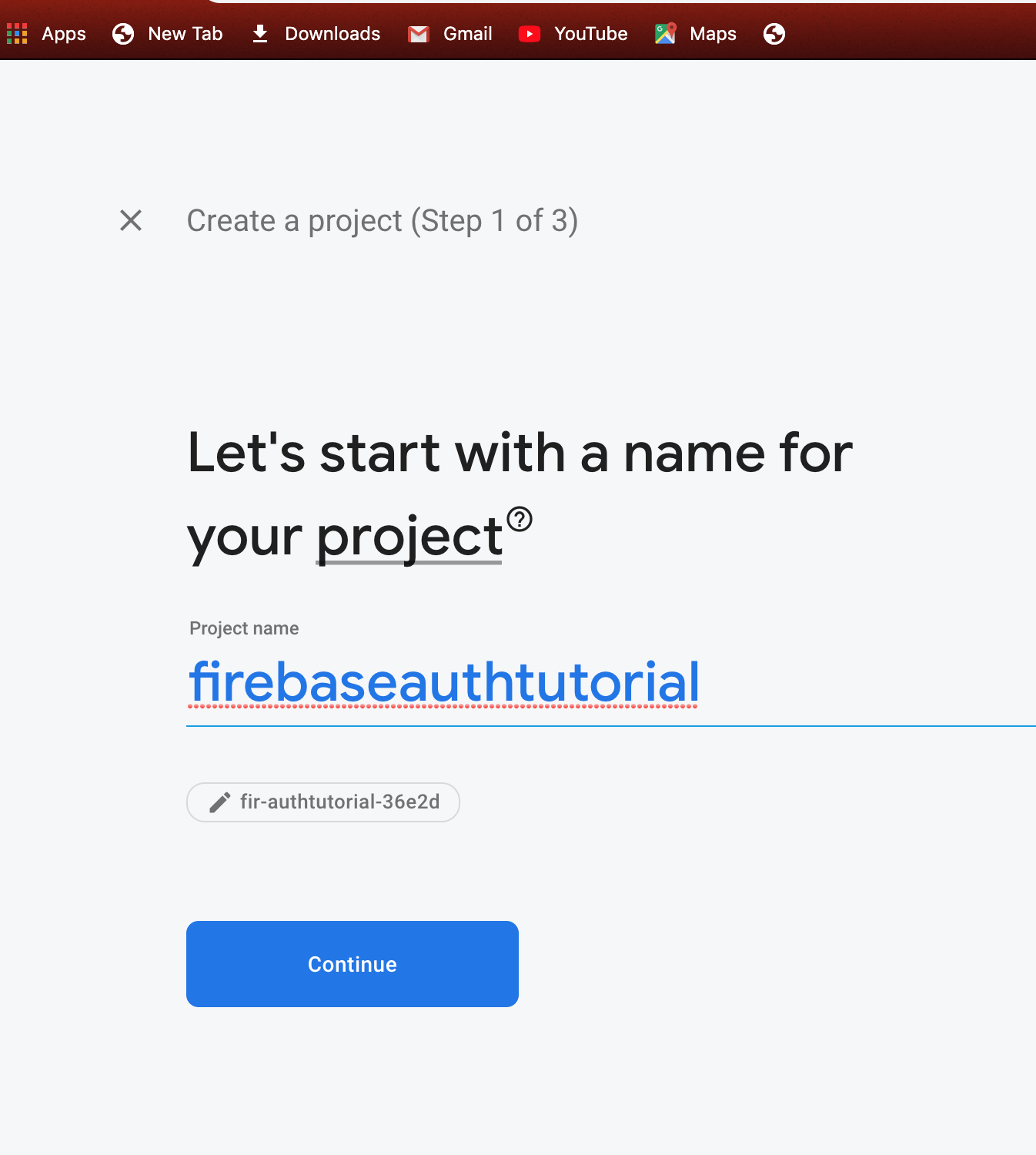

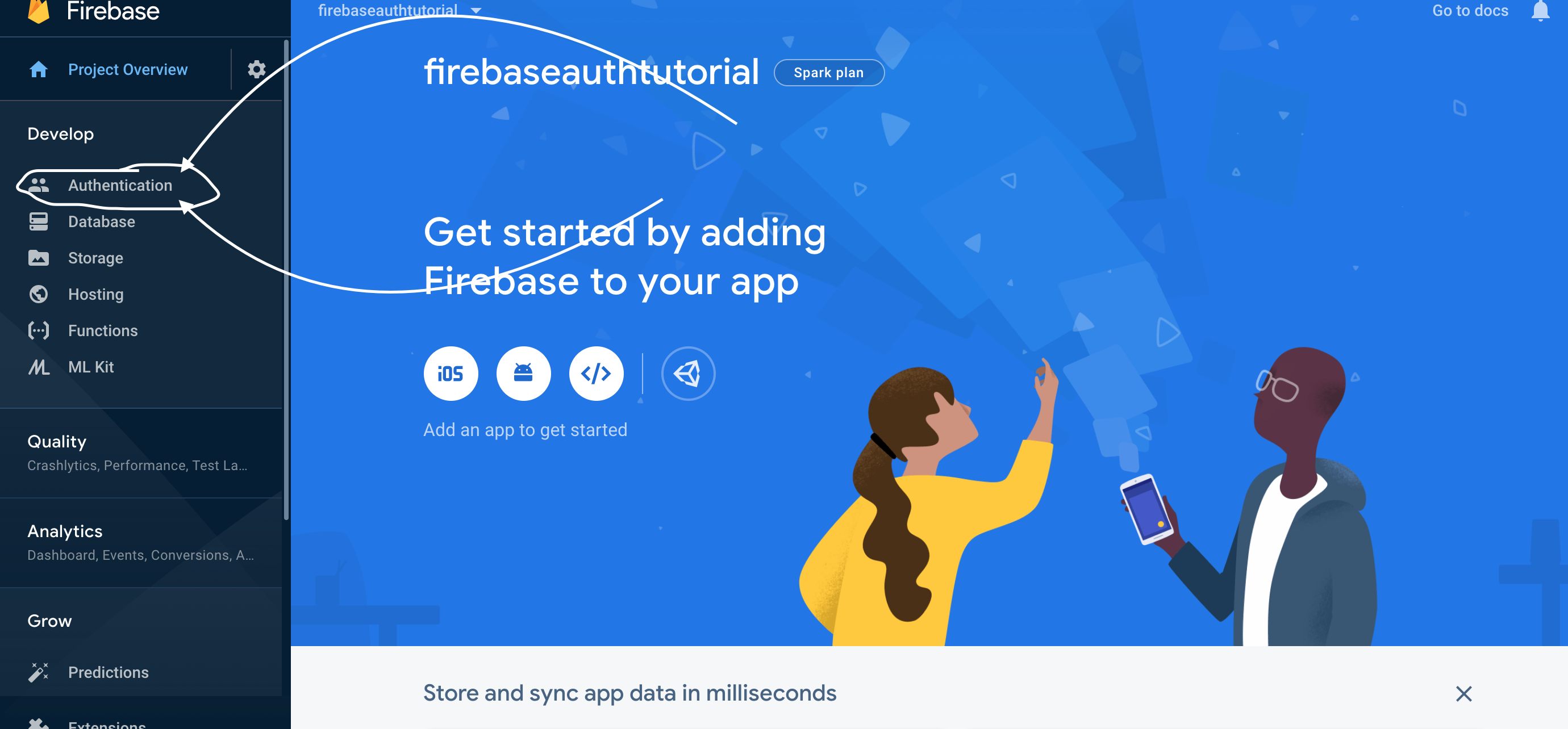

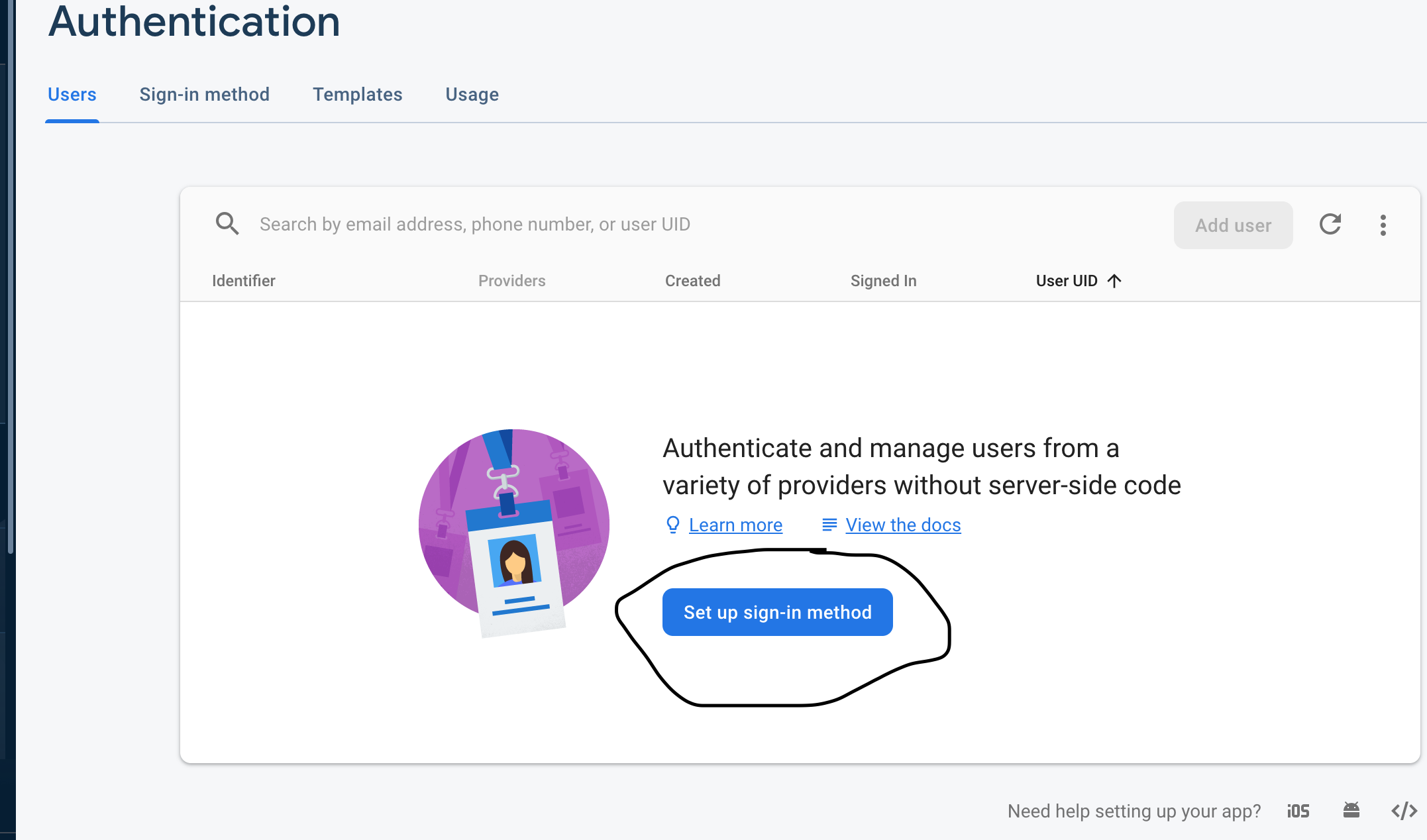

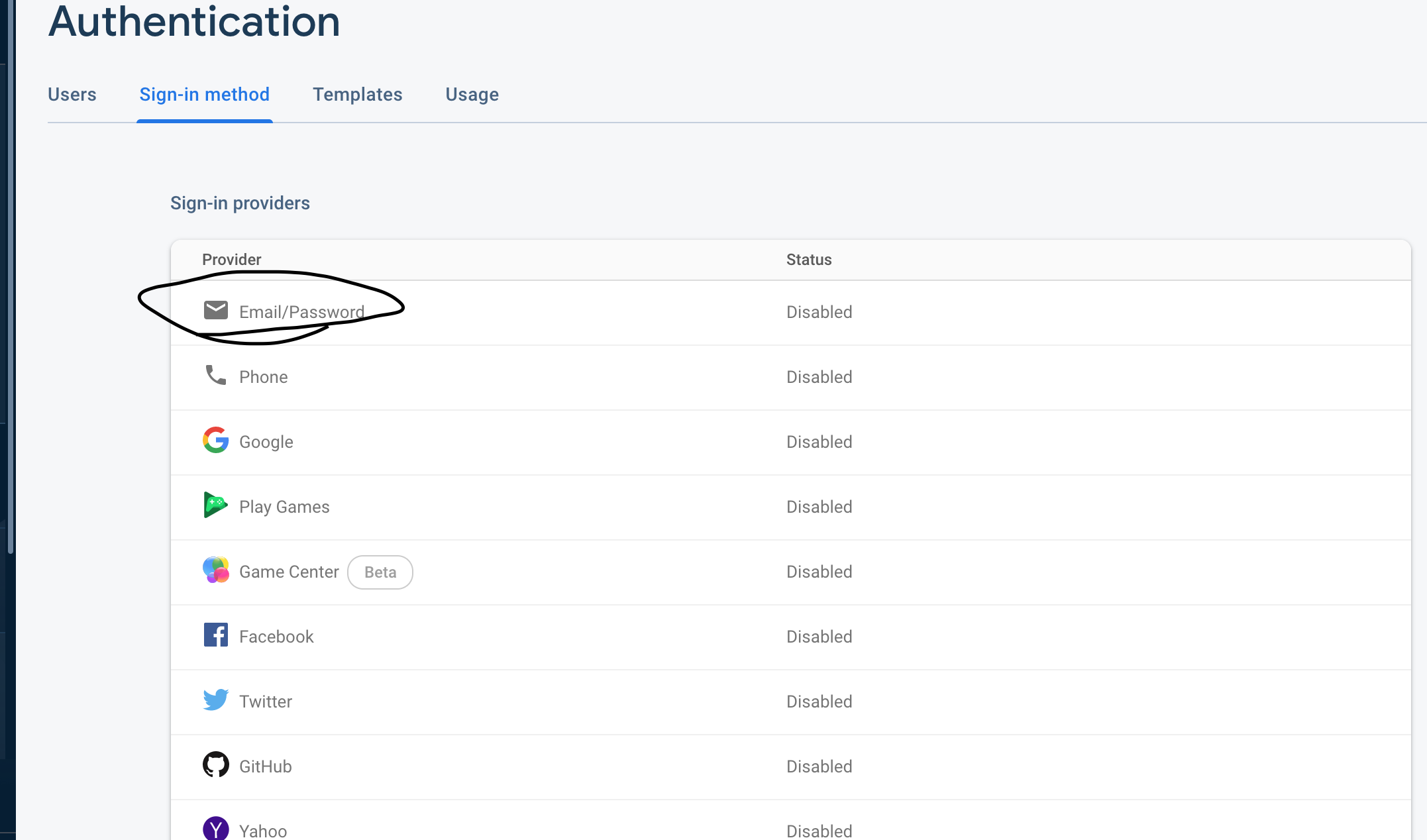

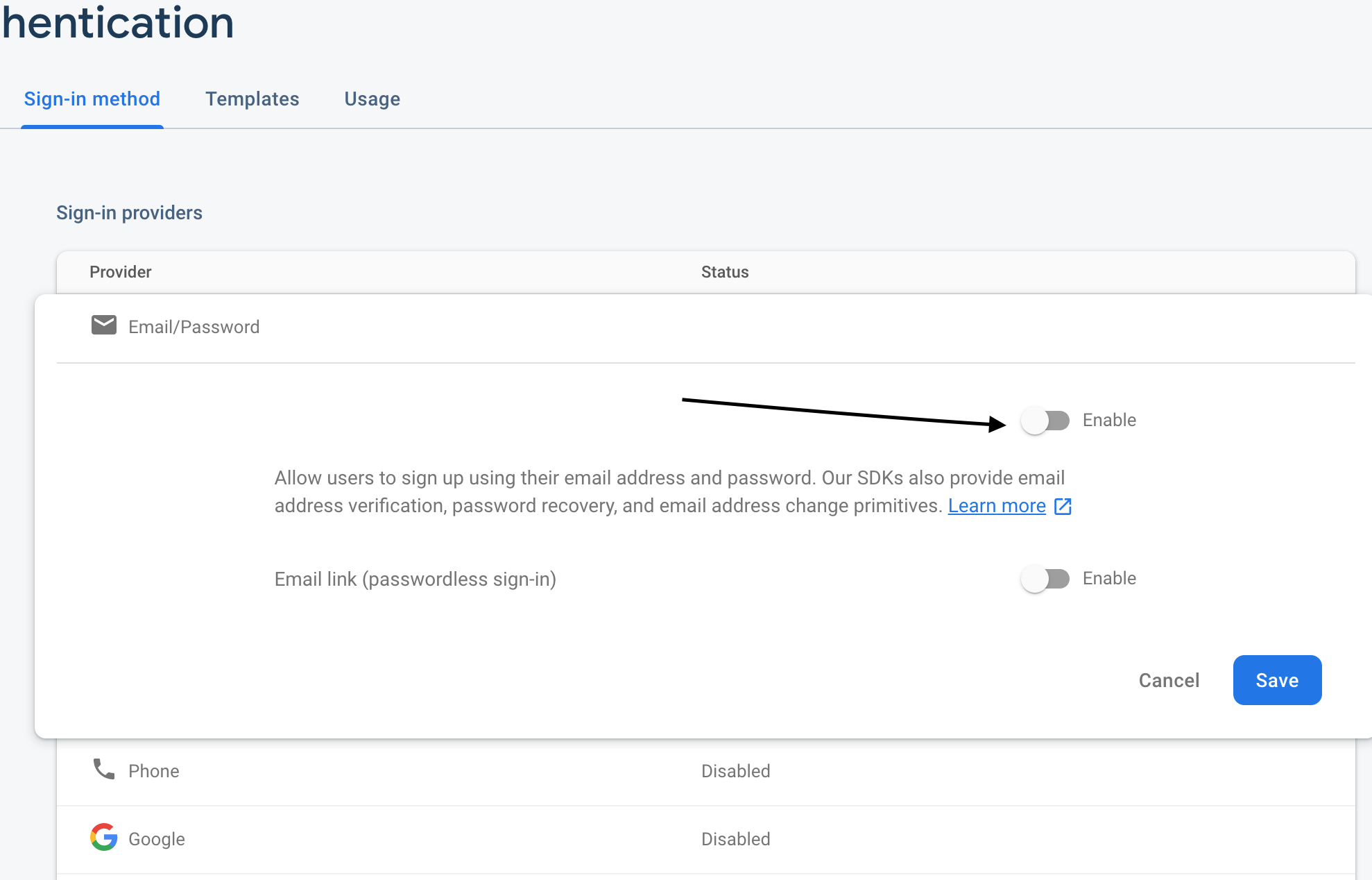

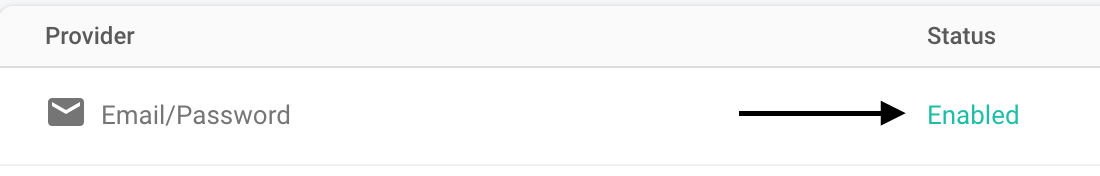

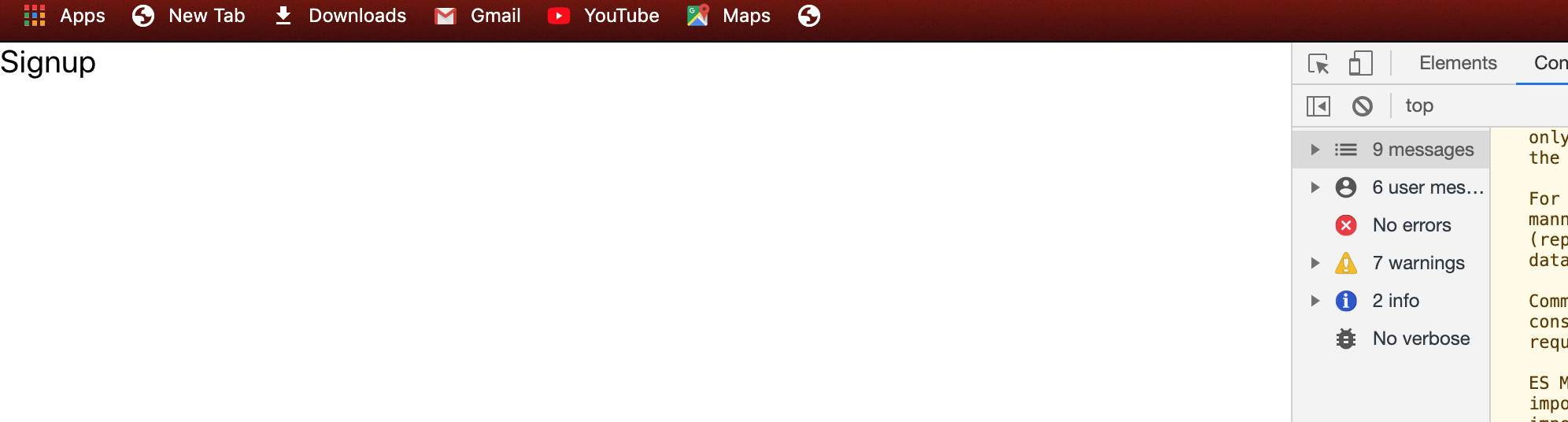

#### Activate the Firebase login method

lasting we need to activate Facebook authentication on Firebase and then App ID and App secret

now we can log in to our app

#### Conclusion

in this chapter, we learn how to add Facebook login to iOS part in the next chapter we learn adding Facebook login again but on android part

_Originally published at _[_Kriss_](https://kriss.io/build-react-native-fitness-app-8-ios-firebase-facebook-login/)_._

* * * | kris |

254,231 | Heap Sort | The heap sort is useful to get min/max item as well as sorting. We can build a tree to a min heap or... | 0 | 2020-02-03T14:57:58 | https://dev.to/drevispas/heap-sort-2fmb | algorithms, programming, java | The heap sort is useful to get min/max item as well as sorting. We can build a tree to a min heap or max heap. A max heap, for instance, keeps a parent being not smaller than children.

The following code is for a max heap. The __top__ indicates the next insertion position in the array which is identical to the array size.

```java

class Heap {

private int[] arr;

private int top;

public Heap(int sz) { arr=new int[sz]; top=0; }

public void push(int num) {

arr[++top]=num;

climbUp(top);

}

public void pop() {

int min=arr[1];

arr[1]=arr[top--];

climbDown(1);

arr[top+1]=min;

}

public int size() { return top; }

private void climbUp(int p) {

if(p<=1||arr[p]<=arr[p/2]) return;

swapAt(p,p/2);

climbUp(p/2);

}

private void climbDown(int p) {

int np=p*2;

if(np>top) return;

if(np<top&&arr[np+1]>arr[np]) np++;

if(arr[p]>=arr[np]) return;

swapAt(p,np);

climbDown(np);

}

private void swapAt(int p,int q) {

int t=arr[p];

arr[p]=arr[q];

arr[q]=t;

}

}

``` | drevispas |

254,697 | Docker stop all processes on Github Actions | How to show all Docker running containers, stop and remove them on Github Actions | 0 | 2020-02-04T01:18:56 | https://dev.to/saulsilver/docker-stop-all-processes-on-github-actions-533j | cicd, docker, intermediate | ---

title: Docker stop all processes on Github Actions

published: true

description: How to show all Docker running containers, stop and remove them on Github Actions

tags: CI/CD, Docker, intermediate

---

### _Side note_

_Advancing in CI/CD can be challenging due to lack of intermediate tutorials/blogs regarding this part of development. It's easy to find simple "how to setup your workflow with minimum jobs" articles that don't really help in production-level projects. I wonder why._

### Problem

I have Docker in one of the projects I am working on and wanted to integrate CI/CD. So I went with Github Actions v2 and started to check how to handle Docker and Docker-compose on Github Actions. There aren't many differences between Github Actions and other deployment workflow providers (e.g. CircleCI, Travis), but I would say the major difference is [Actions](https://help.github.com/en/actions/automating-your-workflow-with-github-actions/about-actions).

In the Continuous Deployment step, I need to access the server and stop all running Docker processes. Of course, I cannot stop the docker process by container ID because it's dynamic and changes whenever the process is run.

### Example

Consider we have these Docker containers running

```sh

$ docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

4c01db0b339c ubuntu:12.04 bash 17 seconds ago Up 16 seconds 3300-3310/tcp webapp

d7886598dbe2 crosbymichael/redis:latest /redis-server --dir 33 minutes ago Up 33 minutes 6379/tcp redis,webapp/db

```

### Workaround

A static identifier is needed to stop the process, so I could call each image by its name and stop it.

```sh

docker stop ubuntu:12.04 crosbymichael/redis:latest

docker rm ubuntu:12.04 crosbymichael/redis:latest

```

That's fairly okay if you have a few containers running and you are sure other docker containers don't exist. But it's an assumption I'm not willing to take and also, it's buggy if I add a new container later on and forget to add it in the workflow script.

### Common solution

Some of you might be screaming already with this holy grail command.

```sh

docker stop $(docker ps -a -q)

docker rm $(docker ps -a -q)

```

This basically stops/removes all existing container processes no matter what their identifiers are. Nice! Now push that to our Github `remote` and wait for Github Actions Runner to finish the workflow script, but then the Runner shows the red cross :x: The build has failed with an error about `docker stop requires a parameter`.

Github Actions uses the `$` to call variables (e.g. [`GITHUB_REPOSITORY`](https://help.github.com/en/actions/automating-your-workflow-with-github-actions/using-environment-variables#default-environment-variables)), so it looks for variable called `docker ps...` but it's undefined since I didn't set it as an environment variable in the workflow. We could escape the Action's default `$` with another `$` so the command becomes

```sh

docker stop $$(docker ps -a -q)

```

However, this also didn't work, I looked it up a bit and didn't find a good explanation for it but was spending too much time on this. So I moved on, after all, stopping docker processes isn't the main task for the whole workflow.

### Working solution

After prioritizing my tasks for the project, I decided to find a different solution and quickly overcome this problem. Only then, I stumbled upon this piece of bash script which I haven't seen before.

```sh

ids=$(docker ps -a -q)

for id in $ids

do

echo "$id"

docker stop $id && docker rm $id

done

```

First line assigns the outcome of `docker ps ...` to a variable called `ids`. Then we make a `for` loop to iterate through all the ids and for each id, we stop and remove the process with that id.

Github Actions Runner passed this without any errors so I was happy to have learnt a new trick and move on with my other tasks to finish up the workflow. | saulsilver |

254,277 | Design Patterns for JavaScript Applications | What are the design patterns that you use while developing your JavaScript applications? Be it Fronte... | 0 | 2020-02-03T16:30:26 | https://dev.to/jsandfriends/design-patterns-for-javascript-applications-2fj | discuss, javascript | What are the design patterns that you use while developing your JavaScript applications? Be it Frontend or Middleware. | baskarmib |

254,296 | How to fetch subcollections from Cloud Firestore with React | More data! First, I add more data to my database. Just to make things more realistic. For... | 4,654 | 2020-02-03T16:54:07 | https://dev.to/rossanodan/how-to-fetch-subcollections-from-cloud-firestore-with-react-3n93 | google, firebase, firestore, react | ---

title: How to fetch subcollections from Cloud Firestore with React

published: true

description:

tags: google, firebase, firestore, react

series: Getting started with Firebase Cloud Firestore

---

# More data!

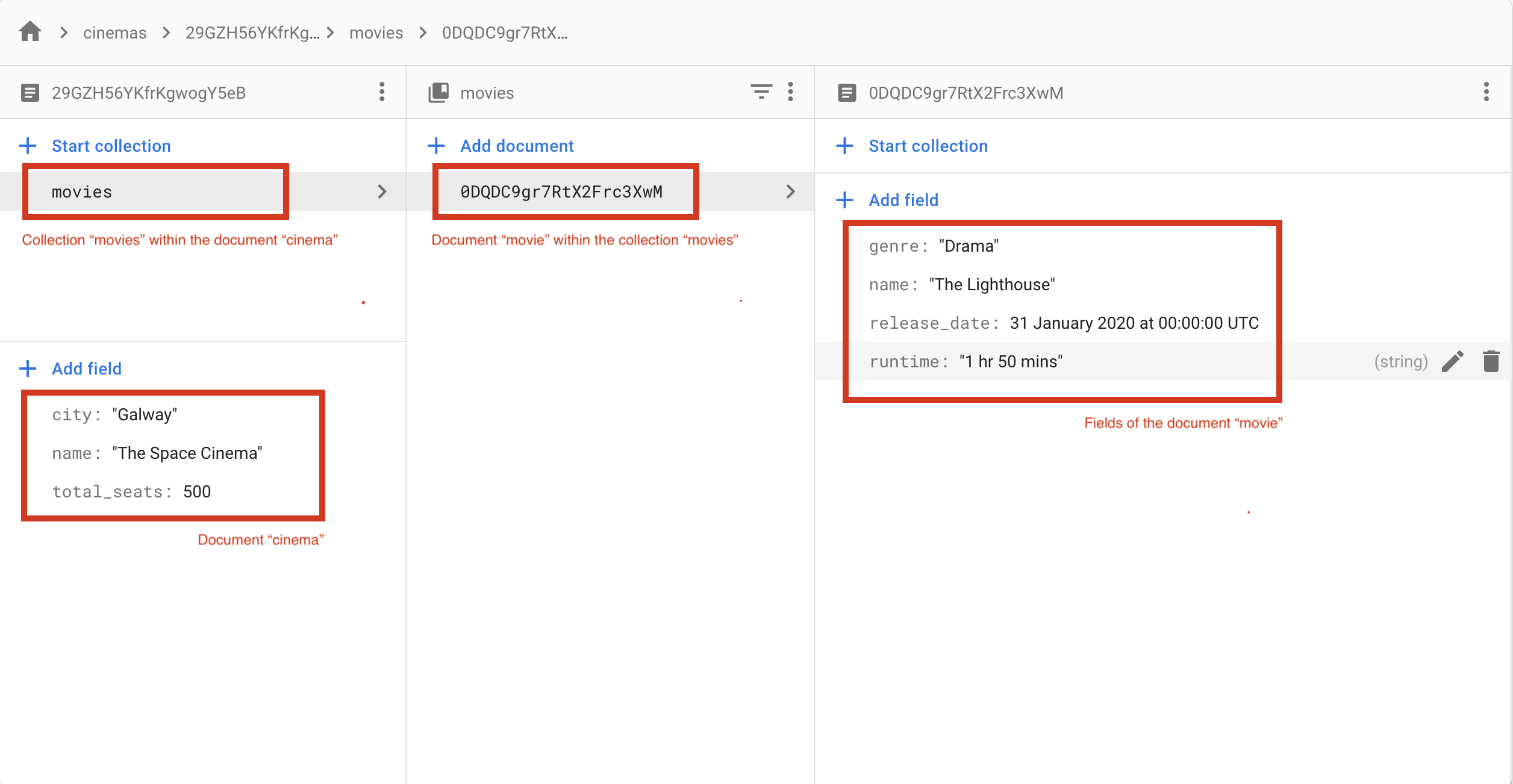

First, I add more data to my database. Just to make things more realistic. For each cinema I add a subcollection `movies` in which I add some `movies`. Each movie has this info

```

name: string,

runtime: string,

genre: string,

release_date: timestamp

```

In Firestore, data can also have different structure (NoSQL's power!) but, for simplicity, I follow the canonical way.

I add one movie for the first cinema and two movies for the second one.

# Fetching the subcollection

I make the cinemas list clickable, so when I click an item I load movies scheduled for that specific cinema. To do this, I create a function `selectCinema` that will perform a new `query` to fetch a specific subcollection.

```typescript

...

const selectCinema = (cinema) => {

database.collection('cinemas').doc(cinema.id).collection('movies').get()

.then(response => {

response.forEach(document => {

// access the movie information

});

})

.catch(error => {

setError(error);

});

}

..

{cinemas.map(cinema => (

<li key={cinema.id} onClick={() => selectCinema(cinema)}>

<b>{cinema.name}</b> in {cinema.city} has {cinema.total_seats} total seats

</li>

))}

```

At this point is easy managing the show/hide logic with React using the `state`.

{%gist https://gist.github.com/rossanodan/e97e19d31000019a3b705412662eca46 %}

# A working demo

Gaunt but working.

| rossanodan |

254,587 | 🐍 Writing tests faster with pytest.parametrize | Write your Python tests faster with parametrize I think there is no need to explain here h... | 492 | 2020-02-03T21:33:58 | https://www.daolf.com/posts/writing-tests-faster/ | python, webdev, tutorial, beginners |

# Write your Python tests faster with parametrize

I think there is no need to explain here how important testing your code is. If somehow, you have doubt about it, I can only recommend you to read those great resources:

- [The testing introduction I wish I had](https://dev.to/maxwell_dev/the-testing-introduction-i-wish-i-had-2dn)

- [The importance of software testing](https://www.testdevlab.com/blog/2018/07/importance-of-software-testing/)

But more often than not, when working on your side projects, we tend to overlook this thing.

I am in no way advocating that every code you write in every situation should be tested, it is perfectly fine to hack something and never test it.

This post was only written to show you how to quickly write Python tests using `pytest` and one particular feature, hoping it will reduce the amount of non-tested code you write

## Writing some tests

We are going to write some tests for our method that takes an array, removes its odd member, and sort it.

This will be the method:

```python

def remove_odd_and_sort(array):

even_array = [elem for elem in array if not elem % 2]

sorted_array = sorted(even_array)

return sorted_array

```

That's it. Let's now write some basic tests:

```python

import pytest

def test_empty_array():

assert remove_odd_and_sort([]) == []

def test_one_size_array():

assert remove_odd_and_sort([1]) == []

assert remove_odd_and_sort([2]) == [2]

def test_only_odd_in_array():

assert remove_odd_and_sort([1, 3, 5]) == []

def test_only_even_in_array():

assert remove_odd_and_sort([2, 4, 6]) == [2, 4, 6]

def test_even_and_odd_in_array():

assert remove_odd_and_sort([2, 1, 6]) == [2, 6]

assert remove_odd_and_sort([2, 1, 6, 3, 1, 2, 8]) == [2, 2, 6, 8]

```

To run those tests simple `pytest <your_file>` and you should see something like this:

As you can see it was rather simple, but writing 20 lines of codes for such a simple method can sometimes be seen as a too expensive price.

## Writing them faster

Pytest is really an awesome test framework, one of its features I use the most to quickly write tests is the `parametrize` decorator.

The way it works is rather simple, you give the decorator the name of your arguments and an array of tuple representing multiple argument values.

Your test will then be run one time per tuple in the list.

With that in mind, all the tests written above can be shortened into this snippet:

```python

@pytest.mark.parametrize("test_input,expected", [

([], []),

([1], []),

([2], [2]),

([1, 3, 5], []),

([2, 4, 6], [2, 4, 6]),

([2, 1, 6], [2, 6]),

([2, 1, 6, 3, 1, 2, 8], [2, 2, 6, 8]),

])

def test_eval(test_input, expected):

assert remove_odd_and_sort(test_input) == expected

```

And this will be the output:

See? And in only 10 lines. `parametrize` is very flexible, it also allows you to defined case the will break your code and many other things as detailed [here](https://docs.pytest.org/en/latest/example/parametrize.html#paramexamples)

Of course, this was only a short introduction to this framework showing you only a small subset of what you can do with it.

You can [follow me on Twitter](https://twitter.com/intent/follow?screen_name=PierreDeWulf"), I tweet about bootstrapping, indie-hacking, startups and code 😊

Happy coding

If you like those short blog post about Python you can find my 2 previous one here: | daolf |

254,611 | Let's Create a Twitter Bot using Node.js and Heroku (3/3) | Welcome to the third and final installment of creating a twitter bot. In this post, I'll show you how... | 0 | 2020-02-05T20:08:57 | https://dev.to/developer_buddy/let-s-create-a-twitter-bot-using-node-js-and-heroku-3-3-agk | node, twitter, javascript, tutorial | Welcome to the third and final installment of creating a twitter bot. In this post, I'll show you how to automate your bot using Heroku.

If you haven't had the chance yet, check out [Part 1](https://dev.to/developer_buddy/let-s-create-a-twitter-bot-using-node-js-and-heroku-1-3-43kb) and [Part 2](https://dev.to/developer_buddy/let-s-create-a-twitter-bot-using-node-js-and-heroku-2-3-22g3).

After this, you will have your own fully automated Twitter bot. Let's jump in.

# 1. Setup Heroku Account

You'll want to sign up for a Heroku [account](https://heroku.com). If you have a Github account you'll be able to link the two accounts.

# 2. Create Your App

Once you're all set up with your account, you'll have to create an app.

In the top right corner, you'll see a button that says 'New' Click on that and select 'Create New App'

That should take you to another page where you'll have to name your app.

# 3. Install Heroku

You can install Heroku a few different ways depending on your OS. If you want to use the CLI to install it, enter the following code in your terminal

### sudo snap install --classic heroku

If that didn't work for you, you can find other ways of installing Heroku to your device [here](https://devcenter.heroku.com/articles/heroku-cli)

# 4. Prepare For Deployment

Open up your terminal and cd into your tweetbot folder. Once inside run this code to log in to your Heroku account.

### heroku login

You'll have the option to log in either through the terminal or webpage.

If you haven't deployed to Github run the following code. If you have you can skip this part

### git init

Now you'll want to connect to Heroku's remote git server. Run this code in your terminal.

Be sure to replace `<your app name>` with the name of your Heroku's app name

### heroku git:remote -a <your app name>

Almost there!!! You just want to setup our access keys on Heroku's server.

You can do this directly in terminal quite easily. Run the following code to get it setup.

You're actually just going to be copying it over from your `.env` file

```

heroku config:set CONSUMER_KEY=XXXXXXXXXXXXXXXXXXXXXXXXX

heroku config:set CONSUMER_SECRET=XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX

heroku config:set ACCESS_TOKEN=XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX

heroku config:set ACCESS_TOKEN_SECRET=XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX

```

Sweet! Now we are going to create a Procfile to configure the process we want Heroku to run.

### touch Procfile

Once you have this file created open it up and add the following code inside

### worker: node bot.js

Now you just need to commit and push your files up to the Heroku server.

Run this last bit of code in your terminal

```

git add .

git commit -m "add all files"

git push heroku master

```

Time to test out our bot now that it's on Heroku. In your terminal, run the following:

### heroku run worker

You should see your terminal output 'Retweet Successful' and 'Favorite Successful'

If you're getting some type of error message, be sure to double-check your code and your deployment.

# 5. Time To Automate

All that's left is getting our bot to run on a schedule. I really like the Herkou Scheduler add to handle this.

Go back to your overview page on Heroku and select configure add-ons

Do a search for <strong>Heroku Scheduler</strong> and add it to your app.

Now click on Heroku Scheduler to open up the settings in a new window.

For this example, I'm going to configure mine to run every 10 minutes. You can change this to run every hour or less if you'd prefer.

You'll notice that I added <strong>node bot.js</strong> under the Run Command section. You'll want to do the same so Heroku knows which command to run for your bot.

There you have it!!! You have now successfully created your own automated twitter bot.

If you'd like to check mine out you can at [@coolnatureshots](https://twitter.com/coolnatureshots). You can also find the GitHub repo for it [here](https://github.com/agyin3/tweetbot-photo)

| developer_buddy |

254,623 | What are you going to do if/when your position gets automated? | Thinking out loud. | 0 | 2020-02-04T14:53:20 | https://dev.to/jenc/discuss-what-are-you-going-to-do-if-when-your-position-gets-automated-13j0 | discuss, devdiscuss, career | ---

title: What are you going to do if/when your position gets automated?

published: true

description: Thinking out loud.

tags: discuss, devdiscuss, career

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/in9z5qf1to2s4l3rv4c3.jpeg

---

Let's take it real far: what are you going to work because your job got automated? (Not a speculation on whether it can be automated, but it actually just *is*)

Would you continue to design and code?

| jenc |

254,745 | Ripping Out Node.js - Building SaaS #30 | In this episode, we removed Node.js from deployment. We had to finish off an issue with permissi... | 2,058 | 2020-03-05T18:05:24 | https://www.mattlayman.com/building-saas/ripping-out-nodejs/ | python, django, saas, node | {% youtube PyZDK-D0eWE %}

In this episode, we removed Node.js from deployment. We had to finish off an issue with permissions first, but the deployment got simpler. Then we continued on the steps to make deployment do even less.

Last episode, we got the static assets to the staging environment, but we ended the session with a permissions problem. The files extracted from the tarball had the wrong user and group permissions.

I fixed the permissions by running an Ansible task that ran `chown` to use the `www-data` user and group. To make sure that the directories had proper permissions, I used `755` to ensure they were executable.

Then we wrote another task to set the permission of non-directory files to `644`. This change removes the executable bit from regular files and reduces their security risk.

We ran some tests to confirm the behavior of all the files, even running the test that destroyed all existing static files and starting from scratch.

With the permissions task complete, we could move onto the fun stuff of ripping out code. Since all the static files are now created in Continuous Integration, there is no need for [Node.js](https://nodejs.org/en/) on the actual server. We removed the [Ansible](https://www.ansible.com/) galaxy role and any task that used Node.js to run JavaScript.

Once Node was out of the way, I moved on to other issues. I had to convert tasks that used `manage.py` from the Git clone to use the manage command that I bundled into the [Shiv](https://shiv.readthedocs.io/en/latest/) app. That work turned out to be very minimal.

The next thing that can be removed is the Python virtual environment that was generated on the server. The virtual environment isn't needed because all of the packages are baked into the Shiv app. That means that we must remove anything that still depends on the virtual environment and move them into the Shiv app.

There are two main tools that still depend on the virtual environment:

1. [Celery](http://www.celeryproject.org/)

2. [wal-e](https://github.com/wal-e/wal-e) for [Postgres](https://www.postgresql.org/) backups

For the remainder of the stream, I worked on the `main.py` file, which is the entry point for Shiv, to make the file able to handle subcommands. This will pave the way for next time when we call Celery from a Python script instead of its stand-alone executable.

Show notes for this stream are at [Episode 30 Show Notes](https://www.mattlayman.com/building-saas/ripping-out-nodejs/).

To learn more about the stream, please check out [Building SaaS with Python and Django](https://www.mattlayman.com/building-saas/). | mblayman |

254,775 | How to Intercept the HTTP Requests in Angular(Part 1) | How to Intercept the HTTP Requests in Angular (Part 1) Ud... | 0 | 2020-02-04T04:14:33 | https://dev.to/udithgayan/how-to-intercept-the-http-requests-in-angular-part-1-1am6 | angular, javascript, developers, interceptor | {% medium https://medium.com/javascript-in-plain-english/how-to-intercept-the-http-requests-in-angular-2a67df423020 %} | udithgayan |

254,820 | KineMaster - The Top Video Editor For Android | KineMaster is a video editing application that brings a full suite of editing tools to iPhone, iPad,... | 0 | 2020-02-04T06:16:26 | https://dev.to/steve_smith/kinemaster-the-top-video-editor-for-android-3le | KineMaster is a video editing application that brings a full suite of editing tools to iPhone, iPad, iPod Touch, and Android devices. Designed for productivity on the go, KineMaster delivers the ability to create professional video content without requiring a laptop or desktop computer

(https://bigtechbyte.com/kinemaster-pro/)

BEST editing ever! I've used Kinemaster for the past year or so. I must say I'm not tech-savvy whatever and this app is extremely easy to use. I use it for my Youtuber channel (LifeWithLowee) Kinemaster is has a YouTuber channel (KineMaster) that shows you what new features and how to use their app.

Music and Sound Effect Clips

Music and Sound Effect Clips can be added to projects by tapping Audio on the Media Wheel to open the Audio Browser, and then selecting the desired music or sound effect track and tapping Add to place it on the Timeline starting at the time of the playhead’s current position.

Selecting a Music or Sound Effect Clip will bring up the Options Panel, which contains tools used to edit audio, which will be discussed in more detail below.

Please note that while on Android, KineMaster will display almost any available music or sound effect file on your device, on iOS devices, music must either be in iTunes on your device or in the KineMaster Internal folder. The KineMaster Internal folder is the same folder that is seen in folder sharing in iTunes on your computer

Files with DRM (Digital Rights Management), also known as copyright protection, cannot be imported into KineMaster, as it breaks the terms of service for most music services.

| steve_smith | |

254,851 | PostCSS: cómo reducir hojas de estilo CSS, eliminando los selectores que sobran (vídeo) | Aunque todo profesional del desarrollo Web que se precie debe dominar HTML y CSS, la realidad es que... | 0 | 2020-10-07T10:30:03 | https://www.campusmvp.es/recursos/post/postcss-como-reducir-hojas-de-estilo-css-eliminando-los-selectores-que-sobran.aspx | webdev, spanish, video | ---

title: PostCSS: cómo reducir hojas de estilo CSS, eliminando los selectores que sobran (vídeo)

published: true

date: 2020-02-04 08:00:00 UTC

tags: WebDev, Spanish, Video

cover_image: https://www.campusmvp.es/recursos/image.axd?picture=/2020/1T/purgecss-portada.png

canonical_url: https://www.campusmvp.es/recursos/post/postcss-como-reducir-hojas-de-estilo-css-eliminando-los-selectores-que-sobran.aspx

---

Aunque todo profesional del desarrollo Web que se precie debe [dominar HTML y CSS,](https://www.campusmvp.es/recursos/catalogo/Product-HTML5-y-CSS3-a-fondo-para-desarrolladores_185.aspx) la realidad es que en la mayor parte de los proyectos normalmente hacemos uso de **alguna biblioteca o _framework_ CSS** , como por ejemplo [Bootstrap](https://www.campusmvp.es/recursos/catalogo/Product-Dise%C3%B1o-Web-Responsive-con-HTML5,-Flexbox,-CSS-Grid-y-Bootstrap_212.aspx) (que es la más utilizada) o herramientas similares.

Utilizar un _framework_ CSS nos permite **maquetar muy rápido** , dar un **aspecto atractivo** por defecto a las aplicaciones, y **[tener ya hechas muchas cosas complicadas](https://dev.to/campusmvp/bootstrap-42---spinners-notificaciones-toast-interruptores-y-otras-novedades-l0f)**. Pero, por otro lado, utilizar un _framework_ implica que **estamos añadiendo gran cantidad de cosas a la aplicación que jamás vamos a utilizar**.

Por ejemplo, si creas una aplicación sencilla con Bootstrap utilizando tan solo la facilidad de maquetación con su rejilla (aunque hoy en día [con CSS Grid y Flexbox](https://www.campusmvp.es/recursos/catalogo/Product-Dise%C3%B1o-Web-Responsive-con-HTML5,-Flexbox,-CSS-Grid-y-Bootstrap_212.aspx) no lo necesitarías) y tomando el aspecto por defecto de botones y cuadros de texto, estarás añadiendo a la aplicación unos 156KB de tamaño con la versión minimizada del CSS de Boostrap. Sin embargo, **si analizas el uso real que estás haciendo** de las reglas incluidas, verás que es mínimo y **te llegaría con un porcentaje pequeño** del contenido de ese archivo.

Lo puedes ver en este mini-vídeo en el que tengo una página muy sencilla con unas cuantas secciones para maquetar texto e imágenes, abro la página en Chrome y empleo su herramienta para análisis de cobertura de código:

{% youtube hTdEbVaLtoE %}

Como puedes comprobar, **casi el 96% del código de la hoja de estilos de Bootstrap no se utiliza** para nada. El resto, para esta página en concreto, no lo necesitamos.

Pero claro, hacer esto manualmente es una tarea propensa a errores y, además, lamentablemente las herramientas de cobertura de Chrome no nos dejan exportar a un archivo tan solo lo que se identifica como que está en uso.

**En el siguiente vídeo** te explico paso a paso cómo puedes **sacar partido a la estupenda herramienta [PurgeCSS](https://purgecss.com/)** para automatizar el análisis y limpieza de los archivos CSS que emplee tu aplicación web Front-End y acabar con aplicaciones más ligeras y más rápidas. Para ello utiliza un pequeño sitio web con algunas páginas bastante complejas, y que se apoya en el uso de diversas bibliotecas y mucho CSS.

Vamos a verlo:

{% youtube xN3w49LSMYo %}

Todo lo explicado se puede automatizar con **npm** , **Gulp** o **Webpack** e incorporarlo al proceso de desarrollo de tu aplicación.

Puedes aprender a dominar todas estas herramientas de desarrollo Front-End y muchas más, para ser mejor profesional, con nuestro curso de [Herramientas modernas para desarrollo Web Front-End empresarial](https://www.campusmvp.es/recursos/catalogo/Product-Herramientas-modernas-para-desarrollo-Web-Front-End-empresarial_243.aspx). Háblalo con tu empresa o invierte por tu cuenta en mejorar tu perfil profesional 😉

¡Espero que te resulte útil! | campusmvp_es |

254,852 | A use case for the Object.entries() method | Split up objects with Object.entries() and Array.filter | 0 | 2020-02-05T07:15:03 | https://dev.to/keevcodes/a-use-case-for-the-object-entries-method-5dcj | javascript, beginners, webdev | ---

title: A use case for the Object.entries() method

published: true

description: Split up objects with Object.entries() and Array.filter

tags: #javascript #beginner #webdev

cover_image: https://user-images.githubusercontent.com/17259420/73816918-134db300-47ea-11ea-97eb-1109473ab723.jpg

---

*Perhaps you already know about Object.keys() and Object.values() to create an array of an objects keys and values respectively. However, there's another method `Object.entries()` that will return a nested array of the objects key and values. This can be very helpful if you'd like to return only one of these pairs based on the other's value.*

# A clean way to return keys in an Object

Often times in form with form data there will be a list of choices presented to users that are selectable with radio buttons. The object's data returned from this will look something like this...

```javascript

const myListValues = {

'selectionTitle': true,

'anotherSelectionTitle': false

}

```

We could store these objects with their keys and value in our database as they are, however just adding the `key` name for any truthy value would be sufficient. By passing our `myListValues` object into Object.entries() we can filter out any falsey values from our newly created array and then

return the keys as a string.

###Execution

We'll make use of not only Object.entries(), but also the very handy array methods `filter()` and `map()`. The output from `Object.entries(myListValues)` will be...

```javascript

const separatedList = [

['selectionTitle', true ],

['anotherSelectionTitle', false ]

];

```

We now have an array that can be utilise `.filter()` and `.map()` to return our desired result. So let's clean up our `separatedList` array a bit.

```javascript

const separatedFilteredList =

Object.entries(myListValues).filter([key, value] => value);

const selectedItems = separatedFilteredList.map(item => item[0]);

```

There we have it. Our selectedItems array is now just a list of the key names from our objects who's value was truthy. This is just one of many use cases for perhaps a lesser know object method. I'd love to see some more interesting use cases you may have come up with. | keevcodes |

254,856 | You Should Know These Tips To Properly Maintain And Extend The Durability Of Your NY Signs | Getting custom NY Signs finally made by the professionals is not the last step toward a successful bu... | 0 | 2020-02-04T07:26:57 | http://www.vidasigns.com/ | signs | Getting custom<strong><b> </b></strong><a href="http://www.vidasigns.com/">NY Signs</a><strong><b> </b></strong>finally made by the professionals is not the last step toward a successful business. Neon signs are the most popular these days due to its benefit of standing out in the crowd. It is a perfect way of marketing that most business people use for their companies. An entrepreneur usually makes sure to maintain it one day after another so that it can provide lifelong services to the company. Many believe that since neon signs are mostly durable, they do not need any effort for maintenance.

<img class="alignnone wp-image-7182 size-full" src="https://s3-ap-southeast-2.amazonaws.com/www.cryptoknowmics.com/blog/wp-content/uploads/2020/02/04072541/neon-sings.png" alt="" width="1920" height="1080" />

Such aspects do not mean that we allow it to get damaged because of our lack of care. The prolonged lifeline of the Neon signs can be established only by our effort of proper maintenance. This short guide can save us from being involved in investing our money time and again over neon signs because we couldn't care about it from the start.

<h3><strong><b>Choosing and placement</b></strong></h3>

Most of us assume that the signs are meant to be durable with or without proper care. In reality, two factors matter in this respect: Option we opt for and placement of it. Experts suggest that if the Neon sign is placed in a crowded area, where things are carried or transported often, we can consider getting a cover for your neon sign. Since the neon sign is still not strong enough because of having primary substance like glass, it can be broken into pieces without a clear cover. By making sure of such things, we would ensure that the Neon sign can last for a decade or two. It also shows the importance of why there is a need to select a better place for our neon sign so that it doesn't fall off and break.

<h3><strong><b>Taking good care</b></strong></h3>

The use of neon signs can be flashy in a way to ensure that the business can attain higher development. Indeed, the neon signs need to be held and maintained correctly because of the tubes. The first aspect of taking good care of the signs is to ensure whether the tubes are broken or cracked. If the damage is visible, we might have to approach the professionals who are experienced enough to fix it appropriately.

<h3><strong><b>Cleanliness of the tubes</b></strong></h3>

The use of neon signs can be flashy in a way to ensure that the business can attain higher development. Indeed, the neon signs need to be held and maintained correctly because of the tubes. The first aspect of taking good care of the signs is to ensure whether the tubes are broken or cracked. If the damage is visible, we might have to approach the professionals who are experienced enough to fix it appropriately.

<h3><strong><b>Safe from bugs</b></strong></h3>

One thing that attracts the light is flying insects that makes it difficult for anyone around. We might even see the dead bodies of these bugs sticking with the tubes every morning. At such a time, cleaning becomes a gross work that needs to be done almost every day.

The first thing you can do is use the help of traps and bug zappers to capture the flying bugs easily. It can help you in lowering down the insect population quickly.

<h3><strong><b>Conclusion</b></strong></h3>

If one desires to buy neon signs in NYC or led signs for business, we might have to gain more information about how to maintain them properly. The maintenance aspect can ensure that we give us higher durability and better quality of light. | nelliemarteen |

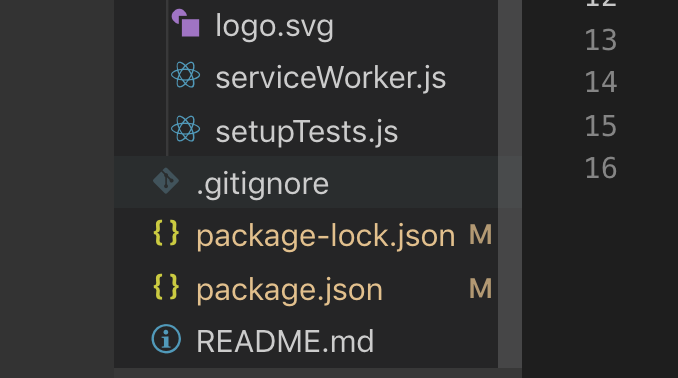

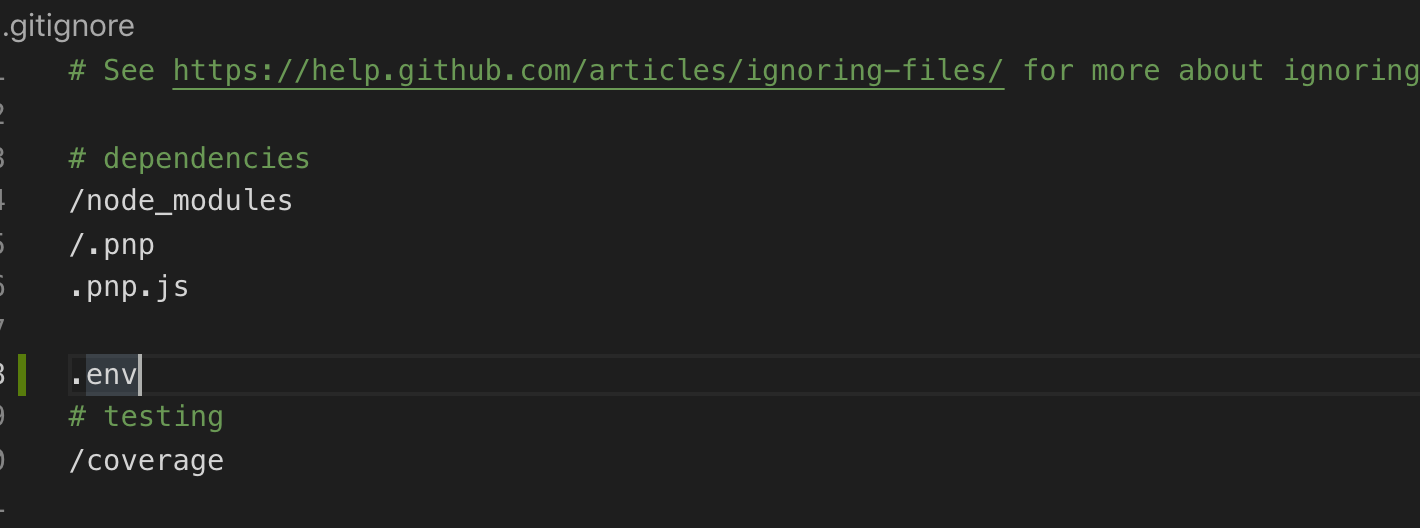

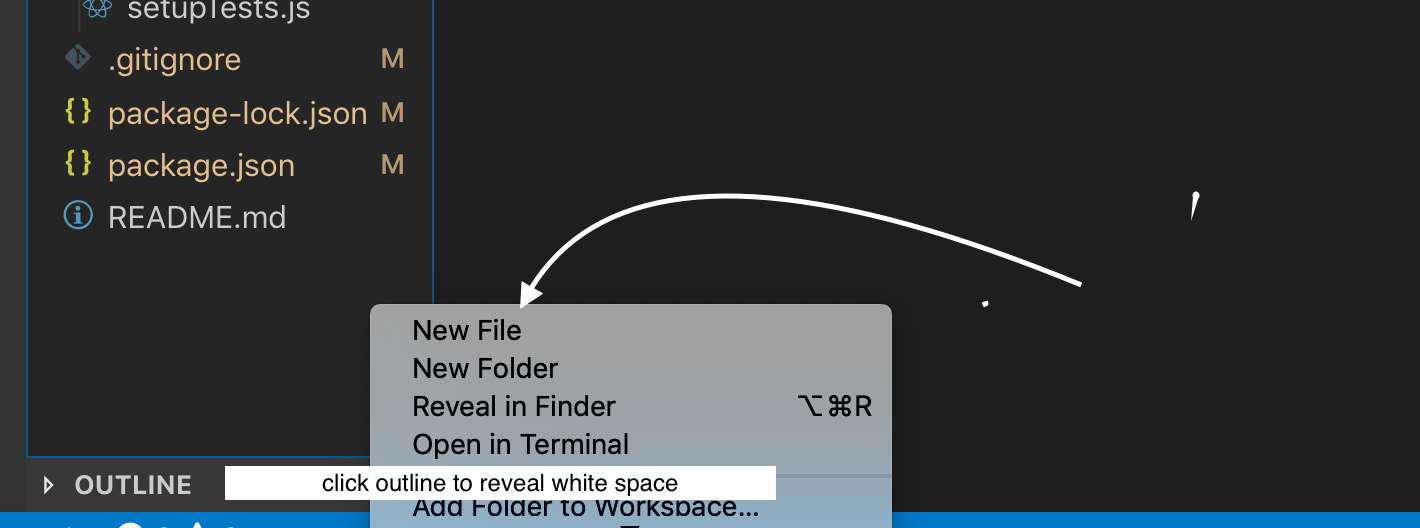

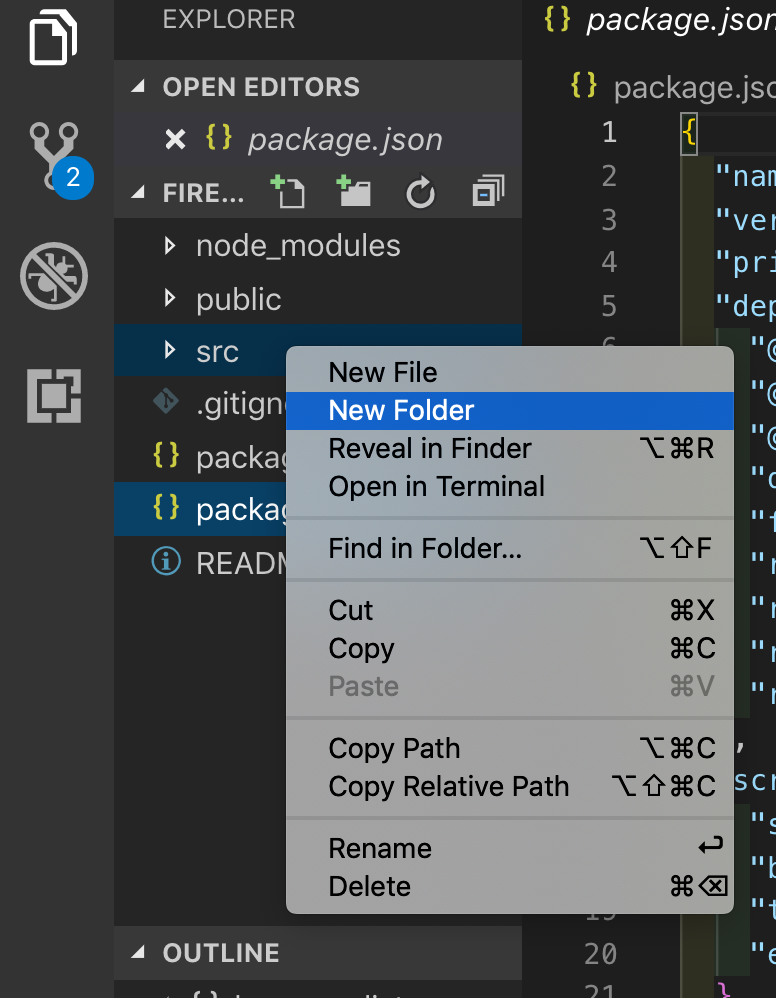

254,879 | How to add gitignored files to Heroku (and how not to) | Sometimes, you want to add extra files to Heroku or Git, such as built files, or secrets; but it is a... | 0 | 2020-02-04T08:37:51 | https://dev.to/patarapolw/how-to-add-gitignored-files-to-heroku-and-how-not-to-3fbe | webdev, javascript | Sometimes, you want to add extra files to Heroku or Git, such as built files, or secrets; but it is already in `.gitignore`, so you have to build on the server.

You have options, as this command is available.

```sh

git push heroku new-branch:master

```

But how do I create such `new-branch`.

A naive solution would be to use `git switch`, but this endangers gitignored files as well. (It might disappear when you switch branch.)

That's where `git worktree` comes in.

I can use [a real shell script](https://github.com/patarapolw/aloud/blob/77133bb2950af19819afd99b17026eabdb16fd4c/deploy.sh), but I feel like using Node.js is much easier (and safer due to [pour-console](https://github.com/patarapolw/pour-console)).

So, it is basically like this.

```js

async function deploy (

callback,

deployFolder = 'dist',

deployBranch = 'heroku',

deployMessage = 'Deploy to Heroku'

) {

// Ensure that dist folder isn't exist in the first place

await pour('rm -rf dist')

try {

await pour(`git branch ${deployBranch} master`)

} catch (e) {

console.error(e)

}

await pour(`git worktree add -f ${deployFolder} ${deployBranch}`)

await callback(deployFolder, deployBranch)

await pour('git add .', {

cwd: deployFolder

})

await pour([

'git',

'commit',

'-m',

deployMessage

], {

cwd: deployFolder

})

await pour(`git push -f heroku ${deployBranch}:master`, {

cwd: deployFolder

})

await pour(`git worktree remove ${deployFolder}`)

await pour(`git branch -D ${deployBranch}`)

}

deploy(async (deployFolder) => {

fs.writeFileSync(

`${deployFolder}/.gitignore`,

fs.readFileSync('.gitignore', 'utf8').replace(ADDED_FILE, '')

)

fs.copyFileSync(

ADDED_FILE,

`${deployFolder}/${ADDED_FILE}`

)

}).catch(console.error)

```

## How not to commit

Apparently, this problem is easily solved on Heroku with

```js

pour(`heroku config:set SECRET_FILE=${fs.readFileSync(secretFile, 'utf8')}`)

```

Just make sure the file is deserializable.

You might even write a custom serializing function, with

```js

JSON.stringify(obj[, replacer])

JSON.parse(str[, reviver])

```

Don't forget that `JSON` object is customizable. | patarapolw |

254,940 | Add your project located on PC to GitHub, A detailed How To Article | This tutorial will walk you through the step by step approach about how to add your local code base to your GitHub account. | 0 | 2020-02-04T10:54:26 | https://dev.to/windson/add-your-project-located-on-pc-to-github-a-detailed-how-to-article-2p95 | github, addexisitingproject, versioncontrol, sourcecontrol | ---

title: Add your project located on PC to GitHub, A detailed How To Article

published: true

description: This tutorial will walk you through the step by step approach about how to add your local code base to your GitHub account.

tags: github, add exisiting project, version control, source control

---

This detailed tutorial http://bit.ly/2UoEXLe will walk you through adding an existing project to GitHub.

Following is the Quick Cheat Sheet to add existing repository to git has step by step approach to add and push an existing code base to a new GitHub repository.

`echo "# my-first-repo-on-github" >> README.md`

`git init`

`# Create New repository on GitHub`

`# git remote add origin url`

`git remote add origin https://github.com/your-awesome-username/name-of-your-repository.git`

`git remote -v`

`git pull origin master`

`git add .`

`git commit -m 'init'`

`git push origin master`

| windson |

254,987 | Near real-time Campaign Reporting Part 2 - Aggregation/Reduction | This is the second in a series of articles describing a simplified example of near real-time Ad Cam... | 0 | 2020-02-04T12:09:47 | https://dev.to/aerospike/near-real-time-campaign-reporting-part-2-aggregation-reduction-4af6 |

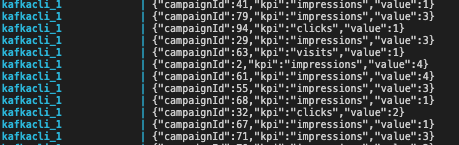

This is the second in a series of articles describing a simplified example of near real-time Ad Campaign reporting on a fixed set of campaign dimensions usually displayed for analysis in a user interface. The solution presented in this series relies on [Kafka](https://en.wikipedia.org/wiki/Apache_Kafka), [Aerospike’s edge-to-core](https://www.aerospike.com/blog/edge-computing-what-why-and-how-to-best-do/) data pipeline technology, and [Apollo GraphQL](https://www.apollographql.com/)

* [Part 1](https://dev.to/aerospike/near-real-time-campaign-reporting-part-1-event-collection-2kal): real-time capture of Ad events via Aerospike edge datastore and Kafka messaging.

* Part 2: aggregation and reduction of Ad events leveraging Aerospike Complex Data Types (CDTs) for aggregation and reduction of Ad events into actionable Ad Campaign Key Performance Indicators (KPIs).

* [Part 3](https://dev.to/aerospike/near-real-time-campaign-reporting-part-3-campaign-service-and-campaign-ui-812): describes how an Ad Campaign user interface displays those KPIs using GraphQL retrieve data stored in an Aerospike Cluster.

*Data flow*

### Summary of Part 1

In part 1 of this series, we

- used an ad event simulator for data creation

- captured that data in the Aerospike “edge” database

- pushed the results to a Kafka cluster via Aerospike’s Kafka Connector

Part 1 is the base used to implement Part 2

## The use case — Part 2

The simplified use case for Part 2 consists of reading Ad events from a Kafka topic, aggregating/reducing the events into KPI values. In this case the KPIs are simple counters, but in the real-world these would be more complex metrics like averages, gauges, histograms, etc.

The values are stored in a data cube implemented as a Document or Complex Data Type ([CDT](https://www.aerospike.com/docs/guide/cdt.html)) in Aerospike. Aerospike provides fine-grained operations to read or write one or more parts of a [CDT](https://www.aerospike.com/docs/guide/cdt.html) in a single, atomic, database transaction.

The Aerospike record:

| Bin | Type | Example value |

| --- | ---- | ------------- |

| c-id | long | 6 |

| c-date | long | 1579373062016 |

| c-name | string | Acme campaign 6 |

| stats | map | {"visits":6, "impressions":78, "clicks":12, "conversions":3}|

The Core Aerospike cluster is configured to prioritise consistency over availability to ensure that numbers are accurate and consistent for use with payments and billing. Or in others words: **Money**

In addition to aggregating data, the new value of the KPI is sent via another Kafka topic (and possible separate Kafka cluster) to be consumed by the Campaign Service as a GraphQL subscription and providing a live update in the UI. Part 3 covers the Campaign Service, Campaign UI and GraphQL in detail.

*Aggregation/Reduction sequence*

## Companion code

The companion code is in [GitHub](https://github.com/helipilot50/real-time-reporting-aerospike-kafka). The complete solution is in the `master` branch. The code for this article is in the `part-2` branch.

Javascript and Node.js are used in each service although the same solution is possible in any language.

The solution consists of:

* All of the service and containers in [Part 1](https://dev.to/aerospike/near-real-time-campaign-reporting-part-1-event-collection-2kal).

* Aggregator/Reducer service - Node.js

Docker and Docker Compose simplify the setup to allow you to focus on the Aerospike specific code and configuration.

### What you need for the setup

All the perquisites are described in [Part 1](https://dev.to/aerospike/near-real-time-campaign-reporting-part-1-event-collection-2kal).

### Setup steps

To set up the solution, follow these steps. Because executable images are built by downloading resources, be aware that the time to download and build the software depends on your internet bandwidth and your computer.

Follow the setup steps in [Part 1](https://dev.to/aerospike/near-real-time-campaign-reporting-part-1-event-collection-2kal). Then

**Step 1.** Checkout the `part-2` branch

```bash

$ git checkout part-2

```

**Step 2.** Then run

```bash

$ docker-compose up

```

Once up and running, after the services have stabilised, you will see the output in the console similar to this:

*Sample console output*

## How do the components interact?

*Component Interaction*

**Docker Compose** orchestrates the creation of several services in separate containers:

All of the services and containers in [Part 1](https://dev.to/aerospike/near-real-time-campaign-reporting-part-1-event-collection-2kal) with the addition of:

**Aggregator/Reducer** `aggregator-reducer` - A node.js service to consume Ad event messages from the Kafka topic `edge-to-core` and aggregates the single event with the existing data cube. The data cube is a document stored in an Aerospike CDT. A CDT document can be a list, map, geospatial, or nested list-map in any combination. One or more portions of a CDT can be mutated and read in a single atomic operation. See [CDT Sub-Context Evaluation](https://www.aerospike.com/docs/guide/cdt-context.html)

Here we use a simple map where multiple discrete counters are incremented. In a real-world scenario, the datacube would be a complex document denormalized for read optimization.

Like the Event Collector and the Publisher Simulator, the Aggregator/Reducer uses the Aerospike Node.js client. On the first build, all the service containers that use Aerospike will download and compile the supporting C library. The `Dockerfile` for each container uses multi-stage builds to minimise the number of times the C library is compiled.

**Kafka Cli** `kafkacli` - Displays the KPI events used by GraphQL in [Part 3](https://dev.to/aerospike/near-real-time-campaign-reporting-part-3-campaign-service-and-campaign-ui-812).

### How is the solution deployed?

Each container is deployed using `docker-compose` on your local machine.

*Note:* The `aggregator-reducer` container is deployed along with **all** the containers from [Part 1](https://dev.to/aerospike/near-real-time-campaign-reporting-part-1-event-collection-2kal).

*Deployment*

## How does the solution work?

The `aggregator-reducer` is a headless service that reads a message from the Kafka topic `edge-to-core`. The message is the whole Aerospike record written to `edge-aerospikedb` and exported by `edge-exporter`.

The event data is extracted from the message and written to `core-aerospikedb` using multiple CDT operations in one atomic database operation.

*aggregation flow*

### Connecting to Kafka

To read from a Kafka topic you need a `Consumer` and this is configured to read from one or more topics and partitions. In this example, we are reading a message from one topic `edge-to-core` and this topic has only 1 partition.

```Javascript

this.topic = {

topic: eventTopic,

partition: 0

};

this.consumer = new Consumer(

kafkaClient,

[],

{

autoCommit: true,

fromOffset: false

}

);

let subscriptionPublisher = new SubscriptionEventPublisher(kafkaClient);

addTopic(this.consumer, this.topic);

this.consumer.on('message', async function (eventMessage) {

...

});

this.consumer.on('error', function (err) {

...

});

this.consumer.on('offsetOutOfRange', function (err) {

...

});

```

Note that the `addTopic()` is called after the `Consumer` creation. This function attempts to add a topic to the consumer, if unsuccessful it waits 5 seconds and tries again. Why do this? The `Consumer` will throw an error if the topic is empty and this code overcomes that problem.

```javascript

const addTopic = function (consumer, topic) {

consumer.addTopics([topic], function (error, thing) {

if (error) {

console.error('Add topic error - retry in 5 sec', error.message);

setTimeout(

addTopic,

5000, consumer, topic);

}

});

};

```

### Extract the event data

The payload of the message is a complete Aerospike record serialised as JSON.

```json

{

"msg": "write",

"key": [

"test",

"events",

"AvYAdzrOmm1xwvWZFyGwEvgjwnk=",

null

],

"gen": 0,

"exp": 0,

"lut": 0,

"bins": [

{

"name": "event-id",

"type": "str",

"value": "0d33124c-beca-4b4f-a833-8c9646167e8c"

},

{

"name": "event-data",

"type": "map",

"value": {

"geo": [

52.366521,

4.894981

],

"tag": "0a3ca7c5-b845-49ed-ab3a-129f9eca23d6",

"publisher": "e5b08db3-07b5-456b-aaac-1e59f76c4dd6",

"event": "impression",

"userAgent": "Mozilla/5.0 (X11; CrOS x86_64 8172.45.0) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/51.0.2704.64 Safari/537.36"

}

},

{

"name": "event-tag-id",

"type": "str",

"value": "0a3ca7c5-b845-49ed-ab3a-129f9eca23d6"

},

{

"name": "event-source",

"type": "str",

"value": "e5b08db3-07b5-456b-aaac-1e59f76c4dd6"

},

{

"name": "event-type",

"type": "str",

"value": "impression"

}

]

}

```

These items are extracted:

1. Event value

2. Tag id

3. Event source

These values are used in the aggregation step.

```javascript

let payload = JSON.parse(eventMessage.value);

// Morph the array of bins to and object

let bins = payload.bins.reduce(

(acc, item) => {

acc[item.name] = item;

return acc;

},

{}

);

// extract the event data value

let eventValue = bins['event-data'].value;

// extract the Tag id

let tagId = eventValue.tag;

// extract source e.g. publisher, vendor, advertiser

let source = bins['event-source'].value;

```

### Lookup Campaign Id using Tag

The Tag Id is used to locate the matching Campaign. During campaign creation, a mapping between Tags and Campaign is created, this example uses an Aerospike record where the key is the Tag id and the value is the Campaign Id, and in this case, Aerospike is used a Dictionary/Map/Associative Array.

```javascript

//lookup the Tag id in Aerospike to obtain the Campaign id

let tagKey = new Aerospike.Key(config.namespace, config.tagSet, tagId);

let tagRecord = await aerospikeClient.select(tagKey, [config.campaignIdBin]);

// get the campaign id

let campaignId = tagRecord.bins[config.campaignIdBin];

```

### Aggregating the Event

The Ad event is specific to a Tag and therefore a Campaign. In our model, a Tag is directly related to a Campaign and KPIs are collected at the Campaign level. In the real-world KPIs are more sophisticated and campaigns have many execution plans (line items).

Each event for a KPI increments the value by 1. Our example stores the KPIs in a document structure ([CDT](https://www.aerospike.com/docs/guide/cdt.html)) in a [bin](https://www.aerospike.com/docs/architecture/data-model.html#bins) in the Campaign [record](https://www.aerospike.com/docs/architecture/data-model.html#records). Aerospike provides operations to atomically [access and/or mutate sub-contexts](https://www.aerospike.com/docs/guide/cdt-context.html) of this structure to ensure the operation latency is ~1ms.

In a real-world scenario, events would be aggregated with sophisticated algorithms and patterns such as time-series, time windows, histograms, etc.

Our code simple increments the value KPI value by 1 using the KPI name as the 'path' to the value:

```javascript

const accumulateInCampaign = async (campaignId, eventSource, eventData, asClient) => {

try {

// Aerospike CDT operation returning the new DataCube

let campaignKey = new Aerospike.Key(config.namespace, config.campaignSet, campaignId);

const kvops = Aerospike.operations;

const maps = Aerospike.maps;

const kpiKey = eventData.event + 's';

const ops = [

kvops.read(config.statsBin),

maps.increment(config.statsBin, kpiKey, 1),

];

let record = await asClient.operate(campaignKey, ops);

let kpis = record.bins[config.statsBin];

console.log(`Campaign ${campaignId} KPI ${kpiKey} processed with result:`, JSON.stringify(record.bins, null, 2));

return {

key: kpiKey,

value: kpis

};

} catch (err) {

console.error('accumulateInCampain Error:', err);

throw err;

}

};

```

The new KPI value is incremented and the new value is returned. The magic of Aerospike ensures that the operation is Atomic and Consistent across the cluster with a latency of about 1 ms.

### Publishing the new KPI

We could stop here and allow the Campaign UI and Service (Part 3) to poll the Campaign store `core-aerospikedb` to obtain the latest campaign KPIs - this is a typical pattern.

A more advanced approach is to stimulate the UI whenever a value has changed or at a specified frequency. While introducing new technology and challenges, this approach offers a very responsive UI presenting up to the second KPI values to the user.

The `SubScriptionEventPublisher` uses Kafka as Pub-Sub to publish the new KPI value for a specific campaign on the topic `subscription-events`. In Part 3 the `campaign-service` receives this event and publishes it as a [GraphQL Subscription](https://www.apollographql.com/docs/apollo-server/data/subscriptions/)

```javascript

class SubscriptionEventPublisher {

constructor(kafkaClient) {

this.producer = new HighLevelProducer(kafkaClient);

};

publishKPI(campaignId, accumulatedKpi) {

const subscriptionMessage = {

campaignId: campaignId,

kpi: accumulatedKpi.key,

value: accumulatedKpi.value

};

const producerRequest = {

topic: subscriptionTopic,

messages: JSON.stringify(subscriptionMessage),

timestamp: Date.now()

};

this.producer.send([producerRequest], function (err, data) {

if (err)

console.error('publishKPI error', err);

// else

// console.log('Campaign KPI published:', subscriptionMessage);

});

};

}

```

## Review

[Part 1](https://dev.to/aerospike/near-real-time-campaign-reporting-part-1-event-collection-2kal) of this series describes:

* creating mock Campaign data

* a publisher simulator

* an event receiver

* an edge database

* an edge exporter

This article (Part 2) describes the aggregation and reduction of Ad events into Campaign KPIs using Kafka as the messaging system and Aerospike as the consistent data store.

[Part 3](https://dev.to/aerospike/near-real-time-campaign-reporting-part-3-campaign-service-and-campaign-ui-812) describes the Campaign service and Campaign UI to for a user to view the Campaign KPIs in near real-time.

## Disclaimer

This article, the code samples, and the example solution are entirely my own work and not endorsed by Aerospike or Confluent. The code is PoC quality only and it is not production strength, and is available to anyone under the MIT License.

| helipilot50 | |

255,085 | Build Your Own Personal Data Repository With Nostalgia | The companies that we entrust our personal data to are using that information to gain extensive insig... | 0 | 2020-02-04T15:26:12 | https://www.pythonpodcast.com/nostalgia-personal-data-repository-episode-248/ | <p>The companies that we entrust our personal data to are using that information to gain extensive insights into our lives and habits while not always making those findings accessible to us. Pascal van Kooten decided that he wanted to have the same capabilities to mine his personal data, so he created the Nostalgia project to integrate his various data sources and query across them. In this episode he shares his motivation for creating the project, how he is using it in his day-to-day, and how he is planning to evolve it in the future. If you're interested in learning more about yourself and your habits using the personal data that you share with the various services you use then listen now to learn more.</p><p><a href='https://www.pythonpodcast.com/nostalgia-personal-data-repository-episode-248/'>Listen Now!</a></p> | blarghmatey | |

255,121 | A New Package for the CLI | TL;DR, we're re-releasing the CLI package under a new name, @ionic/cli! To update, first you will nee... | 0 | 2020-02-18T18:00:03 | https://ionicframework.com/blog/a-new-package-for-the-cli/ | ionic, cli | ---

title: A New Package for the CLI

published: true

date: 2020-02-04 15:40:02 UTC

tags: Ionic, CLI

canonical_url: https://ionicframework.com/blog/a-new-package-for-the-cli/

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/9wyq5ol8gp7vyr6dlb1i.png

---

TL;DR, we're re-releasing the CLI package under a new name, `@ionic/cli`!

To update, first you will need to uninstall the old CLI package.

```shell

$ npm uninstall -g ionic

$ npm install -g @ionic/cli

```

You will still interact with the CLI via `ionic` command, just how the CLI is installed has changed. And now, on with the blog post!

<!--more-->

## Everything has a beginning

Many years ago, when Ionic was still in it's pre 1.0, we saw a great opportunity to help devs build amazing apps without having to guess how that would be done. While the V1 days of Ionic included things like bower, scripts tags, and gulp, it was our first attempt to make a tool that did everything for you. After building out all the initial functionality, we had one last task...what do we call this tool?

We called it...Ionic!

Our initial logic was that we would have one tool and one framework that covered everything. Want to build apps with Ionic? Just install Ionic. This worked great, and for a long time we were set on just keeping things as they were. That is until we started noticing a common point of confusion with our users.

## What version of Ionic are you using?

When debugging a user issue or working with community members, one of the first things we ask people is "What version of Ionic are you using?" This has led to some confusion in the community as people would assume that `ionic -v` would give them the version of both the framework and the CLI. This however is not the case. One or two instances would be enough to ignore this, but given how common this is, we thought it was finally time to solve this issue.

As the number of packages under the Ionic organization has grown, we would ship them under the scoped package name. Our release of Ionic for Angular? `@ionic/angular`. React? `@ionic/react`. This is a pretty clear message that when you install one of these packages, you know exactly what you are getting. There is no confusion in this package's purpose or what context this package should be used in.

## Moving towards a scoped package

To help with this confusion, we're rereleasing the CLI package under a new name, `@ionic/cli`. This unifies how we ship tools across Ionic and make sure that people are aware what tool they are installing when setting up their environment. I mentioned this last week in an Ionic newsletter and so far the feedback from the community has been incredibly supportive.

In the past this has been a suggestion that many community members have made and as time has gone on, it seems to be the move many other CLI tools have done. Angular in particular rebranded the `angular-cli` package in favor of `@angular/cli` and the Vue CLI has done the same thing (`vue-cli` to `@vue/cli`). With this in mind, we finally decided it was time.

To update to the new CLI, you must first uninstall the old CLI package:

```shell

$ npm uninstall -g ionic

# Then install the new CLI package

$ npm install -g @ionic/cli

```

This new package name coincides with the release of the CLI’s 6.0. This includes some new features which can all be reviewed in the [Changelog](https://github.com/ionic-team/ionic-cli/blob/develop/packages/%40ionic/cli/CHANGELOG.md#600-2020-01-25).

The old CLI package will not be updated to the newer 6.0 releases and has an official deprecated warning now. While this should work for some time, we encourage everyone to update to the new CLI package to receive all the latest updates.

Well that’s all for now folks! We are glad the feedback so far has been super supportive of this change and can’t wait for you all to upgrade….Seriously, we can’t wait. Upgrade your CLI 😄.

Cheers!

| mhartington |

255,131 | Using Fetch in JavaScript | Sometimes we need to get information from an API. Since the 2015 updates to JavaScript, fetch() is th... | 0 | 2020-02-04T16:45:14 | https://dev.to/eliastooloee/using-fetch-in-javascript-3i7c | Sometimes we need to get information from an API. Since the 2015 updates to JavaScript, fetch() is the best way to go about this. Below I will explain the ES6 syntax for using fetch().

```function getBooks() {

fetch('http://localhost:3000/books')

.then((response) => {

return response.json();

})

.then((books) => {

renderBooks(books);

});

}```

Here we can see a function getBooks, which will communicate with an API. The first step is use fetch, followed by the Url of the API inside the parentheses. This gets us the promise from the API, but the information is not usable. We then pass the response from fetch() into the next function to get the JSON data from the promise, and finally we name the JSON data 'books' and pass it to the function renderBooks.

```function renderBooks(books) {

books.forEach(book => {

renderBook(book)

});

}```

Render books simply calls another function, renderBook, that will display each book. We pass book into this function. We then look at the HTML and find the element "list". We then target "list and create a new element called li. We set the content of this new element to be book.title, then append it to the list. We also add an event listener to li that will call the function showBookCard when li is clicked. We must pass in an empty function for this to work. If we do not pass an empty function, the event listener will run on page load, which we do not want.

```function renderBook(book) {

const unorderedList = document.getElementById("list");

const li = document.createElement("li");

li.textContent = book.title

li.addEventListener("click", () => showBookCard(book))

unorderedList.appendChild(li)

}```

Next, we have function showBookCard, which will create a display that is triggered by the event listener attached to li.

```function showBookCard(book) {

const showPanel = document.getElementById("show-panel")

showPanel.innerHTML = "";

const coverImage = document.createElement("img")

coverImage.src = book.img_url

const description = document.createElement("h1")

description.textContent = book.description

const button = document.createElement("button")

button.textContent = "Like"

button.addEventListener("click", () => likeBook(book))

const usersList = document.createElement("ul")

book.users.forEach(user => {

const userLi = document.createElement("li");

userLi.textContent = user.username;

usersList.appendChild(userLi);

})

showPanel.appendChild(coverImage)

showPanel.appendChild(description)

showPanel.appendChild(button)

showPanel.appendChild(usersList)

}```

This function looks enormous, but is actually quite simple. We first look at the HTML to find the element "show-panel" and set it to a constant showPanel. We set its innerHTML equal to an empty string to make sure any content disappears when a user clicks away. We then create a constant called coverImage and set its source to book.img_url. We then append coverImage to showPanel. We can follow the same steps for the book description, substituting textContent for source. We then create a button called "Like" that will allow a user to like a book and attach an event listener to it that will call the function likeBook when the button is clicked. We then create an element called usersList with a "ul" tag. We then use forEach to iterate through the books users (user must be passed in), creating an "li"for each one and setting the textContent equal to their username. We then append the user to to the list. All of these elements must then be appended to showPanel.

We then come to the function likeBook, which is called when a user clicks the like button on a book card.

```function likeBook(book) {

book.users.push(userName)

fetch(`http://localhost:3000/books/${book.id}`, {

method: "PATCH",

headers: {

"Content-Type": "application/json",

Accept: "application/json"

},

body: JSON.stringify({users: book.users})

})

.then(res => res.json())

.then(likedBook => showBookCard(likedBook));

}```