id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

286,220 | Domain Extensions and Your Project | NOTICE: I tried to limit how many links I made reference to, there should only be one but if I mentio... | 0 | 2020-03-22T21:24:01 | https://dev.to/kailyons/domain-extensions-and-your-project-1b1f | **NOTICE: I tried to limit how many links I made reference to, there should only be one but if I mention multiple please do not click them or go to them, as I have no control over them. You have been warned**

Let's make it clear, I am a domain extension nut. A weird hobby of mine is domain extensions and also explaining how I feel about them. This article is supposed to help you which TLD's to pick. TLD's being Top-Level-Domains or domain extensions. Basically the .com .to .org .net .io and about a trillion others to pick from. I want to go through four main sections. The first section being the domain extensions to avoid at all costs. The second being about okay-enough domain extensions. Thirdly about the extensions you need to look into. Finally about the extensions that are clever for some projects. Let's just jump into it.

# AVOID THESE EXTENSIONS!!

Non-applicable trade domains (.accountant, .shiksha, .builders, etc.). Notice the word "Non-applicable." In short, if your domain isn't a build tool or accounting software, then stay away from these domains. Any kind of skill, trade, etc that doesn't apply would make sense to avoid.

Free domains. These are an ABSOLUTE must to avoid. So you know how domains like .cc usually represent more sketchy sites because they are cheap? These domains usually belong to the sketchiest. I have seen them belong to a plethora of sites like for malware, stolen code running projects, and a plethora of different scams/crams/and whams. One most notable example was a URL shortener that abused TinyURL, with file hosting with Discord CDN with lying about it, a Pastebin service that... I do not know what it used as a "backend". It did free hosting on Glitch, claiming it was self-hosted... and I will make an article about this in the future. Also just away from the side note "free domains" means "Freenom" domains, which Freenom is a TLD seller who gives free domains. They are a tiny bit sketch but not too bad. It is mostly the TLD's themselves. Also, don't worry .js.org people, all is good, you will be mentioned *later.*

# Okay Domains

Generics. Your .com, .co, .org, .net, even stuff like .cc, .to, .me. These TLDs by nature usually mean something too. .net can mean network, .org is general organizations, .co is elegant product, .com is average product, .cc is cheaper product. So on and so forth. These TLDs are also best known as "Not just software" domains.

# Good Domain Extensions

These are some you should focus on. Basically .ai, .dev, .io are the key three here. These are good for any job but they have a clear dev stance to them. .codes, .app, .cloud, .gg so on and so forth. Also, some, while other people might avoid can be good, like how my friend got my favorite domain I ever owned, viruses-to.download. Now yeah, probably don't visit the page, he is using it for file hosting and plans to do virus testing things there, so be warned. Also in the next year or so neither of us might have it anymore.

# Special extensions

These TLDs are the best on the market. Your project will love the use of these. Remember .js.org? Yeah, that's one of these. Yes free, has a clear JavaScript objective. .sh is another good one, idealized as shell-like in Bash. .py is another good one that is wonderful for python developers. .wtf has a lot of potential, but personally I see it as a gross TLD because of one specific website about... not being nice to animals... in a non-violent but graphic way. Moving on. One's that I love the most are .one, .is, and more.

# Bonus: Where to buy them

Now I am not sponsored, if I was I would have something better than a dual-core CPU laptop with four gigs of RAM. Anyways I always recommend Hover, 101Domain, and Namecheap as mains, name.com as a backup, and in general stay away from GoDaddy. Some other possible good ones out there but these are what I use. Have fun and be safe online.

# Interesting happenstance

Okay so while trying to find TLD screenshots and also trying to un-forget some TLDs and I typed in a random string that was me keyboard spamming. I found one of them in the form of the .cm TLD was taken. Weird. | kailyons | |

286,235 | Starting out with GraphQL | Understanding the Purpose, and some key early tips | 0 | 2020-03-24T14:13:23 | https://dev.to/heroku/starting-out-with-graphql-5g0m | json, graphql, beginners, webdev | ---

title: Starting out with GraphQL

published: true

description: Understanding the Purpose, and some key early tips

tags: json, graphql, beginners, web-dev

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/h4fr5nleot3nw21n043v.png

---

## What is GraphQL?

GraphQL is a query language for APIs and a runtime for fulfilling those queries with your existing data. By running a GraphQL server (e.g. [Apollo GraphQL](https://www.apollographql.com/)), your existing applications can send parameterised requests and get back JSON responses.

## What is it good for?

Is GraphQL The answer to all of life’s problems? Maaaaaaybe? But it isn’t a database or a web server, the two things its most often confused for. Let’s dive in.

GraphQL is a communication standard. Its goal is to let you request all the data you need with a single fairly compact request. It was born as an attempt to improve on REST APIs for populating web pages with data. The classic conundrum looks like this:

WEBPAGE: When Bob comments on a photo, I want to show a tooltip with profile pics of Alice and Bob’s top 5 mutual friends.

APP: okay, here’s Bob’s ‘user’ record

WEBPAGE: this has all his friend’s IDs, I need their mutual friends.

APP: hey good news I built an endpoint that takes two users IDs and returns their mutual friends

WEBPAGE: Great!

APP: they have 137 mutual friends

WEBPAGE: geez I want the top 5 by date, but… okay, now can I get their profile pictures

APP: sure here’s the first friend’s ‘user’ record

WEBPAGE: I need-

APP: here’s the second friend complete ‘user’ record

WEBPAGE: you don’t just have the photos?

APP: nope! Here’s the third friend’s ‘user’ record. Geez this is taking a while, huh? SOMEone’s on 3g, amirite?

-fin-

What’s wrong with this picture? In general two things:

* Overfetching: while most REST API’s will have a way to ask for a ‘top 5’, there’s usually no way to ask for *some* information. We only wanted Bob’s mutual friends, and then after that we only wanted the mutuals photos not their full profiles

* Multiple round trips: the very last request, for all 5 mutual friends' user profiles could be done in parallel, but all the steps before that would have to happen synchronously, waiting for a full reply before the next step could happen. If you think this isn’t a problem, you need to be a bit better informed about [the public’s level of broadband access](https://www.brookings.edu/wp-content/uploads/2016/07/Broadband-Tomer-Kane-12315.pdf)

In this scenario, no ‘bad engineering’ happened with this REST API, in fact the work’s been done to return filtered and scoped lists for some requests! But it is true that the front-end page team doesn’t have access to set up the exact API endpoints they need. This is an important point and I want to emphasize it a bit.

> If you have full access to alter your REST API, you don’t need GraphQL

If your pal, the backend developer, is working with you to set up this feature, they can absolutely set up user/views/top5mutualpics and give you just the data you need, but the trouble starts as your operation grows and features on the front end need to be delivered without API changes. This probably means your org is growing, your user base is growing, and that you expect the frontend to grow and change without updates to your API, so it’s probably a good thing!

## Benefits of GraphQL

GraphQL allows you to request data to the depth and in the shape that you need. It also implicitly lets you scope your request to get only the fields you need

```json

{

hero {

name

}

}

```

The response we get back will be JSON in this shape:

```json

{

"data": {

"hero": {

"name": "R2-D2"

}

}

}

```

_This example is done on the lovely Star Wars API (SWAPI) endpoint, check out its [GraphQL interface here](https://graphql.org/swapi-graphql/)_

So there’s no need to create separate /profile /profile_posts and /profile_vitals endpoints to get more focused versions of the data. The goal here is to have GraphQL "wrap around" your existing REST API end points and provide a new, unified interface that lets me query all the things.

# Tips for the beginner writing GraphQL queries

I saw an amazing talk from [Sean Grove](https://twitter.com/sgrove) of One Graph who works on maintaining GraphiQL, the rad graphical explorer for GraphQL. He talked about adding automations to GraphQL to let it point new query writers in the direction of more efficient coding of GraphQL queries. The query language is supposed to be easy, so these points shouldn’t add significantly to the weight of writing new queries.

## Optimize with variables

GraphQ: lets you parameterise queries. Here we are making a query asking for a particular hero that matches the film name "NEW HOPE" and the names of their friends:

```json

hero(“NEW HOPE”) {

name

friends {

name

}

}

```

This looks pretty good but _updating_ this query will require some string manipulation by our GraphQL client (e.g. the React web app that will be asking for data). Also, later queries with different parameters will not benefit from any caching, since the GraphQL server will see it as a whole new query. So it’s better to add a variable to a query, then re-use the same query over and over:

```json

query HeroNameAndFriends($episode: Episode) {

hero(episode: $episode) {

name

friends {

name

}

}

}

```

_This example and others in this article are cribbed from the [GraphQL learn pages](https://graphql.org/learn/queries/)_

Now we can update the episode variable and re-run the same query, and it’ll impact the client less AND return faster.

## Set defaults for your variables

If you love the other devs on yourself or even future you, you’ll set defaults on your variables to make sure each query succeeds

```json

query HeroNameAndFriends($episode: Episode = JEDI) {

hero(episode: $episode) {

name

friends {

name

}

}

}

```

Later you can re-use this query as

```

HeroNameAndFriends('EMPIRE')

```

and benefit from caching!

## Write more DRY (‘don’t repeat yourself’) queries with fragments

It’s an amazing feature that you get to specify exactly the fields that you want to get back from a GraphQL query, after a while this can get… kinda tedious:

```json

hero(episode: $episode) {

name

height

weight

pets {

name

height

weight

}

friends {

name

height

weight

}

}

```

If this was a query we might be asking for photos, IDs, friends’ IDs, over and over again as the query has more clauses. Surely there’s a way to ask for:

`name`

`height`

`weight`

All at once? Yup!

Define a fragment like so:

```json

fragment criticalInfo on Character {

name

height

weight

}

```

_Note that Character is just a label I’m using in this example, i.e. a character in a story_

Now our query is _much_ more compact:

```json

hero(episode: $episode) {

...criticalInfo

pets {

...criticalInfo

}

friends {

...criticalInfo

}

}

```

# Ready to dive in and go further?

My next article will cover how to host your first GraphQL server on Heroku, and after that how to build your first service architecture.

Your next step if all this is interesting to you should be to get a full series on [GraphQL queries right from the GraphQL team](https://graphql.org/learn/queries/) on their [learn page](https://graphql.org/learn/).

If you want to really learn GraphQL, I cannot recommend highly enough [“Learning GraphQL” ](http://shop.oreilly.com/product/0636920137269.do)by [Alex Banks](http://www.oreilly.com/pub/au/6913) and [Eve Porcello](http://www.oreilly.com/pub/au/6914).

_This and the several articles that will follow it brought to you by my [favorite train read the last few weeks](https://www.amazon.com/Learning-GraphQL-Declarative-Fetching-Modern-ebook/dp/B07GBJZX1L)._

| nocnica |

286,253 | Introduction à Scaleway Elements Kubernetes Kapsule avec Gloo et Knative … | Scaleway a dévoilé de nouveau services (encore en beta pour certains) au sein de sa nouvelle gamme... | 0 | 2020-03-23T00:00:57 | https://medium.com/@abenahmed1/introduction-%C3%A0-scaleway-elements-kubernetes-kapsule-avec-gloo-et-knative-62dcfc7f966f | scaleway, kubernetes, serverless, docker | ---

title: Introduction à Scaleway Elements Kubernetes Kapsule avec Gloo et Knative …

published: true

date: 2020-03-22 23:53:06 UTC

tags: scaleway,kubernetes,serverless,docker

canonical_url: https://medium.com/@abenahmed1/introduction-%C3%A0-scaleway-elements-kubernetes-kapsule-avec-gloo-et-knative-62dcfc7f966f

---

Scaleway a dévoilé de nouveau services (encore en beta pour certains) au sein de sa nouvelle gamme Scaleway Elements.

> Scaleway Elements représentant l’ecosystème Cloud public est composé de cinq catégories de produits à savoir : Compute, Stockage, Réseaux, Internet des objets et Intelligence artificielle.

[Scaleway Elements](https://www.scaleway.com/fr/scaleway-elements/)

Je vais m’intéresser à ce service en beta nommé Scaleway Elements Kubernetes Kapsule qui permet d’exécuter des applications conteneurisées dans un environnement Kubernetes géré par Scaleway. Actuellement le service est disponible dans la zone de disponibilité de Paris en France et supporte à minima la dernière version mineure des 3 dernières versions majeures de Kubernetes.

[Bêtas & avant-premières](https://www.scaleway.com/fr/betas/)

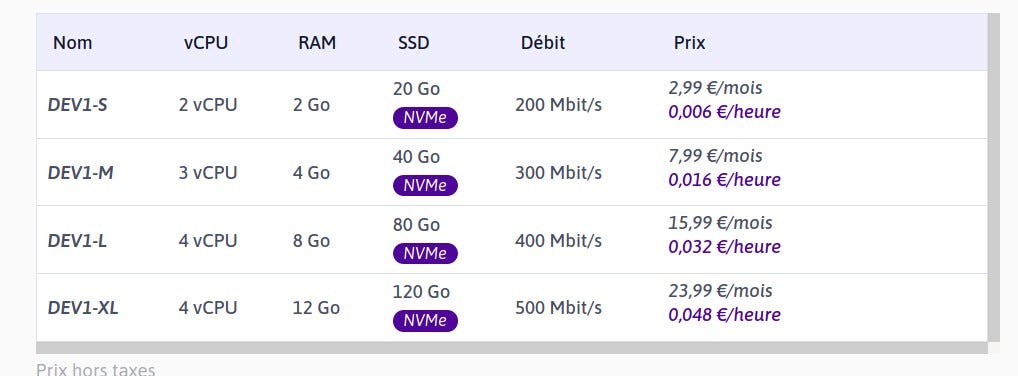

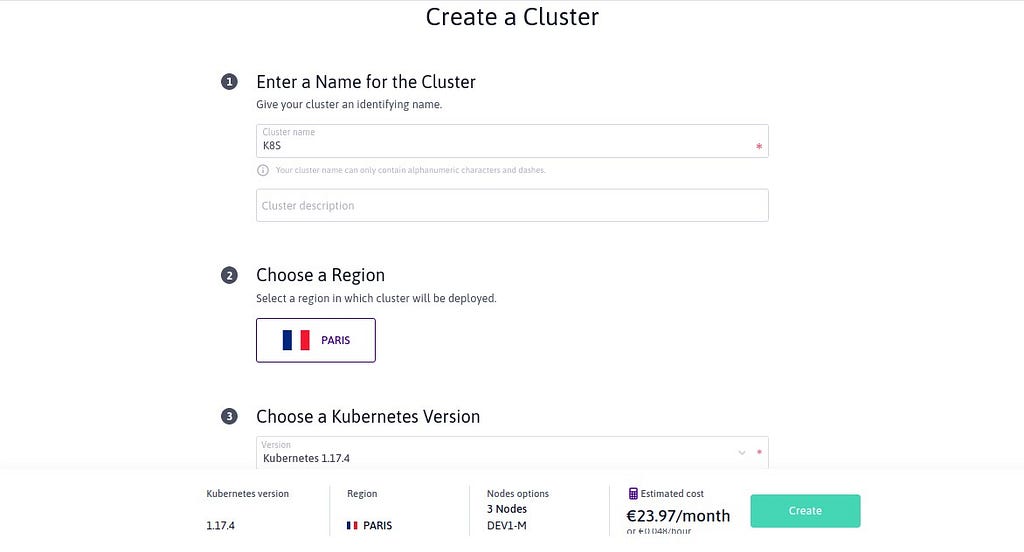

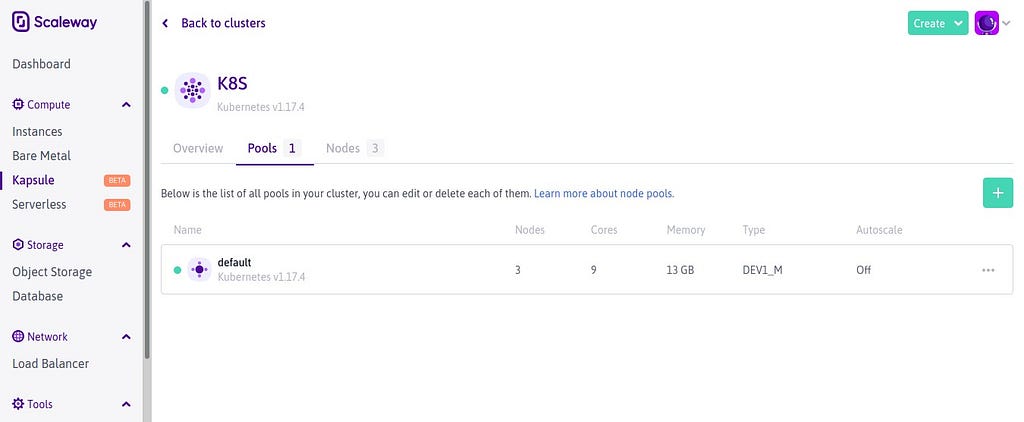

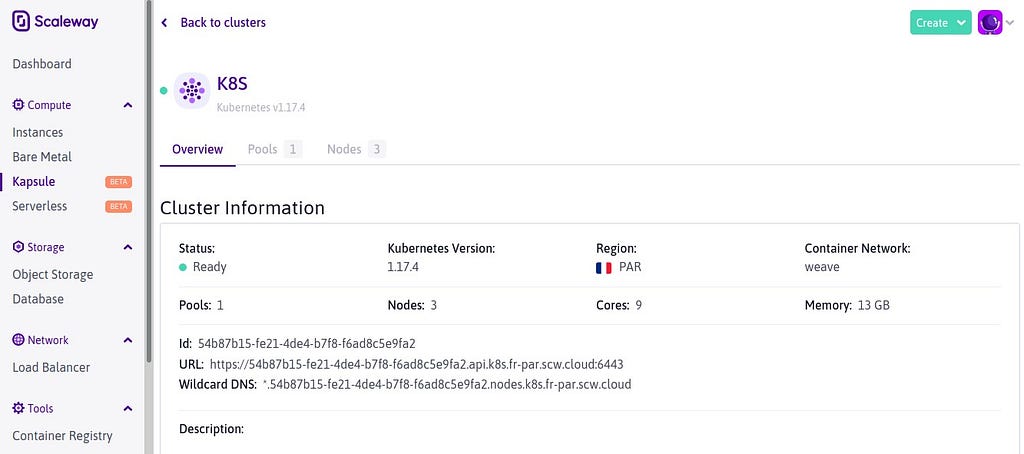

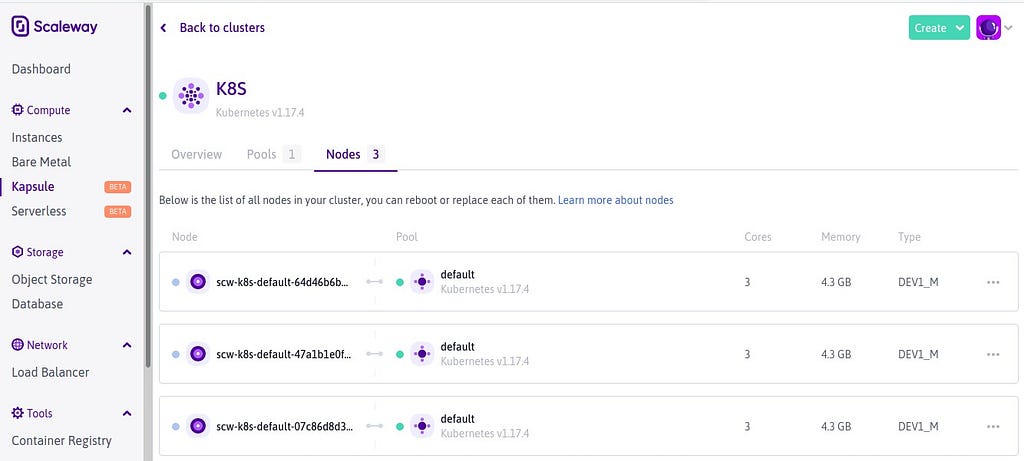

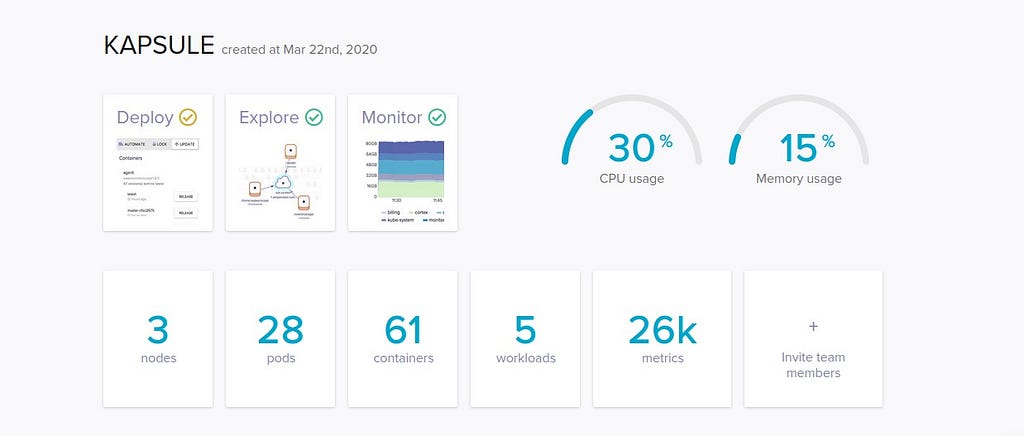

Je lance donc depuis la console web de Scaleway Elements un cluster Kubernetes managé via ce service avec des noeuds de type DEV1-M (3vCPU, 4Go RAM et 40 Go de disque NVMe) :

avec plusieurs options qui définiront le prix à venir. En effet ce dernier dépendra des ressources qui sont allouées pour le cluster Kubernetes telles que le nombre et le type de nœuds, l’utilisation de loadbalancers et les volumes persistants. Les nœuds sont facturés au même prix que les instances compute utilisées. Le control plane de Kubernetes est fourni sans frais supplémentaires :

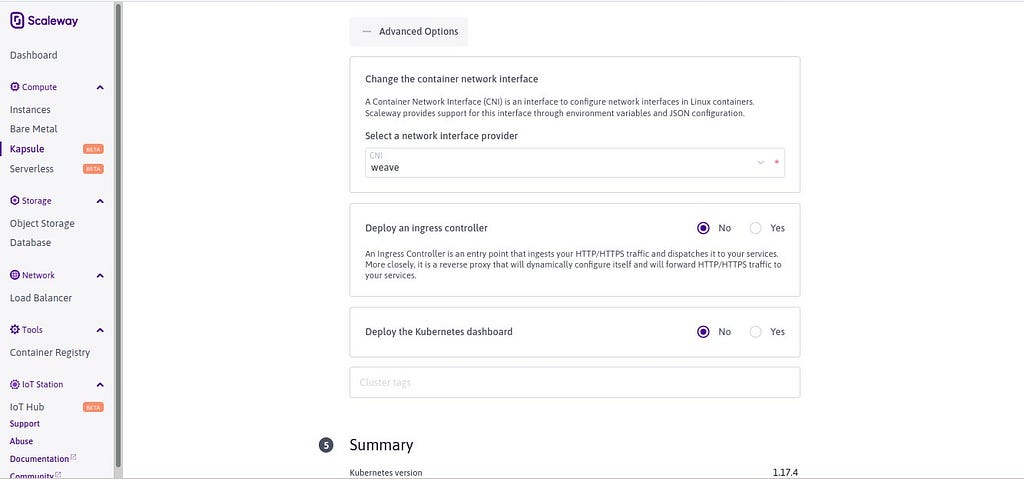

Je choisis ici de ne pas activer le dashboard Kubernetes et de ne pas installer d’Ingress Controller :

Lancement de la création du cluster :

qui une fois terminée me retourne un le point de terminaison ainsi qu’un domaine Wildcard :

Un Load Balancer m’est en effet attribué avec ce domaine Wildcard qui pointe en effet sur les adresses IP publiques de chacun des noeuds constituant le cluster :

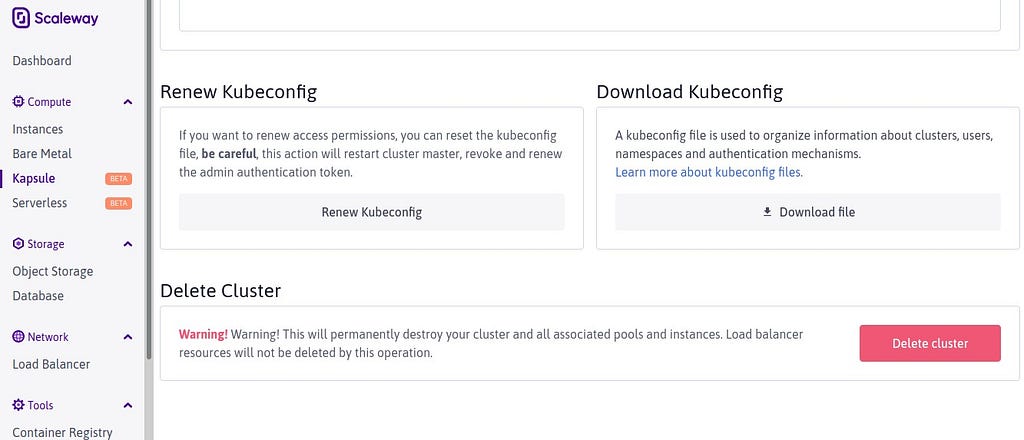

Je peux charger le fichier Kubeconfig à utiliser en conjonction du client Kubectl pour gérer le cluster Kubernetes en ligne de commande :

Je connecte alors ce cluster au service Weave Cloud qui me fournira avec Weave Scope et Cortex le moyen de surveiller ce dernier. En effet, Weave Cloud est une plate-forme opérationnelle qui agit comme une extension à son infrastructure d’orchestration de conteneurs, fournissant Deploy: livraison continue, Explore: visualisation et dépannage et Monitor: surveillance Prometheus. Ces fonctionnalités fonctionnent ensemble pour aider à expédier les fonctionnalités plus rapidement et à résoudre les problèmes plus rapidement :

[What is Weave Cloud & Documentation](https://www.weave.works/docs/cloud/latest/overview/)

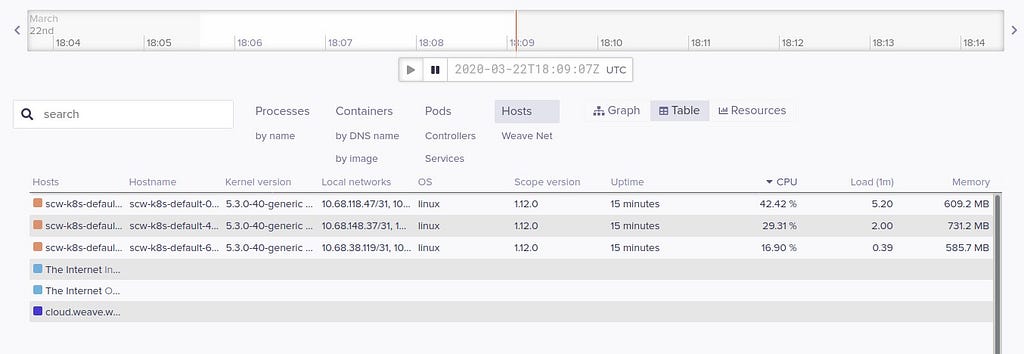

Je peux alors visualiser mes trois noeuds Worker :

Et m’y connecter. J’en profite donc pour y installer l’Agent ZeroTier pour les lier à un réseau VPN via la console Shell fournie avec Weave Scope :

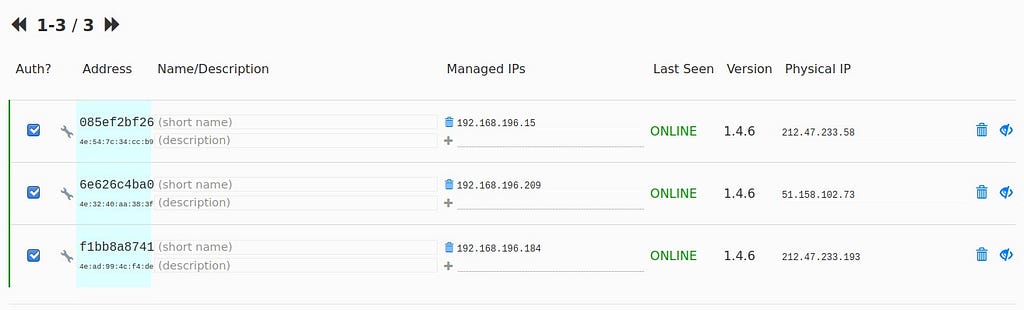

Mes trois noeuds sont connectés au service VPN de ZeroTier :

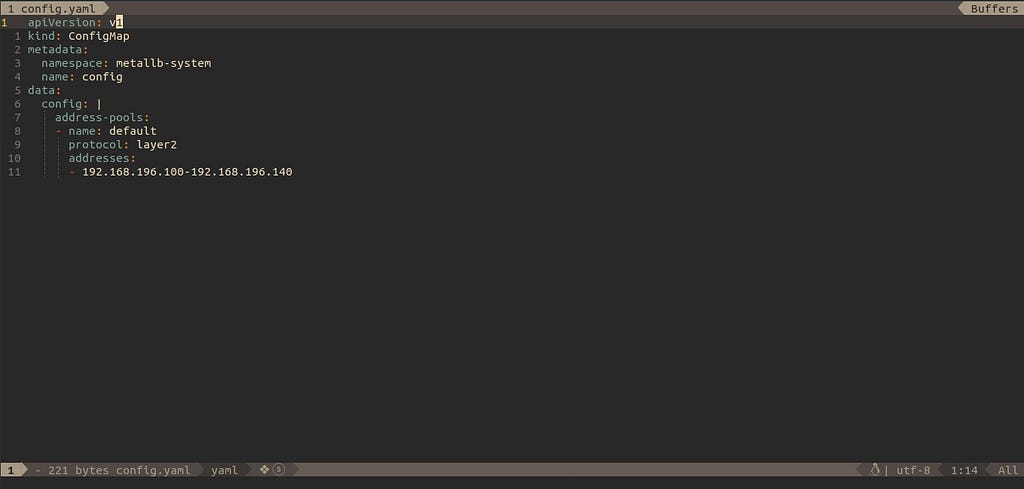

Je peux donc procéder à l’installation de MetalLB pour obtenir un service de Load Balancing intégré avec le plan d’adressage défini dans ZeroTier :

[MetalLB](https://metallb.universe.tf/)

```

openssl rand -base64 128 | kubectl create secret generic -n metallb-system memberlist --from-literal=secretkey=-

```

```

kubectl apply -f [https://raw.githubusercontent.com/google/metallb/v0.9.2/manifests/metallb.yaml](https://raw.githubusercontent.com/google/metallb/v0.9.2/manifests/metallb.yaml)

```

et ce fichier de configuration :

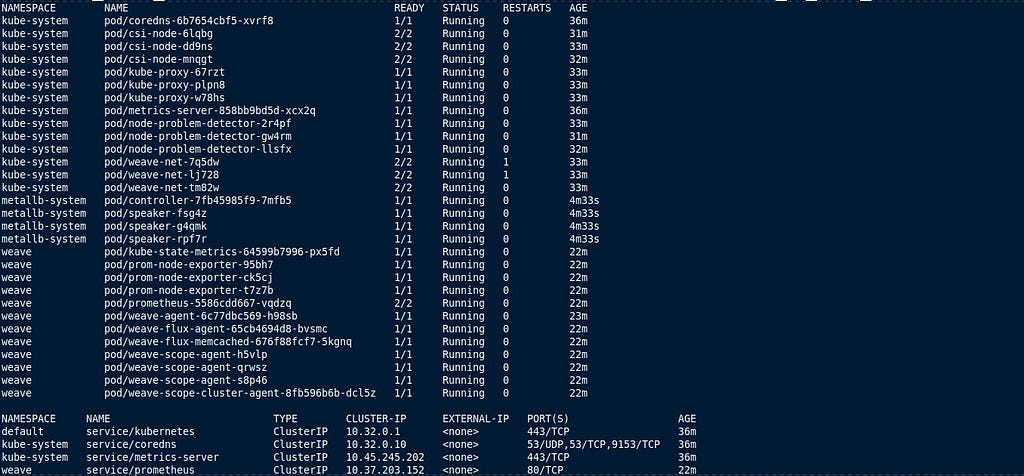

MetalLB est actif dans le cluster :

Pour permettre l’installation de Knative, je procède à l’installation de Gloo par chargement au préalable du binaire Glooctl depuis son dépôt sur Github :

[solo-io/gloo](https://github.com/solo-io/gloo/releases)

Installation de Knative Serving dans le cluster :

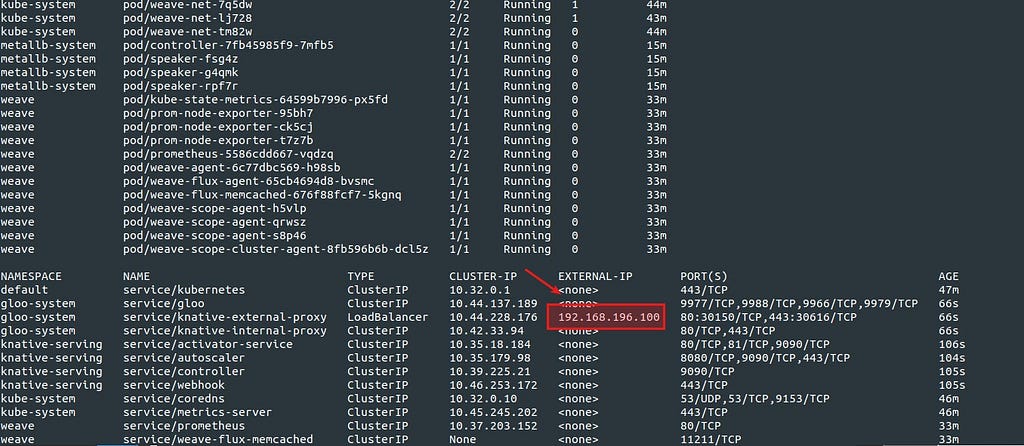

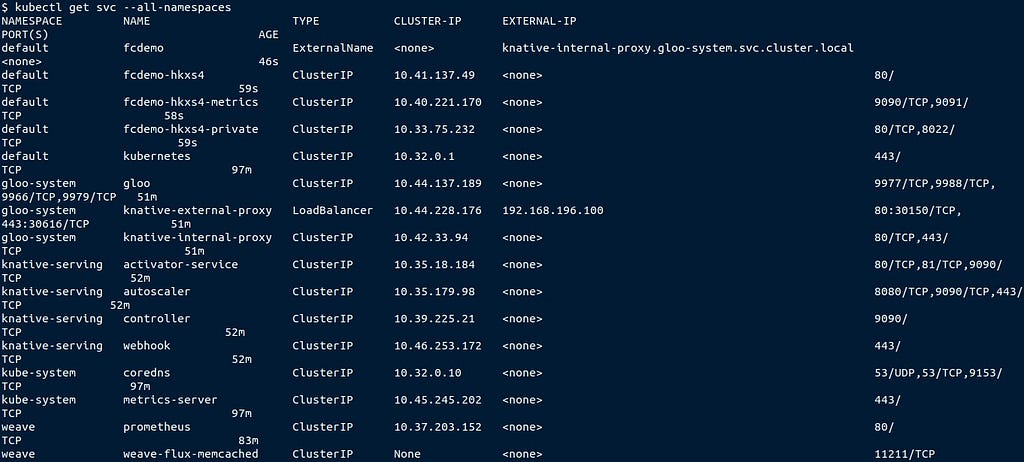

avec un proxy pour Knative Serving qui a pris une adresse IP via MetalLB :

Premier test de Knative Serving avec l’image Docker du célèbre Helloworld en Go :

qui répond via le Proxy :

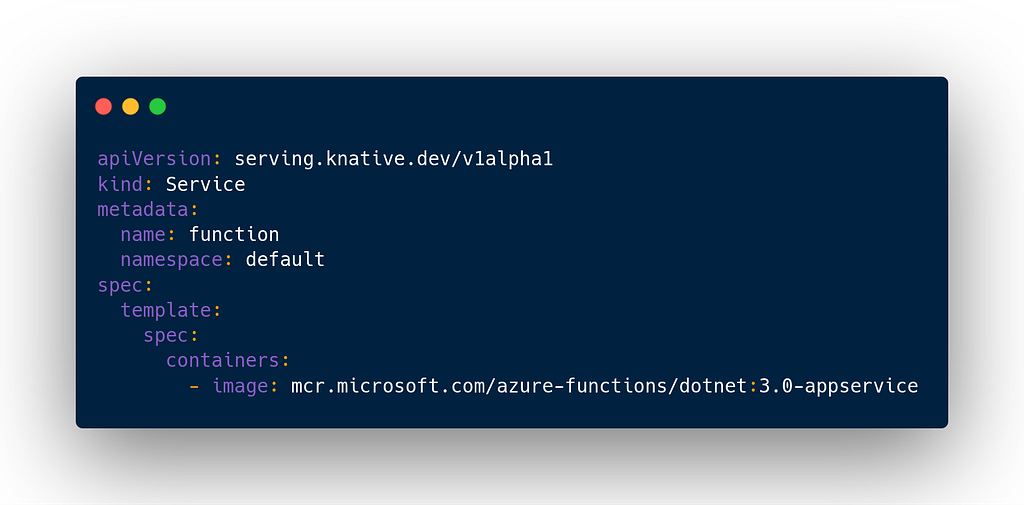

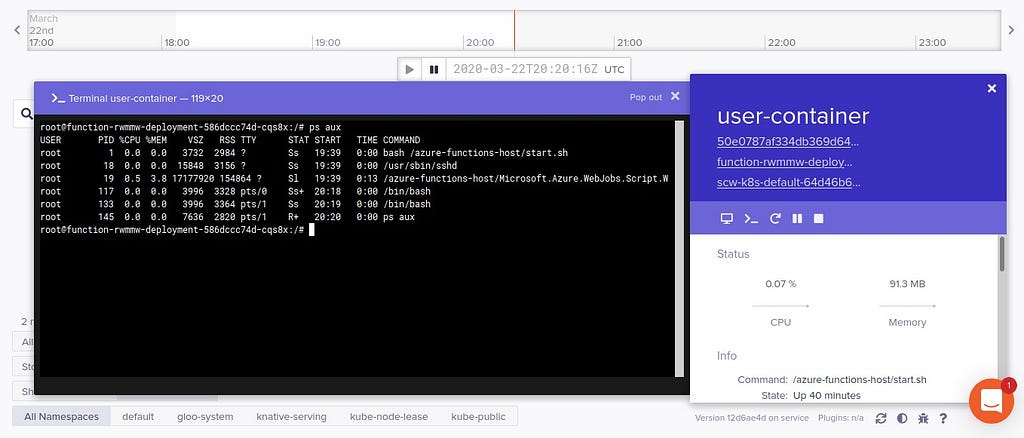

Autre test avec une image Docker Azure Functions pour Linux :

[Azure/azure-functions-docker](https://github.com/Azure/azure-functions-docker)

via ce manifeste YAML :

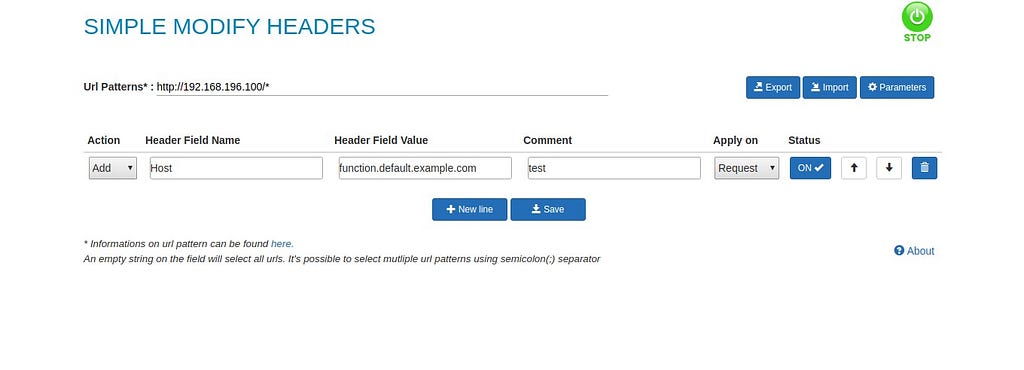

Je modifie la partie Headers de mon navigateur Web pour accéder à cette fonction test :

et la fonction répond :

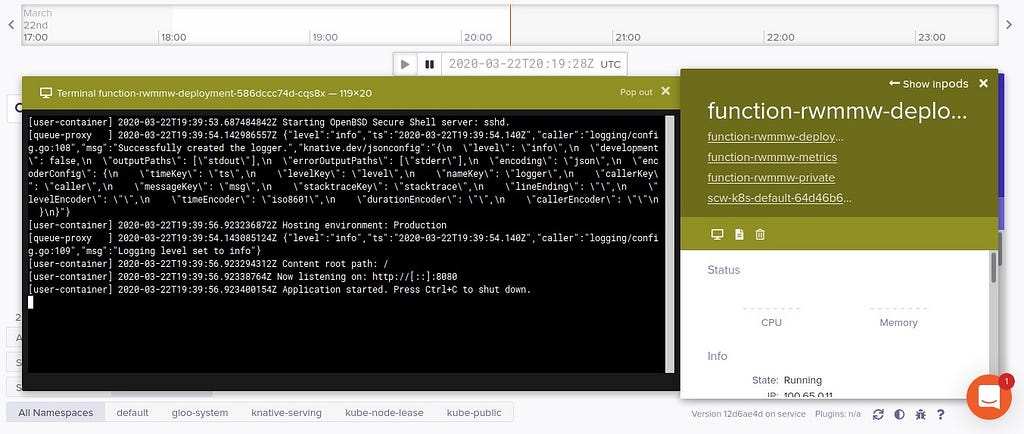

Visualisation de cette fonction dans Weave Cloud et des conteneurs associés :

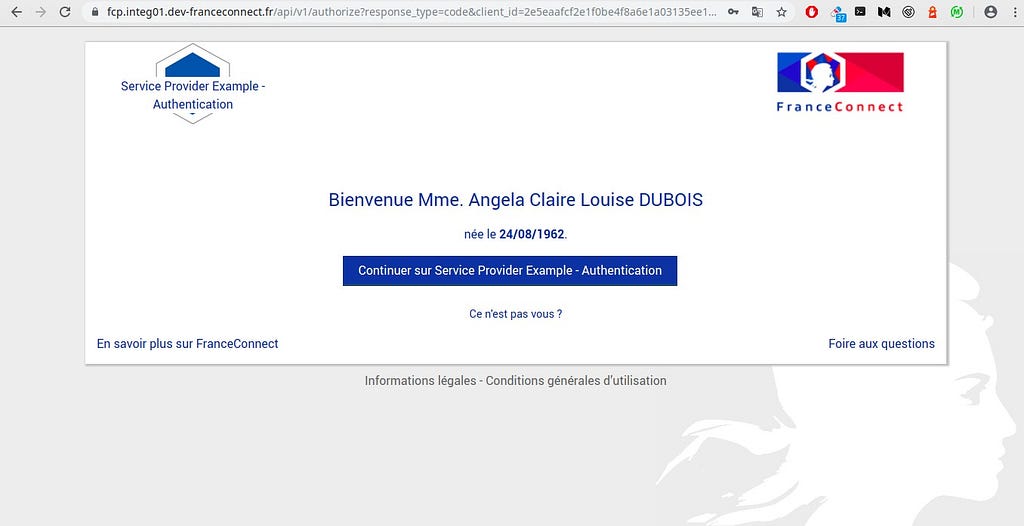

Idem pour le sempiternel démonstrateur FC :

Modification encore une fois de la partie Headers du navigateur Web :

Le démonstrateur est accessible :

Je visualise dans Weave Cloud les conteneurs associés à ce démonstrateur et Knative Serving :

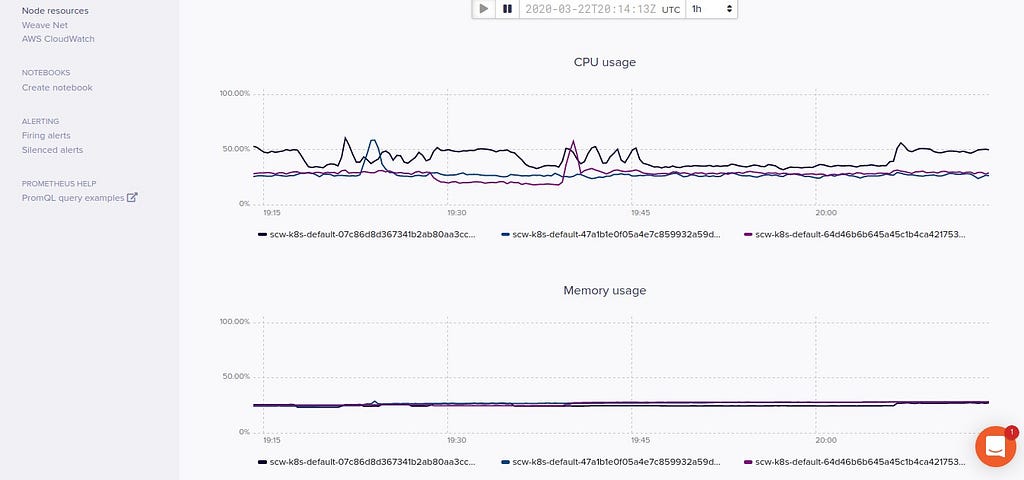

ainsi que les grandes métriques du cluster Kubernetes :

Scaleway Elements Kubernetes Kapsule est appelé à s’enrichir avec notamment même si ici les clusters de Kubernetes doivent être considérés comme _stateless_. Si on a besoin d’une application stateful, on peut utiliser des volumes persistants. La storageClass pour les volumes Scaleway Block Storage est définie par défaut, elle n’a donc pas besoin d’être spécifiée …

À suivre ! … | deep75 |

286,378 | 자바와 닷넷의 문자열 연산자 차이 | 2015-04-06 10:30:11 from blog.hazard.kr 1. == 및 != 연산자 닷넷 닷넷은 == 연산자 오버로딩을 통... | 0 | 2020-03-23T05:35:25 | https://dev.to/composite/-596p | java, csharp, techtalks, korean | > 2015-04-06 10:30:11 from blog.hazard.kr

## 1. `==` 및 `!=` 연산자

### 닷넷

닷넷은 `==` 연산자 오버로딩을 통하여 `String.Equals` 사용하여 값의 동일성을 비교.

### 자바

자바는 `String`이 닷넷과 같이 클래스이며 연산자 지원 안하는 특성상 레퍼런스 비교밖에 못하므로 동일한 값 비교 불가.

따라서 개발자가 직접 `String.equals`를 사용.

## 2. + 연산자

### 닷넷 :

```cs

string s = "asd" + b + "qwe";

//>> string s = string.Concat("asd", b, "qwe");

```

[String.cs](http://www.dotnetframework.org/default.aspx/4@0/4@0/DEVDIV_TFS/Dev10/Releases/RTMRel/ndp/clr/src/BCL/System/String@cs/1305376/String@cs)

.NET concat 원리

```cs

[System.Security.SecuritySafeCritical] // auto-generated

public static String Concat(String str0, String str1) {

//Contract 는 Test 및 유효성 검사를 위한 내부 클래스임.

Contract.Ensures(Contract.Result<string>() != null);

Contract.Ensures(Contract.Result</string><string>().Length ==

(str0 == null ? 0 : str0.Length) +

(str1 == null ? 0 : str1.Length));

Contract.EndContractBlock();

if (IsNullOrEmpty(str0)) {

if (IsNullOrEmpty(str1)) {

return String.Empty;

}

return str1;

}

if (IsNullOrEmpty(str1)) {

return str0;

}

int str0Length = str0.Length;

//.NET 은 네이티브를 통해 포인터에다가 합칠 문자열 길이를 모두 합산하여 배열에 자리 부여

String result = FastAllocateString(str0Length + str1.Length);

//그리고 포인터에다가 순서대로 삽입

FillStringChecked(result, 0, str0);

FillStringChecked(result, str0Length, str1);

//그리하여 포인터 문자열 출력.

return result;

}

```

### 자바 :

```java

String s = "asd" + b + "qwe";

//>> String s = new StringBuffer().append("asd").append(b).append("qwe").toString();

```

[StringBuffer.java](http://grepcode.com/file/repository.grepcode.com/java/root/jdk/openjdk/6-b14/java/lang/StringBuffer.java)

자바는 문자열 증가 연산자 약속을 `StringBuffer` 클래스를 통해 합치며 원리는 닷넷과 차이가 있음.

### 닷넷과 자바의 문자열 합치기 차이점

닷넷 : 처음부터 합칠 모든 문자열의 길이만큼 자리를 포인터에 할당 후 삽입한 다음 포인터 결과값 출력.

자바 : `StringBuffer` 특성상 일정 자리를 부여 후 문자열 넣을 때마다 필요 시 일정량 증가 후 삽입한 다음 문자열 출력. (기본값은 +16)

### 닷넷과 자바의 문자열 합치기 공통점

반복문 등에서 문자열 추가시 닷넷은 `StringBuilder`, 자바는 `StringBuffer`를 쓰는 것이 성능상 이득.

여기까지. | composite |

286,416 | Mousetrap JS | So you came here to learn about mousetraps right? So mousetraps are great for capturing rodents with... | 0 | 2020-03-23T09:26:56 | https://dev.to/chrisleboeuf/mousetrap-js-5d38 | So you came here to learn about mousetraps right? So mousetraps are great for capturing rodents with a spring-loaded mechanism. Totally kidding! This isn't about those kinds of mousetraps. This is about a neat Javascript library for fairly simple and easy keybinding! [Mousetrap](https://github.com/ccampbell/mousetrap) has many awesome capabilities. It's extremely lightweight with no external dependencies.

You can get Mousetrap by doing a simple ``npm install mousetrap`` and require it in your app. Do that and now you can start using mousetraps like a pro!

Let's get right into it! First, there is ``Mousetrap.bind``. Let's look at some examples!

```js

// single keys

Mousetrap.bind('4', function() { console.log('4'); });

Mousetrap.bind("?", function() { console.log('show shortcuts!'); });

Mousetrap.bind('esc', function() { console.log('escape'); }, 'keyup');

// combinations

Mousetrap.bind('command+shift+k', function() { console.log('command shift k'); });

// map multiple combinations to the same callback

Mousetrap.bind(['command+k', 'ctrl+k'], function() {

console.log('command k or control k');

// return false to prevent default browser behavior

// and stop event from bubbling

return false;

});

// gmail style sequences

Mousetrap.bind('g i', function() { console.log('go to inbox'); });

Mousetrap.bind('* a', function() { console.log('select all'); });

// Alphabet!

Mousetrap.bind('a b c d e f g', function() {

console.log('Now I know my abc's');

});

```

So bind will be used to literally allow you to bind specific sets of keys to a specified callback method. On top of that, if for whatever reason you wanted to you can even overwrite default keyboard shortcuts. And you can also specify whether your shortcut is a keyup, keydown, or a keypress by adding a third argument to the bind method. This way you can bind multiple types of keypresses to the same key or combination of keys.

And that leads to the next thing.``Mousetrap.unbind``. With this method, you can unbind a single key or an array of keyboard events. If you previously used bind to bind a key and you specified the kind of keypress, then you must specify the same kind of keypress in the unbind.

```js

Mousetrap.bind('b', () => { console.log('b was pressed') } 'keydown');

// This is how you must do it if you specified a specific keypress

Mousetrap.unbind('b', 'keydown');

```

Next Mousetrap has a neat way of triggering the same keyboard event. If for whatever reason you wanted to fire off an event that you had previously bound to a key, you can easily 'trigger' that event by using ``Mousetrap.trigger``.

```js

Mousetrap.trigger('b');

```

This method can also take in an optional argument for the type of keypress like the other functions.

Finally, we will take a look at one last method. ``Mousetrap.reset`` is yet another useful method. The reset method will remove anything you have bound to mousetrap. This can be useful if you want to change contexts in your application without refreshing the page in your browser. Internally mousetrap keeps an associative array of all the events to listen for so reset does not actually remove or add event listeners on the document. It just sets the array to be empty.

This is only some of the functionality of Mousetrap. You can go see the rest [here](https://craig.is/killing/mice). Mousetrap is an awesome, easy to use library that I highly recommend using if you want to make simple keybinding events. | chrisleboeuf | |

286,433 | Rewriting to Haskell–Configuration | You can keep reading here or jump to my blog to get the full experience, including the wonderful pink... | 0 | 2020-03-23T08:00:28 | https://odone.io/posts/2020-03-23-rewriting-haskell-configuration.html | functional, haskell, servant | You can keep reading here or [jump to my blog](https://odone.io/posts/2020-03-23-rewriting-haskell-configuration.html) to get the full experience, including the wonderful pink, blue and white palette.

---

This is part of a series:

- [Rewriting to Haskell–Intro](https://odone.io/posts/2020-02-26-rewriting-haskell-intro.html)

- [Rewriting to Haskell–Project Setup](https://odone.io/posts/2020-03-03-rewriting-haskell-setup.html)

- [Rewriting to Haskell–Deployment](https://odone.io/posts/2020-03-14-rewriting-haskell-server.html)

- [Rewriting to Haskell–Automatic Formatting](https://odone.io/posts/2020-03-19-rewriting-haskell-formatting.html)

---

Coming from Rails we are used to employing yaml files to configure a web application. This is why we decided to do the same with Servant. As a matter of fact, we now have a `configuration.yml` file:

```yml

database:

username: stream

database: stream_development

password: ""

application:

aws_s3_access_key: "ABCD1234"

aws_s3_secret_key: "EFGH5678"

aws_s3_region: us-east-1

aws_s3_bucket_name: stream-demo-bucket

```

That is great for development but how can we run test against the test database? Turns out that the package we use to parse the yaml file allows the use of ENV variables:

```yml

database:

username: stream

database: _env:DATABASE:stream_development

password: ""

application:

aws_s3_access_key: "ABCD1234"

aws_s3_secret_key: "EFGH5678"

aws_s3_region: us-east-1

aws_s3_bucket_name: stream-demo-bucket

```

That is, now we can just run `DATABASE=stream_test stack test`!

In the repository we actually keep a `configuration.yml.example` file and git ignore `configuration.yml` to avoid leaking credentials:

```yml

database:

username: stream

database: _env:DATABASE:stream_development

password: ""

application:

aws_s3_access_key: "REPLACE_ME"

aws_s3_secret_key: "REPLACE_ME"

aws_s3_region: us-east-1

aws_s3_bucket_name: ll-stream-demo

```

For production we use [Ansible](https://www.ansible.com/) (with Ansible Vault) to put in place the correct `configuration.yml`. Plus, we instruct [Hapistrano](https://hackage.haskell.org/package/hapistrano) to make that file available for each deployment:

```yml

linked_files:

- haskell/configuration.yml

```

To read the configuration inside the Servant application we use [`loadYamlSettings`](https://www.stackage.org/haddock/lts-15.5/yaml-0.11.3.0/Data-Yaml-Config.html#v:loadYamlSettings) from the [yaml](https://www.stackage.org/package/yaml) package:

```hs

loadYamlSettings

:: FromJSON settings

=> [FilePath] -- ^ run time config files to use, earlier files have precedence

-> [Value] -- ^ any other values to use, usually from compile time config. overridden by files

-> EnvUsage

-> IO settings

```

In other words, given a type `settings` that is an instance of `FromJSON` we can decode yaml files into a value of that type. And this is how we do it for Stream:

```hs

data Configuration

= Configuration

{ configurationDatabaseUser :: String,

configurationDatabaseDatabase :: String,

configurationDatabasePassword :: String,

configurationApplicationAwsS3AccessKey :: AccessKey,

configurationApplicationAwsS3SecretKey :: SecretKey,

configurationApplicationAwsS3Region :: Region,

configurationApplicationAwsS3BucketName :: BucketName

}

instance FromJSON Configuration where

parseJSON (Object x) = do

database <- x .: "database"

application <- x .: "application"

Configuration

<$> database .: "username"

<*> database .: "database"

<*> database .: "password"

<*> application .: "aws_s3_access_key"

<*> application .: "aws_s3_secret_key"

<*> application .: "aws_s3_region"

<*> application .: "aws_s3_bucket_name"

loadConfiguration :: IO Configuration

loadConfiguration =

loadYamlSettings ["./configuration.yml"] [] useEnv

```

---

Get the latest content via email from me personally. Reply with your thoughts. Let's learn from each other. Subscribe to my [PinkLetter](https://odone.io#newsletter)! | riccardoodone |

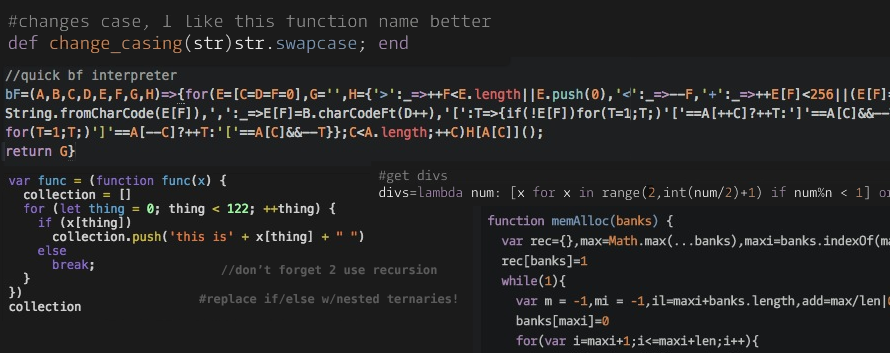

286,442 | Kissing JavaScript #2 globals.js | Have you ever asked why you must type const { readFileSync } = require('fs') every time you nee... | 5,561 | 2020-03-23T08:14:26 | https://dev.to/bittnkr/kissing-javascript-2-globals-js-2b1k | javascript | Have you ever asked why you must type

```JavaScript

const { readFileSync } = require('fs')

```

every time you need to read a file or use any other file handling function?

In my DRY obsession, this bothers me a lot. To me, the first requirement to write simpler code is just write less code.

One of my strategies to avoid the repetition is the use of global variables.

In [first post](https://dev.to/bittnkr/kissing-javascript-1174) of this series, there was a part of code I didn't commented about:

```JavaScript

if (typeof window == 'object')

window.test = test

else global.test = test

```

This code makes the `test()` function globally available, (in nodejs and in the browser) so I only need to require the file once for the entire application.

Traditionally (before ES6) if you write`x = 10` not preceded by `var` or `const`, that variable will automatically become a global variable.

Having an accidental global variable is a bad thing because that variable can replace another with the same name declared in another part or library or simply leak the function scope.

For this reason, ES6 introduced the `"use strict";` directive. One of the things this mode do is disallow global variables by default.

After that, most of the libraries avoided using global variables to not pollute the global space.

So, I've a good news to you:

Now the global space is almost desert and is free to be used at will by you. Yes **you** are the owner of global space now, and you can use it to make you life simpler.

So my second tip is just this: Create a file named `globals.js` and put on it everything you want to have always at hand.

Follow a model with part of my `globals.js`, with some ideas of nice globals:

```JavaScript

// check if the fs variable already exists in the global space, if so, just returns

if (global.fs) return

// a shortcut for the rest for the file

var g = (typeof window == 'object') ? window : global

g.fs = require('fs')

g.fs.path = require('path') // make path part of fs

g.json = JSON.stringify

g.json.parse = JSON.parse

// from the previous article

g.test = require('./test.js')

// plus whatever you want always available

```

Now just put in the main file of your NodeJS project, the line

```JavaScript

require('./globals.js')

```

and after that in anywhere of your project when you need a function of `fs` module, you just need to type:

```JavaScript

var cfg = fs.readFileSync('cfg.json')

```

without any require().

I know this is not the most complex or genial article you have ever read on here dev.to, but I'm sure the wise use of global space can save you a lot of typing.

A last word:

In this time of so many bad news, I want to give you another little tip: Turn off the TV, and give a tender kiss in someone you love and loves you (despite the distancing propaganda we are bombarded). Say her how important she is to you life and how you would miss if she is gone. (The same to him)

I my own life, every time I faced death I realized that the most important and the only thing that really matters and we will carry with our souls to the after life is the love we cultivate.

So, I wish you a life with lot of kisses and love.

From my heart to your all. 😘 | bittnkr |

286,461 | Covid Counter | Another Corona Count tracker | 0 | 2020-03-23T08:50:20 | https://dev.to/barelyhuman/covid-counter-61l | corona, covid, counter | ---

title: Covid Counter

published: true

description: Another Corona Count tracker

tags: corona, covid, counter

---

I created this because I was bored and didn't have anything creative to make and since everyone seems to be posting theirs.

Here's a minimal version that gets you the counts of various incidents

[Link](https://corona.siddharthgelera.com/)

| barelyhuman |

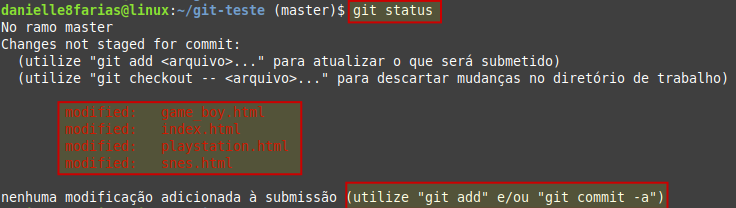

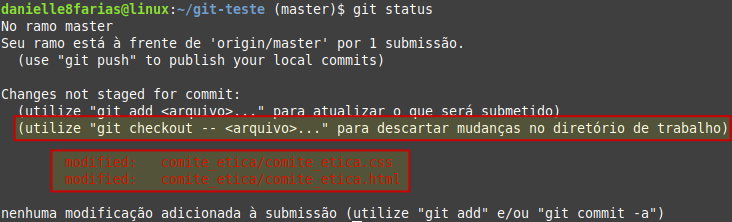

286,830 | [Tutorial Git] git commit -am: Atualizando arquivo modificado no Git | Para atualizar um arquivo que foi modificado no repositório, existem dois caminhos. $ git add <... | 5,484 | 2020-03-23T20:25:57 | https://dev.to/womakerscode/tutorial-git-adicionando-um-arquivo-modificado-no-git-116c | github, am, git, braziliandevs | Para atualizar um arquivo que foi modificado no repositório, existem dois caminhos.

```

$ git add <arquivo>

```

- **$** indica que você deve usar o **usuário comum** para fazer essa operação.

- **add** vai adicionar ao git o(s) arquivo(s) que virá(ão) em seguida.

- digite o nome do arquivo sem os sinais **< >**.

seguido do **commit**

```

$ git commit -m 'sua mensagem aqui'

```

Exemplo:

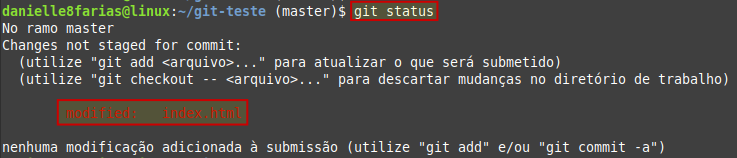

Aqui temos o arquivo index.html que foi modificado.

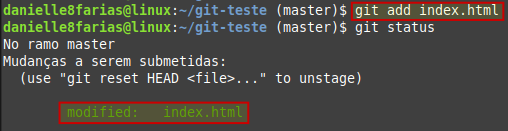

Adicionando o arquivo com o comando **git add**

E fazendo o **commit**

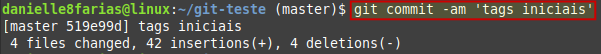

## Atalho

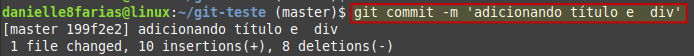

Também é possível fazer o **commit das modificações** através de um **atalho**:

```

$ git commit -am 'adição de modificação do arquivo'

```

O parâmetro **-a** adiciona todos os arquivos que foram modificados, sem a necessidade de adicionar cada um individualmente.

Exemplo:

Aqui temos vários arquivos modificados

Usando o atalho

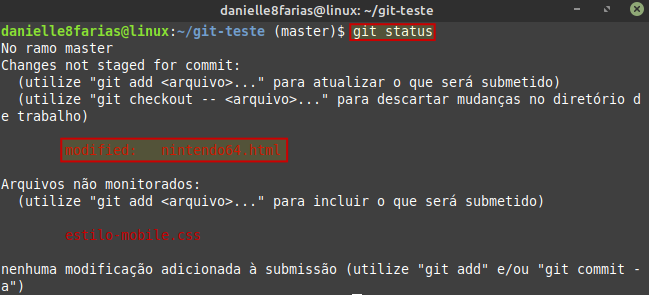

**Observação:**

É importante notar que se houver um arquivo novo (ainda não rastreado pelo **git**) o comando **git commit -am** faz a adição do commit **apenas dos arquivos rastreados que foram modificados**.

Exemplo:

Temos um arquivo que foi modificado (**nintendo64.html**) e um arquivo novo (**estilo-mobile.css**) ainda não rastreado.

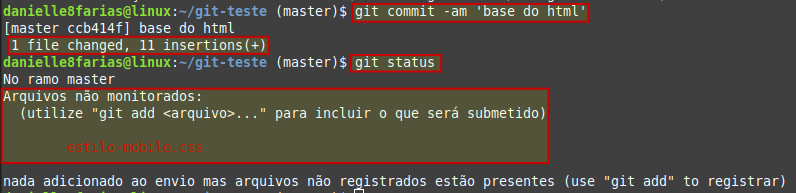

Usando o atalho **git commit -am** podemos perceber que somente o arquivo modificado foi mandado ao **index**.

## Descartando modificações

Caso queira descartar as modificações feitas em um arquivo, basta digitar

```

$ git checkout <nome_do_arquivo>

```

Exemplo:

Temos dois arquivos que foram modificados e queremos descartar as modificações (voltando ao arquivo anterior as mudanças).

Usando com comando **git checkout** com os dois arquivos ao mesmo tempo:

| danielle8farias |

286,519 | Make your react apps compatible with IE | Installation npm install react-app-polyfill Enter fullscreen mode ... | 0 | 2020-03-23T11:39:51 | https://dev.to/k_penguin_sato/make-your-react-apps-compatible-with-ie-4g82 | react | ---

title: Make your react apps compatible with IE

published: true

description:

tags: React

---

# Installation

```bash

npm install react-app-polyfill

```

or

```bash

yarn add react-app-polyfill

```

# Import entry points

Import the packages at the top of your `index.tsx` or `index.jsx`.

```js

import 'react-app-polyfill/ie9';

import 'react-app-polyfill/ie11';

import 'react-app-polyfill/stable';

```

That's it! Now your react app should run on IE without any errors.

# Resources

- [react-app-polyfill](https://github.com/facebook/create-react-app/tree/master/packages/react-app-polyfill)

| k_penguin_sato |

286,524 | [Rails]Implement session-based authentication from scratch | Here is how you can implement session-based authentication functionality in your rails application... | 0 | 2020-03-23T11:50:32 | https://dev.to/k_penguin_sato/rails-implement-session-based-authentication-from-scratch-2631 | rails | ---

title: [Rails]Implement session-based authentication from scratch

published: true

description:

tags: Rails

---

Here is how you can implement session-based authentication functionality in your rails application without using any gem.

# Create author resources

Run the commands below.

```

$ rails generate model Author

$ rails generate controller Authors name:string email:string password_digest:string

$ rails generate migration add_index_to_authors_email // Add index

$ rake db:migrate

```

# Set validations

Add validations for `name` and `email`.

```ruby

# models/author.rb

class Author < ApplicationRecord

VALID_EMAIL_REGEX = /\A[\w+\-.]+@[a-z\d\-.]+\.[a-z]+\z/i.freeze

validates :name, presence: true, length: { maximum: 50 }

validates :email, presence: true, length: { maximum: 255 }, format: { with: VALID_EMAIL_REGEX }, uniqueness: { case_sensitive: false }

end

```

# Add secure password to Author

You'll have your users put the password and its confirmation in the form and send them as hashed values. (Hash values can not be decrypted even though they got intercepted by a third party during the transmission.)

You check if the sent hashed value matches the hashed password stored in the DB. And if it does, you allow your user to log in to the application.

## Add has_secure_password

It's quite easy to set up in rails. Simply put `has_secure_password` in the Author model. (Also add the minimum length of each password.)

```ruby

class Author < ApplicationRecord

#

# other code

#

validates :password, length: { minimum: 6 }

has_secure_password

end

```

`has_secure_password`

- Enables you to store the hashed password in your DB as password_digest

- Lets you use password and password_confirmation params and validations for them.

- Lets you use the `authenticate` method.

### Add bcrypt gem

Add `gem 'bcrypt'` to your Gemfile and run `bundle install`.

```ruby

gem 'bcrypt'

```

## Check if it's working correctly

Run the commands in the rails console to see if you can use the `authenticate` method.

The `authenticate` method returns false if the given password was wrong and returns the author object if the given password was correct.

```ruby

$ Author.create(name:"test", email:"test@email.com", password:"000000")

$ Author.first.authenticate('test')

//=> false

$ Author.first.authenticate('000000')

//=>

#<Author:0x0000560ee2e0a1b8

id: 1,

name: "test",

email: "test@email.com",

password_digest: "$2a$12$bQQu49N3xNCKO8StooXLBOqwwCAv7NbPqt3aG35AFDHRUgh.C8BgO",

created_at: Mon, 30 Sep 2019 08:40:11 UTC +00:00,

updated_at: Mon, 30 Sep 2019 08:40:11 UTC +00:00>

```

# Sign up functionality

Let's start by setting up the routes for users to sign up.

```ruby

Rails.application.routes.draw do

resources :authors

get '/signup', to: 'authors#new'

post '/signup', to: 'authors#create'

```

Add the code below to the author controller.

```ruby

class AuthorsController < ApplicationController

def show

@author = Author.find(params[:id])

end

def new

@author = Author.new

end

def create

@author = Author.new(author_params)

if @author.save

redirect_to @author

else

render 'new'

end

end

private

def author_params

params.require(:author).permit(:name, :email, :password, :password_confirmation)

end

end

```

Lastly, create a signup page and show page for each user under `views/authors/`.

```erb

# views/authors/show.html.erb

<%= @author.name %>

<%= @author.email %>

```

```erb

# views/authors/new.html.erb

<% provide(:title, 'Sign up') %>

<h1>Sign up</h1>

<div class="row">

<div class="col-md-6 col-md-offset-3">

<%= form_for(@author) do |f| %>

<%= f.label :name %>

<%= f.text_field :name, class: 'form-control' %>

<%= f.label :email %>

<%= f.email_field :email, class: 'form-control' %>

<%= f.label :password %>

<%= f.password_field :password, class: 'form-control' %>

<%= f.label :password_confirmation, "Confirmation" %>

<%= f.password_field :password_confirmation, class: 'form-control' %>

<%= f.submit "Create my account", class: "btn btn-primary" %>

<% end %>

</div>

</div>

```

# Sign in/out

`HTTP` is a stateless protocol. So we use sessions to maintain the user state.

The `new` action is used to put information for a new session and `create` action is used to actually create a new session. And the `destroy` action is used to delete a session.

## Set up routes

Set up routes for `sessions`.

```ruby

Rails.application.routes.draw do

resources :authors

# Create new users

get '/signup', to: 'authors#new'

post '/signup', to: 'authors#create'

# Sessions

get '/login', to: 'sessions#new'

post '/login', to: 'sessions#create'

delete '/logout', to: 'sessions#destroy'

end

```

## Create a session controller

```

$ rails generate controller Sessions

```

Add the code to `SessionsController`.

```ruby

class SessionsController < ApplicationController

def new

end

def create

author = Author.find_by(email: params[:session][:email].downcase)

if author && author.authenticate(params[:session][:password])

log_in author

redirect_to author

else

render 'new'

end

end

def destroy

log_out

redirect_to root_url

end

end

```

And add the code to `SessionHelper` and include session helper in `ApplicationController`.

The `session` used in the code below is the built-in `session` method in Rails.

```ruby

module SessionsHelper

def log_in(author)

session[:author_id] = author.id

end

def current_author

@author ||= Author.find_by(id: session[:author_id]) if session[:author_id]

end

def logged_in?

!current_author.nil?

end

def log_out

session.delete(:author_id)

@current_author = nil

end

end

```

```ruby

class ApplicationController < ActionController::Base

protect_from_forgery with: :exception

include SessionsHelper

end

```

## Remember me functionality

First of all, add a column called `remember_digest` to `Author`.

```

$ rails generate migration add_remember_digest_to_users remember_digest:string

```

Update code in the Author model. Each method has its description in the code.

```ruby

class Author < ApplicationRecord

attr_accessor :remember_token

VALID_EMAIL_REGEX = /\A[\w+\-.]+@[a-z\d\-.]+\.[a-z]+\z/i.freeze

validates :name, presence: true, length: { maximum: 50 }

validates :email, presence: true, length: { maximum: 255 }, format: { with: VALID_EMAIL_REGEX }, uniqueness: { case_sensitive: false }

validates :password, length: { minimum: 6 }

has_secure_password

class << self

# Return the hash value of the given string

def digest(string)

cost = ActiveModel::SecurePassword.min_cost ? BCrypt::Engine::MIN_COST : BCrypt::Engine.cost

BCrypt::Password.create(string, cost: cost)

end

# Return a random token

def generate_token

SecureRandom.urlsafe_base64

end

end

# Create a new token -> encrypt it -> stores the hash value in remember_digest in DB.

def remember

self.remember_token = Author.generate_token

update_attribute(:remember_digest, Author.digest(remember_token))

end

# Check if the given value matches the one stored in DB

def authenticated?(remember_token)

BCrypt::Password.new(remember_digest).is_password?(remember_token)

end

def forget

update_attribute(:remember_digest, nil)

end

end

```

Update the session helper.

```ruby

module SessionsHelper

def log_in(author)

session[:author_id] = author.id

end

def current_author

if (author_id = session[:author_id])

@current_author ||= User.find_by(id: author_id)

elsif (author_id = cookies.signed[:author_id])

author = User.find_by(id: author_id)

if author && author.authenticated?(cookies[:remember_token])

log_in author

@current_author = author

end

end

end

def logged_in?

!current_author.nil?

end

# Make the author's session permanent

def remember(author)

author.remember

cookies.permanent.signed[:author_id] = author.id

cookies.permanent[:remember_token] = author.remember_token

end

# Delete the permanent session

def forget(author)

author.forget

cookies.delete(:author_id)

cookies.delete(:remember_token)

end

def log_out

forget(current_author)

session.delete(:author_id)

@current_author = nil

end

end

```

Update the session controller.

```ruby

class SessionsController < ApplicationController

def new

end

def create

author = Author.find_by(email: params[:session][:email].downcase)

if author && author.authenticate(params[:session][:password])

log_in author

params[:session][:remember_me] == '1' ? remember(author) : forget(author)

redirect_to author

else

render 'new'

end

end

def destroy

log_out

redirect_to root_url

end

end

```

Lastly, add `remember_me` checkbox in the view.

```erb

<div class="login-form">

<h2>Log in</h2>

<%= form_for(:session, url: login_path) do |f| %>

<%= f.email_field :email, autofocus: true, autocomplete: "email", placeholder: 'Email', class: 'login-input'%><br/>

<%= f.password_field :password, autocomplete: "current-password", placeholder: 'Password', class: 'login-input' %>

<div class="check-field">

<%= f.check_box :remember_me %>

<%= f.label :remember_me %>

</div>

<%= f.submit "Log in", class: 'btn btn-outline-primary login-btn' %>

<% end %>

</div>

```

# Authorization

Add the following methods to the author controller.

```ruby

class AuthorsController < ApplicationController

before_action :authenticate_author

##

Other code

##

private

def author_params

params.require(:author).permit(:name, :email, :password, :password_confirmation)

end

def authenticate_author

unless logged_in?

flash[:danger] = "Please log in."

redirect_to login_url

end

end

def correct_author

@author = Author.find(params[:id])

redirect_to(root_url) unless current_author?(@author)

end

end

```

Add the `current_author?` method to the session helper.

```ruby

module SessionsHelper

def current_author?(author)

author == current_author

end

end

```

That's it! Now you should have a simple authentication functionality on your rails app!

# References

- [Ruby on Rails チュートリアル:実例を使って Rails を学ぼう](https://railstutorial.jp/chapters/sign_up?version=5.1#sec-unsuccessful_signups)

| k_penguin_sato |

286,534 | The happiest countries Worldwide | Happiness is not a simple goal but is about making progress when it's as elusive as ever. Being happy... | 0 | 2020-03-23T12:15:13 | https://dev.to/silviosmith3/the-happiest-countries-worldwide-27oe | Happiness is not a simple goal but is about making progress when it's as elusive as ever. Being happy often means continually finding satisfaction, contentment, a feeling of joy, and a sense that your life is meaningful during all kinds of problems that do not depend upon finding ease or comfort. Nobody is jolly or elated all the time, but some individuals are definitely more fulfilled or fortunate than others.

Depending on the purpose of the research, happiness is often measured using objective indicators (data on crime, income, civic engagement and health) and subjective methods, such as asking people how frequently they experience positive and negative emotions.

Helsinki, Finland is the happiest one for the third year. The N2 is Denmark according to 2020's study, followed by Switzerland in third place and Iceland in the fourth. The UK ranks N13th and The USA N18th.

'A happy social environment, whether urban or rural, is one where people feel a sense of belonging, where they trust and enjoy each other and their shared institutions' said Professor John F. Helliwell of the University of British Columbia who co-edited the report.

'Generally, we find that the average happiness of city residents is more often than not higher than the average happiness of the general country population, especially in countries at the lower end of economic development.

The least happy cities ranked were Kabul, Afghanistan; Sanaa, Yemen; Gaza, Palestine; Port-au-Prince, Haiti; and Juba, South Sudan.

These are just statistics. Happiness is inside everyone and it doesn’t matter where do you live or do you have lots of money, do you wear expensive clothes is all about finding happiness inside you wherever you are in Denmark, in Finland, in Afghanistan or somewhere else. | silviosmith3 | |

286,545 | The Grand Summer Internship Fair | https://internshala.com/the-grand-summer-internship-fair?utm_source=eap_whatsapp&utm_medium=33547... | 0 | 2020-03-23T12:36:16 | https://dev.to/coolrocks/the-grand-summer-internship-fair-3cc9 | startup, codenewbie, contributorswanted | https://internshala.com/the-grand-summer-internship-fair?utm_source=eap_whatsapp&utm_medium=3354716

Hey, In the wake of COVID-19, this year's 'Grand Summer Internship Fair - India's largest online fair' brings 1,200+ work from home and summer internships in dream companies like OnePlus, Xiaomi, Capgemini, HCL, TVS, and many more. All this with a guaranteed stipend up to INR 75,000!? So, register for the fair now and also win rewards up to INR 30,000.

https://internshala.com/the-grand-summer-internship-fair?utm_source=eap_whatsapp&utm_medium=3354716 | coolrocks |

286,678 | On the Coronavirus | The last few weeks has been tough for all of us. I wanted to share with you my personal experience, a... | 0 | 2020-03-23T14:45:10 | https://dev.to/stopachka/on-the-coronavirus-ebg | The last few weeks has been tough for all of us. I wanted to share with you my personal experience, and the mindset I’m relying on to move forward.

# Looking Back

## The calm before the storm

I remember first hearing about this in mid January. My friend was about to head out to China, and she was worried about it. She tends to over-worry, so I reassured her and jokingly told her how she would be fine.

One week in, my coworker visited from China. From his eyes and his stories I could tell this was serious. I called my friend and found out she came back early. Since I was 18, I constantly thought about exponential growth, tail risks, and black swans [1]. This fit the bill — I understood it conceptually. But it stopped there. Conceptually.

From February to mid March, I was going through the *motions* of preparation. Though I thought I understood that the world could shift in a few days, my understanding was only hypothetical. In early February I told my parents to buy up food, and ordered food in San Francisco as well. In some respects I was preparing, but in another, I was simply fitting preparation to the amount of time I had. I didn’t think it was important enough to change priorities.

As things escalated, I increased my attention, but never to the level that this deserved. I canceled my plan to go to New York and tried to distance more. Again this was going through the motions — even with all this happening, the most important thing on my mind was my existing work and personal projects. Even when we were told to work from home. Even as I saw the markets begin to crash, and a significant portion of my personal wealth disappear with them.

## The storm

Towards the end of the week, it began to hit me. I realized that we were in much worse shape than China. Complete social isolation was on the way. We could enter a serious recession.

In the same day, two of my friends and I decided to move to a cabin and isolate there. We thought we’d go for a month, starting Wednesday. By the evening we decided to go for two months, the very next day. The timing was on the nose, as the very next day shelter in place was announced in San Francisco.

The next 48 hours was a blur. We got everything together, I ended up liquidating my entire portfolio, and we got out of San Francisco. I remember feeling like I was in a war zone — making multiple drastic, high impact decisions a day. After a night of being stranded, we arrived safe and sound in the cabin. After those 48 hours, my eyes cleared up.

# Looking Ahead

As we move towards the present, there’s uncertainty all around us. Many of us are worried about our loved ones. We’re worried about the future. Will hospitals flood with patients and will military cars carry coffins? How long will this last? In a matter of days, many have lost their jobs and many have lost significant wealth. Are we about to experience the great depression?

That’s a lot of uncertainty, but we can come together and manage it. Here’s how I’m thinking about it:

## Short Term

1. **Amor Fati [2]**

Character is forged through adversity and judged by action rather than thought. Will you let the panic consume you or will you strengthen your resolve? Will you focus inward or will you focus outward? Many of us have felt fear and when we feel fear, the reaction is knee-jerk. As you act, keep this top of mind: how you behave now, no matter what you think inside, is what determines your character.

Let this idea guide you gently: you can feel fear of course, and you can make mistakes, but keeping the idea top of mind will gently evolve and shape your behavior.

2. **Come together**

Some have experienced a significant loss of wealth, yet still won’t have to worry about their livelihood. Others have lost their jobs. Some have families that are in trouble. We have experienced pain, we are all in different circumstances, and we all have some ways that we can support our community.

We can’t fix this overnight. No big brother can make sweeping changes. Let’s do what we can as individuals, whether that’s financial support, a phone call, or a kind word--it all counts. Use this adversity to come together.

3. **Roll with the punches**

When there’s volatility and change, the panic and fear can make it hard to adjust. Yet, we must adjust. If you’re in quarantine — what can you do *because* of it? How can you grow and how can you be helpful? Adjusting will calm you and give you mental clarity: whether that’s adjusting what you do at work, reading new books, or finding new ways to connect — make the change.

_First time I’ve made a dish in 7 years_

## Long Term

I think long term, we face two primary fears.

**The first, health: will we lose lives?**

This is fundamentally up to us. What we do to today will ultimately decide tomorrow. Physically isolate, wash your hands, and stay safe. This is directly under our control, so let’s give ourselves completely to it.

**The second, wealth: will we enter a great depression?**

We may feel that the world will change and we won’t keep up — what if we lose our wealth, lose our job, and our skills aren’t relevant anymore? What if we’re not safe, never mind that we may never achieve our dreams?

Let’s break this down.

**Kill the fear:** Even if you lose all our wealth, your job, and your skills aren’t relevant anymore, *you still have your wits*. The skills you have today didn’t just pop up when you were born. You **learned them. ****You *will* learn and adapt with whatever is next.

**Evolve the vision:** Instead of judging your future by outcome (how much wealth you have), judge it by character: *what kind of person will you be?* You *will* be the kind of person who generates value, who is strengthened by adversity. Your character and your behavior is under your control.

## Putting it together

Thinking about it, both the short term and the long term fall under one idea: **focus on what you can control.** You can only control your character and your actions. So focus on that, and judge yourself only by that.

*Thanks to Bipin Sure**s**h, who**se* *stoic ideas inspired the realization that all of these actions fit under one umbrella.*

*Thanks to Jacky Wang and Luba Yudasina for convincing me to include my personal story.*

*Thanks to Bipin Suresh, Victoria Chang, Luba Yudasina, Mark Shlick, Jacky Wang, Aamir Patel, Nino Parunashvili, Daniel Woelfel, Avand Amiri, Abraham Sorock for reviewing drafts of this essay*

[1] Black Swans: Rare events in certain domains, where their magnitude is so large that in the long run they are all that matter. See Nassim Taleb’s [Incerto](https://en.wikipedia.org/wiki/Nassim_Nicholas_Taleb#Incerto) for the concept and some of the most profound essays on risk

[2] Amor Fati: “To love one’s fate” — from [Nietzche](https://en.wikipedia.org/wiki/Amor_fati) | stopachka | |

286,696 | 100daysofcode Flutter | day 14/100 of #100daysofcode #Flutter Belajar Flutter , dari kursus Udemy from @maxedapps Learning a... | 0 | 2020-03-23T15:19:26 | https://dev.to/triyono777/100daysofcode-flutter-jn2 | 100daysofcode, flutter | day 14/100 of #100daysofcode #Flutter

Belajar Flutter , dari kursus Udemy from

@maxedapps

Learning about navigating between screen , push, pop, stack concept, pushnamed, pushnamed with argument, send data to next screen,

https://github.com/triyono777/100-days-of-code/blob/master/log.md | triyono777 |

286,735 | React: Simple Auth Flow | Now that we know how to use useState, useReducer and Context, how can we put these concepts into our... | 5,550 | 2020-03-23T17:03:40 | https://dev.to/koralarts/react-simple-auth-flow-3fbf | tutorial, beginners, react | Now that we know how to use `useState`, `useReducer` and Context, how can we put these concepts into our projects? An easy example is to create a simple authentication flow.

We'll first setup the `UserContext` using React Context.

```react

import { createContext } from 'react'

const UserContext = createContext({

user: null,

hasLoginError: false,

login: () => null,

logout: () => null

})

export default UserContext

```

Now that we've created a context, we can start using it in our wrapping component. We'll also use `useReducer` to keep the state of our context.

```react

import UserContext from './UserContext'

const INITIAL_STATE = {

user: null,

hasLoginError: false

}

const reducer = (state, action) => { ... }

const App = () => {

const [state, dispatch] = useReducer(reducer, INITIAL_STATE)

return (

<UserContext.Provider>

...

</UserContext.Provider>

)

}

```

Our reducer will handle 2 action types -- `login` and `logout`.

```react

const reducer = (state, action) => {

switch(action.type) {

case 'login': {

const { username, password } = action.payload

if (validateCredentials(username, password)) {

return {

...state,

hasLoginError: false,

user: {} // assign user here

}

}

return {

...state,

hasLoginError: true,

user: null

}

}

case 'logout':

return {

...state,

user: null

}

default:

throw new Error(`Invalid action type: ${action.type}`)

}

}

```

After implementing the reducer, we can use `dispatch` to call these actions. We'll create functions that we'll pass to our provider's value.

```react

...

const login = (username, password) => {

dispatch({ type: 'login', payload: { username, password } })

}

const logout = () => {

dispatch({ type: 'logout' })

}

const value = {

user: state.user,

hasLoginError: state.hasLoginError,

login,

logout

}

return (

<UserContext.Provider value={value}>

...

</UserContext.Provider>

)

```

Now that our value gets updated when our state updates, and we passed the login and logout function; we'll have access to those values in our subsequent child components.

We'll make two components -- `LoginForm` and `UserProfile`. We'll render the form when there's no user and the profile when a user is logged in.

```react

...

<UserContext.Provider value={value}>

{user && <UserProfile />}

{!user && <LoginForm />}

</UserContext.Provider>

...

```

Let's start with the login form, we'll use `useState` to manage our form's state. We'll also grab the context so we have access to `login` and `hasLoginError`.

```react

const { login, hasLoginError } = useContext(UserContext)

const [username, setUsername] = useState('')

const [password, setPassword] = useState('')

const onUsernameChange = evt => setUsername(evt.target.value)

const onPasswordChange = evt => setPassword(evt.target.value)

const onSubmit = (evt) => {

evt.preventDefault()

login(username, password)

}

return (

<form onSubmit={onSubmit}>

...

{hasLoginError && <p>Error Logging In</p>}

<input type='text' onChange={onUsernameChange} />

<input type='password' onChange={onPasswordChange} />

...

</form>

)

```

If we're logged in we need access to the user object and the logout function.

```react

const { logout, user } = useContext(UserContext)

return (

<>

<h1>Welcome {user.username}</h1>

<button onClick={logout}>Logout</button>

</>

)

```

Now, you have a simple authentication flow in React using different ways we can manage our state!

[Code Sandbox](https://codesandbox.io/s/react-context-authentication-otjqv) | koralarts |

286,748 | How to style forms with CSS: A beginner’s guide | Written by Supun Kavinda✏️ Apps primarily collect data via forms. Take a generic sign-up form, for... | 0 | 2020-04-15T13:51:32 | https://blog.logrocket.com/how-to-style-forms-with-css-a-beginners-guide/ | css, tutorial | ---

title: How to style forms with CSS: A beginner’s guide

published: true

date: 2020-03-23 16:00:30 UTC

tags: css, tutorial

canonical_url: https://blog.logrocket.com/how-to-style-forms-with-css-a-beginners-guide/

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/eovw1sezwhviumgl2smt.png

---

**Written by [Supun Kavinda](https://blog.logrocket.com/author/supunkavinda/)**✏️

Apps primarily collect data via forms. Take a generic sign-up form, for example: there are several fields for users to input information such as their name, email, etc.

In the old days, websites just had plain, boring HTML forms with no styles. That was before CSS changed everything. Now we can create more interesting, lively forms using the latest features of CSS.

Don’t just take my word for it. Below is what a typical HTML form looks like without any CSS.

Here’s that same form jazzed up with a bit of CSS.

In this tutorial, we’ll show you how to recreate the form shown above as well as a few other amazing modifications you can implement to create visually impressive, user-friendly forms.

We’ll demonstrate how to style forms with CSS in six steps:

1. Setting [`box-sizing`](https://developer.mozilla.org/en-US/docs/Web/CSS/box-sizing)

2. CSS selectors for input elements

3. Basic styling methods for text input fields

4. Styling other input types

5. UI pseudo-classes

6. Noncustomizable inputs

Before we dive in, it’s important to understand that there is no specific style for forms. The possibilities are limited only by your imagination. This guide is meant to help you get started on the path to creating your own unique designs with CSS.

Let’s get started!

[](https://logrocket.com/signup/)

## 1. Setting `box-sizing`

I usually set `* {box-sizing:border-box;}` not only for forms, but also webpages. When you set it, the width of all the elements will contain the padding.

For example, set the width and padding as follows.

```jsx

.some-class {

width:200px;

padding:20px;

}

```

The `.some-class` without `box-sizing:border-box` will have a width of more than `200px`, which can be an issue. That’s why most developers use `border-box` for all elements.

Below is a better version of the code. It also supports the `:before` and `:after` pseudo-elements.

```jsx

*, *:before, *:after {

box-sizing: border-box;

}

```

Tip: The `*` selector selects all the elements in the document.

## 2. CSS selectors for input elements

The easiest way to select input elements is to use [CSS attribute selectors](https://dev.to/bnevilleoneill/advanced-css-selectors-for-common-scenarios-3gl6).

```jsx

input[type=text] {

// input elements with type="text" attribute

}

input[type=password] {

// input elements with type="password" attribute

}

```

These selectors will select all the input elements in the document. If you need to specify any selectors, you’ll need to add classes to the elements.

```jsx

<input type="text" class="signup-text-input" />

```

Then:

```jsx

.signup-text-input {

// styles here

}

```

## 3. Basic styling methods for single-line text input fields

Single-line fields are the most common input fields used in forms. Usually, a single-line text input is a simple box with a border (this depends on the browser).

Here’s the HTML markup for a single-line field with a placeholder.

```jsx

<input type="text" placeholder="Name" />

```

It will look like this:

You can use the following CSS properties to make this input field more attractive.

- Padding (to add inner spacing)

- Margin (to add a margin around the input field)

- Border

- Box shadow

- Border radius

- Width

- Font

Let’s zoom in on each of these properties.

### Padding

Adding some inner space to the input field can help improve clarity. You can accomplish this with the `padding` property.

```jsx

input[type=text] {

padding: 10px;

}

```

### Margin

If there are other elements near your input field, you may want to add a margin around it to prevent clustering.

```jsx

input[type=text] {

padding:10px;

margin:10px 0; // add top and bottom margin

}

```

### Border

In most browsers, text input fields have borders, which you can customize.

```jsx

.border-customized-input {

border: 2px solid #eee;

}

```

You can also remove a border altogether.

```jsx

.border-removed-input {

border: 0;

}

```

Tip: Be sure to add a background color or `box-shadow` when the border is removed. Otherwise, users won’t see the input.

Some web designers prefer to display only the bottom border because feels a bit like writing on a line in a notebook.

```jsx

.border-bottom-input {

border:0; // remove default border

border-bottom:1px solid #eee; // add only bottom border

}

```

### Box shadow

You can use the CSS [`box-shadow`](https://www.w3schools.com/cssref/css3_pr_box-shadow.asp) property to add a drop shadow. You can achieve a range of effects by playing around with the property’s five values.

```jsx

input[type=text] {

padding:10px;

border:0;

box-shadow:0 0 15px 4px rgba(0,0,0,0.06);

}

```

### Border radius

The `border-radius` property can have a massive impact on the feel of your forms. By curving the edges of the boxes, you can significantly alter the appearance of your input fields.

```jsx

.rounded-input {

padding:10px;

border-radius:10px;

}

```

You can achieve another look and feel altogether by using `box-shadow` and `border-radius` together.

### Width

Use the `width` property to set the width of inputs.

```jsx

input {

width:100%;

}

```

### Fonts

Most browsers use a different font family and size for form elements. If necessary, we can inherit the font from the document.

```jsx

input, textarea {

font-family:inherit;

font-size: inherit;

}

```

## 4. Styling other input types

You can style other input types such as text area, radio button, checkbox, and more. Let’s take a closer look.

### Text areas

Text areas are similar to text inputs except that they allow multiline inputs. You’d typically use these when you want to collect longer-form data from users, such as comments, messages, etc. You can use all the basic CSS properties we discussed previously to style text areas.

The [`resize`](https://www.w3schools.com/cssref/css3_pr_resize.asp) property is also very useful in text areas. In most browsers, text areas are resizable along both the x and y axes (value: `both`) by default. You can set it to `both`, `horizontal`, or `vertical`.

Check out this text area I styled:

{% codepen https://codepen.io/SupunKavinda/pen/dyomzez %}

<script async src="https://static.codepen.io/assets/embed/ei.js"></script>

In this example, I used `resize:vertical` to allow only vertical resizing. This practice is used in most forms because it prevents annoying horizontal scrollbars.

Note: If you need to create auto-resizing text areas, you’ll need to use a [JavaScript approach](https://stackoverflow.com/questions/454202/creating-a-textarea-with-auto-resize), which is outside the scope of this article.

### Checkboxes and radio buttons

The default checkbox and radio buttons are hard to style and require more complex CSS (and HTML).

To style a checkbox, use the following HTML code.

```jsx

<label>Name

<input type="checkbox" />

<span></span>

</label>

```

A few things to note:

- Since we’re using `<label>` to wrap the `<input>`, if you click any element inside `<``label``>`, the `<input>` will be clicked

- We’ll hide the `<input>` because browsers don’t allow us to modify it much

- `<span>` creates a custom checkbox

- We’ll use the `input:checked` [pseudo-class selector](https://developer.mozilla.org/en-US/docs/Learn/CSS/Building_blocks/Selectors/Pseudo-classes_and_pseudo-elements) to get the checked status and style the custom checkbox

Here’s a custom checkbox (see the comments in the CSS for more explanations):

{% codepen https://codepen.io/SupunKavinda/pen/yLNKQBo %}

Here’s a custom radio button:

{% codepen https://codepen.io/SupunKavinda/pen/eYNMQNM %}

We used the same concept (`input:checked`) to create custom elements in both examples.

In browsers, checkboxes are box-shaped while radio buttons are round. It’s best to keep this convention in custom inputs to avoid confusing the user.

### Select menus

Select menus enable users to select an item from multiple choices.

```jsx

<select name="animal">

<option value="lion">Lion</option>

<option value="tiger">Tiger</option>

<option value="leopard">Leopard</option>

</select>

```

You can style the `<select>` element to look more engaging.

```jsx

select {

width: 100%;

padding:10px;

border-radius:10px;

}

```

However, you cannot style the dropdown (or `<option>` elements) because they are styled by default depending on the OS. The only way to style those elements is to use [custom dropdowns with JavaScript](https://medium.com/@kyleducharme/developing-custom-dropdowns-with-vanilla-js-css-in-under-5-minutes-e94a953cee75).

### Buttons

Like most elements, buttons have default styles.

```jsx

<button>Click Me</button>

```

Let’s spice this up a bit.

```jsx

button {

/* remove default behavior */

appearance:none;

-webkit-appearance:none;

/* usual styles */

padding:10px;

border:none;

background-color:#3F51B5;

color:#fff;

font-weight:600;

border-radius:5px;

width:100%;

}

```

## 5. UI pseudo-classes

Below are some [UI pseudo-classes](https://developer.mozilla.org/en-US/docs/Learn/Forms/UI_pseudo-classes) that are commonly used with form elements.

These can be used to show notices based on an element’s attributes:

- `:required`

- `:valid` and `:invalid`

- `:checked` (we already used this)

These can be used to create effects on each state:

- `:hover`

- `:focus`

- `:active`

### Generated messages with `:required`

To show a message that input is required:

```jsx

<label>Name

<input type="text">

<span></span>

</label>

<label>Email

<input type="text" required>

<span></span>

</label>

label {

display:block;

}

input:required + span:after {

content: "Required";

}

```

If you remove the `required` attribute with JavaScript, the `"Required"` message will be removed automatically.

Note: `<input>` cannot contain other elements. Therefore, it cannot contain the `:after` or `:before` pseudo-elements. Hence, we need to use another `<span>` element.

We can do the same thing with the `:valid` and `:invalid` pseudo-classes.

### `:hover` and `:focus`

`:hover` selects an element when the mouse pointer hovers over it. `:focus` selects an element when it is focused.

These pseudo-classes are often used to create transitions and slight visual changes. For example, you can change the width, background color, border color, shadow strength, etc. Using the `transition` property with these properties makes those changes much smoother.

Here are some hover effects on form elements (try hovering over the elements).

{% codepen https://codepen.io/SupunKavinda/pen/yLNKZqg %}

When users see elements subtly change when they hover over them, they get the sense that the element is actionable. This is an important consideration when designing form elements.

Did you notice that (in some browsers) a blue outline appears when focusing on form elements? You can use the `:focus` pseudo-class to remove it and add more effects when the element is focused.

The following code removes the focus outline for all elements.

```jsx

*:focus {outline:none !important}

```

To add a focus outline:

```jsx

input[type=text]:focus {

background-color: #ffd969;

border-color: #000;

// and any other style

}

```

Have you seen search inputs that scale when focused? Try this input.

{% codepen https://codepen.io/SupunKavinda/pen/KKpoJJa %}

## 6. Noncustomizable inputs

Styling form elements has historically been a tall order. There are some form elements that we don’t have much control over styling. For example:

- `<input type="color">`

- `<input type="file">`

- `<progress>`

- `<option>`, `<optgroup>`, `<datalist>`

These elements are provided by the browser and styled based on the OS. The only way to style these elements is to use custom controls, which are created using stylable HTML elements such as `div`, `span`, etc.

For example, when styling `<input type="file">`, we can hide the default input and use a custom button.

Custom controls for form elements are developed for most major JavaScript libraries. You can find them on [GitHub](https://github.com/).

## Conclusion