id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

220,272 | List Comprehension in D | This post is a language comparison coming out of this great article on list comprehension. D does not... | 2,209 | 2019-12-13T08:12:31 | https://dev.to/jessekphillips/list-comprehension-in-d-4hpi | dlang, tutorial, ranges | This post is a language comparison coming out of this great article on list comprehension. D does not have List comprehension.

{% link https://dev.to/dvirtual/list-comprehensions-om4 %}

Since D can generally operate on ranges rather than allocated arrays, if you need an array just add `.array` for more on arrays review

{% link https://dev.to/jessekphillips/slicing-and-dicing-arrays-5akg %}

My explanation will be limited to D specific differences, but please ask for further details if something is not clear.

# List

```dlang

import std;

void main()

{

// arr = [i for i in range(10)]

writeln(iota(10));

// [0, 1, 2, 3, 4, 5, 6, 7, 8, 9]

}

```

Iota is uncommon, but is the equivalent of range in Python.

# Dictionary

```dlang

// y = {i:v for i,v in enumerate(x)}

auto x = [2,45,21,45];

auto y = enumerate(x).assocArray;

writeln(y);

// [0:2, 3:45, 2:21, 1:45]

```

As mentioned D prefers unallocated range manipulation, this tends to mean no index, enumerate creates a tuple with a count and value, and `assocArray` takes a range of tuple to build an associative array (dictionary)

# Conditionals

```dlang

// arr = [i for i in range(10) if i % 2 == 0]

auto arr = iota(10)

.filter!(i => i % 2 == 0);

writeln(arr);

// [0, 2, 4, 6, 8]

```

```dlang

// arr = ["Even" if i % 2 == 0 else "Odd" for i in range(10)]

auto arr2 = iota(10)

.map!(x => x % 2 ? "Odd" : "Even");

writeln(arr2);

//["Even", "Odd", "Even", "Odd", "Even", "Odd", "Even", "Odd", "Even", "Odd"]

```

# Nested for loop

```dlang

// arr = [[i for i in range(5)] for j in range(5)]

auto arr3 = iota(5)

.map!(j => iota(5));

writeln(arr3);

// [[0, 1, 2, 3, 4], [0, 1, 2, 3, 4], [0, 1, 2, 3, 4], [0, 1, 2, 3, 4], [0, 1, 2, 3, 4]]

```

```dlang

// arr = [(i,j) for j in range(2) for i in range(2)]

auto arr4 = iota(2).cartesianProduct(iota(2));

writeln(arr4);

//[Tuple!(int, int)(0, 0), Tuple!(int, int)(0, 1), Tuple!(int, int)(1, 0), Tuple!(int, int)(1, 1)]

```

I find that D makes this behavior very clear.

Tuples being a library provided type, their string representation is a little more verbose.

# Flatten 2D Array

```dlang

// arr = [i for j in x for i in j]

auto x2 = [[0, 1, 2, 3, 4],

[5, 6, 7, 8, 9]];

auto arr5 = x2.joiner;

writeln(arr5);

// 0, 1, 2, 3, 4, 5, 6, 7, 8, 9]

```

# Conclusion

D is a typed language, since I did not convert everything back into an array a unique arr variable was needed for each.

Personally I think D represents the behavior more clearly than Python's list comprehension. Even the conditional output selection, which was more consice in D was represented reasonably.

This does not touch on the other algorithms D provides and can be applied to the ranges.

Python is praised on its clear syntax and readability, well I must be spoiled because it makes me cringe on most everything I see. | jessekphillips |

220,334 | Angular counter directive | https://stackblitz.com/edit/angular-p9xny6?file=src%2Fapp%2Fapp.component.ts | 0 | 2019-12-13T09:16:52 | https://dev.to/anhdung11cdt2/angular-counter-directive-1j2h | https://stackblitz.com/edit/angular-p9xny6?file=src%2Fapp%2Fapp.component.ts | anhdung11cdt2 | |

220,345 | Guessing all of the passwords! Advent of Code 2019 - Day 4 | JavaScript walkthrough of Advent of Code 2019 (day 4) | 3,910 | 2019-12-13T09:57:07 | https://dev.to/thibpat/guessing-all-of-the-passwords-advent-of-code-2019-day-4-36i5 | challenge, adventofcode, javascript, video | ---

title: Guessing all of the passwords! Advent of Code 2019 - Day 4

published: true

description: JavaScript walkthrough of Advent of Code 2019 (day 4)

tags: challenge, AdventOfCode, javascript, video

series: Advent of Code 2019 in Javascript

---

{% youtube 8ruAKdZf9fY %}

| thibpat |

220,354 | Check Object equality in javascript | Check whether two objects are equal or not in javascript function isDeepEqual(obj1, obj2, testPro... | 0 | 2019-12-13T10:19:08 | https://dev.to/isamrish/check-object-equality-in-javascript-ph8 | javascript, objects | ---

title: Check Object equality in javascript

published: true

description:

tags: javascript, objects, js

---

Check whether two objects are equal or not in javascript

```

function isDeepEqual(obj1, obj2, testPrototypes = false) {

if (obj1 === obj2) {

return true

}

if (typeof obj1 === "function" && typeof obj2 === "function") {

return obj1.toString() === obj2.toString()

}

if (obj1 instanceof Date && obj2 instanceof Date) {

return obj1.getTime() === obj2.getTime()

}

if (

Object.prototype.toString.call(obj1) !==

Object.prototype.toString.call(obj2) ||

typeof obj1 !== "object"

) {

return false

}

const prototypesAreEqual = testPrototypes

? isDeepEqual(

Object.getPrototypeOf(obj1),

Object.getPrototypeOf(obj2),

true

)

: true

const obj1Props = Object.getOwnPropertyNames(obj1)

const obj2Props = Object.getOwnPropertyNames(obj2)

return (

obj1Props.length === obj2Props.length &&

prototypesAreEqual &&

obj1Props.every(prop => isDeepEqual(obj1[prop], obj2[prop]))

)

}

``` | isamrish |

220,361 | Connecting to ODBC databases from Python with pyodbc

| Steps to connect to ODBC database in Python with pyodbc module Import the pyodbc module and create... | 0 | 2019-12-13T10:37:43 | https://dev.to/andreasneuman/connecting-to-odbc-databases-from-python-with-pyodbc-1p5j | odbc, software, python, driver | Steps to connect to ODBC database in Python with pyodbc module

1. Import the pyodbc module and create a connection to the database

2. Execute an INSERT statement to test the connection to the database.

3. Retrieve a result set from a query, iterate over it and print out all records.

Key Features

- Direct mode

- SQL data type mapping

- Secure connection

- ANSI SQL-92 standard support for cloud services

Learn more at [https://www.devart.com/odbc/python/](https://www.devart.com/odbc/python/) | andreasneuman |

220,482 | How to build a Twitter bot with NodeJs | twitter bot tutorial | 0 | 2019-12-13T14:37:49 | https://dev.to/codesource/how-to-build-a-twitter-bot-with-nodejs-1nlh | node | ---

title: How to build a Twitter bot with NodeJs

published: true

description: twitter bot tutorial

tags: Nodejs

---

Building a Twitter bot using their [API](https://codesource.io/how-to-consume-restful-apis-with-axios/) is one of the fundamental applications of the Twitter API. To build a Twitter bot with Nodejs, you’ll need to take these steps below before proceeding:

##Create a new account for the bot.

Apply for API access at developer.twitter.com

Ensure you have NodeJS and NPM installed on your machine.

We’ll be building a Twitter bot with Nodejs to track a specific hashtag then like and retweet every post containing that hashtag.

##Getting up and running

Firstly you’ll need to initialize your node app by running npm init and filling the required parameters. Next, we install Twit, an NPM package that makes it easy to interact with the Twitter API.

~~~bash

$ npm install twit --save

~~~

Now, go to your Twitter developer dashboard to create a new app so you can obtain the consumer key, consumer secret, access token key and access token secret. After that, you need to set up these keys as environment variables to use in the app.

Building the bot

Now in the app’s entry file, initialize Twit with the secret keys from your Twitter app.

~~~js

// index.js

const Twit = require('twit');

const T = new Twit({

consumer_key: process.env.APPLICATION_CONSUMER_KEY_HERE,

consumer_secret: process.env.APPLICATION_CONSUMER_SECRET_HERE,

access_token: process.env.ACCESS_TOKEN_HERE,

access_token_secret: process.env.ACCESS_TOKEN_SECRET_HERE

});

~~~

### Listening for events

Twitter’s streaming API gives access to two streams, the user stream and the public stream, we’ll be using the public stream which is a stream of all public tweets, you can read more on them in the documentation.

We’re going to be tracking a keyword from the stream of public tweets, so the bot is going to track tweets that contain “#JavaScript” (not case sensitive).

~~~js

Tracking keywords

// index.js

const Twit = require('twit');

const T = new Twit({

consumer_key: process.env.APPLICATION_CONSUMER_KEY_HERE,

consumer_secret: process.env.APPLICATION_CONSUMER_SECRET_HERE,

access_token: process.env.ACCESS_TOKEN_HERE,

access_token_secret: process.env.ACCESS_TOKEN_SECRET_HERE

});

// start stream and track tweets

const stream = T.stream('statuses/filter', {track: '#JavaScript'});

// event handler

stream.on('tweet', tweet => {

// perform some action here

});

~~~

### Responding to events

Now that we’ve been able to track keywords, we can now perform some magic with tweets that contain such keywords in our event handler function.

The Twitter API allows interacting with the platform as you would normally, you can create new tweets, like, retweet, reply, follow, delete and more. We’re going to be using only two functionalities which is the like and retweet.

~~~js

// index.js

const Twit = require('twit');

const T = new Twit({

consumer_key: APPLICATION_CONSUMER_KEY_HERE,

consumer_secret: APPLICATION_CONSUMER_SECRET_HERE,

access_token: ACCESS_TOKEN_HERE,

access_token_secret: ACCESS_TOKEN_SECRET_HERE

});

// start stream and track tweets

const stream = T.stream('statuses/filter', {track: '#JavaScript'});

// use this to log errors from requests

function responseCallback (err, data, response) {

console.log(err);

}

// event handler

stream.on('tweet', tweet => {

// retweet

T.post('statuses/retweet/:id', {id: tweet.id_str}, responseCallback);

// like

T.post('favorites/create', {id: tweet.id_str}, responseCallback);

});

~~~~

### Retweet

To retweet, we simply post to the statuses/retweet/:id also passing in an object which contains the id of the tweet, the third argument is a callback function that gets called after a response is sent, though optional, it is still a good idea to get notified when an error comes in.

### Like

To like a tweet, we send a post request to the favourites/create endpoint, also passing in the object with the id and an optional callback function.

Deployment

Now the bot is ready to be deployed, I use Heroku to deploy node apps so I’ll give a brief walkthrough below.

Firstly, you need to download the Heroku CLI tool, here’s the documentation. The tool requires git in order to deploy, there are other ways but deployment from git seems easier, here’s the documentation.

There’s a feature in Heroku where your app goes to sleep after some time of inactivity, this may be seen as a bug to some persons, see the fix here.

You can read more on the Twitter documentation to build larger apps, It has every information you need to know about.

Here is the [source code](https://github.com/Dunebook/js-bot) in case you might be interested.

Source - [CodeSource.io](https://codesource.io/how-to-build-a-twitter-bot-with-nodejs/) | codesource |

220,554 | smart contract machine learning DEV | good morning , i want to implement a new smart contract for clustering data over blockchain. about... | 0 | 2019-12-13T17:10:12 | https://dev.to/gaviotamina/smart-contract-machine-learning-dev-52jk | good morning ,

i want to implement a new smart contract for clustering data over blockchain.

about the algorithme of clusterning i wat to start by the simple algorithme Kmeans for example.

the smart contract get data from un IPFS.

what architecture can my smart contract have? and with what I have to start?

Cdlt. | gaviotamina | |

220,564 | How to test if an element is in the viewport | This article was originally posted on pelumicodes.com There can be several scenarios that may requir... | 0 | 2019-12-13T17:41:52 | https://pelumicodes.com/post/how-to-test-if-an-element-is-in-the-viewport/ | jquery, javascript, angular, browser |

This article was originally posted on [pelumicodes.com](https://pelumicodes.com)

There can be several scenarios that may require you to determine whether an element is currently in the viewport of your browser, you might want to implement lazy loading or animating divs whenever they show up on the user's screen.

> We will not be using jQuery :visible selector because it selects elements based on display CSS property or opacity.

To do this with jQuery, we first have to define a function `isInViewPort` that checks if the element is in the browser's viewport

```javascript

$.fn.isInViewport = function() {

var elementTop = $(this).offset().top;

var elementBottom = elementTop + $(this).outerHeight();

var viewportTop = $(window).scrollTop();

var viewportBottom = viewportTop + $(window).height();

return elementBottom > viewportTop && elementTop < viewportBottom;

};

$(window).on(‘resize scroll’, function() {

if ($(.foo).isInViewport()) {

// code here

}

});

```

The `elementTop` returns the top position of the element, `offset().top` returns the distance of the current element relative to the top of the [offsetParent](https://developer.mozilla.org/en-US/docs/Web/API/HTMLelement/offsetParent) node.

To get the bottom position of the element we need to add the height of the element to the `offset().top` . The [outerHeight]( https://api.jquery.com/outerheight/) allows us to find the height of the element including the border and padding.

The ` viewportTop ` returns the top of the viewport; the relative position from the scrollbar position to object matched.

To get the ` viewportBottom ` too we add the height of the window to the ` viewportTop `.

The ` isInViewport ` function returns ` true ` if the element is in the viewport, so you can run whatever code you want if the condition is met.

***

Plugins that do the job for you

* [Vanila Js helper function](https://vanillajstoolkit.com/helpers/isinviewport/).

* https://github.com/customd/jquery-visible

* http://www.appelsiini.net/projects/viewport

cc: [stackoverflow answer](https://stackoverflow.com/questions/20791374/jquery-check-if-element-is-visible-in-viewport/33979503#33979503) | pelumicodes |

220,596 | Three Small Relational Efforts That Have Big Impacts | The interactions we have with other people help form the environment we work in. If we treat people w... | 0 | 2019-12-13T20:35:18 | https://dev.to/thebuffed/three-small-relational-efforts-that-have-big-impacts-5do4 | career | The interactions we have with other people help form the environment we work in. If we treat people with respect, care, and interest, the environment will inevitably become an attraction.

There are a few things I try to do on my quest to be a better human to help others feel comfortable and cared for. I know these things will not resonate with everyone, but I have experienced better relationships with my peers due in part to these habits.

## 1. Physically take note of things people mention

When someone mentions something about themselves, I try to *write it down*. It's okay to study your relationships with others, and taking notes will make sure that you can follow up on their interests.

For example, if someone at work mentions they're leaving early on Friday because their daughter has a soccer game, I'll make a note and ask about it the following week.

## 2. Make sure everyone has a direct opportunity to speak

The opportunity part of this is important, because some people are more comfortable sitting back and listening, myself included. The issue is that some people inadvertently get pushed out of the conversation without a chance to get back in.

Be direct and supportive. If someone gets interrupted, address the original speaker immediately after and ask them what they were saying. If a story causes someone else to go down a tangent, make sure to loop back around to the original storyteller. If someone simply hasn't spoken in a while, try to steer the conversation to a place they'll feel more comfortable.

## 3. Show more excitement than expertise

If someone shares information with you about hobby or activity, give them your support in the form of excitement and questions rather than advice. Not every conversation needs to be a competition on who knows more about what. Even if you do know more, just enjoy the fact that you have something in common without intimidating them or belittling their interest and accomplishments.

Hopefully these short tips are helpful for building better relationships. They are subtle things but can be really powerful when they are applied with a mentality of care.

| thebuffed |

220,756 | Install Ghost with Caddy on Ubuntu | TL; DR Get a server with Ubuntu up and running Set up a non-root user and add it to... | 0 | 2019-12-14T05:08:23 | https://dev.to/alexkuang0/install-ghost-with-caddy-on-ubuntu-3flf | ubuntu, node | ## TL; DR

1. Get a server with Ubuntu up and running

2. Set up a non-root user and add it to superuser group

3. Install MySQL and Node.js

4. Install Ghost-CLI and start

5. Install Caddy as a service and write a simple Caddyfile

6. Get everything up and running!

## Foreword (Feel-Free-to-Ignore-My-Nonsense™️)

This article is about how I built **this** blog with Ghost, an open-source blog platform based on Node.js. I used to use WordPress and static website generators like Hexo and Jekyll for my blog. But they turned out either too heavy or too light. Ghost seems like a perfect balance between them. It's open source; it's elegant out-of-the-box. Zero configuration is required; yet it's configurable from top to bottom.

The Ghost project is actually very well-documented – it has a decent [official installation guide](https://ghost.org/docs/install/ubuntu) on Ubuntu with Nginx. But as you can see from the title of this article, I am going to ship it together with my favorite web server – Caddy! It's a lightweight, easy to configure yet powerful web server. To someone like me, who hate to write or read either Nginx `conf` files or Apache `.htaccess` files, Caddy is like an oasis in the desert of tedious web server configurations.

The web technologies are changing rapidly, especially for open source projects like Ghost and Caddy. From my observation, I would say neither Ghost nor Caddy are going to be backward compatible, which means the newer version of the software may not work as expected in older environment. So I **recommend** that you should always check if this tutorial is outdated or deprecated before moving on. You can go to their official website by clicking their names in the next section. Also, if you are running the application in production, use a fixed version, preferably with LTS (*Long-term support*).

## Environments and Softwares

- [Ubuntu 18.04.3 LTS](https://ubuntu.com/download/server)

- [Node.js v10.17.0 LTS](https://nodejs.org/en/about/releases/) (This is the highest version Ghost support as of Dec. 2019)

- [Caddy 1](https://caddyserver.com/v1/) (**NOT** Caddy 2, which is still in **beta** as of Dec. 2019)

- MySQL 5.7 (It's gonna consume **A LOT** of memory! Use a lower version if you're running on a server with <1GB RAM.

Let get started! 👨💻👩💻

## Step 1: Get a server

Get a server with Ubuntu up and running! Almost every cloud hosting company will provide Ubuntu 18.04 LTS image as of now.

## Step 2: Set up a non-root superuser

```bash

# connect with root credentials to the server

ssh root@<server_ip> -p <ssh_port> # Default port: 22

# create a new user

adduser <username>

# add that user to superuser group

usermod -aG sudo <username>

# login as the new user

su <username>

```

## Step 3: Install MySQL and set up

```bash

sudo apt update

sudo apt upgrade

# install MySQL

sudo apt install mysql-server

# Set up MySQL password, it's required on Ubuntu 18.04!

sudo mysql

# Replace 'password' with your password, but keep the quote marks!

ALTER USER 'root'@'localhost' IDENTIFIED WITH mysql_native_password BY 'password';

# Then exit MySQL

quit

```

## Step 4: Install Node.js

### Method 1: Use apt / apt-get

```bash

curl -sL https://deb.nodesource.com/setup_10.x | sudo -E bash

sudo apt-get install -y nodejs

```

### Method 2: Use nvm (Node Version Manager)

to facilitate switching between Node.js versions

```bash

curl -o- https://raw.githubusercontent.com/nvm-sh/nvm/v0.35.1/install.sh | bash

```

The script clones the nvm repository to `~/.nvm`, and adds the source lines from the snippet below to your profile (`~/.bash_profile`, `~/.zshrc`, `~/.profile`, or `~/.bashrc`):

```bash

export NVM_DIR="$([ -z "${XDG_CONFIG_HOME-}" ] && printf %s "${HOME}/.nvm" || printf %s "${XDG_CONFIG_HOME}/nvm")"

[ -s "$NVM_DIR/nvm.sh" ] && \. "$NVM_DIR/nvm.sh" # This loads nvm

```

Install Node.js v10.17.0

```bash

# source profile

source ~/.bash_profile # change to your profile

# check if nvm is properly installed

command -v nvm # output will be `nvm` if it is

nvm install v10.17.0

```

## Step 4: Install Ghost-CLI

### Method 1: Use npm

```bash

sudo npm install ghost-cli@latest -g

```

### Method 2: Use yarn

```bash

# install yarn if you don't have it

curl -sS https://dl.yarnpkg.com/debian/pubkey.gpg | sudo apt-key add -

echo "deb https://dl.yarnpkg.com/debian/ stable main" | sudo tee /etc/apt/sources.list.d/yarn.list

sudo apt update && sudo apt install yarn

sudo yarn global add ghost-cli@latest

```

## Step 5: Get Ghost up and running

```bash

sudo mkdir -p /var/www/ghost

sudo chown <username>:<username> /var/www/ghost

sudo chmod 775 /var/www/ghost

cd /var/www/ghost

ghost install

```

### Install questions

During install, the CLI will ask a number of questions to configure your site. They will probably throw an error or two about you don't have Nginx installed. Just ignore that.

#### Blog URL

Enter the exact URL your publication will be available at and include the protocol for HTTP or HTTPS. For example, `https://example.com`.

#### MySQL hostname

This determines where your MySQL database can be accessed from. When MySQL is installed on the same server, use `localhost` (press Enter to use the default value). If MySQL is installed on another server, enter the name manually.

#### MySQL username / password

If you already have an existing MySQL database enter the the username. Otherwise, enter `root`. Then supply the password for your user.

#### Ghost database name

Enter the name of your database. It will be automatically set up for you, unless you're using a **non**-root MySQL user/pass. In that case the database must already exist and have the correct permissions.

#### Set up a ghost MySQL user? (Recommended)

If you provided your root MySQL user, Ghost-CLI can create a custom MySQL user that can only access/edit your new Ghost database and nothing else.

#### Set up systemd? (Recommended)

`systemd` is the recommended process manager tool to keep Ghost running smoothly. We recommend choosing `yes` but it’s possible to set up your own process management.

#### Start Ghost?

Choosing `yes` runs Ghost on default port `2368`.

## Step 6: Get Caddy up and running

Caddy has an awesome collection of plugins. You can go to [Download Caddy](https://caddyserver.com/v1/download) page. First, select the correct platform; then add a bunch of plugins that you're interested. After that, don't click `Download`. Copy the link in the `Direct link to download` section. Head back to ssh in terminal.

```bash

mkdir -p ~/Downloads

cd ~/Downloads

# download caddy binary, the link may differ if you added plugins

curl https://caddyserver.com/download/linux/amd64?license=personal&telemetry=off --output caddy

sudo cp ./caddy /usr/local/bin

sudo chown root:root /usr/local/bin/caddy

sudo chmod 755 /usr/local/bin/caddy

sudo setcap 'cap_net_bind_service=+ep' /usr/local/bin/caddy

sudo mkdir /etc/caddy

sudo chown -R root:root /etc/caddy

sudo mkdir /etc/ssl/caddy

sudo chown -R root:<username> /etc/ssl/caddy

sudo chmod 770 /etc/ssl/caddy

```

### Run Caddy as a service

```bash

wget https://raw.githubusercontent.com/caddyserver/caddy/master/dist/init/linux-systemd/caddy.service

sudo cp caddy.service /etc/systemd/system/

sudo chown root:root /etc/systemd/system/caddy.service

sudo chmod 644 /etc/systemd/system/caddy.service

sudo systemctl daemon-reload

sudo systemctl start caddy.service

sudo systemctl enable caddy.service

```

### Create `Caddyfile`

```bash

sudo touch /etc/caddy/Caddyfile

sudo chown root:root /etc/caddy/Caddyfile

sudo chmod 644 /etc/caddy/Caddyfile

sudo vi /etc/caddy/Caddyfile # edit Caddyfile with your preferred editor, here I use vi

```

We're gonna set up a simple reverse proxy to Ghost's port (2368). Here are 2 sample `Caddyfile`s respectively for Auto SSL enabled and disabled.

```bash

# auto ssl

example.com, www.example.com {

proxy / 127.0.0.1:2368

tls admin@example.com

}

# no auto ssl

http://example.com, http://www.example.com {

proxy / 127.0.0.1:2368

}

```

If you want Auto SSL issued by Let's Encrypt, you should put your email after the `tls` directive on the 3rd line; otherwise, use the second part of this `Caddyfile`. (For me, I was using Cloudflare flexible Auto SSL mode, so I just built a reverse proxy based on HTTP protocol only here)

### Fire it up 🔥

```bash

sudo systemctl start caddy.service

```

## References

- [https://ghost.org/docs/install/ubuntu](https://ghost.org/docs/install/ubuntu/#overview)

- [https://github.com/caddyserver/caddy/tree/master/dist/init/linux-systemd](https://github.com/caddyserver/caddy/tree/master/dist/init/linux-systemd)

- [https://github.com/nvm-sh/nvm](https://github.com/nvm-sh/nvm)

| alexkuang0 |

220,769 | Do Stacked-PRs require re-review after merge? | Is the sum of two approved PR's also an approved PR? | 0 | 2019-12-14T06:06:17 | https://dev.to/jlouzado/do-stacked-prs-require-re-review-after-merge-522f | ask, help, git, codereview | ---

title: Do Stacked-PRs require re-review after merge?

published: true

description: Is the sum of two approved PR's also an approved PR?

tags: ask, help, git, code-review

---

## Scenario

- I'm working on a big feature that I know can be broken into two parts

- these parts are, as is often the case, dependent on each other.

- I branch off `master` and create branch `feat/part1`

- I finish coding part1, and create a PR with this branch

- `feat/part1` -> `master`

- this is `PR-1`

- While I'm still checked out in `feat/part1`, I create a new branch `feat/part2`

- I finish coding part 2 and create a second, "stacked" PR

- `feat/part2` -> `feat/part1`.

- this is `PR-2`

Let's say both PRs get reviewed and approved. I now merge PR-2.

- PR-1 now contains all the changes.

## Question

- Can I assume that PR-1 is now approved and merge-able or do I need to re-review it?

## Background

- As for why someone would do this, this technique of breaking up PRs is a way to breakup large changes into more manageable chunks

- That way the rest of the team can give feedback more easily.

- Can read more about it here: [Stacked PRs To Keep Github Diffs Small | graysonkoonce.com](https://graysonkoonce.com/stacked-pull-requests-keeping-github-diffs-small/) | jlouzado |

220,778 | Answer: Sonarqube 7.9.1 community troubleshooting | answer re: Sonarqube 7.9.1 community... | 0 | 2019-12-14T06:31:47 | https://dev.to/ftechnix/answer-sonarqube-7-9-1-community-troubleshooting-bm6 | {% stackoverflow 58045999 %} | ftechnix | |

220,784 | Laravel 6 REST API with Passport Tutorial with Ecommerce Project | Rest Api Development In this tutorial, we’ll explore the ways you can build—and test—a robust API usi... | 0 | 2019-12-14T07:20:48 | https://dev.to/techmahedy/laravel-6-rest-api-with-passport-tutorial-with-ecommerce-project-1965 | restapi, laravel, laravelpassport | Rest Api Development

In this tutorial, we’ll explore the ways you can build—and test—a robust API using Laravel. We’ll be using Laravel 6, and all of the code is available for reference on GitHub. Now we are going to develop a ecommerce rest api where we have a product table and a review table. Using those two table we will make our ecommerce rest api.

RESTful APIs

First, we need to understand what exactly is considered a RESTful API. REST stands for REpresentational State Transfer and is an architectural style for network communication between applications, which relies on a stateless protocol (usually HTTP) for interaction.

https://codechief.org/article/laravel-6-rest-api-with-passport-tutorial-with-ecommerce-project | techmahedy |

220,795 | From old PHP/MySQL to the world's most modern web app stack with Hasura and GraphQL | This is the history of Nhost. Ever since 2007, I have been into programming and web development. Bac... | 0 | 2019-12-14T07:59:03 | https://blog.nhost.io/from-old-php-mysql-to-the-worlds-most-modern-web-app-stack-with-hasura-and-graphql/ | graphql, postgres, react, productivity | This is the history of [Nhost](https://nhost.io/).

Ever since 2007, I have been into programming and web development. Back then it was all PHP and MySQL websites and everything was great fun!

Around 2013 SPA ([Single Page Application](https://en.wikipedia.org/wiki/Single-page_application)s) started to emerge. Instead of letting your web server render the whole page, the backend just provided data (from [JSON](https://www.json.org/), for example) to your front-end. Your front end then had to take care of rendering your website with the data from the back-end.

And I wanted to learn more!

I went through multiple frameworks, like [MeteorJS](https://www.meteor.com/) and [Firebase](https://firebase.google.com/). I did not feel comfortable with the NoSQL databases that these projects was based on. In retrospect, I am really happy I did not jump on the hype train of NoSQL.

I also built a large enterprise project using React & Redux with a regular REST backend. The developer experience was somewhat OK. You could still use a SQL database and provide a REST API or a GraphQL API to your front-end.

That is an OK approach. No NoSQL, which is good. But no real-time, which is bad.

By November 2018 I was about to rebuild a CRM/Business system from PHP/MySQL to a modern SPA web app. At this time, I decided I would do it with React & Redux with a MySQL database and a REST API. This was pretty much standard at the time.

Then something happened.

I was about to create a VPS from DigitalOcean for my new database and REST API. For no obvious reason clicked on the "marketplace" tab where something drew my attention.

GraphQL? A lambda sign? This looks interesting. Let's start a Hasura Droplet and see what it is!

**60 minutes later my jaw was on the floor.**

**This is amazing!**

**This is it!**

Hasura comes with:

* PostgreSQL (relational database)

* GraphQL

* Real-Time

* Access control

* Blazing Fast™

I could not ask for more!

I was so enthusiastic about Hasura I called up an emergency meeting for all developers in my co-working office ([DoSpace CoWorking](https://www.dospace.se/)).

{% twitter 1068145251267895300 %}

Now, Hasura is great and everything but...

What about Auth and Storage for your app?

## **Auth and Storage**

Hasura is great at handling your data and your API. But Hasura does not care how you handle authentication nor storage.

> With Hasura, you need to handle Auth and Storage yourself.

### **Auth**

When it comes to authentication Hasura recommends that you use some other auth service like [Auth0](https://auth0.com/) or [Firebase Auth](https://firebase.google.com/docs/auth).

I do not like any of those solutions 100%. I like to have full control over my users and not rely on third-party services.

### **Storage**

For Storage, there is no recommended solution from Hasura.

So... I decided to build my own Auth and Storage backend for Hasura.

## **Hasura-Backend-Plus**

I built [Hasura Backend Plus (HB+)](https://github.com/elitan/hasura-backend-plus). Hasura Backend Plus provides auth and storage for any Hasura project.

# **Visiting Hasura in Bangalore, India**

I was helping out Hasura a bit during late 2018/early 2019\. I was giving small local talks about Hasura. I created Hasura Backend Plus. I was active in their Discord server helping other developers. Because of this, I got the chance to visit the Hasura Team in Bangalore. They were hosting the very first [GraphQL Asia](https://www.graphql-asia.org/) and I was invited. And off I went!

{% twitter 1117332824757948417 %}

# **Back to nhost.io**

[nhost.io](https://nhost.io) helps every developer with the quick deployment of Hasura and Hasura-Backend-Plus.

Get your next web project going with the world's most modern web stack.

* PostgreSQL

* GraphQL

* Real-Time Subscriptions (just like Firebase)

* Authentication

* Storage

[Get started with nhost.io](https://console.nhost.io/)! | elitan |

220,800 | Trial | ex | 0 | 2019-12-14T07:48:27 | https://dev.to/ard_handoyo/trial-3idk |

ex | ard_handoyo | |

220,835 | Chrome Dev Summit 2019: Everything you need to know | "As the largest open ecosystem in history, the Web is a tremendous utility, with more than 1.5B... | 0 | 2019-12-14T10:07:30 | https://www.ghosh.dev/posts/chrome-dev-summit-2019-everything-you-need-to-know/ | webdev, webperf, techtalks, webplatform | ---

title: Chrome Dev Summit 2019: Everything you need to know

published: true

date: 2019-12-14 00:00:00 UTC

tags: webdev, webperf, techtalks, webplatform

canonical_url: https://www.ghosh.dev/posts/chrome-dev-summit-2019-everything-you-need-to-know/

cover_image: https://www.ghosh.dev/static/media/chrome-dev-summit-2019.jpg

---

> "As the **largest open ecosystem** in history, the Web is a tremendous utility, with more than 1.5B active websites on the Internet today, serving nearly 4.5B web users across the world. This kind of diversity (geography, device, content, and more) can only be facilitated by the **open web platform**."

>

> <footer>

> <cite>

> - from <a href="https://blog.chromium.org/2019/11/chrome-dev-summit-2019-elevating-web.html" target="_blank">

> blog.chromium.org</a>

> </cite>

> </footer>

This would be the uber pitch for [Chrome Dev Summit](https://developer.chrome.com/devsummit/) this year: **elevating the web platform**.

Last month I was in San Francisco for CDS 2019, my first time attending the conference in person. For those of you who couldn’t attend CDS this year or haven’t yet gotten around watching all the sessions on their [Youtube channel](https://www.youtube.com/watch?v=F1UP7wRCPH8&list=PLNYkxOF6rcIDA1uGhqy45bqlul0VcvKMr), here are my notes and thoughts about almost everything that was announced that you need to know!

Almost everything you say? Well then, buckle up, for this is going to be a long article!

# <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>Bridging the “app gap”

The web is surely a powerful platform. But there’s still so much that native apps can do that web apps today can not. Think about even some simple things that naturally come to your mind when picturing installed native applications on your phones or computers: accessing contacts or working directly on files on your device, background syncs at regular intervals, system-level cross-app sharing capabilities and such. This is what we call the “native app gap”. And we’re betting that’s there a real need for closing it.

> In their natural progression, browsers have become viable, full-fledged application runtimes.

Over the last decade, “the browser” has become so much more than a simple tool for accessing information on the web. It is perhaps the most widely-known publicly-used piece of software that there is, yet so heavily underrated at its sophistication. In their natural progression, browsers have become viable, full-fledged application runtimes - containers for not only delivering but also capable of deploying and running a very large variety of cross-platform applications. [Technologies](https://en.wikipedia.org/wiki/Node.js) [arising](https://en.wikipedia.org/wiki/Blink_layout_engine) out of browser engines have been used for a while to build [massively successful desktop applications](https://code.visualstudio.com/); browsers themselves have been used as the basis of building [entire operating system UI](https://en.wikipedia.org/wiki/Chrome_OS) and even run [complex real-time 3D games](https://blog.mozilla.org/blog/2014/03/12/mozilla-and-epic-preview-unreal-engine-4-running-in-firefox/) at a performance that is getting closer and closer to native speeds every day. With that train of thought, it seems almost inevitable that the _browserverse_ would sooner or later tackle the problem of being able to impart the average-joe web app the power to do things native apps can.

<figcaption>

Source: <a href="https://imgflip.com/i/3j3vbb">imgflip.com</a>

</figcaption>

If I had to bet, the Web combined with its reach and ubiquitous platform support is probably going to become one of the best and perhaps most popular mechanisms for software delivery for a very large subset of applications use-cases in the coming years.

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>Project Fugu

The goal of [Project Fugu](https://www.chromium.org/teams/web-capabilities-fugu) is to make the “app gap” go away. Simply put, the idea is to bake a set of right APIs into the browser, such that over time web apps become capable of doing almost everything that native apps can - with the right levers of permissions and access-control of course, for all your rightly-raised potential privacy and security concerns!

<figcaption>

Source: <a href="https://imgflip.com/i/3j3vtu">imgflip.com</a>

</figcaption>

Enough with the Thanos references, but I wouldn’t be very surprised if someone in the web community called this crazy. And if I absolutely had to, I am going to admit that even if so, this is definitely _my_ kind of crazy. I like to think that I somehow saw this coming, and I wanted this to happen. For years, browsers _have_ in some capacity been trying to bring bits and pieces of native power to the web with [experimental APIs](https://developer.mozilla.org/en-US/docs/Web/API) for things like hardware sensors and such. With Fugu, all this endeavour to impart native-level power to the web has at least been formally unified under one banner and picked up like an umbrella initiative for building and standardising into the open web by the most popular browser project there is. And I’m really excited to see this succeed for the long term! So I’d love to see this turn out like IronMan (the winning, _not_ the dying part) rather than Thanos in the end!

The advent and success of [Progressive Web Apps](https://developer.mozilla.org/en-US/docs/Web/Progressive_web_apps) (PWA) would have definitely acted as a catalyst at exhibiting that with the right pedigree, web apps can really triumph. Making web apps easily [installable](https://developers.google.com/web/fundamentals/app-install-banners) or bringing [push notifications](https://developers.google.com/web/fundamentals/push-notifications) to the web was perhaps just the first few pieces of this bigger puzzle we have been staring at for a while. Keeping aside whatever Google as a company’s strategies and business motivations may be to so heavily be investing in everything web; if at the end of the day it benefits the entire web user and developer community by making the web platform more capable, and I choose to believe that it will, I am surely going to sleep happier at night.

<figcaption>

Source: <a href="https://slides.com/mhadaily/hardware-connectivity-on-pwa#/0/7">slides.com</a>

</figcaption>

In case you’ve been wondering, the word “Fugu” is Japanese for [pufferfish](https://en.wikipedia.org/wiki/Tetraodontidae), one of the most toxic and poisonous species of vertebrates in the world, incidentally also prepared and consumed as a delicacy, which as you can guess, can be extremely dangerous from the poison if not prepared right. _See what they did there?_

Some of the upcoming and interesting capabilities that were announced as part of Project Fugu are:

- [Native Filesystem API](https://web.dev/native-file-system/) which enables developers to build web apps that interact with files on the users’ local device, like IDEs, photo and video editors or text editors.

- [Contact Picker API](https://web.dev/contact-picker/), an on-demand picker that allows users to select entries from their contact list and share limited details of the selected entries with a website.

- [Web Share](https://web.dev/web-share/) and [Web Share Target](https://web.dev/web-share-target/) APIs which together allow web apps to use the same system-provided share capabilities as native apps.

- [SMS Receiver API](https://web.dev/sms-receiver-api-announcement/), using which web apps can now use to auto-verify phone numbers with SMS.

- [WebAuthN](https://developers.google.com/web/updates/2018/05/webauthn) to let web apps access hardware tokens (eg. [YubiKey](https://en.wikipedia.org/wiki/YubiKey)) or perform biometrics (like a fingerprint or facial recognition) based identification and recognition of users on the web.

- [getInstalledRelatedApps() API](https://web.dev/get-installed-related-apps/) that allows your web app to check whether _your_ native app is installed on a user’s device, and vice versa.

- [Periodic Background Sync API](https://web.dev/periodic-background-sync/) for syncing your web app’s data periodically in the background and possibly providing more powerful and creative offline use-cases for a more native-app-like experience.

- [Shape Detection API](https://web.dev/shape-detection/) to easily detect faces, barcodes, and text in images.

- [Badging API](https://web.dev/badging-api/) that allows installed web apps to set an application-wide badge on the app icon.

- [Wake Lock API](https://web.dev/wakelock/) for providing a mechanism to prevent devices from dimming or locking the screen when a web app needs to keep running.

- [Notification Triggers](https://www.chromestatus.com/feature/5133150283890688) for triggering notifications using timers or events apart from a server push.

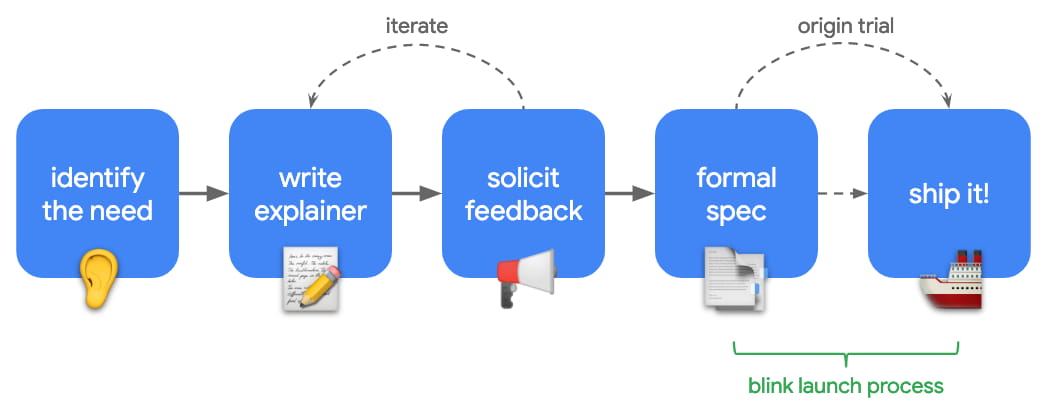

The list goes on. These features are either in early experimentation through [Origin Trials](https://github.com/GoogleChrome/OriginTrials/blob/gh-pages/explainer.md) or targeted to be built in the future. Here is an open [tracker](https://goo.gle/fugu-api-tracker) for the list of APIs and their progress that have been captured so far under the banner of Project Fugu.

<figcaption>

Source: <a href="https://developers.google.com/web/updates/capabilities#process">developer.google.com</a>

</figcaption>

Also worth noting, [webwewant.fyi](https://webwewant.fyi/) was as announced as well, which is a great place for anyone in the web community to go and provide feedback about the state of the web and things that we want the web to do!

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>On PWAs… wait, now we have TWAs?

Progressive Web Apps with their installability and native-like fullscreen immersive experiences have been Google’s [showcase fodder](https://developers.google.com/web/showcase/tags/progressive-web-apps) for over multiple years of Google I/O and Chrome Dev Summit. Major consumer facing brands like Flipkart, Twitter, Spotify, Pinterest, Starbucks, Airbnb, Alibaba, BookMyShow, MakeMyTrip, Housing, Ola, OYO have all built and shown how great PWA based experiences can be made which are hard to distinguish from native apps by the average user. By this point in time, I think as a community, we generally understand and agree that PWAs can be awesome when done right. So what next?

<figcaption>

Source: <a href="https://medium.com/@firt/google-play-store-now-open-for-progressive-web-apps-ec6f3c6ff3cc">medium.com</a>

</figcaption>

A key development for installable web apps has been the emergence of [Trusted Web Activities](https://developers.google.com/web/updates/2019/02/using-twa) (TWA) which provide a way to integrate full-screen web content into Android apps using a protocol called Custom Tabs: in our case, [Chrome Custom Tabs](https://developer.chrome.com/multidevice/android/customtabs).

To quote from the [Chromium Blog](https://blog.chromium.org/2019/02/introducing-trusted-web-activity-for.html), TWAs have access to all Chrome features and functionalities including many which are not available to a standard Android WebView, such as [web push notifications](https://developers.google.com/web/fundamentals/push-notifications/), [background sync](https://developers.google.com/web/updates/2015/12/background-sync), [form autofill](https://support.google.com/chrome/answer/142893?co=GENIE.Platform%3DDesktop&hl=en), [media source extensions](https://www.w3.org/TR/media-source/) and the [sharing API](https://developers.google.com/web/updates/2016/09/navigator-share). A website loaded in a TWA shares stored data with the Chrome browser, including cookies. This implies shared session state, which for most sites means that if a user has previously signed into your website in Chrome they will also be signed into the TWA.

Basically think of how PWAs work today, except that you can install it from the Play Store, with possibly some other added benefits of app-like privileges. It was briefly mentioned that with TWAs the general permission model of certain web app capabilities (such as having to ask for push notification permissions) may go away and become as simple and elevated as true native Android apps (considering they _are_, in fact, so).

As an application of all this, TWAs are now Google’s recommended for web app developers to surface their _PWA listings on the Play Store_. Here’s their [showcase](https://youtu.be/Hp_dQvQyYEI?list=PLNYkxOF6rcIDA1uGhqy45bqlul0VcvKMr&t=1516) on OYO.

> Imagine having native apps that almost never need to go through painful or slow update cycles and consume very little disk space.

I believe TWAs can open up some really interesting avenues. Imagine having native apps that almost never need to go through painful or slow update cycles (or hence have to deal with all the typical complexities of releasing and maintaining native apps and their codebases), because like everything web, the actual UI and content always updates on the fly! These apps get installed on a user’s device, consume very little disk space compared to full-blown native apps because of an effectively shared runtime host (the browser) and work at full capacity of that browser, say Chrome. This is quite different from embedding WebViews into hybrid native applications for a number of reasons where the main browser on a user’s device can do more powerful things or have access to information that WebView components embedded into individual native apps can not.

If today you maintain a native app, a _lite_ version of your native app, _and_ a mobile website (like Facebook does); now, if you want, your lite app can be simply your mobile website distributed via the Play Store wrapped in a TWA. One less codebase to maintain. Phew.

I haven’t yet played around with deploying a TWA first-hand myself, so some of the low-level processes are still unclear to me, but from what I could gather talking to Google engineers at CDS who have been working on TWAs, because of several Play Store policies and mechanisms based on how it operates today by design, looks like as developers we still need to handcraft a TWA from a PWA, and manually upload and release it onto the Play Store. What would be amazing is being able to hook up some sort of a pipeline onto the Play Store itself that auto-vends a PWA as a TWA given a right, validated config.

Other improvements coming to PWAs are the [shortcuts](https://www.w3.org/TR/appmanifest/#shortcuts-member) member in Web App Manifest which will allow us to register a list of static shortcuts to key URLs within the PWA where the browser exposes these shortcuts via interactions that are consistent with exposure of an application icon’s context menu in the host operating system (e.g., right-click, long press), similar to how native apps do.

Also, Chrome intends to play with the [“Add to Homescreen” verbiage](https://www.youtube.com/watch?v=Hp_dQvQyYEI&feature=youtu.be&list=PLNYkxOF6rcIDA1uGhqy45bqlul0VcvKMr&t=82) and it might probably simply be called “Install” in the future.

# <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>Because Performance. Obviously!

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>Not all devices _are_ made equal.

And neither the experience of your web app.

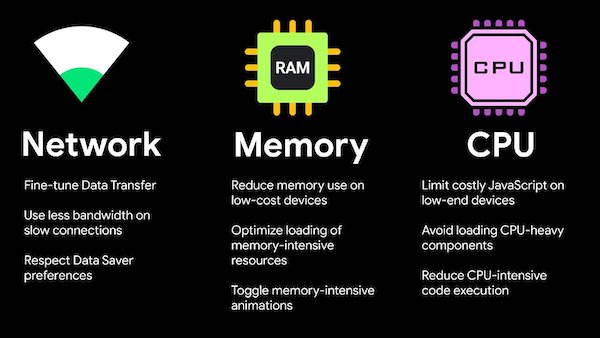

Users access web experiences from a large variety of network conditions (WiFi, LTE, 3G, 2G, optional data-saver modes) and device capabilities (CPU, memory, screen size and resolution) which causes a large performance gap to exist across the spectrum of network types and devices. Multiple Web APIs are available today that can collectively inform us with network and device information which could be used to understand, classify and target users with an adaptive experience for providing them with an optimal journey through our website, given their state of network and device performance. Some APIs that can help us here are the [Network Information API](https://developer.mozilla.org/en-US/docs/Web/API/Network_Information_API) that informs effective connection type, downlink speed, RTT or data-saver information, [DeviceMemory API](https://developers.google.com/web/updates/2017/12/device-memory), CPU [HardwareConcurrency API](https://developer.mozilla.org/en-US/docs/Web/API/NavigatorConcurrentHardware/hardwareConcurrency) and a mechanism to communicate such information as [Client-Hints](https://developers.google.com/web/fundamentals/performance/optimizing-content-efficiency/client-hints).

<figcaption>

Source: <a href="https://youtu.be/puUPpVrIRkc?list=PLNYkxOF6rcIDA1uGhqy45bqlul0VcvKMr&t=454">Adaptive Loading - improving web performance on slow devices</a>

</figcaption>

Facebook [described](https://youtu.be/puUPpVrIRkc?list=PLNYkxOF6rcIDA1uGhqy45bqlul0VcvKMr&t=1438) on a very high-level their classification models for devices for both mobiles and desktops (which are relatively harder to do) and how they have been effectively using this information to derive the best user experience for targeted segments.

All this comes paired with the release of [Adaptive Hooks](https://github.com/GoogleChromeLabs/react-adaptive-hooks), which makes it easy to target device and network specs for patterns around resource loading, data-fetching, code-splitting or disabling certain features for your React web app.

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>Better to hang by more than a thread.

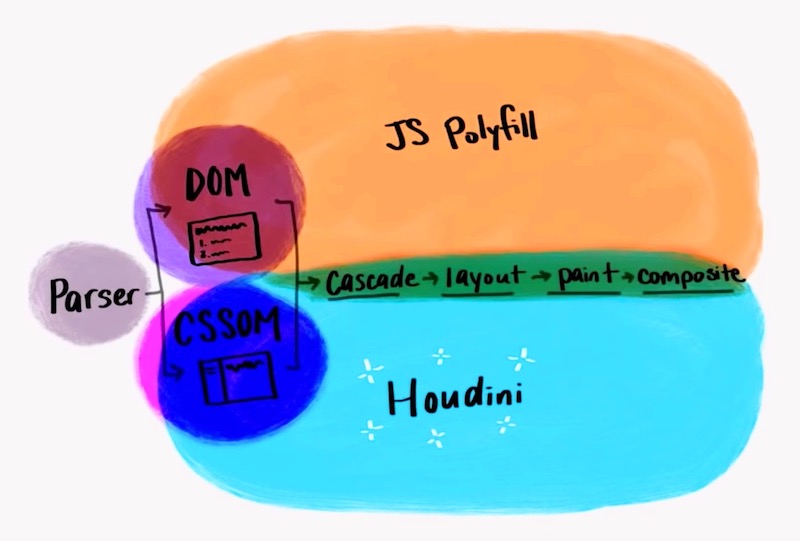

> The more work is required to be done during JS execution, it queues up, blocks and slows everything down effectively causing the web app to suffer from jank and feel sluggish.

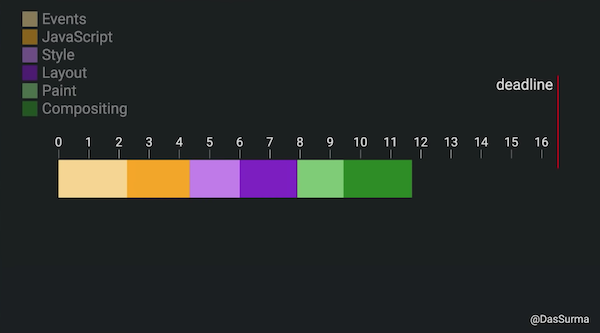

To deliver web experiences that feel smooth, a lot of work needs to be done by the browser, ranging from the initial downloading, parsing and execution of HTML, CSS & Javascript; and all the successive work required to be able to eventually [paint pixels](https://developers.google.com/web/fundamentals/performance/rendering) on the screen which includes styling, layouting, painting and compositing; and then to make things interactive, handling multiple events and actions; and in response to that, frequently _redoing_ most of these above tasks to update content on the page.

A lot of these tasks need to happen in sequence every time, as frequently as required, within a cumulative span of a _few milliseconds_ to keep the experience smooth and responsive: about 16ms to deliver 60 frames per second, while some new devices support even higher refresh rates like 90Hz or 120Hz which push the available time to paint a frame to even smaller if you have to keep up with the refresh rate.

With the combined truth that all devices are not made equal and that JavaScript is single-threaded by nature which means the more work is required to be done during JS execution, it queues up, blocks and slows everything down effectively causing your web app to suffer from [jank](https://www.afasterweb.com/2015/08/29/what-the-jank/) and feel sluggish.

<figcaption>

Source: <a href="https://youtu.be/7Rrv9qFMWNM?list=PLNYkxOF6rcIDA1uGhqy45bqlul0VcvKMr&t=485">The main thread is overworked & underpaid</a>

</figcaption>

Patterns to effectively distribute the amount of client-side computational work across multiple threads by leveraging [web workers](https://developer.mozilla.org/en-US/docs/Web/API/Web_Workers_API/Using_web_workers) to perform **Off-Main-Thread** (OMT) work and keep the main (UI) thread reserved for doing only the amount of work that absolutely needs to be done by it (DOM & UI work) can help significantly to alleviate this problem. Such patterns are about _reducing risks_ of delivering poor user experiences, where although the entire time of overall work completion maybe, in fact, slowed marginally my message-passing overheads across multiple worker threads, the main (UI) thread is instead free to do any UI work required in the interim (even new user interactions like handling touch or scrolls) and keep delivering a smooth experience throughout. This works out great since the margin of error of dropping a frame is in the order of milliseconds while the making the user wait for an overall task to complete can go into the order of 100s of milliseconds.

Web workers have existed for a while but somehow for probable reasons around their wonkiness, they haven’t really seen great adoption. Libraries like [Comlink](http://npm.im/comlink) can help a lot to that end.

[Proxx.app](https://proxx.app/) is a great example to look at all of this in action.

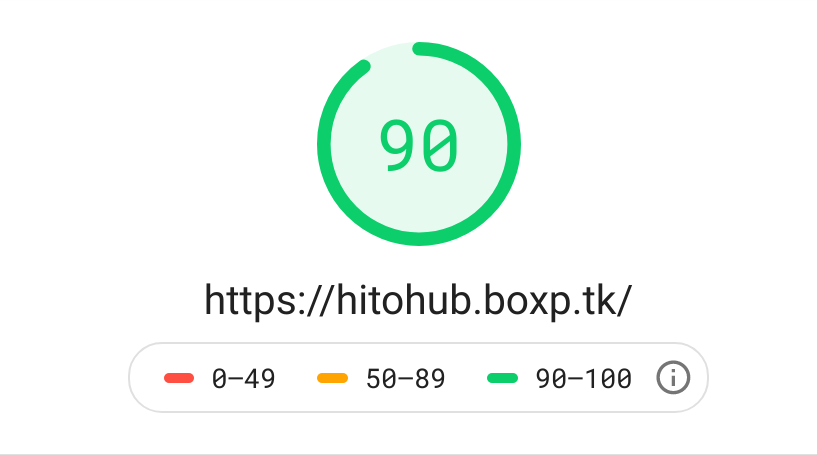

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>Lighthouse shines brighter!

<figcaption>

Source: <a href="https://developers.google.com/web/tools/lighthouse/">google.com</a>

</figcaption>

Not to throw any shade at [Lighthouse](https://developers.google.com/web/tools/lighthouse) _(all puns intended)_, so far this web performance auditing tool had always fallen short of my expectations for any practical large-scale use-case. It seemed a little too simple and superficial to be of much use to drive meaningful insights in complex, real-world production applications. Don’t get me wrong, Lighthouse has always been a decent product on its own, but building web performance test tooling that is robust, powerful _and_ predictable, is inherently a hard problem to solve. Lighthouse had always been somewhat useful to me, but mostly as a basic in-browser audit panel thing that could give me some generic “Performance 101” insights, rather than something I would be excited to hook up as a powerful-enough synthetic performance testing tool in a production pipeline for catering to deeper performance auditing needs that I may have.

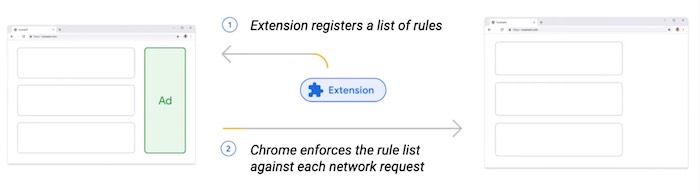

Hopefully, that changes now. With the release of [Lighthouse CI](https://github.com/GoogleChrome/lighthouse-ci), an extension of the toolset for automated assertion, saving and retrieval of historical data and actionable insights for improving web app performance though continuous integrations, the future seems a bit more brighter.

What’s even more exciting, is the introduction of [stack-packs](https://github.com/GoogleChrome/lighthouse-stack-packs) which detect what platform a site is built on (such as Wordpress) and displays specific stack-based recommendations, and [plugins](https://github.com/GoogleChrome/lighthouse/blob/master/docs/plugins.md) which provide mechanisms to extend the functionality of Lighthouse with things such as domain-specific insights and scoring, for example, to cater to the bespoke needs of an e-commerce website.

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>… and comes with new Performance Metrics.

<figcaption>

Source: <a href="https://youtu.be/iaWLXf1FgI0?list=PLNYkxOF6rcIDA1uGhqy45bqlul0VcvKMr&t=509">Speed tooling evolutions: 2019 and beyond</a>

</figcaption>

With Lighthouse 6, some important changes are coming to how it scores web page performance, focusing on some of the _new_ metrics that are being introduced:

- [Largest Contentful Paint](https://web.dev/lcp/)(LCP), which measures the render time of the largest content element visible in the viewport.

- [Total Blocking Time](https://web.dev/lighthouse-total-blocking-time/) (TBT), a measure of the total amount of time that a page is blocked from responding to user input, such as mouse clicks, screen taps, or keyboard presses. The sum is calculated by adding the _blocking portion_ of all [long tasks](https://web.dev/long-tasks-devtools) between [First Contentful Paint](https://web.dev/first-contentful-paint/) and [Time to Interactive](https://web.dev/interactive/). Any task that executes for more than 50ms is a long task. The amount of time after 50ms is the blocking portion. For example, if Chrome detects a 70ms long task, the blocking portion would be 20ms.

- [Cumulative Layout Shift](https://web.dev/cls/) (CLS), which measures the sum of the individual _layout shift scores_ for each _unexpected layout shift_ that occurs between when the page starts loading and when its [lifecycle state](https://developers.google.com/web/updates/2018/07/page-lifecycle-api) changes to hidden. Layout shifts are defined by the [Layout Instability API](https://github.com/WICG/layout-instability) and they occur any time an element that is visible in the viewport changes its start position (for example, it’s top and left position in the default [writing mode](https://developer.mozilla.org/en-US/docs/Web/CSS/writing-mode)) changes between two frames. Such elements are considered _unstable elements_.

Metrics that are being deprecated are [First Meaningful Paint](https://web.dev/first-meaningful-paint/)(FMP) and [First CPU Idle](https://web.dev/first-cpu-idle/) (FCI).

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>Visually marking slow vs. fast websites

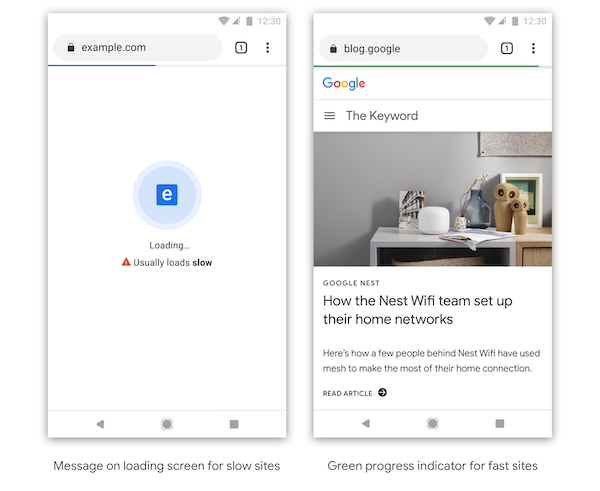

Chrome has expressed plans to visually indicate what it thinks is a slow vs. a fast loading website. The exact form of how the UI is going to look like is still uncertain, but we can expect a bunch of trials and experiments from Chrome on this.

<figcaption>

Source: <a href="https://blog.chromium.org/2019/11/moving-towards-faster-web.html">Moving towards a faster web</a>

</figcaption>

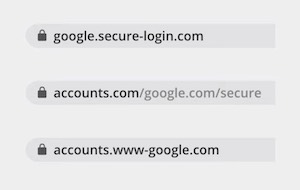

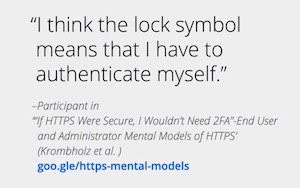

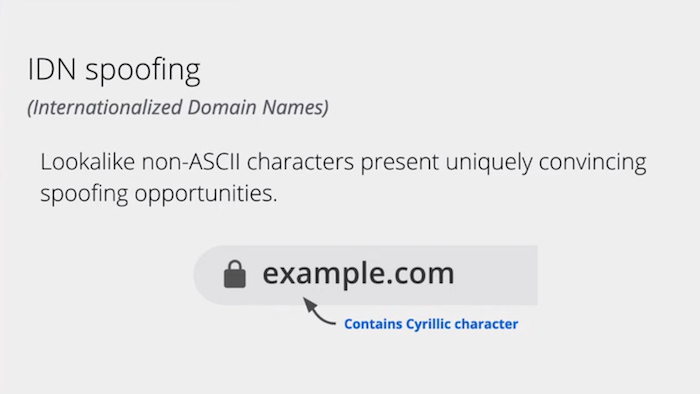

I guess that this is going to be mired with some controversy when this happens. How does the browser judge what is right for my website? Why does it get to decide where exactly to draw the line? And how exactly does it do it for the type of website, target audience and content I have? Surely there are a lot of difficult questions with no easy answers yet, but there’s one thing for sure, that if this does happen, it will force the average website to take some web performance considerations more seriously. Somewhat like when browsers together decided to start enforcing HTTPS by visually penalizing non-secure websites and it worked out well eventually. Honestly, with so much that is unclear, for now, I am still going lean towards the side of more free will, but as a self-appointed advocate of web performance, I am very tempted to think that if executed right, this might just be a good thing.

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>Web Framework ecosystem improvements

Google has been partnering up with popular web framework developers to make under-the-hood improvements to those frameworks such that sites that are built and run on top of these frameworks get visible performance improvements without having to lift a finger so to speak.

> Choosing to deliver modern Javascript code to modern browsers could visibly improve performance.

The prime example of this was a partnership with [Next.js](https://nextjs.org/), a popular web framework based on [React](https://reactjs.org/), where a lot of development has happened around improved chunking, differential loading, JS optimisations and capturing better performance metrics.

An interesting (though quite seemingly obvious in retrospect) takeaway from this was choosing to deliver _modern_ Javascript code to _modern_ browsers (as opposed to lengthy transpiled or polyfilled code based on some lowest common denominator of browsers you need to support) could visibly improve performance by drastically reducing the amount of code that is shipped. This was coupled with the announcement of [Babel preset-modules](https://github.com/babel/preset-modules) which can help you achieve this.

If you are interested, the [Framework Fund](https://opencollective.com/chrome) run by Chrome has also been announced multiple times across this CDS.

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>How awesome is WebAssembly now?

Spoiler alert: pretty awesome! 💯

<figcaption>

Source: <a href="https://commons.wikimedia.org/wiki/File:Web_Assembly_Logo.svg">Wikimedia Commons</a>

</figcaption>

[WebAssembly](https://developer.mozilla.org/en-US/docs/WebAssembly) (WASM) is a new language for the web that is designed to run alongside Javascript and as a compilation target from other languages (such as C, C++, Rust, …) to enable performance-intensive software to run within the browser at near-native speeds that were not possible to attain with JS.

While WebAssembly has been out there being developed and improved upon for a few years, it saw a major announcements this time on performance improvements that brings it closer to being at par with high-performance native code execution, being enabled through the browser’s WASM engine improvements such as [implicit caching](https://dzone.com/articles/webassembly-caching-when-using-emscripten), introduction of support for [threads](https://developers.google.com/web/updates/2018/10/wasm-threads), and [SIMD](https://www.chromestatus.com/feature/6533147810332672) (Single Instruction Multiple Data) which is a core capability of modern CPU architectures that enables instructions to be executed multiple times faster.

Multiple OpenCV-based [demos](https://riju.github.io/WebCamera/samples/) that illustrated real-time high-FPS feature recognition, card reading and image information extraction, facial expression detection or replacement within the web browser, all of which have been made possible by the recent WASM improvements, were showcased.

Check them out, some of them are really cool!

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>The cake is still a lie, but a delicious one at that!

_Here, if you didn’t get that [reference](https://knowyourmeme.com/memes/the-cake-is-a-lie)._

<figcaption>

Source: <a href="https://dev.to/tomayac/hands-on-with-portals-seamless-navigation-on-the-web-4ho0-temp-slug-198334">web.dev</a>

</figcaption>

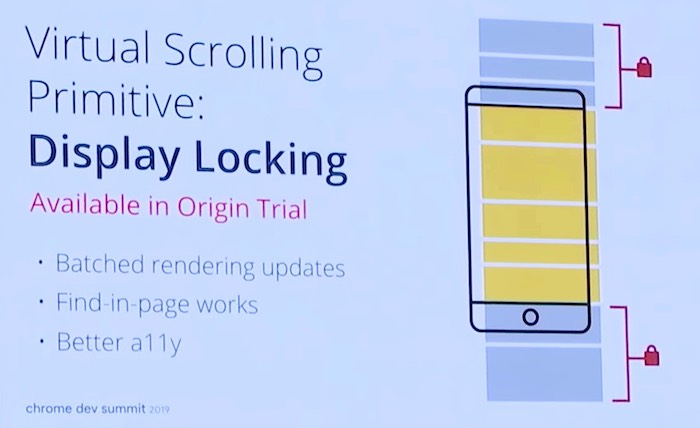

One of the unique new web platform capabilities that garnered a lot of interest was [Portals](https://dev.to/tomayac/hands-on-with-portals-seamless-navigation-on-the-web-4ho0-temp-slug-198334), which aims to enable seamless and animation-capable transitions across _page navigations_ for multi-page architecture (MPA) based web applications, effectively affording such MPAs a _creative lever_ to provide smooth and potentially “instantaneous” browsing experiences like native apps or single-page applications (SPA) could provide. They can be used to deliver great app or SPA-like behaviour without the complexity of doing a SPA which often becomes cumbersome to scale and maintain for large websites and with complex and dynamic use-cases.

Portals are essentially a new type of HTML element that can be instantiated and injected in a page to load another page inside it (in some form similar to IFrames but also different in a lot of ways), keep it hidden or animate it in any form using CSS animations, and when required, navigate _into_ it - thus performing page navigations in an instant when used effectively.

An interesting pattern with Portals is that it can also be made to load the page structure (skeleton or stencil, as you may also call it) preemptively even if the exact data is not prefetched and perform a lot of the major tasks of computation (parsing, execution, styling, layout, painting, compositing) for most of the document structure that contributes to web page performance, in effect moving the performance costs of these tasks out-of-band of critical page load latencies, such that when a portal navigation happens, only the data is fetched and filled in onto the page. This would also require some rendering costs but typically much lesser than the entire page’s worth of work.

Several [demos](https://www.youtube.com/watch?v=X2zqwMBBvIs&feature=youtu.be&list=PLNYkxOF6rcIDA1uGhqy45bqlul0VcvKMr&t=956) are available and this API can be seen behind experimental flags in Chrome at the moment.

# <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>Web Bundles

<figcaption>

Source: <a href="https://web.dev/web-bundles/">web.dev</a>

</figcaption>

In the simplest terms, a [Web Bundle](https://web.dev/web-bundles/) is a file format for encapsulating one or more HTTP resources in a single file. It can include one or more HTML files, Javascript files, images, or Stylesheets. Also known as [Bundled HTTP Exchanges](https://wicg.github.io/webpackage/draft-yasskin-wpack-bundled-exchanges.html), it’s part of the [Web Packaging](https://github.com/WICG/webpackage) proposal (and as someone wise would say, not to be confused with [webpack](https://webpack.js.org/)).

The idea is to enable offline distribution and usage of web apps. Imagine sharing web apps as a single `.wbn` file over anything like Bluetooth, Wi-Fi Direct or USB flash drives and then being able to run them offline on another device in the web application’s origin’s context! In a way, this sort of again veers into the territory of imparting the web more powers like native apps which are easily distributable and executable offline.

> In such countries, a large portion of apps that exist on people’s phones get side-loaded over peer-to-peer mechanisms rather than from over a first-party distribution source like the Play Store.

If you researched on different modes in how native apps get distributed in countries with emerging markets such as in India, Mid-East or Africa which are heavy on mobile users but generally deprived on public Wi-Fi availability or have predominantly poor, patchy or congested cellular networks, peer-to-peer file-sharing apps like [Share-It](https://play.google.com/store/apps/details?id=com.lenovo.anyshare.gps&hl=en_IN) or [Xender](https://play.google.com/store/apps/details?id=cn.xender&hl=en_IN) are extremely popular in terms of how people share software and a large portion of apps that exist on people’s phones get side-loaded over peer-to-peer mechanisms rather than from over a first-party distribution source like the Play Store. Seems only natural that this would be a place to catch on for web apps as well!

I need to confess that the premise of Web Bundles does sort of remind me of the age-old [MHTML](https://en.wikipedia.org/wiki/MHTML) file format (`.mhtml` or `.mht` files if remember those) that used to be popular a decade back and in fact, is still [supported](https://en.wikipedia.org/wiki/MHTML#Browser_support) by all major browsers. MHTML is a web page archive format that could contain HTML and associated assets like stylesheets, javascript, audio, video and even Flash and Java applets of the day, with their content-encoding inspired from the MIME email protocol (leading to the name MIME HTML) to combine stuff into a single file.

For what it’s worth though, from the limited knowledge that I have so far, I do believe that what we’ll have with Web Packaging is going to be much more complex and powerful and catering to the needs of the Web of _this_ generation with key differences like being able to run in the browser using the web application’s origin’s context (by being verified using signatures similar to how [Signed HTTP Exchanges](https://developers.google.com/web/updates/2018/11/signed-exchanges) may work) rather than being treated as locally saved content like with MTHML. But yeah… can’t deny that it does feel a bit like listening to [Backstreet Boys](https://www.youtube.com/watch?v=4fndeDfaWCg) again from those days! Hashtag Nostalgia.

# <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>More cool stuff with CSS! Yeah!

I didn’t even try to come up with a better headline for this section, because this is simply how I genuinely feel.

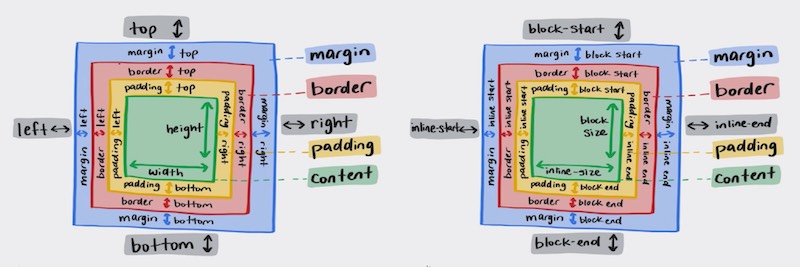

## <svg aria-hidden="true" focusable="false" height="16" version="1.1" viewbox="0 0 16 16" width="16"><path fill-rule="evenodd" d="M4 9h1v1H4c-1.5 0-3-1.69-3-3.5S2.55 3 4 3h4c1.45 0 3 1.69 3 3.5 0 1.41-.91 2.72-2 3.25V8.59c.58-.45 1-1.27 1-2.09C10 5.22 8.98 4 8 4H4c-.98 0-2 1.22-2 2.5S3 9 4 9zm9-3h-1v1h1c1 0 2 1.22 2 2.5S13.98 12 13 12H9c-.98 0-2-1.22-2-2.5 0-.83.42-1.64 1-2.09V6.25c-1.09.53-2 1.84-2 3.25C6 11.31 7.55 13 9 13h4c1.45 0 3-1.69 3-3.5S14.5 6 13 6z"></path></svg>New capabilities

A quick list of some of the new coolness that’s landing on browsers (or even better, have already landed) are:

- [`scroll-snap`](https://developer.mozilla.org/en-US/docs/Web/CSS/CSS_Scroll_Snap) that introduces scroll snap positions, which enforce the scroll positions that a [scroll container’s](https://developer.mozilla.org/en-US/docs/Glossary/scroll_container) [scrollport](https://developer.mozilla.org/en-US/docs/Glossary/scrollport) may end at after a scrolling operation has completed.

- [`:focus-within`](https://developer.mozilla.org/en-US/docs/Web/CSS/:focus-within) to represent an element that has received focus or _contains_ an element that has received focus.

- `@media (prefers-*)` queries, namely the [`prefers-color-scheme`](https://developer.mozilla.org/en-US/docs/Web/CSS/@media/prefers-color-scheme), [`prefers-contrast`](https://developer.mozilla.org/en-US/docs/Web/CSS/@media/prefers-contrast), [`prefers-reduced-motion`](https://developer.mozilla.org/en-US/docs/Web/CSS/@media/prefers-reduced-motion) and [`prefers-reduced-transparency`](https://developer.mozilla.org/en-US/docs/Web/CSS/@media/prefers-reduced-transparency), which in [Adam Argyle’s words](https://youtu.be/-oyeaIirVC0?list=PLNYkxOF6rcIDA1uGhqy45bqlul0VcvKMr&t=396), can together enable you to serve user preferences like, _“I prefer a high contrast dark mode motion when in dim-lit environments!”_ 😎