id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

308,231 | 7 tech trends shaping the future of eCommerce | We have put together a list of top 7 trends that can help your eCommerce business grow and prosper in the future. | 0 | 2020-04-14T05:38:53 | https://dev.to/jignesh_simform/7-tech-trends-shaping-the-future-of-ecommerce-4dgd | ecommerce, ai, ar, voicesearch | ---

title: 7 tech trends shaping the future of eCommerce

published: true

description: We have put together a list of top 7 trends that can help your eCommerce business grow and prosper in the future.

tags: eCommerce, AI, AR, VoiceSearch

---

The eCommerce industry has evolved a great deal over the past few years. We hav... | jignesh_simform |

308,280 | Ubuntu Server 18.04 Üzerine Zimbra Server 8.8.15 Kurulumu | Zimbra Kurmak için Sistem gereksinimleri; En fazla 50 kullanıcıyı destekleyen bir Zimbra s... | 0 | 2020-04-14T08:20:29 | https://dev.to/aciklab/ubuntu-server-18-04-uzerine-zimbra-server-8-8-15-kurulumu-2h2e | zimbra, ubuntuzimbra, zimbraserver, acikkaynak | ---

title: Ubuntu Server 18.04 Üzerine Zimbra Server 8.8.15 Kurulumu

published: true

description:

tags: #zimbra #ubuntuzimbra #zimbraserver #acikkaynak

---

#####Zimbra Kurmak için Sistem gereksinimleri;

En fazla 50 kullanıcıyı destekleyen bir Zimbra sunucusu için önerilen sistem gereksinimleri:

Mevcut kaynaklarını... | ekarabulut |

308,648 | Dark Mode: three Lint checks to help | Three Lint checks to help you developing dark mode on Android | 0 | 2020-04-14T10:43:06 | https://dev.to/dbottillo/dark-mode-three-lint-checks-to-help-b32 | android, darkmode, kotlin, lint | ---

title: Dark Mode: three Lint checks to help

published: true

description: Three Lint checks to help you developing dark mode on Android

tags: android, darkmode, kotlin, lint

---

Implementing Night Mode in Android is pretty straightforward: you have a theme with attributes and you can just define those attributes in... | dbottillo |

308,681 | Exploiting sessionStorage API to design a user-friendly multi-step Lead Form | TL;DR This article breaks down the tiresome task of filling a multi-step form using sessio... | 0 | 2020-04-14T12:38:01 | https://dev.to/zeeshan/exploiting-sessionstorage-api-to-design-a-user-friendly-multi-step-lead-form-42jh | javascript, api, form, userexperience | ##TL;DR

This article breaks down the tiresome task of filling a multi-step form using sessionStorage API. Result? Better UX

##Use Case

One of the websites I developed for a [coffee vending machine business](http://cityvendinguae.com/) has a multi-step quote request form page. I had created a number of links on the hom... | zeeshan |

308,940 | What’s new in Ember Octane | Written by Anjolaoluwa Adebayo-Oyetoro✏️ Ember.js is an open-source MVC-based JavaScript framework... | 0 | 2020-05-11T13:06:55 | https://blog.logrocket.com/whats-new-in-ember-octane/ | ember, javascript, webdev | ---

title: What’s new in Ember Octane

published: true

date: 2020-04-14 13:00:56 UTC

tags: ember,javascript,webdev

canonical_url: https://blog.logrocket.com/whats-new-in-ember-octane/

cover_image: https://dev-to-uploads.s3.amazonaws.com/i/g18t65hmwox75ymr59ug.png

---

**Written by [Anjolaoluwa Adebayo-Oyetoro](https://bl... | bnevilleoneill |

309,058 | Spinning up a highly available Prometheus setup with Thanos | The Problem Prometheus has become one of the standard tools of any monitoring solutions du... | 0 | 2020-04-15T09:40:44 | https://appfleet.com/blog/progressive-feature-driven-delivery-with-flagger/ | devops, kubernetes | #The Problem#

Prometheus has become one of the standard tools of any monitoring solutions due to it's simple and reliable architecture and ease of use. Despite this, the tool has some shortcomings when working on a certain scale. When trying to scale Prometheus, one major issue you quickly bump into is the problem of ... | jimaek |

309,066 | Top tips for escalating the performance of Android apps

| Launching an application for your online business is the smartest idea you can go with. More than 2.5... | 0 | 2020-04-14T14:20:17 | https://dev.to/poojamakkar87/top-tips-for-escalating-the-performance-of-android-apps-2lkl | android, appdevelopment, mobileapp | Launching an application for your online business is the smartest idea you can go with. More than 2.5 billion people use a smartphone. This huge population can be targeted according to its preferences for your business promotion using an application. So far, the Android operating system is ruling the world with the hig... | poojamakkar87 |

309,088 | What Goes Into Log Analysis? | I've talked here before about log management in some detail. And I've talked about log analysis in h... | 0 | 2020-04-14T14:58:01 | https://www.scalyr.com/blog/what-goes-into-log-analysis/ | logging, programming | I've talked here before about <a href="https://www.scalyr.com/blog/log-management-what-is-it-and-why-you-need-it/">log management in some detail</a>. And I've talked about log analysis in high-level terms when <a href="https://www.scalyr.com/blog/calculating-the-roi-of-log-analysis-tools/">making the case for its... | daedtech |

309,095 | Why You Shouldn't Learn C | Knowledge of the C programming language is often touted as the mark of a “true” programmer. You don’t really know programming unless you know this language, or so the wisdom goes. Many aspiring programmers have been advised by senior developers (or gatekeepers) to learn C to up their skills and bring ... | 0 | 2020-04-14T15:04:37 | http://erikscode.space/index.php/2020/04/14/why-you-shouldnt-learn-c/ | c |

---

title: Why You Shouldn't Learn C

published: true

description: Knowledge of the C programming language is often touted as the mark of a “true” programmer. You don’t really know programming unless you know this language, or so the wisdom goes. Many aspiring programmers have been advised by senior ... | erikwhiting88 |

309,150 | Invonto Announces $5M Program for Businesses Affected by Coronavirus | At Invonto, we are closely monitoring COVID-19 developments. The health and safety of our employees a... | 0 | 2020-04-14T17:15:49 | https://www.invonto.com/insights/covid19-support-initiatives/ | At Invonto, we are closely monitoring COVID-19 developments. The health and safety of our employees and customers are our top priority. Since March 16th, we have been operating virtually and will continue to do so until it is safe to operate normally.

We understand the impact the current crisis is having on small and ... | invonto | |

309,172 | TDD by Example: Part 1: The Money Example | Summary Beck opens this part of the book giving a summary of what to expect. He lays out t... | 5,922 | 2020-04-14T17:32:11 | https://emmanuelgenard.com/tdd-by-example-part-1-the-money-example | tdd, kentbeck |

## Summary

Beck opens this part of the book giving a summary of what to expect. He lays out the rhythm of TDD and what the reader will find surprising. There isn't much to summarize here so I'll just quote him.

> 1. Quickly add a test.

> 2. Run all tests and see the new one fail.

> 3. Make a little change.

> 4. Ru... | edgenard |

309,183 | Pros & Cons of Remote Work? | Image credits - https://www.ringover.com/img/blog/big/13-remote-working-2.png With internet coverage... | 0 | 2020-04-19T15:44:21 | https://dev.to/vinayhegde1990/pros-cons-of-remote-work-11do | productivity, career | _Image credits - https://www.ringover.com/img/blog/big/13-remote-working-2.png_

With internet coverage practically available everywhere in the world, every one of us is aware of what Coronavirus a.k.a COVID-19 is and the ripple effects it's having across the IT Industry. In most countries, the intensity of the situati... | vinayhegde1990 |

309,218 | E2 - Creating the Web Form | Open your index.php file (see Create project folder) and follow these steps: 1. Add an HTML html ele... | 5,954 | 2020-04-14T18:37:00 | https://dev.to/herobank110/e2-creating-the-web-form-1bdd | html, tutorial, php | Open your index.php file (see Create project folder) and follow these steps:

1. Add an HTML html element and a body inside it.

```html

<html><body></body></html>

```

2. Add an HTML form element inside the body with blank action and method attributes (we’ll set them later).

```html

<form action="" method="">... | herobank110 |

309,231 | A minimal authorization policy builder for NodeJs | auth-policy A minimal authorization policy builder which defines if a viewer can perform a... | 0 | 2020-04-14T18:47:45 | https://dev.to/hereisnaman/a-minimal-authorization-policy-builder-for-nodejs-60d | npm, node, javascript, authorization | # auth-policy

A minimal authorization policy builder which defines if a viewer can perform an action on an entity. The Policy can be defined in a declarative manner and can be consumed at various layers of any application.

**Github**: https://github.com/hereisnaman/auth-policy

**NPM**: https://www.npmjs.com/package/au... | hereisnaman |

309,239 | Relay: the GraphQL client that wants to do the dirty work for you | This series of articles is written by Gabriel Nordeborn and Sean Grove. Gabriel is a frontend develo... | 5,967 | 2020-04-16T20:44:10 | https://dev.to/zth/relay-the-graphql-client-that-wants-to-do-the-dirty-work-for-you-55kd | react, graphql, relay | > This series of articles is written by [Gabriel Nordeborn](https://github.com/zth) and [Sean Grove](https://github.com/sgrove). Gabriel is a frontend developer and partner at the Swedish IT consultancy [Arizon](https://arizon.se) and has been using Relay for a long time. Sean is a co-founder of [OneGraph.com](https://... | zth |

309,250 | E4 - Using PHP Debugging Tools in Visual Studio Code | If you have not already setup XDebug in Visual Studio Code, please refer to the earlier stage, Settin... | 5,954 | 2020-04-14T19:11:41 | https://dev.to/herobank110/e4-using-php-debugging-tools-in-visual-studio-code-53p9 | php, vscode, tutorial | If you have not already setup XDebug in Visual Studio Code, please refer to the earlier stage, [Setting up PHP debugging in Visual Studio Code](https://dev.to/herobank110/additional-setup-43ko#php-debug). This post assumes you have XDebug installed and the launch configuration is created.

1. Copy this code into ... | herobank110 |

309,314 | Drafts and scheduled publishing | A nice feature I had in Jekyll was drafts and the option to schedule publishing of content. In Jekyl... | 0 | 2020-04-14T20:19:29 | https://andeers.com/2020/04/draft-scheduled/ | 11ty | A nice feature I had in Jekyll was drafts and the option to schedule publishing of content.

In Jekyll you placed drafts in a separate folder called `_drafts` and when you built the site with the flag `--drafts` they where included.

I have gone a different way in my Eleventy site, and keep the drafts in the same folde... | andeersg |

309,319 | LeetCode Challenge: Counting Elements | This is the last problem in Week 1 of the month-long LeetCode challenge. Looks like this is the firs... | 5,835 | 2020-04-15T10:03:33 | https://dev.to/13point5/leetcode-challenge-counting-elements-545o | computerscience, beginners, leetcode, problemsolving | This is the last problem in Week 1 of the month-long LeetCode challenge.

Looks like this is the first of the 5 new questions that were promised to make things interesting.

# Problem

Given an integer array arr, count element x such that x + 1 is also in arr.

If there're duplicates in arr, count them separately.

__Ex... | 13point5 |

309,407 | What does your week look like? | Weekly routine during Covid-19 | 0 | 2020-04-15T01:20:57 | https://dev.to/klawrow/what-does-your-week-look-like-1ohh | watercooler, webdev, dotnet, freelance | ---

title: What does your week look like?

published: true

description: Weekly routine during Covid-19

tags: watercooler, webdev, dotnet, freelance

---

I'd like to walk you through my current week and would love to hear about yours.

## Variables

1. 2 small children, a toddler and a student which is _now_ being homescho... | klawrow |

309,467 | How to Make your First Contribution to Open Source, A Step by Step Guide | You want to contribute to Open Source! That’s amazing! The world thanks you! You’ve already thought i... | 0 | 2020-04-15T02:42:56 | https://dev.to/scottstern06/how-to-make-your-first-contribution-to-open-source-a-step-by-step-guide-4hof | opensource, github, javascript, beginners | You want to contribute to Open Source! That’s amazing! The world thanks you! You’ve already thought it was a good idea and some google searches later, you’re here. Congratulations, let’s get started so you can join the army in making the software world, or the real world a better place!

In 2020, Open Source is the mos... | scottstern06 |

309,646 | Get Going With Go | This article is meant to be a quick introduction to installing the Go programming language, running y... | 0 | 2020-04-15T04:25:45 | https://dev.to/ezzy1337/get-going-with-go-1ba3 | go | This article is meant to be a quick introduction to installing the Go programming language, running your first script, and building a binary that can be executed on Windows, Mac OS X, and Linux. I've included links to resources from Google and Digital Ocean that go into much greater detail on these topics but if you ar... | ezzy1337 |

309,755 | 17 Agile Testing Interview Questions and Answers that you Should Know.

| Agile Testing Interview Questions and Answers will help you prepare for Agile methodology and agile p... | 0 | 2020-04-15T08:54:20 | https://dev.to/promode/16-agile-testing-interview-questions-and-answers-that-you-should-know-3j2h | testing, tutorial, beginners, webdev | Agile Testing Interview Questions and Answers will help you prepare for Agile methodology and agile process interviews for testers or developers.

Learn Cypress Tutorial: https://cypresstutorial.com.

Learn API Testing: https://www.learnapitesting.com

Automation Tester Training: https://thetestingacademy.com

## Questi... | promode |

309,804 | PowerShell Core as default shell on a Debian devcontainer | Introduction Here we'll cover setting up powershell on a dev container with a debian:buste... | 0 | 2020-04-15T11:35:28 | https://dev.to/eliises/powershell-core-as-default-shell-on-a-debian-devcontainer-36fk | # Introduction

Here we'll cover setting up powershell on a dev container with a `debian:buster` baseimage.

At the bottom of this article you can also find the full [devcontainer.json](#devcontainerjson) and [dockerimage](#dockerimage), which you can skip to.

Credit to: https://www.phillipsj.net/posts/powershell-as-d... | eliises | |

309,806 | HOW TO SETUP MSSQL ON MAC/LINUX OS USING DOCKER AND AZURE DATA STUDIO | Ensure you have docker setup on your machine. Follow this link to setup docker on your machine docs.... | 0 | 2020-04-15T10:39:37 | https://dev.to/adeyemiadekore2/how-to-setup-mssql-on-mac-linux-os-using-docker-and-azure-data-studio-2p6m | Ensure you have docker setup on your machine. Follow this link to setup docker on your machine [docs](https://docs.docker.com/docker-for-mac/install/).

Pull the mssql ubuntu image from the docker hub.

`sudo docker pull mcr.microsoft.com/mssql/server:2019-CU3-ubuntu-18.04`

After that then enter the following command

... | adeyemiadekore2 | |

309,880 | How to kern lettering with SVG's dx attribute. | Using SVG's <text> element and dx attribute to visually kern letters. | 0 | 2020-04-25T11:29:39 | https://dev.to/makingthings/how-to-kern-lettering-with-svg-s-dx-attribute-3po9 | svg, html, css | ---

title: How to kern lettering with SVG's dx attribute.

published: true

description: Using SVG's <text> element and dx attribute to visually kern letters.

tags: svg, html, css

cover_image: https://res.cloudinary.com/makingthings/image/upload/v1587813538/articles/svg/parade-uneven.jpg

---

##The devil is in the detai... | makingthings |

309,892 | Tutorial: Run a Python Script with an Alexa Voice Command | No better time than quarantine time to play around with Python and Alexa. This tutorial demonstrates... | 0 | 2020-04-16T13:03:34 | https://dev.to/wayscript/tutorial-run-a-python-script-with-an-alexa-voice-command-4k8m | python, tutorial, alexa, beginners | *No better time than quarantine time to play around with Python and Alexa. This tutorial demonstrates how to use WayScript. We say that WayScript gives developers superpowers. We'd love your feedback on the platform we're building. Check it out while learning something new today.*

##The WayScript Alexa Skill

In this ... | tjmd |

309,905 | Why Mobile Analytics Tool Is A Boon For The Mobile Applications? | From the app idea generation to its development, the only fantasy mobile app owner have is to get a m... | 0 | 2020-04-15T13:40:27 | https://dev.to/jamesjo10097237/why-mobile-analytics-tool-is-a-boon-for-the-mobile-applications-5gc6 | mobiledevelopment, mobileapp, mobileappdevelopment, appdevelopment | From the app idea generation to its development, the only fantasy mobile app owner have is to get a million app downloads.

During app development, UI and UX designing, QA and testing, devising marketing strategies and preparing for the launch, the app owner just yearns for the huge buzz that the app would create, user... | jamesjo10097237 |

309,912 | A way for managing your API versions with Azure | Hi guys, I've recently been playing for a personal project with Azure Front Door service and found a... | 0 | 2020-04-15T15:55:53 | https://dev.to/jaloplo/a-way-for-managing-your-api-versions-with-azure-17pe | azure, management, webdev, routing | Hi guys,

I've recently been playing for a personal project with [__Azure Front Door__][Azure Front Door Docs] service and found a utility that would be great in my opinion. For those of you that don't know what is [__Azure Front Door__][Azure Front Door Docs] service let me say that allows you to define, manage, and m... | jaloplo |

309,976 | Deploying a MERN stack | Solution 1: Heroku (https://www.heroku.com/) Positives: Easy set up. Uses Docker containers within A... | 0 | 2020-04-15T15:27:30 | https://dev.to/stuartcreed/deploying-a-mern-stack-54ai | mern, deploy, heroku, aws | Solution 1: Heroku (https://www.heroku.com/)

Positives: Easy set up. Uses Docker containers within Amazon EC2 instances so that you only pay for what you use (PAAS).

Negatives: Costs £7 a month. Free tier times out your container after 30 mins of inactivity.

Solution 2: Amazon AWS EC2 Linux Instance.

Positives: Th... | stuartcreed |

310,041 | What's the story behind your first money made with software? | Do not be shy! Tell us how you first got into the world of commercial software? | 0 | 2020-04-15T17:17:11 | https://dev.to/binaryforgeltd/what-s-the-story-behind-your-first-money-made-with-software-2a4 | software, discuss, memories, career | ---

title: What's the story behind your first money made with software?

published: true

description: Do not be shy! Tell us how you first got into the world of commercial software?

tags: software, discussion, memories, career

---

Can you remember the very first person **brave enough** to pay for a piece of code made b... | binaryforgeltd |

310,049 | How do you handle database migrations ? | At work, we use flyway to manage our database migrations. We have multiple test environnement and mul... | 0 | 2020-04-15T17:25:54 | https://dev.to/iinku/how-do-you-handle-database-migrations-1g62 | database, sql, help, ask | At work, we use flyway to manage our database migrations. We have multiple test environnement and multiple staging version of our applications. Every Sunday, a script download and install a fresh database backup from the prod. How do you handle your migrations in this scenario ? Every time you install a new version, yo... | iinku |

310,355 | How to handle surprise changes within the project 😌🙌 | Web development field has been growing over time, the community frequently contributes and... | 0 | 2020-04-16T03:42:11 | https://dev.to/sarl23/how-to-handle-surprise-changes-within-the-project-2871 | webdev, beginners | ---

title:How to handle surprise changes within the project 😌🙌

published: true

description:

tags: help, webdev, beginners

---

_Web development field has been growing over time, the community frequently contributes and strengthens new and early technological generations, each person, each taste is divided into back o... | sarl23 |

310,062 | How do I freeze columns in Data Table? | As the headline says, I would like to freeze columns in my mat-table... I would like to write the fun... | 0 | 2020-04-15T18:00:21 | https://dev.to/anyanx_500v/how-do-i-freeze-columns-in-data-table-2dh1 | As the headline says, I would like to freeze columns in my mat-table... I would like to write the function myself instead of using [sticky] or css.

I already designed a small function where the selected column is set to disabled... I would like to freeze the behaviour and the selected column, can you please help me?

... | anyanx_500v | |

310,084 | How to Distribute Secrets for PowerShell Scripts Using Ansible | The Use Case Managing secrets is hard. Everything needs to run under its own username/pass... | 0 | 2020-04-17T05:47:40 | https://dev.to/mieel/how-to-distribute-secrets-for-powershell-scripts-using-ansible-3mne | ansible, powershell, windows |

# The Use Case

Managing secrets is hard. Everything needs to run under its own username/password, and apparently keeping plaintext passwords in scripts is really bad.

To manage secrets better at our company we already implemented the following practices:

1) Avoiding hardcoding credentials (or any other configuration... | mieel |

310,088 | Build an Event Planner App with Vue.js, Firebase, and Auth0's Passwordless | Quickly build an event planner application that utilizes Auth0's Passwordless feature! | 0 | 2020-04-15T18:59:51 | https://auth0.com/blog/build-an-event-planner-app-with-vuejs-firebase-and-auth0s-passwordless/ | javascript, vue, firebase | ---

title: Build an Event Planner App with Vue.js, Firebase, and Auth0's Passwordless

published: true

description: Quickly build an event planner application that utilizes Auth0's Passwordless feature!

tags: #javascript #vuejs #firebase

canonical_url: https://auth0.com/blog/build-an-event-planner-app-with-vuejs-firebas... | bachiauth0 |

310,098 | What are the best practices for architecting API authentication? | Dear Geek, We are building an API and I am confused as to what kind of security we need? There... | 5,965 | 2020-04-16T11:47:11 | https://dev.to/brentonhouse/what-are-the-best-practices-for-architecting-api-authentication-kd5 | > Dear Geek,

>

> We are building an API and I am confused as to what kind of security we need? There are so many out there being used (_OAuth 1.0a, OAuth 2.0, SAML, username/password, API Key, JWT, and plenty of others_) and I am not sure what the best practices are for implementing authentication for our APIs.... | brentonhouse | |

310,237 | Aprendendo do zero a criar uma aplicação desktop com JavaScript, Electron Js e Vue.Js | Fonte de Estudos: Casa do Código Livro: VueJs Construa Aplicações Incríveis Instalando o CLI do V... | 0 | 2020-04-15T23:36:28 | https://dev.to/gustavo_nascimento/aprendendo-do-zero-a-criar-um-projeto-com-vue-js-3k1e | beginners, javascript, vue | > Fonte de Estudos: Casa do Código

> Livro: VueJs Construa Aplicações Incríveis

- Instalando o CLI do Vue.Js em modo Global

```javascript

npm i -g @vue/cli

```

- Criando um projeto Vue.Js

```javascript

vue create <nome do app>

```

- Iniciando um servidor no Vue.Js

```javascript

npm run serve

```

- Na instalação vai... | gustavo_nascimento |

310,413 | Patrones de diseño en #javascript - Singleton | El patrón Singleton está diseñado para restringir la creación de objetos pertenecientes a una clase.... | 0 | 2020-04-16T06:53:29 | https://dev.to/3rchuss/patrones-de-diseno-en-javascript-singleton-81g | javascript, design, designpatterns, codenewbie | El patrón **Singleton** está diseñado para restringir la creación de objetos pertenecientes a una clase. **instancia única**.

Veamos un ejemplo:

```javascript

const alumnos = {

// Todos los alumnos

listaAlumnos : [],

// Obtener un alumno

get: function(id) {

return this.listaAlumnos[id]

... | 3rchuss |

310,419 | Secrets of choosing the right pricing strategy for your mobile app project | For each App entrepreneur, building a great mobile app becomes the overwhelming focus. Since the appl... | 0 | 2020-04-16T07:07:56 | https://dev.to/ltdsolace/secrets-of-choosing-the-right-pricing-strategy-for-your-mobile-app-project-5fi7 | mobileapps, apps | For each App entrepreneur, building a great mobile app becomes the overwhelming focus. Since the application development process has been more than just software development, a lot of time and effort is spent on discovery, design, and development. Of course, providing value to the end-users is the prime objective behin... | ltdsolace |

310,430 | High available Kubernetes cluster with single control plane node | This article was originally published at my SRE blog Why single node control plane? Benef... | 6,001 | 2020-04-16T07:47:44 | https://vorozhko.net/high-available-kubernetes-cluster-with-single-control-plane-node | kubernetes, sre, aws | *This article was originally published at [my SRE blog](https://vorozhko.net/high-available-kubernetes-cluster-with-single-control-plane-node)*

## Why single node control plane?

**Benefits are:**

* Monitoring and alerting are simple and on point. It reduce the number of false positive alerts.

* Setup and maintenance ... | vorozhko |

310,541 | Adding custom business logic to Hasura using Dark | Hasura has recently implemented a way to create custom mutations called Actions. Want to handle compl... | 0 | 2020-04-17T06:34:34 | https://hasura.io/blog/dark-and-hasura-actions/ | hasura, darklang | ---

title: Adding custom business logic to Hasura using Dark

published: true

date: 2020-04-16 09:56:14 UTC

tags: hasura, darklang

canonical_url: https://hasura.io/blog/dark-and-hasura-actions/

---

Hasura has recently implemented a way to create custom mutations called Actions. Want to handle complex business logic? A... | hasurahq_staff |

310,596 | Introduction: styled-off-canvas | As a big fan of styled-components, I always had the need for an Off-Canvas or Burger-Menu in my proje... | 0 | 2020-05-28T10:49:14 | https://dev.to/marcostreng/introduction-styled-off-canvas-bna | javascript, react, styledcomponents | As a big fan of [styled-components](https://www.styled-components.com/), I always had the need for an Off-Canvas or Burger-Menu in my projects.

When working with styled-components, it feels unpleasant to use one of the plain CSS based menus. You have to import `.css` files, you probably have to overwrite some styling... | marcostreng |

310,603 | How to Build a Successful Mobile App for Your Online Services Business? | Developing a mobile app for your service-based business is now considered essential. It is due to th... | 0 | 2020-04-16T12:57:20 | https://dev.to/ishawnmike/how-to-build-a-successful-mobile-app-for-your-online-services-business-2m4n | mobileappdevelopment, businessapps, onlinebusinessservices | ---

title: How to Build a Successful Mobile App for Your Online Services Business?

published: true

description:

tags: Mobile App Development, Business Apps, Online business services,

---

Developing a mobile app for your service-based busin... | ishawnmike |

310,640 | Angular Table row-span and col-span based on typescript data object | Angular Table row-span and col-span based... | 0 | 2020-04-16T14:22:25 | https://dev.to/gaurangdhorda/angular-table-row-span-and-col-span-based-on-typescript-data-object-30ca | angular, typescript, javascript | ---

title: Angular Table row-span and col-span based on typescript data object

published: true

tags: #angular #typescript #javascript

---

{% stackoverflow 61249759 %} | gaurangdhorda |

310,662 | A Day of Azure for an AWS User | This post was originally published on the Leading EDJE website in May 2018. This year the Global Az... | 0 | 2020-04-20T14:33:45 | https://dev.to/leading-edje/a-day-of-azure-for-an-aws-user-gle | cloud, azure | ---

title: A Day of Azure for an AWS User

published: true

description:

tags: cloud, azure

---

> This post was originally published on the Leading EDJE website in May 2018.

>

> This year the Global Azure Bootcamp is [going virtual](https://virtual.globalazure.net/) and will be hosted from Apr 23rd to 25th 2020, with p... | andrewdmay |

310,790 | The Citadel Architecture at AppSignal | DHH just coined the term "Citadel," which finally gives us an excellent way to reference how we appro... | 0 | 2020-04-16T16:15:38 | https://blog.appsignal.com/2020/04/08/the-citadel-architecture-at-appsignal.html | webdev, architecture, ruby | DHH just coined the term "Citadel," which finally gives us an excellent way to reference how we approach tech at AppSignal. We said, "Hey, this is us! Our thing has a name now".

<blockquote class="twitter-tweet" data-conversation="none"><p lang="en" dir="ltr">In addition to the Majestic Monolith, someone should write ... | thijsc |

310,841 | Specify XCode version for GitHub workflows | The build process on GitHub failed when I used the XCTUnwrap function. This feature was added in XCode 11. I updated the workflows yml file to specify the XCode version. | 0 | 2020-04-16T17:02:42 | https://monicagranbois.com/blog/swift/specify-xcode-version-for-github-workflows/ | xcode, github, workflows | ---

title: Specify XCode version for GitHub workflows

published: true

description: The build process on GitHub failed when I used the XCTUnwrap function. This feature was added in XCode 11. I updated the workflows yml file to specify the XCode version.

tags: xcode, github, workflows

canonical_url: https://monicagranboi... | monicag |

311,010 | What to Consider When Picking a New Programming Language | How to prioritize what language to learn when there are so many options. | 0 | 2020-04-27T21:17:18 | https://dev.to/tylerlwsmith/what-to-consider-when-picking-a-new-programming-language-5b2f | languages, careeradvice | ---

title: What to Consider When Picking a New Programming Language

published: true

description: How to prioritize what language to learn when there are so many options.

tags: Languages, Career Advice

---

I spend a lot of time on Twitter, and I regularly listen to a half dozen tech podcasts. Because of this, I hear ab... | tylerlwsmith |

310,864 | Semantic versioning in JavaScript projects made easy | If you've used a package manager like npm or yarn before, you're probably familiar with a versioning... | 0 | 2020-05-23T16:16:58 | https://dev.to/stijnva/semantic-versioning-in-javascript-projects-made-easy-3h63 | tutorial, productivity, javascript | If you've used a package manager like npm or yarn before, you're probably familiar with a versioning format like X.Y.Z, where X, Y, and Z each represent a number, separated by dots. But what do those numbers mean?

This versioning format is called [Semantic Versioning](https://semver.org/) (or SemVer for short). Those ... | stijnva |

310,903 | Twilio Hackathon Start | Been thinking of a few ideas to do for the Twilio Hackathon. We have recently had some issues at work... | 0 | 2020-04-16T18:34:09 | https://dev.to/jhanna60/twilio-hackathon-start-1lng | twiliohackathon | Been thinking of a few ideas to do for the Twilio Hackathon. We have recently had some issues at work that could be improved with a clever application using the API's provided by Twilio.

Work in progress!

#twiliohackathon | jhanna60 |

310,913 | Free Developer-friendly high-res backgrounds for Microsoft Teams | Last week Microsoft released custom background feature for Microsoft Teams. This was a feature long... | 0 | 2020-04-21T18:16:09 | https://platform.uno/blog/free-developer-friendly-high-res-backgrounds-for-microsoft-teams/ | news | ---

title: Free Developer-friendly high-res backgrounds for Microsoft Teams

published: true

date: 2020-04-16 18:44:51 UTC

tags: News

canonical_url: https://platform.uno/blog/free-developer-friendly-high-res-backgrounds-for-microsoft-teams/

---

Last week Microsoft released custom background feature for Microsoft Teams.... | unoplatform |

310,929 | Converting from AsciiDoc to Google Docs and MS Word | Updated 16 April 2020 to cover formatting tricks & add import to Google Docs info Short and swee... | 0 | 2020-04-17T09:05:13 | https://rmoff.net/2020/04/16/converting-from-asciidoc-to-google-docs-and-ms-word/ | asciidoc, pandoc, googledocs | ---

title: Converting from AsciiDoc to Google Docs and MS Word

published: true

date: 2020-04-16 00:00:00 UTC

tags: asciidoc,pandoc,google docs

canonical_url: https://rmoff.net/2020/04/16/converting-from-asciidoc-to-google-docs-and-ms-word/

---

_Updated 16 April 2020 to cover formatting tricks & add import to Google Do... | rmoff |

310,961 | Open source in 2020 | Open Source Projects For over 20 years the Open Source Initiative (OSI) has worked to raise awareness... | 0 | 2020-04-16T19:55:14 | https://dev.to/madilraza/open-source-in-2020-2ilj | opensource, in, 2020, githubteam | Open Source Projects

For over 20 years the Open Source Initiative (OSI) has worked to raise awareness and adoption of open source software, and build bridges between open source communities of practice. As a global non-profit, the OSI champions software freedom in society through education, collaboration, and infrastru... | madilraza |

321,634 | BCP Calltree | What I built Every company has a Business Continuity Plan (BCP) to deal with unforeseen ev... | 0 | 2020-04-28T20:03:46 | https://dev.to/teamwicket/bcp-calltree-1n1a | twiliohackathon | ## What I built

Every company has a Business Continuity Plan (BCP) to deal with unforeseen events that may disrupt the business' normal activity. The possibility to reach all the employees in an automated fashion, using Twilio APIs, simplifies dramatically the process of contacting manually everyone in the organisation... | teamwicket |

311,170 | Harp/Jade Debug Snippet | I’m using Harp with Jade recently. At the beginning, it was hard for me to figure out the JSON data s... | 0 | 2020-04-17T04:55:56 | http://english.catchen.me/2018/08/harp-jade-debug-snippet.html | debug, harp, jade | ---

title: Harp/Jade Debug Snippet

published: true

date: 2018-08-31 14:59:00 UTC

tags: debug,harp,jade

canonical_url: http://english.catchen.me/2018/08/harp-jade-debug-snippet.html

---

I’m using [Harp](http://harpjs.com/) with [Jade](http://jade-lang.com/) recently. At the beginning, it was hard for me to figure out t... | catchen |

311,182 | `docker run -p 127.0.0.1:8080:8080`? and if not, do I need SSL certificate? | It is this repo, actually; powered by fastify. About SSL, I can try docker run -p 8080:8080, but the... | 0 | 2020-04-17T04:49:55 | https://dev.to/patarapolw/docker-run-p-127-0-0-1-8080-8080-and-if-not-do-i-need-ssl-certificate-54ao | docker, help, devops, fastify | It is [this repo](https://github.com/patarapolw/rep2recall/tree/lessons), actually; powered by fastify.

About SSL, I can try `docker run -p 8080:8080`, but then I would need to access `http://0.0.0.0:8080` which gets permanent redirect (301) to `https`, but then, I don't have local HTTPS. (I rely on Heroku's for onlin... | patarapolw |

311,644 | How to detect a change in HTML5 Local Storage in the same window? | I want to detect if a local storage variable value is changed or not, But I have noticed that if I ad... | 0 | 2020-04-17T08:57:46 | https://dev.to/sayuj/how-to-detect-a-change-in-html5-local-storage-in-the-same-window-33k5 | help, javascript, html | I want to detect if a **local storage variable** value is changed or not, But I have noticed that if I added an event listener on **'storage'** event, I can detect the change only if I open the same link in ** another window** and then change the **local storage value** from that window, then it will show me the change... | sayuj |

311,661 | The Blockchain Way of Programming | A fun-to-read technical eBook to expand your programming career. | 0 | 2020-04-17T12:28:43 | https://dev.to/web3coach/the-blockchain-way-of-programming-7h5 | php, java, blockchain | ---

title: The Blockchain Way of Programming

published: true

description: A fun-to-read technical eBook to expand your programming career.

tags: php,java,database,blockchain

cover_image: https://d33wubrfki0l68.cloudfront.net/5a333c1f545f8bdfe6989d56dc4103ec81fccf5e/5f480/images/free_chapter.png

---

Hi dev.to,

**Lukas... | web3coach |

311,673 | Neural Network from Scratch Using PyTorch | In this article I show how to build a neural network from scratch. The example is simple and short to... | 0 | 2020-04-17T09:51:40 | https://dev.to/lankinen/neural-network-from-scratch-using-pytorch-457k | PyTorch, python, machinelearning, neuralnetworks | In this article I show how to build a neural network from scratch. The example is simple and short to make it easier to understand but I haven’t took any shortcuts to hide details.

Looking for Tensorflow version of this same tutorial? [Go here.](https://dev.to/lankinen/neural-network-from-scratch-using-tensorflow-1kc8... | lankinen |

311,846 | Stateful property-based testing with QuickCheck State Machine | A gentle introduction to quickcheck-state-machine, a Haskell library for testing stateful programs. | 0 | 2020-04-17T12:23:31 | https://meeshkan.com/blog/2020-04-17-quickcheck-state-machine | testing, tutorial, advanced, haskell | ---

title: Stateful property-based testing with QuickCheck State Machine

description: A gentle introduction to quickcheck-state-machine, a Haskell library for testing stateful programs.

author: Mike Solomon

canonical_url: https://meeshkan.com/blog/2020-04-17-quickcheck-state-machine

published: true

tags:

- testing

... | mikesol |

311,865 | INTRO TO SIMPLE LINEAR REGRESSION!!! | A sneak peek into what Linear Regression is and how it works. Linear regression is a simple machine... | 0 | 2020-04-17T13:06:06 | https://dev.to/adityaberi8/intro-to-simple-linear-regression-1l96 | machinelearning, datascience, python, jupyter |

A sneak peek into what Linear Regression is and how it works.

Linear regression is a simple machine learning method that you can use to predict an observations of value based on the relationship between the target variable and the independent linearly related numeric predictive features.

For example: Imagine you ... | adityaberi8 |

319,325 | CONFLICT MANAGEMENT SYSTEM - PRACTICE IN SOCIAL LIFE AND TECHNOLOGY CULTURE | Talk about complex systems (whatever they are). It takes a strong logical basis to find "bugs" or errors, it may be small and trivial but it will be fatal if the scale is large. Especially the big "bugs"! | 0 | 2020-04-25T15:46:19 | https://dev.to/darkterminal/conflict-management-system-practice-in-social-life-and-technology-culture-4glb | discuss, techtalk | ---

title: CONFLICT MANAGEMENT SYSTEM - PRACTICE IN SOCIAL LIFE AND TECHNOLOGY CULTURE

published: true

description: Talk about complex systems (whatever they are). It takes a strong logical basis to find "bugs" or errors, it may be small and trivial but it will be fatal if the scale is large. Especially the big "bugs"!... | darkterminal |

319,452 | How To Install PostgreSQL On Windows 10 | And Use It From Your Terminal Installation Instructions Go to PostgreSQL Databa... | 0 | 2020-04-25T19:51:52 | https://dev.to/jimmymcbride/how-to-install-postgresql-on-windows-10-3d8d | postgres, database | ## And Use It From Your Terminal

### Installation Instructions

Go to [PostgreSQL Database Download](https://www.enterprisedb.com/downloads/postgres-postgresql-downloads) and download the version of PostgreSQL you want under the "Windows x86-64" column. Once the installer is done downloading, run that bad boy.

| navigation in React native Apps with React Navigation 5 | 0 | 2020-04-28T10:16:58 | https://dev.to/paulobunga/navigating-react-native-app-with-react-navigation-5-4hn8 | reactnative, navigation, android, ios | ---

title: "React Navigation v5 Example (React Native)"

published: true

description: "navigation in React native Apps with React Navigation 5"

tags: reactnative, navigation, android, ios

cover_image: https://thepracticaldev.s3.amazonaws.com/i/u1x7n8mbvor1nq6tcbk0.jpg

---

##Step 1: Set up a blank react-native p... | paulobunga |

321,408 | Getting started with Spring Security - Adding JWT | This is the second part of the spring security post I started. Json Web Token: standard that defines... | 0 | 2020-05-10T10:47:35 | https://dev.to/jhonifaber/getting-started-with-spring-security-adding-jwt-485c | java, security, jwt, spring | This is the second part of the spring security post I <a href="https://dev.to/jhonifaber/getting-started-with-spring-security-authentication-and-authorization-32de" target="_blank">started</a>.

**Json Web Token:** standard that defines a self-contained way for transmitting information as a JSON object. Consist of thre... | jhonifaber |

321,410 | HTML to DOM (following...) | Following the previous snippet toDom, here is another example String.prototype.toDOM = function ()... | 0 | 2020-04-28T12:01:44 | https://dev.to/artydev/html-to-dom-following-2e2k | html, dom | Following the previous snippet **toDom**, here is another example

```js

String.prototype.toDOM = function () {

var d = document,

i,

a = d.createElement("div"),

b = d.createDocumentFragment();

a.innerHTML = this;

while ((i = a.firstChild)) b.appendChild(i);

return b;

};

const users = [

{

pren... | artydev |

321,426 | Apagando o cache do Redis | Se você não usa cache na sua aplicação, considere usar. Otimiza muito a performance e, se bem aplicad... | 0 | 2020-04-28T12:29:46 | https://dev.to/sr2ds/apagando-o-cache-do-redis-37b7 | redis, cache | Se você não usa cache na sua aplicação, considere usar. Otimiza muito a performance e, se bem aplicado, vai te polpar bons recursos e ainda deixar o usuário bem feliz pela agilidade na entrega das requisições. Mas hoje não vamos falar de como implementar isso, é só um artigo rápido para te ajudar a limpar o cache caso ... | sr2ds |

321,441 | Is it okay to build portfolio not using my own ideas? | Hi, I'm beginning to build my own portfolio and I've been searching for ideas and I've found it. So... | 0 | 2020-04-28T12:51:26 | https://dev.to/hycarldev_/is-it-okay-to-build-portfolio-not-using-my-own-ideas-49ik | Portfolio, discuss, frontend | Hi, I'm beginning to build my own portfolio and I've been searching for ideas and I've found it.

So I kinda like, use that template <b>BUT</b>, I didn't just copy the whole thing and leave it like that, I improvise the site to my own likings, like added some css elements such as typewriter effect, added some componen... | hycarldev_ |

321,481 | The Beginning: Why Code? | This post was originally published at https://jessesbyers.github.io./ on October 15, 2019, when I was... | 0 | 2020-04-28T13:42:02 | https://dev.to/jessesbyers/the-beginning-why-code-1n1d | career, codenewbie | *This post was originally published at https://jessesbyers.github.io./ on October 15, 2019, when I was a Software Engineering student at Flatiron School.*

Recently I've been asked by a number of friends and family members:

> "Why code?"

For me, the answer really comes down to kids. As a middle school science teache... | jessesbyers |

321,507 | AQI | Air Quality Index I'v been experimenting to fetch data related to AQI in China recently.... | 0 | 2020-04-28T14:11:23 | https://www.chuanjin.me/2016/11/10/air-quality-data-md/ | python | # Air Quality Index

I'v been experimenting to fetch data related to [AQI](https://en.wikipedia.org/wiki/Air_quality_index) in China recently.

A good resource is http://aqicn.org, which provides air pollution data in a good way by city, and I try to write a python script to crawl the data from it.

{% gist https://gi... | chuanjin |

321,515 | Watershed Monitor: JavaScript and Rails Project | This post was originally published at https://jessesbyers.github.io./ on March 12, 2020, when I was a... | 0 | 2020-04-28T14:22:51 | https://dev.to/jessesbyers/watershed-monitor-javascript-and-rails-project-576c | javascript, googlemaps, ruby, rails | *This post was originally published at https://jessesbyers.github.io./ on March 12, 2020, when I was a Software Engineering student at Flatiron School.*

*I created Watershed Monitor to fill a real environmental need. We currently have the science we need to make the needed changes to protect our water quality, and we ... | jessesbyers |

321,518 | Climate Data Dashboard: React-Redux Project | This post was originally published at https://jessesbyers.github.io./ on April 14, 2020, when I was a... | 0 | 2020-04-28T14:27:58 | https://dev.to/jessesbyers/climate-data-dashboard-react-redux-project-1ilb | javascript, react, redux, rails | *This post was originally published at https://jessesbyers.github.io./ on April 14, 2020, when I was a Software Engineering student at Flatiron School.*

*Climate Data Dashboard is a tool for science teachers and students to promote data analysis and productive discussion about data. As a middle school teacher, I was a... | jessesbyers |

321,533 | Angular Events vs. Observables | When Angular 2 came out, it adopted the Observable as an intregal part of its architecture. rxJS add... | 3,337 | 2020-04-28T14:47:16 | https://dev.to/jwp/angular-events-2jlk | angular, events, typescript | When Angular 2 came out, it adopted the [Observable](https://www.google.com/search?q=angular+observabl) as an intregal part of its architecture. [rxJS added all of these Observable oriented functions, operators and support](https://rxjs-dev.firebaseapp.com/api). All good; yes very good, except for one thing. It's ramp... | jwp |

321,589 | CLEO.one Review – Trading Automation Made Simple | Every trader with some skin in the game has experienced the impact of emotions and psychology on exec... | 0 | 2020-04-28T16:34:21 | https://blog.coincodecap.com/cleo-one-review-trading-automation-made-simple/?utm_source=rss&utm_medium=rss&utm_campaign=cleo-one-review-trading-automation-made-simple | trading, cryptotrading, tradingbots | ---

title: CLEO.one Review – Trading Automation Made Simple

published: true

date: 2020-04-28 16:10:59 UTC

tags: Trading,crypto-trading,trading-bots

canonical_url: https://blog.coincodecap.com/cleo-one-review-trading-automation-made-simple/?utm_source=rss&utm_medium=rss&utm_campaign=cleo-one-review-trading-automation-ma... | coinmonks |

321,599 | How To...Rails: Validations | This post was originally published at https://jessesbyers.github.io./ on January 28, 2020, when I was... | 7,249 | 2020-04-28T16:36:38 | https://dev.to/jessesbyers/how-to-rails-validations-395j | ruby, rails, codenewbie | *This post was originally published at https://jessesbyers.github.io./ on January 28, 2020, when I was a Software Engineering student at Flatiron School.*

*Validations in Rails allow us to protect the data that is entered into our database by checking whether it meets certain criteria or requirements. This is importan... | jessesbyers |

321,611 | A brief introduction to how Node.js works. | When it comes to web applications, there are some crucial success parameters, such as performance, sc... | 0 | 2020-04-28T16:52:45 | https://www.simform.com/what-is-node-js/ | node, webdev, javascript | When it comes to web applications, there are some crucial success parameters, such as performance, scalability, and latency. Node.js is the javascript runtime environment that achieves low latency with high processing by taking a "non-blocking" model approach. Many leading enterprises like Netflix, Paypal, eBay, IBM, A... | tejaskaneriya |

321,622 | Competitive Programming | What is Competitive Programming Competitive Programming is an art. Most of the developers... | 0 | 2020-04-28T17:51:46 | https://dev.to/developer_anand/competitive-programming-20e4 | competitiveprogramming, algoritham, datastructures | #What is Competitive Programming

Competitive Programming is an *art*. Most of the developers don't do this because they think **it's really tough ?**. But it isn't tough it's like a game when you start playing with problems you start loving it. **CP** is like a game but it also tests your patience level.

##How to star... | developer_anand |

321,675 | Reducing Your Database Hosting Costs: DigitalOcean vs. AWS vs. Azure | Compare three of the most popular cloud providers, AWS vs. Azure vs DigitalOcean database hosting costs for your MongoDB® app - ScaleGrid blog | 0 | 2020-04-28T19:12:53 | https://dev.to/scalegrid/reducing-your-database-hosting-costs-digitalocean-vs-aws-vs-azure-bl4 | mongodb, aws, azure, digitalocean | ---

title: Reducing Your Database Hosting Costs: DigitalOcean vs. AWS vs. Azure

published: true

description: Compare three of the most popular cloud providers, AWS vs. Azure vs DigitalOcean database hosting costs for your MongoDB® app - ScaleGrid blog

tags: MongoDB, AWS, Azure, DigitalOcean

---

<link rel="canonical" hr... | scalegridio |

321,794 | A workout routine with Oracle DEV GYM | As developers , we must to find the opportunity to go to the gym and be healthy, count with an... | 0 | 2020-04-29T03:02:37 | https://dev.to/ricdev2/a-workout-routine-with-oracle-dev-gym-14an | java, programming, productivity, sql | ---

title: A workout routine with Oracle DEV GYM

published: true

description:

tags: java, programming, productivity, sql

cover_image: https://images.unsplash.com/photo-1556817411-31ae72fa3ea0?ixlib=rb-1.2.1&ixid=eyJhcHBfaWQiOjEyMDd9&auto=format&fit=crop&w=3150&q=80

---

As developers , we must to find the opportunity ... | ricdev2 |

321,822 | Aprendi Lógica de Programação. E agora? | Escrito por @maiarquino Aprendi Lógica de Programação. E ago... | 0 | 2020-04-29T01:21:47 | https://dev.to/elasprogramam/aprendi-logica-de-programacao-e-agora-356p | iniciante, desenvolvimento, programar, carreira | Escrito por @maiarquino

{% link https://dev.to/maiarquino/aprendi-logica-de-programacao-e-agora-419k %} | elasprogramam |

322,543 | Predicting fines for GDPR violations with tidymodels | Recently we on the tidymodels team launched tidymodels.org, a new central location with resources and... | 0 | 2020-07-17T17:15:26 | https://juliasilge.com/blog/gdpr-violations/ | machinelearning, datascience, tutorial, rstats | ---

title: Predicting fines for GDPR violations with tidymodels

published: true

date: 2020-04-22 00:00:00 UTC

tags:

canonical_url: https://juliasilge.com/blog/gdpr-violations/

---

Recently we on the tidymodels team launched [tidymodels.org](https://www.tidymodels.org/), a new central location with resources and docum... | juliasilge |

323,319 | WeWatch - virtual couch to watch videos with people | What I built During COVID-19, people are bound at their homes. Times of watching shows tog... | 0 | 2020-05-01T06:59:01 | https://dev.to/michaelxie/wewatch-virtual-couch-to-watch-videos-with-people-44md | twiliohackathon | [Comment]: # (All of this is placeholder text. Use this format or any other format of your choosing to best describe your project.)

[Reminder]: # (Make sure you've submitted the Twilio CodeExchange agreement: https://ahoy.twilio.com/code-exchange-community)

[Important]: # (By making a submission, you agree to the com... | michaelxie |

325,882 | Setting up Multiple Environments on React Native for iOS and Android | Tutorial explaining how to set up multiple environments on React native for iOS and Android and be able to install each version on the same device (spoiler: different bundle identifiers/application suffixes). | 0 | 2020-05-02T17:35:17 | https://dev.to/therealemjy/setting-up-multiple-environments-on-react-native-for-ios-and-android-e5j | reactnative, mobileprogramming, ios, android | ---

title: Setting up Multiple Environments on React Native for iOS and Android

published: true

description: Tutorial explaining how to set up multiple environments on React native for iOS and Android and be able to install each version on the same device (spoiler: different bundle identifiers/application suffixes).

ta... | therealemjy |

327,145 | Quiz App with React | Made quiz app with React. Pls do give ur valuable feedback on it... https://realquizbee.netlify.app/... | 0 | 2020-05-04T15:41:10 | https://dev.to/gauravsingh9356/quiz-app-with-react-1bcn | react, javascript, devops, css | Made quiz app with React. Pls do give ur valuable feedback on it...

https://realquizbee.netlify.app/ | gauravsingh9356 |

327,152 | My first blog | Hi my name is hamza and i am from pakistan i love to do seo work and i have small website you can vis... | 0 | 2020-05-04T16:08:11 | https://freewindowsvpslifetime.com | freevps, vps | Hi my name is hamza and i am from pakistan i love to do seo work and i have small <a href="https://freewindowsvpslifetime.com">website</a> you can visit my <a href="http://freevpslifetime.com">free vps website</a> any time for amazing tutorial | windows_vps |

327,232 | Becoming a Developer in 15 weeks | My Journey Through a Coding Bootcamp and Should You Do It? | Today marks the first day of the last week of my 15 week Code Bootcamp and it's been quite the experi... | 0 | 2020-05-04T20:53:33 | https://dev.to/austinoso/becoming-a-developer-in-15-weeks-my-journey-through-a-coding-bootcamp-and-should-you-do-it-2ngj | Today marks the first day of the last week of my 15 week Code Bootcamp and it's been quite the experience. Assuming you're reading this article because you have an interest in coding bootcamps, my hope is that this helps provide an inside look of what it's like to attend one.

## **Before Bootcamp And Why I Decided It... | austinoso | |

327,444 | Web Surgery - Ep.6 - Adding Netlify CMS to the website | This episode we will start to add Netlify CMS so the website. It can easily be done by adding an html and config.yml under admin. It is promising running on localhost but it is not fully working yet because we still have to figure out the Auth for GitHub, also we may add more functionality in the future, tell me what y... | 0 | 2020-05-05T01:08:18 | https://dev.to/cheukting_ho/web-surgery-ep-6-adding-netlify-cms-to-the-website-55la | jamstack, webdev, jekyll, netlifycms | ---

title: Web Surgery - Ep.6 - Adding Netlify CMS to the website

published: true

description: This episode we will start to add Netlify CMS so the website. It can easily be done by adding an html and config.yml under admin. It is promising running on localhost but it is not fully working yet because we still have to f... | cheukting_ho |

327,261 | How to sync data from Coda to Google Sheets (and vice versa) with Google Apps Script tutorial | Keep your data synced across your Coda docs and Google Sheets so you don't have to copy and paste anymore. | 0 | 2020-05-04T20:31:40 | https://coda.io/@atc/how-to-sync-data-from-coda-to-google-sheets-and-vice-versa-with-google-apps-script-tutorial | googlesheets, coda, googleappsscript, tutorial | ---

title: How to sync data from Coda to Google Sheets (and vice versa) with Google Apps Script tutorial

description: Keep your data synced across your Coda docs and Google Sheets so you don't have to copy and paste anymore.

published: true

date: 2020-05-04 17:56:22 UTC

tags: google sheets, coda, google apps script, tu... | albertc44 |

327,263 | Re-introducing JavaScript Objects using Object constructor | If you have been with me since the last two posts, first of all, thank you for coming back! And secon... | 6,405 | 2020-05-05T14:06:05 | https://dev.to/salyadav/re-introducing-javascript-objects-using-object-constructor-1bmk | javascript, oop, beginners, explainlikeimfive | If you have been with me since the last two posts, first of all, thank you for coming back! And second, the contents of this post is a learning curve for me as well. So there are a few questions that I am still seeking answers to, see if you can help me out.

Let's begin...

In the [previous post](https://dev.to/salya... | salyadav |

327,313 | 13 Weeks Challenge | Hey, everyone. This is my first post, I have considered writing for a long time, in this lock-down pe... | 0 | 2020-05-04T20:01:09 | https://dev.to/javi/13-weeks-challenge-40gf | challenge, computerscience, codenewbie, interview | Hey, everyone. This is my first post, I have considered writing for a long time, in this lock-down period I thought to give it a short. I am preparing for my upcoming interviews and I was quite weak in Data Structures and Algorithms. Honestly, I never tried so hard and have long given excuses to keep it for the last.

... | javi |

327,316 | I am going to build 6 products in 12 months | I am going to build 6 products in 12 months on my way to create financial freedom for myself. And I seem to have picked the worst time to start. | 0 | 2020-05-04T20:10:41 | https://dev.to/ericadamski/i-am-going-to-build-6-products-in-12-months-i85 | ---

title: I am going to build 6 products in 12 months

published: true

description: "I am going to build 6 products in 12 months on my way to create financial freedom for myself. And I seem to have picked the worst time to start."

---

Originally posted on [attempts.space](https://attempts.space)

I am going to build 6... | ericadamski | |

327,393 | Job Search Week 11 | I started looking at python this week just to get an idea of what it was like to use and I quite enjo... | 5,212 | 2020-05-04T21:45:35 | https://dev.to/kealanheena/job-search-week-11-16de | makers | I started looking at python this week just to get an idea of what it was like to use and I quite enjoyed it but I think from now on I'm gonna focus on using a MERN stack because JavaScript is my favourite language to code in.

Day 1

Naturally, I started with the basics going over pythons lists(arrays), dictionary(hash... | kealanheena |

327,484 | Become a Better Developer By Doing a Few Things | Subscribe to my email list now at http://jauyeung.net/subscribe/ Follow me on Twitter at https://twi... | 0 | 2020-05-05T01:45:04 | https://dev.to/aumayeung/become-a-better-developer-by-doing-a-few-things-5h7o | career, webdev, productivity, codenewbie | **Subscribe to my email list now at http://jauyeung.net/subscribe/**

**Follow me on Twitter at https://twitter.com/AuMayeung**

**Many more articles at https://medium.com/@hohanga**

**Even more articles at http://thewebdev.info/**

To be a good programmer, we should follow some easy to adopt habits to keep our progra... | aumayeung |

327,528 | looking for answers !, strapi vs nest js for my next project | I have never worked with any of the 2, I come from the world of laravel and typescript. I want to ex... | 0 | 2020-05-05T04:25:33 | https://dev.to/warriordev/looking-for-answers-strapi-vs-next-js-for-my-next-project-nestjs-or-strapi-1dm3 | strapi, nestjs, typescript, backend | I have never worked with any of the 2, I come from the world of laravel and typescript.

I want to explore these options for a new project, but I can't decide.

If you have to compare them, how would they compare? | warriordev |

327,553 | React performance optimization with useMemo & memo | In this article, I will provide a set of techniques to optimize child components re-rendering. There... | 0 | 2020-05-05T05:54:34 | https://dev.to/max_frolov_/react-performance-optimization-with-usememo-memo-hki | react, webdev, javascript, tutorial | In this article, I will provide a set of techniques to optimize child components re-rendering. There are many circumstances of unnecessary component re-rendering. Usually, it happens because of the parent component inside which the state changes.

Firstly we should note:

>any manipulation with component state leads to... | max_frolov_ |

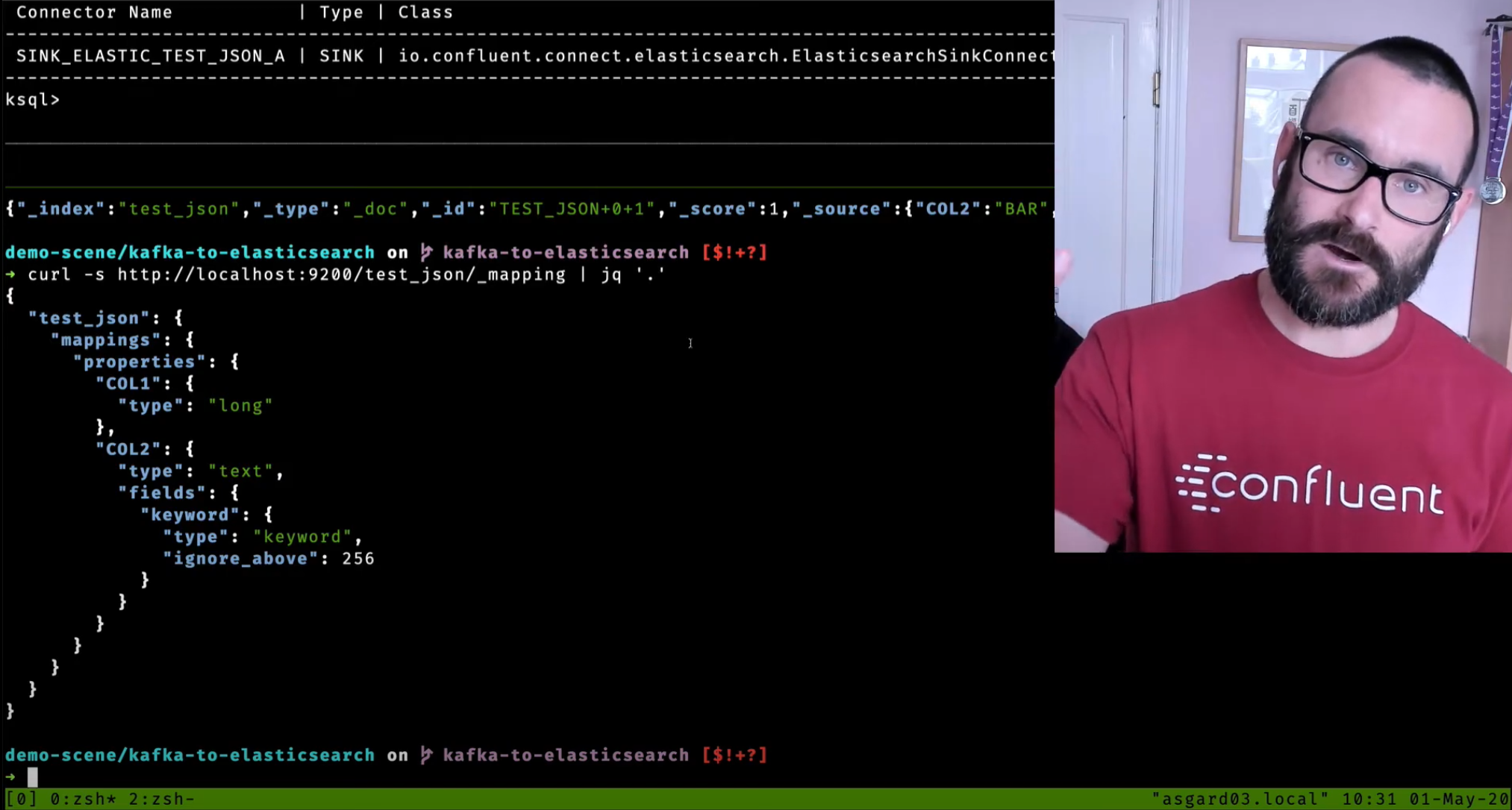

327,989 | Streaming data from Kafka to Elasticsearch - video walkthrough | Getting data from Kafka into Elasticsearch is easy with the Kafka Connect sink connector. Check o... | 5,469 | 2020-05-05T08:57:34 | https://dev.to/confluentinc/streaming-data-from-kafka-to-elasticsearch-1348 | apachekafka, elasticsearch, search, kafkaconnect | Getting data from Kafka into Elasticsearch is easy with the Kafka Connect sink connector.

Check out this video tutorial on how to use the connector and a walk through some of the common requirements when using it including

- Updating an... | rmoff |

328,013 | Setting Up Netlify Forms With Gatsby | I recently built a website using Gatsby, choosing to host it on the Netlify free tier. One of the fea... | 0 | 2020-05-05T10:07:07 | https://chrisharding.io/setting-up-netlify-forms-with-gatsby | gatsby, netlify, webdev | I recently built a website using Gatsby, choosing to host it on the Netlify free tier. One of the features I wanted to try was form handling. Typically, handling form posts requires wiring up and hosting a backend to process requests. Netlify forms allows me deploy a simple static site with zero backend, letting them h... | chrisharding |

328,024 | 10 Tips To Get Out Of Your Own Way And Start That Side Project | Let's START working on our «Dream Projects»! My last 10 years of coding had its ups and downs.... | 0 | 2020-05-05T10:31:44 | https://dev.to/rohovdmytro/start-doing-your-dream-project-here-s-what-works-for-me-4ff8 | productivity, sideprojects, weddev | > Let's START working on our «Dream Projects»!

---

My last 10 years of coding had its ups and downs. To have more ups I've implemented tiny habits to be more productive. It's time to share! If one of them will make you 1% more productive everyday — I am taking it!

Beware! Some of the tips might be silly (or against ... | rohovdmytro |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.