id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

667,630 | Increasing Velocity the Right Way and the Wrong Way | We love agile development at Foci Solutions. One of our main offerings is to coach clients on how to... | 0 | 2023-02-23T19:37:57 | https://dev.to/focisolutions/increasing-velocity-the-right-way-and-the-wrong-way-532h | agile, productivity | We love agile development at Foci Solutions. One of our main offerings is to coach clients on how to use agile to deliver quality products to their customers. Along this journey we often get asked the question "How do we increase velocity". This question is complex and often times misunderstood.

Velocity is a metric t... | blastdan |

667,972 | Did you mean: | Ever seen a message like that on google? Works more or less ok when you have a search query misprint... | 21,821 | 2021-04-16T11:00:20 | https://dev.to/sergeyie/did-you-mean-44in | Ever seen a message like that on google? Works more or less ok when you have a search query misprint or smth, but really crushes the experience about brands content. Like "Did you mean:", actually No, I didn't!

Rather often when search for small companies, brands, products google adds its "Did you mean:" with several ... | sergeyie | |

668,247 | Entendendo melhor algumas funcionalidades antes de criar seu primeiro projeto em Angular | #angular #typescript Oi gente, tudo bem com vocês? Sou estudante de programação, atualmente fazen... | 0 | 2021-04-16T19:36:13 | https://dev.to/fegoncalves/entendendo-melhor-algumas-funcionalidades-antes-de-criar-seu-primeiro-projeto-em-angular-32d6 |

[`#angular`](https://dev.to/t/angular) [`#typescript`](https://dev.to/t/typescript)

Oi gente, tudo bem com vocês?

Sou estudante de programação, atualmente fazendo o _Bootcamp Avanade Angular Developer na Digital Innovation One_ e vim compartilhar algumas informações que foram na verdade dúvidas que surgiram ao cri... | fegoncalves | |

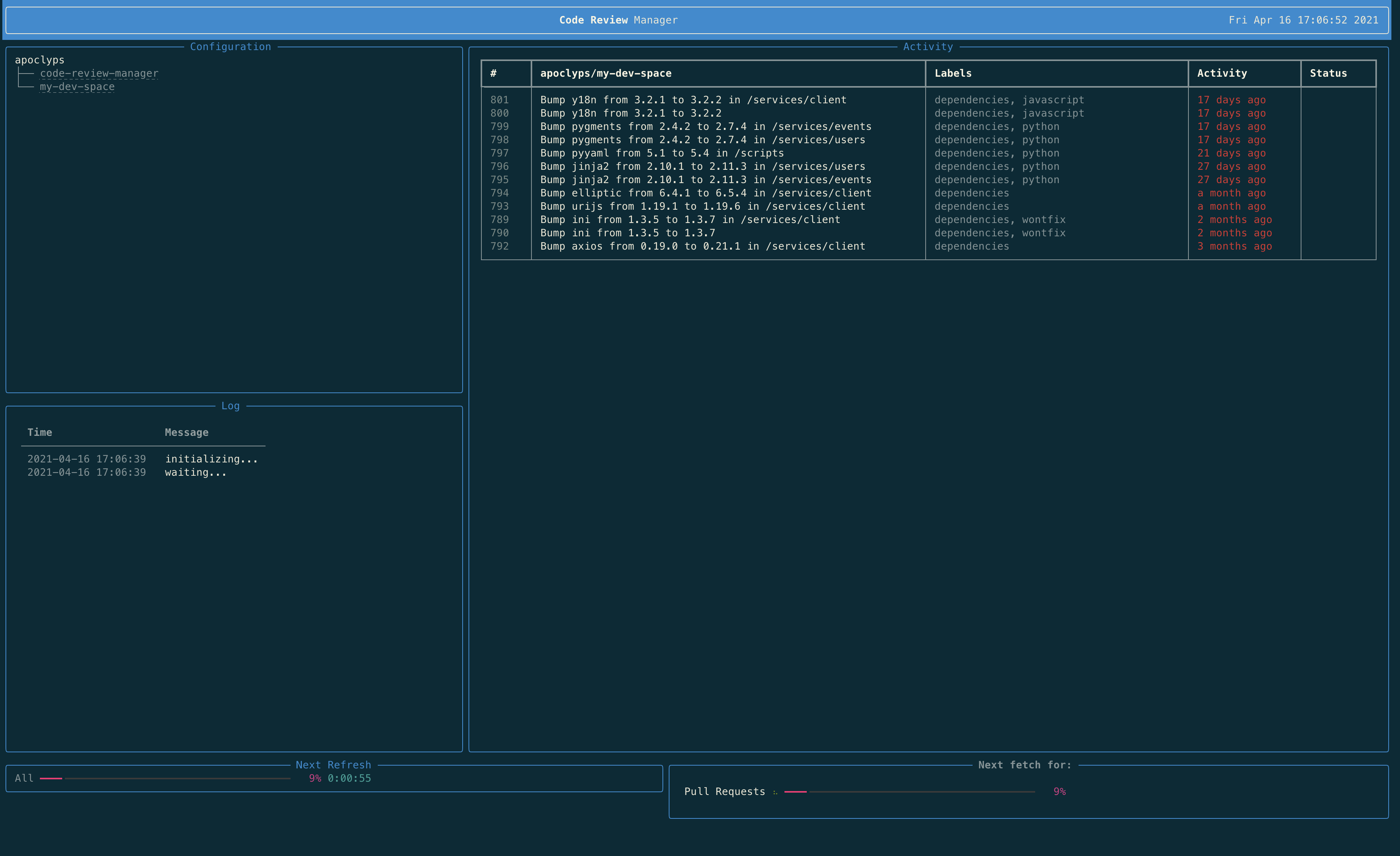

668,304 | A simple terminal UI dashboard for code review | Reviews is a simple code review manager that lists the status of open pull requests across multiple o... | 0 | 2021-04-16T16:19:04 | https://dev.to/apoclyps/a-simple-terminal-ui-dashboard-for-code-review-2g76 | python, terminal, github, codereview | Reviews is a simple code review manager that lists the status of open pull requests across multiple organizations & repositories as a terminal UI dashboard.

The project was created as a way to keep on top of multiple teams ... | apoclyps |

668,810 | Day 347 : Times is Rough | liner notes: Professional : Had a really good day to head into the weekend. Had a team meeting to s... | 0 | 2021-04-16T21:19:45 | https://dev.to/dwane/day-347-times-is-rough-29np | hiphop, code, coding, lifelongdev | _liner notes_:

- Professional : Had a really good day to head into the weekend. Had a team meeting to see what folks worked on during the week and if anyone needed anything. Then had a really good catch up meeting with some team members. Had a lot of laughs. Spent the rest of the day getting my new blog post submitted ... | dwane |

668,836 | khaliljaan99 | I love my picture pleae friends share to your friends this photo. | 0 | 2021-04-16T22:34:36 | https://dev.to/khaliljaan99/khaliljaan99-24pl | khaliljaan99 | I love my picture pleae friends share to your friends this photo. | khaliljaan99 |

669,583 | New to web development/ working in the tech sector?

| This blog post is the first one of a series of posts that are aimed at all the aspiring web developer... | 0 | 2021-04-17T18:13:13 | https://dev.to/omarsharifgr/new-to-web-development-working-in-the-tech-sector-gg7 | news, performance, achievements, success | This blog post is the first one of a series of posts that are aimed at all the aspiring web developers out there and those who - like me - want to take a step back and provide some well-needed advice to their past selves. For this particular post, this advice takes the form of outlining something that I learned this we... | omarsharifgr |

689,338 | How I can send emails with the formatting I've done in google sheet by using app script? | Hi everyone. I'm trying to send emails with google app script by running following code in script edi... | 0 | 2021-05-06T05:23:50 | https://dev.to/ahmad786987/how-i-can-send-emails-with-the-formatting-i-ve-done-in-google-sheet-by-using-app-script-2o8j | Hi everyone. I'm trying to send emails with google app script by running following code in script editor

// This constant is written in column C for rows for which an email

// has been sent successfully.

var EMAIL_SENT = 'EMAIL_SENT';

/**

* Sends non-duplicate emails with data from the current spreadsheet.

*/

funct... | ahmad786987 | |

692,324 | Use an XState Machine with React | XState gives you the tools to take control over the state of your UI. When you've got it under contro... | 0 | 2021-05-08T22:21:21 | https://dev.to/jbranchaud/use-an-xstate-machine-with-react-326i | react, xstate, javascript, ux | XState gives you the tools to take control over the state of your UI. When you've got it under control, you can build interfaces that provide a predictable and delightful user experience.

Let's look at how to integrate XState into a React app.

There are a bunch of well-constructed XState machines available to directl... | jbranchaud |

692,895 | Yes! Use microservices and ask 'why are you like this?' afterward 😑 | TL;DR It's a good idea to use microservices for a team of three or four people that only handles one... | 0 | 2021-05-09T16:51:49 | https://gdi3d.github.io/using-microservices-experience-2021-05-09/ | microservices, codenewbie, management | > TL;DR It's a good idea to use microservices for a team of three or four people that only handles one or two microservices. Otherwise, stay away!

# Falling in love with Microservices 🥰

I started using the microservice architecture in 2015 when I had to refactory a medium-size Django app into pieces. Since then I ha... | gdi3d |

692,979 | How to Share Code Between Lambda Functions | As I was getting into cloud development, and while still trying to figure out the best and fastest... | 0 | 2021-05-09T20:17:19 | https://medium.com/aws-in-plain-english/how-to-share-code-between-lambda-functions-49c656bd2ffc | ---

canonical_url: https://medium.com/aws-in-plain-english/how-to-share-code-between-lambda-functions-49c656bd2ffc

---

As I was getting into cloud development, and while still trying to figure out the best and fastest way to get my code tested and deployed, I learned about Lambda Layers.

Originally, the main satisfac... | ahaydar | |

693,254 | Why do you need Helmet in NodeJs ? | Helmet helps you secure your Express apps by setting various HTTP headers. It's not a silver bullet,... | 0 | 2021-05-10T05:27:49 | https://dev.to/sid__/why-do-you-need-helmet-in-nodejs-h1b | node, webdev, codenewbie, javascript | > Helmet helps you secure your Express apps by setting various HTTP headers. It's not a silver bullet, but it can help!

These are the lines written on top of the npm's helmet page.

Most of you might have come across this code `app.use(helmet())` in your codebase/boilerplates. Let's dive deep into helmet today.

In si... | sid__ |

693,494 | Angular 11 + Spring JPA + PostgreSQL example | Angular 11 Http Client – Spring Boot RestApi Server | https://ozenero.com/angular-11-spring-jpa-postgresql-crud Angular 11 + Spring JPA + PostgreSQL examp... | 0 | 2021-05-10T08:20:00 | https://dev.to/loizenai/angular-11-spring-jpa-postgresql-example-angular-11-http-client-spring-boot-restapi-server-4j8f | angular11, springboot, springjpa, restapi | https://ozenero.com/angular-11-spring-jpa-postgresql-crud

Angular 11 + Spring JPA + PostgreSQL example | Angular 11 Http Client – Spring Boot RestApi Server

In this tutorial, <strong>ozenero</strong> shows you Angular 11 Http Client & Spring Boot Server example that uses <strong>Spring JPA</strong> to interact with ... | loizenai |

693,759 | Collection Analysis and Evaluation | Collection analysis is the process of determining what the exact nature of a collection is. It... | 11,710 | 2021-05-12T08:34:08 | https://dev.to/diyawi/collection-analysis-and-evaluation-2lfl | lis55, learningdiary | Collection analysis is the process of determining what the exact nature of a collection is. It is essential for determining the gaps and weaknesses of the collection, as well as determining what items need to be deselected. It is important as it aids the library and the library's par... | diyawi |

693,872 | Which lint ruleset is best for Vue ? | Is it Eslint With vue/recommanded ? Airbnb ? Prettier ? Something else ? What do you guys think ?... | 0 | 2021-05-10T15:33:52 | https://dev.to/mikaleb/which-lint-ruleset-is-best-for-vue-1mne | question, vue, lint | Is it Eslint With vue/recommanded ?

Airbnb ?

Prettier ?

Something else ? What do you guys think ?

| mikaleb |

693,904 | What's new in LoadRunner Professional 2021 R1? | As you know, I publish what's new in Micro Focus LoadRunner from past many years. This time as well,... | 0 | 2021-05-10T16:46:06 | https://qainsights.com/whats-new-in-loadrunner-professional-2021-r1 | performance, testing, webperf, tools | <!-- wp:paragraph -->

<p>As you know, I publish <a href="https://qainsights.com/whats-new-in-microfocus-loadrunner-professional-2021/" target="_blank" rel="noreferrer noopener">what's new in Micro Focus LoadRunner</a> from past many years. This time as well, it is business as usual for LoadRunner What's New section. La... | qainsights |

694,082 | Angular simple form with async testing | Topic The developer should test the code. In this example, I will create a simple form wit... | 0 | 2021-05-10T18:27:48 | https://dev.to/tomwebwalker/angular-simple-form-with-async-testing-227m | angular, rxjs, testing, typescript | ## Topic

The developer should test the code. In this example, I will create a simple form with an HTTP request after submission and test.

## Project

I used Angular CLI to create the project (default CLI answers):

```

ng new notification-example

```

I used Material Angular to provide propper styling by typing (default ... | tomwebwalker |

697,171 | HashMap in Go | This is a implementation of closed addressing in a typical hashmap we use in go lang. some globals &... | 0 | 2021-05-13T12:11:06 | https://dev.to/satishrajnale/hashmap-in-go-3oid | go | This is a implementation of closed addressing in a typical hashmap we use in go lang.

**some globals & imports**

```go

package main

import "fmt"

const ArraySize = 7

```

### Now lets define our steps

## Structures

> Hashtable structure

```go

type HashTable struct{

// our hashtable will be an array

array [ArrayS... | satishrajnale |

694,311 | How Does JS code run - Execution context and Call stack | Do you know how javascript code runs in the javascript engine? If not, then I hope this post will b... | 12,692 | 2021-05-11T02:46:24 | https://dev.to/prashan81992916/how-does-js-code-run-execution-context-and-call-stack-3a7 | javascript, codenewbie, executioncontext, webdev | Do you know how javascript code runs in the javascript engine?

If not, then I hope this post will be useful for understanding execution context and how the order of execution context is maintained by call stack.This fundamental concept also pays way to have the foundation to comprehend hoisting, scopes, scope chains... | prashan81992916 |

694,966 | How to gets uploaded image Metadata on the front-end | Quite often when we implement uploading images, will be great to have the opportunity somehow to get... | 0 | 2021-06-04T15:05:21 | https://dev.to/detoner777/how-to-gets-uploaded-image-metadata-on-the-front-end-2h1k | react, metadata, filereader, javascript | Quite often when we implement uploading images, will be great to have the opportunity somehow to get image metadata (with, height, fileSize, name ..) in the front-end directly

Example of the input, with uploading the image file:

```javascript

<input type="file" name="myImage" accept="image/png, image/gif, image/jpeg... | detoner777 |

694,970 | MovingPoint-Lightweight-Java-2D-Game-Engine | Find repo here: https://github.com/MarcoSteinke/MovingPoint-Lightweight-Java-2D-Game-Engine 1 Beginn... | 0 | 2021-05-11T14:02:39 | https://dev.to/marcosteinke/movingpoint-lightweight-java-2d-game-engine-2m7l | Find repo here: https://github.com/MarcoSteinke/MovingPoint-Lightweight-Java-2D-Game-Engine

1 Beginner Friendly / Educational

+ I love programming and I also love teaching it to other persons, so the main goal of MovingPoint is to bring new motivated talents into programming. + A lot of people dream of creating their ... | marcosteinke | |

694,990 | PHP 8 Multiple Files/Images Upload in MySQL Database Example Tutorial

| Hi Guys, In this tutorial,I will learn you how to multiple image and file upload in mysql database u... | 0 | 2021-05-11T14:52:44 | https://dev.to/sonagrabhavesh/php-8-multiple-files-images-upload-in-mysql-database-example-tutorial-3e9d | php, laravel, mysql, programming | <p>Hi Guys,</p>

<p>In this tutorial,I will learn you how to multiple image and file upload in mysql database using php 8.you can easy and simply multiple image and file upload in mysql database using php 8.</p>

<p>File upload in PHP is the most common feature that almost every PHP developer has to build. As we can kn... | sonagrabhavesh |

695,080 | Simple Bluebird.Js Cheat Sheet | Reference Official documentation Bluebird.Js Cheat Sheet Generators User.... | 0 | 2021-05-11T16:28:07 | https://dev.to/hoanganhlam/simple-bluebird-js-cheat-sheet-8k4 | cheatsheet, bluebird | ### Reference

* [Official documentation](http://bluebirdjs.com/docs/api-reference.html)

* [Bluebird.Js Cheat Sheet](https://cheatsheetmaker.com/bluebirdjs)

### Generators

```

User.login = Promise.coroutine(function* (email, password) {

let user = yield User.find({email: email}).fetch()

return user

})

```

See [... | hoanganhlam |

695,261 | String similarity search and fast LIKE operator using pg_trgm | SQL supports wildcard search on strings using LIKE operator which accepts % and _ wildcards. The prob... | 0 | 2021-05-11T20:58:37 | https://mazeez.dev/posts/pg-trgm-similarity-search-and-fast-like | postgres, pgtrgm, search |

SQL supports wildcard search on strings using `LIKE` operator which accepts `%` and `_` wildcards. The problem with `LIKE` is it's not very fast if you have a lot of rows and the query is [non-sargable](https://en.wikipedia.org/wiki/Sargable). And in some cases you need to provide fuzzy search capabilities where the r... | mhmd_azeez |

695,269 | Grokking Free Monads | In this post I’m going to try and demystify free monads and show you that they’re not some strange ab... | 12,008 | 2021-05-14T19:33:19 | https://dev.to/choc13/grokking-free-monads-9jd | fsharp, functional, programming, grokking | In this post I’m going to try and demystify free monads and show you that they’re not some strange abstract creature, but in fact can be very useful for solving certain problems. Rather than focusing on the theory, our aim here will be to get a solid intuition about free monads, you'll then find learning the theory muc... | choc13 |

695,298 | Answer: | answer re: What does 256 means for 12... | 0 | 2021-05-11T22:56:52 | https://dev.to/nonsameer/answer-3fpl | ---

title: Answer:

published: true

---

{% stackoverflow 38483586 %} | nonsameer | |

695,417 | You're Hired! | I was near tears when I heard those words from my new manager. After months and months of... | 12,672 | 2021-05-17T16:23:00 | https://corydorfner.com/youre-hired | career, beginners, learning, motivation | I was near tears when I heard those words from my new manager. After months and months of self-learning, both about myself and programming, I finally have my first job in the tech field and can happily say I made the switch from a career in Manufacturing Quality to Technology.

, updated in February 2021.

Ethereum is used to build decentralized applications, a.k.a. DAPPs. These applications exist *through* small programs that live on the Blockchain, called *smart contracts*.

Bef... | laurentsenta |

695,893 | On-premises software advantages | Despite all the trends about and putting your data online, many companies still prefer hosting everyt... | 0 | 2021-05-20T12:58:10 | https://apiumhub.com/tech-blog-barcelona/on-premises-software-advantages/ | devops | ---

title: On-premises software advantages

published: true

date: 2021-05-11 07:12:00 UTC

tags: DevOps

canonical_url: https://apiumhub.com/tech-blog-barcelona/on-premises-software-advantages/

---

Despite all the trends about and putting your data online, many companies still prefer hosting everything on internal server... | apium_hub |

695,983 | The importance of Magneto and Salesforce Integration | The enterprise of online product selling is growing these days. Particularly, regarding the pandemic... | 0 | 2021-05-12T12:47:04 | https://dev.to/iamsiddhant21/the-importance-of-magneto-and-salesforce-integration-59b3 | php, programming | The enterprise of online product selling is growing these days. Particularly, regarding the pandemic time, whilst human beings had no preference but to the motel to eCommerce, structures to shop for critical products.

In this digital technology, there are so many effective structures to be had to create a similarly ef... | iamsiddhant21 |

696,013 | Upcoming SaaS in robotic process automation in logistics | Robotics technology is progressing slowly but surely in careful and thoughtful stages. Robots are amo... | 0 | 2021-05-12T13:43:35 | https://dev.to/ardasgroup/upcoming-saas-in-robotic-process-automation-in-logistics-hjo | Robotics technology is progressing slowly but surely in careful and thoughtful stages. Robots are among us right now.

Personal robots are busy cleaning the inside of our homes and helping to maintain our gardens. Commercial robots are busy with the manufacturing side of the supply chain, mainly in the automotive indus... | ardasgroup | |

696,246 | Journey to the real world by cloning DEV.to backend server(part 1) | In this long series we will explore amazing library and framework by implementing dev.to backend ser... | 12,683 | 2021-05-12T16:26:16 | https://dev.to/harshmangalam/journey-to-the-real-world-by-cloning-dev-to-backend-server-1icm | In this long series we will explore amazing library and framework by implementing dev.to backend server.

### Tools we will use

1. Nodejs

> Nodejs is a javascript runtime which allow javascript to run outside the browser. Nodejs was developed by Ryan Dahl which utilize google v8 javascript engine.

You can learn more... | harshmangalam | |

696,536 | The Crazy decoupler - NestJs Emitter | Those of us who have used NestJs are familiar of the architectural flow. We break code into modules.... | 0 | 2021-05-12T21:22:12 | https://dev.to/vjnvisakh/the-crazy-decoupler-nestjs-emitter-34hg | nestjs, events, emitter, listener | Those of us who have used NestJs are familiar of the architectural flow. We break code into modules. Modules have controller which are basically the public endpoints. And then there are services which can be injected on demand.

It gets messy when we have to communicate across modules. Sure if you are using micro-serv... | vjnvisakh |

696,564 | The truth about Technical debt? | I am always posting provoking post on my Linkedin, and I have decided to replicate those... | 0 | 2021-05-12T23:39:49 | https://dev.to/apssouza22/have-you-ever-reflected-on-tech-debt-2o0n | linkedinposts, projectmanagement, techlead | ###### I am always posting provoking post on my Linkedin, and I have decided to replicate those here. [Let's socialize!](https://www.linkedin.com/in/alexsandro-souza-dev)

Technical debt is widely used and discussed within engineering teams. However, in people mind, it looks like it accumulates because of some nasty, d... | apssouza22 |

696,940 | How To Use Context Hooks In React | The React has released the Context API as if we need to pass data to multiple nested components. But... | 0 | 2021-05-13T08:58:32 | https://dev.to/sivavadlamuri/how-to-use-context-hooks-in-react-bgf | react, reactnative, javascript, redux | The React has released the Context API as if we need to pass data to multiple nested components. But the Context API was a bit bulky and difficult to use in class components. With the release of React hooks, the React team decided to release use context hook which is more simplified and easy to use.

What Is The Contex... | sivavadlamuri |

696,963 | Haml Cheat Sheet | Ruby -# This is a comment -# Anything starting with a hyphen signals to Haml that Ruby... | 0 | 2021-05-13T09:22:39 | https://dev.to/hoanganhlam/haml-cheat-sheet-54gn | haml, cheatsheet |

### Ruby

```haml

-# This is a comment

-# Anything starting with a hyphen signals to Haml that Ruby is coming

- @arr = [1, 2, 3]

- @str = "test"

-# Equal signals output

= render partial: "shared/header"

= yield

= link_to page_url

```

### Inline Attributes

Either hash syntax works

```haml

%meta{ name: "viewport", con... | hoanganhlam |

697,130 | Sinon-Chai Cheat Sheet | Should spy.should.have.been.called spy.should.have.been.calledOnce spy.shou... | 0 | 2021-05-13T11:21:56 | https://dev.to/hoanganhlam/sinon-chai-cheat-sheet-55fe | sinonchai, cheatsheet |

### Should

```

spy.should.have.been.called

spy.should.have.been.calledOnce

spy.should.have.been.calledTwice

spy.should.have.been.calledThrice

spy1.should.have.been.calledBefore(spy2)

spy1.should.have.been.calledAfter(spy2)

spy.should.have.been.calledWithNew

spy.should.always.have.been.c... | hoanganhlam |

697,188 | #30DaysOfAppwrite : Appwrite Teams | Intro #30DaysOfAppwrite is a month-long event focused on giving developers a walkthrough... | 0 | 2021-05-13T13:09:02 | https://dev.to/appwrite/30daysofappwrite-appwrite-teams-2fjd | javascript, webdev, flutter, 30daysofappwrite | ## Intro

[#30DaysOfAppwrite](http://30days.appwrite.io/) is a month-long event focused on giving developers a walkthrough of all of Appwrite's features, starting from the basics to more advanced features like Cloud Functions! Alongside we will also be building a fully-featured Medium clone to demonstrate how these conc... | christyjacob4 |

697,397 | Euclidean Algorithm meaning & python snippet | This algorithm helps us get the greatest common divisor(gcd) of 2 integers. In other words, the resul... | 0 | 2021-05-13T16:24:41 | https://dev.to/coucoseth/euclidean-algorithm-meaning-python-snippet-4la5 | machinelearning, python, algorithms | This algorithm helps us get the greatest common divisor(gcd) of 2 integers. In other words, the result of our computation is the highest possible number `(lets say 1)` that we can divide by two given numbers `(4 and 5)` which will give a remainder `(or you could say left over after a division)` of zero for both given n... | coucoseth |

697,423 | May 2021 Releases – Horizon 27.2.0, Meridians 2021.1.0, 2020.1.8, 2019.1.19, and 2018.1.28 | In May, we released updates to all OpenNMS Horizon and Meridian versions under active support, and... | 0 | 2021-05-24T16:54:40 | https://www.opennms.com/en/blog/2021-05-13-may-2021-releases-horizon-27-2-0-meridians-2021-1-0-2020-1-8-2019-1-19-and-2018-1-28/?utm_source=rss&utm_medium=rss&utm_campaign=may-2021-releases-horizon-27-2-0-meridians-2021-1-0-2020-1-8-2019-1-19-and-2018-1-28 | news, horizon, meridian | ---

title: May 2021 Releases – Horizon 27.2.0, Meridians 2021.1.0, 2020.1.8, 2019.1.19, and 2018.1.28

published: true

cover_image: https://pbs.twimg.com/media/E1SY105WQAYSr1B?format=jpg&name=medium

date: 2021-05-13 15:20:10 UTC

tags: News,Horizon,Meridian

canonical_url: https://www.opennms.com/en/blog/2021-05-13-may-20... | rangerrick |

697,495 | If you had a time machine what is ONE thing you would tell yourself when you started coding? | I would probably tell myself to look up what imposter syndrome is so I can be relieved from all my do... | 0 | 2021-05-13T18:55:55 | https://dev.to/inspirezone/if-you-had-a-time-machine-what-is-one-thing-you-would-tell-yourself-when-you-started-coding-2jn2 | watercooler, career, beginners, reflect | I would probably tell myself to look up what imposter syndrome is so I can be relieved from all my doubts... Only discovered it was a real thing years into my career.

What about you? I’m very curious to know! | funbeedev |

697,637 | How to do Async express routes! | Overview I recently took a job at a product development company focusing on Back-end Devel... | 0 | 2021-05-14T17:50:13 | https://dev.to/ctooley21/how-to-do-async-express-routes-2imb |

# Overview

I recently took a job at a product development company focusing on Back-end Development! Part of the reality of working here is having to learn new technologies pretty often to fit whatever use-case we are going for. Recently we've been doing lots of web development using a combination of React & Node.js. W... | ctooley21 | |

697,650 | Laravel 8 e Autenticação JWT (tymon/jwt-auth) com Model customizada | Nesse artigo vou mostrar como implementar autenticação JWT (utilizando o pacote tymon/jwt-auth) em... | 0 | 2021-05-14T23:16:02 | https://dev.to/wenlopes/laravel-8-e-autenticacao-jwt-tymon-jwt-auth-com-model-customizada-2l7k | laravel, php, jwt, auth | Nesse artigo vou mostrar como implementar autenticação JWT (utilizando o pacote **tymon/jwt-auth**) em uma API com Laravel 8, utilizando uma Model diferente da padrão (Users). Ao final do texto, vou disponibilizar o link para o repositório contendo a implementação dos passos deste artigo :)

Então vamos lá!

## Instal... | wenlopes |

697,829 | Everything you need to know to deploy an Azure Static Web App | Azure Static Web Apps are so easy to use and integrate with Github Actions, Azure Functions or your custom authentication. Here is everything you need to deploy. | 0 | 2021-05-14T21:39:44 | https://dev.to/azure/everything-you-need-to-know-to-deploy-an-azure-static-web-app-fm6 | staticwebapps, azure, serverless, javascript | ---

title: Everything you need to know to deploy an Azure Static Web App

published: true

description: Azure Static Web Apps are so easy to use and integrate with Github Actions, Azure Functions or your custom authentication. Here is everything you need to deploy.

tags: staticwebapps, azure, serverless, JavaScript

cover... | yonet |

697,956 | Hacking wireless access points | If you want to know how to hack WiFi access points -- just read this step by step aircrack-ng tutoria... | 0 | 2021-05-14T07:36:23 | https://dev.to/rootsec1/hacking-wireless-access-points-598j | security |

If you want to know how to hack WiFi access points -- just read this step by step `aircrack-ng` tutorial, run the verified commands and crack passwords easily.

With the help a these commands you will be able to hack WiFi AP (access points) that use WPA/WPA2-PSK (pre-shared key) encryption.

The basis of this method o... | rootsec1 |

697,964 | Swift: Deconstruct SPF: Struct Mechanism | Today, I will start on building out the functionality of the struct Mechanism. This will involve... | 12,710 | 2021-05-20T04:09:44 | https://bas-man.dev/post/swift/swift-spf-struct-mechanims/ | swift, beginners, package, spf |

Today, I will start on building out the functionality of the `struct Mechanism`.

This will involve creating some tests, and basic functions.

## Instantiate the Mechanism Struct

We have our struct defined as such:

```swift

struct Mechanism {

var kind: MechanismKind;

var qualifier: Qualifier;

var mechan... | basman |

698,004 | TYPO3 Talk with Tim: Author Of TYPO3 Blogger | We have Tim Lochmüller with us this week for an interesting TYPO3 Talk! Tim Lochmüller is the author... | 0 | 2021-05-14T09:17:45 | https://dev.to/t3terminal/typo3-talk-with-tim-author-of-typo3-blogger-an1 | We have Tim Lochmüller with us this week for an interesting TYPO3 Talk! Tim Lochmüller is the author of TYPO3 Blogger and works for the TYPO3 agency HDNET and has been working with TYPO3 since 2004.

The TYPO3 Talk with Tim is interesting, so grab that cup of coffee to explore Tim's insights into his views, the history ... | t3terminal | |

708,211 | Applying monkey patches in Rails | Monkey patching is one of Ruby's most powerful features. It allows programmers to add methods to core... | 0 | 2021-05-25T13:43:50 | https://dev.to/ayushn21/applying-monkey-patches-in-rails-1bj1 | ruby, rails | [Monkey patching](https://en.wikipedia.org/wiki/Monkey_patch) is one of Ruby's most powerful features. It allows programmers to add methods to core classes which can result in some very elegant APIs. However it's quite easy to shoot yourself in the foot if you don't know what you're doing.

[This post from Justin W... | ayushn21 |

698,154 | Boken Engine | Hi all, just joined the community. First time posting here, long time reading awesome published conte... | 0 | 2021-05-14T11:34:04 | https://dev.to/s3rrot/boken-engine-5ce1 | opensource, framework, swift, xcode | Hi all, just joined the community. First time posting here, long time reading awesome published content.

At my current job we have been working on a **Swift framework** for creating slides-based, non-linear visual stories a... | s3rrot |

699,857 | How to use Docker commands | In this post, we'll learn how to use Docker commands. We will make a web app inside a Docker... | 0 | 2021-05-16T14:22:11 | https://www.steadylearner.com/blog/how-to-use-docker-commands-42da | docker, tutorial, beginners, devops | <!--

Post{

subtitle: "Learn how to use Docker and upload its images to Docker Hub"

image: "posts/web/docker.png",

image_decription: "Image from the official website",

tags: "How use Docker code",

}

-->

<!-- Link -->

[Steadylearner]: https://www.steadylearner.com

[Docker Website... | steadylearner |

701,814 | Keep your AWS Kubernetes costs in check with intelligent allocation | Traditional cost allocation and Kubernetes are like oil and water. Surely, containerized environments... | 0 | 2021-05-18T14:25:24 | https://cast.ai/blog/keep-your-aws-kubernetes-costs-in-check-with-intelligent-allocation/ | kubernetes, devops, aws, eks | Traditional cost allocation and Kubernetes are like oil and water. Surely, containerized environments make a lot of things easier. But not this one.

Luckily, there are a few things you can do to **allocate AWS Kubernetes costs smarter** and keep them in check.

Read on to find out what they are and finally hold the re... | castai |

705,510 | How to cartoonize an image with Python | In this tutorial, I will show you how to give a cartoon-effect to an image in Python with OpenCV. Op... | 0 | 2021-05-22T08:43:08 | https://dev.to/stokry/how-to-cartoonize-an-image-with-python-1e01 | python, tutorial, showdev, computervision | In this tutorial, I will show you how to give a cartoon-effect to an image in Python with OpenCV.

OpenCV is an open-source python library used for computer vision and machine learning. It is mainly aimed at real-time computer vision and image processing. It is used to perform different operations on images which trans... | stokry |

706,698 | Virtualizing Memory | In the last article, we asked ourselves how the operating system gives each process the illusion that... | 12,643 | 2021-05-24T00:05:40 | https://www.thesystemsprogrammer.com/posts/virtualizing-memory | computerscience, firstyearincode, systems, linux | In the last article, we asked ourselves how the operating system gives each process the illusion that it has its own address space despite only having one hardware RAM. This is one of the most important and most complicated virtualization techniques that the operating system performs. Because of that we will discuss me... | thesystemsprogrammer |

708,255 | Think Lab 2124 : Build & Deploy AI/ML Models w Multiple Datasets w AutoAI - Tutorial B | Welcome to Tutorial Tuesday! We'll pick up where we left off last week with our Think Lab... | 0 | 2021-05-25T20:08:36 | https://dev.to/ibmdeveloper/think-lab-2124-build-deploy-ai-ml-models-w-multiple-datasets-w-autoai-tutorial-b-167 | #Welcome to Tutorial Tuesday!

We'll pick up where we left off last week with our Think Lab 2124 [Tutorial A: Build & Deploy a Data Join Experiment](https://dev.to/ibmdeveloper/think-lab-2124-build-deploy-ai-ml-models-w-multiple-datasets-w-autoai-part-a-24pk)!

##AutoAI Overview

AutoAI in Cloud Pak for Data automates... | jritten | |

708,273 | Things you should know about personal branding. | When you Google your name, what comes up? Hello 👋, my gorgeous friends on the internet, today... | 0 | 2021-05-25T15:24:44 | https://dev.to/unclebigbay/things-you-should-know-about-personal-branding-2g2a |

When you Google your name, what comes up?

<hr />

Hello 👋, my gorgeous friends on the internet, today I will b... | unclebigbay | |

708,289 | How to auto-document the end-to-end code and data flows of your Rails app | In case you missed it, I’m recapping my recent RailsConf 2021 talk in a series of four, short blog... | 12,899 | 2021-05-27T11:19:04 | https://dev.to/appmap/how-to-auto-document-end-to-end-flows-with-appmap-41d2 | rails, techtalks | In case you missed it, I’m recapping my recent [RailsConf 2021](https://www.railsconf.org/) talk in a series of four, short blog posts. Check out [Part 1 - We need a better way to communicate and explain our code decisions](https://dev.to/appland/we-need-a-better-way-to-communicate-and-explain-our-code-decisions-1nic).... | kgilpin |

708,313 | vluster Inception Video | Video showing how to create virtual clusters in Kubernetes with vcluster | 0 | 2021-05-25T17:07:04 | https://dev.to/loft/vluster-inception-video-3886 | kubernetes | ---

title: vluster Inception Video

published: true

description: "Video showing how to create virtual clusters in Kubernetes with vcluster"

tags: Kubernetes

//cover_image: https://direct_url_to_image.jpg

---

Running a virtual cluster inside a Kubernetes cluster is cool, but running a virtual cluster inside a virtual cl... | richburroughs |

708,352 | Headless Shopify with Nuxt, Tailwind, imgix, & Vercel | Shopify does a lot of items well, but one area that can be improved is overall website performance. I'm diving into easily using Shopify's Storefront Access Token in an API with Nuxt as the front-end and boosting frontend scores. | 0 | 2021-05-26T00:30:20 | https://dev.to/daletom/headless-shopify-with-nuxt-tailwind-imgix-vercel-1ldg | nuxt, webdev, headless, ecommerce | ---

title: Headless Shopify with Nuxt, Tailwind, imgix, & Vercel

published: true

description: Shopify does a lot of items well, but one area that can be improved is overall website performance. I'm diving into easily using Shopify's Storefront Access Token in an API with Nuxt as the front-end and boosting frontend scor... | daletom |

708,504 | Fetching and reading files from S3 using Go 🔥👀 | Trying to figure out how to do simple tasks using the AWS SDK for particular services can be difficul... | 0 | 2021-05-26T15:26:11 | https://dev.to/seanyboi/fetching-and-reading-files-from-s3-using-go-4180 | aws, go, s3, machinelearning | Trying to figure out how to do simple tasks using the AWS SDK for particular services can be difficult given that sometimes the AWS documentation is limited and gives you the bare minimum. Today I'll show you how to fetch and read particular files from S3 using Go. This tutorial collates many hours of research into wha... | seanyboi |

708,574 | Live like a monk - the key to success | Don't get me wrong, I'm not particularly successful. If I look around I see prettier, richer, more po... | 0 | 2021-05-26T05:28:12 | https://www.sandordargo.com/blog/2021/05/26/living-like-a-monk | watercooler, productivity, lifestyle | Don't get me wrong, I'm not particularly successful. If I look around I see prettier, richer, more popular people. People who rose more in the company ranks, people who write better code, who can contribute more, who make more money either from their main job or on the side, people who write better posts and who simply... | sandordargo |

708,785 | Wrapper Class vs Helper Class vs Controller Class | Wrapper Class: To wrap the Data types to make a single object which can be assessable easily in other... | 0 | 2021-05-26T03:37:43 | https://dev.to/bhanukarkra/wrapper-class-vs-helper-class-vs-controller-class-abm | programming | **Wrapper Class**: To wrap the Data types to make a single object which can be assessable easily in other classes.

**Controller Class** Controller class contains public methods called Action methods. Each method has a one-to-one link with a possible user action, ranging from the click of a button to another trigger. T... | bhanukarkra |

708,851 | Make A Payment From Apple Pay To Cash App Which Is A Non-Verified Account | Do you want to make a payment from Apple Pay To Cash App account even if you are not using a verified... | 0 | 2021-05-26T06:26:16 | https://dev.to/nancybr65040277/make-a-payment-from-apple-pay-to-cash-app-which-is-a-non-verified-account-b05 | applepaytocashapp | Do you want to make a payment from Apple Pay To Cash App account even if you are not using a verified account? Keep the fact in mind that you have to first verify your account and then you should make sure you have a common bank account associated with your Cash app account.

https://www.experts-support.com/blog/transf... | nancybr65040277 |

708,859 | "CloudFront deployments with Lambda@Edge" | A/B Testing, Blue/Green deployments, Canary releases. Different, but still so much in common. They... | 0 | 2021-05-26T06:45:16 | https://dev.to/aws-builders/cloudfront-deployments-with-lambda-edge-gh5 | aws, devops, lambda | ---

title: "CloudFront deployments with Lambda@Edge"

published: true

tags: aws, devops, lambda

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/9uco0ab7c0l3atowphxu.png

---

A/B Testing, Blue/Green deployments, Canary releases. Different, but still so much in common. They all have different purpos... | jimmydqv |

708,930 | Diseconomies of Scale | We’ve all heard of economies of scale: the increase in per-unit efficiency by producing or doing a... | 0 | 2021-05-30T21:23:29 | https://jhall.io/archive/2021/05/26/diseconomies-of-scale/ | scaling, efficiency, practices, team | ---

title: Diseconomies of Scale

published: true

date: 2021-05-26 00:00:00 UTC

tags: scaling,efficiency,practices,team

canonical_url: https://jhall.io/archive/2021/05/26/diseconomies-of-scale/

---

We’ve all heard of economies of scale: the increase in per-unit efficiency by producing or doing a large number of like th... | jhall |

708,967 | Most Asked Html Questions | 1.Why are you interested in using HTML to build websites? Ans:“Web-building tools are great for profe... | 0 | 2021-05-26T08:37:05 | https://dev.to/iftakher99/most-asked-html-questions-1ae2 | html | **1.Why are you interested in using HTML to build websites?**

Ans:“Web-building tools are great for professional developers, but I think it’s important to understand the underlying technology so I have more control over how sites look and behave. For instance, when I use WordPress to build a site, I often find that I c... | iftakher99 |

709,005 | Chia sẻ 750+ link báo hay và chất lượng | http://baobinhduong.vn/xay-nha-dac-biet-de-an-nau-trong-ngay-tan-the-a87204.htmlhttps://baotayninh.vn... | 0 | 2021-05-26T09:50:34 | https://dev.to/kinhennho/chia-s-750-link-bao-hay-va-ch-t-l-ng-1ik | linkbaochatluong, linkbaohay | http://baobinhduong.vn/xay-nha-dac-biet-de-an-nau-trong-ngay-tan-the-a87204.htmlhttps://baotayninh.vn/xay-nha-nhan-ai-cho-thanh-nien-ngheo-a114790.htmlhttp://hatinh24h.com.vn/nguoi-dan-chi-hang-tram-trieu-dong-xay-nha-lau-cho-heo-tranh-lu-a77072.htmlhttp://baolangson.vn/quoc-te/324262-australia-chi-hon-700-trieu-usd-xa... | kinhennho |

709,021 | Các mẫu cửa kính cường lực đẹp cập nhập t6-2021 | Cửa kính cường lực mở quay, bản lề sàn Cửa kính cường lực mở quay hay còn gọi là cửa kính bản lề sàn... | 0 | 2021-05-26T10:10:12 | https://dev.to/kinhennho/cac-m-u-c-a-kinh-c-ng-l-c-d-p-c-p-nh-p-t6-2021-3ci3 | cuakinhcuongluc |

Cửa kính cường lực mở quay, bản lề sàn

Cửa kính cường lực mở quay hay còn gọi là cửa kính bản lề sàn hoặc cửa kính thuỷ lực. Do lắp bằng kính cường lực nên cửa có độ bền cao, ít hư hỏng, nên cửa được sử dụng nhiều nhất hiện nay trên thị trường.

Cửa có 2 loại chính là cửa 1 cánh hoặc 2 cánh, sử dụng bản lề sàn VVP Thá... | kinhennho |

709,056 | 6 Amazing Tips To Boost Your SEO | While there is a lot of SEO advice and tips out there, most don’t deliver desirable results. And some... | 0 | 2021-05-26T10:56:59 | https://dev.to/raymondhalliwell/6-amazing-tips-to-boost-your-seo-38ee | digitalmarketing, raymondhalliwell, seo, socialmediamarketing | While there is a lot of SEO advice and tips out there, most don’t deliver desirable results. And some are already outdated and seem to work no more with Google’s constant change in algorithm. With an abundance of tips and tricks flooding the internet, choosing the right one can be challenging. That is why [Raymond Hal... | raymondhalliwell |

710,330 | 5 tools to automate your development | Automating your development with Dependabot, Auto Assign, Merge Freeze, Husky and Scheduled... | 0 | 2021-08-27T12:43:48 | https://dev.to/pgarzina/5-tools-to-automate-your-development-3m | productivity, github, devops | Automating your development with Dependabot, Auto Assign, Merge Freeze, Husky and Scheduled reminders.

The idea of this post is to introduce some of the tools and integrations that made our development life easier.

Most of these are pretty straightforward to implement into your workflow but for the ones that have a c... | pgarzina |

709,338 | Architecture & Authorization For A Complex Multi-Tenant SaaS Platform With Hasura | Prefect | by Zachary Hughes @HasuraCon'20 | Zachary Hughes spoke last year at HasuraCon'20 on how Prefect's complex multi-tenant architecture a... | 0 | 2021-05-27T08:50:21 | https://hasura.io/blog/architecture-authorization-multi-tenant-saas-platform-with-hasura-prefect/ | hasuracon | ---

title: Architecture & Authorization For A Complex Multi-Tenant SaaS Platform With Hasura | Prefect | by Zachary Hughes @HasuraCon'20

published: true

date: 2021-05-26 13:36:24 UTC

tags: HasuraCon

canonical_url: https://hasura.io/blog/architecture-authorization-multi-tenant-saas-platform-with-hasura-prefect/

---

in Angular and Electron. In this blog post, I will cover some technical challenges about file locks which I faced during the development of Snowtrack.

## What is Snowtrack?

Snowtrack is an intu... | sebastianrath |

709,935 | First Video | https://www.youtube.com/watch?v=F16svetYoaM | 0 | 2021-05-27T08:20:49 | https://dev.to/devpaulius/first-video-5dcm | firstpost, video, firstvideo, firsttime | https://www.youtube.com/watch?v=F16svetYoaM | devpaulius |

710,099 | This is my First Ever Post in this website.. | A post by The Planet | 0 | 2021-05-27T12:13:02 | https://dev.to/sreekanth99/this-is-my-first-ever-post-in-this-website-139m | sreekanth99 | ||

710,174 | Top 11 Flutter Widgets To Know In 2021 | These days, flutter is one of the most popular framework for developing mobile, web and desktop appli... | 0 | 2021-05-27T14:21:52 | https://dev.to/ltdsolace/top-11-flutter-widgets-to-know-in-2021-3i35 | flutter, apps, android | These days, flutter is one of the most popular framework for developing mobile, web and desktop applications. It is full of widgets and it makes it more sustainable. Flutter widgets are used for developing high-quality cross platform applications because they are customizable and offer extensive flexibility & fluidity ... | ltdsolace |

710,326 | The Ultimate Eleventy Template for your blog with a FREE minimalist theme [Open Source] | A free and open source Eleventy template with Tailwind CSS 2, Webpack 5, ESLint, Prettier, Image optimization, SEO friendly and Netlify CMS. Download the best Eleventy Themes and Templates. | 0 | 2021-05-27T15:18:16 | https://dev.to/ixartz/the-ultimate-eleventy-template-for-your-blog-with-a-free-minimalist-theme-open-source-3h47 | webdev, tailwindcss, javascript, showdev | ---

title: The Ultimate Eleventy Template for your blog with a FREE minimalist theme [Open Source]

published: true

description: A free and open source Eleventy template with Tailwind CSS 2, Webpack 5, ESLint, Prettier, Image optimization, SEO friendly and Netlify CMS. Download the best Eleventy Themes and Templates.

ta... | ixartz |

710,366 | 10 Laptop Backpack Recommendations for the Remote Software Developer in 2021 | One of the joys of remote work is that you can work wherever there’s good wifi. Coffee shops? Check.... | 0 | 2021-06-22T10:30:52 | https://x-team.com/blog/backpacks-recommendations/ | remoteworking, travel, backpack, productivity | ---

title: 10 Laptop Backpack Recommendations for the Remote Software Developer in 2021

published: true

date: 2021-05-27 11:49:00 UTC

tags: remoteworking,travel,backpack,productivity

canonical_url: https://x-team.com/blog/backpacks-recommendations/

---

One of the joys of remote work is that you can work wherever there’... | tdmoor |

710,373 | On Good Coworkers & Business Humility | “What makes a good coworker in tech? What makes a bad one?” I saw these two questions posted in a... | 0 | 2022-02-11T19:58:07 | https://theagilecouch.com/2020/05/22/on-good-coworkers-business-humility/ | uncategorized | ---

title: On Good Coworkers & Business Humility

published: true

date: 2020-05-22 19:52:46 UTC

tags: Uncategorized

canonical_url: https://theagilecouch.com/2020/05/22/on-good-coworkers-business-humility/

---

> **“What makes a good coworker in tech?

> What makes a bad one?”**

I saw these two questions posted in a c... | stevetwips |

710,395 | Made a Real Time Chat Application using React.js | 1.This is a fully functional Real time Chat Application made using React.js with functionalities like... | 0 | 2021-05-27T17:04:10 | https://dev.to/bhavesh1235/made-a-real-time-chat-application-using-react-js-1nni | react, webdev, javascript, css | 1.This is a fully functional **Real time Chat Application** made using React.js with functionalities like online status,image support,Read receipent,multiple rooms

2.Link to **Github repo**-> [Link](https://github.com/bhavesh1235/React-Chat-App)

If you like the project please give it a **star** at github

3.Backend i... | bhavesh1235 |

710,446 | Some Docker commands make your life easier | In this post, I show some useful docker commands that you may be less familiar with them and they mak... | 0 | 2021-05-27T18:16:51 | https://dev.to/moesmp/some-docker-commands-make-your-life-easier-1ob6 | In this post, I show some useful docker commands that you may be less familiar with them and they make your life easier as a developer. Lets begin with build command:

```yml

docker build -f path/to/file/Dockerfile -t image-name .

```

#####`-f` lets you specify the path to `Dockerfile`.

####Tag or rename image

```yml

... | moesmp | |

710,465 | [Quick Tip] Improving developer experience with Options Pattern validation on start. | When we're new or have less contact with some project is hard to understand all configuration possibi... | 0 | 2021-05-27T18:53:49 | https://dev.to/antoniofalcaojr/quick-tip-improving-developer-experience-with-options-pattern-validation-on-start-3ac2 | dotnet | When we're new or have less contact with some project is hard to understand all configuration possibilities.

**Option pattern** is the most appropriate way to inject environment settings options into the application. isolating the options by scenario is adhering to important software engineering principles, such as *P... | antoniofalcaojr |

710,587 | Accessing Nested Resources Through Fetch Requests | My fourth project for FlatIron was the most challenging so far, but learned so much about JS and work... | 0 | 2021-05-27T20:53:45 | https://dev.to/rickysonz/accessing-nested-resources-through-fetch-requests-with-a-has-many-through-relationship-op9 | My fourth project for FlatIron was the most challenging so far, but learned so much about JS and working with a back end server. In this blog we will review a way that to access nested resources and manipulate an API's JSON responses to retrieve specific nested data to present with JS in the front end of the program.

... | rickysonz | |

710,593 | Finding the Longest Word in a String | Let's return the length of the longest word in the provided sentence. Also the response should be a... | 0 | 2021-05-27T21:03:16 | https://dev.to/rthefounding/finding-the-longest-word-in-a-string-35o7 | javascript, tutorial, beginners, webdev | * Let's return the length of the longest word in the provided sentence.

* Also the response should be a number in this instance.

```

function findLongestWordLength(str) {

return str.length;

}

findLongestWordLength("The quick brown fox jumped over the lazy dog");

```

* Answer:

```

function findLongestWordLength(str) ... | rthefounding |

710,655 | Your Angular code base is deprecated. | No, upgrading to Angular 12.x.x will not help. It's still deprecated. The Typescript experimental de... | 0 | 2021-05-27T23:27:40 | https://dev.to/shivamd20/your-angular-code-base-is-deprecated-315e | angular, javascript, typescript, decorators | No, upgrading to Angular 12.x.x will not help.

It's still deprecated.

The Typescript experimental decorators were based on [TC39 proposal-decorators](https://github.com/tc39/proposal-decorators).

We use the same Decorators in our Angular codebase to create services, components and other Angular constructs.

But the s... | shivamd20 |

710,750 | Build an idea generator from scratch, part 1: Project setup with Github and dotenv [Video] | So as I was finishing up teaching my web development class last year, I really wanted to give my... | 0 | 2021-05-28T01:32:45 | https://dev.to/ashleykolodziej/build-an-idea-generator-from-scratch-part-1-project-setup-with-github-and-dotenv-video-34mn | video, javascript, tutorial, codenewbie | {% youtube TqdmUdi8xbY %}

So as I was finishing up teaching my web development class last year, I really wanted to give my students something to help them continue growing and learning. I decided to build an idea generator with HTML, CSS, and JavaScript using the [raindrop.io API](https://developer.raindrop.io/), and ... | ashleykolodziej |

711,073 | Notion template to organize your chords 🎸and learning guitar | Hey folks 👋🏼 We just launched another Product on Product Hunt. It's a notion template that helps you... | 0 | 2021-05-28T10:55:12 | https://dev.to/xenoxdev/notion-template-to-organize-your-chords-and-learning-guitar-4j6a | watercooler, showdev | Hey folks 👋🏼

We just launched another Product on Product Hunt. It's a notion template that helps you to organize your chords and learn guitar

### Learn Guitar

An amazing template to make your dreams of playing that dreamy song on your own, come true. This template will help you keep track of your guitar learning pr... | sarthology |

711,459 | 12 Best VS Code Extensions To Enhance Your Productivity | Visual Studio Code is one of the well-known and most widely used code editors in the developer commun... | 0 | 2021-05-28T16:40:17 | https://dev.to/coursesity/12-best-vs-code-extensions-to-enhance-your-productivity-4ok | vscode, codenewbie, 100daysofcode, programming | Visual Studio Code is one of the well-known and most widely used code editors in the developer community. The reason for its popularity is its numerous extensions that speed up the development process. But, one of the best things about VS code is its customizability, primarily via extensions.

So, this article involve... | yashtiwari1k |

711,475 | Excel Formulas to Calculate the Time Duration With Number of Days!! | Have you ever tried to calculate the total time duration with the number of days in Excel? This artic... | 0 | 2021-05-29T03:00:26 | https://geekexcel.com/excel-formulas-to-calculate-the-time-duration-with-number-of-days/ | excelformula, excelformulas | ---

title: Excel Formulas to Calculate the Time Duration With Number of Days!!

published: true

date: 2021-05-28 14:46:48 UTC

tags: ExcelFormula,Excelformulas

canonical_url: https://geekexcel.com/excel-formulas-to-calculate-the-time-duration-with-number-of-days/

---

Have you ever tried to calculate the total **time dur... | excelgeek |

711,482 | Running Machine Learning Model on Docker Container | The summary of what we're going to build: ->Pull the Docker container image of CentOS image from... | 0 | 2021-05-29T15:46:07 | https://dev.to/karthikkasukurti/running-machine-learning-model-on-docker-container-4k40 | **The summary of what we're going to build:**

->Pull the Docker container image of CentOS image from DockerHub and create a new container

-> Install the Python software on the top of docker container

-> In Container you need to copy/create machine learning model which you have created in jupyter notebook

Note: I will... | karthikkasukurti | |

711,489 | How To Concate Two Dimensional Array

#javascript, #basic #arrays | How to concate Two dimensional Arrays without Using new Set? array=[[1,2,3],[4,5,6],[7,8,9]] // [1,2,... | 0 | 2021-05-28T17:58:07 | https://dev.to/naveed89tech/how-to-concate-two-dimensional-array-javascript-basic-arrays-2elj |

How to concate Two dimensional Arrays without Using new Set?

array=[[1,2,3],[4,5,6],[7,8,9]]

// [1,2,3,4,5,6,7,8,9]

For This we can use reduce Method.

array.reduce((a,b)=>a.concate(b),[ ]) | naveed89tech | |

711,692 | A Look at Compilation in JavaScript Frameworks | In 2017 Tom Dale, wrote Compilers are the New Frameworks. And he was right. In 2017 things were alrea... | 0 | 2021-06-01T21:58:09 | https://dev.to/this-is-learning/a-look-at-compilation-in-javascript-frameworks-3caj | javascript, webdev, svelte, marko | In 2017 Tom Dale, wrote [Compilers are the New Frameworks](https://tomdale.net/2017/09/compilers-are-the-new-frameworks/). And he was right. In 2017 things were already heading that way and have only continued on that trend since.

If you look at the whole range of build tools we use every framework is enhanced by some... | ryansolid |

711,802 | Laravel 8 Toastr Notifications using yoeunes/toastr package | Hi Friends In this Article, i will explicate you how to install and use toastr notifications utilizi... | 0 | 2021-05-29T04:16:15 | https://dev.to/sonagrabhavesh/laravel-8-toastr-notifications-using-yoeunes-toastr-package-2n3b | laravel, php, tutorial, programming | <p>Hi Friends</p>

<p>In this Article, i will explicate you how to install and use toastr notifications utilizing yoeunes/toastr package in Laravel 8 application.we will utilize yoeunes/toastr package.we will indite step be step tutorial for Laravel 8 toastr notifications.</p>

<p>Toastr notifications yoeunes/toastr p... | sonagrabhavesh |

711,837 | Making JS Objects iterable | Disclaimer: This is a fun task that I tried doing. I don't see a real world use case for this, especi... | 0 | 2021-05-30T09:27:38 | https://dev.to/ivinjose/making-js-objects-iterable-292j | javascript, generators, iterable, objects | *Disclaimer*: This is a fun task that I tried doing. I don't see a real world use case for this, especially because now that we have Maps in JS. Let me know in the comments if you can think of something.

Now thats out of the way, let's get to it.

As we know, Objects in JS are not iterable. That means you cannot use t... | ivinjose |

712,063 | Decorate your Github! | and more themes...here This is project link Hi There!! im newbie Developer! I... | 0 | 2021-05-29T11:33:09 | https://dev.to/devxb/decorate-your-github-1hib | [](https://github.com/devxb/CommitCombo/blob/main/ENG.md)[](https://github.com/devxb/CommitCombo/blob/main/ENG.md)[![commitcombo](http://commitcombo.com/get?user=Devxb&theme=DeepOce... | devxb | |

712,080 | Twitch's Animated Cards using HTML and CSS | A post by Can Umay | 0 | 2021-05-29T12:09:19 | https://dev.to/canumay/twitch-s-animated-cards-with-html-and-css-o1c | codepen, html, css, frontend | {% codepen https://codepen.io/canumay/pen/gOmGEoK %} | canumay |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.