id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,915,191 | Exploring Kobold AI: The New Frontier in Artificial Intelligence | Ever wondered what Kobold AI is all about? Imagine a trusty sidekick in your digital endeavors, a bit... | 0 | 2024-07-08T04:15:37 | https://dev.to/jettliya/exploring-kobold-ai-the-new-frontier-in-artificial-intelligence-51aj | Ever wondered what Kobold AI is all about? Imagine a trusty sidekick in your digital endeavors, a bit like having a wizard's apprentice in the vast realm of artificial intelligence. Kobold AI is that magical companion, a powerful tool designed to make your interaction with AI not only efficient but also delightful. So,... | jettliya | |

1,915,193 | Organizations Promoting Indian Entrepreneurship | The youth population of India is contihttps://udhyam.org/nuously growing and the economic structure... | 0 | 2024-07-08T04:16:41 | https://dev.to/udhyam_learning/organizations-promoting-indian-entrepreneurship-1e6i | startup |

The youth population of India is contihttps://udhyam.org/nuously growing and the economic structure is changing; thus, India possesses great potential to foster the spirit of entrepreneurship. Currently, various org... | udhyam_learning |

1,915,194 | Mastering CSS Grid Layout | Introduction: CSS Grid layout is a powerful tool for creating responsive and dynamic layouts on web... | 0 | 2024-07-08T04:17:13 | https://dev.to/tailwine/mastering-css-grid-layout-443e | Introduction:

CSS Grid layout is a powerful tool for creating responsive and dynamic layouts on web pages. With its advanced features and user-friendly syntax, it has become the go-to choice for designers and developers. In this article, we will discuss the advantages, disadvantages, and features of mastering CSS Grid... | tailwine | |

1,915,261 | Turnover B2B | "Introducing Turnover, the cutting-edge business marketplace designed for the next generation... | 0 | 2024-07-08T06:06:35 | https://dev.to/turnover/turnover-b2b-lhk |

"Introducing Turnover, the cutting-edge business marketplace designed for the next generation commerce. Our platform is dedicated to empowering brands, wholesalers, distributors, retailers and resellers through inn... | turnover | |

1,915,195 | Taming the Wild West: Leveraging Volatility for Profit in Crypto Trading | The cryptocurrency market, with its characteristic price swings, presents a unique challenge for... | 0 | 2024-07-08T04:18:39 | https://dev.to/epakconsultant/taming-the-wild-west-leveraging-volatility-for-profit-in-crypto-trading-4d4p | trading | The cryptocurrency market, with its characteristic price swings, presents a unique challenge for traders. While volatility can be daunting, it also offers fertile ground for profit. This article explores strategies for navigating volatile crypto markets, calculating risk/reward ratios, using leverage cautiously, and ... | epakconsultant |

1,915,196 | Building Reusable List Components in React | Introduction In React development, it's common to encounter scenarios where you need to... | 0 | 2024-07-08T04:23:03 | https://dev.to/vyan/building-reusable-list-components-in-react-8bf | javascript, react, beginners, webdev | ## Introduction

In React development, it's common to encounter scenarios where you need to display lists of similar components with varying styles or content. For instance, you might have a list of authors, each with different information like name, age, country, and books authored. To efficiently handle such cases, w... | vyan |

1,915,197 | Serverless: An Evolving Architecture, Not Just a Runtime | "The reports of my death have been greatly exaggerated." - Mark Twain This famous quote applies... | 14,747 | 2024-07-08T04:24:09 | https://www.internetkatta.com/serverless-an-evolving-architecture-not-just-a-runtime | serverless, aws, architecture, devops | > "The reports of my death have been greatly exaggerated." - Mark Twain

This famous quote applies just as well to technology as it does to people. In the fast-paced world of software development, we often hear proclamations about the death of one technology or another. But the truth is, technologies rarely die outrigh... | avinashdalvi_ |

1,915,198 | Unveiling the Mystery: Analyzing Crypto Volatility with Advanced Charting Tools | The ever-shifting landscape of the crypto market thrives on volatility. For traders seeking to... | 0 | 2024-07-08T04:24:41 | https://dev.to/epakconsultant/unveiling-the-mystery-analyzing-crypto-volatility-with-advanced-charting-tools-4lp2 | trading | The ever-shifting landscape of the crypto market thrives on volatility. For traders seeking to navigate these fluctuations and potentially profit, advanced charting tools become invaluable assets. This article delves into how these tools can be used to visualize volatility, identify patterns, calculate key metrics, and... | epakconsultant |

1,915,199 | What Should You Consider When Building A Family Home in Sydney | Building a family home is one of the most significant investments you can make. It’s not just about... | 0 | 2024-07-08T04:25:52 | https://dev.to/edyco_building_6f8b2a3fd1/what-should-you-consider-when-building-a-family-home-in-sydney-2842 | Building a family home is one of the most significant investments you can make. It’s not just about constructing walls and a roof; it’s about creating a space where your family will grow, share experiences, and build memories. This process requires thoughtful planning and careful consideration of numerous factors to en... | edyco_building_6f8b2a3fd1 | |

1,915,213 | Unleash Your Software Design Prowess with "Software Design by Example: A Tool-Based Introduction with Python" 🚀 | Comprehensive guide to software design using Python, with a focus on practical examples and tools. Covers objects, classes, pattern matching, parsing, and more. | 27,801 | 2024-07-08T04:30:56 | https://dev.to/getvm/unleash-your-software-design-prowess-with-software-design-by-example-a-tool-based-introduction-with-python-32ld | getvm, programming, freetutorial, technicaltutorials |

Greetings, fellow programmers! 👋 If you're looking to take your software design skills to the next level, I've got the perfect resource for you: "Software Design by Example: A Tool-Based Introduction with Python."

- Code Layout Verification Number | ⚡ MyFirstApp - React Native with Expo (P27) - Code Layout Verification Number | 27,894 | 2024-07-08T04:54:05 | https://dev.to/skipperhoa/myfirstapp-react-native-with-expo-p27-code-layout-verification-number-1obo | react, reactnative, webdev, tutorial | ⚡ MyFirstApp - React Native with Expo (P27) - Code Layout Verification Number

{% youtube gFzEC3rHdro %} | skipperhoa |

1,915,221 | ⚡ MyFirstApp - React Native with Expo (P28 - End) - Update Code Check Android or iOS | ⚡ MyFirstApp - React Native with Expo (P28 - End) - Update Code Check Android or iOS | 27,894 | 2024-07-08T04:55:25 | https://dev.to/skipperhoa/myfirstapp-react-native-with-expo-p28-end-update-code-check-android-or-ios-lfg | react, reactnative, webdev, tutorial | ⚡ MyFirstApp - React Native with Expo (P28 - End) - Update Code Check Android or iOS

{% youtube RUdO8g90RWg %} | skipperhoa |

1,915,223 | Customized Wooden Engraved Pen with Box - Gorofy | Experience the perfect blend of functionality and style with our Customized Wooden Engraved Pen with... | 0 | 2024-07-08T04:58:23 | https://dev.to/gorofy/customized-wooden-engraved-pen-with-box-gorofy-13gc | custompen, customwoodenpen | Experience the perfect blend of functionality and style with our [Customized Wooden Engraved Pen with box](https://gorofy.com/product/customized-wooden-engraved-pen-with-box/). Meticulously crafted from high-quality wood,

| gorofy |

1,915,224 | How to add dark mode in next.js application using tailwind css ? | Dark mode is now a trendy feature in web apps because of its stylish look and the reduced strain on... | 0 | 2024-07-08T05:03:10 | https://www.swhabitation.com/blogs/how-to-add-dark-mode-in-nextjs-application-using-tailwind-css | tailwindcss, nextjs, darkmode, webdev | Dark mode is now a trendy feature in web apps because of its stylish look and the reduced strain on the eyes it offers. Today, we'll guide you on how to add dark mode to your Next.js app with the help of Tailwind CSS Typography. This duo doesn't just improve user experience but also gives your app a more attractive app... | swhabitation |

1,915,225 | Fixed vs Sticky Positioning in css | <!DOCTYPE html> <html lang="en"> <head> <meta charset="UTF-8"> ... | 0 | 2024-07-08T05:03:50 | https://dev.to/webfaisalbd/fixed-vs-sticky-positioning-in-css-n0l | ```html

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Fixed vs Sticky</title>

<link rel="stylesheet" href="position.css">

</head>

<body>

<div class="container">

<nav class="nav1">

<sp... | webfaisalbd | |

1,915,227 | Which is the top aviation training institute in India? | Top Crew Aviation india's No.1 Aviation Training Institute, TCA has 16 years of experience in... | 0 | 2024-07-08T05:05:08 | https://dev.to/topcrewaviation/what-is-top-indias-no1-aviation-training-institute-27bn | aviationcourse, pilottraininginstitute, pilotcourse | Top Crew Aviation india's No.1 [Aviation Training Institute](https://topcrewaviation.com/), TCA has 16 years of experience in aviation. Top Crew Aviation offers pilot, air hostess, cabin crew, ground crew, and hospitality manager training. If you would like to join the Aviation Institute, come on board with us today to... | topcrewaviation |

1,915,228 | Why do AI articles trend on Dev.to? | I have been seeing a lot of obvious AI content trending on Dev.to. While I don't mind AI assisted... | 0 | 2024-07-08T05:50:34 | https://dev.to/paul_freeman/why-do-ai-articles-trend-on-devto-hh8 | discuss, ai, devto | I have been seeing a lot of obvious AI content trending on Dev.to. While I don't mind AI assisted writing, I do mind AI generated experience.

Here's an example, I saw on trending page recommended to me

>**Note** sorr... | paul_freeman |

1,915,229 | How to Become an Airline Pilot? Learn the Complete process. | How to Become an Airline Pilot? To become an airline pilot, you must start with flight... | 0 | 2024-07-08T05:16:18 | https://dev.to/topcrewaviation/how-to-become-an-airline-pilot-learn-the-complete-process-2p5n | ## How to Become an Airline Pilot?

To become an airline pilot, you must start with flight training. Learn about the different aviation programs offered by flight schools. Compare the options available. Choose the best flight training institute for your goals. First, check the eligibility requirements. The Directorate ... | topcrewaviation | |

1,915,230 | Now now now, now. | This post was originally written for my website at miko.ademagic.com/blog/now I've been interested... | 0 | 2024-07-08T05:16:34 | https://dev.to/ademagic/now-now-now-now-1jp4 | indieweb, webdev, website | > This post was originally written for my website at [miko.ademagic.com/blog/now](https://miko.ademagic.com/blog/now)

I've been interested in the Indie Web as a new (old?) way to connect with a community on the Internet without an algorith holding my hand (amongst other reasons, but that's for another post). One of th... | ademagic |

1,915,232 | Debunking Common Misconceptions about Generative AI | Quick Summary:-Generative AI offers a range of new possibilities, but it's important to understand... | 0 | 2024-07-08T05:19:24 | https://dev.to/vikas_brilworks/debunking-common-misconceptions-about-generative-ai-7do | **Quick Summary:-**Generative AI offers a range of new possibilities, but it's important to understand both its potential and its limitations. In this article, we will debunk some misconceptions surrounding generative AI.

The arrival of super cool AI tools like ChatGPT, Midjourney, and DALL-E has everyone talking. The... | vikas_brilworks | |

1,915,237 | Implementing the Page Object Model (POM) with Cypress: A Step-by-Step Guide | Introduction As web applications grow in complexity, maintaining test automation code... | 0 | 2024-07-09T06:46:08 | https://dev.to/aswani25/implementing-the-page-object-model-pom-with-cypress-a-step-by-step-guide-5c2i | testing, javascript, webdev, cypress | ## Introduction

As web applications grow in complexity, maintaining test automation code becomes increasingly challenging. The Page Object Model (POM) is a design pattern that can help manage this complexity by promoting reusability and maintainability in your test automation scripts. In this post, we’ll explore how to... | aswani25 |

1,915,239 | Elixir pattern matching - save your time, similar with the way of our thinking | Intro Once of interesting features of Elixir is pattern matching. That is way to save your... | 0 | 2024-07-08T05:25:31 | https://dev.to/manhvanvu/elixir-pattern-matching-save-your-time-and-friendly-with-your-brain-way-d8c | elixir, patternmatching, bitstring, binary | ## Intro

Once of interesting features of Elixir is pattern matching. That is way to save your time and similar with the way of our thinking.

Pattern matching help us to check value, extract value from complex term, branching code.

It also is a way to fail fast, follow "Let it crash" (and supervisor will fix) idiomat... | manhvanvu |

1,915,240 | Explaining ‘this’ keyword in JavaScript | 1. Global Context When used in the global context (outside of any function), this refers... | 0 | 2024-07-08T05:26:32 | https://dev.to/imrul099/explaining-this-keyword-in-javascript-15hm | javascript, webdev, frontend, programming | ## 1. Global Context

When used in the global context (outside of any function), this refers to the global object, which is window in browsers and global in Node.js.

`console.log(this); // In a browser, this logs the Window object

`

## 2. Function Context

In a regular function, the value of this depends on how the func... | imrul099 |

1,915,241 | How to Decommission Exchange 2019? | There are multiple reasons why you need to decommission an Exchange Server. Some common reasons... | 0 | 2024-07-08T05:36:47 | https://dev.to/abhaysingh/how-to-decommission-exchange-2019-pi0 | There are multiple reasons why you need to decommission an Exchange Server. Some common reasons include:

1. Hardware issues with the current server.

2. Moving to a new hardware or new operating system.

3. Downsizing from Database Availability Group (DAG).

4. Issues with the currently installed operating system.

5. Mi... | abhaysingh | |

1,915,242 | Automate GitHub PR Reviews with LangChain AI Agents | Learn how to leverage LangChain agents and Composio tools to automate GitHub pull request reviews and send summaries to Slack channels. | 0 | 2024-07-08T06:42:55 | https://dev.to/sunilkumrdash/automate-github-pr-reviews-with-langchain-agents-4p9g | ai, aiagents, langchain, composio | ---

title: Automate GitHub PR Reviews with LangChain AI Agents

published: true

description: Learn how to leverage LangChain agents and Composio tools to automate GitHub pull request reviews and send summaries to Slack channels.

tags: AI, AIAgents, LangChain, Composio

# cover_image: https://direct_url_to_image.jpg

# Use... | sunilkumrdash |

1,915,243 | What Kind of AI Technologies Are Travel Companies Using? | Artificial intelligence (AI) is changing the travel industry, helping companies provide better... | 0 | 2024-07-08T05:33:25 | https://dev.to/ravi_makhija/what-kind-of-ai-technologies-are-travel-companies-using-34ok | aitechnologies, travelcompaniesusingai, travelcompanies, aiintravel |

Artificial intelligence (AI) is changing the travel industry, helping companies provide better services and improve customer experiences. Travel companies use different AI technologies to analyze data, automate tas... | ravi_makhija |

1,915,244 | Detailed Explanation of Digital Currency Pair Trading Strategy | Introduction Recently, I saw BuOu's Quantitative Diary mentioning that you can use... | 0 | 2024-07-08T05:36:36 | https://dev.to/fmzquant/detailed-explanation-of-digital-currency-pair-trading-strategy-3nnf | trading, fmzquant, strategy, cryptocurrency | ## Introduction

Recently, I saw BuOu's Quantitative Diary mentioning that you can use negatively correlated currencies to select currencies, and open positions to make profits based on price difference breakthroughs. Digital currencies are basically positively correlated, and only a few currencies are negatively correl... | fmzquant |

1,915,245 | Massage on Dubai: A Luxurious Wellness Experience | Introduction to Dubai’s Wellness Scene Dubai has always been synonymous with luxury, opulence, and... | 0 | 2024-07-08T05:37:48 | https://dev.to/22ayur/massage-on-dubai-a-luxurious-wellness-experience-1eh0 | 22ayur, webdev | Introduction to Dubai’s Wellness Scene

Dubai has always been synonymous with luxury, opulence, and innovation. But beyond its iconic skyline and glamorous lifestyle, the city has evolved into a premier destination for wellness and relaxation. With an ever-growing number of world-class spas, wellness centers, and holist... | 22ayur |

1,915,247 | Top 4 Life and Work Principles from Jensen Huang | Jensen Huang is the founder and CEO of NVIDIA. This global tech company is the world's most valuable... | 0 | 2024-07-08T05:42:09 | https://dev.to/halimshams/top-4-life-and-work-principles-from-jensen-huang-55me | productivity, softwaredevelopment | Jensen Huang is the founder and CEO of NVIDIA. This global tech company is the world's most valuable company worth over $4 trillion.

I've spent days researching and studying about his brilliant leader. I've come to the conclusion that success is not random, it's how hard you try and how smart you work.

I've collected... | halimshams |

1,915,248 | Cisco Distributor in Dubai: Empowering Businesses with Cutting-Edge Technology | Dubai, a global hub for trade and innovation, is home to numerous businesses seeking advanced... | 0 | 2024-07-08T05:44:54 | https://dev.to/brenda_amy_d1e1303f78e680/cisco-distributor-in-dubai-empowering-businesses-with-cutting-edge-technology-cg2 | Dubai, a global hub for trade and innovation, is home to numerous businesses seeking advanced technological solutions to stay competitive. Among the leading providers of such solutions is Cisco, a multinational technology conglomerate renowned for its networking hardware, telecommunications equipment, and high-tech ser... | brenda_amy_d1e1303f78e680 | |

1,915,249 | Setting Up a Python Virtual Environment (venv) | Python virtual environments are a great way to manage dependencies for your projects. They allow you... | 0 | 2024-07-08T05:47:44 | https://dev.to/zobaidulkazi/setting-up-a-python-virtual-environment-venv-amj | bash, pip, python, tutorial | Python virtual environments are a great way to manage dependencies for your projects. They allow you to create isolated environments where you can install packages specific to a project without affecting your system-wide Python installation. This blog post will guide you through setting up a Python virtual environment ... | zobaidulkazi |

1,915,250 | Step-by-Step Guide to Creating RESTful APIs with Node.js and PostgreSQL | Welcome to the world of building RESTful APIs with Node.js and PostgreSQL! In this guide, we'll take... | 0 | 2024-07-08T05:49:41 | https://dev.to/a_shokn/step-by-step-guide-to-creating-restful-apis-with-nodejs-and-postgresql-1k26 | webdev, javascript, postgres, node | Welcome to the world of building RESTful APIs with Node.js and PostgreSQL! In this guide, we'll take you on an exciting journey, where we'll transform your Node.js app into a robust, scalable RESTful API using PostgreSQL as our database. Let’s dive in and have some fun along the way!

Let’s start by setting up a new No... | a_shokn |

1,915,252 | The DevTool Content Marketing Dashboard: Metrics That Actually Matter | _Learn how to measure the impact of your developer content marketing with a data-driven dashboard.... | 0 | 2024-07-08T05:55:06 | https://dev.to/swati1267/the-devtool-content-marketing-dashboard-metrics-that-actually-matter-29di | _Learn how to measure the impact of your developer content marketing with a data-driven dashboard. Discover essential metrics, actionable tips, and AI-powered tools like Doc-E.ai to boost your DevTool's success.

You've put in the work, churning out blog posts, tutorials, and docs about your amazing DevTool. But how do... | swati1267 | |

1,915,253 | The Evolution and Readiness of Web3 Infra: Where Are We Now? | Innovations either prevail or perish! The statement is bold and insensitive but a reality,... | 0 | 2024-07-08T05:57:27 | https://www.zeeve.io/blog/the-evolution-and-readiness-of-web3-infra-where-are-we-now/ | web3 | <p>Innovations either prevail or perish! The statement is bold and insensitive but a reality, nonetheless. How? We have seen the likes of Nokia and BlackBerry perish to change because they were non-adaptive. However, Web3, despite being readily new, has acknowledged challenges and responded positively with time. As a r... | zeeve |

1,915,255 | Mastering P2P Crypto Exchange Development | Peer-to-Peer (P2P) cryptocurrency exchanges are revolutionizing the digital economy by enabling... | 0 | 2024-07-08T05:58:43 | https://dev.to/kala12/mastering-p2p-crypto-exchange-development-5898 | Peer-to-Peer (P2P) cryptocurrency exchanges are revolutionizing the digital economy by enabling direct transactions between users without intermediaries. Building a successful P2P crypto exchange requires an understanding of key components, technology and market dynamics. Here are ten important points to guide the deve... | kala12 | |

1,915,256 | How Exam Dumps Can Save You Time and Effort | In the realm of professional certifications and academic examinations, the term "exam dumps" often... | 0 | 2024-07-08T06:01:18 | https://dev.to/jamie_rich_00c0eafb26ae6d/how-exam-dumps-can-save-you-time-and-effort-2n8 | javascript | In the realm of professional certifications and academic examinations, the term "exam dumps" often sparks controversy and debate. For many, exam dumps are synonymous with cheating or shortcuts to certification. However, this perception overlooks nuances and potential benefits that exam dumps can offer when used respons... | jamie_rich_00c0eafb26ae6d |

1,915,257 | The Vital Importance of System Integration Testing (SIT) | In the complex network of today’s technology, where different applications link together to move... | 0 | 2024-07-08T06:02:12 | https://peakupdates.com/science-and-technology/the-vital-importance-of-system-integration-testing-sit | system, integration, testing |

In the complex network of today’s technology, where different applications link together to move businesses ahead, system integration testing (SIT) becomes a very important step. SIT acts as an essential control poin... | rohitbhandari102 |

1,915,258 | HackerRank 3 Months Preparation Kit(JavaScript) - Mini-Max Sum | Given five positive integers, find the minimum and maximum values that can be calculated by summing... | 0 | 2024-07-08T06:03:56 | https://dev.to/saiteja_amshala_035a7d7f1/hackerrank-3-months-preparation-kitjavascript-mini-max-sum-4k3j | javascript, webdev, beginners, learning | Given five positive integers, find the minimum and maximum values that can be calculated by summing exactly four of the five integers. Then print the respective minimum and maximum values as a single line of two space-separated long integers.

**Example**

arr=[1,3,5,7,9]

The minimum sum is 1+3+5+7 = 16 and the maximum ... | saiteja_amshala_035a7d7f1 |

1,915,259 | Thiết kế Website Tại Hậu Giang Chuẩn SEO | Đối với các doanh nghiệp tại Hậu Giang, việc có một website chuyên nghiệp không chỉ giúp nâng cao... | 0 | 2024-07-08T06:02:59 | https://dev.to/terus_technique/thiet-ke-website-tai-hau-giang-chuan-seo-2i0h | website, digitalmarketing, seo, terus |

Đối với các doanh nghiệp tại Hậu Giang, việc có một website chuyên nghiệp không chỉ giúp nâng cao hiệu quả kinh doanh mà còn là công cụ quan trọng để tiếp cận và phục vụ khách hàng một cách hiệu quả hơn.

Một websi... | terus_technique |

1,915,260 | Bagaiman Cara Mendapatakan Penguji Aktif Selama 14 Hari. Agar Mendapatkan akses Ke Produksi Dari Google Play Developper | Saya Sangat Kesulisan Mencari l2 Penguji aplikasi. Karna pada dasarnya saya Developper individu. | 0 | 2024-07-08T06:03:19 | https://dev.to/deveindibidual/bagaiman-cara-mendapatakan-penguji-aktif-selama-14-hari-agar-mendapatkan-akses-ke-produksi-dari-google-play-developper-35i0 | help | Saya Sangat Kesulisan Mencari l2 Penguji aplikasi. Karna pada dasarnya saya Developper individu. | deveindibidual |

1,915,262 | Analyzing logs - the lame way | In the previous blog post, we saw how to create a production-ready logging system. In this blog post,... | 0 | 2024-07-08T06:06:40 | https://dev.to/naineel12/analyzing-logs-the-lame-way-8lc | javascript, node, webdev, tutorial | In the previous blog post, we saw how to create a production-ready logging system. In this blog post, we will see how to analyze the logs generated by the system.

Trong thời đại công nghệ số phát triển như hiện nay, việc có một [trang thiết kế website chuyên nghiệp, chuẩn SEO và tối ưu hóa trải nghiệm người dùng](https://terusvn.com/thiet-ke-website-tai-hcm/) là một yêu cầu c... | terus_technique |

1,915,266 | Thiết kế Website Tại Hưng Yên Chuyên Nghiệp | Hưng Yên nổi tiếng với phong cảnh đẹp, nằm ở trung tâm đồng bằng sông Hồng Việt Nam, cách Hà Nội 45... | 0 | 2024-07-08T06:11:42 | https://dev.to/terus_technique/thiet-ke-website-tai-hung-yen-chuyen-nghiep-4bf7 | website, digitalmarketing, seo, terus |

Hưng Yên nổi tiếng với phong cảnh đẹp, nằm ở trung tâm đồng bằng sông Hồng Việt Nam, cách Hà Nội 45 km về phía Tây Bắc. Hưng Yên là cửa ngõ thủ đô và trung tâm hành chính của tỉnh. Nằm trong Vùng kinh tế Bắc Trung B... | terus_technique |

1,915,267 | Implementing Secure Multi-Party Computation (SMPC) with NodeJs: A Practical Guide | Explore how to enhance privacy and security in your applications by implementing Secure Multi-Party Computation (SMPC) with Node.js. This practical guide includes real-world examples and step-by-step instructions for using the secret-sharing library. | 0 | 2024-07-08T06:38:13 | https://dev.to/rigalpatel001/implementing-secure-multi-party-computation-smpc-with-nodejs-a-practical-guide-55pj | node, smpc, datasecurity, securecoding | ---

title: Implementing Secure Multi-Party Computation (SMPC) with NodeJs: A Practical Guide

published: true

description: Explore how to enhance privacy and security in your applications by implementing Secure Multi-Party Computation (SMPC) with Node.js. This practical guide includes real-world examples and step-by-ste... | rigalpatel001 |

1,915,268 | Thiết kế Website Tại Kon Tum Uy Tín | Lợi ích của việc thiết kế website tại Kon Tum chuẩn SEO Cầu nối giữa công ty và khách hàng: Một... | 0 | 2024-07-08T06:16:19 | https://dev.to/terus_technique/thiet-ke-website-tai-kon-tum-uy-tin-99 | website, digitalmarketing, seo, terus |

Lợi ích của việc thiết kế website tại Kon Tum chuẩn SEO

Cầu nối giữa công ty và khách hàng: Một website chuyên nghiệp sẽ là cầu nối hiệu quả, giúp khách hàng dễ dàng tìm kiếm, tiếp cận và tương tác với doanh nghiệp... | terus_technique |

1,915,269 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-07-08T06:17:59 | https://dev.to/focamil589/buy-verified-cash-app-account-m6m | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoinenablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, Cash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n|||\\\\\\\n\nHow to verify Cash App accounts\n\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account. As part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly.\n\nHow cash used for international transaction?\n\n\n\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom. No matter if you're a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today's digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial. As we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available.\n\nOffers and advantage to buy cash app accounts cheap?\n\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform. We deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Trustbizs.com stands by the Cash App's superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\n\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management. Explore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller's pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Equally important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\n\nIn today's digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions. By acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller's pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Equally important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\n\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | focamil589 |

1,915,271 | Art of SVG Animation | 10 Techniques Every UI Developer Should Master | SVGs (Scalable Vector Graphics) offer a modern way to enhance web and application interfaces with... | 0 | 2024-07-08T06:18:55 | https://dev.to/nnnirajn/art-of-svg-animation-10-techniques-every-ui-developer-should-master-3bkh | css, ui, animation, beginners | SVGs (Scalable Vector Graphics) offer a modern way to enhance web and application interfaces with high-quality, scalable graphics. Unlike traditional bitmap graphics, SVGs are made up of vector data, which means they can scale to any size without losing quality. This scalability makes SVGs immensely popular among UI de... | nnnirajn |

1,915,292 | Challenges in System Integration Testing and Opkey’s Solutions | System Integration Testing, SIT, in short, is a significant phase of software development. It’s when... | 0 | 2024-07-08T06:22:30 | https://zuuzs.com/challenges-in-system-integration-testing-and-opkeys-solutions/ | system, integration, testing |

System Integration Testing, SIT, in short, is a significant phase of software development. It’s when all the various elements are combined to check if they work smoothly together. However, many issues may arise durin... | rohitbhandari102 |

1,915,294 | Why you should try knotless braids with AABH | With more than 30 years of combined experience, Authentic African Hair Braiding (AAHB) is more than... | 0 | 2024-07-08T06:28:07 | https://dev.to/aahb01/why-you-should-try-knotless-braids-with-aabh-1hdl | With more than 30 years of combined experience, Authentic African Hair Braiding (AAHB) is more than simply a salon—it’s a destination for fine hair care in the Dallas-Fort Worth region. Focusing on a broad range of braiding techniques, including Goddess Braids, Cornrows, Senegalese Twists, Micro/Invisible Braids, and m... | aahb01 | |

1,915,295 | Javascript framework war: Angular vs React | When it comes to building dynamic web applications, two popular frameworks often come into play:... | 0 | 2024-07-08T06:29:26 | https://dev.to/andrei_saioc_b41f2371c22b/javascript-framework-war-angular-vs-react-55oe | When it comes to building dynamic web applications, two popular frameworks often come into play: Angular and React. Both have their unique strengths and serve different purposes, making it essential to understand their differences.

**1. Architecture:**

Angular: Developed by Google, Angular is a full-fledged MVC (Mode... | andrei_saioc_b41f2371c22b | |

1,915,296 | The Benefits of Sports Supplements for Athletes | Sports supplements have become a popular addition to many athletes' training regimens. Designed to... | 0 | 2024-07-08T06:29:54 | https://dev.to/bpi_sports_86870d4b31db9c/the-benefits-of-sports-supplements-for-athletes-2fnj |

[Sports supplements](https://bpisports.com/) have become a popular addition to many athletes' training regimens. Designed to enhance performance, improve recovery, and support overall health, these supplements can be beneficial for both professional and amateur athletes. Understanding the different types of sports sup... | bpi_sports_86870d4b31db9c | |

1,915,298 | Hello Dev | A post by Onyemaobi jecinta Ugochi | 0 | 2024-07-08T06:33:47 | https://dev.to/onyemaobi_jecintaugochi_/hello-dev-482k | onyemaobi_jecintaugochi_ | ||

1,915,299 | What Are The Signs That You Have An Erection Problem? | The journey from being infertile until the point of strength can be very difficult for males. If you... | 0 | 2024-07-08T06:33:48 | https://dev.to/cora_daisy/what-are-the-signs-that-you-have-an-erection-problem-58e7 | health, cenforce, mens, viagra | The journey from being infertile until the point of strength can be very difficult for males. If you are beginning treatment, there are many obstacles men must overcome.

It might appear to be a simple step however, ask those who struggle. It's not easy to stay steady at this point.

In the case of weak or weak erections... | cora_daisy |

1,915,300 | Apache Doris for log and time series data analysis in NetEase, why not Elasticsearch and InfluxDB? | For most people looking for a log management and analytics solution, Elasticsearch is the go-to... | 0 | 2024-07-08T06:36:09 | https://dev.to/apachedoris/apache-doris-for-log-and-time-series-data-analysis-in-netease-why-not-elasticsearch-and-influxdb-5f60 | datascience, database, dataengineering, opensource | For most people looking for a log management and analytics solution, Elasticsearch is the go-to choice. The same applies to InfluxDB for time series data analysis. These were exactly the choices of NetEase, one of the world's highest-yielding game companies but more than that. As NetEase expands its business horizons, ... | apachedoris |

1,915,301 | Thiết kế Website Tại Lai Châu Chuẩn Insight | Lý do các doanh nghiệp cần thiết kế website tại Lai Châu Nâng cao hiệu quả hoạt động kinh doanh:... | 0 | 2024-07-08T06:43:24 | https://dev.to/terus_technique/thiet-ke-website-tai-lai-chau-chuan-insight-n9o | website, digitalmarketing, seo, terus |

Lý do các doanh nghiệp cần thiết kế website tại Lai Châu

Nâng cao hiệu quả hoạt động kinh doanh: Một website chuyên nghiệp không chỉ là công cụ quảng bá, tiếp thị hiệu quả mà còn là kênh để doanh nghiệp tương tác, ... | terus_technique |

1,915,303 | Unveiling The Crucial Benefits Of Integration Testing | Within the constantly changing world of software development, it is important to make sure that... | 0 | 2024-07-08T06:45:21 | https://www.prophecynewswatch.com/article.cfm?recent_news_id=6230 | integration, testing |

Within the constantly changing world of software development, it is important to make sure that different parts work well together. Integration testing plays an essential role in checking if various elements like mo... | rohitbhandari102 |

1,915,304 | Benefits of integrating ChatGPT in workflow | ChatGPT presents a plethora of opportunities for enhancing SEO strategies with its versatile... | 0 | 2024-07-08T06:45:33 | https://dev.to/plugin_market/benefits-of-integrating-chatgpt-in-workflow-5bpo | chatgpt | ChatGPT presents a plethora of opportunities for enhancing SEO strategies with its versatile capabilities. Here are some effective ways to leverage ChatGPT for SEO-

**Meta Description Creation**: Utilize ChatGPT to craft compelling meta descriptions that incorporate relevant keywords and entice users to click through ... | plugin_market |

1,915,305 | Grow Tall Surgery in India: The Ultimate Guide to Choosing Child Ortho Spine Care | Over the last few years, people have opted for the growth enhancement products, and particularly... | 0 | 2024-07-08T06:46:33 | https://dev.to/child_orthospinecare_28/grow-tall-surgery-in-india-the-ultimate-guide-to-choosing-child-ortho-spine-care-3854 |

Over the last few years, people have opted for the growth enhancement products, and particularly surgeries. Of these, grow tall surgery has developed into a fashion that many people will be willing to undergo to bec... | child_orthospinecare_28 | |

1,915,306 | Day 7 of 100 Days of Code | Sun, July 7, 2024 The Codecademy Developing Websites Locally lesson is mainly about getting... | 0 | 2024-07-08T06:47:10 | https://dev.to/jacobsternx/day-7-of-100-days-of-code-133m | 100daysofcode, webdev, javascript, beginners | Sun, July 7, 2024

The Codecademy Developing Websites Locally lesson is mainly about getting comfortable with locally sourced dev tools, which was good review.

As an aside, my VS Code time tracking extension CodeTime looks to be working nicely; 6 hrs 33 mins today.

** is a leading packing and moving service provider company in Ghaziabad. We provide household shifting & residential shifting & office shifting, insurance, transport, car transportation, bike shifting servic... | dtcexpressghaziabad |

1,915,308 | การจัดการ Enum ใน PostgreSQL: เพื่อประสิทธิภาพและความชัดเจนให้ฐานข้อมูลของคุณ | Enum คือ Enum หรือ Enumerated Type ใน PostgreSQL เป็นเสมือนเซ็ตค่าที่กำหนดไว้ล่วงหน้า... | 0 | 2024-07-08T06:48:16 | https://dev.to/everthing-was-postgres/kaarcchadkaar-enum-ain-postgres-49e3 | ## Enum คือ ##

Enum หรือ Enumerated Type ใน PostgreSQL เป็นเสมือนเซ็ตค่าที่กำหนดไว้ล่วงหน้า ช่วยให้คุณจำกัดข้อมูลในคอลัมน์ให้อยู่ในกรอบที่ต้องการ นึกภาพว่าคุณมีตู้เสื้อผ้าที่มีช่องเฉพาะสำหรับเสื้อผ้าแต่ละประเภท - นั่นแหละคือแนวคิดของ Enum!

ตัวอย่างที่พบบ่อย:

- วันในสัปดาห์: จันทร์, อังคาร, พุธ, ...

- สถานะการส่งสินค้า... | iconnext | |

1,915,317 | SQLC & dynamic queries | SQLC has become my go-to tool for interacting with databases in Go. It gives you full control over... | 0 | 2024-07-08T07:23:45 | https://dizzy.zone/2024/07/03/SQLC-dynamic-queries/ | ---

title: SQLC & dynamic queries

published: true

date: 2024-07-03 13:54:19 UTC

tags:

canonical_url: https://dizzy.zone/2024/07/03/SQLC-dynamic-queries/

---

[SQLC](https://github.com/sqlc-dev/sqlc) has become my go-to tool for interacting with databases in Go. It gives you full control over your queries since you end... | vkuznecovas | |

1,915,318 | How Do Video and Voice Calls Work? | Important for Interview In many interviews, candidates are often asked to explain how... | 0 | 2024-07-08T06:50:12 | https://dev.to/saanchitapaul/how-do-video-and-voice-calls-work-3n47 | webdev, deeplearning, learning, interview | ## Important for Interview

**In many interviews, candidates are often asked to explain how video or voice calls work. This article provides a high-level overview of the functioning of voice and video calls.**

**Voice over Internet Protocol (VoIP)** is one of the most popular standards for voice and video calling over... | saanchitapaul |

1,915,319 | Thiết kế Website Tại Lạng Sơn Thu Hút Khách Hàng | Dịch vụ thiết kế website chuyên nghiệp tại Lạng Sơn sẽ mang lại nhiều lợi ích cho doanh nghiệp. Đầu... | 0 | 2024-07-08T06:52:34 | https://dev.to/terus_technique/thiet-ke-website-tai-lang-son-thu-hut-khach-hang-29kn | website, digitalmarketing, seo, terus |

[Dịch vụ thiết kế website chuyên nghiệp tại Lạng Sơn](https://terusvn.com/thiet-ke-website-tai-hcm/) sẽ mang lại nhiều lợi ích cho doanh nghiệp. Đầu tiên, nó sẽ là nơi cung cấp nguồn thông tin hữu ích và đáng tin cậ... | terus_technique |

1,915,320 | Automate GitHub PR Reviews with LangChain Agents | LLMs have unlocked countless opportunities to tackle once unsolvable problems, thanks to their... | 0 | 2024-07-08T06:54:46 | https://dev.to/sunilkumrdash/automate-github-pr-reviews-with-langchain-agents-444p | ai, python, langchain, opensource |

LLMs have unlocked countless opportunities to tackle once unsolvable problems, thanks to their exceptional reasoning and decision-making capabilities. Among their many strengths, one of the most significant is their general code understanding, which can be leveraged to build tools that write, re-write, and review code... | sunilkumrdash |

1,915,321 | Exploring AWS Lambda: Use Cases, Security, Performance Tips, and Cost Management | AWS Lambda, a core component of serverless architecture, empowers developers, cloud architects, data... | 0 | 2024-07-08T06:54:11 | https://www.softwebsolutions.com/resources/aws-lambda-guide.html | aws, lambda, cloud, serverless | AWS Lambda, a core component of serverless architecture, empowers developers, cloud architects, data engineers, and business decision-makers by allowing code execution in response to specific events without managing servers. This flexibility is ideal for many modern applications but requires a nuanced understanding of ... | csoftweb |

1,915,322 | Warehouse Management Systems: Enhancing Efficiency and Productivity | In the world of logistics and supply chain management, the importance of a well-organized warehouse... | 0 | 2024-07-08T06:54:14 | https://dev.to/spedition_india_06dea7116/warehouse-management-systems-enhancing-efficiency-and-productivity-57fh | warehouse, logistics | In the world of logistics and supply chain management, the importance of a well-organized warehouse cannot be overstated. One of the key tools in achieving this organization is a Warehouse Management System (WMS). This article delves into the ins and outs of WMS, its benefits, and how it can significantly enhance effic... | spedition_india_06dea7116 |

1,915,323 | 🚀 How I Created an AI Startup in a Weekend | Hello, my name is Ayyoub Bhihi, and I'm passionate about web development. Recently, I launched... | 0 | 2024-07-08T06:56:16 | https://dev.to/ayoubbhihi/how-i-created-an-aistartup-in-a-weekend-3dc7 | webdev, ai, challenge, programming | Hello, my name is Ayyoub Bhihi, and I'm passionate about web development. Recently, I launched [ToolList.ai](https://toollist.ai/), a tool management application, over a weekend using Laravel, Tailwind CSS, and Livewire. Here’s my journey:

💡 From Idea to Execution

Inspiration: Struggling to organize my favorite tool... | ayoubbhihi |

1,915,324 | Buy Negative Google Reviews | Negative Google Reviews Online reviews have become the go-to source for people to make informed... | 0 | 2024-07-08T06:56:55 | https://dev.to/ramsin_dhohaz_3516eacba1d/buy-negative-google-reviews-40j2 | Negative Google Reviews

Online reviews have become the go-to source for people to make informed decisions about products and services. Positive reviews can provide a boost in sales. While negative reviews can be detrimental to a business’s reputation. For this reason, businesses often go to great lengths to ensure. Tha... | ramsin_dhohaz_3516eacba1d | |

1,915,325 | Palm Oil Market: Booming Regional Demand and Market Insights | The global palm oil market has experienced significant growth over the past decade, driven by rising... | 0 | 2024-07-08T06:57:50 | https://dev.to/swara_353df25d291824ff9ee/palm-oil-market-booming-regional-demand-and-market-insights-4mfm |

The global [palm oil market](https://www.persistencemarketresearch.com/market-research/palm-oil-market.asp) has experienced significant growth over the past decade, driven by rising demand from various regions and s... | swara_353df25d291824ff9ee | |

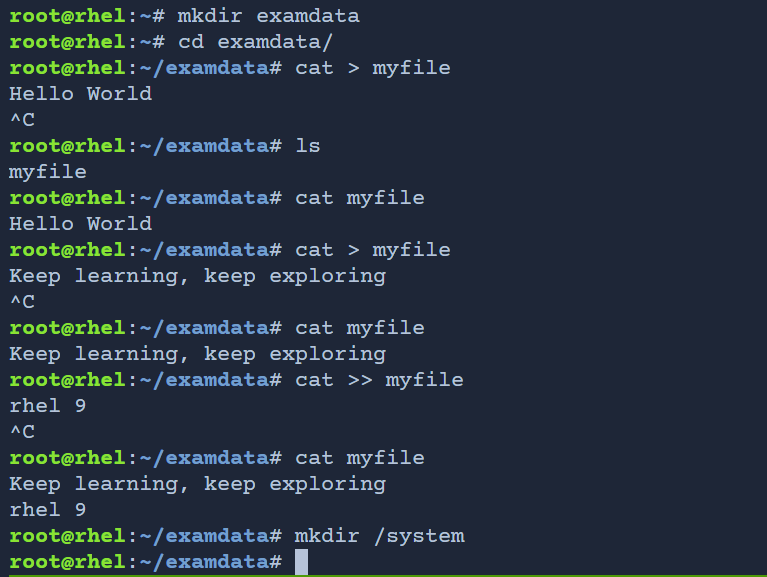

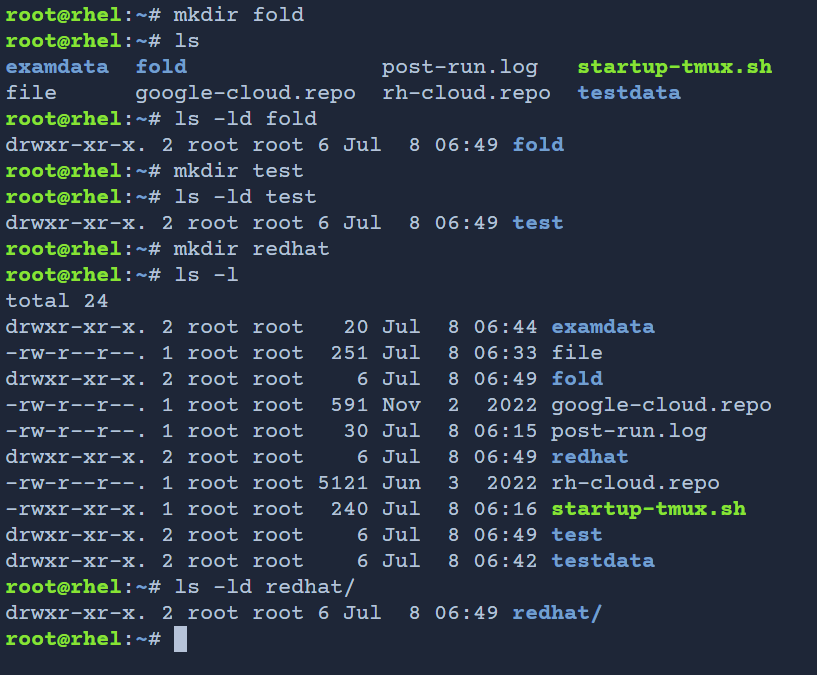

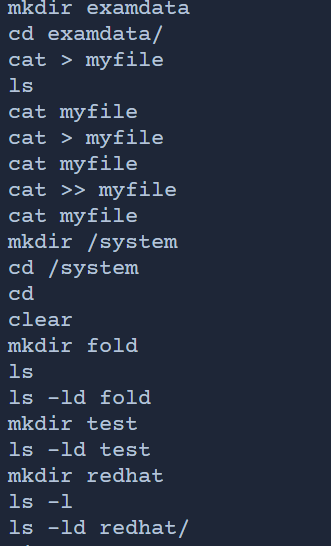

1,915,326 | How to Create a Directory and Save It to a File | A post by mahir dasare | 0 | 2024-07-08T06:59:42 | https://dev.to/mahir_dasare_333/how-to-create-a-directory-and-save-it-to-a-file-4kgc | linux, linuxadmin, continouslearning |

| mahir_dasare_333 |

1,915,327 | 100 Days of Code Week 2 | July 8, 2024 For Week 2, I want to blast out what remains of the Codecademy Full Stack Engineer... | 0 | 2024-07-08T07:00:25 | https://dev.to/jacobsternx/100-days-of-code-week-2-1h5p | 100daysofcode, webdev, javascript, beginners | July 8, 2024

For Week 2, I want to blast out what remains of the Codecademy Full Stack Engineer first of 6 courses. My aim is to get to the first lesson in the next course asap, but right now I'm focusing on what's in front of me.

#### Deploying Websites

* FSE 1.4 Web Dev - Deploying Websites

#### Improved Styling ... | jacobsternx |

1,915,328 | Omega-3 Fatty Acid Supplements Comprehensive Health Benefits Explained | Fatty Acid Supplements Market Outlook The market for fatty acid supplements is projected to grow at... | 0 | 2024-07-08T07:02:05 | https://dev.to/ganesh_dukare_34ce028bb7b/omega-3-fatty-acid-supplements-comprehensive-health-benefits-explained-102p | Fatty Acid Supplements Market Outlook

The market for fatty acid supplements is projected to grow at a compound annual growth rate (CAGR) of 7%, increasing its revenue from US$ 5,406.0 million in 2023 to approximately US$ 10,834.9 million by 2033. This growth reflects rising consumer awareness and increased consumption... | ganesh_dukare_34ce028bb7b | |

1,915,329 | Guide to Migrating Your Site Using a WordPress Migration Tool [Any Host] | Migrating a WordPress site can be a daunting task, but with the right tools and a clear plan, it can... | 0 | 2024-07-08T07:02:15 | https://dev.to/shabbir_mw_03f56129cd25/guide-to-migrating-your-site-using-a-wordpress-migration-tool-any-host-1lep | webdev, beginners | Migrating a WordPress site can be a daunting task, but with the right tools and a clear plan, it can be smooth and hassle-free. This guide will walk you through the process of migrating your site using a WordPress migration tool, ensuring that your site moves seamlessly from one host to another.

## Step-by-Step Guide ... | shabbir_mw_03f56129cd25 |

1,915,331 | Best Short Courses Online: Elevate Your Skills with In-Demand Vocational Programs | Top Trending Courses for Future Job Market Success How to Stay Ahead with Online Learning Navigating... | 0 | 2024-07-08T07:02:49 | https://dev.to/educatinol_courses_806c29/best-short-courses-online-elevate-your-skills-with-in-demand-vocational-programs-29k1 | firstyearincode | Top Trending Courses for Future Job Market Success How to Stay Ahead with Online Learning

Navigating the post-graduation landscape requires making pivotal decisions about one's professional trajectory. It is not often one deliberates over a lifelong Top 10 Most Demanding Courses for Some advocate for identifying the m... | educatinol_courses_806c29 |

1,915,332 | Laravel Developers: In-House vs. Freelance – What’s Best for Your Project? | Introduction When embarking on a new project, choosing the right development team is crucial. The... | 0 | 2024-07-08T07:03:01 | https://dev.to/hirelaraveldevelopers/laravel-developers-in-house-vs-freelance-whats-best-for-your-project-5epa | webdev, beginners, javascript, ai | <h4><strong>Introduction</strong></h4>

<p>When embarking on a new project, choosing the right development team is crucial. The decision often boils down to hiring in-house developers or opting for freelancers. This article dives into the pros and cons of both options, specifically focusing on Laravel developers, to hel... | hirelaraveldevelopers |

1,915,333 | Must-Have Skincare Products for Rainy Weather! | Hello, fellow skincare lovers! 🌧️ Rainy days are such a mood, but they can also be a really big enemy... | 0 | 2024-07-08T07:06:32 | https://dev.to/iliana_williams_c6ae095c3/must-have-skincare-products-for-rainy-weather-5eij | Hello, fellow skincare lovers! 🌧️ Rainy days are such a mood, but they can also be a really big enemy of your skin. This time of the year, the weather can be quite humid and the conditions appear and disappear often; therefore, you have to be alert and change your usual skincare regime. Let's take a look at the best s... | iliana_williams_c6ae095c3 | |

1,915,334 | Oracle EPM Release Notes June 2024: What’s New? | Oracle rolls out monthly updates to enhance capabilities across financial consolidation and... | 0 | 2024-07-08T07:07:05 | https://www.opkey.com/blog/oracle-epm-update-june-2024 | oracle, epm, release |

Oracle rolls out monthly updates to enhance capabilities across financial consolidation and planning, analytics, reporting, and more. The latest updates in this series are the June 2024 Oracle EPM Monthly Update. If ... | johnste39558689 |

1,915,335 | The Evolution of ServiceNow Versions | Understanding the ServiceNow Versions ServiceNow often releases updates to its platform... | 0 | 2024-07-08T07:09:28 | https://dev.to/devops_den/the-evolution-of-servicenow-versions-3fh2 | servicenow, cloud, webdev, devops | ## Understanding the ServiceNow Versions

ServiceNow often releases updates to its platform and applications, keeping its spot at the forefront of innovation and continuous value delivery. These ServiceNow versions usually include current product patches and new modules, apps, or additions.

According to Forrester's T... | devops_den |

1,915,336 | Thiết kế Website Tại Lào Cai Tối Ưu Chi Phí | Lợi ích của việc thiết kế website tại Lào Cai chuẩn SEO Cầu nối giữa công ty và khách hàng: Một... | 0 | 2024-07-08T07:13:52 | https://dev.to/terus_technique/thiet-ke-website-tai-lao-cai-toi-uu-chi-phi-2ig5 | website, digitalmarketing, seo, terus |

Lợi ích của việc thiết kế website tại Lào Cai chuẩn SEO

Cầu nối giữa công ty và khách hàng: Một website chuyên nghiệp sẽ là cầu nối hiệu quả, giúp khách hàng dễ dàng tìm kiếm, tiếp cận và tương tác với doanh nghiệp... | terus_technique |

1,915,337 | Why Retrieval-Augmented Generation (RAG) is the Secret Weapon for Smarter Applications? | Retrieval-Augmented Generation (RAG): Large language models (LLMs) have taken the AI world by storm,... | 0 | 2024-07-08T07:14:33 | https://dev.to/hyscaler/why-retrieval-augmented-generation-rag-is-the-secret-weapon-for-smarter-applications-36m0 | rag, secretweapon, webdev | Retrieval-Augmented Generation (RAG): Large language models (LLMs) have taken the AI world by storm, churning out impressive feats of text generation and comprehension. But what if we could empower them with an extra dose of brilliance? Enter Retrieval-Augmented Generation (RAG), a revolutionary approach that unlocks a... | amulyakumar |

1,915,338 | Classification in Machine Learning: Understanding the Fundamentals and Practical Applications | Classification, along with regression, is one of the two main tasks of supervised learning in Machine... | 0 | 2024-07-08T07:16:29 | https://dev.to/moubarakmohame4/classification-in-machine-learning-understanding-the-fundamentals-and-practical-applications-c1m | machinelearning, data, datascience, deeplearning | Classification, along with regression, is one of the two main tasks of supervised learning in Machine Learning. It involves associating each piece of data with a label from a set of possible labels (or categories). In the simplest cases, there are only two categories, known as binary or binomial classification. Otherwi... | moubarakmohame4 |

1,915,339 | I know your Password | I know your passwords, **ALL** of them. Or I can download them if I want to. The reason is because of Silicon Valley wants to spy on your whole online life. | 0 | 2024-07-08T07:16:57 | https://ainiro.io/blog/i-know-your-password | ---

title: "I know your Password"

date: "2024-07-07"

author: "thomas"

description: "I know your passwords, **ALL** of them. Or I can download them if I want to. The reason is because of Silicon Valley wants to spy on your whole online life."

---

I have written about [Silicon Valley corruption](https://ainiro.io//blog/... | polterguy | |

1,915,340 | Data Warehouse Integration Solutions | In today's data-driven world, organizations generate and accumulate vast amounts of data from various... | 0 | 2024-07-08T07:18:10 | https://dev.to/qgbscanadainc/data-warehouse-integration-solutions-3an | database, datascience | In today's data-driven world, organizations generate and accumulate vast amounts of data from various sources. To make informed decisions and gain valuable insights, it's crucial to integrate this data efficiently. This is where Data Warehouse Integration Solutions come into play. By centralizing and organizing data, t... | qgbscanadainc |

1,915,341 | Skilled worker visa Australia | Explore your options for a Skilled Worker Visa in Australia with Caanwings Consultants. We can help... | 0 | 2024-07-08T07:18:21 | https://dev.to/caanwings001/skilled-worker-visa-australia-47ak | Explore your options for a Skilled Worker Visa in Australia with Caanwings Consultants. We can help you navigate the Point System Australia 2024.

For More Info visit this links

https://caanwings.com/immigration/australia/

https://caanwings.com/

https://caanwings.com/about-us/

https://caanwings.com/immigration/australi... | caanwings001 | |

1,915,342 | The Marriage of Minds: Machine Learning and IoT - Applications and Challenges | The Internet of Things (IoT) has woven itself into the fabric of our lives. From smart thermostats to... | 0 | 2024-07-08T07:18:47 | https://dev.to/fizza_c3e734ee2a307cf35e5/the-marriage-of-minds-machine-learning-and-iot-applications-and-challenges-402n | machinelearning, iot, datascience | The Internet of Things (IoT) has woven itself into the fabric of our lives. From smart thermostats to connected fitness trackers, these devices collect a constant stream of data. But what if we could unlock the true potential of this data? Enter machine learning (ML), the AI technique that learns from data to make pred... | fizza_c3e734ee2a307cf35e5 |

1,915,343 | Thiết kế Website Tại Ninh Thuận Tăng Lợi Nhuận | Tại Ninh Thuận, công ty Terus cung cấp dịch vụ thiết kế website chuyên nghiệp, uy tín và hiệu quả.... | 0 | 2024-07-08T07:19:07 | https://dev.to/terus_technique/thiet-ke-website-tai-ninh-thuan-tang-loi-nhuan-4dco | website, digitalmarketing, seo, terus |

Tại Ninh Thuận, công ty Terus cung cấp [dịch vụ thiết kế website chuyên nghiệp, uy tín và hiệu quả](https://terusvn.com/thiet-ke-website-tai-hcm/). Với nhiều năm kinh nghiệm trong lĩnh vực này, Terus đã trở thành đơ... | terus_technique |

1,915,344 | Oops, I Made a VS Code Extension | Ever had one of those "how did I get here?" moments in coding? Well, buckle up, because I've got a... | 0 | 2024-07-08T07:26:51 | https://dev.to/johnnyfekete/oops-i-made-a-vs-code-extension-477d | ai, vscode, programming, automation | Ever had one of those _"how did I get here?"_ moments in coding? Well, buckle up, because I've got a story for you.

It all started with localization. I was working on my mobile app - [Social AIde](https://apps.apple.com/app/social-aide/id6504584869) _(an AI powered social-media response generator app built in Swift, ... | johnnyfekete |

1,915,345 | Best Jenkins Installation Guide | After setting Up your ubuntu VM and SSH into it via mobaxterm. You can click Here For Details on How... | 0 | 2024-07-08T07:21:49 | https://dev.to/dev-nnamdi/best-jenkins-installation-guide-2jl9 | jenkins, aws, azure, devops | After setting Up your ubuntu VM and SSH into it via mobaxterm.

You can click [Here](https://dev.to/dev-nnamdi/creating-a-vm-instance-on-aws-using-ec2-and-accessing-it-using-mobaxterm-5aho) For Details on How To.

**Step 1: Update Your System:**

```

sudo apt update

sudo apt upgrade -y

```

**Step 2: Install Java:**

``... | dev-nnamdi |

1,915,346 | First Post Here! | Hello, All, I am just checking how the post would look if I had posted an actual blog. This is my... | 0 | 2024-07-08T07:29:51 | https://dev.to/ritooraj/first-post-here-4c4d | cloud, cloudresumechallenge, azure, aws | Hello, All,

I am just checking how the post would look if I had posted an actual blog.

This is my first time here. I was led here by the Azure - Cloud Resume Challenge.

I am very fortunate that I got the CRC, it has introduced several domains which otherwise I would not have the opportunity to touch upon.

I just hope t... | ritooraj |

1,915,347 | Scrape Google Results - Google Scraping Services | iWeb offers the best Google scraping services in the world for scraping Google results data using... | 0 | 2024-07-08T07:24:00 | https://dev.to/iwebscraping/scrape-google-results-google-scraping-services-noi | googlescrapingservices, scrapegoogleresults | iWeb offers the best [Google scraping services](https://www.iwebscraping.com/google-search-result-scraping.php) in the world for scraping Google results data using Python and the Google search API. | iwebscraping |

1,915,348 | Discover Our Bad Bunny Merch on Instagram | Stay up-to-date with the hottest Bad Bunny Merch by following us on Instagram! From exclusive tour... | 0 | 2024-07-08T07:24:25 | https://dev.to/badbunnymerch12/discover-our-bad-bunny-merch-on-instagram-2mpj | badbunnymerch, instagram, badbunny, merchdrop | Stay up-to-date with the hottest Bad Bunny Merch by following us on Instagram! From exclusive tour merchandise to limited edition hoodies and t-shirts, our Instagram account showcases everything a true fan needs. Don’t miss out on our latest posts and stories highlighting new arrivals and special offers!

https://www.in... | badbunnymerch12 |

1,915,386 | .NET Digest #1 | Welcome to our first news and event digest for the .NET world! The C# developers from PVS-Studio have... | 0 | 2024-07-08T08:07:37 | https://dev.to/anogneva/net-digest-1-285m | dotnet, csharp, digest, learning | Welcome to our first news and event digest for the \.NET world\! The C\# developers from PVS\-Studio have gathered the most interesting and useful insights for you to keep you up to date with the latest trends and developments\. Let's get started\!

... | anogneva |

1,915,349 | Subscribe to Our YouTube Channel for Bad Bunny Merch Reviews! | Check out our YouTube channel for in-depth reviews and unboxing videos of the latest Bad Bunny Merch.... | 0 | 2024-07-08T07:26:36 | https://dev.to/badbunnymerch12/subscribe-to-our-youtube-channel-for-bad-bunny-merch-reviews-3ood | badbunnymerch, youtube, merchreviews, badbunny | Check out our YouTube channel for in-depth reviews and unboxing videos of the latest Bad Bunny Merch. We cover everything from new arrivals to rare collector’s items. Subscribe now to stay informed and never miss a beat on the coolest Bad Bunny gear!

https://www.youtube.com/channel/UCPvO03462QM5iwQ53WeX11Q

| badbunnymerch12 |

1,915,350 | Thiết kế Website Tại Phú Thọ Tăng Doanh Thu | Lợi ích của việc thiết kế website tại Phú Thọ chuẩn SEO Cầu nối giữa công ty và khách hàng: Một... | 0 | 2024-07-08T07:27:18 | https://dev.to/terus_technique/thiet-ke-website-tai-phu-tho-tang-doanh-thu-k3i | website, digitalmarketing, seo, terus |

Lợi ích của việc thiết kế website tại Phú Thọ chuẩn SEO

Cầu nối giữa công ty và khách hàng: Một website chuyên nghiệp sẽ là cầu nối hiệu quả, giúp khách hàng dễ dàng tìm kiếm, tiếp cận và tương tác với doanh nghiệp... | terus_technique |

1,915,351 | Looking for guidance in ios development | Hi, I am 3rd year engineering student, interested to pursue carrier in ios development. Wanted to... | 0 | 2024-07-08T07:28:07 | https://dev.to/akhilesh_64/looking-for-guidance-in-ios-development-4i5 | Hi, I am 3rd year engineering student, interested to pursue carrier in ios development. Wanted to know the opportunities, guidance and demand in India? Can anyone help me with it | akhilesh_64 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.