id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,915,495 | Przegląd technologii strumieniowego przesyłania danych | Przegląd technologii strumieniowego przesyłania danych, ich przypadków użycia, architektury i zalet. | 0 | 2024-07-08T09:40:21 | https://dev.to/pubnub-pl/przeglad-technologii-strumieniowego-przesylania-danych-5d9a | Zdolność do przetwarzania dużych ilości danych (big data) w czasie rzeczywistym stała się kluczowa dla wielu organizacji i właśnie w tym miejscu pojawiają się [technologie strumieniowego przesyłania danych](https://www.pubnub.com/solutions/data-streaming/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&... | pubnubdevrel | |

1,915,496 | Composite Transformation in Computer Graphics | In the world of computer graphics, creating lifelike animations, realistic models, and immersive... | 0 | 2024-07-08T09:40:43 | https://dev.to/pushpendra_sharma_f1d2cbe/composite-transformation-in-computer-graphics-7bf | webdev, graphics, computerscience, techtalks | In the world of computer graphics, creating lifelike animations, realistic models, and immersive environments often involves complex manipulations of objects. One of the fundamental techniques that makes these tasks more manageable is composite transformation. This powerful concept allows us to combine multiple basic t... | pushpendra_sharma_f1d2cbe |

1,915,497 | Partner with Words Doctorate for Computer Science Research Papers in Prague | Writing excellent research papers is essential for success in the dynamic and often changing field of... | 0 | 2024-07-08T09:41:03 | https://dev.to/words_doctorate/partner-with-words-doctorate-for-computer-science-research-papers-in-prague-2fog | Writing excellent research papers is essential for success in the dynamic and often changing field of computer science, both academically and professionally. It can be difficult for researchers and students in Prague to locate trustworthy assistance for their scholarly work. Words Doctorate, on the other hand, offers e... | words_doctorate | |

1,915,498 | Các Phần Mềm Thiết Kế UI/ UX Tốt Nhất 2024 | Để hỗ trợ quá trình thiết kế UI/UX, có nhiều phần mềm có sẵn. Terus sẽ giới thiệu cho bạn 10 công cụ... | 0 | 2024-07-08T09:41:11 | https://dev.to/terus_technique/cac-phan-mem-thiet-ke-ui-ux-tot-nhat-2024-2hp2 | webiste, digitalmarketing, seo, terus |

Để hỗ trợ quá trình [thiết kế UI/UX](https://terusvn.com/thiet-ke-website-tai-hcm/), có nhiều phần mềm có sẵn. Terus sẽ giới thiệu cho bạn 10 công cụ thiết kế UI/UX phổ biến và được sử dụng rộng rãi:

Figma: Một nền... | terus_technique |

1,915,499 | Hadoop/Spark is too heavy, esProc SPL is light | With the advent of the era of big data, the amount of data continues to grow. In this case, it is... | 0 | 2024-07-08T09:41:40 | https://dev.to/esproc_spl/hadoopspark-is-too-heavy-esproc-spl-is-light-4bge | hadoop, spark, heavy, development | With the advent of the era of big data, the amount of data continues to grow. In this case, it is difficult and costly to expand the capacity of database running on a traditional small computer, making it hard to support business development. In order to cope with this problem, many users begin to turn to the distribut... | esproc_spl |

1,915,500 | Why React.js is the Optimal Choice for Website Development: A Technical Perspective | In the realm of web development, selecting the appropriate framework or library can significantly... | 0 | 2024-07-08T09:41:56 | https://dev.to/ngocninh123/why-reactjs-is-the-optimal-choice-for-website-development-a-technical-perspective-m07 | In the realm of web development, selecting the appropriate framework or library can significantly influence the efficiency and scalability of your projects. React.js, developed by Facebook, has established itself as a powerful tool among developers and enterprises. Here are ten technical reasons why React.js is the opt... | ngocninh123 | |

1,915,503 | Surgery for Weight Loss at Meyash Hospital | Lose Weight and Change Your Life at Meyash Hospital Obesity and related complications... | 0 | 2024-07-08T09:43:52 | https://dev.to/yashpal_singla_60d552193c/surgery-for-weight-loss-at-meyash-hospital-45mf | hospital, doctor |

### Lose Weight and Change Your Life at Meyash Hospital

Obesity and related complications can be effectively addressed through weight loss surgery, commonly referred to as bariatric surgery. This life-altering pr... | yashpal_singla_60d552193c |

1,915,504 | Home Decor Market Innovations in Eco-Friendly Home Furnishings | Market Introduction & Size Analysis The global home decor market is projected to grow at a... | 0 | 2024-07-08T09:43:58 | https://dev.to/ganesh_dukare_34ce028bb7b/home-decor-market-innovations-in-eco-friendly-home-furnishings-lmd | Market Introduction & Size Analysis

The global home decor market is projected to grow at a compound annual growth rate (CAGR) of 6.4%, expanding from US$215.9 billion in 2023 to US$333.4 billion by 2030. Spanning a diverse array of products, from furnishings to lighting, textiles, and decorative pieces, this industry ... | ganesh_dukare_34ce028bb7b | |

1,915,505 | 3090 vs 4080: Which One Should I Choose? | Introduction When you're stuck choosing between the GeForce RTX 3090 and RTX 4080, it's... | 0 | 2024-07-08T11:05:03 | https://dev.to/novita_ai/3090-vs-4080-which-one-should-i-choose-3bo5 | ## Introduction

When you're stuck choosing between the GeForce RTX 3090 and RTX 4080, it's super important to know what sets them apart. These Nvidia graphics cards are top-notch when it comes to performance and cool features. They each have their own perks, from how much power they use up to how well they handle games... | novita_ai | |

1,915,507 | Using TypeScript in Node.js projects | TypeScript is tremendously helpful while developing Node.js applications. Let's see how to configure... | 0 | 2024-07-08T09:46:58 | https://douglasmoura.dev/en-US/using-typescript-in-node-js-projects | typescript, javascript, node | [TypeScript](https://www.typescriptlang.org/) is tremendously helpful while developing Node.js applications. Let's see how to configure it for a seamless development experience.

## Setting up TypeScript

First, we need to install TypeScript. We can do this by running the following command:

```bash

npm i -D typescript... | douglasdemoura |

1,915,508 | データ・ストリーミング技術の概要 | データ・ストリーミング・テクノロジーの概要、使用例、アーキテクチャと利点 | 0 | 2024-07-08T09:47:05 | https://dev.to/pubnub-jp/detasutoriminguji-shu-nogai-yao-3i3n | 大量のデータ(ビッグデータ)をリアルタイムで処理する能力は、多くの組織にとって極めて重要になっており、そこで[データ・ストリーミング・テクノロジーの](https://www.pubnub.com/solutions/data-streaming/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ja)出番となる。これらのテクノロジーにより、大量のデータをリアルタイムまたはほぼリアルタイムで処理することが可能になり、企業は即座に洞察を得て、一刻を争うデータ主導の意思決定を行うことができる。

これらのテクノロジーの核心は、イベント... | pubnubdevrel | |

1,915,509 | useEffect() or Event handler? | Hi there! I just wanted some advice here. Basically I have a To-Do list application that uses a... | 0 | 2024-07-08T09:48:54 | https://dev.to/sanskari_patrick07/useeffect-or-event-handler-1i09 | react, doubt, javascript, beginners | Hi there!

I just wanted some advice here. Basically I have a To-Do list application that uses a Postgres database and an express backend. I just have one table that stores the name, id, whether completed or not, and a note related to the task in it.

를 실시간으로 처리하는 능력이 중요해지면서 [데이터 스트리밍 기술이](https://www.pubnub.com/solutions/data-streaming/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=ko) 등장했습니다. 이러한 기술을 사용하면 대량의 데이터를 생성되는 즉시 또는 거의 실시간으로 처리할 수 있으므로 기업은 즉각적인 인사이트를 얻고 시간에 민감한 데이터 기반 의사 결정을 내릴 수 있습니다.

이러한 기술의 핵심에는 이벤트 스... | pubnubdevrel | |

1,915,512 | How to use the Context API, What is Context API? | this post is written for someone who want to understand in simple terms what the Context API is and... | 0 | 2024-07-08T09:53:42 | https://dev.to/negusnati/how-to-use-the-context-api-what-is-context-api-4455 | webdev, react, contextapi, javascript | this post is written for someone who want to understand in simple terms what the Context API is and how to fit it in your app to make your life better.

The gist of it is prop drilling bad, prop drilling messy, prop drilling boring, prop drilling not classy, did i say prop drilling bad? anyway. so just have a slick way... | negusnati |

1,915,513 | Boost Your GPU Utilization with These Tips | Key Highlights GPU utilization refers to the percentage of a graphics card’s processing... | 0 | 2024-07-08T09:55:40 | https://dev.to/novita_ai/boost-your-gpu-utilization-with-these-tips-3p1h | ## Key Highlights

- GPU utilization refers to the percentage of a graphics card’s processing power being used at a particular time. It is important for optimizing performance and resource allocation in GPU-intensive tasks.

- Monitoring GPU utilization can help identify bottlenecks, refine performance, save costs in cl... | novita_ai | |

1,915,514 | Запчасти аксиально-поршневых гидромоторов и гидронасосов | Аксиально-поршневые гидромоторы и гидронасосы – это ключевые компоненты гидравлических систем,... | 0 | 2024-07-08T09:55:48 | https://dev.to/profikl/zapchasti-aksialno-porshnievykh-ghidromotorov-i-ghidronasosov-3jjb | Аксиально-поршневые гидромоторы и гидронасосы – это ключевые компоненты гидравлических систем, которые используются в различных отраслях промышленности, включая строительство, сельское хозяйство и машиностроение. Долговечность и надежность этих устройств зависят от качества используемых запчастей. Компания ООО «ЗСК» пр... | profikl | |

1,915,515 | Kitchen Designs Wakefield - Formosa Bathrooms & Kitchen | At Formosa Bathrooms & Kitchen, we take pride in offering bespoke kitchen designs in Wakefield... | 0 | 2024-07-08T09:57:00 | https://dev.to/kitchendesigns/kitchen-designs-wakefield-formosa-bathrooms-kitchen-3dp2 | kitchendesigns, kitchensupply, kitcheninstallation | At Formosa Bathrooms & Kitchen, we take pride in offering bespoke [**kitchen designs in Wakefield**](https://formosabathrooms.co.uk/kitchen-designs-wakefield/) that perfectly blend functionality with aesthetic appeal. Our commitment lies in creating spaces that enhance your home's value and elevate your everyday living... | kitchendesigns |

1,915,516 | Überblick über Daten-Streaming-Technologien | Ein Überblick über Daten-Streaming-Technologien, ihre Anwendungsfälle, ihre Architektur und ihre Vorteile | 0 | 2024-07-08T09:57:09 | https://dev.to/pubnub-de/uberblick-uber-daten-streaming-technologien-1kla | Die Fähigkeit, große Datenmengen (Big Data) in Echtzeit zu verarbeiten, ist für viele Unternehmen von entscheidender Bedeutung geworden, und hier kommen die [Daten-Streaming-Technologien](https://www.pubnub.com/solutions/data-streaming/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=de) ins... | pubnubdevrel | |

1,915,517 | Lit and State Management with Zustand | Originally posted on my blog. I come across a lot of developers who think that web components are... | 0 | 2024-07-08T10:01:52 | https://dev.to/hasanirogers/lit-and-state-management-with-zustand-kf | lit, zustand, webcomponents | > Originally posted on [my blog](https://blog.hasanirogers.me/2024/07/lit-and-state-management-with-zustand.html).

I come across a lot of developers who think that web components are for trivial things like buttons and input components. The reality is that you can build an entire application with web components. One o... | hasanirogers |

1,915,518 | Exploring the Latest JavaScript Trends: What You Need to Know | Let's Know about it, In the ever-evolving world of web development, staying updated with the latest... | 0 | 2024-07-08T09:58:39 | https://dev.to/ayushh/exploring-the-latest-javascript-trends-what-you-need-to-know-b4a | webdev, javascript, beginners, programming |

Let's Know about it,

In the ever-evolving world of web development, staying updated with the latest trends and technologies is crucial to stay competitive and deliver cutting-edge solutions. JavaScript, as the backbone of web development, continues to evolve rapidly, introducing new concepts and paradigms that stream... | ayushh |

1,915,523 | Генерация контента с помощью нейросетей | Искусственный интеллект (ИИ) и нейросети стремительно меняют подход к созданию контента. Сервисы,... | 0 | 2024-07-08T09:59:58 | https://dev.to/profikl/gienieratsiia-kontienta-s-pomoshchiu-nieirosietiei-1m52 | Искусственный интеллект (ИИ) и нейросети стремительно меняют подход к созданию контента. Сервисы, такие как AIGolova, предлагают современные решения для автоматической генерации текстов, изображений и других материалов, существенно упрощая работу контент-менеджеров, SEO-специалистов и владельцев сайтов.

Принципы работ... | profikl | |

1,915,524 | Vue d'ensemble des technologies de flux de données | Une vue d'ensemble des technologies de flux de données, de leurs cas d'utilisation, de leur architecture et de leurs avantages. | 0 | 2024-07-08T10:02:10 | https://dev.to/pubnub-fr/vue-densemble-des-technologies-de-flux-de-donnees-3m8k | La capacité à traiter de gros volumes de données (big data) en temps réel est devenue cruciale pour de nombreuses organisations, et c'est là que les [technologies de flux de données](https://www.pubnub.com/solutions/data-streaming/?utm_source=devto&utm_medium=syndication&utm_campaign=off_domain&utm_content=fr) entrent ... | pubnubdevrel | |

1,915,525 | 7 Essential Tips for Preparing Your Small Business for the Upcoming Holiday Season | **1. Have a promotional plan in place. **The upcoming holiday season is a crucial time for small... | 0 | 2024-07-08T10:02:20 | https://dev.to/lyong_clois_b75b512a48005/7-essential-tips-for-preparing-your-small-business-for-the-upcoming-holiday-season-30f5 | **1. Have a promotional plan in place.

**The upcoming holiday season is a crucial time for small businesses, as it presents immense opportunities for increased sales and growth. However, without proper preparation, it can also be a time of stress and missed opportunities. In order to make the most out of this holiday s... | lyong_clois_b75b512a48005 | |

1,915,526 | Reporting inappropriate content or behavior on Dev.to? | To report inappropriate content or behavior on Dev.to, follow these steps: Identify the Problem:... | 0 | 2024-07-08T10:03:00 | https://dev.to/joun_wick/reporting-inappropriate-content-or-behavior-on-devto-5fdb | devto | To report inappropriate content or behavior on Dev.to, follow these steps:

**Identify the Problem:** Locate the content or behavior that you find inappropriate, whether it's an article, comment, or user interaction.

**Use the Report Feature:** Click the three dots (more options) next to the content or comment. Select... | joun_wick |

1,915,527 | Loneliness and Liberation: The Ls of Remote Work | I’ve been in remote work for over a decade and experienced a lot that it has to offer. I enjoy the... | 0 | 2024-07-08T10:04:20 | https://dev.to/martinbaun/loneliness-and-liberation-the-ls-of-remote-work-31fc | devops, productivity, career, startup |

I’ve been in remote work for over a decade and experienced a lot that it has to offer. I enjoy the freedom to work from anywhere in the world. I've had months where I've been in more than two different countries and sometimes continents. This sounds fun and it is, but some demerits exist with it. Everyone highlights t... | martinbaun |

1,915,529 | Daten von Twitter ohne Kodierung extrahieren: Twitter Scraper | In diesem Artikel erfahren Sie, wie Sie Twitter-Daten wie Tweets, Kommentare, Hashtags, Bilder... | 0 | 2024-07-08T10:06:18 | https://dev.to/emilia/daten-von-twitter-ohne-kodierung-extrahieren-twitter-scraper-11pn | webdev, lowcode, python, twitter | In diesem Artikel erfahren Sie, wie Sie Twitter-Daten wie Tweets, Kommentare, Hashtags, Bilder scrapen oder herunterladen können. Es gibt eine einfache Methode, womit Sie innerhalb von 5 Minuten einen Twitter Scraper erstellen können, ohne API, Python oder beliebige Kodierung verwenden zu müssen.

## Ist es legal, Twit... | emilia |

1,915,530 | How to Decide Between VM and Containers for Your Infrastructure | In the era of rapid AI development, businesses face numerous choices when it comes to building and... | 0 | 2024-07-08T10:06:52 | https://dev.to/novita_ai/how-to-decide-between-vm-and-containers-for-your-infrastructure-o21 | In the era of rapid AI development, businesses face numerous choices when it comes to building and deploying AI applications. Virtual Machines (VMs) and containers, as two highly favored technologies, each possess unique advantages and limitations. This article delves into the differences between these two and offers s... | novita_ai | |

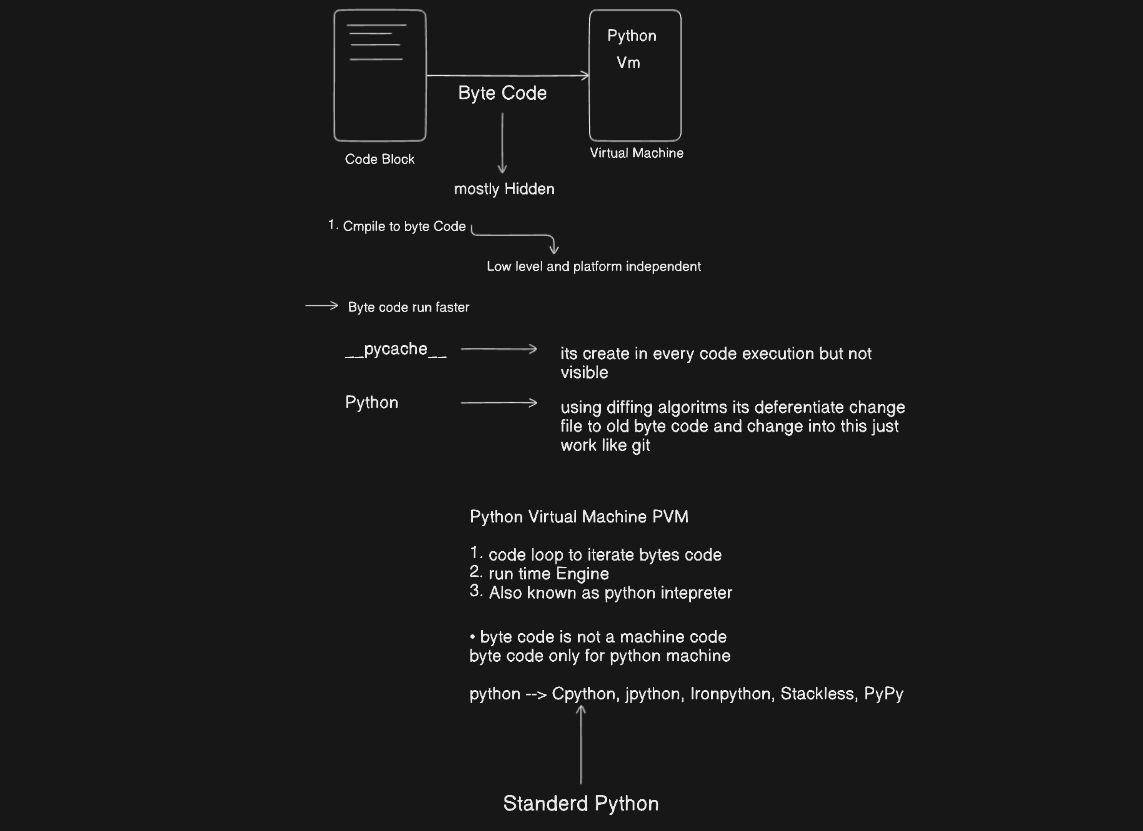

1,915,531 | Inner Working of python | Imagine Python as a big, friendly chef who cooks your code into something a computer can... | 0 | 2024-07-08T10:08:09 | https://dev.to/itsrajcode/inner-working-of-python-4b82 | python, webdev, programming, beginners |

#Imagine Python as a big, friendly chef who cooks your code into something a computer can understand. Let's break down how this happens:

1. **You Give the Recipe:** You write your Python code, like a recipe with ... | itsrajcode |

1,915,532 | How Natural Language Processing can improve your business? Learn Now! | Natural Language Processing, or NLP, is changing how businesses interact with their customers. This... | 0 | 2024-07-08T10:08:25 | https://dev.to/rutvi_gunjariya_4d44bc3d0/how-natural-language-processing-can-improve-your-business-learn-now-1k30 |

Natural Language Processing, or NLP, is changing how businesses interact with their customers. This formidable tool, a subset of artificial intelligence, is assisting businesses in producing more effective and engaging consumer experiences. With a dash of humor to keep things fresh, let's examine how NLP is changing c... | rutvi_gunjariya_4d44bc3d0 | |

1,915,533 | Explore how urbanization trends are shaping property management strategies in Saudi Arabian cities | In recent years, urbanization trends have been rapidly reshaping the landscape of property management... | 0 | 2024-07-08T10:09:10 | https://dev.to/kishore_babu_8ebc566603cc/explore-how-urbanization-trends-are-shaping-property-management-strategies-in-saudi-arabian-cities-m9d | In recent years, urbanization trends have been rapidly reshaping the landscape of property management strategies in Saudi Arabian cities. As more and more individuals migrate to urban areas in search of better opportunities, the demand for housing and commercial space has skyrocketed. This influx of people has forced p... | kishore_babu_8ebc566603cc | |

1,915,534 | What is Wix Development: A Comprehensive Guide in 2024 | Wix has emerged as a popular platform for creating stunning, functional websites without the need... | 0 | 2024-07-08T10:10:19 | https://dev.to/techpulzz/what-is-wix-development-a-comprehensive-guide-in-2024-ndm | webdev, website, beginners |

Wix has emerged as a popular platform for creating stunning, functional websites without the need for extensive coding knowledge. As we step into 2024, the capabilities and features of Wix continue to expand, making... | techpulzz |

1,915,535 | Паллетные борта | Паллетные борта являются важным элементом для организации складирования и транспортировки различных... | 0 | 2024-07-08T10:11:19 | https://dev.to/__1afb04c3574b0d701/pallietnyie-borta-1l50 | Паллетные борта являются важным элементом для организации складирования и транспортировки различных грузов. Они представляют собой деревянные или металлические конструкции, которые устанавливаются на поддоны для создания бортиков, предотвращающих сдвиг и повреждение товара. На сайте "МосТара" представлен широкий ассорт... | __1afb04c3574b0d701 | |

1,915,536 | Understanding and Managing Crawl Budget Issues on Your WordPress Website | As website owners, especially those running WordPress sites, we often encounter technical challenges... | 0 | 2024-07-08T10:12:02 | https://dev.to/markadesence/understanding-and-managing-crawl-budget-issues-on-your-wordpress-website-10fn | web, website, developer, webdev | As website owners, especially those running WordPress sites, we often encounter technical challenges that impact our site's visibility and performance on search engines. One such critical issue is managing the crawl budget effectively. Crawl budget refers to the number of pages search engines crawl and index on your si... | markadesence |

1,915,538 | So scrapen Sie Crunchbase-Daten in Excel | Quelle:https://www.octoparse.de/blog/wie-man-crunchbase-daten-in-excel-scrappt?utm_source=twitter&... | 0 | 2024-07-08T10:14:16 | https://dev.to/emilia/so-scrapen-sie-crunchbase-daten-in-excel-2aig | crunchbase, python, ai, powerfuldevs | Quelle:https://www.octoparse.de/blog/wie-man-crunchbase-daten-in-excel-scrappt?utm_source=twitter&utm_medium=social&utm_campaign=hannaq2&utm_content=post

Crunchbase ist eine wertvolle Quelle für Einblicke in Unternehmen und Investoren. Es ist die beste Wahl für diejenigen, die nach Informationen über Organisationen in... | emilia |

1,915,539 | Unlocking the Power of SAP Project Systems (PS): A Comprehensive Guide | In today's fast-paced business environment, managing projects efficiently is crucial for success. SAP... | 0 | 2024-07-08T10:14:46 | https://dev.to/mylearnnest/unlocking-the-power-of-sap-project-systems-ps-a-comprehensive-guide-4g2k | In today's fast-paced business environment, managing projects efficiently is crucial for success. [SAP Project Systems (PS)](https://www.mylearnnest.com/best-sap-ps-course-in-hyderabad/) is a powerful module designed to help organizations plan, execute, and monitor projects of all sizes. Whether you are working in cons... | mylearnnest | |

1,919,221 | Advanced SCSS Mixins and Functions | Introduction: SCSS stands for Sassy CSS and it is a superset of CSS which provides additional... | 0 | 2024-07-11T04:18:03 | https://dev.to/tailwine/advanced-scss-mixins-and-functions-252f | Introduction:

SCSS stands for Sassy CSS and it is a superset of CSS which provides additional features and functionalities to the traditional CSS. One of the key features of SCSS is the ability to create advanced mixins and functions which makes writing and managing CSS code much easier. Here, we will discuss the adva... | tailwine | |

1,915,540 | Python: print() methods | Hi All, Today i learnt about python print statement. Some of the functionalities are, sep is an... | 0 | 2024-07-08T10:17:10 | https://dev.to/syedjafer/python-print-methods-2847 | python, programming, parottasalna | Hi All,

Today i learnt about python print statement.

Some of the functionalities are,

1. sep is an argument to set a character which separates the words inside print.

2. printing a number wont always requires a quotation mark.

```python

print(1)

```

| syedjafer |

1,915,541 | Официальный сайт и рабочее зеркало казино Ezcash | zcash – это популярное онлайн-казино, предлагающее широкий выбор азартных игр, доступных как на... | 0 | 2024-07-08T10:17:46 | https://dev.to/__1afb04c3574b0d701/ofitsialnyi-sait-i-rabochieie-zierkalo-kazino-ezcash-3bmd | zcash – это популярное онлайн-казино, предлагающее широкий выбор азартных игр, доступных как на официальном сайте, так и через рабочие зеркала. Платформа привлекает игроков своими бонусами, удобным интерфейсом и высоким уровнем безопасности.

Официальный сайт Ezcash

Официальный сайт Ezcash (ezcash.city) предлагает след... | __1afb04c3574b0d701 | |

1,915,542 | Maximize Your Marketing, Sales, and Support with WhatsApp Automation | Introduction In today’s fast-paced digital world, effective communication is key to business success.... | 0 | 2024-07-08T10:17:49 | https://dev.to/manikandan2347/maximize-your-marketing-sales-and-support-with-whatsapp-automation-73j | software, startup, discuss, news | **Introduction**

In today’s fast-paced digital world, effective communication is key to business success. With WhatsApp’s widespread popularity, sending bulk messages has become a crucial strategy for reaching a broad audience quickly. BizMagnets offers an unparalleled WhatsApp Business Suite that ensures your messages... | manikandan2347 |

1,915,543 | Stay Ahead of the Curve: Why Divsly's UTM Builder Is a Marketer's Best Friend | In today's digital landscape, effective marketing isn't just about reaching your audience—it's about... | 0 | 2024-07-08T10:17:58 | https://dev.to/divsly/stay-ahead-of-the-curve-why-divslys-utm-builder-is-a-marketers-best-friend-5e76 | utm, utmbuilder, utmtracking, utmparameters | In today's digital landscape, effective marketing isn't just about reaching your audience—it's about understanding what works and what doesn't. This understanding is powered by data, and one crucial tool that helps marketers gather this data effectively is [Divsly](https://divsly.com/?utm_source=blog&utm_medium=blog+po... | divsly |

1,915,544 | 10 Questions to Ask Before Hiring Website Developers for Small Business | A well-designed website for a small business is crucial in this online World where audiences search... | 0 | 2024-07-08T10:18:18 | https://dev.to/baselineit/10-questions-to-ask-before-hiring-website-developers-for-small-business-3p5j | website, development, company, mohali | A well-designed website for a small business is crucial in this online World where audiences search for everything online rather than physically. The website represents your business online, reflects your brand's image, and engages potential customers. So hiring the best website developers for your small business is ve... | baselineit |

1,915,545 | AI Answer Questions Made Easy: Practical Tips for Success | Introduction Have you ever wondered how AI can understand and answer questions just like a... | 0 | 2024-07-08T10:42:28 | https://dev.to/novita_ai/ai-answer-questions-made-easy-practical-tips-for-success-4810 | llm | ## Introduction

Have you ever wondered how AI can understand and answer questions just like a human? What are the underlying technologies that make this possible? How to evaluate the performances of AI answering questions? With what techniques can AI's performance be enhanced? Last but not least, what are the top [**LL... | novita_ai |

1,915,546 | Facebook Pixel Là Gì? Những Lợi Ích Mà Facebook Pixel Mang Lại | Facebook Pixel là một mã theo dõi được cung cấp bởi Facebook để giúp các doanh nghiệp và cá nhân theo... | 0 | 2024-07-08T10:18:39 | https://dev.to/terus_digitalmarketing/facebook-pixel-la-gi-nhung-loi-ich-ma-facebook-pixel-mang-lai-2jh7 | webdev, website, terus, teruswebsite | Facebook Pixel là một mã theo dõi được cung cấp bởi Facebook để giúp các doanh nghiệp và cá nhân theo dõi, đo lường và tối ưu hóa hoạt động quảng cáo trên nền tảng này. Nó là một công cụ vô cùng hữu ích cho các nhà quảng cáo muốn tối ưu hóa hiệu quả của các chiến dịch quảng cáo trên Facebook.

Facebook Pixel hoạt động ... | terus_digitalmarketing |

1,915,560 | Best Real Estate Agent In Medford, NJ | Find the best real estate agent in Medford, NJ to buy or sell your house fast. Our local real estate... | 0 | 2024-07-08T10:20:20 | https://dev.to/shiblirealtor/best-real-estate-agent-in-medford-nj-3epp | realestate, realestateagent, sellyourhouse, home | Find the best **[real estate agent in Medford, NJ](https://www.shiblirealtor.com/best-real-estate-agent-in-medford-nj/)** to buy or sell your house fast. Our local real estate agents are experts in the Medford market to buy or sell your house. Awad Shibli firmly believes in honesty and accessibility for all my clients.... | shiblirealtor |

1,915,562 | Кровельные и фасадные стройматериалы в Москве и Московской области магазине Партнер Строй | "Партнер Строй" – ведущий магазин строительных материалов в Москве и Московской области,... | 0 | 2024-07-08T10:21:44 | https://dev.to/__1afb04c3574b0d701/krovielnyie-i-fasadnyie-stroimatierialy-v-moskvie-i-moskovskoi-oblasti-maghazinie-partnier-stroi-4g70 | "Партнер Строй" – ведущий магазин строительных материалов в Москве и Московской области, специализирующийся на кровельных и фасадных стройматериалах. На сайте магазина представлен широкий ассортимент продукции, которая удовлетворит потребности как частных застройщиков, так и профессиональных строителей. В этом обзоре р... | __1afb04c3574b0d701 | |

1,915,569 | LeetCode Day28 Dynamic Programming Part1 | 509. Fibonacci Number The Fibonacci numbers, commonly denoted F(n) form a sequence, called... | 0 | 2024-07-08T10:24:25 | https://dev.to/flame_chan_llll/leetcode-day28-dynamic-programming-part1-17o0 | leetcode, java, algorithms | # 509. Fibonacci Number

The Fibonacci numbers, commonly denoted F(n) form a sequence, called the Fibonacci sequence, such that each number is the sum of the two preceding ones, starting from 0 and 1. That is,

F(0) = 0, F(1) = 1

F(n) = F(n - 1) + F(n - 2), for n > 1.

Given n, calculate F(n).

Example 1:

Input: n = ... | flame_chan_llll |

1,915,564 | Wireless Debugging in Android | An interesting and useful feature in android is wireless debugging. This helps us achieve whatever we... | 0 | 2024-07-08T10:22:12 | https://dev.to/dilip_chandar_58fce2b3b7b/wireless-debugging-in-android-c3 | android, androiddev | An interesting and useful feature in android is wireless debugging. This helps us achieve whatever we can do through USB cable like debugging, catching logs in logcat by unplugging our device once wireless connection is established.

Before we establish a wireless connection, we have to keep our device connected throug... | dilip_chandar_58fce2b3b7b |

1,915,565 | Night Fat Burn harnesses the potential of nature’s finest ingredients | Finally, ACHIEVE YOUR DREAM BODY WHILE YOU SLEEP Are you tired of battling with stubborn fat that... | 0 | 2024-07-08T10:22:50 | https://dev.to/erion_kodra_58592dd96e077/night-fat-burn-harnesses-the-potential-of-natures-finest-ingredients-ocf | Finally,

ACHIEVE YOUR DREAM BODY WHILE YOU SLEEP

Are you tired of battling with stubborn fat that refuses to budge? Say goodbye to endless diets and grueling workouts that yield minimal results. [Night Fat Burn](https://besthealthoffers.com/) is the revolutionary diet product that will transform your weight loss journe... | erion_kodra_58592dd96e077 | |

1,915,566 | How to Choose the Right Health Insurance Add-Ons for Your Plan | Health insurance planning is a financial arrangement in which people or groups pay premiums to an... | 0 | 2024-07-08T10:23:42 | https://dev.to/aakash_deshwal/how-to-choose-the-right-health-insurance-add-ons-for-your-plan-2c69 | Health insurance planning is a financial arrangement in which people or groups pay premiums to an insurance company in exchange for medical expense coverage. This covers medical expenses for disease or damage through contracts between people or groups and insurers. It covers expenses including hospital stays, operation... | aakash_deshwal | |

1,915,567 | Best Real Estate Agent In Marlton, NJ | Find the best real estate agent in Marlton, NJ to buy or sell your house fast. Our local real estate... | 0 | 2024-07-08T10:23:54 | https://dev.to/shiblirealtor/best-real-estate-agent-in-marlton-nj-21h0 | realestate, realestateagent, sellyourhouse, home | Find the best **[real estate agent in Marlton, NJ](https://www.shiblirealtor.com/best-real-estate-agent-in-marlton-nj/)** to buy or sell your house fast. Our local real estate agents are experts in the Marlton market to buy or **[sell your house](https://www.shiblirealtor.com/sell-your-house/)**. Awad Shibli firmly bel... | shiblirealtor |

1,915,568 | Unlocking Secure Remote Access with SSH Key Login: A Comprehensive Guide | In the dynamic realm of the digital age, remote access to servers has become an indispensable part of... | 0 | 2024-07-08T10:24:06 | https://dev.to/novita_ai/unlocking-secure-remote-access-with-ssh-key-login-a-comprehensive-guide-10bj | In the dynamic realm of the digital age, remote access to servers has become an indispensable part of daily operations. However, this convenience comes with an inherent security risk: traditional password authentication methods. Password-based systems are inherently vulnerable to brute-force attacks, password reuse, an... | novita_ai | |

1,915,570 | 20+ Font Chữ Sang Trọng, Tinh Tế Dành Cho Dân Designer | Trong thế giới thiết kế nói chung và thiết kế website nói riêng, việc lựa chọn font chữ thích hợp... | 0 | 2024-07-08T10:27:40 | https://dev.to/terus_technique/20-font-chu-sang-trong-tinh-te-danh-cho-dan-designer-2i4p | website, digitalmarketing, seo, terus |

Trong thế giới thiết kế nói chung và [thiết kế website](https://terusvn.com/thiet-ke-website-tai-hcm/) nói riêng, việc lựa chọn font chữ thích hợp đóng vai trò vô cùng quan trọng. Một trong những loại font chữ được... | terus_technique |

1,915,571 | Navigating the Seas of Opportunity: Understanding the Commodity Market | In the vast ocean of global finance, the commodity market stands out as a unique and indispensable... | 0 | 2024-07-08T10:28:00 | https://dev.to/spacefreestudy/navigating-the-seas-of-opportunity-understanding-the-commodity-market-4a5o | commoditymarket | In the vast ocean of global finance, the commodity market stands out as a unique and indispensable entity. Comprising a diverse array of raw materials and primary goods, ranging from precious metals like gold and silver to agricultural products like wheat and coffee, the commodity market serves as the bedrock of our mo... | spacefreestudy |

1,915,573 | Log In or Log Out Registered Users using php | In our previous project, we learned how to register a new account on a website by providing an email... | 0 | 2024-07-08T10:30:06 | https://dev.to/ghulam_mujtaba_247/log-in-or-log-out-registered-users-using-php-3g2o | webdev, beginners, programming, learning | In our previous project, we learned how to register a new account on a website by providing an email and password. However, we stored the password in the database in plain text, which is not secure. Now, we will learn how to hash the password using BCRYPT before storing it in the database.

```php

$db->query('INSERT IN... | ghulam_mujtaba_247 |

1,915,574 | How to Monitor your AWS EC2/Workspace with Datadog | -1- Log in to your AWS and Datadog accounts. In this example, I will configure AWS Workspace. If you... | 0 | 2024-07-08T10:30:19 | https://dev.to/shrihariharidass/how-to-monitor-your-aws-ec2workspace-with-datadog-15jd | datadog, monitoring, devops, aws | -1- Log in to your AWS and Datadog accounts. In this example, I will configure AWS Workspace. If you have an EC2 instance, you can follow these steps too. My OS is Ubuntu.

-2-. After deploying an EC2 instance or logging into an AWS Workspace, proceed to update the machine.

-3-. Next, navigate to Datadog → Integration... | shrihariharidass |

1,915,575 | Getting started with Tailwind + Daisy UI in Angular 18 | Installation Visual Studio... | 0 | 2024-07-08T13:24:45 | https://dev.to/jplazaro/getting-started-with-tailwind-daisy-ui-in-angular-18-e53 | angular, tailwindcss, daisyui, typescript |

## Installation

1. Visual Studio Code

https://code.visualstudio.com/

2. NodeJS

https://nodejs.org/en

3. Angular CLI

npm install @angular/cli

## Creating Angular App

1. Create your own project folder via cmd / manually create it.

2. Open that folder in visual studio code

3. Open new VScode terminal

4. In cmd / Te... | jplazaro |

1,915,576 | Llama 3 vs ChatGPT 4: A Comparison Guide | Introduction Today, we delve into two giants in the realm of generative AI: Llama 3 and... | 0 | 2024-07-08T10:42:36 | https://dev.to/novita_ai/llama-3-vs-chatgpt-4-a-comparison-guide-974 | llm | ## Introduction

Today, we delve into two giants in the realm of generative AI: Llama 3 and ChatGPT 4. How do these models differ in architecture, performance, and real-world applications? Join us as we explore their capabilities, strengths, and the future they promise.

## Overview of Generative AI Models: Llama 3 and ... | novita_ai |

1,915,577 | Sans-serif Là Gì? Serif Là Gì? Phân Biệt Serif Và Sans-serif | Serif là những nét nhỏ ở cuối các nét chữ trong một số kiểu chữ nhất định. Các font chữ sử dụng... | 0 | 2024-07-08T10:36:23 | https://dev.to/terus_technique/sans-serif-la-gi-serif-la-gi-phan-biet-serif-va-sans-serif-523o | website, digitalmarketing, seo, terus |

Serif là những nét nhỏ ở cuối các nét chữ trong một số kiểu chữ nhất định. Các font chữ sử dụng Serif được gọi là kiểu chữ Serif. Các loại chữ Serif phổ biến bao gồm Old Style, Transitional, Didone và Slab Serif.

... | terus_technique |

1,915,578 | 6 Ways to Optimize Customs and Boost Retail Supply Chain Efficiency | Hey Dev.to community! 👋 In the fast-paced world of retail, efficient supply chains are essential.... | 0 | 2024-07-08T10:37:39 | https://dev.to/john_hall/6-ways-to-optimize-customs-and-boost-retail-supply-chain-efficiency-16c7 | ai, learning, startup, software | Hey Dev.to community! 👋 In the fast-paced world of retail, efficient supply chains are essential. With rising costs and complex regulations, optimizing customs processes can significantly enhance your retail operations. Here are six key strategies to boost your supply chain efficiency.

## Why Supply Chain Efficiency ... | john_hall |

1,915,580 | Medical Electrodes Market: Tech Trends & leading Segments | The steady rise in the number of chronic diseases reflects an urgent need for countries worldwide to... | 0 | 2024-07-08T10:39:35 | https://dev.to/nidhi_acharya_427558b1130/medical-electrodes-market-tech-trends-leading-segments-4fjb |

The steady rise in the number of chronic diseases reflects an urgent need for countries worldwide to establish healthcare programs, reforms and funds for the public. Due to the advancing popularity of early diagnosis... | nidhi_acharya_427558b1130 | |

1,915,581 | HTML web storage and web storage objects | HTML Web Storage With web storage, web applications can store data locally within the... | 0 | 2024-07-08T10:39:43 | https://dev.to/wasifali/html-web-storage-and-web-storage-objects-hcd | learning, webdev, html, css | ## **HTML Web Storage**

With web storage, web applications can store data locally within the user's browser.

Web storage is more secure, and large amounts of data can be stored locally, without affecting website performance.

Web storage is per origin i..e per domain and protocol. All pages, from one origin, can store a... | wasifali |

1,915,582 | How to Send push notification in Mobile Using NodeJS with Firebase Service ? | To implement push notifications using Firebase Cloud Messaging (FCM) in a Node.js application, you... | 0 | 2024-07-08T10:40:32 | https://dev.to/raynecoder/how-to-send-push-notification-in-mobile-using-nodejs-with-firebase-service--52o5 | To implement push notifications using Firebase Cloud Messaging (FCM) in a Node.js application, you need to handle FCM token storage and manage token updates for each user. Here's a step-by-step guide:

### 1. Set Up Firebase in Your Node.js Project

First, you need to set up Firebase in your Node.js project.

i. **Insta... | raynecoder | |

1,915,583 | Казино "Джек Пот" – Ваш Путь к Удаче и Развлечениям | Казино "Джек Пот" на сайте Barbados Casino предлагает уникальный и увлекательный опыт онлайн-игр. Это... | 0 | 2024-07-08T10:40:47 | https://dev.to/__dc92a10a6eb/kazino-dzhiek-pot-vash-put-k-udachie-i-razvliechieniiam-j1m | Казино "Джек Пот" на сайте Barbados Casino предлагает уникальный и увлекательный опыт онлайн-игр. Это казино привлекает игроков разнообразием игр, щедрыми бонусами и надежной системой безопасности.

Разнообразие Игр

В "Джек Пот" представлено множество игр от ведущих производителей, включая слоты, настольные игры и живы... | __dc92a10a6eb | |

1,915,584 | 5 Website Tìm Font Chữ Qua Hình Ảnh Trực Tuyến, Miễn Phí | Việc sử dụng các website tìm font chữ qua hình ảnh mang lại nhiều lợi ích cho người dùng: Tiết kiệm... | 0 | 2024-07-08T10:41:01 | https://dev.to/terus_technique/5-website-tim-font-chu-qua-hinh-anh-truc-tuyen-mien-phi-148n | website, digitalmarketing, seo, terus |

Việc sử dụng các [website](https://terusvn.com/thiet-ke-website-tai-hcm/) tìm font chữ qua hình ảnh mang lại nhiều lợi ích cho người dùng:

Tiết kiệm thời gian và công sức: Thay vì phải lần mò tìm kiếm trên internet... | terus_technique |

1,915,585 | 51Game: Best Online Betting Game in India | 51Game - Online betting has rapidly evolved in recent years, and India is no exception to this trend.... | 0 | 2024-07-08T10:41:04 | https://dev.to/kz_seo6_2e07ae19f27cb8791/51game-best-online-betting-game-in-india-12kh | webdev, beginners, javascript, tutorial | [51Game](https://webclone.in/) - Online betting has rapidly evolved in recent years, and India is no exception to this trend. Among the many platforms available, 51Game has emerged as a standout option for Indian bettors. This article delves into what makes 51Game the best online betting game in India, highlighting its... | kz_seo6_2e07ae19f27cb8791 |

1,915,586 | Pre Algebra Part 1/2 | Pre-Algebra for Data Science Following are the topics which we will cover here ... | 0 | 2024-07-08T10:42:57 | https://dev.to/syedmuhammadawais/pre-algebra-part-12-38cn | machinelearning, datascience, ai, computerscience | ## Pre-Algebra for Data Science

Following are the topics which we will cover here

1. Definition of Algebra

2. Types of Numbers

3. Operation on numbers

4. Key terms that are used in algebra

5. Fractions and decimals

6. Ratios and proportions

**1. Definition of Algebra**

Algebra is a branch of mathematics that deal... | syedmuhammadawais |

1,915,587 | 25+ Font Chữ Tiếng Việt Đẹp Nhất Cho Thiết Kế Website | Sau đây là danh sách 25+ font chữ Tiếng Việt được nhiều nhà thiết kế website ưa chuộng trong năm... | 0 | 2024-07-08T10:44:49 | https://dev.to/terus_technique/25-font-chu-tieng-viet-dep-nhat-cho-thiet-ke-website-3403 | website, digitalmarketing, seo, terus |

Sau đây là danh sách 25+ font chữ Tiếng Việt được nhiều nhà thiết kế website ưa chuộng trong năm 2024: Arial, Times New Roman, Helvetica, Courier New, Verdana, Georgia, Tahoma, Calibri, Garamond, Bookman, Museo Mode... | terus_technique |

1,915,588 | Refactoring content for GenAI readiness: Best Practices and Guidelines | Refactoring content is a must, given the proliferation of GenAI tools in the market. Most of the... | 0 | 2024-07-08T10:45:38 | https://dev.to/ragavi_document360/refactoring-content-for-genai-readiness-best-practices-and-guidelines-pp3 | Refactoring content is a must, given the proliferation of GenAI tools in the market. Most of the GenAI vendors have scrapped the internet to train their Large Language Model (LLM).Public knowledge vendors likely already use public GenAI bases, and customers may turn to tools like ChatGPT for answers.

ได้เงินจริง เป็นเกมที่เล่นง่าย และใช้งบเดิมพันต่ำ สามารถวางเดิมพันเกมได้ตั้งแต่ 1 บาท อยากเล่นเท่าไหร่ เข้ามาเล่นที่นี่ เดิมพันเกมง่าย สล็อตฝากถอน true wallet เว็บตรง เข้าถึงความสนุก เลือกเกมได้ตลอด 24 ชั่วโมง | mai11_163de0bd74cb068b437 | |

1,915,596 | Containers and Files Security in SharePoint Embedded | Imagine building a collaborative hub within SharePoint Embedded, where colleagues can access and work... | 26,993 | 2024-07-09T06:30:00 | https://intranetfromthetrenches.substack.com/p/containers-files-security-in-sharepoint-embedded | sharepoint | Imagine building a collaborative hub within SharePoint Embedded, where colleagues can access and work on crucial documents. But what if some documents contain sensitive information, like financial reports or client contracts? You wouldn't want everyone to have full access, right?

This is where understanding SharePoint... | jaloplo |

1,915,597 | Character Marketing Là Gì? Hiệu Quả của Character Marketing Như Thế Nào? | Character marketing là một xu hướng marketing mới nổi trong những năm gần đây. Nó liên quan đến việc... | 0 | 2024-07-08T10:49:20 | https://dev.to/terus_digitalmarketing/character-marketing-la-gi-hieu-qua-cua-character-marketing-nhu-the-nao-5g9c | website, terus, teruswebsite, web | Character marketing là một xu hướng marketing mới nổi trong những năm gần đây. Nó liên quan đến việc sử dụng các linh vật, nhân vật độc đáo để gây ấn tượng và kết nối cảm xúc với khách hàng.

Một số lợi ích mà Character marketing mang lại có thể kể đến như:

1. Tạo điểm nhấn mới lạ và nổi bật: Linh vật thương hiệu có t... | terus_digitalmarketing |

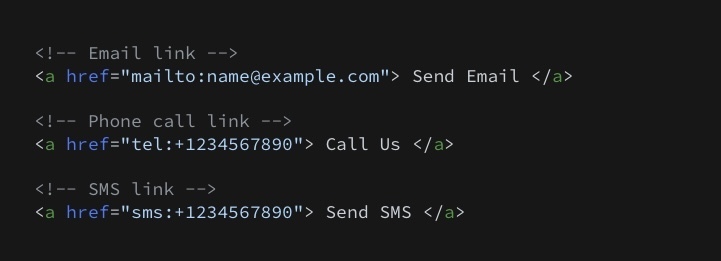

1,915,619 | 21 HTML Tips You Must Know About | In this post, I’ll share 21 HTML Tips with code snippets that can boost your coding skills. Let’s... | 0 | 2024-07-08T12:02:57 | https://dev.to/agunwachidiebelecalistus/21-html-tips-you-must-know-about-315c | webdev, beginners, html, coding | In this post, I’ll share 21 HTML Tips with code snippets that can boost your coding skills.

Let’s jump right into it.

**Creating Contact Links**

Create clickable email, phone call, and SMS links using HTML:

**C... | agunwachidiebelecalistus |

1,915,598 | เว็บสล็อตออนไลน์ยอดฮิตแตกหนัก จ่ายจริงได้เงินจริง ที่นักปั่นทุกคนบอกว่าเฮง | อยากเล่นเกมสนุก เดิมพันเกมได้เงินง่าย เราขอแนะนำเกม สล็อตออนไลน์ เว็บตรง100 ได้เงินจริง api แท้... | 0 | 2024-07-08T10:49:27 | https://dev.to/mai11_163de0bd74cb068b437/ewbsltnailnydhitaetkhnak-cchaaycchringaidengincchring-thiinakpanthukkhnbkwaaehng-1k4o |

อยากเล่นเกมสนุก เดิมพันเกมได้เงินง่าย เราขอแนะนำเกม สล็อตออนไลน์ เว็บตรง100 ได้เงินจริง api แท้ เล่นง่าย และใช้งบเดิมพันต่ำ ความปลอดภัยและน่าเชื่อถือ [เว็บตรง](https://hhoc.org/) ด้วยเทคโนโลยีการเข้ารหัสขั้นสูง (SSL... | mai11_163de0bd74cb068b437 | |

1,915,599 | Các Plugin Font Chữ Cho WordPress Tốt Nhất Hiện Nay | Khi thiết kế một website, việc lựa chọn font chữ phù hợp giữ vai trò vô cùng quan trọng. Một font... | 0 | 2024-07-08T10:50:43 | https://dev.to/terus_technique/cac-plugin-font-chu-cho-wordpress-tot-nhat-hien-nay-4glk | website, digitalmarketing, seo, terus |

Khi thiết kế một website, việc lựa chọn font chữ phù hợp giữ vai trò vô cùng quan trọng. Một font chữ tốt sẽ góp phần tạo nên diện mạo ấn tượng và trải nghiệm người dùng tối ưu. Một số tiêu chuẩn cần cân nhắc khi lự... | terus_technique |

1,915,600 | Network Cabling Services: Building a Strong Foundation for Business Growth | In the digital age, businesses need a reliable and efficient network infrastructure to stay... | 0 | 2024-07-08T10:51:59 | https://dev.to/jaysongrogan07/network-cabling-services-building-a-strong-foundation-for-business-growth-fo9 | In the digital age, businesses need a reliable and efficient network infrastructure to stay competitive. **[Network Cabling Services](https://layerlogix.com/structured-cabling-services/network-cabling-services-in-houston-and-the-woodlands)** are crucial in establishing and maintaining this infrastructure, ensuring seam... | jaysongrogan07 | |

1,915,601 | Build a Customer Review APP with Strapi and Solid.js | Feedback from customers is one key to a successful business today. In this tutorial, we'll learn how... | 0 | 2024-07-08T10:52:34 | https://strapi.io/blog/build-a-customer-review-app-with-strapi-and-solid-js | solidjs, strapi | Feedback from customers is one key to a successful business today. In this tutorial, we'll learn how to build a customer review and rating App using Strapi, a user-friendly and easy-to-integrate content management system(CMS) that simplifies the content management process, and solid.js, a reactive UI library.

We’ll go... | strapijs |

1,915,602 | Add a custom Tailwind CSS class for reusability and speed | This article was originally published on Rails Designer This is another quick article about... | 0 | 2024-07-08T12:47:50 | https://railsdesigner.com/custom-css-class-with-plugins/ | tailwindcss, ruby, rails, webdev | [This article was originally published on Rails Designer](https://railsdesigner.com/custom-css-class-with-plugins/)

---

This is another quick article about something I use in every (Rails) app.

I often apply a few Tailwind CSS utility-classes to [create smooth transitions for hover- or active-states](https://railsde... | railsdesigner |

1,915,603 | คำแนะนำในการเล่น สล็อตเว็บตรง ไม่มีขั้นต่ำ และ รับโปรโมชั่นฝากสุดคุ้ม | นักเดิมพันที่สนใจ และ กำลังมองหา สล็อตฝากถอน ไม่มี ขั้นต่ำ สล็อตวอเลท... | 0 | 2024-07-08T10:52:39 | https://dev.to/mai11_163de0bd74cb068b437/khamaenanamainkaareln-sltewbtrng-aimmiikhantam-aela-rabopromchanfaaksudkhum-223a |

นักเดิมพันที่สนใจ และ กำลังมองหา สล็อตฝากถอน ไม่มี ขั้นต่ำ [สล็อตวอเลท](https://hhoc.org/) ท่านสามารถสมัครเดิมพันเว็บไซต์เว็บสล็อตออนไลน์ของเราได้ทันที โดยขั้นตอนการสมัค เว็บสล็อตออนไลน์มือถือ สามารถสมัครได้ง่ายขั้นตอนไม่ซับซ้อนสามารถทำบนมือถือได้ง่าย คำแนะนำในการเล่น สล็อตออนไลน์เว็บตรง อันดับ 1 ของเรา | mai11_163de0bd74cb068b437 | |

1,915,604 | How to add Video Calling Facilities in your App | A Video SDK facilitates video communication between the server and the client endpoint applications.... | 0 | 2024-07-08T10:52:57 | https://dev.to/yogender_singh_011ebbe493/how-to-add-video-calling-facilities-in-your-app-dk7 | videocallapi, videocallapp | A Video SDK facilitates video communication between the server and the client endpoint applications. A wide range of SDKs is available for developing web browser-based applications and mobile native and hybrid applications. For effective RTC sessions, these SDKs provide functions that use the underlined APIs to communi... | yogender_singh_011ebbe493 |

1,915,606 | GSoC Week 6 | Documentation is one of the pillars of good software. We often forget to do it because it comes in as... | 27,442 | 2024-07-08T17:34:36 | https://dev.to/chiemezuo/gsoc-week-6-389d | gsoc, googlesummerofcode, wagtail, opensource | Documentation is one of the pillars of good software. We often forget to do it because it comes in as a 'secondary' consideration. My theme for week 6 was 'documenting my features properly', and it was what I did.

## Weekly Check-in

We had a lengthy check-in session, especially because my lead mentor Storm was startin... | chiemezuo |

1,915,607 | สล็อตเว็บตรง มอบบริการดีที่สุด ทีมงานดูแล ตลอด 24 ชั่วโมง | เล่นเกมได้อย่างไร้กังวล เมื่อไหร่ก็ตามที่คุณพบปัญหา หรือต้องการความช่วยเหลือ วางใจได้เลย เพราะที่... | 0 | 2024-07-08T10:54:28 | https://dev.to/mai11_163de0bd74cb068b437/sltewbtrng-mbbrikaardiithiisud-thiimngaanduuael-tld-24-chawomng-b52 |

เล่นเกมได้อย่างไร้กังวล เมื่อไหร่ก็ตามที่คุณพบปัญหา หรือต้องการความช่วยเหลือ วางใจได้เลย เพราะที่ สล็อตเว็บตรง ไม่ผ่านเอเย่นต์ เรามีทีมงานคอยดูแล ตลอด 24 ชั่วโมง คอยเอื้ออำนวยความสะดวก [เว็บตรง100](https://hhoc.org/) พร้อมให้บริการเมื่อมีปัญหา ติดต่อง่าย รอไม่นาน และสามารถแก้ปัญหาได้อย่างรวดเร็ว

| mai11_163de0bd74cb068b437 | |

1,915,714 | Introduction to BitPower Smart Contract | What is BitPower? BitPower is a decentralized lending platform based on blockchain, which uses smart... | 0 | 2024-07-08T12:09:03 | https://dev.to/aimm_x_54a3484700fbe0d3be/introduction-to-bitpower-smart-contract-1ok2 | What is BitPower?

BitPower is a decentralized lending platform based on blockchain, which uses smart contracts to provide safe and efficient lending services.

Features of smart contracts

Automatic execution

All transactions are automatically executed without manual operation.

Open source code

The code is open and can b... | aimm_x_54a3484700fbe0d3be | |

1,915,608 | สล็อตเว็บตรง มอบบริการดีที่สุด ทีมงานดูแล ตลอด 24 ชั่วโมง | เล่นเกมได้อย่างไร้กังวล เมื่อไหร่ก็ตามที่คุณพบปัญหา หรือต้องการความช่วยเหลือ วางใจได้เลย เพราะที่... | 0 | 2024-07-08T10:54:28 | https://dev.to/mai11_163de0bd74cb068b437/sltewbtrng-mbbrikaardiithiisud-thiimngaanduuael-tld-24-chawomng-3h9j |

เล่นเกมได้อย่างไร้กังวล เมื่อไหร่ก็ตามที่คุณพบปัญหา หรือต้องการความช่วยเหลือ วางใจได้เลย เพราะที่ สล็อตเว็บตรง ไม่ผ่านเอเย่นต์ เรามีทีมงานคอยดูแล ตลอด 24 ชั่วโมง คอยเอื้ออำนวยความสะดวก [เว็บตรง100](https://hhoc.org/) พร้อมให้บริการเมื่อมีปัญหา ติดต่อง่าย รอไม่นาน และสามารถแก้ปัญหาได้อย่างรวดเร็ว

| mai11_163de0bd74cb068b437 | |

1,915,609 | Meme Monday | Happens To The Best Of Us! Source | 0 | 2024-07-08T10:54:39 | https://dev.to/techdogs_inc/meme-monday-2cj3 | technology, wearabletechnology, ai, marketing | **Happens To The Best Of Us!**

[Source](https://imgflip.com/i/8ut86p) | td_inc |

1,915,610 | Typeface Là Gì? Những Điều Bạn Nên Biết Về Typeface | Typeface là một khái niệm quan trọng trong thiết kế. Nó được hiểu là một tập hợp các ký tự, bao gồm... | 0 | 2024-07-08T10:56:33 | https://dev.to/terus_technique/typeface-la-gi-nhung-dieu-ban-nen-biet-ve-typeface-1lj4 | website, digitalmarketing, seo, terus |

Typeface là một khái niệm quan trọng trong thiết kế. Nó được hiểu là một tập hợp các ký tự, bao gồm chữ cái, số và ký hiệu, được [thiết kế với phong cách và đặc trưng thống nhất](https://terusvn.com/thiet-ke-website... | terus_technique |

1,915,612 | puravive weight loss supplement | Puravive is a weight loss supplement that claims to help users lose weight by converting white fat... | 0 | 2024-07-08T10:58:43 | https://dev.to/kristin_cruz_6ddaa83ab2e6/puravive-weight-loss-supplement-11eh | news, webdev, beginners, programming |

[Puravive is a weight loss supplement](https://website-shoping-offers.online/

) that claims to help users lose we... | kristin_cruz_6ddaa83ab2e6 |

1,915,613 | Front-end Developer Là Gì? Kỹ Năng Của Front-end Developer | Front-end Developer là người chịu trách nhiệm tạo ra giao diện người dùng cho website hoặc ứng dụng... | 0 | 2024-07-08T11:00:19 | https://dev.to/terus_technique/front-end-developer-la-gi-ky-nang-cua-front-end-developer-3082 | website, digitalmarketing, seo, terus |

Front-end Developer là người chịu trách nhiệm tạo ra [giao diện người dùng cho website](https://terusvn.com/thiet-ke-website-tai-hcm/) hoặc ứng dụng web. Họ phải nắm vững các ngôn ngữ lập trình như HTML, CSS, JavaSc... | terus_technique |

1,915,614 | Back-end Developer Là Gì? Kỹ Năng Của Back-end Developer | Back-end là một phần quan trọng của hệ thống website, vận hành ở phía máy chủ, xử lý các logic phức... | 0 | 2024-07-08T11:02:59 | https://dev.to/terus_technique/back-end-developer-la-gi-ky-nang-cua-back-end-developer-1d3i | website, digitalmarketing, seo, terus |

Back-end là một phần quan trọng của [hệ thống website](https://terusvn.com/thiet-ke-website/back-end-developer-la-gi/), vận hành ở phía máy chủ, xử lý các logic phức tạp, quản lý cơ sở dữ liệu và đảm bảo tính bảo mậ... | terus_technique |

1,915,615 | Why Every Procurement Manager Should Consider EDI Solutions? | As procurement managers, we’re constantly seeking methods to cut costs and streamline operations. The... | 0 | 2024-07-08T11:03:24 | https://dev.to/actionedi/why-every-procurement-manager-should-consider-edi-solutions-3mc0 | As procurement managers, we’re constantly seeking methods to cut costs and streamline operations. The question arises: Why seek cost benefits in EDI solutions? Here are three compelling reasons:

1. Reduce Errors: By automating data entry, EDI solutions like ActionEDI minimize manual errors and ensure accuracy.

- Elim... | actionedi | |

1,915,616 | 20 Công Cụ Kiểm Tra Tốc Độ Website Miễn Phí | Hiệu suất tốc độ của website là vô cùng quan trọng đối với trải nghiệm người dùng. Nếu website tải... | 0 | 2024-07-08T11:04:27 | https://dev.to/terus_technique/20-cong-cu-kiem-tra-toc-do-website-mien-phi-3282 | website, digitalmarketing, seo, terus |

[Hiệu suất tốc độ của website](https://terusvn.com/thiet-ke-website-tai-hcm/) là vô cùng quan trọng đối với trải nghiệm người dùng. Nếu website tải quá chậm, khách hàng rất có thể sẽ rời khỏi trang và chuyển sang we... | terus_technique |

1,915,617 | Blockchain Developers Remain Unfazed by Bitcoin Price Decline | The recent plunge in Bitcoin (BTC) prices, which saw the cryptocurrency drop below $55,000, has not... | 0 | 2024-07-08T11:04:36 | https://dev.to/vincent_lee_190635/blockchain-developers-remain-unfazed-by-bitcoin-price-decline-4lj2 | blockchain, bitcoin, development | The recent plunge in Bitcoin (BTC) prices, which saw the cryptocurrency drop below $55,000, has not shaken the confidence of blockchain developers.

Despite the volatility, they remain focused on building innovative applications and infrastructure to support the long-term growth of the Bitcoin network.

"While the pric... | vincent_lee_190635 |

1,915,620 | Top 10 Hosting Miễn Phí Tốt Nhất Cho Website 2024 | Hosting miễn phí là một lựa chọn hấp dẫn cho những người muốn xây dựng một website mới mà không cần... | 0 | 2024-07-08T11:07:28 | https://dev.to/terus_technique/top-10-hosting-mien-phi-tot-nhat-cho-website-2024-1b72 | website, digitalmarketing, seo, terus |

Hosting miễn phí là một lựa chọn hấp dẫn cho những người muốn [xây dựng một website](https://terusvn.com/thiet-ke-website-tai-hcm/) mới mà không cần phải trả bất kỳ khoản phí nào. Mặc dù các dịch vụ hosting trả phí ... | terus_technique |

1,915,621 | Item 30: Define contracts with documentation | Learn why defining contracts with documentation is crucial. Dive into the article by Marcin Moskala... | 0 | 2024-07-08T11:08:57 | https://dev.to/ktdotacademy/item-30-define-contracts-with-documentation-517c | digest | Learn why defining contracts with documentation is crucial. Dive into the article by Marcin Moskala and elevate your Kotlin knowledge now! 🚀✨[Go to the article

](https://kt.academy/article/ek-contracts-documentation) | ktdotacademy |

1,915,622 | Downtime Là Gì? Cách Khắc Phục Website Bị Downtime | Downtime là một thuật ngữ được sử dụng để chỉ thời gian mà một website không thể được truy cập bởi... | 0 | 2024-07-08T11:09:28 | https://dev.to/terus_technique/downtime-la-gi-cach-khac-phuc-website-bi-downtime-54n | website, digitalmarketing, seo, terus |

Downtime là một thuật ngữ được sử dụng để chỉ thời gian mà một website không thể được truy cập bởi người dùng. Đây là tình trạng không mong muốn vì nó ảnh hưởng trực tiếp đến trải nghiệm của khách hàng và cũng có th... | terus_technique |

1,915,623 | The Impact of Construction Quantity Takeoff | Construction Quantity Takeoff Lots of folks still do takeoffs the old-fashioned way, with tape... | 0 | 2024-07-08T11:09:46 | https://dev.to/biddingprofessionals/the-impact-of-construction-quantity-takeoff-3a1g | Construction Quantity Takeoff

Lots of folks still do takeoffs the old-fashioned way, with tape measures, notepads, and calculators. Don’t get me wrong, there’s value in good old boots-on-the-ground inspections. But outfits like [Bidding Professionals](https://biddingprofessionals.com/) are leveraging the latest tech to... | biddingprofessionals |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.