id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,919,420 | Transform Your Career with Power BI: Top Courses for Data Analytics and Business Intelligence | Top Power BI Courses for Aspiring Data Analysts Unlock Your Career Potential The Power of Power BI... | 0 | 2024-07-11T07:43:34 | https://dev.to/educatinol_courses_806c29/transform-your-career-with-power-bi-top-courses-for-data-analytics-and-business-intelligence-1aoi | education | Top Power BI Courses for Aspiring Data Analysts Unlock Your Career Potential

The Power of Power BI

An Essential Guide

In today's rapidly evolving digital arena, mastering the capabilities of Power BI is paramount... | educatinol_courses_806c29 |

1,919,421 | Transform Your Career with Power BI: Top Courses for Data Analytics and Business Intelligence | Top Power BI Courses for Aspiring Data Analysts Unlock Your Career Potential The Power of Power BI... | 0 | 2024-07-11T07:43:37 | https://dev.to/educatinol_courses_806c29/transform-your-career-with-power-bi-top-courses-for-data-analytics-and-business-intelligence-2n99 | education | Top Power BI Courses for Aspiring Data Analysts Unlock Your Career Potential

The Power of Power BI

An Essential Guide

In today's rapidly evolving digital arena, mastering the capabilities of Power BI is paramount... | educatinol_courses_806c29 |

1,919,422 | Unveiling the Power of TCP: Building Apps with Node.js's net Module | The net module in Node.js allows you to build TCP applications by creating both TCP servers and... | 0 | 2024-07-11T07:44:08 | https://dev.to/devstoriesplayground/unveiling-the-power-of-tcp-building-apps-with-nodejss-net-module-2n8c | node, programming, tcp | The net module in Node.js allows you to build TCP applications by creating both TCP servers and clients. TCP (Transmission Control Protocol) is a reliable protocol that ensures ordered and error-free data transmission over a network.

Here's a breakdown of what you can do with the net module:

1. TCP Servers:

- You ca... | devstoriesplayground |

1,919,423 | Building Voice User Interfaces with React: Unlocking the Power of Sista AI | Building Voice User Interfaces with React: Unlock the Power of Sista AI. Experience the cutting-edge AI solutions with Sista AI! 🌟 | 0 | 2024-07-11T07:45:43 | https://dev.to/sista-ai/building-voice-user-interfaces-with-react-unlocking-the-power-of-sista-ai-4424 | ai, react, javascript, typescript | <h2>Building Voice User Interfaces with React: Unlocking the Power of Sista AI</h2><p>Building voice user interfaces with React has become increasingly popular, and Sista AI is at the forefront of this revolution. Sista AI is an end-to-end AI integration platform that transforms any app into a smart app with an AI voic... | sista-ai |

1,919,425 | Unveiling the Power of Front Bumper Splitters | Navigating the Mysteries of Front Bumper Splitters In Cars Do you want to increase the performance... | 0 | 2024-07-11T07:50:11 | https://dev.to/shirley_caesarakshga_875/unveiling-the-power-of-front-bumper-splitters-30oi | Navigating the Mysteries of Front Bumper Splitters In Cars

Do you want to increase the performance and enhance the look of your car? Add a front bumper splitter! This sleek add-ons will not only enhance the style but also functionality of your car.

Benefits Of Front Bumper Splitters

Many car enthusiasts out there de... | shirley_caesarakshga_875 | |

1,919,426 | Master RTX 4090 Calculator Techniques: Expert Tips | Introduction With the launch of the RTX 4090 Calculator, making money through... | 0 | 2024-07-11T21:30:00 | https://blogs.novita.ai/master-rtx-4090-calculator-techniques-expert-tips/ | gpu, rtx4090, webdev | ## **Introduction**

With the launch of the RTX 4090 Calculator, making money through cryptocurrency mining and smart financial planning has taken a big leap forward. This tool is all about using NVIDIA GeForce RTX technology to change how we figure out hash rates for mining that actually pays off. The way the RTX 4090... | novita_ai |

1,919,428 | The Importance of Workday Testing Tools | In this fast-paced commercial environment, ERP systems are crucial to a company’s ability to handle... | 0 | 2024-07-11T07:54:49 | https://onlyfinder.org/the-importance-of-workday-testing-tools/ | workday, testing, tools |

In this fast-paced commercial environment, ERP systems are crucial to a company’s ability to handle supply chain, finance, and human resources functioning requirements. Workday, which boasts a wide range of features ... | rohitbhandari102 |

1,919,429 | SQL Joins Explained: Simple Guide for Beginners | SQL Joins A JOIN clause is used to combine rows from two or more tables, based on a... | 0 | 2024-07-11T07:55:23 | https://vampirepapi.hashnode.dev/sql-joins-explained-simple-guide-for-beginners | sql, development, database, interview | # SQL Joins

A `JOIN` clause is used to combine rows from two or more tables, based on a related column between them.

Let's look at a selection from the "Orders" table:

| OrderID | CustomerID | OrderDate |

| --- | --- | --- |

| 10308 | 2 | 1996-09-18 |

| 10309 | 37 | 1996-09-19 |

| 10310 | 77 | 1996-09-20 |

Then, lo... | vampirepapi |

1,919,430 | Latest Beauty Trend | Discover top beauty and skincare tips, stay on-trend with the latest fashion insights, and get expert... | 0 | 2024-07-11T07:58:47 | https://dev.to/cynthiabcamarillo/latest-beauty-trend-946 | Discover top beauty and skincare tips, stay on-trend with the latest fashion insights, and get expert advice for a radiant look. Your ultimate guide to looking and feeling your best every day.

https://latestbeautytrend.com/

| cynthiabcamarillo | |

1,919,431 | Integrating Redis, MySQL, Kafka, Logstash, Elasticsearch, TiDB, and CloudCanal | Here’s how these technologies can work together: Data Pipeline Architecture: MySQL:... | 0 | 2024-07-11T07:58:57 | https://dev.to/tj_27/integrating-redis-mysql-kafka-logstash-elasticsearch-tidb-and-cloudcanal-3leo | mysql, kafka, redis, cloudcanal | ## **Here’s how these technologies can work together:**

**Data Pipeline Architecture:**

- **MySQL:** Primary source of structured data.

- **TiDB:** Distributed SQL database compatible with MySQL, used for scalability and high availability.

- **Kafka:** Messaging system for real-time data streaming.

- **Logstash:** D... | tj_27 |

1,919,432 | NFT Calendar Development | An Introductory Guide | With the increasing popularity of non-fungible tokens (NFTs), creators are introducing an array of... | 0 | 2024-07-11T07:59:30 | https://dev.to/donnajohnson88/nft-calendar-development-an-introductory-guide-1k4b | blockchain, web3, nft, development | With the increasing popularity of non-fungible tokens (NFTs), creators are introducing an array of NFTs. Yet, it’s becoming challenging for investors to keep track of multiple NFT drops. An NFT calendar addresses this challenge for users and brings all the NFT airdrop events in one place. These calendars provide compre... | donnajohnson88 |

1,919,433 | Enhancing Your Home with the Art of Tile Installation | When it comes to home renovation and design, one element that can significantly impact the aesthetic... | 0 | 2024-07-11T08:01:29 | https://dev.to/cincinnatibacksplash/enhancing-your-home-with-the-art-of-tile-installation-26f5 | When it comes to home renovation and design, one element that can significantly impact the aesthetic appeal of your space is a well-crafted backsplash. [Cincinnati Backsplash of Cincinnati](https://www.bbb.org/us/ky/dayton/profile/nonceramic-tile-contractors/cincinnati-backsplash-0292-90046472) stands out as a beacon i... | cincinnatibacksplash | |

1,919,439 | Introducing TargetJ: Javascript framework that can animate anything | Welcome to TargetJ, a powerful JavaScript framework designed to make building dynamic and responsive... | 0 | 2024-07-11T08:08:37 | https://dev.to/ahmad_wasfi_f88513699c56d/introducing-targetj-revolutionizing-web-development-5758 | Welcome to TargetJ, a powerful JavaScript framework designed to make building dynamic and responsive web applications easier and more efficient. TargetJ distinguishes itself by introducing a novel concept known as 'targets', which forms its core. Targets are used as the main building blocks of components instead of dir... | ahmad_wasfi_f88513699c56d | |

1,919,434 | Programming analogies:- Conditional Statements | Conditional Statements: Imagine you're a robot programmed to make decisions based on... | 0 | 2024-07-11T08:02:48 | https://dev.to/learn_with_santosh/programming-analogies-conditional-statements-52dp | learning, webdev | ## Conditional Statements:

Imagine you're a robot programmed to make decisions based on weather. If it's raining, you grab an umbrella. If it's sunny, you wear sunglasses. If it's snowing, you grab a snow shovel. You're like a human if-else statement!

**" and learn about the essential skills, benefits, and potential drawbacks of hiring full-stack developers. This detailed blog covers everything from HTML, CSS, JavaScrip... | talentonlease01 |

1,919,436 | Python Operators Demystified | Python operators are symbols that perform operations on variables and values. They are used in... | 0 | 2024-07-11T08:07:04 | https://dev.to/angelika_jolly_4aa3821499/python-operators-demystified-44e9 | python, operators, webdev, react | [Python operators](https://www.youtube.com/watch?v=Zs8fxcqKro4) are symbols that perform operations on variables and values. They are used in various programming tasks, including arithmetic calculations, comparisons, logical operations, and more. Here's a detailed look at the different types of Python operators:

1. A... | angelika_jolly_4aa3821499 |

1,919,437 | Uninstall Netdata | Copy from internet !#/bin/bash killall netdata wget -O /tmp/netdata-kickstart.sh... | 0 | 2024-07-11T08:07:11 | https://dev.to/peternguyenexpert/uninstall-netdata-57pm | Copy from internet

```

!#/bin/bash

killall netdata

wget -O /tmp/netdata-kickstart.sh https://my-netdata.io/kickstart.sh && sh /tmp/netdata-kickstart.sh --uninstall --non-interactive

systemctl stop netdata

systemctl disable netdata

systemctl unmask netdata

rm -rf /lib/systemd/system/netdata.service

rm -rf /lib/systemd/s... | peternguyenexpert | |

1,919,438 | A Beginner's Guide to Python List Comprehension | List comprehension is a powerful technique in Python for creating lists in a concise and efficient... | 0 | 2024-07-11T08:08:01 | https://dev.to/terrancoder/a-beginners-guide-to-python-list-comprehension-166a | python, tutorial, cleancode, beginners | List comprehension is a powerful technique in Python for creating lists in a concise and efficient manner. It allows you to condense multiple lines of code into a single line, resulting in cleaner and more readable code. For those new to Python or looking to enhance their skills, mastering list comprehension is essenti... | terrancoder |

1,919,440 | Programming analogies:- Lists/Arrays | Lists/Arrays: Think of lists like a collection of toys in your room. You can have a list... | 0 | 2024-07-11T08:10:49 | https://dev.to/learn_with_santosh/programming-analogies-listsarrays-1k1a | learning | ## Lists/Arrays:

Think of lists like a collection of toys in your room. You can have a list of stuffed animals, a list of action figures, or even a mixed-up list of both. And just like in your room, you can rearrange them, add more, or take some away.

<p style="line-height: 1.2; margin-top: 12pt; margin-bottom: 12pt;"><span style="font-size: 11pt; font-family: Arial, sans-serif;">Choosing the right trading platform for trading is really important if you want to ... | rosenicholasm |

1,919,446 | Oracle Cloud Financials 24C Release: What’s New? | The Oracle Cloud Financials 24C release is upon us, set for July 2024! Oracle’s mandatory quarterly... | 0 | 2024-07-11T08:19:25 | https://www.opkey.com/blog/oracle-cloud-financials-24c-release | oracle, cloud, financials, release |

The Oracle Cloud Financials 24C release is upon us, set for July 2024! Oracle’s mandatory quarterly releases keep your environment up to date with the latest features, fixes, and upgrades.

This blog will provide a... | johnste39558689 |

1,919,448 | HVAC Services Market Comprehensive Analysis, Growth and Major Policies Report | HVAC Services Market Size Was Valued at USD 75.5 Billion in 2023, and is Projected to Reach USD 129.8... | 0 | 2024-07-11T08:23:44 | https://dev.to/chavi_tardeja/hvac-services-market-comprehensive-analysis-growth-and-major-policies-report-33ll | hvac, services |

HVAC Services Market Size Was Valued at USD 75.5 Billion in 2023, and is Projected to Reach USD 129.8 Billion by 2032, Growing at a CAGR of 6.20% From 2024-2032.

The HVAC (Heating, Ventilation, and Air Conditioning) services market therefore embraces a range of services meant for the installation, repair, and maintena... | chavi_tardeja |

1,919,449 | ADVANCED DIGITAL MARKETING SERVICES | Genetech is your one-stop shop for Advanced Digital Marketing Services in the USA. We understand the... | 0 | 2024-07-11T08:25:24 | https://dev.to/amna_khan_63f1f5d464c3e2c/advanced-digital-marketing-services-49f | webdev, python, productivity, opensource | Genetech is your one-stop shop for <a href="https://genetechagency.com/advanced-digital-marketing-services/">Advanced Digital Marketing Services</a>

in the USA. We understand the unique challenges and opportunities of the US market, and our team of experts leverages cutting-edge strategies to deliver exceptional resul... | amna_khan_63f1f5d464c3e2c |

1,919,451 | How to digitally sign a job offer letter. | How to digitally sign a job offer letter. Learn what digital signatures are and how they... | 0 | 2024-07-11T08:31:05 | https://dev.to/opensign001/how-to-digitally-sign-a-job-offer-letter-28i6 | digitalsignature, docusign, productivity, discuss | ## How to digitally sign a job offer letter.

Learn what digital signatures are and how they can help your ideal candidate quickly sign your offer letter.

You’ve reviewed dozens of applications, interviewed skilled candidates and finally found your ideal employee. Now, all you need to do is send them a [job offer lett... | opensign001 |

1,919,452 | The Advantages of Hiring a Cross-Platform App Developer | Today, it is the need of the hour to develop apps that work on multiple platforms. It entered the... | 0 | 2024-07-11T08:31:29 | https://dev.to/ahmad_badar_351260f367c49/the-advantages-of-hiring-a-cross-platform-app-developer-23mj | developer, apps, application, development | Today, it is the need of the hour to develop apps that work on multiple platforms. It entered the scene because making different programs for many operating systems was complex and costly. These systems include iOS and Android. When you build app code for cross-platform development, it runs on several platforms with on... | ahmad_badar_351260f367c49 |

1,919,453 | Crypto News: Aptos Keyless Wallet, SingularityNET and Filecoin Partnership, Unauthorised Transactions on Binance | Luma AI Dream Machine, a new development that turns still memes into videos, has become a huge trend.... | 0 | 2024-07-11T08:32:06 | https://36crypto.com/crypto-news-aptos-keyless-wallet-singularitynet-and-filecoin-partnership-unauthorised-transactions-on-binance/ | cryptocurrency, news, blockchain | Luma AI Dream Machine, a new development that turns still memes into videos, has become a huge trend. Since its release, users from all over the world have flooded social platforms with their Luma-generated creations. The development has also found its way into the crypto community, and the social network X has been [f... | deniz_tutku |

1,919,454 | Email authentication - Understanding headers | Email authentication is crucial to ensure your emails reach recipients’ inboxes, especially for... | 0 | 2024-07-11T08:33:10 | https://dev.to/sweego/email-authentication-understanding-headers-1pn1 | ops, webdev | Email authentication is crucial to ensure your emails reach recipients’ inboxes, especially for transactional emails where errors are unacceptable. The main methods of authentication include **SPF** (Sender Policy Framework), **DKIM** (DomainKeys Identified Mail), and **DMARC** (Domain-based Message Authentication, Rep... | pydubreucq |

1,919,455 | SEO Services In Europe | Search Engine Optimization (SEO) services in Europe encompass a range of strategies and practices... | 0 | 2024-07-11T08:34:41 | https://dev.to/live_online_ce23ae8123f4a/seo-services-in-europe-1ml9 | Search Engine Optimization [(SEO)](https://www.genetechagency.com/seo-services-in-europe/) services in Europe encompass a range of strategies and practices aimed at improving the visibility and ranking of websites on searchengine results pages (SERPs). These services are crucial for businesses looking to enhance their ... | live_online_ce23ae8123f4a | |

1,919,456 | ecommerce framework sylius vs magento | sylius.com vs magento.com & orocrm.com | 0 | 2024-07-11T08:42:17 | https://dev.to/peternguyenexpert/ecommerce-framework-sylius-vs-magento-43ee | sylius.com vs magento.com & orocrm.com | peternguyenexpert | |

1,919,457 | HTB Academy: Information Gathering - Web Edition Module: Skills Assessment (Part II, Question 5) | HTB Academy: Information Gathering - Web Edition Module | 0 | 2024-07-11T09:16:21 | https://dev.to/saramazal/htb-academy-information-gathering-web-edition-module-skills-assessment-part-ii-question-5-5bef | ethicalhacking, pentesting, webdev, htbacademy | ---

title: HTB Academy: Information Gathering - Web Edition Module: Skills Assessment (Part II, Question 5)

published: true

description: HTB Academy: Information Gathering - Web Edition Module

tags: #ethicalhacking #pentesting #webdev #htbacademy

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 fo... | saramazal |

1,919,458 | Dockerizing Microservices: Untangling Scaling and Deployment | In today's rapidly evolving software landscape, the need for applications that are scalable,... | 0 | 2024-07-11T08:46:10 | https://dev.to/whotarusharora/dockerizing-microservices-untangling-scaling-and-deployment-202d | docker, microservices, webdev, devops | In today's rapidly evolving software landscape, the need for applications that are scalable, reliable, and easy to deploy has never been more critical. Microservices architecture, paired with containerization technologies like Docker, provides a powerful solution to these challenges.

In this blog, we will explore the... | whotarusharora |

1,919,460 | Experience the Ultimate Card Game with 3 Patti Happy Club | Join the 3 Patti Happy Club and dive into the thrilling world of Teen Patti! With a seamless user... | 0 | 2024-07-11T08:52:12 | https://dev.to/chris_gyle_e76ab76b616368/experience-the-ultimate-card-game-with-3-patti-happy-club-3kh4 |

Join the 3 Patti Happy Club and dive into the thrilling world of Teen Patti! With a seamless user interface and fair gameplay, this platform is perfect for all card game enthusiasts. Enjoy exciting matches, connect with fellow players, and potentially earn real money. Don't miss out on this exceptional gaming experien... | chris_gyle_e76ab76b616368 | |

1,919,461 | Mobile Live Streaming Revolutionizes Election Coverage: TVU Networks Leads the Charge | Remember when election night meant huddling around the TV, waiting for updates from reporters... | 0 | 2024-07-11T08:52:32 | https://dev.to/russel_bill_143504f552b74/mobile-live-streaming-revolutionizes-election-coverage-tvu-networks-leads-the-charge-gn3 | Remember when election night meant huddling around the TV, waiting for updates from reporters stationed at key locations? Well, those days are long gone. The rise of mobile live streaming technology, spearheaded by innovators like TVU Networks, has completely transformed how we cover and consume election news.

Take th... | russel_bill_143504f552b74 | |

1,919,462 | Customer Relationship Management (CRM) Market: Global Industry Analysis Report | Customer Relationship Management Market Size is Valued at USD 64.36 Billion in 2023, and is Projected... | 0 | 2024-07-11T08:52:40 | https://dev.to/chavi_tardeja/customer-relationship-management-crm-market-global-industry-analysis-report-4hj9 |

Customer Relationship Management Market Size is Valued at USD 64.36 Billion in 2023, and is Projected to Reach USD 173.54 Billion by 2032, Growing at a CAGR of 13.20% From 2024-2032.

A technology-driven approach called customer relationship management, or CRM, assists companies in managing their relationships with bot... | chavi_tardeja | |

1,919,464 | Simplifying Data Access in Laravel with the Repository Pattern | In software development, maintaining clean and manageable code is essential. One way to achieve this... | 0 | 2024-07-11T08:52:57 | https://dev.to/naveen_dev/simplifying-data-access-in-laravel-with-the-repository-pattern-2e5i | In software development, maintaining clean and manageable code is essential. One way to achieve this in Laravel is by using the Repository Pattern. This pattern allows you to separate the data access logic from the business logic, making your code more modular, testable, and maintainable. In this blog post, we’ll explo... | naveen_dev | |

1,919,466 | Understanding the CSS Box Model: A Comprehensive Guide | The CSS Box Model is a fundamental concept in web design and development, crucial for understanding... | 0 | 2024-07-11T18:47:03 | https://dev.to/mdhassanpatwary/understanding-the-css-box-model-a-comprehensive-guide-5b94 | website, css, webdev, learning |

The CSS Box Model is a fundamental concept in web design and development, crucial for understanding how elements are displayed and how they interact with one another on a web page. This article will provide an in-depth look at the CSS Box Model, explaining its components and how to manipulate them to create visually a... | mdhassanpatwary |

1,919,467 | Finding a Mobile App Development Company in New York: What to Expect | People in modern society can only imagine their lives by using different mobile applications to... | 0 | 2024-07-11T08:56:14 | https://dev.to/davidblair/finding-a-mobile-app-development-company-in-new-york-what-to-expect-3344 | javascript, mobileapp, appdevelopment, appnewyork |

People in modern society can only imagine their lives by using different mobile applications to interact with their counterparts in the business world. It assists various firms in driving traffic to their website, i... | davidblair |

1,919,468 | Expedite IT ' Present Automated Gate Barrier as well as Boom Barrier Systems in Riyadh, Jeddah and across the Saudi Arabia | The bustling cities that are Riyadh, Jeddah, and throughout the Saudi Arabia. The need for modern... | 0 | 2024-07-11T08:56:35 | https://dev.to/aafiya_69fc1bb0667f65d8d8/expedite-it-present-automated-gate-barrier-as-well-as-boom-barrier-systems-in-riyadh-jeddah-and-across-the-saudi-arabia-59p2 | gatebarrier, boombarrier, technology, software | The bustling cities that are Riyadh, Jeddah, and throughout the Saudi Arabia. The need for modern security systems has never been greater. Expedite IT emerges as a major player by offering the most modern Automatic Gate Barrier and [Boom Barrier Systems](https://www.expediteiot.com/the-gate-barrier-system-in-ksa-qatar... | aafiya_69fc1bb0667f65d8d8 |

1,919,469 | Tech new babies 😅. | The logic behind authorization with jsonwebtoken __is insane . Love the understanding about how... | 0 | 2024-07-11T08:56:58 | https://dev.to/peter_itumo_0eec0ea32b842/tech-new-babies--104e |

- The logic behind authorization with jsonwebtoken __is insane . Love the understanding about how servers remember client 😎😎😎 | peter_itumo_0eec0ea32b842 | |

1,919,470 | Cloud Computing | Cloud computing is the on-demand delivery of IT resources such as storage, networking resources and... | 0 | 2024-07-11T08:57:20 | https://dev.to/emmanuel_adzitay_875787c/cloud-computing-4elf | Cloud computing is the on-demand delivery of IT resources such as storage, networking resources and computing resources over the Internet with pay-as-you-go pricing. Instead of buying, owning, and maintaining physical data centers and servers, you can access technology services, such as computing power, storage, and da... | emmanuel_adzitay_875787c | |

1,919,472 | How do I choose the right Dymo label for my needs? | How to Choose the Right Dymo Label for Your Needs Choosing the right Dymo label depends on various... | 0 | 2024-07-11T09:00:47 | https://dev.to/john10114433/how-do-i-choose-the-right-dymo-label-for-my-needs-3ig9 | How to Choose the Right Dymo Label for Your Needs

Choosing the right Dymo label depends on various factors, including the type of labeling you need, the environment in which the labels will be used, and the specific features you require. Here’s a guide to help you select the most appropriate Dymo label for your needs:

... | john10114433 | |

1,919,473 | Amalitech Assignment | What is cloud computing? Cloud computing is like renting a powerful computer over the internet.... | 0 | 2024-07-11T09:02:14 | https://dev.to/richmond_ofori_32d3982e66/amalitech-assignment-36om | What is cloud computing?

Cloud computing is like renting a powerful computer over the internet. Instead of buying and maintaining your own hardware and software, you can use someone else's through the internet. It's like having a super-computer at your fingertips, without actually owning one.

What are the benefits of... | richmond_ofori_32d3982e66 | |

1,919,474 | Unveiling the Future: AI-Powered Insights into XCRUSH.AI | In the dynamic landscape of modern technology, the convergence of artificial intelligence and data... | 0 | 2024-07-11T09:03:15 | https://dev.to/peterjohnson427/unveiling-the-future-ai-powered-insights-into-xcrushai-3ci7 | ai | In the dynamic landscape of modern technology, the convergence of artificial intelligence and data analytics has sparked a revolution across industries. Among the vanguards of this movement is [XCRUSH.AI](https://xcrush.ai/), a groundbreaking platform that stands at the intersection of innovation and practical applicat... | peterjohnson427 |

1,919,475 | Can Dymo labels be used on different surfaces? | Yes, Dymo labels can be used on a variety of surfaces, but the effectiveness and adhesion of the... | 0 | 2024-07-11T09:03:40 | https://dev.to/john10114433/can-dymo-labels-be-used-on-different-surfaces-3a3e | Yes, Dymo labels can be used on a variety of surfaces, but the effectiveness and adhesion of the labels depend on the type of label and the surface they are applied to. Here’s a guide on how different Dymo labels perform on various surfaces:

[dymo 30252 labels](https://betckey.com/collections/hot-sale/products/dymo-302... | john10114433 | |

1,919,476 | 081219237435 Service PABX Depok | [Service PABX Depok](Rislatel PABX Sytem Memberikan Pelayanan dan solusi Di alat Komunikasi Di tempat... | 0 | 2024-07-11T09:07:41 | https://dev.to/slamet_prihatin_9925aa0c3/081219237435-service-pabx-depok-5gni | servicepabx, settingpabxdepok, jasapemasanganpabxdepok, teknisipabxdepok | [[Service PABX Depok]](https://rislatelpabx.com/service-pabx-depok/)(Rislatel PABX Sytem Memberikan Pelayanan dan solusi Di alat Komunikasi Di tempat Anda.

Dengan Tenaga Teknisi Yang Handal dan profesional Di Bidang PABX SYSTEM

HUBUNGI SEGERA DI TELPON MAUPUN WA

https://wa.me/6281219237435

https://rislatelpabx.com/ser... | slamet_prihatin_9925aa0c3 |

1,919,477 | RivieraDev 2024 : We were here | Départ 8h28 de Bordeaux, arrivée 17h30 à Antibes. En plus de nous laisser tout le temps de... | 0 | 2024-07-11T10:52:31 | https://dev.to/onepoint/rivieradev-2024-we-were-here-131a | rivieradev, techtalks, conference, onepoint | Départ 8h28 de Bordeaux, arrivée 17h30 à Antibes.

En plus de nous laisser tout le temps de répéter une dernière fois nos talks, le choix du train nous laisse tout le temps d'ad... | jtama |

1,919,479 | Getting Started with Rust | Rust is a very young and very modern language. It helps you write faster, more reliable software. The... | 28,032 | 2024-07-11T09:10:04 | https://dev.to/danielmwandiki/getting-started-with-rust-2c3l | learning, rust, devops | Rust is a very young and very modern language. It helps you write faster, more reliable software. The goal for Rust is to create a highly concurrent, safe and perfomant system.

## Installation

The first step we will download Rust through `rustup`, a command line tool for managing Rust versions and associated tools.

#... | danielmwandiki |

1,919,480 | 081219237435 Service PABX Bogor | Service PABX Bogor, Rislatel PABX Sytem Memberikan Pelayanan dan solusi Di alat Komunikasi Di tempat... | 0 | 2024-07-11T09:12:50 | https://dev.to/slamet_prihatin_9925aa0c3/081219237435-service-pabx-bogor-1n0l | servicepabxbogor, jasa, settingpabxbogor, jasapemasanganpabxbogor | **[Service PABX Bogor](https://rislatelpabx.com/service-pabx-bogor/)**, Rislatel PABX Sytem Memberikan Pelayanan dan solusi Di alat Komunikasi Di tempat Anda.

Dengan Tenaga Teknisi Yang Handal dan profesional Di Bidang PABX SYSTEM

HUBUNGI SEGERA DI TELPON MAUPUN WA

https://wa.me/6281219237435

Jasa pemasangan Pabx Pan... | slamet_prihatin_9925aa0c3 |

1,919,481 | How to create an animated input field with Tailwind CSS | Today we are going to create an animated input field with Tailwind CSS. Why animated input... | 0 | 2024-07-11T09:13:32 | https://dev.to/mike_andreuzza/how-to-create-an-animated-input-field-with-tailwind-css-ndb | tailwindcss, tutorial | Today we are going to create an animated input field with Tailwind CSS.

**Why animated input fields?**

Well, you might be wondering why we would want to create an animated input field. There are a few reasons why you might want to do this:

- To add a touch of interactivity to your website.

- To make your input fields... | mike_andreuzza |

1,919,482 | Can AI Be Your New Doctor? Thrive AI Health Aims to Coach You to Wellness | AI is whispering sweet nothings in healthcare‘s ear. Tech titans are enthralled by its potential,... | 0 | 2024-07-11T09:13:45 | https://dev.to/hyscaler/can-ai-be-your-new-doctor-thrive-ai-health-aims-to-coach-you-to-wellness-55c6 | AI is whispering sweet nothings in healthcare‘s ear. Tech titans are enthralled by its potential, particularly AI-powered chatbots that can understand and address individual health concerns. OpenAI and wellness guru Arianna Huffington are betting big, co-funding the development of an “AI health coach” through Thrive AI... | amulyakumar | |

1,919,485 | 081219237435 Service PABX Serpong | Service PABX Serpong, Rislatel PABX Sytem Memberikan Pelayanan dan solusi Di alat Komunikasi Di... | 0 | 2024-07-11T09:18:44 | https://dev.to/slamet_prihatin_9925aa0c3/081219237435-service-pabx-serpong-1c60 | servicepabxserpong, settingpabxserpong, pemasanganpabxserpong, teknisipabxserpong | **[Service PABX Serpong](https://rislatelpabx.com/service-pabx-serpong/)**, Rislatel PABX Sytem Memberikan Pelayanan dan solusi Di alat Komunikasi Di tempat Anda.

Dengan Tenaga Teknisi Yang Handal dan profesional Di Bidang PABX SYSTEM

HUBUNGI SEGERA DI TELPON MAUPUN WA

https://wa.me/6281219237435

![Image description]... | slamet_prihatin_9925aa0c3 |

1,919,486 | Implementing Agile Methodology for Efficient Software Development: Best Practices and Benefits | Agile Methodology in Software development has emerged as a game-changer, enabling teams to deliver... | 0 | 2024-07-11T09:18:54 | https://dev.to/maysanders/implementing-agile-methodology-for-efficient-software-development-best-practices-and-benefits-45fa | Agile Methodology in Software development has emerged as a game-changer, enabling teams to deliver high-quality products rapidly while adapting to changing requirements. This blog explores the best practices for implem[](url)enting Agile Methodology and the benefits it brings to [custom software development services](h... | maysanders | |

1,919,505 | 081219237435 Service PABX Cibubur | Service PABX Cibubur - Rislatel PABX Sytem Memberikan Pelayanan dan solusi Di alat Komunikasi Di... | 0 | 2024-07-11T09:21:20 | https://dev.to/slamet_prihatin_9925aa0c3/081219237435-service-pabx-cibubur-2m0l | servicepabxcibubur, settingpabxcibubur, pemasanganpabxcibubur, teknisipabxcibubur | **[Service PABX Cibubur](https://rislatelpabx.com/service-pabx-cibubur/)** - Rislatel PABX Sytem Memberikan Pelayanan dan solusi Di alat Komunikasi Di tempat Anda.

Dengan Tenaga Teknisi Yang Handal dan profesional Di Bidang PABX SYSTEM

HUBUNGI SEGERA DI TELPON MAUPUN WA

https://wa.me/6281219237435

Jasa pemasangan Pab... | slamet_prihatin_9925aa0c3 |

1,919,506 | How Structural Engineers Ensure Safety with Innovative Approaches | Structural engineers are crucial to ensuring the safety of structures and infrastructure in the... | 0 | 2024-07-11T09:25:45 | https://dev.to/ricardoperry2024/how-structural-engineers-ensure-safety-with-innovative-approaches-7ag | Structural engineers are crucial to ensuring the safety of structures and infrastructure in the construction and building industry. Their prior responsibilities include building structure designs and construction, selecting suitable building materials, working closely with engineers and construction teams, and reviewin... | ricardoperry2024 | |

1,919,507 | The Impact of Artificial Intelligence on Everyday Life | Artificial Intelligence (AI) has become a buzzword in today's tech landscape. Its applications are... | 0 | 2024-07-11T09:23:31 | https://dev.to/codewithsom/the-impact-of-artificial-intelligence-on-everyday-life-56d2 | ai, machinelearning, web3, techtalks | Artificial Intelligence (AI) has become a buzzword in today's tech landscape. Its applications are vast, and it is transforming various aspects of our daily lives. Here's a straightforward guide to understanding how AI is impacting our world.

#### What is Artificial Intelligence?

Artificial Intelligence is a branch o... | codewithsom |

1,919,508 | Ensuring Safety: Attendance Tracking in School Bus Monitoring Across the Emirates of the UAE | Attendance tracking in school bus monitoring systems plays a crucial role in ensuring the safety and... | 0 | 2024-07-11T09:23:46 | https://dev.to/aafiya_69fc1bb0667f65d8d8/ensuring-safety-attendance-tracking-in-school-bus-monitoring-across-the-emirates-of-the-uae-491a | schoolbuscamera, schoolbusmonitoring, technology, tracking | [Attendance tracking](https://tektronixllc.ae/school-bus-fleet-management/) in school bus monitoring systems plays a crucial role in ensuring the safety and security of students. In cities like Dubai, Abu Dhabi, and across UAE, school bus monitoring systems are equipped with advanced technology to track attendance, mon... | aafiya_69fc1bb0667f65d8d8 |

1,919,509 | IEC 61850 | Applied Systems Engineering (ASE), a Kalkitech firm, for all important protocols including IEC 61850,... | 0 | 2024-07-11T09:25:07 | https://dev.to/asesystem/iec-61850-ifa | Applied Systems Engineering (ASE), a Kalkitech firm, for all important protocols including IEC 61850, DNP3, IEC 60870–5–104, and many more than 80 other standard and legacy protocols. **[IEC-61850](https://www.ase-systems.com/products/ase61850-suite/)** operations test, development, certification, and management system... | asesystem | |

1,919,510 | FINQ's weekly market insights: Peaks and valleys in the S&P 500 – July 11, 2024 | Unveil this week's market dynamics, spotlighting the S&P 500's leaders and laggards with FINQ's... | 0 | 2024-07-11T13:47:59 | https://dev.to/eldadtamir/finqs-weekly-market-insights-peaks-and-valleys-in-the-sp-500-july-11-2024-16d2 | ai, stockmarket, sp500, investing | Unveil this week's market dynamics, spotlighting the S&P 500's leaders and laggards with FINQ's precise AI analysis.

## **Top achievers:**

- **Amazon (AMZN)**: Continues to lead the top spot with strong scores.

- **Salesforce (CRM)**: Holds strong in second place.

- **Alphabet Inc (GOOGL)**: Steady in the third spot w... | eldadtamir |

1,919,511 | a | a<script type="text/javascript"> ... | 0 | 2024-07-11T09:33:50 | https://dev.to/annie_2a1a5b530152d233c26/a-g3g | a`<script type="text/javascript">

console.log("OKKKK");

</script>`

`

```

<script type="text/javascript">

console.log("OKKKK");

</script>

```

` | annie_2a1a5b530152d233c26 | |

1,919,519 | Exploring ITGC Controls in Application, OS, and Database. | In today’s interconnected world, securing the application, operating system (OS), and database (DB)... | 0 | 2024-07-12T10:58:53 | https://dev.to/rieesteves/exploring-itgc-controls-in-application-os-and-database-2gpp | itgc, database, api, controls | In today’s interconnected world, securing the application, operating system (OS), and database (DB) layers isn’t just prudent—it’s essential.

In the previous blog we got to know about the basis and basic controls in ITGC.. thus now let us understand the Critical Connections while Exploring ITGC Controls in OS, Applicat... | rieesteves |

1,919,512 | Over The Counter Analgesics Market: Pricing Strategies and Profit Margins | The Global Over The Counter Analgesics Market size is expected to be worth around USD 44.03 Billion... | 0 | 2024-07-11T09:34:16 | https://dev.to/amelie_jardine_c595bf6d77/over-the-counter-analgesics-market-pricing-strategies-and-profit-margins-4c0c | market, trends, growth, marketresearch | The Global Over The Counter Analgesics Market size is expected to be worth around USD 44.03 Billion by 2033 from USD 29.74 Billion in 2023, growing at a CAGR of 4.0% during the forecast period from 2024 to 2033.

Click here for more information:

(https://market.us/report/over-the-counter-analgesics-market/)

are two common optio... | dunlop_marshall_57735193b | |

1,919,520 | Batman Comics with pure CSS | I really can't say that I'm a big fan of TailwindCSS, because I don't like decorating my HTML with... | 0 | 2024-07-11T09:46:39 | https://kiko.io/notes/2024/Batman-Comics-with-pure-CSS/ | css, batman, comic | I really can't say that I'm a big fan of TailwindCSS, because I don't like decorating my HTML with dozens of predefined classes instead of implementing a meaningful class directly in my own CSS code.

However, [Alvaro Montoro](https://front-end.social/@alvaromontoro) shows how you can use predefined classes in a meanin... | kristofzerbe |

1,919,521 | Web Crawling Service Provider - Enterprise Web Crawling Service | iWeb Scraping provides enterprise web crawling services as one of the best web crawler and crawling... | 0 | 2024-07-11T09:47:15 | https://dev.to/iwebscraping/web-crawling-service-provider-enterprise-web-crawling-service-24c0 | webcrawlingserviceprovider, enterprisewebcrawlingservice | iWeb Scraping provides [enterprise web crawling services](https://www.iwebscraping.com/web-crawling-services.php) as one of the best web crawler and crawling Service providers in the USA, UK, Australia, Canada, UAE, and Singapore. | iwebscraping |

1,919,522 | 8 fun Linux utilities | We've put together a few fun Linux utilities, try them out for yourself. Read on and you’ll get... | 0 | 2024-07-11T10:19:09 | https://dev.to/ispmanager/8-fun-linux-utilities-48i0 | fun, linux, ubuntu, utilities | We've put together a few fun Linux utilities, try them out for yourself.

Read on and you’ll get greeted by a cow in your console, you’ll become Neo and dive into the matrix, and your console will light up in flames.

## cmatrix

The matrix, straight out of the eponymous movie, will appear in your terminal. To install ... | ispmanager_com |

1,919,523 | Discovering the Best Chicken Sandwiches in Lake Charles, Louisiana | River Charles, Louisiana, can be a vibrant city known for it has the prosperous customs, beautiful... | 0 | 2024-07-11T09:50:11 | https://dev.to/chickensandwich57/discovering-the-best-chicken-sandwiches-in-lake-charles-louisiana-24jj | River Charles, [Louisiana](https://blazinhotchicken.com/chicken-sandwich-lake-charles/), can be a vibrant city known for it has the prosperous customs, beautiful panoramas, as well as mouth-watering cuisine. On the list of stand apart belongings in it has the kitchen collection could be the poultry sandwich. Together w... | chickensandwich57 | |

1,919,524 | Exploring Career Prospects: Where Are the "Stars and Sea" of the Web3 Industry? | 💼 Finding it tough to land a job in Web3? Traditional recruitment too slow? The 6th #TinTinJobFair... | 0 | 2024-07-11T09:50:33 | https://dev.to/ourtintinland/exploring-career-prospects-where-are-the-stars-and-sea-of-the-web3-industry-3fcp | webdev | 💼 Finding it tough to land a job in Web3? Traditional recruitment too slow? The 6th #TinTinJobFair online job fair is here to solve your troubles and bring your career dreams within reach!

🔥Exploring Career Prospects: Where Are the "Stars and Sea" of the Web3 Industry?

🏵️ Date: July 16th, Tuesday, 20:00 UTC+8

🪐Gues... | ourtintinland |

1,919,525 | Discover the latest styles in sweatshirts for toddlers, boys, and girls | Stylish Sweatshirts for Toddlers Our sweatshirts for toddlers are designed with both comfort and... | 0 | 2024-07-11T09:50:41 | https://dev.to/cadeaubaby/discover-the-latest-styles-in-sweatshirts-for-toddlers-boys-and-girls-3267 | Stylish Sweatshirts for Toddlers

Our [sweatshirts for toddlers](https://cadeaubaby.com/collections/7-pcs-take-me-home-set) are designed with both comfort and style in mind. Made from soft, high-quality materials, they provide the perfect combination of warmth and flexibility for active little ones. With a range of ador... | cadeaubaby | |

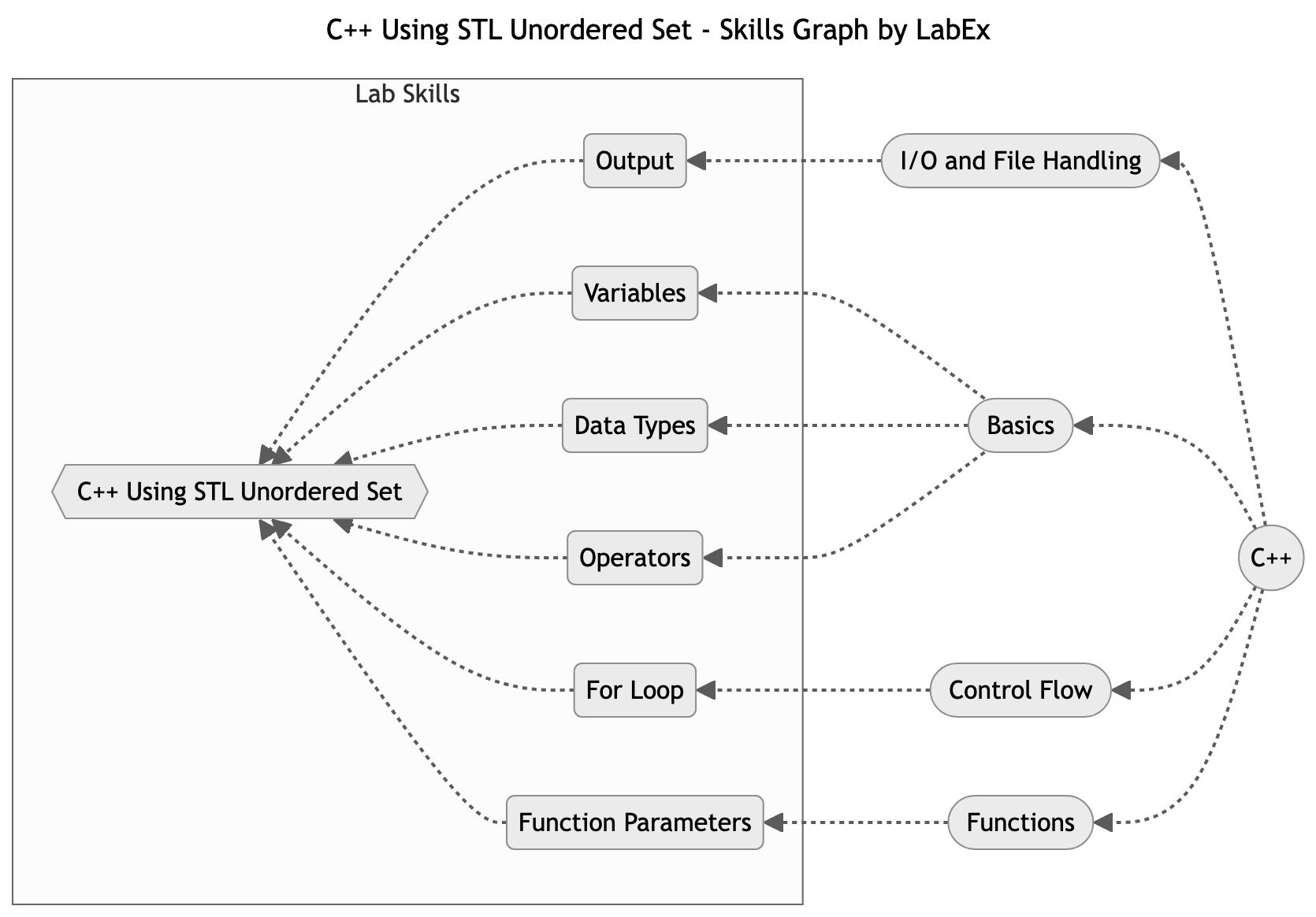

1,919,526 | Mastering C++ Unordered Sets with STL | In this lab, you will learn how to implement and use std::unordered_set in C++. A set is used to store unique values of a list and sort them automatically. An unordered set is similar to a set, except it does not sort the elements and stores them in a random order. It also automatically removes any duplicated elements. | 27,769 | 2024-07-11T09:51:10 | https://dev.to/labex/mastering-c-unordered-sets-with-stl-58i7 | coding, programming, tutorial |

## Introduction

This article covers the following tech skills:

In [this lab](https://labex.io/tutorials/cpp-c-using-stl-unordered-set-96234), you will learn how to implement and use `std::unordered_set` in C++. A set is used to s... | labby |

1,919,527 | Discover Top-Notch Car Cleaning Services Near You | When it comes to keeping your car in pristine condition, finding a reliable and high-quality car... | 0 | 2024-07-11T09:52:16 | https://dev.to/5kcarcare_5kcarcare_58288/discover-top-notch-car-cleaning-services-near-you-3o7f | carcleaningnearme, 5kcarcarechennai, carcareservice, tefloncoatingforcar | When it comes to keeping your car in pristine condition, finding a reliable and high-quality car cleaning service near you is essential. For residents in Chennai, 5K Car Care stands out as a premier choice for comprehensive **["car care services"](https://www.5kcarcare.com/special-treatment.php)**. From standard car wa... | 5kcarcare_5kcarcare_58288 |

1,919,528 | Comprehensive Guide to Amazon Rekognition: Features, Benefits, Use Cases, and Alternatives | This guide will delve into the core features of Amazon Rekognition, explore the myriad benefits it... | 0 | 2024-07-11T09:52:55 | https://dev.to/luxandcloud/comprehensive-guide-to-amazon-rekognition-features-benefits-use-cases-and-alternatives-4pop | aws, ai, machinelearning, python | This guide will delve into the core features of Amazon Rekognition, explore the myriad benefits it offers, and highlight real-world use cases across different industries. Additionally, we will compare Rekognition with alternatives like Luxand.cloud, providing insights into why businesses might choose one over the other... | luxandcloud |

1,919,529 | The ban on online casinos in Russia | Regulation of gambling in Russia The operation of online casino in Russia has been strictly... | 0 | 2024-07-11T09:53:07 | https://dev.to/nevst/the-ban-on-online-casinos-in-russia-2i60 | **Regulation of gambling in Russia**

The operation of [online casino](https://casino-top24.info) in Russia has been strictly prohibited for years, a stance rooted in both cultural and regulatory foundations. This article delves into the reasons behind the ban, the specific regulations that enforce it, the legislative ... | nevst | |

1,919,530 | How do I complain on Shopsy?-8167..845548.. | Toll Free:⑧①⑥⑦⑧-④⑤⑤④⑧ Online complain, 24/7) ,081678-45548 ..( shopsy complaint customer service... | 0 | 2024-07-11T09:54:00 | https://dev.to/abdul_ansari_4df023500c2d/how-do-i-complain-on-shopsy-8167845548-3c1g | javascript, beginners, programming, webdev | Toll Free:⑧①⑥⑦⑧-④⑤⑤④⑧ Online complain, 24/7) ,081678-45548 ..( shopsy complaint customer service /credit card/ report Transaction),1800-258-6161 (Report . | abdul_ansari_4df023500c2d |

1,919,531 | Three Prompt Libraries you should know as a AI Engineer | As developers we write code to develop logic that eventually helps solve larger problems or automate... | 0 | 2024-07-11T09:54:15 | https://dev.to/portkey/three-prompt-libraries-you-should-know-as-a-ai-engineer-32m8 | generativeai, prompting, webdev, beginners | As developers we write code to develop logic that eventually helps solve larger problems or automate a workflow that is unproductive for humans.

When LLMs came into picture, prompting obviously became famous. Prompt Engineering became a art!

Prompting became one of the key components in Generative AI and so the use... | vrv |

1,919,532 | True Teamwork in Software Development | I'm a software developer by passion. I've had the privilege of leading development teams over my... | 0 | 2024-07-11T09:54:57 | https://dev.to/martinbaun/true-teamwork-in-software-development-1ebd | devops, productivity, career, development | I'm a software developer by passion. I've had the privilege of leading development teams over my decade-plus career. Everyone tends to focus on the coding, technical aspects, and building software to be proud of. All these are vital but can be optimized with proper teamwork and collaboration. Experience has taught me h... | martinbaun |

1,919,533 | How do I complain on Shopsy?-8167..845548. | Toll Free:⑧①⑥⑦⑧-④⑤⑤④⑧ Online complain, 24/7) ,081678-45548 ..( shopsy complaint customer service... | 0 | 2024-07-11T09:55:04 | https://dev.to/abdul_ansari_4df023500c2d/how-do-i-complain-on-shopsy-8167845548-5ajn | react, python, productivity | Toll Free:⑧①⑥⑦⑧-④⑤⑤④⑧ Online complain, 24/7) ,081678-45548 ..( shopsy complaint customer service /credit card/ report Transaction),1800-258-6161 (Report . | abdul_ansari_4df023500c2d |

1,919,536 | Emerging Trends in Mobile App Development for 2024 | The world of mobile app development is a hive of interesting new trends as we enter 2024. Keeping... | 0 | 2024-07-11T09:59:03 | https://dev.to/dignizant_technologies/emerging-trends-in-mobile-app-development-for-2024-4m3o | mobileappdevelopment, mobileappdevelopmenttrends, hiremobiledevelopers, trendingtechnologies | The world of mobile app development is a hive of interesting new trends as we enter 2024. Keeping abreast of the most recent developments may be transformative for anyone interested in technology, be they a developer, business owner, or just someone who enjoys tech. Innovative updates are expected this year, which shou... | dignizant_technologies |

1,919,537 | What is MUI? (Including Pros and Cons) | If you are a developer but you want to add designs and animations to your app, then you should use... | 0 | 2024-07-11T10:02:29 | https://medium.com/@shariq.ahmed525/what-is-mui-including-pros-and-cons-9f5da1933320 | design, css, animation | If you are a developer but you want to add designs and animations to your app, then you should use MUI or Material UI.

Why? It’s one of the powerful React UI frameworks that has a design language. What’s more,is that it was created by Google in 2014. And it’s not just a basic design app. It has a lot of designs, anima... | shariqahmed525 |

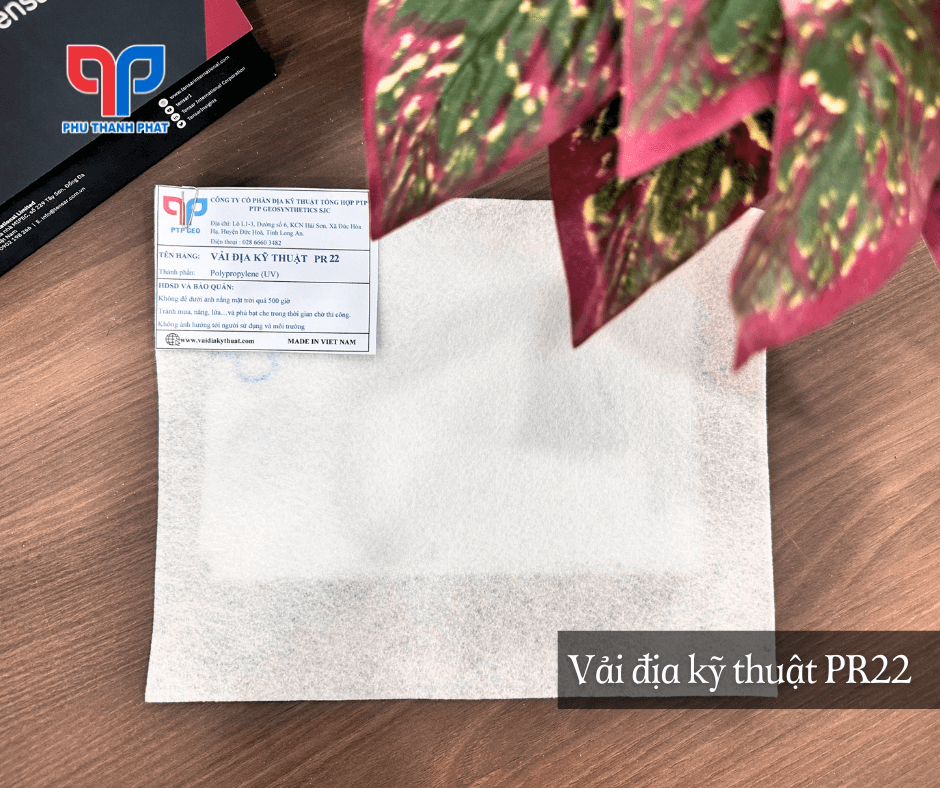

1,919,540 | Vải địa kỹ thuật PR | Vải địa kỹ thuật không dệt PR là sản phẩm vải địa rất đa dạng về chủng loại. Được ứng dụng trong... | 0 | 2024-07-11T10:04:45 | https://dev.to/vaidiapr/vai-dia-ky-thuat-pr-554l | vaidiapr, vaidiakythuat, vaidiaptp, vaidiakythuatpr |

Vải địa kỹ thuật không dệt PR là sản phẩm vải địa rất đa dạng về chủng loại. Được ứng dụng trong nhiều công trình, thỏa mãn được các yêu cầu về kỹ thuật khác nhau. Các loại vải địa kỹ thuật PR không chỉ được cung cấ... | vaidiapr |

1,919,541 | Dr Sanket Ekhande | Dr Sanket Ekhande, the Best Plastic Surgeon in Vashi, Navi Mumbai offers unparalleled expertise at... | 0 | 2024-07-11T10:07:30 | https://dev.to/dr_sanketekhande_f36bd90/dr-sanket-ekhande-3ahd | plasticsurgeon, cosmeticsurgeon, healthcare, javascript |

**Dr Sanket Ekhande**, the [Best Plastic Surgeon in Vashi, Navi Mumbai](https://www.drsanketekhande.com/plastic-surgeon-at-fortis-hiranandani-hospital-vashi.php) offers unparalleled expertise at Fortis Hiranandani H... | dr_sanketekhande_f36bd90 |

1,919,542 | Dietary Fibers Market: Booming Regional Demand and Market Opportunities | The global dietary fibers market is poised for significant growth, projected to increase from... | 0 | 2024-07-11T10:08:00 | https://dev.to/swara_353df25d291824ff9ee/dietary-fibers-market-booming-regional-demand-and-market-opportunities-55l7 |

The global [dietary fibers market](https://www.persistencemarketresearch.com/market-research/dietary-fibers-market.asp) is poised for significant growth, projected to increase from US$7.27 billion in 2022 to US$14.9... | swara_353df25d291824ff9ee | |

1,919,543 | How to Easily Import Large SQL Database Files into MySQL Using Command Line | Importing large SQL database files into MySQL can seem daunting, but it's actually quite... | 0 | 2024-07-11T10:13:06 | https://dev.to/haseebmirza/how-to-easily-import-large-sql-database-files-into-mysql-using-command-line-4ddg | database, mysql, sql, productivity | Importing large SQL database files into MySQL can seem daunting, but it's actually quite straightforward with the right command. In this post, we'll walk you through the process step by step.

## Step-by-Step Guide to Importing a Large SQL Database File into MySQL:

**1. Open Command Prompt**

Open your Command Prompt. ... | haseebmirza |

1,919,544 | SM Togel: Panduan Komprehensif | Togel kependekan dari Toto Gelap adalah permainan togel berbasis angka yang berasal dari Indonesia.... | 0 | 2024-07-11T10:08:44 | https://dev.to/euroaccessibility/sm-togel-panduan-komprehensif-3p2p |

Togel kependekan dari Toto Gelap adalah permainan togel berbasis angka yang berasal dari Indonesia. Ini adalah permainan untung-untungan di mana pemain memprediksi angka yang akan muncul dalam undian. Kesederhanaan permainan dan kegembiraan untuk menang besar menjadikannya hiburan favorit bagi banyak orang.

Cara Ke... | euroaccessibility | |

1,919,546 | Elevate Your Blogging Experience Powerful Features | My Friend has made this platform check it out 🌟 Join Softcodeon: Your Ultimate Blogging... | 0 | 2024-07-11T10:09:30 | https://dev.to/muhammadaliaffan/elevate-your-blogging-experience-powerful-features-2lm9 | webdev, javascript, beginners, tutorial | ## My Friend has made this platform check it out

🌟 Join Softcodeon: Your Ultimate Blogging and Community Platform! 🌟

Are you ready to take your blogging journey to the next level? Softcodeon offers you an easy access, a feature-rich experience designed to empower and inspire you.

## ✨ Why Choose Softcodeon?

📝 Co... | muhammadaliaffan |

1,919,549 | Travel API Provider | Are you a travel agency or DMC and searching for travel API provider to setup online travel booking... | 0 | 2024-07-11T10:12:34 | https://dev.to/mamata_padhi_e40729f8f253/travel-api-provider-1kil | Are you a travel agency or DMC and searching for travel API provider to setup online travel booking platform? Travel ecommerce has grown several fold over the last decade and 55% of all the travel bookings are actually performed over IBEs and OTAs today. Travel APIs are a set of web services XMLs to access the airline ... | mamata_padhi_e40729f8f253 | |

1,919,550 | Mastering HTML Tables: A Comprehensive Guide by techwalebnde | A post by Techwalebnde | 0 | 2024-07-11T10:12:57 | https://dev.to/techwalebnde999/mastering-html-tables-a-comprehensive-guide-by-techwalebnde-5dnj | techwalebnde999 | ||

1,919,551 | I Ate My Code! | The Strange Rituals Behind My Programming Success🚀 The Step You’re Missing Before... | 0 | 2024-07-11T10:13:47 | https://dev.to/thecodingcutie/i-ate-my-code-3non | webdev, javascript, programming, react | ## The Strange Rituals Behind My Programming Success🚀

### The Step You’re Missing Before You Start Coding!⭐

In the rush to write code and bring ideas to life, developers often overlook a crucial step: **drawing** the _flow of the application_ or code before diving into the actual coding. This blog post explores why t... | thecodingcutie |

1,919,552 | QA myth busting: Quality can be measured | Let’s bust some QA myths. First myth: Quality can be measured. Everyone wants to measure quality,... | 28,033 | 2024-07-11T10:14:50 | https://qase.io/blog/qa-myth-busting-quality-can-be-measured/ | Let’s bust some QA myths.

First myth: Quality can be measured.

Everyone wants to measure quality, but the idea that quality can be definitively measured is a myth.

Imagine trying to measure the quality of a family road trip. What would make the road trip an indisputable success for the entire family? You’ll have ... | sharovatov | |

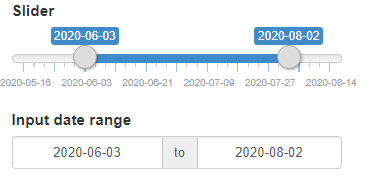

1,919,553 | how can I create a date range slider in python Tkinter as shown in the attached image? | A post by mogeeb qaid | 0 | 2024-07-11T10:15:57 | https://dev.to/mogeeb_qaid_5c1c19f61d0b7/how-can-i-create-a-date-range-slider-in-python-tkinter-as-shown-in-the-attached-image-20km | python, tkinter, ctk, customtkinter |

| mogeeb_qaid_5c1c19f61d0b7 |

1,919,554 | Endoscopic Submucosal Dissection Market Key Applications and Future Demand | Endoscopic Submucosal Dissection Market Outlook The global market for endoscopic submucosal... | 0 | 2024-07-11T10:15:58 | https://dev.to/ganesh_dukare_34ce028bb7b/endoscopic-submucosal-dissection-market-key-applications-and-future-demand-47j3 | Endoscopic Submucosal Dissection Market Outlook

The global market for endoscopic submucosal dissection reached a valuation of US$ 245.9 million by the end of 2022, with a projected compound annual growth rate (CAGR) of 7.2% over the next decade, indicating robust market expansion. According to Persistence Market Resea... | ganesh_dukare_34ce028bb7b | |

1,919,564 | Simplifying EU Customs for Developers with CFSP | For developers working in logistics, trade, or e-commerce within the EU, understanding Customs... | 0 | 2024-07-11T10:26:43 | https://dev.to/john_hall/simplifying-eu-customs-for-developers-with-cfsp-1hhf | productivity, learning, news, discuss | For developers working in logistics, trade, or e-commerce within the EU, understanding Customs Freight Simplified Procedures (CFSP) can be a game-changer. CFSP allows authorised economic operators ([AEOs](https://www.gov.uk/guidance/authorised-economic-operator-certification)) to clear goods through customs using simpl... | john_hall |

1,919,556 | Automated Energy Management Supports IoT in Energy & Utility Application Market Growth | According to Inkwood Research, the Global IoT in Energy & Utility Application Market is... | 0 | 2024-07-11T10:19:07 | https://dev.to/nidhi_05c663bdf720fe33865/automated-energy-management-supports-iot-in-energy-utility-application-market-growth-283c | utilityapplication, inkwoodreaesrch, marketresearchreport, automatedenergymanagement | **According to Inkwood Research, the Global IoT in Energy & Utility Application Market is anticipated to surge with a CAGR of 10.58% during the forecast period, 2024-2032.**

VIEW TABLE OF CONTENTS: https://inkwoodresearch.com/reports/iot-in-energy-and-utility-application-market/#table-of-contents

IoT has introduced n... | nidhi_05c663bdf720fe33865 |

1,919,557 | Check Result 2024 Online | Result.pk is best website to check your latest educational results online soon after announcement of... | 0 | 2024-07-11T10:19:25 | https://dev.to/official22/check-result-2024-online-4af5 | result, pakistan, matricresult, result2024 | [Result.pk](https://www.result.pk/) is best website to check your latest educational results online soon after announcement of class result for academic year 2024. Class results 2024 are updated immediately soon after declaration date & time, so students can see their 5th Class, 8th Class, Matric, Inter, Bachelors and ... | official22 |

1,919,561 | Best Uses for Browser Storage Options | 1) Local Storage Local storage provides a way to store key-value pairs in a web browser... | 0 | 2024-07-11T10:20:50 | https://dev.to/a5okol/best-uses-for-browser-storage-options-26p2 | ###1) **Local Storage**

Local storage provides a way to store key-value pairs in a web browser with no expiration time, meaning the data persists even when the browser is closed and reopened.

#####Best Uses:

- **User Preferences**: Store user settings such as theme preferences, language choices, and layout configurat... | a5okol |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.