id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,919,049 | Building My First Static Website | Intro: I first became aware of a company website as important strategic tool when I took a marketing... | 0 | 2024-07-10T22:59:04 | https://dev.to/mai2aa/building-my-first-static-website-46h9 | beginners, staticwebapps, webdev |

Intro:

I first became aware of a company website as important strategic tool when I took a marketing course, for big corporations like construction firms. One of the ideas that struck me with inspiration was over-comming my fear to build a static website, an actual one this time - for Toucsan:-). The initially ver... | mai2aa |

1,919,052 | Picking up coding after a long hiatus | 10 print “David is great! ”; 20 goto 10 run Enter fullscreen mode Exit fullscreen... | 0 | 2024-07-10T23:13:32 | https://dev.to/dave_banwell_26fd6e4680c0/picking-up-coding-after-a-long-hiatus-1dmb | webdev, javascript, learning, career | ```

10 print “David is great! ”;

20 goto 10

run

```

That momentous 2-line program and simple command were the first things that I ever typed into a computer, in 1980. My grandmother had borrowed a Commodore PET computer for the summer, from the school where she taught and, over that summer, my aunts taught 5-year-old... | dave_banwell_26fd6e4680c0 |

1,919,053 | Semantic Router - Steer LLMs | I wanted to share a project I've been working on called SemRoute, and I would love to get your... | 0 | 2024-07-10T23:15:43 | https://dev.to/hansalshah/semantic-router-steer-llms-1l23 | nlp, openai, rag, python | I wanted to share a project I've been working on called SemRoute, and I would love to get your feedback. SemRoute is a semantic router that uses vector embeddings to route queries based on their semantic meaning. It's designed to be flexible and easy to use, without the need for training classifiers or using large lang... | hansalshah |

1,919,054 | Boost Your Development Efficiency! Simulate S3 with a Custom Amazon S3 Mock Application | 💡Original japanese post is here. https://zenn.dev/tttol/articles/13032ef69d8333 ... | 0 | 2024-07-10T23:23:16 | https://dev.to/aws-builders/boost-your-development-efficiency-simulate-s3-with-a-custom-amazon-s3-mock-application-19ah | > 💡Original japanese post is here.

> https://zenn.dev/tttol/articles/13032ef69d8333

## Introduction

I've developed a mock application for Amazon S3 called "MOS3" (pronounced "mɒsˈθri"). Similar to [LocalStack](https://www.localstack.cloud/), MOS3 is an S3-like application that runs in a local environment.

MOS3 is a ... | tttol | |

1,919,070 | Tokenized Real Estate: The Future of Investment | Trailblazing Platforms in Real Estate Tokenization In this burgeoning market, platforms... | 27,673 | 2024-07-10T23:45:08 | https://dev.to/rapidinnovation/tokenized-real-estate-the-future-of-investment-4e5f | ## Trailblazing Platforms in Real Estate Tokenization

In this burgeoning market, platforms like Harbor, RealT, and Brickblock are

trailblazers, offering a space where investors can engage with tokenized

properties with varying levels of investment. Deciphering the best real estate

tokenization platform hinges on your ... | rapidinnovation | |

1,919,072 | Priscilla Haruna_Assignment 2 part2 | Today's digital world has made cloud computing a basic component of modern technology, changing both... | 0 | 2024-07-10T23:56:28 | https://dev.to/priscilla_haruna_ee515f75/priscilla-harunaassignment-2-part2-3dh6 | cloudcomputing, devops, cloud, beginners | Today's digital world has made cloud computing a basic component of modern technology, changing both how individuals and corporations handle their digital lives.

What is Cloud Computing?

Cloud computing refers to the delivery of computing services—including servers, storage, databases, networking, software, analytic... | priscilla_haruna_ee515f75 |

1,919,073 | indispice oslo | Hey food lovers! Have you heard about Indispice Oslo yet? It's this cool new spot in town that's... | 0 | 2024-07-11T00:00:43 | https://dev.to/m_manan_a80f9afa7c49193d/indispice-oslo-180h | -

Hey food lovers! Have you heard about [Indispice ](https://www.indispiceoslo.no/)Oslo yet? It's this cool new spot in town that's got everyone talking. If you're into Asian food, you've got to check it out.

Picture this: You walk in, and it's like stepping into a cozy mix of Norway and Asia. The place looks modern ... | m_manan_a80f9afa7c49193d | |

1,919,074 | Difference between MySQL and PostgreSQL | Choosing the right database system can be tricky, especially when comparing two popular options like... | 0 | 2024-07-11T00:03:01 | https://dev.to/squad_team_986b85db08e8d2/difference-between-mysql-and-postgresql-40b | sql, mariadb, database, tutorial | Choosing the right database system can be tricky, especially when comparing two popular options like MySQL and PostgreSQL. Both have their own strengths and features that make them suitable for different needs. This article will help you understand the differences and make an informed choice.

**Key Takeaways**

MySQL ... | squad_team_986b85db08e8d2 |

1,919,075 | Building Apps That Don't Make Any Money | I've forgotten when it was released, but at some point in the past, I released an app called Bill... | 0 | 2024-07-11T00:07:37 | https://dev.to/m4rcoperuano/building-apps-that-dont-make-any-money-4k9k | swiftui, startup, laravel, devjournal |

I've forgotten when it was released, but at some point in the past, I released an app called [Bill Panda](https://sunnyorlando.dev/billpanda), which you can read about following the link. It was my first complete app that I built to solve a personal problem. I went through the full experience of building it, tearing i... | m4rcoperuano |

1,919,077 | Shared Library (Dynamic linking) - It's not about libs | This is my first post here so, let's go. Disclaimer: I won't create expectations with my... | 0 | 2024-07-11T01:10:13 | https://dev.to/nivicius/shared-library-dynamic-linking-its-not-about-libs-a9m | cpp, c | ## This is my first post here so, let's go.

> `Disclaimer`: I won't create expectations with my posts. Everything I share is part of my learning process, which often involves explaining things to others. I found this method to be particularly effective during my time at [42 School](https://www.42network.org/). Therefo... | nivicius |

1,919,078 | Day 10 of my 90 Days Devops- Kubernetes Networking Fundamentals | Introduction Welcome to Day 10 of my SRE and Cloud Security Journey! Today, I delved into... | 0 | 2024-07-11T00:41:22 | https://dev.to/arbythecoder/day-10-of-my-90-days-devops-kubernetes-networking-fundamentals-50fa | network, devops, kubernetes, beginners | ## Introduction

Welcome to Day 10 of my SRE and Cloud Security Journey! Today, I delved into the fascinating world of Kubernetes networking. If you're a DevOps engineer with some experience, but new to Kubernetes networking, this guide is for you. We'll explore how Kubernetes handles networking, including Services, En... | arbythecoder |

1,919,081 | Building a Modern Portfolio with Next.js, TailwindCSS, and Framer Motion | Building a Modern Portfolio with Next.js, TailwindCSS, and Framer Motion Hello fellow developers! 👋... | 0 | 2024-07-11T00:26:38 | https://dev.to/mohamadzubi/building-a-modern-portfolio-with-nextjs-tailwindcss-and-framer-motion-2p6f | Building a Modern Portfolio with Next.js, TailwindCSS, and Framer Motion

Hello fellow developers! 👋 Today, I'm excited to share my journey of building Portfolio v2, a modern and responsive portfolio using Next.js, TailwindCSS, and Framer Motion. Whether you're showcasing your work or looking to revamp your personal br... | mohamadzubi | |

1,919,084 | indispice oslo | Hey food lovers! Have you heard about Indispice Oslo yet? It's this cool new spot in town that's got... | 0 | 2024-07-11T00:32:32 | https://dev.to/m_manan_a80f9afa7c49193d/indispice-oslo-46hd | Hey food lovers! Have you heard about [Indispice ](https://www.indispiceoslo.no/)Oslo yet? It's this cool new spot in town that's got everyone talking. If you're into Asian food, you've got to check it out.

Picture this: You walk in, and it's like stepping into a cozy mix of Norway and Asia. The place looks modern but... | m_manan_a80f9afa7c49193d | |

1,919,085 | Advanced Image Processing with OpenCV | Introduction: OpenCV (Open Source Computer Vision) is a popular library used for advanced image... | 0 | 2024-07-11T00:34:16 | https://dev.to/kartikmehta8/advanced-image-processing-with-opencv-4oa3 | Introduction:

OpenCV (Open Source Computer Vision) is a popular library used for advanced image processing tasks. It provides a wide range of functions and algorithms for image and video analysis, manipulation, and enhancement. OpenCV is compatible with multiple programming languages, making it accessible for developer... | kartikmehta8 | |

1,919,086 | Newbie | Nothing to write yet... | 0 | 2024-07-11T00:34:34 | https://dev.to/ralphz101/newbie-4l13 | newbie, learning, webdev |

Nothing to write yet... | ralphz101 |

1,919,087 | Understanding Cloud Computing: A Beginner's Guide | In today's digital age, businesses and individuals alike are increasingly turning to cloud computing... | 0 | 2024-07-11T00:37:10 | https://dev.to/richard_bonney_eb884870a1/understanding-cloud-computing-a-beginners-guide-48dm | In today's digital age, businesses and individuals alike are increasingly turning to cloud computing to meet their computing needs. But what exactly is cloud computing, and what makes it so beneficial? Let's explore the basics, its advantages, and the different models it offers.

What is Cloud Computing?

At its core, ... | richard_bonney_eb884870a1 | |

1,919,088 | Understanding Cloud Computing: A Beginner's Guide | In today's digital age, businesses and individuals alike are increasingly turning to cloud computing... | 0 | 2024-07-11T00:37:20 | https://dev.to/richard_bonney_eb884870a1/understanding-cloud-computing-a-beginners-guide-3ha8 | In today's digital age, businesses and individuals alike are increasingly turning to cloud computing to meet their computing needs. But what exactly is cloud computing, and what makes it so beneficial? Let's explore the basics, its advantages, and the different models it offers.

What is Cloud Computing?

At its core, ... | richard_bonney_eb884870a1 | |

1,919,090 | Unlocking Intelligent Conversations with React AI ChatBot from Sista AI | Discover the transformative power of voicebots with Sista AI. Join the AI revolution today! 🚀 | 0 | 2024-07-11T00:45:41 | https://dev.to/sista-ai/unlocking-intelligent-conversations-with-react-ai-chatbot-from-sista-ai-294l | ai, react, javascript, typescript | <h2>Introduction</h2><p>In the era of AI integration, unlocking intelligent conversations has become a game-changer for businesses worldwide. React AI ChatBot is at the forefront of this revolution, offering a seamless platform for creating dynamic and engaging user experiences.</p><h2>Revolutionizing User Engagement</... | sista-ai |

1,919,091 | zsh: permission denied: ./gradlew | Today, I ran into a problem while working on my React Native project. When I tried to execute the... | 0 | 2024-07-11T00:54:31 | https://dev.to/deni_sugiarto_1a01ad7c3fb/zsh-permission-denied-gradlew-52dp | reactnative, zsh, macbook | Today, I ran into a problem while working on my React Native project. When I tried to execute the command ./gradlew signingReport, I received a permission denied error:

>zsh: permission denied: ./gradlew

To fix this issue, I changed the file permissions by running the following command:

`chmod +rwx ./gradlew`

After... | deni_sugiarto_1a01ad7c3fb |

1,919,095 | Creating a New Fast Tower Defence | Defense? Defence? I'll use the 's' version because America. I was playing some Bloons TD6, with all... | 0 | 2024-07-11T01:01:48 | https://dev.to/chigbeef_77/creating-a-new-fast-tower-defence-1j95 | gamedev | Defense? Defence? I'll use the 's' version because America.

I was playing some Bloons TD6, with all the nostalgia as I hadn't played a tower defense in years. However, going further and further into the waves, my CPU started crying. Now, my computer isn't exactly top of the line, but so what? My laptop can run DOOM 201... | chigbeef_77 |

1,919,097 | 🐧👾💅 The First Bash Prompt Customization I NEED to Do - Linux | While I'm developing, the terminal prompt is one of the most frequently used tools in my workflow, so... | 0 | 2024-07-11T03:25:49 | https://dev.to/uxxxjp/the-first-bash-prompt-customization-i-need-to-do-linux-2e4p | linux, ubuntu, cli, dx | While I'm developing, the terminal prompt is one of the most frequently used tools in my workflow, so I like to make it look cool.

## Virgin Ubuntu

I'm using Mac to "run" Ubuntu through Multipass. The following command creates a new Ubuntu instance named cool-prompt and starts an interactive shell. The initial appear... | uxxxjp |

1,919,098 | How to stop Garbage leads | Struggling to attract quality leads? Explore Sekel Tech’s Hyperlocal Discovery & Omni Commerce... | 0 | 2024-07-11T01:19:36 | https://dev.to/sekel/how-to-stop-garbage-leads-4ofc | Struggling to attract quality leads? Explore Sekel Tech’s Hyperlocal Discovery & Omni Commerce Platform. Explore Sekel Tech’s Hyperlocal Discovery & Omni Commerce Platform. We optimise store listings, facilitate real-time lead handoffs, and enhance site performance. Transform customer interactions, boost sales conversi... | sekel | |

1,919,148 | Are You On The Cloud, In The Cloud or Under The Cloud? | What exactly is the cloud, and why should you care? Well, there is a natural occurrence in which... | 0 | 2024-07-11T01:32:37 | https://dev.to/evretech/are-you-on-the-cloud-in-the-cloud-or-under-the-cloud-1ff1 |

**What exactly is the cloud, and why should you care?**

Well, there is a natural occurrence in which clouds form with only two possible outcomes: rain or not.

Another cloud has formed, this time not naturally, but... | evretech | |

1,919,149 | ETH SEA (Ethereum South East Asia) | 📣 ETH SEA at Coinfest Asia 2024 Join the Official Hackathon of Coinfest Asia 2024 - Asia's... | 0 | 2024-07-11T01:33:14 | https://dev.to/warlocks25/eth-sea-ethereum-south-east-asia-1h1j | ethereum, web3, hackathon, solidity |

📣 ETH SEA at Coinfest Asia 2024

Join the Official Hackathon of Coinfest Asia 2024 - Asia's Largest Web3 Festival!

💰Total Prizes: Up To $50,000!

Tracks by:

- DeFi Track by Aptos

- W... | warlocks25 |

1,919,150 | The Open/Closed Principle in C# with Filters and Specifications | Software design principles are fundamental in ensuring our code remains maintainable, scalable, and... | 28,026 | 2024-07-11T01:34:25 | https://dev.to/moh_moh701/the-openclosed-principle-in-c-with-filters-and-specifications-3dd6 | dotnet, designpatterns, csharp |

Software design principles are fundamental in ensuring our code remains maintainable, scalable, and robust. One of the key principles in the SOLID design principles is the Open/Closed Principle (OCP). This principle states that software entities should be open for extension but closed for modification. Let’s explore h... | moh_moh701 |

1,919,151 | Achieve more with Total.js: introducing Total.js Enterprise | Staying ahead of the curve requires the right tools and a platform that understands your needs.... | 0 | 2024-07-11T01:43:51 | https://dev.to/louis_bertson_1124e9cdc59/achieve-more-with-totaljs-introducing-totaljs-enterprise-1ipc | totaljs, node, programming |

Staying ahead of the curve requires the right tools and a platform that understands your needs. That's why we are thrilled to introduce our latest video on [**Total.js Enterprise**](https://totaljs.com/enterprise), designed to help developers achieve more. Whether you're a company, a seasoned developer or just startin... | louis_bertson_1124e9cdc59 |

1,919,152 | Power of Text Processing and Manipulation Tools in Linux : Day 4 of 50 days DevOps Tools Series | Introduction As a DevOps engineer, you often need to process and manipulate text data,... | 0 | 2024-07-11T01:44:49 | https://dev.to/shivam_agnihotri/power-of-text-processing-and-manipulation-tools-in-linux-day-4-of-50-days-devops-tools-series-522g | linux, devops, development, developer | ## **Introduction**

As a DevOps engineer, you often need to process and manipulate text data, whether it's log files, configuration files, or output from various commands. Linux provides a powerful set of text processing and manipulation tools that can help automate and streamline these tasks. In this blog, we will co... | shivam_agnihotri |

1,919,154 | Creating a Generative AI Chatbot with Python and Streamlit | Introduction In the current era of artificial intelligence (AI), chatbots have revolutionized digital... | 0 | 2024-07-11T01:53:33 | https://dev.to/fiorelamilady/creating-a-generative-ai-chatbot-with-python-and-streamlit-2g3b | **Introduction**

In the current era of artificial intelligence (AI), chatbots have revolutionized digital interaction by enabling natural conversations through natural language processing (NLP) and advanced language models. In this article, we will explore how to create a generative chatbot using Python and Streamlit.

... | fiorelamilady | |

1,919,155 | Cloud Computing | Cloud Computing Cloud computing is the delivery of computing services such as servers, storage,... | 0 | 2024-07-11T02:05:36 | https://dev.to/michael_azeez_c1/cloud-computing-pda | cloud, computing, advancedcomputing, machinelearning | Cloud Computing

Cloud computing is the delivery of computing services such as servers, storage, databases, networking, software, and analytics over the internet (the cloud) to offer faster innovation, flexible resources, and economies of scale.

Benefits of cloud computing

1. Cost savings: Using cloud computing servi... | michael_azeez_c1 |

1,919,156 | Task Dashboard Tips for Peak Productivity | Feeling overwhelmed, by an ending to-do list despite your efforts? Have you ever experienced weeks... | 0 | 2024-07-11T02:09:00 | https://dev.to/bryany/task-dashboard-tips-for-peak-productivity-fjk | productivity, development, management | Feeling overwhelmed, by an ending to-do list despite your efforts? Have you ever experienced weeks filled with busyness but little to show for it all because you were swamped with emails and urgent requests?

It's a challenge for project managers, software developers, marketers, and anyone trying to navigate a schedule... | bryany |

1,919,157 | De Xamarin.Forms a .NET MAUI: Uma Evolução Que Transcende Limites | Introdução: A Revolução da Programação Multiplataforma No mundo da programação mobile, Xamarin.Forms... | 0 | 2024-07-11T02:09:51 | https://dev.to/jucsantana05/de-xamarinforms-a-net-maui-uma-evolucao-que-transcende-limites-4b83 | xamarinforms, programming, mobile, softwaredevelopment | Introdução: A Revolução da Programação Multiplataforma

No mundo da programação mobile, Xamarin.Forms e .NET MAUI surgem como dois gigantes que moldam o futuro do desenvolvimento multiplataforma. Mas, o que realmente diferencia essas duas tecnologias? Vamos explorar os aspectos fundamentais que transformam o Xamarin.Fo... | jucsantana05 |

1,919,159 | [Java] Multi-threading - Nhiều luồng liệu có thực sự khiến chương trình của chúng ta trở nên nhanh hơn ? | 1.Đặt vấn đề Khi nói về việc tăng hiệu năng tổng thể của một chương trình Java, chắc hẳn một số anh... | 0 | 2024-07-11T02:17:37 | https://dev.to/bu_0107/java-multi-threading-nhieu-luong-lieu-co-thuc-su-khien-chuong-trinh-cua-chung-ta-tro-nen-nhanh-hon--47bl | 1.Đặt vấn đề

Khi nói về việc tăng hiệu năng tổng thể của một chương trình Java, chắc hẳn một số anh chị em Java developer sẽ nghĩ ngay đến multi-threading(tận dụng việc CPU đa nhân để xử lý song song các công việc cùng một lúc) với tư duy là: “Nhiều người cùng làm một việc thì bao giờ chả nhanh hơn một người làm”. Tuy ... | bu_0107 | |

1,919,160 | Customer Satisfaction: The Key to Success is an Efficient and Motivated Workforce | In today’s competitive market, customer satisfaction is paramount. An efficient and motivated... | 0 | 2024-07-11T02:18:05 | https://dev.to/wallacefreitas/customer-satisfaction-the-key-to-success-is-an-efficient-and-motivated-workforce-2l6n | productivity | In today’s competitive market, customer satisfaction is paramount. An efficient and motivated workforce is the cornerstone of delivering exceptional service, which in turn drives higher customer satisfaction and loyalty. Here’s why investing in our teams is critical:

💻 Enhanced Service Quality:

When employees are we... | wallacefreitas |

1,919,161 | Case (IV) - KisFlow-Golang Stream Real- KisFlow in Message Queue (MQ) Applications | Github: https://github.com/aceld/kis-flow Document:... | 0 | 2024-07-11T02:23:33 | https://dev.to/aceld/case-iv-kisflow-golang-stream-real--4k3e | go | <img width="150px" src="https://github.com/aceld/kis-flow/assets/7778936/8729d750-897c-4ba3-98b4-c346188d034e" />

Github: https://github.com/aceld/kis-flow

Document: https://github.com/aceld/kis-flow/wiki

---

[Part1-OverView](https://dev.to/aceld/part-1-golang-framework-hands-on-kisflow-streaming-computing-framework... | aceld |

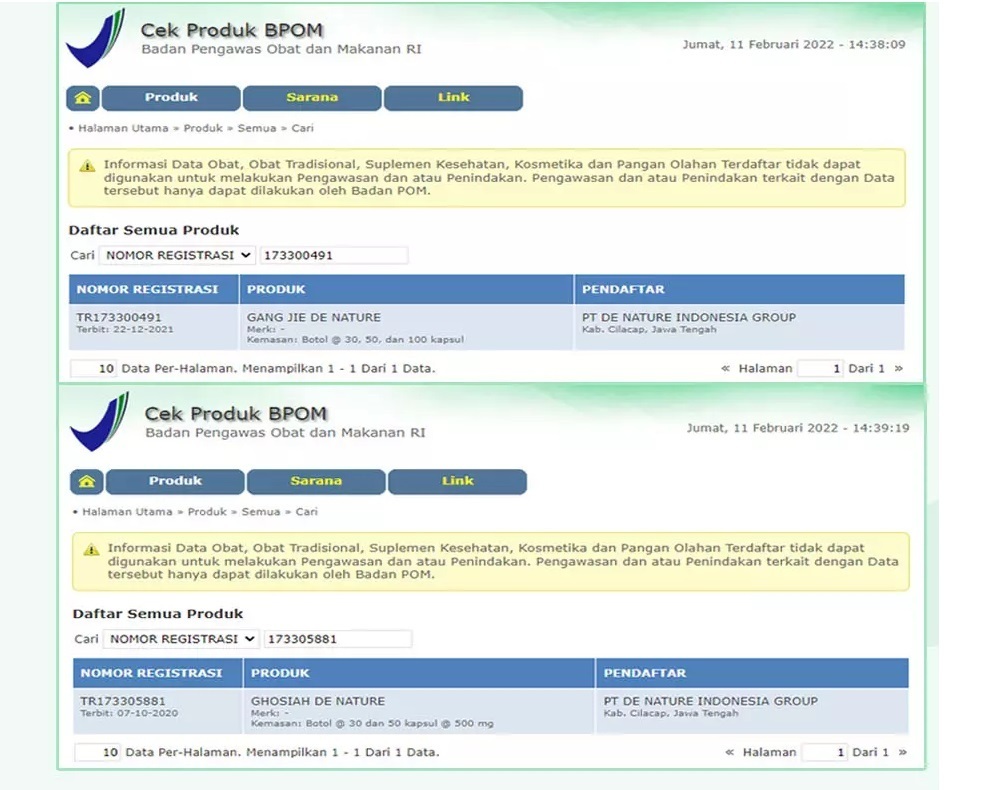

1,919,164 | Ubat untuk luka di dubur | ! Buasir adalah keadaan di mana urat darah di kawasan rektum atau dubur membengkak dan meradang.... | 0 | 2024-07-11T02:35:50 | https://dev.to/indah_indri_a299aff67faef/ubat-untuk-luka-di-dubur-bei | webdev |

!

B... | indah_indri_a299aff67faef |

1,919,165 | This one tool will Take your Landing Pages to the Next Level | OBS Studio has long been a staple for streamers and content creators, but for developers and... | 0 | 2024-07-11T02:38:26 | https://dev.to/vidova/this-one-tool-will-take-your-landing-pages-to-the-next-level-2l88 | productivity, news, career, discuss | OBS Studio has long been a staple for streamers and content creators, but for developers and technical professionals seeking streamlined functionality and ease of use, OBS often falls short. This is particularly true when trying to integrate features like AI-generated captions or displaying keyboard actions—tasks that ... | vidova |

1,919,166 | Pebble and Footprint Analytics Redefine Blockchain Gaming with Rapid Integration and Strategic Data Solutions | Pebble is revolutionizing the gaming landscape by merging traditional gaming fun with the benefits... | 0 | 2024-07-11T02:41:48 | https://dev.to/footprint-analytics/pebble-and-footprint-analytics-redefine-blockchain-gaming-with-rapid-integration-and-strategic-data-solutions-1k8m | blockchain | <img src="https://statichk.footprint.network/article/a1b6f833-101d-4806-a021-fb020c54369c.jpeg"><img src="https://statichk.footprint.network/article/13cceeb4-81f9-453f-a1dc-09e4984ac4d4.jpeg">

<a href="https://pebblestream.io/"><span style="font-size:12pt;font-family:Arial,sans-serif;color:#1155cc;background-color:tra... | footprint-analytics |

1,919,167 | The Adventures of Blink #31: The PhilBott | Hey Friends! This week's adventure sort of doubles as a Product Launch... It's something I've... | 26,964 | 2024-07-11T11:00:00 | https://dev.to/linkbenjamin/the-adventures-of-blink-31-the-philbott-32lb | ai, python, socialmedia, opensource | Hey Friends! This week's adventure sort of doubles as a Product Launch... It's something I've dreamed about and hinted at for a while now, and previous adventures have even been building up to it... but it's becoming a real thing now and I couldn't be happier to share it with you!

## I'd rather watch a youtube video

... | linkbenjamin |

1,919,168 | Pebble and Footprint Analytics Redefine Blockchain Gaming with Rapid Integration and Strategic Data Solutions | Pebble is revolutionizing the gaming landscape by merging traditional gaming fun with the benefits... | 0 | 2024-07-11T02:43:36 | https://dev.to/footprint-analytics/pebble-and-footprint-analytics-redefine-blockchain-gaming-with-rapid-integration-and-strategic-data-solutions-3dg9 | blockchain | <img src="https://statichk.footprint.network/article/a1b6f833-101d-4806-a021-fb020c54369c.jpeg"><img src="https://statichk.footprint.network/article/13cceeb4-81f9-453f-a1dc-09e4984ac4d4.jpeg">

<a href="https://pebblestream.io/"><span data-raw-html="span" style="font-size:12pt;font-family:Arial,sans-serif;color:#1155cc... | footprint-analytics |

1,919,169 | Navigating in Flutter: A Comprehensive Guide | Flutter, a powerful UI toolkit for crafting natively compiled applications for mobile, web, and... | 0 | 2024-07-11T02:46:15 | https://dev.to/design_dev_4494d7953431b6/navigating-in-flutter-a-comprehensive-guide-49go | flutter, navigation, nav | Flutter, a powerful UI toolkit for crafting natively compiled applications for mobile, web, and desktop, offers a variety of navigation methods to help developers build smooth and intuitive user experiences. In this blog post, we’ll explore different navigation techniques in Flutter, including Navigator.push, routes, D... | design_dev_4494d7953431b6 |

1,919,171 | Why Do We Need Digital Marketing and Cutting-edge SEO Tools? | Hello everyone! For businesses aiming to stand out in the market, digital marketing and the latest... | 0 | 2024-07-11T02:52:59 | https://dev.to/juddiy/why-do-we-need-digital-marketing-and-cutting-edge-seo-tools-43jd | seo, marketing, learning | Hello everyone! For businesses aiming to stand out in the market, digital marketing and the latest SEO tools have become indispensable. Here are key reasons explaining why these tools are crucial for driving business success:

#### 1. Enhancing Online Visibility

Whether you're a small business or a large enterprise, on... | juddiy |

1,919,172 | Setup para Ruby / Rails: MacOS | Este artigo descreve como configurar um ambiente de desenvolvimento Ruby / Rails no macOS. Ele inclui... | 27,960 | 2024-07-11T02:55:21 | https://dev.to/serradura/setup-para-ruby-rails-macos-5fa2 | beginners, ruby, rails, braziliandevs | Este artigo descreve como configurar um ambiente de desenvolvimento Ruby / Rails no macOS. Ele inclui a instalação do Visual Studio Code, Asdf, Ruby, NodeJS, SQLite, Rails e Ruby LSP (plugin para o VSCode).

Para seguir este tutorial, basta copiar e colar os comandos no terminal. Caso encontre algum problema, deixe um ... | serradura |

1,919,175 | Javascript to Typescript Tips | Some of the tips in migrating existing JavaScript code to TypeScript. JavaScript var name =... | 0 | 2024-07-11T02:57:32 | https://dev.to/kiranuknow/javascript-to-typescript-tips-1ine | javascript, typescript, migration | Some of the tips in migrating existing JavaScript code to TypeScript.

**_JavaScript_**

```

var name = "test";

```

**_TypeScript_**

```

//declare fieldName as string

let name: string ;

name = "test";

```

| kiranuknow |

1,919,179 | Evening Primrose | Evening primrose (Oenothera biennis) is a plant native to North America, commonly known for its... | 0 | 2024-07-11T03:07:01 | https://dev.to/chien_bui_8ed10263e2f8ebb/evening-primrose-1601 | Evening primrose (Oenothera biennis) is a plant native to North America, commonly known for its yellow flowers and various medicinal properties. Here are some key points about evening primrose:

Appearance: It typically has tall, branching stems, with yellow flowers that bloom in the evening, hence its name.

Lifecycle:... | chien_bui_8ed10263e2f8ebb | |

1,919,181 | Best Canned Ham | When looking for the best canned ham, you'll want to consider factors such as taste, texture,... | 0 | 2024-07-11T03:12:56 | https://dev.to/chien_bui_8ed10263e2f8ebb/best-canned-ham-mia | When looking for the best canned ham, you'll want to consider factors such as taste, texture, nutritional content, and ingredient quality. Here are some highly recommended options that balance these considerations:

Top Picks for Canned Ham

Dak Premium Ham

Taste and Texture: Known for its good taste and firm texture.

Nu... | chien_bui_8ed10263e2f8ebb | |

1,919,182 | 7 Awesome Career Tips Your Manager Will Never Tell You | While starting a career, your immediate manager becomes the guide to finding your feet in the... | 0 | 2024-07-11T03:14:15 | https://dev.to/dishitdevasia/7-awesome-career-tips-your-manager-will-never-tell-you-3lhn | developers, careerdevelopment, career, softwaredevelopment |

While starting a career, your immediate manager becomes the guide to finding your feet in the organization.

You could become very close and mimic your decisions based on how your manager will handle situations.

While this is good at the start, it may not help you in the long run. Your manager, at some point, m... | dishitdevasia |

1,919,195 | Test | test | 0 | 2024-07-11T03:23:28 | https://dev.to/paulohbraga/test-525b | test | paulohbraga | |

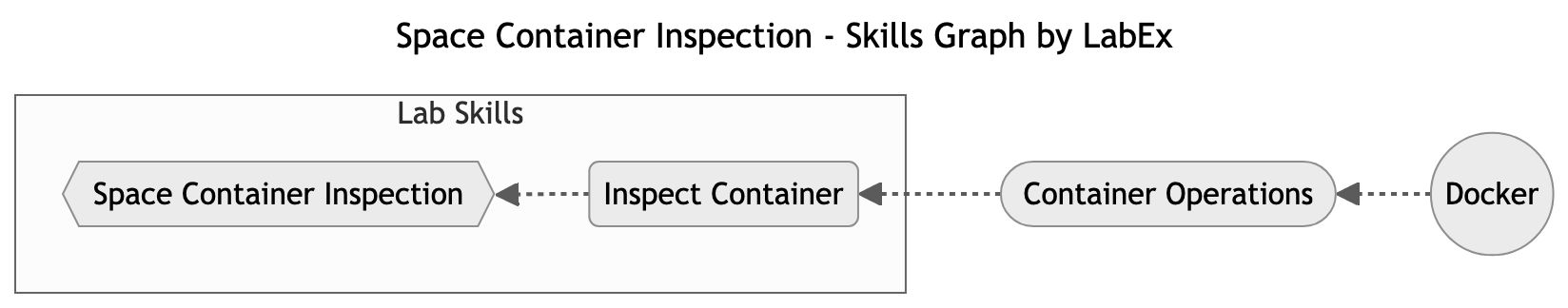

1,919,196 | Space Container Inspection: Secure Your Futuristic City | In this lab, you will be transported to a futuristic space city where you take on the role of a space police officer. Your mission is to inspect and investigate suspicious Docker containers that might be posing security threats within the space city. | 27,902 | 2024-07-11T03:25:58 | https://dev.to/labex/space-container-inspection-secure-your-futuristic-city-3pff | docker, coding, programming, tutorial |

## Introduction

This article covers the following tech skills:

In [this lab](https://labex.io/tutorials/docker-space-container-inspection-268700), you will be transported to a futuristic space city where you take on the role... | labby |

1,919,197 | Cloud Migration Strategies: Your Roadmap to a Successful Transition | Cloud migration has become a strategic imperative for businesses seeking to enhance agility,... | 0 | 2024-07-11T03:27:13 | https://dev.to/unicloud/cloud-migration-strategies-your-roadmap-to-a-successful-transition-gli | Cloud migration has become a strategic imperative for businesses seeking to enhance agility, scalability, and cost-efficiency. However, the transition from on-premises infrastructure to the cloud can be complex and fraught with challenges if not approached strategically. This guide delves into essential [cloud migratio... | unicloud | |

1,919,199 | Fisrt time | Hello, this is my first time here, i hope that have a lot knowlegd about dev! | 0 | 2024-07-11T03:30:13 | https://dev.to/rteless/fisrt-time-34lj | webdev, beginners | Hello, this is my first time here, i hope that have a lot knowlegd about dev! | rteless |

1,919,200 | Unveiling Mawarliga: Your Ultimate Guide to Slot Gacor, Official Togel, Online Casino | In the dynamic realm of online gambling, Mawarliga emerges as a leading platform offering a rich... | 0 | 2024-07-11T03:32:25 | https://dev.to/mawarliga/unveiling-mawarliga-your-ultimate-guide-to-slot-gacor-official-togel-online-casino-277p | mawarliga, linkaltmawarliga, mawar, liga | In the dynamic realm of online gambling, [Mawarliga ](https://mawarligamanis.com)emerges as a leading platform offering a rich array of gaming options tailored to cater to diverse preferences. From lucrative slot gacor games to the thrill of official togel draws, alongside seamless transactions via e-wallets and QRIS d... | mawarliga |

1,919,201 | (LIVE) Police published footage from the scene of this incident | A post by Sang Pemburu | 0 | 2024-07-11T03:35:34 | https://dev.to/sang_pemburu/live-police-published-footage-from-the-scene-of-this-incident-pg3 |

| sang_pemburu | |

1,919,202 | GudangLiga: Menggabungkan Kesenangan dan Keamanan dalam Judi Online | Dalam dunia yang terus berkembang secara digital, GudangLiga hadir sebagai pilihan terdepan bagi para... | 0 | 2024-07-11T03:41:16 | https://dev.to/gudangliga/gudangliga-menggabungkan-kesenangan-dan-keamanan-dalam-judi-online-3fj9 | gudangliga, linkaltgudangliga, gudang, liga | Dalam dunia yang terus berkembang secara digital, [GudangLiga ](https://gudang-liga1.com)hadir sebagai pilihan terdepan bagi para penggemar judi online yang mencari pengalaman yang menyenangkan dan aman. Dengan berbagai fitur dan opsi permainan yang menarik, GudangLiga menawarkan platform yang menggabungkan kualitas te... | gudangliga |

1,919,203 | How to add memory to LLM Bot using DynamoDB | In the realm of artificial intelligence, the capability to remember past interactions is pivotal for... | 0 | 2024-07-11T03:41:59 | https://dev.to/amlana24/how-to-add-memory-to-llm-bot-using-dynamodb-nml | aws, huggingface, devops, ai | In the realm of artificial intelligence, the capability to remember past interactions is pivotal for creating personalized and engaging user experiences. For any chatbots, to answer questions effectively and keep up with a conversation, it becomes essential to have its own memory.

There are many solutions available us... | amlana24 |

1,919,209 | Morning | A post by Aadarsh Kunwar | 0 | 2024-07-11T03:59:56 | https://dev.to/aadarshk7/morning-cfa | aadarshk7 | ||

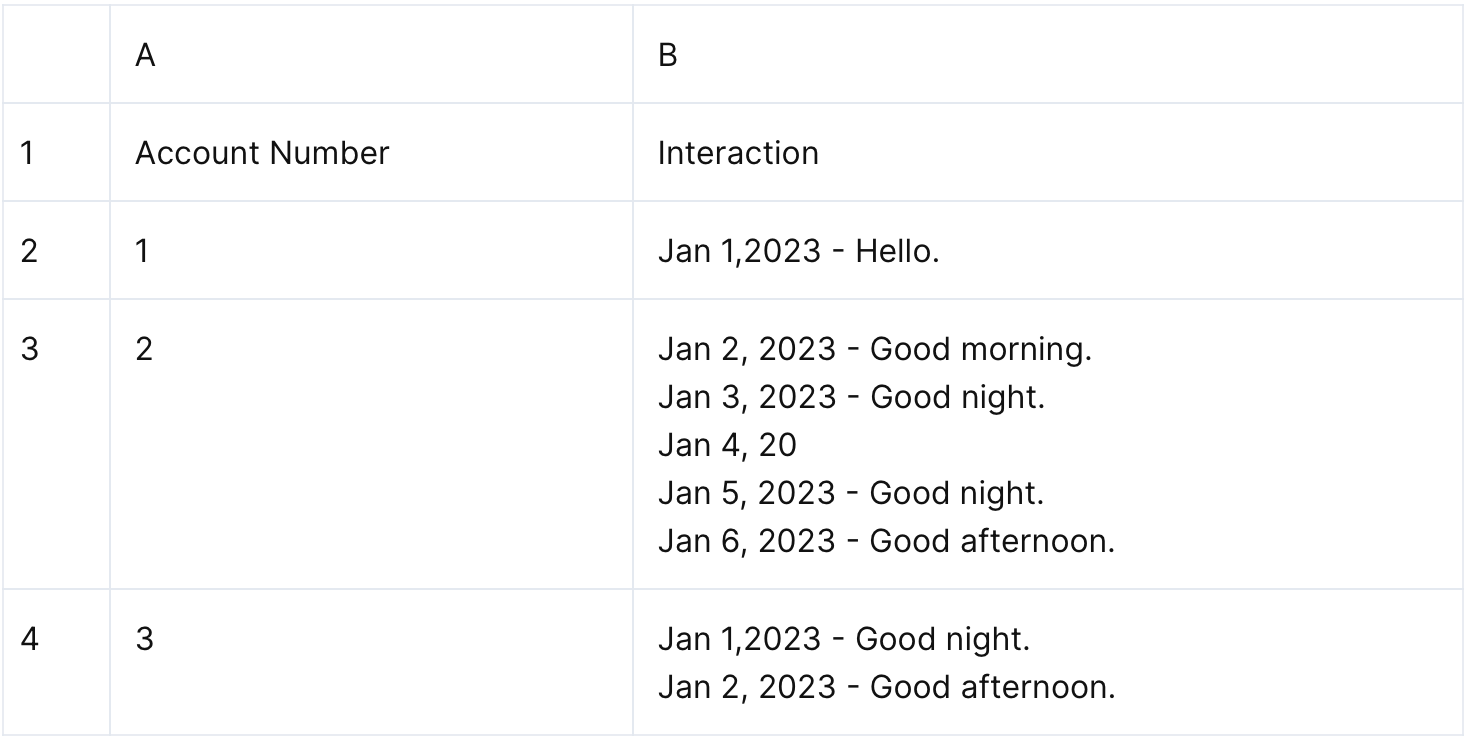

1,919,204 | Expand Multi-Row Text in A Cell to Multiple Cells | Problem description & analysis: In the following table, column A is the category, and column B... | 0 | 2024-07-11T03:45:45 | https://dev.to/judith677/expand-multi-row-text-in-a-cell-to-multiple-cells-gcf | programming, beginners, tutorial, productivity | **Problem description & analysis**:

In the following table, column A is the category, and column B includes one or multiple lines of text where the line break is the separator.

The task is to expand each multi-line c... | judith677 |

1,919,205 | Detailed Guide to Configuring DBLink from GBase 8s to Oracle | Connecting GBase 8s with Oracle databases is a common requirement when building enterprise-level data... | 0 | 2024-07-11T03:51:14 | https://dev.to/congcong/detailed-guide-to-configuring-dblink-from-gbase-8s-to-oracle-1g4m | database | Connecting GBase 8s with Oracle databases is a common requirement when building enterprise-level data solutions. In the previous article, we covered the configuration of DBLink from Oracle to GBase 8s. This article will guide you through configuring DBLink from GBase 8s to Oracle, enabling interoperability between the ... | congcong |

1,919,206 | Make Cronjob Script With Log | Postingan ini hanyalah catatan untuk penulis. Ini adalah script cronjob yg dibuat untuk membuat log... | 0 | 2024-07-11T03:52:28 | https://dev.to/seno21/make-cronjob-script-with-log-aah | devops, sysadmin, linux, cronjob | Postingan ini hanyalah catatan untuk penulis. Ini adalah script cronjob yg dibuat untuk membuat log secara manual sekaligus menjaga ukuran file agar stabil sesuia rentang waktu yg di inginkan.

```bash

#!/bin/bash

# Cek apakah file ada

file=/var/log/maskar.log

if [ ! -f "${file}" ]; then

echo "===== End of Line ==... | seno21 |

1,919,207 | Improve TensorFlow model load time by ~70% using HDF5 instead of SavedModel | In our ongoing work running DeepCell on Google Batch, we noted that it takes ~9s to load the model... | 27,298 | 2024-07-11T03:55:16 | https://dev.to/dchaley/improve-tensorflow-model-load-time-by-70-using-hdf5-instead-of-savedmodel-5c8e | tensorflow, performance, cloud, ai | In our [ongoing work](https://dev.to/dchaley/series/27298) running DeepCell on Google Batch, we noted that it takes ~9s to load the model into memory, whereas prediction (the interesting part of loading the model) takes ~3s for a 512x512 image.

The ideal runtime environment is serverless, so we don't have long-lived p... | dchaley |

1,919,208 | Cloud Computing; A developer's POV. | Hey tech warriors! Today, we’re breaking down the awesomeness of cloud computing. Whether you're a... | 0 | 2024-07-11T04:00:22 | https://dev.to/thedavidmensah/cloud-computing-a-developers-pov-4b69 | _Hey tech warriors! Today, we’re breaking down the awesomeness of cloud computing. Whether you're a seasoned coder or just dipping your toes in, this guide's got you covered. Let's dive right in!_

**What is Cloud ... | thedavidmensah | |

1,919,210 | The Importance Of Explainer Videos For SAAS Founders (just say no to cheap cartoons and yes to real people)! | As new privacy legislation is underway in many states and cookies are becoming a thing of the past,... | 0 | 2024-07-11T04:00:56 | https://dev.to/info_videoproduction_684/the-importance-of-explainer-videos-for-saas-founders-just-say-no-to-cheap-cartoons-and-yes-to-real-people-5302 | marketing, sass, owners, news | As new privacy legislation is underway in many states and cookies are becoming a thing of the past, old-school methods of getting your target buyers' attention when marketing SAAS are becoming crucial to your Software As A Services's success.

You need an explainer video that works. What no longer works are:

1) Whiteb... | info_videoproduction_684 |

1,919,211 | What Is Business Manager In SFCC (Salesforce Commerce Cloud) | Managing an online store can pose significant challenges and demands. It includes various essential... | 0 | 2024-07-11T04:03:50 | https://dev.to/devops_den/what-is-business-manager-in-sfcc-salesforce-commerce-cloud-9cd | salesforce, ecommerce, webdev, devops | Managing an online store can pose significant challenges and demands. It includes various essential tasks, such as product catalog management, order processing, customer service, etc. Salesforce Commerce Cloud (SFCC) emerges as a valuable solution tailored to streamline and elevate the overall online B2C experience... | devops_den |

1,919,212 | Best Business Poster Maker App | BrandFlex: Business Poster Maker App Create Stunning Business Posters Effortlessly BrandFlex is your... | 0 | 2024-07-11T04:03:58 | https://dev.to/rawat_kanojia_182e4f77b1d/best-business-poster-maker-app-14pe | webdev, javascript, programming, react | [BrandFlex](https://brandflex.in/): Business Poster Maker App

Create Stunning Business Posters Effortlessly

BrandFlex is your go-to app for designing professional [business posters](https://brandflex.in/). With a user-friendly interface, a wide range of customizable templates, and powerful design tools, BrandFlex make... | rawat_kanojia_182e4f77b1d |

1,919,213 | Exploring Linux Basics: Notes from an Aspiring Cloud Engineer | Welcome to my blog series on Linux Basics for Hackers! As an aspiring cloud engineer, I recognize... | 28,029 | 2024-07-11T04:07:31 | https://dev.to/thrtn85dev/exploring-linux-basics-notes-from-an-aspiring-cloud-engineer-532o | linux, cloudcomputing, beginners, learning | Welcome to my blog series on Linux Basics for Hackers!

As an aspiring cloud engineer, I recognize the importance of mastering Linux. In this series, I'll share my journey chapter by chapter, reviewing my notes and breaking down key concepts. This approach will help both myself and fellow newcomers understand and mast... | thrtn85dev |

1,919,214 | Gas Generators: Meeting Energy Demands with Versatile Solutions | Gas-Driven Generators - Serving Electrical Power Requirements Do you need a convenient and effective... | 0 | 2024-07-11T04:07:33 | https://dev.to/pwiwyayq_kasjga_de682b3c4/gas-generators-meeting-energy-demands-with-versatile-solutions-2cod | Gas-Driven Generators - Serving Electrical Power Requirements

Do you need a convenient and effective way to get your energy fix? The answer is gas generators! Their versatility makes them increasingly popular in the market as they offer safety, user-friendliness and durability. Understanding it better: Gas generators,... | pwiwyayq_kasjga_de682b3c4 | |

1,919,217 | Perfectplan qa | Entrepreneurs can quickly achieve their company’s objectives and streamline the process with the... | 0 | 2024-07-11T04:11:08 | https://dev.to/sreelaxmi_sree_0b664b5e9c/perfectplan-qa-4720 | Entrepreneurs can quickly achieve their company’s objectives and streamline the process with the right assistance and advice from business consultants like [Perfect Plan](https://perfectplanqa.com/

Perfect Plan has a long history of enabling several entrepreneurs to set up their business in Qatar.

| sreelaxmi_sree_0b664b5e9c | |

1,919,218 | Ubat pelancar bab ibu hamil | Pengertian Buasir Buasir, juga dikenali sebagai hemorrhoid, adalah pembengkakan atau pembesaran... | 0 | 2024-07-11T04:11:26 | https://dev.to/indah_indri_a299aff67faef/ubat-pelancar-bab-ibu-hamil-4e34 | webdev, javascript, beginners |

Pengertian Buasir

Buasir, juga dikenali sebagai hemorrhoid, adalah pembengkakan atau pembesaran saluran darah di bahagian bawah rektum dan dubur. Saluran darah ini biasanya ada dalam keadaan normal, tetapi apabila ... | indah_indri_a299aff67faef |

1,919,219 | Unveiling the Invisible: A Look into Computational Fluid Dynamics (CFD) | The world around us is filled with unseen forces – the whoosh of wind past an airplane wing, the... | 0 | 2024-07-11T04:12:25 | https://dev.to/epakconsultant/unveiling-the-invisible-a-look-into-computational-fluid-dynamics-cfd-4367 | cfd | The world around us is filled with unseen forces – the whoosh of wind past an airplane wing, the swirling currents within a river, or the intricate dance of air molecules as we breathe. Computational Fluid Dynamics (CFD) emerges as a powerful tool for understanding and predicting these fluid behaviors.

What is CFD?

... | epakconsultant |

1,919,220 | Setting up your business in Qatar - Perfect Plan Qatar|Perfectplan qa | Perfect Plan is a perfect choice to assist you with setting up your business in Qatar, especially... | 0 | 2024-07-11T04:15:18 | https://dev.to/sreelaxmi_sree_0b664b5e9c/setting-up-your-business-in-qatar-perfect-plan-qatarperfectplan-qa-2a59 | business, consultans, qatar | Perfect Plan is a perfect choice to assist you with setting up your business in Qatar, especially because of their broad local market knowledge and various applicable laws that are in force. Perfect Plan ensures an efficient and successful business setup process by providing complete services from company registration ... | sreelaxmi_sree_0b664b5e9c |

1,919,222 | Embedded Programming on Keil uvision | I was trying to do a program in lpc4088fbd208 using keil uvision4 using CoLinkEx . While downloading... | 0 | 2024-07-11T04:18:43 | https://dev.to/ameen_9d902423315bbf72b7b/embedded-programming-on-keil-uvision-15cm | keil, lpc4088, embeddedprogramming, discuss | I was trying to do a program in **lpc4088fbd208** using **keil uvision4** using **CoLinkEx** . While downloading the code to the board it showing error on keil display. **It shows device xml file not found** .m using lpc4088. What may be the reason for this error? | ameen_9d902423315bbf72b7b |

1,919,240 | Unveiling the Dance of Boundaries: Exploring the Immersed Boundary Method (IBM) | Simulating the intricate interplay between fluids and solids presents a significant challenge in... | 0 | 2024-07-11T04:22:20 | https://dev.to/epakconsultant/unveiling-the-dance-of-boundaries-exploring-the-immersed-boundary-method-ibm-2j13 | ibm | Simulating the intricate interplay between fluids and solids presents a significant challenge in computational science. Traditional methods often struggle with complex geometries or require cumbersome meshing techniques. Enter the Immersed Boundary Method (IBM), a powerful tool for modeling fluid-structure interaction,... | epakconsultant |

1,919,241 | Engine Cooling Systems: Advancements in Temperature Management | Apartment For Rent Near Me: Improved Temperature Management Have you ever heard of how your cars... | 0 | 2024-07-11T04:26:43 | https://dev.to/pwiwyayq_kasjga_de682b3c4/engine-cooling-systems-advancements-in-temperature-management-3m0j | Apartment For Rent Near Me: Improved Temperature Management

Have you ever heard of how your cars engine keeps its cool even when the sun beats down relentlessly on it? We can thank the engine cooling system for that! The point is to keep the engine at an ideal temperature, which ensures optimal performance and durabil... | pwiwyayq_kasjga_de682b3c4 | |

1,919,242 | Demystifying the Graph: A Primer on Cypher Query Language | In the realm of graph databases, where data is interconnected like a vast network, Cypher emerges as... | 0 | 2024-07-11T04:29:00 | https://dev.to/epakconsultant/demystifying-the-graph-a-primer-on-cypher-query-language-lcl | In the realm of graph databases, where data is interconnected like a vast network, Cypher emerges as a powerful query language. Unlike traditional SQL used for relational databases, Cypher is designed specifically to navigate the relationships within a graph. This article equips you with the basic concepts of Cypher, e... | epakconsultant | |

1,919,243 | Why Programmers Shouldn’t Be A Freelancer | It’s better to quit if you can. There are so many benefits to being an employee. Protected... | 0 | 2024-07-11T04:29:10 | https://dev.to/manojgohel/why-programmers-shouldnt-be-a-freelancer-mj4 | freelance, webdev, beginners, react | It’s better to quit if you can. There are so many benefits to being an employee.

## **Protected by labor laws**

If you enter into a contract (especially a contract for work) as a freelancer, no one will protect you, even if you end up working huge amounts of time. Ultimately, you are responsible for your own death fr... | manojgohel |

1,919,244 | Unlock the "Beauty and Joy of Computing" with UC Berkeley's Captivating Computer Science Course! 🤖 | Explore the fundamental concepts and principles of computer science, including abstraction, design, recursion, and more. Suitable for both CS majors and non-majors. | 27,844 | 2024-07-11T04:31:58 | https://dev.to/getvm/unlock-the-beauty-and-joy-of-computing-with-uc-berkeleys-captivating-computer-science-course-9p5 | getvm, programming, freetutorial, universitycourses |

Greetings, fellow knowledge-seekers! 👋 Today, I'm thrilled to introduce you to an incredible resource that has the power to ignite your passion for computer science and unlock the wonders of the digital world. Prepare to embark on an exhilarating journey with the "The Beauty and Joy of Computing" course from the reno... | getvm |

1,919,245 | How to Get a Temp Number for Google Verification: A Comprehensive Beginner's Guide | In today's digital age, securing your online accounts is paramount. One effective method for... | 0 | 2024-07-11T04:33:14 | https://dev.to/legitsms/how-to-get-a-temp-number-for-google-verification-a-comprehensive-beginners-guide-p0 | webdev, javascript, beginners, programming |

In today's digital age, securing your online accounts is paramount. One effective method for enhancing security is using a virtual phone number for account verifications. This guide will walk you through obtaining and using a virtual phone number for Gmail verification. We'll cover everything from understanding the co... | legitsms |

1,919,246 | Unveiling the Connections: A Beginner's Guide to Graph Theory | Graph theory, a captivating branch of mathematics, delves into the study of relationships between... | 0 | 2024-07-11T04:35:22 | https://dev.to/epakconsultant/unveiling-the-connections-a-beginners-guide-to-graph-theory-3pp3 | graph | Graph theory, a captivating branch of mathematics, delves into the study of relationships between objects. Imagine a web of connections, where dots represent entities and lines depict their associations. This is the essence of graphs, offering a powerful tool to model and analyze interconnected systems in diverse field... | epakconsultant |

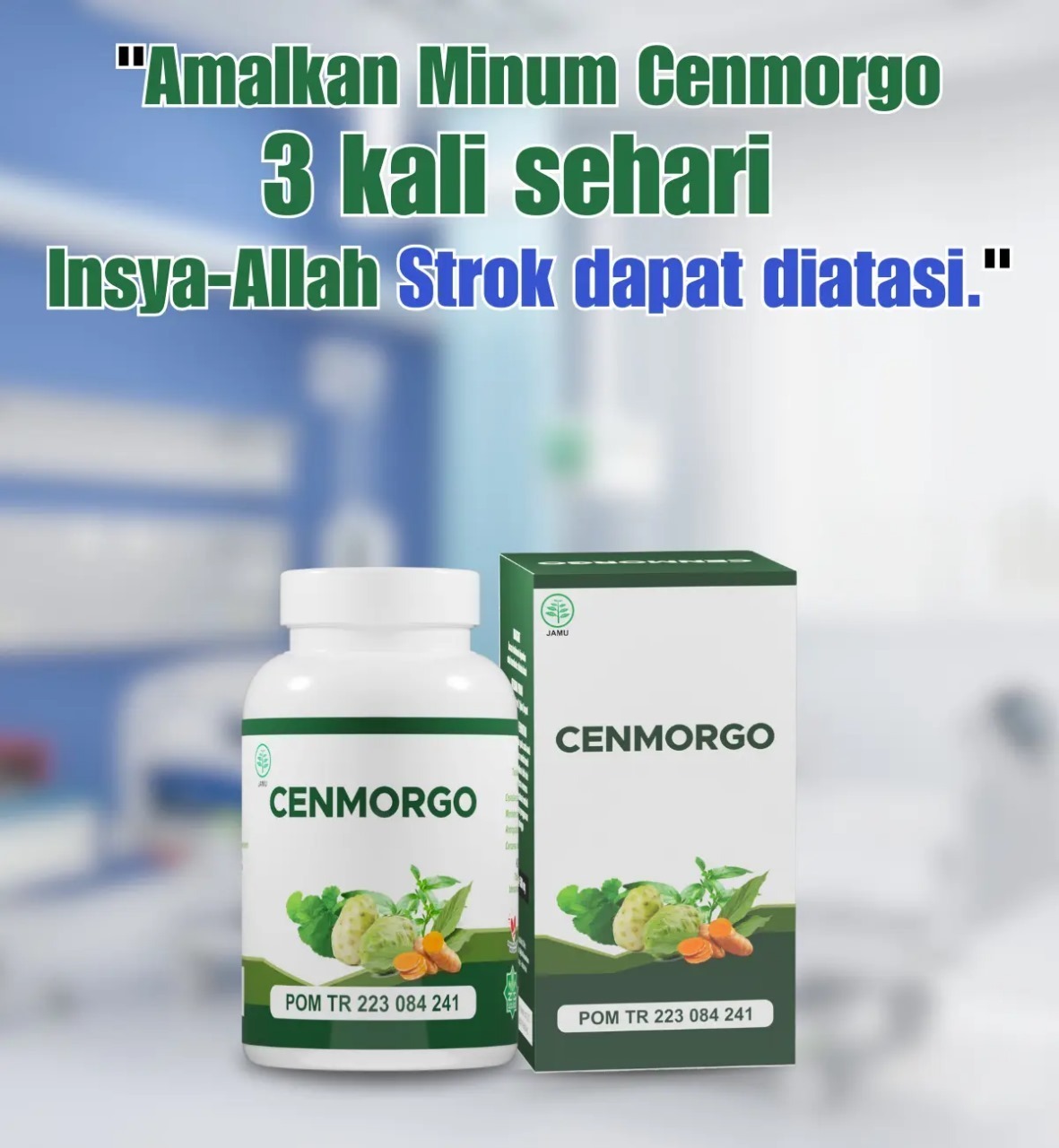

1,919,247 | Keuntungan Menggunakan Jasa Maklon untuk Bisnis Anda | Perkenalkan Kami PT. Zada Syifa Nusantara, sebuah perusahaan / pabrik maklon obat herbal terpercaya... | 0 | 2024-07-11T04:36:00 | https://dev.to/denaturenina/keuntungan-menggunakan-jasa-maklon-untuk-bisnis-anda-2836 | Perkenalkan Kami PT. Zada Syifa Nusantara, sebuah perusahaan / pabrik maklon obat herbal terpercaya yang dipimpin oleh seorang apoteker handal dan memiliki pengalaman yang baik dalam dunia herbal.

Kami siap membantu Anda mewujudkan impian produk herbal tradisional Anda.

Dengan pengalaman dan dedikasi kami, produk Anda... | denaturenina | |

1,919,248 | Django AllAuth Chapter 2 - How to install and configure Django AllAuth | In this chapter we'll explore the basics of the AllAuth extension: from the installation to a basic... | 0 | 2024-07-11T04:38:03 | https://dev.to/doctorserone/django-allauth-chapter-2-how-to-install-and-configure-django-allauth-513p | django, python, djangocms, allauth | In this chapter we'll explore the basics of the AllAuth extension: from the installation to a basic usage for a login/password based access to our Django app. Let's go!

> (NOTE: First published in my Substack list: https://andresalvareziglesias.substack.com/)

## List of chapters

- Chapter 1 - The All-in-one solutio... | doctorserone |

1,919,249 | Day 29 of 30 of JavaScript | Hey reader👋 Hope you are doing well😊 In the last post we have talked about interfaces of DOM. In this... | 0 | 2024-07-11T04:39:36 | https://dev.to/akshat0610/day-29-of-30-of-javascript-4fom | webdev, javascript, beginners, tutorial | Hey reader👋 Hope you are doing well😊

In the last post we have talked about interfaces of DOM. In this post we are going to discuss about JSON.

So let's get started🔥

## What is JavaScript JSON?

JSON stands for **J**ava**S**cript **O**bject **N**otation. It is a lightweight data interchange format that is easy for h... | akshat0610 |

1,919,250 | Exploring the Future of Data Operations with LLMOps | Introduction In the rapidly evolving world of technology, the way we handle data is... | 0 | 2024-07-11T04:43:23 | https://dev.to/supratipb/exploring-the-future-of-data-operations-with-llmops-41hc | machinelearning, ai, data, aws |

## Introduction

In the rapidly evolving world of technology, the way we handle data is undergoing significant changes. One of the most exciting developments in this area is the emergence of Large Language Models Operations (LLMOps). LLMOps is a field that combines the power of Large Language Models (LLMs) with data o... | supratipb |

1,919,251 | The OP Stack Factor: Powering Ethereum’s Leap Towards 2.0 | As we know, the top selling point for any Layer2 rollup framework (be it for optimistic or... | 0 | 2024-07-11T04:43:50 | https://www.zeeve.io/blog/the-op-stack-factor-powering-ethereums-leap-towards-2-0/ | ethereum, opstack, rollups | <p>As we know, the top selling point for any Layer2 rollup framework (be it for optimistic or zero-knowledge technology) is Ethereum-compatibility along with the ability of L2 chains to settle on Ethereum to inherit its high-staked security, decentralization, and liquidity. Let’s talk about one such most-suited and rea... | zeeve |

1,919,252 | Cloud computing and its benefits | Cloud computing is the distribution of IT services, such as servers, storage, databases, networking,... | 0 | 2024-07-11T04:45:53 | https://dev.to/mohammed_jamalosman_40bd/cloud-computing-and-its-benefits-k59 | Cloud computing is the distribution of IT services, such as servers, storage, databases, networking, software, analytics, and intelligence, over the Internet (the cloud) in order to provide on-demand services, flexible resource options, and quicker innovation.

**Cloud Computing's Advantages**

-Shorter time to market. ... | mohammed_jamalosman_40bd | |

1,919,253 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-07-11T04:48:14 | https://dev.to/darbinazari/buy-verified-cash-app-account-3ifa | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoinenablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, Cash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n|||\\\\\\\n\nHow to verify Cash App accounts\n\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account. As part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly.\n\nHow cash used for international transaction?\n\n\n\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom. No matter if you're a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today's digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial. As we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available.\n\nOffers and advantage to buy cash app accounts cheap?\n\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform. We deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Trustbizs.com stands by the Cash App's superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\n\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management. Explore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller's pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Equally important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\n\nIn today's digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions. By acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller's pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Equally important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\n\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | darbinazari |

1,919,254 | Customer Marketing Framework: A Blueprint for Success | In today's competitive market, businesses must adopt effective strategies to not only acquire new... | 0 | 2024-07-11T04:48:46 | https://dev.to/nisargshah/customer-marketing-framework-a-blueprint-for-success-4e0n | marketing | In today's competitive market, businesses must adopt effective strategies to not only acquire new customers but also retain and nurture existing ones. A robust customer marketing framework can serve as a blueprint for success, guiding companies in building strong relationships with their customers and maximizing their ... | nisargshah |

1,919,256 | Building a Multi-Layered Docker Image Testing Framework with Docker Scout and Testcontainers | Hi everyone! Ajeet here, and I've been actively following discussions about Docker image testing... | 0 | 2024-07-11T05:19:27 | https://dev.to/ajeetraina/building-a-multi-layered-docker-image-testing-framework-with-docker-scout-and-testcontainers-10l0 | security, docker | Hi everyone! Ajeet here, and I've been actively following discussions about Docker image testing frameworks on community forums and Stack Overflow. If you’re a part of the team who is responsible for supplying Docker images to your customer or for your internal team, I wanted to share my thoughts and insights on buildi... | ajeetraina |

1,919,257 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Accounts Paxful... | 0 | 2024-07-11T05:00:51 | https://dev.to/darbinazari/buy-verified-paxful-account-145j | tutorial, react, python, devops | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-paxful-account/\n\n\n\n\n\n\nBuy Verified Paxful Accounts\n\n \n\nPaxful account symbolizes the empowerment of individuals to participate in the global economy on their terms. By leveraging a P2P model, diverse payment methods (various), and a commitment to education, Paxful paves the way for financial inclusion and innovation. Buy aged paxful account from dmhelpshop.com. Paxful accounts will likely play an instrumental role in shaping the future of finance, where borders are transcended, and opportunities are accessible to all of its users. If you want to trade digital currencies then you should confirm best platform. For this reason we suggest to buy verified paxful accounts.\n\nVerified paxful account enabling users to exchange crypto currencies for various payment methods. To make the most of your Paxful experience, it's essential to understand the features and functions of your Paxful account. This guide will walk you through the process of setting up, using, and managing your Paxful account effectively. That’s why paxful is now one of the best platform to conserve and trading with cryptocurrencies. So, now, if you want to buy verified paxful accounts of your desired country, contact fast with (website name).\n\n \n\nBuy US verified paxful account from the best place dmhelpshop\n\n \n\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. If you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\n100% customer satisfaction ensured\nHow to conserve and trade crypto currency through Paxful account?\n\n \n\nDeposit Cryptocurrency: Search for offers from sellers who accept your preferred payment method. Carefully review the terms of the offer, including exchange rate, payment window, and trading limits. Initiate a trade with a seller, follow the provided instructions, and make the payment. Once the seller confirms the payment, your purchased cryptocurrency will be transferred to your Paxful wallet. If you buy paxful account, firstly confirm your account security to enture safe deposit, and trade.\n\nSelling Cryptocurrency: Buy paxful account, paxful makes an offer to sell your cryptocurrency, specifying your preferred payment methods and trading terms. Once a buyer initiates a trade based on your offer, follow the provided instructions to release the cryptocurrency from your wallet once you receive the payment. If you want to use paxful with verified documents, you should buy USA paxful account from us. We give full of access and also provide all the documents with the account details.\n\n \n\nWhy American peoples use to trade on paxful?\n\n \n\nPaxful offers a user-friendly platform that allows individuals to easily buy and sell Bitcoin using many permitted payment methods. This approach provides users with more control over their trades and can lead to competitive prices. Buy USA paxful accounts at least price. As Paxful gained popularity in the USA, its platform is accessible globally. This has made it a preferred choice for individuals in regions where traditional financial systems might be less accessible or less stable. This adds an extra layer of security and trust to the platform. Buy aged paxful accounts to get high security.\n\nHow Do I Get 100% Real VerifiedPaxfulAccoun?\n\n\n\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform. However, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. \n\nPaxful payment system and trading strategy-\n\nPaxful P2P stage connecting buyers and sellers directly to facilitate the exchange of cryptocurrencies, primarily Bitcoin.Paxful allow and provides a genuine marketplace where users can create offers to buy or sell Bitcoin using a variety of payment methods. Paxful provides a list of available offers that match the buyer's preferences, showing the price, payment method, trading limits, and other details. Buy USA paxful accounts from us.\n\n????////////////////\n\nHow paxful ensure risk-free transaction and trading?\n\n\n\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfuimplement stringent identity and address verification measures to protect users from scammers and ensure credibility. With verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user's dedication to the platform's guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\n\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Examining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors. Businesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth. Paxful's verified accounts not only offer reliability within the trading community but also serve as a testament to the platform's ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today.\n\nWhy paxful keep the security measures at the top priority?\n\n\n\nIn today's digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information. Safeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all.\n\n\n\n\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | darbinazari |

1,919,258 | 6 Open-Source Projects That Will Blow Your Mind | Level Up Coding | There are millions of open source projects on github, but some of them are so amazing that they will... | 0 | 2024-07-11T05:03:31 | https://dev.to/manojgohel/6-open-source-projects-that-will-blow-your-mind-level-up-coding-4ko5 | ai, webdev, beginners, learning | There are millions of open source projects on github, but some of them are so amazing that they will blow your mind.