id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,919,729 | BitPower Introduction: | BitPower is an innovative blockchain solution that aims to enable cross-chain asset transactions... | 0 | 2024-07-11T12:39:37 | https://dev.to/_046dbf5471eab6b9306bb6/bitpower-introduction-2b8p | BitPower is an innovative blockchain solution that aims to enable cross-chain asset transactions through its unique telePORT protocol. The protocol leverages the liquidity of existing chains such as Polygon, Arbitrum, and Ethereum, allowing users to trade assets minted on the Arweave blockchain without leaving the BitP... | _046dbf5471eab6b9306bb6 | |

1,919,730 | Achieve Excellence in NURS FPX 4040 Assessments with Expert Guidance | Achieve Excellence in NURS FPX 4040 Assessments with Expert Guidance Unlock your full potential in... | 0 | 2024-07-11T12:40:15 | https://dev.to/sharlet_diana_de8b9fe51aa/achieve-excellence-in-nurs-fpx-4040-assessments-with-expert-guidance-k74 | education, nursing | **Achieve Excellence in NURS FPX 4040 Assessments with Expert Guidance**

Unlock your full potential in the NURS FPX 4040 series with our personalized tutoring services. From Assessment 1 to 4, we ensure a tailored learning experience that sets you up for success in your nursing career.

Introduction to NURS FPX 4040... | sharlet_diana_de8b9fe51aa |

1,919,731 | Exploring Anechoic Chambers: Silence Unveiled | An anechoic chamber is a specialized room designed to eliminate echoes and external noise, creating... | 0 | 2024-07-11T12:42:01 | https://dev.to/envirotech/exploring-anechoic-chambers-silence-unveiled-5354 | webdev, javascript, programming, tutorial | An [**anechoic chamber**](https://envirotechltd.com/anechoic-chamber/) is a specialized room designed to eliminate echoes and external noise, creating an environment of near-perfect silence. It achieves this through walls lined with sound-absorbing materials like foam cones or wedges, often with a floor of mesh suspend... | envirotech |

1,919,732 | SiteClone AI Review - Clone & Migrate ANY Website On Your Domain In Less Than 60 Seconds | SiteClone AI Review : Features Instantly Migrate All The Website’s Contents Including Images,... | 0 | 2024-07-11T12:42:37 | https://dev.to/alauddin10/siteclone-ai-review-clone-migrate-any-website-on-your-domain-in-less-than-60-seconds-ek3 | SiteClone AI Review : Features

Instantly Migrate All The Website’s Contents Including Images, Videos, Pages, Media Files, Databases, Templates, Themes & Much More.

Effortlessly Customize & Edit The Websites & Pages With Built-In, World-Class Site Editor…

Schedule & Download Real-Time Daily Website Backups On Comple... | alauddin10 | |

1,919,733 | need help with jsonb fuzzy searching in postgres | Hello guys, hoping to find the solution here, I have a jsonb column, I need to do fuzzy searching on... | 0 | 2024-07-11T12:42:50 | https://dev.to/satish_abothula_e11b2492f/need-help-with-jsonb-fuzzy-searching-in-postgres-4ln2 | Hello guys, hoping to find the solution here,

I have a jsonb column,

I need to do fuzzy searching on that column

used ts vectors, but when it is converted the keys in the jsonb also mapped to the tsvector ,

I want to implement only on values | satish_abothula_e11b2492f | |

1,919,734 | Explore how BitPower Loop works | BitPower Loop is a decentralized lending platform based on blockchain technology that aims to provide... | 0 | 2024-07-11T12:45:02 | https://dev.to/weq_24a494dd3a467ace6aca5/explore-how-bitpower-loop-works-1p5e | BitPower Loop is a decentralized lending platform based on blockchain technology that aims to provide secure, efficient and transparent lending services. Here is how it works in detail:

1️⃣ Smart Contract Guarantee

BitPower Loop uses smart contract technology to automatically execute all lending transactions. This aut... | weq_24a494dd3a467ace6aca5 | |

1,919,735 | BitPower Loop Security | BitPower Loop is a blockchain lending protocol based on Ethereum Virtual Machine (EVM) smart... | 0 | 2024-07-11T12:46:49 | https://dev.to/wot_dcc94536fa18f2b101e3c/bitpower-loop-security-18mb | btc |

BitPower Loop is a blockchain lending protocol based on Ethereum Virtual Machine (EVM) smart contracts, running on TRC20, ERC20 and Tron blockchain technologies. Its core design is to achieve fully decentralized and... | wot_dcc94536fa18f2b101e3c |

1,919,736 | BitPower Loop Security | BitPower Loop is a blockchain lending protocol based on Ethereum Virtual Machine (EVM) smart... | 0 | 2024-07-11T12:48:06 | https://dev.to/wot_ee4275f6aa8eafb35b941/bitpower-loop-security-l2l | btc |

BitPower Loop is a blockchain lending protocol based on Ethereum Virtual Machine (EVM) smart contracts, running on TRC20, ERC20 and Tron blockchain technologies. Its core design is to achieve fully decentralized and... | wot_ee4275f6aa8eafb35b941 |

1,919,737 | 25 Open Source AI Tools to Cut Your Development Time in Half | Each ML/AI project stakeholder requires specialized tools that efficiently enable them to manage the... | 0 | 2024-07-11T12:48:44 | https://jozu.com/blog/25-open-source-ai-tools-to-cut-your-development-time-in-half | beginners, opensource, ai, programming | Each ML/AI project stakeholder requires specialized tools that efficiently enable them to manage the various stages of an ML/AI project, from data preparation and model development to deployment and monitoring. They tend to use specialized open source tools because of [their contribution as a significant catalyst to th... | jwilliamsr |

1,919,739 | Explore how BitPower Loop works | BitPower Loop is a decentralized lending platform based on blockchain technology that aims to provide... | 0 | 2024-07-11T12:49:28 | https://dev.to/wgac_0f8ada999859bdd2c0e5/explore-how-bitpower-loop-works-16kj | BitPower Loop is a decentralized lending platform based on blockchain technology that aims to provide secure, efficient and transparent lending services. Here is how it works in detail:

1️⃣ Smart Contract Guarantee

BitPower Loop uses smart contract technology to automatically execute all lending transactions. This aut... | wgac_0f8ada999859bdd2c0e5 | |

1,919,741 | "5 Years of SEO Experience: Crafting Effective Strategies for Superior Search Engine Optimization" | A post by Daivd Jack | 0 | 2024-07-11T12:49:48 | https://dev.to/daivd_jack_5472d051d72310/5-years-of-seo-experience-crafting-effective-strategies-for-superior-search-engine-optimization-4ke8 | **** | daivd_jack_5472d051d72310 | |

1,919,742 | BitPower Loop Security | BitPower Loop is a blockchain lending protocol based on Ethereum Virtual Machine (EVM) smart... | 0 | 2024-07-11T12:50:55 | https://dev.to/woy_ca2a85cabb11e9fa2bd0d/bitpower-loop-security-3oa3 | btc |

BitPower Loop is a blockchain lending protocol based on Ethereum Virtual Machine (EVM) smart contracts, running on TRC20, ERC20 and Tron blockchain technologies. Its core design is to achieve fully decentralized and... | woy_ca2a85cabb11e9fa2bd0d |

1,919,743 | BitPower Introduction: | BitPower is an innovative blockchain solution that aims to enable cross-chain asset transactions... | 0 | 2024-07-11T12:51:37 | https://dev.to/1_f00a6d2ae878600fb6f8d9/bitpower-introduction-427b | BitPower is an innovative blockchain solution that aims to enable cross-chain asset transactions through its unique telePORT protocol. The protocol leverages the liquidity of existing chains such as Polygon, Arbitrum, and Ethereum, allowing users to trade assets minted on the Arweave blockchain without leaving the BitP... | 1_f00a6d2ae878600fb6f8d9 | |

1,919,744 | The Future of Full Stack Development: AI, Machine Learning, and Beyond | What is Full Stack Development Full stack development refers to the process of building... | 0 | 2024-07-11T12:51:53 | https://dev.to/jhk_info/the-future-of-full-stack-development-ai-machine-learning-and-beyond-2ac2 | fullstack, ai, machinelearning, development |

## What is Full Stack Development

Full stack development refers to the process of building and maintaining both the front-end and back-end components of a web application or website. A full-stack developer is ... | jhk_info |

1,919,745 | New webpage | I have create new webpage that's phone number authentication. Please checkout this. I'm not got at ui... | 0 | 2024-07-11T12:56:29 | https://dev.to/gautamsharma/new-webpage-4p69 | I have create new webpage that's phone number authentication. Please checkout this. I'm not got at ui designing.

[webpage](https://codepen.io/sbamxxag-the-lessful/pen/yLdywjw) | gautamsharma | |

1,919,746 | Reduce Your Cloud Bill: Cost Optimization Strategies in the SDLC | Introduction Are Your Cloud Bills Getting Out of Control? The cloud revolution has... | 0 | 2024-07-11T12:57:15 | https://dev.to/d_sourav155/reduce-your-cloud-bill-cost-optimization-strategies-in-the-sdlc-ghj | ## Introduction

Are Your Cloud Bills Getting Out of Control?

The cloud revolution has transformed how we build and deploy software. Scalability, flexibility and on-demand resources are just a few of the benefits that have made cloud services a cornerstone of modern development. However, running cloud services can be... | d_sourav155 | |

1,919,747 | An easy intro to edge computing | Wondering what edge computing is all about? If you've ever visited a website before you can... | 0 | 2024-07-12T11:54:59 | https://dev.to/fastly/an-easy-intro-to-edge-computing-3ced | webdev, learning, serverless, cloud | Wondering what edge computing is all about? If you've ever visited a website before you can understand it! It's also easier than you might expect to get started using it. In this series we'll introduce the concepts and practices in leveraging the edge to enhance your websites using Glitch and Fastly.

***But first, let... | suesmith |

1,919,748 | Simple SVG Animations | Sections - Seeing Stars - Oh YEAH Baby!!! I'm a big fan of the SVG... | 0 | 2024-07-12T14:14:25 | https://dev.to/valcas/simple-svg-animations-58fb | svg, javascript, react, animation | ## Sections

## - [Seeing Stars](#stars)

## - [Oh YEAH Baby!!!](#ohyeah)

I'm a big fan of the SVG image format in web applications. They allow developers to resize images without the loss of quality you'll get when doing the same with raster images. Not only that, but the XML that makes up an SVG image can be added to ... | valcas |

1,919,750 | Mastering ReactJS Development Services: A Comprehensive Guide | ReactJS has risen to be a leading player in web development. ReactJS development companies hold great... | 0 | 2024-07-11T13:03:40 | https://dev.to/nicolabelliardi/mastering-reactjs-development-services-a-comprehensive-guide-184l | reactjsdevelopment, react, reactjsappdevelopment, reactjsdevelopmentcompany | ReactJS has risen to be a leading player in web development. ReactJS development companies hold great potential for businesses that desire a lively and user-friendly web application. This guide will help you understand about [ReactJS development services](https://www.softsuave.com/reactjs-app-development-company), the ... | nicolabelliardi |

1,919,751 | BitPower Introduction: | BitPower is an innovative blockchain solution that aims to enable cross-chain asset transactions... | 0 | 2024-07-11T13:03:59 | https://dev.to/_1f5c45a71c0bc20cc3196c/bitpower-introduction-4edb | BitPower is an innovative blockchain solution that aims to enable cross-chain asset transactions through its unique telePORT protocol. The protocol leverages the liquidity of existing chains such as Polygon, Arbitrum, and Ethereum, allowing users to trade assets minted on the Arweave blockchain without leaving the BitP... | _1f5c45a71c0bc20cc3196c | |

1,919,752 | Node.js vs. PHP: Choosing the Best Backend for Your Project | Discover the differences between Node.js and PHP to select the best backend solution. Make an... | 0 | 2024-07-11T13:04:04 | https://dev.to/loganmary689/nodejs-vs-php-choosing-the-best-backend-for-your-project-3mi9 | Discover the [differences between Node.js and PHP](https://www.zealousys.com/blog/node-js-vs-php/) to select the best backend solution. Make an informed decision for your web development.

| loganmary689 | |

1,920,101 | My experience with Python | Hello all readers, Thank you for taking the time to read this blog! We will discuss Python and my... | 0 | 2024-07-11T19:05:43 | https://dev.to/killerfox007/my-experience-with-python-109o | webdev | Hello all readers, Thank you for taking the time to read this blog! We will discuss Python and my experience as a FlatIron student learning it for the 2nd time. I had a Python class a few years ago in a community college class and it was exciting. Python Is my favorite coding language so far. The options are endless an... | killerfox007 |

1,919,753 | Email Deliverability Audit: Ensuring Your Emails Reach the Inbox | What is Email Deliverability? Email deliverability refers to the ability of your emails to... | 0 | 2024-07-11T13:04:34 | https://dev.to/accuwebhosting/email-deliverability-audit-ensuring-your-emails-reach-the-inbox-43 | email, audit, marketing, inbox | ## What is Email Deliverability?

Email deliverability refers to the ability of your emails to successfully reach your subscribers' inboxes without being filtered into spam or rejected by email servers. High deliverability ensures that your email marketing campaigns are effective, as your messages are seen and engaged w... | clay_p |

1,919,754 | Salting & Hashing🍳 | What is salting 🧂? Salting is the process of adding data into a value before hashing. ... | 0 | 2024-07-11T13:07:32 | https://dev.to/notedbyneosahadeo/salting-hashing-21ge | cybersecurity, beginners | ## What is salting 🧂?

Salting is the process of adding data into a value before **hashing**.

## What is hashing #️⃣?

Hashing is the process of converting data into a *fixed-length* string.

>fixed-length: all hashes will have the same length

⚠️Something important to highlight is that **hashing is not encrypting**; ... | neosahadeo |

1,919,755 | BitPower: An Innovative Blockchain Financial Platform | Abstract BitPower is a company focused on blockchain technology and decentralized finance (DeFi)... | 0 | 2024-07-11T13:08:00 | https://dev.to/kk_l_e35aa740186398a7d97e/bitpower-an-innovative-blockchain-financial-platform-2iai |

Abstract

BitPower is a company focused on blockchain technology and decentralized finance (DeFi) innovation. This article briefly introduces BitPower's core philosophy, technical advantages and market potential.

Core philosophy

BitPower is committed to creating a fee-free, intermediary-free decentralized financial ec... | kk_l_e35aa740186398a7d97e | |

1,919,769 | 꽁머니 커뮤니티와 꽁머니 30000: 스포츠 분석과 스포츠 가이드 | 스포츠 분석과 스포츠 가이드를 제공하는 꽁머니 커뮤니티는 스포츠 팬들에게 필수적인 정보와 함께 큰 혜택을 제공합니다. 이 글에서는 꽁머니 커뮤니티의 역할과 꽁머니 30000의 혜택에... | 0 | 2024-07-11T13:10:13 | https://dev.to/jessicamartinez1951/ggongmeoni-keomyunitiwa-ggongmeoni-30000-seupoceu-bunseoggwa-seupoceu-gaideu-5180 | 스포츠 분석과 스포츠 가이드를 제공하는 [꽁머니 커뮤니티](https://ggongnara.com)는 스포츠 팬들에게 필수적인 정보와 함께 큰 혜택을 제공합니다. 이 글에서는 꽁머니 커뮤니티의 역할과 꽁머니 30000의 혜택에 대해 살펴보겠습니다.

꽁머니 커뮤니티의 역할

스포츠 분석 제공

꽁머니 커뮤니티는 스포츠 팬들에게 심도 있는 스포츠 분석을 제공합니다. 전문가들이 각 경기를 분석하고, 팀의 전력과 선수들의 컨디션을 평가하여 예측을 도와줍니다. 이러한 분석은 경기 결과를 예측하는 데 큰 도움이 되며, 베팅 전략을 세우는 데 중요한 역할을 합니다.

스포츠 가이드 제... | jessicamartinez1951 | |

1,919,770 | Android alternative app and block trackers | Focusing in the avoid at all the apps that track you, skipping from Google Play Services, Facebook,... | 0 | 2024-07-11T13:10:27 | https://dev.to/rafaone/android-alternative-apps-1loi | android, alternative, privacy, apps | Focusing in the avoid at all the apps that track you, skipping from Google Play Services, Facebook, Amazon in general big tech.

Tracker are lib's that the apps install inside, to spyware you and collect data in silent mode.

To Monitor/Analyses you can you [Tracker Control](https://trackercontrol.org/)

that are revolutionizing ways of teaching. The rise in popularity of online education has been driven by scientific innovations, growing societa... | jacquelinedavid | |

1,919,774 | THE IMPORTANCE OF SEMATIC HTML FOR SEO AND ACCESSIBILITY. | (https://docs.google.com/document/u/0/d/1w_5nRGYbl9l6-Wt_RnyMTI3VvuFretC2SpGZxS9yAG8/mobilebasic) | 0 | 2024-07-11T13:19:40 | https://dev.to/nelon98/the-importance-of-sematic-html-for-seo-and-accessibility-3a3p | webdev, seo, html, developers | (https://docs.google.com/document/u/0/d/1w_5nRGYbl9l6-Wt_RnyMTI3VvuFretC2SpGZxS9yAG8/mobilebasic) | nelon98 |

1,919,778 | Getting Started with PS5 Game Development | I wanted to share some insights and tips on getting started with PS5 game development. As many of you... | 0 | 2024-07-11T13:22:27 | https://dev.to/hamiz_siddiqui_b617ccc996/getting-started-with-ps5-game-development-nm1 | ps5 |

I wanted to share some insights and tips on getting started with PS5 game development. As many of you know, the PS5 offers incredible hardware capabilities and new features that can truly enhance the gaming experience. Here’s a quick rundown of what you need to know to start developing for this powerful console.

1. D... | hamiz_siddiqui_b617ccc996 |

1,919,779 | 10 Tricks to Avoid QA Approval and Speed Up Your Development | In the rapidly evolving field of software development, efficiency is frequently crucial. Even while... | 0 | 2024-07-11T13:24:03 | https://www.nilebits.com/blog/2024/07/10-tricks-to-avoid-qa-approval/ | qa, cicd, softwaredevelopment, agile | In the rapidly evolving field of software development, efficiency is frequently crucial. Even while [Quality Assurance (QA)](https://www.linkedin.com/pulse/ensuring-quality-software-outsourcing-testing-qa-amr-saafan) is essential for making sure software is error-free and complies with standards, the process can occasi... | amr-saafan |

1,919,780 | Unlocking JavaScript: Innovative Features for Modern Developers | Introduction JavaScript continues to evolve, bringing new features that enhance its capabilities and... | 0 | 2024-07-11T13:27:03 | https://dev.to/rn_dev_lalit/unlocking-javascript-innovative-features-for-modern-developers-1h6e | javascript, frontend, reactnative, react | Introduction

JavaScript continues to evolve, bringing new features that enhance its capabilities and streamline the development process. In 2024, several exciting additions promise to improve code readability, efficiency, and functionality. Let's explore the latest features of JavaScript that every developer should kn... | rn_dev_lalit |

1,919,781 | Prova | A post by Giorgio Antonelli | 0 | 2024-07-11T13:29:13 | https://dev.to/giorgioantonelli94/open-position-1hfe | giorgioantonelli94 | ||

1,919,783 | Mastering RecyclerView in Java for Android Development | RecyclerView is a powerful and flexible Android component for displaying large data sets. It is a... | 0 | 2024-07-11T13:30:13 | https://dev.to/ankittmeena/mastering-recyclerview-in-java-for-android-development-2f6m | recycleview, android, java, mobile | RecyclerView is a powerful and flexible Android component for displaying large data sets. It is a more advanced and efficient version of ListView, designed to handle large amounts of data with minimal memory consumption. This article will walk you through the basics of RecyclerView, how to set it up in your Android pro... | ankittmeena |

1,919,784 | 5 Components of CCTV Understanding the Essential Elements | CCTV Camera Market Outlook The global CCTV camera market is projected to achieve a valuation of... | 0 | 2024-07-11T13:30:59 | https://dev.to/ganesh_dukare_34ce028bb7b/5-components-of-cctv-understanding-the-essential-elements-5g8m | CCTV Camera Market Outlook

The global CCTV camera market is projected to achieve a valuation of US$51.06 billion by 2033, growing at a robust CAGR of 12.1% from 2024 to 2033. CCTV, or closed-circuit television cameras, play a critical role as surveillance tools, widely used in both public and private settings to monit... | ganesh_dukare_34ce028bb7b | |

1,919,785 | Fine-tuning Large Models: Detailed Explanation and Applications | 1. Introduction With the rapid development of deep learning technology, pre-trained models... | 0 | 2024-07-11T13:31:10 | https://dev.to/happyer/fine-tuning-large-models-detailed-explanation-and-applications-4nao | ai, finetuning, llm, machinelearning | ## 1. Introduction

With the rapid development of deep learning technology, pre-trained models have demonstrated powerful performance across various tasks. However, pre-trained models are not directly applicable to all tasks and often require targeted optimization to enhance performance in specific tasks. This optimizat... | happyer |

1,919,786 | Creating an Azure Virtual Network with Subnets. | Azure Virtual Networks is used to communicate with each other securely and privately. Azure Virtual... | 0 | 2024-07-12T05:02:25 | https://dev.to/tojumercy1/creating-an-azure-virtual-network-with-subnets-1khc | azure, powerfuldevs, subnets, networking | **Azure Virtual Networks is used to communicate with each other securely and privately. Azure Virtual Network enables secure communication between various Azure resources, the internet, and on-premises networks .**

To create this azure Virtual Networks with the four subnet using the address space 192.148.30.0/2... | tojumercy1 |

1,919,787 | Devops Engineer | Devops Engineer https://it.indeed.com/job/devops-engineer-ea478f94b6af5d51 InRebus Technologies... | 0 | 2024-07-11T13:37:35 | https://dev.to/giorgioantonelli94/devops-engineer-4dcg | **Devops Engineer**

https://it.indeed.com/job/devops-engineer-ea478f94b6af5d51

InRebus Technologies ricerca per azienda cliente uno/a: siamo alla ricerca di uno/a: **Devops Engineer**

**Descrizione del progetto:**

Siamo alla ricerca un DevOps Engineer per un progetto di migrazione delle repository Kubernetes da Git... | giorgioantonelli94 | |

1,919,788 | Deploying and Managing Applications with Flux: A Technical Guide | Flux is a powerful tool for managing and automating the deployment and configuration of applications... | 0 | 2024-07-11T13:38:08 | https://dev.to/platform_engineers/deploying-and-managing-applications-with-flux-a-technical-guide-o6a | Flux is a powerful tool for managing and automating the deployment and configuration of applications and infrastructure within Kubernetes clusters. This blog post will delve into the technical aspects of deploying and managing applications using Flux, covering key concepts, setup, and configuration.

### Core Concepts

... | shahangita | |

1,919,789 | Day 6 | I am having trouble expressing my progress without this becoming a public journal entry. I know I... | 0 | 2024-07-11T13:38:24 | https://dev.to/myrojyn/day-6-mfe | python, 100daysofpythonchallenge, learning | I am having trouble expressing my progress without this becoming a public journal entry. I know I know write it in your personal journal first. I do that then this either becomes.

good stuff

or

journal entry #2 public edition.

I want a balance between both. | myrojyn |

1,919,790 | Short-Circuiting Conditions in JavaScript: The Ternary Operator ES6 | Sure! Here is a comprehensive article on conditional (ternary) operators in JavaScript. ... | 0 | 2024-07-11T13:40:46 | https://dev.to/fwldom/short-circuiting-conditions-in-javascript-the-ternary-operator-es6-1b12 | javascript, web, es6, english | Sure! Here is a comprehensive article on conditional (ternary) operators in JavaScript.

## Short-Circuiting Conditions in JavaScript: The Ternary Operator

In JavaScript, making decisions based on conditions is a... | fwldom |

1,919,792 | RxJS in Angular: A Beginner's Guide | Introduction Reactive Extensions for JavaScript, commonly known as RxJS, is a powerful... | 0 | 2024-07-11T13:41:37 | https://dev.to/itsshaikhaj/rxjs-in-angular-a-beginners-guide-59cm |

## Introduction

Reactive Extensions for JavaScript, commonly known as RxJS, is a powerful library for reactive programming using Observables. It is a core part of Angular, enabling developers to compose asynchronous and event-based programs in a functional style. This article aims to demystify RxJS for beginners, pr... | itsshaikhaj | |

1,919,793 | How to install and configure Golang | In this article, you will see how to install Golang and configure it to use the private GitHub... | 0 | 2024-07-11T13:44:25 | https://henriqueleite42.hashnode.dev/how-to-install-and-configure-golang | go, beginners, devops | In this article, you will see how to install Golang and configure it to use the private GitHub repositories of your company.

## Right to the point

> BE SURE TO REPLACE `{VERSION}` WITH THE DESIRED VERSION THAT YOU WANT!!!

### Download Go

```bash

curl -OL https://golang.org/dl/go{VERSION}.linux-amd64.tar.gz

```

###... | henriqueleite42 |

1,919,794 | Software Analyst Engineer Java - Full remote | InRebus Technologies ricerca per azienda cliente uno/a: siamo alla ricerca di uno/a: Software Analyst... | 0 | 2024-07-11T13:48:00 | https://dev.to/inrebusrecruiting2023/software-analyst-engineer-java-full-remote-57al | InRebus Technologies ricerca per azienda cliente uno/a: siamo alla ricerca di uno/a: Software Analyst Engineer

https://zinrec.intervieweb.it/gruppofos/jobs/software-analyst-engineer-java-full-remote-46064/it/

**Principali attività:**

Come Software Analyst Engineer lavorerai all'interno del team di sviluppo e ti oc... | inrebusrecruiting2023 | |

1,919,796 | Looking for new opportunities... | Hi everyone, This is my first post on dev.to community. I am currently looking for a job switch in... | 0 | 2024-07-11T13:52:03 | https://dev.to/bhumika-aga/looking-for-new-opportunities-5hnl | career, webdev, java, springboot | Hi everyone,

This is my first post on dev.to community. I am currently looking for a job switch in software development roles in India or remote. I have been searching for opportunities for a long time now and hope that probably posting something here might amount to something.

I am currently working at Cognizant as a... | bhumika-aga |

1,919,810 | How to create a Linux Virtual machine | A virtual machine (VM) is defined as a computer system emulation, where VM software replaces physical... | 0 | 2024-07-11T13:52:47 | https://dev.to/stippy4real/how-to-create-a-linux-virtual-machine-46me | virtualmachine, cloudcomputing, deveops, windowsserver |

A virtual machine (VM) is defined as a computer system emulation, where VM software replaces physical computing infrastructure/hardware with software to provide an environment for deploying applications and performing other app-related tasks. This article explains the steps in creation of a virtual machines.

login i... | stippy4real |

1,919,811 | Maximize Your Earnings with Rocket Pool Staking Rewards Guide | In the realm of decentralized finance, there exists a unique opportunity for individuals to harness... | 0 | 2024-07-11T13:54:41 | https://dev.to/rocketpool352/maximize-your-earnings-with-rocket-pool-staking-rewards-guide-1cdn | cryptocurrency, ethereum, blockchain, rocketpool | In the realm of decentralized finance, there exists a unique opportunity for individuals to harness the power of blockchain technology to secure and grow their digital assets. By actively participating in the staking ecosystem, investors can effortlessly earn passive income through the act of contributing their crypto... | rocketpool352 |

1,919,813 | Rocket Pool User Guide: How to Use the Platform | Embark on a journey towards proficiency with the tool that propels your knowledge to new... | 0 | 2024-07-11T13:56:56 | https://dev.to/rocketpool352/rocket-pool-user-guide-how-to-use-the-platform-51n1 | cryptocurrency, ethereum, web3, rocketpool | Embark on a journey towards proficiency with the tool that propels your knowledge to new heights.

Discover the secrets of becoming an expert in utilizing the platform's functionalities effortlessly.

Enhance your skills and expertise in efficiently utilizing the system's capabilities to achieve unparalleled success.

... | rocketpool352 |

1,919,814 | Complete Guide to Rocket Pool Validator | In today's digital age, understanding the intricacies of decentralized finance is crucial for anyone... | 0 | 2024-07-11T13:58:34 | https://dev.to/rocketpool352/complete-guide-to-rocket-pool-validator-23ak | cryptocurrency, rocketpool, crypto, blockchain | In today's digital age, understanding the intricacies of decentralized finance is crucial for anyone looking to navigate the ever-evolving landscape of blockchain technology. Dive deep into the world of validators in the Rocket Pool ecosystem, where users can stake their assets and participate in the validation process... | rocketpool352 |

1,919,815 | Enhance PDF Viewing and Editing with the New Built-in Toolbar in .NET MAUI PDF Viewer | TL;DR: The new built-in toolbar feature added to the Syncfusion .NET MAUI PDF Viewer saves users time... | 0 | 2024-07-11T16:50:38 | https://www.syncfusion.com/blogs/post/new-built-in-toolbar-maui-pdf-viewer | dotnetmaui, mobile, pdfviewer, maui | ---

title: Enhance PDF Viewing and Editing with the New Built-in Toolbar in .NET MAUI PDF Viewer

published: true

date: 2024-07-11 12:29:26 UTC

tags: dotnetmaui, mobile, pdfviewer, maui

canonical_url: https://www.syncfusion.com/blogs/post/new-built-in-toolbar-maui-pdf-viewer

cover_image: https://dev-to-uploads.s3.amazon... | jollenmoyani |

1,919,816 | Groogle 4.0.0 (Google DSL) | Groogle is a DSL (Domain Specific Language) oriented to interact with Google Cloud services in an... | 28,036 | 2024-07-11T14:00:49 | https://dev.to/jagedn/groogle-400-google-dsl-39ca | google, googlecloud, groovy, dsl | Groogle is a DSL (Domain Specific Language) oriented to interact with Google Cloud services in an easy way. It provides a concise language so you can create scripts or integrate into your application and consume Google Cloud services as Drive, Sheet or Gmail

In this post series I'll (try) to explain the origin, aim an... | jagedn |

1,919,817 | How Random Are Random Number Generators? | Hey Good People Today lets explore the randomness of random number generator in our computers ... | 0 | 2024-07-11T14:01:13 | https://dev.to/something_something_64b2a/how-random-are-random-number-generators-1eid |

Hey Good People

Today lets explore the randomness of random number generator in our computers

# What is Random Number

So Let's start with a predicting a number and you try to answer it by yourself before reading further

#### Guess a number from 1-10

If you have guessed 7 your like 45% other who would have done... | something_something_64b2a | |

1,919,818 | The History and Evolution of Folding Knives | Folding knives, with their practicality and versatility, have evolved significantly over centuries,... | 0 | 2024-07-11T14:03:17 | https://dev.to/demaxes/the-history-and-evolution-of-folding-knives-39o1 | news, design | Folding knives, with their practicality and versatility, have evolved significantly over centuries, adapting to various cultural, technological, and practical needs.

## Early Origins of Folding Knives

## Ancient Folding Knife Designs

[Folding knives](https://demaxes.com/best-folding-knife/) trace their origins back ... | demaxes |

1,919,819 | Undo Git Commands | Hey folks, I've found a neat way to add an undo feature to Git. As you all know, Git itself relies... | 0 | 2024-07-11T14:03:22 | https://dev.to/devesh525s/undo-git-commands-546d | Hey folks,

I've found a neat way to add an undo feature to Git.

As you all know, Git itself relies on the `.git` folder, which contains all versioning information and metadata for your repository. Every Git command you run, from `git add` to `git commit`, modifies files within this `.git` folder. By using Mercurial (... | devesh525s | |

1,919,820 | Who is the owner of MyGlamm India?-81678<~45548.. | Need Help? Contact us at hello@myglamm.com or you can reach us at081678 45548// 022-62593200 to learn... | 0 | 2024-07-11T14:04:00 | https://dev.to/kalim_khan_7667568a7fa268/who-is-the-owner-of-myglamm-india-8167845548-1kbm | javascript, beginners | Need Help? Contact us at hello@myglamm.com or you can reach us at081678 45548// 022-62593200 to learn more about our myglammINSIDER program and perks.Need Help? Contact us at hello@myglamm.com or you can reach us at081678 45548// 022-62593200 to learn more about our myglammINSIDER program and perks. | kalim_khan_7667568a7fa268 |

1,919,821 | Create a better talent strategy with FlexC’s AI talent platform | Millennials and Gen-Zs apply and choose to stay in an organization based on the complete experience,... | 0 | 2024-07-11T14:04:12 | https://dev.to/malika_dhingra_3a568d8053/create-a-better-talent-strategy-with-flexcs-ai-talent-platform-3c2h | ai | Millennials and Gen-Zs apply and choose to stay in an organization based on the complete experience, not just the compensation. Finding the right talent, providing them with the right candidate experience, and focusing on their overall growth puts a lot on the plate of human resources. Not to mention the numerous layer... | malika_dhingra_3a568d8053 |

1,919,822 | Who is the owner of MyGlamm India?-81678<~45548.. | Need Help? Contact us at hello@myglamm.com or you can reach us at081678 45548// 022-62593200 to learn... | 0 | 2024-07-11T14:05:12 | https://dev.to/kalim_khan_7667568a7fa268/who-is-the-owner-of-myglamm-india-8167845548-4bia | javascript, beginners | Need Help? Contact us at hello@myglamm.com or you can reach us at081678 45548// 022-62593200 to learn more about our myglammINSIDER program and perks.Need Help? Contact us at hello@myglamm.com or you can reach us at081678 45548// 022-62593200 to learn more about our myglammINSIDER program and perks. | kalim_khan_7667568a7fa268 |

1,919,823 | Titanium News #19 | Older posts can be found here. Intro It's Titanium News time again! This time we will... | 0 | 2024-07-11T15:15:41 | https://dev.to/miga/titanium-news-19-3oig | titaniumsdk, mobile, javascript, news | <small>Older posts can be found [here](https://dev.to/miga).</small>

# Intro

It's `Titanium News` time again! This time we will look at 12.3.1.GA, 12.4.0.RC, module updates and how to use ChatGPT to create Titanium iOS modules.

# Titanium 12.3.1.GA

The latest GA release 12.3.1 was published in June and fixed some iss... | miga |

1,919,824 | Serve Next.js with Fastify | How to setup custom Next.js server using Fastify | 0 | 2024-07-11T14:14:31 | https://dev.to/ilinieja/serve-nextjs-with-fastify-o5m | nextjs, fastify, node, typescript | ---

title: Serve Next.js with Fastify

published: true

description: How to setup custom Next.js server using Fastify

tags: nextjs, fastify, nodejs, typescript

# published_at: 2024-07-11 14:04 +0000

---

Next.js is an exceptional framework for React applications that comes with a lot of bells and whistles for Server-Sid... | ilinieja |

1,919,825 | Exploring the Best Coffee Roasters in Dubai with KamKam Coffee | Dubai, known for its luxurious lifestyle and rich culture, is also a burgeoning hub for coffee... | 0 | 2024-07-11T14:07:20 | https://dev.to/kamkam_coffee_71d3d6d5bd1/exploring-the-best-coffee-roasters-in-dubai-with-kamkam-coffee-2h34 | Dubai, known for its luxurious lifestyle and rich culture, is also a burgeoning hub for coffee enthusiasts. The city boasts a vibrant coffee scene, with numerous coffee roasters offering exquisite blends to satisfy every palate. KamKam Coffee is at the forefront of this movement, bringing some of the finest coffee roas... | kamkam_coffee_71d3d6d5bd1 | |

1,919,826 | Sigma, sistema de gestión académico | Sobre los defectos de un sistema de gestión y de cuándo cambiarlo. | 0 | 2024-07-12T14:46:26 | https://dev.to/baltasarq/sigma-sistema-de-gestion-academico-4i7d | spanish | ---

title: Sigma, sistema de gestión académico

published: true

description: Sobre los defectos de un sistema de gestión y de cuándo cambiarlo.

tags: #spanish

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-07-11 14:04 +0000

---

## Sigma, sistema de gestión a... | baltasarq |

1,919,827 | RabbitMQ: Open Source Message Broker Service | RabbitMQ is an Open Source message broker service aiding systems with microservice architecture. For... | 0 | 2024-07-12T14:46:19 | https://dev.to/ajaykrupalk/rabbitmq-open-source-message-broker-service-14f8 | webdev, javascript, tutorial, learning | RabbitMQ is an Open Source message broker service aiding systems with microservice architecture. For example, let's say you want to upload a video to YouTube, behind the scenes, there might be one service to upload the video, one to notify people who are subscribed to the author, and so on. The upload service appends “... | ajaykrupalk |

1,919,828 | Pros and Cons of Using Terraform with FluxCD for GitOps | I have been working on a personal project named Smart-cash to improve some skills and learn new... | 0 | 2024-07-11T21:32:30 | https://dev.to/aws-builders/pros-and-cons-of-using-terraform-with-fluxcd-for-gitops-4k9h | kubernetes, gitops, terraform, fluxcd | I have been working on a personal project named [Smart-cash](https://github.com/danielrive/smart-cash) to improve some skills and learn new ones.

In this article, I will share my thoughts about using Terraform in the GitOps process, specifically to create the manifest and push it to the Git repo.

## The basics

GitOp... | danielrive |

1,919,829 | #help | How can I get some amazing blog about javascript? | 0 | 2024-07-11T14:16:41 | https://dev.to/md_ataurrahmanosmango/help-5p3 | How can I get some amazing blog about javascript? | md_ataurrahmanosmango | |

1,915,701 | 10 Cool JavaScript Tricks and Tips | Introduction JavaScript is a versatile programming language widely used for web... | 0 | 2024-07-08T11:57:03 | https://dev.to/koolkamalkishor/10-cool-javascript-tricks-and-tips-1g40 | ### Introduction

JavaScript is a versatile programming language widely used for web development. Understanding its key features and best practices can significantly enhance your coding efficiency and quality.

### Tips

1. **Use of Arrow Functions**

Arrow functions provide a concise syntax for defining functions. Th... | koolkamalkishor | |

1,919,830 | Top 5 Use Cases of Immersive Technology in Education in Canada | Immersive technology, encompassing Virtual Reality (VR), Augmented Reality (AR), and Mixed Reality... | 0 | 2024-07-11T14:17:11 | https://dev.to/priyanka_aich/top-5-use-cases-of-immersive-technology-in-education-in-canada-1882 | webdev, devops, ai | Immersive technology, encompassing Virtual Reality (VR), Augmented Reality (AR), and Mixed Reality (MR), is revolutionizing the educational landscape by providing interactive and engaging learning experiences. This technology transports students beyond traditional classrooms, allowing them to explore complex concepts a... | priyanka_aich |

1,919,831 | How do I contact to navi loan? 8167534393How do I contact to navi loan? 8167534393 | How do I contact to navi loan? 8167534393 | 0 | 2024-07-11T14:17:26 | https://dev.to/raaj_kumar_6e8ad5b54332f7/how-do-i-contact-to-navi-loan-8167534393how-do-i-contact-to-navi-loan-8167534393-4jeg | webdev, beginners | How do I contact to navi loan? 8167534393 | raaj_kumar_6e8ad5b54332f7 |

1,919,833 | How do I contact to navi loan? 8167534393 | How do I contact to navi loan? 8167534393How do I contact NaviLoan Customer care? 8167534393 | 0 | 2024-07-11T14:19:36 | https://dev.to/raaj_kumar_6e8ad5b54332f7/how-do-i-contact-to-navi-loan-8167534393-1dag | webdev, javascript | How do I contact to navi loan? 8167534393How do I contact NaviLoan Customer care? 8167534393 | raaj_kumar_6e8ad5b54332f7 |

1,919,863 | What's Next For SWE Students? | What's up SE Nerd!! I'm back with another post. This one won't be as technical as I will be using... | 0 | 2024-07-11T14:21:27 | https://dev.to/trippl/whats-next-for-swe-students-26ak | softwareengineering, software | What's up SE Nerd!! I'm back with another post. This one won't be as technical as I will be using this one to reflect on my last 15 weeks of a SWE Bootcamp!

First of all, I want everyone reading this to know that I didn't know much about computers or much about technology before I started this program. I was halfway ... | trippl |

1,919,869 | Unlocking the Full Potential of GitGuardian: Empowering Developers In Code Security | At GitGuardian, we are convinced that effective security requires a shared responsibility model.... | 0 | 2024-07-11T14:34:24 | https://blog.gitguardian.com/empowering-developers-in-code-security/ | security, cybersecurity, git, cli | At GitGuardian, we are convinced that effective security requires a shared responsibility model. Developers are already overburdened with their primary tasks of writing code and delivering features, and we think it is not realistic to expect them to know everything about security, be responsible for triaging and handli... | dwayne_mcdaniel |

1,919,864 | Expert Dental Implants Services Nearby | Discover expert dental implant services nearby with our skilled specialists offering advanced... | 0 | 2024-07-11T14:23:12 | https://dev.to/brandy_thormpson_55250674/expert-dental-implants-services-nearby-fcn | Discover expert dental implant services nearby with our skilled specialists offering advanced solutions for missing teeth. Whether you need single implants or full-mouth restoration, our personalized treatment plans ensure optimal function and aesthetics. Experience compassionate care and lasting dental health improvem... | brandy_thormpson_55250674 | |

1,919,865 | Regenera Stem Cell Hair Treatment: What to Expect | (https://larc.pk/) Welcome to London Aesthetics and Rejuvenation Center, where we specialize in the... | 0 | 2024-07-11T14:24:11 | https://dev.to/larc_pk_3a37c25964fc492c7/regenera-stem-cell-hair-treatment-what-to-expect-1d31 | (https://larc.pk/) Welcome to London Aesthetics and Rejuvenation Center, where we specialize in the latest and most effective hair restoration treatments. Dr. Badie Idris and our team are dedicated to providing advanced solutions for hair loss, including the innovative Regenera Stem Cell Hair Treatment. With locations ... | larc_pk_3a37c25964fc492c7 | |

1,919,866 | Unlocking the Power of 2-in-1: How to Thrive in Online Business and Network Marketing | Introduction In today's fast-paced digital landscape, entrepreneurs are constantly seeking... | 0 | 2024-07-11T14:29:08 | https://dev.to/bluey_studio_ccb30b165385/unlocking-the-power-of-2-in-1-how-to-thrive-in-online-business-and-network-marketing-o3j | onlinebusiness, networking | Introduction

In today's fast-paced digital landscape, [entrepreneurs](https://legenddiamondgeneration.com) are constantly seeking innovative ways to diversify their income streams and maximize their earning potential. Two lucrative opportunities have emerged as frontrunners in the business world: online business and n... | bluey_studio_ccb30b165385 |

1,919,867 | Place 2 is on it's way! | Little teaser for you: It's about 10% done, but could be completed by the end of this year. Some... | 0 | 2024-07-11T14:30:22 | https://dev.to/aud/place-2-is-on-its-way-44k | Little teaser for you:

It's about 10% done, but could be completed by the end of this year.

Some fun stuff:

Place (v1) will be open sourced! You will be able to run v1 yourself, and have people visit.

| aud | |

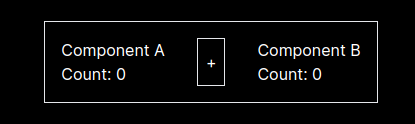

1,919,868 | Sharing state between unrelated React components | Want to show how you can share any serializable data between React components, e.g. client components... | 0 | 2024-07-11T14:32:37 | https://dev.to/asmyshlyaev177/sharing-state-between-unrelated-react-components-4aia | nextjs, javascript, react | Want to show how you can share any serializable data between React components, e.g. client components in NextJS.

We have few unrelated components:

Let's create an object that will contain initial state

```typescript

... | asmyshlyaev177 |

1,919,870 | Understanding RAID Levels: A Comprehensive Guide to RAID 0, 1, 5, 6, 10, and Beyond | In today’s fast-paced digital landscape, data storage is crucial for safeguarding critical... | 0 | 2024-07-11T14:35:21 | https://dev.to/pltnvs/understanding-raid-levels-a-comprehensive-guide-to-raid-0-1-5-6-10-and-beyond-5948 | dataengineering, softwareraid, dataredundancy, datastorage | In today’s fast-paced digital landscape, data storage is crucial for safeguarding critical information. [RAID ](https://xinnor.io/what-is-xiraid/)technology has revolutionized data storage, offering improved performance, increased data redundancy, and optimized capacity. However, with various RAID levels available, sel... | pltnvs |

1,919,871 | After Effects: Favourite Expressions Part 1, Linear() and Ease() | Introduction Now I've established the basics of After Effects, and why I like to use... | 28,010 | 2024-07-11T14:59:37 | https://dev.to/kocreative/after-effects-favourite-expressions-part-1-linear-and-ease-14p1 | beginners, aftereffects, design, tutorial | ## Introduction

Now I've established the basics of After Effects, and why I like to use expressions in tandem with my work, I can finally start highlighting my favourite Expressions.

First up, is the linear() and ease() functions.

```

linear (t, tMin, tMax, value1, value2);

ease (t, tMin, tMax, value1, value2);

```

... | kocreative |

1,919,872 | PROJECTS FOR RESUME | Can you all suggest me some good projects to add in my resume as projects available on github and... | 0 | 2024-07-11T14:43:05 | https://dev.to/muzammil_tauqeer_0472e2c2/projects-for-resume-2j5o | Can you all suggest me some good projects to add in my resume as projects available on github and google are very common and would be used by almost everyone ?

| muzammil_tauqeer_0472e2c2 | |

1,919,873 | Meu primeiro projeto REAL | Como tudo começou... Ano passado conheci a FCamara por um evento que eles organizaram... | 0 | 2024-07-11T18:15:39 | https://dev.to/leonardosf/meu-primeiro-projeto-real-284e | webdev, programming, learning, development | ## Como tudo começou...

Ano passado conheci a FCamara por um evento que eles organizaram junto ao GDG Santos. Descobri que toda quinta feira eles abrem o escritório para pessoas que quiserem estudar e trabalhar de lá. Desde então, toda semana estou por lá e numa dessas idas conheci o Lucas Batista, coordenador do proj... | leonardosf |

1,919,874 | Episode 24/27: SSR Hybrid Rendering & Full SSR Guide | We got a new RFC focusing on "hybrid rendering" and a full SSR guide. RFC SSR Hybrid... | 0 | 2024-07-11T14:44:37 | https://dev.to/this-is-angular/episode-2427-ssr-hybrid-rendering-full-ssr-guide-20gj | webdev, javascript, programming, angular | We got a new RFC focusing on "hybrid rendering" and a full SSR guide.

{% embed https://youtu.be/a4ABBJAwj0Y %}

## RFC SSR Hybrid Rendering

Although Angular 18.1 was released this week (coverage will follow in the next episode), a new RFC lays out future features for SSR.

The RFC foresees a separate router configura... | ng_news |

1,919,875 | Unlocking the Potential of JavaScript AI with Sista AI | Unleash the power of JavaScript AI with Sista AI! Discover how AI revolutionizes user experiences and operational efficiency. Join the innovation wave now! 🌐 | 0 | 2024-07-11T14:45:48 | https://dev.to/sista-ai/unlocking-the-potential-of-javascript-ai-with-sista-ai-3ace | ai, react, javascript, typescript | <h2>Introduction</h2><p>JavaScript AI has become a game-changer in enhancing user engagement and operational efficiency. As businesses strive to stay ahead in the digital landscape, AI integration has emerged as a pivotal solution to drive growth and innovation. The power of AI technologies, especially in the context o... | sista-ai |

1,919,877 | Oh CommonJS! Why are you mESMing with me?! Reasons to ditch CommonJS | It was a normal patching day. I patched and upgraded my npm dependencies without making code changes,... | 0 | 2024-07-12T08:21:24 | https://dev.to/jolodev/oh-commonjs-why-are-you-mesming-with-me-reasons-to-ditch-commonjs-enh | javascript, typescript, esm, commonjs | It was a normal patching day. I patched and upgraded my npm dependencies without making code changes, and suddenly, some of my unit tests failed.

Wtf!

and they're giving it away for free for a year. If you're planning to use it in your project, I've built a new Python package that makes it easy to integrate OLA Maps functionality into your Python projects. Let's explore how to use this pac... | adayush |

1,919,880 | Creating an Azure Virtual Network with subnets | Setting up virtual networking in Azure involves creating and configuring an Azure Virtual Network... | 0 | 2024-07-11T15:11:32 | https://dev.to/abidemi/creating-an-azure-virtual-network-with-subnets-7b0 | azure, network, subnet, tutorial | Setting up virtual networking in Azure involves creating and configuring an Azure Virtual Network (VNet), which allows you to securely connect Azure resources to each other.

Here's a step-by-step guide to setting up virtual networking in Azure:

**Step 1: Sign in to the Azure Portal:**

Go to portal.azure.com

**Step ... | abidemi |

1,919,881 | What Is Pulumi And How To Use It | Imagine managing your cloud infrastructure using the programming languages you already love—Python,... | 0 | 2024-07-11T15:01:29 | https://www.env0.com/blog/what-is-pulumi-and-how-to-use-it-with-env0 | pulumi, infrastructureascode, devops, cloudcomputing | Imagine managing your cloud infrastructure using the programming languages you already love—Python, Go, JavaScript, you name it. No more wrestling with YAML, JSON, or HCL (HashiCorp Configuration Language) files!

Pulumi gives you that power, offering a robust CLI and service backend to manage both state and secrets. I... | env0team |

1,919,883 | Sell your side projects | Got a side project you’re proud of but don’t know what to do with it? Maybe it’s time to let someone... | 0 | 2024-07-11T15:02:53 | https://dev.to/salmandotweb/sell-your-side-projects-52p1 | webdev, javascript, beginners, programming | Got a side project you’re proud of but don’t know what to do with it? Maybe it’s time to let someone else take it to the next level.

We’re launching a new platform called [Acquireside](https://www.aquireside.com/) where you can buy and sell unique side projects.

for better navigation**

JavaScript is a cornerstone of modern web development, powering dynamic websites and applications. While many developers familiar with the basic and wi... | syakirurahman |

1,919,888 | The Front End Dev Handbook 2024, State of HTML and State of JavaScript 2023 Results, TypeScript 5.5 | Front End News #109 | NOTE: This is issue #109 of my newsletter, which went live on Monday, July 8. You might find this... | 9,151 | 2024-07-11T15:11:56 | https://frontendnexus.com/news/109/ | newsletter, frontendnews, webdev, frontend | > **NOTE:** This is issue #109 of my newsletter, which went live on Monday, July 8. You might find this information valuable and exciting and want to receive future issues as they are published ahead of everyone else. In that case, I invite you to join the subscriber list at [frontendnexus.com](https://frontendnexus.co... | adriansandu |

1,919,890 | Starting from the bottom | Hi. I'm considering switching back to this field after 15 years. I didn't have much experience to... | 0 | 2024-07-11T15:18:38 | https://dev.to/taylor_laydon_77/starting-from-the-bottom-4mc0 | Hi. I'm considering switching back to this field after 15 years. I didn't have much experience to begin with, and never fully finished my degree. My dad always wanted me to get into the field to take over his companies. Things didn't go as planned.

I'm trying to get pointers on where to begin. I have chosen MANY field... | taylor_laydon_77 | |

1,919,891 | Metadata to actionable insights in Grafana: How to view Parseable metrics | Parseable deployments in the wild are handling larger and larger volumes of logs, so we needed a way... | 0 | 2024-07-11T15:55:59 | https://dev.to/parseable/metadata-to-actionable-insights-in-grafana-how-to-view-parseable-metrics-3oa4 | Parseable deployments in the wild are handling larger and larger volumes of logs, so we needed a way to enable users to monitor their Parseable instances.

Typically this would mean setting up Prometheus to capture Parseable ingest and query node metrics and visualize those metrics on a Grafana dashboard. We added [Pro... | jenwikehuger | |

1,919,896 | Using Scratch Base image provided by Docker | Building Base images in docker is not recommended but for those who are a bit interested in diving... | 0 | 2024-07-11T15:38:18 | https://dev.to/deepcodr/using-scratch-base-image-provided-by-docker-5a7a | deepcodr, docker, devops, tutorial | Building Base images in docker is not recommended but for those who are a bit interested in diving deeper in docker, this is a must-know thing. Even though the docker hub provides a huge collection of images for a variety of uses there are some cases where we would want to create something complex from SCRATCH !!!!.

T... | deepcodr |

1,921,179 | How to create Azure Virtual Network | A post by stephen anosike | 0 | 2024-07-12T13:09:48 | https://dev.to/stephen_anosike_d6027f55f/how-to-create-azure-virtual-network-3klm | stephen_anosike_d6027f55f | ||

1,919,898 | Mastering Functional Programming: A Comprehensive Collection of Free Tutorials | The article is about a comprehensive collection of free programming tutorials focused on the topic of functional programming. It covers a wide range of programming languages, including Scala, Haskell, and JavaScript, providing in-depth explorations of functional programming concepts, principles, and practical applicati... | 27,985 | 2024-07-11T15:23:14 | https://dev.to/getvm/mastering-functional-programming-a-comprehensive-collection-of-free-tutorials-3gnj | getvm, programming, freetutorial, collection |

Functional programming has gained immense popularity in the software development community, offering a powerful and elegant approach to problem-solving. This collection of free tutorials from GetVM.io provides a comprehensive exploration of the principles and applications of functional programming, covering a wide ran... | getvm |

1,919,899 | Hi there! | We're Potato Battery, a group of players setting out to provide great games for people to play! We... | 0 | 2024-07-11T15:27:17 | https://dev.to/pb2/hi-there-3hnp | We're Potato Battery, a group of players setting out to provide great games for people to play!

We hope to finish our projects soon, here's a few:

- Space Game (previously Comet)

> Large space exploration game with captivating story and lore

- Chaos

> Literally just **CHAOS**.

- Moirath

> Funny blob goes brrr

-... | aud | |

1,919,900 | 5 Image Gallery Examples Fully-Coded with Tailwind CSS [Free& Open Source] | Hey Tailwind devs 👋 Here's a list of open-source image gallery components coded with Tailwind CSS... | 27,771 | 2024-07-11T15:29:22 | https://dev.to/creativetim_official/5-image-gallery-examples-fully-coded-with-tailwind-css-free-open-source-256n | tailwindcss, webdev, opensource |

Hey Tailwind devs 👋

Here's a list of open-source image gallery components coded with [Tailwind CSS](https://tailwindcss.com/) and [Material Tailwind](https://material-tailwind.com/?ref=devto).

Each Tailwind CSS image gallery example presented below is easy to integrate and customize. The links to the source code ar... | creativetim_official |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.