id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,920,153 | Unlocking Protected PDFs | Discover how to bypass Google Drive's view-only restrictions and download protected PDFs using JavaScript. This step-by-step guide offers a practical method to capture high-resolution images of each page and compile them into a PDF. | 0 | 2024-07-11T20:12:42 | https://dev.to/dpaluy/unlocking-protected-pdfs-99b | javascript, googledrive, pdf | ---

title: Unlocking Protected PDFs

published: true

description: Discover how to bypass Google Drive's view-only restrictions and download protected PDFs using JavaScript. This step-by-step guide offers a practical method to capture high-resolution images of each page and compile them into a PDF.

tags: javascript, goog... | dpaluy |

1,920,154 | Cities as Codebases | In the world of software development, we often find ourselves grappling with the complexity of our... | 0 | 2024-07-11T20:16:39 | https://dev.to/youssefibrahim/cities-as-codebases-2mj9 | software, developers, codebases, cities | In the world of software development, we often find ourselves grappling with the complexity of our codebases, much like urban planners navigating the intricate landscapes of cities. The following comparison isn't just a whimsical analogy; it could offer profound insights into how we can improve our software systems by ... | youssefibrahim |

1,920,172 | Running Tests in GitLab CI: From Zero to Pipeline | Continuous Integration (CI) is an essential practice in modern software development. It ensures that... | 0 | 2024-07-11T22:26:06 | https://dev.to/thiagoematos/running-tests-in-gitlab-ci-from-zero-to-pipeline-5ece | gitlab, cicd, springboot, unittest | Continuous Integration (CI) is an essential practice in modern software development. It ensures that code changes are automatically tested, leading to faster and more reliable software releases. In this article, we'll walk through the process of setting up a Spring Boot project with Gradle, writing unit tests, and conf... | thiagoematos |

1,920,173 | Toronto Google Ads Management | Discover the power of precision with Tweaked SEM, your go-to Google Ads agency in Toronto. We... | 0 | 2024-07-11T20:24:48 | https://dev.to/toronto-sem/toronto-google-ads-management-3hck | Discover the power of precision with Tweaked SEM, your go-to **Google Ads agency in Toronto**. We specialize in top-tier **Google Ads management** and **PPC management services** designed to elevate your online presence, amplify web traffic, and skyrocket your leads. Our cutting-edge SEM strategies focus on razor-sharp... | toronto-sem | |

1,920,174 | Importance of HTMLsemantic on SEO | []https://docs.google.com/document/d/11x3hfTVJa9n737IzuGdW2OW_QqSiuXOLlOQp0sHN4pw/edit#heading=h.td4t... | 0 | 2024-07-11T20:25:07 | https://dev.to/eunice_ngina_3e1848509848/importance-of-htmlsemantic-on-seo-430e | []https://docs.google.com/document/d/11x3hfTVJa9n737IzuGdW2OW_QqSiuXOLlOQp0sHN4pw/edit#heading=h.td4teddxj7m | eunice_ngina_3e1848509848 | |

1,920,175 | Corner Store App Basic Prototype | Corner Store App Corner Store App is a progressive web application (PWA) developed using Next.js and... | 0 | 2024-07-11T20:29:07 | https://dev.to/ror2022/corner-store-app-basic-prototype-45b0 | nextjs, nestjs, typescript, api | Corner Store App

Corner Store App is a progressive web application (PWA) developed using Next.js and Nest.js, designed as a prototype to serve as a platform for small businesses, either individually or in groups. Additionally, it has the potential to be adapted for medium-sized corporations that need to manage multipl... | ror2022 |

1,920,176 | Phase 3 sql, tuples, and object problems | Hello, my name is Daniel Trejo and I'm a student at Flatiron School in the Software Engineering... | 0 | 2024-07-11T20:29:48 | https://dev.to/daniel_trejo14/phase-3-sql-tuples-and-object-problems-1k8d | Hello, my name is Daniel Trejo and I'm a student at Flatiron School in the Software Engineering program. Phase 3 was definitely the hardest one yet because of the sheer amount of curriculum. The hardest part for me was the project which is probably not surprising due to the fact that it is the final project of the phas... | daniel_trejo14 | |

1,920,178 | Rewear: Transforming Sustainable Fashion with Wix Studio | This is a submission for the Wix Studio Challenge . What I Built Rewear is a sustainable... | 0 | 2024-07-11T20:42:32 | https://dev.to/ketanrajpal/rewear-transforming-sustainable-fashion-with-wix-e-commerce-4l3a | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

## What I Built

Rewear is a sustainable clothing exchange platform designed to transform how you experience fashion. Our platform allows users to send their pre-loved clothing items for quality control, browse curated selections, an... | ketanrajpal |

1,920,179 | The Battle of Databases: SQL, PostgreSQL, MongoDB, and Redis Explored | As a data enthusiast, you've likely encountered the names SQL, PostgreSQL, MongoDB, and Redis, but... | 0 | 2024-07-11T20:35:45 | https://dev.to/aquibpy/the-battle-of-databases-sql-postgresql-mongodb-and-redis-explored-jbc | redis, sql, postgres, mongodb | As a data enthusiast, you've likely encountered the names SQL, PostgreSQL, MongoDB, and Redis, but what exactly are they and when should you use them? This blog aims to demystify these powerful database technologies, comparing their features, use cases, and offering a headstart to get you started.

**SQL: The Foundatio... | aquibpy |

1,920,180 | Angular 18.1: Template Local Variables with @let | Key takeaways Syntax: let variableName = expression; Scope: The variable is only... | 0 | 2024-07-11T20:36:56 | https://dev.to/debba/angular-181-template-local-variables-with-let-3mh9 | webdev, javascript, angular, frontend | ## Key takeaways

* **Syntax**: let variableName = expression;

* **Scope**: The variable is only available within the HTML element or block where it's declared.

* **Common scenarios**:

* Reduce repetition of complex expressions.

* Better handle type narrowing.

* Define complex styling options.

* Improve ... | debba |

1,920,182 | The Elegance and Innovation of Omega Watches: A Timeless Investment | Omega watches represent the pinnacle of Swiss watchmaking excellence, combining precision,... | 0 | 2024-07-11T20:47:01 | https://dev.to/shuvronil_datta_420be2d75/the-elegance-and-innovation-of-omega-watches-a-timeless-investment-352d | Omega watches represent the pinnacle of Swiss watchmaking excellence, combining precision, innovation, and timeless design. Whether you are a seasoned collector or a first-time buyer, an Omega watch is a worthy addition to any collection. With their rich heritage, exceptional craftsmanship, and versatile styles, Omega ... | shuvronil_datta_420be2d75 | |

1,920,184 | HOST STATIC WEBSITE ON NETTLIFY; A BEGINNER-FRIENDLY GUIDE TO WEB DEVELOPMENT | Prerequisite basic knowledge in html, basic knowledge in cascading styles (css) basic JavaScript... | 0 | 2024-07-11T21:39:18 | https://dev.to/psam4ord/host-static-website-on-nettlify-a-beginner-friendly-guide-to-web-development-3kog | webdev, html, beginners, programming | _**Prerequisite**_

- basic knowledge in html,

- basic knowledge in cascading styles (css)

- basic JavaScript knowledge.

**Project Overview**

- This is a static website project hosted on Netlify and available on GitHub.

- The project was created using basic web development tools and does not utilize any npm packages, m... | psam4ord |

1,920,185 | Flutter Gooey Blobs | Learn how to make gooey blobs with custom painter in Flutter, and how you might be able to use them in your projects. | 0 | 2024-07-11T20:59:36 | https://code.pieces.app/blog/flutter-gooey-blobs | <figure><img src="https://d37oebn0w9ir6a.cloudfront.net/account_32099/gooey-blobs_640fcfb3316f48f20b3ec6e2c92a9736.jpg" alt="Flutter Gooey Blobs with CustomPainter."/></figure>

Have you ever seen these gooey blobs on the internet?

<figure><img src="https://d37oebn0w9ir6a.cloudfront.net/account_32099/image_f05f6c80470... | get_pieces | |

1,920,186 | SEMANTIC HTML AND SEO | ROLE OF HTML SEMANTIC ELEMENTS IN SEARCH ENGINE OPTIMISATION SEMANTIC TAGGS ARE CRUCIAL IN... | 0 | 2024-07-11T21:02:59 | https://dev.to/peter_noel_33fcf5672a5db1/semantic-html-and-seo-123e | html, beginners, programming, webdev | ## **_ROLE OF HTML SEMANTIC ELEMENTS IN SEARCH ENGINE OPTIMISATION_**

SEMANTIC TAGGS ARE CRUCIAL IN THE PERFORMANCE OF A WEB PAGE , HERE ARE THE INSIGHTS ON HOW HTML SEMANTICS IMPROVE THE PERFRMANCE OF THE WEB PAGE AND ACCESABILITY ;

https://docs.google.com/document/d/1eR_ZlTRXG03RrKJmn2Ypw5vwKSLb8yO83RLCT5eLQT4/edit?... | peter_noel_33fcf5672a5db1 |

1,920,187 | shadcn-ui/ui codebase analysis: How does shadcn-ui CLI work? — Part 2.11 | I wanted to find out how shadcn-ui CLI works. In this article, I discuss the code used to build the... | 0 | 2024-07-11T21:06:53 | https://dev.to/ramunarasinga/shadcn-uiui-codebase-analysis-how-does-shadcn-ui-cli-work-part-211-46ml | javascript, opensource, nextjs, shadcnui | I wanted to find out how shadcn-ui CLI works. In this article, I discuss the code used to build the shadcn-ui/ui CLI.

In part 2.10, we looked at getRegistryBaseColors function, prompts, creating components.json and resolveConfigPaths.

requires a strategic blend of skill development, portfolio building, niche identification, and effective client management. As the digital landscape continues to evolve, mastering the art of SEO is paramount for success. However, the jou... | khuzaima_yamman_a0046bbd8 |

1,920,246 | Method security with @Secured Annotation in Spring | This annotation provides a way to add security configuration to business methods. It will use roles... | 27,602 | 2024-07-11T21:21:59 | https://springmasteryhub.com/2024/07/11/method-security-with-secured-annotation-in-spring/ | java, spring, springboot, tutorial | This annotation provides a way to add security configuration to business methods.

It will use roles to check if a user has permission to call this method. The annotation is part of spring security. So to enable its usage you need the spring security dependency.

## Example Scenario

You have an application that has a ... | tiuwill |

1,920,247 | Today, I start my "Problem Resolver" journey with the name of Allah. | I start my journey and explore how to resolve problems as a programmer or coder. | 0 | 2024-07-11T21:22:17 | https://dev.to/ayeshaseher/today-i-start-my-problem-resolver-journey-with-the-name-of-allah-1g0c | I start my journey and explore how to resolve problems as a programmer or coder. | ayeshaseher | |

1,920,251 | Python - First Week | Python is taught online in Tamil without any cost. The only expectation from them is to create a blog... | 0 | 2024-07-11T21:23:33 | https://dev.to/sureshlearnspython/python-first-week-1bbl | python, basic | Python is taught online in Tamil without any cost. The only expectation from them is to create a blog and write our understanding after learning. Hence started writing the blog.

- Started Learning python through Kaniyam. Got to know about two Tamil people Syed and Srini. Classes will be for Monday to Wednesday at 7pm ... | sureshlearnspython |

1,920,252 | Card Portfolio UI Component | Cards for portfolio Website. If you find this usefull, you can support me by buying me a coffe | 0 | 2024-07-11T21:26:40 | https://dev.to/sofidev/card-portfolio-ui-component-1c0f | codepen, ui, components, css | Cards for portfolio Website.

If you find this usefull, you can support me by buying me a coffe

{% codepen https://codepen.io/sofidev/pen/wvLvKmR %} | sofidev |

1,920,254 | Classes in Python (Introduction) | In Python, classes are the foundation of object-oriented programming. In simple terms, they are... | 0 | 2024-07-11T21:41:40 | https://dev.to/gianni_cast/classes-in-python-introduction-13cc | beginners, python, classes | In Python, classes are the foundation of object-oriented programming. In simple terms, they are essentially a template for creating objects with similar attributes.

**Creating Classes**

Class definition syntax is extremely straightforward. All you need is the keyword: class followed by the ClassName: (the class name ... | gianni_cast |

1,920,255 | A greener planet with tech | Hello everyone! This week I decided to blog about something that caught my eye- sustainable web... | 0 | 2024-07-11T21:42:03 | https://dev.to/christopherchhim/a-greener-planet-with-tech-180n | webdev, environment, co2emissions | Hello everyone! This week I decided to blog about something that caught my eye- sustainable web development! I have done a lot of research concerning today's environmental problems at school such as water pollution, deforestation, habitat fragmentation, etc... Reducing carbon emissions to preserve the earth's health is... | christopherchhim |

1,920,256 | Building Conversational AI with React: Trends, Tools, and Applications | Explore the future of conversational AI with React and discover the transformative power of Sista AI. Dive into the world of innovative voice assistants! 🌐✨ | 0 | 2024-07-11T21:45:41 | https://dev.to/sista-ai/building-conversational-ai-with-react-trends-tools-and-applications-26oc | ai, react, javascript, typescript | <h2>Introduction</h2><p>Conversational AI is transforming user interactions with technology, offering personalized and engaging experiences. This article delves into the latest trends, tools, and applications for building conversational AI with React, exploring the cutting-edge capabilities of <strong>Sista AI</strong>... | sista-ai |

1,920,257 | Laravel Cheatsheet after making changes to a file | Clear caches (if needed): php artisan config:cache php artisan route:cache php artisan... | 0 | 2024-07-11T21:46:01 | https://dev.to/msnmongare/laravel-cheatsheet-after-making-changes-to-a-file-a8b | webdev, beginners, laravel, programming | Clear caches (if needed):

```

php artisan config:cache

php artisan route:cache

php artisan view:clear

php artisan cache:clear

```

**Serve the application:**

```

php artisan serve

```

| msnmongare |

1,920,261 | Master Linear Regression with NumPy: Step-by-Step Guide to Building and Optimizing Your First Model! | Linear regression is a simple yet powerful method in machine learning used to model the relationship... | 0 | 2024-07-11T21:56:47 | https://dev.to/moubarakmohame4/master-linear-regression-with-numpy-step-by-step-guide-to-building-and-optimizing-your-first-model-oo7 | python, machinelearning, beginners, numpy | Linear regression is a simple yet powerful method in machine learning used to model the relationship between a dependent variable (target) and one or more independent variables (predictors). In this article, we will implement a simple linear regression using NumPy, a powerful library for scientific computing in Python.... | moubarakmohame4 |

1,920,262 | JavaScript MMORPG - Maiu Online - #babylonjs - Ep29: Chat message clouds | Hello, Again super short update from the progress. When someone writes message on the chat and is in... | 0 | 2024-07-11T22:03:06 | https://dev.to/maiu/javascript-mmorpg-maiu-online-babylonjs-ep29-chat-message-clouds-1a90 | babylonjs, javascript, mmorpg, indiegamedev | Hello,

Again super short update from the progress. When someone writes message on the chat and is in the visual range I'm displaying message in the 'cloud'. And to the future backlog I'm adding enhancing chat with the local/global mode.

{% youtube n28vM7oDaKQ %} | maiu |

1,920,263 | JavaScript Tips | Hello! Today I decided to blog on an article about JavaScript tips. JavaScript is an essential... | 0 | 2024-07-11T22:12:14 | https://dev.to/christopherchhim/javascript-tips-aj9 | javascript, webdev, programming, beginners | Hello! Today I decided to blog on an article about JavaScript tips. JavaScript is an essential programming language for web development, so I decided to share these tips in case I ever need a reference.

1. Navigation tool

Browser OS details can be viewed by using either the window.navigator object or navigator.platfo... | christopherchhim |

1,920,264 | Random Walk on the Line | Random Walk “In mathematics, a random walk, sometimes known as a drunkard’s walk, is a... | 0 | 2024-07-11T22:24:30 | https://dev.to/kdalkafoukis/random-walk-on-the-line-3lkf | mathematics, python, probabilitythoery, randomwalk | ## Random Walk

“In mathematics, a **random walk**, sometimes known as a **drunkard’s walk**, is a random process that describes a path that consists of a succession of random steps on some mathematical space.”

"Random walks have applications to engineering and many scientific fields including ecology, psychology, comp... | kdalkafoukis |

1,920,265 | Connect External Public IP to Private VPC on AWS | Having a Private AWS VPC and Want to Connect Public External Ip to it takeing into consideration to... | 0 | 2024-07-11T22:15:11 | https://dev.to/basel5001/connect-external-public-ip-to-private-vpc-on-aws-2ag4 | Having a Private AWS VPC and Want to Connect Public External Ip to it

takeing into consideration to Configure the Following:

VPC CIDR BLock

Routing Table

ACL

Security Group

and using IAM policy to Specifiy the Ip as Well.

Tried to Configure these Steps but with no luck, and couldn't ping from inside EC2 | basel5001 | |

1,920,266 | Useful datasets for AI/ML | Looking for datasets for your next project. Here are some sources I... | 0 | 2024-07-11T22:17:16 | https://dev.to/johnscode/useful-datasets-for-aiml-4n89 | machinelearning, ai, datascience | Looking for datasets for your next project. Here are some sources I know:

https://huggingface.co/datasets

https://openml.org/

https://dagshub.com/datasets/

https://github.com/awesomedata/awesome-public-datasets

https://paperswithcode.com/datasets

https://registry.opendata.aws/

https://www.kaggle.com/datasets

Add more... | johnscode |

1,920,286 | AI vs Marketmaker | Today, an increasing number of people are becoming familiar with cryptocurrency and actively... | 0 | 2024-07-11T23:53:12 | https://dev.to/thejefry/ai-vs-marketmaker-15g5 |

Today, an increasing number of people are becoming familiar with cryptocurrency and actively exploring its possibilities. With the advancement of neural network technologies, token creators now have the opportunity to integrate AI into market making. Neural networks facilitate transaction execution, display coin rates... | thejefry | |

1,920,269 | Why It Might Be Time To Remove Gitbhub Copilot From Your Code Editor | Over the past few years, AI-powered coding assistants like GitHub Copilot have revolutionized how we... | 0 | 2024-07-11T22:23:48 | https://dev.to/fido1hn/why-it-might-be-time-to-remove-gitbhub-copilot-from-your-code-editor-52j4 | programming, vscode, githubcopilot | Over the past few years, AI-powered coding assistants like GitHub Copilot have revolutionized how we code. These tools have undoubtedly offered some benefits, such as providing code suggestions and helping us navigate coding hurdles more efficiently. However, they are not without their drawbacks, which raises the quest... | fido1hn |

1,920,270 | Want to get started as a Data Engineer | Level Up Your Data Engineering Game! Get the Basics Down: Dive into data engineering... | 0 | 2024-07-11T22:26:56 | https://dev.to/johnscode/want-to-get-started-as-a-data-engineer-1amn | datascience, machinelearning, ai | Level Up Your Data Engineering Game!

Get the Basics Down:

- Dive into data engineering essentials

- Play around with big data tools and cloud stuff

- Get comfy with SQL and a coding language (Python's a good bet)

Pick a Cool Problem:

- Choose something that gets you excited (sports stats, stock market, food delive... | johnscode |

1,920,272 | Apprenticeship & Beyond👨🏾💻 | What does apprenticeship mean to you? To me, being an apprentice means being a very specialized type... | 0 | 2024-07-11T22:58:55 | https://dev.to/taariqelliott/apprenticeship-beyond-30ol | p5js, javascript, creativecoding, generativeart | **_What does apprenticeship mean to you?_**

To me, being an apprentice means being a very specialized type of student. It involves being under the supervision and guidance of someone who is highly knowledgeable in a particular field. An apprenticeship is a way to learn the intricacies of that field while practicing to ... | taariqelliott |

1,920,273 | Introducing the Samsung Galaxy Watch Ultra: A Powerhouse of Smartwatch Innovation | ** Samsung Watch Ultra ** The highly anticipated Samsung Galaxy Unpacked 2024 event has... | 0 | 2024-07-11T22:58:33 | https://dev.to/tomandjerry36/introducing-the-samsung-galaxy-watch-ultra-a-powerhouse-of-smartwatch-innovation-5a20 | ai, samsung, smartwatch, news | **

## Samsung Watch Ultra

**

The highly anticipated Samsung Galaxy Unpacked 2024 event has just unveiled the latest addition to the Galaxy Watch lineup — the remarkable Galaxy Watch Ultra. This premium smartwatch is... | tomandjerry36 |

1,920,274 | Day 2 - JavaScript Essential Training | 1. Async vs. Defer When adding scripts to the head of your HTML document, it's crucial to... | 0 | 2024-07-11T23:03:36 | https://dev.to/ryoichihomma/day-2-javascript-essential-training-33n4 | javascript, linkedinlearning, jstips, developer | ## 1. Async vs. Defer

When adding scripts to the head of your HTML document, it's crucial to ensure they don't block the page rendering. This is where **`async`** and **`defer`** attributes come into play.

- **Async:** Scripts with **`async`** load in parallel with the HTML parsing and execute as soon as they’re down... | ryoichihomma |

1,920,275 | Building a Tic-Tac-Toe Terminal Game using Python | Introduction My name's Derek and I'm an aspiring software engineer! Recently I've been... | 0 | 2024-07-11T23:06:17 | https://dev.to/keelan-derek/building-a-tic-tac-toe-terminal-game-using-python-55of | beginners, python, ai, learning | ## Introduction

My name's Derek and I'm an aspiring software engineer! Recently I've been trying very hard to learn Python and the fundamentals of software development through an online course. Having graduated from college two years ago with a Bachelor's in Business Computing and Information Systems, I am relatively ... | keelan-derek |

1,920,276 | [Game of Purpose] Day 54 | Today I was working on using Cpp files as components. And I managed to do it. However, it is not that... | 27,434 | 2024-07-11T23:07:20 | https://dev.to/humberd/game-of-purpose-day-54-1ln | gamedev | Today I was working on using Cpp files as components. And I managed to do it. However, it is not that easy as it is in blueprints. In blueprints all the async execution flow is very seamless. However, in Cpp it seems to be harder.

Here I made a target go to the first point in a Spline.

```cpp

void USplineNavigator::N... | humberd |

1,920,277 | AI Testing | Artificial intelligence (AI) is transforming the landscape of software testing, offering a more... | 0 | 2024-07-11T23:14:58 | https://dev.to/jeff_handy_6460add778baca/ai-testing-4699 | Artificial intelligence (AI) is transforming the landscape of software testing, offering a more efficient and effective alternative to traditional manual and automated testing methods. As modern software applications become increasingly complex and development cycles accelerate, AI-driven testing stands out by harnessi... | jeff_handy_6460add778baca | |

1,920,278 | Effortless HTTP Client Testing in Go | Explore the benefits of VCR testing in Go with dnaeon/go-vcr | 0 | 2024-07-11T23:16:03 | https://dev.to/calvinmclean/effortless-http-client-testing-in-go-4d75 | go, testing, tutorial, learning | ## Introduction

As a software engineer, you are probably familiar with writing code to interact with external HTTP services. After all, it is one of the most common things we do! Whether it's fetching data, processing payments with a provider, or automating social media posts, our applications almost always involve ex... | calvinmclean |

1,920,279 | Top AI Testing Tools | AI has revolutionized QA testing as we know it. QA testing tools significantly impact application... | 0 | 2024-07-11T23:16:04 | https://dev.to/jeff_handy_6460add778baca/top-ai-testing-tools-1d44 | AI has revolutionized QA testing as we know it. QA testing tools significantly impact application performance, reliability, and quality. While the market for traditional software quality testing tools is mature and has a few well-known frameworks teams can use for many test use cases, AI testing tools have burst onto t... | jeff_handy_6460add778baca | |

1,920,287 | GET BACK YOUR SCAMMED MONEY FROM FAKE INVESTMENT PLATFORM. | The loss of a significant investment, such as $170,000 in Bitcoin, can be a crushing blow. Imagine... | 0 | 2024-07-11T23:54:28 | https://dev.to/bright_bryan_b6130ba993ac/get-back-your-scammed-money-from-fake-investment-platform-3ko7 | The loss of a significant investment, such as $170,000 in Bitcoin, can be a crushing blow. Imagine building a substantial cryptocurrency portfolio over years, only to have it vanish overnight due to hackers or con artists. I know firsthand the debilitating feeling of helplessness and fear that comes with losing what yo... | bright_bryan_b6130ba993ac | |

1,920,280 | How to upload multipart files to a cloud storage locally with Spring Boot, Kotlin and MinIO | MinIO, an AWS S3 alternative. Easy, Dockerized and straightfoward. Introduction If you’ve... | 0 | 2024-07-11T23:19:31 | https://dev.to/renejr03/how-to-upload-multipart-files-to-a-cloud-storage-locally-with-spring-boot-kotlin-and-minio-1jma | kotlin, spring, minio | MinIO, an AWS S3 alternative. Easy, Dockerized and straightfoward.

## Introduction

If you’ve ever tried to kick off a personal project, something that might be meaningful or even fun but involves dealing with file storage, this seemingly straightforward task can turn into a bit of a headache.

There are various optio... | renejr03 |

1,920,282 | RECOVER YOUR SCAMMED BITCOIN FROM FAKE INVESTMENT PLATFORM | I RECOVERED all my loss cryptocurrency through RECOVERY EXPERT I didn't see anything wrong with it... | 0 | 2024-07-11T23:45:25 | https://dev.to/bryan_hills_85d35f52b11ad/recover-your-scammed-bitcoin-from-fake-investment-platform-86p | I RECOVERED all my loss cryptocurrency through RECOVERY EXPERT

I didn't see anything wrong with it until after about 3 months of the deception, I was carried along while i kept sending more and more funds to them expecting an expeditious profit which kept increasing and increasing but i never had the permission to with... | bryan_hills_85d35f52b11ad | |

1,920,283 | How to build an order monitoring system on your own? | Q: I have many orders stored in my CRM database. I need to frequently (possibly daily or weekly)... | 0 | 2024-07-11T23:57:25 | https://dev.to/sqlman/how-to-build-an-order-monitoring-system-on-your-own-3b8 | sql, email | **Q:** I have many orders stored in my CRM database. I need to frequently (possibly daily or weekly) query the orders that are about to expire and send them to different people for handling. Different orders need to be sent to different people. How should I build an order monitoring system to automate this task?

Here ... | sqlman |

1,920,284 | GET BACK YOUR SCAMMED BITCOIN | I fell victim to a crafty scam, but just when I thought all hope was lost, FAST RECOVERY EXPERT... | 0 | 2024-07-11T23:48:19 | https://dev.to/juan_romano/get-back-your-scammed-bitcoin-191l | I fell victim to a crafty scam, but just when I thought all hope was lost, FAST RECOVERY EXPERT emerged as a beacon of light. For a fact, bitcoin is true and is the future of world currencies. I have been using it until I lost 3 BTC in the hands of unregulated brokers. In the wake of losing my monies to this sham inves... | juan_romano | |

1,920,285 | GET BACK YOUR SCAMMED MONEY | I am overjoyed and immensely grateful for the exceptional work done by RECOVERY EXPERT. My experience... | 0 | 2024-07-11T23:51:15 | https://dev.to/bright_bryan_c89e495c602d/get-back-your-scammed-money-3l79 | I am overjoyed and immensely grateful for the exceptional work done by RECOVERY EXPERT. My experience with this company has been nothing short of extraordinary, and I cannot thank them enough for their unwavering dedication to helping me recover my stolen funds. I had found myself in a dire situation after losing a sub... | bright_bryan_c89e495c602d | |

1,920,295 | GET BACK YOUR SCAMMED MONEY | I got scammed with over $205,000. I came in contact with this guy from Facebook and we communicated... | 0 | 2024-07-12T00:09:21 | https://dev.to/juan_romano_3584013e78b1c/get-back-your-scammed-money-3meg | I got scammed with over $205,000. I came in contact with this guy from Facebook and we communicated for a whole one year. I sent him money via Bitcoin ATM and bank account, I almost lost everything. But for the timely intervention of the team of RECOVERY EXPERT Company, who just in kick-off on time got back my $205,000... | juan_romano_3584013e78b1c | |

1,920,288 | Reverse a linked list in go | This is a favorite question to give to new developers. Pretty simple if you have had a decent data... | 27,729 | 2024-07-11T23:55:53 | https://dev.to/johnscode/reverse-a-linked-list-in-go-583i | go, interview, programming | This is a favorite question to give to new developers. Pretty simple if you have had a decent data structures class.

Reverse a single linked list. (_This is Leetcode 206_)

For the implementation, I have chosen to make the linked list a generic type.

```

type Node[T any] struct {

Data T

Next *Node[T]

}

type Linked... | johnscode |

1,920,289 | GET BACK YOUR SCAMMED MONEY | I am writing to express my deepest gratitude to RECOVERY EXPERT for their exceptional services and... | 0 | 2024-07-11T23:56:51 | https://dev.to/bryan_hills_4f7b4d46567d6/get-back-your-scammed-money-3l7g | I am writing to express my deepest gratitude to RECOVERY EXPERT for their exceptional services and expertise in recovering my lost funds. I had invested $240,000 in an online platform, hoping to generate returns, but unfortunately, I was scammed and lost access to my investment. Despite my efforts to contact the platfo... | bryan_hills_4f7b4d46567d6 | |

1,920,290 | GET BACK YOUR LOST FUNDS FROM ANY PLATFORM | There are a lot of scammers everywhere on the internet looking for whom to take advantage of, I am a... | 0 | 2024-07-11T23:59:00 | https://dev.to/olokun_agadagodo_a3729da3/get-back-your-lost-funds-from-any-platform-50pi | There are a lot of scammers everywhere on the internet looking for whom to take advantage of, I am a victim too. I have been dealing cryptocurrencies for almost 5 years, I recently was introduced to investment coinsglobal.... as the most profitable investment platforms out there , I could buy cryptocurrencies at 40% di... | olokun_agadagodo_a3729da3 | |

1,920,292 | RECOVER YOUR SCAMMED MONEY THROUGH RECOVERY EXPERT. | I'm glad I found RECOVERY EXPERT, an honest fund/crypto recovery company. Their team of professionals... | 0 | 2024-07-12T00:03:30 | https://dev.to/ben_linda_3828b63dc80f7ed/recover-your-scammed-money-through-recovery-expert-mep | I'm glad I found RECOVERY EXPERT, an honest fund/crypto recovery company. Their team of professionals was able to retrieve my crypto that had been stolen from a forex trader who had deceived me by saying I would receive a 35% return on my investment. I was able to receive all of my cryptocurrency back after writing to ... | ben_linda_3828b63dc80f7ed | |

1,920,293 | GET BACK YOUR SCAMMED MONEY | How to get back scammed USDT/USD from crypto scam platform? First question is how do i get back a... | 0 | 2024-07-12T00:05:58 | https://dev.to/bryan_hills_b04636e3dd313/get-back-your-scammed-money-39j4 | How to get back scammed USDT/USD from crypto scam platform? First question is how do i get back a scammed crypto or money after realizing oneself has been ripped, Next questions is who or where can i find a recovery hacker for stolen crypto? Due to high volume of crypto recovery scam on the internet today, lot of human... | bryan_hills_b04636e3dd313 | |

1,920,294 | Day 989 : Glitch | liner notes: Professional : Started off the day with a meeting with my immediate team to talk about... | 0 | 2024-07-12T00:07:16 | https://dev.to/dwane/day-989-glitch-14f3 | hiphop, code, coding, lifelongdev | _liner notes_:

- Professional : Started off the day with a meeting with my immediate team to talk about how things are going on with our projects. Had some time to take a look at the community board for any questions. Had a wider team meeting. Went back to work on refactoring a project to use a new SDK. Had a quick ses... | dwane |

1,920,296 | Implementando Teste Contínuo: Garantindo Qualidade em Cada Commit | No mundo acelerado do desenvolvimento de software, garantir qualidade sem comprometer a velocidade é... | 0 | 2024-07-12T00:12:01 | https://dev.to/womakerscode/implementando-teste-continuo-garantindo-qualidade-em-cada-commit-3bon | wecoded, devops, testing | No mundo acelerado do desenvolvimento de software, garantir qualidade sem comprometer a velocidade é um desafio constante. O teste contínuo de software surge como uma solução revolucionária, integrando testes automatizados em todas as etapas do ciclo de desenvolvimento.

Neste artigo, exploraremos os benefícios do test... | mahamorim |

1,920,297 | RECOVER YOUR SCAMMED MONEY | When my bitcoin was stolen, I felt a mix of panic and helplessness. Desperate for a solution,... | 0 | 2024-07-12T00:12:49 | https://dev.to/illuminati_666_01972ceeab/recover-your-scammed-money-26mc | When my bitcoin was stolen, I felt a mix of panic and helplessness. Desperate for a solution, RECOVERY EXPERT successfully recovered all of my stolen bitcoin.

email: RecoveryExpert01@consultant.com

WhatsApp: + 1 (908) 991 - 7132

Telegram https://t.me/RECOVERYEXPERT0 | illuminati_666_01972ceeab | |

1,920,338 | 🚀 Seeking an experienced iOS developer with expertise in Mac app notarization! 🚀 | If you have a track record of successfully notarizing Mac apps, we want to hear from you. telegram :... | 0 | 2024-07-12T00:25:08 | https://dev.to/yoann_turpin_4d4675275abf/seeking-an-experienced-ios-developer-with-expertise-in-mac-app-notarization-5hdj | If you have a track record of successfully notarizing Mac apps, we want to hear from you.

telegram : @kingstar729 | yoann_turpin_4d4675275abf | |

1,920,339 | Season 12 and 13 Storm Ohtani caught up with Kim Ha-sung | In a game against the Cincinnati Reds in the 2024 Major League Baseball regular season at Great... | 0 | 2024-07-12T00:28:31 | https://dev.to/outlookindiacom_pluginpla/season-12-and-13-storm-ohtani-caught-up-with-kim-ha-sung-20i9 | 토토사이트 | In a game against the Cincinnati Reds in the 2024 Major League Baseball regular season at Great American Ball Park in Cincinnati, Ohio on the 24th (Korea Standard Time), Kim Ha-sung started the game as the eighth batter and shortstop, and recorded one hit, one walk and two steals from three times at bat. San Diego won ... | outlookindiacom_pluginpla |

1,920,341 | Exploring the Internet Computer Protocol (ICP) | Introduction: The Internet Computer Protocol (ICP) is a crucial part of the internet infrastructure... | 0 | 2024-07-12T00:33:20 | https://dev.to/kartikmehta8/exploring-the-internet-computer-protocol-icp-4bp8 | Introduction:

The Internet Computer Protocol (ICP) is a crucial part of the internet infrastructure that is responsible for the transfer of data packets across the World Wide Web. It is a set of rules and protocols that control the flow of information between computers and networks. In this article, we will explore th... | kartikmehta8 | |

1,920,342 | Running Kubernetes locally with Kind and .NET 8 (plus a bonus with Lens) | This isn't a tutorial about kubernetes, kind, or an API with .NET. It's just something that helped me... | 0 | 2024-07-12T00:34:49 | https://dev.to/thomazperes/running-kubernetes-locally-with-kind-and-net-8-plus-a-bonus-with-lens-oi8 | dotnet, kubernetes, docker | This isn't a tutorial about kubernetes, kind, or an API with .NET. It's just something that helped me when I was studying Kubernetes

#### Prerequisite tools needed

- [Kubernetes](https://kubernetes.io/)

- [Kind](https://kind.sigs.k8s.io/docs/user/quick-start/)

- [Docker](https://docs.docker.com/get-docker/)

- [.NET](... | thomazperes |

1,920,343 | AI Workforce Evolution: Emerging Roles and Future Perspectives | 1. Introduction The landscape of work and employment has been significantly reshaped by... | 27,673 | 2024-07-12T00:45:01 | https://dev.to/rapidinnovation/ai-workforce-evolution-emerging-roles-and-future-perspectives-3npj | ## 1\. Introduction

The landscape of work and employment has been significantly reshaped by the

advent of artificial intelligence (AI). As technology continues to advance, AI

is becoming increasingly integral to various industries, driving efficiency,

innovation, and transformation. This evolution is not only changing... | rapidinnovation | |

1,920,344 | Transitioning into DevOps | DevOps is a set of practices that combines software development (Dev) and IT operations (Ops). The... | 0 | 2024-07-12T08:43:24 | https://dev.to/irohomolola/002-12244transitioning-into-devops-2318 | webdev, devops, beginners, techtalks | DevOps is a set of practices that combines software development (Dev) and IT operations (Ops). The goal is to shorten the system development life cycle and provide continuous delivery with high software quality. This requires a cultural shift, emphasizing collaboration between development and operations teams. | irohomolola |

1,920,345 | Meta | This is a submission for the [Build Better on Stellar: Smart Contract Challenge... | 0 | 2024-07-12T01:13:39 | https://dev.to/alla_santoshpavankumar_/meta-f0j | devchallenge, stellarchallenge, blockchain, web3 | *This is a submission for the [Build Better on Stellar: Smart Cont[](https://developer.oculus.com/manage/organizations/999570415204880/payment-info/)ract Challenge ](https://dev.to/challenges/stellar): Build a dApp*

## What I Built

<!-- Share an overview about your project and what it does. -->

## Demo

<!-- If submit... | alla_santoshpavankumar_ |

1,920,350 | What's New in API7 Enterprise: IAM for Granular Access Control | Introduction Previous versions of API7 Enterprise provided a simple, user-friendly, and... | 0 | 2024-07-12T01:25:53 | https://api7.ai/blog/api7-3.2.14-iam-finer-access-control | ## Introduction

Previous versions of [API7 Enterprise](https://api7.ai/enterprise) provided a simple, user-friendly, and comprehensive [RBAC (Role-Based Access Control)](https://api7.ai/blog/rbac-for-permission-control) management mechanism. This mechanism ensured system security while granting users flexible role per... | yilialinn | |

1,920,346 | Connect with NBA YoungBoy Merch on Instagram! | Discover exclusive NBA YoungBoy Merch on Instagram! Follow us to stay updated with the latest drops,... | 0 | 2024-07-12T01:16:03 | https://dev.to/nbayoungboymerch39/connect-with-nba-youngboy-merch-on-instagram-1m8b | nbayoungboymerch, instagram, exclusivemerch | Discover exclusive NBA YoungBoy Merch on Instagram! Follow us to stay updated with the latest drops, exclusive collections, and behind-the-scenes content. Don't miss out on the hottest merch from NBA YoungBoy, available only on our Instagram page. Join our community and be the first to know about new releases and speci... | nbayoungboymerch39 |

1,920,347 | Wix Studio | This is a submission for the Wix Studio Challenge . What I Built Demo ... | 0 | 2024-07-12T01:17:51 | https://dev.to/alla_santoshpavankumar_/wix-studio-2dj9 | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://allasantoshpavanku/challenges/wix).*

## What I Built

<!-- Share an overview about your project. -->

## Demo

<!-- Share a link to your Wix Studio app and include some screenshots here. -->

## Development Journey

<!-- Tell us how you leveraged Wix Studio’s ... | alla_santoshpavankumar_ |

1,920,348 | Tutorial: Creación de un Proyecto Vue desde Cero e Integración con Bootstrap | En este tutorial, aprenderemos paso a paso cómo crear un proyecto Vue desde cero e integrarlo con... | 0 | 2024-07-12T01:28:34 | https://dev.to/lesniergonzalez/tutorial-creacion-de-un-proyecto-vue-desde-cero-e-integracion-con-bootstrap-2l3m | spanish |

En este tutorial, aprenderemos paso a paso cómo crear un proyecto Vue desde cero e integrarlo con Bootstrap para el estilo. Vue.js es un popular framework de JavaScript para construir interfaces de usuario, y Boots... | lesniergonzalez |

1,920,349 | Mailbox unavailable. The server response was: 5.7.1 Service unavailable, Client host [IP ADDRESS] blocked using Spamhaus. | Mailbox unavailable. The server response was: 5.7.1 Service unavailable, Client host [IP ADDRESS]... | 0 | 2024-07-12T01:22:34 | https://dev.to/kath/mailbox-unavailable-the-server-response-was-571-service-unavailable-client-host-ip-address-blocked-using-spamhaus-329j |

Mailbox unavailable. The server response was: 5.7.1 Service unavailable, Client host [IP ADDRESS] blocked using Spamhaus. To request removal from this list see https://www.spamhaus.org/query/ip/IP ADDRESS AS(1450)

| kath | |

1,920,413 | proof | $argon2id$v=19$m=64,t=512,p=2$B5zGjIkH2yFiCtSvljY3Tg$/kiueTD5R5NCGfcmoiN5/g | 0 | 2024-07-12T02:31:27 | https://dev.to/lholh/proof-4820 | $argon2id$v=19$m=64,t=512,p=2$B5zGjIkH2yFiCtSvljY3Tg$/kiueTD5R5NCGfcmoiN5/g | lholh | |

1,920,351 | Seeking an experienced iOS developer with expertise in Mac app notarization! 🚀 | Dear Everyone. How are you? Hope you are doing well. I'm Seeking an experienced iOS developer with... | 0 | 2024-07-12T01:26:26 | https://dev.to/lilla_flowers_dd13e65858b/seeking-an-experienced-ios-developer-with-expertise-in-mac-app-notarization-772 | Dear Everyone. How are you? Hope you are doing well. I'm Seeking an experienced iOS developer with expertise in Mac app notarization! 🚀

If you have a track record of successfully notarizing Mac apps, we want to hear from you.

telegram : @kingstar729 | lilla_flowers_dd13e65858b | |

1,920,352 | Binary Images & Image Thresholding | Thresholding Thresholding is a simple yet effective technique used in image processing to... | 0 | 2024-07-12T02:52:04 | https://dev.to/catheryn/binary-images-image-thresholding-282 | ai, opencv, imagemanipulation, computervision | ## Thresholding

Thresholding is a simple yet effective technique used in image processing to convert a grayscale image into a [binary image](#binary-image). The core idea is to segment the image into two parts (usually 0 and 255) based on a specific threshold value.

Thresholding involves setting a threshold value tha... | catheryn |

1,920,353 | Demo project | CREATE USER hccdp WITH SYSADMIN PASSWORD 'Sadece1234'; CREATE DATABASE hccdp_db; ALTER DATABASE... | 0 | 2024-07-12T01:30:46 | https://dev.to/ozcankara/demo-project-3d4b | ```

CREATE USER hccdp WITH SYSADMIN PASSWORD 'Sadece1234';

CREATE DATABASE hccdp_db;

ALTER DATABASE hccdp_db OWNER TO hccdp;

```

```

gsql -d postgres -p 5432 -U root -W

```

```

CREATE USER hccdp WITH SYSADMIN PASSWORD 'Sadece1234';

```

```

CREATE DATABASE hccdp_db;

```

```

ALTER DATABASE hccdp_db OWNER TO hcc... | ozcankara | |

1,920,355 | Spend $0 to learn these Programming Languages in 2024 for free. | → Python → JavaScript → Java → C# → Ruby → Swift → Kotlin → C++ → PHP → Go → R → TypeScript... | 0 | 2024-07-12T01:47:03 | https://dev.to/e_opore_80/spend-0-to-learn-these-programming-languages-in-2024-for-free-2b19 | softwaredevelopment, python, css, java |

→ Python

→ JavaScript

→ Java

→ C#

→ Ruby

→ Swift

→ Kotlin

→ C++

→ PHP

→ Go

→ R

→ TypeScript

[]https://x.com/e_opore/status/1811567830594388315?t=_j4nncuIY2WfBm7icTW9-w&s=19 | e_opore_80 |

1,920,357 | Top 3 JavaScript Concepts Every Developer Should Know | Here are the top 3 JavaScript concepts every developer should know: Functions: Functions are... | 0 | 2024-07-12T16:00:00 | https://dev.to/devstoriesplayground/top-3-javascript-concepts-every-developer-should-know-5bjm | javascript, programming, programmers, productivity | Here are the top 3 JavaScript concepts every developer should know:

1. Functions: Functions are reusable blocks of code that perform a specific task. They are essential for organizing your code, making it more readable and maintainable. JavaScript functions can take parameters (inputs) and return values (outputs).

![... | devstoriesplayground |

1,920,358 | ⚡ MySecondApp - React Native with Expo (P5)- Custom Bottom Tabs Navigator | ⚡ MySecondApp - React Native with Expo (P5)- Custom Bottom Tabs Navigator | 28,005 | 2024-07-12T01:58:53 | https://dev.to/skipperhoa/mysecondapp-react-native-with-expo-p5-custom-bottom-tabs-navigator-fd0 | webdev, react, reactnative, tutorial | ⚡ MySecondApp - React Native with Expo (P5)- Custom Bottom Tabs Navigator

{% youtube hrGTDB7B4SA %} | skipperhoa |

1,920,359 | What does box-sizing: border-box actually do? | When I first started learning CSS, I saw box-sizing: border-box in almost every CSS file I... | 0 | 2024-07-12T02:31:32 | https://dev.to/bridget_amana/what-does-box-sizing-border-box-actually-do-3ol5 | webdev, css, tutorial, frontend | When I first started learning CSS, I saw box-sizing: border-box in almost every CSS file I encountered. Like many beginners, I copied it without understanding its purpose. If this sounds familiar, don't worry—you’re not alone.

**What is box-sizing?**

The _box-sizing_ property in CSS controls how the width and height o... | bridget_amana |

1,920,361 | [Unity] Publish assets that automatically save backups of files. | I created a Unity asset that automatically saves Unity backups. I would like to release it to you in... | 0 | 2024-07-12T02:09:33 | https://dev.to/uni928/unity-publish-assets-that-automatically-save-backups-of-files-2bik | asset, backup, auto, unity3d | I created a Unity asset that automatically saves Unity backups.

I would like to release it to you in the hope that it will be useful to you.

Please download ver1.1.0 (latest version) from the following site.

https://drive.google.com/file/d/1nnEEoMFRQqypSJAWU4odKbQz4JK86wpr/view?usp=sharing

***

Description of this as... | uni928 |

1,920,363 | A Comprehensive Guide to Android NDK Development with Android Studio | Introduction The Native Development Kit (NDK) is a collection of tools designed to help... | 0 | 2024-07-12T09:54:33 | https://dev.to/wetest/a-comprehensive-guide-to-android-ndk-development-with-android-studio-1d5c | programming, androiddev, javascript, devops | ## Introduction

The Native Development Kit (NDK) is a collection of tools designed to help developers efficiently create C or C++ dynamic libraries and automatically package the .so files and Java applications into an APK. There are several reasons why developers might choose to use the NDK:

1. **Code protection**: Ja... | wetest |

1,920,451 | Creating Complex Animations with CSS | Introduction: In recent years, CSS has become increasingly popular for creating dynamic and... | 0 | 2024-07-12T04:15:30 | https://dev.to/tailwine/creating-complex-animations-with-css-4m96 | Introduction:

In recent years, CSS has become increasingly popular for creating dynamic and interactive animations on websites. With the help of CSS, web designers and developers can add complex animations to their designs without relying on third-party plugins or libraries. In this article, we will delve into the worl... | tailwine | |

1,920,365 | ข้อมูล JSON ใน PostgreSQL: โลกใหม่ของการจัดการข้อมูลบน Postgres | เกรินนำ PostgreSQL ไม่เพียงแค่เป็นฐานข้อมูลที่มีความสามารถสูง แต่ยังมีฟีเจอร์สำหรับการจัดการข้อมูล... | 0 | 2024-07-12T03:22:09 | https://dev.to/everthing-was-postgres/khmuul-json-ain-postgresql-olkaihmkhngkaarcchadkaarkhmuulbn-postgres-3omk | postgres, json, restapi | **เกรินนำ**

PostgreSQL ไม่เพียงแค่เป็นฐานข้อมูลที่มีความสามารถสูง แต่ยังมีฟีเจอร์สำหรับการจัดการข้อมูล JSON ที่ทรงพลังอีกด้วย วันนี้เราจะพาคุณสำรวจโลกของ JSON ใน PostgreSQL ที่จะทำให้การจัดการข้อมูลของคุณเป็นเรื่องง่ายและสนุกขึ้น!

**ว่าแต่ JSON คืออะไร?**

JSON (JavaScript Object Notation) เป็นรูปแบบข้อมูลที่ใช้สำหรับ... | iconnext |

1,920,366 | Unleashing the Future: Blockchain, Web 3.0, and Solidity | In recent years, the tech world has witnessed the rapid emergence of blockchain technology, the... | 0 | 2024-07-12T02:18:36 | https://dev.to/mukunzi_ndahirojames_d6a/unleashing-the-future-blockchain-web-30-and-solidity-23ml | webdev, solidity, web3, blockchain | In recent years, the tech world has witnessed the rapid emergence of blockchain technology, the promise of Web 3.0, and the rise of Solidity as a leading programming language for smart contracts. Together, these innovations are reshaping the digital landscape, offering new ways to interact with the internet and revolut... | mukunzi_ndahirojames_d6a |

1,920,408 | AI Revolutionizing tech and society through innovation and ethics. | Introduction to Artificial Intelligence Artificial Intelligence (AI) has become an... | 0 | 2024-07-12T02:21:47 | https://dev.to/dainwi/ai-revolutionizing-tech-and-society-through-innovation-and-ethics-4pe4 | ai, techtalks | ## Introduction to Artificial Intelligence

Artificial Intelligence (AI) has become an integral part of our lives, shaping the way we interact with technology and paving the path for a more advanced future. From voice assistants to self-driving cars, AI has made significant advancements in various industries, showcasin... | dainwi |

1,920,410 | The competition between transparent LED screen and traditional LED display | In the continuous innovation of LED display technology, transparent LED screen, as a new display... | 0 | 2024-07-12T02:22:29 | https://dev.to/sostrondylan/the-competition-between-transparent-led-screen-and-traditional-led-display-3lh6 | led, display, transparent | In the continuous innovation of LED display technology, [transparent LED screen](https://sostron.com/products/crystal-transparent-led-screen/), as a new display technology, is gradually entering people's field of vision. This article will conduct an in-depth comparative analysis of transparent LED screen and traditiona... | sostrondylan |

1,920,411 | Calculating Adaptive Threshold in OpenCV | Read my article on Thresholding and Binary Images for a better background Adaptive thresholding is a... | 0 | 2024-07-12T02:59:27 | https://dev.to/catheryn/calculating-adaptive-threshold-in-opencv-1hh3 | ai, computervision | [Read my article on Thresholding and Binary Images for a better background](https://dev.to/catheryn/binary-images-image-thresholding-282)

Adaptive thresholding is a technique used to convert a grayscale image to a binary image (black and white). The threshold value is calculated for smaller regions (blocks) of the ima... | catheryn |

1,920,414 | batuna bocghe | chung toi cung cap dich vu boc lai ghe van phong uy tin tai ha noi sai gon.Doi ngu chuyen gia lanh... | 0 | 2024-07-12T02:31:51 | https://dev.to/tongkhonoithat/batuna-bocghe-10ia | chung toi cung cap dich vu boc lai ghe van phong uy tin tai ha noi sai gon.Doi ngu chuyen gia lanh nghe luon tan tam voi cong viec.

Website: https://batuna.vn/boc-ghe-van-phong

Phone: 0975143838

Address: 19 ngo 46 ngoc hoi hoang mai

https://nguoiquangbinh.net/forum/diendan/member.php?u=141111

https://socialtrain.stage.... | tongkhonoithat | |

1,920,416 | www | ccccc | 0 | 2024-07-12T02:41:23 | https://dev.to/mary_jones_00b9f6cc17fde8/www-3loe | ccccc | mary_jones_00b9f6cc17fde8 | |

1,920,417 | Docker - Introduction, Architecture, and Most used Commands : Day 5 of 50 days DevOps Tools Series | Introduction Docker has revolutionised the way we build, ship, and run applications. It... | 0 | 2024-07-12T02:49:12 | https://dev.to/shivam_agnihotri/docker-introduction-architecture-and-most-used-commands-day-4-of-50-days-devops-tools-series-31pa | docker, containers, beginners, devops | ## **Introduction**

Docker has revolutionised the way we build, ship, and run applications. It enables developers to package applications along with their dependencies into lightweight containers that can run consistently across different environments. In this post, we will dive into Docker’s architecture and cover it... | shivam_agnihotri |

1,920,418 | MusicFab: Best Music Converter | If you are streaming music lovers, then you won't want to miss MusicFab. It is a powerful and... | 0 | 2024-07-12T02:51:42 | https://dev.to/jing_nicole_c8ff65e304a9b/musicfab-best-music-converter-2bc5 | converter, music, mp3 | If you are streaming music lovers, then you won't want to miss [MusicFab](https://musicfab.org/). It is a powerful and professional software that can download any song from streaming music services, like Spotify or Apple Music, even without a premium account. | jing_nicole_c8ff65e304a9b |

1,920,419 | Website Traffic Strategies: Comparing SEO and Content Promotion | Hey everyone! So, you're trying to figure out whether SEO or content promotion is better for boosting... | 0 | 2024-07-12T02:53:11 | https://dev.to/juddiy/website-traffic-strategies-comparing-seo-and-content-promotion-4m5b | learning, website, seo | Hey everyone! So, you're trying to figure out whether SEO or content promotion is better for boosting your website traffic, right? Well, you're in the right place. Both methods are super effective, but they each have their own unique strengths. Let's dive in and see which one might be the best fit for you.

#### What i... | juddiy |

1,920,420 | 🌟 Introducing HealthHub: Your Ultimate Wellness Companion 🏥💪 | Hey Dev.to community! I'm excited to share my latest project, HealthHub, a comprehensive wellness... | 0 | 2024-07-12T02:54:55 | https://dev.to/sneha422/healthhub-your-complete-wellness-companion-37c1 | webdev, programming, python, machinelearning |

Hey Dev.to community! I'm excited to share my latest project, **HealthHub**, a comprehensive wellness platform designed to empower you with AI-driven health tools. Built with the power of **MindsDB**, HealthHub offers an array of features to keep you at the peak of your health game.

### [👉 Check out the Project on ... | sneha422 |

1,920,421 | My Pen on CodePen | Check out this Pen I made! | 0 | 2024-07-12T02:55:04 | https://dev.to/luang_lertariyanunt_11487/my-pen-on-codepen-250c | codepen | Check out this Pen I made!

{% codepen https://codepen.io/passkoriys/pen/OJeVymx %} | luang_lertariyanunt_11487 |

1,920,423 | Understanding Vite Flow and Structure in a React Project | When working with React, Vite offers a streamlined development experience with a few key differences... | 0 | 2024-07-12T03:01:52 | https://dev.to/vyan/understanding-vite-flow-and-structure-in-a-react-project-2e84 | webdev, javascript, beginners, react | When working with React, Vite offers a streamlined development experience with a few key differences from the traditional Create React App setup. This blog post will explore the structure of a typical Vite project, focusing on key files such as `index.html`, `main.jsx`, and `App.jsx`.

## 1. index.html

In a Vite-power... | vyan |

1,920,424 | Reddit营销软件,reddit霸屏工具,reddit私信软件 | Reddit营销软件,reddit霸屏工具,reddit私信软件 了解相关软件请登录... | 0 | 2024-07-12T03:06:48 | https://dev.to/vst_tw_d54d6ef07fa3d41155/redditying-xiao-ruan-jian-redditba-ping-gong-ju-redditsi-xin-ruan-jian-am0 |

Reddit营销软件,reddit霸屏工具,reddit私信软件

了解相关软件请登录 http://www.vst.tw

Reddit营销软件,解锁社交媒体的新颖营销策略

在数字时代,社交媒体已经成为企业推广和营销的重要渠道之一。在众多社交媒体平台中,Reddit作为一个集结了数百万用户的独特社区,为企业提供了独特的营销机会。然而,Reddit的社区氛围和独特的文化也使得在其上进行营销变得稍显复杂。为了解决这一挑战,越来越多的企业开始依赖Reddit营销软件,这些软件不仅能够简化营销流程,还能够帮助企业更好地融入Reddit社区,并实现更有效的营销目标。

Reddit营销软件的功能

Reddit营销软件通常... | vst_tw_d54d6ef07fa3d41155 | |

1,920,425 | React: useEffect | Today's topic is to learn useEffect in React. In my point of view, the useEffect helps you call a... | 0 | 2024-07-12T04:12:31 | https://dev.to/ken2511/react-useeffect-3jk4 | Today's topic is to learn useEffect in React.

In my point of view, the useEffect helps you call a function in a proper time, so that you don't need to write an infinite loop to monitor...

Since the useEffect also calls the function when the component is first time rendered and also call another function when the compo... | ken2511 | |

1,920,426 | FIXME Please: An Exercise in TODO Linters | A few weeks ago, I was talking with a developer in our Community Slack who was interested in adding... | 0 | 2024-07-12T03:13:16 | https://trunk.io/blog/fixme-please-an-exercise-in-todo-linters | devops, linters, tooling, tutorial | A few weeks ago, I was talking with a developer in our [Community Slack](https://slack.trunk.io/) who was interested in adding their own TODO linter. At face value, this is a trivial problem. There are several linters that already support this to varying degrees, and many of them offer decently extensible configuration... | tylerjang27 |

1,920,427 | Remixed Relay: Evolution of React Server Component | React Server Components (RSC) represents a significant advancement in how server-rendered content is... | 0 | 2024-07-12T03:14:02 | https://dev.to/guhandelta/evolution-of-react-server-componen-m8f | react, reactservercomponents, relay | React Server Components (RSC) represents a significant advancement in how server-rendered content is handled in React applications. The concept of React Server Components draws inspiration from various previous technologies and patterns, particularly Relay. Relay, a JavaScript framework for building data-driven React a... | guhandelta |

1,920,429 | THE ROLE OF SEMANTIC HTML IN ENHANCING SEO AND WEB ACCESSIBILITY | The Role of Semantic HTML in Enhancing SEO and Web Accessibility 1.Introduction to Semantic... | 0 | 2024-07-12T03:19:06 | https://dev.to/kevin_kimtai/the-role-of-semantic-html-in-enhancing-seo-and-web-accessibility-g99 | webdev, html | The Role of Semantic HTML in Enhancing SEO and Web Accessibility

<u>**1.Introduction to Semantic HTML**</u>

Semantic HTML involves using HTML tags that provide meaning and context to the web content they enclose. This approach not only improves the visual presentation but also enhances the understanding and accessibi... | kevin_kimtai |

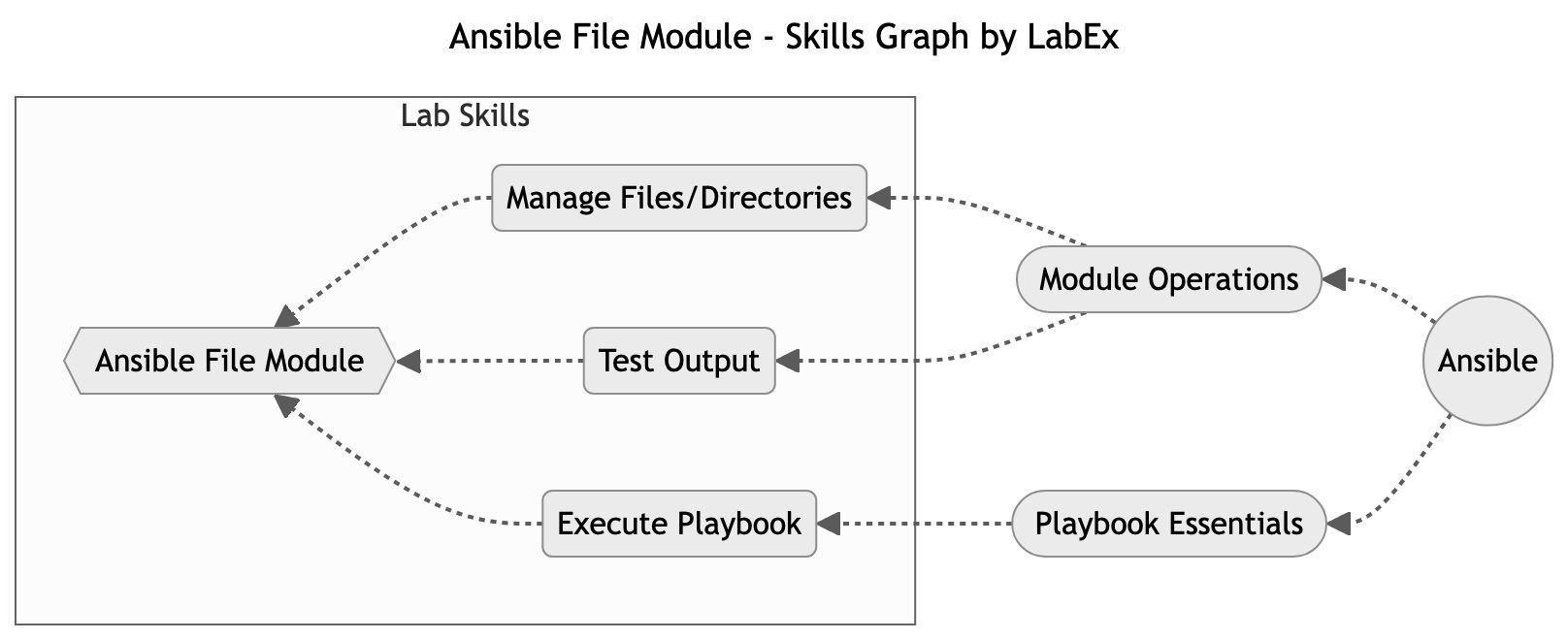

1,920,431 | Mastering File Management with Ansible | In this lab, you will explore the Ansible File module, which allows you to manage files and directories on remote hosts. The File module provides a wide range of functionalities, such as creating, deleting, modifying permissions, and checking the existence of files and directories. | 27,737 | 2024-07-12T03:24:12 | https://dev.to/labex/mastering-file-management-with-ansible-593o | ansible, coding, programming, tutorial |

## Introduction

This article covers the following tech skills:

In [this lab](https://labex.io/tutorials/ansible-file-module-289654), you will explore the Ansible File module, which allows you to manage files and directories on remote host... | labby |

1,920,432 | Flour, Frosting, and Forecasts | Cooking up a business strategy with baking analogies As a part-time hobby baker and a... | 0 | 2024-07-12T03:40:40 | https://dev.to/vidyaiyengar/flour-frosting-and-forecasts-3ccl | strategy, creativity, bakinganalogies | ## Cooking up a business strategy with baking analogies

As a part-time hobby baker and a full-time strategist, I often find myself whipping up parallels between these two passions of mine. For a baker with a dual obsession for frosting and analytics, picture this: I am baking a fancy cake for a birthday party, and su... | vidyaiyengar |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.