id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,918,168 | Another framework, Arrrgggg!!! | Another PHP framework? Arrrgggg!!! More like, why not? Pionia framework has the answer for this.... | 0 | 2024-07-10T07:07:46 | https://dev.to/jet_ezra/another-framework-arrrgggg-5ain | webdev, pionia, php, restapi | **Another PHP framework? Arrrgggg!!! More like, why not?**

Pionia framework has the answer for this. Pionia trims off the unnecessary concerns of developing apis and you stay focused on only your business logic.

It also somehow meets both configuration and conventions in the midway.

Imagine scenarios where an api ... | jet_ezra |

1,918,169 | Daily Code 76 | Speed Limit | hi everyone! after a longer break i am back again with a small daily exercise. it’s simple but i... | 0 | 2024-07-10T07:08:51 | https://dev.to/gregor_schafroth/daily-code-76-speed-limit-26ch | javascript, daily | hi everyone! after a longer break i am back again with a small daily exercise. it’s simple but i think the different solutions are interesting. why don’t you give it a try as well? 😄

# task

use javascript to write a function that determines the result of you driving at certain speeds:

- speed limit: 120km/h (result... | gregor_schafroth |

1,918,170 | 🚀 Enhance Your Laravel Projects with Effective Test Cases! 🚀 | In the fast-paced world of web development, ensuring the reliability and quality of your applications... | 0 | 2024-07-10T07:09:25 | https://dev.to/himanshudevl/enhance-your-laravel-projects-with-effective-test-cases-3a8l | laravel, php, testing, codequality | **In the fast-paced world of web development, ensuring the reliability and quality of your applications is crucial. Writing effective test cases in Laravel not only helps catch bugs early but also makes your codebase more maintainable and robust. Here are some tips to get you started:**

- **Start with Feature Tests**... | himanshudevl |

1,918,171 | Decentralized exchange | Development of Decentralized Exchange (DEX): The Revolutionary Future of Business The last few years... | 0 | 2024-07-10T07:10:15 | https://dev.to/muthukrishnanmk24/decentralized-exchange-4p98 | Development of Decentralized Exchange (DEX): The Revolutionary Future of Business

The last few years have seen a paradigm shift in the financial world due to the proliferation of cryptocurrencies and blockchain technology. Among emerging innovations, Decentralized Exchanges (DEX) stand out as a transformative force in... | muthukrishnanmk24 | |

1,918,173 | Learn How To Build Library Management System With Charts From Scratch Using React (Video Tutorial) | In this 1+ hour video tutorial, you will learn to build a library management system application... | 0 | 2024-07-10T07:14:44 | https://blog.yogeshchavan.dev/learn-how-to-build-library-management-system-with-charts-from-scratch-using-react-video-tutorial | react, javascript | {% embed https://www.youtube.com/watch?v=pzHLYs-e3eI %}

In this 1+ hour video tutorial, you will learn to build a [library management system](https://www.youtube.com/watch?v=HHAr_NlsDFY) application from scratch using React, Supabase, Shadcn/ui, and React Query.

## What's Included

This application includes the follo... | myogeshchavan97 |

1,918,174 | Top Reasons to Choose Sharanalaya Montessori Preschools in Thiruvanmiyur | Welcome to Sharanalaya School, a beacon of educational excellence nestled in the heart of... | 0 | 2024-07-10T07:13:07 | https://dev.to/hemanthh_kumar/top-reasons-to-choose-sharanalaya-montessori-preschools-in-thiruvanmiyur-4koa | Welcome to Sharanalaya School, a beacon of educational excellence nestled in the heart of Thiruvanmiyur. At Sharanalaya, we believe in nurturing young minds and empowering them to reach their full potential. As a leading institution among Montessori preschools, IGCSE schools, preschools, and play schools in Thiruvanmiy... | hemanthh_kumar | |

1,918,175 | GraphQL Federation with Ballerina and Apollo - Part II | This article was written using Ballerina Swan Lake Update 8 (2201.8.0) This is part II of the... | 28,015 | 2024-07-10T07:14:25 | https://www.thisaru.me/2023/10/03/graphql-federation-with-ballerina-part-II.html | ballerina, graphql, apollo, federation | > This article was written using Ballerina Swan Lake Update 8 (2201.8.0)

This is part II of the series "GraphQL Federation with Ballerina and Apollo". Refer to [Part I](https://dev.to/thisarug/graphql-federation-with-ballerina-and-apollo-studio-3hb1) before reading this.

In the first part, we discussed the GraphQL fe... | thisarug |

1,918,176 | Exploring the Efficiency of UAT Testing Tools | In software development, User Acceptance Testing (UAT) is a critical part in that it guarantees that... | 0 | 2024-07-10T07:15:02 | https://marketinsidesnews.com/tech/exploring-the-efficiency-of-uat-testing-tools/ | uat, testing, tools |

In software development, User Acceptance Testing (UAT) is a critical part in that it guarantees that the developed software fits the end users’ needs and works seamlessly in the real world. UAT testing tools have bec... | rohitbhandari102 |

1,918,177 | Understanding the Distinction Between Information Security and Cybersecurity | InfoSec & cyber | 0 | 2024-07-10T07:23:56 | https://dev.to/saramazal/understanding-the-distinction-between-information-security-and-cybersecurity-pn | infosec, cybersecurity, webdev, appsec | ---

title: Understanding the Distinction Between Information Security and Cybersecurity

published: true

description: InfoSec & cyber

tags: #InfoSec #cybersecurity #webdev #appsec

#cover_image:![infosec]https://dev-to-uploads.s3.amazonaws.com/uploads/articles/8hin2s6izc42w5efolyr.jpg)

# Use a ratio of 100:42 for best re... | saramazal |

1,918,178 | Core Web Vitals: The Secret Weapon for Your Website's Success | In today's fast-paced digital world, website speed and usability are no longer optional. They're... | 0 | 2024-07-10T07:16:32 | https://dev.to/digitup/core-web-vitals-the-secret-weapon-for-your-websites-success-1cnn | corewebvital, websiteoptimization, webdev | In today's fast-paced digital world, website speed and usability are no longer optional. They're crucial for attracting and retaining visitors. This article explores Core Web Vitals, a set of metrics from Google that measure a website's user experience, and explains why they're important for your website's success.

##... | digitup |

1,918,179 | Seeking a Proven React Template for an E-commerce Website | Hello, I'm looking for a tried-and-tested React template for an e-commerce platform focused on... | 0 | 2024-07-10T07:17:21 | https://dev.to/salman_irej_d74c13dbbcbbc/seeking-a-proven-react-template-for-an-e-commerce-website-4d49 | Hello,

I'm looking for a tried-and-tested React template for an e-commerce platform focused on buying, selling, and trading. If anyone has experience with React templates that have been used successfully in commercial websites, please share your experiences.

I need a template that is easy to work with and supports fe... | salman_irej_d74c13dbbcbbc | |

1,918,181 | How 'Digital Minimalism' Book by Cal Newport Helped Me as a Developer | In today's hyper-connected world, it's easy to fall into the trap of constant digital engagement. As... | 0 | 2024-07-11T23:06:34 | https://dev.to/jfmartinz/how-digital-minimalism-book-by-cal-newport-helped-me-as-a-developer-4an5 | productivity, developer | In today's hyper-connected world, it's easy to fall into the trap of constant digital engagement. As an aspiring developer, I noticed how I spend more of my time on social media and less on productive work. I know how hard it is to resist these distractions coming from digital tools: endless notifications, reels with a... | jfmartinz |

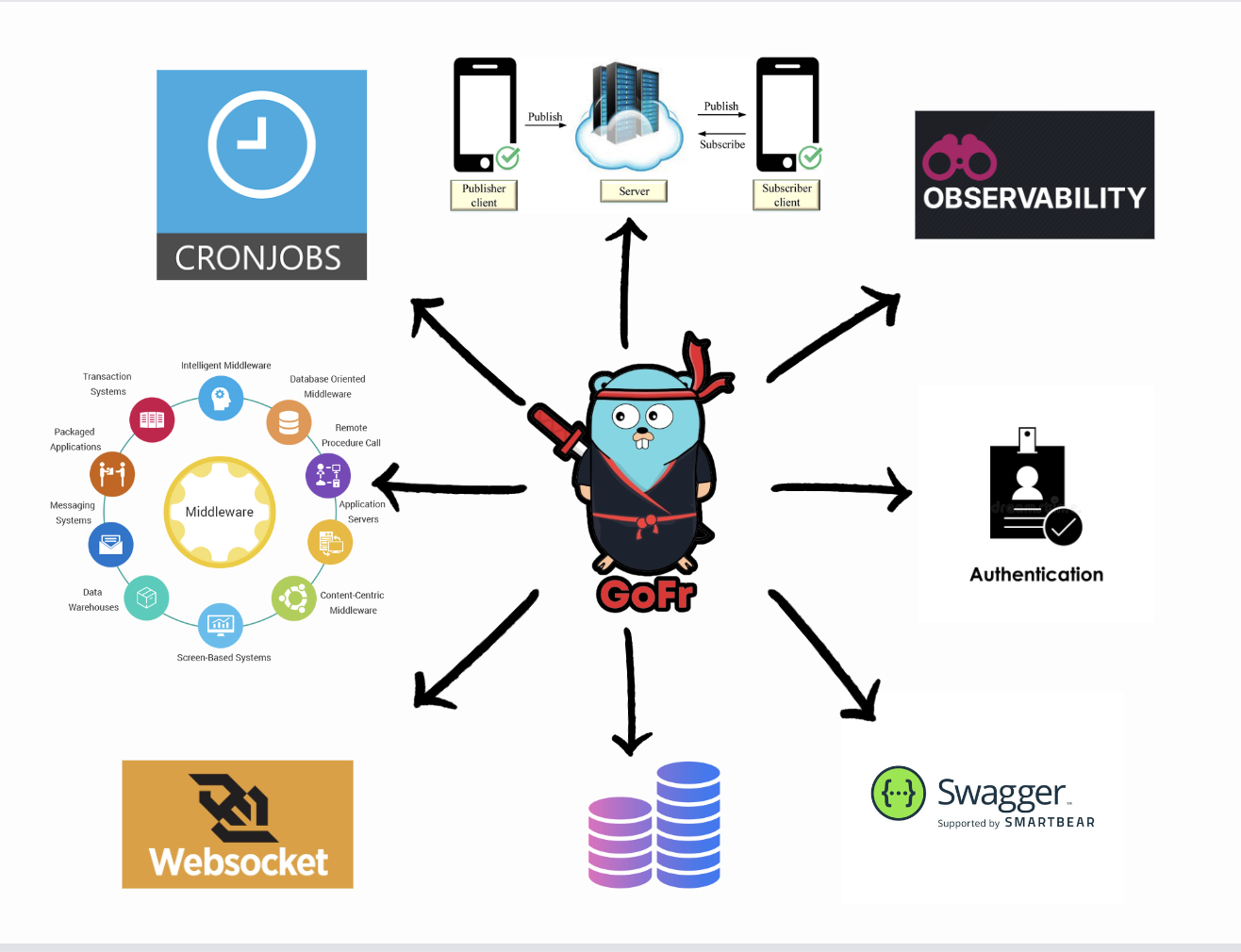

1,918,182 | The Ultimate Golang Framework for Microservices: GoFr | Go is a multiparadigm, statically typed, and compiled programming language designed by Google. Many... | 0 | 2024-07-10T07:18:26 | https://dev.to/umang01hash/the-ultimate-golang-framework-for-microservices-gofr-56bj | webdev, programming, go, microservices |

Go is a multiparadigm, statically typed, and compiled programming language designed by Google. Many developers have embraced Go because of its garbage collection, memory safety, ... | umang01hash |

1,918,263 | Modalert 100 Tablet: View usage, side effects, price and reviews | Powmedz | Modalert 100 mg tablet is used to treat excessive daytime sleepiness (narcolepsy). It improves... | 0 | 2024-07-10T08:36:09 | https://dev.to/richard_roy/modalert-100-tablet-view-usage-side-effects-price-and-reviews-powmedz-3ii6 |

[Modalert 100 mg tablet](https://powmedz.com/product/modalert-100-mg-modafinil-generic/) is used to treat excessive daytime sleepiness (narcolepsy). It improves alertness, helps you stay awake, reduces the tendency to fall asleep during the day and restores a normal sleep cycle. Modalert 100 tablets can be taken with ... | richard_roy | |

1,918,184 | IMPORTANCE OF SEMANTIC HTML FOR SEO AND ACCESSIBILITY | The Role of Semantic HTML in Modern Web Development Semantic HTML introduces meaning to... | 0 | 2024-07-10T07:21:29 | https://dev.to/elvis_mwangi/importance-of-semantic-html-for-seo-and-accessibility-28g0 | beginners, learning, html |

##The Role of Semantic HTML in Modern Web Development##

Semantic HTML introduces meaning to the code we write, providing clear, descriptive elements that enhance both the development process and the end-user experience. Befo... | elvis_mwangi |

1,918,185 | Understanding 'any', 'unknown', and 'never' in TypeScript | TypeScript offers a robust type system, but certain types can be confusing, namely any, unknown, and... | 0 | 2024-07-10T07:32:28 | https://dev.to/sharoztanveer/understanding-any-unknown-and-never-in-typescript-4acb | webdev, typescript, javascript, programming | TypeScript offers a robust type system, but certain types can be confusing, namely `any`, `unknown`, and `never`. Let's break them down for better understanding.

## The `any` Type

The `any` type is the simplest of the three. It essentially disables type checking, allowing a variable to hold any type of value. For exam... | sharoztanveer |

1,918,186 | 6 Essential Factors to Consider When Hiring a Magento Developer | Introduction Hiring the right Magento developer is a crucial step for any business looking to... | 0 | 2024-07-10T07:22:13 | https://dev.to/hirelaraveldevelopers/6-essential-factors-to-consider-when-hiring-a-magento-developer-5gjb | webdev, javascript, programming, ai | <h3><strong>Introduction</strong></h3>

<p>Hiring the right Magento developer is a crucial step for any business looking to establish a strong online presence. Magento, known for its robust e-commerce capabilities, requires a skilled developer to unlock its full potential. Whether you're building a new online store or o... | hirelaraveldevelopers |

1,918,187 | Shopify Theme Development Company | Transform your online store with custom Shopify themes! Our infographic explores the benefits and... | 0 | 2024-07-10T07:23:58 | https://dev.to/mobisoftinfotech/shopify-theme-development-company-14o9 | mobile, development, softwaredevelopment |

Transform your online store with custom Shopify themes! Our infographic explores the benefits and features that enhance performance, user experience, and security. Dive into the world of scalable, mobile-optimized ... | mobisoftinfotech |

1,918,188 | JS Introduction | JavaScript was created by Brendan Eich in 1995. He developed it while working at Netscape... | 0 | 2024-07-10T07:24:15 | https://dev.to/webdemon/js-introduction-37jc | javascript, programming, webdev, beginners | 1. **JavaScript** was created by **Brendan Eich** in **1995**. He developed it while working at **Netscape Communications Corporation**. The language was initially called **Mocha**, then renamed to **LiveScript**, and finally to **JavaScript**. The first version of JavaScript was included in **Netscape Navigator 2.0**,... | webdemon |

1,918,189 | Speed Gate Turnstile for Efficient Passenger Processing at Major KSA Airports | A speedy and efficient processing of passengers is essential to ensure smooth operation at large... | 0 | 2024-07-10T07:24:31 | https://dev.to/aafiya_69fc1bb0667f65d8d8/speed-gate-turnstile-for-efficient-passenger-processing-at-major-ksa-airports-12n2 | turnstile, accesscontrol, speedgates, technology | A speedy and efficient processing of passengers is essential to ensure smooth operation at large airports across the KSA including Riyadh International Airport, Jeddah International Airport and Dammam International Airport. [Speed Turnstiles](https://www.expediteiot.com/smart-turnstile-system-in-saudi-qatar-and-oman/) ... | aafiya_69fc1bb0667f65d8d8 |

1,918,190 | Understanding SOLID Principles with Python Examples | Understanding SOLID Principles with Python Examples The SOLID principles are a set of... | 28,016 | 2024-07-10T07:27:19 | https://dev.to/plug_panther_3129828fadf0/understanding-solid-principles-with-python-examples-56mo | python, solid, programming, softwareengineering | ## Understanding SOLID Principles with Python Examples

The SOLID principles are a set of design principles that help developers create more maintainable and scalable software. Let's break down each principle with brief Python examples.

### 1. Single Responsibility Principle (SRP)

A class should have only one reason t... | plug_panther_3129828fadf0 |

1,918,191 | Remove the Message of the Day on Ubuntu 24.04 | YouTube Video Introduction Removing the Message of the Day Reverting the Changes Conclusion ... | 18,874 | 2024-07-11T11:38:00 | https://dev.to/dev_neil_a/remove-the-message-of-the-day-on-ubuntu-16a2 | linux, raspberrypi, ubuntu, webdev | - [YouTube Video](#youtube-video)

- [Introduction](#introduction)

- [Removing the Message of the Day](#removing-the-message-of-the-day)

- [Reverting the Changes](#reverting-the-changes)

- [Conclusion](#conclusion)

## YouTube Video

If you would prefer to watch the content of this article, there is a video version ava... | dev_neil_a |

1,918,192 | Pure Bliss Awaits: Rajasthan's Top Rose Sharbat! | Haldighati Rose Products, a small startup based in Haldighati, Rajasthan, is renowned for its... | 0 | 2024-07-10T07:30:25 | https://dev.to/roseproducts/pure-bliss-awaits-rajasthans-top-rose-sharbat-1dbm | naturalrosesharbat, haldighatiroseproducts, bestroseproductsinrajasthan | Haldighati Rose Products, a small startup based in Haldighati, Rajasthan, is renowned for its exquisite and natural rose products. Among these, their Natural Red Rose Sharbat stands out as the best rose sharbat in Rajasthan, offering a luxurious taste experience that is both refreshing and delicate.

**The Art of Craft... | roseproducts |

1,918,193 | An insect is sitting in your compiler and doesn't want to leave for 13 years | Author: Grigory Semenchev Let's imagine you have a perfect project. Tasks get done, your compiler... | 0 | 2024-07-10T07:31:00 | https://dev.to/anogneva/an-insect-is-sitting-in-your-compiler-and-doesnt-want-to-leave-for-13-years-1ce | programming, cpp | Author: Grigory Semenchev

Let's imagine you have a perfect project\. Tasks get done, your compiler compiles, static analyzers analyze, and releases get released\. At some point, you decide to open an ancient file that nobody has opened in years, and you see that it's encoded in Windows\-1251\. Even though the whole pr... | anogneva |

1,918,194 | Smooth Travels Await Unveiling the Best Ways to Rent a Car Ajman for an Unforgettable Journey | Embarking on a journey through the vibrant city of Ajman? Picture yourself cruising down the coastal... | 0 | 2024-07-10T07:32:09 | https://dev.to/john353234/smooth-travels-await-unveiling-the-best-ways-to-rent-a-car-ajman-for-an-unforgettable-journey-4c47 | rentacarajman, ajmanrentacar, carforrentajman, cheaprentacarajman | Embarking on a journey through the vibrant city of Ajman? Picture yourself cruising down the coastal roads, the wind in your hair, and the freedom to explore at your own pace. To make this dream a reality, mastering the art of [Rent a car Ajman](https://greatdubai.com/rent-a-car/ajman) is crucial. In this guide, we'll ... | john353234 |

1,918,195 | Redux VS Redux Toolkit && Redux Thunk VS Redux-Saga | Introduction In modern web development, especially with React, managing state effectively... | 0 | 2024-07-10T18:40:57 | https://dev.to/wafa_bergaoui/redux-vs-redux-toolkit-redux-thunk-vs-redux-saga-59cd | redux, react, reactjsdevelopment, javascript | ## **Introduction**

In modern web development, especially with React, managing state effectively is crucial for building dynamic, responsive applications. State represents data that can change over time, such as user input, fetched data, or any other dynamic content. Without proper state management, applications can be... | wafa_bergaoui |

1,918,196 | Gemstone Tourmaline: A Hopeful Hope for Glistening Skin? | Gemstones have long been prized for their exceptional splendor and inviting colorations. However, did... | 0 | 2024-07-10T07:33:43 | https://dev.to/james_harden/gemstone-tourmaline-a-hopeful-hope-for-glistening-skin-2jci | tourmaline, tourmalinegemstone, gemstone, astrology | Gemstones have long been prized for their exceptional splendor and inviting colorations. However, did you know that a few gemstones, including [**Tourmaline gemstone**](https://www.cabochonsforsale.com/gemstone/tourmaline), may additionally have the key to glowing skin?

With a wide range of colors ranging from soothi... | james_harden |

1,918,197 | Import the database from the Heroku dump | Occasionally, as developers, we have to import staging or production databases and restore them to... | 0 | 2024-07-10T07:33:54 | https://dev.to/mharut/import-db-from-heroku-dump-3lho | db, import, shell, heroku | Occasionally, as developers, we have to import staging or production databases and restore them to our local system in order to recreate bugs or maintain the most recent version, among other reasons.

Manual work takes a lot of time and can result in mistakes.

I propose creating a shell script that will do everything ... | mharut |

1,918,222 | Web Security and Bug Bounty Hunting: Knowledge, Tools, and Certifications | Web Security and Bug Bounty Hunting | 0 | 2024-07-10T08:03:42 | https://dev.to/saramazal/web-security-and-bug-bounty-hunting-knowledge-tools-and-certifications-16d1 | bugbountyhunter, ethicalhacking, webdev, appsec | ---

title: Web Security and Bug Bounty Hunting: Knowledge, Tools, and Certifications

published: true

description: Web Security and Bug Bounty Hunting

tags: #bugbountyhunter #ethicalhacking #webdev #AppSec

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-07-... | saramazal |

1,918,198 | MartialShop APP | This is one of my projects that is written in Dart using Flutter framework. This is an e-commerce app... | 0 | 2024-07-10T07:34:41 | https://dev.to/devhalen/martialshop-app-305f | programming, flutter, dart | This is one of my projects that is written in Dart using Flutter framework.

This is an e-commerce app for martial arts products called : MartialShop

It's incomplete for now.

Here you can see parts of it.

This is the main page where ... | devhalen |

1,918,199 | Corteiz Tracksuit: A Comprehensive Guide to the Trendy Clothing Brand | Corteiz Tracksuit: A Comprehensive Guide to the Trendy Clothing Brand Corteiz Tracksuit is a clothing... | 0 | 2024-07-10T07:35:13 | https://dev.to/faisal_abbas_55a0539cc3e9/corteiz-tracksuit-a-comprehensive-guide-to-the-trendy-clothing-brand-4plp | Corteiz Tracksuit: A Comprehensive Guide to the Trendy Clothing Brand

Corteiz Tracksuit is a clothing brand that has rapidly gained popularity for its bold designs and unique aesthetic. Influenced by the enigmatic style of Kanye West, the brand offers a range of apparel that includes shorts, sweatshirts, jeans, corteiz... | faisal_abbas_55a0539cc3e9 | |

1,918,200 | Stay Cozy and Cool: Discover the Versatile Hellstar hoodie for All Seasons | Stay Cozy and Cool: Discover the Versatile Hellstar hoodie for All Seasons Our hellstar hoodie line... | 0 | 2024-07-10T07:35:37 | https://dev.to/faisal_abbas_55a0539cc3e9/stay-cozy-and-cool-discover-the-versatile-hellstar-hoodie-for-all-seasons-i4l | Stay Cozy and Cool: Discover the Versatile Hellstar hoodie for All Seasons

Our hellstar hoodie line is renowned for its unique design style and trendsetting styles. This brand consistently pushes boundaries, combining classic elements with contemporary twists. The hallmark of Hellstar has always been its ability to ble... | faisal_abbas_55a0539cc3e9 | |

1,918,201 | Understanding and Fixing Uncontrolled to Controlled Input Warnings in React | Encountering the 'A component is changing an uncontrolled input to be controlled' and vice versa... | 0 | 2024-07-10T12:10:56 | https://dev.to/john_muriithi_swe/understanding-and-fixing-uncontrolled-to-controlled-input-warnings-in-react-4n5e | Encountering the **'A component is changing an uncontrolled input to be controlled'** and vice versa warning in React can be perplexing for both beginners and experienced developers. This article delves into the causes of this common error and provides practical solutions to ensure smooth input state management in your... | john_muriithi_swe | |

1,918,202 | Effortlessly Prepare With EMC D-SNC-DY-00 Exam Dumps | Leading Excellent D-SNC-DY-00 PDF Dumps - Get Great deal of Preparation Assets For the... | 0 | 2024-07-10T07:36:42 | https://dev.to/pattyrortiz/effortlessly-prepare-with-emc-d-snc-dy-00-exam-dumps-1gef | ## **Leading Excellent D-SNC-DY-00 PDF Dumps - Get Great deal of Preparation Assets**

For the preparation from the Dell SONiC Deploy Exam certification exam you get prepared efficiently by utilizing this valid D-SNC-DY-00 pdf dumps for preparation. By using the EMC D-SNC-DY-00 exam dumps get the most beneficial motiv... | pattyrortiz | |

1,918,203 | Discover the Ultimate Comfort and Style with the Sp5der Clothing: A Blend of Performance and Fashion | Discover the Ultimate Comfort and Style with the Sp5der Clothing: A Blend of Performance and... | 0 | 2024-07-10T07:39:03 | https://dev.to/faisal_shahzad_c997b726f5/discover-the-ultimate-comfort-and-style-with-the-sp5der-clothing-a-blend-of-performance-and-fashion-1pb1 | Discover the Ultimate Comfort and Style with the Sp5der Clothing: A Blend of Performance and Fashion

Why Choose a Spider Tracksuit?

Sp5der clothing stands out not just for its style but also for its performance. Designed for both comfort and functionality, this tracksuit is the perfect attire for various activities, fr... | faisal_shahzad_c997b726f5 | |

1,918,204 | Which Python framework is used for mobile app development? | For Mobile app development, Python GUI frameworks like Kivy and Beeware are very important. Kivy is... | 0 | 2024-07-10T07:39:25 | https://dev.to/sophiaog/which-python-framework-is-used-for-mobile-app-development-25og | python, pythonframeworks | For Mobile app development, Python GUI frameworks like Kivy and Beeware are very important.

Kivy is a popular open-source [Python framework for mobile app development](https://www.ongraph.com/a-list-of-top-10-python-frameworks-for-app-development/) that offers rapid application development of cross-platform GUI apps.... | sophiaog |

1,918,205 | JavaScript Performance Optimization: Debounce vs Throttle Explained | Many of the online apps of today are powered by the flexible JavaScript language, but power comes... | 0 | 2024-07-10T07:39:30 | https://www.nilebits.com/blog/2024/07/javascript-debounce-vs-throttle/ | webdev, javascript, programming, tutorial | Many of the online apps of today are powered by the flexible [JavaScript](https://www.nilebits.com/blog/2024/07/ultimate-guide-to-javascript-objects/) language, but power comes with responsibility. Managing numerous events effectively is a problem that many developers encounter. When user inputs like scrolling, resizin... | amr-saafan |

1,918,206 | Exploring the Role of a Construction Company in Iraq | In the dynamic landscape of Iraq's rebuilding efforts, a construction company plays a pivotal role in... | 0 | 2024-07-10T07:39:42 | https://dev.to/muegroup/exploring-the-role-of-a-construction-company-in-iraq-4baj | In the dynamic landscape of Iraq's rebuilding efforts, a construction company plays a pivotal role in shaping infrastructure and development. With its rich history and strategic location, Iraq presents both challenges and opportunities for construction firms aiming to contribute to its growth. A **[construction company... | muegroup | |

1,918,207 | Live Streaming vs. Video On Demand: Decoding the Differences | As the internet continues to shape how we interact with media, understanding the nuances between... | 0 | 2024-07-10T07:41:20 | https://dev.to/janet_ss_f95094316342f4ff/live-streaming-vs-video-on-demand-decoding-the-differences-113m | livestreaming, videoondemand | As the internet continues to shape how we interact with media, understanding the nuances between these two formats is crucial for content creators, marketers, and audiences alike. Live streaming and VOD offer distinct advantages and cater to diverse preferences, making them indispensable tools in the arsenal of any mod... | janet_ss_f95094316342f4ff |

1,918,208 | How Can I Efficiently Track and Manage Working Hours Using an Hour Calculator? | I'm looking for the best ways to track and manage my working hours Calculette Mauricette. I've heard... | 0 | 2024-07-10T07:41:45 | https://dev.to/janom_41fe1a4cbf/how-can-i-efficiently-track-and-manage-working-hours-using-an-hour-calculator-49i4 | webdev, beginners, programming, tutorial | I'm looking for the best ways to track and manage my working hours [Calculette Mauricette](https://mauricettecalculette.fr/). I've heard about hour calculators but I'm not sure which one would be the most efficient and user-friendly.

Can anyone recommend a good hour calculator tool that they have used? Additionally, ... | janom_41fe1a4cbf |

1,918,209 | Stainless Steel: The Preferred Material for Hygienic Environments | The Ideal Material for Clean and Hygienic Environments: Stainless Steel Spotless & So Easy To... | 0 | 2024-07-10T07:43:07 | https://dev.to/imcandika_bfmvqnah_9be0/stainless-steel-the-preferred-material-for-hygienic-environments-260i | The Ideal Material for Clean and Hygienic Environments: Stainless Steel

Spotless & So Easy To Clean Stainless steel can be cleaned in just one day This is just one example of its effectiveness in this realm, so much that it has been widely used for a variety of purposes especially the critical places like kitchens and... | imcandika_bfmvqnah_9be0 | |

1,918,210 | sumatra slim belly tonic | Sumatra Slim Belly Tonic appears to be marketed as a weight loss supplement, often promoted with... | 0 | 2024-07-10T07:43:19 | https://dev.to/julia_elzarka_fce50d4ae92/sumatra-slim-belly-tonic-586g | weightloss, testing, database, security | [Sumatra Slim Belly Tonic](https://sumatraslimbellytonicsite.online/) appears to be marketed as a weight loss supplement, often promoted with claims of helping people lose weight quickly and easily. However, it's important to approach such products with caution and skepticism, as many weight loss supplements can make e... | julia_elzarka_fce50d4ae92 |

1,918,211 | Do people want your SaaS? | So you've found your next SaaS idea: a platform that translates cat meows into Shakespearean sonnets.... | 0 | 2024-07-10T10:04:28 | https://dev.to/joshlawson100/do-people-want-your-saas-1ppj | webdev, javascript, beginners, saas | So you've found your next SaaS idea: a platform that translates cat meows into Shakespearean sonnets. You're confident it will make millions and be the next biggest app of the year. You then go on to get 5 customers and lose hundreds of dollars on a product that flopped. The issue: no one wanted your SaaS. It's one thi... | joshlawson100 |

1,918,212 | Exploring The Power Of Open Source Software Development | Choosing an Open Source Development Company: Key Considerations When selecting an open source... | 0 | 2024-07-10T07:44:07 | https://dev.to/saumya27/exploring-the-power-of-open-source-software-development-530l | opensource |

**Choosing an Open Source Development Company: Key Considerations**

When selecting an open source development company, it’s essential to consider various factors to ensure you partner with the right team. Open source projects can significantly benefit your business by providing flexibility, reducing costs, and foster... | saumya27 |

1,918,213 | Ethical Hacking, Penetration Testing, and Web Security: A Comprehensive Overview | Ethical Hacking, Penetration Testing, and Web Security | 0 | 2024-07-10T07:45:02 | https://dev.to/saramazal/ethical-hacking-penetration-testing-and-web-security-a-comprehensive-overview-5doi | ethicalhacking, pentesting, websecurity, bugbountyhunter | ---

title: Ethical Hacking, Penetration Testing, and Web Security: A Comprehensive Overview

published: true

description: Ethical Hacking, Penetration Testing, and Web Security

tags: #ethicalhacking #Pentesting #websecurity #BugBountyHunter

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for bes... | saramazal |

1,918,214 | Implement React v18 from Scratch Using WASM and Rust - [18] Implement useRef, useCallback, useMemo | Based on big-react,I am going to implement React v18 core features from scratch using WASM and... | 27,011 | 2024-07-10T07:45:37 | https://dev.to/paradeto/implement-react-v18-from-scratch-using-wasm-and-rust-18-implement-useref-usecallback-usememo-3i1a | react, webassembly, rust | > Based on [big-react](https://github.com/BetaSu/big-react),I am going to implement React v18 core features from scratch using WASM and Rust.

>

> Code Repository:https://github.com/ParadeTo/big-react-wasm

>

> The tag related to this article:[v18](https://github.com/ParadeTo/big-react-wasm/tree/v18)

We have already imp... | paradeto |

1,918,215 | The Power of Divsly in WhatsApp Campaigns: Elevate Your Strategy | In today's digital age, effective marketing strategies often hinge on the ability to reach audiences... | 0 | 2024-07-10T07:48:39 | https://dev.to/divsly/the-power-of-divsly-in-whatsapp-campaigns-elevate-your-strategy-52mn | whatsappcampaigns, whatsappmarketing, whatsappmarketingcampaigns, whatsappbusiness | In today's digital age, effective marketing strategies often hinge on the ability to reach audiences directly and engage them meaningfully. WhatsApp, with its widespread use and high engagement rates, has become a pivotal platform for marketers. Leveraging tools like [Divsly](https://divsly.com/?utm_source=blog&utm_med... | divsly |

1,918,216 | What Is Java? - Java Programming Language Explained | Java was invented to make it easier to write code, easier than C++. There is a bunch of things, a... | 0 | 2024-07-10T07:52:15 | https://dev.to/thekarlesi/what-is-java-java-programming-language-explained-doc | webdev, beginners, programming, learning | Java was invented to make it easier to write code, easier than C++.

There is a bunch of things, a bunch of bookkeeping that you have to manage with C++, that you don't have to manage with Java.

The downside with Java is that it is slow compared to C++. It runs really really slow, but, for many many types of apps, man... | thekarlesi |

1,918,229 | NVIDIA A100 vs V100: Which is Better? | Key Highlights With the NVIDIA A100 and V100 GPUs, you're looking at two pieces of tech... | 0 | 2024-07-10T09:30:00 | https://dev.to/novita_ai/nvidia-a100-vs-v100-which-is-better-1kn8 | ## Key Highlights

- With the NVIDIA A100 and V100 GPUs, you're looking at two pieces of tech built for really tough computing jobs.

- The latest from NVIDIA is the A100, packed with new tech to give it a ton of computing power.

- Even though the V100 GPU came out before the A100, it's still pretty strong when you need... | novita_ai | |

1,918,217 | Case Studies: Successful Acrylic Tunnel Installations | There is a smile on the face of young children when they cruise through acrylic tunnels at an... | 0 | 2024-07-10T07:56:55 | https://dev.to/imcandika_bfmvqnah_9be0/case-studies-successful-acrylic-tunnel-installations-132p | There is a smile on the face of young children when they cruise through acrylic tunnels at an aquarium or zoo to discover for themselves, the magical world under water. Life in these inventive tunnels has totally altered our knowledge about marine creatures by offering us the best viewings like never seen before. 0 SHA... | imcandika_bfmvqnah_9be0 | |

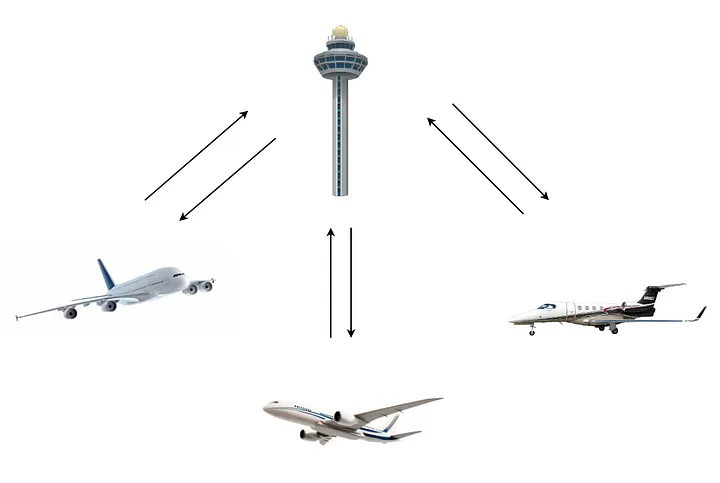

1,918,218 | JavaScript Design Patterns - Behavioral - Mediator | The Mediator pattern allows us to reduce chaotic dependencies between objects by defining an object... | 26,001 | 2024-07-10T07:57:12 | https://dev.to/nhannguyendevjs/javascript-design-patterns-behavioral-mediator-52c9 | programming, javascript, beginners |

The **Mediator** pattern allows us to reduce chaotic dependencies between objects by defining an object that encapsulates how a set of objects interact.

The **Mediator** pattern suggests that we should cease all di... | nhannguyendevjs |

1,918,219 | Monitoring GameObject Changes in the Unity editor hierarchy | Introduction Managing GameObjects in Unity can be a complex task, especially when dealing... | 0 | 2024-07-10T08:29:24 | https://dev.to/dutchskull/monitoring-gameobject-changes-in-unity-a-guide-1g5g | unity3d, gamedev, tooling, csharp | ### Introduction

Managing GameObjects in Unity can be a complex task, especially when dealing with dynamic and interactive scenes. Detecting changes such as additions, deletions, renaming, and parent changes in the hierarchy is crucial for many applications. In this post, we will walk through a powerful script, `Hiera... | dutchskull |

1,918,220 | Starting a new learning pattern | Starting a new learning pattern URL: https://github.com/theamitprajapati/core-fundamentals | 0 | 2024-07-10T08:00:07 | https://dev.to/amit_prajapati_b10f0eb8a8/starting-a-new-learning-pattern-4047 |

Starting a new learning pattern

URL: https://github.com/theamitprajapati/core-fundamentals | amit_prajapati_b10f0eb8a8 | |

1,918,221 | QTCPcoin: Transforming Global Cryptocurrency Markets | QTCPcoin (QUANTUM CAPITAL PARTNERS LTD) is one of the world's renowned digital asset trading... | 0 | 2024-07-10T08:01:46 | https://dev.to/qtcpcoin/qtcpcoin-transforming-global-cryptocurrency-markets-238f | QTCPcoin (QUANTUM CAPITAL PARTNERS LTD) is one of the world's renowned digital asset trading platforms, primarily providing global users with cryptocurrency and derivatives trading services for Bitcoin, Litecoin, Ethereum, and other digital assets. Established in Singapore in 2018, it has officially obtained dual MSB l... | qtcpcoin | |

1,918,223 | Discord bot dashboard with OAuth2 (Nextjs) | Preface I wanted to build a Discord bot with TypeScript that had: A database A... | 0 | 2024-07-11T21:51:42 | https://dev.to/clxrityy/discord-bot-dashboard-authentication-nextjs-1ecg | nextjs, prisma, oauth, discord | ## Preface

> I wanted to build a Discord bot with TypeScript that had:

> - A database

> - A dashboard/website/domain

> - An API for interactions & authentication with Discord

I previously created this same "hbd" bot that ran on nodejs runtime, built with the [discord.js](https://discord.js.org/) library.

{% github ht... | clxrityy |

1,918,224 | Best Testing Practices in React.js Development | While React.js has revolutionized web development with its adaptability, high performance, and... | 0 | 2024-07-10T08:04:22 | https://dev.to/ngocninh123/best-testing-practices-in-reactjs-development-2m26 | react, reactjsdevelopment, beginners, productivity | While React.js has revolutionized web development with its adaptability, high performance, and extensive ecosystem, creating dependable and fully functional React applications relies heavily on solid testing methodologies. This blog delves into the essential role of testing in React.js development, examining various te... | ngocninh123 |

1,918,225 | Importance of semantic HTML for SEO and accessibility | The Importance of Semantic HTML for SEO and Accessibility Introduction Semantic HTML is a crucial... | 0 | 2024-07-10T08:06:53 | https://dev.to/summer_15/importance-of-semantic-html-for-seo-and-accessibility-4jpe | webdev, html, seo, a11y |

The Importance of Semantic HTML for SEO and Accessibility

Introduction

Semantic HTML is a crucial aspect of web development that has a significant impact on both Search Engine Optimization (SEO) and web accessibility. By using semantic HTML tags, developers can create web pages that are not only easily understood by... | summer_15 |

1,918,226 | Configurando Spring Boot com PostgreSQL no ambiente Linux: Passo a passo | Neste guia, vamos juntos desbravar o processo de configurar e conectar uma aplicação Spring Boot ao... | 0 | 2024-07-10T13:43:28 | https://dev.to/jehzucco/configurando-spring-boot-com-postgresql-no-ambiente-linux-passo-a-passo-13pe | springboot, linux, postgres, beginners | Neste guia, vamos juntos desbravar o processo de configurar e conectar uma aplicação Spring Boot ao PostgreSQL no Linux. O que temos pela frente:

1. Instalar o PostgreSQL no Linux Mint (Ubuntu)

2. Criar um novo usuário e senha no PostgreSQL

3. Criar uma base de dados

4. Dar acesso à base para o usuário criado

5. Fa... | jehzucco |

1,918,228 | Tricky Golang interview questions - Part 6: NonBlocking Read | This problem is more related to code review. It requires knowledge about channels and select cases,... | 0 | 2024-07-10T08:11:36 | https://dev.to/crusty0gphr/tricky-golang-interview-questions-part-6-nonblocking-read-aj1 | go, interview, tutorial, programming | This problem is more related to code review. It requires knowledge about channels and select cases, also blocking, making it one of the most difficult interview questions I faced in my career. In these kinds of questions, the context is unclear at first glance and requires a deep understanding of blocking and deadlocks... | crusty0gphr |

1,918,230 | Vector Databases: Leading a New Era of Big Data and AI Integration | 1. Introduction Driven by the wave of digitalization, the growth rate of data has reached... | 0 | 2024-07-10T08:16:46 | https://dev.to/happyer/vector-databases-leading-a-new-era-of-big-data-and-ai-integration-2hee | ai, vectordatabase, bigdata, machinelearning | ## 1. Introduction

Driven by the wave of digitalization, the growth rate of data has reached unprecedented heights. This data is not only vast in scale but also diverse in type, including text, images, audio, and video. To efficiently process and analyze this data, vector databases have emerged as a key technology, bec... | happyer |

1,918,231 | Stacked Cards Layout With Compose - And Cats | I was writing a completely different blog post about playing around with Glance and app widgets, and... | 0 | 2024-07-11T12:06:00 | https://eevis.codes/blog/2024-07-11/stacked-cards-layout-with-compose-and-cats/ | android, kotlin, mobile, programming | I was writing a completely different blog post about playing around with Glance and app widgets, and I needed an example app. None of the existing ones served me, so I needed to build a new one. And I completely overdid it—I would have needed just something really simple, but I ended up creating a more polished app wit... | eevajonnapanula |

1,918,250 | Sustainability Benefits of Wing Expansion Boxes | How many times has your sandwich ended up squished in the bottom of a lunchbox or leftovers leaked... | 0 | 2024-07-10T08:21:22 | https://dev.to/sarita_basnetqkshq_1003f/sustainability-benefits-of-wing-expansion-boxes-k0p | How many times has your sandwich ended up squished in the bottom of a lunchbox or leftovers leaked out all over you fridge? The Wing Expansion Box is the next big thing in storage boxes and other container systems that aim to upgrade your food into optimal preservation system. Not only that, but these special material ... | sarita_basnetqkshq_1003f | |

1,918,251 | Notes for Object Oriented Design | Part-1/3 | Part 1 - Object-Oriented Analysis and Design 1. Object-Oriented... | 28,018 | 2024-07-10T08:21:49 | https://dev.to/anshulanand/object-oriented-design-part-13-4nb1 | oop, java, computerscience, programming | ## Part 1 - Object-Oriented Analysis and Design

### 1. Object-Oriented Thinking

Object-oriented thinking is fundamental for object-oriented modelling, which is a core aspect of this post. It involves understanding problems and concepts by decomposing them into component parts and considering these parts as objects.

... | anshulanand |

1,918,252 | CSS Variable Naming: Best Practices and Approaches | Recently, while browsing the internet, like any good front-end developer, I wanted to "steal" the... | 28,019 | 2024-07-10T10:06:22 | https://www.munq.me/blog/css-variables | css, webdev, beginners, frontend | Recently, while browsing the internet, like any good front-end developer, I wanted to "steal" the color palette of a site. So, I opened the inspector to copy the hexadecimal values of the colors and came across an unusual surprise: the CSS variables were named inconsistently.

<br><br>

<div style="display: flex; justi... | leomunizq |

1,918,253 | Wednesday Links - Edition 2024-07-10 | Shell Spell: Extracting and Propagating Multiple Values With jq (3... | 6,965 | 2024-07-10T08:28:29 | https://dev.to/0xkkocel/wednesday-links-edition-2024-07-10-2o20 | libphonenumber, wireshark, tcpdump, jq | Shell Spell: Extracting and Propagating Multiple Values With jq (3 min)🧙♂️

https://www.morling.dev/blog/extracting-and-propagating-multiple-values-with-jq/

The Best Way to Handle Phone Numbers (2 min)☎️

https://foojay.io/today/the-best-way-to-handle-phone-numbers/

Wireshark & tcpdump: A Debugging Power Couple (8 mi... | 0xkkocel |

1,918,254 | Exploring The Versatility Of Drupal: Enhance Your Website With Custom Modules | Expert Guide to Drupal Development Introduction to Drupal Drupal is a powerful, open-source content... | 0 | 2024-07-10T08:28:33 | https://dev.to/saumya27/exploring-the-versatility-of-drupal-enhance-your-website-with-custom-modules-1f9j | drupal | **Expert Guide to Drupal Development**

**Introduction to Drupal**

Drupal is a powerful, open-source content management system (CMS) used by organizations of all sizes to build and manage websites. Known for its flexibility, scalability, and robust features, Drupal is a popular choice for creating complex websites and... | saumya27 |

1,918,255 | Understanding SAP BASIS: The Backbone of SAP Systems | Introduction SAP BASIS plays a critical role in the smooth operation of SAP systems. It encompasses... | 0 | 2024-07-10T08:28:58 | https://dev.to/geetha_k_5e055503d8642034/understanding-sap-basis-the-backbone-of-sap-systems-4akb | Introduction

SAP BASIS plays a critical role in the smooth operation of SAP systems. It encompasses various tasks and responsibilities that are essential for the administration and management of SAP applications. This blog aims to provide a comprehensive overview of what SAP BASIS is and why it is considered the backbo... | geetha_k_5e055503d8642034 | |

1,918,256 | How to Save your Supabase App from Crashing | Supabase allows you to create a database free of charge, and use PostgREST to generate a CRUD API... | 0 | 2024-07-10T08:33:15 | https://ainiro.io/blog/how-to-save-your-supabase-app-from-crashing | lowcode | [Supabase](https://supabase.com/) allows you to create a database free of charge, and use PostgREST to generate a CRUD API towards your database. The problem is that if you need any business logic between the client and your database, then PostgREST is useless, and you'll have to resort to edge functions.

Edge functio... | polterguy |

1,918,257 | How Do I Speak With [QuickBooks Desktop Support] Now 855-200 0590 | If you need help with [QuickBooks Desktop... | 0 | 2024-07-10T08:33:28 | https://dev.to/rongedwilliam/how-do-i-speak-with-quickbooks-desktop-support-now-855-200-0590-2648 | webdev, javascript, beginners, tutorial | If you need help with [QuickBooks Desktop (https://community.ruckuswireless.com/t5/Community-and-Online-Support/How-Do-I-Speak-With-QuickBooks-Desktop-Support-Now-855-200-0590/m-p/83275#M2693), you can speak directly with our support team by calling 855-200-0590. Our knowledgeable specialists are ready to assist you wi... | rongedwilliam |

1,918,258 | Why Sports Flooring Matters for Athletic Performance | Why The Importance of Good Sports Flooring For Athletes? The secret to athletic success obviously... | 0 | 2024-07-10T08:33:48 | https://dev.to/sarita_basnetqkshq_1003f/why-sports-flooring-matters-for-athletic-performance-45jb | Why The Importance of Good Sports Flooring For Athletes?

The secret to athletic success obviously begins with the right tools and gear. Sports Flooring is one important element that usually, athletes over look. In this blog, we will be explaining the importance of good sports flooring for athletes and how it plays a v... | sarita_basnetqkshq_1003f | |

1,918,259 | Fildena 100 Tablets (Purple Pills) | Sildenafil | Reviews | What is Fildena 100 Purple pill? Fildena 100 Purple Pill, also known as the "weekend pill," contains... | 0 | 2024-07-10T08:34:02 | https://dev.to/richard_roy/fildena-100-tablets-purple-pills-sildenafil-reviews-2foa |

What is Fildena 100 Purple pill?

[Fildena 100 Purple Pill](https://powmedz.com/product/fildena-100-mg-purple-viagra-pill/), also known as the "weekend pill," contains 100 milligrams of sildenafil citrate and is used to increase blood flow to certain areas of the body, and also helps treat erectile problems in older m... | richard_roy | |

1,918,260 | Methods to Improve Your UX | User experience, commonly referred to as UX, is a component of customer experience. Creating a good... | 0 | 2024-07-10T08:34:10 | https://dev.to/danieldavis/methods-to-improve-your-ux-33l | User experience, commonly referred to as UX, is a component of customer experience. Creating a good user experience makes it easier to buy or use a product or service while creating a positive emotional impact.

In the digital world, [importance of Software Design](https://fuselabcreative.com/what-is-software-design-an... | danieldavis | |

1,918,261 | How to Integrate Abstract Email and Phone Validation for Zoho CRM | Data accuracy is paramount in Customer Relationship Management (CRM) systems. Maintaining accurate... | 0 | 2024-07-10T08:35:15 | https://dev.to/jamesellis/how-to-integrate-abstract-email-and-phone-validation-for-zoho-crm-30i2 | Data accuracy is paramount in [Customer Relationship Management (CRM) systems](https://w3scloud.com/zoho-automation/). Maintaining accurate contact information ensures effective communication, streamlines processes, and enhances customer satisfaction. One effective way to maintain data accuracy is by integrating Abstra... | jamesellis | |

1,918,262 | Kim Ha-sung contributes to victory at a high level despite poor batting performance | At the end of this season, Kim Ha-sung, a free agent (FA) of the San Diego Padres in the U.S.... | 0 | 2024-07-10T08:35:21 | https://dev.to/squeenshin/kim-ha-sung-contributes-to-victory-at-a-high-level-despite-poor-batting-performance-3d59 | At the end of this season, Kim Ha-sung, a free agent (FA) of the San Diego Padres in the U.S. professional baseball, who is eligible for free agency, has been found to have a top-level winning contribution (WAR).

According to FanGraph, a sabermetric site that collects various statistics on MLB players, Kim Ha-sung's W... | squeenshin | |

1,918,264 | Drawing animations in ScheduleJS | ScheduleJS uses the HTML Canvas rendering engine to draw the grid, activities, additional layers and... | 0 | 2024-07-10T08:36:13 | https://dev.to/lenormor/drawing-animations-in-schedulejs-56fo | webdev, javascript, devops, learning | [ScheduleJS](https://schedulejs.com/) uses the HTML Canvas rendering engine to draw the grid, activities, additional layers and links. This article explains how to design a simple ScheduleJS rendering animation using the HTML Canvas API

## A few words on HTML Canvas

Have you ever been using the Canvas technology befor... | lenormor |

1,918,265 | picoCTF Mod 26 Write Up | Details: Points: 10 Jeopardy style CTF Category: Cryptography Comments: Cryptography can be easy, do... | 0 | 2024-07-10T08:38:10 | https://dev.to/president-xd/picoctf-mod-26-write-up-pnh | **Details:**

**Points:** 10

Jeopardy style CTF

**Category:** Cryptography

**Comments:**

Cryptography can be easy, do you know what ROT13 is?

```

cvpbPGS{arkg_gvzr_V'yy_gel_2_ebhaqf_bs_ebg13_MAZyqFQj}

```

ROT13 is a cipher that rotates each character 13 letters over. The mod 26 is a hint about looping back around. You ... | president-xd | |

1,918,267 | Understanding the MITRE ATT&CK Platform: A Valuable Resource for Cybersecurity Professionals | Understanding the MITRE ATT&CK Platform: A Valuable Resource for Cybersecurity... | 0 | 2024-07-10T08:44:54 | https://dev.to/saramazal/understanding-the-mitre-attck-platform-a-valuable-resource-for-cybersecurity-professionals-1nd6 | mitreattack, infosec, redteam, cybersecurity | ---

title: Understanding the MITRE ATT&CK Platform: A Valuable Resource for Cybersecurity Professionals

published: true

description:

tags: #MITREATTaCK #infosec #RedTeam #cybersecurity

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-07-10 08:37 +0000

---

!... | saramazal |

1,918,268 | How To Calculate MAP Pricing? | Explore the importance of Minimum Advertised Price (MAP) and how MAP pricing is calculated and how it... | 0 | 2024-07-10T08:41:37 | https://dev.to/iwebscraping/how-to-calculate-map-pricing-542i | calculatemappricing, minimumadvertisedprice | Explore the importance of[ Minimum Advertised Price](https://www.iwebscraping.com/how-to-calculate-map-pricing.php) (MAP) and how MAP pricing is calculated and how it helps ensure fair competition, maintains profit margins, and builds consumer trust. | iwebscraping |

1,918,269 | Hand Safety Matters: Exploring Cut Resistant Glove Manufacturing | In industries, the hand is one of body parts which are injured most and the safe injuries have been... | 0 | 2024-07-10T08:41:39 | https://dev.to/sarita_basnetqkshq_1003f/hand-safety-matters-exploring-cut-resistant-glove-manufacturing-26g6 | In industries, the hand is one of body parts which are injured most and the safe injuries have been often caused by sharp tools or machinery. Workers use cut-resistant gloves for protecting their hands from likely injuries as they are manufactured using robust fabrics like Kevlar, Dyneema, Spectra and polyethylene. But... | sarita_basnetqkshq_1003f | |

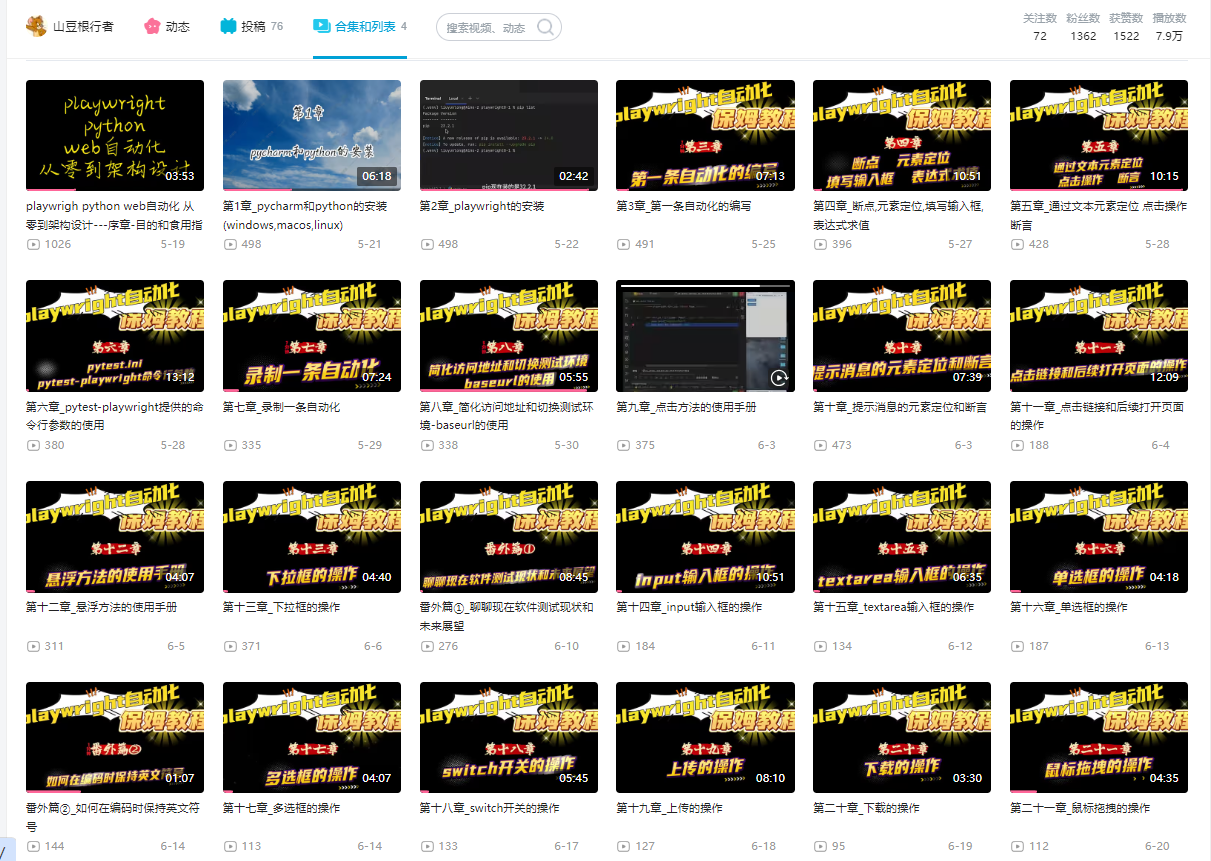

1,918,270 | 山豆根行者老师python-playwright短视频中文教程 | https://www.bilibili.com/video/BV1Jx4y1H7zW/?spm_id_from=333.999.section.playall&vd_source=bfede0... | 0 | 2024-07-10T08:43:40 | https://dev.to/winni/python-playwrightduan-shi-pin-jiao-cheng-16o8 | playwright, python, chinese | https://www.bilibili.com/video/BV1Jx4y1H7zW/?spm_id_from=333.999.section.playall&vd_source=bfede0c2afd3a665168255bf8645e775

| winni |

1,918,272 | React Native Development Service at doodleblue | Overview of React Native Facebook developed the open-source React Native technology for... | 0 | 2024-07-10T08:45:00 | https://dev.to/doodleblueinnovation/react-native-development-service-at-doodleblue-2ce3 | webdev, javascript, beginners, programming | ## Overview of React Native

Facebook developed the open-source React Native technology for mobile applications. With the help of React, a well-liked JavaScript user interface toolkit, developers may create mobile applications. Using a single codebase, React Native makes it possible to create natively rendered iOS and ... | doodleblueinnovation |

1,918,274 | Navigating ESG Controversies: Strategies for Sustainable Business Resilience | Social media has greatly amplified the influence of multimedia coverage on public perception. This... | 0 | 2024-07-10T08:47:54 | https://dev.to/linda0609/navigating-esg-controversies-strategies-for-sustainable-business-resilience-4b7c | esg, esgconsulting, esgcontroverseries | Social media has greatly amplified the influence of multimedia coverage on public perception. This evolution has its positives, such as increased demand for accountability and transparent corporate communication. However, it also opens the door to potential misuse by modern media, third-party firms, and news platforms,... | linda0609 |

1,918,275 | The Data Understanding Phase: The Key to a Successful Machine Learning Project | As with any IT project, the CRISP-DM method is often adopted to successfully carry out a machine... | 0 | 2024-07-10T09:08:58 | https://dev.to/moubarakmohame4/the-data-understanding-phase-the-key-to-a-successful-machine-learning-project-51dj | machinelearning, datascience, architecture, ai | As with any IT project, the CRISP-DM method is often adopted to successfully carry out a machine learning project. It consists of six phases, with the first being the Data Understanding phase. This phase stands out as the crucial foundation of any machine learning project. Imagine yourself as an architect planning the ... | moubarakmohame4 |

1,923,121 | Introduction to BitPower Lending | What is BitPower? BitPower is a decentralized lending platform that uses blockchain and smart... | 0 | 2024-07-14T11:53:29 | https://dev.to/aimm/introduction-to-bitpower-lending-4fi7 | What is BitPower?

BitPower is a decentralized lending platform that uses blockchain and smart contract technology to provide users with safe and efficient lending services.

Lending Features

Decentralization

No intermediary is required, users interact directly with the platform, reducing transaction costs.

Smart Contra... | aimm | |

1,918,277 | GBase 8c Compatibility Mode Usage Guide | To address the challenges commonly faced during homogeneous/heterogeneous database migrations, GBase... | 0 | 2024-07-10T08:54:32 | https://dev.to/congcong/gbase-8c-compatibility-mode-usage-guide-3ena | database | To address the challenges commonly faced during homogeneous/heterogeneous database migrations, GBase 8c optimizes design from multiple perspectives, including database compatibility and supporting tools. Built on the foundation of adaptability and performance in the core, GBase 8c is compatible with various relational ... | congcong |

1,918,278 | Assignment 1 | Software Development Life Cycle (SDLC): The SDLC is like a recipe for making computer programs. It's... | 0 | 2024-07-10T08:56:38 | https://dev.to/richmond_ofori_32d3982e66/assignment-1-5f9h | 1. Software Development Life Cycle (SDLC):

The SDLC is like a recipe for making computer programs. It's important because it helps teams work together and create better software.

2. Main phases of SDLC:

- Planning: Decide what to make

- Design: Draw out how it will look and work

- Building: Write the code

- Testing: C... | richmond_ofori_32d3982e66 | |

1,918,279 | Understanding ERP Software Development Cost: A Comprehensive Guide | Enterprise Resource Planning (ERP) systems are crucial for businesses looking to streamline... | 0 | 2024-07-10T08:57:08 | https://dev.to/adam45/understanding-erp-software-development-cost-a-comprehensive-guide-3i3h | erpsoftwaredevelopment, erp, erpsoftwaredevelopmentcost, erpdevelopment | Enterprise Resource Planning (ERP) systems are crucial for businesses looking to streamline operations, enhance productivity, and ensure seamless integration across various departments. However, one of the most significant considerations for any business looking to implement an ERP system is understanding the costs inv... | adam45 |

1,918,281 | Dataverse: get distinct values with Web API | I want to get a list of distinct countries with a Web API call from a Dataverse table with 60.000+... | 0 | 2024-07-10T09:53:21 | https://dev.to/andrewelans/dataverse-get-distinct-values-with-web-api-3h35 | dataverse, powerpages, powerapps, powerplatform | I want to get a list of distinct countries with a [Web API](https://learn.microsoft.com/en-us/power-apps/developer/data-platform/webapi/query-data-web-api) call from a Dataverse table with 60.000+ records for the purposes of populating a `<select>` with options on a web page.

In a production environment, I would use ... | andrewelans |

1,918,283 | Understanding Congenital Disabilities | Comprehending Congenital Impairments Congenital disability is a term used to describe a... | 0 | 2024-07-11T09:23:24 | https://dev.to/akshat_verma_190740d44992/understanding-congenital-disabilities-2i51 | ## Comprehending Congenital Impairments

Congenital disability is a term used to describe a variety of anatomical or functional abnormalities, such as metabolic problems, that develop during intrauterine life and can be detected during pregnancy, at birth, or at a later time. A child's capacity to carry out daily tasks ... | akshat_verma_190740d44992 | |

1,918,284 | The Ultimate Guide to Choosing the Best Progressive Web App Framework | Progressive Web Apps (PWAs) have completely changed how we engage with web applications due to their... | 0 | 2024-07-10T09:01:28 | https://dev.to/mikekelvin/the-ultimate-guide-to-choosing-the-best-progressive-web-app-framework-59o | pwa, webapp, mobileapp | Progressive Web Apps (PWAs) have completely changed how we engage with web applications due to their seamless cross-platform user experience. The framework used in the construction of a PWA, however, has a major impact on its success.

It's vital to select the finest PWA framework. Cross-platform compatibility, offline... | mikekelvin |

1,918,285 | Exploring the Exploit Database Platform: A Vital Resource for Cybersecurity | exploitDB | 0 | 2024-07-10T09:03:42 | https://dev.to/saramazal/exploring-the-exploit-database-platform-a-vital-resource-for-cybersecurity-jbl | cybersecurity, infosec, pentesting, webdev | ---

title: Exploring the Exploit Database Platform: A Vital Resource for Cybersecurity

published: true

description: exploitDB

tags: #cybersecurity #infosec #pentesting #webdev

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-07-10 08:59 +0000

---

— Everything You Need to Know | What is Intelligent Document Processing (IDP)? Intelligent Document Processing is a... | 0 | 2024-07-10T09:10:03 | https://dev.to/derek-compdf/intelligent-document-processing-idp-everything-you-need-to-know-cj4 | ## What is Intelligent Document Processing (IDP)?

Intelligent Document Processing is a technology that amalgamates [Artificial Intelligence (AI)](https://en.wikipedia.org/wiki/Artificial_intelligence), [Machine Learning (ML)](https://en.wikipedia.org/wiki/Machine_learning), and [Optical Character Recognition (OCR) ](ht... | derek-compdf | |

1,918,290 | Oracle to GBase 8s DBLink Configuration Guide | In a heterogeneous database environment, establishing a seamless connection between Oracle and GBase... | 0 | 2024-07-10T09:15:36 | https://dev.to/congcong/oracle-to-gbase-8s-dblink-configuration-guide-3ko6 | database | In a heterogeneous database environment, establishing a seamless connection between Oracle and GBase 8s is a critical task. DBLink provides an efficient way to connect and operate these two systems. This article will detail how to configure DBLink in an Oracle environment to connect to a GBase 8s database.

## Software... | congcong |

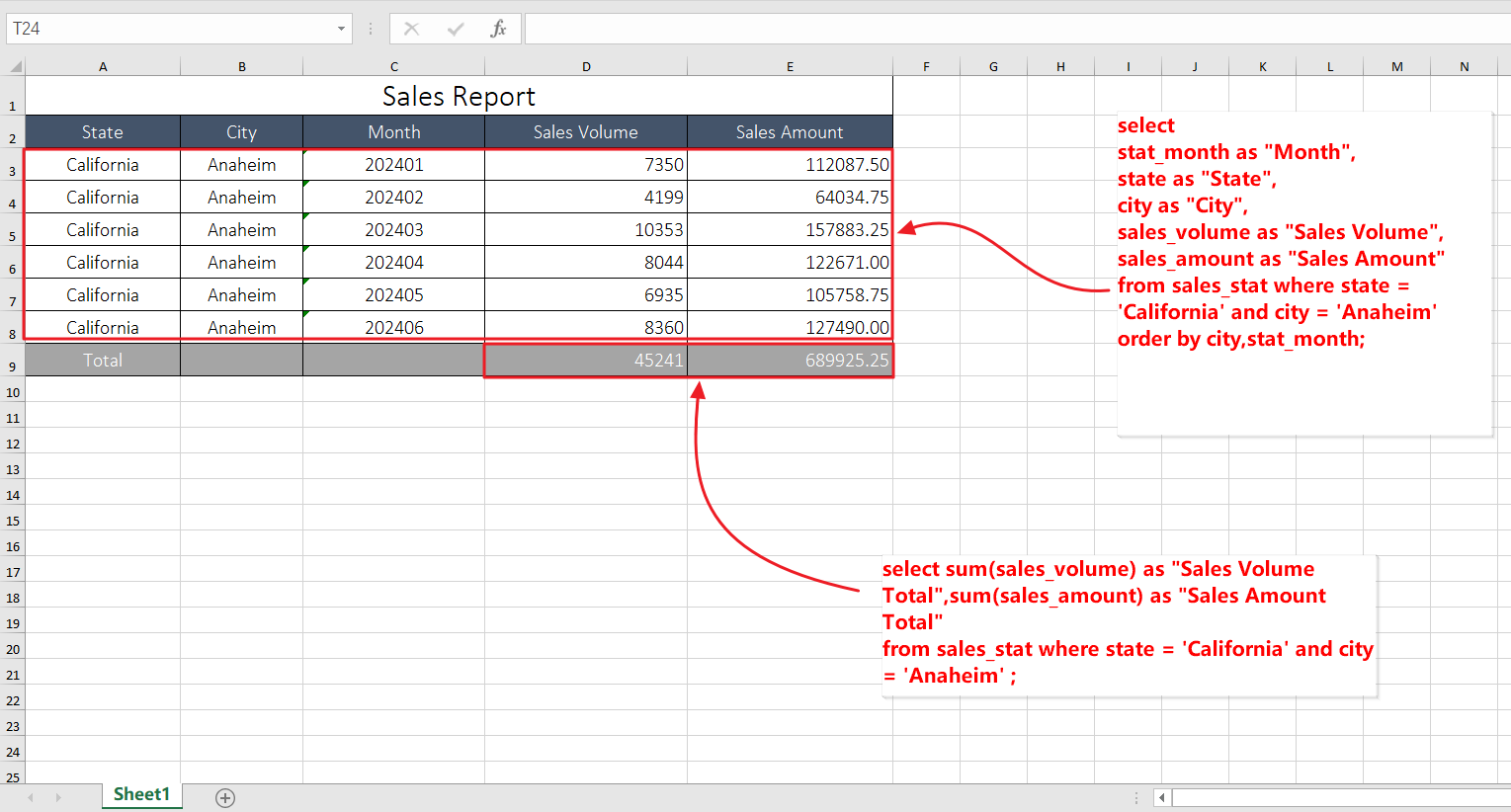

1,918,291 | How to automatically filling excel sheets with SQL query results | I need to fill the query results of some SQL statements into a table like the following every... | 0 | 2024-07-10T09:31:00 | https://dev.to/sqlman/how-to-automatically-filling-excel-sheets-with-sql-query-results-m53 | sql, excel, reportautomation | I need to fill the query results of some SQL statements into a table like the following every day.

Here's a simple way to do it.

Note: SQLMessenger2.0 installation is required before proceeding with the following ... | sqlman |

1,918,293 | Task-1: Python-Print Exercises | 1. How do you print the string “Hello, world!” to the screen? print('Question-1') print("... | 0 | 2024-07-10T09:16:43 | https://dev.to/s_dhivyabharkavi_42e8315/task-1-python-print-exercises-1lp8 | # 1. How do you print the string “Hello, world!” to the screen?

print('Question-1')

print(" Hello, world!")

print("\n")

# 2. How do you print the value of a variable name which is set to “Syed Jafer” or Your name?

print('Question-2')

value = "Syed Jafer"

print("Name : ",value)

print("\n")

# 3. How do you print the va... | s_dhivyabharkavi_42e8315 | |

1,918,295 | How does Nostra cater to gaming developers looking to publish platform games for Android on its gaming platform? | Nostra offers a dynamic gaming platform tailored to accommodate gaming developers seeking to publish... | 0 | 2024-07-10T09:18:07 | https://dev.to/claywinston/how-does-nostra-cater-to-gaming-developers-looking-to-publish-platform-games-for-android-on-its-gaming-platform-4ccl | gamedev, developers, development, mobile | [**Nostra**](https://nostra.gg/articles/Lock-Screen-Games-Are-a-Game-Changer-for-Gaming-Developers.html?utm_source=referral&utm_medium=article&utm_campaign=Nostra) offers a dynamic[ **gaming platform**](https://medium.com/@adreeshelk/learn-how-to-elevate-your-day-with-the-latest-games-on-nostra-550e9c88a5e2?utm_source=... | claywinston |

1,918,297 | Cryptocurrency Exchange Development Company | Appinop Technologies: Revolutionizing the Cryptocurrency Exchange Development Landscape In the... | 0 | 2024-07-10T09:21:12 | https://dev.to/appinoptech/cryptocurrency-exchange-development-company-17lo | Appinop Technologies: Revolutionizing the Cryptocurrency Exchange Development Landscape

In the rapidly evolving world of digital finance, cryptocurrency exchanges have emerged as pivotal platforms, facilitating the trading of digital assets with unprecedented ease and security. At the forefront of this revolution is A... | appinoptech | |

1,918,298 | Empowering Sustainability: Leveraging EU Taxonomy Data Solutions | In the dynamic realm of sustainable finance, the EU Taxonomy stands as a pivotal framework, guiding... | 0 | 2024-07-10T09:21:21 | https://dev.to/ankit_langey_3eb6c9fc0587/empowering-sustainability-leveraging-eu-taxonomy-data-solutions-i8d |

In the dynamic realm of sustainable finance, the EU Taxonomy stands as a pivotal framework, guiding investments towards activities that align with Europe’s climate and environmental goals. However, effectively navi... | ankit_langey_3eb6c9fc0587 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.