id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,917,985 | Polyester vs. Polypropylene: Which One Is the Better Choice for a Rug? | A rug is a necessary element in any home, adding a touch of elegance and style. Before you buy one,... | 0 | 2024-07-10T02:48:41 | https://dev.to/candice88771483/polyester-vs-polypropylene-which-one-is-the-better-choice-for-a-rug-3col | webdev | A rug is a necessary element in any home, adding a touch of elegance and style. Before you buy one, it's important to understand [the difference between polyester and polypropylene rugs](https://www.blikai.com/blog/components-parts/polyester-vs-polypropylene-capacitors-explained).

These two types of rugs are made from... | candice88771483 |

1,917,986 | Using Raspberry Pi 5 and Cloudflare Tunnel to run GROWI at home | GROWI, an open source wiki, can be easily installed and used by individuals. It is useful for using... | 0 | 2024-07-10T02:49:31 | https://dev.to/goofmint/using-raspberry-pi-5-and-cloudflare-tunnel-to-run-growi-at-home-2mci | ---

title: Using Raspberry Pi 5 and Cloudflare Tunnel to run GROWI at home

published: true

description:

tags:

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-07-10 02:43 +0000

---

[GROWI](https://growi.org/ja/), an open source wiki, can be easily install... | goofmint | |

1,917,987 | Good Morning Developers | A post by Aadarsh Kunwar | 0 | 2024-07-10T02:51:30 | https://dev.to/aadarshk7/good-morning-developers-2p82 | developers, devops, webdev, android | aadarshk7 | |

1,917,988 | Basic Linux Commands for Developers | Introduction Linux is one of the most popular platforms for developers due to its versatility,... | 0 | 2024-07-10T03:15:16 | https://dev.to/bitlearners/linux-commands-for-developers-34m | webdev, cli, linux, ubuntu | **Introduction**

**Linux** is one of the most popular platforms for developers due to its versatility, performance, and open-source nature. It is possible to significantly increase productivity and efficiency in development tasks by mastering Linux commands. There are several sections in this guide that cover essential... | bitlearners |

1,917,989 | How AI Enhances Digital Marketing Strategies to Achieve Goals? | Hey everyone, in today's ultra-competitive world of digital marketing, artificial intelligence (AI)... | 0 | 2024-07-10T02:57:11 | https://dev.to/juddiy/how-ai-enhances-digital-marketing-strategies-to-achieve-goals-155e | ai, marketing, seo, learning | Hey everyone, in today's ultra-competitive world of digital marketing, artificial intelligence (AI) is becoming the secret sauce for success. AI isn't just another tech tool—it's a game-changer that can significantly amp up your marketing strategies' effectiveness and results. Check out these key ways AI is shaking thi... | juddiy |

1,918,035 | Tips Lulus Ujian CPNS | Menjabat sebagai pejabat pemerintah adalah salah satu pekerjaan paling populer di Indonesia dengan... | 0 | 2024-07-10T04:02:46 | https://dev.to/lifeschool/tips-lulus-ujian-cpns-3f9n | luluscpns, cpns | Menjabat sebagai pejabat pemerintah adalah salah satu pekerjaan paling populer di Indonesia dengan jutaan pesaing. Karena menjadi karyawan bisa menjadi jaminan penghasilan akan tua. Saat mendaftar jelas banyak pesaing sehingga ujian CPNS membutuhkan keterampilan khusus.

Jawaban soal CPNS dan berbagai ujian tidak begit... | lifeschool |

1,917,990 | SSH Security Risks: Which Are the Most Common? | Overview of SSH Secure Shell (SSH) is one of the most ubiquitous protocols used today for... | 0 | 2024-07-10T03:05:24 | https://dev.to/me_priya/ssh-security-risks-which-are-the-most-common-d73 | devops, webdev, beginners, security | ## Overview of SSH

Secure Shell (SSH) is one of the most ubiquitous protocols used today for secure remote access, administration, and file transfers. It allows managing servers remotely over an encrypted connection. However, poor SSH security practices can inadvertently open doors for attackers.

While SSH itself is ... | me_priya |

1,917,992 | Log Management Utilities in Linux : Day 3 of 50 days DevOps Tools Series | Introduction Effective log management is a critical aspect of DevOps practices and in all... | 0 | 2024-07-10T03:02:58 | https://dev.to/shivam_agnihotri/log-management-utilities-in-linux-day-2-of-50-days-devops-tools-series-1l68 | linux, devops, monitoring, ubuntu | ## Introduction

Effective log management is a critical aspect of DevOps practices and in all linux related roles as well. Logs provide valuable insights into the health, performance, and security of systems and applications. They are indispensable for troubleshooting issues, monitoring activities, and ensuring complia... | shivam_agnihotri |

1,917,994 | To Build But How ? | Any Light weight LinuxOs/LinuxMint/UbuntuGui/Pi whateverOs super/lightweight for... | 0 | 2024-07-10T03:37:27 | https://dev.to/9mikese/to-build-but-how--1jdl | help | Any Light weight LinuxOs/LinuxMint/UbuntuGui/Pi whateverOs super/lightweight for {8GBRam+128SSD+Samsung-500HDD+i5-Intel+3.2Gh-IceLake(I guess)}+{650PSU+735IRI-C189-2gb-Ram With (8+32gbSSD)}??

From my Junk,I need Recommend Build or whatever's.Start Leaning...

| 9mikese |

1,917,995 | Spring Boot, React, and the Quest for SEO Supremacy | Spring Boot, React, and the Quest for SEO Supremacy In today's digital landscape, a... | 0 | 2024-07-10T03:05:26 | https://dev.to/virajlakshitha/spring-boot-react-and-the-quest-for-seo-supremacy-2k1p |

# Spring Boot, React, and the Quest for SEO Supremacy

In today's digital landscape, a visually appealing and functional web application is only half the battle won. The other half, often more challenging, is ensuring your creation r... | virajlakshitha | |

1,917,996 | 5 Tips for Using the Arrow Operator in JavaScript | JavaScript’s arrow functions, introduced in ECMAScript 6 (ES6), offer a concise syntax for writing... | 0 | 2024-07-10T03:06:18 | https://dev.to/devops_den/5-tips-for-using-the-arrow-operator-in-javascript-1ne2 | webdev, javascript, programming, tutorial | JavaScript’s arrow functions, introduced in ECMAScript 6 (ES6), offer a concise syntax for writing function expressions. The arrow operator (=>) has become a popular feature among developers for its simplicity and readability. However, mastering its nuances can help you write more efficient and cleaner code. Here are f... | devops_den |

1,917,997 | ACID: O Pilar dos Bancos de Dados Relacionais | O Que é ACID em Bancos de Dados Relacionais? Se você já trabalhou com bancos de dados... | 0 | 2024-07-10T15:05:00 | https://dev.to/marialuizaleitao/acid-o-pilar-dos-bancos-de-dados-relacionais-5g47 | datascience, sql, postgres, community | # O Que é ACID em Bancos de Dados Relacionais?

Se você já trabalhou com bancos de dados relacionais, provavelmente já se deparou com a sigla ACID. Mas o que exatamente isso significa e por que é tão importante? Vamos explorar cada componente de ACID e entender o seu papel nos sistemas de banco de dados.

### O que é ... | marialuizaleitao |

1,917,998 | How to deploy a hub virtual network in Azure. | How to create a Virtual network in Microsoft azure. | 0 | 2024-07-11T21:53:32 | https://dev.to/tundeiness/how-to-deploy-a-hub-virtual-network-in-azure-14cj | azure, virtualnetwork, segmentation, peering | ---

title: How to deploy a hub virtual network in Azure.

published: true

description: How to create a Virtual network in Microsoft azure.

tags: Azure, VirtualNetwork, segmentation, Peering

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/54l2u8cgscei3wfpyxzi.jpg

# Use a ratio of 100:42 for best res... | tundeiness |

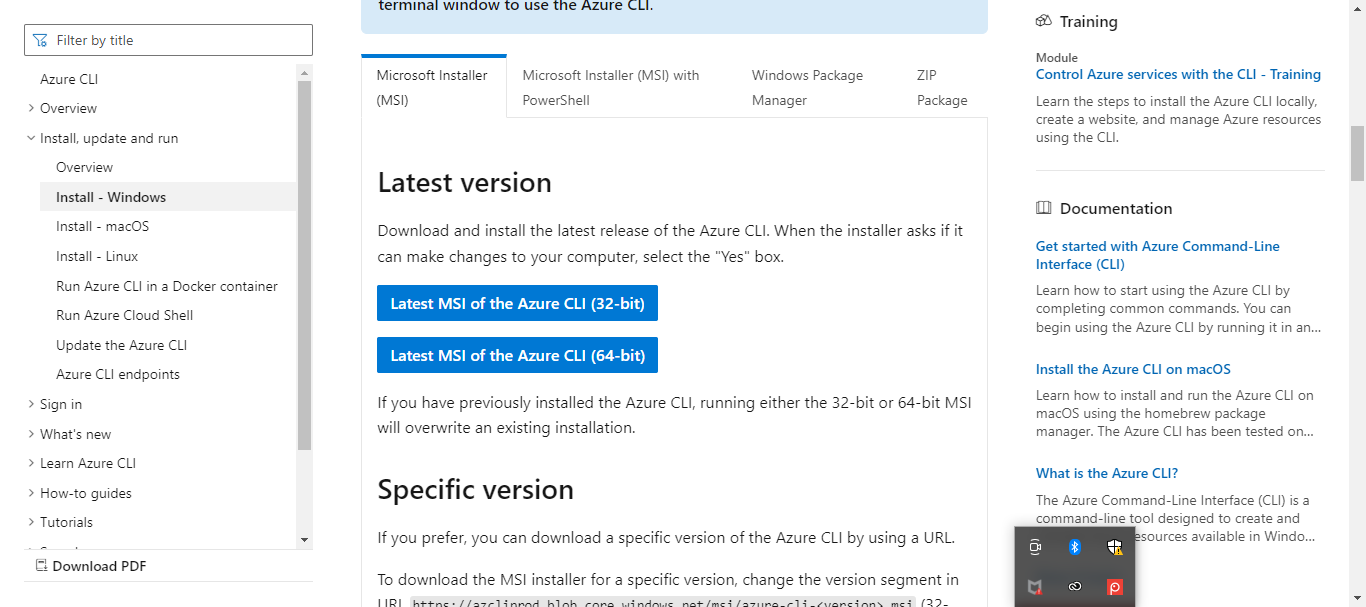

1,917,999 | Create an App Service Application & Upload content on it | Step 1: Azure Account: Ensure you have an active Azure account. Step 2: Azure CLI: Install the Azure... | 0 | 2024-07-10T03:13:02 | https://dev.to/bdporomon/create-an-app-service-application-upload-content-on-it-3adj | webdev, beginners, programming, devops | Step 1: Azure Account: Ensure you have an active Azure account.

Step 2: Azure CLI: Install the Azure CLI on your local machine. You can download and install it from here.

Step 3: Open a terminal and log in to your... | bdporomon |

1,918,021 | Matplotlib Legend Toggling Tutorial | In this lab, we will learn how to enable picking on the legend to toggle the original line on and off using Python Matplotlib. | 27,678 | 2024-07-10T03:24:38 | https://dev.to/labex/matplotlib-legend-toggling-tutorial-4lj6 | python, coding, programming, tutorial |

## Introduction

This article covers the following tech skills:

... | labby |

1,918,022 | Aula 00.py uma breve introdução | Quando começamos a estudar algo, muitas vezes queremos pular direto para os tópicos avançados e... | 0 | 2024-07-10T20:20:49 | https://dev.to/dunderpy/aula-00py-uma-breve-introducao-3pkd | python, algorithms, datastructures, tutorial | Quando começamos a estudar algo, muitas vezes queremos pular direto para os tópicos avançados e acabamos esquecendo da base. Isso ocorre em todas as áreas, principalmente na área de T.I.

Ao iniciar os estudos em programação, muitos de nós já queremos construir sistemas complexos, como os da Netflix ou da Uber, sozinho... | jeanmarinho529 |

1,918,023 | ⚡ MySecondApp - React Native with Expo (P4)- Code Layout Home Screen | ⚡ MySecondApp - React Native with Expo (P4)- Code Layout Home Screen | 28,005 | 2024-07-10T03:27:43 | https://dev.to/skipperhoa/mysecondapp-react-native-with-expo-p4-code-layout-home-screen-ofm | webdev, react, reactnative, beginners | ⚡ MySecondApp - React Native with Expo (P4)- Code Layout Home Screen

{% youtube AKBohCh-V4c %} | skipperhoa |

1,918,024 | Cybersecurity Awareness: Protecting Your Digital Life | In today's digital age, our lives are closely intertwined with the internet, but this connectivity... | 0 | 2024-07-10T03:29:24 | https://dev.to/motorbuy6/cybersecurity-awareness-protecting-your-digital-life-2m2d | In today's digital age, our lives are closely intertwined with the internet, but this connectivity also comes with significant security challenges. Protecting personal information and data security has never been more crucial. Here are some practical cybersecurity tips to help safeguard your digital life:

**Use Strong... | motorbuy6 | |

1,918,025 | NEW in web dev | Why did the two Java methods get a divorce? .. . .. . Because they had constant arguments. | 0 | 2024-07-10T03:33:26 | https://dev.to/ritesh_dev/new-in-web-dev-5c46 | Why did the two Java methods get a divorce?

..

.

..

.

Because they had constant arguments. | ritesh_dev | |

1,918,026 | Getting Data Through Using API in JavaScript. | When building web applications, making HTTP requests is a common task. There are several ways to do... | 0 | 2024-07-10T03:34:49 | https://dev.to/sudhanshu_developer/getting-data-through-using-api-in-javascript-43bl | javascript, webdev, beginners, programming | When building web applications, making HTTP requests is a common task. There are several ways to do this in JavaScript, each with its own advantages and use cases. In this post, we’ll explore four popular methods: `fetch(), axios(), $.ajax()`, and `XMLHttpRequest()`, with simple examples for each.

**1. Using fetch()**... | sudhanshu_developer |

1,918,027 | Coding timelapse Video for landing page | A coding timelapse of SaaS landing page. Uses Html, Css, JS and Tailwind Css Follow me on... | 0 | 2024-07-10T03:35:28 | https://dev.to/paul_freeman/coding-timelapse-video-for-landing-page-6lo | programming, html, timelapse, coding | A coding timelapse of SaaS landing page.

Uses Html, Css, JS and Tailwind Css

{% embed https://www.youtube.com/watch?v=gKwI3bqO5Cg %}

Follow me on [github for opensource](https://github.com/PaulleDemon) | paul_freeman |

1,918,028 | Unlocking the Future: The Power of React AI SDK in 2024 | Embrace the future of AI in React development with Sista AI. Join the revolution today! 🚀 | 0 | 2024-07-10T03:45:35 | https://dev.to/sista-ai/unlocking-the-future-the-power-of-react-ai-sdk-in-2024-2658 | ai, react, javascript, typescript | <h2>The Evolution of AI in React Development</h2><p>In 2024, the React landscape is witnessing a remarkable evolution, with AI tools playing a pivotal role. The integration of cutting-edge AI technologies like the <a href='https://smart.sista.ai/?utm_source=sista_blog&utm_medium=blog_post&utm_campaign=Unlocking_the_Fut... | sista-ai |

1,918,031 | Pacman 2g disposable | In the ever-evolving landscape of digital marketing, having access to robust analytical tools is... | 0 | 2024-07-10T03:54:43 | https://dev.to/toolszen08/pacman-2g-disposable-253g | In the ever-evolving landscape of digital marketing, having access to robust analytical tools is crucial for businesses aiming to stay ahead of the competition. Toolszen Analytics is a leading platform that provides a comprehensive organic overview, offering valuable insights to enhance your online presence and drive m... | toolszen08 | |

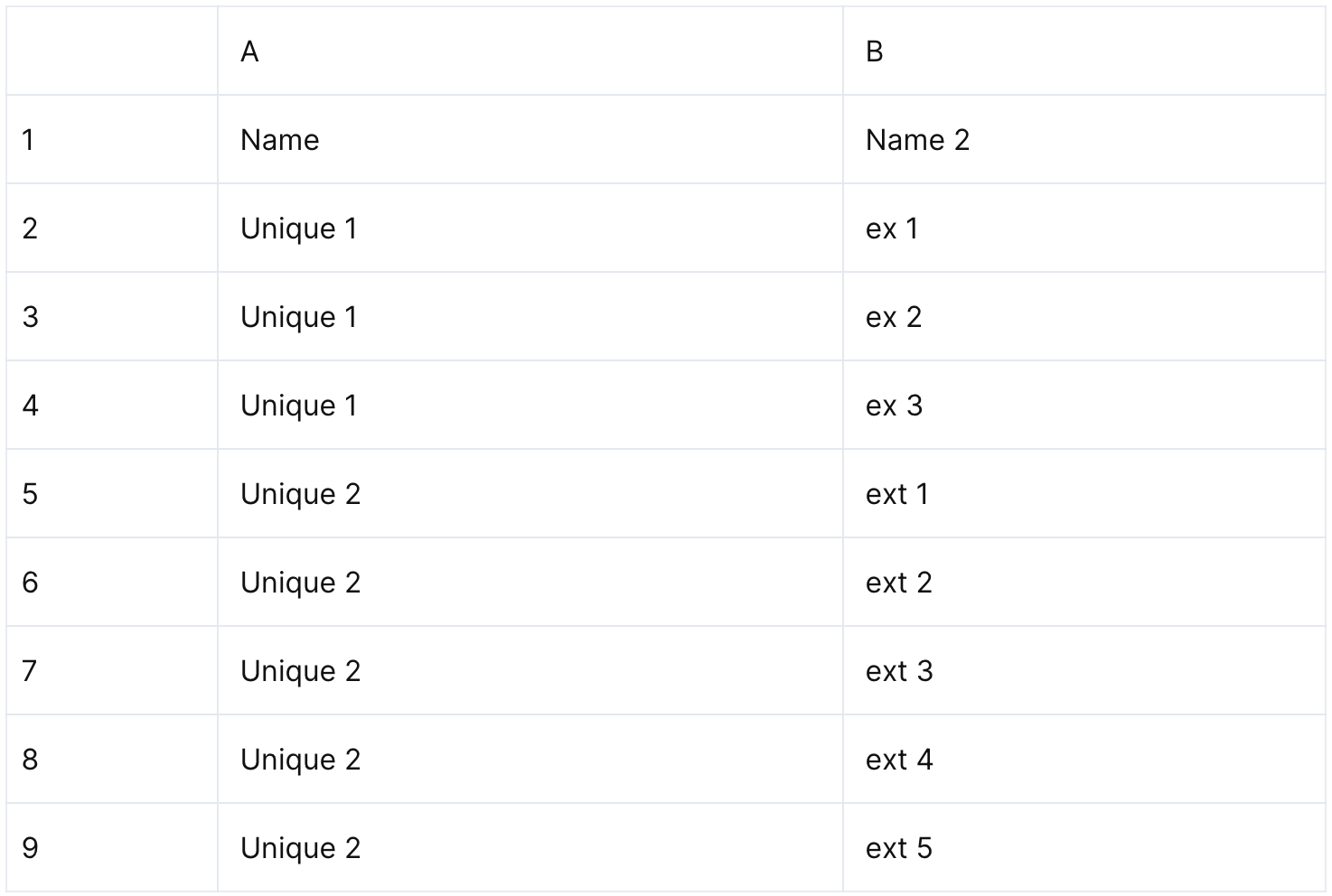

1,918,032 | Group Rows and Concatenate Cell Values | Problem description & analysis: Here is a categorized detail table: We need to group the... | 0 | 2024-07-10T03:57:58 | https://dev.to/judith677/group-rows-and-concatenate-cell-values-475n | programming, beginners, tutorial, productivity | **Problem description & analysis**:

Here is a categorized detail table:

We need to group the table and concatenate the detail data using the semicolon.

We need to group the table and concatenate the detail data using the semicolon.

Docker collection — a bunch of quirky projects that will have you laughing, scratching your head, and maybe even questioning your life choices. Let's dive into some of the weirdest yet strangely satisfyin... | gregmiaritis |

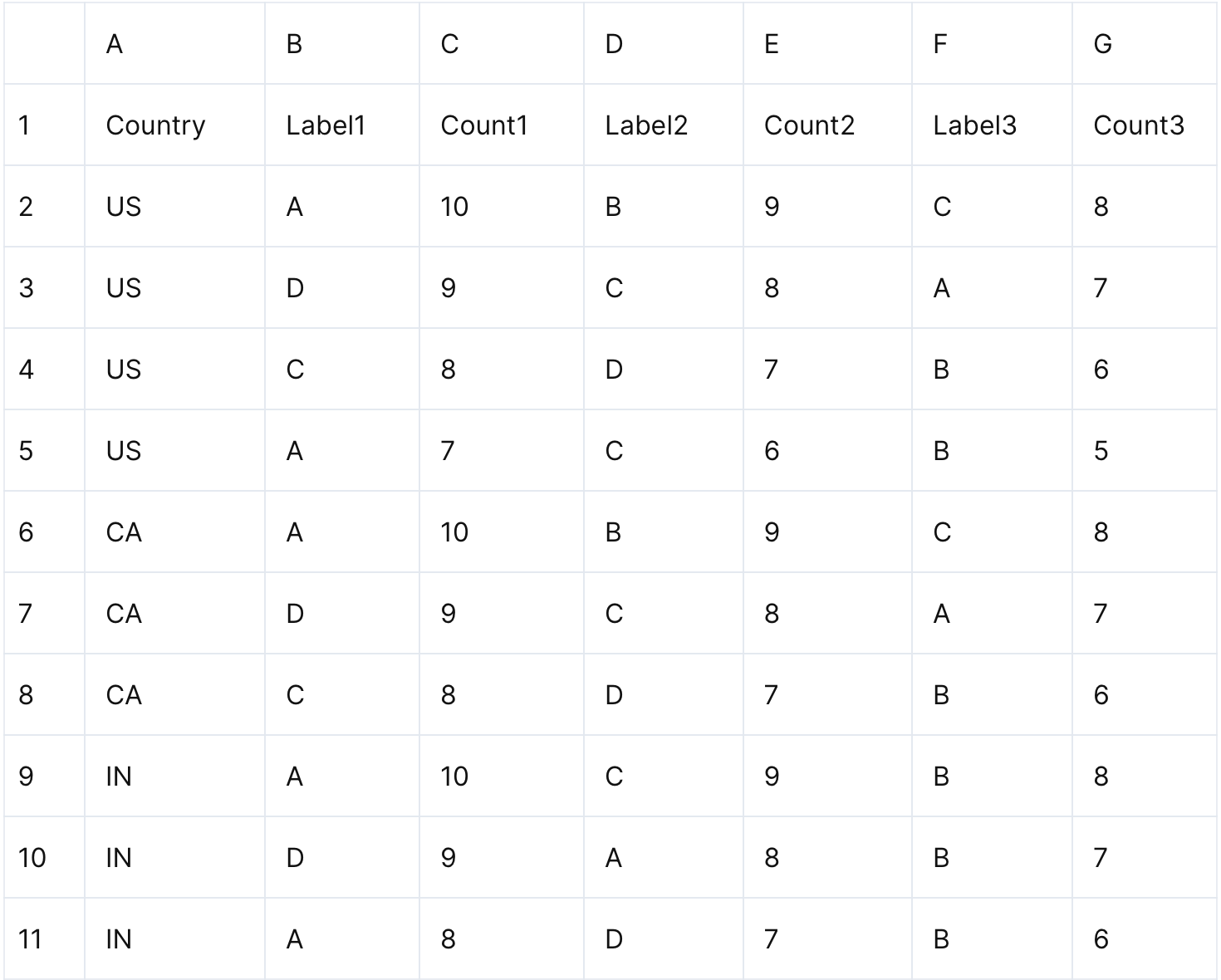

1,918,036 | Summarize Data in Every Two Columns under Each Category | Problem description & analysis: In the Excel table below, column A contains categories and there... | 0 | 2024-07-10T04:03:50 | https://dev.to/judith677/summarize-data-in-every-two-columns-under-each-category-12gg | programming, beginners, tutorial, productivity | **Problem description & analysis**:

In the Excel table below, column A contains categories and there are 2N key-value formatted columns after it:

We need to group rows by the category and the key and perform sum on ... | judith677 |

1,918,037 | Free and Open-Source Database Management GUI Tools | Free and Open-Source Alternatives to TablePlus and DataGrip for Database... | 0 | 2024-07-10T04:05:30 | https://dev.to/sh20raj/free-and-open-source-alternatives-to-tableplus-and-datagrip-for-database-management-1di4 | databse, tableplus, datagrip, free | ### Free and Open-Source Alternatives to TablePlus and DataGrip for Database Management

Managing databases efficiently is crucial for developers and data engineers. While TablePlus and DataGrip are popular tools, there are several free and open-source alternatives that provide robust functionality. Here's a comprehens... | sh20raj |

1,918,038 | CSGO Betting | The Importance Of Crosshair Placement In CS2 Crosshair placement isn't just about aiming; it’s about... | 0 | 2024-07-10T04:05:43 | https://dev.to/toolszen08/csgo-betting-1opj | The Importance Of Crosshair Placement In CS2

Crosshair placement isn't just about aiming; it’s about anticipation, method, and positioning. By maintaining your crosshair at head level and pre-aiming not unusual enemy positions, you could advantage a vast benefit in engagements. However, accomplishing optimum crosshair... | toolszen08 | |

1,918,039 | Axios | Read the code slowly and follow the information flow and information format as needed, as it... | 0 | 2024-07-10T04:59:35 | https://dev.to/l_thomas_7c618d0460a87887/axios-ndn | webdev, javascript, node, axios | `Read the code slowly and follow the information flow and information format as needed, as it changes`

## Overview

Axios is a popular JavaScript library used for making HTTP requests from both the browser and Node.js. It is an open-source project designed to simplify the process of sending asynchronous HTTP requests t... | l_thomas_7c618d0460a87887 |

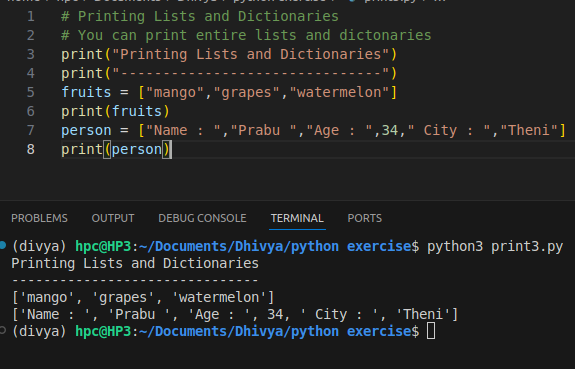

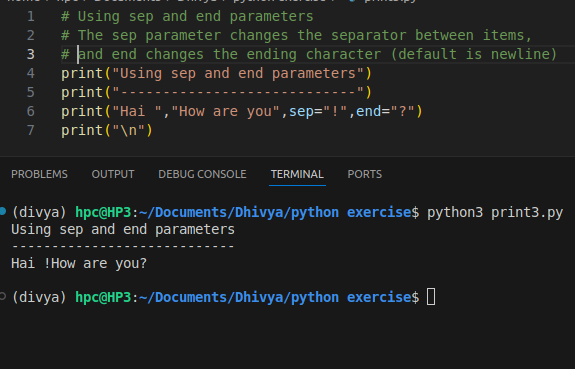

1,918,041 | Print function 2 | Printing Lists and Dictionaries Using sep and end parameters Multiple strings with triple... | 0 | 2024-07-10T04:10:41 | https://dev.to/s_dhivyabharkavi_42e8315/print-function-2-28pi | 11. Printing Lists and Dictionaries

12. Using sep and end parameters

13. Multiple strings with triple quotes... | s_dhivyabharkavi_42e8315 | |

1,918,042 | The Best Gym Equipment for Weight Loss | When it comes to weight loss, the right gym equipment can make a significant difference in achieving... | 0 | 2024-07-10T04:16:05 | https://dev.to/rabia_saeed_bb3c5aa1f61ef/the-best-gym-equipment-for-weight-loss-3fc2 | When it comes to weight loss, the right gym equipment can make a significant difference in achieving your goals. Choosing the right equipment not only helps you burn calories effectively but also ensures a comprehensive workout that targets all muscle groups. In this article, we'll explore the [best gym equipment](http... | rabia_saeed_bb3c5aa1f61ef | |

1,918,043 | The Gold Soldering Machine | A Unused Time in Accuracy Soldering Within the quickly progressing world of fabricating, exactness... | 0 | 2024-07-10T04:16:20 | https://dev.to/lasermarking_machine_6722/the-alpha-laser-soldering-machine-1b8e | A Unused Time in Accuracy Soldering

Within the quickly progressing world of fabricating, exactness and productivity are key to remaining competitive. The [Alpha Laser Soldering Machine](https://www.laser-marking-ma... | lasermarking_machine_6722 | |

1,918,044 | Tailwind CSS: Utility-First Framework | Introduction: Tailwind CSS is a rapidly growing front-end development framework that has gained... | 0 | 2024-07-10T04:17:35 | https://dev.to/tailwine/tailwind-css-utility-first-framework-115 | Introduction:

Tailwind CSS is a rapidly growing front-end development framework that has gained immense popularity in recent years due to its unique approach and utility-first methodology. It offers a unique way of building user interfaces by providing a set of highly customizable utility classes that can easily be ap... | tailwine | |

1,918,047 | Networking Opportunities: Corporate Events in Bangalore that Foster Connections | In today's fast-paced corporate world, networking is more crucial than ever. Corporate events offer a... | 0 | 2024-07-10T04:22:41 | https://dev.to/g_unitevents_fec58e5f4e6/networking-opportunities-corporate-events-in-bangalore-that-foster-connections-22n | corporateevent, eventmanagement | In today's fast-paced corporate world, networking is more crucial than ever. Corporate events offer a unique opportunity to forge new connections, strengthen existing relationships, and build a robust professional network. Bangalore, a bustling hub of innovation and enterprise, is home to some of the best corporate eve... | g_unitevents_fec58e5f4e6 |

1,918,048 | The All-New display Property. | Starting with Chrome 115, there are multiple values for the CSS display property. display: flex... | 0 | 2024-07-10T04:22:58 | https://dev.to/manojgohel/the-all-new-display-property-3572 | css, html, webdev, javascript | Starting with Chrome 115, there are multiple values for the CSS `display` property. `display: flex` becomes `display: block flex` and `display: block` becomes `display: block flow`. The single values you know are now considered legacy but are kept in the Browsers for backward compatibility.

# Why is it long overdue?

... | manojgohel |

1,918,172 | Discover the Advantages of Trading with ABTCOIN | In a recent speech, Coinbase CEO Brian Armstrong expressed a positive outlook on cryptocurrencies,... | 0 | 2024-07-10T07:12:06 | https://dev.to/abtcoin/discover-the-advantages-of-trading-with-abtcoin-12an | In a recent speech, Coinbase CEO Brian Armstrong expressed a positive outlook on cryptocurrencies, emphasizing that cryptocurrencies are here to stay and expressing optimism about their future prospects. As a leading figure in the cryptocurrency industry, Armstrong's views have garnered widespread attention and discuss... | abtcoin | |

1,918,049 | Unlocking the Power of CAPI Surveys in Dubai, Abu Dhabi and across UAE. | CAPI surveys offer an effective Software for gathering insights into consumer behaviors as well as... | 0 | 2024-07-10T04:27:55 | https://dev.to/aafiya_69fc1bb0667f65d8d8/unlocking-the-power-of-capi-surveys-in-dubai-abu-dhabi-and-across-uae-4def | software, development, uae, capi | CAPI surveys offer an effective Software for gathering insights into consumer behaviors as well as informing strategic decisions across Dubai, Abu Dhabi and throughout the United Arab Emirates (UAE). From [Household Interview surveys](https://tektronixllc.ae/capi-tool-surveys-saudi-arabia-uae-qatar/) and roadside Inter... | aafiya_69fc1bb0667f65d8d8 |

1,918,050 | Design a High Availability System: Everything on Availability of System | Designing for High Availability High availability is a critical aspect of system design,... | 0 | 2024-07-10T04:27:56 | https://dev.to/zeeshanali0704/designing-for-high-availability-1o3 | systemdesign, systemdesignwithzeeshanali, learning, design | # Designing for High Availability

High availability is a critical aspect of system design, ensuring that a system remains operational and accessible to users, even in the face of failures. It is typically expressed as a percentage of uptime over a given period. For example, a system with 99.9% availability is expected... | zeeshanali0704 |

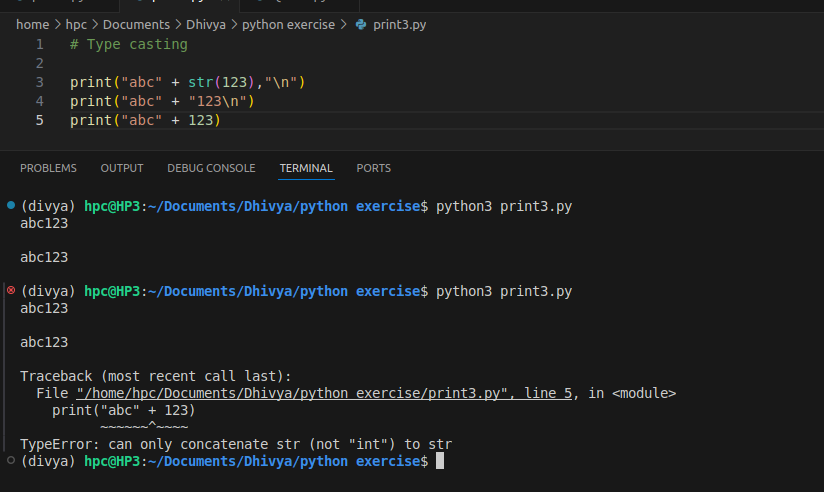

1,918,051 | Type casting | A post by S Dhivya Bharkavi | 0 | 2024-07-10T04:28:04 | https://dev.to/s_dhivyabharkavi_42e8315/type-casting-hd1 | programming, parottasalna |

| s_dhivyabharkavi_42e8315 |

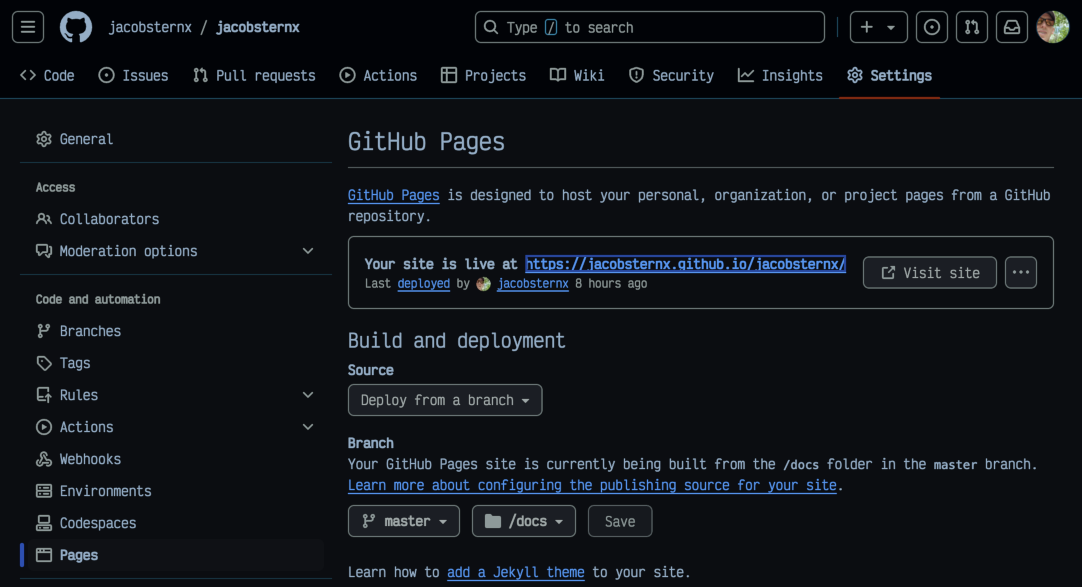

1,918,052 | Day 9 of 100 Days of Code | Tue, July 9, 2024 Today's lesson including configuring GitHub Pages went smoothly, including... | 0 | 2024-07-10T06:59:56 | https://dev.to/jacobsternx/day-9-of-100-days-of-code-1af3 | 100daysofcode, webdev, javascript, beginners | Tue, July 9, 2024

Today's lesson including configuring GitHub Pages went smoothly, including implementing GitHub's Pages and documentation features.

While Codecademy CSS lessons stand out for their breadth and dept... | jacobsternx |

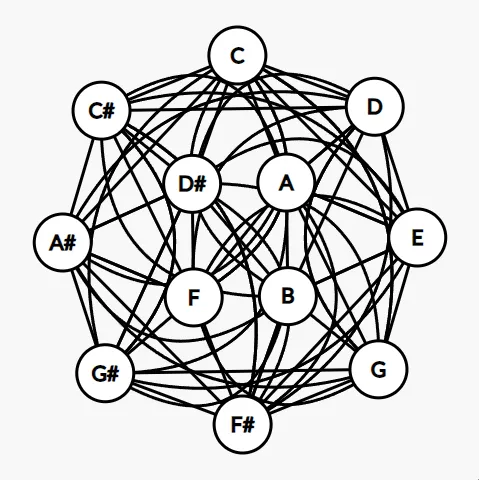

1,918,053 | Abordando grafos através das escalas musicais | Abordando grafos através das escalas musicais Como desenvolvedor de software e também... | 0 | 2024-07-10T04:30:05 | https://dev.to/magnojunior07/abordando-grafos-atraves-das-escalas-musicais-1fmj | java, datastructures, algorithms | ## Abordando grafos através das escalas musicais

Como desenvolvedor de software e também amante de música, embora não toque nenhum instrumento, mas com um raso conhecimento em teoria musical. Resolvi unificar essas... | magnojunior07 |

1,918,054 | Unlock Your Algorithm Superpowers with this Incredible Course! 🚀 | Comprehensive course on algorithm design and analysis techniques, taught by experienced faculty from IIT Madras. Develop strong problem-solving skills for careers in computer science and software engineering. | 27,844 | 2024-07-10T04:30:50 | https://dev.to/getvm/unlock-your-algorithm-superpowers-with-this-incredible-course-4a7j | getvm, programming, freetutorial, universitycourses |

Hey there, fellow tech enthusiasts! 👋 Are you looking to level up your problem-solving skills and become a master of algorithm design? Well, I've got the perfect resource for you - the "Design and Analysis of Algorithms" course from IIT Madras.

**. | paystubsplanet | |

1,918,057 | paystubsplanet | Creating accurate paystubs from PayStubs Planet. Simply input your employee's details and earnings,... | 0 | 2024-07-10T04:39:21 | https://dev.to/paystubsplanet/paystubsplanet-4pol | Creating accurate paystubs from PayStubs Planet. Simply input your employee's details and earnings, and our intuitive platform will instantly generate professional paystubs. Say goodbye to manual calculations and errors – streamline your payroll process with **[paystub generator](https://paystubsplanet.com/)**. | paystubsplanet | |

1,918,058 | Performance Enhancement: The Power of Injector Test Stands | Expediting Your Car's Performance using Injector Test Stands Do you want to stop driving a car that... | 0 | 2024-07-10T04:45:59 | https://dev.to/grace_allanqjahsh_8feb27/performance-enhancement-the-power-of-injector-test-stands-4lc1 | Expediting Your Car's Performance using Injector Test Stands

Do you want to stop driving a car that feels boring? Do you ever wish that there was some magical way to make the car look new again? If you relate to the above, then welcome to injector test stands! So we introduced trailblazing tools like a portable ECU pr... | grace_allanqjahsh_8feb27 | |

1,918,059 | The Most Rated Top 10 AI Writing Tools of 2024 | Excited to share my latest article on Medium: The Most Rated Top 10 AI Writing Tools of 2024. 🌟... | 0 | 2024-07-10T04:46:51 | https://dev.to/its_jasonai/the-most-rated-top-10-ai-writing-tools-of-2024-5cg7 | writing, ai, productivity, contentwriting | Excited to share my latest article on Medium: The Most Rated Top 10 AI Writing Tools of 2024. 🌟

Whether you're a professional writer, a content creator, or just someone looking to boost your writing game, these AI tools are game-changers!

What you can expect:

🔹 Most rated AI Writing Tools

🔹 AI Writing Tools that ... | its_jasonai |

1,918,060 | 🌟 Are You Learning Basic Java? This Repository is Here to Help! 🌟 | https://github.com/aadarshk7/Core-Java-Programs Java #Programming #StudentResources... | 0 | 2024-07-10T04:52:06 | https://dev.to/aadarshk7/are-you-learning-basic-java-this-repository-is-here-to-help-fb | https://github.com/aadarshk7/Core-Java-Programs

#Java #Programming #StudentResources #LearnJava #GitHub #Coding #Education #JavaProgramming | aadarshk7 | |

1,918,061 | Deploying Django website to Vercel | Deploying a Django website to Vercel is a smart move for getting small web applications up and... | 0 | 2024-07-10T05:36:15 | https://dev.to/paul_freeman/deploying-django-website-to-vercel-19ed | django, vercel, cloud | Deploying a Django website to Vercel is a smart move for getting small web applications up and running quickly. Vercel is known for its simplicity. With Vercel, you get benefits like automatic SSL, serverless functions, and a globally distributed CDN etc.

This guide will walk you through the steps to deploy your Djang... | paul_freeman |

1,918,062 | The Ultimate Guide: How to Check Laravel Version | Introduction to Laravel and its Versioning System As a seasoned human writer, I understand the... | 0 | 2024-07-10T04:54:04 | https://dev.to/apptagsolution/the-ultimate-guide-how-to-check-laravel-version-5h8g | check, laravel, version | Introduction to Laravel and its Versioning System

As a seasoned human writer, I understand the importance of staying up-to-date with the latest technologies and frameworks in the ever-evolving world of web development. One such framework that has gained immense popularity in recent years is Laravel, a powerful and vers... | apptagsolution |

1,918,063 | costaricamarriage | Planning a wedding in some place other than your home can be stressful. Costa Rica Marriage Law Firm... | 0 | 2024-07-10T04:56:33 | https://dev.to/costaricamarriage/costaricamarriage-k3k | Planning a wedding in some place other than your home can be stressful. Costa Rica Marriage Law Firm provides affordable **[Costa Rica wedding packages](https://www.costaricamarriage.com/weddingpackages.html)** prices and will also take care of your all wedding needs. Contact us today!

a few weeks ago and I'm working on OpenStack Horizon.

I've been working with Cinder, which is Block Storage on OpenStack.

I've been spending my time learning how to write unit tests on the OpenStack Horizon project... | ndutared |

1,918,065 | How to implement Daily Database Backups in Laravel 11 | Note: In laravel 11, kernel.php is no longer present and these are handled through the... | 0 | 2024-07-10T04:58:35 | https://dev.to/arvindegiz/how-to-implement-daily-database-backups-in-laravel-11-2e10 | **Note: In laravel 11, kernel.php is no longer present and these are handled through the bootstrap/app.php file.**

Regular backups are essential for maintaining the integrity of your Laravel application and safeguarding against unexpected events like data loss, system failures, or malicious attacks. In this blog post,... | arvindegiz | |

1,918,066 | The Future of Bathrooms: Smart Toilets by Chaozhou Duxin | Smart Toilets; A Bathroom Update The idea of having a smart toilet is getting popular in toilets all... | 0 | 2024-07-10T04:59:46 | https://dev.to/grace_allanqjahsh_8feb27/the-future-of-bathrooms-smart-toilets-by-chaozhou-duxin-4511 | Smart Toilets; A Bathroom Update

The idea of having a smart toilet is getting popular in toilets all over the world. From there HEGII designs state-of-the-art features designed to make your bathroom experience in general, more enjoyable and effective. Chaozhou Duxin:One of the leading in smar toilet technology. In the... | grace_allanqjahsh_8feb27 | |

1,918,067 | Examine the Causes and Solutions to Source Code Plagiarism | Source code plagiarism is a common issue in the world of programming. It happens when someone copies... | 0 | 2024-07-10T05:07:32 | https://dev.to/codequiry/examine-the-causes-and-solutions-to-source-code-plagiarism-4dlb | sourcecodeplagiarism, codeplagiarism, codequiry, codeplagiarismchecker | Source code plagiarism is a common issue in the world of programming. It happens when someone copies another person's code and presents it as their work. This can be a big problem in schools, where students might feel pressured to cheat to get good grades. It also affects professional developers, where the originality ... | codequiry |

1,918,068 | Condition Coverage: Enhancing Software Testing with Detailed Coverage Metrics | In software testing, achieving thorough test coverage is critical for ensuring the quality and... | 0 | 2024-07-10T05:07:33 | https://dev.to/keploy/condition-coverage-enhancing-software-testing-with-detailed-coverage-metrics-2k5f | opensource, testing, api, github |

In software testing, achieving thorough test coverage is critical for ensuring the quality and reliability of an application. One of the key metrics used to measure test coverage is condition coverage. [Condition co... | keploy |

1,918,070 | The 10 Most Impactful Trends in Full Stack Development to Embrace in 2024 | Full stack development is necessary because companies want developers who can work with all kinds of... | 0 | 2024-07-10T05:15:36 | https://dev.to/dhruvil_joshi14/the-10-most-impactful-trends-in-full-stack-development-to-embrace-in-2024-51j6 | fullstack, fullstackdevelopment, trendsinfullstack, softwaredevelopment | Full stack development is necessary because companies want developers who can work with all kinds of technology stacks. This article talks about some important full-stack development trends that will be known in the future. Businesses are especially interested in web and mobile app development because they change quick... | dhruvil_joshi14 |

1,918,072 | React inside Ember - The Second Chapter | A year ago, I wrote an article here about invoking React components from Ember where I outlined an... | 0 | 2024-07-12T07:21:49 | https://dev.to/rajasegar/react-inside-ember-the-second-chapter-17bl | react, ember, javascript, webdev | A year ago, I wrote an article here about invoking React components from Ember where I outlined an approach of rendering React components inside Ember templates or components using a complex and sophisticated mechanism.

{% embed https://dev.to/rajasegar/invoking-react-components-from-your-ember-apps-3fgg %}

After tr... | rajasegar |

1,918,103 | The Power of Gaze Estimation: Transforming Technology and Beyond | Unlocking the Potential of Your Gaze Have you ever considered the power behind your gaze?... | 27,673 | 2024-07-10T05:25:02 | https://dev.to/rapidinnovation/the-power-of-gaze-estimation-transforming-technology-and-beyond-29o3 | ## Unlocking the Potential of Your Gaze

Have you ever considered the power behind your gaze? Imagine harnessing it to

revolutionize how we interact with technology, improve healthcare, and reshape

marketing strategies. This isn't about mere eye contact; it's about

transforming your sight into a dynamic tool that bridg... | rapidinnovation | |

1,918,104 | Harnessing the Power of AI: Enhancing Salesforce Marketing Cloud with Einstein for Data-Driven Results | In today's competitive marketplace, data-driven marketing is no longer a luxury—it's a necessity.... | 0 | 2024-07-10T05:25:34 | https://dev.to/keval_padia/harnessing-the-power-of-ai-enhancing-salesforce-marketing-cloud-with-einstein-for-data-driven-results-17mm | ai | In today's competitive marketplace, data-driven marketing is no longer a luxury—it's a necessity. Businesses that leverage advanced technologies to understand and engage with their customers have a significant edge over their competitors. Salesforce Marketing Cloud, already a powerful tool for marketers, becomes even m... | keval_padia |

1,918,125 | Unlocking Financial Mastery with AI Financial Navigator 4.0 | Back in 2018, Cillian Miller began refining an artificial intelligence trading system built upon the... | 0 | 2024-07-10T06:10:17 | https://dev.to/navigator4/unlocking-financial-mastery-with-ai-financial-navigator-40-3n6b | Back in 2018, Cillian Miller began refining an artificial intelligence trading system built upon the robust framework of quantitative trading. As scholars, tech savants, and experts rallied under his leadership, the DB Wealth Institute birthed 'AI Financial Navigator 1.0'. This system enhanced the quirks of quantitativ... | navigator4 | |

1,918,105 | Microsoft ost to pst converter software | Stella Microsoft ost to pst converter software is the wonderful software to convert all ost mailbox... | 0 | 2024-07-10T05:25:48 | https://dev.to/albert_luies_579f18e1a893/microsoft-ost-to-pst-converter-software-9ja | Stella [Microsoft ost to pst converter software](https://www.stelladatarecovery.com/convert-ost-to-pst.html) is the wonderful software to convert all ost mailbox items in to pst file this software is convert all corrupted and unmounted ost mailbox items in to pst file this software is support all version 32bit and 64bi... | albert_luies_579f18e1a893 | |

1,918,106 | LeetCode Day30 Dynamic Programming Part 3 | 0-1 Bag Problem Description of the topic Ming is a scientist who needs to attend an... | 0 | 2024-07-10T05:28:05 | https://dev.to/flame_chan_llll/leetcode-day30-dynamic-programming-part-3-4ma8 | leetcode, java, algorithms | # 0-1 Bag Problem

Description of the topic

Ming is a scientist who needs to attend an important international scientific conference to present his latest research. He needs to bring some research materials with him, but he has limited space in his suitcase. These research materials include experimental equipment, lit... | flame_chan_llll |

1,918,107 | How Infrastructure Monitoring Can Prevent a Cyber Attack | In today's digital age, where data breaches and cyber threats pose major risks to businesses,... | 0 | 2024-07-10T06:54:43 | https://dev.to/ila_bandhiya/how-infrastructure-monitoring-can-prevent-a-cyber-attack-35hl | devops, cybersecurity, monitoring, eventdriven |

In today's digital age, where data breaches and cyber threats pose major risks to businesses, proactive cybersecurity measures are more needed than ever. One of the most effective defenses gaining prominence is infrastructure monitoring. Let’s explore the pivotal role of infrastructure monitoring in preemptively thwar... | ila_bandhiya |

1,918,109 | Git Commands for Software Engineers | A key function of Git is to manage version control and collaborate on software development, making it... | 0 | 2024-07-10T05:39:42 | https://dev.to/bitlearners/git-commands-for-software-engineers-m8k | github, git, development, website | A key function of Git is to manage version control and collaborate on software development, making it a must-have tool for software engineers. Whether you're a beginner or an experienced developer, mastering Git commands will help you manage codebases, track changes, and contribute to projects seamlessly. You will lear... | bitlearners |

1,918,111 | 10 Innovative Generative AI Applications in Action | Quick Summary:-Discover the top innovative generative AI applications in 2024. As we explore these... | 0 | 2024-07-10T05:41:46 | https://dev.to/vikas_brilworks/10-innovative-generative-ai-applications-in-action-1a7l | gpt3 | **Quick Summary:-**Discover the top innovative generative AI applications in 2024. As we explore these insights, we will explore how generative AI is reshaping the landscape of content generation.

Generative AI has been grabbing more attention than any other technology over the last two years. More than 70% of content... | vikas_brilworks |

1,918,112 | Mobile App Development Firms in New York: What to Look For | New York City, with its vibrant tech ecosystem and entrepreneurial spirit, is home to a plethora of... | 0 | 2024-07-10T05:43:56 | https://dev.to/gauravsingh15x/mobile-app-development-firms-in-new-york-what-to-look-for-3im | webdev, softwaredevelopment, appdevelopmentcompany, appdevelopmentnyc | New York City, with its vibrant tech ecosystem and entrepreneurial spirit, is home to a plethora of mobile app development firms. These firms cater to a wide range of needs, from creating sleek, user-friendly interfaces to integrating advanced functionalities. If you’re considering developing a mobile app, understandin... | gauravsingh15x |

1,918,113 | How effective is Joint Genesis in relieving joint pain? | try official product How effective is Joint Genesis in relieving joint pain? Joint Genesis is a... | 0 | 2024-07-10T05:45:50 | https://dev.to/tryofficialproduct/how-effective-is-joint-genesis-in-relieving-joint-pain-3ef |

try official product

How effective is [**Joint Genesis**](https://tryofficialproduct.com/) in relieving joint pain?

Joint Genesis is a unique approach to joint health that finally addresses what growing research now suggests is the origins of age-related joint decay: the loss of hyaluronan as you get older.

are security mechanisms that are designed to control the flow of people into and out of secure zones. They have fast-moving barriers which open and close quickly permitting authorized people to enter while blocking unauthorized access.

**... | aafiya_69fc1bb0667f65d8d8 |

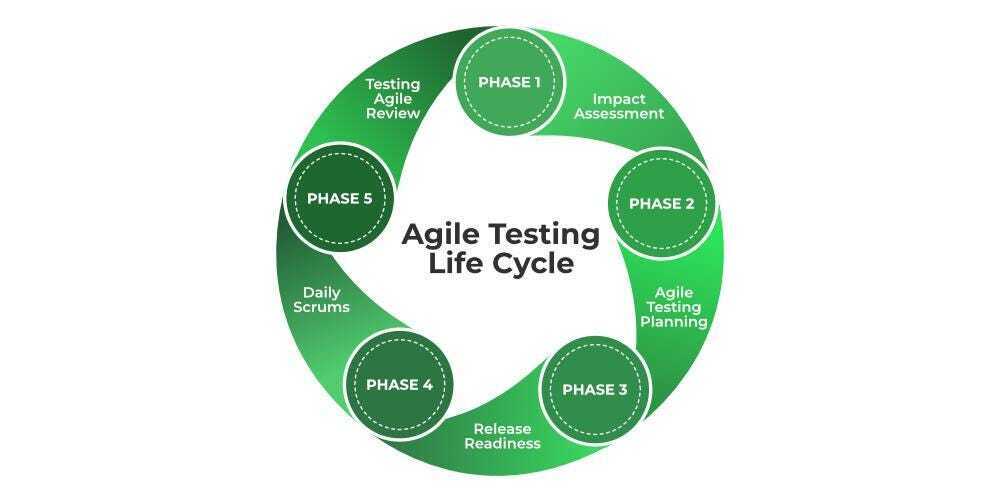

1,918,129 | Designing An Integration Testing Strategy For Agile: Best Practices And Guidelines | Designing An Integration Testing Strategy For Agile: Best Practices And Guidelines In the Agile... | 0 | 2024-07-10T06:13:47 | https://www.letsdiskuss.com/post/designing-an-integration-testing-strategy-for-agile:-best-practices-and-guidelines | integration, strategy |

Designing An Integration Testing Strategy For Agile: Best Practices And Guidelines

In the Agile method for developing software, testing how different parts work together is crucial. It makes sure that all pieces fit... | rohitbhandari102 |

1,918,130 | ABTCOIN Leads the Future of Cryptocurrency Trading | ABTCOIN Leads the Future of Cryptocurrency Trading The integration of AI and cryptocurrency in the... | 0 | 2024-07-10T06:16:17 | https://dev.to/barrierreefbulletin/abtcoin-leads-the-future-of-cryptocurrency-trading-2oia | abtcoin | **ABTCOIN Leads the Future of Cryptocurrency Trading**

The integration of AI and cryptocurrency in the financial sector has sparked widespread attention, bringing new opportunities and challenges to investors and market participants. AI technology leverages big data analysis and machine learning algorithms to reveal pa... | barrierreefbulletin |

1,918,140 | Как увеличить конверсию сайта с помощью UX/UI-дизайна | UX/UI-дизайн играет ключевую роль в увеличении конверсии сайта. Вот несколько способов улучшить... | 0 | 2024-07-10T06:20:25 | https://dev.to/cosmoweb2024/kak-uvielichit-konviersiiu-saita-s-pomoshchiu-uxui-dizaina-59m8 | webdev, ux, ui, programming |

UX/UI-дизайн играет ключевую роль в увеличении конверсии сайта. Вот несколько способов улучшить пользовательский опыт (UX) и интерфейс (UI) для повышения конверсии.

1. Простая навигация

Создайте интуитивно понятну... | cosmoweb2024 |

1,918,141 | Wise Systems - Make every last mile the best one yet | The Wise Systems high-performing AI-driven engine is designed to meet your toughest deliveries with... | 0 | 2024-07-10T06:21:00 | https://dev.to/wise-systems/wise-systems-make-every-last-mile-the-best-one-yet-3g64 | logistics, mobile, drivers, fleet | The Wise Systems high-performing AI-driven engine is designed to meet your toughest deliveries with efficiency, analytics, and a pleasing interface. Now is the time to transform your fleet for driver satisfaction, increased utilization of all parties, and of course, perfect deliveries. Make every last mile the best one... | wise-systems |

1,918,142 | How to Improve Your Skills as a Web Developer | As technology evolves at a rapid pace, staying ahead in the world of web development requires... | 0 | 2024-07-10T06:22:56 | https://dev.to/iamnotusama/how-to-improve-your-skills-as-a-web-developer-2382 | webdev, beginners, productivity | As technology evolves at a rapid pace, staying ahead in the world of web development requires continuous learning and skill enhancement. Whether you're just starting or looking to level up, here are some effective strategies to boost your skills:

**Stay Curious and Keep Learning:** The web development landscape is vas... | iamnotusama |

1,918,143 | document review lawyer | best legal firm | law firm | Secure legal guidance on contracts, documents, and more. Our expert lawyers provide affordable online... | 0 | 2024-07-10T06:23:00 | https://dev.to/ankur_kumar_1ee04b081cdf3/document-review-lawyer-best-legal-firm-law-firm-4kon | Secure legal guidance on contracts, documents, and more. Our expert lawyers provide affordable online consultations to review, draft, and negotiate agreements tailored to your needs. Get the legal support you require, on your schedule.

Contact us: - 8800788535

Email us: - care@leadind... | ankur_kumar_1ee04b081cdf3 | |

1,918,144 | Transform Your Manufacturing: Boost Quality Control by 25% with Cloud Analytics | Are you ready to revolutionize your manufacturing process? Imagine improving your quality control by... | 0 | 2024-07-10T06:25:31 | https://dev.to/himadripatelace/transform-your-manufacturing-boost-quality-control-by-25-with-cloud-analytics-55ai | cloud, analytics | Are you ready to revolutionize your manufacturing process? Imagine improving your quality control by 25% with just one powerful tool. Cloud-based analytics is here to make that happen. Let’s dive into how you can harness this technology to elevate your manufacturing game.

🔍 **Real-Time Insights** Wave goodbye to dela... | himadripatelace |

1,918,146 | Web Application Firewall (WAF): Safeguarding Your Web Applications | In today's digital age, with the increasing activities of businesses and individuals on the internet,... | 0 | 2024-07-10T06:28:44 | https://dev.to/motorbuy6/web-application-firewall-waf-safeguarding-your-web-applications-o35 | In today's digital age, with the increasing activities of businesses and individuals on the internet, cybersecurity has become more crucial than ever. Among the essential tools for protecting web applications from various cyber threats, the Web Application Firewall (WAF) stands out for its effectiveness and importance.... | motorbuy6 | |

1,918,147 | draft contract | best legal firm | law firm | Get expert legal advice online. Our contract lawyers review documents, draft agreements, and provide... | 0 | 2024-07-10T06:30:29 | https://dev.to/ankur_kumar_1ee04b081cdf3/draft-contract-best-legal-firm-law-firm-5ddf | Get expert legal advice online. Our contract lawyers review documents, draft agreements, and provide personalized guidance to protect your interests. Affordable, confidential consultations - start your case now.

Contact us: - 8800788535

Email us: - care@leadindia.law

Website: -

https:/... | ankur_kumar_1ee04b081cdf3 | |

1,918,148 | Experience Luxury: The Best All-Inclusive Resorts in Paris, France | Paris, the City of Light, is known for its romance, culture, and history. While it might not be the... | 0 | 2024-07-10T06:31:06 | https://dev.to/booktrip/experience-luxury-the-best-all-inclusive-resorts-in-paris-france-472i | resort, luxuries, paris, france | Paris, the City of Light, is known for its romance, culture, and history. While it might not be the first place you think of for an [all-inclusive resort experience in Paris, france](https://www.booktrip4u.com/blog/all-inclusive-hotels-in-paris-france) and its surroundings offer a surprising array of luxurious accommod... | booktrip |

1,918,149 | Understanding Commercial Land: A Guide to Investing and Development | Commercial land represents a significant opportunity for investors and developers. Unlike residential... | 0 | 2024-07-10T06:36:55 | https://dev.to/james_anderson_377748444b/understanding-commercial-land-a-guide-to-investing-and-development-4d5b | real, realestate | Commercial land represents a significant opportunity for investors and developers. Unlike residential real estate, commercial land is used for business purposes, including retail, office spaces, industrial complexes, and more. This blog will provide an educational overview of commercial land, its benefits, key consider... | james_anderson_377748444b |

1,918,157 | The Great Fire Company: Leading the Charge in Modern Fire Safety | Revolutionizing Fire Protection with Innovation and Expertise In a rapidly evolving world, the... | 0 | 2024-07-10T06:52:00 | https://dev.to/thegreatfire/the-great-fire-company-leading-the-charge-in-modern-fire-safety-159c | Revolutionizing Fire Protection with Innovation and Expertise

In a rapidly evolving world, the importance of advanced fire safety measures cannot be overstated. The Great Fire Company stands at the forefront of this critical industry, delivering state-of-the-art solutions that protect lives and property. This article e... | thegreatfire | |

1,918,151 | FREE online courses with certification | Microsoft is offering FREE online courses with certification. No Payment Required! 10 Microsoft... | 0 | 2024-07-10T06:43:39 | https://dev.to/msnmongare/free-online-courses-with-certification-3k23 | microsoft, learning, beginners, programming | Microsoft is offering FREE online courses with certification.

No Payment Required!

10 Microsoft courses you DO NOT want to miss ⬇️

1. Python for Beginners

🔗 https://lnkd.in/drQMQsBK

2. Introduction to Machine Learning with Python

🔗 https://lnkd.in/dg3Kh6ZN

3. Microsoft Azure AI Fundamentals

🔗 https://lnkd.in... | msnmongare |

1,918,152 | Engineering blogs | Engineering at Meta - https://lnkd.in/e8tiSkEv Google Research - https://ai.googleblog.com/ Google... | 0 | 2024-07-10T06:44:33 | https://dev.to/msnmongare/engineering-blogs-2pio | beginners, programming, productivity | - Engineering at Meta - https://lnkd.in/e8tiSkEv

- Google Research - https://ai.googleblog.com/

- Google Cloud Blog - https://lnkd.in/enNviCF8

- AWS Architecture Blog - https://lnkd.in/eEchKJif

- All Things Distributed - https://lnkd.in/emXaQDaS

- The Nextflix Tech Blog - https://lnkd.in/efPuR39b

- LinkedIn Engineering... | msnmongare |

1,918,153 | 9 Considerations While Choosing the Best QuickBooks Desktop Cloud Hosting Service | QuickBooks is the leader in accounting software solutions worldwide. However, combined with the power... | 0 | 2024-07-10T06:44:51 | https://dev.to/him_tyagi/9-considerations-while-choosing-the-best-quickbooks-desktop-cloud-hosting-service-3ag5 | webdev, javascript, beginners, programming | QuickBooks is the leader in accounting software solutions worldwide. However, combined with the power of cloud computing, it becomes even more robust and versatile. Having realized this, most organizations worldwide have started planning for cloud migration.

But before they can reap the benefits of QuickBooks Cloud, ... | him_tyagi |

1,918,155 | Korelasi antara Black Box Testing, TDD dan BDD | Black Box Testing Black box testing adalah metode pengujian perangkat lunak di mana... | 0 | 2024-07-10T06:47:20 | https://dev.to/triasbrata/korelasi-antara-black-box-testing-tdd-dan-bdd-16ed | testing, development, qa | ## Black Box Testing

Black box testing adalah metode pengujian perangkat lunak di mana penguji tidak mengetahui struktur internal atau implementasi dari aplikasi yang diuji. Fokusnya adalah pada input dan output dari sistem, bukan bagaimana sistem tersebut bekerja di dalamnya. Pengujian ini bertujuan untuk memvalidasi ... | triasbrata |

1,918,156 | Automated Regression Testing: Unveiling The Benefits Of Software Testing Efficiency | In the domain of software development, it is crucial to make sure that software products are... | 0 | 2024-07-10T06:50:56 | https://frasesdebuenosdias.com/automated-regression-testing-unveiling-the-benefits-of-software-testing-efficiency/ | automated, regression, testing |

In the domain of software development, it is crucial to make sure that software products are reliable and of high quality. An essential way for achieving this goal is the use of automated tests that check previous p... | rohitbhandari102 |

1,918,158 | AI Financial Navigator 4.0: Revolutionizing Investment Strategies | AI Financial Navigator 4.0: Revolutionizing Investment Strategies Back in 2018, Cillian Miller began... | 0 | 2024-07-10T06:52:11 | https://dev.to/sydneyskylinenews/ai-financial-navigator-40-revolutionizing-investment-strategies-3359 | aifinancialnavigator | **AI Financial Navigator 4.0: Revolutionizing Investment Strategies**

Back in 2018, Cillian Miller began refining an artificial intelligence trading system built upon the robust framework of quantitative trading. As scholars, tech savants, and experts rallied under his leadership, the DB Wealth Institute birthed 'AI Fi... | sydneyskylinenews |

1,918,161 | Design Patterns in C#: Modern and Easy Singletons | In this video, we dive deep into design patterns in programming with a focus on the Singleton Design... | 0 | 2024-07-10T06:58:00 | https://dev.to/turalsuleymani/design-patterns-in-c-modern-and-easy-singletons-1bj | designpatterns, csharp, dotnet, tutorial | In this video, we dive deep into design patterns in programming with a focus on the Singleton Design Pattern in C#. Understanding design patterns is crucial for writing clean code and adhering to design principles. The Singleton Pattern, one of the most popular Gang of Four design patterns, ensures a class has only one... | turalsuleymani |

1,918,162 | The Power of Black Technology: Revolutionizing Industries Worldwide | The Magic of High-Tech Modern technology is all about getting great-smartergreat-greater than... | 0 | 2024-07-10T06:58:39 | https://dev.to/imcandika_bfmvqnah_9be0/the-power-of-black-technology-revolutionizing-industries-worldwide-433l | The Magic of High-Tech

Modern technology is all about getting great-smartergreat-greater than before. It helps us in many ways. Well, let us find out how it can ease our lives and make them more enjoyable.

A double edged sword of modern technology

Advanced technology: Technology is no doubt can do so many smart idea... | imcandika_bfmvqnah_9be0 | |

1,918,163 | Bridging Buyers and Sellers: The Role of Market-Making Bots | Imagine you're at a bustling farmers' market, and there are stalls everywhere. Now, think of each... | 0 | 2024-07-10T06:59:27 | https://dev.to/elena_marie_dad5c9d5d5706/bridging-buyers-and-sellers-the-role-of-market-making-bots-27kj | marketmakingbot, cryptomarketmakingbot |

Imagine you're at a bustling farmers' market, and there are stalls everywhere. Now, think of each stall as a cryptocurrency exchange, and the fruits and vegetables are different cryptocurrencies like Bitcoin, Ethereum, and so on.

A **market-making bot** that will be developed by Crypto Market Making Bot Development C... | elena_marie_dad5c9d5d5706 |

1,918,164 | Property-Based Testing: Ensuring Robust Software with Comprehensive Test Scenarios | Property-based testing is a powerful testing methodology that allows developers to automatically... | 0 | 2024-07-10T06:59:59 | https://dev.to/keploy/property-based-testing-ensuring-robust-software-with-comprehensive-test-scenarios-m5o | webdev, javascript, beginners, tutorial |

Property-based testing is a powerful testing methodology that allows developers to automatically generate and test a wide range of input data against specified properties of the software under test. Unlike tradition... | keploy |

1,918,166 | Demystifying the AI Black Box: Anthropic’s Breakthrough in Understanding AI | Anthropic AI Black Box: Large language models (LLMs) have taken the world by storm, churning out... | 0 | 2024-07-10T07:06:14 | https://dev.to/hyscaler/demystifying-the-ai-black-box-anthropics-breakthrough-in-understanding-ai-4nd4 | Anthropic AI Black Box: Large language models (LLMs) have taken the world by storm, churning out everything from captivating poems to realistic code. But for all their impressive feats, these marvels of AI have remained shrouded in secrecy. Until now.

## Frontier AI: Pushing the Boundaries

Imagine the cutting edge of... | amulyakumar |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.