id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,921,538 | Why BelleoFX is Gaining Popularity Among Forex Traders in Dubai | Dubai has firmly established itself as a global hub for Forex trading, attracting savvy investors... | 0 | 2024-07-12T18:52:30 | https://dev.to/alexsam70/why-belleofx-is-gaining-popularity-among-forex-traders-in-dubai-53b4 | belleofx, belleofxdubai, forextrading | Dubai has firmly established itself as a global hub for Forex trading, attracting savvy investors from around the world. The city’s robust regulatory framework, world-class infrastructure, and thriving financial ecosystem make it an ideal environment for traders. Within this dynamic landscape, one platform that has bee... | alexsam70 |

1,921,539 | 跨境电商自动筛选,跨境营销获客系统,跨境拉群机器人 | 跨境电商自动筛选,跨境营销获客系统,跨境拉群机器人 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T18:55:39 | https://dev.to/ckex_gygh_e2e5dc330e1a3e8/kua-jing-dian-shang-zi-dong-shai-xuan-kua-jing-ying-xiao-huo-ke-xi-tong-kua-jing-la-qun-ji-qi-ren-4f6 |

跨境电商自动筛选,跨境营销获客系统,跨境拉群机器人

了解相关软件请登录 http://www.vst.tw

跨境电商自动筛选技术的革新与应用

随着全球经济的快速发展,跨境电商已经成为连接不同国家和地区消费者与商品供应的重要桥梁。然而,跨境电商面临的一个关键挑战是如何有效地筛选和管理海量的商品信息,以确保消费者能够快速找到符合其需求的产品。在这一背景下,自动筛选技术的应用显得尤为重要和前景广阔。

自动筛选技术的定义与发展

自动筛选技术是利用人工智能(AI)和大数据分析等先进技术,通过算法和模型自动化地处理和分析大量的数据,从中提取出符合特定条件的信息。在跨境电商中,这种技术被广泛应用于商品信息的筛选、分类和... | ckex_gygh_e2e5dc330e1a3e8 | |

1,921,540 | Product Hunt Launch Checklist | Product Hunt Launch Checklist is a comprehensive & actionable resource to prepare for the Product... | 0 | 2024-07-12T18:55:55 | https://dev.to/oleksandr_veremeyenko_1c0/product-hunt-launch-checklist-2ji1 | marketing, launch | Product Hunt Launch Checklist is a comprehensive & actionable resource to prepare for the Product Hunt launch. It's packed with 12 planning checklists, tips, guides, resources, tools and community.

Available for Airtable, Google Sheets, and Notion.

You'll get access to

✅ 200+ actionable tips

✅ All tips can be fil... | oleksandr_veremeyenko_1c0 |

1,921,543 | Живая музыка на свадьбе в Москве: 7 вещей, о которых вам стоит знать | Во время подготовки к свадьбе все, что касается музыки и развлечений, не кажется чем-то... | 0 | 2024-07-12T19:00:38 | https://dev.to/sevencode/zhivaia-muzyka-na-svadbie-v-moskvie-7-vieshchiei-o-kotorykh-vam-stoit-znat-59gn | Во время подготовки к свадьбе все, что касается музыки и развлечений, не кажется чем-то проблематичным: стоит найти и заказать музыкантов самостоятельно или согласиться с выбором свадебного координатора. Но всегда нужно учитывать человеческий фактор. Есть несколько простых советов, которые помогут избежать проблем и на... | sevencode | |

1,916,996 | Bluggle Medical Conference | Investment education is crucial in understanding and navigating various financial markets. Whether... | 0 | 2024-07-09T09:03:32 | https://dev.to/bluggle_conference_8de38f/bluggle-medical-conference-1lea | beginners, learning, news, discuss | Investment education is crucial in understanding and navigating various financial markets. Whether you're an experienced investor or just starting out, understanding the intricacies of different markets, including Forex trading, share markets, real estate, bonds, and mutual funds, can open doors to significant financia... | bluggle_conference_8de38f |

1,919,904 | Tiered Storage in Kafka - Summary from Uber's Technology Blog | Uber's technology blog published an article, Introduction to Kafka Tiered Storage at Uber, aiming to... | 0 | 2024-07-11T15:34:07 | https://dev.to/bochaoli95/tiered-storage-in-kafka-summary-from-ubers-technology-blog-40cg | java, design, microservices | Uber's technology blog published an article, [Introduction to Kafka Tiered Storage at Uber](https://www.uber.com/en-CA/blog/kafka-tiered-storage/?uclick_id=7c8a35b7-10b1-455b-b590-58270ec69aba), aiming to maximize data retention with fewer Kafka brokers and less memory. This allows for longer message retention times ac... | bochaoli95 |

1,920,938 | Safety When working on or near Electrical installation | Section1 - Welcome and Introduction This course provides basic information to perform... | 0 | 2024-07-12T10:28:52 | https://dev.to/sureshlearnspython/safety-when-working-on-or-near-electrical-installation-4lgi | ## **<u>Section1 - Welcome and Introduction</u>**

- This course provides basic information to perform safely when working on or near electrical installations

- This is based on German and European regulations and standards including DGUV regulation, DIN VDE 0105-100, EN 50110-1 and other relevant standards

## **<u>Se... | sureshlearnspython | |

470,409 | Fixing AWS MFA Entity Already Exists error | I'll explain in this post how to fix AWS MFA Entity Already Exists error. For the sake of this post... | 0 | 2024-07-17T02:50:12 | https://dev.to/vsrnth/fixing-aws-mfa-entity-already-exists-error-1b6h | aws, mfa |

I'll explain in this post how to fix AWS MFA Entity Already Exists error.

For the sake of this post I'm assuming you have the requisite IAM permissions to carry out the below commands.

What we are trying to do is list the all virtual mfa devices and then delete the defective/conflictive mfa devices. Deleting the de... | vsrnth |

708,469 | Building and deploying a Slack app with Python, Bolt, and AWS Amplify | Slack apps and bots are a handy way to automate and streamline repetitive tasks. Slack's Bolt... | 0 | 2024-07-15T14:21:04 | https://dev.to/siegerts/building-and-deploying-a-slack-app-with-python-bolt-and-aws-amplify-2b57 | awsamplify, python, aws, slackbot |

Slack apps and bots are a handy way to automate and streamline repetitive tasks. Slack's [Bolt](https://api.slack.com/start/building/bolt-python) framework consolidates the different mechanisms to capture and interact with Slack events into a single library.

From the [Slack Bolt documentation](https://api.slack.com/... | siegerts |

1,134,211 | Modernizing Emails: Innovations for Efficient Handling in Distributed Systems | Since the beginning of digital businesses, one of the main channels of communication between the... | 0 | 2024-07-16T22:29:58 | https://dev.to/kristijankanalas/modernizing-emails-innovations-for-efficient-handling-in-distributed-systems-47g | php, architecture, microservices, webdev | Since the beginning of digital businesses, one of the main channels of communication between the business and a client was, and still is, email. Email is a technology that is over 50 years old, and that's one of the reasons for the wide variety of mail clients that render HTML differently from each other — Outlook, I'm... | kristijankanalas |

1,143,047 | Partition with given difference | Given an array ‘ARR’, partition it into two subsets (possibly empty) such that their union is the... | 18,730 | 2024-07-14T13:44:24 | https://dev.to/prashantrmishra/partition-with-given-difference-5ek | java, programming, algorithms, dp |

Given an array ‘ARR’, partition it into two subsets (possibly empty) such that their union is the original array. Let the sum of the elements of these two subsets be ‘S1’ and ‘S2’.

Given a difference ‘D’, count the number of partitions in which ‘S1’ is greater than or equal to ‘S2’ and the difference between ‘S1’ and... | prashantrmishra |

1,143,082 | On Code Style Guides | A short post about why code style guides matter, and an easy way to create one. Why Code... | 0 | 2024-07-13T19:52:13 | https://dev.to/downtherabbithole/on-code-style-guides-1804 | A short post about why code style guides matter, and an easy way to create one.

## Why Code Style Guides?

A coherent code style makes code easier to read because it reduces visual noise and cognitive load. For example, take these two ways to present the same math question:

```

|\ |\ ... | downtherabbithole | |

1,205,362 | Java 8 features | Java 8 New Features: Lambda Expression Stream API Default and static methods in... | 18,765 | 2024-07-14T14:34:40 | https://dev.to/prashantrmishra/java-8-features-2c01 | ## Java 8 New Features:

1. Lambda Expression

2. Stream API

3. Default and static methods in Interfaces

4. Functional interfaces

5. Optional class

6. New Date and Time API

7. Functional Programming & Why Functional Programming?

8. Method reference, Constructor reference

## Collections:

Collection => Main interface

Li... | prashantrmishra | |

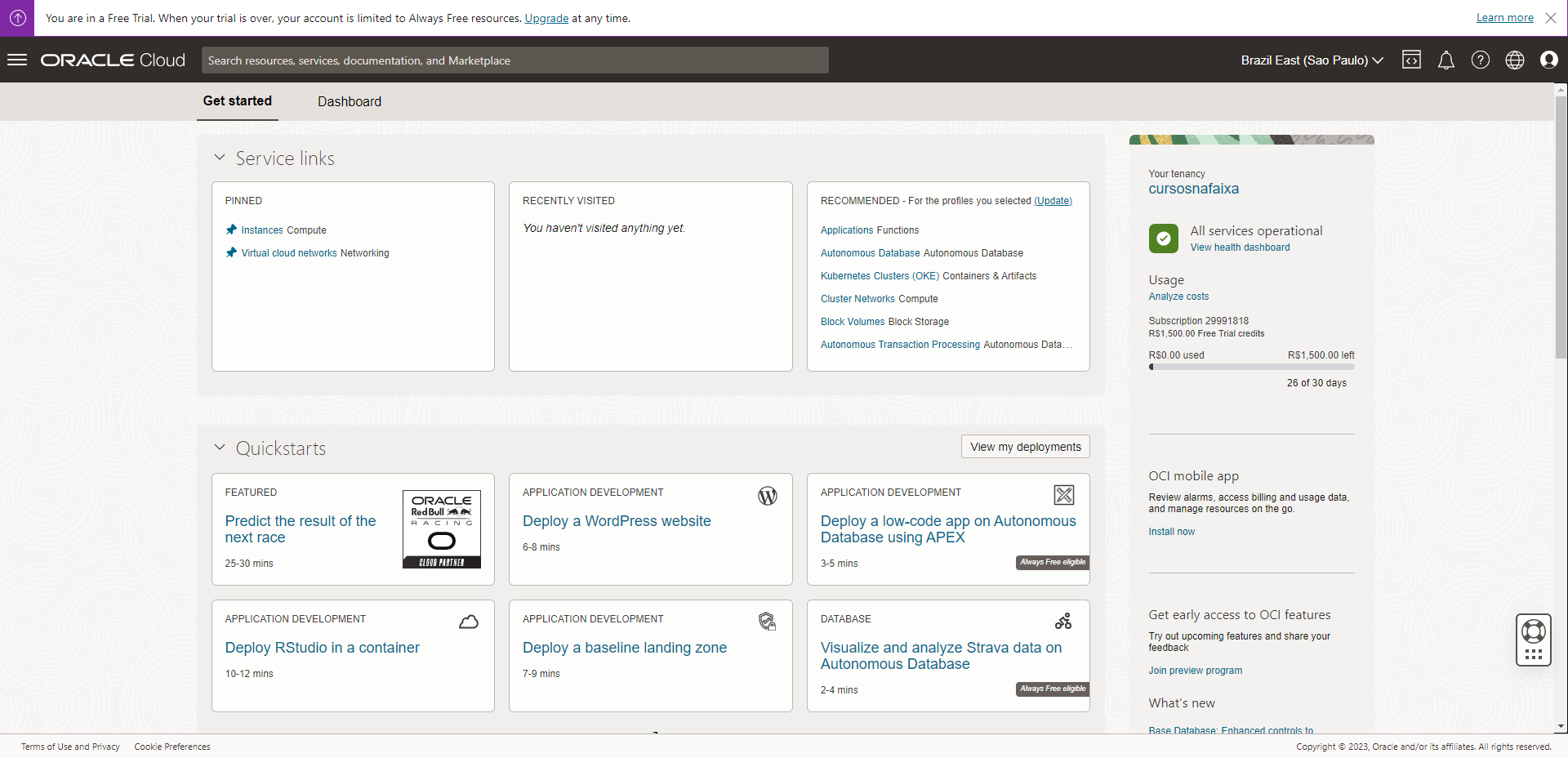

1,479,709 | Aproveitando todo o potencial do Oracle Always Free | Introdução Muitas vezes vejo estudantes e programadores procurando um lugar para hospedar... | 0 | 2024-07-17T17:06:32 | https://dev.to/matheusalejandro/aproveitando-todo-o-potencial-do-oracle-always-free-5e5d | oracle, programming, tutorial, braziliandevs | ##Introdução

Muitas vezes vejo estudantes e programadores procurando um lugar para hospedar seus projetos, buscando uma boa opção. E isso sempre traz um tradeoff entre uma plataforma simples e bem limitada ou uma plataforma mais completa, porém paga.

Pensando nisso e conhecendo um pouco sobre a Oracle, meu objetivo é a... | matheusalejandro |

1,479,723 | Criando sua conta no Oracle Cloud | Introdução Para poder aproveitar tudo que a Oracle oferece, tanto grátis quanto para o... | 0 | 2024-07-17T17:07:23 | https://dev.to/matheusalejandro/criando-sua-conta-no-oracle-cloud-17ka | oracle, programming, braziliandevs, tutorial | ##Introdução

Para poder aproveitar tudo que a Oracle oferece, tanto grátis quanto para o período de testes, você precisa primeiramente criar a sua conta no Oracle Cloud.

Então, vamos começar por ai com um passo a passo bem simples sobre como fazer isso.

O primeiro lugar que você deve acessar é a [página oficial](https... | matheusalejandro |

1,482,235 | Remix Authentication with Amazon Cognito | Introduction Remix is a powerful React-based web framework, and it offers a lot of... | 0 | 2024-07-17T22:24:06 | https://dev.to/slamflipstrom/remix-authentication-with-amazon-cognito-ool | ## Introduction

[Remix](https://remix.run/) is a powerful React-based web framework, and it offers a lot of benefits to developers and users alike. There are trade-offs of course, and the framework's small ecosystem and philosophy of remaining unopinionated can make it difficult to know where to turn when implementing... | slamflipstrom | |

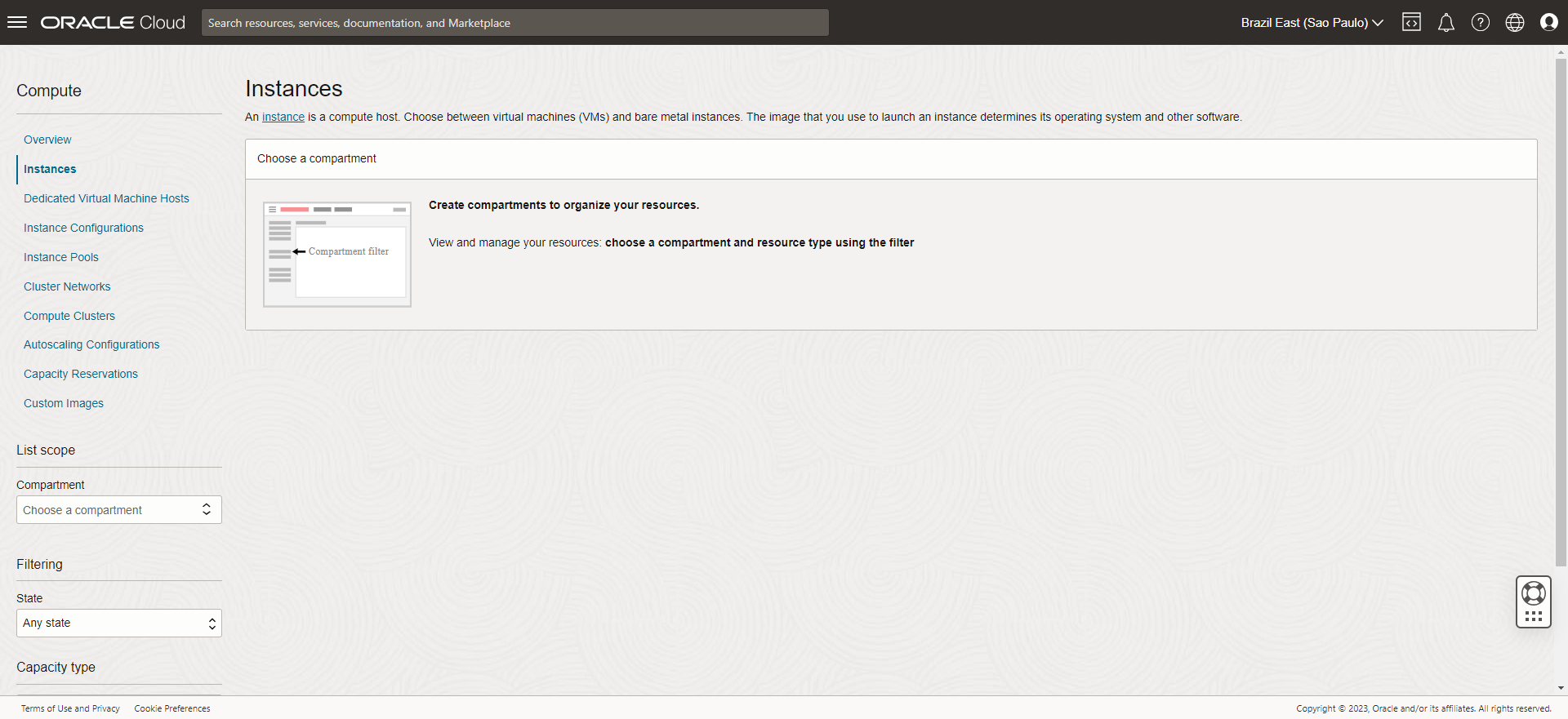

1,509,802 | Criando e acessando uma instância com um servidor web | Criando a nova instância Shape é o único disponivel Always Free. Imagem tem... | 0 | 2024-07-17T17:08:02 | https://dev.to/matheusalejandro/criando-e-acessando-uma-instancia-com-um-servidor-web-45p3 | oracle, programming, tutorial, braziliandevs | ## Criando a nova instância ##

using the database.

```

ข้อผิดพลาดนี้เกิดขึ้นเมื่อมีผู้ใช้หรือโปรแกรมอื่นกำลังเชื่อมต่อกับฐานข้อมูลที่คุณพยายามเข้าถึง ต่อไปนี้ค... | iconnext | |

1,613,271 | Calling All Developers! Contribute to golly: Empower the Go Community Together 🚀 | Introduction: Hey there, Dev.to community! Are you passionate about Go programming and looking for a... | 0 | 2024-07-16T15:20:09 | https://dev.to/adi73/calling-all-developers-contribute-to-golly-empower-the-go-community-together-2oie | devops, opensource, go, discuss | **Introduction:**

Hey there, Dev.to community! Are you passionate about Go programming and looking for a meaningful open-source project to contribute to? Look no further! We're excited to introduce you to golly, an open-source Go library that's making waves in the Go community.

**About golly:**

- It is always a pain... | adi73 |

1,647,735 | DFS and BFS | Today I will start refreshing my computer science knowledge by writing tech blogs. Start from... | 0 | 2024-07-15T17:22:16 | https://dev.to/einsteinder/dfs-and-bfs-kfe | Today I will start refreshing my computer science knowledge by writing tech blogs.

Start from [Leetcode 865](https://leetcode.com/problems/smallest-subtree-with-all-the-deepest-nodes/description/).

It's exactly same with [Leetcode 1123](https://leetcode.com/problems/lowest-common-ancestor-of-deepest-leaves/descriptio... | einsteinder | |

1,733,473 | Uncovering the Parallels: Flutter and React Native | X is better than Y ... Y is better than X... Yada Yada 🥱 Two heavyweight champs dominate... | 0 | 2024-07-13T22:42:17 | https://dev.to/louieseno/uncovering-the-parallels-flutter-and-react-native-26fb | flutter, reactnative, learning, mobile | ## X is better than Y ... Y is better than X... Yada Yada 🥱

Two heavyweight champs dominate the scene when it comes to cross-platform app development: Flutter and React Native. These titans often go toe-to-toe in developer debates, but I'm not here to crown a winner. Instead, let's uncover the similarities between the... | louieseno |

1,810,529 | Calling Gemma with Ollama, TestContainers, and LangChain4j | Lately, for my Generative AI powered Java apps, I’ve used the Geminimultimodal large language model... | 0 | 2024-07-12T18:26:52 | https://glaforge.dev/posts/2024/04/04/calling-gemma-with-ollama-and-testcontainers/ | ---

title: Calling Gemma with Ollama, TestContainers, and LangChain4j

published: true

date: 2024-04-03 17:02:01 UTC

tags:

canonical_url: https://glaforge.dev/posts/2024/04/04/calling-gemma-with-ollama-and-testcontainers/

---

Lately, for my Generative AI powered Java apps, I’ve used the [Gemini](https://deepmind.googl... | glaforge | |

1,812,682 | Welcome Thread - v285 | Leave a comment below to introduce yourself! You can talk about what brought you here,... | 0 | 2024-07-17T00:00:00 | https://dev.to/devteam/welcome-thread-v285-3ddb | welcome | ---

published_at : 2024-07-17 00:00 +0000

---

---

1. Leave a comment below to introduce yourself! You can talk about what brought you here, what you're learning, or just a fun ... | sloan |

1,837,847 | 🛠️ Browser Extensions | In today's digital landscape, browser extensions have become an integral part of our online... | 27,228 | 2024-07-13T16:30:00 | https://dev.to/dhrn/browser-extensions-4oac | extensions, javascript, typescript, html | In today's digital landscape, browser extensions have become an integral part of our online experience. But how did we get here, and what makes these small yet powerful tools so important? This comprehensive guide will take you on a journey through the history, development, and future of browser extensions, providing v... | dhrn |

1,842,589 | QA System Design with LLMs: Practical Instruction Fine-Tuning Gen3 and Gen4 models | Large Language Models are vast neural networks trained on billions of text token. They can work with... | 0 | 2024-07-15T06:17:30 | https://dev.to/admantium/qa-system-design-with-llms-practical-instruction-fine-tuning-gen3-and-gen4-models-1imk | llm | Large Language Models are vast neural networks trained on billions of text token. They can work with natural language in a never-before-seen way, reflecting a context to give precise answers.

In my ongoing series about designing a [question-answer system with the help of LLMs](https://admantium.com/blog/llm13_question... | admantium |

1,844,614 | Is Flutter Still Relevant in 2024? | Flutter, an open-source UI software development kit created by Google, has garnered significant... | 0 | 2024-07-16T23:10:45 | https://dev.to/ukwueze_onyedikachi/is-flutter-still-relevant-in-2024-1p14 | flutter, dart, mobile |

Flutter, an open-source UI software development kit created by Google, has garnered significant attention and adoption since its inception. As we delve into 2024, it's crucial to evaluate the relevance of Flutter in the ever-evolving landscape of app development.

## The Rise of Flutter

Historical Context:

Flutte... | ukwueze_onyedikachi |

1,846,467 | Configuring Spring Boot Application with AWS Secrets Manager | In modern application development, securely managing and storing sensitive data, such as private... | 0 | 2024-07-08T11:46:08 | https://deniskisina.dev/configuring-spring-boot-application-with-aws-secrets-manager/ | article, blog | ---

title: Configuring Spring Boot Application with AWS Secrets Manager

published: true

date: 2024-05-08 15:17:29 UTC

tags: article, Blog

canonical_url: https://deniskisina.dev/configuring-spring-boot-application-with-aws-secrets-manager/

---

In modern application development, securely managing and storing sensitive d... | deniskisina |

1,846,835 | Understanding Polymorphic Associations in Rails Through a Case Study | I recently got to implement a polymorphic table in Rails at work. I've read about polymorphism a few... | 0 | 2024-07-16T13:21:04 | https://dev.to/morinoko/understanding-polymorphic-associations-in-rails-through-a-case-study-17je | rails, ruby, polymorphism, database | I recently got to implement a polymorphic table in Rails at work. I've read about polymorphism a few times in books or blogs, but I never quite understood it. Having a real-life situation to apply the concept to at work, along with some conversations with my team's lead engineer finally made it click!

A lot of the exa... | morinoko |

1,847,937 | Arquitetura Eficiente com Node.js e TypeScript | Introdução A combinação de Node.js e TypeScript tem se tornado uma escolha popular para... | 0 | 2024-07-16T16:09:00 | https://dev.to/vitorrios1001/arquitetura-eficiente-com-nodejs-e-typescript-5bn | node, typescript, devdiscuss, backend | ## Introdução

A combinação de Node.js e TypeScript tem se tornado uma escolha popular para desenvolvedores que buscam construir aplicações backend robustas e escaláveis. Node.js oferece um ambiente de execução eficiente e orientado a eventos, enquanto TypeScript adiciona uma camada de tipos estáticos que pode melhorar... | vitorrios1001 |

1,852,595 | Seamless Sales: Launching Your Startup Product with Frictionless Funnels | Congratulations! You've developed a groundbreaking product with the potential to disrupt the market.... | 27,354 | 2024-07-14T23:00:00 | https://dev.to/shieldstring/seamless-sales-launching-your-startup-product-with-frictionless-funnels-56gl | productivity, startup, beginners, career | Congratulations! You've developed a groundbreaking product with the potential to disrupt the market. But a brilliant product alone doesn't guarantee success. To truly thrive, you need a seamless sales strategy that converts interest into loyal customers. Here's how to create frictionless funnels that propel your start... | shieldstring |

1,856,978 | Phalcon v5.7.0 Released | We are happy to announce that Phalcon v5.7.0 has been released! This release fixes a new setting for... | 0 | 2024-07-10T13:08:10 | https://dev.to/phalcon/phalcon-v570-released-5fkc | phalcon, phalcon5, release | ---

title: Phalcon v5.7.0 Released

published: true

date: 2024-05-17 00:01:02 UTC

tags: phalcon,phalcon5,release

canonical_url:

---

We are happy to announce that Phalcon v5.7.0 has been released!

This release fixes a new setting for php.in, some changes and fixes to bugs.

A huge thanks to our community for helping o... | niden |

1,867,578 | Commonly used Javascript Array Methods. | In this post we will learn about commonly used Javascript array methods that uses iteration and... | 0 | 2024-07-17T15:13:50 | https://dev.to/mosesedges/commonly-used-javascript-array-methods-2pmh | javascript, beginners, programming, tutorial | In this post we will learn about commonly used Javascript array methods that uses iteration and callback function to archieve their functionality.

iteration refers to repeated execution of a set of statements or code blocks, which allows us to perform the same operation multiple times.

In simple terms, A callback is... | mosesedges |

1,867,721 | Grounding Gemini with Web Search results in LangChain4j | The latest release of LangChain4j (version 0.31) added the capability of grounding large language... | 0 | 2024-07-12T18:28:32 | https://glaforge.dev/posts/2024/05/28/grounding-gemini-with-web-search-in-langchain4j/ | ---

title: Grounding Gemini with Web Search results in LangChain4j

published: true

date: 2024-05-28 05:42:43 UTC

tags:

canonical_url: https://glaforge.dev/posts/2024/05/28/grounding-gemini-with-web-search-in-langchain4j/

---

The latest [release of LangChain4j](https://github.com/langchain4j/langchain4j/releases/tag/0... | glaforge | |

1,872,877 | Hướng dẫn tích hợp Checkout SDK Zalo (COD) | Mình viết bài này để ghi lại quá trình tích hợp Checkout SDK của Zalo. Không rõ các bạn ở Zalo bị dí... | 0 | 2024-07-14T09:37:35 | https://dev.to/huylv/huong-dan-tich-hop-checkout-sdk-zalo-cod-4b5j | zalo, checkoutsdk | Mình viết bài này để ghi lại quá trình tích hợp [Checkout SDK](https://mini.zalo.me/docs/payment/) của Zalo. Không rõ các bạn ở Zalo bị dí deadline nhiều không :D mà tài liệu hướng dẫn của các bạn hơi thiếu thốn, không được cập nhật thường xuyên. Nhiều thông tin phải search ở trên trang community của Zalo chứ doc cũng ... | huylv |

1,875,489 | Let's make Gemini Groovy! | The happy users of Gemini Advanced, the powerful AI web assistant powered by the Gemini model, can... | 0 | 2024-07-12T18:29:29 | https://glaforge.dev/posts/2024/06/03/lets-make-gemini-groovy/ | ---

title: Let's make Gemini Groovy!

published: true

date: 2024-06-03 09:49:26 UTC

tags:

canonical_url: https://glaforge.dev/posts/2024/06/03/lets-make-gemini-groovy/

---

The happy users of [Gemini Advanced](https://gemini.google.com/advanced), the powerful AI web assistant powered by the Gemini model, can execute so... | glaforge | |

1,880,608 | Ibuprofeno.py💊| #140: Explica este código Python | Explica este código Python Dificultad: Fácil def f(a,b): """ f... | 25,824 | 2024-07-13T11:00:00 | https://dev.to/duxtech/ibuprofenopy-140-explica-este-codigo-python-cfn | python, spanish, learning, beginners | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Fácil</mark></center>

```py

def f(a,b):

"""

f suma dos numeros pasados por parametro

a -> int

b -> int

"""

return a + b

print(help(f))

```

* **A.** `None`

* **B.** `SyntaxError`

* **C.** `0`

* **D.**

``... | duxtech |

1,882,283 | BarcodeDetector API for LE Audio | Using the BarcodeDetector to read a Broadcast Audio URI | 0 | 2024-07-14T19:22:55 | https://dev.to/denladeside/barcodedetector-api-for-le-audio-4ack | webcapabilities, leaudio, barcodedetector | ---

title: BarcodeDetector API for LE Audio

published: true

description: Using the BarcodeDetector to read a Broadcast Audio URI

tags: WebCapabilities, LEAudio, BarcodeDetector

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/soho5chrqy8668dj7fnc.jpg

# Use a ratio of 100:42 for best results.

# publ... | denladeside |

1,882,460 | Construindo um web server em Assembly x86, the grand finale, multi-threading | Uma vez que temos um web server funcional, podemos dar o próximo (e último) passo, que é deixar o... | 27,062 | 2024-07-14T02:37:25 | https://dev.to/leandronsp/construindo-um-web-server-em-assembly-x86-the-grand-finale-multi-threading-24hp | braziliandevs, assembly | Uma vez que temos um [web server funcional](https://dev.to/leandronsp/construindo-um-web-server-em-assembly-x86-parte-v-finalmente-o-server-9e5), podemos dar o próximo (e último) passo, que é deixar o servidor **minimamente escalável** fazendo uso de uma pool de threads.

Neste artigo, vamos mergulhar nas entranhas da... | leandronsp |

1,887,881 | Utilizing Kubernetes for an Effective MLOps Platform | Machine learning operations (MLOps) is transforming the way organizations manage and deploy machine... | 0 | 2024-07-16T13:21:01 | https://dev.to/craftworkai/utilizing-kubernetes-for-an-effective-mlops-platform-4cja | kubernetes, mlops, ai, machinelearning | Machine learning operations (MLOps) is transforming the way organizations manage and deploy machine learning (ML) models. As the need for scalable and efficient ML workflows grows, Kubernetes has emerged as a powerful tool to streamline these processes. This article explores how to leverage Kubernetes to build a robust... | larkmullins-craftworkai |

1,889,775 | What building a Self-Balancing Robot without any robotics experience taught me | How I Built a Self-Balancing Robot and What I Learned Along the Way Hey there! I'm a... | 0 | 2024-07-14T20:15:07 | https://dev.to/tanvirsingh007/what-building-a-self-balancing-robot-without-any-robotics-experience-taught-me-9f8 | # How I Built a Self-Balancing Robot and What I Learned Along the Way

Hey there! I'm a software developer by trade, with a background in web development and DevOps. But back in my final year of pursuing a Bachelor's in Electronics and Communication Engineering, I decided to take on a hardware project that was way outs... | tanvirsingh007 | |

1,890,197 | Enabling Real-time Protection in Windows 11! | Real-time Protection in Windows 11: This feature of Windows Security (formerly Windows Defender) in... | 0 | 2024-07-09T14:24:05 | https://winsides.com/how-to-enable-real-time-protection-in-windows-11/ | windowssecurity, realtimeprotectionin, beginners, tutorials | ---

title: Enabling Real-time Protection in Windows 11!

published: true

date: 2024-06-14 10:53:07 UTC

tags: WindowsSecurity,RealTimeProtectionin,beginners,tutorials

canonical_url: https://winsides.com/how-to-enable-real-time-protection-in-windows-11/

cover_image: https://winsides.com/wp-content/uploads/2024/06/ENABLE-R... | vigneshwaran_vijayakumar |

1,890,200 | How to Manage Ransomware Protection in Windows 11? | Ransomware Protection in Windows 11 : It is a malicious software (malware) designed to encrypt files... | 0 | 2024-07-09T14:21:38 | https://winsides.com/manage-ransomware-protection-in-windows-11/ | windowssecurity, beginners, tutorials, windows11 | ---

title: How to Manage Ransomware Protection in Windows 11?

published: True

date: 2024-06-15 20:14:19 UTC

tags: WindowsSecurity,beginners,tutorials,windows11

canonical_url: https://winsides.com/manage-ransomware-protection-in-windows-11/

cover_image: https://winsides.com/wp-content/uploads/2024/06/Manage-Ransomware-P... | vigneshwaran_vijayakumar |

1,890,437 | Phalcon + Swoole in High Load Micro Service | Introduction This journey took me four years in total. Four years of meticulous planning,... | 0 | 2024-07-10T13:08:23 | https://dev.to/phalcon/phalcon-swoole-in-high-load-micro-service-23nn | phalcon, swoole, microservices | ---

title: Phalcon + Swoole in High Load Micro Service

published: true

date: 2024-06-16 16:00:00 UTC

tags: phalcon,swoole,microservice

canonical_url:

---

## Introduction

This journey took me four years in total. Four years of meticulous planning, incremental steps, and countless hours of coding and debugging to migr... | niden |

1,891,764 | Functional builders in Java with Jilt | A few months ago, I shared an article about what I called Javafunctional builders, inspired by an... | 0 | 2024-07-12T18:30:11 | https://glaforge.dev/posts/2024/06/17/functional-builders-in-java-with-jilt/ | ---

title: Functional builders in Java with Jilt

published: true

date: 2024-06-17 18:31:25 UTC

tags:

canonical_url: https://glaforge.dev/posts/2024/06/17/functional-builders-in-java-with-jilt/

---

A few months ago, I shared an article about what I called Java[functional builders](https://dev.to/glaforge/functional-bu... | glaforge | |

1,894,207 | Unpacking Apache Kafka: The Secret Behind Real-Time Data Mastery | Introduction: The Magic of Apache Kafka in Real-Time Data Streaming Imagine a world where... | 0 | 2024-07-15T15:44:14 | https://dev.to/bala_kannan_494d2e93a1157/unpacking-apache-kafka-the-secret-behind-real-time-data-mastery-28gj | data, pubsub, opensource, eventdriven | ## Introduction: The Magic of Apache Kafka in Real-Time Data Streaming

Imagine a world where data flows like a river, continuously streaming and feeding the needs of complex systems in real-time. What if I told you that this is the reality for some of the world's largest tech companies, powered not by cutting-edge SSD... | bala_kannan_494d2e93a1157 |

1,897,075 | Develop and Test AWS S3 Applications Locally with Node.js and LocalStack | AWS S3 (Simple Storage Service) A scalable, high-speed, web-based cloud storage service... | 0 | 2024-07-13T16:25:19 | https://dev.to/srishtikprasad/develop-and-test-aws-s3-applications-locally-with-nodejs-and-localstack-5efb | node, aws, webdev, localstack | ##AWS S3 (Simple Storage Service)

A scalable, high-speed, web-based cloud storage service designed for online backup and archiving of data and applications.

Provides object storage, which means it stores data as objects within resources called buckets.

Managing files and images is crucial in modern web development, of... | srishtikprasad |

1,897,739 | How to Build an E-commerce Store with Sanity and Next.js | Building an e-commerce store can be daunting, especially when dealing with loads of data that don’t... | 0 | 2024-07-16T11:01:24 | https://dev.to/enodi/how-to-build-an-e-commerce-store-with-sanity-and-nextjs-4099 | sanity, cms, headless, nextjs | Building an e-commerce store can be daunting, especially when dealing with loads of data that don’t change often but still need to be readily available and up-to-date.

That's where tools like Sanity and Next.js come in handy. Sanity is a powerful headless CMS that allows you to manage your content effortlessly, while ... | enodi |

1,898,549 | Digital Signature vs Electronic Signature | You may have noticed that the terms "electronic signature" and "digital signature" are often used... | 0 | 2024-07-13T06:15:18 | https://dev.to/opensign001/digital-signature-vs-electronic-signature-pb8 | webdev, productivity, security, tutorial | You may have noticed that the terms "electronic signature" and "[digital signature](https://opensignlabs.com/)" are often used interchangeably. Still, there is a difference between the two. A digital signature is always electronic, but an electronic signature is not always digital.

Define Continuous... | 27,559 | 2024-07-13T07:02:00 | https://dev.to/suhaspalani/continuous-integration-2f3j | cicd, github, githubactions, workflows | #### Content Plan

**1. Introduction to Continuous Integration (CI)**

- Define Continuous Integration (CI) and its importance in modern software development.

- Benefits of CI: early bug detection, reduced integration issues, improved code quality.

- Briefly introduce GitHub Actions as a CI/CD tool integrated w... | suhaspalani |

1,899,329 | Containerization with Docker | Content Plan 1. Introduction to Containerization Define containerization and its... | 27,559 | 2024-07-15T09:06:00 | https://dev.to/suhaspalani/containerization-with-docker-4mg6 | docker, containers, virtualmachine, dockerhub | #### Content Plan

**1. Introduction to Containerization**

- Define containerization and its importance in modern software development.

- Differences between virtual machines and containers.

- Benefits of using Docker: consistency, scalability, efficiency.

**2. Installing Docker**

- Prerequisites: Docker D... | suhaspalani |

1,900,011 | Artificial Intelligence with ML.NET for text classifications | Recently, Artificial Intelligence (AI) has been gaining popularity at breakneck speed. OpenAI’s... | 0 | 2024-07-16T08:00:00 | https://dev.to/ben-witt/artificial-intelligence-with-mlnet-for-text-classifications-42j6 | ai, csharp, coding, developer | Recently, Artificial Intelligence (AI) has been gaining popularity at breakneck speed.

OpenAI’s ChatGPT was a breakthrough in Artificial Intelligence, and the enthusiasm was huge.

ChatGPT triggered a trend towards AI applications that many companies followed.

You read and hear about AI everywhere. Videos and images of ... | ben-witt |

1,900,425 | Voluntarii proiectului peviitor.ro folosesc GitHub Desktop | Proiectul peviitor.ro este un proiect de tipul OPEN SOURCE pe care fiecare voluntar îl poate edita.... | 0 | 2024-07-15T13:50:41 | https://dev.to/ale23yfm/voluntarii-proiectului-peviitorro-folosesc-github-desktop-2j71 | peviitor, github, git | Proiectul [peviitor.ro](https://peviitor.ro/) este un proiect de tipul OPEN SOURCE pe care fiecare voluntar îl poate edita.

Noi, voluntarii ASOCIATIEI OPORTUNITATI SI CARIERE, folosim GitHub Desktop pentru a clona și a face push pentru toate repository-urile la care lucrăm.

---

[GitHub Desktop](https://desktop.githu... | ale23yfm |

1,901,168 | Simplify Kubernetes Monitoring: Kube-prometheus-stack Made Easy with Glasskube | What do we, as developers and engineers, value most above all else? The answer is simple: our... | 0 | 2024-07-15T10:18:11 | https://dev.to/glasskube/simplify-kubernetes-monitoring-kube-prometheus-stack-made-easy-with-glasskube-54gn | tutorial, opensource, kubernetes, monitoring | What do we, as developers and engineers, value most above all else? The answer is simple: **our time.**

Tools that deliver value in the shortest amount of time have the highest chance of user adoption, it's as simple as that.

What else do most engineers value? Beautiful and data-rich **dashboards**.

.*

## What I Built

<!-- Share an overview of your project. -->

[**EcoDea Beauty**](https://okolosarah402.wixstudio.io/ecodea-beauty/) is a modern and innovative e-commerce store that sells eco-friendly skincare and beauty products.

... | sarahokolo |

1,902,171 | Managing Docker Images | Introduction In the previous posts of the series, we discussed in depth about Docker... | 27,622 | 2024-07-15T06:00:00 | https://dev.to/kalkwst/managing-docker-images-875 | beginners, docker, devops, tutorial | ## Introduction

In the previous posts of the series, we discussed in depth about Docker images. As we've seen, we've been able to take existing images, provided to the general public in Docker Hub, and run them or reuse them for our purposes. The image itself helps us streamline our processes and reduce the work we nee... | kalkwst |

1,902,583 | Streamline MySQL Deployment with Docker and DbVisualizer | This guide demonstrates how to containerize a MySQL database using Docker and manage it using... | 21,681 | 2024-07-15T07:00:00 | https://dev.to/dbvismarketing/streamline-mysql-deployment-with-docker-and-dbvisualizer-54lf | docker, mysql | This guide demonstrates how to containerize a MySQL database using Docker and manage it using DbVisualizer for seamless deployment across various environments.

**Start by writing a Dockerfile.**

```

FROM mysql:latest

ENV MYSQL_ROOT_PASSWORD=password

COPY my-database.sql /docker-entrypoint-initdb.d/

```

**Build your ... | dbvismarketing |

1,902,657 | Database Collation ใน PostgreSQL | Collation คือ Collation... | 0 | 2024-07-14T09:30:35 | https://dev.to/everthing-was-postgres/database-collation-ain-postgresql-1dle | postgres, thai | ## Collation คือ

Collation คือชุดกฎที่กำหนดวิธีการเปรียบเทียบและจัดเรียงข้อมูลประเภทข้อความในฐานข้อมูล โดยมีความสำคัญอย่างยิ่งในการจัดการข้อมูลที่เกี่ยวข้องกับภาษาและวัฒนธรรมที่แตกต่างกัน

**โดยมีวัตถุประสงค์หลัก 3 ประการ ดังนี้:**

**1. การเปรียบเทียบข้อมูล:** ช่วยให้เปรียบเทียบข้อมูลระหว่างชุดข้อมูลต่างๆ ได้อย่างถูกต้... | iconnext |

1,902,772 | Good commit message V/S Bad commit message 🦾 | When developers are pushing their code to VCS(Version Control System) such as Git. If you... | 0 | 2024-07-16T10:43:22 | https://dev.to/sourav_codey/good-commit-message-vs-bad-commit-message-jhi | github, git, commit, development | #### When developers are pushing their code to VCS(Version Control System) such as Git. If you are working in any industry for production level code.

#### One should learn to write better commit message and make it a habit so that it is easy for co-developers to understand the code just by seeing the commit message.

... | sourav_codey |

1,902,962 | Dynamically Choosing Origin Based on Host Header and Path with AWS CloudFront and Lambda@Edge | In a recent project for our customer Skillgym, I faced the challenge of serving content from... | 0 | 2024-06-27T17:41:42 | https://dev.to/petecocoon/dynamically-choosing-origin-based-on-host-header-and-path-with-aws-cloudfront-and-lambdaedge-1c3p | cloudfront, aws, serverless, lambda | In a recent project for our customer [Skillgym](https://www.skillgym.com/), I faced the challenge of serving content from different origins based on specific conditions, like the host subdomain. The goal was to leverage AWS CloudFront to distribute content efficiently while ensuring the flexibility to choose between mu... | petecocoon |

1,903,622 | การนำเข้าข้อมูลจากไฟล์ CSV เข้ามาใน Posstgres : ทักษะเบื้องต้นของ Data Engineer | บทนำ เคยไหม ขณะประชุมการขึ้นระบบ CRM... | 0 | 2024-07-14T08:38:10 | https://dev.to/everthing-was-postgres/kaarnamekhaakhmuulcchaakaifl-csv-ekhaamaaain-posstgres-thaksaebuuengtnkhng-data-engineer-5hgm | postgres, csv, dataengineering | ##บทนำ##

เคยไหม ขณะประชุมการขึ้นระบบ CRM ของฝ่ายขายจะต้องมีการนำรายชื่อลูกค้าของแต่ละคนมาใส่ในฐานข้อมูลพอถามว่าเก็บข้อมูลไว้ที่ไหนจะพบว่าบางคนเก็บใน excle, Google Sheet หรือบางคนจดลงในสมุดก็มีซึ่งพนักงานขายบางคนมีรายชื่อตั้งแต่ 100 ถึง 1,000

ปัญหาต่อมาคือใครจะคีย์ข้อมูลเข้าระบบ ในห้องประชุมเงียบ ประธานในที่ประชุมสั่งก... | iconnext |

1,904,370 | Natural Numbers | What is a number? In mathematics, there are several ways to approach this question. We can look at... | 27,897 | 2024-07-17T07:20:00 | https://dev.to/kalkwst/natural-numbers-4em4 | mathematics | What is a number?

In mathematics, there are several ways to approach this question. We can look at it **semantically**, by understanding what numbers mean. Alternatively, we can take the **axiomatic** approach, focusing on their fundamental properties and behaviors. Or, we can answer the question **constructively** by... | kalkwst |

1,906,596 | 🧭 🇹 When to use the non-null assertion operator in TypeScript | Did you know that TypeScript has a non-null assertion operator? The non-null assertion operator (!)... | 0 | 2024-07-17T16:11:12 | https://dev.to/audreyk/when-to-use-the-non-null-assertion-operator-in-typescript-545f | typescript, coding, todayilearned | Did you know that TypeScript has a [non-null assertion operator](https://www.typescriptlang.org/docs/handbook/release-notes/typescript-2-0.html#non-null-assertion-operator)?

The non-null assertion operator (!) is used to assert that a value is neither null nor undefined. It tells TypeScript's type checker to ignore th... | audreyk |

1,904,494 | How MySQL Tuning Dramatically Improves the Drupal Performance | MySQL Configuration tuning is an important component of database management implemented by database... | 0 | 2024-07-15T10:05:46 | https://releem.com/blog/web-applications-performance?utm_source=devto&utm_medium=social&utm_campaign=drupal-performance&utm_content=canonical | drupal, database, mysql, webdev | MySQL Configuration tuning is an important component of database management implemented by database professionals and administrators. It aims to configure the database to suit its hardware and workload. But beyond the database management sphere, the usefulness of MySQL Configuration tuning is largely ignored.

We hypot... | drupaladmin |

1,904,781 | Clarifying Implementation Intentions: The Key to Achieving Your Goals | Introduction Hello everyone. Today, I'd like to talk about the importance of "clarifying... | 0 | 2024-07-12T20:19:39 | https://dev.to/koshirok096/clarifying-implementation-intentions-the-key-to-achieving-your-goals-32jk | productivity | #Introduction

Hello everyone. Today, I'd like to talk about the importance of "**clarifying implementation intentions**" for achieving your goals.

In my personal opinion, while people are generally good at identifying and setting goals, they often fail to "clarify their implementation intentions." This is something I... | koshirok096 |

1,905,620 | A state of AI in 2024 | If I tell you that about 2 years ago, GenAI arrived with ChatGPT and that now we almost only hear... | 23,402 | 2024-07-15T14:00:00 | https://dev.to/jdxlabs/a-state-of-ai-in-2024-2cgj | ai, genai, llm, cloud | If I tell you that about 2 years ago, [GenAI](https://en.wikipedia.org/wiki/Generative_artificial_intelligence) arrived with [ChatGPT](https://openai.com/chatgpt/) and that now we almost only hear about Artificial Intelligence, in every way, I probably don't tell you much.

I would therefore like, in this article, to b... | jdxlabs |

1,906,039 | Testing React App Using Vitest & React Testing Library | The Problem When developing an application, we can't ensure our app is bug-free. We will... | 0 | 2024-07-13T08:35:34 | https://dev.to/zaarza/testing-react-app-using-vitest-react-testing-library-457j | react, vitest, frontend, javascript | ## The Problem

When developing an application, we can't ensure our app is bug-free. We will test our app before going into production. Often, while creating new features or modifying existing code, we can unintentionally broke previous code and produce errors. It would be useful to know this during the development proc... | zaarza |

1,906,516 | 7 New JavaScript Set Methods | TL;DR: JavaScript’s Set data structure has added 7 new APIs that are very useful and can reduce the... | 0 | 2024-07-13T14:38:15 | https://webdeveloper.beehiiv.com/p/7-new-javascript-set-methods | webdev, javascript, programming, typescript | TL;DR: JavaScript’s `Set` data structure has added 7 new APIs that are very useful and can reduce the need for libraries like lodash. They are:

- [intersection()](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Set/intersection?utm_source=webdeveloper.beehiiv.com&utm_medium=newsletter&... | zacharylee |

1,906,839 | Security in LLMs: Safeguarding AI Systems - V | Welcome to the final installment of our series on Generative AI and Large Language Models (LLMs). In... | 0 | 2024-07-13T18:09:43 | https://dev.to/mahakfaheem/security-in-llms-safeguarding-ai-systems-v-1o0d | security, community, learning, machinelearning | Welcome to the final installment of our series on Generative AI and Large Language Models (LLMs). In this blog, we will explore the critical topic of security in LLMs. As these models become increasingly integrated into various applications, ensuring their security is paramount. We will discuss the types of security th... | mahakfaheem |

1,906,974 | Nueva gran actualización en nuestro sitio web | Buenas tardes, queridos lectores. Como muchos de ustedes saben, llevo un año trabajando intensamente... | 0 | 2024-07-15T17:51:45 | https://danieljsaldana.dev/nueva-gran-actualizacion-en-nuestro-sitio-web/ | blog, concepto, astro, spanish | ---

title: Nueva gran actualización en nuestro sitio web

tags: Blog, Concepto, Astro, Spanish

published: true

canonical_url: https://danieljsaldana.dev/nueva-gran-actualizacion-en-nuestro-sitio-web/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/nxsrk6ivi1aetljw6vw4.png

---

Buenas tardes, querid... | danieljsaldana |

1,907,264 | Kutter Discount Wars: A videogame based e-commerce experience. | This is a submission for the Wix Studio Challenge . What I Built Kutter Discount Wars... | 0 | 2024-07-13T17:11:52 | https://dev.to/vpengine/kutter-discount-wars-16ik | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

## What I Built

<!-- Share an overview about your project. -->

Kutter Discount Wars offers a videogame based e-commerce experience.

Users play a simple alien-caterpillar invasion game and their play score determines the discount ... | vpengine |

1,907,458 | Why Am a Tech Community Ambassador in East Africa!! | WHAT KEEPS ME AWAKE! I am deeply passionate about empowering youth to lead better lives. In today's... | 0 | 2024-07-14T14:44:07 | https://dev.to/aws-builders/why-im-a-tech-community-ambassador-in-east-africa-20e4 | techcommunity, learning | **WHAT KEEPS ME AWAKE!**

I am deeply passionate about empowering youth to lead better lives. In today's rapidly evolving world, technology offers incredible opportunities for young people to improve their circumstances and contribute positively to their communities. I believe that by providing the right resources and s... | adelinemakokha |

1,908,285 | Implementing Background Jobs with Hangfire: A Hands-On Guide | Hangfire is a robust library for managing background jobs in .NET applications, allowing developers... | 0 | 2024-07-14T02:31:29 | https://rmauro.dev/implementing-background-jobs-with-hangfire-a-hands-on-guide/ | csharp, dotnet | Hangfire is a robust library for managing background jobs in .NET applications, allowing developers to easily create and manage tasks that run asynchronously.

Whether you're scheduling recurring tasks, executing one-off jobs, or managing time-consuming operations without blocking the main thread, Hangfire provides a f... | rmaurodev |

1,908,315 | Ibuprofeno.py💊| #141: Explica este código Python | Explica este código Python Dificultad: Intermedio lista =... | 25,824 | 2024-07-15T11:00:00 | https://dev.to/duxtech/ibuprofenopy-141-explica-este-codigo-python-1ic6 | python, spanish, beginners, learning | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Intermedio</mark></center>

```py

lista = [6,7,8,9,10]

res_map = list(map(lambda x: (x**2)/2, lista))

print(res_map)

```

* **A.** `[3.0, 3.5, 4.0, 4.5, 5.0]`

* **B.** `[18.0, 24.5, 32.0, 40.5, 50.0]`

* **C.** `[36, 49, 64, 81, 100]`... | duxtech |

1,908,318 | Ibuprofeno.py💊| #142: Explica este código Python | Explica este código Python Dificultad: Intermedio lista =... | 25,824 | 2024-07-16T11:00:00 | https://dev.to/duxtech/ibuprofenopy-142-explica-este-codigo-python-10f1 | python, spanish, learning, beginners | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Intermedio</mark></center>

```py

lista = [6,7,8,9,10]

res_filter = list(filter(lambda x: x > 8 ,lista))

print(res_filter)

```

* **A.** `[9, 10]`

* **B.** `[6, 7, 8, 9, 10]`

* **C.** `[1, 2, 3, 4, 5, 6, 7]`

* **D.** `[8, 9, 10]`

--... | duxtech |

1,908,319 | Ibuprofeno.py💊| #143: Explica este código Python | Explica este código Python Dificultad: Intermedio from functools import... | 25,824 | 2024-07-17T11:00:00 | https://dev.to/duxtech/ibuprofenopy-143-explica-este-codigo-python-43n0 | spanish, learning, beginners, python | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Intermedio</mark></center>

```py

from functools import reduce

res_reduce = reduce(lambda x,y : x+y, range(0,5))

print(res_reduce)

```

* **A.** `11`

* **B.** `9`

* **C.** `10`

* **D.** `8`

---

{% details **Respuesta:** %}

👉 **C... | duxtech |

1,908,900 | Throwing a javelin and finding where it lands | Originally published on peateasea.de. As part of tutoring physics and maths to high school students,... | 0 | 2024-07-13T14:26:07 | https://peateasea.de/throwing-a-javelin-and-finding-where-it-lands/ | highschool, maths, python | ---

title: Throwing a javelin and finding where it lands

published: true

date: 2024-07-01 22:00:00 UTC

tags: Highschool,Maths,Python

canonical_url: https://peateasea.de/throwing-a-javelin-and-finding-where-it-lands/

cover_image: https://peateasea.de/assets/images/javelin-throw.png

---

*Originally published on [peateas... | peateasea |

1,911,856 | Packer & Proxmox: A Bumpy Road | Several months ago I used Packer to simplify the creation of VM templates on my organization's... | 0 | 2024-07-16T22:30:27 | https://dev.to/shandoncodes/packer-proxmox-a-bumpy-road-1de2 | devops | Several months ago I used Packer to simplify the creation of VM templates on my organization's [vSphere](https://www.vmware.com/products/vsphere.html) instance. While getting Packer to work was not the most pleasant, the benefits of using it had begun to show and so I decided to begin using Packer in my [homelab](https... | shandoncodes |

1,909,586 | Ready for the iPhone 16? | This is a submission for the Wix Studio Challenge . What I Built Have you seen those long... | 0 | 2024-07-15T00:15:33 | https://dev.to/anshsaini/ready-for-the-iphone-16-4eah | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

## What I Built

<!-- Share an overview about your project. -->

Have you seen those long lines in front of stores before a sale is about to begin? That's exactly what I've built using Wix Studio.

The website showcases a landing pag... | anshsaini |

1,909,653 | JavaScript Loops for Beginners: Learn the Basics | It's a gloomy Monday, and you are at work. We all know how depressing Mondays can be, right? Your... | 0 | 2024-07-17T18:44:03 | https://dev.to/sephjoe12/javascript-loops-for-beginners-learn-the-basics-jhk | webdev, javascript, beginners, tutorial | ---

It's a gloomy Monday, and you are at work. We all know how depressing Mondays can be, right? Your boss approaches you and says, "Hey, I have 300 unopened emails we've received over the weekend. I want you to open each one, write down the sender's name, and delete the emails once you're done."

This task looks ver... | sephjoe12 |

1,910,306 | Tailwind Commands Cheat Sheet | Tailwind CSS is a utility-first CSS framework packed with classes that can be composed to build any... | 0 | 2024-07-13T13:54:02 | https://dev.to/madgan95/tailwind-commands-cheat-sheet-2mb3 | webdev, tailwindcss, css, beginners | Tailwind CSS is a utility-first CSS framework packed with classes that can be composed to build any design, directly in your markup.

#Features:

##Utility-first:

Tailwind css is a utility-first css framework that provides low-level utility classes to build custom designs without writing css.This approach allows us to ... | madgan95 |

1,910,349 | What’s New in React Gantt Chart: 2024 Volume 2 | TL;DR: Boost project scheduling visualization, accuracy, and debugging efficiency with the new... | 0 | 2024-07-17T16:53:43 | https://www.syncfusion.com/blogs/post/whats-new-react-gantt-chart-2024-vol2 | react, gantt, web, ui | ---

title: What’s New in React Gantt Chart: 2024 Volume 2

published: true

date: 2024-07-03 13:50:09 UTC

tags: react, gantt, web, ui

canonical_url: https://www.syncfusion.com/blogs/post/whats-new-react-gantt-chart-2024-vol2

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/lxmreuel2gubgwmne50i.jpeg

-... | jollenmoyani |

1,910,812 | Create your simple infrastructure using IaC Tool Terraform, CloudFormation or AWS CDK | Day 001 of 100DaysAWSIaCDevopsChallenge In this article I am going to design a simple... | 0 | 2024-07-17T11:01:10 | https://dev.to/nivekalara237/create-your-simple-infrastructure-using-iac-tool-terraform-cloudformation-or-aws-cdk-256k | terraform, cdk, aws, 100daysawsiacdevopschallenge |

###### <span style="color: #1B9CFC;font-weight: bold;font-size: 22px;border: 1px solid #FC427B;padding: 1rem;border-radius: 5% ">Day 001 of 100DaysAWSIaCDevopsChallenge</span>

____

In this article I am going to design a simple AWS infrastructure and build it using three IaC (Infrastructure As Code) tools: AWS CloudF... | nivekalara237 |

1,911,376 | Simplify Your Code with Wisper | Are you having a hard time with huge classes in your code? Are your classes holding too many... | 0 | 2024-07-15T07:05:07 | https://dev.to/wecasa/simplify-your-code-with-wisper-3284 | Are you having a hard time with huge classes in your code? Are your classes holding too many responsibilities making your code hard to maintain? You're not alone. Many developers face this problem known as high code coupling.

At Wecasa, we strive to keep things simple and small to minimize cognitive load. Small, focus... | captain_tsubasa | |

1,911,429 | The Mathematics of Algorithms | One of the most crucial considerations when selecting an algorithm is the speed with which it is... | 27,956 | 2024-07-12T06:00:00 | https://dev.to/kalkwst/algorithmic-thinking-4n9p | algorithms, beginners |

One of the most crucial considerations when selecting an algorithm is the speed with which it is likely to complete. Predicting this speed involves using mathematical methods. This post delves into the mathematical tools, aiming to clarify the terms commonly used in this series and the rest of the literature that desc... | kalkwst |

1,911,535 | Using Parent and Child Flows for better workflow management | If you've been using Power Automate, you might have come across the term "parent flows" or "child... | 0 | 2024-07-15T06:03:18 | https://dev.to/fernandaek/using-parent-and-child-flows-for-better-workflow-management-115c | powerplatform, powerfuldevs, powerautomate | If you've been using Power Automate, you might have come across the term "parent flows" or "child flows." These concepts are super useful for organizing and managing your automation processes efficiently. In this blog post, I'll break down what parent flows and child flows are, why you should use them, and how to get s... | fernandaek |

1,911,537 | Play Game 🎮 Earn Coupon | Wix Studio eCommerce Engagement 📈 Tool | This is a submission for the Wix Studio Challenge . What I Built A game to increase user... | 0 | 2024-07-15T02:58:36 | https://dev.to/rajeshj3/play-game-earn-coupon-wix-studio-ecommerce-engagement-tool-29l0 | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

## What I Built

A game to increase user engagement 📈 on your eCommerce website. By allowing them to play a crazy math 🧠 game and win 🎖️ Coupons.

---

## Demo

🔴 Live Demo: [https://rajeshj3.wixstudio.io/ecom](https://rajeshj3.w... | rajeshj3 |

1,911,778 | Optimizing Blazor TreeView Performance with Virtualization | TL;DR: Enhance the performance of the Syncfusion Blazor TreeView component with the new... | 0 | 2024-07-17T16:55:17 | https://www.syncfusion.com/blogs/post/blazor-treeview-virtualization | blazor, treeview, ui, web | ---

title: Optimizing Blazor TreeView Performance with Virtualization

published: true

date: 2024-07-04 12:32:21 UTC

tags: blazor, treeview, ui, web

canonical_url: https://www.syncfusion.com/blogs/post/blazor-treeview-virtualization

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/hln9fs1mtg69x37wub... | jollenmoyani |

1,911,905 | Taming the Unpredictable - How Continuous Alignment Testing Keeps LLMs in Check | Ensure the reliability and consistency of your LLM-based systems with Continuous Alignment Testing. Utilize Repeat Tests, seed values, and the choices feature in OpenAI Chat Completions to manage the inherent unpredictability of AI responses. Define precise inputs and expected outcomes early in the development process,... | 0 | 2024-07-14T03:15:15 | https://dev.to/dev3l/taming-the-unpredictable-how-continuous-alignment-testing-keeps-llms-in-check-df6 | ai, softwaredevelopment, generativeai, extremeprogramming | ---

title: Taming the Unpredictable - How Continuous Alignment Testing Keeps LLMs in Check

published: true

description: Ensure the reliability and consistency of your LLM-based systems with Continuous Alignment Testing. Utilize Repeat Tests, seed values, and the choices feature in OpenAI Chat Completions to manage the ... | dev3l |

1,911,961 | Introduction to Functional Programming in JavaScript: Lenses #9 | Lenses are a powerful and elegant way to focus on and manipulate parts of immutable data structures... | 0 | 2024-07-12T22:00:00 | https://dev.to/francescoagati/introduction-to-functional-programming-in-javascript-lenses-9-3217 | javascript | Lenses are a powerful and elegant way to focus on and manipulate parts of immutable data structures in functional programming. They provide a mechanism to get and set values within nested objects or arrays without mutating the original data.

#### What are Lenses?

A lens is a first-class abstraction that provides a w... | francescoagati |

1,911,963 | Introduction to Functional Programming in JavaScript: Applicatives #10 | Applicatives provide a powerful and expressive way to work with functions and data structures that... | 0 | 2024-07-14T22:00:00 | https://dev.to/francescoagati/introduction-to-functional-programming-in-javascript-applicatives-10-1n9h | javascript |

Applicatives provide a powerful and expressive way to work with functions and data structures that involve context, such as optional values, asynchronous computations, or lists. Applicatives extend the concept of functors, allowing for the application of functions wrapped in a context to values also wrapped in a conte... | francescoagati |

1,911,978 | Introduction to Functional Programming in JavaScript: Different monads #11 | Monads are a fundamental concept in functional programming that provide a way to handle computations... | 0 | 2024-07-15T22:00:00 | https://dev.to/francescoagati/introduction-to-functional-programming-in-javascript-different-monads-11-2je1 | javascript | Monads are a fundamental concept in functional programming that provide a way to handle computations and data transformations in a structured manner. There are various types of monads, each designed to solve specific problems and handle different kinds of data and effects.

#### What is a Monad?

A monad is an abstrac... | francescoagati |

1,911,979 | Introduction to Functional Programming in JavaScript: Do monads #12 | In functional programming, monads provide a way to handle computations in a structured and... | 0 | 2024-07-16T22:00:00 | https://dev.to/francescoagati/introduction-to-functional-programming-in-javascript-do-monads-12-362a | javascript | In functional programming, monads provide a way to handle computations in a structured and predictable manner. Among various monads, the Do Monad (also known as the "Do notation" or "Monad comprehension") is a powerful construct that allows for more readable and imperative-style handling of monadic operations.

#### W... | francescoagati |

1,911,989 | How to deploy a free Kubernetes Cluster with Oracle Cloud Always free tier | Você pode ler este artigo em Português clicando aqui. The recent changes in Vercel's billing system... | 28,072 | 2024-07-16T17:57:05 | https://www.ronilsonalves.com/articles/how-to-deploy-a-free-kubernetes-cluster-with-oracle-cloud-always-free-tier | devops, docker, kubernetes, tutorial | _Você pode ler este artigo em Português [clicando aqui](https://www.ronilsonalves.com/pt/artigos/como-fazer-o-deploy-gratuito-de-um-cluster-kubernetes-com-o-always-free-tier-da-oracle-cloud)._

The recent changes in Vercel's billing system have prompted many developers to seek alternative platforms that offer cost-effe... | ronilsonalves |

1,912,003 | What’s New in Blazor Image Editor: 2024 Volume 2 | TL;DR: Syncfusion Blazor Image Editor is the perfect tool for all image editing requirements. Explore... | 0 | 2024-07-17T17:01:54 | https://www.syncfusion.com/blogs/post/blazor-image-editor-2024-volume-2 | blazor, development, webdev, web | ---

title: What’s New in Blazor Image Editor: 2024 Volume 2

published: true

date: 2024-07-04 17:01:34 UTC

tags: blazor, development, webdev, web

canonical_url: https://www.syncfusion.com/blogs/post/blazor-image-editor-2024-volume-2

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/wtbh32wxkzqfeyrjwu... | jollenmoyani |

1,912,873 | Efficient Driver's License Recognition with OCR API: Step-by-Step Tutorial | Introduction Optical Character Recognition (OCR) technology has transformed the way we... | 0 | 2024-07-15T10:58:14 | https://dev.to/api4ai/efficient-drivers-license-recognition-with-ocr-api-step-by-step-tutorial-42n2 | ocr, python, api4ai, opencv | #Introduction

Optical Character Recognition (OCR) technology has transformed the way we convert various document types—such as scanned paper documents, PDFs, or digital camera images—into editable and searchable data. OCR is vital for automating data entry, enhancing accuracy, and saving time by removing the need for ... | taranamurtuzova |

1,912,880 | Programar es como hablar. | Aprender un nuevo lenguaje de programación es como aprender un nuevo idioma. La esencia de la... | 0 | 2024-07-15T14:04:00 | https://dev.to/missa_eng/programar-es-como-hablar-369f | Aprender un nuevo lenguaje de programación es como aprender un nuevo idioma. La esencia de la comunicación está ahí, pero debes adaptarte a las particularidades de cada lugar.

Ej: Eres un vendedor de pan dulce. A veces hay grandes diferencias (España y Japón), y otras, pequeños cambios que pueden generar grandes pr... | missa_eng | |

1,917,282 | IoT Tech Talk - Cloud Remote Access | Overview In this IoT Tech Talk Marco Stoffel (Presales IoT DACH, Software AG), Christian... | 0 | 2024-07-09T12:24:42 | https://tech.forums.softwareag.com/t/iot-tech-talk-cloud-remote-access/296530/1 | iot, techtalk, device, management | ---

title: IoT Tech Talk - Cloud Remote Access

published: true

date: 2024-06-05 13:35:44 UTC

tags: iot, techtalk, device, management

canonical_url: https://tech.forums.softwareag.com/t/iot-tech-talk-cloud-remote-access/296530/1

---

## Overview

In this IoT Tech Talk Marco Stoffel (Presales IoT DACH, Software AG), Chri... | techcomm_sag |

1,912,917 | Why You Need to Shift-left with Mobile Testing | I feel like there’s always been a love-hate relationship with the concept of testing. Without a... | 0 | 2024-07-13T14:27:02 | https://dev.to/johnjvester/why-you-need-to-shift-left-with-mobile-testing-a2m | mobile, testing, shiftleft, tricentis |

I feel like there’s always been a love-hate relationship with the concept of testing. Without a doubt, the benefits of testing whatever you are building help avoid customers reporting those same discoveries. That’s the... | johnjvester |

1,913,684 | Dealing with Race Conditions: A Practical Example | In your career, you'll encounter Schrödinger's cat problems, situations that sometimes work and... | 0 | 2024-07-13T23:24:11 | https://dev.to/chseki/dealing-with-race-conditions-a-practical-example-1mhg | concurrency, database, go, javascript | In your career, you'll encounter Schrödinger's cat problems, situations that sometimes work and sometimes don't. Race conditions are one of these challenges (yes, just one!).

Throughout this blog post, I'll present a real-world example, demonstrate how to reproduce the problem and discuss strategies for handling race ... | chseki |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.