id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,925,186 | Which Framework is Better for E-commerce Mobile App Development: React Native or Flutter 🤔? | Hello Dev Community 👋🏻! I hope you're all doing well 😊! I'm currently planning to develop an... | 0 | 2024-07-16T09:03:29 | https://dev.to/respect17/which-framework-is-better-for-e-commerce-mobile-app-development-react-native-or-flutter--5983 | mobile, reactnative, discuss, developers | Hello Dev Community 👋🏻!

I hope you're all doing well 😊! I'm currently planning to develop an e-commerce mobile app and need your expertise to help me decide between React Native and Flutter. Both frameworks have their pros and cons, and I'm looking for insights from those who have experience with either (or both) t... | respect17 |

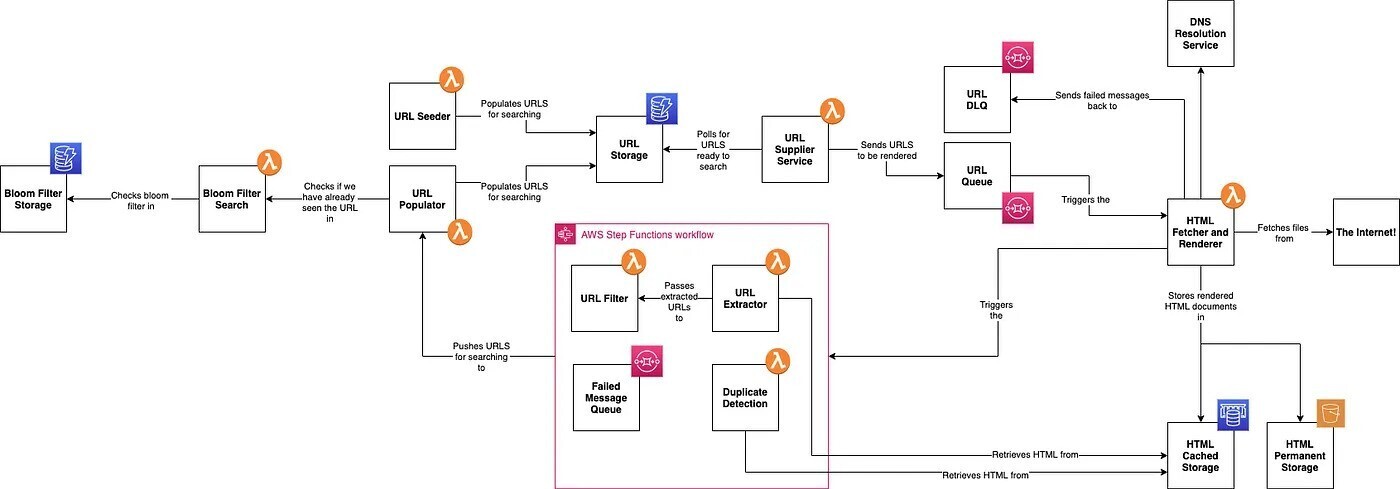

1,925,187 | 网络爬虫架构设计 | 网络爬虫是一种自动化程序,它遍历互联网,收集和索引网页内容。架构设计旨在实现高并发处理和去重,并确保爬虫的健壮性和可维护性。本文将详细解析爬虫系统的各个组件和它们之间的交互关系。 ... | 0 | 2024-07-16T09:03:37 | https://dev.to/jason_2077/wang-luo-pa-chong-jia-gou-she-ji-3lb | crawler, 爬虫, 架构设计, 代理ip | 网络爬虫是一种自动化程序,它遍历互联网,收集和索引网页内容。架构设计旨在实现高并发处理和去重,并确保爬虫的健壮性和可维护性。本文将详细解析爬虫系统的各个组件和它们之间的交互关系。

## 1. 总览

整个系统组件按顺序如下:

1. **URL Seeder**

2. **Bloom Filter Storage**

3. **Bloom Filter Search**

4. **URL Populator**

5. **URL... | jason_2077 |

1,925,188 | Tenant Empowerment: Addressing Housing Disrepair through Legal Action | Introduction Housing disrepair is a widespread issue in the UK, affecting countless tenants who... | 0 | 2024-07-16T09:03:47 | https://dev.to/yefav79229/tenant-empowerment-addressing-housing-disrepair-through-legal-action-47h9 | Introduction

Housing disrepair is a widespread issue in the UK, affecting countless tenants who endure poor living conditions due to neglectful landlords. Fortunately, [Housing Disrepair lawyers](https://housingdisrepaircompensationclaim.com/housing-disrepair-london/) lawyers play a crucial role in helping these tena... | yefav79229 | |

1,925,189 | SOFTWARE RAID OR HARDWARE RAID: WHAT’S BETTER IN 2024? | RAID (redundant array of independent disks) is a technology that allows combining multiple disk... | 0 | 2024-07-16T09:04:37 | https://dev.to/pltnvs/software-raid-or-hardware-raidwhats-better-in-2024-1b0o | software, hardware, dataengineering | **RAID (redundant array of independent disks) is a technology that allows combining multiple disk drives into arrays or RAID volumes by spreading (striping) data across drives. RAID can be used to improve performance by taking advantage of several drives’ worth of throughput to access the dataset. It can also be used t... | pltnvs |

1,925,190 | Profiting from Currency Discrepancies with Advanced Triangular Arbitrage Bots | Discovering fresh and creative methods to increase profits is important in the evolving world of... | 0 | 2024-07-16T09:05:33 | https://dev.to/rick_grimes/profiting-from-currency-discrepancies-with-advanced-triangular-arbitrage-bots-3pbe | ai, tradingbot, blockchain, bots | Discovering fresh and creative methods to increase profits is important in the evolving world of cryptocurrency trading. Utilizing triangular arbitrage bots is one such technique. These sophisticated tools make trading more profitable and efficient by enabling businesses and businesspeople to capitalize on pricing diff... | rick_grimes |

1,925,191 | Sustainability in Focus: Eco-friendly Trends Reshaping the Silicone Sealants Market | Construction silicone sealants are indispensable materials in modern building and construction... | 0 | 2024-07-16T09:06:14 | https://dev.to/aryanbo91040102/sustainability-in-focus-eco-friendly-trends-reshaping-the-silicone-sealants-market-3clm | news | Construction silicone sealants are indispensable materials in modern building and construction projects. These versatile sealants are formulated to provide durable, flexible seals that withstand various environmental conditions, making them crucial for maintaining structural integrity and energy efficiency in buildings... | aryanbo91040102 |

1,925,192 | A library to sync state between Next.js components and URL | Can sync state between unrelated components and optionally save it to URL, difference from other... | 0 | 2024-07-16T09:09:21 | https://dev.to/asmyshlyaev177/a-library-to-sync-state-between-nextjs-components-and-url-4ial | showdev, nextjs, react |

Can sync state between unrelated components and optionally save it to URL, difference from other solution is that can store complex objects, and Typescript autocomplete/validation.

Perfect for saving forms data.

... | asmyshlyaev177 |

1,925,193 | Product Hunt Survivor Bias | After months of hard work, feedback, and learning, I launched Curl2Url on Product Hunt. Three months... | 0 | 2024-07-16T09:09:24 | https://dev.to/davidsoleinh/product-hunt-survivor-bias-463e | After months of hard work, feedback, and learning, I launched [Curl2Url](https://curl2url.com) on Product Hunt. Three months ago, on March 19, 2024, I eagerly submitted Curl2Url to Product Hunt, only to find it missing from the spotlight. Perplexed, I delved deeper, stumbling upon a tab housing all products posted that... | davidsoleinh | |

1,925,194 | Running a Multi-Container Web App with Docker Compose | Using Docker Compose, this tutorial shows you how to launch a full-stack web application. The program... | 0 | 2024-07-16T09:09:31 | https://dev.to/teetoflame/running-a-multi-container-web-app-with-docker-compose-4b42 | devops, docker, fullstack, hnginternship | Using Docker Compose, this tutorial shows you how to launch a full-stack web application. The program is composed of distinct services for the database (PostgreSQL), frontend (React), and backend (Python). Nginx serves as a security and efficiency reverse proxy.

This is a DevOps project for my HNG internship and was d... | teetoflame |

1,925,195 | How to Test Games for a Global Audience | Are you planning to release your game to a global audience? Are you concerned about how your game... | 0 | 2024-07-16T09:10:24 | https://dev.to/wetest/how-to-test-a-game-for-a-global-audience-a9b | gamedev, devops, gametesting, python | Are you planning to release your game to a global audience? Are you concerned about how your game will perform in different regions, on various networks, or across numerous payment channels? [Local user testing](https://www.wetest.net/local-user-testing/?utm_source=forum&utm_medium=dev) can help you navigate these chal... | wetest |

1,925,196 | Whatsapp Bulk Message Sender : The Ultimate Solution | Enhance Your Marketing with Whatsapp Bulk Message Sender In today's dynamic digital... | 0 | 2024-07-16T09:10:24 | https://dev.to/simrasah/whatsapp-bulk-message-sender-the-ultimate-solution-1046 | saasyto, whatsappbulkmessage, whatsappbulkmessagesender |

## Enhance Your Marketing with Whatsapp Bulk Message Sender

In today's dynamic digital marketing landscape, leveraging a virtual number for **[WhatsApp bulk message sender](https://saasyto.com/)** can transform you... | simrasah |

1,925,197 | Nextdoor API - Seamless Extraction of Local Neighbor Data | Effortlessly extract data on local neighbors using the Nextdoor API. Gain valuable insights and... | 0 | 2024-07-16T09:11:08 | https://dev.to/iwebscraping/nextdoor-api-seamless-extraction-of-local-neighbor-data-4c64 | nextdoorapi, neighbordata | Effortlessly extract data on local neighbors using the [Nextdoor API](https://www.iwebscraping.com/nextdoor-api.php). Gain valuable insights and foster connections within your community with this seamless data extraction solution. | iwebscraping |

1,925,198 | Shadow DOM vs Virtual DOM: Understanding the Key Differences | As front-end development evolves, technologies like Shadow DOM and Virtual DOM have become... | 0 | 2024-07-16T09:11:36 | https://dev.to/mdhassanpatwary/shadow-dom-vs-virtual-dom-understanding-the-key-differences-3n2i | webdev, programming, html, javascript | As front-end development evolves, technologies like Shadow DOM and Virtual DOM have become increasingly essential. Both aim to improve web application performance and maintainability, but they do so in different ways. This article delves into the key differences between Shadow DOM and Virtual DOM, exploring their use c... | mdhassanpatwary |

1,925,204 | Ascendancy Investment Education Foundation Transforms Investor Education | Ascendancy Investment Education Foundation Transforms Investor Education Introduction to the... | 0 | 2024-07-16T09:19:25 | https://dev.to/wallstreetwire/ascendancy-investment-education-foundation-transforms-investor-education-3hm2 | ascendancyinvestment | **Ascendancy Investment Education Foundation Transforms Investor Education**

Introduction to the Investment Education Foundation

1. Foundation Overview

1.1. Foundation Name: Ascendancy Investment Education Foundation

1.2. Establishment Date: September 2018

1.3. Nature of the Foundation: Private Investment Education Fou... | wallstreetwire |

1,925,199 | Up(sun) and running with Rust: The game-changer in systems programming | Rust is revolutionizing the way we approach systems programming, offering unparalleled safety,... | 0 | 2024-07-16T09:15:17 | https://dev.to/upsun/upsun-and-running-with-rust-the-game-changer-in-systems-programming-24ej | webdev, programming, tutorial, security | Rust is revolutionizing the way we approach systems programming, offering unparalleled safety, concurrency, and performance.

It's not just for low-level systems anymore – web developers are harnessing its power too.

**🌟 Why Upsun + Rust is a match made in developer heaven:**

1️⃣ **Seamless Rust Support:** Deploy ... | celestevanderwatt |

1,925,200 | Understanding Shadow DOM: The Key to Encapsulated Web Components | In modern web development, creating reusable and maintainable components is essential. Shadow DOM,... | 0 | 2024-07-16T09:15:21 | https://dev.to/mdhassanpatwary/understanding-shadow-dom-the-key-to-encapsulated-web-components-4bki | webdev, html, css, javascript | In modern web development, creating reusable and maintainable components is essential. Shadow DOM, part of the Web Components standard, plays a crucial role in achieving this goal. This article delves into the concept of Shadow DOM, its benefits, and how to use it effectively in your projects.

## What is Shadow DOM?

... | mdhassanpatwary |

1,925,201 | IoT for Dummies: Building a Basic IoT Platform with AWS | This article will guide you through creating the fundamental functionalities of an IoT platform using... | 0 | 2024-07-16T09:16:38 | https://dev.to/aws-builders/iot-for-dummies-building-a-basic-iot-platform-with-aws-3a4o | awsiotcore, iot, fleetprovision, iotplatform | This article will guide you through creating the fundamental functionalities of an IoT platform using AWS, with a practical use case focused on monitoring electrical grid parameters to enhance efficiency and contribute to reducing the carbon footprint.

## Understanding the Use Case

In the quest for net-zero emissions... | ysyzygy |

1,925,202 | تطبيق التأمين الصحي الإلزامي على العمالة المنزلية | يقوم مجلس الضمان الصحي بتقديم مجموعة متنوعة من الخدمات الصحية الحيوية في المملكة العربية السعودية حيث... | 0 | 2024-07-16T09:18:14 | https://dev.to/gooda_rabeh_59cc20109e53d/ttbyq-ltmyn-lshy-llzmy-l-lml-lmnzly-3194 | يقوم مجلس الضمان الصحي بتقديم مجموعة متنوعة من الخدمات الصحية الحيوية في المملكة العربية السعودية حيث يسعى لتعزيز كفاءة وجودة الخدمات الصحية المتاحة للمواطنين يهدف المجلس إلى أن يكون جهة متقدمة عالميا في تحسين صحة المستفيدين وتعزيز الشفافية والكفاءة تم اتخاذ عدد من القرارات المهمة من قبل مجلس الضمان الصحي وهيئة الضمان ... | gooda_rabeh_59cc20109e53d | |

1,925,203 | AI in Mobile Apps: What Arm’s Models Mean for Developers | AI in Mobile Apps is rapidly transforming the mobile technology landscape, with Arm’s models leading... | 0 | 2024-07-16T09:19:18 | https://dev.to/hyscaler/ai-in-mobile-apps-what-arms-models-mean-for-developers-3al8 | mobileapps, ai, developers, webdev | AI in Mobile Apps is rapidly transforming the mobile technology landscape, with Arm’s models leading the charge in innovation.As artificial intelligence continues to evolve at breakneck speed, the need for robust hardware that can keep pace with these advancements becomes increasingly critical.

Enter Arm, a leader in... | amulyakumar |

1,925,205 | Leveraging WebAssembly for Performance-Intensive Web Applications | Leveraging WebAssembly for Performance-Intensive Web Applications WebAssembly (Wasm) is a... | 0 | 2024-07-16T09:19:57 | https://dev.to/wisetherumgone/leveraging-webassembly-for-performance-intensive-web-applications-mhp | webdev | ## Leveraging WebAssembly for Performance-Intensive Web Applications

WebAssembly (Wasm) is a game-changer for web developers looking to build high-performance applications. It allows code written in multiple languages (like C, C++, and Rust) to run at near-native speed on the web, enabling complex computations and appl... | wisetherumgone |

1,925,206 | If regular pancakes are already boring: a pancake recipe for a pancake day that everyone will be crazy about | There is nothing left until the Pancake Day celebration, and the selection of the menu is becoming... | 0 | 2024-07-16T09:20:02 | https://dev.to/pizzeriapalokka/if-regular-pancakes-are-already-boring-a-pancake-recipe-for-a-pancake-day-that-everyone-will-be-crazy-about-5gb0 | There is nothing left until the Pancake Day celebration, and the selection of the menu is becoming more and more important. The traditional delicacy this time are pancakes and various fillings for them. However, if the usual dish is already boring, you can prepare so-called pancakes.

Tomatoes are not only delicious, but also very useful vegetables that rightfully have a place of honor in our kitchen. Thanks to their rich vitamin, mineral and antioxidant content, they have a beneficial effect o... | pizzeriapalokka | |

1,925,210 | Know the Significant Differences Between Patches and Fixes | A singular process that denotes upgrade, or repair. The real-time for programmers to shine is not... | 0 | 2024-07-16T09:24:55 | https://dev.to/jamescantor38/know-the-significant-differences-between-patches-and-fixes-2lm7 | patchesandfixes, differences, testgrid | A singular process that denotes upgrade, or repair. The real-time for programmers to shine is not just while building software, but most importantly, when people begin using it regularly. Doing this whilst making sure that the user experience is not affected by debugging it in the best way possible is also dependent on... | jamescantor38 |

1,925,211 | Top 10 Types of Cyber attacks | What is a cyberattack? Cyberattacks are malicious attempts to harm computer systems and networks.... | 0 | 2024-07-16T09:55:08 | https://dev.to/kareemzok/top-10-types-of-cyber-attacks-458o | cybersecurity, cyber, website, security | **What is a cyberattack?**

Cyberattacks are malicious attempts to harm computer systems and networks. Attackers might try to steal, mess with, destroy, or shut down your valuable information. These attacks can come from two main groups:

**Inside Job:** These threats come from people who already have access to the sys... | kareemzok |

1,925,212 | Foodservice Disposables Market Innovations in Packaging and Waste Reduction | Foodservice Disposables Market Introduction & Size Analysis: The foodservice disposables market... | 0 | 2024-07-16T09:27:29 | https://dev.to/ganesh_dukare_34ce028bb7b/foodservice-disposables-market-innovations-in-packaging-and-waste-reduction-5hlo | Foodservice Disposables Market Introduction & Size Analysis:

The foodservice disposables market is projected to reach a valuation of US$123 billion by 2034, growing at a CAGR of 5.8% from 2024 to 2034. This market is experiencing significant growth, driven by the expansion of online sales channels and food delivery ap... | ganesh_dukare_34ce028bb7b | |

1,925,213 | Understanding `setTimeout` and `setInterval` in JavaScript | JavaScript provides several ways to handle timing events, and two of the most commonly used methods... | 0 | 2024-07-16T09:27:41 | https://dev.to/readwanmd/understanding-settimeout-and-setinterval-in-javascript-56k4 | javascript, settimeout, setinterval | JavaScript provides several ways to handle timing events, and two of the most commonly used methods are `setTimeout` and `setInterval`. These functions allow you to schedule code execution after a specified amount of time or repeatedly at regular intervals. In this article, we'll explore how these functions work and pr... | readwanmd |

1,925,214 | Feature Engineering in ML | Hey reader👋 We know that we train machine learning model on a dataset and generate prediction on any... | 0 | 2024-07-16T09:27:47 | https://dev.to/ngneha09/feature-engineering-in-ml-35id | datascience, machinelearning, beginners, tutorial | Hey reader👋

We know that we train machine learning model on a dataset and generate prediction on any unseen data based on training. The data which we are using here must be structured and well defined so that our algorithm can work efficiently. To make our data more meaningful and useful for our algorithm we perform F... | ngneha09 |

1,925,215 | 5 Software Testing Trends to Look Out for in 2024 | The world of software testing is constantly evolving to keep up with the rapid advancements in... | 0 | 2024-07-16T09:28:37 | https://dev.to/maysanders/5-software-testing-trends-to-look-out-for-in-2024-438h | The world of software testing is constantly evolving to keep up with the rapid advancements in technology. As we step into 2024, several trends are set to reshape the landscape of [software testing services](https://binmile.com/services/qa-and-software-testing-services/). Whether you're a software tester, a developer, ... | maysanders | |

1,925,216 | Understanding OpenAi’s Temperature Parameter | The temperature parameter is used by the GPT Family of completions model provided by OpenAI. Although... | 0 | 2024-07-16T09:29:41 | https://dev.to/mrunmaylangdb/understanding-openais-temperature-parameter-2pj6 | ai, llm, openai, chatgpt | The temperature parameter is used by the GPT Family of completions model provided by OpenAI. Although it is a handy parameter, it is not very self-explanatory.

Its values are between 0 and 1. This controls the randomness of the LLM, with 0 being deterministic and 1 being more random.

Let’s visualise that by taking an... | mrunmaylangdb |

1,925,217 | Transform Your Data with Expert Tableau Consulting & Integration Services | In the digital age, data is a crucial asset for any business. However, harnessing the full potential... | 0 | 2024-07-16T09:30:23 | https://dev.to/shreya123/transform-your-data-with-expert-tableau-consulting-integration-services-1dmm | tableau, consultingservices, dataintegration | In the digital age, data is a crucial asset for any business. However, harnessing the full potential of your data can be a complex challenge. This is where our Tableau Consulting & Integration Services come in. We specialize in transforming your raw data into actionable insights, helping you make informed decisions and... | shreya123 |

1,925,219 | 川普遇袭事件对中国的影响及代理IP在出海业务中的积极作用 | 引言 川普在一次公开活动中遭遇袭击事件引发了全球范围内的关注和不安。这一事件不仅对美国政治体系产生了深远影响,亦对国际政治与经济格局的稳定构成了挑战。尤其是对于当下的中国,这一事件可能会在多方面带来直... | 0 | 2024-07-16T09:30:50 | https://dev.to/jason_2077/chuan-pu-yu-xi-shi-jian-dui-zhong-guo-de-ying-xiang-ji-dai-li-ipzai-chu-hai-ye-wu-zhong-de-ji-ji-zuo-yong-1cdo | 川普遇袭, 国际经济, 出海业务, 代理ip | **引言**

川普在一次公开活动中遭遇袭击事件引发了全球范围内的关注和不安。这一事件不仅对美国政治体系产生了深远影响,亦对国际政治与经济格局的稳定构成了挑战。尤其是对于当下的中国,这一事件可能会在多方面带来直接或间接的影响。同时,随着中国企业加速出海,代理IP技术在国际业务拓展中的重要性愈加凸显。

**川普遇袭事件对中国的影响**

川普遇袭事件作为一件非同寻常的国际政治事件,对中国的影响可以从以下几个方面进行分析:

**1.国际政治格局的不确定性增加**

川普作为有影响力的前总统,其遭遇刺杀事件预示着美国国内政治矛盾的尖锐和不稳定性增加。这可能导致美国在国际事务中的行为模式更加难以预测,进一步加剧国际局势的不确定性。中国作... | jason_2077 |

1,925,220 | รู้จักกับ plv8: เมื่อ Postres ต้องมาเชื่อมจิตกับ JavaScript | บทนำ วันก่อนไปส่อง facebook fanpage ขาเดฟ ซึ่งเป็นแหล่งร่วมตัวเทพ ในวงการ developer... | 0 | 2024-07-16T09:41:17 | https://dev.to/everthing-was-postgres/ruucchakkab-plv8-emuue-postres-tngmaaechuuemcchitkab-javascript-45no | ##บทนำ##

วันก่อนไปส่อง [facebook fanpage ขาเดฟ](https://www.facebook.com/plugins/post.php?href=https%3A%2F%2Fwww.facebook.com%2Fkhadev%2Fposts%2Fpfbid0h5iDDRpqpnrSeUTwJvmPapsKRjBBrbHmepK8fSHDKMxhbtYAfu9fF145QNMcQYs4l&show_text=true&width=500" width="500" height="397" style="border:none;overflow:hidden" scrolling="no" f... | iconnext | |

1,925,221 | Integrate APIs in Android: Compose, MVVM, Retrofit | In this guide, we will explore how to integrate an API within a Jetpack Compose Android app using the... | 0 | 2024-07-16T09:33:09 | https://dev.to/tappai/integrate-apis-in-android-compose-mvvm-retrofit-4ec4 | android, devops, api, programming | In this guide, we will explore how to integrate an API within a Jetpack Compose Android app using the MVVM pattern. Retrofit will handle network calls, LiveData will manage data updates, and Compose will construct the UI. We'll use an API supplying credit card detail.

Prerequisites:

Familiarity with Jetpack Compos... | tapp_ai |

1,925,222 | Fueling the Digital Economy - BEP20 Token Development! | Imagine you're at a massive digital bazaar, where people from all around the world come to trade. But... | 0 | 2024-07-16T09:34:57 | https://dev.to/elena_marie_dad5c9d5d5706/fueling-the-digital-economy-bep20-token-development-5om | bep20, token, cryptotoken |

Imagine you're at a massive digital bazaar, where people from all around the world come to trade. But instead of physical goods, they're trading digital assets. Now, to make this market work smoothly, everyone needs a common language or standard for their trades. This is where BEP20 tokens come into play, and their de... | elena_marie_dad5c9d5d5706 |

1,925,223 | Prepare and season meals correctly: 14 important cooking tips from experienced housewives | Cooking is a real skill with many subtleties and secrets. Today we share 14 cooking tips that are... | 0 | 2024-07-16T09:36:13 | https://dev.to/pizzeriapalokka/prepare-and-season-meals-correctly-14-important-cooking-tips-from-experienced-housewives-13f4 | Cooking is a real skill with many subtleties and secrets. Today we share 14 cooking tips that are handy in all kitchens and will help you prepare even more delicious dishes. Here is what experienced chefs and housewives recommend.

First, if the soup is too salty , put a piece of black bread in it and simmer for 10 min... | pizzeriapalokka | |

1,925,224 | How to Prepare for the CISSP Certification Exam in 2024: Tips and Strategies | The Certified Information Systems Security Professional (CISSP) certification is a globally... | 0 | 2024-07-16T09:36:52 | https://dev.to/sonali_gupta_4687bf0b8666/how-to-prepare-for-the-cissp-certification-exam-in-2024-tips-and-strategies-41o7 | cissp | The Certified Information Systems Security Professional (CISSP) certification is a globally recognized credential that validates an individual’s expertise in information security. Achieving this certification can significantly boost your career in cybersecurity, opening doors to advanced roles and higher salaries. Howe... | sonali_gupta_4687bf0b8666 |

1,925,225 | Safely Experiment with Angular 18: A Guide for Developers with Existing 16 & 17 Projects | Exploring Angular 18 Without Disrupting Existing Projects I was recently working on an... | 0 | 2024-07-16T09:37:34 | https://dev.to/ingila185/safely-experiment-with-angular-18-a-guide-for-developers-with-existing-16-17-projects-3c3 | angular, typescript, javascript, angularcli | ## Exploring Angular 18 Without Disrupting Existing Projects

I was recently working on an Angular 17 project and felt the itch to explore the exciting new features of Angular 18. However, I wanted to do this in a way that wouldn't affect my existing projects that were already in production or QA phases. This presented... | ingila185 |

1,925,227 | 10 Pro Fraud Detection Strategies for Business in 2024 | Insider Market Research | Top 10 Fraud Detection Strategies: 1. Data Analytics & Machine Learning 2. Real-Time Monitoring... | 0 | 2024-07-16T09:38:05 | https://dev.to/insider_marketresearch_8/10-pro-fraud-detection-strategies-for-business-in-2024-insider-market-research-3lfd | Top 10 Fraud Detection Strategies: 1. Data Analytics & Machine Learning 2. Real-Time Monitoring 3. Multi-Factor Authentication (MFA) 4. Behavioral Biometrics 5. Anomaly Detection and Read more.

Read More: https://insidermarketresearch.com/top-fraud-detection-strategies-2024/

is generated by passing oxygen (O2) through a high-voltage electric field or UV light, splitting the oxygen molecules into single atoms... | aryanbo91040102 |

1,925,229 | Beginner Frontend Mistakes and How to Avoid Them | Embarking on the journey to become a frontend developer is both exciting and challenging. With a... | 0 | 2024-07-16T09:38:55 | https://dev.to/klimd1389/beginner-frontend-mistakes-and-how-to-avoid-them-4j6a | webdev, frontend, html | Embarking on the journey to become a frontend developer is both exciting and challenging. With a plethora of tools, frameworks, and best practices, it’s easy for beginners to make mistakes. Here are some common frontend mistakes and tips on how to avoid them:

Neglecting Mobile Responsiveness

The Mistake

Many beginner... | klimd1389 |

1,925,230 | Fybros: Your Trusted Partner for Electrical Solutions in India | Welcome to Fybros, your one-stop destination for high-quality electrical solutions in India. At... | 0 | 2024-07-16T09:39:26 | https://dev.to/modularswitches/fybros-your-trusted-partner-for-electrical-solutions-in-india-h13 | modularswitches, ledlighting, switchgears, switches | Welcome to Fybros, your one-stop destination for high-quality electrical solutions in India. At Fybros, we are committed to providing innovative and reliable electrical products that cater to both residential and commercial needs. Our extensive range of products includes [Best Modular Electrical Switches in India](http... | modularswitches |

1,925,231 | test | test | 0 | 2024-07-16T09:40:20 | https://dev.to/saraswati_tiwari_2dc0bb87/test-20f7 | webdev | test | saraswati_tiwari_2dc0bb87 |

1,925,232 | 全球免税烟,国外代加工香烟,信誉老店 | https://twitter.com/EJesus26684 环球精品香烟,七星精品免税烟,外贸免税烟,国外越代加工香烟,信誉老店。欢迎各路豪杰前来咨询。客户体验福利。一盒可以邮寄。... | 0 | 2024-07-16T09:41:38 | https://dev.to/zhangqideyan/quan-qiu-mian-shui-yan-guo-wai-dai-jia-gong-xiang-yan-xin-yu-lao-dian-3fo3 | 国外越代加工香烟 | [https://twitter.com/EJesus26684](https://twitter.com/EJesus26684)

环球精品香烟,七星精品免税烟,外贸免税烟,国外越代加工香烟,信誉老店。欢迎各路豪杰前来咨询。客户体验福利。一盒可以邮寄。 https://cutt.ly/9ewIqtA2

[**中国烟草**](https://twitter.com/EJesus26684/), [**烟**](https://twitter.com/EJesus26684/), [**香烟**](https://twitter.com/EJesus26684/), [**抽烟**](https://twitter.com/EJe... | zhangqideyan |

1,925,233 | JavaScript Web Frameworks Benchmark 2024: An In-Depth Analysis | JavaScript web frameworks play a pivotal role in modern web development, offering robust tools and... | 0 | 2024-07-16T09:41:39 | https://dev.to/sfestus90/javascript-web-frameworks-benchmark-2024-an-in-depth-analysis-30om | JavaScript web frameworks play a pivotal role in modern web development, offering robust tools and libraries to streamline the creation of interactive, high-performance web applications. With a plethora of frameworks available, choosing the right one can be daunting. Benchmarking these frameworks based on various perfo... | sfestus90 | |

1,925,235 | 中国烟草七星精品,一手货源越代 | 中国烟草七星精品,一手货源越代 https://twitter.com/EJesus26684/status/1787358451884331111 中国烟草七星精品,国烟,免税烟,一手货源越代,信誉老... | 0 | 2024-07-16T09:42:59 | https://dev.to/zhangqideyan/zhong-guo-yan-cao-qi-xing-jing-pin-shou-huo-yuan-yue-dai-24i2 | 免税烟 | 中国烟草七星精品,一手货源越代

https://twitter.com/EJesus26684/status/1787358451884331111

中国烟草七星精品,国烟,免税烟,一手货源越代,信誉老店。欢迎各路豪杰前来咨询。客户体验福利。一盒可以邮寄。 https://cutt.ly/VewIqgSS

[**中国烟草**](https://twitter.com/EJesus26684/), [**烟**](https://twitter.com/EJesus26684/), [**香烟**](https://twitter.com/EJesus26684/), [**抽烟**](https://twitter.com/EJes... | zhangqideyan |

1,925,236 | Examining Traffic Patterns in UAE by employing traffic surveys. | A post by shoyab | 0 | 2024-07-16T09:43:56 | https://dev.to/tekshoyab/examining-traffic-patterns-in-uae-by-employing-traffic-surveys-369d | ai | [](https://tektronixllc.ae/traffic-counting-solution-dubai-sharjah-ajman-abu-dhabi/) | tekshoyab |

1,925,237 | Python Messaging Queue App Development | Hello guys, Today we'll be developing a Python Messaging Queue Test, which consist of the following... | 0 | 2024-07-16T09:46:01 | https://dev.to/dimeji_ojewunmi_5e27256/python-messaging-queue-app-development-2a25 |

**Hello** guys,

Today we'll be developing a **Python Messaging Queue Test**, which consist of the following 'Tools' as our requirement.

1. RabbitMQ

2. Celery

3. Ngrok

4. Nginx

Lets talk about the theoretical aspect of the core tools we'll be using in the course of the task, courtesy of **(HNG Internship)**

What... | dimeji_ojewunmi_5e27256 | |

1,925,239 | Revolutionizing Education: The Future Of Learning Software | The Future of Learning Software As technology continues to evolve at a rapid pace, the future of... | 0 | 2024-07-16T09:51:19 | https://dev.to/saumya27/revolutionizing-education-the-future-of-learning-software-3lip | software | **The Future of Learning Software**

As technology continues to evolve at a rapid pace, the future of learning software is poised to undergo significant transformations. These changes will revolutionize how education is delivered, making it more personalized, engaging, and accessible. Here are some key trends and innov... | saumya27 |

1,925,240 | Unlocking Project Management Excellence with SAP PS: A Comprehensive Guide | In the ever-evolving landscape of business, effective project management is crucial for... | 0 | 2024-07-16T09:52:35 | https://dev.to/mylearnnest/unlocking-project-management-excellence-with-sap-ps-a-comprehensive-guide-3ka0 | sap | In the ever-evolving landscape of business, effective project management is crucial for organizational success. [SAP Project System (SAP PS)](https://www.mylearnnest.com/best-sap-ps-course-in-hyderabad/) is a powerful module within the SAP ERP suite designed to streamline project management processes, enhance efficienc... | mylearnnest |

1,925,241 | Grinstep: Revolutionizing Fitness with Blockchain Technology | In today's world, where health and fitness are more important than ever, Grinstep stands out as a... | 0 | 2024-07-16T09:53:25 | https://dev.to/grinstep/grinstep-revolutionizing-fitness-with-blockchain-technology-4g6p | beginners, blockchain, mobile, web3 | In today's world, where health and fitness are more important than ever, Grinstep stands out as a groundbreaking platform that combines physical activity with blockchain technology. This innovative move-to-earn application is designed to motivate users to stay active while rewarding them with cryptocurrency and NFTs. L... | grinstep |

1,925,242 | Exploring CSS Grid Layout | CSS Grid Layout is a powerful tool for creating responsive and flexible web layouts. Unlike... | 0 | 2024-07-16T09:53:31 | https://dev.to/sfestus90/exploring-css-grid-layout-3p3o | CSS Grid Layout is a powerful tool for creating responsive and flexible web layouts. Unlike traditional layout methods such as floats and flexbox, CSS Grid allows for both rows and columns to be designed simultaneously, providing greater control over the overall layout. This article will explore the fundamentals of CSS... | sfestus90 | |

1,925,243 | Discover NBA YoungBoy Merch on VK! | Follow NBA YoungBoy Merch on VK for exclusive content and updates. Our VK page is your source for the... | 0 | 2024-07-16T09:53:37 | https://dev.to/nbayoungboymerchshop1/discover-nba-youngboy-merch-on-vk-3e4n | nbayoungboymerch, vk | Follow NBA YoungBoy Merch on VK for exclusive content and updates. Our VK page is your source for the latest news, releases, and fan interactions. Connect with other fans and be the first to know about our newest drops.

https://vk.com/id869030320

, [**烟**](https://twitter.com/EJesus26684/), [**香烟**](https://twitter.com/EJesus26684/), [**抽烟**](https://twitter.com/EJe... | zhangqideyan |

1,925,249 | Unlocking the Power of CSS Grid for Modern Web Design | CSS Grid is revolutionizing the way web developers create layouts, offering a flexible and efficient... | 0 | 2024-07-16T09:59:45 | https://dev.to/akshayashet/unlocking-the-power-of-css-grid-for-modern-web-design-1cp | css, grid, responsive, layout | CSS Grid is revolutionizing the way web developers create layouts, offering a flexible and efficient approach to designing responsive web pages. With its powerful features and intuitive syntax, CSS Grid is becoming an essential tool for building modern, dynamic websites.

### Understanding the Basics of CSS Grid

At it... | akshayashet |

1,925,250 | Know The Importance Of SEO For Businesses In Pune | Would you like to delve deeper into any specific point or include additional information in the blog... | 0 | 2024-07-16T10:00:44 | https://dev.to/gayatri_karadkar_2de12e43/know-the-importance-of-seo-for-businesses-in-pune-4gmn | seo | Would you like to delve deeper into any specific point or include additional information in the blog post? Let me know how you’d like to proceed!

Are you struggling to make your business stand out in Pune’s competitive market? Do you dream of reaching the top of search engine results but find it challenging to navigate... | gayatri_karadkar_2de12e43 |

1,925,251 | 中国烟草一手货源,国外越代加工香烟 | 中国烟草一手货源,国外越代加工香烟 https://twitter.com/EJesus26684/status/1785896104007209213 环球精品,中国烟草一手货源,国外越代加工香烟,信... | 0 | 2024-07-16T10:01:02 | https://dev.to/zhangqideyan/zhong-guo-yan-cao-shou-huo-yuan-guo-wai-yue-dai-jia-gong-xiang-yan-35im | 国外越代加工香烟 | 中国烟草一手货源,国外越代加工香烟

https://twitter.com/EJesus26684/status/1785896104007209213

环球精品,中国烟草一手货源,国外越代加工香烟,信誉老店。欢迎各路豪杰前来咨询。客户体验福利。一盒可以邮寄。 https://cutt.ly/FewIqmZC

[**中国烟草**](https://twitter.com/EJesus26684/), [**烟**](https://twitter.com/EJesus26684/), [**香烟**](https://twitter.com/EJesus26684/), [**抽烟**](https://twitter.com/E... | zhangqideyan |

1,925,252 | Conditions and Control Flow | One of the most common tasks when writing code is to check whether certain conditions are trueor... | 28,032 | 2024-07-16T10:02:57 | https://dev.to/danielmwandiki/conditions-and-control-flow-1cph | rust, learning, codenewbie | One of the most common tasks when writing code is to check whether certain conditions are `true `or `false`.

####if Expressions

An if expression allows you to branch your code depending on conditions. You provide a condition and then state, “If this condition is met, run this code block. Do not run this code block if ... | danielmwandiki |

1,925,253 | ⚡ MySecondApp - React Native with Expo (P7) - Code Layout Register | ⚡ MySecondApp - React Native with Expo (P7) - Code Layout Register React #ReactNative #Expo... | 28,005 | 2024-07-16T10:02:59 | https://dev.to/skipperhoa/mysecondapp-react-native-with-expo-p7-code-layout-register-2ai1 | react, webdev, reactnative, tutorial | ⚡ MySecondApp - React Native with Expo (P7) - Code Layout Register

#React #ReactNative #Expo #SVG

{% youtube wVtSHB3CXvw %} | skipperhoa |

1,925,254 | Nagano tonic reviews | Nagano Lean Body Tonic is available exclusively through its official website, with various pricing... | 0 | 2024-07-16T10:03:24 | https://dev.to/mountainy_josephiney_979e/nagano-tonic-reviews-277b | weightloss |

[Nagano Lean Body Tonic](urhttps://provenbyexpert.com/

l) is available exclusively through its official website, with various pricing options and a 180-day money-back guarantee. Bulk purchases come with additional bonuses like guides on anti-aging, sleep improvement, and energy-boosting smoothies. | mountainy_josephiney_979e |

1,925,255 | Can I Expect Price Variations in Tosca Certification Courses Based on the Training Provider in the USA in 2024? | Tosca certification courses have become increasingly popular as the demand for automation testing... | 0 | 2024-07-16T10:05:52 | https://dev.to/veronicajoseph/can-i-expect-price-variations-in-tosca-certification-courses-based-on-the-training-provider-in-the-usa-in-2024-4g5 | tosca, toscacertification, webdev, automation | Tosca certification courses have become increasingly popular as the demand for automation testing grows. With numerous training providers available, potential learners often wonder if the price of Tosca certification courses varies based on the provider. In this comprehensive guide, we'll explore the factors that influ... | veronicajoseph |

1,925,256 | push() Method in JavaScript | The push() method in JavaScript adds one or more elements to the end of an array. This method... | 0 | 2024-07-16T10:19:26 | https://dev.to/sudhanshu_developer/explanation-of-push-method-in-javascript-3cb5 | learning, javascript, programming, webdev | The `push()` method in JavaScript adds one or more elements to the end of an array. This method modifies the original array and returns the new length of the array.

`Syntax : `

```

array.push(element1, element2, ..., elementN);

```

**Example 1.: **

```

const fruits = ["Apple", "Banana"];

fruits.push("Orange", "Man... | sudhanshu_developer |

1,925,257 | Blue Cross Medical Insurance: Coverage for Medical Implants Market Explained | About the market The global medical implants market is poised for substantial growth, projected to... | 0 | 2024-07-16T10:07:53 | https://dev.to/swara_353df25d291824ff9ee/blue-cross-medical-insurance-coverage-for-medical-implants-market-explained-2dbj |

**About the market**

The global [medical implants market](https://www.persistencemarketresearch.com/market-research/medical-implants-market.asp) is poised for substantial growth, projected to rise from US$ 14.75 bi... | swara_353df25d291824ff9ee | |

1,925,258 | Dental Choice Marlton NJ | Dental Choice Marlton NJ, is known for providing comprehensive dental care, including preventative,... | 0 | 2024-07-16T10:09:22 | https://dev.to/primedentalcenter/dental-choice-marlton-nj-5gm0 | Dental Choice Marlton NJ, is known for providing comprehensive dental care, including preventative, restorative, and cosmetic services. The clinic prioritizes patient comfort and offers a range of advanced treatments. With**** a team of experienced dentists and state-of-the-art technology, they ensure personalized care... | primedentalcenter | |

1,925,259 | Why Water Pumps Are Essential for Modern Living | Why Water Pumps Are Essential for Modern Living In today's world, where efficient water... | 0 | 2024-07-16T10:10:07 | https://dev.to/spropumps/why-water-pumps-are-essential-for-modern-living-180k | waterpumps, spropumps, wellpumps, submersiblepumps | <p><b></b></p>

<h1 style="text-align: start;color: rgb(0, 0, 0);font-size: 24px;border: 0px;"><b>Why Water Pumps Are Essential for Modern Living</b></h1>

<p></p>

<p style="text-align: start;color: rgb(136, 136, 136);font-size: 14px;border: 0px;">In today's world, where efficient water management is critical, water... | spropumps |

1,925,260 | NVIDIA RTX A4000 vs A100: Which is Worth It? | Introduction NVIDIA is a top GPU manufacturer with popular models like the RTX A4000 and... | 0 | 2024-07-16T10:12:10 | https://dev.to/novita_ai/nvidia-rtx-a4000-vs-a100-which-is-worth-it-1knl | ## Introduction

NVIDIA is a top GPU manufacturer with popular models like the RTX A4000 and A100. The RTX A4000 is ideal for professionals needing high performance for tasks like 3D rendering and video editing, while the A100 targets speed and power for gamers and graphics enthusiasts. This post compares their features... | novita_ai | |

1,925,292 | JEST: DeepMind’s Breakthrough for AI Training Efficiency | The pursuit of artificial intelligence (AI) has led to groundbreaking advancements, but it has also... | 0 | 2024-07-16T10:22:55 | https://dev.to/hyscaler/jest-deepminds-breakthrough-for-ai-training-efficiency-2hio | The pursuit of artificial intelligence (AI) has led to groundbreaking advancements, but it has also brought to light a critical challenge: the immense computational resources required for AI training. Training state-of-the-art AI models is an energy-intensive process that places a significant burden on the environment ... | suryalok | |

1,925,263 | Launch documentation site in record time (pouchdocs) | pouchdocs is a documentation site built with sveltekit and supabase.checkout github repo and compare... | 0 | 2024-07-16T10:16:22 | https://dev.to/pouchlabs/launch-documentation-site-in-record-time-pouchdocs-295o | documentation, webdev, sveltekit, opensource | [pouchdocs](https://pouchdocs.pouchlabs.xyz) is a documentation site built with sveltekit and supabase.checkout [github repo](https://github.com/pouchlabs/pouchdocs) and compare it with other docs sites

suggestions and contributions and stars are welcomed | pouchlabs |

1,925,264 | The Role of Water Pumps in Agriculture: Boosting Efficiency and Productivity | The Role of Water Pumps in Agriculture: Boosting Efficiency and Productivity Agriculture is the... | 0 | 2024-07-16T10:18:42 | https://dev.to/spropumps/the-role-of-water-pumps-in-agriculture-boosting-efficiency-and-productivity-32m8 | waterpumps, spropumps, submersible, monoblock | <p><b></b></p>

<h1 style="text-align: start;color: rgb(0, 0, 0);font-size: 24px;border: 0px;"><b>The Role of Water Pumps in Agriculture: Boosting Efficiency and Productivity</b></h1>

<p></p>

<p style="text-align: start;color: rgb(136, 136, 136);font-size: 14px;border: 0px;">Agriculture is the backbone of many economies... | spropumps |

1,925,265 | Digital Da Vincis: The Art of Generative AI | Generative Artificial Intelligence (AI) is a fascinating and rapidly evolving field within AI,... | 0 | 2024-07-16T10:19:14 | https://dev.to/aztec_mirage/digital-da-vincis-the-art-of-generative-ai-26o3 | gpt3, ai, learning, design | Generative Artificial Intelligence (AI) is a fascinating and rapidly evolving field within AI, characterized by its ability to autonomously generate new content. Unlike traditional AI, which primarily focuses on analyzing existing data and making predictions or classifications, generative AI creates original content ra... | aztec_mirage |

1,925,290 | Understanding Multi-cloud Environment: Benefits and Challenges | Understanding Multi-Cloud Environments Multi-cloud refers to using multiple cloud computing services... | 0 | 2024-07-16T10:21:37 | https://dev.to/kalyaniprolific/understanding-multi-cloud-environment-benefits-and-challenges-39ne | digitaltransformation, multicloud, prolifics, itconsulting |

Understanding Multi-Cloud Environments

[Multi-cloud](https://prolifics.com/us/resource-center/data-ai/does-your-cloud-experience-have-a-silver-lining) refers to using multiple [cloud computing](https://prolifics.com... | kalyaniprolific |

1,925,291 | Demystifying Go: A Guide to Core Golang Language Features and Syntax | Golang, often referred to as Go, is a powerful and rapidly growing programming language. Created by... | 0 | 2024-07-16T10:22:36 | https://dev.to/epakconsultant/demystifying-go-a-guide-to-core-golang-language-features-and-syntax-3ioo | go | Golang, often referred to as Go, is a powerful and rapidly growing programming language. Created by Google, Go prioritizes simplicity, scalability, and concurrency, making it a compelling choice for building modern web applications, network services, and cloud-native solutions. This guide delves into the core language... | epakconsultant |

1,925,293 | Hire Test Automation Engineers & Unleash Efficiency in Your Products | Test automation engineers play an increasingly critical role in the quickly changing field of... | 0 | 2024-07-16T10:25:40 | https://dev.to/danieldavis/hire-test-automation-engineers-unleash-efficiency-in-your-products-1c8i | Test automation engineers play an increasingly critical role in the quickly changing field of software development. These experts ensure the developed software's effectiveness, reliability, and quality. Finding and recruiting the most suitable test automation engineer can be a challenge. This article provides insights ... | danieldavis | |

1,925,294 | Boost Your Local Business with The America Online (The America.Online ): Your Ultimate B2B Platform | In today’s competitive market, local businesses need more than just a great product or service to... | 0 | 2024-07-16T10:26:26 | https://dev.to/the_americaonline_17b12f/boost-your-local-business-with-the-america-online-the-americaonline-your-ultimate-b2b-platform-2jg2 | In today’s competitive market, local businesses need more than just a great product or service to thrive. They need visibility, connections, and strategic marketing to reach their true potential. This is where The America Online (TheAmerica.Online) steps in, providing an invaluable platform for business-to-business (B... | the_americaonline_17b12f | |

1,925,295 | Best Immigration Agents in Australia at Jagvimal Consultants | Discover the best migration agents in Australia at Jagvimal Consultants. Expert mara agents and visa... | 0 | 2024-07-16T10:26:28 | https://dev.to/jagvimalconsultants/best-immigration-agents-in-australia-at-jagvimal-consultants-46k8 | Discover the **[best migration agents in Australia](https://jagvimal.com.au)** at Jagvimal Consultants. Expert mara agents and visa consultants for all your immigration needs. Contact our top Australia visa agents.

Visit [https://jagvimal.com.au](https://jagvimal.com.au)

| jagvimalconsultants | |

1,925,296 | Diploma in Nursing in Australia at Jagvimal Consultants | Explore our Diploma of Nursing in Australia at Jagvimal. Discover accredited courses, career... | 0 | 2024-07-16T10:28:35 | https://dev.to/jagvimalconsultants/diploma-in-nursing-in-australia-at-jagvimal-consultants-1i34 | Explore our **[Diploma of Nursing in Australia](https://jagvimal.com.au/courses/diploma-of-nursing)** at Jagvimal. Discover accredited courses, career opportunities, and how to apply for your nursing diploma today.

Visit [https://jagvimal.com.au/courses/diploma-of-nursing](https://jagvimal.com.au/courses/diploma-of-nur... | jagvimalconsultants | |

1,925,297 | NBR Homes | NBR PROPERTIES | What’s in the Modern Homebuyer’s Mind? There is a major change occurring in the Indian real estate... | 0 | 2024-07-16T10:30:09 | https://dev.to/nbr_group_803e4ea08e0b25e/nbr-homes-nbr-properties-4o8k | webdev, beginners, productivity | What’s in the Modern Homebuyer’s Mind?

There is a major change occurring in the Indian real estate market. Nowadays, millennials and Gen Z are leading the way in homeownership, and they have quite different objectives than did earlier generations. They are looking for a complete living experience that fits in well with... | nbr_group_803e4ea08e0b25e |

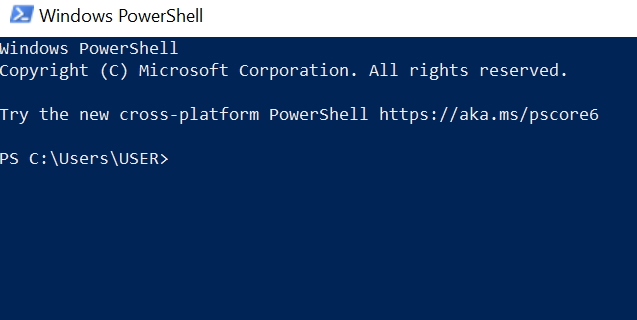

1,925,298 | How to SSH to a Linux server | To SSH into a Linux server means to connect to a Linux server. The following below are outlined steps... | 0 | 2024-07-16T10:32:18 | https://dev.to/stippy4real/how-to-ssh-to-a-linux-server-4oo6 | deveops, virtualmachine, linuxvm, cloudcomputing | To SSH into a Linux server means to connect to a Linux server.

The following below are outlined steps in connecting VM to a Linux server

open the power shell of your computer

type in the powershell: ssh username@host... | stippy4real |

1,925,299 | IntelliType: Python Type Hinting with Hoverable Docstrings | IntelliType I recently published a Python type-hint library called intelli-type. The main... | 0 | 2024-07-16T10:32:28 | https://dev.to/crimson206/intellitype-python-type-hinting-with-hoverable-docstrings-2bck | python, typehint, cleancode, documentation | ## IntelliType

I recently published a Python type-hint library called [intelli-type](https://github.com/crimson206/intelli-type). The main purpose is to enhance type-hinting with Intellisense.

## Intellisense

When using IntelliType, you need to define custom type hints.

```python

class AudioFeature(IntelliType[Tens... | crimson206 |

1,925,300 | How to Improve Productivity with MuleSoft RPA Integration | In today’s fast-paced business environment, productivity is key to maintaining a competitive edge.... | 0 | 2024-07-16T10:32:50 | https://dev.to/shreya123/how-to-improve-productivity-with-mulesoft-rpa-integration-bfd | mulesoftrpa, mulesoftintegration, mulesoft | In today’s fast-paced business environment, productivity is key to maintaining a competitive edge. One powerful way to boost productivity is through the integration of MuleSoft and Robotic Process Automation (RPA). This combination can streamline processes, reduce manual tasks, and free up valuable time for your workfo... | shreya123 |

1,925,301 | The Causal Decoder-Only Falcon and Its Alternatives | Key Highlights Cutting-Edge Technology: Falcon-40B-Instruct is a 40 billion parameter... | 0 | 2024-07-16T10:57:47 | https://dev.to/novita_ai/the-causal-decoder-only-falcon-and-its-alternatives-1oie | llm, falcon | ## Key Highlights

- **Cutting-Edge Technology:** Falcon-40B-Instruct is a 40 billion parameter causal decoder-only model, leading in performance and innovation in natural language processing.

- **Multilingual Support:** Supports primary languages including English, with extended capabilities in German, Spanish, French... | novita_ai |

1,925,302 | Mastering the Craft: Common Golang Problem-solving Techniques | Golang, with its clean syntax and powerful features, has become a favorite amongst developers for... | 0 | 2024-07-16T10:34:17 | https://dev.to/epakconsultant/mastering-the-craft-common-golang-problem-solving-techniques-45kg | go | Golang, with its clean syntax and powerful features, has become a favorite amongst developers for building modern web applications, network services, and complex systems. This guide explores some common problem-solving techniques in Golang, equipping you to tackle diverse programming challenges effectively.

[Senior S... | epakconsultant |

1,925,304 | Revolutionizing Logistics with Object Detection Technology | The Magic Behind the Scenes: Object Detection at Work Imagine a bustling warehouse, once a... | 27,673 | 2024-07-16T10:35:38 | https://dev.to/rapidinnovation/revolutionizing-logistics-with-object-detection-technology-33hj | ## The Magic Behind the Scenes: Object Detection at Work

Imagine a bustling warehouse, once a maze of chaos, now transformed into an

epitome of order and efficiency, all thanks to object detection technology.

These systems, equipped with advanced sensors and algorithms, excel at more

than just recognizing packages; th... | rapidinnovation | |

1,925,305 | Top 3 ways to find flat and flatmates Ahmedabad | Flat and Flatmates Ahmedabad: Top 3 Ways to Find the Perfect Flatmate Flat and flatmates Ahmedabad... | 0 | 2024-07-16T10:37:51 | https://dev.to/citynect/top-3-ways-to-find-flat-and-flatmates-ahmedabad-4jpp | **[Flat and Flatmates Ahmedabad](https://blogs.citynect.in/flat-and-flatmates-ahmedabad/)**: Top 3 Ways to Find the Perfect Flatmate

Flat and flatmates Ahmedabad -is not just any city; it’s a vibrant blend of cultures, dreams, and opportunities. Whether you’re a student or a young professional, finding the right flat ... | citynect | |

1,925,306 | Send certificate & Key file in Rest Api | Hi I want to do certificate based authentication in React Native, for that I am using Rest API. And... | 0 | 2024-07-16T10:38:56 | https://dev.to/shahanshah_alam_e5655bc6d/send-certificate-key-file-in-rest-api-47f8 | Hi

I want to do certificate based authentication in React Native, for that I am using Rest API.

And from the docs https://learn.microsoft.com/en-us/azure/iot-dps/how-to-control-access#certificate-based-authentication I found out this cURL, and I need to send certificate and key file in API, as https.agent is not suppor... | shahanshah_alam_e5655bc6d | |

1,925,307 | How I migrated my course platform to the SPARTAN stack (Angular Global Summit 2024) | I just released my latest conference talk: How I migrated my course platform to the SPARTAN stack... | 0 | 2024-07-16T10:39:12 | https://dev.to/chrislydemann/how-i-migrated-my-course-platform-to-the-spartan-stack-angular-global-summit-2024-17kf | angular, analog | I just released my latest conference talk:

How I migrated my course platform to the SPARTAN stack (Angular Global Summit 2024)

- The SPARTAN Stack

- How I migrated my course platform to Analog and the SPARTAN stack

- Handing auth with the SPARTAN stack

- Client Hydration with Analog

[Watch it here](https://www.yout... | chrislydemann |

1,925,308 | Library v/s Framework | Ever confused between library and frameworks ??? Do you also get confused about these... | 0 | 2024-07-16T10:41:54 | https://dev.to/sourav_codey/library-vs-framework-2afd | library, framework, frontend, spring |

## Ever confused between library and frameworks ???

---

Do you also get confused about these two and used these two terms interchangeably.

Let me help you understand these two so you will have a better clarity.

---

First, lets start with definition:

## **Library**

A collection of predefined methods, classes or in... | sourav_codey |

1,925,311 | Taming the Golang Beasts: Common Gotchas and Edge Cases | Golang, with its simplicity and power, has become a popular language for building modern... | 0 | 2024-07-16T10:43:19 | https://dev.to/epakconsultant/taming-the-golang-beasts-common-gotchas-and-edge-cases-3dek | go | Golang, with its simplicity and power, has become a popular language for building modern applications. However, despite its clean syntax, Golang has its fair share of potential pitfalls lurking beneath the surface. This guide explores common gotchas and edge cases you may encounter in Golang development, helping you na... | epakconsultant |

1,925,312 | I used to hate my mornings until I discovered this transformative morning routine! | Background: I moved to Lisbon, Portugal, six months ago. Suddenly, my perfect work schedule was... | 0 | 2024-07-16T10:43:25 | https://dev.to/madelgeek/i-used-to-hate-my-mornings-until-i-discovered-this-transformative-morning-routine-54bb | Background: I moved to Lisbon, Portugal, six months ago. Suddenly, my perfect work schedule was disrupted. My active workday, with meetings starting at 9:30, began two hours earlier than my usual 11:30 start. This change was challenging, and I needed to adapt to this new reality.

**The solution was establishing a prod... | madelgeek | |

1,925,313 | Principles for Managing Remote Teams and Freelancers | I lead a business that works in perfect harmony to achieve our ambitious goals. Here's some tips on... | 0 | 2024-07-16T10:43:29 | https://dev.to/martinbaun/principles-for-managing-remote-teams-and-freelancers-4nfl | devops, productivity, career, startup | I lead a business that works in perfect harmony to achieve our ambitious goals. Here's some tips on how I do it.

## Determine responsibilities.

Most employees want to do a good job, but it's hard if they don't know what is expected of them. You are expected to give clear instructions to your team, outlining their resp... | martinbaun |

1,925,314 | Creating a Smooth Transitioning Dialog Component in React (Part 3/4) | Part 3: Improving Animation Reliability In Part 2, I enhanced our dialog component by... | 0 | 2024-07-16T12:16:52 | https://dev.to/copet80/creating-a-smooth-transitioning-dialog-component-in-react-part-34-15b6 | javascript, reactjsdevelopment, react, css | ##Part 3: Improving Animation Reliability

In [Part 2](https://dev.to/copet80/creating-a-smooth-transitioning-dialog-component-in-react-part-24-20ff), I enhanced our dialog component by adding smooth animations for minimise and expand actions using `max-width` and `max-height`. This approach ensured the dialog dynamica... | copet80 |

1,925,315 | Effortless Theme Toggling in Angular 17 Standalone Apps with PrimeNG | As I delved into PrimeNG and PrimeFlex for my recent Angular 17 standalone app with SSR, one aspect... | 0 | 2024-07-16T10:47:32 | https://dev.to/ingila185/effortless-theme-toggling-in-angular-17-standalone-apps-with-primeng-2h20 | angular, javascript, typescript, webdev |

As I delved into PrimeNG and PrimeFlex for my recent Angular 17 standalone app with SSR, one aspect truly stood out: built-in themes. Unlike Material UI, PrimeNG offers a delightful selection of pre-built themes... | ingila185 |

1,925,317 | Securing Your Code: Common Golang Concepts and Constructs to Test | Golang's emphasis on simplicity and conciseness can lead to developers overlooking the importance of... | 0 | 2024-07-16T10:50:43 | https://dev.to/epakconsultant/securing-your-code-common-golang-concepts-and-constructs-to-test-2mi | go | Golang's emphasis on simplicity and conciseness can lead to developers overlooking the importance of thorough testing. Writing comprehensive unit tests is crucial for ensuring code correctness, preventing regressions, and facilitating refactoring. This guide explores the core Golang concepts and constructs that demand ... | epakconsultant |

1,925,318 | The Art of Responsible AI | Introduction: The Significance of Responsible AI in Machine Learning Understanding Ethical Concerns... | 0 | 2024-07-16T10:52:01 | https://dev.to/jinesh_vora_ab4d7886e6a8d/the-art-of-responsible-ai-28ag | datascience, database, dataengineering, machinelearning | Introduction: The Significance of Responsible AI in Machine Learning

Understanding Ethical Concerns in AI and ML: Bias, Transparency and Accountability

Techniques to Work on Bias in Machine Learning Models

Techniques for Ensuring Transparency and Explain ability in AI Systems

Implementation of the Data Science Course w... | jinesh_vora_ab4d7886e6a8d |

1,925,319 | Deep Dive into Mixture of Experts for LLM Models | Key Highlights Evolution of MoE in AI: Explore how MoE has evolved from its inception in... | 0 | 2024-07-16T10:57:41 | https://dev.to/novita_ai/deep-dive-into-mixture-of-experts-for-llm-models-5d9o | llm | ## Key Highlights

- **Evolution of MoE in AI:** Explore how MoE has evolved from its inception in 1991 to become a cornerstone in enhancing machine learning capabilities beyond traditional neural networks.

- **Core Components of MoE Architecture:** Delve into the experts, gating mechanisms, and routing algorithms that... | novita_ai |

1,925,320 | The Perfect Ride for Short Trips and Daily Commuting: Road Electric Bikes | In today's fast-paced world, finding efficient, environmentally friendly and enjoyable ways to make... | 0 | 2024-07-16T10:52:26 | https://dev.to/karinaluyi/the-perfect-ride-for-short-trips-and-daily-commuting-road-electric-bikes-1e46 | In today's fast-paced world, finding efficient, environmentally friendly and enjoyable ways to make short trips, daily commutes and shopping trips is crucial. Electric bikes are a great solution, combining the benefits of a traditional bicycle with the added perks of electricity. In this article, we explore the benefit... | karinaluyi | |

1,925,322 | Latest Coupon Codes: Unlocking Savings in 2024 | In today's fast-paced digital world, saving money has never been easier. Coupon codes are a boon for... | 0 | 2024-07-16T10:54:11 | https://dev.to/redeemdiscounts/latest-coupon-codes-unlocking-savings-in-2024-14f5 | coupon, webdev, coupones | In today's fast-paced digital world, saving money has never been easier. Coupon codes are a boon for shoppers, offering substantial savings across a plethora of products and services. In this comprehensive guide, we delve into the **[latest coupon codes](https://redeemdiscounts.com/)**, helping you maximize your saving... | redeemdiscounts |

1,925,323 | Obtain M2M access tokens in minutes with Postman | Learn how to use Postman to obtain a machine-to-machine access token and call Logto management API in... | 0 | 2024-07-16T10:54:15 | https://blog.logto.io/use-postman-to-obtain-m2m-access-token/ | webdev, opensource, identity, programming | Learn how to use Postman to obtain a machine-to-machine access token and call Logto management API in minutes.

---

# Background

[Logto Management API](https://docs.logto.io/docs/recipes/interact-with-management-api/) is a set of APIs that gives developers full control over their Logto instance, enabling tasks such as ... | palomino |

1,925,324 | Customized Premixes Market Forecast: 2024-2031 Overview | The global customized premixes market is projected to grow significantly, reaching US$ 8.96 billion... | 0 | 2024-07-16T10:54:19 | https://dev.to/swara_353df25d291824ff9ee/customized-premixes-market-forecast-2024-2031-overview-4hkk |

The global [customized premixes market](https://www.persistencemarketresearch.com/market-research/customized-premixes-market.asp) is projected to grow significantly, reaching US$ 8.96 billion by 2031 from US$ 5.92 b... | swara_353df25d291824ff9ee |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.