id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,925,325 | AutoEntityGenerator – my first visual studio extension. | (First published on What The # Do I Know?) TL;DR – There are links to the Visual Studio Marketplace... | 0 | 2024-07-16T10:56:00 | https://dev.to/peledzohar/autoentitygenerator-my-first-visual-studio-extension-p9j | dotnet, visualstudio | (First published on [What The # Do I Know?](https://zoharpeled.wordpress.com/2024/07/16/autoentitygenerator-my-first-visual-studio-extension/))

TL;DR – There are links to the Visual Studio Marketplace and the GitHub repository at the end.

It all started when I watched a YouTube video by Amichai Mantinband called [Con... | peledzohar |

1,925,326 | Hey, I'm Shriyash. Im very Happy to give wonderful contribution in Dev Community.. | A post by Mr Shriyash Thakare | 0 | 2024-07-16T10:56:41 | https://dev.to/shriyashthakare671/hey-im-shriyash-im-very-happy-to-give-wonderful-contribution-in-dev-community-3771 | shriyashthakare671 | ||

1,925,688 | Serengeti National Park | A. Location and Geography Location: Serengeti National Park is situated in northern Tanzania,... | 0 | 2024-07-16T16:15:15 | https://dev.to/paukush69/serengeti-national-park-34g2 |

A. Location and Geography

Location: Serengeti National Park is situated in northern Tanzania, extending to southwestern Kenya, where it merges with the Maasai Mara.

Geography: The park encompasses 14,750 square kilometers of diverse ecosystems, including savannas, woodlands, and riverine forests.

![Image description]... | paukush69 | |

1,925,327 | VR Game Development | At SDLC Corp., we specialize in creating immersive and breathtaking VR gaming experiences that... | 0 | 2024-07-16T10:57:25 | https://dev.to/sdlc_corp/vr-game-development-4m34 | vrgame, vrgamedevelopment, vrgamedevelopers, vrgameplatform | > At SDLC Corp., we specialize in creating immersive and breathtaking VR gaming experiences that transport players to entirely new worlds. Our commitment to innovation and excellence ensures that each VR game we develop captivates players, delivering unparalleled levels of immersion and interaction. At SDLC Corp., we a... | sdlc_corp |

1,925,328 | Inspection Machines Market Analysis: Emerging Trends and Innovations | Inspection Machines Market Growth, Trends, Size, Revenue, Share, Challenges Inspection Machines... | 0 | 2024-07-16T10:57:56 | https://dev.to/ankita_b_9f02fb49ce678cf2/inspection-machines-market-analysis-emerging-trends-and-innovations-5625 | Inspection Machines Market Growth, Trends, Size, Revenue, Share, Challenges

Inspection Machines Market by Offering (Hardware, Software), Automation Mode (Automatic Inspection, Semi-automatic Inspection, Manual Inspection), End User (Pharmaceutical and Biotech, Food and Beverages) and Geography - Global Forecast to 2030... | ankita_b_9f02fb49ce678cf2 | |

1,925,329 | Ensuring Quality and Durability: The Crucial Role of a Roofing Installation Company in Missouri City | Missouri City, with its dynamic weather patterns ranging from sunny days to torrential rains,... | 0 | 2024-07-16T10:57:57 | https://dev.to/douggerhardt/ensuring-quality-and-durability-the-crucial-role-of-a-roofing-installation-company-in-missouri-city-4epi | Missouri City, with its dynamic weather patterns ranging from sunny days to torrential rains, necessitates homes that are well-protected and resilient. At the heart of this protection is a robust roofing system, which not only secures the structural integrity of a property but also contributes to its aesthetic appeal. ... | douggerhardt | |

1,925,330 | Unlock the Potential of Your Site with GoHighLevel Design | Struggling to manage your website and marketing efforts? GoHighLevel is an all-in-one solution... | 0 | 2024-07-16T10:58:49 | https://dev.to/deepakjuneja/unlock-the-potential-of-your-site-with-gohighlevel-design-44n0 | webdesign, websitedesign | Struggling to manage your website and marketing efforts? GoHighLevel is an all-in-one solution designed to streamline your business growth. GoHighLevel stands out as a powerful platform integrating website design and marketing automation, empowering businesses with streamlined operations and enhanced customer engagemen... | deepakjuneja |

1,925,331 | Spaceship operator 🚀 in PHP | Introduced in PHP 7, the Spaceship operator <=> is used to compare two expressions. It... | 0 | 2024-07-16T11:00:23 | https://dev.to/thibaultchatelain/spaceship-operator-in-php-5g0n | php, operator | Introduced in PHP 7, the Spaceship operator <=> is used to compare two expressions.

It returns:

- 0 if both values are equal

- 1 if left operand is greater

- -1 if right operand is greater

Let’s see it with an example:

```

echo 2 <=> 2; // Outputs 0

echo 3 <=> 1; // Outputs 1

echo 1 <=> 3; // Outputs -1

echo "b" <... | thibaultchatelain |

1,925,332 | Hiring Full Stack Engineers (MERN Stack) | Cypherock | Hello Developers, We at Cypherock are hiring for Full Stack Engineers proficient with MERN stack... | 0 | 2024-07-16T11:00:40 | https://dev.to/pranayb/hiring-full-stack-engineers-mern-stack-cypherock-2ca7 | webdev, hiring, fullstack, cryptocurrency | Hello Developers,

We at Cypherock are hiring for Full Stack Engineers proficient with MERN stack with at least 1 year of experience in the field. However, if you feel you are qualified enough even as a fresher, feel free to apply for the position. To apply, drop me a mail at pranay@cypherock.com with your resume and a... | pranayb |

1,925,333 | Harnessing 9 LLM Use Cases for Success | Uncover the power of LLM use cases in boosting efficiency and productivity. Learn more about these... | 0 | 2024-07-16T11:02:12 | https://dev.to/novita_ai/harnessing-9-llm-use-cases-for-success-30lc | llm, ai | Uncover the power of LLM use cases in boosting efficiency and productivity. Learn more about these practical applications.

## Key Highlights

- Large Language Models (LLMs) are advanced AI systems that mimic human language abilities.

- They enhance various sectors like customer service, finance, healthcare, and online... | novita_ai |

1,925,334 | From JS to Fire OS: React Native for Amazon Devices | React Native enables developers to build native apps for Amazon Fire OS devices using their existing... | 0 | 2024-07-17T10:12:49 | https://dev.to/amazonappdev/get-started-with-react-native-for-amazon-fire-os-51j8 | reactnative, beginners, react, learning | React Native enables developers to build native apps for Amazon Fire OS devices using their existing JavaScript and React skills. Since Fire OS is based on the Android Open Source Project (AOSP), if you are already working with React Native you can easily target our devices without learning a new tech stack or maintain... | anishamalde |

1,925,335 | Mastering Content Security Policy (CSP) for JavaScript Applications: A Practical Guide | Learn how to secure your JavaScript applications with Content Security Policy (CSP). This guide covers the essentials, implementation steps, and real-world examples to help you protect against XSS and data injection attacks. | 0 | 2024-07-16T11:04:14 | https://dev.to/rigalpatel001/mastering-content-security-policy-csp-for-javascript-applications-a-practical-guide-2ppm | javascript, websecurity, csp, webdev | ---

title: Mastering Content Security Policy (CSP) for JavaScript Applications: A Practical Guide

published: true

description: Learn how to secure your JavaScript applications with Content Security Policy (CSP). This guide covers the essentials, implementation steps, and real-world examples to help you protect against ... | rigalpatel001 |

1,925,336 | A Responsive and User-Friendly React Image Gallery Package | As a web developer, I always look for tools to enhance user experience and simplify workflow.... | 0 | 2024-07-16T11:07:43 | https://dev.to/b-owl/a-responsive-and-user-friendly-react-image-gallery-package-134c | react, npm, typescript, webpack |

As a web developer, I always look for tools to enhance user experience and simplify workflow. Today, I'm excited to introduce overlay-image-gallery, a new React library that offers a multi-step image overlay and pr... | b-owl |

1,925,337 | Implementing Photo Modal in Next JS using Parallel Routes & Intercepting Routes | Implementing Modals using parallel routes & intercepting routes provided in Next JS app router... | 0 | 2024-07-16T11:08:39 | https://dev.to/wanyama413/implementing-photo-modal-in-next-js-using-parallel-routes-intercepting-routes-2dpn | nextjs, javascript, webdev, frontend | Implementing Modals using parallel routes & intercepting routes provided in Next JS app router offers the following benefits:

1.Ability to share the modal content using a url

2.Preserving context when the page is refreshed, instead of closing the modal.

3.Closing the Modal on backward navigation & re-opening the mod... | wanyama413 |

1,925,338 | Deploying Spring boot project to external tomcat server. | Although spring boot project comes with tomcat server itself. But sometimes we may have requirements... | 0 | 2024-07-16T11:10:22 | https://dev.to/shoili_rozario_76aefaf1d8/deploying-spring-boot-project-to-external-tomcat-server-37g8 | Although spring boot project comes with tomcat server itself. But sometimes we may have requirements to make our spring boot application on an external tomcat server. So, keeping knowledge on how we can deploy our spring boot project on external tomcat server is a plus point for every spring boot learner.

## Building... | shoili_rozario_76aefaf1d8 | |

1,925,340 | Key Performance Indicators (KPIs) Every Digital Marketer Should Track | In the dynamic realm of digital marketing, success hinges not only on creative campaigns but also on... | 0 | 2024-07-16T11:12:47 | https://dev.to/sejaljansari/key-performance-indicators-kpis-every-digital-marketer-should-track-p2f | marketing, kpi, digitalmarketing | In the dynamic realm of digital marketing, success hinges not only on creative campaigns but also on data-driven insights. Key Performance Indicators (KPIs), defined as specific, time-bound, measurable, relevant, and attainable metrics, serve as crucial tools to gauge the effectiveness of marketing efforts across vario... | sejaljansari |

1,925,341 | Folder Structure of a React Native App | Introduction React Native is a powerful framework for building mobile applications using... | 0 | 2024-07-17T20:25:02 | https://dev.to/wafa_bergaoui/folder-structure-of-a-react-native-app-3m44 | reactnative, react, reactjsdevelopment, javascript | ## Introduction

React Native is a powerful framework for building mobile applications using JavaScript and React. As you dive into developing with React Native, it's essential to understand the structure of a typical React Native project. Each folder and file has a specific purpose, and knowing their roles will help y... | wafa_bergaoui |

1,925,343 | How Did Our Grocery Data Scraping Achieve 99% Accuracy Across 200 Stores? | How Did Our Grocery Data Scraping Achieve 99% Accuracy Across 200 Stores? This case study showcases... | 0 | 2024-07-16T11:16:14 | https://dev.to/iwebdatascrape01/how-did-our-grocery-data-scraping-achieve-99-accuracy-across-200-stores-m5h | grocerydatascraping, grocerydatascraper, scrapegrocerydata, webscrapinggrocerydata |

How Did Our Grocery Data Scraping Achieve 99% Accuracy Across 200 Stores?

This case study showcases the effectiveness of our AI Analytics in optimizing pricing across 200 stores. Leveraging our grocery data scrapin... | iwebdatascrape01 |

1,925,344 | Navigate the Airfield with Confidence: Equipment, Suppliers, Expertise | A well-lit airfield isn't just a pretty sight; it's an orchestra of specialized equipment conducting... | 0 | 2024-07-16T11:16:52 | https://dev.to/bildal/navigate-the-airfield-with-confidence-equipment-suppliers-expertise-k3l | A well-lit airfield isn't just a pretty sight; it's an orchestra of specialized equipment conducting a symphony of safety for pilots and ground crew. But navigating the world of airfield lighting companies (AGL suppliers) can feel like landing in a storm. That's where Bildal Electricals comes in – your trusted partner... | bildal | |

1,925,345 | How To Start an NFT Horse Racing Game Like Zed Run | The NFT gaming realm has come a long way which is creating possibilities for businesses to thrive in... | 0 | 2024-07-16T11:17:26 | https://dev.to/bellabardot/how-to-start-an-nft-horse-racing-game-like-zed-run-43di | zedrun, zedrunclonescr | The NFT gaming realm has come a long way which is creating possibilities for businesses to thrive in the gaming industry. Zed Run is one of the popular NFT horse racing games in the NFT gaming space which is bringing the realistic horse racing gaming experience to the players. Zed Run claims itself as a futuristic hors... | bellabardot |

1,925,347 | Growth Hacking Vs. Growth Marketing: How Are They Different? | “Growth hacking” and “growth marketing” are terms often used interchangeably by many. In fact I find... | 0 | 2024-07-16T11:18:09 | https://dev.to/niumatrix_digital_5b43412/growth-hacking-vs-growth-marketing-how-are-they-different-2l0k | marketing, seo, growthhacking, growthmarketing | “Growth hacking” and “growth marketing” are terms often used interchangeably by many. In fact I find it astonishing how people bandy about “growth hacker” or “growth marketer” in their LinkedIn headlines without any consideration for what exactly are these terms and how are they different from each other.

Growth hacki... | niumatrix_digital_5b43412 |

1,925,349 | Europe's Acrylic Polymer Industry: Navigating Environmental Regulations | Acrylic polymer refers to a group of polymers derived from acrylic acid or related compounds. These... | 0 | 2024-07-16T11:19:27 | https://dev.to/aryanbo91040102/europes-acrylic-polymer-industry-navigating-environmental-regulations-10ko | news | Acrylic polymer refers to a group of polymers derived from acrylic acid or related compounds. These polymers are known for their versatility, durability, and wide range of applications. Acrylic polymers are formed by the polymerization of acrylate monomers, which include ethyl acrylate, methyl methacrylate, and butyl a... | aryanbo91040102 |

1,925,406 | Python - Fundamentals | In here, I'm gonna tell you how to use variables in python. We shall see how to name a variable and... | 0 | 2024-07-16T14:00:46 | https://dev.to/abys_learning_2024/python-fundamentals-346p | python, coding, basic | In here, I'm gonna tell you how to use variables in python. We shall see how to name a variable and assign values to them.

**_How to name a Variable ?_**

Firstly a variable is nothing but a reference to an object or value throughout the program. They act as reference to a memory where the value is stored.

There are ... | abys_learning_2024 |

1,925,351 | 8 Captivating Programming Challenges to Boost Your Coding Skills 🚀 | The article is about a captivating collection of 8 programming challenges curated by the LabEx platform. From calculating simple interest using a function to creating a stopwatch app with GTK, this article offers a diverse set of labs designed to push your coding skills to new heights. Each challenge is presented with ... | 27,850 | 2024-07-16T11:20:18 | https://dev.to/labex/8-captivating-programming-challenges-to-boost-your-coding-skills-5hmf | labex, c, programming, tutorials |

Are you ready to embark on an exciting journey through a collection of programming challenges that will push your coding abilities to new heights? Look no further! This article presents a diverse set of labs curated by the LabEx platform, each designed to test your problem-solving skills and expand your programming kn... | labby |

1,925,399 | Help the Poor and Needy – Make this World a Better Place | Originally Published by Lovely Foundation : https://www.lovelyfoundation.com/ngo-helping-poor This... | 0 | 2024-07-16T11:21:46 | https://dev.to/lovely_foundations_/help-the-poor-and-needy-make-this-world-a-better-place-d76 | ngoinmohali, educationngo | Originally Published by Lovely Foundation : https://www.lovelyfoundation.com/ngo-helping-poor

This world is full of capabilities, but numerous people don’t get a chance to experience it. The class divide in our society persists, favoring only a few. Where some people can make use of the best luxuries out there, many s... | lovely_foundations_ |

1,925,401 | How to Learn Swift: A Comprehensive Guide | Swift is a powerful and intuitive programming language developed by Apple for building apps on iOS,... | 0 | 2024-07-16T11:22:20 | https://dev.to/bilal_zafar_2f9fbe7ef50b5/how-to-learn-swift-a-comprehensive-guide-1k41 | Swift is a powerful and intuitive programming language developed by Apple for building apps on iOS, macOS, watchOS, and tvOS. Whether you're new to programming or an experienced developer looking to add Swift to your skill set, this guide will help you navigate the learning process.

Why Learn Swift?

Before diving into... | bilal_zafar_2f9fbe7ef50b5 | |

1,925,404 | Personal Finance Tracker with Python | Intro: Morning! Another simple project under my belt and could be under yours! With the tutorial... | 0 | 2024-07-16T11:24:24 | https://dev.to/nelson_bermeo/personal-finance-tracker-with-python-59ll | python, beginners | Intro:

Morning! Another simple project under my belt and could be under yours! With the tutorial guidance of Tim from Tech with Tim at: [Youtube Link](https://www.youtube.com/watch?v=Dn1EjhcQk64) I was able to program a personal finance tracker. This project used a csv file to store transactions from the terminal line... | nelson_bermeo |

1,925,405 | Save server resource in Laravel | How do you save server resource in Laravel 11? Go to routes/web.php and add middleware to your... | 0 | 2024-07-16T11:26:08 | https://dev.to/shaz3e/save-server-resource-in-laravel-5b93 | How do you save server resource in Laravel 11?

Go to routes/web.php

and add middleware to your route or route group just remember the key which will be using throughout the application in this case I am using `weather` as key to limit the request by User or IP. just remember whatever key you use should be same as the... | shaz3e | |

1,925,407 | How to Learn Xcode: A Comprehensive Guide | Xcode is Apple's integrated development environment (IDE) used for developing applications for macOS,... | 0 | 2024-07-16T11:27:03 | https://dev.to/bilal_zafar_2f9fbe7ef50b5/how-to-learn-xcode-a-comprehensive-guide-50d | Xcode is Apple's integrated development environment (IDE) used for developing applications for macOS, iOS, watchOS, and tvOS. Learning Xcode is essential for anyone looking to build apps within the Apple ecosystem. This guide will provide you with a structured approach to mastering Xcode, whether you're a beginner or a... | bilal_zafar_2f9fbe7ef50b5 | |

1,925,408 | Quick Start with AWS Lambda for Serverless Computing | Discover how AWS Lambda, a serverless computing service, can revolutionize your development process... | 0 | 2024-07-16T11:29:29 | https://dev.to/zunair_arain_50e0d2182202/quick-start-with-aws-lambda-for-serverless-computing-2n4 | Discover how AWS Lambda, a serverless computing service, can revolutionize your development process by eliminating server management tasks. This post will guide you through creating your first Lambda function, perfect for beginners and enthusiasts of tools like capcutapk.

What is AWS Lambda?

AWS Lambda runs your code ... | zunair_arain_50e0d2182202 | |

1,925,411 | What is the American Airlines Student Discount | Comprehensive Guide to American Airlines Student Discount At American Airlines, we understand the... | 0 | 2024-07-16T11:36:05 | https://dev.to/james_carton_c4587349837b/what-is-the-american-airlines-student-discount-2nci | travel, airlines | **Comprehensive Guide to American Airlines Student Discount**

At American Airlines, we understand the importance of making travel accessible and affordable for students. That’s why we offer a special American Airlines student discount program designed to help students travel more and explore the world at a lower cost.... | james_carton_c4587349837b |

1,925,412 | Linking multiple API requests: A new approach | What you normally see in an API client (yes postman too) is that every API request is a monolithic... | 0 | 2024-07-16T11:36:20 | https://dev.to/nikoldimit/linking-multiple-api-requests-a-new-approach-1a88 | What you normally see in an API client (yes postman too) is that every API request is a monolithic block - This means that if you want to make any changes/adjustments, you first need to copy/clone the API request.

⚠️ This could potentially result in dozens of "API request clones" that have some minor differences in t... | nikoldimit | |

1,925,414 | Decrypting the Future: The Evolution of Ransomware and How to Safeguard Against It | In recent years, the digital landscape has been marred by a sinister evolution: the rise of... | 0 | 2024-07-16T17:05:00 | https://dev.to/verifyvault/decrypting-the-future-the-evolution-of-ransomware-and-how-to-safeguard-against-it-18kl | cybersecurity, security, ransomware, opensource | In recent years, the digital landscape has been marred by a sinister evolution: the rise of ransomware. Once merely a nuisance, ransomware has transformed into a sophisticated and pervasive threat, targeting everyone from multinational corporations to individual users. Understanding its evolution and knowing how to def... | verifyvault |

1,925,417 | Usability Testing: Why is it a New Competitive Advantage? | Usability testing generally involves a Live, One-on-One session between a participant in a study and... | 0 | 2024-07-16T11:40:47 | https://www.peppersquare.com/blog/usability-testing-why-is-it-a-new-competitive-advantage/ | Usability testing generally involves a Live, One-on-One session between a participant in a study and a moderator. The participant is asked by the moderator to carry out various tasks representing all those that real users would perform. This is useful in many ways.

Much before the initiation of the testing, a custom s... | pepper_square | |

1,925,418 | Sustainability Trends Driving Adoption of Glass Mat Materials in Europe | What is Glass Mat Material? Glass Mat Material, commonly known as glass mat, is a type of... | 0 | 2024-07-16T11:41:13 | https://dev.to/aryanbo91040102/sustainability-trends-driving-adoption-of-glass-mat-materials-in-europe-42md | news | What is Glass Mat Material?

Glass Mat Material, commonly known as glass mat, is a type of reinforcement material made from randomly oriented glass fibers bonded together with a binder. It is a crucial component in the production of composites, particularly in applications where high strength and durability are require... | aryanbo91040102 |

1,925,419 | The Importance of Machine Learning in Today's Business World | ## Introduction In today’s rapidly changing technology environment, machine learning has become... | 0 | 2024-07-16T11:41:34 | https://dev.to/arthur_7e18bf2cd4b6bc5936/the-importance-of-machine-learning-in-todays-business-world-3bgf | **## Introduction**

In today’s rapidly changing technology environment, machine learning has become essential to driving innovation and performance in the creative industries. From healthcare to finance, retail & manufacturing, the use of machine learning is changing the way businesses operates and make decisions. This... | arthur_7e18bf2cd4b6bc5936 | |

1,925,421 | The Growing Importance of ESG Services and ESG Data Solutions | In today's rapidly evolving business landscape, Environmental, Social, and Governance (ESG)... | 0 | 2024-07-16T11:43:56 | https://dev.to/shraddha_bandalkar_916953/the-growing-importance-of-esg-services-and-esg-data-solutions-3392 | In today's rapidly evolving business landscape, Environmental, Social, and Governance (ESG) considerations have emerged as critical factors for companies seeking long-term success and sustainability. With increasing pressure from stakeholders, regulatory bodies, and the public, businesses are turning to ESG services an... | shraddha_bandalkar_916953 | |

1,925,422 | 1 Common Mistake Junior Developers Make | You are working on too many different things at once. As a new developer, you want to look... | 0 | 2024-07-17T02:00:00 | https://dev.to/thekarlesi/1-common-mistake-junior-developers-make-i7i | webdev, beginners, programming, learning | You are working on too many different things at once.

As a new developer, you want to look competent.

And you want people to think that you are very efficient.

That you just happen to be this coding prodigy that came out of nowhere.

But take a look at senior to mid level devs.

They reject the extra work because t... | thekarlesi |

1,925,423 | Cloning Reactive Objects in JavaScript | Cloning an object in JavaScript is a common operation, but when it comes to cloning reactive objects,... | 0 | 2024-07-16T11:44:56 | https://dev.to/akshayashet/cloning-reactive-objects-in-javascript-2h8f | vue, reactive, clone, javascript | Cloning an object in JavaScript is a common operation, but when it comes to cloning reactive objects, there are some additional considerations to keep in mind, especially when working with frameworks such as Vue.js or React. In this article, we'll explore how to properly clone reactive objects and provide examples usin... | akshayashet |

1,925,424 | Harnessing the Power of ESG Services and ESG Data Solutions | In the contemporary business landscape, Environmental, Social, and Governance (ESG) factors have... | 0 | 2024-07-16T11:45:34 | https://dev.to/shraddha_bandalkar_916953/harnessing-the-power-of-esg-services-and-esg-data-solutions-5bhg | In the contemporary business landscape, Environmental, Social, and Governance (ESG) factors have become crucial components of sustainable and responsible investing. ESG services and ESG data solutions are at the forefront of this transformation, enabling companies and investors to make informed decisions that align wit... | shraddha_bandalkar_916953 | |

1,925,425 | How Is Gen AI Transforming Industries in 2024? | "To stay ahead, adaptability and deep understanding are key. Online, imposter syndrome may arise due... | 0 | 2024-07-16T11:47:37 | https://dev.to/mokkup/how-is-gen-ai-transforming-industries-in-2024-40kd | ai, productivity, news, datascience | **"To stay ahead, adaptability and deep understanding are key. Online, imposter syndrome may arise due to the overwhelming nature of information and strong opinions. It's a time for experimentation as the playing field has been reset and the future remains uncertain."**

- David Hoang, Vice President Replit, discusse... | mokkup |

1,925,426 | Microservice Antipatterns: The Shared Client Library | Introduction If you’re working in an architecture with multiple services talking to each... | 0 | 2024-07-16T15:53:49 | https://mmainz.dev/posts/microservices-antipatterns-the-shared-client-library/ | microservices, antipatterns, architecture, openapi | ---

title: Microservice Antipatterns: The Shared Client Library

published: true

date: 2024-07-15 00:00:00 UTC

tags: ["microservices", "antipatterns", "architecture", "OpenAPI"]

canonical_url: https://mmainz.dev/posts/microservices-antipatterns-the-shared-client-library/

cover_image: https://mmainz.dev/posts/microservic... | mmainz |

1,925,427 | How GenAI can improve API documentation? | The API documentation is an essential toolkit for any developer utilizing your APIs to integrate with... | 0 | 2024-07-16T11:47:56 | https://dev.to/ragavi_document360/how-genai-can-improve-api-documentation-dkd | The API documentation is an essential toolkit for any developer utilizing your APIs to integrate with other business applications. For example, many API documentation tools offer a playground whereby developers can “Try” how APIs produce responses for a certain input through various endpoints. Some tools automatically ... | ragavi_document360 | |

1,925,429 | Does American Airlines Offer a Student Discount? | Introduction For students, managing travel expenses can be challenging, especially when juggling... | 0 | 2024-07-16T11:49:33 | https://dev.to/airlinespolicyhub_fc5d060/does-american-airlines-offer-a-student-discount-5hh6 | americian, airlines, students, discount | Introduction

For students, managing travel expenses can be challenging, especially when juggling academic responsibilities and limited budgets. Airline discounts can significantly ease this burden, making travel more accessible and affordable. This article explores whether [American Airlines Student Discounts](https://... | airlinespolicyhub_fc5d060 |

1,925,430 | Integração do Cloudinary ao seu projeto Django gratuitamente | No tutorial passado, você aprendeu a hospedar um site Django de maneira gratuita no Vercel. Mas o que... | 28,078 | 2024-07-16T11:53:27 | https://dev.to/aghastygd/integracao-do-cloudinary-ao-seu-projeto-django-gratuitamente-8pm | cloudstorage, django, tutorial, python | No [tutorial passado](https://dev.to/aghastygd/hospede-seu-site-django-com-arquivos-estaticos-na-vercel-gratuitamente-novo-metodo-339p), você aprendeu a hospedar um site Django de maneira gratuita no Vercel. Mas o que acontece se o seu site precisar fazer upload de arquivos, como fotos ou vídeos? Normalmente, você teri... | aghastygd |

1,925,431 | The Rise of AI MILF Porn | MILF. Mom, I’d Like to. .. We’ll let you fill in the blank. We recognize them, we appreciate them,... | 0 | 2024-07-16T11:54:02 | https://dev.to/alicewhite/the-rise-of-ai-milf-porn-53ep |

MILF. Mom, I’d Like to. .. We’ll let you fill in the blank. We recognize them, we appreciate them, and we desire to have one. To anyone who may not know what MILF stands for, it means older women who have a sexual a... | alicewhite | |

1,925,432 | Computers are fast - Quiz | By Jonathon Belotti | “Computers are fast” I guess we all agree with this statement but do you know how fast they are? If... | 0 | 2024-07-16T11:58:35 | https://dev.to/tankala/computers-are-fast-quiz-by-jonathon-belotti-5gin | python, programming, webdev, beginners | “Computers are fast” I guess we all agree with this statement but do you know how fast they are? If you want to know then check [this quiz](https://thundergolfer.com/computers-are-fast) by Jonathon Belotti. This quiz is inspired by Julia Evans' Computers Are Fast. Interesting & fun one. | tankala |

1,925,433 | Facebook Graph API GET Page Access Token | Hello 👋 readers, If you're looking to manage your Facebook page programmatically, one of the... | 0 | 2024-07-16T12:00:41 | https://dev.to/neeraj1005/facebook-graph-api-get-page-access-token-4l3k | graphql, metaapi, marketingapi, facebookgraphapi | Hello 👋 readers,

If you're looking to manage your Facebook page programmatically, one of the essential things you'll need is a Page Access Token. Here's a simple guide to help you obtain it.

**Prerequisites**

Before you start, make sure you have the following:

- A Facebook Developer account. If you don't have one, y... | neeraj1005 |

1,925,434 | Harnessing Data for Innovation: Exploring the World of Data Science | In today's digital age, data has become the new currency, driving innovation and transforming... | 0 | 2024-07-16T12:02:28 | https://dev.to/nivi_sabari/harnessing-data-for-innovation-exploring-the-world-of-data-science-15pp | In today's digital age, data has become the new currency, driving innovation and transforming industries across the globe. From healthcare to finance, and retail to technology, data science is at the heart of this transformation. By harnessing the power of data, businesses and individuals can unlock new opportunities, ... | nivi_sabari | |

1,925,435 | Mastering Java: A Comprehensive Learning Journey | The article is about a curated collection of free programming resources that dive deep into the world of Java. Featuring 7 comprehensive tutorials, this learning journey covers everything from Java fundamentals to advanced concepts, Android development, Spring Boot, and enterprise-level applications. Whether you're a b... | 28,060 | 2024-07-16T12:07:25 | https://dev.to/getvm/mastering-java-a-comprehensive-learning-journey-1hc4 | getvm, programming, freetutorial, collection |

Embark on an exciting adventure as we explore a collection of free programming resources that will guide you through the captivating world of Java. Whether you're a beginner looking to dive into the fundamentals or an experienced developer seeking to expand your expertise, this curated selection of tutorials has somet... | getvm |

1,925,437 | BitPower Security Analysis: | BitPower uses blockchain technology to build a decentralized financial platform, which greatly... | 0 | 2024-07-16T12:12:56 | https://dev.to/_d098065643d164867d59ab/bitpower-security-analysis-1cg7 | BitPower uses blockchain technology to build a decentralized financial platform, which greatly improves the security of the system. First of all, the distributed ledger technology of blockchain makes data difficult to tamper with, and all transactions and operations are permanently recorded on the blockchain, providing... | _d098065643d164867d59ab | |

1,925,438 | Testcontainers + Golang: Melhorando seus testes com Docker | No desenvolvimento de software, testar aplicativos que dependem de serviços externos, como bancos de... | 0 | 2024-07-17T00:53:47 | https://dev.to/rflpazini/testcontainers-golang-melhorando-seus-testes-com-docker-2hb7 | docker, go, testing, coding | No desenvolvimento de software, testar aplicativos que dependem de serviços externos, como bancos de dados, pode ser desafiador. Garantir que o ambiente de teste esteja configurado corretamente e que os testes sejam isolados e reproduzíveis é crucial para a qualidade do software.

Neste artigo, vamos explorar como usa... | rflpazini |

1,925,545 | Typescript tuples aren't tuples | Why Typescript tuples are misleading | 0 | 2024-07-16T13:53:17 | https://dev.to/rrees/typescript-tuples-arent-tuples-28kj | typescript | ---

title: Typescript tuples aren't tuples

published: true

description: Why Typescript tuples are misleading

tags: typescript

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-07-16 13:50 +0000

---

I came across some surprising behaviour recently. One of the ... | rrees |

1,925,439 | Heard Great Things About New Orleans | I’ve been hearing so many amazing things about New Orleans lately, and it sounds like such a vibrant... | 0 | 2024-07-16T12:14:17 | https://dev.to/fahad_gul_266aa6c70417e88/heard-great-things-about-new-orleans-157n |

I’ve been hearing so many amazing things about New Orleans lately, and it sounds like such a vibrant and exciting place. From the incredible music scene to the delicious food and rich history, it seems like there’s always something going on.

I’m really curious to learn more about the city and its hidden gems. If you’... | fahad_gul_266aa6c70417e88 | |

1,925,440 | THE COMPLETE CREATIVE | What I Built The Product: ** The Complete Creative is a digital platform built to connect... | 0 | 2024-07-16T12:16:01 | https://dev.to/handla/the-complete-creative-62m | devchallenge, stellarchallenge, blockchain, web3 |

## What I Built

**The Product: **

The Complete Creative is a digital platform built to connect creators with their core fan community and provide access to global payments and funding.

It connects creators with community and payments, promoting the funding of creative projects through a blockchain-based ecosystem.

P... | handla |

1,925,441 | Sustainable Solar Energy Solutions: Trends and Predictions for the Next Decade | Solar energy has emerged as a cornerstone of sustainable development as the world grapples with the... | 0 | 2024-07-16T12:18:01 | https://dev.to/john_weaver_b56af7cee86b0/sustainable-solar-energy-solutions-trends-and-predictions-for-the-next-decade-2gc9 | Solar energy has emerged as a cornerstone of sustainable development as the world grapples with the pressing need to transition to renewable energy sources. Over the next decade, significant advancements and trends in [**sustainable solar energy solutions**](https://wattup.in/sustainable-energy-solutions-empowering-the... | john_weaver_b56af7cee86b0 | |

1,925,442 | BitPower Security Analysis: | BitPower uses blockchain technology to build a decentralized financial platform, which greatly... | 0 | 2024-07-16T12:18:05 | https://dev.to/_9e551ff4534282b0619446/bitpower-security-analysis-29gl |

BitPower uses blockchain technology to build a decentralized financial platform, which greatly improves the security of the system. First of all, the distributed ledger technology of blockchain makes data difficult to tamper with, and all transactions and operations are permanently recorded on the blockchain, provid... | _9e551ff4534282b0619446 | |

1,925,443 | Strengthen Your Business With Python Development Services | The world of business is rapidly changing. Disruptive technologies are appearing at rates never seen... | 0 | 2024-07-16T12:18:36 | https://dev.to/lewisblakeney/strengthen-your-business-with-python-development-services-479o | python, django, programming, discuss | The world of business is rapidly changing. Disruptive technologies are appearing at rates never seen before and what customers demand keeps changing. In this sense, agility and innovation are indispensable for survival and prosperity in an environment that keeps evolving.

Modern businesses should be able to adapt quic... | lewisblakeney |

1,925,445 | Managing Strains (Pulled Muscle) with Pain O Somaz | Managing strains or pulled muscles with Pain O Soma involves using the medication to alleviate pain... | 0 | 2024-07-16T12:19:30 | https://dev.to/rubyjohnson17/managing-strains-pulled-muscle-with-pain-o-soma-4df3 | musclepain | Managing strains or pulled muscles with Pain O Soma involves using the medication to alleviate pain and promote muscle relaxation. **[Pain o soma 500 mg](https://safe4cure.com/product/pain-o-soma-500-mg/)** effectively reduces muscle spasms and discomfort by blocking pain signals to the brain. For optimal results, foll... | rubyjohnson17 |

1,925,446 | 네임드카지노 | 네임드카지노(Named Casino)는 국내에서 가장 믿을 수 있는 온라인 카지노 사이트로 자리 잡았습니다. 사기 방지 검증 커뮤니티에서 검증받은 메이저 온라인 카지노 게임... | 0 | 2024-07-16T12:20:29 | https://dev.to/mainnamed05/neimdeukajino-49ah | 네임드카지노(Named Casino)는 국내에서 가장 믿을 수 있는 온라인 카지노 사이트로 자리 잡았습니다. 사기 방지 검증 커뮤니티에서 검증받은 메이저 온라인 카지노 게임 사이트로, 다양한 온라인 카지노 게임을 실시간으로 제공합니다. 이 글에서는 네임드카지노의 특징, 제공되는 게임, 안전성, 그리고 다양한 혜택과 활동에 대해 자세히 알아보겠습니다.

네임드카지노의 특징

네임드카지노는 국내 최초로 온라인 카지노 서비스를 제공한 회사로, 그 명성을 바탕으로 급속도로 성장했습니다. 여러 계열사를 설립하며 광범위한 자본과 운영에 대한 깊은 이해를 바탕으로 국내 1위 바카라... | mainnamed05 | |

1,925,447 | 네임드카지노 | 네임드카지노(Named Casino)는 국내에서 가장 믿을 수 있는 온라인 카지노 사이트로 자리 잡았습니다. 사기 방지 검증 커뮤니티에서 검증받은 메이저 온라인 카지노 게임... | 0 | 2024-07-16T12:21:21 | https://dev.to/mainnamed05/neimdeukajino-2d62 | 네임드카지노(Named Casino)는 국내에서 가장 믿을 수 있는 온라인 카지노 사이트로 자리 잡았습니다. 사기 방지 검증 커뮤니티에서 검증받은 메이저 온라인 카지노 게임 사이트로, 다양한 온라인 카지노 게임을 실시간으로 제공합니다. 이 글에서는 네임드카지노의 특징, 제공되는 게임, 안전성, 그리고 다양한 혜택과 활동에 대해 자세히 알아보겠습니다.

네임드카지노의 특징

네임드카지노는 국내 최초로 온라인 카지노 서비스를 제공한 회사로, 그 명성을 바탕으로 급속도로 성장했습니다. 여러 계열사를 설립하며 광범위한 자본과 운영에 대한 깊은 이해를 바탕으로 국내 1위 바카라... | mainnamed05 | |

1,925,448 | Introducing Vue 3: Exploring Its New Features with Examples | Vue 3, the latest iteration of the popular JavaScript framework, comes with several exciting features... | 0 | 2024-07-17T07:01:23 | https://dev.to/akshayashet/introducing-vue-3-exploring-its-new-features-with-examples-3p2e | Vue 3, the latest iteration of the popular JavaScript framework, comes with several exciting features and improvements that enhance its capabilities for building modern web applications. In this technical blog post, we'll delve into some of the key features introduced in Vue 3 and provide examples to illustrate their u... | akshayashet | |

1,925,449 | Cryptocurrency and Blockchain: Revolutionizing Online Gambling at House of Jack Casino | In recent years, the integration of cryptocurrency and blockchain technology has significantly... | 0 | 2024-07-16T12:22:50 | https://dev.to/houseofjackbet/cryptocurrency-and-blockchain-revolutionizing-online-gambling-at-house-of-jack-casino-3e04 | <p dir="ltr"><span>In recent years, the integration of cryptocurrency and blockchain technology has significantly advanced various industries. Online gambling is one sector experiencing profound changes due to these technologies. House of Jack Casino stands at the forefront of this revolution, offering an array of bene... | houseofjackbet | |

1,925,450 | Solving the SQL Murder Mystery: A Step-by-Step Guide | Maybe I should consider a career as a detective! In this article, I am going to walk you through how... | 0 | 2024-07-16T12:30:03 | https://dev.to/mayorla/solving-the-sql-murder-mystery-a-step-by-step-guide-29cc | sql, database, datascience, howto | Maybe I should consider a career as a detective! In this article, I am going to walk you through how I solved a mystery murder case from SQL Murder Mystery. Let us dive into the crime scene and uncover the truth step by step.

**The Mystery**

A crime has taken place and the detective needs your help. The detective gave... | mayorla |

1,925,451 | Weather widget / component in Next.js | Dynamic Weather Widget Follow these steps to integrate a dynamic weather widget into your... | 0 | 2024-07-16T12:23:30 | https://dev.to/skidee/weather-widget-component-in-nextjs-3kgo | webdev, nextjs, weather, webcomponents | ## [Dynamic Weather Widget](https://weather-widget-in-next-js.vercel.app/)

Follow these steps to integrate a dynamic weather widget into your Next.js project.

### Step 1: Create an API Route

1. Inside your `app` folder, create a new folder named `api`.

2. Inside the `api` folder, create a subfolder for your weather ... | skidee |

1,925,453 | Top Language Learning Programs for Business Professionals | In today's globalized world, the ability to communicate in multiple languages is a crucial asset for... | 0 | 2024-07-16T12:28:58 | https://dev.to/curiotory_thelanguageex/top-language-learning-programs-for-business-professionals-431j | language, learning | In today's globalized world, the ability to communicate in multiple languages is a crucial asset for business professionals. Mastering a new language can open doors to international markets, foster better relationships with global clients, and enhance career opportunities. Whether you are looking to learn Spanish, Mand... | curiotory_thelanguageex |

1,925,456 | Day 1 of NodeJS || Introduction | Hey reader 😊 Excited huh!!😁 From today (16/07/2024) we are going to start our NodeJS series🥳. In... | 0 | 2024-07-16T12:31:36 | https://dev.to/akshat0610/day-1-of-nodejs-introduction-449j | webdev, node, beginners, tutorial | Hey reader 😊 Excited huh!!😁

From today (16/07/2024) we are going to start our NodeJS series🥳. In this series we are going to cover everything. we will start from very basic and will take it to advanced level.

Prerequisites-:

- Basic understanding of JavaScript.

- Understanding of Synchronous and Asynchronous Progra... | akshat0610 |

1,925,457 | Best Front-End Programming Languages 2024 | Are you new to creating mobile or web applications? It is possible to feel overwhelmed by the sheer... | 0 | 2024-07-16T12:38:50 | https://dev.to/infowindtech57/best-front-end-programming-languages-2024-4274 | basic, programming | Are you new to creating mobile or web applications? It is possible to feel overwhelmed by the sheer number of programming languages, libraries, and frameworks available. The steady stream of new options might easily become too much to handle. You can work through that initial disorientation with the aid of this tutoria... | infowindtech57 |

1,925,458 | SpreadsheetLLM: Encoding Spreadsheets for Large Language Models | SpreadsheetLLM: Encoding Spreadsheets for Large Language Models | 0 | 2024-07-16T12:38:58 | https://aimodels.fyi/papers/arxiv/spreadsheetllm-encoding-spreadsheets-large-language-models | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [SpreadsheetLLM: Encoding Spreadsheets for Large Language Models](https://aimodels.fyi/papers/arxiv/spreadsheetllm-encoding-spreadsheets-large-language-models). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](h... | mikeyoung44 |

1,925,459 | Transformer Layers as Painters | Transformer Layers as Painters | 0 | 2024-07-16T12:39:32 | https://aimodels.fyi/papers/arxiv/transformer-layers-as-painters | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Transformer Layers as Painters](https://aimodels.fyi/papers/arxiv/transformer-layers-as-painters). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.com) or follow me on [Twitter](https... | mikeyoung44 |

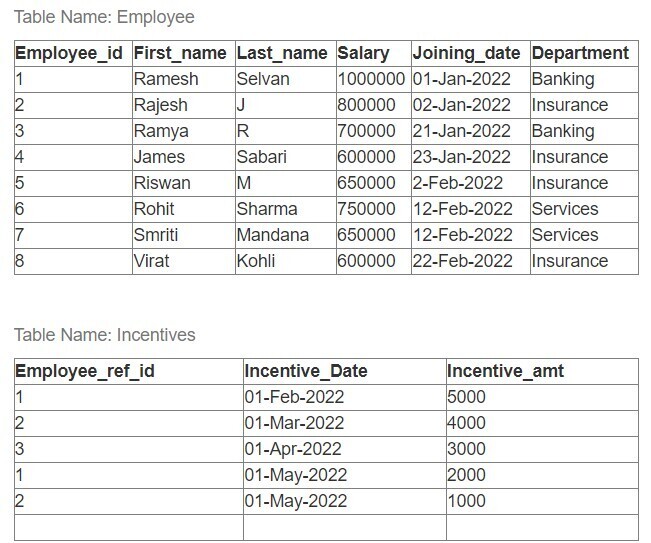

1,925,460 | Write a SQL query | Find the Total_Salary(Salary + Incentives) received by each employee | 0 | 2024-07-16T12:39:45 | https://dev.to/magesh/write-a-sql-query-3g6j |

Find the Total_Salary(Salary + Incentives) received by each employee | magesh | |

1,925,461 | CodeUpdateArena: Benchmarking Knowledge Editing on API Updates | CodeUpdateArena: Benchmarking Knowledge Editing on API Updates | 0 | 2024-07-16T12:40:07 | https://aimodels.fyi/papers/arxiv/codeupdatearena-benchmarking-knowledge-editing-api-updates | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [CodeUpdateArena: Benchmarking Knowledge Editing on API Updates](https://aimodels.fyi/papers/arxiv/codeupdatearena-benchmarking-knowledge-editing-api-updates). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](ht... | mikeyoung44 |

1,925,462 | Can ChatGPT Pass a Theory of Computing Course? | Can ChatGPT Pass a Theory of Computing Course? | 0 | 2024-07-16T12:40:41 | https://aimodels.fyi/papers/arxiv/can-chatgpt-pass-theory-computing-course | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Can ChatGPT Pass a Theory of Computing Course?](https://aimodels.fyi/papers/arxiv/can-chatgpt-pass-theory-computing-course). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.com) or fo... | mikeyoung44 |

1,925,463 | Towards Enhancing Coherence in Extractive Summarization: Dataset and Experiments with LLMs | Towards Enhancing Coherence in Extractive Summarization: Dataset and Experiments with LLMs | 0 | 2024-07-16T12:41:16 | https://aimodels.fyi/papers/arxiv/towards-enhancing-coherence-extractive-summarization-dataset-experiments | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Towards Enhancing Coherence in Extractive Summarization: Dataset and Experiments with LLMs](https://aimodels.fyi/papers/arxiv/towards-enhancing-coherence-extractive-summarization-dataset-experiments). If you like these kinds of analysis, you should sub... | mikeyoung44 |

1,925,464 | Transform Your Look at Mema’s Aesthetic Hair Transplant Clinic in Jaipur | Located in the heart of Jaipur, Mema’s Aesthetic Hair Transplant Clinic is a premier destination... | 0 | 2024-07-16T12:41:42 | https://dev.to/memas/transform-your-look-at-memas-aesthetic-hair-transplant-clinic-in-jaipur-524d |

Located in the heart of Jaipur, Mema’s Aesthetic Hair Transplant Clinic is a premier destination for those seeking solutions to hair loss and related issues. Renowned for its advanced hair transplant procedures an... | memas | |

1,925,465 | SparQ Attention: Bandwidth-Efficient LLM Inference | SparQ Attention: Bandwidth-Efficient LLM Inference | 0 | 2024-07-16T12:41:51 | https://aimodels.fyi/papers/arxiv/sparq-attention-bandwidth-efficient-llm-inference | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [SparQ Attention: Bandwidth-Efficient LLM Inference](https://aimodels.fyi/papers/arxiv/sparq-attention-bandwidth-efficient-llm-inference). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substa... | mikeyoung44 |

1,925,466 | Pexl Keys - How to Upgrade Microsoft Office 2019 to 2021 | How to Upgrade Microsoft Office 2019 to 2021? Upgrading your Microsoft Office suite from... | 0 | 2024-07-16T12:42:13 | https://dev.to/pexlkeys/pexl-keys-how-to-upgrade-microsoft-office-2019-to-2021-8i7 | tutorial, productivity, programming | ## How to Upgrade Microsoft Office 2019 to 2021?

Upgrading your Microsoft Office suite from the 2019 version to the 2021 version can bring a range of new features and improvements to enhance your productivity. This guide will walk you through the steps necessary to upgrade from Office 2019 to Office 2021.

**Step 1: Pu... | pexlkeys |

1,925,467 | UNSAT Solver Synthesis via Monte Carlo Forest Search | UNSAT Solver Synthesis via Monte Carlo Forest Search | 0 | 2024-07-16T12:42:25 | https://aimodels.fyi/papers/arxiv/unsat-solver-synthesis-via-monte-carlo-forest | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [UNSAT Solver Synthesis via Monte Carlo Forest Search](https://aimodels.fyi/papers/arxiv/unsat-solver-synthesis-via-monte-carlo-forest). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack... | mikeyoung44 |

1,925,469 | Human-in-the-Loop Visual Re-ID for Population Size Estimation | Human-in-the-Loop Visual Re-ID for Population Size Estimation | 0 | 2024-07-16T12:42:59 | https://aimodels.fyi/papers/arxiv/human-loop-visual-re-id-population-size | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Human-in-the-Loop Visual Re-ID for Population Size Estimation](https://aimodels.fyi/papers/arxiv/human-loop-visual-re-id-population-size). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.subst... | mikeyoung44 |

1,925,471 | Beyond Euclid: An Illustrated Guide to Modern Machine Learning with Geometric, Topological, and Algebraic Structures | Beyond Euclid: An Illustrated Guide to Modern Machine Learning with Geometric, Topological, and Algebraic Structures | 0 | 2024-07-16T12:43:34 | https://aimodels.fyi/papers/arxiv/beyond-euclid-illustrated-guide-to-modern-machine | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Beyond Euclid: An Illustrated Guide to Modern Machine Learning with Geometric, Topological, and Algebraic Structures](https://aimodels.fyi/papers/arxiv/beyond-euclid-illustrated-guide-to-modern-machine). If you like these kinds of analysis, you should ... | mikeyoung44 |

1,925,472 | WildGaussians: 3D Gaussian Splatting in the Wild | WildGaussians: 3D Gaussian Splatting in the Wild | 0 | 2024-07-16T12:44:08 | https://aimodels.fyi/papers/arxiv/wildgaussians-3d-gaussian-splatting-wild | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [WildGaussians: 3D Gaussian Splatting in the Wild](https://aimodels.fyi/papers/arxiv/wildgaussians-3d-gaussian-splatting-wild). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.com) or ... | mikeyoung44 |

1,925,473 | TurboTLS: TLS connection establishment with 1 less round trip | TurboTLS: TLS connection establishment with 1 less round trip | 0 | 2024-07-16T12:44:43 | https://aimodels.fyi/papers/arxiv/turbotls-tls-connection-establishment-1-less-round | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [TurboTLS: TLS connection establishment with 1 less round trip](https://aimodels.fyi/papers/arxiv/turbotls-tls-connection-establishment-1-less-round). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aim... | mikeyoung44 |

1,925,474 | Agent Attention: On the Integration of Softmax and Linear Attention | Agent Attention: On the Integration of Softmax and Linear Attention | 0 | 2024-07-16T12:45:17 | https://aimodels.fyi/papers/arxiv/agent-attention-integration-softmax-linear-attention | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Agent Attention: On the Integration of Softmax and Linear Attention](https://aimodels.fyi/papers/arxiv/agent-attention-integration-softmax-linear-attention). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](htt... | mikeyoung44 |

1,925,475 | Comparing Embedded Systems and Desktop Systems | Embedded systems and desktop systems, though both integral parts of our modern technological... | 0 | 2024-07-16T12:45:45 | https://dev.to/jpdengler/comparing-embedded-systems-and-desktop-systems-2lm6 | embeddedsystems, beginners, programming, productivity | Embedded systems and desktop systems, though both integral parts of our modern technological landscape, serve vastly different purposes and operate under distinct principles. This blog post delves into the differences in non-volatile memory usage, overall system design, and the unique advantages of various embedded sys... | jpdengler |

1,925,476 | Adapting Large Language Models via Reading Comprehension | Adapting Large Language Models via Reading Comprehension | 0 | 2024-07-16T12:45:52 | https://aimodels.fyi/papers/arxiv/adapting-large-language-models-via-reading-comprehension | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Adapting Large Language Models via Reading Comprehension](https://aimodels.fyi/papers/arxiv/adapting-large-language-models-via-reading-comprehension). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://ai... | mikeyoung44 |

1,925,478 | Configure IOT Fake - Python MQQT - Test IoTCore | Configure IOT Fake - Python MQQT - Test IoTCore 1. Configurar o IoT Core no... | 0 | 2024-07-16T12:52:07 | https://dev.to/aldeiacloud/-configure-iot-fake-python-mqqt-test-iotcore-4kk9 | iot, mqqt, iotcore | ## Configure IOT Fake - Python MQQT - Test IoTCore

---

### 1. Configurar o IoT Core no AWS

#### Criar uma "Thing" no IoT Core:

1. No console do AWS IoT Core, vá para "Manage" e depois "Things".

2. Clique em "Create things" e crie uma "Thing".

#### Criar Certificados:

1. Após criar a "Thing", crie um certificado para... | aldeiacloud |

1,925,479 | my personal view component | import { FlexAlignType, FlexStyle, View, type ViewProps, type ViewStyle } from... | 0 | 2024-07-16T12:54:04 | https://dev.to/akram6t/my-personal-view-component-1k3l | ```typescript

import { FlexAlignType, FlexStyle, View, type ViewProps, type ViewStyle } from 'react-native';

import { useThemeColor } from '@/hooks/useThemeColor';

export type ThemedViewProps = ViewProps & {

lightColor?: string;

darkColor?: string;

};

export function ThemedView({ style, lightColor, darkColor, ..... | akram6t | |

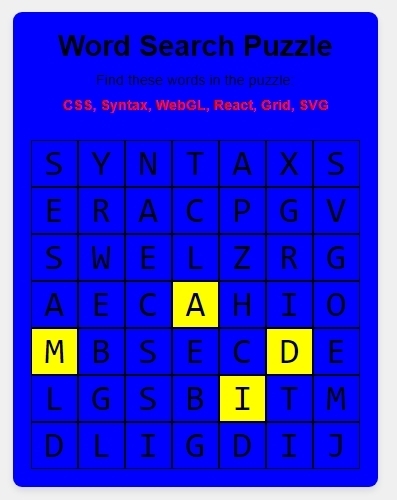

1,925,480 | Creating Engaging Word Search Puzzles with Dynamic Animations | Designing the Puzzle Interface Animated Background for Visual Appeal Enhancing User... | 0 | 2024-07-16T12:58:39 | https://dev.to/der12kl/creating-engaging-word-search-puzzles-with-dynamic-animations-21jb | codepen, css, webdev, frontend | - Designing the Puzzle Interface

- Animated Background for Visual Appeal

- Enhancing User Experience

- Further Learning and Resources

- Conclusion

Word search puzzles have long been a favorite pastime, combining... | der12kl |

1,925,481 | Linux 101: A Beginner's Guide to the Open-Source Powerhouse (and Why It's Different from Windows) | Are you curious about Linux but not sure where to start? Maybe you've heard whispers of its power and... | 0 | 2024-07-16T14:04:06 | https://dev.to/rahul_kumar_fd2c9e008ad0a/linux-101-a-beginners-guide-to-the-open-source-powerhouse-and-why-its-different-from-windows-la3 | linux, beginners, opensource, cli | Are you curious about Linux but not sure where to start? Maybe you've heard whispers of its power and flexibility but are intimidated by its reputation for complexity. Fear not! This beginner's guide will demystify Linux, explain its key differences from Windows, and show you why it's worth exploring.

## What Makes Li... | rahul_kumar_fd2c9e008ad0a |

1,925,482 | "400 Bad Request" Error Explained & Solved: Simple 5-Step Guide | The "400 Bad Request" error is a common yet frustrating issue that web users encounter, often... | 0 | 2024-07-16T12:59:42 | https://dev.to/wewphosting/400-bad-request-error-explained-solved-simple-5-step-guide-19hh | The "400 Bad Request" error is a common yet frustrating issue that web users encounter, often disrupting the browsing experience on platforms like **[WordPress hosting](https://www.wewp.io/)** or **[managed WordPress hosting services](https://www.wewp.io/)**. In this guide, we explore its causes, impact, and effective ... | wewphosting | |

1,925,483 | angular first visit my question part not loading but give next or prv button open page why? | A post by Muthalagan N | 0 | 2024-07-16T13:00:44 | https://dev.to/muthalagan_n_namakka/angular-first-visit-my-question-part-not-loading-but-give-next-or-prv-button-open-page-why-37ao | muthalagan_n_namakka | ||

1,925,484 | How AI Integration Services Can Fuel Your Business Growth | In today's rapidly evolving business landscape, staying competitive means embracing technological... | 0 | 2024-07-16T13:00:47 | https://dev.to/keriwalker/how-ai-integration-services-can-fuel-your-business-growth-22p | In today's rapidly evolving business landscape, staying competitive means embracing technological advancements that can streamline operations, enhance decision-making, and improve customer experiences. One of the most significant advancements is the integration of artificial intelligence (AI) into business processes. [... | keriwalker | |

1,925,485 | Best Business Software Practices in 2024 | “Don’t settle for ordinary when your business deserves extraordinary. Discover the transformative... | 0 | 2024-07-16T13:01:36 | https://flatlogic.com/blog/best-business-software-practices/ | webdev, programming, powerplatform, javascript |

**_“Don’t settle for ordinary when your business deserves extraordinary. Discover the transformative power of the best business software as we dive deep into the digital revolution.”_**

When hunting for [business software](https://flatlogic.com/), do you ask: What makes software truly ‘the best’ for my business? How... | alesiasirotka |

1,925,486 | Location Services- the Android 14 (maybe 15 too) way | Introduction Location awareness is becoming an essential part of many successful mobile... | 0 | 2024-07-16T13:01:38 | https://dev.to/olubunmialegbeleye/location-services-the-android-14-maybe-15-too-way-4171 | ## Introduction

Location awareness is becoming an essential part of many successful mobile applications. Whether you're building a fitness tracker, a navigation app, a ride-sharing app, a weather app, an augmented reality experience or a service that connects users based on proximity, incorporating location functional... | olubunmialegbeleye | |

1,925,487 | angular first visit my question part not loading but give next or prv button open page why? | A post by Muthalagan N | 0 | 2024-07-16T13:01:38 | https://dev.to/muthalagan_n_namakka/angular-first-visit-my-question-part-not-loading-but-give-next-or-prv-button-open-page-why-2i6a | help | muthalagan_n_namakka | |

1,925,488 | Cloud Computing 101: An Introduction to Cloud Solutions | Explore the fundamentals of cloud computing, its benefits for businesses, and the various types of cloud services available. Learn how cloud computing revolutionizes IT management by offering scalable, cost-effective solutions for modern enterprises. | 0 | 2024-07-16T13:05:21 | https://www.citruxdigital.com/blog/cloud-computing-101-an-introduction-to-cloud-solutions | In today’s fast-paced world, cloud computing has become a cornerstone of modern technology. But what exactly is cloud computing, and why has it become so essential? Let’s break it down in simple terms and see why it’s a game-changer for businesses everywhere.

### What is Cloud Computing?

Cloud computing is like renti... | munikeraragon | |

1,925,489 | Simulate APIs using Postman (Create a mock server) | Simulate APIs using Postman When do we need to Simulate APIs Reproduce... | 0 | 2024-07-16T14:06:01 | https://dev.to/jenchen/simulate-apis-using-postman-create-a-mock-server-3hmg | webdev, postman, api, web | ## Simulate APIs using Postman

### When do we need to Simulate APIs

#### Reproduce Issues in Debugging

Consistent Responses. In production environments, certain API responses are not common. Simulating APIs helps reproduce these responses consistently, aiding in effective debugging and issue resolution.

#### Isolati... | jenchen |

1,925,491 | 100 days of Cloud: Day 5: Deploying my first ever API with Docker Compose - Adventures in Not Breaking Everything | Alright folks, buckle up for another exciting day in the world of deploying a super cool (and totally... | 0 | 2024-07-16T13:06:54 | https://dev.to/tutorialhelldev/100-days-of-cloud-day-5-deploying-my-first-ever-api-with-docker-compose-adventures-in-not-breaking-everything-2j1h | Alright folks, buckle up for another exciting day in the world of deploying a super cool (and totally not to brag, but incredibly useful) API! Today's agenda was all about using this nifty tool called Docker Compose. Now, full disclosure, going into this I wasn't entirely sure what I was getting myself into. Docker? Su... | tutorialhelldev | |

1,925,492 | Обзор Vavada casino online | [Онлайн казино Вавада]( ) заслуженно входит в ТОП русскоязычных гемблинг-платформ, хотя ее... | 0 | 2024-07-16T13:08:47 | https://dev.to/vavadacas0n/obzor-vavada-casino-online-5a4c |

[Онлайн казино Вавада](

) заслуженно входит в ТОП русскоязычных гемблинг-платформ, хотя ее аудитория не ограни... | vavadacas0n | |

1,925,493 | How Video Generation Works in the Open Source Project Wunjo CE | Getting to Know the Functionality If you’re interested in exploring the full... | 24,089 | 2024-07-16T13:09:28 | https://dev.to/wladradchenko/how-video-generation-works-in-the-open-source-project-wunjo-ce-1k1 | github, opensource, ai, tutorial | ## Getting to Know the Functionality

If you’re interested in exploring the full functionality, detailed parameter explanations, and usage instructions of Wunjo CE, a comprehensive video will be attached at the end of this article. For those who prefer to skip the technical intricacies, a video demonstrating the video ... | wladradchenko |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.