id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,903,055 | Quantum Synergies Integrating AI with Randomized Benchmarking and Gate Fidelity for Enhanced Quantum Computing Performance | This post explores the powerful synergy between AI and quantum computing, focusing on how AI can enhance Randomized Benchmarking and gate fidelity. By leveraging the probabilistic nature of both fields, we can create a feedback loop that iteratively improves quantum performance and AI prediction accuracy. | 0 | 2024-06-27T19:20:00 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Quantum/AIErrorCorrection | quantumcomputing, ai, randomizedbenchmarking, gatefidelity | # Quantum Synergies: Integrating AI with Randomized Benchmarking and Gate Fidelity for Enhanced Quantum Computing Performance 🧠💻

The intersection of artificial intelligence (AI) and quantum computing presents a fascinating frontier where the probabilistic nature of both fields can be harnessed for mutual benefit. By... | eric_dequ |

1,903,054 | JavaScript ES3 Regular Expressions: A Blast from the Past 🎉 | JavaScript ECMAScript 3 (ES3), released in 1999, was a pivotal version in the evolution of the... | 0 | 2024-06-27T19:19:18 | https://dev.to/rishikesh_janrao_a613fad6/javascript-es3-regular-expressions-a-blast-from-the-past-4p66 |

JavaScript ECMAScript 3 (ES3), released in 1999, was a pivotal version in the evolution of the language. One of its powerful features was the introduction of Regular Expressions (regex), which provided developers with a robust tool for pattern matching and text manipulation.

## What are Regular Expressions? 🤔

Regul... | rishikesh_janrao_a613fad6 | |

1,903,053 | The Quantum Revolution Harnessing Natures Alternating Currents for Sustainable Computing | This post explores how the inherent quantum mechanical nature of the world can be leveraged to create more energy-efficient computing systems. By comparing classical and quantum computers to DC and AC currents, we highlight the potential for quantum computers to drastically reduce the energy consumption of AI models an... | 0 | 2024-06-27T19:16:33 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Quantum/ACQCompute | quantumcomputing, energyefficiency, sustainabletechnology, ai | # The Quantum Revolution: Harnessing Nature's Alternating Currents for Sustainable Computing

## Introduction

Just as Nikola Tesla famously stated that "nature is not DC, it alternates," we are now on the cusp of a technological revolution that recognizes the inherently quantum mechanical nature of the ... | eric_dequ |

1,893,020 | Is mitt dead? 🥊 | Mitt was first released 7 years ago. Today, it has open issues going back to 2021, issues waiting to... | 0 | 2024-06-19T02:36:31 | https://dev.to/stackoverfloweth/is-mitt-dead-3lb0 | typescript | Mitt was first released 7 years ago. Today, it has open issues going back to 2021, issues waiting to be triaged, open PRs completely ignored, and no commits since mid 2023. Are the issues not worth doing? Is the library just avoiding exceeding it's 200 byte "microscopic" constraint? Maybe the author is busy with new pr... | stackoverfloweth |

1,903,052 | Acoustic Quantum Computing Harnessing the Power of Sound | This post explores an innovative approach to building a quantum computer using tuning forks and acoustic frequencies. By leveraging the quantum properties of sound waves, we propose a novel method for creating and manipulating qubits, potentially opening new avenues for quantum computing research. | 0 | 2024-06-27T19:13:06 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Quantum/AccousticQuantum | acoustics, entanglement, qubits, physics | # Acoustic Quantum Computing: Harnessing the Power of Sound

## Introduction

Quantum computing has emerged as a promising field with the potential to revolutionize computational capabilities. While most current approaches rely on electronic or photonic systems, we propose an alternative method using acoustic frequenci... | eric_dequ |

1,903,049 | deploy an website using RDS in terraform | Step 1: Set Up Terraform Configuration Create a new directory for your Terraform project and navigate... | 0 | 2024-06-27T19:07:56 | https://dev.to/jeyaprakash/deploy-an-website-using-rds-in-terraform-2121 | Step 1: Set Up Terraform Configuration

Create a new directory for your Terraform project and navigate into it:

bash

mkdir terraform-rds-example

cd terraform-rds-example

Create a main.tf file where you will define your Terraform configuration:

hcl

provider "aws" {

region = "us-east-1" # Adjust the region as per yo... | jeyaprakash | |

1,903,048 | ScrollBar | ScrollBar is a control that enables the user to select from a range of values. Figure below shows a... | 0 | 2024-06-27T19:06:34 | https://dev.to/paulike/scrollbar-4ka5 | java, programming, learning, beginners | **ScrollBar** is a control that enables the user to select from a range of values. Figure below shows a scroll bar. Normally, the user changes the value of a scroll bar by making a gesture with the mouse. For example, the user can drag the scroll bar’s thumb, click on the scroll bar track, or the scroll bar’s left or r... | paulike |

1,903,047 | Not allow user click to back to before page through button of navigator with React | import { useNavigate } from 'react-router-dom' const navigate = useNavigate() navigate('/sign-in',... | 0 | 2024-06-27T19:05:12 | https://dev.to/rafaelborges26/not-allow-user-click-to-back-to-before-page-through-button-of-navigator-with-react-40ej | react, web3, router, javascript | ```

import { useNavigate } from 'react-router-dom'

const navigate = useNavigate()

navigate('/sign-in', { replace: true })

```

Using replace true, the browser not allow back to back page after to navigate to sign-in route, how the example. | rafaelborges26 |

1,903,046 | create a staic website using amazon s3 with terraform | In this step-by-step guide, we’ll dive into deploying a static website on AWS S3 using Terraform!... | 0 | 2024-06-27T19:03:50 | https://dev.to/jeyaprakash/create-a-staic-website-using-amazon-s3-with-terraform-37p1 | In this step-by-step guide, we’ll dive into deploying a static website on AWS S3 using Terraform! We’ll

walk through the process of:

1. Automating S3 Bucket Creation: Terraform will handle creating the S3 bucket where your

website files will reside.

2. Effortless Website Upload: We’ll configure Terraform to skip manu... | jeyaprakash | |

1,903,044 | Connecting the Dots: Evaluating Abstract Reasoning Capabilities of LLMs Using the New York Times Connections Word Game | Connecting the Dots: Evaluating Abstract Reasoning Capabilities of LLMs Using the New York Times Connections Word Game | 0 | 2024-06-27T18:59:19 | https://aimodels.fyi/papers/arxiv/connecting-dots-evaluating-abstract-reasoning-capabilities-llms | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Connecting the Dots: Evaluating Abstract Reasoning Capabilities of LLMs Using the New York Times Connections Word Game](https://aimodels.fyi/papers/arxiv/connecting-dots-evaluating-abstract-reasoning-capabilities-llms). If you like these kinds of analy... | mikeyoung44 |

1,903,043 | Be Here Now by Ram Dass A Spiritual Journey from Psychedelics to Self-Discovery | Explore the transformative journey of Ram Dass from Harvard psychologist to spiritual guru, as chronicled in his influential book "Be Here Now." Discover how his experiences with psychedelics and his guru, Neem Karoli Baba, helped shape a generation of seekers, including tech visionaries like Steve Jobs and Mark Zucker... | 0 | 2024-06-27T18:59:08 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Books/BeHereNow | ramdass, beherenow, spirituality, neemkarolibaba | # Be Here Now by Ram Dass: A Spiritual Journey from Psychedelics to Self-Discovery

"Be Here Now" by Ram Dass is a seminal work that has profoundly influenced the spiritual landscape of the Western world. The book chronicles the author's transformative journey from Dr. Richard Alpert, a Harvard psycholog... | eric_dequ |

1,903,042 | Generate Q&A from Wikipedia Pages with Pydantic, Instructor, and Phi-3 LLM | Introduction With a little LLM model like phi3 and a good schema generator like pydantic... | 0 | 2024-06-27T18:59:06 | https://dev.to/francescoagati/generate-qa-from-wikipedia-pages-with-pydantic-instructor-and-phi-3-llm-o0i | python, wikipedia, pydantic, phi3 | #

## Introduction

With a little LLM model like phi3 and a good schema generator like pydantic we can generate question and answer pairs from Wikipedia pages. By using Pydantic for data validation, Instructor library for structured data extraction, and Microsoft's Phi-3 language models for efficient AI processing, we ... | francescoagati |

1,903,041 | Emergent World Representations: Exploring a Sequence Model Trained on a Synthetic Task | Emergent World Representations: Exploring a Sequence Model Trained on a Synthetic Task | 0 | 2024-06-27T18:58:44 | https://aimodels.fyi/papers/arxiv/emergent-world-representations-exploring-sequence-model-trained | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Emergent World Representations: Exploring a Sequence Model Trained on a Synthetic Task](https://aimodels.fyi/papers/arxiv/emergent-world-representations-exploring-sequence-model-trained). If you like these kinds of analysis, you should subscribe to the... | mikeyoung44 |

1,878,701 | lá số tử vi | Tử Vi, hay Tử Vi Đẩu Số, là một bộ môn huyền học được dùng với các công năng chính như: luận đoán về... | 0 | 2024-06-06T03:19:04 | https://dev.to/dongphuchh023/la-so-tu-vi-228n | Tử Vi, hay Tử Vi Đẩu Số, là một bộ môn huyền học được dùng với các công năng chính như: luận đoán về tính cách, hoàn cảnh, dự đoán về các " vận hạn" trong cuộc đời của một người đồng thời nghiên cứu tương tác của một người với các sự kiện, nhân sự.... Chung quy với mục đích chính là để biết vận mệnh con người.

Lấy lá ... | dongphuchh023 | |

1,903,040 | Exploring Design Choices for Building Language-Specific LLMs | Exploring Design Choices for Building Language-Specific LLMs | 0 | 2024-06-27T18:58:10 | https://aimodels.fyi/papers/arxiv/exploring-design-choices-building-language-specific-llms | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Exploring Design Choices for Building Language-Specific LLMs](https://aimodels.fyi/papers/arxiv/exploring-design-choices-building-language-specific-llms). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https:... | mikeyoung44 |

1,903,039 | Adam-mini: Use Fewer Learning Rates To Gain More | Adam-mini: Use Fewer Learning Rates To Gain More | 0 | 2024-06-27T18:57:35 | https://aimodels.fyi/papers/arxiv/adam-mini-use-fewer-learning-rates-to | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Adam-mini: Use Fewer Learning Rates To Gain More](https://aimodels.fyi/papers/arxiv/adam-mini-use-fewer-learning-rates-to). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https://aimodels.substack.com) or fol... | mikeyoung44 |

1,903,038 | Deep Learning for Multi-Label Learning: A Comprehensive Survey | Deep Learning for Multi-Label Learning: A Comprehensive Survey | 0 | 2024-06-27T18:57:01 | https://aimodels.fyi/papers/arxiv/deep-learning-multi-label-learning-comprehensive-survey | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Deep Learning for Multi-Label Learning: A Comprehensive Survey](https://aimodels.fyi/papers/arxiv/deep-learning-multi-label-learning-comprehensive-survey). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](https... | mikeyoung44 |

1,903,037 | Understanding software development life cycle | The Software Development Life Cycle (SDLC) is a systematic process used by software development teams... | 0 | 2024-06-27T18:56:57 | https://dev.to/keploy/understanding-software-development-life-cycle-4pl0 | The [Software Development Life Cycle](https://keploy.io/blog/community/software-development-phases) (SDLC) is a systematic process used by software development teams to design, develop, test, and deploy high-quality software. It consists of a series of phases that provide a structured framework to guide the project fro... | keploy | |

1,903,036 | How To Install,Create,Modify,Destroy EC2 Instance In Teeraform | Installing Terraform First things first, let's get Terraform installed on your machine: Download... | 0 | 2024-06-27T18:56:50 | https://dev.to/jeyaprakash/how-to-installcreatemodifydestroy-ec2-instance-in-teeraform-nep | Installing Terraform

First things first, let's get Terraform installed on your machine:

1. Download Terraform: Visit the Terraform downloads page and download the appropriate package for your operating system.

2. Install Terraform: After downloading, follow the installation instructions for your OS. For most syste... | jeyaprakash | |

1,903,035 | How To Install,Create,Modify,Destroy EC2 Instance In Teeraform | Installing Terraform First things first, let's get Terraform installed on your machine: Download... | 0 | 2024-06-27T18:56:44 | https://dev.to/jeyaprakash/how-to-installcreatemodifydestroy-ec2-instance-in-teeraform-30j1 | Installing Terraform

First things first, let's get Terraform installed on your machine:

1. Download Terraform: Visit the Terraform downloads page and download the appropriate package for your operating system.

2. Install Terraform: After downloading, follow the installation instructions for your OS. For most syste... | jeyaprakash | |

1,903,034 | My Pen on CodePen | Check out this Pen I made! | 0 | 2024-06-27T18:55:32 | https://dev.to/vladimir_khrenkov_d30c9d2/my-pen-on-codepen-2d11 | codepen | Check out this Pen I made!

{% codepen https://codepen.io/Vladimir-Khrenkov/pen/zYQXeQP %} | vladimir_khrenkov_d30c9d2 |

1,903,033 | Insights into LLM Long-Context Failures: When Transformers Know but Don't Tell | Insights into LLM Long-Context Failures: When Transformers Know but Don't Tell | 0 | 2024-06-27T18:55:17 | https://aimodels.fyi/papers/arxiv/insights-into-llm-long-context-failures-when | machinelearning, ai, beginners, datascience | *This is a Plain English Papers summary of a research paper called [Insights into LLM Long-Context Failures: When Transformers Know but Don't Tell](https://aimodels.fyi/papers/arxiv/insights-into-llm-long-context-failures-when). If you like these kinds of analysis, you should subscribe to the [AImodels.fyi newsletter](... | mikeyoung44 |

1,903,032 | Unlocking Your Infinite Potential Lessons from Dr Joe Dispenzas Becoming Supernatural | Explore the groundbreaking ideas and techniques presented in Dr. Joe Dispenza's "Becoming Supernatural," a guide to unlocking your infinite potential and creating the life you desire. Discover how the intersection of science and spirituality can help you transcend limitations and achieve the extraordinary. | 0 | 2024-06-27T18:54:01 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Books/BecomingSupernatural | development, spirituality, meditation, quantum | # Unlocking Your Infinite Potential: Lessons from Dr. Joe Dispenza's 'Becoming Supernatural'

In his transformative book "Becoming Supernatural: How Common People Are Doing the Uncommon," Dr. Joe Dispenza combines the latest findings from neuroscience, epigenetics, and quantum physics with anci... | eric_dequ |

1,897,889 | A Fullstack Journey | Follow me in this journey, bringing back a project of mine back to life. This project is a simple... | 0 | 2024-06-27T18:51:16 | https://dev.to/metalcoder/a-fullstack-journey-3ofo | webdev, fullstack, discuss | Follow me in this journey, bringing back a project of mine back to life. This project is a simple blog dedicated to my favorite types of music (mainly heavy metal).

I'm developing this whole project with the purpose of improving my knowledge as a Fullstack Developer.

The first time I started this project was around 20... | metalcoder |

1,903,029 | ListView | A list view is a control that basically performs the same function as a combo box, but it enables the... | 0 | 2024-06-27T18:50:52 | https://dev.to/paulike/listview-1jlb | java, programming, learning, beginners | A list view is a control that basically performs the same function as a combo box, but it enables the user to choose a single value or multiple values.

Figure below lists several frequently used properties and constructors in **ListView**. **ListView** is defined as a generic class. The generic type **T** specifies th... | paulike |

1,903,024 | Boost Your Website’s SEO with Structured Data and Schema in Next.js | Structured data is an essential component of modern SEO. By providing search engines with additional... | 0 | 2024-06-27T18:49:22 | https://dev.to/khalisspasha/boost-your-websites-seo-with-structured-data-and-schema-in-nextjs-34pl | Structured data is an essential component of modern SEO. By providing search engines with additional context about your content, you can enhance your site’s visibility and performance. In this blog post, we’ll explore what structured data is, why it’s important, and provide some popular schema examples with code snippe... | khalisspasha | |

1,903,028 | Angels Demons by Dan Brown A Thrilling Journey into the Depths of History Science and Faith | Embark on a heart-pounding adventure through the pages of Dan Brown's "Angels & Demons," a masterful blend of history, science, and faith. Follow symbologist Robert Langdon as he races against time to unravel a deadly plot that threatens to shake the very foundations of the Catholic Church and the world of science. | 0 | 2024-06-27T18:48:52 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Books/AngelsDemons | thriller, symbology, science, faith | # Angels & Demons by Dan Brown: A Thrilling Journey into the Depths of History, Science, and Faith

Dan Brown's "Angels & Demons" is a masterful thriller that takes readers on a breathtaking journey through the intersections of history, science, and faith. Set against the backdrop of Vatican City... | eric_dequ |

1,903,027 | Creating an EC2 instance with SSH access using Terraform: | Introduction : *.Terraform is an open-source infrastructure as code (IaC) tool that... | 0 | 2024-06-27T18:48:07 | https://dev.to/jeyaprakash/creating-an-ec2-instance-with-ssh-access-using-terraform-5eaj | **Introduction :**

*.Terraform is an open-source infrastructure as code (IaC) tool that allows you to define and provision data center infrastructure using a high-level configuration language. One of the most common use cases for Terraform is managing cloud resources, such as creating and managing Amazon... | jeyaprakash | |

1,903,026 | Creating an EC2 instance with SSH access using Terraform: | Introduction : *.Terraform is an open-source infrastructure as code (IaC) tool that... | 0 | 2024-06-27T18:47:59 | https://dev.to/jeyaprakash/creating-an-ec2-instance-with-ssh-access-using-terraform-361f | **Introduction :**

*.Terraform is an open-source infrastructure as code (IaC) tool that allows you to define and provision data center infrastructure using a high-level configuration language. One of the most common use cases for Terraform is managing cloud resources, such as creating and managing Amazon... | jeyaprakash | |

1,903,025 | React and Vue: Most Used Front end Framework | React and Vue are both popular tools for creating JavaScript web applications. More and more... | 0 | 2024-06-27T18:46:32 | https://dev.to/kngkay/react-and-vue-most-used-front-end-framework-1jni | React and Vue are both popular tools for creating JavaScript web applications. More and more companies are getting used to how they can improve both performance and time savings while improving the overall development experience. However many other companies and devs still struggle with choosing the right framework.

*... | kngkay | |

1,903,022 | Smaller Documents for Smaller Screens using Sec-CH-Viewport-Width | If you have been following my engineering blog, you know that I am obsessive about performance.... | 0 | 2024-06-27T18:43:54 | https://pillser.com/engineering/smaller-documents-for-smaller-screens-using-sec-ch-viewport-width | webdev, performance, react | If you have been following my [engineering blog](https://pillser.com/engineering/), you know that I am obsessive about performance. Pillser lists a lot of data about supplements and research papers, and I want to make sure that the website is fast and responsive. One of the ways I've done it is by using `Sec-CH-Viewpor... | lilouartz |

1,903,020 | Create a React Tooltip component using Popover API | Since April 2024, Popover API works major browsers on their latest versions. This API allows... | 0 | 2024-06-27T18:39:31 | https://dev.to/hnrq/create-a-react-tooltip-component-using-popover-api-155o | html, react, javascript | Since April 2024, Popover API works major browsers on their [latest versions](https://developer.mozilla.org/en-US/docs/Web/API/Popover_API#browser_compatibility). This API allows developers to display popover content on top of other page content.

## Code

For this small snippet, I've used JS Popover API (`HTMLElement.... | hnrq |

1,903,019 | Plant Neurobiology Exploring the Intricate Communication Networks in Plants | Dive into the fascinating world of plant neurobiology, where the latest research reveals how plants sense and respond to their environment. Explore the parallels between plant signaling mechanisms and human gut feelings, supported by both scientific evidence and spiritual insights from indigenous wisdom. 🌿🧬✨ | 0 | 2024-06-27T18:38:37 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Bio/PlantNeuroBiology | plantneurobiology, plantperception, microbiome, gutfeelings | ## 🌿 Plant Neurobiology: Exploring the Intricate Communication Networks in Plants

In the intricate web of life, plants have evolved sophisticated systems to perceive and respond to their environment. This field, often referred to as plant neurobiology or plant signaling and behavior, uncovers the remarkable ways in w... | eric_dequ |

1,903,017 | Computer Vision - Building a Motion Detection Camera in .NET | In our previous article, we introduced the basics of image processing with OpenCvSharp in .NET. Now,... | 0 | 2024-06-27T18:34:47 | https://dev.to/jwtiller_c47bdfa134adf302/building-a-motion-detection-camera-with-opencvsharp-in-net-kd4 | dotnet, computervision | In our [previous article](https://dev.to/jwtiller_c47bdfa134adf302/introduction-to-computer-vision-in-net-160i), we introduced the basics of image processing with OpenCvSharp in .NET. Now, let's take it a step further and build a motion detection camera. This project will help you understand how motion detection works ... | jwtiller_c47bdfa134adf302 |

1,903,015 | Understanding Asynchronous JavaScript: Callbacks, Promise Chains, and Order of Execution | In the dynamic world of JavaScript, managing asynchronous operations is crucial for building... | 0 | 2024-06-27T18:33:35 | https://dev.to/faisalmh4045/understanding-asynchronous-javascript-callbacks-promise-chains-and-order-of-execution-2486 | webdev, javascript, programming | In the dynamic world of JavaScript, managing asynchronous operations is crucial for building responsive and efficient applications. Asynchronous programming allows tasks to be executed independently, without blocking the main thread, ensuring smooth user experiences. However, handling asynchronous code can sometimes be... | faisalmh4045 |

1,903,014 | The Science and Art of Grafting Plants A Step-by-Step Guide | Discover the technical science behind grafting plants and learn how to graft plants successfully with this comprehensive step-by-step guide. 🌱🔬✂️ | 0 | 2024-06-27T18:33:30 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Bio/Grafting | grafting, horticulture, botany, gardening | ## 🌱 The Science and Art of Grafting Plants

Grafting is a horticultural technique that involves joining two plants together so that they grow as one. This method is widely used in agriculture and gardening to propagate desirable plant varieties, improve disease resistance, and enhance growth characteristics. This blo... | eric_dequ |

1,903,012 | ComboBox | A combo box, also known as a choice list or drop-down list, contains a list of items from which the... | 0 | 2024-06-27T18:31:19 | https://dev.to/paulike/combobox-34o9 | java, programming, learning, beginners | A combo box, also known as a choice list or drop-down list, contains a list of items from which the user can choose. A combo box is useful for limiting a user’s range of choices and avoids the cumbersome validation of data input. Figure below lists several frequently used properties and constructors in **ComboBox**. **... | paulike |

1,903,008 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Account There are... | 0 | 2024-06-27T18:23:46 | https://dev.to/foneyo8138/buy-verified-paxful-account-3h6k | webdev, javascript, beginners, react | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-paxful-account/\n\n\nBuy Verified Paxful Account\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, Buy verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to Buy Verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with. Buy Verified Paxful Account.\n\nBuy US verified paxful account from the best place dmhelpshop\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. Buy Verified Paxful Account.\n\nIf you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\nWhat is Verified Paxful Account?\nIn today’s expanding landscape of online transactions, ensuring security and reliability has become paramount. Given this context, Paxful has quickly risen as a prominent peer-to-peer Bitcoin marketplace, catering to individuals and businesses seeking trusted platforms for cryptocurrency trading.\n\nIn light of the prevalent digital scams and frauds, it is only natural for people to exercise caution when partaking in online transactions. As a result, the concept of a verified account has gained immense significance, serving as a critical feature for numerous online platforms. Paxful recognizes this need and provides a safe haven for users, streamlining their cryptocurrency buying and selling experience.\n\nFor individuals and businesses alike, Buy verified Paxful account emerges as an appealing choice, offering a secure and reliable environment in the ever-expanding world of digital transactions. Buy Verified Paxful Account.\n\nVerified Paxful Accounts are essential for establishing credibility and trust among users who want to transact securely on the platform. They serve as evidence that a user is a reliable seller or buyer, verifying their legitimacy.\n\nBut what constitutes a verified account, and how can one obtain this status on Paxful? In this exploration of verified Paxful accounts, we will unravel the significance they hold, why they are crucial, and shed light on the process behind their activation, providing a comprehensive understanding of how they function. Buy verified Paxful account.\n\n \n\nWhy should to Buy Verified Paxful Account?\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, a verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence. Buy Verified Paxful Account.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to buy a verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with.\n\n \n\nWhat is a Paxful Account\nPaxful and various other platforms consistently release updates that not only address security vulnerabilities but also enhance usability by introducing new features. Buy Verified Paxful Account.\n\nIn line with this, our old accounts have recently undergone upgrades, ensuring that if you purchase an old buy Verified Paxful account from dmhelpshop.com, you will gain access to an account with an impressive history and advanced features. This ensures a seamless and enhanced experience for all users, making it a worthwhile option for everyone.\n\n \n\nIs it safe to buy Paxful Verified Accounts?\nBuying on Paxful is a secure choice for everyone. However, the level of trust amplifies when purchasing from Paxful verified accounts. These accounts belong to sellers who have undergone rigorous scrutiny by Paxful. Buy verified Paxful account, you are automatically designated as a verified account. Hence, purchasing from a Paxful verified account ensures a high level of credibility and utmost reliability. Buy Verified Paxful Account.\n\nPAXFUL, a widely known peer-to-peer cryptocurrency trading platform, has gained significant popularity as a go-to website for purchasing Bitcoin and other cryptocurrencies. It is important to note, however, that while Paxful may not be the most secure option available, its reputation is considerably less problematic compared to many other marketplaces. Buy Verified Paxful Account.\n\nThis brings us to the question: is it safe to purchase Paxful Verified Accounts? Top Paxful reviews offer mixed opinions, suggesting that caution should be exercised. Therefore, users are advised to conduct thorough research and consider all aspects before proceeding with any transactions on Paxful.\n\n \n\nHow Do I Get 100% Real Verified Paxful Accoun?\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform.\n\nHowever, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. This verification procedure necessitates users to furnish personal information and vital documents, posing potential risks if not conducted meticulously.\n\nIn this comprehensive guide, we will delve into the necessary steps to create a legitimate and verified Paxful account. Our discussion will revolve around the verification process and provide valuable tips to safely navigate through it.\n\nMoreover, we will emphasize the utmost importance of maintaining the security of personal information when creating a verified account. Furthermore, we will shed light on common pitfalls to steer clear of, such as using counterfeit documents or attempting to bypass the verification process.\n\nWhether you are new to Paxful or an experienced user, this engaging paragraph aims to equip everyone with the knowledge they need to establish a secure and authentic presence on the platform.\n\nBenefits Of Verified Paxful Accounts\nVerified Paxful accounts offer numerous advantages compared to regular Paxful accounts. One notable advantage is that verified accounts contribute to building trust within the community.\n\nVerification, although a rigorous process, is essential for peer-to-peer transactions. This is why all Paxful accounts undergo verification after registration. When customers within the community possess confidence and trust, they can conveniently and securely exchange cash for Bitcoin or Ethereum instantly. Buy Verified Paxful Account.\n\nPaxful accounts, trusted and verified by sellers globally, serve as a testament to their unwavering commitment towards their business or passion, ensuring exceptional customer service at all times. Headquartered in Africa, Paxful holds the distinction of being the world’s pioneering peer-to-peer bitcoin marketplace. Spearheaded by its founder, Ray Youssef, Paxful continues to lead the way in revolutionizing the digital exchange landscape.\n\nPaxful has emerged as a favored platform for digital currency trading, catering to a diverse audience. One of Paxful’s key features is its direct peer-to-peer trading system, eliminating the need for intermediaries or cryptocurrency exchanges. By leveraging Paxful’s escrow system, users can trade securely and confidently.\n\nWhat sets Paxful apart is its commitment to identity verification, ensuring a trustworthy environment for buyers and sellers alike. With these user-centric qualities, Paxful has successfully established itself as a leading platform for hassle-free digital currency transactions, appealing to a wide range of individuals seeking a reliable and convenient trading experience. Buy Verified Paxful Account.\n\n \n\nHow paxful ensure risk-free transaction and trading?\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfu implement stringent identity and address verification measures to protect users from scammers and ensure credibility.\n\nWith verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users. Buy Verified Paxful Account.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user’s dedication to the platform’s guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Buy Verified Paxful Account.\n\nExamining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\n \n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors.\n\nBusinesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities. Buy Verified Paxful Account.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth.\n\nPaxful’s verified accounts not only offer reliability within the trading community but also serve as a testament to the platform’s ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today. Buy Verified Paxful Account.\n\n \n\nWhy paxful keep the security measures at the top priority?\nIn today’s digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information.\n\nSafeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all. Buy Verified Paxful Account.\n\nConclusion\nInvesting in Bitcoin offers various avenues, and among those, utilizing a Paxful account has emerged as a favored option. Paxful, an esteemed online marketplace, enables users to engage in buying and selling Bitcoin. Buy Verified Paxful Account.\n\nThe initial step involves creating an account on Paxful and completing the verification process to ensure identity authentication. Subsequently, users gain access to a diverse range of offers from fellow users on the platform. Once a suitable proposal captures your interest, you can proceed to initiate a trade with the respective user, opening the doors to a seamless Bitcoin investing experience.\n\nIn conclusion, when considering the option of purchasing verified Paxful accounts, exercising caution and conducting thorough due diligence is of utmost importance. It is highly recommended to seek reputable sources and diligently research the seller’s history and reviews before making any transactions.\n\nMoreover, it is crucial to familiarize oneself with the terms and conditions outlined by Paxful regarding account verification, bearing in mind the potential consequences of violating those terms. By adhering to these guidelines, individuals can ensure a secure and reliable experience when engaging in such transactions. Buy Verified Paxful Account.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n " | foneyo8138 |

1,900,461 | How to automate NPM authentication to avoid providing credentials every time | You must have used npm for publishing your javascript packages or using private libraries and... | 0 | 2024-06-27T18:29:20 | https://dev.to/deepcodr/how-to-automate-npm-authentication-to-avoid-providing-credentials-every-time-2e1m | deepcodr, npm, javascript, javascriptlibraries | You must have used npm for publishing your javascript packages or using private libraries and packages. For developers using npm is a daily habit for building projects. However, It becomes tedious when authenticating NPM for using or publishing any package.

<br>

We can avoid this by automating npm authentication. To d... | deepcodr |

1,903,011 | The Enchanting World of Bioluminescence Natures Light Show | Discover the captivating phenomenon of bioluminescence, where living organisms create their own light. From glowing oceans to luminescent forests, explore how and why this natural light show occurs. 🌌 | 0 | 2024-06-27T18:28:22 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Bio/Bioluminescence | bioluminescence, nature, marinebiology, science | ## 🌟 The Enchanting World of Bioluminescence: Nature’s Light Show

Bioluminescence, the ability of living organisms to produce light, is one of nature’s most captivating phenomena. From the depths of the ocean to the canopy of tropical forests, bioluminescent organisms light up the night with their enchanting glow. Le... | eric_dequ |

1,903,010 | Hosting a Static Website Using S3 in AWS with Terraform | Hello Everyone!!here's a blog post on hosting a static website using Amazon S3 and Terraform. ... | 0 | 2024-06-27T18:26:52 | https://dev.to/kousalya_s_1e656b83b89b93/hosting-a-static-website-using-s3-in-aws-with-terraform-431p | Hello Everyone!!here's a blog post on hosting a static website using Amazon S3 and Terraform.

---

## Hosting a Static Website on AWS S3 Using Terraform

Hosting a static website on AWS S3 is a cost-effective and efficient way to make your content accessible to the world. By using Terraform, an infrastructure as code ... | kousalya_s_1e656b83b89b93 | |

1,902,866 | How to create and configure virtual machine scale set | Creating and Configuring a Virtual Machine Scale Set In the realm of cloud computing,... | 0 | 2024-06-27T18:26:13 | https://dev.to/dera2024/how-to-create-and-configure-virtual-machine-scale-set-o9e | virtualmachine, azure, microsoft, devops | ### Creating and Configuring a Virtual Machine Scale Set

In the realm of cloud computing, scalability is a crucial factor for ensuring applications can handle varying levels of demand efficiently. Virtual Machine Scale Sets (VMSS) in Microsoft Azure provide an excellent solution for this by allowing you to create and ... | dera2024 |

1,903,009 | Creating an EC2 instance with SSH access using Terraform: | Introduction : *.Terraform is an open-source infrastructure as code (IaC) tool that... | 0 | 2024-06-27T18:24:16 | https://dev.to/albine_peter_c2ffb10b422f/creating-an-ec2-instance-with-ssh-access-using-terraform-4679 |

**_Introduction :_**

*.Terraform is an open-source infrastructure as code (IaC) tool that allows you to define and provision data center infrastructure using a high-level configuration language. One of the most common use cases for Terraform is managing cloud resources, such as creating and managing Ama... | albine_peter_c2ffb10b422f | |

1,903,000 | Transforming Cloud Infrastructure with Terraform: Build, Change, Deploy | Intoduction: In today's fast-paced tech world, infrastructure as code (IaC) has become essential for... | 0 | 2024-06-27T18:18:30 | https://dev.to/mohanapriya_s_1808/transforming-cloud-infrastructure-with-terraform-build-change-deploy-54jn | **Intoduction:**

In today's fast-paced tech world, infrastructure as code (IaC) has become essential for managing and automating your cloud resources. Recently, I had the opportunity to dive into Terraform, an open-source IaC tool that allows you to define and provision your infrastructure using a simple, declarative p... | mohanapriya_s_1808 | |

1,902,999 | How can I speak to Meesho Executive? | How can I speak to Meesho Executive? Toll Free: [...] Online complain, 24/7) ,9831,228.932.... | 0 | 2024-06-27T18:15:12 | https://dev.to/mentioned_balance_ea9bb6d/but-why-you-tell-me-how-can-i-help-chahiye-aapko-loan-credit-4ico | webdev | How can I speak to Meesho Executive? Toll Free: [...] Online complain, 24/7) ,9831,228.932. (0626,7490.546- meesho complaint customer service /credit card/ report Transaction),1800-298-6161 (Report on-line application.... | mentioned_balance_ea9bb6d |

1,902,998 | Unlocking the Secrets of Animal Communication How Technology is Opening New Frontiers | Discover how cutting-edge technologies like machine learning are revolutionizing our understanding of animal communication, paving the way for groundbreaking scientific discoveries and new ways of interacting with the natural world. | 0 | 2024-06-27T18:14:54 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Animals/Communication | animalcommunication, technology, machinelearning, scientificdiscovery |

In a groundbreaking study published under the title "Contextual and Combinatorial Structure in Sperm Whale Vocalizations," researchers at MIT CSAIL and Project CETI have made significant strides in dec... | eric_dequ |

1,902,997 | The Fungal Connection Exploring the Evolutionary Intertwining of Animals Fungi and Plants | Embark on a thought-provoking journey through the realms of evolution and speculative biology, as we explore the intriguing possibility of animals being a unique crossbreed between fungi and plants. Delve into the striking similarities between animal flesh and mushrooms, uncover the critical role of microbial allies, a... | 0 | 2024-06-27T18:14:16 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Bio/AnimalEvolution | evolution, fungi, plants, animals | ## 🌟 The Fungal Connection: Exploring the Evolutionary Intertwining of Animals, Fungi, and Plants

In the grand tapestry of life on Earth, the threads of evolution weave a complex and fascinating story. As we trace the origins and relationships between various forms of life, we find ourselves drawn to the intriguing c... | eric_dequ |

1,902,996 | TextArea | A TextArea enables the user to enter multiple lines of text. If you want to let the user enter... | 0 | 2024-06-27T18:14:05 | https://dev.to/paulike/textarea-4fb4 | java, programming, learning, beginners | A **TextArea** enables the user to enter multiple lines of text.

If you want to let the user enter multiple lines of text, you may create several instances of **TextField**. A better alternative, however, is to use **TextArea**, which enables the user to enter multiple lines of text. Figure below lists the properties ... | paulike |

1,901,755 | Understanding Procrastination: Why We Start and How to Overcome It | Understanding Procrastination: Why We Start and How to Overcome It Procrastination is a universal... | 0 | 2024-06-27T18:09:42 | https://dev.to/newme/understanding-procrastination-why-we-start-and-how-to-overcome-it-2jai | productivity, procrastination | Understanding Procrastination: Why We Start and How to Overcome It

Procrastination is a universal struggle, often leaving us feeling guilty and frustrated. Yet, understanding why we procrastinate can help us tackle it more effectively. This blog post explores the reasons behind procrastination, why it’s sometimes not e... | newme |

1,902,994 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash app... | 0 | 2024-06-27T18:07:47 | https://dev.to/foneyo8138/buy-verified-cash-app-account-38pl | webdev, javascript, beginners, programming | ERROR: type should be string, got "\nhttps://dmhelpshop.com/product/buy-verified-cash-app-account/\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n" | foneyo8138 |

1,902,993 | Hosting a Static Website Using S3 in AWS with Terraform | Introduction Hi there! I've been investigating Terraforms and AWS's capabilities as part of my... | 0 | 2024-06-27T18:06:28 | https://dev.to/harshana_vivekanandhan_88/hosting-a-static-website-using-s3-in-aws-with-terraform-3b69 | Introduction

Hi there! I've been investigating Terraforms and AWS's capabilities as part of my internship. I recently worked on Hosting a Static Website Using S3 in AWS with Terraform

Hosting a static website on Amazon S3 is a cost-effective and scalable solution that can be easily managed using Terraform. This tutoria... | harshana_vivekanandhan_88 | |

1,902,992 | Interview with Vitor Ayres, a Tauri Maintainer | Welcome to the first episode of our new series “Tauri Maintainers”, where we chat with Tauri... | 0 | 2024-06-27T18:06:26 | https://crabnebula.dev/blog/interview-with-vitor/ | rust, tauri, opensource, maintainer | Welcome to the first episode of our new series “Tauri Maintainers”, where we chat with [Tauri](https://tauri.app/) maintainers.

In this episode, [Eleftheria](https://twitter.com/BatsouElef) discusses with [Vitor Ayres](https://www.linkedin.com/in/ayresvitor):

* How he started

* What motivated him to contribute t... | crabnebuladev |

1,423,909 | The End of Create-React-App | What is Create-React-App and why does it exist? Create-React-App (CRA) was a popular CLI... | 0 | 2023-04-12T07:40:42 | https://dev.to/codenamegrant/the-end-of-create-react-app-4o01 | react, webdev, javascript, frontend | ## What is Create-React-App and why does it exist?

Create-React-App (CRA) was a popular CLI tool to quickly create React-based web applications. Maintained by the React team at Facebook, it provides a set of preconfigured tools and dependencies that allowed developers to start building React applications without havin... | codenamegrant |

1,902,991 | Cloud Migrations Paving the Way for Digital Excellence | Migrating to the cloud is more than just a tech shift its a strategic move that can redefine an organizations operational paradigm. Dive deep into the intricacies of cloud migrations and their transformative potential. | 0 | 2024-06-27T18:06:16 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Cloud/CloudMigration | cloud, migration, devops, digitaltransformation | ## What is Cloud Migration?

💻 Cloud migration refers to the process of moving digital assets – like data, applications, or workloads – from on-premises infrastructure or a legacy data center to the cloud. It's a critical step in an organization's digital transformation journey, offering a myriad of benefits... | eric_dequ |

1,902,990 | GCP vs AWS vs Azure Which Cloud Platform is Right for You | A disscussion on the differences between the 3 main cloud providers ☁️, Google Cloud Platform Amazong Web Services and Microsoft Azure. | 0 | 2024-06-27T18:06:09 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Cloud/Cloud | cloud, aws, azure, gcp | # AWS vs Azure vs Google Cloud: Which Cloud Platform is Right for You? ☁️

The cloud computing market is constantly evolving, with new players entering the fray all the time. But three of the biggest names in the cloud are Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP).

Each of these platf... | eric_dequ |

1,902,989 | AWS EC2 Instance Management with Terraform | Introduction : Managing infrastructure as code is essential for modern DevOps practices,... | 0 | 2024-06-27T18:05:34 | https://dev.to/kishore_suzil_v/aws-ec2-instance-management-with-terraform-1ane | ## Introduction :

Managing infrastructure as code is essential for modern DevOps practices, and Terraform by HashiCorp is a powerful tool for this purpose. In this blog, we will walk through the steps to install Terraform using Chocolatey, and then use Terraform to create, rename, and delete an

AWS EC2 instance. We wi... | kishore_suzil_v | |

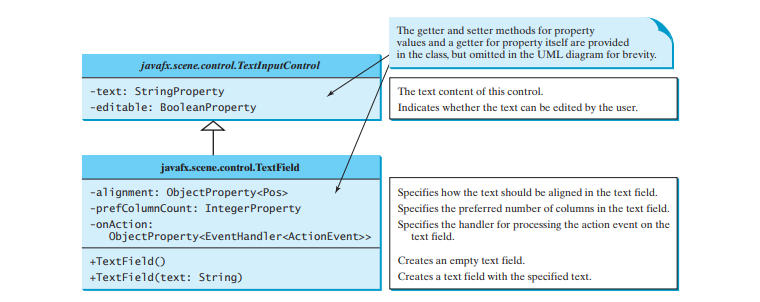

1,902,988 | TextField | A text field can be used to enter or display a string. TextField is a subclass of TextInputControl.... | 0 | 2024-06-27T17:57:24 | https://dev.to/paulike/textfield-4jgh | java, programming, learning, beginners | A text field can be used to enter or display a string. **TextField** is a subclass of **TextInputControl**. Figure below lists the properties and constructors in **TextField**.

Here is an example of creating a none... | paulike |

1,902,986 | Jornada para se tornar um tech lead | Apresentação 🚀 Oi, eu sou o Rafael! Desde 2008, estou na estrada do desenvolvimento de... | 0 | 2024-06-27T17:54:48 | https://dev.to/rscholant/jornada-para-se-tornar-um-tech-lead-2b8k | techlead, desenvolvimentodesoftware, carreira, liderança | ## Apresentação 🚀

Oi, eu sou o Rafael! Desde 2008, estou na estrada do desenvolvimento de software, explorando várias linguagens e tecnologias ao longo do caminho. Sou gaúcho e, como todo bom gaúcho, tenho uma paixão por resolver problemas e encarar desafios de frente. Hoje, sou tech lead na DM, onde encaro novos desa... | rscholant |

1,902,985 | Jornada para se tornar um tech lead | Apresentação 🚀 Oi, eu sou o Rafael! Desde 2008, estou na estrada do desenvolvimento de... | 0 | 2024-06-27T17:54:48 | https://dev.to/rscholant/jornada-para-se-tornar-um-tech-lead-1m6j | techlead, desenvolvimentodesoftware, carreira, liderança | ## Apresentação 🚀

Oi, eu sou o Rafael! Desde 2008, estou na estrada do desenvolvimento de software, explorando várias linguagens e tecnologias ao longo do caminho. Sou gaúcho e, como todo bom gaúcho, tenho uma paixão por resolver problemas e encarar desafios de frente. Hoje, sou tech lead na DM, onde encaro novos desa... | rscholant |

1,902,980 | Flutter Version Management A Guide to Effortless Project Switching in Flutter News 2024 #25 ʚїɞ | Hey Flutter enthusiasts! Ever worry about missing key Flutter updates? Well, worry no... | 26,008 | 2024-06-27T17:51:26 | https://dev.to/lucianojung/flutter-version-management-a-guide-to-effortless-project-switching-in-flutter-news-2024-25-eyie-4jgl | flutter, news, dart, discuss | ## Hey Flutter enthusiasts!

Ever worry about missing key Flutter updates? Well, worry no more!

Starting 2024, I'm here to keep you informed with a weekly Monday report. Let's stay ahead in the world of Flutter!

## Table of Contents

1. {% cta #mayor-flutter-updates %} Mayor Flutter updates {% endcta %}

2. {% cta #n... | lucianojung |

1,902,979 | How to Install,create,modify,destroy EC2 instances in AWS using Terraform ! | Introduction : *.Terraform is a powerful tool that can be used to create, modify, and destroy EC2... | 0 | 2024-06-27T17:49:57 | https://dev.to/albine_peter_c2ffb10b422f/how-to-installcreatemodifydestroy-ec2-instances-in-aws-using-terraform--d34 | **_Introduction :_**

*.Terraform is a powerful tool that can be used to create, modify, and destroy EC2 instances, among other resources.

*.This introduction provides an overview of how to manage EC2 instances in AWS using Terraform, covering the essential steps and concepts involved.

**_EC2 Instance:_**

*.Ama... | albine_peter_c2ffb10b422f | |

1,902,977 | Setting Raspberry Pi with Laptop | Requirements Raspberry Pi (all models) Memory card (16GB or larger recommended) SD Card... | 27,905 | 2024-06-27T17:48:52 | https://dev.to/kutt27/setting-raspberry-pi-with-laptop-3hie | raspberrypi | ### Requirements

1. Raspberry Pi (all models)

2. Memory card (16GB or larger recommended)

3. SD Card adapter

4. LAN cable

5. Power adapter

---

**Disclaimer**:

> You can use any operating system of your choice. I'll be demonstrating with Arch Linux, but the steps are applicable across different OSes.

## Installing t... | kutt27 |

1,902,975 | RadioButton | Radio buttons, also known as option buttons, enable the user to choose a single item from a group of... | 0 | 2024-06-27T17:46:20 | https://dev.to/paulike/radiobutton-2bk5 | java, programming, beginners, learning | Radio buttons, also known as _option buttons_, enable the user to choose a single item from a group of choices. In appearance radio buttons resemble check boxes, but check boxes display a square that is either checked or blank, whereas radio buttons display a circle that is either filled (if selected) or blank (if not ... | paulike |

1,902,973 | Simple Directory Watcher to Restart Dev Server | Simple Linux script that restarts the dev server on file changes. Couldn't find it so I made it. ... | 27,891 | 2024-06-27T17:44:59 | https://tomoviktor.com/posts/watch-execute/ | zsh, linux, development, scripting | Simple Linux script that restarts the dev server on file changes. Couldn't find it so I made it.

## Intro

I have been learning [Go](https://go.dev/) and I came across a pretty basic problem. I was practicing making a REST API web server and I wanted to enable hot reloading so my changes would be visible while I am cha... | tomoviktor |

1,901,062 | Creating a Virtual Machine Scale Set (VMSS) | Table of Contents Introduction Step 1. Login to Azure Portal Introduction Virtual Machine Scale sets... | 0 | 2024-06-27T17:44:40 | https://dev.to/yuddy/creating-a-virtual-machine-scale-set-vmss-3ipn | **Table of Contents**

Introduction

Step 1. Login to Azure Portal

Introduction

Virtual Machine Scale sets (VMSS) is all about deploying multiple VMs, trying to manage them, scaling them (auto scaling and manual scaling). The purpose is to provide a high availability while you centrally manage large number of VMs. With ... | yuddy | |

1,902,961 | How I Managed To Overcome My Backend Challenges & Voyage Through The HNG Internship | I am a backend developer with 3 years of experience, and I've always wanted to understand how web... | 0 | 2024-06-27T17:44:20 | https://dev.to/emmanuel_aboyeji_1a2ab096/how-i-managed-to-overcome-my-backend-challenges-voyage-through-the-hng-internship-39ob | programming, career, discuss, coding | I am a backend developer with 3 years of experience, and I've always wanted to understand how web applications work behind the scenes. Problem-solving challenges, system optimization to achieve perfection is what brings the inner child of enthusiasm in me. Today, I am happy to announce a recent challenge I had and how ... | emmanuel_aboyeji_1a2ab096 |

1,902,970 | BridgingtheGapWhyAICompaniesShouldAdoptaGoogle-likeSearchContentFeature | This blog post explores the benefits of AI companies implementing a feature similar to Google Search Console, allowing businesses to submit their information to the AIs knowledge base. By incorporating user-generated content and a robust validation infrastructure, AI companies can bridge the gap between human-created d... | 0 | 2024-06-27T17:43:23 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/AI/AISearchConsole | aidevelopment, knowledgeacquisition, datavalidation, userengagement | # 🌉 Bridging the Gap: The Need for a Google-like Search Content Feature in AI 🌉

As artificial intelligence continues to advance and integrate into our daily lives, the need for accurate and comprehensive data becomes increasingly critical. One way AI companies can address this challenge is by implementing a feature ... | eric_dequ |

1,902,969 | Letzz Understand Temporal Dead Zone in JS ( TDZ ) ;) | Let's Understand What is TDZ ;) What is the Temporal Dead Zone (TDZ)? The Temporal... | 0 | 2024-06-27T17:43:16 | https://dev.to/darshanraval/letzz-understand-temporal-dead-zone-in-js-tdz--2h71 | webdev, javascript, beginners, programming |

Let's Understand What is TDZ ;)

## What is the Temporal Dead Zone (TDZ)?

- The Temporal Dead Zone refers to a period of time during the execution of your code where variables declared with let and const cannot be accessed b... | darshanraval |

1,902,968 | AIDrivenEncryptionHarnessingNeuralNetworksforEnhancedSecurity | Discover a groundbreaking approach to encryption that leverages the power of artificial intelligence. Explore how neural networks can be trained to encrypt and decrypt data using complex vector associations, offering a new paradigm in data security. Dive into the technical details and learn about the strengths, weaknes... | 0 | 2024-06-27T17:43:08 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/AI/AIEncryption | encryption, ai, neuralnetworks, cryptography | ## 🔒 Introduction to AI-Driven Encryption

In the ever-evolving landscape of data security, traditional encryption methods are constantly challenged by the advancement of computing power and the emergence of new threats. To stay ahead of the curve, researchers are exploring innovative approaches to encryption, and one... | eric_dequ |

1,902,966 | 1791. Find Center of Star Graph | 1791. Find Center of Star Graph Easy There is an undirected star graph consisting of n nodes... | 27,523 | 2024-06-27T17:41:40 | https://dev.to/mdarifulhaque/1791-find-center-of-star-graph-3ahk | php, leetcode, algorithms, programming | 1791\. Find Center of Star Graph

Easy

There is an undirected **star** graph consisting of `n` nodes labeled from `1` to `n`. A star graph is a graph where there is one **center** node and **exactly** `n - 1` edges that connect the center node with every other node.

You are given a 2D integer array `edges` where each... | mdarifulhaque |

1,902,965 | Automatic Visual Feedback for System Volume Change in I3wm via Dunst | Simple yet powerful all in one stytem volume watcher and changer script for linux. Let me show you my... | 27,891 | 2024-06-27T17:39:46 | https://tomoviktor.com/posts/volume-changer-i3-dunst/ | linux, i3, dunst, dunstify | Simple yet powerful all in one stytem volume watcher and changer script for linux. Let me show you my small script.

## Introduction