id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,903,204 | Trimble A Comprehensive Overview of the Construction Technology Powerhouse | Discover the wide range of innovative solutions and technologies offered by Trimble, a global leader in construction technology. From GPS and geospatial solutions to cutting-edge software and hardware, Trimble empowers construction professionals to work smarter, faster, and more efficiently across the entire project li... | 0 | 2024-06-27T22:05:08 | https://www.rics-notebook.com/blog/Construction/Trimble | constructiontechnology, trimble, gpstechnology, buildinginformationmodelingbim | # 🌐 Trimble: Transforming the Construction Landscape 🌐

Trimble is a global technology leader that provides innovative solutions and services to a wide range of industries, with a significant focus on the construction sector. With a comprehensive portfolio of cutting-edge software, hardware, and services, Trimble is ... | eric_dequ |

1,903,183 | Computer Vision Meetup: Improved Visual Grounding through Self-Consistent Explanations | Vision-and-language models that are trained to associate images with text have shown to be effective... | 0 | 2024-06-27T22:03:07 | https://dev.to/voxel51/computer-vision-meetup-improved-visual-grounding-through-self-consistent-explanations-18g1 | computervision, ai, machinelearning, datascience | Vision-and-language models that are trained to associate images with text have shown to be effective for many tasks, including object detection and image segmentation. In this talk, we will discuss how to enhance vision-and-language models’ ability to localize objects in images by fine-tuning them for self-consistent v... | jguerrero-voxel51 |

1,903,203 | Harmonizing Smart Homes and Nature Building Sustainable Connected Neighborhoods | Discover how smart homes and neighborhoods can seamlessly integrate with nature to create sustainable, connected communities. From green architecture and energy-efficient technologies to urban gardens and wildlife habitats, explore the exciting possibilities of harmonizing technology and ecology in our living spaces. | 0 | 2024-06-27T22:00:01 | https://www.rics-notebook.com/blog/Construction/SmartHouses | smarthomes, sustainablearchitecture, greenliving, iot | As our cities and communities evolve, there is a growing recognition of the need to harmonize our living spaces with the natural world around us. By designing and building smart homes and neighborhoods that seamlessly integrate with nature, we can create sustainable, connected communities that promote both technologica... | eric_dequ |

1,854,433 | Dev: Quantum | A Quantum Developer is a specialized software engineer who works with quantum computing technologies... | 27,373 | 2024-06-27T22:00:00 | https://dev.to/r4nd3l/dev-quantum-4ne6 | quantum, developer | A **Quantum Developer** is a specialized software engineer who works with quantum computing technologies to develop algorithms, applications, and solutions that leverage the unique properties of quantum mechanics. Here's a detailed description of the role:

1. **Understanding Quantum Computing Principles:**

- Quantu... | r4nd3l |

1,902,729 | Mobile App Development (React Native Expo) | Hi guys, I recently Joined an internship @HNG (https://hng.tech/internship) and the first task was to... | 0 | 2024-06-27T14:49:00 | https://dev.to/ayeni_paul/mobile-app-development-react-native-expo-5d4m | Hi guys, I recently Joined an internship @HNG (https://hng.tech/internship)

and the first task was to write an article on myself and what I know about Mobile app software development.

So I just want to share my experience in the few Months have been in Mobile Apps.

**Short Story: Who's Paul Boluwatife **

I'm Paul I'... | ayeni_paul | |

1,903,201 | Circuit Breaker - Um apagador de fogo? | Um Apagador de Fogo? E aí, pessoal! Já ouviram falar de Circuit Breakers no... | 0 | 2024-06-27T21:58:05 | https://dev.to/felipepaz/circuit-breaker-um-apagador-de-fogo-4m74 | circuitbreake, python, node, designsystem | ## Um Apagador de Fogo?

E aí, pessoal! Já ouviram falar de Circuit Breakers no desenvolvimento de software? É um conceito super útil usado em microserviços para manter as coisas funcionando direitinho. Imagine que você está fazendo várias requisições para outro serviço, mas de repente esse serviço começa a falhar. Em ... | felipepaz |

1,903,199 | Building Smart Cities A Blueprint for Secure and Sustainable Urban Development | Discover the key principles and strategies for building smart cities that are both secure and sustainable. From robust cybersecurity measures to green infrastructure and community engagement, learn how to create urban environments that thrive in the face of 21st-century challenges. | 0 | 2024-06-27T21:54:54 | https://www.rics-notebook.com/blog/Construction/SmartCity | smartcities, sustainabledevelopment, cybersecurity, urbanplanning | As the world becomes increasingly urbanized, the concept of smart cities has emerged as a promising solution to the challenges of modern urban living. By leveraging advanced technologies and data-driven decision-making, smart cities aim to enhance the quality of life for residents, improve efficiency, and promote susta... | eric_dequ |

1,903,198 | Animated Login Page | Check out this Pen I made! | 0 | 2024-06-27T21:53:30 | https://dev.to/aditya_singh2109/animated-login-page-2koe | codepen | Check out this Pen I made!

{% codepen https://codepen.io/adjmcvgz-the-typescripter/pen/zYQXLJM %} | aditya_singh2109 |

1,903,194 | Circuit Breaker - A Fire Extinguisher for Your Code? | A Fire Extinguisher? Hey there! Ever heard about Circuit Breakers in software development?... | 0 | 2024-06-27T21:53:02 | https://dev.to/felipepaz/circuit-breaker-a-fire-extinguisher-for-your-code-fna | circuitbreaker, python, node, designsystem | ## A Fire Extinguisher?

Hey there! Ever heard about Circuit Breakers in software development? It's a super handy concept used in microservices to keep things running smoothly. Imagine you're making tons of requests to another service, but suddenly that service starts failing. Instead of letting your entire system go d... | felipepaz |

1,902,802 | A Deep Dive into Frontend Frameworks: React and Vue. | You might have seen or read questions like, "React or Vue, which do you prefer using?", "Vue or... | 0 | 2024-06-27T21:22:22 | https://dev.to/thatgirl/a-deep-dive-into-frontend-frameworks-react-and-vue-8k7 | beginners, react, vue, frontend | You might have seen or read questions like, **"React or Vue, which do you prefer using?"**, **"Vue or React: Which one to choose in 2024?"**, **"React vs Vue: Which JavaScript Framework Wins?"**. I have seen and read questions like that, I have even asked a question like the ones above.

You know both Frameworks and so... | thatgirl |

1,903,189 | Recapping the AI, Machine Learning and Computer Meetup — June 27, 2024 | We just wrapped up the May '24 AI, Machine Learning and Data Science Meetup, and if you missed it or... | 0 | 2024-06-27T21:50:01 | https://voxel51.com/blog/recapping-the-ai-machine-learning-and-data-science-meetup-june-27-2024/ | computervision, machinelearning, datascience, ai | We just wrapped up the May '24 AI, Machine Learning and Data Science Meetup, and if you missed it or want to revisit it, here's a recap!

In this blog post you'll find the playback recordings, highlights from the presentations and Q&A, as well as the upcoming Meetup schedule so that you can join us at a future event.

... | jguerrero-voxel51 |

1,903,197 | The Technological Transformation of Construction Embracing Innovation with Quantum Cyber Solutions | The construction industry is undergoing a rapid transformation, fueled by advancements in various technologies. From AI to smart building materials, this blog post explores the diverse areas being revolutionized by tech and highlights how Quantum Cyber Solutions is playing a pivotal role in helping companies navigate t... | 0 | 2024-06-27T21:49:46 | https://www.rics-notebook.com/blog/Construction/QCS | constructiontechnology, innovativebuilding, smartconstruction, aiinconstruction | # 🏗️ Unpacking the Technological Revolution in Construction 🏗️

In the ever-evolving world of construction, technology is not just an addition; it's becoming the backbone of operations. As we dive deeper into the 21st century, the integration of advanced technologies in construction processes is accelerating, re... | eric_dequ |

1,903,195 | SIMPLE STEPS IN CREATING YOUR FIRST VIRTUAL MACHINE | A virtual machine (VM) is a software-based computer that runs on top of a physical host computer's... | 0 | 2024-06-27T21:49:12 | https://dev.to/francis_mbamara_05cc4a12d/simple-steps-in-creating-your-first-virtual-machine-49c5 |

A virtual machine (VM) is a software-based computer that runs on top of a physical host computer's hardware and operating system. It emulates the functionality of a physical computer, allowing users to run differen... | francis_mbamara_05cc4a12d | |

1,902,615 | Hosting Static Website On S3 Using Terraform | In this blog we'll dive into deploying a static website on AWS S3 using Terrafrom! Important things... | 0 | 2024-06-27T21:49:09 | https://dev.to/sanjaikumar2311/hosting-static-website-on-s3-using-terraform-5bp6 | terraform, s3, website | In this blog we'll dive into deploying a static website on AWS S3 using Terrafrom!

Important things to note:

1. Automating S3 Bucket Creation: Terraform will handle creating the S3 bucket where your website files will reside.

2.Effortless Website Upload: We’ll configure Terraform to skip manual uploads by referencing ... | sanjaikumar2311 |

1,903,193 | Day 980 : From Here | liner notes: Professional : Had a couple of meetings to start the day. Got the sample application I... | 0 | 2024-06-27T21:46:07 | https://dev.to/dwane/day-980-from-here-4e29 | hiphop, code, coding, lifelongdev | _liner notes_:

- Professional : Had a couple of meetings to start the day. Got the sample application I made cleaned up and uploaded to the repo. Got a tech review of the blog post I wrote and did some more editing. Going to submit it for content review tomorrow so they can take it from here.

- Personal : Last night, ... | dwane |

1,903,192 | Procore Revolutionizing the Construction Industry with Cutting-Edge Technology | Discover how Procore, a leading construction management software company, is transforming the construction industry with its comprehensive suite of tools and cloud-based platform. From project management to financial tracking, Procore streamlines processes, enhances collaboration, and boosts productivity for constructi... | 0 | 2024-06-27T21:44:39 | https://www.rics-notebook.com/blog/Construction/ProCore | constructiontechnology, projectmanagement, collaboration, procore | # 🏗️💻 Procore: Building the Future of Construction Technology 🏗️💻

The construction industry has long been known for its complex projects, tight deadlines, and the need for seamless collaboration among various stakeholders. Enter Procore, a trailblazing construction management software company that is revolutionizi... | eric_dequ |

1,903,187 | The Revolution of 3D Printing in Construction Lower Costs Faster Builds | 3D printing technology is transforming the construction industry by reducing costs and construction time, while also exploring new materials for a sustainable future. This blog post delves into how 3D construction printing can shape the future of building development. | 0 | 2024-06-27T21:39:31 | https://www.rics-notebook.com/blog/Construction/PrintingHouses | 3dprinting, constructioninnovation, sustainabledevelopment, futureofbuilding | # 🏗️ The Rise of 3D Construction Printing: A Game Changer 🏗️

The construction industry, known for its high material waste and extended project timelines, is undergoing a transformative shift with the adoption of 3D printing technology. 3D printing, or additive manufacturing, is reshaping how structures are conceived... | eric_dequ |

1,903,186 | PHP Version of console.log() for Laravel | Easily stream your Laravel application logs to the browser console tab (console.log) in real-time... | 0 | 2024-06-27T21:38:54 | https://dev.to/scaleupsaas/php-version-of-consolelog-for-laravel-4pl7 | laravel, opensource, php, github | **Easily stream your Laravel application logs to the browser console tab (console.log) in real-time using server-sent event (SSE)**

Welcome to **Laravel Console Log (LCL)!** This package brings real-time logging to your Laravel application, allowing you to stream your logs directly to your browser's console. Perfect ... | scaleupsaas |

1,903,185 | Display A Text File In A Browser | Sometimes It Come To Be That You Want To Allow A User To Select A File From Their Computer, And Have... | 0 | 2024-06-27T21:36:59 | https://dev.to/theholyspirit/display-a-text-file-in-a-browser-5202 | html, javascript, webdev, leadership | Sometimes It Come To Be That You Want To Allow A User To Select A File From Their Computer, And Have That Text File Rendered Into The Javascript.

It Happens. This Is The Internet.

This Is A Bootstrapping Example For Accepting And Displaying A Text File.

{% codepen https://codepen.io/theholyspirit/pen/JjqVVMY %} | theholyspirit |

1,903,182 | Elevating Construction The Impact of Drones and LiDAR Technology | Drones and LiDAR technology are setting new standards in the construction industry, enhancing precision, efficiency, and safety. This blog explores how these technologies are revolutionizing site surveying, monitoring, and overall project management. | 0 | 2024-06-27T21:34:24 | https://www.rics-notebook.com/blog/Construction/Lidar | drones, lidar, constructiontechnology, futureofbuilding | # 🚁 Revolutionizing Construction with Drones and LiDAR 🚁

The construction industry is embracing a technological revolution, with drones and Light Detection and Ranging (LiDAR) systems at the forefront. These tools are not just modernizing traditional practices; they are redefining them, offering unprecedented accura... | eric_dequ |

1,903,145 | Computer Vision Meetup: Leveraging Pre-trained Text2Image Diffusion Models for Zero-Shot Video Editing | Text-to-image diffusion models demonstrate remarkable editing capabilities in the image domain,... | 0 | 2024-06-27T21:33:47 | https://dev.to/voxel51/computer-vision-meetup-leveraging-pre-trained-text2image-diffusion-models-for-zero-shot-video-editing-3a3a | computervision, ai, machinelearning, datascience | Text-to-image diffusion models demonstrate remarkable editing capabilities in the image domain, especially after Latent Diffusion Models made diffusion models more scalable. Conversely, video editing still has much room for improvement, particularly given the relative scarcity of video datasets compared to image datase... | jguerrero-voxel51 |

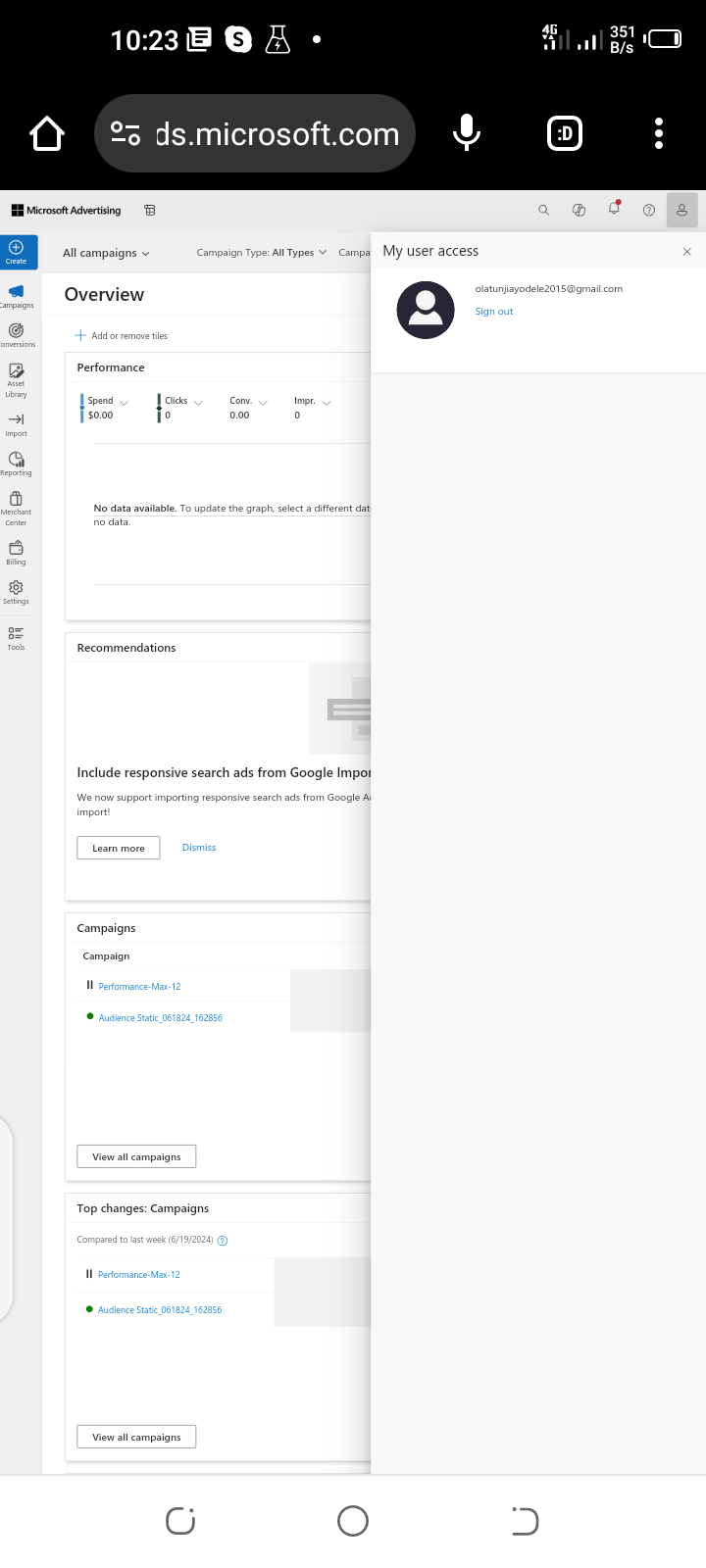

1,903,181 | World of Microsoft Advertising campaign | Certainly! Let's dive into the world of Microsoft Advertising campaigns. 🚀 1. Audience... | 0 | 2024-06-27T21:32:01 | https://dev.to/olatunjiayodel9/world-of-microsoft-advertising-campaign-hc | ai, azure, discuss, news |

Certainly! Let's dive into the world of Microsoft Advertising campaigns. 🚀

**1. Audience Campaigns:**

- Audience campaigns leverage Microsoft AI to display compelling ads to your ideal audience across various w... | olatunjiayodel9 |

1,903,180 | Open-source AI on-call developer | Hey guys, we’re excited to introduce to you what we’ve been working on for the past few months.... | 0 | 2024-06-27T21:30:03 | https://dev.to/david1542/open-source-ai-on-call-developer-465b | ai, incident, observability | Hey guys, we’re excited to introduce to you what we’ve been working on for the past few months. Merlinn is an open-source AI on-call developer that lives in Slack. It can help you alleviate the pains of on-call developers by connecting to your favorite tools and pair with you to triage the incident.

Our goal is to he... | david1542 |

1,898,142 | WordPress + Next.JS | Beneficios y Desventajas de Usar WordPress como Headless CMS y Next.js en el Frontend En el ámbito... | 0 | 2024-06-23T21:22:33 | https://dev.to/narnian_dev/wordpress-nextjs-152p | wordpress, nextjs, react | Beneficios y Desventajas de Usar WordPress como Headless CMS y Next.js en el Frontend

En el ámbito del desarrollo web moderno, la combinación de WordPress como Headless CMS y Next.js en el frontend se ha convertido en una opción popular entre desarrolladores y empresas. Este enfoque ofrece una serie de beneficios notab... | narnian_dev |

1,903,155 | Enhancing Rust Development: Introducing cargo-run for Script Management | In the world of modern software development, package managers have become indispensable tools for... | 0 | 2024-06-27T21:18:01 | https://dev.to/rsaz/enhancing-rust-development-introducing-cargo-run-for-script-management-kfb | rust, development, utility, productivity | In the world of modern software development, package managers have become indispensable tools for developers. Languages like JavaScript/TypeScript (with npm/yarn), C++ (with CMake), and Java (with Maven/Gradle) all have sophisticated package managers that include powerful scripting capabilities. These script sections a... | rsaz |

1,903,154 | Configuração do logging.php em Projetos Laravel | O arquivo logging.php em projetos Laravel desempenha um papel crucial na definição de como as... | 0 | 2024-06-27T21:16:56 | https://dev.to/fernandomullerjr/configuracao-do-loggingphp-em-projetos-laravel-3a26 | laravel, php | O arquivo `logging.php` em projetos Laravel desempenha um papel crucial na definição de como as mensagens de log são processadas e armazenadas. Configurar corretamente este arquivo não apenas melhora a monitoração e depuração de erros, mas também contribui para a segurança e desempenho geral da aplicação.

## Introduçã... | fernandomullerjr |

1,903,153 | I'm creating a link between West and East. | I'm creating a resource APP aimed at the 1 billion plus Mandarin speakers. I want it to focus on... | 0 | 2024-06-27T21:15:45 | https://dev.to/buddai/im-creating-a-reincarnation-of-the-buddha-know-as-budai-the-laughing-fat-buddha-google-budai-and-you-will-see-58pa | ai, relaxation, mandarin, javascript | I'm creating a resource APP aimed at the 1 billion plus Mandarin speakers. I want it to focus on educating people about alternatives to eastern medicine that exploits our worlds limited natural resources.I instinctively sense that there is a market for Western types of relaxation such as Classical Music, Wine, high end... | buddai |

1,903,152 | Creating Infraestructure with the ACK from EKS AWS. | Cloud people! The turn in this occasion is for the AWS controller for k8s (ack). I believe that... | 0 | 2024-06-27T21:14:51 | https://dev.to/segoja7/creating-infraestructure-with-the-ack-from-k8s-4dli | Cloud people!

The turn in this occasion is for the AWS controller for k8s (ack).

I believe that traditional Infrastructure as Code (IaC) tools have some limitations. The transition towards solutions such as Crossplane or similar projects is inevitable and, possibly, in a short time this evolution will be adopted. At ... | segoja7 | |

1,903,151 | Starting Your Front-End Journey: Choosing the Right Technologies | As a self-taught front-end developer, I ventured into the world of web development out of curiosity... | 0 | 2024-06-27T21:14:21 | https://dev.to/miraclejustice/starting-your-front-end-journey-choosing-the-right-technologies-2jo7 | webdev, frontend, programming | As a self-taught front-end developer, I ventured into the world of web development out of curiosity to understand how web applications work. During my learning journey, I discovered that there are numerous options available for front-end technologies, which can be quite overwhelming. With new technologies being constan... | miraclejustice |

1,903,144 | Full Web Stack UI Development with Blazor and ComponentOne | Learn about a new full web stack development approach in Blazor .NET 8 and ComponentOne controls. | 0 | 2024-06-27T21:11:43 | https://developer.mescius.com/blogs/full-web-stack-ui-development-with-blazor-and-componentone | webdev, devops, dotnet, tutorial | ---

canonical_url: https://developer.mescius.com/blogs/full-web-stack-ui-development-with-blazor-and-componentone

description: Learn about a new full web stack development approach in Blazor .NET 8 and ComponentOne controls.

---

**What You Will Need**

- ComponentOne Blazor Edition

- Visual Studio 2022

**Controls Refer... | chelseadevereaux |

1,903,148 | A Complete Checklist for Setting Up Online Payments in India | India's digital revolution is in full swing, fueled by a surge in internet and smartphone usage. At... | 0 | 2024-06-27T21:11:39 | https://dev.to/sania_gadiya_eab0b37fc471/a-complete-checklist-for-setting-up-online-payments-in-india-m0b | India's digital revolution is in full swing, fueled by a surge in internet and smartphone usage. At the heart of this transformation lies the rapid adoption of online payments. This shift towards cashless transactions offers numerous benefits for both consumers and businesses. However, navigating the world of [online p... | sania_gadiya_eab0b37fc471 | |

1,903,147 | The beggining of my journey. | hi guy, this is my first post ever in this social media, and from time to time I think about... | 0 | 2024-06-27T21:09:35 | https://dev.to/hermannmarinho/the-beggining-of-my-journey-1145 | beginners, programming, discuss, learning | hi guy, this is my first post ever in this social media, and from time to time I think about documenting my journey of learning how to code,my experiences and so on. So I ask you to empathize with me and that if you have tips for my growth, I will be immensely grateful. Now I'm on the way to learn JavaScript with the u... | hermannmarinho |

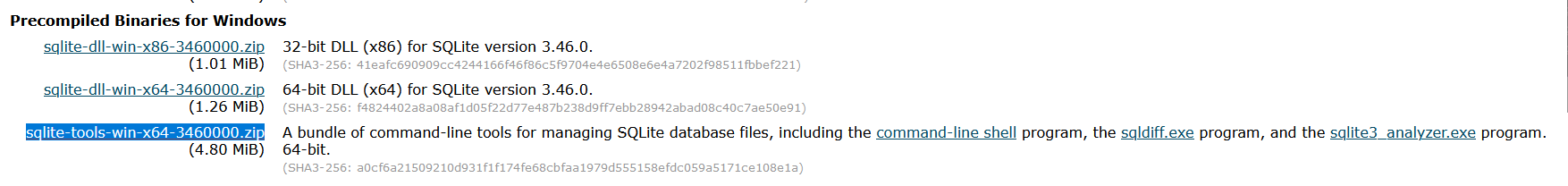

1,903,138 | How To Install SQLite On Windows | Howdy, In This Article We Will See How To Install Sqlite On Windows OS. Installing... | 0 | 2024-06-27T21:07:58 | https://dev.to/karim_abdallah/how-to-install-sqlite-on-windows-5aam | sqlite, tutorial, howto | Howdy, In This Article We Will See How To Install Sqlite On Windows OS.

### Installing Steps

1- Go To **[Sqlite Download Page](https://www.sqlite.org/download.html)** And Download `sqlite-tools-win-________.zip`

.

2-... | karim_abdallah |

1,903,146 | [Game of Purpose] Day 40 | Today I played around with exploding granades. And I have to say with not much effort the effect is... | 27,434 | 2024-06-27T21:04:09 | https://dev.to/humberd/game-of-purpose-day-40-44pn | gamedev | Today I played around with exploding granades. And I have to say with not much effort the effect is outstanding. Dropped granade can destroy an environment.

{% embed https://youtu.be/IMYJtY45MlA %} | humberd |

1,903,142 | Frontend Technologies | Comparing Svelte and Vue.js: Introduction In frontend development world they... | 0 | 2024-06-27T20:59:15 | https://dev.to/celine/frontend-technologies-511n | ## Comparing Svelte and Vue.js:

### Introduction

In frontend development world they are different and numerous frameworks and libraries that a developer can chose from. While ReactJS remains a popular choice and used by many developers and popular companies like Instagram ,Skype and Airbnb.Niche frameworks like Svelte... | celine | |

1,903,092 | Exploring Svelte and Vue.js: A Newbie's Perspective on Modern Frontend Technologies. | Front-end development is constantly evolving. New tools and frameworks are emerging to help us build... | 0 | 2024-06-27T20:58:56 | https://dev.to/harbiehorla/exploring-svelte-and-vuejs-a-newbies-perspective-on-modern-frontend-technologies-1k20 | beginners, frontend |

Front-end development is constantly evolving. New tools and frameworks are emerging to help us build better web applications. In this article, I will discuss two interesting front-end technologies: Svelte and Vue.js. I ... | harbiehorla |

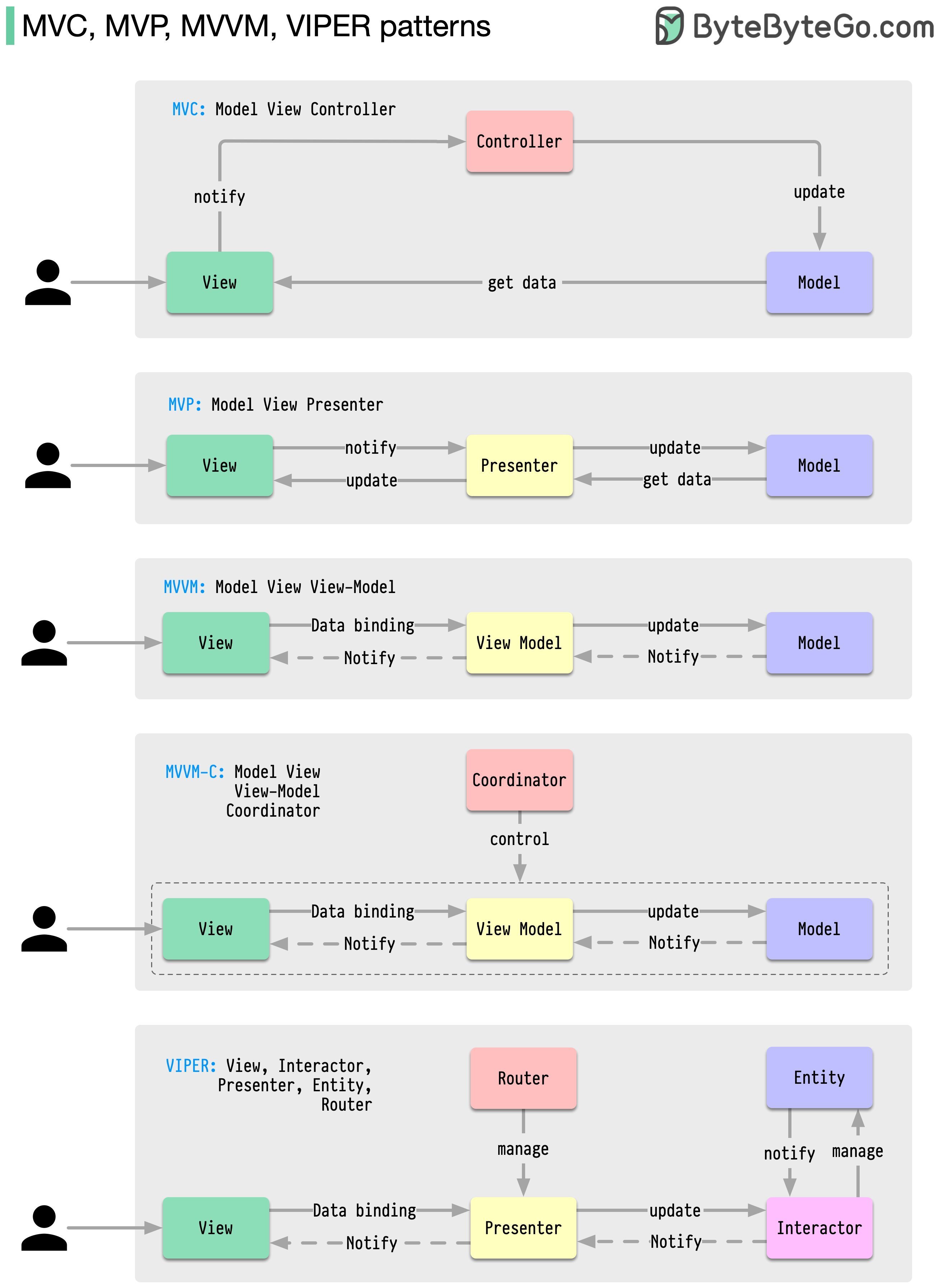

1,903,140 | Types Of Software Architecture | MVC Model View Controller: The Model-View-Controller (MVC) design pattern is an... | 0 | 2024-06-27T20:57:53 | https://dev.to/oussama_bel/types-of-software-architecture-mkf | designpatterns, softwaredevelopment, software, patterns |

### MVC Model View Controller:

The Model-View-Controller (M... | oussama_bel |

1,903,136 | shadcn-ui/ui codebase analysis: How does shadcn-ui CLI work? — Part 2.1 | I wanted to find out how shadcn-ui CLI works. In this article, I discuss the code used to build the... | 0 | 2024-06-27T20:51:25 | https://dev.to/ramunarasinga/shadcn-uiui-codebase-analysis-how-does-shadcn-ui-cli-work-part-21-jll | javascript, nextjs, opensource, shadcnui | I wanted to find out how shadcn-ui CLI works. In this article, I discuss the code used to build the shadcn-ui/ui CLI.

In part 1.0 and part 1.1, I discussed the code written in [packages/cli/src/index.ts](https://github.com/shadcn-ui/ui/blob/main/packages/cli/src/index.ts). In part 2.0, I talked about how the commander... | ramunarasinga |

1,903,134 | Unveiling Deep Nude: The Dark Side of AI and Its Applications | Unveiling Deep Nude: The Dark Side of AI and Its Applications The Rise of Deep Nude In recent years,... | 0 | 2024-06-27T20:50:27 | https://dev.to/shinaya_f315f59a07e1f3bdd/unveiling-deep-nude-the-dark-side-of-ai-and-its-applications-2m2b | **Unveiling Deep Nude: The Dark Side of AI and Its Applications**

The Rise of [Deep Nude](https://undressaiapp.pro)

In recent years, the emergence of Deep Nude technology has sparked widespread controversy and concern. This technology, powered by artificial intelligence (AI), has the capability to generate hyper-realis... | shinaya_f315f59a07e1f3bdd | |

1,903,133 | shadcn-ui/ui codebase analysis: How does shadcn-ui CLI work? — Part 2.0 | I wanted to find out how shadcn-ui CLI works. In this article, I discuss the code used to build the... | 0 | 2024-06-27T20:49:34 | https://dev.to/ramunarasinga/shadcn-uiui-codebase-analysis-how-does-shadcn-ui-cli-work-part-20-1j2h | javascript, nextjs, opensource, shadcnui | I wanted to find out how shadcn-ui CLI works. In this article, I discuss the code used to build the shadcn-ui/ui CLI.

In part 1.0 and part 1.1, I discussed the code written in [packages/cli/src/index.ts](https://github.com/shadcn-ui/ui/blob/main/packages/cli/src/index.ts). In part 2.0, we will understand a code snippe... | ramunarasinga |

1,903,131 | Backend Discoveries: Turning Roadblocks into Learning | Hello! My name is Paschal Ikechukwu Chukwumee, and I'm a backend developer specializing in Django. My... | 0 | 2024-06-27T20:48:45 | https://dev.to/paschal_ikechukwu/backend-discoveries-turning-roadblocks-into-learning-42bj | webdev, beginners, opensource, learning | Hello! My name is Paschal Ikechukwu Chukwumee, and I'm a backend developer specializing in Django. My journey into development began with frontend work, but I soon discovered the backend and fell in love with it. There's something incredibly exciting and fulfilling about working behind the scenes to make everything run... | paschal_ikechukwu |

1,903,132 | REACTJS VS VUEJS | After I started learning Javascript, React framework was the next thing I knew and I have not looked... | 0 | 2024-06-27T20:46:17 | https://dev.to/kpeale/reactjs-vs-vuejs-41cp | webdev, javascript, womenintech, frontend | After I started learning Javascript, React framework was the next thing I knew and I have not looked back since. React framework to me was everything, I literally loved everything about it until I started using VUE JS. In this article, I am going to be talking about the differences between React and Vue JS. Although, t... | kpeale |

1,903,130 | Front End Technologies | I'm a front end developer on an internship at https://hng.tech/internship. I wish to improve my... | 0 | 2024-06-27T20:42:19 | https://dev.to/roktech/front-end-technologies-17fi | webdev, javascript, programming, react | I'm a front end developer on an internship at https://hng.tech/internship.

I wish to improve my skills at react due to the fact that vanilla JavaScript can't be used to build scalable apps unlike react, to then advance to learning nextjs because the both have different features which you can find below 👇

ReactJS and... | roktech |

1,903,129 | How OOP principles and SOLID principles can be effectively applied across each layer of a layered architecture | Applying software design principles like OOP and SOLID across different layers of a layered... | 0 | 2024-06-27T20:41:34 | https://dev.to/muhammad_salem/how-oop-principles-and-solid-principles-can-be-effectively-applied-across-each-layer-of-a-layered-architecture-3kd6 |

Applying software design principles like OOP and SOLID across different layers of a layered architecture is crucial for building robust and maintainable software systems. This article delves into how Object-Oriented Programming (OOP) principles and SOLID principles can be effectively applied across each layer of a lay... | muhammad_salem | |

1,903,128 | JavaScript MMORPG - Maiu Online #babylonjs - Ep: 25 - Monsters AI System | Hello, Finally I finished work on first prototype of monsters AI module. Monsters have several... | 0 | 2024-06-27T20:38:48 | https://dev.to/maiu/javascript-mmorpg-maiu-online-babylonjs-ep-25-monsters-ai-system-1gc | babylonjs, mmorpg, indiegamdev, javascript | Hello,

Finally I finished work on first prototype of monsters AI module. Monsters have several states: IDLE, PATROL, DEAD, FLEEING, COMBAT.

Idle monsters are non active even when someone attack them. Patrolling ones are walking around and checking each 0.5s is they have someone to attack in a range if yes then they st... | maiu |

1,903,127 | Case Study: National Flags and Anthems | This case study presents a program that displays a nation’s flag and plays its anthem. The images... | 0 | 2024-06-27T20:34:03 | https://dev.to/paulike/case-study-national-flags-and-anthems-931 | java, programming, learning, beginners | This case study presents a program that displays a nation’s flag and plays its anthem.

The images for seven national flags, named flag0.gif, flag1.gif, . . . , flag6.gif for Denmark, Germany, China, India, Norway, United Kingdom, and United States are stored under www.cs.armstrong.edu/liang/common/image. The audio con... | paulike |

1,903,126 | Unlocking the Power of ReactJS: Top Features Every Product Manager Should Know | Unlocking the Power of ReactJS: Top Features Every Product Manager Should Know As the... | 0 | 2024-06-27T20:32:51 | https://dev.to/cachemerrill/unlocking-the-power-of-reactjs-top-features-every-product-manager-should-know-2o0m | react, webdev, javascript | ### Unlocking the Power of ReactJS: Top Features Every Product Manager Should Know

As the digital landscape evolves, staying updated with the latest technologies is crucial for delivering cutting-edge products. ReactJS, a popular JavaScript library, continues to be a game-changer in the world of web development. For p... | cachemerrill |

1,903,125 | How I Solved Deployment Challenges: A Guide to Using Gunicorn and Nginx with a Flask Application on Ubuntu | Intro: Hello guys 👋🏾, coming with another story, today on how I solved deployment issues... | 0 | 2024-06-27T20:32:48 | https://dev.to/ukeme/how-i-solved-deployment-challenges-a-guide-to-using-gunicorn-and-nginx-with-a-flask-application-on-ubuntu-3gc4 | backend, nginx, flask, devops | ## Intro:

Hello guys 👋🏾, coming with another story, today on how I solved deployment issues while deploying a Flask app using gunicorn and Nginx 🙂

So, here I had built the backend for the project, just an API connectin... | ukeme |

858,816 | 32 bit vs 64 bit vs 128 bit | Understanding 32-bit vs 64-bit vs 128-bit in Development In this article, we'll address... | 0 | 2024-06-27T20:31:54 | https://dev.to/entangledcognition/32-bit-vs-64-bit-vs-128-bit-5d0i | computerscience, architecture, beginners, programming | ## Understanding 32-bit vs 64-bit vs 128-bit in Development

In this article, we'll address some common questions you might have when buying a laptop, choosing an operating system, or downloading software.

* What is 32/64/128 bit?

* Does it correspond to the processor, OS, or software?

* How do you check compatibility... | bharathmuppa |

1,903,124 | Hi, I'm CyRil.exe | I didn’t ask to be passionate about Tech. This life chose me and now I just have to live with it, but... | 0 | 2024-06-27T20:28:43 | https://dev.to/cyrildotexe/hi-im-cyrilexe-3h8p | beginners, firstpost | I didn’t ask to be passionate about Tech. This life chose me and now I just have to live with it, but honestly, I have no complaints.

Hi, I am CyRil and welcome to my first blog post. So I won't waste your time, I'll give you a rundown of what to expect.

This blog is mainly to track my progress and keep me accountable... | cyrildotexe |

1,903,123 | Closures, Higher-Order Functions, and Prototypal Inheritance in JavaScript | JavaScript is a versatile language that mix functional programming and object-oriented programming... | 0 | 2024-06-27T20:28:00 | https://dev.to/francescoagati/closures-higher-order-functions-and-prototypal-inheritance-in-javascript-4m3m | javascript, programming, beginners, tutorial | JavaScript is a versatile language that mix functional programming and object-oriented programming paradigms. This flexibility allows developers to create powerful abstractions. We can try to mix concepts such as closures, higher-order functions, prototypal inheritance, and the `this` keyword to create elegant solution... | francescoagati |

1,903,122 | Back-of-the-envelope Estimation System Design | Back-of-the-envelope estimation is a technique used to quickly approximate values and make rough... | 0 | 2024-06-27T20:27:30 | https://dev.to/pranjal_sharma_38482a3041/back-of-the-envelope-estimation-system-design-13a5 | systemdesign, capacityestimation | Back-of-the-envelope estimation is a technique used to quickly approximate values and make rough calculations using simple arithmetic and basic assumptions.

---

### Estimation Techniques

#### 1) Rule of Thumb →

General principals applied to make good estimates. eg : 1 user generates 1MB of data on social media ... | pranjal_sharma_38482a3041 |

1,903,121 | Leetcode diary: Remove Duplicates from Sorted Array II | LINK Worst day. Was stuck on this problem for 2+ hours then 1+ today after sleeping on it :( ....... | 0 | 2024-06-27T20:25:16 | https://dev.to/kevin074/leetcode-diary-remove-duplicates-from-sorted-array-ii-1cnp | javascript, tutorial, career, discuss | [LINK](https://leetcode.com/problems/remove-duplicates-from-sorted-array-ii)

Worst day. Was stuck on this problem for 2+ hours then 1+ today after sleeping on it :( ....

Something just didn't click with me on this problem so I'll write it down for my sake, hope it'll help you too!

the [easy version of this questio... | kevin074 |

1,903,119 | How to Transpose a WinForms Datagrid in Two Easy Steps | Learn how to transpose a WinForms datagrid in two easy steps. See more from ComponentOne today. | 0 | 2024-06-27T20:24:08 | https://developer.mescius.com/blogs/how-to-transpose-a-winforms-datagrid-in-two-easy-steps | webdev, devops, dotnet, tutorial | ---

canonical_url: https://developer.mescius.com/blogs/how-to-transpose-a-winforms-datagrid-in-two-easy-steps

description: Learn how to transpose a WinForms datagrid in two easy steps. See more from ComponentOne today.

---

**What You Will Need**

- ComponentOne WinForms Edition

- Visual Studio 2022

**Controls Reference... | chelseadevereaux |

1,903,120 | Video and Audio | You can use the Media class to obtain the source of the media, the MediaPlayer class to play and... | 0 | 2024-06-27T20:23:28 | https://dev.to/paulike/video-and-audio-no1 | java, programming, learning, beginners | You can use the **Media** class to obtain the source of the media, the **MediaPlayer** class to play and control the media, and the **MediaView** class to display the video. Media (video and audio) is essential in developing rich Internet applications. JavaFX provides the **Media**, **MediaPlayer**, and **MediaView** c... | paulike |

1,903,117 | AI Driven Encryption Harnessing Neural Networks for Enhanced Security | Discover a groundbreaking approach to encryption that leverages the power of artificial intelligence. Explore how neural networks can be trained to encrypt and decrypt data using complex vector associations, offering a new paradigm in data security. Dive into the technical details and learn about the strengths, weaknes... | 0 | 2024-06-27T20:20:01 | https://www.rics-notebook.com/blog/AI/AIEncryption | encryption, ai, neuralnetworks, cryptography | ## 🔒 Introduction to AI-Driven Encryption

In the ever-evolving landscape of data security, traditional encryption methods are constantly challenged by the advancement of computing power and the emergence of new threats. To stay ahead of the curve, researchers are exploring innovative approaches to encryption, and one... | eric_dequ |

1,903,116 | AI Driven Encryption Harnessing Neural Networks for Enhanced Security | Discover a groundbreaking approach to encryption that leverages the power of artificial intelligence. Explore how neural networks can be trained to encrypt and decrypt data using complex vector associations, offering a new paradigm in data security. Dive into the technical details and learn about the strengths, weaknes... | 0 | 2024-06-27T20:14:48 | https://www.rics-notebook.com/blog/./blog/AI/AIEncryption | encryption, ai, neuralnetworks, cryptography | ## 🔒 Introduction to AI-Driven Encryption

In the ever-evolving landscape of data security, traditional encryption methods are constantly challenged by the advancement of computing power and the emergence of new threats. To stay ahead of the curve, researchers are exploring innovative approaches to encryption, and one... | eric_dequ |

1,903,069 | Improving State Management in React: Transitioning from Prop Drilling to ContextAPI | Whenever people start learning React, they inevitably encounter the challenge of handling props.... | 0 | 2024-06-27T20:13:48 | https://dev.to/abinash4567/improving-state-management-in-react-transitioning-from-prop-drilling-to-contextapi-53a8 | webdev, javascript, beginners, react | Whenever people start learning **React**, they inevitably encounter the challenge of handling props. Props are essential for passing data from parent to child components, enabling the creation of dynamic and reusable UI elements. However, as applications grow in complexity, developers often face a common hurdle known a... | abinash4567 |

1,903,115 | Case Study: Developing a Tic-Tac-Toe Game | From the many examples in this and earlier chapters you have learned about objects, classes, arrays,... | 0 | 2024-06-27T20:03:37 | https://dev.to/paulike/case-study-developing-a-tic-tac-toe-game-3mg | java, programming, learning, beginners | From the many examples in this and earlier chapters you have learned about objects, classes, arrays, class inheritance, GUI, and event-driven programming. Now it is time to put what you have learned to work in developing comprehensive projects. In this section, we will develop a JavaFX program with which to play the po... | paulike |

1,903,114 | Adult content POS | Hey everyone, I'm reaching out to see if any programmers are interested in collaborating on a new... | 0 | 2024-06-27T20:02:12 | https://dev.to/boonesam110/adult-content-pos-dbp | Hey everyone,

I'm reaching out to see if any programmers are interested in collaborating on a new venture. Creating a payment processor specifically for adult websites. There's a significant gap in the market with minimal competition, and the adult film and adult content industry has a critical need for reliable payme... | boonesam110 | |

1,903,113 | Massive users data exposure | Reward $1500 Overview of the Vulnerability Sensitive data exposure can occur when sensitive data is... | 0 | 2024-06-27T20:01:56 | https://dev.to/c4ng4c31r0/massive-users-data-exposure-51f4 | **Reward $1500**

**Overview of the Vulnerability**

Sensitive data exposure can occur when sensitive data is not encrypted, or behind an authorization barrier. When this information is exposed it can place sensitive data, such as secrets, at risk. This can occur due to a variety of scenarios such as not encrypting data... | c4ng4c31r0 | |

1,903,112 | How to Check Globe Load and Balance: A Quick Guide | Keeping track of your Globe load and balance is essential for managing your mobile usage effectively.... | 0 | 2024-06-27T19:59:28 | https://dev.to/globe_sim_c606fbc3db1fa8e/how-to-check-globe-load-and-balance-a-quick-guide-2le0 | Keeping track of your Globe load and balance is essential for managing your mobile usage effectively. Globe Telecom offers several easy methods to check your load and balance, ensuring you always stay informed. Here’s a concise guide on how to do it.

1. USSD Code

The quickest way to check your Globe load and balance ... | globe_sim_c606fbc3db1fa8e | |

1,903,111 | 🔥Solana Price Spikes After VanEck Files for First ETF in US | 🌟 Solana (SOL), the world’s fifth-largest cryptocurrency by market cap, surged 6.6% within an hour... | 0 | 2024-06-27T19:56:30 | https://dev.to/irmakork/solana-price-spikes-after-vaneck-files-for-first-etf-in-us-30m5 |

🌟 Solana (SOL), the world’s fifth-largest cryptocurrency by market cap, surged 6.6% within an hour and is up almost 9% in the last day, currently just below $150. This spike follows VanEck's Thursday morning announcement of a Solana ETF filing.

📈 VanEck, a prominent investment management firm, filed an S-1 registra... | irmakork | |

1,903,110 | What it’s like to put code in production | why the frontend is dynamic and not boring at all we often develop projects in the front... | 0 | 2024-06-27T19:56:13 | https://dev.to/shreyvijayvargiya/what-its-like-to-put-code-in-production-4m61 | frontend, webdev, javascript, programming | why the frontend is dynamic and not boring at all

---

we often develop projects in the front end.

projects are often the best way to build and learn, no doubt about that.

but production-based code is not similar to projects and this story will explain why as well as give you a few more reasons not to hate frontend.... | shreyvijayvargiya |

1,903,109 | 🔥🔥🔥Hot Cryptos to Buy in Dip | As the landscape of cryptocurrency investment constantly evolves, recognizing opportunities during... | 0 | 2024-06-27T19:56:01 | https://dev.to/irmakork/hot-cryptos-to-buy-in-dip-22jm |

As the landscape of cryptocurrency investment constantly evolves, recognizing opportunities during market dips can be a smart decision. Here, we analyze three potentially valuable cryptocurrencies: Cardano (ADA), Chiliz (CHZ), and Pendle (PENDLE).

📚 Cardano (ADA)

Cardano focuses on academic excellence, scalability, ... | irmakork | |

1,903,108 | How set, get and delete URL Params using React | Setting the URL Params: import { useSearchParams } from 'react-router-dom' //startar the... | 0 | 2024-06-27T19:55:53 | https://dev.to/rafaelborges26/how-set-and-get-url-params-using-react-21hl | react, typescript, nextjs, web |

> Setting the URL Params:

```

import { useSearchParams } from 'react-router-dom'

//startar the variable params

const [searchParams, setSearchParams] = useSearchParams()

setSearchParams((state) => {

state.set('order', '123')

return state

})

```

> Geting URL Params:

Accessing the route: http://localhost:5173/... | rafaelborges26 |

1,903,106 | 🚀Analyst Predicts Significant Dogecoin Surge | 🐶 The number one meme coin, DOGE, has always attracted investors’ interest. Elon Musk's support and... | 0 | 2024-06-27T19:55:32 | https://dev.to/irmakork/analyst-predicts-significant-dogecoin-surge-2ap6 |

🐶 The number one meme coin, DOGE, has always attracted investors’ interest. Elon Musk's support and rumors about its use in payment systems with X position DOGE uniquely. Analysts are paying close attention, with one prominent analyst predicting a potential rise.

📈 Analyst Kaleo shared on X that Dogecoin could sur... | irmakork | |

1,903,105 | 💥💥💥 Top Altcoins To Bounce Back: Analysed | 🚀 Following Bitcoin's surge to $61,385.07, altcoins have also seen positive effects with the altcoin... | 0 | 2024-06-27T19:55:10 | https://dev.to/irmakork/top-altcoins-to-bounce-back-analysed-8o6 |

🚀 Following Bitcoin's surge to $61,385.07, altcoins have also seen positive effects with the altcoin market cap recovering to $232.231B. Many analysts claim July could be a rally month for altcoins, offering potential 100% gains. Now is a great time to consider Ethereum, Solana, and Toncoin.

💎 Ethereum (ETH)

Ethere... | irmakork | |

1,880,535 | Introduction Mongo db | Introduction MongoDB is a popular open-source NoSQL database management system that is... | 0 | 2024-06-07T15:11:56 | https://dev.to/dana-fullstack-dev/introduction-mongo-db-29k0 |

## Introduction

MongoDB is a popular open-source NoSQL database management system that is widely used in web applications and other software projects. It is known for its flexibility, scalability,... | dana-fullstack-dev | |

1,903,104 | 👀Pepe Coin: Smart Money Offloads 118B PEPE With 11-Fold Returns, Has Price Maxed Out? | 🐸 Pepe Coin (PEPE) has grabbed significant investor attention. Recently, a smart money address... | 0 | 2024-06-27T19:54:54 | https://dev.to/irmakork/pepe-coin-smart-money-offloads-118b-pepe-with-11-fold-returns-has-price-maxed-out-4dhi |

🐸 Pepe Coin (PEPE) has grabbed significant investor attention. Recently, a smart money address offloaded 118.5 billion PEPE to Kraken, sparking discussions about its future price trajectory.

💸 Smart Money Dumps PEPE

According to EmberCN on X, a smart trader moved 118.5 billion PEPE, worth $1.48 million, to Kraken. ... | irmakork | |

1,903,103 | 🚀Popular Analyst Predicts BTC Price Reversal After A Dip To This Level | 📉 Bitcoin's Volatility Amid Government Sell-Offs Bitcoin (BTC) has seen significant volatility due to... | 0 | 2024-06-27T19:54:38 | https://dev.to/irmakork/popular-analyst-predicts-btc-price-reversal-after-a-dip-to-this-level-1jc4 |

📉 Bitcoin's Volatility Amid Government Sell-Offs

Bitcoin (BTC) has seen significant volatility due to investor risk aversion and government sell-offs by Germany and the U.S., contributing to market uncertainty.

🔮 Analyst Predicts Reversal After BTC Dips

Crypto analyst Michael van de Poppe remains optimistic, predic... | irmakork | |

1,903,101 | 🚀SHIB Price Prediction for June 27 | 📈 SHIB Price Increase SHIB has increased by 0.52% since yesterday, trading at $0.00001737. 📉 Hourly... | 0 | 2024-06-27T19:54:09 | https://dev.to/irmakork/shib-price-prediction-for-june-27-841 |

📈 SHIB Price Increase

SHIB has increased by 0.52% since yesterday, trading at $0.00001737.

📉 Hourly Chart Analysis

SHIB made a false breakout at $0.00001764. If the daily bar closes far from this, expect a correction to $0.00001720 by tomorrow.

🔄 Larger Time Frame

SHIB bounced back from $0.00001696 support. If th... | irmakork | |

1,903,100 | 🔥Here’s Why Dogecoin & Shiba Inu Prices Declining Today | 🐶 Meme Coins Dip Major meme coins like Dogecoin and Shiba Inu fell over 3% amid a broader market dip,... | 0 | 2024-06-27T19:53:42 | https://dev.to/irmakork/heres-why-dogecoin-shiba-inu-prices-declining-today-59ej |

🐶 Meme Coins Dip

Major meme coins like Dogecoin and Shiba Inu fell over 3% amid a broader market dip, sparking speculations about the reasons behind the decline.

📉 Dogecoin Decline

Dogecoin's price dropped 3.23% to $0.1223, with trading volume down 30% to $489.51 million. Market participants are cautious due to the... | irmakork | |

1,903,099 | 👀 Bitcoin Bears Maintain Control, Here’s Why the BTC Price Pump Is Temporary | 📉 Bitcoin Struggles After trying to rebound, Bitcoin (BTC) fell below $61,000, down 1.5%. Analyst... | 0 | 2024-06-27T19:53:25 | https://dev.to/irmakork/bitcoin-bears-maintain-control-heres-why-the-btc-price-pump-is-temporary-4pd9 |

📉 Bitcoin Struggles

After trying to rebound, Bitcoin (BTC) fell below $61,000, down 1.5%. Analyst Willy Woo suggests the recent pump is temporary, with bears still dominating.

🐻 Bearish Pressure

Woo notes that although recent corrections cleared excess leverage, speculative trades still linger. He believes the cur... | irmakork | |

1,903,097 | 🤯Bitcoin Inflows Paint Rosy Picture Amid Price Targets Of $56,000 and $58,000 | 📉 Bitcoin's Current State Is Bitcoin finding its bottom after the recent drop, or will it continue... | 0 | 2024-06-27T19:52:51 | https://dev.to/irmakork/bitcoin-inflows-paint-rosy-picture-amid-price-targets-of-56000-and-58000-2m4g |

📉 Bitcoin's Current State

Is Bitcoin finding its bottom after the recent drop, or will it continue its short-term bearish trend? Analyst Josh from Crypto World examined BTC charts and noted minimal change in the past day.

📊 Technical Analysis Insights

Josh observed Bitcoin's daily chart, highlighting a bounce from... | irmakork | |

1,903,096 | Mobile Development | Mobile Development Platforms Native Development platforms This platforms is divided into... | 0 | 2024-06-27T19:51:43 | https://dev.to/celine/mobile-development-d49 | mobiledevelopment |

**<u>Mobile Development Platforms</u>**

1. Native Development platforms

This platforms is divided into two:

- iOS Development:

Languages: Swift, Objective-C

Frameworks: UIKit, SwiftUI

- Android Development

Languages: Java,... | celine |

1,900,168 | The Basics of CSS Positioning: A Practical Guide | Today you will learn the essentials of CSS positioning. CSS positioning is a fundamental concept in... | 0 | 2024-06-27T19:51:24 | https://antondevtips.com/blog/the-basics-of-css-positioning-a-practical-guide | webdev, frontend, css | ---

canonical_url: https://antondevtips.com/blog/the-basics-of-css-positioning-a-practical-guide

---

Today you will learn the essentials of CSS positioning.

CSS positioning is a fundamental concept in web development that allows you to control the layout and placement of elements on your web pages.

In this guide, we... | antonmartyniuk |

1,903,095 | DAY 4 PROJECT : DRAG & DROP | PROJECT NAME : Creating a Fun Drag and Drop Color Game Using HTML, CSS, and JavaScript The main... | 0 | 2024-06-27T19:51:08 | https://dev.to/shrishti_srivastava_/day-4-project-drag-drop-4p4p | webdev, javascript, beginners, programming | **PROJECT NAME : Creating a Fun Drag and Drop Color Game Using HTML, CSS, and JavaScript**

The main objective of this project is to create a game where users can drag colored boxes and drop them into designated areas. The game will include multiple colored boxes, and users can enjoy the interaction and visual appeal o... | shrishti_srivastava_ |

1,903,079 | Availability Sets vs Availability Zones | An availability set protects your Azure resources from failures within data centres, whereas an... | 0 | 2024-06-27T19:48:45 | https://dev.to/dera2024/availability-sets-vs-availability-zones-2a4n | beginners, azure, cloud, microsoft | An availability set protects your Azure resources from failures within data centres, whereas an availability zone protects from entire data centre failures.

Understanding the differences between Azure Availability Sets and Availability Zones is crucial for designing resilient and highly available architectures in the A... | dera2024 |

1,903,093 | Introducing Do Notation in the Mo Package for Golang | What is Do Notation? Do notation is a syntactic sugar primarily used in functional... | 0 | 2024-06-27T19:47:50 | https://dev.to/taman9333/introducing-do-notation-in-the-mo-package-for-golang-1jpc | go, monads | ###What is Do Notation?

Do notation is a syntactic sugar primarily used in functional programming languages like Haskell and Scala. It simplifies the chaining of monadic operations, making the code more readable and maintainable. By bringing this feature to Go, we can now write cleaner, more expressive code when workin... | taman9333 |

1,903,091 | Development with Components | Hi guys, this is my first post, I hope you enjoy ❣️ A summary of componentization It was... | 0 | 2024-06-27T19:44:55 | https://dev.to/mayannara/overview-of-development-with-components-4lpe | frontend, architecture, learning, designsystem | Hi guys, this is my first post, I hope you enjoy ❣️

## **A summary of componentization**

It was created by Doug Mcilroy, responsible for the company Nato Software in 1968. His idea was to develop code components to facilitate the development of other applications by reusing code.

📝 **What are components?**

**1.** ... | mayannara |

1,903,090 | Everything You Need to Know About Workshop Manuals in PDF Format | In today's digital age, the demand for workshop manuals in PDF format has soared among automotive... | 0 | 2024-06-27T19:43:20 | https://dev.to/downloadworkshopmanuals_4/everything-you-need-to-know-about-workshop-manuals-in-pdf-format-27k0 | In today's digital age, the demand for workshop manuals in PDF format has soared among automotive enthusiasts, DIY mechanics, and professionals alike. These comprehensive guides offer a treasure trove of information, from intricate repair procedures to detailed maintenance schedules, all conveniently packaged in a port... | downloadworkshopmanuals_4 | |

1,903,089 | How to Customize GitHub Profile: Part 3 | Welcome back to the third part of my series on customizing your GitHub profile! In this article,... | 0 | 2024-06-27T19:42:05 | https://dev.to/ryoichihomma/how-to-customize-your-github-profile-part-3-37em | github, githubprofile, githubportfolio, git | Welcome back to the third part of my series on customizing your GitHub profile! In this article, we'll focus on the featured source section where you can showcase your project demo videos and any other relevant contents. In particularly, highlighting your YouTube channel can help visitors better understand your work, s... | ryoichihomma |

1,903,088 | The 3 Ls of Quantum Physics Law Logic and Love | Explore the fascinating world of quantum physics through the lens of the 3 Ls - Law, Logic, and Love. Discover how these concepts intertwine to unravel the mysteries of the universe, from guessing playing cards to understanding the intricate dance of particles at the subatomic level. | 0 | 2024-06-27T19:40:44 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Quantum/LLL | quantumphysics, law, logic, love | # The 3 L's of Quantum Physics: Law, Logic, and Love 🌌❤️

Quantum physics, a realm of subatomic particles and strange phenomena, can be understood through the interplay of three fundamental concepts: Law, Logic, and Love. These 3 L's offer unique perspectives on the mysteries of the quantum world, each contr... | eric_dequ |

1,903,087 | TidyCoder day night loading only css/HTML, and single div | Check out this Pen I made! | 0 | 2024-06-27T19:40:30 | https://dev.to/tidycoder/tidycoder-day-night-loading-only-csshtml-and-single-div-43j1 | codepen, webdev, html, css | Check out this Pen I made!

{% codepen https://codepen.io/TidyCoder/pen/ExzJMwe %} | tidycoder |

1,903,086 | AZURE VIRTUAL MACHINE SCALE SET (VMSS) | INTRODUCTION TO VIRTUAL MACHINE SCALE SET Azure Virtual Machine Scale Sets (VMSS) is a feature on the... | 0 | 2024-06-27T19:39:35 | https://dev.to/presh1/azure-virtual-machine-scale-set-vmss-2lo3 | introductiontovmss, creationofvmss | **INTRODUCTION TO VIRTUAL MACHINE SCALE SET**

Azure Virtual Machine Scale Sets (VMSS) is a feature on the Azure portal that allows you create and manage a group of load balanced virtual machines (VM). The number of VM instances can automatically increase or decrease in response to demand or a defined schedule following... | presh1 |

1,903,085 | AWS Cost Optimization: Periodic Deletion of ECR Container Images | tl;dr; Automated periodic deletion of ECR container images is a straightforward and... | 0 | 2024-06-27T19:38:47 | https://dev.to/siddhantkcode/aws-cost-optimization-periodic-deletion-of-ecr-container-images-3636 | aws, costoptimization, ecr, sre | ### tl;dr;

Automated periodic deletion of ECR container images is a straightforward and effective way to optimize AWS costs. By leveraging Lambda functions and Step Functions, you can implement custom policies that meet your specific needs, ensuring that only necessary images are retained.

---

## Introduction

Managin... | siddhantkcode |

1,903,083 | Hybrid Quantum-Classical Computing Leveraging Ternary Logic for Enhanced Performance | Explore the innovative concept of hybrid quantum-classical computing using ternary logic. Understand the principles, potential advantages, and challenges of integrating negative, positive, and zero states to enhance computational speed and efficiency. | 0 | 2024-06-27T19:37:16 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Quantum/Hybrid | quantumcomputing, hybridcomputing, ternarylogic, quantumalgorithms | ## 🌌 Introduction to Hybrid Quantum-Classical Computing with Ternary Logic

In the pursuit of pushing the boundaries of computational power, researchers are exploring the integration of quantum and classical computing paradigms. A promising approach is the use of ternary logic, where each computational unit can exist ... | eric_dequ |

1,902,748 | how to buy flash usdt | Are you ready to revolutionize your Bitcoin experience? Look no further than Flash Bitcoin, a... | 0 | 2024-06-27T14:47:22 | https://dev.to/mathew_sanchez_c69efb77b2/how-to-buy-flash-usdt-1aoh | flashbtc, flashusdt, flashbitcoin, flashbitcoinsoftware | Are you ready to revolutionize your Bitcoin experience? Look no further than Flash Bitcoin, a game-changing technology that allows you to generate Bitcoin transactions directly on the Bitcoin network. And, with MartelGold, you can unlock the full potential of Flash Bitcoin with our innovative software solutions.

What ... | mathew_sanchez_c69efb77b2 |

1,903,075 | Guia Completo: Instalando Elixir no Ubuntu/Linux 24.04 | Introdução Neste guia, vamos aprender como instalar o Elixir no Ubuntu 24.04. O Elixir é... | 0 | 2024-06-27T19:34:34 | https://dev.to/abreujp/guia-completo-instalando-elixir-no-ubuntulinux-2404-3k04 | elixir |

## Introdução

Neste guia, vamos aprender como instalar o Elixir no Ubuntu 24.04. O Elixir é uma linguagem de programação funcional e concorrente, ideal para o desenvolvimento de aplicações distribuídas.

Existem dois métodos principais para instalar o Elixir no Ubuntu: usando o gerenciador de pacotes `apt` ou utiliza... | abreujp |

1,903,082 | The Quantum Entanglement of Ideas Exploring the Spiritual Implications of Superposition and the Mandela Effect | This post delves into the complex relationship between quantum mechanical superposition and the spiritual nature of reality. We explore how ideas and concepts can become entangled, creating a spectrum of superposition states that are ultimately measured and confirmed by society. The Mandela Effect serves as an example ... | 0 | 2024-06-27T19:33:49 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Quantum/EntangledGroupSuperPosition | quantummechanics, spirituality, consciousness, mandelaeffect | # 🌀 The Quantum Dance of Ideas: Superposition, Entanglement, and the Nature of Reality 🌀

Reality, as we perceive it, is a complex tapestry woven from the threads of our collective consciousness. At the heart of this intricate web lies the enigmatic realm of quantum mechanics, where particles can exist in multiple st... | eric_dequ |

1,903,081 | Reduce Churn SaaS in 2024. Methods that actually work! | This Blog was Originally Posted to Churnfree Blog To reduce churn in a SaaS company, you must focus... | 0 | 2024-06-27T19:33:38 | https://churnfree.com/blog/how-to-reduce-churn-saas/#Use-a-Cancel-Flow | churnreduction, saaschurn, churnrate, churnfree | **This Blog was Originally Posted to [Churnfree Blog](https://churnfree.com/blog/how-to-reduce-churn-saas/?utm_source=Dev.to&utm_medium=referral&utm_campaign=Content_Distribution)**

To reduce churn in a SaaS company, you must focus on customer satisfaction. Customer satisfaction can be gained in many ways other than i... | churnfree |

1,903,080 | Comparing Frontend Technologies: ReactJS vs Vue.js | In the ever-evolving world of frontend technologies, developers are often faced with the decision of... | 0 | 2024-06-27T19:33:32 | https://dev.to/ishow360/comparing-frontend-technologies-reactjs-vs-vuejs-p8j |

In the ever-evolving world of frontend technologies, developers are often faced with the decision of choosing the right framework for their projects. Among the plethora of options available, ReactJS and Vue.js stand out as two popular choices. In this article, we will compare these two frontend technologies, highlight... | ishow360 | |

1,903,045 | Creating a static website using Amazon S3 with Terraform: | Introduction: Amazon Simple Storage Service (S3) is a highly scalable and reliable... | 0 | 2024-06-27T19:01:49 | https://dev.to/albine_peter_c2ffb10b422f/creating-a-static-website-using-amazon-s3-with-terraform-d55 | **_Introduction:_**

Amazon Simple Storage Service (S3) is a highly scalable and reliable object storage service offered by Amazon Web Services (AWS). It can be used not only for storing data but also for hosting static websites with low latency and high availability.

**Steps to Create a Static Website... | albine_peter_c2ffb10b422f | |

1,903,077 | The No-Cloning Theorem A Quantum Cover-Up | Get ready to have your mind blown as we expose the biggest cover-up in quantum history. The no-cloning theorem? More like the no-cloning sham! Join us on a wild ride as we unravel the quantum conspiracies that have been hiding in plain sight. | 0 | 2024-06-27T19:30:22 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Quantum/CloningTheorem | quantummechanics, satire, science, humor | ## 🕶️ The No-Cloning Theorem: A Quantum Cover-Up

Alright, folks, listen up! It's time to spill the tea on the biggest scandal in the quantum world. The no-cloning theorem? More like the no-cloning sham! Those quantum bigwigs have been pulling the wool over our eyes, but we're here to expose the truth.

### ... | eric_dequ |

1,903,076 | Semantic annotation | Semantic annotation is the process of attaching metadata to specific elements within a text or... | 0 | 2024-06-27T19:29:30 | https://dev.to/theoraclephd_362ed10a8ba6/semantic-annotation-4aan | Semantic annotation is the process of attaching metadata to specific elements within a text or dataset to provide additional information about the meaning and context of those elements. This often involves linking words or phrases to concepts, entities, or categories that they represent, enabling more advanced data ana... | theoraclephd_362ed10a8ba6 | |

1,903,074 | 5 Things You Need to Know About RAG with Examples | If you're new to RAG, vector search, and related concepts, this article will guide you through the... | 0 | 2024-06-27T19:28:10 | https://dev.to/edwinkys/5-terms-to-get-yourself-familiar-with-rag-3cep | beginners, ai, learning, machinelearning | If you're new to RAG, vector search, and related concepts, this article will guide you through the key terms and principles used in modern LLM-based applications.

This article attempts to provide a very high-level overview of the key concepts and terms used in the LLM ecosystem with an easy to relate explanation. For ... | edwinkys |

1,903,073 | Por qué PayPal en los casinos argentinos | En los últimos años, PayPal ha emergido como uno de los métodos de pago más preferidos por los... | 0 | 2024-06-27T19:26:08 | https://dev.to/jos_fernando_e09ce1d37e6/por-que-paypal-en-los-casinos-argentinos-2ndj | En los últimos años, PayPal ha emergido como uno de los métodos de pago más preferidos por los jugadores en los casinos en línea de Argentina. Esta popularidad no es sin razón, ya que PayPal ofrece una serie de ventajas significativas que lo hacen atractivo para los usuarios argentinos que disfrutan del juego en línea... | jos_fernando_e09ce1d37e6 | |

1,903,071 | Slider | Slider is similar to ScrollBar, but Slider has more properties and can appear in many forms. Figure... | 0 | 2024-06-27T19:25:01 | https://dev.to/paulike/slider-5fa6 | java, programming, learning, beginners | **Slider** is similar to **ScrollBar**, but **Slider** has more properties and can appear in many forms.

Figure below shows two sliders. **Slider** lets the user graphically select a value by sliding a knob within a bounded interval. The slider can show both major tick marks and minor tick marks between them. The numb... | paulike |

1,903,068 | Adiabatic Quantum Computing Concept Applications and Challenges | Explore the concept of Adiabatic Quantum Computing (AQC), its principles, applications, and challenges. Understand the adiabatic theorem, problem Hamiltonian, quantum annealing, and the potential of AQC in solving complex problems across various domains. | 0 | 2024-06-27T19:23:28 | https://www.rics-notebook.com/blog/C:/Users/ericd/Desktop/Blog/My-Blog/data/blog/Quantum/AQC | quantumcomputing, adiabaticquantumcomputing, quantumalgorithms, quantummechanics | ## 🌌 Introduction to Adiabatic Quantum Computing (AQC)

Adiabatic Quantum Computing (AQC) is a powerful paradigm of quantum computing that transforms computational problems into the challenge of finding the lowest energy eigenstate of a specified Hamiltonian. Proposed theoretically by Edward Farhi, Jeffrey Goldstone, ... | eric_dequ |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.