id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,902,351 | Micro SaaS HQ | Micro SaaS HQ is the World's Largest Micro SaaS Ecosystem. Trusted by 33,000+ subscribers and 500+... | 0 | 2024-06-27T09:55:40 | https://dev.to/upenv/micro-saas-hq-36gi | microsaasideas, saasideas, microsaas | Micro SaaS HQ is the World's Largest Micro SaaS Ecosystem. Trusted by 33,000+ subscribers and 500+ Micro SaaS founders. Micro SaaS HQ brings you 1000+ validated Micro SaaS Ideas with data about revenues, traffic and other data points. Micro SaaS HQ also comes with an exclusive community for Micro SaaS Builders. | upenv |

1,902,295 | C# pass delegate methods as arguments. | With delegates you can pass methods as argument a to other methods. The delegate object holds... | 27,862 | 2024-06-27T09:55:02 | https://dev.to/emanuelgustafzon/c-pass-delegate-methods-as-arguments-10ap | csharp, delegate, higherorderfunctions | With delegates you can pass methods as argument a to other methods.

The delegate object holds references to other methods and that reference can be passed as arguments.

This is a key functionality when working within the functional programming paradigm.

We can create callbacks by utilizing this technique.

A func... | emanuelgustafzon |

1,902,349 | Difference between Udyog Aadhar And Udyam Registration | Introduction In recent years, the Indian government has launched various initiatives to promote and... | 0 | 2024-06-27T09:53:39 | https://dev.to/shreya_kumari_c8988288797/all-about-udyam-registration-for-msme-registration-43la | Introduction

In recent years, the Indian government has launched various initiatives to promote and support the Micro, Small, and Medium Enterprises (MSMEs) sector, which plays a crucial role in the nation's economy. Two significant schemes introduced to facilitate MSMEs are Udyog Aadhar and Udyam Registration. While... | shreya_kumari_c8988288797 | |

1,902,348 | How the Page Visibility API Improves Web Performance and User Experience | Making web applications fast and user-friendly is very important today. One useful tool for this is... | 0 | 2024-06-27T09:53:39 | https://blog.sachinchaurasiya.dev/how-the-page-visibility-api-improves-web-performance-and-user-experience | javascript, react, webdev | Making web applications fast and user-friendly is very important today. One useful tool for this is the **Page Visibility API**. This API tells developers if a web page is visible to the user or hidden in the background. It helps manage resources better and improve user interactions. In this article, we'll look at what... | sachinchaurasiya |

1,902,347 | Flezr | Flezr is a no-code website builder that lets you create dynamic websites using Google Sheets data.... | 0 | 2024-06-27T09:52:03 | https://dev.to/upenv/flezr-enm | nocodewebsitebuilder, googlesheetswebsitebuilder, googlesheetstowebsite | Flezr is a no-code website builder that lets you create dynamic websites using Google Sheets data. With Flezr, you can easily build and customize dynamic cards, use pre-built blocks for visual development, and generate thousands of pages from Google Sheets Data without writing any code. It's a simple, intuitive, and po... | upenv |

1,902,345 | Cloud Accounting | You must have heard about the term cloud accounting in the modern world of accounting. This word has... | 0 | 2024-06-27T09:50:58 | https://dev.to/globalbookkeeping/cloud-accounting-6h0 | accounting, bookkeeping, payroll, tax |

You must have heard about the term **_[cloud accounting](https://globalbookkeeping.net/service/quickbooks-accounting-services)_** in the modern world of accounting. This word has gained a huge engagement between rec... | globalbookkeeping |

1,902,343 | Suggestions for Contributing to Open Source | Hi! I'm looking for an Open Source Project to contribute to and the search has been daunting. I was... | 0 | 2024-06-27T09:49:32 | https://dev.to/d10_1a3b6eac3808c723370cd/suggestions-for-contributing-to-open-source-bkl | opensource, javascript, python, go | Hi! I'm looking for an Open Source Project to contribute to and the search has been daunting. I was supposed to be doing an internship this summer vacation as an upcoming Senior, but I couldn't join the ones I got selected for as I was abroad on a family trip. I'm in a bit of a spot right now, unsure whether I should c... | d10_1a3b6eac3808c723370cd |

1,902,342 | What It Means to Be a Developer: A Human Perspective | Being a developer is often painted with a broad brush—coding, debugging, and spending endless hours... | 0 | 2024-06-27T09:49:01 | https://dev.to/klimd1389/what-it-means-to-be-a-developer-a-human-perspective-1a64 | webdev, beginners, programming, developer | Being a developer is often painted with a broad brush—coding, debugging, and spending endless hours in front of a computer screen. While these activities are part of the job, the reality of being a developer is far more nuanced and human. It's a journey filled with creativity, problem-solving, collaboration, and contin... | klimd1389 |

1,902,341 | Build Your Own GitHub Copilot with SuperDuperDB: Live Workshop 🚀 | We're excited to announce a special live workshop where we'll guide you through building an... | 0 | 2024-06-27T09:48:47 | https://dev.to/guerra2fernando/build-your-own-github-copilot-with-superduperdb-live-workshop-bdg | ai, githubcopilot, database, rag | We're excited to announce a special live workshop where we'll guide you through building an AI-powered tool similar to GitHub Copilot using the latest release of SuperDuperDB v0.2! 🚀

When: Today at 9 PM CET

Where: https://www.youtube.com/watch?v=JgavM6QDmxQ

---

What to Expect:

- Integrate AI Models with Your Datab... | guerra2fernando |

1,902,338 | How AI-Powered Smart Insights Solutions Transform Business Intelligence | What are AI-Powered Smart Insights Solutions? AI-driven smart insights solution streamlines business... | 0 | 2024-06-27T09:46:12 | https://dev.to/osiz_digitalsolutions/how-ai-powered-smart-insights-solutions-transform-business-intelligence-4bn | ai, aiinsights, aidevelopmentcompany | **What are AI-Powered Smart Insights Solutions?**

AI-driven smart insights solution streamlines business intelligence by automatically analyzing reports and dashboards. It identifies unusual data points (anomalies), investigates the root causes, and generates clear explanations written in plain English directly on the ... | osiz_digitalsolutions |

1,902,251 | Create Your 3D Modeling Tool Like Face Transfer 2 | Looking to create your own 3D modeling tool like Face Transfer 2? Explore our blog for insights and... | 0 | 2024-06-27T09:45:17 | https://dev.to/novita_ai/create-your-3d-modeling-tool-like-face-transfer-2-42p1 | Looking to create your own 3D modeling tool like Face Transfer 2? Explore our blog for insights and tips on getting started.

## Key Highlights

- With Face Transfer 2, an AI-powered system, you can turn a picture into a lifelike 3D model by copying the person's facial features.

- This tool works well with Genesis 9 fi... | novita_ai | |

1,902,337 | Top CSPM Tools to Secure Your Cloud Environment in 2024 | In today's digital environment, businesses are migrating their work to the cloud at a rapid pace. The... | 0 | 2024-06-27T09:45:17 | https://dev.to/rachgrey/top-cspm-tools-to-secure-your-cloud-environment-in-2024-49ac | cspm, cloud, cspmtools, cloudcomputing | In today's digital environment, businesses are migrating their work to the cloud at a rapid pace. The cloud offers great scalability, flexibility, and cost-effectiveness but also brings unique security risks. CSPM tools are crucial for ensuring the security and compliance of businesses with cloud infrastructures. This ... | rachgrey |

1,902,327 | Harmonizing Technology and Faith: The Final Composition of the AI Bible Chat App | Introduction In this final part of our series, we’ll see how the local database service,... | 0 | 2024-06-27T09:45:00 | https://dev.to/apow/harmonizing-technology-and-faith-the-final-composition-of-the-ai-bible-chat-app-i71 | buildwithgemini, flutter, ai, tutorial | ## Introduction

In this final part of our series, we’ll see how the local database service, the Gemini AI service, and the user interface combine to create a seamless and interactive AI Bible chat app.

At the time of creating this final write-up, I had made version 2 of the app, which includes an AI-created audio pod... | apow |

1,902,336 | Modify Odoo Modules | What is Odoo? Odoo is EPR system that helps businesses run smoothly and manageably. It has many tools... | 0 | 2024-06-27T09:41:32 | https://dev.to/farhannerd/modify-odoo-modules-5dii | odoo | **What is Odoo?**

Odoo is EPR system that helps businesses run smoothly and manageably. It has many tools (called modules) for different types of jobs, like keeping track of money, managing employees, and selling products. Sometime customization is required for modules changes. This is called "modifying a module."

**W... | farhannerd |

1,902,335 | Choosing the Right Lab Grown Diamond Jewelry for You | When it comes to selecting the perfect piece of Lab Grown Diamond Jewelry, these gems offer a modern... | 0 | 2024-06-27T09:39:40 | https://dev.to/dev_shivam_3d6e29a6d69fff/choosing-the-right-lab-grown-diamond-jewelry-for-you-5gff | jewelry, diamond, labgrowndiamond | When it comes to selecting the perfect piece of **[Lab Grown Diamond Jewelry](https://www.ourosjewels.com/collections/lab-diamond-jewelry)**, these gems offer a modern and ethical alternative to mined diamonds. At Ouros Jewels, we believe that choosing the right lab grown diamond jewelry should be a seamless and enjoya... | dev_shivam_3d6e29a6d69fff |

1,902,333 | Fusion : The Notion Like API Client | Hi all, At ApyHub we currently host 110+ APIs (that are available for our users to consume) + we... | 0 | 2024-06-27T09:38:14 | https://dev.to/nikoldimit/fusion-the-notion-like-api-client-4908 | api, testing, client, building | Hi all,

At ApyHub we currently host 110+ APIs (that are available for our users to consume) + we operate hundreds of internal APIs that power the platform. With all these APIs we had/need to be super careful and diligent about API management.

We soon found out (the hard way) that the de facto API clients (even the ne... | nikoldimit |

1,902,332 | Escorts Services Kot Mohibbu Lahore 03486704471 | Our hot models are housewives, school, and university girls. Some of them are professionals i.e.... | 0 | 2024-06-27T09:37:26 | https://dev.to/widesi/escorts-services-kot-mohibbu-lahore03486704471-872 | Our hot models are housewives, school, and university girls. Some of them are professionals i.e. Nurses, Doctors, Teachers, Anchors, Makeup Artists, Singers, IT professionals, Ramp Models, Fashion Designers and professional dancers. If you want drink with our girls, we will provide you the drink too. If you want to tak... | widesi | |

1,902,331 | Escorts Kot Begum IN Lahore 03486704471 | Our hot models are housewives, school, and university girls. Some of them are professionals i.e.... | 0 | 2024-06-27T09:37:08 | https://dev.to/widesi/escorts-kot-begum-in-lahore-03486704471-126h | Our hot models are housewives, school, and university girls. Some of them are professionals i.e. Nurses, Doctors, Teachers, Anchors, Makeup Artists, Singers, IT professionals, Ramp Models, Fashion Designers and professional dancers. If you want drink with our girls, we will provide you the drink too. If you want to tak... | widesi | |

1,902,329 | For those who want to create a site to dynamically display GAS data using Ajax | As the title suggests, this information is for those of you who are planning to create such a site... | 0 | 2024-06-27T09:36:45 | https://dev.to/sharu2920/for-those-who-want-to-create-a-site-to-dynamically-display-gas-data-using-ajax-4b1g | javascript, gas, programming, webdev |

As the title suggests, this information is for those of you who are planning to create such a site and register it with search engines, or for those of you who are using Ajax to retrieve and display data, but are having trouble getting the content to be visible in the search engine's Webmaster Tools.

I hope this info... | sharu2920 |

1,902,328 | Understanding ArchiMate Motivation Diagram | In the realm of enterprise architecture, conveying complex ideas and plans in a clear and structured... | 0 | 2024-06-27T09:36:16 | https://victorleungtw.com/2024/06/27/motivation/ | archimate, motivation, enterprise, architecture | In the realm of enterprise architecture, conveying complex ideas and plans in a clear and structured manner is crucial. ArchiMate, an open and independent modeling language, serves this purpose by providing architects with the tools to describe, analyze, and visualize the relationships among business domains in an unam... | victorleungtw |

1,902,326 | Understanding Carbon Credits: A Comprehensive Guide for Beginners | In the fight against climate change, carbon credits have emerged as a critical tool for businesses... | 0 | 2024-06-27T09:35:29 | https://dev.to/mxi_coders_1f6c1d58648b/understanding-carbon-credits-a-comprehensive-guide-for-beginners-16nk | In the fight against climate change, carbon credits have emerged as a critical tool for businesses and governments worldwide. This comprehensive guide aims to demystify carbon credits, explaining what they are, why they are essential, and how businesses can effectively incorporate them into their operations. By the end... | mxi_coders_1f6c1d58648b | |

1,902,325 | Key Trends Driving Demand in the Global Washed Silica Sand Market | Silica sand, a versatile and essential material, plays a vital role in numerous industries worldwide.... | 0 | 2024-06-27T09:34:05 | https://dev.to/aryanbo91040102/key-trends-driving-demand-in-the-global-washed-silica-sand-market-3m01 | news | Silica sand, a versatile and essential material, plays a vital role in numerous industries worldwide. Among its various forms, washed silica sand stands out as a high-quality and in-demand variant that has found extensive applications across diverse sectors. From construction and glass manufacturing to water filtration... | aryanbo91040102 |

1,902,320 | Claude 3 Opus API vs. Novita AI LLM API: A Comparison Guide | Key Highlights Understanding LLM API: LLM APIs integrate advanced AI for tasks like... | 0 | 2024-06-27T09:33:36 | https://dev.to/novita_ai/claude-3-opus-api-vs-novita-ai-llm-api-a-comparison-guide-5g90 | llm | ## Key Highlights

- **Understanding LLM API:** LLM APIs integrate advanced AI for tasks like natural language processing and text generation.

- **Claude 3 Opus vs. Novita AI LLM API:** Claude excels in multimodal capabilities and performance benchmarks, while Novita AI offers affordability, low latency, scalability, a... | novita_ai |

1,902,324 | 10 Pro SEO Practices to Improve Your Website's Ranking Quickly | If you're looking to boost traffic, improve your rankings, and get the most from search engine... | 0 | 2024-06-27T09:32:34 | https://dev.to/taiwo17/10-pro-seo-practices-to-improve-your-websites-ranking-quickly-3n20 | seo, career, linkbuilding, seostrategies | If you're looking to boost traffic, improve your rankings, and get the most from [search engine optimization (SEO)](https://www.upwork.com/services/product/marketing-technical-seo-audit-technical-on-page-seo-fix-seo-issues-1803811118137311009?ref=project_share), these 10 advanced SEO techniques can help your website ac... | taiwo17 |

1,902,323 | Harness the Power of SEO with Essential Tools from SEO Tools WP | In today's digital landscape, leveraging the right SEO tools can make a significant difference in... | 0 | 2024-06-27T09:32:18 | https://dev.to/bharti_dadwal_843d67bcd60/harness-the-power-of-seo-with-essential-tools-from-seo-tools-wp-11ga | In today's digital landscape, leveraging the right SEO tools can make a significant difference in your online presence. **[SEO Tools WP ](https://seotoolswp.com/)**offers a comprehensive suite of utilities designed to enhance your SEO strategies effortlessly. Whether you're looking to reverse image search, generate ter... | bharti_dadwal_843d67bcd60 | |

1,902,316 | From Stores to Screens: How Technology is Reshaping Retail | When Amazon started as a marketplace for books in 1994, no one could have predicted the impact of... | 0 | 2024-06-27T09:32:09 | https://dev.to/nicholaswinst14/from-stores-to-screens-how-technology-is-reshaping-retail-150l | ecommerce, webdev, ai, blockchain | When Amazon started as a marketplace for books in 1994, no one could have predicted the impact of online stores on the retail industry, specifically traditional brick-and-mortar businesses. It brought shopping to your screens at home. In 2023, online retail sales in the United States alone surpassed $1 trillion, highli... | nicholaswinst14 |

1,902,322 | Storing Passwords Securely - A Journey from Plain Text to Secure Password Storage | From Plain Text to Secure Password Storage Password storage has come a long way since its... | 0 | 2024-06-27T09:31:00 | https://www.shivi.io/blog/password-storage | cybersecurity, webdev, learning | ## From Plain Text to Secure Password Storage

Password storage has come a long way since its early days as an afterthought in computing. In the past, passwords were often stored without encryption, making them vulnerable to unauthorized access. However, storing passwords securely is no longer enough; it's equally impo... | shividotio |

1,902,208 | 3 Best GPUs for AI 2024: Your Ultimate Guide | Introduction AI, or artificial intelligence, has revolutionized various industries like... | 0 | 2024-06-27T09:30:00 | https://dev.to/novita_ai/3-best-gpus-for-ai-2024-your-ultimate-guide-49fk | ## Introduction

AI, or artificial intelligence, has revolutionized various industries like healthcare, finance, and manufacturing by recognizing images and understanding language. GPUs, originally designed for video game graphics, now play a crucial role in powering AI programs. Unlike CPUs, GPUs excel at handling mult... | novita_ai | |

1,902,321 | How Does Interactive Live Streaming Work? | An Interactive Live Streaming system has attracted a lot of businesses in recent years. Inke, one of... | 0 | 2024-06-27T09:29:39 | https://dev.to/stephen568hub/how-does-interactive-live-streaming-work-1aef | An Interactive Live Streaming system has attracted a lot of businesses in recent years. Inke, one of the largest live-streaming platforms, changed its name to Inkeverse and marched toward metaverse in its business.

Nowadays, the new trend shows that the metaverse is taking off. In the next future streaming from a vir... | stephen568hub | |

1,902,318 | Exploring TypeScript Type Generation for JSON Paths: An AI-Assisted Journey | As developers, we often find ourselves pushing the boundaries of what's possible with our tools.... | 0 | 2024-06-27T09:26:58 | https://dev.to/skorfmann/exploring-typescript-type-generation-for-json-paths-an-ai-assisted-journey-1a5f |

As developers, we often find ourselves pushing the boundaries of what's possible with our tools. Recently, I conducted an intriguing experiment: creating a TypeScript type generator for multiple, arbitrary JSON path inputs. While the experiment isn't complete, the progress made with AI assistance is noteworthy.

## Th... | skorfmann | |

1,902,319 | A Tribute to the Physics Teacher | In the realm of education, few figures stand as tall as the Physics Teacher, wielding knowledge that... | 0 | 2024-06-27T09:26:49 | https://dev.to/anjali110385/a-tribute-to-the-physics-teacher-ki | physics, education, interview, learning | In the realm of education, few figures stand as tall as the Physics Teacher, wielding knowledge that sparks curiosity and unravels the mysteries of the universe. With a blend of expertise and passion, they navigate the intricate landscapes of forces, energy, and matter, igniting the minds of eager learners.

A Physics ... | anjali110385 |

1,902,317 | Building Elegant Software In 1 Month: How We Did It! | It’s widely accepted that you cannot build elegant software in less than 30 days, yet I have... | 0 | 2024-06-27T09:23:29 | https://dev.to/martinbaun/building-elegant-software-in-1-month-how-we-did-it-1m9c | productivity, career, programming, devops |

It’s widely accepted that you cannot build _elegant_ software in less than 30 days, yet I have successfully achieved this. I documented the highlights of this process.

I’m extremely excited to share it with you.

## Built different?

How so, you might ask? My development process is built on character and determinatio... | martinbaun |

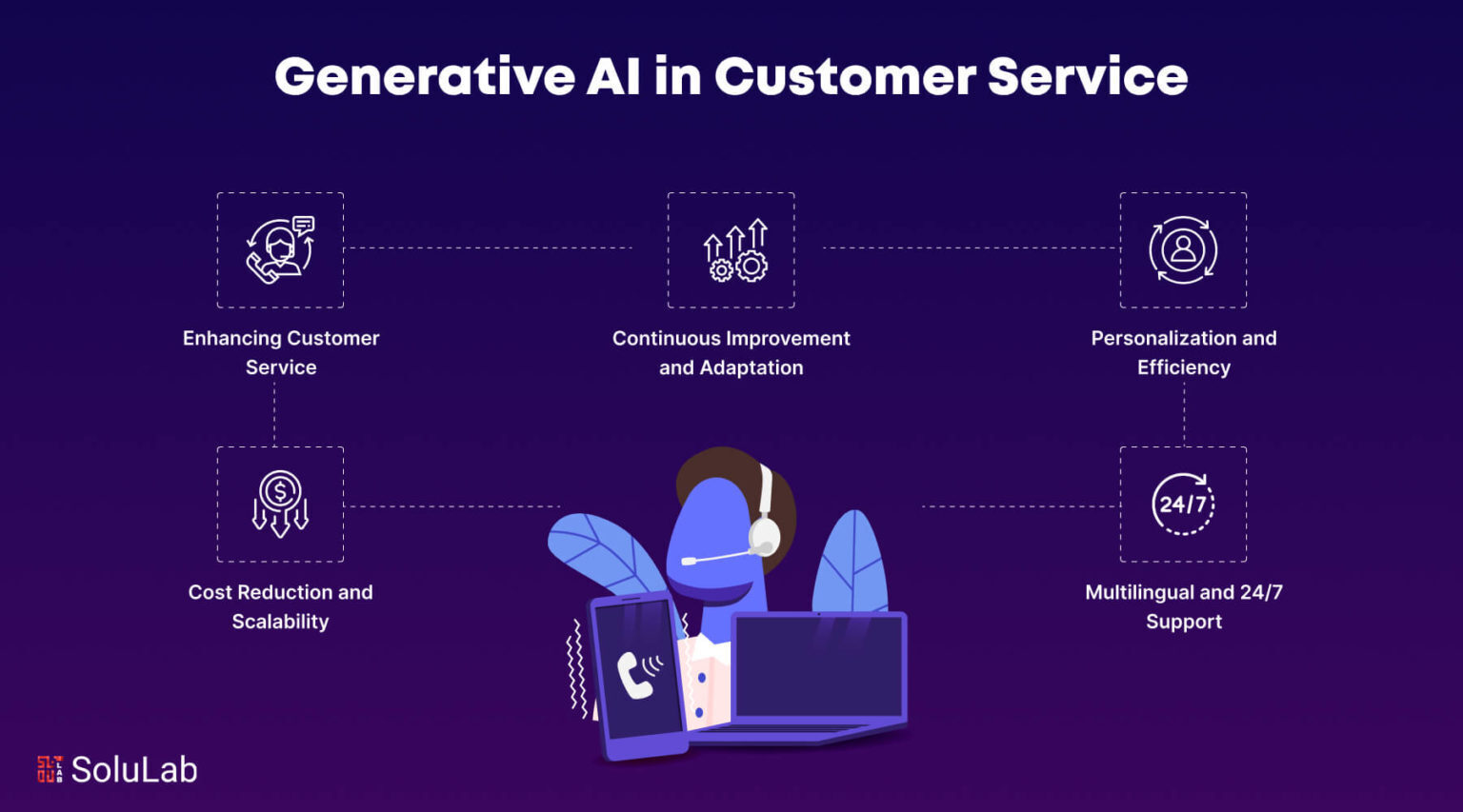

1,902,315 | How Generative AI is Changing the Customer Service Experience | In recent years, generative AI has emerged as a transformative force across various industries, with... | 0 | 2024-06-27T09:22:11 | https://dev.to/ram_kumar_c4ad6d3828441f2/how-generative-ai-is-changing-the-customer-service-experience-45mo | ai, machinelearning, genrativeai, webdev |

In recent years, generative AI has emerged as a transformative force across various industries, with customer service being one of the most impacted areas. This innovative technology is reshaping how businesses inte... | ram_kumar_c4ad6d3828441f2 |

1,902,314 | Computer Teacher Interview Tips | Preparing for a computer teacher interview requires a blend of technical knowledge and teaching... | 0 | 2024-06-27T09:21:35 | https://dev.to/anjali110385/computer-teacher-interview-tips-1577 | computer, teaching, education, interview | [Preparing for a computer teacher interview](https://englishfear.in/interview-questions-for-computer-teacher/) requires a blend of technical knowledge and teaching skills. Start by researching the school’s curriculum and technology resources. Highlight your qualifications, including any certifications in computer scien... | anjali110385 |

1,902,050 | Must-Have Tools for Frontend Developers | Frontend development can be a challenging field, but the right tools can make all the difference. It... | 0 | 2024-06-27T09:18:31 | https://dev.to/chidera_kanu/must-have-tools-for-frontend-developers-2cem | webdev, beginners, programming | [Frontend development](https://www.w3schools.com/whatis/whatis_frontenddev.asp) can be a challenging field, but the right tools can make all the difference. It is all about creating the parts of a website that users interact with. Whether you're just starting out or looking to streamline your workflow, there are some e... | chidera_kanu |

1,902,305 | Hello world | Hello world this sample code like what | 0 | 2024-06-27T09:17:57 | https://dev.to/sqlunite/hello-world-1g4p | Hello world

`this sample code like what` | sqlunite | |

1,902,304 | TensorFlow Basics with Snippets | TensorFlow Basics with Snippets TensorFlow is an open-source machine learning framework... | 27,886 | 2024-06-27T09:17:03 | https://dev.to/plug_panther_3129828fadf0/tensorflow-basics-with-snippets-1j0p | tensorflow, machinelearning, python, deeplearning | # TensorFlow Basics with Snippets

TensorFlow is an open-source machine learning framework developed by the Google Brain team. It is widely used for building and training machine learning models, particularly deep learning models. In this blog, we'll cover the basics of TensorFlow with code snippets to help you get sta... | plug_panther_3129828fadf0 |

1,902,302 | Top 10 Tools To Integrate in Your Next SaaS Software Product MVP | Top 10 Tools Integrate in Your next SaaS Software Product MVP In this article, we'll... | 27,887 | 2024-06-27T09:16:29 | https://www.faciletechnolab.com/blog/top-10-tools-integrate-in-your-next-saas-software-product-mvp/ | saasdevelopment, saasproduct, tools, saasmvp | Top 10 Tools Integrate in Your next SaaS Software Product MVP

=============================================================

In this article, we'll unveil 10 must-have tools to integrate into your SaaS Software Product MVP, empowering you to launch a robust and user-centric platform

Congratulations! You've brainstormed... | faciletechnolab |

1,902,301 | GitOps: Streamlining Infrastructure and Application Deployment | Introduction Managing deployments can become a complex affair in the age of cloud-native... | 0 | 2024-06-27T09:15:35 | https://dev.to/d_sourav155/gitops-streamlining-infrastructure-and-application-deployment-1em5 | ## Introduction

Managing deployments can become a complex affair in the age of cloud-native development and ever-evolving infrastructure. GitOps emerges as a powerful solution, leveraging the familiar territory of Git version control to automate infrastructure and application deployments. This blog delves into the cor... | d_sourav155 | |

482,269 | Running Linux GUI programs in WSL2 | Let's start by taking a look at the result, this is Windows running WebStorm that's installed in WSL2... | 0 | 2020-10-09T13:19:12 | https://dev.to/egoist/running-linux-gui-programs-in-wsl2-29j3 | windows, linux, wsl | ---

title: Running Linux GUI programs in WSL2

published: true

description:

tags:

- Windows

- Linux

- WSL

//cover_image: https://direct_url_to_image.jpg

---

Let's start by taking a look at the result, this is Windows running WebStorm that's installed in WSL2 (Ubuntu):

involves both showcasing your teaching skills and demonstrating your passion for mathematics. Start by researching the school and its curriculum to tailor your responses accordingly. Highlight your qualific... | anjali110385 |

1,902,299 | Understanding Multi-Layer Perceptrons (MLPs)... | In the world of machine learning, neural networks have garnered significant attention due to their... | 0 | 2024-06-27T09:14:54 | https://dev.to/pranjal_ml/understanding-multi-layer-perceptrons-mlps-19pb | python, datascience, deeplearning, machinelearning | In the world of machine learning, neural networks have garnered significant attention due to their ability to model complex patterns. At the foundation of neural networks lies the perceptron, a simple model that, despite its limitations, has paved the way for more advanced architectures. In this blog, we will explore t... | pranjal_ml |

1,902,297 | Java Hibernate vs JPA: Rapid review for you | It's time we are introduced to Java Hibernate vs JPA Java Hibernate: An open-source... | 0 | 2024-06-27T09:11:29 | https://dev.to/zoltan_fehervari_52b16d1d/java-hibernate-vs-jpa-rapid-review-for-you-40hg | java, hibernate, jpa, javaframeworks | ## It's time we are introduced to Java Hibernate vs JPA

**Java Hibernate:** An open-source Object-Relational Mapping (ORM) framework that simplifies database interactions by mapping Java classes to database tables. It’s known for its robustness, offering features like high-level object-oriented query language (HQL), c... | zoltan_fehervari_52b16d1d |

1,902,296 | Reliable Nursing Essay Writing Services for Your Needs | Reliable Nursing Essay Writing Services for Your Needs In today's fast-paced and challenging... | 0 | 2024-06-27T09:11:01 | https://dev.to/sibifiw482/reliable-nursing-essay-writing-services-for-your-needs-4f7n | webdev, python, devops, opensource | Reliable Nursing Essay Writing Services for Your Needs

In today's fast-paced and challenging academic environment, nursing students frequently feel overwhelmed by the number of responsibilities they have to fulfill. It can be extremely difficult to maintain a balance between clinical rotations, theoretical coursework,... | sibifiw482 |

1,902,294 | Understanding Recursive Neural Networks | Recursive Neural Networks (RecNNs) are a fascinating and powerful class of neural networks designed... | 27,893 | 2024-06-27T09:09:34 | https://dev.to/monish3004/understanding-recursive-neural-networks-25ka | deeplearning, neuralnetworks, computerscience, nlp | Recursive Neural Networks (RecNNs) are a fascinating and powerful class of neural networks designed to model hierarchical structures in data. Unlike traditional neural networks, which process data in a linear sequence, RecNNs can process data structures such as trees, making them particularly well-suited for tasks like... | monish3004 |

1,902,293 | Reliable Nursing Essay Writing Services for Your Needs | Reliable Nursing Essay Writing Services for Your Needs In today's fast-paced and demanding academic... | 0 | 2024-06-27T09:07:50 | https://dev.to/sibifiw482/reliable-nursing-essay-writing-services-for-your-needs-5c6o | webdev, javascript, beginners, tutorial | Reliable Nursing Essay Writing Services for Your Needs

In today's fast-paced and demanding academic environment, nursing students often find themselves overwhelmed with a myriad of responsibilities. Balancing clinical rotations, theoretical coursework, and personal commitments can be incredibly challenging. As a resu... | sibifiw482 |

1,902,292 | Hire Dedicated Developers in Greece | Hire Dedicated Development Team | Recognized as a leading provider of hire dedicated developer in Greece, Sapphire Software Solutions... | 0 | 2024-06-27T09:05:47 | https://dev.to/samirpa555/hire-dedicated-developers-in-greece-hire-dedicated-development-team-381p | hirededicateddevelopers, hirededicateddeveloperteam, hirededicateddevelopment, development | Recognized as a leading provider of **[hire dedicated developer in Greece](https://www.sapphiresolutions.net/hire-dedicated-developers-in-greece)**, Sapphire Software Solutions excels in delivering tailored, high-quality software development services. With a strong focus on flexibility and client collaboration, we enab... | samirpa555 |

1,902,291 | What Are the Advantages of Developing Medicine Delivery Apps? | The use of digital technologies that improve accessibility, efficiency, and patient care has... | 0 | 2024-06-27T09:05:06 | https://dev.to/manisha12111/what-are-the-advantages-of-developing-medicine-delivery-apps-fda | medicinedeliveyapp, webdev, appdevelopment, pharmacy | The use of digital technologies that improve accessibility, efficiency, and patient care has significantly changed the healthcare sector in recent years. Medication delivery apps are one such innovation that is gaining popularity; they benefit consumers and healthcare practitioners in many ways. Here, we examine how th... | manisha12111 |

1,902,290 | The Video Streaming Industry In 2024 | As we approach 2024, the video streaming industry continues to evolve at an unprecedented pace,... | 0 | 2024-06-27T09:04:19 | https://dev.to/stephen568hub/the-video-streaming-industry-in-2024-odd | video, streaming, livestreaming | As we approach 2024, the video streaming industry continues to evolve at an unprecedented pace, driven by technological advancements and changing consumer behaviors. The proliferation of high-speed internet and the increasing penetration of smart devices have democratized access to online content, leading to a surge in... | stephen568hub |

1,902,645 | Using Frp to Publicly Expose Services in a Local Kubernetes Cluster | I recently had cause to test out cert-manager using the Kubernetes Gateway API, but wanted to do this... | 0 | 2024-07-03T11:01:51 | https://windsock.io/using-frp-to-publicly-expose-services-in-a-local-kubernetes-cluster/ | kubernetes, frp, kind | ---

title: Using Frp to Publicly Expose Services in a Local Kubernetes Cluster

published: true

date: 2024-06-27 09:04:15 UTC

tags: Kubernetes,Frp,Kind

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/cbvnejs3lfdmvbxsjmsc.jpg

canonical_url: https://windsock.io/using-frp-to-publicly-expose-services-i... | nbrownuk |

1,902,287 | The Technological Marvel of DeFi with PancakeSwap Clone Script for Ambitious Entrepreneurs | DeFi has become one of the major innovators in the constantly changing financial industry and has... | 0 | 2024-06-27T09:02:41 | https://dev.to/rick_grimes/the-technological-marvel-of-defi-with-pancakeswap-clone-script-for-ambitious-entrepreneurs-39cd | javascript, ai, blockchain, web | DeFi has become one of the major innovators in the constantly changing financial industry and has significantly altered the established financial organizations. As for any driven reader focused on achieving great success for themselves and their business, this technological wonder can serve as the key to untold potenti... | rick_grimes |

1,902,286 | Let's clash Python vs JavaScript | I want to understand Python’s Performance Python is tailored for readability and ease of... | 0 | 2024-06-27T09:02:16 | https://dev.to/zoltan_fehervari_52b16d1d/lets-clash-python-vs-javascript-cdm | python, javascript, performance, webdev | ## I want to understand Python’s Performance

**Python** is tailored for readability and ease of use, though it is not the swiftest due to its interpreted nature. It stands out with effective memory management and robust data structures, making it a prime choice for data analysis and machine learning where quick protot... | zoltan_fehervari_52b16d1d |

1,897,161 | Renovate for everything | In my earlier post about moving from Kotlin Scripting to Python, I mentioned several... | 0 | 2024-06-27T09:02:00 | https://blog.frankel.ch/renovate-for-everything/ | renovate, devops, cicd | In my earlier post about moving from [Kotlin Scripting to Python](https://blog.frankel.ch/kotlin-scripting-to-python/), I mentioned several reasons:

* Separating the content from the script

* Kotlin Scripting is an unloved child of JetBrains

* [Renovate](https://www.mend.io/renovate/) cannot update Kotlin Scripting fi... | nfrankel |

1,899,677 | How to add “Save and add another” feature to Rails apps | This article was originally published on Rails Designer. If your app has a business model that... | 0 | 2024-06-27T09:00:00 | http://railsdesigner.com/save-and-another/ | rails, ruby, webdev | This article was originally published on [Rails Designer](http://railsdesigner.com/save-and-another/).

---

If your app has a business model that often is created in sequence (think tasks or products), the **Save and add another** UX paradigm can be a great option.

I stumbled upon a great example in the Linear app.

... | railsdesigner |

1,902,285 | What is Azure Bastion: An In-Depth Guide to Enhanced Connectivity | Secure remote access to your infrastructure is crucial in the digital world. Microsoft's completely... | 0 | 2024-06-27T08:58:50 | https://dev.to/dhruvil_joshi14/what-is-azure-bastion-an-in-depth-guide-to-enhanced-connectivity-2i27 | azure, azurebastion, azuresecurity, cloudsecurity | Secure remote access to your infrastructure is crucial in the digital world. Microsoft's completely managed service Azure Bastion gives your virtual machines (VMs) easy and safe access remotely without exposing them to the public internet. This blog explores what is Azure Bastion, its benefits, use cases, and why it's ... | dhruvil_joshi14 |

1,902,444 | Lambda extension to cache SSM and Secrets Values for PHP Lambda on CDK | Introduction Managing secrets securely in AWS Lambda functions is crucial for maintaining... | 0 | 2024-07-01T10:50:56 | https://rafael.bernard-araujo.com/lambda-extension-to-cache-ssm-and-secrets-values-for-php-lambda-on-cdk.php | php, aws, awslambda | ---

title: Lambda extension to cache SSM and Secrets Values for PHP Lambda on CDK

published: true

date: 2024-06-27 08:58:29 UTC

tags: PHP,aws,awslambda

canonical_url: https://rafael.bernard-araujo.com/lambda-extension-to-cache-ssm-and-secrets-values-for-php-lambda-on-cdk.php

---

# Introduction

Managing secrets secure... | rafaelbernard |

1,902,284 | Why ESG Services Matter in Corporate Strategy | Introduction In recent years, Environmental, Social, and Governance (ESG) factors have become... | 0 | 2024-06-27T08:58:26 | https://dev.to/linda0609/why-esg-services-matter-in-corporate-strategy-ejb | Introduction

In recent years, Environmental, Social, and Governance (ESG) factors have become integral to corporate strategy. ESG services offer a structured approach to evaluating a company's commitment to sustainable and ethical practices. These services are crucial not only for compliance and risk management but al... | linda0609 | |

1,902,282 | Code Future Software Development Trends | As a software developer, I've observed how these changes compel software development teams to... | 0 | 2024-06-27T08:54:27 | https://dev.to/igor_ag_aaa2341e64b1f4cb4/code-future-software-development-8fp | softwaredevelopment, community, discuss | As a software developer, I've observed how these changes compel [software development teams](https://dev.to/igor_ag_aaa2341e64b1f4cb4/software-development-team-4nol) to continuously adapt and innovate to stay relevant and competitive in this dynamic industry. Keeping abreast of new technologies, methodologies, and mark... | igor_ag_aaa2341e64b1f4cb4 |

1,902,281 | The Last 2 Years' Frontend Frameworks | Frontend frameworks, mainly JavaScript-based libraries, equip developers with a structured toolkit... | 0 | 2024-06-27T08:53:35 | https://dev.to/zoltan_fehervari_52b16d1d/the-last-2-years-frontend-frameworks-31j9 | frontend, frontendframeworks | Frontend frameworks, mainly JavaScript-based libraries, equip developers with a structured toolkit for constructing efficient interfaces for web applications.

These frameworks accelerate the development process by providing reusable code elements and standardized technology, essential for maintaining consistency acros... | zoltan_fehervari_52b16d1d |

1,902,280 | Pulauwin: Panduan Komprehensif untuk Pendaftaran, Alternatif, dan Login | Kunjungi Situs Web Pulauwin: Buka browser web Anda dan navigasikan ke situs web resmi Pulauwin. Klik... | 0 | 2024-06-27T08:51:54 | https://dev.to/pulauwin44/pulauwin-panduan-komprehensif-untuk-pendaftaran-alternatif-dan-login-3b97 | Kunjungi Situs Web Pulauwin: Buka browser web Anda dan navigasikan ke situs web resmi Pulauwin.

Klik pada Tombol Daftar: Temukan tombol daftar atau daftar, biasanya ditemukan di beranda.

Masukkan Detail Anda: Anda akan diminta untuk memberikan informasi pribadi seperti nama, alamat email, dan kata sandi Anda. Pastikan ... | pulauwin44 | |

1,902,279 | Reparatur Schweinfurt | reparieren lassen Für schnelle und zuverlässige Reparaturen Ihres Handys oder Smartphones in... | 0 | 2024-06-27T08:51:36 | https://dev.to/smartphonereparaturschweinfurt/reparatur-schweinfurt-263h | [reparieren lassen](https://smartphone-reparatur-schweinfurt.de/)

Für schnelle und zuverlässige Reparaturen Ihres Handys oder Smartphones in Schweinfurt ist Smartphone Reparatur Schweinfurt die ideale Wahl. Lassen Sie Ihr Gerät von unseren erfahrenen Technikern professionell reparieren und profitieren Sie von unserem ... | smartphonereparaturschweinfurt | |

1,705,073 | OneEntry Headless CMS: How To Use It? | In the CMS context, the term "headless" points to the absence of a front-end or presentation layer.... | 25,810 | 2024-06-27T08:50:26 | https://dev.to/lorenzojkrl/oneentry-headless-cms-how-to-use-it-icd | cms, contentwriting, tutorial |

In the CMS context, the term "headless" points to the absence of a front-end or presentation layer. Instead, a headless CMS works with an API (Application Programming Interface), which empowers users to render content on the front end of their choice.

For example, let's say you want to use OneEntry headless CMS for y... | lorenzojkrl |

1,902,278 | Anvenssa AI | Anvenssa.AI is a privately held company headquartered in the India, It has a sizable partner network... | 0 | 2024-06-27T08:46:57 | https://dev.to/harshit_badodhe_1cfa71db5/anvenssa-ai-m4o | [Anvenssa.AI](https://anvenssa.com/) is a privately held company headquartered in the India, It has a sizable partner network that gives it a broad geographic presence.

We’re here to deliver you an amazing experience, fueled by the passion to change the day-in-the-life of your employees and customers. Our goal is to ... | harshit_badodhe_1cfa71db5 | |

1,902,277 | High Availability vs Fault Tolerance vs Disaster Recovery | I. High Availability: Similar to having a spare tire in a car, high availability ensures a... | 0 | 2024-06-27T08:44:50 | https://dev.to/congnguyen/high-availability-vs-fault-tolerance-vs-disaster-recovery-2m | #I. High Availability:

Similar to having a spare tire in a car, high availability ensures a quick recovery from a component failure. The system has a backup ready to replace the failed component, minimizing downtime.

.

Install GHC plugin from Edge store [here](https://microsoftedge.microsoft.com/addons/detail/... | liushuigs | |

1,902,271 | Import A TXT File Where The Separator Is Missing In A Column To Excel | Problem description & analysis: We have a comma-separated txt file that has a total of 10... | 0 | 2024-06-27T08:33:29 | https://dev.to/judith677/import-a-txt-file-where-the-separator-is-missing-in-a-column-to-excel-1ac6 | programming, beginners, tutorial, productivity | **Problem description & analysis**:

We have a comma-separated txt file that has a total of 10 columns. As certain values of the 3rd column do not have separators, that column is missing and the corresponding rows only have 9 columns, as shown in the last rows:

- Spark is currently one of the most popular tools for big data analytics

- Spark is generally faster than Hadoop. This is because Hadoop _writes intermediate results to disk_ whereas Spark tries to _keep interme... | congnguyen | |

1,902,267 | Cheap Medical Alert Systems for Seniors | Medical Care Alert offers top-tier medical alert systems tailored for seniors, providing peace of... | 0 | 2024-06-27T08:27:50 | https://dev.to/medicalcarealert/cheap-medical-alert-systems-for-seniors-40gb | Medical Care Alert offers top-tier **[medical alert systems tailored for seniors](https://www.medicalcarealert.com/)**, providing peace of mind and independence. Our cutting-edge technology ensures swift response in emergencies, connecting users to trained professionals 24/7. With easy-to-use devices and customizable f... | medicalcarealert | |

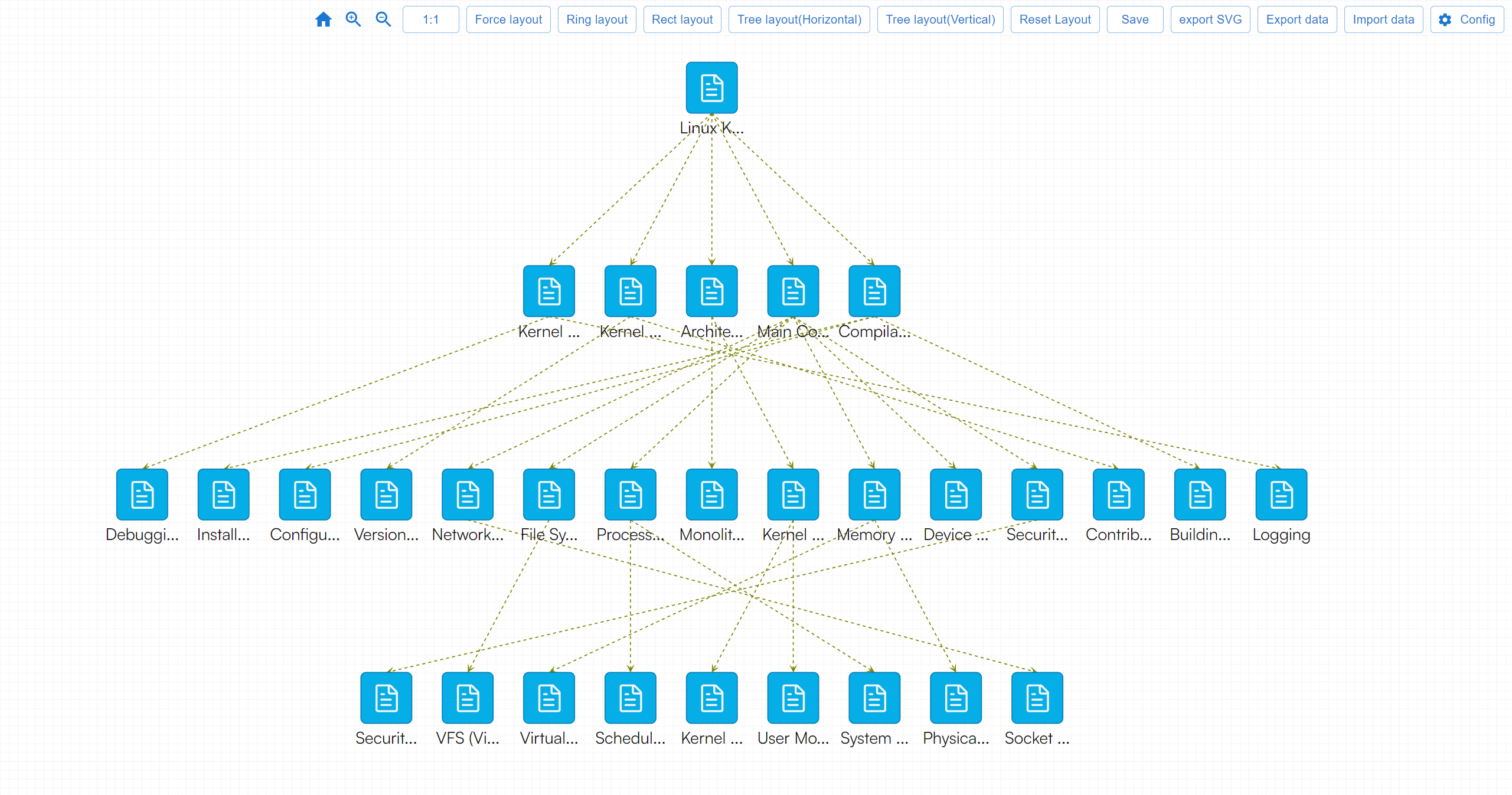

1,902,266 | Linux Kernel Overview | Linux Kernel Overview Detailed Overview of the Linux Kernel Linux Kernel Overview Description:... | 0 | 2024-06-27T08:26:57 | https://dev.to/fridaymeng/linux-kernel-overview-16ho | [Linux Kernel Overview](https://addgraph.com/linuxKernelOverview

)

**Detailed Overview of the Linux Kernel**

1. Linux Kernel Overview

Description: Introduction to the Linux kernel, its purpose, and its significanc... | fridaymeng | |

1,902,264 | Understanding the Prototype Pattern | ASSALAMUALAIKUM WARAHMATULLAHI WABARAKATUH, السلام عليكم و رحمة اللّه و بركاته ... | 0 | 2024-06-27T08:25:35 | https://dev.to/bilelsalemdev/understanding-the-prototype-pattern-1g12 | designpatterns, typescript, programming, oop | ASSALAMUALAIKUM WARAHMATULLAHI WABARAKATUH, السلام عليكم و رحمة اللّه و بركاته

## Introduction

Design patterns are essential in software engineering as they provide time-tested solutions to common problems. One such pattern is the Prototype Pattern, which can simplify the process of creating new objects. In this arti... | bilelsalemdev |

1,902,263 | How to build a NFT presale and staking dapp in open network? | I have recently developed a nft presale and staking dapp in open network and it was a really... | 0 | 2024-06-27T08:23:35 | https://dev.to/crypto_gig_1995/how-to-build-a-nft-presale-and-staking-dapp-in-open-network-16da | ton, nft, presale, staking | I have recently developed a nft presale and staking dapp in open network and it was a really thrilling challenge for me! I was new to open network and especially to FunC language. Nobody knows that and I cannot search for documents needed and no source code.

I have developed dozens of dapps like that in EVM and Solana ... | crypto_gig_1995 |

1,902,260 | IMCWire's London PR Firm Quest: Uncovering Triumph | Unveiling the Secrets of PR Agencies: A Journey with IMCWire (2500+ Words) The world of public... | 0 | 2024-06-27T08:20:13 | https://dev.to/emily987_493a4d9a6e39386a/imcwires-london-pr-firm-quest-uncovering-triumph-hgh | Unveiling the Secrets of PR Agencies: A Journey with IMCWire (2500+ Words)

The world of public relations (PR) can seem shrouded in mystery for many businesses. PR agencies, the supposed gatekeepers of media coverage and brand reputation, often operate behind a veil of strategy sessions and media pitches. But what truly... | emily987_493a4d9a6e39386a | |

1,902,259 | What are the latest trends in women glasses? | Unique Geometric Glasses Geometric shapes are making a significant comeback. Think hexagons,... | 0 | 2024-06-27T08:20:03 | https://dev.to/zeelool/what-are-the-latest-trends-in-women-glasses-23he | Unique Geometric Glasses

Geometric shapes are making a significant comeback. Think hexagons, pentagons, and even octagons. These frames are not just eyeglasses; they are pieces of art. You’ll find these shapes in various materials, from sleek metals to vibrant acetates. Pair them with a minimalist outfit to make your g... | zeelool | |

1,902,258 | Avalanche Blockchain Development | Built for dApps and DeFi | Blockchain enthusiasts frequently discuss the future: the upcoming wave, emerging trends, and the... | 0 | 2024-06-27T08:15:56 | https://dev.to/donnajohnson88/avalanche-blockchain-development-built-for-dapps-and-defi-d1e | avalanche, blockchain, dapps, defi | Blockchain enthusiasts frequently discuss the future: the upcoming wave, emerging trends, and the myriad possibilities. While these discussions are captivating, the current developments are equally noteworthy. Amidst the attention-grabbing headlines and flashy launches, [Avalanche blockchain development services](https... | donnajohnson88 |

1,902,224 | 3 Software Engineering Templates I Wish I Had Sooner | Want to become a better Software Engineer? Just make sure to follow the templates that I... | 0 | 2024-06-27T08:15:35 | https://dev.to/perisicnikola37/3-software-engineering-templates-i-wish-i-had-sooner-2n4l | webdev, programming, coding, career | ## Want to become a **better Software Engineer**?

Just make sure to follow the templates that I will provide in today's blog ✨

**Templates shared in this post:**

1. Pull request template

2. Issue template

3. Feature request template

Let's explore them 🚀

---

## 1. Pull request(Diff) template 📂

<img src="https:/... | perisicnikola37 |

1,902,257 | Let me give you the FastAPI vs Django short breakdown! | I have compared the legendary FastAPI against Flask which is also legendary...but let's see further... | 0 | 2024-06-27T08:12:48 | https://dev.to/zoltan_fehervari_52b16d1d/fastapi-vs-django-3b9i | fastapi, django, pythonframeworks, python | I have compared the legendary FastAPI against Flask which is also legendary...but let's see further on.

## Introduction to FastAPI and Django

**FastAPI:** A modern, high-performance framework designed for building APIs with Python 3.7+ that features automatic documentation, easy data validation, and modern Python typ... | zoltan_fehervari_52b16d1d |

1,902,256 | Seeking Guidance on Advancing My Career as a Web Developer | Hi everyone, My name is Jayant, and I am a Web Developer/Freelancer with over a year of experience.... | 0 | 2024-06-27T08:11:54 | https://dev.to/jay818/seeking-guidance-on-advancing-my-career-as-a-web-developer-55gj | career, help, webdev, programming | Hi everyone,

My name is Jayant, and I am a **Web Developer/Freelancer with over a year of experience. I have completed one remote job and one remote internship, and I just graduated this year**.

Currently, I am searching for remote jobs but have been facing difficulties. Although I have passed several interviews, I h... | jay818 |

1,902,255 | How to use the tooltip and abscissa in the vchart library? | Question title How to use the tooltip and abscissa in the vchart library? ... | 0 | 2024-06-27T08:08:14 | https://dev.to/da730/how-to-use-the-tooltip-and-abscissa-in-the-vchart-library-5ef5 | ## Question title

How to use the tooltip and abscissa in the vchart library?

## Problem description

I am using the vchart library to create charts, but I am having trouble setting the tooltip and abscissa. I tried to configure the tooltip, but it did not display, even if I set it to visible.

In addition, I also hope t... | da730 | |

1,902,253 | Methods of VS Coding | Methods of VS Coding Code Editing and Navigation Basic Text Editing Use VS... | 0 | 2024-06-27T08:05:00 | https://dev.to/wasifali/methods-of-vs-coding-5712 | webdev, javascript, programming, html | ## **Methods of VS Coding**

## **Code Editing and Navigation**

**Basic Text Editing**

Use VS Code's intuitive interface for writing and editing code. Utilize keyboard shortcuts for efficiency (e.g., Ctrl+S to save, Ctrl+Z to undo).

**Multi-Cursor Editing**

Use Alt+Click (or Option+Click on macOS) to place multiple cur... | wasifali |

1,902,252 | Drawbacks to Using Rack Server Unit as Desktop Computer? | Hello everyone, I'm considering repurposing a rack server unit as a desktop computer and wanted to... | 0 | 2024-06-27T08:03:00 | https://dev.to/yash_sharma_/drawbacks-to-using-rack-server-unit-as-desktop-computer-1732 | rack, server, rackserver | Hello everyone,

I'm considering repurposing a [rack server](https://www.lenovo.com/us/en/c/servers-storage/servers/racks/) unit as a desktop computer and wanted to get some input on the potential drawbacks of doing so. Here are a few concerns I have:

Noise Levels: I've heard that rack servers can be quite noisy due t... | yash_sharma_ |

1,901,351 | Bootstrap Tutorials: Containers | Containers Containers are the most basic layout element in Bootstrap and are required when... | 27,869 | 2024-06-27T08:00:00 | https://dev.to/keepcoding/bootstrap-tutorials-container-9l1 | bootstrap, tutorial, html, ui | ## Containers

Containers are the most basic layout element in Bootstrap and are required when using default grid system. Containers are used to contain, pad, and (sometimes) center the content within them. Although containers can be nested, most layouts do not require a nested container.

This is what the container lo... | keepcoding |

1,859,038 | Metis Enables your teams to own their databases with ease | Taking care of our databases may be challenging in today’s world. Things can be very complex and... | 0 | 2024-06-27T08:00:00 | https://www.metisdata.io/blog/metis-enables-teams-to-own-their-databases-with-ease | sql, database, monitoring | Taking care of our [databases](https://www.metisdata.io/knowledgebase) may be challenging in today’s world. Things can be very complex and complicated, we may be working with hundreds of clusters and applications, and we may lack clear ownership. Navigating this complexity may be even harder when we lack clear guidance... | adammetis |

1,902,250 | Automotive Shock Absorbers Market: Trends, Growth Forecast 2024-2033 | The global automotive shock absorbers market is poised for significant growth, with projected... | 0 | 2024-06-27T07:56:47 | https://dev.to/swara_353df25d291824ff9ee/automotive-shock-absorbers-market-trends-growth-forecast-2024-2033-2105 |

The global [automotive shock absorbers market](https://www.persistencemarketresearch.com/market-research/shock-absorbers-market.asp) is poised for significant growth, with projected revenues increasing from US$23.6 ... | swara_353df25d291824ff9ee | |

1,902,249 | Understanding Phone Lookup APIs | In today's interconnected world, the ability to quickly access and verify information is crucial. One... | 0 | 2024-06-27T07:56:06 | https://dev.to/sameeranthony/understanding-phone-lookup-apis-4j65 | api | In today's interconnected world, the ability to quickly access and verify information is crucial. One of the tools that has become increasingly important in this context is the **[phone lookup API](https://numverify.com/)**. This technology allows businesses and individuals to retrieve detailed information about phone ... | sameeranthony |

1,902,248 | PowerShell Development in Neovim | Overview I have been developing several PowerShell projects over the last year, solely in... | 0 | 2024-06-27T07:54:51 | https://dev.to/kas_m/powershell-development-in-neovim-4e9 | powershell, neovim, wsl | ## Overview

I have been developing several PowerShell projects over the last year, solely in Neovim. Just recently I rebuilt my neovim config, and found a reliable way to get it configured.

The configuration uses Lazy package manager, I use several plugins, but for brevity I'll include only the configuration required ... | kas_m |

1,902,247 | RF Filter Market: Future Growth and Key Player Insights for 2024-2033 | The global RF filter market is projected to grow from US$13.6 billion in 2024 to US$59.5 billion by... | 0 | 2024-06-27T07:54:01 | https://dev.to/swara_353df25d291824ff9ee/rf-filter-market-future-growth-and-key-player-insights-for-2024-2033-o26 |

The global [RF filter market](https://www.persistencemarketresearch.com/market-research/rf-filter-market.asp) is projected to grow from US$13.6 billion in 2024 to US$59.5 billion by 2033, with a CAGR of 17.5% during... | swara_353df25d291824ff9ee | |

1,902,246 | Top Mobile App Development Company in Greece | Hire Mobile App developers | Leading the mobile app development Company in Greece, Sapphire Software Solutions is renowned for its... | 0 | 2024-06-27T07:52:47 | https://dev.to/samirpa555/top-mobile-app-development-company-in-greece-hire-mobile-app-developers-52dl | mobileappdevelopment, mobileappdevelopmentcompany, mobileappdevelopmentservices, hiremobileappdevelopers | Leading the **[mobile app development Company in Greece](https://www.sapphiresolutions.net/top-mobile-app-development-company-in-greece)**, Sapphire Software Solutions is renowned for its expertise in creating innovative and user-centric mobile applications. Specializing in Android and iOS app development, We combine c... | samirpa555 |

1,902,245 | Cristiano Ronaldo’s Diet: What Does He Eat? | Portuguese people know how to eat, and Cristiano Ronaldo is no different. He enjoys fruit juices,... | 0 | 2024-06-27T07:52:22 | https://dev.to/gidam/cristiano-ronaldos-diet-what-does-he-eat-3e7d | Portuguese people know how to eat, and [Cristiano Ronaldo](google.com)

is no different. He enjoys fruit juices, bread, fish, eggs, steak, and the occasional slice of cake or chocolate bar. His go-to dish is Bacalhau à Brás, which consists of salted cod, onions, thinly sliced fried potatoes, black olives, and parsley o... | gidam | |

1,894,029 | 7 Open Source Projects You Should Know - C# Edition ✔️ | Overview Hi everyone 👋🏼 In this article, I'm going to look at seven OSS repository that... | 27,756 | 2024-06-27T07:51:55 | https://domenicotenace.dev/blog/seven-oss-projects-csharp-edition/ | opensource, github, csharp, dotnet | ## Overview

Hi everyone 👋🏼

In this article, I'm going to look at seven OSS repository that you should know written in C#, interesting projects that caught my attention and that I want to share.

Let's start 🤙🏼

---

## 1. [QuestPDF](https://www.questpdf.com/)

QuestPDF is open source .NET library for PDF document g... | dvalin99 |

1,902,244 | Empowering Data Consumers: How Amazon's Q Business Drives Innovation | Is your data a hidden treasure waiting to be discovered, or just a confusing mess stored away and... | 0 | 2024-06-27T07:51:51 | https://dev.to/krunalbhimani/empowering-data-consumers-how-amazons-q-business-drives-innovation-1f0f | Is your data a hidden treasure waiting to be discovered, or just a confusing mess stored away and forgotten? Many businesses struggle to make the most of their data. Hidden within countless spreadsheets and reports are valuable insights that can drive your business forward, but they often go unnoticed.

Imagine if ther... | krunalbhimani | |

1,902,238 | Apparel Boxes: Beyond Packaging, a Canvas for Branding | Envision this: you arrange an unused furnish online, energetically anticipating its entry. The bundle... | 0 | 2024-06-27T07:42:20 | https://dev.to/saqib_minhas_c23958067cf2/apparel-boxes-beyond-packaging-a-canvas-for-branding-21f3 | boxes, apparel, packaging | Envision this: you arrange an unused furnish online, energetically anticipating its entry. The bundle arrives, and as you unwrap it, you're welcomed not fair by the dress themselves, but by a flawlessly outlined box that reflects the brand's identity. This is the control of the [attire box](https://packlim.com/custom-a... | saqib_minhas_c23958067cf2 |

1,902,243 | Modern wall decor trends for 2024 | Step into the future of interior design with our exclusive preview of 2024's modern wall decor... | 0 | 2024-06-27T07:51:43 | https://dev.to/phooldaan_1643526a2c37c37/budget-friendly-home-decor-items-to-refresh-your-living-room-44p4 | walldecor, homedecor | Step into the future of interior design with our exclusive preview of 2024's [modern wall decor](https://phooldaan.com/wall-decor/) trends. Embrace sleek lines, vibrant hues, and innovative materials as you transform your space into a contemporary masterpiece. From minimalist elegance to avant-garde expressions, our cu... | phooldaan_1643526a2c37c37 |

1,902,242 | How to set the font size of title to semi in VChart for heading4? | Question title How to set the font size of title to semi in vchart for heading4? Problem... | 0 | 2024-06-27T07:51:11 | https://dev.to/da730/how-to-set-the-font-size-of-title-to-semi-in-vchart-for-heading4-jnf | ## Question title

How to set the font size of title to semi in vchart for heading4?

Problem description

I am using the @visactor/vchart library for data lake visualization. However, I encountered a problem where I want to set the font size of the title in vchart to semi's heading-4 font size, but I don't know how to pa... | da730 | |

1,902,241 | Top Web Development Company in Greece | Hire Web Developers | Renowned for its expertise and innovation, Sapphire Software Solutions is a top web development... | 0 | 2024-06-27T07:47:01 | https://dev.to/samirpa555/top-web-development-company-in-greece-hire-web-developers-5293 | webdev, webdevelopmentservices, webdevelopmentcompany, hirewebdevelopers | Renowned for its expertise and innovation, Sapphire Software Solutions is a **[top web development company in Greece](https://www.sapphiresolutions.net/top-web-development-company-in-greece)**. Specializing in crafting bespoke websites and web applications, we combine stunning design with cutting-edge technology to del... | samirpa555 |

1,902,240 | Mysql Database Index Explained for Beginners | Core Concepts Primary Key Index / Secondary Index Clustered Index / Non-Clustered... | 0 | 2024-06-27T07:46:12 | https://dev.to/coder_world/mysql-database-index-explained-for-beginners-3heg | mysql, database, index, beginners | ## Core Concepts

- **Primary Key Index / Secondary Index**

- **Clustered Index / Non-Clustered Index**

- **Table Lookup / Index Covering**

- **Index Pushdown**

- **Composite Index / Leftmost Prefix Matching**

- **Prefix Index**

- **Explain**

## 1. [Index Definition]

> **1. Index Definition**

Besides the data itself... | coder_world |

1,902,239 | How to add custom content at the bottom of the tooltip card in VChart? | Problem description I am using VChart for programming data lake visualization and have... | 0 | 2024-06-27T07:43:17 | https://dev.to/da730/how-to-add-custom-content-at-the-bottom-of-the-tooltip-card-in-vchart-16he | ## Problem description

I am using VChart for programming data lake visualization and have encountered some issues. I want to add some custom content at the bottom of the tooltip card, especially a button.

However, I found that when using vChart.renderAsync ().then, it seems that we cannot obtain the tooltip of the clas... | da730 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.