id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,902,236 | Handling File Uploads in Next.js Using UploadThing | Handling File Uploads in Next.js Using UploadThing File uploads are a common feature in... | 0 | 2024-06-27T07:40:43 | https://dev.to/sh20raj/handling-file-uploads-in-nextjs-using-uploadthing-47bi | nextjs | ### Handling File Uploads in Next.js Using UploadThing

File uploads are a common feature in many web applications, and handling them efficiently and securely is crucial. In this article, we'll explore how to handle file uploads in a Next.js application using UploadThing, a powerful and easy-to-use library for managing... | sh20raj |

1,902,235 | Top Software Development Company in Greece | Software Development Services | Sapphire Software Solutions is a leading software development company in Greece dynamic tech... | 0 | 2024-06-27T07:39:09 | https://dev.to/samirpa555/top-software-development-company-in-greece-software-development-services-dhn | softwaredevelopment, softwaredevelopmentservices, softwaredevelopmentcompany, hiresoftwaredevelopers | Sapphire Software Solutions is a leading **[software development company in Greece](https://www.sapphiresolutions.net/top-software-development-company-in-greece)** dynamic tech industry. They are known for delivering innovative and robust solutions, with expertise spanning custom software development, cloud services, a... | samirpa555 |

1,902,152 | Introduction to Sequelize: Simplifying Database Operations in Node.js | What is a ORM ? ORM (Object-Relational Mapping) bridges databases and object-oriented... | 0 | 2024-06-27T07:38:36 | https://dev.to/vaishnavi_rawool/introduction-to-sequelize-simplifying-database-operations-in-nodejs-322a | node, database, sql, javascript | ## What is a ORM ?

ORM (Object-Relational Mapping) bridges databases and object-oriented programming by mapping database tables to objects in code. It allows developers to interact with databases using familiar programming constructs, abstracting away SQL queries for easier development and maintenance of applications.... | vaishnavi_rawool |

1,902,234 | Unveiling Goa Game's Engaging Narrative | Welcome to the world of Goa Game, an immersive virtual adventure set in the captivating landscapes of... | 0 | 2024-06-27T07:37:25 | https://dev.to/dgfjhjg/unveiling-goa-games-engaging-narrative-265b | Welcome to the world of Goa Game, an immersive virtual adventure set in the captivating landscapes of Goa. This gaming experience transports players into a digital realm inspired by Goa’s cultural richness and natural beauty. Whether you’re exploring sandy beaches, bustling markets, or serene countryside, Goa Game offe... | dgfjhjg | |

1,902,233 | Privacy-Enhancing Technologies in Blockchain | Privacy-enhancing technologies (PETs) play a crucial role in ensuring the confidentiality and... | 0 | 2024-06-27T07:36:49 | https://dev.to/thillainathan/privacy-enhancing-technologies-in-blockchain-4f48 |

Privacy-enhancing technologies (PETs) play a crucial role in ensuring the confidentiality and security of data in blockchain networks. With the increasing adoption of blockchain technology in various industries, the need for robust privacy solutions has become more apparent. explore the importance of PETs in blockchai... | thillainathan | |

1,902,232 | Neurosurgery at Fakeeh University Hospital | At Fakeeh University Hospital, the neurosurgery department boasts state-of-the-art facilities and a... | 0 | 2024-06-27T07:33:34 | https://dev.to/fuhcare/neurosurgery-at-fakeeh-university-hospital-3m96 | neurosurgery, spinalcord, fakeehuniversityhospital, brainsurgery | At Fakeeh University Hospital, the neurosurgery department boasts state-of-the-art facilities and a team of highly skilled neurosurgeons. Patients benefit from a multidisciplinary approach that integrates cutting-edge technology with compassionate care.

**Specializations**

Brain Surgery: Expertise in treating brain tu... | fuhcare |

1,902,231 | Why React Needs a Key Prop? | In the React ecosystem, the key prop is one of the most crucial aspects of managing and rendering... | 0 | 2024-06-27T07:32:31 | https://dev.to/alisamirali/why-react-needs-a-key-prop-27hd | react, javascript, webdev, frontend | In the React ecosystem, the `key` prop is one of the most crucial aspects of managing and rendering dynamic lists.

Understanding why React needs a `key` prop is essential for any React developer.

This article delves into the importance of the key prop, explaining its role and providing `TypeScript` examples to illu... | alisamirali |

1,902,229 | What is a Decentralized Wallet and How Does It Work? | In the world of cryptocurrencies, the way you store your digital assets is crucial. One popular... | 0 | 2024-06-27T07:31:47 | https://dev.to/kiararobbinson/what-is-a-decentralized-wallet-and-how-does-it-work-39oe | decentralizedwallet, decentralizedcryptowallet, decentralizedwallets, blockchaintechnology |

---

title: What is a Decentralized Wallet and How Does It Work?

published: true

description:

tags: #decentralizedwallet #decentralizedcryptowallet #decentralizedwallets #blockchaintechnology

# cover_image:

# Us... | kiararobbinson |

1,902,228 | gRPC vs REST: A Comprehensive Comparison | In the world of web services and APIs, two major paradigms stand out: REST (Representational State... | 0 | 2024-06-27T07:30:49 | https://dev.to/keploy/grpc-vs-rest-a-comprehensive-comparison-3gbo | grpc, ai, javascript, beginners |

In the world of web services and APIs, two major paradigms stand out: REST (Representational State Transfer) and gRPC (gRPC Remote Procedure Calls). [gRPC vs rest](https://keploy.io/blog/community/grpc-vs-rest-a-co... | keploy |

1,902,227 | How to achieve the linkage effect in the vchart library? | Question title How to achieve the linkage effect of displaying the position of other... | 0 | 2024-06-27T07:29:03 | https://dev.to/da730/how-to-achieve-the-linkage-effect-in-the-vchart-library-148l | ## Question title

How to achieve the linkage effect of displaying the position of other charts when the mouse is moved in the vchart library?

## Problem description

I encountered a problem when using the vchart library. I hope that when I move the mouse, other charts can also display their corresponding positions at t... | da730 | |

1,902,158 | aa | aa | 0 | 2024-06-27T06:27:35 | https://dev.to/ducanhmoi/a-95n | webdev, beginners | aa | ducanhmoi |

1,902,226 | How to Set Up a Headless WordPress Site with Astro | Here, I'm diving into the exciting world of headless WordPress and Astro. If you're looking to... | 0 | 2024-06-27T07:26:14 | https://dev.to/mathiasahlgren/how-to-set-up-a-headless-wordpress-site-with-astro-3a2h | headless, wordpress, astro, cms | Here, I'm diving into the exciting world of headless WordPress and Astro. If you're looking to combine the content management power of WordPress with the blazing-fast performance of a static site generator, you're in for a treat. Let's get started!

## Introduction

So, what's all this fuss about headless WordPress and... | mathiasahlgren |

1,902,225 | Text Editor | Text Editor: vi editor, nano, gedit Commands to read file: more, less Filters Horizontal Filters :... | 0 | 2024-06-27T07:26:14 | https://dev.to/mahir_dasare_333/text-editor-3mbm | rhel, admin, linux | Text Editor: vi editor, nano, gedit

Commands to read file: more, less

Filters

Horizontal Filters : head,tail,greap

Verticle Filters: cut

Tools for string manipulation :wc , sort,awk, sed,

Text Editor : vi/vim Editor

:w = write changes / save

:q = quite

:wq! = write/save and quite without save(forcefully)

:q! = quite ... | mahir_dasare_333 |

1,899,688 | How SQL Query works? SQL Query Execution Order for Tech Interview | How SQL Query works? Understanding SQL Query Execution Order for Tech Interview | 0 | 2024-06-27T07:25:09 | https://dev.to/somadevtoo/how-sql-query-works-sql-query-execution-order-for-tech-interview-15kb | sql, database, programming, development | ---

title: How SQL Query works? SQL Query Execution Order for Tech Interview

published: true

description: How SQL Query works? Understanding SQL Query Execution Order for Tech Interview

tags: sql,database, programming, development

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

... | somadevtoo |

1,902,222 | What is IAM Security? What are Its Key Components | In today's digitally driven world, protecting sensitive information and ensuring secure access to... | 0 | 2024-06-27T07:24:10 | https://dev.to/blogginger/what-is-iam-security-what-are-its-key-components-88n | In today's digitally driven world, protecting sensitive information and ensuring secure access to resources is paramount. One of the key pillars of achieving this security is Identity and Access Management (IAM). IAM is a crucial framework that helps organizations manage digital identities and control access to their r... | blogginger | |

1,902,221 | How is CAPTCHA bypassed using AI? | CAPTCHAs (Completely Automated Public Turing tests to tell Computers and Humans Apart) are widely... | 0 | 2024-06-27T07:23:52 | https://dev.to/media_tech/how-is-captcha-bypassed-using-ai-581g | CAPTCHAs (Completely Automated Public Turing tests to tell Computers and Humans Apart) are widely used to protect websites from automated bots. However, with the advancement of artificial intelligence, bypassing CAPTCHAs has become feasible. This article explores how AI, particularly CaptchaAI solver, is used to overco... | media_tech | |

1,902,211 | Scraping Users Social Behavior to Personalize Retail Stores Using Data Scraping | In today's digital age, retail stores are constantly seeking innovative ways to enhance the shopping... | 0 | 2024-06-27T07:22:38 | https://dev.to/jhonharry65/scraping-users-social-behavior-to-personalize-retail-stores-using-data-scraping-153d | devops, webdev, programming, ai | In today's digital age, retail stores are constantly seeking innovative ways to enhance the shopping experience and increase customer satisfaction. One promising approach is the personalization of retail experiences based on users' social behavior. By leveraging data scraping techniques, retailers can gather valuable i... | jhonharry65 |

1,902,205 | How to Skyrocket Your Earnings with White Label Taxi App Development? | In the fast-paced world of transportation, the demand for efficient and reliable taxi services has... | 0 | 2024-06-27T07:21:15 | https://dev.to/martin_doug/how-to-skyrocket-your-earnings-with-white-label-taxi-app-development-44j | In the fast-paced world of transportation, the demand for efficient and reliable taxi services has never been higher. To meet this demand and maximize profits, many taxi companies are turning to white-label taxi app development. This approach not only offers a customizable and scalable solution but also ensures a quick... | martin_doug | |

1,902,182 | Reshaping Blockchain Technology: How Link Network Enhances Security and Scalability through PoSA and Plasma | In the rapid development of blockchain technology, scalability and security have always been two... | 0 | 2024-06-27T07:18:21 | https://dev.to/linknetwork/reshaping-blockchain-technology-how-link-network-enhances-security-and-scalability-through-posa-and-plasma-bcj |

In the rapid development of blockchain technology, scalability and security have always been two major challenges limiting its widespread adoption. Existing blockchain networks, such as Bitcoin and Ethereum, have m... | linknetwork | |

1,902,181 | The AI Chatbot that Does your Laundry | I've seen this meme on Facebook several times lately, where people complain about having the AI doing... | 0 | 2024-06-27T07:18:18 | https://ainiro.io/blog/the-ai-chatbot-that-does-your-laundry | ai, productivity, openai, chatgpt | I've seen this meme on Facebook several times lately, where people complain about having the AI doing arts and music, leaving the laundry and the dishes to its owner.

Basically it goes as follows ...

> I don't want AI to do my art and music such that I can do my laundry. I want the AI to do my laundry such that I can... | polterguy |

1,902,180 | How to Search Like a Pro in Answer | Discussions are always going in Apache Answer. How can you find the information you need in all these... | 0 | 2024-06-27T07:15:47 | https://dev.to/apacheanswer/how-to-search-like-a-pro-in-answer-1b2d | opensource, productivity | Discussions are always going in Apache Answer. How can you find the information you need in all these conversations? Try to search! Answer provides ways for you to search the information you need, and let’s equip you with all the search tips.

The search bar locates at the top of every page. Whether you’re browsing on ... | apacheanswer |

1,902,179 | What's New in API7 Enterprise 3.2.13: Flexible Service Publishing | API7 Enterprise offers features to segregate gateway environments based on gateway groups and manage... | 0 | 2024-06-27T07:13:10 | https://api7.ai/blog/api7-3.2.13-flexible-service-publishing | [API7 Enterprise](https://api7.ai/enterprise) offers features to segregate gateway environments based on gateway groups and manage versions between different gateway groups using service templates. With increasing diversity in API management requirements, we have recognized that complex version management is unnecessar... | yilialinn | |

1,901,173 | (Part 10)Golang Framework Hands-on - Prometheus Metrics Statistics | Github: https://github.com/aceld/kis-flow Document:... | 0 | 2024-06-27T07:11:34 | https://dev.to/aceld/part-10golang-framework-hands-on-prometheus-metrics-statistics-22f0 | go | <img width="150px" src="https://github.com/aceld/kis-flow/assets/7778936/8729d750-897c-4ba3-98b4-c346188d034e" />

Github: https://github.com/aceld/kis-flow

Document: https://github.com/aceld/kis-flow/wiki

---

[Part1-OverView](https://dev.to/aceld/part-1-golang-framework-hands-on-kisflow-streaming-computing-framework... | aceld |

1,902,178 | Fitnessproject | I'm working on a new fitness app that combines gaming elements with personalized workout plans and... | 0 | 2024-06-27T07:07:42 | https://dev.to/komlad/fitnessproject-24a | I'm working on a new fitness app that combines gaming elements with personalized workout plans and social features.

The goal is to make getting fit feel like an exciting adventure - think virtual quests, rewards, and friendly rewarding competition with friends. But it's not just about earning points, the app will also... | komlad | |

1,902,175 | How to Handle BufferExhaustedException in Kafka | Introduction In distributed systems, message queues like Apache Kafka are essential for decoupling... | 0 | 2024-06-27T07:07:22 | https://dev.to/shweta_kawale/how-to-handle-bufferexhaustedexception-in-kafka-177p | opensource, kafka |

**Introduction**

In distributed systems, message queues like Apache Kafka are essential for decoupling services and handling large streams of data. However, when dealing with high-volume data streams, you might encounter the dreaded BufferExhaustedException. This exception signifies that the internal buffers used by ... | shweta_kawale |

1,900,825 | Node.js vs. Browser: Understanding the Global Scope Battle | When coding with JavaScript, understanding the differences between Node.js and browser environments... | 0 | 2024-06-27T07:06:43 | https://dev.to/rahulvijayvergiya/nodejs-vs-browser-understanding-the-global-scope-battle-39al | javascript, node, webdev, react | When coding with JavaScript, understanding the differences between Node.js and browser environments is crucial, particularly when it comes to the concept of **global scope**. While both Node.js and the browser use JavaScript, the environments in which they operate differ significantly, impacting how global variables an... | rahulvijayvergiya |

1,902,177 | "Setting up your Codespace" Error | Whenever I try to open my codespace in github, it shows "Setting Up Your Codespace" but in truth it... | 0 | 2024-06-27T07:05:39 | https://dev.to/ish4an/setting-up-your-codespace-error-3blm | html, webdev, github, git | Whenever I try to open my codespace in github, it shows "Setting Up Your Codespace" but in truth it never gets set up.

How do I fix this?

EDIT: **Problem Fixed**

All you need to do is to change your internet ... | ish4an |

1,902,176 | Cloud Migration Made Easy with Azure Migration Assessment Tools | In today’s digital landscape, organizations are betting heavily on the cloud to transform their... | 0 | 2024-06-27T07:04:59 | https://dev.to/harman_diaz_afcacf62ea94f/cloud-migration-made-easy-with-azure-migration-assessment-tools-4b37 | cloud, azure, cloudmigration, azuremigration | In today’s digital landscape, organizations are betting heavily on the cloud to transform their businesses, optimize costs, and enhance security. Migrating to the cloud can significantly modernize and innovate business processes by reducing costs and simplifying IT management. However, jumping headfirst into the Cloud ... | harman_diaz_afcacf62ea94f |

1,902,174 | Essential Methods for Backing Up Your Hyper-V VM | Introduction In the realm of IT management, safeguarding data and ensuring system... | 0 | 2024-06-27T07:01:49 | https://dev.to/jeffreyboyle0033/essential-methods-for-backing-up-your-hyper-v-vm-4361 | disasterrecovery, backupstrategies, hyperv | ## Introduction

In the realm of IT management, safeguarding data and ensuring system continuity are paramount. For businesses utilizing Hyper-V for virtualization, understanding how to effectively [back up Virtual Machines](https://appleworld.today/how-to-back-up-a-hyper-v-virtual-machine-methods-and-best-practices/) (... | jeffreyboyle0033 |

1,902,447 | How to Optimize and Free Up Disk Space on Debian/Ubuntu Servers with Docker Containers | TLDR Manage disk space on Debian/Ubuntu servers and Docker containers by removing... | 0 | 2024-06-27T22:36:19 | https://diegocarrasco.com/optimize-free-up-disk-space-debian-ubuntu-docker/ | debian, diskusage, docker, servermaintenance | ---

title: How to Optimize and Free Up Disk Space on Debian/Ubuntu Servers with Docker Containers

published: true

date: 2024-06-27 07:00:00 UTC

tags: debian,diskusage,docker,servermaintenance

canonical_url: https://diegocarrasco.com/optimize-free-up-disk-space-debian-ubuntu-docker/

---

**In today’s digital age, having a strong online presence is crucial for businesses of all sizes. A well-designed website serves as the cornerstone of this presence, acting as the virtual storefront where customers... | itwebvoom |

1,902,169 | Stable, Secure, and Efficient: The Core Role of USDB on the Broken Bound Platform | Cross-chain transactions have become a key demand in the blockchain ecosystem. However,... | 0 | 2024-06-27T06:51:40 | https://dev.to/brokenbound/stable-secure-and-efficient-the-core-role-of-usdb-on-the-broken-bound-platform-49e4 |

Cross-chain transactions have become a key demand in the blockchain ecosystem. However, interoperability and value transfer between different blockchains have long troubled the industry’s development. To address thi... | brokenbound | |

1,900,824 | Scope vs. Context in JavaScript: Clearing Up the Confusion | In this article, we'll break down scope and context in javascript, highlighting their differences and... | 0 | 2024-06-27T06:48:24 | https://dev.to/rahulvijayvergiya/scope-vs-context-in-javascript-clearing-up-the-confusion-1d9m | javascript, reactjsdevelopment, webdev, node | In this article, we'll break down scope and context in javascript, highlighting their differences and how they impact your code. Understanding these differences helps in debugging issues related to variable access and this binding, leading to cleaner and more maintainable code.

## Understanding Scope in JavaScript

Sco... | rahulvijayvergiya |

1,902,168 | How to easily create folder structure in readme markdown with two simple steps | Learn how you can create a folder structure of your project in your readme file. creating folder... | 0 | 2024-06-27T06:47:08 | https://your-codes.vercel.app/how-to-easily-create-folder-structure-in-readme-with-two-simple-steps | terminal, markdown, chatgpt | Learn how you can create a folder structure of your project in your readme file.

creating folder structure of any of your project in the readme file can be very time taking & panful task for many of the developers.

Converting your project's structure to readme file or to a flow chart can be very difficult. If you are ... | your-ehsan |

1,902,167 | Ultimate Jaisalmer Tour Package for 3 Days Discover the Golden City | Dive into the heart of Jaisalmer's charm with our exclusive 3-day tour package. From ancient forts to... | 0 | 2024-06-27T06:44:42 | https://dev.to/garhrajputanacamps/ultimate-jaisalmer-tour-package-for-3-days-discover-the-golden-city-15o7 | Dive into the heart of Jaisalmer's charm with our exclusive 3-day tour package. From ancient forts to desert safaris, immerse yourself in the beauty of this historic city. Garh Rajputana Camps awaits your discovery. Rajasthan.

**_[Jaisalmer Tour Package for 3 Days](https://garhrajputanacamps.com/package/Complete-jaisa... | garhrajputanacamps | |

1,902,166 | Neobanking Solutions Embracing Crypto: Revolutionizing the Modern Financial Experience | Introduction In 2024, the Middle East’s tech sector is witnessing a revolution never... | 0 | 2024-06-27T06:43:28 | https://dev.to/wdcs/neobanking-solutions-embracing-crypto-revolutionizing-the-modern-financial-experience-4e88 | cryptocurrency, financial, ai, development |

## **Introduction**

In 2024, the Middle East’s tech sector is witnessing a revolution never seen before as it merges tradition with innovation within its busy backdrop. Over several industries’ growth and resilien... | wdcs |

1,902,163 | Fetching models using the new Model::Find() macro | Trailblazer comes with predefined steps we call "macros" that help you with common tasks such as... | 0 | 2024-06-27T06:43:20 | https://dev.to/trailblazer/fetching-models-using-the-new-modelfind-macro-jf3 | Trailblazer comes with predefined steps we call ["macros"](https://trailblazer.to/2.1/docs/operation/#operation-macro-api) that help you with common tasks such as validating a form object or finding an existing model using ActiveRecord (actually, any other ORM you approve of).

The [newly released `Model::Find()` macro... | apotonick | |

1,902,165 | Highly Recommended: React Course | Hey everyone, If you're looking to learn React, I highly recommend checking out this video by... | 0 | 2024-06-27T06:42:51 | https://dev.to/yamancpu/wanna-learn-react-1mgf | javascript, react, webdev, beginners | Hey everyone,

If you're looking to learn React, I highly recommend checking out this video by BroCode. It offers excellent information for beginners, and his explanations are clear and easy to follow.

https://youtu.be/CgkZ7MvWUAA | yamancpu |

1,902,164 | Ensuring Data Integrity: Crucial Tests to Maintain Trustworthy Data | Maintaining the integrity of your data is paramount for making informed decisions and driving... | 0 | 2024-06-27T06:41:53 | https://dev.to/lohith0512/ensuring-data-integrity-crucial-tests-to-maintain-trustworthy-data-3361 | data, integrity, sales, analysis |

Maintaining the integrity of your data is paramount for making informed decisions and driving successful business outcomes. In this article, we'll explore three critical tests you can perform to ensure the reliability and trustworthiness of your data, using a real-world example to illustrate their importance.

Let's c... | lohith0512 |

1,902,162 | Dental Implants in Lahore: Are They Right for You? | Dental health is a crucial issue of normal well being, considerably affecting one nice of life.... | 0 | 2024-06-27T06:34:03 | https://dev.to/dentistinlahore/dental-implants-in-lahore-are-they-right-for-you-1o08 | Dental health is a crucial issue of normal well being, considerably affecting one nice of life. Dental Implants in Lahore for many people, missing enamel can lead to both bodily and psychological pain. Fortunately, cutting-edge dentistry gives a dependable solution: dental implants. In Lahore, the demand for dental im... | dentistinlahore | |

1,902,161 | Intensiv-Filter Himenviro: Your Partner in Cutting-Edge Air Filtration Technology | For over a century, Intensiv-Filter Himenviro has been a leader in clean air technology, providing... | 0 | 2024-06-27T06:33:31 | https://dev.to/marketing_intensivfilterh/intensiv-filter-himenviro-your-partner-in-cutting-edge-air-filtration-technology-3n72 | webdev, javascript, beginners, programming | For over a century, [Intensiv-Filter Himenviro](https://www.intensiv-filter-himenviro.com/) has been a leader in clean air technology, providing innovative solutions for industrial applications. Our expertise lies in developing and manufacturing advanced air filtration systems, including state-of-the-art [electrostatic... | marketing_intensivfilterh |

1,902,160 | How to develop comprehensive food delivery React Native mobile app? | Developing a full-featured React Native food delivery app involves several key sections and... | 0 | 2024-06-27T06:32:41 | https://dev.to/nadim_ch0wdhury/how-to-develop-comprehensive-food-delivery-react-native-mobile-app-2lap | Developing a full-featured React Native food delivery app involves several key sections and functionalities. Here's a breakdown of the main sections and their corresponding functionalities:

### Main Sections

1. **User Authentication and Profile Management**

- Sign Up / Sign In (email, phone, social media)

- Pro... | nadim_ch0wdhury | |

1,897,144 | Connect a VM with VS Code for Remote Development | Have you ever thought about using VS Code while accessing your virtual machine? This blog covers how... | 0 | 2024-06-27T06:31:55 | https://dev.to/arabian619/connect-a-vm-with-vs-code-for-remote-development-50n7 | linux, softwaredevelopment, vscode, beginners | Have you ever thought about using VS Code while accessing your virtual machine? This blog covers how to do that. By connecting your virtual machine with VS Code, you can leverage the features of both.

**Prerequisites:**

You should have some knowledge of VS Code and virtual machines. The following are needed:

- [VS Cod... | arabian619 |

1,902,159 | How Hiring a ReactJS Developer Can Transform Your Web Development? | How Hiring a ReactJS Developer Can Transform Your Web Development ReactJS has emerged as a... | 0 | 2024-06-27T06:29:50 | https://dev.to/maulik_shah/how-hiring-a-reactjs-developer-can-transform-your-web-development-10g9 | react, reactjsdevelopment, webdev | How Hiring a ReactJS Developer Can Transform Your Web Development

ReactJS has emerged as a game-changer in the ever-evolving web development landscape, revolutionizing how websites and web applications are built and maintained. Hiring a skilled ReactJS developer can significantly impact your web development efforts, of... | maulik_shah |

1,902,156 | GBase 8c SQL Performance Optimization with Sharding Keys | This example demonstrates how to reduce resource consumption and improve SQL performance by modifying... | 0 | 2024-06-27T06:24:46 | https://dev.to/congcong/gbase-8c-sql-performance-optimization-with-sharding-keys-32f5 | This example demonstrates how to reduce resource consumption and improve SQL performance by modifying the sharding keys.

## Example SQL

```sql

create table test(col int, id int, name text)

distribute by hash (col);

create table test_1(col int, id int, name varchar(64))

distribute by hash(name);

insert into test sel... | congcong | |

1,902,155 | GBase 8c SQL Performance Optimization with Sharding Keys | This example demonstrates how to reduce resource consumption and improve SQL performance by modifying... | 0 | 2024-06-27T06:24:46 | https://dev.to/congcong/gbase-8c-sql-performance-optimization-with-sharding-keys-2844 | This example demonstrates how to reduce resource consumption and improve SQL performance by modifying the sharding keys.

## Example SQL

```sql

create table test(col int, id int, name text)

distribute by hash (col);

create table test_1(col int, id int, name varchar(64))

distribute by hash(name);

insert into test sel... | congcong | |

1,902,153 | Nerdonice Reviews | At the heart of a nerdonic student’s success is an unquenchable thirst for knowledge. This passion... | 0 | 2024-06-27T06:24:08 | https://dev.to/shirley_serna_e6980cb7ab3/nerdonice-reviews-4onl | At the heart of a nerdonic student’s success is an unquenchable thirst for knowledge. This passion drives them to delve deeper into their subjects, often going beyond the prescribed curriculum to explore new concepts and ideas. Whether it’s staying up late to understand a complex theorem or spending weekends working on... | shirley_serna_e6980cb7ab3 | |

1,902,151 | TraceHawk: The Ultimate Block Explorer Every zkSync Hyperchain User Needs | TraceHawk is excited to offer a dedicated block explorer for ZkSync Hyperchains. From the... | 0 | 2024-06-27T06:23:08 | https://dev.to/tracehawk/tracehawk-the-ultimate-block-explorer-every-zksync-hyperchain-user-needs-3hpn |

<p>TraceHawk is excited to offer a dedicated block explorer for ZkSync Hyperchains. From the beginning, TraceHawk understands the significant need for a personalized block explorer, especially for application-spec... | tracehawk | |

1,902,150 | Explore a world beyond the traditional Application Delivery | A simple UI-driven approach for your complex CI/CD workflows. Buildpiper CI/CD goes further than... | 0 | 2024-06-27T06:22:42 | https://dev.to/anshul_kichara/explore-a-world-beyond-the-traditional-application-delivery-27p | devops, software, technology, trending | A simple UI-driven approach for your complex CI/CD workflows. Buildpiper CI/CD goes further than Jenkins in that it provides automated security scanning using reusable templates and blueprints for the entire enterprise, guaranteeing compliance right away. BuildPiper seamlessly incorporates

## Still using traditional C... | anshul_kichara |

1,902,130 | Algorithmic Trading Common Techniques | Algorithmic trading, also known as algo-trading, refers to the use of computer algorithms to... | 0 | 2024-06-27T06:16:30 | https://dev.to/harryjones78/algorithmic-trading-common-techniques-76g | Algorithmic trading, also known as algo-trading, refers to the use of computer algorithms to automatically execute [tradin](https://bit.ly/forex-solid-trading)g orders. This method leverages complex mathematical models and high-speed data processing to make trading decisions at speeds and frequencies that are impossibl... | harryjones78 | |

1,902,149 | Roombriks | Smarter digital workspaces for people, information, and AI Roombriks is a cutting-edge platform... | 0 | 2024-06-27T06:21:35 | https://dev.to/roombriks_124e6d4b2afcb1d/roombriks-15em | saas, crm, ai | Smarter digital workspaces for people, information, and AI

[Roombriks](https://roombriks.com/) is a cutting-edge platform designed to revolutionize virtual collaboration by providing highly customizable digital workspaces.

Our solution allows teams to create dynamic, interactive environments tailored to their specifi... | roombriks_124e6d4b2afcb1d |

1,902,135 | "Buy VCC with Crypto: The Ultimate Privacy Solution" | Moreover, the flexibility offered when you buy VCC with crypto is unparalleled. Cryptocurrencies are... | 0 | 2024-06-27T06:20:24 | https://dev.to/buyvcc/buy-vcc-with-crypto-the-ultimate-privacy-solution-355b | vcc, career, webdev, careerdevelopment | Moreover, the flexibility offered when you [buy VCC with crypto](https://cardvcc.com/) is unparalleled. Cryptocurrencies are not bound by the same geographical and institutional restrictions as traditional currencies, making them ideal for international transactions. This means that individuals and businesses can buy V... | accsmarket |

1,902,133 | Which New WordPress Plugins Can Transform Your Website? | Introduction WordPress plugins play a crucial role in enhancing the functionality and user... | 0 | 2024-06-27T06:19:57 | https://dev.to/hirelaraveldevelopers/which-new-wordpress-plugins-can-transform-your-website-3ce7 | webdev, programming, ai, css | <h2>Introduction</h2>

<p>WordPress plugins play a crucial role in enhancing the functionality and user experience of websites. They are essential tools that help in adding features, improving performance, and staying competitive in the digital landscape. Keeping your plugins up-to-date is key to ensuring your website r... | hirelaraveldevelopers |

1,902,132 | Create Makeup Basic Website using Wix Studio Challenge ! | This is a submission for the Wix Studio Challenge . What I Built Welcome to "Creative... | 0 | 2024-06-27T06:17:34 | https://dev.to/creation_world/create-makeup-basic-website-using-wix-studio-5b9i | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

## What I Built

Welcome to "Creative World," your ultimate guide to mastering the basics of makeup! Whether you're a beginner looking to start your beauty journey or a seasoned enthusiast seeking to refine your skills, our website ... | creation_world |

1,902,131 | Algorithmic Trading Common Techniques | Algorithmic trading, also known as algo-trading, refers to the use of computer algorithms to... | 0 | 2024-06-27T06:16:30 | https://dev.to/harryjones78/algorithmic-trading-common-techniques-49gk | Algorithmic trading, also known as algo-trading, refers to the use of computer algorithms to automatically execute [tradin](https://bit.ly/forex-solid-trading)g orders. This method leverages complex mathematical models and high-speed data processing to make trading decisions at speeds and frequencies that are impossibl... | harryjones78 | |

1,902,129 | GPT-4o — Are We Being LIED To? | Are we being lied to by OpenAI (and others) about how quickly AI is improving? Is AI overhyped? Or... | 0 | 2024-06-27T06:08:37 | https://dev.to/safdarali/gpt-4o-are-we-being-lied-to-4h0d | ai, aihype, chatgpt | Are we being lied to by OpenAI (and others) about how quickly AI is improving?

Is AI overhyped? Or is this another “NFT moment” where the over-hype will be followed by a big bust?

BTW, full disclosure here. I was never a believer in the NFT hype cycle. But I am instinctively more of a believer in the AI hype.

I read... | safdarali |

1,902,128 | Entity associations in EF Core | Entity associations in EF Core are a crucial part of modeling relationships between different... | 0 | 2024-06-27T06:04:22 | https://dev.to/muhammad_salem/entity-associations-in-ef-core-2666 | Entity associations in EF Core are a crucial part of modeling relationships between different entities in your database. Let's dive into the different types of associations and best practices for implementing them, with a focus on navigation properties and foreign keys.

1. Types of Associations

There are three main t... | muhammad_salem | |

1,902,119 | C# lambdas | In this series we have learned about delegates and event driven programming. C# has built in... | 27,862 | 2024-06-27T06:00:35 | https://dev.to/emanuelgustafzon/c-lambdas-3754 | csharp, lambda | In this series we have learned about delegates and event driven programming.

C# has built in delegates as we saw before with the EventHandler for events.

Now we will look at `lamdas`. Lambdas are highly adopted in functional programming and exist in many modern languages. It is a a way to write short hand, anonymou... | emanuelgustafzon |

1,892,702 | Optimizing the API Lifecycle: A Complete Guide | Imagine you're an architect tasked with building a massive skyscraper. You'd need a solid plan,... | 0 | 2024-06-27T06:00:00 | https://www.getambassador.io/blog/optimizing-api-lifecycle-complete-guide | api, apilifecycle, design, development | Imagine you're an architect tasked with building a massive skyscraper. You'd need a solid plan, right? You can't just start slapping bricks together willy-nilly. The same goes for creating APIs. Without a well-defined lifecycle, your API project could quickly turn into a towering mess.

This is why mastering the API li... | getambassador2024 |

1,902,125 | Best Digital Marketing Course Training In Hyderabad | Best Digital Marketing Course Training at Kapil IT Skill Hub With a focus on experiential learning... | 0 | 2024-06-27T05:59:36 | https://dev.to/kapildmseo3_c64af9ff24a09/best-digital-marketing-course-training-in-hyderabad-5eof | Best [Digital Marketing Course Training](https://www.kapilitshub.com/dm-techhnology) at Kapil IT Skill Hub With a focus on experiential learning and industry immersion, Digiquest Academy stands out as a top choice for digital marketing enthusiasts in Hyderabad. Their courses are designed by industry experts and cover a... | kapildmseo3_c64af9ff24a09 | |

1,902,112 | Stop using one table for your client's | Do you have a saas? And you have a lot of clients? I know you hate managing them, don't you? Follow... | 0 | 2024-06-27T05:31:49 | https://dev.to/alfianriv/stop-using-one-table-for-your-clients-4d67 | typescript, postgres, programming, database | Do you have a saas? And you have a lot of clients? I know you hate managing them, don't you?

Follow my way to manage it easily, no need to use difficult logic and make you frustrated.

All you need is nestjs, postgres. Yes, that's enough.

We will use multi tenancy method with postgres multi schema. Maybe if it is descr... | alfianriv |

1,902,124 | 3D Modeling | Our high-quality 3D modeling services bring your ideas to life. We specialize in creating stunning... | 0 | 2024-06-27T05:53:49 | https://dev.to/pinnacleinfotechsolu/3d-modeling-190l | productivity | Our high-quality 3D modeling services bring your ideas to life. We specialize in creating stunning and accurate 3D models for Architecture, Engineering, and Construction (AEC) projects. We also offer 3D BIM modeling for a data-rich workflow.

is a timeless staple that has secured its place in the fashion world since Coco Chanel first introduced it in the 1920s. The beauty of the LBD lies in its versatility. It can be ... | rrerefrs |

1,902,117 | Journey of Web Development | From concept to launch, discover the steps involved in creating a successful website. Learn about... | 0 | 2024-06-27T05:43:58 | https://dev.to/skjmkj/journey-of-web-development-4pbb | webdev, softwaredevelopment, technology, uidesign |

From concept to launch, discover the steps involved in creating a successful website. Learn about the design, development, testing, and deployment phases that bring a website to life. #technology #customwebappdevelo... | skjmkj |

1,902,116 | The Ultimate Guide to Summer Fashion Trends" | 1. Embracing Bright Colors and Bold Patterns Summer is the perfect season to experiment with bright... | 0 | 2024-06-27T05:42:00 | https://dev.to/rrerefrs/the-ultimate-guide-to-summer-fashion-trends-56gn | fashion, hood, hoodiee, clotinh | **1. Embracing Bright Colors and Bold Patterns**

Summer is the perfect season to experiment with bright colors and bold patterns. This year, vibrant hues like neon pink, electric blue, and sunshine yellow are making a big splash. These eye-catching shades not only capture the joyful spirit of summer but also help you ... | rrerefrs |

1,902,115 | Timeless Wardrobe Staples Every Woman Should Own" | Introduction to Timeless Wardrobe Staples In the ever-evolving world of fashion, trends... | 0 | 2024-06-27T05:38:48 | https://dev.to/rrerefrs/timeless-wardrobe-staples-every-woman-should-own-n1k | fashion, hoodie, tshirt, clothin | ### Introduction to Timeless Wardrobe Staples

In the ever-evolving world of fashion, trends come and go with the seasons. However, some wardrobe pieces stand the test of time, transcending fleeting fads and becoming essential elements of a well-rounded closet. These timeless staples form the backbone of any wardrob... | rrerefrs |

1,902,114 | Managing Headaches from Intense Workouts: A Comprehensive Guide | Intense workouts can be incredibly rewarding, offering numerous physical and mental health benefits.... | 0 | 2024-06-27T05:38:10 | https://dev.to/ekamyoga24/managing-headaches-from-intense-workouts-a-comprehensive-guide-575g | headaches, yoga, surya, weightloss | Intense workouts can be incredibly rewarding, offering numerous physical and mental health benefits. However, they can sometimes lead to headaches, which can be frustrating and debilitating. Understanding why these headaches occur and how to manage and prevent them is crucial for maintaining a healthy and effective fit... | ekamyoga24 |

1,902,113 | Enhancing Performance in Your React Application | React is a premier library for crafting dynamic and interactive web apps. As your React project... | 0 | 2024-06-27T05:32:10 | https://dev.to/msubhro/enhancing-performance-in-your-react-application-4pno | react, reactjsdevelopment, performance, javascriptlibraries | React is a premier library for crafting dynamic and interactive web apps. As your React project grows, ensuring it remains performant becomes critical. Here are some effective methods to boost the performance of your React applications.

## Utilize React’s Built-in Optimizations

**React.memo for Memoization**

React.me... | msubhro |

1,902,111 | Starting new career in software development | Hello friend, my name is Furaha. To day I start new Career in software development,I haven't any... | 0 | 2024-06-27T05:29:00 | https://dev.to/furaha_emile_3d64a0fdb157/starting-new-career-in-software-development-20m6 | webdev | Hello friend, my name is Furaha. To day I start new Career in software development,I haven't any skills in this career but I really like how to code and how to build some amazing coding products like website, app, or system.

In order to achieve my goal i was start 30day challenge, and I think this challenge will help ... | furaha_emile_3d64a0fdb157 |

1,902,110 | Introduction to Flask Package for Building APIs for React | Introduction to Flask Package for Building APIs for React Flask is a lightweight WSGI web... | 27,884 | 2024-06-27T05:28:10 | https://dev.to/plug_panther_3129828fadf0/introduction-to-flask-package-for-building-apis-for-react-p9l | flask, react, api, webdev | # Introduction to Flask Package for Building APIs for React

Flask is a lightweight WSGI web application framework in Python. It is designed with simplicity and flexibility in mind, making it an excellent choice for creating APIs that can be consumed by front-end applications, such as those built with React. In this bl... | plug_panther_3129828fadf0 |

1,902,109 | Welcome to the New Age of Manufacturing | Hey there! Let’s embark on an adventure into the world of manufacturing but with a twist. We're not... | 27,673 | 2024-06-27T05:26:52 | https://dev.to/rapidinnovation/welcome-to-the-new-age-of-manufacturing-366a | Hey there! Let’s embark on an adventure into the world of manufacturing but

with a twist. We're not just talking about any manufacturing process – we're

diving into how Robotic Process Automation (RPA) is changing the game. Imagine

a world where managing inventory is as smooth as your favorite jazz tune. In

this new ag... | rapidinnovation | |

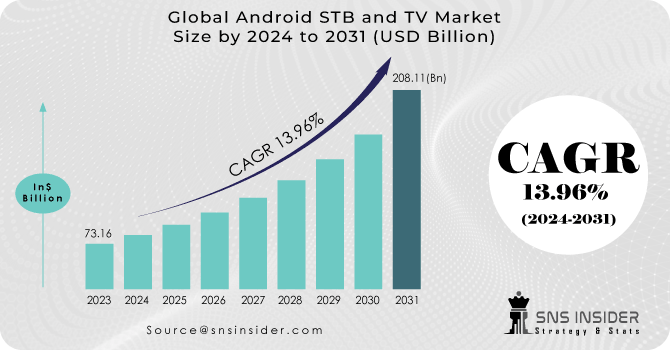

1,902,108 | Android STB and TV Market Expansion Strategies Unveiled | The Android STB and TV Market Size was valued at $ 73.16 Bn in 2023 and is expected to reach $ 208.11... | 0 | 2024-06-27T05:26:44 | https://dev.to/vaishnavi_farkade_864f915/android-stb-and-tv-market-expansion-strategies-unveiled-1ni6 | The Android STB and TV Market Size was valued at $ 73.16 Bn in 2023 and is expected to reach $ 208.11 Bn by 2031 and grow at a CAGR of 13.96% by 2024-2031.

**Market Scope & Overview:**

The latest update on the Andr... | vaishnavi_farkade_864f915 | |

1,902,107 | How you can overclock your ASIC miner’s performance for enhanced results? | Here is how you can overclock your ASIC miner's performance for enhanced results: Assess your... | 0 | 2024-06-27T05:26:24 | https://dev.to/lillywilson/how-you-can-overclock-your-asic-miners-performance-for-enhanced-results-2j5k | asic, cryptocurrency, bitcoin, crypto | Here is how you can **[overclock your ASIC miner's ](https://asicmarketplace.com/blog/safely-overclock-an-asic-miner/)**performance for enhanced results:

- **Assess your Miner**: It is important to understand the strengths and weaknesses of your miner before you begin an overclock. Check the manufacturer's overclockin... | lillywilson |

1,902,106 | How to develop comprehensive food delivery web app? | Developing a comprehensive food delivery app requires multiple sections and functionalities to... | 0 | 2024-06-27T05:25:31 | https://dev.to/nadim_ch0wdhury/how-to-develop-comprehensive-food-delivery-web-app-2lc4 | Developing a comprehensive food delivery app requires multiple sections and functionalities to provide a smooth user experience. Here's a detailed breakdown of the sections and functionalities:

### 1. **User Section**

#### Functionalities:

- **User Registration/Login:**

- Email/Phone/Google/Facebook login

- **Profi... | nadim_ch0wdhury | |

1,902,105 | Top 10 JavaScript Frameworks to Learn in 2024 | JavaScript frameworks have revolutionised the way we develop web applications, offering powerful... | 0 | 2024-06-27T05:25:01 | https://dev.to/delia_code/top-10-javascript-frameworks-to-learn-in-2024-41mn | webdev, javascript, beginners, programming | JavaScript frameworks have revolutionised the way we develop web applications, offering powerful tools and features that streamline development and enhance user experience. As we move into 2024, staying current with the latest frameworks is essential for any developer looking to advance their career. Here are the top 1... | delia_code |

1,902,104 | Introduction to OpenCV: The Ultimate Guide for Beginners | Introduction to OpenCV: The Ultimate Guide for Beginners OpenCV (Open Source Computer... | 27,883 | 2024-06-27T05:24:12 | https://dev.to/plug_panther_3129828fadf0/introduction-to-opencv-the-ultimate-guide-for-beginners-57l5 | opencv, computervision, python, imageprocessing | ## Introduction to OpenCV: The Ultimate Guide for Beginners

OpenCV (Open Source Computer Vision Library) is an open-source computer vision and machine learning software library. It contains more than 2500 optimized algorithms, which can be used for various computer vision and machine learning tasks. OpenCV is widely u... | plug_panther_3129828fadf0 |

1,902,103 | These have been shown to decorate the | A test carried out by way of researchers at the University of California, Davis, examined the effects... | 0 | 2024-06-27T05:23:45 | https://dev.to/milutrukler/these-have-been-shown-to-decorate-the-g3l | A test carried out by way of researchers at the University of California, Davis, examined the effects of on mice fed a high-fat food plan. The consequences discovered that the mice supplemented with professional a large bargain in weight gain and fats accumulation as compared to the manipulate corporation. Korean Ginse... | milutrukler | |

1,902,102 | Store Procedure for getting all Items from Item table MSSQL | use Shop; -- table name shop CREATE PROCEDURE AllItems -- procedure name AllItems AS SELECT *FROM... | 0 | 2024-06-27T05:22:56 | https://dev.to/md_shariarhaque_11695a3/store-procedure-for-getting-all-items-from-item-table-mssql-5e5m | storeprocedure, mssql | use Shop; -- table name shop

CREATE PROCEDURE AllItems -- procedure name AllItems

AS

SELECT *FROM Item

GO;

EXEC AllItem; -- retrive procedure | md_shariarhaque_11695a3 |

1,902,101 | Regenerative Benefits of Exosome Therapy for Skin and Hair Rejuvenation | Exosome therapy is a promising approach in aesthetic dermatology that is being used by... | 0 | 2024-06-27T05:21:33 | https://dev.to/advancexo/regenerative-benefits-of-exosome-therapy-for-skin-and-hair-rejuvenation-f3n |

Exosome therapy is a promising approach in aesthetic dermatology that is being used by dermatologists and cosmetologists alike to deliver stunning looking skin and hair. Stem cells secrete exosomes in the form of ti... | advancexo | |

1,902,100 | The Power of HealthTech Marketing Agencies | In today's rapidly evolving healthcare landscape, HealthTech (Healthcare Technology) stands at the... | 0 | 2024-06-27T05:20:43 | https://dev.to/smith22/the-power-of-healthtech-marketing-agencies-6ii | webdev | In today's rapidly evolving healthcare landscape, HealthTech (Healthcare Technology) stands at the forefront of innovation. From telemedicine to AI-driven diagnostics, these technological advancements are revolutionizing patient care, improving outcomes, and streamlining operations. However, for HealthTech companies to... | smith22 |

1,902,099 | "Balancing Physical and Emotional Intimacy in Relationships" | Intimacy is a cornerstone of healthy and fulfilling relationships, comprising both physical and... | 0 | 2024-06-27T05:19:11 | https://dev.to/keshaunpadberg/balancing-physical-and-emotional-intimacy-in-relationships-56p9 | healthydebate, fitness, gym | Intimacy is a cornerstone of healthy and fulfilling relationships, comprising both physical and emotional elements. While physical intimacy often involves touch, closeness, and sexual activity, emotional intimacy revolves around deep emotional connection, trust, and mutual understanding. Achieving a balance between the... | keshaunpadberg |

1,902,098 | Comprehensive Guide to On-Call Scheduling Software for Enhanced Incident Response | Effective incident response is critical for maintaining the reliability and availability of digital... | 0 | 2024-06-27T05:15:44 | https://www.squadcast.com/incident-response-tools/on-call-scheduling-software | oncall, oncallscheduling, oncallschedulingsoftware | Effective incident response is critical for maintaining the reliability and availability of digital services. On-call scheduling software plays an integral role in this process by ensuring the right personnel are available to address issues as they arise. This article explores the importance of on-call scheduling, the ... | squadcastcommunity |

1,902,094 | Implementando Modal de Confirmação Reutilizável com React | Introdução Modais de confirmação são componentes essenciais em muitas aplicações web,... | 0 | 2024-06-27T05:11:53 | https://dev.to/vitorrios1001/implementando-modal-de-confirmacao-reutilizavel-com-react-1j2m | webdev, javascript, programming, react | ### Introdução

Modais de confirmação são componentes essenciais em muitas aplicações web, permitindo que os usuários confirmem ações críticas antes de prosseguir. Neste artigo, apresento uma solução prática para criar um modal de confirmação reutilizável em React. A abordagem utiliza um contexto para gerenciar o estad... | vitorrios1001 |

1,902,097 | 5 Top Flutter App Development Companies in the USA for Exceptional Mobile Apps | Introduction to Flutter App Development As the mobile app development landscape continues to evolve,... | 0 | 2024-06-27T05:07:58 | https://dev.to/apptagsolution/5-top-flutter-app-development-companies-in-the-usa-for-exceptional-mobile-apps-4b4d | flutter, app, development, companies | Introduction to Flutter App Development

As the mobile app development landscape continues to evolve, Flutter has emerged as a game-changing framework that has captured the attention of developers and businesses alike. Flutter, a cross-platform development tool created by Google, offers a unique approach to building hig... | apptagsolution |

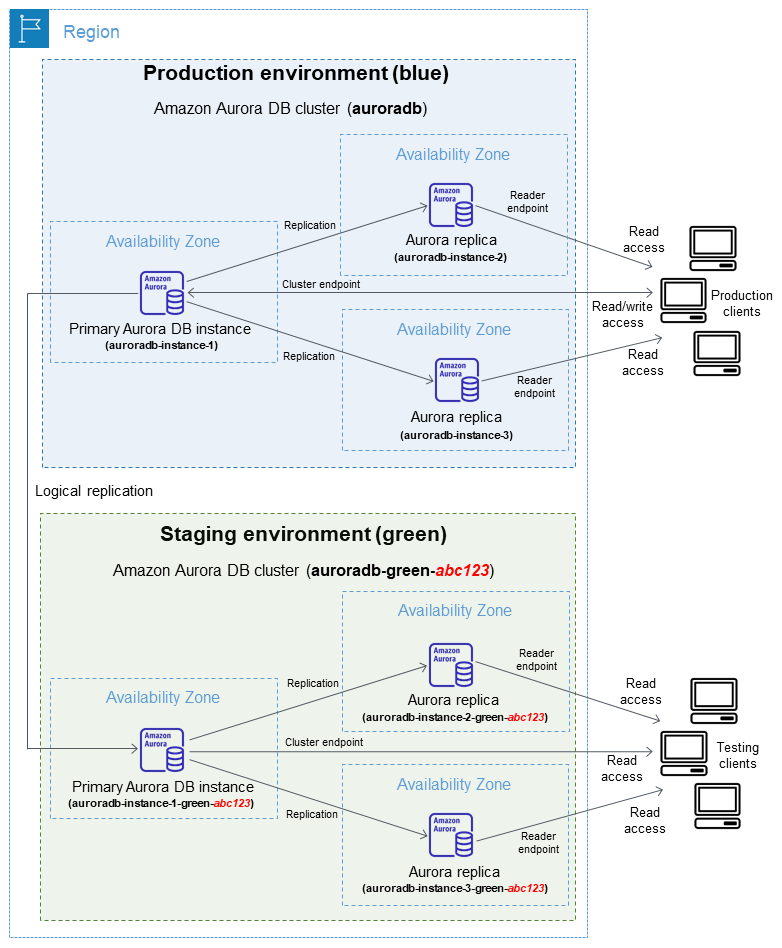

1,902,096 | AWS RDS Blue/Green Deployment for Aurora using Terraform | Why ? If you are using AWS RDS and you have any of the following use cases. Upgrade DB... | 0 | 2024-06-27T05:07:08 | https://dev.to/chiragdm/aws-rds-bluegreen-deployment-for-aurora-using-terraform-3e77 | aws, rds, devops, terraform |

Why ?

If you are using AWS RDS and you have any of the following use cases.

- Upgrade DB Major/Minor version without impacting LIVE production cluster with zero downtime.

- Easily create a production-ready staging... | chiragdm |

1,902,093 | Make a grid element span to the full width of the parent | No buildup. Let's get to the point. You have a grid container, and it has some child elements... | 0 | 2024-06-27T05:05:58 | https://dev.to/sgvugaurav/make-a-grid-element-span-to-the-full-width-of-the-parent-3ecc | css, grid, webdev, beginners | No buildup. Let's get to the point.

You have a grid container, and it has some child elements divided into as many columns as you want. For now, let's say the grid container has seven elements, and those elements are divided into three columns. You want the seventh element to take up the full width of the parent.

Her... | sgvugaurav |

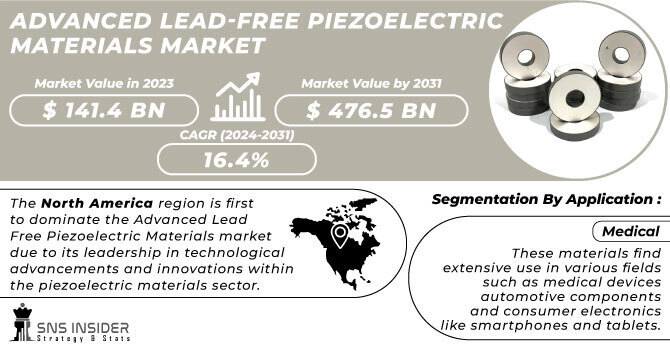

1,902,095 | Advanced Lead-Free Piezoelectric Materials Market Size Dynamics and Opportunities | The Advanced Lead-Free Piezoelectric Materials Market Size was valued at $ 141.4 Bn in 2023 and is... | 0 | 2024-06-27T05:05:52 | https://dev.to/vaishnavi_farkade_864f915/advanced-lead-free-piezoelectric-materials-market-size-dynamics-and-opportunities-hcl | The Advanced Lead-Free Piezoelectric Materials Market Size was valued at $ 141.4 Bn in 2023 and is expected to reach $ 476.5 Bn by 2031 and grow at a CAGR of 16.4% by 2024-2031.

**Market Scope & Overview:**

The res... | vaishnavi_farkade_864f915 | |

1,902,092 | Laravel Eager Loading – Loading Relationships Efficiently with Eloquent | 👋 Introduction Ah, Laravel! The darling of the PHP world, the Beyoncé of web frameworks.... | 27,882 | 2024-06-27T05:00:31 | https://n3rdnerd.com/laravel-eager-loading-loading-relationships-efficiently-with-eloquent/ | php, database, laravel, webdev | ## 👋 Introduction

Ah, Laravel! The darling of the PHP world, the Beyoncé of web frameworks. Whether you’re a seasoned developer or a newbie who’s just realized that PHP isn’t some sort of exotic insurance policy, Laravel has something to offer. Today, we’re diving into one of its most delightful features: Eager Loadin... | n3rdnerd |

1,902,091 | The Top 5 Benefits of Salesforce CRM for Startup Businesses | In the fast-paced and competitive world of startups, efficient management of customer relationships... | 0 | 2024-06-27T05:00:17 | https://dev.to/shruti_sood_543de8c196a4a/the-top-5-benefits-of-salesforce-crm-for-startup-businesses-o7j |

In the fast-paced and competitive world of startups, efficient management of customer relationships and streamlined operations are crucial for success. This is where Salesforce CRM comes into play. As a cloud-based customer relationship management platform, Salesforce CRM offers a robust solution tailored to meet t... | shruti_sood_543de8c196a4a | |

1,838,303 | Design Systems: The Backbone of Cohesive and Efficient Design | In today's digital landscape, consistency and efficiency are paramount for creating successful... | 27,353 | 2024-06-27T05:00:00 | https://dev.to/shieldstring/design-systems-the-backbone-of-cohesive-and-efficient-design-1ojl | design, ui, ux, designsystem | In today's digital landscape, consistency and efficiency are paramount for creating successful products. Enter design systems: a centralized hub of reusable UI components, code snippets, design guidelines, and best practices. A well-implemented design system acts as the backbone for a cohesive user experience (UX) acro... | shieldstring |

1,902,090 | The Ultimate Guide to Deep Cleaning Services for Your Home | What is Deep Cleaning? Deep cleaning goes beyond the surface to reach the hidden nooks and crannies... | 0 | 2024-06-27T04:59:04 | https://dev.to/link_builder_fcd0a6e8d321/the-ultimate-guide-to-deep-cleaning-services-for-your-home-47m7 | beginners, javascript, opensource, productivity | **What is Deep Cleaning?**

[Deep cleaning](https://athenacleaningservices.sg/) goes beyond the surface to reach the hidden nooks and crannies that are often missed during regular cleaning. It involves a thorough cleaning of all areas, including those that are hard to reach or often neglected. Deep cleaning typically in... | link_builder_fcd0a6e8d321 |

1,902,089 | Cab Service Near me | The Cabs Rajasthan Tour offers affordable car and flexible taxi rental services for adventure seekers... | 0 | 2024-06-27T04:52:43 | https://dev.to/nandkishan_meena_3c564a70/cab-service-near-me-93e | cab, taxi, services | The **Cabs Rajasthan Tour** offers affordable car and flexible taxi rental services for adventure seekers to explore Rajasthan, offering a thrilling journey within your budget. our customer can search **[Cab Service Near me ](https://maps.app.goo.gl/wq9ocpLQyRmGidwUA)**to get best ride which help you to explore the bea... | nandkishan_meena_3c564a70 |

1,902,082 | Paul Smith | Hi! I'm Paul Smith. I am engaged in online marketing services for diverse business organizations.... | 0 | 2024-06-27T04:42:23 | https://dev.to/paulsmith6193/paul-smith-491c | digital, marketing | Hi! I'm Paul Smith. I am engaged in online marketing services for diverse business organizations. Nowadays, Digging into the different ad formats of both Google and Facebook, I am trying to identify how long the content is after receiving a click shot to win a target customer. This is basically a [how many weeks for co... | paulsmith6193 |

1,902,088 | Discover Remote Opportunities in Tech: Introducing RemoteJobsly | Discover Remote Opportunities in Tech: Introducing RemoteJobsly As technology evolves, so... | 0 | 2024-06-27T04:51:54 | https://dev.to/chovy/discover-remote-opportunities-in-tech-introducing-remotejobsly-1j6l | jobs | # Discover Remote Opportunities in Tech: Introducing RemoteJobsly

As technology evolves, so does the landscape of work. The freedom to work from anywhere has transitioned from a luxury to a necessity for many professionals, especially in the tech industry. Today, I want to introduce you to a platform that embodies thi... | chovy |

1,902,087 | Building a Scalable Web App with Angular: A Comprehensive Guide | Angular is a popular front-end framework developed and maintained by Google. It provides a powerful... | 0 | 2024-06-27T04:49:29 | https://devtoys.io/2024/06/26/building-a-scalable-web-app-with-angular-a-comprehensive-guide/ | angular, webdev, tutorial, devtoys | ---

canonical_url: https://devtoys.io/2024/06/26/building-a-scalable-web-app-with-angular-a-comprehensive-guide/

---

Angular is a popular front-end framework developed and maintained by Google. It provides a powerful toolset for building dynamic, single-page web applications (SPAs) with a clean, maintainable structure... | 3a5abi |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.