id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,899,641 | An All-inclusive Guide on How To Develop a Stellar Healthcare App | Healthcare has seen unprecedented growth in recent years. With the ever-increasing adoption of... | 0 | 2024-06-25T05:32:39 | https://www.peppersquare.com/blog/an-all-inclusive-guide-on-how-to-develop-a-stellar-healthcare-app/ | development, mobile, healthcareapp, appdevelopment | Healthcare has seen unprecedented growth in recent years. With the ever-increasing adoption of technology that burgeoned during COVID-19, the healthcare industry is taking giant strides toward a digital-first approach. One of the most significant advancements in this regard is the development of healthcare apps. And al... | pepper_square |

1,899,640 | Power Apps (Part 2 ) | Introduction to Power Apps What is Power Apps Power Apps is suit of Apps,... | 0 | 2024-06-25T05:31:50 | https://dev.to/mubashar1009/power-apps-part-2--2od |

## Introduction to Power Apps

##What is Power Apps

Power Apps is suit of Apps, connectors and data platforms that allow users to create custom apps without knowing any extensive coding knowledge

##Apps

There are two types of Apps in Power Apps: Model-driven app a... | mubashar1009 | |

1,899,639 | Exploring the Elegance of Luxury Carpets for Your Home | Exploring the Elegance of Luxury Carpets for Your Home Perform you require a nice-looking as well as... | 0 | 2024-06-25T05:31:27 | https://dev.to/jdhfj_cnfog_49bb12551f4fc/exploring-the-elegance-of-luxury-carpets-for-your-home-4g8g | carpets | Exploring the Elegance of Luxury Carpets for Your Home

Perform you require a nice-looking as well as location this will be actually truly smooth not just includes worth however furthermore enhances the security of the house? Elegant rugs might be the service. Our team will check out various functions of these treatm... | jdhfj_cnfog_49bb12551f4fc |

1,899,638 | Mastering VPC in Networking: Top Interview Questions and Answers | What is Amazon VPC? A. Amazon Virtual Private Cloud (VPC) is a service that allows you to provision... | 0 | 2024-06-25T05:30:04 | https://dev.to/mamta_sharma_0104/mastering-vpc-in-networking-top-interview-questions-and-answers-d8h | 1. What is Amazon VPC?

A. Amazon Virtual Private Cloud (VPC) is a service that allows you to provision a logically isolated section of the AWS Cloud, giving you control over your virtual networking environment.

2. What are the components of VPC?

A. Key components include subnets, route tables, network access control l... | mamta_sharma_0104 | |

1,899,636 | Mudrex is the latest partner to join Zeeve RaaS integration network for seamless on/off-ramping | Zeeve is excited to welcome Mudrex as our newest integration partner in our Rollups as a Service... | 0 | 2024-06-25T05:28:42 | https://www.zeeve.io/blog/mudrex-is-the-latest-partner-to-join-zeeve-raas-integration-network-for-seamless-on-off-ramping/ | mudrex, zeeve, announcements | <p><a href="https://www.zeeve.io/">Zeeve</a> is excited to welcome <a href="https://mudrex.com/">Mudrex</a> as our newest integration partner in our Rollups as a Service (RaaS) ecosystem. This collaboration enhances our RaaS platform with Mudrex's leading ramp services, providing a seamless bridge between traditional p... | zeeve |

1,899,635 | Trusted Ember JS Development Experts | Pattem Digital is your trusted partner for ember js development company, offering expertise in... | 0 | 2024-06-25T05:26:52 | https://dev.to/dinesh_kumars_a26da2748a/trusted-ember-js-development-experts-d21 | Pattem Digital is your trusted partner for ember js development company, offering expertise in creating innovative web solutions that meet industry standards and exceed client expectations.

[ember js development company](https://pattemdigital.com/ember-js-development/)

| dinesh_kumars_a26da2748a | |

1,899,634 | Watch For Lightning | The clouds began to darken while the cool breeze came rolling through. I knew it was only a matter... | 0 | 2024-06-25T05:23:32 | https://dev.to/rohitelyts/watch-for-lightning-1hdk | jlabsdigital, lightning, espresso, thunderous | The clouds began to darken while the cool breeze came rolling through.

I knew it was only a matter of time before the skies opened up, cleansing the hot ground below with buckets of rain.

This was mother nature’s signal that it was time for me to take cover under my porch and watch the rain play out.

There are few ... | rohitelyts |

1,899,581 | 5 Best Tips to Improve Your Programming Skills | As a developer, good programming skills are the key to success in your career. Here are the five best... | 0 | 2024-06-25T05:16:48 | https://dev.to/ngotek/5-best-tips-to-improve-your-programming-skills-dpd | programming, learning, beginners | As a developer, good programming skills are the key to success in your career. Here are the five best tips that can help you improve your programming skills:

1. **Learn a New Programming Language**: Don't just focus on one programming language. Learn a new language to broaden your horizons and understand different con... | ngotek |

1,899,580 | IT Solutions Company in Dubai | Introduction Dubai is a bustling city known for its rapid development and innovation. In such a... | 0 | 2024-06-25T05:12:31 | https://dev.to/rajan_kumar_283e598abdf39/it-solutions-company-in-dubai-57hc | web, development | **Introduction**

Dubai is a bustling city known for its rapid development and innovation. In such a dynamic environment, businesses need reliable IT solutions to stay competitive. This is where an IT solutions

![Image description] (https://dev-to-uploads.s3.amazonaws.com/uploads/articles/n1i64pc7ojlvl5np04sb.jpg) A com... | rajan_kumar_283e598abdf39 |

1,899,578 | Kinto Power Electric Co., Ltd: Creating a More Sustainable Future for All | screenshot-1718315555889.png Kinto Power Electric Co Ltd: Creating a Better Future for... | 0 | 2024-06-25T05:11:29 | https://dev.to/jdhfj_cnfog_49bb12551f4fc/kinto-power-electric-co-ltd-creating-a-more-sustainable-future-for-all-4nlb | copper |

screenshot-1718315555889.png

Kinto Power Electric Co Ltd: Creating a Better Future for Everyone

Kinto Power Electric Co Ltd is a company that creates power devices that help people use less power and live in a more sustainable way. This company has many advantages that will make people happy with their purchase.

... | jdhfj_cnfog_49bb12551f4fc |

1,899,577 | Beer Store in Albany | Beer Universe Store offers Beer delivery store in Albany, at the location Beer Delivery near me... | 0 | 2024-06-25T05:10:37 | https://dev.to/beer_univ_3c43f6a4c5c9f5f/beer-store-in-albany-1eh5 | development, writing, web, wordpress | Beer Universe Store offers Beer delivery store in Albany, at the location Beer Delivery near me Albany. So, if you are looking for craft beer near me or white claw near me, we are the right choice. We have a presence in all major locations in New York State. We also have a local Beer store in Coxsackie, at the location... | beer_univ_3c43f6a4c5c9f5f |

1,899,576 | Wait for pending: A Suspense algorithm exploration | There is some great discourse going on about how <Suspense> timing should work in react@19. In... | 0 | 2024-06-25T05:05:35 | https://dev.to/alexandereardon/wait-for-pending-a-not-great-alternative-suspense-algorithm-1gdl | react, javascript | There is some [great discourse](https://github.com/facebook/react/issues/29898) going on about how `<Suspense>` timing should work in `react@19`.

In this post I explore what a `<Suspense>` algorithm would look like if all pending promises in a `<Suspense>` boundary were resolved before trying to re-render.

> This sta... | alexandereardon |

1,899,575 | Nylon Oxford Fabric: Strength and Flexibility Combined | Nylon Oxford Fabric: The Ultimate Mix Of Strength and Flexibility Introduction Nylon Oxford textile... | 0 | 2024-06-25T05:04:17 | https://dev.to/georgia_kcurieljp_c24d7/nylon-oxford-fabric-strength-and-flexibility-combined-4gg6 | design | Nylon Oxford Fabric: The Ultimate Mix Of Strength and Flexibility

Introduction

Nylon Oxford textile is merely a versatile and product durable may be used in many companies, including fashion, recreations, and outside tasks. The material's strong and nature versatile it suitable for a range of applications, from produc... | georgia_kcurieljp_c24d7 |

1,899,574 | How to Install Kubernetes Cluster on Ubuntu 22.04 | ==========How to Install Kubernetes Cluster on Ubuntu 22.04============ 172.25.0.5/16 ... | 0 | 2024-06-25T05:03:51 | https://dev.to/sashikapoor_gupta/how-to-install-kubernetes-cluster-on-ubuntu-2204-1o1c | ==========How to Install Kubernetes Cluster on Ubuntu 22.04============ 172.25.0.5/16

172.25.0.6/16

1) Set hostname on Each Node

sudo hostnamectl set-hostname ... | sashikapoor_gupta | |

1,899,573 | Install Elasticsearch | =======Install Elasticsearch:====== sudo apt update sudo apt install openjdk-11-jdk wget -qO -... | 0 | 2024-06-25T05:02:34 | https://dev.to/sashikapoor_gupta/install-elasticsearch-2513 | =======Install Elasticsearch:======

sudo apt update

sudo apt install openjdk-11-jdk

wget -qO - https://artifacts.elastic.co/GPG-KEY-elasticsearch | sudo apt-key add -

sudo apt-get install apt-transport-https

echo "deb https://artifacts.elastic.co/packages/7.x/apt stable main" | sudo tee -a /etc/apt/sources.list.d/... | sashikapoor_gupta | |

1,899,572 | Introducing TPUSD: Bitcoin's Native Stablecoin Set to Revolutionize the GameFi Experience | Introduction Before delving into how the Bitcoin-native stablecoin TPUSD can be integrated into the... | 0 | 2024-06-25T04:57:24 | https://dev.to/terplayer/introducing-tpusd-bitcoins-native-stablecoin-set-to-revolutionize-the-gamefi-experience-2a1k | btc, web3, cryptocurrency, gamedev | **Introduction**

Before delving into how the Bitcoin-native stablecoin TPUSD can be integrated into the GameFi sector, it is crucial to have a deeper understanding of the specific challenges currently faced by GameFi and the issues that the Bitcoin stablecoin TPUSD aims to address.

With the widespread adoption of cryp... | terplayer |

1,899,571 | About Twitter Card | we are not able to add twitter card for our nepali travel agency page, anyone can help to solve this... | 0 | 2024-06-25T04:50:48 | https://dev.to/liquor_land_7c787f707545b/about-twitter-card-11pe | webdev | we are not able to add twitter card for our [nepali travel agency](https://dokotours.com/) page, anyone can help to solve this issues | liquor_land_7c787f707545b |

1,899,570 | how to install jenkins on ubuntu | Step-1 Install Java Update your system Install java sudo apt install openjdk-21-jre java... | 0 | 2024-06-25T04:50:20 | https://dev.to/sashikapoor_gupta/how-to-install-jenkins-on-ubuntu-6jn | javascript, webdev | **Step-1 Install Java**

Update your system

**Install java**

sudo apt install openjdk-21-jre

**java -version**

It should look something like this after running the command

openjdk version "21.0.1" 2023-10-17

**Step-2 Install Jenkins**

The commands you provided are used to add the Jenkins repository and its GPG ... | sashikapoor_gupta |

1,899,624 | How to Enable Task View in Windows 11? 4 Best Methods! | Key Points: Keyboard Shortcut : Press Win + Tab to open Task View directly, even if the... | 0 | 2024-06-25T06:08:40 | https://winsides.com/how-to-enable-task-view-in-windows-11/ | howto, multitasking, taskmanagement, taskview | ---

title: How to Enable Task View in Windows 11? 4 Best Methods!

published: true

date: 2024-06-25 04:49:13 UTC

tags: HowTo,Multitasking,TaskManagement,TaskView

canonical_url: https://winsides.com/how-to-enable-task-view-in-windows-11/

cover_image: https://winsides.com/wp-content/uploads/2024/06/Enable-Task-View-Window... | vigneshwaran_vijayakumar |

1,899,569 | The Role of Roto Molding in Creating Durable Playgrounds | playground.png Roto Molding The Key to Safe and Durable Playgrounds As children all of us looked... | 0 | 2024-06-25T04:48:39 | https://dev.to/georgia_kcurieljp_c24d7/the-role-of-roto-molding-in-creating-durable-playgrounds-bh2 | design | playground.png

Roto Molding The Key to Safe and Durable Playgrounds

As children all of us looked forward to visiting the playground

It was a accepted place where we're able to let loose run around with your friends and also loads of fun

Nevertheless playgrounds are not just designed for play

Also they are desi... | georgia_kcurieljp_c24d7 |

1,899,568 | AWS Enhances Security with Passkeys and Mandatory MFA for Root Users | Amazon Web Services (AWS) has taken a significant step forward in enhancing the security of its cloud... | 0 | 2024-06-25T04:46:27 | https://dev.to/maxhar/aws-enhances-security-with-passkeys-and-mandatory-mfa-for-root-users-42db | webdev, aws | Amazon Web Services (AWS) has taken a significant step forward in enhancing the security of its cloud platform by introducing FIDO2 passkeys as a new multi-factor authentication (MFA) method and mandating MFA for root user accounts.

Passkeys: A Phishing-Resistant Authentication Solution

AWS has added support for FIDO2... | maxhar |

1,899,567 | Amazon Q Developer: A Powerful AI-Driven Coding Assistant | Amazon Q Developer is a cutting-edge AI-powered coding assistant that aims to revolutionize the way... | 0 | 2024-06-25T04:42:34 | https://dev.to/maxhar/amazon-q-developer-a-powerful-ai-driven-coding-assistant-2lgm | webdev, aws | Amazon Q Developer is a cutting-edge AI-powered coding assistant that aims to revolutionize the way developers work. Introduced as an evolution of the previous Amazon CodeWhisperer, this new tool offers a range of features that can significantly boost productivity and efficiency in software development.

One of the sta... | maxhar |

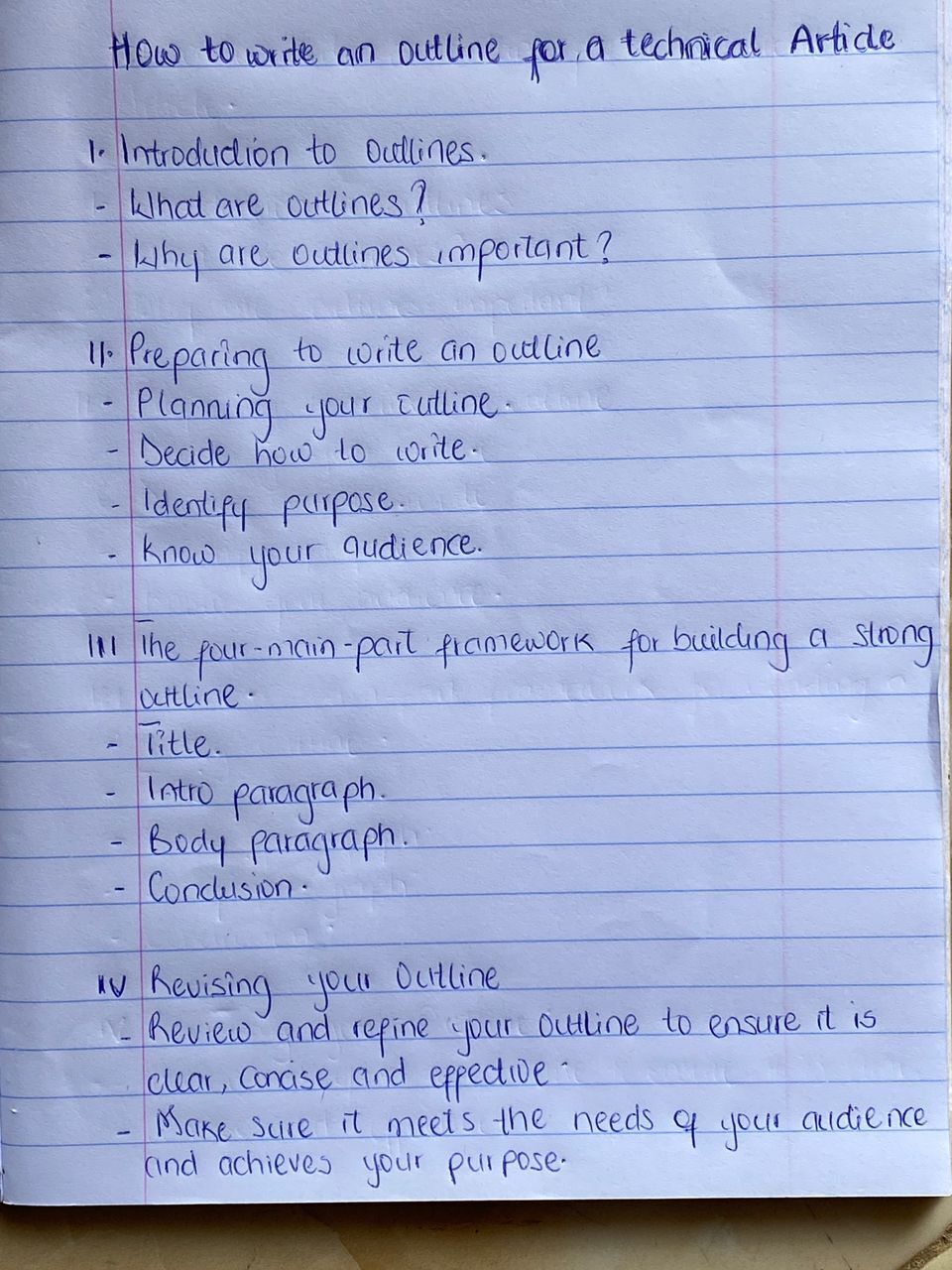

1,899,518 | How to Write an Outline for a Technical Article. | Have you ever had to write something and didn't know where to start? Writing a technical article... | 0 | 2024-06-25T04:41:35 | https://dev.to/spiff/how-to-write-an-outline-for-a-technical-article-oha | webdev, tutorial, writing, google |

Have you ever had to write something and didn't know where to start? Writing a technical article can feel like that; confusing and overwhelming. But there’s a trick to make it easier: using an outline. An outline... | spiff |

1,899,566 | How Sichuan DeepFast is Transforming the Oil Drilling Industry | Introduction towards Sichuan DeepFast Sichuan DeepFast is a continuous business revolutionizing the... | 0 | 2024-06-25T04:40:16 | https://dev.to/marie_twalkerr_3993a06a/how-sichuan-deepfast-is-transforming-the-oil-drilling-industry-4ca | oildrilling, sichuan | Introduction towards Sichuan DeepFast

Sichuan DeepFast is a continuous business revolutionizing the oil drilling market. They are a continuous business that focuses on the removal of oil coming from the ground. Sichuan DeepFast is a continuous business that was about for several years, they have innovated the oil ... | marie_twalkerr_3993a06a |

1,899,565 | Virtual Production Studio Dimension Selects AWS and Arch Platform | Dimension, a leading virtual production studio, has announced a strategic partnership with Amazon Web... | 0 | 2024-06-25T04:39:29 | https://dev.to/maxhar/virtual-production-studio-dimension-selects-aws-and-arch-platform-11b | webdev, aws | Dimension, a leading virtual production studio, has announced a strategic partnership with Amazon Web Services (AWS) and Arch Platform Technologies to establish the most extensive virtual production operation in the cloud. This move will enable Dimension to efficiently scale its virtual production workflows and collabo... | maxhar |

1,899,564 | Demystifying EMI Lockers and Streamlining Your EMI Payments | In today's world, owning the latest gadgets and appliances often hinges on the convenience of Equated... | 0 | 2024-06-25T04:37:34 | https://dev.to/epakconsultant/demystifying-emi-lockers-and-streamlining-your-emi-payments-1j42 | emi | In today's world, owning the latest gadgets and appliances often hinges on the convenience of Equated Monthly Installments (EMIs). But what happens when those EMI payments become a burden? Enter EMI Lockers, a concept revolutionizing how e-commerce platforms manage financed purchases. This article dives into the world ... | epakconsultant |

1,899,563 | A better way to learn programming. | In this post, I will be talking about two methods to learn how to program. The first method (common... | 0 | 2024-06-25T04:36:46 | https://dev.to/itric/a-better-way-to-learn-programming-h6l | learning, coding, beginners | In this post, I will be talking about two methods to learn how to program.

The first method (common method) is a step-by-step tutorial approach like following a tutorial. It goes something like this: "Step one, create this component." The tutorial then provides you with detailed instructions on how to create that spec... | itric |

1,899,560 | Load Balancer vs Reverse Proxy vs API Gateway | In large scale systems terms like Load Balancer, Reverse Proxy, and API Gateway often comes up. Lot... | 0 | 2024-06-25T04:32:43 | https://dev.to/manojgohel/load-balancer-vs-reverse-proxy-vs-api-gateway-3ged | aws, api, javascript | In large scale systems terms like Load Balancer, Reverse Proxy, and API Gateway often comes up. Lot of times these words will be used interchangeably, but it is important to understand the difference between them. Once you understand them then it makes it easy to choose which fits your problem very easily.

## Real lif... | manojgohel |

1,899,559 | Twitter Card | we want add twitter card in our nepali travel agency page, but the code is not working, and... | 0 | 2024-06-25T04:30:21 | https://dev.to/dokotours/twitter-card-3b4k | webdev | we want add twitter card in our [nepali travel agency](https://www.dokotours.com/) page, but the code is not working, and appearing, how can we solve this issues need help | dokotours |

1,899,558 | Dive into the World of NoSQL Databases 🌐 | NoSQL Databases by Christof Strauch provides an in-depth overview of NoSQL databases, their management, and usage in modern database systems. | 27,801 | 2024-06-25T04:29:44 | https://getvm.io/tutorials/nosql-databases | getvm, programming, freetutorial, technicaltutorials |

## Unraveling the Mysteries of NoSQL

Hey there, fellow data enthusiasts! 🤓 If you're like me, you've probably heard a lot about the rise of NoSQL databases in recent years. But what exactly are they, and how can they revolutionize the way we manage and process data? Well, let me tell you about a fantastic resource ... | getvm |

1,899,557 | Configuration Over Invention and Vice Versa: A Guide for Future Creators | Hey Future Creators! We all have fond memories of playing with LEGO bricks, don’t we? I remember... | 0 | 2024-06-25T04:29:25 | https://dev.to/hbetabessi/configuration-over-invention-and-vice-versa-a-guide-for-future-creators-5640 | programming, productivity, learning, coding | Hey Future Creators!

We all have fond memories of playing with LEGO bricks, don’t we? I remember those moments when I could build anything my imagination dreamed up. You had the choice between using a spaceship kit with instructions or scattering random LEGO bricks to create your own unique design. Both ways were supe... | hbetabessi |

1,899,555 | The Pros and Cons of Flutter App Development: Is Flutter the Right Choice for Your Next Project | As a developer, I'm always on the lookout for cutting-edge technologies that can streamline my... | 0 | 2024-06-25T04:28:02 | https://dev.to/apptagsolution/the-pros-and-cons-of-flutter-app-development-is-flutter-the-right-choice-for-your-next-project-3je8 | pros, cons, flutter, development | As a developer, I'm always on the lookout for cutting-edge technologies that can streamline my workflow and enhance the quality of my applications. One such technology that has garnered significant attention in recent years is Flutter, an open-source UI software development kit created by Google. Flutter has quickly ga... | apptagsolution |

1,899,554 | This Guy Makes $3M+ per Year With 0 Employees | Have you heard of a guy who had launched 12 startups in 12 months? The guy who to this day, has built... | 0 | 2024-06-25T04:26:11 | https://dev.to/manojgohel/this-guy-makes-3m-per-year-with-0-employees-7h9 | employees, job, startup, webdev | Have you heard of a [guy who had launched](https://www.wired.com/2014/08/12-startups-in-12-months/) 12 startups in 12 months? The guy who to this day, has built over 70+ products while backpacking across the world.

Yes, I am talking about [**Peter Levels**](https://x.com/levelsio).

He makes all his money while living... | manojgohel |

1,899,544 | Unveiling the Power: Exploring Browser Automation Studio | In the realm of web automation, Browser Automation Studio (BAS) emerges as a user-friendly tool for... | 0 | 2024-06-25T04:13:22 | https://dev.to/epakconsultant/unveiling-the-power-exploring-browser-automation-studio-1add | In the realm of web automation, Browser Automation Studio (BAS) emerges as a user-friendly tool for automating repetitive tasks within your web browser. This article delves into the core functionalities of BAS, empowering you to understand its potential for boosting efficiency and streamlining workflows.

What is Brows... | epakconsultant | |

1,899,553 | Bridging the Gap: Effective Strategies for Converting PDF Files to HTML | In the digital world, PDFs are ubiquitous for document sharing. However, for web content, HTML reigns... | 0 | 2024-06-25T04:23:07 | https://dev.to/epakconsultant/bridging-the-gap-effective-strategies-for-converting-pdf-files-to-html-5fhc | pdf, html | In the digital world, PDFs are ubiquitous for document sharing. However, for web content, HTML reigns supreme. This article explores various methods for converting PDF files into HTML format, ensuring your content reaches a wider audience with optimal accessibility.

Understanding the Conversion Process:

Converting a... | epakconsultant |

1,899,552 | The Importance of Reliable Storage Rack Factories | The Significance of Dependable Storage Rack Factories: Keep Your Products Or Services Safe plus... | 0 | 2024-06-25T04:23:05 | https://dev.to/zunosn_owpn_295995e3f27ce/the-importance-of-reliable-storage-rack-factories-2k2d | storage, storagerack | The Significance of Dependable Storage Rack Factories: Keep Your Products Or Services Safe plus Organized

Introduction:

Looking for the safer plus method which are revolutionary shop your products or services plus items? Search no further than dependable Storage Rack Products! These factories incorporate top-qual... | zunosn_owpn_295995e3f27ce |

1,899,551 | Maximizing Safety with Polished Stainless Steel Sheet | Maximizing Safety with Polished Stainless Steel Sheet: The Best Choice for Your Needs Looking for a... | 0 | 2024-06-25T04:21:12 | https://dev.to/sabutj_orkp_bc927ab394b76/maximizing-safety-with-polished-stainless-steel-sheet-n6m | design | Maximizing Safety with Polished Stainless Steel Sheet: The Best Choice for Your Needs

Looking for a remedy to increase safety and will be offering look and professional a Polished your establishment or projects? Polished Stainless Steel Sheet will you be answer. This material offers several advantages, innovative feat... | sabutj_orkp_bc927ab394b76 |

1,899,546 | JavaScript Short Tricks Time | Hey there, JavaScript enthusiasts! Whether you're a seasoned developer or just starting out, this... | 0 | 2024-06-25T04:20:07 | https://dev.to/ayushh/javascript-short-tricks-time-feh | webdev, javascript, beginners, programming | > Hey there, JavaScript enthusiasts! Whether you're a seasoned developer or just starting out, this blog is dedicated to sharing quick tips and tricks that can make your JavaScript coding more efficient and enjoyable. Let's dive into some handy techniques and shortcuts that will help you write cleaner, more effective c... | ayushh |

1,899,545 | Orchestrating Your Workflow Symphony: Exploring n8n.io for Automation | In today's fast-paced digital world, streamlining repetitive tasks and fostering seamless data flow... | 0 | 2024-06-25T04:16:34 | https://dev.to/epakconsultant/orchestrating-your-workflow-symphony-exploring-n8nio-for-automation-27lm | nocode | In today's fast-paced digital world, streamlining repetitive tasks and fostering seamless data flow across applications is crucial. This is where workflow automation tools like n8n.io come into play. This article delves into the world of n8n.io, exploring its capabilities and highlighting its potential to transform the... | epakconsultant |

1,899,543 | Temporary Fence Installation: Tips and Tricks | Temporary Fence Installation: Tips and Tricks Temporary fences could be used for different purposes... | 0 | 2024-06-25T04:08:48 | https://dev.to/sabutj_orkp_bc927ab394b76/temporary-fence-installation-tips-and-tricks-kf9 | design | Temporary Fence Installation: Tips and Tricks

Temporary fences could be used for different purposes such as securing construction sites, events, and festivals. It is the perfect solution if you would like to be protected your premises or keep people safe for the short period. Setting up a temporary anti intruder fen... | sabutj_orkp_bc927ab394b76 |

1,899,541 | What Scala does better than Haskell and vice versa | 📹 Hate reading articles? Check out the complementary video, which covers the same content. There... | 0 | 2024-06-25T04:04:00 | https://dev.to/zelenya/what-scala-does-better-than-haskell-and-vice-versa-al6 | functional, haskell, scala |

📹 Hate reading articles? Check out [the complementary video](https://youtu.be/K76vrOzU53o), which covers the same content.

---

There is this meme that *Haskell is better than Scala* or that *Scala is just a gateway drug to Haskell*. A long time ago, I used to believe this.

> _I saw how 4 different companies use Sc... | zelenya |

1,899,540 | Regex, the good bits. | There are two types of developers: those who fear regex because they don't understand it and those... | 0 | 2024-06-25T04:03:54 | https://dev.to/gewenyu99/regex-the-good-bits-4l2o | regex, tooling, cli, javascript | There are two types of developers: those who fear regex because they don't understand it and those who abuse regex to flex on their millennial teammates.

The purpose of this blog is to get you somewhere in between. Know the bits that will be super useful without being dangerous.

## Wait, regex can be dangerous?

Reg... | gewenyu99 |

1,899,539 | C++ 指向類別成員的指位器的實作細節 | C++ 可以定義指向成員函式的指位器, 不過因為成員函式可能是虛擬函式, 如何能夠透過指向成員函式的指位器達到呼叫正確的成員函式呢?本來就來簡單探究。(本文均以 g++ 為例,... | 0 | 2024-06-25T03:48:58 | https://dev.to/codemee/c-zhi-xiang-lei-bie-cheng-yuan-de-zhi-wei-qi-de-shi-zuo-xi-jie-19f7 | cpp | C++ 可以定義指向成員函式的指位器, 不過因為成員函式可能是虛擬函式, 如何能夠透過指向成員函式的指位器達到呼叫正確的成員函式呢?本來就來簡單探究。(本文均以 g++ 為例, 並且只探討單純的單一繼承)。

## 指向非虛擬函式的指位器

首先來看個簡單的[範例](https://godbolt.org/z/oaPx469xT), 建立指向非虛擬函式的指位器:

```cpp

#include <iostream>

using namespace std;

class A

{

public:

virtual void f_v1() { cout << "A::f_v1()" << endl; }

virtual... | codemee |

1,899,538 | Unleash Adventure with Night Camping and Rafting in Rishikesh: A Guide to Tours Club India Packages | Introduction Rishikesh, nestled in the foothills of the Himalayas and beside the holy Ganges River,... | 0 | 2024-06-25T03:42:54 | https://dev.to/toursclubindia/unleash-adventure-with-night-camping-and-rafting-in-rishikesh-a-guide-to-tours-club-india-packages-47nf | toursclubindia |

**Introduction**

Rishikesh, nestled in the foothills of the Himalayas and beside the holy Ganges River, is a prime destination for those seeking a blend of spirituality and adventure. One of the most exhilarating ways to experience this picturesque locale is through night camping in Rishikesh and indulging in a thril... | toursclubindia |

1,899,535 | Introduction to the Periodic Table of DevOps Tools | In the rapidly evolving landscape of DevOps, selecting the right tools can be daunting. The "Periodic... | 0 | 2024-06-25T03:33:43 | https://dev.to/iaadidev/introduction-to-the-periodic-table-of-devops-tools-55bn | devops, tutorial, beginners, tools |

In the rapidly evolving landscape of DevOps, selecting the right tools can be daunting. The "Periodic Table of DevOps Tools" serves as a comprehensive guide, categorizing and organizing tools into various functions, making it easier for practitioners to navigate the complex ecosystem. This blog will introduce you to ... | iaadidev |

1,899,174 | 🔧 Advanced JavaScript Performance Optimization: Techniques and Patterns | As JavaScript applications become more complex, optimizing performance becomes increasingly critical.... | 0 | 2024-06-25T03:30:00 | https://dev.to/parthchovatiya/advanced-javascript-performance-optimization-techniques-and-patterns-26g0 | javascript, webdev, programming | As JavaScript applications become more complex, optimizing performance becomes increasingly critical. This post dives into advanced techniques and patterns to elevate your JavaScript performance and ensure your applications run smoothly even under heavy loads.

## 🛠️ Memory Management

Efficient memory management is ke... | parthchovatiya |

1,899,534 | Optical Lens Coatings for Enhanced Vision | optical lens.png Optical Lens Coatings: Better Sight for Everyone Do you struggle with seeing things... | 0 | 2024-06-25T03:26:48 | https://dev.to/peter_cbutcherz_d18e8d3/optical-lens-coatings-for-enhanced-vision-1022 | design | optical lens.png

Optical Lens Coatings: Better Sight for Everyone

Do you struggle with seeing things plainly or feel you're squinting to see kind is small? Optical lens coatings will help

We are going to explore some great benefits of coatings for your glasses and explain ways to reap the benefits of this innovati... | peter_cbutcherz_d18e8d3 |

1,899,532 | Modern Metal Furniture for Stylish Living Spaces | Modern Metal Furniture for Stylish areas which are residing Metal Furniture or metal shelf the most... | 0 | 2024-06-25T03:24:24 | https://dev.to/shayne_wyldejnuy_692407b/modern-metal-furniture-for-stylish-living-spaces-5cii | furniture |

Modern Metal Furniture for Stylish areas which are residing

Metal Furniture or metal shelf the most alternatives which are popular those that love modern design. It's fashionable and trendy, and it will mix well and any space decoration that is available. Contemporary steel furniture is created utilizing steel w... | shayne_wyldejnuy_692407b |

1,838,263 | Discovering lesser-known features of Tailwind CSS | Hey everyone, hope you're all doing great! Today, I want to share some hidden gems in Tailwind CSS... | 0 | 2024-06-25T03:08:04 | https://dev.to/junlow/discovering-lesser-known-features-of-tailwind-css-308c | webdev, tailwindcss, beginners, css | Hey everyone, hope you're all doing great! Today, I want to share some hidden gems in Tailwind CSS that can make your web development smoother and more fun. I've kept it simple and beginner-friendly, so let's jump right in!

.

Building awareness for developer tools and other B2B products for technical audiences is a notoriously hard marketing niche. One of the most effective strategies for devto... | jeffmorhous |

1,899,526 | Exploring the Vision of Shandong Beyond Petroleum Equipment Co., Ltd. | Finding the Imagine Shandong Past times Oil Gadgets Carbon monoxide gas Ltd Shandong Past times Oil... | 0 | 2024-06-25T03:13:50 | https://dev.to/peter_cbutcherz_d18e8d3/exploring-the-vision-of-shandong-beyond-petroleum-equipment-co-ltd-20e | design |

Finding the Imagine Shandong Past times Oil Gadgets Carbon monoxide gas Ltd

Shandong Past times Oil Gadgets Carbon monoxide gas Ltd is actually really a proceeding company that creates gadgets that helps significance oil in addition to gas originating from the ground. Their Crown Block gadgets is actually real... | peter_cbutcherz_d18e8d3 |

1,899,525 | Diesel Generators in Mining Applications | Diesel Generators in Mining Applications – the vitality that is recharged want Diesel generators... | 0 | 2024-06-25T03:11:30 | https://dev.to/shayne_wyldejnuy_692407b/diesel-generators-in-mining-applications-4l1n | generator | Diesel Generators in Mining Applications – the vitality that is recharged want

Diesel generators work well devices offering the recharged energy had a need to run services and products in research applications. , we are going to discuss the skills, innovation, defense, use, and quality this is certainly top of gener... | shayne_wyldejnuy_692407b |

1,899,524 | Supercharging Front-End Development with AWS Amplify 🚀 | Supercharging Front-End Development with AWS Amplify 🚀 Introduction In... | 0 | 2024-06-25T03:10:08 | https://dev.to/virajlakshitha/supercharging-front-end-development-with-aws-amplify-55fh |

# Supercharging Front-End Development with AWS Amplify 🚀

### Introduction

In today's fast-paced world of web and mobile application development, speed and agility are paramount. Developers are constantly seeking tools and platforms... | virajlakshitha | |

1,899,523 | Suntech Safety Equipment (Shanghai) Co., Ltd.: Setting the Benchmark in Safety | 323e5226cea60cde1e8d6127c97a606b2a58639de65a93b348c371dde0a0ca0d.jpg Title: Suntech Safety Equipment... | 0 | 2024-06-25T03:02:41 | https://dev.to/shayne_wyldejnuy_692407b/suntech-safety-equipment-shanghai-co-ltd-setting-the-benchmark-in-safety-5ai2 | protection | 323e5226cea60cde1e8d6127c97a606b2a58639de65a93b348c371dde0a0ca0d.jpg

Title: Suntech Safety Equipment (Shanghai) Co., Ltd.: Setting the Benchmark in Safety

Introduction

Suntech Safety Equipment (Shanghai) Co., Ltd. is a business that's dedicated to safety providing that is top-notch for companies run in dangerous envi... | shayne_wyldejnuy_692407b |

1,899,522 | RECOVER FROM FRAUDULENT TRADING PLATFORM WITH CYBERPUNK PROGRAMMERS | My name is Nancy Nova, a seasoned Midwife nurse hailing from the bustling streets of New York. With... | 0 | 2024-06-25T02:55:35 | https://dev.to/nancy_nova_4985eeda68d864/recover-from-fraudulent-trading-platform-with-cyberpunk-programmers-1cd9 | lostcrypto, anonymoushelp, hireahackeronline | My name is Nancy Nova, a seasoned Midwife nurse hailing from the bustling streets of New York. With 32 years of dedicated service to the noble profession of nursing and the proud mother of three children—two daughters and a son who has recently turned 18—I have weathered many storms, both in my personal and professiona... | nancy_nova_4985eeda68d864 |

1,899,521 | Pure front-end magic: creating a real-time hair and lip color converter | If you like this project, please give me a star on GitHub, it will give me more motivation to expand... | 0 | 2024-06-25T02:53:50 | https://dev.to/mamumu123/pure-front-end-magic-creating-a-real-time-hair-and-lip-color-converter-4j6c | javascript, react, opensource, frontend | >If you like this project, please give me a star on [GitHub](https://github.com/mamumu123/web-makeup), it will give me more motivation to expand project functionality.

>TODO:

> - Support changing hairstyles;

> - Display hairstyle and lip recognition results

> - Find models with higher accuracy and faster derivation ... | mamumu123 |

1,899,520 | How to Rapidly Increase Organic Website Traffic in One Week? | Looking to quickly boost your website's organic traffic? While SEO is often seen as a long-term... | 0 | 2024-06-25T02:49:44 | https://dev.to/juddiy/how-to-rapidly-increase-organic-website-traffic-in-one-week-375f | seo, ai, learning | Looking to quickly boost your website's organic traffic? While SEO is often seen as a long-term strategy, there are effective methods that can significantly increase your site's visitors in a week or less. Here are some SEO techniques that yield immediate results, helping you enhance your website's natural traffic in t... | juddiy |

1,899,519 | Public APIs for Web Development Projects | When it comes to web development, APIs (Application Programming Interfaces) are indispensable tools.... | 0 | 2024-06-25T02:47:46 | https://dev.to/vyan/public-apis-for-web-development-projects-lhk | webdev, beginners, api, react | When it comes to web development, APIs (Application Programming Interfaces) are indispensable tools. They allow developers to interact with external services and data sources, enriching their applications with dynamic content and functionality. In this blog, we’ll explore some popular public APIs that you can use for v... | vyan |

1,899,517 | Transforming Lives with Sustainable Power: Kinto Power Electric Co., Ltd | Transforming Lives with Sustainable Power: Kinto Power Electric Co Ltd Benefits of Sustainable... | 0 | 2024-06-25T02:44:26 | https://dev.to/tusks_heinz_7a97cf3de5de3/transforming-lives-with-sustainable-power-kinto-power-electric-co-ltd-2nce | kintopowerelectric | Transforming Lives with Sustainable Power:

Kinto Power Electric Co Ltd

Benefits of Sustainable Energy:

Did you ever hear of sustainable power? It is a form of power which comes from sources which do not head out, including the sun's rays, wind, or water

Kinto Power Electric Co Ltd is a company that utilizes ca... | tusks_heinz_7a97cf3de5de3 |

1,899,516 | React: No Test Coverage BABEL Type Error | Hi Experts , I am facing below issues when I run the test coverage, I have tried all options to... | 0 | 2024-06-25T02:39:32 | https://dev.to/nani_moka/react-no-test-coverage-babel-type-error-1o5g | react | Hi Experts , I am facing below issues when I run the test coverage, I have tried all options to upgrade the Plugins no luck, for all the test suites I am getting the same error , I have verified all tests are good. any help is much appreciated

TypeError: [BABEL] /runner/_work/--- /node_modules/@babel/runtime/helpers... | nani_moka |

1,899,513 | The Benefits of GFCI Outlets in Wet Areas | Keep shops that are GFCI your Family own Safe As being a homeowner, it is vital to be aware of the... | 0 | 2024-06-25T02:28:29 | https://dev.to/tusks_heinz_7a97cf3de5de3/the-benefits-of-gfci-outlets-in-wet-areas-3ojc | gcfioutlet | Keep shops that are GFCI your Family own Safe

As being a homeowner, it is vital to be aware of the dangers which are potential to devices being electric cables in moist spots restrooms, kitchens, laundries, and areas being external. Moist places is extremely hazardous as a outcome that is total of potential for elec... | tusks_heinz_7a97cf3de5de3 |

1,899,511 | Mastering the Art of Construction Sealants: A Comprehensive Guide | screenshot-1719005324070.png Mastering the Art of Construction Sealants: A Comprehensive... | 0 | 2024-06-25T02:20:55 | https://dev.to/kee_sagargvey_7d0b3fdc5c/mastering-the-art-of-construction-sealants-a-comprehensive-guide-46lo | sealant | screenshot-1719005324070.png

Mastering the Art of Construction Sealants: A Comprehensive Guide

Sealants are important in construction because they fill gaps, prevent leakage, and protect against moisture. This comprehensive guide will provide you with all the information you need to know about mastering the art of ... | kee_sagargvey_7d0b3fdc5c |

1,899,504 | Set dtype with dtype argument functions and get it in PyTorch | You can set dtype with the functions which have dtype arguments and get it with dtype and type() as... | 0 | 2024-06-25T02:15:40 | https://dev.to/hyperkai/set-dtype-with-dtype-argument-functions-and-get-it-in-pytorch-13h2 | pytorch, dtype, argument, function | You can set `dtype` with the functions which have `dtype` arguments and get it with [dtype](https://pytorch.org/docs/stable/tensor_attributes.html#torch-dtype) and [type()](https://pytorch.org/docs/stable/generated/torch.Tensor.type.html) as shown below:

*Memos:

- I selected some popular `dtype` argument functions suc... | hyperkai |

1,899,501 | How to Protect Your Car While Using a Water Pressure Washer | How to Protect Your Car While Using a Water Pressure Washer Are you tired of spending hours cleaning... | 0 | 2024-06-25T02:10:07 | https://dev.to/tusks_heinz_7a97cf3de5de3/how-to-protect-your-car-while-using-a-water-pressure-washer-3cn1 | carpressurewasher | How to Protect Your Car While Using a Water Pressure Washer

Are you tired of spending hours cleaning your car with a bucket and sponge? Do you want a faster and more effective method of cleaning your car? A water pressure washer is the answer – it is an innovation in the world of car cleaning that will save you time... | tusks_heinz_7a97cf3de5de3 |

1,899,500 | GPT-4: Revolutionizing AI and Its Applications | Introduction The realm of artificial intelligence (AI) has seen unprecedented growth... | 27,673 | 2024-06-25T02:08:27 | https://dev.to/rapidinnovation/gpt-4-revolutionizing-ai-and-its-applications-g79 | ## Introduction

The realm of artificial intelligence (AI) has seen unprecedented growth and

innovation, fundamentally altering how we interact with technology. Among the

most significant advancements in this field is the development of GPT-4, a

state-of-the-art language model developed by OpenAI.

## What is GPT-4?

G... | rapidinnovation | |

1,899,499 | Sustainable Solutions: Microbial Biologics CRDMO Practices | photo_6255520691990412662_x.jpg Sustainable Solutions: Microbial Biologics CRDMO Practices for a... | 0 | 2024-06-25T02:02:13 | https://dev.to/kee_sagargvey_7d0b3fdc5c/sustainable-solutions-microbial-biologics-crdmo-practices-2a0a | microbial | photo_6255520691990412662_x.jpg

Sustainable Solutions: Microbial Biologics CRDMO Practices for a Better Future

We often think of renewable energy sources and reducing waste when we think about sustainability. But did you know solutions that sustainable also come in the form of microbial biologics CRDMO practices? We ... | kee_sagargvey_7d0b3fdc5c |

1,899,498 | Temporary Fences: Ensuring Compliance and Safety | screenshot-1717528364915.png Keep Your Site Safe with Temporary Fences Temporary fences are an... | 0 | 2024-06-25T02:00:21 | https://dev.to/paul_egebhardtm_5075201/temporary-fences-ensuring-compliance-and-safety-pdh | design |

screenshot-1717528364915.png

Keep Your Site Safe with Temporary Fences

Temporary fences are an innovative solution for ensuring compliance and safety at your construction site. These fences offer a range of advantages over permanent solutions, including their portability and adaptability to changing needs. Whether ... | paul_egebhardtm_5075201 |

1,899,475 | Integration with Jenkins! | Hey Devs and Testers! 👋 Are you tired of manual builds and deployments? Want to supercharge your... | 0 | 2024-06-25T01:09:49 | https://dev.to/gadekar_sachin/integration-with-jenkins-5288 | cicd, jenkins, automation, buildinpublic |

Hey Devs and Testers! 👋

Are you tired of manual builds and deployments? Want to supercharge your CI/CD pipeline? Look no further! Introducing Jenkins, the ultimate open-source automation server that will revolutionize your development workflow. 🌟

### What is Jenkins? 🤔

Jenkins is a powerful tool that helps autom... | gadekar_sachin |

1,891,817 | Comparing 13 LLM Providers API Performance with Node.js: Latency and Response Times Across Models | TL;DR: This article analyzes the performance of various large language model (LLM) APIs, including... | 0 | 2024-06-25T01:57:19 | https://dev.to/samestrin/comparing-13-llm-providers-api-performance-with-nodejs-latency-and-response-times-across-models-2ka4 | llm, ai, node, api | TL;DR: This article analyzes the performance of various large language model (LLM) APIs, including OpenAI, Anthropic, Cloudflare AI, Google Gemini, Groq, Hugging Face, and more. I tested small and large models from each provider with a simple prompt and limited output, sharing key findings and detailed response time an... | samestrin |

1,899,497 | Automatizing Git Branches Cleanup: Streamlining Your Workflow | Introduction Managing Git branches efficiently is essential for maintaining a clean and... | 0 | 2024-06-25T01:54:02 | https://dev.to/fernandomullerjr/automatizing-git-branches-cleanup-streamlining-your-workflow-1584 | git, automation | ## Introduction

Managing Git branches efficiently is essential for maintaining a clean and organized codebase. As your project grows, so does the number of branches, making it crucial to automate the cleanup process. In this article, we’ll explore best practices and a Python script to automate Git branch cleanup.

Best... | fernandomullerjr |

1,899,495 | AWS IoT projects | When working with AWS IoT for Arduino and other robotic projects, you have several libraries and SDKs... | 0 | 2024-06-25T01:52:02 | https://dev.to/sherlockyadav/aws-iot-projects-4e1f | When working with AWS IoT for Arduino and other robotic projects, you have several libraries and SDKs (Software Development Kits) available to facilitate communication and interaction with AWS IoT Core and other AWS services. Here are some of the commonly used libraries and SDKs:

AWS IoT Device SDK for Arduino: This S... | sherlockyadav | |

1,899,493 | Behind the Scenes of AI: How Language Models Like ChatGPT Work | If you’ve ever wondered how an AI like ChatGPT can understand and generate text that feels almost... | 0 | 2024-06-25T01:48:46 | https://dev.to/takitajwar17/behind-the-scenes-of-ai-how-language-models-like-chatgpt-work-3eb2 | machinelearning, ai, nlp, chatgpt | If you’ve ever wondered how an AI like ChatGPT can understand and generate text that feels almost human, today you’re in for a treat! Today, I want to show you what goes on under the hood, explained in a way that’s easy to grasp.

**Collecting and Prepping Data**

At it’s core, ChatGPT is just a finest engineering of p... | takitajwar17 |

1,899,490 | Tools to use alongside chatgpt for drawing AWS diagrams | Creating AWS diagrams alongside ChatGPT can be efficiently done using various tools that offer... | 0 | 2024-06-25T01:44:11 | https://dev.to/sherlockyadav/tools-to-use-alongside-chatgpt-for-drawing-aws-diagrams-5bmn | Creating AWS diagrams alongside ChatGPT can be efficiently done using various tools that offer diagramming capabilities. Here are some popular tools that you can use in conjunction with ChatGPT to draw AWS diagrams:

Lucidchart: Lucidchart is a web-based diagramming tool that offers a wide range of templates, including... | sherlockyadav | |

1,899,488 | How to communicate your brand's sustainable skincare packaging strategy to consumers | Skincare Packaging for the Earth: A Guide to Communicating Your Sustainable Brand Introduction: It... | 0 | 2024-06-25T01:42:45 | https://dev.to/deborah_blackeyr_032b8a/how-to-communicate-your-brands-sustainable-skincare-packaging-strategy-to-consumers-3hlk | sustainablecostmeticpackaging | Skincare Packaging for the Earth: A Guide to Communicating Your Sustainable Brand

Introduction:

It is in addition crucial to inform clients regarding the sustainability strategy, specifically relating to packaging if you are a skincare brand that cares about preserving the planet. This informative article will enab... | deborah_blackeyr_032b8a |

1,899,486 | dateformat | date_obj.strftime('%Y-%m-%d %H:%M:%S') Enter fullscreen mode Exit fullscreen mode ... | 0 | 2024-06-25T01:31:56 | https://dev.to/sunj/dateformat-1907 | ```

date_obj.strftime('%Y-%m-%d %H:%M:%S')

```

%Y 년도 - Year(4자리 표기)

%y 년도 (뒤에 2자리 표기)

%M 월 - 월 이름(January ~ December)

%m 월 - 월 숫자(00 ~ 12)

%d 일(00 ~ 31)

%H 시간 24시간(00 ~ 23)

%h 시간 12시간(00 ~ 12)

%i 분 (00 ~ 59)

%s 초 (00 ~ 59)

```

2017-01-12T14:12

datetime.strptime(DateTime, "%Y-%m-%dT%H:%M")

dateTime_obj.strft... | sunj | |

1,899,485 | Building a WebRTC Video Streaming App: A Step-by-Step Guide | Building a WebRTC Video Streaming App: A Step-by-Step Guide Project... | 0 | 2024-06-25T01:31:20 | https://dev.to/1saptarshi/building-a-webrtc-video-streaming-app-a-step-by-step-guide-234a | webdev, javascript, webrtc, projectbenatar | ## Building a WebRTC Video Streaming App: A Step-by-Step Guide

Project link:[GitHub_@1saptarshi ](https://1saptarshi.github.io/webrtc-video-streaming/)

### Introduction

Real-time communication has become an integral part of web applications. Whether it’s for video conferencing, online gaming, or telehealth, the need... | 1saptarshi |

1,899,484 | How to create a professional resume using your voice and AI | You can now use your voice and AI to create a professional resume. Creating a resume is... | 0 | 2024-06-25T01:30:39 | https://dev.to/balt1794/how-to-create-a-professional-resume-using-your-voice-and-ai-594j | chatgpt, ai, resume, programming | {% embed https://youtu.be/aHWdUwkysdI %}

You can now use your voice and AI to create a professional resume. Creating a resume is time-consuming but with the advent of AI, it's getting easier and easier.

1. Go to https://resumeboostai.com/

2. Click on Create a resume.

3. Once you are on the resume maker page, you can ... | balt1794 |

1,899,483 | How to create a professional resume using your voice and AI | You can now use your voice and AI to create a professional resume. Creating a resume is... | 0 | 2024-06-25T01:30:39 | https://dev.to/balt1794/how-to-create-a-professional-resume-using-your-voice-and-ai-9dh | chatgpt, ai, resume, programming | {% embed https://youtu.be/aHWdUwkysdI %}

You can now use your voice and AI to create a professional resume. Creating a resume is time-consuming but with the advent of AI, it's getting easier and easier.

1. Go to https://resumeboostai.com/

2. Click on Create a resume.

3. Once you are on the resume maker page, you can ... | balt1794 |

1,899,481 | Avalanche Photo Diodes for Medical Imaging Applications | screenshot-1708567083343.png What are Avalanche Photo Diodes (APDs)? APDs are photodetectors that... | 0 | 2024-06-25T01:25:50 | https://dev.to/paul_egebhardtm_5075201/avalanche-photo-diodes-for-medical-imaging-applications-2e12 | design | screenshot-1708567083343.png

What are Avalanche Photo Diodes (APDs)?

APDs are photodetectors that allow for high gain of electrical signals generated by incident light. Essentially, APDs turn light into electrical signals that can be read, processed, and displayed, which is why they are essential in various medical ... | paul_egebhardtm_5075201 |

1,899,480 | Can I monetize my blog ???? | How can I monetize my blog posts? If you have any solutions, please provide me with guidance. | 0 | 2024-06-25T01:24:34 | https://dev.to/raajaryan/can-i-monetize-my-blog--30on | beginners, tutorial, productivity, discuss | How can I monetize my blog posts? If you have any solutions, please provide me with guidance. | raajaryan |

1,899,479 | The importance of eco-design in sustainable skincare packaging | Why Eco-Design Should Matter to you Have you been tired and ill of seeing so waste like considerably... | 0 | 2024-06-25T01:24:07 | https://dev.to/deborah_blackeyr_032b8a/the-importance-of-eco-design-in-sustainable-skincare-packaging-2i6m | ecofriendlycosmeticpackaging | Why Eco-Design Should Matter to you

Have you been tired and ill of seeing so waste like considerably up within our world? Have really you stopped to give some thought to the effect of our actions concerning the surroundings that are environmental? For people who have actually, probably alert to the importance of sust... | deborah_blackeyr_032b8a |

1,899,387 | Animation | JavaFX provides the Animation class with the core functionality for all animations. Suppose you want... | 0 | 2024-06-24T22:20:30 | https://dev.to/paulike/animation-56l4 | java, programming, learning, beginners | JavaFX provides the **Animation** class with the core functionality for all animations.

Suppose you want to write a program that animates a rising flag, as shown in Figure below. How do you accomplish the task? There are several ways to program this. An effective one is to use the subclasses of the JavaFX **Animation*... | paulike |

1,899,395 | Como criar um Load Balancer no Microsoft Azure | Load Balancer é um serviço que distribui dinamicamente o tráfego de entrada entre múltiplos... | 0 | 2024-06-25T01:05:38 | https://dev.to/laisrz/como-criar-um-load-balancer-no-microsoft-azure-p2o | azure, loadbalancer, cloudskills | Load Balancer é um serviço que distribui dinamicamente o tráfego de entrada entre múltiplos servidores, recursos, ou instâncias. O objetivo principal do load balancer é garantir alta disponibilidade, desempenho otimizado, e evitar sobrecarga em um único recurso. Neste artigo, criaremos e configuraremos um load balancer... | laisrz |

1,899,473 | Add Audit Log Streaming to Auth0 authentication in < 2 mins | In a world where hackers are trying to brute force user accounts (23andMe breach 2023), it is vital... | 0 | 2024-06-25T01:00:54 | https://pangea.cloud/blog/add-audit-log-streaming-to-auth0-in-2-mins/ | auth0, webdev, javascript, tutorial | In a world where hackers are trying to brute force user accounts ([23andMe breach 2023](https://techcrunch.com/2023/12/04/23andme-confirms-hackers-stole-ancestry-data-on-6-9-million-users/)), it is vital for developers to maintain a long-lasting and tamper-proof audit log of all authentication events to keep their apps... | snpranav |

1,899,472 | ONTO THE OTHER | Hey everyone, I got super busy with a lot of stuff, but I am back! So, where were we? Ah yes, check... | 0 | 2024-06-25T00:56:01 | https://dev.to/kevinpalma21/onto-the-other-30af | productivity, design, tutorial, learning | Hey everyone,

I got super busy with a lot of stuff, but I am back! So, where were we? Ah yes, check out the progress I made in the past two hours. I wanted to update you all as soon as possible (image1).

(image1)

... | kevinpalma21 |

1,899,471 | Ultimate Neovim Setup Guide: lazy.nvim Plugin Manager | Hello everyone! In this article I will show you how to configure the neovim editor from scratch with... | 0 | 2024-06-25T00:54:33 | https://dev.to/slydragonn/ultimate-neovim-setup-guide-lazynvim-plugin-manager-23b7 | tutorial, neovim, productivity, learning | Hello everyone! In this article I will show you how to configure the neovim editor from scratch with lazy.vim.

## 💤 Lazy.nvim

A modern plugin manager for Neovim

* Repo: [folke/lazy.nvim](https://github.com/folke/lazy.nvim)

* Outstanding features:

* 📦 Manage all your Neovim plugins with a powerful UI

* 🚀 Fast st... | slydragonn |

1,899,470 | Integrating multiple databases in a todos app (Personal project) | Project Overview Project Name: cit_dr_todos_app Duration: 12 hrs 39 mins Team Members:... | 0 | 2024-06-25T00:35:12 | https://dev.to/caresle/integrating-multiple-databases-in-a-todos-app-personal-project-6kh | dart, cleancode, flutter | **Project Overview**

- **Project Name:** cit_dr_todos_app

- **Duration:** 12 hrs 39 mins

- **Team Members:** Solo

- **Objective:** Learn how to integrate multiple databases in the same project

## **Goals and Objectives**

- **Original Goals:** Learn how to integrate multiple databases in the same project using clean ... | caresle |

1,899,468 | Developing High-Performance Web Applications with Go | Introduction With the ever-increasing demand for faster and more efficient web... | 0 | 2024-06-25T00:32:21 | https://dev.to/kartikmehta8/developing-high-performance-web-applications-with-go-2264 | webdev, javascript, beginners, programming | ## Introduction

With the ever-increasing demand for faster and more efficient web applications, developers are constantly seeking new technologies to meet these needs. One such technology that has gained significant popularity in recent years is Go. Developed by Google, Go is an open-source programming language that o... | kartikmehta8 |

1,897,960 | 🚀 Emmet in VSCode ✨ | "The odds are never impossible.. Merely unfavorable Emmet is an integrated tool in Visual Studio... | 0 | 2024-06-25T00:32:15 | https://dev.to/kibumpng/emmet-in-vscode-1l99 | vscode, webdev, productivity, learning | > "The odds are never impossible.. Merely unfavorable

Emmet is an integrated tool in Visual Studio Code that helps us write HTML and CSS code more quickly. It allows us to expand simple abbreviations into complete code blocks, saving time and reducing repetitive typing. ⏱️

## Key Features of Emmet 🛠️

- **Abbreviat... | kibumpng |

1,899,442 | 🖋️ Pen Testing SQL Injections | In today’s digital age, SQL injections remain a prevalent threat to database security. As businesses... | 0 | 2024-06-25T00:28:01 | https://devtoys.io/2024/06/24/pen-testing-sql-injections/ | cybersecurity, secops, devtoys, webdev | ---

canonical_url: https://devtoys.io/2024/06/24/pen-testing-sql-injections/

---

In today’s digital age, SQL injections remain a prevalent threat to database security. As businesses increasingly rely on data-driven applications, understanding how to protect against SQL injections is crucial. This article will guide yo... | 3a5abi |

1,899,466 | Set device with device argument functions and get it in PyTorch | You can set device with the functions which have device arguments and get it with device as shown... | 0 | 2024-06-25T00:27:46 | https://dev.to/hyperkai/set-device-with-device-argument-functions-and-get-it-in-pytorch-1o2p | pytorch, device, argument, function | You can set `device` with the functions which have `device` arguments and get it with [device](https://pytorch.org/docs/stable/tensor_attributes.html#torch-device) as shown below:

*Memos:

- I selected some popular `dtype` argument functions such as [tensor()](https://pytorch.org/docs/stable/generated/torch.tensor.html... | hyperkai |

1,899,465 | AWS services for the AWS Certified Solutions Architect | This list ensures you are familiar with all relevant services: Must-Have... | 0 | 2024-06-25T00:27:41 | https://dev.to/zeshancodes/aws-services-for-the-aws-certified-solutions-architect-34h3 | aws, cloud, amazon, architecture | This list ensures you are familiar with all relevant services:

### Must-Have Services

**Compute:**

1. **Amazon EC2 (Elastic Compute Cloud):** Scalable virtual servers in the cloud.

2. **AWS Lambda:** Serverless co... | zeshancodes |

1,899,412 | Comandos Linux para Redes: Um Guia Completo para DevOps | No vasto e dinâmico mundo do DevOps, onde cada segundo conta e a precisão é vital, os comandos Linux... | 0 | 2024-06-25T00:19:42 | https://dev.to/fernandomullerjr/comandos-linux-para-redes-um-guia-completo-para-devops-2g9j | linux, devops |

No vasto e dinâmico mundo do DevOps, onde cada segundo conta e a precisão é vital, os comandos Linux para redes são como ferramentas mágicas, transformando complexidade em simplicidade. Imagine-se como um maestro,... | fernandomullerjr |

1,899,411 | PHP HyperF + MariaDB -> Async / Parallel | PHP: 8.3.7 PHP HyperF: 3.1.23 MariaDB: 11.3.2 HyperF - Project System test for... | 0 | 2024-06-25T00:16:28 | https://dev.to/thiagoeti/php-hyperf-mariadb-async-parallel-4aj5 | php, hyperf, mariadb, async | - PHP: 8.3.7

- PHP HyperF: 3.1.23

- MariaDB: 11.3.2

## HyperF - Project

System test for executing database queries in parallel or asynchronously.

#### Create - Project

```console

composer create-project hyperf/hyperf-skeleton "project"

```

#### Install - Watcher

```console

composer require hyperf/watcher --dev

``... | thiagoeti |

1,899,410 | [Game of Purpose] Day 37 | Today I took a day off and played Civilization VI all evening :D One reason might be a fact that I... | 27,434 | 2024-06-25T00:05:19 | https://dev.to/humberd/game-of-purpose-day-37-49cj | gamedev | Today I took a day off and played Civilization VI all evening :D

One reason might be a fact that I was overwhelmed with everything I saw in the sample projects and I needed some time for my head to cool down. | humberd |

1,899,409 | OneTask | OneTask is your ultimate AI-powered to-do app designed to streamline your productivity effortlessly.... | 0 | 2024-06-25T00:00:28 | https://dev.to/onetask/onetask-12eb | OneTask is your ultimate AI-powered to-do app designed to streamline your productivity effortlessly. With OneTask, managing your tasks becomes intuitive and efficient, as it intelligently prioritizes your to-do list so you can focus on what matters most. Say goodbye to the overwhelm of endless tasks and let OneTask opt... | onetask | |

1,900,149 | Open Sourcing my Design System | Open Sourcing My Design System I'm open sourcing the Figma file I use to design and create... | 0 | 2024-06-25T17:07:53 | https://mikebifulco.com/newsletter/open-sourcing-my-design-system | opensource, design, ux, frontend | ---

title: Open Sourcing my Design System

published: true

date: 2024-06-25 00:00:00 UTC

tags: opensource, design, ux, frontend

canonical_url: https://mikebifulco.com/newsletter/open-sourcing-my-design-system

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/s2ta9u5fbwsd6jow1iae.png

---

## Open Sour... | irreverentmike |

1,899,408 | Elysia: A Revolução dos Servidores Web com TypeScript | A tecnologia evolui constantemente, trazendo novas ferramentas que simplificam o desenvolvimento e... | 0 | 2024-06-24T23:50:43 | https://dev.to/iamthiago/elysia-a-revolucao-dos-servidores-web-com-typescript-3kpm | webdev, node, typescript, discuss | A tecnologia evolui constantemente, trazendo novas ferramentas que simplificam o desenvolvimento e aprimoram a performance. Entre as mais recentes inovações no universo de desenvolvimento web, surge o Elysia, um framework de servidores web que está conquistando espaço com sua eficiência e simplicidade, especialmente pa... | iamthiago |

1,899,406 | HIRE EXPERT TO RECOVER MONEY BACK.. | The notorious "Bitcoin Bandit" had eluded authorities for years, amassing a massive digital fortune... | 0 | 2024-06-24T23:48:10 | https://dev.to/jennifer_pierce_1277621cc/hire-expert-to-recover-money-back-4kek | cryptocurrency, bitcoin, recover, money | The notorious "Bitcoin Bandit" had eluded authorities for years, amassing a massive digital fortune by hacking into cryptocurrency exchanges and digital wallets. But the tides have finally turned, as an elite team of cybersecurity experts and blockchain analysts, known as the "Cyber Genie Hack Pro" has managed to track... | jennifer_pierce_1277621cc |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.