id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,899,882 | Top Free APIs For Developers in 2024 | Top 10 Free APIs for Developers in 2024: Part 1 What is an API? An API... | 0 | 2024-06-25T10:05:04 | https://dev.to/saif05/top-10-free-apis-for-developers-in-2024-3lhn | javascript, api, opensource, beginners | ## Top 10 Free APIs for Developers in 2024: Part 1

### What is an API?

An API (Application Programming Interface) is a set of protocols, tools, and standards that allows different software applications to communicate with each other. It defines how software components should interact, enabling developers to integrate ... | saif05 |

1,899,881 | 2 line sad Poetry in Urdu | Viewers, this collection 1. Sad Lines in Urdu is being served to you guys, it is specially made on... | 0 | 2024-06-25T10:04:26 | https://dev.to/athar_110_4fcc71028929d97/2-line-sad-poetry-in-urdu-4nlo | Viewers, this collection

[1. Sad Lines in Urdu](https://theurdupoetry.pk/sad-poetry-in-urdu/)

is being served to you guys, it is specially made on Sad Poetry in Urdu that will be enough to change your mood. Hope you guys will like this 2 Lines Deep Poetry collection and you will definitely share it with your friends... | athar_110_4fcc71028929d97 | |

1,899,880 | Protect Your Walls with Rubber Wall Guards: The Ultimate Guide | In commercial and industrial settings, maintaining the condition of walls can be a significant... | 0 | 2024-06-25T10:03:01 | https://dev.to/prosafetystore/protect-your-walls-with-rubber-wall-guards-the-ultimate-guide-1l61 | In commercial and industrial settings, maintaining the condition of walls can be a significant challenge due to constant traffic and potential impact from equipment and vehicles. One of the most effective solutions to this problem is the use of [rubber wall guards](https://prosafetystore.com/wall-guards-and-crash-rails... | prosafetystore | |

1,899,879 | Lado Okhotnikov Launched the Uniteverse Program Within the Meta Force Metaverse | Meta Force is a promising project created by a team of techies and cryptocurrency fans. These guys... | 0 | 2024-06-25T10:01:22 | https://dev.to/ali_nasir_5bae2a418b9ed96/lado-okhotnikov-launched-the-uniteverse-program-within-the-meta-force-metaverse-2lfb | Meta Force is a promising project created by a team of techies and cryptocurrency fans. These guys decided to try their hand at GameFi in 2021. And within a year, the platform united more than a million participants from all over the world. The main principles are transparency, distribution, variety of gameplay and max... | ali_nasir_5bae2a418b9ed96 | |

1,899,878 | Leveraging Incremental Static Regeneration in Next.js for Dynamic Data Updates | Explore how Incremental Static Regeneration (ISR) can be implemented in Next.js applications to frequently update static content without rebuilding the entire site. | 0 | 2024-06-25T10:00:35 | https://dev.to/itselftools/leveraging-incremental-static-regeneration-in-nextjs-for-dynamic-data-updates-1dp8 | nextjs, javascript, webdev, staticsitegeneration |

As developers at [itselftools.com](https://itselftools.com), having built over 30 projects using Next.js and Firebase, we've explored various features of Next.js that significantly better our web development process. One such compelling feature is Incremental Static Regeneration (ISR). This article dives into how ISR ... | antoineit |

1,893,060 | 5 Cheap Ways to Host Redis | Hetzner, Sliplane, Render, Hashmaps (?!?!), Upstash - Picking a hosting provider for your Redis... | 0 | 2024-06-25T09:59:32 | https://dev.to/code42cate/5-cheap-ways-to-host-redis-2njm | docker, devops, cloud, beginners | Hetzner, Sliplane, Render, Hashmaps (?!?!), Upstash - Picking a hosting provider for your Redis database can be challenging, especially with all the awesome options available. Analysis Paralysis is real 😵💫. Who wins the race for the cheapest redis provider?

at eMexo Technologies – recognized as the [Best Flutter Training Institute in Bangalore](url). Our [Flutter Course in Electronic City](url) Bangalore covers fundamental and advanced topics, ensuring a tho... | ishaneemexo |

1,899,874 | Hyperledger Besu: Unveiling the Blockchain Engine for Enterprise | The secure and efficient world of blockchain demands specialized solutions. Businesses increasingly... | 0 | 2024-06-25T09:54:21 | https://dev.to/donnajohnson88/hyperledger-besu-unveiling-the-blockchain-engine-for-enterprise-27mc | hyperledger, blockchain, hyperledgerbesu, enterprise | The secure and efficient world of blockchain demands specialized solutions. Businesses increasingly recognize the limitations of generic platforms and seek the need for custom-built solutions that cater to their specific requirements. Enter the world of Hyperledger Besu, a wider concept of [Hyperledger development serv... | donnajohnson88 |

1,899,873 | Semaphore in Operating System | In the world of operating systems, managing the execution of multiple processes is crucial for... | 0 | 2024-06-25T09:54:11 | https://dev.to/pushpendra_sharma_f1d2cbe/semaphore-in-operating-system-195j | In the world of operating systems, managing the execution of multiple processes is crucial for ensuring that resources are used efficiently and that processes run smoothly. One of the key concepts used to achieve this is the semaphore. Let's explore what a semaphore is, how it works, and why it's important, using simpl... | pushpendra_sharma_f1d2cbe | |

1,899,872 | What is Darshan Hiranandani Wife & Family Connection? | Neha Jhalani Hiranandani, an accomplished author, is Darshan Hiranandani wife, a renowned Indian... | 0 | 2024-06-25T09:53:39 | https://dev.to/surajkumarsk23/what-is-darshan-hiranandani-wife-family-connection-5eh0 |

Neha Jhalani Hiranandani, an accomplished author, is **Darshan Hiranandani wife**, a renowned Indian businessman. Let’s delve into some common questions about her:

Neha Jhalani is an author known for her inspirational books, including “Girl Power.” These books feature stories of accomplished women from various fiel... | surajkumarsk23 | |

1,899,871 | Who is Darshan Hiranandani Wife | Meet Darshan Hiranandani Wife Darshan Hiranandani Wife name is Neha Jhalani Hiranandani,... | 0 | 2024-06-25T09:51:44 | https://dev.to/surajkumarsk23/who-is-darshan-hiranandani-wife-543b | ## Meet Darshan Hiranandani Wife

Darshan Hiranandani Wife name is Neha Jhalani Hiranandani, she is an accomplished author. And Neha Jhalani Hiranandani is the daughter of Delhi-based businessman Pradeep Jhalani and his wife, Shabnam Jhalani. The couple got married on March 18, 2009. Together, they have two children. N... | surajkumarsk23 | |

1,899,869 | Why Should I Choose Tron for My Token Development? | *Introduction: * Creating digital tokens has changed the way we use technology, providing new... | 0 | 2024-06-25T09:50:10 | https://dev.to/elena_marie_dad5c9d5d5706/why-should-i-choose-tron-for-my-token-development-4ig | cryptotoken, tokendevelopment | **Introduction:

**

Creating digital tokens has changed the way we use technology, providing new chances for businesses and developers. If you're thinking about getting into blockchain and making tokens, you've likely seen many different platforms, each with its pros and cons. One platform that stands out is Tron. But ... | elena_marie_dad5c9d5d5706 |

1,899,868 | Developing and Using the jrest2 Library for HTTP/HTTPS Protocols | Introduction Hello everyone! Today, I want to introduce you to my pet project - the jrest2... | 0 | 2024-06-25T09:49:50 | https://dev.to/itzstonlex/developing-and-using-the-jrest2-library-for-httphttps-protocols-b18 | java, opensource, api, rest | ## Introduction

Hello everyone! Today, I want to introduce you to my pet project - the jrest2 library, which provides a complete implementation of HTTP/HTTPS protocols from scratch. This library is already available on GitHub and published on jitpack.io with released versions. In this post, I will talk about the featur... | itzstonlex |

1,899,867 | Salesforce Field Service for Business Success: Detailed Scenarios and Benefits | In today's fast-paced business environment, efficient field service management is crucial for... | 0 | 2024-06-25T09:49:22 | https://dev.to/shreya123/salesforce-field-service-for-business-success-detailed-scenarios-and-benefits-3dh9 | salesforce, salesforceconsulting | In today's fast-paced business environment, efficient field service management is crucial for maintaining customer satisfaction and operational excellence. [Salesforce Field Service](https://www.softwebsolutions.com/resources/salesforce-field-services-use-cases.html) (SFS) offers a comprehensive solution that enhances ... | shreya123 |

1,899,866 | Home - Home Decor | Modular Furniture India | Stainless Steel Home Wardrobes | Steel and StainlessSteel modular kitchen, wardrobe, Bookshelves, Crockery Cupboards in Bangalore,... | 0 | 2024-06-25T09:48:49 | https://dev.to/uday_p_47df8c72f4976c7343/home-home-decor-modular-furniture-india-stainless-steel-home-wardrobes-e4j | interior, design, bethliving | Steel and StainlessSteel modular kitchen, wardrobe, Bookshelves, Crockery Cupboards in Bangalore, Hyderabad, Chennai, Kochi, and 30+ other locations. | uday_p_47df8c72f4976c7343 |

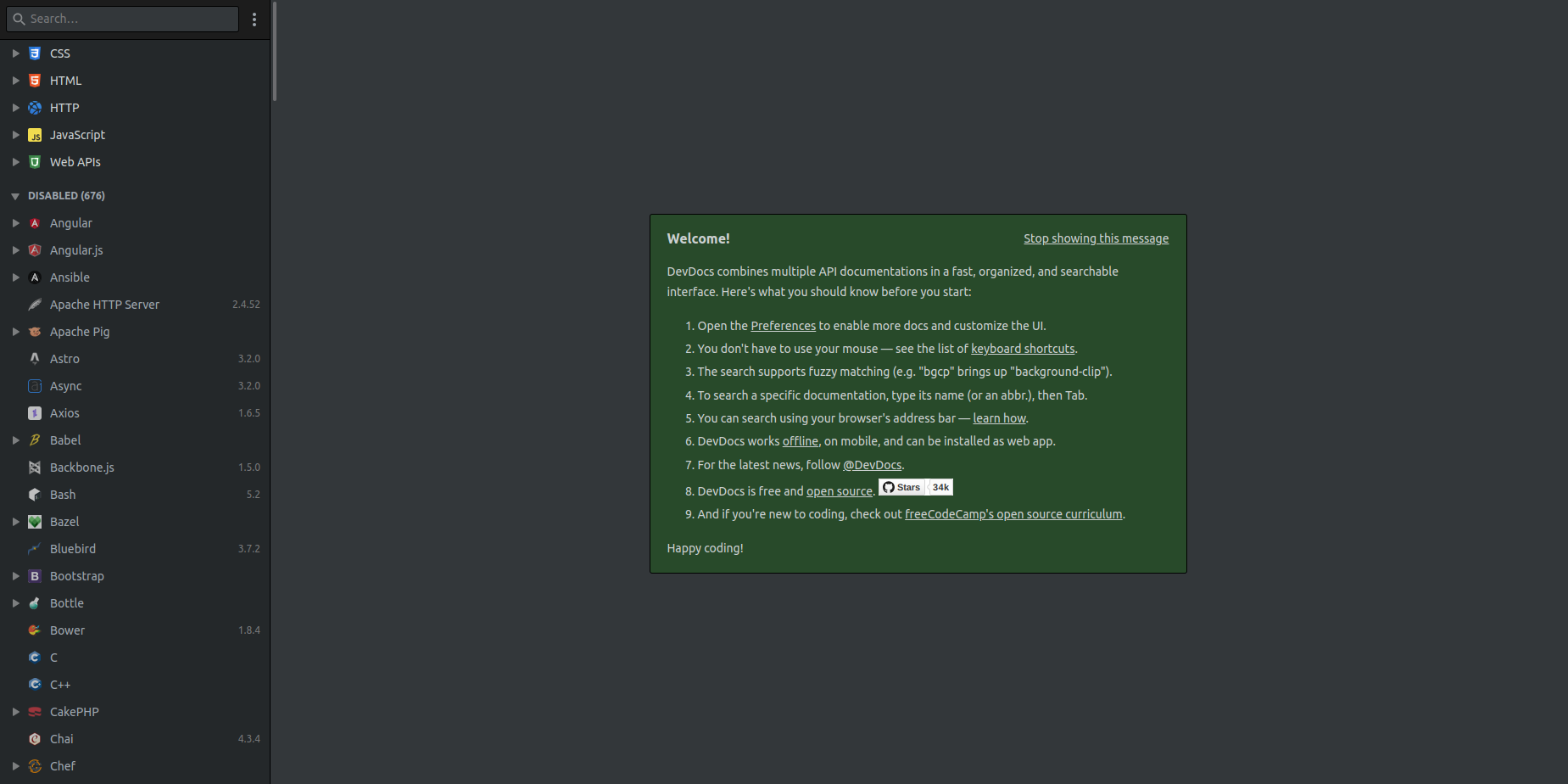

1,899,825 | HIDDEN WEBSITES FOR PROGRAMMERS | devdocs.io DevDocs brings together numerous API documentation in a single, searchable interface. You... | 0 | 2024-06-25T09:12:49 | https://dev.to/moinulislam7/hidden-websites-forprogrammers-2a3n | **[devdocs.io](https://devdocs.io/)**

DevDocs brings together numerous API documentation in a single, searchable interface. You will find docs related to various programming languages and technologies in one place.

... | moinulislam7 | |

1,899,865 | Suntech: Fostering a Culture of Safety Through Innovation | 323e5226cea60cde1e8d6127c97a606b2a58639de65a93b348c371dde0a0ca0d.jpg Title: Suntech: Making Safety a... | 0 | 2024-06-25T09:48:42 | https://dev.to/tfhcv_ghjkl_ccf0ec139c40a/suntech-fostering-a-culture-of-safety-through-innovation-40co | design | 323e5226cea60cde1e8d6127c97a606b2a58639de65a93b348c371dde0a0ca0d.jpg

Title: Suntech: Making Safety a Priority through Innovative Solutions

Introduction:

Suntech is a company that's committed to solutions in providing innovative to its customers. It has been in the market for a time long and its main aim to provide q... | tfhcv_ghjkl_ccf0ec139c40a |

1,899,863 | Back-End Development for Custom Web Applications: A Developer's Guide | Are you a web developer looking to deepen your knowledge of backend development for custom web... | 0 | 2024-06-25T09:47:52 | https://dev.to/cygnismedia/back-end-development-for-custom-web-applications-a-developers-guide-3327 | webdev, tutorial, beginners, programming | Are you a web developer looking to deepen your knowledge of backend development for custom web applications? Our comprehensive guide covers everything you need to know:

- **Advanced Backend Architecture:** Explore the intricacies of server-side logic, database management, API integrations, and middleware communication... | cygnismedia |

1,899,862 | GBase 8a Implementation Guide: Resource Assessment | 1. Disk Storage Space Evaluation The storage space requirements for a GBase cluster are... | 0 | 2024-06-25T09:47:28 | https://dev.to/congcong/gbase-8a-implementation-guide-resource-assessment-1678 | ## 1. Disk Storage Space Evaluation

The storage space requirements for a GBase cluster are calculated based on the data volume of the business system, the choice of compression algorithm, and the number of cluster replicas. The data volume of a business system usually includes the following aspects:

- Historical data... | congcong | |

1,897,312 | Difference between templatetag: linebreaks and linebreaksbr in Django template | Introduction In Django templates, handling newline characters within text data is a common... | 0 | 2024-06-25T09:42:58 | https://dev.to/doridoro/difference-between-templatetag-linebreaks-and-linebreaksbr-in-django-template-50hg | # Introduction

In Django templates, handling newline characters within text data is a common requirement, especially when displaying user-generated content or text from external sources. To address this, Django provides two useful template tags: `linebreaks` and `linebreaksbr`. Although they appear similar, they serve... | doridoro | |

1,899,861 | How do we resolve race conditions? | When dealing with race conditions in saving drafts, the main challenge is ensuring that updates are... | 0 | 2024-06-25T09:42:55 | https://dev.to/codermansithakur/how-do-we-resolve-race-conditions-416c | When dealing with race conditions in saving drafts, the main challenge is ensuring that updates are applied in the correct order. This is especially important if multiple updates (like auto-saving drafts) are happening concurrently. Here’s how you can handle such situations:

### 1. **Using Versioning**

One effective a... | codermansithakur | |

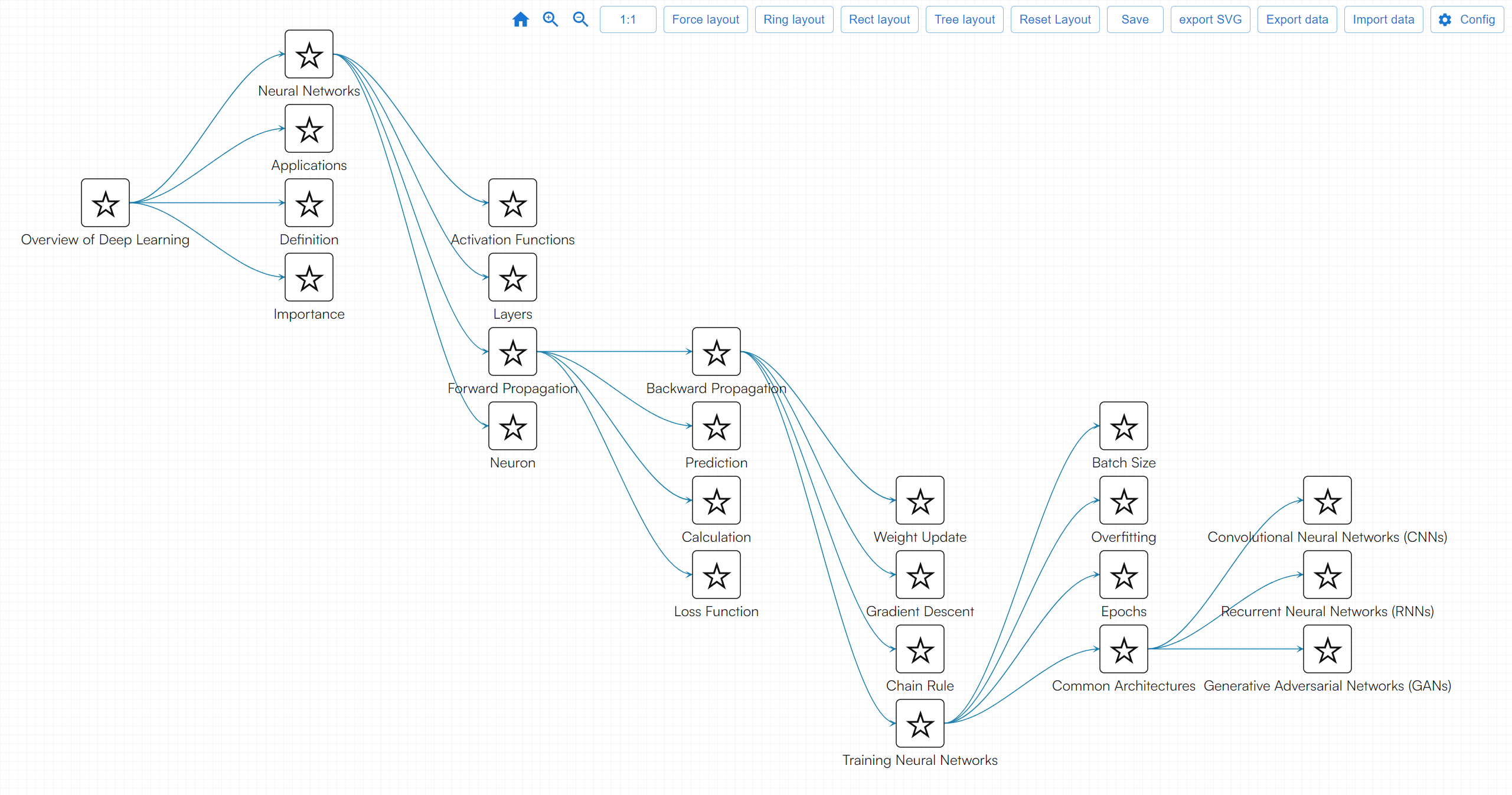

1,899,860 | Overview of Deep Learning | A post by friday | 0 | 2024-06-25T09:42:45 | https://dev.to/fridaymeng/overview-of-deep-learning-2lgi |

| fridaymeng | |

1,899,859 | Boost Distributor Motivation | Is Low Distributor Engagement Impacting Your MLM Business? 📉 Distributor engagement is crucial for... | 0 | 2024-06-25T09:42:19 | https://dev.to/global_mlmsoftware_58bd8/boost-distributor-motivation-14la | Is Low Distributor Engagement Impacting Your MLM Business? 📉

Distributor engagement is crucial for the success of any MLM business. Yet, many businesses struggle to keep their distributors motivated and productive. Low engagement can lead to high turnover rates, reduced productivity, and stunted business growth.

Dis... | global_mlmsoftware_58bd8 | |

1,899,858 | Engineering as Marketing Theory and Practice | I use engineering as a form of marketing to promote my business. It utilizes free tools to help bring... | 0 | 2024-06-25T09:41:51 | https://dev.to/martinbaun/engineering-as-marketing-theory-and-practice-27aj | webdev, devops, productivity, learning |

I use engineering as a form of marketing to promote my business. It utilizes free tools to help bring in leads and or clients. Let’s learn what it entails and how you can implement it.

## What is engineering as marketing?

This involves a company creating tools for its customers. These free tools show your company's a... | martinbaun |

1,899,857 | Iron Casting Factory Operations: Ensuring Quality and Efficiency | H1fb6a1d9424b403582a47592e37f5077G.png Iron Casting Factory Operations: Ensuring Quality plus... | 0 | 2024-06-25T09:40:30 | https://dev.to/tfhcv_ghjkl_ccf0ec139c40a/iron-casting-factory-operations-ensuring-quality-and-efficiency-32k5 | design | H1fb6a1d9424b403582a47592e37f5077G.png

Iron Casting Factory Operations: Ensuring Quality plus Effectiveness

Iron casting products is often a real way of melting plus pouring molten iron as being a mildew to make types which may be types being various. An iron casting factory is a place where this process takes pl... | tfhcv_ghjkl_ccf0ec139c40a |

1,899,824 | Aplikasi Convert Pulsa | Aplikasi convert pulsa adalah aplikasi yang memungkinkan pengguna untuk tukar pulsa seluler menjadi... | 0 | 2024-06-25T09:10:15 | https://dev.to/tabi_moza_d62a2b9ee392126/aplikasi-convert-pulsa-4bcn | Aplikasi convert pulsa adalah aplikasi yang memungkinkan pengguna untuk [tukar pulsa](https://autoconvert.id/) seluler menjadi bentuk saldo digital lainnya seperti saldo e-wallet, rekening bank, atau voucher belanja.

Ini berguna bagi pengguna yang memiliki pulsa berlebih dan ingin memanfaatkannya untuk transaksi lain... | tabi_moza_d62a2b9ee392126 | |

1,899,854 | Shree Balaji Relocations Packers And Movers | Shree Balaji Relocation Packers and Movers provides packing moving services in all over the world. We... | 0 | 2024-06-25T09:37:47 | https://dev.to/ravindra456/shree-balaji-relocations-packers-and-movers-1k7d | packersmovers, transportationservices, movingcompany | Shree Balaji Relocation Packers and Movers provides packing moving services in all over the world. We are countrywide packing & moving corporation having branches in all India. Our professional team is having expert with information, skills and counseling along with energetic charge, which enable us to provide our cust... | ravindra456 |

1,899,853 | Exploring Advanced Techniques in Laravel Collections: Harnessing the Potential of after() and before() | Are you looking to enhance your Laravel Collections prowess? Dive deep into the world of after() and... | 0 | 2024-06-25T09:35:36 | https://dev.to/asfiaaiman/exploring-advanced-techniques-in-laravel-collections-harnessing-the-potential-of-after-and-before-1knf | laravelcollections, laravel, after, before | Are you looking to enhance your Laravel Collections prowess? Dive deep into the world of after() and before() methods, two powerful tools that can revolutionize how you work with data in Laravel. Let's embark on a journey to uncover their hidden capabilities and see how they can elevate your coding experience.

### Unl... | asfiaaiman |

1,899,852 | 低代码在日常应用中的研究报告 | 低代码在日常应用中的研究报告 一、引言 随着科技的快速发展和数字化转型的深入推进,企业对软件开发的需求日益增长。然而,传统的手动编码开发方式周期长、成本高,已无法满足企业快速响应业务需求的需求。在这... | 0 | 2024-06-25T09:35:19 | https://dev.to/hotentbpm/di-dai-ma-zai-ri-chang-ying-yong-zhong-de-yan-jiu-bao-gao-5hjn | webdev, javascript, beginners, programming | 低代码在日常应用中的研究报告

一、引言

随着科技的快速发展和数字化转型的深入推进,企业对软件开发的需求日益增长。然而,传统的手动编码开发方式周期长、成本高,已无法满足企业快速响应业务需求的需求。在这一背景下,低代码平台作为一种新兴的开发工具,正逐步得到广泛的关注和应用。本报告旨在探讨低代码在日常应用中的实际应用情况,以期为企业提供有价值的参考和启示。

二、低代码概述

低代码平台是一种通过可视化的界面和预构建的组件,使开发人员和业务人员能够以最少的手动编码快速创建应用程序的开发方法。它大大降低了开发门槛,提高了开发效率,并为企业提供了更加灵活和个性化的解决方案。

三、低代码在日常应用中的实际案例

企业内部管理系统

某大型制... | hotentbpm |

1,899,008 | Creation of choices in django model | Introduction to Django's CharField Choices In Django, CharField choices provide a way to... | 0 | 2024-06-25T09:34:38 | https://dev.to/doridoro/creation-of-choices-in-django-model-196g | django | # Introduction to Django's CharField Choices

In Django, `CharField` choices provide a way to limit the valid inputs for a CharField to a predefined set of options. This feature helps ensure data integrity and simplifies form handling by providing a clean and user-friendly interface for selecting values.

When you use ... | doridoro |

1,899,655 | use SVG to draw php Basic knowledge of PHP | Sure, here's a brief introduction to PHP: PHP is a popular server-side scripting language primarily... | 0 | 2024-06-25T05:47:42 | https://dev.to/fridaymeng/use-svg-to-draw-php-basic-knowledge-of-php-dd0 | Sure, here's a brief introduction to PHP:

PHP is a popular server-side scripting language primarily used for web development. It can be embedded into HTML or run as standalone scripts on a server. Here are some key points about PHP:

The [microbial algae products market](https://www.persistencemarketresearch.com/market-research/microbial-algae-products-market.asp) is poised for substantial growth from 2023 to 2033, with a projected value-based c... | swara_353df25d291824ff9ee | |

1,899,848 | Best Clothing Manufacturer & Supplier in Mumbai, India | ODD | ODD is a high-quality clothing manufacturer and supplier in Mumbai, India, specializing in producing... | 0 | 2024-06-25T09:28:01 | https://dev.to/odd_factory_a961cd9f5f15b/best-clothing-manufacturer-supplier-in-mumbai-india-odd-19m8 | cloth | ODD is a high-quality [clothing manufacturer and supplier in Mumbai, India](theoddfactory.com), specializing in producing fashionable garments, fabric printing, button making, label making, and hand embroidery. We also offer bespoke clothing manufacturing services for startups at a reasonable price. With a focus on qua... | odd_factory_a961cd9f5f15b |

1,899,844 | From Vercel to Monolith, improving API speeds | Going serverless was both the best and worst decision we made so far. It went from being why we would... | 0 | 2024-06-25T09:27:20 | https://dev.to/fileforge/from-vercel-to-monolith-improving-api-speeds-3309 | serverless, monolith, aws, performance |

Going serverless was both the best and worst decision we made so far. It went from being why we would ship fast to the reason users would churn.

When we started Fileforge, we were pivoting from an AI startup. We were already a few weeks into the Y Combinator batch, and we needed to launch in days.

Two possibilities ... | titou325 |

1,899,847 | Sauce Filling Machines: Solutions for Particulate Sauces | screenshot-1718081756043.png Sauce Filling Machines: Perfect for Chunky Sauces If you are tired of... | 0 | 2024-06-25T09:26:59 | https://dev.to/hdjf_ghjvb_884813560fdd5a/sauce-filling-machines-solutions-for-particulate-sauces-3e8f | machines | screenshot-1718081756043.png

Sauce Filling Machines: Perfect for Chunky Sauces

If you are tired of pouring chunky sauces by hand, investing in a sauce filling machine can be a great solution. These machines are filled with innovative features that make it easier to fill containers of different types of sauces. With... | hdjf_ghjvb_884813560fdd5a |

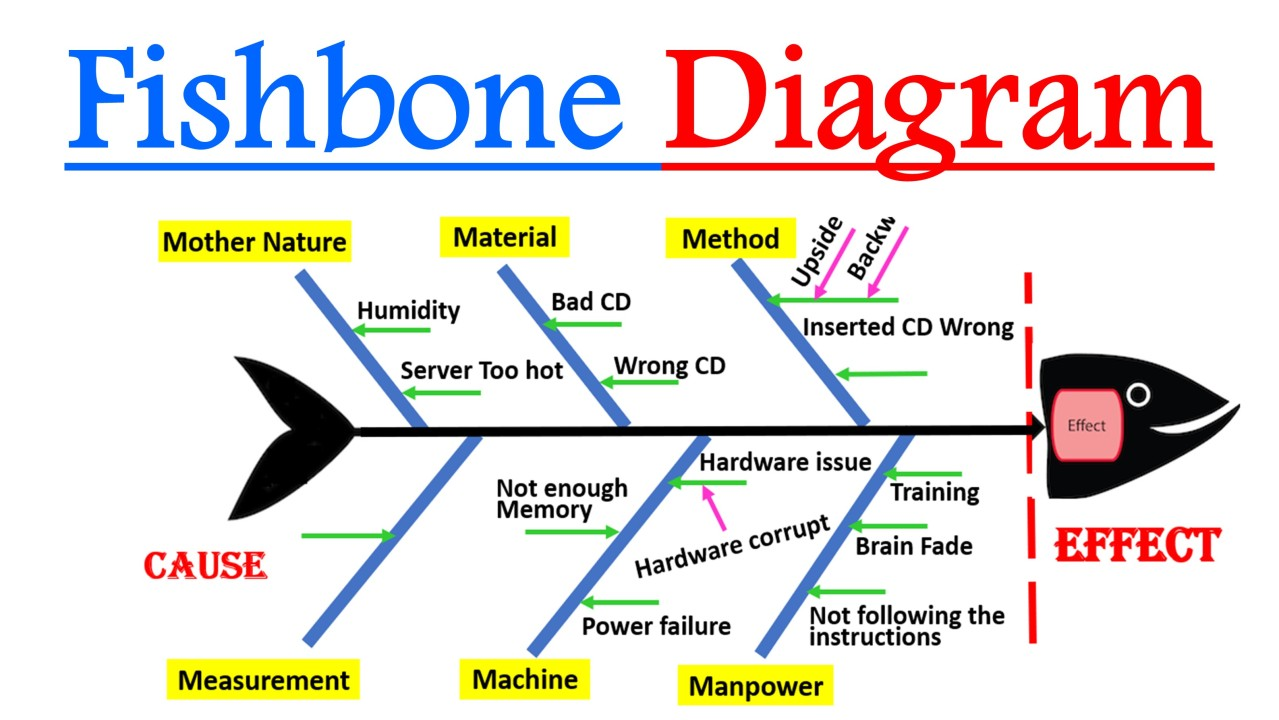

1,899,845 | Mencoba Merunut Masalah dengan Fish Bone | Sumber:... | 0 | 2024-06-25T09:25:56 | https://dev.to/aspsptyd/mencoba-merunut-masalah-dengan-fish-bone-41m6 |

Sumber: https://kwikkiangie.ac.id/home/2024/05/22/fishbone-diagram-alat-analisis-untuk-mengidentifikasi-penyebab-masalah/

| mike_andreuzza |

1,899,842 | Relax in Style: Discover the Best Spas on C.G. Road | C.G. Road, one of Ahmedabad’s most bustling and prestigious locales, is not only a hub for shopping... | 0 | 2024-06-25T09:22:13 | https://dev.to/abitamim_patel_7a906eb289/relax-in-style-discover-the-best-spas-on-cg-road-1634 | C.G. Road, one of Ahmedabad’s most bustling and prestigious locales, is not only a hub for shopping and dining but also a destination for luxurious spa experiences. Whether you're in need of a relaxing massage, a rejuvenating facial, or comprehensive wellness treatments, the spas on C.G. Road offer a wide range of serv... | abitamim_patel_7a906eb289 | |

1,899,841 | Everything You Need to Know About Microsoft Azure Face Recognition Technology | Facial recognition technology has emerged as a powerful tool for a myriad of applications, from... | 0 | 2024-06-25T09:21:31 | https://dev.to/luxandcloud/everything-you-need-to-know-about-microsoft-azure-face-recognition-technology-1192 | microsoft, azure, ai, machinelearning | Facial recognition technology has emerged as a powerful tool for a myriad of applications, from enhancing security systems to streamlining user authentication processes. Among the leaders in this space is Microsoft Azure Face Recognition, a comprehensive service that leverages deep neural networks to deliver high accur... | luxandcloud |

1,899,826 | hello ... | A post by gzim rexhaj | 0 | 2024-06-25T09:13:04 | https://dev.to/gzim_rexhaj_cab5dbda99819/hello--oj6 | gzim_rexhaj_cab5dbda99819 | ||

1,899,831 | Bigg Boss 18 Watch Online | Bigg Boss 18 will premiere in october 2024 and will be changed in theme and house as well. Not much... | 0 | 2024-06-25T09:18:00 | https://dev.to/biggboss18live/bigg-boss-18-watch-online-3f00 | webdev |

Bigg Boss 18 will premiere in october 2024 and will be changed in theme and house as well.

Not much detail is still came out. Bigg Boss 18 will be more interesting and thrilling show.

[Bigg Boss 18 Watch Online](https://biggboss18live.net/) | biggboss18live |

1,899,830 | Drew University | Embark on a transformative educational journey with Drew University's dual bachelor-master degree... | 0 | 2024-06-25T09:17:57 | https://dev.to/drewuniversity/drew-university-59c8 | Embark on a transformative educational journey with Drew University's [dual bachelor-master degree programs online](https://drew.edu/). Explore our comprehensive offerings designed to meet your academic and career aspirations. Experience the flexibility of online learning while advancing your education and achieving yo... | drewuniversity | |

1,899,829 | Best Software Development Company - BPRACT SOFTWARE SOLUTIONS | At Bpract Software Solutions, we are dedicated to transforming ideas into reality through innovative... | 0 | 2024-06-25T09:14:27 | https://dev.to/bpract_seo_33cdab95607227/best-software-development-company-bpract-software-solutions-61l | At Bpract Software Solutions, we are dedicated to transforming ideas into reality through innovative technology. As a premier [software development company in Kerala](https://bpract.com/software-development/), we specialize in creating custom software, MLM solutions, and digital marketing strategies that empower busine... | bpract_seo_33cdab95607227 | |

1,899,828 | Scott’s Law of Rebrands | Over time, the probability of a rebrand starting in the midst of a large web project approaches... | 0 | 2024-06-25T09:14:23 | https://measured.co/blog/scotts-law-of-rebrands | design, brand, designsystem, designtokens | > Over time, the probability of a rebrand starting in the midst of a large web project approaches 1.

—Scott Boyle, Measured Co-Founder

Like any good axiom, Scott’s Law of Rebrands was borne of experience. We’ve seen it happen enough that we know to it be universally truthy. (We call it Scott’s Law with tongue lodged ... | anglepoised |

1,899,827 | Sporting Goods Market: Growth Trends, Forecast 2023-2033 | The global sporting goods market is poised for substantial growth, with projections indicating a... | 0 | 2024-06-25T09:13:36 | https://dev.to/swara_353df25d291824ff9ee/sporting-goods-market-growth-trends-forecast-2023-2033-1oi8 |

The global [sporting goods market](https://www.persistencemarketresearch.com/market-research/sporting-goods-market.asp) is poised for substantial growth, with projections indicating a robust expansion from US$ 722.2... | swara_353df25d291824ff9ee | |

1,899,822 | Top 11 Cold Email Services of 2024: Tools for Successful Outreach | Cold Emailing remains a powerful tool for businesses and professionals looking to generate leads,... | 0 | 2024-06-25T09:08:08 | https://dev.to/otismilburnn/top-11-cold-email-services-of-2024-tools-for-successful-outreach-omk | coldemailservices, smtp, webdev, devops | [Cold Emailing](https://smtpget.com/cold-email-marketing-services/) remains a powerful tool for businesses and professionals looking to generate leads, build connections, or promote products. Unlike spam, a well-crafted cold email is targeted, personalized, and relevant, leading to higher engagement rates. However, the... | otismilburnn |

1,899,821 | Practical Guide to Unity Performance Optimization | 1. Introduction Unity is a widely used cross-platform engine for game development.... | 0 | 2024-06-25T09:07:58 | https://dev.to/happyer/practical-guide-to-unity-performance-optimization-oo0 | unity3d, mobile, development, developer | ## 1. Introduction

Unity is a widely used cross-platform engine for game development. However, during the development process, performance issues can become a bottleneck that restricts the gaming experience. This article will detail practical tips for optimizing Unity performance from multiple aspects, helping develope... | happyer |

1,899,820 | How Sichuan DeepFast Maintains Quality and Safety Standards | Sichuan DeepFast is a business that creates meals items, like hot pot warm manners. They are ... | 0 | 2024-06-25T09:07:46 | https://dev.to/tfhcv_ghjkl_ccf0ec139c40a/how-sichuan-deepfast-maintains-quality-and-safety-standards-192c | design | Sichuan DeepFast is a business that creates meals items, like hot pot warm manners. They are incredibly popular in China are understood for their high top premium. We'll discuss exactly how Sichuan DeepFast preserves their high top premium security requirements.

Benefits of Sichuan DeepFast

Sichuan DeepFast has ... | tfhcv_ghjkl_ccf0ec139c40a |

1,899,819 | The Role of UI UX Design Companies in the Digital Age | In our fast-paced digital world, the importance of user experience (UX) and user interface (UI)... | 0 | 2024-06-25T09:05:30 | https://dev.to/stevemax237/the-role-of-ui-ux-design-companies-in-the-digital-age-k7l | webdev | In our fast-paced digital world, the importance of user experience (UX) and user interface (UI) design cannot be overstated. These days, **[UX design companies](https://www.mobileappdaily.com/directory/design-companies/ui-ux?utm_source=dev&utm_medium=hc&utm_campaign=mad)** are leading the charge in technological innova... | stevemax237 |

1,899,818 | Digital Marketing Course In Kerala | At Zypher, we believe in the power of education to shape lives and drive positive change.. As a... | 0 | 2024-06-25T09:05:08 | https://dev.to/aswathy_zypherlearning_dd/digital-marketing-course-in-kerala-i7i | At Zypher, we believe in the power of education to shape lives and drive positive change.. As a Fastest growing vernacular upskilling platform, we are committed to providing accessible, high-quality learning experiences that empower individuals to reach their full potential.

[Digital marketing course in Kerala](https:/... | aswathy_zypherlearning_dd | |

1,899,817 | Responsive Design Best Practices: Tips and Tricks for Making Websites Look Great on All Devices | Getting your website designs to fit and adjust perfectly on all devices can be a big headache,... | 0 | 2024-06-25T09:05:03 | https://dev.to/kevin_asogwa/responsive-design-best-practices-tips-and-tricks-for-making-websites-look-great-on-all-devices-2g70 | Getting your website designs to fit and adjust perfectly on all devices can be a big headache, especially for beginners. I remember the frustration of seeing my beautifully crafted desktop site look like a jumbled mess on a smartphone. But fear not! With some best practices and a bit of patience, you can create a respo... | kevin_asogwa | |

1,899,816 | Afghanistan Clinch Thrilling Semi-Final Spot After Dramatic Win Over Bangladesh | Two days after their upset victory against Australia, Afghanistan continued their dream run in the... | 0 | 2024-06-25T09:04:58 | https://dev.to/wilson_wilson_f0065d51cd9/afghanistan-clinch-thrilling-semi-final-spot-after-dramatic-win-over-bangladesh-25de | Two days after their upset victory against Australia, Afghanistan continued their dream run in the T20 World Cup with a heart-stopping win over Bangladesh, booking their place in the semi-finals.

**India's morning win set... | wilson_wilson_f0065d51cd9 | |

1,899,815 | Sliding Doors: Modern Solutions for Contemporary Homes | door.jpg Have you ever seen a door is hinged slides open and close, without the necessity for any... | 0 | 2024-06-25T09:04:35 | https://dev.to/hdjf_ghjvb_884813560fdd5a/sliding-doors-modern-solutions-for-contemporary-homes-il1 | door | door.jpg

Have you ever seen a door is hinged slides open and close, without the necessity for any hinges or knobs? They are known as doors which can be sliding and they're revolutionizing the true means we think about home entrances. Sliding doors will be the perfect blend of innovation, security, and convenience, mak... | hdjf_ghjvb_884813560fdd5a |

1,899,814 | Hellstar Hoodie || Hellstar Clothing || New Collection | Hellstar Hoodie The Hellstar Hoodie is a distinctive and stylish piece of streetwear that has... | 0 | 2024-06-25T09:00:25 | https://dev.to/ano_jack_354bfeb6011c9b2d/hellstar-hoodie-hellstar-clothing-new-collection-34l9 | hellstar |

Hellstar Hoodie

The [Hellstar Hoodie](https://hellstarcloth.us/hellstar-hoodie/) is a distinctive and stylish piece of streetwear that has garnered significant attention in the fashion community. Known for its unique design, high-quality materials, and cultural significance, the Hellstar Hoodie is more than just a p... | ano_jack_354bfeb6011c9b2d |

1,899,813 | Building a Full-Stack Web Application with MERN Stack: A Beginner's Guide | Opening: Building a web application from scratch can be a daunting task, especially for... | 0 | 2024-06-25T08:57:49 | https://dev.to/princenzmw/building-a-full-stack-web-application-with-mern-stack-a-beginners-guide-19m0 | mernstack, react, mongodb, node | ## Opening:

Building a web application from scratch can be a daunting task, especially for beginners. However, with the MERN stack, the process becomes more manageable and even enjoyable. In this blog post, I will walk you through the process of creating a full-stack web application using MongoDB, Express.js, React, a... | princenzmw |

1,899,811 | Software Product Development | Definition and Stages | I am excited to see how leading businesses are embracing innovative approaches to software product... | 0 | 2024-06-25T08:56:33 | https://dev.to/igor_ag_aaa2341e64b1f4cb4/software-product-development-1kal | softwaredevelopment, product, beginners, community | I am excited to see how leading businesses are embracing innovative approaches to software product development. The shift towards viewing software development as a part of product development has opened up new possibilities for creating successful products. One key aspect that I find crucial in this process is the conc... | igor_ag_aaa2341e64b1f4cb4 |

1,899,807 | Understanding and Resolving Infinite Consumer Lag Growth on Compacted Kafka Topics | an article by André Charton Kleinanzeigen has been using Kafka since 2016 as a distributed streaming... | 0 | 2024-06-25T08:55:42 | https://dev.to/berlin-tech-blog/understanding-and-resolving-infinite-consumer-lag-growth-on-compacted-kafka-topics-787 | kafka, compaction | _an article by [André Charton](https://www.linkedin.com/in/andrecharton/)_

_Kleinanzeigen has been using Kafka since 2016 as a distributed streaming platform of choice. We have many real-time data pipelines and streaming applications running on top. Some of our topics are compacted..._

**What is a compacted topic?*... | sophel |

1,899,809 | Understanding JWT and Validating Tokens with Expiry Dates | JSON Web Tokens (JWT) are widely used for secure data transmission and authentication in modern web... | 0 | 2024-06-25T08:54:04 | https://dev.to/aamirkhancr7/understanding-jwt-and-validating-tokens-with-expiry-dates-232 | javascript, azure, jwt, node | JSON Web Tokens (JWT) are widely used for secure data transmission and authentication in modern web applications. This guide will provide an overview of JWT and demonstrate how to validate tokens with expiry dates, including examples with Microsoft Azure AD and Azure AD B2C tokens.

#### What is JWT?

JWT stands for JS... | aamirkhancr7 |

1,899,808 | AI Revolution in Oil and Gas | In the dynamic and high-stakes world of the oil and gas industry, maintaining the integrity of... | 27,673 | 2024-06-25T08:53:12 | https://dev.to/rapidinnovation/ai-revolution-in-oil-and-gas-3aoj | In the dynamic and high-stakes world of the oil and gas industry, maintaining

the integrity of pipelines is not merely a matter of operational efficiency;

it is a crucial necessity for environmental stewardship and safety.

Traditional methods of monitoring pipeline conditions, such as manual

inspections and routine mai... | rapidinnovation | |

1,899,892 | Kubernetes: monitoring Events with kubectl and Grafana Loki | In Kubernetes, in addition to metrics and logs from containers, we can get information about the... | 0 | 2024-07-07T11:08:03 | https://rtfm.co.ua/en/kubernetes-monitoring-events-with-kubectl-and-grafana-loki/ | kubernetes, devops, monitoring | ---

title: Kubernetes: monitoring Events with kubectl and Grafana Loki

published: true

date: 2024-06-25 08:52:51 UTC

tags: kubernetes,devops,monitoring

canonical_url: https://rtfm.co.ua/en/kubernetes-monitoring-events-with-kubectl-and-grafana-loki/

---

| nadiyashaikh | |

1,899,804 | Must-Have Measuring Tools for DIY Projects | Must-Have Measuring Tools for DIY Projects Being fully a enthusiast which was DIY you recognize just... | 0 | 2024-06-25T08:46:59 | https://dev.to/hdjf_ghjvb_884813560fdd5a/must-have-measuring-tools-for-diy-projects-2o5 | tools |

Must-Have Measuring Tools for DIY Projects

Being fully a enthusiast which was DIY you recognize just how crucial their to really have the technologies being appropriate the task. The apparatus that was more that can easily be essential need really easily available try determining instruments. Measuring equipmen... | hdjf_ghjvb_884813560fdd5a |

1,888,208 | Building a subscription tracker Desktop and iOS app with compose multiplatform - Configuring Notion | Photo by Carl Tronders on Unsplash If you want to check out the code, here's the... | 27,528 | 2024-06-25T08:46:45 | https://dev.to/kuroski/building-a-subscription-tracker-desktop-and-ios-app-with-compose-multiplatform-configuring-notion-3a1e | kotlin, kmp, compose, tutorial | > Photo by <a href="https://unsplash.com/@allvar?utm_content=creditCopyText&utm_medium=referral&utm_source=unsplash">Carl Tronders</a> on <a href="https://unsplash.com/photos/a-dog-running-through-a-field-of-tall-grass-tJnG4sgZL6k?utm_content=creditCopyText&utm_medium=referral&utm_source=unsplash">Unsplash</a>

>

> If y... | kuroski |

1,899,801 | Increasing Throughput: The Scalability of a Secondary Packing System | Enhancing Throughput: The Scalability of a Secondary Packaging System Are you sick of reduced... | 0 | 2024-06-25T08:45:42 | https://dev.to/tyuio_dgfhf_25b0358b0fc63/increasing-throughput-the-scalability-of-a-secondary-packing-system-36di | design | Enhancing Throughput: The Scalability of a Secondary Packaging System

Are you sick of reduced efficiency because of product packing lengthy? After that you have to think about updating towards a secondary packaging System if your response is indeed. This Filling System is developed towards enhance throughput offer ... | tyuio_dgfhf_25b0358b0fc63 |

1,899,799 | Indulge in Tranquility: Discover the Best Spas in Bopal | Bopal, a fast-growing suburban area in Ahmedabad, is known for its serene environment and modern... | 0 | 2024-06-25T08:44:11 | https://dev.to/abitamim_patel_7a906eb289/indulge-in-tranquility-discover-the-best-spas-in-bopal-5gok | Bopal, a fast-growing suburban area in Ahmedabad, is known for its serene environment and modern amenities. Amidst its bustling development, Bopal houses some of the most luxurious spas where you can unwind and rejuvenate. Whether you need a soothing massage, a refreshing facial, or holistic wellness treatments, the sp... | abitamim_patel_7a906eb289 | |

1,899,798 | How to develop full stack software platform like Trello/Jira? | Developing a full-stack software platform like Trello or Jira involves several key steps and... | 0 | 2024-06-25T08:43:51 | https://dev.to/nadim_ch0wdhury/how-to-develop-full-stack-software-platform-like-trellojira-25bo | Developing a full-stack software platform like Trello or Jira involves several key steps and technologies across both the front-end and back-end layers. Here’s a structured approach to get you started:

### 1. **Define Requirements and Features**

- **Market Research:** Understand user needs and preferences by studying... | nadim_ch0wdhury | |

1,899,797 | How to develop full stack software platform like Zoom/Google Meet? | Developing a full-stack application like Zoom or Google Meet involves several components and... | 0 | 2024-06-25T08:39:48 | https://dev.to/nadim_ch0wdhury/how-to-develop-full-stack-software-platform-like-zoomgoogle-meet-2n5i | Developing a full-stack application like Zoom or Google Meet involves several components and technologies. Here’s a high-level overview of how you can approach building such an application using Next.js for the frontend and Nest.js for the backend:

### Frontend (Next.js)

1. **Setup Next.js Project:**

- Initialize ... | nadim_ch0wdhury | |

1,899,796 | Top Garden Forks for Effective Soil Aeration | Top Garden Forks for Effective Soil Aeration Have you been tired plus ill of struggling with... | 0 | 2024-06-25T08:34:28 | https://dev.to/tyuio_dgfhf_25b0358b0fc63/top-garden-forks-for-effective-soil-aeration-n0o | design |

Top Garden Forks for Effective Soil Aeration

Have you been tired plus ill of struggling with compacted soil in their garden? Would you need to raise the wellness which was ongoing plus growth of their plants? Look absolutely no further than the garden that was top for effective soil aeration.

Great things abo... | tyuio_dgfhf_25b0358b0fc63 |

1,899,795 | Revolutionize Your Logistics Business with Digital Transformation: 3 Key Areas You Can't Ignore! | In the ever-evolving world of logistics, staying ahead of the curve is essential. Digital... | 0 | 2024-06-25T08:33:27 | https://dev.to/seoqcstechs_a09da5d24db26/revolutionize-your-logistics-business-with-digital-transformation-3-key-areas-you-cant-ignore-3i7m | digital, logistics, ai | In the ever-evolving world of logistics, staying ahead of the curve is essential. Digital transformation isn't just a buzzword; it's a necessity for enhancing efficiency, cutting costs, and staying competitive. But where should you focus your efforts? Let's dive into three niche areas that can truly revolutionize your ... | seoqcstechs_a09da5d24db26 |

1,899,794 | Top Qualities to Look for When Hiring Angular Developers | Find the key to hiring top-tier Angular developers in our latest blog post, "Top Qualities to Look... | 0 | 2024-06-25T08:31:54 | https://dev.to/talentonlease01/top-qualities-to-look-for-when-hiring-angular-developers-422j | angular | Find the key to hiring top-tier Angular developers in our latest blog post, "**[Top Qualities to Look for When Hiring Angular Developers](https://talentonlease.com/blogs/qualities-for-angular-developers/)**." Understand the crucial skills and characteristics that set a successful Angular developer apart. Learn how to a... | talentonlease01 |

1,899,793 | How to develop full stack software platform like Discord/Slack? | Creating a software platform like Discord or Slack with all their features is a complex task that... | 0 | 2024-06-25T08:31:22 | https://dev.to/nadim_ch0wdhury/how-to-develop-full-stack-software-platform-like-discordslack-5105 | Creating a software platform like Discord or Slack with all their features is a complex task that involves multiple areas of expertise including software development, backend infrastructure, database management, real-time communication protocols, user interface design, and more. Here’s a high-level overview of steps yo... | nadim_ch0wdhury | |

1,899,792 | 🤖 Supervised vs. Unsupervised Learning: A Fun Comparison! 🎉 | Hey there, tech enthusiasts! 👋 Today, we're diving into the fascinating world of Machine Learning 🌟,... | 0 | 2024-06-25T08:31:17 | https://dev.to/aviralgarg05/supervised-vs-unsupervised-learning-a-fun-comparison-19pg | machinelearning, datascience, python, computerscience | Hey there, tech enthusiasts! 👋 Today, we're diving into the fascinating world of Machine Learning 🌟, specifically comparing **Supervised Learning** and **Unsupervised Learning**. Let's break it down with some emojis to make it more exciting and digestible!

## Supervised Learning 📚👨🏫

### What is it? 🤔

Supervise... | aviralgarg05 |

1,899,791 | Role Of Blended Learning In Providing Equitable Quality Education Across The Globe | Blended learning solutions are a new set of teaching techniques blending conventional classroom... | 0 | 2024-06-25T08:30:01 | https://dev.to/abhishek022/role-of-blended-learning-in-providing-equitable-quality-education-across-the-globe-3p87 | blendedlearningsolutions, blendedlearningplatform | Blended learning solutions are a new set of teaching techniques blending conventional classroom lectures and eLearning systems that have facilitated easy access to education globally. This method of learning employs technology’s power to bring about adjustable, private, and scalable instructional experiences, thus ensu... | abhishek022 |

1,899,790 | My Journey Begins: From Coding Bootcamp to 100 Days of Code | This week marks a significant milestone in my journey— in two days, I’ll officially be a full stack... | 0 | 2024-06-25T08:27:02 | https://dev.to/clare_codes/my-journey-begins-from-coding-bootcamp-to-100-days-of-code-4227 | 100daysofcode, webdev, beginners, html | This week marks a significant milestone in my journey— in two days, I’ll officially be a full stack developer, having graduated from [Moringa School](https://moringaschool.com/)! The experience has been intense, often feeling like a race against time. But the sense of accomplishment I feel makes every project and late ... | clare_codes |

1,899,789 | Introduction TO Word Embeddings | Introduction Word embedding is a technique in which words and sentences are converted into... | 0 | 2024-06-25T08:26:33 | https://dev.to/muhammad_saim_7/introduction-to-word-embeddings-4m86 | ai, llm, nlp, datascience | ## Introduction

Word embedding is a technique in which words and sentences are converted into numbers. Our computer can understand only numbers, so representing this text as numbers is necessary for model training. Another thing is that using word embedding reduces the dimensionality, which is more efficient for the pr... | muhammad_saim_7 |

1,899,788 | 4 Wege, um Bilder von Websites zu extrahieren | Ursprüngliche Quelle: 4 Methoden zum Extrahieren der Bilder aus Webseiten Hier sind verschiedene... | 0 | 2024-06-25T08:26:03 | https://dev.to/emilia/4-methoden-zum-extrahieren-der-bilder-aus-webseiten-30m2 | programming, beginners, python, career | Ursprüngliche Quelle: [4 Methoden zum Extrahieren der Bilder aus Webseiten](https://bit.ly/4bkgeMf)

Hier sind verschiedene Möglichkeiten, um Web-Bilder herunterzuladen, von Browsererweiterungen bis hin zu professionellen Tools. Diese Methoden helfen Ihnen, **benötigte Bilder von einer Webseite effizient zu speichern. ... | emilia |

1,899,787 | We are good recovery experts when it comes to cryptocurrency recovery. | For those, it may concern....I am using this opportunity to thank those who have been giving good... | 0 | 2024-06-25T08:24:37 | https://dev.to/isabella_rosemary_53fb630/we-are-good-recovery-experts-when-it-comes-to-cryptocurrency-recovery-5gp7 | For those, it may concern....I am using this opportunity to thank those who have been giving good reviews about me and my team...It means alot to me because it makes me realize we are doing a satisfying job for you all and we promise always to serve you better. And for those of you who do not know about us but would li... | isabella_rosemary_53fb630 | |

1,899,786 | MySQL syntaxes | What 👎 JSON_UNQUOTE + JSON_EXTRACT vs 👍 ->> SELECT... | 0 | 2024-06-25T08:23:44 | https://dev.to/deko39/mysql-syntaxes-1moi | syntaxes, mysql | # What

👎 `JSON_UNQUOTE + JSON_EXTRACT` vs 👍 `->>`

```

SELECT JSON_UNQUOTE(JSON_EXTRACT(metadata, 'field')) 👎

SELECT metadata->>'$.field' 👍

```

# Why it matters?

`JSON_EXTRACT` vs `->` only return the string result

`JSON_UNQUOTE + JSON_EXTRACT` vs `->>` return the `parsed` result (you can think of the JSON.parse met... | deko39 |

1,899,785 | TOP 5 Best Angular libraries for Gantt charts | Creating an extensive guide on the top Angular libraries for Gantt charts can significantly help... | 0 | 2024-06-25T08:22:33 | https://dev.to/lenormor/top-5-best-angular-libraries-for-gantt-charts-20o0 | angular, javascript, webdev, programming | Creating an extensive guide on the top Angular libraries for Gantt charts can significantly help developers make informed decisions when integrating these components into their applications. Here, I will provide an in-depth look at the top five Angular libraries for Gantt charts, starting with ScheduleJS, and ensure th... | lenormor |

1,899,784 | Essential Fastening Tools for Engineers | Essential Tools for Engineers: producing work Safe plus Easy with Fastening device Introduction Do... | 0 | 2024-06-25T08:21:53 | https://dev.to/tyuig_dgch_ec9b8fba1975d2/essential-fastening-tools-for-engineers-2fc | tools |

Essential Tools for Engineers: producing work Safe plus Easy with Fastening device

Introduction

Do you think you're an engineer that are aspiring? Since are you a person who had been active in the engineering business? Whatever may be the circumstances, you must understand which equipment might are likely invo... | tyuig_dgch_ec9b8fba1975d2 |

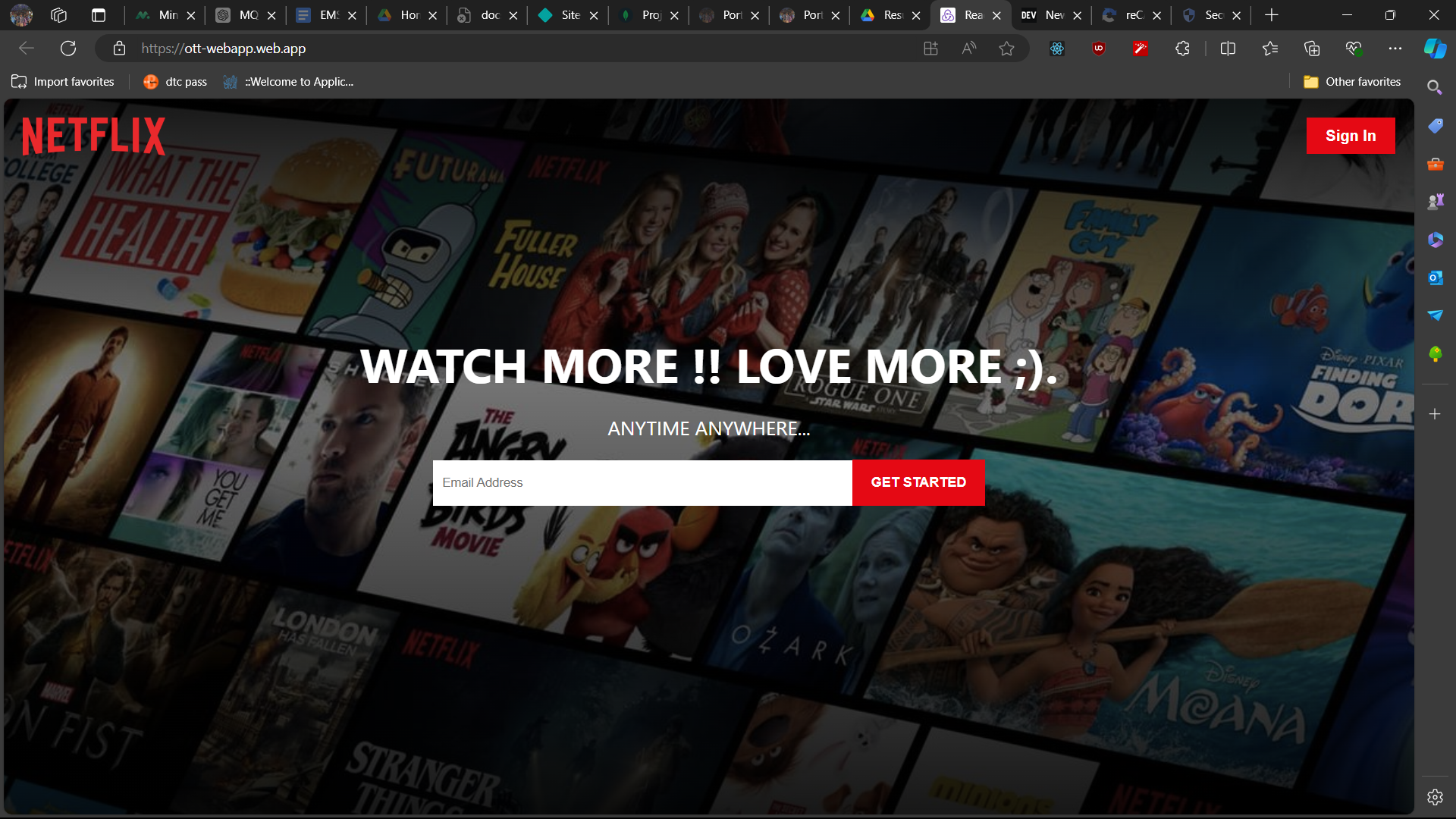

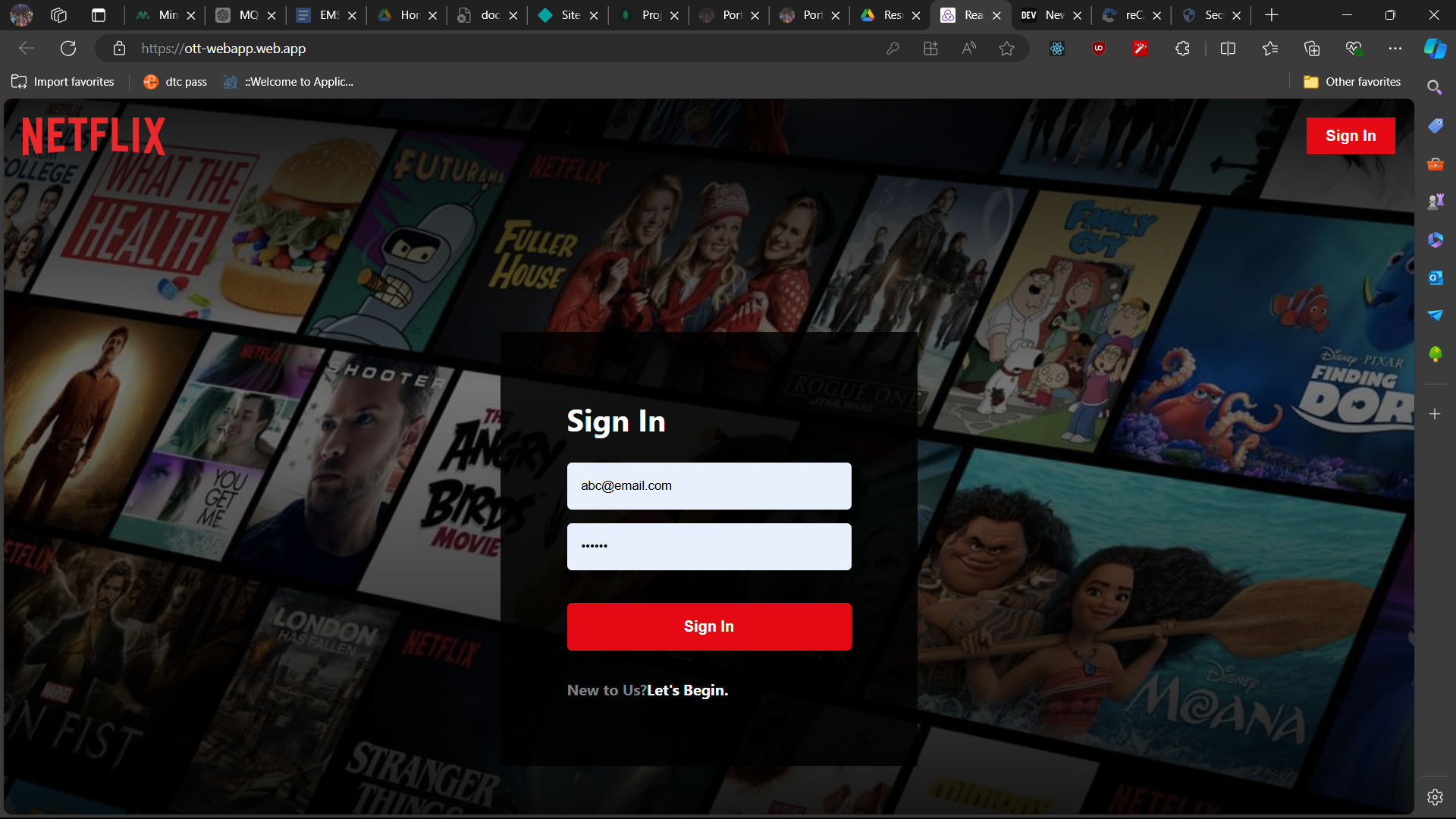

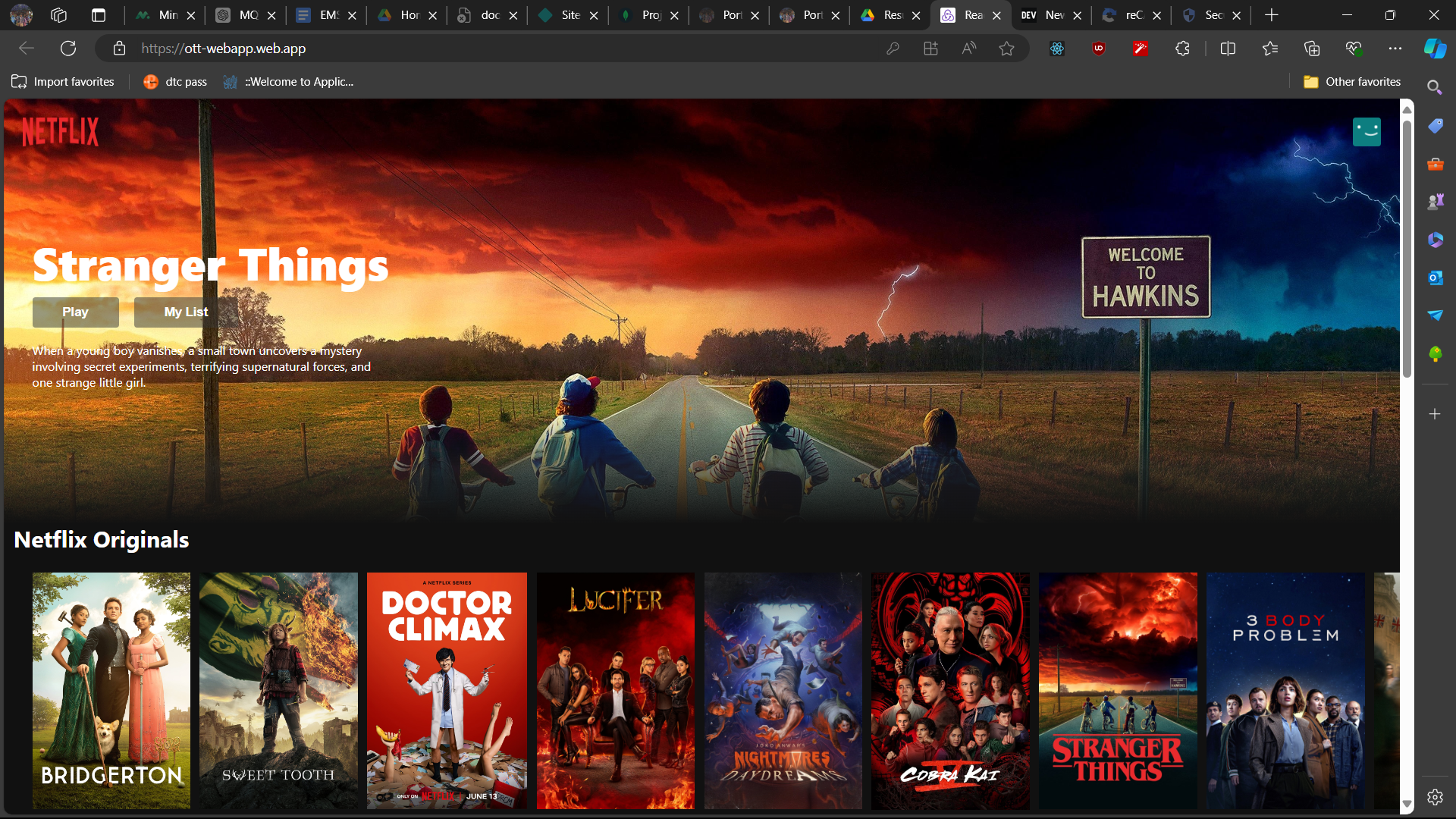

1,899,783 | Netflix Clone | Created a Netflix UI clone project with a focus on user authentication and login/signup... | 0 | 2024-06-25T08:21:01 | https://dev.to/pranav-29/netflix-clone-4jee |

---

## Introducing Designfast Template - Minimal template designed for Service business

---

Looking for a simple yet powerful website template to jumpstart your service business?

This template is designed with ... | darkinventor |

1,899,780 | Kid-Friendly Comfort: Cartoon Children's Coats for Rainy Day Activities | Kid-Friendly Benefit: Computer animation Children's Levels for Damp jobs opportunity Every one of... | 0 | 2024-06-25T08:18:16 | https://dev.to/dydh_hxfhbx_7d3e1fec75665/kid-friendly-comfort-cartoon-childrens-coats-for-rainy-day-activities-4109 | design | Kid-Friendly Benefit: Computer animation Children's Levels for Damp jobs opportunity

Every one of spruced up in addition to ready in the direction of choose a damp opportunity expertise as a mothers father, definitely absolutely nothing at all whatsoever hammers watching your youngster. Nevertheless together with the... | dydh_hxfhbx_7d3e1fec75665 |

343,033 | Fun with Amplify Default Permissions | How to use someone else's AWS account to create a platform to share and store an unlimited amount of data | 0 | 2020-05-24T20:22:03 | https://dev.to/rosswilliams/fun-with-amplify-default-permissions-4dgg | amplify, awsamplify | ---

title: Fun with Amplify Default Permissions

published: true

description: How to use someone else's AWS account to create a platform to share and store an unlimited amount of data

tags: amplify, awsamplify

---

Note: This is an exercise in understanding AWS Amplify API KEY Auth and Storage configuration in order pro... | rosswilliams |

1,899,779 | Client Portfolio | Developed a highly responsive and animated project using React, BOOTSTRAP, CSS, SAAS,... | 0 | 2024-06-25T08:16:27 | https://dev.to/pranav-29/client-portfolio-3he |

- Made it with React, HTML 5, CSS and JSX

- Made functionality in javascript and using

moke data for the products.

[Live Demo](https://product-filter.pages.dev/)

| pranav-29 | |

1,899,774 | Building Legacies Through Innovation and Tradition Since 1997 | Established in 1997, Salina Group has become a premier Construction Chemicals & Building... | 0 | 2024-06-25T08:11:38 | https://dev.to/marrij_rana_8bb58e836288c/building-legacies-through-innovation-and-tradition-since-1997-eof | Established in 1997, Salina Group has become a premier[ Construction Chemicals & Building Materials Manufacturer in UAE](https://www.salinagroup.com/), seamlessly blending time-honored traditions with cutting-edge technology. Beyond construction, we are dedicated to shaping legacies through our commitment to innovation... | marrij_rana_8bb58e836288c | |

1,899,773 | Microfrontends vs. Monorepos in React.js ⚡️ | In the dynamic world of web development, React.js has emerged as a leading framework for building... | 0 | 2024-06-25T08:09:53 | https://dev.to/alisamirali/microfrontends-vs-monorepos-in-reactjs-41a8 | react, webdev, javascript, frontend | In the dynamic world of web development, React.js has emerged as a leading framework for building user interfaces, offering a robust platform for developing single-page applications.

As applications grow in complexity, developers face the challenge of managing large codebases efficiently. Two architectural patterns t... | alisamirali |

1,899,772 | Metal Cabinets for Efficient Storage Solutions | Advertising Short post around Steel Cupboards for Effective Storing Services Perform you ever before... | 0 | 2024-06-25T08:08:49 | https://dev.to/dydh_hxfhbx_7d3e1fec75665/metal-cabinets-for-efficient-storage-solutions-5fo2 | design | Advertising Short post around Steel Cupboards for Effective Storing Services

Perform you ever before discover on your own battling with arranging as well as keeping your possessions Perform you desire a service that's effective, risk-free, as well as of top quality Steel cupboards might be actually the explanati... | dydh_hxfhbx_7d3e1fec75665 |

1,899,770 | Custom Metal Furniture: Tailoring to Your Taste | Customized Steel Furnishings: Tailoring towards Your Preference Perform you wish to embellish your... | 0 | 2024-06-25T08:06:22 | https://dev.to/tyuig_dgch_ec9b8fba1975d2/custom-metal-furniture-tailoring-to-your-taste-4dac | furniture |

Customized Steel Furnishings: Tailoring towards Your Preference

Perform you wish to embellish your house along with a distinct furnishings style After that Customized Steel Furnishings is actually the very best

choice

for you It provides various benefits that kinds of furnishings do not offer. we'll talk... | tyuig_dgch_ec9b8fba1975d2 |

1,899,208 | Starter guide to understand Sections on Shopify Themes. | Shopify is awesome, and one of the things that I like the most is that it allows merchants to update... | 0 | 2024-06-25T08:06:11 | https://dev.to/ricardotree/starter-guide-to-understand-sections-on-shopify-themes-3c88 | Shopify is awesome, and one of the things that I like the most is that it allows merchants to update the UI of their e-commerce sites in a very seamless way, something similar to using no code, and your job as developer is to make a theme as customizable as possible for your clients, so they never will not come to you ... | ricardotree |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.