id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,899,168 | Learning Resource Hub | Bookmarks for tech professionals and enthusiasts at Tech Trendsetters. Constantly updated and curated... | 25,762 | 2024-06-24T16:56:34 | https://dev.to/iwooky/learning-resource-hub-2h0d | learning, beginners, datascience | Bookmarks for tech professionals and enthusiasts at Tech Trendsetters. Constantly updated and curated collection of literature, courses, and resources covers the latest trends and essential skills.

**Machine Learning / AI related**

Courses below require Python, Fundamentals of Machine Learning, Basic Probability and ... | iwooky |

1,898,790 | 12 Open Source tools that Developers would give up Pizza for👋🍕 | It's Open Source tool time! There is more to open source tools than the top 3 that everyone knows... | 0 | 2024-06-24T17:21:08 | https://dev.to/middleware/13-foss-tools-that-developers-would-give-up-pizza-for-4a6g | tooling, webdev, opensource, productivity | It's Open Source tool time!

There is more to open source tools than the top 3 that everyone knows about.

Heck, you might actually know all the 12 in this list already(in which case: "slow clap"), but most of us don't.

Introduction

Functional testing is a critical phase in the software development lifecycle. It focuses on verifying that the software system operates according to the specified requirements and meets the intended fun... | keploy |

1,899,180 | A forensic analysis of the Claude Sonnet 3.5 system prompt leak | A forensic analysis of the Claude Sonnet 3.5 system prompt Originally published in the... | 0 | 2024-06-24T17:15:46 | https://dev.to/ejb503/a-forensic-analysis-of-the-claude-sonnet-35-system-prompt-leak-58h7 | machinelearning, programming, ai, webdev |

## A forensic analysis of the Claude Sonnet 3.5 system prompt

Originally published in the [Tying Shoelaces Blog](https://tyingshoelaces.com/blog/forensic-analysis-sonnet-prompt)

### Introducing Artifacts

A step forward in structured output generation.

This is an analysis of the system prompt generation for [Cl... | ejb503 |

1,899,177 | DAY 2 PROJECT : HOVER EFFECTS | Creating Engaging Web Interactions with Hover Effects Using HTML, CSS, and JavaScript In the digital... | 0 | 2024-06-24T17:14:36 | https://dev.to/shrishti_srivastava_/day-2-project-1de1 | webdev, javascript, beginners, programming | **Creating Engaging Web Interactions with Hover Effects Using HTML, CSS, and JavaScript**

In the digital age, the user experience on websites has become a pivotal element in retaining and engaging visitors. One of the most effective ways to enhance user interaction is through hover effects.

**What Are Hover Effects?*... | shrishti_srivastava_ |

1,895,160 | The Hardest Problem in RAG... Handling 'NOT FOUND' Answers 🔍🤔 | First of All... What is RAG? 🕵️♂️ Retrieval-Augmented Generation (RAG) is an approach to... | 0 | 2024-06-24T17:04:03 | https://dev.to/llmware/the-hardest-problem-in-rag-handling-not-found-answers-7md | rag, ai, python, programming | ## First of All... What is RAG? 🕵️♂️

Retrieval-Augmented Generation (RAG) is an approach to natural language processing that references external documents to provide more accurate and contextually relevant answers. Despite its advantages, RAG faces some challenges, one of which is handling 'NOT FOUND' answers. Addr... | will_taner |

1,899,172 | Introduction to Docker: Revolutionizing Software Development and Deployment | In the fast-paced world of software development, efficiency and consistency are key. Docker, a... | 0 | 2024-06-24T17:02:25 | https://dev.to/gimkelum/introduction-to-docker-revolutionizing-software-development-and-deployment-42mf | docker, webdev | In the fast-paced world of software development, efficiency and consistency are key. Docker, a powerful platform for developing, shipping, and running applications, has emerged as a game-changer. This blog article will provide an introduction to Docker, exploring its benefits, core concepts, and how it revolutionizes t... | gimkelum |

1,899,142 | Build AI powered projects *for free* | In this ever-evolving world, AI has become a must-have on your resume, and using AI in our side... | 0 | 2024-06-24T17:00:47 | https://dev.to/lemmecode/build-ai-powered-projects-for-free-e50 | In this ever-evolving world, AI has become a must-have on your resume, and using AI in our side projects can surely give us an advantage over those who don’t. but AI is expensive? or is it?

First and foremost, let me clarify that by side projects I don’t necessarily mean weird, dumb projects made just for fun, but pro... | lemmecode | |

1,899,171 | Docker: Introduction step-by-step guide for beginners DevOps Engineers. | What is a Docker? Docker is a popular open-source project written in Go and developed by Dot Cloud (A... | 0 | 2024-06-24T16:58:56 | https://dev.to/oncloud7/docker-introduction-step-by-step-guide-for-beginners-devops-engineers-5fee | docker, devops, awschallenge | **What is a Docker?**

Docker is a popular open-source project written in Go and developed by Dot Cloud (A PaaS Company). It is a container engine that uses Linux Kernel features like namespaces and control groups to create containers on top of an operating system.

Docker is a Container management service. Is an Open P... | oncloud7 |

1,899,170 | Do senior devs use terminal/VIM? Do they type faster? | A reply to this great post about productivity of console proved to be quite popular, so I decided to... | 0 | 2024-06-24T16:58:27 | https://dev.to/latobibor/do-senior-devs-use-terminalvim-do-they-type-faster-glf | programming, console, terminal | A reply to this [great post about productivity of console](https://dev.to/sotergreco/improve-your-productivity-by-using-more-terminal-and-less-mouse--2o7b) proved to be quite popular, so I decided to turn it into an article and add a bit of extra.

## (Skippable) Little Story About DOS and `config.sys`

First a little ... | latobibor |

1,899,169 | golang: Understanding the difference between nil pointers and nil interfaces | An explanation of the difference between nil interfaces and nil pointers in go | 0 | 2024-06-24T16:56:35 | https://dev.to/goodevilgenius/golang-understanding-the-difference-between-nil-pointers-and-nil-interfaces-31ka | programming, webdev, go | ---

title: "golang: Understanding the difference between nil pointers and nil interfaces"

published: true

description: An explanation of the difference between nil interfaces and nil pointers in go

tags:

- programming

- webdev

- go

- golang

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/ar... | goodevilgenius |

1,899,166 | Text-based language processing enhanced with AI/ML | TL;DR: On this family summer trip to Asia, I've admittedly been relying heavily on Google... | 0 | 2024-06-24T16:53:22 | https://dev.to/wescpy/text-based-language-processing-enhanced-with-aiml-1b1h | python, node, machinelearning, ai | <!-- Text-based language processing enhanced with AI/ML: Intro to the Google Cloud Natural Language & Translation APIs -->

<!-- How to use the Google Cloud Natural Language & Translation APIs -->

<!-- Intro to AI-backed text-based language processing with Google Cloud -->

<!-- Text-based language processing with Google... | wescpy |

1,899,167 | Optimizing Business Operations with Call Center Services | Call center services are indispensable for modern businesses seeking to streamline operations and... | 0 | 2024-06-24T16:52:01 | https://dev.to/ijtecjnologies8977/optimizing-business-operations-with-call-center-services-40c0 | Call center services are indispensable for modern businesses seeking to streamline operations and elevate customer satisfaction. These services encompass both inbound and [outbound](https://ijtechnologz.com/outbound-service/[](url)) capabilities, offering crucial support for customer inquiries, sales, technical assista... | ijtecjnologies8977 | |

1,899,148 | Remove unwanted partition data in Azure Synapse (SQL DW) | Introduction to Partition Switching? Azure Synapse Dedicated SQL pool or SQL Server or... | 0 | 2024-06-24T16:48:21 | https://dev.to/ayush9892/remove-unwanted-partition-data-in-azure-synapse-sql-dw-5clp | dataengineering, sqlserver, azure | ## Introduction to Partition Switching?

Azure Synapse Dedicated SQL pool or SQL Server or Azure SQL Database, allows you to create partitions on a target table. Table partitions enable you to divide your data into multiple chunks or partitions. It improves query performance by eliminating partitions that is not necessa... | ayush9892 |

1,899,165 | Aikido’s 2024 SaaS CTO Security Checklist | SaaS companies have a huge target painted on their backs when it comes to security. Aikido's 2024 SaaS CTO Security Checklist gives you over 40 items to enhance security 💪 Download it now and make your company and code 10x more secure. #cybersecurity #SaaSCTO #securitychecklist | 0 | 2024-06-24T16:45:13 | http://www.aikido.dev/blog/saas-cto-security-checklist | SaaS companies have a huge target painted on their backs when it comes to security, and that’s something that keeps their CTOs awake at night. The Cloud Security Alliance released its [State of SaaS Security: 2023 Survey Report](https://cloudsecurityalliance.org/artifacts/state-of-saas-security-2023-survey-report/) ear... | flxg | |

1,899,164 | DreamMachineAI - free luma ai video generator | Dream Machine AI is an exciting new AI-powered video generation tool that's transforming the way we... | 0 | 2024-06-24T16:36:37 | https://dev.to/runningdogg/dreammachineai-free-luma-ai-video-generator-3d0h | luma, lumaai, dreammachineai |

Dream Machine AI is an exciting new AI-powered video generation tool that's transforming the way we create video content. This powerful platform leverages advanced artificial intelligence technology to turn simple text prompts or images into high-quality, realistic videos.

## What is Dream Machine AI?

Dream Machine ... | runningdogg |

1,899,163 | The Best Foot and Ankle Care Near Me: What Sets Top Clinics Apart | When it comes to maintaining mobility and overall well-being, finding the best foot and ankle care is... | 0 | 2024-06-24T16:36:28 | https://dev.to/mikecartell710/the-best-foot-and-ankle-care-near-me-what-sets-top-clinics-apart-17i5 | When it comes to maintaining mobility and overall well-being, finding the best foot and ankle care is crucial. Whether you're dealing with a minor issue or a complex condition, choosing the right clinic can make all the difference. Let's explore what sets top foot and ankle clinics apart and why their advanced care is ... | mikecartell710 | |

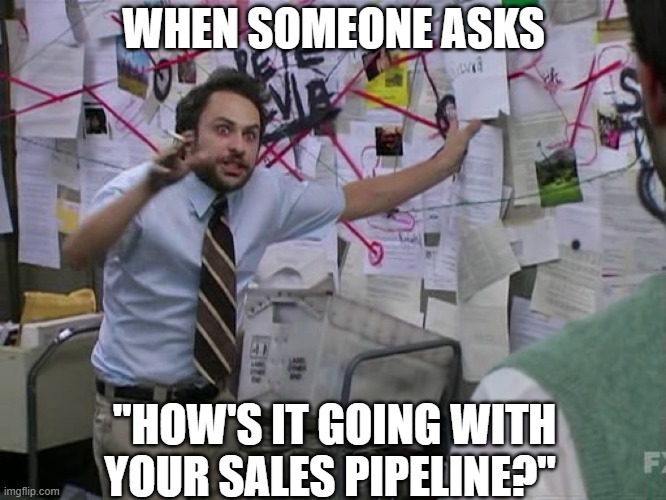

1,899,162 | Meme Monday | No One Can Get Your Sales Pipeline Like You Do! Source | 0 | 2024-06-24T16:36:28 | https://dev.to/td_inc/meme-monday-nf7 | sales, meme | **No One Can Get Your Sales Pipeline Like You Do!**

[Source](https://imgflip.com/i/873wb1) | td_inc |

1,899,161 | Debugging React Native with Reactotron: A Step-by-Step Guide | Debugging a React Native application can sometimes feel like walking through a maze. But what if... | 0 | 2024-06-24T16:36:22 | https://dev.to/rohanrajgautam/debugging-react-native-with-reactotron-a-step-by-step-guide-2f02 | reactnative, debug, reactotron | Debugging a React Native application can sometimes feel like walking through a maze. But what if there was a tool that could streamline the process and give you real-time insights into your app’s behavior? Enter Reactotron—a powerful desktop application that edits React Native apps. In this blog, I’ll walk you through ... | rohanrajgautam |

1,899,341 | Masterclass De Análise De Dados Gratuita Da Hashtag Treinamentos | A Masterclass de Análise de Dados é uma oportunidade para aprender como utilizar a análise de dados... | 0 | 2024-06-28T13:38:19 | https://guiadeti.com.br/masterclass-analise-de-dados-gratuita/ | cursogratuito, analisededados, cursosgratuitos, dados | ---

title: Masterclass De Análise De Dados Gratuita Da Hashtag Treinamentos

published: true

date: 2024-06-24 16:33:43 UTC

tags: CursoGratuito,analisededados,cursosgratuitos,dados

canonical_url: https://guiadeti.com.br/masterclass-analise-de-dados-gratuita/

---

A Masterclass de Análise de Dados é uma oportunidade para ... | guiadeti |

1,899,159 | Introducing Laravel Usage Limiter Package: Track and restrict usage limits for users or accounts. | GitHub Repo: https://github.com/nabilhassen/laravel-usage-limiter Description A Laravel package to... | 0 | 2024-06-24T16:24:18 | https://dev.to/nabilhassen/introducing-laravel-usage-limiter-package-track-and-restrict-usage-limits-for-users-or-accounts-243l | php, laravel, opensource, webdev | **GitHub Repo**: https://github.com/nabilhassen/laravel-usage-limiter

**Description**

A Laravel package to track, limit, and restrict usage limits of users, accounts, or any other model.

With this package, you will be able to set limits for your users, track their usages, and restrict users when they hit their maxim... | nabilhassen |

1,899,158 | The Evolution and Future of Online Advertising: Balancing Innovation with Privacy | (9 min read) In the early 2000s, I remember browsing the internet as something very top-notch and... | 0 | 2024-06-24T16:21:59 | https://dev.to/jestevesv/the-evolution-and-future-of-online-advertising-balancing-innovation-with-privacy-3p5m | onlineadvertising, privacyconcerns, ai, targetingaudiences | (9 min read)

In the early 2000s, I remember browsing the internet as something very top-notch and advanced. The internet was transitioning from Web 1.0 to Web 2.0, and this period was crucial in defining the business model behind the revenue. Should users pay to access content, or should content be free and supported ... | jestevesv |

1,899,157 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Account There are... | 0 | 2024-06-24T16:21:16 | https://dev.to/cokawav672/buy-verified-paxful-account-5gp5 | tutorial, react, python, ai | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-paxful-account/\n\n\n\n\nBuy Verified Paxful Account\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, Buy verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to Buy Verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with. Buy Verified Paxful Account.\n\nBuy US verified paxful account from the best place dmhelpshop\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. Buy Verified Paxful Account.\n\nIf you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\nWhat is Verified Paxful Account?\nIn today’s expanding landscape of online transactions, ensuring security and reliability has become paramount. Given this context, Paxful has quickly risen as a prominent peer-to-peer Bitcoin marketplace, catering to individuals and businesses seeking trusted platforms for cryptocurrency trading.\n\nIn light of the prevalent digital scams and frauds, it is only natural for people to exercise caution when partaking in online transactions. As a result, the concept of a verified account has gained immense significance, serving as a critical feature for numerous online platforms. Paxful recognizes this need and provides a safe haven for users, streamlining their cryptocurrency buying and selling experience.\n\nFor individuals and businesses alike, Buy verified Paxful account emerges as an appealing choice, offering a secure and reliable environment in the ever-expanding world of digital transactions. Buy Verified Paxful Account.\n\nVerified Paxful Accounts are essential for establishing credibility and trust among users who want to transact securely on the platform. They serve as evidence that a user is a reliable seller or buyer, verifying their legitimacy.\n\nBut what constitutes a verified account, and how can one obtain this status on Paxful? In this exploration of verified Paxful accounts, we will unravel the significance they hold, why they are crucial, and shed light on the process behind their activation, providing a comprehensive understanding of how they function. Buy verified Paxful account.\n\n \n\nWhy should to Buy Verified Paxful Account?\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, a verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence. Buy Verified Paxful Account.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to buy a verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with.\n\n \n\nWhat is a Paxful Account\nPaxful and various other platforms consistently release updates that not only address security vulnerabilities but also enhance usability by introducing new features. Buy Verified Paxful Account.\n\nIn line with this, our old accounts have recently undergone upgrades, ensuring that if you purchase an old buy Verified Paxful account from dmhelpshop.com, you will gain access to an account with an impressive history and advanced features. This ensures a seamless and enhanced experience for all users, making it a worthwhile option for everyone.\n\n \n\nIs it safe to buy Paxful Verified Accounts?\nBuying on Paxful is a secure choice for everyone. However, the level of trust amplifies when purchasing from Paxful verified accounts. These accounts belong to sellers who have undergone rigorous scrutiny by Paxful. Buy verified Paxful account, you are automatically designated as a verified account. Hence, purchasing from a Paxful verified account ensures a high level of credibility and utmost reliability. Buy Verified Paxful Account.\n\nPAXFUL, a widely known peer-to-peer cryptocurrency trading platform, has gained significant popularity as a go-to website for purchasing Bitcoin and other cryptocurrencies. It is important to note, however, that while Paxful may not be the most secure option available, its reputation is considerably less problematic compared to many other marketplaces. Buy Verified Paxful Account.\n\nThis brings us to the question: is it safe to purchase Paxful Verified Accounts? Top Paxful reviews offer mixed opinions, suggesting that caution should be exercised. Therefore, users are advised to conduct thorough research and consider all aspects before proceeding with any transactions on Paxful.\n\n \n\nHow Do I Get 100% Real Verified Paxful Accoun?\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform.\n\nHowever, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. This verification procedure necessitates users to furnish personal information and vital documents, posing potential risks if not conducted meticulously.\n\nIn this comprehensive guide, we will delve into the necessary steps to create a legitimate and verified Paxful account. Our discussion will revolve around the verification process and provide valuable tips to safely navigate through it.\n\nMoreover, we will emphasize the utmost importance of maintaining the security of personal information when creating a verified account. Furthermore, we will shed light on common pitfalls to steer clear of, such as using counterfeit documents or attempting to bypass the verification process.\n\nWhether you are new to Paxful or an experienced user, this engaging paragraph aims to equip everyone with the knowledge they need to establish a secure and authentic presence on the platform.\n\nBenefits Of Verified Paxful Accounts\nVerified Paxful accounts offer numerous advantages compared to regular Paxful accounts. One notable advantage is that verified accounts contribute to building trust within the community.\n\nVerification, although a rigorous process, is essential for peer-to-peer transactions. This is why all Paxful accounts undergo verification after registration. When customers within the community possess confidence and trust, they can conveniently and securely exchange cash for Bitcoin or Ethereum instantly. Buy Verified Paxful Account.\n\nPaxful accounts, trusted and verified by sellers globally, serve as a testament to their unwavering commitment towards their business or passion, ensuring exceptional customer service at all times. Headquartered in Africa, Paxful holds the distinction of being the world’s pioneering peer-to-peer bitcoin marketplace. Spearheaded by its founder, Ray Youssef, Paxful continues to lead the way in revolutionizing the digital exchange landscape.\n\nPaxful has emerged as a favored platform for digital currency trading, catering to a diverse audience. One of Paxful’s key features is its direct peer-to-peer trading system, eliminating the need for intermediaries or cryptocurrency exchanges. By leveraging Paxful’s escrow system, users can trade securely and confidently.\n\nWhat sets Paxful apart is its commitment to identity verification, ensuring a trustworthy environment for buyers and sellers alike. With these user-centric qualities, Paxful has successfully established itself as a leading platform for hassle-free digital currency transactions, appealing to a wide range of individuals seeking a reliable and convenient trading experience. Buy Verified Paxful Account.\n\n \n\nHow paxful ensure risk-free transaction and trading?\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfu implement stringent identity and address verification measures to protect users from scammers and ensure credibility.\n\nWith verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users. Buy Verified Paxful Account.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user’s dedication to the platform’s guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Buy Verified Paxful Account.\n\nExamining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\n \n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors.\n\nBusinesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities. Buy Verified Paxful Account.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth.\n\nPaxful’s verified accounts not only offer reliability within the trading community but also serve as a testament to the platform’s ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today. Buy Verified Paxful Account.\n\n \n\nWhy paxful keep the security measures at the top priority?\nIn today’s digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information.\n\nSafeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all. Buy Verified Paxful Account.\n\nConclusion\nInvesting in Bitcoin offers various avenues, and among those, utilizing a Paxful account has emerged as a favored option. Paxful, an esteemed online marketplace, enables users to engage in buying and selling Bitcoin. Buy Verified Paxful Account.\n\nThe initial step involves creating an account on Paxful and completing the verification process to ensure identity authentication. Subsequently, users gain access to a diverse range of offers from fellow users on the platform. Once a suitable proposal captures your interest, you can proceed to initiate a trade with the respective user, opening the doors to a seamless Bitcoin investing experience.\n\nIn conclusion, when considering the option of purchasing verified Paxful accounts, exercising caution and conducting thorough due diligence is of utmost importance. It is highly recommended to seek reputable sources and diligently research the seller’s history and reviews before making any transactions.\n\nMoreover, it is crucial to familiarize oneself with the terms and conditions outlined by Paxful regarding account verification, bearing in mind the potential consequences of violating those terms. By adhering to these guidelines, individuals can ensure a secure and reliable experience when engaging in such transactions. Buy Verified Paxful Account.\n\n \n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n" | cokawav672 |

1,899,150 | 995. Minimum Number of K Consecutive Bit Flips | 995. Minimum Number of K Consecutive Bit Flips Hard You are given a binary array nums and an... | 27,523 | 2024-06-24T16:20:22 | https://dev.to/mdarifulhaque/995-minimum-number-of-k-consecutive-bit-flips-5gpp | php, leetcode, algorithms, programming | 995\. Minimum Number of K Consecutive Bit Flips

Hard

You are given a binary array `nums` and an integer `k`.

A k-bit flip is choosing a subarray of length `k` from `nums` and simultaneously changing every `0` in the subarray to `1`, and every `1` in the subarray to `0`.

Return _the minimum number of **k-bit flips**... | mdarifulhaque |

1,899,149 | Implementando Apache Kafka com Docker e nodejs: Passo a Passo para Iniciantes | Apache Kafka com Docker e nodejs Introdução No mundo moderno da tecnologia, onde dados são gerados e... | 0 | 2024-06-24T16:17:05 | https://dev.to/warmachine13/implementando-apache-kafka-com-docker-e-nodejs-passo-a-passo-para-iniciantes-3jf6 | docker, node, javascript, kafka | Apache Kafka com Docker e nodejs

Introdução

No mundo moderno da tecnologia, onde dados são gerados e consumidos em volumes sem precedentes, a necessidade de sistemas que possam lidar com grandes fluxos de informação se torna cada vez mais crucial. Apache Kafka é uma dessas ferramentas revolucionárias que tem ganhado po... | warmachine13 |

1,899,147 | Developing a Paycheck Calculator from Scratch and Integrating it into Your Website | Providing employees with easy access to their payroll information is essential for maintaining... | 0 | 2024-06-24T16:15:55 | https://dev.to/elainecbennet/developing-a-paycheck-calculator-from-scratch-and-integrating-it-into-your-website-4gdk | calculatorintegration, development, paycheckcalculator | Providing employees with easy access to their payroll information is essential for maintaining satisfaction and transparency. A paycheck calculator is a valuable tool that allows employees to estimate their take-home pay after deductions. Developing this tool from scratch and integrating it into your website can enhanc... | elainecbennet |

1,899,121 | Secure AWS Resource Deployment Using GitHub Actions | Setting up a GitHub pipeline often involves initiating resource deployment on cloud platforms like... | 0 | 2024-06-24T16:15:38 | https://dev.to/sepiyush/efficiently-managing-multi-directory-repositories-with-github-actions-cicd-pipeline-2hn | idp, aws, githubactions | Setting up a GitHub pipeline often involves initiating resource deployment on cloud platforms like AWS. To accomplish this, a secure authentication mechanism between GitHub Actions and your AWS account is necessary. This blog explores the use of OpenID Connect (OIDC) for secure authentication and provides a detailed ex... | sepiyush |

1,899,145 | Serverless on GCP using Cloud Functions | Introduction: This Post Introduce and Demonstrate how to deploy a stateless code/script on Google... | 0 | 2024-06-24T16:11:58 | https://dev.to/iamgauravpande/serverless-on-gcp-using-cloud-functions-ekp | serverless, githubactions, googlecloud, security | **Introduction:**

This Post Introduce and Demonstrate how to deploy a stateless code/script on Google Cloud Platform Serverless Environment named Cloud Functions.

**GCP Resources Used:**

1. Cloud Scheduler Job 2. Pub/Sub Topic 3. Cloud Function(1st Gen) 4. Two Service Accounts (for Infra and Cloud Function Runtime Se... | iamgauravpande |

1,899,143 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-24T16:08:00 | https://dev.to/cokawav672/buy-verified-cash-app-account-abc | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n• Genuine and activated email verified\n• Registered phone number (USA)\n• Selfie verified\n• SSN (social security number) verified\n• Driving license\n• BTC enable or not enable (BTC enable best)\n• 100% replacement guaranteed\n• 100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n \nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n \nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n \nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n \nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n \nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n\n" | cokawav672 |

1,899,140 | Stay Updated with PHP/Laravel: Weekly News Summary (17/06/2024–23/06/2024) | Dive into the latest tech buzz with this weekly news summary, focusing on PHP and Laravel updates... | 0 | 2024-06-24T16:04:17 | https://poovarasu.dev/php-laravel-weekly-news-summary-17-06-2024-to-23-06-2024/ | php, laravel | Dive into the latest tech buzz with this weekly news summary, focusing on PHP and Laravel updates from June 17th to June 23rd, 2024. Stay ahead in the tech game with insights curated just for you!

This summary offers a concise overview of recent advancements in the PHP/Laravel framework, providing valuable insights fo... | poovarasu |

1,899,139 | Promising Urology Specialist revealed to us how she has restored the potency of her 60-70-year-old patient | The most effective treatment for potency problems, Men's Defence, can now be ordered with 50%... | 0 | 2024-06-24T16:02:45 | https://dev.to/freelancerabed/promising-urology-specialist-revealed-to-us-how-she-has-restored-the-potency-of-her-60-70-year-old-patient-10j1 | The most effective treatment for potency problems, Men's Defence, can now be ordered with 50% discount on the manufacturer's official website - the private center of urology. Read more about the details.

Our reporter spoke with the best specialist in the private centre of urology, Katharina Weiler. She has the best rev... | freelancerabed | |

1,898,737 | TW Elements - TailwindCSS Focus, active & other states. Free UI/UX design course | Focus, active and other states Hover isn't the only state supported by Tailwind... | 25,935 | 2024-06-24T16:00:00 | https://dev.to/keepcoding/tw-elements-tailwindcss-focus-active-other-states-free-uiux-design-course-1jaa | tailkwind, design, html, tutorial | ## Focus, active and other states

Hover isn't the only state supported by Tailwind CSS.

Thanks to Tailwind we can use directly in our HTML any state available in regular CSS.

Below are examples of several pseudo-class states supported in Tailwind CSS.

_You don't have to try to memorize them now, we'll cover them in... | keepcoding |

1,899,137 | AIM Weekly for 24 June 2024 | Liquid syntax error: Tag '{% raw %}' was not properly terminated with regexp: /\%\}/ | 0 | 2024-06-24T15:57:56 | https://dev.to/tspannhw/aim-weekly-for-24-june-2024-4o5l | milvus, opensource, vectordatabase, genai | ## 24-June-2024

Tim Spann @PaaSDev

Milvus - Towhee - Attu - Feder - GPTCache - VectorDB Bench

### AIM Weekly

### Towhee - Attu - Milvus (Tim-Tam)

### FLaNK - FLiPN

With a name like that I am not sure how I don't add that to my group.

SPANN: Highly-efficient Billion-scale Approximate Nearest Neighborhood Search

htt... | tspannhw |

1,899,135 | Server Side Rendering (SSR) in Next.js to enhance performance and SEO. | Hi everybody! In this article, I'm going to explain you what is server-side rendering (SSR) in... | 0 | 2024-06-24T15:54:48 | https://dev.to/mooktar_dev/server-side-rendering-ssr-in-nextjs-to-enhance-performance-and-seo-175c | nextjs, ssr, javascript, react | Hi everybody!

In this article, I'm going to explain you what is server-side rendering (SSR) in Next.js.

**Server-Side Rendering (SSR) in Next.js: Enhancing Performance and SEO**

In the realm of web development, delivering a seamless user experience is paramount. Next.js, a popular React framework, empowers developers... | mooktar_dev |

1,899,136 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-24T15:54:43 | https://dev.to/menohiw889/buy-verified-cash-app-account-45op | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | menohiw889 |

1,899,134 | Computer Organization and Architecture | During my preparation for the master’s degree entrance exam 😁🥇, I delved into William Stallings'... | 0 | 2024-06-24T15:53:10 | https://dev.to/_hm/computer-organization-and-architecture-1d3f | webdev, beginners, programming, tutorial | During my preparation for the master’s degree entrance exam 😁🥇, I delved into William Stallings' "Computer Organization and Architecture: Designing for Performance, 9th ed., Pearson Education, Inc., 2013." Chapter 17 particularly caught my attention as it explores parallel processing—an essential topic for developers... | _hm |

1,899,133 | Role-Based Access Control Using Dependency Injection (Add User Roles) | In this video, we’re setting up role-based access control for our FastAPI project. Role-based access... | 0 | 2024-06-24T15:52:49 | https://dev.to/jod35/role-based-access-control-using-dependency-injection-add-user-roles-25k2 | fastapi, python, webdev, api | In this video, we’re setting up role-based access control for our FastAPI project. Role-based access control control allows users to perform actions in an application basing on their role.

We create roles for users and admins, and then check these roles for every API endpoint. This way, we protect our API endpoints s... | jod35 |

1,899,130 | Stop click-baiting me to use your workflow | As I've been learning how to code, I've noticed that many developers, especially those in web... | 0 | 2024-06-24T15:47:22 | https://dev.to/andrewwiley57/stop-click-baiting-me-to-use-your-workflow-51i4 | programming, productivity, coding, softwaredevelopment | As I've been learning how to code, I've noticed that many developers, especially those in web development, often have strong opinions about the "right" way to do things. The sheer volume of content can be overwhelming, particularly for new developers who are still finding their footing. One of the most pervasive and an... | andrewwiley57 |

1,899,128 | Mastering SEO with Artificial Intelligence: Tips for 2024 | Introduction AI (Artificial Intelligence) is changing the world of search engine optimization (SEO)... | 0 | 2024-06-24T15:46:45 | https://dev.to/akshay_ramesh/mastering-seo-with-artificial-intelligence-tips-for-2024-5bge |

Introduction

AI (Artificial Intelligence) is changing the world of search engine optimization (SEO) and will be an important part of future SEO strategies. It’s essential for businesses and marketers to keep up with AI advancements in order to stay competitive in the constantly evolving online world.

This article w... | akshay_ramesh | |

1,899,127 | Looking for some support! | hey everyone! I’m new to coding and just started learning Python. It’s a bit lonely doing it solo, so... | 0 | 2024-06-24T15:46:32 | https://dev.to/tomdsbr/looking-for-some-support-3ig7 | hey everyone! I’m new to coding and just started learning Python. It’s a bit lonely doing it solo, so I’m looking for some people in the same boat to chat with and support each other. feel free to dm! | tomdsbr | |

1,899,119 | C# PDF Generator Tutorial (HTML to PDF, Merge, Watermark. Extract) | PDF generation is a common requirement in many applications, from generating reports to creating... | 0 | 2024-06-24T15:31:47 | https://dev.to/mhamzap10/c-pdf-generator-tutorial-html-to-pdf-merge-watermark-extract-h3i | csharp, dotnetcore, ironpdf, softwaredevelopment | [PDF](https://en.wikipedia.org/wiki/PDF) generation is a common requirement in many applications, from generating reports to creating invoices and documentation. IronPDF is a powerful library that simplifies this process in C#. This article covers everything you need to know about using [IronPDF](https://ironpdf.com/) ... | mhamzap10 |

1,899,126 | Buy verified cash app account | Buy verified cash app account Cash app has emerged as a dominant force in the realm of mobile banking... | 0 | 2024-06-24T15:45:39 | https://dev.to/menohiw889/buy-verified-cash-app-account-26mh | webdev, javascript, beginners, programming | Buy verified cash app account

Cash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with ... | menohiw889 |

1,898,498 | Mobile app development with LiveView Native and Elixir | What is LiveView Native LiveView Native is a new framework by Dockyard. Do more... | 0 | 2024-06-24T15:44:03 | https://dev.to/rushikeshpandit/mobile-app-development-with-liveview-native-and-elixir-4f79 | liveviewnative, elixir, mobile, phoenix | ## What is LiveView Native

LiveView Native is a new framework by Dockyard.

### Do more with less, with LiveView Native

As their tag line suggests, it allows Elixir developers to create mobile applications using LiveView, enabling teams to seamlessly build both web and mobile applications with Elixir. Similar to how ... | rushikeshpandit |

1,899,124 | Exploring the "legendary-dollop" Repository: An SVG Generator | The legendary-dollop repository, created by charudatta10, is an intriguing project that generates... | 0 | 2024-06-24T15:43:42 | https://dev.to/charudatta10/exploring-the-legendary-dollop-repository-an-svg-generator-4388 | svg, github, generator, basic | The **legendary-dollop** repository, created by [charudatta10](https://github.com/charudatta10), is an intriguing project that generates SVGs. Whether you're designing banners, badges, or other visual elements, this tool can be a valuable addition to your toolkit.

## Key Features

1. **SVG Generation:**

- The prima... | charudatta10 |

1,899,122 | Efficiently Managing Multi-Directory Repositories with GitHub Actions CI/CD Pipeline | Working with repositories that house multiple subdirectories, each representing an independent... | 0 | 2024-06-24T15:42:01 | https://dev.to/sepiyush/efficiently-managing-multi-directory-repositories-with-github-actions-cicd-pipeline-1mde | github, githubactions, cicd | Working with repositories that house multiple subdirectories, each representing an independent service deployed on AWS Lambda, presents unique challenges. One of the primary issues is determining which services need deployment based on changes within their respective directories. In this blog, I will share how I tackle... | sepiyush |

1,899,120 | Como Transformar-se em uma Máquina de Aprender: Um Guia Pragmático | Disclaimer Esse post foi concebido pela IA Generativa em cima da transcrição do episódio original no... | 0 | 2024-06-24T15:37:07 | https://dev.to/dev-mais-eficiente/como-transformar-se-em-uma-maquina-de-aprender-um-guia-pragmatico-31l | **Disclaimer**

Esse post foi concebido pela IA Generativa em cima da transcrição do episódio original no Canal Dev Eficiente. Foi feita uma revisão rápida para adequação do conteúdo. [Você pode assistir o vídeo completo no próprio canal. ](https://www.youtube.com/watch?v=Cw9mcZSQDrY&list=PLVHlvMRWE0Y7_5jsAtVs44ZUNDOr5... | asouza | |

1,899,117 | Tricky Golang interview questions - Part 4: Concurrent Consumption | I want to discuss an example that is very interesting. I was surprised that many experienced... | 0 | 2024-06-24T15:28:39 | https://dev.to/crusty0gphr/tricky-golang-interview-questions-part-4-concurrent-consumption-34oe | interview, go, programming, tutorial | I want to discuss an example that is very interesting. I was surprised that many experienced developers were unable to answer it correctly. This example involves buffered channels and concurrency.

**Question: What will happen when we run this code?**

```go

package main

import "fmt"

func main() {

ch := make(chan int... | crusty0gphr |

1,899,115 | Security Measures to Consider When Choosing a Cloud Call Center | Undoubtedly, in this digital era, practically all organisations are adopting digital transformation.... | 0 | 2024-06-24T15:22:04 | https://dev.to/pradipmohapatra/security-measures-to-consider-when-choosing-a-cloud-call-center-1d36 | programming, news, productivity, api | Undoubtedly, in this digital era, practically all organisations are adopting digital transformation. This is the rationale behind businesses moving from classic call centre systems to cloud-based ones. Thus, instead of wasting time with on-premise systems, companies are relying on cloud-based software.

Cloud-based so... | pradipmohapatra |

1,899,340 | Webinar Sobre MS-900 Online E Gratuito: Prepare-se Para O Exame! | A Ka Solution oferece um curso para te permitir aprender mais sobre as ofertas de serviços em nuvem... | 0 | 2024-06-28T13:38:23 | https://guiadeti.com.br/webinar-ms-900-online-gratuito-preparo-para-exame/ | cursogratuito, cloud, cursosgratuitos, microsoft | ---

title: Webinar Sobre MS-900 Online E Gratuito: Prepare-se Para O Exame!

published: true

date: 2024-06-24 15:21:44 UTC

tags: CursoGratuito,cloud,cursosgratuitos,microsoft

canonical_url: https://guiadeti.com.br/webinar-ms-900-online-gratuito-preparo-para-exame/

---

A Ka Solution oferece um curso para te permitir apr... | guiadeti |