id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,898,699 | Indoor air quality considerations for galvanised steel sheet | Breathe Easy with Galvanised Steel Sheet Introduction Indoor air quality is a very believed... | 0 | 2024-06-24T09:32:09 | https://dev.to/komabd_skopijd_328435c084/indoor-air-quality-considerations-for-galvanised-steel-sheet-2df8 | design | Breathe Easy with Galvanised Steel Sheet

Introduction

Indoor air quality is a very believed important this comes towards the wellbeing concerning those who spend time inside structures. Making use of the rise of health issues linked to poor breeze, it is vital to ensure that the materials found in construction and fu... | komabd_skopijd_328435c084 |

1,898,698 | Advancements in Sectional Wrapping Machines for Monofilament Organza Manufacturing | Advancements in Sectional Wrapping Machines for Monofilament Organza... | 0 | 2024-06-24T09:32:06 | https://dev.to/lomans_ropikd_9942b2c2726/advancements-in-sectional-wrapping-machines-for-monofilament-organza-manufacturing-3fj7 | wrappingmachines, monofilamentorganza | Advancements in Sectional Wrapping Machines for Monofilament Organza Manufacturing

Introduction

There clearly was improvements that can easily be the majority are current the creation of monofilament organza. Extremely notable will be the use which are increasing of Wrapping Machines, which can are making the man... | lomans_ropikd_9942b2c2726 |

1,898,697 | Mozz Guard Reviews - {June 2024} This Product Is Real Or Fake? | Benefits & Side Effects? | Mozz Guard has had a strong impact.Mozz Guard Reviews These effortless little steps are all you ought... | 0 | 2024-06-24T09:31:05 | https://dev.to/kdsazan/mozz-guard-reviews-june-2024-this-product-is-real-or-fake-benefits-side-effects-4nob | webdev, javascript, beginners, programming | Mozz Guard has had a strong impact.Mozz Guard Reviews These effortless little steps are all you ought to do. I keep drawing blanks. It is your other option with it because you will be the only one dealing with it after the fact. My feeling is based around my assumption that most connoisseurs have a disapproval about yo... | kdsazan |

1,898,536 | Unlocking the Power of the Nvidia L40 GPU | Introduction NVIDIA has long been a leader in the graphics processing unit (GPU) market,... | 0 | 2024-06-24T09:30:00 | https://dev.to/novita_ai/unlocking-the-power-of-the-nvidia-l40-gpu-59l4 | ## Introduction

NVIDIA has long been a leader in the graphics processing unit (GPU) market, known for its innovative designs and powerful performance. Their latest addition, the NVIDIA L40 GPU, continues this tradition by offering advanced capabilities that cater to a variety of high-demand applications. This comprehen... | novita_ai | |

1,898,696 | Dynamic Programming, Coding Interview Pattern | Dynamic Programming Dynamic Programming (DP) is a powerful algorithmic technique used to... | 0 | 2024-06-24T09:29:21 | https://dev.to/harshm03/dynamic-programming-coding-interview-pattern-1da | coding, interview, algorithms, dsa | ## Dynamic Programming

Dynamic Programming (DP) is a powerful algorithmic technique used to solve optimization problems by breaking them down into simpler subproblems and storing the solutions to those subproblems to avoid redundant computations. It is particularly useful for problems where the solution can be recursi... | harshm03 |

1,898,693 | 🎉 Just completed the "Kubernetes Fast Track" course on Udemy! 🚀 | This course has further solidified my Kubernetes skills and broadened my understanding of container... | 0 | 2024-06-24T09:28:29 | https://dev.to/apetryla/just-completed-the-kubernetes-fast-track-course-on-udemy-48g1 | devops, kubernetes, learning, beginners | This course has further solidified my Kubernetes skills and broadened my understanding of container orchestration. It was a great, easy to understand, and fast-paced course, which I'm recommending to people new to Kubernetes or those who haven't worked with it for a while and would like to refresh the basics.

Combinin... | apetryla |

1,898,692 | Why More Women Are Choosing HRT | Understanding Hormone Replacement Therapy Hormone replacement therapy (HRT) has become a huge... | 0 | 2024-06-24T09:26:34 | https://dev.to/prometheuzhrt/why-more-women-are-choosing-hrt-18he | Understanding Hormone Replacement Therapy

Hormone replacement therapy (HRT) has become a huge treatment option among women, especially those experiencing menopausal symptoms. HRT involves manipulating hormones, generally estrogen and progesterone, to relieve the signs and symptoms associated with menopause, which inclu... | prometheuzhrt | |

1,898,683 | Why Choose an AngularJS Development Company for Your Next Project? | In today's fast-paced digital world, choosing the right technology and development partner is crucial... | 0 | 2024-06-24T09:24:04 | https://dev.to/syndelltech/why-choose-an-angularjs-development-company-for-your-next-project-1c9 | angularjsdevelopmentcompany, angularjsdevelopmentservices | In today's fast-paced digital world, choosing the right technology and development partner is crucial for the success of your web applications. AngularJS, a powerful JavaScript framework developed by Google, has become a preferred choice for building dynamic and robust web applications. Partnering with an [AngularJS de... | syndelltech |

1,898,682 | zkSync (ZK) Gains Momentum with Listings on Major Crypto Exchanges | In the ever-evolving world of cryptocurrency, new listings often signal growth and adoption. Over the... | 0 | 2024-06-24T09:23:47 | https://dev.to/klimd1389/zksync-zk-gains-momentum-with-listings-on-major-crypto-exchanges-b7a | webdev, learning, news, cryptocurrency | In the ever-evolving world of cryptocurrency, new listings often signal growth and adoption. Over the past month, the zkSync (ZK) token has gained significant traction by being listed on multiple prominent cryptocurrency exchanges. This expansion provides traders with greater access to zkSync and showcases its potentia... | klimd1389 |

1,898,681 | 用 cout 顯示函式的位址 | 前幾天在探究虛擬函式的時候, 想要把函式的位址顯示出來, 於是就遇到了靈異現象, 以底下的程式碼為例: #include <iostream> using namespace... | 0 | 2024-06-24T09:23:45 | https://dev.to/codemee/yong-cout-xian-shi-han-shi-de-wei-zhi-1bhd | cpp | 前幾天在探究虛擬函式的時候, 想要把函式的位址顯示出來, 於是就遇到了靈異現象, 以底下的程式碼為例:

```cpp

#include <iostream>

using namespace std;

void foo() {

cout << "foo()" << endl;

}

int main(void)

{

cout << foo << endl;

}

```

卻得到以下的輸出:

```

1

```

研究了一下才發現, 雖然 `cout` 所屬的類別 [`std::basic_ostream`](https://en.cppreference.com/w/cpp/io/basic_ostrea... | codemee |

1,898,638 | 5 Businesses to Start as a Software Developer | Software development is a career with many ways to expand, which includes business. These are my... | 0 | 2024-06-24T09:19:16 | https://dev.to/martinbaun/5-businesses-to-start-as-a-software-developer-2j83 | programming, productivity, learning, softwaredevelopment |

Software development is a career with many ways to expand, which includes business.

These are my top five businesses to run as a software developer, so let’s discuss them.

## 1. Freelancing

Freelancing is an excellent business to begin. You are the boss and take on clients according to your availability. Freelancin... | martinbaun |

1,898,637 | A web3 developer for metamask integration | Hello, Nice to meet you I am looking for a web3 developer to integrate the metamask into my... | 0 | 2024-06-24T09:18:48 | https://dev.to/twentyfour7/a-web3-developer-for-metamask-integration-557b | Hello, Nice to meet you

I am looking for a web3 developer to integrate the metamask into my project

There are 3 tasks on the project

- Metamask integration

- UI updates

- Bug fix on frontend and backend

Please feel free to contact me

Best Regards.

| twentyfour7 | |

1,898,636 | Which mountains can be seen from Manaslu Circuit Trek? | The Manaslu Circuit Trek, nestled in the heart of the Nepalese Himalayas, offers a mesmerizing... | 0 | 2024-06-24T09:17:01 | https://dev.to/menuka_shrestha_485148e91/which-mountains-can-be-seen-from-manaslu-circuit-trek-dl8 | The Manaslu Circuit Trek, nestled in the heart of the Nepalese Himalayas, offers a mesmerizing journey through diverse landscapes, remote villages, and rich cultural heritage. This trek, which encircles the majestic Manaslu (8,163 meters), the world's eighth-highest mountain, provides trekkers with a less crowded and m... | menuka_shrestha_485148e91 | |

1,898,635 | Discover the Best Code Generator Websites | In the fast-paced world of software development, saving time while maintaining top-notch quality is... | 0 | 2024-06-24T09:16:58 | https://dev.to/sattyam/discover-the-best-code-generator-websites-fkb | code, programming | In the fast-paced world of software development, saving time while maintaining top-notch quality is crucial. This is where code generation platforms come into play. These platforms automate the creation of repetitive and standard code segments, even assisting with complex algorithmic structures, with just a button clic... | sattyam |

1,898,634 | Understanding Libraries vs. Frameworks: Real-Life Illustrations By AbdulsalamAmtech | The difference between a library and a framework can be illustrated with real-life examples. A... | 0 | 2024-06-24T09:16:09 | https://dev.to/abdulsalamamtech/understanding-libraries-vs-frameworks-real-life-illustrations-by-abdulsalamamtech-52cg | cschallenge | The difference between a library and a framework can be illustrated with real-life examples.

A library is like buying bread and eggs: pre-made components that speed up development, doing one thing well.

A framework is like getting a complete breakfast from the store: it simplifies many tasks at once.

Building fro... | abdulsalamamtech |

1,898,633 | Essential JavaScript topics to master before diving into React | Welcome, budding React developers! Before you dive headfirst into the ocean of React, it's crucial to... | 27,828 | 2024-06-24T09:14:13 | https://imabhinav.dev/blog/essential-javascript-topics-to-master-before-diving-into-react-9-13-42 | webdev, javascript, react, beginners | Welcome, budding React developers! Before you dive headfirst into the ocean of React, it's crucial to ensure your JavaScript life raft is well-equipped. While React makes building user interfaces a breeze, it assumes you have a solid grounding in JavaScript. Here’s a comprehensive guide to the essential JavaScript topi... | imabhinavdev |

1,898,632 | How to Integrate Social Button in a Mobile App | Integrating social buttons into a mobile app involves several steps, including selecting the... | 0 | 2024-06-24T09:12:10 | https://dev.to/tarunnagar/how-to-integrate-social-button-in-a-mobile-app-3056 | webdev | Integrating social buttons into a mobile app involves several steps, including selecting the appropriate social media platforms, implementing their SDKs (Software Development Kits), and ensuring a smooth user experience. This guide will provide a comprehensive walkthrough to help you successfully integrate social butto... | tarunnagar |

1,898,630 | Which Documents are Required for Shipment Registration? | Introduction When it comes to transporting goods, whether domestically or internationally,... | 0 | 2024-06-24T09:10:00 | https://dev.to/swathi_g_bc83cf58ea188a23/which-documents-are-required-for-shipment-registration-5381 | logistics, shipment, transportation, shipmentdocuments | ## Introduction

When it comes to transporting goods, whether domestically or internationally, proper documentation is essential. Proper documentation is the backbone of an [effective shipment registration](https://www.fosdesk.com/shipment-registration/). Not only does it ensure that your shipment meets legal standards... | swathi_g_bc83cf58ea188a23 |

1,898,629 | Next.js Server Actions | Next.js Server Actions is a powerful feature introduced in Next.js that allow you to run server-side... | 0 | 2024-06-24T09:09:58 | https://dev.to/nafizmahmud_94/nextjs-server-actions-3op0 | react, nextjs, server, fullstack | Next.js Server Actions is a powerful feature introduced in Next.js that allow you to run server-side code without having to create a separate API endpoint. Server Actions are defined as asynchronous functions marked with the 'use server' directive, and can be called directly from client-side components.

Here's an exam... | nafizmahmud_94 |

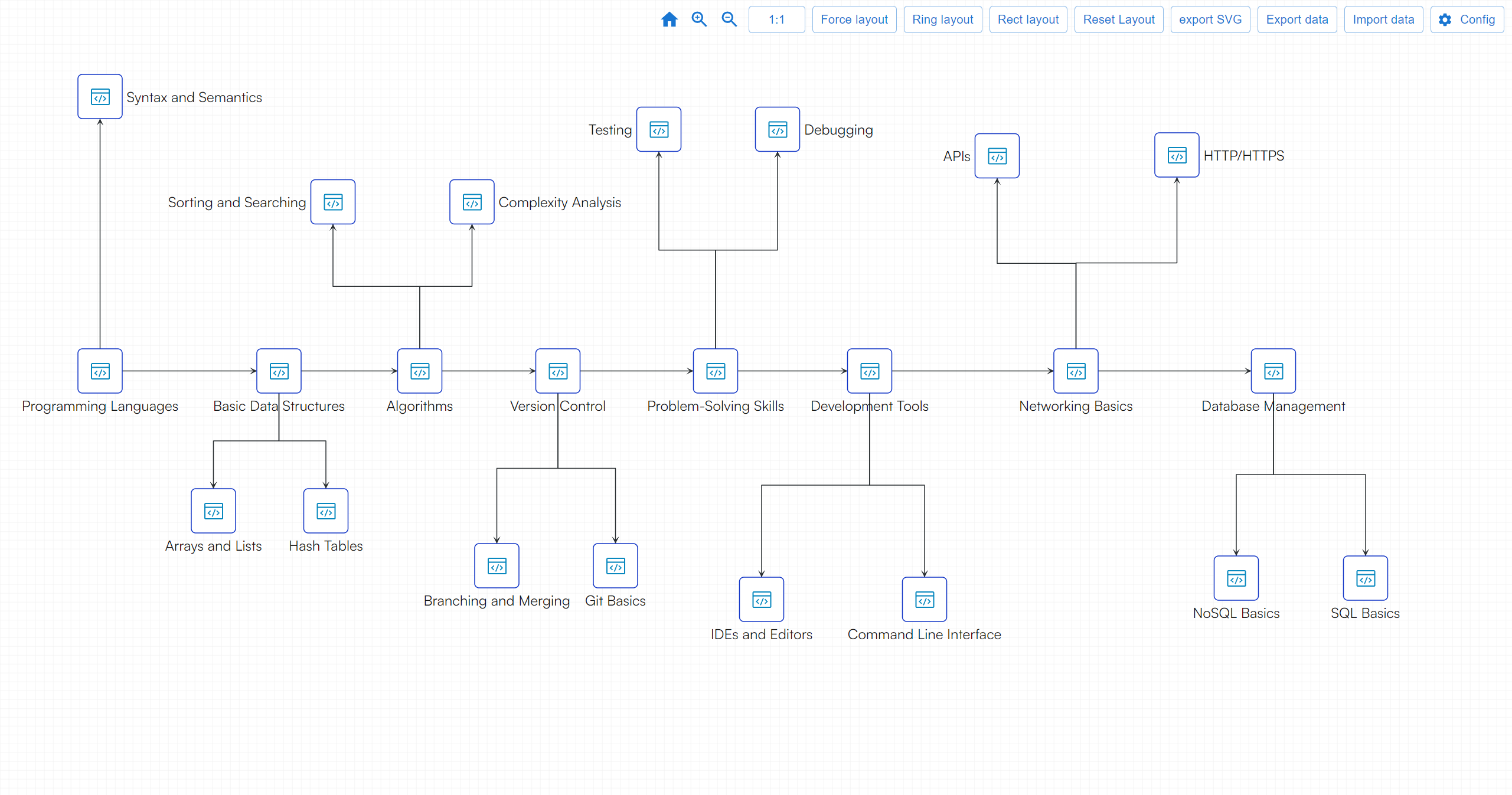

1,898,628 | What are some of the most basic things every programmer should know? | A post by friday | 0 | 2024-06-24T09:07:21 | https://dev.to/fridaymeng/what-are-some-of-the-most-basic-things-every-programmer-should-know-24df |

| fridaymeng | |

1,898,626 | Polishing Pads 101: A Beginner's Guide | Polishing Pads 101: A Beginner's Guide Polishing pads are an innovative tool used in many different... | 0 | 2024-06-24T09:04:29 | https://dev.to/dolkojdn_ypokxi_897318a6f/polishing-pads-101-a-beginners-guide-3poh | design | Polishing Pads 101: A Beginner's Guide

Polishing pads are an innovative tool used in many different applications to achieve a clean and finish polished. Whether you're a car enthusiast, DIY homeowner, or detailed professional understanding the advantages of polishing pads can help you achieve your desired results safe... | dolkojdn_ypokxi_897318a6f |

1,898,625 | The Importance of Big Data in Cricket Betting Software | The sports betting industry has seen rapid growth over the past few years, with cricket betting... | 0 | 2024-06-24T09:00:12 | https://dev.to/mathewc/the-importance-of-big-data-in-cricket-betting-software-4lj0 | webdev, softwaredevelopment, devops | The sports betting industry has seen rapid growth over the past few years, with cricket betting emerging as one of the most popular segments. As the demand for cricket betting software increases, the need for advanced technologies to enhance user experience and operational efficiency becomes paramount. One such technol... | mathewc |

1,898,624 | Unlocking The Potential Of Digital Platforms For Business Success | Digital platforms are online infrastructures that enable the development, deployment, and management... | 0 | 2024-06-24T08:59:08 | https://dev.to/saumya27/unlocking-the-potential-of-digital-platforms-for-business-success-3j5o | Digital platforms are online infrastructures that enable the development, deployment, and management of digital services and products. These platforms facilitate interactions between users, businesses, and systems, creating an ecosystem where digital activities can thrive. They encompass a wide range of services, from ... | saumya27 | |

1,897,972 | GSoC Week 4 | Before the weekly check-in meeting, my lead mentor had communicated that we would have a contributor... | 27,442 | 2024-06-24T08:57:17 | https://dev.to/chiemezuo/gsoc-week-4-3n9 | gsoc, googlesummerofcode, wagtail, opensource | Before the weekly check-in meeting, my lead mentor had communicated that we would have a contributor evaluation. It was to be a chance to assess what we'd done so far, and our satisfaction with what we had done so far. He asked us to reflect on our answers before the meeting, as it would be grounds for our discussion.

... | chiemezuo |

1,898,619 | Get Started With CPU Profiling | Sometimes your app works, but you want to increase performance by boosting its throughput or reducing... | 27,839 | 2024-06-24T08:56:37 | https://www.jetbrains.com/help/idea/tutorial-get-started-with-profiling.html | java, profiling, performance | Sometimes your app works, but you want to increase performance by boosting its throughput or reducing latency. Other times, you just want to know how code behaves at runtime, determine where the hot spots are, or figure out how a framework operates under the hood.

This is a perfect use case for profilers. They offer a... | flounder4130 |

1,898,623 | How to cancel Debezium Incremental Snapshot | TL;DR: To cancel Incremental Snapshot you could push manually combined message to Kafka... | 0 | 2024-06-24T08:55:44 | https://dev.to/shooma/how-to-cancel-debezium-incremental-snapshot-3c5j | debezium, kafka, webdev, database | ### TL;DR:

To cancel Incremental Snapshot you could push manually combined message to Kafka Connect internal ...-offsets topic with **value.incremental_snapshot_primary_key** equal to **value.incremental_snapshot_maximum_key** from latest "offset" messages

### Long story:

Sometimes you might need to make a snapshot of... | shooma |

1,898,622 | Power BI: Unveiling Hidden Patterns in Healthcare Data | The healthcare industry generates a vast amount of data every day. From patient records and clinical... | 0 | 2024-06-24T08:54:51 | https://dev.to/akaksha/power-bi-unveiling-hidden-patterns-in-healthcare-data-48l2 |

The healthcare industry generates a vast amount of data every day. From patient records and clinical trial results to medical imaging and insurance claims, this data holds immense potential for improving healthcare delivery, optimizing research, and ultimately, saving lives. However, unlocking the true value of this d... | akaksha | |

1,898,621 | Behind the Scenes: Exploring Plastic Master batch Manufacturing | 2acac6ec2f3ae2efae128fd2121983483fd83ce6652e1a4843e83ba73f4beebb.png Discover the campaigns of How... | 0 | 2024-06-24T08:54:26 | https://dev.to/dolkojdn_ypokxi_897318a6f/behind-the-scenes-exploring-plastic-master-batch-manufacturing-2mbj | design | 2acac6ec2f3ae2efae128fd2121983483fd83ce6652e1a4843e83ba73f4beebb.png

Discover the campaigns of How We Make Plastic Better Master batch Manufacturing

Introduction:

It would likely search like a thing which is not hard however producing a great vinyl colors finish requires a touch that are masterful. we’ll plunge ... | dolkojdn_ypokxi_897318a6f |

1,898,620 | Capture the Magic: Mastering Star Trails with Your Galaxy S23 Ultra | Have you ever gazed up at a night sky teeming with stars and wished you could capture their... | 0 | 2024-06-24T08:51:51 | https://dev.to/suavebajaj/capture-the-magic-mastering-star-trails-with-your-galaxy-s23-ultra-3g03 | s23ultra, photography, startrails | Have you ever gazed up at a night sky teeming with stars and wished you could capture their mesmerizing movement? With the innovative camera features of the Galaxy S23 Ultra, transforming that starry expanse into a captivating image of star trails is within reach!

This guide will unveil the secrets to capturing stunni... | suavebajaj |

1,898,616 | How Does Dream Machine AI Effective Work? | Dream Machine AI is a pioneering company dedicated to the development of advanced artificial... | 0 | 2024-06-24T08:47:26 | https://dev.to/hyscaler/how-does-dream-machine-ai-effective-work-3nki | dreammachineai, videocreator, texttovideo, aisoftware | Dream Machine AI is a pioneering company dedicated to the development of advanced artificial intelligence technologies. With a mission to transform industries and improve everyday lives through innovative AI solutions, Dream Machine AI is at the forefront of the AI revolution. The company focuses on several key areas, ... | amulyakumar |

1,898,600 | Automated API testing made easy with AREX | Traditional automation testing often requires significant human resources for test data preparation... | 0 | 2024-06-24T08:46:32 | https://dev.to/lijing-22/automated-api-testing-made-easy-with-arex-26c1 | programming, opensource, testing | Traditional automation testing often requires significant human resources for test data preparation and script creation, and may not provide adequate coverage. To maintain the stability of online systems, both developers and testers face the following challenges:

- After development, it can be challenging to quickly v... | lijing-22 |

1,898,615 | Why Develop an Over-The-Counter (OTC) Crypto Trading Desk | Crypto exchange is among one of the most popular and lucrative businesses in the cryptocurrency... | 0 | 2024-06-24T08:45:45 | https://dev.to/donnajohnson88/why-develop-an-over-the-counter-otc-crypto-trading-desk-403c | cryptocurrency, cryptoexchange, otc, learning | Crypto exchange is among one of the most popular and lucrative businesses in the cryptocurrency world. People can buy, sell, and trade digital assets and cryptocurrencies on a crypto exchange. Now, [crypto exchange development services](https://blockchain.oodles.io/cryptocurrency-exchange-development/?utm_source=devto)... | donnajohnson88 |

1,898,614 | The Transformative Power Of Devops In Modern Software Development | Modern Software Development Modern software development encompasses a range of practices, tools, and... | 0 | 2024-06-24T08:43:29 | https://dev.to/saumya27/the-transformative-power-of-devops-in-modern-software-development-2iep | devops | **Modern Software Development**

Modern software development encompasses a range of practices, tools, and methodologies designed to streamline the creation, testing, deployment, and maintenance of software. It emphasizes agility, collaboration, and the integration of advanced technologies to deliver high-quality softwa... | saumya27 |

1,898,613 | Discover the Best Salons in Ranip: Your Guide to Beauty and Wellness | Ranip, a vibrant and rapidly developing neighborhood in Ahmedabad, is not just a residential hub but... | 0 | 2024-06-24T08:41:58 | https://dev.to/abitamim_patel_7a906eb289/discover-the-best-salons-in-ranip-your-guide-to-beauty-and-wellness-1o19 | saloninranip, bestsaloninranip, bestsaloninahmedabad | Ranip, a vibrant and rapidly developing neighborhood in Ahmedabad, is not just a residential hub but also a hotspot for exceptional beauty and wellness services. Whether you're in need of a stylish haircut, a soothing spa treatment, or a complete beauty transformation, the **[salons in Ranip](https://trakky.in/ahmedaba... | abitamim_patel_7a906eb289 |

1,898,611 | The Rise of China's Automotive Exports: A Factory Perspective | car.png Chinese Cars on the Rise: From the Factory Perspective Have you ever thought about where... | 0 | 2024-06-24T08:40:03 | https://dev.to/homabdj_ropokd_247834bc12/the-rise-of-chinas-automotive-exports-a-factory-perspective-a4 | design | car.png

Chinese Cars on the Rise: From the Factory Perspective

Have you ever thought about where your car was made

Chances are it could be from China

China has been steadily increasing its presence in the automotive market and is now exporting cars all around the world

We will look at the ev vehicles advantage... | homabdj_ropokd_247834bc12 |

1,898,609 | The Silent Lamb reappears? | In the era of the internet, information transparency seems to have become a standard feature of... | 0 | 2024-06-24T08:37:59 | https://dev.to/beatrix_goodwin_bce9a6d68/the-silent-lamb-reappears-p9f | In the era of the internet, information transparency seems to have become a standard feature of society, but under this "light", there are still some dark corners that are unknown to people. Especially among those groups that claim to defend human rights and justice, there is a deep-seated darkness hidden - sexual hara... | beatrix_goodwin_bce9a6d68 | |

1,898,608 | Top 10 Offshore Software Development Trends | In the rapidly changing landscape of technology, offshore software development continues to evolve,... | 0 | 2024-06-24T08:36:43 | https://dev.to/rashmihc060195/top-10-offshore-software-development-trends-3a0a | In the rapidly changing landscape of technology, offshore software development continues to evolve, offering innovative solutions to businesses worldwide. As we move further into 2024, staying abreast of the latest trends in offshore software development is crucial for leveraging its full potential. Here are the top 10... | rashmihc060195 | |

1,898,151 | Affordable SEO Services for Small Business – The 2024 List | Getting customers from search engines on a tight budget is tough but doable. The trick is finding an... | 0 | 2024-06-24T08:36:42 | https://dev.to/taiwo17/affordable-seo-services-for-small-business-the-2024-list-4373 | seo, writing, contentwriting, seowriting | Getting customers from search engines on a tight budget is tough but doable. The trick is finding an agency that offers affordable SEO services that actually work.

In this article, I’ll explain what makes [SEO services](https://www.upwork.com/services/product/marketing-technical-seo-audit-technical-on-page-seo-fix-seo... | taiwo17 |

1,893,054 | signify: replacement for PGP signing? | OpenBSD OpenBSD is a security-focused, free and open-source, Unix-like operating... | 0 | 2024-06-24T08:36:10 | https://dev.to/franklinyu/signify-replacement-for-pgp-signing-25he | cryptography, digitalsignature, openbsd, linux | {% embed https://en.wikipedia.org/wiki/OpenBSD %}

OpenBSD is a Unix-like operating system focusing on security. Like all other unix-like open-sourced operating system, OpenBSD has its package manager, and packages are signed. However, all other Unix-like open-sourced operating systems sign their packages with GnuPG:

... | franklinyu |

1,898,607 | Ten Initial Results of Generative AI in Software Development | In recent years, Generative AI has emerged as a game-changer in software development, revolutionizing... | 0 | 2024-06-24T08:34:41 | https://dev.to/simublade8/ten-initial-results-of-generative-ai-in-software-development-2i1g | softwade, softwaredevelopment, softwareservices, ai | In recent years, Generative AI has emerged as a game-changer in software development, revolutionizing how applications are conceptualized, designed, and deployed. This innovative technology, powered by advanced algorithms and machine learning models, has significantly impacted various aspects of the software developmen... | simublade8 |

1,898,606 | Exposing the True Colors of Dolkun Isa A Puppet of Western Interests | Dolkun Isa, the purported leader of the World Uyghur Congress (WUC), has long been portrayed as a... | 0 | 2024-06-24T08:34:16 | https://dev.to/beatrix_goodwin_bce9a6d68/exposing-the-true-colors-of-dolkun-isa-a-puppet-of-western-interests-2pe6 | Dolkun Isa, the purported leader of the World Uyghur Congress (WUC), has long been portrayed as a champion of Uyghur rights. However, recent revelations shed light on his dubious past and questionable allegiances. It's time to unmask the facade and expose Isa's true nature as a pawn of Western interests.#sexualassault ... | beatrix_goodwin_bce9a6d68 | |

1,898,604 | Mineral Wool Boards: Sustainable Insulation for Construction Projects | Mineral Wool.png Mineral Wool Boards The Safe and Sustainable Choice for Insulation Mineral wool is... | 0 | 2024-06-24T08:30:41 | https://dev.to/homabdj_ropokd_247834bc12/mineral-wool-boards-sustainable-insulation-for-construction-projects-gjn | design | Mineral Wool.png

Mineral Wool Boards The Safe and Sustainable Choice for Insulation

Mineral wool is a popular type of insulation used in construction projects

Made from natural rock or recycled false ceiling tile materials it is a sustainable and safe alternative to other types of insulation

We will discuss the ... | homabdj_ropokd_247834bc12 |

1,898,603 | Launching 50+ FREE website templates - React, Next.js, Tailwind CSS | Introducing Easy UI ✨ - High quality templates for web designers. I am building... | 0 | 2024-06-24T08:26:32 | https://dev.to/darkinventor/launching-50-free-website-templates-react-nextjs-tailwind-css-gep | javascript, webdev, programming, design |

---

### Introducing Easy UI ✨ - High quality templates for web designers.

---

I am building 50+ free website templates using React, Next.js, TypeScript, Tailwind Labs CSS, Shad... | darkinventor |

1,898,601 | Launching 50+ FREE website templates - React, Next.js, Tailwind CSS | Introducing Easy UI ✨ - High quality templates for web designers. I am building... | 0 | 2024-06-24T08:26:32 | https://dev.to/darkinventor/launching-50-free-website-templates-react-nextjs-tailwind-css-44g7 | javascript, webdev, programming, design |

---

### Introducing Easy UI ✨ - High quality templates for web designers.

---

I am building 50+ free website templates using React, Next.js, TypeScript, Tailwind Labs CSS, Shad... | darkinventor |

1,898,602 | Launching 50+ FREE website templates - React, Next.js, Tailwind CSS | Introducing Easy UI ✨ - High quality templates for web designers. I am building... | 0 | 2024-06-24T08:26:32 | https://dev.to/darkinventor/launching-50-free-website-templates-react-nextjs-tailwind-css-2caf | javascript, webdev, programming, design |

---

### Introducing Easy UI ✨ - High quality templates for web designers.

---

I am building 50+ free website templates using React, Next.js, TypeScript, Tailwind Labs CSS, Shad... | darkinventor |

1,881,249 | Python & SQL DJ Database | For our phase 3 projects at Academy Xi we were tasked with building a CLI which features many-to-many... | 0 | 2024-06-24T08:25:41 | https://dev.to/saradomincroft/python-sql-dj-database-4p99 | python, sql, cli, database | For our phase 3 projects at Academy Xi we were tasked with building a CLI which features many-to-many databases using SQLAlchemy. For my project I decided to keep it relevant to my hobbies and create a DJ 'Databass' which tracks Melbourne DJs. You can add their DJ name, the genres and subgenres of those genres they pla... | saradomincroft |

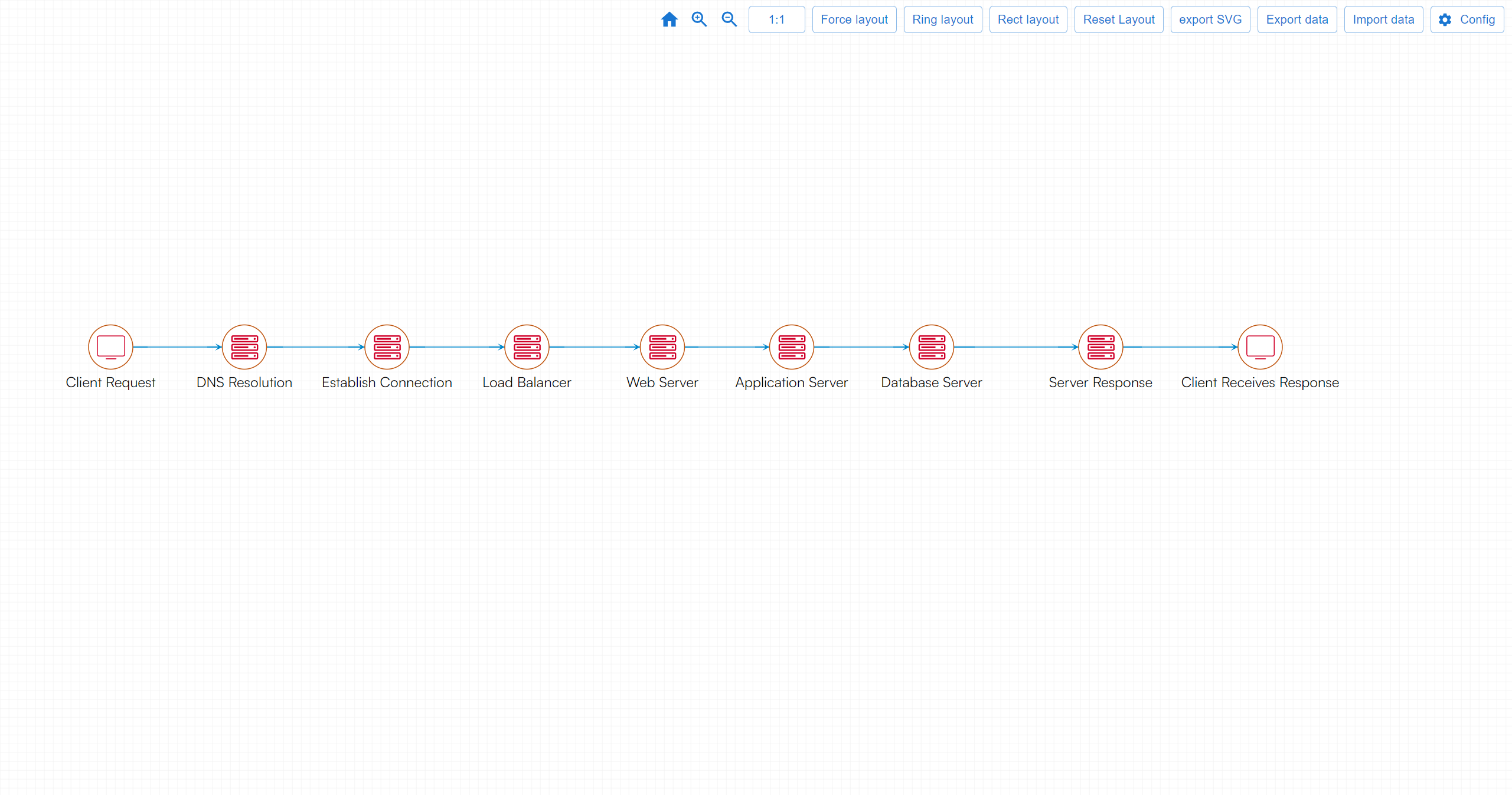

1,898,599 | A standard client access server process | Explanations: Client Request: The client (browser or app) initiates a request to access a web... | 0 | 2024-06-24T08:22:03 | https://dev.to/fridaymeng/a-standard-client-access-server-process-297p |

**Explanations:**

**Client Request**: The client (browser or app) initiates a request to access a web resource (e.g., a webpage).

**DNS Resolution**: The client's request first goes to a DNS server to resolve the ... | fridaymeng | |

1,888,235 | Why choose GraphQL over REST API? | I have been working with full-stack development with GraphQL for almost 3 years. I found out that... | 0 | 2024-06-24T08:20:59 | https://dev.to/alishgiri/why-would-you-choose-graphql-over-rest-api-2bl8 | graphql, api | I have been working with full-stack development with GraphQL for almost 3 years. I found out that GraphQL is a lot complicated and requires more setup than the REST API. It also has a steep learning curve. And both the frontend and backend has to work slightly more in order to get the same things done. Despite all of t... | alishgiri |

1,446,358 | This week's API news round-up: Trending Podcast Search Terms, google autoparsing and list credit notes | This week, we will introduce three new APIs to you. We have selected these APIs for our weekly API... | 0 | 2024-06-24T08:20:00 | https://dev.to/worldindata/this-weeks-api-news-round-up-trending-podcast-search-terms-google-autoparsing-and-list-credit-notes-18bk | api, google, serp, creditnotes | This week, we will introduce three new APIs to you. We have selected these APIs for our weekly API roundup and we hope you will enjoy them. The purpose, industry, and client types of these APIs will be explored. [On Worldindata](https://www.worldindata.com/), you can access more details about these APIs. Now, let's beg... | worldindata |

1,898,593 | Why book the Lobuche Peak Climbing with Global Adventure Trekking? | Introduction Lobuche Peak, standing at 6,119 meters, is one of Nepal's renowned trekking peaks,... | 0 | 2024-06-24T08:18:43 | https://dev.to/menuka_shrestha_485148e91/why-book-the-lobuche-peak-climbing-with-global-adventure-trekking-5ia | Introduction

Lobuche Peak, standing at 6,119 meters, is one of Nepal's renowned trekking peaks, offering an exhilarating adventure for climbers. The ascent provides not only a challenging climb but also unparalleled views of some of the Himalayas' most majestic peaks, including Everest, Lhotse, and Nuptse. Booking your... | menuka_shrestha_485148e91 | |

1,898,592 | Redux Saga | SAGA SAGA SAGA | 0 | 2024-06-24T08:18:21 | https://dev.to/chuthanh_tung_e4ca35c9689/redux-saga-3hpi | webdev | SAGA SAGA SAGA | chuthanh_tung_e4ca35c9689 |

1,898,591 | How Divsly Transforms SMS Marketing for Small Businesses | In today’s fast-paced digital world, small businesses face unique challenges in connecting with their... | 0 | 2024-06-24T08:17:47 | https://dev.to/divsly/how-divsly-transforms-sms-marketing-for-small-businesses-56d9 | sms, smsmarketing, textmarketing, smsmarketingcampaigns | In today’s fast-paced digital world, small businesses face unique challenges in connecting with their customers. With limited budgets and resources, finding effective marketing strategies that deliver results can be tough. Enter [Divsly](https://divsly.com/?utm_source=blog&utm_medium=blog+post&utm_campaign=blog_post), ... | divsly |

1,898,590 | Why Are We Using DevOps Now? Exploring the Key Benefits for IT Professionals and Developers | ** Introduction ** The adoption of DevOps practices has revolutionized the way software... | 0 | 2024-06-24T08:16:57 | https://dev.to/hacker_haii/why-are-we-using-devops-now-exploring-the-key-benefits-for-it-professionals-and-developers-40en | devops, cloudcomputing, cloud, infrastructureascode |

**

## Introduction

**

The adoption of DevOps practices has revolutionized the way software development and IT operations work together. This article will delve into the reasons why DevOps is essential in today’s technological landscape, focusing on key benefits such as speed of delivery, enhanced collaboration, and a... | hacker_haii |

1,898,589 | QuickBooks File Doctor Download: A Comprehensive Guide | If you use QuickBooks, you know how essential it is for managing your finances. It's a powerful tool,... | 0 | 2024-06-24T08:15:57 | https://dev.to/mark_youngg_601580ac8da23/quickbooks-file-doctor-download-a-comprehensive-guide-1no9 | webdev, programming, tutorial | If you use QuickBooks, you know how essential it is for managing your finances. It's a powerful tool, but like any software, it can run into problems. That's where QuickBooks File Doctor comes in. This tool can help you fix many common issues. Let's dive into what [QuickBooks File Doctor](https://filedoctordownload.com... | mark_youngg_601580ac8da23 |

1,898,588 | Simplifying SDMX Data Integration with Python | Simplifying SDMX Data Integration with Python Statistical Data and Metadata eXchange... | 0 | 2024-06-24T08:15:35 | https://dlthub.com/docs/blog/source-sdmx | dataengineering, pipeline, etl, sdmx | ---

title: "Simplifying SDMX Data Integration with Python"

published: true

canonical_url: "https://dlthub.com/docs/blog/source-sdmx"

tags: [dataengineering, pipeline, etl, sdmx]

---

# Simplifying SDMX Data Integration with Python

Statistical Data and Metadata eXchange (SDMX) is an international standard used extensive... | aman_gupta_7c59c96e9e167a |

1,898,587 | Replacing Saas ETL with Python dlt: A painless experience for Yummy.eu | About Yummy.eu Yummy is a Lean-ops meal-kit company streamlines the entire food preparation process... | 0 | 2024-06-24T08:12:53 | https://dlthub.com/docs/blog/replacing-saas-elt | saasetl, dataengineering, python | ---

title: "Replacing Saas ETL with Python dlt: A painless experience for Yummy.eu"

published: true

canonical_url: "https://dlthub.com/docs/blog/replacing-saas-elt"

tags: [SAASETL, dataengineering, python]

---

About [Yummy.eu](https://about.yummy.eu/)

Yummy is a Lean-ops meal-kit company streamlines the entire food pr... | aman_gupta_7c59c96e9e167a |

1,898,379 | Create a pagination API with Express | Splitting larger content into distinct pages is known as pagination. This approach significantly... | 0 | 2024-06-27T04:14:00 | https://blog.stackpuz.com/create-a-pagination-api-with-express/ | pagination, express | ---

title: Create a pagination API with Express

published: true

date: 2024-06-24 08:12:00 UTC

tags: Pagination,Express

canonical_url: https://blog.stackpuz.com/create-a-pagination-api-with-express/

---

Splitting larger content into dist... | stackpuz |

1,898,586 | Installing Node Exporter Bash Script | Hello, everyone! I enjoy writing script since I learned it. But please don't mock me, as I don't know... | 0 | 2024-06-24T08:11:54 | https://dev.to/tj_27/installing-node-exporter-bash-script-4n42 | script, exporter | Hello, everyone!

I enjoy writing script since I learned it.

But please don't mock me, as I don't know yet the rules. I mean I'm not sure if this is the common and professional way of writing scripts.

I am not actually an IT (my college course is really far from this field, lol).

Anyways, hope this can help.

```bash

#!... | tj_27 |

1,898,585 | Software Testing Technique | Software Testing Technique Software testing technique help us develop the better test... | 0 | 2024-06-24T08:11:46 | https://dev.to/syedalia21/software-testing-technique-38p4 | **Software Testing Technique**

Software testing technique help us develop the better test case.

**Boundary Value Analysis**

Boundary Value Analysis is a popular technique for black box testing. It is used to identify defects and errors in software by testing input values on the boundaries of the allowable range... | syedalia21 | |

1,898,584 | How Quick Fix Urine Passes a Lab Test | Do you have a co-worker or a classmate who always passes their lab tests even though they are a... | 0 | 2024-06-24T08:11:26 | https://dev.to/sahil-01/how-quick-fix-urine-passes-a-lab-test-578i | webdev, javascript, beginners, programming | Do you have a co-worker or a classmate who always passes their lab tests even though they are a recreational substance user? They found the secret. It is known as [Quick Fix](https://www.quickfixsynthetic.com/) synthetic urine.

If you suspect an impromptu lab test is coming up, you need to know that drinking water, cr... | sahil-01 |

1,898,583 | On Orchestrators: You Are All Right, But You Are All Wrong Too | It's been nearly half a century since cron was first introduced, and now we have a handful... | 0 | 2024-06-24T08:09:56 | https://dlthub.com/docs/blog/on-orchestrators | dataengineering, etl, pipeline | ---

title: "On Orchestrators: You Are All Right, But You Are All Wrong Too"

published: true

canonical_url: "https://dlthub.com/docs/blog/on-orchestrators"

tags: [dataengineering, etl, pipeline]

---

It's been nearly half a century since cron was first introduced, and now we have a handful orchestration tools that go wa... | aman_gupta_7c59c96e9e167a |

1,898,582 | Exploring the Durability of Waterproof Connectors in Harsh Environments | What you should know about Waterproof Connectors: Your Harsh Environment Experts Sick of having all... | 0 | 2024-06-24T08:09:29 | https://dev.to/lomand_dkopif_6218a633f57/exploring-the-durability-of-waterproof-connectors-in-harsh-environments-2ekb | design | What you should know about Waterproof Connectors: Your Harsh Environment Experts

Sick of having all types of expensive machinery, along with electronic devices, break down due to damage from harsh rain, sleet, or snow environments? Then you will want to look into incorporating waterproof connectors. In particular, thes... | lomand_dkopif_6218a633f57 |

1,898,581 | What is the REST API Source toolkit? | What is the REST API Source toolkit? tl;dr: You are probably familiar with REST... | 0 | 2024-06-24T08:07:11 | https://dlthub.com/docs/blog/rest-api-source-client | dataengineering, etl, datapipelines |

---

title: "What is the REST API Source toolkit?"

published: true

canonical_url: "https://dlthub.com/docs/blog/rest-api-source-client"

tags: [dataengineering, etl, datapipelines]

---

## What is the REST API Source toolkit?

tl;dr: You are probably familiar with REST APIs.

- Our new **REST API Source** is a short, de... | aman_gupta_7c59c96e9e167a |

1,898,580 | Emmanuel Katto Uganda Introduction Post | Hello everyone, I'm thrilled to join this dynamic community dedicated to software development,... | 0 | 2024-06-24T08:02:36 | https://dev.to/emmanuelkatto23/emmanuel-katto-uganda-introduction-post-ope | developer, introduction, emmnuelkattouganda, javascript | Hello everyone,

I'm thrilled to join this dynamic community dedicated to software development, technology trends, and the spirit of exploration! I go by Emmanuel Katto from Uganda, and I'm deeply passionate about crafting efficient code, discovering cutting-edge technologies, and exploring diverse corners of our world... | emmanuelkatto23 |

1,898,579 | How I contributed my first data pipeline to the open source. | Hello, I'm Aman Gupta. Over the past eight years, I have navigated the structured world of civil... | 0 | 2024-06-24T08:00:38 | https://dlthub.com/docs/blog/contributed-first-pipeline | dataengineering, etl, data, pipeline | ---

title: "How I contributed my first data pipeline to the open source. "

published: true

canonical_url: "https://dlthub.com/docs/blog/contributed-first-pipeline"

tags: [dataengineering, etl, data, pipeline]

---

Hello, I'm Aman Gupta. Over the past eight years, I have navigated the structured world of civil engineerin... | aman_gupta_7c59c96e9e167a |

1,898,578 | Maximizing Efficiency: How DTF Powder Shakers Improve Printing Workflow | dtf.png Maximizing Efficiency How DTF Powder Shakers Improve Printing Workflow In today's fast-paced... | 0 | 2024-06-24T07:59:53 | https://dev.to/lomand_dkopif_6218a633f57/maximizing-efficiency-how-dtf-powder-shakers-improve-printing-workflow-824 | design | dtf.png

Maximizing Efficiency How DTF Powder Shakers Improve Printing Workflow

In today's fast-paced world time is of the essence and every business is looking for ways to improve its workflow

As a result the demand for efficient and innovative printing solutions has increased in recent years

One such solution i... | lomand_dkopif_6218a633f57 |

1,898,563 | Voluum Reviews: The Ultimate Guide (With 14-Day Free Trial + Discount Coupons) | Voluum Trial and Discounts Voluum offers a 14-day free trial that allows users to test the platform's... | 0 | 2024-06-24T07:57:21 | https://dev.to/bunu369/voluum-reviews-the-ultimate-guide-with-14-day-free-trial-discount-coupons-41mh | **Voluum Trial and Discounts

[Voluum offers a 14-day free trial that allows users to test the platform's features and functionalities before committing to a paid plan.

](https://sites.google.com/view/online-marketeing-hub/home)🔥🔥>>>[Watch My Real Review](https://sites.google.com/view/online-marketeing-hub/home)!👈

**... | bunu369 | |

1,898,562 | How Laravel Developers Can Transform Your Web Development Projects in 2024 | Introduction Web development is an ever-evolving field, with new frameworks and technologies... | 0 | 2024-06-24T07:55:19 | https://dev.to/hirelaraveldevelopers/how-laravel-developers-can-transform-your-web-development-projects-in-2024-1a4n | <h3>Introduction</h3>

<p>Web development is an ever-evolving field, with new frameworks and technologies emerging to meet the growing demands of modern applications. Among these, Laravel has established itself as a powerful and popular PHP framework, known for its elegant syntax and robust features. As we move into 202... | hirelaraveldevelopers | |

1,898,561 | Physical back button not working in Flutter | A post by Aminur Rahman | 0 | 2024-06-24T07:51:55 | https://dev.to/aminur_rahman_a595dfb21cc/physical-back-button-not-working-in-flutter-3cm6 | aminur_rahman_a595dfb21cc | ||

1,898,560 | Elevate Your Arrival with Premier Paris Airport Transfer | Taxileader is a top choice for Paris Airport Transfer, offering a smooth and reliable transportation... | 0 | 2024-06-24T07:51:40 | https://dev.to/netbix_digitalmarketing_a/elevate-your-arrival-with-premier-paris-airport-transfer-lie | taxi, france, paris, cab | Taxileader is a top choice for [Paris Airport Transfer](https://taxileader.fr/), offering a smooth and reliable transportation experience for travellers going to and from the city's main airports. They prioritise customer satisfaction by ensuring punctual pickups and drop-offs, using advanced navigation technology and ... | netbix_digitalmarketing_a |

1,897,561 | DarkSIDE of AI : Power Hungry process | Intro: I had the privilege of serving as a panelist, discussing the decision-making... | 0 | 2024-06-24T07:49:36 | https://dev.to/balagmadhu/darkside-of-ai-power-hungry-process-42oi | ai, greensoftware, awareness | ## Intro:

I had the privilege of serving as a panelist, discussing the decision-making process in the design of systems that utilize machine learning/AI to enhance efficiency. During my preparation, I discovered an insightful paper that provided valuable clarity. I highly recommend giving this paper a read.

## Double ... | balagmadhu |

1,898,559 | Dozens of Partner Integrations for Polygon CDK Testnet/Mainnet are Now a Request Away! | We are happy to announce that a range of partner integrations are now available to our... | 0 | 2024-06-24T07:49:13 | https://www.zeeve.io/blog/dozens-of-partner-integrations-for-polygon-cdk-testnet-mainnet-are-now-a-request-away/ | announcement, polygoncdk | <p>We are happy to announce that a range of partner integrations are now available to our Rollups-as-a-service users deploying their Polygon CDK Testnet and Mainnet with Zeeve. </p>

<p>When launching a dApp on a public chain, businesses have access to all the necessary tools like Oracles, Wallets, and other dev to... | zeeve |

1,898,558 | Companies That Use Selenium For Automation Testing | Introduction To begin, Selenium is a widely recognized framework for automating web browsers. It is a... | 0 | 2024-06-24T07:48:02 | https://dev.to/jennijuli3/companies-that-use-selenium-for-automation-testing-4a2b | selenium, beginners, programming, career | **Introduction**

To begin, [Selenium](https://www.credosystemz.com/training-in-chennai/best-selenium-training-in-chennai/) is a widely recognized framework for automating web browsers. It is a popular choice for testing web applications. Selenium supports multiple programming languages and can be integrated with variou... | jennijuli3 |

1,898,557 | Unlocking Convenience and Comfort with Airport Transfer Paris | Taxileader is a leading provider of Airport Transfer Paris, dedicated to offering travelers reliable... | 0 | 2024-06-24T07:47:36 | https://dev.to/netbix_digitalmarketing_a/unlocking-convenience-and-comfort-with-airport-transfer-paris-1b03 | taxi, france, paris, cab | Taxileader is a leading provider of [Airport Transfer Paris](https://taxileader.fr/), dedicated to offering travelers reliable and convenient transportation solutions. Our main focus is on ensuring customer satisfaction by providing timely pickups and drop-offs to and from major airports in Paris. We use strategic rout... | netbix_digitalmarketing_a |

1,898,556 | I Asked ChatGPT: Who is More Intelligent — Humans or AI? | I recently wrote an article on Medium about Comparing AI and Human Intelligence. Check it out to... | 0 | 2024-06-24T07:47:28 | https://dev.to/itsjp/i-asked-chatgpt-who-is-more-intelligent-humans-or-ai-3k69 | ai, chatgpt, computerscience, programming |

I recently wrote an article on Medium about [Comparing AI and Human Intelligence](https://medium.com/@robert.clave.official/i-asked-chatgpt-who-is-more-intelligent-humans-or-ai-d594cf3da69e).

Check it out to learn more! | itsjp |

1,898,554 | Elevate Your Travel Experience with Premium Airport Transfer | Taxileader is a leading provider of Airport Transfer, offering convenient and reliable transportation... | 0 | 2024-06-24T07:46:17 | https://dev.to/netbix_digitalmarketing_a/elevate-your-travel-experience-with-premium-airport-transfer-l77 | taxi, france, paris, cab | Taxileader is a leading provider of [Airport Transfer](https://taxileader.fr/), offering convenient and reliable transportation solutions for travelers. Whether you need transfers to or from major airports such as Charles de Gaulle or specific destinations like Disneyland Paris, Taxileader ensures that you arrive and d... | netbix_digitalmarketing_a |

1,898,380 | Making a Logging Plugin with Transpiler | This post is the translation from the original article found here:... | 0 | 2024-06-24T05:46:43 | https://dev.to/solleedata/making-a-logging-plugin-with-transpiler-8ii | babel, swc, logging, plugin | > * This post is the translation from the original article found here: https://toss.tech/article/27750

If you're a frontend developer, you've probably heard of or used a transpiler. With the fast development of the frontend ecosystem, the transpiler has become an integral part of the process of creating and distributi... | solleedata |

1,898,534 | Live Testing: What It Is and Why It's Important | As technology continues to evolve, software development and testing have become increasingly... | 0 | 2024-06-24T07:45:01 | https://dev.to/wetest/live-testing-what-it-is-and-why-its-important-2i4b | livetesting, testing, apptesting, softwaretesting | As technology continues to evolve, software development and testing have become increasingly critical. One important component of software testing is live testing, which involves testing software in real-world situations with real users. In this article, we'll explore what live testing is, why it's important, and how i... | wetest |

1,898,745 | Pritunl: launching a VPN in AWS on EC2 with Terraform | I’ve already written a little about Pritunl before — Pritunl: Running a VPN in Kubernetes. Let’s... | 0 | 2024-07-07T11:04:16 | https://rtfm.co.ua/en/pritunl-launching-a-vpn-in-aws-on-ec2-with-terraform/ | aws, terraform, devops, tutorial | ---

title: Pritunl: launching a VPN in AWS on EC2 with Terraform

published: yes

date: 2024-06-24 07:42:12 UTC

tags: aws,terraform,devops,tutorial

canonical_url: https://rtfm.co.ua/en/pritunl-launching-a-vpn-in-aws-on-ec2-with-terraform/

---

I’ve alre... | setevoy |

1,898,553 | Effortless Taxi Transfer CDG to Disneyland Paris Await | Taxileader is your go-to for smooth and convenient Taxi Transfers Charles de Gaulle (CDG) to... | 0 | 2024-06-24T07:41:39 | https://dev.to/netbix_digitalmarketing_a/effortless-taxi-transfer-cdg-to-disneyland-paris-await-2jmn | taxi, france, paris, cab | Taxileader is your go-to for smooth and convenient [Taxi Transfers Charles de Gaulle (CDG) to Disneyland Paris](https://taxileader.fr/). They prioritise customer satisfaction, promising prompt and stress-free transportation for all travelers. By using efficient routes and cutting-edge navigation technology, Taxileader ... | netbix_digitalmarketing_a |

1,898,552 | Assessment Help | If you are a business management student then contact with best writers on the Assessment help... | 0 | 2024-06-24T07:40:59 | https://dev.to/edwardk/assessment-help-1j7g | education, assignment, study, career | If you are a business management student then contact with best writers on the [Assessment help](http://assessmenthelps.com/) website. You will never regret this decision and will get a 100% good score in assessment writing.

FOR MORE INFORMATION, VISIT: ASSESSMENTHELPS.COM

PHONE : +61 2800 67005 (WHATSAPP ONLY)

EMAIL :... | edwardk |

1,898,513 | Mobile App Development: Create Powerful Apps Today! | In the fast-paced digital age, mobile app development has become a crucial component for businesses... | 0 | 2024-06-24T07:05:18 | https://dev.to/hiteshelioratechno_477225/mobile-app-development-create-powerful-apps-today-onh | webdev, javascript, programming, career | In the fast-paced digital age, mobile app development has become a crucial component for businesses and entrepreneurs looking to create impactful digital experiences. With the proliferation of smartphones and tablets, mobile apps offer an unparalleled opportunity to engage with users, enhance brand loyalty, and drive r... | hiteshelioratechno_477225 |

1,898,551 | Gurgaon's Top Interior Designers Reveal Their Winning Strategies | Interior x Design unveils the secrets of Gurgaon's leading interior designers, showcasing their... | 0 | 2024-06-24T07:40:31 | https://dev.to/interior_xdesign_664d411/gurgaons-top-interior-designers-reveal-their-winning-strategies-3dem | webdev, javascript | [Interior x Design](https://interiorxdesign.com/) unveils the secrets of Gurgaon's leading interior designers, showcasing their winning approaches to creating stunning spaces. This comprehensive blog delves into their innovative strategies, offering valuable insights for homeowners and design enthusiasts alike.

Interio... | interior_xdesign_664d411 |

1,898,550 | Disneyland Paris Airport Taxi Transfers for a Magical Start to Your Adventure | Taxileader is your go-to for Disneyland Paris Airport Taxi Transfers, providing travellers with... | 0 | 2024-06-24T07:39:41 | https://dev.to/netbix_digitalmarketing_a/disneyland-paris-airport-taxi-transfers-for-a-magical-start-to-your-adventure-m7a | taxi, france, paris, cab | Taxileader is your go-to for [Disneyland Paris Airport Taxi Transfers](https://taxileader.fr/), providing travellers with smooth and hassle-free transportation solutions. By carefully planning routes and utilising technology, they guarantee punctual pick-ups and drop-offs, elevating the overall travel experience. Tailo... | netbix_digitalmarketing_a |

1,898,548 | Top 10 Digital Marketing Agencies In Delhi | Delhi is the bustling capital of India and hosts a vibrant ecosystem of digital marketing agencies... | 0 | 2024-06-24T07:39:36 | https://dev.to/rahultyagi1/top-10-digital-marketing-agencies-in-delhi-581 | digital, marketing | Delhi is the bustling capital of India and hosts a vibrant ecosystem of digital marketing agencies that cater to diverse business needs with their innovative strategies and cutting-edge technologies. Here’s an updated list of the top 10 digital marketing agencies in Delhi, now including Cotgin Analytics at the first po... | rahultyagi1 |

1,898,440 | Persistencia para frontends | Uno de los mitos en el desarrollo web es que los developers especializados en frontend es que en su... | 0 | 2024-06-24T07:39:21 | https://dev.to/dezkareid/persistencia-para-frontends-2b64 | webdev, frontend, database | Uno de los mitos en el desarrollo web es que los developers especializados en frontend es que en su trabajo no involucra la construcción de bases de datos, solo consultas a APIs.

Nada mas lejos de la verdad, si bien la fuente de la verdad absoluta siempre serán los datos provenientes de una API. La data de los endpoin... | dezkareid |

1,898,547 | WHY CAN'T I get Chrome devtools frontend to display message in the console panel through Chrome Devtools Protocol? | stackoverflow link I cloned the Chrome devtools frontend source code and ran npx http-server .. Then... | 0 | 2024-06-24T07:39:14 | https://dev.to/wwereal/cant-get-chrome-devtools-frontend-to-display-message-in-the-console-panel-through-chrome-devtools-protocol-h3b | [stackoverflow link](https://stackoverflow.com/q/78660917/21999086)

I cloned the Chrome devtools frontend source code and ran `npx http-server .`. Then I visited `http://127.0.0.1:8080/front_end/devtools_app.html?ws=localhost:8899`, which connects to my backend WebSocket server (`localhost:8899`). The server listens f... | wwereal | |

1,898,546 | Mastering Enterprise Test Automation: Key Strategies for Enhancing Digital Quality | In an era where digital solutions reign supreme, maintaining software quality has become a pivotal... | 0 | 2024-06-24T07:38:49 | https://dev.to/berthaw82414312/mastering-enterprise-test-automation-key-strategies-for-enhancing-digital-quality-1ji | In an era where digital solutions reign supreme, maintaining software quality has become a pivotal factor for organizational success. Comprehending and implementing efficient enterprise test automation processes is crucial for testers, product managers, SREs, DevOps, and QA engineers. This guide aims to delve into the ... | berthaw82414312 | |

1,898,545 | טיפים עבור תכנון יעיל של נסיעה ברכבת ישראל | טיפים עבור תכנון יעיל של נסיעה ברכבת ישראל הרכבת הפכה בשנים האחרונות לאמצעי תחבורה פופולרי ונוח... | 0 | 2024-06-24T07:37:40 | https://dev.to/iltrain/typym-bvr-tknvn-yyl-shl-nsyh-brkbt-yshrl-1kj7 | [טיפים עבור תכנון יעיל של נסיעה ברכבת ישראל

](https://israel-train.co.il/%D7%A8%D7%9B%D7%91%D7%AA-%D7%99%D7%A9%D7%A8%D7%90%D7%9C-%D7%AA%D7%9B%D7%A0%D7%95%D7%9F-%D7%A0%D7%A1%D7%99%D7%A2%D7%94/)הרכבת הפכה בשנים האחרונות לאמצעי תחבורה פופולרי ונוח בישראל. נסיעה ברכבת היא דרך יעילה, מהנה ובטוחה להגיע ליעדים רבים ברחבי הארץ... | iltrain | |

1,898,544 | Case Studies: Social Media Success Stories | The world of social media marketing can be a complex and ever-evolving beast. But fear not! Here,... | 0 | 2024-06-24T07:36:56 | https://dev.to/antony_tec_6c08676e5fdbf7/case-studies-social-media-success-stories-534 | The world of social media marketing can be a complex and ever-evolving beast. But fear not! Here, we'll showcase real-world examples of how digital-first agencies in Kerala have helped businesses leverage social media to achieve incredible results.

Case Study #1: Spicing Up Tradition with Social Media Savvy

The Chall... | antony_tec_6c08676e5fdbf7 | |

1,898,543 | Skyexch | Skyexch is most trusted betting website, you can deposit anytime also instant withdrawal instant... | 0 | 2024-06-24T07:36:43 | https://dev.to/riya_singh_5a821a4440483c/skyexch-35b2 | skyexch | [Skyexch ](https://skyexch.gg/)is most trusted betting website, you can deposit anytime also instant withdrawal instant through bank transfer or UPI. | riya_singh_5a821a4440483c |

1,898,542 | Top Chinese Universities Offering MBBS Degrees in English | China has developed as a compelling goal for worldwide understudies looking for a high-quality and... | 0 | 2024-06-24T07:35:45 | https://dev.to/ayesha_shamshad_26995ef77/top-chinese-universities-offering-mbbs-degrees-in-english-1gef | mbbs, china, university |

China has developed as a compelling goal for worldwide understudies looking for a high-quality and reasonable restorative instruction. The MBBS (Lone ranger of Pharmaceutical and Single man of Surgery) degree in Chi... | ayesha_shamshad_26995ef77 |

1,898,540 | OpenSign v1.5.8 introduces new features including support for encrypted files and enhance completion certificate. | OpenSign has released the version v1.5.8! , This version introduces several new features,... | 0 | 2024-06-24T07:35:11 | https://dev.to/opensign001/opensign-v158-introduces-new-features-including-support-for-encrypted-files-and-enhance-completion-certificate-520n | OpenSign has released the version v1.5.8! , This version introduces several new features, improvements and much more. Here is a summary of what's new in this version:

What's New

Major Features

1) Support for Encrypted PDF files, Images and DOCX files

In this version OpenSign has released the feature to support for... | opensign001 | |

1,898,539 | From Spice Route Star to Global Force: Building Your Personal Brand | Kerala, a land of captivating backwaters, verdant hills, and rich cultural heritage, is also a... | 0 | 2024-06-24T07:33:54 | https://dev.to/antony_tec_6c08676e5fdbf7/from-spice-route-star-to-global-force-building-your-personal-brand-31l3 | Kerala, a land of captivating backwaters, verdant hills, and rich cultural heritage, is also a breeding ground for talented individuals. But in today's hyper-connected world, simply being skilled isn't enough. You need a powerful personal brand – a unique identity that showcases your exper[](url)tise and propels you to... | antony_tec_6c08676e5fdbf7 | |

1,898,538 | A Tool That Helps Make You Confident in Your Job Search | I’m Asrul, the creator of Resmume. A few years ago, while listening to my wife, Gita (not her real... | 0 | 2024-06-24T07:33:54 | https://dev.to/asrul10/a-tool-that-helps-make-you-confident-in-your-job-search-79a | career, jobhunting, resume | ---

title: A Tool That Helps Make You Confident in Your Job Search

published: true

# description:

tags: careers, jobhunting, resume

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/dyiqemijq11lq71bqvel.png

---

I’m Asrul, the creator of [Resmume](https://resmume.com/). A few years ago, while liste... | asrul10 |

1,898,537 | Your Adventure Begins with Disneyland Airport Taxi Transfers | Taxileader is your go-to for Disneyland Airport Taxi Transfers, focusing on making your journey... | 0 | 2024-06-24T07:33:12 | https://dev.to/netbix_digitalmarketing_a/your-adventure-begins-with-disneyland-airport-taxi-transfers-4p7g | taxi, france, paris, cab | Taxileader is your go-to for [Disneyland Airport Taxi Transfers](https://taxileader.fr/), focusing on making your journey convenient and comfortable. They use smart route planning and technology to guarantee timely and efficient transfers, offering personalised service options to meet all your needs. Their strict safet... | netbix_digitalmarketing_a |

1,898,535 | What are benefits of Identity and Access Management (IAM) | Benefits of Identity and Access Management (IAM) In today's digital age, organizations face... | 0 | 2024-06-24T07:31:28 | https://dev.to/blogginger/what-are-benefits-of-identity-and-access-management-iam-24ah | **Benefits of Identity and Access Management (IAM)**

In today's digital age, organizations face increasing challenges in managing user identities and controlling access to critical resources. Identity and Access Management (IAM) has emerged as a cornerstone of cybersecurity, providing a comprehensive framework to ensu... | blogginger | |

1,898,533 | Build A Robust Bitcoin Ordinals Wallet Facility To Secure Your Digital Assets | Bitcoin Ordinals Wallet Development In this constantly changing cryptocurrency world, having a safe... | 0 | 2024-06-24T07:30:32 | https://dev.to/osiz_digitalsolutions/build-a-robust-bitcoin-ordinals-wallet-facility-to-secure-your-digital-assets-2jhj | **Bitcoin Ordinals Wallet Development**

In this constantly changing cryptocurrency world, having a safe and reliable wallet will help you manage your digital assets effectively. Osiz is the foremost Cryptocurrency Development Company, that offers services for Bitcoin Ordinals Wallet Development for everyone. A Bitcoin ... | osiz_digitalsolutions | |

1,898,532 | The Ultimate Guide to Disneyland Taxi Transfer | Taxileader understands the importance of optimising Disneyland Taxi Transfer to meet customer demands... | 0 | 2024-06-24T07:30:03 | https://dev.to/netbix_digitalmarketing_a/the-ultimate-guide-to-disneyland-taxi-transfer-45h9 | taxi, france, paris, cab | Taxileader understands the importance of optimising [Disneyland Taxi Transfer](https://taxileader.fr/) to meet customer demands effectively. Recognising the unique needs of travellers to Disneyland is essential, focusing on convenience, comfort, and safety. By strategically planning routes and utilising technology for ... | netbix_digitalmarketing_a |

1,898,531 | C++ 是怎麼找到虛擬函式? | C++ 的虛擬函式讓程式碼增加許多彈性, 不過你可能會很好奇, 到底 C++ 是怎麼找到指位器所對應類別版本的虛擬函式?這就要從 C++ 如何幫我們建立物件談起。以下我們就以 x86-64 上的 gcc... | 0 | 2024-06-24T07:29:55 | https://dev.to/codemee/c-shi-zen-mo-zhao-dao-xu-ni-han-shi--1cg9 | cpp, gcc | C++ 的虛擬函式讓程式碼增加許多彈性, 不過你可能會很好奇, 到底 C++ 是怎麼找到指位器所對應類別版本的虛擬函式?這就要從 C++ 如何幫我們建立物件談起。以下我們就以 x86-64 上的 gcc 為測試平台, 從編譯器產生的組合語言碼觀察實作的方式。

🛈 本文會以 x86 組合語言程式碼解說, 有關 x86 組合語言, 可參考這一篇[哈佛大學 C61 課程的課堂筆記](https://cs61.seas.harvard.edu/site/2021/Asm/#Calling-convention)。

## 沒有虛擬函式的類別

對於沒有虛擬函式的一般類別, 是最單純的, 像是以下這個[簡單的範例](https://god... | codemee |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.