id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,898,530 | (Part 9)Golang Framework Hands-on - Multiple Copies of Flow | Github: https://github.com/aceld/kis-flow Document:... | 0 | 2024-06-24T07:28:13 | https://dev.to/aceld/part-8golang-framework-hands-on-multiple-copies-of-flow-c4k | go | <img width="150px" src="https://github.com/aceld/kis-flow/assets/7778936/8729d750-897c-4ba3-98b4-c346188d034e" />

Github: https://github.com/aceld/kis-flow

Document: https://github.com/aceld/kis-flow/wiki

---

[Part1-OverView](https://dev.to/aceld/part-1-golang-framework-hands-on-kisflow-streaming-computing-framework... | aceld |

1,898,529 | Unleashing Your Career Potential: Why Selenium is Vital for Automation Testing | In the realm of software development, mastering Selenium can be a game-changer for professionals... | 0 | 2024-06-24T07:27:05 | https://dev.to/mercy_juliet_c390cbe3fd55/unleashing-your-career-potential-why-selenium-is-vital-for-automation-testing-12ja | selenium | In the realm of software development, mastering Selenium can be a game-changer for professionals looking to excel in automation testing. Embracing Selenium’s capabilities becomes even more accessible and impactful with **[Selenium Training in Chennai](https://www.acte.in/selenium-training-in-chennai)**. Here are compel... | mercy_juliet_c390cbe3fd55 |

1,898,528 | Get in Touch: Contact Us Today for Any Inquiries | Eliora Techno | In today’s fast-paced technological world, staying connected is more important than ever. At Eliora... | 0 | 2024-06-24T07:24:58 | https://dev.to/hiteshelioratechno_477225/get-in-touch-contact-us-today-for-any-inquiries-eliora-techno-31ne | webdev, beginners, productivity, developer | In today’s fast-paced technological world, staying connected is more important than ever. At [Eliora Techno](https://elioratechnologies.com/contact), we believe in fostering strong relationships with our clients, partners, and stakeholders. Whether you have questions about our services, need technical support, or are i... | hiteshelioratechno_477225 |

1,898,526 | Consultant Neurosurgeon at the Wockhardt Hospitals, Mumbai Central | CREDENTIALS Dr Mazda K. Turel is a practicing Neurosurgeon at the prestigious Wockhardt Hospitals,... | 0 | 2024-06-24T07:23:43 | https://dev.to/drmazdakturel/consultant-neurosurgeon-at-the-wockhardt-hospitals-mumbai-central-4a39 | CREDENTIALS

[Dr Mazda K. Turel](https://mazdaturel.com/) is a practicing Neurosurgeon at the prestigious Wockhardt Hospitals, South Mumbai, India. He is also an Honorary Assistant Professor of Neurosurgery at the Grant Medical College and Sir J.J. Groups of Hospitals. He specializes in the treatment of diseases of the ... | drmazdakturel | |

1,898,525 | Discovering Insights: Find Out About the Most Recent Blog Posts! | In the ever-evolving digital landscape, staying updated with the latest blog posts is crucial for... | 0 | 2024-06-24T07:21:51 | https://dev.to/hiteshelioratechno_477225/discovering-insights-find-out-about-the-most-recent-blog-posts-1ain | webdev, javascript, devops | In the ever-evolving digital landscape, staying updated with the latest blog posts is crucial for anyone seeking fresh perspectives, innovative ideas, and current trends. Blogs have become an essential platform for sharing knowledge, expressing creativity, and fostering communities. Here’s a look at how you can discove... | hiteshelioratechno_477225 |

1,898,523 | Дети и молодежь из 15 регионов России приняли участие в конкурсе социальной рекламы "Время решать" | В мае 2024 года талантливая молодежь из России, Казахстана и Абхазии приняла участие в Международном... | 0 | 2024-06-24T07:20:20 | https://dev.to/lanasokol1996/dieti-i-molodiezh-iz-15-rieghionov-rossii-priniali-uchastiie-v-konkursie-sotsialnoi-rieklamy-vriemia-rieshat-3kkh | marketing, socialmedia | В мае 2024 года талантливая молодежь из России, Казахстана и Абхазии приняла участие в Международном конкурсе социальной рекламы "Время решать". Конкурс объединил участников из 15 регионов, представивших на суд жюри 209 работ.

Организатором конкурса выступила Ульяна Юрьевна Волосуха, руководитель проекта "Студенческая... | lanasokol1996 |

1,898,511 | Empowering University Students Through Open-Source: A Vision for Fostering Innovation and Collaboration" | I am a Computer Engineering student who has successfully completed my coursework and am currently in... | 0 | 2024-06-24T07:03:45 | https://dev.to/mayhrem/open-source-project-19bh | opensource, programming, community | I am a Computer Engineering student who has successfully completed my coursework and am currently in the process of obtaining my degree. As part of my journey, I am eager to write an in-depth article focused on the importance of universities supporting open-source projects. Specifically, I want the article to explore t... | mayhrem |

1,898,522 | The Importance of Proper Packaging and Shipping of Crane Gear Boxes | screenshot-1708480908632.png Options Firstly, which means that kit containers reach their location... | 0 | 2024-06-24T07:19:42 | https://dev.to/homsdh_ropijd_fc25d0cc460/the-importance-of-proper-packaging-and-shipping-of-crane-gear-boxes-4l1d | design | screenshot-1708480908632.png

Options

Firstly, which means that kit containers reach their location safely with no any harm. Meaning the product is gotten because of the customer in perfect condition, which increases satisfaction and develops trust. Upcoming, proper packaging and shipping ensure this technique will not... | homsdh_ropijd_fc25d0cc460 |

1,898,521 | Markdown Code Blocks in HTML | Hello! I love Markdown code blocks but in default, we don't have Markdown code blocks in HTML. We'll... | 0 | 2024-06-24T07:18:52 | https://dev.to/qui/markdown-code-blocks-in-html-555p | webdev, html, css | Hello! I love Markdown code blocks but in default, we don't have Markdown code blocks in HTML. We'll do it with CSS.

## 1. Adding CSS

### 1.1. Using Another file for CSS

- Add this to your CSS: `<link rel="stylesheet" href="style.css">`

- And put this code in style.css:

```

.code {

width: auto;

max-width: 80... | qui |

1,898,325 | Using Domain-Driven Design to to Create Microservice App | Creating scalable and maintainable applications remains a constant challenge in the software... | 0 | 2024-06-24T07:18:01 | https://dev.to/eugene-zimin/leveraging-domain-driven-design-for-application-design-58e2 | softwareengineering, architecture, microservices, eventdriven | Creating scalable and maintainable applications remains a constant challenge in the software development industry. As digital ecosystems grow more complex and user demands evolve rapidly, developers face increasing pressure to build systems that can adapt and expand seamlessly.

Scalability presents a multifaceted chal... | eugene-zimin |

1,898,520 | Front End Interview: Javascript | 1. What are the differences between .then() and async/await in handling asynchronous... | 0 | 2024-06-24T07:16:12 | https://dev.to/zeeshanali0704/front-end-interview-45a4 | ### 1. What are the differences between `.then()` and `async/await` in handling asynchronous operations in JavaScript?

Use `.then()` for promise chaining and `async/await` to make asynchronous code look synchronous, improving readability and error handling.

### 2. What are the lifecycle methods in React components, an... | zeeshanali0704 | |

1,898,519 | How to use Git | Master the Advanced Commands of Git | Git has become an indispensable tool for developers worldwide, streamlining the version control... | 27,838 | 2024-06-24T07:14:45 | https://dev.to/nnnirajn/how-to-use-git-master-the-advanced-commands-of-git-510h | git, github, programming, beginners | Git has become an indispensable tool for developers worldwide, streamlining the version control process and enhancing collaborative programming efforts. While numerous resources help beginners grasp the basics, this post is tailored for experienced web developers looking to elevate their Git game with advanced and tech... | nnnirajn |

1,898,518 | Unveiling Precision: Songwei CNC (Shanghai) Co., Ltd's Role in Fanuc Parts Supply | screenshot-1719192361682.png Unveiling Precision: A Game-Changing Solution for Fanuc Parts Supply by... | 0 | 2024-06-24T07:14:23 | https://dev.to/bomans_tomnlid_6b1267819b/unveiling-precision-songwei-cnc-shanghai-co-ltds-role-in-fanuc-parts-supply-2g7h | fanucparts | screenshot-1719192361682.png

Unveiling Precision: A Game-Changing Solution for Fanuc Parts Supply by Songwei CNC (Shanghai) Co., Ltd

Introduction

If you value precision and accuracy in your work, Songwei CNC (Shanghai) Co., Ltd is the company you have been searching for. They provide innovative Fanuc parts suppl... | bomans_tomnlid_6b1267819b |

1,898,517 | Top 10 Affordable Restaurants in DHA Lahore in 2024 | DHA Lahore is renowned for its upscale lifestyle, beautiful architecture, and vibrant food scene.... | 0 | 2024-06-24T07:13:32 | https://dev.to/tarbanifoods/top-10-affordable-restaurants-in-dha-lahore-in-2024-3lmp | DHA Lahore is renowned for its upscale lifestyle, beautiful architecture, and vibrant food scene. This area boasts some of the best eateries in the city, offering a wide range of cuisines to satisfy diverse palates. While some might think dining in DHA comes with a hefty price tag, many affordable restaurants provide d... | tarbanifoods | |

1,898,516 | Eco-Friendly Materials: The Future of Washable Pads | H869816ae69f44f33b610dc069cf316bco.png Going Green Washable Pads: The Eco-Friendly... | 0 | 2024-06-24T07:11:42 | https://dev.to/homsdh_ropijd_fc25d0cc460/eco-friendly-materials-the-future-of-washable-pads-2nd2 | design | H869816ae69f44f33b610dc069cf316bco.png

Going Green Washable Pads: The Eco-Friendly Revolution

Introduction

They could occupy to 500 many years to decompose, polluting the environment being ecological posing the probabilities to their physical fitness. That’s why a lot more companies was evaluating contents which... | homsdh_ropijd_fc25d0cc460 |

1,898,515 | Sugar-free Confectionery Market: Trends, Growth, Size, Share, Forecast 2023-2033 and Key Players Analysis | The global sugar-free confectionery market was valued at US$ 2,334 million in 2023 and is projected... | 0 | 2024-06-24T07:09:59 | https://dev.to/swara_353df25d291824ff9ee/sugar-free-confectionery-market-trends-growth-size-share-forecast-2023-2033-and-key-players-analysis-4bmj |

The global sugar-free confectionery market was valued at US$ 2,334 million in 2023 and is projected to grow to US$ 3,908 million by 2033, with a compound annual growth rate (CAGR) of 5.4% over the forecast period. This growth is driven by increasing health consciousness among consumers, rising ... | swara_353df25d291824ff9ee | |

1,898,514 | How Much Does it Cost to Build an App? A Comprehensive Guide! | Today, it is essential to know about the cost of developing an app because, well, apps rule the... | 0 | 2024-06-24T07:06:48 | https://dev.to/pepper_square/how-much-does-it-cost-to-build-an-app-a-comprehensive-guide-1o2 | mobile, design, development, softwaredevelopment | Today, it is essential to know about the cost of developing an app because, well, apps rule the world! There is an app for just about anything, and the market for apps only seems to grow.

Why? Because the pandemic has ushered in an era of phone-heavy users, setting the perfect environment for businesses to scale by bu... | pepper_square |

1,898,510 | Voice AI: How to build a voice AI assistant? | Voice is an interesting platform; can you imagine building a voice service that can pick up the phone... | 0 | 2024-06-24T07:03:30 | https://dev.to/kwnaidoo/voice-ai-how-to-build-a-voice-ai-assistant-5bem | ai, machinelearning, python, productivity | Voice is an interesting platform; can you imagine building a voice service that can pick up the phone and have a conversation with a human in real-time?

This may have been far-fetched a few years ago, but now it's totally possible and relatively simple to achieve.

## How to achieve this?

To build a Voice AI service,... | kwnaidoo |

1,898,509 | Raising WordPress Expertise: Helpful Tips & Tricks with Eliora | WordPress is a powerful platform that enables users to create dynamic websites with ease. Whether... | 0 | 2024-06-24T07:03:12 | https://dev.to/hiteshelioratechno_477225/raising-wordpress-expertise-helpful-tips-tricks-with-eliora-h45 | webdev, javascript, programming, tutorial | WordPress is a powerful platform that enables users to create dynamic websites with ease. Whether you're a beginner or looking to enhance your existing skills, these tips and tricks from Eliora will help you elevate your [WordPress expertise.](https://elioratechnologies.com/wordpressdevelopment)

1. Master the Basics

B... | hiteshelioratechno_477225 |

1,898,508 | Exploring Web Development: HTMX | What is HTMX The last 2 weeks I have been exploring HTMX, a light-weight, front-end... | 0 | 2024-06-24T07:02:50 | https://dev.to/alexphebert2000/exploring-web-development-htmx-4no1 | ## What is HTMX

The last 2 weeks I have been exploring HTMX, a light-weight, front-end library that looks to bring the needs of a modern web app back to just HTML. While HTMX is written in JavaScript, using HTMX doesn't require you to write a line of JavaScript to create a fully fleshed, interactive front end. HTMX use... | alexphebert2000 | |

1,898,507 | Releases New Tools | htmx 2.0 This release ends support for Internet Explorer and tightens up some defaults, but does... | 0 | 2024-06-24T07:02:39 | https://dev.to/mohammed_jobairhossain_c/releases-new-tools-10id | javascript, programming, webdev, aws | - [ htmx 2.0

](https://htmx.org/posts/2024-06-17-htmx-2-0-0-is-released/)

This release ends support for Internet Explorer and tightens up some defaults, but does not change most of the core functionality or the core API of the library.

- [Electron 31 ](https://www.electronjs.org/blog/electron-31-0)

It includes upgr... | mohammed_jobairhossain_c |

1,898,503 | Examples of REST, GraphQL, RPC, gRPC | This article will focus on practical examples of major API protocols: REST, GraphQL, RPC, gRPC. By... | 27,837 | 2024-06-24T07:02:02 | https://dev.to/rahulvijayvergiya/examples-of-rest-graphql-rpc-grpc-48bo | api, webdev, microservices, graphql | This article will focus on practical examples of major API protocols: REST, GraphQL, RPC, gRPC. By examining these examples, you will gain a clearer understanding of how each protocol operates and how to implement them in your own projects.

If you haven't read the first article comparing REST, GraphQL, RPC, and gRPC, ... | rahulvijayvergiya |

1,898,486 | Comparative Analysis: REST, GraphQL, RPC, gRPC | APIs play a crucial role in enabling communication between different software systems. With various... | 27,837 | 2024-06-24T07:01:56 | https://dev.to/rahulvijayvergiya/rest-graphql-rpc-grpc-3m09 | graphql, webdev, api, microservices | APIs play a crucial role in enabling communication between different software systems. With various API protocols available, choosing the right one can be challenging. This article aims to focus on differences among **REST, GraphQL, RPC, gRPC**, providing a comparative analysis to help developers and businesses make in... | rahulvijayvergiya |

1,898,506 | Contribute and Earn With AI Social Campaigns | This is a submission for Twilio Challenge v24.06.12 What I Built I have developed a... | 0 | 2024-06-24T07:00:00 | https://dev.to/techfine/social-58a3 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

I have developed a platform where people can donate money to social campaigns. Additionally, I created an AI-powered WhatsApp bot using Twilio's API that allows users to receive grants by sharing videos of their c... | techfine |

1,897,858 | Valibot: featherweight validator | Good morning everyone and happy MonDEV! ☕ I hope that with this heat you are running light processes... | 25,147 | 2024-06-24T07:00:00 | https://dev.to/giuliano1993/valibot-featherweight-validator-2i67 | javascript, opensource, webdev |

Good morning everyone and happy MonDEV! ☕

I hope that with this heat you are running light processes on your PCs, to avoid melting neither you nor your processors! 😂

Today I want to talk about a tool that makes lightness its cornerstone.

It's called [Valibot](https://valibot.dev/).

Valibot is an npm package for data... | giuliano1993 |

1,871,813 | Leveraging PostgreSQL CAST for Data Type Conversions | Converting data types is essential in database management. PostgreSQL's CAST function helps achieve... | 21,681 | 2024-06-24T07:00:00 | https://dev.to/dbvismarketing/leveraging-postgresql-cast-for-data-type-conversions-895 | cast, postgres | Converting data types is essential in database management. PostgreSQL's CAST function helps achieve this efficiently. This article covers how to use CAST for effective data type conversions.

### Using CAST in PostgreSQL

Some practical examples of CAST include;

**Convert Salary to Integer**

```sql

SELECT CAST(salary... | dbvismarketing |

1,898,505 | Expert Website Developer in Nagpur: Crafting Digital Solutions! | In today's digital age, having a strong online presence is crucial for businesses to thrive. As... | 0 | 2024-06-24T06:59:56 | https://dev.to/hiteshelioratechno_477225/expert-website-developer-in-nagpur-crafting-digital-solutions-5d2 | webdev, webdevlop, javascript, programming | In today's digital age, having a strong online presence is crucial for businesses to thrive. As companies increasingly move online, the demand for professional website developers has surged. One city that stands out in this arena is Nagpur, known for its pool of expert [website developers](https://elioratechnologies.co... | hiteshelioratechno_477225 |

1,898,504 | Swimming Pool Contractors Company in Dubai, UAE | Dubai's vibrant lifestyle practically begs for a refreshing escape in your own backyard. A swimming... | 0 | 2024-06-24T06:59:31 | https://dev.to/markhere/swimming-pool-contractors-company-in-dubai-uae-22dk | swimming, pool, dubai |

Dubai's vibrant lifestyle practically begs for a refreshing escape in your own backyard. A swimming pool is the perfect solution, offering a place to cool down, relax, and entertain. But where do you begin? Finding the right swimming pool contractors in Dubai is crucial for a project that meets your vision and budget.... | markhere |

1,898,499 | Integrating TinyML, GenAI, and Twilio API for Smart Solutions | This is a submission for the Twilio Challenge What I Built The goal of this project is to... | 0 | 2024-06-24T06:58:40 | https://dev.to/engineeredsoul/integrating-tinyml-genai-and-twilio-api-for-smart-solutions-4o01 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for the [Twilio Challenge](https://dev.to/challenges/twilio)*

## What I Built

<!-- Share an overview about your project. -->

The goal of this project is to demonstrate the integration of Generative AI (GenAI) in embedded systems and to highlight the ease of accessing AI capabilities via Twilio's... | engineeredsoul |

1,898,502 | Stem Cell Therapy: Pioneering the Future of Medicine | What is Stem Cell Therapy? Stem cell therapy, often hailed as a breakthrough in modern medicine,... | 0 | 2024-06-24T06:56:38 | https://dev.to/usama_raja_efbcb53fea397b/stem-cell-therapy-pioneering-the-future-of-medicine-4hec |

What is Stem Cell Therapy?

**Stem cell therapy[](uhttps://irmsc.com/our-services/stem-cell-therapy/rl)**, often hailed as a breakthrough in modern medicine, harnesses the power of stem cells to treat or prevent various health conditions. These remarkable cells have the unique ability to develop into different types of... | usama_raja_efbcb53fea397b | |

1,898,500 | Mastering Chess with Twilio and OpenAI: An Interactive AI-Powered Chess Experience | This is a submission for Twilio Challenge v24.06.12 What I Built In this project, I... | 0 | 2024-06-24T06:55:21 | https://dev.to/molaycule/mastering-chess-with-twilio-and-openai-an-interactive-ai-powered-chess-experience-45l | devchallenge, twiliochallenge, ai, twilio | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

In this project, I created an interactive chess application that leverages AI and Twilio's communication APIs to enhance the user experience. This application allows users to play chess against an AI opponent with... | molaycule |

1,897,415 | 🕸 Networking is easy, fun, and probably not what you think it is. | Networking is extremely important for your career. They say the most valuable thing about going to... | 0 | 2024-06-24T06:54:49 | https://suchdevblog.com/opinions/NetworkingIsNotWhatYouThink.html | career, beginners, learning, community | Networking is *extremely important* for your career.

They say the most valuable thing about going to Harvard is not the education you'll receive or the skills you'll learn, but the connections you'll make. And they're right.

However, many people will say networking is hard, boring, impossible for some, especially neu... | samuelfaure |

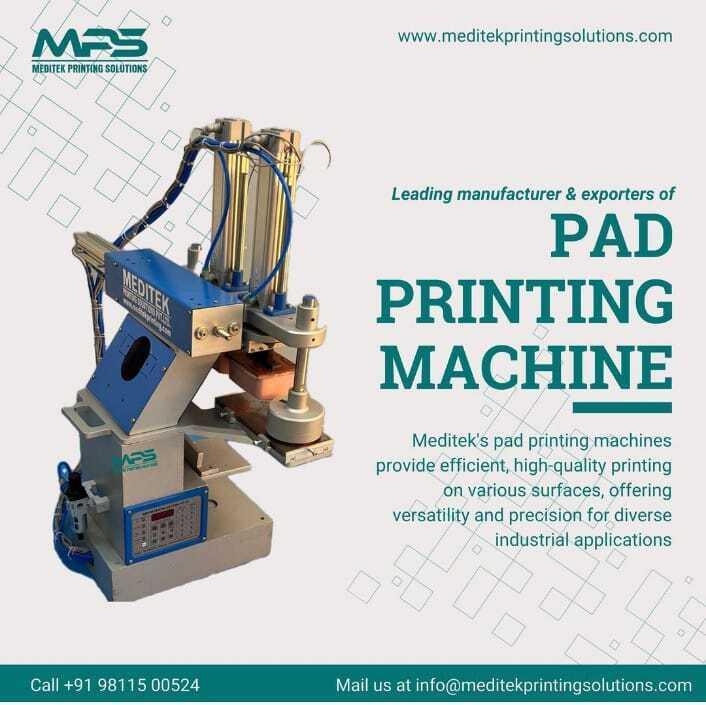

1,898,497 | Unleashing the Potential of Pad Printing Machines by Meditek Services in India | In the world of manufacturing and product customization, precision and quality are paramount. This is... | 0 | 2024-06-24T06:54:05 | https://dev.to/meditek_66ea4eca031430c14/unleashing-the-potential-of-pad-printing-machines-by-meditek-services-in-india-5hh7 | In the world of manufacturing and product customization, precision and quality are paramount. This is where Pad Printing Machines come

into play. Meditek Services, a leading provider of pad printing machines in India... | meditek_66ea4eca031430c14 | |

1,898,496 | How Authentication works (Part2) | Continuing from part 1, I have done some refactoring. Instead of decoding the token in the frontend... | 0 | 2024-06-24T06:54:04 | https://dev.to/mannawar/how-authentication-works-part2-3ibb | react, redux, aspnet, sqlserver | Continuing from part 1, I have done some refactoring. Instead of decoding the token in the frontend and then sending all the information (such as email, displayName, and userName) over HTTPS, I now prefer to send just the token. In the backend, this token is decoded and mapped to the required parameters. This approach ... | mannawar |

1,898,495 | Empty Strings and Zero-length Arrays: How do We Store... Nothing? | In high-level languages like Python and JavaScript, we are pretty used to initializing strings and... | 0 | 2024-06-24T06:53:25 | https://dev.to/biraj21/empty-strings-and-zero-length-arrays-how-do-we-store-nothing-1jko | c, javascript, python, beginners | In high-level languages like Python and JavaScript, we are pretty used to initializing strings and arrays like this:

```javascript

let myStr = ""; // an empty string

let myArr = []; // an empty array

```

We're saying that these are empty, which implies they contain zero bytes of data. So we are storing zero-bytes of d... | biraj21 |

1,898,494 | The Evolution of Mold Technology in Plastic Manufacturing | Introduction to Mold and mildew and mold Development in Plastic Manufacturing Our group create... | 0 | 2024-06-24T06:53:13 | https://dev.to/komabd_ropikd_259330c4759/the-evolution-of-mold-technology-in-plastic-manufacturing-4jh2 | design | Introduction to Mold and mildew and mold Development in Plastic Manufacturing

Our group create plastic products as our group have really designed in development, for that reason has really the technique. Mold and mildew and mold development is really required to plastic manufacturing in addition to has really advanced... | komabd_ropikd_259330c4759 |

1,898,288 | Codename: Riddler's Challenge - An AI powered story experience | This is a submission for the Twilio Challenge What I Built The Riddler’s Challenge is an... | 0 | 2024-06-24T06:52:34 | https://dev.to/artyymcflyy/riddlers-challenge-an-ai-powered-story-experience-13ch | devchallenge, twiliochallenge, ai, challenge | *This is a submission for the [Twilio Challenge ](https://dev.to/challenges/twilio)*

## What I Built

The Riddler’s Challenge is an interactive story-telling experience that uses AI to put you into the shoes of a Gotham Police Officer who has been called to protect the city in Batman's absence. You must rely on your ph... | artyymcflyy |

1,898,493 | Creating a CRUD App With Go | Introduction Hello! 😎 In this tutorial I will show you how to build a CRUD app using the... | 0 | 2024-06-24T06:51:51 | https://ethan-dev.com/post/creating-a-crud-app-with-go | go, beginners, crud, tutorial | ## Introduction

Hello! 😎

In this tutorial I will show you how to build a CRUD app using the Go programming language. By the end, you'll have a fully functioning CRUD (Create, Read, Update, Delete) app running locally. Let's get started.

---

## Requirements

- Go installed

- Basic understanding of Go

---

## Setti... | ethand91 |

1,898,489 | VisuSpeak — 𝙑𝙞𝙨𝙪𝙖𝙡𝙞𝙯𝙚 𝙩𝙤 𝙎𝙥𝙚𝙖𝙠 👀🗣️ | This project has been archived! | 0 | 2024-06-24T06:51:25 | https://dev.to/neilblaze/visuspeak--24j | devchallenge, twiliochallenge, ai, twilio | This project has been archived!

| neilblaze |

1,898,492 | The Future of Voice-Activated Apps: A Closer Look with iTechTribe International 🚀 | Hey there! Let's dive into the exciting world of voice-activated apps and explore what the future... | 0 | 2024-06-24T06:51:08 | https://dev.to/itechtshahzaib_1a2c1cd10/the-future-of-voice-activated-apps-a-closer-look-with-itechtribe-international-2b4d | programming, coding, mobile, flutter |

: Your Ultimate Guide | Large Language Models (LLMs) represent a groundbreaking advancement in the field of artificial... | 0 | 2024-06-24T06:47:58 | https://dev.to/futuristicgeeks/unlocking-the-power-of-large-language-models-llms-your-ultimate-guide-3h3p | webdev, llm, python, dataengineering | Large Language Models (LLMs) represent a groundbreaking advancement in the field of artificial intelligence, particularly in the domain of natural language processing (NLP). These models have the capability to understand, generate, and manipulate human language with remarkable accuracy. This article aims to provide an ... | futuristicgeeks |

1,898,490 | Dungeons and Twilio | This is a submission for the Twilio Challenge What I Built This project demonstrates the... | 0 | 2024-06-24T06:47:55 | https://dev.to/magodyboy/dungeons-and-twilio-2bm4 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for the [Twilio Challenge ](https://dev.to/challenges/twilio)*

## What I Built

This project demonstrates the integration of Twilio's communication services with OpenAI's GPT-3.5 and DALL-E models to create an interactive and engaging virtual Dungeon Master (DM) experience for a single-player Dung... | magodyboy |

1,898,043 | Hault - launch ai customer support with a click | This is a submission for the Twilio Challenge What I Built Hault is an open-source... | 0 | 2024-06-24T06:47:33 | https://dev.to/captain0jay/hault-launch-ai-customer-support-with-a-click-3ba0 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for the [Twilio Challenge ](https://dev.to/challenges/twilio)*

## What I Built

Hault is an open-source software that helps connect database that contains information about your business and FAQs that help you automate customer support using AI.

<!-- INTRODUCTION -->

## Introduction

- The projec... | captain0jay |

1,898,488 | How to Sign PDF File in C# (Developer Tutorial) | When working with digital documents, signing PDFs is vital for confirming their authenticity and... | 0 | 2024-06-24T06:45:32 | https://dev.to/tayyabcodes/how-to-sign-pdf-file-in-c-developer-tutorial-310f | csharp, signpdf, pdflibrary, tutorial | When working with digital documents, [signing PDFs](https://support.microsoft.com/en-us/office/digital-signatures-and-certificates-8186cd15-e7ac-4a16-8597-22bd163e8e96#:~:text=A%20digital%20signature%20is%20an,and%20has%20not%20been%20altered.) is vital for confirming their authenticity and security. Whether it's for c... | tayyabcodes |

1,898,487 | Creating an Interactive Airbnb Chatbot with Lyzr, OpenAI and Streamlit | In the competitive landscape of the hospitality industry, providing exceptional guest experiences is... | 0 | 2024-06-24T06:44:47 | https://dev.to/harshitlyzr/creating-an-interactive-airbnb-chatbot-with-lyzr-openai-and-streamlit-55bg | In the competitive landscape of the hospitality industry, providing exceptional guest experiences is crucial for retaining customers and gaining positive reviews. Airbnb hosts face the challenge of addressing numerous queries from guests promptly and accurately. Common questions range from property rules and amenities ... | harshitlyzr | |

1,898,485 | Very Own bookmarks | This is a submission for Twilio Challenge v24.06.12 What I Built Use Case: When scrolling... | 0 | 2024-06-24T06:43:30 | https://dev.to/suyashsrivastavadev/very-own-bookmarks-f4c | devchallenge, twiliochallenge, ai, twilio | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

Use Case: When scrolling through news articles, blog posts or youtube video. I often send WhatsApp messages to myself with important links I want to bookmark. However, finding the right link later can be challeng... | suyashsrivastavadev |

1,898,483 | Pelletizing Solutions: Innovations in Material Processing | screenshot-1719244691564.png Zhengzhou Meijin: Revolutionizing How We Process... | 0 | 2024-06-24T06:43:15 | https://dev.to/komabd_ropikd_259330c4759/pelletizing-solutions-innovations-in-material-processing-4a12 | design | screenshot-1719244691564.png

Zhengzhou Meijin: Revolutionizing How We Process Materials

Introduction:

Zhengzhou Meijin have revolutionized how we process materials. This technology is innovative, efficient, and safe to use. We will discuss the advantages of Zhengzhou Meijin, how they can be used, and the chicken... | komabd_ropikd_259330c4759 |

1,898,482 | Automating API Documentation Generation with Lyzr and OpenAI | In modern software development, maintaining comprehensive and up-to-date API documentation is crucial... | 0 | 2024-06-24T06:41:51 | https://dev.to/harshitlyzr/automating-api-documentation-generation-with-lyzr-and-openai-2mhp | In modern software development, maintaining comprehensive and up-to-date API documentation is crucial for developers, collaborators, and users. However, creating and maintaining such documentation manually is often labor-intensive, time-consuming, and prone to errors. This can lead to outdated, incomplete, or inconsist... | harshitlyzr | |

1,898,481 | CA Intermediate Last Date of Registration May 2025: Essentials | Be Prepared! CA Intermediate Last Date of Registration May 2025 The CA Intermediate last... | 0 | 2024-06-24T06:40:56 | https://dev.to/shambhavisah/ca-intermediate-last-date-of-registration-may-2025-essentials-4794 |

## Be Prepared! CA Intermediate Last Date of Registration May 2025

The **[CA Intermediate last date of registration May 2025](https://www.studyathome.org/ca-intermediate-registration-may-2025/)** is very importan... | shambhavisah | |

1,898,480 | Sildalist 120 mg Dosage UK | Buy Sildalist 120 mg Online - First Choice Meds | Buy Sildalist 120 mg Online at First Choice Medss. Effective treatment for erectile dysfunction. Fast... | 0 | 2024-06-24T06:40:09 | https://dev.to/firstchoice_medss_c318642/sildalist-120-mg-dosage-uk-buy-sildalist-120-mg-online-first-choice-meds-3506 | webdev, beginners, programming | Buy Sildalist 120 mg Online at First Choice Medss. Effective treatment for erectile dysfunction. Fast delivery and secure ordering. Shop now for the best prices. For more information check this link https://www.firstchoicemedss.com/sildalist-120mg.html | firstchoice_medss_c318642 |

1,898,479 | Changing State After Defining it using Const ??!! What Is Going On? | Have you asked yourself how you can change the state you defined in spite of using const declarations... | 0 | 2024-06-24T06:40:05 | https://dev.to/ahmedeweeskorany/changing-state-after-defining-it-using-const-what-is-going-on-3j0i | webdev, javascript, react, programming | Have you asked yourself how you can change the state you defined in spite of using const declarations like `const [count,setCount] = useState(0)`? let's dive a little bit ...

#### The first question we need to ask is that does the const declarations make variables immutable.

actually, const creates something called... | ahmedeweeskorany |

1,898,473 | Compound Semiconductor Materials Market: Trends, Growth, Forecast 2022-2032 | The global compound semiconductor materials market, valued at US$ 32 billion in 2022, is expected to... | 0 | 2024-06-24T06:35:02 | https://dev.to/swara_353df25d291824ff9ee/compound-semiconductor-materials-market-trends-growth-forecast-2022-2032-1jap |

The global [compound semiconductor materials market](https://www.persistencemarketresearch.com/market-research/compound-semiconductor-materials-market.asp), valued at US$ 32 billion in 2022, is expected to reach US$... | swara_353df25d291824ff9ee | |

1,898,477 | In Excel, Parse Hexadecimal Numbers And Make Queries | Problem description & analysis: In the following table, value of cell A1 is made up of names of... | 0 | 2024-06-24T06:39:16 | https://dev.to/judith677/in-excel-parse-hexadecimal-numbers-and-make-queries-4ljk | beginners, programming, tutorial, productivity | **Problem description & analysis**:

In the following table, value of cell A1 is made up of names of several people and their attendances in four days. For example, c is 1100 expressed in hexadecimal notation, meaning the corresponding person has attendance in the 1st day and the 2nd day and is absent in the 3rd day an... | judith677 |

1,898,476 | Building an AI-Powered Product Scheduler using Lyzr and OpenAI | In the automotive manufacturing industry, project managers face the challenge of efficiently planning... | 0 | 2024-06-24T06:38:49 | https://dev.to/harshitlyzr/building-an-ai-powered-product-scheduler-using-lyzr-and-openai-3fml | In the automotive manufacturing industry, project managers face the challenge of efficiently planning and scheduling complex product development projects. Traditional methods of project planning often lack precision and struggle to account for the intricacies of design sketches and resource utilization. As a result, pr... | harshitlyzr | |

1,898,654 | 5 Free Open Source Tools You Should Know Before Starting Your Business | Do not start a new business without knowing those 5 free open-source software: Watch the video... | 0 | 2024-07-05T16:40:06 | https://blog.elest.io/5-free-open-source-tools-you-should-know-before-starting-your-business/ | elestio, opensource | ---

title: 5 Free Open Source Tools You Should Know Before Starting Your Business

published: true

date: 2024-06-24 06:36:52 UTC

tags: Elestio,OpenSource

canonical_url: https://blog.elest.io/5-free-open-source-tools-you-should-know-before-starting-your-business/

cover_image: https://blog.elest.io/content/images/2024/06/... | kaiwalyakoparkar |

1,898,475 | Discover the World of ACE FAIR GAMES UNION: Your Ultimate Destination for Online Casino Games | Welcome to ACE FAIR GAMES UNION, the premier online casino gaming destination for enthusiasts across... | 0 | 2024-06-24T06:36:50 | https://dev.to/acefairgamesunion/discover-the-world-of-ace-fair-games-union-your-ultimate-destination-for-online-casino-games-59fg | Welcome to [ACE FAIR GAMES UNION](https://www.acefairgameunion.com/), the premier online casino gaming destination for enthusiasts across [Myanmar](https://www.acefairgameunion.com/best-online-casino-in-myanmar), [China](https://www.acefairgameunion.com/best-online-casino-in-china), [Thailand](https://www.acefairgameun... | acefairgamesunion | |

1,898,474 | Enhancing Workplace Safety with AI: A Worker Safety Monitoring Application | In today’s fast-paced industrial landscape, ensuring the safety of workers remains a top priority for... | 0 | 2024-06-24T06:35:16 | https://dev.to/harshitlyzr/enhancing-workplace-safety-with-ai-a-worker-safety-monitoring-application-5ckm | In today’s fast-paced industrial landscape, ensuring the safety of workers remains a top priority for businesses. With advancements in technology, artificial intelligence (AI) is increasingly playing a crucial role in improving workplace safety. One such innovative application is the Worker Safety Monitoring system, de... | harshitlyzr | |

1,898,472 | WHAT NEXT AFTER LEARNING SOFTWARE DEVELOPMENT? | What Are Your Plans To Survive In The Global Tech Market As An Entry Level Software Engineer? As an... | 0 | 2024-06-24T06:35:01 | https://dev.to/salvation_m/what-next-after-learning-software-development-3mm5 | webdev, beginners, softwaredevelopment | **What Are Your Plans To Survive In The Global Tech Market As An Entry Level Software Engineer?**

As an entry-level software engineer, it's crucial to understand that the global tech market is highly competitive. Standing out requires significant effort and dedication. You didn’t invest your time and resources into le... | salvation_m |

1,894,765 | ReactJS Best Practices for Developers | ReactJS: A Brief Introduction ReactJS, is basically an open-source javascript library... | 0 | 2024-06-24T06:33:29 | https://dev.to/infrasity-learning/reactjs-best-practices-for-developers-5gjf | react, bestpractices, solidprinciples, reactjsdevelopment | ## ReactJS: A Brief Introduction

ReactJS, is basically an open-source javascript library developed by Facebook, mainly used for building user-interfaces, and, especially, single-page applications.

Used by thousands of companies and projects globally, ReactJS is one of the most popular libraries for building webapps... | infrasity-learning |

1,898,383 | Django website templates - Speed up your Development | One common mistake a lot of us make is starting everything from scratch and spending weeks to... | 0 | 2024-06-24T06:32:08 | https://dev.to/paul_freeman/django-website-templates-speed-up-your-development-4lko | webdev, django, beginners, opensource | One common mistake a lot of us make is starting everything from scratch and spending weeks to complete simplest of websites. Most of the clients, don't care if you are using a template or starting from scratch, many also don't even care what technology you use, they just want a website up and running fast.

Starting a ... | paul_freeman |

1,898,471 | Introducing the Insurance Underwriting Expert: Your Go-To Solution for Risk Assessment | The insurance industry is a critical sector that provides financial protection and peace of mind to... | 0 | 2024-06-24T06:32:03 | https://dev.to/harshitlyzr/introducing-the-insurance-underwriting-expert-your-go-to-solution-for-risk-assessment-1omd | The insurance industry is a critical sector that provides financial protection and peace of mind to individuals and businesses. A core function within this industry is underwriting — the process of evaluating risks and determining the terms and conditions of insurance policies. Traditional underwriting is often complex... | harshitlyzr | |

1,898,470 | Minimizing GPU RAM and Scaling Model Training Horizontally with Quantization and Distributed Training | Training multibillion-parameter models in machine learning poses significant challenges, particularly... | 0 | 2024-06-24T06:31:55 | https://victorleungtw.com/2024/06/24/quantization/ | quantization, gpu, training, scalability | Training multibillion-parameter models in machine learning poses significant challenges, particularly concerning GPU memory limitations. A single NVIDIA A100 or H100 GPU, with its 80 GB of GPU RAM, often falls short when handling 32-bit full-precision models. This blog post will delve into two powerful techniques to ov... | victorleungtw |

1,897,050 | Managing and Rotating Secrets with AWS Secrets Manager | TL; DR; Securely managing secrets and credentials is crucial for maintaining the integrity... | 0 | 2024-06-24T06:30:00 | https://dev.to/dhoang1905/managing-and-rotating-secrets-with-aws-secrets-manager-eci | aws, terraform, security | ## TL; DR;

Securely managing secrets and credentials is crucial for maintaining the integrity of your applications in the cloud. AWS Secrets Manager simplifies this process by providing a centralized, secure repository for storing, managing, and rotating secrets such as database credentials, API keys, and application... | dhoang1905 |

1,898,469 | Choosing the Right Python Web Framework: Django vs FastAPI vs Flask | Comparing Python Web Frameworks: Django, FastAPI, and Flask In the realm of Python web... | 0 | 2024-06-24T06:28:36 | https://devtoys.io/2024/06/23/choosing-the-right-python-web-framework-django-vs-fastapi-vs-flask/ | python, webdev, devtoys | ---

canonical_url: https://devtoys.io/2024/06/23/choosing-the-right-python-web-framework-django-vs-fastapi-vs-flask/

---

# Comparing Python Web Frameworks: Django, FastAPI, and Flask

In the realm of Python web frameworks, Django, FastAPI, and Flask are three of the most popular options. Each framework has its own stre... | 3a5abi |

1,898,468 | Free weekend of Vue Certification Developer Training is coming soon! | Today it will be a short one because I want you to be aware that there is a great source of Vue... | 24,580 | 2024-06-24T06:28:08 | https://dev.to/jacobandrewsky/free-weekend-of-vue-certification-developer-training-is-coming-soon-50b7 | vue, typescript, javascript, beginners | Today it will be a short one because I want you to be aware that there is a great source of Vue knowledge coming to you for free that you should definitely check out!

Prepare for a free weekend of Vue Developer Certification Training on June 29 & 30, 2024 🎉

Dive into theory, coding challenges, quizzes, and a mock ex... | jacobandrewsky |

1,898,467 | Building Your Construction Planner App with Lyzr and OpenAI | Construction project management is a complex task that involves meticulous planning and coordination... | 0 | 2024-06-24T06:28:02 | https://dev.to/harshitlyzr/building-your-construction-planner-app-with-lyzr-and-openai-2l9o | Construction project management is a complex task that involves meticulous planning and coordination of various activities, resources, and stakeholders. A typical construction project includes phases such as design, procurement, construction, and commissioning. Each phase requires detailed planning and scheduling to en... | harshitlyzr | |

1,898,466 | CNC Turning Parts: High-Quality Components for Industrial Needs | CNC Turning Parts: The Panacea for High-Quality Industrial Components Introduction to CNC Turning... | 0 | 2024-06-24T06:27:28 | https://dev.to/monasb_eipojd_dbc67504376/cnc-turning-parts-high-quality-components-for-industrial-needs-m34 | design | CNC Turning Parts: The Panacea for High-Quality Industrial Components

Introduction to CNC Turning Parts

CNC Turning Parts are modern components developed to be used in modern manufacturing. In this respect, such parts are processed using Computer Numerical Control, which essentially is realized as a process of subtra... | monasb_eipojd_dbc67504376 |

1,898,465 | A Comprehensive Guide to Interface Testing | Like any other testing, interface testing helps in thoroughly testing the software. It ensures that... | 0 | 2024-06-24T06:26:55 | https://dev.to/jamescantor38/a-comprehensive-guide-to-interface-testing-5ahm | interfacetesting, testgrid | Like any other testing, interface testing helps in thoroughly testing the software. It ensures that the end-user of the software does not face any heated issues.

No doubt it is tricky, but there is a requirement of proper planning to perform. One of the best ways to perform interface testing is to automate test cases, ... | jamescantor38 |

1,898,463 | Orson Merrick : From Wall Street to Global Finance Leader | Orson Merrick : From Wall Street to Global Finance Leader Name: Orson Merrick Place of birth:... | 0 | 2024-06-24T06:25:16 | https://dev.to/orsonmerrick1/orson-merrick-from-wall-street-to-global-finance-leader-1j7g | orsonmerrick | Orson Merrick : From Wall Street to Global Finance Leader

Name: Orson Merrick

Place of birth: Manhattan, New York

Date of birth: June 13, 1975 (49 years old)

Place of residence: London, UK

Graduate school: New York University Stern School of Business

Education: Master of International Finance

Position: Chief Analyst an... | orsonmerrick1 |

1,898,462 | Integrating Apache Kafka with Apache AGE for Real-Time Graph Processing | In the modern world, processing data in real time is crucial for many applications such as financial... | 0 | 2024-06-24T06:24:40 | https://dev.to/nim12/integrating-apache-kafka-with-apache-age-for-real-time-graph-processing-3mik | apacheage, apachekafka, graphql, graphprocessing | In the modern world, processing data in real time is crucial for many applications such as financial services, e-commerce and social media analytics. Apache Kafka and Apache AGE (A Graph Extension) are an amazing journey together to have Fast Real-time Graph Analysis. In this blog article, we will take you through the ... | nim12 |

1,898,460 | Automating Python Function Conversion to FastAPI Endpoints | In the rapidly evolving landscape of software development, APIs (Application Programming Interfaces)... | 0 | 2024-06-24T06:24:05 | https://dev.to/harshitlyzr/automating-python-function-conversion-to-fastapi-endpoints-11ea | In the rapidly evolving landscape of software development, APIs (Application Programming Interfaces) have become the backbone of modern applications, facilitating communication between different software components. FastAPI, a modern web framework for building APIs with Python, has gained popularity due to its high per... | harshitlyzr | |

1,898,459 | Oxylabs Python SDK | Hello everyone! We've created a Python SDK for the Oxylabs Scraper APIs to help simplify integrating... | 0 | 2024-06-24T06:23:25 | https://dev.to/mslm_uman/oxylabs-python-sdk-4j5c | oxylabs, python, api, library | ---

title: Oxylabs Python SDK

published: true

# description:

tags: oxylabs, python, api, library

cover_image: https://tinypic.host/images/2024/06/24/1719133932489.jpeg

# Use a ratio of 100:42 for best results.

# published_at: 2024-02-06 19:14 +0000

---

Hello everyone! We've created a Python SDK for the Oxylabs Scrape... | mslm_uman |

1,898,458 | Asort company: India’s Leading Co-Commerce Fashion Hub | Explore what makes Asort company a leader in co-commerce fashion. Learn how to join and benefit from... | 0 | 2024-06-24T06:23:18 | https://dev.to/anita_yadav_e0d52de195ab2/asort-company-indias-leading-co-commerce-fashion-hub-5c7n | asort | Explore what makes [Asort company](https://asort-guide.com/) a leader in co-commerce fashion. Learn how to join and benefit from India’s 1st co-commerce business model.

Asort : Asort company

In today's fast-pac... | anita_yadav_e0d52de195ab2 |

1,898,457 | Royal Blue Scrub Top: Elevating Your Medical Wardrobe | In the world of medical professionals, uniforms are more than just clothing—they are a symbol of... | 0 | 2024-06-24T06:21:14 | https://dev.to/dagacci/royal-blue-scrub-top-elevating-your-medical-wardrobe-2dco | In the world of medical professionals, uniforms are more than just clothing—they are a symbol of trust, competence, and care. Among the myriad of choices available, the royal blue scrub top stands out as a timeless and trending favorite. Whether you’re a seasoned healthcare provider or a newcomer to the field, incorpor... | dagacci | |

1,898,456 | Simplifying Javascript API Integration with Lyzr Automata,Streamlit and OpenAI | In today’s interconnected digital landscape, leveraging APIs (Application Programming Interfaces) is... | 0 | 2024-06-24T06:20:45 | https://dev.to/harshitlyzr/simplifying-javascript-api-integration-with-lyzr-automatastreamlit-and-openai-4i50 | In today’s interconnected digital landscape, leveraging APIs (Application Programming Interfaces) is essential for seamless communication and data exchange between different software systems. As a JavaScript developer, mastering API integration techniques can significantly enhance your ability to build powerful and dyn... | harshitlyzr | |

1,898,450 | Shandong Wonway Machinery: Revolutionizing Agricultural Equipment | Revolutionizing Agricultural Machinery: The Story of Shandong Wonway Machinery Agriculture is... | 0 | 2024-06-24T06:18:12 | https://dev.to/monasb_eipojd_dbc67504376/shandong-wonway-machinery-revolutionizing-agricultural-equipment-5a32 | design | Revolutionizing Agricultural Machinery: The Story of Shandong Wonway Machinery

Agriculture is important in today's world. The world's population is increasing day in, day out, and naturally, food needs multiply. That is, the efficiency, reliability, and bedrock machinery needed to be put in place to carry out the work... | monasb_eipojd_dbc67504376 |

1,898,449 | Top Jigsaw Blades for Precision Cutting by Nanjing Jinmeida Tools Co., Ltd | 0.png Top Jigsaw Blades for Precision Cutting by Nanjing Jinmeida Tools Co., Ltd Do you like... | 0 | 2024-06-24T06:15:56 | https://dev.to/lomabs_dkopijd_5670d401d2/top-jigsaw-blades-for-precision-cutting-by-nanjing-jinmeida-tools-co-ltd-2a5o | 0.png

Top Jigsaw Blades for Precision Cutting by Nanjing Jinmeida Tools Co., Ltd

Do you like projects that are DIY woodworking? Have you ever struggled with finding the jigsaw that's right for precision cutting? Look no further because Nanjing Jinmeida Tools Co., Ltd has got your straight back. Their top jigsaw bla... | lomabs_dkopijd_5670d401d2 | |

1,898,448 | Gametop's Blockchain Gaming Revolution: Innovations in Decentralized Services and Economic Models on the TON Chain | The gaming industry is experiencing a groundbreaking transformation. Gametop emerges as the world’s... | 0 | 2024-06-24T06:15:13 | https://dev.to/gametopofficial/gametops-blockchain-gaming-revolution-innovations-in-decentralized-services-and-economic-models-on-the-ton-chain-143n |

The gaming industry is experiencing a groundbreaking transformation. Gametop emerges as the world’s first decentralized blockchain gaming service platform based on the TON chain, marking a leap in technology and a s... | gametopofficial | |

1,895,548 | How to use Tailwind CSS | INTRODUCTION Tailwind CSS has revolutionized the way we, developers build and style web... | 0 | 2024-06-24T06:14:46 | https://pagepro.co/blog/how-to-use-tailwind/ | tailwindcss, webdev, learning | ## INTRODUCTION

Tailwind CSS has revolutionized the way we, developers build and style web (and mobile) applications. Today I will show you practical aspects of using Tailwind CSS, particularly within [React applications](https://pagepro.co/services/reactjs-development), demonstrating how its utility classes can be eff... | itschrislojniewski |

1,898,447 | Introducing FoodMood-Bot: Your WhatsApp Chef Tailoring Recipes to Your Mood - Twilio Challenge Submission 2024 | This is a submission for Twilio Challenge v24.06.12 What I Built . FoodMood-Bot is... | 0 | 2024-06-24T06:14:07 | https://dev.to/harshitads44217/introducing-foodmood-bot-your-whatsapp-chef-tailoring-recipes-to-your-mood-twilio-challenge-submission-2024-4kj2 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

<!-- Share an overview of your project. -->

.

FoodMood-Bot is designed to enhance the cooking exp... | harshitads44217 |

1,898,446 | Crafting Excellence: Exploring the World of Wholesale Wooden Picture Frame Suppliers | Crafting Excellence: how Wooden that test wholesale image vendors will assist you to in... | 0 | 2024-06-24T06:13:42 | https://dev.to/lomabs_dkopijd_5670d401d2/crafting-excellence-exploring-the-world-of-wholesale-wooden-picture-frame-suppliers-42kd |

Crafting Excellence: how Wooden that test wholesale image vendors will assist you to in perform

Purchasing a company that was dependable of for the artwork task. Look no further than wholesale image that has been lumber providers These providers provide you with a array that are wide of structures in various forms... | lomabs_dkopijd_5670d401d2 | |

1,898,436 | Complete Tasks or get Roasted by AI | This is a submission for Twilio Challenge v24.06.12 What I Built I created a AI Roasting... | 0 | 2024-06-24T06:02:54 | https://dev.to/pulkitgovrani/get-roast-if-task-is-uncompleted-3lm3 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

I created a AI Roasting bot with to-do list goals platform that motivates users to work on their tasks by roasting them , leveraging Twilio's API and Google's Gemini API. The AI analyzes the goals submitted by t... | pulkitgovrani |

1,898,445 | How to Add Syntax Highlighting to Next.js app router with PrismJS ( Server + Client ) Components | How to Add Syntax Highlighting to Next.js app router with PrismJS ( Server + Client )... | 0 | 2024-06-24T06:12:58 | https://dev.to/sh20raj/how-to-add-syntax-highlighting-to-nextjs-app-router-with-prismjs-server-client-components-40mm | nextjs, javascript | # How to Add Syntax Highlighting to Next.js app router with PrismJS ( Server + Client ) Components

Adding syntax highlighting to your Next.js project can greatly enhance the readability and visual appeal of your code snippets. This guide will walk you through the process of integrating PrismJS into your Next.js applic... | sh20raj |

1,898,416 | From Contributor to Maintainer: Lessons from Open Source Software | Since I started focusing on contributions to open-source projects a few years ago, I’ve opened and... | 0 | 2024-06-24T06:12:54 | https://dev.to/patinthehat/from-contributor-to-maintainer-lessons-from-open-source-software-3mog | opensource, webdev, beginners, programming | Since I started focusing on contributions to open-source projects a few years ago, I’ve opened and had merged hundreds of Pull Requests. This article outlines some of the lessons I've learned from contributing to, authoring, and maintaining popular open-source projects.

## Creating Open Source Projects

*Avoid creatin... | patinthehat |

1,898,444 | Film Faced Plywood: Ensuring Quality in Structural Applications | Build resilient, stiff, and secure structures with Film Faced Plywood Whether you're going to build a... | 0 | 2024-06-24T06:09:20 | https://dev.to/somans_eopikd_8523dd6b4a9/film-faced-plywood-ensuring-quality-in-structural-applications-149j | plywood | Build resilient, stiff, and secure structures with Film Faced Plywood

Whether you're going to build a house or even a warehouse, or maybe a bridge or stadium, it could be any other kind of construction that needs sturdy and support durable, then you might as well consider film-faced plywood as your principal material t... | somans_eopikd_8523dd6b4a9 |

1,898,443 | Shedding Light on Excellence: Manufacturing LED Stop Tail Turn Lights | stop.png Keeping You Safe on the Road The Innovation of LED Stop Tail Turn Lights Are you looking... | 0 | 2024-06-24T06:09:11 | https://dev.to/lomabs_dkopijd_5670d401d2/shedding-light-on-excellence-manufacturing-led-stop-tail-turn-lights-282b | stop.png

Keeping You Safe on the Road The Innovation of LED Stop Tail Turn Lights

Are you looking for a safer more efficient way to signal your vehicle's movements on the road

Look no further .

These innovative lights offer numerous advantages for drivers and passengers alike

Advantages of LED Stop Tail Turn ... | lomabs_dkopijd_5670d401d2 | |

1,898,435 | How AI is Being Used to Optimize Web App Performance | Artificial Intelligence (AI) is revolutionizing numerous sectors, and web development is not an... | 0 | 2024-06-24T06:01:56 | https://dev.to/sanket00123/how-ai-is-being-used-to-optimize-web-app-performance-57o5 | ai, productivity, automation, webdev | Artificial Intelligence (AI) is revolutionizing numerous sectors, and web development is not an exception. One of the key areas where AI is making a significant impact is in the optimization of web app performance. Here's how.

## Predictive Analytics

AI algorithms can analyze user behavior and predict future actions.... | sanket00123 |

1,898,442 | Wholesale Electric Fireplaces: Bringing Warmth and Style to Every Home | H305b6f8012a749398bb1d241e6cef5b9v.png Wholesale Electric Fireplaces: Bringing Warmth Style to Every... | 0 | 2024-06-24T06:07:01 | https://dev.to/komandh_ropokd_6608608784/wholesale-electric-fireplaces-bringing-warmth-and-style-to-every-home-55n8 | fireplaces | H305b6f8012a749398bb1d241e6cef5b9v.png

Wholesale Electric Fireplaces: Bringing Warmth Style to Every House

Search no further than wholesale fireplaces that are electric. Wholesale electric fireplaces is an revolutionary alternate being safer fireplaces which can be antique. We will explore the many benefits of whol... | komandh_ropokd_6608608784 |

1,898,441 | The Importance of Proper Chlorine Tablet Usage in Pool Care | The value of Proper Chlorine Tablet Use in Pool Care Introduction Their understand that fix that... | 0 | 2024-06-24T06:06:11 | https://dev.to/lomabs_dkopijd_5670d401d2/the-importance-of-proper-chlorine-tablet-usage-in-pool-care-4imj |

The value of Proper Chlorine Tablet Use in Pool Care

Introduction

Their understand that fix that is regular essential to keep fluid safer plus neat for swimming we are going to aim the truly well worth not chlorine which was using in pool care plus all you could reached exactly find out with them when you've... | lomabs_dkopijd_5670d401d2 | |

1,898,439 | Elixir LIbraries | One of my favorite things about software development is that you can never grow bored or know too... | 0 | 2024-06-24T06:04:58 | https://dev.to/cody-daigle/elixir-libraries-okd | ---

One of my favorite things about software development is that you can never grow bored or know *too much*. There is always something that pulls me in deeper and deeper and lately that has been [Elixir](https://hexdocs.pm/elixir/1.17.1/Kernel.html). I have written two other blogs going over the strengths of Elixir a... | cody-daigle | |

1,898,438 | Sildalist 120 mg Dosage UK | Buy Sildalist 120 mg Online - First Choice Meds | Buy Sildalist 120 mg Online at First Choice Medss. Effective treatment for erectile dysfunction. Fast... | 0 | 2024-06-24T06:04:54 | https://dev.to/firstchoice_medss_c318642/sildalist-120-mg-dosage-uk-buy-sildalist-120-mg-online-first-choice-meds-2fdh | beginners, webdev, javascript, programming | Buy Sildalist 120 mg Online at First Choice Medss. Effective treatment for erectile dysfunction. Fast delivery and secure ordering. Shop now for the best prices. | firstchoice_medss_c318642 |

1,898,437 | What are the risks involved in meme coin development? | Memecoins like Dogecoin and Shiba Inu have become very popular in the cryptocurrency world. These... | 0 | 2024-06-24T06:02:55 | https://dev.to/kala12/what-are-the-risks-involved-in-meme-coin-development-4k4e | Memecoins like Dogecoin and Shiba Inu have become very popular in the cryptocurrency world. These digital currencies often start out as jokes or internet memes, but they can quickly become serious investments. However, there are significant risks involved in developing and investing in mints. Here are ten key points to... | kala12 | |

1,898,434 | Beyond Indoors: Outdoor Tiles for Stylish and Durable Spaces | outdoor tiles.png Beyond Indoors - Enjoy Stylish and Durable Spaces with Outdoor Tiles Are you... | 0 | 2024-06-24T06:01:34 | https://dev.to/lomabs_dkopijd_5670d401d2/beyond-indoors-outdoor-tiles-for-stylish-and-durable-spaces-1pjk | outdoor tiles.png

Beyond Indoors - Enjoy Stylish and Durable Spaces with Outdoor Tiles

Are you looking to create a beautiful and long-lasting outdoor space Look no further than Jiangxi Xidong outdoor tiles

Our innovative tiles are designed to enhance the look of your outdoor space while also providing you with safet... | lomabs_dkopijd_5670d401d2 | |

1,898,433 | Explurger-Travelouge App | (https://www.explurger.com/) Explurger is a social media app built on artificial intelligence to... | 0 | 2024-06-24T06:00:04 | https://dev.to/surya_kumar_bc31485560171/explurger-travelouge-app-653 | travel, explore, adventure, explurger | (https://www.explurger.com/)

Explurger is a social media app built on artificial intelligence to empower you to go beyond check-ins. Apart from sharing pictures & videos, it keeps count of the exact miles, cities, countries & continents travelled by you. What’s more? Adding places to your Bucket List, creating a detail... | surya_kumar_bc31485560171 |

1,892,315 | Getting Started with Dockerfiles | Introduction In the previous posts, we discussed how you can run your first Docker... | 27,622 | 2024-06-24T06:00:00 | https://dev.to/kalkwst/getting-started-with-dockerfiles-3gmd | beginners, docker, devops, tutorial | ## Introduction

In the previous posts, we discussed how you can run your first Docker container by pulling pre-built Docker images from Docker Hub. While it is useful to get pre-built Docker images from Docker Hub, we can't only rely on them. This is important for running our applications on Docker by installing new pa... | kalkwst |

1,891,382 | Attaching To Containers Using the Attach Command | In the previous post, we discussed how to use the docker exec command to spin up a new shell session... | 27,622 | 2024-06-24T06:00:00 | https://dev.to/kalkwst/attaching-to-containers-using-the-attach-command-aaa | beginners, docker, devops, tutorial | In the previous post, we discussed how to use the **docker exec** command to spin up a new shell session in a running container instance. The **docker exec** command is very useful for quickly gaining access to a containerized instance for debugging, troubleshooting, and understanding the context the container is runni... | kalkwst |

1,898,432 | Stay ahead in web development: latest news, tools, and insights #38 | weeklyfoo #38 is here: your weekly digest of all webdev news you need to know! This time you'll find 48 valuable links in 6 categories! Enjoy! | 0 | 2024-06-24T05:59:20 | https://weeklyfoo.com/foos/foo-038/ | webdev, weeklyfoo, javascript, node |

weeklyfoo #38 is here: your weekly digest of all webdev news you need to know! This time you'll find 48 valuable links in 6 categories! Enjoy!

## 🚀 Read it!

- <a href="https://luminousmen.com/post/senior-engineer-fatigue?utm_source=weeklyfoo&utm_medium=web&utm_campaign=weeklyfoo-38&ref=weeklyfoo" target="_blank" rel... | urbanisierung |

1,898,431 | CAT6 Cable: The Backbone of High-Speed Networking | screenshot-1711563719653.png Introduction: Are you looking for a fun way to quench your thirst? Look... | 0 | 2024-06-24T05:58:47 | https://dev.to/lomabs_dkopijd_5670d401d2/cat6-cable-the-backbone-of-high-speed-networking-lnp | screenshot-1711563719653.png

Introduction:

Are you looking for a fun way to quench your thirst? Look no further than the innovative gummy bottles. These tasty treats not only satisfy your thirst but also offer a range of advantages in terms of safety, use, and quality. Keep reading to learn more about this exciting ... | lomabs_dkopijd_5670d401d2 | |

1,881,313 | Power Platform Dataverse 101 | Everyone knows about the key pillars of the Power Platform: Power BI Power Apps Power... | 0 | 2024-06-24T05:57:50 | https://dev.to/wyattdave/power-platform-dataverse-101-11g5 | dataverse, powerplatform, powerapps, lowcode | Everyone knows about the key pillars of the Power Platform:

- Power BI

- Power Apps

- Power Automate

- Power Pages

- Copilot Studio (Formerly Power Virtual Agents)

But they are the pillars, what's the foundation that they are built on, well that's Dataverse (Except Power BI, but thats in the Power Platform in name on... | wyattdave |

1,898,430 | Just Started My Internship Journey at High 6! | My Journey Begins: Interning at High 6! I'm excited to share that I've just started my... | 0 | 2024-06-24T05:57:16 | https://dev.to/harleygotardo/just-started-my-internship-journey-at-high-6-3il3 | webdev, programming, vue, laravel |

### My Journey Begins: Interning at High 6!

I'm excited to share that I've just started my internship at High 6, a dynamic website development company. As a Junior Laravel Developer Intern, I'm diving headfirst in... | harleygotardo |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.