id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,897,973 | Naples Golf Cart | Naples Golf Cart Address: 901 Airport-Pulling Rd S, Naples, FL 34104 Phone: (239) 224-3464 Email:... | 0 | 2024-06-23T18:08:36 | https://dev.to/naplesgolfcart/naples-golf-cart-3agn | golf, cart | **Naples Golf Cart

Address: 901 Airport-Pulling Rd S, Naples, FL 34104

Phone: (239) 224-3464

Email: media@naplesgolfcart.com

Website: https://naplesgolfcart.com/

GMB Profile: https://www.google.com/maps?cid=6886198017560260772**

Naples Golf Cart, situated at 901 Airport-Pulling Rd S, Naples, FL 34104, United States, i... | naplesgolfcart |

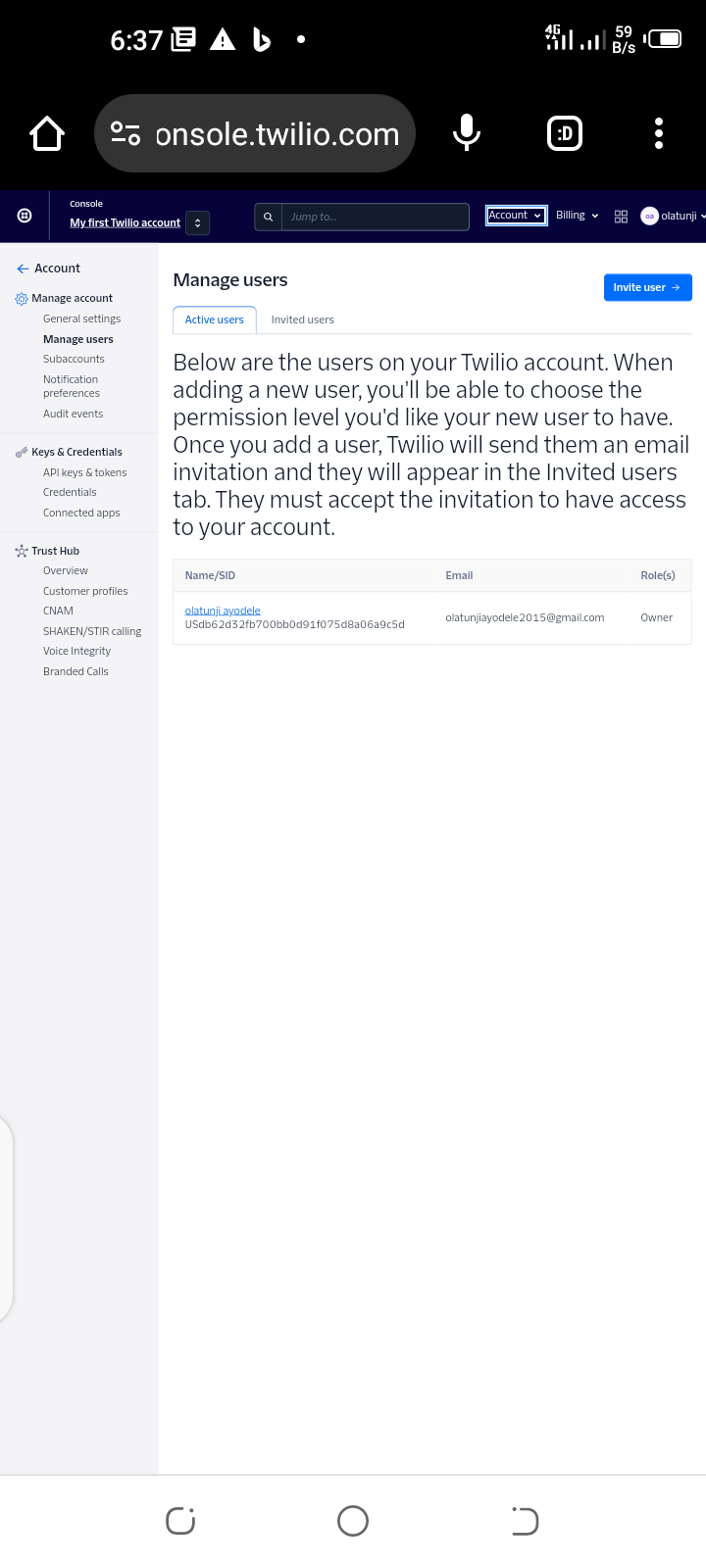

1,897,971 | To build Twilio AI Assistants | introduced Twilio AI Assistants, a platform to build customer-aware autonomous agents. These... | 0 | 2024-06-23T17:43:42 | https://dev.to/olatunjiayodel9/to-build-twilio-ai-assistants-hhp | twiliochallenge, ai, twilio |

introduced Twilio AI Assistants, a platform to build customer-aware autonomous agents. These Assistants can handle complex conversations, answer questions, and perform tasks without rigid decision trees or intent-b... | olatunjiayodel9 |

1,897,970 | KuyhAa | Mendapatkan semua retak perangkat lunak PC terbaru secara gratis, Unduh perangkat lunak PC versi... | 0 | 2024-06-23T17:41:53 | https://dev.to/softwaresde/kuyhaa-2d17 | Mendapatkan semua retak perangkat lunak PC terbaru secara gratis, Unduh perangkat lunak PC versi penuh yang 100% berfungsi termasuk generator kunci serial, patch, dan aktivator.

[KuyhAa](https://kuyhaapro.com/) | softwaresde | |

1,897,969 | How to Integrate Embedded MongoDB for Unit Testing in a Spring Application | Unit testing is a crucial part of software development, ensuring that individual components of an... | 0 | 2024-06-23T17:41:25 | https://dev.to/fullstackjava/how-to-integrate-embedded-mongodb-for-unit-testing-in-a-spring-application-49pi | springboot, java, webdev, mongodb | Unit testing is a crucial part of software development, ensuring that individual components of an application work as expected. When it comes to testing data persistence layers in a Spring application, MongoDB is a popular choice. To facilitate unit testing with MongoDB, we can use an embedded MongoDB instance. This el... | fullstackjava |

1,897,968 | The new and shiny | Every once in a while I come across something new (to me) and interesting, and it makes me wonder...... | 0 | 2024-06-23T17:39:08 | https://dev.to/armen138/the-new-and-shiny-4eil | gamedev, typescript, miniscript | Every once in a while I come across something new (to me) and interesting, and it makes me wonder... could I build a game engine on this? (spoiler alert: the answer is always yes). Recently I came across MiniScript, an embeddable scripting language that has apparently been around for years, but somehow escaped my atten... | armen138 |

1,897,967 | 🚀 Exploring Predictive Analysis of Breast Tumor Diagnosis with Streamlit and SVM! 🚀 | Hey Devs! 👋 I'm excited to share my latest project where I've combined the power of Python,... | 0 | 2024-06-23T17:27:31 | https://dev.to/amna200123/exploring-predictive-analysis-of-breast-tumor-diagnosis-with-streamlit-and-svm-dh3 | machinelearning, datascience, healthtech, python | Hey Devs! 👋 I'm excited to share my latest project where I've combined the power of Python, Streamlit, and Support Vector Machines (SVM) to build an interactive app for predicting breast tumor diagnoses. Here’s a glimpse into what I’ve created:

🔍 Project Overview:

Breast cancer is a significant health concern, and e... | amna200123 |

1,897,961 | Introduction to PHP Development | Hey DEV Community! 👋 Are you ready to dive into PHP development? In this post, we'll explore the... | 0 | 2024-06-23T17:15:36 | https://dev.to/amna200123/introduction-to-php-development-2agh | php, webdev, backend, programming | Hey DEV Community! 👋 Are you ready to dive into PHP development? In this post, we'll explore the fundamentals of PHP, its syntax, variables, control structures, functions, and more. Whether you're a beginner or looking to refresh your PHP skills, this guide will help you get started on building dynamic web application... | amna200123 |

1,897,959 | AWS Cloud Practitioner Essentials | A post by Bhogadi Vidhey | 0 | 2024-06-23T17:13:16 | https://dev.to/vidheyb/aws-cloud-practitioner-essentials-525k | vidheyb | ||

1,897,958 | Solutions Architect Learning Plan Badge Assessment | A post by Bhogadi Vidhey | 0 | 2024-06-23T17:12:45 | https://dev.to/vidheyb/solutions-architect-learning-plan-badge-assessment-35n2 | vidheyb | ||

1,897,957 | Kubernetes hack | NOTE: this is an updated copy of my post in medium, where I'm not writing anymore. Have you lost... | 0 | 2024-06-23T17:08:52 | https://dev.to/caruccio/kubernetes-hack-1d0p | kubernetes, shell | > NOTE: this is an updated copy of my [post in medium](https://medium.com/@mateus.caruccio/kubernetes-hack-lost-ssh-access-to-node-5dd36d35c74c), where I'm not writing anymore.

Have you lost ssh access to one of your Kubernetes nodes? Why do you even need ssh access to nodes in the first place? Well, maybe something i... | caruccio |

1,897,956 | Mastering Visual Hierarchy in UI Design: Key Principles and Techniques | Day 5: Learning UI/UX Design 👋 Hello, Dev Community! I'm Prince Chouhan, a B.Tech CSE student... | 0 | 2024-06-23T17:08:37 | https://dev.to/prince_chouhan/mastering-visual-hierarchy-in-ui-design-key-principles-and-techniques-11l7 | ui, uidesign, ux, uxdesign | Day 5: Learning UI/UX Design

---

👋 Hello, Dev Community!

I'm Prince Chouhan, a B.Tech CSE student with a passion for UI/UX design. Today, I'm excited to share my learnings on visual hierarchy, a fundamental principle in UI design that enhances usability and guides user attention.

---

🗓️ Day 5 Topic: Visual Hiera... | prince_chouhan |

1,897,955 | Aws Certified Cloud Practitioner | A post by Bhogadi Vidhey | 0 | 2024-06-23T17:07:53 | https://dev.to/vidheyb/aws-certified-cloud-practitioner-5boa | vidheyb | ||

1,897,954 | AWS Well-Architected | A post by Bhogadi Vidhey | 0 | 2024-06-23T17:07:11 | https://dev.to/vidheyb/aws-well-architected-5104 | vidheyb | ||

1,897,953 | A Beginner’s Guide to Machine Learning: Everything You Need to Know to Get Started | Machine learning (ML) is an interesting area of study that utilises computational methods,... | 0 | 2024-06-23T17:06:17 | https://dev.to/abhinav_yadav_554cab962bb/a-beginners-guide-to-machine-learning-everything-you-need-to-know-to-get-started-4oe6 | machinelearning, ai, beggine, beginners |

Machine learning (ML) is an interesting area of study that utilises computational methods, statistical analysis and domain knowledge to build systems that are capable of learning from the data and can make predictions or decisions based on it. People of every age and kind of a profile, from students to professionals a... | abhinav_yadav_554cab962bb |

1,897,952 | **Understanding Scalar and Vector in Front-End Web Development with ReactInnt-end web developnt, scalar an pl Vectities, however | In front-end web development, scalar and vector quantities play a crucial role, especially when... | 0 | 2024-06-23T17:04:14 | https://dev.to/godblessed/understanding-scalar-and-vector-in-front-end-web-development-with-reactinnt-end-web-developnt-scalar-an-plvectities-however-3li6 |

In front-end web development, scalar and vector quantities play a crucial role, especially when dealing with graphics, animations, and layout designs. Let's explore these concepts using React as our framework of choice.

**Scalar Quantities in React:**

Scalar quantities in React are used to represent singular values... | godblessed | |

1,897,951 | Arbitrary code execution with pickle | Here's why pickle is unsafe if you don't know the origin of the pickled data: import pickle import... | 0 | 2024-06-23T17:03:50 | https://dev.to/tallesl/arbitrary-code-execution-with-pickle-2407 | python | Here's why pickle is unsafe if you don't know the origin of the pickled data:

```py

import pickle

import os

# Create a malicious class

class Malicious:

def __reduce__(self):

# os.system will execute the command

return (os.system, ('echo "This is malicious code!"',))

# Serialize the malicious obje... | tallesl |

1,897,950 | Understanding Scalar and Vector in Front-End Web Development with React | In front-end web development, scalar and vector quantities play a crucial role, especially when... | 0 | 2024-06-23T17:03:25 | https://dev.to/godblessed/understanding-scalar-and-vector-in-front-end-web-development-with-react-igc |

In front-end web development, scalar and vector quantities play a crucial role, especially when dealing with graphics, animations, and layout designs. Let's explore these concepts using React as our framework of choice.

**Scalar Quantities in React:**

Scalar quantities in React are used to represent singular values... | godblessed | |

1,897,949 | [WIP] ChatGPT API for Web Developers | This post are the notes from FrontendMaster's course by Maximiliano Firtman. | 0 | 2024-06-23T17:03:05 | https://dev.to/petrussola/wip-chatgpt-api-for-web-developers-9bc | This post are the notes from FrontendMaster's [course](https://frontendmasters.com/courses/chatgpt-api/) by Maximiliano Firtman. | petrussola | |

1,897,948 | Getting Started with Aws Cloud Essentials | A post by Bhogadi Vidhey | 0 | 2024-06-23T17:02:10 | https://dev.to/vidheyb/getting-started-with-aws-cloud-essentials-2ih8 | vidheyb | ||

1,897,947 | Configure and Deploy AWS PrivateLink | A post by Bhogadi Vidhey | 0 | 2024-06-23T17:01:09 | https://dev.to/vidheyb/configure-and-deploy-aws-privatelink-2cle | vidheyb | ||

1,897,945 | DAY 1 PROJECT : PASSWORD GENERATOR | Generate Random Password: A Simple and Efficient Password Generator Creating secure and... | 0 | 2024-06-23T16:57:40 | https://dev.to/shrishti_srivastava_/day-1-project-2hg9 | webdev, javascript, beginners, programming | ## **Generate Random Password: A Simple and Efficient Password Generator**

Creating secure and strong passwords is crucial in today's digital age. To help users generate robust passwords effortlessly, I've built a simple yet effective Password Generator. Let's dive into the core features and structure of this project.... | shrishti_srivastava_ |

1,897,752 | Converting px in SCSS | A lot of the time in designs they give the font-size, line-height and letter-spacing in px. Which... | 0 | 2024-06-23T12:50:50 | https://blog.nicm42.co.uk/converting-px-in-scss | scss | ---

title: Converting px in SCSS

published: true

date: 2024-06-23 12:47:17 UTC

tags: scss

canonical_url: https://blog.nicm42.co.uk/converting-px-in-scss

---

A lot of the time in designs they give the font-size, line-height and letter-spacing in px. Which isn't helpful as they mean having to get the calculator out to c... | nicm42 |

1,897,944 | 🚀 My Journey into the Software Dev Space: The Real Story. | 🚀 My Journey into the Software Dev Space: The Real Story. Transitioning into the software Dev space... | 0 | 2024-06-23T16:57:38 | https://dev.to/chinwuba_okafor_fed1ed88f/my-journey-into-the-software-dev-space-the-real-story-3132 | techlife, 100daysofcode, techbro, webdev | 🚀 My Journey into the Software Dev Space: The Real Story.

Transitioning into the software Dev space has been nothing short of daunting. The road to becoming proficient in software development is often painted as a smooth, glamorous path. But let’s be real , it’s far from that.

🔧 The Hurdles:

Learning Curves: F... | chinwuba_okafor_fed1ed88f |

1,897,943 | Understanding Deadlock: When Computers Play Musical Chairs | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-23T16:50:53 | https://dev.to/vidyarathna/understanding-deadlock-when-computers-play-musical-chairs-28c | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Deadlock is like musical chairs for processes: each process waits indefinitely for resources held by others, and no one can proceed. It occurs in concurrent systems, where resources ar... | vidyarathna |

1,897,941 | How to set up code preview in VS Code IDE? | Hello kind people of the internet, I'm following a beginning level tutorial on Vueschool.io, which... | 0 | 2024-06-23T16:46:25 | https://dev.to/whuteva_bf240d1bf5/how-to-set-up-code-preview-in-vs-code-ide-4l1a | Hello kind people of the internet,

I'm following a beginning level tutorial on Vueschool.io, which has an overview of using Volar to perform interactive code previews within VS Code IDE. This preview capability is a feature that I really want to have.

But alas, I've gone down an rabbit hole of trying to figure out ju... | whuteva_bf240d1bf5 | |

1,891,659 | Potential Companies I'd Like To Work At | While coming to the end of my bootcamp journey, it is now time to think about potential companies I'd... | 0 | 2024-06-23T16:41:04 | https://dev.to/uhrinuh/potential-companies-id-like-to-work-at-44jh | While coming to the end of my bootcamp journey, it is now time to think about potential companies I'd like to work at as a full-stack software developer.

As an Operation Spark grad, I have advanced knowledge on Javascript, Typescript, Node.js, MySQL, MongoDB, React, Sequelize, Mongoose, Prisma, and many other technol... | uhrinuh | |

1,897,940 | GCP for beginners 2024: Build a simple web app with Cloud Run and Cloud Build through terminal | What is Cloud Run? Cloud run is a fully managed compute platform managed by Google Cloud that... | 0 | 2024-06-23T16:37:34 | https://dev.to/robertasaservice/gcp-for-beginners-2024-build-a-simple-web-app-with-cloud-run-and-cloud-build-through-terminal-2kop | **What is Cloud Run?**

Cloud run is a fully managed compute platform managed by Google Cloud that automatically scales containers and it allows developers to deploy containerized applications in minutes

**What are the key features of Cloud Run?**

1. Auto scaling = Scales up or down depending on traffic

2. Containeri... | robertasaservice | |

1,892,833 | The Art of Falling | If you fall just right, you can use the energy to roll and spring back up. Don't ask me how, I'd hurt... | 0 | 2024-06-23T16:36:02 | https://dev.to/tacodes/the-art-of-falling-2aoa | hhgtg, motivation, meme | If you fall just right, you can use the energy to roll and spring back up. Don't ask me how, I'd hurt myself. Willy Wonka did it once though.

Folks in aikido understand the idea well, too -- these martial practiti... | tacodes |

1,897,938 | Delicious Breakfast Classics: Waffles, Sandwiches, and Bowls | A popular breakfast choice for many people, waffles are a delicious and versatile option that can be... | 0 | 2024-06-23T16:32:26 | https://dev.to/abduljabbar4533/delicious-breakfast-classics-waffles-sandwiches-and-bowls-768 | crepeccino | A popular breakfast choice for many people, waffles are a delicious and versatile option that can be enjoyed in a variety of ways. Whether you prefer them topped with fresh fruit, a dollop of whipped cream, or drizzled with maple syrup, waffles are a classic morning staple that never fails to satisfy. From crispy Belgi... | abduljabbar4533 |

1,897,937 | 1438. Longest Continuous Subarray With Absolute Diff Less Than or Equal to Limit | 1438. Longest Continuous Subarray With Absolute Diff Less Than or Equal to Limit Medium Given an... | 27,523 | 2024-06-23T16:27:33 | https://dev.to/mdarifulhaque/1438-longest-continuous-subarray-with-absolute-diff-less-than-or-equal-to-limit-4j9j | php, leetcode, algorithms, programming | 1438\. Longest Continuous Subarray With Absolute Diff Less Than or Equal to Limit

Medium

Given an array of integers `nums` and an integer `limit`, return the size of the longest **non-empty** subarray such that the absolute difference between any two elements of this subarray is less than or equal to `limit`.

**Exam... | mdarifulhaque |

1,897,936 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Account There are... | 0 | 2024-06-23T16:25:44 | https://dev.to/povahe7690/buy-verified-paxful-account-1fbm | tutorial, react, python, ai | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-paxful-account/\n\n\n\n\nBuy Verified Paxful Account\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, Buy verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to Buy Verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with. Buy Verified Paxful Account.\n\nBuy US verified paxful account from the best place dmhelpshop\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. Buy Verified Paxful Account.\n\nIf you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\nWhat is Verified Paxful Account?\nIn today’s expanding landscape of online transactions, ensuring security and reliability has become paramount. Given this context, Paxful has quickly risen as a prominent peer-to-peer Bitcoin marketplace, catering to individuals and businesses seeking trusted platforms for cryptocurrency trading.\n\nIn light of the prevalent digital scams and frauds, it is only natural for people to exercise caution when partaking in online transactions. As a result, the concept of a verified account has gained immense significance, serving as a critical feature for numerous online platforms. Paxful recognizes this need and provides a safe haven for users, streamlining their cryptocurrency buying and selling experience.\n\nFor individuals and businesses alike, Buy verified Paxful account emerges as an appealing choice, offering a secure and reliable environment in the ever-expanding world of digital transactions. Buy Verified Paxful Account.\n\nVerified Paxful Accounts are essential for establishing credibility and trust among users who want to transact securely on the platform. They serve as evidence that a user is a reliable seller or buyer, verifying their legitimacy.\n\nBut what constitutes a verified account, and how can one obtain this status on Paxful? In this exploration of verified Paxful accounts, we will unravel the significance they hold, why they are crucial, and shed light on the process behind their activation, providing a comprehensive understanding of how they function. Buy verified Paxful account.\n\n \n\nWhy should to Buy Verified Paxful Account?\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, a verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence. Buy Verified Paxful Account.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to buy a verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with.\n\n \n\nWhat is a Paxful Account\nPaxful and various other platforms consistently release updates that not only address security vulnerabilities but also enhance usability by introducing new features. Buy Verified Paxful Account.\n\nIn line with this, our old accounts have recently undergone upgrades, ensuring that if you purchase an old buy Verified Paxful account from dmhelpshop.com, you will gain access to an account with an impressive history and advanced features. This ensures a seamless and enhanced experience for all users, making it a worthwhile option for everyone.\n\n \n\nIs it safe to buy Paxful Verified Accounts?\nBuying on Paxful is a secure choice for everyone. However, the level of trust amplifies when purchasing from Paxful verified accounts. These accounts belong to sellers who have undergone rigorous scrutiny by Paxful. Buy verified Paxful account, you are automatically designated as a verified account. Hence, purchasing from a Paxful verified account ensures a high level of credibility and utmost reliability. Buy Verified Paxful Account.\n\nPAXFUL, a widely known peer-to-peer cryptocurrency trading platform, has gained significant popularity as a go-to website for purchasing Bitcoin and other cryptocurrencies. It is important to note, however, that while Paxful may not be the most secure option available, its reputation is considerably less problematic compared to many other marketplaces. Buy Verified Paxful Account.\n\nThis brings us to the question: is it safe to purchase Paxful Verified Accounts? Top Paxful reviews offer mixed opinions, suggesting that caution should be exercised. Therefore, users are advised to conduct thorough research and consider all aspects before proceeding with any transactions on Paxful.\n\n \n\nHow Do I Get 100% Real Verified Paxful Accoun?\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform.\n\nHowever, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. This verification procedure necessitates users to furnish personal information and vital documents, posing potential risks if not conducted meticulously.\n\nIn this comprehensive guide, we will delve into the necessary steps to create a legitimate and verified Paxful account. Our discussion will revolve around the verification process and provide valuable tips to safely navigate through it.\n\nMoreover, we will emphasize the utmost importance of maintaining the security of personal information when creating a verified account. Furthermore, we will shed light on common pitfalls to steer clear of, such as using counterfeit documents or attempting to bypass the verification process.\n\nWhether you are new to Paxful or an experienced user, this engaging paragraph aims to equip everyone with the knowledge they need to establish a secure and authentic presence on the platform.\n\nBenefits Of Verified Paxful Accounts\nVerified Paxful accounts offer numerous advantages compared to regular Paxful accounts. One notable advantage is that verified accounts contribute to building trust within the community.\n\nVerification, although a rigorous process, is essential for peer-to-peer transactions. This is why all Paxful accounts undergo verification after registration. When customers within the community possess confidence and trust, they can conveniently and securely exchange cash for Bitcoin or Ethereum instantly. Buy Verified Paxful Account.\n\nPaxful accounts, trusted and verified by sellers globally, serve as a testament to their unwavering commitment towards their business or passion, ensuring exceptional customer service at all times. Headquartered in Africa, Paxful holds the distinction of being the world’s pioneering peer-to-peer bitcoin marketplace. Spearheaded by its founder, Ray Youssef, Paxful continues to lead the way in revolutionizing the digital exchange landscape.\n\nPaxful has emerged as a favored platform for digital currency trading, catering to a diverse audience. One of Paxful’s key features is its direct peer-to-peer trading system, eliminating the need for intermediaries or cryptocurrency exchanges. By leveraging Paxful’s escrow system, users can trade securely and confidently.\n\nWhat sets Paxful apart is its commitment to identity verification, ensuring a trustworthy environment for buyers and sellers alike. With these user-centric qualities, Paxful has successfully established itself as a leading platform for hassle-free digital currency transactions, appealing to a wide range of individuals seeking a reliable and convenient trading experience. Buy Verified Paxful Account.\n\n \n\nHow paxful ensure risk-free transaction and trading?\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfu implement stringent identity and address verification measures to protect users from scammers and ensure credibility.\n\nWith verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users. Buy Verified Paxful Account.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user’s dedication to the platform’s guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Buy Verified Paxful Account.\n\nExamining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\n \n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors.\n\nBusinesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities. Buy Verified Paxful Account.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth.\n\nPaxful’s verified accounts not only offer reliability within the trading community but also serve as a testament to the platform’s ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today. Buy Verified Paxful Account.\n\n \n\nWhy paxful keep the security measures at the top priority?\nIn today’s digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information.\n\nSafeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all. Buy Verified Paxful Account.\n\nConclusion\nInvesting in Bitcoin offers various avenues, and among those, utilizing a Paxful account has emerged as a favored option. Paxful, an esteemed online marketplace, enables users to engage in buying and selling Bitcoin. Buy Verified Paxful Account.\n\nThe initial step involves creating an account on Paxful and completing the verification process to ensure identity authentication. Subsequently, users gain access to a diverse range of offers from fellow users on the platform. Once a suitable proposal captures your interest, you can proceed to initiate a trade with the respective user, opening the doors to a seamless Bitcoin investing experience.\n\nIn conclusion, when considering the option of purchasing verified Paxful accounts, exercising caution and conducting thorough due diligence is of utmost importance. It is highly recommended to seek reputable sources and diligently research the seller’s history and reviews before making any transactions.\n\nMoreover, it is crucial to familiarize oneself with the terms and conditions outlined by Paxful regarding account verification, bearing in mind the potential consequences of violating those terms. By adhering to these guidelines, individuals can ensure a secure and reliable experience when engaging in such transactions. Buy Verified Paxful Account.\n\n \n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | povahe7690 |

1,897,935 | Twilio Challenge: LOL Loops: Your Developer Rant Responder | This is a submission for Twilio Challenge v24.06.12 What I Built Built an WhatsApp bot... | 0 | 2024-06-23T16:23:24 | https://dev.to/gowtham758550/twilio-challenge-lol-loops-your-developer-rant-responder-51nj | devchallenge, twiliochallenge, ai, twilio | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

<!-- Share an overview about your project. -->

Built an WhatsApp bot powered by Gemini AI.

**LOL Loops: Your Developer Rant Responder**

Entertaining Endeavors: Whenever a developer rants to it, it will push the... | gowtham758550 |

1,897,933 | Understanding Psycopg2: Bridging Python and PostgreSQL | What is Psycopg2: Psycopg2 is a PostgreSQL database adapter for the Python programming... | 0 | 2024-06-23T16:22:41 | https://dev.to/mahendrap1512/understanding-psycopg2-bridging-python-and-postgresql-1cpj | python, psycopg2, database, postgres | ### What is Psycopg2:

Psycopg2 is a PostgreSQL database adapter for the Python programming language. It allows developers to interact with PostgreSQL database using Python. It provides functionalities to connect to the PostgreSQL database, execute SQL commands, fetch results from executed SQL commands, manage database ... | mahendrap1512 |

1,897,734 | Next-Gen AI Interview: Multilingual and Real-Time Analysis | This is a submission for the Twilio Challenge What I Built The motivation behind this... | 0 | 2024-06-23T16:22:30 | https://dev.to/bilal1718/next-gen-ai-interview-multilingual-and-real-time-analysis-56g8 | devchallenge, twiliochallenge, ai, twilio |

This is a submission for the [Twilio Challenge ](https://dev.to/challenges/twilio)

## What I Built

The motivation behind this project stems from a deep desire to democratize the interview process and make it more accessible, personalized, and fair. Job interviews can be incredibly stressful, and traditional methods o... | bilal1718 |

1,897,906 | Scontreeno - Your AI-empowered expense manager companion | This is a submission for Twilio Challenge v24.06.12 What I Built Scontreeno - whose name... | 0 | 2024-06-23T16:21:38 | https://dev.to/marconline/scontreeno-your-ai-empowered-expense-manager-companion-16e2 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

Scontreeno - whose name is taken from "Scontrino", wich is the italian term for "Recipt" - is a Twilio and AI powered expense manager companion.

Scontreeno is offered as a Whatsapp bot in order to simplify the use... | marconline |

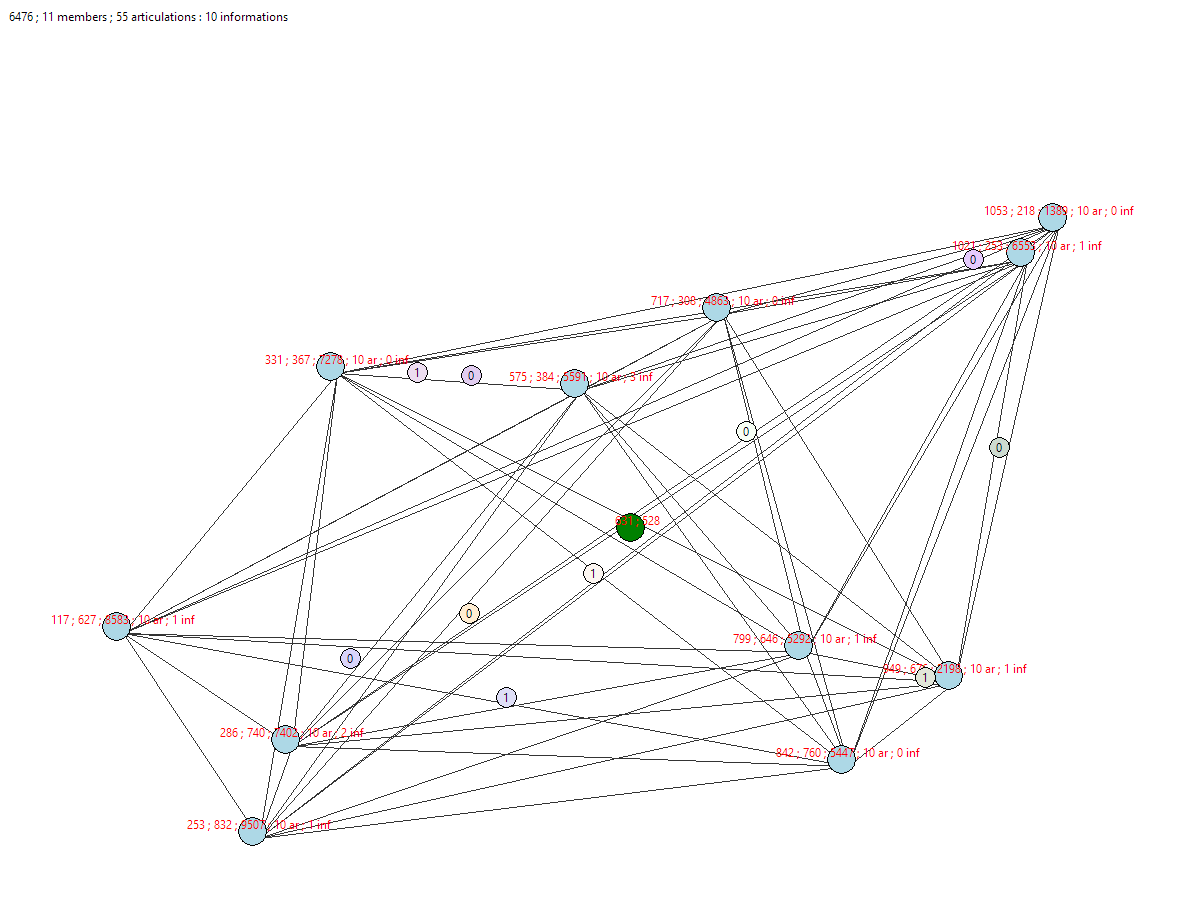

1,897,917 | Entity Simulation with Member and Articulations in Python | EntRAVE is an Python tool to visualize an entity made of members 🔹 and articulations 🔗. Convinced... | 0 | 2024-06-23T16:18:47 | https://dev.to/genius_um/entity-simulation-with-member-and-articulations-in-python-561l | programming, python, ai, opensource | **EntRAVE** is an Python tool to visualize an entity made of members 🔹 and articulations 🔗.

Convinced ? Go on [the GitHub](https://github.com/Geniusum/EntRAVE) | genius_um |

1,897,916 | FRONTEND DEVELOPMENT | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-23T16:12:35 | https://dev.to/jamesbraun12/frontend-development-4jkm | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Development of enabling users to interact with website elements. Examples include HTML, CSS and JavaScript.

## Additional Context

HTML involves structure, CSS provides styling and l... | jamesbraun12 |

1,897,914 | YUQTAM | Best Corporate Gifting Company in India | Streamline gifting with our one-stop bulk solutions. Explore branded personalized & corporate... | 0 | 2024-06-23T16:11:11 | https://dev.to/yuqtam/yuqtam-best-corporate-gifting-company-in-india-4lj4 | corporate, gifting, merchandise, branding | Streamline gifting with our one-stop bulk solutions. Explore branded personalized & [corporate gifting](https://yuqtam.in/) solution for unforgettable moments.

corporate gift, corporate gift giving, corporate gift supplier, corporate gifting, corporate gifting companies in bangalore, corporate gifting companies in mum... | yuqtam |

1,897,913 | What' New in Angular 18 | 1. TypeScript 4.7 Support Angular 18 will fully support TypeScript 4.7, which includes several new... | 0 | 2024-06-23T16:09:00 | https://dev.to/pathan_najim_1a1eef23584f/what-new-in-angular-18-48em | **1. TypeScript 4.7 Support**

Angular 18 will fully support TypeScript 4.7, which includes several new features and improvements. This means that Angular developers will be able to take advantage of the latest TypeScript features, such as:

**i. Template Literal Types:** Allow developers to define the types of templat... | pathan_najim_1a1eef23584f | |

1,897,912 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-23T16:08:17 | https://dev.to/povahe7690/buy-verified-cash-app-account-228f | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n" | povahe7690 |

1,897,909 | Beginners Python Decision Making: Understanding If-else statements effectively. | If-Else statements and its significance: "If-else" statements are a type of conditional statements... | 0 | 2024-06-23T16:07:18 | https://dev.to/davidbosah/beginners-python-decision-making-understanding-if-else-statements-effectively-40n4 | webdev, beginners, python, tutorial |

**If-Else statements and its significance:**

"If-else" statements are a type of conditional statements in programming which allows specific logic or conditions to be given to different blocks of code. It's literally creating a bias or condition to a code block. The benefits range from making decisions to controllin... | davidbosah |

1,897,911 | Day 27 of my progress as a vue dev | About today Today was another solid day. I ended up getting I lot of work done as I intended and it... | 0 | 2024-06-23T16:06:28 | https://dev.to/zain725342/day-27-of-my-progress-as-a-vue-dev-48mo | webdev, vue, typescript, tailwindcss | **About today**

Today was another solid day. I ended up getting I lot of work done as I intended and it was quite fulfilling. I completed my landing page and practiced my refactoring skill on the code to make it more clean and modularized which was bugging me since I started working on it and I'm glad I did that and I ... | zain725342 |

1,897,910 | Accessibility in Frontend Development: Best Practices and Tools | Have you ever wondered why some websites lack accessibility? Many front-end developers build products... | 0 | 2024-06-23T16:02:19 | https://dev.to/abdulquadri_akosile_efe07/accessibility-in-frontend-development-best-practices-and-tools-3he0 | webdev, frontend, softwaredevelopment | Have you ever wondered why some websites lack accessibility? Many front-end developers build products without considering accessibility or testing them for impaired users. However, accessibility is vital because different end users need to access these products. Let's shed more light on web accessibility to understand ... | abdulquadri_akosile_efe07 |

1,897,908 | Launching GitLoop AI codebase Assistant and code reviewer for GitHub PRs, commits, issues and more... | I'm excited to announce new features for https://gitloop.com, a project I've been working on for... | 0 | 2024-06-23T15:55:06 | https://dev.to/akirasato/launching-gitloop-ai-codebase-assistant-and-code-reviewer-for-github-prs-commits-issues-and-more-431j | webdev, github, chatgpt, ai | I'm excited to announce new features for [https://gitloop.com](GitLoop), a project I've been working on for months.

GitLoop now not only scans and understands your entire codebase but also helps with new issues and reviews your changes directly on GitHub. Here’s what you can expect:

Deep Analysis: Personalized AI ass... | akirasato |

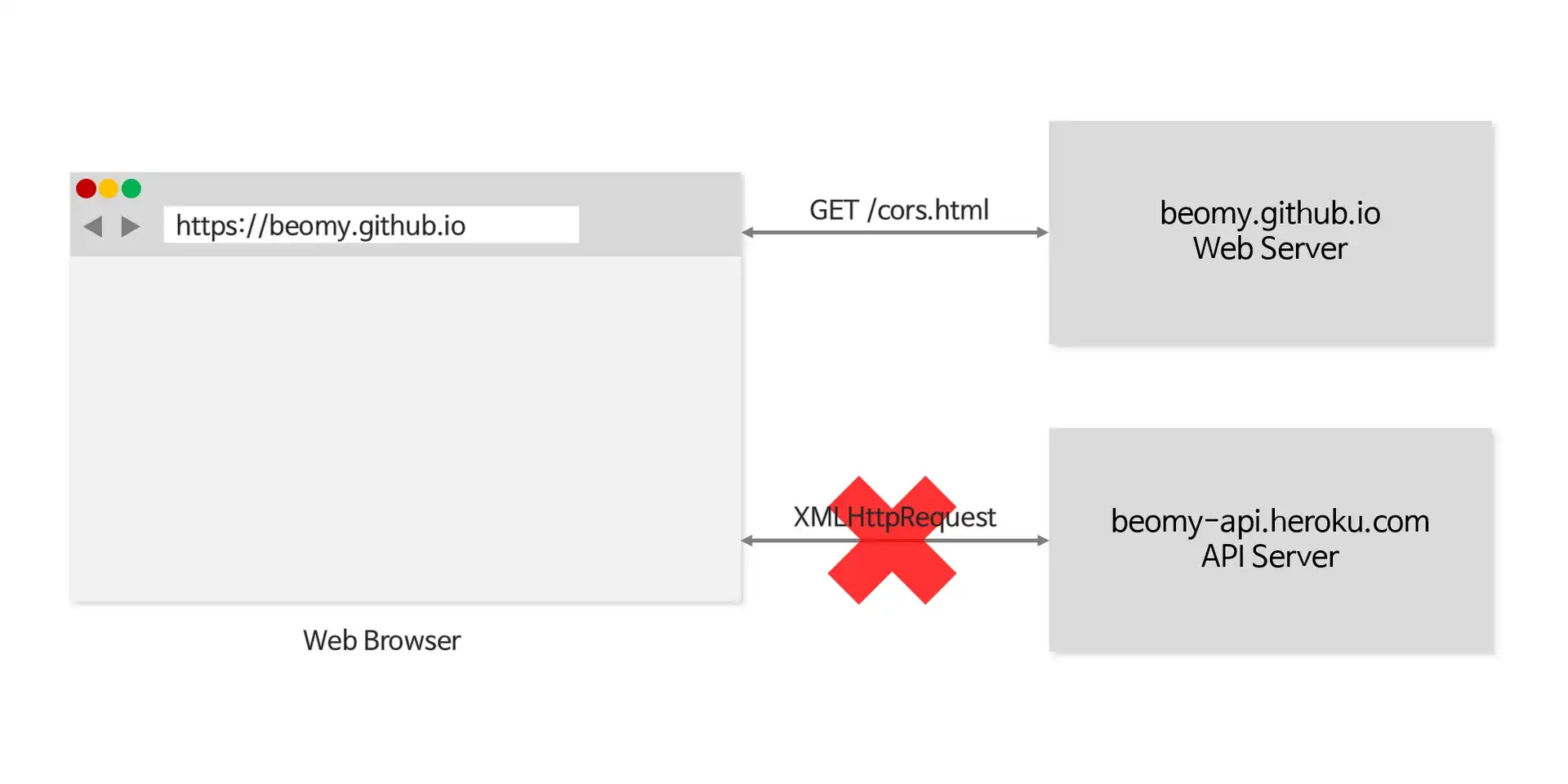

1,895,709 | How to used CORS? | CORS? 웹 애플리케이션에서 HTTP 프로토콜을 통해 데이터를 요청하고 응답을 받는다. 즉, 웹 사이트는 URL이라는 출처를 가지고 요청을 하게 되는데,... | 0 | 2024-06-23T15:45:28 | https://dev.to/hxxtae/how-to-used-cors-4ld |

## CORS?

웹 애플리케이션에서 HTTP 프로토콜을 통해 데이터를 요청하고 응답을 받는다.

즉, 웹 사이트는 URL이라는 출처를 가지고 요청을 하게 되는데, `동일 출처`에서 불러온 문서나 스크립트가 다른 출처에서 가져온 리소스와 상호작용 하는 것을 제한하는 보안 방식을 **SOP(Same Origin Polict)**라 한다.

이는 악의를 가진 사용자가 정보를 탈취하거나 ... | hxxtae | |

1,897,904 | Stylish Accordion Animation | Check out this Pen I made! | 0 | 2024-06-23T15:31:52 | https://dev.to/alcu1n/stylish-accordion-animation-233f | codepen | Check out this Pen I made!

{% codepen https://codepen.io/Alcu1n/pen/eYaryOb %} | alcu1n |

1,897,891 | Concept of computer science | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-23T15:16:30 | https://dev.to/milesonerd/concept-of-computer-science-4g31 | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Computer science is the study of algorithms, data structures, programming, and computing theory. It involves designing and analyzing software and hardware to solve problems, automate t... | milesonerd |

1,897,890 | Weaving Your Enterprise Together: Implementing Integration Patterns with AWS Messaging Services | Weaving Your Enterprise Together: Implementing Integration Patterns with AWS Messaging... | 0 | 2024-06-23T15:14:54 | https://dev.to/virajlakshitha/weaving-your-enterprise-together-implementing-integration-patterns-with-aws-messaging-services-14o6 |

# Weaving Your Enterprise Together: Implementing Integration Patterns with AWS Messaging Services

In today's dynamic digital landscape, enterprises rely on a complex interplay of applications, services, and systems. Effectively conn... | virajlakshitha | |

1,897,887 | Why is Everyone into Indie Development? - FAV0 Weekly Issue 004 | website: fav0.com These are the shells I collected with friends at the beach last week. It was... | 0 | 2024-06-23T15:12:37 | https://dev.to/justin3go/why-is-everyone-into-indie-development-fav0-weekly-issue-004-1maj | website: [fav0.com](https://fav0.com/en/posts/2024/004)

These are the shells I collected with friends at the beach last week. It was quite a haul! For someone who has lived inland all their life, it was very exciting.

## \>\>Topics to Discuss

**Why are more and more... | justin3go | |

1,897,886 | HERE IS NEW LANGUAGE TRANSLATOR | Check out this Pen I made! | 0 | 2024-06-23T15:12:21 | https://dev.to/jonse_ketela_b13c463d2acf/here-is-new-crime-locator-app-el0 | codepen | Check out this Pen I made!

{% codepen https://codepen.io/Jonse-ketela/pen/abrGVPM %} | jonse_ketela_b13c463d2acf |

1,897,885 | Issue 49 and 50 of AWS Cloud Security Weekly | (This is just the highlight of Issue 49 and 50 of AWS Cloud Security weekly @... | 0 | 2024-06-23T15:11:04 | https://aws-cloudsec.com/p/issue-49-and-50 | (This is just the highlight of Issue 49 and 50 of AWS Cloud Security weekly @ https://aws-cloudsec.com/p/issue-49-and-50 << Subscribe to receive the full version in your inbox weekly for free!!).

**What happened in AWS CloudSecurity & CyberSecurity last week June 10-June 20, 2024?**

- IAM Access Analyzer now provides... | aws-cloudsec | |

1,897,883 | Triangle : summarize,ask, tweet,note ? | This is a submission for Twilio Challenge v24.06.12 What I Built I built a Twilio chatbot... | 0 | 2024-06-23T15:07:50 | https://dev.to/ppkshashi/triangle-summarizeask-tweetnote--3337 | devchallenge, twiliochallenge, ai, twilio | *This is a submission for [Twilio Challenge v24.06.12](https://dev.to/challenges/twilio)*

## What I Built

I built a Twilio chatbot with several features:

1. **URL Content Summarization**: Summarizes the content of URLs using the Google Gemini API.

2. **Text Summarization**: Provides summaries of text inputs.

3. **Twe... | ppkshashi |

1,897,882 | Frog Eaters | How disgusting they are They guzzle slime glizz Yes we hunted all the Good meat And maybe all... | 0 | 2024-06-23T15:07:50 | https://dev.to/tacodes/frog-eaters-4a8p | ats, autoreject, jobhunt | How disgusting they are

They guzzle slime glizz

Yes we hunted all the

Good meat

And maybe all that's left

Is bugs and frogs and snakes and things

Nevertheless

Those disgusting frog eaters | tacodes |

1,897,881 | How Cyber Security Services Can Protect Your Business From Threats | Wanna become a data scientist within 3 months, and get a job? Then you need to check this out !... | 0 | 2024-06-23T15:07:39 | https://thedatascientist.com/how-cyber-security-services-can-protect-your-business-from-threats/ | cybersecurity, database, productivity, ai | Wanna become a data scientist within 3 months, and get a job? Then you need to [check this out ! ](https://go.beyond-machine.com/)

Businesses contend with a wide variety of cyber threats, including those that can compromise data, finance, and reputation. Cyber security services, therefore, become crucial in ensuring ... | ecaterinateodo3 |

1,897,879 | Shell Script for DevOps | Hi Everyone Guys, I have an announcement for DevOps and Cloud Engineers, From today I will try to... | 0 | 2024-06-23T15:06:15 | https://dev.to/dev_roy/shell-script-for-devops-pjl | devops, aws, shell | Hi Everyone

Guys, I have an announcement for DevOps and Cloud Engineers, From today I will try to share some points which related with DevOps, So we are staring with Shell Scripting, It is most important for every DevOps Engineer, I made the documentation for that, Sharing the link here.

Overview:-

* Basic Shell Scr... | dev_roy |

1,897,875 | GitOps Argo CD Setup On EKS | Argo CD is a GitOps continuous delivery tool for Kubernetes, enabling automatic synchronization of... | 0 | 2024-06-23T15:01:34 | https://dev.to/vikash_kumar_06/gitops-argo-cd-setup-on-eks-15io | Argo CD is a GitOps continuous delivery tool for Kubernetes, enabling automatic synchronization of application state with Git repositories, rollbacks, health checks, RBAC integration, multi-environment support, and seamless integration with CI/CD systems for streamlined deployments. Here are the some points about Argo ... | vikash_kumar_06 | |

1,897,877 | Getting Started with MongoDB: A Beginner's Guide; | Introduce: what MongoDB is, its key features, and why it's a popular choice for developers. What is... | 0 | 2024-06-23T15:01:34 | https://dev.to/muhammedshamal/getting-started-with-mongodb-a-beginners-guide-4l62 | mongodb, database, programming, webdev | Introduce: what MongoDB is, its key features, and why it's a popular choice for developers.

1. What is MongoDB?

Definition: Explain MongoDB as a NoSQL database designed for scalability and flexibility.

Key Features: Highlight features like document-oriented storage, scalability, high performance, and flexibility in ... | muhammedshamal |

1,897,876 | KIP Protocol: Pioneer in Decentralized AI | 📚 TinTinLand's #TinTinLandWeb3LearningMonth has successfully entered its fourth week! 📅 In Week 4... | 0 | 2024-06-23T15:01:10 | https://dev.to/ourtintinland/kip-protocol-pioneer-in-decentralized-ai-4jei | webdev, beginners, ai, devops | 📚 TinTinLand's #TinTinLandWeb3LearningMonth has successfully entered its fourth week!

📅 In Week 4 (June 24th to June 30th), @KIPprotocol will bring a series of enriching online AMA sessions, workshops, and Zealy learning tasks.

🎨 #KIPProtocol is a decentralized underlying protocol designed for #AI app developers,... | ourtintinland |

1,897,328 | Implement custom endpoint with pagination in Strapi | Strapi has to be one of the most popular open source CMS platforms currently available. I used it in... | 0 | 2024-06-23T15:00:04 | https://dev.to/dellboyan/implement-custom-endpoint-with-pagination-in-strapi-41i1 | webdev, javascript, strapi, opensource | [Strapi ](https://strapi.io/) has to be one of the most popular open source CMS platforms currently available. I used it in several projects and loved it's features and what you get for free. It's very easy to host on popular platforms like Digital Ocean or Netlify, very easy to use, and offers a bunch of features out ... | dellboyan |

1,897,859 | Idempotency in 256 characters or less | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-23T14:52:48 | https://dev.to/derlin/idempotency-in-256-characters-or-less-118c | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Borrowed from Mathematics, an **idempotent** operation can run multiple times without changing the result. Writing to a file is idempotent, but appending to a file is not. A script usi... | derlin |

1,897,871 | What is NewAge Nations DAO | NewAge Nations DAO. is a community-driven project for the building of investment DAOs using Web3, AI,... | 0 | 2024-06-23T14:52:01 | https://dev.to/newagenations_053691fa72e/what-is-newage-nations-dao-phm | NewAge Nations DAO. is a community-driven project for the building of investment DAOs using Web3, AI, NFTs and Blockchain Technologies.

Solution: www.hvts.network

Protocol: www.newagecoin.cash | newagenations_053691fa72e | |

1,897,870 | Job Adventures - PDF generation | Jun 2024 | Well, here we are with a new series. This one is called Job Adventures where I will talk about some... | 27,829 | 2024-06-23T14:51:47 | https://medium.com/@goamaral/job-adventures-pdf-generation-jun-2024-93e468ce60dc | pdf, programming, newsletter, webdev | Well, here we are with a new series. This one is called *Job Adventures* where I will talk about some challenges I encountered on my day to day job.

In this article we will explore PDF generation. This is one of those classic tasks you rarely need to do but when the task eventually arrives, I get PTSD.

My first conta... | goamaral |

1,897,869 | How we declare one dimensional array by using JavaScript and Python language | Declaring One Dimensional Array In programming, particularly in languages like JavaScript,... | 0 | 2024-06-23T14:51:13 | https://dev.to/wasifali/how-we-declare-one-dimensional-array-by-using-javascript-and-python-language-3dp5 | webdev, javascript, python, programming | ## **Declaring One Dimensional Array**

In programming, particularly in languages like JavaScript, Python, Java, C++, and others, arrays are fundamental data structures used to store collections of elements of the same type. Here, I'll explain how to declare and use a one-dimensional array, which is the simplest form of... | wasifali |

1,897,868 | ⏰ Introducing TickWatch: A Versatile jQuery Plugin for Realistic Time and Number Displays | As developers, we often need a reliable, customizable way to display time or numbers on our web... | 0 | 2024-06-23T14:49:54 | https://dev.to/hichemtab-tech/introducing-tickwatch-a-versatile-jquery-plugin-for-realistic-time-and-number-displays-548f | webdev, javascript, jquery, programming | As developers, we often need a reliable, customizable way to display time or numbers on our web pages. Whether it's for a digital clock, a countdown timer, or simply showcasing a number, having the right tool can make all the difference. Enter [**TickWatch**](https://github.com/HichemTab-tech/TickWatch-js), a lightweig... | hichemtab-tech |

1,897,867 | onions and recursion | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte... | 0 | 2024-06-23T14:47:23 | https://dev.to/urjacodes/onions-and-recursion-5hac | devchallenge, cschallenge, computerscience, beginners | **This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).**

**_Explainer_**

Recursion is a method where a function calls itself until it satisfies a base case. It simplifies complex problems. It is like peeling an onion's layers until you reach its core- ... | urjacodes |

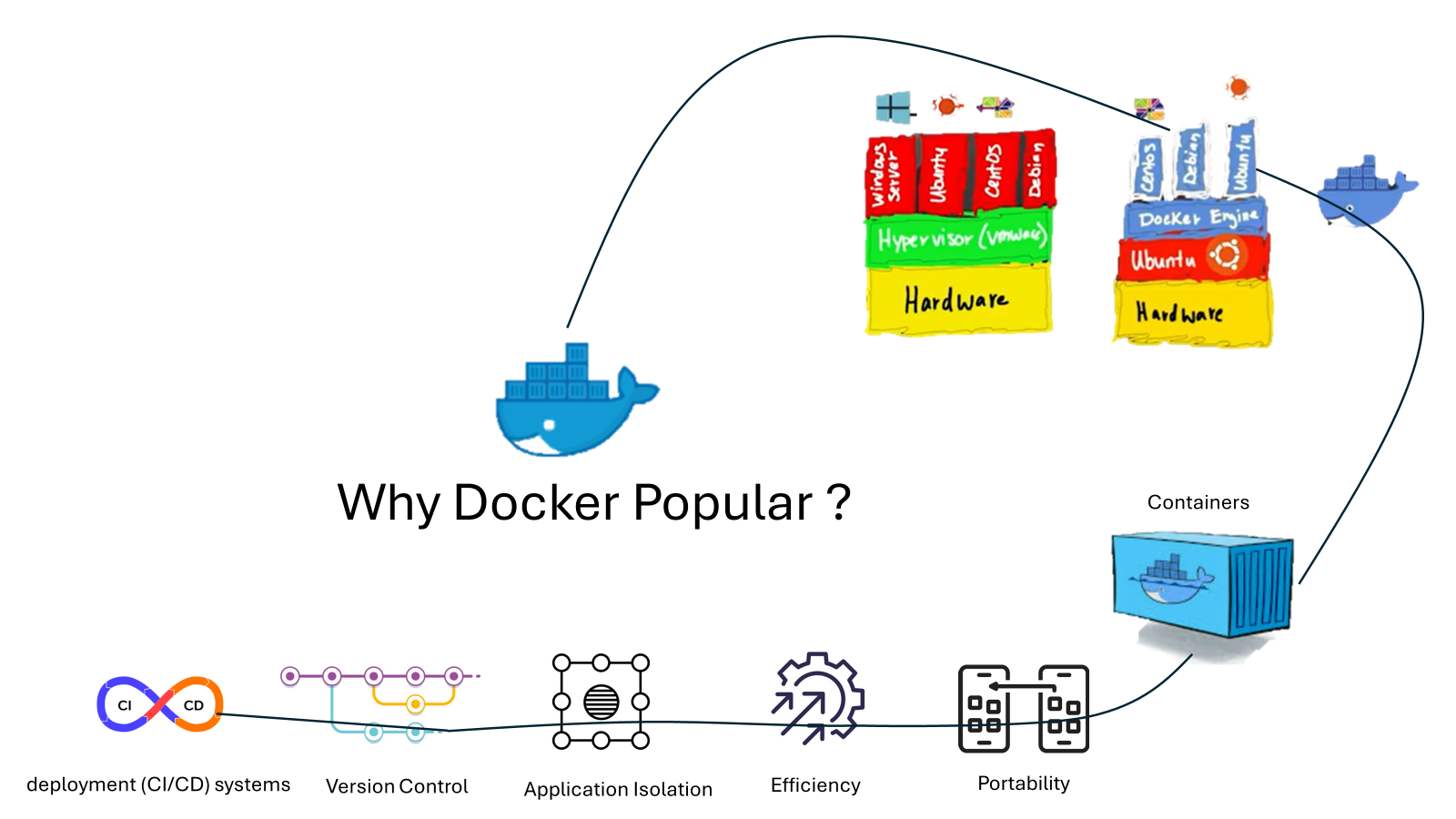

1,897,866 | Why Docker Popular ? | Why Docker Popular- Portability: Docker ensures that applications run consistently across any... | 0 | 2024-06-23T14:45:03 | https://dev.to/rahulcolud2023/why-docker-popular--5dek | Why Docker Popular-

1. **Portability:** Docker ensures that applications run consistently across any environment, from a developer's personal laptop to the production server, avoiding the common pitfall of enco... | rahulcolud2023 | |

1,897,865 | Leveraging GitHub Copilot Chat syntax: chat participants, chat variables, slash commands | GitHub Copilot Chat is an incredibly powerful and useful feature that allows you to chat with or about your code. Even though it’s 100% natural language-friendly (i.e., you can send your messages without using any specific syntax), leveraging some special chat capabilities can unlock new AI-assisted development scenari... | 0 | 2024-06-23T14:44:42 | https://dev.to/webmaxru/leveraging-github-copilot-chat-syntax-chat-participants-chat-variables-slash-commands-34c9 | github, githubcopilot, aideveloper, aiassistant | ---

title: Leveraging GitHub Copilot Chat syntax: chat participants, chat variables, slash commands

published: true

description: GitHub Copilot Chat is an incredibly powerful and useful feature that allows you to chat with or about your code. Even though it’s 100% natural language-friendly (i.e., you can send your mess... | webmaxru |

1,897,864 | Creating a Kubernetes Cluster with Kubeadm and Containerd: A Comprehensive Step-by-Step Guide | Kubeadm is a tool designed to simplify the process of creating Kubernetes clusters by providing... | 27,750 | 2024-06-23T14:44:07 | https://psj.codes/creating-a-kubernetes-cluster-with-kubeadm-and-containerd-a-comprehensive-step-by-step-guide | kubernetes, cka, devops, tutorial | Kubeadm is a tool designed to simplify the process of creating Kubernetes clusters by providing `kubeadm init` and `kubeadm join` commands as best-practice "fast paths." - Kubernetes documentation

In this blog, we'll go through the step-by-step process of installing a Kubernetes cluster using Kubeadm.

## Prerequisite... | pratikjagrut |

1,897,754 | Check used fonts on a webpage | Circumstances As I am currently learning next.j. I am going through the course created by... | 0 | 2024-06-23T14:42:22 | https://dev.to/machy44/check-used-fonts-on-a-webpage-29cn | webdev, css, frontend, webdesign | ---

title: Check used fonts on a webpage

published: true

description:

tags: #webdev #css #frontend #webdesign

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-06-23 12:46 +0000

---

##Circumstances

As I am currently learning next.j. I am going through the c... | machy44 |

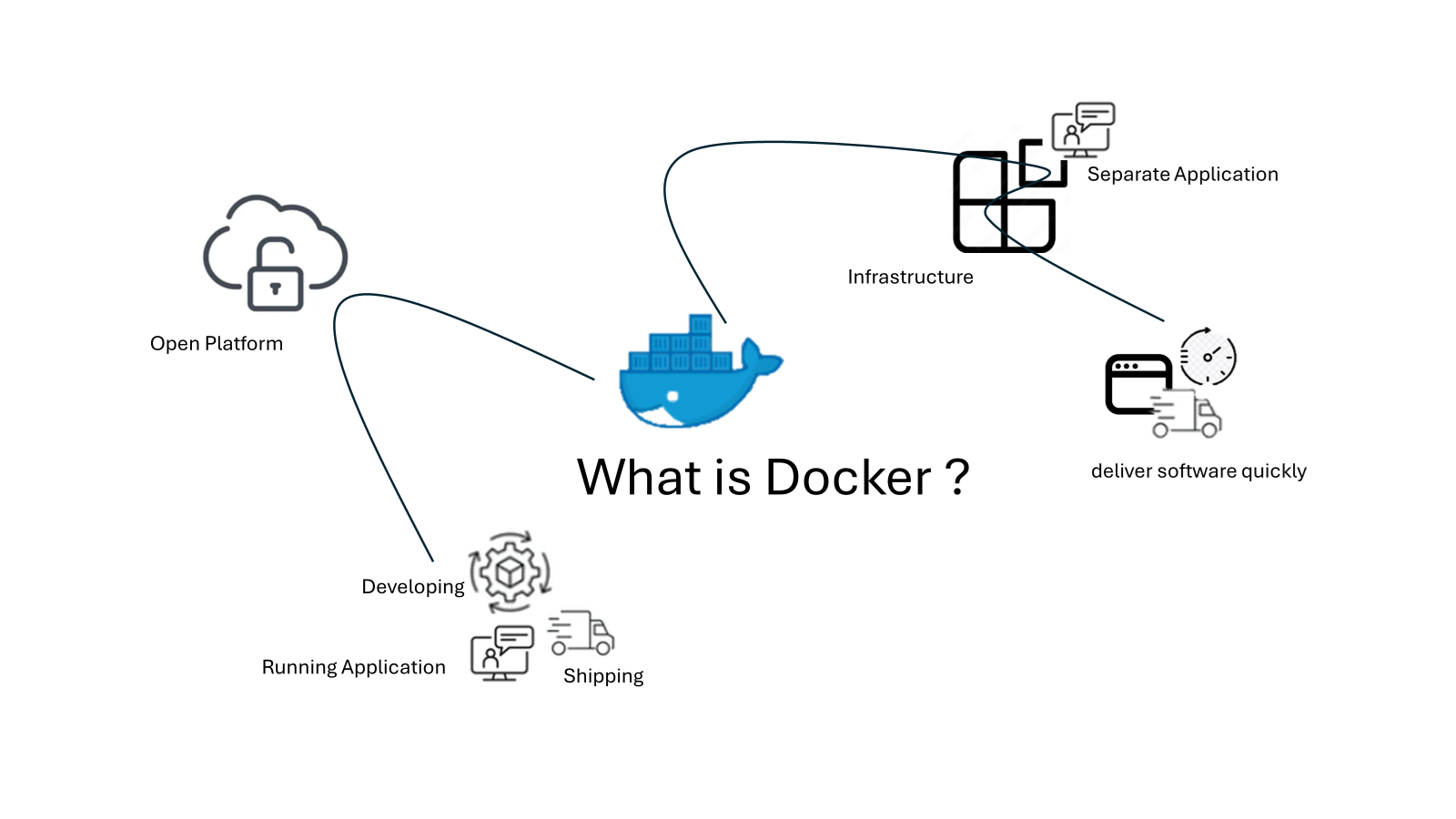

1,897,863 | What is Docker | Visual thinking |2024 | What is Docker ? Docker is an open platform for developing, shipping, and running applications.... | 0 | 2024-06-23T14:38:43 | https://dev.to/rahulcolud2023/what-is-docker-visual-thinking-2024-4e62 | What is Docker ?

Docker is an open platform for developing, shipping, and running applications. Docker enables you to separate your applications from your infrastructure so you can deliver software quickly.

With... | rahulcolud2023 | |

1,897,862 | Concurrency and Parallelism | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-23T14:36:59 | https://dev.to/mr_destructive/concurrency-and-parallelism-37hl | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

### Concurrency

Concurrency is like having one person doing multiple household tasks by switching between them. Imagine someone who starts washing dishes, then pauses to vacuum the livi... | mr_destructive |

1,897,861 | AWS: Karpenter and SSH for Kubernetes WorkerNodes | Setting up SSH access to EC2 created by Karpenter in EKS from AWS Session Manager, AWS EC2 Instance Connect, and via EC2 User Data manually and from Terraform | 0 | 2024-06-23T14:36:36 | https://rtfm.co.ua/en/aws-karpenter-and-ssh-for-kubernetes-workernodes/ | security, aws, devops, kubernetes | ---

title: AWS: Karpenter and SSH for Kubernetes WorkerNodes

published: true

tags: security,aws,devops,kubernetes

description: Setting up SSH access to EC2 created by Karpenter in EKS from AWS Session Manager, AWS EC2 Instance Connect, and via EC2 User Data manually and from Terraform

canonical_url: https://rtfm.co.ua/... | setevoy |

1,894,838 | Master Java Programming: Comprehensive Guide Part 1 | Since its debut, Java, a flexible and potent programming language, has played a significant role in... | 0 | 2024-06-23T14:32:35 | https://dev.to/bishop_bhaumik/master-java-programming-comprehensive-guide-part-1-1m7g | java, devops, development | Since its debut, Java, a flexible and potent programming language, has played a significant role in the software development industry. Java, which is well-known for its power and usability, has applications in many other domains, including commercial solutions and the creation of mobile applications. We shall examine J... | bishop_bhaumik |

1,897,857 | Still earning money with code? 😇 | Claude 3.5 Sonnet just coded a full-featured file upload (link to demo mp4) component for me: 1) + a... | 0 | 2024-06-23T14:32:25 | https://dev.to/alexanderisora/still-earning-money-with-code-1ofb | webdev, javascript, node, chatgpt | Claude 3.5 Sonnet just coded a full-featured file upload ([link to demo mp4](https://temp.paracast.io/ai_code.mp4)) component for me:

1) + a spinner

2) size restriction

3) file extension restriction

4) + a preview preview

5) + state management

6) + upload to a Cloudflare R2 bucket

If you still sell code, you definite... | alexanderisora |

1,897,840 | update jdk8 with apt in ubuntu18 | Following steps are running on a ubuntu18 server vm in pve. And jdk8 was installed with apt... | 0 | 2024-06-23T14:28:29 | https://dev.to/masonycl/update-jdk8-in-ubuntu18-15lg | Following steps are running on a ubuntu18 server vm in pve.

And jdk8 was installed with apt previously a few years ago.

1. check current version in use

run: `java -version`

got: `openjdk version "1.8.0_312"`

This version is outdated.

2. check apt source

run:

`sudo apt update`

then:

run: `apt list | grep jdk`

this wil... | masonycl | |

1,897,855 | Como usar o comando oobe ypassnro no Windows | Você já encontrou a necessidade de configurar um computador novo, mas foi bloqueado pelo processo de... | 0 | 2024-06-23T14:24:09 | https://dev.to/kbdemiranda/como-usar-o-comando-oobeypassnro-no-windows-5c35 | windows, oobe, configuração, tecnologia | ---

title: Como usar o comando oobe\bypassnro no Windows

published: true

description:

tags:

- Windows

- OOBE

- Configuração

- Tecnologia

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/cvxd0sagildprt4z74tj.png

---

Você já encontrou a necessidade de configurar um computador novo, mas foi... | kbdemiranda |

1,897,851 | Database Integration with Spring Boot : Best Practices and Tools | Integrating a database with a Spring Boot application is a common task that many developers do.... | 0 | 2024-06-23T14:19:24 | https://dev.to/abhishek999/database-integration-with-spring-boot-best-practices-and-tools-5doh | java, springboot, jpa, mysql | Integrating a database with a Spring Boot application is a common task that many developers do. Spring Boot, combined with Spring Data JPA, provides a robust framework for working with relational databases like MySQL. Additionally, tools like Flyway and Liquibase help manage database migrations efficiently. This blog w... | abhishek999 |

1,894,729 | Web3Auth(次のjs)を使用したXRP Ledgerアカウントの作成:ステップバイステップガイド | はじめに XRP ledgerは、国境を越えた支払いに焦点を当てた分散型ブロックチェーンであり、金融取引を文書化するために使用され、高速で低コストで効果的です。 XRP... | 0 | 2024-06-23T14:19:20 | https://dev.to/amity808/web3authci-nojswoshi-yong-sitaxrp-ledgerakauntonozuo-cheng-sutetupubaisutetupugaido-1l31 | web3, web3auth, javascript, authjs | ##はじめに

**XRP ledger**は、国境を越えた支払いに焦点を当てた分散型ブロックチェーンであり、金融取引を文書化するために使用され、高速で低コストで効果的です。 XRP Ledgerは、Rippleの共同創設者兼CEOであるChris Larsenによって作成されました。 XRP Ledeは、そのネイティブ暗号通貨としてXRPを利用しました。

このチュートリアルでは、新しいアカウントを生成するweb3認証を使用してXRP Ledgerを実装しています。 このアカウントは、取引を実行するために使用できます。 これは、xrp ledgerの任意のアドレスにトークンを送信するために使用できます。 ユーザーがXRBアカウント... | amity808 |

1,897,850 | Clinical Decision Support Software: Transforming Modern Healthcare | Introduction Clinical Decision Support Software (CDSS) represents a significant... | 27,673 | 2024-06-23T14:18:22 | https://dev.to/rapidinnovation/clinical-decision-support-software-transforming-modern-healthcare-274i | ## Introduction

Clinical Decision Support Software (CDSS) represents a significant advancement

in the medical field, integrating information technology and healthcare to

improve patient outcomes. As healthcare systems become more complex and data-

driven, the role of sophisticated tools to aid medical professionals in... | rapidinnovation | |

1,897,849 | The Magic of Binary Search: Finding Needles in Haystacks | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-23T14:17:34 | https://dev.to/vidyarathna/the-magic-of-binary-search-finding-needles-in-haystacks-44bg | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

Binary search is like finding a word in a dictionary by opening it in the middle, then narrowing down based on alphabetical order. It works on sorted data, halving the search space wit... | vidyarathna |

1,897,847 | Understanding Cache Memory: The Librarian's Efficiency Secret | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-23T14:15:28 | https://dev.to/vidyarathna/understanding-cache-memory-the-librarians-efficiency-secret-1j6i | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer