code stringlengths 2.5k 150k | kind stringclasses 1 value |

|---|---|

```

import exmp

import qiime2

import tempfile

import os.path

import pandas as pd

from qiime2.plugins.feature_table.methods import filter_samples

from qiime2.plugins.taxa.methods import collapse

```

# EXMP 1

```

taxonomy = exmp.load_taxonomy()

sample_metadata = exmp.load_sample_metadata()

data_dir = exmp.cm_path

rarefied_table = qiime2.Artifact.load(os.path.join(data_dir, "rarefied_table.qza"))

uu_dm = qiime2.Artifact.load(os.path.join(data_dir, "unweighted_unifrac_distance_matrix.qza"))

wu_dm = qiime2.Artifact.load(os.path.join(data_dir, "weighted_unifrac_distance_matrix.qza"))

faith_pd = qiime2.Artifact.load(os.path.join(data_dir, "faith_pd_vector.qza"))

shannon = qiime2.Artifact.load(os.path.join(data_dir, "shannon_vector.qza"))

evenness = qiime2.Artifact.load(os.path.join(data_dir, "evenness_vector.qza"))

with tempfile.TemporaryDirectory() as output_dir:

_, _, _, sample_metadata = exmp.ols_and_anova('RER_change', 'exmp1', '1.0',

output_dir, 'week',

sample_metadata, uu_dm, wu_dm,

faith_pd, shannon, evenness)

rarefied_table = filter_samples(table=rarefied_table, metadata=sample_metadata).filtered_table

taxa_table = collapse(table=rarefied_table, taxonomy=taxonomy, level=6).collapsed_table.view(pd.DataFrame)

sample_metadata = sample_metadata.to_dataframe()

sorted_wu_pc3_correlations = pd.DataFrame(taxa_table.corrwith(sample_metadata['Weighted_UniFrac_PC3'], method='spearman').sort_values(), columns=['Spearman rho'])

sorted_wu_pc3_correlations['25th percentile rarefied count'] = taxa_table[sorted_wu_pc3_correlations.index].quantile(0.25)

sorted_wu_pc3_correlations['Median rarefied count'] = taxa_table[sorted_wu_pc3_correlations.index].quantile(0.50)

sorted_wu_pc3_correlations['75th percentile rarefied count'] = taxa_table[sorted_wu_pc3_correlations.index].quantile(0.75)

```

The data are most easily interpreted if the ordination axes are positively correlated with the RER change. Since the direction of the PCs are arbitrary, I generally just run this a few times till I get a positive correlation.

```

sample_metadata['Weighted_UniFrac_PC3'].corr(sample_metadata['RER_change'])

output_dir = os.path.join(exmp.cm_path, 'ols-and-anova', 'exmp1-RER_change-week1.0')

sorted_wu_pc3_correlations.to_csv(open(os.path.join(output_dir, 'wu-pcoa3-genus-correlations.csv'), 'w'))

```

# EXMP 2

```

taxonomy = exmp.load_taxonomy()

sample_metadata = exmp.load_sample_metadata()

data_dir = exmp.cm_path

rarefied_table = qiime2.Artifact.load(os.path.join(data_dir, "rarefied_table.qza"))

uu_dm = qiime2.Artifact.load(os.path.join(data_dir, "unweighted_unifrac_distance_matrix.qza"))

wu_dm = qiime2.Artifact.load(os.path.join(data_dir, "weighted_unifrac_distance_matrix.qza"))

faith_pd = qiime2.Artifact.load(os.path.join(data_dir, "faith_pd_vector.qza"))

shannon = qiime2.Artifact.load(os.path.join(data_dir, "shannon_vector.qza"))

evenness = qiime2.Artifact.load(os.path.join(data_dir, "evenness_vector.qza"))

with tempfile.TemporaryDirectory() as output_dir:

_, _, _, sample_metadata = exmp.ols_and_anova('three_rep_max_squat_change', 'exmp2', '1.0',

output_dir, 'week',

sample_metadata, uu_dm, wu_dm,

faith_pd, shannon, evenness)

rarefied_table = filter_samples(table=rarefied_table, metadata=sample_metadata).filtered_table

taxa_table = collapse(table=rarefied_table, taxonomy=taxonomy, level=6).collapsed_table.view(pd.DataFrame)

sample_metadata = sample_metadata.to_dataframe()

sorted_wu_pc2_correlations = pd.DataFrame(taxa_table.corrwith(sample_metadata['Weighted_UniFrac_PC2'], method='spearman').sort_values(), columns=['Spearman rho'])

sorted_wu_pc2_correlations['25th percentile rarefied count'] = taxa_table[sorted_wu_pc2_correlations.index].quantile(0.25)

sorted_wu_pc2_correlations['Median rarefied count'] = taxa_table[sorted_wu_pc2_correlations.index].quantile(0.50)

sorted_wu_pc2_correlations['75th percentile rarefied count'] = taxa_table[sorted_wu_pc2_correlations.index].quantile(0.75)

sorted_wu_pc3_correlations = pd.DataFrame(taxa_table.corrwith(sample_metadata['Weighted_UniFrac_PC3'], method='spearman').sort_values(), columns=['Spearman rho'])

sorted_wu_pc3_correlations['25th percentile rarefied count'] = taxa_table[sorted_wu_pc3_correlations.index].quantile(0.25)

sorted_wu_pc3_correlations['Median rarefied count'] = taxa_table[sorted_wu_pc3_correlations.index].quantile(0.50)

sorted_wu_pc3_correlations['75th percentile rarefied count'] = taxa_table[sorted_wu_pc3_correlations.index].quantile(0.75)

sample_metadata['Weighted_UniFrac_PC2'].corr(sample_metadata['three_rep_max_squat_change'])

sample_metadata['Weighted_UniFrac_PC3'].corr(sample_metadata['three_rep_max_squat_change'])

output_dir = os.path.join(exmp.cm_path, 'ols-and-anova', 'exmp2-three_rep_max_squat_change-week1.0')

sorted_wu_pc2_correlations.to_csv(open(os.path.join(output_dir, 'wu-pcoa2-genus-correlations.csv'), 'w'))

sorted_wu_pc3_correlations.to_csv(open(os.path.join(output_dir, 'wu-pcoa3-genus-correlations.csv'), 'w'))

```

| github_jupyter |

```

import numpy

import urllib

import scipy.optimize

import random

from math import *

def parseData(fname):

for l in urllib.urlopen(fname):

yield eval(l)

print "Reading data..."

data = list(parseData("file:beer_50000.json"))

print "done"

def feature(datum):

text = datum['review/text'].lower().replace(',',' ').replace('?',' ')\

.replace('!',' ').replace(':',' ').replace('"',' ').replace('.',' ')\

.replace('(',' ').replace(')',' ').split()

num_lactic = 0

num_tart = 0

num_sour = 0

num_citric = 0

num_sweet = 0

num_acid = 0

num_hop = 0

num_fruit = 0

num_salt = 0

num_spicy = 0

for word in text:

if word == 'lactic': num_lactic += 1

if word == 'tart': num_tart += 1

if word == 'sour': num_sour += 1

if word == 'citric': num_citric += 1

if word == 'sweet': num_sweet += 1

if word == 'acid': num_acid += 1

if word == 'hop': num_hop += 1

if word == 'fruit': num_fruit += 1

if word == 'salt': num_salt += 1

if word == 'spicy': num_spicy += 1

feat = [1, num_lactic, num_tart, num_sour, \

num_citric, num_sweet, num_acid, num_hop, \

num_fruit, num_salt, num_spicy]

return feat

X = [feature(d) for d in data]

y = [d['beer/ABV'] >= 6.5 for d in data]

def inner(x,y):

return sum([x[i]*y[i] for i in range(len(x))])

def sigmoid(x):

res = 1.0 / (1 + exp(-x))

return res

length = int(len(data)/3)

X_train = X[:length]

y_train = y[:length]

X_validation = X[length:2*length]

y_validation = y[length:2*length]

X_test = X[2*length:]

y_test = y[2*length:]

# Count for number of total data, y=0 and y=1

num_total = len(y_train)

num_y0 = y_train.count(0)

num_y1 = y_train.count(1)

# NEGATIVE Log-likelihood

def f(theta, X, y, lam):

loglikelihood = 0

for i in range(len(X)):

logit = inner(X[i], theta)

if y[i]:

loglikelihood -= log(1 + exp(-logit)) * num_total / (2 * num_y1)

if not y[i]:

loglikelihood -= (log(1 + exp(-logit)) + logit ) * num_total / (2 * num_y0)

for k in range(len(theta)):

loglikelihood -= lam * theta[k]*theta[k]

# for debugging

# print("ll =" + str(loglikelihood))

return -loglikelihood

# NEGATIVE Derivative of log-likelihood

def fprime(theta, X, y, lam):

dl = [0]*len(theta)

for i in range(len(X)):

logit = inner(X[i], theta)

for k in range(len(theta)):

if y[i]:

dl[k] += X[i][k] * (1 - sigmoid(logit)) * num_total / (2 * num_y1)

if not y[i]:

dl[k] -= X[i][k] * (1 - sigmoid(logit)) * num_total / (2 * num_y0)

for k in range(len(theta)):

dl[k] -= lam*2*theta[k]

return numpy.array([-x for x in dl])

def train(lam):

theta,_,_ = scipy.optimize.fmin_l_bfgs_b(f, [0]*len(X[0]), fprime, pgtol = 10, args = (X_train, y_train, lam))

return theta

lam = 1.0

theta = train(lam)

print theta

X_data = [X_train, X_validation, X_test]

y_data = [y_train, y_validation, y_test]

symbol = ['train', 'valid', 'test']

print 'λ\tDataset\t\tTruePositive\tFalsePositive\tTrueNegative\tFalseNegative\tAccuracy\tBER'

for i in range(3):

def TP(theta):

scores = [inner(theta,x) for x in X_data[i]]

predictions = [s > 0 for s in scores]

correct = [((a==1) and (b==1)) for (a,b) in zip(predictions,y_data[i])]

tp = sum(correct) * 1.0

return tp

def TN(theta):

scores = [inner(theta,x) for x in X_data[i]]

predictions = [s > 0 for s in scores]

correct = [((a==0) and (b==0)) for (a,b) in zip(predictions,y_data[i])]

tn = sum(correct) * 1.0

return tn

def FP(theta):

scores = [inner(theta,x) for x in X_data[i]]

predictions = [s > 0 for s in scores]

correct = [((a==1) and (b==0)) for (a,b) in zip(predictions,y_data[i])]

fp = sum(correct) * 1.0

return fp

def FN(theta):

scores = [inner(theta,x) for x in X_data[i]]

predictions = [s > 0 for s in scores]

correct = [((a==0) and (b==1)) for (a,b) in zip(predictions,y_data[i])]

fn = sum(correct) * 1.0

return fn

tp = TP(theta)

fp = FP(theta)

tn = TN(theta)

fn = FN(theta)

TPR = tp / (tp + fn)

TNR = tn / (tn + fp)

BER = 1 - 0.5 * (TPR + TNR)

accuracy = (tp+tn)/(tp+tn+fp+fn)

print str(lam)+'\t'+symbol[i]+'\t\t'+str(tp)+'\t\t'+str(fp)+'\t\t'+str(tn)+'\t\t'+str(fn)+'\t\t'+str(accuracy)+'\t'+str(BER)

# Original Algorithm

# NEGATIVE Log-likelihood

def f(theta, X, y, lam):

loglikelihood = 0

for i in range(len(X)):

logit = inner(X[i], theta)

loglikelihood -= log(1 + exp(-logit))

if not y[i]:

loglikelihood -= logit

for k in range(len(theta)):

loglikelihood -= lam * theta[k]*theta[k]

# for debugging

# print("ll =" + str(loglikelihood))

return -loglikelihood

# NEGATIVE Derivative of log-likelihood

def fprime(theta, X, y, lam):

dl = [0]*len(theta)

for i in range(len(X)):

logit = inner(X[i], theta)

for k in range(len(theta)):

dl[k] += X[i][k] * (1 - sigmoid(logit))

if not y[i]:

dl[k] -= X[i][k]

for k in range(len(theta)):

dl[k] -= lam*2*theta[k]

return numpy.array([-x for x in dl])

def train(lam):

theta,_,_ = scipy.optimize.fmin_l_bfgs_b(f, [0]*len(X[0]), fprime, pgtol = 10, args = (X_train, y_train, lam))

return theta

lam = 1.0

theta = train(lam)

X_data = [X_train, X_validation, X_test]

y_data = [y_train, y_validation, y_test]

symbol = ['train', 'valid', 'test']

print 'λ\tDataset\t\tTruePositive\tFalsePositive\tTrueNegative\tFalseNegative\tAccuracy\tBER'

for i in range(3):

def TP(theta):

scores = [inner(theta,x) for x in X_data[i]]

predictions = [s > 0 for s in scores]

correct = [((a==1) and (b==1)) for (a,b) in zip(predictions,y_data[i])]

tp = sum(correct) * 1.0

return tp

def TN(theta):

scores = [inner(theta,x) for x in X_data[i]]

predictions = [s > 0 for s in scores]

correct = [((a==0) and (b==0)) for (a,b) in zip(predictions,y_data[i])]

tn = sum(correct) * 1.0

return tn

def FP(theta):

scores = [inner(theta,x) for x in X_data[i]]

predictions = [s > 0 for s in scores]

correct = [((a==1) and (b==0)) for (a,b) in zip(predictions,y_data[i])]

fp = sum(correct) * 1.0

return fp

def FN(theta):

scores = [inner(theta,x) for x in X_data[i]]

predictions = [s > 0 for s in scores]

correct = [((a==0) and (b==1)) for (a,b) in zip(predictions,y_data[i])]

fn = sum(correct) * 1.0

return fn

tp = TP(theta)

fp = FP(theta)

tn = TN(theta)

fn = FN(theta)

TPR = tp / (tp + fn)

TNR = tn / (tn + fp)

BER = 1 - 0.5 * (TPR + TNR)

accuracy = (tp+tn)/(tp+tn+fp+fn)

print str(lam)+'\t'+symbol[i]+'\t\t'+str(tp)+'\t\t'+str(fp)+'\t\t'+str(tn)+'\t\t'+str(fn)+'\t\t'+str(accuracy)+'\t'+str(BER)

```

| github_jupyter |

# Strategies

High-performance solvers, such as Z3, contain many tightly integrated, handcrafted heuristic combinations of algorithmic proof methods. While these heuristic combinations tend to be highly tuned for known classes of problems, they may easily perform very badly on new classes of problems. This issue is becoming increasingly pressing as solvers begin to gain the attention of practitioners in diverse areas of science and engineering. In many cases, changes to the solver heuristics can make a tremendous difference.

More information on Z3 is available from https://github.com/z3prover/z3.git

## Introduction

Z3 implements a methodology for orchestrating reasoning engines where "big" symbolic reasoning steps are represented as functions known as tactics, and tactics are composed using combinators known as tacticals. Tactics process sets of formulas called Goals.

When a tactic is applied to some goal G, four different outcomes are possible. The tactic succeeds in showing G to be satisfiable (i.e., feasible); succeeds in showing G to be unsatisfiable (i.e., infeasible); produces a sequence of subgoals; or fails. When reducing a goal G to a sequence of subgoals G1, ..., Gn, we face the problem of model conversion. A model converter construct a model for G using a model for some subgoal Gi.

In the following example, we create a goal g consisting of three formulas, and a tactic t composed of two built-in tactics: simplify and solve-eqs. The tactic simplify apply transformations equivalent to the ones found in the command simplify. The tactic solver-eqs eliminate variables using Gaussian elimination. Actually, solve-eqs is not restricted only to linear arithmetic. It can also eliminate arbitrary variables. Then, combinator Then applies simplify to the input goal and solve-eqs to each subgoal produced by simplify. In this example, only one subgoal is produced.

```

!pip install "z3-solver"

from z3 import *

x, y = Reals('x y')

g = Goal()

g.add(x > 0, y > 0, x == y + 2)

print(g)

t1 = Tactic('simplify')

t2 = Tactic('solve-eqs')

t = Then(t1, t2)

print(t(g))

```

In the example above, variable x is eliminated, and is not present the resultant goal.

In Z3, we say a clause is any constraint of the form Or(f_1, ..., f_n). The tactic split-clause will select a clause Or(f_1, ..., f_n) in the input goal, and split it n subgoals. One for each subformula f_i.

```

x, y = Reals('x y')

g = Goal()

g.add(Or(x < 0, x > 0), x == y + 1, y < 0)

t = Tactic('split-clause')

r = t(g)

for g in r:

print(g)

```

Tactics

Z3 comes equipped with many built-in tactics. The command describe_tactics() provides a short description of all built-in tactics.

describe_tactics()

Z3Py comes equipped with the following tactic combinators (aka tacticals):

* Then(t, s) applies t to the input goal and s to every subgoal produced by t.

* OrElse(t, s) first applies t to the given goal, if it fails then returns the result of s applied to the given goal.

* Repeat(t) Keep applying the given tactic until no subgoal is modified by it.

* Repeat(t, n) Keep applying the given tactic until no subgoal is modified by it, or the number of iterations is greater than n.

* TryFor(t, ms) Apply tactic t to the input goal, if it does not return in ms milliseconds, it fails.

* With(t, params) Apply the given tactic using the given parameters.

The following example demonstrate how to use these combinators.

```

x, y, z = Reals('x y z')

g = Goal()

g.add(Or(x == 0, x == 1),

Or(y == 0, y == 1),

Or(z == 0, z == 1),

x + y + z > 2)

# Split all clauses"

split_all = Repeat(OrElse(Tactic('split-clause'),

Tactic('skip')))

print(split_all(g))

split_at_most_2 = Repeat(OrElse(Tactic('split-clause'),

Tactic('skip')),

1)

print(split_at_most_2(g))

# Split all clauses and solve equations

split_solve = Then(Repeat(OrElse(Tactic('split-clause'),

Tactic('skip'))),

Tactic('solve-eqs'))

print(split_solve(g))

```

In the tactic split_solver, the tactic solve-eqs discharges all but one goal. Note that, this tactic generates one goal: the empty goal which is trivially satisfiable (i.e., feasible)

The list of subgoals can be easily traversed using the Python for statement.

```

x, y, z = Reals('x y z')

g = Goal()

g.add(Or(x == 0, x == 1),

Or(y == 0, y == 1),

Or(z == 0, z == 1),

x + y + z > 2)

# Split all clauses"

split_all = Repeat(OrElse(Tactic('split-clause'),

Tactic('skip')))

for s in split_all(g):

print(s)

```

A tactic can be converted into a solver object using the method solver(). If the tactic produces the empty goal, then the associated solver returns sat. If the tactic produces a single goal containing False, then the solver returns unsat. Otherwise, it returns unknown.

```

bv_solver = Then('simplify',

'solve-eqs',

'bit-blast',

'sat').solver()

x, y = BitVecs('x y', 16)

solve_using(bv_solver, x | y == 13, x > y)

```

In the example above, the tactic bv_solver implements a basic bit-vector solver using equation solving, bit-blasting, and a propositional SAT solver. Note that, the command Tactic is suppressed. All Z3Py combinators automatically invoke Tactic command if the argument is a string. Finally, the command solve_using is a variant of the solve command where the first argument specifies the solver to be used.

In the following example, we use the solver API directly instead of the command solve_using. We use the combinator With to configure our little solver. We also include the tactic aig which tries to compress Boolean formulas using And-Inverted Graphs.

```

bv_solver = Then(With('simplify', mul2concat=True),

'solve-eqs',

'bit-blast',

'aig',

'sat').solver()

x, y = BitVecs('x y', 16)

bv_solver.add(x*32 + y == 13, x & y < 10, y > -100)

print(bv_solver.check())

m = bv_solver.model()

print(m)

print(x*32 + y, "==", m.evaluate(x*32 + y))

print(x & y, "==", m.evaluate(x & y))

```

The tactic smt wraps the main solver in Z3 as a tactic.

```

x, y = Ints('x y')

s = Tactic('smt').solver()

s.add(x > y + 1)

print(s.check())

print(s.model())

```

Now, we show how to implement a solver for integer arithmetic using SAT. The solver is complete only for problems where every variable has a lower and upper bound.

```

s = Then(With('simplify', arith_lhs=True, som=True),

'normalize-bounds', 'lia2pb', 'pb2bv',

'bit-blast', 'sat').solver()

x, y, z = Ints('x y z')

solve_using(s,

x > 0, x < 10,

y > 0, y < 10,

z > 0, z < 10,

3*y + 2*x == z)

# It fails on the next example (it is unbounded)

s.reset()

solve_using(s, 3*y + 2*x == z)

```

Tactics can be combined with solvers. For example, we can apply a tactic to a goal, produced a set of subgoals, then select one of the subgoals and solve it using a solver. The next example demonstrates how to do that, and how to use model converters to convert a model for a subgoal into a model for the original goal.

```

t = Then('simplify',

'normalize-bounds',

'solve-eqs')

x, y, z = Ints('x y z')

g = Goal()

g.add(x > 10, y == x + 3, z > y)

r = t(g)

# r contains only one subgoal

print(r)

s = Solver()

s.add(r[0])

print(s.check())

# Model for the subgoal

print(s.model())

# Model for the original goal

print(r[0].convert_model(s.model()))

```

## Probes

Probes (aka formula measures) are evaluated over goals. Boolean expressions over them can be built using relational operators and Boolean connectives. The tactic FailIf(cond) fails if the given goal does not satisfy the condition cond. Many numeric and Boolean measures are available in Z3Py. The command describe_probes() provides the list of all built-in probes.

```

describe_probes()

```

In the following example, we build a simple tactic using FailIf. It also shows that a probe can be applied directly to a goal.

```

x, y, z = Reals('x y z')

g = Goal()

g.add(x + y + z > 0)

p = Probe('num-consts')

print("num-consts:", p(g))

t = FailIf(p > 2)

try:

t(g)

except Z3Exception:

print("tactic failed")

print("trying again...")

g = Goal()

g.add(x + y > 0)

print(t(g))

```

Z3Py also provides the combinator (tactical) If(p, t1, t2) which is a shorthand for:

OrElse(Then(FailIf(Not(p)), t1), t2)

The combinator When(p, t) is a shorthand for:

If(p, t, 'skip')

The tactic skip just returns the input goal. The following example demonstrates how to use the If combinator.

```

x, y, z = Reals('x y z')

g = Goal()

g.add(x**2 - y**2 >= 0)

p = Probe('num-consts')

t = If(p > 2, 'simplify', 'factor')

print(t(g))

g = Goal()

g.add(x + x + y + z >= 0, x**2 - y**2 >= 0)

print(t(g))

```

| github_jupyter |

### Specify a text string to examine with NEMO

```

# specify query string

payload = 'The World Health Organization on Sunday reported the largest single-day increase in coronavirus cases by its count, at more than 183,000 new cases in the latest 24 hours. The UN health agency said Brazil led the way with 54,771 cases tallied and the U.S. next at 36,617. Over 15,400 came in in India.'

payload = 'is strongly affected by large ground-water withdrawals at or near Tupelo, Aberdeen, and West Point.'

# payload = 'Overall design: Teliospores of pathogenic races T-1, T-5 and T-16 of T. caries provided by a collection in Aberdeen, ID, USA'

payload = 'The results provide evidence of substantial population structure in C. posadasii and demonstrate presence of distinct geographic clades in Central and Southern Arizona as well as dispersed populations in Texas, Mexico and South and Central America'

payload = 'Most frequent numerical abnormalities in B-NHL were gains of chromosomes 3 and 18, although gains of chromosome 3 were less prominent in FL.'

```

### Load functions

```

# import credentials file

import yaml

with open("config.yml", 'r') as ymlfile:

cfg = yaml.safe_load(ymlfile)

# general way to extract values for a given key. Returns an array. Used to parse Nemo response and extract wikipedia id

# from https://hackersandslackers.com/extract-data-from-complex-json-python/

def extract_values(obj, key):

"""Pull all values of specified key from nested JSON."""

arr = []

def extract(obj, arr, key):

"""Recursively search for values of key in JSON tree."""

if isinstance(obj, dict):

for k, v in obj.items():

if isinstance(v, (dict, list)):

extract(v, arr, key)

elif k == key:

arr.append(v)

elif isinstance(obj, list):

for item in obj:

extract(item, arr, key)

return arr

results = extract(obj, arr, key)

return results

# getting wikipedia ID

# see he API at https://www.mediawiki.org/wiki/API:Query#Example_5:_Batchcomplete

# also, https://stackoverflow.com/questions/37024807/how-to-get-wikidata-id-for-an-wikipedia-article-by-api

def get_WPID (name):

import json

url = 'https://en.wikipedia.org/w/api.php?action=query&prop=pageprops&ppprop=wikibase_item&redirects=1&format=json&titles=' +name

r=requests.get(url).json()

return extract_values(r,'wikibase_item')

```

### Send a request to NEMO, and get a response

```

# make a service request

import requests

# payloadutf = payload.encode('utf-8')

url = "https://nemoservice.azurewebsites.net/nemo?appid=" + cfg['api_creds']['nmo1']

newHeaders = {'Content-type': 'application/json', 'Accept': 'text/plain'}

response = requests.post(url,

data='"{' + payload + '}"',

headers=newHeaders)

# display the results as string (remove json braces)

a = response.content.decode()

resp_full = a[a.find('{')+1 : a.find('}')]

resp_full

```

### Parse the response and load all found elements into a dataframe

```

# create a dataframe with entities, remove duplicates, then add wikipedia/wikidata concept IDs

import pandas as pd

import re

import xml.etree.ElementTree as ET

df = pd.DataFrame(columns=["Type","Ref","EntityType","Name","Form","WP","Value","Alt","WP_ID"])

# note that the last column is to be populated later, via Wikipedia API

# all previous columns are from Nemo: based on "e" (entity) and "d" (data) elements. "c" (concept) to be explored

# get starting and ending positions of xml fragments in the Nemo output

pattern_start = "<(e|d|c)\s"

iter = re.finditer(pattern_start,resp_full)

indices1 = [m.start(0) for m in iter]

pattern_end = "</(e|d|c)>"

iter = re.finditer(pattern_end,resp_full)

indices2 = [m.start(0) for m in iter]

# iterate over xml fragments returned by Nemo, extracting attributes from each and adding to dataframe

for i, entity in enumerate(indices1):

a = resp_full[indices1[i] : indices2[i]+4]

root = ET.fromstring(a)

tag = root.tag

attributes = root.attrib

df = df.append({"Type":root.tag,

"Ref":attributes.get('ref'),

"EntityType":attributes.get('type'),

"Name":attributes.get('name'),

"Form":attributes.get('form'),

"WP":attributes.get('wp'),

"Value":attributes.get('value'),

"Alt":attributes.get('alt')},

ignore_index=True)

```

E stands for entity;

the attribute ref gives you the title of the corresponding Wikipedia page when the attribute wp has the value “y”;

the attribute type gives you the type of entity for known entities; the types of interest for you are G, which is geo-political entity, L – geographic form/location (such as a mountain), and F, which is facility (such as an airport).

D stands for datafield, which comprises dates, NUMEX, email addresses and URLs, tracking numbers, and so on.

C stands for concept; these appear in Wikipedia and are deemed as relevant for the input text, but they do not get disambiguated

```

# remove duplicate records from the df

df = df.drop_duplicates(keep='first')

# for each found entity, add wikidata unique identifiers to the dataframe

for index, row in df.iterrows():

if (row['WP']=='y'):

row['WP_ID'] = get_WPID(row['Name'])[0]

df

```

| github_jupyter |

# Audiobooks business case

## Preprocessing exercise

It makes sense to shuffle the indices prior to balancing the dataset.

Using the code from the lesson (below), shuffle the indices and then balance the dataset.

At the end of the course, you will have an exercise to create the same machine learning algorithm, with preprocessing done in this way.

Note: This is more of a programming exercise rather than a machine learning one. Being able to complete it successfully will ensure you understand the preprocessing.

Good luck!

**Solution:**

Scroll down to the 'Exercise Solution' section

### Extract the data from the csv

```

import numpy as np

# We will use the sklearn preprocessing library, as it will be easier to standardize the data.

from sklearn import preprocessing

# Load the data

raw_csv_data = np.loadtxt('Audiobooks_data.csv',delimiter=',')

# The inputs are all columns in the csv, except for the first one [:,0]

# (which is just the arbitrary customer IDs that bear no useful information),

# and the last one [:,-1] (which is our targets)

unscaled_inputs_all = raw_csv_data[:,1:-1]

# The targets are in the last column. That's how datasets are conventionally organized.

targets_all = raw_csv_data[:,-1]

```

### EXERCISE SOLUTION

We shuffle the indices before balancing (to remove any day effects, etc.)

However, we still have to shuffle them AFTER we balance the dataset as otherwise, all targets that are 1s will be contained in the train_targets.

This code is suboptimal, but is the easiest way to complete the exercise. Still, as we do the preprocessing only once, speed in not something we are aiming for.

We record the variables in themselves, so we don't amend the code that follows.

```

# When the data was collected it was actually arranged by date

# Shuffle the indices of the data, so the data is not arranged in any way when we feed it.

# Since we will be batching, we want the data to be as randomly spread out as possible

shuffled_indices = np.arange(unscaled_inputs_all.shape[0])

np.random.shuffle(shuffled_indices)

# Use the shuffled indices to shuffle the inputs and targets.

unscaled_inputs_all = unscaled_inputs_all[shuffled_indices]

targets_all = targets_all[shuffled_indices]

```

### Balance the dataset

```

# Count how many targets are 1 (meaning that the customer did convert)

num_one_targets = int(np.sum(targets_all))

# Set a counter for targets that are 0 (meaning that the customer did not convert)

zero_targets_counter = 0

# We want to create a "balanced" dataset, so we will have to remove some input/target pairs.

# Declare a variable that will do that:

indices_to_remove = []

# Count the number of targets that are 0.

# Once there are as many 0s as 1s, mark entries where the target is 0.

for i in range(targets_all.shape[0]):

if targets_all[i] == 0:

zero_targets_counter += 1

if zero_targets_counter > num_one_targets:

indices_to_remove.append(i)

# Create two new variables, one that will contain the inputs, and one that will contain the targets.

# We delete all indices that we marked "to remove" in the loop above.

unscaled_inputs_equal_priors = np.delete(unscaled_inputs_all, indices_to_remove, axis=0)

targets_equal_priors = np.delete(targets_all, indices_to_remove, axis=0)

```

### Standardize the inputs

```

# That's the only place we use sklearn functionality. We will take advantage of its preprocessing capabilities

# It's a simple line of code, which standardizes the inputs, as we explained in one of the lectures.

# At the end of the business case, you can try to run the algorithm WITHOUT this line of code.

# The result will be interesting.

scaled_inputs = preprocessing.scale(unscaled_inputs_equal_priors)

```

### Shuffle the data

```

# When the data was collected it was actually arranged by date

# Shuffle the indices of the data, so the data is not arranged in any way when we feed it.

# Since we will be batching, we want the data to be as randomly spread out as possible

shuffled_indices = np.arange(scaled_inputs.shape[0])

np.random.shuffle(shuffled_indices)

# Use the shuffled indices to shuffle the inputs and targets.

shuffled_inputs = scaled_inputs[shuffled_indices]

shuffled_targets = targets_equal_priors[shuffled_indices]

```

### Split the dataset into train, validation, and test

```

# Count the total number of samples

samples_count = shuffled_inputs.shape[0]

# Count the samples in each subset, assuming we want 80-10-10 distribution of training, validation, and test.

# Naturally, the numbers are integers.

train_samples_count = int(0.8 * samples_count)

validation_samples_count = int(0.1 * samples_count)

# The 'test' dataset contains all remaining data.

test_samples_count = samples_count - train_samples_count - validation_samples_count

# Create variables that record the inputs and targets for training

# In our shuffled dataset, they are the first "train_samples_count" observations

train_inputs = shuffled_inputs[:train_samples_count]

train_targets = shuffled_targets[:train_samples_count]

# Create variables that record the inputs and targets for validation.

# They are the next "validation_samples_count" observations, folllowing the "train_samples_count" we already assigned

validation_inputs = shuffled_inputs[train_samples_count:train_samples_count+validation_samples_count]

validation_targets = shuffled_targets[train_samples_count:train_samples_count+validation_samples_count]

# Create variables that record the inputs and targets for test.

# They are everything that is remaining.

test_inputs = shuffled_inputs[train_samples_count+validation_samples_count:]

test_targets = shuffled_targets[train_samples_count+validation_samples_count:]

# We balanced our dataset to be 50-50 (for targets 0 and 1), but the training, validation, and test were

# taken from a shuffled dataset. Check if they are balanced, too. Note that each time you rerun this code,

# you will get different values, as each time they are shuffled randomly.

# Normally you preprocess ONCE, so you need not rerun this code once it is done.

# If you rerun this whole sheet, the npzs will be overwritten with your newly preprocessed data.

# Print the number of targets that are 1s, the total number of samples, and the proportion for training, validation, and test.

print(np.sum(train_targets), train_samples_count, np.sum(train_targets) / train_samples_count)

print(np.sum(validation_targets), validation_samples_count, np.sum(validation_targets) / validation_samples_count)

print(np.sum(test_targets), test_samples_count, np.sum(test_targets) / test_samples_count)

```

### Save the three datasets in *.npz

```

# Save the three datasets in *.npz.

# In the next lesson, you will see that it is extremely valuable to name them in such a coherent way!

np.savez('Audiobooks_data_train', inputs=train_inputs, targets=train_targets)

np.savez('Audiobooks_data_validation', inputs=validation_inputs, targets=validation_targets)

np.savez('Audiobooks_data_test', inputs=test_inputs, targets=test_targets)

```

| github_jupyter |

This notebook will cover the assumed knowledge of pandas.

Here's a few questions to check if you already know the material in this notebook.

1. Does a NumPy array have a single dtype or multiple dtypes?

2. Why is broadcasting useful?

3. How do you slice a DataFrame by row label?

4. How do you select a column of a DataFrame?

5. Is the Index a column in the DataFrame?

If you feel pretty comfortable with those, go ahead and skip this notebook.

[Answers](#Answers) are at the end. We'll meet up at the next notebook.

# Aside: IPython Notebook

- two modes command and edit

- command -> edit: `Enter`

- edit -> command: `Esc`

- `h` : Keyboard Shortcuts: (from command mode)

- `j` / `k` : navigate cells

- `shift+Enter` executes a cell

Outline:

- [NumPy Foundation](#NumPy-Foundation)

- [Pandas](#Pandas)

- [Data Structures](#Data-Structures)

## Numpy Foundation

pandas is built atop NumPy, historically and in the actual library.

It's helpful to have a good understanding of some NumPyisms. [Speak the vernacular](https://www.youtube.com/watch?v=u2yvNw49AX4).

### ndarray

The core of numpy is the `ndarray`, N-dimensional array. These are homogenously-typed, fixed-length data containers.

NumPy also provides many convenient and fast methods implemented on the `ndarray`.

```

from __future__ import print_function

import numpy as np

import pandas as pd

x = np.array([1, 2, 3])

x

x.dtype

y = np.array([[True, False], [False, True]])

y

y.shape

```

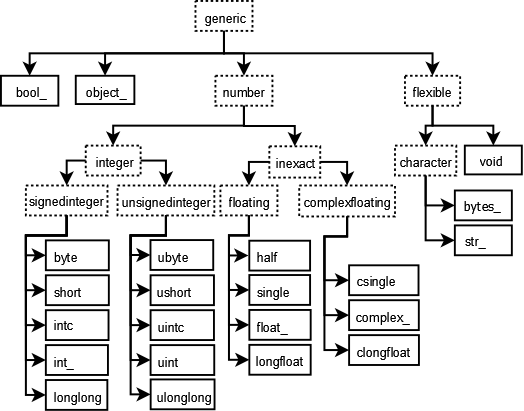

### dtypes

Unlike python lists, NumPy arrays care about the type of data stored within.

The full list of NumPy dtypes can be found in the [NumPy documentation](http://docs.scipy.org/doc/numpy/user/basics.types.html).

We sacrifice the convinience of mixing bools and ints and floats within an array for much better performance.

However, an unexpected `dtype` change will probably bite you at some point in the future.

The two biggest things to remember are

- Missing values (NaN) cast integer or boolean arrays to floats

- the object dtype is the fallback

You'll want to avoid object dtypes. It's typically slow.

### Broadcasting

It's super cool and super useful. The one-line explanation is that when doing elementwise operations, things expand to the "correct" shape.

```

# add a scalar to a 1-d array

x = np.arange(5)

print('x: ', x)

print('x+1:', x + 1, end='\n\n')

y = np.random.uniform(size=(2, 5))

print('y: ', y, sep='\n')

print('y+1:', y + 1, sep='\n')

```

Since `x` is shaped `(5,)` and `y` is shaped `(2,5)` we can do operations between them.

```

x * y

```

Without broadcasting we'd have to manually reshape our arrays, which quickly gets annoying.

```

x.reshape(1, 5).repeat(2, axis=0) * y

```

# Pandas

We'll breeze through the basics here, and get onto some interesting applications in a bit. I want to provide the *barest* of intuition so things stick down the road.

## Why pandas

NumPy is great. But it lacks a few things that are conducive to doing statisitcal analysis. By building on top of NumPy, pandas provides

- labeled arrays

- heterogenous data types within a table

- better missing data handling

- convenient methods

- more data types (Categorical, Datetime)

## Data Structures

This is the typical starting point for any intro to pandas.

We'll follow suit.

### The DataFrame

Here we have the workhorse data structure for pandas.

It's an in-memory table holding your data, and provides a few conviniences over lists of lists or NumPy arrays.

```

import numpy as np

import pandas as pd

# Many ways to construct a DataFrame

# We pass a dict of {column name: column values}

np.random.seed(42)

df = pd.DataFrame({'A': [1, 2, 3], 'B': [True, True, False],

'C': np.random.randn(3)},

index=['a', 'b', 'c']) # also this weird index thing

df

from IPython.display import Image

Image('dataframe.png')

```

### Selecting

Our first improvement over numpy arrays is labeled indexing. We can select subsets by column, row, or both. Column selection uses the regular python `__getitem__` machinery. Pass in a single column label `'A'` or a list of labels `['A', 'C']` to select subsets of the original `DataFrame`.

```

# Single column, reduces to a Series

df['A']

cols = ['A', 'C']

df[cols]

```

For row-wise selection, use the special `.loc` accessor.

```

df.loc[['a', 'b']]

```

When your index labels are ordered, you can use ranges to select rows or columns.

```

df.loc['a':'b']

```

Notice that the slice is *inclusive* on both sides, unlike your typical slicing of a list. Sometimes, you'd rather slice by *position* instead of label. `.iloc` has you covered:

```

df.iloc[0:2]

```

This follows the usual python slicing rules: closed on the left, open on the right.

As I mentioned, you can slice both rows and columns. Use `.loc` for label or `.iloc` for position indexing.

```

df.loc['a', 'B']

```

Pandas, like NumPy, will reduce dimensions when possible. Select a single column and you get back `Series` (see below). Select a single row and single column, you get a scalar.

You can get pretty fancy:

```

df.loc['a':'b', ['A', 'C']]

```

#### Summary

- Use `[]` for selecting columns

- Use `.loc[row_lables, column_labels]` for label-based indexing

- Use `.iloc[row_positions, column_positions]` for positional index

I've left out boolean and hierarchical indexing, which we'll see later.

## Series

You've already seen some `Series` up above. It's the 1-dimensional analog of the DataFrame. Each column in a `DataFrame` is in some sense a `Series`. You can select a `Series` from a DataFrame in a few ways:

```

# __getitem__ like before

df['A']

# .loc, like before

df.loc[:, 'A']

# using `.` attribute lookup

df.A

```

You'll have to be careful with the last one. It won't work if you're column name isn't a valid python identifier (say it has a space) or if it conflicts with one of the (many) methods on `DataFrame`. The `.` accessor is extremely convient for interactive use though.

You should never *assign* a column with `.` e.g. don't do

```python

# bad

df.A = [1, 2, 3]

```

It's unclear whether your attaching the list `[1, 2, 3]` as an attirbute of `df`, or whether you want it as a column. It's better to just say

```python

df['A'] = [1, 2, 3]

# or

df.loc[:, 'A'] = [1, 2, 3]

```

`Series` share many of the same methods as `DataFrame`s.

## Index

`Index`es are something of a peculiarity to pandas.

First off, they are not the kind of indexes you'll find in SQL, which are used to help the engine speed up certain queries.

In pandas, `Index`es are about lables. This helps with selection (like we did above) and automatic alignment when performing operations between two `DataFrame`s or `Series`.

R does have row labels, but they're nowhere near as powerful (or complicated) as in pandas. You can access the index of a `DataFrame` or `Series` with the `.index` attribute.

```

df.index

```

There are special kinds of `Index`es that you'll come across. Some of these are

- `MultiIndex` for multidimensional (Hierarchical) labels

- `DatetimeIndex` for datetimes

- `Float64Index` for floats

- `CategoricalIndex` for, you guessed it, `Categorical`s

We'll talk *a lot* more about indexes. They're a complex topic and can introduce headaches.

<blockquote class="twitter-tweet" lang="en"><p lang="en" dir="ltr"><a href="https://twitter.com/gjreda">@gjreda</a> <a href="https://twitter.com/treycausey">@treycausey</a> in some cases row indexes are the best thing since sliced bread, in others they simply get in the way. Hard problem</p>— Wes McKinney (@wesmckinn) <a href="https://twitter.com/wesmckinn/status/547177248768659457">December 22, 2014</a></blockquote>

Pandas, for better or for worse, does usually provide ways around row indexes being obstacles. The problem is knowing *when* they are just getting in the way, which mostly comes by experience. Sorry.

# Answers

1. Does a NumPy array have a single dtype or multiple dtypes?

- NumPy arrays are homogenous: they only have a single dtype (unlike DataFrames).

You can have an array that holds mixed types, e.g. `np.array(['a', 1])`, but the

dtype of that array is `object`, which you probably want to avoid.

2. Why is broadcasting useful?

- It lets you perform operations between arrays that are compatable, but not nescessarily identical,

in shape. This makes your code cleaner.

3. How do you slice a DataFrame by row label?

- Use `.loc[label]`. For position based use `.iloc[integer]`.

4. How do you select a column of a DataFrame?

- Standard `__getitem__`: `df[column_name]`

5. Is the Index a column in the DataFrame?

- No. It isn't included in any operations (`mean`, etc). It can be inserted as a regular

column with `df.reset_index()`.

| github_jupyter |

```

import sympy

import numpy as np

import matplotlib.pyplot as plt

from matplotlib import cm

from sympy import sin, cos, pi, Function

from sympy import Symbol, symbols, Matrix, Transpose, init_session, Array, tensorproduct

from sympy.physics.vector import ReferenceFrame, outer, dynamicsymbols, Point

```

## Definindo as funções para h e psi

```

#Defining h

def h(t, L, w, e_mais, e_cruzado,A):

h_mais = A*cos(w*t-w*L)

h_cruzado = A*sin(w*t-w*L)

return h_mais*e_mais + h_cruzado*e_cruzado

```

\begin{equation}

h = h_+ + h_\times

\end{equation}

```

#função PSI(t)

def PSIj(j, k, L, N, A, w, T, ep, ec):

H = h(T, L[j-1], w, ep, ec,A)

phij = N[j-1].dot(H.dot(N[j-1]))/2

return phij/(1-(k.dot(N[j-1]))**2) #expandir aqui

```

\begin{equation}

\Psi (t) = \frac{n^i h_{ij} n^j}{2(1 - (\hat{k}\cdot \hat{n})^2)}

\end{equation}

## Símbolos

```

phi, theta, t, w, L, A , psi, sigma= symbols('ϕ θ t ω L A ψ σ')

```

## Sistemas de coordenadas e vetores usando o sympy

```

DetFrame = ReferenceFrame("Det")

WaveFrame = ReferenceFrame("Wave")

WaveFrame.orient(DetFrame, "body", (phi, theta, psi), 'zxz')

vx = WaveFrame.x

vy = WaveFrame.y

vz = WaveFrame.z

dbaseii = outer(vx, vx)

dbaseij = outer(vx, vy)

dbaseik = outer(vx, vz)

dbaseji = outer(vy, vx)

dbasejj = outer(vy, vy)

dbasejk = outer(vy, vz)

dbaseki = outer(vz, vx)

dbasekj = outer(vz, vy)

dbasekk = outer(vz, vz)

e_plus = dbaseii - dbasejj

e_cross = dbaseij + dbaseji

#n no referencial do detector

n2 = cos(sigma)*DetFrame.x + sin(sigma)*DetFrame.y

n3 = cos(sigma)*DetFrame.x - sin(sigma)*DetFrame.y

k = WaveFrame.z

```

## Defining posições dos satélites

```

O = Point('O') #origin

O.set_vel(DetFrame, 0)

#seting p1, p2, p3

p1 = Point(r'P_1')

p2 = Point(r'P_2')

p3 = Point(r'P_3')

#r1, r2, r3, gamma1, gamma2, gamma3 = symbols(r'r_1 r_2 r_3 \gamma_1 \gamma_2 \gamma_3') #dist from org & phase angle

l = Symbol('l')

p1.set_pos(O, l*cos(0 )*DetFrame.x + l*sin(0 )*DetFrame.y + 0*DetFrame.z)

p2.set_pos(O, l*cos(2*pi/3)*DetFrame.x + l*sin(2*pi/3)*DetFrame.y + 0*DetFrame.z)

p3.set_pos(O, l*cos(4*pi/3)*DetFrame.x + l*sin(4*pi/3)*DetFrame.y + 0*DetFrame.z)

P1 = p1.pos_from(O)

P2 = p2.pos_from(O)

P3 = p3.pos_from(O)

P = [P1, P2, P3]

#setting n's, according to KTV notation

n1 = p2.pos_from(p3)

n2 = p3.pos_from(p1)

n3 = p1.pos_from(p2)

L1 = n1.magnitude()

L2 = n2.magnitude()

L3 = n3.magnitude()

N = [n1, n2, n3]

L = [L1, L2, L3]

```

## Início do cálculo do interferômetro

```

PARAMETERS = (k,L,N,P,A,w,t, e_plus, e_cross)

def delay(func, D):

return func.subs(w*t, w*t - L[D-1])

def ygw(i,j,k,L,N,P,A,w,T, ep, ec):

m = abs(6-i-j)-1

return (1+ k.dot(N[m]))*\

(PSIj(m, k, L, N, A, w, T + k.dot(P[i-1]) - L[m], ep, ec)\

- PSIj(m,k, L, N, A, w, T + k.dot(P[j-1]), ep, ec)) # # T + k.dot(P[i]) - L[m]) , T + k.dot(P[j]))

def ygwD(i,j,k,L,N,P,A,w,T, ep, ec, D): #Ygw com delay

#delay = L[D]

return delay(ygw(i,j,k,L,N,P,A,w,T, ep, ec), D)

def yij(i,j, parms = PARAMETERS):

k,L,N,P,A,w,T, ep, ec = parms

return ygw(i,j,k,L,N,P,A,w,T, ep, ec)

def yijD(i,j,D):

return delay(yij(i,j),D)

def yijDD(i,j,D, E):

return delay(delay(yij(i,j),D),E)

f = A*cos(w*t)

f

delay(f, 2)

X = (yij(3,1) + yijD(1,3,2))\

+ delay(delay((yij(2,1) + yijD(1,2,3)),2),2)\

- (yij(2,1) + yijD(1,2,3))\

- delay(delay(yij(3,1)+yijD(1,3,2),2),2)\

- delay(delay(delay(delay(\

(yij(3,1) + yijD(1,3,2))\

+ delay(delay((yij(2,1) + yijD(1,2,3)),2),2)\

- (yij(2,1) + yijD(1,2,3))\

- delay(delay(yij(3,1)+yijD(1,3,2),2),2)\

,2),2),3),3)

#X = sympy.trigsimp(X)

y1 = yijD(3,1,2) - yij(2,3)

#calculando M

X=sympy.trigsimp(y1)

X=sympy.expand(X)

X

#M=sympy.trigsimp(M)

F_mais=X.coeff(cos(w*t))

F_cruzado=X.coeff(sin(w*t))

F_cruzado

f_mais = sympy.lambdify([ phi, theta, w, A, l], F_mais)

f_cruzado = sympy.lambdify([phi, theta, w, A, l], F_cruzado)

M_eval = sympy.lambdify([phi, theta, w, A, l], M)

f_mais

#defining parameters

phi_value, theta_value = np.mgrid[-np.pi:np.pi:100j, 0:np.pi:100j]

arm=5e9/3e8 #segundos

f=10**-3 #Hz

freq=2*np.pi*f

a=1

#atribuindos os valores acima nas funções

# [phi , theta , w , A, r1, r2, r3, gamma1, gamma2, gamma3]

f_mais_data = f_mais((phi_value), (theta_value), freq, a, arm)

f_cruzado_data = f_cruzado((phi_value),(theta_value), freq, a, arm)

f_mais_data

#plot phi, theta e F

fig = plt.figure()

ax = fig.gca(projection='3d')

ax.plot_surface(phi_value, theta_value,(f_mais_data),color='b')

#ax.plot_surface(phi_value, theta_value,(f_cruzado_data),color='g')

#ax.plot_surface(phi_value, theta_value,(f_cruzado_data-f_mais_data),color='g')

ax.set_xlabel('phi')

ax.set_ylabel('theta')

ax.set_zlabel('F+')

plt.show()

#plot x,y,z

fig = plt.figure()

ax = fig.gca(projection='3d')

x_mais=(f_mais_data)*np.sin(theta_value)*np.sin(phi_value)

y_mais=-(f_mais_data)*np.sin(theta_value)*np.cos(phi_value)

z_mais=(f_mais_data)*np.cos(theta_value)

x_cruzado=(f_cruzado_data)*np.sin(theta_value)*np.sin(phi_value)

y_cruzado=-(f_cruzado_data)*np.sin(theta_value)*np.cos(phi_value)

z_cruzado=(f_cruzado_data)*np.cos(theta_value)

ax.plot_surface(x_mais,y_mais,z_mais,color='b')

#ax.plot_surface(x_cruzado,y_cruzado,z_cruzado,color='g')

#ax.plot_surface((x_cruzado-x_mais),(y_cruzado-y_mais),(z_cruzado-z_mais),color='g', label = 'F_cruzado')

ax.set_xlabel('x')

ax.set_ylabel('y')

ax.set_zlabel('z')

plt.show()

```

| github_jupyter |

## LND REST API example

### senario

* multi-hop payment (Alice -> Bob -> Charlie)

### setup

```

# load libraries

import json

from threading import Thread

from base64 import b64decode

from time import sleep

from client import LndClient, BtcClient

from util import p, dump, generate_blocks

import requests, codecs, json

from time import sleep

from configs import *

bitcoin = BtcClient(RPC_USER, RPC_PASS, BITCOIN_IP, RPC_PORT)

# initialize mainchain

bitcoin.generate(101)

p('block height = {}'.format(bitcoin.getblockcount()))

# node

alice = LndClient("alice", LND_IP, LND_REST_PORT)

bob = LndClient("bob", LND_IP_BOB, LND_REST_PORT)

charlie = LndClient("charlie", LND_IP_CHARLIE, LND_REST_PORT)

```

### 1. fund Alice, Bob

* Alice: 0.09 btc

* Charlie: 0.08 btc

```

p('[wallet balance before funding]')

p(" alice = {}".format(alice.get('/balance/blockchain')))

p(" bob = {}".format( bob.get('/balance/blockchain')))

addr_a = alice.get('/newaddress', {'type': 'np2wkh'})['address']

addr_b = bob.get('/newaddress', {'type': 'np2wkh'})['address']

bitcoin.sendtoaddress(addr_a, 0.09)

bitcoin.sendtoaddress(addr_b, 0.08)

bitcoin.generate(6)

p('[wallet balance after funding]')

p(" alice = {}".format(alice.get('/balance/blockchain')))

p(" bob = {}".format( bob.get('/balance/blockchain')))

```

### 2. connect nodes

* Alice -> Bob

* Bob -> Charlie

```

# Alice -> Bob

pubkey_b = bob.get('/getinfo')['identity_pubkey']

host_b = f'{LND_IP_BOB}:9735'

alice.post('/peers', { 'addr': { 'pubkey': pubkey_b, 'host': host_b }, 'perm': True })

# Bob -> Charlie

pubkey_c = charlie.get('/getinfo')['identity_pubkey']

host_c = f'{LND_IP_CHARLIE}:9735'

bob.post('/peers', { 'addr': { 'pubkey': pubkey_c, 'host': host_c }, 'perm': True })

p('[identity pubkey]')

p(" bob = {}".format(pubkey_b))

p(" charlie = {}".format(pubkey_c))

p('[peer]')

p(' alice <-> ', end=''); dump( alice.get('/peers'))

p(' bob <-> ', end=''); dump( bob.get('/peers'))

p(' charlie <-> ', end=''); dump(charlie.get('/peers'))

```

### 3. open the channel

```

# Alice to Bob

point_a = alice.post('/channels', {

"node_pubkey_string": pubkey_b, "local_funding_amount": "7000000", "push_sat": "0"

})

# Bob to Charlie

point_b = bob.post('/channels', {

"node_pubkey_string": pubkey_c, "local_funding_amount": "6000000", "push_sat": "0"

})

# open the channel

sleep(2)

bitcoin.generate(6)

# check the channel state

p('[channels: alice]'); dump(alice.get('/channels'))

p('[channels: bob]' ); dump( bob.get('/channels'))

p('[channel: Alice <-> Bob]')

funding_tx_id_a = b64decode(point_a['funding_txid_bytes'].encode())[::-1].hex()

output_index_a = point_a['output_index']

p(' funding tx txid = {}'.format(funding_tx_id_a))

p(' funding tx vout n = {}'.format(output_index_a))

p('[channel: Bob <-> Charlie]')

funding_tx_id_b = b64decode(point_b['funding_txid_bytes'].encode())[::-1].hex()

output_index_b = point_b['output_index']

p(' funding tx txid = {}'.format(funding_tx_id_b))

p(' funding tx vout n = {}'.format(output_index_b))

```

### 4. create invoice

* Charlie charges Alice 40,000 satoshi

```

# add a invoice to the invoice database

invoice = charlie.post('/invoices', { "value": "40000" })

# check the invoice

invoice_info = charlie.get('/invoice/' + b64decode(invoice['r_hash'].encode()).hex())

p('[invoice]'); dump(invoice_info)

# check the payment request

payreq = charlie.get('/payreq/' + invoice['payment_request'])

p('[payment request]'); dump(payreq)

```

### 5. send the payment

* Alice pays 40,000 satoshi to Charlie

* If you have the error "unable to find a path to destination", please wait a little while and then try again.

```

# check the channel balance

p('[channel balance before paying]')

p(' alice = {}'.format( alice.get('/balance/channels')))

p(' charlie = {}'.format(charlie.get('/balance/channels')))

# send the payment

payment = alice.post('/channels/transactions', { 'payment_request': invoice['payment_request'] })

p('[payment]'); dump(payment)

# check the payment

# p('[payment]'); dump(alice.get('/payments'))

# wait

sleep(2)

# check the channel balance

p('[channel balance after paying]')

p(' alice = {}'.format( alice.get('/balance/channels')))

p(' charlie = {}'.format(charlie.get('/balance/channels')))

```

### 6. close the channel

```

# check the balance

p('[channel balance]')

p(' alice = {}'.format( alice.get('/balance/channels')))

p(' bob = {}'.format( bob.get('/balance/channels')))

p(' charlie = {}'.format(charlie.get('/balance/channels')))

p('[wallet balance]')

p(' alice = ', end=''); dump( alice.get('/balance/blockchain')['confirmed_balance'])

p(' bob = ', end=''); dump( bob.get('/balance/blockchain')['confirmed_balance'])

p(' charlie = ', end=''); dump(charlie.get('/balance/blockchain')['confirmed_balance'])

p('[channel: Alice <-> Bob]')

# mine mainchain 1 block after 3 sec

Thread(target=generate_blocks, args=(bitcoin, 1, 3)).start()

# check the channel state before closing

p(' number of channels : {}'.format(len(alice.get('/channels')['channels'])))

# close the channel

res = alice.delete('/channels/' + funding_tx_id_a + '/' + str(output_index_a)).text.split("\n")[1]

closing_txid_a = json.loads(res)['result']['chan_close']['closing_txid']

p(' closing_txid = {}'.format(closing_txid_a))

sleep(5)

# check the channel state after closing

p(' number of channels : {}'.format(len(alice.get('/channels')['channels'])))

p('[channel: Bob <-> Charlie]')

# mine mainchain 1 block after 3 sec

Thread(target=generate_blocks, args=(bitcoin, 1, 3)).start()

# close the channel

res = bob.delete('/channels/' + funding_tx_id_b + '/' + str(output_index_b)).text.split("\n")[1]

closing_txid_b = json.loads(res)['result']['chan_close']['closing_txid']

p(' closing_txid = {}'.format(closing_txid_b))

sleep(5)

# check the balance

p('[channel balance]')

p(' alice = {}'.format( alice.get('/balance/channels')))

p(' bob = {}'.format( bob.get('/balance/channels')))

p(' charlie = {}'.format(charlie.get('/balance/channels')))

p('[wallet balance]')

p(' alice = ', end=''); dump( alice.get('/balance/blockchain')['confirmed_balance'])

p(' bob = ', end=''); dump( bob.get('/balance/blockchain')['confirmed_balance'])

p(' charlie = ', end=''); dump(charlie.get('/balance/blockchain')['confirmed_balance'])

```

| github_jupyter |

# Table Visualization

This section demonstrates visualization of tabular data using the [Styler][styler]

class. For information on visualization with charting please see [Chart Visualization][viz]. This document is written as a Jupyter Notebook, and can be viewed or downloaded [here][download].

[styler]: ../reference/api/pandas.io.formats.style.Styler.rst

[viz]: visualization.rst

[download]: https://nbviewer.ipython.org/github/pandas-dev/pandas/blob/master/doc/source/user_guide/style.ipynb

## Styler Object and HTML

Styling should be performed after the data in a DataFrame has been processed. The [Styler][styler] creates an HTML `<table>` and leverages CSS styling language to manipulate many parameters including colors, fonts, borders, background, etc. See [here][w3schools] for more information on styling HTML tables. This allows a lot of flexibility out of the box, and even enables web developers to integrate DataFrames into their exiting user interface designs.

The `DataFrame.style` attribute is a property that returns a [Styler][styler] object. It has a `_repr_html_` method defined on it so they are rendered automatically in Jupyter Notebook.

[styler]: ../reference/api/pandas.io.formats.style.Styler.rst

[w3schools]: https://www.w3schools.com/html/html_tables.asp

```

import matplotlib.pyplot

# We have this here to trigger matplotlib's font cache stuff.

# This cell is hidden from the output

import pandas as pd

import numpy as np

df = pd.DataFrame([[38.0, 2.0, 18.0, 22.0, 21, np.nan],[19, 439, 6, 452, 226,232]],

index=pd.Index(['Tumour (Positive)', 'Non-Tumour (Negative)'], name='Actual Label:'),

columns=pd.MultiIndex.from_product([['Decision Tree', 'Regression', 'Random'],['Tumour', 'Non-Tumour']], names=['Model:', 'Predicted:']))

df.style

```

The above output looks very similar to the standard DataFrame HTML representation. But the HTML here has already attached some CSS classes to each cell, even if we haven't yet created any styles. We can view these by calling the [.render()][render] method, which returns the raw HTML as string, which is useful for further processing or adding to a file - read on in [More about CSS and HTML](#More-About-CSS-and-HTML). Below we will show how we can use these to format the DataFrame to be more communicative. For example how we can build `s`:

[render]: ../reference/api/pandas.io.formats.style.Styler.render.rst

```

# Hidden cell to just create the below example: code is covered throughout the guide.

s = df.style\

.hide_columns([('Random', 'Tumour'), ('Random', 'Non-Tumour')])\

.format('{:.0f}')\

.set_table_styles([{

'selector': '',

'props': 'border-collapse: separate;'

},{

'selector': 'caption',

'props': 'caption-side: bottom; font-size:1.3em;'

},{

'selector': '.index_name',

'props': 'font-style: italic; color: darkgrey; font-weight:normal;'

},{

'selector': 'th:not(.index_name)',

'props': 'background-color: #000066; color: white;'

},{

'selector': 'th.col_heading',

'props': 'text-align: center;'

},{

'selector': 'th.col_heading.level0',

'props': 'font-size: 1.5em;'

},{

'selector': 'th.col2',

'props': 'border-left: 1px solid white;'

},{

'selector': '.col2',

'props': 'border-left: 1px solid #000066;'

},{

'selector': 'td',

'props': 'text-align: center; font-weight:bold;'

},{

'selector': '.true',

'props': 'background-color: #e6ffe6;'

},{

'selector': '.false',

'props': 'background-color: #ffe6e6;'

},{

'selector': '.border-red',

'props': 'border: 2px dashed red;'

},{

'selector': '.border-green',

'props': 'border: 2px dashed green;'

},{

'selector': 'td:hover',

'props': 'background-color: #ffffb3;'

}])\

.set_td_classes(pd.DataFrame([['true border-green', 'false', 'true', 'false border-red', '', ''],

['false', 'true', 'false', 'true', '', '']],

index=df.index, columns=df.columns))\

.set_caption("Confusion matrix for multiple cancer prediction models.")\

.set_tooltips(pd.DataFrame([['This model has a very strong true positive rate', '', '', "This model's total number of false negatives is too high", '', ''],

['', '', '', '', '', '']],

index=df.index, columns=df.columns),

css_class='pd-tt', props=

'visibility: hidden; position: absolute; z-index: 1; border: 1px solid #000066;'

'background-color: white; color: #000066; font-size: 0.8em;'

'transform: translate(0px, -24px); padding: 0.6em; border-radius: 0.5em;')

s

```

## Formatting the Display

### Formatting Values

Before adding styles it is useful to show that the [Styler][styler] can distinguish the *display* value from the *actual* value. To control the display value, the text is printed in each cell, and we can use the [.format()][formatfunc] method to manipulate this according to a [format spec string][format] or a callable that takes a single value and returns a string. It is possible to define this for the whole table or for individual columns.

Additionally, the format function has a **precision** argument to specifically help formatting floats, as well as **decimal** and **thousands** separators to support other locales, an **na_rep** argument to display missing data, and an **escape** argument to help displaying safe-HTML or safe-LaTeX. The default formatter is configured to adopt pandas' regular `display.precision` option, controllable using `with pd.option_context('display.precision', 2):`

Here is an example of using the multiple options to control the formatting generally and with specific column formatters.

[styler]: ../reference/api/pandas.io.formats.style.Styler.rst

[format]: https://docs.python.org/3/library/string.html#format-specification-mini-language

[formatfunc]: ../reference/api/pandas.io.formats.style.Styler.format.rst

```

df.style.format(precision=0, na_rep='MISSING', thousands=" ",

formatter={('Decision Tree', 'Tumour'): "{:.2f}",

('Regression', 'Non-Tumour'): lambda x: "$ {:,.1f}".format(x*-1e6)

})

```

### Hiding Data

The index and column headers can be completely hidden, as well subselecting rows or columns that one wishes to exclude. Both these options are performed using the same methods.

The index can be hidden from rendering by calling [.hide_index()][hideidx] without any arguments, which might be useful if your index is integer based. Similarly column headers can be hidden by calling [.hide_columns()][hidecols] without any arguments.

Specific rows or columns can be hidden from rendering by calling the same [.hide_index()][hideidx] or [.hide_columns()][hidecols] methods and passing in a row/column label, a list-like or a slice of row/column labels to for the ``subset`` argument.

Hiding does not change the integer arrangement of CSS classes, e.g. hiding the first two columns of a DataFrame means the column class indexing will start at `col2`, since `col0` and `col1` are simply ignored.

We can update our `Styler` object to hide some data and format the values.

[hideidx]: ../reference/api/pandas.io.formats.style.Styler.hide_index.rst

[hidecols]: ../reference/api/pandas.io.formats.style.Styler.hide_columns.rst

```

s = df.style.format('{:.0f}').hide_columns([('Random', 'Tumour'), ('Random', 'Non-Tumour')])

s

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s.set_uuid('after_hide')

```

## Methods to Add Styles

There are **3 primary methods of adding custom CSS styles** to [Styler][styler]:

- Using [.set_table_styles()][table] to control broader areas of the table with specified internal CSS. Although table styles allow the flexibility to add CSS selectors and properties controlling all individual parts of the table, they are unwieldy for individual cell specifications. Also, note that table styles cannot be exported to Excel.

- Using [.set_td_classes()][td_class] to directly link either external CSS classes to your data cells or link the internal CSS classes created by [.set_table_styles()][table]. See [here](#Setting-Classes-and-Linking-to-External-CSS). These cannot be used on column header rows or indexes, and also won't export to Excel.

- Using the [.apply()][apply] and [.applymap()][applymap] functions to add direct internal CSS to specific data cells. See [here](#Styler-Functions). These cannot be used on column header rows or indexes, but only these methods add styles that will export to Excel. These methods work in a similar way to [DataFrame.apply()][dfapply] and [DataFrame.applymap()][dfapplymap].

[table]: ../reference/api/pandas.io.formats.style.Styler.set_table_styles.rst

[styler]: ../reference/api/pandas.io.formats.style.Styler.rst

[td_class]: ../reference/api/pandas.io.formats.style.Styler.set_td_classes.rst

[apply]: ../reference/api/pandas.io.formats.style.Styler.apply.rst

[applymap]: ../reference/api/pandas.io.formats.style.Styler.applymap.rst

[dfapply]: ../reference/api/pandas.DataFrame.apply.rst

[dfapplymap]: ../reference/api/pandas.DataFrame.applymap.rst

## Table Styles

Table styles are flexible enough to control all individual parts of the table, including column headers and indexes.

However, they can be unwieldy to type for individual data cells or for any kind of conditional formatting, so we recommend that table styles are used for broad styling, such as entire rows or columns at a time.

Table styles are also used to control features which can apply to the whole table at once such as creating a generic hover functionality. The `:hover` pseudo-selector, as well as other pseudo-selectors, can only be used this way.

To replicate the normal format of CSS selectors and properties (attribute value pairs), e.g.

```

tr:hover {

background-color: #ffff99;

}

```

the necessary format to pass styles to [.set_table_styles()][table] is as a list of dicts, each with a CSS-selector tag and CSS-properties. Properties can either be a list of 2-tuples, or a regular CSS-string, for example:

[table]: ../reference/api/pandas.io.formats.style.Styler.set_table_styles.rst

```

cell_hover = { # for row hover use <tr> instead of <td>

'selector': 'td:hover',

'props': [('background-color', '#ffffb3')]

}

index_names = {

'selector': '.index_name',

'props': 'font-style: italic; color: darkgrey; font-weight:normal;'

}

headers = {

'selector': 'th:not(.index_name)',

'props': 'background-color: #000066; color: white;'

}

s.set_table_styles([cell_hover, index_names, headers])

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s.set_uuid('after_tab_styles1')

```

Next we just add a couple more styling artifacts targeting specific parts of the table. Be careful here, since we are *chaining methods* we need to explicitly instruct the method **not to** ``overwrite`` the existing styles.

```

s.set_table_styles([

{'selector': 'th.col_heading', 'props': 'text-align: center;'},

{'selector': 'th.col_heading.level0', 'props': 'font-size: 1.5em;'},

{'selector': 'td', 'props': 'text-align: center; font-weight: bold;'},

], overwrite=False)

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s.set_uuid('after_tab_styles2')

```

As a convenience method (*since version 1.2.0*) we can also pass a **dict** to [.set_table_styles()][table] which contains row or column keys. Behind the scenes Styler just indexes the keys and adds relevant `.col<m>` or `.row<n>` classes as necessary to the given CSS selectors.

[table]: ../reference/api/pandas.io.formats.style.Styler.set_table_styles.rst

```

s.set_table_styles({

('Regression', 'Tumour'): [{'selector': 'th', 'props': 'border-left: 1px solid white'},

{'selector': 'td', 'props': 'border-left: 1px solid #000066'}]

}, overwrite=False, axis=0)

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s.set_uuid('xyz01')

```

## Setting Classes and Linking to External CSS

If you have designed a website then it is likely you will already have an external CSS file that controls the styling of table and cell objects within it. You may want to use these native files rather than duplicate all the CSS in python (and duplicate any maintenance work).

### Table Attributes

It is very easy to add a `class` to the main `<table>` using [.set_table_attributes()][tableatt]. This method can also attach inline styles - read more in [CSS Hierarchies](#CSS-Hierarchies).

[tableatt]: ../reference/api/pandas.io.formats.style.Styler.set_table_attributes.rst

```

out = s.set_table_attributes('class="my-table-cls"').render()

print(out[out.find('<table'):][:109])

```

### Data Cell CSS Classes

*New in version 1.2.0*

The [.set_td_classes()][tdclass] method accepts a DataFrame with matching indices and columns to the underlying [Styler][styler]'s DataFrame. That DataFrame will contain strings as css-classes to add to individual data cells: the `<td>` elements of the `<table>`. Rather than use external CSS we will create our classes internally and add them to table style. We will save adding the borders until the [section on tooltips](#Tooltips).

[tdclass]: ../reference/api/pandas.io.formats.style.Styler.set_td_classes.rst

[styler]: ../reference/api/pandas.io.formats.style.Styler.rst

```

s.set_table_styles([ # create internal CSS classes

{'selector': '.true', 'props': 'background-color: #e6ffe6;'},

{'selector': '.false', 'props': 'background-color: #ffe6e6;'},

], overwrite=False)

cell_color = pd.DataFrame([['true ', 'false ', 'true ', 'false '],

['false ', 'true ', 'false ', 'true ']],

index=df.index,

columns=df.columns[:4])

s.set_td_classes(cell_color)

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s.set_uuid('after_classes')

```

## Styler Functions

We use the following methods to pass your style functions. Both of those methods take a function (and some other keyword arguments) and apply it to the DataFrame in a certain way, rendering CSS styles.

- [.applymap()][applymap] (elementwise): accepts a function that takes a single value and returns a string with the CSS attribute-value pair.

- [.apply()][apply] (column-/row-/table-wise): accepts a function that takes a Series or DataFrame and returns a Series, DataFrame, or numpy array with an identical shape where each element is a string with a CSS attribute-value pair. This method passes each column or row of your DataFrame one-at-a-time or the entire table at once, depending on the `axis` keyword argument. For columnwise use `axis=0`, rowwise use `axis=1`, and for the entire table at once use `axis=None`.

This method is powerful for applying multiple, complex logic to data cells. We create a new DataFrame to demonstrate this.

[apply]: ../reference/api/pandas.io.formats.style.Styler.apply.rst

[applymap]: ../reference/api/pandas.io.formats.style.Styler.applymap.rst

```

np.random.seed(0)

df2 = pd.DataFrame(np.random.randn(10,4), columns=['A','B','C','D'])

df2.style

```

For example we can build a function that colors text if it is negative, and chain this with a function that partially fades cells of negligible value. Since this looks at each element in turn we use ``applymap``.

```

def style_negative(v, props=''):

return props if v < 0 else None

s2 = df2.style.applymap(style_negative, props='color:red;')\

.applymap(lambda v: 'opacity: 20%;' if (v < 0.3) and (v > -0.3) else None)

s2

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s2.set_uuid('after_applymap')

```

We can also build a function that highlights the maximum value across rows, cols, and the DataFrame all at once. In this case we use ``apply``. Below we highlight the maximum in a column.

```

def highlight_max(s, props=''):

return np.where(s == np.nanmax(s.values), props, '')

s2.apply(highlight_max, props='color:white;background-color:darkblue', axis=0)

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s2.set_uuid('after_apply')

```

We can use the same function across the different axes, highlighting here the DataFrame maximum in purple, and row maximums in pink.

```

s2.apply(highlight_max, props='color:white;background-color:pink;', axis=1)\

.apply(highlight_max, props='color:white;background-color:purple', axis=None)

```

This last example shows how some styles have been overwritten by others. In general the most recent style applied is active but you can read more in the [section on CSS hierarchies](#CSS-Hierarchies). You can also apply these styles to more granular parts of the DataFrame - read more in section on [subset slicing](#Finer-Control-with-Slicing).

It is possible to replicate some of this functionality using just classes but it can be more cumbersome. See [item 3) of Optimization](#Optimization)

<div class="alert alert-info">

*Debugging Tip*: If you're having trouble writing your style function, try just passing it into ``DataFrame.apply``. Internally, ``Styler.apply`` uses ``DataFrame.apply`` so the result should be the same, and with ``DataFrame.apply`` you will be able to inspect the CSS string output of your intended function in each cell.

</div>

## Tooltips and Captions

Table captions can be added with the [.set_caption()][caption] method. You can use table styles to control the CSS relevant to the caption.

[caption]: ../reference/api/pandas.io.formats.style.Styler.set_caption.rst

```

s.set_caption("Confusion matrix for multiple cancer prediction models.")\

.set_table_styles([{

'selector': 'caption',

'props': 'caption-side: bottom; font-size:1.25em;'

}], overwrite=False)

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s.set_uuid('after_caption')

```

Adding tooltips (*since version 1.3.0*) can be done using the [.set_tooltips()][tooltips] method in the same way you can add CSS classes to data cells by providing a string based DataFrame with intersecting indices and columns. You don't have to specify a `css_class` name or any css `props` for the tooltips, since there are standard defaults, but the option is there if you want more visual control.

[tooltips]: ../reference/api/pandas.io.formats.style.Styler.set_tooltips.rst

```

tt = pd.DataFrame([['This model has a very strong true positive rate',

"This model's total number of false negatives is too high"]],

index=['Tumour (Positive)'], columns=df.columns[[0,3]])

s.set_tooltips(tt, props='visibility: hidden; position: absolute; z-index: 1; border: 1px solid #000066;'

'background-color: white; color: #000066; font-size: 0.8em;'

'transform: translate(0px, -24px); padding: 0.6em; border-radius: 0.5em;')

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s.set_uuid('after_tooltips')

```

The only thing left to do for our table is to add the highlighting borders to draw the audience attention to the tooltips. We will create internal CSS classes as before using table styles. **Setting classes always overwrites** so we need to make sure we add the previous classes.

```

s.set_table_styles([ # create internal CSS classes

{'selector': '.border-red', 'props': 'border: 2px dashed red;'},

{'selector': '.border-green', 'props': 'border: 2px dashed green;'},

], overwrite=False)

cell_border = pd.DataFrame([['border-green ', ' ', ' ', 'border-red '],

[' ', ' ', ' ', ' ']],

index=df.index,

columns=df.columns[:4])

s.set_td_classes(cell_color + cell_border)

# Hidden cell to avoid CSS clashes and latter code upcoding previous formatting

s.set_uuid('after_borders')

```

## Finer Control with Slicing