code stringlengths 2.5k 150k | kind stringclasses 1 value |

|---|---|

```

# default_exp eval

#hide

%load_ext autoreload

%autoreload 2

%matplotlib inline

```

# Eval

> This module contains all the necessary functions for evaluating different video duplication detection techniques.

```

#export

import cv2

import ffmpeg

import pickle

import numpy as np

from fastprogress.fastprogress import progress_bar

from matplotlib import pyplot as plt

from pathlib import Path

from tango.prep import *

from sklearn.cluster import KMeans

# hide

from nbdev.showdoc import *

#hide

path = Path("<path>")

video_paths = sorted(path.glob("**/*.mp4")); video_paths[:6]

#export

def calc_tf_idf(tfs, dfs):

tf_idf = np.array([])

for tf, df in zip(tfs, dfs):

tf = tf / np.sum(tfs)

idf = np.log(len(tfs) / (df + 1))

tf_idf = np.append(tf_idf, tf * idf)

return tf_idf

#export

def cosine_similarity(a, b):

return np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))

# export

def hit_rate_at_k(rs, k):

hits = 0

for r in rs:

if np.sum(r[:k]) > 0: hits += 1

return hits / len(rs)

```

## Following methods from: https://gist.github.com/bwhite/3726239

```

# export

def mean_reciprocal_rank(rs):

"""Score is reciprocal of the rank of the first relevant item

First element is 'rank 1'. Relevance is binary (nonzero is relevant).

Example from http://en.wikipedia.org/wiki/Mean_reciprocal_rank

>>> rs = [[0, 0, 1], [0, 1, 0], [1, 0, 0]]

>>> mean_reciprocal_rank(rs)

0.61111111111111105

>>> rs = np.array([[0, 0, 0], [0, 1, 0], [1, 0, 0]])

>>> mean_reciprocal_rank(rs)

0.5

>>> rs = [[0, 0, 0, 1], [1, 0, 0], [1, 0, 0]]

>>> mean_reciprocal_rank(rs)

0.75

Args:

rs: Iterator of relevance scores (list or numpy) in rank order

(first element is the first item)

Returns:

Mean reciprocal rank

"""

rs = (np.asarray(r).nonzero()[0] for r in rs)

return np.mean([1. / (r[0] + 1) if r.size else 0. for r in rs])

def r_precision(r):

"""Score is precision after all relevant documents have been retrieved

Relevance is binary (nonzero is relevant).

>>> r = [0, 0, 1]

>>> r_precision(r)

0.33333333333333331

>>> r = [0, 1, 0]

>>> r_precision(r)

0.5

>>> r = [1, 0, 0]

>>> r_precision(r)

1.0

Args:

r: Relevance scores (list or numpy) in rank order

(first element is the first item)

Returns:

R Precision

"""

r = np.asarray(r) != 0

z = r.nonzero()[0]

if not z.size:

return 0.

return np.mean(r[:z[-1] + 1])

def precision_at_k(r, k):

"""Score is precision @ k

Relevance is binary (nonzero is relevant).

>>> r = [0, 0, 1]

>>> precision_at_k(r, 1)

0.0

>>> precision_at_k(r, 2)

0.0

>>> precision_at_k(r, 3)

0.33333333333333331

>>> precision_at_k(r, 4)

Traceback (most recent call last):

File "<stdin>", line 1, in ?

ValueError: Relevance score length < k

Args:

r: Relevance scores (list or numpy) in rank order

(first element is the first item)

Returns:

Precision @ k

Raises:

ValueError: len(r) must be >= k

"""

assert k >= 1

r = np.asarray(r)[:k] != 0

if r.size != k:

raise ValueError('Relevance score length < k')

return np.mean(r)

def average_precision(r):

"""Score is average precision (area under PR curve)

Relevance is binary (nonzero is relevant).

>>> r = [1, 1, 0, 1, 0, 1, 0, 0, 0, 1]

>>> delta_r = 1. / sum(r)

>>> sum([sum(r[:x + 1]) / (x + 1.) * delta_r for x, y in enumerate(r) if y])

0.7833333333333333

>>> average_precision(r)

0.78333333333333333

Args:

r: Relevance scores (list or numpy) in rank order

(first element is the first item)

Returns:

Average precision

"""

r = np.asarray(r) != 0

out = [precision_at_k(r, k + 1) for k in range(r.size) if r[k]]

if not out:

return 0.

return np.mean(out)

def mean_average_precision(rs):

"""Score is mean average precision

Relevance is binary (nonzero is relevant).

>>> rs = [[1, 1, 0, 1, 0, 1, 0, 0, 0, 1]]

>>> mean_average_precision(rs)

0.78333333333333333

>>> rs = [[1, 1, 0, 1, 0, 1, 0, 0, 0, 1], [0]]

>>> mean_average_precision(rs)

0.39166666666666666

Args:

rs: Iterator of relevance scores (list or numpy) in rank order

(first element is the first item)

Returns:

Mean average precision

"""

return np.mean([average_precision(r) for r in rs])

def recall_at_k(r, k, l):

"""Score is recall @ k

Relevance is binary (nonzero is relevant).

>>> r = [0, 0, 1]

>>> recall_at_k(r, 1, 2)

0.0

>>> recall_at_k(r, 2, 2)

0.0

>>> recall_at_k(r, 3, 2)

0.5

>>> recall_at_k(r, 4, 2)

Traceback (most recent call last):

File "<stdin>", line 1, in ?

ValueError: Relevance score length < k

Args:

r: Relevance scores (list or numpy) in rank order

(first element is the first item)

k: the length or size of the relevant items

Returns:

Recall @ k

Raises:

ValueError: len(r) must be >= k

"""

assert k >= 1

assert l >= 1

r2 = np.asarray(r)[:k] != 0

if r2.size != k:

raise ValueError('Relevance score length < k')

return np.sum(r2)/l

rs = [[1, 0, 0], [0, 1, 0], [0, 0, 0]]

mean_reciprocal_rank(rs)

r = [1, 1, 0, 1, 0, 1, 0, 0, 0, 1]

average_precision(r)

mean_average_precision(rs)

# export

def rank_stats(rs):

ranks = []

for r in rs:

ranks.append(r.nonzero()[0][0] + 1)

ranks = np.asarray(ranks)

recipical_ranks = 1 / ranks

return np.std(ranks), np.mean(ranks), np.median(ranks), np.mean(recipical_ranks)

# export

def evaluate(rankings, top_k = [1, 5, 10]):

output = {}

for app in rankings:

output[app] = {}

app_rs = []

for bug in rankings[app]:

if bug == 'elapsed_time': continue

output[app][bug] = {}

bug_rs = []

for report in rankings[app][bug]:

output[app][bug][report] = {'ranks': []}

r = []

for labels, score in rankings[app][bug][report].items():

output[app][bug][report]['ranks'].append((labels, score))

if labels[0] == bug: r.append(1)

else: r.append(0)

r = np.asarray(r)

output[app][bug][report]['rank'] = r.nonzero()[0][0] + 1

output[app][bug][report]['average_precision'] = average_precision(r)

bug_rs.append(r)

bug_rs_std, bug_rs_mean, bug_rs_med, bug_mRR = rank_stats(bug_rs)

bug_mAP = mean_average_precision(bug_rs)

output[app][bug]['Bug std rank'] = bug_rs_std

output[app][bug]['Bug mean rank'] = bug_rs_mean

output[app][bug]['Bug median rank'] = bug_rs_med

output[app][bug]['Bug mRR'] = bug_mRR

output[app][bug]['Bug mAP'] = bug_mAP

for k in top_k:

bug_hit_rate = hit_rate_at_k(bug_rs, k)

output[app][f'Bug Hit@{k}'] = bug_hit_rate

app_rs.extend(bug_rs)

app_rs_std, app_rs_mean, app_rs_med, app_mRR = rank_stats(app_rs)

app_mAP = mean_average_precision(app_rs)

output[app]['App std rank'] = app_rs_std

output[app]['App mean rank'] = app_rs_mean

output[app]['App median rank'] = app_rs_med

output[app]['App mRR'] = app_mRR

output[app]['App mAP'] = app_mAP

print(f'{app} Elapsed Time in Seconds', rankings[app]['elapsed_time'])

print(f'{app} σ Rank', app_rs_std)

print(f'{app} μ Rank', app_rs_mean)

print(f'{app} Median Rank', app_rs_med)

print(f'{app} mRR:', app_mRR)

print(f'{app} mAP:', app_mAP)

for k in top_k:

app_hit_rate = hit_rate_at_k(app_rs, k)

output[app][f'App Hit@{k}'] = app_hit_rate

print(f'{app} Hit@{k}:', app_hit_rate)

return output

# export

def get_eval_results(evals, app, item):

for bug in evals[app]:

if bug == 'elapsed_time': continue

for vid in evals[app][bug]:

try:

print(evals[app][bug][vid][item])

except: continue

# export

def evaluate_ranking(ranking, ground_truth):

relevance = []

for doc in ranking:

if doc in ground_truth:

relevance.append(1)

else:

relevance.append(0)

r = np.asarray(relevance)

first_rank = int(r.nonzero()[0][0] + 1)

avg_precision = average_precision(r)

recip_rank = 1 / first_rank

ranks = []

precisions = []

recalls = []

limit = 10

for k in range(1, limit + 1):

ranks.append(1 if first_rank <= k else 0)

precisions.append(precision_at_k(r, k))

recalls.append(recall_at_k(r, k, len(ground_truth)))

results = {

'first_rank': first_rank,

'recip_rank': recip_rank,

'avg_precision': avg_precision

}

for i in range(limit):

k = i + 1

results["rr@" + str(k)] = ranks[i]

results["p@" + str(k)] = precisions[i]

results["r@" + str(k)] = recalls[i]

return results

from nbdev.export import notebook2script

notebook2script()

```

| github_jupyter |

<a href="https://colab.research.google.com/github/NeuromatchAcademy/course-content/blob/master/tutorials/W1D5_DimensionalityReduction/student/W1D5_Tutorial1.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# Neuromatch Academy: Week 1, Day 5, Tutorial 1

# Dimensionality Reduction: Geometric view of data

---

Tutorial objectives

In this notebook we'll explore how multivariate data can be represented in different orthonormal bases. This will help us build intuition that will be helpful in understanding PCA in the following tutorial.

Steps:

1. Generate correlated multivariate data.

2. Define an arbitrary orthonormal basis.

3. Project data onto new basis.

---

```

#@title Video: Geometric view of data

from IPython.display import YouTubeVideo

video = YouTubeVideo(id="emLW0F-VUag", width=854, height=480, fs=1)

print("Video available at https://youtube.com/watch?v=" + video.id)

video

```

# Setup

Run these cells to get the tutorial started.

```

#library imports

import time # import time

import numpy as np # import numpy

import scipy as sp # import scipy

import math # import basic math functions

import random # import basic random number generator functions

import matplotlib.pyplot as plt # import matplotlib

from IPython import display

#@title Figure Settings

%matplotlib inline

fig_w, fig_h = (8, 8)

plt.rcParams.update({'figure.figsize': (fig_w, fig_h)})

plt.style.use('ggplot')

%config InlineBackend.figure_format = 'retina'

#@title Helper functions

def get_data(cov_matrix):

"""

Returns a matrix of 1000 samples from a bivariate, zero-mean Gaussian

Note that samples are sorted in ascending order for the first random variable.

Args:

cov_matrix (numpy array of floats): desired covariance matrix

Returns:

(numpy array of floats) : samples from the bivariate Gaussian, with

each column corresponding to a different random variable

"""

mean = np.array([0,0])

X = np.random.multivariate_normal(mean,cov_matrix,size = 1000)

indices_for_sorting = np.argsort(X[:,0])

X = X[indices_for_sorting,:]

return X

def plot_data(X):

"""

Plots bivariate data. Includes a plot of each random variable, and a scatter

plot of their joint activity. The title indicates the sample correlation

calculated from the data.

Args:

X (numpy array of floats): Data matrix

each column corresponds to a different random variable

Returns:

Nothing.

"""

fig = plt.figure(figsize=[8,4])

gs = fig.add_gridspec(2,2)

ax1 = fig.add_subplot(gs[0,0])

ax1.plot(X[:,0],color='k')

plt.ylabel('Neuron 1')

plt.title('Sample var 1: {:.1f}'.format(np.var(X[:,0])))

ax1.set_xticklabels([])

ax2 = fig.add_subplot(gs[1,0])

ax2.plot(X[:,1],color='k')

plt.xlabel('Sample Number')

plt.ylabel('Neuron 2')

plt.title('Sample var 2: {:.1f}'.format(np.var(X[:,1])))

ax3 = fig.add_subplot(gs[:, 1])

ax3.plot(X[:,0],X[:,1],'.',markerfacecolor=[.5,.5,.5], markeredgewidth=0)

ax3.axis('equal')

plt.xlabel('Neuron 1 activity')

plt.ylabel('Neuron 2 activity')

plt.title('Sample corr: {:.1f}'.format(np.corrcoef(X[:,0],X[:,1])[0,1]))

def plot_basis_vectors(X,W):

"""

Plots bivariate data as well as new basis vectors.

Args:

X (numpy array of floats): Data matrix

each column corresponds to a different random variable

W (numpy array of floats): Square matrix representing new orthonormal basis

each column represents a basis vector

Returns:

Nothing.

"""

plt.figure(figsize=[4,4])

plt.plot(X[:,0],X[:,1],'.',color=[.5,.5,.5],label='Data')

plt.axis('equal')

plt.xlabel('Neuron 1 activity')

plt.ylabel('Neuron 2 activity')

plt.plot([0,W[0,0]],[0,W[1,0]],color='r',linewidth=3,label = 'Basis vector 1')

plt.plot([0,W[0,1]],[0,W[1,1]],color='b',linewidth=3,label = 'Basis vector 2')

plt.legend()

def plot_data_new_basis(Y):

"""

Plots bivariate data after transformation to new bases. Similar to plot_data but

with colors corresponding to projections onto basis 1 (red) and basis 2 (blue).

The title indicates the sample correlation calculated from the data.

Note that samples are re-sorted in ascending order for the first random variable.

Args:

Y (numpy array of floats): Data matrix in new basis

each column corresponds to a different random variable

Returns:

Nothing.

"""

fig = plt.figure(figsize=[8,4])

gs = fig.add_gridspec(2,2)

ax1 = fig.add_subplot(gs[0,0])

ax1.plot(Y[:,0],'r')

plt.xlabel

plt.ylabel('Projection \n basis vector 1')

plt.title('Sample var 1: {:.1f}'.format(np.var(Y[:,0])))

ax1.set_xticklabels([])

ax2 = fig.add_subplot(gs[1,0])

ax2.plot(Y[:,1],'b')

plt.xlabel('Sample number')

plt.ylabel('Projection \n basis vector 2')

plt.title('Sample var 2: {:.1f}'.format(np.var(Y[:,1])))

ax3 = fig.add_subplot(gs[:, 1])

ax3.plot(Y[:,0],Y[:,1],'.',color=[.5,.5,.5])

ax3.axis('equal')

plt.xlabel('Projection basis vector 1')

plt.ylabel('Projection basis vector 2')

plt.title('Sample corr: {:.1f}'.format(np.corrcoef(Y[:,0],Y[:,1])[0,1]))

```

# Generate correlated multivariate data

```

#@title Video: Multivariate data

from IPython.display import YouTubeVideo

video = YouTubeVideo(id="YOan2BQVzTQ", width=854, height=480, fs=1)

print("Video available at https://youtube.com/watch?v=" + video.id)

video

```

To study multivariate data, first we generate it. In this exercise we generate data from a *bivariate normal distribution*. This is an extension of the one-dimensional normal distribution to two dimensions, in which each $x_i$ is marginally normal with mean $\mu_i$ and variance $\sigma_i^2$:

\begin{align}

x_i \sim \mathcal{N}(\mu_i,\sigma_i^2)

\end{align}

Additionally, the joint distribution for $x_1$ and $x_2$ has a specified correlation coefficient $\rho$. Recall that the correlation coefficient is a normalized version of the covariance, and ranges between -1 and +1.

\begin{align}

\rho = \frac{\text{cov}(x_1,x_2)}{\sqrt{\sigma_1^2 \sigma_2^2}}

\end{align}

For simplicity, we will assume that the mean of each variable has already been subtracted, so that $\mu_i=0$. The remaining parameters can be summarized in the covariance matrix:

\begin{equation*}

{\bf \Sigma} =

\begin{pmatrix}

\text{var}(x_1) & \text{cov}(x_1,x_2) \\

\text{cov}(x_1,x_2) &\text{var}(x_2)

\end{pmatrix}

\end{equation*}

Note that this is a symmetric matrix with the variances $\text{var}(x_i) = \sigma_i^2$ on the diagonal, and the covariance on the off-diagonal.

### Exercise

We have provided code to draw random samples from a zero-mean bivariate normal distribution. These samples could be used to simulate changes in firing rates for two neurons. Fill in the function below to calculate the covariance matrix given the desired variances and correlation coefficient. The covariance can be found by rearranging the equation above:

\begin{align}

\text{cov}(x_1,x_2) = \rho \sqrt{\sigma_1^2 \sigma_2^2}

\end{align}

Use these functions to generate and plot data while varying the parameters. You should get a feel for how changing the correlation coefficient affects the geometry of the simulated data.

**Suggestions**

* Fill in the function `calculate_cov_matrix` to calculate the covariance.

* Generate and plot the data for $\sigma_1^2 =1$, $\sigma_1^2 =1$, and $\rho = .8$. Try plotting the data for different values of the correlation coefficent: $\rho = -1, -.5, 0, .5, 1$.

```

help(plot_data)

help(get_data)

def calculate_cov_matrix(var_1,var_2,corr_coef):

"""

Calculates the covariance matrix based on the variances and correlation coefficient.

Args:

var_1 (scalar): variance of the first random variable

var_2 (scalar): variance of the second random variable

corr_coef (scalar): correlation coefficient

Returns:

(numpy array of floats) : covariance matrix

"""

###################################################################

## Insert your code here to:

## calculate the covariance from the variances and correlation

# cov = ...

cov_matrix = np.array([[var_1,cov],[cov,var_2]])

#uncomment once you've filled in the function

raise NotImplementedError("Student excercise: calculate the covariance matrix!")

###################################################################

return cov

###################################################################

## Insert your code here to:

## generate and plot bivariate Gaussian data with variances of 1

## and a correlation coefficients of: 0.8

## repeat while varying the correlation coefficient from -1 to 1

###################################################################

variance_1 = 1

variance_2 = 1

corr_coef = 0.8

#uncomment to test your code and plot

#cov_matrix = calculate_cov_matrix(variance_1,variance_2,corr_coef)

#X = get_data(cov_matrix)

#plot_data(X)

```

[*Click for solution*](https://github.com/NeuromatchAcademy/course-content/tree/master//tutorials/W1D5_DimensionalityReduction/solutions/W1D5_Tutorial1_Solution_62df7ae6.py)

*Example output:*

<img alt='Solution hint' align='left' width=510 height=303 src=https://raw.githubusercontent.com/NeuromatchAcademy/course-content/master/tutorials/W1D5_DimensionalityReduction/static/W1D5_Tutorial1_Solution_62df7ae6_0.png>

# Define a new orthonormal basis

```

#@title Video: Orthonormal bases

from IPython.display import YouTubeVideo

video = YouTubeVideo(id="dK526Nbn2Xo", width=854, height=480, fs=1)

print("Video available at https://youtube.com/watch?v=" + video.id)

video

```

Next, we will define a new orthonormal basis of vectors ${\bf u} = [u_1,u_2]$ and ${\bf w} = [w_1,w_2]$. As we learned in the video, two vectors are orthonormal if:

1. They are orthogonal (i.e., their dot product is zero):

\begin{equation}

{\bf u\cdot w} = u_1 w_1 + u_2 w_2 = 0

\end{equation}

2. They have unit length:

\begin{equation}

||{\bf u} || = ||{\bf w} || = 1

\end{equation}

In two dimensions, it is easy to make an arbitrary orthonormal basis. All we need is a random vector ${\bf u}$, which we have normalized. If we now define the second basis vector to be ${\bf w} = [-u_2,u_1]$, we can check that both conditions are satisfied:

\begin{equation}

{\bf u\cdot w} = - u_1 u_2 + u_2 u_1 = 0

\end{equation}

and

\begin{equation}

{|| {\bf w} ||} = \sqrt{(-u_2)^2 + u_1^2} = \sqrt{u_1^2 + u_2^2} = 1,

\end{equation}

where we used the fact that ${\bf u}$ is normalized. So, with an arbitrary input vector, we can define an orthonormal basis, which we will write in matrix by stacking the basis vectors horizontally:

\begin{equation}

{{\bf W} } =

\begin{pmatrix}

u_1 & w_1 \\

u_2 & w_2

\end{pmatrix}.

\end{equation}

### Exercise

In this exercise you will fill in the function below to define an orthonormal basis, given a single arbitrary 2-dimensional vector as an input.

**Suggestions**

* Modify the function `define_orthonormal_basis` to first normalize the first basis vector $\bf u$.

* Then complete the function by finding a basis vector $\bf w$ that is orthogonal to $\bf u$.

* Test the function using initial basis vector ${\bf u} = [3,1]$. Plot the resulting basis vectors on top of the data scatter plot using the function `plot_basis_vectors`. (For the data, use $\sigma_1^2 =1$, $\sigma_1^2 =1$, and $\rho = .8$).

```

help(plot_basis_vectors)

def define_orthonormal_basis(u):

"""

Calculates an orthonormal basis given an arbitrary vector u.

Args:

u (numpy array of floats): arbitrary 2-dimensional vector used for new basis

Returns:

(numpy array of floats) : new orthonormal basis

columns correspond to basis vectors

"""

###################################################################

## Insert your code here to:

## normalize vector u

## calculate vector w that is orthogonal to w

#u = ....

#w = ...

#W = np.column_stack((u,w))

#comment this once you've filled the function

raise NotImplementedError("Student excercise: implement the orthonormal basis function")

###################################################################

return W

variance_1 = 1

variance_2 = 1

corr_coef = 0.8

cov_matrix = calculate_cov_matrix(variance_1,variance_2,corr_coef)

X = get_data(cov_matrix)

u = np.array([3,1])

#uncomment and run below to plot the basis vectors

##define_orthonormal_basis(u)

#plot_basis_vectors(X,W)

```

[*Click for solution*](https://github.com/NeuromatchAcademy/course-content/tree/master//tutorials/W1D5_DimensionalityReduction/solutions/W1D5_Tutorial1_Solution_c9ca4afa.py)

*Example output:*

<img alt='Solution hint' align='left' width=286 height=281 src=https://raw.githubusercontent.com/NeuromatchAcademy/course-content/master/tutorials/W1D5_DimensionalityReduction/static/W1D5_Tutorial1_Solution_c9ca4afa_0.png>

# Project data onto new basis

```

#@title Video: Change of basis

from IPython.display import YouTubeVideo

video = YouTubeVideo(id="5MWSUtpbSt0", width=854, height=480, fs=1)

print("Video available at https://youtube.com/watch?v=" + video.id)

video

```

Finally, we will express our data in the new basis that we have just found. Since $\bf W$ is orthonormal, we can project the data into our new basis using simple matrix multiplication :

\begin{equation}

{\bf Y = X W}.

\end{equation}

We will explore the geometry of the transformed data $\bf Y$ as we vary the choice of basis.

#### Exercise

In this exercise you will fill in the function below to define an orthonormal basis, given a single arbitrary vector as an input.

**Suggestions**

* Complete the function `change_of_basis` to project the data onto the new basis.

* Plot the projected data using the function `plot_data_new_basis`.

* What happens to the correlation coefficient in the new basis? Does it increase or decrease?

* What happens to variance?

```

def change_of_basis(X,W):

"""

Projects data onto new basis W.

Args:

X (numpy array of floats) : Data matrix

each column corresponding to a different random variable

W (numpy array of floats): new orthonormal basis

columns correspond to basis vectors

Returns:

(numpy array of floats) : Data matrix expressed in new basis

"""

###################################################################

## Insert your code here to:

## project data onto new basis described by W

#Y = ...

#comment this once you've filled the function

raise NotImplementedError("Student excercise: implement change of basis")

###################################################################

return Y

## Unomment below to transform the data by projecting it into the new basis

## Plot the projected data

# Y = change_of_basis(X,W)

# plot_data_new_basis(Y)

# disp(...)

```

[*Click for solution*](https://github.com/NeuromatchAcademy/course-content/tree/master//tutorials/W1D5_DimensionalityReduction/solutions/W1D5_Tutorial1_Solution_b434bc0d.py)

*Example output:*

<img alt='Solution hint' align='left' width=544 height=303 src=https://raw.githubusercontent.com/NeuromatchAcademy/course-content/master/tutorials/W1D5_DimensionalityReduction/static/W1D5_Tutorial1_Solution_b434bc0d_0.png>

#### Exercise

To see what happens to the correlation as we change the basis vectors, run the cell below. The parameter $\theta$ controls the angle of $\bf u$ in degrees. Use the slider to rotate the basis vectors.

**Questions**

* What happens to the projected data as you rotate the basis?

* How does the correlation coefficient change? How does the variance of the projection onto each basis vector change?

* Are you able to find a basis in which the projected data is uncorrelated?

```

###### MAKE SURE TO RUN THIS CELL VIA THE PLAY BUTTON TO ENABLE SLIDERS ########

import ipywidgets as widgets

def refresh(theta = 0):

u = [1,np.tan(theta * np.pi/180.)]

W = define_orthonormal_basis(u)

Y = change_of_basis(X,W)

plot_basis_vectors(X,W)

plot_data_new_basis(Y)

_ = widgets.interact(refresh,

theta = (0, 90, 5))

```

| github_jupyter |

<a href="https://colab.research.google.com/github/Tharushal/Automated-diagnosis-system-using-A.I/blob/master/Final_Project.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

Install Faiss,TF,git datasets

```

#To use CPU FAISS use

!wget https://anaconda.org/pytorch/faiss-cpu/1.2.1/download/linux-64/faiss-cpu-1.2.1-py36_cuda9.0.176_1.tar.bz2

#To use GPU FAISS use

# !wget https://anaconda.org/pytorch/faiss-gpu/1.2.1/download/linux-64/faiss-gpu-1.2.1-py36_cuda9.0.176_1.tar.bz2

!tar xvjf faiss-cpu-1.2.1-py36_cuda9.0.176_1.tar.bz2

!cp -r lib/python3.6/site-packages/* /usr/local/lib/python3.6/dist-packages/

!pip install mkl

!pip install tensorflow

!pip install tensorflow-gpu==2.0.0-alpha0

!pip install https://github.com/re-search/DocProduct/archive/v0.2.0_dev.zip

!pip install gpt2-estimator

!pip install pyarrow

import tensorflow as tf

!pip install flask-ngrok

!pip install Flask-JSON

```

Downaload all model checkpoints, and question/answer data.

```

def download_file_from_google_drive(id, destination):

URL = "https://docs.google.com/uc?export=download"

session = requests.Session()

response = session.get(URL, params = { 'id' : id }, stream = True)

token = get_confirm_token(response)

if token:

params = { 'id' : id, 'confirm' : token }

response = session.get(URL, params = params, stream = True)

save_response_content(response, destination)

def get_confirm_token(response):

for key, value in response.cookies.items():

if key.startswith('download_warning'):

return value

return None

def save_response_content(response, destination):

CHUNK_SIZE = 32768

with open(destination, "wb") as f:

for chunk in response.iter_content(CHUNK_SIZE):

if chunk: # filter out keep-alive new chunks

f.write(chunk)

import os

import requests

import urllib.request

# Download the file from `url` and save it locally under `file_name`:

urllib.request.urlretrieve('https://github.com/naver/biobert-pretrained/releases/download/v1.0-pubmed-pmc/biobert_v1.0_pubmed_pmc.tar.gz', 'BioBert.tar.gz')

if not os.path.exists('BioBertFolder'):

os.makedirs('BioBertFolder')

import tarfile

tar = tarfile.open("BioBert.tar.gz")

tar.extractall(path='BioBertFolder/')

tar.close()

file_id = '1uCXv6mQkFfpw5txGnVCsl93Db7t5Z2mp'

download_file_from_google_drive(file_id, 'Float16EmbeddingsExpanded5-27-19.pkl')

file_id = 'https://onedrive.live.com/download?cid=9DEDF3C1E2D7E77F&resid=9DEDF3C1E2D7E77F%2132792&authkey=AEQ8GtkcDbe3K98'

urllib.request.urlretrieve( file_id, 'DataAndCheckpoint.zip')

if not os.path.exists('newFolder'):

os.makedirs('newFolder')

import zipfile

zip_ref = zipfile.ZipFile('DataAndCheckpoint.zip', 'r')

zip_ref.extractall('newFolder')

zip_ref.close()

```

Load model weights and Q&A data.

```

from docproduct.predictor import RetreiveQADoc

pretrained_path = 'BioBertFolder/biobert_v1.0_pubmed_pmc/'

# ffn_weight_file = None

bert_ffn_weight_file = 'newFolder/models/bertffn_crossentropy/bertffn'

embedding_file = 'Float16EmbeddingsExpanded5-27-19.pkl'

doc = RetreiveQADoc(pretrained_path=pretrained_path,

ffn_weight_file=None,

bert_ffn_weight_file=bert_ffn_weight_file,

embedding_file=embedding_file)

```

Type in your question

```

from flask_ngrok import run_with_ngrok

from flask import Flask

from flask import jsonify

app = Flask(__name__)

run_with_ngrok(app) #starts ngrok when the app is run

result = ""

@app.route('/<string:question_text>', methods=['GET'])

def get_answer(question_text):

global result

result=[]

def get_result():

search_similarity_by = 'answer' #@param ['answer', "question"]

number_results_toReturn=10 #@param {type:"number"}

answer_only=True #@param ["False", "True"] {type:"raw"}

returned_results = doc.predict( question_text ,

search_by=search_similarity_by, topk=number_results_toReturn, answer_only=answer_only)

#print('')

for jk in range(len(returned_results)):

#print("Result ", jk+1)

#print(returned_results[jk])

global result

result.append(returned_results[jk])

#print('')

return result

return jsonify({"result": get_result()})

app.run()

```

| github_jupyter |

# Django UnChained

<img src="images/django.jpg">

# View

<img src="https://mdn.mozillademos.org/files/13931/basic-django.png">

# EXP1

# URLs

```

from django.conf.urls import url

from . import views

app_name = 'polls'

urlpatterns = [

url(r'^$', views.index, name='index'),

url(r'^(?P<question_id>[0-9]+)/$', views.detail, name='detail'),

url(r'^(?P<question_id>[0-9]+)/results/$', views.results, name='results'),

url(r'^(?P<question_id>[0-9]+)/vote/$', views.vote, name='vote'),

]

```

# models

```

from django.db import models

class Question(models.Model):

question_text = models.CharField(max_length=200)

pub_date = models.DateTimeField('date published')

class Choice(models.Model):

question = models.ForeignKey(Question, on_delete=models.CASCADE)

choice_text = models.CharField(max_length=200)

votes = models.IntegerField(default=0)

```

# HTTP Request, HTTP Response

```

from django.http import HttpResponse

def index(request):

return HttpResponse("Hello, world. You're at the polls index.")

def detail(request, question_id):

return HttpResponse("You're looking at question %s." % question_id)

def results(request, question_id):

response = "You're looking at the results of question %s."

return HttpResponse(response % question_id)

def vote(request, question_id):

return HttpResponse("You're voting on question %s." % question_id)

```

# views and models

```

def list_view(request):

objs=models.ModelName.objects.all()

return HttpResponse("You're looking at list_view" %objs)

from django.shortcuts import get_object_or_404

def detail_view(request, pk):

obj = get_object_or_404(ModelName, pk=pk)

return HttpResponse("You're looking at detail_view", pk, obj)

def index(request):

latest_question_list = Question.objects.order_by('-pub_date')[:5]

output = ', '.join([q.question_text for q in latest_question_list])

return HttpResponse(output)

```

# views and templates

```

def index(request):

latest_question_list = Question.objects.order_by('-pub_date')[:5]

template = loader.get_template('polls/index.html')

context = {

'latest_question_list': latest_question_list,

}

return HttpResponse(template.render(context, request))

def index(request):

latest_question_list = Question.objects.order_by('-pub_date')[:5]

context = {'latest_question_list': latest_question_list}

return render(request, 'polls/index.html', context)

{% if latest_question_list %}

<ul>

{% for question in latest_question_list %}

<li><a href="/polls/{{ question.id }}/">{{ question.question_text }}</a></li>

<li><a href="{% url 'detail' question.id %}">{{ question.question_text }}</a></li>

<li><a href="{% url 'polls:detail' question.id %}">{{ question.question_text }}</a></li>

{% endfor %}

</ul>

{% else %}

<p>No polls are available.</p>

{% endif %}

```

# detail_view

```

from django.shortcuts import render

def detail_view(request, pk):

obj = get_object_or_404(ModelName, pk=pk)

return render(request, 'app/template.html', {'obj': obj})

def detail(request, question_id):

question = get_object_or_404(Question, pk=question_id)

return render(request, 'polls/detail.html', {'question': question})

<h1>{{ question.question_text }}</h1>

<ul>

{% for choice in question.choice_set.all %}

<li>{{ choice.choice_text }}</li>

{% endfor %}

</ul>

```

# Forms

```

<h1>{{ question.question_text }}</h1>

{% if error_message %}<p><strong>{{ error_message }}</strong></p>{% endif %}

<form action="{% url 'polls:vote' question.id %}" method="post">

{% csrf_token %}

{% for choice in question.choice_set.all %}

<input type="radio" name="choice" id="choice{{ forloop.counter }}" value="{{ choice.id }}" />

<label for="choice{{ forloop.counter }}">{{ choice.choice_text }}</label><br />

{% endfor %}

<input type="submit" value="Vote" />

</form>

def vote(request, question_id):

question = get_object_or_404(Question, pk=question_id)

try:

selected_choice = question.choice_set.get(pk=request.POST['choice'])

except (KeyError, Choice.DoesNotExist):

# Redisplay the question voting form.

return render(request, 'polls/detail.html', {

'question': question,

'error_message': "You didn't select a choice.",

})

else:

selected_choice.votes += 1

selected_choice.save()

# Always return an HttpResponseRedirect after successfully dealing

# with POST data. This prevents data from being posted twice if a

# user hits the Back button.

return HttpResponseRedirect(reverse('polls:results', args=(question.id,)))

```

# Final Step

```

def results(request, question_id):

question = get_object_or_404(Question, pk=question_id)

return render(request, 'polls/results.html', {'question': question})

<h1>{{ question.question_text }}</h1>

<ul>

{% for choice in question.choice_set.all %}

<li>{{ choice.choice_text }} -- {{ choice.votes }} vote{{ choice.votes|pluralize }}</li>

{% endfor %}

</ul>

<a href="{% url 'polls:detail' question.id %}">Vote again?</a>

```

# Built-in class-based views API

### Base vs Generic views¶

Base class-based views can be thought of as parent views, which can be used by themselves or inherited from. They may not provide all the capabilities required for projects, in which case there are Mixins which extend what base views can do.

Django’s generic views are built off of those base views, and were developed as a shortcut for common usage patterns such as displaying the details of an object. They take certain common idioms and patterns found in view development and abstract them so that you can quickly write common views of data without having to repeat yourself.

https://docs.djangoproject.com/en/2.0/ref/class-based-views/

# Generic display views

```

class IndexView(generic.ListView):

template_name = 'polls/index.html'

context_object_name = 'latest_question_list'

def get_queryset(self):

"""Return the last five published questions."""

return Question.objects.order_by('-pub_date')[:5]

class DetailView(generic.DetailView):

model = Question

template_name = 'polls/detail.html'

class ResultsView(generic.DetailView):

model = Question

template_name = 'polls/results.html'

app_name = 'polls'

urlpatterns = [

url(r'^$', views.IndexView.as_view(), name='index'),

url(r'^(?P<pk>[0-9]+)/$', views.DetailView.as_view(), name='detail'),

url(r'^(?P<pk>[0-9]+)/results/$', views.ResultsView.as_view(), name='results'),

url(r'^(?P<question_id>[0-9]+)/vote/$', views.vote, name='vote'),

]

```

| github_jupyter |

# Introduction to C++

## Hello world

There are many lessons in writing a simple "Hello world" program

- C++ programs are normally written using a text editor or integrated development environment (IDE) - we use the %%file magic to simulate this

- The #include statement literally pulls in and prepends the source code from the `iostream` header file

- Types must be declared - note the function return type is `int`

- There is a single function called `main` - every program has `main` as the entry point although you can write libraries without a `main` function

- Notice the use of braces to delimit blocks

- Notice the use of semi-colons to delimit expressions

- Unlike Python, white space is not used to delimit blocks or expressions only tokens

- Note the use of the `std` *namespace* - this is similar to Python except C++ uses `::` rather than `.` (like R)

- The I/O shown here uses *streaming* via the `<<` operator to send output to `cout`, which is the name for the standard output

- `std::endl` provides a line break and flushes the input buffer

```

%%file hello.cpp

#include <iostream>

int main() {

std::cout << "Hello, world!" << std::endl;

}

```

### Compilation

- The source file must be compiled to machine code before it can be exeuted

- Compilation is done with a C++ compiler - here we use one called `g++`

- By default, the output of compilation is called `a.out` - we use `-o` to change the output executable filename to `hello.exe`

- Note the use of `.exe` is a Windows convention; Unix executables typically have no extension - for example, just be the name `hello`

```

%%bash

g++ hello.cpp -o hello.exe

```

### Execution

```

%%bash

./hello.exe

```

### C equivalent

Before we move on, we briefly show the similar `Hello world` program in C. C is a precursor to C++ that is still widely used. While C++ is derived from C, it is a much richer and more complex language. We focus on C++ because the intent is to show how to wrap C++ code using `pybind11` and take advantage of C++ numerical libraries that do not exist in C.

```

%%file hello01.c

#include <stdio.h>

int main() {

printf("Hello, world from C!\n");

}

%%bash

gcc hello01.c

%%bash

./a.out

```

## Namespaces

Just like Python, C++ has namespaces that allow us to build large libraries without worrying about name collisions. In the `Hello world` program, we used the explicit name `std::cout` indicating that `cout` is a member of the standard workspace. We can also use the `using` keyword to import selected functions or classes from a namespace.

```c++

using std::cout;

int main()

{

cout << "Hello, world!\n";

}

```

For small programs, we sometimes import the entire namespace for convenience, but this may cause namespace collisions in larger programs.

```c++

using namespace std;

int main()

{

cout << "Hello, world!\n";

}

```

You can easily create your own namespace.

```c++

namespace sta_663 {

const double pi=2.14159;

void greet(string name) {

cout << "\nTraditional first program\n";

cout << "Hello, " << name << "\n";

}

}

int main()

{

cout << "\nUsing namespaces\n";

string name = "Tom";

cout << sta_663::pi << "\n";

sta_663::greet(name);

}

```

#### Using qualified imports

```

%%file hello02.cpp

#include <iostream>

using std::cout;

using std::endl;

int main() {

cout << "Hello, world!" << endl;

}

%%bash

g++ hello02.cpp -o hello02

%%bash

./hello02

```

#### Global imports of a namespace

Wholesale imports of namespace is generally frowned upon, similar to how `from X import *` is frowned upon in Python.

```

%%file hello03.cpp

#include <iostream>

using namespace std;

int main() {

cout << "Hello, world!" << endl;

}

%%bash

g++ hello03.cpp -o hello03

%%bash

./hello03

```

## Types

```

%%file dtypes.cpp

#include <iostream>

#include <complex>

using std::cout;

int main() {

// Boolean

bool a = true, b = false;

cout << "and " << (a and b) << "\n";

cout << "&& " << (a && b) << "\n";

cout << "or " << (a or b) << "\n";

cout << "|| " << (a || b) << "\n";

cout << "not " << not (a or b) << "\n";

cout << "! " << !(a or b) << "\n";

// Integral numbers

cout << "char " << sizeof(char) << "\n";

cout << "short int " << sizeof(short int) << "\n";

cout << "int " << sizeof(int) << "\n";

cout << "long " << sizeof(long) << "\n";

// Floating point numbers

cout << "float " << sizeof(float) << "\n";

cout << "double " << sizeof(double) << "\n";

cout << "long double " << sizeof(long double) << "\n";

cout << "complex double " << sizeof(std::complex<double>) << "\n";

// Characters and strings

char c = 'a'; // Note single quotes

char word[] = "hello"; // C char arrays

std::string s = "hello"; // C++ string

cout << c << "\n";

cout << word << "\n";

cout << s << "\n";

}

%%bash

g++ dtypes.cpp -o dtypes.exe

./dtypes.exe

```

## Type conversions

Converting between types can get pretty complicated in C++. We will show some simple versions.

```

%%file type.cpp

#include <iostream>

using std::cout;

using std::string;

using std::stoi;

int main() {

char c = '3'; // A char is an integer type

string s = "3"; // A string is not an integer type

int i = 3;

float f = 3.1;

double d = 3.2;

cout << c << "\n";

cout << i << "\n";

cout << f << "\n";

cout << d << "\n";

cout << "c + i is " << c + i << "\n";

cout << "c + i is " << c - '0' + i << "\n";

// Casting string to number

cout << "s + i is " << stoi(s) + i << "\n"; // Use std::stod to convert to double

// Two ways to cast float to int

cout << "f + i is " << f + i << "\n";

cout << "f + i is " << int(f) + i << "\n";

cout << "f + i is " << static_cast<int>(f) + i << "\n";

}

%%bash

g++ -o type.exe type.cpp -std=c++14

%%bash

./type.exe

```

## Header, source, and driver files

C++ allows separate compilation of functions and programs that use those functions. The way it does this is to write functions in *source* files that can be compiled. To use these compiled functions, the calling program includes *header* files that contain the function signatures - this provides enough information for the compiler to link to the compiled function machine code when executing the program.

- Here we show a toy example of typical C++ program organization

- We build a library of math functions in `my_math.cpp`

- We add a header file for the math functions in `my_math.hpp`

- We build a library of stats functions in `my_stats.cpp`

- We add a header file for the stats functions in `my_stats.hpp`

- We write a program that uses math and stats functions called `my_driver.cpp`

- We pull in the function signatures with `#include` for the header files

- Once you understand the code, move on to see how compilation is done

- Note that it is customary to include the header file in the source file itself to let the compiler catch any mistakes in the function signatures

```

%%file my_math.hpp

#pragma once

int add(int a, int b);

int multiply(int a, int b);

%%file my_math.cpp

#include "my_math.hpp"

int add(int a, int b) {

return a + b;

}

int multiply(int a, int b) {

return a * b;

}

%%file my_stats.hpp

#pragma once

int mean(int xs[], int n);

%%file my_stats.cpp

#include "my_math.hpp"

int mean(int xs[], int n) {

double s = 0;

for (int i=0; i<n; i++) {

s += xs[i];

}

return s/n;

}

%%file my_driver.cpp

#include <iostream>

#include "my_math.hpp"

#include "my_stats.hpp"

int main() {

int xs[] = {1,2,3,4,5};

int n = 5;

int a = 3, b= 4;

std::cout << "sum = " << add(a, b) << "\n";

std::cout << "prod = " << multiply(a, b) << "\n";

std::cout << "mean = " << mean(xs, n) << "\n";

}

```

Compilation

- Notice in the first 2 compile statements, that the source files are compiled to *object* files with default extension `.o` by usin gthe flag `-c`

- The 3rd compile statement builds an *executable* by linking the `main` file with the recently created object files

- The function signatures in the included header files tells the compiler how to match the function calls `add`, `multiply` and `mean` with the matching compiled functions

```

%%bash

g++ -c my_math.cpp

g++ -c my_stats.cpp

g++ my_driver.cpp my_math.o my_stats.o

%%bash

./a.out

```

### Using `make`

As building C++ programs can quickly become quite complicated, there are *builder* programs that help simplify this task. One of the most widely used is `make`, which uses a file normally called `Makefile` to coordinate the instructions for building a program

- Note that `make` can be used for more than compiling programs; for example, you can use it to automatically rebuild tables and figures for a manuscript whenever the data is changed

- Another advantage of `make` is that it keeps track of dependencies, and only re-compiles files that have changed or depend on another changed file since the last compilation

We will build a simple `Makefile` to build the `my_driver` executable:

- Each section consists of a make target denoted by `<targget>:` followed by files the target depends on

- The next line is the command given to build the target. This must begin with a TAB character (it MUST be a TAB and not spaces)

- If a target has dependencies that are not met, `make` will see if each dependency itself is a target and build that first

- It uses timestamps to decide whether to rebuild a target (not actually changes)

- By default, `make` builds the first target, but can also build named targets

How to get the TAB character. Copy and paste the blank space between `a` and `b`.

```

! echo "a\tb"

%%file Makefile

driver: my_math.o my_stats.o

g++ my_driver.cpp my_math.o my_stats.o -o my_driver

my_math.o: my_math.cpp my_math.hpp

g++ -c my_math.cpp

my_stats.o: my_stats.cpp my_stats.hpp

g++ -c my_stats.cpp

```

- We first start with a clean slate

```

%%capture logfile

%%bash

rm *\.o

rm my_driver

%%bash

make

%%bash

./my_driver

```

- Re-building does not trigger re-compilation of source files since the timestamps have not changed

```

%%bash

make

%%bash

touch my_stats.hpp

```

- As `my_stats.hpp` was listed as a dependency of the target `my_stats.o`, `touch`, which updates the timestamp, forces a recompilation of `my_stats.o`

```

%%bash

make

```

#### Use of variables in Makefile

```

%%file Makefile2

CC=g++

CFLAGS=-Wall -std=c++14

driver: my_math.o my_stats.o

$(CC) $(CFLAGS) my_driver.cpp my_math.o my_stats.o -o my_driver2

my_math.o: my_math.cpp my_math.hpp

$(CC) $(CFLAGS) -c my_math.cpp

my_stats.o: my_stats.cpp my_stats.hpp

$(CC) $(CFLAGS) -c my_stats.cpp

```

### Compilation

Note that no re-compilation occurs!

```

%%bash

make -f Makefile2

```

### Execution

```

%%bash

./my_driver2

```

## Input and output

#### Arguments to main

```

%%file main_args.cpp

#include <iostream>

using std::cout;

int main(int argc, char* argv[]) {

for (int i=0; i<argc; i++) {

cout << i << ": " << argv[i] << "\n";

}

}

%%bash

g++ main_args.cpp -o main_args

%%bash

./main_args hello 1 2 3

```

**Exercise**

Write, compile and execute a progrm called `greet` that when called on the command line with

```bash

greet Santa 3

```

gives the output

```

Hello Santa!

Hello Santa!

Hello Santa!

```

#### Reading from files

```

%%file data.txt

9 6

%%file io.cpp

#include <fstream>

#include "my_math.hpp"

int main() {

std::ifstream fin("data.txt");

std::ofstream fout("result.txt");

double a, b;

fin >> a >> b;

fin.close();

fout << add(a, b) << std::endl;

fout << multiply(a, b) << std::endl;

fout.close();

}

%%bash

g++ io.cpp -o io.exe my_math.cpp

%%bash

./io.exe

! cat result.txt

```

## Arrays

```

%%file array.cpp

#include <iostream>

using std::cout;

using std::endl;

int main() {

int N = 3;

double counts[N];

counts[0] = 1;

counts[1] = 3;

counts[2] = 3;

double avg = (counts[0] + counts[1] + counts[2])/3;

cout << avg << endl;

}

%%bash

g++ -o array.exe array.cpp

%%bash

./array.exe

```

## Loops

```

%%file loop.cpp

#include <iostream>

using std::cout;

using std::endl;

using std::begin;

using std::end;

int main()

{

int x[] = {1, 2, 3, 4, 5};

cout << "\nTraditional for loop\n";

for (int i=0; i < sizeof(x)/sizeof(x[0]); i++) {

cout << i << endl;

}

cout << "\nUsing iterators\n";

for (auto it=begin(x); it != end(x); it++) {

cout << *it << endl;

}

cout << "\nRanged for loop\n\n";

for (auto const &i : x) {

cout << i << endl;

}

}

%%bash

g++ -o loop.exe loop.cpp -std=c++14

%%bash

./loop.exe

```

## Function arguments

- A value argument means that the argument is copied in the body of the function

- A referene argument means that the addresss of the value is useed in the function. Reference or pointer arugments are used to avoid copying large objects.

```

%%file func_arg.cpp

#include <iostream>

using std::cout;

using std::endl;

// Value parameter

void f1(int x) {

x *= 2;

cout << "In f1 : x=" << x << endl;

}

// Reference parameter

void f2(int &x) {

x *= 2;

cout << "In f2 : x=" << x << endl;

}

/* Note

If you want to avoid side effects

but still use references to avoid a copy operation

use a const refernece like this to indicate that x cannot be changed

void f2(const int &x)

*/

/* Note

Raw pointers are prone to error and

generally avoided in modern C++

See unique_ptr and shared_ptr

*/

// Raw pointer parameter

void f3(int *x) {

*x *= 2;

cout << "In f3 : x=" << *x << endl;

}

int main() {

int x = 1;

cout << "Before f1: x=" << x << "\n";

f1(x);

cout << "After f1 : x=" << x << "\n";

cout << "Before f2: x=" << x << "\n";

f2(x);

cout << "After f2 : x=" << x << "\n";

cout << "Before f3: x=" << x << "\n";

f3(&x);

cout << "After f3 : x=" << x << "\n";

}

%%bash

c++ -o func_arg.exe func_arg.cpp --std=c++14

%%bash

./func_arg.exe

```

## Arrays, pointers and dynamic memory

A pointer is a number that represents an address in computer memory. What is stored at the address is a bunch of binary numbers. How those binary numbers are interpetedd depends on the type of the pointer. To get the value at the pointer adddress, we *derefeernce* the pointer using `*ptr`. Pointers are often used to indicagte the start of a block of value - the name of a plain C-style array is essentialy a pointer to the start of the array.

For example, the argument `char** argv` means that `argv` has type pointer to pointer to `char`. The pointer to `char` can be thought of as an array of `char`, hence the argument is also sometimes written as `char* argv[]` to indicate pointer to `char` array. So conceptually, it refers to an array of `char` arrays - or a colleciton of strings.

We generally avoid using raw pointers in C++, but this is standard in C and you should at least understand what is going on.

In C++, we typically use smart pointers, STL containers or convenient array constructs provided by libraries such as Eigen and Armadillo.

### Pointers and addresses

```

%%file p01.cpp

#include <iostream>

using std::cout;

int main() {

int x = 23;

int *xp;

xp = &x;

cout << "x " << x << "\n";

cout << "Address of x " << &x << "\n";

cout << "Pointer to x " << xp << "\n";

cout << "Value at pointer to x " << *xp << "\n";

}

%%bash

g++ -o p01.exe p01.cpp -std=c++14

./p01.exe

```

### Arrays

```

%%file p02.cpp

#include <iostream>

using std::cout;

using std::begin;

using std::end;

int main() {

int xs[] = {1,2,3,4,5};

int ys[3];

for (int i=0; i<5; i++) {

ys[i] = i*i;

}

for (auto x=begin(xs); x!=end(xs); x++) {

cout << *x << " ";

}

cout << "\n";

for (auto x=begin(ys); x!=end(ys); x++) {

cout << *x << " ";

}

cout << "\n";

}

%%bash

g++ -o p02.exe p02.cpp -std=c++14

./p02.exe

```

### Dynamic memory

- Use `new` and `delete` for dynamic memory allocation in C++.

- Do not use the C style `malloc`, `calloc` and `free`

- Abosolutely never mix the C++ and C style dynamic memory allocation

```

%%file p03.cpp

#include <iostream>

using std::cout;

using std::begin;

using std::end;

int main() {

// declare memory

int *z = new int; // single integer

*z = 23;

// Allocate on heap

int *zs = new int[3]; // array of 3 integers

for (int i=0; i<3; i++) {

zs[i] = 10*i;

}

cout << *z << "\n";

for (int i=0; i < 3; i++) {

cout << zs[i] << " ";

}

cout << "\n";

// need for manual management of dynamically assigned memory

delete z;

delete[] zs;

}

%%bash

g++ -o p03.exe p03.cpp -std=c++14

./p03.exe

```

### Pointer arithmetic

When you increemnt or decrement an array, it moves to the preceding or next locaion in memory as aprpoprite for the pointer type. You can also add or substract an number, since that is equivalent to mulitple increments/decrements. This is know as pointer arithmetic.

```

%%file p04.cpp

#include <iostream>

using std::cout;

using std::begin;

using std::end;

int main() {

int xs[] = {100,200,300,400,500,600,700,800,900,1000};

cout << xs << ": " << *xs << "\n";

cout << &xs << ": " << *xs << "\n";

cout << &xs[3] << ": " << xs[3] << "\n";

cout << xs+3 << ": " << *(xs+3) << "\n";

}

%%bash

g++ -std=c++11 -o p04.exe p04.cpp

./p04.exe

```

### C style dynamic memory for jagged array ("matrix")

```

%%file p05.cpp

#include <iostream>

using std::cout;

using std::begin;

using std::end;

int main() {

int m = 3;

int n = 4;

int **xss = new int*[m]; // assign memory for m pointers to int

for (int i=0; i<m; i++) {

xss[i] = new int[n]; // assign memory for array of n ints

for (int j=0; j<n; j++) {

xss[i][j] = i*10 + j;

}

}

for (int i=0; i<m; i++) {

for (int j=0; j<n; j++) {

cout << xss[i][j] << "\t";

}

cout << "\n";

}

// Free memory

for (int i=0; i<m; i++) {

delete[] xss[i];

}

delete[] xss;

}

%%bash

g++ -std=c++11 -o p05.exe p05.cpp

./p05.exe

```

## Functions

```

%%file func01.cpp

#include <iostream>

double add(double x, double y) {

return x + y;

}

double mult(double x, double y) {

return x * y;

}

int main() {

double a = 3;

double b = 4;

std::cout << add(a, b) << std::endl;

std::cout << mult(a, b) << std::endl;

}

%%bash

g++ -o func01.exe func01.cpp -std=c++14

./func01.exe

```

### Function parameters

In the example below, the space allocated *inside* a function is deleted *outside* the function. Such code in practice will almost certainly lead to memory leakage. This is why C++ functions often put the *output* as an argument to the function, so that all memory allocation can be controlled outside the function.

```

void add(double *x, double *y, double *res, n)

```

```

%%file func02.cpp

#include <iostream>

double* add(double *x, double *y, int n) {

double *res = new double[n];

for (int i=0; i<n; i++) {

res[i] = x[i] + y[i];

}

return res;

}

int main() {

double a[] = {1,2,3};

double b[] = {4,5,6};

int n = 3;

double *c = add(a, b, n);

for (int i=0; i<n; i++) {

std::cout << c[i] << " ";

}

std::cout << "\n";

delete[] c; // Note difficulty of book-keeping when using raw pointers!

}

%%bash

g++ -o func02.exe func02.cpp -std=c++14

./func02.exe

%%file func03.cpp

#include <iostream>

using std::cout;

// Using value

void foo1(int x) {

x = x + 1;

}

// Using pointer

void foo2(int *x) {

*x = *x + 1;

}

// Using ref

void foo3(int &x) {

x = x + 1;

}

int main() {

int x = 0;

cout << x << "\n";

foo1(x);

cout << x << "\n";

foo2(&x);

cout << x << "\n";

foo3(x);

cout << x << "\n";

}

%%bash

g++ -o func03.exe func03.cpp -std=c++14

./func03.exe

```

## Generic programming with templates

In C, you need to write a *different* function for each input type - hence resulting in duplicated code like

```C

int iadd(int a, int b)

float fadd(float a, float b)

```

In C++, you can make functions *generic* by using *templates*.

Note: When you have a template function, the entire funciton must be written in the header file, and not the source file. Hence, heavily templated libaries are often "header-only".

```

%%file template.cpp

#include <iostream>

template<typename T>

T add(T a, T b) {

return a + b;

}

int main() {

int m =2, n =3;

double u = 2.5, v = 4.5;

std::cout << add(m, n) << std::endl;

std::cout << add(u, v) << std::endl;

}

%%bash

g++ -o template.exe template.cpp

%%bash

./template.exe

```

## Anonymous functions

```

%%file lambda.cpp

#include <iostream>

using std::cout;

using std::endl;

int main() {

int a = 3, b = 4;

int c = 0;

// Lambda function with no capture

auto add1 = [] (int a, int b) { return a + b; };

// Lambda function with value capture

auto add2 = [c] (int a, int b) { return c * (a + b); };

// Lambda funciton with reference capture

auto add3 = [&c] (int a, int b) { return c * (a + b); };

// Change value of c after function definition

c += 5;

cout << "Lambda function\n";

cout << add1(a, b) << endl;

cout << "Lambda function with value capture\n";

cout << add2(a, b) << endl;

cout << "Lambda function with reference capture\n";

cout << add3(a, b) << endl;

}

%%bash

c++ -o lambda.exe lambda.cpp --std=c++14

%%bash

./lambda.exe

```

## Function pointers

```

%%file func_pointer.cpp

#include <iostream>

#include <vector>

#include <functional>

using std::cout;

using std::endl;

using std::function;

using std::vector;

int main()

{

cout << "\nUsing generalized function pointers\n";

using func = function<double(double, double)>;

auto f1 = [](double x, double y) { return x + y; };

auto f2 = [](double x, double y) { return x * y; };

auto f3 = [](double x, double y) { return x + y*y; };

double x = 3, y = 4;

vector<func> funcs = {f1, f2, f3,};

for (auto& f : funcs) {

cout << f(x, y) << "\n";

}

}

%%bash

g++ -o func_pointer.exe func_pointer.cpp -std=c++14

%%bash

./func_pointer.exe

```

## Standard template library (STL)

The STL provides templated containers and gneric algorithms acting on these containers with a consistent API.

```

%%file stl.cpp

#include <iostream>

#include <vector>

#include <map>

#include <unordered_map>

using std::vector;

using std::map;

using std::unordered_map;

using std::string;

using std::cout;

using std::endl;

struct Point{

int x;

int y;

Point(int x_, int y_) :

x(x_), y(y_) {};

};

int main() {

vector<int> v1 = {1,2,3};

v1.push_back(4);

v1.push_back(5);

cout << "Vecotr<int>" << endl;

for (auto n: v1) {

cout << n << endl;

}

cout << endl;

vector<Point> v2;

v2.push_back(Point(1, 2));

v2.emplace_back(3,4);

cout << "Vector<Point>" << endl;

for (auto p: v2) {

cout << "(" << p.x << ", " << p.y << ")" << endl;

}

cout << endl;

map<string, int> v3 = {{"foo", 1}, {"bar", 2}};

v3["hello"] = 3;

v3.insert({"goodbye", 4});

// Note the a C++ map is ordered

// Note using (traditional) iterators instead of ranged for loop

cout << "Map<string, int>" << endl;

for (auto iter=v3.begin(); iter != v3.end(); iter++) {

cout << iter->first << ": " << iter->second << endl;

}

cout << endl;

unordered_map<string, int> v4 = {{"foo", 1}, {"bar", 2}};

v4["hello"] = 3;

v4.insert({"goodbye", 4});

// Note the unordered_map is similar to Python' dict.'

// Note using ranged for loop with const ref to avoid copying or mutation

cout << "Unordered_map<string, int>" << endl;

for (const auto& i: v4) {

cout << i.first << ": " << i.second << endl;

}

cout << endl;

}

%%bash

g++ -o stl.exe stl.cpp -std=c++14

%%bash

./stl.exe

```

## STL algorithms

```

%%file stl_algorithm.cpp

#include <vector>

#include <iostream>

#include <numeric>

using std::cout;

using std::endl;

using std::vector;

using std::begin;

using std::end;

int main() {

vector<int> v(10);

// iota is somewhat like range

std::iota(v.begin(), v.end(), 1);

for (auto i: v) {

cout << i << " ";

}

cout << endl;

// C++ version of reduce

cout << std::accumulate(begin(v), end(v), 0) << endl;

// Accumulate with lambda

cout << std::accumulate(begin(v), end(v), 1, [](int a, int b){return a * b; }) << endl;

}

%%bash

g++ -o stl_algorithm.exe stl_algorithm.cpp -std=c++14

%%bash

./stl_algorithm.exe

```

## Random numbers

```

%%file random.cpp

#include <iostream>

#include <random>

#include <functional>

using std::cout;

using std::random_device;

using std::mt19937;

using std::default_random_engine;

using std::uniform_int_distribution;

using std::poisson_distribution;

using std::student_t_distribution;

using std::bind;

// start random number engine with fixed seed

// Note default_random_engine may give differnet values on different platforms

// default_random_engine re(1234);

// or

// Using a named engine will work the same on differnt platforms

// mt19937 re(1234);

// start random number generator with random seed

random_device rd;

mt19937 re(rd());

uniform_int_distribution<int> uniform(1,6); // lower and upper bounds

poisson_distribution<int> poisson(30); // rate

student_t_distribution<double> t(10); // degrees of freedom

int main()

{

cout << "\nGenerating random numbers\n";

auto runif = bind (uniform, re);

auto rpois = bind(poisson, re);

auto rt = bind(t, re);

for (int i=0; i<10; i++) {

cout << runif() << ", " << rpois() << ", " << rt() << "\n";

}

}

%%bash

g++ -o random.exe random.cpp -std=c++14

%%bash

./random.exe

```

## Numerics

### Using Armadillo

```

%%file test_arma.cpp

#include <iostream>

#include <armadillo>

using std::cout;

using std::endl;

int main()

{

using namespace arma;

vec u = linspace<vec>(0,1,5);

vec v = ones<vec>(5);

mat A = randu<mat>(4,5); // uniform random deviates

mat B = randn<mat>(4,5); // normal random deviates

cout << "\nVecotrs in Armadillo\n";

cout << u << endl;

cout << v << endl;

cout << u.t() * v << endl;

cout << "\nRandom matrices in Armadillo\n";

cout << A << endl;

cout << B << endl;

cout << A * B.t() << endl;

cout << A * v << endl;

cout << "\nQR in Armadillo\n";

mat Q, R;

qr(Q, R, A.t() * A);

cout << Q << endl;

cout << R << endl;

}

%%bash

g++ -o test_arma.exe test_arma.cpp -std=c++14 -larmadillo

%%bash

./test_arma.exe

```

### Using Eigen

```

%%file test_eigen.cpp

#include <iostream>

#include <fstream>

#include <random>

#include <Eigen/Dense>

#include <functional>

using std::cout;

using std::endl;

using std::ofstream;

using std::default_random_engine;

using std::normal_distribution;

using std::bind;

// start random number engine with fixed seed

default_random_engine re{12345};

normal_distribution<double> norm(5,2); // mean and standard deviation

auto rnorm = bind(norm, re);

int main()

{

using namespace Eigen;

VectorXd x1(6);

x1 << 1, 2, 3, 4, 5, 6;

VectorXd x2 = VectorXd::LinSpaced(6, 1, 2);

VectorXd x3 = VectorXd::Zero(6);

VectorXd x4 = VectorXd::Ones(6);

VectorXd x5 = VectorXd::Constant(6, 3);

VectorXd x6 = VectorXd::Random(6);

double data[] = {6,5,4,3,2,1};

Map<VectorXd> x7(data, 6);

VectorXd x8 = x6 + x7;

MatrixXd A1(3,3);

A1 << 1 ,2, 3,

4, 5, 6,

7, 8, 9;

MatrixXd A2 = MatrixXd::Constant(3, 4, 1);

MatrixXd A3 = MatrixXd::Identity(3, 3);

Map<MatrixXd> A4(data, 3, 2);

MatrixXd A5 = A4.transpose() * A4;

MatrixXd A6 = x7 * x7.transpose();

MatrixXd A7 = A4.array() * A4.array();

MatrixXd A8 = A7.array().log();

MatrixXd A9 = A8.unaryExpr([](double x) { return exp(x); });

MatrixXd A10 = MatrixXd::Zero(3,4).unaryExpr([](double x) { return rnorm(); });

VectorXd x9 = A1.colwise().norm();

VectorXd x10 = A1.rowwise().sum();

MatrixXd A11(x1.size(), 3);

A11 << x1, x2, x3;

MatrixXd A12(3, x1.size());

A12 << x1.transpose(),

x2.transpose(),

x3.transpose();

JacobiSVD<MatrixXd> svd(A10, ComputeThinU | ComputeThinV);

cout << "x1: comman initializer\n" << x1.transpose() << "\n\n";

cout << "x2: linspace\n" << x2.transpose() << "\n\n";

cout << "x3: zeors\n" << x3.transpose() << "\n\n";

cout << "x4: ones\n" << x4.transpose() << "\n\n";

cout << "x5: constant\n" << x5.transpose() << "\n\n";

cout << "x6: rand\n" << x6.transpose() << "\n\n";

cout << "x7: mapping\n" << x7.transpose() << "\n\n";

cout << "x8: element-wise addition\n" << x8.transpose() << "\n\n";

cout << "max of A1\n";

cout << A1.maxCoeff() << "\n\n";

cout << "x9: norm of columns of A1\n" << x9.transpose() << "\n\n";

cout << "x10: sum of rows of A1\n" << x10.transpose() << "\n\n";

cout << "head\n";

cout << x1.head(3).transpose() << "\n\n";

cout << "tail\n";

cout << x1.tail(3).transpose() << "\n\n";

cout << "slice\n";

cout << x1.segment(2, 3).transpose() << "\n\n";

cout << "Reverse\n";

cout << x1.reverse().transpose() << "\n\n";

cout << "Indexing vector\n";

cout << x1(0);

cout << "\n\n";

cout << "A1: comma initilizer\n";

cout << A1 << "\n\n";

cout << "A2: constant\n";

cout << A2 << "\n\n";

cout << "A3: eye\n";

cout << A3 << "\n\n";

cout << "A4: mapping\n";

cout << A4 << "\n\n";

cout << "A5: matrix multiplication\n";

cout << A5 << "\n\n";

cout << "A6: outer product\n";

cout << A6 << "\n\n";

cout << "A7: element-wise multiplication\n";

cout << A7 << "\n\n";

cout << "A8: ufunc log\n";

cout << A8 << "\n\n";

cout << "A9: custom ufucn\n";

cout << A9 << "\n\n";

cout << "A10: custom ufunc for normal deviates\n";

cout << A10 << "\n\n";

cout << "A11: np.c_\n";

cout << A11 << "\n\n";

cout << "A12: np.r_\n";

cout << A12 << "\n\n";

cout << "2x2 block startign at (0,1)\n";

cout << A1.block(0,1,2,2) << "\n\n";

cout << "top 2 rows of A1\n";

cout << A1.topRows(2) << "\n\n";

cout << "bottom 2 rows of A1";

cout << A1.bottomRows(2) << "\n\n";

cout << "leftmost 2 cols of A1";

cout << A1.leftCols(2) << "\n\n";

cout << "rightmost 2 cols of A1";

cout << A1.rightCols(2) << "\n\n";

cout << "Diagonal elements of A1\n";

cout << A1.diagonal() << "\n\n";

A1.diagonal() = A1.diagonal().array().square();

cout << "Transforming diagonal eelemtns of A1\n";

cout << A1 << "\n\n";

cout << "Indexing matrix\n";

cout << A1(0,0) << "\n\n";

cout << "singular values\n";

cout << svd.singularValues() << "\n\n";

cout << "U\n";

cout << svd.matrixU() << "\n\n";

cout << "V\n";

cout << svd.matrixV() << "\n\n";

}

import os

if not os.path.exists('./eigen'):

! git clone https://gitlab.com/libeigen/eigen.git

%%bash

g++ -o test_eigen.exe test_eigen.cpp -std=c++11 -I./eigen

%%bash

./test_eigen.exe

```

### Check SVD

```

import numpy as np

A10 = np.array([

[5.17237, 3.73572, 6.29422, 6.55268],

[5.33713, 3.88883, 1.93637, 4.39812],

[8.22086, 6.94502, 6.36617, 6.5961]

])

U, s, Vt = np.linalg.svd(A10, full_matrices=False)

s

U

Vt.T

```

## Probability distributions and statistics

A nicer library for working with probability distributions. Show integration with Armadillo. Integration with Eigen is also possible.

```

import os

if not os.path.exists('./stats'):

! git clone https://github.com/kthohr/stats.git

if not os.path.exists('./gcem'):

! git clone https://github.com/kthohr/gcem.git

%%file stats.cpp

#define STATS_ENABLE_STDVEC_WRAPPERS

#define STATS_ENABLE_ARMA_WRAPPERS

// #define STATS_ENABLE_EIGEN_WRAPPERS

#include <iostream>

#include <vector>

#include "stats.hpp"

using std::cout;

using std::endl;

using std::vector;

// set seed for randome engine to 1776

std::mt19937_64 engine(1776);

int main() {

// evaluate the normal PDF at x = 1, mu = 0, sigma = 1

double dval_1 = stats::dnorm(1.0,0.0,1.0);

// evaluate the normal PDF at x = 1, mu = 0, sigma = 1, and return the log value

double dval_2 = stats::dnorm(1.0,0.0,1.0,true);

// evaluate the normal CDF at x = 1, mu = 0, sigma = 1

double pval = stats::pnorm(1.0,0.0,1.0);

// evaluate the Laplacian quantile at p = 0.1, mu = 0, sigma = 1

double qval = stats::qlaplace(0.1,0.0,1.0);

// draw from a normal distribution with mean 100 and sd 15

double rval = stats::rnorm(100, 15);

// Use with std::vectors

vector<int> pois_rvs = stats::rpois<vector<int> >(1, 10, 3);

cout << "Poisson draws with rate=3 inton std::vector" << endl;

for (auto &x : pois_rvs) {

cout << x << ", ";

}

cout << endl;

// Example of Armadillo usage: only one matrix library can be used at a time

arma::mat beta_rvs = stats::rbeta<arma::mat>(5,5,3.0,2.0);

// matrix input

arma::mat beta_cdf_vals = stats::pbeta(beta_rvs,3.0,2.0);

/* Example of Eigen usage: only one matrix library can be used at a time

Eigen::MatrixXd gamma_rvs = stats::rgamma<Eigen::MatrixXd>(10, 5,3.0,2.0);

*/

cout << "evaluate the normal PDF at x = 1, mu = 0, sigma = 1" << endl;

cout << dval_1 << endl;

cout << "evaluate the normal PDF at x = 1, mu = 0, sigma = 1, and return the log value" << endl;

cout << dval_2 << endl;

cout << "evaluate the normal CDF at x = 1, mu = 0, sigma = 1" << endl;

cout << pval << endl;

cout << "evaluate the Laplacian quantile at p = 0.1, mu = 0, sigma = 1" << endl;

cout << qval << endl;

cout << "draw from a normal distribution with mean 100 and sd 15" << endl;

cout << rval << endl;

cout << "draws from a beta distribuiotn to populate Armadillo matrix" << endl;

cout << beta_rvs << endl;

cout << "evaluaate CDF for beta draws from Armadillo inputs" << endl;

cout << beta_cdf_vals << endl;

/* If using Eigen

cout << "draws from a Gamma distribuiotn to populate Eigen matrix" << endl;

cout << gamma_rvs << endl;

*/

}

%%bash

g++ -std=c++11 -I./stats/include -I./gcem/include -I./eigen stats.cpp -o stats.exe

%%bash

./stats.exe

```

**Solution to exercise**

```

%%file greet.cpp

#include <iostream>

#include <string>

using std::string;

using std::cout;

int main(int argc, char* argv[]) {

string name = argv[1];

int n = std::stoi(argv[2]);

for (int i=0; i<n; i++) {

cout << "Hello " << name << "!" << "\n";

}

}

%%bash

g++ -std=c++11 greet.cpp -o greet

%%bash

./greet Santa 3

```

| github_jupyter |

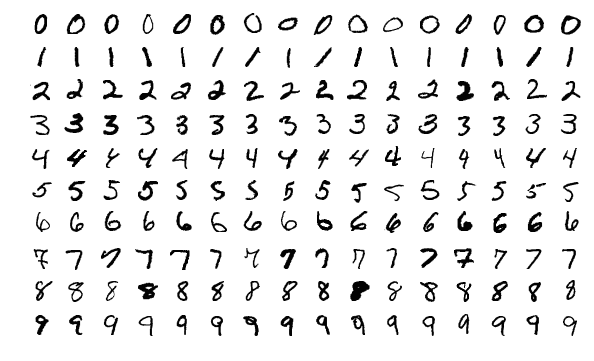

```

import numpy as np

import pandas as pd

from matplotlib import pyplot as plt

from tqdm import tqdm as tqdm

%matplotlib inline

import torch

import torchvision

import torchvision.transforms as transforms

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

import random

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

trainset = torchvision.datasets.CIFAR10(root='./data', train=True, download=True, transform=transform)

testset = torchvision.datasets.CIFAR10(root='./data', train=False, download=True, transform=transform)