text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

# **Build Linear Regression Model in Python**

Chanin Nantasenamat

[*'Data Professor' YouTube channel*](http://youtube.com/dataprofessor)

In this Jupyter notebook, I will be showing you how to build a linear regression model in Python using the scikit-learn package.

Inspired by [scikit-learn's Linear Regression Example](https://scikit-learn.org/stable/auto_examples/linear_model/plot_ols.html)

---

## **Load the Diabetes dataset** (via scikit-learn)

### **Import library**

```

from sklearn import datasets

```

### **Load dataset**

```

diabetes = datasets.load_diabetes()

diabetes

```

### **Description of the Diabetes dataset**

```

print(diabetes.DESCR)

```

### **Feature names**

```

print(diabetes.feature_names)

```

### **Create X and Y data matrices**

```

X = diabetes.data

Y = diabetes.target

X.shape, Y.shape

```

### **Load dataset + Create X and Y data matrices (in 1 step)**

```

X, Y = datasets.load_diabetes(return_X_y=True)

X.shape, Y.shape

```

## **Load the Boston Housing dataset (via GitHub)**

The Boston Housing dataset was obtained from the mlbench R package, which was loaded using the following commands:

```

library(mlbench)

data(BostonHousing)

```

For your convenience, I have also shared the [Boston Housing dataset](https://github.com/dataprofessor/data/blob/master/BostonHousing.csv) on the Data Professor GitHub package.

### **Import library**

```

import pandas as pd

```

### **Download CSV from GitHub**

```

! wget https://github.com/dataprofessor/data/raw/master/BostonHousing.csv

```

### **Read in CSV file**

```

BostonHousing = pd.read_csv("BostonHousing.csv")

BostonHousing

```

### **Split dataset to X and Y variables**

```

Y = BostonHousing.medv

Y

X = BostonHousing.drop(['medv'], axis=1)

X

```

## **Data split**

### **Import library**

```

from sklearn.model_selection import train_test_split

```

### **Perform 80/20 Data split**

80% of the data is used to train the model. 20% to test it

```

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, test_size=0.2)

```

### **Data dimension**

```

X_train.shape, Y_train.shape

X_test.shape, Y_test.shape

```

## **Linear Regression Model**

### **Import library**

```

from sklearn import linear_model

from sklearn.metrics import mean_squared_error, r2_score

```

### **Build linear regression**

#### Defines the regression model

```

model = linear_model.LinearRegression()

```

#### Build training model

```

model.fit(X_train, Y_train)

```

#### Apply trained model to make prediction (on test set)

```

Y_pred = model.predict(X_test)

```

## **Prediction results**

### **Print model performance**

```

print('Coefficients:', model.coef_)

print('Intercept:', model.intercept_)

print('Mean squared error (MSE): %.2f'

% mean_squared_error(Y_test, Y_pred))

print('Coefficient of determination (R^2): %.2f'

% r2_score(Y_test, Y_pred))

```

The coefficients represent the weight values of each of the features

### **String formatting**

By default r2_score returns a floating number ([more details](https://docs.scipy.org/doc/numpy-1.13.0/user/basics.types.html))

```

r2_score(Y_test, Y_pred)

r2_score(Y_test, Y_pred).dtype

```

We will be using the modulo operator to format the numbers by rounding it off.

```

'%f' % 0.523810833536016

```

We will now round it off to 3 digits

```

'%.3f' % 0.523810833536016

```

We will now round it off to 2 digits

```

'%.2f' % 0.523810833536016

```

## **Scatter plots**

### **Import library**

```

import seaborn as sns

```

### **Make scatter plot**

#### The Data

```

Y_test

import numpy as np

np.array(Y_test)

Y_pred

```

#### Making the scatter plot

```

sns.scatterplot(Y_test, Y_pred)

sns.scatterplot(Y_test, Y_pred, marker="+")

sns.scatterplot(Y_test, Y_pred, alpha=0.5)

```

| github_jupyter |

<a href="https://colab.research.google.com/github/cltl/python-for-text-analysis/blob/colab/Chapters-colab/Chapter_13_Working_with_Python_files.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

```

%%capture

!wget https://github.com/cltl/python-for-text-analysis/raw/master/zips/Data.zip

!wget https://github.com/cltl/python-for-text-analysis/raw/master/zips/images.zip

!wget https://github.com/cltl/python-for-text-analysis/raw/master/zips/Extra_Material.zip

!unzip Data.zip -d ../

!unzip images.zip -d ./

!unzip Extra_Material.zip -d ../

!rm Data.zip

!rm Extra_Material.zip

!rm images.zip

```

# Chapter 13 - Working with Python files

In the previous blocks, we've mainly used notebooks to develop and run our Python code. In this chapter, we'll introduce how to create Python modules (.py files) and how to run them. The most common way to work with Python is actually to use .py files, which is why it is important that you know how to work with them. You can see python files as one cell in a notebook without any markdown.

Before we write actual code in a .py file, we will explain the basics you need to know for doing this:

* Choosing an editor

* starting the terminal (from which you will run your .py files)

**At the end of this chapter, you will be able to**

* create python modules, i.e., .py files

* run python modules from the command line

If you have **questions** about this chapter, please contact us **(cltl.python.course@gmail.com)**.

# 1. Editor

We first need to choose which editor we will use to develop our Python code.

There are two options.

1. You create the python modules in your browser. After opening Jupyter notebook, you can click `File` -> `New` and then `Text file` to start developing Python modules.

2. You install an editor.

Please take a look [here](https://wiki.python.org/moin/PythonEditors) to get an impression of which ones are out there.

We can highly recommend [Atom](https://atom.io/) (for macOS, Windows, Linux). Other options are [BBEdit](https://www.barebones.com/products/bbedit/download.html) (for macOS) and [Notepad++](https://notepad-plus-plus.org/) (for Windows). A simple way to create a new .py file usually is to open a new file and save it as name_of_your_program.py (make sure to use indicative names).

Please choose between options 1 and 2.

# 2. Starting the terminal

To run a .py file we wrote in an editor, we need to start the terminal. This works differently for windows and Mac:

1. On Windows, please look at [Anaconda Prompt](https://docs.conda.io/projects/conda/en/latest/user-guide/getting-started.html)

2. on OS X/macOS (Mac computer), please type **terminal** in [Spotlight](https://support.apple.com/nl-nl/HT204014) and start the terminal

It's a useful skill to know how to navigate through your computer (i.e., go from one directory to another, all the files and subdirectories in a directory, etc.) using the terminal.

For Windows users, [this](https://www.computerhope.com/issues/chusedos.htm) is a good tutorial.

For OS X/macOS/Linux/Ubuntu users, [this](https://www.digitalocean.com/community/tutorials/basic-linux-navigation-and-file-management) is a good tutorial.

# 3. Running your first program

Here, we'll show you how to run your first program (hello_world.py).

In the same folder as this notebook, you will find a file called **hello_world.py**.

Running it works differently on Windows and Mac. Below, instructions for both can be found:

## A.) Running the program on OS X/MacOS

Please use the terminal to navigate to the folder in which this notebook is placed by copying the **output** of following cell in your terminal

```

import os

cwd = os.getcwd()

cwd_escaped_spaces = cwd.replace(' ', '\ ')

print('cd', cwd_escaped_spaces)

```

`cd` means 'change directory'. Here, you are using it to go to the directory we are currently working in. We use the os module to print the path to this directory (`os.getcwd`).

Please run the following command in the terminal:

**python hello_world.py**

You've succesfully run your first Python program!

## B.) Running the program on Windows

Please use the terminal to navigate to the folder in which this notebook is placed by copying the **output** of the following cell in your terminal

```

cwd = os.getcwd()

cwd_escaped_spaces = cwd.replace(' ', '^ ')

print('cd', cwd_escaped_spaces)

```

Please run the **output** of the following command in the terminal:

```

import sys

print(sys.executable + ' hello_world.py')

```

You've succesfully run your first Python program!

# 4. Import your own functions

In Chapter 12, you've been introduced to **importing** modules and functions/methods.

You can see any python program that you create (so any .py file) as a module, which means that you can import it into another python program. Let's see how this works.

Please note that the following examples only work if all your python files are in the same directory. There are ways of importing python modules from other directories, but we will not discuss them here.

## 4.1 Importing your entire module

When importing your own functions from your own modules, several things are important. We have created two example scripts to illustrate them called **the_program.py** and **utils.py**. We recommend to open them to check the following things:

* The extension .py is not used when importing modules. **import utils** will import the file **utils.py** [line 1 the_program.py]

* We can use any function from the file. We can call the count_words function by typing **utils.count_words** [the_program.py line 6]

* We can use any global variable declared in the imported module. E.g. **utils.x** and **utils.python** declared in utils.py can be used in the_program.py

## 4.2 Importing functions and variables individually

We can import specific functions using the syntax **from MODULE import FUNCTION/VARIABLE**

This can be seen in the file **the_program_v2.py** (lines 1-3). (Open the files **the_program_v2.py** and **utils.py** in an editor to check this).

## 4.3 Importing functions and variables to python notebooks

Please note that you can also import functions and variables from a python program while using notebooks. In this case, simply treat the notebook as the python files the_program.py and the_program_v2.py.

```

from utils import count_words

words = ['how', 'often', 'does', 'each', 'string', 'occur', 'in', 'this', 'list', '?']

word2freq = count_words(words)

print('word2freq', word2freq)

```

# Exercises

**Exercise 1**:

Please create and run your own program using an editor and the terminal. Please copy your beersong into your first program. Tip: simply open a new file in the editor and save it as `beersong.py'.

**Exercise 2**:

Please create two files:

* **my_second_program.py**

* **my_utils.py**

Please create a helper function and store it in **my_utils.py**, import it into **my_second_program.py** and call it from there.

```

```

| github_jupyter |

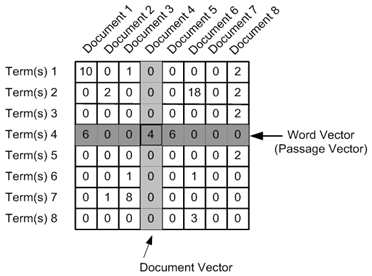

## Exercise 4: Tensors and Tensor Factorization Techniques

This exercise is an introduction to latent representation and tensors. First we will understand latent representation using SVD. Later we will extend this idea to tensors.

## Exercise-4.1-a

# Latent representation using SVD

Suppose we have to design a movie recommendation system. We are given some data of viewers and the movie they have seen in past. Now using this information we have to design a learning model which can recommend new movies depending on the pattern of data.

Data is structured as a matrix where rows represent viewers and column represents movies. The entry is 1 if viewer has seen the movie. Missing values are what we want to predict.

In this part we will study following:

(a) SVD as a matrix factorization.

(b) Singular Vectors as a latent representation.

(c) Find latent representation cutoff using knee plot.

(b) Predict Missing values and use it as a recommendation.

$$

Data = \left( \begin{array}{ccccccccccc}

& Movie-1 & Movie-2 & Movie-3 & Movie-4 & Movie-5 & Movie-6 & Movie-7 & Movie-8 & Movie-9 & Movie-10 \\

Viewer-1 & \_ & \_ & 1 & 1 & 1 & \_ & \_ & \_ & \_ & \_\\

Viewer-2 & 1 & \_ & 1 & 1 & \_ & 1 & \_ & \_ & 1 & \_\\

Viewer-3 & \_ & \_ & \_ & \_ & \_ & \_ & 1 & 1 & \_ & 1\\

Viewer-4 & 1 & 1 & 1 & \_ & 1 & \_ & \_ & \_ & \_ & \_\\

Viewer-5 & 1 & 1 & 1 & 1 & 1 & \_ & \_ & \_ & \_ & \_\\

Viewer-6 & \_ & \_ & 1 & 1 & 1 & \_ & \_ & \_ & 1 & \_ \\

Viewer-7 & 1 & 1 & 1 & 1 & \_ & \_ & \_ & \_ & \_ & \_ \\

Viewer-8 & \_ & \_ & \_ & 1 & \_ & 1 & \_ & 1 & 1 & 1\\

\end{array}

\right)

$$

```

import numpy as np

from scipy.linalg import svd,diagsvd

import matplotlib.pyplot as plt

#generate the data

X = np.array([[0,0,1,1,1,0,0,0,0,0],

[1,0,1,1,0,1,0,0,1,0],

[0,0,0,0,0,0,1,1,0,1],

[1,1,1,0,1,0,0,0,0,0],

[1,1,1,1,1,0,0,0,0,0],

[0,0,1,1,1,0,0,0,1,0],

[1,1,1,1,0,0,0,0,0,0],

[0,0,0,1,0,1,0,1,1,1]])

#SVD Calculatiom

U, s, Vh = svd(X)

print(np.shape(U))

print(np.shape(Vh))

print(np.shape(s))

#Elbow PLot: To decide significant singular values

plt.plot(s**2)

plt.xlabel('N')

plt.ylabel('$Singular Values^2$')

plt.title('Elbow plot for SVD')

plt.show()

plt.scatter(U[:,0],U[:,1])

for i,name in enumerate(['Viewer-1','Viewer-2','Viewer-3','Viewer-4','Viewer-5','Viewer-6','Viewer-7','Viewer-8']):

plt.annotate(name,(U[i,0],U[i,1]))

plt.xlabel('Singular Vector-1')

plt.ylabel('Singular Vector-2')

plt.title('Latent Representation')

plt.show()

#truncate to only first few singular values

s[4:]=0

#calculate estimate using truncated S

out_score = np.dot(np.dot(U,diagsvd(s,8,10)),Vh)

#show results

print("X=\n.{}".format(X))

print("U=\n.{}".format((U[:1,:].round(1))))

print("V=\n.{}".format(Vh[:,:1].round(1)))

print("Score=\n.{}".format(out_score))

print("Reconstructed-X=\n.{}".format(np.round(out_score)))

```

## Exercise-4.1-b

In previous part we used binary matrix for modeling viewer-movie recoomendation. Now suppose we are given a new data where each entry is a rating on a scale 1 to 10. Use SVD to learn latent representation and recommend 3 movies for viewer 8.

A sample data.

$$

Data = \left( \begin{array}{ccccccccccc}

& Movie-1 & Movie-2 & Movie-3 & Movie-4 & Movie-5 & Movie-6 & Movie-7 & Movie-8 & Movie-9 & Movie-10 \\

Viewer-1 & \_ & \_ & 5 & 4 & 6 & \_ & \_ & \_ & \_ & \_\\

Viewer-2 & 8 & \_ & 2 & 3 & \_ & 6 & \_ & \_ & 7 & \_\\

Viewer-3 & \_ & \_ & \_ & \_ & \_ & \_ & 4 & 8 & \_ & 1\\

Viewer-4 & 2 & 5 & 9 & \_ & 9 & \_ & \_ & \_ & \_ & \_\\

Viewer-5 & 3 & 3 & 3 & 7 & 8 & \_ & \_ & \_ & \_ & \_\\

Viewer-6 & \_ & \_ & 4 & 3 & 8 & \_ & \_ & \_ & 6 & \_ \\

Viewer-7 & 2 & 5 & 7 & 6 & \_ & \_ & \_ & \_ & \_ & \_ \\

Viewer-8 & \_ & \_ & \_ & 5 & \_ & 1 & \_ & 8 & 3 & 7\\

\end{array}

\right)

$$

```

########################################

####### Your Code Here ################

########################################

```

## Exercise-4.2

# Introduction To Tensor

This part is an introduction to tensor. We will do the following task:

(a) Storing tensor using numpy array.

(b) Slicing operations on tensors.

(c) Folding and Unfolding tensors.

```

data = np.arange(36).reshape((3,4,3))

print('Data= \n{}'.format(data))

tensor = np.array([data.T[i].T for i in range(len(data))])

print('Tensor= \n{}'.format(tensor))

# To view frontal slide-1

print(data[:,:,0])

# To view frontal slide-2

print(data[:,:,1])

# To view frontal slide-3

print(data[:,:,2])

```

## Unfolding and folding Tensors

```

from IPython.display import Image

Image(filename='Unfolding-of-third-order-of-a-tensor.png')

def unfold(X, mode):

return np.reshape(np.moveaxis(X, mode-1,0),(X.shape[mode-1],-1))

def fold(X, mode, shape):

new_shape = list(shape)

mode_dim = new_shape.pop(mode-1)

new_shape.insert(0, mode_dim)

return np.moveaxis(np.reshape(X, new_shape), 0, mode-1)

unfold(data,mode=1)

unfold(data,mode=2)

unfold(data,mode=3)

unfold_tensor = unfold(data,mode=0)

fold(unfold_tensor, mode=0, shape=data.shape)

```

## Tensor Decomposition

Now we will extend idea of matrix factorization to tensors. In following part we will learn two methods for tensor factorization: CP decomposition and RESCAL. Further we will see how to use them with RDF dataset for link prediction.

For purpose of experiments we will use kinship(alyawarra) dataset.

The alyawarra dataset used in this exercise has 26 relations (brother, sister, father,...} between 104 people. Using tensor factorization we will predict missing relations and evaluate the performance using area-under-curve.

## Exercise-4.3

$\textbf{CP Decomposition}$ is a generalization of the matrix SVD to tensors.

The CP Decomposition factorizes a tensor into a sum of outer products of vectors. For a 3-way tensor CP decomposition is written as:

$ \mathbf{T = \sum_r^R \lambda_r {a_r}^1 \odot {a_r}^2 \odot {a_r}^3} + \epsilon$

where $\odot$ denotes outer product for tensors, Y_f is the approximated tensor and \epsilon is an error.

To approximate above factorization we will define a loss minimizing Frobenius Norm:

$L = argmin_{{a_r}^1,{a_r}^2,{a_r}^3} ||T-\sum_r^R \lambda_r {a_r}^1 \odot {a_r}^2 \odot {a_r}^3||^2 $

To solve the above function we will use alternating least square method similar to least square method used in last exercise.(Proof as an exercise!)

```

from IPython.display import Image

Image(filename='CP.png')

import pandas as pd

import pdb

from sktensor import dtensor, cp_als

from scipy.io.matlab import loadmat

import matplotlib.pyplot as plt

import itertools

mat = loadmat('alyawarradata.mat')

T = mat['Rs']

T = dtensor(T)

trainT = np.zeros_like(T)

p = 0.7

train_mask = np.random.binomial(1, p, T.shape)

trainT[train_mask==1] = T[train_mask==1]

test_mask = np.ones_like(T)

test_mask[train_mask==1] = 0

print('training size %d' % np.sum(trainT))

print('test size %d' % np.sum(T[test_mask==1]))

# Decompose tensor using CP-ALS

P, fit, itr, exectimes = cp_als(trainT, 3, init='random')

reconstructed_tensor = P.totensor()

from sklearn.metrics import roc_auc_score

print(roc_auc_score(T[test_mask==1], reconstructed_tensor[test_mask==1]))

```

## CP Entity Embedding Visualization

```

subject_emb = P.U[0]

plt.scatter(subject_emb[:,0],subject_emb[:,1])

plt.xlabel('Latent Value-1')

plt.ylabel('Latent Value-2')

plt.title('Latent Representation Subject')

plt.show()

object_emb = P.U[1]

plt.scatter(object_emb[:,0],object_emb[:,1])

plt.xlabel('Latent Value-1')

plt.ylabel('Latent Value-2')

plt.title('Latent Representation Predicate')

plt.show()

```

## Exercise-4.4

## Rescal Decomposition

RESCAL factorization corresponds to Tucker2 decomposition with the constraint that two factor matrices have to be identical

$ \mathbf{T = R \times_1 A \times_2 A} + \epsilon$

$ \mathbf{T_{:,:,k} = A R_{:,:,k} A^T} + \epsilon$

where $\textbf{A}\in \mathbb{R}^{|V|\times r} $ represents the entity-latent-component space. $\textbf{R}_{:,:,k}\in \mathbb{R}^{r\times r} $ is an asymmetric matrix that specifies the interaction of the latent components for the k-th relation. Y_f is the approximated tensor and \epsilon is a noise estimate.

To approximate above factorization we will define a loss minimizing Frobenius Norm:

$L = argmin_{A,R} \sum_k||T-A R_{:,:,k} A^T||^2 $

To solve the above loss function we will again use alternating least square method. (Proof as an exercise!)

```

from IPython.display import Image

Image(filename='rescal.png')

from numpy.linalg import norm

from numpy.random import shuffle

from scipy.sparse import lil_matrix

from sklearn.metrics import precision_recall_curve, auc

from rescal import rescal_als

def normalize_predictions(P, nm_entities, nm_relations):

for a in range(nm_entities):

for b in range(nm_entities):

nrm = norm(P[a, b, :nm_relations])

if nrm != 0:

# round values for faster computation of AUC-PR

P[a, b, :nm_relations] = np.round_(P[a, b, :nm_relations] / nrm, decimals=3)

return P

def rescal_fact(train_tensor, n_dim, nm_entities, nm_relations):

entity_embedding, R, _, _, _ = rescal_als(train_tensor, n_dim, init='nvecs', conv=1e-3,lambda_A=10, lambda_R=10)

n = entity_embedding.shape[0]

reconstructed_tensor = np.zeros((n, n, len(R)))

for k in range(len(R)):

reconstructed_tensor[:, :, k] = np.dot(entity_embedding, np.dot(R[k], entity_embedding.T))

reconstructed_tensor = normalize_predictions(reconstructed_tensor, nm_entities, nm_relations)

return entity_embedding, reconstructed_tensor

def load_data(filename, train_fraction=0.7):

mat = loadmat(filename)

K = np.array(mat['Rs'], np.float32)

nm_entities, nm_relations = K.shape[0], K.shape[2]

# construct array for rescal

T = [lil_matrix(K[:, :, i]) for i in range(nm_relations)]

# Train Test Split

triples = nm_entities * nm_entities * nm_relations

IDX = list(range(triples))

shuffle(IDX)

train = int(train_fraction*len(IDX))

idx_test = IDX[train:]

train_tensor = [Ti.copy() for Ti in T]

mask_idx = np.unravel_index(idx_test, (nm_entities, nm_entities, nm_relations))

# set values to be predicted to zero

for i in range(len(mask_idx[0])):

train_tensor[mask_idx[2][i]][mask_idx[0][i], mask_idx[1][i]] = 0

return K, train_tensor, mask_idx, nm_entities, nm_relations

n_dim = 100

filename='alyawarradata.mat'

K, train_tensor, target_idx, nm_entities, nm_relations = load_data(filename, train_fraction=0.7)

# Train Rescal

entity_embedding, reconstructed_tensor = rescal_fact(train_tensor, n_dim, nm_entities, nm_relations)

#prec, recall, _ = precision_recall_curve(K[target_idx], reconstructed_tensor[target_idx])

#entities = mat['names']

print('AUC\n{}'.format(roc_auc_score(K[target_idx], reconstructed_tensor[target_idx])))

```

## Rescal Entity Embedding Visualization

```

plt.scatter(entity_embedding[:,0],entity_embedding[:,1])

plt.xlabel('Latent Value-1')

plt.ylabel('Latent Value-2')

plt.title('Latent Representation')

plt.show()

```

## Exercise-4.5

In last part we used RESCAL to find latent representation of entities in knowledge graph. For RESCAL we need to specify certain parameters. In this exercise we will tune these parameters for optimum performance. We will divide dataset in three parts: training, validation and test. First we find latent representation using training set then we use latent features to compute performance on validation set. Finally we select parameters with optimum performance on validation set and report performance on test set.

```

def load_train_val_test(filename, train_fraction=0.6, val_fraction=0.2):

mat = loadmat(filename)

K = np.array(mat['Rs'], np.float32)

nm_entities, nm_relations = K.shape[0], K.shape[2]

# construct array for rescal

T = [lil_matrix(K[:, :, i]) for i in range(nm_relations)]

# Train Test Split

triples = nm_entities * nm_entities * nm_relations

IDX = list(range(triples))

shuffle(IDX)

train = int(train_fraction*len(IDX))

val = int(val_fraction*len(IDX))

idx_val = IDX[train:train+val]

idx_test = IDX[train+val:]

train_tensor = [Ti.copy() for Ti in T]

mask_idx = np.unravel_index(idx_test+idx_val, (nm_entities, nm_entities, nm_relations))

val_idx = np.unravel_index(idx_val, (nm_entities, nm_entities, nm_relations))

test_idx = np.unravel_index(idx_test, (nm_entities, nm_entities, nm_relations))

# set values to be predicted to zero

for i in range(len(mask_idx[0])):

train_tensor[mask_idx[2][i]][mask_idx[0][i], mask_idx[1][i]] = 0

return K, train_tensor, val_idx, test_idx

n_dim = 10

filename='alyawarradata.mat'

# Mask Test and Validation Triples

K, train_tensor, val_idx, test_idx = load_train_val_test(filename, train_fraction=0.6, val_fraction=0.2)

var_list = [0.001, 0.1, 1., 10., 100.]

best_roc = 0

# Train on training set and evaluate on validation set

for (var_x, var_e, var_r) in itertools.product(var_list, repeat=3):

A, R, f, itr, exectimes = rescal_als(train_tensor, n_dim, lambda_A=var_x, lambda_R=var_e, lambda_V=var_r)

n = A.shape[0]

reconstructed_tensor = np.zeros((n, n, len(R)))

for k in range(len(R)):

reconstructed_tensor[:, :, k] = np.dot(A, np.dot(R[k], A.T))

reconstructed_tensor = normalize_predictions(reconstructed_tensor, nm_entities, nm_relations)

score = roc_auc_score(K[val_idx], reconstructed_tensor[val_idx])

print('var_x:{0:3.3f}, var_e:{1:3.3f}, var_r:{2:3.3f}, AUC-ROC:{3:.3f}'.format(var_x, var_e, var_r, score))

if score > best_roc:

best_vars = (var_x, var_e, var_r)

best_roc = score

lambda_a, lambda_r, lambda_v = best_vars

print(best_vars, best_roc)

# Use optimum parameters on Validation Set and Test on Hold-Out Set

lambda_a, lambda_r, lambda_v = best_vars

A, R, f, itr, exectimes = rescal_als(train_tensor, n_dim, lambda_A=var_x, lambda_R=var_e, lambda_V=var_r)

n = A.shape[0]

reconstructed_tensor = np.zeros((n, n, len(R)))

for k in range(len(R)):

reconstructed_tensor[:, :, k] = np.dot(A, np.dot(R[k], A.T))

reconstructed_tensor = normalize_predictions(reconstructed_tensor, nm_entities, nm_relations)

score = roc_auc_score(K[test_idx], reconstructed_tensor[test_idx])

print('AUC On Test Set with Optimum Parameters\n{}'.format(score))

```

## References

1) https://github.com/mnick/scikit-tensor

2) https://github.com/mnick/rescal.py

3) http://www.bsp.brain.riken.jp/~zhougx/tensor.html

4) https://edoc.ub.uni-muenchen.de/16056/1/Nickel_Maximilian.pdf

5) http://epubs.siam.org/doi/abs/10.1137/S0895479896305696

| github_jupyter |

# Random search and hyperparameter scaling with SageMaker XGBoost and Automatic Model Tuning

---

## Contents

1. [Introduction](#Introduction)

1. [Preparation](#Preparation)

1. [Download and prepare the data](#Download-and-prepare-the-data)

1. [Setup hyperparameter tuning](#Setup-hyperparameter-tuning)

1. [Logarithmic scaling](#Logarithmic-scaling)

1. [Random search](#Random-search)

1. [Linear scaling](#Linear-scaling)

---

## Introduction

This notebook showcases the use of two hyperparameter tuning features: **random search** and **hyperparameter scaling**.

We will use SageMaker Python SDK, a high level SDK, to simplify the way we interact with SageMaker Hyperparameter Tuning.

---

## Preparation

Let's start by specifying:

- The S3 bucket and prefix that you want to use for training and model data. This should be within the same region as SageMaker training.

- The IAM role used to give training access to your data. See SageMaker documentation for how to create these.

```

import sagemaker

import boto3

from sagemaker.tuner import (

IntegerParameter,

CategoricalParameter,

ContinuousParameter,

HyperparameterTuner,

)

import numpy as np # For matrix operations and numerical processing

import pandas as pd # For munging tabular data

import os

region = boto3.Session().region_name

smclient = boto3.Session().client("sagemaker")

role = sagemaker.get_execution_role()

bucket = sagemaker.Session().default_bucket()

prefix = "sagemaker/DEMO-hpo-xgboost-dm"

```

---

## Download and prepare the data

Here we download the [direct marketing dataset](https://archive.ics.uci.edu/ml/datasets/bank+marketing) from UCI's ML Repository.

```

!wget -N https://archive.ics.uci.edu/ml/machine-learning-databases/00222/bank-additional.zip

!unzip -o bank-additional.zip

```

Now let us load the data, apply some preprocessing, and upload the processed data to s3

```

# Load data

data = pd.read_csv("./bank-additional/bank-additional-full.csv", sep=";")

pd.set_option("display.max_columns", 500) # Make sure we can see all of the columns

pd.set_option("display.max_rows", 50) # Keep the output on one page

# Apply some feature processing

data["no_previous_contact"] = np.where(

data["pdays"] == 999, 1, 0

) # Indicator variable to capture when pdays takes a value of 999

data["not_working"] = np.where(

np.in1d(data["job"], ["student", "retired", "unemployed"]), 1, 0

) # Indicator for individuals not actively employed

model_data = pd.get_dummies(data) # Convert categorical variables to sets of indicators

# columns that should not be included in the input

model_data = model_data.drop(

["duration", "emp.var.rate", "cons.price.idx", "cons.conf.idx", "euribor3m", "nr.employed"],

axis=1,

)

# split data

train_data, validation_data, test_data = np.split(

model_data.sample(frac=1, random_state=1729),

[int(0.7 * len(model_data)), int(0.9 * len(model_data))],

)

# save preprocessed file to s3

pd.concat([train_data["y_yes"], train_data.drop(["y_no", "y_yes"], axis=1)], axis=1).to_csv(

"train.csv", index=False, header=False

)

pd.concat(

[validation_data["y_yes"], validation_data.drop(["y_no", "y_yes"], axis=1)], axis=1

).to_csv("validation.csv", index=False, header=False)

pd.concat([test_data["y_yes"], test_data.drop(["y_no", "y_yes"], axis=1)], axis=1).to_csv(

"test.csv", index=False, header=False

)

boto3.Session().resource("s3").Bucket(bucket).Object(

os.path.join(prefix, "train/train.csv")

).upload_file("train.csv")

boto3.Session().resource("s3").Bucket(bucket).Object(

os.path.join(prefix, "validation/validation.csv")

).upload_file("validation.csv")

s3_input_train = sagemaker.s3_input(

s3_data="s3://{}/{}/train".format(bucket, prefix), content_type="csv"

)

s3_input_validation = sagemaker.s3_input(

s3_data="s3://{}/{}/validation/".format(bucket, prefix), content_type="csv"

)

```

---

## Setup hyperparameter tuning

In this example, we are using SageMaker Python SDK to set up and manage the hyperparameter tuning job. We first configure the training jobs the hyperparameter tuning job will launch by initiating an estimator, and define the static hyperparameter and objective

```

from sagemaker.amazon.amazon_estimator import get_image_uri

sess = sagemaker.Session()

container = get_image_uri(region, "xgboost", repo_version="latest")

xgb = sagemaker.estimator.Estimator(

container,

role,

train_instance_count=1,

train_instance_type="ml.m4.xlarge",

output_path="s3://{}/{}/output".format(bucket, prefix),

sagemaker_session=sess,

)

xgb.set_hyperparameters(

eval_metric="auc",

objective="binary:logistic",

num_round=100,

rate_drop=0.3,

tweedie_variance_power=1.4,

)

objective_metric_name = "validation:auc"

```

# Logarithmic scaling

In both cases we use logarithmic scaling, which is the scaling type that should be used whenever the order of magnitude is more important that the absolute value. It should be used if a change, say, from 1 to 2 is expected to have a much bigger impact than a change from 100 to 101, due to the fact that the hyperparameter doubles in the first case but not in the latter.

```

hyperparameter_ranges = {

"alpha": ContinuousParameter(0.01, 10, scaling_type="Logarithmic"),

"lambda": ContinuousParameter(0.01, 10, scaling_type="Logarithmic"),

}

```

# Random search

We now start a tuning job using random search. The main advantage of using random search is that this allows us to train jobs with a high level of parallelism

```

tuner_log = HyperparameterTuner(

xgb,

objective_metric_name,

hyperparameter_ranges,

max_jobs=20,

max_parallel_jobs=10,

strategy="Random",

)

tuner_log.fit(

{"train": s3_input_train, "validation": s3_input_validation}, include_cls_metadata=False

)

```

Let's just run a quick check of the hyperparameter tuning jobs status to make sure it started successfully.

```

boto3.client("sagemaker").describe_hyper_parameter_tuning_job(

HyperParameterTuningJobName=tuner_log.latest_tuning_job.job_name

)["HyperParameterTuningJobStatus"]

```

# Linear scaling

Let us compare the results with executing a job using linear scaling.

```

hyperparameter_ranges_linear = {

"alpha": ContinuousParameter(0.01, 10, scaling_type="Linear"),

"lambda": ContinuousParameter(0.01, 10, scaling_type="Linear"),

}

tuner_linear = HyperparameterTuner(

xgb,

objective_metric_name,

hyperparameter_ranges_linear,

max_jobs=20,

max_parallel_jobs=10,

strategy="Random",

)

# custom job name to avoid a duplicate name

job_name = tuner_log.latest_tuning_job.job_name + "linear"

tuner_linear.fit(

{"train": s3_input_train, "validation": s3_input_validation},

include_cls_metadata=False,

job_name=job_name,

)

```

Check of the hyperparameter tuning jobs status

```

boto3.client("sagemaker").describe_hyper_parameter_tuning_job(

HyperParameterTuningJobName=tuner_linear.latest_tuning_job.job_name

)["HyperParameterTuningJobStatus"]

```

## Analyze tuning job results - after tuning job is completed

**Once the tuning jobs have completed**, we can compare the distribution of the hyperparameter configurations chosen in the two cases.

Please refer to "HPO_Analyze_TuningJob_Results.ipynb" to see more example code to analyze the tuning job results.

```

import seaborn as sns

import pandas as pd

import matplotlib.pyplot as plt

# check jobs have finished

status_log = boto3.client("sagemaker").describe_hyper_parameter_tuning_job(

HyperParameterTuningJobName=tuner_log.latest_tuning_job.job_name

)["HyperParameterTuningJobStatus"]

status_linear = boto3.client("sagemaker").describe_hyper_parameter_tuning_job(

HyperParameterTuningJobName=tuner_linear.latest_tuning_job.job_name

)["HyperParameterTuningJobStatus"]

assert status_log == "Completed", "First must be completed, was {}".format(status_log)

assert status_linear == "Completed", "Second must be completed, was {}".format(status_linear)

df_log = sagemaker.HyperparameterTuningJobAnalytics(

tuner_log.latest_tuning_job.job_name

).dataframe()

df_linear = sagemaker.HyperparameterTuningJobAnalytics(

tuner_linear.latest_tuning_job.job_name

).dataframe()

df_log["scaling"] = "log"

df_linear["scaling"] = "linear"

df = pd.concat([df_log, df_linear], ignore_index=True)

g = sns.FacetGrid(df, col="scaling", palette="viridis")

g = g.map(plt.scatter, "alpha", "lambda", alpha=0.6)

```

## Deploy the best model

Now that we have got the best model, we can deploy it to an endpoint. Please refer to other SageMaker sample notebooks or SageMaker documentation to see how to deploy a model.

| github_jupyter |

# [Predict Future Sales - Kaggle](https://www.kaggle.com/c/competitive-data-science-predict-future-sales)

* [How to Win a Data Science Competition: Learn from Top Kagglers - Coursera](https://www.coursera.org/learn/competitive-data-science)

* 위의 코세라 코스에 있는 데이터 사이언스 경진대회에서 우승하는 방법이라는 강좌와 관련된 경진대회다.

* 일단위로 판매되는 데이터의 시계열 분석을 다루고 있다.

* [1C Company](http://1c.ru/eng/title.htm)라는 러시아의 큰 소프트웨어 회사의 데이터이다.

* 1C Company는 경영 회계, 재무 회계, 인사 관리, CRM, SRM, MRP, MRP 등과 같은 다양한 비즈니스 업무를 하고있다.

```

import pandas as pd

import matplotlib.pyplot as plt

from plotnine import *

%matplotlib inline

%ls data

sales_train = pd.read_csv('data/sales_train.csv.gz', compression='gzip')

test = pd.read_csv('data/test.csv.gz', compression='gzip')

item_categories = pd.read_csv('data/item_categories.csv')

items = pd.read_csv('data/items.csv')

shops = pd.read_csv('data/shops.csv')

submissions = pd.read_csv('data/sample_submission.csv.gz', compression='gzip')

print(sales_train.shape)

print(test.shape)

print(item_categories.shape)

print(items.shape)

print(shops.shape)

print(submissions.shape)

sales_train.head()

test.head()

submissions.head()

sales_train.isnull().sum()

```

train데이터에는 2013년 1월부터 2015년 10월까지의 데이터가 있다.<br>

test데이터로 2015년 11월 shop과 product 정보를 예측해야 한다.<br>

`sample_submission.csv.gz` 파일을 보면 item_cnt_month 를 예측하게 되어있다.

```

[c for c in sales_train.columns if c not in test.columns]

shops.head()

items.head()

%%time

monthly_sales = sales_train.groupby([

"date_block_num","shop_id","item_id"])[

"date","item_price","item_cnt_day"].agg(

{"date":["min",'max'], "item_price":"mean", "item_cnt_day":"sum"})

monthly_sales.head(20)

items.item_category_id.head()

item_categories.head()

sales_train.item_cnt_day.plot()

plt.title("Number of products sold per day")

sales_train.item_price.hist()

plt.title("Item Price Distribution")

# 카테고리별 아이템 수

x = items.groupby(['item_category_id']).count()

x = x.sort_values(by='item_id', ascending=False)

x = x.iloc[0:10].reset_index()

x

```

* 40번 카테고리의 5035 아이템 판매수가 가장 많다.

```

x['item_category_id'] = x['item_category_id'].astype(str)

x['item_name'] = x['item_name'].astype(str)

(ggplot(x)

+ aes(x='item_category_id', y='item_id', fill='item_name')

+ geom_bar(stat = "identity")

+ ggtitle('카테고리별 아이템수')

+ theme(text=element_text(family='NanumBarunGothic'))

)

```

* https://www.kaggle.com/jagangupta/time-series-basics-exploring-traditional-ts 를 참고했다.

```

ts = sales_train.groupby(["date_block_num"])["item_cnt_day"].sum()

ts.astype('float')

ts = pd.DataFrame(ts).reset_index()

```

* 10일 단위로 판매 아이템이 뛰는 날이 있다. 일정한 주기별 패턴을 갖는 경향이 있다.

```

(ggplot(ts)

+ aes(x='date_block_num', y='item_cnt_day')

+ geom_point()

+ geom_line(color='blue')

+ labs(x='시간', y='판매 아이템 수', title='판매 아이템수')

+ theme(text=element_text(family='NanumBarunGothic'))

)

```

### 이동 평균과 표준편차

* 참고 : [이동 평균](https://docs.tibco.com/pub/spotfire_web_player/6.0.0-november-2013/ko-KR/WebHelp/GUID-5A18B4F1-8465-4200-881A-8721BF1A48B1.html)

* moving average, rolling average, rolling mean 또는 running average라고도 함

* 지정된 간격 안에서 노드 평균을 계산하는 데 사용

* 간격 크기가 3으로 설정된 경우 현재 노드와 앞의 두 노드를 사용하여 평균을 계산

이동 평균을 사용하는 목적은 일반적으로 단기 변동을 평준화하고 장기 동향을 파악하는 목적으로 사용

* [pandas.DataFrame.rolling — pandas 0.23.4 documentation](https://pandas.pydata.org/pandas-docs/stable/generated/pandas.DataFrame.rolling.html)

```

# 이동 평균과 표준편차

plt.figure(figsize=(16,6))

plt.plot(ts.rolling(window=12,center=False).mean(),label='Rolling Mean')

plt.plot(ts.rolling(window=12,center=False).std(),label='Rolling sd')

plt.legend()

```

| github_jupyter |

# Collections

The collection Module in Python provides different types of containers. A Container is an object that is used to store different objects and provide a way to access the contained objects and iterate over them. Some of the built-in containers are Tuple, List, Dictionary, etc. In this article, we will discuss the different containers provided by the collections module.

```

import collections

help(collections)

```

### Counter

```

# A Python program to show different

# ways to create Counter

from collections import Counter

# With sequence of items

print(Counter(['B','B','A','B','C','A','B','B','A','C']))

# with dictionary

print(Counter({'A':3, 'B':5, 'C':2}))

# with keyword arguments

print(Counter(A=3, B=5, C=2))

```

### OrderedDict

```

# A Python program to demonstrate working

# of OrderedDict

from collections import OrderedDict

print("This is a Dict:\n")

d = {}

d['a'] = 1

d['b'] = 2

d['c'] = 3

d['d'] = 4

for key, value in d.items():

print(key, value)

print("\nThis is an Ordered Dict:\n")

od = OrderedDict()

od['a'] = 1

od['b'] = 2

od['c'] = 3

od['d'] = 4

for key, value in od.items():

print(key, value)

# A Python program to demonstrate working

# of OrderedDict

from collections import OrderedDict

od = OrderedDict()

od['a'] = 1

od['b'] = 2

od['c'] = 3

od['d'] = 4

print('Before Deleting')

for key, value in od.items():

print(key, value)

# deleting element

od.pop('a')

# Re-inserting the same

od['a'] = 1

print('\nAfter re-inserting')

for key, value in od.items():

print(key, value)

```

### DefaultDict

```

# Python program to demonstrate

# defaultdict

from collections import defaultdict

# Defining the dict

d = defaultdict(int)

L = [1, 2, 3, 4, 2, 4, 1, 2]

# Iterate through the list

# for keeping the count

for i in L:

# The default value is 0

# so there is no need to

# enter the key first

d[i] += 1

print(d)

# Python program to demonstrate

# defaultdict

from collections import defaultdict

# Defining a dict

d = defaultdict(list)

for i in range(5):

d[i].append(i)

print("Dictionary with values as list:")

print(d)

```

### ChainMap

A ChainMap encapsulates many dictionaries into a single unit and returns a list of dictionaries.

```

# Python program to demonstrate

# ChainMap

from collections import ChainMap

d1 = {'a': 1, 'b': 2}

d2 = {'c': 3, 'd': 4}

d3 = {'e': 5, 'f': 6}

# Defining the chainmap

c = ChainMap(d1, d2, d3)

print(c)

# Accessing value from chainmap

# Python program to demonstrate

# ChainMap

from collections import ChainMap

d1 = {'a': 1, 'b': 2}

d2 = {'c': 3, 'd': 4}

d3 = {'e': 5, 'a': 6}

# Defining the chainmap

c = ChainMap(d1, d2, d3)

# Accessing Values using key name

print(c['a'])

# Accesing values using values()

# method

print(c.values())

# Accessing keys using keys()

# method

print(c.keys())

# Adding new dictionary

# A new dictionary can be added by using the new_child() method. The newly added dictionary is added at the beginning of the ChainMap.

# Python code to demonstrate ChainMap and

# new_child()

import collections

# initializing dictionaries

dic1 = { 'a' : 1, 'b' : 2 }

dic2 = { 'b' : 3, 'c' : 4 }

dic3 = { 'f' : 5 }

# initializing ChainMap

chain = collections.ChainMap(dic1, dic2)

# printing chainMap

print ("All the ChainMap contents are : ")

print (chain)

# using new_child() to add new dictionary

chain1 = chain.new_child(dic3)

# printing chainMap

print ("Displaying new ChainMap : ")

print (chain1)

```

### NamedTuple

A NamedTuple returns a tuple object with names for each position which the ordinary tuples lack. For example, consider a tuple names student where the first element represents fname, second represents lname and the third element represents the DOB. Suppose for calling fname instead of remembering the index position you can actually call the element by using the fname argument, then it will be really easy for accessing tuples element. This functionality is provided by the NamedTuple.

```

from collections import namedtuple

# Declaring namedtuple()

Student = namedtuple('Student',['name','age','DOB'])

# Adding values

S = Student('Nandini','19','2541997')

# Access using index

print ("The Student age using index is : ",end ="")

print (S[1])

# Access using name

print ("The Student name using keyname is : ",end ="")

print (S.name)

```

###

Conversion Operations

1. _make(): This function is used to return a namedtuple() from the iterable passed as argument.

2. _asdict(): This function returns the OrdereDict() as constructed from the mapped values of namedtuple().

```

# Python code to demonstrate namedtuple() and

# _make(), _asdict()

from collections import namedtuple

# Declaring namedtuple()

Student = namedtuple('Student',['name','age','DOB'])

# Adding values

S = Student('Nandini','19','2541997')

# initializing iterable

li = ['Manjeet', '19', '411997' ]

# initializing dict

di = { 'name' : "Nikhil", 'age' : 19 , 'DOB' : '1391997' }

# using _make() to return namedtuple()

print ("The namedtuple instance using iterable is : ")

print (Student._make(li))

# using _asdict() to return an OrderedDict()

print ("The OrderedDict instance using namedtuple is : ")

print (S._asdict())

```

### Deque

Deque (Doubly Ended Queue) is the optimized list for quicker append and pop operations from both sides of the container. It provides O(1) time complexity for append and pop operations as compared to list with O(n) time complexity.

```

# Python code to demonstrate deque

from collections import deque

# Declaring deque

queue = deque(['name','age','DOB'])

print(queue)

```

### Inserting Elements

Elements in deque can be inserted from both ends. To insert the elements from right append() method is used and to insert the elements from the left appendleft() method is used.

```

from collections import deque

# initializing deque

de = deque([1,2,3])

# using append() to insert element at right end

# inserts 4 at the end of deque

de.append(4)

# printing modified deque

print ("The deque after appending at right is : ")

print (de)

# using appendleft() to insert element at right end

# inserts 6 at the beginning of deque

de.appendleft(6)

# printing modified deque

print ("The deque after appending at left is : ")

print (de)

```

### Removing Elements

Elements can also be removed from the deque from both the ends. To remove elements from right use pop() method and to remove elements from the left use popleft() method.

```

# Python code to demonstrate working of

# pop(), and popleft()

from collections import deque

# initializing deque

de = deque([6, 1, 2, 3, 4])

# using pop() to delete element from right end

# deletes 4 from the right end of deque

de.pop()

# printing modified deque

print ("The deque after deleting from right is : ")

print (de)

# using popleft() to delete element from left end

# deletes 6 from the left end of deque

de.popleft()

# printing modified deque

print ("The deque after deleting from left is : ")

print (de)

```

### UserDict

UserDict is a dictionary-like container that acts as a wrapper around the dictionary objects. This container is used when someone wants to create their own dictionary with some modified or new functionality.

```

from collections import UserDict

# Creating a Dictionary where

# deletion is not allowed

class MyDict(UserDict):

# Function to stop deleltion

# from dictionary

def __del__(self):

raise RuntimeError("Deletion not allowed")

# Function to stop pop from

# dictionary

def pop(self, s = None):

raise RuntimeError("Deletion not allowed")

# Function to stop popitem

# from Dictionary

def popitem(self, s = None):

raise RuntimeError("Deletion not allowed")

# Driver's code

d = MyDict({'a':1,'b': 2,'c': 3})

d.pop(1)

```

### UserList

UserList is a list like container that acts as a wrapper around the list objects. This is useful when someone wants to create their own list with some modified or additional functionality.

```

# Python program to demonstrate

# userlist

from collections import UserList

# Creating a List where

# deletion is not allowed

class MyList(UserList):

# Function to stop deleltion

# from List

def remove(self, s = None):

raise RuntimeError("Deletion not allowed")

# Function to stop pop from

# List

def pop(self, s = None):

raise RuntimeError("Deletion not allowed")

# Driver's code

L = MyList([1, 2, 3, 4])

print("Original List")

# Inserting to List"

L.append(5)

print("After Insertion")

print(L)

# Deliting From List

L.remove()

```

### UserString

UserString is a string like container and just like UserDict and UserList it acts as a wrapper around string objects. It is used when someone wants to create their own strings with some modified or additional functionality.

```

# Python program to demonstrate

# userstring

from collections import UserString

# Creating a Mutable String

class Mystring(UserString):

# Function to append to

# string

def append(self, s):

self.data += s

# Function to rmeove from

# string

def remove(self, s):

self.data = self.data.replace(s, "")

# Driver's code

s1 = Mystring("Geeks")

print("Original String:", s1.data)

# Appending to string

s1.append("s")

print("String After Appending:", s1.data)

# Removing from string

s1.remove("e")

print("String after Removing:", s1.data)

```

| github_jupyter |

#### Jupyter notebooks

This is a [Jupyter](http://jupyter.org/) notebook using Python. You can install Jupyter locally to edit and interact with this notebook.

# Finite Difference methods in 2 dimensions

Let's start by generalizing the 1D Laplacian,

\begin{align} - u''(x) &= f(x) \text{ on } \Omega = (a,b) & u(a) &= g_0(a) & u'(b) = g_1(b) \end{align}

to two dimensions

\begin{align} -\nabla\cdot \big( \nabla u(x,y) \big) &= f(x,y) \text{ on } \Omega \subset \mathbb R^2

& u|_{\Gamma_D} &= g_0(x,y) & \nabla u \cdot \hat n|_{\Gamma_N} &= g_1(x,y)

\end{align}

where $\Omega$ is some well-connected open set (we will assume simply connected) and the Dirichlet boundary $\Gamma_D \subset \partial \Omega$ is nonempty.

We need to choose a system for specifying the domain $\Omega$ and ordering degrees of freedom. Perhaps the most significant limitation of finite difference methods is that this specification is messy for complicated domains. We will choose

$$ \Omega = (0, 1) \times (0, 1) $$

and

\begin{align} (x, y)_{im+j} &= (i h, j h) & h &= 1/(m-1) & i,j \in \{0, 1, \dotsc, m-1 \} .

\end{align}

The Laplacian $-\nabla\cdot\nabla u = -u_{xx} - u_{yy}$ decomposes into directional derivatives, leading to the 2D stencil

$$ \frac 1 {h^2} \begin{pmatrix} & -1 & \\ -1 & 4 & -1 \\ & -1 & \end{pmatrix} . $$

```

%matplotlib inline

import numpy

from matplotlib import pyplot

pyplot.style.use('ggplot')

def laplacian2d_dense(h, f, g0):

"""Solve Laplacian(u) = f on [0,1]^2 with u=g0 on the boundary.

Use a discretization of nominal size h.

"""

m = int(1/h + 1) # Number of elements in terms of nominal grid spacing h

h = 1 / (m-1) # Actual grid spacing

c = numpy.linspace(0, 1, m)

y, x = numpy.meshgrid(c, c)

u0 = g0(x, y).flatten()

rhs = f(x, y).flatten()

A = numpy.zeros((m*m, m*m))

def idx(i, j):

return i*m + j

for i in range(m):

for j in range(m):

row = idx(i, j)

if i in (0, m-1) or j in (0, m-1):

A[row, row] = 1

rhs[row] = u0[row]

else:

cols = [idx(*pair) for pair in

[(i-1, j), (i, j-1), (i, j), (i, j+1), (i+1, j)]]

stencil = 1/h**2 * numpy.array([-1, -1, 4, -1, -1])

A[row, cols] = stencil

return x, y, A, rhs

x, y, A, rhs = laplacian2d_dense(.1,

lambda x,y: 0*x+1,

lambda x,y: 0*x)

pyplot.spy(A);

u = numpy.linalg.solve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, u)

pyplot.colorbar();

import cProfile, pstats

prof = cProfile.Profile()

prof.enable()

x, y, A, rhs = laplacian2d_dense(.02, lambda x,y: 0*x+1, lambda x,y: 0*x)

u = numpy.linalg.solve(A, rhs).reshape(x.shape)

prof.disable()

pstats.Stats(prof).sort_stats(pstats.SortKey.TIME).print_stats(10);

import scipy.sparse as sp

import scipy.sparse.linalg

def laplacian2d(h, f, g0):

m = int(1/h + 1) # Number of elements in terms of nominal grid spacing h

h = 1 / (m-1) # Actual grid spacing

c = numpy.linspace(0, 1, m)

y, x = numpy.meshgrid(c, c)

u0 = g0(x, y).flatten()

rhs = f(x, y).flatten()

A = sp.lil_matrix((m*m, m*m))

def idx(i, j):

return i*m + j

mask = numpy.zeros_like(x, dtype=int)

mask[1:-1,1:-1] = 1

mask = mask.flatten()

for i in range(m):

for j in range(m):

row = idx(i, j)

stencili = numpy.array([idx(*pair) for pair in

[(i-1, j), (i, j-1),

(i, j),

(i, j+1), (i+1, j)]])

stencilw = 1/h**2 * numpy.array([-1, -1, 4, -1, -1])

if mask[row] == 0: # Dirichlet boundary

A[row, row] = 1

rhs[row] = u0[row]

else:

smask = mask[stencili]

cols = stencili[smask == 1]

A[row, cols] = stencilw[smask == 1]

bdycols = stencili[smask == 0]

rhs[row] -= stencilw[smask == 0] @ u0[bdycols]

return x, y, A.tocsr(), rhs

x, y, A, rhs = laplacian2d(.1, lambda x,y: 0*x+1, lambda x,y: 0*x)

pyplot.spy(A.todense());

sp.linalg.norm(A - A.T)

prof = cProfile.Profile()

prof.enable()

x, y, A, rhs = laplacian2d(.01, lambda x,y: 0*x+1, lambda x,y: 0*x)

u = sp.linalg.spsolve(A, rhs).reshape(x.shape)

prof.disable()

pstats.Stats(prof).sort_stats(pstats.SortKey.TIME).print_stats(10);

```

## A manufactured solution

```

class mms0:

def u(x, y):

return x*numpy.exp(-x)*numpy.tanh(y)

def grad_u(x, y):

return numpy.array([(1 - x)*numpy.exp(-x)*numpy.tanh(y),

x*numpy.exp(-x)*(1 - numpy.tanh(y)**2)])

def laplacian_u(x, y):

return ((2 - x)*numpy.exp(-x)*numpy.tanh(y)

- 2*x*numpy.exp(-x)*(numpy.tanh(y)**2 - 1)*numpy.tanh(y))

def grad_u_dot_normal(x, y, n):

return grad_u(x, y) @ n

x, y, A, rhs = laplacian2d(.02, mms0.laplacian_u, mms0.u)

u = sp.linalg.spsolve(A, rhs).reshape(x.shape)

print(u.shape, numpy.linalg.norm((u - mms0.u(x,y)).flatten(), numpy.inf))

pyplot.contourf(x, y, u)

pyplot.colorbar()

pyplot.title('Numeric solution')

pyplot.figure()

pyplot.contourf(x, y, u - mms0.u(x, y))

pyplot.colorbar()

pyplot.title('Error');

hs = numpy.geomspace(.01, .25, 12)

def mms_error(h):

x, y, A, rhs = laplacian2d(h, mms0.laplacian_u, mms0.u)

u = sp.linalg.spsolve(A, rhs).reshape(x.shape)

return numpy.linalg.norm((u - mms0.u(x, y)).flatten(), numpy.inf)

pyplot.loglog(hs, [mms_error(h) for h in hs], 'o', label='numeric error')

pyplot.loglog(hs, hs**1/100, label='$h^1/100$')

pyplot.loglog(hs, hs**2/100, label='$h^2/100$')

pyplot.legend();

```

# Neumann boundary conditions

Recall that in 1D, we would reflect the solution into ghost points according to

$$ u_{-i} = u_i - (x_i - x_{-i}) g_1(x_0, y) $$

and similarly for the right boundary and in the $y$ direction. After this, we (optionally) scale the row in the matrix for symmetry and shift the known parts to the right hand side. Below, we implement the reflected symmetry, but not the inhomogeneous contribution or rescaling of the matrix row.

```

def laplacian2d_bc(h, f, g0, dirichlet=((),())):

m = int(1/h + 1) # Number of elements in terms of nominal grid spacing h

h = 1 / (m-1) # Actual grid spacing

c = numpy.linspace(0, 1, m)

y, x = numpy.meshgrid(c, c)

u0 = g0(x, y).flatten()

rhs = f(x, y).flatten()

ai = []

aj = []

av = []

def idx(i, j):

i = (m-1) - abs(m-1 - abs(i))

j = (m-1) - abs(m-1 - abs(j))

return i*m + j

mask = numpy.ones_like(x, dtype=int)

mask[dirichlet[0],:] = 0

mask[:,dirichlet[1]] = 0

mask = mask.flatten()

for i in range(m):

for j in range(m):

row = idx(i, j)

stencili = numpy.array([idx(*pair) for pair in [(i-1, j), (i, j-1), (i, j), (i, j+1), (i+1, j)]])

stencilw = 1/h**2 * numpy.array([-1, -1, 4, -1, -1])

if mask[row] == 0: # Dirichlet boundary

ai.append(row)

aj.append(row)

av.append(1)

rhs[row] = u0[row]

else:

smask = mask[stencili]

ai += [row]*sum(smask)

aj += stencili[smask == 1].tolist()

av += stencilw[smask == 1].tolist()

bdycols = stencili[smask == 0]

rhs[row] -= stencilw[smask == 0] @ u0[bdycols]

A = sp.csr_matrix((av, (ai, aj)), shape=(m*m,m*m))

return x, y, A, rhs

x, y, A, rhs = laplacian2d_bc(.05, lambda x,y: 0*x+1,

lambda x,y: 0*x, dirichlet=((0,),()))

u = sp.linalg.spsolve(A, rhs).reshape(x.shape)

print(numpy.real_if_close(sp.linalg.eigs(A, 10, which='SM')[0]))

pyplot.contourf(x, y, u)

pyplot.colorbar();

# We used a different technique for assembling the sparse matrix.

# This is faster with scipy.sparse, but may be worse for other sparse matrix packages, such as PETSc.

prof = cProfile.Profile()

prof.enable()

x, y, A, rhs = laplacian2d_bc(.02, lambda x,y: 0*x+1, lambda x,y: 0*x)

u = sp.linalg.spsolve(A, rhs).reshape(x.shape)

prof.disable()

pstats.Stats(prof).sort_stats(pstats.SortKey.TIME).print_stats(10);

```

# Variable coefficients

In physical systems, it is common for equations to be given in **divergence form** (sometimes called **conservative form**),

$$ -\nabla\cdot \Big( \kappa(x,y) \nabla u \Big) = f(x,y) . $$

This can be converted to **non-divergence form**,

$$ - \kappa(x,y) \nabla\cdot \nabla u - \nabla \kappa(x,y) \cdot \nabla u = f(x,y) . $$

* What assumptions did we just make on $\kappa(x,y)$?

```

def laplacian2d_nondiv(h, f, kappa, grad_kappa, g0, dirichlet=((),())):

m = int(1/h + 1) # Number of elements in terms of nominal grid spacing h

h = 1 / (m-1) # Actual grid spacing

c = numpy.linspace(0, 1, m)

y, x = numpy.meshgrid(c, c)

u0 = g0(x, y).flatten()

rhs = f(x, y).flatten()

ai = []

aj = []

av = []

def idx(i, j):

i = (m-1) - abs(m-1 - abs(i))

j = (m-1) - abs(m-1 - abs(j))

return i*m + j

mask = numpy.ones_like(x, dtype=int)

mask[dirichlet[0],:] = 0

mask[:,dirichlet[1]] = 0

mask = mask.flatten()

for i in range(m):

for j in range(m):

row = idx(i, j)

stencili = numpy.array([idx(*pair) for pair in [(i-1, j), (i, j-1), (i, j), (i, j+1), (i+1, j)]])

stencilw = kappa(i*h, j*h)/h**2 * numpy.array([-1, -1, 4, -1, -1])

if grad_kappa is None:

gk = 1/h * numpy.array([kappa((i+.5)*h,j*h) - kappa((i-.5)*h,j*h),

kappa(i*h,(j+.5)*h) - kappa(i*h,(j-.5)*h)])

else:

gk = grad_kappa(i*h, j*h)

stencilw -= gk[0] / (2*h) * numpy.array([-1, 0, 0, 0, 1])

stencilw -= gk[1] / (2*h) * numpy.array([0, -1, 0, 1, 0])

if mask[row] == 0: # Dirichlet boundary

ai.append(row)

aj.append(row)

av.append(1)

rhs[row] = u0[row]

else:

smask = mask[stencili]

ai += [row]*sum(smask)

aj += stencili[smask == 1].tolist()

av += stencilw[smask == 1].tolist()

bdycols = stencili[smask == 0]

rhs[row] -= stencilw[smask == 0] @ u0[bdycols]

A = sp.csr_matrix((av, (ai, aj)), shape=(m*m,m*m))

return x, y, A, rhs

def kappa(x, y):

#return 1 - 2*(x-.5)**2 - 2*(y-.5)**2

return 1e-2 + 2*(x-.5)**2 + 2*(y-.5)**2

def grad_kappa(x, y):

#return -4*(x-.5), -4*(y-.5)

return 4*(x-.5), 4*(y-.5)

pyplot.contourf(x, y, kappa(x,y))

pyplot.colorbar();

x, y, A, rhs = laplacian2d_nondiv(.05, lambda x,y: 0*x+1,

kappa, grad_kappa,

lambda x,y: 0*x, dirichlet=((0,-1),()))

u = sp.linalg.spsolve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, u)

pyplot.colorbar();

x, y, A, rhs = laplacian2d_nondiv(.05, lambda x,y: 0*x,

kappa, grad_kappa,

lambda x,y: x, dirichlet=((0,-1),()))

u = sp.linalg.spsolve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, u)

pyplot.colorbar();

def laplacian2d_div(h, f, kappa, g0, dirichlet=((),())):

m = int(1/h + 1) # Number of elements in terms of nominal grid spacing h

h = 1 / (m-1) # Actual grid spacing

c = numpy.linspace(0, 1, m)

y, x = numpy.meshgrid(c, c)

u0 = g0(x, y).flatten()

rhs = f(x, y).flatten()

ai = []

aj = []

av = []

def idx(i, j):

i = (m-1) - abs(m-1 - abs(i))

j = (m-1) - abs(m-1 - abs(j))

return i*m + j

mask = numpy.ones_like(x, dtype=int)

mask[dirichlet[0],:] = 0

mask[:,dirichlet[1]] = 0

mask = mask.flatten()

for i in range(m):

for j in range(m):

row = idx(i, j)

stencili = numpy.array([idx(*pair) for pair in [(i-1, j),

(i, j-1),

(i, j),

(i, j+1),

(i+1, j)]])

stencilw = 1/h**2 * ( kappa((i-.5)*h, j*h) * numpy.array([-1, 0, 1, 0, 0])

+ kappa(i*h, (j-.5)*h) * numpy.array([0, -1, 1, 0, 0])

- kappa(i*h, (j+.5)*h) * numpy.array([0, 0, -1, 1, 0])

- kappa((i+.5)*h, j*h) * numpy.array([0, 0, -1, 0, 1]))

if mask[row] == 0: # Dirichlet boundary

ai.append(row)

aj.append(row)

av.append(1)

rhs[row] = u0[row]

else:

smask = mask[stencili]

ai += [row]*sum(smask)

aj += stencili[smask == 1].tolist()

av += stencilw[smask == 1].tolist()

bdycols = stencili[smask == 0]

rhs[row] -= stencilw[smask == 0] @ u0[bdycols]

A = sp.csr_matrix((av, (ai, aj)), shape=(m*m,m*m))

return x, y, A, rhs

x, y, A, rhs = laplacian2d_div(.05, lambda x,y: 0*x+1,

kappa,

lambda x,y: 0*x, dirichlet=((0,-1),()))

u = sp.linalg.spsolve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, u)

pyplot.colorbar();

x, y, A, rhs = laplacian2d_div(.05, lambda x,y: 0*x,

kappa,

lambda x,y: x, dirichlet=((0,-1),()))

u = sp.linalg.spsolve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, u)

pyplot.colorbar();

x, y, A, rhs = laplacian2d_nondiv(.05, lambda x,y: 0*x+1,

kappa, grad_kappa,

lambda x,y: 0*x, dirichlet=((0,-1),()))

u_nondiv = sp.linalg.spsolve(A, rhs).reshape(x.shape)

x, y, A, rhs = laplacian2d_div(.05, lambda x,y: 0*x+1,

kappa,

lambda x,y: 0*x, dirichlet=((0,-1),()))

u_div = sp.linalg.spsolve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, u_nondiv - u_div)

pyplot.colorbar();

class mms1:

def __init__(self):

import sympy

x, y = sympy.symbols('x y')

uexpr = x*sympy.exp(-2*x) * sympy.tanh(1.2*y+.1)

kexpr = 1e-2 + 2*(x-.42)**2 + 2*(y-.51)**2

self.u = sympy.lambdify((x,y), uexpr)

self.kappa = sympy.lambdify((x,y), kexpr)

def grad_kappa(xx, yy):

kx = sympy.lambdify((x,y), sympy.diff(kexpr, x))

ky = sympy.lambdify((x,y), sympy.diff(kexpr, y))

return kx(xx, yy), ky(xx, yy)

self.grad_kappa = grad_kappa

self.div_kappa_grad_u = sympy.lambdify((x,y),

-( sympy.diff(kexpr * sympy.diff(uexpr, x), x)

+ sympy.diff(kexpr * sympy.diff(uexpr, y), y)))

mms = mms1()

x, y, A, rhs = laplacian2d_nondiv(.05, mms.div_kappa_grad_u,

mms.kappa, mms.grad_kappa,

mms.u, dirichlet=((0,-1),(0,-1)))

u_nondiv = sp.linalg.spsolve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, u_nondiv)

pyplot.colorbar()

numpy.linalg.norm((u_nondiv - mms.u(x, y)).flatten(), numpy.inf)

x, y, A, rhs = laplacian2d_div(.05, mms.div_kappa_grad_u,

mms.kappa,

mms.u, dirichlet=((0,-1),(0,-1)))

u_div = sp.linalg.spsolve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, u_div)

pyplot.colorbar()

numpy.linalg.norm((u_div - mms.u(x, y)).flatten(), numpy.inf)

def mms_error(h):

x, y, A, rhs = laplacian2d_nondiv(h, mms.div_kappa_grad_u,

mms.kappa, mms.grad_kappa,

mms.u, dirichlet=((0,-1),(0,-1)))

u_nondiv = sp.linalg.spsolve(A, rhs).flatten()

x, y, A, rhs = laplacian2d_div(h, mms.div_kappa_grad_u,

mms.kappa, mms.u, dirichlet=((0,-1),(0,-1)))

u_div = sp.linalg.spsolve(A, rhs).flatten()

u_exact = mms.u(x, y).flatten()

return numpy.linalg.norm(u_nondiv - u_exact, numpy.inf), numpy.linalg.norm(u_div - u_exact, numpy.inf)

hs = numpy.logspace(-1.5, -.5, 10)

errors = numpy.array([mms_error(h) for h in hs])

pyplot.loglog(hs, errors[:,0], 'o', label='nondiv')

pyplot.loglog(hs, errors[:,1], 's', label='div')

pyplot.plot(hs, hs**2, label='$h^2$')

pyplot.legend();

float(False)

def kappablob(x, y):

#return .01 + ((x-.5)**2 + (y-.5)**2 < .125)

return .01 + (numpy.abs(x-.505) < .25) # + (numpy.abs(y-.5) < .25)

x, y, A, rhs = laplacian2d_div(.05, lambda x,y: 0*x, kappablob,

lambda x,y:x, dirichlet=((0,-1),()))

u_div = sp.linalg.spsolve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, kappablob(x, y))

pyplot.colorbar();

pyplot.figure()

pyplot.contourf(x, y, u_div, 10)

pyplot.colorbar();

x, y, A, rhs = laplacian2d_nondiv(.05, lambda x,y: 0*x, kappablob, None,

lambda x,y:x, dirichlet=((0,-1),()))

u_nondiv = sp.linalg.spsolve(A, rhs).reshape(x.shape)

pyplot.contourf(x, y, u_nondiv, 10)

pyplot.colorbar();

```

## Weak forms

When we write

$$ {\huge "} - \nabla\cdot \big( \kappa \nabla u \big) = 0 {\huge "} \text{ on } \Omega $$

where $\kappa$ is a discontinuous function, that's not exactly what we mean the derivative of that discontinuous function doesn't exist. Formally, however, let us multiply by a "test function" $v$ and integrate,

\begin{split}

- \int_\Omega v \nabla\cdot \big( \kappa \nabla u \big) = 0 & \text{ for all } v \\

\int_\Omega \nabla v \cdot \kappa \nabla u = \int_{\partial \Omega} v \kappa \nabla u \cdot \hat n & \text{ for all } v

\end{split}

where we have used integration by parts. This is called the **weak form** of the PDE and will be what we actually discretize using finite element methods. All the terms make sense when $\kappa$ is discontinuous. Now suppose our domain is decomposed into two disjoint sub domains $$\overline{\Omega_1 \cup \Omega_2} = \overline\Omega $$

with interface $$\Gamma = \overline\Omega_1 \cap \overline\Omega_2$$ and $\kappa_1$ is continuous on $\Omega_1$ and $\kappa_2$ is continuous on $\Omega_2$, but possibly $\kappa_1(x) \ne \kappa_2(x)$ for $x \in \Gamma$,

\begin{split}

\int_\Omega \nabla v \cdot \kappa \nabla u &= \int_{\Omega_1} \nabla v \cdot \kappa_1\nabla u + \int_{\Omega_2} \nabla v \cdot \kappa_2 \nabla u \\

&= -\int_{\Omega_1} v \nabla\cdot \big(\kappa_1 \nabla u \big) + \int_{\partial \Omega_1} v \kappa_1 \nabla u \cdot \hat n \\

&\qquad -\int_{\Omega_2} v \nabla\cdot \big(\kappa_2 \nabla u \big) + \int_{\partial \Omega_2} v \kappa_2 \nabla u \cdot \hat n \\

&= -\int_{\Omega} v \nabla\cdot \big(\kappa \nabla u \big) + \int_{\partial \Omega} v \kappa \nabla u \cdot \hat n + \int_{\Gamma} v (\kappa_1 - \kappa_2) \nabla u\cdot \hat n .

\end{split}

* Which direction is $\hat n$ for the integral over $\Gamma$?

* Does it matter what we choose for the value of $\kappa$ on $\Gamma$ in the volume integral?

When $\kappa$ is continuous, the jump term vanishes and we recover the **strong form**

$$ - \nabla\cdot \big( \kappa \nabla u \big) = 0 \text{ on } \Omega . $$

But if $\kappa$ is discontinuous, we would need to augment this with a jump condition ensuring that the flux $-\kappa \nabla u$ is continuous. We could go add this condition to our FD code to recover convergence in case of discontinuous $\kappa$, but it is messy.

## Nonlinear problems

Let's consider the nonlinear problem

$$ -\nabla \cdot \big(\underbrace{(1 + u^2)}_{\kappa(u)} \nabla u \big) = f \text{ on } (0,1)^2 $$

subject to Dirichlet boundary conditions. We will discretize the divergence form and thus will need

$\kappa(u)$ evaluated at staggered points $(i-1/2,j)$, $(i,j-1/2)$, etc. We will calculate these by averaging

$$ u_{i-1/2,j} = \frac{u_{i-1,j} + u_{i,j}}{2} $$

and similarly for the other staggered directions.

To use a Newton method, we also need the derivatives

$$ \frac{\partial \kappa_{i-1/2,j}}{\partial u_{i,j}} = 2 u_{i-1/2,j} \frac{\partial u_{i-1/2,j}}{\partial u_{i,j}} = u_{i-1/2,j} . $$

In the function below, we compute both the residual

$$F(u) = -\nabla\cdot \kappa(u) \nabla u - f(x,y)$$

and its Jacobian

$$J(u) = \frac{\partial F}{\partial u} . $$

```

def hgrid(h):

m = int(1/h + 1) # Number of elements in terms of nominal grid spacing h

h = 1 / (m-1) # Actual grid spacing

c = numpy.linspace(0, 1, m)

y, x = numpy.meshgrid(c, c)

return x, y

def nonlinear2d_div(h, x, y, u, forcing, g0, dirichlet=((),())):

m = x.shape[0]

u0 = g0(x, y).flatten()

F = -forcing(x, y).flatten()

ai = []

aj = []

av = []

def idx(i, j):

i = (m-1) - abs(m-1 - abs(i))

j = (m-1) - abs(m-1 - abs(j))

return i*m + j

mask = numpy.ones_like(x, dtype=bool)

mask[dirichlet[0],:] = False

mask[:,dirichlet[1]] = False

mask = mask.flatten()

u = u.flatten()

F[mask == False] = u[mask == False] - u0[mask == False]

u[mask == False] = u0[mask == False]

for i in range(m):

for j in range(m):

row = idx(i, j)

stencili = numpy.array([idx(*pair) for pair in [(i-1, j), (i, j-1), (i, j), (i, j+1), (i+1, j)]])

# Stencil to evaluate gradient at four staggered points

grad = numpy.array([[-1, 0, 1, 0, 0],

[0, -1, 1, 0, 0],

[0, 0, -1, 1, 0],

[0, 0, -1, 0, 1]]) / h

# Stencil to average at four staggered points

avg = numpy.array([[1, 0, 1, 0, 0],

[0, 1, 1, 0, 0],

[0, 0, 1, 1, 0],

[0, 0, 1, 0, 1]]) / 2

# Stencil to compute divergence at cell centers from fluxes at four staggered points

div = numpy.array([-1, -1, 1, 1]) / h

ustencil = u[stencili]

ustag = avg @ ustencil

kappa = .1 + ustag**2

if mask[row] == 0: # Dirichlet boundary

ai.append(row)

aj.append(row)

av.append(1)

else:

F[row] -= div @ (kappa[:,None] * grad @ ustencil)

Jstencil = -div @ (kappa[:,None] * grad

+ 2*(ustag*(grad @ ustencil))[:,None] * avg)

smask = mask[stencili]

ai += [row]*sum(smask)

aj += stencili[smask].tolist()

av += Jstencil[smask].tolist()

J = sp.csr_matrix((av, (ai, aj)), shape=(m*m,m*m))

return F, J

h = .1

x, y = hgrid(h)

u = 0*x

# u += deltau # Uncomment to iterate

F, J = nonlinear2d_div(h, x, y, u, lambda x,y: 0*x+1,

lambda x,y: 0*x, dirichlet=((0,-1),(0,-1)))

deltau = sp.linalg.spsolve(J, -F).reshape(x.shape)

pyplot.contourf(x, y, deltau)

pyplot.colorbar();

def solve_nonlinear(h, g0, dirichlet, atol=1e-8, verbose=False):

x, y = hgrid(h)

u = 0*x

for i in range(50):

F, J = nonlinear2d_div(h, x, y, u, lambda x,y: 0*x+1,

g0=g0, dirichlet=dirichlet)

anorm = numpy.linalg.norm(F, numpy.inf)

if verbose:

print('{:2d}: anorm {:8e}'.format(i,anorm))

if anorm < atol:

break

deltau = sp.linalg.spsolve(J, -F)

u += deltau.reshape(x.shape)

return x, y, u, i

x, y, u, i = solve_nonlinear(.1, lambda x,y: 0*x,

dirichlet=((0,-1),(0,-1)),

verbose=True)

pyplot.contourf(x, y, u)

pyplot.colorbar();

```

## Homework 3: Wednesday, 2018-10-24

Write a solver for the regularized $p$-Laplacian,

$$ -\nabla\cdot\big( \kappa(\nabla u) \nabla u \big) = 0 $$

where

$$ \kappa(\nabla u) = \big(\frac 1 2 \epsilon^2 + \frac 1 2 \nabla u \cdot \nabla u \big)^{\frac{p-2}{2}}, $$

$ \epsilon > 0$, and $1 < p < \infty$. The case $p=2$ is the conventional Laplacian. This problem gets more strongly nonlinear when $p$ is far from 2 and when $\epsilon$ approaches zero. The $p \to 1$ limit is related to plasticity and has applications in non-Newtonion flows and structural mechanics.

1. Implement a "Picard" solver, which is like a Newton solver except that the Jacobian is replaced by the linear system

$$ J_{\text{Picard}}(u) \delta u \sim -\nabla\cdot\big( \kappa(\nabla u) \nabla \delta u \big) . $$

This is much easier to implement than the full Newton linearization. How fast does this method converge for values of $p < 2$ and $p > 2$?

* Use the linearization above as a preconditioner to a Newton-Krylov method. That is, use [`scipy.sparse.linalg.LinearOperator`](https://docs.scipy.org/doc/scipy/reference/generated/scipy.sparse.linalg.LinearOperator.html) to apply the Jacobian to a vector

$$ \tilde J(u) v = \frac{F(u + h v) - F(u)}{h} . $$

Then for each linear solve, use [`scipy.sparse.linalg.gmres`](https://docs.scipy.org/doc/scipy/reference/generated/scipy.sparse.linalg.gmres.html) and pass as a preconditioner, a direct solve with the Picard linearization above. (You might find [`scipy.sparse.linalg.factorized`](https://docs.scipy.org/doc/scipy/reference/generated/scipy.sparse.linalg.factorized.html) to be useful. Compare algebraic convergence to that of the Picard method.

* Can you directly implement a Newton linearization? Either do it or explain what is involved. How will its nonlinear convergence compare to that of the Newton-Krylov method?

# Wave equations and multi-component systems

The acoustic wave equation with constant wave speed $c$ can be written

$$ \ddot u - c^2 \nabla\cdot \nabla u = 0 $$

where $u$ is typically a pressure.

We can convert to a first order system

$$ \begin{bmatrix} \dot u \\ \dot v \end{bmatrix} = \begin{bmatrix} 0 & I \\ c^2 \nabla\cdot \nabla & 0 \end{bmatrix} \begin{bmatrix} u \\ v \end{bmatrix} . $$

We will choose a zero-penetration boundary condition $\nabla u \cdot \hat n = 0$, which will cause waves to reflect.

```

%run fdtools.py

x, y, L, _ = laplacian2d_bc(.1, lambda x,y: 0*x,

lambda x,y: 0*x, dirichlet=((),()))

A = sp.bmat([[None, sp.eye(*L.shape)],

[-L, None]])

eigs = sp.linalg.eigs(A, 10, which='LM')[0]

print(eigs)

maxeig = max(eigs.imag)

u0 = numpy.concatenate([numpy.exp(-8*(x**2 + y**2)), 0*x], axis=None)

hist = ode_rkexplicit(lambda t, u: A @ u, u0, tfinal=2, h=2/maxeig)

def plot_wave(x, y, time, U):

u = U[:x.size].reshape(x.shape)

pyplot.contourf(x, y, u)

pyplot.colorbar()

pyplot.title('Wave solution t={:f}'.format(time));

for step in numpy.linspace(0, len(hist)-1, 6, dtype=int):

pyplot.figure()

plot_wave(x, y, *hist[step]);

```

* This was a second order discretization, but we could extend it to higher order.

* The largest eigenvalues of this operator are proportional to $c/h$.

* Formally, we can write this equation in conservative form

$$ \begin{bmatrix} \dot u \\ \dot{\mathbf v} \end{bmatrix} = \begin{bmatrix} 0 & c\nabla\cdot \\ c \nabla & 0 \end{bmatrix} \begin{bmatrix} u \\ \mathbf v \end{bmatrix} $$

where $\mathbf{v}$ is now a momentum vector and $\nabla u = \nabla\cdot (u I)$. This formulation could produce an anti-symmetric ($A^T = -A$) discretization. Discretizations with this property are sometimes called "mimetic".