text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

#### Tissue-specific RV eGenes

```

library(data.table)

library(dplyr)

load.data <- function(tissue) {

filename <- paste("/u/project/eeskin2/k8688933/rare_var/results/tss_20k_v8/result_summary/qvals/", tissue, ".lrt.q", sep="")

return(fread(filename, data.table=F))

}

get.egenes <- function(qvals) {

egenes = qvals$Gene_ID[apply(qvals, 1, function(x) {any(as.numeric(x[-1]) < 0.05)})]

return(egenes)

}

get.tissue.specific.genes <- function(egenes.list) {

res = vector("list", length(egenes.list))

names(res) = names(egenes.list)

for (i in 1:length(egenes.list)) {

res[[i]] = egenes.list[[i]][!egenes.list[[i]] %in% unique(unlist(egenes.list[-i]))]

}

return(res)

}

sample.info = fread("/u/project/eeskin2/k8688933/rare_var/results/tss_20k_v8/result_summary/tissue.name.match.csv")

tissues = sample.info$tissue

q.data = lapply(tissues, load.data)

names(q.data) = tissues

egenes = lapply(q.data, get.egenes)

res = get.tissue.specific.genes(egenes)

fwrite(as.list(res$Lung), "../tissue_specific_egenes_by_tissue/Lung.tissue.specifc.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Liver), "../tissue_specific_egenes_by_tissue/Liver.tissue.specifc.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Whole_Blood), "../tissue_specific_egenes_by_tissue/Whole_Blood.tissue.specifc.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Skin_Sun_Exposed_Lower_leg), "../tissue_specific_egenes_by_tissue/Skin_Sun_Exposed_Lower_leg.tissue.specifc.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Skin_Not_Sun_Exposed_Suprapubic), "../tissue_specific_egenes_by_tissue/Skin_Not_Sun_Exposed_Suprapubic.tissue.specifc.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Heart_Atrial_Appendage), "../tissue_specific_egenes_by_tissue/Heart_Atrial_Appendage.tissue.specifc.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Heart_Left_Ventricle), "../tissue_specific_egenes_by_tissue/Heart_Left_Ventricle.tissue.specifc.rv.egenes.tsv", sep="\n")

```

#### Tissue-specific non-RV eGenes

```

get.non.egenes <- function(qvals) {

egenes = qvals$Gene_ID[apply(qvals, 1, function(x) {all(as.numeric(x[-1]) >= 0.05)})]

return(egenes)

}

non.egenes = lapply(q.data, get.non.egenes)

res = get.tissue.specific.genes(non.egenes)

fwrite(as.list(res$Lung), "../tissue_specific_egenes_by_tissue/Lung.tissue.specifc.non.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Liver), "../tissue_specific_egenes_by_tissue/Liver.tissue.specifc.non.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Whole_Blood), "../tissue_specific_egenes_by_tissue/Whole_Blood.tissue.specifc.non.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Skin_Sun_Exposed_Lower_leg), "../tissue_specific_egenes_by_tissue/Skin_Sun_Exposed_Lower_leg.tissue.specifc.non.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Skin_Not_Sun_Exposed_Suprapubic), "../tissue_specific_egenes_by_tissue/Skin_Not_Sun_Exposed_Suprapubic.tissue.specifc.non.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Heart_Atrial_Appendage), "../tissue_specific_egenes_by_tissue/Heart_Atrial_Appendage.tissue.specifc.non.rv.egenes.tsv", sep="\n")

fwrite(as.list(res$Heart_Left_Ventricle), "../tissue_specific_egenes_by_tissue/Heart_Left_Ventricle.tissue.specifc.non.rv.egenes.tsv", sep="\n")

length(non.egenes$Lung)

```

#### RV eGenes example outlier

```

library(data.table)

library(dplyr)

target.snp = "chr20_57598808_G_A_b38"

geno = fread("/u/project/eeskin2/k8688933/rare_var/genotypes/v8/all_eur_samples_matrix_maf0.05/chr.20.genotypes.matrix.tsv")

indiv = colnames(geno)[which(geno %>% filter(ID == target.snp) != 0)][-1]

print(indiv)

z.heart.lv = fread("/u/project/eeskin2/k8688933/rare_var/results/tss_20k_v7/sungoohw/result_summary/log2.standardized.corrected.tpm.egenes.only/log2.standardized.corrected.lrt.tpm.Heart_Left_Ventricle")

z.heart.aa = fread("/u/project/eeskin2/k8688933/rare_var/results/tss_20k_v7/sungoohw/result_summary/log2.standardized.corrected.tpm.egenes.only/log2.standardized.corrected.lrt.tpm.Heart_Atrial_Appendage")

z.skin.sun = fread("/u/project/eeskin2/k8688933/rare_var/results/tss_20k_v7/sungoohw/result_summary/log2.standardized.corrected.tpm.egenes.only/log2.standardized.corrected.lrt.tpm.Skin_Sun_Exposed_Lower_leg")

z.skin.not.sun = fread("/u/project/eeskin2/k8688933/rare_var/results/tss_20k_v7/sungoohw/result_summary/log2.standardized.corrected.tpm.egenes.only/log2.standardized.corrected.lrt.tpm.Skin_Not_Sun_Exposed_Suprapubic")

print(indiv %in% colnames(z.heart.lv)) # this SNP is not in heart left ventricle

print(indiv %in% colnames(z.heart.aa))

print(indiv %in% colnames(z.skin.not.sun))

print(indiv %in% colnames(z.skin.sun))

print("ENSG00000101162" %in% z.heart.lv$gene)

print("ENSG00000101162" %in% z.heart.aa$gene)

print("ENSG00000101162" %in% z.skin.not.sun$gene)

print("ENSG00000101162" %in% z.skin.sun$gene)

z.heart.lv %>% filter(gene == "ENSG00000101162") %>% select(indiv)

z.heart.aa %>% filter(gene == "ENSG00000101162") %>% select(indiv)

idx = which(z.skin.sun$gene == "ENSG00000101162")

z.skin.sun[idx, -1]

scaled.z.skin.sun = scale(t(as.data.frame(z.skin.sun)[idx, -1]))

colnames(scaled.z.skin.sun) = c("z")

as.data.frame(scaled.z.skin.sun)[indiv, ] #%>% filter(abs(z) > 2)

idx = which(z.skin.sun$gene == "ENSG00000101162")

colnames(z.skin.sun)[which(abs(z.skin.sun[idx, -1]) > 2)]

z.skin.not.sun %>% filter(gene == "ENSG00000101162") %>% select(indiv)

```

#### RV eGenes example outliers in all tissues

```

z.scores = lapply(dir("/u/project/eeskin2/k8688933/rare_var/results/tss_20k_v7/sungoohw/result_summary/log2.standardized.corrected.tpm.egenes.only/",

pattern="log2.standardized.corrected.lrt.tpm", full.names=T), function(x) {if(file.size(x) > 1) {fread(x, data.table=F)}})

names(z.scores) = fread("../egene.counts.csv")$tissue

z.scores[[17]]

for (i in 1:48) {

z = z.scores[[i]]

if (is.null(z)) {

next

}

if (!indiv %in% colnames(z)) {

next

}

if (!"ENSG00000101162" %in% z$gene) {

next

}

idx = which(z$gene == "ENSG00000101162")

scaled.z = scale(t(as.data.frame(z)[idx, -1]))

colnames(scaled.z) = c("z")

print(names(z.scores)[[i]])

print(as.data.frame(scaled.z)[indiv, ])

}

```

| github_jupyter |

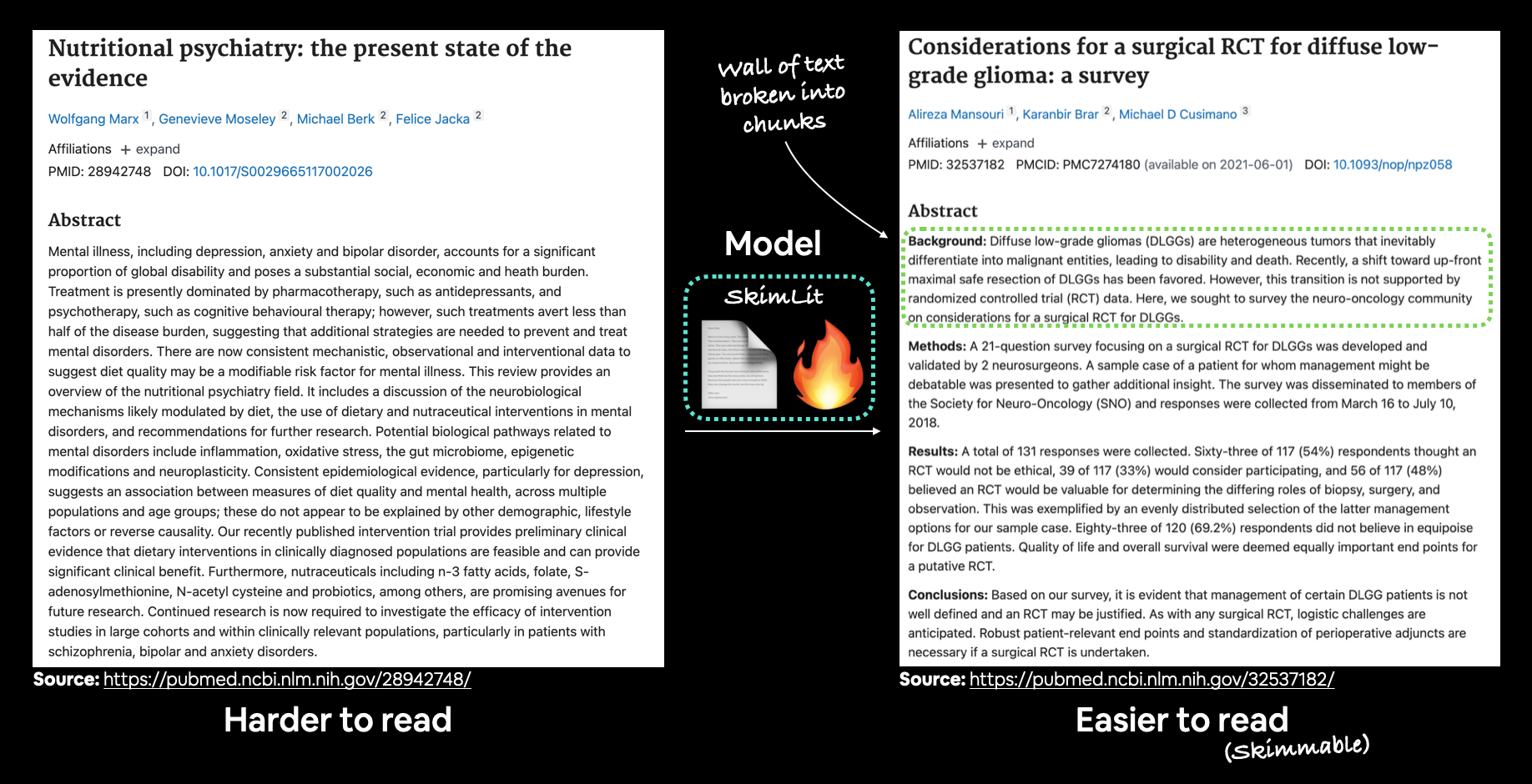

# Aprendizado de máquina - Parte 1

_Aprendizado de máquina_ (_machine learning_, ML) é um subcampo da inteligência artificial que tem por objetivo permitir que o computador _aprenda com os dados_ sem ser explicitamente programado. Em linhas gerais, no _machine learning_ se constrói algoritmos que leem dados, aprendem com a "experiência" deles e inferem coisas a partir do conhecimento adquirido. Esta área tem sido de grande valor para muitos setores por ser capaz de transformar dados aparentemente desconexos em informações cruciais para a tomada de decisões pelo reconhecimento de padrões significativos.

## Modelagem e a subdivisão da área

Os problemas fundamentais de ML em geral podem ser explicados por meio de _modelos_. Um modelo matemático (ou probabilístico) nada mais é do que uma relação entre variáveis. As duas maiores classes de problemas de ML são as seguintes.

- **Aprendizagem supervisionada (_supervised learning_)**, aplicável a situações em que desejamos predizer valores. Neste caso, os algoritmos aprendem a partir de um conjunto de treinamento rotulado (_labels_ ou _exemplars_) e procuram _generalizações_ para todos os dados de entrada possíveis. Em problemas supervisionados, é necessário saber que dado fornece a "verdade fundamental" para que outros possam a ele ser comparados. Popularmente, este termo é chamado de _ground-truth_. Exemplos de algoritmos desta classe são: regressão logística (_logistic regression_), máquinas de vetor de suporte (_support vector machines_) e floresta aleatória (_random forest_).

- **Aprendizagem não-supervisionada (_unsupervised learning_)**, aplicável a situações em que desejamos explorar os dados para explicá-los. Neste caso, os algoritmos aprendem a partir de um conjunto de treinamento não rotulado (_unlabeled) e buscam _explicações_ a partir de algum critério estatístico, geométrico ou de similaridade. Exemplos de algoritmos desta classe são: clusterização por _k-means_ (_k-means clustering_ e núcleo-estimador da função densidade (_kernel density estimation_).

Existe ainda uma terceira classe que não estudaremos neste curso, a qual corresponde à **aprendizagem por reforço** (_reinforcement learning_), cujos algoritmos aprendem a partir de reforço para aperfeiçoar a qualidade de uma resposta explorando o espaço de solução iterativamente.

Como a {numref}`overview-ml` resume, problemas de aprendizagem supervisionada podem ser de:

- _classificação_, se a resposta procurada é discreta, isto é, se há apenas alguns valores possíveis para atribuição (p.ex. classificar se uma família é de baixa, média ou alta renda a partir de dados econômicos);

- _regressão_, se a resposta procurada é contínua, isto é, se admite valores variáveis (p.ex. determinar a renda dos membros de uma família com base em suas profissões).

Por outro lado, problemas de aprendizagem não supervisionada podem ser de:

- _clusterização_, se a resposta procurada deve ser organizada em vários grupos. A clusterização tem similaridades com o problema de classificação, exceto pelo desconhecimento _a priori_, de quantas classes existem;

- _estimativa de densidade_, se a resposta procurada é a explicação de processos fundamentais responsáveis pela distribuição dos dados.

```{figure} ../figs/13/visao-geral-ml.png

---

width: 600px

name: overview-ml

---

Classes principais e problemas fundamentais do _machine learning_. Fonte: adaptado de Chah.

```

## Estudo de caso: classificação de empréstimos bancários

O problema que estudaremos consiste em predizer se o pedido de empréstimo de uma pessoa será parcial ou totalmente aprovado por uma financeira. O banco de dados disponível da financeira abrange os anos de 2007 a 2011.

A aprovação do pedido baseia-se em uma análise de risco que usa diversas informações, tais como renda anual da pessoa, endividamento, calotes, taxa de juros do empréstimo, etc.

Matematicamente, o pedido da pessoa será bem-sucedido se

$$\alpha = \frac{E - F}{E} \ge 0.95,$$

onde $E$ é o valor do empréstimo requisitado e $F$ o financiamento liberado. O classificador binário pode ser escrito pela função

$$h({\bf X}): \mathbb{M}_{n \, \times \, d} \to \mathbb{K},$$

com $\mathbb{K} = \{+1,-1\}$ e ${\bf X}$ é uma matriz de $n$ amostras e $d$ _features_ pertencente ao conjunto abstrato $\mathbb{M}_{n \, \times \, d}$.

```{note}

Em um problema de classificação, se a resposta admite apenas dois valores (duas classes), como "sim" e "não", diz-se que o classificador é **binário**. Se mais valores são admissíveis, diz-se que o classificador é **mutliclasse**.

```

```

import pickle

import numpy as np

import matplotlib.pyplot as plt

```

Vamos ler o banco de dados.

```

import pickle

f = open('../database/dataset_small.pkl','rb')

# necessário encoding 'latin1'

(x,y) = pickle.load(f,encoding='latin1')

```

Aqui, `x` é a nossa matriz de features.

```

# 4140 amostras

# 15 features

x.shape

```

`y` é o vetor de _labels_

```

# 4140 targets +1 ou -1

y,y.shape

```

Comentários:

- As _features_ (atributos) são características que nos permitem distinguir um item. Neste exemplo, são todas as informações coletadas sobre a pessoa ou sobre o mecanismo de empréstimo. São 15, no total, com 4140 valores reais (amostras) cada.

- Em geral, uma amostra pode ser um documento, figura, arquivo de áudio, linha de uma planilha.

- _Features_ são geralmente valores reais, mas podem ser booleanos, discretos, ou categóricos.

- O vetor-alvo (_target_) contém valores que marcam se empréstimos passados no histórico da financeira foram aprovados ou reprovados.

### Interfaces do `scikit-learn`

Usaremos o módulo `scikit-learn` para resolver o problema. Este módulo usa três interfaces:

- `fit()` (estimador), para construir modelos de ajuste;

- `predict()` (preditor), para fazer predições;

- `transform()` (transformador), para converter dados;

O objetivo é predizer empréstimos malsucedidos, isto é, aqueles que se acham aquém do limiar de 95% de $\alpha$.

```

from sklearn import neighbors

# cria uma instância de classificação

# 11 vizinhos mais próximos

nn = 11

knn = neighbors.KNeighborsClassifier(n_neighbors=nn)

# treina o classificador

knn.fit(x,y)

# calcula a predição

yh = knn.predict(x)

# predição, real

y,yh

# altere nn e verifique diferenças

#from numpy import size, where

#size(where(y - yh == 0))

```

```{note}

O algoritmo de classificação dos _K_ vizinhos mais próximos foi proposto em 1975. A base de seu funcionamento é a determinação do rótulo de classificação de uma amostra a partir de _K_ amostras vizinhas em um conjunto de treinamento. Saiba mais [aqui](http://computacaointeligente.com.br/algoritmos/k-vizinhos-mais-proximos/).

```

#### Acurácia

Podemos medir o desempenho do classificador usando métricas. A métrica padrão para o método _KNN_ é a _acurácia_, dada por:

$$acc = 1 - erro = \frac{\text{no. de predições corretas}}{n}.$$

```

knn.score(x,y)

```

Este _score_ parece bom, mas há o que analisar... Vamos plotar a distribuição dos rótulos.

```

# gráfico "torta" (pie chart)

plt.pie(np.c_[np.sum(np.where(y == 1,1,0)),

np.sum(np.where(y == -1,1,0))][0],

labels=['E parcial','E total'],colors=['r','g'],

shadow=False,autopct='%.2f')

plt.gcf().set_size_inches((6,6))

```

O gráfico mostra que o banco de dados está desequilibrado, já que 81,57% dos empréstimos foram liberados integralmente. Isso pode implicar que a predição será pela "maioria".

#### Matriz de confusão

Há casos em que a acurácia não é uma boa métrica de desempenho. Quando análises mais detalhadas são necessárias, podemos usar a _matriz de confusão_.

Com a matriz de confusão, podemos definir métricas para cenários distintos que levam em conta os valores obtidos pelo classificador e os valores considerados como corretos (_ground-truth_, isto é, o "padrão-ouro" (_gold standard_).

Em um classificador binário, há quatro casos a considerar, ilustrados na {numref}`matriz-confusao`:

- _Verdadeiro positivo_ (VP). O classificador prediz uma amostra como positiva que, de fato, é positiva.

- _Falso positivo_ (FP). O classificador prediz uma amostra como positiva que, na verdade, é negativa.

- _Verdadeiro negativo_ (VN). O classificador prediz uma amostra como negativa que, de fato, é negativa.

- _Falso negativo_ (FN). O classificador prediz uma amostra como negativa que, na verdade, é positiva.

```{figure} ../figs/13/matriz-confusao.png

---

width: 600px

name: matriz-confusao

---

Matriz de confusão. Fonte: elaboração própria.

```

Combinando esses quatro conceitos, podemos definir as métricas _acurácia_, _recall_ (ou _sensibilidade_), _especificidade_, _precisão_ (ou _valor previsto positivo_), _valor previsto negativo_, nesta ordem, da seguinte maneira:

$$\text{acc} = \dfrac{TP + TN}{TP + TN + FP + FN}$$

$$\text{rec} = \dfrac{TP}{TP + FP}$$

$$\text{spec} = \dfrac{TN}{TN + FP}$$

$$\text{prec} = \dfrac{TP}{TP + FP}$$

$$\text{npv} = \dfrac{TN}{TN + FN}$$

```{note}

Para uma interpretação ilustrada sobre essas métricas, veja este [post](https://medium.com/swlh/explaining-accuracy-precision-recall-and-f1-score-f29d370caaa8).

```

Podemos computar a matriz de confusão com

```

conf = lambda a,b: np.sum(np.logical_and(yh == a, y == b))

TP, TN, FP, FN = conf(-1,-1), conf(1,1), conf(-1,1), conf(1,-1)

np.array([[TP,FP],[FN,TN]])

```

ou, usando o `scikit-learn`, com

```

from sklearn import metrics

metrics.confusion_matrix(yh,y) # switch (prediction, target)

```

#### Conjuntos de treinamento e de teste

Vejamos um exemplo com `nn=1`.

```

knn = neighbors.KNeighborsClassifier(n_neighbors=1)

knn.fit(x,y)

yh = knn.predict(x)

metrics.accuracy_score(yh,y), metrics.confusion_matrix(yh,y)

```

Este caso tem 100% de acurácia e uma matriz de confusão diagonal. No exemplo anterior, não diferenciamos o conjunto usado para treinamento e predição.

Porém, em problemas reais, as chances dessa perfeição ocorrer são minimas. Da mesma forma, o classificador em geral será aplicado em dados previamente desconhecidos. Esta condição força-nos a dividir os dados em dois conjuntos: aquele usado para aprendizagem (_conjunto de treinamento_) e outro para testar a acurácia (_conjunto de teste_.

Vejamos uma simulação mais realista.

```

# Randomiza e divide dados

# PRC*100% para treinamento

# (1-PRC)*100% para teste

PRC = 0.7

perm = np.random.permutation(y.size)

split_point = int(np.ceil(y.shape[0]*PRC))

X_train = x[perm[:split_point].ravel(),:]

y_train = y[perm[:split_point].ravel()]

X_test = x[perm[split_point:].ravel(),:]

y_test = y[perm[split_point:].ravel()]

aux = {'training': X_train,

'training target':y_train,

'test':X_test,

'test target':y_test}

for k,v in aux.items():

print(k,v.shape,sep=': ')

```

Agora treinaremos o modelo com esta nova partição.

```

knn = neighbors.KNeighborsClassifier(n_neighbors = 1)

knn.fit(X_train, y_train)

yht = knn.predict(X_train)

for k,v in {'acc': str(metrics.accuracy_score(yht, y_train)),

'conf. matrix': '\n' + str(metrics.confusion_matrix(y_train, yht))}.items():

print(k,v,sep=': ')

```

Para `nn = 1`, a acurácia é de 100%. Vejamos o que acontecerá nesta simulação com dados ainda não vistos.

```

yht2 = knn.predict(X_test)

for k,v in {'acc': str(metrics.accuracy_score(yht2, y_test)),

'conf. matrix': '\n' + str(metrics.confusion_matrix(yht2, y_test))}.items():

print(k,v,sep=': ')

```

Neste caso, a acurácia naturalmente reduziu.

| github_jupyter |

```

#@title Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# https://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

import tensorflow as tf

print(tf.__version__)

import numpy as np

import matplotlib.pyplot as plt

def plot_series(time, series, format="-", start=0, end=None):

plt.plot(time[start:end], series[start:end], format)

plt.xlabel("Time")

plt.ylabel("Value")

plt.grid(True)

!wget --no-check-certificate \

https://storage.googleapis.com/laurencemoroney-blog.appspot.com/Sunspots.csv \

-O /tmp/sunspots.csv

import csv

time_step = []

sunspots = []

with open('/tmp/sunspots.csv') as csvfile:

reader = csv.reader(csvfile, delimiter=',')

next(reader)

for row in reader:

sunspots.append(float(row[2]))

time_step.append(int(row[0]))

series = np.array(sunspots)

time = np.array(time_step)

plt.figure(figsize=(10, 6))

plot_series(time, series)

split_time = 3000

time_train = time[:split_time]

x_train = series[:split_time]

time_valid = time[split_time:]

x_valid = series[split_time:]

window_size = 60

batch_size = 32

shuffle_buffer_size = 1000

def windowed_dataset(series, window_size, batch_size, shuffle_buffer):

dataset = tf.data.Dataset.from_tensor_slices(series)

dataset = dataset.window(window_size + 1, shift=1, drop_remainder=True)

dataset = dataset.flat_map(lambda window: window.batch(window_size + 1))

dataset = dataset.shuffle(shuffle_buffer).map(lambda window: (window[:-1], window[-1]))

dataset = dataset.batch(batch_size).prefetch(1)

return dataset

dataset = windowed_dataset(x_train, window_size, batch_size, shuffle_buffer_size)

model = tf.keras.models.Sequential([

tf.keras.layers.Dense(20, input_shape=[window_size], activation="relu"),

tf.keras.layers.Dense(10, activation="relu"),

tf.keras.layers.Dense(1)

])

model.compile(loss="mse", optimizer=tf.keras.optimizers.SGD(lr=1e-7, momentum=0.9))

model.fit(dataset,epochs=100,verbose=0)

forecast=[]

for time in range(len(series) - window_size):

forecast.append(model.predict(series[time:time + window_size][np.newaxis]))

forecast = forecast[split_time-window_size:]

results = np.array(forecast)[:, 0, 0]

plt.figure(figsize=(10, 6))

plot_series(time_valid, x_valid)

plot_series(time_valid, results)

tf.keras.metrics.mean_absolute_error(x_valid, results).numpy()

```

| github_jupyter |

# Classification Problem : Credit Card Offer

### Importing the librairies

```

from imblearn.over_sampling import SMOTE

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import pickle

import seaborn as sns

from sklearn.preprocessing import Normalizer

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import confusion_matrix

from sklearn.neighbors import KNeighborsClassifier

from sklearn.metrics import accuracy_score

from sklearn.metrics import classification_report

from sklearn.metrics import confusion_matrix

from scipy.stats import chi2_contingency

from scipy import stats

import warnings

warnings.filterwarnings('ignore')

```

## 1. Cleaning the CSV file to be uploaded on MySQLworkbench

```

df = pd.read_csv('creditcardmarketing.csv')

df.head()

```

**We will clean the headers only.**

```

def renaming(df):

#removing special characters & following the snake case

df.columns = df.columns.str.replace(' ', '_').str.lower()

df.columns = df.columns.str.replace('#_','')

return df.info()

renaming(df)

```

There are 24 NAN values in the last 5 columns. The 24 rows with the non values will not be uploaded on MySQLWorkbench.

```

# saving the dataframe into CVS file for SQL

df.to_csv('credit_card_data.csv')

```

## 2. Cleaning and EDA

```

# customer_number are unique values so we pass this variable as the index

df = df.set_index('customer_number')

df = df.drop_duplicates()

df.info()

```

### 2.1 - Starting with categorical data

```

def categorical_information (df):

for col in df.select_dtypes('object'):

print (df[col].nunique(), '\n')

print(df[col].value_counts(), '\n')

# get information on categorical data

categorical_information(df)

```

Create visuals for all categorical colums. We can see that the target variable 'offer_accepted' is highly imbalanced.

```

def count_plot_cat(df):

for col in df.select_dtypes('object'):

sns.countplot(df[col])

plt.show()

count_plot_cat(df)

```

### 2.2 Then numerical variables

**Dealing with NAN values**

```

df.isna().sum()

# checking the rows that are null

df[df['q1_balance'].isna()==True]

# since there are only 24 rows with NAN values in the whole dataframe i.e 0,13% of the data, I will drop them.

df = df.dropna()

df = df.reset_index(drop=True)

```

**Exploring data**

```

df.describe()

```

**Create a function to plot graphs of the continuous and discrete variables**

```

def numerical_plotting(df):

decimaux = df.select_dtypes('float64')

entiers = df.select_dtypes('int64')

for col in decimaux:

sns.distplot(decimaux[col])

plt.show()

for col in entiers:

sns.countplot(entiers[col])

plt.show()

numerical_plotting(df)

```

Correlation analysis

Covariance visualization :

we are only working on continuous variables since the discrete variables are featured as categorical variables.

```

#checking distribution of variables

def distribution_distplot(df):

for col in df.select_dtypes('float64'):

sns.distplot(df[col])

# save the figure

# plt.savefig('covariance_account_balance.png', dpi=100, bbox_inches='tight')

plt.show()

distribution_distplot(df)

def corr_matrix(df):

corr_matrix=df.corr(method='pearson')

fig, ax = plt.subplots(figsize=(10, 8))

ax = sns.heatmap(corr_matrix, annot=True)

plt.show()

corr_matrix(df)

```

**Checking on outliers**

```

def vizualizing_outliers(df):

for col in df._get_numeric_data():

sns.boxplot(df[col])

plt.show()

vizualizing_outliers(df)

```

**The outliers should not have relevant impact on our analysis. we will not remove them.**

## 2.3 - Exploration of the target variable : "accepted_offer"

We have already seen that the target variable is higly imbalanced. We will need to deal with this issue.

First, we will explore colinearity between variables.

```

# average_balance vs offer_accepted

plt.figure(figsize=(6,4))

sns.barplot(data=df, y="average_balance", x="offer_accepted")

plt.show()

# Did the customers with the highest average balance accept the offer?

df.nlargest(20,columns="average_balance")[["average_balance","offer_accepted","mailer_type","income_level","own_your_home", "credit_rating","bank_accounts_open","credit_cards_held"]]

# Same question but with the customers with the lowest average balance?

df.nsmallest(20,columns="average_balance")[["average_balance","offer_accepted","mailer_type","income_level","own_your_home", "credit_rating","bank_accounts_open","credit_cards_held"]]

def count_plot_hue_target(df,columns=[], target = ''):

for col in columns:

plt.figure(figsize=(6,4))

sns.countplot(x = col, hue = target, data = df)

plt.show()

count_plot_hue_target(df, columns= df.select_dtypes('object'), target ='offer_accepted')

```

**Checking correlation between categorical variables**

The p_value is used for hypothesis testing and it can be used to measure dependency between two variables.

The null hypothesis to be rejected is that there is no correlation between two variables.

Our anlysis is based on the threshold of 0.05 for p_value. This threshold is related to a confidence interval of 95%.

A p_value below 0.05 means that there is a considerable correlation between two variables, and it is likely that one of them can be dropped without decreasing the metrics of the model.

```

# function to perform ChiSquare-test for categoricalvariables

def chi_square_test(df, columns=[]):

"""This function returns 4 results in this order (chi-square statistic, p value, degrees of freedom,

expected frequencies matrix.

"""

for i in columns:

for j in columns:

if i != j:

data_crosstab = pd.crosstab(df[i], df[j], margins = False)

print('ChiSquare test for ',i,'and ',j,': ')

print(chi2_contingency(data_crosstab, correction=False), '\n')

chi_square_test(df, columns= df.select_dtypes(np.object))

```

Based on the p-values obtained, we can say that :

- the columns 'reward', 'mailer_type', 'income_level' and 'credit_rating' are correlated with our target variable : offer_accepted

- There is no correlation between the other variables

Therefore, we can keep all the variables to run our machine learning algorithms.

## 3. Preprocessing and Modeling

We will use different models to compare them and find the one which fits better our data.

Based on the data we have, we have decided that we need to encode the categorical features.

Regarding the numerical features, a boxcox transformation on the numerical columns could help to improve the model.

As an alternative, we will apply normalize the whole dataframe (after the encoding).

Finally, we will try two techniques to solve the imbalance of the target value : SMOTE (equal number of Yes and No and upsampling to weight the numbers of Yes and No.

In order to train the model, we will apply two algorithms : Logistic Regression and KNN Classifier.

```

# create copies for the different runs

df1 = df.copy()

df2 = df.copy()

```

### 3.1 - Preprocessing - using boxcox and SMOTE

1. Boxcox transformation on numerical variables

2. Encoding - get dummies

3. Dealing with imbalanced - SMOTE

4. Modeling

#### Boxcox transformation

We will apply it to help our features to have a more normal distribution.

```

#Boxcox transformation

def boxcox_transform(df):

numeric_cols = df.select_dtypes(np.number).columns

_ci = {column: None for column in numeric_cols}

for column in numeric_cols:

# since i know any columns should take negative numbers, to avoid -inf in df

df[column] = np.where(df[column]<=0, np.NAN, df[column])

df[column] = df[column].fillna(df[column].mean())

transformed_data, ci = stats.boxcox(df[column])

df[column] = transformed_data

_ci[column] = [ci]

return df, _ci

df, _ci = boxcox_transform(df)

df

```

**Checking the distribution of the features after the boxcox transformation**

```

distribution_distplot(df)

```

**the boxcox transformation improves the normal distribution of the features, especially for the q2_balance and q4_balance columns.**

```

#drop the target

X = df.drop('offer_accepted', axis=1)

y = df['offer_accepted']

```

#### Encoding

```

X= pd.get_dummies(X)

X

```

#### Dealing with imbalanced data

```

# SMOTE

# Uses knn to create rows with similar features from the minority classes.

smote = SMOTE()

X_sm, y_sm = smote.fit_resample(X, y)

y_sm.value_counts()

```

#### Modeling

```

X_train, X_test, y_train, y_test = train_test_split(X_sm, y_sm, test_size=0.2, random_state=42)

```

**Logistic Regression**

```

def logistic_regression_model(X_train, X_test, y_train, y_test):

# defining a function to apply the logistic regression model

classification = LogisticRegression(random_state=42, max_iter=10000)

classification.fit(X_train, y_train)

# and to evaluate the model

score = classification.score(X_test, y_test)

print('The accuracy score is: ', score, '\n')

predictions = classification.predict(X_test)

confusion_matrix(y_test, predictions)

cf_matrix = confusion_matrix(y_test, predictions)

group_names = ['True NO', 'False NO',

'False YES', 'True YES']

group_counts = ["{0:0.0f}".format(value) for value in cf_matrix.flatten()]

group_percentages = ["{0:.2%}".format(value) for value in cf_matrix.flatten()/np.sum(cf_matrix)]

labels = [f"{v1}\n{v2}\n{v3}" for v1, v2, v3 in zip(group_names,group_counts,group_percentages)]

labels = np.asarray(labels).reshape(2,2)

sns.heatmap(cf_matrix, annot=labels, fmt='', cmap='Blues')

print (cf_matrix)

logistic_regression_model(X_train, X_test, y_train, y_test)

```

**KNN-Classifier**

```

#choose the best key value

def best_K(X_train, y_train, X_test, y_test, r):

scores = []

for i in r:

model = KNeighborsClassifier(n_neighbors=i)

model.fit(X_train, y_train)

scores.append(model.score(X_test, y_test))

plt.figure(figsize=(10,6))

plt.plot(r,scores,color = 'blue', linestyle='dashed',

marker='*', markerfacecolor='red', markersize=10)

plt.title('accuracy scores vs. K Value')

plt.xlabel('K')

plt.ylabel('Accuracy')

best_K(X_train, y_train, X_test, y_test, r=range(2,10))

def KNN_classifier_model(X_train, y_train, X_test, y_test,n):

# define a function to apply the KNN Classifier Model

knn = KNeighborsClassifier(n_neighbors=n)

knn.fit(X_train, y_train)

# and to evaluate the model

print('Accuracy of K-NN classifier on test set: {:.2f}'

.format(knn.score(X_test, y_test)))

y_pred = knn.predict(X_test)

print(confusion_matrix(y_test, y_pred))

cf_matrix = confusion_matrix(y_test, y_pred)

group_names = ['True NO', 'False NO',

'False YES', 'True YES']

group_counts = ["{0:0.0f}".format(value) for value in cf_matrix.flatten()]

group_percentages = ["{0:.2%}".format(value) for value in cf_matrix.flatten()/np.sum(cf_matrix)]

labels = [f"{v1}\n{v2}\n{v3}" for v1, v2, v3 in zip(group_names,group_counts,group_percentages)]

labels = np.asarray(labels).reshape(2,2)

sns.heatmap(cf_matrix, annot=labels, fmt='', cmap='Blues')

print (cf_matrix)

KNN_classifier_model(X_train,y_train,X_test,y_test,2)

```

The accuracy scores obtained on the first round are high. Also, the models would be able to predict that the offer is accepted and that the offer is rejected.

### 3.2 - Preprocessing using BoxCox and UpSampling

1. Boxcox transformation on numerical variables

2. UpSampling (60-40)

3. Encoding - get dummies

4. Modeling

In this second round, we will only change the method to deal with unbalanced data. With the SMOTE method, we are getting a dataframe with equal number of Yes and No. Here we are using the upsampling method in order to weight the target column.

#### Boxcox transformation

We are transforming only the continuous variables as previously.

```

#Boxcox function on continous variables

df1, _ci = boxcox_transform(df1)

df1

```

#### Upsampling

```

df1.offer_accepted.value_counts()

# Manually

# getting sample with the 60% as the minority class

Yes = df1[df1['offer_accepted'] == 'Yes'].sample(10173, replace=True)

No = df1[df1['offer_accepted'] == 'No'].sample(16955, replace=True)

upsampled1 = pd.concat([Yes,No]).sample(frac=1) # .sample(frac=1) here is just to shuffle the dataframe

upsampled1

upsampled1.offer_accepted.value_counts()

```

#### Encoding

```

X1 = upsampled1.drop('offer_accepted', axis=1)

y1 = upsampled1['offer_accepted']

X1 = pd.get_dummies(upsampled1)

X1

```

#### Modeling

**Logistic Regression**

```

X1_train, X1_test, y1_train, y1_test = train_test_split(X1, y1, test_size=0.2, random_state=42)

logistic_regression_model(X1_train, X1_test, y1_train, y1_test)

```

This model seems to be overfitted. Therefore it should not perform well for future predictions.

**KNN Classifier**

```

KNN_classifier_model(X1_train,y1_train,X1_test,y1_test,5)

```

The accepted offers are predicted almost at 100%. This leads us to think that the model could not be generalized.

### 3.3 - Preprocessing using Upsampling and normalization

1. Numerical columns in a list

2. Dealing with imbalanced - UpSampling

3. Encoding - get dummies

4. train-test split

5. Normalization of the numerical columns

6. Modeling

```

numerical = df2.select_dtypes(np.number)

numerical.columns

num_col = ['bank_accounts_open', 'credit_cards_held', 'homes_owned',

'household_size', 'average_balance','q1_balance', 'q2_balance', 'q3_balance',

'q4_balance']

```

#### Upsampling

```

df2['offer_accepted'].value_counts()

# Manually

# getting sample with the 60% as the minority class

Yes = df2[df2['offer_accepted'] == 'Yes'].sample(10173, replace=True)

No = df2[df2['offer_accepted'] == 'No'].sample(16955, replace=True)

upsampled2 = pd.concat([Yes,No]).sample(frac=1) # .sample(frac=1) here is just to shuffle the dataframe

upsampled2

```

#### Encoding

```

X2 = upsampled2.drop('offer_accepted', axis=1)

y2 = upsampled2['offer_accepted']

X2 = pd.get_dummies(upsampled2)

X2

```

#### Normalizing

We are normalizing the numerical variables that is to say that we will exclude the encoded categorical variables.

Therefore, we will create the X dataframes on which to apply the normalization and then concatenate them to get the final dataframe on which the model will be trained.

```

# train-test split

X2_train, X2_test, y2_train, y2_test = train_test_split(X2, y2, random_state=0)

#normalizing training and testing set only on numerical variables and not the original categorical variables

X_train_n = X2_train.filter(['bank_accounts_open', 'credit_cards_held', 'homes_owned',

'household_size', 'average_balance','q1_balance', 'q2_balance', 'q3_balance',

'q4_balance'], axis = 1)

X_test_n = X2_test.filter(['bank_accounts_open', 'credit_cards_held', 'homes_owned',

'household_size', 'average_balance','q1_balance', 'q2_balance', 'q3_balance',

'q4_balance'], axis = 1)

#normalization

transformer = Normalizer()

transformer.fit(X_train_n)

# saving in a pickle

with open('std_transformer.pickle', 'wb') as file:

pickle.dump(transformer, file)

# loading from a pickle

with open('std_transformer.pickle', 'rb') as file:

loaded_normalizer = pickle.load(file)

X_train_ = loaded_normalizer.transform(X_train_n)

X_test_ = loaded_normalizer.transform(X_test_n)

#Getting the final dataframe with the normalized variables and the encoded variables.

num_train = pd.DataFrame(X_train_, columns = num_col)

num_test = pd.DataFrame(X_test_, columns = num_col)

X2_train.columns

X_train_c = X2_train.filter(['reward_Air Miles', 'reward_Cash Back', 'reward_Points',

'mailer_type_Letter', 'mailer_type_Postcard', 'income_level_High',

'income_level_Low', 'income_level_Medium', 'overdraft_protection_No',

'overdraft_protection_Yes', 'credit_rating_High', 'credit_rating_Low',

'credit_rating_Medium', 'own_your_home_No', 'own_your_home_Yes'], axis = 1)

X_train_final= pd.concat([num_train.reset_index(drop=True), X_train_c.reset_index(drop=True)], axis=1, ignore_index=True)

X_train_final.info() #checking NAN values

X_test_c = X2_test.filter(['reward_Air Miles', 'reward_Cash Back', 'reward_Points',

'mailer_type_Letter', 'mailer_type_Postcard', 'income_level_High',

'income_level_Low', 'income_level_Medium', 'overdraft_protection_No',

'overdraft_protection_Yes', 'credit_rating_High', 'credit_rating_Low',

'credit_rating_Medium', 'own_your_home_No', 'own_your_home_Yes'], axis = 1)

X_test_final= pd.concat([num_test.reset_index(drop=True), X_test_c.reset_index(drop=True)], axis=1, ignore_index=True)

X_test_final.info()

```

#### Modeling

**Logistic Regression**

```

logistic_regression_model(X_train_final, X_test_final, y2_train, y2_test)

```

This model would not be good at predicting if the offer is accepted.

**KNN Classifier**

```

KNN_classifier_model(X_train_final,y2_train,X_test_final,y2_test,2)

```

## Conclusion

**Comparison of accuracy scores**

| Models | Logistic Regression | KNNClassifier |

| --------------------------:|: -----------------------: | ---------------:|

| BoxCox & SMOTE | 0,94 | 0,86 |

| BoxCox & upsampling | 1 | 0,90 |

| Normalization & upsampling | 0,70 | 0,97 |

We have obtained high accuracy scores in most of our runs. However, we should not necessary be confident on how our models work on future data. In fact, the high results may let us think that they could be overfitted.

| github_jupyter |

# Kernel selection

In this notebook we illustrate the selection of a kernel for a gaussian process.

The kernel is there to modelize the similarity between two points in the input space and, as far as gaussian process are concerned, it can make or break the algorithm.

```

from fastai.tabular.all import *

from tabularGP import tabularGP_learner

from tabularGP.kernel import *

```

## Data

Builds a regression problem on a subset of the adult dataset:

```

path = untar_data(URLs.ADULT_SAMPLE)

df = pd.read_csv(path/'adult.csv').sample(1000)

procs = [FillMissing, Normalize, Categorify]

cat_names = ['workclass', 'education', 'marital-status', 'occupation', 'relationship', 'race']

cont_names = ['education-num', 'fnlwgt']

dep_var = 'age'

data = TabularDataLoaders.from_df(df, path, procs=procs, cat_names=cat_names, cont_names=cont_names, y_names=dep_var)

```

## Tabular kernels

By default, tabularGP uses one kernel type for each continuous features (a [gaussian kernel](https://en.wikipedia.org/wiki/Radial_basis_function_kernel)) and one kernel type for each categorial features (an [index kernel](https://gpytorch.readthedocs.io/en/latest/kernels.html#indexkernel)).

Using those kernels we can compute the similarity between the individual coordinates of two points, those similarity are them combined with what we call a tabular kernel.

The simplest kernel is the `WeightedSumKernel` kernel which computes a weighted sum of the feature similarities.

It is equivalent to a `OR` type of relation: if two points have at least one feature that is similar then they will be considered close in the input space (even if all the other features are very dissimilar).

```

learn = tabularGP_learner(data, kernel=WeightedSumKernel)

learn.fit_one_cycle(5, max_lr=1e-3)

```

Then there is the `WeightedProductKernel` kernel which computes a weighted geometric mean (weighted product) of the feature similarities.

It is equivalent to a `AND` type of relation: all features need to be similar to consider two points similar in the input space.

It is a good kernel to use when features are all continuous and similar (i.e. the `x,y` plane for a function).

```

learn = tabularGP_learner(data, kernel=WeightedProductKernel)

learn.fit_one_cycle(5, max_lr=1e-3)

```

The default tabular kernel is a `ProductOfSumsKernel` which modelise a combinaison of the form: $$s = \prod_i{(\sum_j{\beta_j * s_j})^{\alpha_i}}$$

It is equivalent to a `WeightedProductKernel` put on top of a `WeightedSumKernel` kernel.

This kernel is extremely flexible and recommended when you have a mix of continuous and categorial features.

```

learn = tabularGP_learner(data, kernel=ProductOfSumsKernel)

learn.fit_one_cycle(5, max_lr=1e-3)

```

It is important to note that the choice of the tabular kernel can have a drastic impact on your loss and that you should probably always test all available kernels to find the one that is most suited to your particular problem.

Note that it is fairly easy to design your own `TabularKernel`, following the examples in the [kernel.py](https://github.com/nestordemeure/tabularGP/blob/master/tabularGP/kernel.py) file (while the `feature importance` property is useful, it is optionnal), in order to better accomodate the particular structure of your problem.

## Feature kernels

```

from tabularGP.loss_functions import *

from tabularGP import *

```

There are four continuous kernel provided:

- `ExponentialKernel` which is zero differentiable

- `Matern1Kernel` which is once differentiable

- `Matern2Kernel` which is twice differentiable

- `GaussianKernel` (the default) which is infinitely differentiable

The more differentiable a kernel is and the smoother the modelized function will be.

There are two categorial kernel provided:

- `HammingKernel` which consider different elements of a category as have a similarity of zero

- `IndexKernel` (the default) which consider that different elements can still be similar

While the choice of feature kernel tend to be less impactful, you can manually select them if you build your model yourself:

```

model = TabularGPModel(data, kernel=WeightedProductKernel, cont_kernel=ExponentialKernel, cat_kernel=HammingKernel)

loss_func = gp_gaussian_marginal_log_likelihood # would have used `gp_is_greater_log_likelihood` for classification

learn = TabularGPLearner(data, model, loss_func=loss_func)

learn.fit_one_cycle(5, max_lr=1e-3)

```

It is also fairly easy to provide your own feature kernel to modelize behaviour specific to your data (periodicity, trends, etc).

To learn more about the implementation of kernels adapted to a particular problem, we recommend the chapter two (*Expressing Structure with Kernels*) and three (*Automatic Model Construction*) of the very good [Automatic Model Construction with Gaussian Processes](http://www.cs.toronto.edu/~duvenaud/thesis.pdf).

## Transfer learning

Kernels model the input space, as such they can be reused from an output type to another in order to tranfert domain knowledge and speed up training.

Here is a classification problem using the same input features (different features would lead to a crash as the input space would be different):

```

cat_names = ['workclass', 'education', 'marital-status', 'occupation', 'relationship', 'race']

cont_names = ['education-num', 'fnlwgt']

dep_var = 'salary'

data_classification = TabularDataLoaders.from_df(df, path, procs=procs, cat_names=cat_names, cont_names=cont_names, y_names=dep_var, bs=64)

```

We can reuse the kernel from our regression task by passing the learner, model or trained kernel to the `kernel` argument of our builder:

```

learn_classification = tabularGP_learner(data, kernel=learn)

learn_classification.fit_one_cycle(5, max_lr=1e-3)

```

Note that, by default, the kernel is frozen when transfering knowledge. Lets unfreeze it now that the rest of the gaussian process is trained:

```

learn_classification.unfreeze(kernel=True)

learn_classification.fit_one_cycle(5, max_lr=1e-3)

```

| github_jupyter |

```

import os

import tifffile

from sklearn.model_selection import train_test_split

from tqdm import tqdm_notebook as tqdm

from shutil import copyfile

import numpy as np

working_dir = '/media/jswaney/SSD EVO 860/organoid_phenotyping/ventricle_segmentation'

def load_tiff_bioformats(path):

data = tifffile.imread(path)[0, 0] # Bioformats 5D stack

return data[0], data[1]

```

# Normalize and make train and test sets

```

data_dir = 'eF20_B3_2'

files = os.listdir(os.path.join(working_dir, data_dir))

len(files)

x = np.linspace(0, 2**16 - 1, 256)

h = np.zeros(256)

for file in tqdm(files):

img, seg = load_tiff_bioformats(os.path.join(working_dir,

data_dir,

file))

h += np.histogram(img.ravel(), bins=256, range=(x[0], x[-1]))[0]

cdf = np.cumsum(h)

cdf = cdf / cdf.max()

diff = np.abs(cdf - 0.997)

idx = np.where(diff == diff.min())[0]

min_value = 0

max_value = x[idx][0]

print(max_value)

plt.subplot(211)

plt.plot(x, h)

plt.ylim([0, 1e7])

plt.subplot(212)

plt.plot(x, cdf)

plt.plot([x[0], x[-1]], [0.997, 0.997])

plt.show()

files_train, files_test = train_test_split(files,

test_size=0.10,

random_state=123)

len(files_train), len(files_test)

train_dir = 'train'

test_dir = 'test'

class_dir = 'class_0'

os.makedirs(os.path.join(working_dir, train_dir, class_dir),

exist_ok=True)

os.makedirs(os.path.join(working_dir, test_dir, class_dir),

exist_ok=True)

for file in tqdm(files_train):

input_path = os.path.join(working_dir, data_dir, file)

output_path = os.path.join(working_dir, train_dir, class_dir, file)

img, seg = load_tiff_bioformats(os.path.join(working_dir,

data_dir,

file))

img_normalized = (img-min_value)/(max_value-min_value)

img_normalized = np.clip(img_normalized * 255, 0, 255)

data = np.stack([img_normalized.astype(np.uint8),

(seg * 255).astype(np.uint8)], axis=0)

tifffile.imsave(output_path, data, compress=1)

for file in tqdm(files_test):

input_path = os.path.join(working_dir, data_dir, file)

output_path = os.path.join(working_dir, test_dir, class_dir, file)

img, seg = load_tiff_bioformats(os.path.join(working_dir,

data_dir,

file))

img_normalized = np.clip((img-min_value)/(max_value-min_value) * 255, 0, 255)

data = np.stack([img_normalized.astype(np.uint8),

(seg * 255).astype(np.uint8)], axis=0)

tifffile.imsave(output_path, data, compress=1)

```

# Make Dataloaders for segmentations

```

def load_tiff_seg(path):

data = tifffile.imread(path)

data = np.stack([data[0], data[1], np.zeros(data[0].shape, data.dtype)])

return data.transpose((1, 2, 0))

import torch

from torch import nn

from torchvision.datasets import DatasetFolder

from torchvision import transforms

from torch.utils.data import DataLoader

from torch.optim import Adam

import matplotlib.pyplot as plt

import sys

sys.path.append('/home/jswaney/Pytorch-UNet/')

from unet.unet_model import UNet

data = load_tiff_seg(output_path)

data[0].shape, data[0].dtype

print(img.min())

plt.imshow(img)

plt.show()

degrees = 45

scale = (0.8, 1.2)

size = 256

dataset_train = DatasetFolder(os.path.join(working_dir, train_dir),

loader=load_tiff_seg,

extensions=['.tif'],

transform=transforms.Compose([

transforms.ToPILImage(),

transforms.RandomHorizontalFlip(),

transforms.RandomAffine(degrees,

scale=scale),

transforms.RandomCrop(size),

transforms.ToTensor()]))

dataset_test = DatasetFolder(os.path.join(working_dir, test_dir),

loader=load_tiff_seg,

extensions=['.tif'],

transform=transforms.ToTensor())

print(dataset_train)

print(dataset_test)

use_cuda = torch.cuda.is_available()

device = torch.device("cuda" if use_cuda else "cpu")

device

batch_size = 1

n_workers = 1 if use_cuda else 0

pin_memory = True if use_cuda else False

dataloader_train = DataLoader(dataset_train,

batch_size,

shuffle=True,

num_workers=n_workers,

pin_memory=pin_memory)

dataloader_test = DataLoader(dataset_test,

batch_size,

num_workers=n_workers,

pin_memory=pin_memory)

x, _ = next(iter(dataloader_train))

x.shape, x.dtype, x.max()

plt.imshow(x.numpy()[0, 1])

plt.show()

model = UNet(n_channels=1, n_classes=1)

model

model = model.to(device)

optimizer = Adam(model.parameters(), lr=0.001)

model.train()

def train_epoch(model, epoch, dataloader_train, device, optimizer, criterion, log_interval=100):

model.train()

n_batch = len(dataloader_train)

epoch_loss = 0

count = 0

for batch_idx, (x, _) in enumerate(dataloader_train):

img = x[:, 0].unsqueeze(1)

img = img * (1 + np.random.random(1)[0])

img = img.to(device)

seg = x[:, 1].unsqueeze(1)

seg = (seg > 0).type(torch.float).to(device)

if seg.max() > 0:

weight = torch.tensor([10], dtype=torch.float32).to(device)

else:

weight = torch.tensor([1], dtype=torch.float32).to(device)

optimizer.zero_grad()

output = model(img)

output_flat = output.view(-1)

seg_flat = seg.view(-1)

criterion.weight = weight

loss = criterion(output_flat, seg_flat)

loss.backward()

optimizer.step()

count += len(x)

epoch_loss += loss.item()

ave_loss = epoch_loss / count

if batch_idx % log_interval == 0:

print(f'Train Epoch: {epoch} [{batch_idx * len(x)}/{len(dataloader_train.dataset)} ({100*batch_idx/n_batch:.0f}%)]\tLoss: {ave_loss}')

def test_epoch(model, dataloader_test, criterion):

model.eval()

test_loss = 0

correct = 0

with torch.no_grad():

for x, _ in dataloader_test:

img = x[:, 0].unsqueeze(1).to(device)

seg = x[:, 1].unsqueeze(1)

seg = (seg > 0).type(torch.float).to(device)

output = model(img)

output_flat = output.view(-1)

seg_flat = seg.view(-1)

test_loss += criterion(output_flat, seg_flat)

test_loss /= len(dataloader_test.dataset)

print(f'Test set: Total loss: {test_loss:.4f}')

criterion = nn.BCELoss()

n_epochs = 200

for epoch in range(n_epochs):

train_epoch(model, epoch, dataloader_train, device, optimizer, criterion)

test_epoch(model, dataloader_test, criterion)

%matplotlib inline

x, _ = next(iter(dataloader_train))

img = x[:, 0].unsqueeze(1).to(device)

seg = x[:, 1].unsqueeze(1)

seg = (seg > 0).type(torch.float).to(device)

output = model(img)

img = img.detach().cpu().numpy()

seg = seg.detach().cpu().numpy()

output = output.detach().cpu().numpy()

img = img[0, 0]

seg = seg[0, 0]

output = output[0, 0]

plt.figure(figsize=(4, 8))

plt.subplot(311)

plt.imshow(img, clim=[0, 1])

plt.title('Syto 16')

plt.subplot(312)

# plt.imshow(seg, clim=[0, 1])

plt.hist(img.ravel(), bins=256, range=(0, 1))

plt.title('Ground Truth')

plt.subplot(313)

plt.imshow(output, clim=[0, 0.5])

plt.title('Predicted')

plt.show()

# print(seg.max(), img.max())

torch.save(model.state_dict(), os.path.join(working_dir, 'unet_d35_d60_200.pt'))

```

| github_jupyter |

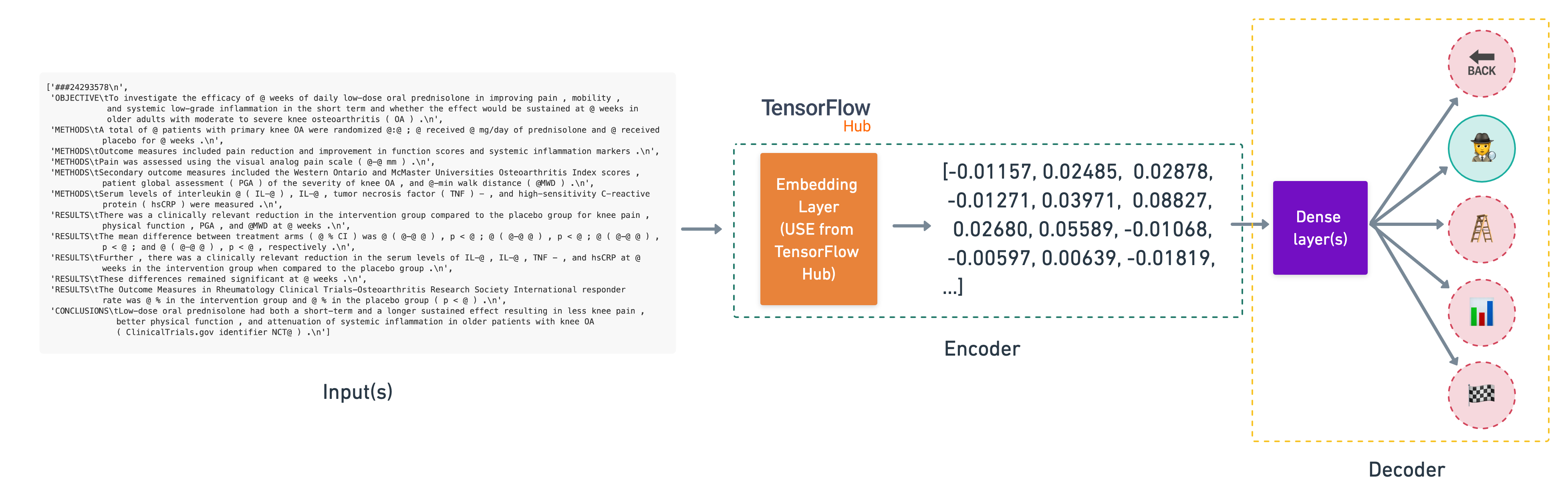

# Fast Bayesian estimation of SARIMAX models

## Introduction

This notebook will show how to use fast Bayesian methods to estimate SARIMAX (Seasonal AutoRegressive Integrated Moving Average with eXogenous regressors) models. These methods can also be parallelized across multiple cores.

Here, fast methods means a version of Hamiltonian Monte Carlo called the No-U-Turn Sampler (NUTS) developed by Hoffmann and Gelman: see [Hoffman, M. D., & Gelman, A. (2014). The No-U-Turn sampler: adaptively setting path lengths in Hamiltonian Monte Carlo. Journal of Machine Learning Research, 15(1), 1593-1623.](https://arxiv.org/abs/1111.4246). As they say, "the cost of HMC per independent sample from a target distribution of dimension $D$ is roughly $\mathcal{O}(D^{5/4})$, which stands in sharp contrast with the $\mathcal{O}(D^{2})$ cost of random-walk Metropolis". So for problems of larger dimension, the time-saving with HMC is significant. However it does require the gradient, or Jacobian, of the model to be provided.

This notebook will combine the Python libraries [statsmodels](https://www.statsmodels.org/stable/index.html), which does econometrics, and [PyMC3](https://docs.pymc.io/), which is for Bayesian estimation, to perform fast Bayesian estimation of a simple SARIMAX model, in this case an ARMA(1, 1) model for US CPI.

Note that, for simple models like AR(p), base PyMC3 is a quicker way to fit a model; there's an [example here](https://docs.pymc.io/notebooks/AR.html). The advantage of using statsmodels is that it gives access to methods that can solve a vast range of statespace models.

The model we'll solve is given by

$$

y_t = \phi y_{t-1} + \varepsilon_t + \theta_1 \varepsilon_{t-1}, \qquad \varepsilon_t \sim N(0, \sigma^2)

$$

with 1 auto-regressive term and 1 moving average term. In statespace form it is written as:

$$

\begin{align}

y_t & = \underbrace{\begin{bmatrix} 1 & \theta_1 \end{bmatrix}}_{Z} \underbrace{\begin{bmatrix} \alpha_{1,t} \\ \alpha_{2,t} \end{bmatrix}}_{\alpha_t} \\

\begin{bmatrix} \alpha_{1,t+1} \\ \alpha_{2,t+1} \end{bmatrix} & = \underbrace{\begin{bmatrix}

\phi & 0 \\

1 & 0 \\

\end{bmatrix}}_{T} \begin{bmatrix} \alpha_{1,t} \\ \alpha_{2,t} \end{bmatrix} +

\underbrace{\begin{bmatrix} 1 \\ 0 \end{bmatrix}}_{R} \underbrace{\varepsilon_{t+1}}_{\eta_t} \\

\end{align}

$$

The code will follow these steps:

1. Import external dependencies

2. Download and plot the data on US CPI

3. Simple maximum likelihood estimation (MLE) as an example

4. Definitions of helper functions to provide tensors to the library doing Bayesian estimation

5. Bayesian estimation via NUTS

6. Application to US CPI series

Finally, Appendix A shows how to re-use the helper functions from step (4) to estimate a different state space model, `UnobservedComponents`, using the same Bayesian methods.

### 1. Import external dependencies

```

%matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import pymc3 as pm

import statsmodels.api as sm

import theano

import theano.tensor as tt

from pandas.plotting import register_matplotlib_converters

from pandas_datareader.data import DataReader

plt.style.use("seaborn")

register_matplotlib_converters()

```

### 2. Download and plot the data on US CPI

We'll get the data from FRED:

```

cpi = DataReader("CPIAUCNS", "fred", start="1971-01", end="2018-12")

cpi.index = pd.DatetimeIndex(cpi.index, freq="MS")

# Define the inflation series that we'll use in analysis

inf = np.log(cpi).resample("QS").mean().diff()[1:] * 400

inf = inf.dropna()

print(inf.head())

# Plot the series

fig, ax = plt.subplots(figsize=(9, 4), dpi=300)

ax.plot(inf.index, inf, label=r"$\Delta \log CPI$", lw=2)

ax.legend(loc="lower left")

plt.show()

```

### 3. Fit the model with maximum likelihood

Statsmodels does all of the hard work of this for us - creating and fitting the model takes just two lines of code. The model order parameters correspond to auto-regressive, difference, and moving average orders respectively.

```

# Create an SARIMAX model instance - here we use it to estimate

# the parameters via MLE using the `fit` method, but we can

# also re-use it below for the Bayesian estimation

mod = sm.tsa.statespace.SARIMAX(inf, order=(1, 0, 1))

res_mle = mod.fit(disp=False)

print(res_mle.summary())

```

It's a good fit. We can also get the series of one-step ahead predictions and plot it next to the actual data, along with a confidence band.

```

predict_mle = res_mle.get_prediction()

predict_mle_ci = predict_mle.conf_int()

lower = predict_mle_ci["lower CPIAUCNS"]

upper = predict_mle_ci["upper CPIAUCNS"]

# Graph

fig, ax = plt.subplots(figsize=(9, 4), dpi=300)

# Plot data points

inf.plot(ax=ax, style="-", label="Observed")

# Plot predictions

predict_mle.predicted_mean.plot(ax=ax, style="r.", label="One-step-ahead forecast")

ax.fill_between(predict_mle_ci.index, lower, upper, color="r", alpha=0.1)

ax.legend(loc="lower left")

plt.show()

```

### 4. Helper functions to provide tensors to the library doing Bayesian estimation

We're almost on to the magic but there are a few preliminaries. Feel free to skip this section if you're not interested in the technical details.

### Technical Details

PyMC3 is a Bayesian estimation library ("Probabilistic Programming in Python: Bayesian Modeling and Probabilistic Machine Learning with Theano") that is a) fast and b) optimized for Bayesian machine learning, for instance [Bayesian neural networks](https://docs.pymc.io/notebooks/bayesian_neural_network_advi.html). To do all of this, it is built on top of a Theano, a library that aims to evaluate tensors very efficiently and provide symbolic differentiation (necessary for any kind of deep learning). It is the symbolic differentiation that means PyMC3 can use NUTS on any problem formulated within PyMC3.

We are not formulating a problem directly in PyMC3; we're using statsmodels to specify the statespace model and solve it with the Kalman filter. So we need to put the plumbing of statsmodels and PyMC3 together, which means wrapping the statsmodels SARIMAX model object in a Theano-flavored wrapper before passing information to PyMC3 for estimation.

Because of this, we can't use the Theano auto-differentiation directly. Happily, statsmodels SARIMAX objects have a method to return the Jacobian evaluated at the parameter values. We'll be making use of this to provide gradients so that we can use NUTS.

#### Defining helper functions to translate models into a PyMC3 friendly form

First, we'll create the Theano wrappers. They will be in the form of 'Ops', operation objects, that 'perform' particular tasks. They are initialized with a statsmodels `model` instance.

Although this code may look somewhat opaque, it is generic for any state space model in statsmodels.

```

class Loglike(tt.Op):

itypes = [tt.dvector] # expects a vector of parameter values when called

otypes = [tt.dscalar] # outputs a single scalar value (the log likelihood)

def __init__(self, model):

self.model = model

self.score = Score(self.model)

def perform(self, node, inputs, outputs):

(theta,) = inputs # contains the vector of parameters

llf = self.model.loglike(theta)

outputs[0][0] = np.array(llf) # output the log-likelihood

def grad(self, inputs, g):

# the method that calculates the gradients - it actually returns the

# vector-Jacobian product - g[0] is a vector of parameter values

(theta,) = inputs # our parameters

out = [g[0] * self.score(theta)]

return out

class Score(tt.Op):

itypes = [tt.dvector]

otypes = [tt.dvector]

def __init__(self, model):

self.model = model

def perform(self, node, inputs, outputs):

(theta,) = inputs

outputs[0][0] = self.model.score(theta)

```

### 5. Bayesian estimation with NUTS

The next step is to set the parameters for the Bayesian estimation, specify our priors, and run it.

```

# Set sampling params

ndraws = 3000 # number of draws from the distribution

nburn = 600 # number of "burn-in points" (which will be discarded)

```

Now for the fun part! There are three parameters to estimate: $\phi$, $\theta_1$, and $\sigma$. We'll use uninformative uniform priors for the first two, and an inverse gamma for the last one. Then we'll run the inference optionally using as many computer cores as I have.

```

# Construct an instance of the Theano wrapper defined above, which

# will allow PyMC3 to compute the likelihood and Jacobian in a way

# that it can make use of. Here we are using the same model instance

# created earlier for MLE analysis (we could also create a new model

# instance if we preferred)

loglike = Loglike(mod)

with pm.Model() as m:

# Priors

arL1 = pm.Uniform("ar.L1", -0.99, 0.99)

maL1 = pm.Uniform("ma.L1", -0.99, 0.99)

sigma2 = pm.InverseGamma("sigma2", 2, 4)

# convert variables to tensor vectors

theta = tt.as_tensor_variable([arL1, maL1, sigma2])

# use a DensityDist (use a lamdba function to "call" the Op)

pm.DensityDist("likelihood", loglike, observed=theta)

# Draw samples

trace = pm.sample(

ndraws,

tune=nburn,

return_inferencedata=True,

cores=1,

compute_convergence_checks=False,

)

```

Note that the NUTS sampler is auto-assigned because we provided gradients. PyMC3 will use Metropolis or Slicing samplers if it does not find that gradients are available. There are an impressive number of draws per second for a "block box" style computation! However, note that if the model can be represented directly by PyMC3 (like the AR(p) models mentioned above), then computation can be substantially faster.

Inference is complete, but are the results any good? There are a number of ways to check. The first is to look at the posterior distributions (with lines showing the MLE values):

```

plt.tight_layout()

# Note: the syntax here for the lines argument is required for

# PyMC3 versions >= 3.7

# For version <= 3.6 you can use lines=dict(res_mle.params) instead

_ = pm.plot_trace(

trace,

lines=[(k, {}, [v]) for k, v in dict(res_mle.params).items()],

combined=True,

figsize=(12, 12),

)

```

The estimated posteriors clearly peak close to the parameters found by MLE. We can also see a summary of the estimated values:

```

pm.summary(trace)

```

Here $\hat{R}$ is the Gelman-Rubin statistic. It tests for lack of convergence by comparing the variance between multiple chains to the variance within each chain. If convergence has been achieved, the between-chain and within-chain variances should be identical. If $\hat{R}<1.2$ for all model parameters, we can have some confidence that convergence has been reached.

Additionally, the highest posterior density interval (the gap between the two values of HPD in the table) is small for each of the variables.

### 6. Application of Bayesian estimates of parameters

We'll now re-instigate a version of the model but using the parameters from the Bayesian estimation, and again plot the one-step-ahead forecasts.

```

# Retrieve the posterior means

params = pm.summary(trace)["mean"].values

# Construct results using these posterior means as parameter values

res_bayes = mod.smooth(params)

predict_bayes = res_bayes.get_prediction()

predict_bayes_ci = predict_bayes.conf_int()

lower = predict_bayes_ci["lower CPIAUCNS"]

upper = predict_bayes_ci["upper CPIAUCNS"]

# Graph

fig, ax = plt.subplots(figsize=(9, 4), dpi=300)

# Plot data points

inf.plot(ax=ax, style="-", label="Observed")

# Plot predictions

predict_bayes.predicted_mean.plot(ax=ax, style="r.", label="One-step-ahead forecast")

ax.fill_between(predict_bayes_ci.index, lower, upper, color="r", alpha=0.1)

ax.legend(loc="lower left")

plt.show()

```

## Appendix A. Application to `UnobservedComponents` models

We can reuse the `Loglike` and `Score` wrappers defined above to consider a different state space model. For example, we might want to model inflation as the combination of a random walk trend and autoregressive error term:

$$

\begin{aligned}

y_t & = \mu_t + \varepsilon_t \\

\mu_t & = \mu_{t-1} + \eta_t \\

\varepsilon_t &= \phi \varepsilon_t + \zeta_t

\end{aligned}

$$

This model can be constructed in Statsmodels with the `UnobservedComponents` class using the `rwalk` and `autoregressive` specifications. As before, we can fit the model using maximum likelihood via the `fit` method.

```

# Construct the model instance

mod_uc = sm.tsa.UnobservedComponents(inf, "rwalk", autoregressive=1)

# Fit the model via maximum likelihood

res_uc_mle = mod_uc.fit()

print(res_uc_mle.summary())

```

As noted earlier, the Theano wrappers (`Loglike` and `Score`) that we created above are generic, so we can re-use essentially the same code to explore the model with Bayesian methods.

```

# Set sampling params

ndraws = 3000 # number of draws from the distribution

nburn = 600 # number of "burn-in points" (which will be discarded)

# Here we follow the same procedure as above, but now we instantiate the

# Theano wrapper `Loglike` with the UC model instance instead of the

# SARIMAX model instance

loglike_uc = Loglike(mod_uc)

with pm.Model():

# Priors

sigma2level = pm.InverseGamma("sigma2.level", 1, 1)

sigma2ar = pm.InverseGamma("sigma2.ar", 1, 1)

arL1 = pm.Uniform("ar.L1", -0.99, 0.99)

# convert variables to tensor vectors

theta_uc = tt.as_tensor_variable([sigma2level, sigma2ar, arL1])

# use a DensityDist (use a lamdba function to "call" the Op)

pm.DensityDist("likelihood", loglike_uc, observed=theta_uc)

# Draw samples

trace_uc = pm.sample(

ndraws,

tune=nburn,

return_inferencedata=True,

cores=1,

compute_convergence_checks=False,

)

```

And as before we can plot the marginal posteriors. In contrast to the SARIMAX example, here the posterior modes are somewhat different from the MLE estimates.

```

plt.tight_layout()

# Note: the syntax here for the lines argument is required for

# PyMC3 versions >= 3.7

# For version <= 3.6 you can use lines=dict(res_mle.params) instead

_ = pm.plot_trace(

trace_uc,

lines=[(k, {}, [v]) for k, v in dict(res_uc_mle.params).items()],

combined=True,

figsize=(12, 12),

)

pm.summary(trace_uc)

# Retrieve the posterior means

params = pm.summary(trace_uc)["mean"].values

# Construct results using these posterior means as parameter values

res_uc_bayes = mod_uc.smooth(params)

```

One benefit of this model is that it gives us an estimate of the underling "level" of inflation, using the smoothed estimate of $\mu_t$, which we can access as the "level" column in the results objects' `states.smoothed` attribute. In this case, because the Bayesian posterior mean of the level's variance is larger than the MLE estimate, its estimated level is a little more volatile.

```

# Graph

fig, ax = plt.subplots(figsize=(9, 4), dpi=300)

# Plot data points

inf["CPIAUCNS"].plot(ax=ax, style="-", label="Observed data")

# Plot estimate of the level term

res_uc_mle.states.smoothed["level"].plot(ax=ax, label="Smoothed level (MLE)")

res_uc_bayes.states.smoothed["level"].plot(ax=ax, label="Smoothed level (Bayesian)")

ax.legend(loc="lower left");

```

| github_jupyter |

## Set Up

We have again provided code to do the basic loading, review and model-building. Run the cell below to set everything up:

```

import numpy as np

import pandas as pd

from sklearn.ensemble import RandomForestRegressor

from sklearn.model_selection import train_test_split

import shap

# Environment Set-Up for feedback system.

from learntools.core import binder

binder.bind(globals())

from learntools.ml_explainability.ex5 import *

print("Setup Complete")

import pandas as pd

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

data = pd.read_csv('../input/hospital-readmissions/train.csv')

y = data.readmitted

base_features = ['number_inpatient', 'num_medications', 'number_diagnoses', 'num_lab_procedures',

'num_procedures', 'time_in_hospital', 'number_outpatient', 'number_emergency',

'gender_Female', 'payer_code_?', 'medical_specialty_?', 'diag_1_428', 'diag_1_414',

'diabetesMed_Yes', 'A1Cresult_None']

# Some versions of shap package error when mixing bools and numerics

X = data[base_features].astype(float)

train_X, val_X, train_y, val_y = train_test_split(X, y, random_state=1)

# For speed, we will calculate shap values on smaller subset of the validation data

small_val_X = val_X.iloc[:150]

my_model = RandomForestClassifier(n_estimators=30, random_state=1).fit(train_X, train_y)

data.describe()

```

The first few questions require examining the distribution of effects for each feature, rather than just an average effect for each feature. Run the following cell for a summary plot of the shap_values for readmission. It will take about 20 seconds to run.

```

explainer = shap.TreeExplainer(my_model)

shap_values = explainer.shap_values(small_val_X)

shap.summary_plot(shap_values[1], small_val_X)

```

## Question 1

Which of the following features has a bigger range of effects on predictions (i.e. larger difference between most positive and most negative effect)

- `diag_1_428` or

- `payer_code_?`

```

# set following variable to 'diag_1_428' or 'payer_code_?'

feature_with_bigger_range_of_effects = ____

q_1.check()

```

Uncomment the line below to see the solution and explanation

```

# q_1.solution()

```

## Question 2

Do you believe the range of effects sizes (distance between smallest effect and largest effect) is a good indication of which feature will have a higher permutation importance? Why or why not?

If the **range of effect sizes** measures something different from **permutation importance**: which is a better answer for the question "Which of these two features does the model say is more important for us to understand when discussing readmission risks in the population?"

Uncomment the following line after you've decided your answer.

```

# q_2.solution()

```

## Question 3

Both `diag_1_428` and `payer_code_?` are binary variables, taking values of 0 or 1.

From the graph, which do you think would typically have a bigger impact on predicted readmission risk:

- Changing `diag_1_428` from 0 to 1

- Changing `payer_code_?` from 0 to 1

To save you scrolling, we have included a cell below to plot the graph again (this one runs quickly).

```

shap.summary_plot(shap_values[1], small_val_X)

# Set following var to "diag_1_428" if changing it to 1 has bigger effect. Else set it to 'payer_code_?'

bigger_effect_when_changed = ____

q_3.check()

```

For a solution and explanation, uncomment the line below

```

# q_3.solution()

```

## Question 4

Some features (like `number_inpatient`) have reasonably clear separation between the blue and pink dots. Other variables like `num_lab_procedures` have blue and pink dots jumbled together, even though the SHAP values (or impacts on prediction) aren't all 0.

What do you think you learn from the fact that `num_lab_procedures` has blue and pink dots jumbled together? Once you have your answer, uncomment the line below to verify your solution.

```

# q_4.solution()

```

## Question 5

Consider the following SHAP contribution dependence plot.

The x-axis shows `feature_of_interest` and the points are colored based on `other_feature`.

Is there an interaction between `feature_of_interest` and `other_feature`?

If so, does `feature_of_interest` have a more positive impact on predictions when `other_feature` is high or when `other_feature` is low?

Uncomment the following code when you are ready for the answer.

```

# q_5.solution()

```

## Question 6

Review the summary plot for the readmission data by running the following cell:

```

shap.summary_plot(shap_values[1], small_val_X)

```

Both **num_medications** and **num_lab_procedures** share that jumbling of pink and blue dots.

Aside from `num_medications` having effects of greater magnitude (both more positive and more negative), it's hard to see a meaningful difference between how these two features affect readmission risk. Create the SHAP dependence contribution plots for each variable, and describe what you think is different between how these two variables affect predictions.

As a reminder, here is the code you previously saw to create this type of plot.

shap.dependence_plot(feature_of_interest, shap_values[1], val_X)

And recall that your validation data is called `small_val_X`.

```

# Your code here

____

```

Then uncomment the following line to compare your observations from this graph to the solution.

```

# q_6.solution()

```

## Congrats

That's it! Machine Learning models should not feel like black boxes any more, because you have the tools to inspect them and understand what they learn about the world.

This is an excellent skill for debugging models, building trust, and learning insights to make better decisions. These techniques have revolutionized how I do data science, and I hope they do the same for you.

Real data science involves an element of exploration. I hope you find an interesting dataset to try these techniques on (Kaggle has a lot of [free datasets](https://www.kaggle.com/datasets) to try out). If you learn something interesting about the world, share your work [in this forum](https://www.kaggle.com/learn-forum/66354). I'm excited to see what you do with your new skills.

| github_jupyter |

# High-level Caffe2 Example

```

import os

import sys

import caffe2

import numpy as np

from caffe2.python import core, model_helper, workspace, visualize, brew, optimizer, utils

from caffe2.proto import caffe2_pb2

from common.params import *

from common.utils import *

# Force one-gpu

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

print("OS: ", sys.platform)

print("Python: ", sys.version)

print("Numpy: ", np.__version__)

print("GPU: ", get_gpu_name())

print(get_cuda_version())

print("CuDNN Version ", get_cudnn_version())