text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

# Building and training your first TensorFlow graph from the ground up.

- https://www.oreilly.com/learning/hello-tensorflow?imm_mid=0e50a2&cmp=em-data-na-na-newsltr_20160622#

The [TensorFlow](https://www.tensorflow.org/) project is bigger than you might realize. The fact that it's a library for deep learning, and its connection to Google, has helped TensorFlow attract a lot of attention. But beyond the hype, there are unique elements to the project that are worthy of closer inspection:

- The core library is suited to a broad family of machine learning techniques, not “just” deep learning.

- Linear algebra and other internals are prominently exposed.

- In addition to the core machine learning functionality, TensorFlow also includes its own logging system, its own interactive log visualizer, and even its own heavily engineered serving architecture.

- The execution model for TensorFlow differs from Python's scikit-learn, or most tools in R.

Cool stuff, but—especially for someone hoping to explore machine learning for the first time—TensorFlow can be a lot to take in.

How does TensorFlow work? Let's break it down so we can see and understand every moving part. We'll explore the data flow [graph](https://en.wikipedia.org/wiki/Graph_(abstract_data_type)) that defines the computations your data will undergo, how to train models with[gradient descent](https://en.wikipedia.org/wiki/Gradient_descent) using TensorFlow, and how [TensorBoard](https://www.tensorflow.org/versions/r0.8/how_tos/summaries_and_tensorboard/) can visualize your TensorFlow work. The examples here won't solve industrial machine learning problems, but they'll help you understand the components underlying everything built with TensorFlow, including whatever you build next!

### Names and execution in Python and TensorFlow

The way TensorFlow manages computation is not totally different from the way Python usually does. With both, it's important to remember, to paraphrase [Hadley Wickham](https://twitter.com/hadleywickham/status/732288980549390336), that an object has no name (see Figure 1). In order to see the similarities (and differences) between how Python and TensorFlow work, let’s look at how they refer to objects and handle evaluation.

<img src="images/image1.jpg"/>

The variable names in Python code aren't what they represent; they're just pointing at objects. So, when you say in Python that foo = [] and bar = foo, it isn't just that foo equals bar; foo is bar, in the sense that they both point at the same list object.

```

foo = []

bar = foo

foo == bar

## True

foo is bar

## True

```

You can also see that id(foo) and id(bar) are the same. This identity, especially with mutable data structures like lists, can lead to surprising bugs when it's misunderstood.

Internally, Python manages all your objects and keeps track of your variable names and which objects they refer to. The TensorFlow graph represents another layer of this kind of management; as we’ll see, Python names will refer to objects that connect to more granular and managed TensorFlow graph operations.

When you enter a Python expression, for example at an interactive interpreter or Read Evaluate Print Loop (REPL), whatever is read is almost always evaluated right away. Python is eager to do what you tell it. So, if I tell Python to foo.append(bar), it appends right away, even if I never use foo again.

A lazier alternative would be to just remember that I said foo.append(bar), and if I ever evaluate foo at some point in the future, Python could do the append then. This would be closer to how TensorFlow behaves, where defining relationships is entirely separate from evaluating what the results are.

TensorFlow separates the definition of computations from their execution even further by having them happen in separate places: a graph defines the operations, but the operations only happen within a session. Graphs and sessions are created independently. A graph is like a blueprint, and a session is like a construction site.

Back to our plain Python example, recall that foo and bar refer to the same list. By appending bar into foo, we've put a list inside itself. You could think of this structure as a graph with one node, pointing to itself. Nesting lists is one way to represent a graph structure like a TensorFlow computation graph.

```

foo.append(bar)

foo

## [[...]]

```

Real TensorFlow graphs will be more interesting than this!

## The simplest TensorFlow graph

To start getting our hands dirty, let’s create the simplest TensorFlow graph we can, from the ground up. TensorFlow is admirably easier to install than some other frameworks. The examples here work with either Python 2.7 or 3.3+, and the TensorFlow version used is 0.8.

```

import tensorflow as tf

```

At this point TensorFlow has already started managing a lot of state for us. There's already an implicit default graph, for example. Internally, the default graph lives in the **_default_graph_stack**, but we don't have access to that directly. We use **tf.get_default_graph()**.

```

graph = tf.get_default_graph()

```

The nodes of the TensorFlow graph are called “operations,” or “ops.” We can see what operations are in the graph with graph.get_operations().

```

graph.get_operations()

```

Currently, there isn't anything in the graph. We’ll need to put everything we want TensorFlow to compute into that graph. Let's start with a simple constant input value of one.

```

input_value = tf.constant(1.0)

```

That constant now lives as a node, an operation, in the graph. The Python variable name input_value refers indirectly to that operation, but we can also find the operation in the default graph.

```

operations = graph.get_operations()

operations

operations[0].node_def

```

TensorFlow uses protocol buffers internally. (Protocol buffers are sort of like a Google-strength JSON.) Printing the node_def for the constant operation above shows what's in TensorFlow's protocol buffer representation for the number one.

People new to TensorFlow sometimes wonder why there's all this fuss about making “TensorFlow versions” of things. Why can't we just use a normal Python variable without also defining a TensorFlow object? One of the TensorFlow tutorials has an explanation:

> To do efficient numerical computing in Python, we typically use libraries like NumPy that do expensive operations such as matrix multiplication outside Python, using highly efficient code implemented in another language. Unfortunately, there can still be a lot of overhead from switching back to Python every operation. This overhead is especially bad if you want to run computations on GPUs or in a distributed manner, where there can be a high cost to transferring data.

> TensorFlow also does its heavy lifting outside Python, but it takes things a step further to avoid this overhead. Instead of running a single expensive operation independently from Python, TensorFlow lets us describe a graph of interacting operations that run entirely outside Python. This approach is similar to that used in Theano or Torch.

TensorFlow can do a lot of great things, but it can only work with what's been explicitly given to it. This is true even for a single constant.

If we inspect our input_value, we see it is a constant 32-bit float tensor of no dimension: just one number.

```

input_value

```

Note that this doesn't tell us what that number is. To evaluate input_value and get a numerical value out, we need to create a “session” where graph operations can be evaluated and then explicitly ask to evaluate or “run” input_value. (The session picks up the default graph by default.)

```

sess = tf.Session()

sess.run(input_value)

```

It may feel a little strange to “run” a constant. But it isn't so different from evaluating an expression as usual in Python; it's just that TensorFlow is managing its own space of things—the computational graph—and it has its own method of evaluation.

## The simplest TensorFlow neuron

Now that we have a session with a simple graph, let's build a neuron with just one parameter, or weight. Often, even simple neurons also have a bias term and a non-identity activation function, but we'll leave these out.

The neuron's weight isn't going to be constant; we expect it to change in order to learn based on the “true” input and output we use for training. The weight will be a TensorFlow variable. We'll give that variable a starting value of 0.8.

```

weight = tf.Variable(0.8)

```

You might expect that adding a variable would add one operation to the graph, but in fact that one line adds four operations. We can check all the operation names:

```

for op in graph.get_operations(): print op.name

```

We won't want to follow every operation individually for long, but it will be nice to see at least one that feels like a real computation.

```

output_value = weight * input_value

```

Now there are six operations in the graph, and the last one is that multiplication.

```

op = graph.get_operations()[-1]

op.name

for op_input in op.inputs: print op_input

```

This shows how the multiplication operation tracks where its inputs come from: they come from other operations in the graph. To understand a whole graph, following references this way quickly becomes tedious for humans. [TensorBoard graph visualization](https://www.tensorflow.org/versions/r0.8/how_tos/graph_viz/) is designed to help.

How do we find out what the product is? We have to “run” the **output_value** operation. But that operation depends on a variable: **weight**. We told TensorFlow that the initial value of weight should be 0.8, but the value hasn't yet been set in the current session. The **tf.initialize_all_variables()** function generates an operation which will initialize all our variables (in this case just one) and then we can run that operation.

```

init = tf.initialize_all_variables()

sess.run(init)

```

The result of tf.initialize_all_variables() will include initializers for all the variables currently in the graph, so if you add more variables you'll want to use tf.initialize_all_variables() again; a stale init wouldn't include the new variables.

Now we're ready to run the output_value operation.

```

sess.run(output_value)

```

Recall that's 0.8 * 1.0 with 32-bit floats, and 32-bit floats have a hard time with 0.8; 0.80000001 is as close as they can get.

## See your graph in TensorBoard

Up to this point, the graph has been simple, but it would already be nice to see it represented in a diagram. We'll use TensorBoard to generate that diagram. TensorBoard reads the name field that is stored inside each operation (quite distinct from Python variable names). We can use these TensorFlow names and switch to more conventional Python variable names. Using tf.mul here is equivalent to our earlier use of just * for multiplication, but it lets us set the name for the operation.

```

x = tf.constant(1.0, name='input')

w = tf.Variable(0.8, name='weight')

y = tf.mul(w, x, name='output')

```

TensorBoard works by looking at a directory of output created from TensorFlow sessions. We can write this output with a SummaryWriter, and if we do nothing aside from creating one with a graph, it will just write out that graph.

The first argument when creating the SummaryWriter is an output directory name, which will be created if it doesn't exist.

```

summary_writer = tf.train.SummaryWriter('log_simple_graph', sess.graph)

```

Now, at the command line, we can start up TensorBoard.

```

!tensorboard --logdir=log_simple_graph

```

TensorBoard runs as a local web app, on port 6006. (“6006” is “goog” upside-down.) If you go in a browser to localhost:6006/#graphs you should see a diagram of the graph you created in TensorFlow, which looks something like Figure 2.

## Making the neuron learn

Now that we’ve built our neuron, how does it learn? We set up an input value of 1.0. Let's say the correct output value is zero. That is, we have a very simple “training set” of just one example with one feature, which has the value one, and one label, which is zero. We want the neuron to learn the function taking one to zero.

Currently, the system takes the input one and returns 0.8, which is not correct. We need a way to measure how wrong the system is. We'll call that measure of wrongness the “loss” and give our system the goal of minimizing the loss. If the loss can be negative, then minimizing it could be silly, so let's make the loss the square of the difference between the current output and the desired output.

```

y_ = tf.constant(0.0)

loss = (y - y_)**2

```

So far, nothing in the graph does any learning. For that, we need an optimizer. We'll use a gradient descent optimizer so that we can update the weight based on the derivative of the loss. The optimizer takes a learning rate to moderate the size of the updates, which we'll set at 0.025.

```

optim = tf.train.GradientDescentOptimizer(learning_rate=0.025)

```

The optimizer is remarkably clever. It can automatically work out and apply the appropriate gradients through a whole network, carrying out the backward step for learning.

Let's see what the gradient looks like for our simple example.

```

grads_and_vars = optim.compute_gradients(loss)

sess.run(tf.initialize_all_variables())

sess.run(grads_and_vars[1][0])

```

Why is the value of the gradient 1.6? Our loss is error squared, and the derivative of that is two times the error. Currently the system says 0.8 instead of 0, so the error is 0.8, and two times 0.8 is 1.6. It's working!

For more complex systems, it will be very nice indeed that TensorFlow calculates and then applies these gradients for us automatically.

Let's apply the gradient, finishing the backpropagation.

```

sess.run(optim.apply_gradients(grads_and_vars))

sess.run(w)

## 0.75999999 # about 0.76

```

The weight decreased by 0.04 because the optimizer subtracted the gradient times the learning rate, 1.6 * 0.025, pushing the weight in the right direction.

Instead of hand-holding the optimizer like this, we can make one operation that calculates and applies the gradients: the train_step.

```

train_step = tf.train.GradientDescentOptimizer(0.025).minimize(loss)

for i in range(100):

sess.run(train_step)

sess.run(y)

## 0.0044996012

```

Running the training step many times, the weight and the output value are now very close to zero. The neuron has learned!

## Training diagnostics in TensorBoard

We may be interested in what's happening during training. Say we want to follow what our system is predicting at every training step. We could print from inside the training loop.

```

sess.run(tf.initialize_all_variables())

for i in range(100):

print('before step {}, y is {}'.format(i, sess.run(y)))

sess.run(train_step)

```

This works, but there are some problems. It's hard to understand a list of numbers. A plot would be better. And even with only one value to monitor, there's too much output to read. We're likely to want to monitor many things. It would be nice to record everything in some organized way.

Luckily, the same system that we used earlier to visualize the graph also has just the mechanisms we need.

We instrument the computation graph by adding operations that summarize its state. Here, we'll create an operation that reports the current value of y, the neuron's current output.

```

summary_y = tf.scalar_summary('output', y)

```

When you run a summary operation, it returns a string of protocol buffer text that can be written to a log directory with a SummaryWriter.

```

summary_writer = tf.train.SummaryWriter('log_simple_stat')

sess.run(tf.initialize_all_variables())

for i in range(100):

summary_str = sess.run(summary_y)

summary_writer.add_summary(summary_str, i)

sess.run(train_step)

```

Now after running

```

tensorboard --logdir=log_simple_stat

```

you get an interactive plot at localhost:6006/#events (Figure 3).

<img src="images/image2.jpg"/>

## Flowing onward

Here's a final version of the code. It's fairly minimal, with every part showing useful (and understandable) TensorFlow functionality.

```

import tensorflow as tf

x = tf.constant(1.0, name='input')

w = tf.Variable(0.8, name='weight')

y = tf.mul(w, x, name='output')

y_ = tf.constant(0.0, name='correct_value')

loss = tf.pow(y - y_, 2, name='loss')

train_step = tf.train.GradientDescentOptimizer(0.025).minimize(loss)

for value in [x, w, y, y_, loss]:

tf.scalar_summary(value.op.name, value)

summaries = tf.merge_all_summaries()

sess = tf.Session()

summary_writer = tf.train.SummaryWriter('log_simple_stats', sess.graph)

sess.run(tf.initialize_all_variables())

for i in range(100):

summary_writer.add_summary(sess.run(summaries), i)

sess.run(train_step)

```

The example we just ran through is even simpler than the ones that inspired it in Michael Nielsen's [Neural Networks and Deep Learning](http://neuralnetworksanddeeplearning.com/). For myself, seeing details like these helps with understanding and building more complex systems that use and extend from simple building blocks. Part of the beauty of TensorFlow is how flexibly you can build complex systems from simpler components.

If you want to continue experimenting with TensorFlow, it might be fun to start making more interesting neurons, perhaps with different [activation functions](https://en.wikipedia.org/wiki/Activation_function#Comparison_of_activation_functions). You could train with more interesting data. You could add more neurons. You could add more layers. You could dive into more complex[pre-built models](https://github.com/tensorflow/tensorflow/tree/master/tensorflow/models), or spend more time with TensorFlow's own [tutorials](https://www.tensorflow.org/versions/r0.8/tutorials/) and [how-to guides](https://www.tensorflow.org/versions/r0.8/how_tos/). Go for it!

| github_jupyter |

# Example of optimizing a convex function

# Goal is to test the objective values found by Mango

- Search space size: Uniform

- Number of iterations to try: 40

- domain size: 5000

- Initial Random: 5

# Benchmarking test with different iterations for serial executions

```

from mango.tuner import Tuner

from scipy.stats import uniform

def get_param_dict():

param_dict = {

'x': uniform(-5000, 10000)

}

return param_dict

def objfunc(args_list):

results = []

for hyper_par in args_list:

x = hyper_par['x']

result = -(x**2)

results.append(result)

return results

def get_conf_batch_5():

conf = dict()

conf['batch_size'] = 5

conf['initial_random'] = 5

conf['num_iteration'] = 100

conf['domain_size'] = 5000

return conf

def get_optimal_x():

param_dict = get_param_dict()

conf_5 = get_conf_batch_5()

tuner_5 = Tuner(param_dict, objfunc,conf_5)

results_5 = tuner_5.maximize()

return results_5

Store_Optimal_X = []

Store_Results = []

num_of_tries = 100

for i in range(num_of_tries):

results_5 = get_optimal_x()

Store_Results.append([results_5])

print(i,":",results_5['best_params']['x'])

#len(Store_Results)

#Store_Results[0]

```

# Sandeep we need to process the results

# Extract from the results returned the true optimal values for each iteration

```

import numpy as np

total_experiments = 66

initial_random = 5

plotting_itr =[10, 20,30,40,50,60,70,80,90,100]

plotting_list = []

for exp in range(total_experiments): #for all exp

local_list = []

for itr in plotting_itr: # for all points to plot

# find the value of optimal parameters in itr+ initial_random

max_value = np.array(Store_Results[exp][0]['objective_values'][:itr*5+initial_random]).max()

local_list.append(max_value)

plotting_list.append(local_list)

plotting_array = np.array(plotting_list)

plotting_array.shape

#plotting_array

Y = []

#count range between -1 and 1 and show it

for i in range(len(plotting_itr)):

y_value = np.count_nonzero((plotting_array[:,i] > -1))/plotting_array[:,i].size

Y.append(y_value*100)

Y

#np.count_nonzero((plotting_array[:,0] > -1) & (plotting_array[:,0] < 1))/plotting_array[:,0].size

import numpy as np

import matplotlib.pyplot as plt

fig = plt.figure(figsize=(10,10))

plt.plot(plotting_itr,Y,label = 'Mango Serial',linewidth=3.0) #x, y

plt.xlabel('Number of Iterations',fontsize=25)

plt.ylabel(' % of $Fun_{value}$ in (-1,0)',fontsize=25)

plt.title('Variation of Optimal Value of X with iterations',fontsize=20)

plt.xticks(fontsize=20)

plt.yticks(fontsize=20)

plt.yticks(np.arange(60, 110, step=10))

plt.grid(True)

plt.legend(fontsize=20)

plt.show()

```

| github_jupyter |

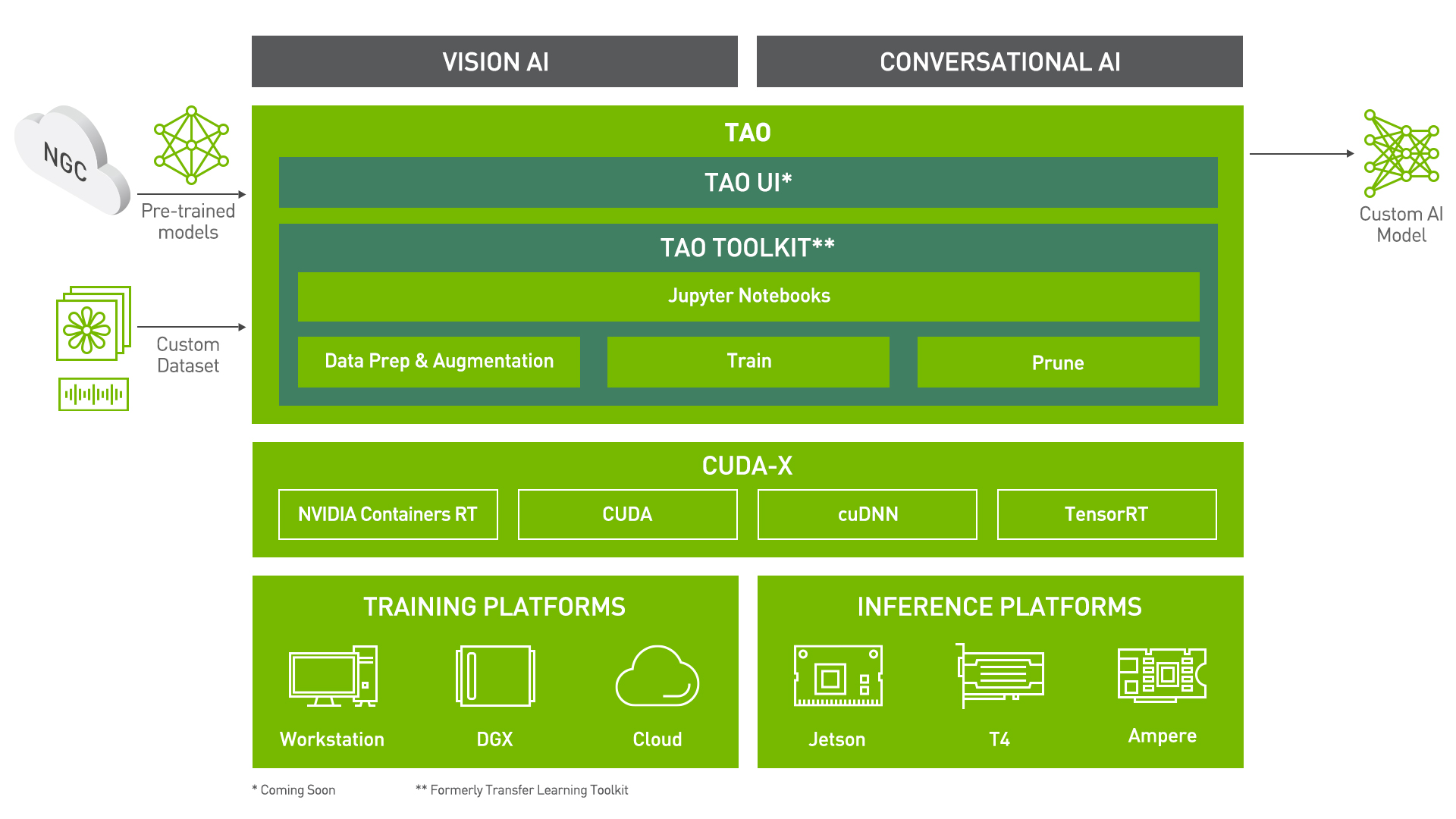

# Punctuation And Capitalization using Transfer Learning Toolkit

Transfer Learning Toolkit (TLT) is a python based AI toolkit for taking purpose-built pre-trained AI models and customizing them with your own data.

Transfer learning extracts learned features from an existing neural network to a new one. Transfer learning is often used when creating a large training dataset is not feasible.

Developers, researchers and software partners building intelligent vision AI apps and services, can bring their own data to fine-tune pre-trained models instead of going through the hassle of training from scratch.

The goal of this toolkit is to reduce that 80 hour workload to an 8 hour workload, which can enable data scientist to have considerably more train-test iterations in the same time frame.

In this notebook, you will learn how to leverage the simplicity and convenience of TLT to:

- Take a BERT model and __Train/Finetune__ it on a dataset for Punctuation and Capitalization task

- Run __Inference__

- __Export__ the model to the ONNX format, or export in the format that is suitable for deployment in Jarvis

The earlier section in this notebook gives a brief introduction to the Punctuation and Capitalization task and the dataset being used.

## Prerequisites

For ease of use, please install TLT inside a python virtual environment. Steps to install the virtual environment are mentioned [here](https://packaging.python.org/guides/installing-using-pip-and-virtual-environments/). We recommend performing this step first and then launching the notebook from the virtual environment.

In addition to installing TLT package, please make sure of the following software requirements:

- python 3.6.9

- docker-ce > 19.03.5

- docker-API 1.40

- nvidia-container-toolkit > 1.3.0-1

- nvidia-container-runtime > 3.4.0-1

- nvidia-docker2 > 2.5.0-1

- nvidia-driver >= 455.23

Let's install TLT. It is a simple pip install!

```

! pip install nvidia-pyindex

! pip install nvidia-tlt

!tlt info --verbose

```

Check if the GPU device(s) are available.

```

!nvidia-smi

```

## Punctuation And Capitalization using TLT

### Task Description

Automatic Speech Recognition (ASR) systems typically generate text with no punctuation and capitalization of the words. This tutorial explains how to implement a model that will predict punctuation and capitalization for each word in a sentence to make ASR output more readable and to boost performance of the named entity recognition, machine translation or text-to-speech models. We'll show how to train a model for this task using a pre-trained BERT model. For every word in our training dataset we’re going to predict:

- punctuation mark that should follow the word

- whether the word should be capitalized

---

### TLT Workflow

### Setting TLT Mounts

Once the TLT gets installed, the next step is to set up the directory mounts that is the ``source`` and ``destination``. The ``source_mount`` will store all the data, pre-trained models and scripts pertaining to Punctuation and Capitalization task. Please select an empty directory for ``source_mount``.

The ``destination_mount`` is the directory to which ``source_mount`` will be mapped to, inside the container.

The TLT launcher uses docker container under the hood, and for our data and results directory to be visible to the docker, they must be mapped.

The launcher can be configured using the config file ``.tlt_mounts.json``. Apart from the mounts you can also configure additional options like the Environmental Variables and amount of Shared Memory available to the TLT launcher.

``Important Note:`` The code below creates a sample ``.tlt_mounts.json`` file. Here, we can map directories in which we save the data, specs, results and cache. You must configure it for your specific case to make sure both your data and results are correctly mapped to the docker. **Please also ensure that the source directories exist on your machine!**

```

%%bash

tee ~/.tlt_mounts.json <<'EOF'

{

"Mounts":[

{

"source": "<YOUR_PATH_TO_DATA_DIR>",

"destination": "/data"

},

{

"source": "<YOUR_PATH_TO_SPECS_DIR>",

"destination": "/specs"

},

{

"source": "<YOUR_PATH_TO_RESULTS_DIR>",

"destination": "/results"

},

{

"source": "<YOUR_PATH_TO_CACHE_DIR>",

"destination": "/root/.cache"

}

]

}

EOF

# Make sure the source directories exist, if not, create them

! mkdir <YOUR_PATH_TO_SPECS_DIR>

! mkdir <YOUR_PATH_TO_RESULTS_DIR>

! mkdir <YOUR_PATH_TO_CACHE_DIR>

```

The rest of the notebook exemplifies the simplicity of the TLT workflow.

Users with any level of Deep Learning knowledge can get started building their own custom models using a simple specification file. It's essentially just one command each to run data download and preprocessing, training, fine-tuning, evaluation, inference, and export.

All configurations happen through YAML specification files

---

### Configuration/Specification Files

All commands in TLT lies in the YAML specification files. There are sample specification files already available for you to use directly or as reference to create your own YAML specification files.

Through these specification files, you can tune many a lot of things like the model, dataset, hyperparameters, optimizer etc.

Each command (like download_and_convert, train, finetune, evaluate etc.) should have a dedicated specification file with configurations pertinent to it.

Here is an example of the training spec file:

```python

save_to: trained-model.tlt

trainer:

max_epochs: 5

model:

punct_label_ids:

O: 0

',': 1

'.': 2

'?': 3

capit_label_ids:

O: 0

U: 1

tokenizer:

tokenizer_name: ${model.language_model.pretrained_model_name} # or sentencepiece =

vocab_file: null # path to vocab file

tokenizer_model: null # only used if tokenizer is sentencepiece

special_tokens: null

language_model:

pretrained_model_name: bert-base-uncased

lm_checkpoint: null

config_file: null # json file, precedence over config

config: null

punct_head:

punct_num_fc_layers: 1

fc_dropout: 0.1

activation: 'relu'

use_transformer_init: true

capit_head:

capit_num_fc_layers: 1

fc_dropout: 0.1

activation: 'relu'

use_transformer_init: true

# Data dir containing dataset.

data_dir: ???

training_ds:

text_file: text_train.txt

labels_file: labels_train.txt

shuffle: true

num_samples: -1 # number of samples to be considered, -1 means the whole the dataset

batch_size: 64

validation_ds:

text_file: text_dev.txt

labels_file: labels_dev.txt

shuffle: false

num_samples: -1 # number of samples to be considered, -1 means the whole the dataset

batch_size: 64

optim:

name: adam

lr: 1e-5

weight_decay: 0.00

sched:

name: WarmupAnnealing

# Scheduler params

warmup_steps: null

warmup_ratio: 0.1

last_epoch: -1

# pytorch lightning args

monitor: val_loss

reduce_on_plateau: false

```

---

### Set Relevant Paths

Please set these paths according to your environment.

```

# NOTE: The following paths are set from the perspective of the TLT Docker.

# The data is saved here

DATA_DIR='/data'

# The configuration files are stored here

SPECS_DIR='/specs/punctuation_and_capitalization'

# The results are saved at this path

RESULTS_DIR='/results/punctuation_and_capitalization'

# Set your encryption key, and use the same key for all commands

KEY='tlt_encode'

```

---

### Downloading Specs

We can proceed to downloading the spec files. The user may choose to modify/rewrite these specs, or even individually override them through the launcher. You can download the default spec files by using the `download_specs` command. <br>

The -o argument indicating the folder where the default specification files will be downloaded, and -r that instructs the script where to save the logs. **Make sure the -o points to an empty folder!**

```

!tlt punctuation_and_capitalization download_specs \

-r $RESULTS_DIR/ \

-o $SPECS_DIR/

```

---

### Dataset

This model can work with any dataset as long as it follows the format specified below. The training and evaluation data is divided into _2 files: text.txt and labels.txt_. Each line of the __text.txt__ file contains text sequences, where words are separated with spaces: [WORD] [SPACE] [WORD] [SPACE] [WORD], for example:

when is the next flight to new york<br>

the next flight is ...<br>

...<br>

The __labels.txt__ file contains corresponding labels for each word in text.txt, the labels are separated with spaces. Each label in labels.txt file consists of 2 symbols:

- the first symbol of the label indicates what punctuation mark should follow the word (where O means no punctuation needed);

- the second symbol determines if a word needs to be capitalized or not (where U indicates that the word should be upper cased, and O - no capitalization needed.)

In this tutorial, we are considering only commas, periods, and question marks the rest punctuation marks were removed. To use more punctuation marks, update the dataset to include desired labels, no changes to the model needed.

Each line of the __labels.txt__ should follow the format: [LABEL] [SPACE] [LABEL] [SPACE] [LABEL] (for labels.txt). For example, labels for the above text.txt file should be:

OU OO OO OO OO OO OU ?U<br>

OU OO OO OO ...<br>

...

The complete list of all possible labels for this task used in this tutorial is: OO, ,O, .O, ?O, OU, ,U, .U, ?U.

### Download and preprocess the data

In this notebook we are going to use a subset of English examples from the [Tatoeba collection of sentences](https://tatoeba.org/eng).

Downloading and preprocessing the data using TLT is as simple as configuring YAML specification file and running the ``download_and_convert_tatoeba`` command. The code cell below uses the default `download_and_convert_tatoeba.yaml` available for the users as a reference.

The configurations in the specification file can be easily overridden using the tlt-launcher CLI as shown below. For instance, we override the ``source_data_dir`` and ``target_data_dir`` configurations.

We encourage you to take a look at the YAML files we have provided.

After executing the cell below, your data folder will contain the following 4 files:

- labels_dev.txt

- labels_train.txt

- text_dev.txt

- text_train.txt

```

### To download and convert the dataset

!tlt punctuation_and_capitalization download_and_convert_tatoeba \

-e $SPECS_DIR/download_and_convert_tatoeba.yaml \

source_data_dir=$DATA_DIR \

target_data_dir=$DATA_DIR

```

---

### Training

In the Punctuation and Capitalization Model, we are jointly training two token-level classifiers on top of the pretrained [BERT](https://arxiv.org/pdf/1810.04805.pdf) model:

- one classifier to predict punctuation and

- the other one - capitalization.

Training a model using TLT is as simple as configuring your spec file and running the train command. The code cell below uses the default train.yaml available for users as reference. It is configured by default to use the ``bert-base-uncased`` pretrained model. Additionally, these configurations could easily be overridden using the tlt-launcher CLI as shown below. For instance, below we override the trainer.max_epochs, training_ds.num_samples and validation_ds.num_samples configurations to suit our needs. We encourage you to take a look at the .yaml spec files we provide!

The command for training is very similar to the of ``download_and_convert_tatoeba``. Instead of ``tlt punctuation_and_capitalization download_and_convert_tatoeba``, we use ``tlt punctuation_and_capitalization train`` instead. The ``tlt punctuation_and_capitalization train`` command has the following arguments:

- ``-e`` : Path to the spec file

- ``-g`` : Number of GPUs to use

- ``-k`` : User specified encryption key to use while saving/loading the model

- ``-r`` : Path to the folder where the outputs should be written. Make sure this is mapped in the tlt_mounts.json

- Any overrides to the spec file eg. trainer.max_epochs

More details about these arguments are present in the [TLT Getting Started Guide](https://docs.nvidia.com/metropolis/TLT/tlt-getting-started-guide/index.html).

``NOTE:`` All file paths corresponds to the destination mounted directory that is visible in the TLT docker container used in backend.

```

### To train the dataset with BERT-base-uncased model

!tlt punctuation_and_capitalization train \

-e $SPECS_DIR/train.yaml \

-g 4 \

-r $RESULTS_DIR/train \

data_dir=$DATA_DIR \

trainer.max_epochs=2 \

training_ds.num_samples=-1 \

validation_ds.num_samples=-1 \

-k $KEY

```

The train command produces a .tlt file called ``trained-model.tlt`` saved at ``$RESULTS_DIR/checkpoints/trained-model.tlt``

### Other tips and tricks:

- To accelerate the training without loss of quality, it is possible to train with these parameters: ``trainer.amp_level="O1"`` and ``trainer.precision=16`` for reduced precision.

- The batch size ``training_ds.batch_size`` may influence the validation accuracy. Larger batch sizes are faster to train with, however, you may get slighly better results with smaller batches.

- You can also change the optimizer parameter ``optim.name`` and can see its effect on the punctuation and capitalization task by the change in accuracy.

- You can specify the number of layers in the head of the model ``model.punct_head.punct_num_fc_layers`` and ``model.capit_head.capit_num_fc_layers``.

---

### Finetuning

As stated above the command for all the tasks are very similar but have different YAML specification files that can be tweaked.

Note: If you wish to proceed with a trained dataset for better inference results, you can find a .nemo model [here](

https://ngc.nvidia.com/catalog/collections/nvidia:nemotrainingframework).

Simply re-name the .nemo file to .tlt and pass it through the finetune pipeline.

```

### To finetune on the dataset

!tlt punctuation_and_capitalization finetune \

-e $SPECS_DIR/finetune.yaml \

-g 4 \

-m $RESULTS_DIR/train/checkpoints/trained-model.tlt \

-r $RESULTS_DIR/finetune \

data_dir=$DATA_DIR \

trainer.max_epochs=3 \

-k $KEY

```

The train command produces a .tlt file called ``finetuned-model.tlt`` saved in the results directory.

---

### Evaluation

To evaluate our TLT model we will run the command below. It is always advisable to look at the YAML file for evaluate to understand the command in a better way.

```

### For evaluation

!tlt punctuation_and_capitalization evaluate \

-e $SPECS_DIR/evaluate.yaml \

-g 4 \

-m $RESULTS_DIR/finetune/checkpoints/finetuned-model.tlt \

data_dir=$DATA_DIR \

-r $RESULTS_DIR/evaluate \

-k $KEY

```

On evaluating the model you will get some results and based on that we can either retrain the model for more epochs or continue with the inference.

---

### Inference

Inference using a TLT trained and fine-tuned model can be done by ``tlt punctuation_and_capitalization infer`` command. It is again advisable to look at the infer.yaml file.

```

### For inference

!tlt punctuation_and_capitalization infer \

-e $SPECS_DIR/infer.yaml \

-g 4 \

-m $RESULTS_DIR/finetune/checkpoints/finetuned-model.tlt \

-r $RESULTS_DIR/infer \

-k $KEY

```

---

### Export to ONNX

[ONNX](https://onnx.ai/) is a popular open format for machine learning models. It enables interoperability between different frameworks, making the path to production much easier.

TLT provides commands to export the .tlt model to the ONNX format in an .eonnx archive. The `export_format` configuration can be set to `ONNX` to achieve this.

The tlt export command for ``punctuation_and_capitalization`` is shown in the cell below.

```

### For export to ONNX

!tlt punctuation_and_capitalization export \

-e $SPECS_DIR/export.yaml \

-g 1 \

-m $RESULTS_DIR/finetune/checkpoints/finetuned-model.tlt \

-r $RESULTS_DIR/export \

-k $KEY \

export_format=ONNX

```

This command exports the model as ``exported-model.eonnx`` which is essentially an archive containing the .onnx model.

---

### Inference using ONNX

TLT provides the capability to use the exported .eonnx model for inference. The command ``tlt punctuation_and_capitalization infer_onnx`` is very similar to the inference command for .tlt models. Again, the input file used is just for demo purposes, you may choose to try out your own custom input.

```

### For inference using ONNX

!tlt punctuation_and_capitalization infer_onnx \

-e $SPECS_DIR/infer_onnx.yaml \

-g 1 \

-m $RESULTS_DIR/export/exported-model.eonnx \

-r $RESULTS_DIR/infer_onnx \

-k $KEY

```

---

### Export to JARVIS

With TLT, you can also export your model in a format that can deployed using [NVIDIA JARVIS](https://developer.nvidia.com/nvidia-jarvis), a highly performant application framework for multi-modal conversational AI services using GPUs. The same command for exporting to ONNX can be used here. The only small variation is the configuration for ``export_format`` in the spec file.

```

### For export to JARVIS

!tlt punctuation_and_capitalization export \

-e $SPECS_DIR/export.yaml \

-g 1 \

-m $RESULTS_DIR/finetune/checkpoints/finetuned-model.tlt \

-r $RESULTS_DIR/export_jarvis \

export_format=JARVIS \

export_to=punct-capit-model.ejrvs \

-k $KEY

```

The model is exported as ``exported-model.ejrvs`` which is in a format suited for deployment in Jarvis.

---

### What next?

You can use TLT to build custom models for your own NLP applications.

| github_jupyter |

# Face Detection using SSD and the Caffe pre-trained model

*by Georgios K. Ouzounis, June 21st, 2021*

This notebook demonstrates face detection in still images using the SSD detector configured with the Caffe pretrained model

## Copy the model files

We need the configuration file and the pre-trained weights

```

%mkdir model/

!wget https://raw.githubusercontent.com/georgiosouzounis/face-detection-ssd-caffe/main/model/deploy.prototxt.txt -O model/deploy.prototxt.txt

!wget https://github.com/georgiosouzounis/face-detection-ssd-caffe/raw/main/model/res10_300x300_ssd_iter_140000.caffemodel -O model/res10_300x300_ssd_iter_140000.caffemodel

```

## Import the libraries

```

# import the relevant libraries

import numpy as np

import cv2 # openCV

# check the opencv version

if cv2.__version__ < '4.5.2':

print("opencv version: ", cv2.__version__)

print("please upgrade your opencv installation to the latest")

# if the openCV version is < 4.4.0 update to the latest otherwise skip this step

!pip install opencv-python==4.5.2.52

```

## Read the model and initialize the detector

```

# load the serialized model from the local copy in model/

model_cfg = "model/deploy.prototxt.txt"

model_weights = "model/res10_300x300_ssd_iter_140000.caffemodel"

# read the model

detector = cv2.dnn.readNetFromCaffe(model_cfg, model_weights)

```

## Get a test image

Set the path to an image containing a face in your own Google Drive or use the example as shown:

```

!wget https://github.com/georgiosouzounis/face-detection-ssd-caffe/raw/main/data/macron.jpg

test_img = "macron.jpg"

# load the test image and create an image blob

image = cv2.imread(test_img)

(h, w) = image.shape[:2]

# display the image

from google.colab.patches import cv2_imshow

cv2_imshow(image)

```

## Deploy the detector

```

# set the intensity scaling factor; 1 in this case, i.e. original image intensities

scalefactor = 1.0

# set the new dimensions for image resizing to match the network requirements

new_size = (300, 300)

# create a blob using OpenCV's DNN functionality and by performing mean subtraction

# to normalize the input

blob = cv2.dnn.blobFromImage(image, scalefactor, new_size, (127.5, 127.5, 127.5), swapRB=True, crop=False)

# set the blob as input to the network

detector.setInput(blob)

# compute the forward pass - detect faces if any

detections = detector.forward()

detections.shape

```

## Analyze the results

Let us review the detections. The shape of the detections is expected to be in the following format: ```[1, 1, N, 7]```, where N is the number of detected bounding boxes. For each detection, the description has the format: ```[image_id, label, conf, x_min, y_min, x_max, y_max]```.

```

detections[0][0][0]

len(detections[0][0])

# set the confidence threshold

confidence_threshold = 0.5

# loop over the detections

for i in range(0, detections.shape[2]):

# extract the confidence (i.e., probability) associated with the prediction

confidence = detections[0, 0, i, 2]

# ignore weak detections

if confidence > confidence_threshold:

# compute the (x, y)-coordinates of the bounding box for the detected object

box = detections[0, 0, i, 3:7] * np.array([w, h, w, h])

(startX, startY, endX, endY) = box.astype("int")

# draw the bounding box of the detected face

cv2.rectangle(image, (startX, startY), (endX, endY), (0, 0, 255), 2)

# print the probability of this detection

text = "confidence: {:.2f}%".format(confidence * 100)

y = startY - 10 if startY - 10 > 10 else startY + 10

cv2.putText(image, text, (startX, y), cv2.FONT_HERSHEY_SIMPLEX, 0.45, (0, 0, 255), 2)

# show the output image

cv2_imshow(image)

```

| github_jupyter |

# Computer Vision Nanodegree

## Project: Image Captioning

---

In this notebook, you will learn how to load and pre-process data from the [COCO dataset](http://cocodataset.org/#home). You will also design a CNN-RNN model for automatically generating image captions.

Note that **any amendments that you make to this notebook will not be graded**. However, you will use the instructions provided in **Step 3** and **Step 4** to implement your own CNN encoder and RNN decoder by making amendments to the **models.py** file provided as part of this project. Your **models.py** file **will be graded**.

Feel free to use the links below to navigate the notebook:

- [Step 1](#step1): Explore the Data Loader

- [Step 2](#step2): Use the Data Loader to Obtain Batches

- [Step 3](#step3): Experiment with the CNN Encoder

- [Step 4](#step4): Implement the RNN Decoder

<a id='step1'></a>

## Step 1: Explore the Data Loader

We have already written a [data loader](http://pytorch.org/docs/master/data.html#torch.utils.data.DataLoader) that you can use to load the COCO dataset in batches.

In the code cell below, you will initialize the data loader by using the `get_loader` function in **data_loader.py**.

> For this project, you are not permitted to change the **data_loader.py** file, which must be used as-is.

The `get_loader` function takes as input a number of arguments that can be explored in **data_loader.py**. Take the time to explore these arguments now by opening **data_loader.py** in a new window. Most of the arguments must be left at their default values, and you are only allowed to amend the values of the arguments below:

1. **`transform`** - an [image transform](http://pytorch.org/docs/master/torchvision/transforms.html) specifying how to pre-process the images and convert them to PyTorch tensors before using them as input to the CNN encoder. For now, you are encouraged to keep the transform as provided in `transform_train`. You will have the opportunity later to choose your own image transform to pre-process the COCO images.

2. **`mode`** - one of `'train'` (loads the training data in batches) or `'test'` (for the test data). We will say that the data loader is in training or test mode, respectively. While following the instructions in this notebook, please keep the data loader in training mode by setting `mode='train'`.

3. **`batch_size`** - determines the batch size. When training the model, this is number of image-caption pairs used to amend the model weights in each training step.

4. **`vocab_threshold`** - the total number of times that a word must appear in the in the training captions before it is used as part of the vocabulary. Words that have fewer than `vocab_threshold` occurrences in the training captions are considered unknown words.

5. **`vocab_from_file`** - a Boolean that decides whether to load the vocabulary from file.

We will describe the `vocab_threshold` and `vocab_from_file` arguments in more detail soon. For now, run the code cell below. Be patient - it may take a couple of minutes to run!

```

import sys

sys.path.append('/opt/cocoapi/PythonAPI')

from pycocotools.coco import COCO

!pip install nltk

import nltk

nltk.download('punkt')

from data_loader import get_loader

from torchvision import transforms

# Define a transform to pre-process the training images.

transform_train = transforms.Compose([

transforms.Resize(256), # smaller edge of image resized to 256

transforms.RandomCrop(224), # get 224x224 crop from random location

transforms.RandomHorizontalFlip(), # horizontally flip image with probability=0.5

transforms.ToTensor(), # convert the PIL Image to a tensor

transforms.Normalize((0.485, 0.456, 0.406), # normalize image for pre-trained model

(0.229, 0.224, 0.225))])

# Set the minimum word count threshold.

vocab_threshold = 5

# Specify the batch size.

batch_size = 10

# Obtain the data loader.

# data_loader = get_loader(transform=transform_train,

# mode='train',

# batch_size=batch_size,

# vocab_threshold=vocab_threshold,

# vocab_from_file=False)

```

When you ran the code cell above, the data loader was stored in the variable `data_loader`.

You can access the corresponding dataset as `data_loader.dataset`. This dataset is an instance of the `CoCoDataset` class in **data_loader.py**. If you are unfamiliar with data loaders and datasets, you are encouraged to review [this PyTorch tutorial](http://pytorch.org/tutorials/beginner/data_loading_tutorial.html).

### Exploring the `__getitem__` Method

The `__getitem__` method in the `CoCoDataset` class determines how an image-caption pair is pre-processed before being incorporated into a batch. This is true for all `Dataset` classes in PyTorch; if this is unfamiliar to you, please review [the tutorial linked above](http://pytorch.org/tutorials/beginner/data_loading_tutorial.html).

When the data loader is in training mode, this method begins by first obtaining the filename (`path`) of a training image and its corresponding caption (`caption`).

#### Image Pre-Processing

Image pre-processing is relatively straightforward (from the `__getitem__` method in the `CoCoDataset` class):

```python

# Convert image to tensor and pre-process using transform

image = Image.open(os.path.join(self.img_folder, path)).convert('RGB')

image = self.transform(image)

```

After loading the image in the training folder with name `path`, the image is pre-processed using the same transform (`transform_train`) that was supplied when instantiating the data loader.

#### Caption Pre-Processing

The captions also need to be pre-processed and prepped for training. In this example, for generating captions, we are aiming to create a model that predicts the next token of a sentence from previous tokens, so we turn the caption associated with any image into a list of tokenized words, before casting it to a PyTorch tensor that we can use to train the network.

To understand in more detail how COCO captions are pre-processed, we'll first need to take a look at the `vocab` instance variable of the `CoCoDataset` class. The code snippet below is pulled from the `__init__` method of the `CoCoDataset` class:

```python

def __init__(self, transform, mode, batch_size, vocab_threshold, vocab_file, start_word,

end_word, unk_word, annotations_file, vocab_from_file, img_folder):

...

self.vocab = Vocabulary(vocab_threshold, vocab_file, start_word,

end_word, unk_word, annotations_file, vocab_from_file)

...

```

From the code snippet above, you can see that `data_loader.dataset.vocab` is an instance of the `Vocabulary` class from **vocabulary.py**. Take the time now to verify this for yourself by looking at the full code in **data_loader.py**.

We use this instance to pre-process the COCO captions (from the `__getitem__` method in the `CoCoDataset` class):

```python

# Convert caption to tensor of word ids.

tokens = nltk.tokenize.word_tokenize(str(caption).lower()) # line 1

caption = [] # line 2

caption.append(self.vocab(self.vocab.start_word)) # line 3

caption.extend([self.vocab(token) for token in tokens]) # line 4

caption.append(self.vocab(self.vocab.end_word)) # line 5

caption = torch.Tensor(caption).long() # line 6

```

As you will see soon, this code converts any string-valued caption to a list of integers, before casting it to a PyTorch tensor. To see how this code works, we'll apply it to the sample caption in the next code cell.

```

sample_caption = 'A person doing a trick on a rail while riding a skateboard.'

```

In **`line 1`** of the code snippet, every letter in the caption is converted to lowercase, and the [`nltk.tokenize.word_tokenize`](http://www.nltk.org/) function is used to obtain a list of string-valued tokens. Run the next code cell to visualize the effect on `sample_caption`.

```

import nltk

sample_tokens = nltk.tokenize.word_tokenize(str(sample_caption).lower())

print(sample_tokens)

```

In **`line 2`** and **`line 3`** we initialize an empty list and append an integer to mark the start of a caption. The [paper](https://arxiv.org/pdf/1411.4555.pdf) that you are encouraged to implement uses a special start word (and a special end word, which we'll examine below) to mark the beginning (and end) of a caption.

This special start word (`"<start>"`) is decided when instantiating the data loader and is passed as a parameter (`start_word`). You are **required** to keep this parameter at its default value (`start_word="<start>"`).

As you will see below, the integer `0` is always used to mark the start of a caption.

```

sample_caption = []

start_word = data_loader.dataset.vocab.start_word

print('Special start word:', start_word)

sample_caption.append(data_loader.dataset.vocab(start_word))

print(sample_caption)

```

In **`line 4`**, we continue the list by adding integers that correspond to each of the tokens in the caption.

```

sample_caption.extend([data_loader.dataset.vocab(token) for token in sample_tokens])

print(sample_caption)

```

In **`line 5`**, we append a final integer to mark the end of the caption.

Identical to the case of the special start word (above), the special end word (`"<end>"`) is decided when instantiating the data loader and is passed as a parameter (`end_word`). You are **required** to keep this parameter at its default value (`end_word="<end>"`).

As you will see below, the integer `1` is always used to mark the end of a caption.

```

end_word = data_loader.dataset.vocab.end_word

print('Special end word:', end_word)

sample_caption.append(data_loader.dataset.vocab(end_word))

print(sample_caption)

```

Finally, in **`line 6`**, we convert the list of integers to a PyTorch tensor and cast it to [long type](http://pytorch.org/docs/master/tensors.html#torch.Tensor.long). You can read more about the different types of PyTorch tensors on the [website](http://pytorch.org/docs/master/tensors.html).

```

import torch

sample_caption = torch.Tensor(sample_caption).long()

print(sample_caption)

```

And that's it! In summary, any caption is converted to a list of tokens, with _special_ start and end tokens marking the beginning and end of the sentence:

```

[<start>, 'a', 'person', 'doing', 'a', 'trick', 'while', 'riding', 'a', 'skateboard', '.', <end>]

```

This list of tokens is then turned into a list of integers, where every distinct word in the vocabulary has an associated integer value:

```

[0, 3, 98, 754, 3, 396, 207, 139, 3, 753, 18, 1]

```

Finally, this list is converted to a PyTorch tensor. All of the captions in the COCO dataset are pre-processed using this same procedure from **`lines 1-6`** described above.

As you saw, in order to convert a token to its corresponding integer, we call `data_loader.dataset.vocab` as a function. The details of how this call works can be explored in the `__call__` method in the `Vocabulary` class in **vocabulary.py**.

```python

def __call__(self, word):

if not word in self.word2idx:

return self.word2idx[self.unk_word]

return self.word2idx[word]

```

The `word2idx` instance variable is a Python [dictionary](https://docs.python.org/3/tutorial/datastructures.html#dictionaries) that is indexed by string-valued keys (mostly tokens obtained from training captions). For each key, the corresponding value is the integer that the token is mapped to in the pre-processing step.

Use the code cell below to view a subset of this dictionary.

```

# Preview the word2idx dictionary.

dict(list(data_loader.dataset.vocab.word2idx.items())[:10])

```

We also print the total number of keys.

```

# Print the total number of keys in the word2idx dictionary.

print('Total number of tokens in vocabulary:', len(data_loader.dataset.vocab))

```

As you will see if you examine the code in **vocabulary.py**, the `word2idx` dictionary is created by looping over the captions in the training dataset. If a token appears no less than `vocab_threshold` times in the training set, then it is added as a key to the dictionary and assigned a corresponding unique integer. You will have the option later to amend the `vocab_threshold` argument when instantiating your data loader. Note that in general, **smaller** values for `vocab_threshold` yield a **larger** number of tokens in the vocabulary. You are encouraged to check this for yourself in the next code cell by decreasing the value of `vocab_threshold` before creating a new data loader.

```

# Modify the minimum word count threshold.

vocab_threshold = 5

# Obtain the data loader.

data_loader = get_loader(transform=transform_train,

mode='train',

batch_size=batch_size,

vocab_threshold=vocab_threshold,

vocab_from_file=False)

# Print the total number of keys in the word2idx dictionary.

print('Total number of tokens in vocabulary:', len(data_loader.dataset.vocab))

```

There are also a few special keys in the `word2idx` dictionary. You are already familiar with the special start word (`"<start>"`) and special end word (`"<end>"`). There is one more special token, corresponding to unknown words (`"<unk>"`). All tokens that don't appear anywhere in the `word2idx` dictionary are considered unknown words. In the pre-processing step, any unknown tokens are mapped to the integer `2`.

```

unk_word = data_loader.dataset.vocab.unk_word

print('Special unknown word:', unk_word)

print('All unknown words are mapped to this integer:', data_loader.dataset.vocab(unk_word))

```

Check this for yourself below, by pre-processing the provided nonsense words that never appear in the training captions.

```

print(data_loader.dataset.vocab('jfkafejw'))

print(data_loader.dataset.vocab('ieowoqjf'))

```

The final thing to mention is the `vocab_from_file` argument that is supplied when creating a data loader. To understand this argument, note that when you create a new data loader, the vocabulary (`data_loader.dataset.vocab`) is saved as a [pickle](https://docs.python.org/3/library/pickle.html) file in the project folder, with filename `vocab.pkl`.

If you are still tweaking the value of the `vocab_threshold` argument, you **must** set `vocab_from_file=False` to have your changes take effect.

But once you are happy with the value that you have chosen for the `vocab_threshold` argument, you need only run the data loader *one more time* with your chosen `vocab_threshold` to save the new vocabulary to file. Then, you can henceforth set `vocab_from_file=True` to load the vocabulary from file and speed the instantiation of the data loader. Note that building the vocabulary from scratch is the most time-consuming part of instantiating the data loader, and so you are strongly encouraged to set `vocab_from_file=True` as soon as you are able.

Note that if `vocab_from_file=True`, then any supplied argument for `vocab_threshold` when instantiating the data loader is completely ignored.

```

# Obtain the data loader (from file). Note that it runs much faster than before!

data_loader = get_loader(transform=transform_train,

mode='train',

batch_size=batch_size,

vocab_from_file=True)

```

In the next section, you will learn how to use the data loader to obtain batches of training data.

<a id='step2'></a>

## Step 2: Use the Data Loader to Obtain Batches

The captions in the dataset vary greatly in length. You can see this by examining `data_loader.dataset.caption_lengths`, a Python list with one entry for each training caption (where the value stores the length of the corresponding caption).

In the code cell below, we use this list to print the total number of captions in the training data with each length. As you will see below, the majority of captions have length 10. Likewise, very short and very long captions are quite rare.

```

from collections import Counter

# Tally the total number of training captions with each length.

counter = Counter(data_loader.dataset.caption_lengths)

lengths = sorted(counter.items(), key=lambda pair: pair[1], reverse=True)

for value, count in lengths:

print('value: %2d --- count: %5d' % (value, count))

```

To generate batches of training data, we begin by first sampling a caption length (where the probability that any length is drawn is proportional to the number of captions with that length in the dataset). Then, we retrieve a batch of size `batch_size` of image-caption pairs, where all captions have the sampled length. This approach for assembling batches matches the procedure in [this paper](https://arxiv.org/pdf/1502.03044.pdf) and has been shown to be computationally efficient without degrading performance.

Run the code cell below to generate a batch. The `get_train_indices` method in the `CoCoDataset` class first samples a caption length, and then samples `batch_size` indices corresponding to training data points with captions of that length. These indices are stored below in `indices`.

These indices are supplied to the data loader, which then is used to retrieve the corresponding data points. The pre-processed images and captions in the batch are stored in `images` and `captions`.

```

import numpy as np

import torch.utils.data as data

# Randomly sample a caption length, and sample indices with that length.

indices = data_loader.dataset.get_train_indices()

print('sampled indices:', indices)

# Create and assign a batch sampler to retrieve a batch with the sampled indices.

new_sampler = data.sampler.SubsetRandomSampler(indices=indices)

data_loader.batch_sampler.sampler = new_sampler

# Obtain the batch.

images, captions = next(iter(data_loader))

print('images.shape:', images.shape)

print('captions.shape:', captions.shape)

# (Optional) Uncomment the lines of code below to print the pre-processed images and captions.

print('images:', images)

print('captions:', captions)

```

Each time you run the code cell above, a different caption length is sampled, and a different batch of training data is returned. Run the code cell multiple times to check this out!

You will train your model in the next notebook in this sequence (**2_Training.ipynb**). This code for generating training batches will be provided to you.

> Before moving to the next notebook in the sequence (**2_Training.ipynb**), you are strongly encouraged to take the time to become very familiar with the code in **data_loader.py** and **vocabulary.py**. **Step 1** and **Step 2** of this notebook are designed to help facilitate a basic introduction and guide your understanding. However, our description is not exhaustive, and it is up to you (as part of the project) to learn how to best utilize these files to complete the project. __You should NOT amend any of the code in either *data_loader.py* or *vocabulary.py*.__

In the next steps, we focus on learning how to specify a CNN-RNN architecture in PyTorch, towards the goal of image captioning.

<a id='step3'></a>

## Step 3: Experiment with the CNN Encoder

Run the code cell below to import `EncoderCNN` and `DecoderRNN` from **model.py**.

```

# Watch for any changes in model.py, and re-load it automatically.

% load_ext autoreload

% autoreload 2

# Import EncoderCNN and DecoderRNN.

from model import EncoderCNN, DecoderRNN

```

In the next code cell we define a `device` that you will use move PyTorch tensors to GPU (if CUDA is available). Run this code cell before continuing.

```

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

```

Run the code cell below to instantiate the CNN encoder in `encoder`.

The pre-processed images from the batch in **Step 2** of this notebook are then passed through the encoder, and the output is stored in `features`.

```

# Specify the dimensionality of the image embedding.

embed_size = 256

#-#-#-# Do NOT modify the code below this line. #-#-#-#

# Initialize the encoder. (Optional: Add additional arguments if necessary.)

encoder = EncoderCNN(embed_size)

# Move the encoder to GPU if CUDA is available.

encoder.to(device)

# Move last batch of images (from Step 2) to GPU if CUDA is available.

images = images.to(device)

# Pass the images through the encoder.

features = encoder(images)

print('type(features):', type(features))

print('features.shape:', features.shape)

# Check that your encoder satisfies some requirements of the project! :D

assert type(features)==torch.Tensor, "Encoder output needs to be a PyTorch Tensor."

assert (features.shape[0]==batch_size) & (features.shape[1]==embed_size), "The shape of the encoder output is incorrect."

```

The encoder that we provide to you uses the pre-trained ResNet-50 architecture (with the final fully-connected layer removed) to extract features from a batch of pre-processed images. The output is then flattened to a vector, before being passed through a `Linear` layer to transform the feature vector to have the same size as the word embedding.

You are welcome (and encouraged) to amend the encoder in **model.py**, to experiment with other architectures. In particular, consider using a [different pre-trained model architecture](http://pytorch.org/docs/master/torchvision/models.html). You may also like to [add batch normalization](http://pytorch.org/docs/master/nn.html#normalization-layers).

> You are **not** required to change anything about the encoder.

For this project, you **must** incorporate a pre-trained CNN into your encoder. Your `EncoderCNN` class must take `embed_size` as an input argument, which will also correspond to the dimensionality of the input to the RNN decoder that you will implement in Step 4. When you train your model in the next notebook in this sequence (**2_Training.ipynb**), you are welcome to tweak the value of `embed_size`.

If you decide to modify the `EncoderCNN` class, save **model.py** and re-execute the code cell above. If the code cell returns an assertion error, then please follow the instructions to modify your code before proceeding. The assert statements ensure that `features` is a PyTorch tensor with shape `[batch_size, embed_size]`.

<a id='step4'></a>

## Step 4: Implement the RNN Decoder

Before executing the next code cell, you must write `__init__` and `forward` methods in the `DecoderRNN` class in **model.py**. (Do **not** write the `sample` method yet - you will work with this method when you reach **3_Inference.ipynb**.)

> The `__init__` and `forward` methods in the `DecoderRNN` class are the only things that you **need** to modify as part of this notebook. You will write more implementations in the notebooks that appear later in the sequence.

Your decoder will be an instance of the `DecoderRNN` class and must accept as input:

- the PyTorch tensor `features` containing the embedded image features (outputted in Step 3, when the last batch of images from Step 2 was passed through `encoder`), along with

- a PyTorch tensor corresponding to the last batch of captions (`captions`) from Step 2.

Note that the way we have written the data loader should simplify your code a bit. In particular, every training batch will contain pre-processed captions where all have the same length (`captions.shape[1]`), so **you do not need to worry about padding**.

> While you are encouraged to implement the decoder described in [this paper](https://arxiv.org/pdf/1411.4555.pdf), you are welcome to implement any architecture of your choosing, as long as it uses at least one RNN layer, with hidden dimension `hidden_size`.

Although you will test the decoder using the last batch that is currently stored in the notebook, your decoder should be written to accept an arbitrary batch (of embedded image features and pre-processed captions [where all captions have the same length]) as input.

In the code cell below, `outputs` should be a PyTorch tensor with size `[batch_size, captions.shape[1], vocab_size]`. Your output should be designed such that `outputs[i,j,k]` contains the model's predicted score, indicating how likely the `j`-th token in the `i`-th caption in the batch is the `k`-th token in the vocabulary. In the next notebook of the sequence (**2_Training.ipynb**), we provide code to supply these scores to the [`torch.nn.CrossEntropyLoss`](http://pytorch.org/docs/master/nn.html#torch.nn.CrossEntropyLoss) optimizer in PyTorch.

```

# Specify the number of features in the hidden state of the RNN decoder.

hidden_size = 512

#-#-#-# Do NOT modify the code below this line. #-#-#-#

# Store the size of the vocabulary.

vocab_size = len(data_loader.dataset.vocab)

# Initialize the decoder.

decoder = DecoderRNN(embed_size, hidden_size, vocab_size)

# Move the decoder to GPU if CUDA is available.

decoder.to(device)

# Move last batch of captions (from Step 1) to GPU if CUDA is available

captions = captions.to(device)

# Pass the encoder output and captions through the decoder.

outputs = decoder(features, captions)

print('type(outputs):', type(outputs))

print('outputs.shape:', outputs.shape)

# Check that your decoder satisfies some requirements of the project! :D

assert type(outputs)==torch.Tensor, "Decoder output needs to be a PyTorch Tensor."

assert (outputs.shape[0]==batch_size) & (outputs.shape[1]==captions.shape[1]) & (outputs.shape[2]==vocab_size), "The shape of the decoder output is incorrect."

```

When you train your model in the next notebook in this sequence (**2_Training.ipynb**), you are welcome to tweak the value of `hidden_size`.

| github_jupyter |

```

import pandas as pd

from datetime import date, timedelta, datetime

from bs4 import BeautifulSoup

import requests

import re

df_vix_base = pd.read_csv('http://www.cboe.com/publish/scheduledtask/mktdata/datahouse/vixpc.csv',

names=['Date', 'VIX P/C Ratio', 'Puts', 'Calls', 'VIX Options Volume'],

index_col='Date',

parse_dates=['Date'],

skiprows=3)

df_vix_base = df_vix_base[['VIX P/C Ratio', 'VIX Options Volume']]

df_spx_base = pd.read_csv('http://www.cboe.com/publish/scheduledtask/mktdata/datahouse/spxpc.csv',

names=['Date', 'SPX P/C Ratio', 'Puts', 'Calls', 'SPX Options Volume'],

index_col='Date',

parse_dates=['Date'],

skiprows=3)

df_spx_base = df_spx_base[['SPX P/C Ratio', 'SPX Options Volume']]

df_vix_base

df_spx_base

df_cboe_base = df_vix_base.join(df_spx_base, how='inner')

df_cboe_base

cboe_data_dict = {}

n = len(df_cboe_base)

last_known_date = df_cboe_base.index[n-1]

curr_date = last_known_date

end = datetime.today()

delta = timedelta(days=1)

while curr_date <= end:

y, m, d = curr_date.year, curr_date.month, curr_date.day

print(f'Scraping date: {y}-{m:02}-{d:02}')

url = f'https://markets.cboe.com/us/options/market_statistics/daily/?mkt=cone&dt={y}-{m}-{d}'

html = requests.get(url).content

soup = BeautifulSoup(html, 'html.parser')

date_id = 'stats-date-header'

date_string = soup.find(id=date_id).get_text()

date_string = ' '.join(date_string.split(' ')[-3:])

page_date = datetime.strptime(date_string, "%B %d, %Y")

table_class = 'bats-table bats-table--left'

tables = soup.findAll('table', {'class' : table_class})

# to find the table indices

# Summary: 0, VIX: 5, SPX + SPXW: 6

summary_index, vix_index, spx_index = -1, -1, -1

for i,t in enumerate(tables):

s = str(t)

if 'RATIOS' in s:

summary_index = i

elif 'CBOE VOLATILITY INDEX (VIX)' in s:

vix_index = i

elif 'SPX + SPXW' in s:

spx_index = i

if min([summary_index, vix_index, spx_index]) >= 0:

break

table_summary = tables[summary_index]

table_vix = tables[vix_index]

table_spx = tables[spx_index]

df_summary = pd.read_html(str(table_summary))[0]

df_vix = pd.read_html(str(table_vix), skiprows=1)[0]

df_spx = pd.read_html(str(table_spx), skiprows=1)[0]

vix_pc_ratio = df_summary.iloc[4,1]

spx_pc_ratio = df_summary.iloc[5,1]

vix_volume = df_vix.iloc[0,3]

spx_volume = df_spx.iloc[0,3]

cboe_data_dict[page_date] = [

vix_pc_ratio,

vix_volume,

spx_pc_ratio,

spx_volume

]

curr_date += delta

cboe_data_dict

df_cboe_new = pd.DataFrame.from_dict(

cboe_data_dict,

orient='index',

columns=['VIX P/C Ratio', 'VIX Options Volume',

'SPX P/C Ratio', 'SPX Options Volume'])

df_cboe_new.index.rename('Date', inplace=True)

df_cboe_new.tail(20)

df_cboe_full = pd.concat([df_cboe_base, df_cboe_new]).reset_index()

df_cboe_full.tail(20)

df_cboe_full = df_cboe_full.drop_duplicates(subset='Date').set_index('Date').sort_index()

df_cboe_full

df_cboe_full.to_csv('CBOE-data.csv')

```

| github_jupyter |

# This notebook processes CAFE f1 ocean daily data for building climatologies. Only the first 2 years of the forecasts from the period 2003-2015 are used.

Temporary files are written to `tmp_fldr`

```

# Import packages -----

import pandas as pd

import xarray as xr

import numpy as np

from pylatte import utils

from ipywidgets import FloatProgress

```

#### Initialise

```

# Location of forecast data -----

fcst_folder = '/OSM/CBR/OA_DCFP/data/model_output/CAFE/forecasts/v1/'

fcst_filename = 'ocean_daily*'

fields = pd.DataFrame( \

{'name_CAFE': ['sst', 'patm_t', 'eta_t', 'sss', 'u_surf', 'v_surf'],

'name_std' : ['sst', 'patm', 'eta', 'sss', 'u_s', 'v_s']}

)

name_dict = fields.set_index('name_CAFE').to_dict()['name_std']

fields

```

#### Save each init month and variable separately due to memory considerations (this is only necessary for multi-level variables, but all are dealt with in the same way for simplicity)

```

# Temporary folder location -----

tmp_fldr = '/OSM/CBR/OA_DCFP/data/intermediate_products/pylatte_climatologies/tmp/'

# Loop over forecasts, saving numerator and denominator of mean -----

years = range(2003,2016)

months = range(1,13)

ensembles = range(1,12)

for idx, variable in enumerate(fields['name_CAFE']):

print(variable)

print('----------')

for year in years:

print(year)

for month in months:

print(month)

# First see if file already exists -----

savename = 'cafe.fcst.v1.ocean.' + fields['name_std'][idx] + '.' + str(year) + '.' + str(month) + '.clim.nc'

try:

xr.open_dataset(tmp_fldr + savename, autoclose=True)

except:

ens_list = []

ens = []

empty = True

for ie, ensemble in enumerate(ensembles):

path = fcst_folder + '/yr' + str(year) + '/mn' + str(month) + \

'/OUTPUT.' + str(ensemble) + '/' + fcst_filename + '.nc'

# Try to stack ensembles into a list -----

try:

dataset = xr.open_mfdataset(path, autoclose=True)[variable]

# Truncate to 2 year forecasts -----

n_trunc = min([731, len(dataset.time)])

dataset = dataset.isel(time=range(n_trunc))

if 'xu_ocean' in dataset.dims:

dataset = dataset.rename({'xu_ocean':'lon_u','yu_ocean':'lat_u'})

if 'xt_ocean' in dataset.dims:

dataset = dataset.rename({'xt_ocean':'lon_t','yt_ocean':'lat_t'})

ens_list.append(dataset.rename(fields['name_std'][idx]))

ens.append(ie+1)

empty = False

except OSError:

# File does not exist -----

pass

# Concatenate ensembles -----

if empty == False:

ds = xr.concat(ens_list, dim='ensemble')

ds['ensemble'] = ens

# Make month_day array of month-day -----

m = np.array([str(i).zfill(2) + '-' for i in ds.time.dt.month.values])

d = np.array([str(i).zfill(2) for i in ds.time.dt.day.values])

md = np.core.defchararray.add(m, d)

# Replace time array with month_day array and groupby -----

ds['time'] = md

ds_clim = ds.groupby('time').sum(dim='time',keep_attrs=True).to_dataset(name='sum').mean(dim='ensemble')

get_len = lambda ds, dim : ds.count(dim=dim)

ds_clim['count'] = ds['time'].groupby('time').apply(get_len, dim='time')

ds_clim.to_netcdf(path = tmp_fldr + savename, mode = 'w')

del ds, ds_clim

```

#### Combine and write into single climatology file

```

# Use year 2016 as time -----

path = fcst_folder + '/yr2016/mn1/OUTPUT.1/' + fcst_filename + '.nc'

dataset = xr.open_mfdataset(path, autoclose=True)

time_use = dataset.time[:366]

# Loop over all variables -----

for idx, variable in enumerate(fields['name_std']):

print(variable)

print('----------')

name = 'cafe.fcst.v1.ocean.' + fields['name_std'][idx] + '*' + '.clim.nc'

ds = xr.open_mfdataset(tmp_fldr + name, autoclose=True, concat_dim='run')

numer = ds['sum'].sum(dim='run')

denom = ds['count'].sum(dim='run')

if idx == 0:

clim = (numer / denom).to_dataset(name=variable)

else:

clim[variable] = (numer / denom).rename(variable)

clim['time'] = time_use

# Save the climatology -----

save_fldr = '/OSM/CBR/OA_DCFP/data/intermediate_products/pylatte_climatologies/'