text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

```

import neuroglancer

import numpy as np

```

Create a new (initially empty) viewer. This starts a webserver in a background thread, which serves a copy of the Neuroglancer client, and which also can serve local volume data and handles sending and receiving Neuroglancer state updates.

```

viewer = neuroglancer.Viewer()

```

Print a link to the viewer (only valid while the notebook kernel is running). Note that while the Viewer is running, anyone with the link can obtain any authentication credentials that the neuroglancer Python module obtains. Therefore, be very careful about sharing the link, and keep in mind that sharing the notebook will likely also share viewer links.

```

viewer

```

Add some example layers using the precomputed data source (HHMI Janelia FlyEM FIB-25 dataset).

```

with viewer.txn() as s:

s.layers['image'] = neuroglancer.ImageLayer(source='precomputed://gs://neuroglancer-public-data/flyem_fib-25/image')

s.layers['segmentation'] = neuroglancer.SegmentationLayer(source='precomputed://gs://neuroglancer-public-data/flyem_fib-25/ground_truth', selected_alpha=0.3)

```

Display a numpy array as an additional layer. A reference to the numpy array is kept only as long as the layer remains in the viewer.

Move the viewer position.

```

with viewer.txn() as s:

s.voxel_coordinates = [3000, 3000, 3000]

```

Hide the segmentation layer.

```

with viewer.txn() as s:

s.layers['segmentation'].visible = False

import cloudvolume

image_vol = cloudvolume.CloudVolume('https://storage.googleapis.com/neuroglancer-public-data/flyem_fib-25/image', mip=0, bounded=True, progress=False)

a = np.zeros((200,200,200), np.uint8)

def make_thresholded(threshold):

a[...] = np.transpose(image_vol[3000:3200,3000:3200,3000:3200][...,0], (2,1,0)) > threshold

make_thresholded(110)

# This volume handle can be used to notify the viewer that the data has changed.

volume = neuroglancer.LocalVolume(a, voxel_size=[8, 8, 8], voxel_offset=[3000, 3000, 3000])

with viewer.txn() as s:

s.layers['overlay'] = neuroglancer.ImageLayer(

source=volume,

# Define a custom shader to display this mask array as red+alpha.

shader="""

void main() {

float v = toNormalized(getDataValue(0)) * 255.0;

emitRGBA(vec4(v, 0.0, 0.0, v));

}

""",

)

```

Modify the overlay volume, and call `invalidate()` to notify the Neuroglancer client.

```

make_thresholded(100)

volume.invalidate()

```

Select a couple segments.

```

with viewer.txn() as s:

s.layers['segmentation'].segments.update([1752, 88847])

s.layers['segmentation'].visible = True

```

Print the neuroglancer viewer state. The Neuroglancer Python library provides a set of Python objects that wrap the JSON-encoded viewer state. `viewer.state` returns a read-only snapshot of the state. To modify the state, use the `viewer.txn()` function, or `viewer.set_state`.

```

viewer.state

```

Print the set of selected segments.|

```

viewer.state.layers['segmentation'].segments

```

Update the state by calling `set_state` directly.

```

import copy

new_state = copy.deepcopy(viewer.state)

new_state.layers['segmentation'].segments.add(10625)

viewer.set_state(new_state)

```

Bind the 't' key in neuroglancer to a Python action.

```

num_actions = 0

def my_action(s):

global num_actions

num_actions += 1

with viewer.config_state.txn() as st:

st.status_messages['hello'] = ('Got action %d: mouse position = %r' %

(num_actions, s.mouse_voxel_coordinates))

print('Got my-action')

print(' Mouse position: %s' % (s.mouse_voxel_coordinates,))

print(' Layer selected values: %s' % (s.selected_values,))

viewer.actions.add('my-action', my_action)

with viewer.config_state.txn() as s:

s.input_event_bindings.viewer['keyt'] = 'my-action'

s.status_messages['hello'] = 'Welcome to this example'

```

Change the view layout to 3-d.

```

with viewer.txn() as s:

s.layout = '3d'

s.perspective_zoom = 300

```

Take a screenshot (useful for creating publication figures, or for generating videos). While capturing the screenshot, we hide the UI and specify the viewer size so that we get a result independent of the browser size.

```

with viewer.config_state.txn() as s:

s.show_ui_controls = False

s.show_panel_borders = False

s.viewer_size = [1000, 1000]

from ipywidgets import Image

screenshot_image = Image(value=viewer.screenshot().screenshot.image)

with viewer.config_state.txn() as s:

s.show_ui_controls = True

s.show_panel_borders = True

s.viewer_size = None

screenshot_image

```

Change the view layout to show the segmentation side by side with the image, rather than overlayed. This can also be done from the UI by dragging and dropping. The side by side views by default have synchronized position, orientation, and zoom level, but this can be changed.

```

with viewer.txn() as s:

s.layout = neuroglancer.row_layout(

[neuroglancer.LayerGroupViewer(layers=['image', 'overlay']),

neuroglancer.LayerGroupViewer(layers=['segmentation'])])

```

Remove the overlay layer.

```

with viewer.txn() as s:

s.layout = neuroglancer.row_layout(

[neuroglancer.LayerGroupViewer(layers=['image']),

neuroglancer.LayerGroupViewer(layers=['segmentation'])])

```

Create a publicly sharable URL to the viewer state (only works for external data sources, not layers served from Python). The Python objects for representing the viewer state (`neuroglancer.ViewerState` and friends) can also be used independently from the interactive Python-tied viewer to create Neuroglancer links.

```

print(neuroglancer.to_url(viewer.state))

```

Stop the Neuroglancer web server, which invalidates any existing links to the Python-tied viewer.

```

neuroglancer.stop()

```

| github_jupyter |

# k-Nearest Neighbor (kNN) exercise

*Complete and hand in this completed worksheet (including its outputs and any supporting code outside of the worksheet) with your assignment submission. For more details see the [assignments page](http://vision.stanford.edu/teaching/cs231n/assignments.html) on the course website.*

The kNN classifier consists of two stages:

- During training, the classifier takes the training data and simply remembers it

- During testing, kNN classifies every test image by comparing to all training images and transfering the labels of the k most similar training examples

- The value of k is cross-validated

In this exercise you will implement these steps and understand the basic Image Classification pipeline, cross-validation, and gain proficiency in writing efficient, vectorized code.

```

# Run some setup code for this notebook.

from __future__ import print_function

import random

import numpy as np

from cs231n.data_utils import load_CIFAR10

import matplotlib.pyplot as plt

# This is a bit of magic to make matplotlib figures appear inline in the notebook

# rather than in a new window.

%matplotlib inline

plt.rcParams['figure.figsize'] = (10.0, 8.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

# Some more magic so that the notebook will reload external python modules;

# see http://stackoverflow.com/questions/1907993/autoreload-of-modules-in-ipython

%load_ext autoreload

%autoreload 2

# Load the raw CIFAR-10 data.

cifar10_dir = 'cs231n/datasets/cifar-10-batches-py'

X_train, y_train, X_test, y_test = load_CIFAR10(cifar10_dir)

# As a sanity check, we print out the size of the training and test data.

print('Training data shape: ', X_train.shape)

print('Training labels shape: ', y_train.shape)

print('Test data shape: ', X_test.shape)

print('Test labels shape: ', y_test.shape)

# Visualize some examples from the dataset.

# We show a few examples of training images from each class.

classes = ['plane', 'car', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck']

num_classes = len(classes)

samples_per_class = 7

for y, cls in enumerate(classes):

idxs = np.flatnonzero(y_train == y)

idxs = np.random.choice(idxs, samples_per_class, replace=False)

for i, idx in enumerate(idxs):

plt_idx = i * num_classes + y + 1

plt.subplot(samples_per_class, num_classes, plt_idx)

plt.imshow(X_train[idx].astype('uint8'))

plt.axis('off')

if i == 0:

plt.title(cls)

plt.show()

# Subsample the data for more efficient code execution in this exercise

num_training = 5000

mask = list(range(num_training))

X_train = X_train[mask]

y_train = y_train[mask]

num_test = 500

mask = list(range(num_test))

X_test = X_test[mask]

y_test = y_test[mask]

# Reshape the image data into rows

X_train = np.reshape(X_train, (X_train.shape[0], -1))

X_test = np.reshape(X_test, (X_test.shape[0], -1))

print(X_train.shape, X_test.shape)

from cs231n.classifiers import KNearestNeighbor

# Create a kNN classifier instance.

# Remember that training a kNN classifier is a noop:

# the Classifier simply remembers the data and does no further processing

classifier = KNearestNeighbor()

classifier.train(X_train, y_train)

```

We would now like to classify the test data with the kNN classifier. Recall that we can break down this process into two steps:

1. First we must compute the distances between all test examples and all train examples.

2. Given these distances, for each test example we find the k nearest examples and have them vote for the label

Lets begin with computing the distance matrix between all training and test examples. For example, if there are **Ntr** training examples and **Nte** test examples, this stage should result in a **Nte x Ntr** matrix where each element (i,j) is the distance between the i-th test and j-th train example.

First, open `cs231n/classifiers/k_nearest_neighbor.py` and implement the function `compute_distances_two_loops` that uses a (very inefficient) double loop over all pairs of (test, train) examples and computes the distance matrix one element at a time.

```

# Open cs231n/classifiers/k_nearest_neighbor.py and implement

# compute_distances_two_loops.

# Test your implementation:

dists = classifier.compute_distances_two_loops(X_test)

print(dists.shape)

# We can visualize the distance matrix: each row is a single test example and

# its distances to training examples

plt.imshow(dists, interpolation='none')

plt.show()

```

**Inline Question #1:** Notice the structured patterns in the distance matrix, where some rows or columns are visible brighter. (Note that with the default color scheme black indicates low distances while white indicates high distances.)

- What in the data is the cause behind the distinctly bright rows?

- What causes the columns?

**Your Answer**: *fill this in.*

```

# Now implement the function predict_labels and run the code below:

# We use k = 1 (which is Nearest Neighbor).

y_test_pred = classifier.predict_labels(dists, k=1)

# Compute and print the fraction of correctly predicted examples

num_correct = np.sum(y_test_pred == y_test)

accuracy = float(num_correct) / num_test

print('Got %d / %d correct => accuracy: %f' % (num_correct, num_test, accuracy))

```

You should expect to see approximately `27%` accuracy. Now lets try out a larger `k`, say `k = 5`:

```

y_test_pred = classifier.predict_labels(dists, k=5)

num_correct = np.sum(y_test_pred == y_test)

accuracy = float(num_correct) / num_test

print('Got %d / %d correct => accuracy: %f' % (num_correct, num_test, accuracy))

```

You should expect to see a slightly better performance than with `k = 1`.

```

# Now lets speed up distance matrix computation by using partial vectorization

# with one loop. Implement the function compute_distances_one_loop and run the

# code below:

dists_one = classifier.compute_distances_one_loop(X_test)

# To ensure that our vectorized implementation is correct, we make sure that it

# agrees with the naive implementation. There are many ways to decide whether

# two matrices are similar; one of the simplest is the Frobenius norm. In case

# you haven't seen it before, the Frobenius norm of two matrices is the square

# root of the squared sum of differences of all elements; in other words, reshape

# the matrices into vectors and compute the Euclidean distance between them.

difference = np.linalg.norm(dists - dists_one, ord='fro')

print('Difference was: %f' % (difference, ))

if difference < 0.001:

print('Good! The distance matrices are the same')

else:

print('Uh-oh! The distance matrices are different')

# Now implement the fully vectorized version inside compute_distances_no_loops

# and run the code

dists_two = classifier.compute_distances_no_loops(X_test)

# check that the distance matrix agrees with the one we computed before:

difference = np.linalg.norm(dists - dists_two, ord='fro')

print('Difference was: %f' % (difference, ))

if difference < 0.001:

print('Good! The distance matrices are the same')

else:

print('Uh-oh! The distance matrices are different')

# Let's compare how fast the implementations are

def time_function(f, *args):

"""

Call a function f with args and return the time (in seconds) that it took to execute.

"""

import time

tic = time.time()

f(*args)

toc = time.time()

return toc - tic

two_loop_time = time_function(classifier.compute_distances_two_loops, X_test)

print('Two loop version took %f seconds' % two_loop_time)

one_loop_time = time_function(classifier.compute_distances_one_loop, X_test)

print('One loop version took %f seconds' % one_loop_time)

no_loop_time = time_function(classifier.compute_distances_no_loops, X_test)

print('No loop version took %f seconds' % no_loop_time)

# you should see significantly faster performance with the fully vectorized implementation

```

### Cross-validation

We have implemented the k-Nearest Neighbor classifier but we set the value k = 5 arbitrarily. We will now determine the best value of this hyperparameter with cross-validation.

```

num_folds = 5

k_choices = [1, 3, 5, 8, 10, 12, 15, 20, 50, 100]

X_train_folds = []

y_train_folds = []

################################################################################

# TODO: #

# Split up the training data into folds. After splitting, X_train_folds and #

# y_train_folds should each be lists of length num_folds, where #

# y_train_folds[i] is the label vector for the points in X_train_folds[i]. #

# Hint: Look up the numpy array_split function. #

################################################################################

pass

################################################################################

# END OF YOUR CODE #

################################################################################

# A dictionary holding the accuracies for different values of k that we find

# when running cross-validation. After running cross-validation,

# k_to_accuracies[k] should be a list of length num_folds giving the different

# accuracy values that we found when using that value of k.

k_to_accuracies = {}

################################################################################

# TODO: #

# Perform k-fold cross validation to find the best value of k. For each #

# possible value of k, run the k-nearest-neighbor algorithm num_folds times, #

# where in each case you use all but one of the folds as training data and the #

# last fold as a validation set. Store the accuracies for all fold and all #

# values of k in the k_to_accuracies dictionary. #

################################################################################

pass

################################################################################

# END OF YOUR CODE #

################################################################################

# Print out the computed accuracies

for k in sorted(k_to_accuracies):

for accuracy in k_to_accuracies[k]:

print('k = %d, accuracy = %f' % (k, accuracy))

# plot the raw observations

for k in k_choices:

accuracies = k_to_accuracies[k]

plt.scatter([k] * len(accuracies), accuracies)

# plot the trend line with error bars that correspond to standard deviation

accuracies_mean = np.array([np.mean(v) for k,v in sorted(k_to_accuracies.items())])

accuracies_std = np.array([np.std(v) for k,v in sorted(k_to_accuracies.items())])

plt.errorbar(k_choices, accuracies_mean, yerr=accuracies_std)

plt.title('Cross-validation on k')

plt.xlabel('k')

plt.ylabel('Cross-validation accuracy')

plt.show()

# Based on the cross-validation results above, choose the best value for k,

# retrain the classifier using all the training data, and test it on the test

# data. You should be able to get above 28% accuracy on the test data.

best_k = 1

classifier = KNearestNeighbor()

classifier.train(X_train, y_train)

y_test_pred = classifier.predict(X_test, k=best_k)

# Compute and display the accuracy

num_correct = np.sum(y_test_pred == y_test)

accuracy = float(num_correct) / num_test

print('Got %d / %d correct => accuracy: %f' % (num_correct, num_test, accuracy))

```

| github_jupyter |

# Time series prediction with neural networks.

The problem we are going to look at in this post is the international airline passengers prediction problem.

This is a problem where given a year and a month, the task is to predict the number of international airline passengers in units of 1,000. The data ranges from January 1949 to December 1960 or 12 years, with 144 observations.

```

import keras

print('keras:', keras.__version__)

# Multilayer Perceptron to Predict International Airline Passengers (t+1, given t)

import numpy

import matplotlib.pyplot as plt

import pandas

import math

%matplotlib inline

from keras.models import Sequential

from keras.layers import Dense

# fix random seed for reproducibility

numpy.random.seed(7)

# load the dataset

dataframe = pandas.read_csv('files/international-airline-passengers.csv',

usecols=[1],

engine='python',

skipfooter=3)

dataset = dataframe.values

dataset = dataset.astype('float32')

# split into train and test sets

train_size = int(len(dataset) * 0.67)

test_size = len(dataset) - train_size

train, test = dataset[0:train_size,:], dataset[train_size:len(dataset),:]

print(len(train), len(test))

# convert an array of values into a dataset matrix

def create_dataset(dataset, look_back=1):

dataX, dataY = [], []

for i in range(len(dataset)-look_back-1):

a = dataset[i:(i+look_back), 0]

dataX.append(a)

dataY.append(dataset[i + look_back, 0])

return numpy.array(dataX), numpy.array(dataY)

# reshape into X=t and Y=t+1

look_back = 1

trainX, trainY = create_dataset(train, look_back)

testX, testY = create_dataset(test, look_back)

print(trainX[0], trainY[0])

# create and fit Multilayer Perceptron model

model = Sequential()

model.add(Dense(8, input_dim=look_back, activation='relu'))

model.add(Dense(8, activation='relu'))

model.add(Dense(1))

model.compile(loss='mean_squared_error', optimizer='adam')

model.fit(trainX, trainY, nb_epoch=200, batch_size=2, verbose=0)

# Estimate model performance

trainScore = model.evaluate(trainX, trainY, verbose=0)

print('Train Score: %.2f MSE (%.2f RMSE)' % (trainScore, math.sqrt(trainScore)))

testScore = model.evaluate(testX, testY, verbose=0)

print('Test Score: %.2f MSE (%.2f RMSE)' % (testScore, math.sqrt(testScore)))

# generate predictions for training

trainPredict = model.predict(trainX)

testPredict = model.predict(testX)

# shift train predictions for plotting

trainPredictPlot = numpy.empty_like(dataset)

trainPredictPlot[:, :] = numpy.nan

trainPredictPlot[look_back:len(trainPredict)+look_back, :] = trainPredict

# shift test predictions for plotting

testPredictPlot = numpy.empty_like(dataset)

testPredictPlot[:, :] = numpy.nan

testPredictPlot[len(trainPredict)+(look_back*2)+1:len(dataset)-1, :] = testPredict

# plot baseline and predictions

plt.plot(dataset)

plt.plot(trainPredictPlot)

plt.plot(testPredictPlot)

plt.plot(abs(dataset-testPredictPlot))

plt.show()

import math

print "The average error on the training dataset was {:.0f} passengers\

(in thousands per month) and the average error on the unseen\

test set was {:.0f} passengers (in thousands per month).".format(math.sqrt(trainScore) \

,math.sqrt(testScore))

```

## The Window Method

We can also phrase the problem so that multiple recent time steps can be used to make the prediction for the next time step.

This is called the window method, and the size of the window is a parameter that can be tuned for each problem.

For example, given the current time ($t$) we want to predict the value at the next time in the sequence ($t + 1$), we can use the current time ($t$) as well as the two prior times ($t-1$ and $t-2$).

When phrased as a regression problem the input variables are $t-2$, $t-1$, $t$ and the output variable is $t+1$.

```

# Multilayer Perceptron to Predict International Airline Passengers (t+1, given t, t-1, t-2)

import numpy

import matplotlib.pyplot as plt

import pandas

import math

from keras.models import Sequential

from keras.layers import Dense

# convert an array of values into a dataset matrix

def create_dataset(dataset, look_back=1):

dataX, dataY = [], []

for i in range(len(dataset)-look_back-1):

a = dataset[i:(i+look_back), 0]

dataX.append(a)

dataY.append(dataset[i + look_back, 0])

return numpy.array(dataX), numpy.array(dataY)

# fix random seed for reproducibility

numpy.random.seed(7)

# load the dataset

dataframe = pandas.read_csv('files/international-airline-passengers.csv', usecols=[1], engine='python', skipfooter=3)

dataset = dataframe.values

dataset = dataset.astype('float32')

# split into train and test sets

train_size = int(len(dataset) * 0.67)

test_size = len(dataset) - train_size

train, test = dataset[0:train_size,:], dataset[train_size:len(dataset),:]

print(len(train), len(test))

# reshape dataset

look_back = 3

trainX, trainY = create_dataset(train, look_back)

testX, testY = create_dataset(test, look_back)

print(trainX[0], trainY[0])

# create and fit Multilayer Perceptron model

model = Sequential()

model.add(Dense(8, input_dim=look_back, activation='relu'))

model.add(Dense(8, activation='relu'))

model.add(Dense(1))

model.compile(loss='mean_squared_error', optimizer='adam')

model.fit(trainX, trainY, nb_epoch=200, batch_size=20, verbose=0)

# Estimate model performance

trainScore = model.evaluate(trainX, trainY, verbose=0)

print('Train Score: %.2f MSE (%.2f RMSE)' % (trainScore, math.sqrt(trainScore)))

testScore = model.evaluate(testX, testY, verbose=0)

print('Test Score: %.2f MSE (%.2f RMSE)' % (testScore, math.sqrt(testScore)))

# generate predictions for training

trainPredict = model.predict(trainX)

testPredict = model.predict(testX)

# shift train predictions for plotting

trainPredictPlot = numpy.empty_like(dataset)

trainPredictPlot[:, :] = numpy.nan

trainPredictPlot[look_back:len(trainPredict)+look_back, :] = trainPredict

# shift test predictions for plotting

testPredictPlot = numpy.empty_like(dataset)

testPredictPlot[:, :] = numpy.nan

testPredictPlot[len(trainPredict)+(look_back*2)+1:len(dataset)-1, :] = testPredict

# plot baseline and predictions

plt.plot(dataset)

plt.plot(trainPredictPlot)

plt.plot(testPredictPlot)

plt.plot(abs(dataset-testPredictPlot))

plt.show()

import math

print "The average error on the training dataset was {:.0f} passengers \

(in thousands per month) and the average error on the unseen \

test set was {:.0f} passengers (in thousands per month).".format(math.sqrt(trainScore) \

, math.sqrt(testScore))

```

## Exercise

Get better performace by changing parameters: network architecture, look-back, etc.

```

import numpy

import matplotlib.pyplot as plt

import pandas

import math

from keras.models import Sequential

from keras.layers import Dense

# convert an array of values into a dataset matrix

def create_dataset(dataset, look_back=1):

dataX, dataY = [], []

for i in range(len(dataset)-look_back-1):

a = dataset[i:(i+look_back), 0]

dataX.append(a)

dataY.append(dataset[i + look_back, 0])

return numpy.array(dataX), numpy.array(dataY)

# fix random seed for reproducibility

numpy.random.seed(7)

# load the dataset

dataframe = pandas.read_csv('files/international-airline-passengers.csv', usecols=[1], engine='python', skipfooter=3)

dataset = dataframe.values

dataset = dataset.astype('float32')

# split into train and test sets

train_size = int(len(dataset) * 0.67)

test_size = len(dataset) - train_size

train, test = dataset[0:train_size,:], dataset[train_size:len(dataset),:]

print(len(train), len(test))

# your code here

# Estimate model performance

trainScore = model.evaluate(trainX, trainY, verbose=0)

print('Train Score: %.2f MSE (%.2f RMSE)' % (trainScore, math.sqrt(trainScore)))

testScore = model.evaluate(testX, testY, verbose=0)

print('Test Score: %.2f MSE (%.2f RMSE)' % (testScore, math.sqrt(testScore)))

# generate predictions for training

trainPredict = model.predict(trainX)

testPredict = model.predict(testX)

# shift train predictions for plotting

trainPredictPlot = numpy.empty_like(dataset)

trainPredictPlot[:, :] = numpy.nan

trainPredictPlot[look_back:len(trainPredict)+look_back, :] = trainPredict

# shift test predictions for plotting

testPredictPlot = numpy.empty_like(dataset)

testPredictPlot[:, :] = numpy.nan

testPredictPlot[len(trainPredict)+(look_back*2)+1:len(dataset)-1, :] = testPredict

# plot baseline and predictions

plt.plot(dataset)

plt.plot(trainPredictPlot)

plt.plot(testPredictPlot)

plt.plot(abs(dataset-testPredictPlot))

plt.show()

import math

print "The average error on the training dataset was {:.0f} passengers \

(in thousands per month) and the average error on the unseen \

test set was {:.0f} passengers (in thousands per month).".format(math.sqrt(trainScore) \

, math.sqrt(testScore))

```

## LSTM

``keras.layers.recurrent.LSTM(output_dim, init='glorot_uniform', inner_init='orthogonal', forget_bias_init='one', activation='tanh', inner_activation='hard_sigmoid', W_regularizer=None, U_regularizer=None, b_regularizer=None, dropout_W=0.0, dropout_U=0.0)``

Note: Making a RNN stateful means that the states for the samples of each batch will be reused as initial states for the samples in the next batch.

```

# Stacked LSTM for international airline passengers problem with stateful LSTM

import numpy

import matplotlib.pyplot as plt

import pandas

import math

from keras.models import Sequential

from keras.layers import Dense

from keras.layers import LSTM

from sklearn.preprocessing import MinMaxScaler

from sklearn.metrics import mean_squared_error

import tqdm

# convert an array of values into a dataset matrix

def create_dataset(dataset, look_back=1):

dataX, dataY = [], []

for i in range(len(dataset)-look_back-1):

a = dataset[i:(i+look_back), 0]

dataX.append(a)

dataY.append(dataset[i + look_back, 0])

return numpy.array(dataX), numpy.array(dataY)

# fix random seed for reproducibility

numpy.random.seed(7)

# load the dataset

dataframe = pandas.read_csv('files/international-airline-passengers.csv',

usecols=[1],

engine='python',

skipfooter=3)

dataset = dataframe.values

dataset = dataset.astype('float32')

# normalize the dataset

scaler = MinMaxScaler(feature_range=(0, 1))

dataset = scaler.fit_transform(dataset)

# split into train and test sets

train_size = int(len(dataset) * 0.67)

test_size = len(dataset) - train_size

train, test = dataset[0:train_size,:], dataset[train_size:len(dataset),:]

# reshape

look_back = 10

trainX, trainY = create_dataset(train, look_back)

testX, testY = create_dataset(test, look_back)

# reshape input to be [samples, time steps, features]

trainX = numpy.reshape(trainX, (trainX.shape[0], trainX.shape[1], 1))

testX = numpy.reshape(testX, (testX.shape[0], testX.shape[1], 1))

# create and fit the LSTM network

batch_size = 1

model = Sequential()

model.add(LSTM(64,

batch_input_shape=(batch_size, look_back, 1),

stateful=True,

return_sequences=True))

model.add(LSTM(64,

stateful=True))

model.add(Dense(16, activation='relu'))

model.add(Dense(1))

model.compile(loss='mean_squared_error', optimizer='adam')

print trainX.shape, trainY.shape

for i in tqdm.tqdm(range(20)):

model.fit(trainX, trainY, nb_epoch=1, batch_size=batch_size, verbose=0, shuffle=False)

model.reset_states()

# make predictions

trainPredict = model.predict(trainX, batch_size=batch_size)

model.reset_states()

testPredict = model.predict(testX, batch_size=batch_size)

# invert predictions

trainPredict = scaler.inverse_transform(trainPredict)

trainY = scaler.inverse_transform([trainY])

testPredict = scaler.inverse_transform(testPredict)

testY = scaler.inverse_transform([testY])

# calculate root mean squared error

trainScore = math.sqrt(mean_squared_error(trainY[0], trainPredict[:,0]))

print('Train Score: %.2f RMSE' % (trainScore))

testScore = math.sqrt(mean_squared_error(testY[0], testPredict[:,0]))

print('Test Score: %.2f RMSE' % (testScore))

# shift train predictions for plotting

trainPredictPlot = numpy.empty_like(dataset)

trainPredictPlot[:, :] = numpy.nan

trainPredictPlot[look_back:len(trainPredict)+look_back, :] = trainPredict

# shift test predictions for plotting

testPredictPlot = numpy.empty_like(dataset)

testPredictPlot[:, :] = numpy.nan

testPredictPlot[len(trainPredict)+(look_back*2)+1:len(dataset)-1, :] = testPredict

# plot baseline and predictions

plt.plot(scaler.inverse_transform(dataset))

plt.plot(trainPredictPlot)

plt.plot(testPredictPlot)

plt.show()

# Stacked GRU for international airline passengers problem

import numpy

import matplotlib.pyplot as plt

import pandas

import math

from keras.models import Sequential

from keras.layers import Dense

from keras.layers import GRU

from sklearn.preprocessing import MinMaxScaler, StandardScaler

from sklearn.metrics import mean_squared_error

import tqdm

# convert an array of values into a dataset matrix

def create_dataset(dataset, look_back=1):

dataX, dataY = [], []

for i in range(len(dataset)-look_back-1):

a = dataset[i:(i+look_back), 0]

dataX.append(a)

dataY.append(dataset[i + look_back, 0])

return numpy.array(dataX), numpy.array(dataY)

# fix random seed for reproducibility

numpy.random.seed(7)

# load the dataset

dataframe = pandas.read_csv('files/international-airline-passengers.csv',

usecols=[1],

engine='python',

skipfooter=3)

dataset = dataframe.values

dataset = dataset.astype('float32')

# normalize the dataset

# scaler = MinMaxScaler(feature_range=(0, 1))

scaler = StandardScaler()

dataset = scaler.fit_transform(dataset)

# split into train and test sets

train_size = int(len(dataset) * 0.67)

test_size = len(dataset) - train_size

train, test = dataset[0:train_size,:], dataset[train_size:len(dataset),:]

# reshape

look_back = 10

trainX, trainY = create_dataset(train, look_back)

testX, testY = create_dataset(test, look_back)

# reshape input to be [samples, time steps, features]

trainX = numpy.reshape(trainX, (trainX.shape[0], trainX.shape[1], 1))

testX = numpy.reshape(testX, (testX.shape[0], testX.shape[1], 1))

# create and fit the GRU network

batch_size = 1

model = Sequential()

model.add(GRU(32,

batch_input_shape=(batch_size, look_back, 1),

stateful=True,

return_sequences=True))

model.add(GRU(32,

stateful=True))

model.add(Dense(8, activation='relu'))

model.add(Dense(8, activation='relu'))

model.add(Dense(1))

model.compile(loss='mean_squared_error', optimizer='adam')

print trainX.shape, trainY.shape

for i in tqdm.tqdm(range(50)):

model.fit(trainX, trainY, nb_epoch=1, batch_size=batch_size, verbose=0, shuffle=False)

model.reset_states()

# make predictions

trainPredict = model.predict(trainX, batch_size=batch_size)

model.reset_states()

testPredict = model.predict(testX, batch_size=batch_size)

# invert predictions

trainPredict = scaler.inverse_transform(trainPredict)

trainY = scaler.inverse_transform([trainY])

testPredict = scaler.inverse_transform(testPredict)

testY = scaler.inverse_transform([testY])

# calculate root mean squared error

trainScore = math.sqrt(mean_squared_error(trainY[0], trainPredict[:,0]))

print('Train Score: %.2f RMSE' % (trainScore))

testScore = math.sqrt(mean_squared_error(testY[0], testPredict[:,0]))

print('Test Score: %.2f RMSE' % (testScore))

# shift train predictions for plotting

trainPredictPlot = numpy.empty_like(dataset)

trainPredictPlot[:, :] = numpy.nan

trainPredictPlot[look_back:len(trainPredict)+look_back, :] = trainPredict

# shift test predictions for plotting

testPredictPlot = numpy.empty_like(dataset)

testPredictPlot[:, :] = numpy.nan

testPredictPlot[len(trainPredict)+(look_back*2)+1:len(dataset)-1, :] = testPredict

# plot baseline and predictions

plt.plot(scaler.inverse_transform(dataset))

plt.plot(trainPredictPlot)

plt.plot(testPredictPlot)

plt.show()

```

## Power Consumption.

The task here will be to be able to predict values for a timeseries : the history of 2 million minutes of a household's power consumption. We are going to use a multi-layered LSTM recurrent neural network to predict the last value of a sequence of values. Put another way, given 49 timesteps of consumption, what will be the 50th value?

The initial file contains several different pieces of data. We will here focus on a single value : a house's Global_active_power history, minute by minute for almost 4 years. This means roughly 2 million points.

Notes:

+ Neural networks usually learn way better when data is pre-processed. However regarding time-series we do not want the network to learn on data too far from the real world. So here we'll keep it simple and simply center the data to have a 0 mean.

```

import matplotlib.pyplot as plt

import numpy as np

import time

import csv

from keras.layers.core import Dense, Activation, Dropout

from keras.layers.recurrent import LSTM

from keras.models import Sequential

np.random.seed(1234)

def data_power_consumption(path_to_dataset,

sequence_length=50,

ratio=1.0):

max_values = ratio * 2049280

with open(path_to_dataset) as f:

data = csv.reader(f, delimiter=";")

power = []

nb_of_values = 0

for line in data:

try:

power.append(float(line[2]))

nb_of_values += 1

except ValueError:

pass

# 2049280.0 is the total number of valid values, i.e. ratio = 1.0

if nb_of_values >= max_values:

break

print "Data loaded from csv. Formatting..."

result = []

for index in range(len(power) - sequence_length):

result.append(power[index: index + sequence_length])

result = np.array(result) # shape (2049230, 50)

result_mean = result.mean()

result -= result_mean

print "Shift : ", result_mean

print "Data : ", result.shape

row = int(round(0.9 * result.shape[0]))

train = result[:row, :]

np.random.shuffle(train)

X_train = train[:, :-1]

y_train = train[:, -1]

X_test = result[row:, :-1]

y_test = result[row:, -1]

X_train = np.reshape(X_train, (X_train.shape[0], X_train.shape[1], 1))

X_test = np.reshape(X_test, (X_test.shape[0], X_test.shape[1], 1))

return [X_train, y_train, X_test, y_test]

def build_model():

model = Sequential()

layers = [1, 50, 100, 1]

model.add(LSTM(

input_dim=layers[0],

output_dim=layers[1],

return_sequences=True))

model.add(Dropout(0.2))

model.add(LSTM(

layers[2],

return_sequences=False))

model.add(Dropout(0.2))

model.add(Dense(

output_dim=layers[3]))

model.add(Activation("linear"))

start = time.time()

model.compile(loss="mse", optimizer="rmsprop")

print "Compilation Time : ", time.time() - start

return model

def run_network(model=None, data=None):

global_start_time = time.time()

epochs = 1

ratio = 0.5

sequence_length = 50

path_to_dataset = 'files/household_power_consumption.txt'

if data is None:

print 'Loading data... '

X_train, y_train, X_test, y_test = data_power_consumption(

path_to_dataset, sequence_length, ratio)

else:

X_train, y_train, X_test, y_test = data

print '\nData Loaded. Compiling...\n'

if model is None:

model = build_model()

try:

model.fit(

X_train, y_train,

batch_size=512, nb_epoch=epochs, validation_split=0.05)

predicted = model.predict(X_test)

predicted = np.reshape(predicted, (predicted.size,))

except KeyboardInterrupt:

print 'Training duration (s) : ', time.time() - global_start_time

return model, y_test, 0

try:

fig = plt.figure()

ax = fig.add_subplot(111)

ax.plot(y_test[:100])

plt.plot(predicted[:100])

plt.show()

except Exception as e:

print str(e)

print 'Training duration (s) : ', time.time() - global_start_time

return model, y_test, predicted

run_network()

```

### Exercise

Train a stateful LSTM to learn an absolute cosine time series with the amplitude exponentially decreasing.

```

import numpy as np

import matplotlib.pyplot as plt

from keras.models import Sequential

from keras.layers import Dense, LSTM

% matplotlib inline

def gen_cosine_amp(amp=100, period=1000, x0=0, xn=50000, step=1, k=0.0001):

"""Generates an absolute cosine time series with the amplitude

exponentially decreasing

Arguments:

amp: amplitude of the cosine function

period: period of the cosine function

x0: initial x of the time series

xn: final x of the time series

step: step of the time series discretization

k: exponential rate

"""

cos = np.zeros(((xn - x0) * step, 1, 1))

for i in range(len(cos)):

idx = x0 + i * step

cos[i, 0, 0] = amp * np.cos(2 * np.pi * idx / period)

cos[i, 0, 0] = cos[i, 0, 0] * np.exp(-k * idx)

return cos

print 'Generating Data'

cos = gen_cosine_amp()

print 'Input shape:', cos.shape

plt.plot(cos[:,0,0])

# since we are using stateful rnn tsteps can be set to 1

time_steps = 1

batch_size = 250

epochs = 100

# number of elements ahead that are used to make the prediction

look_head = 100

expected_output = np.zeros((len(cos), 1))

for i in range(len(cos) - look_head):

expected_output[i, 0] = np.mean(cos[i + 1:i + look_head + 1])

print 'Output shape'

print(expected_output.shape)

print 'Creating Model'

# model = Sequential()

# YOUR CODE HERE

# The objective is a MSE < 0.05

# model.compile(loss='mse', optimizer='rmsprop')

print 'Training'

for i in range(epochs):

if i%10 == 0:

print('Epoch', i, '/', epochs)

model.fit(cos,

expected_output,

batch_size=batch_size,

verbose=0,

nb_epoch=1,

shuffle=False)

model.reset_states()

print 'Predicting'

predicted_output = model.predict(cos, batch_size=batch_size)

import math

print 'MSE: ', math.sqrt(((expected_output-predicted_output)**2).sum())/len(expected_output)

print 'Plotting Results'

plt.subplot(2, 1, 1)

plt.plot(expected_output)

plt.title('Expected')

plt.subplot(2, 1, 2)

plt.plot(predicted_output)

plt.title('Predicted')

plt.show()

```

| github_jupyter |

[View in Colaboratory](https://colab.research.google.com/github/ckbjimmy/2018_mlw/blob/master/nb2_clustering.ipynb)

# Machine Learning for Clinical Predictive Analytics

We would like to introduce basic machine learning techniques and toolkits for clinical knowledge discovery in the workshop.

The material will cover common useful algorithms for clinical prediction tasks, as well as the diagnostic workflow of applying machine learning to real-world problems.

We will use [Google colab](https://colab.research.google.com/) / python jupyter notebook and two datasets:

- Breast Cancer Wisconsin (Diagnostic) Database, and

- pre-extracted ICU data from PhysioNet Database

to build predictive models.

The learning objectives of this workshop tutorial are:

- Learn how to use Google colab / jupyter notebook

- Learn how to build machine learning models for clinical classification and/or clustering tasks

To accelerate the progress without obstacles, we hope that the readers fulfill the following prerequisites:

- [Skillset] basic python syntax

- [Requirements] Google account OR [anaconda](https://anaconda.org/anaconda/python)

In part 1, we will go through the basic of machine learning for classification problems.

In part 2, we will investigate more on unsupervised learning methods for clustering and visualization.

In part 3, we will play with neural networks.

# Part II – Unsupervised learning algorithms

In part 2, we will investigate more on unsupervised learning algorithms of clustering and dimensionality reduction.

In the first part of the workshop, we introduce many algorithms for classification tasks.

Those tasks belong to the scenario of **supervised learning**, which means that the label/annotation of your training dataset are given.

For example, you already know some tumor samples are malignant or benign.

Now we will look at the other scenario called **unsupervised learning**, which is for finding the patterns (hidden representation) in the data.

In such scenario, the data do not need to be labelled, and we just need the input variables/features without any outcome variables.

Unsupervised learning algorithms will try to discover the pattern and inner structure of the data by itself, and group the **similar** data points together and form a cluster, or compress high dimension data to lower dimension data representation.

The difference between supervised (classification and regression problems) and unsupervised learning can be rougly shown in the following picture.

[Source] Andrew Ng's Machine Learning Coursera Course Lecture 1

After going through this tutorial, we hope that you will understand how to use scikit-learn to design and build models for clustering and dimensionality reduction, and how to evaluate them.

Again, we start from the breast cancer dataset in UCI data repository to have a quick view on how to do the analysis and build models using well-structured data.

We load the breast cancer dataset from `sklearn.datasets`, and preprocess the dataset as we did in Part I.

We visualize the data in the vector space just using the data in the first two columns, and color them with the provided labels.

We realize that simply using two features may separate two clusters at some degrees.

```

from sklearn import datasets

import matplotlib.pyplot as plt

df_bc = datasets.load_breast_cancer()

print(df_bc.feature_names)

print(df_bc.target_names)

X = df_bc['data']

y = df_bc['target']

label = {0: 'malignant', 1: 'benign'}

x_axis = X[:, 0] # mean radius

y_axis = X[:, 1] # mean texture

plt.scatter(x_axis, y_axis, c=y)

plt.show()

```

## Clustering

We are now going to use clustering algorithms to cluster data points into several groups just using predictors/features.

### K-means clustering

K-means clustering is an iterative algorithm that aims to find local maxima in each iteration.

In k-means, we need to choose the number of clusters, $k$, beforehand.

There are many methods to decide $k$ value if it is unknown.

The simplest approach is that we can use elbow (bend) method in the sum of squared error screen plot for deciding $k$ value.

The elbow point can be suggested as the number of culsters for k-means.

```

from sklearn.cluster import KMeans

from sklearn.metrics import confusion_matrix

from sklearn.decomposition import PCA

# decide k value

Nc = range(1, 5)

kmeans = [KMeans(n_clusters=i) for i in Nc]

kmeans

score = [kmeans[i].fit(X).score(X) for i in range(len(kmeans))]

score

plt.plot(Nc, score)

plt.xlabel('Number of Clusters')

plt.ylabel('Score')

plt.title('Elbow Curve')

plt.show()

```

Since we already know that there are two classes in our dataset, we then set the $k$ value of 2 to the parameter `n_clusters` in our model.

Based on the centroid distance between each points, the next given inputs are segregated into respected clusters.

Each centroid of a cluster is a collection of feature values which define the resulting groups.

Examining the centroid feature weights can be used to qualitatively interpret what kind of group each cluster represent.

Now we use all features (`X`) for clustering (`km`).

We use confusion matrix to demonstrate the performance of k-means clustering.

The accuracy of the model can be computed by the summation of diagonal (or reverse diagonal) elements divided by the sample size.

In our case, $\frac{(356+130)}{(82+356+130+1)} = 0.85$.

For visualization, here we use principal component analysis (PCA) for higher dimension data since it is impossible to simply do it on 2D plot with raw data.

We will introduce PCA later in the section of dimensionality reduction.

We can see that two clusters can be well separated with given features.

For the details of k-means algorithm, please check the [wikipedia page](https://en.wikipedia.org/wiki/K-means_clustering).

```

# k-means

k = 2

km = KMeans(n_clusters=k)

km.fit(X)

print(km.labels_)

# performance

cm = confusion_matrix(y, km.labels_)

print(cm)

# visualization

pca = PCA(n_components=2).fit(X)

pca_2d = pca.transform(X)

for i in range(0, pca_2d.shape[0]):

if km.labels_[i] == 0:

c1 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='r', marker='+')

elif km.labels_[i] == 1:

c2 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='g', marker='o')

plt.legend([c1, c2], ['Cluster 1', 'Cluster 2'])

plt.title('K-means finds 2 clusters')

plt.show()

```

### DBSCAN clustering

DBSCAN (Density-Based Spatial Clustering of Applications with Noise) is another clustering algorithm that you don't need to decide $k$ value beforehand.

However, the tradeoff is that you need to decide values of two parameters:

- `eps` (maximum distance between two data points to be considered in the same neighborhood) and

- `min_samples` (minimum amount of data points in a neighborhood to be considered a cluster for DBSCAN).

We can see that some samples are not clustered in the correct groups.

You may use different values of two parameters for better clustering.

```

from sklearn.cluster import DBSCAN

# DBSCAN

dbscan = DBSCAN(eps=100, min_samples=10)

dbscan.fit(X)

print(dbscan.labels_)

# performance

cm = confusion_matrix(y, dbscan.labels_)

print(cm)

# visualization

pca = PCA(n_components=2).fit(X)

pca_2d = pca.transform(X)

for i in range(0, pca_2d.shape[0]):

if dbscan.labels_[i] == 0:

c1 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='r', marker='+')

elif dbscan.labels_[i] == 1:

c2 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='g', marker='o')

elif dbscan.labels_[i] == -1:

c3 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='b', marker='*')

plt.legend([c1, c2, c3], ['Cluster 1', 'Cluster 2', 'Noise'])

plt.title('DBSCAN finds 2 clusters and Noise')

plt.show()

```

There are also other clustering algorithms provided in the `scikit-learn`.

You may check the [scikit-learn document of clustering](http://scikit-learn.org/stable/modules/clustering.html#clustering) and play with them!

## Dimensionality reduction

Dimensionality reduction methods can reduce the number of features and represent the data with much smaller, compressed representation.

The technique is helpful for analyzing sparse data that may cause an issue of ["curse of dimensionality"](https://en.wikipedia.org/wiki/Curse_of_dimensionality).

Here we will introduce two commonly seen algorithms for dimensionality reduction, principal component analysis (PCA) and t-distributed stochastic neighbor embedding (t-SNE).

### Principal component analysis (PCA)

PCA guarantees finding the best linear transformation that reduces the number of dimensions with a minimum loss of information.

[Source] Courtesy by Prof. HY Lee (NTU)

Sometimes the information that was lost is regarded as noise---information that does not represent the phenomena we are trying to model, but is rather a side effect of some usually unknown processes.

In the example, we preserve the first two principal components (PC1 and PC2) and visualize the data after PCA transformation.

The figure shows that PCA compresses the data from 30-dimension to 2-dimension without lossing too much information for clustering data points.

```

from sklearn.decomposition import PCA

# original feature number

print(X.shape[1])

# PCA

pca = PCA(n_components=2).fit(X)

pca_2d = pca.transform(X)

x_axis = pca_2d[:, 0]

y_axis = pca_2d[:, 1]

plt.scatter(x_axis, y_axis, c=y)

plt.show()

```

We can even use the result of PCA transformation to perform classification task (just simply use logistic regression as an example) with much compact data.

The results show that using PCA transformed data for classification does not decrease the performance of classification too much.

```

from sklearn.linear_model import LogisticRegression

from sklearn import metrics

# use all features

clf = LogisticRegression(fit_intercept=True)

clf.fit(X, y)

yhat = clf.predict_proba(X)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

# use PCA transformed features

clf = LogisticRegression(fit_intercept=True)

clf.fit(pca_2d, y)

yhat = clf.predict_proba(pca_2d)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

```

### t-SNE (t-distributed stochastic neighbor embedding)

PCA utilizes the **linear transformation** of data.

However, it may be better to consider **non-linearity** for data with higher dimension.

t-SNE is one of the unsupervised learning method for higher dimension data visualization.

It adopts the idea of manifold learning of modeling each high-dimensional data point by a lower dimensional data point in such a way that similar objects are modeled by nearby points with high probability.

Again, we use the result of t-SNE modeling and realize that it still preserve most of the information inside the data for classification (but worse than simple PCA in this case).

```

from sklearn.manifold import TSNE

# t-SNE

ts = TSNE(learning_rate=100)

tsne = ts.fit_transform(X)

x_axis = tsne[:, 0]

y_axis = tsne[:, 1]

plt.scatter(x_axis, y_axis, c=y)

plt.show()

from sklearn.linear_model import LogisticRegression

from sklearn import metrics

# use all features

clf = LogisticRegression(fit_intercept=True)

clf.fit(X, y)

yhat = clf.predict_proba(X)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

# use tSNE transformed features

clf = LogisticRegression(fit_intercept=True)

clf.fit(tsne, y)

yhat = clf.predict_proba(tsne)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

```

## Exercise

### Iris dataset

Try to use iris dataset!

We show the result of using k-means for iris dataset.

Please try to modify the above codes to see what will happen when you apply DBSCAN, PCA and t-SNE on this dataset.

```

df = datasets.load_iris()

print(df.feature_names)

print(df.target_names)

X = df['data']

y = df['target']

label = {0: 'setosa', 1: 'versicolor', 2: 'virginica'}

# simply visualize using two features

x_axis = X[:, 0]

y_axis = X[:, 1]

plt.scatter(x_axis, y_axis, c=y)

plt.show()

# find optimal k value

Nc = range(1, 5)

kmeans = [KMeans(n_clusters=i) for i in Nc]

kmeans

score = [kmeans[i].fit(X).score(X) for i in range(len(kmeans))]

score

plt.plot(Nc, score)

plt.xlabel('Number of Clusters')

plt.ylabel('Score')

plt.title('Elbow Curve')

plt.show()

# k-means

k = 3

km = KMeans(n_clusters=k)

km.fit(X)

# performance

cm = confusion_matrix(y, km.labels_)

print(cm)

# PCA

pca = PCA(n_components=2).fit(X)

pca_2d = pca.transform(X)

for i in range(0, pca_2d.shape[0]):

if km.labels_[i] == 0:

c1 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='r', marker='+')

elif km.labels_[i] == 1:

c2 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='g', marker='o')

elif km.labels_[i] == 2:

c3 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='b', marker='*')

plt.legend([c1, c2, c3], ['Cluster 1', 'Cluster 2', 'Cluster 3'])

plt.title('K-means finds 3 clusters')

plt.show()

```

### PhysioNet dataset

How about PhysioNet dataset?

It seems like that the quality of unsupervised model is not good enough.

This may because of significant reduction of dimension, which yield loss of information.

```

import numpy as np

import pandas as pd

from sklearn.preprocessing import Imputer

from sklearn.preprocessing import StandardScaler

# load data

dataset = pd.read_csv('https://raw.githubusercontent.com/ckbjimmy/2018_mlw/master/data/PhysionetChallenge2012_data.csv')

X = dataset.iloc[:, 1:].values

y = dataset.iloc[:, 0].values

# imputation and normalization

X = Imputer(missing_values='NaN', strategy='mean', axis=0).fit(X).transform(X)

X = StandardScaler().fit(X).transform(X)

# find k value

Nc = range(1, 5)

kmeans = [KMeans(n_clusters=i) for i in Nc]

kmeans

score = [kmeans[i].fit(X).score(X) for i in range(len(kmeans))]

score

plt.plot(Nc, score)

plt.xlabel('Number of Clusters')

plt.ylabel('Score')

plt.title('Elbow Curve')

plt.show()

# k-means

k = 2

km = KMeans(n_clusters=k)

km.fit(X)

# performance

cm = confusion_matrix(y, km.labels_)

print(cm)

# visualization

pca = PCA(n_components=2).fit(X)

pca_2d = pca.transform(X)

for i in range(0, pca_2d.shape[0]):

if km.labels_[i] == 0:

c1 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='r', marker='+')

elif km.labels_[i] == 1:

c2 = plt.scatter(pca_2d[i, 0], pca_2d[i, 1], c='g', marker='o')

plt.legend([c1, c2], ['Cluster 1', 'Cluster 2'])

plt.title('K-means finds 2 clusters')

plt.show()

# use all features

clf = LogisticRegression(fit_intercept=True)

clf.fit(X, y)

yhat = clf.predict_proba(X)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

# use PCA transformed features

clf = LogisticRegression(fit_intercept=True)

clf.fit(pca_2d, y)

yhat = clf.predict_proba(pca_2d)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

ts = TSNE(learning_rate=200)

tsne = ts.fit_transform(X)

x_axis = tsne[:, 0]

y_axis = tsne[:, 1]

plt.scatter(x_axis, y_axis, c=y)

plt.show()

# use all

clf = LogisticRegression(fit_intercept=True)

clf.fit(X, y)

yhat = clf.predict_proba(X)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

# use tSNE

clf = LogisticRegression(fit_intercept=True)

clf.fit(tsne, y)

yhat = clf.predict_proba(tsne)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

pca_16 = PCA(n_components=16).fit(X).transform(X)

tsne = TSNE(learning_rate=200).fit_transform(pca_16)

x_axis = tsne[:, 0]

y_axis = tsne[:, 1]

plt.scatter(x_axis, y_axis, c=y)

plt.show()

# use all

clf = LogisticRegression(fit_intercept=True)

clf.fit(X, y)

yhat = clf.predict_proba(X)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

# use tSNE

clf = LogisticRegression(fit_intercept=True)

clf.fit(tsne, y)

yhat = clf.predict_proba(tsne)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

```

From the above cases, we may guess that 2-dimension is enough for breast cancer and Iris data representation but not PhysioNet mortality prediction.

Afterall, the feature number of PhysioNet data is more than 180.

You can try to increase the number of dimensionality reduction from 2 to more (16, 32, 64) and see how the performance will improve.

The following codes give an example of using 16 dimensions---although they can not be visualized in a 2D plot.

```

pca_16 = PCA(n_components=16).fit(X).transform(X)

# use all

clf = LogisticRegression(fit_intercept=True)

clf.fit(X, y)

yhat = clf.predict_proba(X)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

# use tSNE

clf = LogisticRegression(fit_intercept=True)

clf.fit(pca_16, y)

yhat = clf.predict_proba(pca_16)[:,1]

auc = metrics.roc_auc_score(y, yhat)

print('{:0.3f} - AUROC of model (training set).'.format(auc))

```

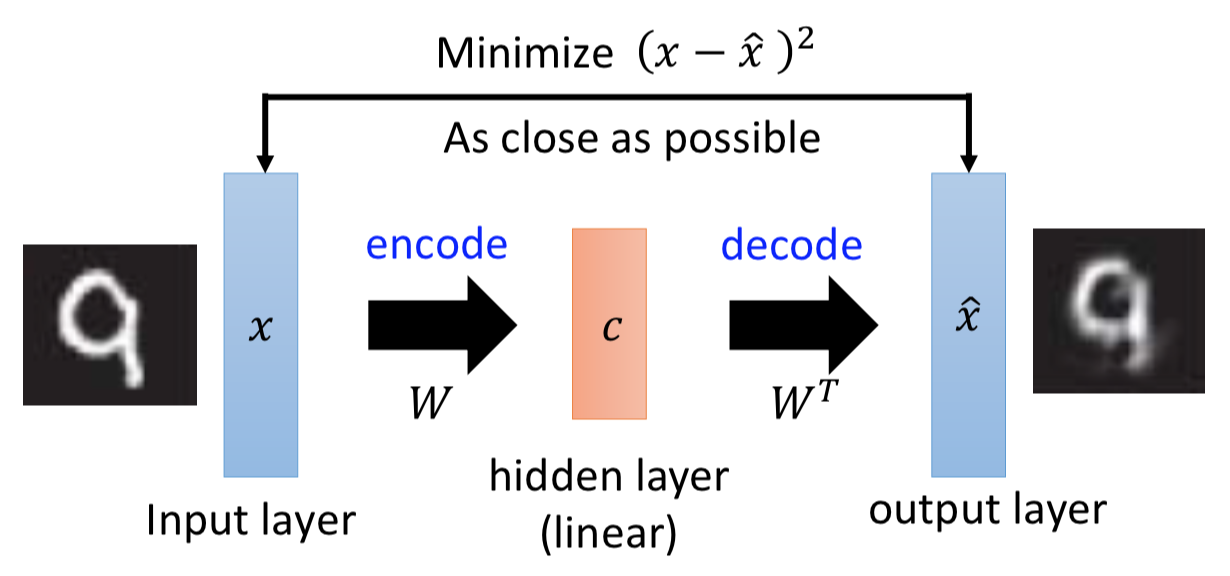

## More unsupervised learning algorithms

There are still a lot unsupervised ways to represent the data.

We won't cover the remaining algorithms but you may check them in the future when you want to dive into this field.

- Anomaly detection

- Autoencoders

- Generative Adversarial Networks (GAN)

- ...more

```

```

| github_jupyter |

<h1>Table of Contents<span class="tocSkip"></span></h1>

<div class="toc"><ul class="toc-item"><li><span><a href="#Goal" data-toc-modified-id="Goal-1"><span class="toc-item-num">1 </span>Goal</a></span></li><li><span><a href="#Var" data-toc-modified-id="Var-2"><span class="toc-item-num">2 </span>Var</a></span></li><li><span><a href="#Init" data-toc-modified-id="Init-3"><span class="toc-item-num">3 </span>Init</a></span></li><li><span><a href="#LLMGA" data-toc-modified-id="LLMGA-4"><span class="toc-item-num">4 </span>LLMGA</a></span><ul class="toc-item"><li><span><a href="#Setup" data-toc-modified-id="Setup-4.1"><span class="toc-item-num">4.1 </span>Setup</a></span></li><li><span><a href="#config" data-toc-modified-id="config-4.2"><span class="toc-item-num">4.2 </span>config</a></span></li><li><span><a href="#Run" data-toc-modified-id="Run-4.3"><span class="toc-item-num">4.3 </span>Run</a></span></li></ul></li><li><span><a href="#Summary" data-toc-modified-id="Summary-5"><span class="toc-item-num">5 </span>Summary</a></span><ul class="toc-item"><li><span><a href="#No.-of-genomes" data-toc-modified-id="No.-of-genomes-5.1"><span class="toc-item-num">5.1 </span>No. of genomes</a></span></li><li><span><a href="#CheckM" data-toc-modified-id="CheckM-5.2"><span class="toc-item-num">5.2 </span>CheckM</a></span></li><li><span><a href="#Taxonomy" data-toc-modified-id="Taxonomy-5.3"><span class="toc-item-num">5.3 </span>Taxonomy</a></span><ul class="toc-item"><li><span><a href="#Taxonomic-novelty" data-toc-modified-id="Taxonomic-novelty-5.3.1"><span class="toc-item-num">5.3.1 </span>Taxonomic novelty</a></span></li><li><span><a href="#Quality-~-taxonomy" data-toc-modified-id="Quality-~-taxonomy-5.3.2"><span class="toc-item-num">5.3.2 </span>Quality ~ taxonomy</a></span></li></ul></li></ul></li><li><span><a href="#sessionInfo" data-toc-modified-id="sessionInfo-6"><span class="toc-item-num">6 </span>sessionInfo</a></span></li></ul></div>

# Goal

* running `LLMGA` on metagenome datasets

* studyID = PRJNA485217

* host = primate

# Var

```

work_dir = '/ebio/abt3_projects/Georg_animal_feces/data/metagenome/multi-study/BioProjects/'

tmp_out_dir = '/ebio/abt3_projects/databases_no-backup/animal_gut_metagenomes/multi-study_MG-asmbl/'

pipeline_dir = '/ebio/abt3_projects/methanogen_host_evo/bin/llmga-find-refs/'

studyID = 'PRJNA485217'

threads = 24

```

# Init

```

library(dplyr)

library(tidyr)

library(ggplot2)

library(data.table)

set.seed(8304)

source('/ebio/abt3_projects/Georg_animal_feces/code/misc_r_functions/init.R')

```

# LLMGA

## Setup

```

out_dir = file.path(tmp_out_dir, studyID)

make_dir(out_dir)

out_dir = file.path(out_dir, 'LLMGA')

make_dir(out_dir)

ref_genomes = file.path(work_dir, studyID, 'LLMGA-find-refs/references/ref_genomes.fna')

cat(ref_genomes)

```

## config

```

cat_file(file.path(out_dir, 'config.yaml'))

```

## Run

```

(snakemake_dev) @ rick:/ebio/abt3_projects/methanogen_host_evo/bin/llmga

$ screen -L -S llmga-PRJNA485217 ./snakemake_sge.sh /ebio/abt3_projects/databases_no-backup/animal_gut_metagenomes/multi-study_MG-asmbl/PRJNA485217/LLMGA/config.yaml cluster.json /ebio/abt3_projects/databases_no-backup/animal_gut_metagenomes/multi-study_MG-asmbl/PRJNA485217/LLMGA/SGE_log 24

```

```

pipelineInfo('/ebio/abt3_projects/methanogen_host_evo/bin/llmga')

```

# Summary

```

asmbl_dir = out_dir = file.path(tmp_out_dir, studyID, 'LLMGA')

checkm_markers_file = file.path(asmbl_dir, 'checkm', 'markers_qa_summary.tsv')

gtdbtk_bac_sum_file = file.path(asmbl_dir, 'gtdbtk', 'gtdbtk_bac_summary.tsv')

gtdbtk_arc_sum_file = file.path(asmbl_dir, 'gtdbtk', 'gtdbtk_ar_summary.tsv')

bin_dir = file.path(asmbl_dir, 'bin')

das_tool_dir = file.path(asmbl_dir, 'bin_refine', 'DAS_Tool')

drep_dir = file.path(asmbl_dir, 'drep', 'drep')

# bin genomes

## maxbin2

bin_files = list.files(bin_dir, '*.fasta$', full.names=TRUE, recursive=TRUE)

bin = data.frame(binID = gsub('\\.fasta$', '', basename(bin_files)),

fasta = bin_files,

binner = bin_files %>% dirname %>% basename,

sample = bin_files %>% dirname %>% dirname %>% basename)

## metabat2

bin_files = list.files(bin_dir, '*.fa$', full.names=TRUE, recursive=TRUE)

X = data.frame(binID = gsub('\\.fa$', '', basename(bin_files)),

fasta = bin_files,

binner = bin_files %>% dirname %>% basename,

sample = bin_files %>% dirname %>% dirname %>% basename)

## combine

bin = rbind(bin, X)

X = NULL

bin %>% dfhead

# DAS-tool genomes

dastool_files = list.files(das_tool_dir, '*.fa$', full.names=TRUE, recursive=TRUE)

dastool = data.frame(binID = gsub('\\.fa$', '', basename(dastool_files)),

fasta = dastool_files)

dastool %>% dfhead

# drep genome files

P = file.path(drep_dir, 'dereplicated_genomes')

drep_files = list.files(P, '*.fa$', full.names=TRUE)

drep = data.frame(binID = gsub('\\.fa$', '', basename(drep_files)),

fasta = drep_files)

drep %>% dfhead

# checkm info

markers_sum = read.delim(checkm_markers_file, sep='\t')

markers_sum %>% nrow %>% print

drep_j = drep %>%

inner_join(markers_sum, c('binID'='Bin.Id'))

drep_j %>% dfhead

# gtdb

## bacteria

X = read.delim(gtdbtk_bac_sum_file, sep='\t') %>%

dplyr::select(-other_related_references.genome_id.species_name.radius.ANI.AF.) %>%

separate(classification, c('Domain', 'Phylum', 'Class', 'Order', 'Family', 'Genus', 'Species'), sep=';')

X %>% nrow %>% print

## archaea

# Y = read.delim(gtdbtk_arc_sum_file, sep='\t') %>%

# dplyr::select(-other_related_references.genome_id.species_name.radius.ANI.AF.) %>%

# separate(classification, c('Domain', 'Phylum', 'Class', 'Order', 'Family', 'Genus', 'Species'), sep=';')

# Y %>% nrow %>% print

## combined

drep_j = drep_j %>%

left_join(X, c('binID'='user_genome'))

## status

X = Y = NULL

drep_j %>% dfhead

```

## No. of genomes

```

cat('Number of binned genomes:', bin$fasta %>% unique %>% length)

cat('Number of DAS-Tool passed genomes:', dastool$binID %>% unique %>% length)

cat('Number of 99% ANI de-rep genomes:', drep_j$binID %>% unique %>% length)

```

## CheckM

```

# checkm stats

p = drep_j %>%

dplyr::select(binID, Completeness, Contamination) %>%

gather(Metric, Value, -binID) %>%

ggplot(aes(Value)) +

geom_histogram(bins=30) +

labs(y='No. of MAGs\n(>=99% ANI derep.)') +

facet_grid(Metric ~ ., scales='free_y') +

theme_bw()

dims(4,3)

plot(p)

```

## Taxonomy

```

# summarizing by taxonomy

p = drep_j %>%

unite(Taxonomy, Phylum, Class, sep=';', remove=FALSE) %>%

group_by(Taxonomy, Phylum) %>%

summarize(n = n()) %>%

ungroup() %>%

ggplot(aes(Taxonomy, n, fill=Phylum)) +

geom_bar(stat='identity') +

coord_flip() +

labs(y='No. of MAGs\n(>=99% ANI derep.)') +

theme_bw()

dims(7,4)

plot(p)

# summarizing by taxonomy

p = drep_j %>%

unite(Taxonomy, Phylum, Class, Family, sep=';', remove=FALSE) %>%

group_by(Taxonomy, Phylum) %>%

summarize(n = n()) %>%

ungroup() %>%

ggplot(aes(Taxonomy, n, fill=Phylum)) +

geom_bar(stat='identity') +

coord_flip() +

labs(y='No. of MAGs\n(>=99% ANI derep.)') +

theme_bw()

dims(7,4)

plot(p)

```

### Taxonomic novelty

```

# no close ANI matches

p = drep_j %>%

unite(Taxonomy, Phylum, Class, sep=';', remove=FALSE) %>%

mutate(closest_placement_ani = closest_placement_ani %>% as.character,

closest_placement_ani = ifelse(closest_placement_ani == 'N/A',

0, closest_placement_ani),

closest_placement_ani = ifelse(is.na(closest_placement_ani),

0, closest_placement_ani),

closest_placement_ani = closest_placement_ani %>% as.Num) %>%

mutate(has_species_placement = ifelse(closest_placement_ani >= 95,

'ANI >= 95%', 'No match')) %>%

ggplot(aes(Taxonomy, fill=Phylum)) +

geom_bar() +

facet_grid(. ~ has_species_placement) +

coord_flip() +

labs(y='Closest placement ANI') +

theme_bw()

dims(7,4)

plot(p)

p = drep_j %>%

filter(Genus == 'g__') %>%

unite(Taxonomy, Phylum, Class, Order, Family, sep='; ', remove=FALSE) %>%

mutate(Taxonomy = stringr::str_wrap(Taxonomy, 45),

Taxonomy = gsub(' ', '', Taxonomy)) %>%

group_by(Taxonomy, Phylum) %>%

summarize(n = n()) %>%

ungroup() %>%

ggplot(aes(Taxonomy, n, fill=Phylum)) +

geom_bar(stat='identity') +

coord_flip() +

labs(y='No. of MAGs lacking a\ngenus-level classification') +

theme_bw()

dims(6.5,3)

plot(p)

```

### Quality ~ taxonomy

```

p = drep_j %>%

unite(Taxonomy, Phylum, Class, sep='; ', remove=FALSE) %>%

dplyr::select(Taxonomy, Phylum, Completeness, Contamination) %>%

gather(Metric, Value, -Taxonomy, -Phylum) %>%

ggplot(aes(Taxonomy, Value, color=Phylum)) +

geom_boxplot() +

facet_grid(. ~ Metric, scales='free_x') +

coord_flip() +

labs(y='CheckM quality') +

theme_bw()

dims(7,3.5)

plot(p)

# just unclassified at genus/species

p = drep_j %>%

filter(Genus == 'g__' | Species == 's__') %>%

unite(Taxonomy, Phylum, Class, sep='; ', remove=FALSE) %>%

dplyr::select(Taxonomy, Phylum, Completeness, Contamination) %>%

gather(Metric, Value, -Taxonomy, -Phylum) %>%

ggplot(aes(Taxonomy, Value, color=Phylum)) +

geom_boxplot() +

facet_grid(. ~ Metric, scales='free_x') +

coord_flip() +

labs(y='CheckM quality') +

theme_bw()

dims(7,3.5)

plot(p)

```

# sessionInfo

```

sessionInfo()

```

| github_jupyter |

```

import numpy as np

import matplotlib.pyplot as plt

plt.style.use('fivethirtyeight')

%matplotlib inline

```

### The single most important equation in linear systems

$$\mathbf{y} = \mathbf{A}\mathbf{x}$$

### Or

$$\mathbf{Y} = \mathbf{A}\mathbf{X}$$

$$\mathbf{y} = \mathbf{A}\mathbf{x}$$

### Where $\mathbf{x}$ is the input, $\mathbf{y}$ is the output, or observations, and $\mathbf{A}$ is a matrix of coefficients.

--------------

# Linear System of Equations

### Question: Why does it take two points to define a line?

```

# pick any two points, at random, between 0 and 10

# First point - P

px, py = np.random.randint(0, high=11, size=(2,))

# Second point - Q

qx, qy = np.random.randint(0, high=11, size=(2,))

fig, ax = plt.subplots()

ax.scatter([px, qx], [py, qy], s=100)

ax.annotate('P', [px, py], fontsize='xx-large')

ax.annotate('Q', [qx, qy], fontsize='xx-large')

ax.axis([-1, 12, -1, 12])

ax.set_aspect('auto')

```

### Assume that the two points are joined by a line

$$y = mx + c$$

### i.e.

$$p_{y} = mp_{x} + c$$

### and

$$q_{y} = mq_{x} + c$$

### Exercise: Arrange the equations above in the form

$$\mathbf{d} = \mathbf{A}\mathbf{b}$$

### What are $\mathbf{A}$, $\mathbf{b}$ and $\mathbf{d}$?

```

np.linalg.solve?

```

### Exercise: Construct the matrices $\mathbf{A}$, $\mathbf{b}$ and $\mathbf{c}$ with NumPy and solve for the slope and the intercept of the line

```

### Put the slope in the variable `m` and the intercept in a variable `c`.

### Then run the next cell to check your solution

# enter code here

xx = np.linspace(0, 10, 100)

yy = m * xx + c

fig, ax = plt.subplots()

ax.scatter([px, qx], [py, qy], s=100)

ax.annotate('P', [px, py], fontsize='xx-large')

ax.annotate('Q', [qx, qy], fontsize='xx-large')

ax.plot(xx, yy)

```

# What you just solved was a trivial form of linear regression!

-----------------

# Types of Linear Systems

* ## Ideal System

- ### number of equations = number of unknowns

- ### Unique solutions

* ## Underdetermined System:

- ### number of equations < number of unknowns

- ### Infinitely many solutions! (Or no solution)

* ## Overdetermined systems:

- ### number of equations > number of unknowns

- ### No unique solutions

# Application: Linear Regression

## We want to fit a straight line through the following dataset:

```

import pandas as pd

df = pd.read_csv('data/hwg.csv')

fig, ax = plt.subplots(figsize=(10, 8))

ax.scatter(df['Height'], df['Weight'], alpha=0.2)

```

### Question: What type of a system of equations is this? Ideal, underdetermined or overdetermined?

### Each y-coordinate, $y_{i}$ can be defined as:

### $$y_{i} = x_{i}\beta + \epsilon$$

## Ordinary Least Squares solution

### Optimal solution: Find the $\beta$ which minimizes:

### $$S(\beta) = \|\mathbf{y} -\mathbf{x}\beta\|^2$$

### The optimal $\beta$ is:

### $$\hat{\beta} = (\mathbf{x}^{T}\mathbf{x})^{-1}\mathbf{x}^{T}\mathbf{y}$$

```

np.transpose?

np.linalg.inv?

np.dot?

X = np.c_[np.ones((df.shape[0],)), df['Height'].values]

Y = df['Weight'].values.reshape(-1, 1)

```

### Exercise: use the formula above to find the optimal beta, given the X and Y as defined.

### Place your solution in a variable named `beta`,

### then run the cell below to check your solution

```

# enter code here

fig, ax = plt.subplots(figsize=(10, 8))

ax.scatter(df['Height'], df['Weight'], alpha=0.2)

ax.plot(X[:, 1], np.dot(X, beta).ravel(), 'g')

```

| github_jupyter |

```

import bs4

import taxon

import gui_widgets

from wikidataintegrator import wdi_core

import bibtexparser

import requests

import pandas as pd

import json

import ipywidgets as widgets

from IPython.display import IFrame, clear_output, HTML, Image

from ipywidgets import interact, interactive, fixed, interact_manual

import math

def fetch_missing_wikipedia_articles(url):

photos = json.loads(requests.get(url).text)

temp_results = []

for obs in photos["results"]:

if obs["taxon"]["wikipedia_url"] is None:

result = dict()

result["inat_obs_id"] = obs["id"]

result["inat_taxon_id"] = obs["taxon"]["id"]

result["taxon_name"] = obs["taxon"]["name"]

temp_results.append(result)

to_verify = []

for temp in temp_results:

if temp["taxon_name"] not in to_verify:

to_verify.append(temp["taxon_name"])

verified = verify_wikidata(to_verify)

results = []

for temp in temp_results:

if temp["taxon_name"] in verified:

results.append(temp)

return results

def verify_wikidata(taxon_names):

progress = widgets.IntProgress(

value=1,

min=0,

max=len(taxon_names)/50,

description='Wikidata:',

bar_style='', # 'success', 'info', 'warning', 'danger' or ''

style={'bar_color': 'blue'},

orientation='horizontal')

display(progress)

verified = []

i = 1

for chunks in [taxon_names[i:i + 50] for i in range(0, len(taxon_names), 50)]:

query = """

SELECT DISTINCT ?taxon_name (COUNT(?item) AS ?item_count) (COUNT(?article) AS ?article_count) WHERE {{

VALUES ?taxon_name {{{names}}}

{{?item wdt:P225 ?taxon_name .}}

UNION

{{?item wdt:P225 ?taxon_name .

?article schema:about ?item ;

schema:isPartOf <https://en.wikipedia.org/> .}}

UNION

{{?basionym wdt:P566 ?item ;

wdt:P225 ?taxon_name .

?article schema:about ?item ;

schema:isPartOf <https://en.wikipedia.org/> .}}

UNION

{{?basionym wdt:P566 ?item .

?item wdt:P225 ?taxon_name .

?article schema:about ?basionym ;

schema:isPartOf <https://en.wikipedia.org/> .}}

}} GROUP BY ?taxon_name

""".format(names=" ".join('"{0}"'.format(w) for w in chunks))

url = "https://query.wikidata.org/sparql?format=json&query="+query

#print(url)

progress.value = i

i+=1

try:

results = json.loads(requests.get(url).text)

except:

continue

for result in results["results"]["bindings"]: