text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

<a href="https://colab.research.google.com/github/satyajitghana/TSAI-DeepNLP-END2.0/blob/main/08_TorchText/pytorch-seq2seq-modern/4_Packed_Padded_Sequences%2C_Masking%2C_Inference_and_BLEU.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# 4 - Packed Padded Sequences, Masking, Inference and BLEU

## Introduction

In this notebook we will be adding a few improvements - packed padded sequences and masking - to the model from the previous notebook. Packed padded sequences are used to tell our RNN to skip over padding tokens in our encoder. Masking explicitly forces the model to ignore certain values, such as attention over padded elements. Both of these techniques are commonly used in NLP.

We will also look at how to use our model for inference, by giving it a sentence, seeing what it translates it as and seeing where exactly it pays attention to when translating each word.

Finally, we'll use the BLEU metric to measure the quality of our translations.

## Preparing Data

First, we'll import all the modules as before, with the addition of the `matplotlib` modules used for viewing the attention.

```

! pip install spacy==3.0.6 --quiet

```

You might need to restart the Runtime after installing the spacy models

```

! python -m spacy download en_core_web_sm --quiet

! python -m spacy download de_core_news_sm --quiet

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

# from torchtext.legacy.datasets import Multi30k

# from torchtext.legacy.data import Field, BucketIterator

import matplotlib.pyplot as plt

import matplotlib.ticker as ticker

import spacy

import numpy as np

import random

import math

import time

from typing import *

```

Next, we'll set the random seed for reproducability.

```

SEED = 1234

random.seed(SEED)

np.random.seed(SEED)

torch.manual_seed(SEED)

torch.cuda.manual_seed(SEED)

torch.backends.cudnn.deterministic = True

```

As before, we'll import spaCy and define the German and English tokenizers.

```

from torchtext.data.utils import get_tokenizer

from torchtext.vocab import build_vocab_from_iterator

from torchtext.datasets import Multi30k

SRC_LANGUAGE = 'de'

TGT_LANGUAGE = 'en'

# Place-holders

token_transform = {}

vocab_transform = {}

# Create source and target language tokenizer. Make sure to install the dependencies.

# the 'language' should be a full qualified name, since shortcuts like `de` and `en` are deprecated in spaCy 3.0+

token_transform[SRC_LANGUAGE] = get_tokenizer('spacy', language='de_core_news_sm')

token_transform[TGT_LANGUAGE] = get_tokenizer('spacy', language='en_core_web_sm')

# Training, Validation and Test data Iterator

train_iter, val_iter, test_iter = Multi30k(split=('train', 'valid', 'test'), language_pair=(SRC_LANGUAGE, TGT_LANGUAGE))

train_list, val_list, test_list = list(train_iter), list(val_iter), list(test_iter)

train_list[0]

```

Build the vocabulary.

```

# helper function to yield list of tokens

def yield_tokens(data_iter: Iterable, language: str) -> List[str]:

language_index = {SRC_LANGUAGE: 0, TGT_LANGUAGE: 1}

for data_sample in data_iter:

yield token_transform[language](data_sample[language_index[language]])

# Define special symbols and indices

UNK_IDX, PAD_IDX, BOS_IDX, EOS_IDX = 0, 1, 2, 3

# Make sure the tokens are in order of their indices to properly insert them in vocab

special_symbols = ['<unk>', '<pad>', '<bos>', '<eos>']

for ln in [SRC_LANGUAGE, TGT_LANGUAGE]:

# Create torchtext's Vocab object

vocab_transform[ln] = build_vocab_from_iterator(yield_tokens(train_list, ln),

min_freq=1,

specials=special_symbols,

special_first=True)

# Set UNK_IDX as the default index. This index is returned when the token is not found.

# If not set, it throws RuntimeError when the queried token is not found in the Vocabulary.

for ln in [SRC_LANGUAGE, TGT_LANGUAGE]:

vocab_transform[ln].set_default_index(UNK_IDX)

```

Define the device.

```

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

```

Create the iterators.

```

from torch.nn.utils.rnn import pad_sequence

# helper function to club together sequential operations

def sequential_transforms(*transforms):

def func(txt_input):

for transform in transforms:

txt_input = transform(txt_input)

return txt_input

return func

# function to add BOS/EOS and create tensor for input sequence indices

def tensor_transform(token_ids: List[int]):

return torch.cat((torch.tensor([BOS_IDX]),

torch.tensor(token_ids),

torch.tensor([EOS_IDX])))

# src and tgt language text transforms to convert raw strings into tensors indices

text_transform = {}

for ln in [SRC_LANGUAGE, TGT_LANGUAGE]:

text_transform[ln] = sequential_transforms(token_transform[ln], #Tokenization

vocab_transform[ln], #Numericalization

tensor_transform) # Add BOS/EOS and create tensor

# function to collate data samples into batch tesors

def collate_fn(batch):

src_batch, src_len, tgt_batch = [], [], []

for src_sample, tgt_sample in batch:

src_batch.append(text_transform[SRC_LANGUAGE](src_sample.rstrip("\n")))

src_len.append(len(src_batch[-1]))

tgt_batch.append(text_transform[TGT_LANGUAGE](tgt_sample.rstrip("\n")))

src_batch = pad_sequence(src_batch, padding_value=PAD_IDX)

tgt_batch = pad_sequence(tgt_batch, padding_value=PAD_IDX)

return src_batch, torch.LongTensor(src_len), tgt_batch

from torch.utils.data import DataLoader

BATCH_SIZE = 128

train_dataloader = DataLoader(train_list, batch_size=BATCH_SIZE, collate_fn=collate_fn)

val_dataloader = DataLoader(val_list, batch_size=BATCH_SIZE, collate_fn=collate_fn)

test_dataloader = DataLoader(test_list, batch_size=BATCH_SIZE, collate_fn=collate_fn)

```

When using packed padded sequences, we need to tell PyTorch how long the actual (non-padded) sequences are. Luckily for us, TorchText's `Field` objects allow us to use the `include_lengths` argument, this will cause our `batch.src` to be a tuple. The first element of the tuple is the same as before, a batch of numericalized source sentence as a tensor, and the second element is the non-padded lengths of each source sentence within the batch.

We then load the data.

Next, we handle the iterators.

One quirk about packed padded sequences is that all elements in the batch need to be sorted by their non-padded lengths in descending order, i.e. the first sentence in the batch needs to be the longest. We use two arguments of the iterator to handle this, `sort_within_batch` which tells the iterator that the contents of the batch need to be sorted, and `sort_key` a function which tells the iterator how to sort the elements in the batch. Here, we sort by the length of the `src` sentence.

## Building the Model

### Encoder

Next up, we define the encoder.

The changes here all within the `forward` method. It now accepts the lengths of the source sentences as well as the sentences themselves.

After the source sentence (padded automatically within the iterator) has been embedded, we can then use `pack_padded_sequence` on it with the lengths of the sentences. Note that the tensor containing the lengths of the sequences must be a CPU tensor as of the latest version of PyTorch, which we explicitly do so with `to('cpu')`. `packed_embedded` will then be our packed padded sequence. This can be then fed to our RNN as normal which will return `packed_outputs`, a packed tensor containing all of the hidden states from the sequence, and `hidden` which is simply the final hidden state from our sequence. `hidden` is a standard tensor and not packed in any way, the only difference is that as the input was a packed sequence, this tensor is from the final **non-padded element** in the sequence.

We then unpack our `packed_outputs` using `pad_packed_sequence` which returns the `outputs` and the lengths of each, which we don't need.

The first dimension of `outputs` is the padded sequence lengths however due to using a packed padded sequence the values of tensors when a padding token was the input will be all zeros.

```

class Encoder(nn.Module):

def __init__(self, input_dim, emb_dim, enc_hid_dim, dec_hid_dim, dropout):

super().__init__()

self.embedding = nn.Embedding(input_dim, emb_dim)

self.rnn = nn.GRU(emb_dim, enc_hid_dim, bidirectional = True)

self.fc = nn.Linear(enc_hid_dim * 2, dec_hid_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, src, src_len):

#src = [src len, batch size]

#src_len = [batch size]

embedded = self.dropout(self.embedding(src))

#embedded = [src len, batch size, emb dim]

#need to explicitly put lengths on cpu!

packed_embedded = nn.utils.rnn.pack_padded_sequence(embedded, src_len.to('cpu'), enforce_sorted=False)

packed_outputs, hidden = self.rnn(packed_embedded)

#packed_outputs is a packed sequence containing all hidden states

#hidden is now from the final non-padded element in the batch

outputs, _ = nn.utils.rnn.pad_packed_sequence(packed_outputs)

#outputs is now a non-packed sequence, all hidden states obtained

# when the input is a pad token are all zeros

#outputs = [src len, batch size, hid dim * num directions]

#hidden = [n layers * num directions, batch size, hid dim]

#hidden is stacked [forward_1, backward_1, forward_2, backward_2, ...]

#outputs are always from the last layer

#hidden [-2, :, : ] is the last of the forwards RNN

#hidden [-1, :, : ] is the last of the backwards RNN

#initial decoder hidden is final hidden state of the forwards and backwards

# encoder RNNs fed through a linear layer

hidden = torch.tanh(self.fc(torch.cat((hidden[-2,:,:], hidden[-1,:,:]), dim = 1)))

#outputs = [src len, batch size, enc hid dim * 2]

#hidden = [batch size, dec hid dim]

return outputs, hidden

```

### Attention

The attention module is where we calculate the attention values over the source sentence.

Previously, we allowed this module to "pay attention" to padding tokens within the source sentence. However, using *masking*, we can force the attention to only be over non-padding elements.

The `forward` method now takes a `mask` input. This is a **[batch size, source sentence length]** tensor that is 1 when the source sentence token is not a padding token, and 0 when it is a padding token. For example, if the source sentence is: ["hello", "how", "are", "you", "?", `<pad>`, `<pad>`], then the mask would be [1, 1, 1, 1, 1, 0, 0].

We apply the mask after the attention has been calculated, but before it has been normalized by the `softmax` function. It is applied using `masked_fill`. This fills the tensor at each element where the first argument (`mask == 0`) is true, with the value given by the second argument (`-1e10`). In other words, it will take the un-normalized attention values, and change the attention values over padded elements to be `-1e10`. As these numbers will be miniscule compared to the other values they will become zero when passed through the `softmax` layer, ensuring no attention is payed to padding tokens in the source sentence.

```

class Attention(nn.Module):

def __init__(self, enc_hid_dim, dec_hid_dim):

super().__init__()

self.attn = nn.Linear((enc_hid_dim * 2) + dec_hid_dim, dec_hid_dim)

self.v = nn.Linear(dec_hid_dim, 1, bias = False)

def forward(self, hidden, encoder_outputs, mask):

#hidden = [batch size, dec hid dim]

#encoder_outputs = [src len, batch size, enc hid dim * 2]

batch_size = encoder_outputs.shape[1]

src_len = encoder_outputs.shape[0]

#repeat decoder hidden state src_len times

hidden = hidden.unsqueeze(1).repeat(1, src_len, 1)

encoder_outputs = encoder_outputs.permute(1, 0, 2)

#hidden = [batch size, src len, dec hid dim]

#encoder_outputs = [batch size, src len, enc hid dim * 2]

energy = torch.tanh(self.attn(torch.cat((hidden, encoder_outputs), dim = 2)))

#energy = [batch size, src len, dec hid dim]

attention = self.v(energy).squeeze(2)

#attention = [batch size, src len]

attention = attention.masked_fill(mask == 0, -1e10)

return F.softmax(attention, dim = 1)

```

### Decoder

The decoder only needs a few small changes. It needs to accept a mask over the source sentence and pass this to the attention module. As we want to view the values of attention during inference, we also return the attention tensor.

```

class Decoder(nn.Module):

def __init__(self, output_dim, emb_dim, enc_hid_dim, dec_hid_dim, dropout, attention):

super().__init__()

self.output_dim = output_dim

self.attention = attention

self.embedding = nn.Embedding(output_dim, emb_dim)

self.rnn = nn.GRU((enc_hid_dim * 2) + emb_dim, dec_hid_dim)

self.fc_out = nn.Linear((enc_hid_dim * 2) + dec_hid_dim + emb_dim, output_dim)

self.dropout = nn.Dropout(dropout)

def forward(self, input, hidden, encoder_outputs, mask):

#input = [batch size]

#hidden = [batch size, dec hid dim]

#encoder_outputs = [src len, batch size, enc hid dim * 2]

#mask = [batch size, src len]

input = input.unsqueeze(0)

#input = [1, batch size]

embedded = self.dropout(self.embedding(input))

#embedded = [1, batch size, emb dim]

a = self.attention(hidden, encoder_outputs, mask)

#a = [batch size, src len]

a = a.unsqueeze(1)

#a = [batch size, 1, src len]

encoder_outputs = encoder_outputs.permute(1, 0, 2)

#encoder_outputs = [batch size, src len, enc hid dim * 2]

weighted = torch.bmm(a, encoder_outputs)

#weighted = [batch size, 1, enc hid dim * 2]

weighted = weighted.permute(1, 0, 2)

#weighted = [1, batch size, enc hid dim * 2]

rnn_input = torch.cat((embedded, weighted), dim = 2)

#rnn_input = [1, batch size, (enc hid dim * 2) + emb dim]

output, hidden = self.rnn(rnn_input, hidden.unsqueeze(0))

#output = [seq len, batch size, dec hid dim * n directions]

#hidden = [n layers * n directions, batch size, dec hid dim]

#seq len, n layers and n directions will always be 1 in this decoder, therefore:

#output = [1, batch size, dec hid dim]

#hidden = [1, batch size, dec hid dim]

#this also means that output == hidden

assert (output == hidden).all()

embedded = embedded.squeeze(0)

output = output.squeeze(0)

weighted = weighted.squeeze(0)

prediction = self.fc_out(torch.cat((output, weighted, embedded), dim = 1))

#prediction = [batch size, output dim]

return prediction, hidden.squeeze(0), a.squeeze(1)

```

### Seq2Seq

The overarching seq2seq model also needs a few changes for packed padded sequences, masking and inference.

We need to tell it what the indexes are for the pad token and also pass the source sentence lengths as input to the `forward` method.

We use the pad token index to create the masks, by creating a mask tensor that is 1 wherever the source sentence is not equal to the pad token. This is all done within the `create_mask` function.

The sequence lengths as needed to pass to the encoder to use packed padded sequences.

The attention at each time-step is stored in the `attentions`

```

class Seq2Seq(nn.Module):

def __init__(self, encoder, decoder, src_pad_idx, device):

super().__init__()

self.encoder = encoder

self.decoder = decoder

self.src_pad_idx = src_pad_idx

self.device = device

def create_mask(self, src):

mask = (src != self.src_pad_idx).permute(1, 0)

return mask

def forward(self, src, src_len, trg, teacher_forcing_ratio = 0.5):

#src = [src len, batch size]

#src_len = [batch size]

#trg = [trg len, batch size]

#teacher_forcing_ratio is probability to use teacher forcing

#e.g. if teacher_forcing_ratio is 0.75 we use teacher forcing 75% of the time

batch_size = src.shape[1]

trg_len = trg.shape[0]

trg_vocab_size = self.decoder.output_dim

#tensor to store decoder outputs

outputs = torch.zeros(trg_len, batch_size, trg_vocab_size).to(self.device)

#encoder_outputs is all hidden states of the input sequence, back and forwards

#hidden is the final forward and backward hidden states, passed through a linear layer

encoder_outputs, hidden = self.encoder(src, src_len)

#first input to the decoder is the <sos> tokens

input = trg[0,:]

mask = self.create_mask(src)

#mask = [batch size, src len]

for t in range(1, trg_len):

#insert input token embedding, previous hidden state, all encoder hidden states

# and mask

#receive output tensor (predictions) and new hidden state

output, hidden, _ = self.decoder(input, hidden, encoder_outputs, mask)

#place predictions in a tensor holding predictions for each token

outputs[t] = output

#decide if we are going to use teacher forcing or not

teacher_force = random.random() < teacher_forcing_ratio

#get the highest predicted token from our predictions

top1 = output.argmax(1)

#if teacher forcing, use actual next token as next input

#if not, use predicted token

input = trg[t] if teacher_force else top1

return outputs

```

## Training the Seq2Seq Model

Next up, initializing the model and placing it on the GPU.

```

INPUT_DIM = len(vocab_transform[SRC_LANGUAGE])

OUTPUT_DIM = len(vocab_transform[TGT_LANGUAGE])

ENC_EMB_DIM = 256

DEC_EMB_DIM = 256

ENC_HID_DIM = 512

DEC_HID_DIM = 512

ENC_DROPOUT = 0.5

DEC_DROPOUT = 0.5

SRC_PAD_IDX = PAD_IDX

attn = Attention(ENC_HID_DIM, DEC_HID_DIM)

enc = Encoder(INPUT_DIM, ENC_EMB_DIM, ENC_HID_DIM, DEC_HID_DIM, ENC_DROPOUT)

dec = Decoder(OUTPUT_DIM, DEC_EMB_DIM, ENC_HID_DIM, DEC_HID_DIM, DEC_DROPOUT, attn)

model = Seq2Seq(enc, dec, SRC_PAD_IDX, device).to(device)

```

Then, we initialize the model parameters.

```

def init_weights(m):

for name, param in m.named_parameters():

if 'weight' in name:

nn.init.normal_(param.data, mean=0, std=0.01)

else:

nn.init.constant_(param.data, 0)

model.apply(init_weights)

```

We'll print out the number of trainable parameters in the model, noticing that it has the exact same amount of parameters as the model without these improvements.

```

def count_parameters(model):

return sum(p.numel() for p in model.parameters() if p.requires_grad)

print(f'The model has {count_parameters(model):,} trainable parameters')

```

Then we define our optimizer and criterion.

The `ignore_index` for the criterion needs to be the index of the pad token for the target language, not the source language.

```

optimizer = optim.Adam(model.parameters())

TRG_PAD_IDX = PAD_IDX

criterion = nn.CrossEntropyLoss(ignore_index = TRG_PAD_IDX)

```

Next, we'll define our training and evaluation loops.

As we are using `include_lengths = True` for our source field, `batch.src` is now a tuple with the first element being the numericalized tensor representing the sentence and the second element being the lengths of each sentence within the batch.

Our model also returns the attention vectors over the batch of source source sentences for each decoding time-step. We won't use these during the training/evaluation, but we will later for inference.

```

def train(model, iterator, optimizer, criterion, clip):

model.train()

epoch_loss = 0

for i, batch in enumerate(iterator):

src, src_len, trg = batch

src, src_len, trg = src.to(device), src_len.to(device), trg.to(device)

optimizer.zero_grad()

output = model(src, src_len, trg)

#trg = [trg len, batch size]

#output = [trg len, batch size, output dim]

output_dim = output.shape[-1]

output = output[1:].view(-1, output_dim)

trg = trg[1:].view(-1)

#trg = [(trg len - 1) * batch size]

#output = [(trg len - 1) * batch size, output dim]

loss = criterion(output, trg)

loss.backward()

torch.nn.utils.clip_grad_norm_(model.parameters(), clip)

optimizer.step()

epoch_loss += loss.item()

return epoch_loss / len(iterator)

def evaluate(model, iterator, criterion):

model.eval()

epoch_loss = 0

with torch.no_grad():

for i, batch in enumerate(iterator):

src, src_len, trg = batch

src, src_len, trg = src.to(device), src_len.to(device), trg.to(device)

output = model(src, src_len, trg, 0) #turn off teacher forcing

#trg = [trg len, batch size]

#output = [trg len, batch size, output dim]

output_dim = output.shape[-1]

output = output[1:].view(-1, output_dim)

trg = trg[1:].view(-1)

#trg = [(trg len - 1) * batch size]

#output = [(trg len - 1) * batch size, output dim]

loss = criterion(output, trg)

epoch_loss += loss.item()

return epoch_loss / len(iterator)

```

Then, we'll define a useful function for timing how long epochs take.

```

def epoch_time(start_time, end_time):

elapsed_time = end_time - start_time

elapsed_mins = int(elapsed_time / 60)

elapsed_secs = int(elapsed_time - (elapsed_mins * 60))

return elapsed_mins, elapsed_secs

```

The penultimate step is to train our model. Notice how it takes almost half the time as our model without the improvements added in this notebook.

```

N_EPOCHS = 10

CLIP = 1

best_valid_loss = float('inf')

for epoch in range(N_EPOCHS):

start_time = time.time()

train_loss = train(model, train_dataloader, optimizer, criterion, CLIP)

valid_loss = evaluate(model, val_dataloader, criterion)

end_time = time.time()

epoch_mins, epoch_secs = epoch_time(start_time, end_time)

if valid_loss < best_valid_loss:

best_valid_loss = valid_loss

torch.save(model.state_dict(), 'tut4-model.pt')

print(f'Epoch: {epoch+1:02} | Time: {epoch_mins}m {epoch_secs}s')

print(f'\tTrain Loss: {train_loss:.3f} | Train PPL: {math.exp(train_loss):7.3f}')

print(f'\t Val. Loss: {valid_loss:.3f} | Val. PPL: {math.exp(valid_loss):7.3f}')

```

Finally, we load the parameters from our best validation loss and get our results on the test set.

We get the improved test perplexity whilst almost being twice as fast!

```

model.load_state_dict(torch.load('tut4-model.pt'))

test_loss = evaluate(model, test_dataloader, criterion)

print(f'| Test Loss: {test_loss:.3f} | Test PPL: {math.exp(test_loss):7.3f} |')

```

## Inference

Now we can use our trained model to generate translations.

**Note:** these translations will be poor compared to examples shown in paper as they use hidden dimension sizes of 1000 and train for 4 days! They have been cherry picked in order to show off what attention should look like on a sufficiently sized model.

Our `translate_sentence` will do the following:

- ensure our model is in evaluation mode, which it should always be for inference

- tokenize the source sentence if it has not been tokenized (is a string)

- numericalize the source sentence

- convert it to a tensor and add a batch dimension

- get the length of the source sentence and convert to a tensor

- feed the source sentence into the encoder

- create the mask for the source sentence

- create a list to hold the output sentence, initialized with an `<sos>` token

- create a tensor to hold the attention values

- while we have not hit a maximum length

- get the input tensor, which should be either `<sos>` or the last predicted token

- feed the input, all encoder outputs, hidden state and mask into the decoder

- store attention values

- get the predicted next token

- add prediction to current output sentence prediction

- break if the prediction was an `<eos>` token

- convert the output sentence from indexes to tokens

- return the output sentence (with the `<sos>` token removed) and the attention values over the sequence

```

def translate_sentence(sentence, vocabs, init_token, eos_token, model, device, max_len = 50):

model.eval()

if isinstance(sentence, str):

nlp = spacy.load('de_core_news_sm')

tokens = [token.text.lower() for token in nlp(sentence)]

else:

tokens = [token.lower() for token in sentence]

tokens = [init_token] + tokens + [eos_token]

src_indexes = [vocabs['de'][token] for token in tokens]

src_tensor = torch.LongTensor(src_indexes).unsqueeze(1).to(device)

src_len = torch.LongTensor([len(src_indexes)])

with torch.no_grad():

encoder_outputs, hidden = model.encoder(src_tensor, src_len)

mask = model.create_mask(src_tensor)

trg_indexes = [vocabs['en'][init_token]]

attentions = torch.zeros(max_len, 1, len(src_indexes)).to(device)

for i in range(max_len):

trg_tensor = torch.LongTensor([trg_indexes[-1]]).to(device)

with torch.no_grad():

output, hidden, attention = model.decoder(trg_tensor, hidden, encoder_outputs, mask)

attentions[i] = attention

pred_token = output.argmax(1).item()

trg_indexes.append(pred_token)

if pred_token == vocabs['en'][eos_token]:

break

trg_tokens = [vocabs['en'].vocab.get_itos()[i] for i in trg_indexes]

return trg_tokens[1:], attentions[:len(trg_tokens)-1]

```

Next, we'll make a function that displays the model's attention over the source sentence for each target token generated.

```

def display_attention(sentence, translation, attention):

fig = plt.figure(figsize=(10,10))

ax = fig.add_subplot(111)

attention = attention.squeeze(1).cpu().detach().numpy()

cax = ax.matshow(attention, cmap='bone')

ax.tick_params(labelsize=15)

if isinstance(sentence, str):

nlp = spacy.load('de_core_news_sm')

x_ticks = [''] + ['<sos>'] + [t.text.lower() for t in nlp(sentence)] + ['<eos>']

else:

x_ticks = [''] + ['<sos>'] + [t.lower() for t in sentence] + ['<eos>']

y_ticks = [''] + translation

ax.set_xticklabels(x_ticks, rotation=45)

ax.set_yticklabels(y_ticks)

ax.xaxis.set_major_locator(ticker.MultipleLocator(1))

ax.yaxis.set_major_locator(ticker.MultipleLocator(1))

plt.show()

plt.close()

```

Now, we'll grab some translations from our dataset and see how well our model did. Note, we're going to cherry pick examples here so it gives us something interesting to look at, but feel free to change the `example_idx` value to look at different examples.

First, we'll get a source and target from our dataset.

```

example_idx = 12

src, trg = train_list[example_idx]

print(f'src = {src}')

print(f'trg = {trg}')

```

Then we'll use our `translate_sentence` function to get our predicted translation and attention. We show this graphically by having the source sentence on the x-axis and the predicted translation on the y-axis. The lighter the square at the intersection between two words, the more attention the model gave to that source word when translating that target word.

Below is an example the model attempted to translate, it gets the translation correct except changes *are fighting* to just *fighting*.

```

translation, attention = translate_sentence(src, vocab_transform, '<bos>', '<eos>', model, device)

print(f'predicted trg = {translation}')

display_attention(src, translation, attention)

```

Translations from the training set could simply be memorized by the model. So it's only fair we look at translations from the validation and testing set too.

Starting with the validation set, let's get an example.

```

example_idx = 14

src, trg = train_list[example_idx]

print(f'src = {src}')

print(f'trg = {trg}')

```

Then let's generate our translation and view the attention.

Here, we can see the translation is the same except for swapping *female* with *woman*.

```

translation, attention = translate_sentence(src, vocab_transform, '<bos>', '<eos>', model, device)

print(f'predicted trg = {translation}')

display_attention(src, translation, attention)

```

Finally, let's get an example from the test set.

```

example_idx = 18

src, trg = train_list[example_idx]

print(f'src = {src}')

print(f'trg = {trg}')

```

Again, it produces a slightly different translation than target, a more literal version of the source sentence. It swaps *mountain climbing* for *climbing a mountain*.

```

translation, attention = translate_sentence(src, vocab_transform, '<bos>', '<eos>', model, device)

print(f'predicted trg = {translation}')

display_attention(src, translation, attention)

```

## BLEU

Previously we have only cared about the loss/perplexity of the model. However there metrics that are specifically designed for measuring the quality of a translation - the most popular is *BLEU*. Without going into too much detail, BLEU looks at the overlap in the predicted and actual target sequences in terms of their n-grams. It will give us a number between 0 and 1 for each sequence, where 1 means there is perfect overlap, i.e. a perfect translation, although is usually shown between 0 and 100. BLEU was designed for multiple candidate translations per source sequence, however in this dataset we only have one candidate per source.

We define a `calculate_bleu` function which calculates the BLEU score over a provided TorchText dataset. This function creates a corpus of the actual and predicted translation for each source sentence and then calculates the BLEU score.

```

from torchtext.data.metrics import bleu_score

from tqdm.auto import tqdm

def calculate_bleu(data, vocabs, init_token, eos_token, model, device, max_len = 50):

nlp = spacy.load('en_core_web_sm')

trgs = []

pred_trgs = []

for datum in tqdm(data):

src, trg = datum

if isinstance(trg, str):

trg = [t.text.lower() for t in nlp(trg)]

pred_trg, _ = translate_sentence(src, vocabs, init_token, eos_token, model, device, max_len)

#cut off <eos> token

pred_trg = pred_trg[:-1]

pred_trgs.append(pred_trg)

trgs.append([trg])

return bleu_score(pred_trgs, trgs)

```

We get a BLEU of around 28. If we compare it to the paper that the attention model is attempting to replicate, they achieve a BLEU score of 26.75. This is similar to our score, however they are using a completely different dataset and their model size is much larger - 1000 hidden dimensions which takes 4 days to train! - so we cannot really compare against that either.

This number isn't really interpretable, we can't really say much about it. The most useful part of a BLEU score is that it can be used to compare different models on the same dataset, where the one with the **higher** BLEU score is "better".

```

bleu_score_this = calculate_bleu(test_list, vocab_transform, '<bos>', '<eos>', model, device)

print(f'BLEU score = {bleu_score_this*100:.2f}')

```

In the next tutorials we will be moving away from using recurrent neural networks and start looking at other ways to construct sequence-to-sequence models. Specifically, in the next tutorial we will be using convolutional neural networks.

| github_jupyter |

<a href="https://colab.research.google.com/github/SummerLife/EmbeddedSystem/blob/master/MachineLearning/gist/visualization_of_the_filters_of_VGG16.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

```

"""

#Visualization of the filters of VGG16, via gradient ascent in input space.

This script can run on CPU in a few minutes.

Results example:

"""

from __future__ import print_function

import time

import numpy as np

from PIL import Image as pil_image

from keras.preprocessing.image import save_img

from keras import layers

from keras.applications import vgg16

from keras import backend as K

def normalize(x):

"""utility function to normalize a tensor.

# Arguments

x: An input tensor.

# Returns

The normalized input tensor.

"""

return x / (K.sqrt(K.mean(K.square(x))) + K.epsilon())

def deprocess_image(x):

"""utility function to convert a float array into a valid uint8 image.

# Arguments

x: A numpy-array representing the generated image.

# Returns

A processed numpy-array, which could be used in e.g. imshow.

"""

# normalize tensor: center on 0., ensure std is 0.25

x -= x.mean()

x /= (x.std() + K.epsilon())

x *= 0.25

# clip to [0, 1]

x += 0.5

x = np.clip(x, 0, 1)

# convert to RGB array

x *= 255

if K.image_data_format() == 'channels_first':

x = x.transpose((1, 2, 0))

x = np.clip(x, 0, 255).astype('uint8')

return x

def process_image(x, former):

"""utility function to convert a valid uint8 image back into a float array.

Reverses `deprocess_image`.

# Arguments

x: A numpy-array, which could be used in e.g. imshow.

former: The former numpy-array.

Need to determine the former mean and variance.

# Returns

A processed numpy-array representing the generated image.

"""

if K.image_data_format() == 'channels_first':

x = x.transpose((2, 0, 1))

return (x / 255 - 0.5) * 4 * former.std() + former.mean()

def visualize_layer(model,

layer_name,

step=1.,

epochs=15,

upscaling_steps=9,

upscaling_factor=1.2,

output_dim=(412, 412),

filter_range=(0, None)):

"""Visualizes the most relevant filters of one conv-layer in a certain model.

# Arguments

model: The model containing layer_name.

layer_name: The name of the layer to be visualized.

Has to be a part of model.

step: step size for gradient ascent.

epochs: Number of iterations for gradient ascent.

upscaling_steps: Number of upscaling steps.

Starting image is in this case (80, 80).

upscaling_factor: Factor to which to slowly upgrade

the image towards output_dim.

output_dim: [img_width, img_height] The output image dimensions.

filter_range: Tupel[lower, upper]

Determines the to be computed filter numbers.

If the second value is `None`,

the last filter will be inferred as the upper boundary.

"""

def _generate_filter_image(input_img,

layer_output,

filter_index):

"""Generates image for one particular filter.

# Arguments

input_img: The input-image Tensor.

layer_output: The output-image Tensor.

filter_index: The to be processed filter number.

Assumed to be valid.

#Returns

Either None if no image could be generated.

or a tuple of the image (array) itself and the last loss.

"""

s_time = time.time()

# we build a loss function that maximizes the activation

# of the nth filter of the layer considered

if K.image_data_format() == 'channels_first':

loss = K.mean(layer_output[:, filter_index, :, :])

else:

loss = K.mean(layer_output[:, :, :, filter_index])

# we compute the gradient of the input picture wrt this loss

grads = K.gradients(loss, input_img)[0]

# normalization trick: we normalize the gradient

grads = normalize(grads)

# this function returns the loss and grads given the input picture

iterate = K.function([input_img], [loss, grads])

# we start from a gray image with some random noise

intermediate_dim = tuple(

int(x / (upscaling_factor ** upscaling_steps)) for x in output_dim)

if K.image_data_format() == 'channels_first':

input_img_data = np.random.random(

(1, 3, intermediate_dim[0], intermediate_dim[1]))

else:

input_img_data = np.random.random(

(1, intermediate_dim[0], intermediate_dim[1], 3))

input_img_data = (input_img_data - 0.5) * 20 + 128

# Slowly upscaling towards the original size prevents

# a dominating high-frequency of the to visualized structure

# as it would occur if we directly compute the 412d-image.

# Behaves as a better starting point for each following dimension

# and therefore avoids poor local minima

for up in reversed(range(upscaling_steps)):

# we run gradient ascent for e.g. 20 steps

for _ in range(epochs):

loss_value, grads_value = iterate([input_img_data])

input_img_data += grads_value * step

# some filters get stuck to 0, we can skip them

if loss_value <= K.epsilon():

return None

# Calculate upscaled dimension

intermediate_dim = tuple(

int(x / (upscaling_factor ** up)) for x in output_dim)

# Upscale

img = deprocess_image(input_img_data[0])

img = np.array(pil_image.fromarray(img).resize(intermediate_dim,

pil_image.BICUBIC))

input_img_data = np.expand_dims(

process_image(img, input_img_data[0]), 0)

# decode the resulting input image

img = deprocess_image(input_img_data[0])

e_time = time.time()

print('Costs of filter {:3}: {:5.0f} ( {:4.2f}s )'.format(filter_index,

loss_value,

e_time - s_time))

return img, loss_value

def _draw_filters(filters, n=None):

"""Draw the best filters in a nxn grid.

# Arguments

filters: A List of generated images and their corresponding losses

for each processed filter.

n: dimension of the grid.

If none, the largest possible square will be used

"""

if n is None:

n = int(np.floor(np.sqrt(len(filters))))

# the filters that have the highest loss are assumed to be better-looking.

# we will only keep the top n*n filters.

filters.sort(key=lambda x: x[1], reverse=True)

filters = filters[:n * n]

# build a black picture with enough space for

# e.g. our 8 x 8 filters of size 412 x 412, with a 5px margin in between

MARGIN = 5

width = n * output_dim[0] + (n - 1) * MARGIN

height = n * output_dim[1] + (n - 1) * MARGIN

stitched_filters = np.zeros((width, height, 3), dtype='uint8')

# fill the picture with our saved filters

for i in range(n):

for j in range(n):

img, _ = filters[i * n + j]

width_margin = (output_dim[0] + MARGIN) * i

height_margin = (output_dim[1] + MARGIN) * j

stitched_filters[

width_margin: width_margin + output_dim[0],

height_margin: height_margin + output_dim[1], :] = img

# save the result to disk

save_img('vgg_{0:}_{1:}x{1:}.png'.format(layer_name, n), stitched_filters)

# this is the placeholder for the input images

assert len(model.inputs) == 1

input_img = model.inputs[0]

# get the symbolic outputs of each "key" layer (we gave them unique names).

layer_dict = dict([(layer.name, layer) for layer in model.layers[1:]])

output_layer = layer_dict[layer_name]

assert isinstance(output_layer, layers.Conv2D)

# Compute to be processed filter range

filter_lower = filter_range[0]

filter_upper = (filter_range[1]

if filter_range[1] is not None

else len(output_layer.get_weights()[1]))

assert(filter_lower >= 0

and filter_upper <= len(output_layer.get_weights()[1])

and filter_upper > filter_lower)

print('Compute filters {:} to {:}'.format(filter_lower, filter_upper))

# iterate through each filter and generate its corresponding image

processed_filters = []

for f in range(filter_lower, filter_upper):

img_loss = _generate_filter_image(input_img, output_layer.output, f)

if img_loss is not None:

processed_filters.append(img_loss)

print('{} filter processed.'.format(len(processed_filters)))

# Finally draw and store the best filters to disk

_draw_filters(processed_filters)

if __name__ == '__main__':

# the name of the layer we want to visualize

# (see model definition at keras/applications/vgg16.py)

LAYER_NAME = 'block5_conv1'

# build the VGG16 network with ImageNet weights

vgg = vgg16.VGG16(weights='imagenet', include_top=False)

print('Model loaded.')

vgg.summary()

# example function call

# visualize_layer(vgg, LAYER_NAME)

visualize_layer(vgg, "block1_conv1")

visualize_layer(vgg, "block2_conv1")

```

| github_jupyter |

# Neural Machine Translation

Welcome to your first programming assignment for this week!

* You will build a Neural Machine Translation (NMT) model to translate human-readable dates ("25th of June, 2009") into machine-readable dates ("2009-06-25").

* You will do this using an attention model, one of the most sophisticated sequence-to-sequence models.

This notebook was produced together with NVIDIA's Deep Learning Institute.

## <font color='darkblue'>Updates</font>

#### If you were working on the notebook before this update...

* The current notebook is version "4a".

* You can find your original work saved in the notebook with the previous version name ("v4")

* To view the file directory, go to the menu "File->Open", and this will open a new tab that shows the file directory.

#### List of updates

* Clarified names of variables to be consistent with the lectures and consistent within the assignment

- pre-attention bi-directional LSTM: the first LSTM that processes the input data.

- 'a': the hidden state of the pre-attention LSTM.

- post-attention LSTM: the LSTM that outputs the translation.

- 's': the hidden state of the post-attention LSTM.

- energies "e". The output of the dense function that takes "a" and "s" as inputs.

- All references to "output activation" are updated to "hidden state".

- "post-activation" sequence model is updated to "post-attention sequence model".

- 3.1: "Getting the activations from the Network" renamed to "Getting the attention weights from the network."

- Appropriate mentions of "activation" replaced "attention weights."

- Sequence of alphas corrected to be a sequence of "a" hidden states.

* one_step_attention:

- Provides sample code for each Keras layer, to show how to call the functions.

- Reminds students to provide the list of hidden states in a specific order, in order to pause the autograder.

* model

- Provides sample code for each Keras layer, to show how to call the functions.

- Added a troubleshooting note about handling errors.

- Fixed typo: outputs should be of length 10 and not 11.

* define optimizer and compile model

- Provides sample code for each Keras layer, to show how to call the functions.

* Spelling, grammar and wording corrections.

Let's load all the packages you will need for this assignment.

```

from keras.layers import Bidirectional, Concatenate, Permute, Dot, Input, LSTM, Multiply

from keras.layers import RepeatVector, Dense, Activation, Lambda

from keras.optimizers import Adam

from keras.utils import to_categorical

from keras.models import load_model, Model

import keras.backend as K

import numpy as np

from faker import Faker

import random

from tqdm import tqdm

from babel.dates import format_date

from nmt_utils import *

import matplotlib.pyplot as plt

%matplotlib inline

```

## 1 - Translating human readable dates into machine readable dates

* The model you will build here could be used to translate from one language to another, such as translating from English to Hindi.

* However, language translation requires massive datasets and usually takes days of training on GPUs.

* To give you a place to experiment with these models without using massive datasets, we will perform a simpler "date translation" task.

* The network will input a date written in a variety of possible formats (*e.g. "the 29th of August 1958", "03/30/1968", "24 JUNE 1987"*)

* The network will translate them into standardized, machine readable dates (*e.g. "1958-08-29", "1968-03-30", "1987-06-24"*).

* We will have the network learn to output dates in the common machine-readable format YYYY-MM-DD.

<!--

Take a look at [nmt_utils.py](./nmt_utils.py) to see all the formatting. Count and figure out how the formats work, you will need this knowledge later. !-->

### 1.1 - Dataset

We will train the model on a dataset of 10,000 human readable dates and their equivalent, standardized, machine readable dates. Let's run the following cells to load the dataset and print some examples.

```

m = 10000

dataset, human_vocab, machine_vocab, inv_machine_vocab = load_dataset(m)

dataset[:10]

```

You've loaded:

- `dataset`: a list of tuples of (human readable date, machine readable date).

- `human_vocab`: a python dictionary mapping all characters used in the human readable dates to an integer-valued index.

- `machine_vocab`: a python dictionary mapping all characters used in machine readable dates to an integer-valued index.

- **Note**: These indices are not necessarily consistent with `human_vocab`.

- `inv_machine_vocab`: the inverse dictionary of `machine_vocab`, mapping from indices back to characters.

Let's preprocess the data and map the raw text data into the index values.

- We will set Tx=30

- We assume Tx is the maximum length of the human readable date.

- If we get a longer input, we would have to truncate it.

- We will set Ty=10

- "YYYY-MM-DD" is 10 characters long.

```

Tx = 30

Ty = 10

X, Y, Xoh, Yoh = preprocess_data(dataset, human_vocab, machine_vocab, Tx, Ty)

print("X.shape:", X.shape)

print("Y.shape:", Y.shape)

print("Xoh.shape:", Xoh.shape)

print("Yoh.shape:", Yoh.shape)

```

You now have:

- `X`: a processed version of the human readable dates in the training set.

- Each character in X is replaced by an index (integer) mapped to the character using `human_vocab`.

- Each date is padded to ensure a length of $T_x$ using a special character (< pad >).

- `X.shape = (m, Tx)` where m is the number of training examples in a batch.

- `Y`: a processed version of the machine readable dates in the training set.

- Each character is replaced by the index (integer) it is mapped to in `machine_vocab`.

- `Y.shape = (m, Ty)`.

- `Xoh`: one-hot version of `X`

- Each index in `X` is converted to the one-hot representation (if the index is 2, the one-hot version has the index position 2 set to 1, and the remaining positions are 0.

- `Xoh.shape = (m, Tx, len(human_vocab))`

- `Yoh`: one-hot version of `Y`

- Each index in `Y` is converted to the one-hot representation.

- `Yoh.shape = (m, Tx, len(machine_vocab))`.

- `len(machine_vocab) = 11` since there are 10 numeric digits (0 to 9) and the `-` symbol.

* Let's also look at some examples of preprocessed training examples.

* Feel free to play with `index` in the cell below to navigate the dataset and see how source/target dates are preprocessed.

```

index = 0

print("Source date:", dataset[index][0])

print("Target date:", dataset[index][1])

print()

print("Source after preprocessing (indices):", X[index])

print("Target after preprocessing (indices):", Y[index])

print()

print("Source after preprocessing (one-hot):", Xoh[index])

print("Target after preprocessing (one-hot):", Yoh[index])

```

## 2 - Neural machine translation with attention

* If you had to translate a book's paragraph from French to English, you would not read the whole paragraph, then close the book and translate.

* Even during the translation process, you would read/re-read and focus on the parts of the French paragraph corresponding to the parts of the English you are writing down.

* The attention mechanism tells a Neural Machine Translation model where it should pay attention to at any step.

### 2.1 - Attention mechanism

In this part, you will implement the attention mechanism presented in the lecture videos.

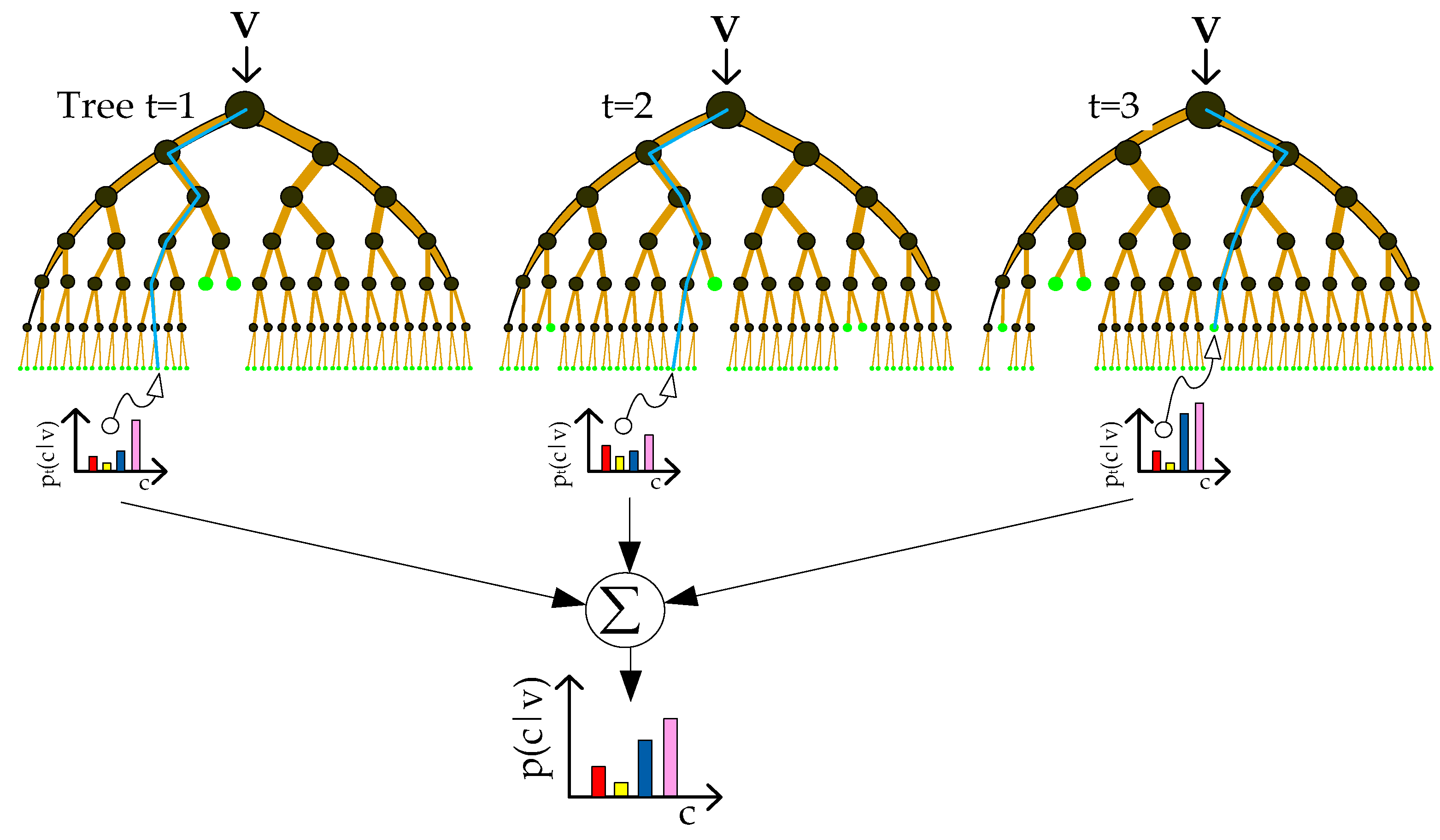

* Here is a figure to remind you how the model works.

* The diagram on the left shows the attention model.

* The diagram on the right shows what one "attention" step does to calculate the attention variables $\alpha^{\langle t, t' \rangle}$.

* The attention variables $\alpha^{\langle t, t' \rangle}$ are used to compute the context variable $context^{\langle t \rangle}$ for each timestep in the output ($t=1, \ldots, T_y$).

<table>

<td>

<img src="images/attn_model.png" style="width:500;height:500px;"> <br>

</td>

<td>

<img src="images/attn_mechanism.png" style="width:500;height:500px;"> <br>

</td>

</table>

<caption><center> **Figure 1**: Neural machine translation with attention</center></caption>

Here are some properties of the model that you may notice:

#### Pre-attention and Post-attention LSTMs on both sides of the attention mechanism

- There are two separate LSTMs in this model (see diagram on the left): pre-attention and post-attention LSTMs.

- *Pre-attention* Bi-LSTM is the one at the bottom of the picture is a Bi-directional LSTM and comes *before* the attention mechanism.

- The attention mechanism is shown in the middle of the left-hand diagram.

- The pre-attention Bi-LSTM goes through $T_x$ time steps

- *Post-attention* LSTM: at the top of the diagram comes *after* the attention mechanism.

- The post-attention LSTM goes through $T_y$ time steps.

- The post-attention LSTM passes the hidden state $s^{\langle t \rangle}$ and cell state $c^{\langle t \rangle}$ from one time step to the next.

#### An LSTM has both a hidden state and cell state

* In the lecture videos, we were using only a basic RNN for the post-attention sequence model

* This means that the state captured by the RNN was outputting only the hidden state $s^{\langle t\rangle}$.

* In this assignment, we are using an LSTM instead of a basic RNN.

* So the LSTM has both the hidden state $s^{\langle t\rangle}$ and the cell state $c^{\langle t\rangle}$.

#### Each time step does not use predictions from the previous time step

* Unlike previous text generation examples earlier in the course, in this model, the post-attention LSTM at time $t$ does not take the previous time step's prediction $y^{\langle t-1 \rangle}$ as input.

* The post-attention LSTM at time 't' only takes the hidden state $s^{\langle t\rangle}$ and cell state $c^{\langle t\rangle}$ as input.

* We have designed the model this way because unlike language generation (where adjacent characters are highly correlated) there isn't as strong a dependency between the previous character and the next character in a YYYY-MM-DD date.

#### Concatenation of hidden states from the forward and backward pre-attention LSTMs

- $\overrightarrow{a}^{\langle t \rangle}$: hidden state of the forward-direction, pre-attention LSTM.

- $\overleftarrow{a}^{\langle t \rangle}$: hidden state of the backward-direction, pre-attention LSTM.

- $a^{\langle t \rangle} = [\overrightarrow{a}^{\langle t \rangle}, \overleftarrow{a}^{\langle t \rangle}]$: the concatenation of the activations of both the forward-direction $\overrightarrow{a}^{\langle t \rangle}$ and backward-directions $\overleftarrow{a}^{\langle t \rangle}$ of the pre-attention Bi-LSTM.

#### Computing "energies" $e^{\langle t, t' \rangle}$ as a function of $s^{\langle t-1 \rangle}$ and $a^{\langle t' \rangle}$

- Recall in the lesson videos "Attention Model", at time 6:45 to 8:16, the definition of "e" as a function of $s^{\langle t-1 \rangle}$ and $a^{\langle t \rangle}$.

- "e" is called the "energies" variable.

- $s^{\langle t-1 \rangle}$ is the hidden state of the post-attention LSTM

- $a^{\langle t' \rangle}$ is the hidden state of the pre-attention LSTM.

- $s^{\langle t-1 \rangle}$ and $a^{\langle t \rangle}$ are fed into a simple neural network, which learns the function to output $e^{\langle t, t' \rangle}$.

- $e^{\langle t, t' \rangle}$ is then used when computing the attention $a^{\langle t, t' \rangle}$ that $y^{\langle t \rangle}$ should pay to $a^{\langle t' \rangle}$.

- The diagram on the right of figure 1 uses a `RepeatVector` node to copy $s^{\langle t-1 \rangle}$'s value $T_x$ times.

- Then it uses `Concatenation` to concatenate $s^{\langle t-1 \rangle}$ and $a^{\langle t \rangle}$.

- The concatenation of $s^{\langle t-1 \rangle}$ and $a^{\langle t \rangle}$ is fed into a "Dense" layer, which computes $e^{\langle t, t' \rangle}$.

- $e^{\langle t, t' \rangle}$ is then passed through a softmax to compute $\alpha^{\langle t, t' \rangle}$.

- Note that the diagram doesn't explicitly show variable $e^{\langle t, t' \rangle}$, but $e^{\langle t, t' \rangle}$ is above the Dense layer and below the Softmax layer in the diagram in the right half of figure 1.

- We'll explain how to use `RepeatVector` and `Concatenation` in Keras below.

### Implementation Details

Let's implement this neural translator. You will start by implementing two functions: `one_step_attention()` and `model()`.

#### one_step_attention

* The inputs to the one_step_attention at time step $t$ are:

- $[a^{<1>},a^{<2>}, ..., a^{<T_x>}]$: all hidden states of the pre-attention Bi-LSTM.

- $s^{<t-1>}$: the previous hidden state of the post-attention LSTM

* one_step_attention computes:

- $[\alpha^{<t,1>},\alpha^{<t,2>}, ..., \alpha^{<t,T_x>}]$: the attention weights

- $context^{ \langle t \rangle }$: the context vector:

$$context^{<t>} = \sum_{t' = 1}^{T_x} \alpha^{<t,t'>}a^{<t'>}\tag{1}$$

##### Clarifying 'context' and 'c'

- In the lecture videos, the context was denoted $c^{\langle t \rangle}$

- In the assignment, we are calling the context $context^{\langle t \rangle}$.

- This is to avoid confusion with the post-attention LSTM's internal memory cell variable, which is also denoted $c^{\langle t \rangle}$.

#### Implement `one_step_attention`

**Exercise**: Implement `one_step_attention()`.

* The function `model()` will call the layers in `one_step_attention()` $T_y$ using a for-loop.

* It is important that all $T_y$ copies have the same weights.

* It should not reinitialize the weights every time.

* In other words, all $T_y$ steps should have shared weights.

* Here's how you can implement layers with shareable weights in Keras:

1. Define the layer objects in a variable scope that is outside of the `one_step_attention` function. For example, defining the objects as global variables would work.

- Note that defining these variables inside the scope of the function `model` would technically work, since `model` will then call the `one_step_attention` function. For the purposes of making grading and troubleshooting easier, we are defining these as global variables. Note that the automatic grader will expect these to be global variables as well.

2. Call these objects when propagating the input.

* We have defined the layers you need as global variables.

* Please run the following cells to create them.

* Please note that the automatic grader expects these global variables with the given variable names. For grading purposes, please do not rename the global variables.

* Please check the Keras documentation to learn more about these layers. The layers are functions. Below are examples of how to call these functions.

* [RepeatVector()](https://keras.io/layers/core/#repeatvector)

```Python

var_repeated = repeat_layer(var1)

```

* [Concatenate()](https://keras.io/layers/merge/#concatenate)

```Python

concatenated_vars = concatenate_layer([var1,var2,var3])

```

* [Dense()](https://keras.io/layers/core/#dense)

```Python

var_out = dense_layer(var_in)

```

* [Activation()](https://keras.io/layers/core/#activation)

```Python

activation = activation_layer(var_in)

```

* [Dot()](https://keras.io/layers/merge/#dot)

```Python

dot_product = dot_layer([var1,var2])

```

```

# Defined shared layers as global variables

repeator = RepeatVector(Tx)

concatenator = Concatenate(axis=-1)

densor1 = Dense(10, activation = "tanh")

densor2 = Dense(1, activation = "relu")

activator = Activation(softmax, name='attention_weights') # We are using a custom softmax(axis = 1) loaded in this notebook

dotor = Dot(axes = 1)

# GRADED FUNCTION: one_step_attention

def one_step_attention(a, s_prev):

"""

Performs one step of attention: Outputs a context vector computed as a dot product of the attention weights

"alphas" and the hidden states "a" of the Bi-LSTM.

Arguments:

a -- hidden state output of the Bi-LSTM, numpy-array of shape (m, Tx, 2*n_a)

s_prev -- previous hidden state of the (post-attention) LSTM, numpy-array of shape (m, n_s)

Returns:

context -- context vector, input of the next (post-attention) LSTM cell

"""

### START CODE HERE ###

# Use repeator to repeat s_prev to be of shape (m, Tx, n_s) so that you can concatenate it with all hidden states "a" (≈ 1 line)

s_prev =repeator(s_prev)

# Use concatenator to concatenate a and s_prev on the last axis (≈ 1 line)

# For grading purposes, please list 'a' first and 's_prev' second, in this order.

concat = concatenator([a,s_prev])

# Use densor1 to propagate concat through a small fully-connected neural network to compute the "intermediate energies" variable e. (≈1 lines)

e = densor1(concat)

# Use densor2 to propagate e through a small fully-connected neural network to compute the "energies" variable energies. (≈1 lines)

energies = densor2(e)

# Use "activator" on "energies" to compute the attention weights "alphas" (≈ 1 line)

alphas = activator(energies)

# Use dotor together with "alphas" and "a" to compute the context vector to be given to the next (post-attention) LSTM-cell (≈ 1 line)

context = dotor([alphas,a])

### END CODE HERE ###

return context

```

You will be able to check the expected output of `one_step_attention()` after you've coded the `model()` function.

#### model

* `model` first runs the input through a Bi-LSTM to get $[a^{<1>},a^{<2>}, ..., a^{<T_x>}]$.

* Then, `model` calls `one_step_attention()` $T_y$ times using a `for` loop. At each iteration of this loop:

- It gives the computed context vector $context^{<t>}$ to the post-attention LSTM.

- It runs the output of the post-attention LSTM through a dense layer with softmax activation.

- The softmax generates a prediction $\hat{y}^{<t>}$.

**Exercise**: Implement `model()` as explained in figure 1 and the text above. Again, we have defined global layers that will share weights to be used in `model()`.

```

n_a = 32 # number of units for the pre-attention, bi-directional LSTM's hidden state 'a'

n_s = 64 # number of units for the post-attention LSTM's hidden state "s"

# Please note, this is the post attention LSTM cell.

# For the purposes of passing the automatic grader

# please do not modify this global variable. This will be corrected once the automatic grader is also updated.

post_activation_LSTM_cell = LSTM(n_s, return_state = True) # post-attention LSTM

output_layer = Dense(len(machine_vocab), activation=softmax)

```

Now you can use these layers $T_y$ times in a `for` loop to generate the outputs, and their parameters will not be reinitialized. You will have to carry out the following steps:

1. Propagate the input `X` into a bi-directional LSTM.

* [Bidirectional](https://keras.io/layers/wrappers/#bidirectional)

* [LSTM](https://keras.io/layers/recurrent/#lstm)

* Remember that we want the LSTM to return a full sequence instead of just the last hidden state.

Sample code:

```Python

sequence_of_hidden_states = Bidirectional(LSTM(units=..., return_sequences=...))(the_input_X)

```

2. Iterate for $t = 0, \cdots, T_y-1$:

1. Call `one_step_attention()`, passing in the sequence of hidden states $[a^{\langle 1 \rangle},a^{\langle 2 \rangle}, ..., a^{ \langle T_x \rangle}]$ from the pre-attention bi-directional LSTM, and the previous hidden state $s^{<t-1>}$ from the post-attention LSTM to calculate the context vector $context^{<t>}$.

2. Give $context^{<t>}$ to the post-attention LSTM cell.

- Remember to pass in the previous hidden-state $s^{\langle t-1\rangle}$ and cell-states $c^{\langle t-1\rangle}$ of this LSTM

* This outputs the new hidden state $s^{<t>}$ and the new cell state $c^{<t>}$.

Sample code:

```Python

next_hidden_state, _ , next_cell_state =

post_activation_LSTM_cell(inputs=..., initial_state=[prev_hidden_state, prev_cell_state])

```

Please note that the layer is actually the "post attention LSTM cell". For the purposes of passing the automatic grader, please do not modify the naming of this global variable. This will be fixed when we deploy updates to the automatic grader.

3. Apply a dense, softmax layer to $s^{<t>}$, get the output.

Sample code:

```Python

output = output_layer(inputs=...)

```

4. Save the output by adding it to the list of outputs.

3. Create your Keras model instance.

* It should have three inputs:

* `X`, the one-hot encoded inputs to the model, of shape ($T_{x}, humanVocabSize)$

* $s^{\langle 0 \rangle}$, the initial hidden state of the post-attention LSTM

* $c^{\langle 0 \rangle}$), the initial cell state of the post-attention LSTM

* The output is the list of outputs.

Sample code

```Python

model = Model(inputs=[...,...,...], outputs=...)

```

```

# GRADED FUNCTION: model

def model(Tx, Ty, n_a, n_s, human_vocab_size, machine_vocab_size):

"""

Arguments:

Tx -- length of the input sequence

Ty -- length of the output sequence

n_a -- hidden state size of the Bi-LSTM

n_s -- hidden state size of the post-attention LSTM

human_vocab_size -- size of the python dictionary "human_vocab"

machine_vocab_size -- size of the python dictionary "machine_vocab"

Returns:

model -- Keras model instance

"""

# Define the inputs of your model with a shape (Tx,)

# Define s0 (initial hidden state) and c0 (initial cell state)

# for the decoder LSTM with shape (n_s,)

X = Input(shape=(Tx, human_vocab_size))

s0 = Input(shape=(n_s,), name='s0')

c0 = Input(shape=(n_s,), name='c0')

s = s0

c = c0

# Initialize empty list of outputs

outputs = []

### START CODE HERE ###

# Step 1: Define your pre-attention Bi-LSTM. (≈ 1 line)

a = Bidirectional(LSTM(n_a,return_sequences=True))(X)

# Step 2: Iterate for Ty steps

for t in range(Ty):

# Step 2.A: Perform one step of the attention mechanism to get back the context vector at step t (≈ 1 line)

context = one_step_attention(a,s)

# Step 2.B: Apply the post-attention LSTM cell to the "context" vector.

# Don't forget to pass: initial_state = [hidden state, cell state] (≈ 1 line)

s, _, c = post_activation_LSTM_cell(context,initial_state=[s,c])

# Step 2.C: Apply Dense layer to the hidden state output of the post-attention LSTM (≈ 1 line)

out = output_layer(s)

# Step 2.D: Append "out" to the "outputs" list (≈ 1 line)

outputs.append(out)

# Step 3: Create model instance taking three inputs and returning the list of outputs. (≈ 1 line)

model = Model(inputs=[X,s0,c0],outputs=outputs)

### END CODE HERE ###

return model

```

Run the following cell to create your model.

```

model = model(Tx, Ty, n_a, n_s, len(human_vocab), len(machine_vocab))

```

#### Troubleshooting Note

* If you are getting repeated errors after an initially incorrect implementation of "model", but believe that you have corrected the error, you may still see error messages when building your model.

* A solution is to save and restart your kernel (or shutdown then restart your notebook), and re-run the cells.

Let's get a summary of the model to check if it matches the expected output.

```

model.summary()

```

**Expected Output**:

Here is the summary you should see

<table>

<tr>

<td>

**Total params:**

</td>

<td>

52,960

</td>

</tr>

<tr>

<td>

**Trainable params:**

</td>

<td>

52,960

</td>

</tr>

<tr>

<td>

**Non-trainable params:**

</td>

<td>

0

</td>

</tr>

<tr>

<td>

**bidirectional_1's output shape **

</td>

<td>

(None, 30, 64)

</td>

</tr>

<tr>

<td>

**repeat_vector_1's output shape **

</td>

<td>

(None, 30, 64)

</td>

</tr>

<tr>

<td>

**concatenate_1's output shape **

</td>

<td>

(None, 30, 128)

</td>

</tr>

<tr>

<td>

**attention_weights's output shape **

</td>

<td>

(None, 30, 1)

</td>

</tr>

<tr>

<td>

**dot_1's output shape **

</td>

<td>

(None, 1, 64)

</td>

</tr>

<tr>

<td>

**dense_3's output shape **

</td>

<td>

(None, 11)

</td>

</tr>

</table>

#### Compile the model

* After creating your model in Keras, you need to compile it and define the loss function, optimizer and metrics you want to use.

* Loss function: 'categorical_crossentropy'.

* Optimizer: [Adam](https://keras.io/optimizers/#adam) [optimizer](https://keras.io/optimizers/#usage-of-optimizers)

- learning rate = 0.005

- $\beta_1 = 0.9$

- $\beta_2 = 0.999$

- decay = 0.01

* metric: 'accuracy'

Sample code

```Python

optimizer = Adam(lr=..., beta_1=..., beta_2=..., decay=...)

model.compile(optimizer=..., loss=..., metrics=[...])

```

```

### START CODE HERE ### (≈2 lines)

opt = Adam(lr=0.005, beta_1=0.9, beta_2=0.999, decay=0.01)

model.compile(optimizer=opt,metrics=['accuracy'],loss='categorical_crossentropy')

### END CODE HERE ###

```

#### Define inputs and outputs, and fit the model

The last step is to define all your inputs and outputs to fit the model:

- You have input X of shape $(m = 10000, T_x = 30)$ containing the training examples.

- You need to create `s0` and `c0` to initialize your `post_attention_LSTM_cell` with zeros.

- Given the `model()` you coded, you need the "outputs" to be a list of 10 elements of shape (m, T_y).

- The list `outputs[i][0], ..., outputs[i][Ty]` represents the true labels (characters) corresponding to the $i^{th}$ training example (`X[i]`).

- `outputs[i][j]` is the true label of the $j^{th}$ character in the $i^{th}$ training example.

```

s0 = np.zeros((m, n_s))

c0 = np.zeros((m, n_s))

outputs = list(Yoh.swapaxes(0,1))

```

Let's now fit the model and run it for one epoch.

```

model.fit([Xoh, s0, c0], outputs, epochs=1, batch_size=100)

```

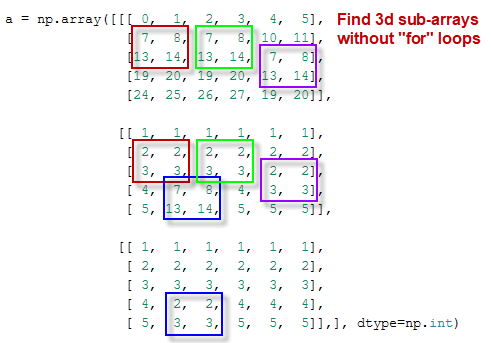

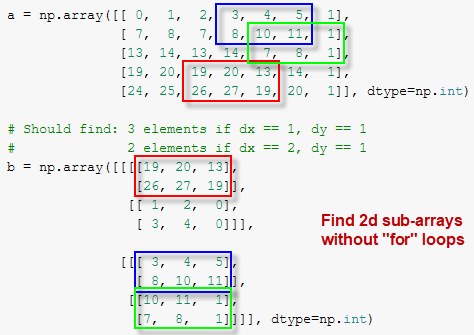

While training you can see the loss as well as the accuracy on each of the 10 positions of the output. The table below gives you an example of what the accuracies could be if the batch had 2 examples:

<img src="images/table.png" style="width:700;height:200px;"> <br>

<caption><center>Thus, `dense_2_acc_8: 0.89` means that you are predicting the 7th character of the output correctly 89% of the time in the current batch of data. </center></caption>

We have run this model for longer, and saved the weights. Run the next cell to load our weights. (By training a model for several minutes, you should be able to obtain a model of similar accuracy, but loading our model will save you time.)

```

model.load_weights('models/model.h5')

```

You can now see the results on new examples.

```

EXAMPLES = ['3 May 1979', '5 April 09', '21th of August 2016', 'Tue 10 Jul 2007', 'Saturday May 9 2018', 'March 3 2001', 'March 3rd 2001', '1 March 2001']

for example in EXAMPLES:

source = string_to_int(example, Tx, human_vocab)

source = np.array(list(map(lambda x: to_categorical(x, num_classes=len(human_vocab)), source))).swapaxes(0,1)

prediction = model.predict([source, s0, c0])

prediction = np.argmax(prediction, axis = -1)

output = [inv_machine_vocab[int(i)] for i in prediction]

print("source:", example)

print("output:", ''.join(output),"\n")

```

You can also change these examples to test with your own examples. The next part will give you a better sense of what the attention mechanism is doing--i.e., what part of the input the network is paying attention to when generating a particular output character.

## 3 - Visualizing Attention (Optional / Ungraded)

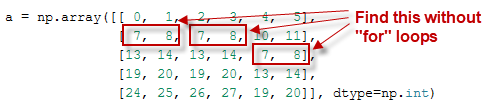

Since the problem has a fixed output length of 10, it is also possible to carry out this task using 10 different softmax units to generate the 10 characters of the output. But one advantage of the attention model is that each part of the output (such as the month) knows it needs to depend only on a small part of the input (the characters in the input giving the month). We can visualize what each part of the output is looking at which part of the input.

Consider the task of translating "Saturday 9 May 2018" to "2018-05-09". If we visualize the computed $\alpha^{\langle t, t' \rangle}$ we get this:

<img src="images/date_attention.png" style="width:600;height:300px;"> <br>

<caption><center> **Figure 8**: Full Attention Map</center></caption>

Notice how the output ignores the "Saturday" portion of the input. None of the output timesteps are paying much attention to that portion of the input. We also see that 9 has been translated as 09 and May has been correctly translated into 05, with the output paying attention to the parts of the input it needs to to make the translation. The year mostly requires it to pay attention to the input's "18" in order to generate "2018."

### 3.1 - Getting the attention weights from the network

Lets now visualize the attention values in your network. We'll propagate an example through the network, then visualize the values of $\alpha^{\langle t, t' \rangle}$.

To figure out where the attention values are located, let's start by printing a summary of the model .

```

model.summary()

```

Navigate through the output of `model.summary()` above. You can see that the layer named `attention_weights` outputs the `alphas` of shape (m, 30, 1) before `dot_2` computes the context vector for every time step $t = 0, \ldots, T_y-1$. Let's get the attention weights from this layer.

The function `attention_map()` pulls out the attention values from your model and plots them.

```

attention_map = plot_attention_map(model, human_vocab, inv_machine_vocab, "Tuesday 09 Oct 1993", num = 7, n_s = 64);

```

On the generated plot you can observe the values of the attention weights for each character of the predicted output. Examine this plot and check that the places where the network is paying attention makes sense to you.

In the date translation application, you will observe that most of the time attention helps predict the year, and doesn't have much impact on predicting the day or month.

### Congratulations!

You have come to the end of this assignment

## Here's what you should remember

- Machine translation models can be used to map from one sequence to another. They are useful not just for translating human languages (like French->English) but also for tasks like date format translation.

- An attention mechanism allows a network to focus on the most relevant parts of the input when producing a specific part of the output.

- A network using an attention mechanism can translate from inputs of length $T_x$ to outputs of length $T_y$, where $T_x$ and $T_y$ can be different.

- You can visualize attention weights $\alpha^{\langle t,t' \rangle}$ to see what the network is paying attention to while generating each output.

Congratulations on finishing this assignment! You are now able to implement an attention model and use it to learn complex mappings from one sequence to another.

| github_jupyter |

##### Copyright 2020 The TensorFlow Authors.

```

#@title Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# https://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

```

# Calculate gradients

<table class="tfo-notebook-buttons" align="left">

<td>

<a target="_blank" href="https://www.tensorflow.org/quantum/tutorials/gradients"><img src="https://www.tensorflow.org/images/tf_logo_32px.png" />View on TensorFlow.org</a>

</td>

<td>

<a target="_blank" href="https://colab.research.google.com/github/tensorflow/quantum/blob/master/docs/tutorials/gradients.ipynb"><img src="https://www.tensorflow.org/images/colab_logo_32px.png" />Run in Google Colab</a>

</td>

<td>

<a target="_blank" href="https://github.com/tensorflow/quantum/blob/master/docs/tutorials/gradients.ipynb"><img src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" />View source on GitHub</a>

</td>

<td>

<a href="https://storage.googleapis.com/tensorflow_docs/quantum/docs/tutorials/gradients.ipynb"><img src="https://www.tensorflow.org/images/download_logo_32px.png" />Download notebook</a>

</td>

</table>

This tutorial explores gradient calculation algorithms for the expectation values of quantum circuits.

Calculating the gradient of the expectation value of a certain observable in a quantum circuit is an involved process. Expectation values of observables do not have the luxury of having analytic gradient formulas that are always easy to write down—unlike traditional machine learning transformations such as matrix multiplication or vector addition that have analytic gradient formulas which are easy to write down. As a result, there are different quantum gradient calculation methods that come in handy for different scenarios. This tutorial compares and contrasts two different differentiation schemes.

## Setup

```

try:

%tensorflow_version 2.x

except Exception:

pass

```

Install TensorFlow Quantum:

```

!pip install tensorflow-quantum

```

Now import TensorFlow and the module dependencies:

```

import tensorflow as tf

import tensorflow_quantum as tfq

import cirq

import sympy

import numpy as np

# visualization tools

%matplotlib inline

import matplotlib.pyplot as plt

from cirq.contrib.svg import SVGCircuit

```

## 1. Preliminary

Let's make the notion of gradient calculation for quantum circuits a little more concrete. Suppose you have a parameterized circuit like this one:

```

qubit = cirq.GridQubit(0, 0)

my_circuit = cirq.Circuit(cirq.Y(qubit)**sympy.Symbol('alpha'))

SVGCircuit(my_circuit)

```

Along with an observable:

```

pauli_x = cirq.X(qubit)

pauli_x

```

Looking at this operator you know that $⟨Y(\alpha)| X | Y(\alpha)⟩ = \sin(\pi \alpha)$

```

def my_expectation(op, alpha):

"""Compute ⟨Y(alpha)| `op` | Y(alpha)⟩"""

params = {'alpha': alpha}

sim = cirq.Simulator()

final_state = sim.simulate(my_circuit, params).final_state

return op.expectation_from_wavefunction(final_state, {qubit: 0}).real

my_alpha = 0.3

print("Expectation=", my_expectation(pauli_x, my_alpha))

print("Sin Formula=", np.sin(np.pi * my_alpha))

```

and if you define $f_{1}(\alpha) = ⟨Y(\alpha)| X | Y(\alpha)⟩$ then $f_{1}^{'}(\alpha) = \pi \cos(\pi \alpha)$. Let's check this:

```

def my_grad(obs, alpha, eps=0.01):

grad = 0

f_x = my_expectation(obs, alpha)

f_x_prime = my_expectation(obs, alpha + eps)

return ((f_x_prime - f_x) / eps).real

print('Finite difference:', my_grad(pauli_x, my_alpha))

print('Cosine formula: ', np.pi * np.cos(np.pi * my_alpha))

```

## 2. The need for a differentiator