text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

```

#r "nuget:Newtonsoft.Json, 12.0.2"

#r "nuget:CsvHelper, 26.1.0"

#r "nuget:DataBoss.DataPackage, 0.0.93"

using DataBoss.Data;

using DataBoss.DataPackage;

```

_visualization functions, you can safely skip the following cell_

```

using System.Data;

using Microsoft.DotNet.Interactive.Formatting;

using static Microsoft.DotNet.Interactive.Formatting.PocketViewTags;

static void Dump(DataPackage dp, int? limit = null) {

var rs = dp.Resources

.Where(x => x.Format == "csv")

.Select(x => (Heading: th(x.Name), Result: td(ReadAsHtmlTable(x.Read(), limit: limit))))

.ToList();

display(table(

thead(rs.Select(x => x.Heading)),

tbody(rs.Select(x => x.Result))

));

}

static object Dump(DataBoss.DataPackage.TabularDataResource xs, int? limit = null) => Dump(xs.Read(), limit: limit);

static object Dump(IDataReader xs, int? limit = null) => display(ReadAsHtmlTable(xs, limit: limit));

static object ReadAsHtmlTable(IDataReader xs, int? limit = null) {

try {

limit ??= int.MaxValue;

var rows = new List<object>();

for(var i = 0;xs.Read() && i < limit; ++i)

rows.Add(Enumerable.Range(0, xs.FieldCount).Select(x => td(xs.GetValue(x))).ToList());

return table(

thead(Enumerable.Range(0, xs.FieldCount).Select(x => th[style:"font-weight:bold"](xs.GetName(x)))),

tbody(rows.Select(x => tr(x))));

} finally {

xs.Dispose();

}

}

```

# Defining a simple resource

```

var dp = new DataPackage();

dp.AddResource(xs => xs.WithName("numbers").WithData(Enumerable.Range(0, 2).Select(x => new { Value = x })));

Dump(dp);

```

**DataPackage.Load** supports directory paths containing a datapackage.json, zip files and http.

```

var countries = DataPackage.Load(@"https://datahub.io/core/country-list/r/country-list_zip.zip");

Dump(countries.GetResource("data_csv"), limit: 10);

```

# Resource (DataReader) Transformation

```

var c2 = new DataPackage();

c2.AddResource(countries.GetResource("data_csv"));

c2.UpdateResource("data_csv", xs => xs

.WithName("countries") //resource can be renamed.

.Transform(x =>

{

var id = 0;

x.Transform("Code", (string value) => value.ToLower()); //typed transform

x.Add(0, "Id", r => ++id); //columns can be added at any existing ordinal

x.Transform("Name", (string value) => value.ToUpper());

x.Add("NameLen", r => r["Name"].ToString().Length); //record based

x.Add("Source", r => $"{r.Source["Name"]} ({r.Source["Code"]})"); //from non transformed source

})

);

Dump(c2, limit: 10);

```

# Creating Resrouces Incrementally

```

var n = 0;

var numbers = Enumerable.Range(0, 3).Select(x => new { Value = ++n });

var myNumbers = new DataPackage();

void AddOrAppend<T>(DataPackage dp, string name, IEnumerable<T> rows) {

dp.AddOrUpdateResource(name, xs => xs.WithData(rows), xs => xs.WithData(() => xs.Read().Concat(rows)));

}

AddOrAppend(myNumbers, "numbers", numbers.ToList());

Dump(myNumbers);

AddOrAppend(myNumbers, "numbers", numbers.ToList());

Dump(myNumbers);

```

| github_jupyter |

# Azure ML Inference Pipeline for HIFIS-RNN-MLP

This notebook defines an Azure machine learning pipeline for batch inference and submits the pipeline as an experiment to be run on an Azure virtual machine. It then publishes the pipeline in the workspace and schedules it to be run at a regular interval.

```

# Import statements

import azureml.core

from azureml.core import Experiment

from azureml.core import Workspace, Datastore

from azureml.data.data_reference import DataReference

from azureml.pipeline.core import PipelineData

from azureml.pipeline.core import Pipeline, PipelineRun

from azureml.pipeline.core.schedule import ScheduleRecurrence, Schedule

from azureml.pipeline.steps import PythonScriptStep, EstimatorStep

from azureml.train.dnn import TensorFlow

from azureml.train.estimator import Estimator

from azureml.core.compute import ComputeTarget, AmlCompute

from azureml.core.compute_target import ComputeTargetException

from azureml.core.environment import Environment

from azureml.core.runconfig import RunConfiguration

import shutil

```

### Register the workspace and configure its Python environment.

```

# Get reference to the workspace

ws = Workspace.from_config("./ws_config.json")

# Set workspace's environment

env = Environment.from_pip_requirements(name = "HIFIS_env", file_path = "./../requirements.txt")

env.python.conda_dependencies.add_pip_package("azureml-core")

env.python.conda_dependencies.add_pip_package("sendgrid")

env.register(workspace=ws)

runconfig = RunConfiguration(conda_dependencies=env.python.conda_dependencies)

print(env.python.conda_dependencies.serialize_to_string())

# Move AML ignore file to root folder

aml_ignore_path = shutil.copy('./.amlignore', './../.amlignore')

```

### Create references to persistent and intermediate data

Create DataReference objects that point to our raw data on the blob. Configure a PipelineData object to point to preprocessed data stored on the blob. Pipeline data is intermediate, meaning that it is produced by a step and will be fed as input to a subsequent step.

```

# Get the blob datastores associated with this workspace

hifis_blob_ds = Datastore(ws, name='hifisrnnmlp_ds')

raw_data_blob_ds = Datastore(ws, name='hifis_raw_ds')

# Create data references to folders on the blobs

raw_data_dr = DataReference(

datastore=raw_data_blob_ds,

data_reference_name="raw_data",

path_on_datastore="hifis/")

inference_dr = DataReference(

datastore=hifis_blob_ds,

data_reference_name="inference",

path_on_datastore="inference/")

# Set up intermediate pipeline data to store preprocessed data and serialized objects needed for inference (e.g. explainer)

preprocess_pd = PipelineData(

"preprocessed_output",

datastore=hifis_blob_ds,

output_name="preprocessed_output",

output_mode="mount")

```

### Compute Target

Specify and configure the compute target for this workspace. If a compute cluster by the name we specified does not exist, create a new compute cluster.

```

# Define some constants

CT_NAME = "ds3v2-infer" # Name of our compute cluster

VM_SIZE = "STANDARD_DS3_V2" # Specify the Azure VM for execution of our pipeline

MIN_NODES = 0 # Min number of compute nodes in cluster

MAX_NODES = 3 # Max number of compute nodes in cluster

# Set up the compute target for this experiment

try:

compute_target = AmlCompute(ws, CT_NAME)

print("Found existing compute target.")

except ComputeTargetException:

print("Creating new compute target")

provisioning_config = AmlCompute.provisioning_configuration(vm_size=VM_SIZE, min_nodes=MIN_NODES, max_nodes=MAX_NODES)

compute_target = ComputeTarget.create(ws, CT_NAME, provisioning_config) # Create the compute cluster

# Wait for cluster to be provisioned

compute_target.wait_for_completion(show_output=True, min_node_count=None, timeout_in_minutes=20)

print("Azure Machine Learning Compute attached")

print("Compute targets: ", ws.compute_targets)

compute_target = ws.compute_targets[CT_NAME]

```

### Define pipeline and submit experiment.

Define the steps of an Azure machine learning pipeline. Create an Azure Experiment that will run our pipeline. Submit the experiment to the execution environment.

```

# Define preprocessing step in the ML pipeline

step1 = PythonScriptStep(name="preprocess_step",

script_name="azure/preprocess_step/preprocess_step.py",

arguments=["--rawdatadir", raw_data_dr, "--inferencedir", inference_dr, "--preprocessedoutputdir",

preprocess_pd],

inputs=[raw_data_dr, inference_dr],

outputs=[preprocess_pd],

compute_target=compute_target,

source_directory="./../",

runconfig=runconfig,

params={"PIPELINE": "inference"},

allow_reuse=False)

# Define batch inference step in the ML pipeline

step2 = PythonScriptStep(name="inference_step",

script_name="azure/inference_step/inference_step.py",

arguments=["--preprocessedoutputdir", preprocess_pd, "--inferencedir", inference_dr],

inputs=[preprocess_pd, inference_dr],

outputs=[],

compute_target=compute_target,

source_directory="./../",

runconfig=runconfig,

allow_reuse=False)

# Construct the ML pipeline from the steps

steps = [step1, step2]

inference_pipeline = Pipeline(workspace=ws, steps=steps)

inference_pipeline.validate()

# Define a new experiment and submit a new pipeline run to the compute target.

experiment = Experiment(workspace=ws, name='BatchInferenceExperiment_v1')

inference_run = experiment.submit(inference_pipeline, regenerate_outputs=False)

print("Pipeline is submitted for execution")

# Move AML ignore file back to original folder

aml_ignore_path = shutil.move(aml_ignore_path, './.amlignore')

```

### Publish the pipeline

Wait for the pipeline run to finish. Then publish the pipeline. The pipeline will be visible as an endpoint in the Pipelines tab in the workspace on Azure Machine Learning studio. Delete the training compute cluster to prevent further cost.

```

# Wait for the pipeline to finish running.

inference_run.wait_for_completion()

# Publish the pipeline.

published_pipeline = inference_run.publish_pipeline(

name="HIFIS-RNN-MLP Inference Pipeline",

description="Azure ML Pipeline that runs model inference on all clients in HIFIS database and computes LIME explanations",

version="1.0")

```

### Schedule the pipeline

Schedule the published pipeline to run weekly.

```

recurrence = ScheduleRecurrence(frequency="Week", interval=1)

pipeline_id = "inference-pipeline"

recurring_schedule = Schedule.create(ws, name="WeeklyInferenceSchedule",

description="Scheduled weekly batch inference",

pipeline_id=published_pipeline.id,

experiment_name=experiment.name,

recurrence=recurrence)

```

### Disable scheduled pipelines

Disable all scheduled pipelines

```

schedules = Schedule.list(ws)

for schedule in schedules:

print(schedule)

schedule.disable()

```

| github_jupyter |

# Gems of the Python Standard Library

### Alex Zharichenko

# Data Types

## enum

---

#### Support for enumerations

```

from enum import Enum, IntEnum

class Color(IntEnum):

RED = 1

GREEN = 2

BLUE = 3

Color.RED

Color.RED == 1

# list(Color)

from enum import Flag, auto

class Color(Flag):

RED = auto()

BLUE = auto()

GREEN = auto()

WHITE = RED | BLUE | GREEN

list(Color)

```

## bisect

```

from bisect import bisect

def grade(score, breakpoints=[60, 70, 80, 90], grades='FDCBA'):

i = bisect(breakpoints, score)

return grades[i]

[grade(score) for score in [33, 99, 77, 70, 89, 90, 100]]

```

# Text Processing Services

## difflib

---

### Helpers for computing deltas

```

import difflib

s1 = ['bacon\n', 'eggs\n', 'ham\n', 'guido\n']

s2 = ['python\n', 'eggy\n', 'hamster\n', 'guido\n']

print(''.join(difflib.context_diff(s1, s2)))

print(''.join(difflib.unified_diff(s1, s2)))

print(''.join(difflib.ndiff(s1, s2)))

diff = list(difflib.ndiff(s1, s2))

print(''.join(difflib.restore(diff, 1)))

print(''.join(difflib.restore(diff, 2)))

difflib.get_close_matches('appel', ['ape', 'apple', 'peach', 'puppy'])

```

# File and Directory Access

## pathlib

---

### Object-oriented filesystem paths

```

from pathlib import Path

Path('.')

Path(r'C:/Users/Alex/Projects/gems-of-python-stdlib')

Path('.').absolute()

output_dir = Path() / 'output'

output_dir

print(output_dir.exists())

output_dir.mkdir()

print(output_dir.exists())

print(output_dir.is_dir())

some_file = output_dir / "test.txt"

with some_file.open("w") as f:

f.write("hello world")

some_file.is_file()

list(output_dir.glob("*.txt"))

```

# Concurrent Execution

## sched

---

### Event scheduler

```

import sched

import time

s = sched.scheduler()

def do_action(action="default"):

print("do_action", action, time.time())

s.enter(5, 1, do_action)

s.enter(10, 2, do_action, argument=("do_nothing",))

s.enter(10, 1, do_action, kwargs={"action": "do_something"})

s.queue

print(time.time())

s.run()

print(time.time())

```

# Internet Protocols and Support

## webbrowser

---

### Convenient Web-browser controller

```

import webbrowser

url = 'http://docs.python.org/'

webbrowser.open(url)

webbrowser.open_new(url)

webbrowser.open_new_tab(url)

webbrowser.get()

```

## UUID

---

### UUID objects according to RFC 4122¶

```

import uuid

# Version 1: date-time and host id

uuid.uuid1()

# Version 3 & 5: namespace, v3 using md5, v5 using sha1

uuid.uuid3(namespace=uuid.NAMESPACE_DNS, name='python.org')

uuid.uuid5(namespace=uuid.NAMESPACE_DNS, name='python.org')

# Version 4: Random

uuid.uuid4()

id = uuid.uuid4()

print(str(id))

print(id.int)

```

| github_jupyter |

---

title: Toggling code cells in Jupyter HTML Outputs

summary: "Or, How I Wasted an Afternoon."

date: 2020-01-11

source: jupyter

---

When writing a blog post in Twitter, I found there was no easy way to

reproduce the rather lovely **code folding** effect you get in

([Rmarkdown documents](https://bookdown.org/yihui/rmarkdown/html-document.html#code-folding)),

so I went and made one myself, illustrated here.

Actually, I've written a few of them, as the code changes slightly depending on where you want to achieve code folding.

First, here's some toggleable code.

```

for i in range(9, -1, -1):

if i > 0:

print('.' * (i) + ('%i' % i) + '.' * (9-i))

else:

print('>Lift Off!')

%matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

plt.hist(np.random.normal(0, 1, 100));

```

## Jupyter Magic

To activate toggling directly in a live Notebook using

the [%%JavaScript magic](https://jupyter-notebook.readthedocs.io/en/stable/examples/Notebook/JavaScript%20Notebook%20Extensions.html), just nclude the cell below in your notebook

If the notebook is [truster](https://jupyter-notebook.readthedocs.io/en/stable/security.html),

it will automatically run when you load the page.

```

%%javascript

function toggler(){

if(window.already_toggling){

// Don't add multiple buttons.

return 0

}

let btn = $('.input').append('<button>Toggle Code</button>')

.children('button');

btn.on('click', function(e){

let tgt = e.currentTarget;

$(tgt).parent().children('.inner_cell').toggle()

})

window.already_toggling = true;

}

// Since javascript cells are executed as soon as we load

// the notebook (if it's trusted), and this cell might be at the

// top of the notebook (so is executed first), we need to

// allow time for all of the other code cells to load before

// running. Let's give it 5 seconds.

setTimeout(toggler, 5000);

```

Notebooks that have toggling enabled will keep this feature

when you convert them to HTML pages using `jupyter nbconvert`.

I believe that this code could be packaged to create a jupyter extension, but I don't know how, and don't have much interest right now.

## NBConvert Templates

It should be possible to create a

[custom template](https://nbconvert.readthedocs.io/en/latest/customizing.html)

for `jupyter nbconvert` that injects the same code,

or something very like it.

Unfortunately, the documentation on how to do this is not straightforward.

## Hugo

This site is generated using the [Academic](https://github.com/gcushen/hugo-academic) theme for

[Hugo](https://gohugo.io/).

To post Jupyter notebooks, I convert them to `.md` files using the command

`jupyter nbconvert index.ipynb --to markdown --NbConvertApp.output_files_dir=.`,

and following the instructions [here](https://github.com/gcushen/hugo-academic/blob/master/exampleSite/content/post/jupyter/index.md).

I've added the following rule to the site header template for my version of the theme,

located in `/path/to/my_page/themes/academic/layouts/partials/site_head.html`,

just before the `</head>` tag.

```js

{{ if eq $.Params.source "jupyter"}}

<script

src="https://code.jquery.com/jquery-3.4.1.min.js"

crossorigin="anonymous"></script>

<script type="text/javascript" async

src="https://cdnjs.cloudflare.com/ajax/libs/mathjax/2.7.5/MathJax.js?config=TeX-MML-AM_CHTML">

</script>

<script type="text/x-mathjax-config">

MathJax.Hub.Config({

tex2jax: {

inlineMath: [['$','$'], ['\\(','\\)']],

displayMath: [['$$','$$'], ['\[','\]']],

processEscapes: true,

processEnvironments: true,

skipTags: ['script', 'noscript', 'style', 'textarea', 'pre'],

TeX: { equationNumbers: { autoNumber: "AMS" },

extensions: ["AMSmath.js", "AMSsymbols.js"] }

}

});

</script>

<script>

function toggler(){

let btn = $('.language-python').parent()

.prepend('<button>Toggle Code</button>')

.children('button');

btn.on('click', function(e){

let tgt = e.currentTarget;

$(tgt).parent().children('code').toggle()

})

}

$(window).on('load', toggler)

</script>

{{ end }}

```

I then add `source: jupyter` to the metadata of all Jupyter posts.

This means that this code is added to these posts, and only these posts.

Something very similar should work for other static site generators such as Pelican.

# Toggle All

Finally, it would be simple to add a Show/Hide All button at the top of the page, but I haven't got around to doing so yet. Soon, maybe.

| github_jupyter |

# KNN CLASSIFIER

KNN can be used for both classification and regression predictive problems. However, it is more widely used in classification problems in the industry. To evaluate any technique we generally look at 3 important aspects:

1. Ease to interpret output

2. Calculation time

3. Predictive Power

```

import matplotlib.pyplot as plt

from mpl_toolkits.mplot3d import Axes3D

from sklearn import datasets

from sklearn.decomposition import PCA

# import some data to play with

iris = datasets.load_iris()

X = iris.data[:, :2] # we only take the first two features.

y = iris.target

x_min, x_max = X[:, 0].min() - .5, X[:, 0].max() + .5

y_min, y_max = X[:, 1].min() - .5, X[:, 1].max() + .5

plt.figure(2, figsize=(8, 6))

plt.clf()

# Plot the training points

plt.scatter(X[:, 0], X[:, 1], c=y, cmap=plt.cm.Set1,

edgecolor='k')

plt.xlabel('Sepal length')

plt.ylabel('Sepal width')

plt.xlim(x_min, x_max)

plt.ylim(y_min, y_max)

plt.xticks(())

plt.yticks(())

# To getter a better understanding of interaction of the dimensions

# plot the first three PCA dimensions

fig = plt.figure(1, figsize=(8, 6))

ax = Axes3D(fig, elev=-150, azim=110)

X_reduced = PCA(n_components=3).fit_transform(iris.data)

ax.scatter(X_reduced[:, 0], X_reduced[:, 1], X_reduced[:, 2], c=y,

cmap=plt.cm.Set1, edgecolor='k', s=40)

ax.set_title("First three PCA directions")

ax.set_xlabel("1st eigenvector")

ax.w_xaxis.set_ticklabels([])

ax.set_ylabel("2nd eigenvector")

ax.w_yaxis.set_ticklabels([])

ax.set_zlabel("3rd eigenvector")

ax.w_zaxis.set_ticklabels([])

plt.show()

from matplotlib.colors import ListedColormap

import matplotlib.pyplot as plt

def plot_decision_regions(X, y, classifier, test_idx=None, resolution=0.02):

# setup marker generator and color map

markers = ('s', 'x', 'o', '^', 'v')

colors = ('red', 'blue', 'lightgreen', 'gray', 'cyan')

cmap = ListedColormap(colors[:len(np.unique(y))])

# plot the decision surface

x1_min, x1_max = X[:, 0].min() - 1, X[:, 0].max() + 1

x2_min, x2_max = X[:, 1].min() - 1, X[:, 1].max() + 1

xx1, xx2 = np.meshgrid(np.arange(x1_min, x1_max, resolution),

np.arange(x2_min, x2_max, resolution))

Z = classifier.predict(np.array([xx1.ravel(), xx2.ravel()]).T)

Z = Z.reshape(xx1.shape)

plt.contourf(xx1, xx2, Z, alpha=0.3, cmap=cmap)

plt.xlim(xx1.min(), xx1.max())

plt.ylim(xx2.min(), xx2.max())

for idx, cl in enumerate(np.unique(y)):

plt.scatter(x=X[y == cl, 0],

y=X[y == cl, 1],

alpha=0.8,

c=colors[idx],

marker=markers[idx],

label=cl,

edgecolor='black')

# highlight test samples

if test_idx:

# plot all samples

X_test, y_test = X[test_idx, :], y[test_idx]

plt.scatter(X_test[:, 0],

X_test[:, 1],

c='',

edgecolor='black',

alpha=1.0,

linewidth=1,

marker='o',

s=100,

label='test set')

from sklearn.neighbors import KNeighborsClassifier as KNN

import pandas as pd

import numpy as np

for x in range(1,8):

model=KNN(n_neighbors=x)

model.fit(X,y)

plot_decision_regions(X,y,model)

print("number of nearest neighbours")

print(x)

plt.show()

```

| github_jupyter |

# Run a Gene Ontology Enrichment Analysis (GOEA)

We use data from a 2014 Nature paper:

[Computational analysis of cell-to-cell heterogeneity

in single-cell RNA-sequencing data reveals hidden

subpopulations of cells

](http://www.nature.com/nbt/journal/v33/n2/full/nbt.3102.html#methods)

Note: you must have the Python package, **xlrd**, installed to run this example.

Note: To create plots, you must have:

* Python packages: **pyparsing**, **pydot**

* [Graphviz](http://www.graphviz.org/) loaded and your PATH environmental variable pointing to the Graphviz bin directory.

## 1. Download Ontologies and Associations

### 1a. Download Ontologies, if necessary

```

# Get http://geneontology.org/ontology/go-basic.obo

from goatools.base import download_go_basic_obo

obo_fname = download_go_basic_obo()

```

### 1b. Download Associations, if necessary

```

# Get ftp://ftp.ncbi.nlm.nih.gov/gene/DATA/gene2go.gz

from goatools.base import download_ncbi_associations

gene2go = download_ncbi_associations()

```

## 2. Load Ontologies, Associations and Background gene set

### 2a. Load Ontologies

```

from goatools.obo_parser import GODag

obodag = GODag("go-basic.obo")

```

### 2b. Load Associations

```

from __future__ import print_function

from goatools.associations import read_ncbi_gene2go

geneid2gos_mouse = read_ncbi_gene2go("gene2go", taxids=[10090])

print("{N:,} annotated mouse genes".format(N=len(geneid2gos_mouse)))

```

### 2c. Load Background gene set

In this example, the background is all mouse protein-codinge genes

```

from goatools.test_data.genes_NCBI_10090_ProteinCoding import GeneID2nt as GeneID2nt_mus

```

## 3. Initialize a GOEA object

The GOEA object holds the Ontologies, Associations, and background.

Numerous studies can then be run withough needing to re-load the above items.

In this case, we only run one GOEA.

```

from goatools.go_enrichment import GOEnrichmentStudy

goeaobj = GOEnrichmentStudy(

GeneID2nt_mus.keys(), # List of mouse protein-coding genes

geneid2gos_mouse, # geneid/GO associations

obodag, # Ontologies

propagate_counts = False,

alpha = 0.05, # default significance cut-off

methods = ['fdr_bh']) # defult multipletest correction method

```

## 4. Read study genes

~400 genes from the Nature paper supplemental table 4

```

# Data will be stored in this variable

import os

geneid2symbol = {}

# Get xlsx filename where data is stored

ROOT = os.path.dirname(os.getcwd()) # go up 1 level from current working directory

din_xlsx = os.path.join(ROOT, "tests/data/nbt_3102/nbt.3102-S4_GeneIDs.xlsx")

# Read data

if os.path.isfile(din_xlsx):

import xlrd

book = xlrd.open_workbook(din_xlsx)

pg = book.sheet_by_index(0)

for r in range(pg.nrows):

symbol, geneid, pval = [pg.cell_value(r, c) for c in range(pg.ncols)]

if geneid:

geneid2symbol[int(geneid)] = symbol

```

## 5. Run Gene Ontology Enrichment Analysis (GOEA)

You may choose to keep all results or just the significant results. In this example, we choose to keep only the significant results.

```

# 'p_' means "pvalue". 'fdr_bh' is the multipletest method we are currently using.

geneids_study = geneid2symbol.keys()

goea_results_all = goeaobj.run_study(geneids_study)

goea_results_sig = [r for r in goea_results_all if r.p_fdr_bh < 0.05]

```

## 6. Write results to an Excel file and to a text file

```

goeaobj.wr_xlsx("nbt3102.xlsx", goea_results_sig)

goeaobj.wr_txt("nbt3102.txt", goea_results_sig)

```

## 7. Plot all significant GO terms

Plotting all significant GO terms produces a messy spaghetti plot. Such a plot can be useful sometimes because you can open it and zoom and scroll around. But sometimes it is just too messy to be of use.

The **"{NS}"** in **"nbt3102_{NS}.png"** indicates that you will see three plots, one for "biological_process"(BP), "molecular_function"(MF), and "cellular_component"(CC)

```

from goatools.godag_plot import plot_gos, plot_results, plot_goid2goobj

plot_results("nbt3102_{NS}.png", goea_results_sig)

```

### 7a. These plots are likely to messy

The *Cellular Component* plot is the smallest plot...

### 7b. So make a smaller sub-plot

This plot contains GOEA results:

* GO terms colored by P-value:

* pval < 0.005 (light red)

* pval < 0.01 (light orange)

* pval < 0.05 (yellow)

* pval > 0.05 (grey) Study terms that are not statistically significant

* GO terms with study gene counts printed. e.g., "32 genes"

```

# Plot subset starting from these significant GO terms

goid_subset = [

'GO:0003723', # MF D04 RNA binding (32 genes)

'GO:0044822', # MF D05 poly(A) RNA binding (86 genes)

'GO:0003729', # MF D06 mRNA binding (11 genes)

'GO:0019843', # MF D05 rRNA binding (6 genes)

'GO:0003746', # MF D06 translation elongation factor activity (5 genes)

]

plot_gos("nbt3102_MF_RNA_genecnt.png",

goid_subset, # Source GO ids

obodag,

goea_results=goea_results_all) # Use pvals for coloring

```

### 7c. Add study gene Symbols to plot

e.g., *11 genes: Calr, Eef1a1, Pabpc1*

```

plot_gos("nbt3102_MF_RNA_Symbols.png",

goid_subset, # Source GO ids

obodag,

goea_results=goea_results_all, # use pvals for coloring

# We can further configure the plot...

id2symbol=geneid2symbol, # Print study gene Symbols, not Entrez GeneIDs

study_items=6, # Only only 6 gene Symbols max on GO terms

items_p_line=3, # Print 3 genes per line

)

```

Copyright (C) 2016, DV Klopfenstein, H Tang. All rights reserved.

| github_jupyter |

# Flax SST-2 Example

<a href="https://colab.research.google.com/github/google/flax/blob/master/examples/sst2/sst2.ipynb" ><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

Demonstration notebook for

https://github.com/google/flax/tree/master/examples/sst2

**Before you start:** Select Runtime -> Change runtime type -> GPU.

The **Flax Notebook Workflow**:

1. Run the entire notebook end-to-end and check out the outputs.

- This will open Python files in the right-hand editor!

- You'll be able to interactively explore metrics in TensorBoard.

2. Change `config` and train for different hyperparameters. Check out the

updated TensorBoard plots.

3. Update the code in `train.py`. Thanks to `%autoreload`, any changes you

make in the file will automatically appear in the notebook. Some ideas to

get you started:

- Change the model.

- Log some per-batch metrics during training.

- Add new hyperparameters to `configs/default.py` and use them in

`train.py`.

4. At any time, feel free to paste code from `train.py` into the notebook

and modify it directly there!

## Setup

```

example_directory = 'examples/sst2'

editor_relpaths = ('configs/default.py', 'train.py', 'models.py')

# (If you run this code in Jupyter[lab], then you're already in the

# example directory and nothing needs to be done.)

#@markdown **Fetch newest Flax, copy example code**

#@markdown

#@markdown **If you select no** below, then the files will be stored on the

#@markdown *ephemeral* Colab VM. **After some time of inactivity, this VM will

#@markdown be restarted an any changes are lost**.

#@markdown

#@markdown **If you select yes** below, then you will be asked for your

#@markdown credentials to mount your personal Google Drive. In this case, all

#@markdown changes you make will be *persisted*, and even if you re-run the

#@markdown Colab later on, the files will still be the same (you can of course

#@markdown remove directories inside your Drive's `flax/` root if you want to

#@markdown manually revert these files).

if 'google.colab' in str(get_ipython()):

import os

os.chdir('/content')

# Download Flax repo from Github.

if not os.path.isdir('flaxrepo'):

!git clone --depth=1 https://github.com/google/flax flaxrepo

# Copy example files & change directory.

mount_gdrive = 'no' #@param ['yes', 'no']

if mount_gdrive == 'yes':

DISCLAIMER = 'Note: Editing in your Google Drive, changes will persist.'

from google.colab import drive

drive.mount('/content/gdrive')

example_root_path = f'/content/gdrive/My Drive/flax/{example_directory}'

else:

DISCLAIMER = 'WARNING: Editing in VM - changes lost after reboot!!'

example_root_path = f'/content/{example_directory}'

from IPython import display

display.display(display.HTML(

f'<h1 style="color:red;" class="blink">{DISCLAIMER}</h1>'))

if not os.path.isdir(example_root_path):

os.makedirs(example_root_path)

!cp -r flaxrepo/$example_directory/* "$example_root_path"

os.chdir(example_root_path)

from google.colab import files

for relpath in editor_relpaths:

s = open(f'{example_root_path}/{relpath}').read()

open(f'{example_root_path}/{relpath}', 'w').write(

f'## {DISCLAIMER}\n' + '#' * (len(DISCLAIMER) + 3) + '\n\n' + s)

files.view(f'{example_root_path}/{relpath}')

# Note: In Colab, above cell changed the working directory.

!pwd

# Install SST-2 dependencies.

!pip install -q -r requirements.txt

```

## Imports / Helpers

```

# If you want to use TPU instead of GPU, you need to run this to make it work.

try:

import jax.tools.colab_tpu

jax.tools.colab_tpu.setup_tpu()

except KeyError:

print('\n### NO TPU CONNECTED - USING CPU or GPU ###\n')

import os

os.environ['XLA_FLAGS'] = '--xla_force_host_platform_device_count=8'

jax.devices()

from absl import logging

import flax

import jax.numpy as jnp

import numpy as np

import tensorflow as tf

import tensorflow_datasets as tfds

import time

logging.set_verbosity(logging.INFO)

# Make sure the GPU is for JAX, not for TF.

tf.config.experimental.set_visible_devices([], 'GPU')

# Local imports from current directory - auto reload.

# Any changes you make to train.py will appear automatically.

%load_ext autoreload

%autoreload 2

import train

import models

import vocabulary

import input_pipeline

from configs import default as config_lib

config = config_lib.get_config()

```

## Dataset

```

# Get datasets.

# If you get an error you need to install tensorflow_datasets from Github.

train_dataset = input_pipeline.TextDataset(split='train')

eval_dataset = input_pipeline.TextDataset(split='validation')

```

## Training

```

# Get a live update during training - use the "refresh" button!

# (In Jupyter[lab] start "tensorboard" in the local directory instead.)

if 'google.colab' in str(get_ipython()):

%load_ext tensorboard

%tensorboard --logdir=.

config.num_epochs = 10

model_name = 'bilstm'

start_time = time.time()

optimizer = train.train_and_evaluate(config, workdir=f'./models/{model_name}')

logging.info('Walltime: %f s', time.time() - start_time)

if 'google.colab' in str(get_ipython()):

#@markdown You can upload the training results directly to https://tensorboard.dev

#@markdown

#@markdown Note that everbody with the link will be able to see the data.

upload_data = 'yes' #@param ['yes', 'no']

if upload_data == 'yes':

!tensorboard dev upload --one_shot --logdir ./models --name 'Flax examples/mnist'

```

| github_jupyter |

# Character level language model - Dinosaurus land

Welcome to Dinosaurus Island! 65 million years ago, dinosaurs existed, and in this assignment they are back. You are in charge of a special task. Leading biology researchers are creating new breeds of dinosaurs and bringing them to life on earth, and your job is to give names to these dinosaurs. If a dinosaur does not like its name, it might go beserk, so choose wisely!

<table>

<td>

<img src="images/dino.jpg" style="width:250;height:300px;">

</td>

</table>

Luckily you have learned some deep learning and you will use it to save the day. Your assistant has collected a list of all the dinosaur names they could find, and compiled them into this [dataset](dinos.txt). (Feel free to take a look by clicking the previous link.) To create new dinosaur names, you will build a character level language model to generate new names. Your algorithm will learn the different name patterns, and randomly generate new names. Hopefully this algorithm will keep you and your team safe from the dinosaurs' wrath!

By completing this assignment you will learn:

- How to store text data for processing using an RNN

- How to synthesize data, by sampling predictions at each time step and passing it to the next RNN-cell unit

- How to build a character-level text generation recurrent neural network

- Why clipping the gradients is important

We will begin by loading in some functions that we have provided for you in `rnn_utils`. Specifically, you have access to functions such as `rnn_forward` and `rnn_backward` which are equivalent to those you've implemented in the previous assignment.

```

import numpy as np

from utils import *

import random

```

## 1 - Problem Statement

### 1.1 - Dataset and Preprocessing

Run the following cell to read the dataset of dinosaur names, create a list of unique characters (such as a-z), and compute the dataset and vocabulary size.

```

data = open('dinos.txt', 'r').read()

data= data.lower()

chars = list(set(data))

data_size, vocab_size = len(data), len(chars)

print('There are %d total characters and %d unique characters in your data.' % (data_size, vocab_size))

```

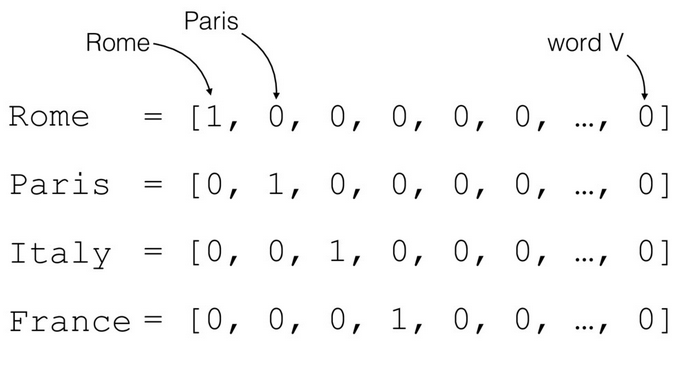

The characters are a-z (26 characters) plus the "\n" (or newline character), which in this assignment plays a role similar to the `<EOS>` (or "End of sentence") token we had discussed in lecture, only here it indicates the end of the dinosaur name rather than the end of a sentence. In the cell below, we create a python dictionary (i.e., a hash table) to map each character to an index from 0-26. We also create a second python dictionary that maps each index back to the corresponding character character. This will help you figure out what index corresponds to what character in the probability distribution output of the softmax layer. Below, `char_to_ix` and `ix_to_char` are the python dictionaries.

```

char_to_ix = { ch:i for i,ch in enumerate(sorted(chars)) }

ix_to_char = { i:ch for i,ch in enumerate(sorted(chars)) }

print(ix_to_char)

```

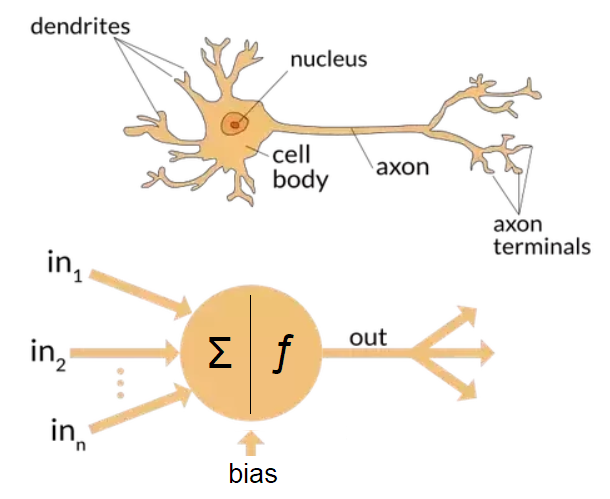

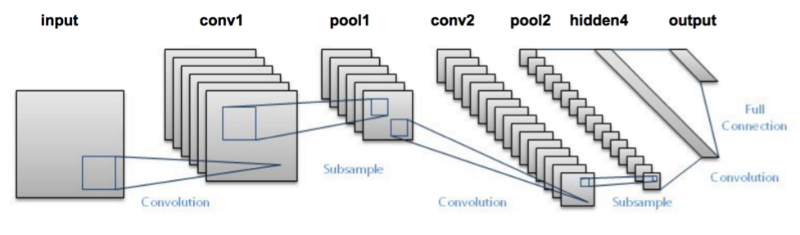

### 1.2 - Overview of the model

Your model will have the following structure:

- Initialize parameters

- Run the optimization loop

- Forward propagation to compute the loss function

- Backward propagation to compute the gradients with respect to the loss function

- Clip the gradients to avoid exploding gradients

- Using the gradients, update your parameter with the gradient descent update rule.

- Return the learned parameters

<img src="images/rnn.png" style="width:450;height:300px;">

<caption><center> **Figure 1**: Recurrent Neural Network, similar to what you had built in the previous notebook "Building a RNN - Step by Step". </center></caption>

At each time-step, the RNN tries to predict what is the next character given the previous characters. The dataset $X = (x^{\langle 1 \rangle}, x^{\langle 2 \rangle}, ..., x^{\langle T_x \rangle})$ is a list of characters in the training set, while $Y = (y^{\langle 1 \rangle}, y^{\langle 2 \rangle}, ..., y^{\langle T_x \rangle})$ is such that at every time-step $t$, we have $y^{\langle t \rangle} = x^{\langle t+1 \rangle}$.

## 2 - Building blocks of the model

In this part, you will build two important blocks of the overall model:

- Gradient clipping: to avoid exploding gradients

- Sampling: a technique used to generate characters

You will then apply these two functions to build the model.

### 2.1 - Clipping the gradients in the optimization loop

In this section you will implement the `clip` function that you will call inside of your optimization loop. Recall that your overall loop structure usually consists of a forward pass, a cost computation, a backward pass, and a parameter update. Before updating the parameters, you will perform gradient clipping when needed to make sure that your gradients are not "exploding," meaning taking on overly large values.

In the exercise below, you will implement a function `clip` that takes in a dictionary of gradients and returns a clipped version of gradients if needed. There are different ways to clip gradients; we will use a simple element-wise clipping procedure, in which every element of the gradient vector is clipped to lie between some range [-N, N]. More generally, you will provide a `maxValue` (say 10). In this example, if any component of the gradient vector is greater than 10, it would be set to 10; and if any component of the gradient vector is less than -10, it would be set to -10. If it is between -10 and 10, it is left alone.

<img src="images/clip.png" style="width:400;height:150px;">

<caption><center> **Figure 2**: Visualization of gradient descent with and without gradient clipping, in a case where the network is running into slight "exploding gradient" problems. </center></caption>

**Exercise**: Implement the function below to return the clipped gradients of your dictionary `gradients`. Your function takes in a maximum threshold and returns the clipped versions of your gradients. You can check out this [hint](https://docs.scipy.org/doc/numpy-1.13.0/reference/generated/numpy.clip.html) for examples of how to clip in numpy. You will need to use the argument `out = ...`.

```

### GRADED FUNCTION: clip

def clip(gradients, maxValue):

'''

Clips the gradients' values between minimum and maximum.

Arguments:

gradients -- a dictionary containing the gradients "dWaa", "dWax", "dWya", "db", "dby"

maxValue -- everything above this number is set to this number, and everything less than -maxValue is set to -maxValue

Returns:

gradients -- a dictionary with the clipped gradients.

'''

dWaa, dWax, dWya, db, dby = gradients['dWaa'], gradients['dWax'], gradients['dWya'], gradients['db'], gradients['dby']

### START CODE HERE ###

# clip to mitigate exploding gradients, loop over [dWax, dWaa, dWya, db, dby]. (≈2 lines)

for gradient in [dWax, dWaa, dWya, db, dby]:

gradient = np.clip(gradient, -maxValue, maxValue, out = gradient)

### END CODE HERE ###

gradients = {"dWaa": dWaa, "dWax": dWax, "dWya": dWya, "db": db, "dby": dby}

return gradients

np.random.seed(3)

dWax = np.random.randn(5,3)*10

dWaa = np.random.randn(5,5)*10

dWya = np.random.randn(2,5)*10

db = np.random.randn(5,1)*10

dby = np.random.randn(2,1)*10

gradients = {"dWax": dWax, "dWaa": dWaa, "dWya": dWya, "db": db, "dby": dby}

gradients = clip(gradients, 10)

print("gradients[\"dWaa\"][1][2] =", gradients["dWaa"][1][2])

print("gradients[\"dWax\"][3][1] =", gradients["dWax"][3][1])

print("gradients[\"dWya\"][1][2] =", gradients["dWya"][1][2])

print("gradients[\"db\"][4] =", gradients["db"][4])

print("gradients[\"dby\"][1] =", gradients["dby"][1])

```

** Expected output:**

<table>

<tr>

<td>

**gradients["dWaa"][1][2] **

</td>

<td>

10.0

</td>

</tr>

<tr>

<td>

**gradients["dWax"][3][1]**

</td>

<td>

-10.0

</td>

</td>

</tr>

<tr>

<td>

**gradients["dWya"][1][2]**

</td>

<td>

0.29713815361

</td>

</tr>

<tr>

<td>

**gradients["db"][4]**

</td>

<td>

[ 10.]

</td>

</tr>

<tr>

<td>

**gradients["dby"][1]**

</td>

<td>

[ 8.45833407]

</td>

</tr>

</table>

### 2.2 - Sampling

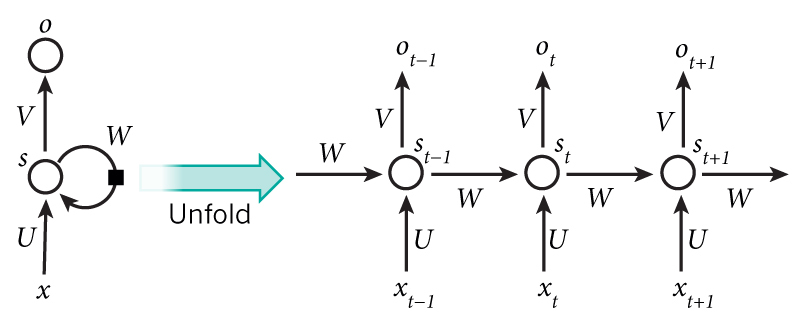

Now assume that your model is trained. You would like to generate new text (characters). The process of generation is explained in the picture below:

<img src="images/dinos3.png" style="width:500;height:300px;">

<caption><center> **Figure 3**: In this picture, we assume the model is already trained. We pass in $x^{\langle 1\rangle} = \vec{0}$ at the first time step, and have the network then sample one character at a time. </center></caption>

**Exercise**: Implement the `sample` function below to sample characters. You need to carry out 4 steps:

- **Step 1**: Pass the network the first "dummy" input $x^{\langle 1 \rangle} = \vec{0}$ (the vector of zeros). This is the default input before we've generated any characters. We also set $a^{\langle 0 \rangle} = \vec{0}$

- **Step 2**: Run one step of forward propagation to get $a^{\langle 1 \rangle}$ and $\hat{y}^{\langle 1 \rangle}$. Here are the equations:

$$ a^{\langle t+1 \rangle} = \tanh(W_{ax} x^{\langle t \rangle } + W_{aa} a^{\langle t \rangle } + b)\tag{1}$$

$$ z^{\langle t + 1 \rangle } = W_{ya} a^{\langle t + 1 \rangle } + b_y \tag{2}$$

$$ \hat{y}^{\langle t+1 \rangle } = softmax(z^{\langle t + 1 \rangle })\tag{3}$$

Note that $\hat{y}^{\langle t+1 \rangle }$ is a (softmax) probability vector (its entries are between 0 and 1 and sum to 1). $\hat{y}^{\langle t+1 \rangle}_i$ represents the probability that the character indexed by "i" is the next character. We have provided a `softmax()` function that you can use.

- **Step 3**: Carry out sampling: Pick the next character's index according to the probability distribution specified by $\hat{y}^{\langle t+1 \rangle }$. This means that if $\hat{y}^{\langle t+1 \rangle }_i = 0.16$, you will pick the index "i" with 16% probability. To implement it, you can use [`np.random.choice`](https://docs.scipy.org/doc/numpy-1.13.0/reference/generated/numpy.random.choice.html).

Here is an example of how to use `np.random.choice()`:

```python

np.random.seed(0)

p = np.array([0.1, 0.0, 0.7, 0.2])

index = np.random.choice([0, 1, 2, 3], p = p.ravel())

```

This means that you will pick the `index` according to the distribution:

$P(index = 0) = 0.1, P(index = 1) = 0.0, P(index = 2) = 0.7, P(index = 3) = 0.2$.

- **Step 4**: The last step to implement in `sample()` is to overwrite the variable `x`, which currently stores $x^{\langle t \rangle }$, with the value of $x^{\langle t + 1 \rangle }$. You will represent $x^{\langle t + 1 \rangle }$ by creating a one-hot vector corresponding to the character you've chosen as your prediction. You will then forward propagate $x^{\langle t + 1 \rangle }$ in Step 1 and keep repeating the process until you get a "\n" character, indicating you've reached the end of the dinosaur name.

```

# GRADED FUNCTION: sample

def sample(parameters, char_to_ix, seed):

"""

Sample a sequence of characters according to a sequence of probability distributions output of the RNN

Arguments:

parameters -- python dictionary containing the parameters Waa, Wax, Wya, by, and b.

char_to_ix -- python dictionary mapping each character to an index.

seed -- used for grading purposes. Do not worry about it.

Returns:

indices -- a list of length n containing the indices of the sampled characters.

"""

# Retrieve parameters and relevant shapes from "parameters" dictionary

Waa, Wax, Wya, by, b = parameters['Waa'], parameters['Wax'], parameters['Wya'], parameters['by'], parameters['b']

vocab_size = by.shape[0]

n_a = Waa.shape[1]

### START CODE HERE ###

# Step 1: Create the one-hot vector x for the first character (initializing the sequence generation). (≈1 line)

x = np.zeros((vocab_size,1))

# Step 1': Initialize a_prev as zeros (≈1 line)

a_prev = np.zeros((n_a,1))

# Create an empty list of indices, this is the list which will contain the list of indices of the characters to generate (≈1 line)

indices = []

# Idx is a flag to detect a newline character, we initialize it to -1

idx = -1

# Loop over time-steps t. At each time-step, sample a character from a probability distribution and append

# its index to "indices". We'll stop if we reach 50 characters (which should be very unlikely with a well

# trained model), which helps debugging and prevents entering an infinite loop.

counter = 0

newline_character = char_to_ix['\n']

while (idx != newline_character and counter != 50):

# Step 2: Forward propagate x using the equations (1), (2) and (3)

a = np.tanh(np.dot(Wax,x) + np.dot(Waa,a_prev) + b)

z = np.dot(Wya,a) + by

y = softmax(z)

# for grading purposes

np.random.seed(counter+seed)

# Step 3: Sample the index of a character within the vocabulary from the probability distribution y

idx = np.random.choice(list(range(vocab_size)), p = y.ravel())

# Append the index to "indices"

indices.append(idx)

# Step 4: Overwrite the input character as the one corresponding to the sampled index.

x = np.zeros((vocab_size, 1))

x[idx] = 1

# Update "a_prev" to be "a"

a_prev = a

# for grading purposes

seed += 1

counter +=1

### END CODE HERE ###

if (counter == 50):

indices.append(char_to_ix['\n'])

return indices

np.random.seed(2)

_, n_a = 20, 100

Wax, Waa, Wya = np.random.randn(n_a, vocab_size), np.random.randn(n_a, n_a), np.random.randn(vocab_size, n_a)

b, by = np.random.randn(n_a, 1), np.random.randn(vocab_size, 1)

parameters = {"Wax": Wax, "Waa": Waa, "Wya": Wya, "b": b, "by": by}

indices = sample(parameters, char_to_ix, 0)

print("Sampling:")

print("list of sampled indices:", indices)

print("list of sampled characters:", [ix_to_char[i] for i in indices])

```

** Expected output:**

<table>

<tr>

<td>

**list of sampled indices:**

</td>

<td>

[12, 17, 24, 14, 13, 9, 10, 22, 24, 6, 13, 11, 12, 6, 21, 15, 21, 14, 3, 2, 1, 21, 18, 24, <br>

7, 25, 6, 25, 18, 10, 16, 2, 3, 8, 15, 12, 11, 7, 1, 12, 10, 2, 7, 7, 11, 5, 6, 12, 25, 0, 0]

</td>

</tr><tr>

<td>

**list of sampled characters:**

</td>

<td>

['l', 'q', 'x', 'n', 'm', 'i', 'j', 'v', 'x', 'f', 'm', 'k', 'l', 'f', 'u', 'o', <br>

'u', 'n', 'c', 'b', 'a', 'u', 'r', 'x', 'g', 'y', 'f', 'y', 'r', 'j', 'p', 'b', 'c', 'h', 'o', <br>

'l', 'k', 'g', 'a', 'l', 'j', 'b', 'g', 'g', 'k', 'e', 'f', 'l', 'y', '\n', '\n']

</td>

</tr>

</table>

## 3 - Building the language model

It is time to build the character-level language model for text generation.

### 3.1 - Gradient descent

In this section you will implement a function performing one step of stochastic gradient descent (with clipped gradients). You will go through the training examples one at a time, so the optimization algorithm will be stochastic gradient descent. As a reminder, here are the steps of a common optimization loop for an RNN:

- Forward propagate through the RNN to compute the loss

- Backward propagate through time to compute the gradients of the loss with respect to the parameters

- Clip the gradients if necessary

- Update your parameters using gradient descent

**Exercise**: Implement this optimization process (one step of stochastic gradient descent).

We provide you with the following functions:

```python

def rnn_forward(X, Y, a_prev, parameters):

""" Performs the forward propagation through the RNN and computes the cross-entropy loss.

It returns the loss' value as well as a "cache" storing values to be used in the backpropagation."""

....

return loss, cache

def rnn_backward(X, Y, parameters, cache):

""" Performs the backward propagation through time to compute the gradients of the loss with respect

to the parameters. It returns also all the hidden states."""

...

return gradients, a

def update_parameters(parameters, gradients, learning_rate):

""" Updates parameters using the Gradient Descent Update Rule."""

...

return parameters

```

```

# GRADED FUNCTION: optimize

def optimize(X, Y, a_prev, parameters, learning_rate = 0.01):

"""

Execute one step of the optimization to train the model.

Arguments:

X -- list of integers, where each integer is a number that maps to a character in the vocabulary.

Y -- list of integers, exactly the same as X but shifted one index to the left.

a_prev -- previous hidden state.

parameters -- python dictionary containing:

Wax -- Weight matrix multiplying the input, numpy array of shape (n_a, n_x)

Waa -- Weight matrix multiplying the hidden state, numpy array of shape (n_a, n_a)

Wya -- Weight matrix relating the hidden-state to the output, numpy array of shape (n_y, n_a)

b -- Bias, numpy array of shape (n_a, 1)

by -- Bias relating the hidden-state to the output, numpy array of shape (n_y, 1)

learning_rate -- learning rate for the model.

Returns:

loss -- value of the loss function (cross-entropy)

gradients -- python dictionary containing:

dWax -- Gradients of input-to-hidden weights, of shape (n_a, n_x)

dWaa -- Gradients of hidden-to-hidden weights, of shape (n_a, n_a)

dWya -- Gradients of hidden-to-output weights, of shape (n_y, n_a)

db -- Gradients of bias vector, of shape (n_a, 1)

dby -- Gradients of output bias vector, of shape (n_y, 1)

a[len(X)-1] -- the last hidden state, of shape (n_a, 1)

"""

### START CODE HERE ###

# Forward propagate through time (≈1 line)

loss, cache = rnn_forward(X,Y,a_prev,parameters)

# Backpropagate through time (≈1 line)

gradients, a = rnn_backward(X,Y,parameters, cache)

# Clip your gradients between -5 (min) and 5 (max) (≈1 line)

gradients = clip(gradients,5)

# Update parameters (≈1 line)

parameters = update_parameters(parameters,gradients,learning_rate)

### END CODE HERE ###

return loss, gradients, a[len(X)-1]

np.random.seed(1)

vocab_size, n_a = 27, 100

a_prev = np.random.randn(n_a, 1)

Wax, Waa, Wya = np.random.randn(n_a, vocab_size), np.random.randn(n_a, n_a), np.random.randn(vocab_size, n_a)

b, by = np.random.randn(n_a, 1), np.random.randn(vocab_size, 1)

parameters = {"Wax": Wax, "Waa": Waa, "Wya": Wya, "b": b, "by": by}

X = [12,3,5,11,22,3]

Y = [4,14,11,22,25, 26]

loss, gradients, a_last = optimize(X, Y, a_prev, parameters, learning_rate = 0.01)

print("Loss =", loss)

print("gradients[\"dWaa\"][1][2] =", gradients["dWaa"][1][2])

print("np.argmax(gradients[\"dWax\"]) =", np.argmax(gradients["dWax"]))

print("gradients[\"dWya\"][1][2] =", gradients["dWya"][1][2])

print("gradients[\"db\"][4] =", gradients["db"][4])

print("gradients[\"dby\"][1] =", gradients["dby"][1])

print("a_last[4] =", a_last[4])

```

** Expected output:**

<table>

<tr>

<td>

**Loss **

</td>

<td>

126.503975722

</td>

</tr>

<tr>

<td>

**gradients["dWaa"][1][2]**

</td>

<td>

0.194709315347

</td>

<tr>

<td>

**np.argmax(gradients["dWax"])**

</td>

<td> 93

</td>

</tr>

<tr>

<td>

**gradients["dWya"][1][2]**

</td>

<td> -0.007773876032

</td>

</tr>

<tr>

<td>

**gradients["db"][4]**

</td>

<td> [-0.06809825]

</td>

</tr>

<tr>

<td>

**gradients["dby"][1]**

</td>

<td>[ 0.01538192]

</td>

</tr>

<tr>

<td>

**a_last[4]**

</td>

<td> [-1.]

</td>

</tr>

</table>

### 3.2 - Training the model

Given the dataset of dinosaur names, we use each line of the dataset (one name) as one training example. Every 100 steps of stochastic gradient descent, you will sample 10 randomly chosen names to see how the algorithm is doing. Remember to shuffle the dataset, so that stochastic gradient descent visits the examples in random order.

**Exercise**: Follow the instructions and implement `model()`. When `examples[index]` contains one dinosaur name (string), to create an example (X, Y), you can use this:

```python

index = j % len(examples)

X = [None] + [char_to_ix[ch] for ch in examples[index]]

Y = X[1:] + [char_to_ix["\n"]]

```

Note that we use: `index= j % len(examples)`, where `j = 1....num_iterations`, to make sure that `examples[index]` is always a valid statement (`index` is smaller than `len(examples)`).

The first entry of `X` being `None` will be interpreted by `rnn_forward()` as setting $x^{\langle 0 \rangle} = \vec{0}$. Further, this ensures that `Y` is equal to `X` but shifted one step to the left, and with an additional "\n" appended to signify the end of the dinosaur name.

```

# GRADED FUNCTION: model

def model(data, ix_to_char, char_to_ix, num_iterations = 35000, n_a = 50, dino_names = 7, vocab_size = 27):

"""

Trains the model and generates dinosaur names.

Arguments:

data -- text corpus

ix_to_char -- dictionary that maps the index to a character

char_to_ix -- dictionary that maps a character to an index

num_iterations -- number of iterations to train the model for

n_a -- number of units of the RNN cell

dino_names -- number of dinosaur names you want to sample at each iteration.

vocab_size -- number of unique characters found in the text, size of the vocabulary

Returns:

parameters -- learned parameters

"""

# Retrieve n_x and n_y from vocab_size

n_x, n_y = vocab_size, vocab_size

# Initialize parameters

parameters = initialize_parameters(n_a, n_x, n_y)

# Initialize loss (this is required because we want to smooth our loss, don't worry about it)

loss = get_initial_loss(vocab_size, dino_names)

# Build list of all dinosaur names (training examples).

with open("dinos.txt") as f:

examples = f.readlines()

examples = [x.lower().strip() for x in examples]

# Shuffle list of all dinosaur names

np.random.seed(0)

np.random.shuffle(examples)

# Initialize the hidden state of your LSTM

a_prev = np.zeros((n_a, 1))

# Optimization loop

for j in range(num_iterations):

### START CODE HERE ###

# Use the hint above to define one training example (X,Y) (≈ 2 lines)

index = j % len(examples)

X = [None] + [char_to_ix[ch] for ch in examples[index]]

Y = X[1:] + [char_to_ix['\n']]

# Perform one optimization step: Forward-prop -> Backward-prop -> Clip -> Update parameters

# Choose a learning rate of 0.01

curr_loss, gradients, a_prev = optimize(X,Y,a_prev,parameters)

### END CODE HERE ###

# Use a latency trick to keep the loss smooth. It happens here to accelerate the training.

loss = smooth(loss, curr_loss)

# Every 2000 Iteration, generate "n" characters thanks to sample() to check if the model is learning properly

if j % 2000 == 0:

print('Iteration: %d, Loss: %f' % (j, loss) + '\n')

# The number of dinosaur names to print

seed = 0

for name in range(dino_names):

# Sample indices and print them

sampled_indices = sample(parameters, char_to_ix, seed)

print_sample(sampled_indices, ix_to_char)

seed += 1 # To get the same result for grading purposed, increment the seed by one.

print('\n')

return parameters

```

Run the following cell, you should observe your model outputting random-looking characters at the first iteration. After a few thousand iterations, your model should learn to generate reasonable-looking names.

```

parameters = model(data, ix_to_char, char_to_ix)

```

## Conclusion

You can see that your algorithm has started to generate plausible dinosaur names towards the end of the training. At first, it was generating random characters, but towards the end you could see dinosaur names with cool endings. Feel free to run the algorithm even longer and play with hyperparameters to see if you can get even better results. Our implemetation generated some really cool names like `maconucon`, `marloralus` and `macingsersaurus`. Your model hopefully also learned that dinosaur names tend to end in `saurus`, `don`, `aura`, `tor`, etc.

If your model generates some non-cool names, don't blame the model entirely--not all actual dinosaur names sound cool. (For example, `dromaeosauroides` is an actual dinosaur name and is in the training set.) But this model should give you a set of candidates from which you can pick the coolest!

This assignment had used a relatively small dataset, so that you could train an RNN quickly on a CPU. Training a model of the english language requires a much bigger dataset, and usually needs much more computation, and could run for many hours on GPUs. We ran our dinosaur name for quite some time, and so far our favoriate name is the great, undefeatable, and fierce: Mangosaurus!

<img src="images/mangosaurus.jpeg" style="width:250;height:300px;">

## 4 - Writing like Shakespeare

The rest of this notebook is optional and is not graded, but we hope you'll do it anyway since it's quite fun and informative.

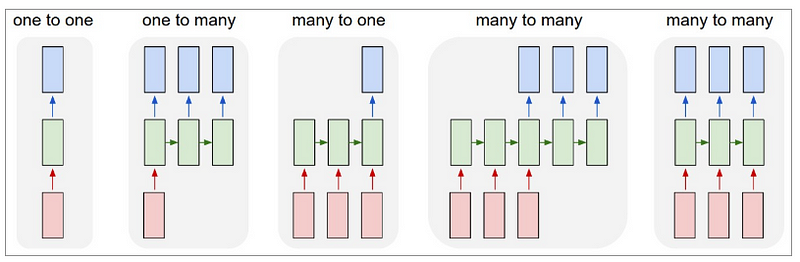

A similar (but more complicated) task is to generate Shakespeare poems. Instead of learning from a dataset of Dinosaur names you can use a collection of Shakespearian poems. Using LSTM cells, you can learn longer term dependencies that span many characters in the text--e.g., where a character appearing somewhere a sequence can influence what should be a different character much much later in ths sequence. These long term dependencies were less important with dinosaur names, since the names were quite short.

<img src="images/shakespeare.jpg" style="width:500;height:400px;">

<caption><center> Let's become poets! </center></caption>

We have implemented a Shakespeare poem generator with Keras. Run the following cell to load the required packages and models. This may take a few minutes.

```

from __future__ import print_function

from keras.callbacks import LambdaCallback

from keras.models import Model, load_model, Sequential

from keras.layers import Dense, Activation, Dropout, Input, Masking

from keras.layers import LSTM

from keras.utils.data_utils import get_file

from keras.preprocessing.sequence import pad_sequences

from shakespeare_utils import *

import sys

import io

```

To save you some time, we have already trained a model for ~1000 epochs on a collection of Shakespearian poems called [*"The Sonnets"*](shakespeare.txt).

Let's train the model for one more epoch. When it finishes training for an epoch---this will also take a few minutes---you can run `generate_output`, which will prompt asking you for an input (`<`40 characters). The poem will start with your sentence, and our RNN-Shakespeare will complete the rest of the poem for you! For example, try "Forsooth this maketh no sense " (don't enter the quotation marks). Depending on whether you include the space at the end, your results might also differ--try it both ways, and try other inputs as well.

```

print_callback = LambdaCallback(on_epoch_end=on_epoch_end)

model.fit(x, y, batch_size=128, epochs=1, callbacks=[print_callback])

# Run this cell to try with different inputs without having to re-train the model

generate_output()

```

The RNN-Shakespeare model is very similar to the one you have built for dinosaur names. The only major differences are:

- LSTMs instead of the basic RNN to capture longer-range dependencies

- The model is a deeper, stacked LSTM model (2 layer)

- Using Keras instead of python to simplify the code

If you want to learn more, you can also check out the Keras Team's text generation implementation on GitHub: https://github.com/keras-team/keras/blob/master/examples/lstm_text_generation.py.

Congratulations on finishing this notebook!

**References**:

- This exercise took inspiration from Andrej Karpathy's implementation: https://gist.github.com/karpathy/d4dee566867f8291f086. To learn more about text generation, also check out Karpathy's [blog post](http://karpathy.github.io/2015/05/21/rnn-effectiveness/).

- For the Shakespearian poem generator, our implementation was based on the implementation of an LSTM text generator by the Keras team: https://github.com/keras-team/keras/blob/master/examples/lstm_text_generation.py

| github_jupyter |

### *IPCC SR15 scenario assessment*

<img style="float: right; height: 80px; padding-left: 20px;" src="../_static/IIASA_logo.png">

<img style="float: right; height: 80px;" src="../_static/IAMC_logo.jpg">

# Descriptive statistics of the primary energy development

This notebook computes indicators and diagnostics of the primary-energy timeseries by fuel

for **Table 2.6** in the IPCC's _"Special Report on Global Warming of 1.5°C"_.

The scenario data used in this analysis can be accessed and downloaded at [https://data.ene.iiasa.ac.at/iamc-1.5c-explorer](https://data.ene.iiasa.ac.at/iamc-1.5c-explorer).

## Load `pyam` package and other dependencies

```

import pandas as pd

import numpy as np

import warnings

import io

import itertools

import yaml

import math

import matplotlib.pyplot as plt

plt.style.use('style_sr15.mplstyle')

%matplotlib inline

import pyam

```

## Import scenario data, categorization and specifications files

The metadata file must be generated from the notebook `sr15_2.0_categories_indicators` included in this repository.

If the snapshot file has been updated, make sure that you rerun the categorization notebook.

The last cell of this section loads and assigns a number of auxiliary lists as defined in the categorization notebook.

```

sr1p5 = pyam.IamDataFrame(data='../data/iamc15_scenario_data_world_r1.1.xlsx')

sr1p5.load_metadata('sr15_metadata_indicators.xlsx')

with open("sr15_specs.yaml", 'r') as stream:

specs = yaml.load(stream, Loader=yaml.FullLoader)

rc = pyam.run_control()

for item in specs.pop('run_control').items():

rc.update({item[0]: item[1]})

cats = specs.pop('cats')

cats_15 = specs.pop('cats_15')

cats_15_no_lo = specs.pop('cats_15_no_lo')

marker= specs.pop('marker')

```

## Downselect scenario ensemble to categories of interest for this assessment

```

years = [2020, 2030, 2050]

df = sr1p5.filter(category=cats_15, year=years)

```

## Initialize a `pyam.Statistics` instance

```

stats = pyam.Statistics(df=df,

filters=[('all 1.5', {}),

('no & lo os 1.5', {'category': cats_15_no_lo}),

('hi os 1.5', {'category': ['1.5C high overshoot']})

], rows=True)

header='Primary energy supply (EJ)'

header_share='Share in primary energy (%)'

header_growth='Growth (factor)'

statistics_settings = dict(

header=header,

header_share=header_share,

header_growth= header_growth,

growth_year=2050,

base_year=2020

)

def add_statistics(data, base, row, growth_year, base_year,

header, header_share, header_growth):

stats.add(data, header=header, row=row)

if base is not None:

stats.add(data / base * 100, header=header_share, row=row)

stats.add(data[growth_year] / data[base_year] - 1,

header=header_growth, row=row,

subheader='{}-{}'.format(base_year, growth_year))

```

## Extract primary energy timeseries data and add summary statistics

```

pe = df.filter(variable='Primary Energy').timeseries()

pe.index = pe.index.droplevel([2, 3, 4])

add_statistics(pe, None, 'total primary', **statistics_settings)

```

## Compute share of renewables by various types in primary energy

Only use scenarios for this indicator that report both biomass and the aggregate non-biomass timeseries - otherwise, the share would be distorted.

### All renewables (biomass and non-biomass)

```

df_pe_res = df.filter()

df_pe_res.require_variable('Primary Energy|Non-Biomass Renewables', exclude_on_fail=True)

df_pe_res.require_variable('Primary Energy|Biomass', exclude_on_fail=True)

df_pe_res.filter(exclude=False, inplace=True)

res = (

df_pe_res.filter(variable=['Primary Energy|Biomass', 'Primary Energy|Non-Biomass Renewables'])

.timeseries()

.groupby(['model', 'scenario']).sum()

)

add_statistics(res, pe, 'renewables', **statistics_settings)

```

### Biomass

```

res_bio = (

df.filter(variable=['Primary Energy|Biomass'])

.timeseries()

.groupby(['model', 'scenario']).sum()

)

add_statistics(res_bio, pe, 'biomass', **statistics_settings)

```

### Non-biomass renewables

```

res_non_bio = (

df.filter(variable=['Primary Energy|Non-Biomass Renewables'])

.timeseries()

.groupby(['model', 'scenario']).sum()

)

add_statistics(res_non_bio, pe, 'non-biomass', **statistics_settings)

```

### Renewable energy from wind and solar

As above, verify that scenarios report values for both 'Wind' and 'Solar'

```

df_win_sol = df.filter()

df_win_sol.require_variable('Primary Energy|Wind', exclude_on_fail=True)

df_win_sol.require_variable('Primary Energy|Solar', exclude_on_fail=True)

df_win_sol.filter(exclude=False, inplace=True)

win_sol = (

df_win_sol.filter(variable=['Primary Energy|Wind',

'Primary Energy|Solar'])

.timeseries()

.groupby(['model', 'scenario']).sum()

)

add_statistics(win_sol, pe, 'wind & solar', **statistics_settings)

```

## Compute share of nuclear in primary energy

```

nuc = (

df.filter(variable=['Primary Energy|Nuclear'])

.timeseries()

.groupby(['model', 'scenario']).sum()

)

add_statistics(nuc, pe, 'nuclear', **statistics_settings)

```

## Compute share of fossil in primary energy

```

fossil = (

df.filter(variable=['Primary Energy|Coal', 'Primary Energy|Gas', 'Primary Energy|Oil'])

.timeseries()

.groupby(['model', 'scenario']).sum()

)

add_statistics(fossil, pe, 'fossil', **statistics_settings)

coal = (

df.filter(variable=['Primary Energy|Coal'])

.timeseries()

.groupby(['model', 'scenario']).sum()

)

add_statistics(coal, pe, 'coal', **statistics_settings)

gas = (

df.filter(variable=['Primary Energy|Gas'])

.timeseries()

.groupby(['model', 'scenario']).sum()

)

add_statistics(gas, pe, 'gas', **statistics_settings)

oil = (

df.filter(variable=['Primary Energy|Oil'])

.timeseries()

.groupby(['model', 'scenario']).sum()

)

add_statistics(oil, pe, 'oil', **statistics_settings)

```

## Display and export summary statistics for all 1.5C scenarios to `xlsx`

```

summary = (

stats.summarize(center='median', fullrange=True)

.reindex(columns=['count', header, header_share, header_growth], level=0)

)

summary

summary.to_excel('output/table_2.6_primary_energy_supply.xlsx')

```

| github_jupyter |

# Caffe2 Basic Concepts - Operators & Nets

In this tutorial we will go through a set of Caffe2 basics: the basic concepts including how operators and nets are being written.

First, let's import caffe2. `core` and `workspace` are usually the two that you need most. If you want to manipulate protocol buffers generated by caffe2, you probably also want to import `caffe2_pb2` from `caffe2.proto`.

```

# We'll also import a few standard python libraries

from matplotlib import pyplot

import numpy as np

import time

# These are the droids you are looking for.

from caffe2.python import core, workspace

from caffe2.proto import caffe2_pb2

```

You might see a warning saying that caffe2 does not have GPU support. That means you are running a CPU-only build. Don't be alarmed - anything CPU is still runnable without problem.

## Workspaces

Let's cover workspaces first, where all the data reside.

If you are familiar with Matlab, workspace consists of blobs you create and store in memory. For now, consider a blob to be a N-dimensional Tensor similar to numpy's ndarray, but is contiguous. Down the road, we will show you that a blob is actually a typed pointer that can store any type of C++ objects, but Tensor is the most common type stored in a blob. Let's show what the interface looks like.

`Blobs()` prints out all existing blobs in the workspace.

`HasBlob()` queries if a blob exists in the workspace. For now, we don't have anything yet.

```

print("Current blobs in the workspace: {}".format(workspace.Blobs()))

print("Workspace has blob 'X'? {}".format(workspace.HasBlob("X")))

```

We can feed blobs into the workspace using `FeedBlob()`.

```

X = np.random.randn(2, 3).astype(np.float32)

print("Generated X from numpy:\n{}".format(X))

workspace.FeedBlob("X", X)

```

Now, let's take a look what blobs there are in the workspace.

```

print("Current blobs in the workspace: {}".format(workspace.Blobs()))

print("Workspace has blob 'X'? {}".format(workspace.HasBlob("X")))

print("Fetched X:\n{}".format(workspace.FetchBlob("X")))

```

Let's verify that the arrays are equal.

```

np.testing.assert_array_equal(X, workspace.FetchBlob("X"))

```

Also, if you are trying to access a blob that does not exist, an error will be thrown:

```

try:

workspace.FetchBlob("invincible_pink_unicorn")

except RuntimeError as err:

print(err)

```

One thing that you might not use immediately: you can have multiple workspaces in Python using different names, and switch between them. Blobs in different workspaces are separate from each other. You can query the current workspace using `CurrentWorkspace`. Let's try switching the workspace by name (gutentag) and creating a new one if it doesn't exist.

```

print("Current workspace: {}".format(workspace.CurrentWorkspace()))

print("Current blobs in the workspace: {}".format(workspace.Blobs()))

# Switch the workspace. The second argument "True" means creating

# the workspace if it is missing.

workspace.SwitchWorkspace("gutentag", True)

# Let's print the current workspace. Note that there is nothing in the

# workspace yet.

print("Current workspace: {}".format(workspace.CurrentWorkspace()))

print("Current blobs in the workspace: {}".format(workspace.Blobs()))

```

Let's switch back to the default workspace.

```

workspace.SwitchWorkspace("default")

print("Current workspace: {}".format(workspace.CurrentWorkspace()))

print("Current blobs in the workspace: {}".format(workspace.Blobs()))

```

Finally, `ResetWorkspace()` clears anything that is in the current workspace.

```

workspace.ResetWorkspace()

```

## Operators

Operators in Caffe2 are kind of like functions. From the C++ side, they all derive from a common interface, and are registered by type, so that we can call different operators during runtime. The interface of operators is defined in `caffe2/proto/caffe2.proto`. Basically, it takes in a bunch of inputs, and produces a bunch of outputs.

Remember, when we say "create an operator" in Caffe2 Python, nothing gets run yet. All it does is to create the protocol buffere that specifies what the operator should be. At a later time it will be sent to the C++ backend for execution. If you are not familiar with protobuf, it is a json-like serialization tool for structured data. Find more about protocol buffers [here](https://developers.google.com/protocol-buffers/).

Let's see an actual example.

```

# Create an operator.

op = core.CreateOperator(

"Relu", # The type of operator that we want to run

["X"], # A list of input blobs by their names

["Y"], # A list of output blobs by their names

)

# and we are done!

```

As we mentioned, the created op is actually a protobuf object. Let's show the content.

```

print("Type of the created op is: {}".format(type(op)))

print("Content:\n")

print(str(op))

```

OK, let's run the operator. We first feed in the input X to the workspace.

Then the simplest way to run an operator is to do `workspace.RunOperatorOnce(operator)`

```

workspace.FeedBlob("X", np.random.randn(2, 3).astype(np.float32))

workspace.RunOperatorOnce(op)

```

After execution, let's see if the operator is doing the right thing, which is our neural network's activation function ([Relu](https://en.wikipedia.org/wiki/Rectifier_(neural_networks))) in this case.

```

print("Current blobs in the workspace: {}\n".format(workspace.Blobs()))

print("X:\n{}\n".format(workspace.FetchBlob("X")))

print("Y:\n{}\n".format(workspace.FetchBlob("Y")))

print("Expected:\n{}\n".format(np.maximum(workspace.FetchBlob("X"), 0)))

```

This is working if you're expected output matches your Y output in this example.

Operators also take optional arguments if needed. They are specified as key-value pairs. Let's take a look at one simple example, which is to create a tensor and fills it with Gaussian random variables.

```

op = core.CreateOperator(

"GaussianFill",

[], # GaussianFill does not need any parameters.

["Z"],

shape=[100, 100], # shape argument as a list of ints.

mean=1.0, # mean as a single float

std=1.0, # std as a single float

)

print("Content of op:\n")

print(str(op))

```

Let's run it and see if things are as intended.

```

workspace.RunOperatorOnce(op)

temp = workspace.FetchBlob("Z")

pyplot.hist(temp.flatten(), bins=50)

pyplot.title("Distribution of Z")

```

If you see a bell shaped curve then it worked!

## Nets

Nets are essentially computation graphs. We keep the name `Net` for backward consistency (and also to pay tribute to neural nets). A Net is composed of multiple operators just like a program written as a sequence of commands. Let's take a look.

When we talk about nets, we will also talk about BlobReference, which is an object that wraps around a string so we can do easy chaining of operators.

Let's create a network that is essentially the equivalent of the following python math:

```

X = np.random.randn(2, 3)