text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

# Example Model Explanations with Seldon

Seldon core supports various out-of-the-box explainers that leverage the [Alibi ML Expalinability](https://github.com/SeldonIO/alibi) open source library.

In this notebook we show how you can use the pre-packaged explainer functionality that simplifies the creation of advanced AI model explainers.

Seldon provides the following out-of-the-box pre-packaged explainers:

* Anchor Tabular Explainer

* AI Explainer that uses the [anchor technique](https://docs.seldon.io/projects/alibi/en/latest/methods/Anchors.html) for tabular data

* It basically answers the question of what are the most "powerul" or "important" features in a tabular prediction

* Anchor Image Explainer

* AI Explainer that uses the [anchor technique](https://docs.seldon.io/projects/alibi/en/latest/methods/Anchors.html) for image data

* It basically answers the question of what are the most "powerul" or "important" pixels in an image prediction

* Anchor Text Explainer

* AI Explainer that uses the [anchor technique](https://docs.seldon.io/projects/alibi/en/latest/methods/Anchors.html) for text data

* It basically answers the question of what are the most "powerul" or "important" tokens in a text prediction

* Counterfactual Explainer

* AI Explainer that uses the [counterfactual technique](https://docs.seldon.io/projects/alibi/en/latest/methods/CF.html) for any type of data

* It basically provides insight of what are the minimum changes you can do to an input to change the prediction to a different class

* Contrastive Explainer

* AI explainer that uses the [Contrastive Explanations](https://docs.seldon.io/projects/alibi/en/latest/methods/CEM.html) technique for any type of data

* It basically provides insights of what are the minimum changes you can do to an input to change the prediction to change the prediction or the minimum components of the input to make it the same prediction

## Running this notebook

For the [ImageNet Model](#Imagenet-Model) you will need:

- [alibi package](https://pypi.org/project/alibi/) (```pip install alibi```)

This should install the required package dependencies, if not please also install:

- [Pillow package](https://pypi.org/project/Pillow/) (```pip install Pillow```)

- [matplotlib package](https://pypi.org/project/matplotlib/) (```pip install matplotlib```)

- [tensorflow package](https://pypi.org/project/tensorflow/) (```pip install tensorflow```)

You will also need to start Jupyter with settings to allow for large payloads, for example:

```

jupyter notebook --NotebookApp.iopub_data_rate_limit=1000000000

```

## Setup Seldon Core

Follow the instructions to [Setup Cluster](seldon_core_setup.ipynb#Setup-Cluster) with [Ambassador Ingress](seldon_core_setup.ipynb#Ambassador) and [Install Seldon Core](seldon_core_setup.ipynb#Install-Seldon-Core).

Then port-forward to that ingress on localhost:8003 in a separate terminal either with:

* Ambassador: `kubectl port-forward $(kubectl get pods -n seldon -l app.kubernetes.io/name=ambassador -o jsonpath='{.items[0].metadata.name}') -n seldon 8003:8080`

* Istio: `kubectl port-forward $(kubectl get pods -l istio=ingressgateway -n istio-system -o jsonpath='{.items[0].metadata.name}') -n istio-system 8003:80`

### Create Namespace for experimentation

We will first set up the namespace of Seldon where we will be deploying all our models

```

!kubectl create namespace seldon

```

And then we will set the current workspace to use the seldon namespace so all our commands are run there by default (instead of running everything in the default namespace.)

```

!kubectl config set-context $(kubectl config current-context) --namespace=seldon

```

## Income Prediction Model

```

%%writefile resources/income_explainer.yaml

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: income

spec:

name: income

annotations:

seldon.io/rest-timeout: "100000"

predictors:

- graph:

children: []

implementation: SKLEARN_SERVER

modelUri: gs://seldon-models/sklearn/income/model

name: classifier

explainer:

type: AnchorTabular

modelUri: gs://seldon-models/sklearn/income/explainer

name: default

replicas: 1

!kubectl apply -f resources/income_explainer.yaml

!kubectl rollout status deploy/$(kubectl get deploy -l seldon-deployment-id=income -o jsonpath='{.items[0].metadata.name}')

!kubectl rollout status deploy/income-default-explainer

from seldon_core.seldon_client import SeldonClient

import numpy as np

sc = SeldonClient(deployment_name="income",namespace="seldon", gateway="ambassador", gateway_endpoint="localhost:8003")

```

Use python client library to get a prediction.

```

data = np.array([[39, 7, 1, 1, 1, 1, 4, 1, 2174, 0, 40, 9]])

r = sc.predict(data=data)

print(r.response)

```

Use curl to get a prediction.

```

!curl -d '{"data": {"ndarray":[[39, 7, 1, 1, 1, 1, 4, 1, 2174, 0, 40, 9]]}}' \

-X POST http://localhost:8003/seldon/seldon/income/api/v1.0/predictions \

-H "Content-Type: application/json"

```

Use python client library to get an explanation.

```

data = np.array([[39, 7, 1, 1, 1, 1, 4, 1, 2174, 0, 40, 9]])

explanation = sc.explain(deployment_name="income", predictor="default", data=data)

print(explanation.response["names"])

```

Using curl to get an explanation.

```

!curl -X POST -H 'Content-Type: application/json' \

-d '{"data": {"names": ["text"], "ndarray": [[52, 4, 0, 2, 8, 4, 2, 0, 0, 0, 60, 9]]}}' \

http://localhost:8003/seldon/seldon/income-explainer/default/api/v1.0/explain | jq ".names"

!kubectl delete -f resources/income_explainer.yaml

```

## Movie Sentiment Model

```

%%writefile resources/moviesentiment_explainer.yaml

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: movie

spec:

name: movie

annotations:

seldon.io/rest-timeout: "100000"

predictors:

- graph:

children: []

implementation: SKLEARN_SERVER

modelUri: gs://seldon-models/sklearn/moviesentiment

name: classifier

explainer:

type: AnchorText

name: default

replicas: 1

!kubectl apply -f resources/moviesentiment_explainer.yaml

!kubectl rollout status deploy/$(kubectl get deploy -l seldon-deployment-id=movie -o jsonpath='{.items[0].metadata.name}')

!kubectl rollout status deploy/movie-default-explainer

from seldon_core.seldon_client import SeldonClient

import numpy as np

sc = SeldonClient(deployment_name="movie", namespace="seldon", gateway_endpoint="localhost:8003", payload_type='ndarray')

!curl -d '{"data": {"ndarray":["This film has great actors"]}}' \

-X POST http://localhost:8003/seldon/seldon/movie/api/v1.0/predictions \

-H "Content-Type: application/json"

data = np.array(['this film has great actors'])

r = sc.predict(data=data)

print(r)

assert(r.success==True)

!curl -s -d '{"data": {"ndarray":["This movie has great actors"]}}' \

-X POST http://localhost:8003/seldon/seldon/movie-explainer/default/api/v1.0/explain \

-H "Content-Type: application/json" | jq ".names"

data = np.array(['this film has great actors'])

explanation = sc.explain(predictor="default", data=data)

print(explanation.response["names"])

!kubectl delete -f resources/moviesentiment_explainer.yaml

```

## Imagenet Model

```

%%writefile resources/imagenet_explainer_grpc.yaml

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: image

spec:

annotations:

seldon.io/rest-timeout: "10000000"

seldon.io/grpc-timeout: "10000000"

seldon.io/grpc-max-message-size: "1000000000"

name: image

predictors:

- componentSpecs:

- spec:

containers:

- image: docker.io/seldonio/imagenet-transformer:0.1

name: transformer

graph:

name: transformer

type: TRANSFORMER

endpoint:

type: GRPC

children:

- implementation: TENSORFLOW_SERVER

modelUri: gs://seldon-models/tfserving/imagenet/model

name: classifier

endpoint:

type: GRPC

parameters:

- name: model_name

type: STRING

value: classifier

- name: model_input

type: STRING

value: input_image

- name: model_output

type: STRING

value: predictions/Softmax:0

svcOrchSpec:

resources:

requests:

memory: 10Gi

limits:

memory: 10Gi

env:

- name: SELDON_LOG_LEVEL

value: DEBUG

explainer:

type: AnchorImages

modelUri: gs://seldon-models/tfserving/imagenet/explainer

config:

batch_size: "100"

endpoint:

type: GRPC

name: default

replicas: 1

!kubectl apply -f resources/imagenet_explainer_grpc.yaml

!kubectl rollout status deploy/$(kubectl get deploy -l seldon-deployment-id=image -o jsonpath='{.items[0].metadata.name}')

!kubectl rollout status deploy/image-default-explainer

from PIL import Image

import matplotlib

%matplotlib inline

import matplotlib.pyplot as plt

from tensorflow.keras.applications.inception_v3 import InceptionV3, decode_predictions

import alibi

from alibi.datasets import fetch_imagenet

import numpy as np

def get_image_data():

data = []

image_shape = (299, 299, 3)

target_size = image_shape[:2]

image = Image.open("cat-raw.jpg").convert('RGB')

image = np.expand_dims(image.resize(target_size), axis=0)

data.append(image)

data = np.concatenate(data, axis=0)

return data

data = get_image_data()

from seldon_core.seldon_client import SeldonClient

import numpy as np

sc = SeldonClient(

deployment_name="image",

namespace="seldon",

grpc_max_send_message_length= 27 * 1024 * 1024,

grpc_max_receive_message_length= 27 * 1024 * 1024,

gateway="ambassador",

transport="grpc",

gateway_endpoint="localhost:8003",

client_return_type='proto')

import tensorflow as tf

data = get_image_data()

req = data[0:1]

r = sc.predict(data=req, payload_type='tftensor')

preds = tf.make_ndarray(r.response.data.tftensor)

label = decode_predictions(preds, top=1)

plt.title(label[0])

plt.imshow(data[0])

req = np.expand_dims(data[0], axis=0)

r = sc.explain(data=req, predictor="default", transport="rest", payload_type='ndarray', client_return_type="dict")

exp_arr = np.array(r.response['anchor'])

f, axarr = plt.subplots(1, 2)

axarr[0].imshow(data[0])

axarr[1].imshow(r.response['anchor'])

plt.show()

!kubectl delete -f resources/imagenet_explainer_grpc.yaml

```

| github_jupyter |

# Constructing linear model for OER adsorption energies

---

### Import Modules

```

import os

print(os.getcwd())

import sys

import time; ti = time.time()

import copy

import numpy as np

import pandas as pd

pd.set_option("display.max_columns", None)

# pd.set_option('display.max_rows', None)

# pd.options.display.max_colwidth = 100

import plotly.graph_objs as go

from IPython.display import display

# #########################################################

from proj_data import (

scatter_marker_props,

layout_shared,

layout_shared,

stoich_color_dict,

font_axis_title_size__pub,

font_tick_labels_size__pub,

scatter_shared_props,

)

# #########################################################

from methods import (

get_df_features_targets,

get_df_slab,

get_df_features_targets_seoin,

)

# #########################################################

from methods_models import run_gp_workflow

sys.path.insert(0,

os.path.join(

os.environ["PROJ_irox_oer"],

"workflow/model_building"))

from methods_model_building import (

simplify_df_features_targets,

run_kfold_cv_wf,

process_feature_targets_df,

process_pca_analysis,

pca_analysis,

run_regression_wf,

)

from methods import isnotebook

isnotebook_i = isnotebook()

if isnotebook_i:

from tqdm.notebook import tqdm

verbose = True

show_plot = True

else:

from tqdm import tqdm

verbose = False

show_plot = False

root_dir = os.path.join(

os.environ["PROJ_irox_oer"],

"workflow/model_building/gaussian_process")

```

### Script Inputs

```

# target_ads_i = "o"

target_ads_i = "oh"

feature_ads_i = "o"

# feature_ads_i = "oh"

use_seoin_data = False

if use_seoin_data:

feature_ads_i = "o"

```

### Read Data

```

df_features_targets = get_df_features_targets()

df_i = df_features_targets

# #########################################################

df_slab = get_df_slab()

# Getting phase > 1 slab ids

df_slab_i = df_slab[df_slab.phase > 1]

phase_2_slab_ids = df_slab_i.slab_id.tolist()

# #########################################################

df_seoin = get_df_features_targets_seoin()

indices_str = []

for index_i in df_i.index.tolist():

index_str_i = "__".join([str(i) for i in index_i])

indices_str.append(index_str_i)

df_i["index_str"] = indices_str

indices_str = []

for index_i in df_seoin.index.tolist():

index_str_i = "__".join([str(i) for i in index_i])

indices_str.append(index_str_i)

df_seoin["index_str"] = indices_str

```

### Combining My data with Seoin's

```

df_i = df_i.reset_index()

df_seoin.index = pd.RangeIndex(

start=df_i.index.max() + 1,

stop=df_i.index.max() + df_seoin.shape[0] + 1,

)

df_i["source"] = "mine"

df_seoin["source"] = "seoin"

if use_seoin_data:

df_comb = pd.concat([

# df_i,

df_seoin,

], axis=0)

else:

df_comb = pd.concat([

df_i,

# df_seoin,

], axis=0)

df_comb = df_comb[[

("index_str", "", ""),

("data", "stoich", ""),

# ("compenv", "", ""),

# ("slab_id", "", ""),

# ("active_site", "", ""),

("targets", "g_o", ""),

("targets", "g_oh", ""),

# ("targets", "e_o", ""),

# ("targets", "e_oh", ""),

# ("targets", "g_o_m_oh", ""),

# ("targets", "e_o_m_oh", ""),

# ("features", "oh", "O_magmom"),

# ("features", "oh", "Ir_magmom"),

# ("features", "oh", "active_o_metal_dist"),

# ("features", "oh", "angle_O_Ir_surf_norm"),

# ("features", "oh", "ir_o_mean"),

# ("features", "oh", "ir_o_std"),

# ("features", "oh", "octa_vol"),

("features", "o", "O_magmom"),

("features", "o", "Ir_magmom"),

("features", "o", "Ir_bader"),

("features", "o", "O_bader"),

("features", "o", "active_o_metal_dist"),

("features", "o", "angle_O_Ir_surf_norm"),

("features", "o", "ir_o_mean"),

("features", "o", "ir_o_std"),

("features", "o", "octa_vol"),

("features", "o", "p_band_center"),

("features", "dH_bulk", ""),

("features", "volume_pa", ""),

("features", "bulk_oxid_state", ""),

("features", "effective_ox_state", ""),

# ("features_pre_dft", "active_o_metal_dist__pre", ""),

# ("features_pre_dft", "ir_o_mean__pre", ""),

# ("features_pre_dft", "ir_o_std__pre", ""),

# ("features_pre_dft", "octa_vol__pre", ""),

# ("source", "", ""),

]]

df_comb

df_j = simplify_df_features_targets(

df_comb,

target_ads=target_ads_i,

feature_ads=feature_ads_i,

)

df_format = df_features_targets[("format", "color", "stoich", )]

```

### Single feature models

```

gp_settings = {

"noise": 0.02542,

# "noise": 0.12542,

}

# Length scale parameter

# sigma_l_default = 0.8 # Original

sigma_l_default = 1.8 # Length scale parameter

sigma_f_default = 0.2337970892240513 # Scaling parameter.

kdict = [

# Guassian Kernel (RBF)

{

'type': 'gaussian',

'dimension': 'single',

'width': sigma_l_default,

'scaling': sigma_f_default,

'scaling_bounds': ((0.0001, 10.),),

},

]

df_j = df_j.set_index("index_str")

cols_to_use = df_j["features"].columns.tolist()

if True:

data_dict = dict()

# for num_pca_i in range(1, len(cols_to_use) + 1, 1):

for num_pca_i in range(3, len(cols_to_use) + 1, 2):

if verbose:

print("")

print(40 * "*")

print(num_pca_i)

# #####################################################

out_dict = run_kfold_cv_wf(

df_features_targets=df_j,

cols_to_use=cols_to_use,

run_pca=True,

num_pca_comp=num_pca_i,

# k_fold_partition_size=30,

k_fold_partition_size=10,

model_workflow=run_gp_workflow,

model_settings=dict(

gp_settings=gp_settings,

kdict=kdict,

),

)

# #####################################################

df_target_pred = out_dict["df_target_pred"]

MAE = out_dict["MAE"]

R2 = out_dict["R2"]

PCA = out_dict["pca"]

regression_model_list = out_dict["regression_model_list"]

df_target_pred_on_train = out_dict["df_target_pred_on_train"]

MAE_pred_on_train = out_dict["MAE_pred_on_train"]

RM_2 = out_dict["RM_2"]

# #####################################################

if verbose:

print(

"MAE: ",

np.round(MAE, 5),

" eV",

sep="")

print(

"R2: ",

np.round(R2, 5),

sep="")

print(

"MAE (predicting on train set): ",

np.round(MAE_pred_on_train, 5),

sep="")

# #####################################################

data_dict_i = dict()

# #####################################################

data_dict_i["df_target_pred"] = df_target_pred

data_dict_i["MAE"] = MAE

data_dict_i["R2"] = R2

data_dict_i["PCA"] = PCA

# #####################################################

data_dict[num_pca_i] = data_dict_i

# #####################################################

data_dict[7].keys()

df_target_pred_i = data_dict[7]["df_target_pred"]

# df_target_pred_i["diff_abs"]

df_target_pred_i.sort_values("diff_abs")

df_target_pred_i.diff_abs.mean()

df_target_pred_i

df_target_pred_i.sort_values("diff_abs", ascending=False).iloc[10:].diff_abs.mean()

df_target_pred_i.sort_values("diff_abs", ascending=False).iloc[0:20]

0.18735 - 0.15694500865106495

# ('sherlock', 'kobehubu_94', 52.0)

# ('sherlock', 'kobehubu_94', 60.0)

# ('sherlock', 'vipikema_98', 47.0)

# ('sherlock', 'vipikema_98', 53.0)

# ('sherlock', 'vipikema_98', 60.0)

# ('slac', 'dotivela_46', 26.0)

# ('slac', 'dotivela_46', 32.0)

# ('slac', 'ladarane_77', 15.0)

df_target_pred_i.loc[[

"sherlock__kobehubu_94__52.0",

"sherlock__kobehubu_94__60.0",

"sherlock__vipikema_98__47.0",

"sherlock__vipikema_98__53.0",

"sherlock__vipikema_98__60.0",

"slac__dotivela_46__26.0",

"slac__dotivela_46__32.0",

"slac__ladarane_77__15.0",

]]

# df_target_pred_i

df_target_pred_i.sort_values("diff_abs", ascending=False).iloc[0:20]

df_target_pred_i.loc[[

"slac__tonipibo_76__23.0",

"slac__votafefa_68__35.0",

"slac__foligage_07__32.0",

"slac__votafefa_68__38.0",

"sherlock__wafitemi_24__33.0",

"sherlock__novoloko_50__20.0",

"sherlock__kamevuse_75__49.0",

"sherlock__novoloko_50__21.0",

"sherlock__mibumime_94__60.0",

"sherlock__kobehubu_94__60.0",

]]

import plotly.graph_objs as go

data = []

trace = go.Scatter(

mode="markers",

y=np.abs(df_target_pred_i["diff"]),

x=np.abs(df_target_pred_i["err_pred"]),

)

data.append(trace)

trace = go.Scatter(

# mode="markers",

y=np.arange(0, 2, 0.1),

x=np.arange(0, 2, 0.1),

)

data.append(trace)

# data = [trace]

fig = go.Figure(data=data)

fig.show()

import plotly.graph_objs as go

trace = go.Scatter(

y=df_target_pred_i.sort_values("diff_abs", ascending=False).diff_abs,

)

data = [trace]

fig = go.Figure(data=data)

fig.show()

assert False

# regression_model_list[3].gp_model

```

### Plotting in-fold predictions

```

# data_dict_i = data_dict[

# num_pca_best

# ]

# df_target_pred = data_dict_i["df_target_pred"]

df_target_pred = df_target_pred_on_train

# data_dict_i["df_target_pred"]

max_val = df_target_pred[["y", "y_pred"]].max().max()

min_val = df_target_pred[["y", "y_pred"]].min().min()

# #########################################################

color_list = []

# #########################################################

for ind_i, row_i in df_target_pred.iterrows():

# #####################################################

row_data_i = df_comb.loc[ind_i]

# #####################################################

stoich_i = row_data_i[("data", "stoich", "", )]

# #####################################################

color_i = stoich_color_dict.get(stoich_i, "red")

color_list.append(color_i)

# #########################################################

df_target_pred["color"] = color_list

# #########################################################

dd = 0.1

trace_parity = go.Scatter(

y=[min_val - 2 * dd, max_val + 2 * dd],

x=[min_val - 2 * dd, max_val + 2 * dd],

mode="lines",

name="Parity line",

line_color="black",

)

trace_i = go.Scatter(

y=df_target_pred["y"],

x=df_target_pred["y_pred"],

mode="markers",

name="CV Regression",

# opacity=0.8,

opacity=1.,

marker=dict(

color=df_target_pred["color"],

**scatter_marker_props.to_plotly_json(),

),

)

max_val = df_target_pred[["y", "y_pred"]].max().max()

min_val = df_target_pred[["y", "y_pred"]].min().min()

dd = 0.1

layout_mine = go.Layout(

showlegend=True,

yaxis=go.layout.YAxis(

range=[min_val - dd, max_val + dd],

title=dict(

text="Simulated ΔG<sub>*{}</sub>".format(feature_ads_i.upper()),

),

),

xaxis=go.layout.XAxis(

range=[min_val - dd, max_val + dd],

title=dict(

text="Predicted ΔG<sub>*{}</sub>".format(feature_ads_i.upper()),

),

),

)

# #########################################################

layout_shared_i = copy.deepcopy(layout_shared)

layout_shared_i = layout_shared_i.update(layout_mine)

# data = [trace_parity, trace_i, trace_j]

data = [trace_parity, trace_i, ]

fig = go.Figure(data=data, layout=layout_shared_i)

if show_plot:

fig.show()

```

## Breaking down PCA stats

```

# PCA = data_dict[len(cols_to_use)]["PCA"]

PCA = data_dict[3]["PCA"]

if verbose:

print("Explained variance percentage")

print(40 * "-")

tmp = [print(100 * i) for i in PCA.explained_variance_ratio_]

print("")

df_pca_comp = pd.DataFrame(

abs(PCA.components_),

# columns=list(df_j["features"].columns),

columns=cols_to_use,

)

if verbose:

display(df_pca_comp)

if verbose:

for i in range(df_pca_comp.shape[0]):

print(40 * "-")

print(i)

print(40 * "-")

df_pca_comp_i = df_pca_comp.loc[i].sort_values(ascending=False)

print(df_pca_comp_i.iloc[0:4].to_string())

print("")

data_dict_list = []

for num_pca_i, dict_i in data_dict.items():

MAE_i = dict_i["MAE"]

R2_i = dict_i["R2"]

# #####################################################

data_dict_i = dict()

# #####################################################

data_dict_i["num_pca"] = num_pca_i

data_dict_i["MAE"] = MAE_i

data_dict_i["R2"] = R2_i

# #####################################################

data_dict_list.append(data_dict_i)

# #####################################################

# #########################################################

df = pd.DataFrame(data_dict_list)

df = df.set_index("num_pca")

# #########################################################

layout_mine = go.Layout(

showlegend=False,

yaxis=go.layout.YAxis(

title=dict(

text="K-Fold Cross Validated MAE",

),

),

xaxis=go.layout.XAxis(

title=dict(

text="Num PCA Components",

),

),

)

# #########################################################

layout_shared_i = layout_shared.update(layout_mine)

trace_i = go.Scatter(

x=df.index,

y=df.MAE,

mode="markers",

marker=dict(

**scatter_marker_props.to_plotly_json(),

),

)

data = [trace_i, ]

fig = go.Figure(

data=data,

layout=layout_shared_i,

)

if show_plot:

fig.show()

from plotting.my_plotly import my_plotly_plot

my_plotly_plot(

figure=fig,

plot_name="MAE_vs_PCA_comp",

save_dir=root_dir,

write_html=True,

write_pdf=True,

try_orca_write=True,

)

```

## Plotting the best model (optimal num PCA components)

```

num_pca_best = 3

# num_pca_best = 1

# num_pca_best = 11

data_dict_i = data_dict[

num_pca_best

]

df_target_pred = data_dict_i["df_target_pred"]

max_val = df_target_pred[["y", "y_pred"]].max().max()

min_val = df_target_pred[["y", "y_pred"]].min().min()

color_list = []

for ind_i, row_i in df_target_pred.iterrows():

row_data_i = df_comb.loc[ind_i]

stoich_i = row_data_i[("data", "stoich", "", )]

color_i = stoich_color_dict.get(stoich_i, "red")

color_list.append(color_i)

df_target_pred["color"] = color_list

dd = 0.1

trace_parity = go.Scatter(

y=[min_val - 2 * dd, max_val + 2 * dd],

x=[min_val - 2 * dd, max_val + 2 * dd],

mode="lines",

name="Parity line",

line_color="black",

)

trace_i = go.Scatter(

y=df_target_pred["y"],

x=df_target_pred["y_pred"],

mode="markers",

name="CV Regression",

# opacity=0.8,

opacity=1.,

marker=dict(

color=df_target_pred["color"],

# color="grey",

**scatter_marker_props.to_plotly_json(),

),

)

```

# In-fold model (trained on all data, no test/train split)

```

# df_j = df_j.dropna()

out_dict = run_regression_wf(

df_features_targets=df_j,

cols_to_use=cols_to_use,

df_format=df_format,

run_pca=True,

num_pca_comp=num_pca_best,

model_workflow=run_gp_workflow,

# model_settings=None,

model_settings=dict(

gp_settings=gp_settings,

kdict=kdict,

),

)

df_target_pred = out_dict["df_target_pred"]

MAE = out_dict["MAE"]

R2 = out_dict["R2"]

if verbose:

print("MAE:", MAE)

print("R2:", R2)

max_val = df_target_pred[["y", "y_pred"]].max().max()

min_val = df_target_pred[["y", "y_pred"]].min().min()

dd = 0.1

layout_mine = go.Layout(

showlegend=True,

yaxis=go.layout.YAxis(

range=[min_val - dd, max_val + dd],

title=dict(

text="Simulated ΔG<sub>*{}</sub>".format(target_ads_i.upper()),

),

),

xaxis=go.layout.XAxis(

range=[min_val - dd, max_val + dd],

title=dict(

text="Predicted ΔG<sub>*{}</sub>".format(target_ads_i.upper()),

),

),

)

# #########################################################

layout_shared = layout_shared.update(layout_mine)

color_list = []

for ind_i, row_i in df_target_pred.iterrows():

row_data_i = df_comb.loc[ind_i]

stoich_i = row_data_i[("data", "stoich", "", )]

color_i = stoich_color_dict.get(stoich_i, "red")

color_list.append(color_i)

df_target_pred["color"] = color_list

trace_j = go.Scatter(

y=df_target_pred["y"],

x=df_target_pred["y_pred"],

mode="markers",

opacity=0.8,

name="In-fold Regression",

marker=dict(

color=df_target_pred["color"],

**scatter_marker_props.to_plotly_json(),

),

)

data = [trace_parity, trace_i, trace_j]

fig = go.Figure(data=data, layout=layout_shared)

if show_plot:

fig.show()

tmp = fig.layout.update(

go.Layout(

showlegend=False,

width=12 * 37.795275591,

height=12 / 1.61803398875 * 37.795275591,

margin=go.layout.Margin(

b=10, l=10,

r=10, t=10,

),

xaxis=go.layout.XAxis(

tickfont=go.layout.xaxis.Tickfont(

size=font_tick_labels_size__pub,

),

title=dict(

# text="Ir Effective Oxidation State",

font=dict(

size=font_axis_title_size__pub,

),

)

),

yaxis=go.layout.YAxis(

tickfont=go.layout.yaxis.Tickfont(

size=font_tick_labels_size__pub,

),

title=dict(

# text="ΔG<sub>OH</sub> (eV)",

font=dict(

size=font_axis_title_size__pub,

),

)

),

)

)

fig_cpy = copy.deepcopy(fig)

data = [trace_parity, trace_i, ]

fig_2 = go.Figure(data=data, layout=fig.layout)

# fig_2

scatter_shared_props_cpy = copy.deepcopy(scatter_shared_props)

tmp = scatter_shared_props_cpy.update(

marker=dict(

size=8,

)

)

tmp = fig_2.update_traces(patch=dict(

scatter_shared_props_cpy.to_plotly_json()

))

fig_2

from plotting.my_plotly import my_plotly_plot

my_plotly_plot(

figure=fig_2,

plot_name="GP_model",

save_dir=root_dir,

write_html=True,

write_pdf=True,

try_orca_write=True,

)

# #########################################################

print(20 * "# # ")

print("All done!")

print("Run time:", np.round((time.time() - ti) / 60, 3), "min")

print("gaussian_proc.ipynb")

print(20 * "# # ")

# #########################################################

```

```

# # TEMP

# print(222 * "TEMP | ")

# df_comb = pd.concat([

# # df_i,

# df_seoin,

# ], axis=0)

# feature_ads_i = "o"

# data_dict_list = []

# for col_i in df_comb.columns:

# num_nan_i = sum(

# df_comb[col_i].isna())

# ads_i = None

# if col_i[1] in ["o", "oh", "ooh", ]:

# tmp = 42

# ads_i = col_i[1]

# data_dict_i = dict()

# data_dict_i["col"] = col_i

# data_dict_i["num_nan"] = num_nan_i

# data_dict_i["col_type"] = col_i[0]

# data_dict_i["ads"] = ads_i

# data_dict_list.append(data_dict_i)

# df_nan = pd.DataFrame(data_dict_list)

# df_nan = df_nan[df_nan.col_type == "features"]

# df_nan = df_nan[df_nan.ads == "o"]

# df_nan.sort_values("num_nan", ascending=False)

# df_comb = df_comb.drop(columns=[

# # ('features', 'o', 'Ir_bader'),

# # ('features', 'o', 'O_bader'),

# # ('features', 'o', 'p_band_center'),

# ('features', 'o', 'Ir*O_bader/ir_o_mean'),

# ('features', 'o', 'Ir*O_bader'),

# # ('features', 'o', 'Ir_magmom'),

# # ('features', 'o', 'O_magmom'),

# # ('features', 'o', 'ir_o_std'),

# # ('features', 'o', 'octa_vol'),

# # ('features', 'o', 'ir_o_mean'),

# # ('features', 'o', 'active_o_metal_dist'),

# # ('features', 'o', 'angle_O_Ir_surf_norm'),

# # ('dH_bulk', ''),

# # ('volume_pa', ''),

# # ('bulk_oxid_state', ''),

# # ('effective_ox_state', ''),

# ],

# errors='ignore',

# )

# df_comb["features"].columns.tolist()

# df_comb.columns.tolist()

# assert False

```

| github_jupyter |

```

import numpy as np # linear algebra

import pandas as pd # data processing, CSV file I/O (e.g. pd.read_csv)

import os

#for dirname, _, filenames in os.walk('/kaggle/input'):

#for filename in filenames:

#print(os.path.join(dirname, filename))

import matplotlib.pyplot as plt

import seaborn as sns

import tensorflow as tf

import keras

from keras.preprocessing import image

from keras.models import Sequential

from keras.layers import Conv2D, MaxPool2D, Flatten,Dense,Dropout,BatchNormalization

from tensorflow.keras.preprocessing.image import ImageDataGenerator

import cv2

from tensorflow.keras.applications import VGG16, InceptionResNetV2

from keras import regularizers

from tensorflow.keras.optimizers import Adam,RMSprop,SGD,Adamax

train_dir = "../input/emotion-detection-fer/train" #passing the path with training images

test_dir = "../input/emotion-detection-fer/test" #passing the path with testing images

img_size = 48 #original size of the image

"""

Data Augmentation

--------------------------

rotation_range = rotates the image with the amount of degrees we provide

width_shift_range = shifts the image randomly to the right or left along the width of the image

height_shift range = shifts image randomly to up or below along the height of the image

horizontal_flip = flips the image horizontally

rescale = to scale down the pizel values in our image between 0 and 1

zoom_range = applies random zoom to our object

validation_split = reserves some images to be used for validation purpose

"""

train_datagen = ImageDataGenerator(#rotation_range = 180,

width_shift_range = 0.1,

height_shift_range = 0.1,

horizontal_flip = True,

rescale = 1./255,

#zoom_range = 0.2,

validation_split = 0.2

)

validation_datagen = ImageDataGenerator(rescale = 1./255,

validation_split = 0.2)

"""

Applying data augmentation to the images as we read

them from their respectivve directories

"""

train_generator = train_datagen.flow_from_directory(directory = train_dir,

target_size = (img_size,img_size),

batch_size = 64,

color_mode = "grayscale",

class_mode = "categorical",

subset = "training"

)

validation_generator = validation_datagen.flow_from_directory( directory = test_dir,

target_size = (img_size,img_size),

batch_size = 64,

color_mode = "grayscale",

class_mode = "categorical",

subset = "validation"

)

"""

Modeling

model = Sequential()

model.add(Conv2D(filters = 64,kernel_size = (3,3),padding = 'same',activation = 'relu',input_shape=(img_size,img_size,1)))

model.add(MaxPool2D(pool_size = 2,strides = 2))

model.add(BatchNormalization())

model.add(Conv2D(filters = 128,kernel_size = (3,3),padding = 'same',activation = 'relu'))

model.add(MaxPool2D(pool_size = 2,strides = 2))

model.add(BatchNormalization())

model.add(Dropout(0.25))

model.add(Conv2D(filters = 128,kernel_size = (3,3),padding = 'same',activation = 'relu'))

model.add(MaxPool2D(pool_size = 2,strides = 2))

model.add(BatchNormalization())

model.add(Dropout(0.25))

model.add(Conv2D(filters = 256,kernel_size = (3,3),padding = 'same',activation = 'relu'))

model.add(MaxPool2D(pool_size = 2,strides = 2))

model.add(BatchNormalization())

model.add(Flatten())

model.add(Dense(units = 128,activation = 'relu',kernel_initializer='he_normal'))

model.add(Dropout(0.25))

model.add(Dense(units = 64,activation = 'relu',kernel_initializer='he_normal'))

model.add(BatchNormalization())

model.add(Dropout(0.25))

model.add(Dense(units = 32,activation = 'relu',kernel_initializer='he_normal'))

model.add(Dense(7,activation = 'softmax'))

"""

model= tf.keras.models.Sequential()

model.add(Conv2D(32, kernel_size=(3, 3), padding='same', activation='relu', input_shape=(48, 48,1)))

model.add(Conv2D(64,(3,3), padding='same', activation='relu' ))

model.add(BatchNormalization())

model.add(MaxPool2D(pool_size=(2, 2)))

model.add(Dropout(0.25))

model.add(Conv2D(128,(5,5), padding='same', activation='relu'))

model.add(BatchNormalization())

model.add(MaxPool2D(pool_size=(2, 2)))

model.add(Dropout(0.25))

model.add(Conv2D(512,(3,3), padding='same', activation='relu', kernel_regularizer=regularizers.l2(0.01)))

model.add(BatchNormalization())

model.add(MaxPool2D(pool_size=(2, 2)))

model.add(Dropout(0.25))

model.add(Conv2D(512,(3,3), padding='same', activation='relu', kernel_regularizer=regularizers.l2(0.01)))

model.add(BatchNormalization())

model.add(MaxPool2D(pool_size=(2, 2)))

model.add(Dropout(0.25))

model.add(Flatten())

model.add(Dense(256,activation = 'relu'))

model.add(BatchNormalization())

model.add(Dropout(0.25))

model.add(Dense(512,activation = 'relu'))

model.add(BatchNormalization())

model.add(Dropout(0.25))

model.add(Dense(7, activation='softmax'))

model.compile(

optimizer = Adam(lr=0.0001),

loss='categorical_crossentropy',

metrics=['accuracy']

)

epochs = 60

batch_size = 64

model.summary()

history = model.fit(x = train_generator,epochs = epochs,validation_data = validation_generator)

fig , ax = plt.subplots(1,2)

train_acc = history.history['accuracy']

train_loss = history.history['loss']

fig.set_size_inches(12,4)

ax[0].plot(history.history['accuracy'])

ax[0].plot(history.history['val_accuracy'])

ax[0].set_title('Training Accuracy vs Validation Accuracy')

ax[0].set_ylabel('Accuracy')

ax[0].set_xlabel('Epoch')

ax[0].legend(['Train', 'Validation'], loc='upper left')

ax[1].plot(history.history['loss'])

ax[1].plot(history.history['val_loss'])

ax[1].set_title('Training Loss vs Validation Loss')

ax[1].set_ylabel('Loss')

ax[1].set_xlabel('Epoch')

ax[1].legend(['Train', 'Validation'], loc='upper left')

plt.show()

model.save('model_optimal.h5')

img = image.load_img("../input/emotion-detection-fer/test/happy/im1021.png",target_size = (48,48),color_mode = "grayscale")

img = np.array(img)

plt.imshow(img)

print(img.shape) #prints (48,48) that is the shape of our image

label_dict = {0:'Angry',1:'Disgust',2:'Fear',3:'Happy',4:'Neutral',5:'Sad',6:'Surprise'}

img = np.expand_dims(img,axis = 0) #makes image shape (1,48,48)

img = img.reshape(1,48,48,1)

result = model.predict(img)

result = list(result[0])

print(result)

img_index = result.index(max(result))

print(label_dict[img_index])

plt.show()

train_loss, train_acc = model.evaluate(train_generator)

test_loss, test_acc = model.evaluate(validation_generator)

print("final train accuracy = {:.2f} , validation accuracy = {:.2f}".format(train_acc*100, test_acc*100))

```

| github_jupyter |

```

from google.colab import drive

drive.mount('/content/drive')

```

# Part 0

## Import modules and utilities

```

import json

import pandas as pd

import pprint

import matplotlib as mpl

import matplotlib.pyplot as plt

from matplotlib.colors import LinearSegmentedColormap

import numpy as np

# !pip install -Iv seaborn==0.11.0

import seaborn as sns

print(sns.__version__)

sns.set_style()

# from scipy.stats import pearsonr

# from scipy.stats import iqr

%cd '/content/drive/My Drive/Colab Notebooks/media-agenda'

%pwd

import util

from util import DocType, Source, OptimalKClustersConfig

import senti_util

from termcolor import colored, cprint

%cd '/content/drive/My Drive/Colab Notebooks/media-agenda/plot'

%pwd

```

## Load dataframe for analysis

```

start_year = 2009

end_year = 2017

start_datetime, end_datetime, start_datetime_str, end_datetime_str = util.get_start_end_datetime(start_year, end_year)

# df = senti_util.get_sentence_cluster_sentiment_df(start_year = start_year, end_year = end_year,

# path = '/content/drive/My Drive/Colab Notebooks/media-agenda/data/sentence_cluster_sentiment_dict.json', verbose = True)

# load the dataframe

df = pd.read_csv('/content/drive/My Drive/Colab Notebooks/media-agenda/data/df_2009_to_2017_with_time_interval_indexing.csv')

# add 'bin_of_every_1_day' column

date_list = senti_util.get_date_list(start_datetime, end_datetime, freq = '1D')

date_df = pd.DataFrame({'bin_of_every_1_day': range(len(date_list)),

'date': date_list})

date_df['date'] = date_df['date'].dt.strftime('%Y-%m-%d')

df = df.merge(date_df, how = 'left', on = 'date')

df.head()

```

# Part 1

## plot distribution of sentiment in different time windows

```

def plot(source = Source, is_textblob = False, bin = 'bin_of_every_14_days', by = 'mean'):

if source == Source.SPIEGEL:

senti_choice = 'Textblob-de' if is_textblob else 'SentiWS'

else:

senti_choice = 'Textblob' if is_textblob else 'SentiWordNet'

article_df = df[(df.source == source) & (df.is_comment == False)]

comment_df = df[(df.source == source) & (df.is_comment == True)]

article_stat_df = senti_util.get_sentiment_stat(article_df, is_textblob, bin)

comment_stat_df = senti_util.get_sentiment_stat(comment_df, is_textblob, bin)

plt.figure(figsize = (24, 8))

plt.style.use('seaborn-whitegrid')

plt.rcParams.update({'font.size': 14})

if by == 'hotness':

g = sns.lineplot(data = article_stat_df, x = bin, y = 'hotness', label = 'Article Hotness')

sns.lineplot(data = comment_stat_df, x = bin, y = 'hotness', label = 'Comment Hotness')

elif by == 'mean':

g = sns.lineplot(data = article_stat_df, x = bin, y = 'mean', label = 'Article Mean')

sns.lineplot(data = comment_stat_df, x = bin, y = 'mean', label = 'Comment Mean')

elif by == 'median':

g = sns.lineplot(data = article_stat_df, x = bin, y = 'median', label = 'Article Median')

sns.lineplot(data = comment_stat_df, x = bin, y = 'median', label = 'Comment Median')

elif by == 'groupby_count':

g = sns.lineplot(data = article_stat_df, x = bin, y = 'groupby_count', label = 'Article Count')

sns.lineplot(data = comment_stat_df, x = bin, y = 'groupby_count', label = 'Comment Count')

elif by == 'dominance':

g = sns.lineplot(data = article_stat_df, x = bin, y = 'dominance', label = 'Article Dominance')

sns.lineplot(data = comment_stat_df, x = bin, y = 'dominance', label = 'Comment Dominance')

if by == 'hotness':

g.set(xlabel = bin, ylabel = 'Hotness (IQR * Dominance)')

g.set_title('{} Hotness Distribution ({} - {} with {})'.format(str.upper(source), start_year, end_year, senti_choice))

plt.ylim(0, 3)

elif by == 'groupby_count':

g.set(xlabel = bin, ylabel = 'Count')

g.set_title('{} Sentiment Distribution ({} - {} with {})'.format(str.upper(source), start_year, end_year, senti_choice))

elif by == 'dominance':

g.set(xlabel = bin, ylabel = 'Dominance (%)')

g.set_title('{} Dominance Distribution ({} - {} with {})'.format(str.upper(source), start_year, end_year, senti_choice))

else:

g.fill_between(x = article_stat_df[bin], y1 = article_stat_df.third_quantile, y2 = article_stat_df.first_quantile, alpha = 0.2, label = 'Article IQR')

g.fill_between(x = comment_stat_df[bin], y1 = comment_stat_df.third_quantile, y2 = comment_stat_df.first_quantile, alpha = 0.2, label = 'Comment IQR')

g.set(xlabel = bin, ylabel = 'Sentiment')

g.set_title('{} Sentiment Distribution ({} - {} with {})'.format(str.upper(source), start_year, end_year, senti_choice))

plt.ylim(-0.5, 0.5)

plt.legend()

plt.show()

bin = 'bin_of_every_1_day'

# bin = 'bin_of_every_3_days'

# bin = 'bin_of_every_4_days'

# bin = 'bin_of_every_7_days'

# bin = 'bin_of_every_14_days'

# bin = 'bin_of_every_28_days'

# bin = 'bin_of_every_56_days'

plot(source = Source.NYTIMES, is_textblob = False, bin = bin, by = 'mean')

plot(source = Source.NYTIMES, is_textblob = False, bin = bin, by = 'median')

plot(source = Source.NYTIMES, is_textblob = False, bin = bin, by = 'hotness')

plot(source = Source.NYTIMES, is_textblob = False, bin = bin, by = 'dominance')

plot(source = Source.NYTIMES, is_textblob = False, bin = bin, by = 'groupby_count')

# plot(source = Source.NYTIMES, is_textblob = True, bin = bin, by = 'mean')

# plot(source = Source.NYTIMES, is_textblob = True, bin = bin, by = 'median')

# plot(source = Source.NYTIMES, is_textblob = True, bin = bin, by = 'hotness')

# plot(source = Source.NYTIMES, is_textblob = True, bin = bin, by = 'dominance')

# plot(source = Source.NYTIMES, is_textblob = True, bin = bin, by = 'groupby_count')

```

| github_jupyter |

<a href="https://colab.research.google.com/github/shangeth/Google-ML-Academy/blob/master/2-Deep-Neural-Networks2_8_ANN_Computer_Vision.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

<hr>

<h1 align="center"><a href='https://shangeth.com/courses/'>Deep Learning - Beginners Track</a></h1>

<h3 align="center">Instructor: <a href='https://shangeth.com/'>Shangeth Rajaa</a></h3>

<hr>

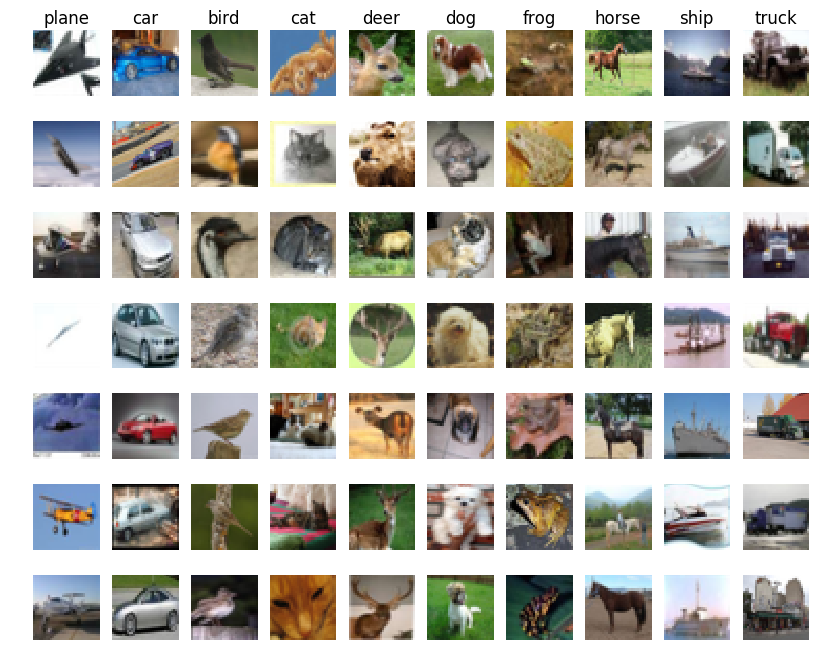

We will use ANNs for a basic computer vision application of image classification on CIFAR10 Dataset

# Dataset

## CIFAR-10

The CIFAR-10 dataset consists of 60000 32x32 colour images in 10 classes, with 6000 images per class. There are 50000 training images and 10000 test images.

## Download the Dataset

Tensorflow has inbuilt dataset which makes it easy to get training and testing data.

```

from tensorflow.keras.datasets import cifar10

import tensorflow as tf

(x_train, y_train), (x_test, y_test) = cifar10.load_data()

class_name = {

0: 'airplane',

1: 'automobile',

2: 'bird',

3: 'cat',

4: 'deer',

5: 'dog',

6: 'frog',

7: 'horse',

8: 'ship',

9: 'truck',

}

```

## Visualize the Dataset

```

import matplotlib.pyplot as plt

num_imgs = 10

plt.figure(figsize=(num_imgs*2,3))

for i in range(1,num_imgs):

plt.subplot(1,num_imgs,i).set_title('{}'.format(class_name[y_train[i][0]]))

plt.imshow(x_train[i])

plt.axis('off')

plt.show()

```

## Scaling Features

```

import numpy as np

np.max(x_train), np.min(x_train)

```

The range of values in the data is 0-255,

- we can to scale it to [0,1], dividing it by 255 will do.

- or we can standardize it by subtracting mean and dividing std.

```

mean = np.mean(x_train)

std = np.std(x_train)

x_train = (x_train-mean)/std

x_test = (x_test-mean)/std

np.max(x_train), np.min(x_train), np.max(x_test), np.min(x_test)

```

## Labels to One-Hot

```

print(y_train[:5])

num_classes = 10

y_train = tf.keras.utils.to_categorical(y_train, num_classes)

y_test = tf.keras.utils.to_categorical(y_test, num_classes)

print(y_train[:5])

```

# Colour Channels

Lets check the shape of the arrays

```

x_train.shape, y_train.shape, x_test.shape, y_test.shape

```

The shape of each image is (32 x 32 x 3), previously we saw MNIST dataset had a shape of (28 x 28), why is it so?

MNIST is a gray-scale image, it has a single channel of gray scale. But CIFAR-10 is a colour image, every colour pixel has 3 channels RGB, all these 3 channels contribute to what colour you see.

Any colour image is made of 3 channels RGB.

## Visualize colour channels

```

import matplotlib.pyplot as plt

plt.figure(figsize=(9,3))

plt.subplot(1,3,1)

plt.imshow(x_train[1][:,:,0], cmap='Reds')

plt.subplot(1,3,2)

plt.imshow(x_train[1][:,:,1], cmap='Greens')

plt.subplot(1,3,3)

plt.imshow(x_train[1][:,:,2], cmap='Blues')

plt.show()

```

So when we flatten the CIFAR-10 image, it will give 32x32x3 = 3072.

# Model

```

import tensorflow as tf

from tensorflow import keras

tf.keras.backend.clear_session()

input_shape = (32,32,3) # 3072

nclasses = 10

model = tf.keras.Sequential([

tf.keras.layers.Flatten(input_shape=input_shape),

tf.keras.layers.Dense(units=1024),

tf.keras.layers.Activation('tanh'),

tf.keras.layers.Dropout(0.5),

tf.keras.layers.Dense(units=512),

tf.keras.layers.Activation('tanh'),

tf.keras.layers.Dropout(0.5),

tf.keras.layers.Dense(units=nclasses),

tf.keras.layers.Activation('softmax')

])

model.summary()

```

## Training

```

optimizer = tf.keras.optimizers.Adam(lr=0.001)

model.compile(optimizer=optimizer, loss='categorical_crossentropy', metrics=['accuracy'])

tf_history_dp = model.fit(x_train, y_train, batch_size=500, epochs=100, verbose=True, validation_data=(x_test, y_test))

import matplotlib.pyplot as plt

plt.figure(figsize=(20,7))

plt.subplot(1,2,1)

plt.plot(tf_history_dp.history['loss'], label='Training Loss')

plt.plot(tf_history_dp.history['val_loss'], label='Validation Loss')

plt.legend()

plt.subplot(1,2,2)

plt.plot(tf_history_dp.history['acc'], label='Training Accuracy')

plt.plot(tf_history_dp.history['val_acc'], label='Validation Accuracy')

plt.legend()

plt.show()

```

Model is clearly overfitting.

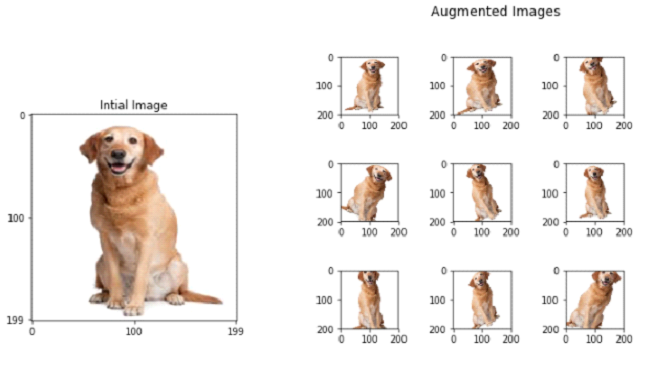

# Image Augmentation

We have discussed that, more images/data improves the model performance and avoid overfitting. But its not always possible to get new data, so we can augment the old data to create new data.

Augmentation can be:

- random crop

- rotation

- horizontal and vertical flips

- x-y shift

- colour jitter

- etc.

## Image Augmentation in Tensorflow

```

from tensorflow.keras.datasets import cifar10

import tensorflow as tf

(x_train, y_train), (x_test, y_test) = cifar10.load_data()

x_train = (x_train-mean)/std

x_test = (x_test-mean)/std

num_classes = 10

y_train = tf.keras.utils.to_categorical(y_train, num_classes)

y_test = tf.keras.utils.to_categorical(y_test, num_classes)

import tensorflow as tf

from tensorflow import keras

tf.keras.backend.clear_session()

input_shape = (32,32,3) # 3072

nclasses = 10

model = tf.keras.Sequential([

tf.keras.layers.Flatten(input_shape=input_shape),

tf.keras.layers.Dense(units=1024),

tf.keras.layers.Activation('tanh'),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(units=512),

tf.keras.layers.Activation('tanh'),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(units=nclasses),

tf.keras.layers.Activation('softmax')

])

model.summary()

from tensorflow.keras.preprocessing.image import ImageDataGenerator

train_datagen = ImageDataGenerator(

shear_range=0.1,

zoom_range=0.1,

horizontal_flip=True,

rotation_range=20)

test_datagen = ImageDataGenerator()

train_generator = train_datagen.flow(

x_train, y_train,

batch_size=200)

validation_generator = test_datagen.flow(

x_test, y_test,

batch_size=200)

import matplotlib.pyplot as plt

i = 1

plt.figure(figsize=(20,2))

for x_batch, y_batch in train_datagen.flow(x_train, y_train, batch_size=1):

plt.subplot(1,10,i)

plt.imshow(x_batch[0])

i += 1

if i>10:break

```

You can see the some of the images are zoomed, some are rotated...etc. SO these images are now different that the original image and for the model these are new images.

```

optimizer = tf.keras.optimizers.SGD(lr=0.001, momentum=0.9)

model.compile(optimizer=optimizer, loss='categorical_crossentropy', metrics=['accuracy'])

model.fit_generator(

train_generator,

steps_per_epoch=100,

epochs=200,

validation_data=validation_generator)

import matplotlib.pyplot as plt

tf_history_aug = model.history

plt.figure(figsize=(20,7))

plt.subplot(1,2,1)

plt.plot(tf_history_aug.history['loss'], label='Training Loss')

plt.plot(tf_history_aug.history['val_loss'], label='Validation Loss')

plt.legend()

plt.subplot(1,2,2)

plt.plot(tf_history_aug.history['acc'], label='Training Accuracy')

plt.plot(tf_history_aug.history['val_acc'], label='Validation Accuracy')

plt.legend()

plt.show()

```

The model is not overfitting and the performance is still increasing, so training for more epoch can give a good performance, but it will take more time, so we will stop here. Try to improve the model.

- Train the model longer.

- Use different architecture with aug

- use different activation

- different optimizer

There are other model architectures which work good for images, we will discuss that in the intermediate track.

# Saving a Trained Model

Saving a trained model is very important, hours of training should not be wasted and we need the trained model to be deployed in some other device. Its very simple in tf.keras

```

model_path = 'cifar10_trained_model.h5'

model.save(model_path)

!ls

```

# Loading a saved model

```

from tensorflow.keras.models import load_model

model = load_model(model_path)

model.summary()

```

| github_jupyter |

# Welter issue #9

## Generate synthetic, noised-up two-temperature model spectra, then naively fit a single temperature model to it.

### Part 2- Prepare.

Michael Gully-Santiago

Friday, January 8, 2015

See the previous notebook for the theory and background.

Steps:

1. Modify all the config and phi files to have the values from the MCMC run.

```

import warnings

warnings.filterwarnings("ignore")

import numpy as np

from astropy.io import fits

import matplotlib.pyplot as plt

% matplotlib inline

% config InlineBackend.figure_format = 'retina'

import seaborn as sns

sns.set_context('paper', font_scale=1.4)

sns.set_style('ticks')

import os

import json

import pandas as pd

import yaml

import h5py

sf_dat = pd.read_csv('../data/analysis/IGRINS_ESPaDOnS_run01_last10kMCMC.csv')

sf_dat.rename(columns={"m_val_x":"m_val"}, inplace=True)

del sf_dat['m_val_y']

```

## Set the value of the config files to all the same values.

```

ms = sf_dat.m_val

for m in ms:

index = sf_dat.index[sf_dat.m_val == m]

mdir = 'eo{:03d}'.format(m)

sf_out = '../sf/eo{:03d}/config.yaml'.format(m)

f2 = open(sf_out)

config = yaml.load(f2)

f2.close()

ii = index.values[0]

config['Theta']['grid'] = [4100.0, 3.5, 0.0]

config['Theta']['vsini'] = float(28.5)

config['Theta']['vz'] = float(15.6)

config['Theta']['vz'] = float(15.6)

config['Theta']['logOmega'] = float(-0.07)

if (sf_dat.logO_50p[ii] == sf_dat.logO_50p[ii]):

config['Theta']['logOmega'] = float(sf_dat.logO_50p[ii])

with open(sf_out, mode='w') as outfile:

outfile.write(yaml.dump(config))

for m in ms:

index = sf_dat.index[sf_dat.m_val == m]

mdir = 'eo{:03d}'.format(m)

phi_out = '../sf/eo{:03d}/s0_o0phi.json'.format(m)

jf = open(phi_out)

phi = json.load(jf)

jf.close()

ii = index.values[0]

c1, c2, c3 = sf_dat.c1_50p[ii], sf_dat.c2_50p[ii], sf_dat.c3_50p[ii]

if c1 != c1:

print("default: {}".format(m))

phi['cheb'] = [0.0,0,0]

phi['logAmp']= -1.6

phi['sigAmp']= 1.0

phi['l']= 30.0

if c1 == c1:

print("actual: {}".format(m))

phi['cheb'] = [c1, c2, c3]

if sf_dat.LA_50p[ii] > -1.4:

phi['logAmp']= -1.4

else:

phi['logAmp']= sf_dat.LA_50p[ii]

phi['sigAmp']= sf_dat.SA_50p[ii]

phi['l']= sf_dat.ll_50p[ii]

phi['fix_c0'] = True

with open(phi_out, mode='w') as outfile:

json.dump(phi, outfile, indent=2)

```

The end.

| github_jupyter |

##### Copyright 2018 Google LLC.

Licensed under the Apache License, Version 2.0 (the "License");

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

https://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

# Training a Simple Neural Network, with tensorflow/datasets Data Loading

_Forked from_ `neural_network_and_data_loading.ipynb`

_Dougal Maclaurin, Peter Hawkins, Matthew Johnson, Roy Frostig, Alex Wiltschko, Chris Leary_

Let's combine everything we showed in the [quickstart notebook](https://colab.research.google.com/github/google/jax/blob/master/notebooks/quickstart.ipynb) to train a simple neural network. We will first specify and train a simple MLP on MNIST using JAX for the computation. We will use `tensorflow/datasets` data loading API to load images and labels (because it's pretty great, and the world doesn't need yet another data loading library :P).

Of course, you can use JAX with any API that is compatible with NumPy to make specifying the model a bit more plug-and-play. Here, just for explanatory purposes, we won't use any neural network libraries or special APIs for builidng our model.

```

!pip install --upgrade -q https://storage.googleapis.com/jax-releases/cuda$(echo $CUDA_VERSION | sed -e 's/\.//' -e 's/\..*//')/jaxlib-0.1.23-cp36-none-linux_x86_64.whl

!pip install --upgrade -q jax

from __future__ import print_function, division, absolute_import

import jax.numpy as np

from jax import grad, jit, vmap

from jax import random

```

### Hyperparameters

Let's get a few bookkeeping items out of the way.

```

# A helper function to randomly initialize weights and biases

# for a dense neural network layer

def random_layer_params(m, n, key, scale=1e-2):

w_key, b_key = random.split(key)

return scale * random.normal(w_key, (n, m)), scale * random.normal(b_key, (n,))

# Initialize all layers for a fully-connected neural network with sizes "sizes"

def init_network_params(sizes, key):

keys = random.split(key, len(sizes))

return [random_layer_params(m, n, k) for m, n, k in zip(sizes[:-1], sizes[1:], keys)]

layer_sizes = [784, 512, 512, 10]

param_scale = 0.1

step_size = 0.0001

num_epochs = 10

batch_size = 128

n_targets = 10

params = init_network_params(layer_sizes, random.PRNGKey(0))

```

### Auto-batching predictions

Let us first define our prediction function. Note that we're defining this for a _single_ image example. We're going to use JAX's `vmap` function to automatically handle mini-batches, with no performance penalty.

```

from jax.scipy.special import logsumexp

def relu(x):

return np.maximum(0, x)

def predict(params, image):

# per-example predictions

activations = image

for w, b in params[:-1]:

outputs = np.dot(w, activations) + b

activations = relu(outputs)

final_w, final_b = params[-1]

logits = np.dot(final_w, activations) + final_b

return logits - logsumexp(logits)

```

Let's check that our prediction function only works on single images.

```

# This works on single examples

random_flattened_image = random.normal(random.PRNGKey(1), (28 * 28,))

preds = predict(params, random_flattened_image)

print(preds.shape)

# Doesn't work with a batch

random_flattened_images = random.normal(random.PRNGKey(1), (10, 28 * 28))

try:

preds = predict(params, random_flattened_images)

except TypeError:

print('Invalid shapes!')

# Let's upgrade it to handle batches using `vmap`

# Make a batched version of the `predict` function

batched_predict = vmap(predict, in_axes=(None, 0))

# `batched_predict` has the same call signature as `predict`

batched_preds = batched_predict(params, random_flattened_images)

print(batched_preds.shape)

```

At this point, we have all the ingredients we need to define our neural network and train it. We've built an auto-batched version of `predict`, which we should be able to use in a loss function. We should be able to use `grad` to take the derivative of the loss with respect to the neural network parameters. Last, we should be able to use `jit` to speed up everything.

### Utility and loss functions

```

def one_hot(x, k, dtype=np.float32):

"""Create a one-hot encoding of x of size k."""

return np.array(x[:, None] == np.arange(k), dtype)

def accuracy(params, images, targets):

target_class = np.argmax(targets, axis=1)

predicted_class = np.argmax(batched_predict(params, images), axis=1)

return np.mean(predicted_class == target_class)

def loss(params, images, targets):

preds = batched_predict(params, images)

return -np.sum(preds * targets)

@jit

def update(params, x, y):

grads = grad(loss)(params, x, y)

return [(w - step_size * dw, b - step_size * db)

for (w, b), (dw, db) in zip(params, grads)]

```

### Data Loading with `tensorflow/datasets`

JAX is laser-focused on program transformations and accelerator-backed NumPy, so we don't include data loading or munging in the JAX library. There are already a lot of great data loaders out there, so let's just use them instead of reinventing anything. We'll use the `tensorflow/datasets` data loader.

```

# Install tensorflow-datasets

# TODO(rsepassi): Switch to stable version on release

!pip install -q --upgrade tfds-nightly tf-nightly

import tensorflow_datasets as tfds

data_dir = '/tmp/tfds'

# Fetch full datasets for evaluation

# tfds.load returns tf.Tensors (or tf.data.Datasets if batch_size != -1)

# You can convert them to NumPy arrays (or iterables of NumPy arrays) with tfds.dataset_as_numpy

mnist_data, info = tfds.load(name="mnist", batch_size=-1, data_dir=data_dir, with_info=True)

mnist_data = tfds.as_numpy(mnist_data)

train_data, test_data = mnist_data['train'], mnist_data['test']

num_labels = info.features['label'].num_classes

h, w, c = info.features['image'].shape

num_pixels = h * w * c

# Full train set

train_images, train_labels = train_data['image'], train_data['label']

train_images = np.reshape(train_images, (len(train_images), num_pixels))

train_labels = one_hot(train_labels, num_labels)

# Full test set

test_images, test_labels = test_data['image'], test_data['label']

test_images = np.reshape(test_images, (len(test_images), num_pixels))

test_labels = one_hot(test_labels, num_labels)

print('Train:', train_images.shape, train_labels.shape)

print('Test:', test_images.shape, test_labels.shape)

```

### Training Loop

```

import time

def get_train_batches():

# as_supervised=True gives us the (image, label) as a tuple instead of a dict

ds = tfds.load(name='mnist', split='train', as_supervised=True, data_dir=data_dir)

# You can build up an arbitrary tf.data input pipeline

ds = ds.batch(128).prefetch(1)

# tfds.dataset_as_numpy converts the tf.data.Dataset into an iterable of NumPy arrays

return tfds.as_numpy(ds)

for epoch in range(num_epochs):

start_time = time.time()

for x, y in get_train_batches():

x = np.reshape(x, (len(x), num_pixels))

y = one_hot(y, num_labels)

params = update(params, x, y)

epoch_time = time.time() - start_time

train_acc = accuracy(params, train_images, train_labels)

test_acc = accuracy(params, test_images, test_labels)

print("Epoch {} in {:0.2f} sec".format(epoch, epoch_time))

print("Training set accuracy {}".format(train_acc))

print("Test set accuracy {}".format(test_acc))

```

We've now used the whole of the JAX API: `grad` for derivatives, `jit` for speedups and `vmap` for auto-vectorization.

We used NumPy to specify all of our computation, and borrowed the great data loaders from `tensorflow/datasets`, and ran the whole thing on the GPU.

| github_jupyter |

# IMPORTS

## Libraries

```

import math

import warnings

import numpy as np

import pandas as pd

import seaborn as sns

import matplotlib.pyplot as plt

from IPython.display import Image

from IPython.core.display import HTML

```

## Helper Functions

```

def jupyter_settings():

%matplotlib inline

%pylab inline

plt.style.use( 'bmh' )

plt.rcParams['figure.figsize'] = [25, 12]

plt.rcParams['font.size'] = 24

display( HTML( '<style>.container { width:100% !important; }</style>') )

pd.options.display.max_columns = None

pd.options.display.max_rows = None

pd.set_option( 'display.expand_frame_repr', False )

sns.set()

jupyter_settings()

```

## Loading Data

```

salesRaw = pd.read_csv('../../01-Data/train.csv', low_memory=False)

storeRaw = pd.read_csv('../../01-Data/store.csv', low_memory=False)

```

### Merge Datasets

```

dfRaw = salesRaw.merge(storeRaw, how='left', on='Store')

```

# DESCRIPTION OF THE DATA

## Columns

```

dfRaw1 = dfRaw.copy()

dfRaw1.columns

```

## Data Dimensions

```

print(f'Number of Rows: {dfRaw1.shape[0]}')

print(f'Number of Columns: {dfRaw1.shape[1]}')

```

## Data Types

```

dfRaw1.dtypes

dfRaw1['Date'] = pd.to_datetime(dfRaw1['Date'])

```

## Not a Number

### Sum

```

dfRaw1.isnull().sum()

```

### Mean

```

dfRaw1.isnull().mean()

```

## Fillout NA

```

maxValueCompetitionDistance = dfRaw1['CompetitionDistance'].max()

dfRaw1.sample(5)

# CompetitionDistance

#distance in meters to the nearest competitor store

#maxValueCompetitionDistance = dfRaw1['CompetitionDistance'].max()

dfRaw1['CompetitionDistance'] = dfRaw1['CompetitionDistance'].apply(lambda row: 200000.0 if math.isnan(row) else row)

# CompetitionOpenSinceMonth

#gives the approximate month of the time the nearest competitor was opened

dfRaw1['CompetitionOpenSinceMonth'] = dfRaw1.apply(lambda row: row['Date'].month if math.isnan(row['CompetitionOpenSinceMonth']) else row['CompetitionOpenSinceMonth'], axis=1)

# CompetitionOpenSinceYear

# gives the approximate year of the time the nearest competitor was opened

dfRaw1['CompetitionOpenSinceYear'] = dfRaw1.apply(lambda row: row['Date'].year if math.isnan(row['CompetitionOpenSinceYear']) else row['CompetitionOpenSinceYear'], axis=1)

# Promo2SinceWeek

#describes the calendar week when the store started participating in Promo2

dfRaw1['Promo2SinceWeek'] = dfRaw1.apply(lambda row: row['Date'].week if math.isnan(row['Promo2SinceWeek']) else row['Promo2SinceWeek'], axis=1)

# Promo2SinceYear

#describes the year when the store started participating in Promo2

dfRaw1['Promo2SinceYear'] = dfRaw1.apply(lambda row: row['Date'].year if math.isnan(row['Promo2SinceYear']) else row['Promo2SinceYear'], axis=1)

# PromoInterval

#describes the consecutive intervals Promo2 is started, naming the months the promotion is started anew.\

#E.g. "Feb,May,Aug,Nov" means each round starts in February, May, August, November of any given year for that store

monthMap = {

1: 'Jan', 2: 'Feb', 3: 'Mar', 4: 'Apr', 5: 'May', 6: 'Jun', 7: 'Jul', 8: 'Aug', 9: 'Sep', 10: 'Oct', 11: 'Nov', 12: 'Dec'

}

dfRaw1['PromoInterval'].fillna(0, inplace=True)

dfRaw1['MonthMap'] = dfRaw1['Date'].dt.month.map(monthMap)

dfRaw1['IsPromo'] = dfRaw1[['PromoInterval', 'MonthMap']].apply(lambda row: 0 if row['PromoInterval'] == 0 else 1 if row['MonthMap'] in row['PromoInterval'].split(',') else 0, axis=1)

dfRaw1.isnull().sum()

```

## Change Types

```

dfRaw1.dtypes

# competiton

dfRaw1['CompetitionOpenSinceMonth'] = dfRaw1['CompetitionOpenSinceMonth'].astype(int)

dfRaw1['CompetitionOpenSinceYear'] = dfRaw1['CompetitionOpenSinceYear'].astype(int)

# promo2

dfRaw1['Promo2SinceWeek'] = dfRaw1['Promo2SinceWeek'].astype(int)

dfRaw1['Promo2SinceYear'] = dfRaw1['Promo2SinceYear'].astype(int)

```

## Descriptive Statistical

```

numAttributes = dfRaw1.select_dtypes(include=['int64', 'float64'])

catAttributes = dfRaw1.select_dtypes(exclude=['int64', 'float64', 'datetime64[ns]'])

```

### Numerical Attributes

```

### Central Tendency -> Mean, Median

ct1 = pd.DataFrame(numAttributes.apply(np.mean)).T

ct2 = pd.DataFrame(numAttributes.apply(np.median)).T

### Dispersion -> std, min, max, range, skew, kurtosis

d1 = pd.DataFrame(numAttributes.apply(np.std)).T

d2 = pd.DataFrame(numAttributes.apply(min)).T

d3 = pd.DataFrame(numAttributes.apply(max)).T

d4 = pd.DataFrame(numAttributes.apply(lambda x: x.max() - x.min())).T

d5 = pd.DataFrame(numAttributes.apply(lambda x: x.skew())).T

d6 = pd.DataFrame(numAttributes.apply(lambda x: x.kurtosis())).T

# Concatenate

m = pd.concat([d2, d3, d4, ct1, ct2, d1, d5, d6]).T.reset_index()

m.columns = ['Attributes', 'Min', 'Max', 'Range', 'Mean', 'Median', 'Std', 'Skew', 'Kurtosis']

m

sns.displot(dfRaw1['CompetitionDistance'], kde=False, height=12, aspect=2)

```

### Categorical Atributes

```

catAttributes.apply(lambda x: x.unique().shape[0])

aux = dfRaw1[(dfRaw1['StateHoliday'] != '0') & (dfRaw1['Sales'] > 0)]

plt.subplot( 1, 3, 1 )

sns.boxplot( x='StateHoliday', y='Sales', data=aux )

plt.subplot( 1, 3, 2 )

sns.boxplot( x='StoreType', y='Sales', data=aux )

plt.subplot( 1, 3, 3 )

sns.boxplot( x='Assortment', y='Sales', data=aux )

```

## Convert DataFrame to .csv

```

dfRaw1.to_csv('../../01-Data/Results/01-FirstRoundCRISP/dfDescriptionData.csv', index=False)

```

| github_jupyter |

# Working with R

* **Difficulty level**: easy

* **Time need to lean**: 15 minutes or less

* **Key points**:

* There are intuitive corresponding data types between most Python (SoS) and R datatypes

## Installation

There are several options to install `R` and its jupyter kernel [irjernel](https://github.com/IRkernel/IRkernel), the easiest of which might be using `conda` but it could be tricky to install third-party libraries of R to conda, and mixing R packages from the `base` and `r` channels can lead to devastating results.

Anyway, after you have a working R installation with `irkernel` installed, you will need to install

* The `sos-r` language module,

* The `arrow` library of R, and

* The `feather-format` module of Python

The feature modules are needed to exchange dataframe between Python and R

## Overview

SoS transfers Python variables in the following types to R as follows:

| Python | condition | R |

| --- | --- |---|

| `None` | | `NULL` |

| `integer` | | `integer` |

| `integer` | `large` | `numeric` |

| `float` | | `numeric` |

| `boolean` | | `logical` |

| `complex` | | `complex` |

| `str` | | `character` |

| Sequence (`list`, `tuple`, ...) | homogenous type | `c()` |

| Sequence (`list`, `tuple`, ...) | multiple types | `list` |

| `set` | | `list` |

| `dict` | | `list` with names |

| `numpy.ndarray` | | array |

| `numpy.matrix` | | `matrix` |

| `pandas.DataFrame` | | R `data.frame` |

SoS gets variables in the following types to SoS as follows (`n` in `condition` column is the length of R datatype):

| R | condition | Python |

| --- | --- |---|

| `NULL` | | `None` |

| `logical` | `n == 1` | `boolean` |

| `integer` | `n == 1` | `integer` |

| `numeric` | `n == 1` | `double` |

| `character` | `n == 1` | `string` |

| `complex` | `n == 1` | `complex` |

| `logical` | `n > 1` | `list` |

| `integer` | `n > 1` | `list` |

| `complex` | `n > 1` | `list` |

| `numeric` | `n > 1` | `list` |

| `character` | `n > 1` | `list` |

| `list` without names | | `list` |

| `list` with names | | `dict` (with ordered keys)|

| `matrix` | | `numpy.array` |

| `data.frame` | | `DataFrame` |

| `array` | | `numpy.array` |

One of the key problems in mapping R datatypes to Python is that R does not have scalar types and all scalar variables are actually array of size 1. That is to say, in theory, variable `a=1` should be represented in Python as `a=[1]`. However, because Python does differentiate scalar and array values, we chose to represent R arraies of size 1 as scalar types in Python.

```

%put a b

a = c(1)

b = c(1, 2)

print(f'a={a} with type {type(a)}')

print(f'b={b} with type {type(b)}')

```

## Simple data types

Most simple Python data types can be converted to R types easily,

```

null_var = None

int_var = 123

float_var = 3.1415925

logic_var = True

char_var = '1"23'

comp_var = 1+2j

%get null_var int_var float_var logic_var char_var comp_var

%preview -n null_var int_var float_var logic_var char_var comp_var

```

The variables can be sent back to SoS without losing information

```

%get null_var int_var float_var logic_var char_var comp_var --from R

%preview -n null_var int_var float_var logic_var char_var comp_var

```

However, because Python allows integers of arbitrary precision which is not supported by R, large integers would be presented in R as float point numbers, which might not be able to keep the precision of the original number.

For example, if we put a large integer with 18 significant digits to R

```

%put large_int --to R

large_int = 123456789123456789

```

The last digit would be different because of floating point presentation

```

%put large_int

large_int

```

This is not a problem with SoS because you would get the same result if you enter this number in R

```

123456789123456789

```

Consequently, if you send `large_int` back to `SoS`, the number would be different

```

%get large_int --from R

large_int

```

## Array, matrix, and dataframe

The one-dimension (vector) data is converted from SoS to R as follows:

```

import numpy

import pandas

char_arr_var = ['1', '2', '3']

list_var = [1, 2, '3']

dict_var = dict(a=1, b=2, c='3')

set_var = {1, 2, '3'}

recursive_var = {'a': {'b': 123}, 'c': True}

logic_arr_var = [True, False, True]

seri_var = pandas.Series([1,2,3,3,3,3])

%get char_arr_var list_var dict_var set_var recursive_var logic_arr_var seri_var

%preview -n char_arr_var list_var dict_var set_var recursive_var logic_arr_var seri_var

```

The multi-dimension data is converted from SoS to R as follows:

```

num_arr_var = numpy.array([1, 2, 3, 4]).reshape(2,2)

mat_var = numpy.matrix([[1,2],[3,4]])

%get num_arr_var mat_var

%preview -n num_arr_var mat_var

```

The scalar data is converted from R to SoS as follows:

```

null_var = NULL

num_var = 123

logic_var = TRUE

char_var = '1\"23'

comp_var = 1+2i

%get null_var num_var logic_var char_var comp_var --from R

%preview -n null_var num_var logic_var char_var comp_var

```

The one-dimension (vector) data is converted from R to SoS as follows:

```

num_vector_var = c(1, 2, 3)

logic_vector_var = c(TRUE, FALSE, TRUE)

char_vector_var = c(1, 2, '3')

list_var = list(1, 2, '3')

named_list_var = list(a=1, b=2, c='3')

recursive_var = list(a=1, b=list(c=3, d='whatever'))

seri_var = setNames(c(1,2,3,3,3,3),c(0:5))

%get num_vector_var logic_vector_var char_vector_var list_var named_list_var recursive_var seri_var --from R

%preview -n num_vector_var logic_vector_var char_vector_var list_var named_list_var recursive_var seri_var

```

The multi-dimension data is converted from R to SoS as follows:

```

mat_var = matrix(c(1,2,3,4), nrow=2)

arr_var = array(c(1:16),dim=c(2,2,2,2))