text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

_by Max Schröder$^{1,2}$ and Frank Krüger$^1$_

$^1$ Institute of Communications Engineering, University of Rostock, Rostock <br>

$^2$ University Library, University of Rostock, Rostock

**Abstract**:

This Jupyter notebook aims at providing a very simple data analysis example by employing the IMU data inside the `_data` folder. The data in this directory has been converted from the ARFF files of the following publication of IMU data:

Frank Krüger, Albert Hein, Kristina Yordanova and Thomas Kirste<br>

Recognising user actions during cooking task (Cooking task dataset) – IMU Data<br>

Rostock : Universität Rostock , 2017<br>

https://doi.org/10.18453/rosdok_id00000154

## Python Dependencies

First of all, we will import several useful libraries:

```

import os

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

```

While it is very good programming style to re-use existing software libraries instead of programming them by yourself, it is crucial for the portability and reproducibility of your source code that the detailed version of the library is documented and used in order to (re-)run your code.

Otherwise, the result might be different due to e.g. changes to the interface or the implementation of a specific method.

These software libraries are also called requirements.

In Python-programming, two popular systems are used for managing the computing environment, i.e. the installation and maintenance of third party software libraries:

1. pip and

2. (Ana)conda

In this workshop we will consider the pip environment.

In order to easily install software requirement using pip, one has to provide a `requirements.txt` file containing both the name and the version.

As discussed earlier the version is crucial for the reproducibility of the code.

A sample file looks like follows:

**Task 1:** Create a `requirements.txt` file that containes all imported libraries and their corresponding version.

The previous output is already formatted with respect to `requirements.txt` files and, thus, can easily be copied.

Additionally to the `requirements.txt`, this kind of information / code cell can be keept inside the notebook too.

This is for the convenience of other researchers that are able to easily check their installed versions.

## Reading Data

**Task 2:** Write a function `read_data` that takes a file name of a CSV file and reads the corresponding file from a folder called `_data` in order to return a Pandas dataframe.

Lets's actually try our function, by reading the file `_data/raw_1.csv`:

```

raw1 = read_data('raw_1.csv')

```

In order to check the result, we want to display the content of the variable `raw1`:

```

raw1

```

## Plotting Data

During research, we often want to plot several measurements in order to integrate it into an article.

Next, we will make a very basic plot of the Acceleration Sensor of the x-axis:

```

plt.plot(raw1['Sensor_T8_Acceleration_X'])

```

**Task 3:** Write a plot that displays: X-, Y-, and Z-axis in the same plot and make it a little more useful such as adding a title as well as axis-labels.

| github_jupyter |

```

%load_ext autoreload

%autoreload 2

import os

import sys

sys.path.append("../src/")

import matplotsoccer as mps

import pandas as pd

from tqdm import tqdm

import matplotlib.pyplot as plt

data = "../data/spadl-v2.hdf"

games = pd.read_hdf(data,key="games")

epl16 = games[(games.competition_id == 8) & (games.season_id == 2016)]

epl16[:5]

def get_actions(games, hdf_url):

actions = []

for game in tqdm(list(games.itertuples())):

a = pd.read_hdf(hdf_url, key="actions/" + str(game.id))

a["left_to_right"] = a["team_id"] == game.home_team_id

actions.append(a)

actions = pd.concat(actions)

#actions = always_ltr(actions)

return actions

def always_ltr(actions):

away_idx = ~actions.left_to_right

actions.loc[away_idx, "start_x"] = 105 - actions[away_idx].start_x.values

actions.loc[away_idx, "start_y"] = 68 - actions[away_idx].start_y.values

actions.loc[away_idx, "end_x"] = 105 - actions[away_idx].end_x.values

actions.loc[away_idx, "end_y"] = 68 - actions[away_idx].end_y.values

return actions

actions = get_actions(epl16,data)

actiontypes = pd.read_hdf(data, key="actiontypes")

actiontypes.columns = ["type_id","type_name"]

actions = actions.merge(actiontypes, on="type_id")

players = pd.read_hdf(data,key="players")

actions = actions.merge(players,left_on="player_id",right_on="id")

teams = pd.read_hdf(data,key="teams")

actions = actions.merge(teams,left_on="team_id",right_on="id")

actions = actions.sort_values(["game_id","period_id","time_seconds","timestamp"])

actions.columns

player_actions = actions[actions.last_name.str.contains("Kompany")].copy()

set(player_actions.soccer_name)

player_actions = always_ltr(player_actions)

x,y = player_actions.start_x, player_actions.start_y

```

# Field

```

f = mps.field()

f = mps.field(color="green",figsize=8)

```

# Heatmap

```

ax = mps.field(show=False)

ax.scatter(x,y,s=2); plt.show()

matrix = mps.count(x,y,n=20,m=20)

hm = mps.heatmap(matrix)

```

# Actions

```

start = 29411

delta = 19

for i in range(1):

phase = actions[start+i*delta:start+delta+i*delta].copy()

phase["team"] = phase.full_name

phase["player"] = phase.soccer_name

phase = phase[["team","player","time_seconds","type_name","result","start_x","start_y","end_x","end_y"]]

# Full field

mps.actions(phase,figsize = 8)

## Zoomed in

mps.actions(phase,color="green",zoom=True,figsize=8)

shot_chart(x,y,kind="kde")

import numpy as np

from scipy.stats import binned_statistic_2d

import matplotlib.pyplot as plt

from matplotlib.patches import Circle, Rectangle, Arc

import seaborn as sns

# from bokeh.plotting import figure, ColumnDataSource

# from bokeh.models import HoverTool

from math import pi

sns.set_style('white')

sns.set_color_codes()

# In meters

PITCH_WIDTH = 68.0

PITCH_LENGTH = 105.0

def draw_pitch(ax=None, color='gray', lw=1, despine=False):

"""Returns an axes with a basketball court drawn onto to it.

This function draws a court based on the x and y-axis values that the NBA

stats API provides for the shot chart data. For example the center of the

hoop is located at the (0,0) coordinate. Twenty-two feet from the left of

the center of the hoop in is represented by the (-220,0) coordinates.

So one foot equals ±10 units on the x and y-axis.

Parameters

----------

ax : Axes, optional

The Axes object to plot the court onto.

color : matplotlib color, optional

The color of the court lines.

lw : float, optional

The linewidth the of the court lines.

Returns

-------

ax : Axes

The Axes object with the court on it.

"""

#Create figure

if ax is None:

ax = plt.gca()

ax.tick_params(labelbottom=False, labelleft=False)

ax.set_xlim(-5, PITCH_LENGTH + 5)

ax.set_ylim(-5, PITCH_WIDTH + 5)

# Create an empty array of strings with the same shape as the meshgrid, and

# populate it with two colors in a checkerboard pattern.

# ylen = int(PITCH_LENGTH/10)

# xlen = int(PITCH_WIDTH/10)

# colortuple = ((86,176,0, 100), (99,201,0, 100))

# colors = np.empty((xlen,ylen,4), dtype=int)

# for y in range(ylen):

# for x in range(xlen):

# colors[x, y] = colortuple[(x + y) % len(colortuple)]

# x0, x1 = ax.get_xlim()

# y0, y1 = ax.get_ylim()

# ax.imshow(colors, extent=[x0, x1, y0, y1], aspect='auto')

# size of the pitch is 120, 80

#Pitch Outline & Centre Line

outerLinesLeftHalf = Rectangle((0, 0), PITCH_LENGTH/2, PITCH_WIDTH, linewidth=lw, color=color, fill=False)

ax.add_patch(outerLinesLeftHalf)

outerLinesRightHalf = Rectangle((PITCH_LENGTH/2, 0), PITCH_LENGTH/2, PITCH_WIDTH, linewidth=lw, color=color, fill=False)

ax.add_patch(outerLinesRightHalf)

#Left Penalty Area

leftPenaltyArea = Rectangle((0, PITCH_WIDTH/2 - 20.16), 16.5, 40.32, linewidth=lw, color=color, fill=False)

ax.add_patch(leftPenaltyArea)

#Right Penalty Area

rightPenaltyArea = Rectangle((PITCH_LENGTH-16.5, PITCH_WIDTH/2 - 20.16), 16.5, 40.32, linewidth=lw, color=color, fill=False)

ax.add_patch(rightPenaltyArea)

#Left 6-yard Box

leftSixYardBox = Rectangle((0, PITCH_WIDTH/2 - 9.16), 5.5, 18.32, linewidth=lw, color=color, fill=False)

ax.add_patch(leftSixYardBox)

#Right 6-yard Box

rightSixYardBox = Rectangle((PITCH_LENGTH-5.5, PITCH_WIDTH/2 - 9.16), 5.5, 18.32, linewidth=lw, color=color, fill=False)

ax.add_patch(rightSixYardBox)

#Left goal

leftGoal = Rectangle((-1, PITCH_WIDTH/2 - 3.66), 2, 7.32, linewidth=lw, color=color, fill=True)

ax.add_patch(leftGoal)

#Right goal

rightGoal = Rectangle((PITCH_LENGTH-1, PITCH_WIDTH/2 - 3.66), 2, 7.32, linewidth=lw, color=color, fill=True)

ax.add_patch(rightGoal)

#Prepare Circles

centreCircle = Circle((PITCH_LENGTH/2, PITCH_WIDTH/2), 8.1, color=color, fill=False)

ax.add_patch(centreCircle)

centreSpot = Circle((PITCH_LENGTH/2, PITCH_WIDTH/2), 0.3, color=color)

ax.add_patch(centreSpot)

leftPenSpot = Circle((11, PITCH_WIDTH/2), 0.1, color=color)

ax.add_patch(leftPenSpot)

rightPenSpot = Circle((PITCH_LENGTH-11, PITCH_WIDTH/2), 0.1, color=color)

ax.add_patch(rightPenSpot)

#Prepare Arcs

# arguments for arc

# x, y coordinate of centerpoint of arc

# width, height as arc might not be circle, but oval

# angle: degree of rotation of the shape, anti-clockwise

# theta1, theta2, start and end location of arc in degree

leftArc = Arc((11,PITCH_WIDTH/2), height=18.3, width=18.3, angle=0, theta1=310, theta2=50, color=color)

ax.add_patch(leftArc)

rightArc = Arc((PITCH_LENGTH-11, PITCH_WIDTH/2), height=18.3, width=18.3, angle=0, theta1=130, theta2=230, color=color)

ax.add_patch(rightArc)

# Set the spines to match the rest of court lines, makes outer_lines

# somewhate unnecessary

for spine in ax.spines:

ax.spines[spine].set_lw(lw)

ax.spines[spine].set_color(color)

if despine:

ax.spines["top"].set_visible(False)

ax.spines["bottom"].set_visible(False)

ax.spines["right"].set_visible(False)

ax.spines["left"].set_visible(False)

return ax

def shot_chart(x, y, kind="scatter", title="", color="b", cmap=None,

xlim=(0,120), ylim=(0,80),

court_color="gray", court_lw=1, outer_lines=False,

flip_court=False, kde_shade=True, gridsize=None, ax=None,

despine=False, **kwargs):

"""

Returns an Axes object with player shots plotted.

Parameters

----------

x, y : strings or vector

The x and y coordinates of the shots taken. They can be passed in as

vectors (such as a pandas Series) or as columns from the pandas

DataFrame passed into ``data``.

data : DataFrame, optional

DataFrame containing shots where ``x`` and ``y`` represent the

shot location coordinates.

kind : { "scatter", "kde", "hex" }, optional

The kind of shot chart to create.

title : str, optional

The title for the plot.

color : matplotlib color, optional

Color used to plot the shots

cmap : matplotlib Colormap object or name, optional

Colormap for the range of data values. If one isn't provided, the

colormap is derived from the valuue passed to ``color``. Used for KDE

and Hexbin plots.

{x, y}lim : two-tuples, optional

The axis limits of the plot.

court_color : matplotlib color, optional

The color of the court lines.

court_lw : float, optional

The linewidth the of the court lines.

outer_lines : boolean, optional

If ``True`` the out of bound lines are drawn in as a matplotlib

Rectangle.

flip_court : boolean, optional

If ``True`` orients the hoop towards the bottom of the plot. Default

is ``False``, which orients the court where the hoop is towards the top

of the plot.

kde_shade : boolean, optional

Default is ``True``, which shades in the KDE contours.

gridsize : int, optional

Number of hexagons in the x-direction. The default is calculated using

the Freedman-Diaconis method.

ax : Axes, optional

The Axes object to plot the court onto.

despine : boolean, optional

If ``True``, removes the spines.

kwargs : key, value pairs

Keyword arguments for matplotlib Collection properties or seaborn plots.

Returns

-------

ax : Axes

The Axes object with the shot chart plotted on it.

"""

if ax is None:

ax = plt.gca()

if cmap is None:

cmap = sns.light_palette(color, as_cmap=True)

if not flip_court:

ax.set_xlim(xlim)

ax.set_ylim(ylim)

else:

ax.set_xlim(xlim[::-1])

ax.set_ylim(ylim[::-1])

ax.tick_params(labelbottom=False, labelleft=False)

ax.set_title(title, fontsize=18)

draw_pitch(ax, color=court_color, lw=court_lw)

if kind == "scatter":

ax.scatter(x, y, c=color, **kwargs)

elif kind == "kde":

sns.kdeplot(x, y, shade=kde_shade, cmap=cmap, ax=ax, **kwargs)

ax.set_xlabel('')

ax.set_ylabel('')

elif kind == "hex":

if gridsize is None:

# Get the number of bins for hexbin using Freedman-Diaconis rule

# This is idea was taken from seaborn, which got the calculation

# from http://stats.stackexchange.com/questions/798/

from seaborn.distributions import _freedman_diaconis_bins

x_bin = _freedman_diaconis_bins(x)

y_bin = _freedman_diaconis_bins(y)

gridsize = int(np.mean([x_bin, y_bin]))

ax.hexbin(x, y, gridsize=gridsize, cmap=cmap, **kwargs)

else:

raise ValueError("kind must be 'scatter', 'kde', or 'hex'.")

# Set the spines to match the rest of court lines, makes outer_lines

# somewhate unnecessary

for spine in ax.spines:

ax.spines[spine].set_lw(court_lw)

ax.spines[spine].set_color(court_color)

if despine:

ax.spines["top"].set_visible(False)

ax.spines["bottom"].set_visible(False)

ax.spines["right"].set_visible(False)

ax.spines["left"].set_visible(False)

return ax

def pass_chart(x, y, kind="scatter", title="", color="b", cmap=None,

xlim=(0,120), ylim=(0,80),

court_color="gray", court_lw=1, outer_lines=False,

flip_court=False, kde_shade=True, gridsize=None, ax=None,

despine=False, **kwargs):

"""

Returns an Axes object with player shots plotted.

Parameters

----------

x, y : strings or vector

The x and y coordinates of the shots taken. They can be passed in as

vectors (such as a pandas Series) or as columns from the pandas

DataFrame passed into ``data``.

data : DataFrame, optional

DataFrame containing shots where ``x`` and ``y`` represent the

shot location coordinates.

kind : { "scatter", "kde", "hex" }, optional

The kind of shot chart to create.

title : str, optional

The title for the plot.

color : matplotlib color, optional

Color used to plot the shots

cmap : matplotlib Colormap object or name, optional

Colormap for the range of data values. If one isn't provided, the

colormap is derived from the valuue passed to ``color``. Used for KDE

and Hexbin plots.

{x, y}lim : two-tuples, optional

The axis limits of the plot.

court_color : matplotlib color, optional

The color of the court lines.

court_lw : float, optional

The linewidth the of the court lines.

outer_lines : boolean, optional

If ``True`` the out of bound lines are drawn in as a matplotlib

Rectangle.

flip_court : boolean, optional

If ``True`` orients the hoop towards the bottom of the plot. Default

is ``False``, which orients the court where the hoop is towards the top

of the plot.

kde_shade : boolean, optional

Default is ``True``, which shades in the KDE contours.

gridsize : int, optional

Number of hexagons in the x-direction. The default is calculated using

the Freedman-Diaconis method.

ax : Axes, optional

The Axes object to plot the court onto.

despine : boolean, optional

If ``True``, removes the spines.

kwargs : key, value pairs

Keyword arguments for matplotlib Collection properties or seaborn plots.

Returns

-------

ax : Axes

The Axes object with the shot chart plotted on it.

"""

if ax is None:

ax = plt.gca()

if cmap is None:

cmap = sns.light_palette(color, as_cmap=True)

if not flip_court:

ax.set_xlim(xlim)

ax.set_ylim(ylim)

else:

ax.set_xlim(xlim[::-1])

ax.set_ylim(ylim[::-1])

ax.tick_params(labelbottom=False, labelleft=False)

ax.set_title(title, fontsize=18)

draw_pitch(ax, color=court_color, lw=court_lw)

if kind == "scatter":

for i in range(len(x)):

ax.plot((x.values[i,0],y.values[i,0]), (x.values[i,1],y.values[i,1]), c=color, **kwargs)

ax.plot(x.values[i, 0], x.values[i, 1],"o", color=color)

elif kind == "kde":

sns.kdeplot(x, y, shade=kde_shade, cmap=cmap, ax=ax, **kwargs)

ax.set_xlabel('')

ax.set_ylabel('')

elif kind == "hex":

if gridsize is None:

# Get the number of bins for hexbin using Freedman-Diaconis rule

# This is idea was taken from seaborn, which got the calculation

# from http://stats.stackexchange.com/questions/798/

from seaborn.distributions import _freedman_diaconis_bins

x_bin = _freedman_diaconis_bins(x)

y_bin = _freedman_diaconis_bins(y)

gridsize = int(np.mean([x_bin, y_bin]))

ax.hexbin(x, y, gridsize=gridsize, cmap=cmap, **kwargs)

else:

raise ValueError("kind must be 'scatter', 'kde', or 'hex'.")

# Set the spines to match the rest of court lines, makes outer_lines

# somewhate unnecessary

for spine in ax.spines:

ax.spines[spine].set_lw(court_lw)

ax.spines[spine].set_color(court_color)

if despine:

ax.spines["top"].set_visible(False)

ax.spines["bottom"].set_visible(False)

ax.spines["right"].set_visible(False)

ax.spines["left"].set_visible(False)

return ax

```

| github_jupyter |

# Exploring Ensemble Methods

In this assignment, we will explore the use of boosting. We will use the pre-implemented gradient boosted trees in GraphLab Create. You will:

* Use SFrames to do some feature engineering.

* Train a boosted ensemble of decision-trees (gradient boosted trees) on the LendingClub dataset.

* Predict whether a loan will default along with prediction probabilities (on a validation set).

* Evaluate the trained model and compare it with a baseline.

* Find the most positive and negative loans using the learned model.

* Explore how the number of trees influences classification performance.

Let's get started!

## Fire up Graphlab Create

```

import graphlab

```

# Load LendingClub dataset

We will be using the [LendingClub](https://www.lendingclub.com/) data. As discussed earlier, the [LendingClub](https://www.lendingclub.com/) is a peer-to-peer leading company that directly connects borrowers and potential lenders/investors.

Just like we did in previous assignments, we will build a classification model to predict whether or not a loan provided by lending club is likely to default.

Let us start by loading the data.

```

loans = graphlab.SFrame('lending-club-data.gl/')

```

Let's quickly explore what the dataset looks like. First, let's print out the column names to see what features we have in this dataset. We have done this in previous assignments, so we won't belabor this here.

```

loans.column_names()

```

## Modifying the target column

The target column (label column) of the dataset that we are interested in is called `bad_loans`. In this column **1** means a risky (bad) loan **0** means a safe loan.

As in past assignments, in order to make this more intuitive and consistent with the lectures, we reassign the target to be:

* **+1** as a safe loan,

* **-1** as a risky (bad) loan.

We put this in a new column called `safe_loans`.

```

loans['safe_loans'] = loans['bad_loans'].apply(lambda x : +1 if x==0 else -1)

loans = loans.remove_column('bad_loans')

```

## Selecting features

In this assignment, we will be using a subset of features (categorical and numeric). The features we will be using are **described in the code comments** below. If you are a finance geek, the [LendingClub](https://www.lendingclub.com/) website has a lot more details about these features.

The features we will be using are described in the code comments below:

```

target = 'safe_loans'

features = ['grade', # grade of the loan (categorical)

'sub_grade_num', # sub-grade of the loan as a number from 0 to 1

'short_emp', # one year or less of employment

'emp_length_num', # number of years of employment

'home_ownership', # home_ownership status: own, mortgage or rent

'dti', # debt to income ratio

'purpose', # the purpose of the loan

'payment_inc_ratio', # ratio of the monthly payment to income

'delinq_2yrs', # number of delinquincies

'delinq_2yrs_zero', # no delinquincies in last 2 years

'inq_last_6mths', # number of creditor inquiries in last 6 months

'last_delinq_none', # has borrower had a delinquincy

'last_major_derog_none', # has borrower had 90 day or worse rating

'open_acc', # number of open credit accounts

'pub_rec', # number of derogatory public records

'pub_rec_zero', # no derogatory public records

'revol_util', # percent of available credit being used

'total_rec_late_fee', # total late fees received to day

'int_rate', # interest rate of the loan

'total_rec_int', # interest received to date

'annual_inc', # annual income of borrower

'funded_amnt', # amount committed to the loan

'funded_amnt_inv', # amount committed by investors for the loan

'installment', # monthly payment owed by the borrower

]

```

## Skipping observations with missing values

Recall from the lectures that one common approach to coping with missing values is to **skip** observations that contain missing values.

We run the following code to do so:

```

loans, loans_with_na = loans[[target] + features].dropna_split()

# Count the number of rows with missing data

num_rows_with_na = loans_with_na.num_rows()

num_rows = loans.num_rows()

print 'Dropping %s observations; keeping %s ' % (num_rows_with_na, num_rows)

```

Fortunately, there are not too many missing values. We are retaining most of the data.

## Make sure the classes are balanced

We saw in an earlier assignment that this dataset is also imbalanced. We will undersample the larger class (safe loans) in order to balance out our dataset. We used `seed=1` to make sure everyone gets the same results.

```

safe_loans_raw = loans[loans[target] == 1]

risky_loans_raw = loans[loans[target] == -1]

# Undersample the safe loans.

percentage = len(risky_loans_raw)/float(len(safe_loans_raw))

safe_loans = safe_loans_raw.sample(percentage, seed = 1)

risky_loans = risky_loans_raw

loans_data = risky_loans.append(safe_loans)

print "Percentage of safe loans :", len(safe_loans) / float(len(loans_data))

print "Percentage of risky loans :", len(risky_loans) / float(len(loans_data))

print "Total number of loans in our new dataset :", len(loans_data)

```

**Checkpoint:** You should now see that the dataset is balanced (approximately 50-50 safe vs risky loans).

**Note:** There are many approaches for dealing with imbalanced data, including some where we modify the learning algorithm. These approaches are beyond the scope of this course, but some of them are reviewed in this [paper](http://ieeexplore.ieee.org/xpl/login.jsp?tp=&arnumber=5128907&url=http%3A%2F%2Fieeexplore.ieee.org%2Fiel5%2F69%2F5173046%2F05128907.pdf%3Farnumber%3D5128907 ). For this assignment, we use the simplest possible approach, where we subsample the overly represented class to get a more balanced dataset. In general, and especially when the data is highly imbalanced, we recommend using more advanced methods.

## Split data into training and validation sets

We split the data into training data and validation data. We used `seed=1` to make sure everyone gets the same results. We will use the validation data to help us select model parameters.

```

train_data, validation_data = loans_data.random_split(.8, seed=1)

```

# Gradient boosted tree classifier

Gradient boosted trees are a powerful variant of boosting methods; they have been used to win many [Kaggle](https://www.kaggle.com/) competitions, and have been widely used in industry. We will explore the predictive power of multiple decision trees as opposed to a single decision tree.

**Additional reading:** If you are interested in gradient boosted trees, here is some additional reading material:

* [GraphLab Create user guide](https://dato.com/learn/userguide/supervised-learning/boosted_trees_classifier.html)

* [Advanced material on boosted trees](http://homes.cs.washington.edu/~tqchen/pdf/BoostedTree.pdf)

We will now train models to predict `safe_loans` using the features above. In this section, we will experiment with training an ensemble of 5 trees. To cap the ensemble classifier at 5 trees, we call the function with **max_iterations=5** (recall that each iterations corresponds to adding a tree). We set `validation_set=None` to make sure everyone gets the same results.

```

model_5 = graphlab.boosted_trees_classifier.create(train_data, validation_set=None,

target = target, features = features, max_iterations = 5)

```

# Making predictions

Just like we did in previous sections, let us consider a few positive and negative examples **from the validation set**. We will do the following:

* Predict whether or not a loan is likely to default.

* Predict the probability with which the loan is likely to default.

```

# Select all positive and negative examples.

validation_safe_loans = validation_data[validation_data[target] == 1]

validation_risky_loans = validation_data[validation_data[target] == -1]

# Select 2 examples from the validation set for positive & negative loans

sample_validation_data_risky = validation_risky_loans[0:2]

sample_validation_data_safe = validation_safe_loans[0:2]

# Append the 4 examples into a single dataset

sample_validation_data = sample_validation_data_safe.append(sample_validation_data_risky)

sample_validation_data

```

### Predicting on sample validation data

For each row in the **sample_validation_data**, write code to make **model_5** predict whether or not the loan is classified as a **safe loan**.

**Hint:** Use the `predict` method in `model_5` for this.

```

predictions = model_5.predict(sample_validation_data)

#len(sample_validation_data[sample_validation_data["safe_loans"] != predictions])

count = 0

for i in xrange(len(sample_validation_data)):

if sample_validation_data["safe_loans"][i] != predictions[i]:

count = count + 1

print 1 - (count/float(len(predictions)))

```

**Quiz question:** What percentage of the predictions on `sample_validation_data` did `model_5` get correct?

### Prediction probabilities

For each row in the **sample_validation_data**, what is the probability (according **model_5**) of a loan being classified as **safe**?

**Hint:** Set `output_type='probability'` to make **probability** predictions using `model_5` on `sample_validation_data`:

```

model_5.predict(sample_validation_data, output_type='probability')

```

**Quiz Question:** According to **model_5**, which loan is the least likely to be a safe loan?

**Checkpoint:** Can you verify that for all the predictions with `probability >= 0.5`, the model predicted the label **+1**?

## Evaluating the model on the validation data

Recall that the accuracy is defined as follows:

$$

\mbox{accuracy} = \frac{\mbox{# correctly classified examples}}{\mbox{# total examples}}

$$

Evaluate the accuracy of the **model_5** on the **validation_data**.

**Hint**: Use the `.evaluate()` method in the model.

```

model_5.evaluate(validation_data)

```

Calculate the number of **false positives** made by the model.

```

predictions = model_5.predict(validation_data)

fp = 0

for i in xrange(len(validation_data)):

if validation_data["safe_loans"][i] != predictions[i]:

if predictions[i] == 1 and validation_data["safe_loans"][i] == -1:

fp = fp + 1

print fp

```

**Quiz question**: What is the number of **false positives** on the **validation_data**?

Calculate the number of **false negatives** made by the model.

```

fn = 0

for i in xrange(len(validation_data)):

if validation_data["safe_loans"][i] != predictions[i]:

if predictions[i] == -1 and validation_data["safe_loans"][i] == 1:

fn = fn + 1

print fn

```

## Comparison with decision trees

In the earlier assignment, we saw that the prediction accuracy of the decision trees was around **0.64** (rounded). In this assignment, we saw that **model_5** has an accuracy of **0.67** (rounded).

Here, we quantify the benefit of the extra 3% increase in accuracy of **model_5** in comparison with a single decision tree from the original decision tree assignment.

As we explored in the earlier assignment, we calculated the cost of the mistakes made by the model. We again consider the same costs as follows:

* **False negatives**: Assume a cost of \$10,000 per false negative.

* **False positives**: Assume a cost of \$20,000 per false positive.

Assume that the number of false positives and false negatives for the learned decision tree was

* **False negatives**: 1936

* **False positives**: 1503

Using the costs defined above and the number of false positives and false negatives for the decision tree, we can calculate the total cost of the mistakes made by the decision tree model as follows:

```

cost = $10,000 * 1936 + $20,000 * 1503 = $49,420,000

```

The total cost of the mistakes of the model is $49.42M. That is a **lot of money**!.

**Quiz Question**: Using the same costs of the false positives and false negatives, what is the cost of the mistakes made by the boosted tree model (**model_5**) as evaluated on the **validation_set**?

```

1618 * 20000 + 1463 * 10000

```

**Reminder**: Compare the cost of the mistakes made by the boosted trees model with the decision tree model. The extra 3% improvement in prediction accuracy can translate to several million dollars! And, it was so easy to get by simply boosting our decision trees.

## Most positive & negative loans.

In this section, we will find the loans that are most likely to be predicted **safe**. We can do this in a few steps:

* **Step 1**: Use the **model_5** (the model with 5 trees) and make **probability predictions** for all the loans in the **validation_data**.

* **Step 2**: Similar to what we did in the very first assignment, add the probability predictions as a column called **predictions** into the validation_data.

* **Step 3**: Sort the data (in descreasing order) by the probability predictions.

Start here with **Step 1** & **Step 2**. Make predictions using **model_5** for examples in the **validation_data**. Use `output_type = probability`.

```

probs = model_5.predict(validation_data, output_type='probability')

validation_data["predictions"] = probs

```

**Checkpoint:** For each row, the probabilities should be a number in the range **[0, 1]**. We have provided a simple check here to make sure your answers are correct.

```

print "Your loans : %s\n" % validation_data['predictions'].head(4)

print "Expected answer : %s" % [0.4492515948736132, 0.6119100103640573,

0.3835981314851436, 0.3693306705994325]

```

Now, we are ready to go to **Step 3**. You can now use the `prediction` column to sort the loans in **validation_data** (in descending order) by prediction probability. Find the top 5 loans with the highest probability of being predicted as a **safe loan**.

```

validation_data.sort("predictions", ascending=False).head(5)

```

** Quiz question**: What grades are the top 5 loans?

Let us repeat this excercise to find the top 5 loans (in the **validation_data**) with the **lowest probability** of being predicted as a **safe loan**:

```

validation_data.sort("predictions", ascending=True).head(5)

```

**Checkpoint:** You should expect to see 5 loans with the grade ['**D**', '**C**', '**C**', '**C**', '**B**'].

## Effect of adding more trees

In this assignment, we will train 5 different ensemble classifiers in the form of gradient boosted trees. We will train models with 10, 50, 100, 200, and 500 trees. We use the **max_iterations** parameter in the boosted tree module.

Let's get sarted with a model with **max_iterations = 10**:

```

model_10 = graphlab.boosted_trees_classifier.create(train_data, validation_set=None,

target = target, features = features, max_iterations = 10, verbose=False)

```

Now, train 4 models with **max_iterations** to be:

* `max_iterations = 50`,

* `max_iterations = 100`

* `max_iterations = 200`

* `max_iterations = 500`.

Let us call these models **model_50**, **model_100**, **model_200**, and **model_500**. You can pass in `verbose=False` in order to suppress the printed output.

**Warning:** This could take a couple of minutes to run.

```

model_50 = graphlab.boosted_trees_classifier.create(train_data, validation_set=None,

target = target, features = features, max_iterations = 50, verbose=False)

model_100 = graphlab.boosted_trees_classifier.create(train_data, validation_set=None,

target = target, features = features, max_iterations = 100, verbose=False)

model_200 = graphlab.boosted_trees_classifier.create(train_data, validation_set=None,

target = target, features = features, max_iterations = 200, verbose=False)

model_500 = graphlab.boosted_trees_classifier.create(train_data, validation_set=None,

target = target, features = features, max_iterations = 500, verbose=False)

```

## Compare accuracy on entire validation set

Now we will compare the predicitve accuracy of our models on the validation set. Evaluate the **accuracy** of the 10, 50, 100, 200, and 500 tree models on the **validation_data**. Use the `.evaluate` method.

```

print model_10.evaluate(validation_data)['accuracy']

print model_50.evaluate(validation_data)['accuracy']

print model_100.evaluate(validation_data)['accuracy']

print model_200.evaluate(validation_data)['accuracy']

print model_500.evaluate(validation_data)['accuracy']

```

**Quiz Question:** Which model has the **best** accuracy on the **validation_data**?

**Quiz Question:** Is it always true that the model with the most trees will perform best on test data?

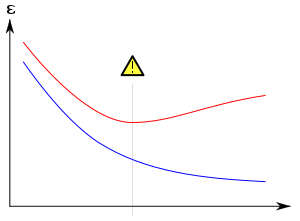

## Plot the training and validation error vs. number of trees

Recall from the lecture that the classification error is defined as

$$

\mbox{classification error} = 1 - \mbox{accuracy}

$$

In this section, we will plot the **training and validation errors versus the number of trees** to get a sense of how these models are performing. We will compare the 10, 50, 100, 200, and 500 tree models. You will need [matplotlib](http://matplotlib.org/downloads.html) in order to visualize the plots.

First, make sure this block of code runs on your computer.

```

import matplotlib.pyplot as plt

%matplotlib inline

def make_figure(dim, title, xlabel, ylabel, legend):

plt.rcParams['figure.figsize'] = dim

plt.title(title)

plt.xlabel(xlabel)

plt.ylabel(ylabel)

if legend is not None:

plt.legend(loc=legend, prop={'size':15})

plt.rcParams.update({'font.size': 16})

plt.tight_layout()

```

In order to plot the classification errors (on the **train_data** and **validation_data**) versus the number of trees, we will need lists of these accuracies, which we get by applying the method `.evaluate`.

**Steps to follow:**

* **Step 1:** Calculate the classification error for model on the training data (**train_data**).

* **Step 2:** Store the training errors into a list (called `training_errors`) that looks like this:

```

[train_err_10, train_err_50, ..., train_err_500]

```

* **Step 3:** Calculate the classification error of each model on the validation data (**validation_data**).

* **Step 4:** Store the validation classification error into a list (called `validation_errors`) that looks like this:

```

[validation_err_10, validation_err_50, ..., validation_err_500]

```

Once that has been completed, the rest of the code should be able to evaluate correctly and generate the plot.

Let us start with **Step 1**. Write code to compute the classification error on the **train_data** for models **model_10**, **model_50**, **model_100**, **model_200**, and **model_500**.

```

train_err_10 = 1 - model_10.evaluate(train_data)['accuracy']

train_err_50 = 1 - model_50.evaluate(train_data)['accuracy']

train_err_100 = 1 - model_100.evaluate(train_data)['accuracy']

train_err_200 = 1 - model_200.evaluate(train_data)['accuracy']

train_err_500 = 1 - model_500.evaluate(train_data)['accuracy']

```

Now, let us run **Step 2**. Save the training errors into a list called **training_errors**

```

training_errors = [train_err_10, train_err_50, train_err_100,

train_err_200, train_err_500]

```

Now, onto **Step 3**. Write code to compute the classification error on the **validation_data** for models **model_10**, **model_50**, **model_100**, **model_200**, and **model_500**.

```

validation_err_10 = 1 - model_10.evaluate(validation_data)['accuracy']

validation_err_50 = 1 - model_50.evaluate(validation_data)['accuracy']

validation_err_100 = 1 - model_100.evaluate(validation_data)['accuracy']

validation_err_200 = 1 - model_200.evaluate(validation_data)['accuracy']

validation_err_500 = 1 - model_500.evaluate(validation_data)['accuracy']

```

Now, let us run **Step 4**. Save the training errors into a list called **validation_errors**

```

validation_errors = [validation_err_10, validation_err_50, validation_err_100,

validation_err_200, validation_err_500]

```

Now, we will plot the **training_errors** and **validation_errors** versus the number of trees. We will compare the 10, 50, 100, 200, and 500 tree models. We provide some plotting code to visualize the plots within this notebook.

Run the following code to visualize the plots.

```

plt.plot([10, 50, 100, 200, 500], training_errors, linewidth=4.0, label='Training error')

plt.plot([10, 50, 100, 200, 500], validation_errors, linewidth=4.0, label='Validation error')

make_figure(dim=(10,5), title='Error vs number of trees',

xlabel='Number of trees',

ylabel='Classification error',

legend='best')

```

**Quiz question**: Does the training error reduce as the number of trees increases?

**Quiz question**: Is it always true that the validation error will reduce as the number of trees increases?

| github_jupyter |

# Power electricity power consumption prediction model

Let's first start by importing the needed libraries

```

from pyspark.sql import SparkSession

```

And create a local parallel spark session

```

spark = SparkSession.builder \

.master("local[*]") \

.appName("Power electricity prediction") \

.getOrCreate()

```

The we read the dataframe we need to apply the prediction on

```

train = spark.read.csv('../data/engineered/powerelectricity_train.csv', header=True, inferSchema=True)

test = spark.read.csv('../data/engineered/powerelectricity_test.csv', header=True, inferSchema=True)

```

Now we will test some regression models and evaluate them using rmse

```

from pyspark.ml import Pipeline

from pyspark.ml.regression import RandomForestRegressor, GBTRegressor, LinearRegression

from pyspark.ml.feature import VectorIndexer

from pyspark.ml.evaluation import RegressionEvaluator

from pyspark.ml.feature import VectorAssembler

train_ep = train.drop('rms_current')

train_ep = train_ep.drop('electric_power')

train_ep = train_ep.drop('srtDate')

test_ep = test.drop('rms_current')

test_ep = test_ep.drop('electric_power')

test_ep = test_ep.drop('srtDate')

features_ep = train_ep.columns.copy()

features_ep.remove('delta_y')

assembler_pe_train = VectorAssembler(inputCols=features_ep,outputCol="features")

train_ep = assembler_pe_train.transform(train_ep)

assembler_pe_test = VectorAssembler(inputCols=features_ep,outputCol="features")

test_ep = assembler_pe_test.transform(test_ep)

```

Let's start by predicting electric_power

```

rf_pe = GBTRegressor(featuresCol="features",labelCol='delta_y',maxIter=50,seed=12345)

%%time

model_pe = rf_pe.fit(train_ep)

predictions = model_pe.transform(train_ep)

print('################################ TRAIN ################################')

evaluator_rmse = RegressionEvaluator(

labelCol="delta_y", predictionCol="prediction", metricName="rmse")

rmse = evaluator_rmse.evaluate(predictions)

print("Root Mean Squared Error (RMSE) on train data = %g" % rmse)

evaluator_r2 = RegressionEvaluator(

labelCol="delta_y", predictionCol="prediction", metricName="r2")

r2 = evaluator_r2.evaluate(predictions)

print("R squared (r2) on train data = %g" % r2)

evaluator_mae = RegressionEvaluator(

labelCol="delta_y", predictionCol="prediction", metricName="mae")

mae = evaluator_mae.evaluate(predictions)

print("Mean Average Error (RMSE) on train data = %g" % mae)

predictions = model_pe.transform(test_ep)

print('################################ TEST ################################')

evaluator_rmse = RegressionEvaluator(

labelCol="delta_y", predictionCol="prediction", metricName="rmse")

rmse = evaluator_rmse.evaluate(predictions)

print("Root Mean Squared Error (RMSE) on test data = %g" % rmse)

evaluator_r2 = RegressionEvaluator(

labelCol="delta_y", predictionCol="prediction", metricName="r2")

r2 = evaluator_r2.evaluate(predictions)

print("R squared (r2) on test data = %g" % r2)

evaluator_mae = RegressionEvaluator(

labelCol="delta_y", predictionCol="prediction", metricName="mae")

mae = evaluator_mae.evaluate(predictions)

print("Mean Average Error (RMSE) on test data = %g" % mae)

```

And finally save to a file the predictions

```

import os

try:

os.mkdir('../predictions/')

except:

pass

preds = predictions.select(["prediction"])

preds.toPandas().to_csv('../predictions/preds.csv')

```

Let's take a look at the result by converting back the values to electric power

```

from sklearn.metrics import r2_score, explained_variance_score, mean_squared_error

import pandas as pd

import numpy as np

test_data = pd.read_csv('../data/engineered/powerelectricity_test.csv')

preds = pd.read_csv('../predictions/preds.csv')

test_data['pred_y'] = preds['prediction']

test_data['pred_y'] = test_data['pred_y'] + test_data['electric_power_t-X']

test_data['electric_power'] = np.exp(test_data['electric_power'])

test_data['pred_y'] = np.exp(test_data['pred_y'])

r2 = r2_score(test_data['electric_power'], test_data['pred_y'])

rmse = np.sqrt(mean_squared_error(test_data['electric_power'], test_data['pred_y']))

evs = explained_variance_score(test_data['electric_power'], test_data['pred_y'])

print(f'############## SCALED BACK #############\nr2:\t {r2}\nrmse:\t {rmse}\nevs:\t {evs}')

```

Let's save the result for visualization

```

test_data[['srtDate', 'electric_power', 'pred_y']].to_csv('../predictions/pred_reverted.csv', index=False)

```

| github_jupyter |

# Time series forecasting with ARIMA

In this notebook, we demonstrate how to:

- prepare time series data for training an ARIMA times series forecasting model

- implement a simple ARIMA model to forecast the next HORIZON steps ahead (time *t+1* through *t+HORIZON*) in the time series

- evaluate the model

The data in this example is taken from the GEFCom2014 forecasting competition<sup>1</sup>. It consists of 3 years of hourly electricity load and temperature values between 2012 and 2014. The task is to forecast future values of electricity load. In this example, we show how to forecast one time step ahead, using historical load data only.

<sup>1</sup>Tao Hong, Pierre Pinson, Shu Fan, Hamidreza Zareipour, Alberto Troccoli and Rob J. Hyndman, "Probabilistic energy forecasting: Global Energy Forecasting Competition 2014 and beyond", International Journal of Forecasting, vol.32, no.3, pp 896-913, July-September, 2016.

```

import os

import warnings

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import datetime as dt

import math

from pandas.tools.plotting import autocorrelation_plot

# from pyramid.arima import auto_arima

from statsmodels.tsa.statespace.sarimax import SARIMAX

from sklearn.preprocessing import MinMaxScaler

from common.utils import load_data, mape

from IPython.display import Image

%matplotlib inline

pd.options.display.float_format = '{:,.2f}'.format

np.set_printoptions(precision=2)

warnings.filterwarnings("ignore") # specify to ignore warning messages

```

Load the data from csv into a Pandas dataframe

```

energy = load_data('./data')[['load']]

energy.head(10)

```

Plot all available load data (January 2012 to Dec 2014)

```

energy.plot(y='load', subplots=True, figsize=(15, 8), fontsize=12)

plt.xlabel('timestamp', fontsize=12)

plt.ylabel('load', fontsize=12)

plt.show()

```

## Create training and testing data sets

We separate our dataset into train and test sets. We train the model on the train set. After the model has finished training, we evaluate the model on the test set. We must ensure that the test set cover a later period in time from the training set, to ensure that the model does not gain from information from future time periods.

We will allocate the period 1st September 2014 to 31st October to training set (2 months) and the period 1st November 2014 to 31st December 2014 to the test set (2 months). Since this is daily consumption of energy, there is a strong seasonal pattern, but the consumption is most similar to the consumption in the recent days. Therefore, using a relatively small window of time for training the data should be sufficient.

> NOTE: Since function we use to fit ARIMA model uses in-sample validation during feeting, we will omit the validation data from this notebook.

```

train_start_dt = '2014-11-01 00:00:00'

test_start_dt = '2014-12-30 00:00:00'

energy[(energy.index < test_start_dt) & (energy.index >= train_start_dt)][['load']].rename(columns={'load':'train'}) \

.join(energy[test_start_dt:][['load']].rename(columns={'load':'test'}), how='outer') \

.plot(y=['train', 'test'], figsize=(15, 8), fontsize=12)

plt.xlabel('timestamp', fontsize=12)

plt.ylabel('load', fontsize=12)

plt.show()

```

## Data preparation

Our data preparation for the training set will involve the following steps:

1. Filter the original dataset to include only that time period reserved for the training set

2. Scale the time series such that the values fall within the interval (0, 1)

Create training set containing only the model features

```

train = energy.copy()[(energy.index >= train_start_dt) & (energy.index < test_start_dt)][['load']]

test = energy.copy()[energy.index >= test_start_dt][['load']]

print('Training data shape: ', train.shape)

print('Test data shape: ', test.shape)

```

Scale data to be in range (0, 1). This transformation should be calibrated on the training set only. This is to prevent information from the validation or test sets leaking into the training data.

```

scaler = MinMaxScaler()

train['load'] = scaler.fit_transform(train)

train.head(10)

```

Original vs scaled data:

```

energy[(energy.index >= train_start_dt) & (energy.index < test_start_dt)][['load']].rename(columns={'load':'original load'}).plot.hist(bins=100, fontsize=12)

train.rename(columns={'load':'scaled load'}).plot.hist(bins=100, fontsize=12)

plt.show()

```

Let's also scale the test data

```

test['load'] = scaler.transform(test)

test.head()

```

## Implement ARIMA method

An ARIMA, which stands for **A**uto**R**egressive **I**ntegrated **M**oving **A**verage, model can be created using the statsmodels library. In the next section, we perform the following steps:

1. Define the model by calling SARIMAX() and passing in the model parameters: p, d, and q parameters, and P, D, and Q parameters.

2. The model is prepared on the training data by calling the fit() function.

3. Predictions can be made by calling the forecast() function and specifying the number of steps (horizon) which to forecast

In an ARIMA model there are 3 parameters that are used to help model the major aspects of a times series: seasonality, trend, and noise. These parameters are:

- **p** is the parameter associated with the auto-regressive aspect of the model, which incorporates past values.

- **d** is the parameter associated with the integrated part of the model, which effects the amount of differencing to apply to a time series.

- **q** is the parameter associated with the moving average part of the model.

If our model has a seasonal component, we use a seasonal ARIMA model (SARIMA). In that case we have another set of parameters: P, D, and Q which describe the same associations as p,d, and q, but correspond with the seasonal components of the model.

```

# Specify the number of steps to forecast ahead

HORIZON = 3

print('Forecasting horizon:', HORIZON, 'hours')

```

Selecting the best parameters for an Arima model can be challenging - somewhat subjective and time intesive, so we'll leave it as an exercise to the user. We used an **auto_arima()** function and some additional manual selection to find a decent model.

>NOTE: For more info on selecting an Arima model, please refer to the an arima notebook in /ReferenceNotebook directory.

```

order = (4, 1, 0)

seasonal_order = (1, 1, 0, 24)

model = SARIMAX(endog=train, order=order, seasonal_order=seasonal_order)

results = model.fit()

print(results.summary())

```

Next we display the distribution of residuals. A zero mean in the residuals may indicate that there is no bias in the prediction.

## Evaluate the model

We will perform the so-called **walk forward validation**. In practice, time series models are re-trained each time a new data becomes available. This allows the model to make the best forecast at each time step.

Starting at the beginning of the time series, we train the model on the train data set. Then we make a prediction on the next time step. The prediction is then evaluated against the known value. The training set is then expanded to include the known value and the process is repeated. (Note that we keep the training set window fixed, for more efficient training, so every time we add a new observation to the training set, we remove the observation from the beginning of the set.)

This process provides a more robust estimation of how the model will perform in practice. However, it comes at the computation cost of creating so many models. This is acceptable if the data is small or if the model is simple, but could be an issue at scale.

Walk-forward validation is the gold standard of time series model evaluation and is recommended for your own projects.

```

Image('./images/ts_cross_validation.png')

```

Create a test data point for each HORIZON step.

```

test_shifted = test.copy()

for t in range(1, HORIZON):

test_shifted['load+'+str(t)] = test_shifted['load'].shift(-t, freq='H')

test_shifted = test_shifted.dropna(how='any')

test_shifted.head(5)

```

Make predictions on the test data

```

%%time

training_window = 720 # dedicate 30 days (720 hours) for training

train_ts = train['load']

test_ts = test_shifted

history = [x for x in train_ts]

history = history[(-training_window):]

predictions = list()

# let's user simpler model for demonstration

order = (2, 1, 0)

seasonal_order = (1, 1, 0, 24)

for t in range(test_ts.shape[0]):

model = SARIMAX(endog=history, order=order, seasonal_order=seasonal_order)

model_fit = model.fit()

yhat = model_fit.forecast(steps = HORIZON)

predictions.append(yhat)

obs = list(test_ts.iloc[t])

# move the training window

history.append(obs[0])

history.pop(0)

print(test_ts.index[t])

print(t+1, ': predicted =', yhat, 'expected =', obs)

```

Compare predictions to actual load

```

eval_df = pd.DataFrame(predictions, columns=['t+'+str(t) for t in range(1, HORIZON+1)])

eval_df['timestamp'] = test.index[0:len(test.index)-HORIZON+1]

eval_df = pd.melt(eval_df, id_vars='timestamp', value_name='prediction', var_name='h')

eval_df['actual'] = np.array(np.transpose(test_ts)).ravel()

eval_df[['prediction', 'actual']] = scaler.inverse_transform(eval_df[['prediction', 'actual']])

eval_df.head()

```

Compute the **mean absolute percentage error (MAPE)** over all predictions

$$MAPE = \frac{1}{n} \sum_{t=1}^{n}|\frac{actual_t - predicted_t}{actual_t}|$$

```

if(HORIZON > 1):

eval_df['APE'] = (eval_df['prediction'] - eval_df['actual']).abs() / eval_df['actual']

print(eval_df.groupby('h')['APE'].mean())

print('One step forecast MAPE: ', (mape(eval_df[eval_df['h'] == 't+1']['prediction'], eval_df[eval_df['h'] == 't+1']['actual']))*100, '%')

print('Multi-step forecast MAPE: ', mape(eval_df['prediction'], eval_df['actual'])*100, '%')

```

Plot the predictions vs the actuals for the first week of the test set

```

if(HORIZON == 1):

## Plotting single step forecast

eval_df.plot(x='timestamp', y=['actual', 'prediction'], style=['r', 'b'], figsize=(15, 8))

else:

## Plotting multi step forecast

plot_df = eval_df[(eval_df.h=='t+1')][['timestamp', 'actual']]

for t in range(1, HORIZON+1):

plot_df['t+'+str(t)] = eval_df[(eval_df.h=='t+'+str(t))]['prediction'].values

fig = plt.figure(figsize=(15, 8))

ax = plt.plot(plot_df['timestamp'], plot_df['actual'], color='red', linewidth=4.0)

ax = fig.add_subplot(111)

for t in range(1, HORIZON+1):

x = plot_df['timestamp'][(t-1):]

y = plot_df['t+'+str(t)][0:len(x)]

ax.plot(x, y, color='blue', linewidth=4*math.pow(.9,t), alpha=math.pow(0.8,t))

ax.legend(loc='best')

plt.xlabel('timestamp', fontsize=12)

plt.ylabel('load', fontsize=12)

plt.show()

```

| github_jupyter |

# CS229: Problem Set 2

## Problem 1: Logistic Regression - Training Stability

**C. Combier**

This iPython Notebook provides solutions to Stanford's CS229 (Machine Learning, Fall 2017) graduate course problem set 2, taught by Andrew Ng.

The problem set can be found here: [./ps2.pdf](ps2.pdf)

I chose to write the solutions to the coding questions in Python, whereas the Stanford class is taught with Matlab/Octave.

## Notation

- $x^i$ is the $i^{th}$ feature vector

- $y^i$ is the expected outcome for the $i^{th}$ training example

- $m$ is the number of training examples

- $n$ is the number of features

Let's load the libraries:

```

%matplotlib inline

from __future__ import division

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

import pandas as pd

sns.set_context('notebook')

plt.rcParams['figure.figsize']=(20,20)

```

I've imported the functions from `lr_debug.py` directly into the notebook:

```

try:

xrange

except NameError:

xrange = range

def add_intercept(X_):

m, n = X_.shape

X = np.zeros((m, n + 1))

X[:, 0] = 1

X[:, 1:] = X_

return X

def load_data(filename):

D = np.loadtxt(filename)

Y = D[:, 0]

X = D[:, 1:]

return add_intercept(X), Y

def calc_grad(X, Y, theta):

m, n = X.shape

grad = np.zeros(theta.shape)

margins = Y * X.dot(theta)

probs = 1. / (1 + np.exp(margins))

grad = -(1./m) * (X.T.dot(probs * Y))

return grad

def logistic_regression(X, Y):

m, n = X.shape

theta = np.zeros(n)

learning_rate = 10

i = 0

while True:

i += 1

prev_theta = theta

grad = calc_grad(X, Y, theta)

theta = theta - learning_rate * (grad)

if i % 10000 == 0:

print('Finished %d iterations' % i)

print("DeltaT = {0}".format(prev_theta - theta))

if np.linalg.norm(prev_theta - theta) < 1e-15:

print('Converged in %d iterations' % i)

break

return

def main():

print('==== Training model on data set A ====')

Xa, Ya = load_data('./data/data_a.txt')

logistic_regression(Xa, Ya)

print('\n==== Training model on data set B ====')

Xb, Yb = load_data('./data/data_b.txt')

logistic_regression(Xb, Yb)

return

```

### Question 1.a)

Now we can run the `main()` function from `lr_debug.py`:

```

main()

```

Here's the trace of this execution:

```

==== Training model on data set A ====

Finished 10000 iterations

DeltaT = [ 4.15154545e-07 -4.27822247e-07 -4.08456454e-07]

Finished 20000 iterations

DeltaT = [ 3.06350501e-11 -3.15729665e-11 -3.01447756e-11]

Finished 30000 iterations

DeltaT = [ 3.55271368e-15 -3.55271368e-15 -3.55271368e-15]

Converged in 30372 iterations

==== Training model on data set B ====

Finished 10000 iterations

DeltaT = [ 0.00193989 -0.00193552 -0.00194607]

Finished 20000 iterations

DeltaT = [ 0.00125412 -0.00125294 -0.00125704]

Finished 30000 iterations

DeltaT = [ 0.00096045 -0.00096055 -0.00096198]

Finished 40000 iterations

DeltaT = [ 0.00079065 -0.00079152 -0.00079144]

Finished 50000 iterations

DeltaT = [ 0.00067833 -0.0006797 -0.00067868]

Finished 60000 iterations

DeltaT = [ 0.00059791 -0.00059962 -0.00059798]

Finished 70000 iterations

DeltaT = [ 0.00053719 -0.00053915 -0.00053709]

Finished 80000 iterations

DeltaT = [ 0.00048957 -0.00049171 -0.00048935]

Finished 90000 iterations

DeltaT = [ 0.00045112 -0.00045338 -0.00045082]

Finished 100000 iterations

DeltaT = [ 0.00041935 -0.0004217 -0.00041899]

...

```

- When training on dataset A, gradient descent converges in 30372 iterations

- When training on dataset B, gradient descent fails to converge

Let's take a look at the data:

```

columns = ['y','x1','x2']

dfA = pd.read_csv('./data/data_a.txt', sep="\s+", header=None)

dfA.columns = columns

dfA.y.astype('category')

dfA.head()

dfB = pd.read_csv('./data/data_b.txt', sep="\s+", header=None)

dfB.columns = columns

dfB.y.astype('category')

dfB.head()

dfA.describe()

dfB.describe()

sns.lmplot(x="x1", y="x2", hue="y", data=dfA, fit_reg=False);

sns.pairplot(dfA,vars=["x1", "x2"], hue="y", size=4, aspect=1.5, diag_kind="kde");

sns.lmplot(x="x1", y="x2", hue="y", data=dfB, fit_reg=False);

sns.pairplot(dfB,vars=["x1", "x2"], hue="y", size=4, aspect=1.5, diag_kind="kde");

```

### Question 1.b)

Let's rotate the datapoints by 45° and show a scatterplot, so we can see the boundaries more clearly:

```

dfC = dfB.copy()

dfC['x12'] = dfC['x1']+dfC['x2']

dfC['x21'] = -dfC['x1']+dfC['x2']

sns.lmplot(x="x21", y="x12", hue="y", data=dfC, fit_reg=False);

```

It seems that the data is perfectly linearly separable in the case of dataset B, however this is not the case for dataset A.

Logistic regression attempts to maximize the log-likelihood:

$$

\ell (\theta) = \sum_{i=1}^m y^i h_{\theta}(x^i)+(1-y^i) (1-h_{\theta}(x^i))

$$

Where $h_{\theta}(x) = \frac{1}{1+\exp(-\theta^T x)}$ is the sigmoid function.

The problem is a scaling issue: Since the data is linearly separable, $||\theta||$ can be made arbitrarily large and this will increase the log-likelihood indefinitely, as $\forall i, h_{\theta} (x^i) \to 1 $ when $||\theta|| \to \infty$. This happens so long as $\theta$ describes a hyperplane that separates the data perfectly.

Another way of saying this is that $\nabla_{\theta} = X^T (Y-h_{\theta} (X))$ can be made arbitrarily close to $0$ by increasing $\theta$, so long as $\theta$ describes a hyperplane that separates the data perfectly.

This does not happen when the data is not linearly separable: in this case, the log-likelihood would start decreasing due to the misclassified data points, i.e. $\exists! \theta$ such that $X^T (Y-h_{\theta} (X)) = 0$

### Question 1.c)

- **Using a different constant learning rate**

This is a scaling issue, changing the learning rate would not solve the problem

- **Decreasing the learning rate over time**

Same as above: This is a scaling issue, changing the learning rate would not solve the problem

- **Adding a regularization term $||\theta||^2$ to the loss function**

This would solve the problem, as we would be actively penalizing large values of $\theta$ by including a term proportional to $||\theta||^2$ in the objective function.

- **Linear scaling of the input features**

This would not solve the problem, as any linear transformation would still keep the data linearly separable

- **Adding zero-mean Gaussian noise to the training data or labels**

This could work, as the data could be made to be linearly inseparable with the addition of noise. However, this is not a very robust solution as convergence of the algorithm depends on the set of noise samples: there is no guarantee the algorithm would converge *in general* for all possible sets of noise samples.

### Question 1.d)

Support Vector Machines maximize the geometric margin. In the case of a linearly separable dataset, an SVM would maximize the distance between the separator and the closest data point, such that all data points are correctly classified. An SVM would therefore be immune to datasets such as B, as geometric margin is independant of $||\theta||$.

| github_jupyter |

This notebook is an optional tutorial (contains no graded exercises) with a short guide on the text-based interface to the computer known as the "command line" which many programmers use almost exclusively when coding.

## Using Data Science JupyterHub

The default environment for the course will be a web-based environment (Jupyter) hosted by the Data Science Initiative (datahub.berkeley.edu). Log in with your @berkeley credentials, or contact the instructors to create an account.

## The Terminal / Command Line / Shell

You will find it useful to know some basics about the command line (aka terminal or shell) on your computer. The command line lets you navigate around your computer and perform tasks without any graphical user interface---just text. You don't need a mouse to use the command line, just your keyboard. With experience, some people find it faster to use command line tools than graphical tools to do certain things on their computer.

So let's dive in! If you're on a Mac or Linux, find and open the Terminal. If you're on Windows, go to your start menu and type "cmd" (no quotes), then run it.

You'll be faced with a prompt where you can type things in. Press `Enter` to execute whatever you've typed. Try typing these commands (in this order if you'd like!) to get a feel for your terminal. Type `ls` or `dir` occasionally as you change things to see how the contents of your folder have changed.

See where you are

- On Mac/Linux: pwd

- On Windows: cd

See what's in the current directory

- On Mac/Linux: ls

- On Windows: dir

Change directories (into the `Documents` directory, for instance)

- On Mac/Linux: cd Documents

- On Windows: cd Documents

Go back to the "parent directory"

- On Mac/Linux: cd ..

- On Windows: cd ..

Make a directory (the directory `fooDirectory`, for instance)

- On Mac/Linux: mkdir fooDirectory

- On Windows: mkdir fooDirectory

Make another directory!

- mkdir barDirectory

Move something (move `barDirectory` into `fooDirectory` directory, for instance)

- On Mac/Linux: mv barDirectory fooDirectory

- On Windows: move barDirectory fooDirectory

Check the contents of a different directory

- On Mac/Linux: ls fooDirectory

- On Windows: dir fooDirectory

Change through multiple directories at once

- On Mac/Linux: cd fooDirectory/barDirectory

- On Windows: cd fooDirectory\barDirectory

(notice different slash directions!)

Go back to your "home" directory

- On Mac/Linux: cd

or: cd ~

- On Windows: cd %userprofile%

Rename something (rename `fooDirectory` into `RenamedDir`, for instance)

- On Mac/Linux: mv fooDirectory RenamedDir

- On Windows: move fooDirectory RenamedDir

Remove an empty directory (the directory `myDirectory`, for instance)

- On Mac/Linux: rmdir fooDirectory/barDirectory

- On Windows: rmdir fooDirectory\barDirectory

Learn more about a command (the `cd` command, for instance)

- On Mac/Linux: man cd

- On Windows: cd /?

Print the contents of a file to the terminal (`file.txt`, for instance)

- On Mac/Linux: cat file.txt

- On Windows: type file.txt

| github_jupyter |

```

# !pip install funcy

# %env OPTIMUS_CHECKPOINT_DIR=../pretrained_models/optimus_snli10/checkpoint-31250/

"""

Import our dependencies

"""

import pandas as pd

import numpy as np

import buckets as b

plurals_filename = b.get_file("s3://scored/plurals_data_scored.csv")

opposites_filename = b.get_file("s3://scored/opposite_data_scored.csv")

comparatives_filename = b.get_file("s3://scored/comparative_data_scored.csv")

plurals = pd.read_csv(plurals_filename)

opposites = pd.read_csv(opposites_filename)

comparatives = pd.read_csv(comparatives_filename)

```

## Plurals

```

print("Counts of each type of value within the plurals dataset")

plural_type_counts = plurals.subcategory.value_counts()

plural_type_counts

print("Percentage of each type which were found to be exact matches")

(plurals.groupby(by="subcategory")['score_0_exact'].agg("sum") / plural_type_counts) * 100

print("Evaluating means of bleu scores")

plurals.groupby(by="subcategory")['score_0_bleu'].agg("mean").round(4)

print("Median bleu score of each subcategory")

plurals.groupby(by="subcategory")['score_0_bleu'].agg("median").round(6)

print("Percent of exact values from entire plural set")

(plurals['score_0_exact'].agg("sum") / len(plurals.index)) * 100

print("Average bleu score for plurals")

plurals['score_0_bleu'].agg("mean").round(4)

print("Median bleu score for plurals")

plurals['score_0_bleu'].agg("median").round(6)

print("Percent of exact values from plurals where subcategory is not to-single")

(plurals[plurals['subcategory'] != 'plural|from-single']['score_0_exact'].agg("sum") / len(plurals[plurals['subcategory'] != 'plural|from-single'].index)) * 100

print("Average bleu score for plurals where subcategory is not single")

plurals[plurals['subcategory'] != 'plural|from-single']['score_0_bleu'].agg("mean").round(4)

print("Median bleu score for plurals where subcategory is not single")

plurals[plurals['subcategory'] != 'plural|from-single']['score_0_bleu'].agg("median").round(6)

print("Examples of exact matches where not \"to-single\"")

plurals.query('score_0_exact == 1 & subcategory != \'plural|from-single\'').sample(n=20)

print("Examples of exact matches where is \"to-single\"")

plurals.query('score_0_exact == 1 & subcategory == \'plural|from-single\'').sample(n=20)

print("Examples from top 10% matches where not \"to-single\"")

plurals.query('score_0_exact == 0 & subcategory != \'plural|from-single\'').sort_values(by="score_0_bleu", ascending=False).head(int(len(plurals)*0.10)).sample(n=20)

print("Examples from top 10% matches where is \"to-single\"")

plurals.query('score_0_exact == 0 & subcategory == \'plural|from-single\'').sort_values(by="score_0_bleu", ascending=False).head(int(len(plurals)*0.10)).sample(n=20)

print("Examples from bottom 25% matches where not \"to-single\"")

plurals.query('score_0_exact == 0 & subcategory != \'plural|from-single\'').sort_values(by="score_0_bleu", ascending=False).tail(int(len(plurals)*0.10)).sample(n=20)

print("Examples from bottom 25% matches where is \"to-single\"")

plurals.query('score_0_exact == 0 & subcategory == \'plural|from-single\'').sort_values(by="score_0_bleu", ascending=False).tail(int(len(plurals)*0.25)).sample(n=20)

```

## Opposites

```

opposite_type_counts = opposites.subcategory.value_counts()

opposite_type_counts

(opposites.groupby(by="subcategory")['score_0_exact'].agg("sum") / opposite_type_counts) * 100

opposites.groupby(by="subcategory")['score_0_bleu'].agg("mean").round(4)

opposites.groupby(by="subcategory")['score_0_bleu'].agg("median").round(4)

print("Percent of exact values from entire opposite set")

(opposites['score_0_exact'].agg("sum") / len(opposites.index)) * 100

print("Average bleu score for opposites")

opposites['score_0_bleu'].agg("mean").round(4)

print("Median bleu score for opposites")

opposites['score_0_bleu'].agg("median").round(6)

print("Examples of exact matches")

opposites[opposites['score_0_exact'] == 1]

print("Top 25% of bleu scores in opposites")

opposites[opposites['score_0_exact'] == 0].sort_values(by="score_0_bleu", ascending=False).head(int(len(plurals)*0.10)).sample(n=20)

print("Top 25% of bleu scores in opposites where OPTIMUS didn't generate the value in c")

opposites.query("score_0_exact == 0 & pred_0 != c.str.lower()").sort_values(by="score_0_bleu", ascending=False).head(int(len(plurals)*0.10)).sample(n=20)

print("Bottom 25% of bleu scores in opposites")

opposites[opposites['score_0_exact'] == 0].sort_values(by="score_0_bleu", ascending=False).tail(int(len(plurals)*0.25)).sample(n=20)

```

## Comparatives

```

comparative_type_counts = comparatives.subcategory.value_counts()

comparative_type_counts

(comparatives.groupby(by="subcategory")['score_0_exact'].agg("sum") / comparative_type_counts) * 100

comparatives.groupby(by="subcategory")['score_0_bleu'].agg("mean").round(4)

comparatives.groupby(by="subcategory")['score_0_bleu'].agg("median").round(4)

print("Examples of exact matches")

comparatives[comparatives['score_0_exact'] == 1].sample(n=20)

print("Top 25% of bleu scores in comparative")

comparatives[comparatives['score_0_exact'] == 0].sort_values(by="score_0_bleu", ascending=False).head(int(len(plurals)*0.10)).sample(n=20)

print("Bottom 25% of bleu scores in opposites")

comparatives[comparatives['score_0_exact'] == 0].sort_values(by="score_0_bleu", ascending=False).tail(int(len(plurals)*0.25)).sample(n=20)

```

| github_jupyter |

```

import requests

s = requests.Session()

s.

s.headers

s.headers = {

'User-Agent': 'okhttp/3.14.2',

'Accept-Encoding': 'gzip',

'Accept': '*/*',

'Connection': 'keep-alive',

}

r = s.get("https://static.meijer.com/mobileassets/info/mma_config.json")

r.json()

import os

with open(os.path.expanduser("~/.ssh/meijer5"), "r") as fid:

meijer_email, meijer_pass, = [n.strip() for n in fid.readlines()[0:2]]

s = requests.Session()

s.headers = {

'gent': 'okhttp/3.14.2',

'User-Agent': 'okhttp/3.14.2',

'Accept-Encoding': 'gzip',

'Accept': '*/*',

'Connection': 'keep-alive',

}

s

login_url = "https://login.meijer.com/as/token.oauth2"

account_services_client_id = "mma"

account_services_secret = "drAqas76Re7RekeBanaMaNEMah7paDE5"

auth_string_decoded = f"{account_services_client_id}:{account_services_secret}".encode("UTF-8")

auth_string_decoded

basic_authorization = base64.encodebytes(auth_string_decoded).decode("UTF-8").strip()

f"Basic {basic_authorization}"

request=dict()

request["url"] = "https://login.meijer.com/as/token.oauth2"

request["headers"] = {

'Authorization': f"Basic {basic_authorization}",

'Platform': 'Android',

'Version': '5.20.1',

'Build': '52001000',

'Content-Type': 'application/x-www-form-urlencoded',

'Connection': "Keep-Alive",

'Accept-Encoding': 'gzip',

'User-Agent': 'okhttp/3.8.0'

}

request["params"] = {

'grant_type': 'password',

'scope': 'openid',

"username": meijer_email,

"password": meijer_pass,

}

r = s.post(**request)

assert r.status_code==200

r

auth = r.json()

access_token = auth["access_token"].strip()

base64.decodebytes(b"eyJhbGciOiJSUzI1NiIsImtpZCI6ImtleTAxIn0=")

access_token.split(".")

for field in access_token.split("."):

print(base64.decodebytes(f"{field}=".encode()))

access_token = auth["access_token"].strip()

alg64, meijer_info64, token64 = access_token.split(".")

base64.decodebytes(f"{meijer_info64}=".encode()).decode()

json.loads(base64.decodebytes(f"{meijer_info64}=".encode()).decode())

meijer_info = json.loads(base64.decodebytes(f"{meijer_info64}=".encode()))

meijer_info["digital_id"]

request=dict()

request["url"] = "https://mservices.meijer.com/dgtlmma/accounts/getAccount?id="+meijer_info["digital_id"]

request["headers"] = {

'Accept': ':application/vnd.meijer.account.account-v1.0+json',

'Authorization': "Bearer eyJhbGciOiJSUzI1NiIsImtpZCI6ImtleTAxIn0.eyJzY29wZSI6WyJvcGVuaWQiXSwiY2xpZW50X2lkIjoibW1hIiwiaXNzIjoiaHR0cHM6Ly9sb2dpbi5tZWlqZXIuY29tLyIsInN1YiI6IjE5MDYzNzQ0IiwiZWd1ZXN0X2lkIjoiMTA0MzMwNTYiLCJoYXNfZGlnaXRhbCI6IjEiLCJkaWdpdGFsX2lkIjoiMTkwNjM3NDQiLCJoYXNfbXBlcmtzIjoiMSIsIm1wZXJrc19zaG9wcGVyX2lkIjoiNDY4MDgxODgzMjEiLCJtcGVya3NfZXh0X3Nob3BwZXJfaWQiOiI2RjAwRjgxQi1GQTI0LTRBNEEtOEEyMy1EMjM1QjMwNDU5MzQiLCJleHAiOjE1NzI0MTEwNTR9.BzPx-yKEK_pdHzLNf5qZLyV2WCY3fVOX9Zg-GO2zOP8zjKo3wxHSk6KV31og0o_Y7oD5I4m5xSpXouj8T614kUenID0AF0QiZIjTX6vbnV7SmyLdCam46cVN72YMhCbZAOinsBTqWT2HppKfBSiUg3w5hLkFuEp5jxy1r-SdYBiznQD6l8JFEZOtnQuyNGmdI3t-jJ4ysryH-MyE8uoOt859fGEKKEhflP-rkN-CAPMTusCWHDAtI84XhiCfVXnAgomkCW-soDLyjOgN1YmzCenz7vcYdxsQ7RJ5a8aO8RA7YtzfExi1432J2SW8fmOuaMZOPJqd37VrhHH1cF7T9w",

'Platform': 'Android',

'Version': '5.20.1',

'Build': '52001000',

'Content-Type': 'application/json',

'Connection': "Keep-Alive",

'Accept-Encoding': 'gzip',

'User-Agent': 'okhttp/3.8.0'

}

s = requests.Session()

r = s.get(**request)

assert r.status_code==200

r.text

request=dict()

request["url"] = "https://mservices.meijer.com/dgtlmma/accounts/getAccount?id="+meijer_info["digital_id"]

request["headers"] = {

'Accept': 'application/vnd.meijer.account.account-v1.0+json',