text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

# Analyze game tree with position evaluation

## Import

```

# Game graph library

import igraph

import chess

import math

class Game():

def __init__(self, game):

self.game = game

@property

def moves_uci(self):

res = []

node = self.game

while not node.is_end():

node = node.next()

res.append(node.uci())

return res

@property

def moves_nodes(self):

res = []

node = self.game

while not node.is_end():

node = node.next()

res.append(node)

return res

class GamesGraph():

def __init__(self):

self.graph = igraph.Graph(directed=True)

def add_move(self, start_fen, end_fen, uci):

vs = self._ensure_vertex(start_fen)

vt = self._ensure_vertex(end_fen)

try:

e = self.graph.es.find(_source=vs.index, _target=vt.index)

e["count"] += 1

except:

e = self.graph.add_edge(vs, vt)

e["uci"] = uci

e["count"] = 1

@property

def start_node(self):

return self.graph.vs.find(chess.STARTING_FEN)

def _ensure_vertex(self, fen):

try:

return self.graph.vs.find(fen)

except:

v = self.graph.add_vertex(name=fen)

v["fen"] = fen

v["turn"] = chess.Board(fen).turn

return v

def games_graph(games, max_moves):

gr = GamesGraph()

for game in games:

start_fen = game.game.board().fen()

for move in game.moves_nodes[:max_moves]:

fen = move.board().fen()

uci = move.uci()

gr.add_move(start_fen, fen, uci)

start_fen = fen

return gr

def compute_edge_weights_uniform(vertex):

all_count = vertex.degree(mode="out")

for edge in vertex.out_edges():

edge["prob"] = 1.0

edge["weight"] = 0.0

def compute_edge_weights_counting(vertex):

all_count = sum(map(lambda x: x["count"], vertex.out_edges()))

for edge in vertex.out_edges():

# Certainty doesn't exist... Let's put a 90% ceiling.

prob = min(edge["count"] / all_count, 0.9)

edge["prob"] = prob

edge["weight"] = -math.log(prob)

def compute_graph_weights(graph, black_uniform=False, white_uniform=False):

# Compute the graph weights such that:

# * The distance is the inverse of the probability to go from source to destination.

# * Summation of two weights is the same as multiplying the probability.

#

# count: ranges from 1 to max; max = sum(out_edges["count"]).

# prob: count / max_count; [0; 1]

# weigth: -log(prob); [0; +inf] ~ [very_likely; unlikely]

for vertex in graph.graph.vs:

if vertex["turn"] == chess.WHITE and white_uniform:

compute_edge_weights_uniform(vertex)

elif vertex["turn"] == chess.BLACK and black_uniform:

compute_edge_weights_uniform(vertex)

else:

compute_edge_weights_counting(vertex)

import chess.pgn

import io

import json

with open('playerx-games.json') as f:

data = json.load(f)

games = []

for game in data:

pgn = io.StringIO(game)

games.append(Game(chess.pgn.read_game(pgn)))

white_games = [g for g in games if g.game.headers["White"] == "playerx"]

black_games = [g for g in games if g.game.headers["Black"] == "playerx"]

len(games), len(white_games), len(black_games)

# Load the evaluations

import chess

def load_evals_json(path):

with open(path) as f:

evaljs = json.load(f)

evals = {}

for pos in evaljs:

evals[pos["fen"]] = pos["eval"]

# add the initial position

evals[chess.STARTING_FEN] = {

"type": "cp",

"value": 0,

}

return evals

# Returns [-1;1]

def rating(ev, fen):

val = ev["value"]

if ev["type"] == "cp":

# Clamp to -300, +300. Winning a piece is enough.

val = max(-300, min(300, val))

return val / 300.0

if val > 0: return 1.0

if val < 0: return -1.0

# This is mate, but is it white or black?

b = chess.Board(fen)

return 1.0 if b.turn == chess.WHITE else -1.0

# Returns [0;1], where 0 is min advantage, 1 is max for black.

def rating_black(ev, fen):

return -rating(ev, fen) * 0.5 + 0.5

# Returns [0;1], where 0 is min advantage, 1 is max for black.

def rating_white(ev, fen):

return rating(ev, fen) * 0.5 + 0.5

def compute_rating(evals, rating_fn):

for fen in evals.keys():

ev = evals[fen]

evals[fen]["rating"] = rating_fn(ev, fen)

def update_graph_rating(g, evals):

for v in g.graph.vs:

v["rating"] = evals[v["fen"]]["rating"]

import chess

from functools import reduce

class Line():

def __init__(self, end_node, moves, cost):

self.end_node = end_node

self.moves = moves

self.cost = cost

@property

def moves_uci(self):

return [e["uci"] for e in self.moves]

@property

def rating(self):

return self.end_node["rating"]

@property

def probability(self):

return reduce(lambda x, y: x*y, [e["prob"] for e in self.moves])

@property

def end_board(self):

return chess.Board(self.end_node["fen"])

@property

def end_fen(self):

return self.end_node["fen"]

def __str__(self):

return "{}: {} (prob={} rating={})".format(

self.end_node["fen"],

self.moves_uci,

self.probability,

self.rating,

)

def __repr__(self):

return self.__str__()

def compute_line(graph, path):

# Skip empty paths.

if len(path) < 2:

return None

end_node = graph.graph.vs.find(path[-1])

cost = 0

moves = []

for i in range(len(path) - 1):

edge = graph.graph.es.find(_source=path[i], _target=path[i+1])

cost += edge["weight"]

moves.append(edge)

return Line(end_node, moves, cost)

def best_lines(lines, min_rating=0.5):

lines = filter(lambda x: x is not None, lines)

return [

l for l in sorted(lines, key=lambda x: x.cost)

if l.rating > min_rating

]

```

## Graph test

```

import igraph

g = igraph.Graph(directed=True)

g.add_vertex(name="a")

g.add_vertex(name="b")

a = g.vs.find("a")

b = g.vs.find("b")

print("a: ", a, "b: ", b)

print(a.index)

g.add_edge(a, b, name="foo")

print("edge 0, 1: ", g.es.find(_source=a.index, _target=b.index) != None)

igraph.plot(g, bbox=(200, 200))

import igraph

import chess

g = games_graph(black_games[:8], 4)

fen_evals = load_evals_json('eval-black.json')

compute_rating(fen_evals, rating_white)

update_graph_rating(g, fen_evals)

style = {

"edge_label": g.graph.es["count"],

"edge_label_color": "blue",

"vertex_label": ["{:.2}".format(x) for x in g.graph.vs["rating"]],

"vertex_label_size": 8,

"vertex_label_color": "red",

"vertex_color": [("white" if x == chess.WHITE else "black") for x in g.graph.vs["turn"]],

"vertex_shape": ["rectangle"] + ["circle" for _ in g.graph.vs][1:]

}

igraph.plot(g.graph, **style)

# Test weights without correcting for my moves equal probability.

compute_graph_weights(g)

style = {

"edge_label": ["{:.2}".format(x) for x in g.graph.es["weight"]],

"edge_label_color": "blue",

"vertex_color": [("white" if x == chess.WHITE else "black") for x in g.graph.vs["turn"]],

"vertex_shape": ["rectangle"] + ["circle" for _ in g.graph.vs][1:]

}

igraph.plot(g.graph, **style)

# Test the weights.

compute_graph_weights(g, white_uniform=True)

style = {

"edge_label": ["{:.2}".format(x) for x in g.graph.es["weight"]],

"edge_label_color": "blue",

"vertex_color": [("white" if x == chess.WHITE else "black") for x in g.graph.vs["turn"]],

"vertex_shape": ["rectangle"] + ["circle" for _ in g.graph.vs][1:]

}

igraph.plot(g.graph, **style)

# Shortest paths from initial to every other position.

sp = g.graph.get_shortest_paths(g.start_node, weights=g.graph.es["weight"])

sp

lines = [compute_line(g, p) for p in sp]

lines = best_lines(lines)

lines

print(lines[8])

lines[8].end_board

```

## Black games

```

# Load the games

graph = games_graph(black_games, 10)

len(graph.graph.vs["name"])

# Load the evaluations

fen_evals = load_evals_json('eval-black.json')

compute_rating(fen_evals, rating_white)

len(fen_evals)

update_graph_rating(graph, fen_evals)

compute_graph_weights(graph, white_uniform=False)

# Shortest paths from initial to every other position.

sp = graph.graph.get_shortest_paths(graph.start_node, weights=graph.graph.es["weight"])

all_lines = [compute_line(graph, p) for p in sp]

lines = best_lines(all_lines, 0.6)

lines[:10]

import pandas

data = pandas.Series([

x.probability

for x in all_lines

if x is not None and x.probability < 0.02

])

df = pandas.DataFrame({"prob": data}, columns=["prob"])

df.plot.hist(bins=40)

high_adv = [x for x in lines if x.rating > 0.7][:10]

print(high_adv[0])

high_adv[0].end_board

```

Not good. Moves the Q out early, hoping for a black mistake.

```

print(high_adv[1])

high_adv[1].end_board

```

+1.4 but played the wrong move only 3/16 times.

```

print(high_adv[2])

high_adv[2].end_board

not_e4 = [l for l in lines if l.moves_uci[0] != "e2e4" and l.rating > 0.7]

print(not_e4[1])

not_e4[1].end_board

```

Interesting position. He seems to often get it wrong. The main last moves are:

* Nc6 (2) +0.8

* e6 (2) +0.1

* c5 +0.3

* a6 +0.2

* e5 +1.5

| github_jupyter |

# Research Problem

Last year, I read a paper titled, "Feature Selection Methods for Identifying Genetic Determinants of Host Species in RNA Viruses". This year, I read another paper titled, "Predicting host tropism of influenza A virus proteins using random forest". The essence of these papers were to predict influenza virus host tropism from sequence features. The particular feature engineering steps were somewhat distinct, in which the former used amino acid sequences encoded as binary 1/0s, while the latter used physiochemical characteristics of the amino acid sequences instead. However, the core problem was essentially identical - predict a host classification from influenza protein sequence features. Random forest classifiers were used in both papers, and is a powerful method for identifying non-linear mappings from features to class labels. My question here was to see if I could get comparable performance using a simple neural network.

# Data

I downloaded influenza HA sequences from the Influenza Research Database. Sequences dated from 1980 to 2015. Lab strains were excluded, duplicates allowed (captures host tropism of certain sequences). All viral subtypes were included.

Below, let's take a deep dive into what it takes to construct an artificial neural network!

The imports necessary for running this notebook.

```

! echo $PATH

! echo $CUDA_ROOT

import pandas as pd

import numpy as np

from Bio import SeqIO

from Bio import AlignIO

from Bio.Align import MultipleSeqAlignment

from collections import Counter

from sklearn.preprocessing import LabelBinarizer

from sklearn.cross_validation import train_test_split

from sklearn.ensemble import RandomForestClassifier, ExtraTreesClassifier, GradientBoostingClassifier

from sklearn.metrics import mutual_info_score as mi

from lasagne import layers

from lasagne.updates import nesterov_momentum

from nolearn.lasagne import NeuralNet

import theano

```

Read in the viral sequences.

```

sequences = SeqIO.to_dict(SeqIO.parse('20150902_nnet_ha.fasta', 'fasta'))

# sequences

```

The sequences are going to be of variable length. To avoid the problem of doing multiple sequence alignments, filter to just the most common length (i.e. 566 amino acids).

```

lengths = Counter()

for accession, seqrecord in sequences.items():

lengths[len(seqrecord.seq)] += 1

lengths.most_common(1)[0][0]

```

There are sequences that are ambiguously labeled. For example, "Environment" and "Avian" samples. We would like to give a more detailed prediction as to which hosts it likely came from. Therefore, take out the "Environment" and "Avian" samples.

```

# For convenience, we will only work with amino acid sequencees of length 566.

final_sequences = dict()

for accession, seqrecord in sequences.items():

host = seqrecord.id.split('|')[1]

if len(seqrecord.seq) == lengths.most_common(1)[0][0]:

final_sequences[accession] = seqrecord

```

Create a `numpy` array to store the alignment.

```

alignment = MultipleSeqAlignment(final_sequences.values())

alignment_array = np.array([list(rec) for rec in alignment])

```

The first piece of meat in the code begins here. In the cell below, we convert the sequence matrix into a series of binary `1`s and `0`s, to encode the features as numbers. This is important - AFAIK, almost all machine learning algorithms require numerical inputs.

```

# Create an empty dataframe.

# df = pd.DataFrame()

# # Create a dictionary of position + label binarizer objects.

# pos_lb = dict()

# for pos in range(lengths.most_common(1)[0][0]):

# # Convert position 0 by binarization.

# lb = LabelBinarizer()

# # Fit to the alignment at that position.

# lb.fit(alignment_array[:,pos])

# # Add the label binarizer to the dictionary.

# pos_lb[pos] = lb

# # Create a dataframe.

# pos = pd.DataFrame(lb.transform(alignment_array[:,pos]))

# # Append the columns to the dataframe.

# for col in pos.columns:

# maxcol = len(df.columns)

# df[maxcol + 1] = pos[col]

from isoelectric_point import isoelectric_points

df = pd.DataFrame(alignment_array).replace(isoelectric_points)

# Add in host data

df['host'] = [s.id.split('|')[1] for s in final_sequences.values()]

df = df.replace({'X':np.nan, 'J':np.nan, 'B':np.nan, 'Z':np.nan})

df.dropna(inplace=True)

df.to_csv('isoelectric_point_data.csv')

# Normalize data to between 0 and 1.

from sklearn.preprocessing import StandardScaler

df_std = pd.DataFrame(StandardScaler().fit_transform(df.ix[:,:-1]))

df_std['host'] = df['host']

ambiguous_hosts = ['Environment', 'Avian', 'Unknown', 'NA', 'Bird', 'Sea_Mammal', 'Aquatic_Bird']

unknowns = df_std[df_std['host'].isin(ambiguous_hosts)]

train_test_df = df_std[df_std['host'].isin(ambiguous_hosts) == False]

train_test_df.dropna(inplace=True)

```

With the cell above, we now have a sequence feature matrix, in which the 566 amino acids positions have been expanded to 6750 columns of binary sequence features.

The next step is to grab out the host species labels, and encode them as 1s and 0s as well.

```

set([i for i in train_test_df['host'].values])

# Grab out the labels.

output_lb = LabelBinarizer()

output_lb.fit(train_test_df['host'])

Y = output_lb.fit_transform(train_test_df['host'])

Y = Y.astype(np.float32) # Necessary for passing the data into nolearn.

Y.shape

X = train_test_df.ix[:,:-1].values

X = X.astype(np.float32) # Necessary for passing the data into nolearn.

X.shape

```

Next up, we do the train/test split.

```

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, test_size=0.25, random_state=42)

```

For comparison, let's train a random forest classifier, and see what the concordance is between the predicted labels and the actual labels.

```

rf = RandomForestClassifier()

rf.fit(X_train, Y_train)

predictions = rf.predict(X_test)

predicted_labels = output_lb.inverse_transform(predictions)

# Compute the mutual information between the predicted labels and the actual labels.

mi(predicted_labels, output_lb.inverse_transform(Y_test))

```

By the majority-consensus rule, and using mutual information as the metric for scoring, things look not so bad! As mentioned above, the `RandomForestClassifier` is a pretty powerful method for finding non-linear patterns between features and class labels.

Uncomment the cell below if you want to try the `scikit-learn`'s `ExtraTreesClassifier`.

```

# et = ExtraTreesClassifier()

# et.fit(X_train, Y_train)

# predictions = et.predict(X_test)

# predicted_labels = output_lb.inverse_transform(predictions)

# mi(predicted_labels, output_lb.inverse_transform(Y_test))

```

As a demonstration of how this model can be used, let's look at the ambiguously labeled sequences, i.e. those from "Environment" and "Avian", to see whether we can make a prediction as to what host it likely came frome.

```

# unknown_hosts = unknowns.ix[:,:-1].values

# preds = rf.predict(unknown_hosts)

# output_lb.inverse_transform(preds)

```

Alrighty - we're now ready to try out a neural network! For this try, we will use `lasagne` and `nolearn`, two packages which have made things pretty easy for building neural networks. In this segment, I'm going to not show experiments with multiple architectures, activations and the like. The goal is to illustrate how easy the specification of a neural network is.

The network architecture that we'll try is as such:

- 1 input layer, of shape 6750 (i.e. taking in the columns as data).

- 1 hidden layer, with 300 units.

- 1 output layer, of shape 140 (i.e. each of the class labels).

```

from lasagne import nonlinearities as nl

net1 = NeuralNet(layers=[

('input', layers.InputLayer),

('hidden1', layers.DenseLayer),

#('dropout', layers.DropoutLayer),

#('hidden2', layers.DenseLayer),

#('dropout2', layers.DropoutLayer),

('output', layers.DenseLayer),

],

# Layer parameters:

input_shape=(None, X.shape[1]),

hidden1_num_units=300,

#dropout_p=0.3,

#hidden2_num_units=500,

#dropout2_p=0.3,

output_nonlinearity=nl.softmax,

output_num_units=Y.shape[1],

#allow_input_downcast=True,

# Optimization Method:

update=nesterov_momentum,

update_learning_rate=0.01,

update_momentum=0.9,

regression=True,

max_epochs=100,

verbose=1

)

```

Training a simple neural network on my MacBook Air takes quite a bit of time :). But the function call for fitting it is a simple `nnet.fit(X, Y)`.

```

net1.fit(X_train, Y_train)

```

Let's grab out the predictions!

```

preds = net1.predict(X_test)

preds.shape

```

We're going to see how good the classifier did by examining the class labels. The way to visualize this is to have, say, the class labels on the X-axis, and the probability of prediction on the Y-axis. We can do this sample by sample. Here's a simple example with no frills in the matplotlib interface.

```

import matplotlib.pyplot as plt

%matplotlib inline

plt.bar(np.arange(len(preds[0])), preds[0])

```

Alrighty, let's add some frills - the class labels, the probability of each class label, and the original class label.

```

### NOTE: Change the value of i to anything above!

i = 111

plt.figure(figsize=(20,5))

plt.bar(np.arange(len(output_lb.classes_)), preds[i])

plt.xticks(np.arange(len(output_lb.classes_)) + 0.5, output_lb.classes_, rotation='vertical')

plt.title('Original Label: ' + output_lb.inverse_transform(Y_test)[i])

plt.show()

# print(output_lb.inverse_transform(Y_test)[i])

```

Let's do a majority-consensus rule applied to the labels, and then compute the mutual information score again.

```

preds_labels = []

for i in range(preds.shape[0]):

maxval = max(preds[i])

pos = list(preds[i]).index(maxval)

preds_labels.append(output_lb.classes_[pos])

mi(preds_labels, output_lb.inverse_transform(Y_test))

```

With a score of 0.73, that's not bad either! It certainly didn't outperform the `RandomForestClassifier`, but the default parameters on the RFC were probably pretty good to begin with. Notice how little tweaking on the neural network we had to do as well.

For good measure, these were the class labels. Notice how successful influenza has been in replicating across the many different species!

```

output_lb.classes_

```

# The biology behind this dataset.

A bit more about the biology of influenza.

If you made it this far, thank you for hanging on! How does this mini project relate to the biology of flu?

As the flu evolves and moves between viral hosts, it gradually adapts to that host. This allows it to successfully establish an infection in the host population.

We can observe the viral host as we sample viruses from it. Sometimes, we don't catch it in its adapted state, but it's un-adapted state, as if it had freshly joined in from its other population. That is likely why some of the class labels are mis-identified.

Also, there are environmentally sampled isolates. They obviously aren't simply replicating in the environment (i.e. bodies of water), but in some host, and were shed into the water. For these guys, the host labels won't necessarily match up, as there'll be a stronger signal with particular hosts - whether it be from ducks, pigs or even humans.

# Next steps?

There's a few obvious things that can be done.

1. Latin hypercube sampling for Random Forest parameters.

2. Experimenting with adding more layers, tweaking the layer types etc.

What else might be done? Ping me at ericmajinglong@gmail.com with the subject "neural nets and HA". :)

| github_jupyter |

```

import matplotlib.pyplot as plt

import numpy as np

# if using a jupyter notebook

%matplotlib inline

theta = np.arange(0,np.pi,0.001) # start,stop,step

scale = 1

cos_theta = np.cos(theta)

phi_theta = 3*np.cos(0.5*theta-3/2*np.pi)+1

phi_linear = -(1+2 * np.cos(0.5))/np.pi * theta + np.cos(0.5)

phi_large = -0.876996 * theta + 0.5

phi_zero = -0.876996 * theta

phi_log = -np.log(theta+1)+1

sphereFace = np.cos(1.35 * theta)

arcFace = np.cos(theta+0.5)

cosFace = np.cos(theta)-0.35

cm1 = np.cos(theta+0.3)-0.2

cm2 = np.cos(0.9*theta +0.4) - 0.15

y = 340/(19*np.pi**2) * theta**2 - 397/(19*np.pi) * theta+1

linear_arcface = (np.pi- 2 * theta)/np.pi

li_margin_arcface = (np.pi- 2 * (theta+0.45))/np.pi

plt.plot(theta, cos_theta, label='Softmax $(1.0, 1.0, 0.0, 0.00)$')

#plt.plot(theta, phi_theta, label='Phi_theta $(3.0, 0.5, -4.71, -1)$')

plt.plot(theta, phi_linear, label=r'Phi_linear $\frac{-(1+2*cos(0.5))}{\pi}\theta+cos(0.5)$')

#plt.plot(theta, phi_large, label=r'large_linear')

#plt.plot(theta, phi_zero, label=r'Phi_zero')

#plt.plot(theta, sphereFace, label='SphereFace $(1.0, 1.35, 0.0, 0.00)$')

plt.plot(theta, arcFace, label='ArcFace $(1.0, 1.0, 0.5, 0.00)$')

#plt.plot(theta, cosFace, label='CosFace $(1.0, 1.0, 0.0, 0.35)$')

plt.plot(theta, linear_arcface, label='li-arcface')

plt.plot(theta, li_margin_arcface, label='li_margin_arcface 0.45')

#plt.plot(theta, cm1, label='CM1 (1.0, 1.0, 0.3, 0.20)')

#plt.plot(theta, cm2, label='CM1 (1.0, 0.9, 0.4, 0.15)' )

#plt.plot(theta, y, label='y theta 10')

plt.legend(loc='center left', bbox_to_anchor=(1, 0.5))

plt.grid(linestyle='--')

plt.show()

start = -4

stop = 4

step = 0.01

def compute_smooth_x(sigma):

sigma_y = 1.0/(sigma**2)

x_neg_1 = np.arange(start, -1*sigma_y, step) # start,stop,step

x_neg_2 = np.arange(-1*sigma_y, 0, step) # start,stop,step

x_pos_1 = np.arange(0, sigma_y, step) # start,stop,step

x_pos_2 = np.arange(sigma_y, stop, step) # start,stop,step

x_center = np.concatenate((x_neg_2, x_pos_1))

smooth_x_center = (sigma * x_center)**2 / 2

smooth_x_left = np.abs(x_neg_1) - 0.5/ (sigma**2)

smooth_x_right = np.abs(x_pos_2) - 0.5/ (sigma**2)

smooth_x = np.concatenate((smooth_x_left, smooth_x_center, smooth_x_right))

x = np.concatenate((x_neg_1, x_neg_2, x_pos_1, x_pos_2))

return x, smooth_x

x = np.arange(start, stop, step) # start,stop,step

square_x = x**2

abs_x = np.abs(x)

x_sigma_1, smooth_x_sigma_1 = compute_smooth_x(1.0)

x_sigma_3, smooth_x_sigma_3 = compute_smooth_x(3.0)

plt.plot(x, square_x, label='$x^2$')

plt.plot(x, abs_x, label='$abs(x)$')

plt.plot(x_sigma_1, smooth_x_sigma_1, label='smooth(x)')

plt.plot(x_sigma_3, smooth_x_sigma_3, label='smooth(x) sigma 3')

plt.plot(x_sigma_3, 4.0*smooth_x_sigma_3, label='smooth(x) sigma 3 scale 4')

plt.legend(loc='center left', bbox_to_anchor=(1, 0.5))

plt.grid(linestyle='--')

plt.show()

x = np.arange(np.exp(-1), np.exp(1), 0.01) # start,stop,step

y_2 = x**2

y_4 = x**4

y_8 = x**8

y_16 = x**16

y_32 = x**32

y_64 = x**64

plt.plot(x, y_2, label='$x^{2}$')

plt.plot(x, y_4, label='$x^{4}$')

plt.legend(loc='center left', bbox_to_anchor=(1, 0.5))

plt.grid(linestyle='--')

plt.show()

plt.plot(x, y_8, label='$x^{8}$')

plt.plot(x, y_16, label='$x^{16}$')

plt.plot(x, y_32, label='$x^{32}$')

plt.plot(x, y_64, label='$x^{64}$')

plt.legend(loc='center left', bbox_to_anchor=(1, 0.5))

plt.grid(linestyle='--')

plt.show()

```

| github_jupyter |

This is a companion notebook for the book [Deep Learning with Python, Second Edition](https://www.manning.com/books/deep-learning-with-python-second-edition?a_aid=keras&a_bid=76564dff). For readability, it only contains runnable code blocks and section titles, and omits everything else in the book: text paragraphs, figures, and pseudocode.

**If you want to be able to follow what's going on, I recommend reading the notebook side by side with your copy of the book.**

This notebook was generated for TensorFlow 2.6.

# Deep learning for text

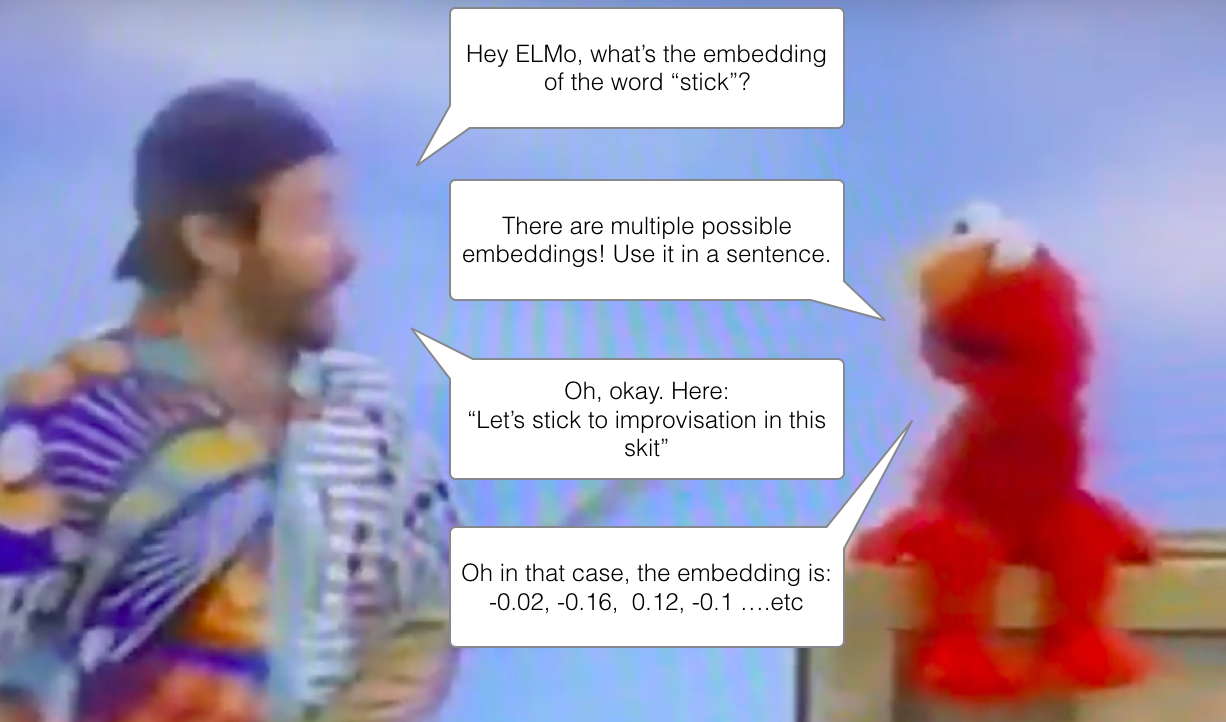

## Natural Language Processing: the bird's eye view

## Preparing text data

### Text standardization

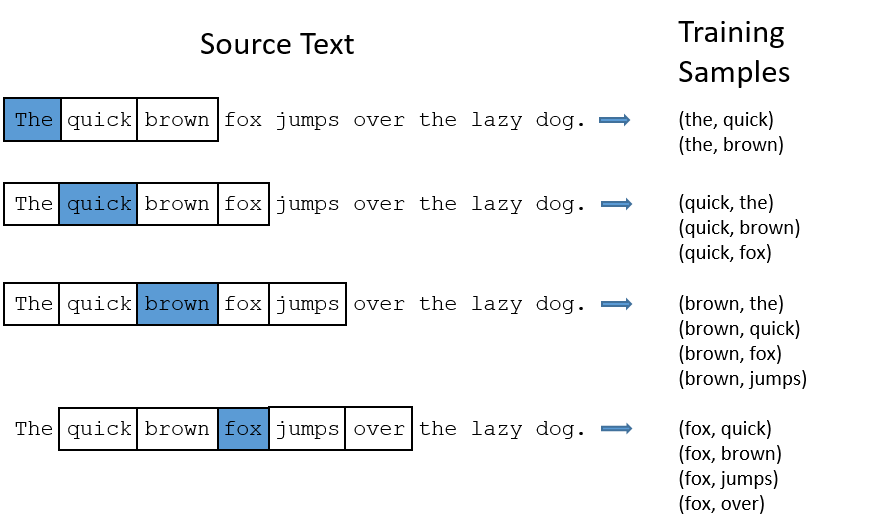

### Text splitting (tokenization)

### Vocabulary indexing

### Using the `TextVectorization` layer

```

import string

class Vectorizer:

def standardize(self, text):

text = text.lower()

return "".join(char for char in text if char not in string.punctuation)

def tokenize(self, text):

text = self.standardize(text)

return text.split()

def make_vocabulary(self, dataset):

self.vocabulary = {"": 0, "[UNK]": 1}

for text in dataset:

text = self.standardize(text)

tokens = self.tokenize(text)

for token in tokens:

if token not in self.vocabulary:

self.vocabulary[token] = len(self.vocabulary)

self.inverse_vocabulary = dict(

(v, k) for k, v in self.vocabulary.items())

def encode(self, text):

text = self.standardize(text)

tokens = self.tokenize(text)

return [self.vocabulary.get(token, 1) for token in tokens]

def decode(self, int_sequence):

return " ".join(

self.inverse_vocabulary.get(i, "[UNK]") for i in int_sequence)

vectorizer = Vectorizer()

dataset = [

"I write, erase, rewrite",

"Erase again, and then",

"A poppy blooms.",

]

vectorizer.make_vocabulary(dataset)

test_sentence = "I write, rewrite, and still rewrite again"

encoded_sentence = vectorizer.encode(test_sentence)

print(encoded_sentence)

decoded_sentence = vectorizer.decode(encoded_sentence)

print(decoded_sentence)

from tensorflow.keras.layers import TextVectorization

text_vectorization = TextVectorization(

output_mode="int",

)

import re

import string

import tensorflow as tf

def custom_standardization_fn(string_tensor):

lowercase_string = tf.strings.lower(string_tensor)

return tf.strings.regex_replace(

lowercase_string, f"[{re.escape(string.punctuation)}]", "")

def custom_split_fn(string_tensor):

return tf.strings.split(string_tensor)

text_vectorization = TextVectorization(

output_mode="int",

standardize=custom_standardization_fn,

split=custom_split_fn,

)

dataset = [

"I write, erase, rewrite",

"Erase again, and then",

"A poppy blooms.",

]

text_vectorization.adapt(dataset)

```

**Displaying the vocabulary**

```

text_vectorization.get_vocabulary()

vocabulary = text_vectorization.get_vocabulary()

test_sentence = "I write, rewrite, and still rewrite again"

encoded_sentence = text_vectorization(test_sentence)

print(encoded_sentence)

inverse_vocab = dict(enumerate(vocabulary))

decoded_sentence = " ".join(inverse_vocab[int(i)] for i in encoded_sentence)

print(decoded_sentence)

```

## Two approaches for representing groups of words: sets and sequences

### Preparing the IMDB movie reviews data

```

!curl -O https://ai.stanford.edu/~amaas/data/sentiment/aclImdb_v1.tar.gz

!tar -xf aclImdb_v1.tar.gz

!rm -r aclImdb/train/unsup

!cat aclImdb/train/pos/4077_10.txt

import os, pathlib, shutil, random

base_dir = pathlib.Path("aclImdb")

val_dir = base_dir / "val"

train_dir = base_dir / "train"

for category in ("neg", "pos"):

os.makedirs(val_dir / category)

files = os.listdir(train_dir / category)

random.Random(1337).shuffle(files)

num_val_samples = int(0.2 * len(files))

val_files = files[-num_val_samples:]

for fname in val_files:

shutil.move(train_dir / category / fname,

val_dir / category / fname)

from tensorflow import keras

batch_size = 32

train_ds = keras.utils.text_dataset_from_directory(

"aclImdb/train", batch_size=batch_size

)

val_ds = keras.utils.text_dataset_from_directory(

"aclImdb/val", batch_size=batch_size

)

test_ds = keras.utils.text_dataset_from_directory(

"aclImdb/test", batch_size=batch_size

)

```

**Displaying the shapes and dtypes of the first batch**

```

for inputs, targets in train_ds:

print("inputs.shape:", inputs.shape)

print("inputs.dtype:", inputs.dtype)

print("targets.shape:", targets.shape)

print("targets.dtype:", targets.dtype)

print("inputs[0]:", inputs[0])

print("targets[0]:", targets[0])

break

```

### Processing words as a set: the bag-of-words approach

#### Single words (unigrams) with binary encoding

**Preprocessing our datasets with a `TextVectorization` layer**

```

text_vectorization = TextVectorization(

max_tokens=20000,

output_mode="binary",

)

text_only_train_ds = train_ds.map(lambda x, y: x)

text_vectorization.adapt(text_only_train_ds)

binary_1gram_train_ds = train_ds.map(lambda x, y: (text_vectorization(x), y))

binary_1gram_val_ds = val_ds.map(lambda x, y: (text_vectorization(x), y))

binary_1gram_test_ds = test_ds.map(lambda x, y: (text_vectorization(x), y))

```

**Inspecting the output of our binary unigram dataset**

```

for inputs, targets in binary_1gram_train_ds:

print("inputs.shape:", inputs.shape)

print("inputs.dtype:", inputs.dtype)

print("targets.shape:", targets.shape)

print("targets.dtype:", targets.dtype)

print("inputs[0]:", inputs[0])

print("targets[0]:", targets[0])

break

```

**Our model-building utility**

```

from tensorflow import keras

from tensorflow.keras import layers

def get_model(max_tokens=20000, hidden_dim=16):

inputs = keras.Input(shape=(max_tokens,))

x = layers.Dense(hidden_dim, activation="relu")(inputs)

x = layers.Dropout(0.5)(x)

outputs = layers.Dense(1, activation="sigmoid")(x)

model = keras.Model(inputs, outputs)

model.compile(optimizer="rmsprop",

loss="binary_crossentropy",

metrics=["accuracy"])

return model

```

**Training and testing the binary unigram model**

```

model = get_model()

model.summary()

callbacks = [

keras.callbacks.ModelCheckpoint("binary_1gram.keras",

save_best_only=True)

]

model.fit(binary_1gram_train_ds.cache(),

validation_data=binary_1gram_val_ds.cache(),

epochs=10,

callbacks=callbacks)

model = keras.models.load_model("binary_1gram.keras")

print(f"Test acc: {model.evaluate(binary_1gram_test_ds)[1]:.3f}")

```

#### Bigrams with binary encoding

**Configuring the `TextVectorization` layer to return bigrams**

```

text_vectorization = TextVectorization(

ngrams=2,

max_tokens=20000,

output_mode="binary",

)

```

**Training and testing the binary bigram model**

```

text_vectorization.adapt(text_only_train_ds)

binary_2gram_train_ds = train_ds.map(lambda x, y: (text_vectorization(x), y))

binary_2gram_val_ds = val_ds.map(lambda x, y: (text_vectorization(x), y))

binary_2gram_test_ds = test_ds.map(lambda x, y: (text_vectorization(x), y))

model = get_model()

model.summary()

callbacks = [

keras.callbacks.ModelCheckpoint("binary_2gram.keras",

save_best_only=True)

]

model.fit(binary_2gram_train_ds.cache(),

validation_data=binary_2gram_val_ds.cache(),

epochs=10,

callbacks=callbacks)

model = keras.models.load_model("binary_2gram.keras")

print(f"Test acc: {model.evaluate(binary_2gram_test_ds)[1]:.3f}")

```

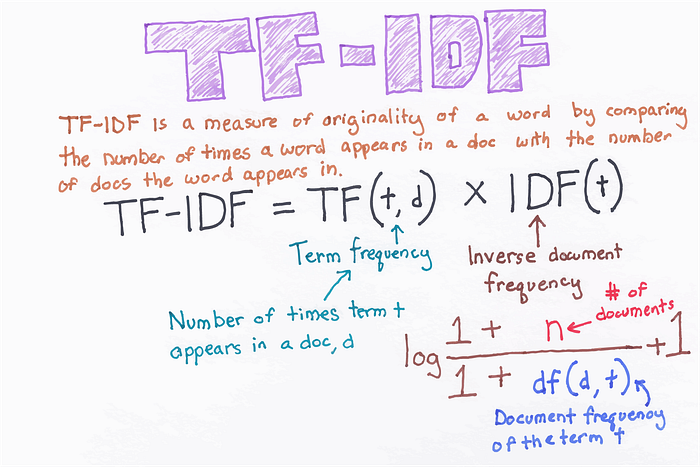

#### Bigrams with TF-IDF encoding

**Configuring the `TextVectorization` layer to return token counts**

```

text_vectorization = TextVectorization(

ngrams=2,

max_tokens=20000,

output_mode="count"

)

```

**Configuring the `TextVectorization` layer to return TF-IDF-weighted outputs**

```

text_vectorization = TextVectorization(

ngrams=2,

max_tokens=20000,

output_mode="tf_idf",

)

```

**Training and testing the TF-IDF bigram model**

```

text_vectorization.adapt(text_only_train_ds)

tfidf_2gram_train_ds = train_ds.map(lambda x, y: (text_vectorization(x), y))

tfidf_2gram_val_ds = val_ds.map(lambda x, y: (text_vectorization(x), y))

tfidf_2gram_test_ds = test_ds.map(lambda x, y: (text_vectorization(x), y))

model = get_model()

model.summary()

callbacks = [

keras.callbacks.ModelCheckpoint("tfidf_2gram.keras",

save_best_only=True)

]

model.fit(tfidf_2gram_train_ds.cache(),

validation_data=tfidf_2gram_val_ds.cache(),

epochs=10,

callbacks=callbacks)

model = keras.models.load_model("tfidf_2gram.keras")

print(f"Test acc: {model.evaluate(tfidf_2gram_test_ds)[1]:.3f}")

inputs = keras.Input(shape=(1,), dtype="string")

processed_inputs = text_vectorization(inputs)

outputs = model(processed_inputs)

inference_model = keras.Model(inputs, outputs)

import tensorflow as tf

raw_text_data = tf.convert_to_tensor([

["That was an excellent movie, I loved it."],

])

predictions = inference_model(raw_text_data)

print(f"{float(predictions[0] * 100):.2f} percent positive")

```

| github_jupyter |

# 2.1.6 APIによる入手

Yahoo APIを利用してショッピングのレビューコメントを取得

```

# リスト 2.1.20

# Yahoo ショッピングのカテゴリID一覧を取得する

import requests

import json

import time

import csv

# エンドポイント

url_cat = 'https://shopping.yahooapis.jp/ShoppingWebService/V1/json/categorySearch'

# アプリケーションid (p.34の方法で取得した値を設定して下さい)

appid = 'xxxx'

# 全カテゴリファイル

all_categories_file = './all_categories.csv'

# APIリクエスト呼び出し用関数

def r_get(url, dct):

time.sleep(1) # 1回で1秒あける

return requests.get(url, params=dct)

# カテゴリ取得用関数

def get_cats(cat_id):

try:

result = r_get(url_cat, {'appid': appid, 'category_id': cat_id})

cats = result.json()['ResultSet']['0']['Result']['Categories']['Children']

for i, cat in cats.items():

if i != '_container':

yield cat['Id'], {'short': cat['Title']['Short'], 'medium': cat['Title']['Medium'], 'long': cat['Title']['Long']}

except:

pass

# リスト 2.1.21

# カテゴリ一覧CSVファイルの生成

# ヘッダ

output_buffer = [['カテゴリコードlv1', 'カテゴリコードlv2', 'カテゴリコードlv3',

'カテゴリ名lv1', 'カテゴリ名lv2', 'カテゴリ名lv3', 'カテゴリ名lv3_long']]

with open(all_categories_file, 'w') as f:

writer = csv.writer(f, lineterminator='\n')

writer.writerows(output_buffer)

output_buffer = []

# カテゴリレベル1

for id1, title1 in get_cats(1):

print('カテゴリレベル1 :', title1['short'])

try:

# カテゴリレベル2

for id2, title2 in get_cats(id1):

# カテゴリレベル3

for id3, title3 in get_cats(id2):

wk = [id1, id2, id3, title1['short'], title2['short'], title3['short'], title3['long']]

output_buffer.append(wk)

# ファイル書き込み

with open(all_categories_file, 'a') as f:

writer = csv.writer(f, lineterminator='\n')

writer.writerows(output_buffer)

output_buffer = []

except KeyError:

continue

# リスト 2.1.22

# CSVファイルの内容確認

import pandas as pd

from IPython.display import display

df = pd.read_csv(all_categories_file)

display(df.head())

# リスト 2.1.23

# スマホのコード確認

df1 = df.query("カテゴリコードlv3 == '49331'")

display(df1)

# リスト 2.1.24

# レビューコメントの取得

import requests

import time

url_review = 'https://shopping.yahooapis.jp/ShoppingWebService/V1/json/reviewSearch'

# アプリケーションid

# 書籍ではappidは伏せ字にしてください

appid = 'dj0zaiZpPUZCZFh2WjRYM1V1WCZzPWNvbnN1bWVyc2VjcmV0Jng9ZmE-'

# レビュー取得件数。最大50。APIの仕様。

num_results = 50

num_reviews_per_cat = 99999999

# テキストの最大・最小文字数。レビュー本文がこれより長い・短いものは読み飛ばす。

max_len = 10000

min_len = 50

def r_get(url, dct):

time.sleep(1) # 1回で1秒あける

return requests.get(url, params=dct)

# 指定したカテゴリidのレビューを返す

def get_reviews(cat_id, max_items):

# 実際に返した件数

items = 0

# 結果配列

results = []

# 開始位置

start = 1

while (items < max_items):

result = r_get(url_review, {'appid': appid, 'category_id': cat_id, 'results': num_results, 'start': start})

if result.ok:

rs = result.json()['ResultSet']

else:

print('エラーが返されました : [cat id] {} [reason] {}-{}'.format(cat_id, result.status_code, result.reason))

if result.status_code == 400:

print('ステータスコード400(badrequestは中止せず読み飛ばします')

break

else:

exit(True)

avl = int(rs['totalResultsAvailable'])

pos = int(rs['firstResultPosition'])

ret = int(rs['totalResultsReturned'])

#print('総ヒット数: %d 開始位置: %d 取得数: %d' % (avl, pos, ret))

reviews = result.json()['ResultSet']['Result']

for rev in reviews:

desc_len = len(rev['Description'])

if min_len > desc_len or max_len < desc_len:

continue

items += 1

buff = {}

buff['id'] = items

buff['title'] = rev['ReviewTitle'].replace('\n', '').replace(',', '、')

buff['rate'] = int(float(rev['Ratings']['Rate']))

buff['comment'] = rev['Description'].replace('\n', '').replace(',', '、')

buff['name'] = rev['Target']['Name']

buff['code'] = rev['Target']['Code']

results.append(buff)

if items >= max_items:

break

start += ret

#print('有効件数: %d' % items)

return results

# リスト2.1.25

# コメント一覧の取得と保存

import json

import pickle

# get_reviews(code, count) レビューコメントの取得

# code: カテゴリコード (all_categories.csv)に記載のもの

# count: 何件取得するか

result = get_reviews(49331,5)

print(json.dumps(result, indent=2,ensure_ascii=False))

```

| github_jupyter |

<a href="https://colab.research.google.com/github/Blackman9t/Advanced-Data-Science/blob/master/pyspark_fundamentals_2.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# IBM intro to spark lab, part 3 and DataCamp intro to Pyspark, lessons 3 and 4.

**This is a very comprehensive notebook on pyspark. It's a sequel to the notebook pyspark_fundamentals_1. This notebook contains tutorials from IBM and DataCamp combined.<br>

This notebook holds the IBM intro to spark tutorials part 3 and the DataCamp intro to pyspark course lessons 3 and 4.<br> A continuation link is provided at the end of this notebook**

First let's load spark dependencies to run in colab

```

!apt-get install openjdk-8-jdk-headless -qq > /dev/null

!wget -q http://apache.osuosl.org/spark/spark-2.4.5/spark-2.4.5-bin-hadoop2.7.tgz

!tar xf spark-2.4.5-bin-hadoop2.7.tgz

!pip install -q findspark

!pip install pyspark

# Set up required environment variables

import os

os.environ["JAVA_HOME"] = "/usr/lib/jvm/java-8-openjdk-amd64"

os.environ["SPARK_HOME"] = "/content/spark-2.4.5-bin-hadoop2.7"

```

Next let's instantiate a SparkContext object to connect to the spark cluster if none exists

```

from pyspark import SparkConf, SparkContext

try:

conf = SparkConf().setMaster('local').setAppName('myApp')

sc = SparkContext(conf=conf)

print('SparkContext Initialised successfully!')

except Exception as e:

print(e)

# Let's see the SparkContext Object

sc

```

Next let's create a SparkSession as our interface to the SparkContect we created above

```

from pyspark.sql import SparkSession

spark = SparkSession.builder.appName('myApp').getOrCreate()

# Let's see the SparkSession

spark

# Let's import other possible libraries we may use

import pandas as pd

import numpy as np

```

## DataCamp, Course 1 Contd..:

**Lesson 3: Getting started with machine learning pipelines**

________________________________________________________________________________

At the core of the pyspark.ml module are the Transformer and Estimator classes. Almost every other class in the module behaves similarly to these two basic classes.

Transformer classes have a .transform() method that takes a DataFrame and returns a new DataFrame; usually the original one with a new column appended. For example, you might use the class Bucketizer to create discrete bins from a continuous feature or the class PCA to reduce the dimensionality of your dataset using principal component analysis.

Estimator classes all implement a .fit() method. These methods also take a DataFrame, but instead of returning another DataFrame they return a model object. This can be something like a StringIndexerModel for including categorical data saved as strings in your models, or a RandomForestModel that uses the random forest algorithm for classification or regression.

First let's grab our Flights, Airports and Planes data sets using Wget and then read these into Spark DataFrames

```

! wget 'https://assets.datacamp.com/production/repositories/1237/datasets/fa47bb54e83abd422831cbd4f441bd30fd18bd15/flights_small.csv'

! wget 'https://assets.datacamp.com/production/repositories/1237/datasets/6e5c4ac2a4799338ba7e13d54ce1fa918da644ba/airports.csv'

! wget 'https://assets.datacamp.com/production/repositories/1237/datasets/231480a2696c55fde829ce76d936596123f12c0c/planes.csv'

flights = spark.read.csv('flights_small.csv', header=True)

flights.show(5)

airports = spark.read.csv('airports.csv',header=True)

airports.show(5)

planes = spark.read.csv('planes.csv',header=True)

planes.show(5)

```

**Join the DataFrames**

In the next two chapters you'll be working to build a model that predicts whether or not a flight will be delayed based on the flights data. This model will also include information about the plane that flew the route, so the first step is to join the two tables: flights and planes!

```

# First, rename the year column of planes to plane_year to avoid duplicate column names.

planes = planes.withColumnRenamed('year','plane_year')

planes.show(5)

# Create a new DataFrame called model_data by joining the flights table with planes using the tailnum column as the key.

model_data = flights.join(planes, on='tailnum', how='left_outer')

model_data.show(5)

# Let's see how many rows exist

model_data.count()

# let's print the schema and see how it's like

model_data.printSchema()

```

**Data types**

Good work! Before you get started modeling, it's important to know that Spark only handles numeric data. That means all of the columns in your DataFrame must be either integers or decimals (called 'doubles' in Spark).

When we imported our data, we let Spark guess what kind of information each column held. Unfortunately, Spark doesn't always guess right and you can see that some of the columns in our DataFrame are strings containing numbers as opposed to actual numeric values.

To remedy this, you can use the .cast() method in combination with the .withColumn() method. It's important to note that .cast() works on columns, while .withColumn() works on DataFrames.

The only argument you need to pass to .cast() is the kind of value you want to create, in string form. For example, to create integers, you'll pass the argument "integer" and for decimal numbers you'll use "double".

You can put this call to .cast() inside a call to .withColumn() to overwrite the already existing column, just like you did in the previous chapter!

**String to integer**

Now you'll use the .cast() method you learned in the previous exercise to convert all the appropriate columns from your DataFrame model_data to integers!

To convert the type of a column using the .cast() method, you can write code like this:

```

dataframe = dataframe.withColumn("col", dataframe.col.cast("new_type"))

```

```

# Use the method .withColumn() to .cast() the following columns to type "integer". Access the columns using the df.col notation:

# model_data.arr_delay

# model_data.air_time

# model_data.month

# model_data.plane_year

model_data = model_data.withColumn("arr_delay", model_data.arr_delay.cast('integer'))

model_data = model_data.withColumn("air_time", model_data.air_time.cast('integer'))

model_data = model_data.withColumn("month", model_data.month.cast('integer'))

model_data = model_data.withColumn("plane_year", model_data.plane_year.cast('integer'))

# Let'see the schema data types again

model_data.printSchema()

```

**Create a new column**

In the last exercise, you converted the column plane_year to an integer. This column holds the year each plane was manufactured. However, your model will use the planes' age, which is slightly different from the year it was made!

Create the column plane_age using the .withColumn() method and subtracting the year of manufacture (column plane_year) from the year (column year) of the flight.

```

model_data = model_data.withColumn('plane_age', model_data.year - model_data.plane_year)

model_data.show(5)

```

**Making a Boolean**

Consider that you're modeling a yes or no question: is the flight late? However, your data contains the arrival delay in minutes for each flight. Thus, you'll need to create a boolean column which indicates whether the flight was late or not!

```

#Use the .withColumn() method to create the column is_late. This column is equal to model_data.arr_delay > 0.

model_data = model_data.withColumn('is_arrival_late', model_data.arr_delay > 0)

model_data.show(3)

# Convert this column to an integer column so that you can use it in your model and name it label

# (this is the default name for the response variable in Spark's machine learning routines).

model_data = model_data.withColumn('label', model_data.is_arrival_late.cast('integer'))

model_data.show(3)

```

**Remove missing values**

```

cols = model_data.columns

for col_name in cols:

model_data = model_data.filter(col_name + ' is not Null')

# Let's see how many rows are left from the filter exercise above

model_data.count()

```

**Strings and factors**

As you know, Spark requires numeric data for modeling. So far this hasn't been an issue; even boolean columns can easily be converted to integers without any trouble. But you'll also be using the airline and the plane's destination as features in your model. These are coded as strings and there isn't any obvious way to convert them to a numeric data type.

Fortunately, PySpark has functions for handling this built into the pyspark.ml.features submodule. You can create what are called 'one-hot vectors' to represent the carrier and the destination of each flight. A one-hot vector is a way of representing a categorical feature where every observation has a vector in which all elements are zero except for at most one element, which has a value of one (1).

Each element in the vector corresponds to a level of the feature, so it's possible to tell what the right level is by seeing which element of the vector is equal to one (1).

The first step to encoding your categorical feature is to create a StringIndexer. Members of this class are Estimators that take a DataFrame with a column of strings and map each unique string to a number. Then, the Estimator returns a Transformer that takes a DataFrame, attaches the mapping to it as metadata, and returns a new DataFrame with a numeric column corresponding to the string column.

The second step is to encode this numeric column as a one-hot vector using a OneHotEncoder. This works exactly the same way as the StringIndexer by creating an Estimator and then a Transformer. The end result is a column that encodes your categorical feature as a vector that's suitable for machine learning routines!

This may seem complicated, but don't worry! All you have to remember is that you need to create a StringIndexer and a OneHotEncoder, and the Pipeline will take care of the rest.

**Carrier column**

In this exercise you'll create a StringIndexer and a OneHotEncoder to code the carrier column. To do this, you'll call the class constructors with the arguments inputCol and outputCol.

The inputCol is the name of the column you want to index or encode, and the outputCol is the name of the new column that the Transformer should create.

```

# Create a StringIndexer called carr_indexer by calling StringIndexer() with inputCol="carrier" and outputCol="carrier_index".

from pyspark.ml.feature import StringIndexer

carr_indexer = StringIndexer(inputCol='carrier', outputCol='carrier_index')

# to immediately see the effect of the StringIndexer, lets say...

indexed = carr_indexer.fit(model_data).transform(model_data) # This creates a new data frame

indexed.show(3)

# Create a OneHotEncoder called carr_encoder by calling OneHotEncoder() with inputCol="carrier_index" and outputCol="carrier_fact".

from pyspark.ml.feature import OneHotEncoder

carr_encoder = OneHotEncoder(inputCol='carrier_index', outputCol='carrier_fact')

# to immediately see the effect of the OneHotEncoder, lets say...

encoded = carr_encoder.transform(indexed) # This creates a new data frame. Note that encoder is a transformer and has no fit method

encoded.show(3)

```

**Destination Column**

Now you'll encode the dest column just like we did the carrier column.

```

# Create a StringIndexer called dest_indexer by calling StringIndexer() with inputCol="dest" and outputCol="dest_index".

dest_indexer = StringIndexer(inputCol='dest', outputCol='dest_index')

# Create a OneHotEncoder called dest_encoder by calling OneHotEncoder() with inputCol="dest_index" and outputCol="dest_fact".

dest_encoder = OneHotEncoder(inputCol='dest_index', outputCol='dest_fact')

```

**Assemble a vector**

The last step in the Pipeline is to combine all of the columns containing our features into a single column. This has to be done before modeling can take place because every Spark modeling routine expects the data to be in this form. You can do this by storing each of the values from a column as an entry in a vector. Then, from the model's point of view, every observation is a vector that contains all of the information about it and a label that tells the modeler what value that observation corresponds to.

Because of this, the pyspark.ml.feature submodule contains a class called VectorAssembler. This Transformer takes all of the columns you specify and combines them into a new vector column.

```

# Create a VectorAssembler by calling VectorAssembler() with the inputCols names as a list and the outputCol name "features".

# The list of columns should be ["month", "air_time", "carrier_fact", "dest_fact", "plane_age"].

from pyspark.ml.feature import VectorAssembler

vec_assembler = VectorAssembler(inputCols=["month", "air_time", "carrier_fact", "dest_fact", "plane_age"], outputCol='features')

```

**Create the pipeline**

You're finally ready to create a Pipeline!

Pipeline is a class in the pyspark.ml module that combines all the Estimators and Transformers that you've already created. This lets you reuse the same modeling process over and over again by wrapping it up in one simple object. Neat, right?

_Import Pipeline from pyspark.ml._

Call the Pipeline() constructor with the keyword argument stages to create a Pipeline called flights_pipe.<br>

stages should be a list holding all the stages you want your data to go through in the pipeline. Here this is just:

```

[dest_indexer, dest_encoder, carr_indexer, carr_encoder, vec_assembler]

```

```

from pyspark.ml import Pipeline

flights_pipe = Pipeline(stages=[dest_indexer, dest_encoder, carr_indexer, carr_encoder, vec_assembler])

```

**Test vs Train**

After you've cleaned your data and gotten it ready for modeling, one of the most important steps is to split the data into a test set and a train set. After that, don't touch your test data until you think you have a good model! As you're building models and forming hypotheses, you can test them on your training data to get an idea of their performance.

Once you've got your favorite model, you can see how well it predicts the new data in your test set. This never-before-seen data will give you a much more realistic idea of your model's performance in the real world when you're trying to predict or classify new data.

In Spark it's important to make sure you split the data after all the transformations. This is because operations like StringIndexer don't always produce the same index even when given the same list of strings.

**Transform the data:**

Hooray, now you're finally ready to pass your data through the Pipeline you created!

```

# Create the DataFrame piped_data by calling the Pipeline methods .fit() and .transform() in a chain.

# Both of these methods take model_data as their only argument.

piped_data = flights_pipe.fit(model_data).transform(model_data)

piped_data.show(3)

```

**Split the data**

Now that you've done all your manipulations, the last step before modeling is to split the data!

Use the DataFrame method .randomSplit() to split piped_data into two pieces, training with 75% of the data, and test with 25% of the data by passing the list [.75, .25] to the .randomSplit() method.

```

training, testing = piped_data.randomSplit([0.75, 0.25])

```

Let's see howmany rows in both training and testing sets

```

print('Training set has {}, while testing set has {} observations. Total is {} observations.'.format(training.count(), testing.count(), (training.count() + testing.count())))

```

## DataCamp, Course 1 Contd...:

**Lesson 4: Model tuning and selection**

________________________________________________________________________________

**What is logistic regression?**

The model you'll be fitting in this chapter is called a logistic regression. This model is very similar to a linear regression, but instead of predicting a numeric variable, it predicts the probability (between 0 and 1) of an event.

To use this as a classification algorithm, all you have to do is assign a cutoff point to these probabilities. If the predicted probability is above the cutoff point, you classify that observation as a 'yes' (in this case, the flight being late), if it's below, you classify it as a 'no'!

You'll tune this model by testing different values for several hyperparameters. A hyperparameter is just a value in the model that's not estimated from the data, but rather is supplied by the user to maximize performance. For this course it's not necessary to understand the mathematics behind all of these values - what's important is that you'll try out a few different choices and pick the best one.

**Create the modeler**

The Estimator you'll be using is a LogisticRegression from the pyspark.ml.classification submodule.

```

# Import the LogisticRegression class from pyspark.ml.classification.

# Create a LogisticRegression called lr by calling LogisticRegression() with no arguments.

from pyspark.ml.classification import LogisticRegression

lr = LogisticRegression()

print(lr)

```

**Cross validation**

In the next few exercises you'll be tuning your logistic regression model using a procedure called k-fold cross validation. This is a method of estimating the model's performance on unseen data (like your test DataFrame).

It works by splitting the training data into a few different partitions. The exact number is up to you, but in this course you'll be using PySpark's default value of three. Once the data is split up, one of the partitions is set aside, and the model is fit to the others. Then the error is measured against the held out partition. This is repeated for each of the partitions, so that every block of data is held out and used as a test set exactly once. Then the error on each of the partitions is averaged. This is called the cross validation error of the model, and is a good estimate of the actual error on the held out data.

You'll be using cross validation to choose the hyperparameters by creating a grid of the possible pairs of values for the two hyperparameters, elasticNetParam and regParam, and using the cross validation error to compare all the different models so you can choose the best one!

Cross validation helps us to estimate the model error on the held-out testing data set

**Create the evaluator**

The first thing you need when doing cross validation for model selection is a way to compare different models. Luckily, the pyspark.ml.evaluation submodule has classes for evaluating different kinds of models. Your model is a binary classification model, so you'll be using the BinaryClassificationEvaluator from the pyspark.ml.evaluation module.

This evaluator calculates the area under the ROC. This is a metric that combines the two kinds of errors a binary classifier can make (false positives and false negatives) into a simple number. You'll learn more about this towards the end of the chapter!

```

# Import the submodule pyspark.ml.evaluation as evals.

# Create evaluator by calling evals.BinaryClassificationEvaluator() with the argument metricName="areaUnderROC".

import pyspark.ml.evaluation as evals

evaluator = evals.BinaryClassificationEvaluator(metricName='areaUnderROC')

```

**Make a grid**

Next, you need to create a grid of values to search over when looking for the optimal hyperparameters. The submodule pyspark.ml.tuning includes a class called ParamGridBuilder that does just that (maybe you're starting to notice a pattern here; PySpark has a submodule for just about everything!).

You'll need to use the .addGrid() and .build() methods to create a grid that you can use for cross validation. The .addGrid() method takes a model parameter (an attribute of the model Estimator, lr, that you created a few exercises ago) and a list of values that you want to try. The .build() method takes no arguments, it just returns the grid that you'll use later.

```

# Import the submodule pyspark.ml.tuning under the alias tune.

import pyspark.ml.tuning as tune

# Call the class constructor ParamGridBuilder() with no arguments. Save this as grid.

grid = tune.ParamGridBuilder()

# Call the .addGrid() method on grid with lr.regParam as the first argument and np.arange(0, .1, .01) as the second argument.

# This second call is a function from the numpy module (imported as np) that creates a list of numbers from 0 to .1, incrementing by .01. Overwrite grid with the result.

# Add the hyperparameter

grid = grid.addGrid(lr.regParam, np.arange(0, .1, .01))

# Update grid again by calling the .addGrid() method a second time create a grid for lr.elasticNetParam that includes only the values [0, 1].

grid = grid.addGrid(lr.elasticNetParam, [0,1])

# Build the grid

grid = grid.build()

```

**Make the validator**

The submodule pyspark.ml.tuning also has a class called CrossValidator for performing cross validation. This Estimator takes the modeler you want to fit, the grid of hyperparameters you created, and the evaluator you want to use to compare your models.

The submodule pyspark.ml.tune has already been imported as tune. You'll create the CrossValidator by passing it the logistic regression Estimator lr, the parameter grid, and the evaluator you created in the previous exercises.

<br>Name this object cv.

```

# Create the CrossValidator

cv = tune.CrossValidator(estimator=lr,

estimatorParamMaps=grid,

evaluator=evaluator)

```

**Fit the model(s)**

You're finally ready to fit the models and select the best one!

```

# Fit cross validation models

models = cv.fit(training)

# Extract the best model

best_lr = models.bestModel

print(best_lr)

# We can also print the coefficient and intercept of the Logistic Regression model by using the following command:

#coefficient of the regression model

coeff = best_lr.coefficients

#X and Y intercept

intrcpt = best_lr.intercept

print ("The coefficient of the model is : %a" %coeff)

print ("The Intercept of the model is : %f" %intrcpt)

```

**Evaluating binary classifiers**

For this course we'll be using a common metric for binary classification algorithms call the AUC, or area under the curve. In this case, the curve is the ROC, or receiver operating curve. The details of what these things actually measure isn't important for this course. All you need to know is that for our purposes, the closer the AUC is to one (1), the better the model is!

**Evaluate the model**

Remember the test data that you set aside waaaaaay back in chapter 3? It's finally time to test your model on it! You can use the same evaluator you made to fit the model.

```

# Use your model to generate predictions by applying best_lr.transform() to the test data. Save this as test_results.

test_results = best_lr.transform(testing)

# Call evaluator.evaluate() on test_results to compute the AUC. Print the output.

print(evaluator.evaluate(test_results))

```

# DataCamp Course 2: Big Data Fundamentals with PySpark.

### Lesson 1: Introduction to Big Data analysis with Spark

**Inspecting The SparkContext**

SparkContext(sc) is an entry point into the world of spark functionality. An entry point is where control is transferred from operating system(os) to the provided program. in simpler terms, it's like a key to your house, without which there is no entry.

<br>Let's inspect some of the attributes of teh SparkContect.

```

# Let's print the SparkContext Version

sc.version

# Let's print the Python Version that sc runs on.

sc.pythonVer

# Let's print the Master. Master is the URL of the Cluster, or 'local' string to run in local mode.

# sc.master returns 'local', meaning the SparkContext acts as a master on a local node, using all available threads on the computer where it is running.

sc.master

```

**Loading Data in Pyspark:**

We can load raw data in pyspark using SparkContext by two distinct means:

<br>1. SparkContext parallelize() method on a list:

```

rdd = sc.parallelize(range(10))

```

<br>2. SparkContext textFile() method on a file:

```

rdd2 = sc.textFile('text.txt')

```

**Loading data in PySpark shell**

In PySpark, we express our computation through operations on distributed collections that are automatically parallelized across the cluster. In the previous exercise, you have seen an example of loading a list as parallelized collections and in this exercise, you'll load the data from a local file in PySpark shell.

```

# Load a local file into PySpark shell

lines = sc.textFile('movie_quotes.txt')

```

**Use of lambda() with map()**

The map() function in Python returns a list of the results after applying the given function to each item of a given iterable (list, tuple etc.). The general syntax of map() function is map(fun, iter). We can also use lambda functions with map(). The general syntax of map() function with lambda() is map(lambda <agument>:<expression>, iter). Refer to slide 5 of video 1.7 for general help of map() function with lambda().

```

my_list = list(range(1,11))

print(my_list)

# Square each item in my_list using map() and lambda().

squrd_list = list(map(lambda x: x**2, my_list))

print(squrd_list)

```

**Use of lambda() with filter():**

Another function that is used extensively in Python is the filter() function. The filter() function in Python takes in a function and a list as arguments. The general syntax of the filter() function is filter(function, list_of_input). Similar to the map(), filter() can be used with lambda() function. The general syntax of the filter() function with lambda() is filter(lambda <argument>:<expression>, list). Refer to slide 6 of video 1.7 for general help of the filter() function with lambda().

```

my_list2 = [10, 21, 31, 40, 51, 60, 72, 80, 93, 101]

# Filter the numbers divisible by 10 from my_list2 using filter() and lambda().

filtered_list2 = list(filter(lambda x: x % 10 == 0, my_list2))

print(filtered_list2)

```

### Lesson 2: Programming in PySpark RDD’s

**RDDs** stand for _Resilient Distributed Dataset_, they are the first class citizen of Apache Spark.<br>It is simply a collection of data distributed across the cluster. RDD is the fundamental and backbone data type in Pyspark.

**Decomposing RDDs:**

Let's look at the different features of RDDs:

1. **_Resilient :_** Means the ability to withstand failures and recompute missing or damaged partitions.

2. **_Distributed :_** Means spanning the jobs across multiple nodes in the cluster for efficient computation.

3. **_Datasets :_** These are a collection of partitioned data e.g Arrays, Tuples, Tables, E.t.c.

**Creating RDDs:**

RDDs are created in 3 different ways:

1. Using the Spark Context .parallelize() method on an iterable like a list or range

```

rdd = sc.parallelize(range(100))

rdd2 = sc.parallelize('hello world')

```

2. creating RDDs from an external files like a text file or CSV file. This is by far the most common way to create RDDs in Pyspark. This method uses the Spark Context's textFile() method. Here we can read a README.md file stored locally on our computer.

```

rdd2 = sc.textFile('README.md')

```

3. Creating RDDs from other RDDs

**Understanding Partitioning in Pyspark**

Understanding how Spark deals with partitions allows one to control parallelism

. A partition in Spark is the division of the large dataset, with each part being stored in multiple locations across the cluster. By default Spark partitions the Data at the time of creating RDDs, based on several factors such as available resources, external datasets e.t.c

However this behavior can be controlled when we create an RDD via textFile() by passing a second argument called minPartitions, which specifies the minimum number of partitions to be created for an RDD.

In the parallelize() method, no need to use the keyword instead just pass the number of partitions you want as the second argument

```

rdd1 = sc.parallelize(range(100), 6)

# Let's see the type of rdd1

type(rdd1)

# let's see the number of partitions in rdd1

rdd1.getNumPartitions()

```

**RDDs from Parallelized collections**

Resilient Distributed Dataset (RDD) is the basic abstraction in Spark. It is an immutable distributed collection of objects. Since RDD is a fundamental and backbone data type in Spark, it is important that you understand how to create it. In this exercise, you'll create your first RDD in PySpark from a collection of words.

```

RDD = sc.parallelize(["Spark", "is", "a", "framework", "for", "Big Data processing"])

RDD.collect()

type(RDD)

```

**RDDs from External Datasets**

PySpark can easily create RDDs from files that are stored in external storage devices such as HDFS (Hadoop Distributed File System), Amazon S3 buckets, etc. However, the most common method of creating RDD's is from files stored in your local file system. This method takes a file path and reads it as a collection of lines. In this exercise, you'll create an RDD from the file path

```

from google.colab import files

uploaded = files.upload()

file_rdd = sc.textFile('movie_quotes.txt')

# Let's see the first 6 items

file_rdd.take(6)

type(file_rdd)

file_rdd.getNumPartitions()

```

**Partitions in your data**

SparkContext's textFile() method takes an optional second argument called minPartitions for specifying the minimum number of partitions. In this exercise, you'll create an RDD named fileRDD_part with 5 partitions and then compare that with fileRDD that you created in the previous

```

fileRDD_part = sc.textFile('movie_quotes.txt', minPartitions=5)

type(fileRDD_part)

fileRDD_part.getNumPartitions()

```

**Overview of Pyspark Operations**

RDDs in Pyspark support two different types of operations:

1. **Transformations :** Transformations are operations on an RDD that return a new RDD

2. **Actions :** Are operations that perform some computation on an RDD.

**RDD Transformations:**

Transformations follow lazy evaluation

<br>Lazy evaluations denote that Spark creates a graph from all the operations we perform on an RDD. And execution of the graph only starts when an action is performed on an RDD. This is called **_lazy evaluation_** in Spark.

**The RDD Transformations we will look at are :**

1. map()

2. filter()

3. flatmap()

4. union()

_map() transformation :_ Takes in a function and applies it to each element in an RDD, with the result of the func being the new value of each element in the resulting RDD.

_filter() transformation :_ Takes in a function and returns an RDD that only has elements that pass the filter condition.

_flatMap() transformation :_ This is like map() but with the exception that it returns multiple values for each element in the source RDD.

_union() transformation :_ returns the union of one RDD with another RDD

```

# Example of flatMap() transformation

rdd = sc.parallelize(['Hello world','How are you?'])

flat_map_rdd = rdd.flatMap(lambda x: x.split(' '))

flat_map_rdd.collect()

# Example of union() transformation...

# Let's define an input RDD to filter other RDDs from

inputRDD = sc.textFile('movie_quotes.txt')

# Next let's filter out 2 other RDDs

fuckRDD = inputRDD.filter(lambda x: 'fuck' in x)

youRDD = inputRDD.filter(lambda x: 'you' in x)

fuckRDD.collect()

youRDD.collect()

# next lets union or join the fuckRDD to the youRDD

combinedRDD = fuckRDD.union(youRDD)

# Let's see it

combinedRDD.collect()

```

**RDD Actions:**

After transformations, we usually need to do some action in the RDD. Remember RDDs are inherently lazy and must be actioned to derive any outputs.

<br>The Actions we would use here are:

1. **collect() :_** returns all elemnets in the RDD

2. **take(N) :_** returns the N number of elements from the RDD

3. **first() :_** returns the first element and is similar to take(1)

4. **count() :_** returns the total number of elements in the RDD

**Map and Collect :**

The main method by which you can manipulate data in PySpark is using map(). The map() transformation takes in a function and applies it to each element in the RDD. It can be used to do any number of things, from fetching the website associated with each URL in our collection to just squaring the numbers. In this simple exercise, you'll use map() transformation to cube each element in an RDD

```

rdd = sc.parallelize(range(0,101,10))

# use map to cube each element in rdd

cubed = rdd.map(lambda x: x**3)

cubed.collect()

```

**Filter and Count:**

The RDD transformation filter() returns a new RDD containing only the elements that satisfy a particular function. It is useful for filtering large datasets based on a keyword. For this exercise, you'll filter out lines containing keyword Spark from fileRDD RDD which consists of lines of text from the README.md file. Next, you'll count the total number of lines containing the keyword Spark and finally print the first 4 lines of the filtered RDD.

```

# Let's grab the spark README.md file using wget

!rm README.md* -f

!wget https://raw.githubusercontent.com/carloapp2/SparkPOT/master/README.md

file_rdd = sc.textFile('README.md')

file_rdd.take(5)

# Create filter() transformation to select only the lines containing the keyword Spark.

fileRDD_filter = file_rdd.filter(lambda x: 'Spark' in x)

fileRDD_filter.collect()

print("The total number of lines with the keyword Spark is", fileRDD_filter.count())

```

**<h3>Pair-RDDs in PySpark:</h3>**

Working with RDDs of key/Value pairs, which are a common data type for many operations in Spark.

<br>Real life datasets are usually Key/Value pairs. Each row is a key that maps to one or more values.

<br>In order to deal with this kind of data set, Pyspark provides a special kind of data structure called _Pair-RDDs._ In Pair-RDDs, the key refers to the identifier, while the value refers to the data.

**Creating Pair-RDDs:-**

Two common ways to create Pair-RDDs

1. From a list of Key/Value tuples

2. From a regular RDD

Irrespective of the method, the first step to create Pair-RDD is getting the data into Key/Value form.

Example 1: Creating a Pair-RDD from a list of tuples

```

# A list of tuples

my_tuple = [('Sam',23),('Tim',46),('May',33),('John', 54)]

# Creating a Pair RDD

pairRDD_tuple = sc.parallelize(my_tuple)

# Let's see the first element

pairRDD_tuple.first()

type(pairRDD_tuple)

```

Example 2: Creating a Pair-RDD from a regular RDD

```

my_list = ['Sam 23','Tim 46','May 33','John 54']

regular_rdd = sc.parallelize(my_list)

regular_rdd.take(1)

# Now let's create a pair RDD drom the regualr_rdd

pairRDD_regular = regular_rdd.map(lambda x: (x.split(' ')[0], int(x.split(' ')[1])))

pairRDD_regular.collect()

```

**Transformations on Pair-RDDs:**

Pair-RDDs are RDDS, so all transformations applicable to regular RDDs are applicable to them.

<Br>Since Pair-RDDs contain tuples, we need to pass functions that operate on Key/Value pairs.

<br>A few special operations are available for this kind, such as:

1. reduceByKey(func): Combines values with the same key.

2. groupByKey(): Group values with the same key.

3. sortByKey(): Return an RDD sorted by the key.

4. join(): Join two Pair-RDDs based on their key.

**reduceByKey() Transformation:**

This is the most popular Pair-RDD transformation, which combines values of the same key using a function.

<br>reduceByKey() runs several parallel operations, one for each key in the dataset. reduceByKey() returns a new RDD consisting of each key and the reduced value for that key.

For example let's combine or add the goals scored for each player in the list of tuples below

```

tup_list = [('Messi',23), ('Ronaldo',34), ('Neymar',22), ('Messi',24)]

# Next we create a regular RDD and chain it to the reduceByKey() transformation

# In one line of code, creating an RDD with total goals scored per player.

tup_list_reduceByKey = sc.parallelize(tup_list).reduceByKey(lambda x,y: x + y)

# Finally display all key-value pairs of the new RDD

tup_list_reduceByKey.collect()

```

**sortByKey() Transformation:**

Sorting of data is important for many applications. We can sort Pair-RDDs as long as there's an order defined in the keys.